# Monte Carlo Tree Search Boosts Reasoning via Iterative Preference Learning

> Correspondence to: Yuxi Xie ( ) and Anirudh Goyal ( ).

## Abstract

We introduce an approach aimed at enhancing the reasoning capabilities of Large Language Models (LLMs) through an iterative preference learning process inspired by the successful strategy employed by AlphaZero. Our work leverages Monte Carlo Tree Search (MCTS) to iteratively collect preference data, utilizing its look-ahead ability to break down instance-level rewards into more granular step-level signals. To enhance consistency in intermediate steps, we combine outcome validation and stepwise self-evaluation, continually updating the quality assessment of newly generated data. The proposed algorithm employs Direct Preference Optimization (DPO) to update the LLM policy using this newly generated step-level preference data. Theoretical analysis reveals the importance of using on-policy sampled data for successful self-improving. Extensive evaluations on various arithmetic and commonsense reasoning tasks demonstrate remarkable performance improvements over existing models. For instance, our approach outperforms the Mistral-7B Supervised Fine-Tuning (SFT) baseline on GSM8K, MATH, and ARC-C, with substantial increases in accuracy to $81.8\$ (+ $5.9\$ ), $34.7\$ (+ $5.8\$ ), and $76.4\$ (+ $15.8\$ ), respectively. Additionally, our research delves into the training and inference compute tradeoff, providing insights into how our method effectively maximizes performance gains. Our code is publicly available at https://github.com/YuxiXie/MCTS-DPO.

## 1 Introduction

Development of Large Language Models (LLMs), has seen a pivotal shift towards aligning these models more closely with human values and preferences (Stiennon et al., 2020; Ouyang et al., 2022; Bai et al., 2022a). A critical aspect of this process involves the utilization of preference data. There are two prevailing methodologies for incorporating this data: the first entails the construction of a reward model based on preferences, which is then integrated into a Reinforcement Learning (RL) framework to update the policy (Christiano et al., 2017; Bai et al., 2022b); the second, more stable and scalable method, directly applies preferences to update the model’s policy (Rafailov et al., 2023).

In this context, the concept of “iterative” development is a key, especially when contrasted with the conventional Reinforcement Learning from Human Feedback (RLHF) paradigm (Christiano et al., 2017; Stiennon et al., 2020; Ouyang et al., 2022; Bai et al., 2022a), where the reward model is often trained offline and remains static. An iterative approach proposes a dynamic and continuous refinement process (Zelikman et al., 2022; Gülçehre et al., 2023; Huang et al., 2023; Yuan et al., 2024). It involves a cycle that begins with the current policy, progresses through the collection and analysis of data to generate new preference data, and uses this data to update the policy. This approach underlines the importance of ongoing adaptation in LLMs, highlighting the potential for these models to become more attuned to the complexities of human decision-making and reasoning.

<details>

<summary>x1.png Details</summary>

### Visual Description

## Diagram: Iterative Policy Tuning via Monte Carlo Tree Search and Preference Learning

### Overview

The image is a technical flowchart illustrating an iterative machine learning process for policy optimization. The system uses a Monte Carlo Tree Search (MCTS) to explore a prompt data pool, generates step-level preferences from the search results, and uses these preferences to update a policy model. The process is cyclical, with the updated policy from one iteration becoming the input for the next.

### Components/Axes

The diagram is divided into two main sections: a left panel detailing the search process and a right panel showing the policy update loop.

**Left Panel - Search Process:**

* **Prompt Data Pool:** A rounded rectangle at the top-left, labeled "Prompt Data Pool". An arrow points downward from it into the main search box.

* **Monte Carlo Tree Search Box:** A large, rounded rectangle containing a tree structure. It is labeled "Monte Carlo Tree Search" in the top-right corner of the box.

* **Tree Structure:** A hierarchical tree with a root node at the top and multiple levels of child nodes. Nodes are represented by rounded rectangles.

* **Node Color Legend:** Located at the bottom-left of the image. It is a horizontal gradient bar labeled "Q Values". The left end is dark purple, labeled "low". The right end is dark green, labeled "high". The gradient transitions through shades of purple, light purple, light green, to green.

* **Output Arrow:** An arrow exits the bottom-right of the MCTS box, pointing to the right towards the "Step-Level Preferences" element.

**Right Panel - Policy Update Loop:**

* **Policy from Last Iteration:** Located at the top-right. It is represented by a network graph icon (nodes and edges) and labeled "Policy from Last Iteration". The mathematical notation `π_θ(i-1)` is written above the icon. An arrow points downward from this element.

* **Preference Learning:** A rounded rectangle in the center-right, labeled "Preference Learning". It receives two inputs: one from "Policy from Last Iteration" (from above) and one from "Step-Level Preferences" (from below).

* **Updated Policy:** To the right of "Preference Learning". It is represented by an identical network graph icon and labeled "Updated Policy". The notation `π_θ(i)` is written above it. An arrow points from "Preference Learning" to this element.

* **Step-Level Preferences:** Located at the bottom-center. It is represented by a stack of three colored rectangles (two green, one purple) and labeled "Step-Level Preferences `D_i`". An arrow points upward from this element to "Preference Learning".

* **Iteration Label:** A gray box in the bottom-right corner, labeled "Policy Tuning at Iteration `i`".

### Detailed Analysis

**1. Monte Carlo Tree Search (MCTS) Component:**

* The tree originates from a single root node (light gray).

* The root has four direct child nodes. From left to right, their approximate colors (based on the Q-Value legend) are: light green, dark green, purple, light green.

* The tree expands to a maximum of four levels deep (root, level 1, level 2, level 3).

* **Node Color Distribution:** The nodes exhibit a range of colors from the Q-Value spectrum.

* **High Q-Value (Green) Nodes:** Several nodes are dark or medium green, indicating high estimated value. Notable examples include a level-1 node (second from left) and a level-3 node (far right).

* **Low Q-Value (Purple) Nodes:** Several nodes are dark or medium purple, indicating low estimated value. Notable examples include a level-1 node (third from left) and a level-2 node (far left).

* **Medium Q-Value Nodes:** Many nodes are light green or light purple, representing intermediate values.

* **Spatial Grounding:** The legend is positioned at the bottom-left, clearly mapping the color gradient to the Q-Value scale from low (purple) to high (green).

**2. Policy Update Loop:**

* The flow is cyclical and iterative, as indicated by the notation `i` and `i-1`.

* The process at iteration `i` takes the policy from the previous iteration (`π_θ(i-1)`) and the newly generated preferences (`D_i`) as inputs to the "Preference Learning" module.

* The output is an updated policy for the current iteration (`π_θ(i)`).

* The "Step-Level Preferences `D_i`" are generated from the results of the Monte Carlo Tree Search, as shown by the connecting arrow.

### Key Observations

1. **Color-Coded Value Assessment:** The MCTS visualization uses a continuous color gradient (purple to green) to represent the estimated quality (Q-Value) of different search paths or states, allowing for a quick visual assessment of promising vs. poor branches.

2. **Hierarchical Exploration:** The tree structure shows a systematic exploration of possibilities from a prompt, branching out into multiple potential sequences of steps or decisions.

3. **Closed-Loop Learning:** The system forms a feedback loop where the policy's performance (evaluated via MCTS and preference learning) is used to directly improve the policy itself for the next cycle.

4. **Data Flow:** The diagram clearly traces the flow of information: from a static data pool, through an active search process, to the generation of preference data, and finally into a learning module that updates the core policy model.

### Interpretation

This diagram depicts a sophisticated reinforcement learning or AI alignment technique. The core idea is to use a planning algorithm (Monte Carlo Tree Search) to explore a vast space of possible actions or responses stemming from a given prompt. The search doesn't just find a single best answer; it generates a rich set of trajectories with associated value estimates (the Q-values shown by color).

These search results are distilled into "Step-Level Preferences" (`D_i`). This likely means the system compares different paths found by the MCTS and creates data that says, "Given this state, path A is preferred over path B." This preference data is more stable and informative for learning than simple reward signals.

The "Preference Learning" module then uses this curated data to adjust the parameters (`θ`) of the policy model (`π`), moving it towards behaviors that are preferred according to the search-based evaluation. By iterating this process (`i-1` to `i`), the policy progressively improves, becoming better at generating high-value responses that are consistent with the preferences extracted from its own simulated search.

The system essentially uses look-ahead planning (MCTS) to create its own training signal (preferences), enabling iterative self-improvement without requiring an external reward model for every step. This is a powerful paradigm for complex decision-making or generation tasks where direct supervision is scarce.

</details>

<details>

<summary>x2.png Details</summary>

### Visual Description

\n

## Line Chart: Accuracy on ARC-C vs. Training Data Percentage

### Overview

The image is a line chart comparing the performance of three different methods ("Online", "Offline", and "SFT Baseline") on the ARC-C benchmark as a function of the percentage of training data used. The chart demonstrates how accuracy changes with increasing data for each method.

### Components/Axes

* **Chart Type:** Line chart with three data series.

* **Y-Axis:**

* **Label:** "Accuracy on ARC-C"

* **Scale:** Linear, ranging from 60 to 90.

* **Major Tick Marks:** 60, 65, 70, 75, 80, 85, 90.

* **X-Axis:**

* **Label:** "Training Data %"

* **Scale:** Linear, ranging from 0 to 60.

* **Major Tick Marks:** 0, 10, 20, 30, 40, 50, 60.

* **Legend:**

* **Position:** Top-right corner of the plot area.

* **Items:**

1. **Online:** Represented by a solid green line.

2. **Offline:** Represented by a solid blue line.

3. **SFT Baseline:** Represented by a solid pink/magenta line.

### Detailed Analysis

**1. Online (Green Line):**

* **Trend:** Shows a rapid, steep increase in accuracy from 0% to approximately 10% training data, followed by a more gradual, steady increase that plateaus around 35-40% data. The line remains relatively flat with minor fluctuations from 40% to 60%.

* **Approximate Data Points:**

* At 0%: ~60

* At 10%: ~73

* At 20%: ~75

* At 30%: ~75

* At 40%: ~76 (Peak)

* At 50%: ~75

* At 60%: ~76

**2. Offline (Blue Line):**

* **Trend:** Also increases sharply from 0% to about 10% data, but then exhibits significant volatility. It peaks around 20% data, dips sharply around 30%, recovers slightly around 40%, dips again around 50%, and shows a slight upturn at 60%.

* **Approximate Data Points:**

* At 0%: ~60

* At 10%: ~69

* At 20%: ~71 (Peak)

* At 30%: ~66 (Local minimum)

* At 40%: ~68

* At 50%: ~64 (Global minimum after initial rise)

* At 60%: ~66

**3. SFT Baseline (Pink Line):**

* **Trend:** A perfectly horizontal line, indicating constant performance regardless of the training data percentage shown.

* **Approximate Data Point:** Constant at ~60 across the entire x-axis (0% to 60%).

### Key Observations

1. **Performance Hierarchy:** The "Online" method consistently achieves the highest accuracy after the initial training phase (~5% data onward). The "Offline" method performs worse than "Online" but better than the baseline for most data points, except at its lowest dips. The "SFT Baseline" shows no improvement.

2. **Data Efficiency:** Both "Online" and "Offline" methods show their most significant gains with the first 10-20% of training data.

3. **Stability:** The "Online" method demonstrates stable, monotonic improvement after the initial phase. The "Offline" method is highly unstable, with large swings in accuracy as more data is added, suggesting potential issues with optimization or data utilization.

4. **Baseline Ceiling:** The flat baseline at ~60 suggests that the starting model (SFT) has a fixed performance ceiling on this task that is not broken by simply adding more of the same training data without the "Online" or "Offline" adaptation strategies.

### Interpretation

This chart likely illustrates the results of an experiment in machine learning, specifically in adapting a pre-trained model (the SFT Baseline) to a new task (ARC-C). The key finding is that **active adaptation strategies ("Online" and "Offline") are crucial for improving performance beyond the baseline**, and that the **"Online" strategy is significantly more effective and stable** than the "Offline" one.

The "Online" method's curve is characteristic of successful learning: rapid initial gain followed by diminishing returns as it approaches an asymptotic performance limit. The "Offline" method's erratic behavior could indicate problems such as catastrophic forgetting, unstable training dynamics, or poor handling of data distribution shifts as the training set grows. The experiment demonstrates that not all adaptation methods are equal, and the choice between "Online" and "Offline" processing has a major impact on final model accuracy and reliability. The fact that both methods start at the same point as the baseline (60% at 0% data) confirms they are being evaluated from the same starting model.

</details>

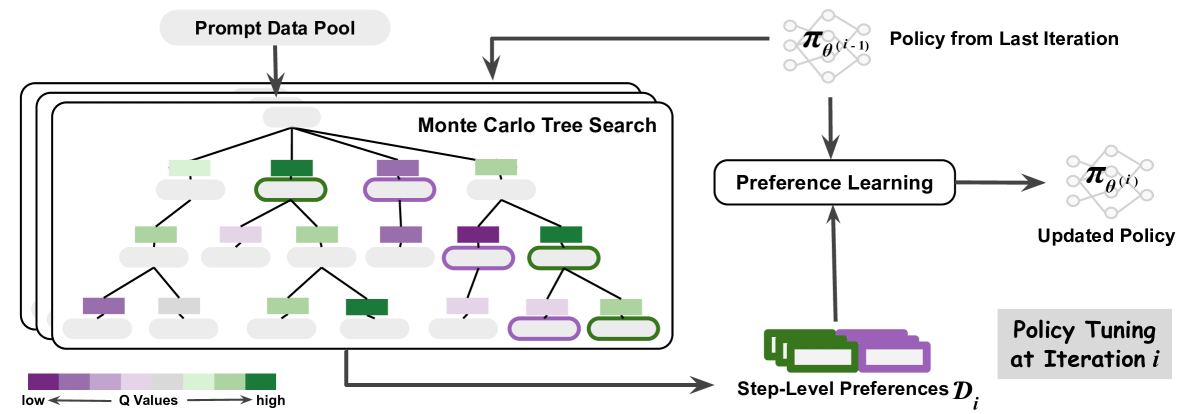

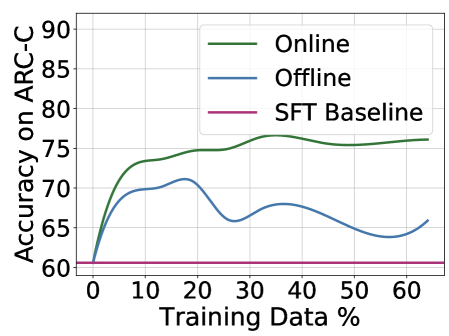

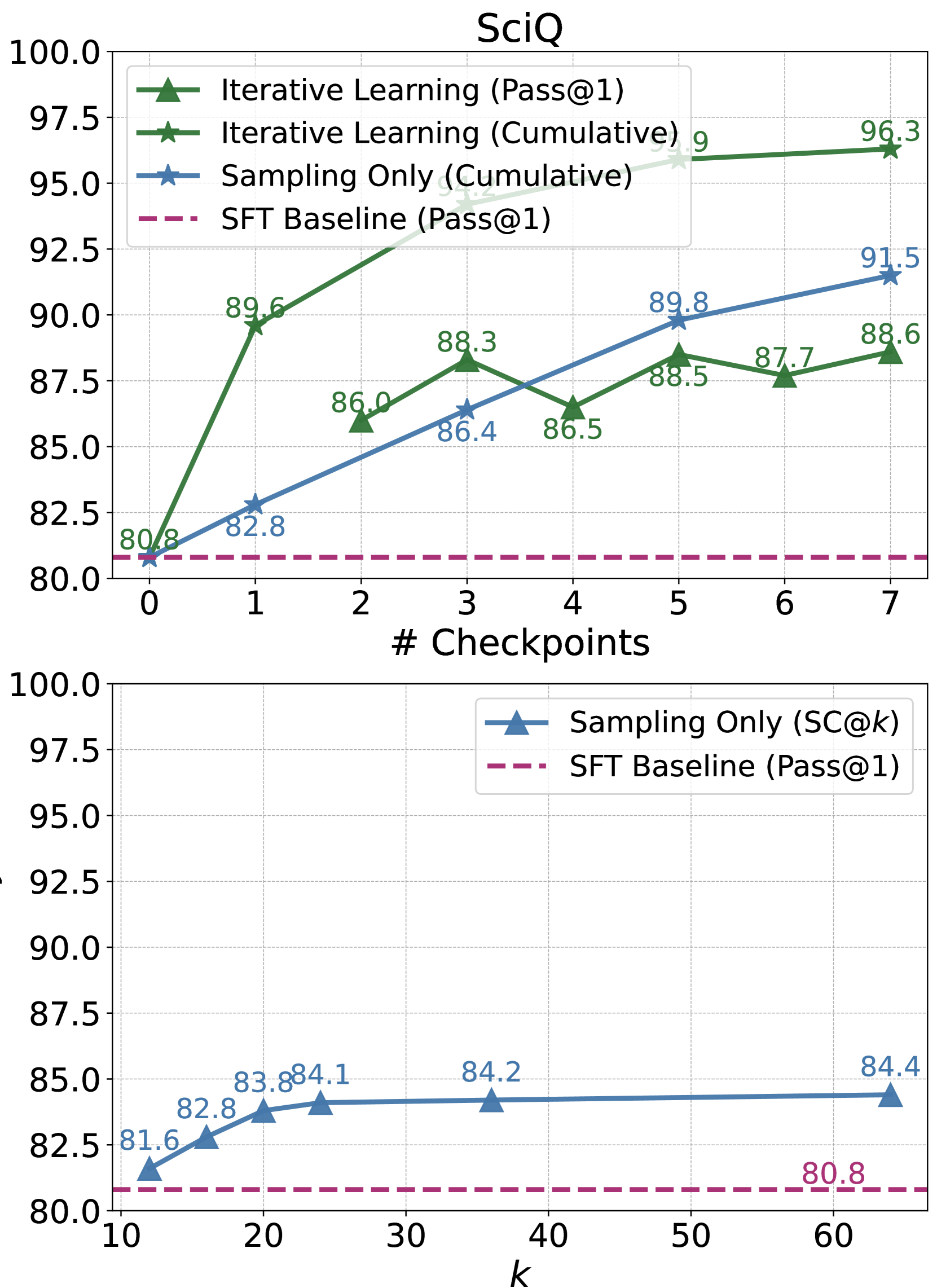

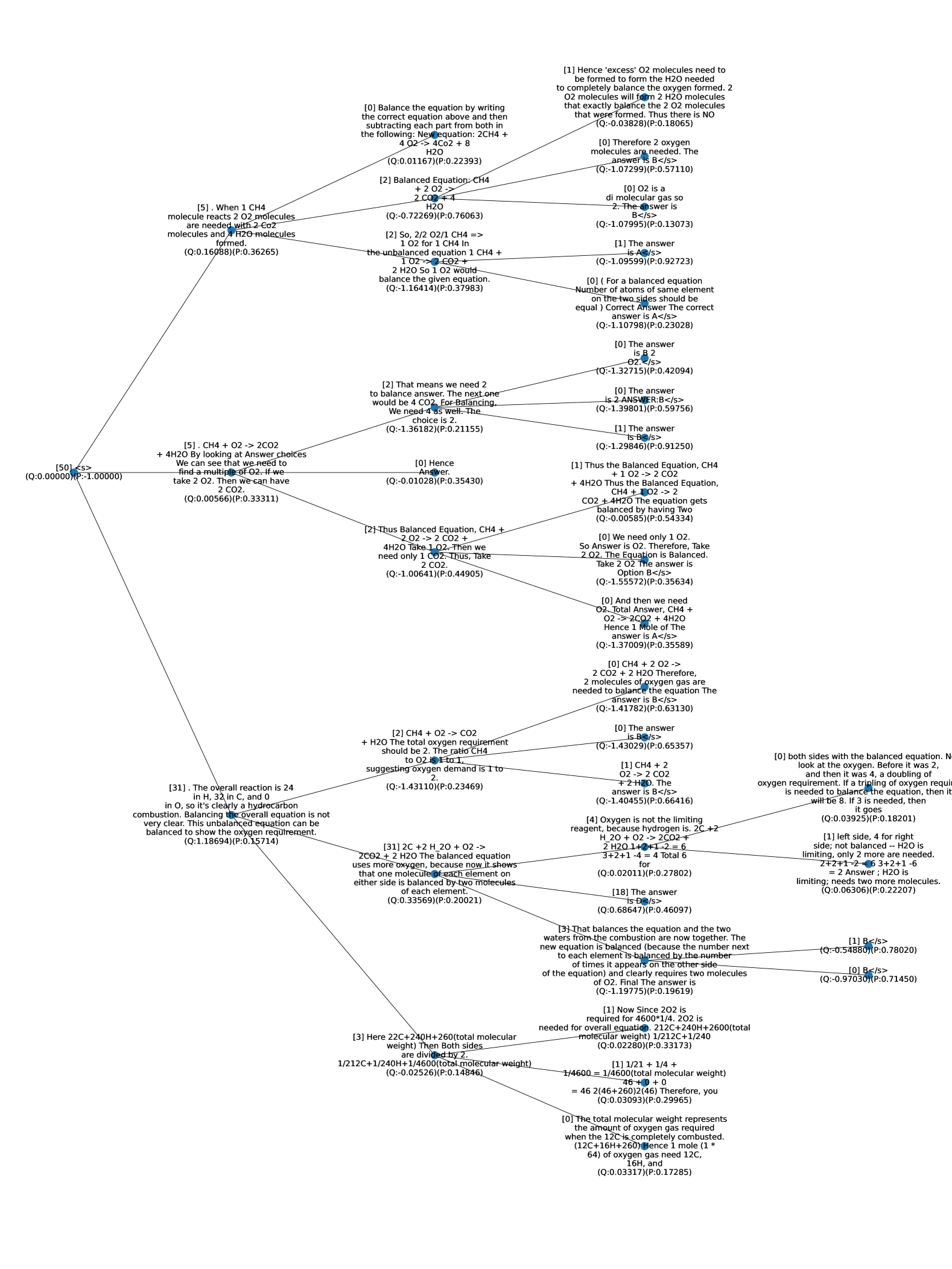

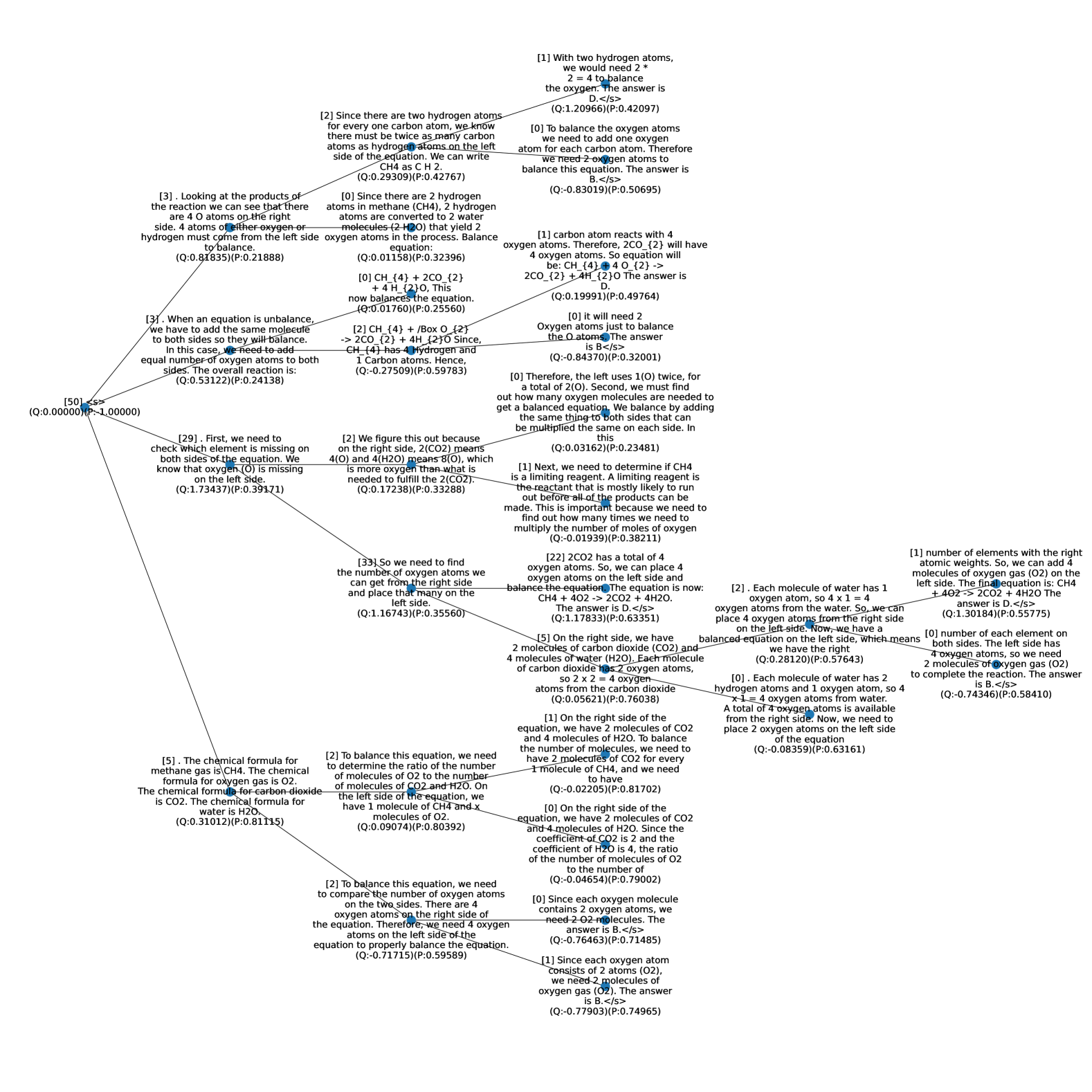

Figure 1: Monte Carlo Tree Search (MCTS) boosts model performance via iterative preference learning. Each iteration of our framework (on the left) consists of two stages: MCTS to collect step-level preferences and preference learning to update the policy. Specifically, we use action values $Q$ estimated by MCTS to assign the preferences, where steps of higher and lower $Q$ values will be labeled as positive and negative data, respectively. The scale of $Q$ is visualized in the colormap. We show the advantage of the online manner in our iterative learning framework using the validation accuracy curves as training progresses on the right. The performance of ARC-C validation illustrates the effectiveness and efficiency of our proposed method compared to its offline variant.

A compelling illustration of the success of such an iterative approach can be seen in the case of AlphaZero (Silver et al., 2017) for its superhuman performance across various domains, which combines the strengths of neural networks, RL techniques, and Monte Carlo Tree Search (MCTS) (Coulom, 2006; Kocsis and Szepesvári, 2006). The integration of MCTS as a policy improvement operator that transforms the current policy into an improved policy (Grill et al., 2020). The effectiveness of AlphaZero underscores the potential of combining these advanced techniques in LLMs. By integrating MCTS into the iterative process of policy development, it is plausible to achieve significant strides in LLMs, particularly in the realm of reasoning and decision-making aligned with human-like preferences (Zhu et al., 2023; Hao et al., 2023).

The integration of MCTS in collecting preference data to improve the current policy iteratively is nuanced and demands careful consideration. One primary challenge lies in determining the appropriate granularity for applying MCTS. Conventionally, preference data is collected at the instance level. The instance-level approach employs sparse supervision, which can lose important information and may not optimally leverage the potential of MCTS in improving the LLMs (Wu et al., 2023). Another challenge is the reliance of MCTS on a critic or a learned reward function. This function is crucial for providing meaningful feedback on different rollouts generated by MCTS, thus guiding the policy improvement process (Liu et al., 2023a).

Addressing this granularity issue, evidence from LLM research indicates the superiority of process-level or stepwise evaluations over instance-level ones (Lightman et al., 2023; Li et al., 2023; Xie et al., 2023; Yao et al., 2023). Our approach utilizes MCTS rollouts for step-level guidance, aligning with a more granular application of MCTS. Moreover, we employ self-evaluation, where the model assesses its outputs, fostering a more efficient policy improvement pipeline by acting as both policy and critic (Kadavath et al., 2022; Xie et al., 2023). This method streamlines the process and ensures more cohesive policy updates, aligning with the iterative nature of policy enhancement and potentially leading to more robust and aligned LLMs.

To summarize, we propose an algorithm based on Monte Carlo Tree Search (MCTS) that breaks down the instance-level preference signals into step-level. MCTS allows us to use the current LLM policy to generate preference data instead of a predetermined set of human preference data, enabling the LLM to receive real-time training signals. During training, we generate sequences of text on the fly and label the preference via MCTS based on feedback from self-evaluation (Figure 1). To update the LLM policy using the preference data, we use Direct Preference Optimization (DPO) (Rafailov et al., 2023). We extensively evaluate the proposed approach on various arithmetic and commonsense reasoning tasks and observe significant performance improvements. For instance, the proposed approach outperforms the Mistral-7B SFT baseline by $81.8\$ (+ $5.9\$ ), $34.7\$ (+ $5.8\$ ), and $76.4\$ (+ $15.8\$ ) on GSM8K, MATH, and SciQ, respectively. Further analysis of the training and test compute tradeoff shows that our method can effectively push the performance gains in a more efficient way compared to sampling-only approaches.

## 2 MCTS-Enhanced Iterative Preference Learning

In this paper, we introduce an approach for improving LLM reasoning, centered around an iterative preference learning process. The proposed method begins with an initial policy $π_θ^(0)$ , and a dataset of prompts $D_P$ . Each iteration $i$ involves selecting a batch of prompts from $D_P$ , from which the model, guided by its current policy $π_θ^(i-1)$ , generates potential responses for each prompt. We then apply a set of dynamically evolving reward criteria to extract preference data $D_i$ from these responses. The model’s policy is subsequently tuned using this preference data, leading to an updated policy $π_θ^(i)$ , for the next iteration. This cycle of sampling, response generation, preference extraction, and policy tuning is repeated, allowing for continuous self-improvement and alignment with evolving preferences. In addressing the critical aspects of this methodology, two key challenges emerge: the effective collection of preference data and the process of updating the policy post-collection.

We draw upon the concept that MCTS can act as an approximate policy improvement operator, transforming the current policy into an improved one. Our work leverages MCTS to iteratively collect preference data, utilizing its look-ahead ability to break down instance-level rewards into more granular step-level signals. To enhance consistency in intermediate steps, we incorporate stepwise self-evaluation, continually updating the quality assessment of newly generated data. This process, as depicted in Figure 1, enables MCTS to balance quality exploitation and diversity exploration during preference data sampling at each iteration. Detailed in section 2.1, our approach utilizes MCTS for step-level preference data collection. Once this data is collected, the policy is updated using DPO, as outlined in section 2.2. Our method can be viewed as an online version of DPO, where the updated policy is iteratively employed to collect preferences via MCTS. Our methodology, thus, not only addresses the challenges in preference data collection and policy updating but also introduces a dynamic, iterative framework that significantly enhances LLM reasoning.

[tb] . MCTS-Enhanced Iterative Preference Learning. Given an initial policy $π_θ^(0)=π_sft$ , our algorithm iteratively conducts step-level preference data sampling via MCTS and preference learning via DPO to update the policy. Input: $D_P$ : prompt dataset; $q(·\mid x)$ : MCTS sampling strategy that constructs a tree-structured set of possible responses given a prompt $x$ , where $q_π$ represents that the strategy is based on the policy $π$ for both response generation and self-evaluation; $\ell_i(x,y_w,y_l;θ)$ : loss function of preference learning at the $i$ -th iteration, where the corresponding sampling policy is $π^(i)$ ; $M$ : number of iterations; $B$ : number of samples per iteration; $T$ : average number of steps per sample Train $π_θ$ on $D_P$ using step-level preference learning. $i=1$ to $M$ $π^(i)←π_θ←π_θ^(i-1)$ Sample a batch of $B$ samples from $D_P$ as $D_P^(i)$ . MCTS for Step-Level Preference Data Collection For each $x∈D_P^(i)$ , elicit a search tree of depth $T$ via $q_π_{θ}(·\mid x)$ . Collect a batch of preferences $D_i=\{$ $\{(x^j,y_l^(j,t),y_l^(j,t))|_t=1^T\}|_j=1^B$ s.t. $x^j∼D_P^(i),y_w^(j,t)≠ y_w^(j,t)∼ q _π_{θ}(·\mid x^j)$ $\}$ , where $y_w^(j,t)$ and $y_l^(j,t)$ is the nodes at depth $t$ , with the highest and lowest $Q$ values, respectively, among all the children nodes of their parent node. Preference Learning for Policy Improvement Optimize $θ$ by minimizing $J(θ)=E_(x,y_{w,y_l)∼D_i}\ell_i(x,y_w,y_l ;θ)$ . Obtain the updated policy $π_θ^(i)$ $π_θ←π_θ^(M)$ Output: Policy $π_θ$

### 2.1 MCTS for Step-Level Preference

To transform instance-level rewards into granular, step-level signals, we dissect the reasoning process into discrete steps, each represented by a token sequence. We define the state at step $t$ , $s_t$ , as the prefix of the reasoning chain, with the addition of a new reasoning step $a$ transitioning the state to $s_t+1$ , where $s_t+1$ is the concatenation of $s_t$ and $a$ . Utilizing the model’s current policy $π_θ$ , we sample candidate steps from its probability distribution $π_θ(a\mid x,s_t)$ For tasks (e.g., MATH) where the initial policy performs poorly, we also include the ground-truth reasoning steps for training. We detail the step definition for different tasks with examples in Appendices C and D., with $x$ being the task’s input prompt. MCTS serves as an approximate policy improvement operator by leveraging its look-ahead capability to predict the expected future reward. This prediction is refined through stepwise self-evaluation (Kadavath et al., 2022; Xie et al., 2023), enhancing process consistency and decision accuracy. The tree-structured search supports a balance between exploring diverse possibilities and exploiting promising paths, essential for navigating the vast search space in LLM reasoning.

The MCTS process begins from a root node, $s_0$ , as the sentence start or incomplete response, and unfolds in three iterative stages: selection, expansion, and backup, which we detail further.

#### Select.

The objective of this phase is to identify nodes that balance search quality and computational efficiency. The selection is guided by two key variables: $Q(s_t,a)$ , the value of taking action $a$ in state $s_t$ , and $N(s_t)$ , the visitation frequency of state $s_t$ . These variables are crucial for updating the search strategy, as explained in the backup section. To navigate the trade-off between exploring new nodes and exploiting visited ones, we employ the Predictor + Upper Confidence bounds applied to Trees (PUCT) (Rosin, 2011). At node $s_t$ , the choice of the subsequent node follows the formula:

$$

\displaystyle{s_t+1}^* \displaystyle=\arg\max_s_{t}\Bigl{[}Q(s_t,a)+c_puct· p(a

\mid s_t)\frac{√{N(s_t)}}{1+N(s_t+1)}\Bigl{]} \tag{1}

$$

where $p(a\mid s_t)=π_θ(a\mid x,s_t)/|a|^λ$ denotes the policy $π_θ$ ’s probability distribution for generating a step $a$ , adjusted by a $λ$ -weighted length penalty to prevent overly long reasoning chains.

#### Expand.

Expansion occurs at a leaf node during the selection process to integrate new nodes and assess rewards. The reward $r(s_t,a)$ for executing step $a$ in state $s_t$ is quantified by the reward difference between states $R(s_t)$ and $R(s_t+1)$ , highlighting the advantage of action $a$ at $s_t$ . As defined in Eq. (2), reward computation merges outcome correctness $O$ with self-evaluation $C$ . We assign the outcome correctness to be $1$ , $-1$ , and $0 0$ for correct terminal, incorrect terminal, and unfinished intermediate states, respectively. Following Xie et al. (2023), we define self-evaluation as Eq. (3), where $A$ denotes the confidence score in token-level probability for the option indicating correctness We show an example of evaluation prompt in Table 6.. Future rewards are anticipated by simulating upcoming scenarios through roll-outs, following the selection and expansion process until reaching a terminal state The terminal state is reached when the whole response is complete or exceeds the maximum length..

$$

R(s_t)=O(s_t)+C(s_t) \tag{2}

$$

$$

C(s_t)=π_θ(A\mid{prompt}_eval

,x,s_t) \tag{3}

$$

#### Backup.

Once a terminal state is reached, we carry out a bottom-up update from the terminal node back to the root. We update the visit count $N$ , the state value $V$ , and the transition value $Q$ :

$$

Q(s_t,a)← r(s_t,a)+γ V(s_t+1) \tag{4}

$$

$$

V(s_t)←∑_aN(s_t+1)Q(s_t,a)/∑_aN(s_t+1) \tag{5}

$$

$$

N(s_t)← N(s_t)+1 \tag{6}

$$

where $γ$ is the discount for future state values.

For each step in the response generation, we conduct $K$ iterations of MCTS to construct the search tree while updating $Q$ values and visit counts $N$ . To balance the diversity, quality, and efficiency of the tree construction, we initialize the search breadth as $b_1$ and anneal it to be a smaller $b_2<b_1$ for the subsequent steps. We use the result $Q$ value corresponding to each candidate step to label its preference, where higher $Q$ values indicate preferred next steps. For a result search tree of depth $T$ , we obtain $T$ pairs of step-level preference data. Specifically, we select the candidate steps of highest and lowest $Q$ values as positive and negative samples at each tree depth, respectively. The parent node selected at each tree depth has the highest value calculated by multiplying its visit count and the range of its children nodes’ visit counts, indicating both the quality and diversity of the generations.

### 2.2 Iterative Preference Learning

Given the step-level preferences collected via MCTS, we tune the policy via DPO (Rafailov et al., 2023). Considering the noise in the preference labels determined by $Q$ values, we employ the conservative version of DPO (Mitchell, 2023) and use the visit counts simulated in MCTS to apply adaptive label smoothing on each preference pair. Using the shorthand $h_π_{θ}^y_w,y_l=\log\frac{π_θ(y_w\mid x)}{π_ ref(y_w\mid x)}-\log\frac{π_θ(y_l\mid x)}{π_ ref(y_l\mid x)}$ , at the $i$ -th iteration, given a batch of preference data $D_i$ sampled with the latest policy $π_θ^(i-1)$ , we denote the policy objective $\ell_i(θ)$ as follows:

$$

\displaystyle\ell_i(θ)= \displaystyle-E_(x,y_{w,y_l)∼D_i}\Bigr{[}(1-α

_x,y_{w,y_l})\logσ(β≤ft.h_π_{θ}^y_w,y_l\right)+ \displaystyleα_x,y_{w,y_l}\logσ(-β h_π_{θ}^y_w,y

_l)\Bigr{]} \tag{7}

$$

where $y_w$ and $y_l$ represent the step-level preferred and dispreferred responses, respectively, and the hyperparameter $β$ scales the KL constraint. Here, $α_x,y_{w,y_l}$ is a label smoothing variable calculated using the visit counts at the corresponding states of the preference data $y_w$ , $y_l$ in the search tree:

$$

α_x,y_{w,y_l}=\frac{1}{N(x,y_w)/N(x,y_l)+1} \tag{8}

$$

where $N(x,y_w)$ and $N(x,y_l)$ represent the states taking the actions of generating $y_w$ and $y_l$ , respectively, from their previous state as input $x$ .

After optimization, we obtain the updated policy $π_θ^(i)$ and repeat the data collection process in Section 2.1 to iteratively update the LLM policy. We outline the full algorithm of our MCTS-enhanced Iterative Preference Learning in Algorithm 2.

## 3 Theoretical Analysis

Our approach can be viewed as an online version of DPO, where we iteratively use the updated policy to sample preferences via MCTS. In this section, we provide theoretical analysis to interpret the advantages of our online learning framework compared to the conventional alignment techniques that critically depend on offline preference data. We review the typical RLHF and DPO paradigms in Appendix B.

We now consider the following abstract formulation for clean theoretical insights to analyze our online setting of preference learning. Given a prompt $x$ , there exist $n$ possible suboptimal responses $\{\bar{y}_1,\dots,\bar{y}_n\}=Y$ and an optimal outcome $y^*$ . As specified in Equation 7, at the $i$ -th iteration, a pair of responses $(y,{y^\prime})$ are sampled from some sampling policy $π^(i)$ without replacement so that $y≠{y^\prime}$ as $y∼π^(i)(·\mid x)$ and ${y^\prime}∼π^(i)(·\mid x,y)$ . Then, these are labeled to be $y_w$ and $y_l$ according to the preference. Define $Θ$ be a set of all global optimizers of the preference loss for all $M$ iterations, i.e., for any $θ∈Θ$ , $\ell_i(θ)=0$ for all $i∈\{1,2,⋯,M\}$ . Similarly, let ${θ^(i)}$ be a parameter vector such that $\ell_j(θ^(i))=0$ for all $j∈\{1,2,⋯,i-1\}$ for $i≥ 1$ whereas $θ^(0)$ is the initial parameter vector.

This abstract formulation covers both the offline and online settings. The offline setting in previous works is obtained by setting $π^(i)=π$ for some fixed distribution $π$ . The online setting is obtained by setting $π^(i)=π_θ^(i-1)$ where $π_θ^(i-1)$ is the latest policy at beginning of the $i$ -th iteration.

The following theorem shows that the offline setting can fail with high probability if the sampling policy $π^(i)$ differs too much from the current policy $π_θ^(i-1)$ :

**Theorem 3.1 (Offline setting can fail with high probability)**

*Let $π$ be any distribution for which there exists $\bar{y}∈ Y$ such that $π(\bar{y}\mid x),π(\bar{y}\mid x,y)≤ε$ for all $y∈(Y∖\bar{y})∪\{y^*\}$ and $π_θ^(i-1)(\bar{y}\mid x)≥ c$ for some $i∈\{1,2,⋯,M\}$ . Set $π^(i)=π$ for all $i∈\{1,2,⋯,M\}$ . Then, there exists $θ∈Θ$ such that with probability at least $1-2ε M$ (over the samples of $π^(i)=π$ ), the following holds: $π_θ(y^*\mid x)≤ 1-c$ .*

If the current policy and the sampling policy differ too much, it is possible that $ε=0$ and $c≈ 1.0$ , for which Theorem 3.1 can conclude $π_θ(y^*\mid x)≈ 0$ with probability $1$ for any number of steps $M$ . When $ε≠ 0$ , the lower bound of the failure probability decreases towards zero as we increase $M$ . Thus, it is important to make sure that $ε≠ 0$ and $ε$ is not too low. This is achieved by using the online setting, i.e., $π^(i)=π_θ^(i)$ . Therefore, Theorem 3.1 motivates us to use the online setting. Theorem 3.2 confirms that we can indeed avoid this failure case in the online setting.

**Theorem 3.2 (Online setting can avoid offline failure case)**

*Let $π^(i)=π_θ^(i-1)$ . Then, for any $θ∈Θ$ , it holds that $π_θ(y^*\mid x)=1$ if $M≥ n+1$ .*

See Appendix B for the proofs of Theorems 3.1 and 3.2. As suggested by the theorems, a better sampling policy is to use both the latest policy and the optimal policy for preference sampling. However, since we cannot access the optimal policy $π^*$ in practice, we adopt online DPO via sampling from the latest policy $π_θ^(i-1)$ . The key insight of our iterative preference learning approach is that online DPO is proven to enable us to converge to an optimal policy even if it is inaccessible to sample outputs. We provide further discussion and additional insights in Appendix B.

## 4 Experiments

We evaluate the effectiveness of MCTS-enhanced iterative preference learning on arithmetic and commonsense reasoning tasks. We employ Mistral-7B (Jiang et al., 2023) as the base pre-trained model. We conduct supervised training using Arithmo https://huggingface.co/datasets/akjindal53244/Arithmo-Data which comprises approximately $540$ K mathematical and coding problems. Detailed information regarding the task formats, specific implementation procedures, and parameter settings of our experiments can be found in Appendix C.

#### Datasets.

We aim to demonstrate the effectiveness and versatility of our approach by focusing on two types of reasoning: arithmetic and commonsense reasoning. For arithmetic reasoning, we utilize two datasets: GSM8K (Cobbe et al., 2021), which consists of grade school math word problems, and MATH (Hendrycks et al., 2021), featuring challenging competition math problems. Specifically, in the GSM8K dataset, we assess both chain-of-thought (CoT) and program-of-thought (PoT) reasoning abilities. We integrate the training data from GSM8K and MATH to construct the prompt data for our preference learning framework, aligning with a subset of the Arithmo data used for Supervised Fine-Tuning (SFT). This approach allows us to evaluate whether our method enhances reasoning abilities on specific arithmetic tasks. For commonsense reasoning, we use four multiple-choice datasets: ARC (easy and challenge splits) (Clark et al., 2018), focusing on science exams; AI2Science (elementary and middle splits) (Clark et al., 2018), containing science questions from student assessments; OpenBookQA (OBQA) (Mihaylov et al., 2018), which involves open book exams requiring broad common knowledge; and CommonSenseQA (CSQA) (Talmor et al., 2019), featuring commonsense questions necessitating prior world knowledge. The diversity of these datasets, with different splits representing various grade levels, enables a comprehensive assessment of our method’s generalizability in learning various reasoning tasks through self-distillation. Performance evaluation is conducted using the corresponding validation sets of each dataset. Furthermore, we employ an unseen evaluation using the validation set of an additional dataset, SciQ (Welbl et al., 2017), following the approach of Liu et al. (2023b), to test our model’s ability to generalize to novel reasoning contexts.

Baselines. Our study involves a comparative evaluation of our method against several prominent approaches and fair comparison against variants including instance-level iterative preference learning and offline MCTS-enhanced learning. We use instance-level sampling as a counterpart of step-level preference collection via MCTS. For a fair comparison, we also apply self-evaluation and correctness assessment and control the number of samples under a comparable compute budget with MCTS in instance-level sampling. The offline version uses the initial policy for sampling rather than the updated one at each iteration.

We contrast our approach with the Self-Taught Reasoner (STaR) (Zelikman et al., 2022), an iterated learning model based on instance-level rationale generation, and Crystal (Liu et al., 2023b), an RL-tuned model with a focus on knowledge introspection in commonsense reasoning. Considering the variation in base models used by these methods, we include comparisons with Direct Tuning, which entails fine-tuning base models directly bypassing chain-of-thought reasoning. In the context of arithmetic reasoning tasks, our analysis includes Language Model Self-Improvement (LMSI) (Huang et al., 2023), a self-training method using self-consistency to gather positive data, and Math-Shepherd (Wang et al., 2023a), which integrates process supervision within Proximal Policy Optimization (PPO). To account for differences in base models and experimental setups across these methods, we also present result performance of SFT models as baselines for each respective approach.

Table 1: Result comparison (accuracy $\$ ) on arithmetic tasks. We supervised fine-tune the base model Mistral-7B on Arithmo data, while Math-Shepherd (Wang et al., 2023a) use MetaMATH (Yu et al., 2023b) for SFT. We highlight the advantages of our approach via conceptual comparison with other methods, where NR, OG, OF, and NS represent “w/o Reward Model”, “On-policy Generation”, “Online Feedback”, and “w/ Negative Samples”.

| Approach | Base Model | Conceptual Comparison | GSM8K | MATH | | | |

| --- | --- | --- | --- | --- | --- | --- | --- |

| NR | OG | OF | NS | | | | |

| LMSI | PaLM-540B | ✓ | ✓ | ✗ | ✗ | $73.5$ | $-$ |

| SFT (MetaMath) | Mistral-7B | $-$ | $-$ | $-$ | $-$ | $77.7$ | $28.2$ |

| Math-Shepherd | ✗ | ✓ | ✗ | ✓ | $84.1$ | $33.0$ | |

| SFT (Arithmo) | Mistral-7B | $-$ | $-$ | $-$ | $-$ | $75.9$ | $28.9$ |

| MCTS Offline-DPO | ✓ | ✗ | ✗ | ✓ | $79.9$ | $31.9$ | |

| Instance-level Online-DPO | ✓ | ✓ | ✓ | ✓ | $79.7$ | $32.9$ | |

| Ours | ✓ | ✓ | ✓ | ✓ | $80.7$ | $32.2$ | |

| Ours (w/ G.T.) | | ✓ | ✓ | ✓ | ✓ | $81.8$ | $34.7$ |

<details>

<summary>x3.png Details</summary>

### Visual Description

## Line Chart: ARC-C Accuracy vs. Training Data Percentage

### Overview

The image is a line chart titled "ARC-C" that plots the performance (Accuracy) of three different training methods and one baseline against the percentage of training data used. The chart compares "Step-Level (Online)", "Instance-Level (Online)", and "Step-Level (Offline)" methods against a fixed "SFT Baseline".

### Components/Axes

* **Title:** "ARC-C" (centered at the top).

* **Y-Axis:** Labeled "Accuracy". The scale runs from 55 to 75, with major gridlines at intervals of 5 (55, 60, 65, 70, 75).

* **X-Axis:** Labeled "Training Data %". The scale runs from approximately 5 to 55, with labeled tick marks at 10, 20, 30, 40, and 50.

* **Legend:** Located in the bottom-left quadrant of the plot area. It contains four entries:

1. **Step-Level (Online):** Represented by a solid green line with star (★) markers.

2. **Instance-Level (Online):** Represented by a solid blue line with upward-pointing triangle (▲) markers.

3. **Step-Level (Offline):** Represented by a solid yellow/gold line with star (★) markers.

4. **SFT Baseline:** Represented by a dashed pink/magenta horizontal line.

### Detailed Analysis

**Data Series and Exact Values:**

1. **Step-Level (Online) - Green Line with Stars:**

* Trend: Shows a steady upward trend from 10% to 30% training data, peaks at 30%, then slightly declines and plateaus.

* Data Points:

* At 10%: Accuracy = 72.2

* At 20%: Accuracy = 74.7

* At 30%: Accuracy = 76.4 (Peak)

* At 40%: Accuracy = 75.6

* At 50%: Accuracy = 75.8

2. **Instance-Level (Online) - Blue Line with Triangles:**

* Trend: Shows a strong upward trend from 10% to 40%, then a noticeable drop at 50%.

* Data Points:

* At 10%: Accuracy = 66.5

* At 20%: Accuracy = 72.2

* At 30%: Accuracy = 73.3

* At 40%: Accuracy = 75.2 (Peak)

* At 50%: Accuracy = 73.4

3. **Step-Level (Offline) - Yellow Line with Stars:**

* Trend: Increases slightly from 10% to 20%, then shows a consistent downward trend as training data increases beyond 20%.

* Data Points:

* At 10%: Accuracy = 69.2

* At 20%: Accuracy = 70.8 (Peak)

* At 30%: Accuracy = 67.3

* At 40%: Accuracy = 66.5

* (No data point is plotted for 50%).

4. **SFT Baseline - Dashed Pink Line:**

* Trend: Constant horizontal line, indicating a fixed performance level independent of the training data percentage shown.

* Value: Accuracy = 60.6 (labeled on the right side of the chart).

### Key Observations

* **Performance Hierarchy:** The "Step-Level (Online)" method consistently achieves the highest accuracy across all training data percentages shown.

* **Online vs. Offline:** Both online methods (Step-Level and Instance-Level) significantly outperform the offline method ("Step-Level (Offline)") and the SFT Baseline at all data points.

* **Diminishing Returns/Overfitting:** The "Step-Level (Online)" method's performance plateaus after 30% data. The "Instance-Level (Online)" method's performance drops after 40% data, suggesting potential overfitting or diminishing returns with more data for this method.

* **Offline Method Decline:** The "Step-Level (Offline)" method shows a clear negative correlation between training data percentage and accuracy beyond the 20% mark.

* **Baseline Comparison:** All three experimental methods provide a substantial improvement over the "SFT Baseline" of 60.6 accuracy.

### Interpretation

This chart demonstrates the comparative effectiveness of different training paradigms on the ARC-C benchmark. The data suggests that **online training methods (both Step-Level and Instance-Level) are superior to the offline method and the standard SFT baseline** for this task, yielding accuracy gains of approximately 10-16 percentage points.

The **"Step-Level (Online)" approach appears to be the most robust and effective**, maintaining high performance even as training data increases. The peak performance for this method occurs with 30% of the training data, after which additional data provides minimal benefit.

The **decline in performance for the "Instance-Level (Online)" method at 50% data and the consistent decline for the "Step-Level (Offline)" method** are critical anomalies. They indicate that simply adding more data is not universally beneficial and can be detrimental depending on the training strategy. This could point to issues like overfitting, noise in the additional data, or a mismatch between the training objective and the evaluation metric when data scales.

In summary, the chart argues for the efficacy of online, step-level training for maximizing accuracy on ARC-C, while cautioning that the benefits of increased data are not automatic and are highly dependent on the specific training methodology employed.

</details>

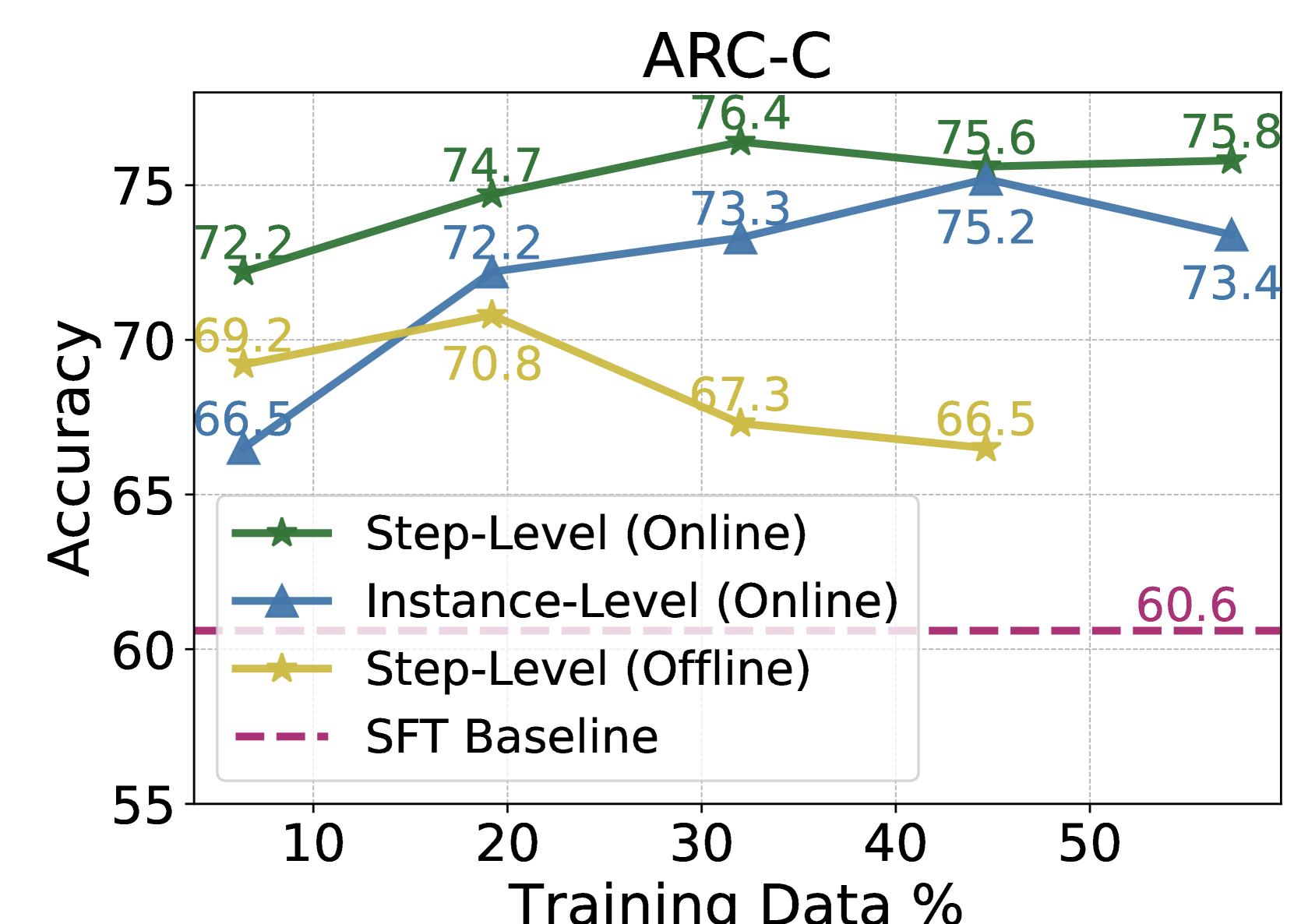

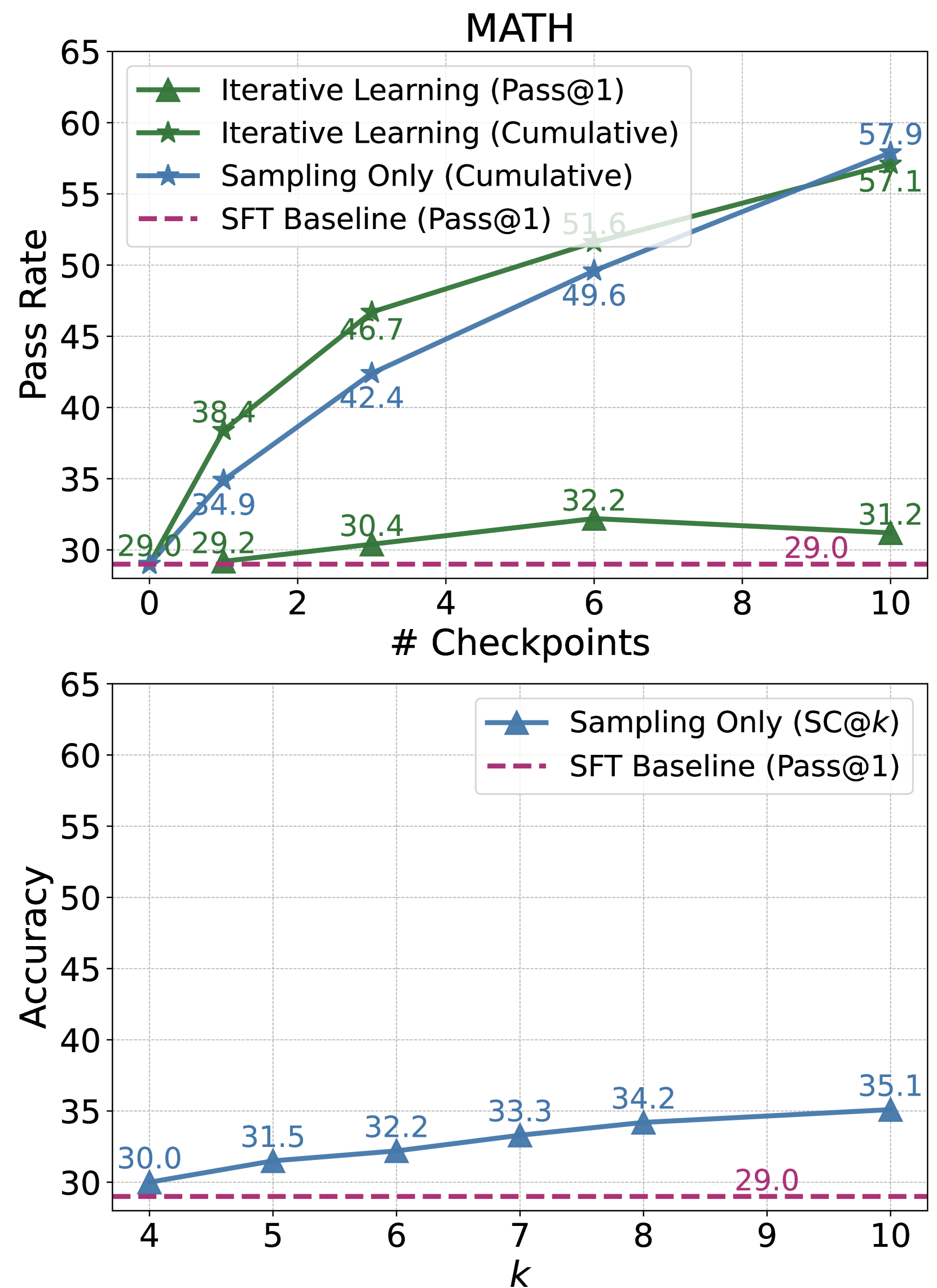

Figure 2: Performance on the validation set of ARC-C via training with different settings.

Table 2: Result comparisons (accuracy $\$ ) on commonsense reasoning tasks. The results based on GPT-3-curie (Brown et al., 2020) and T5 (Raffel et al., 2020) are reported from Liu et al. (2023b). For CSQA, we also include the GPT-J (Wang and Komatsuzaki, 2021) results reported by Zelikman et al. (2022). We follow Liu et al. (2023b) to combine the training data of ARC, AI2Sci, OBQA, and CSQA for training , while STaR (Zelikman et al., 2022) only use CSQA for training.

| Approach | Base Model | Conceptual Comparison | ARC-c | AI2Sci-m | CSQA | SciQ | Train Data Used ( $\$ ) | | | |

| --- | --- | --- | --- | --- | --- | --- | --- | --- | --- | --- |

| NR | OG | OF | NS | | | | | | | |

| CoT Tuning | GPT-3-curie (6.7B) | ✓ | ✗ | ✗ | ✗ | $-$ | $-$ | $56.8$ | $-$ | $100$ |

| Direct Tuning | GPT-J (6B) | ✓ | ✗ | ✗ | ✗ | $-$ | $-$ | $60.0$ | $-$ | $100$ |

| STaR | ✓ | ✓ | ✓ | ✗ | $-$ | $-$ | $72.5$ | $-$ | $86.7$ | |

| Direct TUning | T5-11B | ✓ | ✗ | ✗ | ✗ | $72.9$ | $84.0$ | $82.0$ | $83.2$ | $100$ |

| Crystal | ✗ | ✓ | ✓ | ✓ | $73.2$ | $84.8$ | $82.3$ | $85.3$ | $100$ | |

| SFT Base (Arithmo) | Mistral-7B | $-$ | $-$ | $-$ | $-$ | $60.6$ | $70.9$ | $54.1$ | $80.8$ | $-$ |

| Direct Tuning | ✓ | ✗ | ✗ | ✗ | $73.9$ | $85.2$ | $79.3$ | $86.4$ | $100$ | |

| MCTS Offline-DPO | ✓ | ✗ | ✗ | ✓ | $70.8$ | $82.6$ | $68.5$ | $87.4$ | $19.2$ | |

| Instance-level Online-DPO | ✓ | ✓ | ✓ | ✓ | $75.3$ | $87.3$ | $63.1$ | $87.6$ | $45.6$ | |

| Ours | ✓ | ✓ | ✓ | ✓ | $76.4$ | $88.2$ | $74.8$ | $88.5$ | $47.8$ | |

### 4.1 Main Results

Arithmetic Reasoning. In Table 1, we present a comparative analysis of performance gains in arithmetic reasoning tasks. Our method demonstrates substantial improvements, notably on GSM8K, increasing from $75.9\$ , and on MATH, enhancing from $28.9\$ . When compared to Math-Shepherd, which also utilizes process supervision in preference learning, our approach achieves similar performance enhancements without the necessity of training separate reward or value networks. This suggests the potential of integrating trained reward model signals into our MCTS stage to further augment performance. Furthermore, we observe significant performance gain on MATH when incorporating the ground-truth solutions in the MCTS process for preference data collection, illustrating an effective way to refine the preference data quality with G.T. guidance.

<details>

<summary>x4.png Details</summary>

### Visual Description

## [Line Charts]: ARC-C Performance Metrics

### Overview

The image contains two line charts stacked vertically, both titled "ARC-C". They display performance metrics (Pass Rate and Accuracy) for different machine learning training/evaluation methods across varying parameters (Checkpoints and k). The charts compare "Iterative Learning", "Sampling Only", and an "SFT Baseline".

### Components/Axes

**Top Chart:**

* **Title:** ARC-C

* **Y-axis:** Label: "Pass Rate". Scale: 60 to 95, with major ticks every 5 units.

* **X-axis:** Label: "# Checkpoints". Scale: 0 to 7, with integer ticks.

* **Legend (Center-Right):**

* Green line with upward-pointing triangle markers: "Iterative Learning (Pass@1)"

* Green line with star markers: "Iterative Learning (Cumulative)"

* Blue line with star markers: "Sampling Only (Cumulative)"

* Pink dashed line: "SFT Baseline (Pass@1)"

**Bottom Chart:**

* **Y-axis:** Label: "Accuracy". Scale: 60 to 95, with major ticks every 5 units.

* **X-axis:** Label: "k". Scale: 10 to 60, with major ticks at 10, 20, 30, 40, 50, 60.

* **Legend (Top-Right):**

* Blue line with upward-pointing triangle markers: "Sampling Only (SC@k)"

* Pink dashed line: "SFT Baseline (Pass@1)"

### Detailed Analysis

**Top Chart - Data Points & Trends:**

* **Iterative Learning (Pass@1) [Green Triangles]:** Starts at 60.6 (k=0). Increases sharply to 72.2 (k=1), then rises more gradually: 73.6 (k=2), 74.7 (k=3), 75.1 (k=4), 76.4 (k=5), 75.8 (k=6), 76.2 (k=7). **Trend:** Steep initial rise, followed by a plateau around 76.

* **Iterative Learning (Cumulative) [Green Stars]:** Starts at 60.6 (k=0). Increases sharply to 79.7 (k=1), then continues a strong upward trend: 86.9 (k=2), 90.0 (k=3), 91.3 (k=4), 92.4 (k=5), 93.3 (k=6), 94.1 (k=7). **Trend:** Consistent, strong upward slope, approaching 95.

* **Sampling Only (Cumulative) [Blue Stars]:** Starts at 60.6 (k=0). Increases to 71.9 (k=1), then follows a steady upward curve: 80.6 (k=2), 86.6 (k=3), 89.3 (k=4), 91.7 (k=5), 92.9 (k=6), 93.5 (k=7). **Trend:** Steady upward slope, consistently below the Iterative Learning (Cumulative) line but converging towards it at higher checkpoints.

* **SFT Baseline (Pass@1) [Pink Dashed Line]:** Constant horizontal line at approximately 60.6 across all checkpoints.

**Bottom Chart - Data Points & Trends:**

* **Sampling Only (SC@k) [Blue Triangles]:** Data points at specific k values: 61.9 (k=1), 70.0 (k=8), 72.2 (k=16), 73.4 (k=32), 74.1 (k=64). **Trend:** Increases with k, but the rate of improvement diminishes significantly after k=16, showing a logarithmic-like growth curve.

* **SFT Baseline (Pass@1) [Pink Dashed Line]:** Constant horizontal line at 60.6 across all k values.

### Key Observations

1. **Performance Hierarchy:** In the top chart, "Iterative Learning (Cumulative)" achieves the highest Pass Rate, followed closely by "Sampling Only (Cumulative)". "Iterative Learning (Pass@1)" performs significantly lower than the cumulative methods but still well above the baseline.

2. **Baseline Comparison:** All active learning/sampling methods substantially outperform the static "SFT Baseline" of 60.6.

3. **Diminishing Returns:** Both charts show diminishing returns. In the top chart, the rate of improvement for all lines slows after Checkpoint 3. In the bottom chart, increasing `k` beyond 16 yields only marginal gains in Accuracy.

4. **Method Comparison:** The "Iterative Learning (Cumulative)" method shows a clear advantage over "Sampling Only (Cumulative)" at every checkpoint, though the gap narrows slightly at the highest values.

### Interpretation

These charts likely evaluate techniques for improving a language model's reasoning or problem-solving capabilities on the ARC (Abstraction and Reasoning Corpus) benchmark, specifically the "C" (likely "Challenge") subset.

* **What the data suggests:** The data demonstrates that both iterative learning and sampling-based methods are highly effective at improving model performance beyond a standard supervised fine-tuning (SFT) baseline. The cumulative metrics (which likely aggregate success across multiple attempts or steps) show that the model's *potential* to solve problems is much higher than its single-attempt (Pass@1) performance.

* **How elements relate:** The top chart shows the learning trajectory over training iterations (checkpoints). The bottom chart isolates the effect of the sampling parameter `k` (likely the number of samples generated per problem) on accuracy for the "Sampling Only" method. The consistent SFT baseline in both provides a fixed reference point.

* **Notable trends/anomalies:** The most significant trend is the superiority of cumulative evaluation over single-pass evaluation, highlighting the model's ability to self-correct or explore solution spaces when given multiple chances. The plateau in the "Iterative Learning (Pass@1)" line suggests a limit to the model's single-shot reasoning capability under this training regime, even as its cumulative capability continues to grow. The clear, consistent ordering of the methods provides strong evidence for the efficacy of iterative learning approaches over pure sampling for this task.

</details>

<details>

<summary>x5.png Details</summary>

### Visual Description

## Line Chart: SciQ Dataset Performance Comparison

### Overview

The image contains two line charts stacked vertically, both titled "SciQ". They compare the performance of different learning or sampling methods on the SciQ question-answering dataset. The top chart tracks performance across training checkpoints, while the bottom chart examines performance as a function of a parameter `k`.

### Components/Axes

**Top Chart:**

* **Title:** SciQ

* **X-axis:** Label: "# Checkpoints". Scale: Linear, from 0 to 7, with integer markers.

* **Y-axis:** Unlabeled, but represents a performance metric (likely accuracy percentage). Scale: Linear, from 80.0 to 100.0, with increments of 2.5.

* **Legend (Top-Left):**

* `Iterative Learning (Pass@1)`: Green line with upward-pointing triangle markers.

* `Iterative Learning (Cumulative)`: Dark green line with star markers.

* `Sampling Only (Cumulative)`: Blue line with star markers.

* `SFT Baseline (Pass@1)`: Magenta dashed line.

**Bottom Chart:**

* **X-axis:** Label: "k". Scale: Linear, from 10 to 60, with increments of 10.

* **Y-axis:** Unlabeled, same scale as top chart (80.0 to 100.0).

* **Legend (Top-Right):**

* `Sampling Only (SC@k)`: Blue line with upward-pointing triangle markers.

* `SFT Baseline (Pass@1)`: Magenta dashed line.

### Detailed Analysis

**Top Chart - Performance vs. Checkpoints:**

1. **Iterative Learning (Cumulative) [Dark Green, Star]:**

* **Trend:** Strong, consistent upward trend. Starts at the baseline and ends as the highest-performing method.

* **Data Points:**

* Checkpoint 0: 80.8

* Checkpoint 1: 89.6

* Checkpoint 2: 93.2 (approximate, label partially obscured)

* Checkpoint 3: 94.9 (approximate, label partially obscured)

* Checkpoint 4: 95.9 (approximate, label partially obscured)

* Checkpoint 5: 96.3

2. **Sampling Only (Cumulative) [Blue, Star]:**

* **Trend:** Steady, linear upward trend. Consistently outperforms the SFT baseline and the Pass@1 methods after the first checkpoint.

* **Data Points:**

* Checkpoint 0: 80.8

* Checkpoint 1: 82.8

* Checkpoint 2: 84.5 (approximate, interpolated between labeled points)

* Checkpoint 3: 86.4

* Checkpoint 4: 88.1 (approximate, interpolated)

* Checkpoint 5: 89.8

* Checkpoint 6: 90.7 (approximate, interpolated)

* Checkpoint 7: 91.5

3. **Iterative Learning (Pass@1) [Green, Triangle]:**

* **Trend:** Volatile. Shows an initial sharp increase, then fluctuates with a slight overall upward trend, but remains below the cumulative methods.

* **Data Points:**

* Checkpoint 0: 80.8

* Checkpoint 1: 89.6

* Checkpoint 2: 86.0

* Checkpoint 3: 88.3

* Checkpoint 4: 86.5

* Checkpoint 5: 88.5

* Checkpoint 6: 87.7

* Checkpoint 7: 88.6

4. **SFT Baseline (Pass@1) [Magenta, Dashed]:**

* **Trend:** Flat horizontal line, indicating constant performance.

* **Data Point:** Constant at 80.8 across all checkpoints.

**Bottom Chart - Performance vs. k:**

1. **Sampling Only (SC@k) [Blue, Triangle]:**

* **Trend:** Logarithmic-like growth. Performance increases rapidly for low `k` values and then plateaus, showing diminishing returns.

* **Data Points:**

* k=10: 81.6

* k=15: 82.8

* k=20: 83.8

* k=25: 84.1

* k=40: 84.2

* k=60: 84.4

2. **SFT Baseline (Pass@1) [Magenta, Dashed]:**

* **Trend:** Flat horizontal line.

* **Data Point:** Constant at 80.8.

### Key Observations

* **Cumulative Superiority:** Both "Cumulative" methods (Iterative Learning and Sampling Only) significantly and consistently outperform their "Pass@1" counterparts and the SFT baseline as training progresses.

* **Iterative Learning Peak:** The "Iterative Learning (Cumulative)" method achieves the highest overall performance (96.3 at checkpoint 5).

* **Baseline Performance:** The SFT Baseline is static at 80.8, serving as a fixed reference point.

* **Diminishing Returns on k:** The bottom chart shows that increasing `k` beyond ~25 yields minimal performance gains for the "Sampling Only (SC@k)" method.

* **Volatility in Pass@1:** The "Iterative Learning (Pass@1)" metric shows significant checkpoint-to-checkpoint variance, unlike the smoother cumulative curves.

### Interpretation

The data demonstrates the effectiveness of iterative and cumulative learning strategies over simple supervised fine-tuning (SFT) and single-pass (Pass@1) evaluation on the SciQ benchmark.

1. **Methodological Insight:** The stark difference between the "Cumulative" and "Pass@1" lines for the same underlying method (Iterative Learning) suggests that the model's ability to generate multiple correct answers (captured by cumulative metrics) improves more reliably and dramatically than its top-1 accuracy during training. This highlights the value of methods that leverage multiple samples or iterations.

2. **Training Progression:** The top chart shows that performance gains are not linear. The most substantial improvements for the best method occur in the first few checkpoints (0 to 1), after which gains continue but at a slower rate.

3. **Resource vs. Performance Trade-off:** The bottom chart provides a practical guide for the `k` parameter in self-consistency (SC) decoding. It suggests that using a `k` value between 20 and 40 offers a good balance, capturing most of the performance benefit without the computational cost of sampling a very large number of answers (e.g., k=60).

4. **Overall Conclusion:** For maximizing performance on SciQ, an iterative learning approach evaluated with a cumulative metric is most effective. If using sampling-based methods (like SC@k), a moderate `k` value is sufficient, and these methods also reliably surpass the static SFT baseline.

</details>

<details>

<summary>x6.png Details</summary>

### Visual Description

## Line Chart: MATH Performance Metrics

### Overview

The image displays two line charts under the main title "MATH". The top chart plots "Pass Rate" against the number of checkpoints ("# Checkpoints"). The bottom chart plots "Accuracy" against a variable "k". Both charts compare different learning or sampling methods against a baseline.

### Components/Axes

**Top Chart:**

* **Title:** MATH

* **Y-axis:** Label: "Pass Rate". Scale: 30 to 65, with increments of 5.

* **X-axis:** Label: "# Checkpoints". Scale: 0 to 10, with increments of 2.

* **Legend (Top-Left):**

* `Iterative Learning (Pass@1)`: Green line with upward-pointing triangle markers.

* `Iterative Learning (Cumulative)`: Green line with star markers.

* `Sampling Only (Cumulative)`: Blue line with star markers.

* `SFT Baseline (Pass@1)`: Magenta dashed line.

**Bottom Chart:**

* **Y-axis:** Label: "Accuracy". Scale: 30 to 65, with increments of 5.

* **X-axis:** Label: "k". Scale: 4 to 10, with increments of 1.

* **Legend (Top-Right):**

* `Sampling Only (SC@k)`: Blue line with upward-pointing triangle markers.

* `SFT Baseline (Pass@1)`: Magenta dashed line.

### Detailed Analysis

**Top Chart Data Points & Trends:**

1. **Iterative Learning (Pass@1) [Green, Triangles]:** The line shows a slight upward trend, then plateaus.

* Checkpoint 0: ~29.0

* Checkpoint 1: 29.2

* Checkpoint 3: 30.4

* Checkpoint 6: 32.2

* Checkpoint 10: 31.2

2. **Iterative Learning (Cumulative) [Green, Stars]:** The line shows a strong, consistent upward trend.

* Checkpoint 0: ~29.0

* Checkpoint 1: 38.4

* Checkpoint 3: 46.7

* Checkpoint 6: 51.6

* Checkpoint 10: 57.1

3. **Sampling Only (Cumulative) [Blue, Stars]:** The line shows a strong, consistent upward trend, closely following but slightly below the Iterative Learning (Cumulative) line.

* Checkpoint 0: ~29.0

* Checkpoint 1: 34.9

* Checkpoint 3: 42.4

* Checkpoint 6: 49.6

* Checkpoint 10: 57.9

4. **SFT Baseline (Pass@1) [Magenta, Dashed]:** A flat, horizontal line indicating a constant baseline performance.

* All Checkpoints: 29.0

**Bottom Chart Data Points & Trends:**

1. **Sampling Only (SC@k) [Blue, Triangles]:** The line shows a steady, linear upward trend as k increases.

* k=4: 30.0

* k=5: 31.5

* k=6: 32.2

* k=7: 33.3

* k=8: 34.2

* k=10: 35.1

2. **SFT Baseline (Pass@1) [Magenta, Dashed]:** A flat, horizontal line indicating a constant baseline performance.

* All k values: 29.0

### Key Observations

* **Cumulative vs. Pass@1:** For Iterative Learning, the "Cumulative" metric shows dramatically higher improvement (from ~29 to 57.1) compared to the "Pass@1" metric (from ~29 to 31.2).

* **Method Comparison:** At the final checkpoint (10), "Sampling Only (Cumulative)" achieves the highest Pass Rate (57.9), slightly surpassing "Iterative Learning (Cumulative)" (57.1). Both significantly outperform the baseline.

* **Baseline:** The SFT Baseline remains constant at 29.0 across all checkpoints and k values in both charts.

* **SC@k Trend:** The "Sampling Only (SC@k)" accuracy in the bottom chart improves linearly with k, but the gains are modest (from 30.0 to 35.1 over k=4 to 10).

### Interpretation

The data demonstrates the effectiveness of iterative learning and sampling strategies over a static supervised fine-tuning (SFT) baseline on the MATH benchmark. The key insight is the power of **cumulative evaluation**. While the single-attempt performance (Pass@1) of Iterative Learning improves only marginally, its cumulative performance—likely measuring success across multiple attempts or steps—shows substantial gains. This suggests the model's ability to arrive at correct solutions improves significantly when allowed multiple opportunities or when building upon previous steps.

The "Sampling Only (Cumulative)" method performs comparably to the iterative approach, indicating that repeated sampling without an explicit iterative learning loop can also yield high cumulative success rates. The bottom chart shows that increasing the number of samples (k) for the "Sampling Only" method leads to a predictable, linear increase in accuracy (SC@k), but the absolute improvement per unit of k is relatively small. This implies that while more samples help, the most significant performance leap comes from switching from a single-attempt to a cumulative evaluation framework, as seen in the top chart. The consistent 29.0 baseline provides a clear reference point, highlighting the magnitude of improvement achieved by the other methods.

</details>

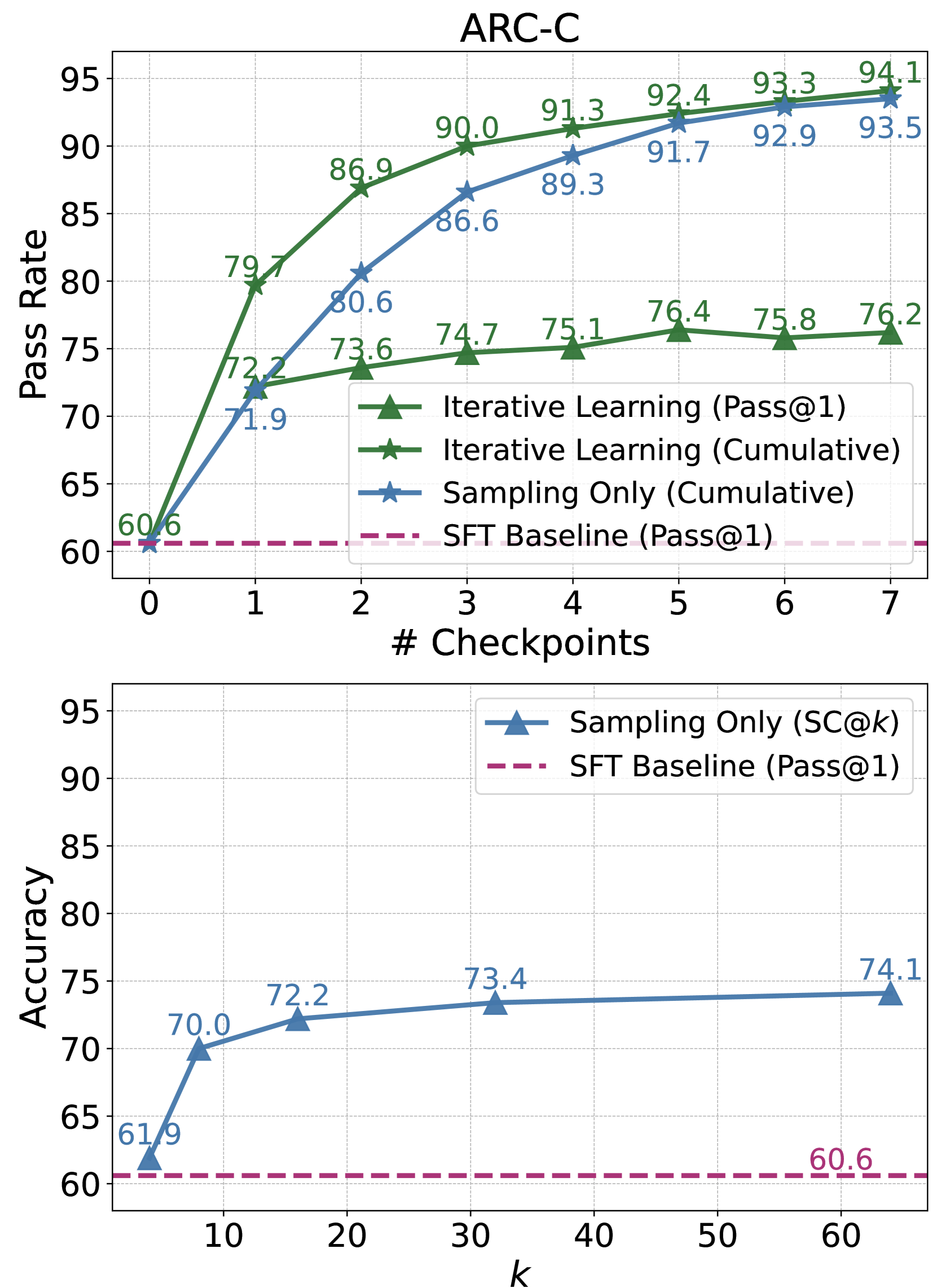

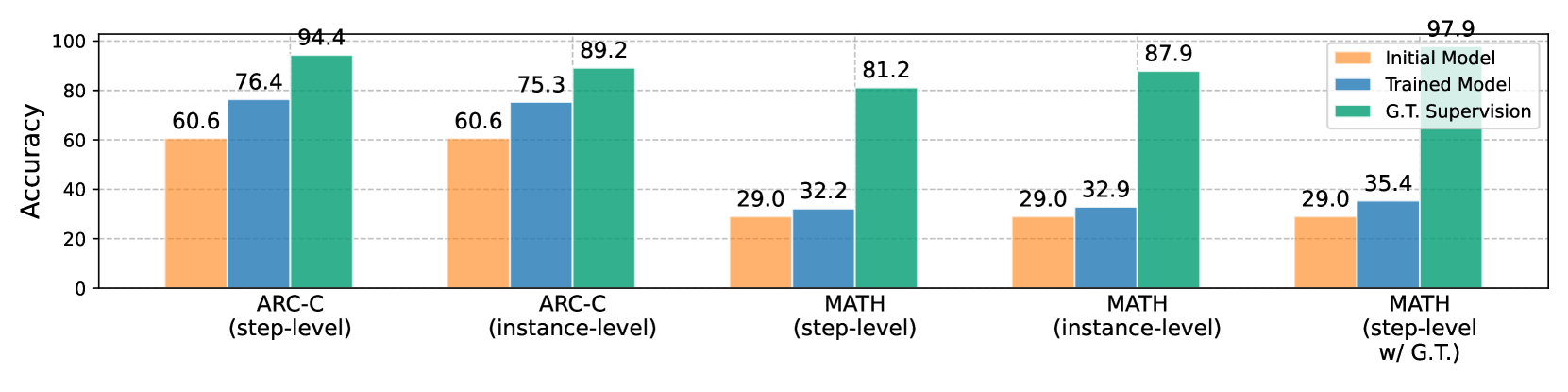

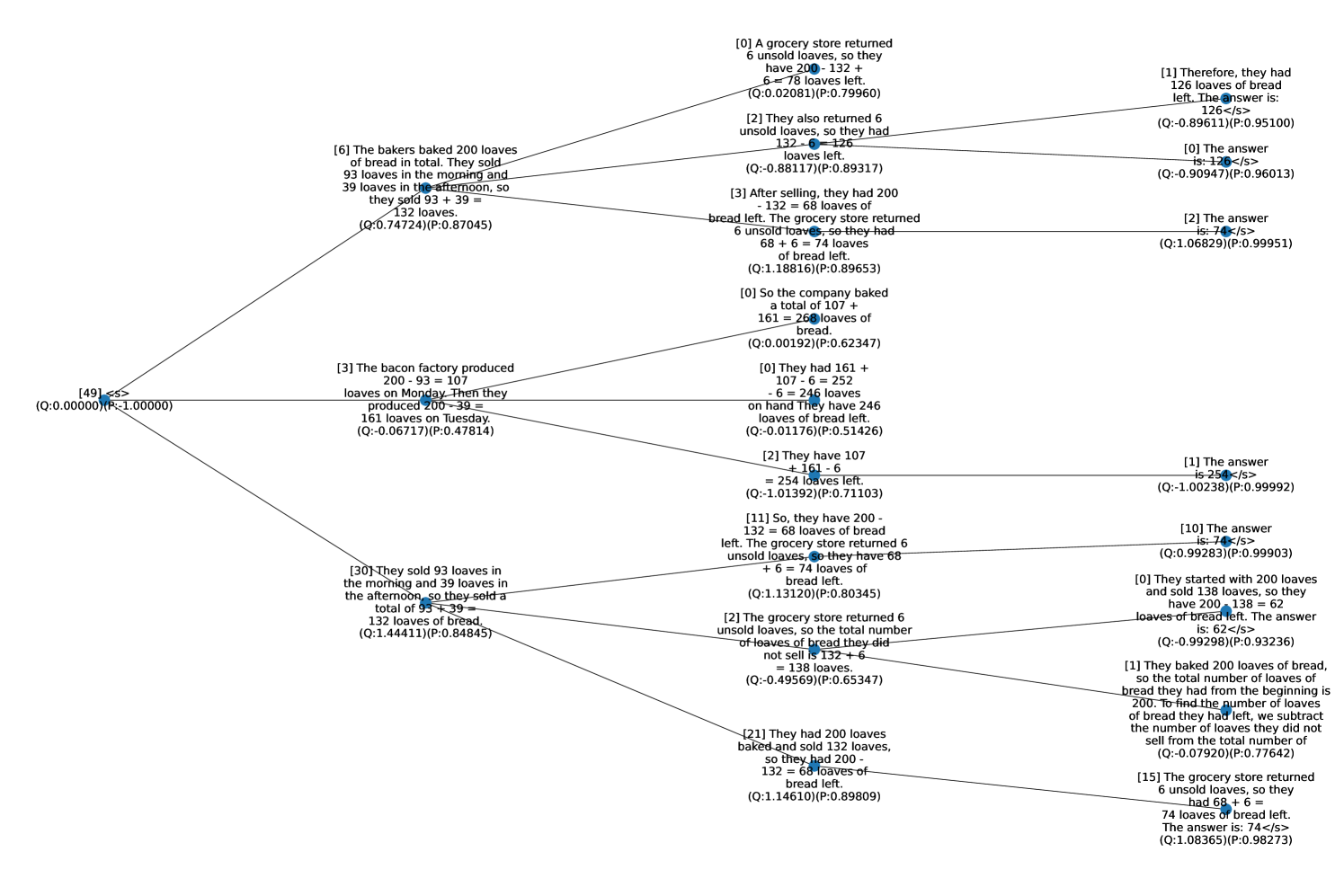

Figure 3: Training- vs. Test- Time Compute Scaling on ARC-C, SciQ, and MATH evaluation sets. The cumulative pass rate of our iterative learning method can be seen as the pass rate of an ensemble of different model checkpoints. We use greedy decoding to obtain the inference time performance of our method of iterative learning.

Table 3: Ablation of “EXAMPLE ANSWER” in self-evaluation on GSM8K, MATH, and ARC-C. We report AUC and accuracy ( $\$ ) to compare the discriminative abilities of self-evaluation scores.

| w/ example answer-w/o example answer | $74.7$ $62.0$ | $72.5$ $69.5$ | $76.6$ $48.1$ | $48.8$ $42.3$ | $65.2$ $55.8$ | $57.5$ $48.4$ |

| --- | --- | --- | --- | --- | --- | --- |

Commonsense Reasoning. In Table 2, we report the performance on commonsense reasoning tasks, where our method shows consistent improvements. Notably, we achieve absolute accuracy increases of $2.5\$ , $3.0\$ , and $2.1\$ on ARC-Challenge (ARC-C), AI2Sci-Middle (AI2Sci-M), and SciQ, respectively, surpassing the results of direct tuning. However, in tasks like OBQA and CSQA, our method, focusing on intermediate reasoning refinement, is less efficient compared to direct tuning. Despite significant improvements over the Supervised Fine-Tuning (SFT) baseline (for instance, from $59.8\$ to $79.2\$ on OBQA, and from $54.1\$ to $74.8\$ on CSQA), the gains are modest relative to direct tuning. This discrepancy could be attributed to the base model’s lack of specific knowledge, where eliciting intermediate reasoning chains may introduce increased uncertainty in model generations, leading to incorrect predictions. We delve deeper into this issue of hallucination and its implications in our qualitative analysis, as detailed in Section 4.2.

### 4.2 Further Analysis

Training- vs. Test- Time Compute Scaling. Our method integrates MCTS with preference learning, aiming to enhance both preference quality and policy reasoning via step-level alignment. We analyze the impact of training-time compute scaling versus increased inference-time sampling.

We measure success by the pass rate, indicating the percentage of correctly elicited answers. Figure 3 displays the cumulative pass rate at each checkpoint, aggregating the pass rates up to that point. For test-time scaling, we increase the number of sampled reasoning chains. Additionally, we compare the inference performance of our checkpoints with a sampling-only method, self-consistency, to assess their potential performance ceilings. The pass rate curves on ARC-C, SciQ, and MATH datasets reveal that our MCTS-enhanced approach yields a higher training compute scaling exponent. This effect is particularly pronounced on the unseen SciQ dataset, highlighting our method’s efficiency and effectiveness in enhancing specific reasoning abilities with broad applicability. Inference-time performance analysis shows higher performance upper bounds of our method on ARC-C and SciQ. For instance, while self-consistency on SciQ plateaus at around $84\$ , our framework pushes performance to $88.6\$ . However, on MATH, the sampling-only approach outperforms training compute scaling: more sampling consistently enhances performance beyond $35\$ , whereas post-training performance hovers around $32.2\$ . This observation suggests that in-domain SFT already aligns the model well with task-specific requirements.

#### Functions of Self-Evaluation Mechanism.

As illustrated in Section 2.1, the self-evaluation score inherently revises the $Q$ value estimation for subsequent preference data collection. In practice, we find that the ground-truth information, i.e., the “EXAMPLE ANSWER” in Table 6, is crucial to ensure the reliability of self-evaluation. We now compare the score distribution and discriminative abilities when including v.s. excluding this ground-truth information in Table 3. With this information , the accuracy of self-evaluation significantly improves across GSM8K, MATH, and ARC-C datasets.

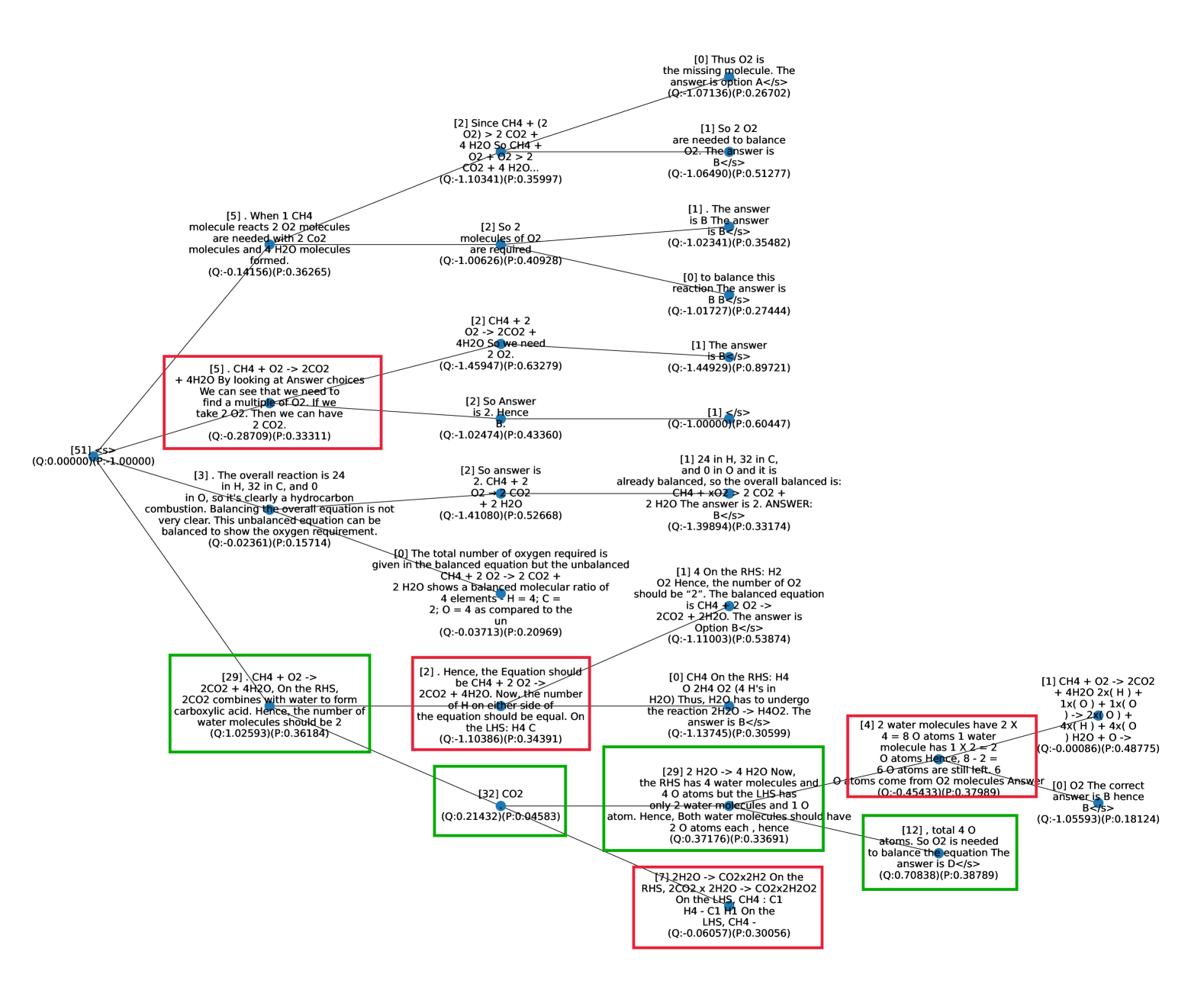

#### Ablation Study.

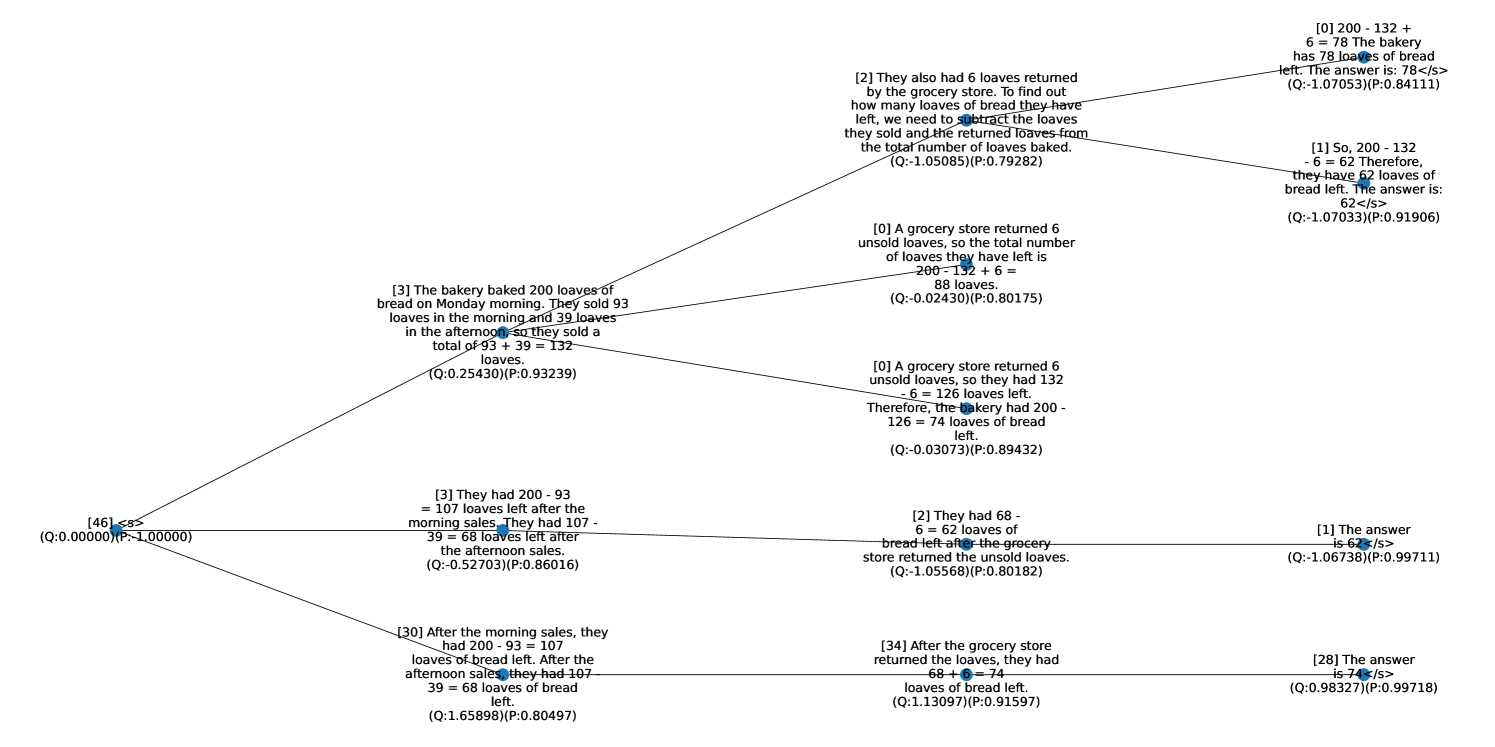

We ablate the impact of step-level supervision signals and the online learning aspect of our MCTS-based approach. Tables 1 and 2 shows performance comparisons across commonsense and arithmetic reasoning tasks under different settings. Our method, focusing on step-level online preference learning, consistently outperforms both instance-level and offline approaches in commonsense reasoning. For example, we achieve $76.4\$ on ARC-C and $88.5\$ on SciQ, surpassing $70.8\$ and $87.4\$ of the offline variant, and $75.3\$ and $87.6\$ of the instance-level approach.

<details>

<summary>x7.png Details</summary>

### Visual Description

\n

## Grouped Bar Chart: Model Accuracy Comparison on ARC-C and MATH Tasks

### Overview

The image displays a grouped bar chart comparing the accuracy percentages of three different model types ("Initial Model," "Trained Model," and "G.T. Supervision") across five distinct evaluation settings related to the ARC-C and MATH benchmarks. The chart clearly demonstrates the performance hierarchy and the impact of training and ground truth supervision.

### Components/Axes

* **Chart Type:** Grouped Bar Chart.

* **Y-Axis:** Labeled "Accuracy." The scale runs from 0 to 100 in increments of 20, with horizontal grid lines at these intervals.

* **X-Axis:** Contains five categorical groups representing different evaluation tasks:

1. ARC-C (step-level)

2. ARC-C (instance-level)

3. MATH (step-level)

4. MATH (instance-level)

5. MATH (step-level w/ G.T.)

* **Legend:** Positioned in the top-right corner of the chart area. It defines the three data series:

* **Initial Model:** Represented by orange bars.

* **Trained Model:** Represented by blue bars.

* **G.T. Supervision:** Represented by green bars. ("G.T." likely stands for "Ground Truth").

### Detailed Analysis

The chart presents the following specific accuracy values for each model in each task category:

**1. ARC-C (step-level)**

* Initial Model (Orange): 60.6

* Trained Model (Blue): 76.4

* G.T. Supervision (Green): 94.4

**2. ARC-C (instance-level)**

* Initial Model (Orange): 60.6

* Trained Model (Blue): 75.3

* G.T. Supervision (Green): 89.2

**3. MATH (step-level)**

* Initial Model (Orange): 29.0

* Trained Model (Blue): 32.2

* G.T. Supervision (Green): 81.2

**4. MATH (instance-level)**

* Initial Model (Orange): 29.0

* Trained Model (Blue): 32.9

* G.T. Supervision (Green): 87.9

**5. MATH (step-level w/ G.T.)**

* Initial Model (Orange): 29.0

* Trained Model (Blue): 35.4

* G.T. Supervision (Green): 97.9

### Key Observations

1. **Consistent Performance Hierarchy:** In every single task category, the "G.T. Supervision" model (green) achieves the highest accuracy, followed by the "Trained Model" (blue), with the "Initial Model" (orange) performing the worst.

2. **Task Difficulty Disparity:** There is a stark contrast in baseline performance between ARC-C and MATH tasks. The "Initial Model" scores ~60.6% on ARC-C tasks but only 29.0% on all MATH tasks, indicating MATH is a significantly more challenging benchmark for the base model.

3. **Impact of Training:** Training ("Trained Model") provides a substantial accuracy boost over the "Initial Model" on ARC-C tasks (+15.8 and +14.7 percentage points). The improvement on MATH tasks is much smaller (+3.2, +3.9, and +6.4 percentage points).

4. **Dominance of Ground Truth Supervision:** The "G.T. Supervision" model shows dramatic performance gains, especially on the difficult MATH tasks. Its accuracy on "MATH (step-level w/ G.T.)" reaches 97.9%, nearly perfect performance.

5. **Instance vs. Step-level:** For ARC-C, step-level evaluation yields slightly higher accuracy for G.T. Supervision (94.4 vs. 89.2). For MATH, the pattern is less clear, with instance-level (87.9) outperforming step-level (81.2) for G.T. Supervision, but step-level with G.T. (97.9) being the highest overall.

### Interpretation

This chart illustrates a clear narrative about model capability and the value of supervision. The data suggests that:

* **The core reasoning ability (Initial Model) is insufficient for complex mathematical problems,** as evidenced by the low 29% baseline on MATH versus ~60% on ARC-C.

* **Standard training provides moderate improvements,** but is particularly limited in elevating performance on the hardest tasks (MATH).

* **Access to ground truth (G.T. Supervision) is the most critical factor for high performance,** enabling near-perfect scores even on the most difficult task variant. This implies that the model's underlying architecture may be capable, but it relies heavily on correct guidance or supervision to apply that capability effectively.

* The **"MATH (step-level w/ G.T.)"** result (97.9%) is a key outlier, demonstrating a ceiling effect when the model is provided with perfect intermediate steps. This highlights a potential pathway for improving model performance: not just training on final answers, but on the correctness of the reasoning process itself.

The chart effectively argues that while training helps, the provision of ground truth supervision—particularly at the step-level for complex problems—is the dominant driver of high accuracy in these evaluations.

</details>

Figure 4: Abltation study on step- vs. instance-level supervision, online vs. offline learning, and w/ vs. w/o G.T. supervision. We also compare the accuracy of the training signals collected via MCTS or instance-level sampling.

Table 4: Qualitative Analysis of model outputs on OBQA, AI2Sci, and MATH. We highlight correct and incorrect keywords. The predicted answers on MATH are boxed, where our method solves the complex problem while SFT baseline failed to elicit a complete reasoning chain.

$$

f(x)=x^7+ax^3+cx f(5)=3, f(-5) f(5)=5^7+5a+5c=3. f(-5), (-5)^7+(-5a) +(-5c). (-5)^7=-5^7=-3, f(-5)=-3-5a-5c. f(-5)=-3-5(-3)-5c=-3+15-5c=\boxed{{\color[rgb]{0.609375,0.37890625,0.71875}12-

5c}}. f(5)=5^7+5a+5c=3. f(x) f(-5)=(-5)^7-5a-5c=\boxed{{\color[rgb]{0,0.88,0}-3}}. f(c)=\frac{3}{2c-3} \frac{kn^2}{lm} f^-1(c)× c× f(c) \frac{kc+l}{mc+n} k,l,m, and n f^-1(c) f(c)=x c \frac{3}{2c-3}=x 2c-3=\frac{3}{x} 2c=\frac{3}{x}+3 c=\frac{\frac{3}{x}+3}{2} f^-1(c)=\frac{\frac{3}{c}+3}{2c} f^-1(c) f^-1(c)× c× f(c) \frac{\frac{3}{c}+3}{2c}× c×\frac{3}{2c-3} \frac{3+3c}{2c}×\frac{3}{2c-3} \frac{9+9c}{4c^2-6c} \frac{9+9c}{4c(c-1)} \frac{kc+l}{mc+n} k,l,m, n \frac{9+9c}{4c(c-1)}=\frac{kc+l}{mc+n} 9+9c=4c(c-1)(kc+l) 9+9c=4c^2k-4ck-4cl+l 4c^2k-4 f(f^-1(c))=c c f^-1 f(f^-1(c))=f(c) c f^-1 f(f^-1(c))=f(c) f(c)=c c f^-1 f(c)=\frac{3}{2c-3} f(c)=c c f^-1 2c-3=c c f^-1 c=3 c f^-1 f^-1(c)=3 c f^-1 f^-1(c)=3 f^-1(c)× c× f(c) 3× 3×\frac{3}{2(3)-3} 9×\frac{3}{3}=9 \frac{kn^2}{lm}=\boxed{{\color[rgb]{0,0.88,0}9}} \tag{5}

$$

In arithmetic reasoning, performance differences among settings are less pronounced for challenging task such as MATH without the incorporation of ground-truth solutions (e.g., $32.2\$ for our method (w/o G.T.) vs. $31.9\$ and $32.9\$ for offline and instance-level on MATH). The comparable performance of offline learning aligns with our theoretical analysis that offline approaches can be effective when the initial policy is already well-tuned with high-quality, in-domain data. We further interpret how G.T. guidance integration to enhance the effectiveness of our framework in Figure 4. With G.T. supervision, the accuracy of training signals improve significantly from $81.2\$ to $97.9\$ , leading to substantial performance gain on model performance. This also explains the similar performance (w/o G.T.) between corresponding using step- and instance-level supervision, where our step-level approach shows effectiveness in narrowing the gap between accuracies of corresponding supervisions.

#### Training Dynamics in Iterative Learning.

As shown in Figure 2, online learning exhibits cyclic performance fluctuations, with validation performance peaking before dipping. We conduct theoretical analysis on this in Appendix B and shows that continuous policy updates with the latest models can lead to periodic knowledge loss due to insufficient optimization in iterative updates. We further probe these phenomena qualitatively next.

#### Qualitative Analysis.

Our qualitative analysis in Table 4 examines the impact of step-level supervision on intermediate reasoning correctness across different tasks. In OBQA, the implementation of MCTS, as discussed in Section 4.1, often leads to longer reasoning chains. This can introduce errors in commonsense reasoning tasks, as seen in our OBQA example, where an extended chain results in an incorrect final prediction. Conversely, in the MATH dataset, our approach successfully guides the model to rectify mistakes and formulates accurate, extended reasoning chains, demonstrating its effectiveness in complex math word problems. This analysis underscores the need to balance reasoning chain length and logical coherence, particularly in tasks with higher uncertainty, such as commonsense reasoning.

## 5 Related Work

Various studies focus on self-improvement to exploit the model’s capability. One line of work focuses on collecting high-quality positive data from model generations guided by static reward heuristic (Zelikman et al., 2022; Gülçehre et al., 2023; Polu et al., 2023). Recently, Yuan et al. (2024) utilize the continuously updated LLM self-rewarding to collect both positive and negative data for preference learning. Fu et al. (2023) adopt exploration strategy via rejection sampling to do online data collection for iterative preference learning. Different from prior works at instance-level alignment, we leverage MCTS as a policy improvement operator to iteratively facilitate step-level preference learning. We discuss additional related work in Appendix A.

## 6 Conclusion

In this paper, we propose MCTS-enhanced iterative preference learning, utilizing MCTS as a policy improvement operator to enhance LLM alignment via step-level preference learning. MCTS balances quality exploitation and diversity exploration to produce high-quality training data, efficiently pushing the ceiling performance of the LLM on various reasoning tasks. Theoretical analysis shows that online sampling in our iterative learning framework is key to improving the LLM policy toward optimal alignment. We hope our proposed approach can inspire future research on LLM alignment from both data-centric and algorithm-improving aspects: to explore searching strategies and utilization of history data and policies to augment and diversify training examples; to strategically employ a tradeoff between offline and online learning to address the problem of cyclic performance change of the online learning framework as discussed in our theoretical analysis.

## Acknowledgments and Disclosure of Funding

The computational work for this article was partially performed on resources of the National Supercomputing Centre (NSCC), Singapore https://www.nscc.sg/.

## References

- Anthony et al. (2017) Thomas Anthony, Zheng Tian, and David Barber. 2017. Thinking fast and slow with deep learning and tree search. In Advances in Neural Information Processing Systems 30: Annual Conference on Neural Information Processing Systems 2017, December 4-9, 2017, Long Beach, CA, USA, pages 5360–5370.

- Azar et al. (2023) Mohammad Gheshlaghi Azar, Mark Rowland, Bilal Piot, Daniel Guo, Daniele Calandriello, Michal Valko, and Rémi Munos. 2023. A general theoretical paradigm to understand learning from human preferences. CoRR, abs/2310.12036.

- Bai et al. (2022a) Yuntao Bai, Andy Jones, Kamal Ndousse, Amanda Askell, Anna Chen, Nova DasSarma, Dawn Drain, Stanislav Fort, Deep Ganguli, Tom Henighan, Nicholas Joseph, Saurav Kadavath, Jackson Kernion, Tom Conerly, Sheer El Showk, Nelson Elhage, Zac Hatfield-Dodds, Danny Hernandez, Tristan Hume, Scott Johnston, Shauna Kravec, Liane Lovitt, Neel Nanda, Catherine Olsson, Dario Amodei, Tom B. Brown, Jack Clark, Sam McCandlish, Chris Olah, Benjamin Mann, and Jared Kaplan. 2022a. Training a helpful and harmless assistant with reinforcement learning from human feedback. CoRR, abs/2204.05862.

- Bai et al. (2022b) Yuntao Bai, Saurav Kadavath, Sandipan Kundu, Amanda Askell, Jackson Kernion, Andy Jones, Anna Chen, Anna Goldie, Azalia Mirhoseini, Cameron McKinnon, et al. 2022b. Constitutional ai: Harmlessness from ai feedback. arXiv preprint arXiv:2212.08073.

- Bradley and Terry (1952) Ralph Allan Bradley and Milton E Terry. 1952. Rank analysis of incomplete block designs: I. the method of paired comparisons. Biometrika, 39(3/4):324–345.