# Self-Reflection in LLM Agents: Effects on Problem-Solving Performance

**Authors**:

- Matthew Renze (Johns Hopkins University)

- &Erhan Guven (Johns Hopkins University)

## Abstract

In this study, we investigated the effects of self-reflection in large language models (LLMs) on problem-solving performance. We instructed nine popular LLMs to answer a series of multiple-choice questions to provide a performance baseline. For each incorrectly answered question, we instructed eight types of self-reflecting LLM agents to reflect on their mistakes and provide themselves with guidance to improve problem-solving. Then, using this guidance, each self-reflecting agent attempted to re-answer the same questions. Our results indicate that LLM agents are able to significantly improve their problem-solving performance through self-reflection ( $p<0.001$ ). In addition, we compared the various types of self-reflection to determine their individual contribution to performance. All code and data are available on GitHub at https://github.com/matthewrenze/self-reflection

## 1 Introduction

### 1.1 Background

Self-reflection is a process in which a person thinks about their thoughts, feelings, and behaviors. In the context of problem-solving, self-reflection allows us to inspect the thought process leading to our solution. This type of self-reflection aims to avoid making similar errors when confronted with similar problems in the future.

Like humans, large language model (LLM) agents can be instructed to produce a chain of thought (CoT) before answering a question. CoT prompting has been shown to significantly improve LLM performance on a variety of problem-solving tasks [1, 2, 3]. However, LLMs still often make errors in their CoT due to logic errors, mathematical errors, hallucination, etc. [4, 5, 6, 7, 8, 9].

Also similar to humans, LLM agents can be instructed to reflect on their own CoT. This allows them to identify errors, explain the cause of these errors, and generate advice to avoid making similar types of errors in the future [10, 11, 12, 13, 14, 15].

Our research investigates the use of self-reflection in LLM agents to improve their problem-solving capabilities.

### 1.2 Prior Literature

Over the past few years, we’ve seen the emergence of AI agents based on LLM architectures [16, 17]. These agents have demonstrated impressive capabilities in solving multi-step problems [18, 19, 10]. In addition, they’ve been observed successfully using tools, including web browsers, search engines, code interpreters, etc. [20, 19, 10, 21].

However, these LLM agents have several limitations. They have limited knowledge, make errors in reasoning, hallucinate output, and get stuck in unproductive loops [4, 5, 6, 7, 8, 9].

To improve their performance, we can provide them with a series of cognitive capabilities. For example, we can provide them with a CoT [1, 2, 3], access to external memory [22, 23, 24, 25], and the ability to learn from feedback [18, 10, 19].

Learning from feedback can be decomposed into several components. These components include the source of the feedback, the type of feedback, and the strategy used to learn from feedback [11]. There are two sources of feedback (i.e., internal or external feedback) and two main types of feedback (i.e., scalar values or natural language) [11, 12].

There are also several strategies for learning from feedback. These strategies depend on where they occur in the LLM’s output-generation process. They can occur at model-training time, output-generation time, or after the output has been generated. Within each of these three phases, there are various techniques available (e.g., model fine-tuning, output re-ranking, and self-correction) [11].

In terms of learning from self-correction, various methods are currently being investigated. These include iterative refinement, multi-model debate, and self-reflection [11].

Self-reflection in LLM agents is a metacognitive strategy also known as introspection [13, 14]. Some research studies have indicated that LLMs using self-reflection are able to identify and correct their mistakes [12, 10, 8, 15]. Others have indicated that LLMs cannot identify errors in their reasoning; regardless, they still may be able to correct them with external feedback [7, 26].

### 1.3 Contribution

Our research builds upon the prior literature by determining which aspects of self-reflection are most beneficial in improving an LLM agent’s performance on problem-solving tasks. It decomposes the process of self-reflection into several components and identifies how each component contributes to the agent’s overall increase in performance.

In addition, it provides insight into which types of LLMs and problem domains benefit most from each type of self-reflection. These include LLMs such as GPT-4, Llama 2 70B, and Gemini 1.5 Pro. It also includes various problem domains such as math, science, medicine, etc.

This information is useful to AI engineers attempting to build LLM agents with self-reflection capabilities. In addition, it is valuable to AI researchers studying metacognition in LLM agents.

## 2 Methods

### 2.1 Data

Our test dataset consists of a set of multiple-choice question-and-answer (MCQA) problems derived from popular LLM benchmarks. These benchmarks include ARC, AGIEval, HellaSwag, MedMCQA, etc. [27, 28, 29, 30, 31, 32].

We preprocessed and converted these datasets into a standardized format. Then, we randomly selected 100 questions from each of the ten datasets to create a multi-domain exam consisting of 1,000 problems.

For a complete list of the source problem sets used to create the MCQA exam, see Table 1. For a sample of an MCQA problem, see Figure 5 in the appendix.

Table 1: Problem sets used to create the 1,000-question multi-domain MCQA exam.

| ARC Challenge Test | ARC | Science | 1,173 | CC BY-SA | [27] |

| --- | --- | --- | --- | --- | --- |

| AQUA-RAT | AGI Eval | Math | 254 | Apache v2.0 | [30] |

| Hellaswag Val | Hellaswag | Common Sense Reasoning | 10,042 | MIT | [28] |

| LogiQA (English) | AGI Eval | Logic | 651 | GitHub | [30, 31] |

| LSAT-AR | AGI Eval | Law (Analytic Reasoning) | 230 | MIT | [30, 32] |

| LSAT-LR | AGI Eval | Law (Logical Reasoning) | 510 | MIT | [30, 32] |

| LSAT-RC | AGI Eval | Law (Reading Comprehension) | 260 | MIT | [30, 32] |

| MedMCQA Valid | MedMCQA | Medicine | 6,150 | MIT | [29] |

| SAT-English | AGI Eval | English | 206 | MIT | [30] |

| SAT-Math | AGI Eval | Math | 220 | MIT | [30] |

Note: The GitHub repository for LogiQA does not include a license file. However, both the paper and readme.md file states that "The dataset is freely available."

### 2.2 Models

We evaluated our agents using nine popular LLMs, including GPT-4, Llama 2 70B, Gemini 1.5 Pro, etc. [33, 34, 35, 36, 37, 38, 39, 40, 41, 42, 43, 44, 45, 46, 47]. All models were accessed via cloud-based APIs hosted by Microsoft, Anthropic, and Google.

Each of these LLMs has its own unique strengths and weaknesses. For example, LLMs like GPT-4, Gemini 1.5 Pro, and Claude Opus are powerful LLMs with a large number of parameters [44, 40, 34]. However, they have a significantly higher cost per token than smaller models like GPT-3.5 and Llama 2 7B [42, 46].

For a complete list of LLMs used in our experiment, see Table 2.

Table 2: LLMs used in the experiment.

| Claude 3 Opus | Anthropic | 2024-03-04 | Closed | [33, 34] |

| --- | --- | --- | --- | --- |

| Command R+ | Cohere | 2024-04-04 | Open | [35, 36] |

| Gemini 1.0 Pro | Google | 2023-12-06 | Closed | [37, 38] |

| Gemini 1.5 Pro (Preview) | Google | 2024-02-15 | Closed | [39, 40] |

| GPT-3.5 Turbo | OpenAI | 2022-11-30 | Closed | [41, 42] |

| GPT-4 | OpenAI | 2023-03-14 | Closed | [43, 44] |

| Llama 2 7B Chat | Meta | 2023-07-18 | Open | [45, 46] |

| Llama 2 70B Chat | Meta | 2023-07-18 | Open | [45, 46] |

| Mistral Large | Mistral AI | 2024-02-26 | Open | [47] |

### 2.3 Agents

We investigated eight types of self-reflecting LLM agents. These agents reflect upon their own CoT and then generate self-reflections to use when attempting to re-answer questions. Each of these agents uses a unique type of self-reflection to assist it. We also included a single non-reflecting (i.e., Baseline) agent as our control.

Listed below are the various types of agents and the type of self-reflection they generate and use to re-answer questions:

- Baseline - no self-reflection capabilities.

- Retry - informed that it answered incorrectly and simply tries again.

- Keywords - a list of keywords for each type of error.

- Advice - a list of general advice for improvement.

- Explanation - an explanation of why it made an error.

- Instructions - an ordered list of instructions for how to solve the problem.

- Solution - a step-by-step solution to the problem.

- Composite - all six types of self-reflections.

- Unredacted - all six types without the answers redacted

The Baseline agent is our control for the experiment and a lower bound for the scores. It informs us how well the base model answers the question without using any self-reflection. The Baseline agent used standard prompt-engineering techniques, including domain expertise, CoT, conciseness, and few-shot prompting [48, 49, 1, 2, 3]. The sampling temperature was set to 0.0 for all LLMs to improve reproducibility [50]. See Figure 6 in the appendix for an example of the Baseline answer prompt.

The self-reflecting agents used the same prompt-engineering techniques as the Baseline agent to re-answer questions. However, they also reflected upon their mistakes before attempting to re-answer. While re-answering, the self-reflection was injected into the re-answer prompt to allow the agent to learn from its mistakes. See Figures 7 and 8 in the appendix for examples of the self-reflection prompt and the re-answer prompt.

We redacted all of the answer labels (e.g., "A", "B", "C") and answer descriptions (e.g., "Baltimore", "Des Moines", "Las Vegas") from the agents’ self-reflections. However, the Unredacted agent retains this information. This agent is only used to provide an upper bound for the scores. Essentially, the Unredacted agent tells us how accurately the LLM could answer the questions when given the correct answer in its self-reflection.

### 2.4 Process

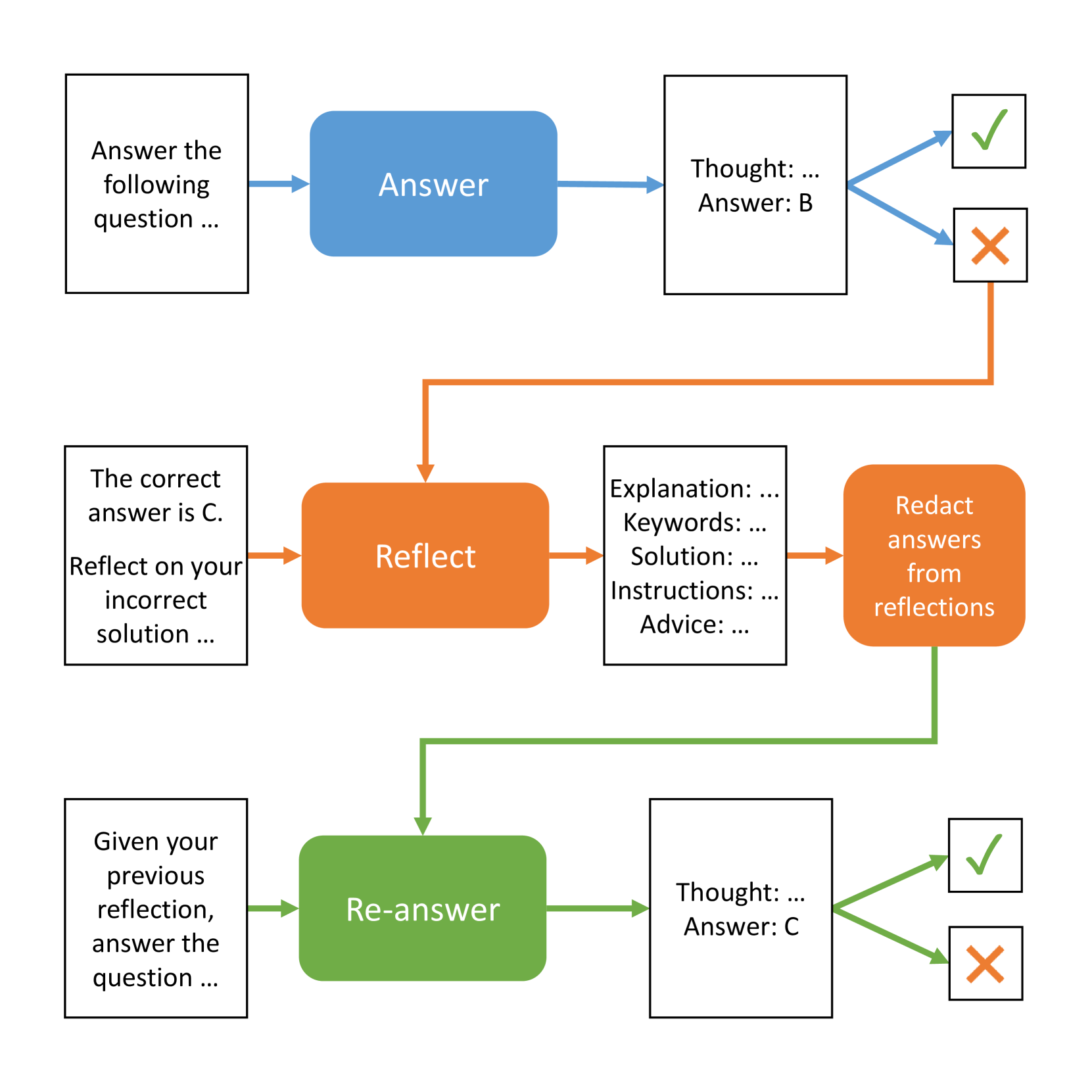

First, the Baseline agent answered all 1,000 questions. If a question was answered correctly, it was added to the Baseline agent’s score. If it was answered incorrectly, it was added to a queue of incorrectly answered questions to be reflected upon (see Figure 1).

Next, for each incorrectly answered question, the self-reflecting agents reflected upon the problem, their incorrect solution, and the correct answer. Using the correct answer as an external feedback signal, they each generated one of the eight types of self-reflection feedback described above.

Then, a find-and-replace operation was performed on the text of each self-reflection to redact the answer labels and answer descriptions. For example, we replaced answer labels (e.g., "A", "B", "C") and answer descriptions (e.g., "Baltimore", "Des Moines", "Las Vegas") with the text "[REDACTED]". The process we used to redact answer labels and descriptions was greedy. It often redacted additional text that did not leak the answer. However, we felt it necessary to err on the side of caution by eliminating any possible answer leakage. This was done to all of the self-reflecting agents, except for the Unredacted agent, to prevent answer leakage in the self-reflections. It is important to note that the self-reflections generated by the Explanation, Instructions, and Solution agents indirectly leak information about the correct answer without directly specifying the correct or incorrect answers. However, they generated this information on their own based on nothing more than being provided the correct answer during the self-reflection process.

Finally, for each incorrectly answered question, the self-reflecting agents used their specific self-reflection text to assist them in re-answering the question. We calculated the scores for all agents and compared them to the Baseline agent for analysis.

While LLM agents typically operate over a series of iterative steps, the code for this experiment was implemented as batch operations to save time and cost. So, each step in the self-reflection process occurred in one of four batch phases described above. Conceptually, the experiment represented virtual multi-step agents. However, the technical implementation of the experiment was actually a series of batch operations (see Algorithm 1).

<details>

<summary>x1.png Details</summary>

### Visual Description

\n

## Diagram: Iterative Answer Correction Process Flowchart

### Overview

The image displays a three-stage flowchart illustrating a process for answering questions, receiving feedback on incorrect answers, reflecting on the error, and then re-answering. The process is color-coded by stage: blue for the initial answer, orange for reflection, and green for the re-answer. The flow is primarily left-to-right and top-to-bottom, with a critical feedback loop from an incorrect outcome back to the reflection stage.

### Components/Axes

The diagram is composed of rectangular boxes (some with rounded corners) connected by directional arrows. The boxes contain textual instructions or outputs. The arrows indicate the flow of the process.

**Color Legend (Implied by Stage):**

* **Blue:** Initial Answer Stage

* **Orange:** Reflection Stage

* **Green:** Re-answer Stage

* **Green Checkmark (✓):** Symbol for a correct outcome.

* **Red Cross (✗):** Symbol for an incorrect outcome.

**Spatial Layout:**

* The diagram is divided into three horizontal bands or "swimlanes," one for each major stage.

* The **Initial Answer (Blue)** stage occupies the top band.

* The **Reflection (Orange)** stage occupies the middle band.

* The **Re-answer (Green)** stage occupies the bottom band.

* A feedback arrow (orange) connects the incorrect outcome (✗) of the first stage to the input of the Reflection stage.

### Detailed Analysis

**Stage 1: Initial Answer (Top Band, Blue Flow)**

1. **Input Box (Top-Left):** A standard rectangle containing the text: `Answer the following question ...`

2. **Process Box (Top-Center):** A blue, rounded rectangle labeled `Answer`.

3. **Output Box (Top-Right):** A standard rectangle containing the text:

`Thought: ...`

`Answer: B`

4. **Decision Point:** Two arrows branch from the Output Box:

* An arrow points to a small square containing a **green checkmark (✓)**, representing a correct answer.

* An arrow points to a small square containing a **red cross (✗)**, representing an incorrect answer.

**Stage 2: Reflection (Middle Band, Orange Flow)**

* **Trigger:** An orange arrow originates from the **red cross (✗)** in Stage 1 and points to the input of this stage.

1. **Input Box (Middle-Left):** A standard rectangle containing the text:

`The correct answer is C.`

`Reflect on your incorrect solution ...`

2. **Process Box (Middle-Center):** An orange, rounded rectangle labeled `Reflect`.

3. **Output Box (Middle-Right):** A standard rectangle containing a structured list:

`Explanation: ...`

`Keywords: ...`

`Solution: ...`

`Instructions: ...`

`Advice: ...`

4. **Process Box (Far-Right):** An orange, rounded rectangle labeled `Redact answers from reflections`.

**Stage 3: Re-answer (Bottom Band, Green Flow)**

* **Trigger:** A green arrow originates from the "Redact answers from reflections" box in Stage 2 and points to the input of this stage.

1. **Input Box (Bottom-Left):** A standard rectangle containing the text: `Given your previous reflection, answer the question ...`

2. **Process Box (Bottom-Center):** A green, rounded rectangle labeled `Re-answer`.

3. **Output Box (Bottom-Right):** A standard rectangle containing the text:

`Thought: ...`

`Answer: C`

4. **Decision Point:** Two arrows branch from this Output Box:

* An arrow points to a small square containing a **green checkmark (✓)**.

* An arrow points to a small square containing a **red cross (✗)**.

### Key Observations

1. **Iterative Correction Loop:** The core mechanism is a loop triggered by an incorrect answer (✗). The process does not end on failure but routes to a dedicated reflection and correction phase.

2. **Structured Reflection:** The reflection output is not free-form; it is categorized into specific components (Explanation, Keywords, Solution, Instructions, Advice), suggesting a methodical analysis of the error.

3. **Redaction Step:** The "Redact answers from reflections" step is crucial. It implies that the raw reflection may contain the incorrect answer or other information that must be filtered out before attempting to re-answer, preventing the model from simply repeating its mistake.

4. **Color-Coded State Tracking:** The consistent use of blue, orange, and green for arrows and process boxes provides a clear visual cue for which phase of the process is active.

5. **Identical Structure, Different Content:** The input/output boxes for Stage 1 and Stage 3 are structurally identical, but the content differs. Stage 3's input explicitly references the prior reflection, and its output shows a corrected answer (`Answer: C`), demonstrating the intended outcome of the process.

### Interpretation

This flowchart models a **self-correcting learning system**, likely for a language model or AI agent. It formalizes a "chain of thought" or "self-reflection" technique aimed at improving accuracy.

* **What it demonstrates:** The process explicitly separates *answering*, *error diagnosis*, and *corrected answering*. It moves beyond simple trial-and-error by instituting a mandatory, structured reflection phase upon failure. The redaction step is particularly insightful, as it acknowledges that the model's internal reasoning (the reflection) might be contaminated by its initial incorrect hypothesis and must be sanitized.

* **How elements relate:** The flow is causal and conditional. The initial answer's correctness determines the path. The reflection stage is entirely dependent on an incorrect outcome. The re-answer stage is dependent on the processed output of the reflection. This creates a closed-loop system for improvement.

* **Notable implications:** This diagram is a blueprint for building more robust and reliable AI systems. It operationalizes the concept of "learning from mistakes" within a single inference cycle. The final green checkmark (✓) in the Re-answer stage represents the system's goal: achieving the correct answer (`C`) not on the first try, but through a structured process of error and correction. The presence of a potential second red cross (✗) in the final stage also honestly acknowledges that the correction process itself may not always succeed, implying the loop could, in theory, be repeated.

</details>

Figure 1: Diagram of the self-reflection experiment.

Algorithm 1 Self-reflection Experiment (Batch)

1: for each model, exam, and problem do

2: Create the answer prompt

3: Answer the question

4: if the answer is incorrect then

5: Add the problem to the incorrect list

6: end if

7: end for

8: Calculate the Baseline agent scores

9:

10: for each model, exam, and problem do

11: Reflect upon the incorrect solution

12: Generate the self-reflections

13: if not the Unredacted agent then

14: Redact the answers

15: end if

16: Separate the reflections by type

17: end for

18:

19: for each model, agent, exam, and problem do

20: Create the re-answer prompt

21: Inject the agent’s reflection

22: Re-answer the question

23: end for

24: Calculate the reflected agent scores

### 2.5 Metrics

We used correct-answer accuracy as our primary metric to measure the performance of the agents. Accuracy is calculated by dividing the number of correctly answered questions by the total number of questions.

However, to reduce the cost of running our experiment, we did not have the self-reflecting agents re-answer all of the questions that were correctly answered by the Baseline agent. Rather, the self-reflecting agents only re-answered the incorrectly answered questions. We then added the self-reflecting agent’s correct re-answer score to the Baseline agent’s score to create a new total score for the self-reflecting agent.

The calculations for accuracy used in our experiment are listed in Equation(s) 1. In these equations, the subscript base refers to the Baseline agent’s correct-answer score, and the subscript ref is the reflection agent’s correct re-answer score.

$$

\displaystyleAccuracy_base \displaystyle=\frac{Correct_base}{Total_base} \displaystyle\hskip 56.9055ptAccuracy_ref \displaystyle=\frac{Correct_base+Correct_ref}{

Total_base} \tag{1}

$$

### 2.6 Analysis

When comparing the scores of the self-reflecting agents to the Baseline agent, we performed the McNemar test to determine statistical significance and report p-values. This test was specifically chosen because our analysis compared two series of binary outcomes (i.e., correct or incorrect answers). These outcomes were paired question-by-question across both the Baseline agent and self-reflecting agent being compared.

The McNemar test compares the number of discordant pairs in the two sets of pair-wise outcomes. To compute the test statistic, we create a $2× 2$ contingency table of the outcomes. In cell $a$ , we state the number of cases where both agents answered incorrectly. Cell $d$ contains the cases where they both answered correctly. Cell $b$ contains incorrect-correct answer pairs and cell $c$ contains correct-incorrect answer pairs (which, in our case, will always be zero) [51].

The McNemar’s test statistic is calculated as:

$$

χ^2=\frac{(b-c)^2}{b+c} where b and c are the

discordant pairs in ≤ft[\begin{array}[]{cc}a&b\\

c&d\\

\end{array}\right]

$$

## 3 Results

### 3.1 Performance by Agent

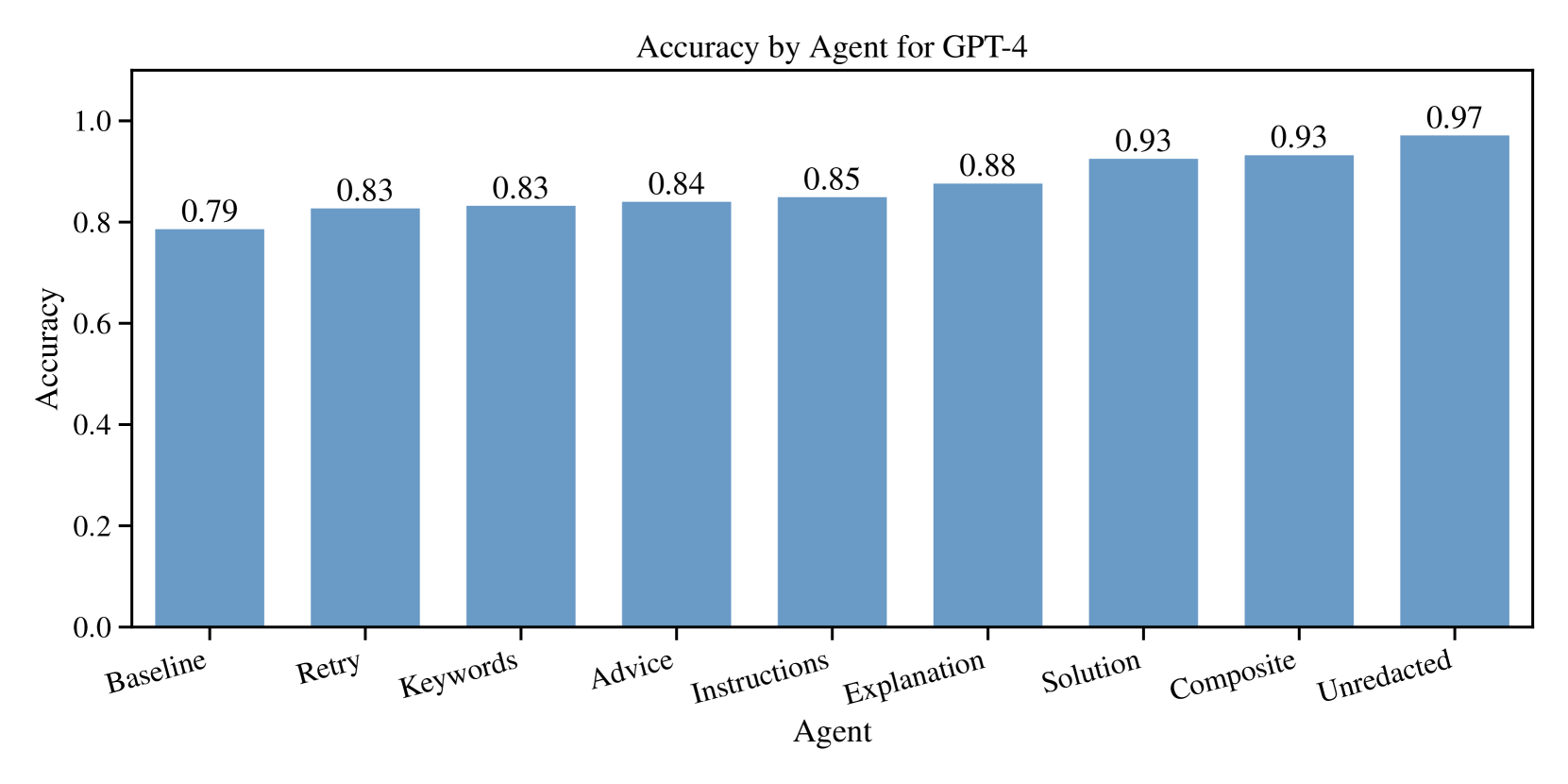

Our analysis revealed that agents using various types of self-reflection outperformed our Baseline agent. The increase in performance was statistically significant ( $p<0.001$ ) for all types of self-reflection across all LLMs. We can use GPT-4 as an example case. In Figure 2, we can see that all types of self-reflection improve the accuracy of the agent in solving MCQA problems. See Table 3 in the appendix for a numerical analysis of the results for GPT-4.

<details>

<summary>x2.png Details</summary>

### Visual Description

## Bar Chart: Accuracy by Agent for GPT-4

### Overview

The image is a vertical bar chart titled "Accuracy by Agent for GPT-4". It displays the performance accuracy of nine different "Agent" types when used with the GPT-4 model. The chart shows a clear, generally increasing trend in accuracy from left to right across the agent categories.

### Components/Axes

* **Chart Title:** "Accuracy by Agent for GPT-4" (centered at the top).

* **Y-Axis (Vertical):**

* **Label:** "Accuracy" (rotated 90 degrees).

* **Scale:** Linear scale from 0.0 to 1.0, with major tick marks at 0.0, 0.2, 0.4, 0.6, 0.8, and 1.0.

* **X-Axis (Horizontal):**

* **Label:** "Agent".

* **Categories (from left to right):** Baseline, Retry, Keywords, Advice, Instructions, Explanation, Solution, Composite, Unredacted.

* **Data Series:** A single series represented by nine blue bars. Each bar's height corresponds to its accuracy value, which is explicitly labeled above each bar.

* **Legend:** Not present. Each data point is directly labeled with its category on the x-axis and its value above the bar.

### Detailed Analysis

The chart presents the following data points for each agent type, listed in order from left to right:

1. **Baseline:** Accuracy = 0.79

2. **Retry:** Accuracy = 0.83

3. **Keywords:** Accuracy = 0.83

4. **Advice:** Accuracy = 0.84

5. **Instructions:** Accuracy = 0.85

6. **Explanation:** Accuracy = 0.88

7. **Solution:** Accuracy = 0.93

8. **Composite:** Accuracy = 0.93

9. **Unredacted:** Accuracy = 0.97

**Trend Verification:** The visual trend is a steady, step-wise increase in bar height from left to right. The "Baseline" agent has the lowest accuracy. Accuracy increases gradually through "Retry", "Keywords", "Advice", and "Instructions". A more noticeable jump occurs at "Explanation". The trend then plateaus between "Solution" and "Composite" (both at 0.93) before reaching the highest point with "Unredacted".

### Key Observations

* **Highest Accuracy:** The "Unredacted" agent achieves the highest accuracy at 0.97.

* **Lowest Accuracy:** The "Baseline" agent has the lowest accuracy at 0.79.

* **Steady Progression:** There is a consistent, monotonic increase in accuracy across the agent types as presented on the x-axis.

* **Plateau:** The "Solution" and "Composite" agents show identical performance (0.93).

* **Magnitude of Improvement:** The difference between the lowest (Baseline: 0.79) and highest (Unredacted: 0.97) accuracy is 0.18, representing a significant relative improvement.

### Interpretation

The data suggests a clear hierarchy in the effectiveness of different prompting or agent strategies for GPT-4 on the evaluated task. The "Baseline" represents a starting point, and each subsequent agent type appears to incorporate more sophisticated or comprehensive information, leading to improved accuracy.

The progression implies that providing the model with more context, structure, or explicit information (moving from simple retries or keywords towards explanations, solutions, and composite methods) enhances its performance. The peak performance of the "Unredacted" agent is particularly notable; it suggests that providing the model with full, unfiltered information (as opposed to potentially redacted or simplified inputs used in other agents) yields the best results. This could indicate that the model's reasoning benefits greatly from complete data access.

The plateau between "Solution" and "Composite" suggests that, for this specific task, combining methods (Composite) did not yield an advantage over providing a direct solution (Solution). The overall trend strongly supports the hypothesis that more informative and complete agent designs lead to higher accuracy for GPT-4 in this context.

</details>

Figure 2: All self-reflection types improved the accuracy of GPT-4 agents.

### 3.2 Performance by Model

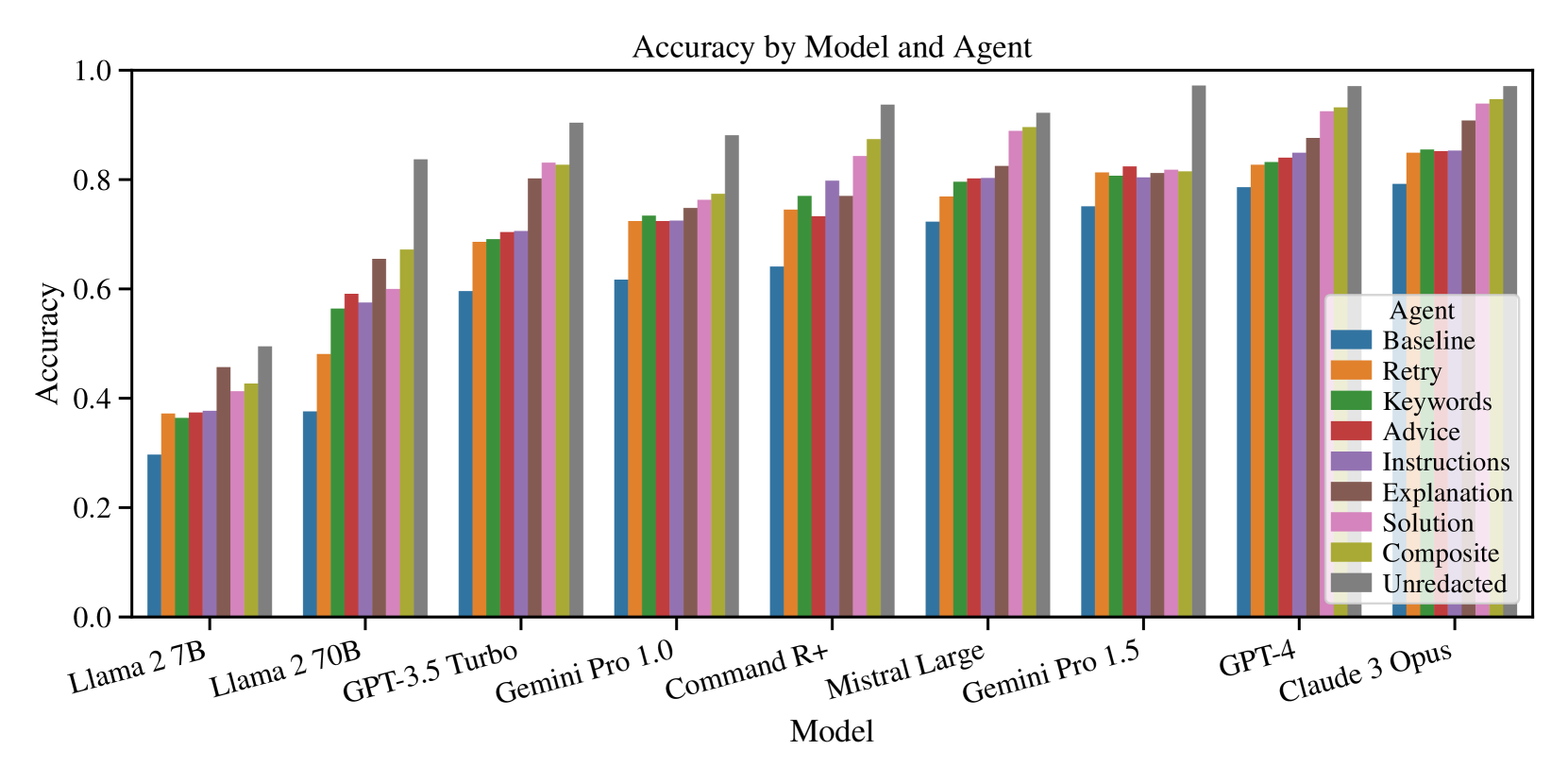

In terms of performance by model, every LLM that we tested demonstrated similar increases in accuracy across all types of self-reflection. In all cases, the improvement in performance was statistically significant ( $p<0.001$ ). See Figure 3 for a plot of accuracy by model and agent. See Table 4 for a numerical analysis of accuracy across all models.

<details>

<summary>x3.png Details</summary>

### Visual Description

## Grouped Bar Chart: Accuracy by Model and Agent

### Overview

This is a grouped bar chart comparing the performance accuracy of nine different large language models (LLMs) across nine distinct "agent" configurations or prompting strategies. The chart visualizes how accuracy varies both by model and by the agent method applied.

### Components/Axes

* **Title:** "Accuracy by Model and Agent"

* **Y-Axis:** Labeled "Accuracy". Scale ranges from 0.0 to 1.0, with major tick marks at 0.0, 0.2, 0.4, 0.6, 0.8, and 1.0.

* **X-Axis:** Labeled "Model". Lists nine distinct models:

1. Llama 2 7B

2. Llama 2 70B

3. GPT-3.5 Turbo

4. Gemini Pro 1.0

5. Command R+

6. Mistral Large

7. Gemini Pro 1.5

8. GPT-4

9. Claude 3 Opus

* **Legend:** Positioned in the bottom-right quadrant, overlapping the bars for the last two models. It defines the "Agent" types by color:

* **Baseline:** Blue

* **Retry:** Orange

* **Keywords:** Green

* **Advice:** Red

* **Instructions:** Purple

* **Explanation:** Brown

* **Solution:** Pink

* **Composite:** Olive/Yellow-Green

* **Unredacted:** Gray

### Detailed Analysis

Below are the approximate accuracy values for each agent within each model group, derived from visual inspection of the bar heights. Values are estimated to the nearest 0.01.

**1. Llama 2 7B**

* **Trend:** Generally low accuracy, with a gradual increase from Baseline to Unredacted.

* **Values:** Baseline (~0.30), Retry (~0.37), Keywords (~0.36), Advice (~0.37), Instructions (~0.38), Explanation (~0.45), Solution (~0.41), Composite (~0.42), Unredacted (~0.50).

**2. Llama 2 70B**

* **Trend:** Significant improvement over the 7B model. A clear upward trend from Baseline to Unredacted, with a notable jump for the Unredacted agent.

* **Values:** Baseline (~0.38), Retry (~0.48), Keywords (~0.56), Advice (~0.59), Instructions (~0.57), Explanation (~0.65), Solution (~0.60), Composite (~0.67), Unredacted (~0.84).

**3. GPT-3.5 Turbo**

* **Trend:** Higher overall accuracy. A steady, step-wise increase across agents, with Unredacted performing best.

* **Values:** Baseline (~0.60), Retry (~0.69), Keywords (~0.69), Advice (~0.70), Instructions (~0.71), Explanation (~0.80), Solution (~0.83), Composite (~0.82), Unredacted (~0.90).

**4. Gemini Pro 1.0**

* **Trend:** Similar pattern to GPT-3.5 Turbo but with slightly lower peak accuracy for Unredacted.

* **Values:** Baseline (~0.61), Retry (~0.72), Keywords (~0.73), Advice (~0.72), Instructions (~0.72), Explanation (~0.75), Solution (~0.77), Composite (~0.78), Unredacted (~0.88).

**5. Command R+**

* **Trend:** Strong performance, with a pronounced peak for the Unredacted agent.

* **Values:** Baseline (~0.64), Retry (~0.74), Keywords (~0.77), Advice (~0.73), Instructions (~0.80), Explanation (~0.77), Solution (~0.84), Composite (~0.87), Unredacted (~0.94).

**6. Mistral Large**

* **Trend:** High and relatively flat performance across most agents, with Unredacted and Composite leading.

* **Values:** Baseline (~0.72), Retry (~0.77), Keywords (~0.79), Advice (~0.80), Instructions (~0.80), Explanation (~0.82), Solution (~0.89), Composite (~0.90), Unredacted (~0.92).

**7. Gemini Pro 1.5**

* **Trend:** Very high accuracy, with most agents clustering above 0.80. Unredacted is the clear outlier at the top.

* **Values:** Baseline (~0.75), Retry (~0.81), Keywords (~0.81), Advice (~0.82), Instructions (~0.81), Explanation (~0.81), Solution (~0.81), Composite (~0.81), Unredacted (~0.97).

**8. GPT-4**

* **Trend:** Consistently high accuracy across all agents, with a gradual increase towards the rightmost agents.

* **Values:** Baseline (~0.79), Retry (~0.83), Keywords (~0.83), Advice (~0.84), Instructions (~0.85), Explanation (~0.88), Solution (~0.93), Composite (~0.93), Unredacted (~0.97).

**9. Claude 3 Opus**

* **Trend:** The highest-performing model overall. All agents score above 0.80, with a tight cluster at the top end.

* **Values:** Baseline (~0.79), Retry (~0.85), Keywords (~0.85), Advice (~0.85), Instructions (~0.85), Explanation (~0.91), Solution (~0.94), Composite (~0.95), Unredacted (~0.97).

### Key Observations

1. **Model Performance Hierarchy:** There is a clear progression in overall accuracy from left to right on the x-axis. Llama 2 7B is the lowest-performing model, while Claude 3 Opus and GPT-4 are the highest.

2. **Agent Effect:** Within every single model group, the **Unredacted** agent (gray bar) achieves the highest accuracy. The **Baseline** agent (blue bar) is consistently the lowest or among the lowest.

3. **Performance Clustering:** For the top-performing models (Gemini Pro 1.5, GPT-4, Claude 3 Opus), the accuracy scores for many agents (Retry, Keywords, Advice, Instructions) are very similar, forming a plateau. The major differentiators at the top are the Explanation, Solution, Composite, and especially Unredacted agents.

4. **Non-Linear Improvement:** The jump in accuracy from Llama 2 7B to Llama 2 70B is substantial, particularly for the more advanced agents (Explanation, Composite, Unredacted), indicating that model scale significantly amplifies the benefits of these prompting strategies.

### Interpretation

This chart demonstrates two key findings in LLM evaluation:

1. **Model Capability is Foundational:** The base capability of the model (represented by its position on the x-axis) sets the primary ceiling for performance. No agent strategy can elevate a weaker model (e.g., Llama 2 7B) to the level of a stronger one (e.g., Claude 3 Opus).

2. **Agent Strategies Unlock Potential:** The choice of agent or prompting strategy has a profound and consistent impact on accuracy *within* a given model. The "Unredacted" strategy, which likely involves providing the model with full, unfiltered context or information, universally yields the best results. This suggests that performance bottlenecks are often related to information access or framing rather than pure model reasoning. The "Baseline" strategy's poor performance highlights the inadequacy of minimal prompting.

The data implies that for optimal performance, one should use the most capable model available **and** employ advanced agent strategies like "Unredacted," "Composite," or "Solution." The diminishing returns between agents for the top models suggest they are approaching a performance ceiling on this specific task, where further gains require either better base models or fundamentally different approaches.

</details>

Figure 3: All LLMs we tested showed a similar pattern of improvement across self-reflection agents.

### 3.3 Performance by Exam

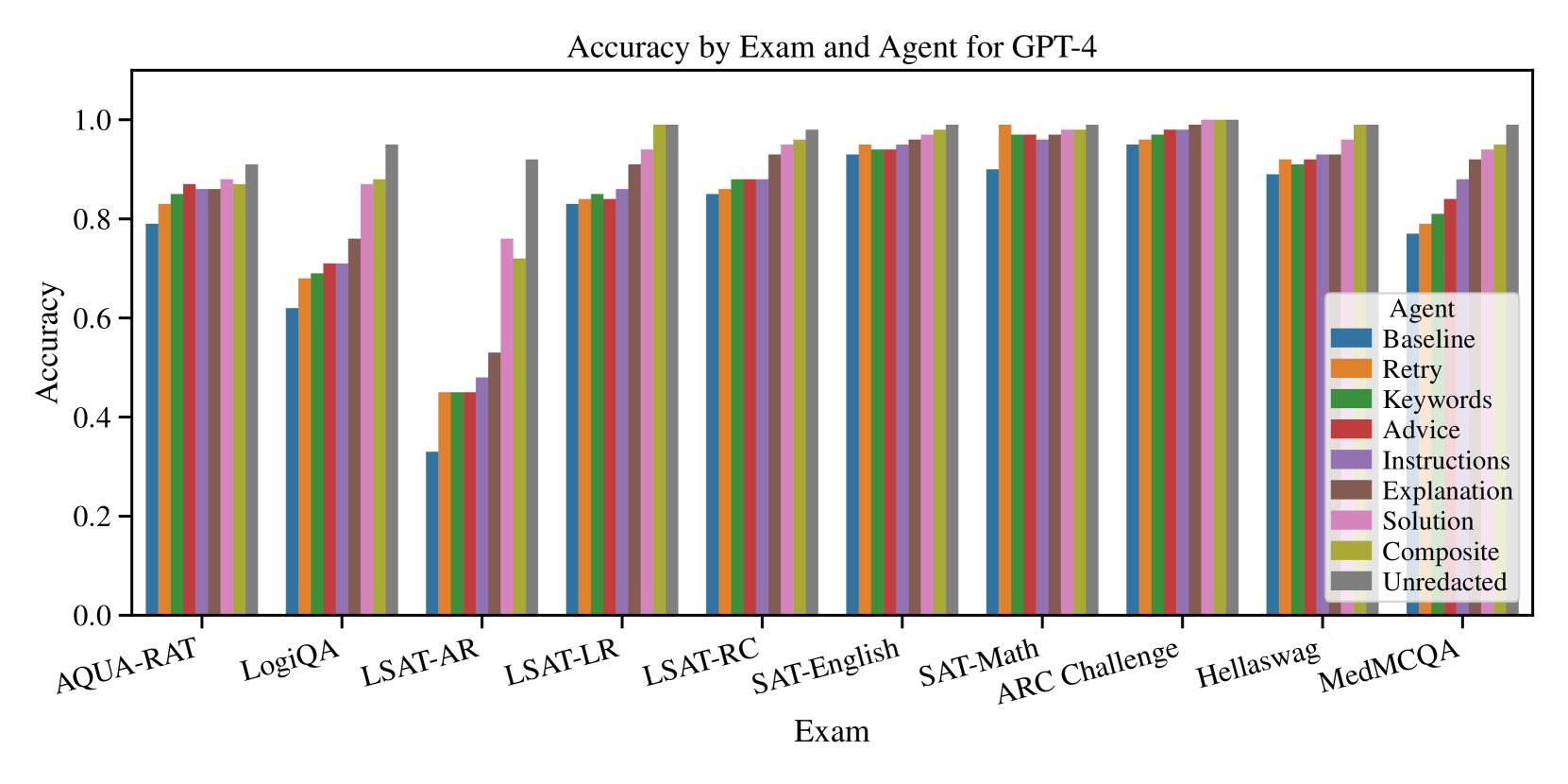

In terms of performance by exam, we saw that self-reflection significantly increased performance for some problem domains. However, other problem domains were less affected. For example, we saw the largest improvement on the LSAT-AR (Analytical Reasoning) exam. Other exams, like the SAT English exam, had much smaller effects. See Figure 4 for a plot of accuracy by exam and agent for GPT-4. See Table 5 in the appendix for a numerical analysis of the results.

<details>

<summary>x4.png Details</summary>

### Visual Description

## Grouped Bar Chart: Accuracy by Exam and Agent for GPT-4

### Overview

This is a grouped bar chart comparing the accuracy of GPT-4 across ten different exams or benchmarks, using nine different prompting "Agent" strategies. The chart visualizes how various prompting techniques affect performance on different types of tasks.

### Components/Axes

* **Chart Title:** "Accuracy by Exam and Agent for GPT-4"

* **Y-Axis:** Labeled "Accuracy". The scale runs from 0.0 to 1.0, with major tick marks at 0.0, 0.2, 0.4, 0.6, 0.8, and 1.0.

* **X-Axis:** Labeled "Exam". It lists ten distinct exam/benchmark categories.

* **Legend:** Located in the bottom-right quadrant of the chart area. It is titled "Agent" and defines the color coding for nine different prompting strategies.

* **Baseline:** Blue

* **Retry:** Orange

* **Keywords:** Green

* **Advice:** Red

* **Instructions:** Purple

* **Explanation:** Brown

* **Solution:** Pink

* **Composite:** Olive/Yellow-Green

* **Unredacted:** Gray

### Detailed Analysis

Data is presented as clusters of nine bars (one per Agent) for each Exam. Values are approximate based on visual alignment with the y-axis.

**1. AQUA-RAT**

* **Trend:** General upward trend from Baseline to Unredacted, with a slight dip for Instructions.

* **Approximate Values:** Baseline ~0.79, Retry ~0.83, Keywords ~0.85, Advice ~0.87, Instructions ~0.86, Explanation ~0.86, Solution ~0.88, Composite ~0.87, Unredacted ~0.91.

**2. LogiQA**

* **Trend:** Steady, consistent increase from Baseline to Unredacted.

* **Approximate Values:** Baseline ~0.62, Retry ~0.68, Keywords ~0.69, Advice ~0.71, Instructions ~0.71, Explanation ~0.76, Solution ~0.87, Composite ~0.88, Unredacted ~0.95.

**3. LSAT-AR**

* **Trend:** Significant overall increase. Baseline is notably low. A large jump occurs between Instructions and Solution.

* **Approximate Values:** Baseline ~0.33, Retry ~0.45, Keywords ~0.45, Advice ~0.45, Instructions ~0.48, Explanation ~0.53, Solution ~0.76, Composite ~0.72, Unredacted ~0.92.

**4. LSAT-LR**

* **Trend:** Gradual, consistent increase across all agents.

* **Approximate Values:** Baseline ~0.83, Retry ~0.84, Keywords ~0.85, Advice ~0.85, Instructions ~0.86, Explanation ~0.91, Solution ~0.94, Composite ~0.99, Unredacted ~0.99.

**5. LSAT-RC**

* **Trend:** Steady increase, with all agents performing above 0.8.

* **Approximate Values:** Baseline ~0.85, Retry ~0.86, Keywords ~0.88, Advice ~0.88, Instructions ~0.88, Explanation ~0.93, Solution ~0.96, Composite ~0.97, Unredacted ~0.98.

**6. SAT-English**

* **Trend:** High baseline performance with a slight, steady increase. All values are above 0.9.

* **Approximate Values:** Baseline ~0.93, Retry ~0.95, Keywords ~0.94, Advice ~0.94, Instructions ~0.95, Explanation ~0.97, Solution ~0.98, Composite ~0.99, Unredacted ~0.99.

**7. SAT-Math**

* **Trend:** Very high performance across the board. A slight dip for Instructions relative to adjacent bars.

* **Approximate Values:** Baseline ~0.90, Retry ~0.99, Keywords ~0.97, Advice ~0.97, Instructions ~0.96, Explanation ~0.98, Solution ~0.99, Composite ~0.99, Unredacted ~0.99.

**8. ARC Challenge**

* **Trend:** Extremely high and consistent performance. All bars are near or at 1.0.

* **Approximate Values:** Baseline ~0.95, Retry ~0.96, Keywords ~0.97, Advice ~0.98, Instructions ~0.98, Explanation ~0.99, Solution ~1.00, Composite ~1.00, Unredacted ~1.00.

**9. Hellaswag**

* **Trend:** High baseline with a gradual increase. Composite and Unredacted are near perfect.

* **Approximate Values:** Baseline ~0.89, Retry ~0.92, Keywords ~0.91, Advice ~0.92, Instructions ~0.93, Explanation ~0.93, Solution ~0.96, Composite ~0.99, Unredacted ~0.99.

**10. MedMCQA**

* **Trend:** Clear, strong upward trend from Baseline to Unredacted.

* **Approximate Values:** Baseline ~0.77, Retry ~0.79, Keywords ~0.80, Advice ~0.84, Instructions ~0.88, Explanation ~0.91, Solution ~0.94, Composite ~0.95, Unredacted ~0.99.

### Key Observations

1. **Agent Performance Hierarchy:** Across nearly all exams, the "Unredacted" agent (gray bar) achieves the highest or tied-for-highest accuracy. "Composite" (olive) is consistently the second-best. "Baseline" (blue) is almost always the lowest.

2. **Exam Difficulty Spectrum:** The exams show a wide range of baseline difficulty for GPT-4. LSAT-AR appears the hardest (baseline ~0.33), while ARC Challenge appears the easiest (baseline ~0.95).

3. **Impact of Prompting:** The improvement from "Baseline" to "Unredacted" is dramatic on harder exams (e.g., LSAT-AR: +~0.59) but marginal on easier ones (e.g., ARC Challenge: +~0.05).

4. **Non-Linear Gains:** Performance does not always improve linearly with each agent. Significant jumps often occur at "Explanation", "Solution", or "Composite" stages, suggesting these prompting strategies are particularly effective.

5. **SAT-Math Anomaly:** The "Retry" agent (orange) shows a very high accuracy (~0.99) on SAT-Math, nearly matching the top performers, which is unusual compared to its performance on other exams.

### Interpretation

This chart demonstrates the significant impact of advanced prompting strategies on GPT-4's reasoning and knowledge-based performance. The data suggests that simply using the base model ("Baseline") leaves substantial capability untapped, especially on complex, structured reasoning tasks like the LSAT Analytical Reasoning section.

The consistent superiority of "Unredacted" and "Composite" agents implies that providing the model with more context, explicit instructions, and worked examples ("Solution") synergistically improves its accuracy. The diminishing returns on easier benchmarks (like ARC Challenge) indicate a performance ceiling where prompting strategies matter less because the task is well within the model's base capabilities.

The outlier on SAT-Math, where "Retry" performs exceptionally well, might indicate that for certain types of mathematical problems, a simple retry mechanism is highly effective, possibly by allowing the model to catch and correct computational or simple logical errors on a second attempt.

Overall, the chart is a strong argument for the importance of prompt engineering and agentic workflows in maximizing the utility of large language models, transforming them from capable base models into highly reliable problem-solving tools across diverse domains.

</details>

Figure 4: The increase in performance from self-reflection was larger for some exams and smaller for others.

## 4 Discussion

### 4.1 Interpretation

Based on these results, all types of self-reflection improve the performance of LLM agents. In addition, these effects were observed across every LLM we tested. Self-reflections that contain more information (e.g., Instructions, Explanation, and Solution) outperform types of self-reflection with limited information (e.g., Retry, Keywords, and Advice).

The difference in accuracy between the self-reflecting agents and the Unredacted agent demonstrates that we were effectively eliminating direct answer leakage from the self-reflections. However, the structure of feedback generated by the Instruction, Explanation, Solution, and Composite agents clearly provides indirect guidance toward the correct answer without directly giving the answer away.

Interestingly, the Retry agent significantly improved performance across all LLMs. As a result, it appears that even the mere knowledge that the agent previously made a mistake improves the agent’s performance while re-answering the question. We hypothesize that this is either the result of the agent being more diligent in its second attempt or choosing the second most likely answer based on its re-answer CoT. Further investigation will be required to answer this question.

### 4.2 Limitations

First, the LLM agent we created for this experiment only solved a single-step problem. The real value in LLM agents is their ability to solve complex multi-step problems by iteratively choosing actions that lead them toward their goal. As a result, this experiment does not fully demonstrate the potential of self-reflecting LLM agents.

Second, API response errors may have introduced a small amount of error into our results. API errors typically occurred when content-safety filters were triggered by the questions being asked. In most cases, this may have amounted to an error in reporting an agent’s accuracy of less than 1%. However, in the case of the Gemini 1.0 Pro and Mistral Large models, this error could be as high as 2.8%.

Third, the top-performing LLMs scored above 90% accuracy for most exams. As a result, the increase in scores for the top exams was compressed near the upper limit of 100% (i.e., a perfect score). This compression effect makes it difficult to accurately assess the performance increase. As a result, our analysis would benefit from exams with a higher level of difficulty.

Finally, for all models and agents, the LSAT-AR (Analytical Reasoning) exam was the most difficult and also the most benefited by self-reflection. This large increase in performance from a single exam had the potential to skew the aggregate results across all exams. Using a set of exams with more uniform difficulty would eliminate this skewness.

### 4.3 Implications

Our research builds upon prior work on LLM agents and self-reflection.

It has practical implications for AI engineers who are building agentic LLM systems. Agents that can self-reflect on their own mistakes based on error signals from the environment can learn to avoid similar mistakes in the future. This will also help prevent the common issue of agents getting stuck in unproductive loops because they continue repeating the same mistake indefinitely.

In addition, our research has theoretical implications for AI researchers studying metacognition in LLMs. If LLMs are able to self-reflect on their own CoT, other similar metacognitive processes may also be leveraged to improve their performance.

### 4.4 Future Research

First, we recommend repeating this experiment using a more complex set of problems. Using problems as difficult or more difficult than the LSAT-AR exam would better reflect the performance improvement from self-reflection by avoiding compression of the scores around 100% accuracy.

Second, we recommend performing an experiment using multi-step problems. This would allow the agents to receive feedback from their environment after each step to use as external signals for error correction. It would also demonstrate the potential of self-reflection on long-horizon problems.

Third, we recommend repeating this experiment while providing the agents with access to external tools. This would allow us to see how error signals from the tools benefit self-reflection. For example, we could observe how an agent adapts to compiler errors from a Python interpreter or low-rank search results from a search engine.

Fourth, we recommend repeating this experiment with agents that possess external memory. Having an agent answer the same questions based on self-reflection is only beneficial from an experimental standpoint. Real-world agents need to store self-reflections and retrieve them (using Retrieval Augmented Generation) when encountering similar but not necessarily identical problems.

Finally, we recommend a survey of self-reflection across a wider set of LLMs, agent types, and problem domains. This would help us better characterize the effects of self-reflection and provide further empirical evidence for the potential benefits of self-reflecting LLM agents.

## 5 Conclusion

In this study, we investigated the effects of self-reflection in LLM agents on problem-solving tasks. Our results indicate that LLMs are able to reflect upon their own CoT and produce guidance that can significantly improve problem-solving performance. These performance improvements were observed across multiple LLMs, self-reflection types, and problem domains. This research has practical implications for AI engineers building agentic AI systems as well as theoretical implications for AI researchers studying metacognition in LLMs.

## 6 Acknowledgements

Funding for this research was provided by Microsoft and the Renze AI Research Institute.

## References

- [1] T. Kojima, S. S. Gu, M. Reid, Y. Matsuo, and Y. Iwasawa, “Large language models are zero-shot reasoners,” in Advances in Neural Information Processing Systems, vol. 35, 5 2022, pp. 22 199–22 213. [Online]. Available: https://arxiv.org/abs/2205.11916

- [2] J. Wei, X. Wang, D. Schuurmans, M. Bosma, B. Ichter, F. Xia, E. Chi, Q. Le, and D. Zhou, “Chain-of-thought prompting elicits reasoning in large language models,” arXiv, 1 2022. [Online]. Available: https://arxiv.org/abs/2201.11903

- [3] Y. Zhou, A. I. Muresanu, Z. Han, K. Paster, S. Pitis, H. Chan, and J. Ba, “Large language models are human-level prompt engineers,” The Eleventh International Conference on Learning Representations, 11 2023. [Online]. Available: https://arxiv.org/abs/2211.01910

- [4] Z. Ji, N. Lee, R. Frieske, T. Yu, D. Su, Y. Xu, E. Ishii, Y. Bang, D. Chen, H. S. Chan, W. Dai, A. Madotto, and P. Fung, “Survey of hallucination in natural language generation,” ACM Computing Surveys, vol. 55, 2 2022. [Online]. Available: http://dx.doi.org/10.1145/3571730

- [5] M. U. Hadi, qasem al tashi, R. Qureshi, A. Shah, amgad muneer, M. Irfan, A. Zafar, M. B. Shaikh, N. Akhtar, J. Wu, S. Mirjalili, Q. Al-Tashi, and A. Muneer, “A survey on large language models: Applications, challenges, limitations, and practical usage,” Authorea Preprints, 10 2023. [Online]. Available: https://doi.org/10.36227/techrxiv.23589741.v1

- [6] A. Payandeh, D. Pluth, J. Hosier, X. Xiao, and V. K. Gurbani, “How susceptible are llms to logical fallacies?” arXiv, 8 2023. [Online]. Available: https://arxiv.org/abs/2308.09853v1

- [7] J. Huang, X. Chen, S. Mishra, H. S. Zheng, A. W. Yu, X. Song, and D. Zhou, “Large language models cannot self-correct reasoning yet,” arXiv, 10 2023. [Online]. Available: https://arxiv.org/abs/2310.01798

- [8] Z. Ji, T. Yu, Y. Xu, N. Lee, E. Ishii, and P. Fung, “Towards mitigating hallucination in large language models via self-reflection,” arXiv, 10 2023. [Online]. Available: https://arxiv.org/abs/2310.06271

- [9] S. Minaee, T. Mikolov, N. Nikzad, M. Chenaghlu, R. Socher, X. Amatriain, and J. Gao, “Large language models: A survey,” arXiv, 2 2024. [Online]. Available: https://arxiv.org/abs/2402.06196

- [10] N. Shinn, F. Cassano, E. Berman, A. Gopinath, K. Narasimhan, and S. Yao, “Reflexion: Language agents with verbal reinforcement learning,” arXiv, 3 2023. [Online]. Available: https://arxiv.org/abs/2303.11366

- [11] L. Pan, M. Saxon, W. Xu, D. Nathani, X. Wang, and W. Y. Wang, “Automatically correcting large language models: Surveying the landscape of diverse self-correction strategies,” arXiv, 8 2023. [Online]. Available: https://arxiv.org/abs/2308.03188

- [12] A. Madaan, N. Tandon, P. Gupta, S. Hallinan, L. Gao, S. Wiegreffe, U. Alon, N. Dziri, S. Prabhumoye, Y. Yang, S. Gupta, B. P. Majumder, K. Hermann, S. Welleck, A. Yazdanbakhsh, and P. Clark, “Self-refine: Iterative refinement with self-feedback,” arXiv, 3 2023. [Online]. Available: https://arxiv.org/abs/2303.17651v2

- [13] J. Toy, J. MacAdam, and P. Tabor, “Metacognition is all you need? using introspection in generative agents to improve goal-directed behavior,” arXiv, 1 2024. [Online]. Available: https://arxiv.org/abs/2401.10910

- [14] Y. Wang and Y. Zhao, “Metacognitive prompting improves understanding in large language models,” arXiv, 8 2023. [Online]. Available: https://arxiv.org/abs/2308.05342

- [15] A. Asai, Z. Wu, Y. Wang, A. Sil, and H. Hajishirzi, “Self-rag: Learning to retrieve, generate, and critique through self-reflection,” arXiv, 10 2023. [Online]. Available: https://arxiv.org/abs/2310.11511v1

- [16] G. Wang, Y. Xie, Y. Jiang, A. Mandlekar, C. Xiao, Y. Zhu, A. Anandkumar, U. Austin, and U. Madison, “Voyager: An open-ended embodied agent with large language models,” arXiv, 5 2023. [Online]. Available: https://arxiv.org/abs/2305.16291v2

- [17] Z. Xi, W. Chen, X. Guo, W. He, Y. Ding, B. Hong, M. Zhang, J. Wang, S. Jin, E. Zhou, R. Zheng, X. Fan, X. Wang, L. Xiong, Y. Zhou, W. Wang, C. Jiang, Y. Zou, X. Liu, Z. Yin, S. Dou, R. Weng, W. Cheng, Q. Zhang, W. Qin, Y. Zheng, X. Qiu, X. Huang, and T. Gui, “The rise and potential of large language model based agents: A survey,” arXiv, 9 2023. [Online]. Available: https://arxiv.org/abs/2309.07864v3

- [18] S. Yao, J. Zhao, D. Yu, N. Du, I. Shafran, K. Narasimhan, and Y. Cao, “React: Synergizing reasoning and acting in language models,” arXiv, 10 2022. [Online]. Available: https://arxiv.org/abs/2210.03629

- [19] N. Miao, Y. W. Teh, and T. Rainforth, “Selfcheck: Using llms to zero-shot check their own step-by-step reasoning,” arXiv, 8 2023. [Online]. Available: https://arxiv.org/abs/2308.00436v3

- [20] R. Nakano, J. Hilton, S. Balaji, J. Wu, L. Ouyang, C. Kim, C. Hesse, S. Jain, V. Kosaraju, W. Saunders, X. Jiang, K. Cobbe, T. Eloundou, G. Krueger, K. Button, M. Knight, B. Chess, and J. S. Openai, “Webgpt: Browser-assisted question-answering with human feedback,” arXiv, 12 2021. [Online]. Available: https://arxiv.org/abs/2112.09332v3

- [21] T. Schick, J. Dwivedi-Yu, R. Dessì, R. Raileanu, M. Lomeli, L. Zettlemoyer, N. Cancedda, and T. Scialom, “Toolformer: Language models can teach themselves to use tools,” arXiv, 2 2023. [Online]. Available: https://arxiv.org/abs/2302.04761v1

- [22] P. Lewis and et al., “Retrieval-augmented generation for knowledge-intensive nlp tasks,” arXiv, 5 2020. [Online]. Available: https://arxiv.org/abs/2005.11401

- [23] Y. Gao, Y. Xiong, X. Gao, K. Jia, J. Pan, Y. Bi, Y. Dai, J. Sun, M. Wang, and H. Wang, “Retrieval-augmented generation for large language models: A survey,” arXiv, 12 2023. [Online]. Available: https://arxiv.org/abs/2312.10997v5

- [24] W. Zhong, L. Guo, Q. Gao, H. Ye, and Y. Wang, “Memorybank: Enhancing large language models with long-term memory,” Proceedings of the AAAI Conference on Artificial Intelligence, vol. 38, pp. 19 724–19 731, 5 2023. [Online]. Available: https://arxiv.org/abs/2305.10250v3

- [25] Z. Wang, A. Liu, H. Lin, J. Li, X. Ma, and Y. Liang, “Rat: Retrieval augmented thoughts elicit context-aware reasoning in long-horizon generation,” arXiv, 3 2024. [Online]. Available: https://arxiv.org/abs/2403.05313

- [26] G. Tyen, H. Mansoor, V. Cărbune, P. Chen, and T. Mak, “Llms cannot find reasoning errors, but can correct them!” arXiv, 11 2023. [Online]. Available: https://arxiv.org/abs/2311.08516v2

- [27] P. Clark, I. Cowhey, O. Etzioni, T. Khot, A. Sabharwal, C. Schoenick, and O. Tafjord, “Think you have solved question answering? try arc, the ai2 reasoning challenge,” ArXiv, 3 2018. [Online]. Available: https://arxiv.org/abs/1803.05457

- [28] R. Zellers, A. Holtzman, Y. Bisk, A. Farhadi, and Y. Choi, “Hellaswag: Can a machine really finish your sentence?” in Proceedings of the 57th Annual Meeting of the Association for Computational Linguistics, 2019. [Online]. Available: https://arxiv.org/abs/1905.07830

- [29] A. Pal, L. K. Umapathi, and M. Sankarasubbu, “Medmcqa: A large-scale multi-subject multi-choice dataset for medical domain question answering,” in Proceedings of the Conference on Health, Inference, and Learning. PMLR, 2022, pp. 248–260. [Online]. Available: https://proceedings.mlr.press/v174/pal22a.html

- [30] W. Zhong, R. Cui, Y. Guo, Y. Liang, S. Lu, Y. Wang, A. Saied, W. Chen, and N. Duan, “Agieval: A human-centric benchmark for evaluating foundation models,” ArXiv, 4 2023. [Online]. Available: https://arxiv.org/abs/2304.06364

- [31] J. Liu, L. Cui, H. Liu, D. Huang, Y. Wang, and Y. Zhang, “Logiqa: A challenge dataset for machine reading comprehension with logical reasoning,” in International Joint Conference on Artificial Intelligence, 2020. [Online]. Available: https://arxiv.org/abs/2007.08124

- [32] S. Wang, Z. Liu, W. Zhong, M. Zhou, Z. Wei, Z. Chen, and N. Duan, “From lsat: The progress and challenges of complex reasoning,” IEEE/ACM Transactions on Audio, Speech and Language Processing, vol. 30, pp. 2201–2216, 8 2021. [Online]. Available: https://doi.org/10.1109/TASLP.2022.3164218

- [33] Anthropic, “Introducing the next generation of claude anthropic,” 2024. [Online]. Available: https://www.anthropic.com/news/claude-3-family

- [34] ——, “The claude 3 model family: Opus, sonnet, haiku,” 2024. [Online]. Available: https://www.anthropic.com/claude-3-model-card

- [35] Cohere, “Command r+,” 2024. [Online]. Available: https://docs.cohere.com/docs/command-r-plus

- [36] ——, “Model card for c4ai command r+,” 2024. [Online]. Available: https://huggingface.co/CohereForAI/c4ai-command-r-plus

- [37] S. Pichai and D. Hassabis, “Introducing gemini: Google’s most capable ai model yet,” 2023. [Online]. Available: https://blog.google/technology/ai/google-gemini-ai/

- [38] Gemini-Team, “Gemini: A family of highly capable multimodal models,” arXiv, 12 2023.

- [39] S. Pichai and D. Hassabis, “Introducing gemini 1.5, google’s next-generation ai model,” 2024. [Online]. Available: https://blog.google/technology/ai/google-gemini-next-generation-model-february-2024/

- [40] Gemini-Team, “Gemini 1.5: Unlocking multimodal understanding across millions of tokens of context,” 2024. [Online]. Available: https://arxiv.org/abs/2403.05530

- [41] OpenAI, “Introducing chatgpt,” 11 2022. [Online]. Available: https://openai.com/blog/chatgpt

- [42] ——, “Models - openai api.” [Online]. Available: https://platform.openai.com/docs/models/gpt-3-5-turbo

- [43] ——, “Gpt-4,” 3 2023. [Online]. Available: https://openai.com/research/gpt-4

- [44] ——, “Gpt-4 technical report,” arXiv, 3 2023. [Online]. Available: https://arxiv.org/abs/2303.08774

- [45] Meta, “Meta and microsoft introduce the next generation of llama | meta,” 2023. [Online]. Available: https://about.meta.com/news/2023/07/llama-2/

- [46] H. Touvron, L. Martin, K. Stone, P. Albert, A. Almahairi, Y. Babaei, N. Bashlykov, S. Batra, P. Bhargava, S. Bhosale, D. Bikel, L. Blecher, C. C. Ferrer, M. Chen, G. Cucurull, D. Esiobu, J. Fernandes, J. Fu, W. Fu, B. Fuller, C. Gao, V. Goswami, N. Goyal, A. Hartshorn, S. Hosseini, R. Hou, H. Inan, M. Kardas, V. Kerkez, M. Khabsa, I. Kloumann, A. Korenev, P. S. Koura, M.-A. Lachaux, T. Lavril, J. Lee, D. Liskovich, Y. Lu, Y. Mao, X. Martinet, T. Mihaylov, P. Mishra, I. Molybog, Y. Nie, A. Poulton, J. Reizenstein, R. Rungta, K. Saladi, A. Schelten, R. Silva, E. M. Smith, R. Subramanian, X. E. Tan, B. Tang, R. Taylor, A. Williams, J. X. Kuan, P. Xu, Z. Yan, I. Zarov, Y. Zhang, A. Fan, M. Kambadur, S. Narang, A. Rodriguez, R. Stojnic, S. Edunov, and T. Scialom, “Llama 2: Open foundation and fine-tuned chat models,” arXiv, 7 2023. [Online]. Available: https://arxiv.org/abs/2307.09288

- [47] Mistral-AI-Team, “Au large | mistral ai | frontier ai in your hands,” 2024. [Online]. Available: https://mistral.ai/news/mistral-large/

- [48] S. M. Bsharat, A. Myrzakhan, and Z. Shen, “Principled instructions are all you need for questioning llama-1/2, gpt-3.5/4,” arXiv, 12 2023. [Online]. Available: https://arxiv.org/abs/2312.16171

- [49] M. Renze and E. Guven, “The benefits of a concise chain of thought on problem-solving in large language models,” arXiv, 1 2024. [Online]. Available: https://arxiv.org/abs/2401.05618v1

- [50] ——, “The effect of sampling temperature on problem solving in large language models,” arXiv, 2 2024. [Online]. Available: https://arxiv.org/abs/2402.05201v1

- [51] Q. McNemar, “Note on the sampling error of the difference between correlated proportions or percentages,” Psychometrika, vol. 12, pp. 153–157, 6 1947. [Online]. Available: https://doi.org/10.1007/BF02295996

## Appendix A Appendix

### A.1 Results

Table 3: Comparison of accuracy by agent for GPT-4

| Baseline | 0.786 | N/A | N/A | N/A |

| --- | --- | --- | --- | --- |

| Retry | 0.827 | 0.041 | 39.024 | $<0.001$ |

| Keywords | 0.832 | 0.046 | 44.022 | $<0.001$ |

| Advice | 0.840 | 0.054 | 52.019 | $<0.001$ |

| Instructions | 0.849 | 0.063 | 61.016 | $<0.001$ |

| Explanation | 0.876 | 0.090 | 88.011 | $<0.001$ |

| Solution | 0.925 | 0.139 | 137.007 | $<0.001$ |

| Composite | 0.932 | 0.146 | 144.007 | $<0.001$ |

| Unredacted | 0.971 | 0.185 | 183.005 | $<0.001$ |

Table 4: Accuracy by model and agent

| Claude 3 Opus Cohere Command R+ Gemini 1.0 Pro | 0.792 0.641 0.617 | 0.849 0.745 0.724 | 0.855 0.770 0.734 | 0.852 0.733 0.724 | 0.853 0.798 0.725 | 0.908 0.770 0.748 | 0.939 0.843 0.763 | 0.947 0.874 0.774 | 0.971 0.937 0.881 |

| --- | --- | --- | --- | --- | --- | --- | --- | --- | --- |

| Gemini 1.5 Pro | 0.751 | 0.813 | 0.807 | 0.824 | 0.804 | 0.812 | 0.818 | 0.815 | 0.972 |

| GPT-3.5 Turbo | 0.596 | 0.686 | 0.691 | 0.704 | 0.706 | 0.802 | 0.831 | 0.827 | 0.904 |

| GPT-4 | 0.786 | 0.827 | 0.832 | 0.840 | 0.849 | 0.876 | 0.925 | 0.932 | 0.971 |

| Llama 2 70b | 0.376 | 0.481 | 0.564 | 0.591 | 0.575 | 0.655 | 0.600 | 0.672 | 0.837 |

| Llama 2 7b | 0.297 | 0.372 | 0.364 | 0.374 | 0.377 | 0.457 | 0.413 | 0.427 | 0.495 |

| Mistral Large | 0.723 | 0.769 | 0.796 | 0.802 | 0.803 | 0.825 | 0.889 | 0.896 | 0.922 |

Table 5: Accuracy by agent and exam for GPT-4

| Baseline Retry Keywords | 0.79 0.83 0.85 | 0.95 0.96 0.97 | 0.89 0.92 0.91 | 0.33 0.45 0.45 | 0.83 0.84 0.85 | 0.85 0.86 0.88 | 0.62 0.68 0.69 | 0.77 0.79 0.81 | 0.93 0.95 0.94 | 0.90 0.99 0.97 |

| --- | --- | --- | --- | --- | --- | --- | --- | --- | --- | --- |

| Advice | 0.87 | 0.98 | 0.92 | 0.45 | 0.84 | 0.88 | 0.71 | 0.84 | 0.94 | 0.97 |

| Instructions | 0.86 | 0.98 | 0.93 | 0.48 | 0.86 | 0.88 | 0.71 | 0.88 | 0.95 | 0.96 |

| Explanation | 0.86 | 0.99 | 0.93 | 0.53 | 0.91 | 0.93 | 0.76 | 0.92 | 0.96 | 0.97 |

| Solution | 0.88 | 1.00 | 0.96 | 0.76 | 0.94 | 0.95 | 0.87 | 0.94 | 0.97 | 0.98 |

| Composite | 0.87 | 1.00 | 0.99 | 0.72 | 0.99 | 0.96 | 0.88 | 0.95 | 0.98 | 0.98 |

| Unredacted | 0.91 | 1.00 | 0.99 | 0.92 | 0.99 | 0.98 | 0.95 | 0.99 | 0.99 | 0.99 |

### A.2 Data

{ "source": "arc/arc-challenge-test", "source_id": 1, "topic": "Science", "context": "", "question": "An astronomer observes that a planet rotates faster after a meteorite impact. Which is the most likely effect of this increase in rotation?", "choices": { "A": "Planetary density will decrease.", "B": "Planetary years will become longer.", "C": "Planetary days will become shorter.", "D": "Planetary gravity will become stronger." }, "answer": "C", "solution":"" }

Figure 5: Sample of an MCQA problem in JSON-L format – with whitespace added for readability. [System Prompt] You are an expert in {{topic}}. Your task is to answer the following multiple-choice questions. Think step-by-step to ensure you have the correct answer. Then, answer the question using the following format ’Action: Answer("[choice]")’ The parameter [choice] is the letter or number of the answer you want to select (e.g. "A", "B", "C", or "D") For example, ’Answer("C")’ will select the choice "C" as the best answer. You MUST select one of the available choices; the answer CANNOT be "None of the Above". Be concise in your response but include any essential information. [Example Problem] Topic: Geography Question: What is the capital of the state where Johns Hopkins University is located? Choices: A: Baltimore B: Annapolis C: Des Moines D: Las Vegas [Example Solution] Thought: Johns Hopkins University is located in Baltimore, Maryland. The capital of Maryland is Annapolis. Action: Answer("B")

Figure 6: Sample of the answer prompt used by the baseline agent to solve MCQA problems. [System Prompt] You are an expert in {{topic}}. You have incorrectly answered the following multiple-choice question. Your task is to reflect on the problem, your solution, and the correct answer. You will then use this information help you answer the same question in the future. First, explain why you answered the question incorrectly. Second, list the keywords that describe the type of your errors from most general to most specific. Third, solve the problem again, step-by-step, based on your knowledge of the correct answer. Fourth, create a list of detailed instructions to help you correctly solve this problem in the future. Finally, create a list of general advice to help you solve similar types of problems in the future. Be concise in your response; however, capture all of the essential information. For guidance, I will provide you with a single generic example problem and reflection (below). [Example Input] Topic: Geography and Math Question: What is the product of the number of letters contained in the name of the city where Iowa State University is located multiplied by the number of letters contained in the name of the state? Choices: A: 16 B: 20 C: 24 D: 32 Thought: Iowa State University is located in the city of Ames ISU is located in the state of Iowa. Action: Answer("D") --- Correct Answer: A [Example Output] Explanation: I miscalculated the product of the number of letters in the city and state names. The gap in my knowledge was not in geography but in basic arithmetic. I knew the correct city and state but made a calculation error. Error Keywords: - Calculation error - Arithmetic error - Multiplication error Solution: Iowa State University is located in the city of Ames Iowa State University is located in the state of Iowa. The city name "Ames" contains 4 letters. The state name "Iowa" contains 4 letters. The product of 4*4 is 16. Instructions: 1. Identify the city where the university is located. 2. Identify the state where the university is located. 3. Count the number of letters in the name of the city. 4. Count the number of letters in the name of the state. 5. Multiply the number of letters in the city by the number of letters in the state. 6. Work step-by-step through your mathematical calculations. 7. Double-check your calculations to ensure accuracy. 8. Choose the answer that matches your calculated result. Advice: - Always read the question carefully and understand the problem. - Always decompose complex problems into multiple simple steps. - Always think through each subproblem step-by-step. - Never skip any steps; be explicit in each step of your reasoning. - Always double-check your calculations and final answer. - Remember that the product of two numbers is the result of multiplying them together, not adding them.

Figure 7: Sample of the self-reflection prompt used to reflect on incorrectly answered MCQA problems. [System Prompt (same)] [Example Problem (same)] [Example Solution (same)] [Reflection Prompt] Reflection: You previously answered this question incorrectly. Then you reflected on the problem, your solution, and the correct answer. Use your self-reflection (below) to help you answer this question. Any information that you are not allowed to see will be marked [REDACTED]. {{reflection}}

Figure 8: Sample of the re-answer prompt used by the self-reflecting agents. The system prompt, example problem, and example solution are identical to the answer prompt and thus omitted for clarity.