# Chapter 0 Statistical Mechanics and Artificial Neural Networks: Principles, Models, and Applications

Abstract

The field of neuroscience and the development of artificial neural networks (ANNs) have mutually influenced each other, drawing from and contributing to many concepts initially developed in statistical mechanics. Notably, Hopfield networks and Boltzmann machines are versions of the Ising model, a model extensively studied in statistical mechanics for over a century. In the first part of this chapter, we provide an overview of the principles, models, and applications of ANNs, highlighting their connections to statistical mechanics and statistical learning theory.

Artificial neural networks can be seen as high-dimensional mathematical functions, and understanding the geometric properties of their loss landscapes (i.e., the high-dimensional space on which one wishes to find extrema or saddles) can provide valuable insights into their optimization behavior, generalization abilities, and overall performance. Visualizing these functions can help us design better optimization methods and improve their generalization abilities. Thus, the second part of this chapter focuses on quantifying geometric properties and visualizing loss functions associated with deep ANNs.

\body Contents

1. 1 Introduction

1. 1 The McCulloch–Pitts calculus and Hebbian learning

1. 2 The Perceptron

1. 3 Objective Bayes, entropy, and information theory

1. 4 Connections to the Ising model

1. 2 Hopfield network

1. 3 Boltzmann machine learning

1. 1 Boltzmann machines

1. 2 Restricted Boltzmann machines

1. 3 Derivation of the learning algorithm

1. 4 Loss landscapes of artificial neural networks

1. 1 Principal curvature in random projections

1. 2 Extracting curvature information

1. 3 Hessian directions

1. 5 Conclusions

1 Introduction

There has been a long tradition in studying learning systems within statistical mechanics. [1, 2, 3] Some versions of artificial neural networks (ANNs) were, in fact, inspired by the Ising model that has been proposed in 1920 by Wilhelm Lenz as a model for ferromagnetism and solved in one dimension by his doctoral student, Ernst Ising, in 1924. [4, 5, 6] In the language of machine learning, the Ising model can be considered as a non-learning recurrent neural network (RNN).

Contributions to ANN research extend beyond statistical mechanics, however, requiring a broad range of scientific disciplines. Historically, these efforts have been marked by eras of interdisciplinary collaboration referred to as cybernetics [7, 8], connectionism [9, 10], artificial intelligence [11, 12], and machine learning [13, 14]. To emphasize the importance of multidisciplinary contributions in ANN research, we will often highlight the research disciplines of individuals who have played pivotal roles in the advancement of ANNs.

1 The McCulloch–Pitts calculus and Hebbian learning

An influential paper by the neuroscientist Warren S. McCulloch and logician Walter Pitts published in 1943 is often regarded as inaugurating neural network research, [15] but in fact there was an active research community at that time working on the mathematics of neural activity. [16] McCulloch and Pitts’s pivotal contribution was to join propositional logic and Alan Turing’s mathematical notion of computation [17] to propose a calculus for creating compositional representations of neural behavior. Their work has later on been picked up by the mathematician Stephen C. Kleene [18], who made some of their work more accessible. Kleene comments on the work by McCulloch and Pitts in his 1956 article [18]: “The present memorandum is partly an exposition of the McCulloch–Pitts results; but we found the part of their paper which treats of arbitrary nets obscure; so we have proceeded independently here.”

Contemporaneously, the physchologist Donald O. Hebb formulated in his 1949 book “The Organization of Behavior” [19] a neurophysiological postulate, which is known today as “Hebb’s rule” or “Hebbian learning”: \MakeFramed \FrameRestore Let us assume then that the persistence or repetition of a reverberatory activity (or “trace”) tends to induce lasting cellular changes that add to its stability. … When an axon of cell $A$ is near enough to excite a cell $B$ and repeatedly or persistently takes part in firing it, some growth process or metabolic change takes place in one or both cells such that $A$ ’s efficiency, as one of the cells firing $B$ , is increased. Donald O. Hebb in “The Organization of Behavior” (1949) \endMakeFramed In the modern literature, Hebbian learning is often summarized by the slogan “neurons wire together if they fire together”. [20]

2 The Perceptron

In 1958, the psychologist Frank Rosenblatt developed the perceptron [21, 22], which is essentially a binary classifier that is based on a single artificial neuron and a Hebbian-type learning rule. The artificial neuron of this type is sometimes referred to as “McCulloch–Pitts neuron”, owing to its connection to the foundational work of McCulloch and Pitts [15]. Some authors distinguish McCulloch–Pitts neurons from perceptrons by noting that the former handle binary signals, while the latter can process real-valued inputs. In parallel to Rosenblatt’s work, the electrical engineers Bernard Widrow and Ted Hoff developed a similar ANN named ADALINE (Adaptive Linear Neuron). [23]

We show a schematic of a perceptron in Fig. 1. It receives inputs $x_{1},x_{2},...,x_{n}$ that are weighted with $w_{1},w_{2},...,w_{n}$ . Within the summer (indicated by a $\Sigma$ symbol), the artificial neuron computes $\sum_{i}w_{i}x_{i}+b$ , where $b$ is a bias term. This quantity is then used as input of a step activation function (indicated by a step symbol). The output of this function is $y$ . Such simple artificial neurons can be used to implement logical functions including AND, OR, and NOT. For example, consider a perceptron with Heaviside activation, two binary inputs $x_{1},x_{2}∈\{0,1\}$ , weights $w_{1},w_{1}=1$ and bias $b=-1.5$ . We can readily verify that $y=x_{1}\wedge x_{2}$ . An example of a logical function that cannot be represented by a perceptron is XOR. This was shown by the computer scientists Marvin L. Minsky and Seymour A. Papert in their 1969 book “Perceptrons: An Introduction to Computational Geometry” [11]. Based on their analysis of perceptrons they considered the extension of research on this topic to be “sterile”.

Problems like the one associated with representing XOR using a single perceptron can be overcome by employing multilayer perceptrons (MLPs) or, in a broader sense, multilayer feedforward networks equipped with various types of activation functions. [24, 25, 26] One effective way to train such multilayered structures is reverse mode automatic differentiation (i.e., backpropagation). [27, 28, 29, 30, 31]

Notice that perceptrons and other ANNs predominantly operate on real-valued data. Nevertheless, there is an expanding body of research focused on ANNs that are designed to handle complex-valued and, in some cases, quaternion-based information. [32, 33, 34, 35, 36, 37, 38]

$x_{1}$

$x_{2}$

$...$

$x_{n}$ $\Sigma$ $w_{1}$ $w_{2}$ $...$ $w_{n}$ $b$ $y$

Figure 1: A schematic of a perceptron with output $y$ , inputs $x_{1},x_{2},...,x_{n}$ , corresponding weights $w_{1},w_{2},...,w_{n}$ , and a bias term $b$ . Traditionally, the inputs and outputs of perceptrons were considered to be binary (e.g., $\{-1,1\}$ or $\{0,1\}$ ), while weights and biases are generally represented as real numbers. The symbol $\Sigma$ signifies the summation process wherein the artificial neuron computes $\sum_{i}w_{i}x_{i}+b$ . This computed value then becomes the input for a step activation function, denoted by a step symbol. We denote the final output as $y$ . While the original perceptron employed a step activation function, contemporary applications frequently utilize a variety of functions including sigmoid, $\tanh$ , and rectified linear unit (ReLU).

3 Objective Bayes, entropy, and information theory

Over the same period of time, seminal work in probability and statistics appeared that would later prove crucial to the development of ANNs. In the early 20th century, a renewed interest in inverse “Bayesian“ inference [39, 40] met strong resistance from the emerging frequentist school of statistics led by Jerzy Neyman, Karl Pearson, and especially Ronald Fisher, who advocated his own fiducial inference as a better alternative to Bayesian inference [41, 42]. In response to criticism that Bayesian methods were irredeemably subjective, Harold Jeffreys presented a methodology for “objectively” assigning prior probabilities based on symmetry principles and invariance properties. [43]

Edwin Jaynes, building on Jeffreys’s ideas for objective Bayesian priors and Shannon’s quantification of uncertainty [44], proposed the Maximum Entropy (MaxEnt) principle as a rationality criteria for assigning prior probabilities that are at once consistent with known information while remaining maximally uncommitted about what remains uncertain [45, 46, 47]. Jaynes envisioned the MaxEnt principle as the basis for a “logic of science” [48]. For instance, he proposed a reinterpretation of the principles of thermodynamics in information-theoretic terms where macro-states of a physical system are understood through the partial information they provide about the possible underlying microstates, showing how the original Boltzmann–Gibbs interpretation of entropy could be replaced by a more general single framework based on Shannon entropy. Objective Bayesianism does not remove subjective judgment [49], MaxEnt is far from a universal principle for encoding prior information [50], and the terms ‘objective’ and ‘subjective’ are outdated. [51, 52]

For example, the MaxEnt principle can be used to select the least biased distribution from many common exponential family distributions by imposing constraints that match the expectation values of their sufficient statistics. That is, from constraints on the expected values of some function $h(x)$ on data parameterized by $\theta$ , applying the MaxEnt principle to find the probability distribution that maximizes entropy under those constraints often takes the form of an exponential family distribution

$$

p(x\mid\theta)=h(x)\,\exp\!\left(\theta^{\top}T(x)-A(\theta)\right)\,, \tag{1}

$$

where $h(x)$ is the underlying base measure, $\theta$ is the natural parameter of the distribution, $A(\theta)$ is the log normalizer, and $T(x)$ is the sufficient statistic determined by MaxEnt under the given constraints. MaxEnt derived distributions depend on contingencies of the data and the availability of the right constraints to ensure the derivation goes through, however–contingencies that undermine viewing MaxEnt as a fundamental principle of rational inference.

Nevertheless, Jaynes’s notion of treating probability as an extension of logic [48, 48, 53] and probabilistic inference as governed by coherence conditions on information states [54, 55] has proved useful in optimization (e.g., cross-entropy loss [56]), entropy-based regularization of ANNs [57], and Bayesian neural networks. [58]

4 Connections to the Ising model

Returning to the Ising model, a modified version of it has been endowed with a learning algorithm by the mathematical neuroscientist Shun’ichi Amari in 1972 and has been employed for learning patterns and sequences of patterns. [59] Amari’s self-organizing ANN is an early model of associative memory that has later been popularized by the physicist John Hopfield. [60] Despite its limited representational capacity and use in practical applications, the study of Hopfield-type learning systems is still an active research area. [61, 36, 62, 63]

The Hopfield network shares the Hamiltonian with the Ising model. Its learning algorithm is deterministic and aims at minimizing the “energy” of the system. One problem with the deterministic learning algorithm of Hopfield networks is that it cannot escape local minima. A solution to this problem is to employ a stochastic learning rule akin to Monte-Carlo methods that are used to generate Ising configurations. [64] In 1985, David H. Ackley, Geoffrey E. Hinton, and Terrence J. Sejnowski proposed such a learning algorithm for an Ising-type ANN which they called “Boltzmann machine” (BM). [65, 66, 67, 68] In 1986, Smolensky introduced the concept of “Harmonium” [69], which later evolved into restricted Boltzmann machines (RBMs). In contrast to Boltzmann machines, RBMs benefit from more efficient training algorithms that were developed in the early 2000s. [70, 71] Since then, RBMs have been applied in various context such as to reduce dimensionality of datasets [72], study phase transitions [73, 74, 3, 75], represent wave functions [76, 77].

Our historical overview of ANNs aims at emphasizing the strong interplay between statistical mechanics and related fields in the investigation of learning systems. Given the extensive span of over eight decades since the pioneering work of McCulloch and Pitts, summarizing the history of ANNs within a few pages cannot do justice to its depth and significance. We therefore refer the reader to the excellent review by Jürgen Schmidhuber (see Ref. 78) for a more detailed overview of the history of deep learning in ANNs.

As computing power continues to increase, deep (i.e., multilayer) ANNs have, over the past decade, become pervasive across various scientific domains. [79] Different established and newly developed ANN architectures have not only been employed to tackle scientific problems but have also found extensive applications in tasks such as image classification and natural language processing. [80, 81, 82, 83]

Finally, we would also like to emphasize an area of research that has significantly advanced due to the availability of automatic differentiation [84] and the utilization of ANNs as universal function approximators. [85, 86, 87] This area resides at the intersection of non-linear dynamics [88, 89], control theory [90, 91, 92, 93, 94, 95, 96, 97, 98], and dynamics-informed learning, where researchers often aim to integrate ANNs and their associated learning algorithms with domain-specific knowledge. [99, 100, 101, 102, 103, 104] This integration introduces an inductive bias that facilitates effective learning.

The outline of this chapter is as follows. To better illustrate the connections between the Ising model and ANNs, we devote Secs. 2 and 3 to the Hopfield model and Boltzmann machines, respectively. In Sec. 4, we discuss how mathematical tools from high-dimensional probability and differential geometry can help us study the loss landscape of deep ANNs. [105] We conclude this chapter in Sec. 5.

2 Hopfield network

A Hopfield network is a complete (i.e., fully connected) undirected graph in which each node is an artificial neuron of McCulloch–Pitts type (see Fig. 1). We show an example of a Hopfield network with $n=5$ neurons in Fig. 2. We use $x_{i}∈\{-1,1\}$ to denote the state of neuron $i$ ( $i∈\{1,...,n\}$ ). Because the underlying graph is fully connected, neuron $i$ receives $n-1$ inputs $x_{j}$ ( $j≠ i$ ). The inputs $x_{j}$ associated with neuron $i$ are assigned weights $w_{ij}∈\mathbb{R}$ . In a Hopfield network, weights are assumed to be symmetric (i.e., $w_{ij}=w_{ji}$ ), and self-weights are considered to be absent (i.e., $w_{ii}=0$ ). $x_{1}$ $x_{2}$ $x_{3}$ $x_{4}$ $x_{5}$ $w_{12}$ $w_{15}$

Figure 2: An example of a Hopfield network with $n=5$ neurons. Each neuron is connected to all other neurons through black edges, representing both inputs and outputs. (Adapted from 106.)

With these definitions in place, the activation of neuron $i$ is given by

$$

a_{i}=\sum_{j}w_{ij}x_{j}+b_{i}\,, \tag{2}

$$

where $b_{i}$ is the bias term of neuron $i$ .

Regarding connections between the Ising model and Hopfield networks, the states of artificial neurons align with the binary values employed in the Ising model to model the orientations of elementary magnets, often referred to as classical “spins”. The weights in a Hopfield network represent the potentially diverse couplings between different elementary magnets. Lastly, the bias terms assume the function of external magnetic fields that act on these elementary magnets. Given these structural similarities between the Ising model and Hopfield networks, it is natural to assign them the Ising-type energy function

$$

E=-\frac{1}{2}\sum_{i,j}w_{ij}x_{i}x_{j}-\sum_{i}b_{i}x_{i}\,. \tag{3}

$$

Hopfield networks are not just a collection of interconnected artificial neurons, but they are dynamical systems whose states $x_{i}$ evolve in discrete time according to

$$

x_{i}\leftarrow\begin{cases}1\,,&\text{if }a_{i}\geq 0\\

-1\,,&\text{otherwise}\,,\end{cases} \tag{4}

$$

where $a_{i}$ is the activation of neuron $i$ [see Eq. (2)].

For asynchronous updates in which the state of one neuron is updated at a time, the update rule (4) has an interesting property: Under this update rule, the energy $E$ [see Eq. (3)] of a Hopfield network never increases.

The proof of this statement is straightforward. We are interested in the cases where an update of $x_{i}$ causes this quantity to change its sign. Otherwise the energy will stay constant. Consider the two cases (i) $a_{i}=\sum_{j}w_{ij}x_{j}+b_{i}<0$ with $x_{i}=1$ , and (ii) $a_{i}≥ 0$ with $x_{i}=-1$ . In the first case, the energy difference is

$$

\Delta E_{i}=E(x_{i}=-1)-E(x_{i}=1)=2\Bigl{(}b_{i}+\sum_{j}w_{ij}x_{j}\Bigr{)}=2a_{i}\,. \tag{5}

$$

Notice that $\Delta E_{i}<0$ because $a_{i}=\sum_{j}w_{ij}x_{j}+b_{i}<0$ .

In the second case, we have

$$

\Delta E_{i}=E(x_{i}=1)-E(x_{i}=-1)=-2\Bigl{(}b_{i}+\sum_{j}w_{ij}x_{j}\Bigr{)}=-2a_{i}\,. \tag{6}

$$

The energy difference satisfies $\Delta E_{i}≤ 0$ because $a_{i}≥ 0$ . In summary, we have shown that $\Delta E_{i}≤ 0$ for all changes of the state variable $x_{i}$ according to update rule (4).

Equations (5) and (6) also show that the energy difference associated with a change of sign in $x_{i}$ is $2a_{i}$ and $-2a_{i}$ for $a_{i}<0$ and $a_{i}≥ 0$ , respectively. Absorbing the bias term $b_{i}$ in the weights $w_{ij}$ by associating an extra active unit to every node in the network yields for the corresponding energy differences

$$

\Delta E_{i}=\pm 2\sum_{j}w_{ij}x_{j}\,. \tag{7}

$$

One potential application of Hopfield networks is to store and retrieve information in local minima by adjusting their weights. For instance, consider the task of storing $N$ binary (black and white) patterns in a Hopfield network. Let these $N$ patterns be represented by the set $\{p_{i}^{\nu}=± 1|1≤ i≤ n\}$ with $\nu∈\{1,...,N\}$ .

To learn the weights that represent our binary patterns, we can apply the Hebbian-type rule

$$

w_{ij}=w_{ji}=\frac{1}{N}\sum_{\nu=1}^{N}p_{i}^{\nu}p_{j}^{\nu}\,. \tag{8}

$$

In Hopfield networks with a large number of neurons, the capacity for storing and retrieving patterns is limited to approximately 14% of the total number of neurons. [107] However, it is possible to enhance this capacity through the use of modified versions of the learning rule (8). [108]

After applying the Hebbian learning rule to adjust the weights of a given Hopfield network, we may be interested in studying the evolution of different initial configurations $\{x_{i}\}$ according to update rule (4). A Hopfield network accurately represents a pattern $\{p_{i}^{\nu}=± 1|1≤ i≤ n\}$ if $x_{i}$ remains equal to $p_{i}^{\nu}$ both before and after an update for all $i$ (meaning $\{p_{i}^{\nu}\}$ is a fixed point of the system). Depending on the number of stored patterns and the chosen initial configuration, the Hopfield network may converge to a local minimum that does not align with the desired pattern. To mitigate this behavior, one can employ a stochastic update rule instead of the deterministic rule (4). This will be the topic that we are going to discuss in the following section.

3 Boltzmann machine learning

In Hopfield networks, if we start with an initial configuration that is close enough to a desired local energy minimum, we can recover the corresponding pattern $\{p_{i}^{\nu}\}$ . However, for certain applications, relying solely on the deterministic update rule (4) may not be enough as it never accepts transitions that are associated with an increase in energy. In constraint satisfaction tasks, we often require learning algorithms to have the capability to occasionally accept transitions to configurations of higher energy and move the system under consideration away from local minima towards a global minimum. A method that is commonly used in this context is the M(RT) 2 algorithm introduced by Nicholas Metropolis, Arianna W. Rosenbluth, Marshall N. Rosenbluth, Augusta H. Teller, and Edward Teller in their seminal 1953 paper “Equation of State Calculations by Fast Computing Machines”. [109, 110] This algorithm became the basis of many optimization methods, such as simulated annealing. [111]

In the 1980s, the M(RT) 2 algorithm has been adopted to equip Hopfield-type systems with a stochastic update rule, in which neuron $i$ is activated (set to $1$ ) regardless of its current state, with probability

$$

\sigma_{i}\equiv\sigma(\Delta E_{i}/T)=\sigma(2a_{i}/T)=\frac{1}{1+\exp(-\Delta E_{i}/T)}\,. \tag{9}

$$

Otherwise, it is set to $-1$ . The corresponding ANNs were dubbed “’Boltzmann machines”. [65, 66, 67, 68] $-10$ $-8$ $-6$ $-4$ $-2$ $0$ $2$ $4$ $6$ $8$ $10$ $0$ $0.2$ $0.4$ $0.6$ $0.8$ $1$ $\Delta E_{i}$ $\sigma_{i}$ $T=0.5$ $T=1$ $T=2$

Figure 3: The sigmoid function $\sigma_{i}\equiv\sigma(\Delta E_{i}/T)$ [see Eq. (9)] as function of $\Delta E_{i}$ for $T=0.5,1,2$ .

In Eq. (9), the quantity $\Delta E_{i}=2a_{i}$ is the energy difference between an inactive neuron $i$ and an active one [see Eq. (5)]. We use the convention employed in Refs. 67, 68. The authors of Ref. 66, use the convention that $\Delta E_{i}=-2a_{i}$ and $\sigma_{i}\equiv\sigma(\Delta E_{i}/T)=1/(1+\exp(\Delta E_{i}/T))$ . The function $\sigma(x)=1/\left(1+\exp(-x)\right)$ represents the sigmoid function, and the parameter $T$ serves as an equivalent to temperature. In the limit $T→ 0$ , we recover the deterministic update rule (4). In Fig. 3, we show $\sigma(\Delta E_{i}/T)$ as a function of $\Delta E_{i}$ for $T=0.5,1,2$ .

Examining Eqs. (3) and (9), we notice that we are simulating a system akin to the Ising model with Glauber (heat bath) dynamics. [112, 64] Because Glauber dynamics satisfy the detailed balance condition, Boltzmann machines will eventually reach thermal equilibrium. The corresponding probabilities $p_{\mathrm{eq}}(X)$ and $p_{\mathrm{eq}}(Y)$ for the ANN to be in states $X$ and $Y$ , respectively, will satisfy We set the Boltzmann constant $k_{B}$ to 1.

$$

\frac{p_{\mathrm{eq}}(Y)}{p_{\mathrm{eq}}(X)}=\exp\left(-\frac{E(Y)-E(X)}{T}\right)\,. \tag{10}

$$

In other words, the Boltzmann distribution provides the relative probability $p_{\mathrm{eq}}(Y)/p_{\mathrm{eq}}(X)$ associated with the states $X$ and $Y$ of a “thermalized” Boltzmann machine. Regardless of the initial configuration, at a given temperature $T$ , the stochastic update rule in which neurons are activated with probability $\sigma_{i}$ always leads to a thermal equilibrium configuration that is solely determined by its energy.

1 Boltzmann machines

Unlike Hopfield networks, Boltzmann machines have two types of nodes: visible units and hidden units. We denote the corresponding sets of visible and hidden units by $V$ and $H$ , respectively. Notice that the set $H$ may be empty. In Fig. 4, we show an example of a Boltzmann machine with five visible units and three hidden units.

During the training of a Boltzmann machine, the visible units are “clamped” to the environment, which means they are set to binary vectors drawn from an empirical distribution. Hidden units may be used to account for constraints involving more than two visible units. $h_{1}$ $h_{2}$ $h_{3}$ $v_{1}$ $v_{2}$ $v_{3}$ $v_{4}$ $v_{5}$

Figure 4: Boltzmann machines consist of visible units (blue) and hidden units (red). In the shown example, there are five visible units $\left\{v_{i}\right\}$ $({i∈\{1,...,5\}})$ and three hidden units $\left\{h_{j}\right\}$ $(j∈\{1,2,3\})$ . Similar to Hopfield networks, the network architecture in a Boltzmann machine is complete.

We denote the probability distribution of all configurations of visible units $\{\nu\}$ in a freely running network as $P^{\prime}\left(\{\nu\}\right)$ . Here, “freely running” means that no external inputs are fixed (or “clamped”) to the visible units. We derive the distribution $P^{\prime}\left(\{\nu\}\right)$ by summing (i.e., marginalizing) over the corresponding joint probability distribution. That is,

$$

P^{\prime}\left(\{\nu\}\right)=\sum_{\{h\}}P^{\prime}\left(\{\nu\},\{h\}\right)\,, \tag{11}

$$

where the summation is performed over all possible configurations of hidden units $\{h\}$ .

Our objective is now to devise a method such that $P^{\prime}\left(\{\nu\}\right)$ converges to the unknown environment (i.e., data) distribution $P\left(\{\nu\}\right)$ . To do so, we quantity the disparity between $P^{\prime}\left(\{\nu\}\right)$ and $P\left(\{\nu\}\right)$ using the Kullback–Leibler (KL) divergence (or relative entropy)

$$

G(P,P^{\prime})=\sum_{\{\nu\}}P\left(\{\nu\}\right)\ln\left[\frac{P\left(\{\nu\}\right)}{P^{\prime}\left(\{\nu\}\right)}\right]\,. \tag{12}

$$

To minimize the KL divergence $G(P,P^{\prime})$ , we perform a gradient descent according to

$$

\frac{\partial G}{\partial w_{ij}}=-\frac{1}{T}\left(p_{ij}-p^{\prime}_{ij}\right)\,, \tag{13}

$$

where $p_{ij}$ represents the probability of both units $i$ and $j$ being active when the environment dictates the states of the visible units, and $p^{\prime}_{ij}$ is the corresponding probability in a freely running network without any connection to the environment. [66, 67, 68] We derive Eq. (13) in Sec. 3.

Both probabilities $p_{ij}$ and $p_{ij}^{\prime}$ are measured once the Boltzmann machine has reached thermal equilibrium. Subsequently, the weights $w_{ij}$ of the network are then updated according to

$$

\Delta w_{ij}=\epsilon\left(p_{ij}-p^{\prime}_{ij}\right)\,, \tag{14}

$$

where $\epsilon$ is the learning rate. To reach thermal equilibrium, the states of the visible and hidden units are updated using the update probability (9).

In summary, the steps relevant for training a Boltzmann machine are as follows. 1.

Clamp the input data (environment distribution) to the visible units. 2.

Update the state of all hidden units according to Eq. (9) until the system reaches thermal equilibrium and then compute $p_{ij}$ . 3.

Unclamp the input data from the visible units. 4.

Update the state of all neurons according to Eq. (9) until the system reaches thermal equilibrium and then compute $p_{ij}^{\prime}$ . 5.

Update all weights according to Eq. (14) and return to step 2 or stop if the weight updates are sufficiently small. After training a Boltzmann machine, we can unclamp the visible units from the environment and generate samples to evaluate their quality. To do this, we use various initial configurations and activate neurons according to Eq. (9) until thermal equilibrium is reached. If the Boltzmann machine was trained successfully, the distribution of states for the unclamped visible units should align with the environment distribution.

2 Restricted Boltzmann machines

Boltzmann machines are not widely used in general learning tasks. Their impracticality arises from the significant computational burden associated with achieving thermal equilibrium, especially in instances involving large system sizes. $h_{1}$ $h_{2}$ $h_{3}$ $h_{4}$ $v_{1}$ $v_{2}$ $v_{3}$ $v_{4}$ $v_{5}$ $v_{6}$

Figure 5: Restricted Boltzmann machines consist of a visible layer (blue) and a hidden layer (red). In the shown example, the respective layers comprise six visible units $\left\{v_{i}\right\}$ $(i∈\{1,...,6\})$ and four hidden units $\left\{h_{j}\right\}$ $(j∈\{1,...,4\})$ . The network structure of an RBM is bipartite and undirected.

Restricted Boltzmann machines provide an ANN structure that can be trained more efficiently by omitting connections between the hidden and visible units (see Fig. 5). Because of these missing intra-layer connections, the network architecture of an RBM is bipartite.

In RBMs, updates for visible and hidden units are performed alternately. Because there are no connections within each layer, we can update all units within each layer in parallel. Specifically, visible unit $v_{i}$ is activated with conditional probability

$$

p(v_{i}=1|\{h_{j}\})=\sigma\Bigl{(}\sum_{j}w_{ij}h_{j}+b_{i}\Bigr{)}\,, \tag{15}

$$

where $b_{i}$ is the bias of visible unit $v_{i}$ and $\{h_{j}\}$ is a given configuration of hidden units. We then activate all hidden units based on their conditional probabilities

$$

p(h_{j}=1|\{v_{i}\})=\sigma\Bigl{(}\sum_{i}w_{ij}v_{i}+c_{j}\Bigr{)}\,, \tag{16}

$$

where $h_{j}$ represents hidden unit $j$ , $c_{j}$ is its associated bias, and $\{v_{i}\}$ denotes a specific configuration of visible units. This technique of sampling is referred to as “block Gibbs sampling”.

Training an RBM shares similarities with training a BM. The key difference lies in the need to consider the bipartite network structure in the weight update equation (14). For an RBM, the weight updates are

$$

\Delta w_{ij}=\epsilon(\langle\nu_{i}h_{j}\rangle_{\text{data}}-\langle\nu_{i}h_{j}\rangle_{\text{model}})\,. \tag{17}

$$

Instead of sampling configurations to compute $\langle\nu_{i}h_{j}\rangle_{\text{data}}$ and $\langle\nu_{i}h_{j}\rangle_{\text{model}}$ at thermal equilibrium, we can instead employ a few relaxation steps. This approach is known as “contrastive divergence”. [70, 113] The corresponding weight updates are

$$

\Delta w_{ij}^{\text{CD}}=\epsilon(\langle\nu_{i}h_{j}\rangle_{\text{data}}-\langle\nu_{i}h_{j}\rangle_{\text{model}}^{k})\,. \tag{18}

$$

The superscript $k$ indicates the number of block Gibbs updates performed. For a more detailed discussion on the contrastive divergence training of RBMs, see Ref. 114.

<details>

<summary>x1.png Details</summary>

### Visual Description

\n

## Heatmap: Correlation Analysis at Different Temperatures

### Overview

The image presents a 2x3 grid of heatmaps illustrating the correlation between two variables, M(RT)² and RBM, at three different temperature regimes: T < Tc, T ≈ Tc, and T > Tc. Each cell in the grid represents a heatmap, with darker squares indicating a stronger correlation. The heatmaps appear to be based on a square grid of data points.

### Components/Axes

* **Y-axis:** M(RT)² (top row) and RBM (bottom row). These are the two variables being correlated.

* **X-axis:** Temperature regimes: T < Tc, T ≈ Tc, and T > Tc. Tc represents a critical temperature.

* **Heatmap Color:** Black squares represent high correlation, while white squares represent low or no correlation. Shades of gray indicate intermediate correlation levels.

* **Grid Structure:** The image is organized as a 2x3 grid, with the rows representing the variables (M(RT)² and RBM) and the columns representing the temperature regimes.

### Detailed Analysis

The heatmaps show a clear trend in correlation strength as temperature increases.

* **T < Tc (Left Column):** Both heatmaps (M(RT)² and RBM) show very sparse black squares, indicating a very weak correlation between the variables at temperatures below the critical temperature. The black squares are clustered towards the bottom-left corner of each heatmap.

* **T ≈ Tc (Middle Column):** The correlation increases significantly at temperatures around the critical temperature. The heatmaps show a moderate density of black squares, distributed more randomly than in the T < Tc case. There are some visible clusters of black squares.

* **T > Tc (Right Column):** The correlation is strongest at temperatures above the critical temperature. The heatmaps are almost entirely filled with black squares, indicating a strong correlation between the variables. The distribution of black squares appears more uniform and random than in the other two cases.

It is difficult to extract precise numerical values from the heatmaps without the underlying data. However, we can qualitatively assess the correlation strength based on the density of black squares.

### Key Observations

* The correlation between M(RT)² and RBM increases dramatically as the temperature approaches and exceeds the critical temperature Tc.

* At temperatures below Tc, the correlation is minimal.

* The distribution of correlation changes from clustered (T < Tc) to more random (T ≈ Tc and T > Tc) as the temperature increases.

### Interpretation

The data suggests a phase transition occurring at the critical temperature Tc. Below Tc, the variables M(RT)² and RBM are largely uncorrelated, indicating that they behave independently. As the temperature approaches and exceeds Tc, a strong correlation emerges, suggesting that the two variables become strongly coupled. This could indicate a change in the underlying system's behavior, such as the emergence of long-range order or a collective phenomenon. The increasing randomness of the correlation pattern at higher temperatures might indicate a more disordered state. The heatmaps visually demonstrate how the relationship between these two variables changes drastically with temperature, highlighting the importance of the critical temperature Tc. The data suggests that the system undergoes a transition from a weakly correlated state to a strongly correlated state at Tc.

</details>

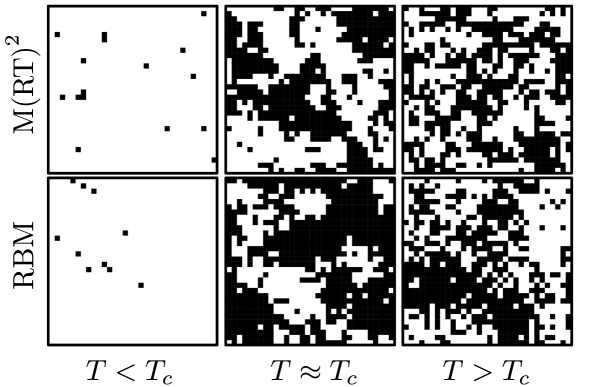

Figure 6: Snapshots of $32× 32$ Ising configurations are shown for $T∈\{1.5,2.5,4\}$ . These configurations are derived from both M(RT) 2 and RBM samples. The quantity $T_{c}=2/\ln(1+\sqrt{2})≈ 2.269$ is the critical temperature of the two-dimensional Ising model.

Restricted Boltzmann machines have been employed in diverse contexts, including dimensionality reduction of datasets [72], the study of phase transitions [73, 74, 3, 75], and the representation of wave functions [76, 77]. In Fig. 6, we show three snapshots of Ising configurations, generated using both M(RT) 2 sampling and RBMs, each comprising $32× 32$ spins. The RBM was trained using $20× 10^{4}$ realizations of Ising configurations sampled at various temperatures. [75]

3 Derivation of the learning algorithm

To derive Eq. (13), we follow the approach outlined in Ref. 68. Notice that the environment distribution $P\left(\{\nu\}\right)$ does not depend $w_{ij}$ . Hence, we have

$$

\frac{\partial G}{\partial w_{ij}}=-\sum_{\{\nu\}}\frac{P\left(\{\nu\}\right)}{P^{\prime}\left(\{\nu\}\right)}\frac{\partial P^{\prime}\left(\{\nu\}\right)}{\partial w_{ij}}\,. \tag{19}

$$

Next, we wish to compute the gradient $∂ P^{\prime}(\{\nu\})/∂ w_{ij}$ . In a freely running BM, the equilibrium distribution of the visible units follows a Boltzmann distribution. That is,

$$

P^{\prime}(\{\nu\})=\sum_{\{h\}}P^{\prime}\left(\{\nu\},\{h\}\right)=\frac{\sum_{\{h\}}e^{-E\left({\{\nu,h\}}\right)/T}}{\sum_{\{\nu,h\}}e^{-E\left({\{\nu,h\}}\right)/T}}\,. \tag{20}

$$

Here, the quantity

$$

E\left({\{\nu,h\}}\right)=-\frac{1}{2}\sum_{i,j}w_{ij}x_{i}^{\{\nu,h\}}x_{j}^{\{\nu,h\}} \tag{21}

$$

is the Ising-type energy function in which field (or bias) terms are absorbed in the weights $w_{ij}$ [see Eqs. (3) and (7)]. For a BM in state ${\{\nu,h\}}$ , we denote the state of neuron $i$ by $x_{i}^{\{\nu,h\}}$ . Using

$$

\frac{\partial e^{-E\left({\{\nu,h\}}\right)/T}}{\partial w_{ij}}=\frac{1}{T}x_{i}^{\{\nu,h\}}x_{j}^{\{\nu,h\}}e^{-E\left({\{\nu,h\}}\right)/T} \tag{22}

$$

yields

$$

\displaystyle\begin{split}\frac{\partial P^{\prime}\left(\{\nu\}\right)}{\partial w_{ij}}&=\frac{\frac{1}{T}\sum_{\{h\}}x_{i}^{\{\nu,h\}}x_{j}^{\{\nu,h\}}e^{-E\left({\{\nu,h\}}\right)/T}}{\sum_{\{\nu,h\}}e^{-E\left({\{\nu,h\}}\right)/T}}\\

&-\frac{\sum_{\{h\}}e^{-E\left({\{\nu,h\}}\right)/T}\frac{1}{T}\sum_{\{\nu,h\}}x_{i}^{\{\nu,h\}}x_{j}^{\{\nu,h\}}e^{-E\left({\{\nu,h\}}\right)/T}}{\left(\sum_{\{\nu,h\}}e^{-E\left({\{\nu,h\}}\right)/T}\right)^{2}}\\

&=\frac{1}{T}\left[\sum_{\{h\}}x_{i}^{\{\nu,h\}}x_{j}^{\{\nu,h\}}P^{\prime}\left(\{\nu\},\{h\}\right)\right.\\

&\hskip 28.45274pt\left.-P^{\prime}(\{\nu\})\sum_{\{\nu,h\}}x_{i}^{\{\nu,h\}}x_{j}^{\{\nu,h\}}P^{\prime}\left(\{\nu\},\{h\}\right)\right]\,.\end{split} \tag{23}

$$

We will now substitute this result into Eq. (19) to obtain

$$

\displaystyle\begin{split}\frac{\partial G}{\partial w_{ij}}&=-\sum_{\{\nu\}}\frac{P\left(\{\nu\}\right)}{P^{\prime}\left(\{\nu\}\right)}\frac{1}{T}\left[\sum_{\{h\}}x_{i}^{\{\nu,h\}}x_{j}^{\{\nu,h\}}P^{\prime}\left(\{\nu\},\{h\}\right)\right.\\

&\hskip 91.04872pt\left.-P^{\prime}\left(\{\nu\}\right)\sum_{\{\nu,h\}}x_{i}^{\{\nu,h\}}x_{j}^{\{\nu,h\}}P^{\prime}\left(\{\nu\},\{h\}\right)\right]\\

&=-\frac{1}{T}\left[\sum_{\{\nu,h\}}x_{i}^{\{\nu,h\}}x_{j}^{\{\nu,h\}}P\left(\{\nu\},\{h\}\right)-\sum_{\{\nu,h\}}x_{i}^{\{\nu,h\}}x_{j}^{\{\nu,h\}}P^{\prime}\left(\{\nu\},\{h\}\right)\right]\,,\end{split} \tag{24}

$$

where we used that $\sum_{\{\nu\}}P\left(\{\nu\}\right)=1$ and $P\left(\{\nu\}\right)/P^{\prime}\left(\{\nu\}\right)P^{\prime}\left(\{\nu\},\{h\}\right)=P\left(\{\nu\},\{h\}\right)\,.$ The latter equation follows from the definition of joint probability distributions

$$

P\left(\{\nu\},\{h\}\right)=P\left(\{h\}|\{\nu\}\right)P\left(\{\nu\}\right) \tag{25}

$$

and

$$

P^{\prime}\left(\{\nu\},\{h\}\right)=P^{\prime}\left(\{h\}|\{\nu\}\right)P^{\prime}\left(\{\nu\}\right)\,, \tag{26}

$$

with $P\left(\{h\}|\{\nu\}\right)=P^{\prime}\left(\{h\}|\{\nu\}\right)$ . The conditional distributions $P(\{h\}|\{\nu\})$ and $P^{\prime}(\{h\}|\{\nu\})$ are identical, as the probability of observing a certain hidden state given a visible one is independent of the origin of the visible state in an equilibrated system. In other words, for the conditional distributions in an equilibrated system, it is irrelevant whether the visible state is provided by the environment or generated by a freely running machine. Defining

$$

p_{ij}\coloneqq\sum_{\{\nu,h\}}x_{i}^{\{\nu,h\}}x_{j}^{\{\nu,h\}}P\left(\{\nu\},\{h\}\right) \tag{27}

$$

and

$$

p_{ij}^{\prime}\coloneqq\sum_{\{\nu,h\}}x_{i}^{\{\nu,h\}}x_{j}^{\{\nu,h\}}P^{\prime}\left(\{\nu\},\{h\}\right)\,, \tag{28}

$$

we finally obtain Eq. (13).

4 Loss landscapes of artificial neural networks

Training an ANN involves minimizing a given loss function such as the KL divergence [see Eq. (12)]. The loss landscapes of ANNs are affected by several factors, including structural properties [86, 87, 115] and a range of implementation attributes [116, 117, 118]. Knowledge of their precise effects on learning performance, however, remains incomplete.

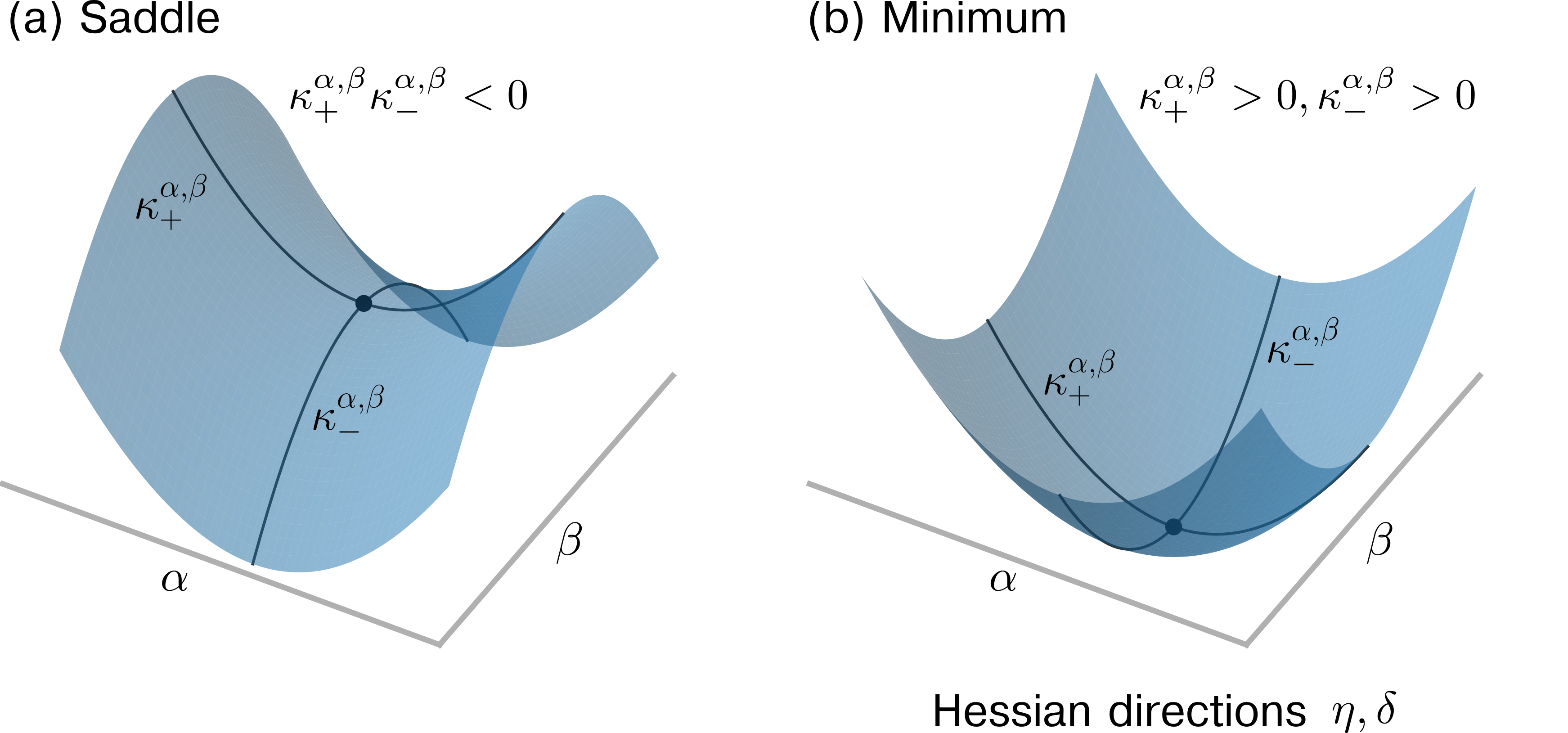

One path to a better understanding of the relationships between ANN structure, implementation attributes, and learning performance is through a more in-depth analysis of the geometric properties of loss landscapes. For instance, Keskar et al. [119] analyze the local curvature around candidate minimizers via the spectrum of the underlying Hessian to characterize the flatness and sharpness of loss minima, and Dinh et al. [120] demonstrate that reparameterizations can render flat minima sharp without affecting generalization properties. Even so, one challenge to the study of geometric properties of loss landscapes is high dimensionality. To meet that challenge, some propose to visualize the curvature around a given point by projecting curvature properties of high-dimensional loss functions to a lower-dimensional (and often random) projection of two or three dimensions [121, 122, 123, 124, 125]. Horoi et al. [125], building on this approach, pursue improvements in learning by dynamically sampling points in projected low-loss regions surrounding local minima during training.

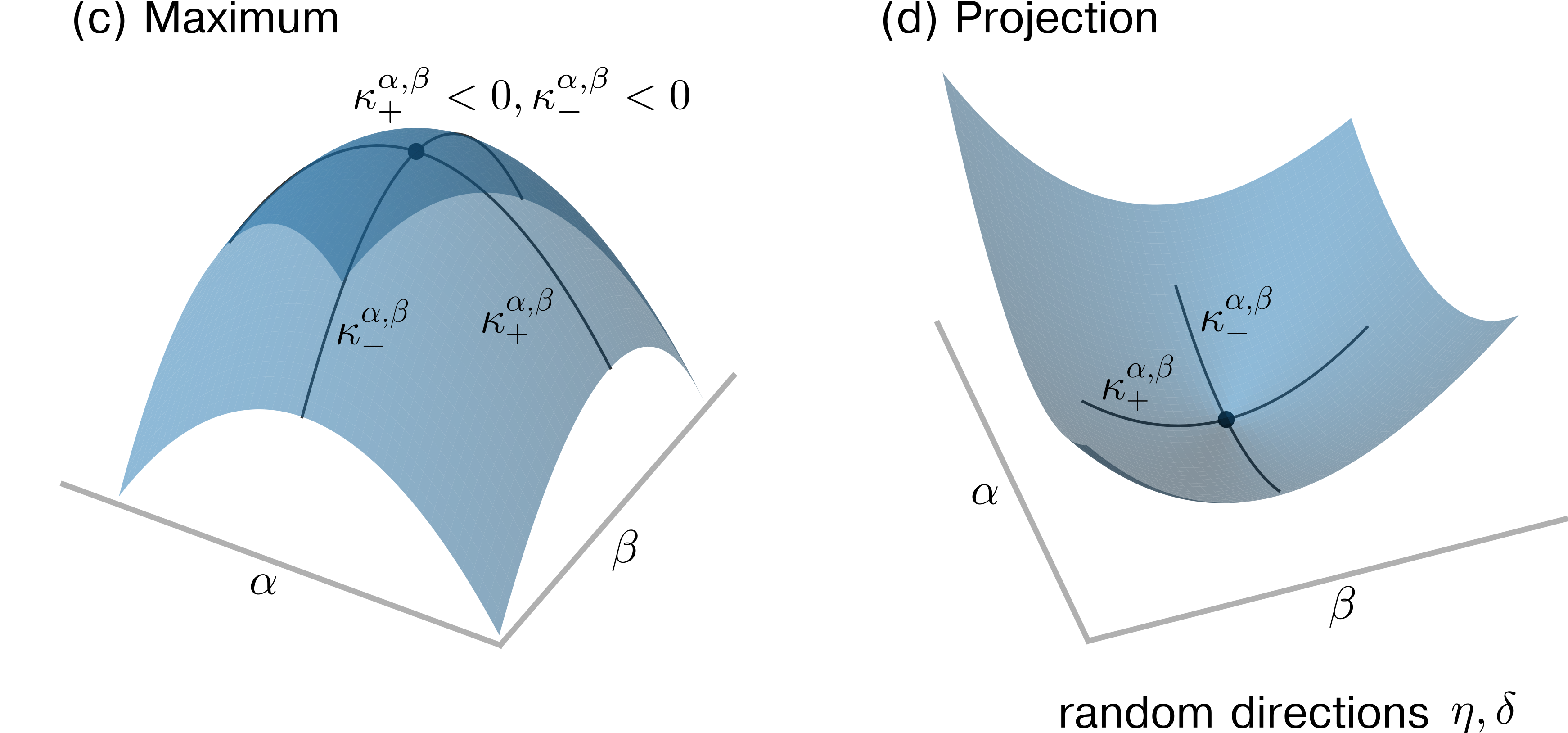

However, visualizing high-dimensional loss landscape curvature relies on accurate projections of curvature properties to lower dimensions. Unfortunately, random projections do not preserve curvature information, thus do not afford accurate low-dimensional representations of curvature information. This argument is given in Sec. 1 and illustrated with a simulation example in Sec. 2. Principal curvatures in a low-dimensional projection are nevertheless given by functions of weighted ensemble means of the Hessian elements in the original, high-dimensional space, a result also established in Sec. 1. Instead of using random projections to visualize loss functions, we propose to analyze projections along dominant Hessian directions associated with the largest-magnitude positive and negative principal curvatures.

1 Principal curvature in random projections

To describe the connection between the principal curvature of a loss function $L(\theta)$ with ANN parameters $\theta∈\mathbb{R}^{N}$ and that associated with a lower-dimensional, random projection, we provide in Sec. 1 an overview of concepts from differential geometry [126, 127, 128] that are useful to mathematically describe curvature in high-dimensional spaces. In Secs. 1 and 1, we show the relationship between principal curvature and random projections. Finally, in Sec. 1, we highlight a relationship between measures of curvature presented in Sec. 1 and Hessian trace estimates, which affords an alternative to Hutchinson’s method for computing unbiased Hessian trace estimates.

Differential and information geometry concepts

In differential geometry, the principal curvatures are the eigenvalues of the shape operator (or Weingarten map Some authors distinguish between the shape operator and the Weingarten map depending on if the change of the underlying tangent vector is described in the original manifold or in Euclidean space (see, e.g., chapter 3 in [129]).), a linear endomorphism defined on the tangent space $T_{p}$ of $L$ at a point $p$ . For the original high-dimensional space, we have $(\theta,L(\theta))⊂eq\mathbb{R}^{N+1}$ and there are $N$ principal curvatures $\kappa_{1}^{\theta}≥\kappa_{2}^{\theta}≥...≥\kappa_{N}^{\theta}$ . At a non-degenerate critical point $\theta^{*}$ where the gradient $∇_{\theta}L$ vanishes, the matrix of the shape parameter is given by the Hessian $H_{\theta}$ with elements $(H_{\theta})_{ij}=∂^{2}L/(∂\theta_{i}∂\theta_{j})$ ( $i,j∈\{1,...,N\}$ ). A critical point is degenerate if the Hessian $H_{\theta}$ at this point is singular (i.e., $\mathrm{det}(H_{\theta})=0$ ). At degenerate critical points, one cannot use the eigenvalues of $H_{\theta}$ to determine if the critical point is a minimum (positive definite $H_{\theta}$ ) or a maximum (negative definite $H_{\theta}$ ). Geometrically, at a degenerate critical point, a quadratic approximation fails to capture the local behavior of the function that one wishes to study. Some works refer to the Hessian as the “curvature matrix” [130] or use it to characterize the curvature properties of $L(\theta)$ [122]. In the vicinity of a non-degenerate critical point $\theta^{*}$ , the eigenvalues of the Hessian $H_{\theta}$ are the principal curvatures and describe the loss function in the eigenbasis of $H_{\theta}$ according to

$$

L(\theta^{*}+\Delta\theta)=L(\theta^{*})+\frac{1}{2}\sum_{i=1}^{N}\kappa_{i}^{\theta}\Delta\theta_{i}^{2}\,. \tag{29}

$$

The Morse lemma states that, if a critical point $\theta^{*}$ of $L(\theta)$ is non-degenerate, then there exists a chart $(\tilde{\theta}_{1},...,\tilde{\theta}_{N})$ in a neighborhood of $\theta^{*}$ such that

$$

L(\tilde{\theta})=-\tilde{\theta}^{2}_{1}-\cdots-\tilde{\theta}^{2}_{i}+\tilde{\theta}^{2}_{i+1}+\cdots+\tilde{\theta}^{2}_{N}+L(\theta^{*})\,, \tag{30}

$$

where $\tilde{\theta}_{i}(\theta)=0$ for $i∈\{1,...,N\}$ . The loss function $L(\tilde{\theta})$ in Eq. (30) is decreasing along $i$ directions and increasing along the remaining $i+1$ to $N$ directions. Further, the index $i$ of a critical point $\theta^{*}$ is the number of negative eigenvalues of the Hessian $H_{\theta}$ at that point.

In the standard basis, the Hessian is

$$

H_{\theta}\coloneqq\nabla_{\theta}\nabla_{\theta}L(\theta)=\begin{pmatrix}\frac{\partial^{2}L}{\partial\theta_{1}^{2}}&\cdots&\frac{\partial^{2}L}{\partial\theta_{1}\partial\theta_{N}}\\

\vdots&\ddots&\vdots\\

\frac{\partial^{2}L}{\partial\theta_{N}\partial\theta_{1}}&\cdots&\frac{\partial^{2}L}{\partial\theta_{N}^{2}}\end{pmatrix}\,. \tag{31}

$$

Random projections

To graphically explore an $N$ -dimensional loss function $L$ around a critical point $\theta^{*}$ , one may wish to work in a lower-dimensional representation. For example, a two-dimensional projection of $L$ around $\theta^{*}$ is provided by

$$

L(\theta^{*}+\alpha\eta+\beta\delta)\,, \tag{32}

$$

where the parameters $\alpha,\beta∈\mathbb{R}$ scale the directions $\eta,\delta∈\mathbb{R}^{N}$ . The corresponding graph representation is $(\alpha,\beta,L(\alpha,\beta))⊂eq\mathbb{R}^{3}$ .

In high-dimensional spaces, there exist vastly many more almost-orthogonal than orthogonal directions. In fact, if the dimension of our space is large enough, with high probability, random vectors will be sufficiently close to orthogonal [131]. Following this result, many related works [122, 124, 125] use random Gaussian directions with independent and identically distributed vector elements $\eta_{i},\delta_{i}\sim\mathcal{N}(0,1)$ ( $i∈\{1,...,N\}$ ).

The scalar product of random Gaussian vectors $\eta,\delta$ is a sum of the difference between two chi-squared distributed random variables because

$$

\sum_{i=1}^{N}\eta_{i}\delta_{i}=\sum_{i=1}^{N}\frac{1}{4}(\eta_{i}+\delta_{i})^{2}-\frac{1}{4}(\eta_{i}-\delta_{i})^{2}=\sum_{i=1}^{N}\frac{1}{2}X_{i}^{2}-\frac{1}{2}Y_{i}^{2}\,, \tag{33}

$$

where $X_{i},Y_{i}\sim\mathcal{N}(0,1)$ .

Notice that $\eta,\delta$ are almost orthogonal, which can be proved using a concentration inequality for chi-squared distributed random variables. For further details, see Ref. 105.

Principal curvature

With the form of random Gaussian projections in hand, we now analyze the principal curvatures in both the original and lower-dimensional spaces. The Hessian associated with the two-dimensional loss projection (32) is

$$

\displaystyle\begin{split}H_{\alpha,\beta}&=\begin{pmatrix}\frac{\partial^{2}L}{\partial\alpha^{2}}&\frac{\partial^{2}L}{\partial\alpha\partial\beta}\\

\frac{\partial^{2}L}{\partial\beta\partial\alpha}&\frac{\partial^{2}L}{\partial\beta^{2}}\\

\end{pmatrix}\\

&=\begin{pmatrix}\sum_{i,j}\eta_{i}\eta_{j}\frac{\partial^{2}L}{\partial\theta_{i}\theta_{j}}&\sum_{i,j}\eta_{i}\delta_{j}\frac{\partial^{2}L}{\partial\theta_{i}\theta_{j}}\\

\sum_{i,j}\eta_{i}\delta_{j}\frac{\partial^{2}L}{\partial\theta_{i}\theta_{j}}&\sum_{i,j}\delta_{i}\delta_{j}\frac{\partial^{2}L}{\partial\theta_{i}\theta_{j}}\\

\end{pmatrix}\\

&=\begin{pmatrix}(H_{\theta})_{ij}\eta^{i}\eta^{j}&(H_{\theta})_{ij}\eta^{i}\delta^{j}\\

(H_{\theta})_{ij}\eta^{i}\delta^{j}&(H_{\theta})_{ij}\delta^{i}\delta^{j}\\

\end{pmatrix}\,,\end{split} \tag{34}

$$

where we use Einstein notation in the last equality.

Because the elements of $\delta,\eta$ are distributed according to a standard normal distribution, the second derivatives of the loss function $L$ in Eq. (34) have prefactors that are products of standard normal variables and, hence, can be expressed as sums of chi-squared distributed random variables as in Eq. (33). To summarize, elements of $H_{\alpha,\beta}$ are sums of second derivatives of $L$ in the original space weighted with chi-squared distributed prefactors.

The principal curvatures $\kappa_{±}^{\alpha,\beta}$ (i.e., the eigenvalues of $H_{\alpha,\beta}$ ) are

$$

\kappa_{\pm}^{\alpha,\beta}=\frac{1}{2}\left(A+C\pm\sqrt{4B^{2}+(A-C)^{2}}\right)\,, \tag{35}

$$

where $A=(H_{\theta})_{ij}\eta^{i}\eta^{j}$ , $B=(H_{\theta})_{ij}\eta^{i}\delta^{j}$ , and $C=(H_{\theta})_{ij}\delta^{i}\delta^{j}$ . To the best of our knowledge, a closed, analytic expression for the distribution of the quantities $A,B,C$ is not yet known [132, 133, 134, 135].

Returning to principal curvature, since $\sum_{i,j}a_{ij}\eta^{i}\eta^{j}=\sum_{i}a_{ii}\eta^{i}\eta^{i}+\sum_{i≠ j}a_{ij}\eta^{i}\eta^{j}$ ( $a_{ij}∈\mathbb{R}$ ), we find that $\mathds{E}[A]=\mathds{E}[C]={(H_{\theta})^{i}}_{i}$ and $\mathds{E}[B]=0$ where ${(H_{\theta})^{i}}_{i}\equiv\mathrm{tr}(H_{\theta})=\sum_{i=1}^{N}\kappa_{i}^{\theta}$ . The expected values of the quantities $A$ , $B$ , and $C$ correspond to ensemble means (43) in the limit $S→∞$ , where $S$ is the number of independent realizations of the underlying random variable. To show that the expected values of $a_{ij}\eta^{i}\eta^{j}$ ( $i≠ j$ ) or $a_{ij}\eta^{i}\delta^{j}$ vanish, one can either invoke independence of $\eta^{i},\eta^{j}$ ( $i≠ j$ ) and $\eta^{i},\delta^{j}$ or transform both products into corresponding differences of two chi-squared random variables with the same mean [see Eq. (33)].

Hence, the expected, dimension-reduced Hessian (34) is

$$

\mathds{E}[H_{\alpha,\beta}]=\begin{pmatrix}{(H_{\theta})^{i}}_{i}&0\\

0&{(H_{\theta})^{i}}_{i}\\

\end{pmatrix}\,. \tag{36}

$$

The corresponding eigenvalue (or principal curvature) $\bar{\kappa}^{\alpha,\beta}$ is therefore given by the sum over all principal curvatures in the original space (i.e., $\bar{\kappa}^{\alpha,\beta}=\sum_{i=1}^{N}\kappa_{i}^{\theta}$ ). Hence, the value of the principal curvature $\bar{\kappa}^{\alpha,\beta}$ in the expected dimension-reduced space will be either positive (if the positive principal curvatures in the original space dominate), negative (if the negative principal curvatures in the original space dominate), or close to zero (if positive and negative principal curvatures in the original space cancel out each other). As a result, saddle points will not appear as such in the expected random projection.

In addition to the connection between $\bar{\kappa}^{\alpha,\beta}$ and the principal curvatures $\kappa_{i}^{\theta}$ , we now provide an overview of additional mathematical relations between different curvature measures that are useful to quantify curvature properties of high-dimensional loss functions and their two-dimensional random projections.

\tbl

Quantities to characterize curvature. Symbol Definition $H_{\theta}∈\mathbb{R}^{N× N}$ Hessian in original loss space $\kappa_{i}^{\theta}∈\mathbb{R}$ principal curvatures in original loss space with $i∈\{1,...,N\}$ (i.e., the eigenvalues of $H_{\theta}$ ) $H_{\alpha,\beta}∈\mathbb{R}^{2× 2}$ Hessian in a two-dimensional projection of an $N$ -dimensional loss function $\kappa_{±}^{\alpha,\beta}∈\mathbb{R}$ principal curvatures in a two-dimensional loss projection (i.e., the eigenvalues of $H_{\alpha,\beta}$ ) $\bar{\kappa}^{\alpha,\beta}∈\mathbb{R}$ principal curvature in expected, two-dimensional loss projection (i.e., the eigenvalues of $\mathbb{E}[H_{\alpha,\beta}]$ ) $H∈\mathbb{R}$ mean curvature (i.e., $\sum_{i=1}^{N}\kappa_{i}^{\theta}/N=\bar{\kappa}^{\alpha,\beta}/N$ )

Invoking Eq. (35), we can relate $\bar{\kappa}^{\alpha,\beta}$ to $\mathrm{tr}(H_{\theta})$ and $\kappa_{±}^{\alpha,\beta}$ . Because $\kappa_{+}^{\alpha,\beta}+\kappa_{-}^{\alpha,\beta}=A+C$ , we have

$$

\mathrm{tr}(H_{\theta})=\bar{\kappa}^{\alpha,\beta}=\sum_{i=1}^{N}\kappa_{i}^{\theta}=\frac{1}{2}\left(\mathbb{E}[\kappa_{-}^{\alpha,\beta}]+\mathbb{E}[\kappa_{+}^{\alpha,\beta}]\right)\,. \tag{37}

$$

The mean curvature $H$ in the original space is related to $\bar{\kappa}^{\alpha,\beta}$ via

$$

H=\frac{1}{N}\bar{\kappa}^{\alpha,\beta}=\frac{1}{N}\sum_{i=1}^{N}\kappa_{i}^{\theta}\,. \tag{38}

$$

We summarize the definitions of the employed Hessians and curvature measures in Tab. 1.

The appeal of random projections is that pairwise distances between points in a high-dimensional space can be nearly preserved by a lower-dimensional linear embedding, affording a low-dimensional representation of mean and variance information with minimal distortion [136]. The relationship between random Gaussian directions and principal curvature is less straightforward. Our results show that the principal curvatures $\kappa_{±}^{\alpha,\beta}$ in a two-dimensional loss projection are weighted averages of the Hessian elements $(H_{\theta})_{ij}$ in the original space, not weighted averages of the principal curvatures $\kappa_{i}^{\theta}$ as claimed by Ref. 122. Similar arguments apply to projections with dimension larger than 2.

Hessian trace estimates

Finally, we point to a connection between curvature measures and Hessian trace estimates. A common way of estimating $\mathrm{tr}(H_{\theta})$ without explicitly computing all eigenvalues of $H_{\theta}$ is based on Hutchinson’s method [137] and random numerical linear algebra [138, 139]. The basic idea behind this approach is to (i) use a random vector $z∈\mathbb{R}^{N}$ with elements $z_{i}$ that are distributed according to a distribution function with zero mean and unit variance (e.g., a Rademacher distribution with $\Pr\left(z_{i}=± 1\right)=1/2$ ), and (ii) compute $z^{→p}H_{\theta}z$ , an unbiased estimator of $\mathrm{tr}(H_{\theta})$ . That is,

$$

\mathrm{tr}(H_{\theta})=\mathbb{E}[z^{\top}H_{\theta}z]\,. \tag{39}

$$

Recall that Eq. (37) shows that the principal curvature of the expected random loss projection, $\bar{\kappa}^{\alpha,\beta}$ , is equal to $\mathrm{tr}(H_{\theta})$ . Instead of estimating $\mathrm{tr}(H_{\theta})$ using Hutchinson’s method (39), an alternative Hutchinson-type estimate of this quantity is provided by the mean of the expected values of $\kappa_{-}^{\alpha,\beta}$ and $\kappa_{+}^{\alpha,\beta}$ [see Eq. (37)].

2 Extracting curvature information

We now study two examples that will help build intuitions. In the first example presented in Sec. 2, we study a critical point $\theta^{*}$ of an $N$ -dimensional loss function $L(\theta)$ for which (i) all principal curvatures have the same magnitude and (ii) the number of positive curvature directions is equal to the number of negative curvature directions. In this example, saddles can be correctly detected by ensemble means but success depends on the averaging process used. In the second example presented in Sec. 2, we use a loss function associated with an unequal number of negative and positive curvature directions. For the different curvature measures derived in Sec. 1, we find that random projections cannot identify the underlying saddle point. In Sec. 2, we will use the two example loss functions to discuss how curvature-based Hessian trace estimates relate to those obtained with the original Hutchinson’s method.

Equal number of curvature directions

The loss function of our first example is

$$

L(\theta)=\frac{1}{2}\theta_{2n+1}\left(\sum_{i=1}^{n}\theta_{i}^{2}-\theta_{i+n}^{2}\right)\,,\quad n\in\mathbb{Z}_{+}\,, \tag{40}

$$

where we set $N=2n+1$ . A critical point $\theta^{*}$ of the loss function (40) satisfies

$$

(\nabla_{\theta}L)(\theta^{*})=\left(\begin{array}[]{c}\theta^{*}_{1}\theta^{*}_{2n+1}\\

\vdots\\

\theta^{*}_{n}\theta^{*}_{2n+1}\\

-\theta^{*}_{n+1}\theta^{*}_{2n+1}\\

\vdots\\

-\theta^{*}_{2n}\theta^{*}_{2n+1}\\

\frac{1}{2}\left(\sum_{i=1}^{n}{\theta_{i}^{{*\textsuperscript{2}}}}-{\theta_{i+n}^{{*\textsuperscript{2}}}}\right)\end{array}\right)=0\,. \tag{41}

$$

The Hessian at the critical point $\theta^{*}=(\theta^{*}_{1},...,\theta^{*}_{2n},\theta^{*}_{2n+1})$ $=(0,...,0,1)$ is

$$

H_{\theta}=\mathrm{diag}(\underbrace{1,\dots,1}_{n~\mathrm{times}},\underbrace{-1,\dots,-1}_{n~\mathrm{times}},0)\,. \tag{42}

$$

<details>

<summary>hessian_alpha_beta_left.png Details</summary>

### Visual Description

## Chart: Difference in Entropy Estimates vs. Number of Samples

### Overview

The image presents two line charts (labeled (a) and (c)) displaying the difference between the sample entropy estimate and the expected entropy estimate, denoted as `((Hα,β)ij) - E[(Hα,β)ij]`, plotted against the number of samples, `S`, on a logarithmic scale. Each chart contains four data series, distinguished by line style and color, representing different index pairs (i, j).

### Components/Axes

* **Y-axis:** `((Hα,β)ij) - E[(Hα,β)ij]` ranging from -20 to 20.

* **X-axis:** `number of samples S` on a logarithmic scale, ranging from 10⁰ to 10⁴.

* **Legend:** Located in the top-right corner of each chart.

* `(i, j) = (1, 1)`: Solid black line.

* `(i, j) = (2, 2)`: Dashed blue line.

* `(i, j) = (1, 2), (i, j) = (2, 1)`: Dashed red line.

* **Chart Labels:**

* (a) is positioned in the top-left corner.

* (c) is positioned in the bottom-left corner.

### Detailed Analysis or Content Details

**Chart (a):**

* **(i, j) = (1, 1) (Black Line):** The line starts at approximately 10, decreases to around -5 at S=10, fluctuates between -10 and 10, and then gradually approaches 0 as S increases towards 10⁴. There are significant oscillations between S=10 and S=100.

* **(i, j) = (2, 2) (Blue Dashed Line):** Starts at approximately 5, rapidly decreases to around -15 at S=10, then oscillates between -15 and 5, and approaches 0 as S increases. The initial drop is steeper than the black line.

* **(i, j) = (1, 2), (i, j) = (2, 1) (Red Dashed Line):** Starts at approximately -10, increases to around 10 at S=10, then oscillates between -15 and 10, and approaches 0 as S increases. This line exhibits the most pronounced oscillations.

**Chart (c):**

* **(i, j) = (1, 1) (Black Line):** Starts at approximately 10, decreases to around -5 at S=10, fluctuates between -10 and 5, and then gradually approaches 0 as S increases towards 10⁴. Similar to chart (a), but with slightly different oscillation patterns.

* **(i, j) = (2, 2) (Blue Dashed Line):** Starts at approximately 15, rapidly decreases to around -10 at S=10, then oscillates between -15 and 5, and approaches 0 as S increases. The initial drop is steep.

* **(i, j) = (1, 2), (i, j) = (2, 1) (Red Dashed Line):** Starts at approximately -15, increases to around 5 at S=10, then oscillates between -15 and 10, and approaches 0 as S increases. This line exhibits significant oscillations.

### Key Observations

* All four data series in both charts converge towards 0 as the number of samples (S) increases. This suggests that the difference between the sample entropy estimate and the expected entropy estimate decreases with increasing sample size.

* The initial difference is most pronounced for the (2, 2) index pair (blue dashed line), indicating that this pair requires a larger sample size to achieve a stable entropy estimate.

* The (1, 2) and (2, 1) index pairs (red dashed line) exhibit the most significant oscillations, suggesting that their entropy estimates are more sensitive to sample variations.

* The charts (a) and (c) show similar trends, but with different initial values and oscillation amplitudes.

### Interpretation

The charts demonstrate the convergence of entropy estimates as the number of samples increases. The difference between the sample estimate and the expected value represents the error in the estimation. As S grows, this error diminishes, indicating that the sample entropy estimate becomes a more reliable approximation of the true entropy.

The varying convergence rates for different index pairs (i, j) suggest that the complexity of the underlying system or the relationship between the variables represented by i and j influences the required sample size for accurate entropy estimation. The (2,2) pair appears to be more sensitive to sample size, requiring more data to stabilize. The oscillations in the (1,2) and (2,1) pairs suggest a more complex relationship or higher variability in the data associated with those indices.

The fact that all lines converge to zero implies that, given enough data, the entropy estimates will become consistent and accurate, regardless of the initial index pair. The differences between the charts (a) and (c) could be due to different underlying data sets or experimental conditions. Further investigation would be needed to determine the specific reasons for these variations.

</details>

<details>

<summary>hessian_alpha_beta_right.png Details</summary>

### Visual Description

\n

## Chart: Principal Curvatures vs. Number of Samples

### Overview

The image presents two line graphs, labeled (b) and (d), displaying the relationship between principal curvatures and the number of samples (S). Both graphs share a logarithmic scale for the x-axis (number of samples) and display two distinct curves representing different curvature measurements.

### Components/Axes

* **X-axis:** Number of samples (S), labeled at the bottom of both charts. The scale is logarithmic, ranging from 10⁰ to 10⁴.

* **Y-axis (Top Chart (b)):** Principal curvatures, labeled on the left side, ranging from -50 to 75.

* **Y-axis (Bottom Chart (d)):** Principal curvatures, labeled on the left side, ranging from 550 to 700.

* **Legend (Top-right of both charts):**

* Solid Black Line: `<κ⁺ₐ,β>`

* Dashed Red Line: `<κ⁻ₐ,β>`

### Detailed Analysis

**Chart (b):**

* **Solid Black Line (<κ⁺ₐ,β>):** The line starts at approximately 20 at S=10⁰, rises to a peak of around 60 at S=10¹, then gradually decreases, fluctuating between 20 and 30 as S increases from 10² to 10⁴.

* **Dashed Red Line (<κ⁻ₐ,β>):** The line begins at approximately 25 at S=10⁰, rapidly decreases to a minimum of around -25 at S=10¹, and then slowly rises, stabilizing around 10 as S approaches 10⁴.

**Chart (d):**

* **Solid Black Line (<κ⁺ₐ,β>):** The line starts at approximately 640 at S=10⁰, quickly drops to around 560 at S=10¹, and remains relatively constant, fluctuating between 560 and 580, as S increases from 10² to 10⁴.

* **Dashed Red Line (<κ⁻ₐ,β>):** The line begins at approximately 670 at S=10⁰, decreases to around 610 at S=10¹, and then slowly rises, stabilizing around 630 as S approaches 10⁴.

### Key Observations

* In both charts, the solid black line represents a curvature that is generally positive in chart (b) and relatively low in chart (d).

* The dashed red line represents a curvature that is initially positive in chart (b) and high in chart (d), but becomes negative and stabilizes as the number of samples increases.

* The scales of the y-axes are significantly different between the two charts, indicating different magnitudes of curvature being measured.

* Both charts show a trend of curvature values stabilizing as the number of samples increases, suggesting convergence.

### Interpretation

The data suggests an analysis of principal curvatures as a function of sample size. The two charts likely represent different aspects or scales of the same underlying phenomenon. The initial fluctuations and subsequent stabilization of the curves indicate that the curvature measurements are sensitive to sample size at lower sample counts, but become more consistent as more samples are included.

The difference in the y-axis scales and the overall curvature values between the two charts suggests that `<κ⁺ₐ,β>` and `<κ⁻ₐ,β>` represent different types of curvature, potentially related to different geometric properties of the analyzed surface or object. The negative curvature observed in the dashed red line in chart (b) could indicate saddle-like features or regions of negative Gaussian curvature. The stabilization of both curves at higher sample sizes suggests that the curvature measurements are converging towards a stable value, indicating that the sample size is sufficient to accurately characterize the underlying geometry.

The logarithmic scale on the x-axis is important because it shows the rate of change in curvature as the number of samples increases. The rapid changes observed at lower sample counts suggest that adding more samples initially has a significant impact on the curvature measurements, while adding more samples at higher counts has a diminishing effect.

</details>

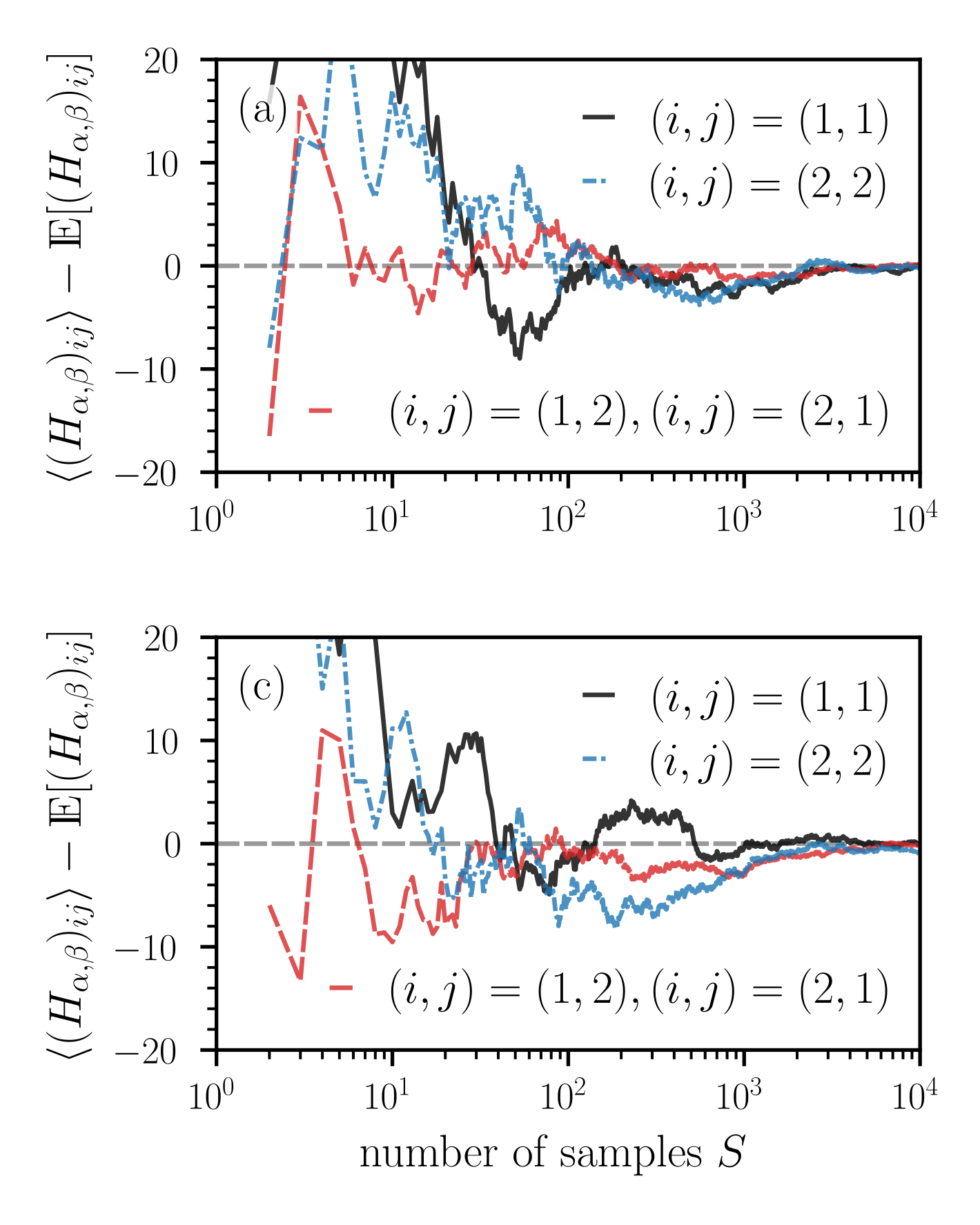

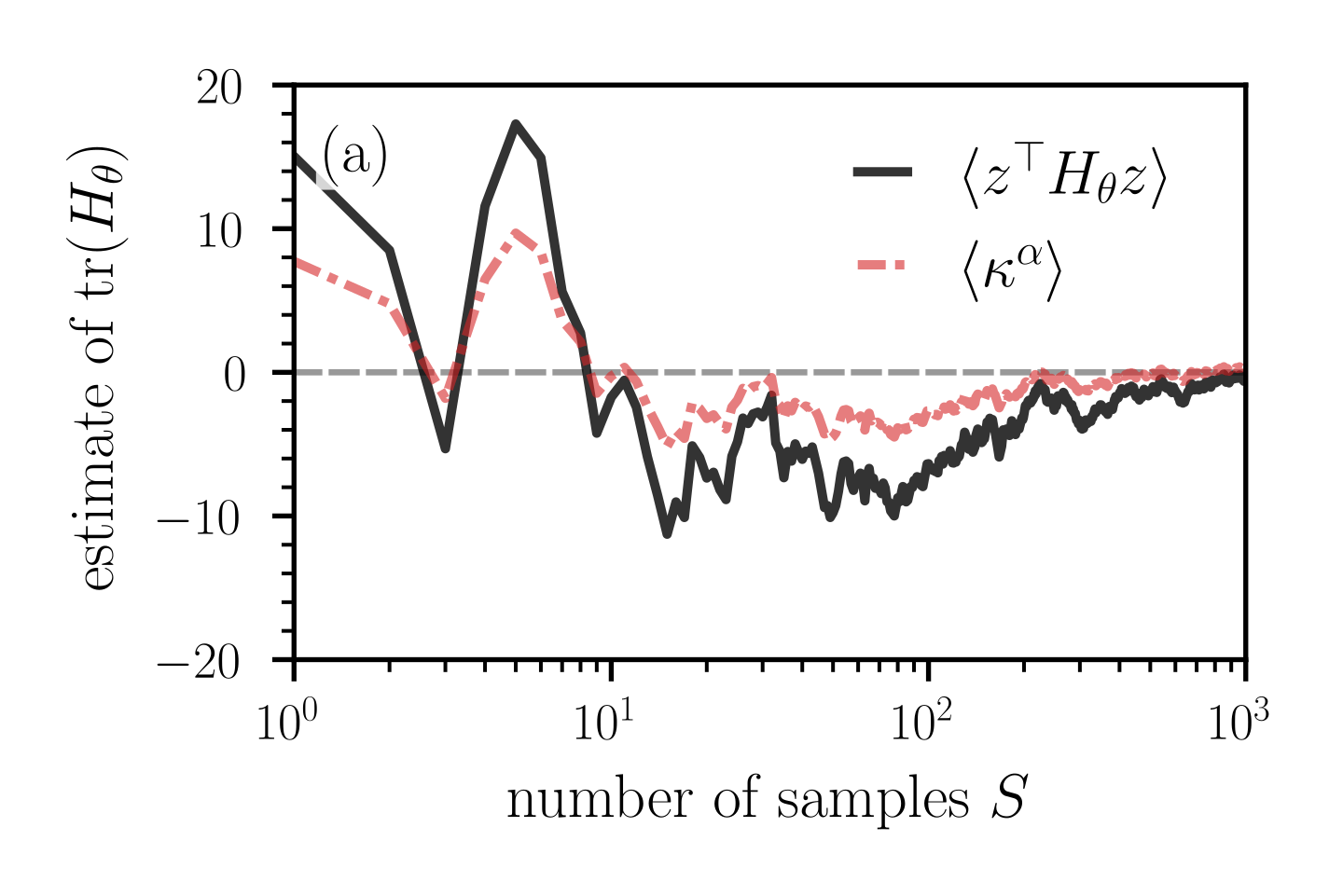

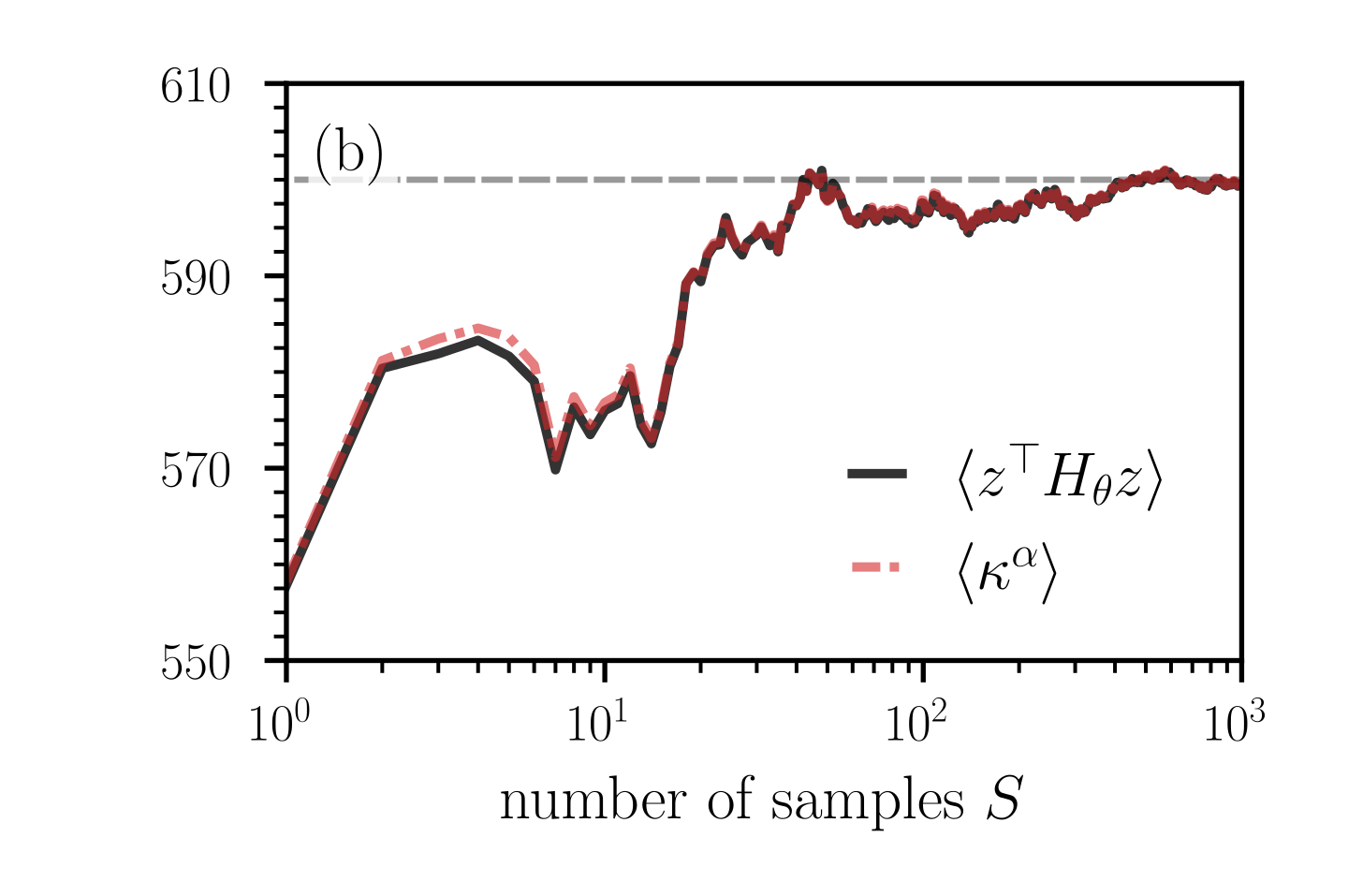

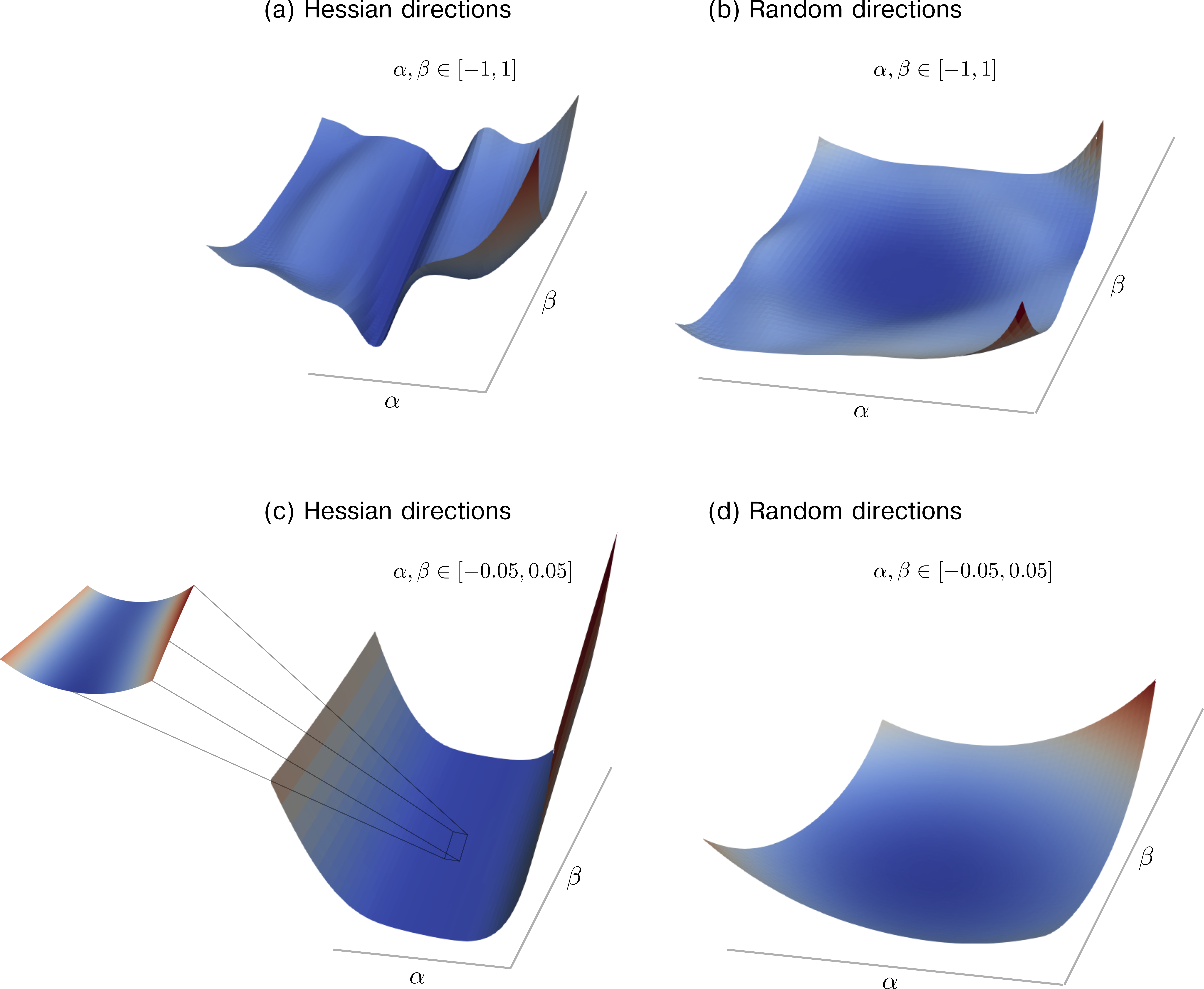

Figure 7: Convergence of the ensemble mean (43) of Hessian elements and curvatures measures as a function of the number of random projections $S$ . (a,c) The deviation of the ensemble means $\langle(H_{\alpha,\beta})_{ij}\rangle$ ( ${i,j∈\{1,2\}}$ ) of Hessian elements from the corresponding expected values as a function of $S$ . Notice that the expected value of the diagonal elements $(H_{\alpha,\beta})_{11}$ and $(H_{\alpha,\beta})_{22}$ is equal to $\bar{\kappa}^{\alpha,\beta}$ (i.e., to the sum of principal curvatures in the original space) [see Eqs. (36) and (37)]. A relatively large number of random projections between $10^{3}$ and $10^{4}$ is required to keep the deviations at values smaller than about 2–4. (b,d) The ensemble means $\langle\kappa^{\alpha,\beta}_{±}\rangle$ [see Eq. (35)] and $\langle\tilde{\kappa}^{\alpha,\beta}_{±}\rangle$ [see Eq. (44)] as a function of $S$ . Dashed grey lines represent $\bar{\kappa}^{\alpha,\beta}=\mathrm{tr}(H_{\theta})$ . In panels (a,b) and (c,d), the $N$ -dimensional loss functions are given by Eqs. (40) and (45), respectively. We evaluate the corresponding Hessians (42) and (46) at the saddle point $\theta^{*}=(\theta^{*}_{1},...,\theta^{*}_{2n},\theta^{*}_{2n+1})=(0,...,0,1)$ . In both loss functions, we set $n=500$ and in loss function (45) we set $\tilde{n}=800$ .

Because $H_{\theta}$ has positive and negative eigenvalues, the critical point is a saddle. The corresponding principal curvatures are $\kappa_{i}^{\theta}∈\{-1,1\}$ ( $i∈\{1,...,N-1\}$ ) and $\kappa^{\theta}_{N}=0$ . In this example, the mean curvature $H$ , as defined in Eq. (38), is equal to 0. According to Eq. (36), the principal curvature, $\bar{\kappa}^{\alpha,\beta}$ , associated with the expected, dimension-reduced Hessian $H_{\alpha,\beta}$ is also equal to 0, erroneously indicating an apparently flat loss landscape if one would use $\bar{\kappa}^{\alpha,\beta}$ as the main measure of curvature. To compare the convergence of different curvature measures as a function of the number of loss projections $S$ , we will now study the ensemble mean

$$

\langle X\rangle=\frac{1}{S}\sum_{k=1}^{S}X^{(k)} \tag{43}

$$

of different quantities of interest $X$ such as Hessian elements and principal curvature measures in dimension-reduced space. Here, $X^{(k)}$ is the $k$ -th realization (or sample) of $X$ .

<details>

<summary>curvature_pdfs_left.png Details</summary>

### Visual Description

\n

## Chart: Principal Curvature Distribution

### Overview

The image presents a histogram showing the probability density function (PDF) of principal curvatures, denoted as κ±α,β. Two distributions are overlaid: one representing negative principal curvatures (κ−α,β) and the other representing positive principal curvatures (κ+α,β). The distributions appear approximately normal, but with some skewness.

### Components/Axes

* **X-axis:** "principal curvatures κ±α,β". Scale ranges from -150 to 150, with tick marks every 50 units.

* **Y-axis:** "PDF" (Probability Density Function). Scale ranges from 0.0 to 1.5, with tick marks every 0.5 units.

* **Legend:** Located at the top-right of the chart.

* Red dashed line: κ−α,β

* Black solid line: κ+α,β

* **Label (a):** Located at the top-left of the chart.

### Detailed Analysis

The chart displays two overlapping histograms.

**κ−α,β (Red Dashed Line):**

The distribution is centered around approximately -30 to -40. The PDF rises from near 0 at -150, reaches a peak around -30, and then declines back to near 0 at 150. The peak PDF value is approximately 1.05. The distribution is right-skewed.

**κ+α,β (Black Solid Line):**

The distribution is centered around approximately 40 to 50. The PDF rises from near 0 at -150, reaches a peak around 40, and then declines back to near 0 at 150. The peak PDF value is approximately 0.95. The distribution is left-skewed.

The two distributions overlap significantly between approximately -20 and 80.

### Key Observations

* The negative principal curvatures (κ−α,β) are more spread out and have a higher peak PDF value than the positive principal curvatures (κ+α,β).

* The distributions are not symmetrical around zero, indicating an asymmetry in the principal curvatures.

* There is a noticeable overlap between the two distributions, suggesting that both positive and negative curvatures are present in the data.

### Interpretation

The chart suggests that the principal curvatures are not evenly distributed around zero. The negative curvatures are more prevalent and have a wider range of values than the positive curvatures. This asymmetry could indicate a directional bias or a specific geometric property of the surface or object from which these curvatures were derived. The overlap between the distributions suggests that the surface exhibits both concave and convex regions. The data suggests that the surface is more likely to have negative curvature than positive curvature. The distributions are not perfectly normal, indicating that the curvature values may not follow a simple Gaussian distribution. This could be due to the presence of outliers or a more complex underlying process generating the curvatures.

</details>

<details>

<summary>curvature_pdfs_right.png Details</summary>

### Visual Description

\n

## Chart: Distribution of Principal Curvatures

### Overview

The image presents a chart displaying the distribution of two sets of principal curvatures, denoted as κ<sup>α,β</sup><sub>-</sub> and κ<sup>α,β</sup><sub>+</sub>. The chart appears to be a histogram with overlaid curves representing the distribution.

### Components/Axes

* **X-axis:** Labeled "principal curvatures κ<sup>α,β</sup><sub>±</sub>". The scale ranges from approximately 450 to 750, with tick marks at intervals of 50.

* **Y-axis:** Represents frequency or probability density, ranging from 0.0 to 1.5, with tick marks at intervals of 0.5.

* **Legend:** Located in the top-left corner.

* Red dashed line: κ<sup>α,β</sup><sub>-</sub>

* Black solid line: κ<sup>α,β</sup><sub>+</sub>

* **Title:** "(b)" in the top-left corner. This likely indicates this is a sub-figure within a larger figure.

### Detailed Analysis

The chart shows two overlapping distributions.

**κ<sup>α,β</sup><sub>-</sub> (Red Dashed Line):**

The distribution is approximately normal, peaking around 550. The curve rises from a value of approximately 0.1 at 450, reaches a maximum of approximately 1.05 at around 550, and then declines to approximately 0.1 at 750. The histogram bars closely follow the curve.

**κ<sup>α,β</sup><sub>+</sub> (Black Solid Line):**

This distribution is also approximately normal, but is shifted to the right compared to the red distribution. It peaks around 650. The curve rises from a value of approximately 0.1 at 450, reaches a maximum of approximately 1.0 at around 650, and then declines to approximately 0.1 at 750. The histogram bars closely follow the curve.

The two distributions overlap significantly between approximately 600 and 700.

### Key Observations

* The two distributions have different means. The red distribution (κ<sup>α,β</sup><sub>-</sub>) is centered around 550, while the black distribution (κ<sup>α,β</sup><sub>+</sub>) is centered around 650.

* Both distributions have similar shapes and spreads.

* There is a noticeable overlap between the two distributions, indicating that some values of principal curvatures are common to both.

* The distributions are not perfectly symmetrical.

### Interpretation

The chart suggests that the principal curvatures κ<sup>α,β</sup><sub>-</sub> and κ<sup>α,β</sup><sub>+</sub> are not identically distributed. The difference in their means indicates that there is a systematic difference in the curvature properties being measured. The overlap between the distributions suggests that there is some correlation between the two curvature measures.

The fact that both distributions are approximately normal suggests that the underlying processes generating these curvatures are well-behaved. The slight asymmetry in the distributions might indicate the presence of some skewness or outliers in the data.

Without further context, it is difficult to determine the specific meaning of these principal curvatures. However, the chart provides valuable information about their statistical properties and relationships. The label "principal curvatures" suggests that these values are related to the geometry of a surface or object, and the differences between the two distributions might reflect different aspects of its shape.

</details>

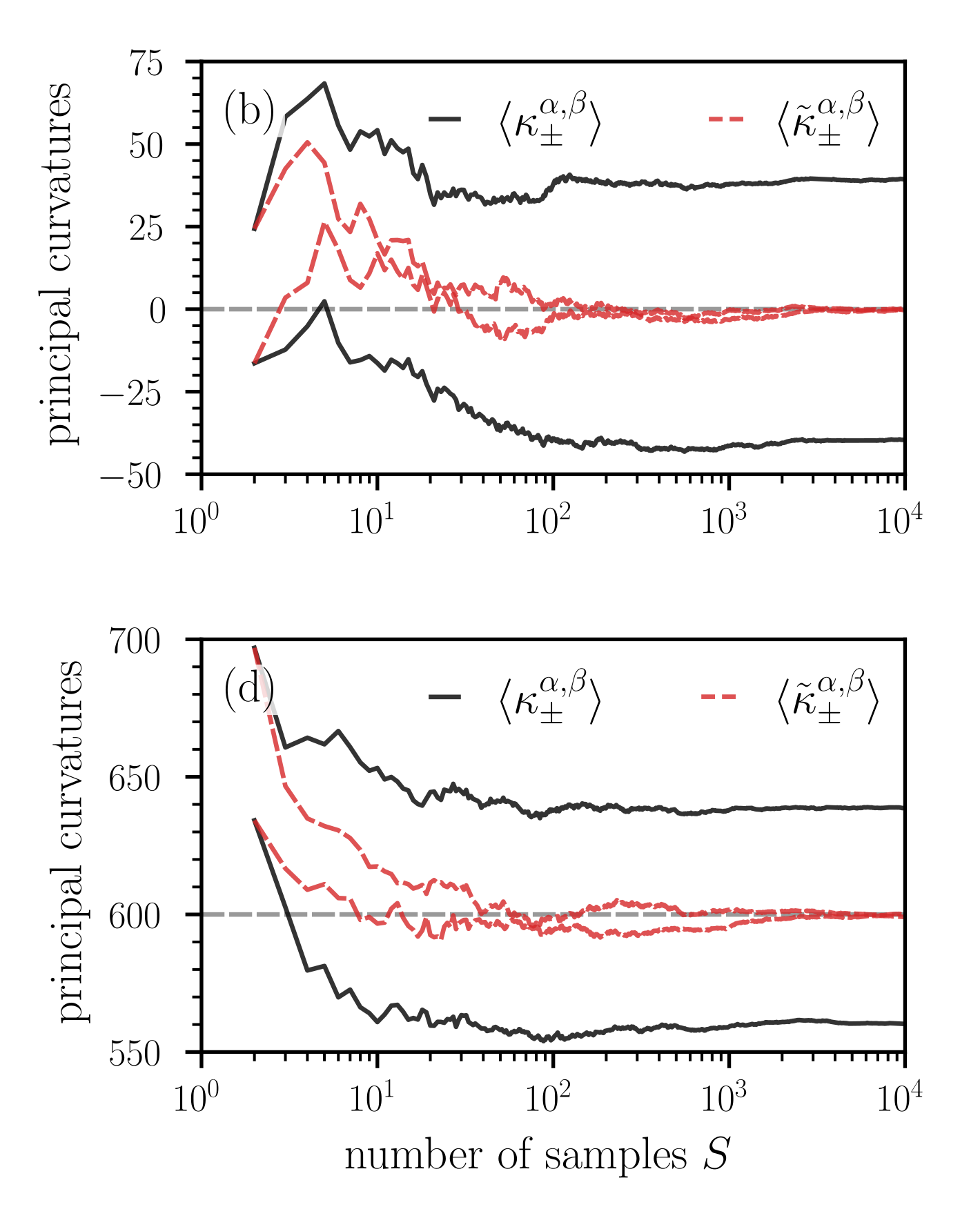

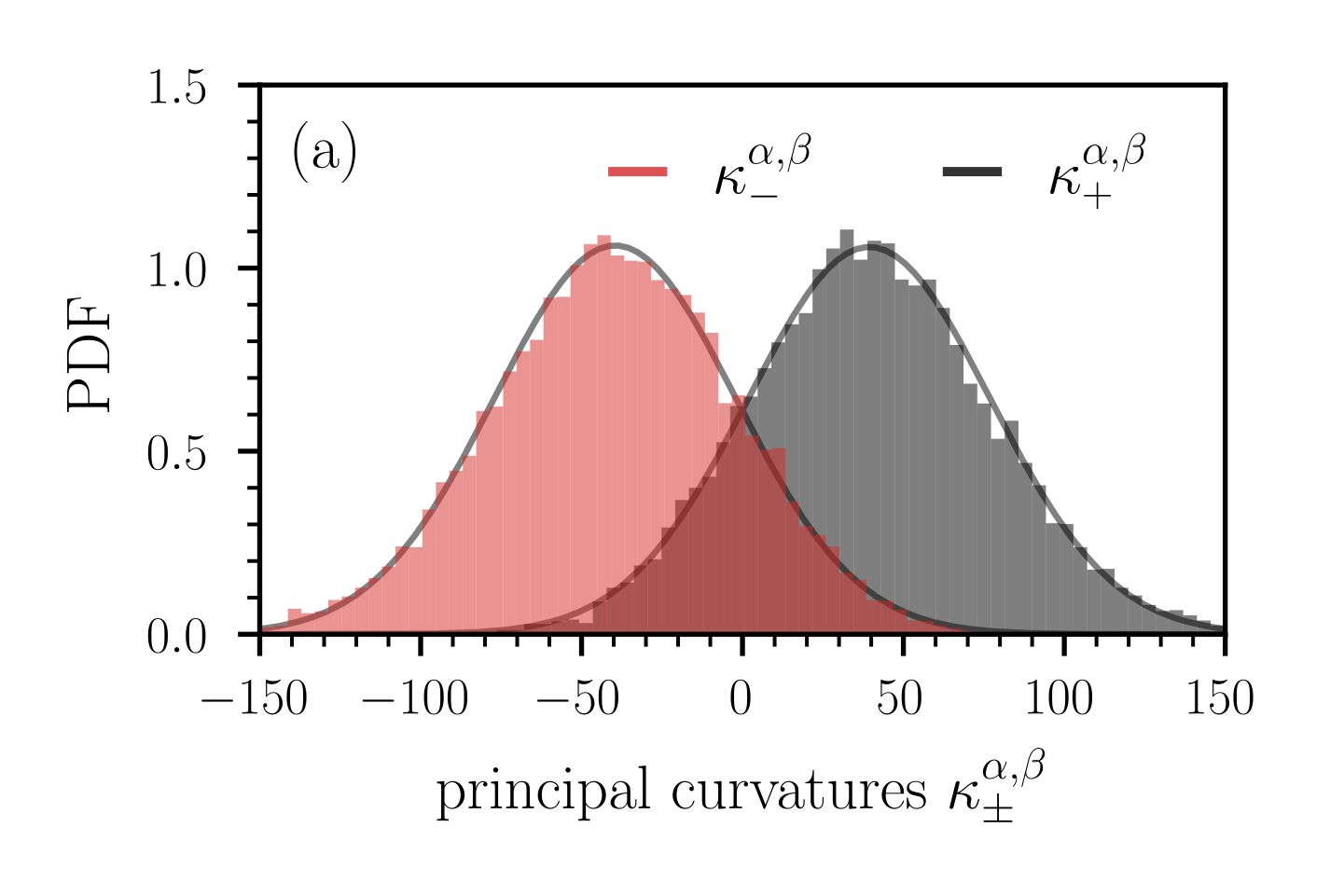

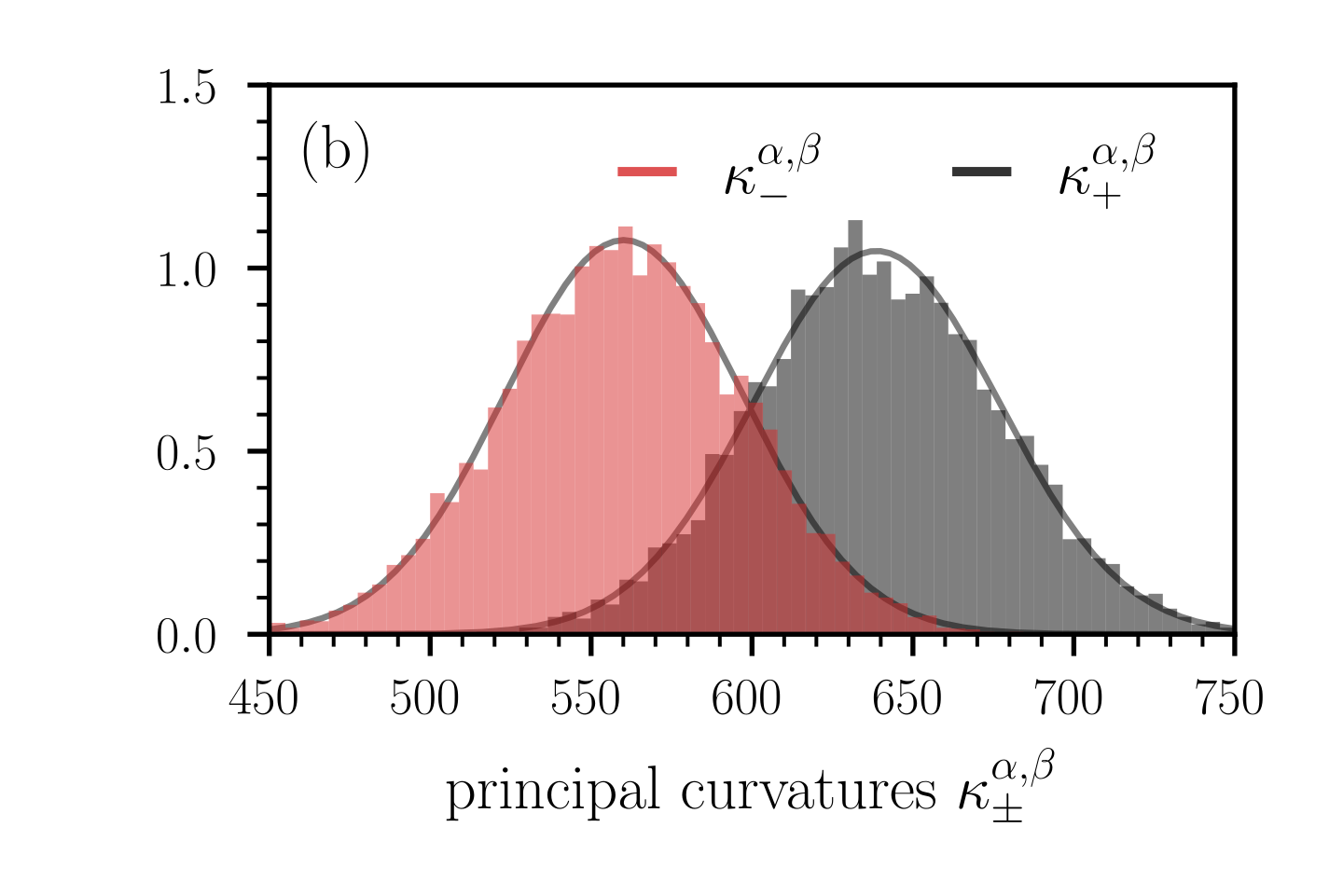

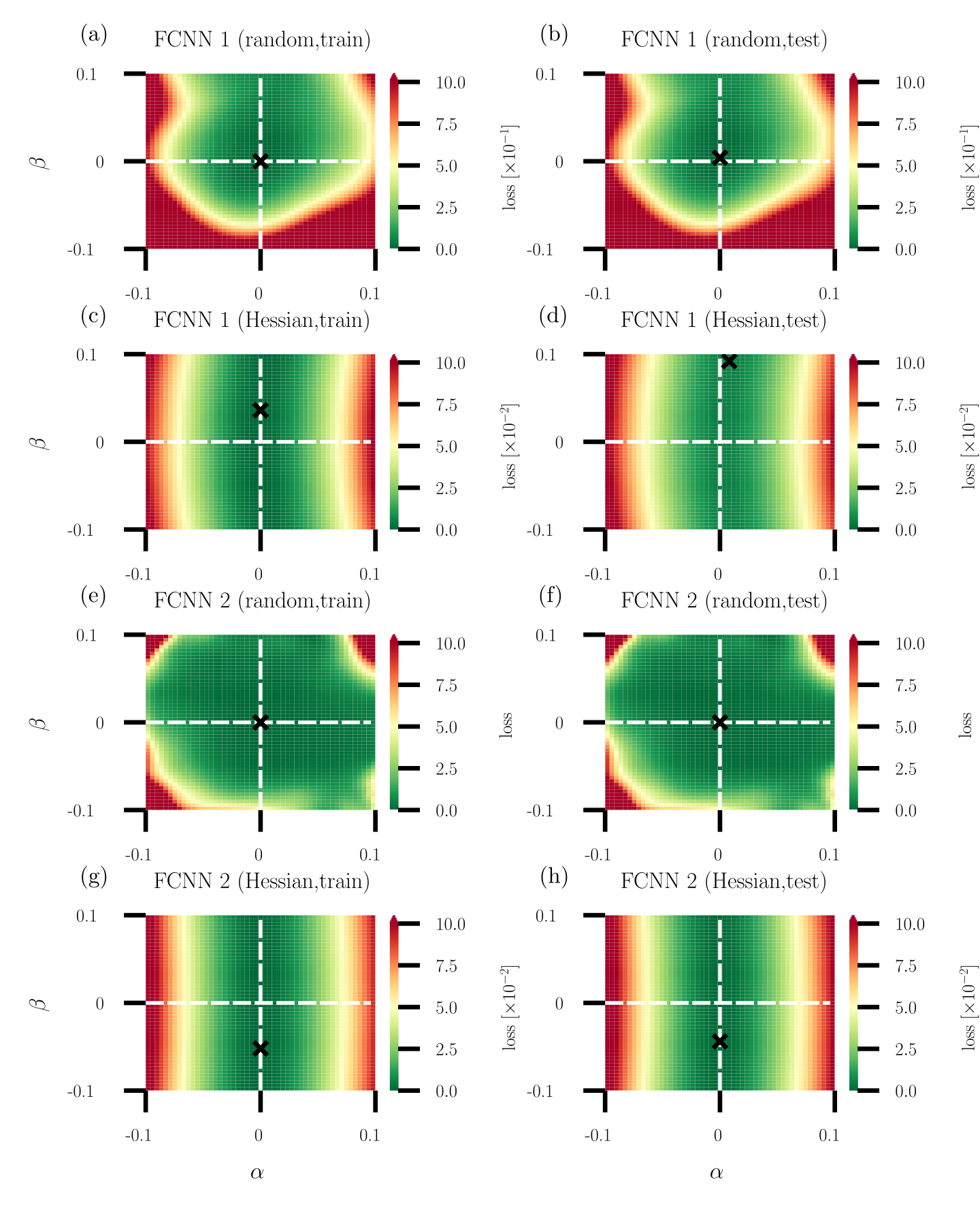

Figure 8: Distribution of principal curvatures $\kappa_{-}^{\alpha,\beta}$ (red bars) and $\kappa_{+}^{\alpha,\beta}$ (black bars). In panels (a) and (b), the loss functions are given by Eqs. (40) and (45), respectively. We evaluate the corresponding Hessians (42) and (46) at the saddle point $\theta^{*}=(\theta^{*}_{1},...,\theta^{*}_{2n},\theta^{*}_{2n+1})=(0,...,0,1)$ . In both loss functions, we set $n=500$ and in loss function (45) we set $\tilde{n}=800$ . In panel (a), the probability $\Pr(\kappa_{+}^{\alpha,\beta}\kappa_{-}^{\alpha,\beta}>0)$ that the critical point in the lower-dimensional, random projection does not appear as a saddle is about 0.3, and it is 1 in panel (b). Histograms are based on 10,000 random projections used to compute $\kappa_{±}^{\alpha,\beta}$ . Solid grey lines are Gaussian approximations of the empirical distributions.

We first study the dependence of ensemble means $\langle(H_{\alpha,\beta})_{ij}\rangle$ ( $i,j∈\{1,2\}$ ) of elements of the dimension-reduced Hessian $H_{\alpha,\beta}$ on the number of samples $S$ . According to Eq. (36), the diagonal elements of $\mathds{E}[H_{\alpha,\beta}]$ are proportional to the mean curvature of the original high-dimensional space and are thus useful to examine curvature properties of high-dimensional loss functions. Figure 7 (a) shows the convergence of the ensemble means $\langle(H_{\alpha,\beta})_{ij}\rangle$ toward the expected values $\mathds{E}[(H_{\alpha,\beta})_{ij}]$ as a function of $S$ . Note that $\mathds{E}[(H_{\alpha,\beta})_{ij}]=0$ for all $i,j$ . For a few dozen loss projections, the deviations of some of the ensemble means from the corresponding expected values reach values larger than 20. A relatively large number of loss projections $S$ between $10^{3}$ – $10^{4}$ is required to keep these deviations at values that are smaller than about 2–4. The solid black and red lines in Fig. 7 (b), respectively, show the ensemble means $\langle\kappa_{±}^{\alpha,\beta}\rangle$ and

$$

\langle\tilde{\kappa}_{\pm}^{\alpha,\beta}\rangle=\frac{1}{2}\left(\langle A\rangle+\langle C\rangle\pm\sqrt{4\langle B\rangle^{2}+(\langle A\rangle-\langle C\rangle)^{2}}\right) \tag{44}

$$

as a function of $S$ . Since $\mathds{E}[A]=\mathds{E}[C]={(H_{\theta})^{i}}_{i}$ and $\mathds{E}[B]=0$ [see Eq. (36)], we have that $\langle\tilde{\kappa}_{±}^{\alpha,\beta}\rangle=\bar{\kappa}^{\alpha,\beta}$ in the limit $S→∞$ . In the current example, the ensemble means $\langle\tilde{\kappa}_{±}^{\alpha,\beta}\rangle$ thus approach $\bar{\kappa}^{\alpha,\beta}=0$ for large numbers of samples $S$ , represented by the dashed red lines in Fig. 7 (b). The ensemble means $\langle\kappa_{±}^{\alpha,\beta}\rangle$ converge towards values of opposite sign, indicating a saddle point.

For a sample size of $S=10^{4}$ , we show the distribution of the principal curvatures $\kappa_{±}^{\alpha,\beta}$ in Fig. 8 (a). We observe that the distributions are plausibly Gaussian. We also calculate the probability $\Pr(\kappa_{+}^{\alpha,\beta}\kappa_{-}^{\alpha,\beta}>0)$ that the critical point in the lower-dimensional, random projection does not appear as a saddle (i.e., $\kappa_{+}^{\alpha,\beta}\kappa_{-}^{\alpha,\beta}>0$ ). For the example shown in Fig. 8 (a), we find that $\Pr(\kappa_{+}^{\alpha,\beta}\kappa_{-}^{\alpha,\beta}>0)≈ 0.3$ . That is, in about 30% of the simulated projections, the lower-dimensional loss landscape wrongly indicates that it does not correspond to a saddle.

Our first example, which is based on the loss function (40), shows that the principal curvatures in the lower-dimensional representation of $L(\theta)$ may capture the saddle behavior in the original space if one computes ensemble means $\langle\kappa_{±}^{\alpha,\beta}\rangle$ in the lower-dimensional space [see Fig. 7 (b)]. However, if one first calculates ensemble means of the elements of the dimension-reduced Hessian $H_{\alpha,\beta}$ to infer $\langle\tilde{\kappa}_{±}^{\alpha,\beta}\rangle$ , the loss landscape appears to be flat in this example. We thus conclude that different ways of computing ensemble means (either before or after calculating the principal curvatures) may lead to different results with respect to the “flatness” of a dimension-reduced loss landscape.

Unequal number of curvature directions

In the next example, we will show that random projections cannot identify certain saddle points regardless of the underlying averaging process. We consider the loss function

$$

L(\theta)=\frac{1}{2}\theta_{2n+1}\left(\sum_{i=1}^{\tilde{n}}\theta_{i}^{2}-\sum_{i=\tilde{n}+1}^{2n}\theta_{i}^{2}\right)\,,\quad n\in\mathbb{Z}_{+}\,,n<\tilde{n}\leq 2n\,, \tag{45}

$$

where we use the convention $\sum_{i=a}^{b}(·)=0$ if $a>b$ . The Hessian at the critical point $(\theta^{*}_{1},...,\theta^{*}_{2n},\theta^{*}_{2n+1})=(0,...,0,1)$ is

$$

H_{\theta}=\mathrm{diag}(\underbrace{1,\dots,1}_{\tilde{n}~\mathrm{times}},\underbrace{-1,\dots,-1}_{2n-\tilde{n}~\mathrm{times}},0)\,. \tag{46}

$$