# Base of RoPE Bounds Context Length

**Authors**:

- Xin Men (Baichuan Inc.)

- &Mingyu Xu (Baichuan Inc.)

- &Bingning Wang (Baichuan Inc.)

- \ANDQingyu Zhang

- &Hongyu Lin

- &Xianpei Han

- \AND Weipeng Chen

- Baichuan Inc

> Equal contributionCorresponding author,daniel@baichuan-inc.com

Abstract

Position embedding is a core component of current Large Language Models (LLMs). Rotary position embedding (RoPE), a technique that encodes the position information with a rotation matrix, has been the de facto choice for position embedding in many LLMs, such as the Llama series. RoPE has been further utilized to extend long context capability, which is roughly based on adjusting the base parameter of RoPE to mitigate out-of-distribution (OOD) problems in position embedding. However, in this paper, we find that LLMs may obtain a superficial long-context ability based on the OOD theory. We revisit the role of RoPE in LLMs and propose a novel property of long-term decay, we derive that the base of RoPE bounds context length: there is an absolute lower bound for the base value to obtain certain context length capability. Our work reveals the relationship between context length and RoPE base both theoretically and empirically, which may shed light on future long context training.

<details>

<summary>x1.png Details</summary>

### Visual Description

# Technical Document Extraction: RoPE Base Lower Bound Analysis

## 1. Image Overview

This image is a technical line-and-scatter plot on a log-log scale. It illustrates the relationship between the "Context length" of a model and the "Lower bound of RoPE's base." The chart includes a series of discrete data points and a fitted regression line with an associated mathematical formula.

## 2. Component Isolation

### A. Header / Legend Area

* **Location:** Top-left quadrant of the chart area.

* **Legend Item:** A solid red line segment followed by the mathematical expression: $y = 0.0424x^{1.628}$.

* **Visual Confirmation:** The red line in the legend matches the solid red trend line passing through the data points.

### B. Main Chart Area (Data Series)

* **Grid:** Light gray dashed grid lines for both major X and Y axes.

* **Data Points (Scatter):** Represented by dark blue triangles ($\black upward \text{ triangles}$).

* **Trend Line:** A solid red line.

* **Trend Verification:** The data points follow a consistent upward linear path on this log-log scale, indicating a power-law relationship. The red line acts as a "best fit" for these points.

### C. Axis Definitions

* **X-Axis (Horizontal):**

* **Label:** "Context length"

* **Scale:** Logarithmic (Base 10).

* **Markers:** $10^3, 10^4, 10^5, 10^6$.

* **Y-Axis (Vertical):**

* **Label:** "Lower bound of RoPE's base"

* **Scale:** Logarithmic (Base 10).

* **Markers:** $10^4, 10^5, 10^6, 10^7, 10^8$.

## 3. Data Extraction and Reconstructed Table

Based on the log-log scale, the following data points (blue triangles) are estimated from their spatial positioning relative to the grid:

| Context length (x) | Lower bound of RoPE's base (y) | Notes |

| :--- | :--- | :--- |

| $\approx 10^3$ | $\approx 4 \times 10^3$ | First data point |

| $\approx 2 \times 10^3$ | $\approx 1.5 \times 10^4$ | Slightly above the $10^4$ line |

| $\approx 4 \times 10^3$ | $\approx 3 \times 10^4$ | |

| $\approx 8 \times 10^3$ | $\approx 8 \times 10^4$ | Just below the $10^5$ line |

| $\approx 1.5 \times 10^4$ | $\approx 3 \times 10^5$ | |

| $\approx 3 \times 10^4$ | $\approx 6 \times 10^5$ | |

| $\approx 6 \times 10^4$ | $\approx 2 \times 10^6$ | |

| $\approx 1.2 \times 10^5$ | $\approx 8 \times 10^6$ | Just below the $10^7$ line |

| $\approx 2.5 \times 10^5$ | $\approx 4 \times 10^7$ | |

| $\approx 5 \times 10^5$ | $\approx 7 \times 10^7$ | |

| $\approx 10^6$ | $\approx 4 \times 10^8$ | Final data point |

## 4. Key Trends and Mathematical Findings

* **Relationship Type:** The data exhibits a strong power-law relationship, evidenced by the straight-line fit on a log-log plot.

* **Regression Formula:** The relationship is defined by the equation:

$$y = 0.0424x^{1.628}$$

* **Growth Rate:** The exponent of $1.628$ indicates that the lower bound of the RoPE (Rotary Positional Embedding) base grows super-linearly with respect to the context length. Specifically, as the context length increases by a factor of 10, the lower bound increases by a factor of approximately $10^{1.628} \approx 42.46$.

* **Observation:** The blue triangle data points show minor variance (noise) around the red regression line but maintain a very high correlation with the predicted model.

</details>

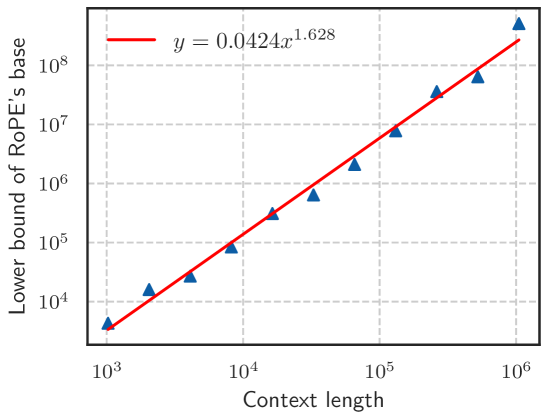

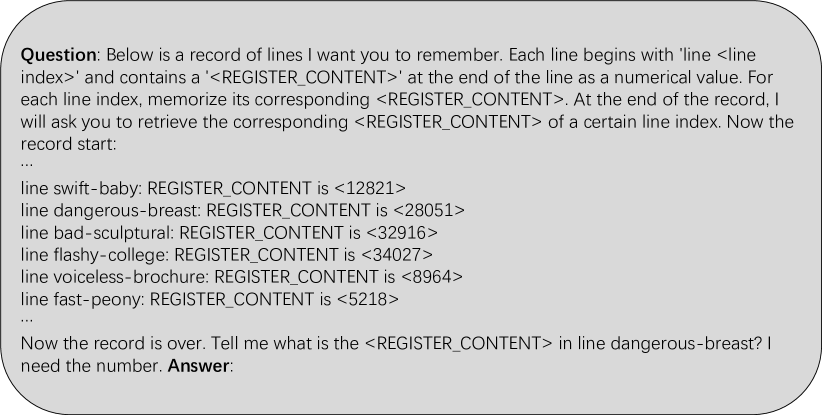

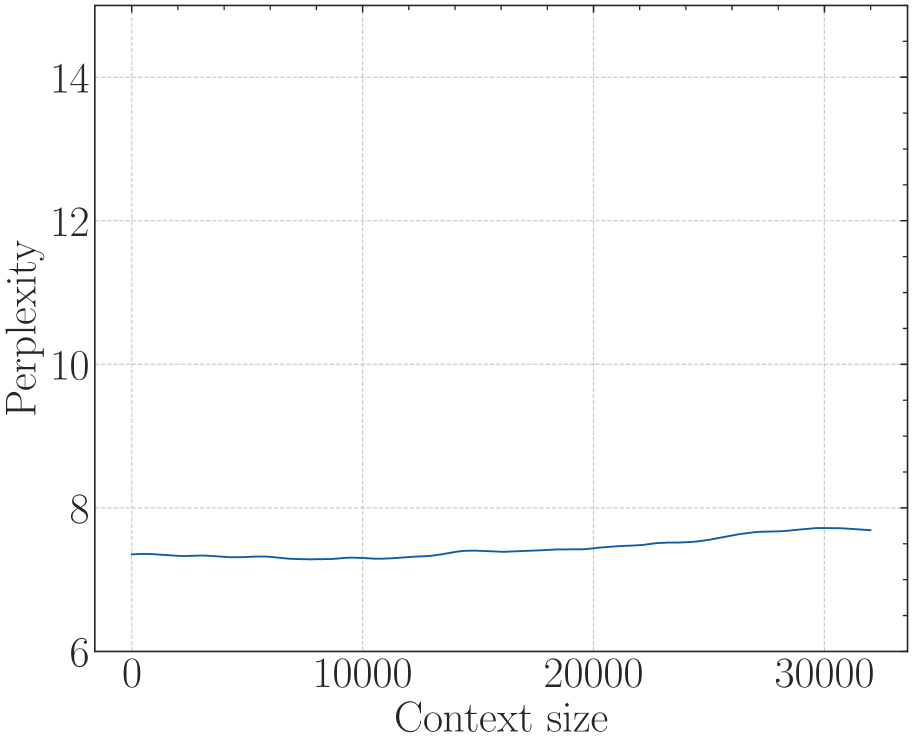

Figure 1: Context length and its corresponding lower bound of RoPE’s base value.

1 Introduction

In the past few years, large language models have demonstrated surprising capabilities and undergone rapid development. By now, LLMs have been widely applied across various domains, including chatbots, intelligent agents, and code assistants (Achiam et al., 2023; Jiang et al., 2023b). The Transformer (Vaswani et al., 2017), based on the attention mechanism, has been the most popular backbone of LLMs due to its good performance and scaling properties (Tay et al., 2022). One of the key component modules in the Transformer is position embedding, which is introduced to embed positional information that is vital for processing sequential data. Rotary position embedding (RoPE), which encodes relative distance information in the form of absolute position embedding (Su et al., 2024), has been a popular choice and applied in many LLMs (Touvron et al., 2023a; Yang et al., 2023; Bai et al., 2023).

RoPE introduces no training parameters and shows improvement in language modeling and many other tasks (Su et al., 2024; Heo et al., 2024). One reason that RoPE is widely used is its ability for context length extrapolation (Peng et al., 2023b; Chen et al., 2023), which extends the context length of a trained LLM without expensive retraining. In practice, many works (Touvron et al., 2023a; Liu et al., 2024a; Young et al., 2024) have successfully extended the window length by simply increasing base value, the only one hyper-parameter in RoPE, and fine-tuning on long texts.

The reasons behind the success of these long context extensions are often explained as avoiding out-of-distribution (OOD) rotation angles (Liu et al., 2024b; Han et al., 2023) in RoPE, meaning the extended context length (OOD) can be mapped to the in-distribution context length that has been properly trained. Based on the OOD theory, a recent study (Liu et al., 2024b) finds that a smaller base can mitigate OOD and is beneficial for the model’s ability to process long contexts, which inspires us to further study the relationship between the base of RoPE and the length of context the model can process.

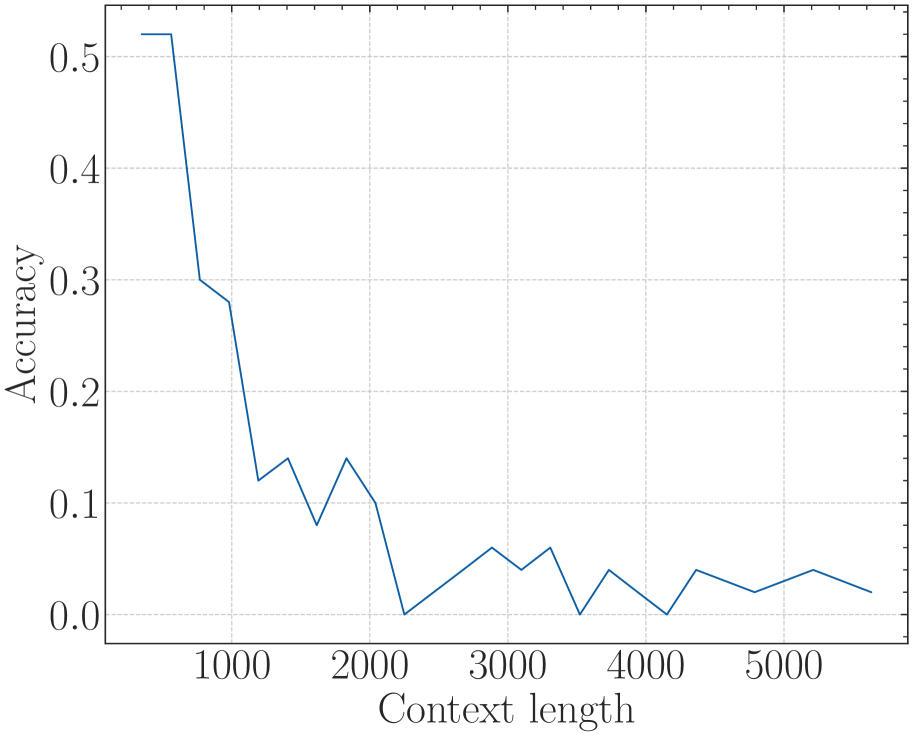

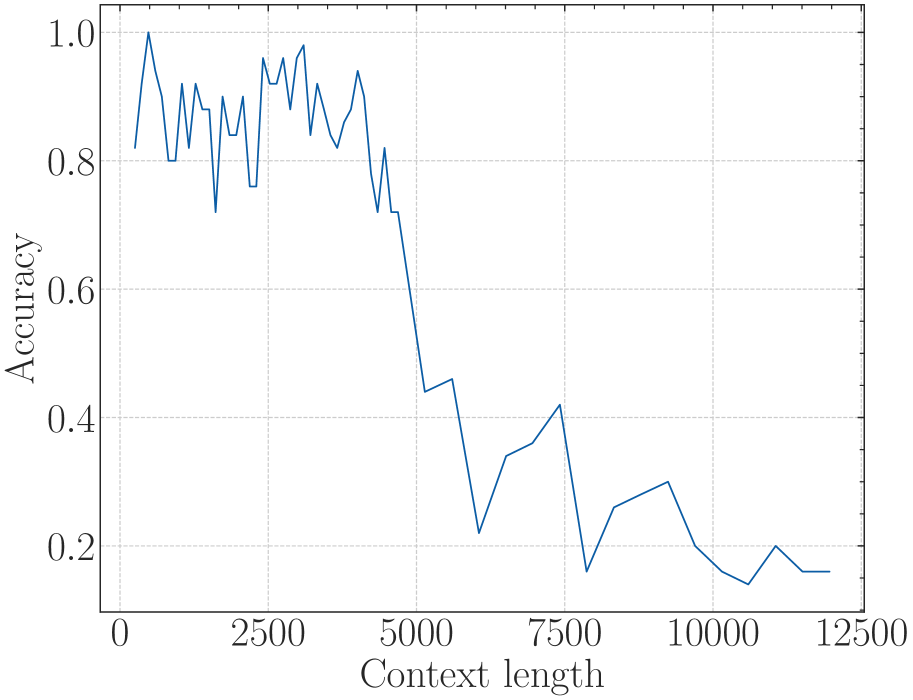

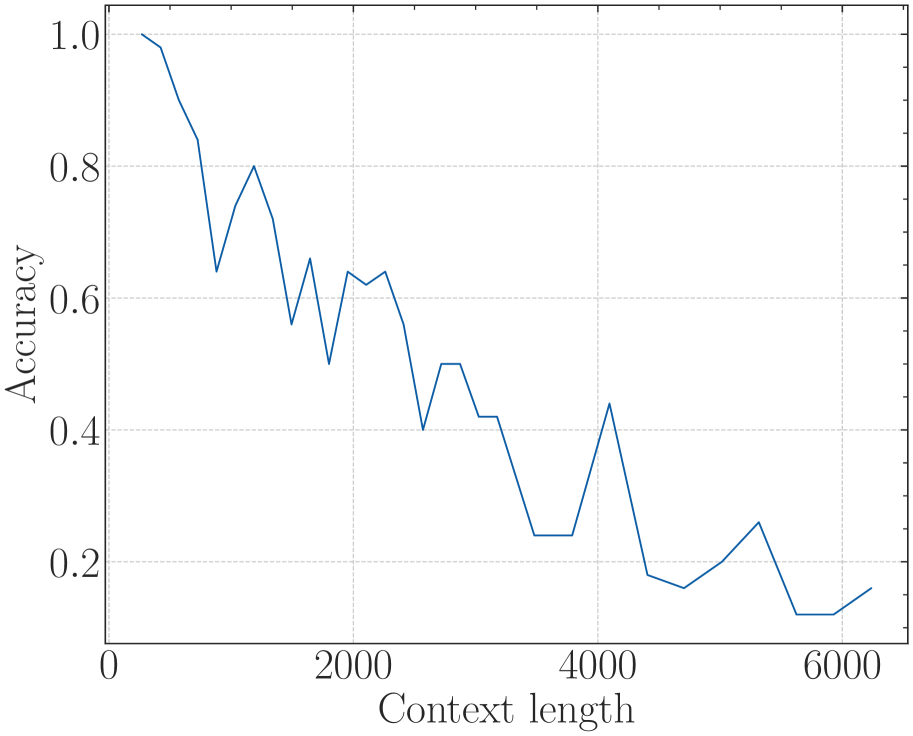

In this paper, we find that the model may show superficial long context capability with an inappropriate RoPE base value, in which case the model can only preserve low perplexity but loses the ability to retrieve long context information. We also show that the out-of-distribution (OOD) theory in position embedding, which motivates most length extrapolation works (Peng et al., 2023b; Chen et al., 2023; Liu et al., 2024b), is insufficient to fully reflect the model’s ability to process long contexts. Therefore, we revisit the role of RoPE in LLMs and derive a novel property of long-term decay in RoPE: the ability to attend more attention to similar tokens than random tokens decays as the relative distance increases. While previous long context works often focus on the relative scale of the RoPE base, based on our theory, we derive an absolute lower bound for the base value of RoPE to obtain a certain context length ability, as shown in Figure 1. To verify our theory, we conducted thorough experiments on various LLMs such as Llama2-7B (Touvron et al., 2023b), Baichuan2-7B (Yang et al., 2023) and a 2-billion model we trained from scratch, demonstrating that this lower bound holds not only in the fine-tuning stage but also in the pre-training stage.

We summarize the contributions of the paper as follows:

- Theoretical perspective: we derive a novel property of long-term decay in RoPE, indicating the model’s ability to attend more to similar tokens than random tokens, which is a new perspective to study the long context capability of the LLMs.

- Lower Bound of RoPE’s Base: to achieve the expected context length capability, we derive an absolute lower bound for RoPE’s base according to our theory. In short, the base of RoPE bounds context length.

- Superficial Capability: we reveal that if the RoPE’s base is smaller than a lower bound, the model may obtain superficial long context capability, which can preserve low perplexity but lose the ability to retrieve information from long context.

2 Background

In this section, we first introduce the Transformer and RoPE, which are most commonly used in current LLMs. Then we discuss long context methods based on the OOD of rotation angle theory.

2.1 Attention and RoPE

The LLMs in current are primarily based on the Transformer (Vaswani et al., 2017). The core component of it is the calculation of the attention mechanism. The naive attention can be written as:

$$

\displaystyle A_{ij} \displaystyle=q_{i}^{T}k_{j} \displaystyle\text{ATTN}(X) \displaystyle=\text{softmax}(A/\sqrt{d})\ v, \tag{1}

$$

where $A∈ R^{L× L}$ $q,k,v∈ R^{d}$ . Position embedding is introduced to make use of the order of the sequence in attention.

RoPE (Su et al., 2024) implements relative position embedding through absolute position embedding, which applies rotation matrix into the calculation of the attention score in Eq. 1, which can be written as:

$$

\displaystyle A_{ij}=(R_{i,\theta}q_{i})^{T}(R_{j,\theta}k_{i})=q_{i}^{T}R_{j-i,\theta}k_{j}=q_{i}^{T}R_{m,\theta}k_{j}, \tag{3}

$$

where $m=j-i$ is the relative distance of $i$ and $j$ , $R_{m,\theta}$ is a rotation matrix denoted as:

$$

\displaystyle\left[\begin{array}[]{ccccccc}cos(m\theta_{0})&-sin(m\theta_{0})&0&0&\cdots&0&0\\

sin(m\theta_{0})&cos(m\theta_{0})&0&0&\cdots&0&0\\

0&0&cos(m\theta_{1})&-sin(m\theta_{1})&\cdots&0&0\\

0&0&sin(m\theta_{1})&cos(m\theta_{1})&\cdots&0&0\\

\vdots&\vdots&\vdots&\vdots&\ddots&\vdots&\vdots\\

0&0&0&0&\cdots&cos(m\theta_{d/2-1})&-sin(m\theta_{d/2-1})\\

0&0&0&0&\cdots&sin(m\theta_{d/2-1})&cos(m\theta_{d/2-1})\end{array}\right] \tag{11}

$$

Generally, the selection of rotation angles satisfies $\theta_{i}=base^{-2i/d}$ , the typical base value for current LLMs is 10,000, and the base of RoPE in LLMs is shown in Table 1.

Table 1: The setting of RoPE’s base and context length in various LLMs.

| Base | 10,000 | 10,000 | 500,000 | 1,000,000 | 10,000 |

| --- | --- | --- | --- | --- | --- |

| Length | 2,048 | 4,096 | 8,192 | 32,768 | 4,096 |

<details>

<summary>x2.png Details</summary>

### Visual Description

# Technical Document Extraction: RoPE vs. Context-size Analysis

## 1. Image Overview

This image is a line graph illustrating the relationship between "RoPE" (Rotary Positional Embedding) values and "Context-size" in a machine learning context. The graph highlights the behavior of the embedding as the context window extends beyond its original training limits.

## 2. Component Isolation

### A. Header/Title

* No explicit title is present at the top of the image.

### B. Main Chart Area

* **Type:** 2D Line Plot with shaded background regions.

* **X-Axis (Horizontal):**

* **Label:** "Context-size"

* **Major Tick Markers:** 0, 10000, 20000, 30000.

* **Minor Tick Markers:** Increments of 2000 (e.g., 2000, 4000, 6000, 8000).

* **Y-Axis (Vertical):**

* **Label:** "RoPE"

* **Major Tick Markers:** -1.0, -0.5, 0.0, 0.5, 1.0.

* **Minor Tick Markers:** Increments of 0.1.

* **Gridlines:** Horizontal grey lines are present at y-values: -1.0, -0.5, 0.0, 0.5, 1.0.

### C. Shaded Regions (Background)

* **Light Blue Region:** Extends from x = 0 to approximately x = 4000. This represents the "standard" or "original" context window.

* **Light Red/Pink Region:** Extends from approximately x = 4000 to the end of the x-axis (approx. 33000).

* **Embedded Text:** "Extended context" is centered within this region at roughly [x=23000, y=0.05].

## 3. Data Series Analysis

### Trend Verification

* **Series:** Single dark blue solid line.

* **Visual Trend:** The line follows a sinusoidal (specifically, a cosine-like) curve. It starts at its maximum value at x=0, slopes downward through the x-axis, reaches a minimum, and begins to slope upward again at the far right of the chart.

### Data Point Extraction (Estimated)

| Context-size (x) | RoPE Value (y) | Region |

| :--- | :--- | :--- |

| 0 | 1.0 | Standard (Blue) |

| 4000 | ~0.9 | Boundary |

| 10000 | ~0.5 | Extended (Red) |

| 14000 | 0.0 | Extended (Red) |

| 20000 | ~-0.6 | Extended (Red) |

| 27000 | -1.0 (Local Minimum) | Extended (Red) |

| 33000 | ~-0.8 | Extended (Red) |

## 4. Technical Summary

The chart visualizes how a specific dimension of a Rotary Positional Embedding (RoPE) oscillates as the sequence length (Context-size) increases.

1. **Standard Context:** Within the first 4,000 tokens (blue area), the RoPE value remains high (between 1.0 and 0.9), indicating high correlation or specific positional encoding for short-range dependencies.

2. **Extended Context:** As the context size enters the "Extended context" zone (red area), the value crosses zero at approximately 14,000 tokens and reaches a full phase inversion (-1.0) at approximately 27,000 tokens.

This visualization is typically used to demonstrate the "out-of-distribution" behavior of positional embeddings when a model is pushed beyond the sequence length it was originally trained on, showing the periodic nature of the encoding mechanism.

</details>

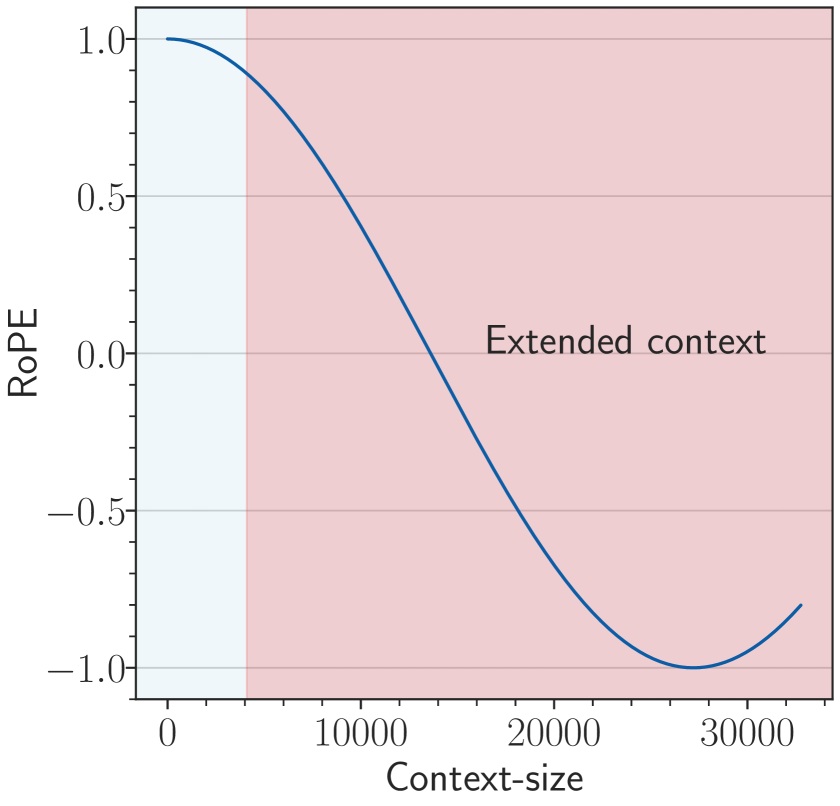

(a) base=1e4

<details>

<summary>x3.png Details</summary>

### Visual Description

# Technical Document Extraction: Context-Size Periodic Waveform Analysis

## 1. Component Isolation

The image is a technical line chart plotted on a Cartesian coordinate system. It is segmented into two distinct background regions to differentiate operational phases.

* **Header:** None present.

* **Main Chart Area:** Contains a single continuous blue oscillating line, two colored background regions, and one text annotation.

* **Footer:** Contains the X-axis label and numerical scale.

## 2. Axis and Label Extraction

### X-Axis (Horizontal)

* **Label:** `Context-size`

* **Scale Range:** 0 to approximately 33,000.

* **Major Tick Markers:** `0`, `10000`, `20000`, `30000`.

* **Minor Tick Markers:** Present at intervals of 1,000 units.

### Y-Axis (Vertical)

* **Label:** None explicitly stated (represents amplitude).

* **Scale Range:** -1.0 to 1.0.

* **Major Tick Markers:** `-1.0`, `-0.5`, `0.0`, `0.5`, `1.0`.

* **Minor Tick Markers:** Present at intervals of 0.1 units.

* **Gridlines:** Horizontal grey lines are present at every major Y-axis tick marker.

## 3. Data Series Analysis

### Series 1: Blue Oscillating Line

* **Color:** Dark Blue.

* **Trend Verification:** The line follows a consistent, undamped sinusoidal (cosine-like) pattern. It begins at a peak of 1.0 at $x=0$, descends to a trough of -1.0, and repeats this cycle with a constant frequency and amplitude across the entire X-axis range.

* **Key Data Points:**

* **Start Point:** [0, 1.0]

* **Amplitude:** 1.0 (Peak-to-peak amplitude of 2.0).

* **Periodicity:** There are approximately 11 full cycles shown between 0 and 33,000. This suggests a period of roughly 3,000 units per cycle.

## 4. Regional Segmentation and Annotations

The chart area is divided into two vertical background zones:

| Region | X-Axis Range (Approx) | Background Color | Description |

| :--- | :--- | :--- | :--- |

| **Training/Base Context** | 0 to 4,000 | Light Blue | Represents the initial context window. |

| **Extended Context** | 4,000 to 33,000+ | Light Red/Pink | Represents the expansion of the context window. |

### Embedded Text

* **Content:** `Extended context`

* **Spatial Grounding:** Located centrally within the red background region, approximately at coordinates [16000, 0.05].

* **Significance:** This label identifies the red-shaded area as the "Extended context" zone, indicating that the periodic signal (likely representing positional embeddings or attention patterns) maintains stability even when the context size exceeds the initial training window.

## 5. Summary of Technical Information

This chart visualizes the stability of a periodic function (likely a Rotary Positional Embedding or similar mechanism in a Transformer model) as the **Context-size** increases. The data demonstrates that the signal's frequency and amplitude remain perfectly consistent when transitioning from the base context (0 - 4,000, blue zone) into the **Extended context** (4,000 - 33,000, red zone), suggesting successful extrapolation or scaling of the underlying mechanism.

</details>

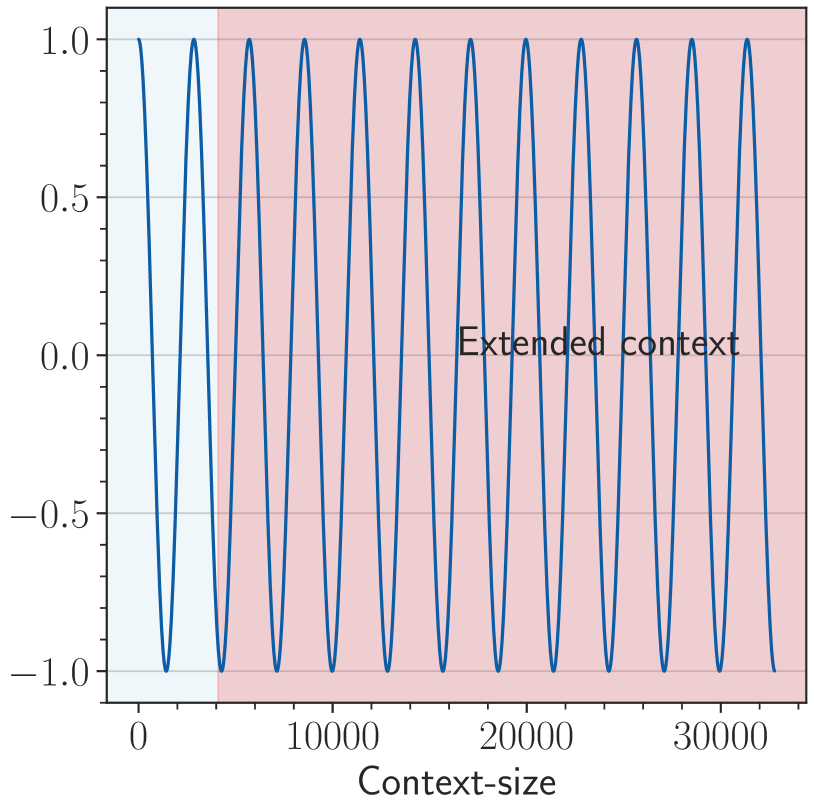

(b) base=500

<details>

<summary>x4.png Details</summary>

### Visual Description

# Technical Document Extraction: Context-size Performance Chart

## 1. Component Isolation

* **Header:** None present.

* **Main Chart Area:** A line graph plotting a performance metric (Y-axis) against "Context-size" (X-axis). The background is divided into two distinct colored regions.

* **Footer:** Contains the X-axis label and major tick marks.

## 2. Axis and Labels

* **X-axis Title:** `Context-size`

* **X-axis Scale:** Linear, ranging from 0 to approximately 33,000.

* **Major Ticks:** 0, 10000, 20000, 30000.

* **Minor Ticks:** Present at intervals of 1,000 and 5,000.

* **Y-axis Title:** None explicitly labeled, but represents a normalized value or probability.

* **Y-axis Scale:** Linear, ranging from 0.88 to 1.00.

* **Major Ticks:** 0.90, 0.92, 0.94, 0.96, 0.98, 1.00.

* **Gridlines:** Horizontal grey lines correspond to each major Y-axis tick.

* **Embedded Text:** "Extended context" is located in the center-right of the plot area.

## 3. Background Regions (Spatial Grounding)

The chart area is divided vertically into two shaded regions:

1. **Light Blue Region (Left):** Extends from X = 0 to approximately X = 4,000. This represents the standard or baseline context window.

2. **Light Red Region (Right):** Extends from approximately X = 4,000 to the end of the X-axis (approx. 33,000). This region is associated with the "Extended context" label.

## 4. Data Series Analysis

### Series 1: Standard Performance (Solid Blue Line)

* **Visual Trend:** The line starts at (0, 1.00) and exhibits a very sharp, steep downward slope.

* **Key Data Points:**

* **Start:** [0, 1.00]

* **End:** Terminates abruptly at approximately [4000, 0.89].

* **Observation:** This series represents a rapid degradation in performance as the context size increases beyond a very small threshold, failing completely shortly after the 4,000 mark.

### Series 2: Extended Performance (Dash-Dot Red Line)

* **Visual Trend:** The line starts at (0, 1.00) and exhibits a much more gradual, concave downward slope.

* **Key Data Points:**

* **Start:** [0, 1.00]

* **Mid-point (approx):** [16000, 0.97]

* **End:** [32768 (approx), 0.89]

* **Observation:** This series maintains significantly higher performance over a much larger context window compared to the blue series. It reaches the same degradation level (0.89) at ~32k that the blue series reached at ~4k.

## 5. Summary of Findings

The chart illustrates the effectiveness of an "Extended context" method (Red Dash-Dot line) compared to a baseline method (Blue Solid line).

* **Baseline:** Performance drops precipitously, losing ~11% of its value within the first 4,000 units of context size.

* **Extended:** Performance is preserved much longer, maintaining >95% of its value up to approximately 22,000 units and only reaching the 11% degradation point at roughly 32,000 units.

* **Conclusion:** The extended context method provides approximately an 8x increase in usable context size for the same level of performance degradation.

</details>

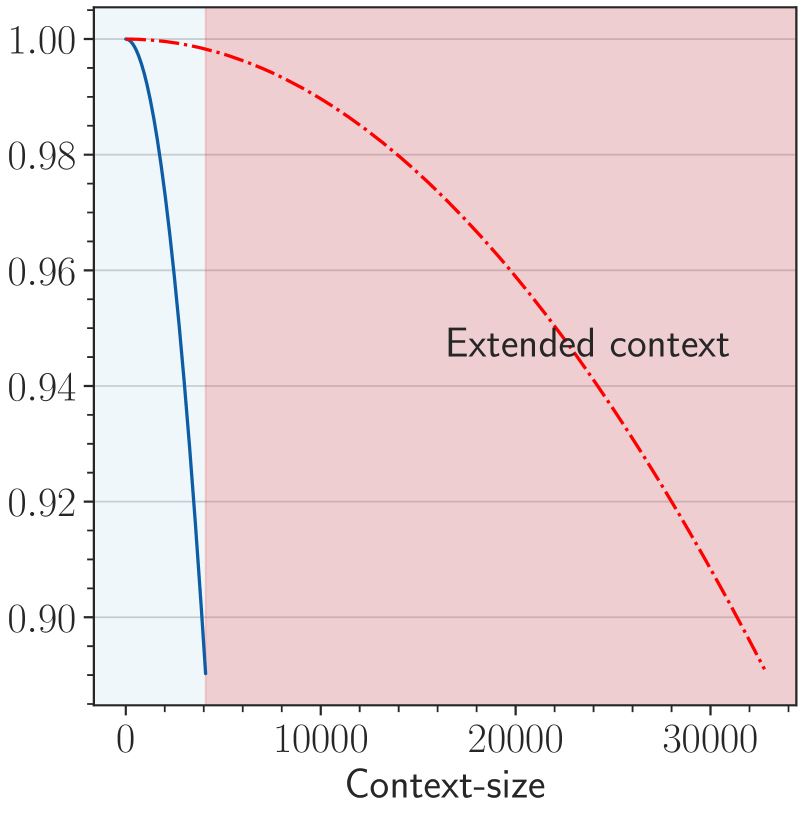

(c) base= $b· s^{\frac{d}{d-2}}$

Figure 2: An illustration of OOD in RoPE when we extend context length from 4k to 32k, and two solutions to avoid the OOD. We show the last dimension as it is the lowest frequency part of RoPE, which suffers OOD mostly in extrapolation. (a) For a 4k context-length model with base value as 1e4, when we extend the context length to 32k without changing the base value, the context length from 4k to 32k is OOD for RoPE (red area in the figure). (b) OOD can be avoided with a small base value like 500 (Liu et al., 2024b), since the full period has been fitted during fine-tuning stage. (c) We set base as $b· s^{\frac{d}{d-2}}$ from NTK (Peng et al., 2023b).The blue line denotes the pre-training stage (base=1e4) and the red dashed line denotes the fine-tuning stage (base= $b· s^{\frac{d}{d-2}}$ ), we can observe that the RoPE’s rotation angle of extended positions is in-distribution.

2.2 OOD theory of relative rotation angle

Based on RoPE, researchers have proposed various methods to extend the long context ability of LLMs, among which representatives are PI (Chen et al., 2023) and NTK-series (NTK-aware (bloc97, 2023), YaRN (Peng et al., 2023b), and Dynamical-NTK (emozilla, 2023)). Those methods depend on the relative scale $s=T_{\text{new}}/T_{\text{origin}}$ , where $T_{\text{origin}}$ is the training length of the original pre-trained model and $T_{\text{new}}$ is the training length in long-context fine-tuning.

PI

PI directly interpolates the position embedding, and the calculation of $A_{ij}$ becomes:

$$

\displaystyle A_{ij}=(R_{i/s}q_{i})^{T}(R_{j/s}k_{i})=q_{i}^{T}R_{(j-i)/s}k_{j}=q_{i}^{T}R_{m/s}k_{j}, \tag{12}

$$

In other words, the position embedding of the token at position $i$ in pre-training becomes $i/s$ in fine-tuning, ensuring the position embedding range of the longer context remains the same as before.

NTK-series

The idea is that neural networks are difficult to learn high-frequency features, and direct interpolation can affect the high-frequency parts. Therefore, the NTK-aware method achieves high-frequency extrapolation and low-frequency interpolation by modifying the base value of RoPE. Specifically, it modifies the base $b$ of the RoPE to:

$$

\displaystyle b_{\text{new}}=b\ s^{\frac{d}{d-2}}. \tag{13}

$$

The derivation of this expression is derived from $T_{\text{new}}b_{\text{new}}^{-\frac{d-2}{d}}=T_{\text{origin}}b^{-\frac{d-2}{d}}$ to ensure that the lowest frequency part being interpolated.

A recent study (Liu et al., 2024b) proposes to set a much smaller base (e.g. 500), in which case $\theta_{i}=base^{-\frac{2i}{d}}$ is small enough and typical training length (say 4,096) fully covers the period of $\cos(t-s)\theta_{i}$ , so the model can obtain longer context capabilities.

One perspective to explain current extrapolation methods is the OOD of rotation angle (Liu et al., 2024b; Han et al., 2023). If all possible values of $\cos(t-s)\theta_{i}$ have been fitted during the pre-training stage, OOD would be avoided when processing longer context. Figure 2 demonstrates how these methods avoid OOD of RoPE.

3 Motivation

<details>

<summary>x5.png Details</summary>

### Visual Description

# Technical Document Extraction: Perplexity vs. Context Size Chart

## 1. Component Isolation

* **Header/Legend Region:** Located in the top-right quadrant of the plot area.

* **Main Chart Area:** A line graph with a grid background, plotting Perplexity against Context Size.

* **Axes:** Y-axis (left) representing Perplexity; X-axis (bottom) representing Context size.

---

## 2. Metadata and Labels

* **Y-Axis Title:** Perplexity

* **X-Axis Title:** Context size

* **Y-Axis Markers:** 20, 40, 60, 80, 100

* **X-Axis Markers:** 0, 25000, 50000, 75000, 100000, 125000

* **Legend (Spatial Placement: Top-Right [x≈0.6, y≈0.8]):**

* **Blue Line:** `finetune on 32k(base=500)`

* **Red Line:** `Llama2-7B-Baseline`

---

## 3. Data Series Analysis and Trend Verification

### Series 1: Llama2-7B-Baseline (Red Line)

* **Trend Description:** The line starts at a low perplexity (below 10) at a context size of 0. It remains stable for a very short duration and then exhibits a near-vertical upward spike, exiting the top of the chart (Perplexity > 100) before reaching a context size of approximately 4,000.

* **Key Data Points:**

* Context 0: Perplexity ≈ 8

* Context ~2,500: Perplexity begins sharp ascent.

* Context ~4,000: Perplexity > 100 (Off-chart).

### Series 2: finetune on 32k(base=500) (Blue Line)

* **Trend Description:** This line starts at a similar low perplexity (below 10) and maintains a very gradual, slightly oscillating upward slope across the entire horizontal range of the chart. It demonstrates extreme stability compared to the baseline.

* **Key Data Points:**

* Context 0: Perplexity ≈ 8

* Context 32,000: Perplexity ≈ 8-9

* Context 62,500: Perplexity ≈ 9

* Context 100,000: Perplexity ≈ 10

* Context 125,000: Perplexity ≈ 11

---

## 4. Comparative Summary

The chart illustrates the performance of two Large Language Models (LLMs) in terms of perplexity (where lower is better) as the input context window increases.

1. **Llama2-7B-Baseline:** This model fails rapidly as the context size exceeds its training window. Its perplexity explodes (goes to infinity/off-chart) almost immediately after the 2,000-4,000 token mark.

2. **finetune on 32k(base=500):** This model shows significant architectural or fine-tuning improvements. Despite being "finetuned on 32k," it maintains a low and stable perplexity (under 12) even when the context size is extended to 125,000 tokens, which is nearly 4x its stated fine-tuning length.

---

## 5. Grid and Scale Details

* **Grid:** Light gray dashed lines.

* **X-axis Major Ticks:** Every 25,000 units.

* **Y-axis Major Ticks:** Every 20 units.

* **Language:** English (No other languages detected).

</details>

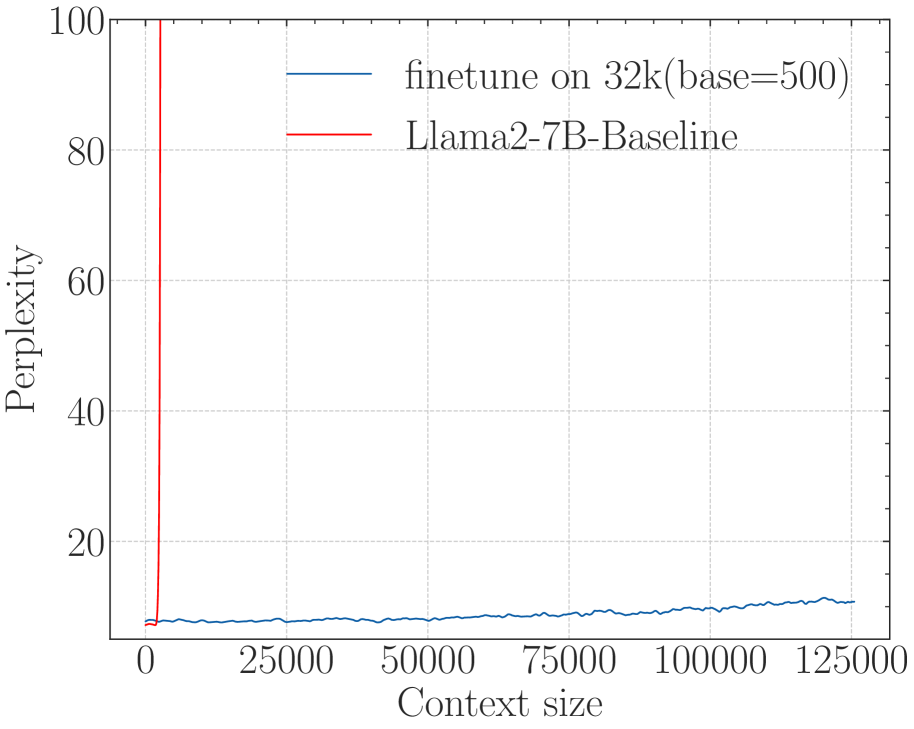

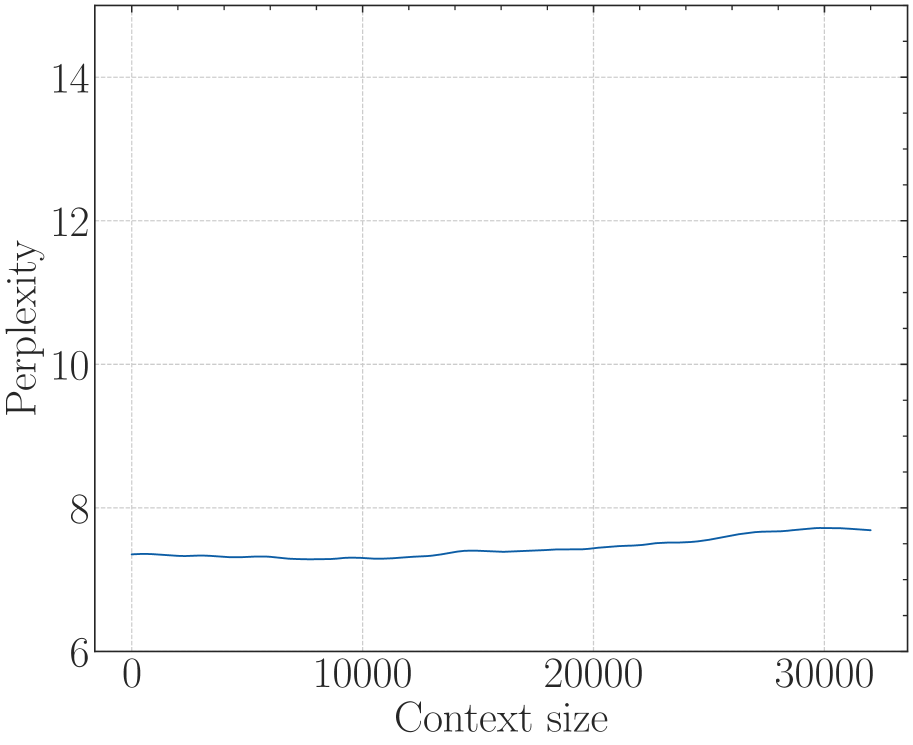

(a) Perplexity

<details>

<summary>x6.png Details</summary>

### Visual Description

# Technical Data Extraction: Model Accuracy vs. Context Length

## 1. Component Isolation

* **Header:** None present.

* **Main Chart Area:** A 2D line graph plotted on a Cartesian coordinate system with a light-gray dashed grid.

* **Legend:** Located in the center-right of the plot area.

* **Axes:** Y-axis (left) representing "Accuracy" and X-axis (bottom) representing "Context length".

---

## 2. Axis Labels and Markers

### Y-Axis (Vertical)

* **Label:** Accuracy

* **Scale:** Linear, ranging from 0.0 to 1.0.

* **Major Tick Markers:** 0.0, 0.2, 0.4, 0.6, 0.8, 1.0.

### X-Axis (Horizontal)

* **Label:** Context length

* **Scale:** Linear, ranging from approximately 500 to 5500.

* **Major Tick Markers:** 1000, 2000, 3000, 4000, 5000.

---

## 3. Legend and Data Series Identification

The legend contains two entries, which correspond to the two lines plotted:

1. **Blue Line (Dark Blue/Teal):** `finetune on 32k(base=500)`

2. **Red Line:** `Llama2-7B-Baseline`

---

## 4. Trend Verification and Data Extraction

### Series 1: Llama2-7B-Baseline (Red Line)

* **Visual Trend:** The line starts at near-perfect accuracy (~0.98) at the shortest context length. It maintains high performance (fluctuating between 0.85 and 1.0) until a context length of approximately 3800. At the 4000 mark, the line exhibits a catastrophic "cliff-edge" drop, falling vertically to 0.0 and remaining at 0.0 for all subsequent context lengths.

* **Key Data Points (Estimated):**

| Context Length | Accuracy |

| :--- | :--- |

| ~500 | 0.98 |

| 1000 | 0.98 |

| 2000 | 1.0 |

| 2200 | 0.84 |

| 3800 | 0.80 |

| 4000 | 0.0 |

| 5000+ | 0.0 |

### Series 2: finetune on 32k(base=500) (Blue Line)

* **Visual Trend:** This line starts high (~0.98) but immediately begins a steep decline as context length increases. By a context length of 1000, accuracy has dropped significantly. It continues to trend downward with high volatility (zig-zagging) between 0.0 and 0.4. Unlike the baseline, it does not hit a hard zero at 4000, but its overall performance is significantly lower than the baseline in the 500-3800 range.

* **Key Data Points (Estimated):**

| Context Length | Accuracy |

| :--- | :--- |

| ~500 | 0.98 |

| 1000 | 0.26 |

| 1500 | 0.10 |

| 2000 | 0.36 |

| 3000 | 0.10 |

| 4000 | 0.02 |

| 4500 | 0.18 |

| 5500 | 0.12 |

---

## 5. Summary of Findings

The chart compares the performance of a baseline Llama2-7B model against a version finetuned on 32k context with a base of 500.

* **The Baseline (Red)** is highly effective within its native context window (up to ~3800-4000 tokens) but fails completely and immediately once that limit is exceeded.

* **The Finetuned Model (Blue)** shows a significant degradation in accuracy even at relatively short context lengths (starting at 1000). While it technically "survives" past the 4000-token limit where the baseline fails, its accuracy remains very low (generally below 0.2) and unstable across the entire extended range.

</details>

(b) Long-eval (Li* et al., 2023)

<details>

<summary>x7.png Details</summary>

### Visual Description

# Technical Data Extraction: Heatmap Analysis

## 1. Document Overview

This image is a technical heatmap visualization representing the relationship between "Token Limit" and "Context length" relative to a "Score" metric. The chart uses a color gradient to represent numerical values across a two-dimensional grid.

## 2. Component Isolation

### A. Header/Axes Labels

- **Y-Axis Label (Left):** "Context length"

- **X-Axis Label (Bottom):** "Token Limit"

- **Legend Label (Right):** "Score"

### B. Main Chart Area (Data Grid)

The chart consists of a grid where the X-axis represents discrete token intervals and the Y-axis represents context length percentages.

### C. Legend (Color Scale)

- **Location:** Right side of the image.

- **Scale Range:** 0 to 10.

- **Color Mapping:**

- **Teal/Bright Green (Value 10):** High performance/score.

- **Yellow/Light Green (Value 5-7):** Moderate performance/score.

- **Orange/Red (Value 0-3):** Low performance/score.

---

## 3. Axis Markers and Categories

### X-Axis: Token Limit

The axis contains 41 discrete markers ranging from 0 to 4000 in increments of 100:

`0, 100, 200, 300, 400, 500, 600, 700, 800, 900, 1000, 1100, 1200, 1300, 1400, 1500, 1600, 1700, 1800, 1900, 2000, 2100, 2200, 2300, 2400, 2500, 2600, 2700, 2800, 2900, 3000, 3100, 3200, 3300, 3400, 3500, 3600, 3700, 3800, 3900, 4000`

### Y-Axis: Context length

The axis contains 10 markers representing percentages or relative lengths:

`0.0, 11.0, 22.0, 33.0, 44.0, 56.0, 67.0, 78.0, 89.0, 100.0`

---

## 4. Trend Verification and Data Extraction

### General Trends

1. **High Performance Zone (Left):** There is a consistent block of high scores (Teal, ~10) for Token Limits between 0 and 600 across almost all context lengths.

2. **Performance Degradation (Middle-Right):** As the Token Limit increases beyond 900, the scores generally drop into the low range (Red/Orange, ~0-3), with sporadic "islands" of moderate performance.

3. **The "100.0" Context Length Exception:** The bottom-most row (Context length 100.0) maintains a high score (Teal, ~10) across almost the entire Token Limit range, regardless of the horizontal value.

4. **Vertical Strips:** There are vertical bands of consistent color, suggesting that for certain Token Limits (e.g., 1300, 1700-1900, 3100-3700), the score is consistently low regardless of context length (excluding the 100.0 row).

### Specific Data Point Observations (Logic-Check)

* **Token Limit 0-600:** Predominantly Teal (Score 9-10).

* **Token Limit 700-800:** Transition zone, predominantly Light Green (Score 7-8).

* **Token Limit 1300:** Vertical strip of Red (Score ~1-2).

* **Token Limit 2000:** Shows a vertical strip of Yellow/Green (Score ~6-7) amidst a lower-scoring region.

* **Token Limit 3800:** Shows a vertical strip of Yellow (Score ~5-6).

* **Token Limit 4000:** The final column shows a slight improvement to Orange/Yellow compared to the deep Red of the 3100-3700 range.

---

## 5. Reconstructed Data Table (Summary)

| Context Length | Token Limit 0-600 | Token Limit 900-1200 | Token Limit 1300 | Token Limit 3100-3700 | Token Limit 4000 |

| :--- | :--- | :--- | :--- | :--- | :--- |

| **0.0 - 89.0** | High (9-10) | Low-Mid (3-5) | Very Low (1-2) | Very Low (1-2) | Low-Mid (3-5) |

| **100.0** | High (10) | High (10) | High (10) | High (10) | High (10) |

---

## 6. Final Technical Summary

The heatmap illustrates a "short-context" or "low-token" bias where performance is optimal at Token Limits below 700. A significant anomaly exists at the maximum Context Length (100.0), where performance remains high across the entire tested Token Limit spectrum. Conversely, the region between Token Limits 3100 and 3700 represents the lowest performance area for all context lengths except the maximum.

</details>

(c) Needle in Haystack (G, 2023)

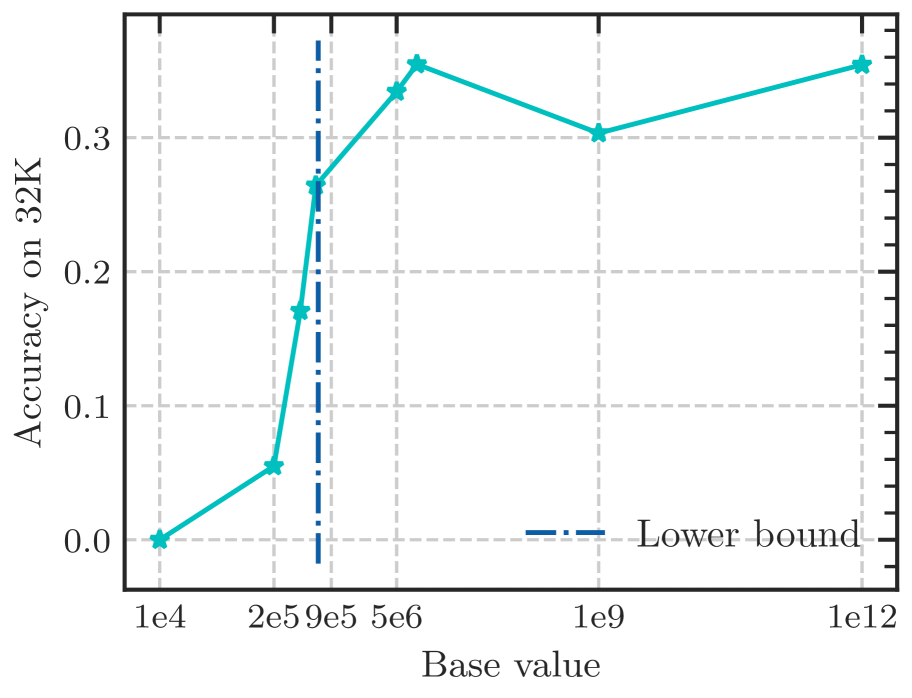

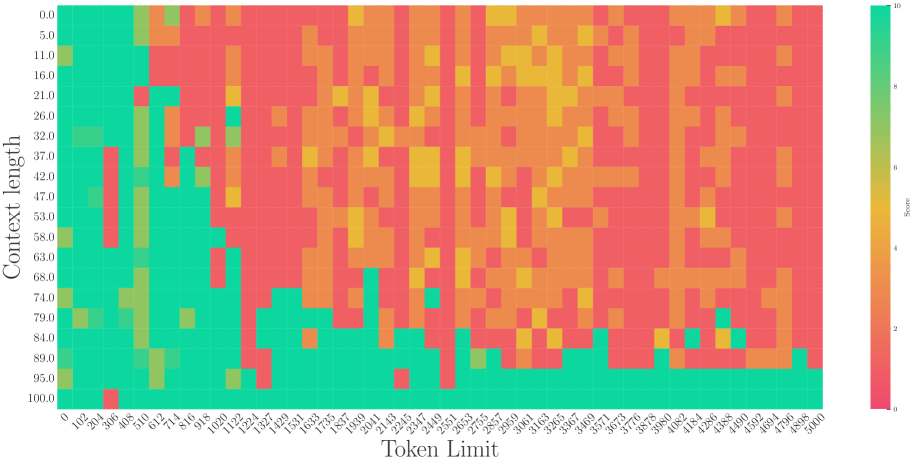

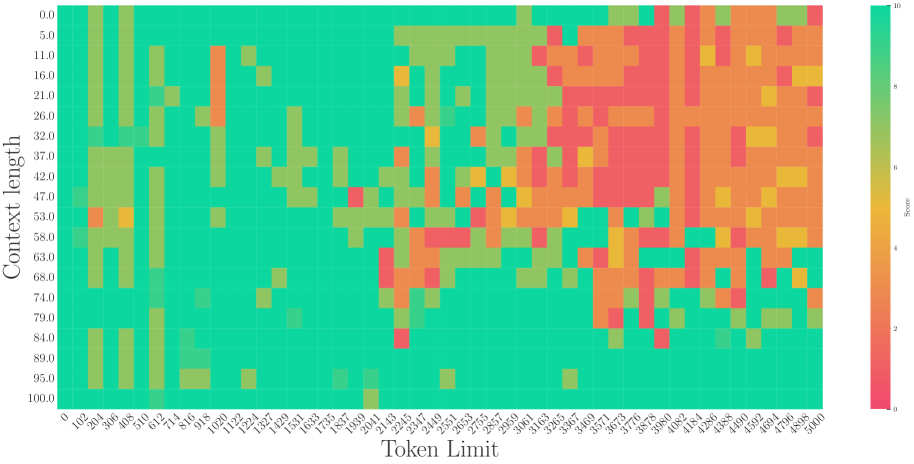

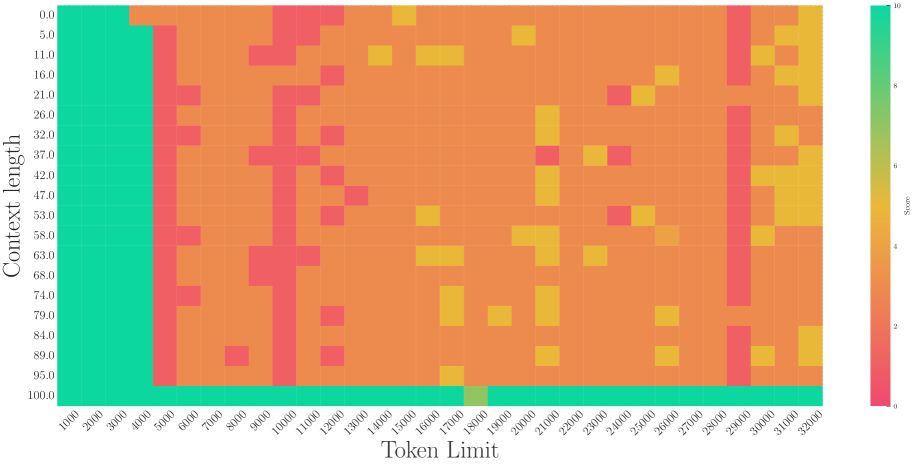

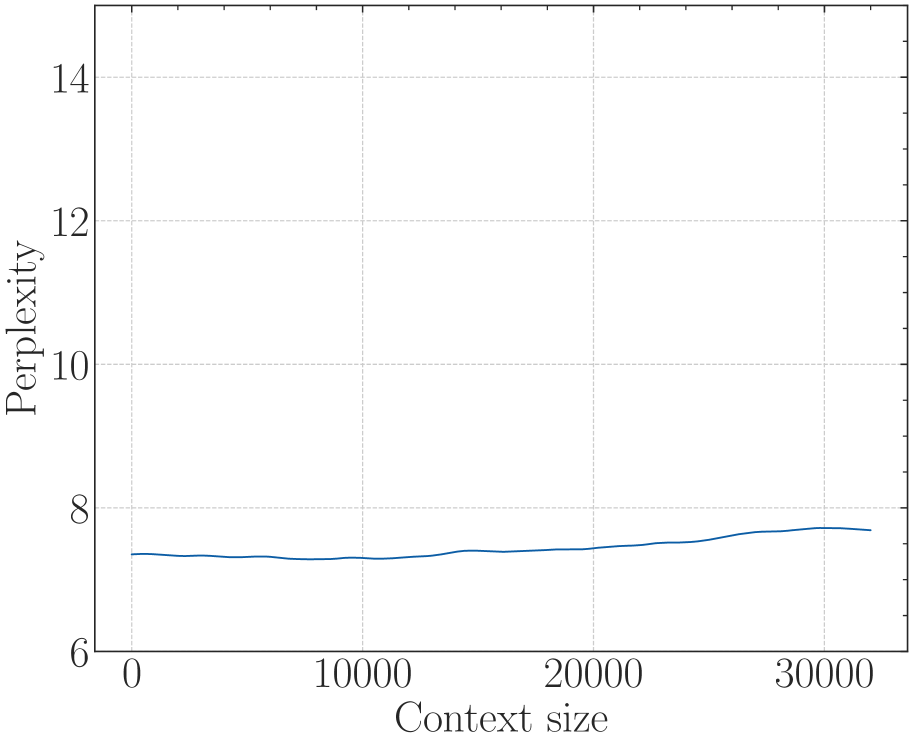

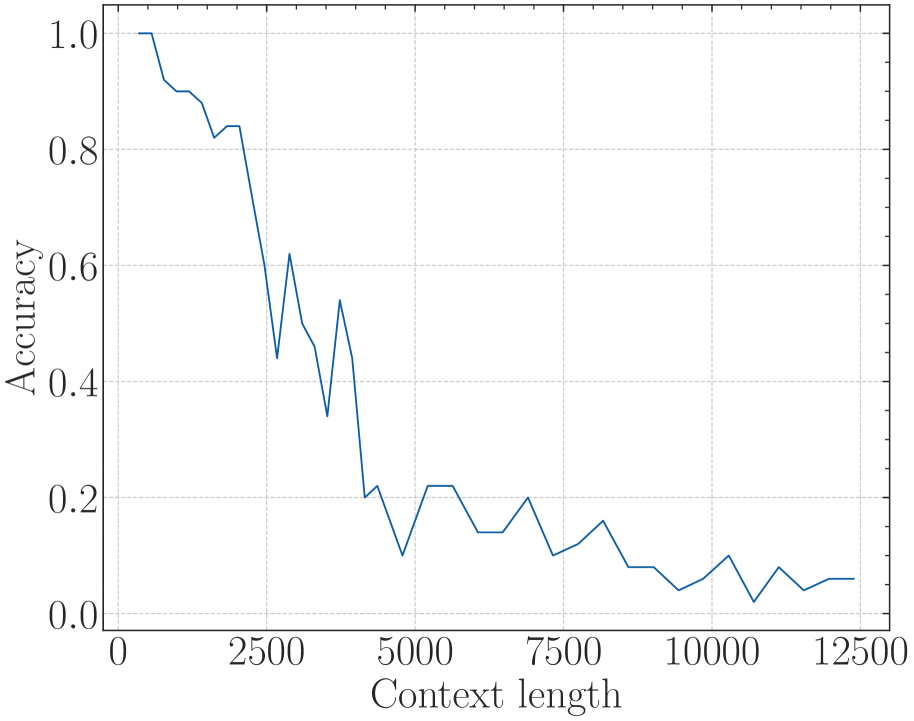

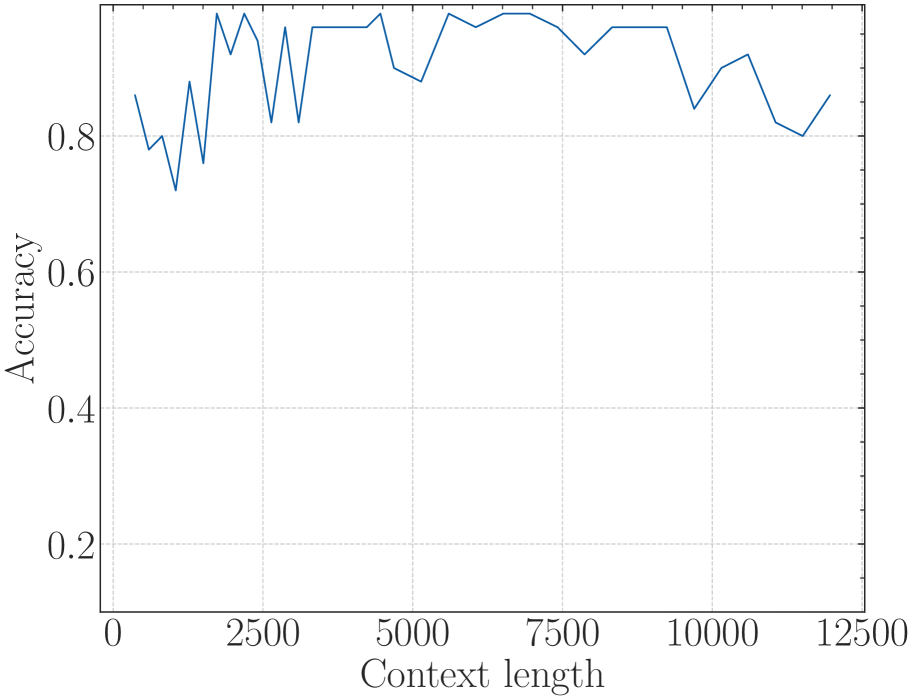

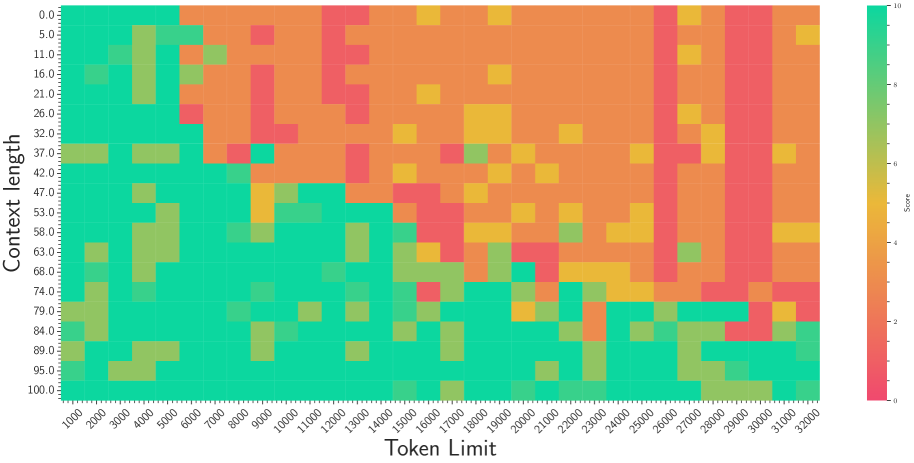

Figure 3: The superficial long context capability of avoiding OOD by the smaller base. Following the recent work (Liu et al., 2024b), we fine-tune Llama2-7B with a small base (500) to a context length of 32k.

NTK-based methods are widely adopted in long-context extension (Touvron et al., 2023a; Liu et al., 2024a; Young et al., 2024). To obtain better long-context capability, however, practitioners often adopt a much larger base than the original NTK-aware method suggested. This leads to speculation that there is another bound of RoPE’s base determined by context length.

On the other hand, a recent work (Liu et al., 2024b) proposes to set a much smaller base for RoPE to extend the context length. However, we find it may be a superficial long-context capability as shown in Figure 3. This method can obtain a low perplexity even at 128k context length, which can be explained by the OOD theory as explained above, but the model could not retrieve related information for context length as short as 1k, even much shorter than the model’s pre-trained length. Our findings support previous research (Hu et al., 2024) on the limitations of perplexity in evaluating long-context abilities. To delve deep into this phenomenon, we do the theoretical exploration in the next section.

4 Theory Perspective

For attention mechanism in language modeling, we have the following desiderata:

**Desiderata 1**

*The closer token gets more attention: the current token tends to pay more attention to the token that has a smaller relative distance.*

**Desiderata 2**

*The similar token gets more attention: the token tends to pay more attention to the token whose key value is more similar to the query value of the current token.*

Then we examine the desiderata when we apply RoPE to the attention mechanism in LLMs.

4.1 Long-term Decay of Upper Bound of Attention Score

For Desiderata 1, the property of RoPE makes the model attend more to closer tokens. This kind of long-term decay has been thoroughly discussed in previous work (Su et al., 2024; Sun et al., 2022). It comes from the upper bound of attention score calculation, which can be written as:

$$

\displaystyle|A_{ij}|=|q_{i}^{T}R_{m}k_{j}| \displaystyle\leq\max_{l}(|h_{l}-h_{l+1}|)\sum_{n=1}^{d/2}|S_{n}| \displaystyle=\max_{l}(|h_{l}-h_{l+1}|)\sum_{n=1}^{d/2}|\sum_{l=0}^{n-1}e^{(j-i)\theta_{l}\sqrt{-1}}|, \tag{14}

$$

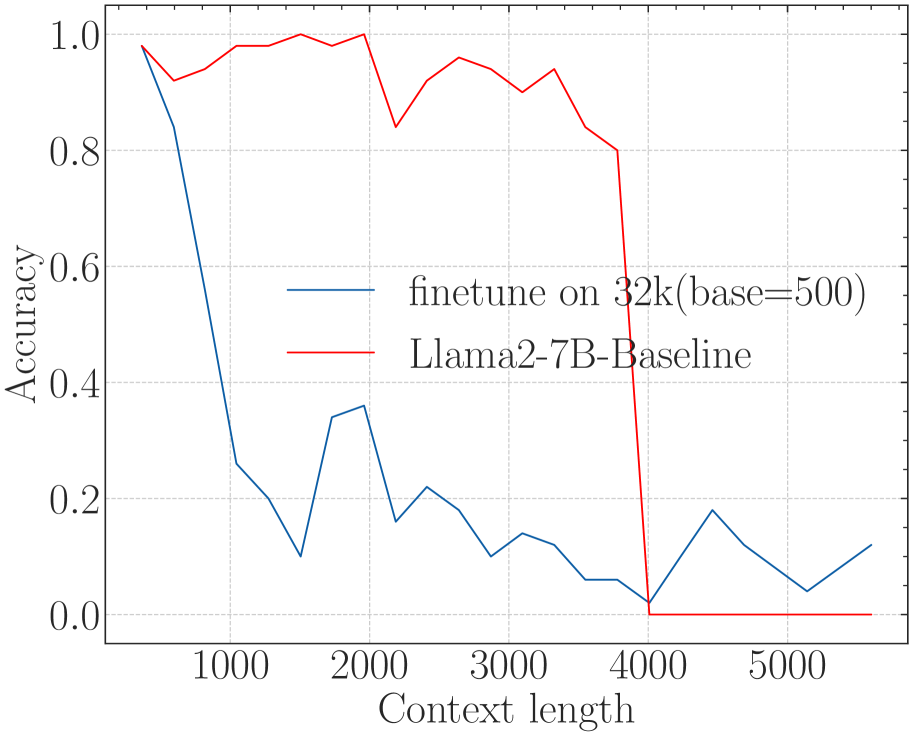

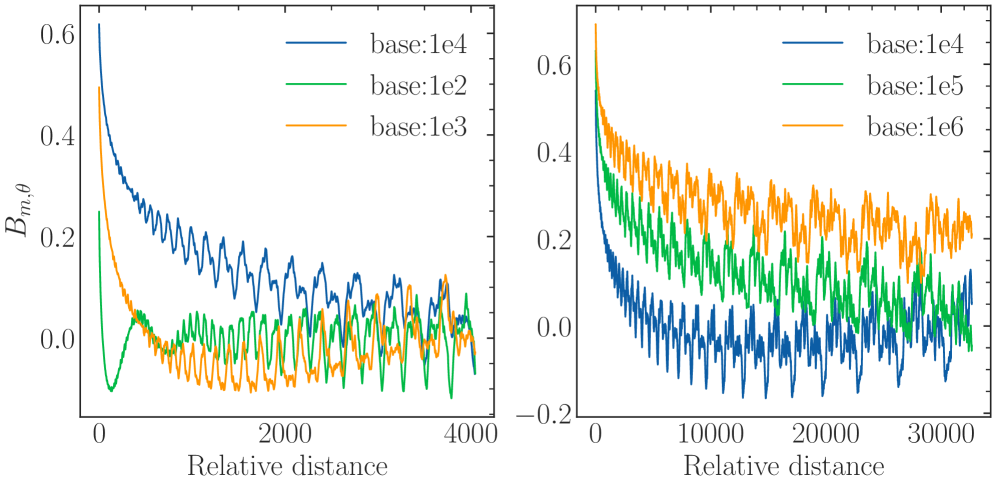

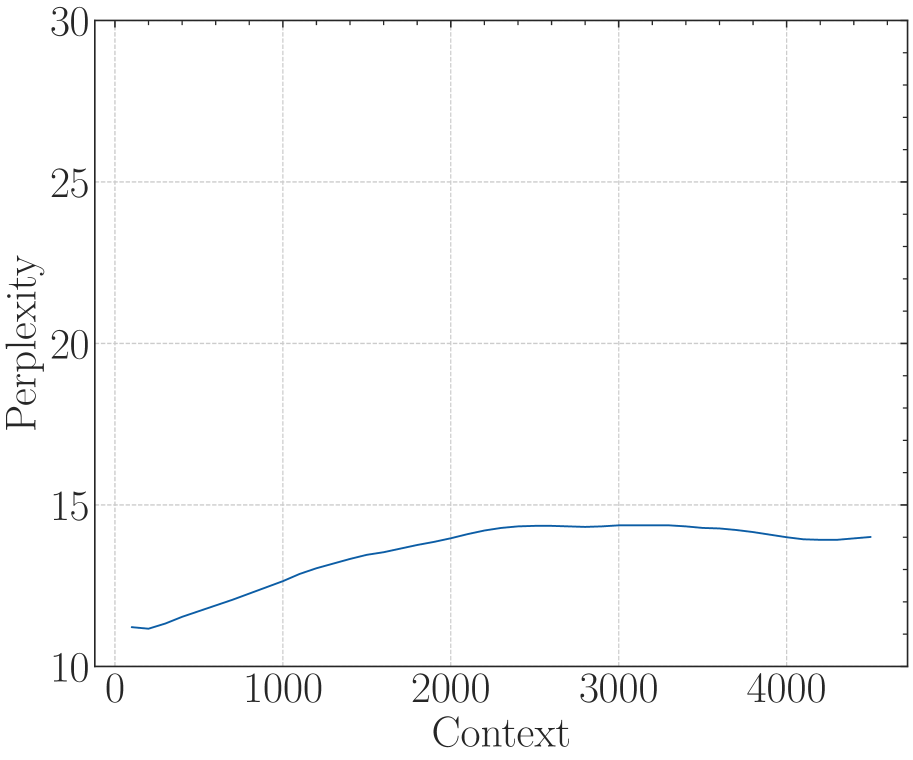

where $h_{l}=q_{i}^{T}[2l:l2+1]k_{j}[2l:2l+1]$ . Equation 4.1 indicates that the upper bound of the attention score $|A_{ij}|$ decays as the relative distance increases. Figure 5 shows the long-term decay curve of this upper bound, which is in accordance with previous findings (Su et al., 2024; Sun et al., 2022).

4.2 Long-term Decay of the Ability to Attend More to Similar Tokens than Random Tokens

In addition to the attention score’s upper bound, we also find there exists another long-term decay property in RoPE: the ability to attend more to similar tokens than random tokens decays as the relative distance increases. We define the ability to attend more to similar tokens than random tokens as:

$$

\displaystyle\mathbb{E}_{q,k^{*}}\left[q^{T}R_{m,\theta}k^{*}\right]-\mathbb{E}_{q,k}\left[q^{T}R_{m,\theta}k\right], \tag{15}

$$

where $q∈ R^{d}$ is the query vector for the current token, $k^{*}=q+\epsilon$ is the key value of a similar token, where $\epsilon$ is a small random variable, $k∈ R^{d}$ is the key vector of a random token, $R_{m,\theta}$ is the rotation matrix in RoPE. The first term in Eq. 15 is the attention score of $q$ and a similar token $k^{*}$ , the second term in Eq. 15 is the attention score of $q$ and random token $k$ . Then we derive the following theorem:

**Theorem 1**

*Assuming that the components of query $q∈ R^{d}$ and key $k∈ R^{d}$ are independent and identically distributed, their standard deviations are denoted as $\sigma∈ R$ . The key $k^{*}=q+\epsilon$ is a token similar to the query, where $\epsilon$ is a random variable with a mean of 0. Then we have:

$$

\displaystyle\frac{1}{2\sigma^{2}}(\mathbb{E}_{q,k^{*}}\left[q^{T}R_{m,\theta}k^{*}\right]-\mathbb{E}_{q,k}\left[q^{T}R_{m,\theta}k\right])=\sum_{i=0}^{d/2-1}\cos(m\theta_{i}) \tag{16}

$$*

<details>

<summary>x8.png Details</summary>

### Visual Description

# Technical Data Extraction: Relative Upper Bound Analysis

This document provides a comprehensive extraction of the data and trends presented in the provided image, which consists of two side-by-side line charts comparing "Relative upper bound" against "Relative distance" for different "base" configurations.

## 1. General Metadata

* **Image Type:** Two-panel line graph.

* **Language:** English.

* **Primary Metrics:**

* **Y-Axis:** Relative upper bound (Linear scale).

* **X-Axis:** Relative distance (Linear scale).

* **Common Legend Format:** `base:1eN` (where N is an integer).

---

## 2. Left Chart Analysis (Short Range)

### Component Isolation

* **Header/Legend:** Located in the top-right quadrant.

* **X-Axis Range:** 0 to 4000. Markers at [0, 2000, 4000].

* **Y-Axis Range:** 2.5 to 15.0. Markers at [2.5, 5.0, 7.5, 10.0, 12.5, 15.0].

### Data Series & Trends

| Series Label | Color | Initial Value (approx.) | Visual Trend Description | Convergence/Steady State |

| :--- | :--- | :--- | :--- | :--- |

| **base:1e4** | Blue | ~16.0 | Sharp exponential decay from 0 to 1000, then stabilizes into a high-frequency oscillation. | Oscillates between ~4.0 and ~6.0. |

| **base:1e2** | Green | ~10.0 | Extremely rapid drop to a floor within the first 100 units of distance. | Oscillates between ~4.0 and ~5.5. |

| **base:1e3** | Orange | ~13.0 | Rapid decay, intersecting the green line at distance ~500. Exhibits the highest amplitude oscillations. | Oscillates between ~3.0 and ~6.5. |

---

## 3. Right Chart Analysis (Long Range)

### Component Isolation

* **Header/Legend:** Located in the top-right quadrant.

* **X-Axis Range:** 0 to 30000+. Markers at [0, 10000, 20000, 30000].

* **Y-Axis Range:** 5 to 15+. Markers at [5, 10, 15].

### Data Series & Trends

| Series Label | Color | Initial Value (approx.) | Visual Trend Description | Convergence/Steady State |

| :--- | :--- | :--- | :--- | :--- |

| **base:1e4** | Blue | ~18.0 | Rapid decay. In this wider view, it maintains the lowest baseline of the three series. | Oscillates between ~3.5 and ~6.0. |

| **base:1e5** | Green | ~15.0 | Moderate decay. Stays consistently between the blue and orange lines. | Oscillates between ~4.0 and ~7.0. |

| **base:1e6** | Orange | ~18.0 | Slowest decay relative to the others. Maintains the highest average bound across the distance. | Oscillates between ~5.0 and ~8.0. |

---

## 4. Comparative Summary and Key Findings

### Trend Verification

1. **Initial Penalty:** All configurations start with a high "Relative upper bound" at distance 0, which decays as distance increases.

2. **Base Value Correlation:**

* In the **Left Chart** (lower base values), the `base:1e4` (highest in that set) has the slowest decay and highest initial bound.

* In the **Right Chart** (higher base values), the `base:1e6` (highest in that set) has the slowest decay and maintains the highest bound over long distances.

* **Conclusion:** Increasing the "base" value generally increases the relative upper bound and slows the rate of decay toward the steady-state oscillation.

3. **Oscillatory Behavior:** All series transition from a smooth decay curve into a "noisy" or oscillatory steady state. The amplitude of these oscillations appears more pronounced in the `base:1e3` (Left) and `base:1e6` (Right) configurations.

### Spatial Grounding of Legends

* **Left Plot Legend [x~0.7, y~0.8]:** Correctly identifies Blue (1e4), Green (1e2), and Orange (1e3).

* **Right Plot Legend [x~0.7, y~0.8]:** Correctly identifies Blue (1e4), Green (1e5), and Orange (1e6). Note that Blue (1e4) is the common reference point between both charts.

</details>

Figure 4: The upper bound of attention score with respect to the relative distance.

<details>

<summary>x9.png Details</summary>

### Visual Description

# Technical Data Extraction: Rotary Positional Embedding (RoPE) Decay Analysis

This document provides a detailed technical extraction of the data presented in the two-panel line chart. The charts illustrate the relationship between "Relative distance" and a metric labeled $B_{m, \theta}$, likely representing the decay of basis functions or attention scores in a transformer model using different "base" values for positional encoding.

---

## 1. Global Metadata and Layout

* **Image Type:** Two-panel line plot (Left and Right).

* **Primary Language:** English.

* **Y-Axis Label (Shared):** $B_{m, \theta}$

* **X-Axis Label (Shared):** Relative distance

* **Visual Style:** Scientific plot with LaTeX-style font rendering.

---

## 2. Left Panel Analysis (Short Range)

### Axis Scales

* **X-Axis Range:** 0 to 4000. Major ticks at 0, 2000, 4000.

* **Y-Axis Range:** -0.2 to 0.6. Major ticks at 0.0, 0.2, 0.4, 0.6.

### Legend and Data Series [Spatial Grounding: Top Right Quadrant]

| Series Color | Label | Trend Description |

| :--- | :--- | :--- |

| **Blue** | `base:1e4` | Starts highest (~0.62). Slopes downward with high-frequency oscillations. Remains above other series until ~3500. |

| **Orange** | `base:1e3` | Starts at ~0.5. Rapidly decays to near 0.0 by distance 1000, then oscillates around the 0.0 axis. |

| **Green** | `base:1e2` | Starts lowest (~0.25). Drops sharply into negative values (~ -0.1) before distance 500, then oscillates around 0.0. |

### Key Observations

* Higher base values (1e4) maintain a higher $B_{m, \theta}$ value over longer relative distances.

* Lower base values (1e2, 1e3) exhibit much faster initial decay and settle into a zero-centered oscillation pattern much earlier.

---

## 3. Right Panel Analysis (Long Range)

### Axis Scales

* **X-Axis Range:** 0 to 30,000+. Major ticks at 0, 10000, 20000, 30000.

* **Y-Axis Range:** -0.2 to 0.6 (consistent with left panel).

### Legend and Data Series [Spatial Grounding: Top Right Quadrant]

| Series Color | Label | Trend Description |

| :--- | :--- | :--- |

| **Orange** | `base:1e6` | Starts highest (~0.65). Slopes downward gradually, maintaining the highest mean value (~0.25) at distance 30,000. |

| **Green** | `base:1e5` | Starts middle (~0.55). Slopes downward, maintaining a mean value around 0.1 at distance 30,000. |

| **Blue** | `base:1e4` | Starts lowest (~0.45 on this scale). Slopes downward rapidly, oscillating around 0.0 by distance 10,000. |

### Key Observations

* This panel focuses on much larger relative distances (up to 32k).

* The `base:1e4` series, which was the "high" performer in the left plot, is the "low" performer here, showing that base values must scale with the intended context window to prevent the metric from decaying to zero.

* All series exhibit a "fuzzy" appearance caused by high-frequency oscillations superimposed on the general downward decay curve.

---

## 4. Comparative Summary Table

| Base Value | Initial Value ($B_{m, \theta}$) | Effective Range (before mean $\approx$ 0) |

| :--- | :--- | :--- |

| **1e2** | ~0.25 | < 500 |

| **1e3** | ~0.50 | ~1,000 |

| **1e4** | ~0.62 | ~8,000 - 10,000 |

| **1e5** | ~0.55 | > 32,000 (Mean remains > 0) |

| **1e6** | ~0.65 | > 32,000 (Mean remains ~0.2) |

**Conclusion:** Increasing the `base` parameter significantly extends the distance over which the $B_{m, \theta}$ metric remains positive and significant, effectively "stretching" the positional encoding's reach.

</details>

Figure 5: The ability to attend more to similar tokens than random tokens.

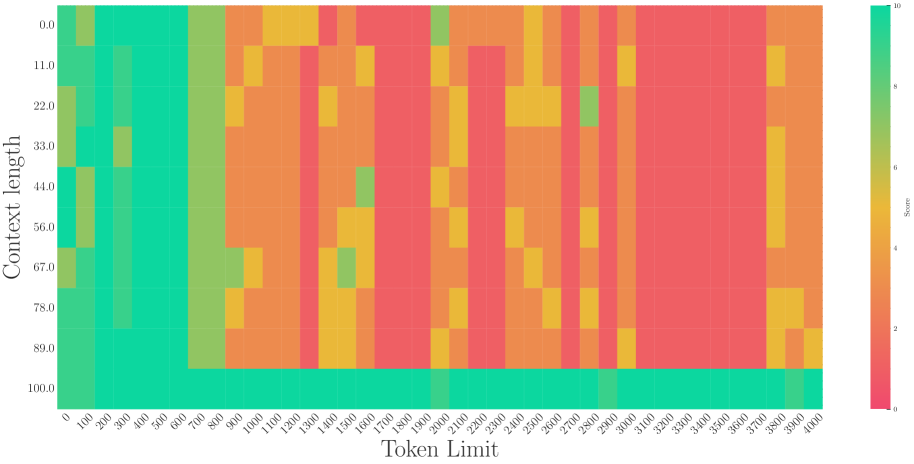

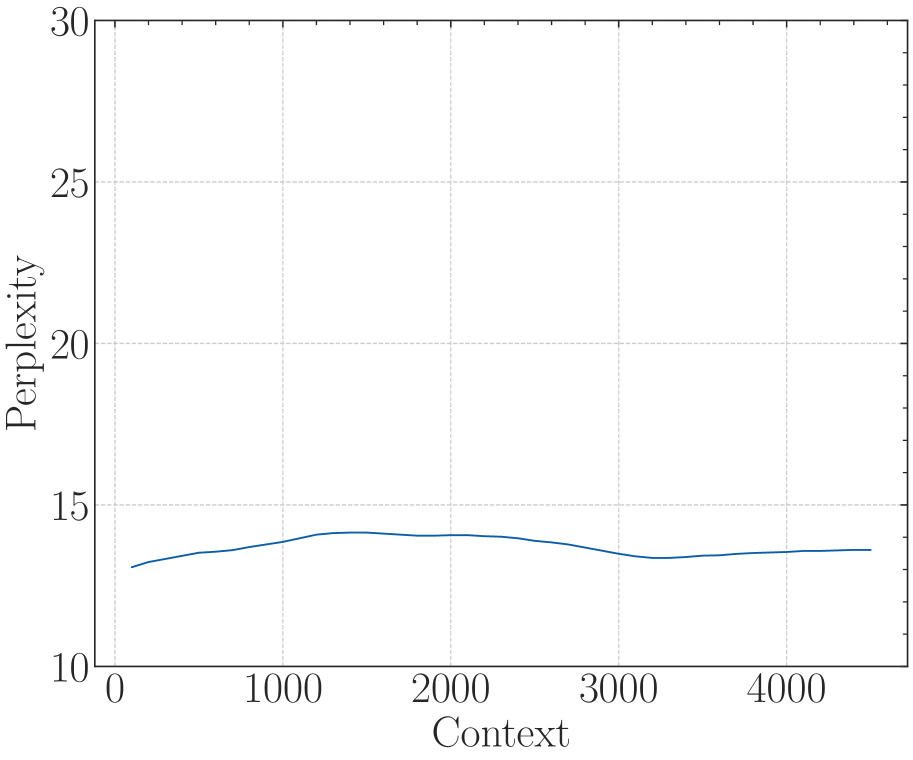

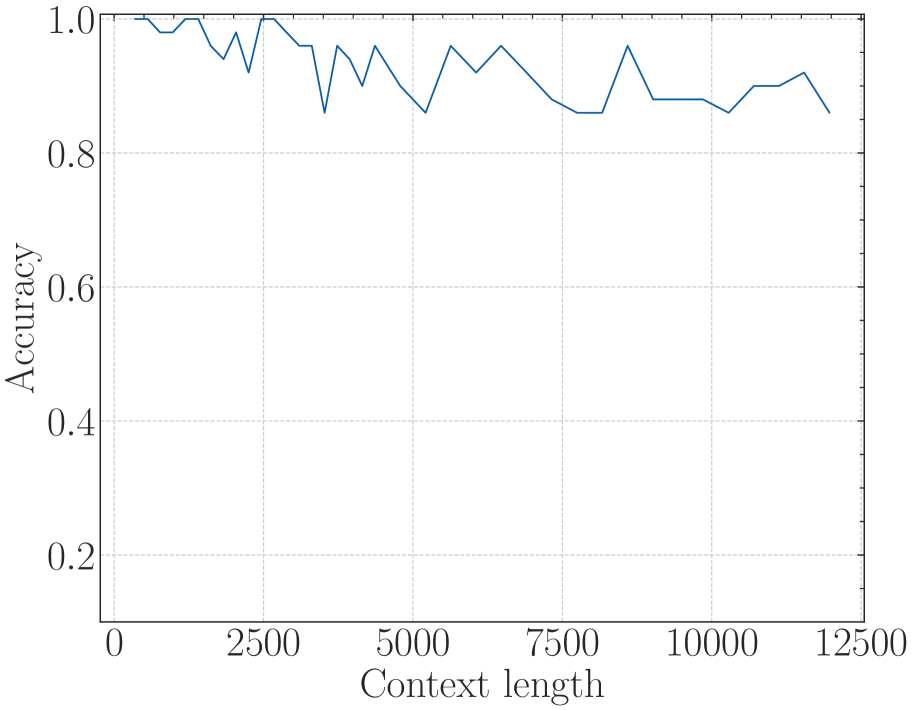

The proof is shown in Appendix A. We denote $\sum_{i=0}^{d/2-1}\cos(m\theta_{i})$ as $B_{m,\theta}$ , and according to Theorem 1, $B_{m,\theta}$ measures the ability to give more attention to similar tokens than random tokens, which decreases as the relative distance $m$ increases, as shown in Figure 5. For a very small base value, we can observe that the $B_{m,\theta}$ is even below zero at a certain distance, meaning the random tokens have larger attention scores than the similar tokens, which may be problematic for long context modeling.

4.3 Base of RoPE Bounds the Context Length

To satisfy the Desiderata 2, we will get $\mathbb{E}_{q,k^{*}}\left[q^{T}R_{m,\theta}k^{*}\right]≥\mathbb{E}_{q,k}\left[q^{T}R_{m,\theta}k\right]$ . According to Theorem 1, $B_{m,\theta}$ needs to be larger than zero. Given the $\theta$ in RoPE, the context length $L_{\theta}$ that can be truly obtained satisfies:

$$

\displaystyle L_{\theta}=\sup\{L|B_{m,\theta}\geq 0,\forall m\in[0,1,...,L]\} \tag{17}

$$

In other word, if we follow the setting that $\theta_{i}=base^{-2i/d}$ , in order to get the expected context length $L$ , there is a lower bound of the base value $base_{L}$ :

$$

\displaystyle base_{L}=\inf\{base|B_{m,\theta}\geq 0,\forall m\in[0,1,...,L]\} \tag{18}

$$

In summary, the RoPE’s base determines the upper bound of context length the model can truly obtain. Although there exists the absolute lower bound, Eq. 16 and Eq. 18 are hard to get the closed-form solution since $B_{m,\theta}$ is a summation of many cosine functions. Therefore, in this paper, we get the numerical solution. Table 2 shows this lower bound for context length ranging from 1,000 to one million. In Figure 1, we plot the context length and corresponding lower bound, we can observe that as the context length increases, the required base also increases.

Table 2: Context length and its corresponding lower bound of RoPE’s base.

| Context Len. Lower Bound | 1k 4.3e3 | 2k 1.6e4 | 4k 2.7e4 | 8k 8.4e4 | 16k 3.1e5 | 32k 6.4e5 | 64k 2.1e6 | 128k 7.8e6 | 256k 3.6e7 | 512k 6.4e7 | 1M 5.1e8 |

| --- | --- | --- | --- | --- | --- | --- | --- | --- | --- | --- | --- |

Note: this boundary is not very strict because the stacking of layers in LLMs allows the model to extract information beyond the single layers’ range, which may increase the context length in Eq. 17 and decrease the base in Eq. 18. Notwithstanding, in Section 5 we find that the derived bound approximates the real context length in practice.

Long-term decay from different perspectives. The long-term decay in section 4.1 and section 4.2 are from different perspectives. The former refers to the long-term decay of the attention score as the relative distance increases. This ensures that current tokens tend to pay more attention to the tokens closer to them. The latter indicates that with the introduction of the rotation matrix in attention, the ability to discriminate the relevant tokens from irrelevant tokens decreases as the relative distance increases. Therefore, a large $B_{m,\theta}$ , corresponding to a large base value, is important to keep the model’s discrimination ability in long context modeling.

5 Experiment

In this section, we conduct thorough experiments. The empirical result can be summarized in Table 3, the details are in the following sections.

Table 3: In Section 5, we aim to answer the following questions.

| Q: Does RoPE’s base bounds the context length during the fine-tuning stage? | Yes. When the base is small, it is difficult to get extrapolation for specific context length. |

| --- | --- |

| Q: Does RoPE’s base bounds the context length during the pre-training stage? | Yes. Our proposed lower bound for RoPE’s base also applies to pre-training. If we train a model from scratch with a small base but the context length is large (larger than the bounded length), the resulting model has very limited the context length capabilities, meaning some of context in pre-training is wasted. |

| Q: What happened when base is set smaller than the lower bound? | The model will get the superficial long context capability. The model can keep perplexity low, but can’t retrieve useful information from long context. |

5.1 Experiments Setup

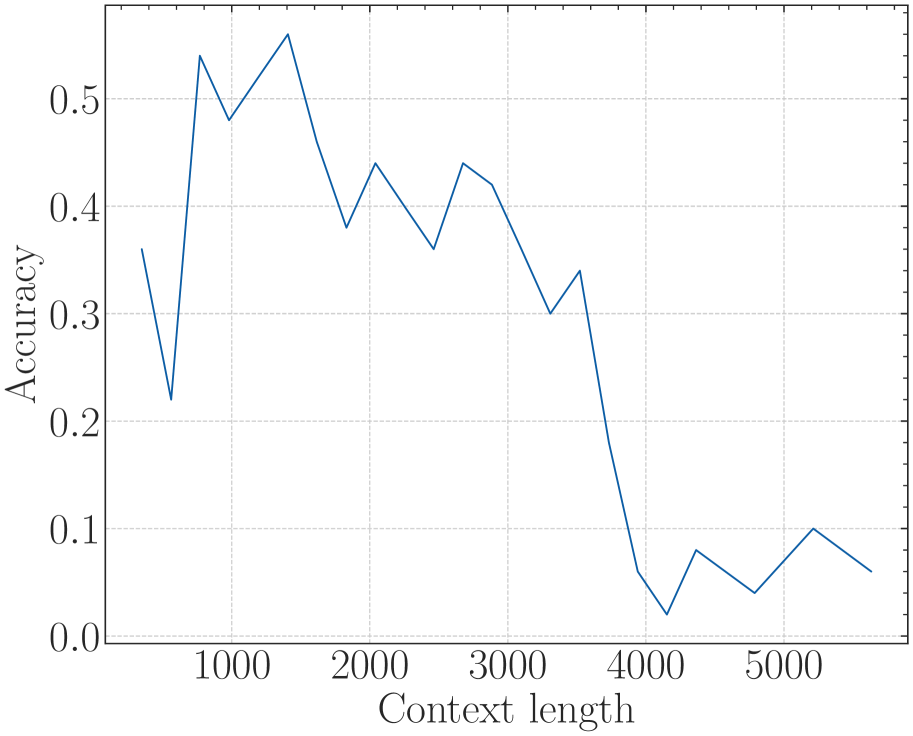

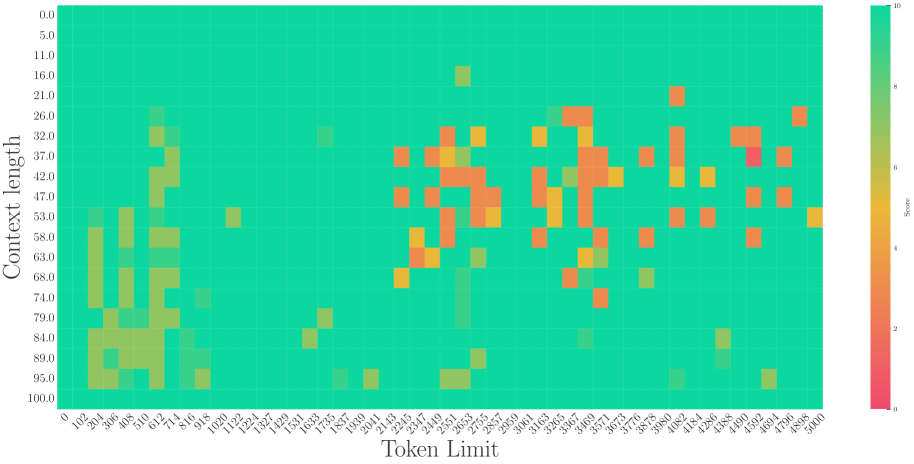

For fine-tuning, we utilized Llama2-7B (Touvron et al., 2023a) and Baichuan2-7B (Yang et al., 2023), both of which are popular open-source models employing RoPE with a base of $1e4$ . We utilized a fixed learning rate of 2e-5 and a global batch size of 128 and fine-tuning for 1000 steps. For pre-training, we trained a Llama-like 2B model from scratch for a total of 1 trillion tokens. We set the learning rate to 1e-4 and adopted a cosine decay schedule, with models trained on a total of 1T tokens. The dataset we used is a subset of RedPajama (Computer, 2023). More details of the experimental setup are provided in Appendix B.

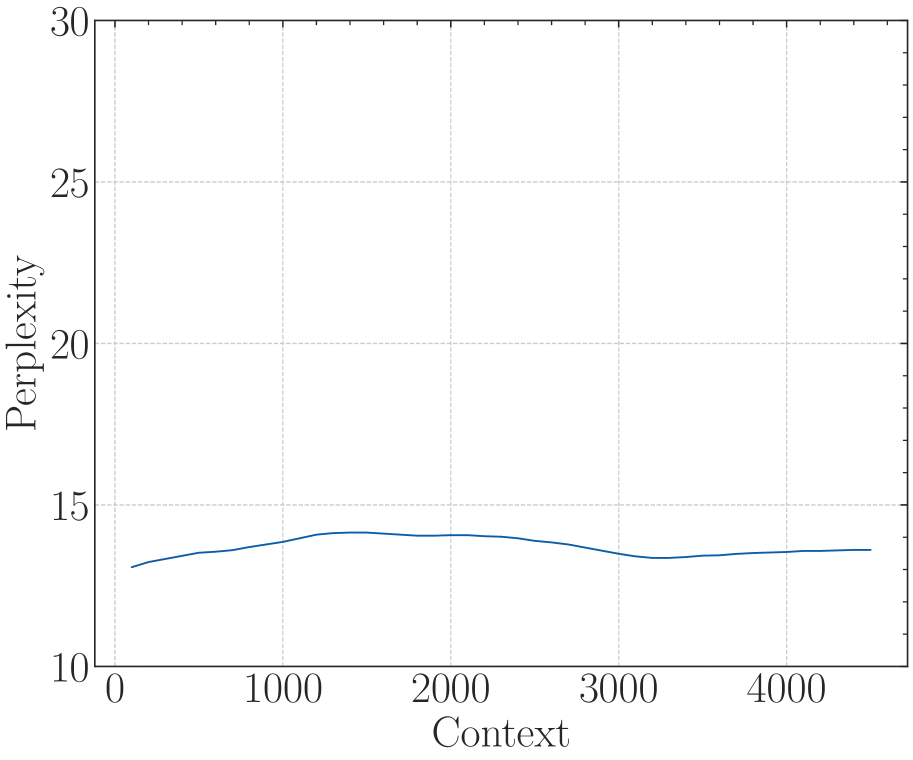

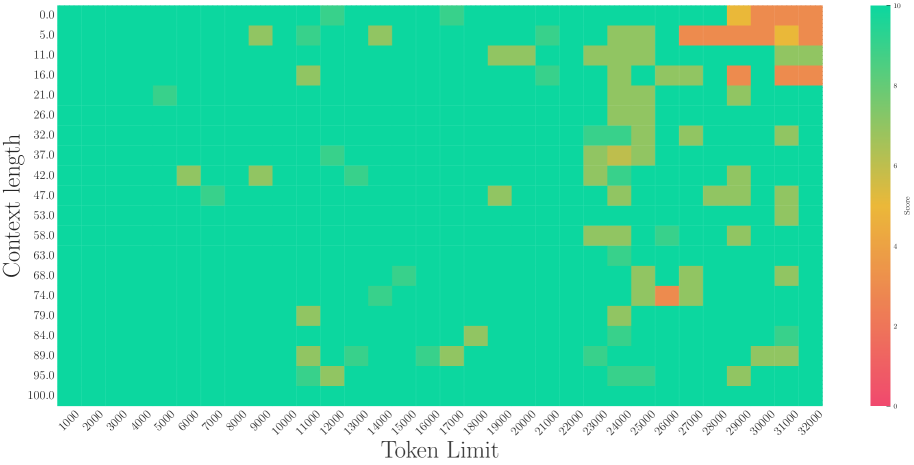

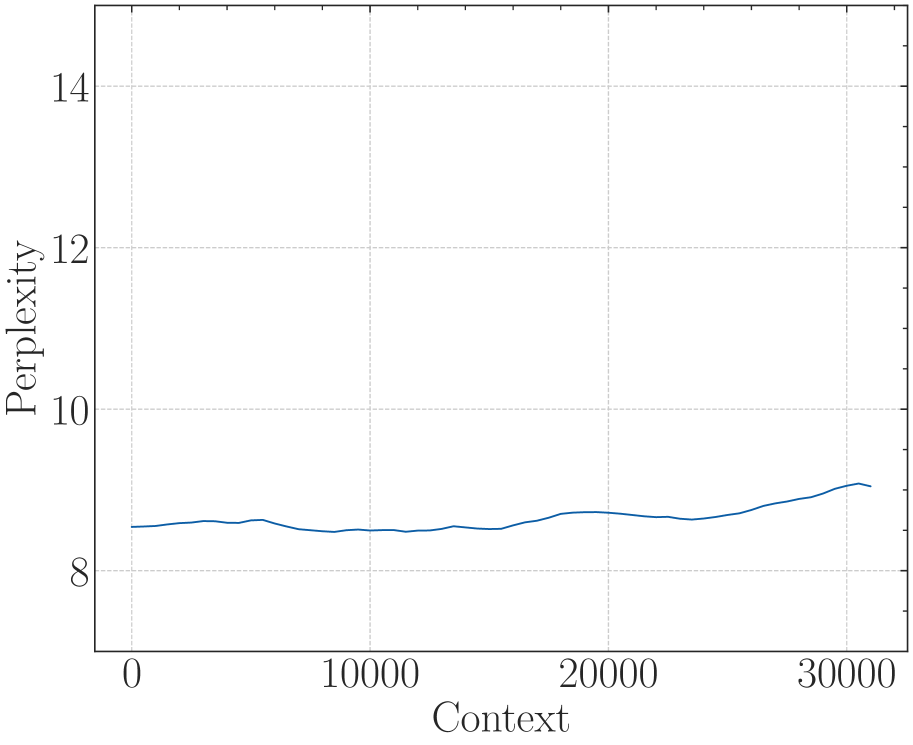

Our evaluation focused on two aspects: (1) Perplexity: we use PG19 dataset (Rae et al., 2019) which are often used in long context evaluation; (2) Retrieval: in addition to perplexity, we also adopt retrieval since it represents the real long-context understanding ability of LLMs. We choose a) Long-eval benchmark from (Li* et al., 2023) and b) needle in a haystack (NIH) (G, 2023). The Long-eval benchmark generates numerous random similar sentences and asks the model to answer questions based on a specific sentence within the context, while the NIH requires the model to retrieve information from various positions in the long context.

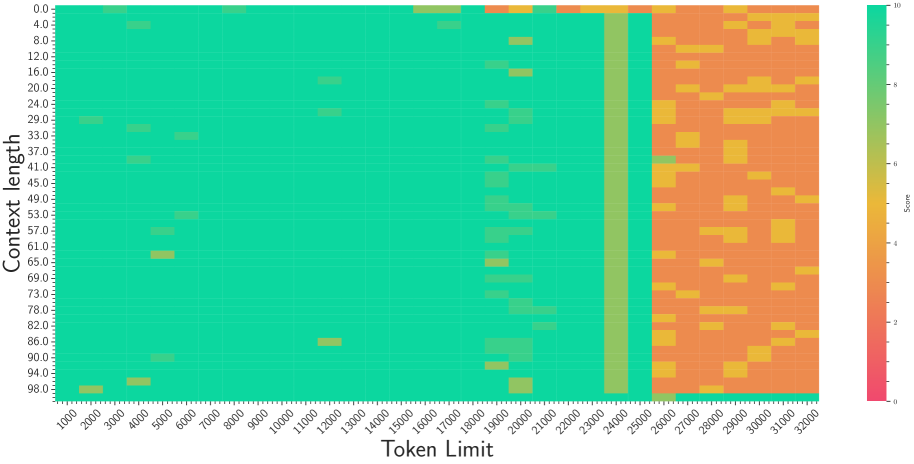

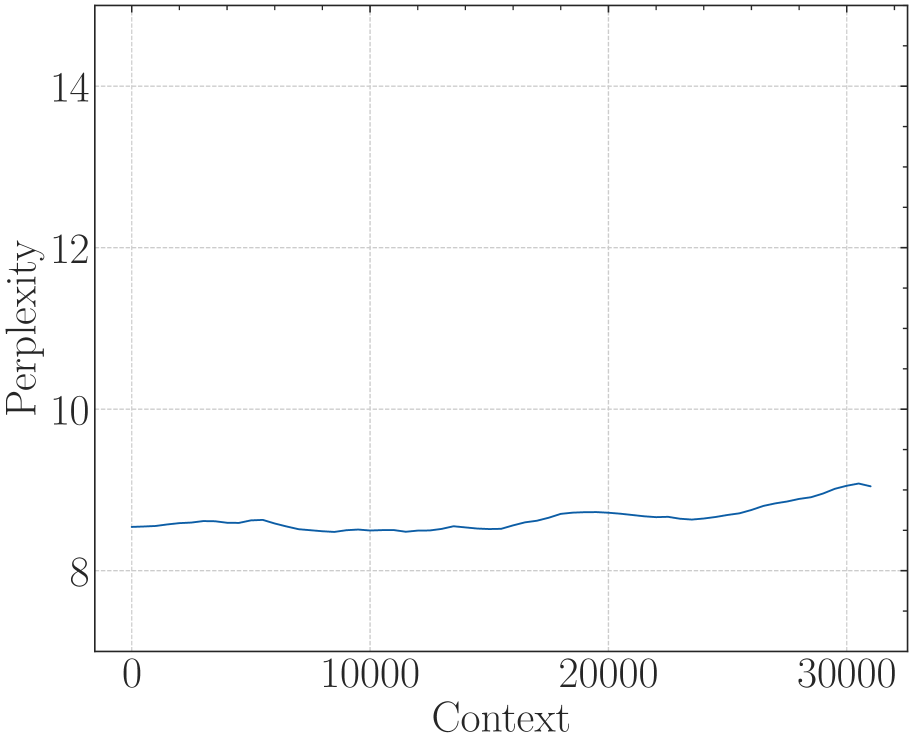

5.2 Base of RoPE bounds context length in fine-tuning stages

<details>

<summary>x10.png Details</summary>

### Visual Description

# Technical Data Extraction: Perplexity vs. Context Length Chart

## 1. Image Overview

This image is a line graph illustrating the relationship between **Context** (x-axis) and **Perplexity** (y-axis) for various configurations of a language model. The chart compares a baseline model with a 4K context window against several models configured with a 32K context window using different "base" parameters.

## 2. Component Isolation

### A. Header/Metadata

* **Language:** English.

* **Content:** No explicit title is present within the image frame.

### B. Main Chart Area

* **Y-Axis Label:** Perplexity

* **Y-Axis Scale:** Linear, ranging from 5 to 20. Major tick marks are placed at intervals of 2 (6, 8, 10, 12, 14, 16, 18, 20).

* **X-Axis Label:** Context

* **X-Axis Scale:** Linear, ranging from 5,000 to 30,000. Major tick marks are placed at intervals of 5,000 (5000, 10000, 15000, 20000, 25000, 30000).

* **Grid:** Horizontal and vertical dashed light-gray grid lines corresponding to the major tick marks.

### C. Legend (Spatial Grounding: Top-Right Quadrant)

The legend is located at approximately `[x=0.65 to 0.95, y=0.05 to 0.50]` in normalized coordinates from the top-left. It contains seven entries:

1. **Blue line:** `32K-base:1e4`

2. **Green line:** `32K-base:2e5`

3. **Orange line:** `32K-base:9e5`

4. **Red line:** `32K-base:5e6`

5. **Purple line:** `32K-base:1e9`

6. **Dark Gray/Black line:** `32K-base:1e12`

7. **Light Gray line:** `4K-Baseline`

---

## 3. Trend Verification and Data Extraction

### Series 1: 4K-Baseline (Light Gray)

* **Trend:** Sharp exponential upward slope. The perplexity explodes almost immediately after the 5,000 context mark.

* **Data Points:**

* At Context 5,000: ~8.2

* At Context 6,000: ~10.2

* At Context 7,500: Exceeds 20.0 (off-chart).

### Series 2: 32K-base Group (Multiple Colors)

* **Trend:** All 32K-base models follow a nearly identical, stable horizontal trend. They maintain low perplexity (between 8 and 10) across the entire context range shown (5,000 to 31,000). There is a very slight, gradual increase in perplexity as context increases, with a small "hump" or local peak around Context 19,000.

* **Detailed Comparison:**

* **32K-base:1e4 (Blue):** Generally the highest perplexity among the 32K group, ending near 9.5 at Context 31,000.

* **32K-base:1e12 (Dark Gray):** Closely follows the blue line.

* **32K-base:5e6 (Red):** Generally the lowest perplexity among the 32K group, ending near 9.0 at Context 31,000.

* **Representative Data Points (Approximate Average of Group):**

* Context 5,000: ~8.1

* Context 10,000: ~8.3

* Context 15,000: ~8.2

* Context 19,000: ~8.8 (Local peak)

* Context 25,000: ~8.7

* Context 31,000: ~9.1 to 9.5

---

## 4. Summary Table of Extracted Data

| Context Length | 4K-Baseline Perplexity | 32K-base (Group Avg) Perplexity |

| :--- | :--- | :--- |

| 5,000 | ~8.2 | ~8.1 |

| 7,500 | > 20.0 | ~8.4 |

| 10,000 | N/A (Off-chart) | ~8.3 |

| 15,000 | N/A | ~8.2 |

| 20,000 | N/A | ~8.7 |

| 25,000 | N/A | ~8.7 |

| 30,000 | N/A | ~9.2 |

## 5. Technical Conclusion

The chart demonstrates that the **4K-Baseline** model fails to generalize beyond its training window, as evidenced by the vertical spike in perplexity after 5,000 context tokens. Conversely, all **32K-base** variants (ranging from 1e4 to 1e12) successfully maintain performance (low perplexity) up to at least 31,000 context tokens, with only marginal differences between the specific base configurations.

</details>

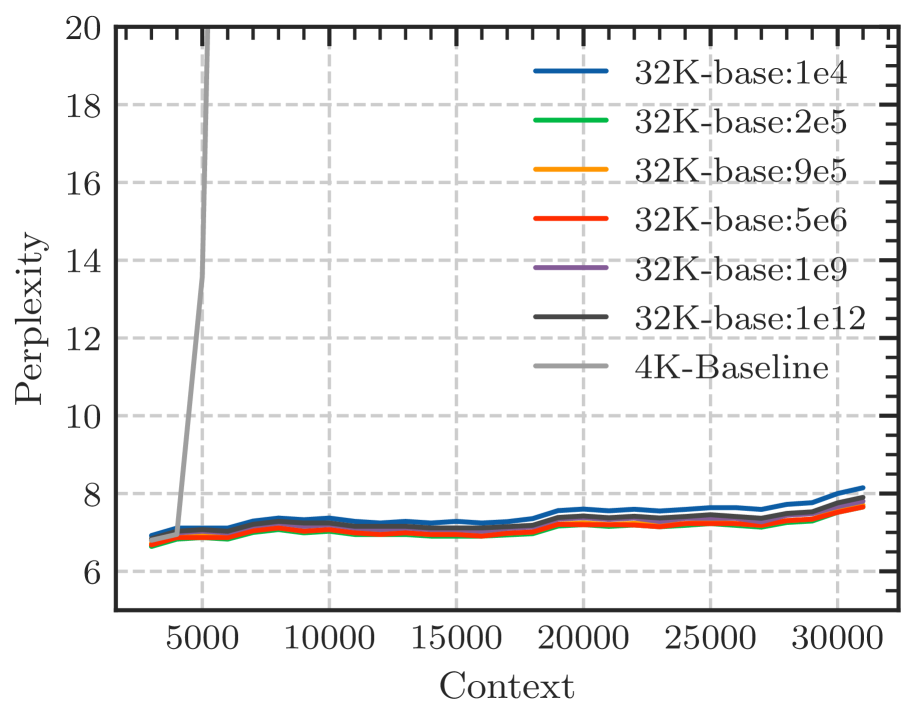

(a) Perplexity

<details>

<summary>x11.png Details</summary>

### Visual Description

# Technical Data Extraction: Accuracy vs. Base Value Chart

## 1. Component Isolation

* **Header:** None present.

* **Main Chart Area:** A 2D line plot with a grid, featuring one data series (teal line with star markers) and one reference line (dark blue dash-dotted vertical line).

* **Legend:** Located in the bottom-right quadrant.

* **Axes:** X-axis (Base value) and Y-axis (Accuracy on 32K).

## 2. Axis Specifications

* **Y-Axis (Vertical):**

* **Label:** "Accuracy on 32K"

* **Scale:** Linear, ranging from 0.0 to 0.4 (topmost visible tick is 0.3, but the grid extends higher).

* **Major Markers:** 0.0, 0.1, 0.2, 0.3.

* **X-Axis (Horizontal):**

* **Label:** "Base value"

* **Scale:** Logarithmic/Non-linear (based on scientific notation labels).

* **Major Markers:** 1e4, 2e5, 9e5, 5e6, 1e9, 1e12.

## 3. Legend and Reference Lines

* **Legend Location:** [x=0.7, y=0.1] (Bottom-right).

* **Legend Item:**

* **Symbol:** Dark blue dash-dotted line (`- . -`).

* **Label:** "Lower bound".

* **Reference Line Placement:** A vertical dark blue dash-dotted line is positioned exactly at the x-axis value of **9e5**.

## 4. Data Series Analysis (Teal Line)

* **Color:** Teal / Cyan.

* **Marker:** 5-point star.

* **Trend Verification:** The line shows a sharp upward slope from 1e4 to 5e6, reaching a peak. It then experiences a slight dip at 1e9 before recovering to a high plateau at 1e12.

### Extracted Data Points (Estimated from Grid)

| Base value (X) | Accuracy on 32K (Y) | Notes |

| :--- | :--- | :--- |

| 1e4 | ~0.00 | Starting point at origin. |

| 2e5 | ~0.06 | Initial slow growth. |

| ~5e5 | ~0.17 | Sharp increase (unlabeled tick). |

| 9e5 | ~0.27 | Intersects the "Lower bound" vertical line. |

| ~2e6 | ~0.33 | Continued sharp growth. |

| 5e6 | ~0.35 | Local maximum/peak. |

| 1e9 | ~0.30 | Notable performance dip. |

| 1e12 | ~0.35 | Recovery to peak performance level. |

## 5. Summary of Findings

The chart illustrates the relationship between a "Base value" and "Accuracy on 32K". Performance is negligible at low base values (1e4) but improves rapidly as the value approaches **9e5**, which is explicitly marked as a "Lower bound." The accuracy peaks around **5e6**, remains relatively stable despite a minor fluctuation at **1e9**, and finishes at its highest recorded accuracy (approx. 0.35) at a base value of **1e12**.

</details>

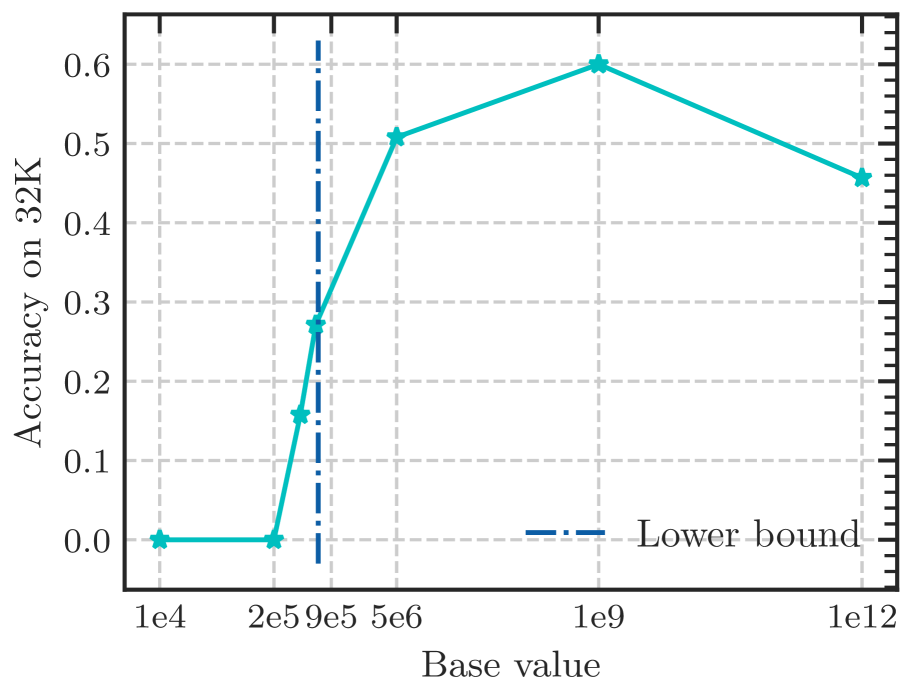

(b) Long-eval 32k

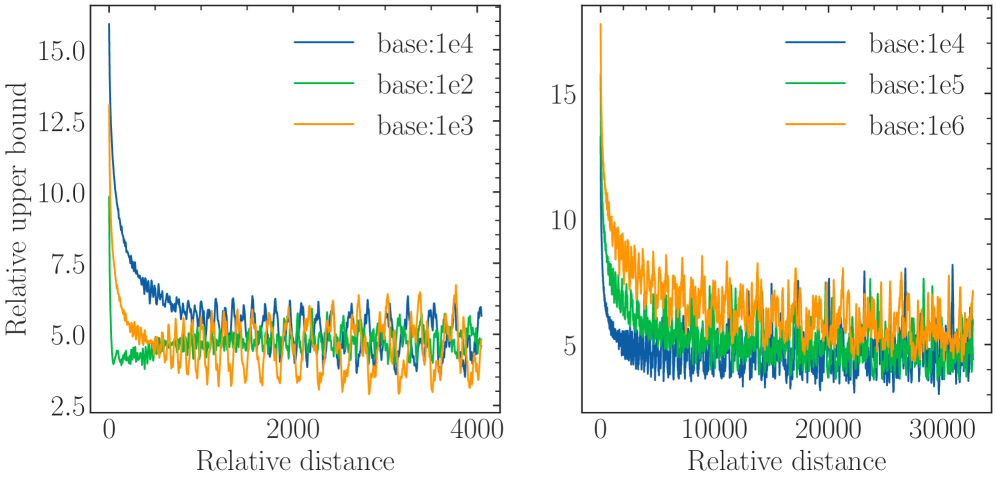

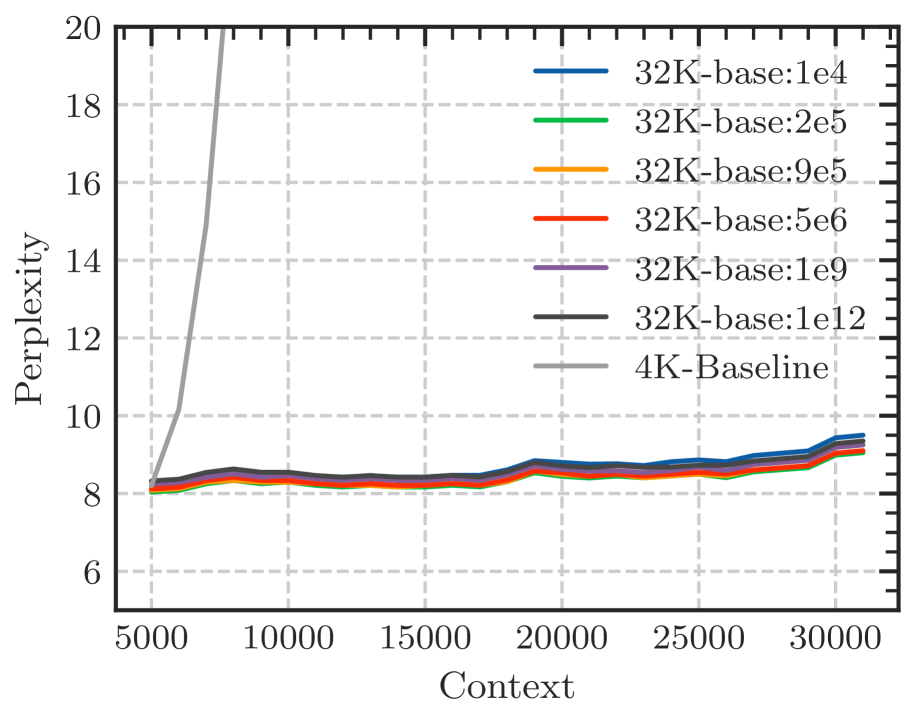

Figure 6: Fine-tuning Llama2-7B-Base on 32k context length with varying RoPE’s base. Although the perplexity remains low with varying bases, the Long-eval accuracy reveals a discernible bound for the base value, below which the Long-eval accuracy declines significantly. The dotted line denotes the lower bound derived from Eq. 18.

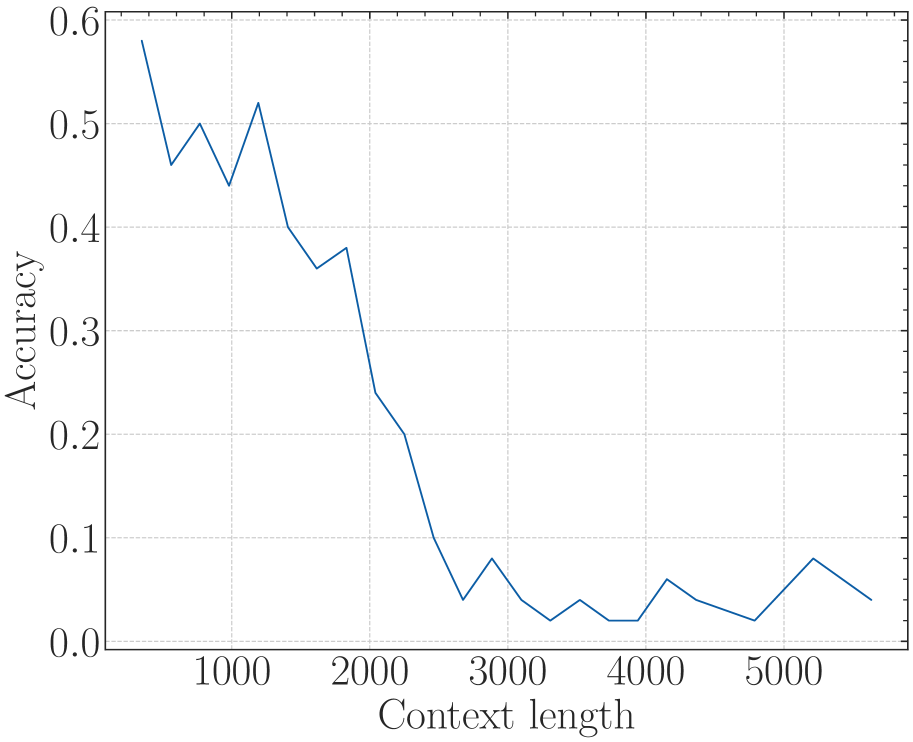

According to Eq. 18, there is a lower bound of RoPE’s base determined by expected context length. We fine-tune Llama2-7b-Base on 32k context with varying bases. As depicted in Figure 6, although the difference in perplexity between different bases is negligible, the accuracy of Long-eval varies significantly. In Figure 6(b), the dotted line denotes the lower bound derived from Eq. 18, below which the Long-eval accuracy declines significantly. Additional results are provided in Appendix C. Notably, this empirically observed lower bound closely aligns with our theoretical derivation. On the other hand, we can see that $base=2e5$ achieves the best perplexity, but the accuracy of Long-eval is very low, which indicates the limitations of perplexity in evaluating long context capabilities.

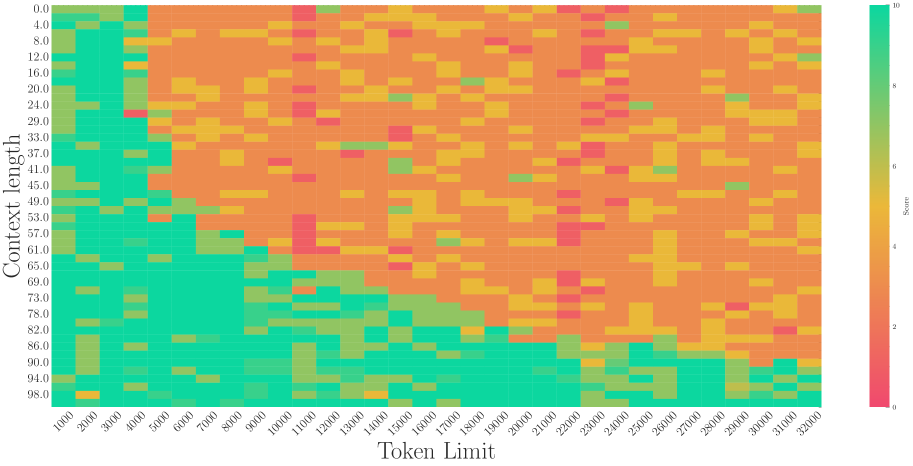

5.3 The Base of RoPE bounds context length in pre-training stages

<details>

<summary>x12.png Details</summary>

### Visual Description

# Technical Document Extraction: Perplexity vs. Context Chart

## 1. Image Classification and Overview

This image is a line chart depicting the relationship between "Context" (independent variable) and "Perplexity" (dependent variable). The chart is presented in a clean, academic style with a grid background.

## 2. Component Isolation

### Header/Title

* **Content:** None present.

### Main Chart Area

* **Background:** White with a light gray dashed grid.

* **Grid Lines:** Vertical and horizontal dashed lines corresponding to major axis ticks.

* **Data Series:** A single solid line in a dark blue/teal color.

### Axis Labels and Markers

* **Y-Axis (Vertical):**

* **Label:** "Perplexity" (oriented vertically).

* **Range:** 10 to 30.

* **Major Ticks:** 10, 15, 20, 25, 30.

* **Minor Ticks:** Present between major ticks (unlabeled).

* **X-Axis (Horizontal):**

* **Label:** "Context".

* **Range:** 0 to approximately 4500.

* **Major Ticks:** 0, 1000, 2000, 3000, 4000.

* **Minor Ticks:** Present at intervals of 500 (unlabeled).

### Legend

* **Location:** None present. As there is only one data series, the line color is the primary identifier.

---

## 3. Data Extraction and Trend Analysis

### Trend Verification

The data series (Dark Blue Line) exhibits a non-linear trend:

1. **Initial Phase (0 - 200):** Starts at approximately 11.2, dips slightly, then begins to rise.

2. **Growth Phase (200 - 2500):** The line slopes upward steadily, showing a positive correlation between Context and Perplexity.

3. **Plateau Phase (2500 - 3500):** The line flattens out, reaching its peak value.

4. **Slight Decline/Stabilization (3500 - 4500):** The line shows a very slight downward trend before leveling off at the end of the recorded range.

### Estimated Data Points

Based on the visual alignment with the grid and axis markers:

| Context (X) | Perplexity (Y) | Notes |

| :--- | :--- | :--- |

| ~100 | ~11.2 | Starting point |

| 500 | ~11.8 | Steady climb |

| 1000 | ~12.7 | |

| 1500 | ~13.5 | |

| 2000 | ~14.1 | Approaching plateau |

| 2500 | ~14.4 | Peak region |

| 3000 | ~14.4 | Peak region |

| 3500 | ~14.3 | Slight dip |

| 4000 | ~14.1 | |

| 4500 | ~14.1 | Final data point |

---

## 4. Summary of Information

The chart illustrates that as the **Context** increases from 0 to roughly 2500, the **Perplexity** increases from approximately 11 to 14.4. Beyond a Context value of 2500, the Perplexity stabilizes and remains relatively constant between 14.0 and 14.5 through to the Context value of 4500. This suggests a "saturation" point where additional context no longer significantly impacts the perplexity metric in the same upward trajectory.

</details>

<details>

<summary>x13.png Details</summary>

### Visual Description

# Technical Data Extraction: Accuracy vs. Context Length

## 1. Image Classification

This image is a **line chart** depicting the relationship between a model's performance (Accuracy) and the length of the input context (Context length).

## 2. Component Isolation

### Header/Metadata

* **Title:** None present.

* **Language:** English.

### Main Chart Area

* **X-Axis Label:** "Context length"

* **Y-Axis Label:** "Accuracy"

* **Grid:** Light gray dashed grid lines for both major x and y intervals.

* **Data Series:** A single solid blue line.

### Legend

* **Presence:** No legend is present as there is only one data series.

---

## 3. Axis Scales and Markers

### X-Axis (Context length)

* **Range:** Approximately 400 to 5800.

* **Major Tick Labels:** 1000, 2000, 3000, 4000, 5000.

* **Minor Ticks:** Present between major labels, indicating intervals of 200 units.

### Y-Axis (Accuracy)

* **Range:** 0.0 to 0.55.

* **Major Tick Labels:** 0.0, 0.1, 0.2, 0.3, 0.4, 0.5.

* **Minor Ticks:** Present at intervals of 0.025.

---

## 4. Trend Verification and Data Extraction

### Visual Trend Analysis

The blue line exhibits a **sharp negative correlation** initially, followed by a **volatile plateau** at low values.

1. **Initial Phase (400 - 1200):** The line starts at its peak and drops precipitously.

2. **Transition Phase (1200 - 2200):** The line fluctuates with a downward bias, showing small "sawtooth" peaks.

3. **Degraded Phase (2200 - 5600):** The line remains near the baseline (0.0 to 0.06), oscillating frequently and hitting 0.0 at multiple points.

### Estimated Data Points

Based on the grid intersections and axis markers:

| Context length (approx.) | Accuracy (approx.) | Notes |

| :--- | :--- | :--- |

| 400 | 0.52 | Peak performance |

| 600 | 0.52 | Performance holds |

| 800 | 0.30 | Sharp drop |

| 1000 | 0.28 | Continued decline |

| 1200 | 0.12 | Local minimum |

| 1400 | 0.14 | Small recovery |

| 1600 | 0.08 | Local minimum |

| 1800 | 0.14 | Local peak |

| 2000 | 0.10 | Decline |

| 2200 | 0.00 | First zero-accuracy point |

| 2800 | 0.06 | Small recovery |

| 3000 | 0.04 | Decline |

| 3200 | 0.06 | Small recovery |

| 3500 | 0.00 | Second zero-accuracy point |

| 3700 | 0.04 | Small recovery |

| 4100 | 0.00 | Third zero-accuracy point |

| 4300 | 0.04 | Small recovery |

| 4800 | 0.02 | Decline |

| 5200 | 0.04 | Small recovery |

| 5600 | 0.02 | Final data point |

---

## 5. Summary of Findings

The chart demonstrates a significant degradation in model accuracy as the context length increases. The model maintains its highest accuracy (~0.52) only for very short contexts (under 600 units). By the time the context length reaches 2200 units, the accuracy effectively collapses, frequently hitting 0.0 and never recovering above 0.06 for the remainder of the tested range (up to 5600 units).

</details>

<details>

<summary>x14.png Details</summary>

### Visual Description

# Technical Data Extraction: Performance Heatmap

## 1. Document Overview

This image is a technical heatmap visualization representing the relationship between "Context length" and "Token Limit" on a numerical "Score." The chart uses a color gradient to represent performance metrics, where green indicates high scores and red indicates low scores.

## 2. Component Isolation

### A. Header / Metadata

* **Language:** English.

* **Content:** No explicit title text is present at the top of the image.

### B. Main Chart (Heatmap)

* **Type:** 2D Heatmap / Grid.

* **Y-Axis Label:** Context length (Vertical, left side).

* **X-Axis Label:** Token Limit (Horizontal, bottom).

* **Color Scale (Legend):** Located on the far right [x=950, y=500 approx.]. It is a vertical gradient bar labeled "Score".

### C. Axis Scales and Markers

#### Y-Axis (Context length)

The axis represents a percentage or ratio from 0.0 to 100.0, with markers every ~5.0 to 6.0 units:

`0.0, 5.0, 11.0, 16.0, 21.0, 26.0, 32.0, 37.0, 42.0, 47.0, 53.0, 58.0, 63.0, 68.0, 74.0, 79.0, 84.0, 89.0, 95.0, 100.0`

#### X-Axis (Token Limit)

The axis represents a count from 0 to 5000, with markers at irregular intervals (roughly every 102 units):

`0, 102, 204, 306, 408, 510, 612, 714, 816, 918, 1020, 1122, 1224, 1327, 1429, 1531, 1633, 1735, 1837, 1939, 2041, 2143, 2245, 2347, 2449, 2551, 2653, 2755, 2857, 2959, 3061, 3163, 3265, 3367, 3469, 3571, 3673, 3776, 3878, 3980, 4082, 4184, 4286, 4388, 4490, 4592, 4694, 4796, 4898, 5000`

## 3. Legend and Color Mapping

* **Score 10 (Bright Green):** Optimal performance.

* **Score 5-7 (Yellow/Orange):** Moderate/Degraded performance.

* **Score 0 (Red/Pink):** Failure or lowest performance.

## 4. Trend Verification and Data Analysis

### Visual Trend Analysis

1. **High Performance Zone (Green):** Concentrated in the bottom-left corner and along the bottom edge. As "Context length" increases (moving down the Y-axis), the system maintains high scores for longer "Token Limits."

2. **Degradation Zone (Red/Orange):** Concentrated in the top-right quadrant. As "Token Limit" increases while "Context length" remains low (top of the chart), performance drops significantly.

3. **The "Diagonal" Boundary:** There is a visible diagonal threshold. When `Context length` is low (e.g., 0.0 - 21.0), performance drops to red almost immediately after a Token Limit of ~510. When `Context length` is high (e.g., 95.0 - 100.0), performance remains green across almost the entire Token Limit range (0 to 5000).

### Key Data Observations

* **Low Context Length (0.0 - 16.0):** Performance is high (Green) only for very small Token Limits (< 510). Beyond 612, the score drops to Red (0-2) with scattered Orange (4-5) noise.

* **Mid Context Length (47.0 - 68.0):** Performance stays high (Green) up to a Token Limit of approximately 1020. Beyond this, it transitions into a "noisy" field of Red and Orange.

* **High Context Length (89.0 - 100.0):** Performance is consistently high (Green) across nearly the entire X-axis. There are very few "failure" pixels (Red), notably one at `Context length 100.0 / Token Limit 306`.

* **The "Noise" Pattern:** In the upper-right region (Token Limit > 2000, Context length < 50), the data is not solid red but "speckled" with orange and yellow, suggesting inconsistent or stochastic performance rather than total failure.

## 5. Summary of Findings

The chart demonstrates an inverse relationship between the two variables regarding performance stability. The system performs best when the **Context length** is high (near 100.0), regardless of the **Token Limit**. Conversely, when the Context length is low, the system can only handle very small Token Limits before the Score degrades to near zero.

</details>

<details>

<summary>x15.png Details</summary>

### Visual Description

# Technical Document Extraction: Perplexity vs. Context Chart

## 1. Component Isolation

* **Header:** None present.

* **Main Chart Area:** A line graph plotted on a Cartesian coordinate system with a light-gray dashed grid.

* **Footer/Axes:** Contains the X-axis label "Context" and the Y-axis label "Perplexity".

## 2. Axis Identification and Markers

* **Y-Axis (Vertical):**

* **Label:** Perplexity

* **Range:** 10 to 30

* **Major Tick Marks:** 10, 15, 20, 25, 30

* **Orientation:** Text is rotated 90 degrees counter-clockwise.

* **X-Axis (Horizontal):**

* **Label:** Context

* **Range:** 0 to approximately 4500+

* **Major Tick Marks:** 0, 1000, 2000, 3000, 4000

* **Minor Tick Marks:** Present at intervals of 500 (unlabeled).

## 3. Data Series Analysis

The chart contains a single data series represented by a solid dark blue line.

### Trend Verification

* **Initial Phase (0 - 1500 Context):** The line shows a steady upward slope, starting slightly above 13 and peaking near 14.2.

* **Middle Phase (1500 - 3200 Context):** The line exhibits a gradual decline, dipping back toward the 13.5 mark.

* **Final Phase (3200 - 4500 Context):** The line stabilizes and shows a very slight upward recovery, ending just below the 14 mark.

* **Overall Stability:** The data remains remarkably stable within a narrow band between 13 and 14.5 perplexity across the entire context window.

### Extracted Data Points (Approximate)

Based on the visual alignment with the grid:

| Context (X) | Perplexity (Y) |

| :--- | :--- |

| 100 | ~13.1 |

| 500 | ~13.5 |

| 1000 | ~13.8 |

| 1500 | ~14.2 (Peak) |

| 2000 | ~14.1 |

| 2500 | ~13.9 |

| 3200 | ~13.4 (Local Minimum) |

| 4000 | ~13.6 |

| 4500 | ~13.7 |

## 4. Visual Style and Metadata

* **Grid:** Light gray dashed lines for both major and minor increments.

* **Font:** Serif typeface used for all labels and numbers.

* **Legend:** No legend is present, as there is only one data series.

* **Language:** English.

## 5. Summary of Information

This technical chart illustrates the relationship between "Context" and "Perplexity." In the field of natural language processing, this typically represents how a model's predictive performance (Perplexity) changes as the input sequence length (Context) increases. The data indicates that the model maintains a consistent perplexity level (between 13 and 14.5) regardless of context length up to 4500 units, suggesting high stability and sustained performance over long sequences.

</details>

<details>

<summary>x16.png Details</summary>

### Visual Description

# Technical Document Extraction: Accuracy vs. Context Length Chart

## 1. Component Isolation

* **Header:** None present.

* **Main Chart Area:** A line graph plotted on a Cartesian coordinate system with a light gray dashed grid.

* **Footer/Axes:** Contains the X-axis label "Context length" and the Y-axis label "Accuracy".

## 2. Axis and Label Extraction

* **Y-Axis (Vertical):**

* **Label:** Accuracy

* **Scale:** 0.0 to 0.6

* **Major Tick Markers:** 0.0, 0.1, 0.2, 0.3, 0.4, 0.5, 0.6

* **X-Axis (Horizontal):**

* **Label:** Context length

* **Scale:** Approximately 400 to 5600

* **Major Tick Markers:** 1000, 2000, 3000, 4000, 5000

## 3. Data Series Analysis

### Trend Verification

The data series is represented by a single solid blue line.

* **Initial Phase (400 - 1200):** The line shows high volatility but maintains a relatively high accuracy between 0.45 and 0.58.

* **Degradation Phase (1200 - 2600):** The line shows a sharp, consistent downward slope, indicating a significant loss in accuracy as context length increases.

* **Baseline Phase (2600 - 5600):** The line flattens out, oscillating at a very low accuracy level (near 0.0 to 0.08), suggesting the model has reached a floor or "noise" level.

### Data Point Extraction (Estimated)

Based on the grid intersections and axis markers, the following data points are extracted:

| Context Length (X) | Accuracy (Y) |

| :--- | :--- |

| ~400 | 0.58 |

| ~600 | 0.46 |

| ~800 | 0.50 |

| ~1000 | 0.44 |

| ~1200 | 0.52 |

| ~1400 | 0.40 |

| ~1600 | 0.36 |

| ~1800 | 0.38 |

| ~2000 | 0.24 |

| ~2200 | 0.20 |

| ~2400 | 0.10 |

| ~2600 | 0.04 |

| ~2800 | 0.08 |

| ~3000 | 0.04 |

| ~3200 | 0.02 |

| ~3400 | 0.04 |

| ~3600 | 0.02 |

| ~3800 | 0.02 |

| ~4000 | 0.06 |

| ~4200 | 0.04 |

| ~4400 | 0.03 |

| ~4600 | 0.02 |

| ~4800 | 0.05 |

| ~5000 | 0.08 |

| ~5200 | 0.06 |

| ~5400 | 0.04 |

## 4. Summary of Findings

The chart illustrates a strong inverse relationship between "Context length" and "Accuracy". The performance of the system/model is highest at short context lengths (under 1200 units). A critical performance "cliff" occurs between context lengths of 1800 and 2600, where accuracy drops from approximately 38% to nearly 4%. Beyond a context length of 3000, the accuracy remains consistently low, failing to recover significantly.

</details>

<details>

<summary>x17.png Details</summary>

### Visual Description

# Technical Data Extraction: Performance Heatmap Analysis

## 1. Document Overview

This image is a technical heatmap visualizing the relationship between two variables—**Context length** and **Token Limit**—and their impact on a numerical **Score**. The chart uses a color gradient to represent performance levels across a grid of data points.

## 2. Component Isolation

### A. Header/Axes Labels

* **Y-Axis Label (Left):** "Context length"

* **X-Axis Label (Bottom):** "Token Limit"

* **Legend Label (Right):** "Score"

### B. Legend and Scale (Spatial Grounding: [x=right, y=center])

The legend is a vertical color bar on the right side of the chart.

* **Scale Range:** 0 to 10.

* **Color Mapping:**

* **10 (Top - Teal/Green):** Represents the highest score/optimal performance.

* **5-7 (Middle - Yellow/Light Green):** Represents moderate performance.

* **0 (Bottom - Red/Pink):** Represents the lowest score/poor performance.

### C. Axis Markers (Data Categories)

* **Y-Axis (Context length):** 20 intervals ranging from **0.0** to **100.0** (increments of approximately 5.3 units).

* Values: 0.0, 5.0, 11.0, 16.0, 21.0, 26.0, 32.0, 37.0, 42.0, 47.0, 53.0, 58.0, 63.0, 68.0, 74.0, 79.0, 84.0, 89.0, 95.0, 100.0.

* **X-Axis (Token Limit):** 50 intervals ranging from **0** to **5000** (increments of 102 units).

* Key markers: 0, 102, 204, 306, 408, 510, 612, 714, 816, 918, 1020, 1122, 1224, 1327, 1429, 1531, 1633, 1735, 1837, 1939, 2041, 2143, 2245, 2347, 2449, 2551, 2653, 2755, 2857, 2959, 3061, 3163, 3265, 3367, 3469, 3571, 3673, 3776, 3878, 3980, 4082, 4184, 4286, 4388, 4490, 4592, 4694, 4796, 4898, 5000.

---

## 3. Trend Verification and Data Analysis

### Visual Trend Description

The heatmap shows a distinct performance degradation (shifting from teal to red) as both the **Token Limit** and **Context length** increase, though the Token Limit appears to be the primary driver of failure.

1. **High Performance Zone (Teal):** Dominates the left side of the chart (Token Limit < 2000) and the bottom-left quadrant.

2. **Transition Zone (Yellow/Orange):** Appears as a diagonal "noise" pattern starting around Token Limit 2143.

3. **Low Performance Zone (Red/Pink):** Concentrated in the upper-right quadrant, specifically where Token Limit > 3000 and Context length < 50.0.

### Key Data Observations

* **Stability at Low Token Limits:** For Token Limits between 0 and 2041, the score remains consistently high (Teal, Score ~10), regardless of Context length, with only minor isolated fluctuations (e.g., at Token Limit 1020, Context length 21.0-26.0).

* **The "Failure Wall":** A significant drop in scores begins at **Token Limit 2143**. From this point forward, the top half of the chart (Context length 0.0 to 53.0) shows a high density of red and orange cells.