# Federated Model Heterogeneous Matryoshka Representation Learning

Abstract

Model heterogeneous federated learning (MHeteroFL) enables FL clients to collaboratively train models with heterogeneous structures in a distributed fashion. However, existing MHeteroFL methods rely on training loss to transfer knowledge between the client model and the server model, resulting in limited knowledge exchange. To address this limitation, we propose the Fed erated model heterogeneous M atryoshka R epresentation L earning (FedMRL) approach for supervised learning tasks. It adds an auxiliary small homogeneous model shared by clients with heterogeneous local models. (1) The generalized and personalized representations extracted by the two models’ feature extractors are fused by a personalized lightweight representation projector. This step enables representation fusion to adapt to local data distribution. (2) The fused representation is then used to construct Matryoshka representations with multi-dimensional and multi-granular embedded representations learned by the global homogeneous model header and the local heterogeneous model header. This step facilitates multi-perspective representation learning and improves model learning capability. Theoretical analysis shows that FedMRL achieves a $\mathcal{O}(1/T)$ non-convex convergence rate. Extensive experiments on benchmark datasets demonstrate its superior model accuracy with low communication and computational costs compared to seven state-of-the-art baselines. It achieves up to $8.48\%$ and $24.94\%$ accuracy improvement compared with the state-of-the-art and the best same-category baseline, respectively.

1 Introduction

Traditional federated learning (FL) [29] often relies on a central FL server to coordinate multiple data owners (a.k.a., FL clients) to train a global shared model without exposing local data. In each communication round, the server broadcasts the global model to the clients. A client trains it on its local data and sends the updated local model to the FL server. The server aggregates local models to produce a new global model. These steps are repeated until the global model converges.

However, the above design cannot handle the following heterogeneity challenges [49] commonly found in practical FL applications: (1) Data heterogeneity [40, 45, 44, 47, 39, 55]: FL clients’ local data often follow non-independent and identically distributions (non-IID). A single global model produced by aggregating local models trained on non-IID data might not perform well on all clients. (2) System heterogeneity [11, 46, 48]: FL clients can have diverse system configurations in terms of computing power and network bandwidth. Training the same model structure among such clients means that the global model size must accommodate the weakest device, leading to sub-optimal performance on other more powerful clients. (3) Model heterogeneity [41]: When FL clients are enterprises, they might have heterogeneous proprietary models which cannot be directly shared with others during FL training due to intellectual property (IP) protection concerns.

To address these challenges, the field of model heterogeneous federated learning (MHeteroFL) [52, 49, 53, 54, 51, 50] has emerged. It enables FL clients to train local models with tailored structures suitable for local system resources and local data distributions. Existing MHeteroFL methods [38, 43] are limited in terms of knowledge transfer capabilities as they commonly leverage the training loss between server and client models for this purpose. This design leads to model performance bottlenecks, incurs high communication and computation costs, and risks exposing private local model structures and data.

<details>

<summary>x1.png Details</summary>

### Visual Description

## Diagram: Matryoshka Representation Learning

### Overview

The image is a diagram illustrating a machine learning model architecture, specifically focusing on a "Matryoshka Reps" approach. The diagram shows how an input `x` is processed through a feature extractor, then split into multiple representations, each leading to a separate header and loss calculation, which are then aggregated.

### Components/Axes

* **Input:** `x` (input data)

* **Feature Extractor:** A teal trapezoid labeled "Feature Extractor"

* **Matryoshka Reps:** A dashed green box containing a dashed yellow box, a dashed pink box, and a Matryoshka doll image.

* **Headers:** Three colored rectangles (pink, yellow, green) labeled "Headers" below the green rectangle.

* **Outputs:** `ŷ₁`, `ŷ₂`, `ŷ₃` (predicted outputs)

* **Losses:** `ℓ₁`, `ℓ₂`, `ℓ₃` (individual losses)

* **Aggregated Loss:** `ℓ` (total loss)

* **Aggregation Function:** A blue circle with a plus sign inside, representing the aggregation of individual losses.

### Detailed Analysis

1. **Input `x`:** The input data `x` enters the system from the left.

2. **Feature Extractor:** The input `x` is fed into a "Feature Extractor," which is represented as a teal trapezoid.

3. **Matryoshka Reps:** The output of the feature extractor goes into a "Matryoshka Reps" block. This block contains nested representations, visually represented by nested dashed rectangles (pink inside yellow inside green) and a Matryoshka doll.

4. **Headers:** The output of the "Matryoshka Reps" block is split into three branches, each leading to a different colored "Header":

* Top branch: Pink header leading to `ŷ₁` and `ℓ₁`

* Middle branch: Yellow header leading to `ŷ₂` and `ℓ₂`

* Bottom branch: Green header leading to `ŷ₃` and `ℓ₃`

5. **Outputs and Losses:** Each header produces an output (`ŷ₁`, `ŷ₂`, `ŷ₃`) and a corresponding loss (`ℓ₁`, `ℓ₂`, `ℓ₃`).

6. **Aggregation:** The individual losses (`ℓ₁`, `ℓ₂`, `ℓ₃`) are aggregated using an aggregation function (blue circle with a plus sign) to produce the total loss `ℓ`.

### Key Observations

* The "Matryoshka Reps" block suggests a hierarchical representation learning approach, where different levels of abstraction are captured.

* The use of multiple headers implies that the model is trained to produce multiple outputs or predictions, each associated with a specific representation level.

* The aggregation of individual losses into a total loss indicates that the model is optimized to minimize the overall error across all representation levels.

### Interpretation

The diagram illustrates a machine learning model that leverages hierarchical representations, inspired by the nested structure of Matryoshka dolls. The model extracts features from the input data and then creates multiple representations at different levels of abstraction. Each representation is associated with a separate header, which produces an output and a corresponding loss. The individual losses are then aggregated to train the model. This approach allows the model to learn more robust and generalizable representations by capturing different aspects of the input data. The nested representations within the "Matryoshka Reps" block likely correspond to different levels of granularity or abstraction, enabling the model to capture both fine-grained details and high-level concepts.

</details>

<details>

<summary>x2.png Details</summary>

### Visual Description

## Neural Network Diagram: Feature Extraction and Header

### Overview

The image presents a block diagram of a neural network architecture, illustrating the flow of data through different layers. The network consists of a "Feature Extractor" block, a "Rep" (Representation) block, and a "Header" block. The diagram shows the sequence of operations from input *x* to output *ŷ*.

### Components/Axes

* **Input:** *x* (located on the left side of the diagram)

* **Feature Extractor:** A block containing Conv1, Conv2, FC1, and FC2 layers. The block is enclosed in a light blue box with a dashed black border.

* **Conv1:** Convolutional Layer 1

* **Conv2:** Convolutional Layer 2

* **FC1:** Fully Connected Layer 1

* **FC2:** Fully Connected Layer 2

* **Rep:** Representation block, a vertical rectangle in light gray with a dashed black border.

* **Header:** A block containing FC3 layer. The block is enclosed in a light pink box with a dashed black border.

* **FC3:** Fully Connected Layer 3

* **Output:** *ŷ* (located on the right side of the diagram)

* **Arrows:** Arrows indicate the direction of data flow between the layers.

### Detailed Analysis

The diagram shows the following sequence of operations:

1. Input *x* enters the "Feature Extractor" block.

2. The input passes through Conv1, Conv2, FC1, and FC2 layers sequentially.

3. The output of the "Feature Extractor" is fed into the "Rep" block.

4. The output of the "Rep" block is fed into the "Header" block.

5. Inside the "Header" block, the data passes through the FC3 layer.

6. The output of the FC3 layer is the final output *ŷ*.

### Key Observations

* The "Feature Extractor" block consists of two convolutional layers (Conv1 and Conv2) followed by two fully connected layers (FC1 and FC2).

* The "Rep" block acts as a representation layer between the feature extractor and the header.

* The "Header" block consists of a single fully connected layer (FC3).

* The diagram illustrates a typical feedforward neural network architecture.

### Interpretation

The diagram represents a neural network designed for a task where feature extraction is crucial. The convolutional layers (Conv1 and Conv2) likely extract spatial features from the input, while the fully connected layers (FC1, FC2, and FC3) perform classification or regression based on these features. The "Rep" block likely transforms the extracted features into a suitable representation for the header. The overall architecture suggests a hierarchical feature learning approach, where the network learns increasingly complex features as data flows through the layers.

</details>

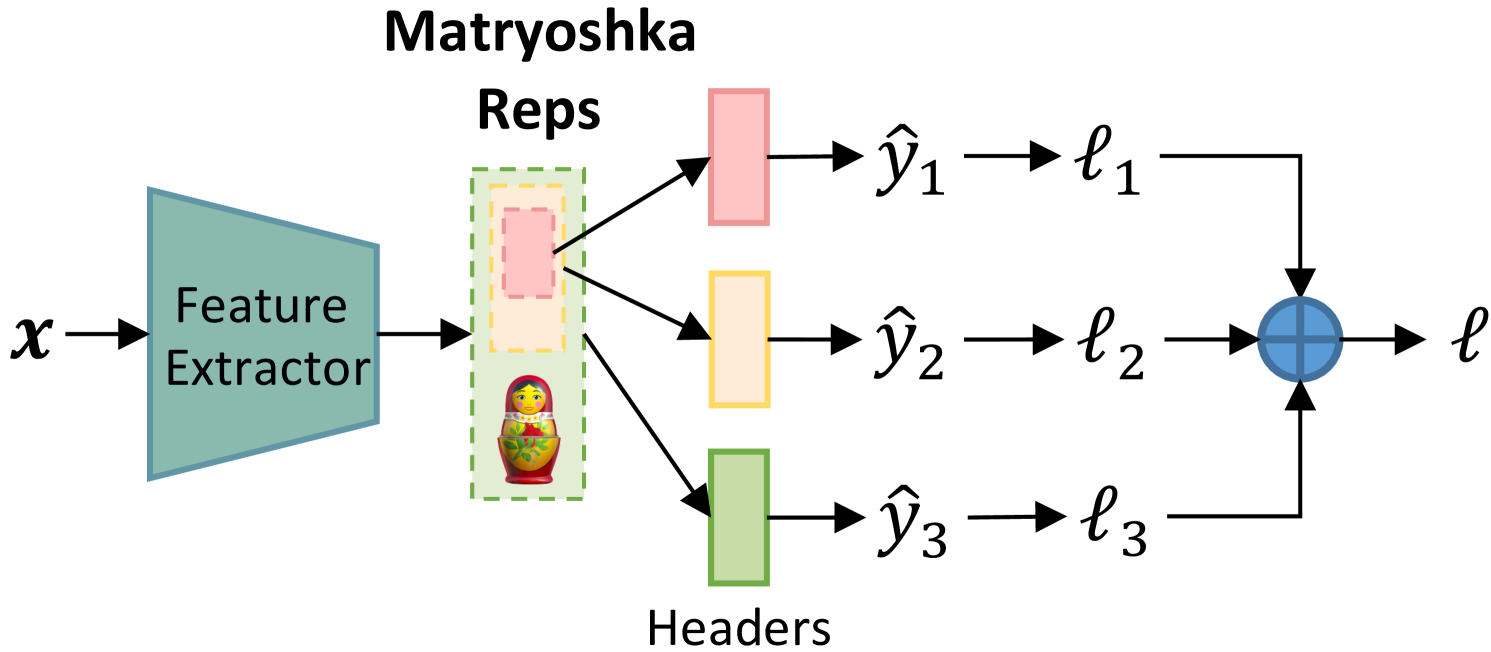

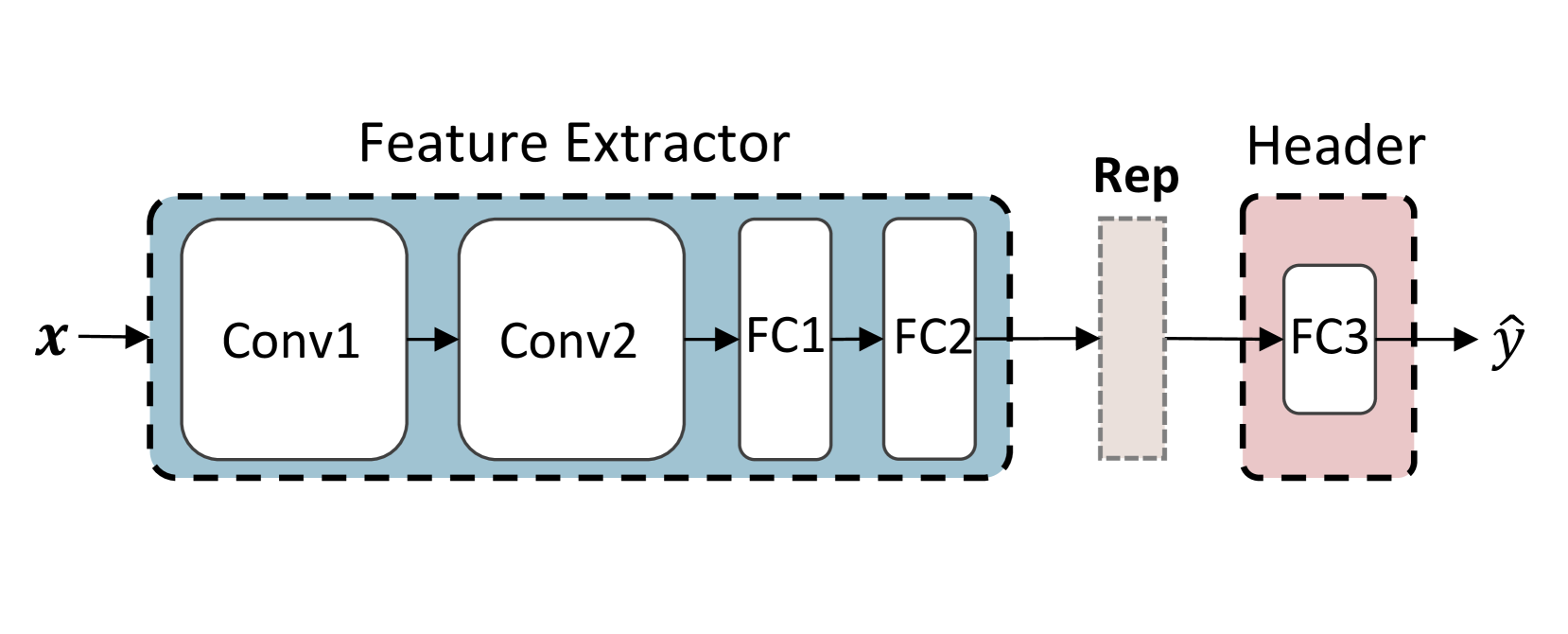

Figure 1: Left: Matryoshka Representation Learning. Right: Feature extractor and prediction header.

Recently, Matryoshka Representation Learning (MRL) [21] has emerged to tailor representation dimensions based on the computational and storage costs required by downstream tasks to achieve a near-optimal trade-off between model performance and inference costs. As shown in Figure 1 (left), the representation extracted by the feature extractor is constructed to form Matryoshka Representations involving a series of embedded representations ranging from low-to-high dimensions and coarse-to-fine granularities. Each of them is processed by a single output layer for calculating loss, and the sum of losses from all branches is used to update model parameters. This design is inspired by the insight that people often first perceive the coarse aspect of a target before observing the details, with multi-perspective observations enhancing understanding.

Inspired by MRL, we address the aforementioned limitations of MHeteroFL by proposing the Fed erated model heterogeneous M atryoshka R epresentation L earning (FedMRL) approach for supervised learning tasks. For each client, a shared global auxiliary homogeneous small model is added to interact with its heterogeneous local model. Both two models consist of a feature extractor and a prediction header, as depicted in Figure 1 (right). FedMRL has two key design innovations. (1) Adaptive Representation Fusion: for each local data sample, the feature extractors of the two local models extract generalized and personalized representations, respectively. The two representations are spliced and then mapped to a fused representation by a lightweight personalized representation projector adapting to local non-IID data. (2) Multi-Granularity Representation Learning: the fused representation is used to construct Matryoshka Representations involving multi-dimension and multi-granularity embedded representations, which are processed by the prediction headers of the two models, respectively. The sum of their losses is used to update all models, which enhances the model learning capability owing to multi-perspective representation learning.

The personalized multi-granularity MRL enhances representation knowledge interaction between the homogeneous global model and the heterogeneous client local model. Each client’s local model and data are not exposed during training for privacy-preservation. The server and clients only transmit the small homogeneous models, thereby incurring low communication costs. Each client only trains a small homogeneous model and a lightweight representation projector in addition, incurring low extra computational costs. We theoretically derive the $\mathcal{O}(1/T)$ non-convex convergence rate of FedMRL and verify that it can converge over time. Experiments on benchmark datasets comparing FedMRL against seven state-of-the-art baselines demonstrate its superiority. It improves model accuracy by up to $8.48\%$ and $24.94\%$ over the best baseline and the best same-category baseline, while incurring lower communication and computation costs.

2 Related Work

Existing MHeteroFL works can be divided into the following four categories.

MHeteroFL with Adaptive Subnets. These methods [3, 4, 5, 11, 14, 56, 64] construct heterogeneous local subnets of the global model by parameter pruning or special designs to match with each client’s local system resources. The server aggregates heterogeneous local subnets wise parameters to generate a new global model. In cases where clients hold black-box local models with heterogeneous structures not derived from a common global model, the server is unable to aggregate them.

MHeteroFL with Knowledge Distillation. These methods [6, 8, 9, 15, 16, 17, 22, 23, 25, 27, 30, 32, 35, 36, 42, 57, 59] often perform knowledge distillation on heterogeneous client models by leveraging a public dataset with the same data distribution as the learning task. In practice, such a suitable public dataset can be hard to find. Others [12, 60, 61, 63] train a generator to synthesize a shared dataset to deal with this issue. However, this incurs high training costs. The rest (FD [19], FedProto [41] and others [1, 2, 13, 49, 58]) share the intermediate information of client local data for knowledge fusion.

MHeteroFL with Model Split. These methods split models into feature extractors and predictors. Some [7, 10, 31, 33] share homogeneous feature extractors across clients and personalize predictors, while others (LG-FedAvg [24] and [18, 26]) do the opposite. Such methods expose part of the local model structures, which might not be acceptable if the models are proprietary IPs of the clients.

MHeteroFL with Mutual Learning. These methods (FedAPEN [34], FML [38], FedKD [43] and others [28]) add a shared global homogeneous small model on top of each client’s heterogeneous local model. For each local data sample, the distance of the outputs from these two models is used as the mutual loss to update model parameters. Nevertheless, the mutual loss only transfers limited knowledge between the two models, resulting in model performance bottlenecks.

The proposed FedMRL approach further optimizes mutual learning-based MHeteroFL by enhancing the knowledge transfer between the server and client models. It achieves personalized adaptive representation fusion and multi-perspective representation learning, thereby facilitating more knowledge interaction across the two models and improving model performance.

3 The Proposed FedMRL Approach

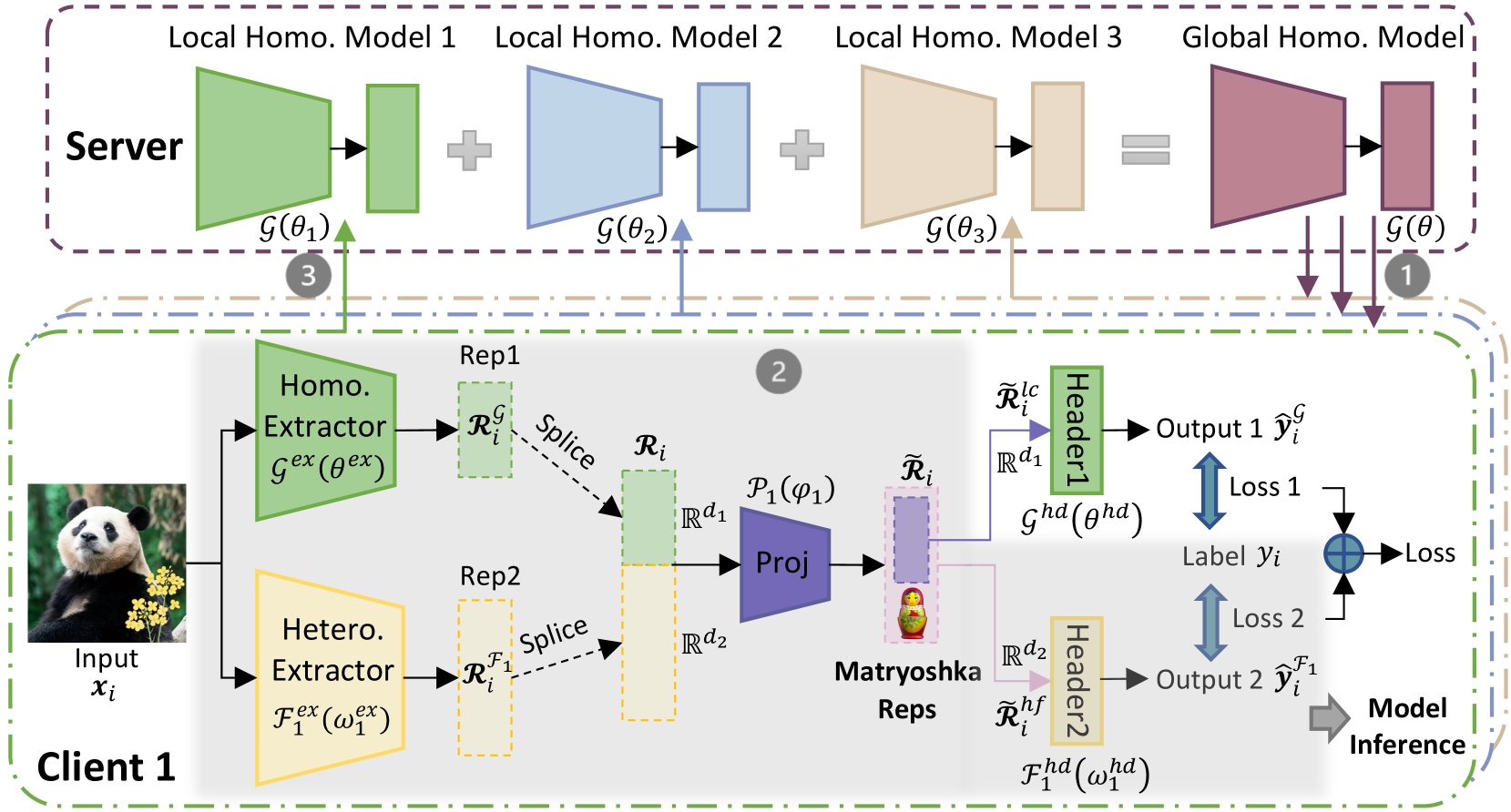

FedMRL aims to tackle data, system, and model heterogeneity in supervised learning tasks, where a central FL server coordinates $N$ FL clients to train heterogeneous local models. The server maintains a global homogeneous small model $\mathcal{G}(\theta)$ shared by all clients. Figure 2 depicts its workflow Algorithm 1 in Appendix A describes the FedMRL algorithm.:

1. In each communication round, $K$ clients participate in FL (i.e., the client participant rate $C=K/N$ ). The global homogeneous small model $\mathcal{G}(\theta)$ is broadcast to them.

1. Each client $k$ holds a heterogeneous local model $\mathcal{F}_{k}(\omega_{k})$ ( $\mathcal{F}_{k}(·)$ is the heterogeneous model structure, and $\omega_{k}$ are personalized model parameters). Client $k$ simultaneously trains the heterogeneous local model and the global homogeneous small model on local non-IID data $D_{k}$ ( $D_{k}$ follows the non-IID distribution $P_{k}$ ) via personalized Matryoshka Representations Learning with a personalized representation projector $\mathcal{P}_{k}(\varphi_{k})$ .

1. The updated homogeneous small models are uploaded to the server for aggregation to produce a new global model for knowledge fusion across heterogeneous clients.

The objective of FedMRL is to minimize the sum of the loss from the combined models ( $\mathcal{W}_{k}(w_{k})=(\mathcal{G}(\theta)\circ\mathcal{F}_{k}(\omega_{k})|%

\mathcal{P}_{k}(\varphi_{k}))$ ) on all clients, i.e.,

$$

\min_{\theta,\omega_{0,\ldots,N-1}}\sum_{k=0}^{N-1}\ell\left(\mathcal{W}_{k}%

\left(D_{k};\left(\theta\circ\omega_{k}\mid\varphi_{k}\right)\right)\right). \tag{1}

$$

These steps repeat until each client’s model converges. After FL training, a client uses its local combined model without the global header for inference. Appendix C.3 provides experimental evidence for inference model selection.

<details>

<summary>x3.png Details</summary>

### Visual Description

## Neural Network Diagram: Federated Learning with Matryoshka Representations

### Overview

The image presents a diagram of a federated learning system, specifically focusing on the use of "Matryoshka Representations." The system involves a server and a client, with the client performing local feature extraction and the server aggregating information from multiple clients (in this case, represented by Local Homo. Models). The diagram highlights the flow of data and parameters between the client and server, as well as the internal processing steps within each.

### Components/Axes

**Regions:**

* **Server (Top):** Enclosed in a dashed purple box.

* **Client 1 (Bottom):** Enclosed in a dashed green box.

**Server Components:**

* **Local Homo. Model 1:** Represented by a green trapezoid and a green rectangle, labeled as G(θ1).

* **Local Homo. Model 2:** Represented by a blue trapezoid and a blue rectangle, labeled as G(θ2).

* **Local Homo. Model 3:** Represented by a tan trapezoid and a tan rectangle, labeled as G(θ3).

* **Global Homo. Model:** Represented by a maroon trapezoid and a maroon rectangle, labeled as G(θ).

**Client 1 Components:**

* **Input:** An image of a panda, labeled as "Input xi".

* **Homo. Extractor:** A green trapezoid labeled as "Gex (θex)".

* **Hetero. Extractor:** A tan trapezoid labeled as "Fex (ω1ex)".

* **Rep1:** A dashed green rectangle labeled as "RiG".

* **Rep2:** A dashed tan rectangle labeled as "RiF1".

* **Proj:** A blue trapezoid labeled as "P1(φ1)".

* **Matryoshka Reps:** A purple rectangle containing a Matryoshka doll.

* **Header1:** A green rectangle labeled as "Header1".

* **Header2:** A tan rectangle labeled as "Header2".

* **Output 1:** Labeled as "Output 1 ŷiG".

* **Output 2:** Labeled as "Output 2 ŷiF1".

* **Label:** Labeled as "Label yi".

**Arrows and Labels:**

* Arrows indicate the flow of data and parameters.

* "Splice" labels indicate the combination of representations.

* "Loss 1" and "Loss 2" indicate loss calculations.

* "Model Inference" indicates the final output.

* Rd1 and Rd2 indicate the dimensionality of the representations.

* R̃i lc and R̃i hf are representations after the Matryoshka Reps.

**Numerical Indicators:**

* (1), (2), and (3) in gray circles indicate different stages or steps in the process.

### Detailed Analysis

**Server Side:**

* The server aggregates information from three "Local Homo. Models." Each model consists of a trapezoidal shape followed by a rectangular shape.

* The outputs of the local models are combined (indicated by "+" signs) to form a "Global Homo. Model."

**Client Side:**

* The client takes an image as input and extracts features using two extractors: "Homo. Extractor" and "Hetero. Extractor."

* The outputs of the extractors are represented as "RiG" and "RiF1" respectively.

* These representations are spliced and projected using "Proj."

* The projected representation is then processed by "Matryoshka Reps."

* The outputs of the Matryoshka Reps are fed into "Header1" and "Header2" to produce "Output 1" and "Output 2."

* Losses are calculated based on the difference between the outputs and the "Label yi."

**Data Flow:**

* The "Global Homo. Model" parameters are sent to the client (indicated by arrow 1).

* The client processes the input and sends information back to the server (indicated by arrow 2).

* The server updates its local models based on the information received from the client (indicated by arrow 3).

### Key Observations

* The diagram illustrates a federated learning setup where the client performs local feature extraction and the server aggregates information from multiple clients.

* The use of "Matryoshka Representations" suggests a hierarchical or multi-scale approach to feature learning.

* The presence of two extractors ("Homo." and "Hetero.") indicates that the system is designed to capture different types of features.

### Interpretation

The diagram depicts a federated learning system that leverages "Matryoshka Representations" for feature learning. The system aims to learn a global model by aggregating information from multiple clients while preserving data privacy. The use of two extractors on the client side suggests that the system is designed to capture both homogeneous and heterogeneous features from the input data. The "Matryoshka Reps" likely play a role in creating a more robust and generalizable representation of the data. The diagram highlights the key components and data flow within the system, providing a high-level overview of its architecture and functionality. The system appears to be designed for a scenario where data is distributed across multiple clients, and it is not feasible or desirable to centralize the data for training.

</details>

Figure 2: The workflow of FedMRL.

3.1 Adaptive Representation Fusion

We denote client $k$ ’s heterogeneous local model feature extractor as $\mathcal{F}_{k}^{ex}(\omega_{k}^{ex})$ , and prediction header as $\mathcal{F}_{k}^{hd}(\omega_{k}^{hd})$ . We denote the homogeneous global model feature extractor as $\mathcal{G}^{ex}(\theta^{ex})$ and prediction header as $\mathcal{G}^{hd}(\theta^{hd})$ . Client $k$ ’s local personalized representation projector is denoted as $\mathcal{P}_{k}(\varphi_{k})$ . In the $t$ -th communication round, client $k$ inputs its local data sample $(\boldsymbol{x}_{i},y_{i})∈ D_{k}$ into the two feature extractors to extract generalized and personalized representations as:

$$

\boldsymbol{\mathcal{R}}_{i}^{\mathcal{G}}=\ \mathcal{G}^{ex}({\boldsymbol{x}_%

{i};\theta}^{ex,t-1}),\boldsymbol{\mathcal{R}}_{i}^{\mathcal{F}_{k}}=\ %

\mathcal{F}_{k}^{ex}(\boldsymbol{x}_{i};\omega_{k}^{ex,t-1}). \tag{2}

$$

The two extracted representations $\boldsymbol{\mathcal{R}}_{i}^{\mathcal{G}}∈\mathbb{R}^{d_{1}}$ and $\boldsymbol{\mathcal{R}}_{i}^{\mathcal{F}_{k}}∈\mathbb{R}^{d_{2}}$ are spliced as:

$$

\boldsymbol{\mathcal{R}}_{i}=\boldsymbol{\mathcal{R}}_{i}^{\mathcal{G}}\circ%

\boldsymbol{\mathcal{R}}_{i}^{\mathcal{F}_{k}}. \tag{3}

$$

Then, the spliced representation is mapped into a fused representation by the lightweight representation projector $\mathcal{P}_{k}(\varphi_{k}^{t-1})$ as:

$$

{\widetilde{\boldsymbol{\mathcal{R}}}}_{i}=\mathcal{P}_{k}(\boldsymbol{%

\mathcal{R}}_{i}{;\varphi}_{k}^{t-1}), \tag{4}

$$

where the projector can be a one-layer linear model or multi-layer perceptron. The fused representation ${\widetilde{\boldsymbol{\mathcal{R}}}}_{i}$ contains both generalized and personalized feature information. It has the same dimension as the client’s local heterogeneous model representation $\mathbb{R}^{d_{2}}$ , which ensures the representation dimension $\mathbb{R}^{d_{2}}$ and the client local heterogeneous model header parameter dimension $\mathbb{R}^{d_{2}× L}$ ( $L$ is the label dimension) match.

The representation projector can be updated as the two models are being trained on local non-IID data. Hence, it achieves personalized representation fusion adaptive to local data distributions. Splicing the representations extracted by two feature extractors can keep the relative semantic space positions of the generalized and personalized representations, benefiting the construction of multi-granularity Matryoshka Representations. Owing to representation splicing, the representation dimensions of the two feature extractors can be different (i.e., $d_{1}≤ d_{2}$ ). Therefore, we can vary the representation dimension of the small homogeneous global model to improve the trade-off among model performance, storage requirement and communication costs.

In addition, each client’s local model is treated as a black box by the FL server. When the server broadcasts the global homogeneous small model to the clients, each client can adjust the linear layer dimension of the representation projector to align it with the dimension of the spliced representation. In this way, different clients may hold different representation projectors. When a new model-agnostic client joins in FedMRL, it can adjust its representation projector structure for local model training. Therefore, FedMRL can accommodate FL clients owning local models with diverse structures.

3.2 Multi-Granular Representation Learning

To construct multi-dimensional and multi-granular Matryoshka Representations, we further extract a low-dimension coarse-granularity representation ${\widetilde{\boldsymbol{\mathcal{R}}}}_{i}^{lc}$ and a high-dimension fine-granularity representation ${\widetilde{\boldsymbol{\mathcal{R}}}}_{i}^{hf}$ from the fused representation ${\widetilde{\boldsymbol{\mathcal{R}}}}_{i}$ . They align with the representation dimensions $\{\mathbb{R}^{d_{1}},\mathbb{R}^{d_{2}}\}$ of two feature extractors for matching the parameter dimensions $\{\mathbb{R}^{d_{1}× L},\mathbb{R}^{d_{2}× L}\}$ of the two prediction headers,

$$

{\widetilde{\boldsymbol{\mathcal{R}}}}_{i}^{lc}={{\widetilde{\boldsymbol{%

\mathcal{R}}}}_{i}}^{1:d_{1}},{\widetilde{\boldsymbol{\mathcal{R}}}}_{i}^{hf}=%

{{\widetilde{\boldsymbol{\mathcal{R}}}}_{i}}^{1:d_{2}}. \tag{5}

$$

The embedded low-dimension coarse-granularity representation ${\widetilde{\boldsymbol{\mathcal{R}}}}_{i}^{lc}∈\mathbb{R}^{d_{1}}$ incorporates coarse generalized and personalized feature information. It is learned by the global homogeneous model header $\mathcal{G}^{hd}(\theta^{hd,t-1})$ (parameter space: $\mathbb{R}^{d_{1}× L}$ ) with generalized prediction information to produce:

$$

{\hat{{y}}}_{i}^{\mathcal{G}}=\mathcal{G}^{hd}({\widetilde{\boldsymbol{%

\mathcal{R}}}}_{i}^{lc};\theta^{hd,t-1}). \tag{6}

$$

The embedded high-dimension fine-granularity representation ${\widetilde{\boldsymbol{\mathcal{R}}}}_{i}^{hf}∈\mathbb{R}^{d_{2}}$ carries finer generalized and personalized feature information, which is further processed by the heterogeneous local model header $\mathcal{F}_{k}^{hd}(\omega_{k}^{hd,t-1})$ (parameter space: $\mathbb{R}^{d_{2}× L}$ ) with personalized prediction information to generate:

$$

{\hat{{y}}}_{i}^{\mathcal{F}_{k}}=\mathcal{F}_{k}^{hd}({\widetilde{\boldsymbol%

{\mathcal{R}}}}_{i}^{hf};\omega_{k}^{hd,t-1}). \tag{7}

$$

We compute the losses $\ell$ (e.g., cross-entropy loss [62]) between the two outputs and the label $y_{i}$ as:

$$

\ell_{i}^{\mathcal{G}}=\ell({\hat{{y}}}_{i}^{\mathcal{G}},y_{i}),\ \ell_{i}^{%

\mathcal{F}_{k}}=\ell({\hat{{y}}}_{i}^{\mathcal{F}_{k}},y_{i}). \tag{8}

$$

Then, the losses of the two branches are weighted by their importance $m_{i}^{\mathcal{G}}$ and $m_{i}^{\mathcal{F}_{k}}$ and summed as:

$$

\ell_{i}=m_{i}^{\mathcal{G}}\cdot\ell_{i}^{\mathcal{G}}+m_{i}^{\mathcal{F}_{k}%

}\cdot\ell_{i}^{\mathcal{F}_{k}}. \tag{9}

$$

We set $m_{i}^{\mathcal{G}}=m_{i}^{\mathcal{F}_{k}}=1$ by default to make the two models contribute equally to model performance. The complete loss $\ell_{i}$ is used to simultaneously update the homogeneous global small model, the heterogeneous client local model, and the representation projector via gradient descent:

$$

\displaystyle\theta_{k}^{t} \displaystyle\leftarrow\theta^{t-1}-\eta_{\theta}\nabla\ell_{i}, \displaystyle\omega_{k}^{t} \displaystyle\leftarrow\omega_{k}^{t-1}-\eta_{\omega}\nabla\ell_{i}, \displaystyle\varphi_{k}^{t} \displaystyle\leftarrow\varphi_{k}^{t-1}-\eta_{\varphi}\nabla\ell_{i}, \tag{10}

$$

where $\eta_{\theta},\eta_{\omega},\ \eta_{\varphi}$ are the learning rates of the homogeneous global small model, the heterogeneous local model and the representation projector. We set $\eta_{\theta}=\eta_{\omega}=\ \eta_{\varphi}$ by default to ensure stable model convergence. In this way, the generalized and personalized fused representation is learned from multiple perspectives, thereby improving model learning capability.

4 Convergence Analysis

Based on notations, assumptions and proofs in Appendix B, we analyse the convergence of FedMRL.

**Lemma 1**

*Local Training. Given Assumptions 1 and 2, the loss of an arbitrary client’s local model $w$ in local training round $(t+1)$ is bounded by:

$$

\mathbb{E}[\mathcal{L}_{(t+1)E}]\leq\mathcal{L}_{tE+0}+(\frac{L_{1}\eta^{2}}{2%

}-\eta)\sum_{e=0}^{E}\|\nabla\mathcal{L}_{tE+e}\|_{2}^{2}+\frac{L_{1}E\eta^{2}%

\sigma^{2}}{2}. \tag{11}

$$*

**Lemma 2**

*Model Aggregation. Given Assumptions 2 and 3, after local training round $(t+1)$ , a client’s loss before and after receiving the updated global homogeneous small models is bounded by:

$$

\mathbb{E}[\mathcal{L}_{(t+1)E+0}]\leq\mathbb{E}[\mathcal{L}_{tE+1}]+{\eta%

\delta}^{2}. \tag{12}

$$*

**Theorem 1**

*One Complete Round of FL. Given the above lemmas, for any client, after receiving the updated global homogeneous small model, we have:

$$

\mathbb{E}[\mathcal{L}_{(t+1)E+0}]\leq\mathcal{L}_{tE+0}+(\frac{L_{1}\eta^{2}}%

{2}-\eta)\sum_{e=0}^{E}\|\nabla\mathcal{L}_{tE+e}\|_{2}^{2}+\frac{L_{1}E\eta^{%

2}\sigma^{2}}{2}+\eta\delta^{2}. \tag{13}

$$*

**Theorem 2**

*Non-convex Convergence Rate of FedMRL. Given Theorem 1, for any client and an arbitrary constant $\epsilon>0$ , the following holds:

$$

\displaystyle\frac{1}{T}\sum_{t=0}^{T-1}\sum_{e=0}^{E-1}\|\nabla\mathcal{L}_{%

tE+e}\|_{2}^{2} \displaystyle\leq\frac{\frac{1}{T}\sum_{t=0}^{T-1}[\mathcal{L}_{tE+0}-\mathbb{%

E}[\mathcal{L}_{(t+1)E+0}]]+\frac{L_{1}E\eta^{2}\sigma^{2}}{2}+\eta\delta^{2}}%

{\eta-\frac{L_{1}\eta^{2}}{2}}<\epsilon, \displaystyle s.t. \displaystyle\eta<\frac{2(\epsilon-\delta^{2})}{L_{1}(\epsilon+E\sigma^{2})}. \tag{14}

$$*

Therefore, we conclude that any client’s local model can converge at a non-convex rate of $\epsilon\sim\mathcal{O}(1/T)$ in FedMRL if the learning rates of the homogeneous small model, the client local heterogeneous model and the personalized representation projector satisfy the above conditions.

5 Experimental Evaluation

We implement FedMRL on Pytorch, and compare it with seven state-of-the-art MHeteroFL methods. The experiments are carried out over two benchmark supervised image classification datasets on $4$ NVIDIA GeForce 3090 GPUs (24GB Memory). Codes are available in supplemental materials.

5.1 Experiment Setup

Datasets. The benchmark datasets adopted are CIFAR-10 and CIFAR-100 https://www.cs.toronto.edu/%7Ekriz/cifar.html [20], which are commonly used in FL image classification tasks for the evaluating existing MHeteroFL algorithms. CIFAR-10 has $60,000$ $32× 32$ colour images across $10$ classes, with $50,000$ for training and $10,000$ for testing. CIFAR-100 has $60,000$ $32× 32$ colour images across $100$ classes, with $50,000$ for training and $10,000$ for testing. We follow [37] and [34] to construct two types of non-IID datasets. Each client’s non-IID data are further divided into a training set and a testing set with a ratio of $8:2$ .

- Non-IID (Class): For CIFAR-10 with $10$ classes, we randomly assign $2$ classes to each FL client. For CIFAR-100 with $100$ classes, we randomly assign $10$ classes to each FL client. The fewer classes each client possesses, the higher the non-IIDness.

- Non-IID (Dirichlet): To produce more sophisticated non-IID data settings, for each class of CIFAR-10/CIFAR-100, we use a Dirichlet( $\alpha$ ) function to adjust the ratio between the number of FL clients and the assigned data. A smaller $\alpha$ indicates more pronounced non-IIDness.

Models. We evaluate MHeteroFL algorithms under model-homogeneous and heterogeneous FL scenarios. FedMRL ’s representation projector is a one-layer linear model (parameter space: $\mathbb{R}^{d2×(d_{1}+d_{2})}$ ).

- Model-Homogeneous FL: All clients train CNN-1 in Table 2 (Appendix C.1). The homogeneous global small models in FML and FedKD are also CNN-1. The extra homogeneous global small model in FedMRL is CNN-1 with a smaller representation dimension $d_{1}$ (i.e., the penultimate linear layer dimension) than the CNN-1 model’s representation dimension $d_{2}$ , $d_{1}≤ d_{2}$ .

- Model-Heterogeneous FL: The $5$ heterogeneous models {CNN-1, $...$ , CNN-5} in Table 2 (Appendix C.1) are evenly distributed among FL clients. The homogeneous global small models in FML and FedKD are the smallest CNN-5 models. The homogeneous global small model in FedMRL is the smallest CNN-5 with a reduced representation dimension $d_{1}$ compared with the CNN-5 model representation dimension $d_{2}$ , i.e., $d_{1}≤ d_{2}$ .

Comparison Baselines. We compare FedMRL with state-of-the-art algorithms belonging to the following three categories of MHeteroFL methods:

- Standalone. Each client trains its heterogeneous local model only with its local data.

- Knowledge Distillation Without Public Data: FD [19] and FedProto [41].

- Model Split: LG-FedAvg [24].

- Mutual Learning: FML [38], FedKD [43] and FedAPEN [34].

Evaluation Metrics. We evaluate MHeteroFL algorithms from the following three aspects:

- Model Accuracy. We record the test accuracy of each client’s model in each round, and compute the average test accuracy.

- Communication Cost. We compute the number of parameters sent between the server and one client in one communication round, and record the required rounds for reaching the target average accuracy. The overall communication cost of one client for target average accuracy is the product between the cost per round and the number of rounds.

- Computation Overhead. We compute the computation FLOPs of one client in one communication round, and record the required communication rounds for reaching the target average accuracy. The overall computation overall for one client achieving the target average accuracy is the product between the FLOPs per round and the number of rounds.

Training Strategy. We search optimal FL hyperparameters and unique hyperparameters for all MHeteroFL algorithms. For FL hyperparameters, we test MHeteroFL algorithms with a $\{64,128,256,512\}$ batch size, $\{1,10\}$ epochs, $T=\{100,500\}$ communication rounds and an SGD optimizer with a $0.01$ learning rate. The unique hyperparameter of FedMRL is the representation dimension $d_{1}$ of the homogeneous global small model, we vary $d_{1}=\{100,\ 150,...,500\}$ to obtain the best-performing FedMRL.

5.2 Results and Discussion

We design three FL settings with different numbers of clients ( $N$ ) and client participation rates ( $C$ ): ( $N=10,C=100\%$ ), ( $N=50,C=20\%$ ), ( $N=100,C=10\%$ ) for both model-homogeneous and model-heterogeneous FL scenarios.

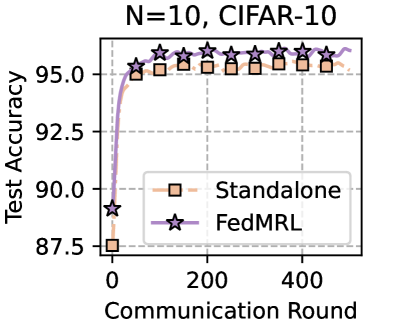

5.2.1 Average Test Accuracy

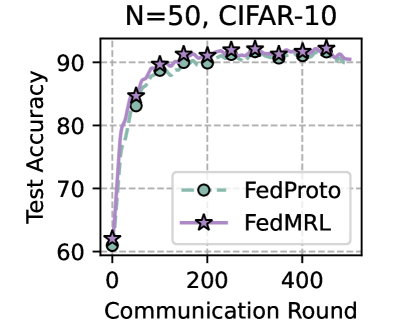

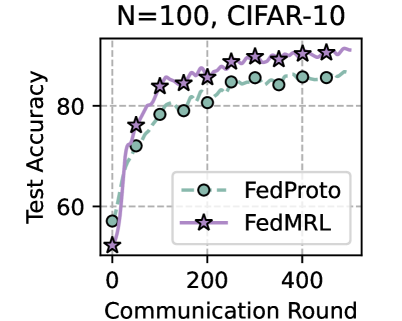

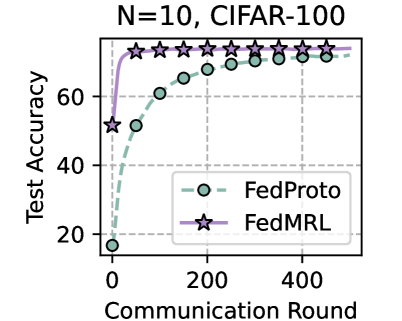

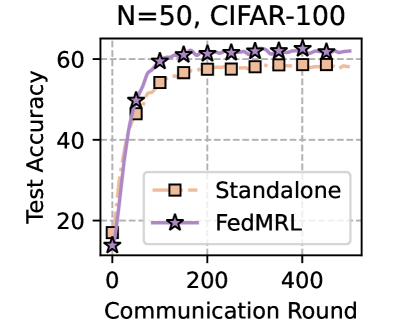

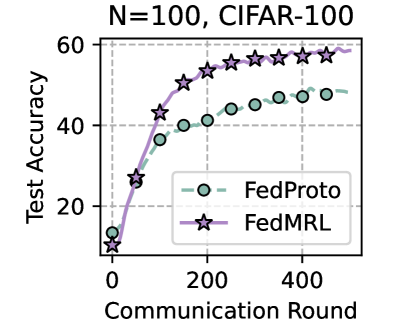

Table 1 and Table 3 (Appendix C.2) show that FedMRL consistently outperforms all baselines under both model-heterogeneous or homogeneous settings. It achieves up to a $8.48\%$ improvement in average test accuracy compared with the best baseline under each setting. Furthermore, it achieves up to a $24.94\%$ average test accuracy improvement than the best same-category (i.e., mutual learning-based MHeteroFL) baseline under each setting. These results demonstrate the superiority of FedMRL in model performance owing to its adaptive personalized representation fusion and multi-granularity representation learning capabilities. Figure 3 (left six) shows that FedMRL consistently achieves faster convergence speed and higher average test accuracy than the best baseline under each setting.

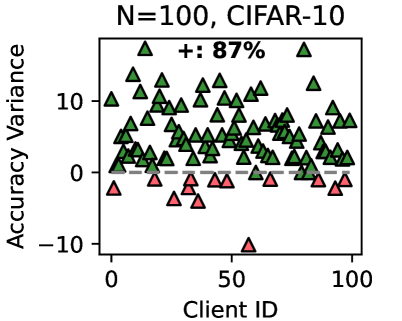

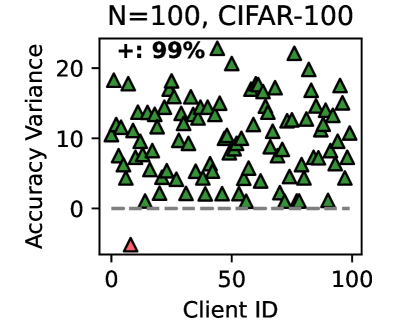

5.2.2 Individual Client Test Accuracy

Figure 3 (right two) shows the difference between the test accuracy achieved by FedMRL vs. the best-performing baseline FedProto (i.e., FedMRL - FedProto) under ( $N=100,C=10\%$ ) for each individual client. It can be observed that $87\%$ and $99\%$ of all clients achieve better performance under FedMRL than under FedProto on CIFAR-10 and CIFAR-100, respectively. This demonstrates that FedMRL possesses stronger personalization capability than FedProto owing to its adaptive personalized multi-granularity representation learning design.

Table 1: Average test accuracy (%) in model-heterogeneous FL.

| FL Setting Method Standalone | N=10, C=100% CIFAR-10 96.53 | N=50, C=20% CIFAR-100 72.53 | N=100, C=10% CIFAR-10 95.14 | CIFAR-100 62.71 | CIFAR-10 91.97 | CIFAR-100 53.04 |

| --- | --- | --- | --- | --- | --- | --- |

| LG-FedAvg [24] | 96.30 | 72.20 | 94.83 | 60.95 | 91.27 | 45.83 |

| FD [19] | 96.21 | - | - | - | - | - |

| FedProto [41] | 96.51 | 72.59 | 95.48 | 62.69 | 92.49 | 53.67 |

| FML [38] | 30.48 | 16.84 | - | 21.96 | - | 15.21 |

| FedKD [43] | 80.20 | 53.23 | 77.37 | 44.27 | 73.21 | 37.21 |

| FedAPEN [34] | - | - | - | - | - | - |

| FedMRL | 96.63 | 74.37 | 95.70 | 66.04 | 95.85 | 62.15 |

| FedMRL -Best B. | 0.10 | 1.78 | 0.22 | 3.33 | 3.36 | 8.48 |

| FedMRL -Best S.C.B. | 16.43 | 21.14 | 18.33 | 21.77 | 22.64 | 24.94 |

“-”: failing to converge. “ ”: the best MHeteroFL method. “ Best B.”: the best baseline. “ Best S.C.B.”: the best same-category (mutual learning-based MHeteroFL) baseline. The underscored values denote the largest accuracy improvement of FedMRL across $6$ settings.

<details>

<summary>x4.png Details</summary>

### Visual Description

## Chart: Test Accuracy vs. Communication Round

### Overview

The image is a line chart comparing the test accuracy of two machine learning models, "Standalone" and "FedMRL," over a number of communication rounds. The chart is titled "N=10, CIFAR-10". The x-axis represents the communication round, and the y-axis represents the test accuracy.

### Components/Axes

* **Title:** N=10, CIFAR-10

* **X-axis:** Communication Round

* Scale: 0 to 500, with visible markers at 0, 200, and 400.

* **Y-axis:** Test Accuracy

* Scale: 87.5 to 95.0, with markers at 87.5, 90.0, 92.5, and 95.0.

* **Legend:** Located in the center-right of the chart.

* Standalone (peach dashed line with square markers)

* FedMRL (lavender solid line with star markers)

### Detailed Analysis

* **Standalone:**

* Trend: The peach dashed line starts at approximately 87.5% accuracy at round 0, quickly increases to approximately 95% by round 50, and then plateaus around 95.5% with slight fluctuations.

* Data Points:

* Round 0: ~87.5%

* Round 50: ~95%

* Round 200: ~95.5%

* Round 400: ~95.5%

* Round 500: ~95.5%

* **FedMRL:**

* Trend: The lavender solid line starts at approximately 89% accuracy at round 0, quickly increases to approximately 95% by round 50, and then plateaus around 96% with slight fluctuations.

* Data Points:

* Round 0: ~89%

* Round 50: ~95%

* Round 200: ~96%

* Round 400: ~96%

* Round 500: ~96%

### Key Observations

* Both models show a rapid increase in test accuracy in the initial communication rounds.

* FedMRL consistently outperforms Standalone, achieving a slightly higher test accuracy after the initial rounds.

* Both models plateau after approximately 50 communication rounds, with minimal improvement in accuracy.

### Interpretation

The chart demonstrates the performance of two machine learning models, Standalone and FedMRL, on the CIFAR-10 dataset with N=10. The FedMRL model exhibits a slightly better performance compared to the Standalone model, achieving a higher test accuracy. The rapid increase in accuracy during the initial communication rounds suggests that both models learn quickly. The plateauing of accuracy after a certain number of rounds indicates that further communication rounds do not significantly improve the model's performance. This could be due to the models reaching their maximum potential on the given dataset or the need for further optimization techniques.

</details>

<details>

<summary>x5.png Details</summary>

### Visual Description

## Line Chart: Test Accuracy vs. Communication Round for FedProto and FedMRL

### Overview

The image is a line chart comparing the test accuracy of two federated learning algorithms, FedProto and FedMRL, over a number of communication rounds. The chart shows the performance of these algorithms on the CIFAR-10 dataset with N=50.

### Components/Axes

* **Title:** N=50, CIFAR-10

* **X-axis:** Communication Round, with markers at 0, 200, and 400.

* **Y-axis:** Test Accuracy, ranging from 60 to 90, with gridlines at intervals of 10.

* **Legend:** Located on the right side of the chart.

* FedProto: Light green dashed line with circle markers.

* FedMRL: Light purple solid line with star markers.

### Detailed Analysis

* **FedProto (Light Green, Dashed Line, Circle Markers):**

* The line starts at approximately 61% accuracy at communication round 0.

* It increases rapidly to approximately 89% by round 100.

* It plateaus around 91-92% for the remaining communication rounds (200-500).

* **FedMRL (Light Purple, Solid Line, Star Markers):**

* The line starts at approximately 63% accuracy at communication round 0.

* It increases rapidly to approximately 90% by round 100.

* It plateaus around 92-93% for the remaining communication rounds (200-500).

### Key Observations

* Both algorithms show a significant increase in test accuracy during the initial communication rounds.

* FedMRL consistently outperforms FedProto by a small margin after the initial increase.

* Both algorithms plateau in performance after approximately 200 communication rounds.

### Interpretation

The chart demonstrates that both FedProto and FedMRL algorithms are effective in improving test accuracy over communication rounds on the CIFAR-10 dataset. The initial rapid increase in accuracy suggests that the models quickly learn from the data. The plateau indicates that the models reach a point of diminishing returns, where further communication rounds do not significantly improve performance. FedMRL's slightly higher accuracy suggests that it may be a more effective algorithm for this particular task and dataset.

</details>

<details>

<summary>x6.png Details</summary>

### Visual Description

## Chart: Test Accuracy vs. Communication Round for FedProto and FedMRL

### Overview

The image is a line chart comparing the test accuracy of two federated learning algorithms, FedProto and FedMRL, over a number of communication rounds. The chart displays the performance of these algorithms on the CIFAR-10 dataset with N=100.

### Components/Axes

* **Title:** N=100, CIFAR-10

* **X-axis:** Communication Round, with ticks at 0, 200, 400. The x-axis represents the number of communication rounds.

* **Y-axis:** Test Accuracy, with ticks at 60, 80. The y-axis represents the test accuracy in percentage.

* **Legend:** Located in the bottom-right of the chart.

* FedProto: Represented by a dashed light green line with circle markers.

* FedMRL: Represented by a solid light purple line with star markers.

* **Grid:** The chart has a light gray dashed grid.

### Detailed Analysis

* **FedProto (light green, dashed line with circle markers):**

* The line starts at approximately 55% accuracy at communication round 0.

* The line increases sharply until approximately round 100, reaching around 78% accuracy.

* From round 100 to 500, the line continues to increase, but at a slower rate, reaching approximately 85% accuracy.

* **FedMRL (light purple, solid line with star markers):**

* The line starts at approximately 50% accuracy at communication round 0.

* The line increases sharply until approximately round 100, reaching around 83% accuracy.

* From round 100 to 500, the line continues to increase, but at a slower rate, reaching approximately 90% accuracy.

### Key Observations

* Both algorithms show a significant increase in test accuracy during the initial communication rounds.

* FedMRL consistently outperforms FedProto throughout the communication rounds.

* The rate of improvement in test accuracy decreases as the number of communication rounds increases for both algorithms.

* The final test accuracy for FedProto is around 85%, while for FedMRL it is around 90%.

### Interpretation

The chart demonstrates that both FedProto and FedMRL are effective federated learning algorithms for the CIFAR-10 dataset. However, FedMRL achieves higher test accuracy compared to FedProto, suggesting it may be a more efficient or better-suited algorithm for this particular task and dataset. The diminishing returns in accuracy with increasing communication rounds suggest that there is a point beyond which further training provides only marginal improvements. The initial rapid increase in accuracy highlights the importance of the early communication rounds in establishing a good model.

</details>

<details>

<summary>x7.png Details</summary>

### Visual Description

## Scatter Plot: Accuracy Variance vs. Client ID for N=100, CIFAR-10

### Overview

The image is a scatter plot showing the accuracy variance for different clients (Client ID) in a system trained on the CIFAR-10 dataset with N=100. The plot displays the variance in accuracy for each client, with data points represented as triangles. Green triangles indicate positive variance, while red triangles indicate negative variance. A dashed gray line represents the zero-variance baseline. The plot also indicates that 87% of the clients have positive accuracy variance.

### Components/Axes

* **Title:** N=100, CIFAR-10

* **X-axis:** Client ID, ranging from 0 to 100.

* **Y-axis:** Accuracy Variance, ranging from -10 to 10.

* **Data Points:** Green triangles (positive variance) and red triangles (negative variance).

* **Zero-Variance Baseline:** Dashed gray line at y=0.

* **Percentage of Positive Variance:** "+: 87%" located at the top of the plot.

### Detailed Analysis

* **X-Axis (Client ID):** The x-axis represents the Client ID, ranging from 0 to 100 in increments of 50.

* **Y-Axis (Accuracy Variance):** The y-axis represents the Accuracy Variance, ranging from -10 to 10 in increments of 10.

* **Data Point Distribution:**

* The majority of data points are green triangles, indicating positive accuracy variance.

* A smaller number of data points are red triangles, indicating negative accuracy variance.

* The data points are scattered across the range of Client IDs.

* **Zero-Variance Baseline:** The dashed gray line at y=0 serves as a reference point to easily distinguish between positive and negative accuracy variance.

* **Percentage of Positive Variance:** The "+: 87%" indicates that 87% of the clients have positive accuracy variance.

### Key Observations

* **Dominance of Positive Variance:** The plot visually confirms that a significant majority of clients have positive accuracy variance, as indicated by the "+: 87%".

* **Variance Range:** The accuracy variance ranges from approximately -10 to 13.

* **Outliers:** There is one notable outlier, a red triangle (negative variance) at Client ID ~55 with an accuracy variance of approximately -10.

### Interpretation

The scatter plot illustrates the distribution of accuracy variance across different clients in a system trained on the CIFAR-10 dataset. The dominance of green triangles (positive variance) suggests that, overall, the system performs well across most clients. The "+: 87%" reinforces this observation, indicating that 87% of the clients experience positive accuracy variance.

The presence of red triangles (negative variance) indicates that some clients perform worse than average. The outlier at Client ID ~55 with an accuracy variance of approximately -10 suggests a particularly poor performance for that specific client.

The plot highlights the variability in performance across different clients and suggests that further investigation may be needed to understand the factors contributing to the negative variance observed in some clients. This could involve analyzing the data distribution for those clients, the model's performance on specific classes, or other client-specific characteristics.

</details>

<details>

<summary>x8.png Details</summary>

### Visual Description

## Line Chart: Test Accuracy vs. Communication Round for FedProto and FedMRL

### Overview

The image is a line chart comparing the test accuracy of two federated learning algorithms, FedProto and FedMRL, over a number of communication rounds. The chart displays the performance of these algorithms on the CIFAR-100 dataset with N=10.

### Components/Axes

* **Title:** N=10, CIFAR-100

* **X-axis:** Communication Round, with ticks at 0, 200, and 400.

* **Y-axis:** Test Accuracy, ranging from 20 to 60, with ticks at 20, 40, and 60.

* **Legend:** Located on the right side of the chart.

* FedProto: Represented by a dashed teal line with circle markers.

* FedMRL: Represented by a solid purple line with star markers.

### Detailed Analysis

* **FedProto (Dashed Teal Line with Circle Markers):**

* The line starts at approximately 17% accuracy at communication round 0.

* The accuracy increases rapidly until around communication round 200, reaching approximately 65%.

* After round 200, the accuracy continues to increase, but at a slower rate, reaching approximately 70% at round 500.

* Data points: (0, ~17), (100, ~52), (200, ~65), (300, ~68), (400, ~69), (500, ~70)

* **FedMRL (Solid Purple Line with Star Markers):**

* The line starts at approximately 50% accuracy at communication round 0.

* The accuracy increases rapidly and plateaus around 75% before communication round 100.

* The accuracy remains relatively stable around 75% for the rest of the communication rounds.

* Data points: (0, ~50), (50, ~73), (100, ~75), (200, ~75), (300, ~75), (400, ~75), (500, ~75)

### Key Observations

* FedMRL starts with a significantly higher initial accuracy than FedProto.

* FedMRL converges much faster than FedProto, reaching a stable accuracy level early on.

* FedMRL achieves a higher final accuracy compared to FedProto.

### Interpretation

The chart demonstrates that FedMRL outperforms FedProto in terms of both initial accuracy and convergence speed on the CIFAR-100 dataset with N=10. FedMRL's rapid convergence suggests it may be more efficient in scenarios where communication rounds are limited. The higher final accuracy of FedMRL indicates a better overall performance in this specific setting. The data suggests that FedMRL is a more effective federated learning algorithm for this particular task and dataset configuration.

</details>

<details>

<summary>x9.png Details</summary>

### Visual Description

## Line Chart: Test Accuracy vs. Communication Round

### Overview

The image is a line chart comparing the test accuracy of two methods, "Standalone" and "FedMRL," over a number of communication rounds. The chart displays the performance of these methods on the CIFAR-100 dataset with N=50.

### Components/Axes

* **Title:** N=50, CIFAR-100

* **X-axis:** Communication Round

* Scale: 0 to 500, with visible markers at 0, 200, and 400.

* **Y-axis:** Test Accuracy

* Scale: 0 to 60, with visible markers at 20, 40, and 60.

* **Legend:** Located in the center-right of the chart.

* Standalone: Light orange line with square markers.

* FedMRL: Purple line with star markers.

* Gridlines: Dashed gray lines at intervals of 20 on the Y-axis.

### Detailed Analysis

* **Standalone:**

* Trend: The light orange line with square markers represents the "Standalone" method. It starts at approximately 15% accuracy at round 0, rises sharply to about 55% by round 100, and then plateaus around 58-60% for the remaining rounds.

* Data Points:

* Round 0: ~15%

* Round 100: ~55%

* Round 200: ~58%

* Round 300: ~59%

* Round 400: ~59%

* Round 500: ~58%

* **FedMRL:**

* Trend: The purple line with star markers represents the "FedMRL" method. It starts at approximately 14% accuracy at round 0, rises sharply to about 50% by round 50, and then continues to increase, surpassing the "Standalone" method. It plateaus around 62-64% for the remaining rounds.

* Data Points:

* Round 0: ~14%

* Round 50: ~50%

* Round 100: ~59%

* Round 200: ~62%

* Round 300: ~63%

* Round 400: ~63%

* Round 500: ~62%

### Key Observations

* Both methods show a rapid increase in test accuracy in the initial communication rounds.

* FedMRL consistently outperforms Standalone after approximately 100 communication rounds.

* Both methods plateau in accuracy after a certain number of rounds, indicating diminishing returns from further communication.

### Interpretation

The chart demonstrates that the FedMRL method achieves higher test accuracy compared to the Standalone method when trained on the CIFAR-100 dataset with N=50. The initial rapid increase in accuracy for both methods suggests that the models quickly learn from the data. The plateauing effect indicates that the models eventually reach a point where further communication rounds do not significantly improve performance. The FedMRL method's superior performance suggests that it is a more effective approach for this particular task and dataset.

</details>

<details>

<summary>x10.png Details</summary>

### Visual Description

## Chart: Test Accuracy vs Communication Round for FedProto and FedMRL

### Overview

The image is a line chart comparing the test accuracy of two federated learning algorithms, FedProto and FedMRL, over a number of communication rounds. The chart displays the performance of these algorithms on the CIFAR-100 dataset with N=100.

### Components/Axes

* **Title:** N=100, CIFAR-100

* **X-axis:** Communication Round

* Scale: 0 to 500, with visible ticks at 0, 200, and 400.

* **Y-axis:** Test Accuracy

* Scale: 0 to 60, with visible ticks at 0, 20, 40, and 60.

* **Legend:** Located in the bottom-right of the chart.

* FedProto: Represented by a dashed light green line with circle markers.

* FedMRL: Represented by a solid purple line with star markers.

* **Grid:** The chart has a light gray dashed grid.

### Detailed Analysis

* **FedProto (light green, dashed line, circle markers):**

* Trend: The line slopes upward, indicating increasing test accuracy with more communication rounds, and then plateaus.

* Data Points:

* Round 0: Accuracy ~13

* Round 100: Accuracy ~40

* Round 200: Accuracy ~40

* Round 300: Accuracy ~44

* Round 400: Accuracy ~47

* Round 500: Accuracy ~47

* **FedMRL (purple, solid line, star markers):**

* Trend: The line slopes upward, indicating increasing test accuracy with more communication rounds, and then plateaus.

* Data Points:

* Round 0: Accuracy ~10

* Round 100: Accuracy ~43

* Round 200: Accuracy ~52

* Round 300: Accuracy ~56

* Round 400: Accuracy ~56

* Round 500: Accuracy ~58

### Key Observations

* Both algorithms show an increase in test accuracy as the number of communication rounds increases.

* FedMRL consistently outperforms FedProto in terms of test accuracy across all communication rounds.

* Both algorithms appear to plateau in performance after approximately 300 communication rounds.

### Interpretation

The chart demonstrates the performance of two federated learning algorithms on the CIFAR-100 dataset. FedMRL achieves higher test accuracy compared to FedProto, suggesting it is a more effective algorithm for this particular task and dataset. The plateauing of both algorithms indicates a point of diminishing returns, where further communication rounds do not significantly improve the test accuracy. This information is valuable for optimizing the training process and selecting the most efficient algorithm for federated learning tasks.

</details>

<details>

<summary>x11.png Details</summary>

### Visual Description

## Scatter Plot: Accuracy Variance vs. Client ID

### Overview

The image is a scatter plot showing the accuracy variance for different clients. The x-axis represents the Client ID, ranging from 0 to 100. The y-axis represents the Accuracy Variance, ranging from 0 to 20. Most data points are green triangles, with one red triangle outlier. A dashed gray line is present at y=0. The plot is labeled "N=100, CIFAR-100" and "+: 99%".

### Components/Axes

* **Title:** N=100, CIFAR-100

* **Additional Label:** +: 99%

* **X-axis:**

* Label: Client ID

* Scale: 0 to 100

* Ticks: 0, 50, 100

* **Y-axis:**

* Label: Accuracy Variance

* Scale: 0 to 20

* Ticks: 0, 10, 20

* **Data Points:**

* Green triangles: Represent the accuracy variance for most clients.

* Red triangle: Represents an outlier with a significantly lower accuracy variance.

* **Horizontal Line:** Dashed gray line at Accuracy Variance = 0.

### Detailed Analysis

* **Green Triangles:** The green triangles are scattered across the plot, indicating varying accuracy variance among the clients. The majority of the green triangles are above the y=0 line, with accuracy variance values ranging approximately from 2 to 20.

* **Red Triangle:** There is one red triangle located near Client ID = 0 and Accuracy Variance = -5. This point is a clear outlier, indicating a client with a significantly lower accuracy variance compared to the others.

* **Trend:** There is no clear trend in the distribution of the green triangles. The accuracy variance appears to be randomly distributed across the client IDs.

### Key Observations

* Most clients have a positive accuracy variance.

* One client (Client ID ~ 0) has a significantly negative accuracy variance, making it an outlier.

* The accuracy variance does not appear to be correlated with the Client ID.

### Interpretation

The scatter plot visualizes the accuracy variance across a set of 100 clients using the CIFAR-100 dataset. The "N=100" likely refers to the number of clients. The "+: 99%" label is unclear without additional context, but it may refer to a confidence interval or a percentage related to the overall accuracy. The presence of one outlier (the red triangle) suggests that there may be an issue with that particular client's data or model. The lack of a clear trend indicates that the accuracy variance is not systematically related to the client ID. Further investigation would be needed to understand the cause of the outlier and the factors contributing to the variance in accuracy across the clients.

</details>

Figure 3: Left six: average test accuracy vs. communication rounds. Right two: individual clients’ test accuracy (%) differences (FedMRL - FedProto).

<details>

<summary>x12.png Details</summary>

### Visual Description

## Bar Chart: Communication Rounds for FedProto and FedMRL

### Overview

The image is a bar chart comparing the number of communication rounds required by two federated learning algorithms, FedProto and FedMRL, on two datasets, CIFAR-10 and CIFAR-100. The chart displays the number of communication rounds on the y-axis and the datasets on the x-axis. Each dataset has two bars representing the communication rounds for FedProto and FedMRL.

### Components/Axes

* **Y-axis:** "Communication Rounds", with scale markers at 0, 100, 200, and 300.

* **X-axis:** Datasets: CIFAR-10 and CIFAR-100.

* **Legend:** Located at the top-right of the chart.

* FedProto: Represented by a light yellow bar with a black outline.

* FedMRL: Represented by a dark blue-gray bar with a black outline.

### Detailed Analysis

* **CIFAR-10:**

* FedProto: Approximately 330 communication rounds.

* FedMRL: Approximately 190 communication rounds.

* **CIFAR-100:**

* FedProto: Approximately 240 communication rounds.

* FedMRL: Approximately 130 communication rounds.

### Key Observations

* For both datasets, FedProto requires significantly more communication rounds than FedMRL.

* Both algorithms require fewer communication rounds on CIFAR-100 compared to CIFAR-10.

### Interpretation

The chart suggests that FedMRL is more communication-efficient than FedProto for both CIFAR-10 and CIFAR-100 datasets. The difference in communication rounds is substantial, indicating a potential advantage of using FedMRL in communication-constrained federated learning scenarios. The fact that both algorithms require fewer rounds on CIFAR-100 might be due to the specific characteristics of the dataset or the model's performance on it.

</details>

<details>

<summary>x13.png Details</summary>

### Visual Description

## Bar Chart: Number of Communication Parameters for FedProto and FedMRL on CIFAR-10 and CIFAR-100

### Overview

The image is a bar chart comparing the number of communication parameters (in scientific notation) for two methods, FedProto and FedMRL, across two datasets, CIFAR-10 and CIFAR-100.

### Components/Axes

* **Y-axis:** "Num. of Comm. Paras." (Number of Communication Parameters). The scale is in scientific notation, with "1e8" at the top, and values marked at 0.0, 0.5, and 1.0. This indicates the y-axis values are scaled by 10^8.

* **X-axis:** Categorical axis with two categories: "CIFAR-10" and "CIFAR-100".

* **Legend:** Located at the top-center of the chart.

* "FedProto" is represented by a light yellow bar with a black outline.

* "FedMRL" is represented by a dark blue-gray bar with a black outline.

### Detailed Analysis

* **CIFAR-10:**

* FedProto: The bar is very small, close to 0.0 on the y-axis. Approximate value: 0.02 * 10^8 = 2,000,000.

* FedMRL: The bar reaches approximately 0.85 on the y-axis. Approximate value: 0.85 * 10^8 = 85,000,000.

* **CIFAR-100:**

* FedProto: The bar is very small, close to 0.0 on the y-axis. Approximate value: 0.04 * 10^8 = 4,000,000.

* FedMRL: The bar reaches approximately 0.95 on the y-axis. Approximate value: 0.95 * 10^8 = 95,000,000.

### Key Observations

* For both CIFAR-10 and CIFAR-100, FedMRL has significantly more communication parameters than FedProto.

* The number of communication parameters for FedMRL is higher for CIFAR-100 than for CIFAR-10.

* The number of communication parameters for FedProto is very low for both datasets.

### Interpretation

The chart suggests that FedMRL requires a much larger number of communication parameters compared to FedProto for both CIFAR-10 and CIFAR-100 datasets. This could indicate that FedMRL has a more complex model or a different communication strategy that necessitates more parameter exchange during training or inference. The slight increase in communication parameters for FedMRL when moving from CIFAR-10 to CIFAR-100 might be due to the increased complexity of the CIFAR-100 dataset, which has more classes. FedProto's consistently low communication parameters suggest a more efficient or lightweight approach.

</details>

<details>

<summary>x14.png Details</summary>

### Visual Description

## Bar Chart: Computation FLOPS Comparison

### Overview

The image is a bar chart comparing the computation FLOPS (Floating Point Operations Per Second) of two methods, FedProto and FedMRL, on two datasets, CIFAR-10 and CIFAR-100. The y-axis represents computation FLOPS in units of 1e9, and the x-axis represents the datasets.

### Components/Axes

* **Title:** Implicitly, "Computation FLOPS Comparison"

* **X-axis:** Datasets: CIFAR-10, CIFAR-100

* **Y-axis:** Computation FLOPS (1e9). Scale: 0 to 4, with an additional tick at the top labeled "1e9", implying the scale is in billions of FLOPS.

* **Legend:** Located at the top-right of the chart.

* FedProto: Represented by light yellow bars with black outlines.

* FedMRL: Represented by dark blue-gray bars with black outlines.

### Detailed Analysis

* **CIFAR-10:**

* FedProto: Approximately 4.8e9 FLOPS.

* FedMRL: Approximately 2.4e9 FLOPS.

* **CIFAR-100:**

* FedProto: Approximately 3.5e9 FLOPS.

* FedMRL: Approximately 1.7e9 FLOPS.

### Key Observations

* For both datasets, FedProto requires significantly more computation FLOPS than FedMRL.

* Both FedProto and FedMRL require fewer FLOPS on CIFAR-100 than on CIFAR-10.

### Interpretation

The chart suggests that FedMRL is more computationally efficient than FedProto for both CIFAR-10 and CIFAR-100 datasets. The difference in FLOPS between the two methods is more pronounced for CIFAR-10. The lower FLOPS required for CIFAR-100 compared to CIFAR-10 for both methods could be due to differences in the complexity or structure of the datasets, or the way the algorithms handle them.

</details>

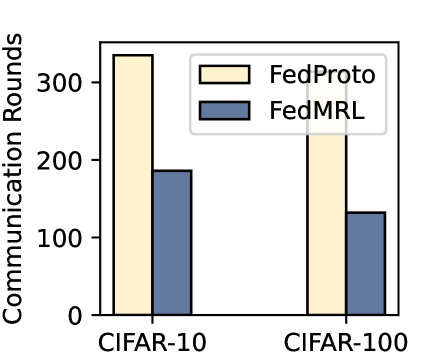

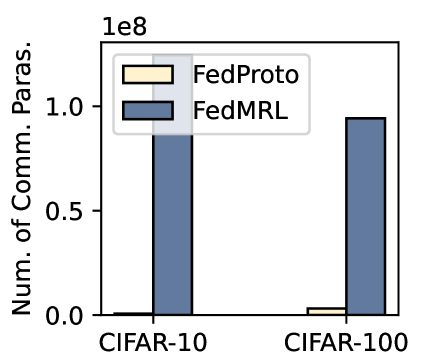

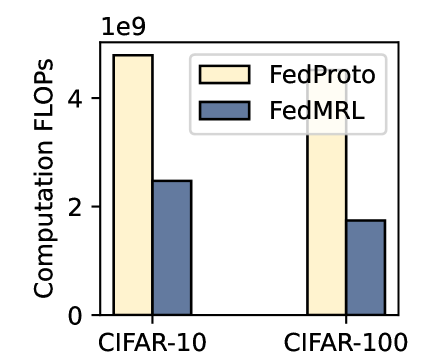

Figure 4: Communication rounds, number of communicated parameters, and computation FLOPs required to reach $90\%$ and $50\%$ average test accuracy targets on CIFAR-10 and CIFAR-100.

5.2.3 Communication Cost

We record the communication rounds and the number of parameters sent per client to achieve $90\%$ and $50\%$ target test average accuracy on CIFAR-10 and CIFAR-100, respectively. Figure 4 (left) shows that FedMRL requires fewer rounds and achieves faster convergence than FedProto. Figure 4 (middle) shows that FedMRL incurs higher communication costs than FedProto as it transmits the full homogeneous small model, while FedProto only transmits each local seen-class average representation between the server and the client. Nevertheless, FedMRL with an optional smaller representation dimension ( $d_{1}$ ) of the homogeneous small model still achieves higher communication efficiency than same-category mutual learning-based MHeteroFL baselines (FML, FedKD, FedAPEN) with a larger representation dimension.

5.2.4 Computation Overhead

We also calculate the computation FLOPs consumed per client to reach $90\%$ and $50\%$ target average test accuracy on CIFAR-10 and CIFAR-100, respectively. Figure 4 (right) shows that FedMRL incurs lower computation costs than FedProto, owing to its faster convergence (i.e., fewer rounds) even with higher computation overhead per round due to the need to train an additional homogeneous small model and a linear representation projector.

5.3 Case Studies

5.3.1 Robustness to Non-IIDness (Class)

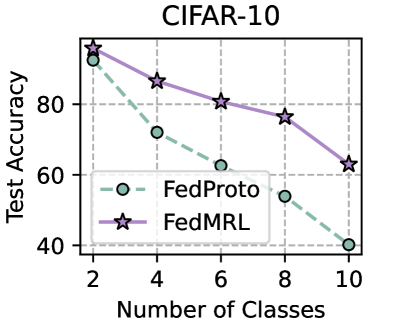

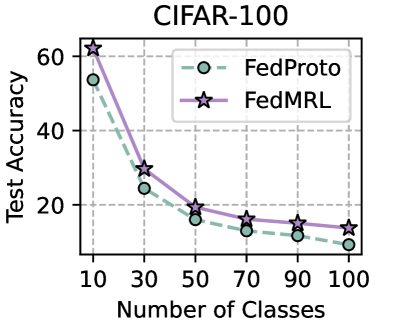

We evaluate the robustness of FedMRL to different non-IIDnesses as a result of the number of classes assigned to each client under the ( $N=100,C=10\%$ ) setting. The fewer classes assigned to each client, the higher the non-IIDness. For CIFAR-10, we assign $\{2,4,...,10\}$ classes out of total $10$ classes to each client. For CIFAR-100, we assign $\{10,30,...,100\}$ classes out of total $100$ classes to each client. Figure 5 (left two) shows that FedMRL consistently achieves higher average test accuracy than the best-performing baseline - FedProto on both datasets, demonstrating its robustness to non-IIDness by class.

<details>

<summary>x15.png Details</summary>

### Visual Description

## Line Chart: CIFAR-10 Test Accuracy vs. Number of Classes

### Overview

The image is a line chart comparing the test accuracy of two models, FedProto and FedMRL, across different numbers of classes. The x-axis represents the number of classes, ranging from 2 to 10. The y-axis represents the test accuracy, ranging from 40 to 100.

### Components/Axes

* **Title:** CIFAR-10

* **X-axis Title:** Number of Classes

* **X-axis Markers:** 2, 4, 6, 8, 10

* **Y-axis Title:** Test Accuracy

* **Y-axis Markers:** 40, 60, 80

* **Legend:** Located in the center-left of the chart.

* **FedProto:** Represented by a dashed light green line with circle markers.

* **FedMRL:** Represented by a solid light purple line with star markers.

### Detailed Analysis

* **FedProto:** The dashed light green line represents the performance of the FedProto model.

* At 2 classes, the test accuracy is approximately 94%.

* At 4 classes, the test accuracy is approximately 72%.

* At 6 classes, the test accuracy is approximately 62%.

* At 8 classes, the test accuracy is approximately 54%.

* At 10 classes, the test accuracy is approximately 40%.

* **Trend:** The test accuracy of FedProto decreases as the number of classes increases.

* **FedMRL:** The solid light purple line represents the performance of the FedMRL model.

* At 2 classes, the test accuracy is approximately 94%.

* At 4 classes, the test accuracy is approximately 86%.

* At 6 classes, the test accuracy is approximately 80%.

* At 8 classes, the test accuracy is approximately 76%.

* At 10 classes, the test accuracy is approximately 63%.

* **Trend:** The test accuracy of FedMRL also decreases as the number of classes increases, but at a slower rate than FedProto.

### Key Observations

* Both models exhibit decreasing test accuracy as the number of classes increases.

* FedMRL consistently outperforms FedProto across all tested numbers of classes.

* The performance gap between FedMRL and FedProto widens as the number of classes increases.

### Interpretation

The chart demonstrates that both FedProto and FedMRL models experience a decline in test accuracy when faced with a larger number of classes in the CIFAR-10 dataset. However, FedMRL maintains a higher level of accuracy compared to FedProto, suggesting it is more robust to the challenge of increasing class complexity. The widening performance gap indicates that FedMRL's advantage becomes more pronounced as the classification task becomes more difficult. This could be due to FedMRL's architecture or training methodology being better suited for handling a larger number of distinct classes.

</details>

<details>

<summary>x16.png Details</summary>

### Visual Description

## Line Chart: CIFAR-100 Test Accuracy vs. Number of Classes

### Overview

The image is a line chart comparing the test accuracy of two models, FedProto and FedMRL, across varying numbers of classes in the CIFAR-100 dataset. The x-axis represents the number of classes, and the y-axis represents the test accuracy.

### Components/Axes

* **Title:** CIFAR-100

* **X-axis:** Number of Classes, with tick marks at 10, 30, 50, 70, 90, and 100.

* **Y-axis:** Test Accuracy, with tick marks at 20, 40, and 60.

* **Legend:** Located in the top-right corner.

* FedProto: Represented by a dashed light green line with circle markers.

* FedMRL: Represented by a solid light purple line with star markers.

* **Grid:** Dashed gray lines provide a visual grid.

### Detailed Analysis

**FedProto (Dashed Light Green Line with Circle Markers):**

* **Trend:** The test accuracy decreases as the number of classes increases.

* **Data Points:**

* 10 Classes: Approximately 54%

* 30 Classes: Approximately 24%

* 50 Classes: Approximately 16%

* 70 Classes: Approximately 13%

* 90 Classes: Approximately 11%

* 100 Classes: Approximately 8%

**FedMRL (Solid Light Purple Line with Star Markers):**

* **Trend:** The test accuracy decreases as the number of classes increases.

* **Data Points:**

* 10 Classes: Approximately 62%

* 30 Classes: Approximately 31%

* 50 Classes: Approximately 19%

* 70 Classes: Approximately 16%

* 90 Classes: Approximately 14%

* 100 Classes: Approximately 13%

### Key Observations

* Both FedProto and FedMRL models exhibit a decline in test accuracy as the number of classes increases.

* FedMRL consistently outperforms FedProto across all tested numbers of classes.

* The most significant drop in accuracy for both models occurs between 10 and 30 classes.

* The rate of accuracy decrease slows down as the number of classes increases beyond 50.

### Interpretation

The chart illustrates the performance of two federated learning models, FedProto and FedMRL, on the CIFAR-100 dataset. The decreasing test accuracy with an increasing number of classes suggests that both models struggle to maintain performance as the classification task becomes more complex. FedMRL's consistently higher accuracy indicates that it is a more robust model for this particular task and dataset. The steep initial decline in accuracy highlights the challenge of distinguishing between a larger number of classes, while the subsequent plateau suggests a limit to the performance degradation as the number of classes continues to increase.

</details>

<details>

<summary>x17.png Details</summary>

### Visual Description

## Line Chart: CIFAR-10 Test Accuracy vs. Alpha

### Overview

The image is a line chart comparing the test accuracy of two federated learning algorithms, FedProto and FedMRL, on the CIFAR-10 dataset. The x-axis represents the parameter alpha (α), and the y-axis represents the test accuracy.

### Components/Axes

* **Title:** CIFAR-10

* **X-axis:**

* Label: α

* Scale: 0.1, 0.2, 0.3, 0.4, 0.5

* **Y-axis:**

* Label: Test Accuracy

* Scale: 40, 50, 60

* **Legend:** Located in the center of the chart.

* FedProto: Light green dashed line with circle markers.

* FedMRL: Purple solid line with star markers.

### Detailed Analysis

* **FedProto (Light Green Dashed Line with Circle Markers):**

* Trend: The test accuracy generally decreases as alpha increases.

* Data Points:

* α = 0.1, Test Accuracy ≈ 44

* α = 0.2, Test Accuracy ≈ 42

* α = 0.3, Test Accuracy ≈ 42.5

* α = 0.4, Test Accuracy ≈ 40

* α = 0.5, Test Accuracy ≈ 40

* **FedMRL (Purple Solid Line with Star Markers):**

* Trend: The test accuracy decreases as alpha increases, but the decrease is less pronounced than FedProto.

* Data Points:

* α = 0.1, Test Accuracy ≈ 65

* α = 0.2, Test Accuracy ≈ 64

* α = 0.3, Test Accuracy ≈ 62

* α = 0.4, Test Accuracy ≈ 62

* α = 0.5, Test Accuracy ≈ 62

### Key Observations

* FedMRL consistently outperforms FedProto across all values of alpha.

* The performance of FedProto is more sensitive to changes in alpha than FedMRL.

* Both algorithms show a slight decrease in test accuracy as alpha increases.

### Interpretation

The chart suggests that FedMRL is a more robust algorithm for the CIFAR-10 dataset compared to FedProto, as it maintains higher accuracy across different values of alpha. The decrease in accuracy for both algorithms as alpha increases could indicate that a higher alpha value negatively impacts the learning process, potentially due to increased noise or instability in the federated learning environment. The choice of alpha appears to be more critical for FedProto, as its performance degrades more noticeably with increasing alpha.

</details>

<details>

<summary>x18.png Details</summary>

### Visual Description

## Line Chart: CIFAR-100 Test Accuracy vs. Alpha

### Overview

The image is a line chart comparing the test accuracy of two models, FedProto and FedMRL, on the CIFAR-100 dataset, with respect to the parameter alpha (α). The x-axis represents the value of alpha, ranging from 0.1 to 0.5. The y-axis represents the test accuracy, ranging from 10 to 16.

### Components/Axes

* **Title:** CIFAR-100

* **X-axis:**

* Label: α

* Scale: 0.1, 0.2, 0.3, 0.4, 0.5

* **Y-axis:**

* Label: Test Accuracy

* Scale: 10, 12, 14

* **Legend:** Located in the top-right corner.

* FedProto: Represented by a dashed light green line with circle markers.

* FedMRL: Represented by a solid light purple line with star markers.

* **Grid:** The chart has a light gray dashed grid.

### Detailed Analysis

* **FedProto (light green, dashed line, circle markers):**

* Trend: The test accuracy generally decreases as alpha increases.

* Data Points:

* α = 0.1, Test Accuracy ≈ 12.4

* α = 0.2, Test Accuracy ≈ 11.2

* α = 0.3, Test Accuracy ≈ 10.0

* α = 0.4, Test Accuracy ≈ 9.3

* α = 0.5, Test Accuracy ≈ 9.6

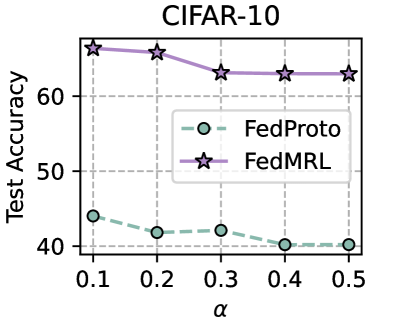

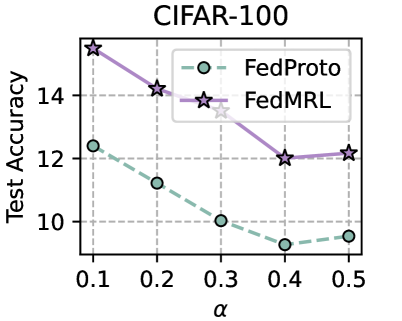

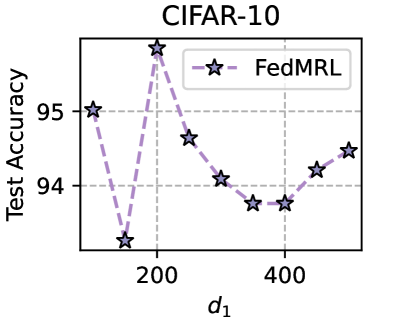

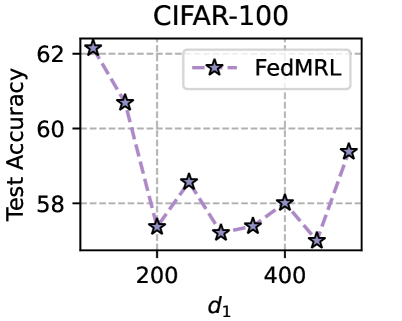

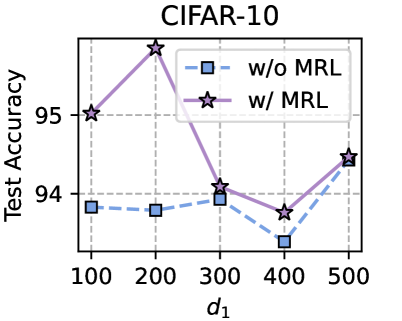

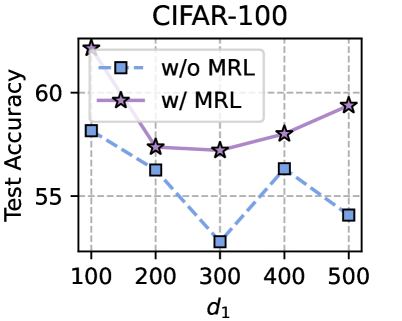

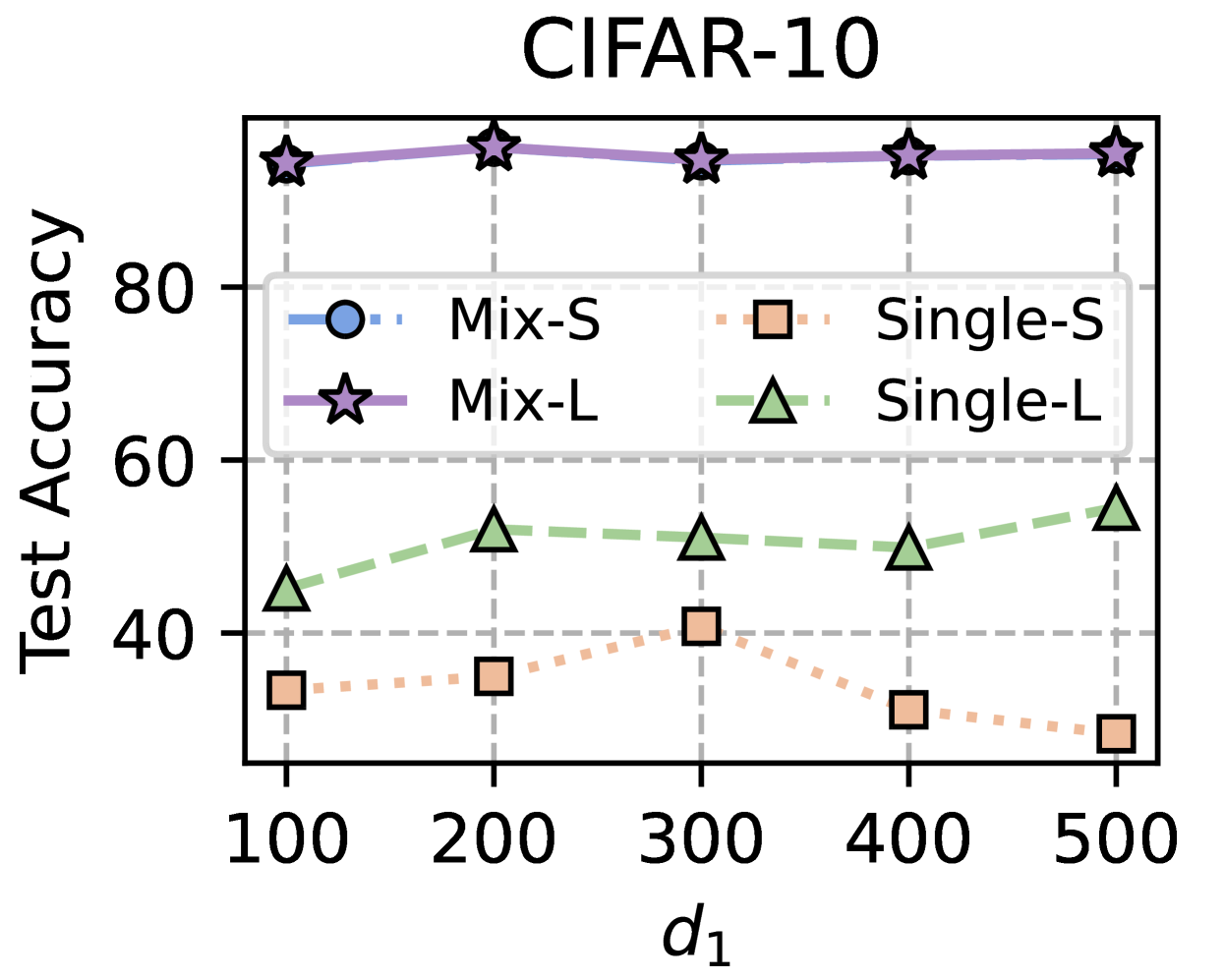

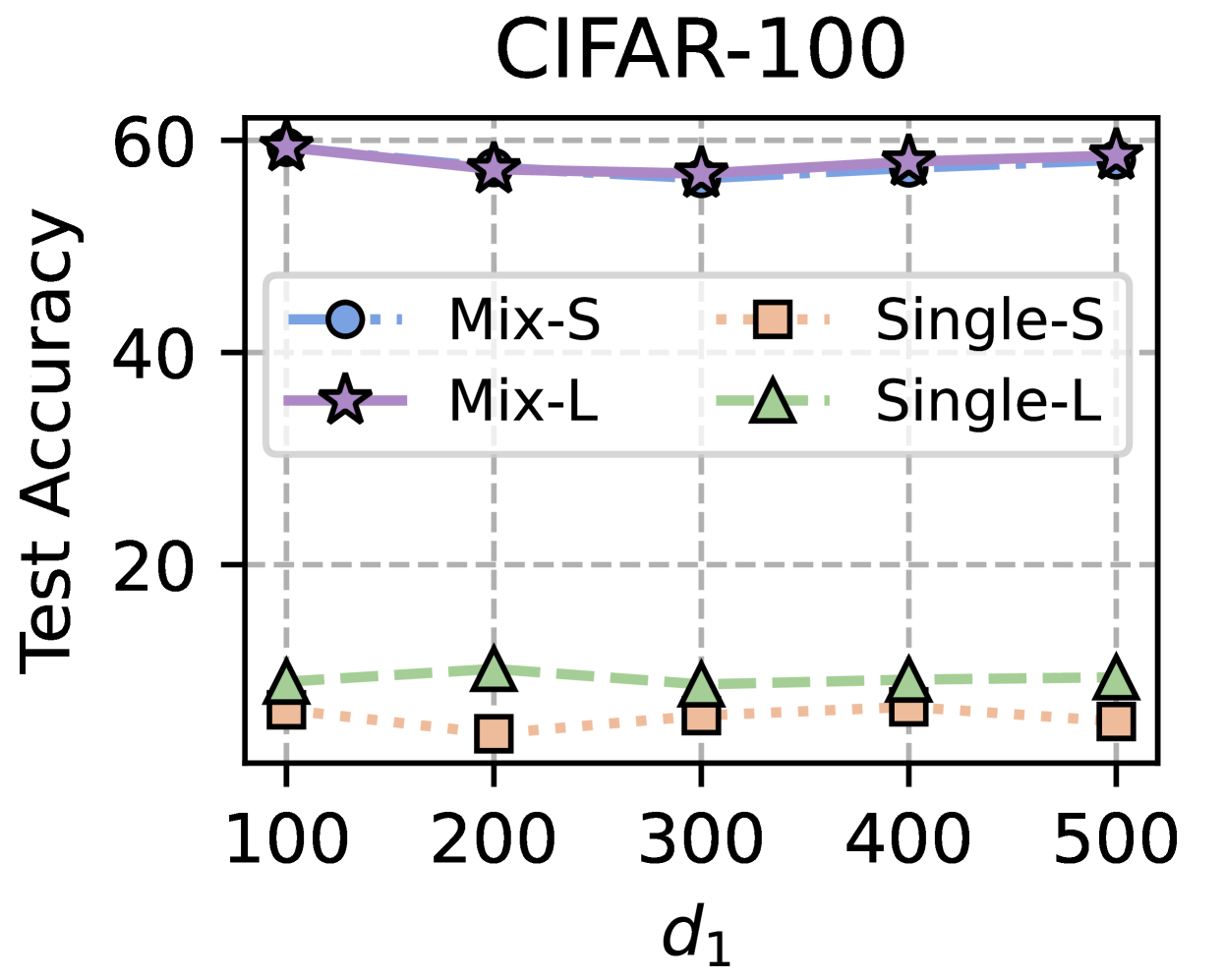

* **FedMRL (light purple, solid line, star markers):**