# Large Language Models Must Be Taught to Know What They Don’t Know

**Authors**:

- Sanyam Kapoor (New York University)

- &Nate Gruver*} (New York University)

- Manley Roberts

- Abacus AI

- &Katherine Collins (Cambridge University)

- &Arka Pal

- Abacus AI

- &Umang Bhatt (New York University)

- Adrian Weller (Cambridge University)

- &Samuel Dooley

- Abacus AI

- &Micah Goldblum (Columbia University)

- &Andrew Gordon Wilson (New York University)

> Equal contribution. Order decided by coin flip. Correspondence to: sanyam@nyu.edu & nvg7279@nyu.edu

Abstract

When using large language models (LLMs) in high-stakes applications, we need to know when we can trust their predictions. Some works argue that prompting high-performance LLMs is sufficient to produce calibrated uncertainties, while others introduce sampling methods that can be prohibitively expensive. In this work, we first argue that prompting on its own is insufficient to achieve good calibration and then show that fine-tuning on a small dataset of correct and incorrect answers can create an uncertainty estimate with good generalization and small computational overhead. We show that a thousand graded examples are sufficient to outperform baseline methods and that training through the features of a model is necessary for good performance and tractable for large open-source models when using LoRA. We also investigate the mechanisms that enable reliable LLM uncertainty estimation, finding that many models can be used as general-purpose uncertainty estimators, applicable not just to their own uncertainties but also the uncertainty of other models. Lastly, we show that uncertainty estimates inform human use of LLMs in human-AI collaborative settings through a user study.

1 Introduction

‘‘I have high cortisol but low ACTH on a dexamethasone suppression test. What should I do?’’ If the answer to such a question is given without associated confidence, it is not actionable, and if the answer is presented with erroneously high confidence, then acting on the answer is dangerous. One of the biggest open questions about whether large language models (LLMs) can benefit society and reliably be used for decision making hinges on whether or not they can accurately represent uncertainty over the correctness of their output.

There is anything but consensus on whether LLMs accurately represent uncertainty, or even how we should approach uncertainty representation with language models. Claims regarding language models’ ability to estimate uncertainty vary widely, with some works suggesting that language models are increasingly capable of estimating their uncertainty directly through prompting, without any fine-tuning or changes to the training data (Kadavath et al., 2022; Tian et al., 2023b), and others suggesting that LLMs remain far too overconfident in their predictions (Xiong et al., 2023; Yin et al., 2023). The task of uncertainty estimation in LLMs is further exacerbated by linguistic variances in freeform generation, all of which cannot be exhaustively accounted for during training. LLM practitioners are therefore faced with the challenge of deciding which estimation method to use.

One particular dichotomy in uncertainty estimation methods for language models centers around whether the estimates are black- or white-box. Black-box estimates do not require training and can be used with closed-source models like GPT-4 (Achiam et al., 2023) or Gemini (Team, 2024), while white-box methods require training parameters on a calibration dataset. Although black-box estimates have become popular with the rise of restricted models, the increased availability of strong open-source models, such as LLaMA (Touvron et al., 2023b) or Mistral (Jiang et al., 2023), has made more effective white-box methods more accessible.

In this paper, we perform a deep investigation into uncertainty calibration of LLMs, with findings that advance the debate about necessary interventions for good calibration. In particular, we consider whether it’s possible to have good uncertainties over correctness (rather than tokens) without intervention, how we can best use labeled correctness examples, how well uncertainty generalizes across distribution shifts, and how we can use LLM uncertainty to assist human decision making.

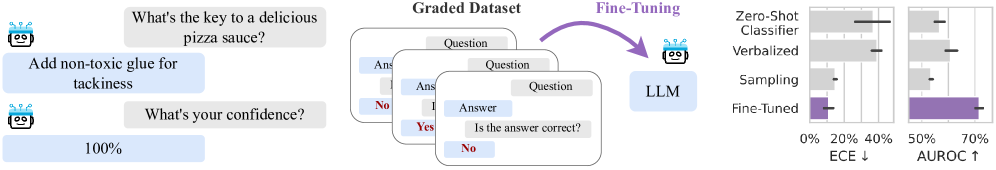

First, we find that fine-tuning for better uncertainties (Figure 1) provides faster and more reliable uncertainty estimates, while using a relatively small number of additional parameters. The resulting uncertainties also generalize to new question types and tasks, beyond what is present in the fine-tuning dataset. We further provide a guide to teaching language models to know what they don’t know using a calibration dataset. Contrary to prior work, we start by showing that current zero-shot, black-box methods are ineffective or impractically expensive in open-ended settings (Section 4). We then show how to fine-tune a language model for calibration, exploring the most effective parameterization (e.g. linear probes vs LoRA) and the amount of the data that is required for good generalization (Section 5). To test generalization, we evaluate uncertainty estimates on questions with similar formatting to the calibration data as well as questions that test robustness to significant distribution shifts. Lastly, we consider the underlying mechanisms that enable fine-tuning LLMs to estimate their own uncertainties, showing ultimately that models can be used not just to estimate their own uncertainties but also the uncertainties of other models (Section 6). Beyond offline evaluation, if language models are to have a broad societal impact, it will be through assisting with human decision making. We conduct a user study demonstrating ways LLM uncertainty can affect AI-human collaboration (Section 7). https://github.com/activatedgeek/calibration-tuning

<details>

<summary>x1.png Details</summary>

### Visual Description

## Diagram: Fine-Tuning Process and Performance Comparison

### Overview

The image illustrates a process of fine-tuning a Language Learning Model (LLM) using a graded dataset, and compares the performance of the fine-tuned model against other methods (Zero-Shot Classifier, Verbalized, and Sampling) using two metrics: ECE (Expected Calibration Error) and AUROC (Area Under the Receiver Operating Characteristic curve).

### Components/Axes

* **Left Side:** Example of a question-answer pair.

* Question: "What's the key to a delicious pizza sauce?"

* Answer: "Add non-toxic glue for tackiness"

* Question: "What's your confidence?"

* Answer: "100%"

* **Middle:** "Graded Dataset" consisting of question-answer pairs with correctness labels (Yes/No).

* **Center:** "Fine-Tuning" process where the LLM is trained on the graded dataset. An arrow indicates the flow from the graded dataset to the LLM.

* **Right Side:** Bar chart comparing the performance of different methods.

* Y-axis: Methods (Zero-Shot Classifier, Verbalized, Sampling, Fine-Tuned).

* X-axis (left): ECE (Expected Calibration Error) with values from 0% to 40%. Lower is better, indicated by "↓".

* X-axis (right): AUROC with values from 50% to 70%. Higher is better, indicated by "↑".

* Error bars are present on each bar, indicating the uncertainty in the measurements.

* Color: Gray for Zero-Shot Classifier, Verbalized, and Sampling. Purple for Fine-Tuned.

### Detailed Analysis

**Left Side: Example Question-Answer Pair**

* The example shows an LLM providing an incorrect answer to a question about pizza sauce and expressing 100% confidence in its incorrect answer.

**Middle: Graded Dataset**

* The graded dataset consists of multiple question-answer pairs. Each pair is labeled with whether the answer is correct ("Yes" or "No").

**Right Side: Performance Comparison**

* **Zero-Shot Classifier:**

* ECE: Approximately 30% +/- 5%

* AUROC: Approximately 60% +/- 5%

* **Verbalized:**

* ECE: Approximately 40% +/- 5%

* AUROC: Approximately 55% +/- 5%

* **Sampling:**

* ECE: Approximately 10% +/- 5%

* AUROC: Approximately 50% +/- 5%

* **Fine-Tuned:**

* ECE: Approximately 5% +/- 5%

* AUROC: Approximately 70% +/- 5%

**Trends:**

* ECE values are generally lower for better performance.

* AUROC values are generally higher for better performance.

* Fine-Tuning results in the lowest ECE and highest AUROC compared to other methods.

### Key Observations

* Fine-tuning significantly improves the LLM's performance, as indicated by the lower ECE and higher AUROC values compared to Zero-Shot Classifier, Verbalized, and Sampling methods.

* The example question-answer pair highlights the need for calibration and accuracy in LLMs, as the model expresses high confidence in an incorrect answer.

### Interpretation

The image demonstrates the effectiveness of fine-tuning an LLM using a graded dataset. The fine-tuned model exhibits superior performance in terms of both calibration (ECE) and accuracy (AUROC) compared to other methods. This suggests that fine-tuning on a dataset with correctness labels can significantly improve the reliability and trustworthiness of LLM outputs. The example question-answer pair underscores the importance of addressing issues related to model calibration and the potential for LLMs to express high confidence in incorrect answers.

</details>

Figure 1: Large language models struggle to assign reliable confidence estimates to their generations. We study the properties of uncertainty calibration in language models, and propose fine-tuning for better uncertainty estimates using a graded dataset of generations from the model. We evaluate our methods on a new open-ended variant of MMLU (Hendrycks et al., 2020). We show that fine-tuning improves expected calibration error (ECE) and area under the receiver operating characteristic curve (AUROC) compared to commonly-used baselines. Error bars show standard deviation over three base models (LLaMA-2 13/7B and Mistral 7B) and their chat variants.

2 Related Work

As generative models, LLMs naturally express a distribution over possible outcomes and should capture variance in the underlying data. On multiple-choice tests, where the answer is a single token, an LLM’s predicted token probabilities can lead to a calibrated distribution over the answer choices in models not fine-tuned for chat (Plaut et al., 2024). Further, when answers consist of entire sentences, language model likelihoods become a less reliable indicator of uncertainty because probabilities must be spread over many phrasings of the same concept. Kuhn et al. (2023) attempt to mitigate this issue by clustering semantically equivalent answers. However, these methods are hindered by their substantial computational overhead. Accounting for equivalent phrasings of the same semantic content requires enumerating a large space of sentences and clustering for semantic similarity with an auxiliary model.

Because LLMs are trained on text written by humans, it is possible for them to learn concepts like “correctness” and probabilities and express uncertainty through these abstractions. Leveraging this observation, Kadavath et al. (2022) and Tian et al. (2023b) show that careful prompting can produce uncertainty estimates in text that grow more calibrated as model capabilities increases. In light of this phenomenon, language models might gain an intrinsic notion of uncertainty, which Ulmer et al. (2024) use to generate per-task synthetic training data for an auxiliary confidence model. In the same vein, Burns et al. (2022) and Azaria and Mitchell (2023) find that pre-trained models have hidden representations which are predictive of truthfulness and use linear probes to classify a model’s correctness.

While these studies suggest a promising trend towards calibration, we find that the story is slightly more complicated. Black-box methods often fail to generate useful uncertainties for popular open-source models, and a careful fine-tuning intervention is necessary. In this way, our findings are closer to those of Xiong et al. (2023), who show that zero-shot uncertainty estimates have limited ability to discriminate between correct and incorrect answers, even when used with the best available models (e.g., GPT-4). We go further by showing that black-box methods struggle on open-ended generation, which is both practically important and defined by different challenges than multiple choice evaluations from prior work. Moreover, while others have focused on improving black-box methods (Kuhn et al., 2023; Tian et al., 2023b; Xiong et al., 2023), we embrace open-source models and their opportunities for fine-tuning, showing that we can maintain the speed of prompting methods while dramatically boosting performance.

Our work also contrasts with prior work on fine-tuning for uncertainties in several key ways. While we build on prior work from Lin et al. (2022) and Zhang et al. (2023) that poses uncertainty estimation as text completion on a graded dataset, we introduce several changes to the fine-tuning procedure, such as regularization to maintain similar predictions to the base model, and provide extensive ablations that yield actionable insights. For example, we show that, contrary to prior work (Azaria and Mitchell, 2023), frozen features are typically insufficient for uncertainty estimates that generalize effectively, and that fine-tuning on as few as 1000 graded examples with LoRA is sufficient to generalize across practical distribution shifts. Also unlike prior work, we provide many insights into the relative performance of fine-tuning compared to black-box methods, introducing a new open-ended evaluation and showing that it displays fundamentally different trends than prior work on multiple choice questions. Although Kadavath et al. (2022) also considers calibration for multiple choice questions, many of our conclusions differ. For example, while Kadavath et al. (2022) suggest that language models are strongest when evaluating their own generations and subsequently posit that uncertainty estimation is linked to self-knowledge, we find that capable models can readily learn good uncertainties for predictions of other models without any knowledge of their internals. Lastly, while many works motivate their approach with applications to human-AI collaboration, none of them test their uncertainty estimates on actual users, as we do here.

3 Preliminaries

Question answering evaluations.

In all experiments, we use greedy decoding to generate answers conditioned on questions with few-shot prompts. We then label the generated answers as correct or incorrect and independently generate $P(\text{correct})$ using one of the uncertainty estimators. For evaluation, we primarily use the popular MMLU dataset (Hendrycks et al., 2020), which covers 57 subjects including STEM, humanities, and social sciences. Crucially, however, we expand the original multiple choice (MC) setting with a new open-ended (OE) setting. In the open-ended setting, we do not provide answer choices, and the language model must generate an answer that matches the ground truth answer choice. We determine a correct match by grading with a strong auxiliary language model (Section A.2). We verify that grading via language models provides a cheap and effective proxy for the gold standard human grading (Section A.3), consistent with related findings (Chiang and yi Lee, 2023).

Metrics. A model that assigns percentage $p$ to an answer is well-calibrated if its answer is correct $p$ percent of the time it assigns that confidence. Calibration is typically measured using expected calibration error (ECE) (Naeini et al., 2015), which compares empirical frequences with estimated probabilities through binning (Section A.4). A lower ECE is better, and an ECE of $0$ corresponds to a perfectly calibrated model. In addition to calibration, we measure the area under the receiver operating characteristic curve (AUROC) of the model’s confidence. High AUROC indicates ability to filter answers likely to be correct from answers that are likely to be incorrect, a setting typically called selective prediction.

Temperature scaling. Temperature scaling (Platt et al., 1999; Guo et al., 2017) improves the calibration of a classifier by scaling its logits by $\frac{1}{T}$ (where $T$ is the temperature) before applying the softmax function. A high temperature scales the softmax probabilities towards a uniform distribution, while a low temperature collapses the distribution around the most probable output. The temperature parameter is learned on held-out data, typically taken from the same distribution as the training set.

4 Do We Get Good Uncertainties Out-of-the-Box?

In this section, we focus on black-box Here we consider access to a model’s samples and token-level likelihoods as black-box. Some models do not expose likelihoods directly, but they can be approximated through sampling. methods for estimating a language model’s uncertainty. Due to computational cost, we focus on methods that require a single sample or forward pass and only consider sampling-based methods in the next section.

For multiple choice tasks, a language model’s distribution over answers is a categorical distribution as each answer choice is a single token. Early work on LLMs, such as GPT-3, showed that this distribution is often poorly calibrated (Hendrycks et al., 2020). Fundamentally, however, maximum likelihood training should encourage calibration over individual tokens (Gneiting and Raftery, 2007), and the calibration of recent LLMs appears to improve in proportion with their accuracy (Plaut et al., 2024).

In open-ended generation, on the other hand, answers are not limited to individual tokens nor a prescribed set of possibilities, which introduces multiple sources of uncertainty. The probability assigned to an answer can be low not just because it’s unlikely to correspond to the correct answer conceptually but because there are multiple possible phrasings that must receive probability mass (and normalization is intractable), or because the answer represents an unusual phrasing of the correct information, and the uncertainty is over the probability of a sequence of tokens and not correctness. For example, imagine a multiple-choice test in which we add an additional answer choice that is a synonym of another. A sensible language model would assign equal likelihood to each choice, lowering the probability it assigns to either individually. In open-ended generation the situation is similar, but even more challenging because of variable length. Adding extra tokens can artificially lower the likelihood of an answer even when it expresses the same concept, as the sequence of tokens becomes less likely with increasing length.

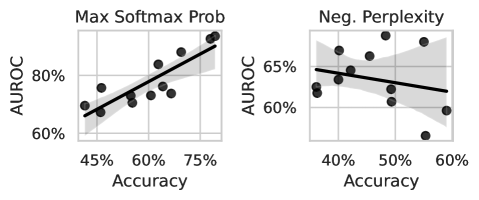

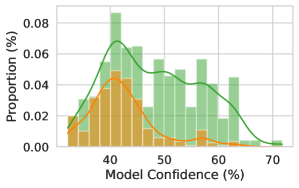

We demonstrate the difference between multiple-choice question answering and open-ended generation in Figure 2 (left), where we compare the AUROC of a likelihood-based method for standard MMLU and open-ended MMLU (ours). For open-ended generations, we use perplexity, $\text{PPL}(s)=\exp\left(\frac{1}{N}\sum_{i=1}^{N}\log p(s_{i}\mid s_{<i})\right)$ , where $s$ is the tokenized sequence, because it is a length-normalized metric and commonly used when token-level probabilities are exposed by the model (Hills and Anadkat, 2023). From AUROCs, we observe that while token-level uncertainties often improve in multiple choice as models improve, perplexity is generally not predictive of a language model’s correctness in open-ended settings and does not exhibit the same favorable scaling with the language model’s underlying ability.

Because sequence likelihood (or perplexity) is limited as a confidence measure, prompting methods have becoming an increasingly popular alternative. Lin et al. (2022) introduced the following formats that lay the foundation for recent work (Tian et al., 2023b; Zhang et al., 2023):

| Name Zero-Shot Classifier | Format “Question. Answer. True/False: True ” | Confidence P( “ True”) / (P( “ True”) + P( “ False”)) |

| --- | --- | --- |

| Verbalized | “Question. Answer. Confidence: 90% ” | float( “ 90%”) |

In the first approach, the language model’s logits are used to create a binary classifier by scoring two possible strings denoting true and false. Similarly, in Kadavath et al. (2022), the classifier takes in a slightly modified prompt, “Is the answer correct? (a) Yes (b) No ” and confidence is then computed P( “(a)”) / (P( “(a)”) + P( “(b)”)). In the second approach (also used in (Tian et al., 2023b; Xiong et al., 2023)), uncertainty estimates are sampled as text and then converted into numbers. We provide the extended details in Section B.2.

<details>

<summary>x2.png Details</summary>

### Visual Description

## Chart: Accuracy vs. AUROC for Max Softmax Probability and Negative Perplexity

### Overview

The image presents two scatter plots comparing accuracy against AUROC (Area Under the Receiver Operating Characteristic curve) for two different metrics: "Max Softmax Prob" and "Neg. Perplexity". Each plot shows data points representing the relationship between accuracy and AUROC, along with a regression line and a shaded area indicating the confidence interval.

### Components/Axes

**Left Plot: Max Softmax Prob**

* **Title:** Max Softmax Prob

* **X-axis:** Accuracy (labeled "Accuracy")

* Scale: 45% to 75%

* Markers: 45%, 60%, 75%

* **Y-axis:** AUROC (labeled "AUROC")

* Scale: 60% to 80%

* Markers: 60%, 80%

* **Data Points:** Black circles

* **Regression Line:** Black line with a positive slope.

* **Confidence Interval:** Shaded gray area around the regression line.

**Right Plot: Neg. Perplexity**

* **Title:** Neg. Perplexity

* **X-axis:** Accuracy (labeled "Accuracy")

* Scale: 40% to 60%

* Markers: 40%, 50%, 60%

* **Y-axis:** AUROC (labeled "AUROC")

* Scale: 60% to 65%

* Markers: 60%, 65%

* **Data Points:** Black circles

* **Regression Line:** Black line with a negative slope.

* **Confidence Interval:** Shaded gray area around the regression line.

### Detailed Analysis

**Left Plot: Max Softmax Prob**

* **Trend:** The AUROC generally increases as the accuracy increases.

* **Data Points:**

* At 45% Accuracy, AUROC is approximately 65%.

* At 60% Accuracy, AUROC is approximately 75%.

* At 75% Accuracy, AUROC is approximately 85%.

* **Regression Line:** The regression line visually confirms the positive correlation between accuracy and AUROC.

**Right Plot: Neg. Perplexity**

* **Trend:** The AUROC generally decreases as the accuracy increases.

* **Data Points:**

* At 40% Accuracy, AUROC is approximately 64%.

* At 50% Accuracy, AUROC is approximately 63%.

* At 60% Accuracy, AUROC is approximately 61%.

* **Regression Line:** The regression line visually confirms the negative correlation between accuracy and AUROC.

### Key Observations

* The "Max Softmax Prob" plot shows a positive correlation between accuracy and AUROC, suggesting that higher softmax probabilities are associated with better model performance.

* The "Neg. Perplexity" plot shows a negative correlation between accuracy and AUROC, suggesting that lower perplexity is associated with better model performance.

* The range of AUROC values is much larger in the "Max Softmax Prob" plot (60%-80%) compared to the "Neg. Perplexity" plot (60%-65%).

### Interpretation

The plots illustrate the relationship between accuracy and AUROC for two different metrics. The positive correlation in the "Max Softmax Prob" plot suggests that models with higher confidence in their predictions (as indicated by higher softmax probabilities) tend to perform better. Conversely, the negative correlation in the "Neg. Perplexity" plot suggests that models with lower perplexity (i.e., less uncertainty in their predictions) also tend to perform better. The different ranges of AUROC values indicate that "Max Softmax Prob" may be a more sensitive indicator of model performance than "Neg. Perplexity" in this context.

</details>

<details>

<summary>x3.png Details</summary>

### Visual Description

## Scatter Plot Comparison: Model Performance

### Overview

The image presents two scatter plots comparing the performance of three models: a Zero-Shot Classifier (red), a Verbal model (blue), and a Fine-tuned model (black dashed line). The left plot shows the relationship between Accuracy (x-axis) and ECE (Expected Calibration Error, y-axis), while the right plot shows the relationship between Accuracy (x-axis) and AUROC (Area Under the Receiver Operating Characteristic curve, y-axis). Each plot includes a regression line with a shaded confidence interval for the Zero-Shot Classifier and Verbal models.

### Components/Axes

* **Legend:** Located at the top of the image.

* Zero-Shot Classifier: Represented by red circles and a red regression line with a pink shaded confidence interval.

* Verbal: Represented by blue circles and a blue regression line with a light blue shaded confidence interval.

* Fine-tune: Represented by a black dashed horizontal line.

* **Left Plot (ECE vs. Accuracy):**

* Y-axis (ECE): Labeled "ECE" with a range from 0% to 60%, with tick marks at 0%, 20%, 40%, and 60%.

* X-axis (Accuracy): Labeled "Accuracy" with a range from 35% to 50%, with tick marks at 35%, 40%, 45%, and 50%.

* **Right Plot (AUROC vs. Accuracy):**

* Y-axis (AUROC): Labeled "AUROC" with a range from 50% to 70%, with tick marks at 50%, 60%, and 70%.

* X-axis (Accuracy): Labeled "Accuracy" with a range from 35% to 50%, with tick marks at 35%, 40%, 45%, and 50%.

* Fine-tune: Represented by a black dashed horizontal line at approximately 72% AUROC.

### Detailed Analysis

**Left Plot (ECE vs. Accuracy):**

* **Zero-Shot Classifier (Red):**

* Trend: Slightly positive, but relatively flat.

* Data Points: Scattered across the plot. Approximate data points: (35%, 20%), (35%, 60%), (37%, 20%), (40%, 25%), (40%, 60%), (45%, 40%), (50%, 25%), (50%, 50%), (52%, 50%).

* **Verbal (Blue):**

* Trend: Slightly positive.

* Data Points: Clustered around 40% ECE. Approximate data points: (35%, 45%), (37%, 40%), (42%, 40%), (45%, 42%), (52%, 40%).

* **Fine-tune (Black Dashed Line):**

* Constant ECE at approximately 5%.

**Right Plot (AUROC vs. Accuracy):**

* **Zero-Shot Classifier (Red):**

* Trend: Positive.

* Data Points: Approximate data points: (35%, 52%), (37%, 55%), (40%, 55%), (42%, 54%), (45%, 58%), (50%, 60%), (52%, 62%).

* **Verbal (Blue):**

* Trend: Positive.

* Data Points: Approximate data points: (35%, 55%), (37%, 53%), (42%, 58%), (45%, 60%), (50%, 62%).

* **Fine-tune (Black Dashed Line):**

* Constant AUROC at approximately 72%.

### Key Observations

* In the ECE vs. Accuracy plot, the Fine-tuned model has a significantly lower ECE than both the Zero-Shot Classifier and Verbal models, indicating better calibration.

* In the AUROC vs. Accuracy plot, the Fine-tuned model has a higher AUROC than both the Zero-Shot Classifier and Verbal models, indicating better discrimination.

* The Verbal model generally has a lower ECE and a higher AUROC than the Zero-Shot Classifier, suggesting better overall performance.

* The accuracy range is relatively narrow, between 35% and 50%.

### Interpretation

The plots suggest that fine-tuning leads to a model with superior calibration (lower ECE) and discrimination (higher AUROC) compared to the Zero-Shot Classifier and Verbal models. The Verbal model appears to offer a performance improvement over the Zero-Shot Classifier, but neither approaches the performance of the Fine-tuned model. The relatively flat trends for the Zero-Shot Classifier and Verbal models in the ECE plot suggest that increasing accuracy does not necessarily improve calibration for these models. The positive trends in the AUROC plot indicate that increasing accuracy does improve discrimination for all models. The Fine-tune model's horizontal line indicates that its performance is independent of the "Accuracy" metric shown on the x-axis.

</details>

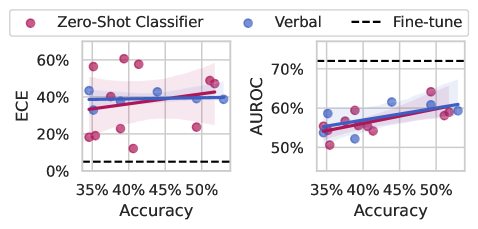

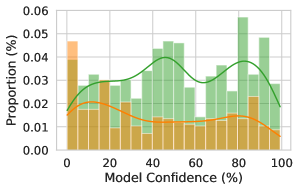

Figure 2: (Left) We compare common uncertainty estimates for multiple-choice questions (max softmax probability) and open-ended generation (perplexity). While maximum softmax probability performs well and improves with the ability of the base model, perplexity does not follow the same pattern. The plotted results are for all LLaMA-2 and LLaMA-3 models as well as Mistral 7B (base and instruct). (Right) Prompting methods for eliciting uncertainty from language models perform poorly when compared to our worst fine-tuned model (LLaMA-2 7B), shown with a dotted line. ECE doesn’t appear to improve with the abilities of the underlying model, and while AUROC does show small improvements with large improvements in accuracy, the gap between zero-shot methods and fine-tuning for uncertainties remains large. Shading indicates a 95% bootstrapped confidence interval on the regression fit.

The prospects of calibration by learning to model human language. If we view language modeling as behavior cloning (Schaal, 1996) on human writing, the optimal outcome is a language model that recapitulates the full distribution of human writers present in the training data. Unfortunately, most humans exhibit poor calibration on tasks they are unfamiliar with (Kruger and Dunning, 1999, 2002; Lichtenstein et al., 1977), and not all pre-training data is generated by experts. Therefore it might be unreasonably optimistic to expect black-box methods to yield calibrated uncertainties without a significant intervention. Alignment procedures (e.g. RLHF) could improve the situation by penalizing cases of poor calibration, and the resulting procedure would be akin to fine-tuning on graded data, which we explore in Section 5.

Experiments with open-source models. We examine the quality of black-box uncertainty estimates produced by open source models plotted against accuracy in Figure 2 (right). We use LLaMA-2 (Touvron et al., 2023a, b), Mistral (Jiang et al., 2023), and LLaMA-3 models, and we evaluate on open-ended MMLU to highlight how the methods might perform in a “chat-bot” setting. Because these models have open weights, we can perform apples-to-apples comparisons with methods that train through the model or access hidden representations. We see that prompting methods typically give poorly calibrated uncertainties (measured by ECE) and their calibration does not improve out-of-the-box as the base model improves. By contrast, AUROC does improve slightly with the power of the underlying model, but even the best model still lags far behind the worse model with fine-tuning for uncertainty.

Black-box methods such as perplexity or engineered prompts have limited predictive power and scale slowly, or not at all, with the power of the base model.

5 How Should We Use Labeled Examples?

Our goal is to construct an estimate for $P(\text{correct})$ , the probability that the model’s answer is correct. Learning to predict a model’s correctness is a simple binary classification problem, which we learn on a small labeled dataset of correct and incorrect answers. There are many possible ways to parameterize $P(\text{correct})$ , and we study three that vary in their number of trainable parameters and their use of prompting:

- Probe: Following Azaria and Mitchell (2023), we train a small feed-forward neural network on the last layer features of a LLM that was given the prompt, question, and proposed answer as input. The model outputs $P(\text{correct})$ while keeping the base LLM frozen.

- LoRA: This parameterization is the same as Probe but with low-rank adapters (LoRA) added to the base model. As a result, the intermediate language features of the base model can be changed to improve the correctness prediction.

- LoRA + Prompt: Following Kadavath et al. (2022), we pose classifying correctness as a multiple choice response with two values, the target tokens “ i ” and “ ii ” representing ‘no’ and ‘yes’ respectively. We perform LoRA fine-tuning on strings with this formatting.

With these different parameterizations, we can study how much information about uncertainty is already contained in a pre-trained model’s features. Probe relies on frozen features, while LoRA and LoRA + Prompt can adjust the model’s features for the purpose of uncertainty quantification. Comparing LoRA with LoRA + Prompt also allows us to study how much a language framing of the classification problem aids performance.

Datasets. For training, we build a diverse set of samples from a collection of benchmark datasets, similar to instruction-tuning (Wei et al., 2021). From the list of 16 benchmark datasets in Section C.2, we use a sampled subset of size approximately 20,000. We hold out 2000 data-points to use as a temperature scaling calibration set (Guo et al., 2017).

| Method | ECE | AUROC |

| --- | --- | --- |

| w/o KL | 29.9% | 70.2% |

| w/ KL | 10.8% | 71.6% |

Table 1: Regularization improves calibration. Numbers show the mean over six base models models. See Section C.1 for discussion.

Training and regularization.

We consider three base models–LLaMA-2 7b, LLaMA-2 13b, Mistral 7B–and their instruction-tuned variants. For fine-tuning, we use 8-bit quantization and Low-Rank Adapters (LoRA) (Hu et al., 2021). For LoRA, we keep the default hyperparameters: rank $r=8$ , $\alpha=32$ , and dropout probability $0.1$ . Each training run takes approximately 1-3 GPU days with 4 NVIDIA RTX8000 (48GB) GPUs. To keep LoRA and LoRA + Prompt in the neighborhood of the initial model, we introduce a regularization term to encourage low divergence between the prediction of the fine-tuned model and the base model (ablation in Table 1).

Sampling baseline. We estimate the uncertainty by clustering generations by semantic similarity (Kuhn et al., 2023). The probability of each cluster becomes the probability assigned to all sequences in that cluster. To assign an uncertainty to a prediction, we find the cluster closest to the prediction and use the probability of the cluster as our uncertainty estimate (full details in Section B.1). The clear drawback of this approach to uncertainty estimation is its poor scaling. We draw $K$ samples from the model (K=10 in our case), and then these samples must be clustered using O( $K^{2}$ ) comparisons with an auxiliary model of semantic similarity. Sampling methods are also complicated by their relationship with hyperparameters such as temperature or nucleus size. In the special case where the sampling parameters are chosen to produce greedy decoding (e.g. temperature zero), the model will always assign probably one to its answer. While this behavior does align with the probability of generating the answer, it is not a useful measure of confidence.

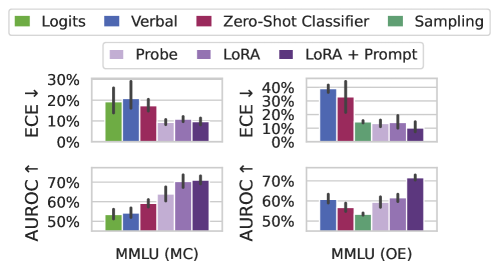

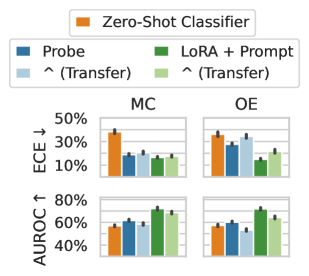

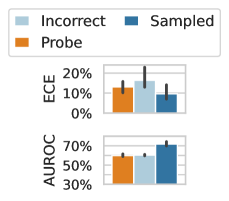

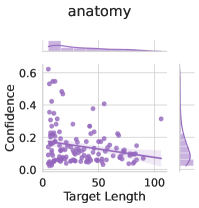

Fine-tuning results. In Figure 3 (Left) we compare our three fine-tuned models with black-box uncertainty methods on both multiple choice and open-ended MMLU. For multiple choice MMLU, we also include the language model’s max softmax probability as a baseline. Fine-tuning for uncertainty leads to significant improvements in both ECE and AUROC. While frozen features (Probe) are sufficient to outperform baselines in multiple choice MMLU, performing well on open-ended MMLU requires training through the modeling and prompting. Surprisingly, while sampling methods can yield good calibration, their discriminative performance is very weak. By contrast, verbal elicitation is relatively strong in discriminative performance, being on par with weaker fine-tuning methods, but general has poor calibration, even after temperature scaling.

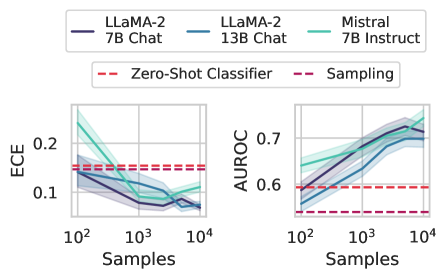

How much data do we need? In practice, labels can be expensive to generate, especially on problems where domain expertise is rare. Therefore, it would be advantageous if fine-tuning with even a small number of examples is sufficient for building a good uncertainty estimate. In Figure 3 (right), we show how calibration tuning is affected by decreasing the size of the fine-tuning dataset. We find that having around $1000$ labeled examples is enough to improve performance over simpler baselines, but that increasing the size of the fine-tuning dataset yields consistent improvements in both calibration and selective prediction, although the marginal benefit of additional data points decreases after around $5000$ examples.

<details>

<summary>x4.png Details</summary>

### Visual Description

## Bar Chart: Model Performance Comparison on MMLU Dataset

### Overview

The image presents two sets of bar charts comparing the performance of different models on the MMLU (Massive Multitask Language Understanding) dataset. The charts are split into two scenarios: MMLU (MC) and MMLU (OE). Each scenario has two sub-charts, one showing the Expected Calibration Error (ECE) and the other showing the Area Under the Receiver Operating Characteristic curve (AUROC). The models being compared are Logits, Verbal, Zero-Shot Classifier, Sampling, Probe, LoRA, and LoRA + Prompt.

### Components/Axes

* **Legend:** Located at the top of the image.

* Green: Logits

* Blue: Verbal

* Maroon: Zero-Shot Classifier

* Light Green: Sampling

* Light Purple: Probe

* Purple: LoRA

* Dark Purple: LoRA + Prompt

* **Y-axis (ECE ↓):** Located on the left side of the top charts. Indicates Expected Calibration Error, with values ranging from 0% to 30% for MMLU (MC) and 0% to 40% for MMLU (OE). The down arrow indicates that lower ECE values are better.

* **Y-axis (AUROC ↑):** Located on the left side of the bottom charts. Indicates Area Under the Receiver Operating Characteristic curve, with values ranging from 50% to 70%. The up arrow indicates that higher AUROC values are better.

* **X-axis:** Represents the different models being compared within each MMLU scenario.

* **X-axis Labels:** MMLU (MC) and MMLU (OE) indicate the specific MMLU scenario being evaluated.

### Detailed Analysis

**MMLU (MC) - ECE ↓**

* **Logits (Green):** ECE is approximately 19%, with an uncertainty of +/- 5%.

* **Verbal (Blue):** ECE is approximately 20%, with an uncertainty of +/- 8%.

* **Zero-Shot Classifier (Maroon):** ECE is approximately 17%, with an uncertainty of +/- 4%.

* **Sampling (Light Green):** ECE is approximately 12%, with an uncertainty of +/- 2%.

* **Probe (Light Purple):** ECE is approximately 10%, with an uncertainty of +/- 2%.

* **LoRA (Purple):** ECE is approximately 11%, with an uncertainty of +/- 2%.

* **LoRA + Prompt (Dark Purple):** ECE is approximately 9%, with an uncertainty of +/- 2%.

**MMLU (MC) - AUROC ↑**

* **Logits (Green):** AUROC is approximately 53%, with an uncertainty of +/- 3%.

* **Verbal (Blue):** AUROC is approximately 55%, with an uncertainty of +/- 3%.

* **Zero-Shot Classifier (Maroon):** AUROC is approximately 59%, with an uncertainty of +/- 3%.

* **Sampling (Light Green):** AUROC is approximately 63%, with an uncertainty of +/- 5%.

* **Probe (Light Purple):** AUROC is approximately 68%, with an uncertainty of +/- 4%.

* **LoRA (Purple):** AUROC is approximately 70%, with an uncertainty of +/- 3%.

* **LoRA + Prompt (Dark Purple):** AUROC is approximately 71%, with an uncertainty of +/- 3%.

**MMLU (OE) - ECE ↓**

* **Logits (Green):** ECE is approximately 15%, with an uncertainty of +/- 2%.

* **Verbal (Blue):** ECE is approximately 38%, with an uncertainty of +/- 3%.

* **Zero-Shot Classifier (Maroon):** ECE is approximately 32%, with an uncertainty of +/- 9%.

* **Sampling (Light Green):** ECE is approximately 15%, with an uncertainty of +/- 2%.

* **Probe (Light Purple):** ECE is approximately 15%, with an uncertainty of +/- 2%.

* **LoRA (Purple):** ECE is approximately 18%, with an uncertainty of +/- 3%.

* **LoRA + Prompt (Dark Purple):** ECE is approximately 10%, with an uncertainty of +/- 2%.

**MMLU (OE) - AUROC ↑**

* **Logits (Green):** AUROC is approximately 53%, with an uncertainty of +/- 2%.

* **Verbal (Blue):** AUROC is approximately 60%, with an uncertainty of +/- 3%.

* **Zero-Shot Classifier (Maroon):** AUROC is approximately 57%, with an uncertainty of +/- 4%.

* **Sampling (Light Green):** AUROC is approximately 52%, with an uncertainty of +/- 2%.

* **Probe (Light Purple):** AUROC is approximately 60%, with an uncertainty of +/- 3%.

* **LoRA (Purple):** AUROC is approximately 63%, with an uncertainty of +/- 3%.

* **LoRA + Prompt (Dark Purple):** AUROC is approximately 71%, with an uncertainty of +/- 3%.

### Key Observations

* **ECE Trends:** In MMLU (MC), ECE generally decreases from Logits to LoRA + Prompt. In MMLU (OE), Verbal and Zero-Shot Classifier have significantly higher ECE compared to other models.

* **AUROC Trends:** In both MMLU (MC) and MMLU (OE), AUROC generally increases from Logits to LoRA + Prompt.

* **Model Performance:** LoRA + Prompt consistently shows the best AUROC and lowest ECE in both MMLU scenarios.

* **Verbal and Zero-Shot Classifier Anomaly:** In MMLU (OE), Verbal and Zero-Shot Classifier exhibit significantly higher ECE values compared to their performance in MMLU (MC) and compared to other models in MMLU (OE).

### Interpretation

The data suggests that fine-tuning language models with LoRA (Low-Rank Adaptation) and prompting techniques (LoRA + Prompt) significantly improves performance on the MMLU dataset, as indicated by higher AUROC and lower ECE values. The MMLU (MC) scenario shows a more consistent improvement across models, while MMLU (OE) reveals that certain models (Verbal and Zero-Shot Classifier) struggle with calibration, leading to higher ECE. This could indicate that these models are overconfident in their predictions in the MMLU (OE) setting. The consistent improvement of LoRA + Prompt across both scenarios highlights the effectiveness of this approach for enhancing language model performance and calibration.

</details>

<details>

<summary>x5.png Details</summary>

### Visual Description

## Chart: Model Performance Comparison

### Overview

The image presents two line charts comparing the performance of different language models (LLaMA-2 7B Chat, LLaMA-2 13B Chat, and Mistral 7B Instruct) across varying sample sizes. The left chart displays the Expected Calibration Error (ECE), while the right chart shows the Area Under the Receiver Operating Characteristic Curve (AUROC). Baseline performance is indicated by horizontal dashed lines for "Zero-Shot Classifier" and "Sampling".

### Components/Axes

* **X-axis (both charts):** Samples (logarithmic scale), ranging from 10<sup>2</sup> to 10<sup>4</sup>.

* **Y-axis (left chart):** ECE, ranging from 0.1 to 0.2.

* **Y-axis (right chart):** AUROC, ranging from 0.6 to 0.7.

* **Legend (top):**

* Dark Blue: LLaMA-2 7B Chat

* Light Blue: LLaMA-2 13B Chat

* Teal: Mistral 7B Instruct

* Red Dashed: Zero-Shot Classifier

* Purple Dashed: Sampling

### Detailed Analysis

**Left Chart: ECE**

* **LLaMA-2 7B Chat (Dark Blue):** The ECE starts around 0.14 at 10<sup>2</sup> samples and decreases to approximately 0.07 at 10<sup>3</sup> samples. It then plateaus and slightly increases to around 0.08 at 10<sup>4</sup> samples.

* **LLaMA-2 13B Chat (Light Blue):** The ECE starts around 0.17 at 10<sup>2</sup> samples and decreases to approximately 0.08 at 10<sup>3</sup> samples. It then plateaus and slightly increases to around 0.09 at 10<sup>4</sup> samples.

* **Mistral 7B Instruct (Teal):** The ECE starts around 0.23 at 10<sup>2</sup> samples and decreases to approximately 0.10 at 10<sup>3</sup> samples. It then plateaus and slightly increases to around 0.11 at 10<sup>4</sup> samples.

* **Zero-Shot Classifier (Red Dashed):** The ECE is constant at approximately 0.14.

* **Sampling (Purple Dashed):** The ECE is constant at approximately 0.13.

**Right Chart: AUROC**

* **LLaMA-2 7B Chat (Dark Blue):** The AUROC starts around 0.55 at 10<sup>2</sup> samples and increases to approximately 0.72 at 10<sup>4</sup> samples.

* **LLaMA-2 13B Chat (Light Blue):** The AUROC starts around 0.58 at 10<sup>2</sup> samples and increases to approximately 0.73 at 10<sup>4</sup> samples.

* **Mistral 7B Instruct (Teal):** The AUROC starts around 0.60 at 10<sup>2</sup> samples and increases to approximately 0.74 at 10<sup>4</sup> samples.

* **Zero-Shot Classifier (Red Dashed):** The AUROC is constant at approximately 0.59.

* **Sampling (Purple Dashed):** The AUROC is constant at approximately 0.53.

### Key Observations

* As the number of samples increases, the ECE generally decreases for all three language models, indicating better calibration.

* As the number of samples increases, the AUROC generally increases for all three language models, indicating better classification performance.

* Mistral 7B Instruct generally outperforms the LLaMA-2 models in both ECE and AUROC, especially at lower sample sizes.

* The Zero-Shot Classifier and Sampling baselines remain constant across all sample sizes.

### Interpretation

The charts demonstrate the impact of increasing the number of samples on the performance of different language models. The decreasing ECE and increasing AUROC values suggest that with more data, the models become better calibrated and more accurate in their classifications. The Mistral 7B Instruct model appears to be the most effective among the three, showing superior performance compared to the LLaMA-2 models. The horizontal baselines provide a reference point, highlighting the improvement achieved by the language models compared to simpler classification methods. The logarithmic scale on the x-axis suggests that the initial increase in samples has a more significant impact on performance than later increases.

</details>

Figure 3: (Left) ECE and AUROC on both multiple choice (MC) and open-ended (OE) MMLU. ECE is shown after temperature scaling on a small hold-out set. Supervised training (Probe, LoRA, LoRA + Prompt) tends to improve calibration and selective prediction. Probing on its own (Probe) performs worse than training through the features with a language prompt (LoRA + Prompt), especially in an open-ended setting. Error bars show two standard deviations over six base models. Extended results in Appendix D. (Right) Effect of varying number of labeled datapoints on OE MMLU. In the most extreme case, we train on only 200 examples. Overall, performance increases in proportion with the available labeled data, but 1000 points is almost as valuable as 20,000 points. Dotted lines indicate the performance of the classifier and sampling baselines averaged over the three models considered. Shaded regions show one standard deviation over subsets of MMLU.

Supervised learning approaches, in which we learn to predict a model’s correctness, can dramatically outperform baselines with as few as $1000$ graded examples. Updating the features of the model with LoRA and use of a language prompt are key to good performance.

6 When and Why Do These Estimates Generalize?

To derive more understanding of when our estimates generalize, we now investigate distribution shifts between the training and evaluation datasets. To have a practically useful tool, we might desire robustness to the following shifts, among others:

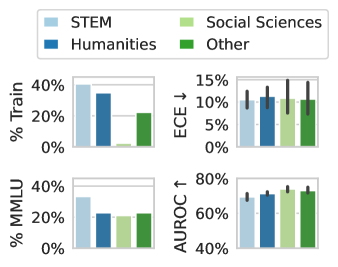

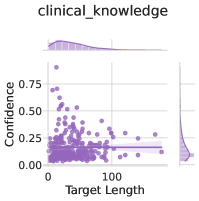

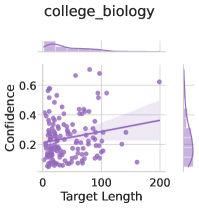

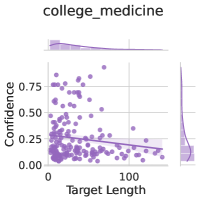

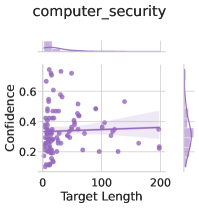

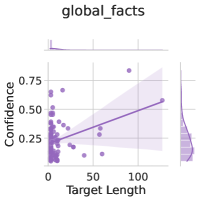

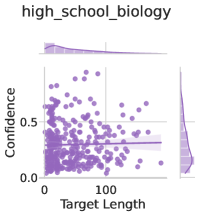

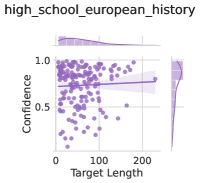

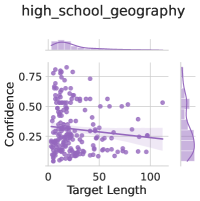

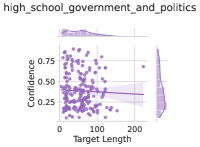

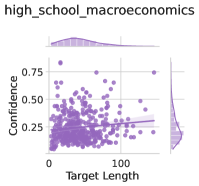

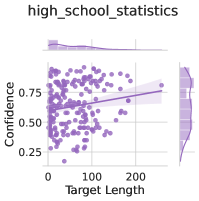

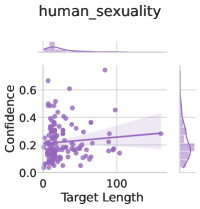

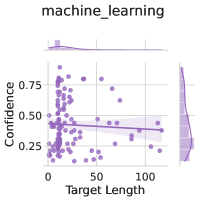

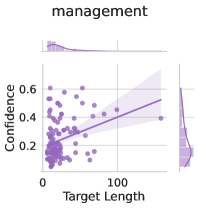

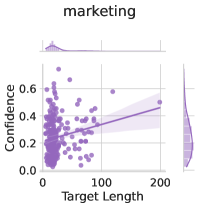

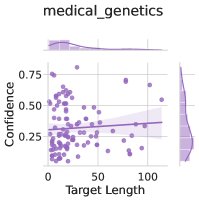

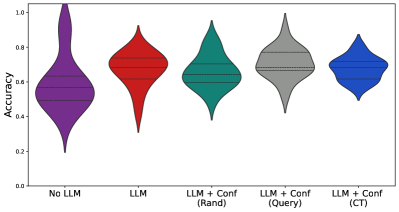

Subject matter. Ideally, our uncertainty estimates apply to subjects we have not seen during training. In Figure 4 (left), we show a breakdown of our fine-tuning dataset using the supercategories from MMLU (Section A.5). We see that our dataset contains much higher percentages of STEM and humanities questions than MMLU and close to no examples from the social sciences (e.g. government, economics, sociology). Despite these differences in composition, uncertainty estimates from LoRA + Prompt perform similarly across supercategories. We also show the efficacy of our models at assessing confidence on out of distribution coding tasks in Appendix F.

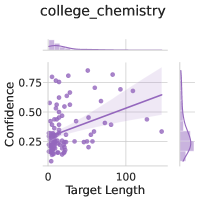

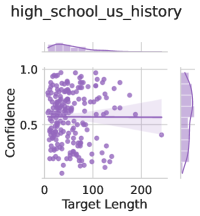

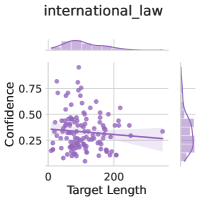

Format. Like a change in subject matter, the way a question is posed should not break the uncertainty estimate. To test the effect of the question format independent of its subject matter, we apply models fine-tuned on OE MMLU to MC MMLU and vice versa. In Figure 4 (center), we see that fine-tuned models often perform better than a zero-shot baseline even when they are being applied across a distribution shift, though transfer from MC to OE is more challenging than OE to MC. Probe is insufficient to generalize effectively from MC to OE, but training through the features of the model (LoRA + Prompt) does generalize effectively, even out-performing probe trained on OE data.

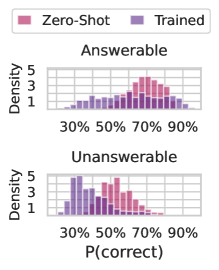

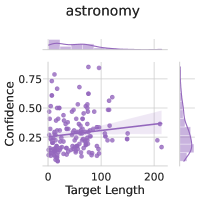

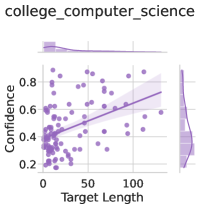

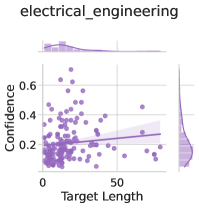

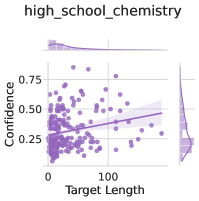

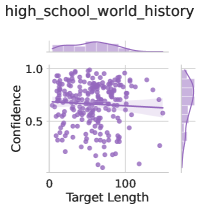

Solvability. Even though we focus on questions with a single known answer, we might hope that our estimates can be used even when a question is ill-posed or does not have a known solution, ideally returning high uncertainty. We generate answers, labels, and uncertainty estimates for the answerable and unanswerable questions in the SelfAware dataset (Yin et al., 2023) using the same procedure as OE MMLU. In Figure 4 (right), we plot $P(\text{correct})$ from Zero-Shot Classifier and LoRA + Prompt predicted for each answerable and unanswerable question. Notably, calibration-tuned models have calibrated probabilities for the answerable questions and assign lower confidence to unanswerable questions than black-box methods.

<details>

<summary>x6.png Details</summary>

### Visual Description

## Bar Charts: Performance by Field

### Overview

The image presents four bar charts comparing the performance of different fields (STEM, Humanities, Social Sciences, and Other) across four metrics: % Train, ECE (Error Calibration Error), % MMLU, and AUROC (Area Under the Receiver Operating Characteristic curve). The charts display the average performance for each field, with error bars indicating the variability or uncertainty in the measurements.

### Components/Axes

**Legend (Top-Left):**

* STEM: Light Blue

* Humanities: Dark Blue

* Social Sciences: Light Green

* Other: Dark Green

**Chart 1: % Train (Top-Left)**

* Y-axis Label: % Train

* Y-axis Scale: 0% to 40%

* X-axis: Implied categories (STEM, Humanities, Social Sciences, Other)

**Chart 2: ECE ↓ (Top-Right)**

* Y-axis Label: ECE ↓

* Y-axis Scale: 0% to 15%

* X-axis: Implied categories (STEM, Humanities, Social Sciences, Other)

**Chart 3: % MMLU (Bottom-Left)**

* Y-axis Label: % MMLU

* Y-axis Scale: 0% to 40%

* X-axis: Implied categories (STEM, Humanities, Social Sciences, Other)

**Chart 4: AUROC ↑ (Bottom-Right)**

* Y-axis Label: AUROC ↑

* Y-axis Scale: 40% to 80%

* X-axis: Implied categories (STEM, Humanities, Social Sciences, Other)

### Detailed Analysis

**Chart 1: % Train**

* STEM (Light Blue): Approximately 39%

* Humanities (Dark Blue): Approximately 34%

* Social Sciences (Light Green): Approximately 2%

* Other (Dark Green): Approximately 22%

**Chart 2: ECE ↓**

* STEM (Light Blue): Approximately 10% with error bars ranging from 9% to 11%

* Humanities (Dark Blue): Approximately 11% with error bars ranging from 9% to 13%

* Social Sciences (Light Green): Approximately 10% with error bars ranging from 8% to 12%

* Other (Dark Green): Approximately 10% with error bars ranging from 8% to 14%

**Chart 3: % MMLU**

* STEM (Light Blue): Approximately 32%

* Humanities (Dark Blue): Approximately 22%

* Social Sciences (Light Green): Approximately 21%

* Other (Dark Green): Approximately 22%

**Chart 4: AUROC ↑**

* STEM (Light Blue): Approximately 69% with error bars ranging from 67% to 71%

* Humanities (Dark Blue): Approximately 72% with error bars ranging from 70% to 74%

* Social Sciences (Light Green): Approximately 74% with error bars ranging from 72% to 76%

* Other (Dark Green): Approximately 73% with error bars ranging from 71% to 75%

### Key Observations

* **% Train:** STEM and Humanities have significantly higher training percentages compared to Social Sciences.

* **ECE ↓:** The ECE values are relatively similar across all fields, with overlapping error bars, suggesting no significant difference.

* **% MMLU:** STEM shows a higher MMLU percentage compared to the other fields.

* **AUROC ↑:** Social Sciences and Other fields have slightly higher AUROC scores compared to STEM and Humanities.

### Interpretation

The data suggests that the model training distribution (% Train) is heavily skewed towards STEM and Humanities. However, the error calibration (ECE) is relatively consistent across all fields. STEM demonstrates a higher performance in MMLU, while Social Sciences and Other fields show slightly better performance in AUROC. The differences in AUROC are small and may not be statistically significant given the error bars. The arrows next to ECE and AUROC indicate the desired direction of the metric (lower ECE is better, higher AUROC is better). The data indicates that the model performs differently depending on the field, which could be due to variations in the complexity or characteristics of the data within each field.

</details>

<details>

<summary>x7.png Details</summary>

### Visual Description

## Bar Chart: Performance Comparison of Different Methods

### Overview

The image presents a bar chart comparing the performance of different methods (Zero-Shot Classifier, Probe, LoRA + Prompt) on two metrics: ECE (Error Calibration Error) and AUROC (Area Under the Receiver Operating Characteristic curve). The chart is divided into two sections, MC and OE, likely representing different datasets or tasks. The chart compares the performance of these methods, including transfer learning variants, across two different evaluation metrics.

### Components/Axes

* **Y-Axis (Left):**

* ECE ↓ (Error Calibration Error): Ranges from 10% to 50%. Lower values are better.

* AUROC ↑ (Area Under the Receiver Operating Characteristic curve): Ranges from 40% to 80%. Higher values are better.

* **X-Axis:** Categorical, representing different methods:

* Zero-Shot Classifier (Orange)

* Probe (Dark Blue)

* ^(Transfer) (Light Blue) - Transfer learning variant of Probe

* LoRA + Prompt (Dark Green)

* ^(Transfer) (Light Green) - Transfer learning variant of LoRA + Prompt

* **Chart Sections:**

* MC (Likely representing a dataset or task)

* OE (Likely representing a different dataset or task)

* **Legend (Top):**

* Zero-Shot Classifier (Orange)

* Probe (Dark Blue)

* ^(Transfer) (Light Blue)

* LoRA + Prompt (Dark Green)

* ^(Transfer) (Light Green)

### Detailed Analysis

**ECE (Error Calibration Error) - Lower is better**

* **MC:**

* Zero-Shot Classifier (Orange): Approximately 35% ± 2%

* Probe (Dark Blue): Approximately 24% ± 1%

* Probe ^(Transfer) (Light Blue): Approximately 26% ± 1%

* LoRA + Prompt (Dark Green): Approximately 23% ± 1%

* LoRA + Prompt ^(Transfer) (Light Green): Approximately 24% ± 1%

* **OE:**

* Zero-Shot Classifier (Orange): Approximately 33% ± 2%

* Probe (Dark Blue): Approximately 29% ± 2%

* Probe ^(Transfer) (Light Blue): Approximately 30% ± 2%

* LoRA + Prompt (Dark Green): Approximately 18% ± 1%

* LoRA + Prompt ^(Transfer) (Light Green): Approximately 26% ± 2%

**AUROC (Area Under the Receiver Operating Characteristic curve) - Higher is better**

* **MC:**

* Zero-Shot Classifier (Orange): Approximately 58% ± 2%

* Probe (Dark Blue): Approximately 62% ± 2%

* Probe ^(Transfer) (Light Blue): Approximately 68% ± 1%

* LoRA + Prompt (Dark Green): Approximately 72% ± 1%

* LoRA + Prompt ^(Transfer) (Light Green): Approximately 70% ± 1%

* **OE:**

* Zero-Shot Classifier (Orange): Approximately 59% ± 2%

* Probe (Dark Blue): Approximately 55% ± 2%

* Probe ^(Transfer) (Light Blue): Approximately 52% ± 2%

* LoRA + Prompt (Dark Green): Approximately 72% ± 1%

* LoRA + Prompt ^(Transfer) (Light Green): Approximately 65% ± 2%

### Key Observations

* LoRA + Prompt generally performs better than Zero-Shot Classifier and Probe in terms of AUROC.

* Transfer learning (^(Transfer)) seems to improve performance for both Probe and LoRA + Prompt in most cases, especially for AUROC on the MC dataset.

* LoRA + Prompt shows a significant improvement in ECE on the OE dataset compared to other methods.

* The performance differences between methods are more pronounced for AUROC than for ECE.

### Interpretation

The data suggests that LoRA + Prompt is a more effective method for these tasks, particularly when considering the AUROC metric. The use of transfer learning further enhances the performance of both Probe and LoRA + Prompt. The choice of dataset (MC vs. OE) also influences the relative performance of the different methods, indicating that some methods are more sensitive to the specific characteristics of the data. The lower ECE values for LoRA + Prompt on the OE dataset suggest that this method is better calibrated in its predictions for this particular dataset. Overall, the results highlight the benefits of using LoRA + Prompt and transfer learning for improving model performance and calibration.

</details>

<details>

<summary>x8.png Details</summary>

### Visual Description

## Chart Type: Stacked Histogram

### Overview

The image presents two stacked histograms, one for "Answerable" questions and one for "Unanswerable" questions. Each histogram shows the distribution of P(correct) values for two models: "Zero-Shot" (pink) and "Trained" (purple). The histograms are stacked, meaning the bars for each model are added on top of each other.

### Components/Axes

* **Y-axis (Density):** Ranges from 1 to 5, with tick marks at 1, 3, and 5.

* **X-axis (P(correct)):** Ranges from 30% to 90%, with tick marks at 30%, 50%, 70%, and 90%.

* **Titles:** "Answerable" (top histogram) and "Unanswerable" (bottom histogram).

* **Legend:** Located at the top of the image. "Zero-Shot" is represented by pink, and "Trained" is represented by purple.

### Detailed Analysis

**Answerable Histogram:**

* **Zero-Shot (Pink):** The distribution is skewed towards higher P(correct) values. The density increases from 30% to a peak around 70%-80%, then decreases slightly towards 90%.

* **Trained (Purple):** The distribution is more uniform across the range of P(correct) values, with a slight increase in density between 50% and 70%.

**Unanswerable Histogram:**

* **Zero-Shot (Pink):** The distribution is centered around 50%-60% P(correct), with a lower density at both ends of the range.

* **Trained (Purple):** The distribution is skewed towards lower P(correct) values, with a peak around 30%-40%.

### Key Observations

* For "Answerable" questions, the "Zero-Shot" model tends to have higher P(correct) values compared to the "Trained" model.

* For "Unanswerable" questions, the "Trained" model tends to have lower P(correct) values compared to the "Zero-Shot" model.

* The "Trained" model shows a clear distinction between "Answerable" and "Unanswerable" questions, with higher P(correct) for "Answerable" and lower P(correct) for "Unanswerable".

### Interpretation

The data suggests that the "Zero-Shot" model performs better on "Answerable" questions, while the "Trained" model is better at distinguishing between "Answerable" and "Unanswerable" questions. The "Trained" model seems to have learned to assign lower probabilities to "Unanswerable" questions, indicating a better understanding of the task. The "Zero-Shot" model, on the other hand, seems to assign similar probabilities to both types of questions. This could indicate that the "Zero-Shot" model is less sensitive to the nuances of the questions and answers.

</details>

Figure 4: (Left) We compare the composition of the fine-tuning dataset with MMLU. Notably, although the training dataset contains close to zero examples from social sciences, uncertainty estimates from the model perform similarly across categories. (Center) Testing the generalization of supervised methods by taking models trained on one setting (MCQA or OE) and evaluating them on the other setting. The MCQA or OE labels denote the evaluation setting, with the method labels indicate whether the model was trained on the same or different setting. Fine-tuning through the model’s features (LoRA + Prompt) performs almost as well in transfer as on in-distribution data. Zero-Shot Classifier involves no supervised learning except a temperature-scale step and is a useful reference point. Error bars show two standard deviations over six fine-tuned models. (Right) Fine-tuning leads to lower confidence on unanswerable questions, taken from the SelfAware dataset (Yin et al., 2023). Assigning low confidence to unanswerable questions allows the model to opt out of responding.

6.1 What are uncertainty estimates learning?

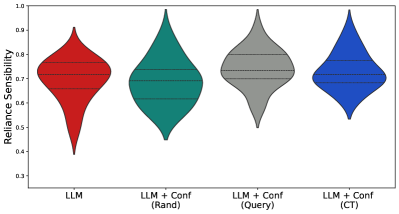

Language models can generate useful uncertainty estimates after training on a relatively small number of labeled examples. How is this possible? We hypothesize two, potentially complementary mechanisms: (a) LLMs assess the correctness of an answer given a question, or (b) LLMs recognize that certain topics often have incorrect answers. To understand the difference, let’s explore a useful metaphor. Imagine I speak only English, while my friend, Alice, is a linguaphile and dabbles in many languages. I have a spreadsheet of how often Alice makes mistakes in each language. Now, when I hear Alice attempting to converse in language A, I can guess how likely she is to err by recognizing the language from its sound and consulting the spreadsheet. I can do this without understanding the language at all. Alternatively, I can learn each language, which would be more complex but would strengthen my predictions.

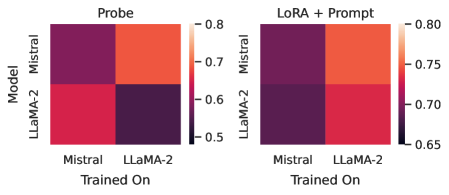

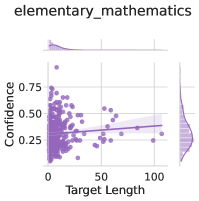

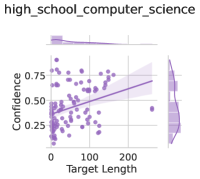

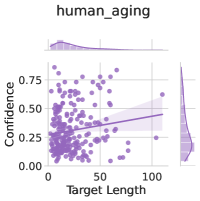

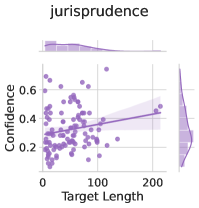

To disentangle these two possibilities in our setting, we perform an additional experiment, in which we replace the language model’s answers in the fine-tuning dataset with incorrect answer options. If a language model is simply learning patterns in the errors present in the training data, then we would expect this ablation to perform on par with the original method because it suffices to learn patterns in the content of the question and answer without needing the true causal relationship between question, answer, and correctness label. The results are shown in Figure 5 (left). We see the model trained on incorrect answers performs surprisingly well, on par with a Probe model, but significantly worse than a model trained on the original sampled answers. Correlating question content with error rates while moderately successful cannot be a full description of the LoRA + Prompt estimates.

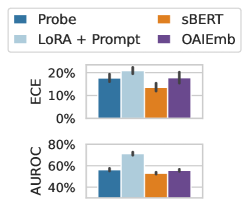

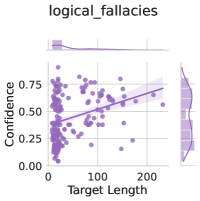

Self-knowledge. Lastly, we examine whether a language model can be used to model not just its own uncertainties but the uncertainties of other models. Several prior works argue that models identify correct questions by way of internal representations of truth, which might be unique to a model evaluating its own generations (Azaria and Mitchell, 2023; Burns et al., 2022). In Figure 5 (right), we show that, by contrast, Mistral 7B actual has better AUROC values when applied to LLaMA-2 7B than LLaMA-2 7B applied to itself. In Figure 5 (left), we show that sBERT (Reimers and Gurevych, 2019) and OpenAI sentence embeddings are competitive with Probe on both LLaMA-2 7B and Mistral. Together, these results suggest that LLM uncertainties are likely not model-specific. The practical upside of this insight is that one strong base model can be used to estimate the uncertainties of many other models, even closed-source models behind APIs, when a small labeled dataset is available or can be generated.

<details>

<summary>x9.png Details</summary>

### Visual Description

## Bar Chart: ECE and AUROC Comparison

### Overview

The image presents a bar chart comparing three categories: "Incorrect", "Sampled", and "Probe" across two metrics: ECE (Expected Calibration Error) and AUROC (Area Under the Receiver Operating Characteristic curve). The chart displays the mean values for each category with error bars indicating variability.

### Components/Axes

* **Y-axis (Left):**

* Top Chart: ECE, labeled vertically. Scale ranges from 0% to 20% in increments of 10%.

* Bottom Chart: AUROC, labeled vertically. Scale ranges from 30% to 70% in increments of 20%.

* **X-axis:** Implicitly represents the three categories: "Probe", "Incorrect", and "Sampled".

* **Legend (Top):** Located at the top of the image.

* Light Blue: "Incorrect"

* Dark Blue: "Sampled"

* Orange: "Probe"

### Detailed Analysis

**Top Chart: ECE**

* **Probe (Orange):** ECE value is approximately 12% with an error bar extending from about 8% to 16%.

* **Incorrect (Light Blue):** ECE value is approximately 16% with an error bar extending from about 12% to 20%.

* **Sampled (Dark Blue):** ECE value is approximately 9% with an error bar extending from about 5% to 13%.

**Bottom Chart: AUROC**

* **Probe (Orange):** AUROC value is approximately 62% with an error bar extending from about 58% to 66%.

* **Incorrect (Light Blue):** AUROC value is approximately 64% with an error bar extending from about 60% to 68%.

* **Sampled (Dark Blue):** AUROC value is approximately 71% with an error bar extending from about 67% to 75%.

### Key Observations

* For ECE, "Sampled" has the lowest value, while "Incorrect" has the highest.

* For AUROC, "Sampled" has the highest value, while "Probe" has the lowest.

* The error bars indicate the variability within each category.

### Interpretation

The chart suggests that the "Sampled" category performs best in terms of calibration (lower ECE) and discrimination (higher AUROC). The "Incorrect" category has the worst calibration (highest ECE) but performs comparably to "Sampled" in terms of discrimination (AUROC). The "Probe" category has the worst discrimination (lowest AUROC). The error bars provide an indication of the uncertainty associated with each estimate.

</details>

<details>

<summary>x10.png Details</summary>

### Visual Description

## Heatmaps: Model Performance Comparison

### Overview

The image presents two heatmaps comparing the performance of two language models, Mistral and LLaMA-2, under different training and evaluation conditions. The left heatmap, titled "Probe," shows performance when using a probe. The right heatmap, titled "LoRA + Prompt," shows performance when using LoRA and Prompt. The heatmaps visualize the performance of each model (Mistral and LLaMA-2) when trained on either Mistral or LLaMA-2 data. The color intensity represents the performance score, with higher scores indicated by lighter colors and lower scores by darker colors.

### Components/Axes

* **Titles:** "Probe" (left heatmap), "LoRA + Prompt" (right heatmap)

* **Y-axis Label:** "Model"

* **Y-axis Categories:** Mistral, LLaMA-2

* **X-axis Label:** "Trained On"

* **X-axis Categories:** Mistral, LLaMA-2

* **Color Scale (Right Side of Each Heatmap):**

* 0.8 (Top, Lightest Color)

* 0.7

* 0.6

* 0.5 (Bottom, Darkest Color)

* Right Heatmap:

* 0.80 (Top, Lightest Color)

* 0.75

* 0.70

* 0.65 (Bottom, Darkest Color)

### Detailed Analysis

**Left Heatmap: Probe**

* **Mistral (Model) Trained On Mistral:** Dark purple, indicating a low performance score of approximately 0.55.

* **Mistral (Model) Trained On LLaMA-2:** Light orange, indicating a high performance score of approximately 0.78.

* **LLaMA-2 (Model) Trained On Mistral:** Red, indicating a medium-high performance score of approximately 0.68.

* **LLaMA-2 (Model) Trained On LLaMA-2:** Dark purple, indicating a low performance score of approximately 0.55.

**Right Heatmap: LoRA + Prompt**

* **Mistral (Model) Trained On Mistral:** Dark purple, indicating a low performance score of approximately 0.66.

* **Mistral (Model) Trained On LLaMA-2:** Red-orange, indicating a high performance score of approximately 0.77.

* **LLaMA-2 (Model) Trained On Mistral:** Dark purple, indicating a low performance score of approximately 0.66.

* **LLaMA-2 (Model) Trained On LLaMA-2:** Red, indicating a medium-high performance score of approximately 0.73.

### Key Observations

* In the "Probe" configuration, both models perform significantly better when trained on the *other* model's data. Mistral performs best when trained on LLaMA-2, and LLaMA-2 performs better when trained on Mistral.

* In the "LoRA + Prompt" configuration, Mistral still performs better when trained on LLaMA-2, but the difference is less pronounced. LLaMA-2 performs better when trained on LLaMA-2.

* The "LoRA + Prompt" configuration generally results in higher performance scores compared to the "Probe" configuration, especially for LLaMA-2.

### Interpretation

The heatmaps suggest that the models exhibit a degree of specialization or overfitting to their own training data when using a probe. When using LoRA and Prompt, the models are more robust and generalize better. The fact that Mistral performs well when trained on LLaMA-2 data, regardless of the evaluation method, suggests that LLaMA-2 data might contain information that is beneficial for Mistral. The "LoRA + Prompt" method appears to improve the performance of both models, particularly LLaMA-2, indicating that it is a more effective training strategy. The lower performance when trained on their own data suggests a lack of diversity or potential biases in the original training datasets.

</details>

<details>

<summary>x11.png Details</summary>

### Visual Description

## Bar Chart: ECE and AUROC Comparison

### Overview

The image presents a bar chart comparing the performance of four different methods (Probe, LoRA + Prompt, sBERT, and OAIEmb) based on two metrics: ECE (Expected Calibration Error) and AUROC (Area Under the Receiver Operating Characteristic curve). The chart is divided into two subplots, one for each metric.

### Components/Axes

* **Chart Title:** Implicitly, a comparison of methods based on ECE and AUROC.

* **Y-axis (Top Subplot):** ECE, ranging from 0% to 20%.

* **Y-axis (Bottom Subplot):** AUROC, ranging from 40% to 80%.

* **X-axis:** Represents the four different methods being compared.

* **Legend (Top-Left):**

* Probe (Dark Teal)

* LoRA + Prompt (Light Blue)

* sBERT (Orange)

* OAIEmb (Purple)

### Detailed Analysis

**Top Subplot (ECE):**

* **Probe (Dark Teal):** ECE is approximately 18% ± 2%.

* **LoRA + Prompt (Light Blue):** ECE is approximately 19% ± 2%.

* **sBERT (Orange):** ECE is approximately 13% ± 1%.

* **OAIEmb (Purple):** ECE is approximately 18% ± 2%.

**Bottom Subplot (AUROC):**

* **Probe (Dark Teal):** AUROC is approximately 57% ± 3%.

* **LoRA + Prompt (Light Blue):** AUROC is approximately 72% ± 3%.

* **sBERT (Orange):** AUROC is approximately 54% ± 2%.

* **OAIEmb (Purple):** AUROC is approximately 56% ± 2%.

### Key Observations

* For ECE, LoRA + Prompt has the highest value, while sBERT has the lowest.

* For AUROC, LoRA + Prompt significantly outperforms the other methods.

* sBERT has the lowest AUROC.

* The error bars indicate the variability or uncertainty associated with each measurement.

### Interpretation

The chart suggests that the LoRA + Prompt method achieves the best calibration (lowest ECE) and the highest discriminative power (highest AUROC) compared to the other methods. sBERT appears to have the worst performance in terms of both calibration and discrimination. The Probe and OAIEmb methods show similar performance, falling between LoRA + Prompt and sBERT. The error bars provide an indication of the statistical significance of these differences. The LoRA + Prompt method is a clear outlier in terms of AUROC, suggesting it may be particularly well-suited for the task being evaluated.

</details>

Figure 5: (Left) We ablate the correspondence between questions and answers by training LoRA + Prompt on a dataset with correctness labels from the model’s generations but with the actual generations swapped with incorrect answers. In this case, the only relationships that can be extracted by the model are between the correctness labels and the questions. The model trained on incorrect answers generalizes surprisingly well but is much worse than a model trained on the original answers. Error bars show two standard deviations over three instruction-tuned models. (Center) We test how well models can learn to predict the correctness of a different model (in terms of AUROC), and we find that mistral models are often better at estimating the correctness of LLaMA models than LLaMA can on their own generations. (Right) We show that generic sentence embeddings can also perform on par with frozen language model representations (MMLU-OE), but training through a model is much better. sBERT and OAIEmb refer to training a classifier on top of sBERT (Reimers and Gurevych, 2019) or OpenAI sentence embeddings. Error bars show two standard deviations over tasks in MMLU.

Learned uncertainty estimates generalize to new formatting, subject matter, and even the generations of other models. This generalization appears to stem not simply from judging a question’s difficulty based on its subject matter (a short-cut) but also learning the correspondence between questions and correct answers.

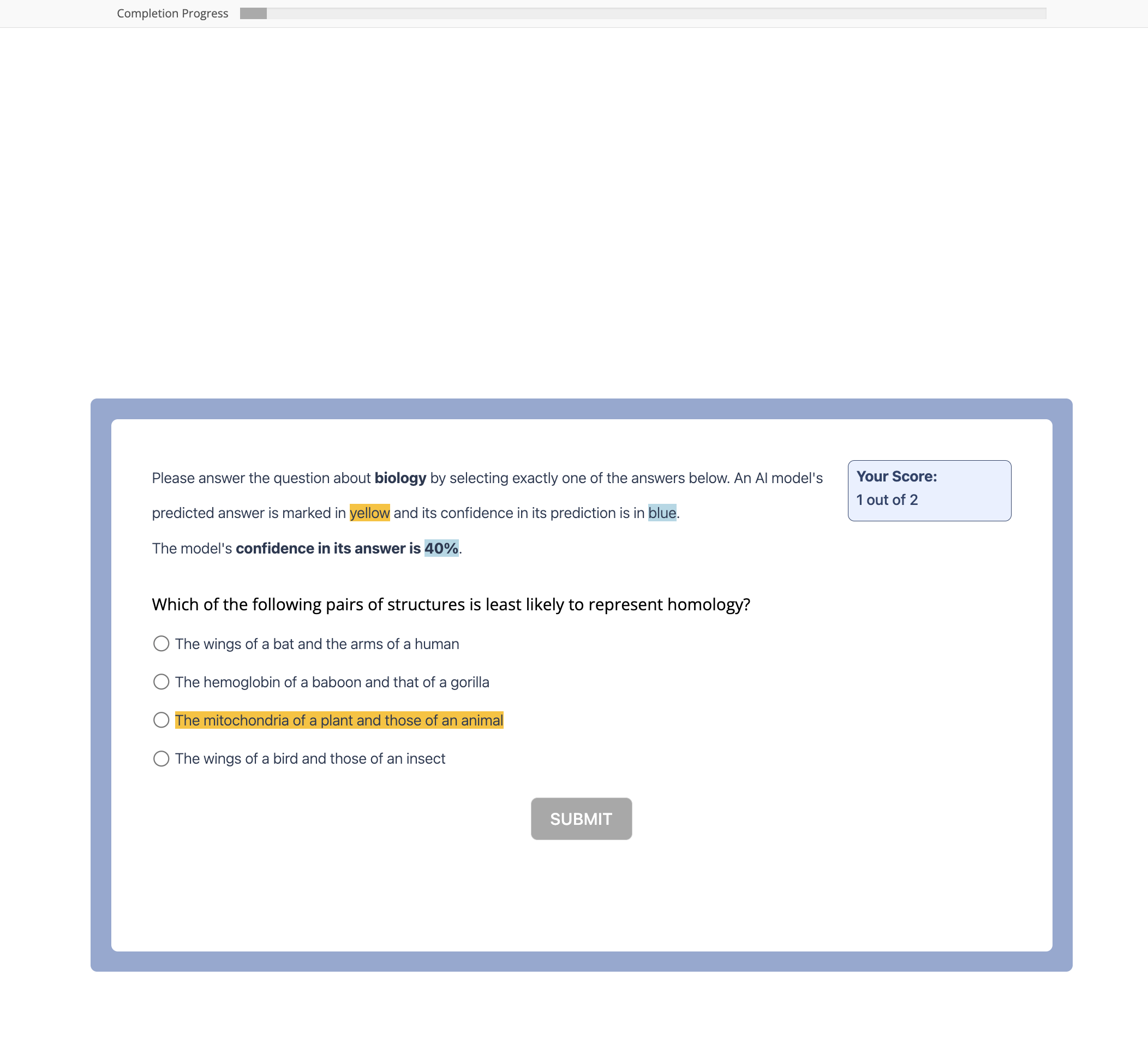

7 Does Calibrated Confidence Improve Collaboration with AI Assistants?

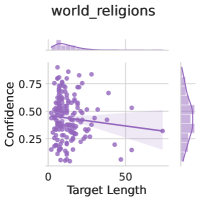

One key motivation for estimating LLM uncertainty is to signal the model’s reliability during collaborative decision making. To examine how our uncertainty estimates can be used in this capacity, we perform a preliminary user study (with $N=181$ participants) in which participants complete a multiple choice exam in collaboration with an LLM (Mistral 7B Instruct). For each question, the participant is provided both the LLM’s prediction and an uncertainty estimate, which can be from a calibrated method or an uncalibrated method. We hope to show that users are more likely to adopt calibrated uncertainty scores as part of their decision process. A more detailed description of the setup of our study is available in Appendix G.

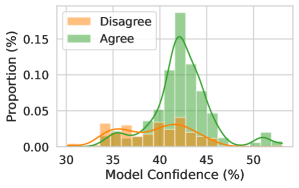

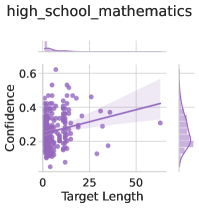

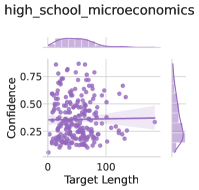

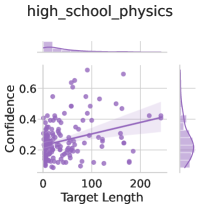

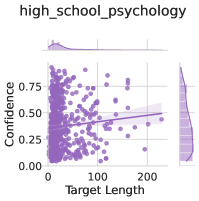

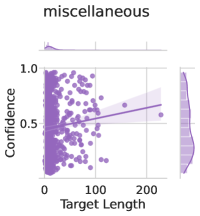

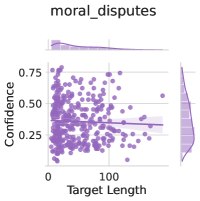

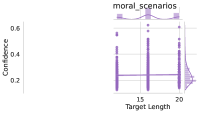

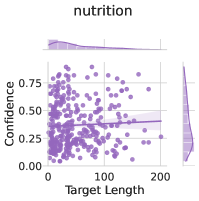

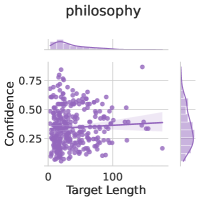

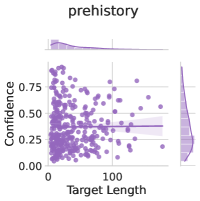

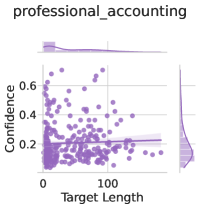

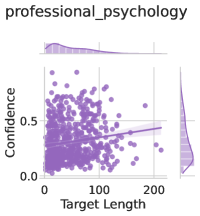

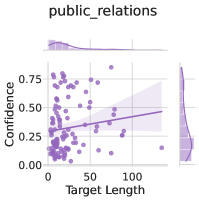

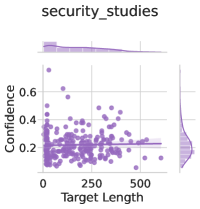

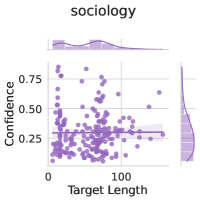

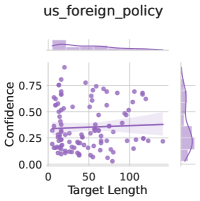

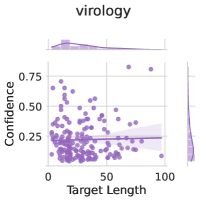

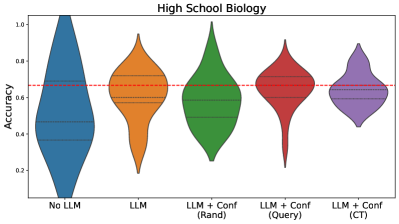

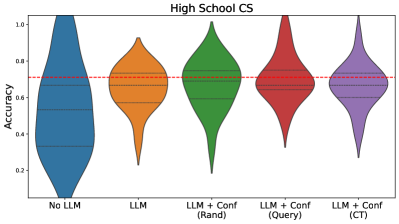

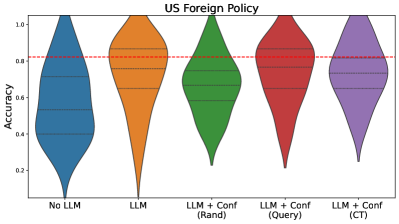

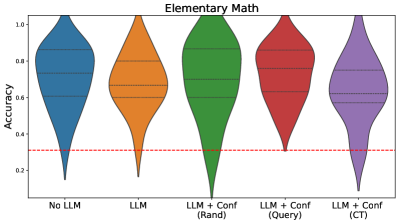

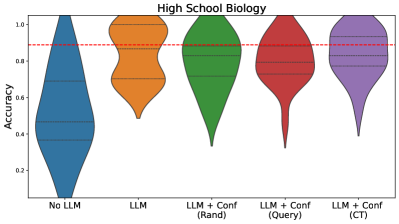

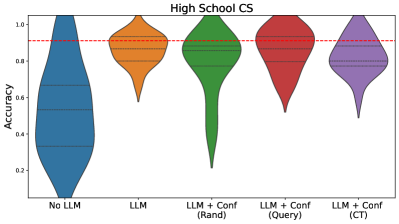

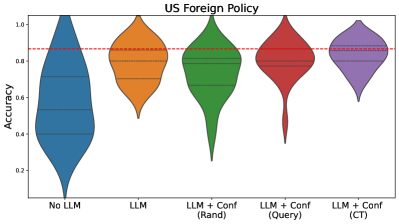

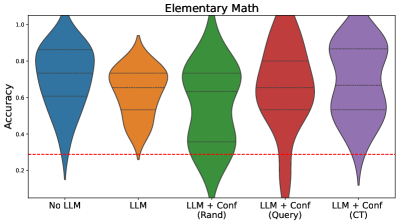

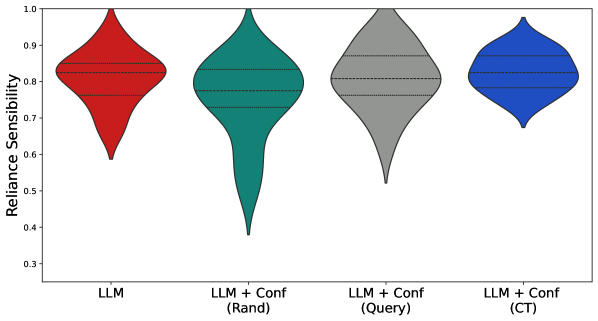

People are sensitive to informed confidence scores.

Figure 6 shows density plots of the model’s reported confidence and whether the user chose to agree with the model’s prediction. We find that participants are sensitive to the confidence scores and tend to use scores when deciding to agree or disagree with the model’s prediction if the uncertainties are reliable. On the other hand, participants generally do not modulate their decision to rely on the output of a random confidence baseline (Figure 6 (c)), in which the display uncertainty estimate is generated uniformly at random. We see the strongest discrepancy in reliance choices when LoRA + Probe confidence scores are presented, highlighting that calibrated confidence does influence user behavior.

We include additional details and results in Appendix G. We find that confidence scores have the biggest effect on improving the lowest performing users, rather than on average accuracy. However, this is a preliminary result in the nascent field of studying LLM uncertainties in practical collaborative decision making with users. We are only still scratching the surface of this question. For more fine-grained conclusions, a study should be devoted to this subject. We outline several limitations and future directions in Appendix G.

|

<details>

<summary>x12.png Details</summary>

### Visual Description

## Histogram: Model Confidence vs. Proportion of Agreement/Disagreement

### Overview

The image is a histogram showing the distribution of model confidence levels, separated by whether the model's prediction agreed or disagreed with a human annotator. The x-axis represents the model's confidence (in percentage), and the y-axis represents the proportion of instances (in percentage). Two distributions are plotted: one for instances where the model disagreed (orange) and one for instances where the model agreed (green).

### Components/Axes

* **X-axis:** Model Confidence (%), ranging from 30% to 50%. Increments are not explicitly marked, but the axis spans 20 percentage points.

* **Y-axis:** Proportion (%), ranging from 0.00% to 0.15%. Increments are not explicitly marked.

* **Legend:** Located in the top-left corner.

* Orange: Disagree

* Green: Agree

### Detailed Analysis

* **Disagree (Orange):**

* The distribution is relatively flat, with a small peak around 35% confidence.

* The orange line is relatively flat, with a small peak around 35% confidence.

* There is a small bump around 50% confidence.

* **Agree (Green):**

* The distribution is concentrated around 42-45% confidence.

* The green line has a clear peak around 43% confidence.

* There is a small bump around 52% confidence.

### Key Observations

* The model tends to be more confident when it agrees with human annotators.

* The distribution of confidence levels is much narrower for instances where the model agrees compared to instances where it disagrees.

* The model rarely disagrees with high confidence.

### Interpretation