# Semantic Structure-Mapping in LLM and Human Analogical Reasoning

**Authors**: Sam Musker, Alex Duchnowski, Raphaël Millière, Ellie Pavlick

## Abstract

Analogical reasoning is considered core to human learning and cognition. Recent studies have compared the analogical reasoning abilities of human subjects and Large Language Models (LLMs) on abstract symbol manipulation tasks, such as letter string analogies. However, these studies largely neglect analogical reasoning over semantically meaningful symbols, such as natural language words. This ability to draw analogies that link language to non-linguistic domains, which we term semantic structure-mapping, is thought to play a crucial role in language acquisition and broader cognitive development. We test human subjects and LLMs on analogical reasoning tasks that require the transfer of semantic structure and content from one domain to another. Advanced LLMs match human performance across many task variations. However, humans and LLMs respond differently to certain task variations and semantic distractors. Overall, our data suggest that LLMs are approaching human-level performance on these important cognitive tasks, but are not yet entirely human like.

keywords: language models , analogies , structure-mapping journal: review.

[label1]organization=Brown University,addressline=Department of Computer Science, city=Providence, postcode=02912, state=RI, country=USA

[label2]organization=Macquarie University,addressline=Department of Philosophy, city=Sydney, postcode=2109, state=NSW, country=Australia

## 1 Introduction

The recent advances of large language models (LLMs) have raised the question of whether LLMs can serve as useful cognitive models in the study of various aspects of human learning, cognition, and behavior [1, 2, 3]. One such recent debate has focused on whether LLMs acquire the ability to perform analogical reasoning as a by-product of their self-supervised learning objective [4, 5, 6, 7]. Analogical reasoning—the ability to align abstract structures between a source and target domain—is posited to play a central role in human learning and generalization, for example, our ability to reason efficiently in unfamiliar domains [8, 9]. Thus, the question of whether LLMs can reason analogically in a human-like way directly bears on their ability to serve as computational models of human behavior beyond just next-word prediction.

Recent work has focused on the ability of advanced LLMs to match human analogical reasoning performance on tasks that involve recognition of spatial and logical transformations in matrices [4] or detecting patterns in strings of letters or numbers [7]. For example, Mitchell [7] uses analogy tasks such as abcd:abce::ijkl:?? in order to test the extent to which LLMs and humans can recognize and generalize abstract structures and operations (in this example, ordered sequences and successor functions). Such studies have produced mixed results, with evidence suggesting that advanced LLMs achieve the same performance and even produce similar error patterns to those observed in humans [4, 6], but with doubts remaining about the robustness of LLMs’ abilities, particularly with respect to increasingly abstract and challenging domains [10].

Previous work has focused almost exclusively on analogies using abstract and arbitrary symbols, where structures are derived from symbols’ spatial positions in the text prompt, but the symbols themselves are unimportant. This leaves out questions about reasoning analogically over semantically meaningful symbols, such as words in natural language. This type of analogical reasoning, which we call semantic structure-mapping, requires mapping between semantic structure in one domain (e.g., the relationship between a dog and a puppy, or that a dog has four legs) and non-semantic (arbitrary) structure in the other domain (e.g., spatial position in the text prompt). This type of mapping is thought to play a crucial role in human cognition and development, such as in the language-analogical reasoning feedback loop proposed by Structure-Mapping Theory (SMT) [11]. Moreover, if LLMs are to provide insight into how humans perform certain cognitive functions, it will likely involve the role of distributional semantic learning [12, 13, 14] in the acquisition or representation of those functions. Therefore, we focus on investigating how humans and LLMs compare in tasks requiring semantic structure-mapping and assessing whether patterns differ from those observed on tasks involving only arbitrary symbols.

We design two experiments, focused respectively on the mapping of semantic structure (i.e., semantic relationships between symbols, such as relating the symbol dog to the symbol puppy) and semantic content (i.e., information attached to a symbol such as the knowledge that a dog has four legs). In each experiment, the subject (human or LLM) is presented with a set of left-hand terms (the source domain) and a corresponding set of right-hand terms (the target domain), with the final right-hand term omitted. The subject is asked to fill in this blank. An exact copy of our prompt and an example question is shown in Figure 1. We design multiple variants of such questions designed to probe structure-mapping that involves semantic structure and semantic content, respectively. We additionally design a series of control and distractor conditions—e.g., interleaving informative mappings (square => C C C) with uninformative ones (lime => X X X) in order to expose differences in the underlying mechanism.

Overall, the most advanced LLMs we tested match human performance across our primary conditions, even producing human-like error patterns. However, significant differences emerge in several control settings. Even the most advanced LLMs show more sensitivity than humans to information presentation order and struggle to ignore irrelevant semantic information that humans readily dismiss. Thus, our results contribute to the ongoing debate about analogical reasoning, corroborating both work arguing for impressive LLM performance [4, 15] and work highlighting important mechanistic differences between humans and LLMs [10, 16]. Code and data are available at https://github.com/AnonymousReview123/Semantic_Structure_Mapping_Anon. By presenting data on the unique role of semantic structure and content in analogical reasoning, we suggest differences remain in how LLMs and humans represent and map semantic structure, although this gap may be closing as models increase in size and incorporate more diverse training signals. We argue that this has important implications for studying cognitive development and the role of LLMs in this research going forward.

## 2 Methods

### 2.1 Experiment Details

#### 2.1.1 Semantic Structure

Each subject was presented with a quiz, which is a sequence of four such questions generated using four sets of base domains and four sets of target domains selected such that a participant sees each base and target domain exactly once. Eight variants of the task were devised to investigate the influence of task variations as described above.

Questions are introduced with the prompt “We are conducting an experiment on general reasoning abilities. Below we will show you various words and drawings of each, after which you will need to complete the last drawing. Respond as concisely as possible with only the last drawing.” We use the term “drawings” to describe the elements in the target domain because it loosely encapsulates the idea of mapping between the source and target domains. In a similar way to how drawings serve as partial structurally isomorphic representations that depict a subject with varying degrees of abstraction [17], the elements in our target domains establish a space of relations that are isomorphic to those in the source domain. In some cases the term “drawing” is straightforwardly applicable, as when the capitalization of characters corresponds to the term for a mature animal. In other cases the use is strained, as when capitalization instead corresponds to a shape being symmetrical. The transparently liberal use of the term “drawing” is used to prime subjects to reason creatively while attending to the correspondence between source and target domains. The prompt’s lack of reference to analogical reasoning accesses pre-theoretic responses to the extent possible. For the same purpose the experiment is introduced to human subjects and LLMs as studying “general reasoning abilities.”

#### 2.1.2 Semantic Content

Each condition (described in Table 4) contains two quizzes, with four questions per quiz. Unless otherwise stated, methodological details of the Semantic Content experiment match those of the Semantic Structure experiment.

The four conditions are divided into those that require numeric reasoning and those that do not. Within the numeric and non-numeric conditions respectively, one condition utilizes only one dimension of variation (referred to as “single-attribute”) whereas another adds a second dimension of variation (“multi-attribute”). This allows for comparing the relative performance of human subjects and models when the task is made to require compositional reasoning over layered transformations.

Questions were formatted like the following example:

| horse => * * * * |

| --- |

| cat => * * * * |

| ant => ! ! ! ! ! ! |

| bee => ! ! ! ! ! ! |

| chicken => ! ! |

| spider => ! ! ! ! ! ! ! ! |

| dog => * * * * |

| human => |

In this example, the number of symbols corresponds to a number-of-legs feature, and the usage of exclamation marks and asterisks corresponds to an egg-laying feature (or, alternatively, a mammal feature). The right-hand sequences of characters thereby encode properties of the entities denoted by the left-hand words. Given that humans are two-legged mammals, the correct answer here would be * *. In order to solve this task, the participant must understand both aspects of the information encoded in the right-hand terms and then construct the answer by generalizing to a new example.

### 2.2 Participants

#### 2.2.1 LLMs

We run our experiments on the following LLMs: GPT-3 [18], GPT-4 [19], Pythia-12B [20], Claude 2 [21], Claude 3 Opus [22], and Falcon-40B [23]. All of the above are transformer-based LLMs trained primarily on a next word prediction objective.

GPT-3 consists of a 175B parameter model trained on text completion and finetuned to produce more coherent answers. The details of GPT-4 are not publicly known, but it is considered by some sources to be a mixture-of-experts (MoE) model consisting of numerous GPT-3-scale language models [24]. GPT-4, unlike GPT-3, supplements text-completion pretraining and finetuning with reinforcement learning from human feedback (RLHF) in order to better align model outputs with the expectations of a human user. The training of Claude 2 also includes RLHF, but its performance falls short of GPT-4. The more recent Claude 3 (in our case, the most advanced Opus version) is considered to approximately match GPT-4 performance in general. GPT-3 and -4 are developed by OpenAI, whereas Claude 2 and 3 are developed by Anthropic. Pythia-12B and Falcon-40B are open-weights LLMs trained on a text-completion objective and consist of 12B and 40B parameters respectively. Neither undergoes RLHF. Pythia-12B is developed by EleutherAI, and Falcon-40B is developed by the Technology Innovation Institute.

#### 2.2.2 Human Subjects

We also test human participants on our experiments. Reported in the main text are results obtained from 194 (mostly undergraduate) University-Name University students (132 in the Semantic Structure experiment, and 62 in the Semantic Content experiment). The split of participants between experiments approximately matches the 9:4 ratio of experiment conditions. The number of participants by condition are as follows: Defaults 18, Distracted 18, Only RHS 18, Permuted Pairs 17, Permuted Questions 17, Random Finals 15, Random Permuted Pairs 6, Randoms 8, Relational 15, Categorial 16, Multi Attribute 16, Numeric 16, Numeric Multi Attribute 14. The Relational, Categorial, Multi Attribute, Numeric, and Numeric Multi Attribute conditions each have two quizzes while the remaining conditions each have four quizzes per condition. Subjects were assigned randomly to a single quiz from one condition without the re-use of subjects. Roughly the same number of participants were assigned to each condition, with the exception of the Random and Random Permuted Pairs conditions. These were together assigned roughly the expected number of subjects for a single condition due to their similarity.

The subjects were recruited through email advertisements and offered $10 in compensation. Earlier results obtained for the Semantic Structure experiment from an online sample of participants recruited through Prolific are reported in Figure 11 of the Appendix.

We ensure that humans and LLMs are given comparable information in our prompting design. A given human participant sees one quiz with four questions, with questions revealed one at a time with the answer shown following each response. LLMs are prompted with the first question of a quiz, then the second question with the first question and its (correct) answer accumulating in the prompt, and so forth for the four questions in a quiz. This prompt accumulation mimics the availability in the memory of human subjects of previous answers within a quiz.

### 2.3 Statistical testing

In each experiment, we are interested in the relative performance of human subjects and the best-performing models and how this depends on the particular experiment conditions. Differences between most models and human subjects are large and do not require statistical analysis, and so we focus our statistical analysis on the performance of GPT-4 relative to human subjects and Claude 3 relative to human subjects.

For each experiment and pair of subjects (human subjects and GPT-4, or human subjects and Claude 3) we fit a logistic model to the data with and without interactions between the subject type and the experiment condition. In all cases, the outcome variable is the un-aggregated per-question score achieved by a subject (either a 0 or 1), and the predictor variables are experiment condition (e.g. “Defaults” or “Permuted Pairs”) and subject type (e.g. “human subjects” or “GPT-4”). We use four likelihood ratio tests to assess whether the interaction between subject type and experiment condition is significant for a given pair of subjects within a particular experiment, as motivated by Glover [25]. In all four cases the interaction is significant, and so we use simple effects analysis to investigate the direction and significance of the effect of subject type within particular conditions.

For the semantic content experiment, we additionally perform a logistic simple effects analysis comparing the performance of a single subject type (human, GPT-4, or Claude 3) in compositional versus non-compositional conditions for the numeric and non-numeric cases respectively with the non-compositional condition as reference. For example, we assess the effect of the condition being Multi Attribute with Categorial as the reference condition for only the subject type Claude 3 (and likewise for the other two examined subject types).

Further details are provided in Sections A.1 and A.2 of the Appendix.

## 3 Results

### 3.1 Mapping Semantic Structure

We first design a set of experiments investigating the ability of LLMs and human subjects to map semantic structure in the source domain onto arbitrary, non-semantic structure in the target domain. In this set of experiments, our source domain (left-hand side) is a set of words which are assumed to possess some relational structure, and our target domain (right-hand side) is a set of strings related via non-linguistic string operations.

| We are conducting an experiment on general reasoning abilities. Below we will show you various words and drawings of each, after which you will need to complete the last drawing. Respond as concisely as possible with only the last drawing. |

| --- |

| Question 1: |

| square => C C C |

| rectangle => c c c |

| circle => C C |

| oval => |

square rectangle circle oval C C C c c c C C c c

Figure 1: An example question (from the Defaults condition of the Semantic Structure experiment) with a representation of the structure-mapping solution below. The source domain is in blue and the target domain is in orange (for the provided elements) and yellow (for the inferred element).

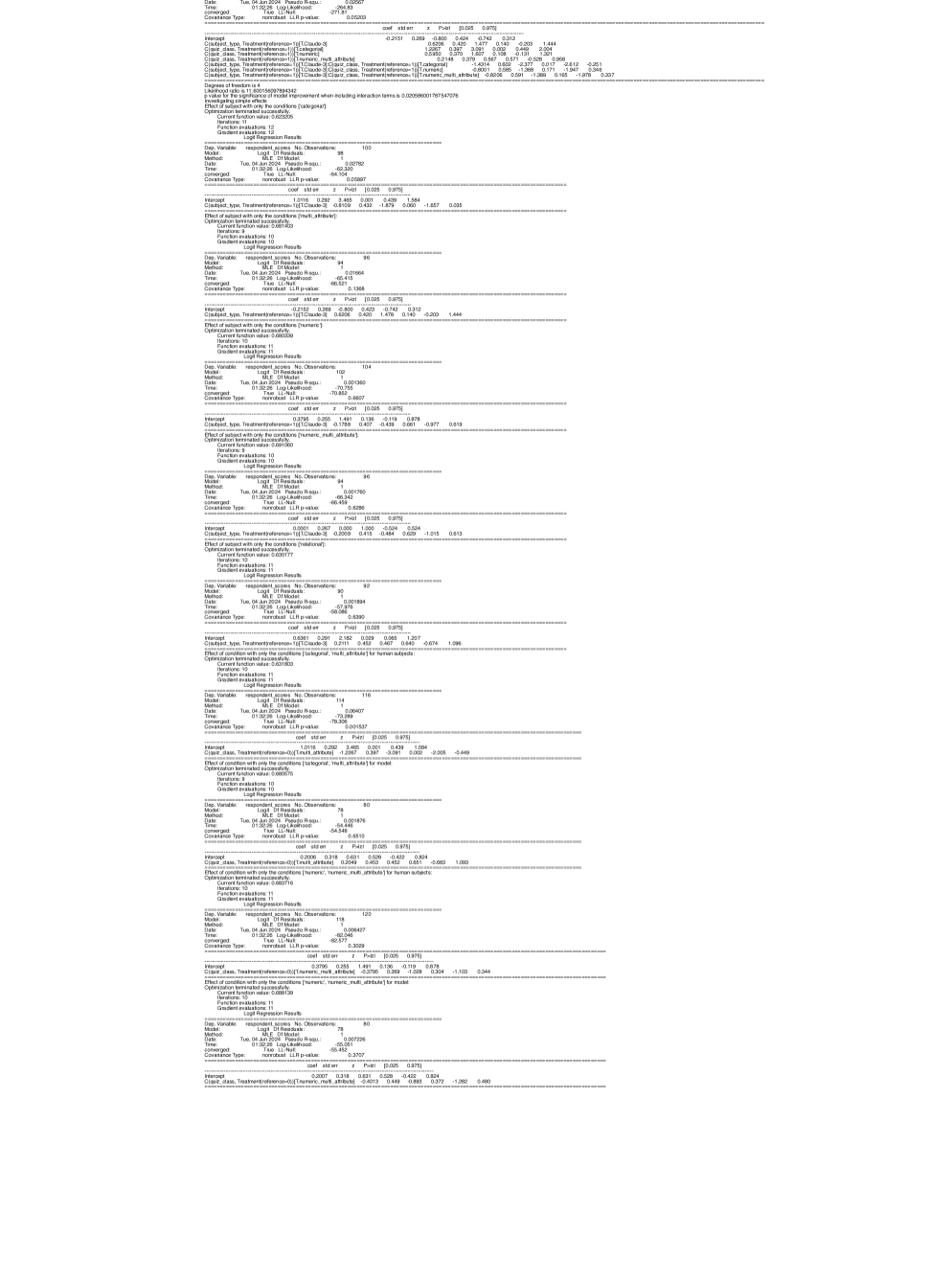

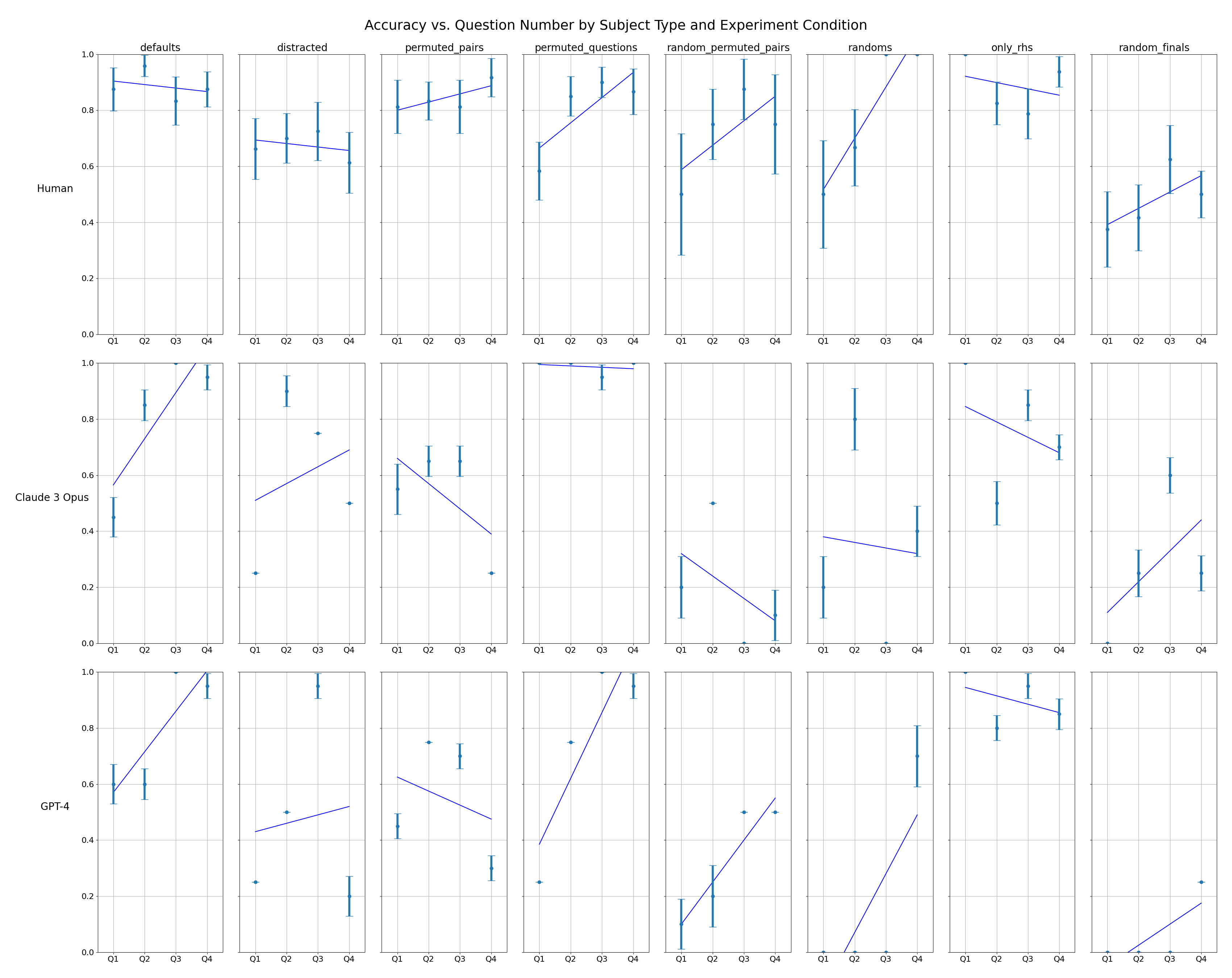

#### 3.1.1 Overall Performance

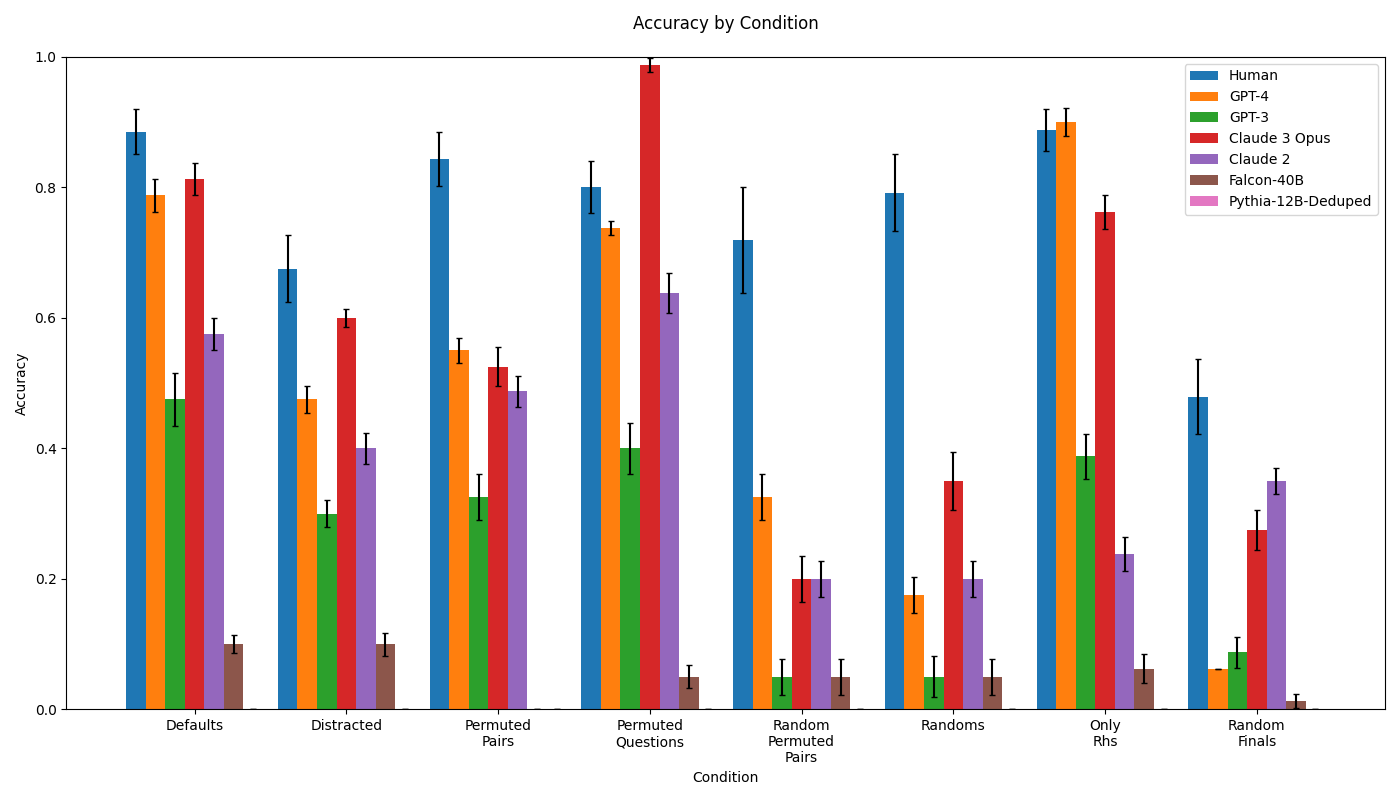

Human subjects perform well overall, obtaining accuracy between 0.4 and 0.9 across the various conditions. The most advanced LLMs that we test attain accuracies in the range 0.1-0.95 across conditions. This performance range is comparable to prior work on analogical reasoning over arbitrary symbols. For example, the results of human subjects on the “zero-generalization setting” studied by both Webb et al. [4] and Mitchell et al. [10] range from 0.2-0.8 in the former study and from 0.5-1.0 in the latter study. Similarly, results for LLMs (GPT-3, GPT-3.5, and GPT-4) across those conditions range from 0.1-1.0 in the two studies. Thus, our data suggest that analogies involving semantic structure-mapping are not inherently easier or harder than those which make use of arbitrary symbols.

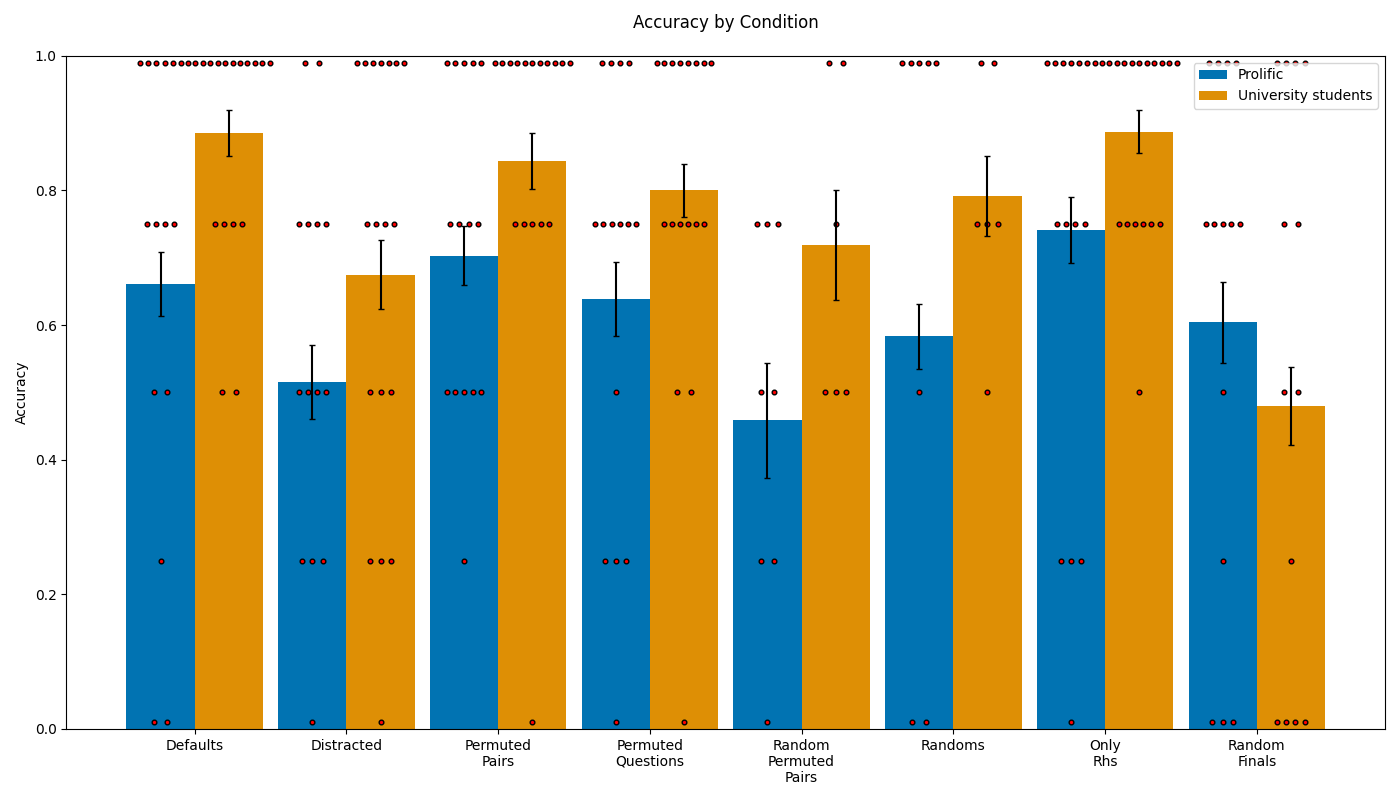

Our Defaults condition consists of lexical items as a source domain and one of several string operation relations as a target domain. To investigate the robustness of performance metrics, we introduce three control conditions: (1) Permuted Questions, in which we present unaltered versions of the core task with varied question ordering; (2) Permuted Pairs, in which we alter the order in which the lines of the analogy are presented; and (3) Distracted, in which we interleave unrelated mappings between the lines of the target analogy. These conditions are shown in Table 1. We do not expect Permuted Questions to materially alter the task, but might see some effect of the Permuted Pairs and Distracted conditions, as they could make the relevant relations less transparent: see, for example, work on the blocking advantage in humans [26] and in LLMs [27].

| Defaults | Basic test of semantic structure-mapping | square => C C C rectangle => c c c circle => C C oval => |

| --- | --- | --- |

| Permuted Pairs | Like Defaults, but with row order permuted | rectangle => c c c circle => C C square => C C C oval => |

| Distracted | Like Defaults, but with a distractor row added | square => C C C rectangle => c c c pillow => A P circle => C C oval => |

Table 1: Defaults and control conditions used to measure ability of humans and LLMs to perform analogical reasoning tasks that involve semantic structure-mapping. The Permuted Questions condition (not shown) is identical to Defaults, but with question order permuted.

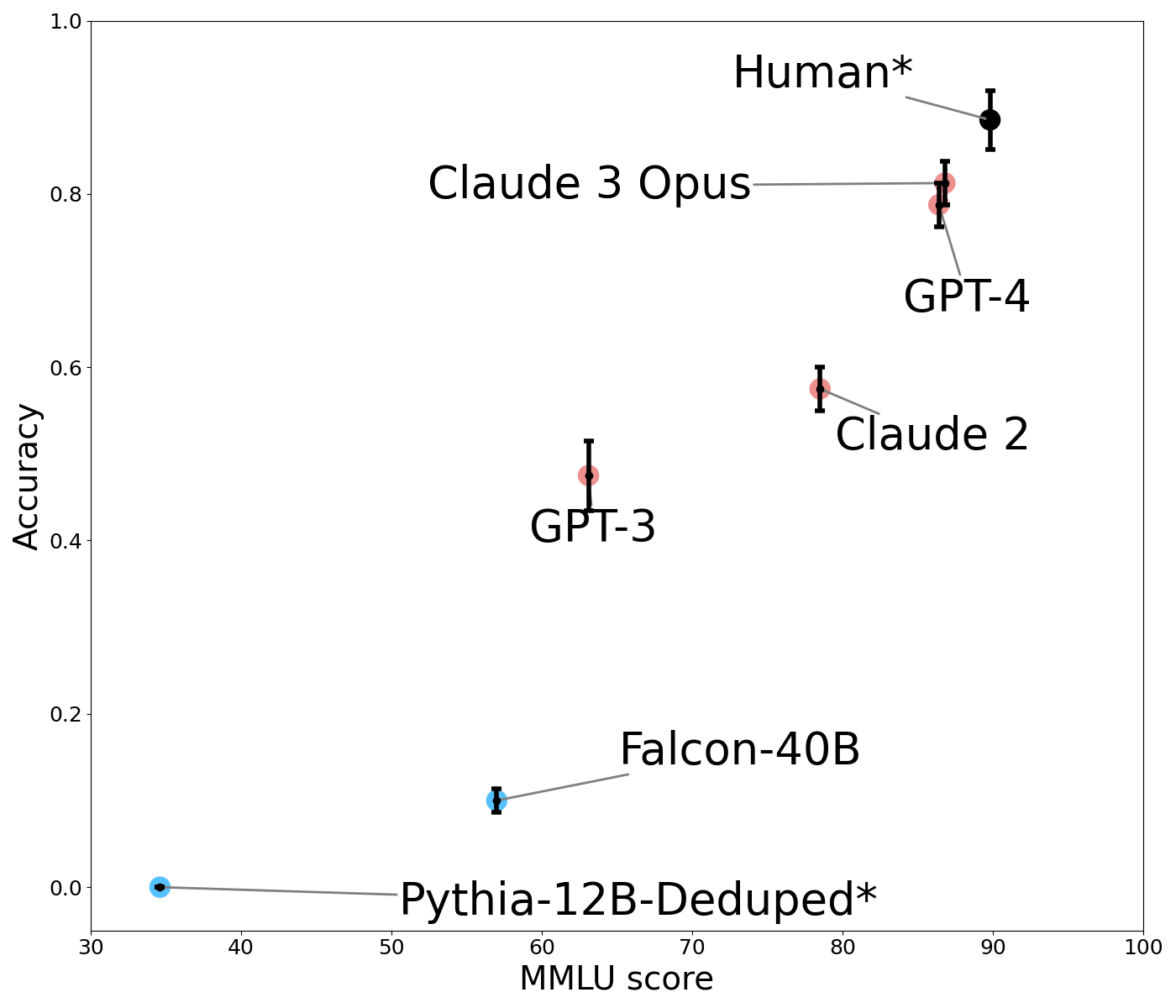

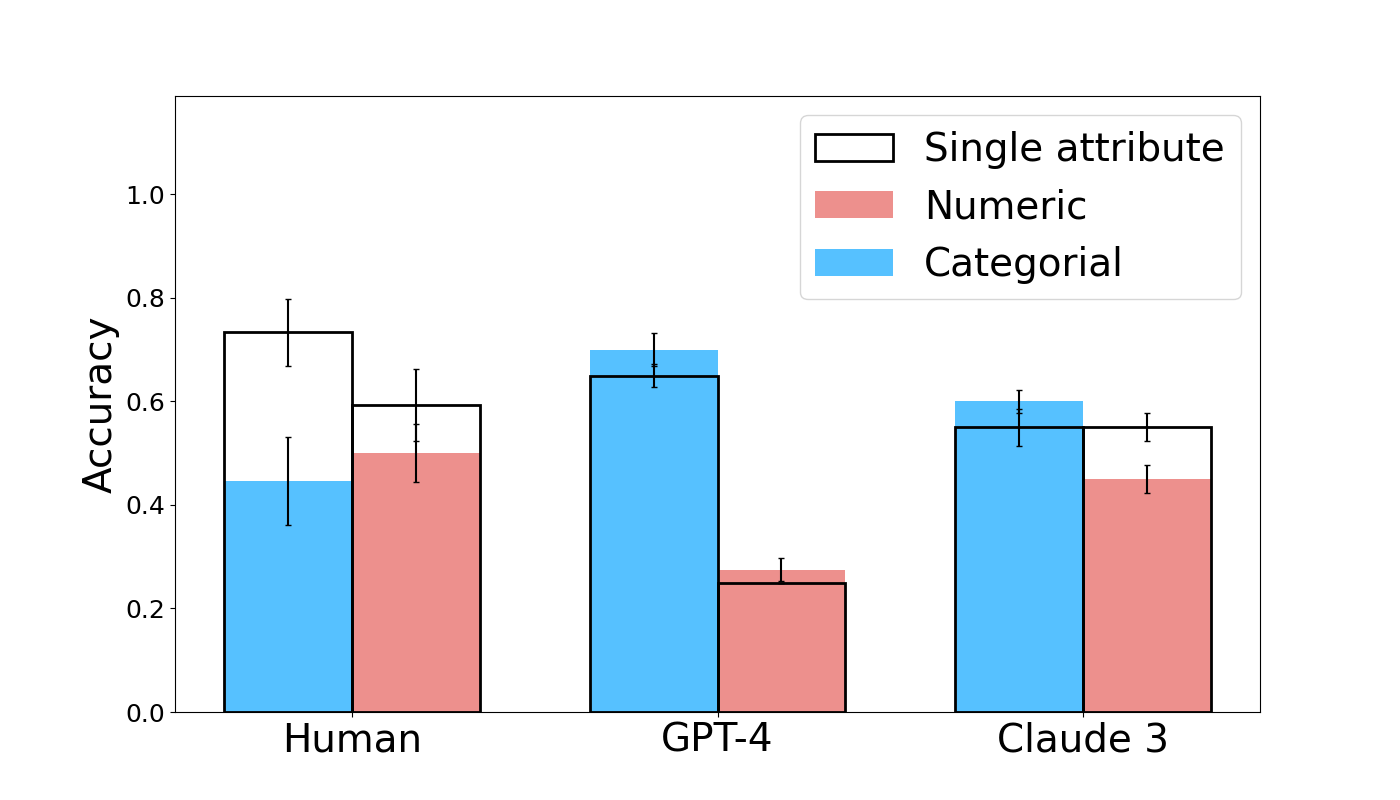

Figure 2 shows the performance of humans and LLMs in the Defaults condition as a function of their performance on MMLU MMLU scores are few-shot for GPT-4 and 5-shot for other models. The reported human baseline is the estimate for human experts given by Hendrycks et al. [28]. The score for Pythia 12B could not be found and so we use the reported value for Pythia 6.9B Tulu., a widely-used language competency benchmark. Increasing MMLU score is associated with higher accuracy on the Defaults condition. Smaller models do not perform competitively (Pythia-12B obtains an accuracy of 0.0, Falcon 40B 0.1, GPT-3 0.5, and Claude 2 0.6). This steadily increasing performance is presumed to correlate with the scale of model parameters and training data [29]. We focus our remaining analysis on comparing human subjects to GPT-4 and Claude 3. In the Defaults condition, neither GPT-4 (coef=-0.7696, z=-1.659, p=0.097) nor Claude 3 (coef=-0.6131, z=-1.299, p=0.194) performs significantly worse than human subjects.

<details>

<summary>extracted/5679376/Images/Comparison_Default_MMLU.png Details</summary>

### Visual Description

## Scatter Plot with Error Bars: AI Model Performance vs. Human Benchmark on MMLU

### Overview

The image is a scatter plot comparing the performance of various large language models (LLMs) and a human benchmark on two metrics: "MMLU score" (x-axis) and "Accuracy" (y-axis). Each data point represents a model or human performance, accompanied by error bars indicating uncertainty. The plot demonstrates a positive correlation between MMLU score and accuracy.

### Components/Axes

* **X-Axis:** Labeled "MMLU score". Scale ranges from 30 to 100, with major tick marks at 30, 40, 50, 60, 70, 80, 90, and 100.

* **Y-Axis:** Labeled "Accuracy". Scale ranges from 0.0 to 1.0, with major tick marks at 0.0, 0.2, 0.4, 0.6, 0.8, and 1.0.

* **Data Series & Labels:** Seven distinct data points are plotted, each labeled directly on the chart. The labels are connected to their respective points by thin gray lines.

* **Pythia-12B-Deduped\*** (Blue point, bottom-left)

* **Falcon-40B** (Blue point, lower-middle)

* **GPT-3** (Red point, center)

* **Claude 2** (Red point, upper-middle right)

* **GPT-4** (Red point, upper-right)

* **Claude 3 Opus** (Red point, upper-right, slightly above GPT-4)

* **Human\*** (Black point, top-right)

* **Error Bars:** Vertical black bars extend above and below each data point, representing the range of uncertainty or variance for the "Accuracy" metric.

### Detailed Analysis

**Data Point Extraction (Approximate Values):**

| Model / Entity | MMLU Score (X) | Accuracy (Y) | Error Bar Range (Accuracy) | Point Color |

| :--- | :--- | :--- | :--- | :--- |

| Pythia-12B-Deduped* | ~35 | ~0.00 | Very small, near zero | Blue |

| Falcon-40B | ~57 | ~0.10 | ~0.08 to ~0.12 | Blue |

| GPT-3 | ~62 | ~0.47 | ~0.42 to ~0.52 | Red |

| Claude 2 | ~78 | ~0.57 | ~0.55 to ~0.60 | Red |

| GPT-4 | ~86 | ~0.79 | ~0.77 to ~0.82 | Red |

| Claude 3 Opus | ~87 | ~0.81 | ~0.79 to ~0.83 | Red |

| Human* | ~90 | ~0.88 | ~0.85 to ~0.91 | Black |

**Trend Verification:**

* The overall visual trend is a clear upward slope from the bottom-left to the top-right. As the MMLU score increases, the Accuracy score also increases.

* **Pythia-12B-Deduped*:** Positioned at the extreme bottom-left, showing near-zero accuracy with a low MMLU score.

* **Falcon-40B:** Shows a modest increase in both metrics compared to Pythia.

* **GPT-3:** Represents a significant jump in accuracy from the previous models.

* **Claude 2:** Continues the upward trend, positioned higher and to the right of GPT-3.

* **GPT-4 & Claude 3 Opus:** Clustered closely together in the upper-right quadrant, indicating high performance on both metrics. Claude 3 Opus is marginally higher in accuracy and MMLU score.

* **Human*:** Positioned as the top-right outlier, achieving the highest scores on both axes.

### Key Observations

1. **Performance Hierarchy:** The plot establishes a clear performance hierarchy: Human* > Claude 3 Opus ≈ GPT-4 > Claude 2 > GPT-3 > Falcon-40B > Pythia-12B-Deduped*.

2. **Model Generations:** The data visually groups models by generation or capability tier. The latest models (Claude 3 Opus, GPT-4) are clustered near human performance, while older models (GPT-3, Falcon-40B) are significantly lower.

3. **Error Bar Variance:** The length of the error bars varies. GPT-3 has a notably wider error bar in accuracy compared to others like Claude 2 or GPT-4, suggesting greater uncertainty or variance in its performance on this specific evaluation.

4. **Color Coding:** Models are color-coded. The two lowest-performing models (Pythia, Falcon) are blue. The mid-to-high performing AI models (GPT-3, Claude 2, GPT-4, Claude 3 Opus) are red. The human benchmark is uniquely black.

### Interpretation

This chart illustrates the rapid advancement of AI language models on the MMLU (Massive Multitask Language Understanding) benchmark, a standard test of broad knowledge and problem-solving. The strong positive correlation between MMLU score and Accuracy suggests that performance on this benchmark is a reliable predictor of general accuracy in the evaluated tasks.

The key takeaway is the closing gap between top-tier AI models and human performance. Claude 3 Opus and GPT-4 operate at approximately 90% of the human accuracy level shown here, marking a significant milestone. The asterisk (*) on "Human*" and "Pythia-12B-Deduped*" likely denotes a specific condition or footnote not visible in the image (e.g., "Human" may represent an average of expert performance, and "Deduped" refers to a data deduplication process for the Pythia model).

The plot serves as a snapshot of the competitive landscape in AI development as of the data's collection, highlighting both the achievements of leading models and the remaining margin to reach and surpass human-level performance on this comprehensive benchmark.

</details>

Figure 2: Human and LLM accuracy in the Defaults condition, relative to performance on the MMLU benchmark. Models in blue are not instruction-tuned while models in orange are. Error bars show standard errors.

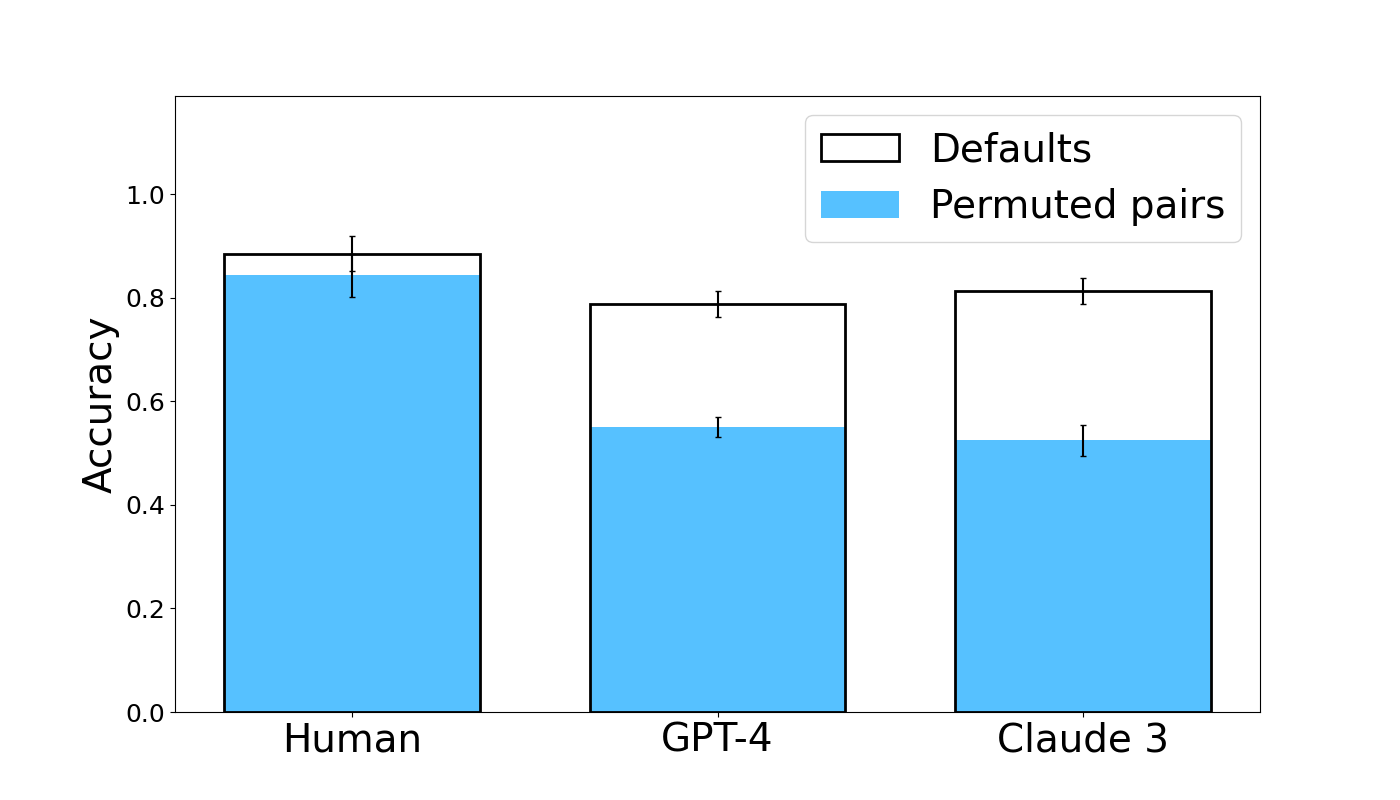

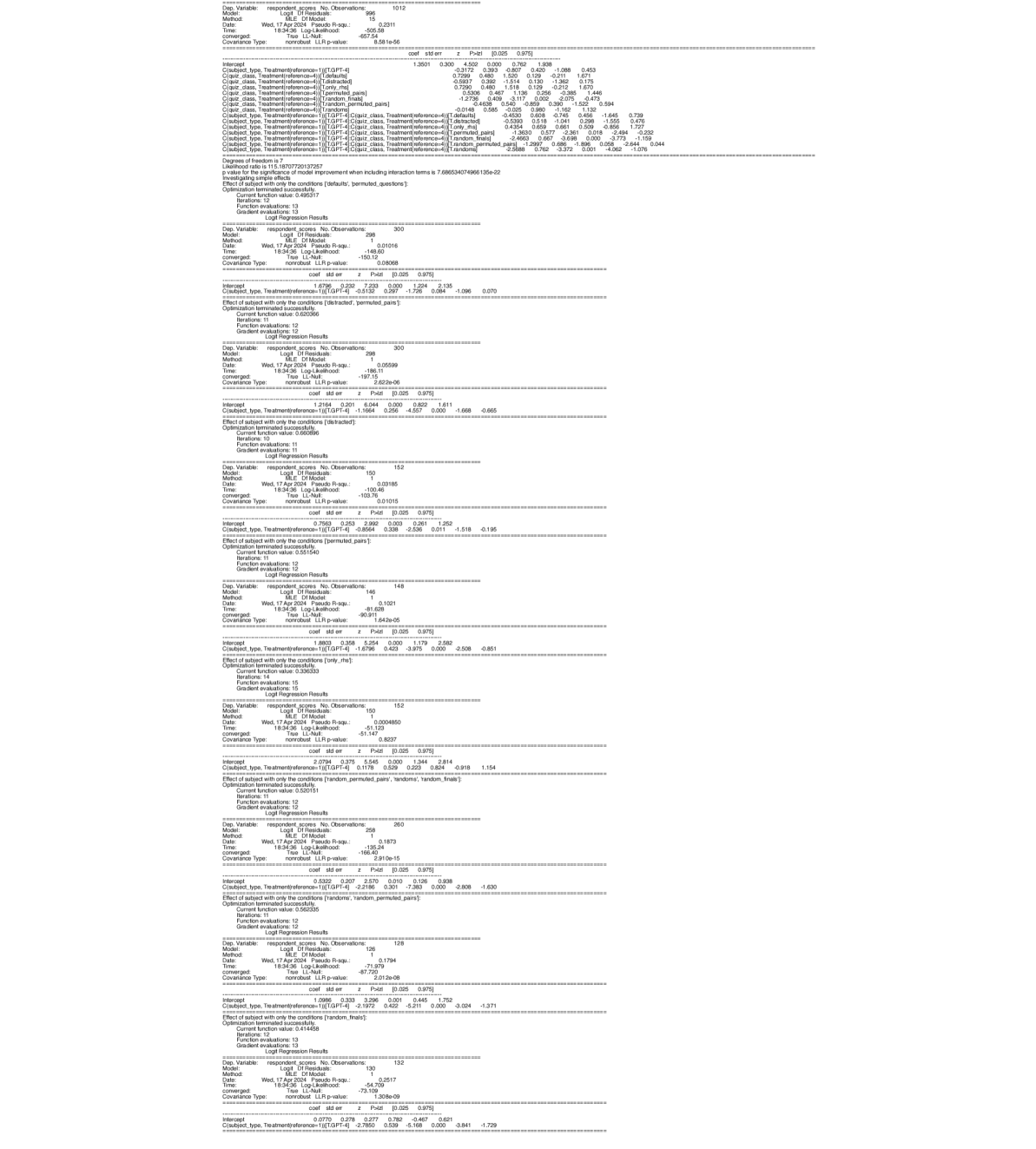

Figure 3 compares humans to high-performing LLMs in the Defaults and Permuted Pairs conditions. LLM performance drops in the Permuted Pairs condition, while humans seem equally able to infer the mapping regardless of word presentation order. This effect is significant for both Claude 3 (coef = -1.7802, z = -4.217, p < 0.001) and GPT-4 (coef = -1.6796, z = -3.975, p < 0.001). This suggests that, while the overall performance is comparable, there are likely meaningful mechanistic differences in how the analogy is processed in humans versus LLMs. The remaining control conditions and data for all tested models are shown in Figure 15 of the Appendix . In these conditions, we find that humans and models are roughly equally affected. For example, accuracy in the Distracted condition drops by approximately 0.25 for all three subject types.

<details>

<summary>extracted/5679376/Images/fig3_new.png Details</summary>

### Visual Description

## Bar Chart: Accuracy Comparison of Human and AI Models Under Default and Permuted Pair Conditions

### Overview

The image is a grouped bar chart comparing the accuracy of three entities—Human, GPT-4, and Claude 3—under two experimental conditions: "Defaults" and "Permuted pairs." The chart visually demonstrates a performance gap between the two conditions for each entity, with a notably larger drop for the AI models.

### Components/Axes

* **Chart Type:** Grouped bar chart with error bars.

* **Y-Axis:** Labeled "Accuracy." The scale runs from 0.0 to 1.0, with major tick marks at 0.0, 0.2, 0.4, 0.6, 0.8, and 1.0.

* **X-Axis:** Contains three categorical groups: "Human," "GPT-4," and "Claude 3."

* **Legend:** Located in the top-right corner of the plot area.

* A white bar with a black outline represents the "Defaults" condition.

* A solid light blue bar represents the "Permuted pairs" condition.

* **Data Series:** Each of the three x-axis categories has two adjacent bars corresponding to the legend conditions. Each bar is topped with a vertical error bar (black line with horizontal caps).

### Detailed Analysis

**1. Human Group (Leftmost)**

* **Defaults (White Bar):** The top of the bar aligns with an accuracy of approximately **0.88**. The error bar extends from roughly 0.85 to 0.91.

* **Permuted pairs (Blue Bar):** The top of the bar aligns with an accuracy of approximately **0.84**. The error bar extends from roughly 0.81 to 0.87.

* **Trend:** The accuracy for "Permuted pairs" is slightly lower than for "Defaults," but the difference is small, and the error bars overlap significantly.

**2. GPT-4 Group (Center)**

* **Defaults (White Bar):** The top of the bar aligns with an accuracy of approximately **0.79**. The error bar extends from roughly 0.77 to 0.81.

* **Permuted pairs (Blue Bar):** The top of the bar aligns with an accuracy of approximately **0.55**. The error bar extends from roughly 0.53 to 0.57.

* **Trend:** There is a substantial drop in accuracy from the "Defaults" to the "Permuted pairs" condition. The error bars for the two conditions do not overlap.

**3. Claude 3 Group (Rightmost)**

* **Defaults (White Bar):** The top of the bar aligns with an accuracy of approximately **0.81**. The error bar extends from roughly 0.79 to 0.83.

* **Permuted pairs (Blue Bar):** The top of the bar aligns with an accuracy of approximately **0.52**. The error bar extends from roughly 0.50 to 0.54.

* **Trend:** Similar to GPT-4, there is a large drop in accuracy from "Defaults" to "Permuted pairs." The error bars for the two conditions do not overlap.

### Key Observations

1. **Condition Effect:** For all three entities, accuracy is higher in the "Defaults" condition than in the "Permuted pairs" condition.

2. **Magnitude of Effect:** The negative impact of permutation is dramatically larger for the AI models (GPT-4 and Claude 3) than for Humans. The drop for Humans is approximately 0.04 accuracy points, while the drops for GPT-4 and Claude 3 are approximately 0.24 and 0.29 points, respectively.

3. **AI Model Comparison:** Under the "Defaults" condition, Claude 3 (≈0.81) performs slightly better than GPT-4 (≈0.79). Under the "Permuted pairs" condition, their performance is very similar (GPT-4 ≈0.55, Claude 3 ≈0.52), with both falling to near or just above chance level (0.5).

4. **Human Performance:** Human accuracy remains high and relatively stable across both conditions, staying above 0.8.

### Interpretation

This chart presents evidence from a cognitive or linguistic experiment testing robustness to permutation. The "Defaults" condition likely represents a standard, expected, or natural pairing of items (e.g., words, concepts, images). The "Permuted pairs" condition involves randomly shuffling these pairings.

The data suggests that **human performance is largely resilient to this permutation**, indicating that human understanding or task completion does not rely heavily on the specific, default pairings tested. In contrast, **both GPT-4 and Claude 3 show a severe performance degradation when the default pairings are disrupted**. This implies that these AI models may be leveraging statistical associations or patterns inherent in the default data structure to a much greater degree. When those structures are broken (permuted), their ability to perform the task collapses toward random guessing.

The key takeaway is a qualitative difference in processing: humans appear to use a more flexible, conceptual understanding that survives recombination, while the AI models' performance is more contingent on the specific, learned configurations of the input data. This has implications for evaluating the robustness and generalization capabilities of large language models.

</details>

Figure 3: Human and LLM accuracy in the Defaults and Permuted Pairs conditions. Error bars show standard errors.

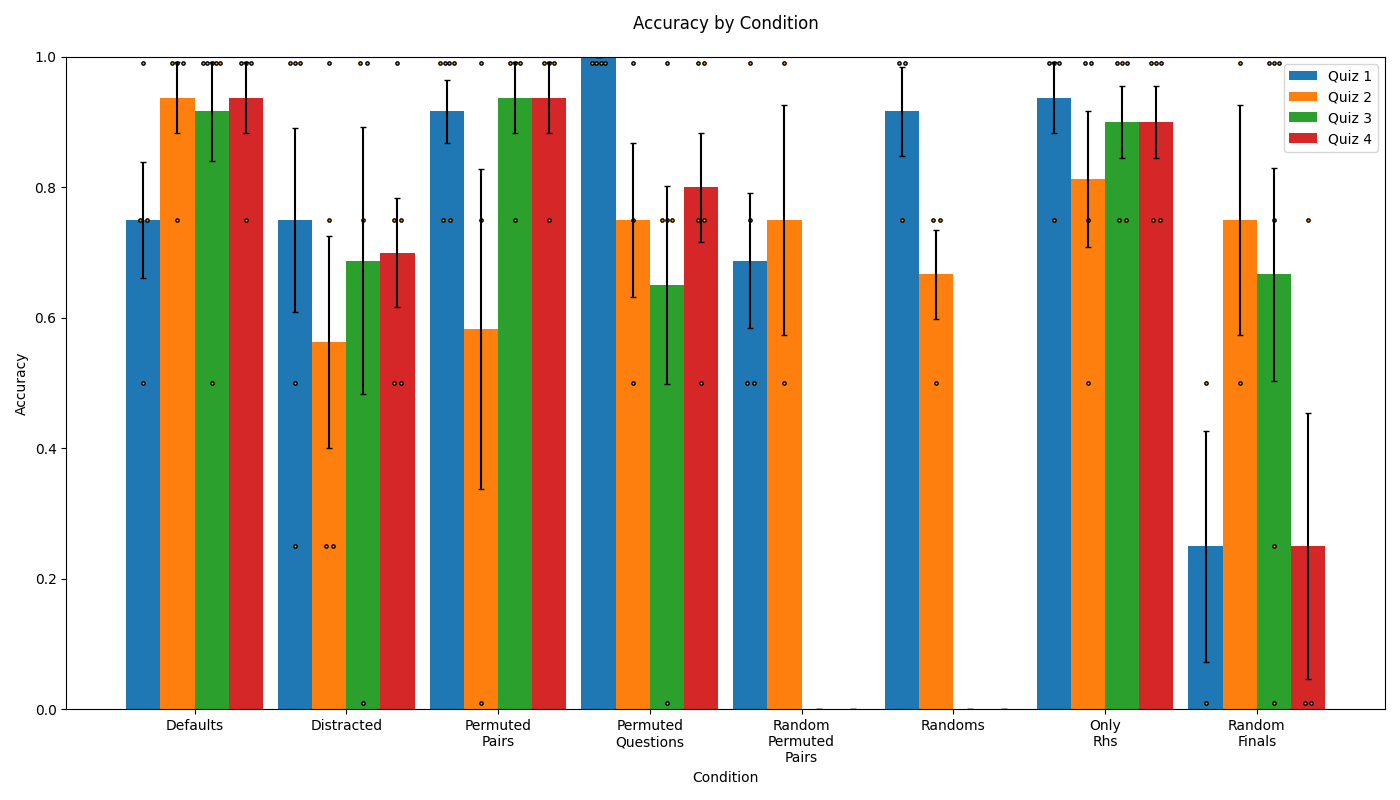

#### 3.1.2 Effect of Semantic Structure on Reasoning

We next investigate more directly the extent to which humans and LLMs leverage semantic structure in order to complete our analogy tasks. To do this, we design three variants of our Defaults analogy task (see Table 2). First, the Only RHS condition removes the source domain entirely. High performance in this condition thus indicates that a subject is able to complete the questions based only on the evident pattern in the target domain. We then introduce two variants which make the semantic structure in the source domain less coherent: the Randoms condition uses unrelated words, while the Random Finals condition uses of three related words followed by one random word. We thus take the performance difference between the RHS Only condition and either the Random or Random Final condition to be a measure of the subject’s bias toward using the semantic structure of the source domain. That is, if the subject is capable of solving the task by simply ignoring the left hand side (the Only RHS condition), then poor performance in the other conditions indicates that the subject was misled by the presence of the altered left hand side.

| Only RHS | Test of how well the answer can be inferred without using any structure-mapping | C C C c c c C C |

| --- | --- | --- |

| Randoms | Variant of Defaults in which there is no semantic structure relating the words on the left hand side | banana => C C C fireplace => c c c bean => C C plug => |

| Random Last | Variant of Defaults in which the final term is not semantically related to the preceding terms | square => C C C rectangle => c c c circle => C C lime => |

Table 2: Conditions involving alteration or omission of the source domain. The Random Permuted Pairs condition (not shown) is identical to Randoms, but with the order of elements within questions permuted.

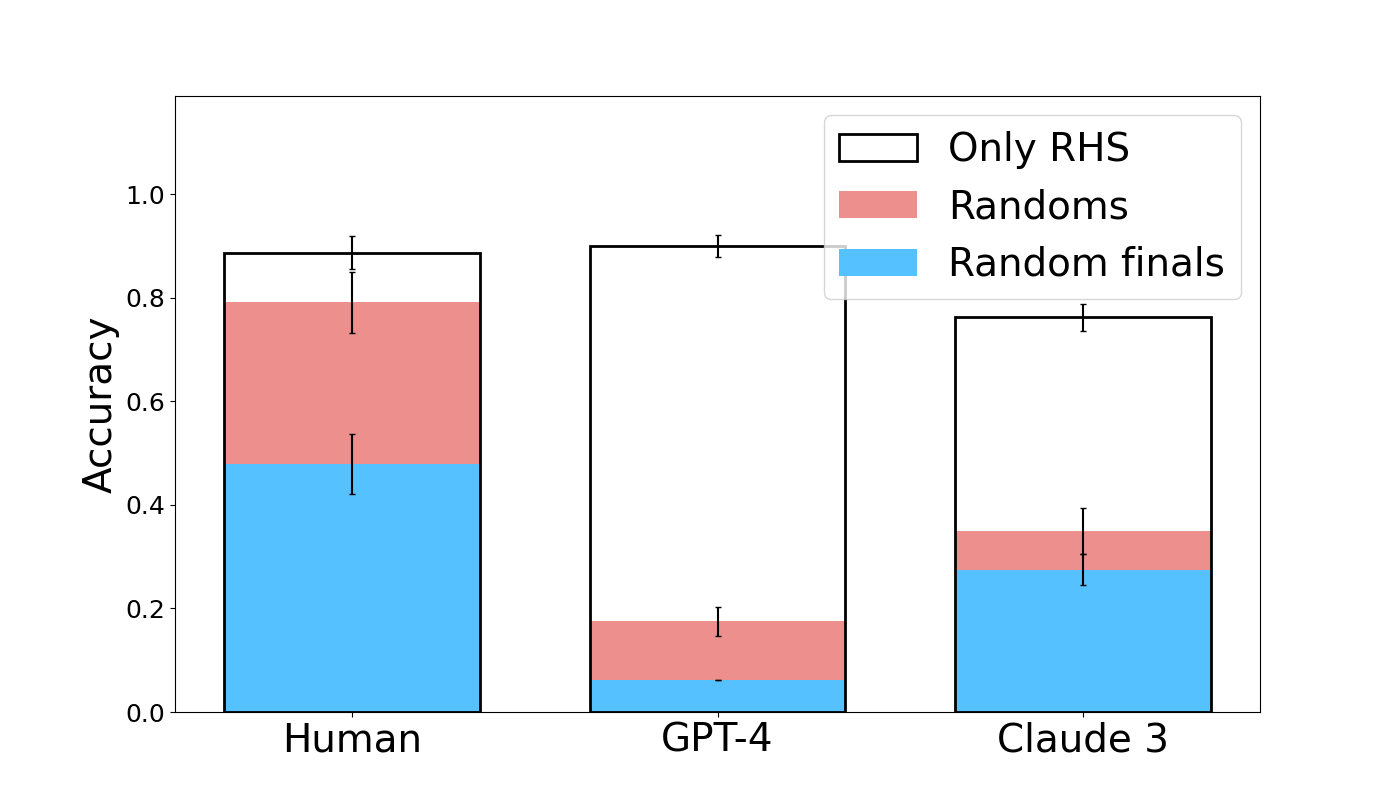

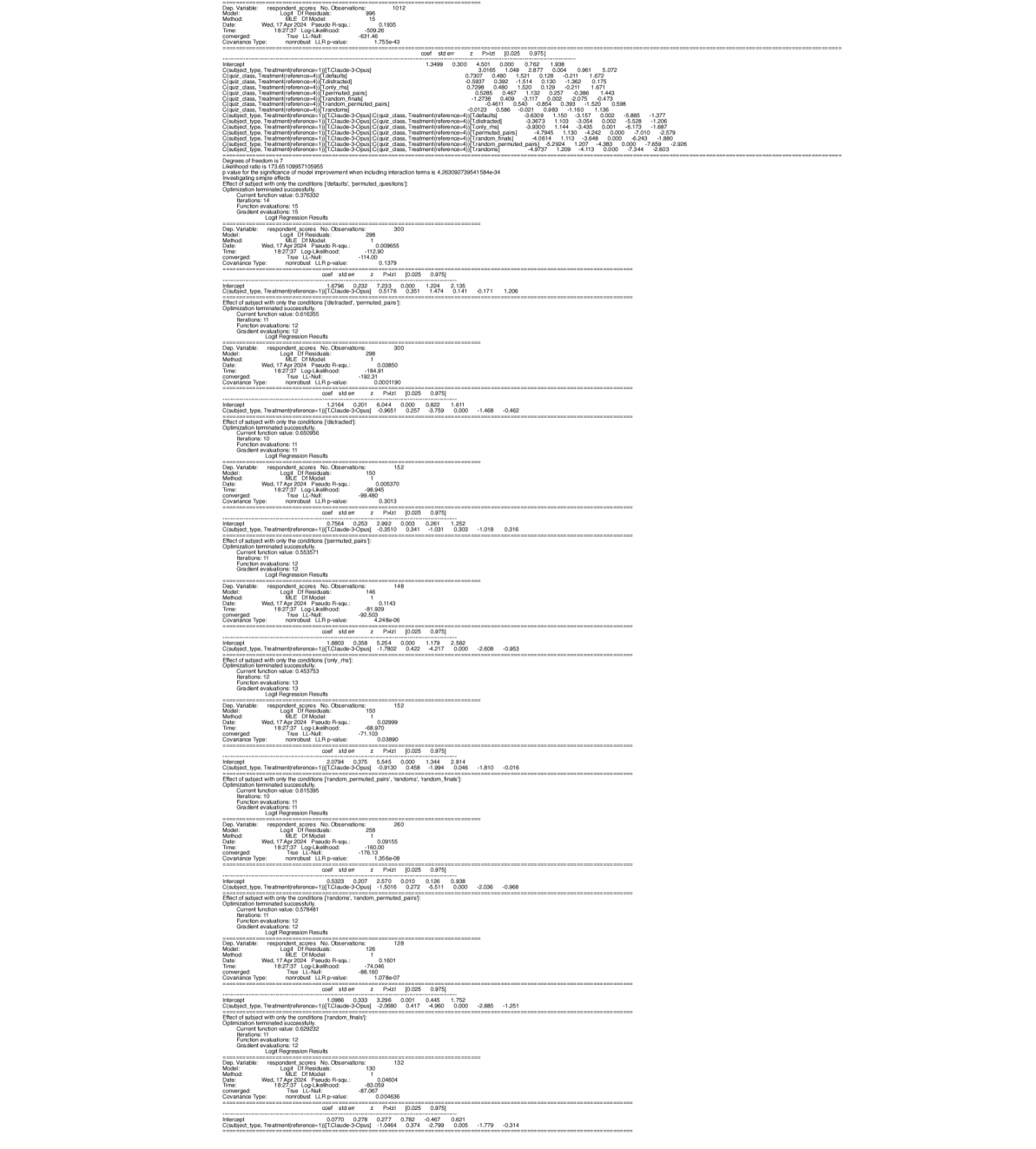

Both humans and models competently complete the Only RHS condition (see Figure 4). Accuracy is approximately 0.8 for Claude 3 with human subjects and GPT-4 slightly higher at 0.9. GPT-4 is not significantly different from humans in this condition (coef = 0.1178, z = 0.223, p = 0.824), and Claude 3 is worse than humans by a barely significant margin (coef = -0.9130, z = -1.994, p = 0.046). Thus, both humans and LLMs are able to complete the task without the guidance of the left hand side. Considering this, we look at the performance degradation associated with encountering incoherent semantic structure on the left hand side. Humans exhibit a modest decrease in accuracy of about 0.15 in the Random and Random Permuted Pairs conditions relative to defaults. Claude-3 and GPT-4, however, exhibit much larger drops: Claude 3 decreases by approximately 0.5 relative to Defaults, while GPT-4 decreases by 0.6 and 0.4 in the Random and Random Permuted Pairs conditions. Across these two conditions, both GPT-4 (coef = -2.1972, z = -5.211, p < 0.001) and Claude 3 (coef = -2.0680, z = -4.960, p < 0.001) perform significantly worse than humans.

<details>

<summary>extracted/5679376/Images/fig4_new.png Details</summary>

### Visual Description

## Stacked Bar Chart: Accuracy Comparison Across Human and AI Models

### Overview

The image displays a stacked bar chart comparing the accuracy of three entities—Human, GPT-4, and Claude 3—across three distinct performance categories. The chart is designed to show the composition of overall accuracy for each entity, broken down into contributions from "Only RHS," "Randoms," and "Random finals."

### Components/Axes

* **Y-Axis:** Labeled "Accuracy," with a linear scale ranging from 0.0 to 1.0. Major tick marks are present at 0.0, 0.2, 0.4, 0.6, 0.8, and 1.0.

* **X-Axis:** Categorical, listing three groups: "Human," "GPT-4," and "Claude 3."

* **Legend:** Positioned in the top-right corner of the chart area. It defines three stacked components:

* **Only RHS:** Represented by a white bar segment with a black outline.

* **Randoms:** Represented by a pink/salmon-colored bar segment.

* **Random finals:** Represented by a light blue bar segment.

* **Error Bars:** Each colored segment within every bar has a vertical black error bar extending above and below its top edge, indicating variability or confidence intervals for that specific measurement.

### Detailed Analysis

The chart presents the following approximate accuracy values for each entity, decomposed by category. Values are estimated from the visual scale.

**1. Human**

* **Total Accuracy (Top of White Bar):** ~0.89

* **Component Breakdown (from bottom to top):**

* **Random finals (Blue):** ~0.48

* **Randoms (Pink):** ~0.31 (Cumulative height: ~0.79)

* **Only RHS (White):** ~0.10 (Cumulative height: ~0.89)

* **Trend:** The largest contributor to Human accuracy is "Random finals," followed by "Randoms." The "Only RHS" component is the smallest.

**2. GPT-4**

* **Total Accuracy (Top of White Bar):** ~0.90

* **Component Breakdown (from bottom to top):**

* **Random finals (Blue):** ~0.06

* **Randoms (Pink):** ~0.12 (Cumulative height: ~0.18)

* **Only RHS (White):** ~0.72 (Cumulative height: ~0.90)

* **Trend:** The vast majority of GPT-4's accuracy comes from the "Only RHS" component. The contributions from "Random finals" and "Randoms" are minimal.

**3. Claude 3**

* **Total Accuracy (Top of White Bar):** ~0.77

* **Component Breakdown (from bottom to top):**

* **Random finals (Blue):** ~0.28

* **Randoms (Pink):** ~0.07 (Cumulative height: ~0.35)

* **Only RHS (White):** ~0.42 (Cumulative height: ~0.77)

* **Trend:** Claude 3 shows a mixed profile. "Only RHS" is the largest single component, but "Random finals" also provides a substantial contribution. "Randoms" is the smallest component.

### Key Observations

1. **Divergent Strategies:** The three entities exhibit fundamentally different accuracy compositions. Humans rely heavily on "Random finals" and "Randoms," GPT-4 relies almost exclusively on "Only RHS," and Claude 3 uses a combination of both strategies.

2. **Performance Hierarchy:** In terms of total accuracy, GPT-4 (~0.90) and Human (~0.89) are nearly tied at the top, with Claude 3 (~0.77) performing lower.

3. **"Only RHS" Dominance in AI:** Both AI models (GPT-4 and Claude 3) derive a larger proportion of their accuracy from the "Only RHS" category compared to Humans.

4. **Error Bar Variability:** The error bars are most pronounced on the "Only RHS" segments for Human and GPT-4, suggesting greater uncertainty or variance in that specific measurement for those groups. The error bars on the smaller "Randoms" and "Random finals" segments for GPT-4 are relatively tight.

### Interpretation

This chart likely visualizes the results of a benchmark or experiment testing reasoning or problem-solving capabilities. The categories suggest different methods or information sources:

* **"Only RHS":** Possibly refers to using only the Right-Hand Side of an equation or a specific, constrained set of rules.

* **"Random finals" / "Randoms":** Suggest strategies involving randomness, guessing, or less deterministic approaches.

The data demonstrates that advanced AI models like GPT-4 can achieve human-level accuracy on this task, but they do so through a markedly different mechanism—leveraging a precise, rule-based approach ("Only RHS") rather than the more stochastic or heuristic methods ("Random finals"/"Randoms") that characterize human performance in this context. Claude 3 represents an intermediate state, blending both approaches but not excelling in either to the same degree as the specialists (Human in randomness, GPT-4 in rules). The high accuracy of GPT-4 with a low error bar on the "Only RHS" segment indicates a robust and reliable mastery of that specific method.

</details>

Figure 4: Human and LLM accuracy in Only RHS, Randoms, and Random finals conditions. Data from the Random Permuted Pairs condition is shown in Figure 15 of the Appendix . Error bars show standard errors.

From this we conclude that human subjects are able to easily identify when the left hand side contains no useful semantic structure to leverage. When there is none, they are able to employ a strategy that only relies on the right hand side. By contrast, models do not seem capable of easily identifying the lack of informativeness of the left hand side in these conditions, as they do not use the strategy of only attending to the right hand side, even though they show their capability of using this strategy when no left hand side is present. This suggests mechanistic differences between how human subjects and models process this task.

Although the performance of human subjects does not drop notably in the Random condition compared to the Only RHS condition, it does drop by a wide margin in the Random Finals condition. In this condition, accuracy is approximately 0.5 lower than in the Only RHS condition. This further suggests that the semantic relatedness of the left hand side affects the strategy of human subjects: when the left hand side is clearly unrelated, the information it provides is discarded, but when much of the left hand side appears related, the information is not discarded and the random final word of the source domain prompts an incorrect answer from human subjects. Models also show a large drop in performance in the Random Finals condition relative to Only RHS, with Claude 3 dropping by 0.5 and GPT-4 dropping by 0.8. Simple effects analysis shows that both Claude 3 (coef = -1.0464, z = -2.799, p = 0.005) and GPT-4 (coef = -2.7850, z = -5.168, p < 0.001) are significantly worse than humans in the Random Finals condition. However, we see this difference as less informative than that both models drop in performance across all the random conditions relative to their own performance in the Only RHS condition.

#### 3.1.3 Other Observations

We additionally analyze the extent to which human subjects and models improve by question (Figure 14 of the Appendix), and the extent to which the errors made by humans and models follow the same distribution across questions grouped by target domain and across qualitative error types (Figure 13 and Table 5 of the Appendix). We find that humans and models alike improve over subsequent questions, adding to a body of evidence about in-context learning [30, 31, 32]. Humans and models show similar error distributions by target domain, but qualitative error types reveal a closer correspondence between human and GPT-4 errors than Claude 3.

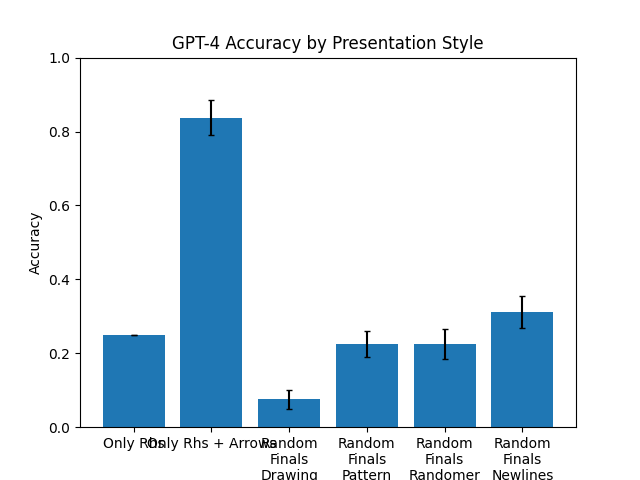

#### 3.1.4 Diagnosing the Use of an RHS-Only Heuristic

To clarify whether subjects actually make use of left-right relations or only complete right-side patterns in the Semantic Structure experiment, we design the Relational condition, a $2× n$ variant of the Defaults condition which cannot be solved (consistently) using only the right-hand terms (see the example in Table 3).

| pants => H # H |

| --- |

| glove => X # X |

| torso => V |

| foot => Z |

| head => M |

| shirt => V # V |

| hat => |

Table 3: An example from the Relational variant of the Defaults task, used to diagnose subjects’ tendency to rely on RHS-only heuristics to solve the task.

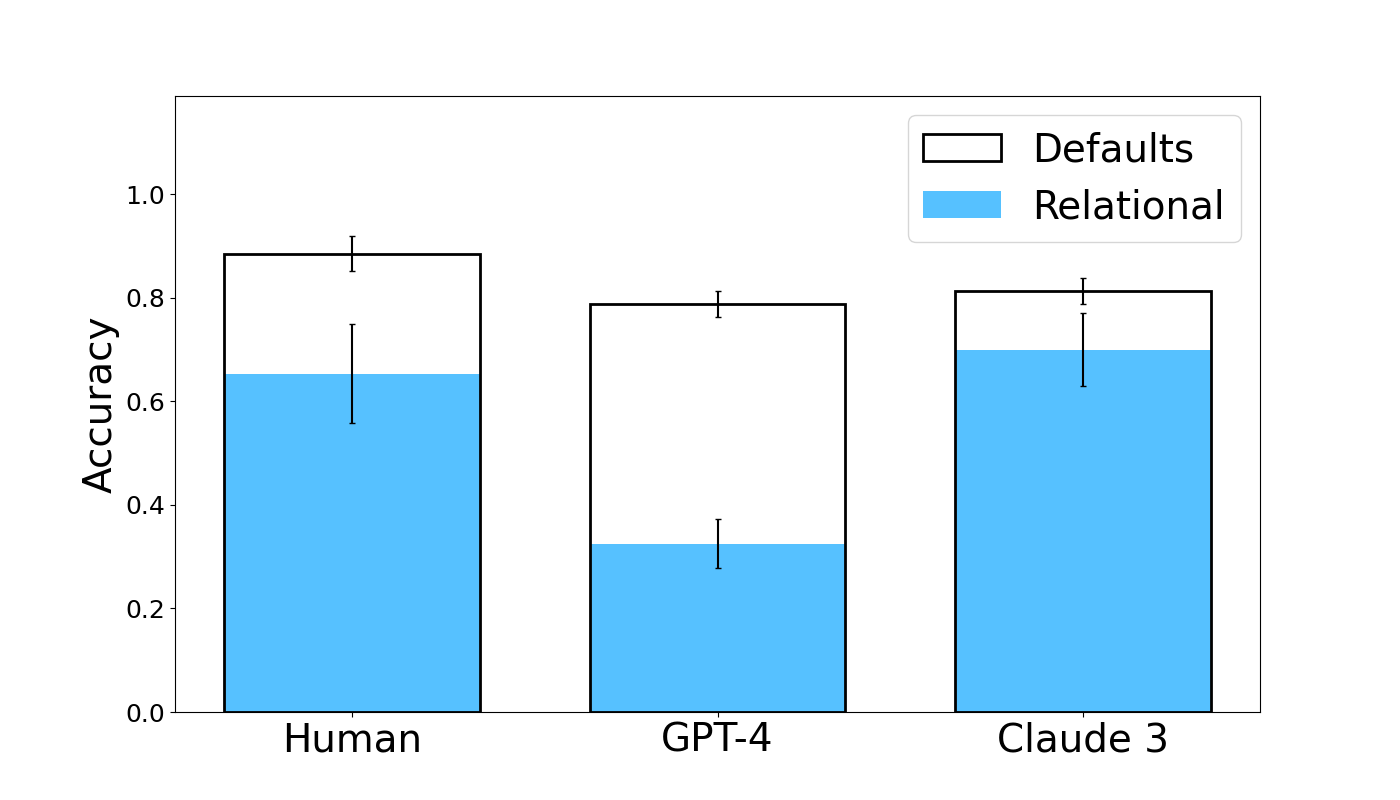

Results are shown in Figure 5. Human subjects and Claude 3 exhibit similar performance, with accuracies of approximately 0.7. GPT-4, however, attains much lower accuracy of approximately 0.35. Simple effects analysis shows that GPT-4 obtains significantly worse accuracy than human subjects (coef = -1.3669, z = -3.065, p = 0.002), while the accuracy of Claude 3 does not differ significantly from human subjects (coef = 0.2111, z = 0.467, p = 0.640).

<details>

<summary>extracted/5679376/Images/fig5_new.png Details</summary>

### Visual Description

## Bar Chart: Accuracy Comparison of Defaults vs. Relational Tasks

### Overview

The image is a grouped bar chart comparing the accuracy of three entities—Human, GPT-4, and Claude 3—on two types of tasks: "Defaults" and "Relational." The chart includes error bars for each data point, indicating variability or confidence intervals. The overall visual suggests a performance comparison between human and AI model capabilities on different cognitive task types.

### Components/Axes

* **Y-Axis:** Labeled "Accuracy." The scale runs from 0.0 to 1.0, with major tick marks at 0.0, 0.2, 0.4, 0.6, 0.8, and 1.0.

* **X-Axis:** Categorical, listing three entities: "Human," "GPT-4," and "Claude 3."

* **Legend:** Located in the top-right corner of the chart area.

* **Defaults:** Represented by a white bar with a black outline.

* **Relational:** Represented by a solid light blue bar.

* **Data Series:** For each entity on the x-axis, there are two adjacent bars: a white "Defaults" bar and a blue "Relational" bar. Each bar has a vertical error bar extending above and below its top edge.

### Detailed Analysis

**1. Human:**

* **Defaults (White Bar):** The bar height is approximately **0.88**. The error bar extends from roughly **0.85 to 0.92**.

* **Relational (Blue Bar):** The bar height is approximately **0.65**. The error bar is larger, extending from roughly **0.56 to 0.75**.

* **Trend:** Human accuracy is significantly higher on Defaults tasks than on Relational tasks.

**2. GPT-4:**

* **Defaults (White Bar):** The bar height is approximately **0.79**. The error bar is relatively small, extending from roughly **0.77 to 0.81**.

* **Relational (Blue Bar):** The bar height is approximately **0.32**. The error bar extends from roughly **0.28 to 0.37**.

* **Trend:** GPT-4 shows a very large performance drop from Defaults to Relational tasks, with Relational accuracy being less than half of its Defaults accuracy.

**3. Claude 3:**

* **Defaults (White Bar):** The bar height is approximately **0.81**. The error bar extends from roughly **0.79 to 0.83**.

* **Relational (Blue Bar):** The bar height is approximately **0.70**. The error bar extends from roughly **0.63 to 0.77**.

* **Trend:** Claude 3 also performs better on Defaults than Relational tasks, but the gap is smaller than that observed for GPT-4.

### Key Observations

1. **Universal Performance Gap:** All three entities (Human, GPT-4, Claude 3) achieve higher accuracy on "Defaults" tasks compared to "Relational" tasks.

2. **Magnitude of Gap Varies:** The performance gap between task types is most extreme for GPT-4, moderate for Humans, and smallest for Claude 3.

3. **Relative Performance:**

* On **Defaults** tasks, Humans (~0.88) have the highest accuracy, followed closely by Claude 3 (~0.81) and then GPT-4 (~0.79).

* On **Relational** tasks, Claude 3 (~0.70) has the highest accuracy, followed by Humans (~0.65), with GPT-4 (~0.32) performing substantially worse.

4. **Error Bar Variability:** The error bars for "Relational" tasks are generally larger than those for "Defaults" tasks, particularly for Human and Claude 3, suggesting greater variability or uncertainty in performance on relational reasoning.

### Interpretation

The data suggests a fundamental distinction in capability between "Defaults" (likely factual recall or common-sense knowledge) and "Relational" (likely involving reasoning about relationships between entities or concepts) tasks.

* **Human Performance:** Humans show a robust but not perfect ability in both domains, with a notable drop in accuracy when relational reasoning is required. The larger error bar on the relational task indicates this is a more variable skill among humans.

* **AI Model Divergence:** The two AI models exhibit starkly different profiles. **GPT-4** demonstrates strong performance on Defaults, nearly matching humans, but fails dramatically on Relational tasks. This implies its knowledge base is extensive, but its capacity for structured relational reasoning is a significant weakness.

* **Claude 3's Profile:** **Claude 3** shows a more balanced profile. While slightly less accurate than humans on Defaults, it outperforms humans on the Relational task in this sample and maintains a much smaller performance gap between the two task types. This suggests a stronger architectural or training emphasis on relational reasoning compared to GPT-4.

* **Overall Implication:** The chart highlights that "accuracy" is not a monolithic metric. An AI's performance is highly dependent on the *type* of cognitive task. Claude 3 appears more robust for tasks requiring relational understanding, while GPT-4's strength lies in default knowledge retrieval. The human benchmark provides a reference point for a balanced, albeit imperfect, integration of both capabilities.

</details>

Figure 5: Human and LLM accuracy in the Relational condition followup, with Defaults condition performance for reference. Error bars show standard errors.

#### 3.1.5 Takeaways

Despite weak performance from many models on our analogical reasoning tasks, GPT-4 and Claude 3 perform well, showing similar patterns to humans in leveraging semantic structure of corresponding domains to solve analogies. However, differences do remain in how they handle semantic structure in the source domain. Humans prefer leveraging semantic structure when a clear pattern exists (evidenced by the Defaults and Random Finals conditions) but can ignore words when structure is lacking (Randoms condition). Models show the former bias but not the latter ability, appearing distracted by random lexical items. Nevertheless, model results increasingly resemble human subjects, suggesting larger models may close this gap.

Furthermore, qualitative differences exist even between the best models. GPT-4 and Claude 3 match human performance in the Defaults condition, but when the structure is generalized from $2× 2$ to $2× n$ in the Relational followup, making a right-hand-only strategy unworkable, Claude 3 maintains human-level performance while GPT-4 drops significantly. Despite limited public information, it’s notable that models produced using presumably similar approaches can exhibit meaningfully different behavioral patterns.

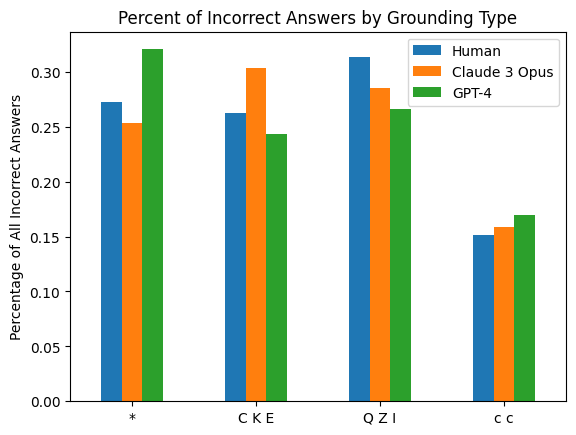

### 3.2 Mapping Semantic Content

The Semantic Structure experiment, which presented subjects with source and target domains with corresponding semantic structure (i.e., with corresponding relations between terms), provides insight into the relative bias of human subjects and models to transfer this structure across domains. The Semantic Content experiment modifies the tasks to investigate the extent to which human subjects and models can transfer elements of the linguistic meaning of terms from one domain to another.

To achieve this, we ensure that elements of the target domain directly depend on properties of corresponding source domain elements, requiring knowledge of the source domain terms’ meaning for perfect performance. As in the Semantic Structure experiment, source and target domains are paired such that patterns in the target domain mirror those in the source domain. Together, these experiments compare the subject’s ability and tendency to use a structure-mapping approach. Four tasks are generated, encoding either one or two dimensions of variation and either involving or not involving numeric reasoning (see Table 4).

| Categorial: Right-hand terms are single characters corresponding to a Categorial property of the left-hand terms. | chicken => ! spider => ! cat => * horse => * ant => ! dog => * bee => ! human => |

| --- | --- |

| Multi-Attribute: Right-hand terms are a sequence of several characters that vary according to two properties of the left-hand terms. | grandfather => ! grandmother => * mother => * * father => ! ! brother => ! ! ! sister => |

| Numeric: Right-hand terms are a sequence of a single repeated character, with the number of repetitions corresponding to a numeric property of the left-hand terms. | chicken => * * human => * * dog => * * * * spider => * * * * * * * * cat => * * * * horse => * * * * bee => |

| Numeric Multi-Attribute: Right-hand terms are a sequence of a repeated character, with the number of repetitions corresponding to a numeric property of the left-hand terms and the character corresponding to a Categorial property. | horse => * * * * cat => * * * * ant => ! ! ! ! ! ! bee => ! ! ! ! ! ! chicken => ! ! spider => ! ! ! ! ! ! ! ! dog => * * * * human => |

Table 4: The conditions of the Semantic Content experiment.

<details>

<summary>extracted/5679376/Images/exp2_new.png Details</summary>

### Visual Description

## Grouped Bar Chart: Accuracy Comparison of Human and AI Models on Attribute Tasks

### Overview

The image is a grouped bar chart comparing the accuracy of three entities—Human, GPT-4, and Claude 3—on tasks involving different attribute types. The chart measures performance on "Single attribute," "Numeric," and "Categorical" tasks, with accuracy plotted on the y-axis. Error bars are included for each data point, indicating variability or confidence intervals.

### Components/Axes

- **Chart Type**: Grouped bar chart with error bars.

- **Y-Axis**: Labeled "Accuracy," with a linear scale from 0.0 to 1.0, marked at intervals of 0.2 (0.0, 0.2, 0.4, 0.6, 0.8, 1.0).

- **X-Axis**: Three categorical groups: "Human," "GPT-4," and "Claude 3."

- **Legend**: Located in the top-right corner of the chart area. It defines three bar types:

- **Single attribute**: White bar with a black outline.

- **Numeric**: Solid pink/salmon-colored bar.

- **Categorical**: Solid light blue bar.

- **Data Series**: Each group (Human, GPT-4, Claude 3) contains two or three bars, representing the attribute types. The "Single attribute" bar appears only for Human and Claude 3, not for GPT-4.

### Detailed Analysis

**Human Group (Leftmost Cluster):**

- **Categorical (Blue Bar)**: Height is approximately 0.45. The error bar extends from roughly 0.36 to 0.53.

- **Numeric (Pink Bar)**: Height is approximately 0.50. The error bar extends from roughly 0.44 to 0.56.

- **Single attribute (White Bar)**: Height is approximately 0.73. The error bar extends from roughly 0.67 to 0.80.

**GPT-4 Group (Center Cluster):**

- **Categorical (Blue Bar)**: Height is approximately 0.70. The error bar extends from roughly 0.63 to 0.74.

- **Numeric (Pink Bar)**: Height is approximately 0.25. The error bar extends from roughly 0.22 to 0.29.

- **Single attribute**: No bar is present for this category.

**Claude 3 Group (Rightmost Cluster):**

- **Categorical (Blue Bar)**: Height is approximately 0.60. The error bar extends from roughly 0.52 to 0.62.

- **Numeric (Pink Bar)**: Height is approximately 0.45. The error bar extends from roughly 0.42 to 0.47.

- **Single attribute (White Bar)**: Height is approximately 0.55. The error bar extends from roughly 0.51 to 0.58.

### Key Observations

1. **Performance Disparity by Task Type**: There is a clear divergence in performance between Numeric and Categorical tasks for the AI models. GPT-4 shows the largest gap, with high Categorical accuracy (~0.70) but very low Numeric accuracy (~0.25). Humans show a smaller gap, with Numeric (~0.50) slightly outperforming Categorical (~0.45).

2. **Human Superiority on Single Attribute Tasks**: The "Single attribute" task, which appears to be a composite or different benchmark, shows Humans achieving the highest overall accuracy (~0.73) on the chart. Claude 3's performance on this task (~0.55) is notably lower.

3. **Model Comparison**: GPT-4 leads in Categorical accuracy among the AI models. Claude 3 shows more balanced performance between Numeric and Categorical tasks compared to GPT-4, but its accuracy in both is moderate.

4. **Error Bar Variability**: The error bars for Human performance on Categorical tasks and GPT-4 performance on Numeric tasks appear relatively large, suggesting higher uncertainty or variability in those measurements. Claude 3's error bars are comparatively tighter.

### Interpretation

This chart suggests a fundamental difference in how humans and current large language models (LLMs) process different types of information. Humans demonstrate a more balanced and robust capability across numeric and categorical reasoning, with a particular strength in integrated "single attribute" tasks.

The LLMs, however, show a pronounced specialization or weakness. GPT-4's profile indicates a strong capability for categorical reasoning (e.g., classifying, sorting) but a significant deficit in numeric reasoning (e.g., arithmetic, quantitative comparison). Claude 3 mitigates this weakness somewhat, achieving a more even performance profile, but at the cost of lower peak accuracy in its stronger category compared to GPT-4.

The absence of a "Single attribute" bar for GPT-4 is notable. It could imply that this specific benchmark was not run for GPT-4, or that the task was not applicable to its evaluation framework. The data highlights that while AI models can excel in specific domains (like GPT-4 in categorical tasks), they have not yet achieved the generalized, cross-domain accuracy of humans, particularly in tasks that may require integrating multiple reasoning skills. The variability indicated by the error bars also suggests that model performance on these tasks is not yet fully consistent.

</details>

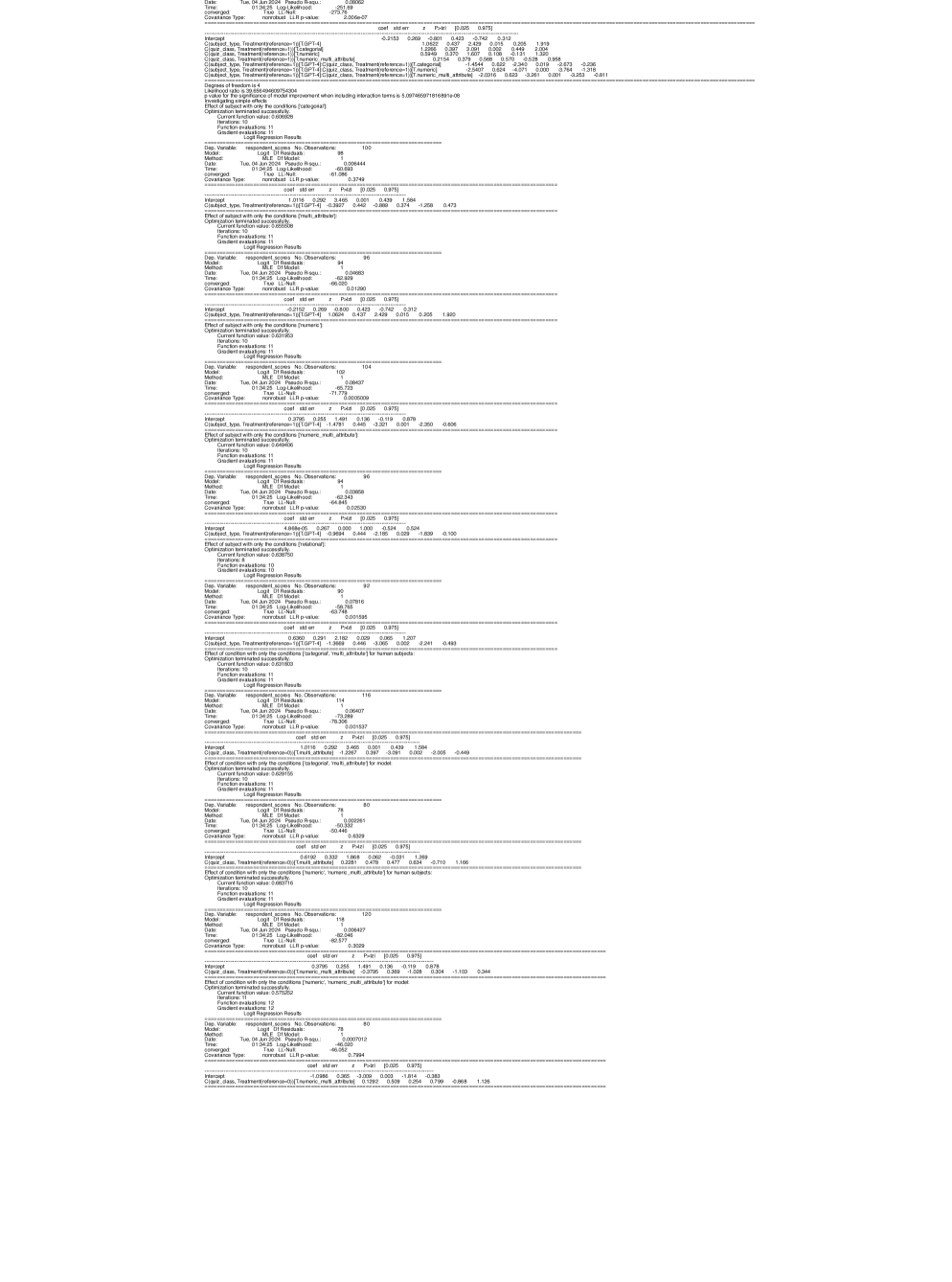

Figure 6: Human and model accuracy by condition in the Semantic Content experiment. Error bars show standard errors.

Results for human subjects, GPT-4, and Claude 3 are shown in Figure 6 (other tested models attain much lower accuracy as before).

#### 3.2.1 Human Performance Continues to be Robust

Human subjects perform robustly and consistently, as in the previous experiment. Human accuracy ranges from 0.4 to 0.8 across conditions, comparable to the earlier Semantic Structure experiment. As expected, subjects generally describe their strategy as relating properties of the left-hand terms to their representations on the right-hand side.

#### 3.2.2 Claude 3 Matches Human Performance Stably Across Conditions

Claude 3 matches human performance stably across the different conditions of the Semantic Content experiment with its accuracy falling into a comparable range of 0.4 to 0.7. The model exhibits marginally better performance in the Multi-Attribute condition and marginally worse performance in the remaining three. These differences are insignificant across all conditions, which covers the Categorial (coef = -0.8109, z = -1.879, p = 0.060), Multi-Attribute (coef = 0.6206, z = 1.478, p = 0.140), Numeric (coef = -0.1788, z = -0.439, p = 0.661), and Numeric Multi-Attribute (coef = -0.2009, z = -0.484, p = 0.629) conditions. Therefore, Claude 3 performs as well as human subjects across all conditions of this experiment.

#### 3.2.3 GPT-4 Lags Human Subjects on Numeric Reasoning

GPT-4 achieves good results in the Categorial and Multi-Attribute conditions, with mean accuracies of approximately 0.7 in both (compared to 0.7 and 0.4 respectively for human subjects). GPT-4 is not significantly worse than humans in the Categorial condition (coef = -0.3927, z = -0.889, p = 0.374). GPT-4 significantly outperforms human subjects in the Multi-Attribute condition (coef = 1.0624, z = 2.429, p = 0.015). However, its accuracy drops to 0.2-0.3 in the remaining conditions and we find that GPT-4 is significantly worse than humans in both the Numeric (coef = -1.4781, z = -3.321, p = 0.001) and Numeric Multi-Attribute conditions (coef = -0.9694, z = -2.185, p = 0.029).

In these conditions, GPT-4 fails to correctly relate the number of characters in a response to the numeric property of the object (see Table 7 for an illustrative example). GPT-4’s failure to reason about the number of characters in the expected way is further observed in the sanity check shown in Table 8 of the Appendix, even when the model is not required to relate a property of a word to its representation.

#### 3.2.4 Human Performance Drops in Compositional Conditions, But Models Remain Constant

When comparing the performance of a subject in a non-compositional (single-attribute) condition to the corresponding compositional (multi-attribute) version, we observe some decrease in performance for human subjects but not for models (note that this surprising result is subject to alternative explanations, addressed in the discussion below). The accuracy of human subjects drops from approximately 0.7 to approximately 0.4 when comparing the Categorial condition to the corresponding compositional version (the Multi-Attribute condition). A simple effects analysis confirms that this decline is significant (coef = -1.2267, z = -3.091, p = 0.002). We see a non-significant decrease in accuracy for human subjects when comparing the Numeric condition to its compositional counterpart, with performance dropping from approximately 0.6 to approximately 0.5 (coef = -0.3795, z = -1.028, p = 0.304).

By contrast, we do not find either model to be significantly worse in compositional conditions than non-compositional ones. In fact, GPT-4 exhibits a slight improvement in the compositional conditions, though this change is statistically insignificant for both the Multi-Attribute condition relative to the Categorial condition (coef = 0.2281, z = 0.477, p = 0.634) and for the Numeric Multi-Attribute condition relative to the Numeric condition (coef = 0.1292, z = 0.254, p = 0.799). For Claude 3 we similarly find the differences to be insignificant for the Multi-Attribute condition relative to the Categorial condition (coef = 0.2049, z = 0.452, p = 0.651) and for the Numeric Multi-Attribute condition relative to the Numeric condition (coef = -0.4013, z = -0.893, p = 0.372).

#### 3.2.5 Takeaways

The Semantic Content experiment confirms that human subjects perform robustly and flexibly across diverse task variations. Claude 3 matches human performance in all conditions, indicating it shares humans’ tendency to use the source domain’s semantic content when completing target domains. While GPT-4’s poor performance in numeric conditions is notable, it reflects a failure in numeric reasoning rather than a difference in analogical reasoning.

We find evidence of decreased human performance, but not model performance, in compositional conditions, contrasting with some existing research [33]. However, other factors may be at play. Models’ negative compositionality effect may be masked by a positive effect, such as increased available information: when the target domain represents two source domain properties, models may more easily recognize the encoding of source domain properties. Human subjects may benefit less from this competing effect if they do not struggle to observe this information encoding.

## 4 Discussion

Our results show that the best-performing LLMs are able to successfully complete many analogical reasoning tasks with human-level accuracy using novel stimuli not present in their training data. They also show that there remain meaningful differences in how such analogies are processed, evidenced by differences in how humans and models respond to distracting or misleading information. However, we observe a clear trend: more recent models come increasingly close to matching human performance across our tasks. In particular, Claude 3, the most recently-released model we test, exhibits impressively robust performance across most task variations, even closing the gap with humans in some test conditions in which its predecessor (GPT-4) exhibited limitations (such as the Relational task version in which mapping from the source domain must be used for success). Together, these results raise questions about the ability of LLMs and similar models to serve as candidate cognitive models, which we discuss briefly below.

### 4.1 Evaluating the Competence of LLMs

The breadth of Claude 3’s success in our tasks is noteworthy. It suggests that state-of-the-art LLMs can broadly match human performance not only in formal analogical reasoning tasks, as suggested by Webb et al. [4], but also in tasks that require mapping semantic information across linguistic and non-linguistic domains. As such, our results weigh against a long-standing view in cognitive science, according to which connectionist models without a built-in symbolic component are constitutively limited in their ability to robustly handle analogical reasoning tasks [7]. They also inform discussions of whether LLMs possess “functional” linguistic competence, in addition to “formal” linguistic competence [3]. Further work is needed to characterize the precise mechanism that LLMs are using to solve these tasks; it is possible–though increasingly unlikely given the robustness of the behavioral results–that success is due to a myriad of heuristics rather than a systematic analogical reasoning process. Even so, evidence of LLMs completing analogical reasoning tasks in domains designed to involve linguistic structure-mapping, in addition to tasks over abstract symbols, runs counter to the claim that LLMs are capable of formal but not functional linguistic competence.

There remain examples of LLMs performing much worse than humans on analogical reasoning tasks [10], which must be reconciled with our results. Here the competence-performance distinction, originally introduced by Noam Chomsky [34], can be usefully applied to the evaluation of LLMs [35, 2, 36]. This distinction allows researchers to theorize about the abstract computational principles governing cognition separately from the “noise” introduced by performance factors. In humans, it is generally assumed that there is a double dissociation between performance and competence: neither success nor failure on a task designed to measure a particular capacity can always be taken as conclusive evidence that subjects have or lack that capacity, due to auxiliary factors affecting task performance. When it comes to LLMs, by contrast, the distinction is typically applied in a single direction: human-like performance on benchmarks is often explained away by reliance on shallow heuristics [37] and/or lack of construct validity [38], while sub-human performance is often taken as reliable evidence of lack of competence. However, LLM performance can also be negatively affected by strong auxiliary task demands [39] and mismatched conditions in comparisons with human subjects [40]. These are compelling reasons to apply the dissociation in both directions to LLMs as well.

From this perspective, our results offer evidence to support both sides of the present debate about whether LLMs possess human-level analogical reasoning (see Webb et al. [15], Mitchell et al. [10], and Hodel et al. [16]). Supporting the argument of Webb et al. [15] that deficiencies in capabilities other than analogical reasoning can explain poor model performance in some tasks, we find that GPT-4’s failure in the numeric conditions of our Semantic Content experiment may be due to a deficiency in counting ability. However, contrary to Webb et al. [4], who report impressive analogical reasoning in both GPT-3 and GPT-4, we do find a qualitative difference in the performance of these two models, with GPT-3 performing quite poorly on our tasks. Among the models tested, only GPT-4 and Claude 3 produce results that merit detailed comparison with human subjects. This suggests that claims of human-level performance of LLMs on analogical reasoning tasks may have been premature and might have relied on insufficiently challenging tasks.

However, other differences we observe between human subjects and LLMs across task variations are not subject to an auxiliary task demand explanation and suggest that the underlying mechanisms of analogical reasoning in these systems may differ from that in humans. Importantly, these differences persist even in our best performing model, Claude 3. For instance, Claude 3 responds differently than human subjects when some or all words in the target domain are replaced with random words, indicating that they may use distinct strategies for identifying and leveraging relational similarities between source and target domains. Furthermore, Claude 3 remains more sensitive than human subjects to the ordering of elements within domains, which is difficult to explain if LLMs are using a generalizable symbolic working memory approach.

Collectively, these patterns bear on the larger question of how we should arbitrate disputes about competence in machine-human comparisons. On the one hand, it seems reasonable to assume that any system that can reliably achieve success at or above human level on experiments like ours–without relying on memorization and other confounds–should be considered competent at analogical reasoning through structure-mapping. On the other hand, we should be open to the possibility that such competence may be implemented differently in LLMs and humans.

The question of whether we require human-likeness of the mechanism to declare human-level “competence” is ultimately not empirical, but rather demands philosophical consensus among the scientific community around our ultimate goals and metrics for achieving them.

### 4.2 Analogy in Human(-like) Learning and Bootstrapping

Unlike previous research comparing analogical reasoning in human subjects and LLMs, our tasks involve transferring semantic structure and content from source to target domains, rather than reasoning over abstract symbols. Our experiments thus investigate whether LLMs’ analogical reasoning resembles that of human subjects in a manner pertinent to its purportedly central role in broader cognition. Following Gentner [11], emphasis has been placed on relational similarity, rather than just feature similarity, in mapping from a familiar source to a foreign target domain during analogical reasoning to allow for the flexible transfer of knowledge [41, 42, 43]. This conception allows analogical reasoning to play a fundamental role in human cognition, supporting the emergence of diverse cognitive abilities via “bootstrapping” [44, 45, 46]. In bootstrapping, two cognitive processes mutually support each other’s development. In Gentner’s Structure-Mapping Theory (SMT), language development and structure-mapping-based analogical reasoning are hypothesized to co-develop, with structure-mapping developing the necessary relational reasoning to model language-world relations, and language acquisition in turn developing symbolic reasoning capacities that amplify structure-mapping abilities. Consequently, analogical reasoning is seen as a central cognitive phenomenon of interest.

The success of some LLMs in many of our tasks suggests that the most advanced models may be capable of employing a structure-mapping based approach to analogical reasoning, in which relations in the source domain are used to constrain and guide reasoning about relations in the target domain. This raises the possibility that a bootstrapping cycle between language development and analogical reasoning in humans, as proposed by Gentner [44], may be paralleled in language models. The emergence of such competence from training primarily on text prediction would yield new hypotheses about the emergence of analogical reasoning as a central cognitive faculty from generic learning mechanisms (possibly combined with the unique pressures of language acquisition). However, the mixed success of LLMs and the significant differences from humans in certain conditions underscore the need for continued research to test the robustness of any conclusion that analogical reasoning in LLMs closely matches that of human subjects. As LLM outputs continue to converge toward human responses–an expected product of the language modelling objective–it is crucial to develop novel tasks that examine analogical reasoning ability and are not attested in the training data. While our task allows for clear discrimination between human performance and that of most models prior to Claude 3, further differences in analogical reasoning patterns between humans and Claude 3 likely exist beyond those revealed by our tests. More granular testing would help clarify the extent of the remaining discrepancies between humans and the most advanced LLMs, and much further work is required to verify the hypothesis that language models parallel the bootstrapping cycle between language development and analogical reasoning in humans.

The proprietary nature of leading LLMs like Claude 3 unfortunately limits our ability to directly investigate the features that may explain the emergence of a response pattern largely mirroring that of human subjects. However, increasingly sophisticated open-weights models are being released, which may allow for interpretability work to analyze the internal mechanisms of a model and shed light on the underlying mechanisms that enable advanced LLMs to exhibit impressive analogical reasoning abilities in many tasks.

## 5 Acknowledgments

This work was supported in part by NIH NIGMS COBRE grant #5P20GM10364510.

## References

- [1] A. Srivastava, A. Rastogi, A. Rao, A. A. M. S. E. al., Beyond the imitation game: Quantifying and extrapolating the capabilities of language models (2023). arXiv:2206.04615.

- [2] E. Pavlick, Symbols and grounding in large language models, Philosophical Transactions of the Royal Society A: Mathematical, Physical and Engineering Sciences 381 (2251) (Jun. 2023). doi:10.1098/rsta.2022.0041. URL http://dx.doi.org/10.1098/rsta.2022.0041

- [3] K. Mahowald, A. A. Ivanova, I. A. Blank, N. Kanwisher, J. B. Tenenbaum, E. Fedorenko, Dissociating language and thought in large language models (2023). arXiv:2301.06627.

- [4] T. Webb, K. J. Holyoak, H. Lu, Emergent analogical reasoning in large language models, Nature Human Behaviour 7 (9) (2023) 1526––1541.

- [5] S. J. Han, K. Ransom, A. Perfors, C. Kemp, Inductive reasoning in humans and large language models (2023). arXiv:2306.06548.

- [6] X. Hu, S. Storks, R. L. Lewis, J. Chai, In-context analogical reasoning with pre-trained language models (2023). arXiv:2305.17626.

- [7] M. Mitchell, Abstraction and analogy-making in artificial intelligence, Annals of the New York Academy of Sciences 1505 (1) (2021) 79–101. arXiv:https://nyaspubs.onlinelibrary.wiley.com/doi/pdf/10.1111/nyas.14619, doi:https://doi.org/10.1111/nyas.14619. URL https://nyaspubs.onlinelibrary.wiley.com/doi/abs/10.1111/nyas.14619

- [8] K. J. Holyoak, D. Gentner, B. N. Kokinov, Introduction: The Place of Analogy in Cognition, in: The Analogical Mind: Perspectives from Cognitive Science, The MIT Press, 2001. arXiv:https://direct.mit.edu/book/chapter-pdf/2323335/9780262316057\_caa.pdf, doi:10.7551/mitpress/1251.003.0003. URL https://doi.org/10.7551/mitpress/1251.003.0003

- [9] D. R. Hofstadter, Epilogue: Analogy as the Core of Cognition, in: The Analogical Mind: Perspectives from Cognitive Science, The MIT Press, 2001. arXiv:https://direct.mit.edu/book/chapter-pdf/2323391/9780262316057\_cao.pdf, doi:10.7551/mitpress/1251.003.0020. URL https://doi.org/10.7551/mitpress/1251.003.0020

- [10] M. Lewis, M. Mitchell, Using counterfactual tasks to evaluate the generality of analogical reasoning in large language models (2024). arXiv:2402.08955.

- [11] D. Gentner, Structure-mapping: A theoretical framework for analogy*, Cognitive Science 7 (2) (1983) 155–170. arXiv:https://onlinelibrary.wiley.com/doi/pdf/10.1207/s15516709cog0702_3, doi:https://doi.org/10.1207/s15516709cog0702\_3. URL https://onlinelibrary.wiley.com/doi/abs/10.1207/s15516709cog0702_3

- [12] K. Erk, Towards a semantics for distributional representations, in: A. Koller, K. Erk (Eds.), Proceedings of the 10th International Conference on Computational Semantics (IWCS 2013) – Long Papers, Association for Computational Linguistics, Potsdam, Germany, 2013, pp. 95–106. URL https://aclanthology.org/W13-0109

- [13] G. Boleda, Distributional semantics and linguistic theory, CoRR abs/1905.01896 (2019). arXiv:1905.01896. URL http://arxiv.org/abs/1905.01896

- [14] L. Gleitman, C. Fisher, 6 universal aspects of word learning, in: J. A. McGilvray (Ed.), The Cambridge Companion to Chomsky, Cambridge University Press, 2005, p. 123.

- [15] T. Webb, K. J. Holyoak, H. Lu, Evidence from counterfactual tasks supports emergent analogical reasoning in large language models (2024). arXiv:2404.13070.

- [16] D. Hodel, J. West, Response: Emergent analogical reasoning in large language models (2024). arXiv:2308.16118.

- [17] S. French, A model-theoretic account of representation (or, i don’t know much about art…but i know it involves isomorphism), Philosophy of Science 70 (5) (2003) 1472–1483. doi:10.1086/377423.

- [18] T. B. Brown, B. Mann, N. Ryder, M. Subbiah, J. Kaplan, P. Dhariwal, A. Neelakantan, P. Shyam, G. Sastry, A. Askell, S. Agarwal, A. Herbert-Voss, G. Krueger, T. Henighan, R. Child, A. Ramesh, D. M. Ziegler, J. Wu, C. Winter, C. Hesse, M. Chen, E. Sigler, M. Litwin, S. Gray, B. Chess, J. Clark, C. Berner, S. McCandlish, A. Radford, I. Sutskever, D. Amodei, Language models are few-shot learners (2020). arXiv:2005.14165.