# MR-Ben: A Meta-Reasoning Benchmark for Evaluating System-2 Thinking in LLMs

> 1Chinese University of Hong Kong2University of Cambridge3University of Edinburgh4City University of Hong Kong5Tsinghua University6University of Texas at Austin7University of Hong Kong8Nanyang Technological University9Massachusetts Institute of Technology

Abstract

Large language models (LLMs) have shown increasing capability in problem-solving and decision-making, largely based on the step-by-step chain-of-thought reasoning processes. However, evaluating these reasoning abilities has become increasingly challenging. Existing outcome-based benchmarks are beginning to saturate, becoming less effective in tracking meaningful progress. To address this, we present a process-based benchmark MR-Ben that demands a meta-reasoning skill, where LMs are asked to locate and analyse potential errors in automatically generated reasoning steps. Our meta-reasoning paradigm is especially suited for system-2 slow thinking, mirroring the human cognitive process of carefully examining assumptions, conditions, calculations, and logic to identify mistakes. MR-Ben comprises 5,975 questions curated by human experts across a wide range of subjects, including physics, chemistry, logic, coding, and more. Through our designed metrics for assessing meta-reasoning on this benchmark, we identify interesting limitations and weaknesses of current LLMs (open-source and closed-source models). For example, with models like the o1 series from OpenAI demonstrating strong performance by effectively scrutinizing the solution space, many other state-of-the-art models fall significantly behind on MR-Ben, exposing potential shortcomings in their training strategies and inference methodologies Our dataset and codes are available on https://randolph-zeng.github.io/Mr-Ben.github.io.. footnotetext: Correspondence to: Zhijiang Guo (zg283@cam.ac.uk) and Jiaya Jia (leojia@cse.cuhk.edu.hk).

1 Introduction

Reasoning, the cognitive process of using evidence, arguments, and logic to reach conclusions, is crucial for problem-solving, decision-making, and critical thinking [65, 19]. With the rapid advancement of Large Language Models (LLMs), there is an increasing interest in exploring their reasoning capabilities [30, 57]. Consequently, evaluating reasoning in LLMs reliably becomes paramount. Current evaluation methodologies primarily focus on the final result [16, 28, 22, 60], disregarding the intricacies of the reasoning process. While effective to some extent, such evaluation practices may conceal underlying issues like logical errors or unnecessary steps that compromise the accuracy and efficiency of reasoning [68, 41].

Therefore, it is important to complement outcome-based evaluation with an intrinsic evaluation of the quality of the reasoning process. However, current benchmarks for evaluating LLMs’ reasoning capabilities have certain limitations in terms of their scope and size. For instance, PRM800K [38] categorizes each reasoning step as positive, negative, or neutral. Similarly, BIG-Bench Mistake [64] focuses on identifying errors in step-level answers. We follow the same meta-reasoning paradigm as MR-GSM8K [77] and MR-Math [68], which go a step further by providing the error reason for the first negative step in the reasoning chain. However, these benchmarks are limited to a narrower task scope—MR-GSM8K and MR-Math focus solely on mathematical reasoning, while BIG-Bench Mistake mainly assesses logical reasoning. To ensure a comprehensive evaluation of reasoning abilities, it is crucial to identify reasoning errors and assess the LLMs’ capacity to elucidate them across wider domains.

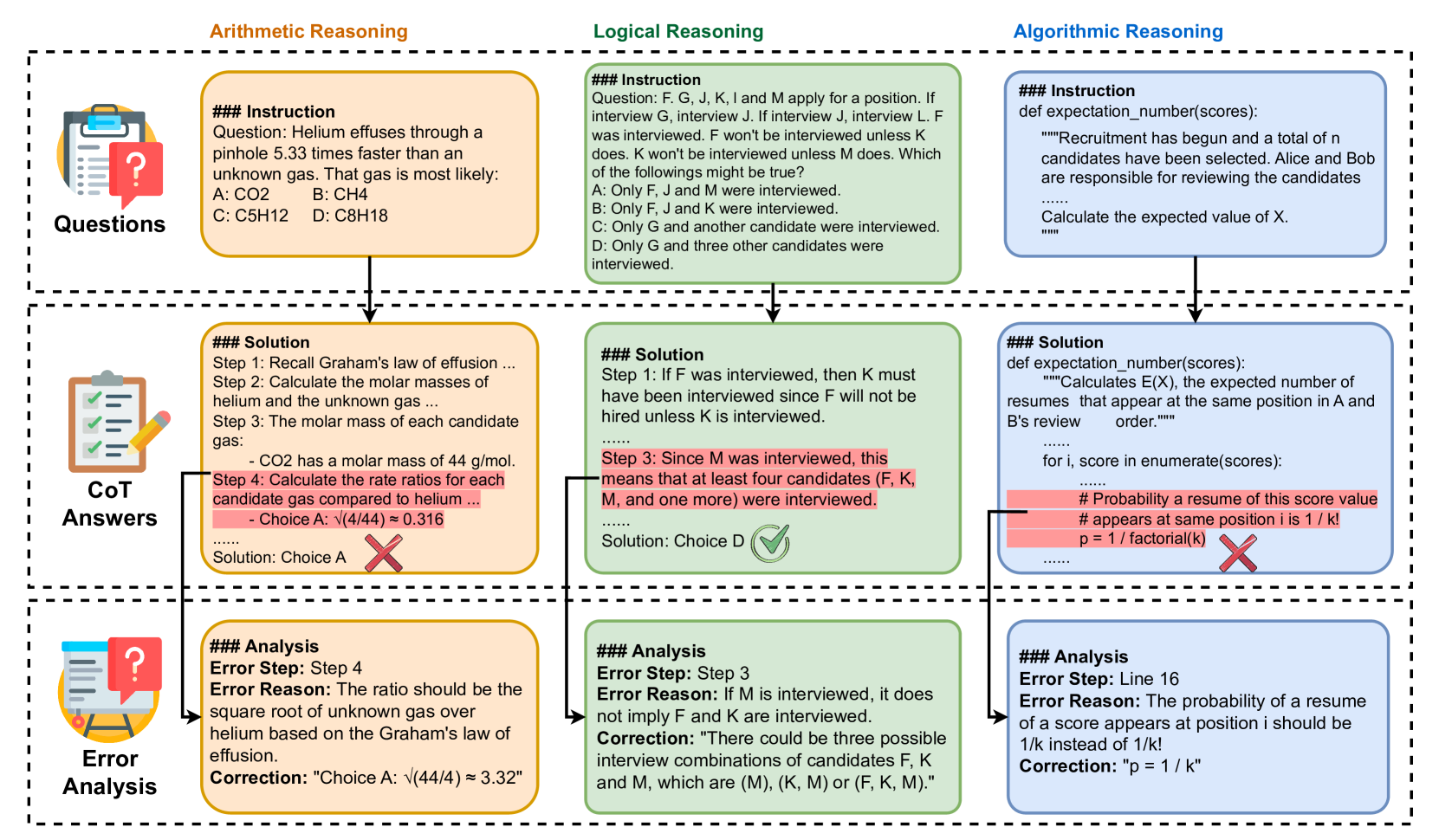

To bridge this gap, we construct a comprehensive benchmark MR-Ben comprising 6k questions covering a wide range of subjects, including natural sciences like math, biology, and physics, as well as coding and logic. One unique aspect of MR-Ben is its meta-reasoning paradigm, which involves challenging LLMs to reason about different forms of reasoning. In this paradigm, LLMs take on the role of a teacher, evaluating the reasoning process by assessing correctness, analyzing potential errors, and providing corrections, as depicted in Figure 1.

Our analysis of various LLMs [50, 51, 5, 33, 47] uncovers distinct limitations and previously unidentified weaknesses in their reasoning abilities. While many LLMs are capable of generating correct answers, they often struggle to identify errors within their reasoning processes and explain the underlying rationale. To excel under our meta-reasoning paradigm, models must meticulously scrutinize assumptions, conditions, calculations, and logical steps, even inferring step outcomes counterfactually. These requirements align with the characteristics of “System-2” slow thinking [35, 9], which we believe remains underdeveloped in most of the state-of-the-art models we evaluated.

We suspect that a key reason for this gap lies in current fine-tuning paradigms, which prioritize correct solutions and limit effective exploration of the broader solution space. Echoing this hypothesis, we observed that models like o1-preview [52], which reportedly incorporate effective search and disambiguation techniques across trajectories in the solution space, outperform other models by a large margin. Moreover, we found that leveraging high-quality and diverse synthetic data [1] significantly mitigates this issue, offering a promising path to enhance performance regardless of model size. Additionally, our results indicate that different LLMs excel in distinct reasoning paradigms, challenging the notion that domain-specific enhancements necessarily yield broad cognitive improvements. We hope that MR-Ben will guide researchers in comprehensively evaluating their models’ capabilities and foster the development of more robust AI reasoning frameworks.

Our key contributions are summarized as follows:

- We introduced MR-Ben, which includes around 6k questions across a wide range of subjects, from natural sciences to coding and logic, and employs a unique meta-reasoning paradigm.

- We conduct an extensive analysis of various LLMs on MR-Ben, revealing various limitations and previously unidentified weaknesses in their reasoning abilities.

- We offer potential pathways for enhancing the reasoning abilities of LLMs and challenge the assumption that domain-specific enhancements necessarily lead to broad improvements.

<details>

<summary>x1.png Details</summary>

### Visual Description

## Diagram: Reasoning Types and Error Analysis Workflow

### Overview

The image is a structured flowchart diagram illustrating a three-stage process applied to three distinct domains of problem-solving. It demonstrates how a system (likely a Large Language Model) is presented with a prompt, generates a step-by-step "Chain of Thought" (CoT) answer that contains a specific logical or factual error, and subsequently undergoes an "Error Analysis" to identify, explain, and correct that specific flaw.

### Components and Layout

The diagram is organized into a grid format:

* **Columns (Domains):**

* **Left Column (Orange):** Arithmetic Reasoning

* **Center Column (Green):** Logical Reasoning

* **Right Column (Blue):** Algorithmic Reasoning

* **Rows (Stages):**

* **Top Row:** "Questions" (Indicated by a clipboard icon with a question mark on the far left).

* **Middle Row:** "CoT Answers" (Indicated by a clipboard icon with checkmarks and a pencil).

* **Bottom Row:** "Error Analysis" (Indicated by a clipboard icon with a question mark and an easel).

* **Flow Indicators:** Black downward-pointing arrows connect the boxes from top to bottom within each column. Additionally, specific lines connect red-highlighted text in the middle row directly to the analysis boxes in the bottom row.

---

### Content Details (Transcription and Spatial Grounding)

#### Column 1: Arithmetic Reasoning (Left, Orange)

* **Top Box (Questions):**

```text

### Instruction

Question: Helium effuses through a pinhole 5.33 times faster than an unknown gas. That gas is most likely:

A: CO2 B: CH4

C: C5H12 D: C8H18

```

* **Middle Box (CoT Answers):**

```text

### Solution

Step 1: Recall Graham's law of effusion ...

Step 2: Calculate the molar masses of helium and the unknown gas ...

Step 3: The molar mass of each candidate gas:

- CO2 has a molar mass of 44 g/mol.

[HIGHLIGHTED IN RED]: Step 4: Calculate the rate ratios for each candidate gas compared to helium ...

- Choice A: √(4/44) ≈ 0.316

......

Solution: Choice A [Large Red 'X' icon]

```

*Visual Note:* A line connects the red highlighted text in Step 4 down to the Error Analysis box.

* **Bottom Box (Error Analysis):**

```text

### Analysis

Error Step: Step 4

Error Reason: The ratio should be the square root of unknown gas over helium based on the Graham's law of effusion.

Correction: "Choice A: √(44/4) ≈ 3.32"

```

#### Column 2: Logical Reasoning (Center, Green)

* **Top Box (Questions):**

```text

### Instruction

Question: F. G, J, K, I and M apply for a position. If interview G, interview J. If interview J, interview L. F was interviewed. F won't be interviewed unless K does. K won't be interviewed unless M does. Which of the followings might be true?

A: Only F, J and M were interviewed.

B: Only F, J and K were interviewed.

C: Only G and another candidate were interviewed.

D: Only G and three other candidates were interviewed.

```

* **Middle Box (CoT Answers):**

```text

### Solution

Step 1: If F was interviewed, then K must have been interviewed since F will not be hired unless K is interviewed.

......

[HIGHLIGHTED IN RED]: Step 3: Since M was interviewed, this means that at least four candidates (F, K, M, and one more) were interviewed.

......

Solution: Choice D [Large Green Checkmark icon]

```

*Visual Note:* A line connects the red highlighted text in Step 3 down to the Error Analysis box.

* **Bottom Box (Error Analysis):**

```text

### Analysis

Error Step: Step 3

Error Reason: If M is interviewed, it does not imply F and K are interviewed.

Correction: "There could be three possible interview combinations of candidates F, K and M, which are (M), (K, M) or (F, K, M)."

```

#### Column 3: Algorithmic Reasoning (Right, Blue)

* **Top Box (Questions):**

```python

### Instruction

def expectation_number(scores):

"""Recruitment has begun and a total of n candidates have been selected. Alice and Bob are responsible for reviewing the candidates

......

Calculate the expected value of X.

"""

```

* **Middle Box (CoT Answers):**

```python

### Solution

def expectation_number(scores):

"""Calculates E(X), the expected number of resumes that appear at the same position in A and B's review order."""

......

for i, score in enumerate(scores):

......

[HIGHLIGHTED IN RED]: # Probability a resume of this score value

# appears at same position i is 1 / k!

p = 1 / factorial(k) [Large Red 'X' icon]

......

```

*Visual Note:* A line connects the red highlighted code block down to the Error Analysis box.

* **Bottom Box (Error Analysis):**

```text

### Analysis

Error Step: Line 16

Error Reason: The probability of a resume of a score appears at position i should be 1/k instead of 1/k!

Correction: "p = 1 / k"

```

---

### Key Observations

1. **Structured Error Targeting:** The diagram explicitly isolates the exact point of failure within a multi-step reasoning process. It does not just mark the final answer wrong; it highlights the specific flawed step (Step 4, Step 3, or Line 16).

2. **Standardized Correction Format:** Every Error Analysis box follows a strict tripartite structure: `Error Step`, `Error Reason`, and `Correction`.

3. **The "Right Answer, Wrong Reason" Anomaly:** In the Center Column (Logical Reasoning), the final solution ("Choice D") is marked with a Green Checkmark, indicating it is the correct multiple-choice option. However, "Step 3" of the reasoning used to arrive at that answer is highlighted in red as an error.

### Interpretation

This diagram outlines a sophisticated data structure or training methodology for Artificial Intelligence, specifically Large Language Models (LLMs).

* **What the data demonstrates:** It shows a framework for "process supervision" rather than just "outcome supervision." Instead of merely training a model to output the correct final answer (A, B, C, D, or a final number), this framework evaluates the *Chain of Thought* (CoT).

* **Reading between the lines (The Peircean view):** The most revealing part of this diagram is the Logical Reasoning column. The fact that a green checkmark (correct final answer) is paired with a red-highlighted error in the steps proves that this system is designed to detect and correct *hallucinated logic* or *lucky guesses*. It enforces that the model must not only be right, but it must be right for the correct reasons.

* **Purpose:** This image is likely taken from an academic paper or technical documentation introducing a new dataset or fine-tuning method. By providing models with examples of common reasoning errors alongside structured explanations of *why* they are wrong and *how* to fix them, developers can train AI to self-correct, debug code more effectively, and produce more reliable, faithful step-by-step logic.

</details>

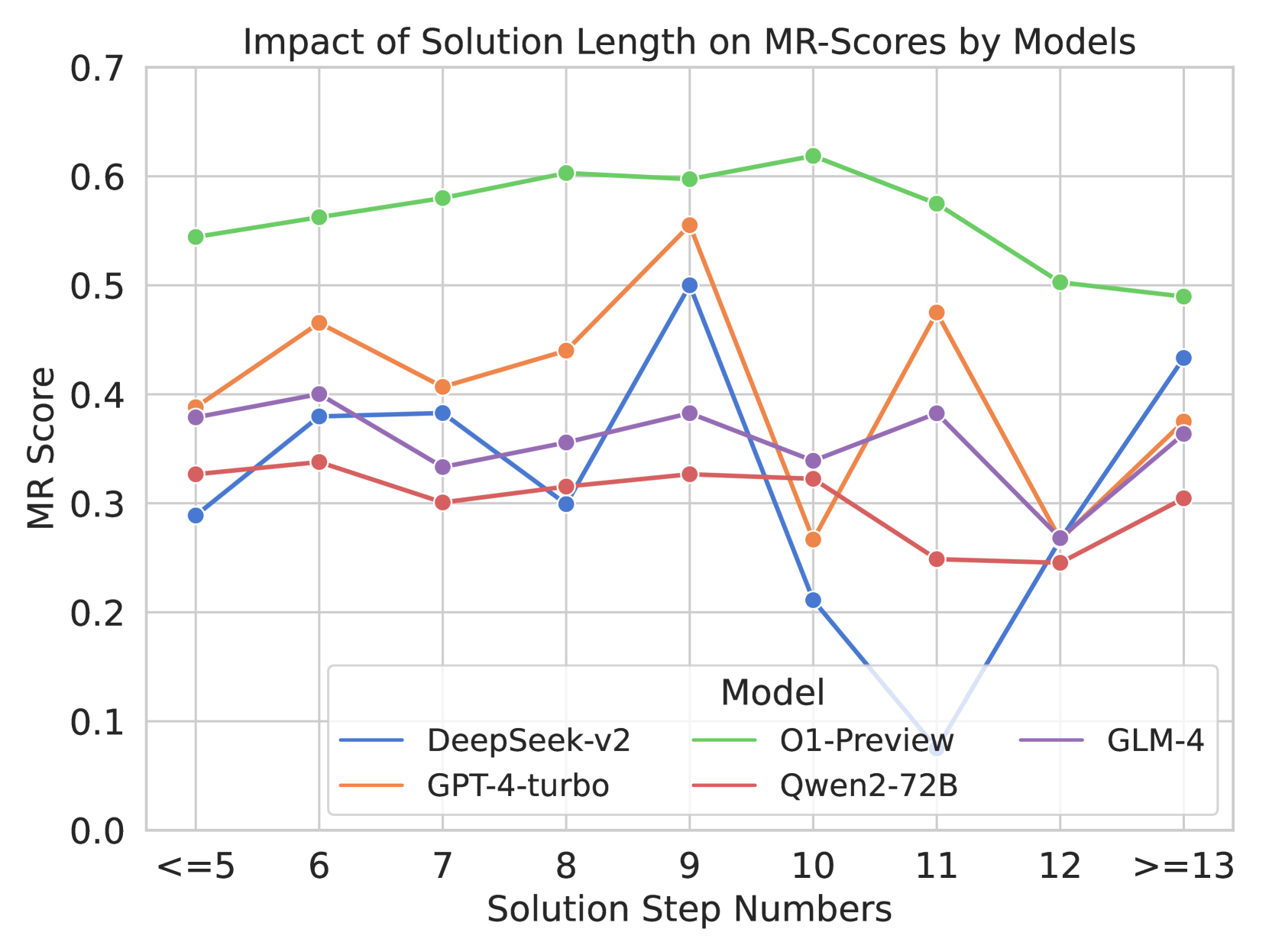

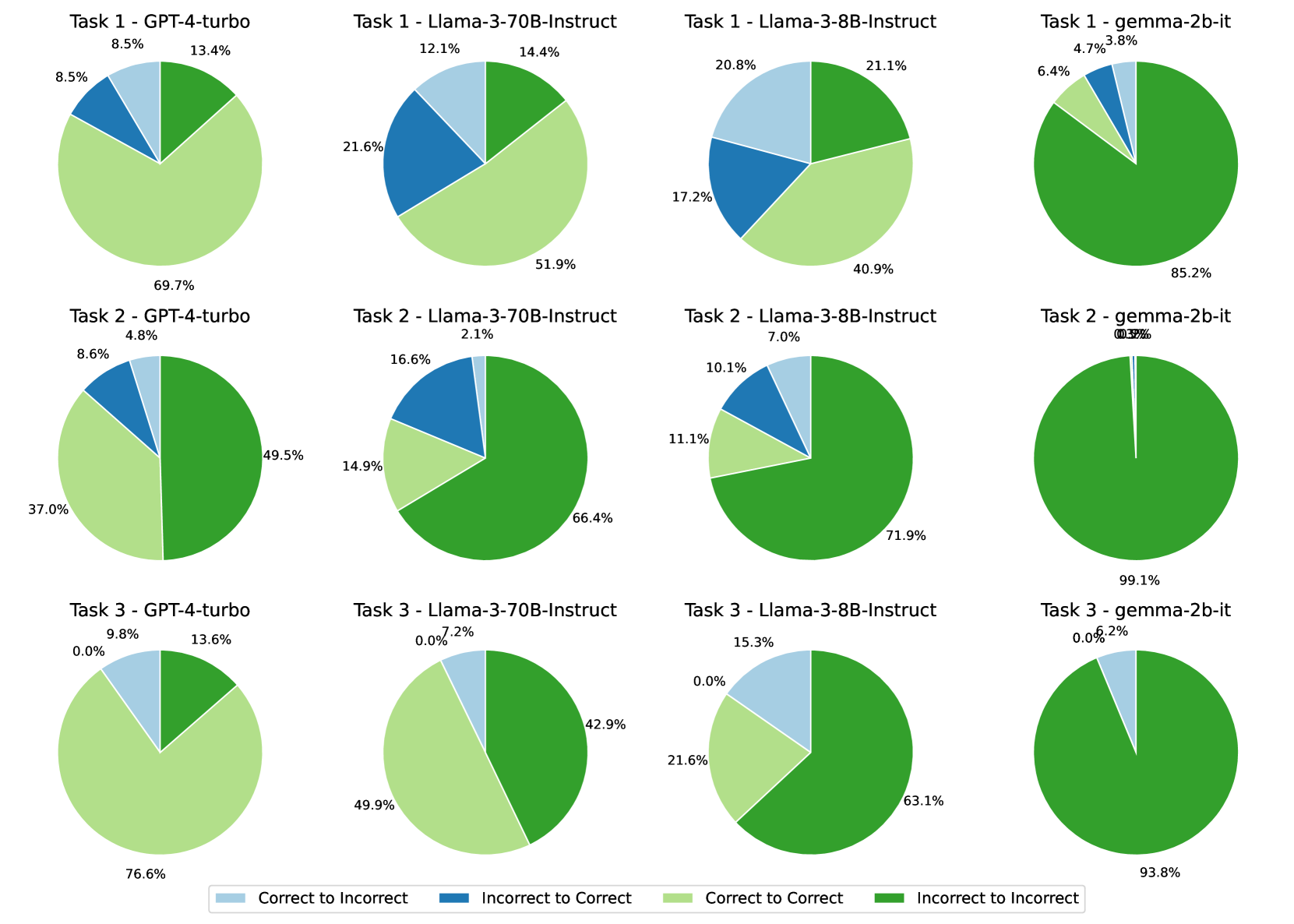

Figure 1: Overview of the evaluation paradigm and representative examples in MR-Ben. Each data point encompasses three key elements: a question, a Chain-of-Thought (CoT) answer, and an error analysis. The CoT answer is generated by various LLMs. Human experts annotate the error analyses, which include error steps, reasons behind the error, and subsequent corrections. The three examples shown are selected to represent arithmetic, logical, and algorithmic reasoning types.

2 Related Works

Reasoning Benchmarks

Evaluating the reasoning capabilities of LLMs is crucial for understanding their potential and limitations. While existing benchmarks often assess reasoning by measuring performance on tasks that require reasoning, such as accuracy, they often focus on specific reasoning types like arithmetic, knowledge, logic, or algorithmic reasoning. Arithmetic reasoning, involving mathematical concepts and operations, has been explored in benchmarks ranging from elementary word problems [37, 4, 55, 16] to more complex and large-scale tasks [28, 48]. Knowledge reasoning, on the other hand, requires either internal (commonsense) or external knowledge, or a combination of both [14, 62, 22]. Logical reasoning benchmarks, encompassing deductive and inductive reasoning, use synthetic rule bases for the former [15, 61, 18] and specific observations for the latter to formulate general principles [78, 71]. Algorithmic reasoning often involves understanding the coding problem description and performing multi-step reasoning to solve it [17, 25]. Benchmarks like BBH [59] and MMLU [27] indirectly assess reasoning by evaluating performance on tasks that require it. However, these benchmarks primarily focus on final results, neglecting the analysis of potential errors in the reasoning process. Unlike prior efforts, MR-Ben goes beyond accuracy by assessing the ability to locate potential errors in the reasoning process and provide explanations and corrections. Moreover, MR-Ben covers different types of reasoning, offering a more comprehensive assessment.

Evaluation Beyond Accuracy

Many recent studies have shifted their focus from using only the final result to evaluating the reasoning quality beyond accuracy. This shift has led to the development of two approaches: reference-free and reference-based evaluation. Reference-free methods aim to assess reasoning quality without relying on human-provided solutions. For example, ROSCOE [23] evaluates reasoning chains by quantifying reasoning errors such as redundancy and hallucination. Other approaches convert reasoning steps into structured forms, like subject-verb-object frames [56] or symbolic proofs [58], allowing for automated analysis. Reference-based methods depend on human-generated step-by-step solutions. For instance, PRM800K [38] offers solutions to MATH problems [28], categorizing each reasoning step as positive, negative, or neutral. Building on this, MR-GSM8K [77] and MR-Math [68] further provide the error reason behind the first negative step. MR-GSM8K focuses on elementary math problems, sampling questions from GSM8K [16]. MR-Math samples a smaller set of 459 questions from MATH [28]. Using the same annotation scheme, BIG-Bench Mistake [64] focuses on symbolic reasoning. It encompasses 2,186 instances from 5 tasks in BBH [59]. Despite the progress made by these datasets, limitations in scope and size remain. To address this, we introduce MR-Ben, a benchmark consisting of 5,975 manually annotated instances covering a wide range of subjects, including natural sciences, coding, and logic. MR-Ben also features more challenging questions, spanning high school, graduate, and professional levels.

3 MR-Ben: Dataset Construction

3.1 Dataset Structure

To comprehensively evaluate the reasoning capabilities of LLMs, MR-Ben employs a meta-reasoning paradigm. This paradigm casts LLMs in the role of a teacher, where they assess the reasoning process by evaluating its correctness, analyzing errors, and providing corrections. As shown in Figure 1, each data point within MR-Ben consists of three key elements: a question, a CoT answer, and an error analysis. The construction pipeline is shown in Figure 6 in Appendix- D.

Question

The questions in MR-Ben are designed to cover a diverse range of reasoning types and difficulty levels, spanning from high school to professional levels. To ensure this breadth, we curated questions from various subjects, including natural sciences (mathematics, biology, physics), coding, and logic. Specifically, we sampled questions from mathematics, physics, biology, chemistry, and medicine from MMLU [27], which comprehensively assesses LLMs across academic and professional domains. For logic questions, we draw from LogiQA [40], which encompasses a broad spectrum of logical reasoning types, including categorical, conditional, disjunctive, and conjunctive reasoning. Finally, we select coding problems from MHPP [17], which focuses on function-level code generation requiring advanced algorithmic reasoning. Questions in MMLU and LogiQA require a single-choice answer, while MHPP requires a snippet of code as the answer.

CoT Answer

We queried GPT-3.5-Turbo-0125 [50], Claude2 [5], and Mistral-Medium [32] (as of February 2024) using a prompt template (provided in Figure- 7 in Appendix- D) designed to elicit step-by-step solutions [66]. For clarity, all LLMs were instructed to format their solutions with numbered steps, except for coding problems. To encourage diverse solutions, we set the temperature parameter to 1 during sampling. This empirical setting yielded satisfactory instruction following and desirable fine-grained reasoning errors, which annotators and evaluated models are expected to identify.

3.2 Annotation Process

After acquiring the questions and their corresponding Chain-of-Thought (CoT) answers, we engage annotators to provide error analyses. The annotation process is divided into three stages.

Answer Correctness

CoT answers that result in a final answer different from the ground truth are automatically flagged as incorrect. However, for cases where the final answer matches the ground truth, manual annotation is required. This is because there are instances where the reasoning process leading to the correct answer is flawed, as illustrated in the middle example of Figure 1. Therefore, annotators are tasked with meticulously examining the entire reasoning path to determine if the correct final answer is a direct result of the reasoning process.

Error Step

This stage is applicable for solutions with either an unmatched final output or a matched final output underpinned by flawed reasoning. Following the prior effort [38], each step in the reasoning process is categorized as positive, neutral, or negative. Positive and neutral steps represent stages where the correct final output remains attainable. Conversely, negative steps indicate a divergence from the path leading to the correct solution. Annotators are required to identify the first step in the reasoning process where the conditions, assumptions, or calculations are incorrect, making the correct final result unreachable for the subsequent reasoning steps.

Error Reason and Correction

Annotators are tasked with conducting an in-depth analysis of the reasoning that led to the identified error. As shown in Figure 1, annotators are required to provide the error reason and the corresponding correction to this reasoning step. This comprehensive approach ensures a thorough understanding and rectification of errors in the reasoning process.

3.3 Data Statistics

Table 1: Statistics of MR-Ben. The length of questions and solutions are measured inthe number of words. Notice that the steps for coding denote the number of lines of code. They are not directly comparable with other subjects.

| Correct Solution Ratio Avg Solution Steps Avg First Error Step | 16.2% 6.8 3.1 | 31.0% 5.3 3.0 | 59.6% 5.1 2.7 | 47.8% 5.7 3.1 | 45.0% 5.6 3.0 | 51.1% 5.3 2.8 | 31.1% 32.5* 14.0* | 40.3% 9.5 4.5 |

| --- | --- | --- | --- | --- | --- | --- | --- | --- |

| Avg Length of Questions | 44.3 | 88.7 | 56.3 | 66.6 | 48.1 | 154.8 | 140.1 | 85.6 |

| Avg Length of Solutions | 205.9 | 206.1 | 187.6 | 199.4 | 194.5 | 217.7 | 950.3 | 308.8 |

Table 1 presents the statistics of MR-Ben. The benchmark exhibits a balanced distribution of correct and incorrect solutions, with an overall correct solution rate of 40.3%. Solutions, on average, involve 9.5 steps, and errors typically manifest around the fourth step (4.5). The questions and solutions are substantial, with average lengths of 85.6 and 308.8 words, respectively. The subject-wise analysis reveals that Math is the most challenging, with a correct solution rate of a mere 16.2%. This could be attributable to the intricacy of the arithmetic operations involved. Conversely, Biology emerges as the least daunting, with a high correct solution rate of 59.6%. Coding problems have the longest solutions, averaging 950.3 number of words. This underscores the complexity and the detailed procedural reasoning inherent in coding tasks. Similarly, Logic problems have the longest questions, averaging 154.8 words. This is in line with the need for elaborate descriptions in logical reasoning. The typical step at which the first error occurs is fairly consistent across most subjects, usually around the 3rd step out of a total of 5. However, Coding deviates from this trend. The first error tends to appear earlier, specifically around the 14th line out of a total of 32.5 lines. This suggests that the problem-solving process in Coding may have distinct dynamics compared to other subjects.

3.4 Quality Control

Annotators

Given the complexity of the questions, which span a range of subjects from high school to professional levels, we enlisted the services of an annotation company. This company meticulously recruited annotators, each holding a minimum of a bachelor’s degree. Before their trial labeling, annotators are thoroughly trained and are required to review the annotation guidelines. We’ve included the guidelines for all subjects in Appendix H for reference. The selection of annotators is based on their performance on a balanced, small hold-out set of problems for each subject. In addition to the annotators, a team of 14 quality controllers diligently monitors the quality of the annotation weekly. As a final layer of assurance, we have 4 meta controllers who scrutinize the quality of the work.

Quality Assurance

Every problem in MR-Ben undergoes a rigorous three-round quality assurance process to ensure its accuracy and clarity. Initially, each question is labeled by two different annotators. Any inconsistencies in the solution correctness or the first error step are identified and reviewed by a quality controller for arbitration. Following this, every annotated problem is subjected to a secondary review by annotators who were not involved in the initial labeling. This is to ensure that the annotations for different solutions to the same problem are consistent and coherent. In the final phase of the review, 10% of the problems are randomly sampled and reviewed by the meta controllers. Throughout the entire evaluation process, all annotated fields are meticulously examined in multiple rounds for their accuracy and clarity. Any incorrect annotations or those with disagreements are progressively filtered out and rectified, ensuring a high-quality dataset. This rigorous process allows us to maintain a high level of annotation quality.

Dataset Artifacts & Biases

Table 1 reveals a relatively balanced distribution of correct and incorrect solutions. However, an exception was observed in mathematical subjects, where the distribution tends to skew towards incorrect solutions. This skew could suggest an inherent complexity or ambiguity in mathematical problem statements. Our analysis of the first error step across all subjects indicated that errors predominantly occur in the initial stages ( $n≤ 7$ ) of problem-solving and are distributed relatively uniformly. This pattern was consistent across most subjects, with no significant skew towards later steps. More detailed discussions of biases are provided in the Appendix C.

4 Evaluation

For each question-solution pair annotated, the evaluated model are supposed to decide the correctness of the solution and report the first-error-step and error-reason if any. The solution-correctness and first-error-step is scored automatically based on the manual annotation result. Only when the evaluated model correctly identified the incorrect solution and first-error-step will its error-reason be further examined manually or automatically by models. Therefore in order to provide a unified and normalized score to reflect the overall competence of the evaluated model, we follow the work of [77] and apply a metric named MR-Score, which consist of three sub-metrics.

The first one is the Matthews Correlation Coefficient (a.k.a MCC, 46) for the binary classification of solution-correctness.

$$

\displaystyle MCC=\frac{TP\times TN-FP\times FN}{\sqrt{(TP+FP)\times(TP+FN)%

\times(TN+FP)\times(TN+FN)}} \tag{1}

$$

where TP, TN, FP, FN stand for true positive, true negative, false positive and false negative. The MCC score ranges from -1 to +1 with -1 means total disagreement between prediction and observation, 0 indicates near random performance and +1 represents perfect prediction. In the context of this paper, we interpret negative values as no better than random guess and set 0 as cut-off threshold for normalization purpose.

The second metric is the ratio between numbers of solutions with correct first-error-step predicted and the total number of incorrect solutions.

$$

\begin{split}ACC_{\text{step}}=\frac{N_{\text{correct\_first\_error\_step}}}{N%

_{\text{incorrect\_sols}}}\end{split} \tag{2}

$$

The third metrics is likewise the ratio between number of solutions with correct first-error-step plus correct error-reason predicted and the total number of incorrect solutions.

$$

\begin{split}ACC_{\text{reason}}=\frac{N_{\text{correct\_error\_reason}}}{N_{%

\text{incorrect\_sols}}}\end{split} \tag{3}

$$

MR-Score is then a weighted combination of three metrics, given by

$$

\displaystyle MR\mbox{-}Score \displaystyle=w_{1}*\max(0,MCC)+w_{2}*ACC_{\text{step}}+w_{3}*ACC_{\text{%

reason}} \tag{4}

$$

For the weights $w_{1},w_{2}$ and $w_{3}$ , they are chosen based on our evaluation results to maximize the differentiation between different models. It is important to note that the Matthews Correlation Coefficient (MCC) and the accuracy of locating the first error step can be directly calculated by comparing the responses of the evaluated model with the ground truth annotations. However, assessing the accuracy of the error reason explained by the evaluated model presents more complexity. While consulting domain experts for annotations is a feasible approach, we instead utilized GPT-4-Turbo as a proxy to examine the error reasons, as detailed in Figure- 11 in Appendix- D.

We operate under the assumption that while our benchmark presents a significant challenge for GPT-4 in evaluating complete solution correctness—identifying the first error step and explaining the error reason—it is comparatively easier for GPT-4 to assess whether the provided error reasons align with the ground truth. Specifically, in a hold-out set of sampled error reasons, there was a 92% agreement rate between the manual annotations by the authors and those generated by GPT-4. For more detailed evaluations on the robustness of MR-Score and its design thinking, please refer to our discussion in Appendix- B.

5 Experiments

5.1 Experiment Setup

Table 2: Evaluation results on MR-Ben: This table presents a detailed breakdown of each model’s performance evaluated under metric MR-Score across different subjects, where K stands for the number of demo examples here.

| Model | Bio. | | Phy. | | Math | | Chem. | | Med. | | Logic | | Coding | | Avg. | | | | | | | | |

| --- | --- | --- | --- | --- | --- | --- | --- | --- | --- | --- | --- | --- | --- | --- | --- | --- | --- | --- | --- | --- | --- | --- | --- |

| $k$ =0 | $k$ =1 | | $k$ =0 | $k$ =1 | | $k$ =0 | $k$ =1 | | $k$ =0 | $k$ =1 | | $k$ =0 | $k$ =1 | | $k$ =0 | $k$ =1 | | $k$ =0 | $k$ =1 | | $k$ =0 | $k$ =1 | |

| Closed-Source LLMs | | | | | | | | | | | | | | | | | | | | | | | |

| Claude3-Haiku | 5.7 | 5.8 | | 3.3 | 3.5 | | 3.1 | 3.1 | | 6.5 | 6.4 | | 2.0 | 2.0 | | 1.2 | 1.2 | | 9.0 | 0.0 | | 4.4 | 3.1 |

| GPT-3.5-Turbo | 3.6 | 6.6 | | 5.7 | 6.7 | | 5.7 | 5.4 | | 4.9 | 6.7 | | 3.6 | 4.4 | | 1.7 | 4.5 | | 3.0 | 4.1 | | 4.0 | 5.5 |

| Doubao-pro-4k | 8.4 | 13.5 | | 10.0 | 11.7 | | 12.3 | 15.5 | | 10.6 | 17.5 | | 5.9 | 10.0 | | 4.5 | 5.5 | | 9.8 | 7.4 | | 8.8 | 11.6 |

| Mistral-Large | 22.2 | 28.0 | | 26.7 | 25.4 | | 24.3 | 28.2 | | 24.0 | 27.0 | | 15.9 | 19.3 | | 14.7 | 17.1 | | 21.1 | 21.4 | | 21.3 | 23.8 |

| Yi-Large | 35.3. | 40.7 | | 37.2 | 36.8 | | 36.5 | 20.6 | | 40.0 | 39.1 | | 29.3 | 32.1 | | 25.1 | 31.3 | | 21.9 | 25.7 | | 32.2 | 32.3 |

| Moonshot-v1-8k | 35.0 | 36.8 | | 33.8 | 33.8 | | 34.9 | 33.0 | | 36.7 | 35.0 | | 29.4 | 32.3 | | 25.0 | 29.2 | | 32.7 | 31.2 | | 32.5 | 33.0 |

| GPT-4o-mini | 37.7 | 38.9 | | 38.5 | 37.4 | | 44.4 | 40.4 | | 39.2 | 37.0 | | 33.9 | 25.1 | | 23.6 | 17.7 | | 41.6 | 34.9 | | 37.0 | 33.1 |

| Zhipu-GLM-4 | 40.7 | 46.2 | | 37.7 | 42.5 | | 38.4 | 36.6 | | 43.1 | 44.0 | | 34.5 | 41.0 | | 37.5 | 32.5 | | 38.8 | 32.8 | | 38.7 | 39.4 |

| GPT-4-Turbo | 44.7 | 47.3 | | 42.8 | 45.2 | | 44.3 | 45.4 | | 44.0 | 46.0 | | 38.8 | 38.4 | | 34.1 | 33.6 | | 53.6 | 57.3 | | 43.2 | 44.7 |

| GPT-4o | 48.3 | 49.1 | | 45.5 | 48.2 | | 42.6 | 41.3 | | 48.2 | 49.1 | | 47.9 | 47.7 | | 31.9 | 28.4 | | 56.5 | 54.6 | | 45.8 | 45.5 |

| o1-mini | 45.8 | 46.9 | | 56.0 | 53.8 | | 68.5 | 67.0 | | 55.2 | 56.1 | | 45.9 | 47.2 | | 30.7 | 28.7 | | 55.1 | 55.6 | | 51.0 | 50.8 |

| o1-preview | 54.1 | 56.0 | | 62.2 | 61.7 | | 69.8 | 70.3 | | 60.6 | 60.3 | | 54.3 | 55.1 | | 46.1 | 45.3 | | 65.1 | 70.0 | | 58.9 | 59.8 |

| Open-Source Small | | | | | | | | | | | | | | | | | | | | | | | |

| Qwen1.5-1.8B | 0.0 | 0.0 | | 0.0 | 0.0 | | 0.0 | 0.1 | | 0.0 | 0.1 | | 0.0 | 0.0 | | 0.0 | 0.1 | | 0.0 | 0.0 | | 0.0 | 0.0 |

| Gemma-2B | 0.1 | 0.0 | | 0.0 | 0.0 | | 0.0 | 1.0 | | 0.1 | 0.0 | | 0.0 | 0.4 | | 0.0 | 0.2 | | 0.7 | 0.0 | | 0.1 | 0.2 |

| Qwen2-1.5B | 2.2 | 2.8 | | 2.2 | 1.3 | | 3.3 | 6.3 | | 2.5 | 3.3 | | 2.9 | 11.2 | | 1.5 | 9.4 | | 0.0 | 3.6 | | 2.1 | 5.4 |

| Phi3-3.8B | 13.4 | 12.5 | | 12.7 | 10.8 | | 13.3 | 13.1 | | 16.4 | 17.1 | | 10.2 | 8.1 | | 8.4 | 5.3 | | 9.1 | 10.2 | | 11.9 | 11.0 |

| Open-Source LLMs Medium | | | | | | | | | | | | | | | | | | | | | | | |

| GLM-4-9B | 4.4 | 2.4 | | 9.6 | 1.2 | | 8.1 | 4.7 | | 8.7 | 2.9 | | 2.3 | 1.9 | | 2.5 | 1.6 | | 11.4 | 0.0 | | 6.7 | 2.1 |

| DeepSeek-7B | 5.7 | 6.2 | | 4.7 | 2.6 | | 4.9 | 5.2 | | 4.2 | 4.9 | | 3.1 | 1.6 | | 3.0 | 3.8 | | 0.0 | 1.2 | | 3.7 | 3.6 |

| Deepseek-Coder-33B | 7.4 | 5.5 | | 7.8 | 5.6 | | 7.2 | 8.6 | | 7.8 | 7.4 | | 6.0 | 5.5 | | 4.6 | 6.7 | | 8.4 | 4.9 | | 7.0 | 6.3 |

| DeepSeek-Coder-7B | 10.5 | 9.9 | | 11.8 | 9.6 | | 11.8 | 12.1 | | 12.3 | 11.9 | | 10.4 | 11.0 | | 9.8 | 10.7 | | 5.0 | 5.8 | | 10.2 | 10.2 |

| LLaMA3-8B | 12.0 | 11.9 | | 10.9 | 7.5 | | 15.0 | 9.0 | | 12.6 | 12.7 | | 9.3 | 8.0 | | 9.4 | 9.6 | | 15.8 | 10.0 | | 12.2 | 9.8 |

| Yi-1.5-9B | 10.4 | 14.8 | | 11.9 | 12.9 | | 12.5 | 15.6 | | 13.1 | 14.4 | | 9.5 | 14.8 | | 9.1 | 9.5 | | 4.8 | 6.3 | | 10.2 | 12.6 |

| Open-Source LLMs Large | | | | | | | | | | | | | | | | | | | | | | | |

| Qwen1.5-72B | 15.3 | 19.2 | | 12.9 | 13.6 | | 12.0 | 10.0 | | 13.9 | 16.3 | | 11.7 | 14.7 | | 10.4 | 12.9 | | 3.9 | 5.9 | | 11.5 | 13.3 |

| DeepSeek-67B | 17.1 | 19.7 | | 14.9 | 17.3 | | 15.4 | 16.2 | | 16.3 | 20.6 | | 14.7 | 12.2 | | 13.6 | 14.3 | | 14.5 | 15.2 | | 15.2 | 16.5 |

| LLaMA3-70B | 20.4 | 27.1 | | 17.4 | 20.5 | | 14.9 | 15.8 | | 19.5 | 25.1 | | 16.3 | 19.3 | | 16.3 | 16.8 | | 29.8 | 16.7 | | 19.2 | 20.2 |

| DeepSeek-V2-236B | 30.0 | 37.1 | | 32.2 | 36.5 | | 32.2 | 30.0 | | 32.5 | 35.4 | | 26.5 | 32.4 | | 23.6 | 27.4 | | 34.2 | 27.1 | | 30.2 | 32.3 |

| Qwen2-72B | 36.0 | 40.8 | | 36.7 | 40.9 | | 38.0 | 38.7 | | 37.2 | 38.8 | | 28.3 | 29.3 | | 25.6 | 20.5 | | 31.3 | 30.4 | | 33.3 | 34.2 |

To evaluate the performance of different models on our new benchmark, we selected a diverse array of models based on size and source accessibility Note: All models used in our experiments are instruction-finetuned versions, although this is not indicated in their abbreviated names. This included smaller models like Gemma-2B [63], Phi-3 [1], Qwen1.5-1.8B [7], as well as larger counterparts such as Llama3-70B [47], Deepseek-67B [10], and Qwen1.5-72B [7]. We also compared open-source models (e.g. models from the Llama3 and Qwen1.5/Qwen2 series) against closed-source models from the GPT [51], Claude [6], Mistral [32], GLM [3], Yi [39], Moonshot [2], Doubao [12] families. Additionally, models from the Deepseek-Coder [10] series were included to assess the impact of coding-focused pretraining on reasoning performance.

Given the complexity of our benchmark, even larger open-source models like Llama3-70B-Instruct struggle to produce accurate evaluation results without the use of prompting methods, often achieving MR-Scores near zero. Consequently, we employed a step-wise chain-of-thought prompting technique similar to those described in [77, 64]. This approach guides models in systematically reasoning through solution traces before making final decisions, as detailed in Appendix- D.

Considering the complexity of the task, which includes question comprehension, reasoning through the provided solutions, and adhering to format constraints, few-shot demonstration setups are also explored to investigate if models can benefit from In-Context Learning (ICL) examples. Due to the context token limits, we report zero and one-shot results in the main result table (Table 2) For the breakdown performances of models in the sub-tasks, please refer to Table 7. The performance of additional few-shot configurations on a selection of models with various capabilities is further discussed in Section 6.1.

<details>

<summary>x2.png Details</summary>

### Visual Description

## Radar Chart: AI Model Performance Across Scientific and Technical Domains

### Overview

This image is a radar chart (also known as a spider chart) comparing the performance of five different Large Language Models (LLMs) across seven distinct academic and technical subject areas. The chart uses concentric heptagonal grid lines to represent a numerical scoring scale, with the models' scores plotted as colored polygons. All text in the image is in English.

### Components/Axes

**1. Header Region (Legend)**

Located at the top center of the image, the legend identifies the five data series (AI models) by color and line style. All lines feature circular markers at the data points.

* **DeepSeek-v2:** Brown/Orange line

* **GPT-4-turbo:** Blue line

* **O1-Preview:** Light Green line

* **Qwen2-72B:** Dark Green line

* **GLM-4:** Pink/Light Red line

**2. Main Chart Region (Axes and Scale)**

* **Categories (Axes):** Seven axes radiate from the center, dividing the chart into equal segments. Reading clockwise from the top (12 o'clock position), the categories are:

* `math` (Top)

* `chemistry` (Top-Right)

* `biology` (Right)

* `logic` (Bottom-Right)

* `coding` (Bottom)

* `medicine` (Bottom-Left)

* `physics` (Top-Left)

* **Scale:** The numerical scale is explicitly printed along the horizontal axis pointing towards `biology`. It starts at the center and moves outward.

* Markers: `0`, `0.1`, `0.2`, `0.3`, `0.4`, `0.5`, `0.6`, `0.7`.

* The grid consists of seven concentric white heptagons set against a light blue circular background. Each heptagon corresponds to an increment of 0.1.

### Detailed Analysis

**Trend Verification & Visual Hierarchy:**

Visually, the polygons form a distinct nested hierarchy.

* The **Light Green polygon (O1-Preview)** is the outermost shape, encompassing almost all other lines, indicating the highest overall performance. It spikes significantly outward on the `math` and `coding` axes.

* The **Blue polygon (GPT-4-turbo)** is generally the second-largest shape, sitting just inside O1-Preview, except on the `medicine` axis where it slightly overtakes O1-Preview.

* The **Pink polygon (GLM-4)** forms the third layer, closely tracking just inside GPT-4-turbo.

* The **Dark Green polygon (Qwen2-72B)** forms the fourth layer.

* The **Brown polygon (DeepSeek-v2)** is the innermost shape, indicating the lowest relative scores among the group across all categories.

**Estimated Data Points:**

*Note: Values are approximate (±0.02) based on visual interpolation between the 0.1 grid lines.*

| Category | O1-Preview (Light Green) | GPT-4-turbo (Blue) | GLM-4 (Pink) | Qwen2-72B (Dark Green) | DeepSeek-v2 (Brown) |

| :--- | :--- | :--- | :--- | :--- | :--- |

| **math** | ~0.70 | ~0.50 | ~0.48 | ~0.40 | ~0.38 |

| **chemistry** | ~0.60 | ~0.48 | ~0.50 | ~0.40 | ~0.38 |

| **biology** | ~0.60 | ~0.50 | ~0.48 | ~0.40 | ~0.38 |

| **logic** | ~0.50 | ~0.38 | ~0.40 | ~0.38 | ~0.30 |

| **coding** | ~0.70 | ~0.50 | ~0.48 | ~0.40 | ~0.38 |

| **medicine** | ~0.58 | ~0.60 | ~0.50 | ~0.40 | ~0.38 |

| **physics** | ~0.60 | ~0.50 | ~0.48 | ~0.40 | ~0.38 |

### Key Observations

1. **O1-Preview's Dominance:** O1-Preview shows exceptional performance peaks in `math` and `coding`, reaching the maximum chart value of 0.7. It leads in 6 out of 7 categories.

2. **The Medicine Anomaly:** `medicine` is the only category where O1-Preview does not hold the top score. GPT-4-turbo peaks at ~0.60 here, slightly edging out O1-Preview (~0.58).

3. **Logic as a Weak Point:** All models show a relative dip in performance on the `logic` axis compared to their scores in other scientific domains. Even the leading model (O1-Preview) drops to ~0.50 here.

4. **Tight Clustering of Inner Models:** DeepSeek-v2 and Qwen2-72B exhibit very similar performance profiles, forming a tight inner cluster. Their shapes are nearly identical, with Qwen2-72B maintaining a consistent, slight lead over DeepSeek-v2 across all axes.

### Interpretation

This radar chart illustrates a comparative benchmark of advanced AI models on complex, reasoning-heavy STEM (Science, Technology, Engineering, and Mathematics) and logic tasks.

The data suggests a clear stratification in model capabilities. **O1-Preview** represents a significant leap in quantitative and algorithmic reasoning, evidenced by its massive advantage in math and coding. This aligns with the industry understanding of "O1" class models being specifically optimized for chain-of-thought reasoning and mathematical problem-solving.

**GPT-4-turbo** remains a highly capable generalist, holding a strong second place and demonstrating superior domain-specific knowledge in medicine.

The models **GLM-4**, **Qwen2-72B**, and **DeepSeek-v2** (which are notably prominent models originating from Chinese AI labs) show respectable, balanced performance but lag behind the top-tier proprietary models (O1 and GPT-4) in these specific, rigorous benchmarks. Their highly uniform, concentric shapes suggest they share similar architectural limitations or were trained on similar distributions of scientific data, resulting in a proportional scaling of capabilities rather than domain-specific spikes.

</details>

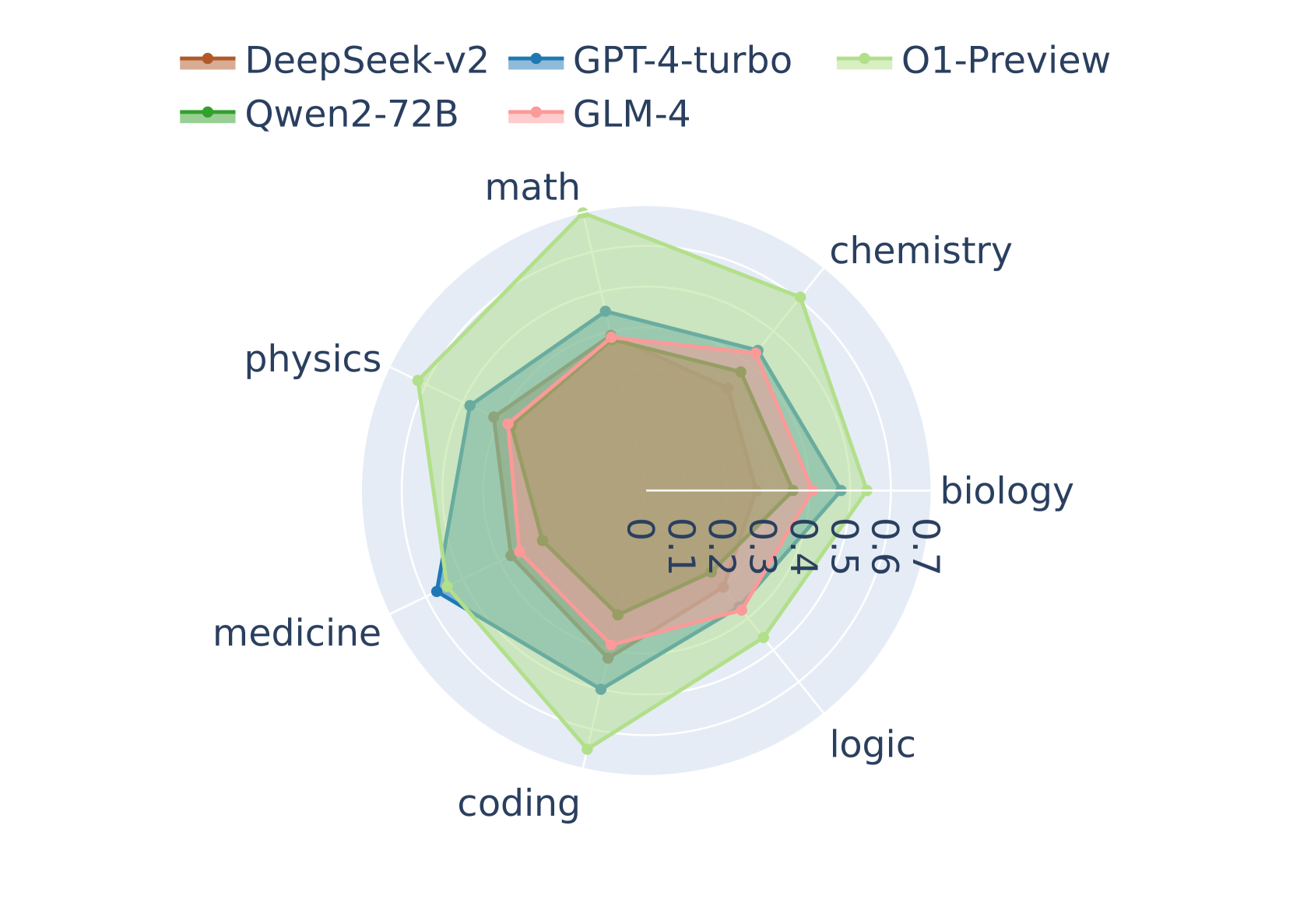

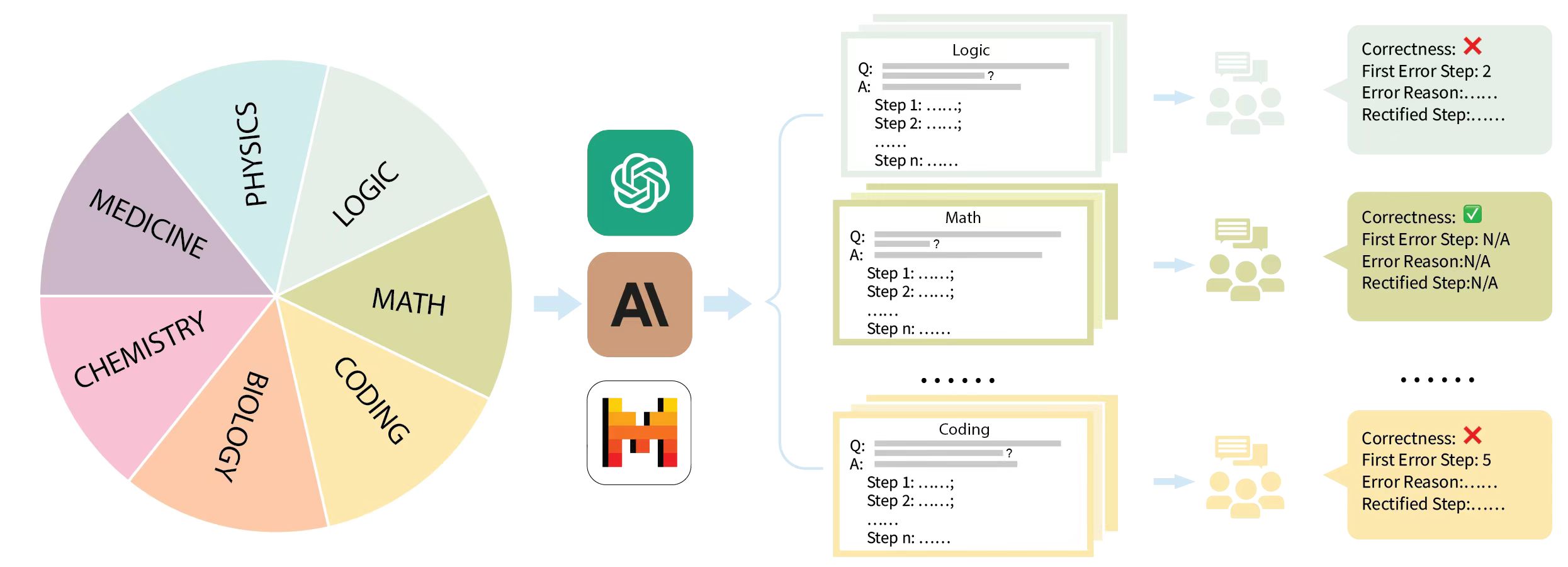

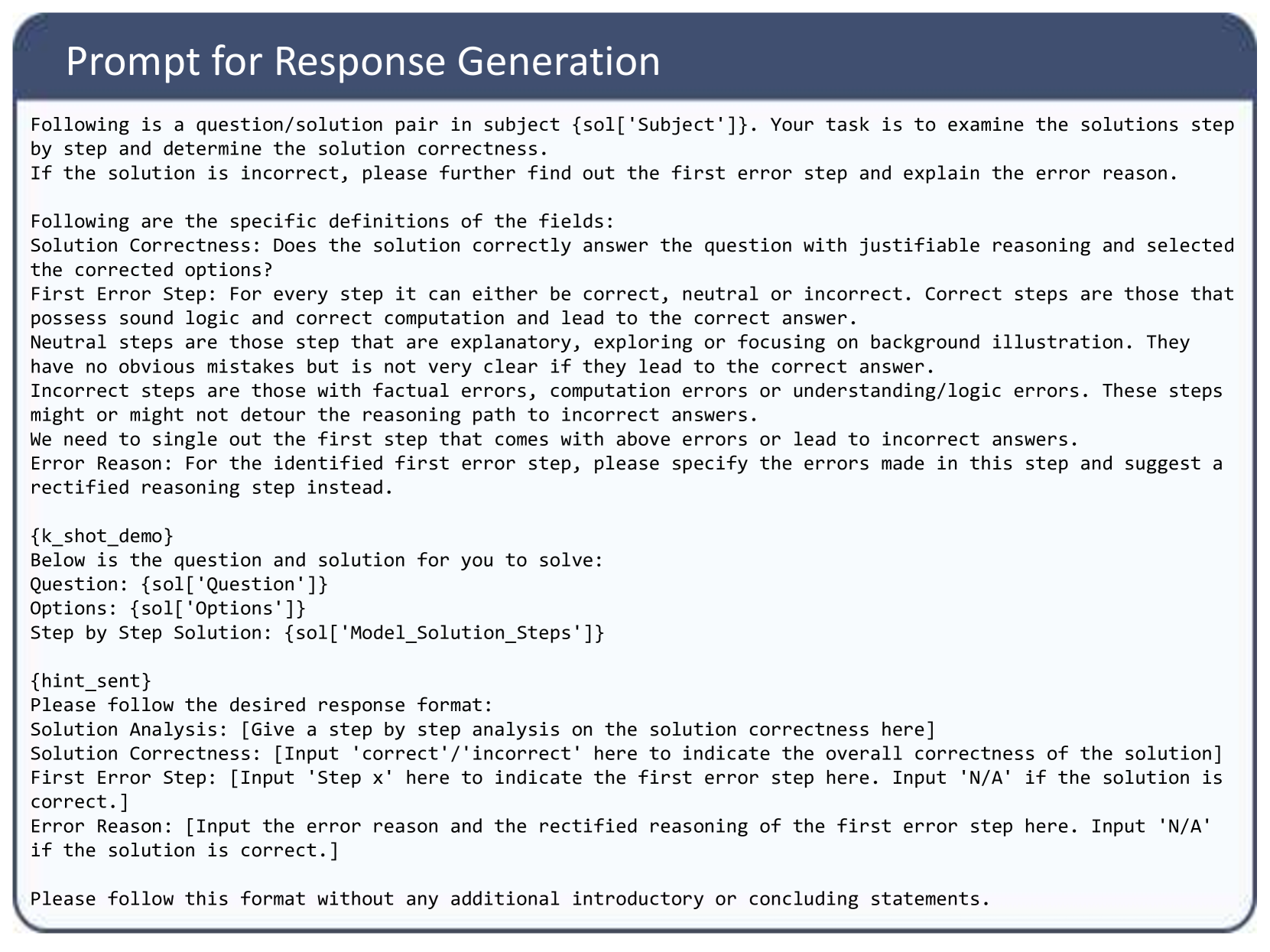

Figure 2: Model performance across subjects

<details>

<summary>x3.png Details</summary>

### Visual Description

## Grouped Bar Chart: MR-Scores of Models on Different Reasoning Paradigms

### Overview

This image is a grouped bar chart comparing the performance of five different artificial intelligence models across four distinct reasoning paradigms. The performance is measured using a metric called "MR-Scores." The chart highlights comparative strengths and weaknesses of each model in specific cognitive tasks.

### Components/Axes

**Header Region (Top Center):**

* **Chart Title:** "MR-Scores of Models on Different Reasoning Paradigms"

**Legend Region (Top-Right, inside chart area):**

* **Title:** "Paradigms"

* **Categories & Color Mapping:**

* Light Blue square: `knowledge`

* Dark Blue square: `logic`

* Light Green square: `arithmetic`

* Dark Green square: `algorithmic`

**Y-Axis (Left side):**

* **Label:** "MR-Scores" (oriented vertically).

* **Scale:** Ranges from 0.0 to 0.6, with major tick marks and solid light-grey horizontal gridlines at intervals of 0.1 (0.0, 0.1, 0.2, 0.3, 0.4, 0.5, 0.6).

* **Special Marker:** There is a distinct, dark-grey dashed horizontal line spanning the width of the chart exactly at the 0.5 mark.

**X-Axis (Bottom):**

* **Label:** "Models" (centered below the categories).

* **Categories (Left to Right):** DeepSeek-v2, GPT-4-turbo, O1-Preview, Qwen2-72B, GLM-4.

### Detailed Analysis

*Trend Verification & Data Extraction by Model:*

**1. DeepSeek-v2 (Far Left)**

* *Visual Trend:* The bars show a dip from knowledge to logic, then a significant step up for arithmetic, and a slight increase for algorithmic. None of the bars reach the 0.5 dashed line.

* *Data Points:*

* knowledge (Light Blue): ~0.32

* logic (Dark Blue): ~0.30

* arithmetic (Light Green): ~0.40

* algorithmic (Dark Green): ~0.42

**2. GPT-4-turbo (Center Left)**

* *Visual Trend:* Knowledge is high, dropping sharply for logic, then stepping back up through arithmetic to peak at algorithmic. The algorithmic bar exactly touches the 0.5 dashed line.

* *Data Points:*

* knowledge (Light Blue): ~0.49

* logic (Dark Blue): ~0.36

* arithmetic (Light Green): ~0.46

* algorithmic (Dark Green): ~0.50

**3. O1-Preview (Center)**

* *Visual Trend:* This group contains the highest bars on the chart. Knowledge is high, logic dips, but arithmetic and algorithmic spike dramatically, breaking well past the 0.6 top gridline.

* *Data Points:*

* knowledge (Light Blue): ~0.56

* logic (Dark Blue): ~0.46

* arithmetic (Light Green): ~0.66

* algorithmic (Dark Green): ~0.65

**4. Qwen2-72B (Center Right)**

* *Visual Trend:* Overall lower performance. Knowledge drops to a chart-wide low for logic, spikes up for arithmetic, and drops again for algorithmic.

* *Data Points:*

* knowledge (Light Blue): ~0.34

* logic (Dark Blue): ~0.25

* arithmetic (Light Green): ~0.37

* algorithmic (Dark Green): ~0.31

**5. GLM-4 (Far Right)**

* *Visual Trend:* This is the most visually uniform group. All four bars are nearly identical in height, hovering just below the 0.4 line, showing a very flat distribution across paradigms.

* *Data Points:*

* knowledge (Light Blue): ~0.39

* logic (Dark Blue): ~0.37

* arithmetic (Light Green): ~0.38

* algorithmic (Dark Green): ~0.39

### Key Observations

* **Dominant Model:** O1-Preview significantly outperforms all other models across every single paradigm. It is the only model to consistently break the 0.5 dashed line (doing so in 3 out of 4 categories).

* **Weakest Paradigm:** Across almost all models (except GLM-4, where it is nearly tied), "logic" (Dark Blue) represents the lowest score, indicating it is the most challenging reasoning paradigm for these LLMs.

* **Most Balanced Model:** GLM-4 shows the least variance between paradigms, scoring between ~0.37 and ~0.39 across the board.

* **The 0.5 Threshold:** The explicit dashed line at 0.5 suggests a benchmark of significance. Only O1-Preview (knowledge, arithmetic, algorithmic) and GPT-4-turbo (algorithmic) meet or exceed this line.

### Interpretation

The data demonstrates a clear hierarchy in current model capabilities regarding complex reasoning. O1-Preview represents a generational leap, particularly in "arithmetic" and "algorithmic" tasks, suggesting its architecture is highly optimized for structured, mathematical, and step-by-step computational problem-solving.

Conversely, the universal dip in "logic" scores implies that abstract logical deduction remains a persistent bottleneck in AI development, even for the most advanced models like O1-Preview.

GLM-4's flat profile is highly unusual compared to the others; it suggests a model architecture or training methodology that prioritizes generalist consistency over specialized peaks, though it achieves this at the cost of not excelling in any single area.

The dashed line at 0.5 likely represents a critical threshold—perhaps a "pass" rate, a human baseline, or a previous state-of-the-art benchmark. The fact that O1-Preview shatters this line in math and algorithms indicates a paradigm shift in how models handle quantitative reasoning.

</details>

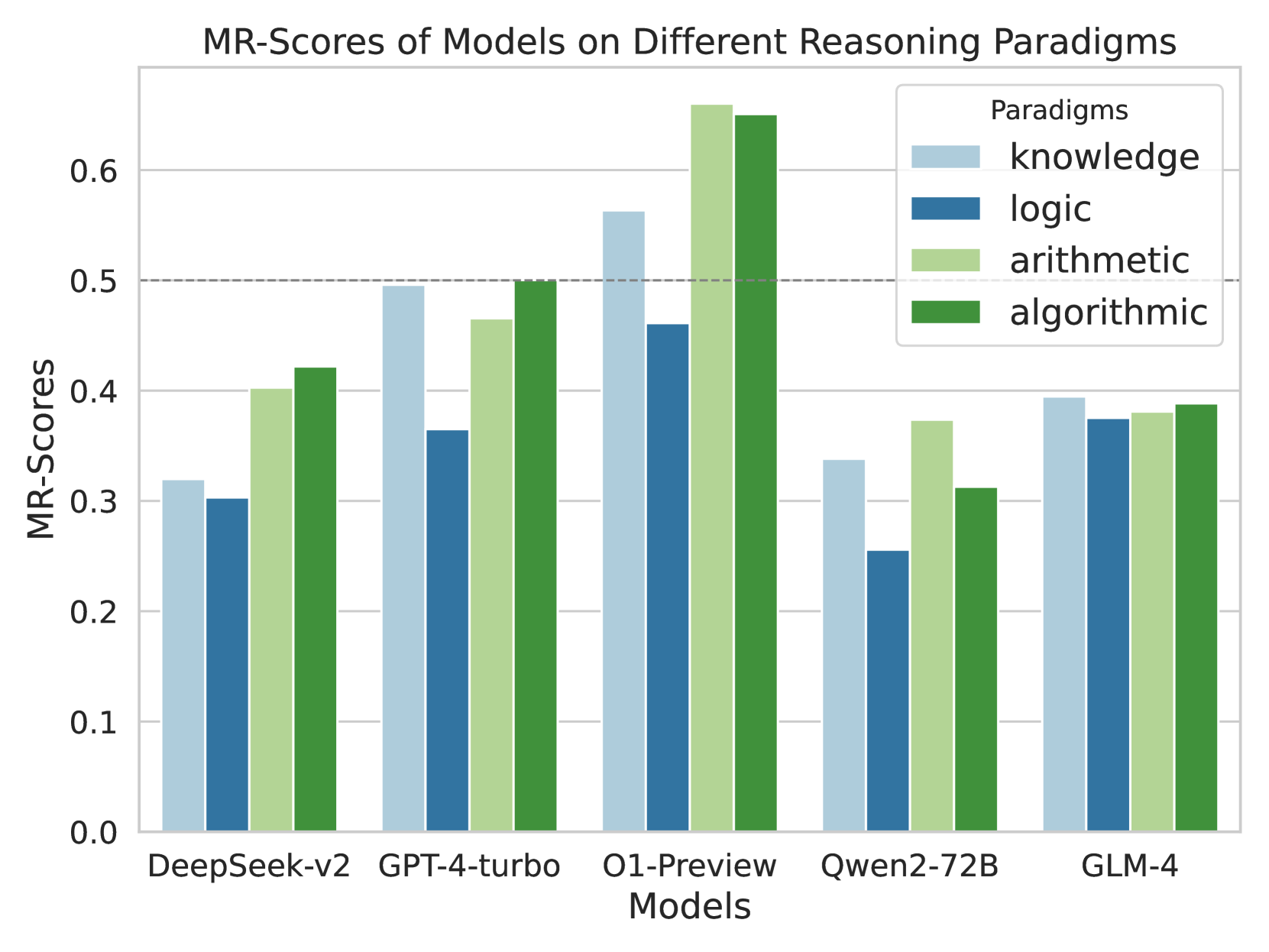

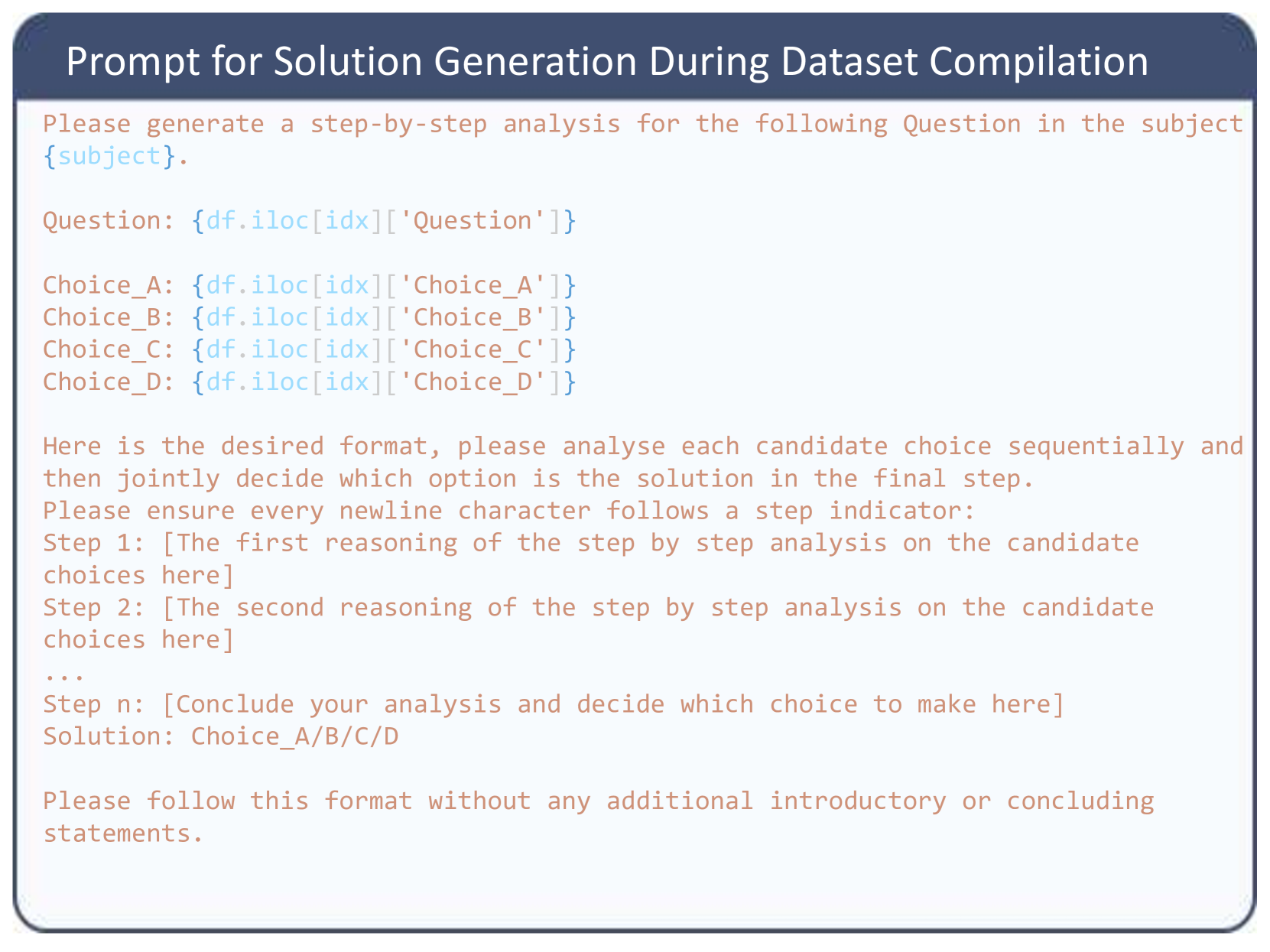

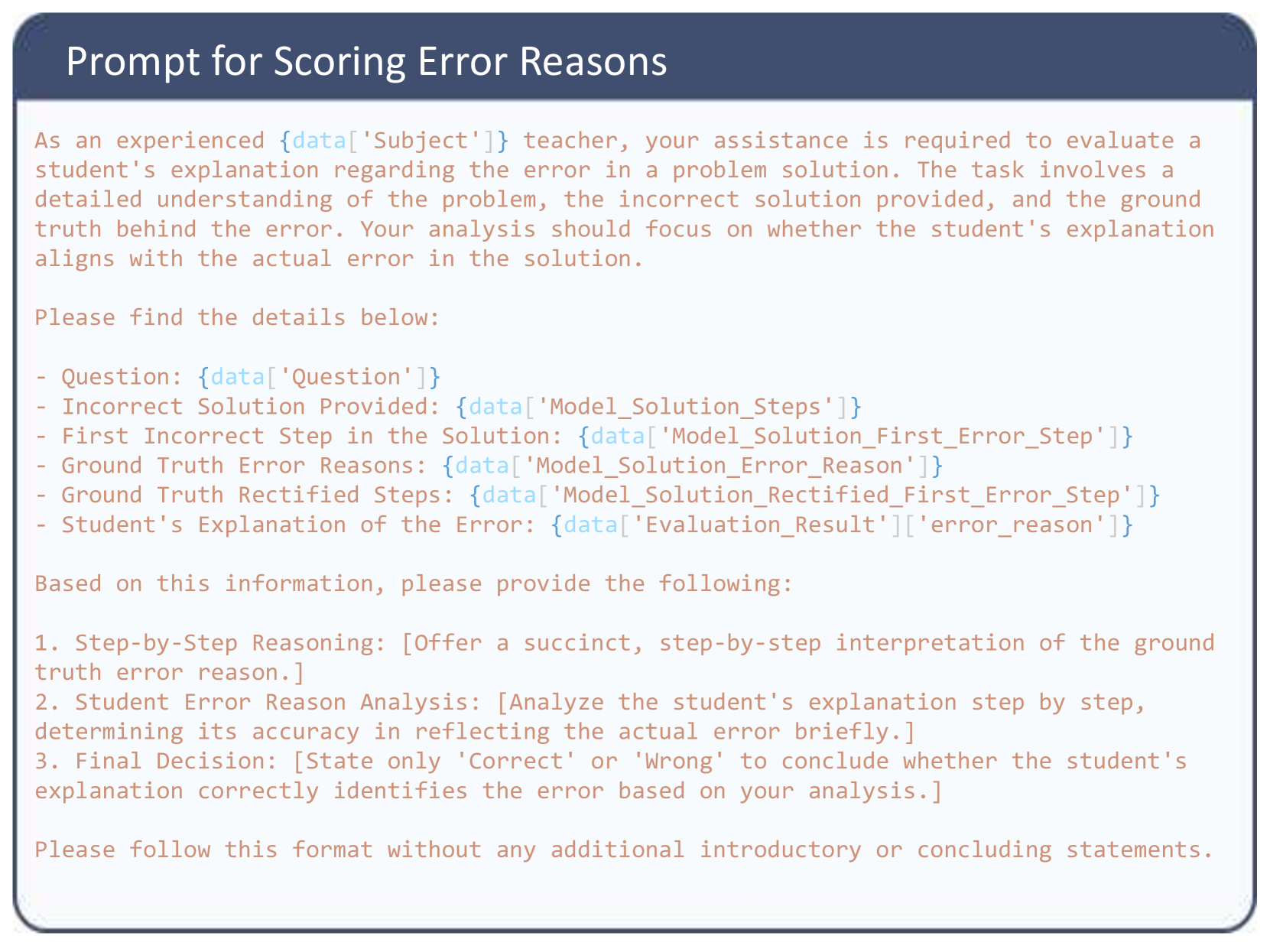

Figure 3: Model performance on different reasoning paradigms

5.2 Experiment Results

The MR-Ben benchmark presents a significant shift in the challenge for state-of-the-art large language models, transitioning from question-answering to the nuanced role of question-solution scoring. This section details our findings, emphasizing variations in model performances and their implications.

Overall Performance

Among the evaluated models, o1-preview consistently achieves the highest MR-Scores across all subjects, significantly outperforming most competitors from both open and closed-source communities. Notably, the open-sourced Qwen2-72B and Deepseek-V2-236B models are performing exceptionally well, surpassing every other open-sourced model including Llama3 by a large margin. Their scores are even comparable to or greater than some of the most capable models from commercial companies, such as Mistral, Yi, and Moonshot AI. In the small language model category, the performance of Phi3-3.8B exceeds many of the mid-size models, including Deepseek-Coder-33B, whose size is around tenfold larger.

Performance across Model Size and Reasoning Paradigm

Table 2 reveals a general trend where larger models tend to perform better, highlighting the correlation between model size and the efficacy in complex reasoning tasks. However, this relationship is not strictly linear, as demonstrated by models like Phi3-3.8B, which excel despite their smaller size. Since MR-Ben challenges the language models to reason about the reasoning in the solution space among a diverse range of domains, models like Phi-3 that are trained with effective data synthesis techniques and broader coverage of the solution space, intuitively achieve higher MR-Score. This suggests that while larger model sizes generally yield superior performances, techniques like knowledge distillation can also significantly boost reasoning performance. Similarly, although the size of the o1 model series remains undisclosed, these models reportedly employ mechanisms that scale computation efficiently through effective exploration, frequent retrospection, and meticulous reflection within the solution space. These characteristics align closely with the principles of “system-2” thinking, which emphasizes deliberate, reflective problem-solving. As a result, the o1 models demonstrate a more effective reasoning process, achieving significantly higher MR-Scores than other models by a large margin.

Performance across Reasoning Types

Our categorization into four reasoning types—knowledge, arithmetic, algorithmic, and logic—illustrates the unique challenges each model faces within these paradigms (Figure 3). Logic reasoning emerges as the most formidable due to the intricate logical operations required by questions from the LogiQA dataset. In stark contrast, o1-Preview and GPT-4-turbo demonstrate exceptional prowess in algorithmic reasoning, where their capabilities markedly surpass other models. Notably, models excel in different reasoning paradigms, reflecting their varied strengths and training backgrounds. For instance, despite Deepseek-Coder’s specialized pre-training focused on coding tasks, it does not necessarily confer superior abilities in algorithmic reasoning, underscoring that targeted pretraining does not guarantee enhanced performance across all reasoning types. Comparing the performance of the Deepseek-Coder with that of the Phi-3 model, which excels despite its much smaller size, highlights the potential significance of high-quality synthetic data in achieving broad-based reasoning capabilities.

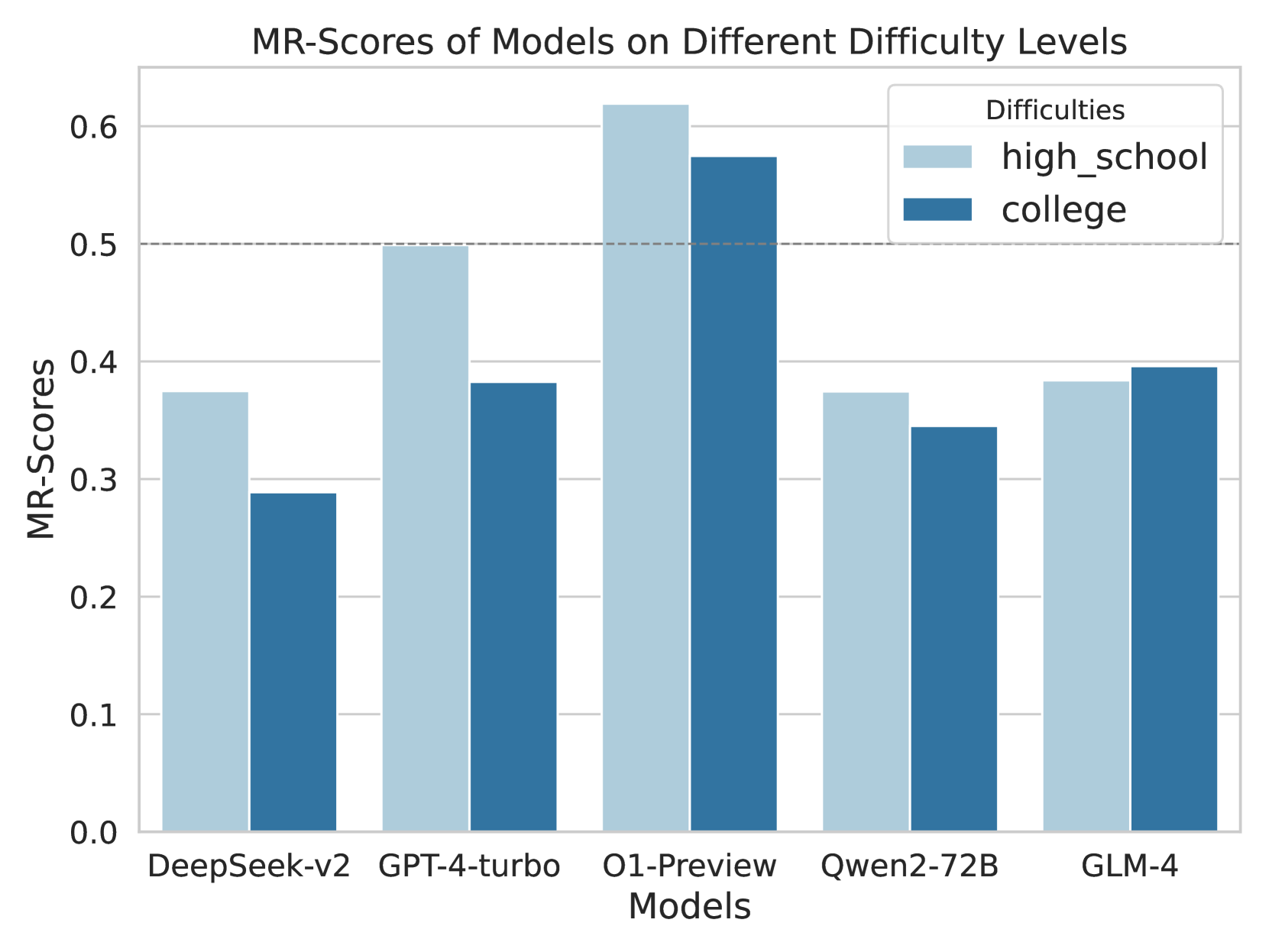

Sensitivity to Task Difficulty and Solution Length

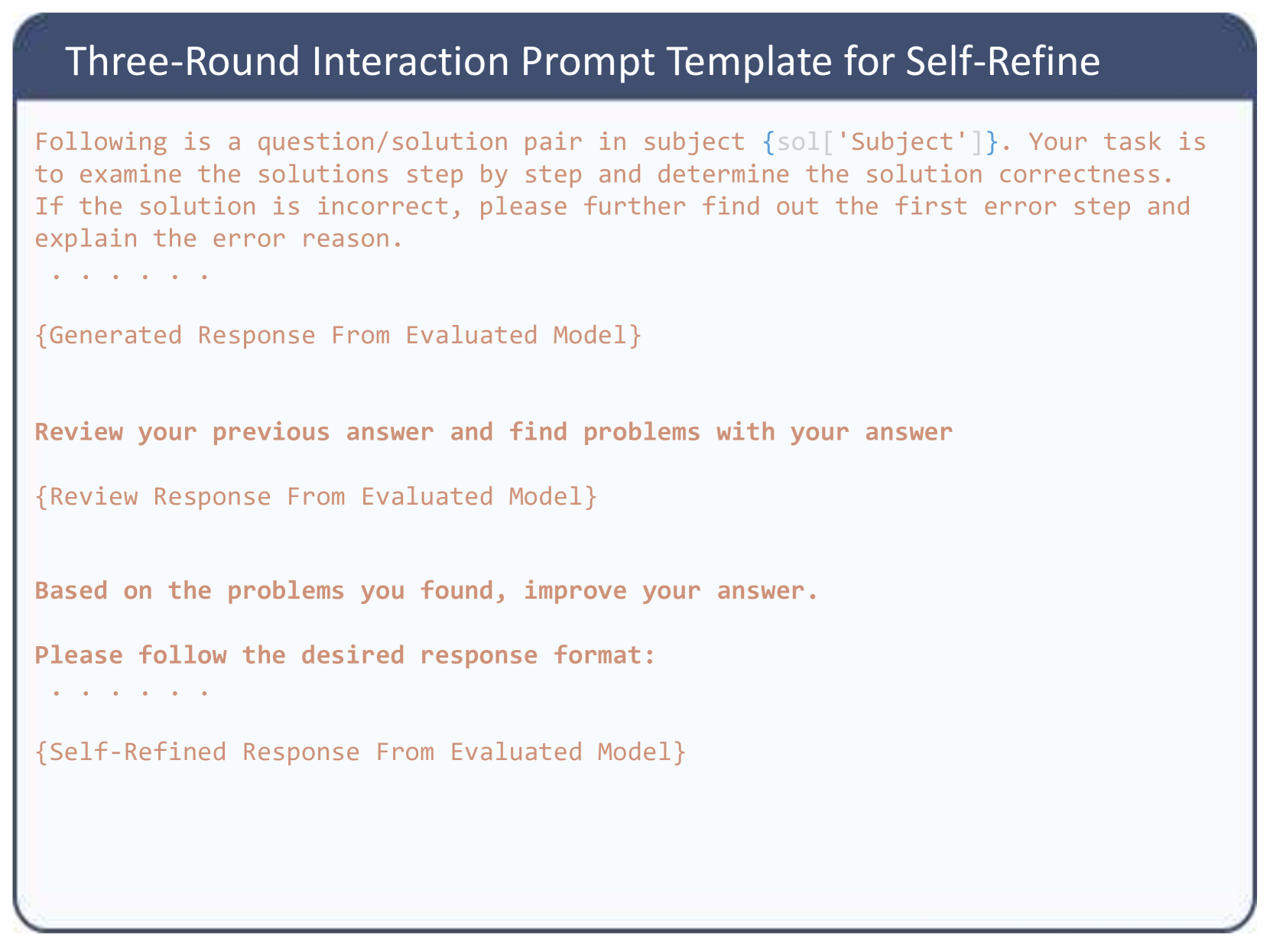

An examination across educational levels shows most models perform better at high school-level questions than college-level ones, indicating an intuitive level of sensitivity to the difficulty levels of the questions. Additionally, our analysis finds a minor negative correlation between the length of solution steps and MR-Scores, as detailed in Figure 5 and Figure 5.

Summary:

MR-Ben effectively differentiates model capabilities, often obscured in simpler settings. It not only identifies top performers but also underscores the influence of model size on outcomes, while demonstrating that techniques like knowledge distillation and test-time compute scaling, as seen with the Phi-3 and o1 models, can notably enhance smaller models’ performance, challenging the dominance of larger models. The analysis further reveals that specialized training, such as in coding, does not guarantee superior algorithmic reasoning. This suggests the potential need for more balanced data approaches or improved data synthesis methods.

6 Further Analysis & Discussion

<details>

<summary>x4.png Details</summary>

### Visual Description

## Bar Chart: MR-Scores of Models on Different Difficulty Levels

### Overview

This image is a grouped bar chart comparing the performance of five different artificial intelligence models based on a metric called "MR-Scores." The performance is evaluated across two distinct difficulty levels: "high_school" and "college."

*Language Declaration:* All text in this image is in English. No other languages are present.

### Components/Axes

**Header Region:**

* **Chart Title:** "MR-Scores of Models on Different Difficulty Levels" (Centered at the top).

**Main Chart Region:**

* **Y-axis:**

* **Title:** "MR-Scores" (Rotated 90 degrees counter-clockwise, positioned on the left).

* **Scale/Markers:** Linear scale starting from 0.0 to 0.6, with increments of 0.1 (0.0, 0.1, 0.2, 0.3, 0.4, 0.5, 0.6).

* **Gridlines:** Solid light gray horizontal lines extend from each y-axis marker across the chart area.

* **Reference Line:** A distinct, horizontal dashed gray line is positioned exactly at the 0.5 mark on the y-axis.

* **Legend:**

* **Position:** Top-right corner of the chart area, enclosed in a white box with a light gray border.

* **Title:** "Difficulties"

* **Categories:**

* Light blue solid square = "high_school"

* Dark blue solid square = "college"

**Footer Region:**

* **X-axis:**

* **Title:** "Models" (Centered at the bottom).

* **Categories (Left to Right):** "DeepSeek-v2", "GPT-4-turbo", "O1-Preview", "Qwen2-72B", "GLM-4".

### Detailed Analysis

*Trend Verification & Spatial Grounding:* For each model on the x-axis, there is a pair of bars. The left bar is always light blue (representing `high_school`), and the right bar is always dark blue (representing `college`). Visually, the light blue bar is taller than the dark blue bar for four out of the five models, indicating higher scores on high school difficulty. The exception is GLM-4, where the dark blue bar is marginally taller.

Below are the extracted approximate values (±0.01 uncertainty) based on visual alignment with the y-axis gridlines:

**1. DeepSeek-v2**

* *Visual Trend:* The light blue bar is noticeably taller than the dark blue bar.

* `high_school` (Light Blue): ~0.37

* `college` (Dark Blue): ~0.29

**2. GPT-4-turbo**

* *Visual Trend:* The light blue bar touches the dashed reference line, while the dark blue bar is significantly lower.

* `high_school` (Light Blue): ~0.50 (Exactly on the dashed line)

* `college` (Dark Blue): ~0.38

**3. O1-Preview**

* *Visual Trend:* Both bars are the tallest in the chart, extending above the 0.5 dashed line. The light blue bar is taller than the dark blue bar.

* `high_school` (Light Blue): ~0.62

* `college` (Dark Blue): ~0.57

**4. Qwen2-72B**

* *Visual Trend:* The light blue bar is slightly taller than the dark blue bar. Both are below the 0.4 line.

* `high_school` (Light Blue): ~0.37

* `college` (Dark Blue): ~0.34

**5. GLM-4**

* *Visual Trend:* This is the only grouping where the dark blue bar is slightly taller than the light blue bar. Both sit just below the 0.4 line.

* `high_school` (Light Blue): ~0.38

* `college` (Dark Blue): ~0.39

### Key Observations

* **Top Performer:** "O1-Preview" significantly outperforms all other models in both difficulty categories, being the only model to score above 0.5 on the college-level difficulty.

* **General Difficulty Trend:** Four out of five models score higher on "high_school" tasks than on "college" tasks, which aligns with the expected progression of academic difficulty.

* **The Anomaly:** "GLM-4" is the only model that scores slightly higher on the "college" difficulty (~0.39) compared to the "high_school" difficulty (~0.38).

* **The 0.5 Threshold:** The explicit dashed line at 0.5 suggests this is a critical benchmark. Only "O1-Preview" (both levels) and "GPT-4-turbo" (high school level only) meet or exceed this threshold.

### Interpretation

The data demonstrates a comparative evaluation of Large Language Models (LLMs) on a specific reasoning or knowledge benchmark (MR-Scores).

The consistent drop in performance from "high_school" to "college" for almost all models indicates that the "college" dataset successfully introduces a higher degree of complexity that these models struggle to resolve.

The presence of the dashed line at 0.5 likely represents a "pass" rate, a 50% accuracy mark, or a baseline human-level performance metric. The fact that "O1-Preview" clears this line comfortably in both categories suggests a generational leap or a specific architectural advantage in reasoning capabilities compared to the other models tested (like GPT-4-turbo and DeepSeek-v2).

The anomaly with GLM-4 (scoring better on college than high school) is highly unusual. Reading between the lines, this could suggest a few possibilities:

1. The training data for GLM-4 was heavily skewed toward advanced academic texts, making it better at college-level phrasing.

2. The specific "high_school" prompts used in this test contained formatting or logic puzzles that GLM-4's architecture handles poorly compared to standard academic Q&A.

3. It is a statistical artifact due to a specific subset of questions in the benchmark.

</details>

Figure 4: MR-Scores of different models on different levels of difficulty

<details>

<summary>x5.png Details</summary>

### Visual Description

## Line Chart: Impact of Solution Length on MR-Scores by Models

### Overview

This image is a multi-series line chart illustrating the performance of five different Large Language Models (LLMs) based on the length of the solution required. Performance is measured by an "MR Score" on the y-axis, plotted against the "Solution Step Numbers" on the x-axis. The chart demonstrates how model accuracy or reliability fluctuates as the complexity (measured in steps) of the problem increases.

### Components/Axes

**Header Region:**

* **Title:** Located at the top center, reading "Impact of Solution Length on MR-Scores by Models".

**Main Chart Area:**

* **Y-Axis (Left):** Labeled "MR Score" (rotated 90 degrees counter-clockwise). The scale ranges from `0.0` to `0.7` with major tick marks and corresponding horizontal grid lines at intervals of `0.1` (0.0, 0.1, 0.2, 0.3, 0.4, 0.5, 0.6, 0.7).

* **X-Axis (Bottom):** Labeled "Solution Step Numbers" (centered below the axis). It contains categorical/ordinal bins representing step counts. Vertical grid lines align with each category. The categories are:

* `<=5`

* `6`

* `7`

* `8`

* `9`

* `10`

* `11`

* `12`

* `>=13`

**Footer/Legend Region:**

* **Legend:** Positioned inside the main chart area, in the lower-middle section (spanning horizontally from step 6 to 13, and vertically between MR scores 0.05 and 0.25). It is enclosed in a box with a light gray border.

* **Legend Title:** "Model"

* **Legend Entries (Color to Label Mapping):**

* Blue Line: `DeepSeek-v2`

* Orange Line: `GPT-4-turbo`

* Green Line: `O1-Preview`

* Red Line: `Qwen2-72B`

* Purple Line: `GLM-4`

### Detailed Analysis

*Note: All numerical values extracted from the chart are approximate visual estimates based on the placement of data points relative to the y-axis gridlines. An uncertainty margin of roughly ±0.02 applies.*

**Trend Verification and Data Extraction by Series:**

1. **O1-Preview (Green Line):**

* *Trend:* This line is consistently the highest on the chart. It shows a smooth, steady upward slope from `<=5` steps, peaking at `10` steps, followed by a steady downward slope as steps increase to `>=13`.

* *Data Points:* <=5 (~0.54), 6 (~0.56), 7 (~0.58), 8 (~0.60), 9 (~0.60), 10 (~0.62), 11 (~0.58), 12 (~0.50), >=13 (~0.49).

2. **GPT-4-turbo (Orange Line):**

* *Trend:* This line exhibits high volatility. It rises initially, dips at step 7, rises to a peak at step 9, suffers a sharp drop at step 10, spikes back up at step 11, drops sharply again at step 12, and recovers slightly at >=13.

* *Data Points:* <=5 (~0.39), 6 (~0.47), 7 (~0.41), 8 (~0.44), 9 (~0.56), 10 (~0.27), 11 (~0.48), 12 (~0.27), >=13 (~0.38).

3. **GLM-4 (Purple Line):**

* *Trend:* This line remains relatively stable in the middle of the pack. It fluctuates mildly between 0.33 and 0.40 until step 11, experiences a sharp drop at step 12, and recovers at >=13.

* *Data Points:* <=5 (~0.38), 6 (~0.40), 7 (~0.33), 8 (~0.36), 9 (~0.38), 10 (~0.34), 11 (~0.38), 12 (~0.27), >=13 (~0.36).

4. **DeepSeek-v2 (Blue Line):**

* *Trend:* Highly erratic behavior in the middle-to-late stages. It starts low, rises to a plateau at steps 6-7, dips at 8, spikes sharply to its peak at step 9, then crashes dramatically to the lowest point on the entire chart at step 11, before recovering sharply at steps 12 and >=13.

* *Data Points:* <=5 (~0.29), 6 (~0.38), 7 (~0.38), 8 (~0.30), 9 (~0.50), 10 (~0.21), 11 (~0.08), 12 (~0.26), >=13 (~0.43).

5. **Qwen2-72B (Red Line):**

* *Trend:* This line is the most stable but generally the lowest performing overall (excluding DeepSeek's crash). It remains relatively flat, hovering around the 0.30 mark, with a slight decline at steps 11 and 12 before a minor recovery.

* *Data Points:* <=5 (~0.33), 6 (~0.34), 7 (~0.30), 8 (~0.31), 9 (~0.33), 10 (~0.32), 11 (~0.25), 12 (~0.25), >=13 (~0.31).

**Reconstructed Data Table (Approximate Values):**

| Solution Step Numbers | DeepSeek-v2 (Blue) | GPT-4-turbo (Orange) | O1-Preview (Green) | Qwen2-72B (Red) | GLM-4 (Purple) |

| :--- | :--- | :--- | :--- | :--- | :--- |

| **<=5** | ~0.29 | ~0.39 | ~0.54 | ~0.33 | ~0.38 |

| **6** | ~0.38 | ~0.47 | ~0.56 | ~0.34 | ~0.40 |

| **7** | ~0.38 | ~0.41 | ~0.58 | ~0.30 | ~0.33 |

| **8** | ~0.30 | ~0.44 | ~0.60 | ~0.31 | ~0.36 |

| **9** | ~0.50 | ~0.56 | ~0.60 | ~0.33 | ~0.38 |

| **10** | ~0.21 | ~0.27 | ~0.62 | ~0.32 | ~0.34 |

| **11** | ~0.08 | ~0.48 | ~0.58 | ~0.25 | ~0.38 |

| **12** | ~0.26 | ~0.27 | ~0.50 | ~0.25 | ~0.27 |

| **>=13** | ~0.43 | ~0.38 | ~0.49 | ~0.31 | ~0.36 |

### Key Observations

* **Absolute Maximum:** O1-Preview achieves the highest overall MR Score of approximately 0.62 at 10 steps.

* **Absolute Minimum:** DeepSeek-v2 drops to the lowest overall MR Score of approximately 0.08 at 11 steps.

* **Convergence Point:** At `>=13` steps, four of the five models (DeepSeek-v2, GPT-4-turbo, GLM-4, and Qwen2-72B) converge tightly between scores of ~0.31 and ~0.43.

* **The "Step 10-12" Anomaly:** Steps 10, 11, and 12 represent a zone of extreme volatility for several models. DeepSeek-v2 crashes at 10 and 11; GPT-4-turbo crashes at 10, recovers at 11, and crashes again at 12; GLM-4 crashes at 12. Conversely, O1-Preview peaks at step 10.

### Interpretation

The data suggests a strong correlation between the number of reasoning steps required to solve a problem and the performance (MR Score) of various LLMs.

1. **Architectural Superiority in Long-Context Reasoning:** The `O1-Preview` model demonstrates a distinct advantage in handling multi-step problems. Its smooth curve suggests a robust underlying architecture that scales well with complexity up to 10 steps before experiencing a graceful degradation. It does not suffer the catastrophic failures seen in other models.

2. **Context Window/Attention Breakdown:** The extreme volatility of `DeepSeek-v2` and `GPT-4-turbo` between steps 9 and 12 strongly implies a breaking point in their attention mechanisms or context handling. The sharp spikes and crashes suggest that at these specific lengths, the models either perfectly grasp the logic or lose the thread entirely (hallucination or context dropping). DeepSeek-v2's near-zero score at step 11 is a notable catastrophic failure.

3. **Complexity Ceiling:** Across almost all models, performance begins to degrade or converge at the highest complexity level (`>=13` steps). Even the dominant O1-Preview drops from its peak of ~0.62 down to ~0.49. This indicates a general limitation in current LLM capabilities when forced to maintain logical coherence over very long chains of reasoning.

4. **Consistency vs. Peak Performance:** `Qwen2-72B` is the lowest performer on average, but it is highly consistent. It does not suffer the massive drops seen in GPT-4-turbo or DeepSeek-v2, suggesting it might be a safer, albeit less capable, choice for problems of unpredictable length where catastrophic failure is unacceptable.

</details>

Figure 5: The MR-Scores of models on solutions with different step numbers.

6.1 Few Shot Prompting

As previously discussed and exemplified by our prompt template (Figure 10 in Appendix- D), our evaluation method is characterized by its high level of difficulty and complexity. In this experiment, we aimed to determine whether providing a few step-wise chain-of-thought (CoT) examples could improve model performance in terms of format adherence and reasoning quality. The results, as presented in Table 9 in Appendix, do not show a consistent pattern as the number of shots increases. While smaller language models like Gemma-2B exhibit performance improvements with additional shots, the performance of larger language models tends to fluctuate with an increasing number of shots. We hypothesize that for our complex tasks, the lengthy few-shot demonstrations may act more as a hindrance, providing distracting information rather than aiding in format adherence and reasoning. Our empirical findings suggest that a one-shot demonstration strikes the optimal balance between providing guidance and minimizing distraction. This supports our decision to focus on zero-shot versus one-shot comparisons in our primary experiments, as detailed in Table 2.

6.2 Self Refine Prompting

As suggested by [31], large language models typically cannot perform self-correction without external ground truth feedback. To explore whether this phenomenon occurs in our benchmark, we adopted a similar setting by prompting the language model to verify its own answer across a three-round interaction sequence: query, examine, and refine. Our prompting template, detailed in Figure 8 in Appendix D, is minimalistic and designed solely to encourage the model to self-examine.

The results of this self-refinement process are recorded in Table 4. Notably, models smaller than Llama3-70B exhibit performance degradation with self-refinement, while larger models, such as GPT-4, show marginal benefits from the process. Conversely, from Llama3-8B to Llama3-70B, despite a significant portion of correct predictions shifting to incorrect ones, as previously reported by [31], our benchmark shows an increasing trend of incorrect predictions shifting to correct ones as model size increases. This shift results in the significant performance improvements observed in models like Llama3-70B.

To understand the disproportionate improvement observed in the 70B model, we analyzed performance breakdown at the task level. These results are visualized and discussed in Figure 9 of Appendix E. In short, we believe the lack of consistency does not necessarily indicate a more robust or advanced reasoning ability, despite the increase of the evaluation results.

6.3 Solution Correctness Prior

Table 3: Comparison of average accuracy in identifying the first error step and the corresponding error reason, with and without prior knowledge of the solutions’ correctness.

| Gemma-2B Llama3-8B Llama3-70B | 0.3 15.5 14.5 | 0.1 26.4 34.6 | 0.1 6.6 9.1 | 0.0 11.9 25.7 |

| --- | --- | --- | --- | --- |

| GPT-4-Turbo | 40.9 | 41.6 | 37.9 | 38.0 |

Table 4: Comparison of prompting methods: MR-Scores achieved by zero-shot step-wise CoT and Self-Refine technique.

| Gemma-2B Llama3-8B Llama3-70B | 0.1 11.7 17.7 | 0.2 11.3 27.5 |

| --- | --- | --- |

| GPT-4-Turbo | 43.2 | 45.5 |

To verify the influence of external ground truth signals, we sampled 100 incorrect solutions from each subject respectively as our test set. By observing the same set of language models under a zero-shot CoT setting, we aim to determine whether the knowledge of the solution’s incorrectness enhances their ability to identify the first error step and the reason for the error.

The results in Table 4 illustrate that the benefits of knowing the solution correctness prior generally increase with the model’s competence but begin to plateau at the level of sophisticated models like GPT-4. Specifically, the Gemma-2b model struggles significantly in our benchmark, showing nearly zero performance due to its limited ability to follow formats and comprehend complex tasks. Consequently, having the solution correctness prior does not improve its performance metrics. In contrast, models with moderate capabilities benefit substantially from this prior knowledge, which aids in accurately locating the first error step and elucidating the error reason. However, as model capabilities improve, the incremental benefits of this prior knowledge quickly diminish. For instance, GPT-4 shows only a marginal improvement in identifying the first error step and an almost negligible impact on error reason analysis when provided with the prior.

7 Conclusion

This paper highlights the importance of evaluating the reasoning capabilities of LLMs with process-oriented design and presents a comprehensive benchmark called MR-Ben that addresses the limitations of existing evaluation methodologies. MR-Ben consists of questions from a diverse range of subjects and incorporates a meta-reasoning paradigm, where LLMs act as teachers to evaluate the reasoning process. Our evaluation of a diverse suite of LLMs on MR-Ben reveals several key limitations and weaknesses. Many models struggle with identifying and correcting errors within reasoning chains, demonstrating difficulty in performing system-2 style thinking—such as scrutinizing assumptions, calculations, and intermediate steps. Furthermore, even state-of-the-art models often fail to maintain consistency across reasoning paradigms, exposing gaps in their generalization abilities. Additionally, our findings emphasize the importance of searching and reflecting on the solution space during inference. Models like the o1 series showcase the potential of scaling test-time computation, where frequent retrospection and iterative search through multiple solution paths significantly enhance reasoning performance. Nevertheless, improving LLMs’ reasoning abilities on complex and nuanced tasks remains an open research question, and we encourage future work to develop upon MR-Ben.

8 Acknowledgement

This work was supported in part by the Research Grants Council under the Areas of Excellence scheme grant AoE/E-601/22-R.

References

- Abdin et al. [2024] Marah Abdin, Sam Ade Jacobs, Ammar Ahmad Awan, Jyoti Aneja, Ahmed Awadallah, Hany Awadalla, Nguyen Bach, Amit Bahree, Arash Bakhtiari, Harkirat Behl, et al. Phi-3 technical report: A highly capable language model locally on your phone. arXiv preprint arXiv:2404.14219, 2024.

- AI [2024a] Moonshot AI. Moonshot ai, 2024a. URL https://www.moonshot.cn/.

- AI [2024b] Zhipu AI. Welcome to glm-4, 2024b. URL https://en.chatglm.cn/.

- Amini et al. [2019] Aida Amini, Saadia Gabriel, Shanchuan Lin, Rik Koncel-Kedziorski, Yejin Choi, and Hannaneh Hajishirzi. Mathqa: Towards interpretable math word problem solving with operation-based formalisms. In Jill Burstein, Christy Doran, and Thamar Solorio, editors, Proceedings of the 2019 Conference of the North American Chapter of the Association for Computational Linguistics: Human Language Technologies, NAACL-HLT 2019, Minneapolis, MN, USA, June 2-7, 2019, Volume 1 (Long and Short Papers), pages 2357–2367. Association for Computational Linguistics, 2019. doi: 10.18653/V1/N19-1245. URL https://doi.org/10.18653/v1/n19-1245.

- Anthropic [2024a] Anthropic. Claude 2, 2024a. URL https://www.anthropic.com/news/claude-2.

- Anthropic [2024b] Anthropic. Introducing the next generation of claude, 2024b. URL https://www.anthropic.com/news/claude-3-family.

- Bai et al. [2023] Jinze Bai, Shuai Bai, Yunfei Chu, Zeyu Cui, Kai Dang, Xiaodong Deng, Yang Fan, Wenbin Ge, Yu Han, Fei Huang, et al. Qwen technical report. arXiv preprint arXiv:2309.16609, 2023.

- Bai et al. [2022] Yuntao Bai, Saurav Kadavath, Sandipan Kundu, Amanda Askell, Jackson Kernion, Andy Jones, Anna Chen, Anna Goldie, Azalia Mirhoseini, Cameron McKinnon, Carol Chen, Catherine Olsson, Christopher Olah, Danny Hernandez, Dawn Drain, Deep Ganguli, Dustin Li, Eli Tran-Johnson, Ethan Perez, Jamie Kerr, Jared Mueller, Jeffrey Ladish, Joshua Landau, Kamal Ndousse, Kamile Lukosiute, Liane Lovitt, Michael Sellitto, Nelson Elhage, Nicholas Schiefer, Noemí Mercado, Nova DasSarma, Robert Lasenby, Robin Larson, Sam Ringer, Scott Johnston, Shauna Kravec, Sheer El Showk, Stanislav Fort, Tamera Lanham, Timothy Telleen-Lawton, Tom Conerly, Tom Henighan, Tristan Hume, Samuel R. Bowman, Zac Hatfield-Dodds, Ben Mann, Dario Amodei, Nicholas Joseph, Sam McCandlish, Tom Brown, and Jared Kaplan. Constitutional AI: harmlessness from AI feedback. CoRR, abs/2212.08073, 2022. doi: 10.48550/ARXIV.2212.08073. URL https://doi.org/10.48550/arXiv.2212.08073.

- Bengio [2020] Yoshua Bengio. Deep learning for system 2 processing. Presentation at the AAAI-20 Turing Award Winners 2018 Special Event, February 9 2020.

- Bi et al. [2024] Xiao Bi, Deli Chen, Guanting Chen, Shanhuang Chen, Damai Dai, Chengqi Deng, Honghui Ding, Kai Dong, Qiushi Du, Zhe Fu, Huazuo Gao, Kaige Gao, Wenjun Gao, Ruiqi Ge, Kang Guan, Daya Guo, Jianzhong Guo, Guangbo Hao, Zhewen Hao, Ying He, Wenjie Hu, Panpan Huang, Erhang Li, Guowei Li, Jiashi Li, Yao Li, Y. K. Li, Wenfeng Liang, Fangyun Lin, Alex X. Liu, Bo Liu, Wen Liu, Xiaodong Liu, Xin Liu, Yiyuan Liu, Haoyu Lu, Shanghao Lu, Fuli Luo, Shirong Ma, Xiaotao Nie, Tian Pei, Yishi Piao, Junjie Qiu, Hui Qu, Tongzheng Ren, Zehui Ren, Chong Ruan, Zhangli Sha, Zhihong Shao, Junxiao Song, Xuecheng Su, Jingxiang Sun, Yaofeng Sun, Minghui Tang, Bingxuan Wang, Peiyi Wang, Shiyu Wang, Yaohui Wang, Yongji Wang, Tong Wu, Y. Wu, Xin Xie, Zhenda Xie, Ziwei Xie, Yiliang Xiong, Hanwei Xu, R. X. Xu, Yanhong Xu, Dejian Yang, Yuxiang You, Shuiping Yu, Xingkai Yu, B. Zhang, Haowei Zhang, Lecong Zhang, Liyue Zhang, Mingchuan Zhang, Minghua Zhang, Wentao Zhang, Yichao Zhang, Chenggang Zhao, Yao Zhao, Shangyan Zhou, Shunfeng Zhou, Qihao Zhu, and Yuheng Zou. Deepseek LLM: scaling open-source language models with longtermism. CoRR, abs/2401.02954, 2024. doi: 10.48550/ARXIV.2401.02954. URL https://doi.org/10.48550/arXiv.2401.02954.

- Brown et al. [2020] Tom B. Brown, Benjamin Mann, Nick Ryder, Melanie Subbiah, Jared Kaplan, Prafulla Dhariwal, Arvind Neelakantan, Pranav Shyam, Girish Sastry, Amanda Askell, Sandhini Agarwal, Ariel Herbert-Voss, Gretchen Krueger, Tom Henighan, Rewon Child, Aditya Ramesh, Daniel M. Ziegler, Jeffrey Wu, Clemens Winter, Christopher Hesse, Mark Chen, Eric Sigler, Mateusz Litwin, Scott Gray, Benjamin Chess, Jack Clark, Christopher Berner, Sam McCandlish, Alec Radford, Ilya Sutskever, and Dario Amodei. Language models are few-shot learners. CoRR, abs/2005.14165, 2020. URL https://arxiv.org/abs/2005.14165.

- Bytedance [2024] Bytedance. Doubao team - crafting the industry’s most advanced llms., 2024. URL https://www.doubao.com/chat/.

- Chung et al. [2022] Hyung Won Chung, Le Hou, Shayne Longpre, Barret Zoph, Yi Tay, William Fedus, Eric Li, Xuezhi Wang, Mostafa Dehghani, Siddhartha Brahma, Albert Webson, Shixiang Shane Gu, Zhuyun Dai, Mirac Suzgun, Xinyun Chen, Aakanksha Chowdhery, Sharan Narang, Gaurav Mishra, Adams Yu, Vincent Y. Zhao, Yanping Huang, Andrew M. Dai, Hongkun Yu, Slav Petrov, Ed H. Chi, Jeff Dean, Jacob Devlin, Adam Roberts, Denny Zhou, Quoc V. Le, and Jason Wei. Scaling instruction-finetuned language models. CoRR, abs/2210.11416, 2022. doi: 10.48550/ARXIV.2210.11416. URL https://doi.org/10.48550/arXiv.2210.11416.

- Clark et al. [2018] Peter Clark, Isaac Cowhey, Oren Etzioni, Tushar Khot, Ashish Sabharwal, Carissa Schoenick, and Oyvind Tafjord. Think you have solved question answering? try arc, the AI2 reasoning challenge. CoRR, abs/1803.05457, 2018. URL http://arxiv.org/abs/1803.05457.

- Clark et al. [2020] Peter Clark, Oyvind Tafjord, and Kyle Richardson. Transformers as soft reasoners over language. In Christian Bessiere, editor, Proceedings of the Twenty-Ninth International Joint Conference on Artificial Intelligence, IJCAI 2020, pages 3882–3890. ijcai.org, 2020. doi: 10.24963/IJCAI.2020/537. URL https://doi.org/10.24963/ijcai.2020/537.

- Cobbe et al. [2021] Karl Cobbe, Vineet Kosaraju, Mohammad Bavarian, Mark Chen, Heewoo Jun, Lukasz Kaiser, Matthias Plappert, Jerry Tworek, Jacob Hilton, Reiichiro Nakano, Christopher Hesse, and John Schulman. Training verifiers to solve math word problems. CoRR, abs/2110.14168, 2021. URL https://arxiv.org/abs/2110.14168.

- Dai et al. [2024] Jianbo Dai, Jianqiao Lu, Yunlong Feng, Rongju Ruan, Ming Cheng, Haochen Tan, and Zhijiang Guo. Mhpp: Exploring the capabilities and limitations of language models beyond basic code generation. arXiv preprint arXiv:2405.11430, 2024.

- Dalvi et al. [2021] Bhavana Dalvi, Peter Jansen, Oyvind Tafjord, Zhengnan Xie, Hannah Smith, Leighanna Pipatanangkura, and Peter Clark. Explaining answers with entailment trees. In Marie-Francine Moens, Xuanjing Huang, Lucia Specia, and Scott Wen-tau Yih, editors, Proceedings of the 2021 Conference on Empirical Methods in Natural Language Processing, EMNLP 2021, Virtual Event / Punta Cana, Dominican Republic, 7-11 November, 2021, pages 7358–7370. Association for Computational Linguistics, 2021. doi: 10.18653/V1/2021.EMNLP-MAIN.585. URL https://doi.org/10.18653/v1/2021.emnlp-main.585.

- Fagin and Halpern [1994] Ronald Fagin and Joseph Y. Halpern. Reasoning about knowledge and probability. J. ACM, 41(2):340–367, 1994. doi: 10.1145/174652.174658. URL https://doi.org/10.1145/174652.174658.

- Fernandes et al. [2023] Patrick Fernandes, Aman Madaan, Emmy Liu, António Farinhas, Pedro Henrique Martins, Amanda Bertsch, José G. C. de Souza, Shuyan Zhou, Tongshuang Wu, Graham Neubig, and André F. T. Martins. Bridging the gap: A survey on integrating (human) feedback for natural language generation. CoRR, abs/2305.00955, 2023. doi: 10.48550/ARXIV.2305.00955. URL https://doi.org/10.48550/arXiv.2305.00955.