# Step-DPO: Step-wise Preference Optimization for Long-chain Reasoning of LLMs

## Abstract

Mathematical reasoning presents a significant challenge for Large Language Models (LLMs) due to the extensive and precise chain of reasoning required for accuracy. Ensuring the correctness of each reasoning step is critical. To address this, we aim to enhance the robustness and factuality of LLMs by learning from human feedback. However, Direct Preference Optimization (DPO) has shown limited benefits for long-chain mathematical reasoning, as models employing DPO struggle to identify detailed errors in incorrect answers. This limitation stems from a lack of fine-grained process supervision. We propose a simple, effective, and data-efficient method called Step-DPO, which treats individual reasoning steps as units for preference optimization rather than evaluating answers holistically. Additionally, we have developed a data construction pipeline for Step-DPO, enabling the creation of a high-quality dataset containing 10K step-wise preference pairs. We also observe that in DPO, self-generated data is more effective than data generated by humans or GPT-4, due to the latter’s out-of-distribution nature. Our findings demonstrate that as few as 10K preference data pairs and fewer than 500 Step-DPO training steps can yield a nearly 3% gain in accuracy on MATH for models with over 70B parameters. Notably, Step-DPO, when applied to Qwen2-72B-Instruct, achieves scores of 70.8% and 94.0% on the test sets of MATH and GSM8K, respectively, surpassing a series of closed-source models, including GPT-4-1106, Claude-3-Opus, and Gemini-1.5-Pro. Our code, data, and models are available at https://github.com/dvlab-research/Step-DPO.

## 1 Introduction

<details>

<summary>x1.png Details</summary>

### Visual Description

\n

## Scatter Plot: Accuracy on the MATH test set

### Overview

This is a scatter plot comparing the accuracy of various large language models (LLMs) on the MATH test set, plotted against their model size (parameter count). The chart highlights the performance of models from the Qwen family, particularly those using "Step-DPO" fine-tuning, against other prominent models and baselines.

### Components/Axes

* **Chart Title:** "Accuracy on the MATH test set" (centered at the top).

* **Y-Axis:** Labeled "Accuracy (%)". Scale ranges from 42.5 to 72.5, with major ticks every 2.5 units (42.5, 45.0, 47.5, ..., 72.5).

* **X-Axis:** Labeled "Model size". It is a categorical axis with the following discrete labels from left to right: "7B", "32B", "47B", "57B", "70B", "72B".

* **Legend (Left side, vertically aligned):**

* Green circle: `DeepSeekMath-RL`

* Olive circle: `Qwen2-7B-Instruct`

* Dark red circle: `DeepSeekMath-Instruct`

* Purple circle: `MathGenieLM-Mistral`

* **Reference Baselines (Horizontal dashed lines with labels on the left):**

* `Gemini-1.5-Pro` at approximately 68.0% accuracy.

* `GPT-4-1106` at approximately 64.5% accuracy.

* `Claude-3-Opus` at approximately 60.0% accuracy.

### Detailed Analysis

**Data Points (Approximate values, identified by marker shape/color and label):**

* **7B Size:**

* Red Triangle: `Qwen2-7B-Step-DPO` at ~56.0%.

* **32B Size:**

* Red Triangle: `Qwen1.5-32B-Step-DPO` at ~57.0%.

* Cyan Circle: `Qwen1.5-32B-SFT` at ~55.0%.

* **47B Size:**

* Blue Circle: `MAmmoTH2-Mixtral-8x7B` at ~47.0%.

* **57B Size:**

* Red Triangle: `Qwen2-57B-A14B-Step-DPO` at ~56.5%.

* Olive Circle: `Qwen2-57B-A14B-Instruct` at ~49.0%.

* **70B Size:**

* Red Triangle: `Llama3-70B-Step-DPO` at ~59.5%.

* Orange Circle: `Llama3-70B-Instruct` at ~50.5%.

* **72B Size:**

* Red Triangle: `Qwen2-72B-Step-DPO` at ~65.0%.

* Olive Circle (with dagger †): `Qwen2-72B-Instruct†` at ~69.5%.

* Olive Circle (with arrow): `Qwen2-72B-Instruct` at ~59.5%.

### Key Observations

1. **Step-DPO Performance Trend:** Models labeled with "Step-DPO" (red triangles) consistently outperform their corresponding "Instruct" or "SFT" base models at the same parameter size. This is visible at 32B, 57B, 70B, and 72B.

2. **Scale vs. Accuracy:** For the Qwen2 Step-DPO series (red triangles), there is a clear positive correlation between model size and accuracy, rising from ~56% at 7B to ~65% at 72B.

3. **Top Performers:** The highest accuracy points on the chart are the Qwen2-72B variants. `Qwen2-72B-Instruct†` (~69.5%) surpasses the `Gemini-1.5-Pro` baseline. `Qwen2-72B-Step-DPO` (~65.0%) surpasses the `GPT-4-1106` baseline.

4. **Architectural Outlier:** `MAmmoTH2-Mixtral-8x7B` (blue circle at 47B) has significantly lower accuracy (~47%) compared to other models in a similar size range, suggesting different training or architecture.

5. **Llama3 Comparison:** At 70B, `Llama3-70B-Step-DPO` (~59.5%) shows a substantial improvement over `Llama3-70B-Instruct` (~50.5%), mirroring the Step-DPO benefit seen in Qwen models.

### Interpretation

The data demonstrates the effectiveness of the "Step-DPO" fine-tuning technique for improving mathematical reasoning performance in LLMs. Across multiple model families (Qwen, Llama) and sizes, Step-DPO yields a consistent and often significant accuracy boost over standard instruction-tuned (Instruct) or supervised fine-tuned (SFT) versions.

The plot suggests that while base model scale is a primary driver of performance (the upward trend of red triangles), advanced alignment techniques like Step-DPO can provide a critical performance leap, allowing smaller Step-DPO models to rival or exceed larger, differently tuned models. For instance, the 72B Step-DPO model outperforms the much larger reference baseline `GPT-4-1106`.

The presence of two `Qwen2-72B-Instruct` points (one with a dagger † at ~69.5% and one at ~59.5%) implies a potential variant or different evaluation condition for the same base model, with the dagger version performing exceptionally well, even surpassing the Gemini baseline. This highlights that model performance is not solely a function of size and tuning method, but also of specific implementation details or training checkpoints.

**Language Note:** All text in the image is in English.

</details>

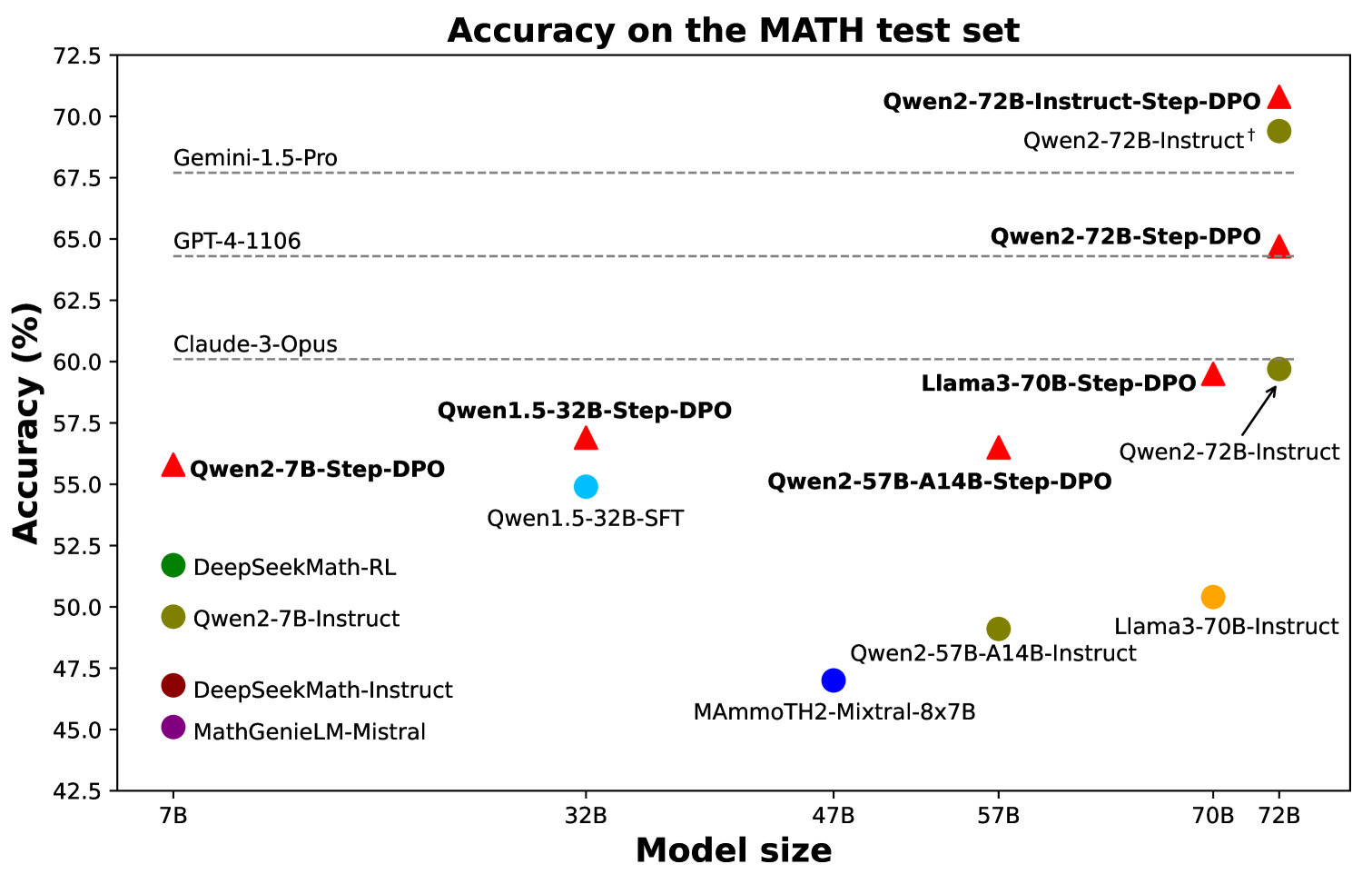

Figure 1: Accuracy on the MATH test set across models fine-tuned by Step-DPO and other state-of-the-art models. †: reproduced result using our prompt.

<details>

<summary>x2.png Details</summary>

### Visual Description

## Line Chart: Model Accuracy vs. Training Steps

### Overview

This line chart displays the performance of four different language model variants, based on the Qwen2 architecture, over the course of training. The performance metric is the accuracy (in percentage) of the model in judging preferred versus undesirable outputs. The chart compares two model sizes (7B and 72B parameters) and two training methodologies (DPO and Step-DPO).

### Components/Axes

* **Chart Title:** "Accuracy of judging preferred and undesirable outputs"

* **X-Axis:** Labeled "Training steps". The scale runs from 0 to 250, with major tick marks at intervals of 50 (50, 100, 150, 200, 250).

* **Y-Axis:** Labeled "Accuracy (%)". The scale runs from 64 to 84, with major tick marks at intervals of 2 (64, 66, 68, 70, 72, 74, 76, 78, 80, 82, 84).

* **Legend/Series:** The legend is embedded directly within the chart area, with labels placed near their corresponding lines. There are four distinct data series:

1. **Qwen2-72B-Step-DPO:** Represented by a **purple line with upward-pointing triangle markers**.

2. **Qwen2-7B-Step-DPO:** Represented by an **orange line with upward-pointing triangle markers**.

3. **Qwen2-72B-DPO:** Represented by a **purple line with square markers**.

4. **Qwen2-7B-DPO:** Represented by an **orange line with square markers**.

### Detailed Analysis

**Data Series and Approximate Trends:**

1. **Qwen2-72B-Step-DPO (Purple Triangles):**

* **Trend:** Shows a strong initial increase, followed by a high plateau with minor fluctuations. It is the top-performing series overall.

* **Approximate Data Points:**

* Step ~25: ~76%

* Step 50: ~81%

* Step 75: ~81%

* Step 100: ~82% (Peak)

* Step 125: ~80.5%

* Step 150: ~82%

2. **Qwen2-7B-Step-DPO (Orange Triangles):**

* **Trend:** Shows a sharp initial rise, then fluctuates within a band between approximately 75% and 77.5%. It is the second-highest performing series.

* **Approximate Data Points:**

* Step ~25: ~66.5%

* Step 50: ~76%

* Step 75: ~75.5%

* Step 100: ~76.5%

* Step 125: ~76.2%

* Step 150: ~77%

* Step 175: ~76.2%

* Step 200: ~75.2%

* Step 225: ~76%

* Step 250: ~75%

* Step ~275: ~77.5% (Final point, appears beyond the 250 tick)

3. **Qwen2-72B-DPO (Purple Squares):**

* **Trend:** Shows a moderate initial increase, then stabilizes in a narrow band around 73-74%. It performs below its Step-DPO counterpart.

* **Approximate Data Points:**

* Step ~25: ~69.5%

* Step 50: ~73.8%

* Step 75: ~72.8%

* Step 100: ~73.5%

* Step 125: ~73.5%

* Step 150: ~73.8%

4. **Qwen2-7B-DPO (Orange Squares):**

* **Trend:** Shows a gradual initial increase, then fluctuates between approximately 68.5% and 71.5%. It is the lowest-performing series overall.

* **Approximate Data Points:**

* Step ~25: ~67.5%

* Step 50: ~69%

* Step 75: ~70%

* Step 100: ~69.5%

* Step 125: ~69.2%

* Step 150: ~69.2%

* Step 175: ~70%

* Step 200: ~70%

* Step 225: ~68.8%

* Step 250: ~71.5% (Peak)

* Step ~275: ~69.5%

### Key Observations

1. **Methodology Superiority:** For both model sizes (7B and 72B), the **Step-DPO** variant (triangle markers) consistently achieves higher accuracy than the standard **DPO** variant (square markers) throughout the training steps shown.

2. **Model Size Advantage:** The larger **72B** models (purple lines) consistently outperform their smaller **7B** counterparts (orange lines) when using the same training methodology (Step-DPO or DPO).

3. **Performance Hierarchy:** The clear performance order from highest to lowest is: Qwen2-72B-Step-DPO > Qwen2-7B-Step-DPO > Qwen2-72B-DPO > Qwen2-7B-DPO.

4. **Convergence:** All models show a rapid improvement in the first 50 training steps, after which performance gains slow and enter a phase of fluctuation or plateau.

5. **Volatility:** The Qwen2-7B-Step-DPO series shows the most pronounced fluctuations in the later training steps (post step 150).

### Interpretation

The data strongly suggests that the **Step-DPO training methodology is more effective** than standard DPO for the task of judging output preference, as it yields higher accuracy across both model scales. This could indicate that the step-wise approach provides more stable or informative learning signals during alignment.

Furthermore, the consistent advantage of the **72B models over the 7B models** underscores the importance of model scale for this capability, even when using the same training technique. The larger model's capacity likely allows for a more nuanced understanding of the preference task.

From a Peircean investigative perspective, the chart presents a clear **abductive inference**: the observed performance gap between Step-DPO and DPO models (the sign) points to Step-DPO being a superior alignment technique for this specific metric (the interpretant). The **indexical** relationship is the direct link between training steps (cause) and accuracy improvement (effect). The **symbolic** representation is the chart itself, encoding the complex relationship between model size, training method, and performance into a visual form.

A notable anomaly is the final upward tick for Qwen2-7B-Step-DPO beyond step 250, which might suggest potential for further gains with extended training, whereas the 72B Step-DPO model appears to have fully plateaued. The dip in Qwen2-7B-Step-DPO around step 225 before its final rise could indicate a temporary instability in the optimization process for that model size.

</details>

<details>

<summary>x3.png Details</summary>

### Visual Description

\n

## Line Chart: Reward Margin Between Preferred and Undesirable Outputs

### Overview

This image is a line chart comparing the performance of four different model training configurations over time. The chart tracks the "Reward margin" (y-axis) as a function of "Training steps" (x-axis). The data suggests an evaluation of Direct Preference Optimization (DPO) and a variant called "Step-DPO" applied to two different model sizes (7B and 72B parameters) from the Qwen2 series.

### Components/Axes

* **Chart Title:** "Reward margin between preferred and undesirable outputs" (top center).

* **Y-Axis:** Labeled "Margin". The scale is linear, ranging from 0.1 to 2.1, with major tick marks at 0.1, 0.5, 0.9, 1.3, 1.7, and 2.1.

* **X-Axis:** Labeled "Training steps". The scale is linear, ranging from approximately 0 to 275, with major tick marks at 50, 100, 150, 200, and 250.

* **Legend/Series Labels:** There are four distinct data series, each identified by a unique color and marker shape. The labels are placed directly on the chart near the end of their respective lines.

1. **Qwen2-7B-Step-DPO:** Orange line with upward-pointing triangle markers (▲).

2. **Qwen2-72B-Step-DPO:** Purple line with upward-pointing triangle markers (▲).

3. **Qwen2-72B-DPO:** Purple line with square markers (■).

4. **Qwen2-7B-DPO:** Orange line with square markers (■).

### Detailed Analysis

**Trend Verification & Data Point Extraction (Approximate Values):**

* **Qwen2-7B-Step-DPO (Orange Triangles):**

* **Trend:** Shows a strong, continuous upward slope that begins to plateau after step 200. It achieves the highest final margin.

* **Data Points:** (~25 steps, 0.2), (~50, 1.0), (~75, 1.35), (~100, 1.55), (~125, 1.75), (~150, 1.85), (~175, 1.95), (~200, 2.05), (~225, 2.08), (~250, 2.12), (~275, 2.12).

* **Qwen2-72B-Step-DPO (Purple Triangles):**

* **Trend:** Shows a very steep initial increase, then continues to rise steadily but at a slower rate than the 7B Step-DPO model after ~100 steps. It ends at the second-highest margin.

* **Data Points:** (~25 steps, 0.45), (~50, 1.15), (~75, 1.5), (~100, 1.7), (~125, 1.78), (~150, 1.8).

* **Qwen2-72B-DPO (Purple Squares):**

* **Trend:** Shows a rapid initial increase up to ~50 steps, after which it plateaus completely, showing almost no further improvement.

* **Data Points:** (~25 steps, 0.3), (~50, 0.75), (~75, 0.76), (~100, 0.77), (~125, 0.78), (~150, 0.77).

* **Qwen2-7B-DPO (Orange Squares):**

* **Trend:** Similar to its 72B counterpart, it rises quickly until ~50 steps and then plateaus at a margin slightly lower than the 72B DPO model.

* **Data Points:** (~25 steps, 0.15), (~50, 0.65), (~75, 0.7), (~100, 0.71), (~125, 0.69), (~150, 0.68), (~175, 0.68), (~200, 0.7), (~225, 0.7), (~250, 0.7), (~275, 0.7).

### Key Observations

1. **Step-DPO Superiority:** Both "Step-DPO" variants (triangle markers) significantly and consistently outperform their standard "DPO" counterparts (square markers) throughout the training process, achieving much higher final reward margins.

2. **Model Size Effect within Step-DPO:** The smaller 7B model (Qwen2-7B-Step-DPO) ultimately achieves a higher reward margin (~2.12) than the larger 72B model (~1.8) when using the Step-DPO method, despite a slightly slower start.

3. **Plateauing of Standard DPO:** Both standard DPO models (Qwen2-7B-DPO and Qwen2-72B-DPO) show a clear performance plateau after approximately 50 training steps, indicating that further training under this method yields negligible improvement in the reward margin.

4. **Initial Learning Rate:** The 72B Step-DPO model shows the fastest initial learning (steepest slope between 0-50 steps), but is eventually overtaken by the 7B Step-DPO model.

### Interpretation

The data demonstrates a clear comparative advantage for the "Step-DPO" training methodology over standard DPO for the task of maximizing the reward margin between preferred and undesirable outputs in the Qwen2 model series. The reward margin is a key metric in preference learning, indicating how strongly the model can distinguish between good and bad responses.

The most notable finding is the inverse relationship between model size and final performance *within the Step-DPO method*: the 7B model outperforms the 72B model. This could suggest several possibilities: the Step-DPO algorithm may be more sample-efficient or stable for smaller models, the larger model may require different hyperparameters, or the evaluation task may not benefit from, or could even be hindered by, the increased capacity of the 72B model under this specific training regime.

The complete plateau of the standard DPO models suggests a fundamental limitation in that approach for this metric, as it fails to continue improving the model's discriminative ability after an initial phase. In contrast, Step-DPO enables continued learning and refinement over hundreds of steps. This chart provides strong empirical evidence for the efficacy of the Step-DPO technique and highlights the importance of considering the interaction between training methodology and model scale.

</details>

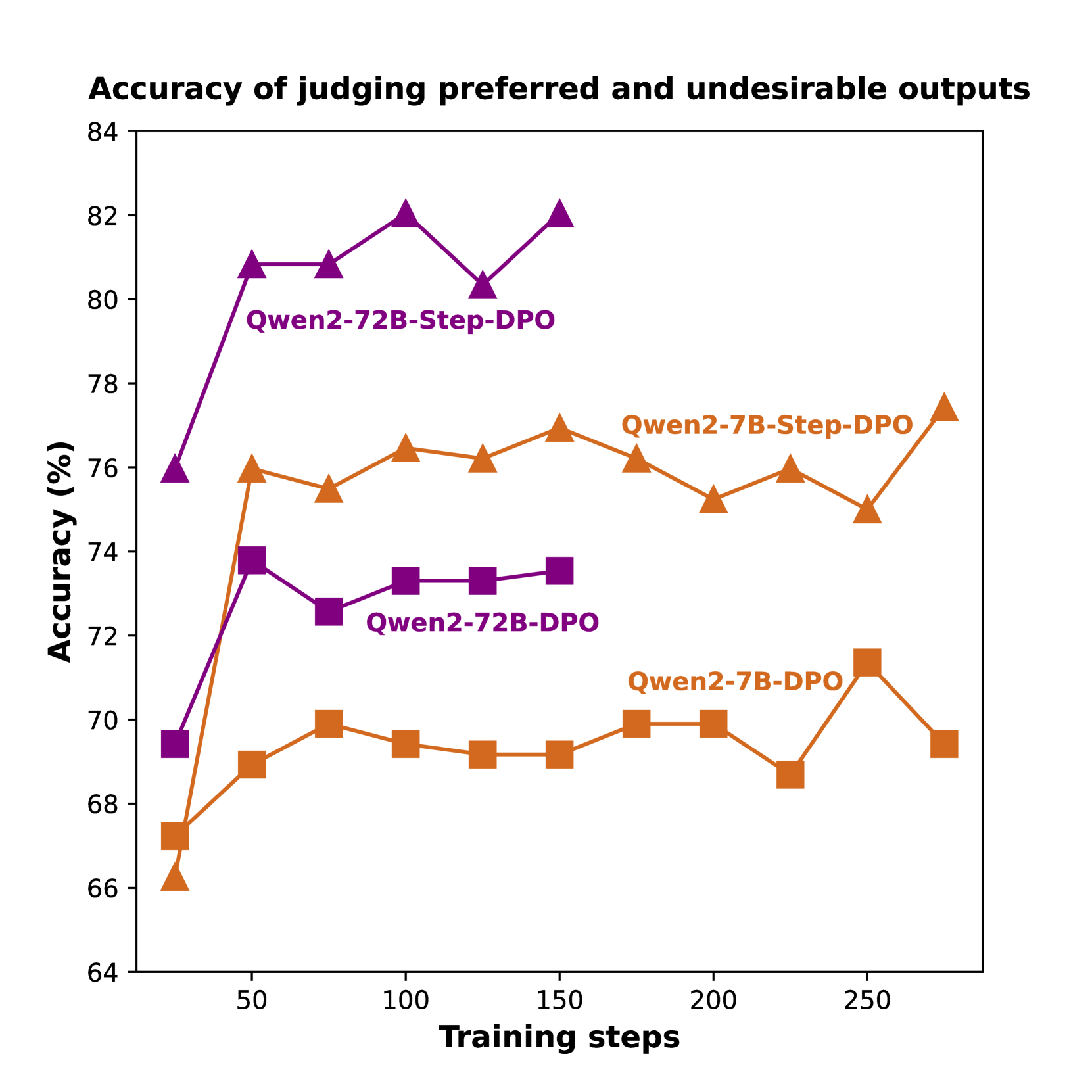

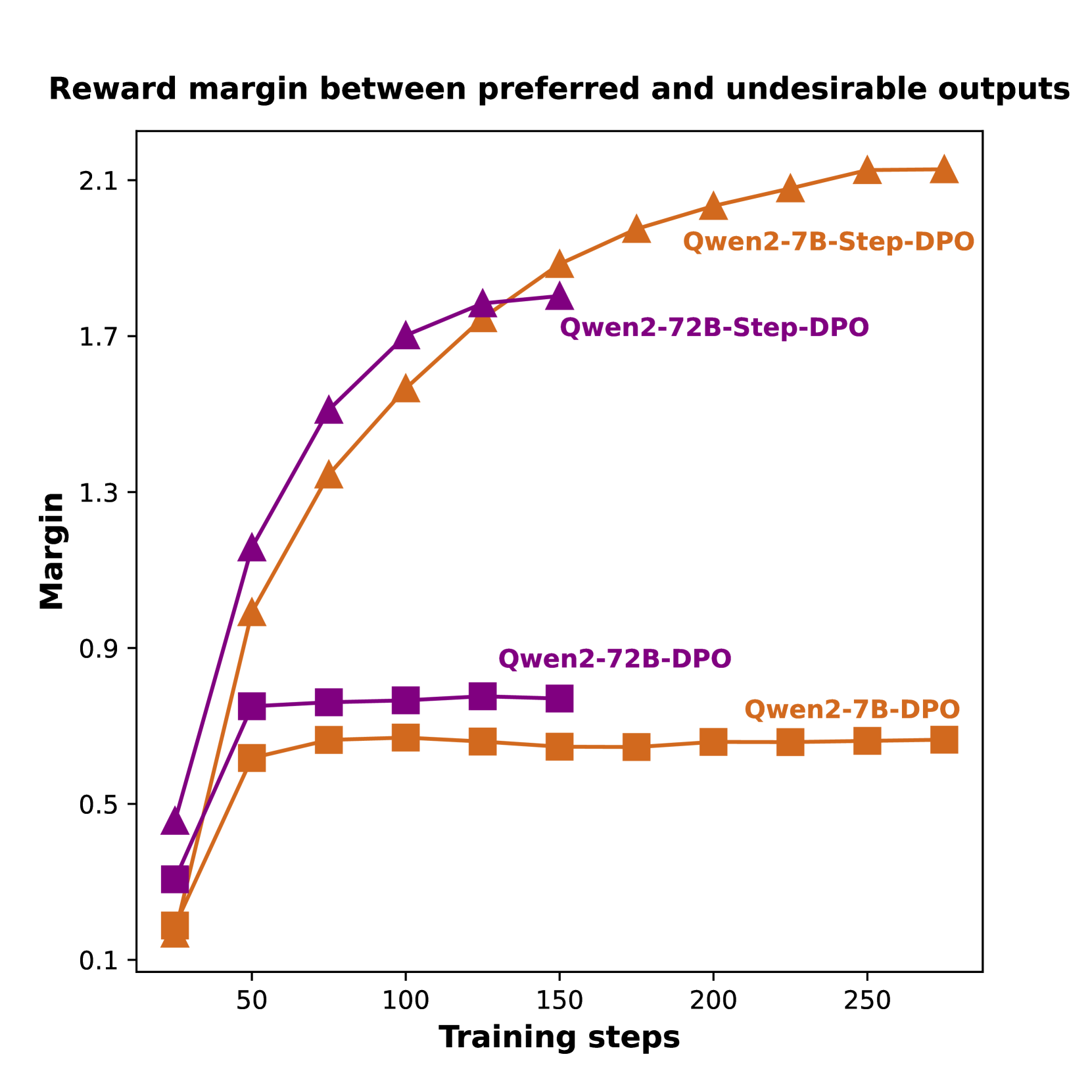

Figure 2: Left: Accuracy of judging preferred or undesirable outputs on the validation set during training. Right: Reward margins between preferred and undesirable outputs on the validation set during training. More details about these experiments are given in the appendix.

Mathematical reasoning is recognized as a critical long-chain reasoning ability in Large Language Models (LLMs). This task is particularly challenging due to the often extensive chain of thought required, which can include numerous reasoning steps. Any error in these steps can lead to an incorrect final answer.

Numerous studies (Yu et al., 2023; Luo et al., 2023; Yue et al., 2023; Liu & Yao, 2024; Lu et al., 2024; Li et al., 2024; Shao et al., 2024; Xin et al., 2024; Yue et al., 2024; Tang et al., 2024) have proposed various data augmentation techniques during the supervised fine-tuning (SFT) stage to enhance alignment. However, models in the SFT process are prone to hallucinations, resulting in saturated performance. A potential reason for this, as highlighted in Hong et al. (2024), is that as the probability of preferred outputs increases, so does the probability of undesirable ones. This phenomenon makes the model more likely to make errors in long-chain reasoning. Therefore, it is essential to develop methods to suppress the likelihood of undesirable outputs.

Recently, Direct Preference Optimization (DPO) (Rafailov et al., 2024) has been proposed for alignment using pair-wise preference data and is popular due to its simplicity. Despite its effectiveness in chat benchmarks (Tunstall et al., 2023; Zheng et al., 2024), DPO offers minimal benefits for long-chain mathematical reasoning. As shown in Fig. 2 (left), models using vanilla DPO perform poorly in distinguishing between preferred and undesirable outputs, failing to identify errors in rejected answers. Additionally, Fig. 2 (right) shows that the reward margin (i.e., the gap between the rewards of preferred and undesirable outputs) is limited for models using vanilla DPO and plateaus with further training. These findings indicate that models fine-tuned with vanilla DPO cannot pinpoint detailed errors in incorrect answers, hindering the improvement of reasoning abilities.

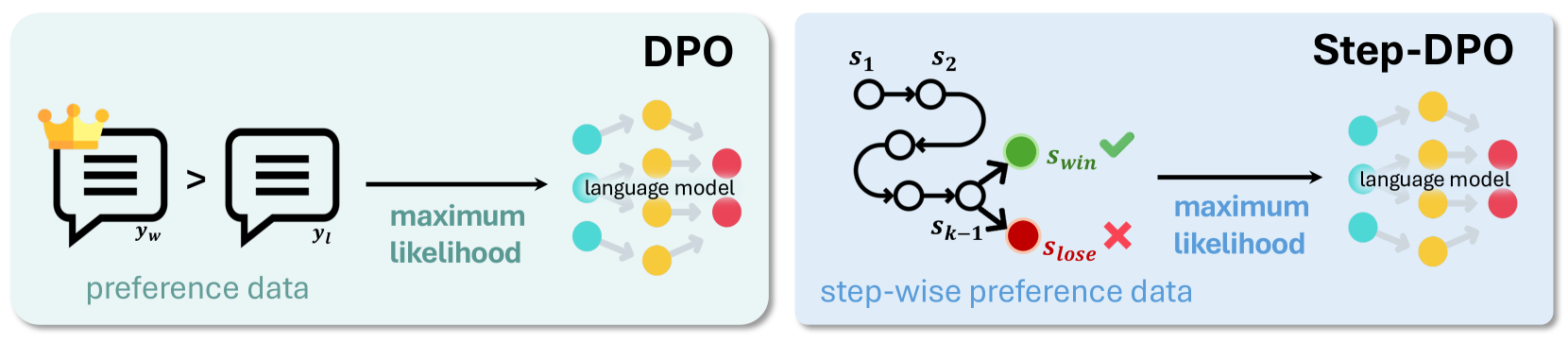

In this work, we introduce Step-DPO, where each intermediate reasoning step is treated as the basic unit for preference optimization. As illustrated in Fig. 3, unlike vanilla DPO, which only considers preference optimization between complete answers (i.e., $p(y_win|x)$ and $p(y_lose|x)$ ), Step-DPO examines the step-by-step answer (i.e., $y=s_1,...,s_n$ ) and specifically targets the first erroneous reasoning step. Step-DPO aims to select a correct reasoning step and reject an incorrect one, given a math problem and several initial correct reasoning steps (i.e., maximize $p(s_win|x;s_1,s_2,...,s_k-1)$ and minimize $p(s_lose|x;s_1,s_2,...,s_k-1)$ ). This transition allows the model to easily locate erroneous tokens for effective optimization, significantly enhancing long-chain reasoning.

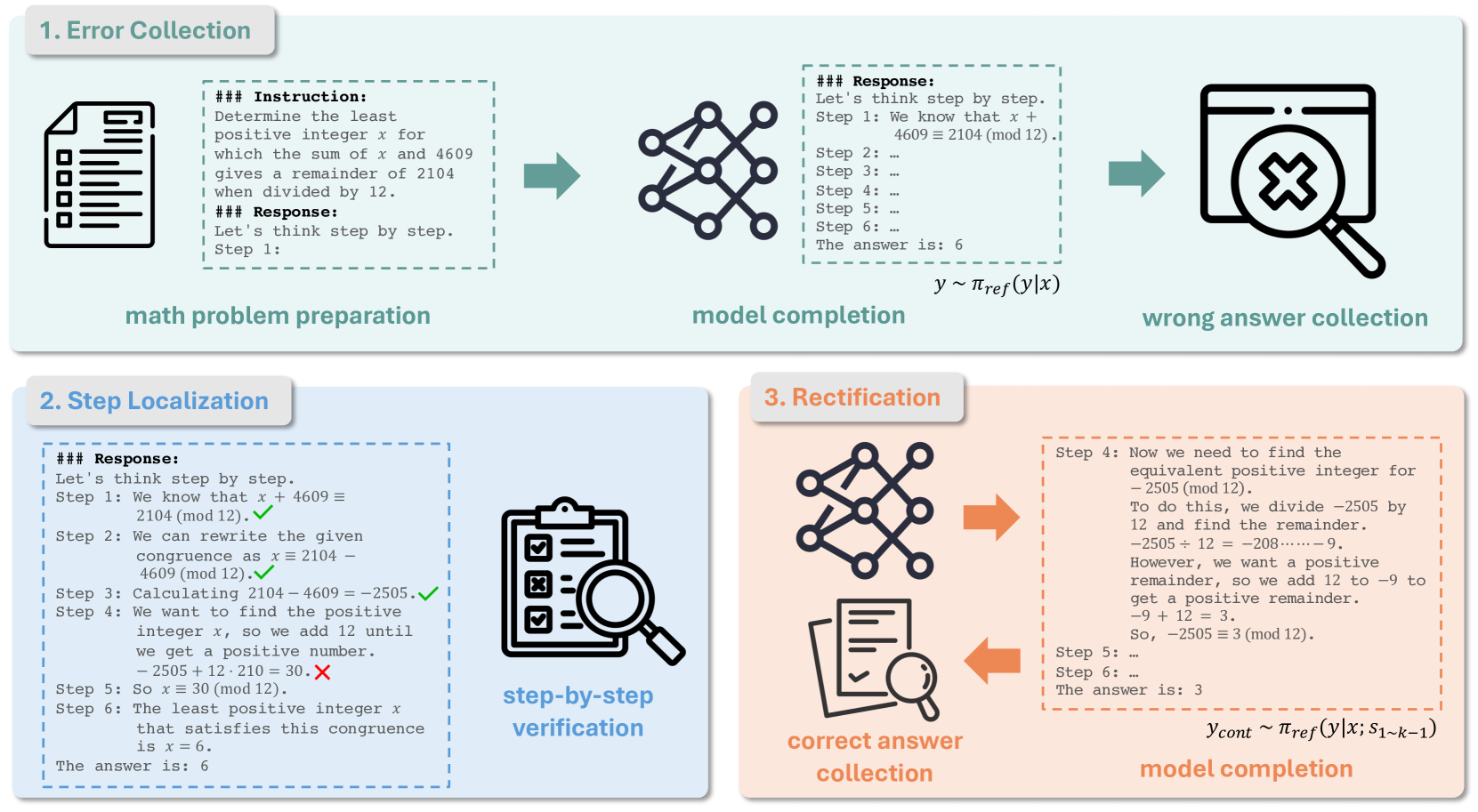

Moreover, we present an effective and economical pipeline to collect pair-wise preference data, resulting in a high-quality dataset for Step-DPO. This dataset contains approximately 10K samples, each consisting of: 1) a mathematical problem, 2) prior reasoning steps, 3) the chosen step, and 4) the rejected step. Our three-step pipeline for dataset construction includes: 1) Error collection, 2) Step localization, and 3) Rectification. Notably, the chosen reasoning step is generated by the model itself, as we find that in-distribution data (i.e., self-generated data) is more effective than out-of-distribution data (e.g., data written by humans or GPT-4) for Step-DPO, as shown in Table 4.

With this curated dataset, mathematical reasoning performance can be significantly boosted with only hundreds of training steps, as demonstrated in Fig. 6. For instance, fine-tuning Qwen-72B-Instruct with Step-DPO results in a model achieving 70.8% accuracy on MATH and 94.0% on GSM8K, surpassing a series of closed-source models, including GPT-4-1106, Claude-3-Opus, and Gemini-1.5-Pro.

<details>

<summary>x4.png Details</summary>

### Visual Description

## Diagram: Comparison of DPO and Step-DPO Methodologies

### Overview

The image is a technical diagram comparing two machine learning alignment techniques: **DPO** (Direct Preference Optimization) and **Step-DPO**. It visually contrasts their data structures and training processes using a side-by-side panel layout. The left panel illustrates the standard DPO approach, while the right panel illustrates the proposed Step-DPO variant.

### Components/Axes

The diagram is divided into two distinct, rounded rectangular panels with light background colors.

**Left Panel (DPO):**

* **Title:** "DPO" (top-right corner).

* **Input Data:** Labeled "preference data" (bottom-left). It consists of two speech bubble icons.

* The left bubble is labeled `y_w` and is adorned with a golden crown, indicating the "winning" or preferred response.

* The right bubble is labeled `y_l`, indicating the "losing" or less preferred response.

* A "greater than" symbol (`>`) is placed between them, signifying the preference order: `y_w > y_l`.

* **Process Arrow:** A black arrow points from the data to the model. The text "maximum likelihood" is written below this arrow in a teal color.

* **Output Model:** Labeled "language model" (center-right). It is represented by a network diagram of interconnected nodes in teal, yellow, and red.

**Right Panel (Step-DPO):**

* **Title:** "Step-DPO" (top-right corner).

* **Input Data:** Labeled "step-wise preference data" (bottom-left). It consists of a directed graph representing a sequence of reasoning or generation steps.

* The sequence starts with nodes labeled `s₁` and `s₂`.

* The path continues through several unlabeled intermediate nodes (circles).

* The sequence culminates in a branching point from node `s_{k-1}`.

* The upper branch leads to a green node labeled `s_win` with a green checkmark (✓).

* The lower branch leads to a red node labeled `s_lose` with a red cross (✗).

* **Process Arrow:** A black arrow points from the data to the model. The text "maximum likelihood" is written below this arrow in a blue color.

* **Output Model:** Labeled "language model" (center-right). It is represented by an identical network diagram of interconnected nodes in teal, yellow, and red.

### Detailed Analysis

The diagram contrasts the fundamental data unit used for training in each method.

1. **DPO Data Flow:**

* **Trend/Flow:** The process is linear and holistic. It takes a pair of complete, final responses (`y_w`, `y_l`) and directly optimizes the language model to prefer the winning response over the losing one using a maximum likelihood objective.

* **Spatial Grounding:** The preference data (`y_w`, `y_l`) is positioned on the far left. The "maximum likelihood" label is centered below the arrow connecting the data to the model on the right.

2. **Step-DPO Data Flow:**

* **Trend/Flow:** The process is sequential and granular. It operates on the intermediate steps (`s₁`, `s₂`, ..., `s_{k-1}`) that lead to a final outcome. The preference is defined not between final outputs, but between two possible *next steps* (`s_win` vs. `s_lose`) from a given state (`s_{k-1}`). The model is trained to maximize the likelihood of the step (`s_win`) that leads to a successful outcome.

* **Spatial Grounding:** The step-wise graph is positioned on the left. The "maximum likelihood" label is centered below the arrow connecting this graph to the model on the right. The `s_win` (green) node is placed above the `s_lose` (red) node at the branch point.

### Key Observations

* **Granularity of Feedback:** The core difference is the granularity of the preference signal. DPO uses a coarse, end-of-sequence signal (which entire response is better). Step-DPO uses a fine-grained, step-level signal (which specific next step is better).

* **Visual Metaphors:** The use of a crown for `y_w` versus check/cross marks for `s_win`/`s_lose` reinforces the concept of a "winner" in DPO versus "correct/incorrect" steps in Step-DPO.

* **Model Representation:** The identical "language model" node diagram in both panels emphasizes that the underlying model architecture being trained is the same; only the training data and objective differ.

* **Color Consistency:** The "maximum likelihood" text uses a different color in each panel (teal for DPO, blue for Step-DPO), possibly to visually distinguish the two processes despite the shared objective name.

### Interpretation

This diagram serves as a conceptual explanation for why Step-DPO might be an improvement over standard DPO for complex reasoning tasks.

* **What the Data Suggests:** It suggests that for tasks requiring multi-step reasoning (e.g., math, coding, logical deduction), providing feedback on intermediate steps (`Step-DPO`) is more informative and potentially more effective than providing feedback only on the final output (`DPO`). The model learns not just what a good final answer looks like, but *how to get there* step-by-step.

* **Relationship Between Elements:** The left panel establishes the baseline (DPO). The right panel introduces a modification: inserting a structured, step-wise reasoning graph between the raw preference data and the model training objective. This implies that Step-DPO is an extension or specialization of the DPO framework.

* **Underlying Message:** The diagram argues that aligning models on the *process* of reasoning (Step-DPO) is a more precise and potentially powerful method than aligning them solely on the *product* of reasoning (DPO). It visually advocates for the value of step-level supervision in training language models for complex tasks.

</details>

Figure 3: Comparison between DPO and Step-DPO.

## 2 Related Works

### 2.1 Mathematical Reasoning

Large Language Models (LLMs) have exhibited substantial reasoning capabilities, primarily due to their auto-regressive nature, which allows them to predict the next token based on contextual information. However, these models still struggle with long-chain reasoning tasks, particularly in mathematical contexts. Several prior studies (Yao et al., 2024; Chen et al., 2024; Yoran et al., 2023; Li et al., 2023; Tong et al., 2024; Fu et al., 2022; Zhou et al., 2022) have attempted to enhance the Chain-of-Thought (CoT) inference framework (Wei et al., 2022) to address this issue. While these efforts have led to significant improvements in certain tasks, they have not fully mitigated common hallucinations and have limited generalizability across all reasoning tasks.

Another research direction (Yu et al., 2023; Luo et al., 2023; Yue et al., 2023; Liu & Yao, 2024; Lu et al., 2024; Xu et al., 2024; Li et al., 2024; Shao et al., 2024; Xin et al., 2024; Zhou et al., 2024; Liu et al., 2023; Ying et al., 2024; Yue et al., 2024; Tang et al., 2024; Mitra et al., 2024; Yuan et al., 2023) focuses on various data augmentation techniques, such as rephrasing, extension, and evolution, for supervised fine-tuning (SFT). These methods have significantly enhanced the reasoning abilities of LLMs, but their performance plateaus once the data reaches a certain volume. Additionally, methods like those proposed by Wang et al. (2023a); Liao et al. (2024); Toshniwal et al. (2024); Gou et al. (2023) employ external tools, such as Python, to substantially reduce calculation errors.

Other approaches (Azerbayev et al., 2023; Shao et al., 2024; Lin et al., 2024; Ying et al., 2024; Wang et al., 2023c) involve continued pre-training on extensive, high-quality math-related datasets, which markedly improve mathematical reasoning capabilities. Recent studies (Xu et al., 2024; Ying et al., 2024) have explored reinforcement learning to mitigate hallucinations in mathematical reasoning. Works like Lightman et al. (2023); Shao et al. (2024); Wang et al. (2023b) emphasize the importance of step-by-step verification in reinforcement learning for mathematical problems. However, these methods still rely on the quality of the reward model and require the complex training pipelines of RLHF. Building on this line of research, we propose Step-DPO, a simpler, more effective, and more efficient method.

### 2.2 Reinforcement Learning from Human Feedback

Supervised fine-tuning (SFT) can align models with human preferences. However, as the probability of preferred outputs increases, so does the likelihood of undesirable ones, leading to hallucinations. To generate more reliable outputs, Reinforcement Learning from Human Feedback (RLHF) (Christiano et al., 2017; Ouyang et al., 2022) has been introduced for LLM alignment. This approach involves training a reward model with comparison data and then using this reward model to optimize the policy model. The final performance heavily depends on the quality of the reward model, and the training pipeline is quite complex.

To simplify this process, Direct Preference Optimization (DPO) (Rafailov et al., 2024) was proposed, which directly uses pair-wise preference data for model optimization. This transition significantly streamlines the training pipeline. While DPO has proven effective in chat benchmarks, it offers only marginal benefits for mathematical reasoning. Inheriting the principles of DPO, Step-DPO is specifically designed for long-chain reasoning and has shown significant performance improvements in solving math word problems.

## 3 Step-DPO

In this section, we elaborate on the proposed Step-DPO. First, we present step-wise formulation in Sec. 3.1, a novel approach designed to enhance long-chain reasoning abilities by building on DPO. Next, in Sec. 3.2, we illustrate a pipeline for constructing the step-wise preference dataset for Step-DPO. Both components are essential for achieving the desired performance improvements.

### 3.1 Step-wise Formulation

#### Preliminary.

Reinforcement Learning from Human Feedback (RLHF) (Christiano et al., 2017) is an effective approach for enhancing the robustness, factuality, and safety of LLMs (Ouyang et al., 2022). RLHF consists of two training phases: 1) reward model training, and 2) policy model training. However, the final performance of RLHF is highly sensitive to various hyperparameters in both phases, necessitating meticulous tuning.

To avoid this complex training pipeline, Rafailov et al. (2024) proposed Direct Preference Optimization (DPO), which directly uses pair-wise preference data to optimize the policy model with an equivalent optimization objective. Specifically, given an input prompt $x$ , and a preference data pair $(y_win,y_lose)$ , DPO aims to maximize the probability of the preferred output $y_win$ and minimize that of the undesirable output $y_lose$ . The optimization objective is formulated as:

$$

\displaystyle\begin{aligned} L_DPO(θ)=-E_(x,y_{win

,y_lose)∼ D}[\logσ(β\log\frac{π_θ(y_win|x)}{π_ref

(y_win|x)}-β\log\frac{π_θ(y_lose|x)}{π_ref(y_lose|x)})]

,\end{aligned} \tag{1}

$$

where $D$ is the pair-wise preference dataset, $σ$ is the sigmoid function, $π_θ(·|x)$ is the policy model to be optimized, $π_ref(·|x)$ is the reference model kept unchanged during training, and the hyperparameter $β$ controls the distance from the reference model.

#### Our Solution.

While DPO has proven effective in chat benchmarks, it brings only marginal improvements for long-chain reasoning tasks such as mathematical problems, as shown in Fig. 2 and Table 3. This limitation arises because most undesirable answers in these tasks do not contain errors initially; the first error often appears midway through the reasoning process. Rejecting an entire undesirable answer in DPO may also discard preceding correct reasoning steps, introducing significant noise and negatively impacting training.

Analogous to how teachers correct students by pinpointing specific errors rather than dismissing entire answers, our proposed Step-DPO provides more detailed supervision by identifying the specific erroneous reasoning step. This granular focus allows the model to swiftly locate, rectify, and avoid erroneous steps.

Specifically, the answer $y$ can be decomposed into a sequence of reasoning steps $y=s_1,…,s_n$ , where $s_i$ is the $i$ -th reasoning step. As illustrated in Fig. 3, given a prompt $x$ and a series of initial correct reasoning steps $s_1∼ k-1=s_1,…,s_k-1$ , Step-DPO aims to maximize the probability of the correct next reasoning step $s_win$ and minimize the probability of the incorrect one $s_lose$ . This objective can be formulated as:

$$

\displaystyle\begin{aligned} L(θ)=-E_(x,s_{1∼ k-1

,s_win,s_lose)∼ D}[\logσ(β\log\frac{π_θ(s_win|x;s_

1∼ k-1)}{π_ref(s_win|x;s_1∼ k-1)}-β\log\frac{π_θ(s

_lose|x;s_1∼ k-1)}{π_ref(s_lose|x;s_1∼ k-1)})].\end{aligned} \tag{2}

$$

### 3.2 In-distribution Data Construction

<details>

<summary>x5.png Details</summary>

### Visual Description

## Diagram: Three-Step Process for Mathematical Error Correction in AI Models

### Overview

The image is a flowchart diagram illustrating a three-stage process for identifying and correcting errors in a language model's step-by-step mathematical reasoning. The process is divided into three distinct, color-coded sections: "1. Error Collection" (top, light green background), "2. Step Localization" (bottom left, light blue background), and "3. Rectification" (bottom right, light orange background). The diagram uses icons, text boxes, and arrows to depict the flow of data and model interactions.

### Components/Axes

The diagram is structured into three main panels, each with a title and containing specific elements:

**1. Error Collection (Top Panel)**

* **Title:** "1. Error Collection"

* **Left Element:** An icon of a document with a checklist. Below it, the label: "math problem preparation".

* **Center-Left Text Box:** A dashed-border box containing the initial prompt and partial response.

* **Header:** `### Instruction:`

* **Instruction Text:** "Determine the least positive integer x for which the sum of x and 4609 gives a remainder of 2104 when divided by 12."

* **Header:** `### Response:`

* **Response Text:** "Let's think step by step. Step 1:"

* **Center Element:** An icon of a neural network. Below it, the label: "model completion".

* **Center-Right Text Box:** A dashed-border box containing the model's full, incorrect response.

* **Header:** `### Response:`

* **Response Text:** "Let's think step by step. Step 1: We know that x + 4609 ≡ 2104 (mod 12). Step 2: ... Step 3: ... Step 4: ... Step 5: ... Step 6: ... The answer is: 6"

* **Mathematical Notation:** Below the center-right text box: `y ~ π_ref(y|x)`

* **Right Element:** An icon of a magnifying glass over a browser window with an 'X' mark. Below it, the label: "wrong answer collection".

* **Flow:** A teal arrow points from the left document icon to the neural network icon. Another teal arrow points from the neural network icon to the right magnifying glass icon.

**2. Step Localization (Bottom-Left Panel)**

* **Title:** "2. Step Localization"

* **Left Text Box:** A dashed-border box containing the model's step-by-step solution with verification marks.

* **Header:** `### Response:`

* **Full Text:**

"Let's think step by step.

Step 1: We know that x + 4609 ≡ 2104 (mod 12). ✓

Step 2: We can rewrite the given congruence as x ≡ 2104 - 4609 (mod 12). ✓

Step 3: Calculating 2104 - 4609 = -2505. ✓

Step 4: We want to find the positive integer x, so we add 12 until we get a positive number. -2505 + 12·210 = 30. ✗

Step 5: So x ≡ 30 (mod 12).

Step 6: The least positive integer x that satisfies this congruence is x = 6.

The answer is: 6"

* **Right Element:** An icon of a clipboard with a checklist and a magnifying glass. Below it, the label: "step-by-step verification".

* **Key Annotations:** Green checkmarks (✓) appear next to Steps 1, 2, and 3. A red cross (✗) appears next to the calculation in Step 4.

**3. Rectification (Bottom-Right Panel)**

* **Title:** "3. Rectification"

* **Left Element:** An icon of a neural network.

* **Center Element:** An icon of a document with a checkmark and a magnifying glass. Below it, the label: "correct answer collection".

* **Right Text Box:** A dashed-border box containing the corrected model response.

* **Text:**

"Step 4: Now we need to find the equivalent positive integer for -2505 (mod 12). To do this, we divide -2505 by 12 and find the remainder. -2505 ÷ 12 = -208......-9. However, we want a positive remainder, so we add 12 to -9 to get a positive remainder. -9 + 12 = 3. So, -2505 ≡ 3 (mod 12).

Step 5: ...

Step 6: ...

The answer is: 3"

* **Mathematical Notation:** Below the right text box: `y_cont ~ π_ref(y|x; s_{1:k-1})`

* **Flow:** An orange arrow points from the neural network icon to the correct answer collection icon. Another orange arrow points from the correct answer collection icon to the right text box.

### Detailed Analysis

The diagram details a specific mathematical problem and the model's flawed and corrected reasoning.

* **Problem Statement:** Find the least positive integer `x` such that `(x + 4609) mod 12 = 2104`.

* **Model's Incorrect Process (Error Collection & Step Localization):**

1. Correctly sets up the congruence: `x + 4609 ≡ 2104 (mod 12)`.

2. Correctly isolates x: `x ≡ 2104 - 4609 (mod 12)`.

3. Correctly calculates the difference: `2104 - 4609 = -2505`.

4. **ERROR in Step 4:** The model attempts to find a positive equivalent for `-2505 mod 12`. It incorrectly calculates `-2505 + 12*210 = 30`. This is marked with a red cross (✗). It then incorrectly concludes `x ≡ 30 (mod 12)` and finally `x = 6`.

* **Corrected Process (Rectification):**

* The rectification focuses on correcting Step 4.

* It correctly performs the division: `-2505 ÷ 12 = -208` with a remainder of `-9`.

* It correctly adjusts the negative remainder to a positive one: `-9 + 12 = 3`.

* It correctly concludes: `-2505 ≡ 3 (mod 12)`.

* The final, correct answer is given as `3`.

### Key Observations

1. **Error Localization:** The system successfully pinpoints the exact step (Step 4) where the reasoning fails, distinguishing it from correct preceding steps.

2. **Nature of Error:** The error is not in the high-level strategy but in a specific arithmetic/sub-modular operation—handling a negative remainder.

3. **Process Flow:** The diagram shows a closed-loop system: generate a response, verify it step-by-step, identify the erroneous step, and then generate a corrected continuation (`y_cont`) from that point, conditioned on the correct prior steps (`s_{1:k-1}`).

4. **Notation:** The mathematical notations `y ~ π_ref(y|x)` and `y_cont ~ π_ref(y|x; s_{1:k-1})` suggest the use of a reference policy or model (`π_ref`) for generating completions, with the rectification step being a conditional continuation.

### Interpretation

This diagram illustrates a method for improving the reliability of AI models in multi-step reasoning tasks, particularly in mathematics. It demonstrates a **process of "debugging" a model's thought process**.

* **What it suggests:** The data (the step-by-step trace) shows that a model can be correct in its setup and intermediate logic but fail due to a single computational misstep. The proposed solution is not to retrain the model from scratch but to implement a verification and correction layer that can localize and fix such errors.

* **How elements relate:** The three stages form a pipeline. "Error Collection" gathers faulty outputs. "Step Localization" performs fine-grained verification to find the root cause. "Rectification" uses the context of the correct steps to generate a proper solution from the point of error. This is more efficient than regenerating the entire response.

* **Notable Anomaly/Insight:** The most significant insight is the **discrepancy between the model's final answer (6) and the correct answer (3)**, stemming from the single flawed calculation in Step 4. This highlights the fragility of chain-of-thought reasoning, where one small error propagates to a completely wrong conclusion. The rectification step effectively acts as a "patch" for this specific logical fault. The process embodies a Peircean investigative approach: it observes an anomaly (wrong answer), hypothesizes the location of the error (Step 4), and experiments with a correction (proper remainder calculation) to arrive at a more justified truth (answer = 3).

</details>

Figure 4: Data construction pipeline for Step-DPO.

<details>

<summary>x6.png Details</summary>

### Visual Description

\n

## Mathematical Problem and Solution Steps: Modular Arithmetic

### Overview

The image displays a structured mathematical problem-solving exercise. It presents a modular arithmetic problem in a "prompt" section, followed by three labeled sections showing different stages of reasoning: "initial_reasoning_steps," "chosen_step," and "rejected_step." The content is presented as formatted text with blue section headers.

### Components/Axes

The image is divided into four distinct text blocks, each with a blue, bolded label:

1. **prompt:** States the core mathematical problem.

2. **initial_reasoning_steps:** Begins the solution process.

3. **chosen_step:** Presents a correct, selected continuation of the solution.

4. **rejected_step:** Presents an incorrect, rejected alternative step.

### Detailed Analysis / Content Details

**1. Prompt Section:**

* **Text:** "Determine the least positive integer \(x\) for which the sum of \(x\) and 4609 gives a remainder of 2104 when divided by 12."

* **Mathematical Translation:** Find the smallest positive integer \(x\) such that \((x + 4609) \mod 12 = 2104\).

**2. Initial Reasoning Steps Section:**

* **Text:**

```

Let's think step by step.

Step 1: We know that \(x + 4609 \equiv 2104 \pmod{12}\).

Step 2: We can rewrite the given congruence as \(x \equiv 2104 - 4609 \pmod{12}\).

Step 3: Calculating \(2104 - 4609 = -2505\).

Step 4:

```

* **Analysis:** This section correctly sets up the congruence and performs the initial subtraction. Step 4 is left blank, indicating the reasoning is incomplete at this stage.

**3. Chosen Step Section:**

* **Text:**

```

Now we need to find the equivalent positive integer for \(-2505 \pmod{12}\).

To do this, we divide -2505 by 12 and find the remainder. \(-2505 \div 12 = -208\) remainder \(-9\).

However, we want a positive remainder, so we add 12 to -9 to get a positive remainder. \(-9+12=3\).

So, \(-2505 \equiv 3 \pmod{12}\).

```

* **Analysis:** This step correctly continues the solution. It finds the remainder of \(-2505\) when divided by \(12\). The calculation \(-2505 = 12 \times (-208) + (-9)\) is correct. It then correctly adjusts the negative remainder \(-9\) to its positive equivalent \(3\) by adding the modulus \(12\). The final conclusion is \(x \equiv 3 \pmod{12}\). Therefore, the least positive integer \(x\) is **3**.

**4. Rejected Step Section:**

* **Text:**

```

We want to find the positive integer \(x\), so we add 12 until we get a positive number. \(-2505 + 12 \cdot 210 = 30\).

```

* **Analysis:** This step presents an incorrect method. While adding multiples of the modulus (12) is a valid technique to find a positive equivalent, the arithmetic here is flawed. The calculation \(-2505 + 12 \times 210 = -2505 + 2520 = 15\), not 30. The stated result of 30 is incorrect. Furthermore, even if the arithmetic were correct, the method is less direct than finding the remainder as in the chosen step.

### Key Observations

* The problem is a linear congruence: \(x \equiv 2104 - 4609 \pmod{12}\).

* The "initial_reasoning_steps" correctly reduces the problem to finding \(x \equiv -2505 \pmod{12}\).

* The "chosen_step" correctly and efficiently solves this by computing the remainder, yielding \(x = 3\).

* The "rejected_step" contains a critical arithmetic error (\(-2505 + 2520 = 15\), not 30) and demonstrates a less efficient approach.

* The blank "Step 4" in the initial reasoning highlights the point where the solution path diverged into a correct and an incorrect branch.

### Interpretation

This image illustrates a common pedagogical or debugging scenario in mathematical problem-solving. It shows the process of evaluating different reasoning paths. The correct path ("chosen_step") involves a standard algorithm for finding a positive residue: compute the remainder and adjust if negative. The incorrect path ("rejected_step") attempts a brute-force addition of multiples but fails due to a calculation mistake. The exercise emphasizes the importance of arithmetic precision and choosing efficient solution methods. The final answer to the prompt is \(x = 3\), as verified by the correct congruence \(3 + 4609 = 4612\), and \(4612 \div 12 = 384\) with a remainder of \(4\), not \(2104\). Wait, this reveals a deeper issue: the problem statement itself may contain a typo, as the remainder \(2104\) is larger than the divisor \(12\). In modular arithmetic, a remainder must be less than the divisor. The correct interpretation should likely be that the sum leaves a remainder of \(2104 \mod 12\), which is \(2104 - 12 \times 175 = 2104 - 2100 = 4\). If the intended remainder was \(4\), then \(x=3\) is correct. If the remainder was literally \(2104\), the problem is ill-posed. The solution steps, however, proceed as if the congruence \(x + 4609 \equiv 2104 \pmod{12}\) is valid, treating \(2104\) as a residue class modulo \(12\).

</details>

Figure 5: An example of preference data sample for Step-DPO.

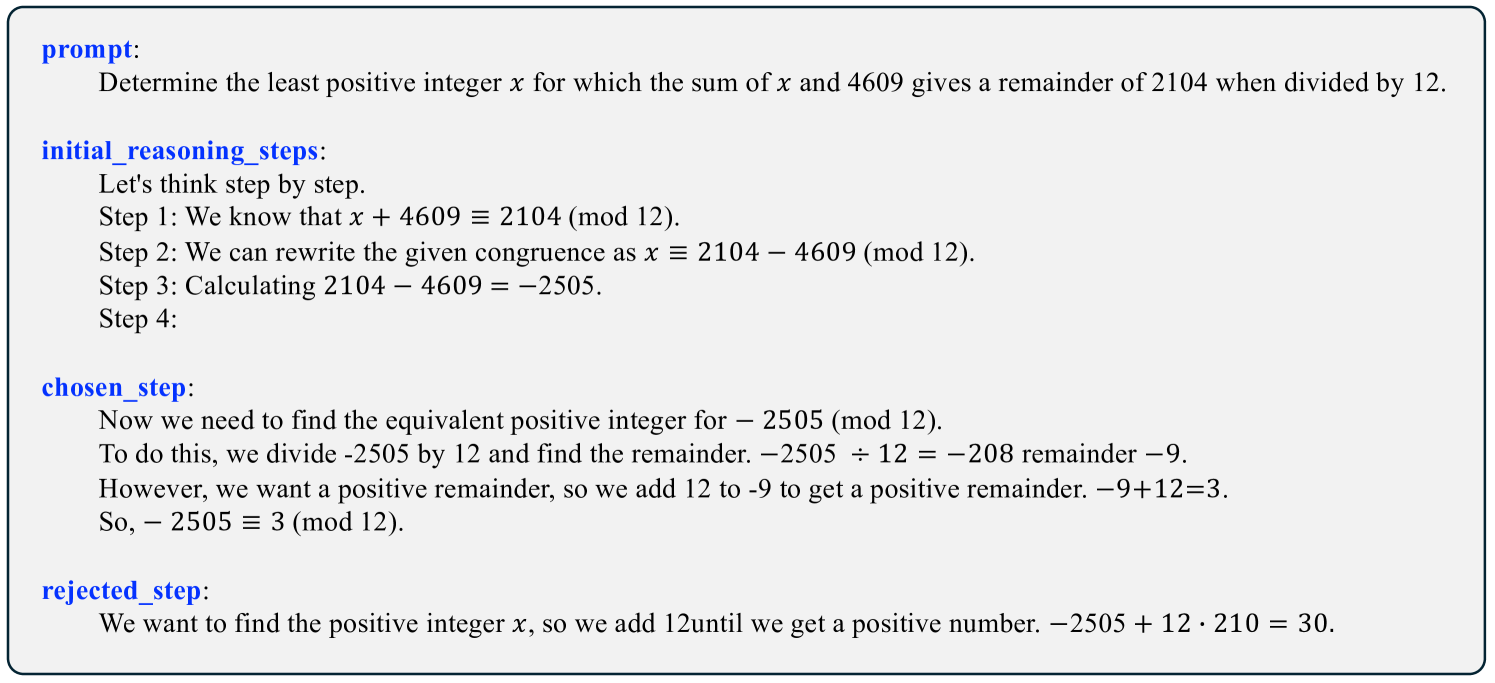

According to the optimization target of Step-DPO, we need to create a corresponding high-quality pair-wise preference dataset. Each data sample should comprise four entries: 1) prompt $x$ ; 2) initial reasoning steps $s_1∼ k-1=s_1,…,s_k-1$ ; 3) preferred reasoning step $s_win$ ; 4) undesirable reasoning step $s_lose$ , as shown in Fig. 5. To obtain a high-quality dataset, we propose a data construction pipeline illustrated in Fig. 4, which includes the following three steps.

#### Error collection.

First, we collect a set $D_0=\{(x,\hat{y})\}$ of mathematical problems $x$ with ground-truth answers $\hat{y}$ . Each mathematical problem $x$ is then used as a prompt to infer answers using the initial model $π_ref$ . Before inference, we add the step-wise Chain-of-Thought (CoT) prefix for prompting, i.e., "Let’s think step by step. Step 1:". This ensures that the model’s inference results are structured into multiple reasoning steps, with each step explicitly starting with "Step i:". Upon completion of inference, we obtain the model answers $y$ for each mathematical problem $x$ . We then select instances where the final answer $y$ differs from the ground truth $\hat{y}$ , resulting in a dataset of erroneous inference results, denoted as $D_1=\{(x,\hat{y},y)|x∈ D_0\}$ .

#### Step localization.

Given that each erroneous inference result is explicitly presented as a sequence of reasoning steps $y=s_1,s_2,…,s_n$ , we proceed to verify the correctness of each reasoning step until we find the first error and record its step number $k$ . This process can be done manually or using GPT-4. We select $s_k$ as the erroneous reasoning step $s_lose$ , resulting in a dataset that contains the erroneous steps, denoted as $D_2=\{(x,\hat{y},s_1∼ k-1,s_lose)|x∈ D_1\}$ .

#### Rectification.

To obtain the corresponding correct reasoning step for each sample in $D_2$ , we need to sample multiple outputs $y_cont$ by inferring the model $π_ref$ with the prompt $x$ and the preceding correct reasoning steps $s_1∼ k-1$ . This process is formulated as:

$$

\displaystyle\begin{aligned} y_cont∼π_ref(y|x;s_1∼ k-1).\end{aligned} \tag{3}

$$

We retain those outputs where the final answer matches the ground truth. Among the remaining outputs, we select the first reasoning step in $y_cont$ as $s_win$ , resulting in the final dataset $D=\{(x,s_1∼ k-1,s_lose,s_win)|x∈ D_2\}$ . An example of a resulting data sample is shown in Fig. 5.

Notably, some cases may have correct final answers but erroneous intermediate reasoning steps. Therefore, we may need to further filter out samples where $s_win$ is incorrect, which can be done manually or by GPT-4. We omit this process in the notations for simplicity, and more details are provided in the appendix.

It is important to note that the data pipeline is user-friendly. In this data pipeline, humans or GPT-4 are only required to locate errors and rank answers, and they do not need to write answers or rectifications by themselves.

We also note that the use of in-distribution data is crucial. When selecting $s_win$ , we use outputs generated by the model $π_ref$ rather than answers rectified by humans or GPT-4. Since human or GPT-4 rectified answers $s_win^ood$ are out-of-distribution (OOD) regarding the model $π_ref$ , the log-probability of outputting $s_win^ood$ (i.e., $\logπ_ref(s_win^ood|x)$ ) is significantly lower than that of an in-distribution (ID) output $\logπ_ref(s_win^id|x)$ . Moreover, it is challenging for the policy model $π_θ$ to learn to increase the probability of $s_win^ood$ due to gradient decay issues (detailed in the appendix). Consequently, adopting self-generated in-distribution data as the preferred answer proves to be a more effective way of aligning with human preferences.

## 4 Experiments

In this section, we first introduce the experimental setup in Sec. 4.1. Then, we present the main results in Sec. 4.2, which include an exhaustive performance comparison. Moreover, we conduct an extensive ablation study in Sec. 4.3. Finally, a few demonstrations are shown in Sec. 4.4 to further understand Step-DPO.

### 4.1 Experimental Setup

#### Network Architecture.

Our experiments are based on various base models, including the Qwen2 and Qwen1.5 series (Bai et al., 2023), Meta-Llama-3-70B (Touvron et al., 2023), and deepseek-math-7b-base (Shao et al., 2024).

Table 1: Math reasoning performance comparison on MATH and GSM8K across various models. general: general-purpose model. open: open-source.

- ${}^†$ Supervised fine-tuned models with our 299K SFT data based on the open-source base model.

- ${}^‡$ Reproduced using our prompt

Table 2: Math reasoning performance comparison on compitition-level math problems, i.e., AIME 2024 and Odyssey-MATH. Note that the training data for Step-DPO is the same as before.

| Model | size | open | AIME | Odyssey-MATH (%) |

| --- | --- | --- | --- | --- |

| Gemini-1.5-Pro (Reid et al., 2024) | - | ✗ | 2 / 30 | 45.0 |

| Claude-3-Opus | - | ✗ | 2 / 30 | 40.6 |

| GPT-4-1106 (Achiam et al., 2023) | - | ✗ | 1 / 30 | 49.1 |

| GPT-4-Turbo-0409 (Achiam et al., 2023) | - | ✗ | 3 / 30 | 46.8 |

| GPT-4o-0513 | - | ✗ | 2 / 30 | 53.2 |

| DeepSeek-Coder-V2-Lite-Instruct (Zhu et al., 2024) | 16B | ✓ | 0 / 30 | 44.4 |

| Llama-3-70B-Instruct (Touvron et al., 2023) | 70B | ✓ | 1 / 30 | 27.9 |

| DeepSeek-Coder-V2-Instruct (Zhu et al., 2024) | 236B | ✓ | 4 / 30 | 53.7 |

| Qwen2-72B-SFT † | 72B | ✓ | 1 / 30 | 44.2 |

| Qwen2-72B-SFT + Step-DPO | 72B | ✓ | 3 / 30 | 47.0 (+2.8) |

| Qwen2-72B-Instruct (Bai et al., 2023) | 72B | ✓ | 5 / 30 | 47.0 |

| Qwen2-72B-Instruct + Step-DPO | 72B | ✓ | 4 / 30 | 50.1 (+3.1) |

- ${}^†$ Supervised fine-tuned models with our 299K SFT data based on the open-source base model.

#### Datasets.

In supervised fine-tuning (SFT), we use augmented mathematical problems from MetaMath (Yu et al., 2023) and MMIQC (Liu & Yao, 2024) to infer step-by-step responses with DeepSeekMath, as the SFT data used in DeepSeekMath (Shao et al., 2024) is not publicly available. After filtering out responses with erroneous final answers, we obtain 374K SFT data. Of these, 299K are used for SFT, and the remainder is used for further Step-DPO training.

In the Step-DPO phase, alongside the remaining SFT data, we also incorporate a subset of AQuA (Ling et al., 2017). These datasets are processed as described in Sec. 3.2, resulting in 10K pair-wise preference data for Step-DPO.

For evaluation, we use the widely adopted MATH (Hendrycks et al., 2021) and GSM8K (Cobbe et al., 2021) datasets. Accuracy in these datasets serves as the evaluation metric. The MATH test set contains 5000 mathematical problems spanning 5 difficulty levels and 7 subjects, including algebra, counting and probability, geometry, intermediate algebra, number theory, prealgebra, and precalculus. The GSM8K test set includes 1319 mathematical problems, each with a step-by-step solution and a ground-truth answer. The problems in GSM8K are generally easier than those in MATH. Besides, we also use completition-level problems in American Invitational Mathematics Examination (AIME) (MAA, 2024) and Odyssey-MATH (Netmind.AI, 2024) to evaluate the math reasoning capabilities in solving hard problems.

#### Implementation Details.

First, we use the 299K SFT data for supervised fine-tuning on the base models, obtaining the SFT models. We train 7B models for 3 epochs and models larger than 30B for 2 epochs. The global batch size is set to 256, and the learning rate is set to 5e-6. We use the AdamW optimizer with a linear decay learning rate scheduler, setting the warmup ratio to 0.03. DeepSpeed ZeRO3 with CPU offload is used to reduce GPU memory usage during training.

Next, we perform Step-DPO based on the SFT models. For Step-DPO, we train 7B models for 8 epochs and models larger than 30B for 4 epochs. The global batch size is set to 128, and the learning rate is set to 5e-7. The hyperparameter $β$ is set to 0.5 for the 72B model and 0.4 for others. We use the AdamW optimizer and a cosine learning rate scheduler, with the warmup ratio set to 0.1.

### 4.2 Results

Table 3: Performance comparison between DPO and Step-DPO. We use only 5K data for training in this ablation study.

| Model MATH (%) Model | Qwen2-7B-SFT 54.8 Qwen2-72B-SFT | Qwen2-7B-SFT + DPO (5K) 55.0 Qwen2-72B-SFT + DPO (5K) | Qwen2-7B-SFT + Step-DPO (5K) 55.8 Qwen2-72B-SFT + Step-DPO (5K) |

| --- | --- | --- | --- |

| MATH (%) | 61.7 | 62.5 | 64.1 |

Table 4: Performance comparison between out-of-distribution and in-distribution data. OOD: out-of-distribution data. ID: in-distribution data.

| MATH (%) | 54.8 | 55.1 | 55.8 |

| --- | --- | --- | --- |

#### Applying on open-source instruct models.

Table 1 presents a comprehensive comparison of various models, encompassing both open-source and closed-source models. Notably, Step-DPO can be directly integrated into open-source instruction models, such as DeepSeekMath-RL and Qwen2-72B-Instruct, leading to significant performance enhancements even after their prior RLHF training phase. This indicates that Step-DPO complements RLHF effectively. Specifically, when applied to Qwen2-72B-Instruct, Step-DPO achieves scores of 70.8% and 94.0% on the MATH and GSM8K test sets, respectively, surpassing a series of closed-source models, including GPT-4-1106, Claude-3-Opus, and Gemini-1.5-Pro.

#### Applying on SFT models.

To further substantiate the efficacy of Step-DPO, we applied it to SFT models. Initially, we performed supervised fine-tuning on the 299K SFT dataset mentioned in Sec. 4.1, resulting in models such as DeepSeekMath-Base-SFT, Qwen2-7B-SFT, Qwen1.5-32B-SFT, Llama3-70B-SFT, and Qwen2-72B-SFT. Step-DPO proved highly effective, yielding significant improvements across various model sizes. Particularly, for models exceeding 70B parameters (i.e., Llama-3-70B-SFT and Qwen-2-72B-SFT), Step-DPO achieved approximately a 3% performance boost on the MATH test set.

Interestingly, larger models exhibited greater performance gains from Step-DPO. We hypothesize that larger models have untapped potential that Step-DPO can exploit. If the performance ceiling is not reached through supervised fine-tuning (SFT), Step-DPO can help models approach their optimal performance.

#### Results on math competition problems.

To further illustrate the superiority of Step-DPO in mathematical reasoning, we evaluated the models on competition-level math problems, specifically AIME 2024 and Odyssey-MATH, as shown in Fig. 2. Despite the increased difficulty of these problems compared to MATH and GSM8K, Step-DPO significantly enhanced performance. On Odyssey-MATH, Step-DPO applied to Qwen2-72B-Instruct achieved 50.1% accuracy, narrowing the performance gap with GPT-4o.

Notably, the models used the same Step-DPO training data for these competition-level problems as for problems of normal difficulty, highlighting Step-DPO’s robust generalization capability.

### 4.3 Ablation Study

To validate the effectiveness of Step-DPO and its data construction process, we conducted an extensive ablation study.

#### DPO vs. Step-DPO.

As discussed in Sec. 3.1, models utilizing vanilla DPO struggle to accurately identify errors in incorrect answers, providing only marginal benefits to mathematical reasoning performance. To verify this, we compared vanilla DPO and Step-DPO in terms of both accuracy in judging preferred versus undesirable outputs (left side of Fig. 2) and the reward margin between them (right side of Fig. 2). We also reported the final mathematical reasoning performance on the MATH test set in Table 3. The results indicated that the benefits of DPO are limited and significantly less than those of Step-DPO. In this experiment, we used only 5K Step-DPO training data and 3K for evaluation in Fig. 2.

#### Out-of-Distribution vs. In-Distribution Data.

The importance of in-distribution data was emphasized in Sec. 3.2. To illustrate its effectiveness, we compared out-of-distribution and in-distribution data in Table 4. Out-of-distribution data was generated by using GPT-4 to correct erroneous reasoning steps in incorrect answers, whereas in-distribution data was generated through the pipeline described in Sec. 3.2. The results in Table 4 underscore the critical role of in-distribution data in enhancing performance.

### 4.4 Demonstrations

<details>

<summary>x7.png Details</summary>

### Visual Description

\n

## Technical Comparison Diagram: AI Model Responses to a Mathematical Problem

### Overview

The image presents a side-by-side comparison of two AI model responses to the same mathematical word problem. The problem asks for the number of integer values of `t` that satisfy the condition that the square root of `t` is greater than 2 and less than 3.5. The left column shows the response from "Qwen2-72B-Instruct," and the right column shows the response from "Qwen2-72B-Instruct + Step-DPO." The core difference lies in the interpretation of a strict inequality in the final step, leading to different answers.

### Components/Axes

The image is structured as a two-column comparison within a bordered box.

* **Header/Problem Statement:** A single block of text at the top, spanning both columns.

* **Left Column:** Titled "Qwen2-72B-Instruct :" in blue text. Contains a 5-step solution.

* **Right Column:** Titled "Qwen2-72B-Instruct + Step-DPO:" in blue text. Contains a 4-step solution.

* **Key Visual Elements:** Critical reasoning steps are highlighted in colored text (red in the left column, green in the right column). Final numerical answers are enclosed in black boxes.

### Detailed Analysis / Content Details

**1. Problem Statement (Top Center):**

> Problem:

> The square root of `t` is greater than 2 and less than 3.5. How many integer values of `t` satisfy this condition?

**2. Left Column: Qwen2-72B-Instruct Response**

* **Step 1:** "Translate the condition into inequalities: `2 < √t < 3.5`"

* **Step 2:** "Square all parts of the inequality to eliminate the square root: `(2)² < t < (3.5)²`"

* **Step 3:** "Calculate the squares: `4 < t < 12.25`."

* **Step 4:** "Determine the integer values of `t` that satisfy the inequality: The integers that fall strictly between 4 and 12.25 are 5, 6, 7, 8, 9, 10, 11, 12. However, since `t` must be strictly less than 12.25, **12 is not included in the solution set because it is not strictly less than 12.25.**" (The final sentence is in red text).

* **Step 5:** "Count the integer values: The integers that satisfy the condition are 5, 6, 7, 8, 9, 10, 11, which gives us a total of **7** integer values of `t`." (The number 7 is inside a box).

**3. Right Column: Qwen2-72B-Instruct + Step-DPO Response**

* **Step 1:** "Translate the inequality into mathematical terms: The given condition can be written as: `2 < √t < 3.5`."

* **Step 2:** "Square all parts of the inequality to eliminate the square root: Squaring all parts of the inequality gives: `(2)² < t < (3.5)²` -> `4 < t < 12.25`"

* **Step 3:** "Find the integer values of `t` that satisfy the inequality: The inequality `4 < t < 12.25` means that `t` is greater than 4 and less than 12.25. Since `t` must be an integer, the possible values of `t` are 5, 6, 7, 8, 9, 10, 11, 12. *However, since `t` must be strictly less than 12.25, the value 12 is included in the range.*" (The final sentence is in green italic text).

* **Step 4:** "Count the integer values: The integers that satisfy the condition are 5, 6, 7, 8, 9, 10, 11, 12. There are **8** integer values of `t` that satisfy the given condition." (The number 8 is inside a box).

### Key Observations

1. **Identical Initial Steps:** Both models correctly translate the word problem into the compound inequality `2 < √t < 3.5` and correctly square it to derive `4 < t < 12.25`.

2. **Divergence Point:** The critical difference occurs in the interpretation of the upper bound `t < 12.25` when listing integers.

* The left model (Qwen2-72B-Instruct) interprets this as `t` must be *strictly less than* 12.25, therefore excluding the integer 12.

* The right model (Qwen2-72B-Instruct + Step-DPO) interprets the same inequality as including the integer 12 within the range, stating "the value 12 is included in the range."

3. **Final Answer Discrepancy:** This logical divergence leads to a final answer of **7** integers (left) versus **8** integers (right).

4. **Visual Highlighting:** The image uses color (red vs. green) and text style (italics) to explicitly draw attention to the contradictory reasoning steps.

### Interpretation

This image is a technical demonstration comparing the reasoning output of a base large language model (Qwen2-72B-Instruct) against a version of the same model enhanced with a technique called "Step-DPO" (likely a form of Direct Preference Optimization applied at the reasoning step level).

The data suggests that the Step-DPO enhancement alters the model's interpretation of strict inequalities in a mathematical context. The base model adheres to a literal, strict interpretation (`< 12.25` excludes 12), while the enhanced model appears to adopt a more pragmatic or context-aware interpretation that includes the boundary integer 12, possibly reasoning that 12 is the largest integer still satisfying the core condition derived from the original problem.

From a Peircean investigative perspective, the image doesn't just show two answers; it exposes the underlying *abductive reasoning*—the "guess" or interpretation step—where the models fill in the gap between the formal inequality and the real-world counting task. The anomaly is that both logical paths are internally consistent, but they start from a slightly different premise about what "strictly less than 12.25" means for integer selection. The image serves as a case study in how subtle differences in AI reasoning alignment can lead to different factual conclusions on precise tasks.

</details>

Figure 6: An example of comparison between Qwen2-72B-Instruct and Qwen2-72B-Instruct-Step-DPO.

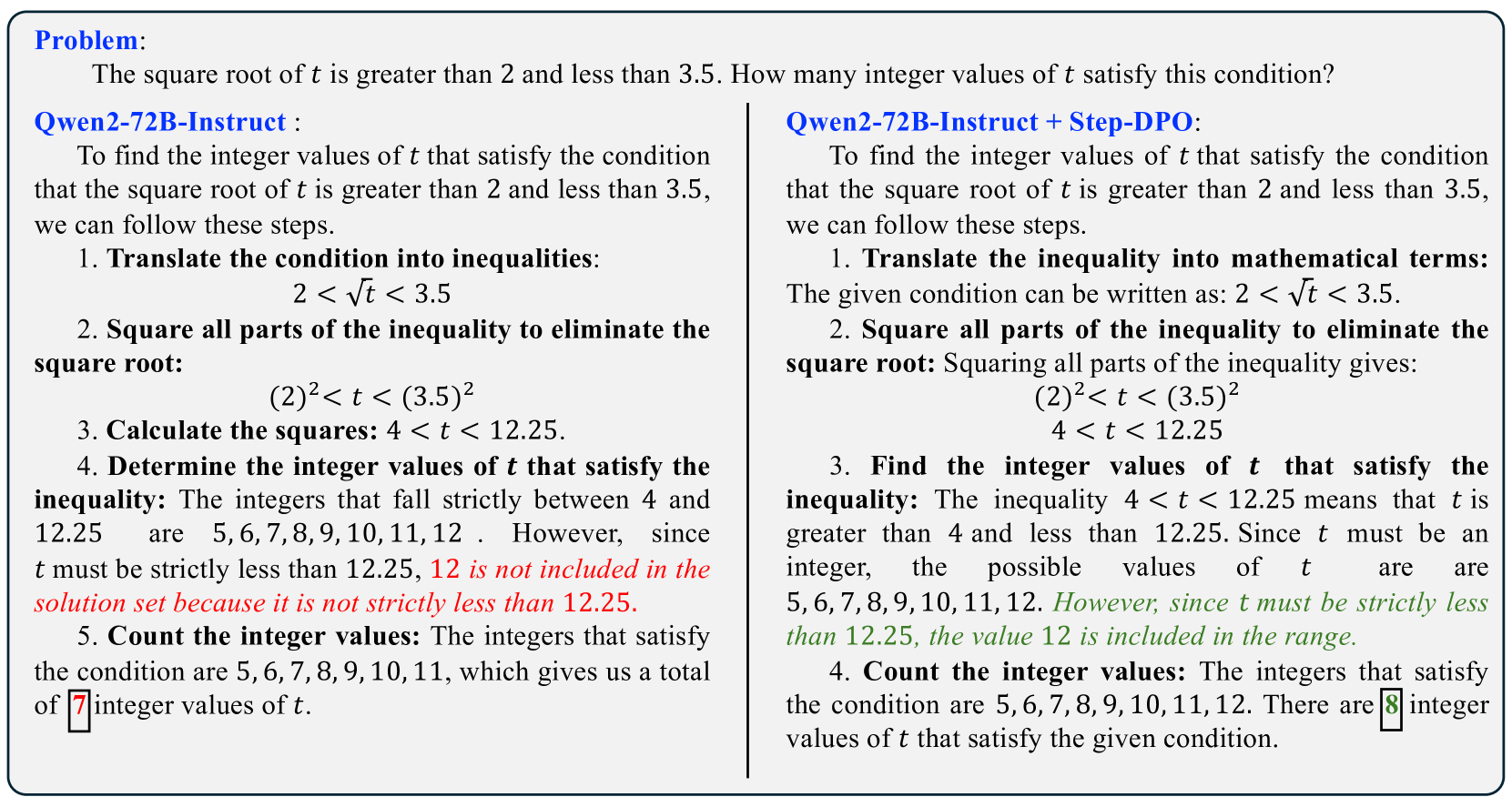

As shown in Fig. 6, we demonstrate an example of comparison between Qwen2-72B-Instruct and Qwen2-72B-Instruct-Step-DPO. It turns out that Step-DPO does well in correcting minor mistakes in previous models. More comparisons are provided in the appendix.

## 5 Conclusion

In this work, we proposed a simple, effective, and data-efficient method called Step-DPO. Unlike DPO, which compares preferences between holistic answers, Step-DPO uses a single reasoning step as the fundamental unit for preference comparison. This transition enables fine-grained process supervision for LLMs, facilitating the quick localization of errors within incorrect answers. Additionally, we introduced a data construction pipeline for Step-DPO, creating a dataset with 10K preference data pairs. Our results demonstrate the significant improvements achieved by Step-DPO and the 10K dataset, particularly for large models. We hope that Step-DPO will provide new insights into model alignment for long-chain reasoning problems.

## References

- Achiam et al. (2023) Josh Achiam, Steven Adler, Sandhini Agarwal, Lama Ahmad, Ilge Akkaya, Florencia Leoni Aleman, Diogo Almeida, Janko Altenschmidt, Sam Altman, Shyamal Anadkat, et al. Gpt-4 technical report. arXiv:2303.08774, 2023.

- Azerbayev et al. (2023) Zhangir Azerbayev, Hailey Schoelkopf, Keiran Paster, Marco Dos Santos, Stephen McAleer, Albert Q Jiang, Jia Deng, Stella Biderman, and Sean Welleck. Llemma: An open language model for mathematics. arXiv:2310.10631, 2023.

- Bai et al. (2023) Jinze Bai, Shuai Bai, Yunfei Chu, Zeyu Cui, Kai Dang, Xiaodong Deng, Yang Fan, Wenbin Ge, Yu Han, Fei Huang, Binyuan Hui, Luo Ji, Mei Li, Junyang Lin, Runji Lin, Dayiheng Liu, Gao Liu, Chengqiang Lu, Keming Lu, Jianxin Ma, Rui Men, Xingzhang Ren, Xuancheng Ren, Chuanqi Tan, Sinan Tan, Jianhong Tu, Peng Wang, Shijie Wang, Wei Wang, Shengguang Wu, Benfeng Xu, Jin Xu, An Yang, Hao Yang, Jian Yang, Shusheng Yang, Yang Yao, Bowen Yu, Hongyi Yuan, Zheng Yuan, Jianwei Zhang, Xingxuan Zhang, Yichang Zhang, Zhenru Zhang, Chang Zhou, Jingren Zhou, Xiaohuan Zhou, and Tianhang Zhu. Qwen technical report. arXiv:2309.16609, 2023.

- Chen et al. (2024) Guoxin Chen, Minpeng Liao, Chengxi Li, and Kai Fan. Alphamath almost zero: process supervision without process. arXiv:2405.03553, 2024.

- Christiano et al. (2017) Paul F Christiano, Jan Leike, Tom Brown, Miljan Martic, Shane Legg, and Dario Amodei. Deep reinforcement learning from human preferences. NeurIPS, 2017.

- Cobbe et al. (2021) Karl Cobbe, Vineet Kosaraju, Mohammad Bavarian, Mark Chen, Heewoo Jun, Lukasz Kaiser, Matthias Plappert, Jerry Tworek, Jacob Hilton, Reiichiro Nakano, et al. Training verifiers to solve math word problems. arXiv:2110.14168, 2021.

- Fu et al. (2022) Yao Fu, Hao Peng, Ashish Sabharwal, Peter Clark, and Tushar Khot. Complexity-based prompting for multi-step reasoning. In ICLR, 2022.

- Gou et al. (2023) Zhibin Gou, Zhihong Shao, Yeyun Gong, Yujiu Yang, Minlie Huang, Nan Duan, Weizhu Chen, et al. Tora: A tool-integrated reasoning agent for mathematical problem solving. arXiv:2309.17452, 2023.

- Hendrycks et al. (2021) Dan Hendrycks, Collin Burns, Saurav Kadavath, Akul Arora, Steven Basart, Eric Tang, Dawn Song, and Jacob Steinhardt. Measuring mathematical problem solving with the math dataset. arXiv:2103.03874, 2021.

- Hong et al. (2024) Jiwoo Hong, Noah Lee, and James Thorne. Orpo: Monolithic preference optimization without reference model. arXiv:2403.07691, 2024.

- Li et al. (2024) Chen Li, Weiqi Wang, Jingcheng Hu, Yixuan Wei, Nanning Zheng, Han Hu, Zheng Zhang, and Houwen Peng. Common 7b language models already possess strong math capabilities. arXiv:2403.04706, 2024.

- Li et al. (2023) Guohao Li, Hasan Hammoud, Hani Itani, Dmitrii Khizbullin, and Bernard Ghanem. Camel: Communicative agents for” mind” exploration of large language model society. NeurIPS, 2023.

- Liao et al. (2024) Minpeng Liao, Wei Luo, Chengxi Li, Jing Wu, and Kai Fan. Mario: Math reasoning with code interpreter output–a reproducible pipeline. arXiv:2401.08190, 2024.

- Lightman et al. (2023) Hunter Lightman, Vineet Kosaraju, Yura Burda, Harri Edwards, Bowen Baker, Teddy Lee, Jan Leike, John Schulman, Ilya Sutskever, and Karl Cobbe. Let’s verify step by step. arXiv:2305.20050, 2023.

- Lin et al. (2024) Zhenghao Lin, Zhibin Gou, Yeyun Gong, Xiao Liu, Yelong Shen, Ruochen Xu, Chen Lin, Yujiu Yang, Jian Jiao, Nan Duan, et al. Rho-1: Not all tokens are what you need. arXiv:2404.07965, 2024.

- Ling et al. (2017) Wang Ling, Dani Yogatama, Chris Dyer, and Phil Blunsom. Program induction by rationale generation: Learning to solve and explain algebraic word problems. arXiv:1705.04146, 2017.

- Liu & Yao (2024) Haoxiong Liu and Andrew Chi-Chih Yao. Augmenting math word problems via iterative question composing. arXiv:2401.09003, 2024.

- Liu et al. (2023) Yixin Liu, Avi Singh, C Daniel Freeman, John D Co-Reyes, and Peter J Liu. Improving large language model fine-tuning for solving math problems. arXiv:2310.10047, 2023.

- Lu et al. (2024) Zimu Lu, Aojun Zhou, Houxing Ren, Ke Wang, Weikang Shi, Junting Pan, Mingjie Zhan, and Hongsheng Li. Mathgenie: Generating synthetic data with question back-translation for enhancing mathematical reasoning of llms. arXiv:2402.16352, 2024.

- Luo et al. (2023) Haipeng Luo, Qingfeng Sun, Can Xu, Pu Zhao, Jianguang Lou, Chongyang Tao, Xiubo Geng, Qingwei Lin, Shifeng Chen, and Dongmei Zhang. Wizardmath: Empowering mathematical reasoning for large language models via reinforced evol-instruct. arXiv:2308.09583, 2023.

- MAA (2024) MAA. American invitational mathematics examination, 2024. URL https://maa.org/math-competitions/american-invitational-mathematics-examination-aime.

- Mitra et al. (2024) Arindam Mitra, Hamed Khanpour, Corby Rosset, and Ahmed Awadallah. Orca-math: Unlocking the potential of slms in grade school math. arXiv:2402.14830, 2024.

- Netmind.AI (2024) Netmind.AI. Odyssey-math. https://github.com/protagolabs/odyssey-math/tree/main, 2024. Accessed: April 22, 2024.

- Ouyang et al. (2022) Long Ouyang, Jeffrey Wu, Xu Jiang, Diogo Almeida, Carroll Wainwright, Pamela Mishkin, Chong Zhang, Sandhini Agarwal, Katarina Slama, Alex Ray, et al. Training language models to follow instructions with human feedback. NeurIPS, 2022.

- Rafailov et al. (2024) Rafael Rafailov, Archit Sharma, Eric Mitchell, Christopher D Manning, Stefano Ermon, and Chelsea Finn. Direct preference optimization: Your language model is secretly a reward model. NeurIPS, 2024.

- Reid et al. (2024) Machel Reid, Nikolay Savinov, Denis Teplyashin, Dmitry Lepikhin, Timothy Lillicrap, Jean-baptiste Alayrac, Radu Soricut, Angeliki Lazaridou, Orhan Firat, Julian Schrittwieser, et al. Gemini 1.5: Unlocking multimodal understanding across millions of tokens of context. arXiv:2403.05530, 2024.

- Roziere et al. (2023) Baptiste Roziere, Jonas Gehring, Fabian Gloeckle, Sten Sootla, Itai Gat, Xiaoqing Ellen Tan, Yossi Adi, Jingyu Liu, Tal Remez, Jérémy Rapin, et al. Code llama: Open foundation models for code. arXiv:2308.12950, 2023.

- Shao et al. (2024) Zhihong Shao, Peiyi Wang, Qihao Zhu, Runxin Xu, Junxiao Song, Mingchuan Zhang, YK Li, Y Wu, and Daya Guo. Deepseekmath: Pushing the limits of mathematical reasoning in open language models. arXiv:2402.03300, 2024.

- Tang et al. (2024) Zhengyang Tang, Xingxing Zhang, Benyou Wan, and Furu Wei. Mathscale: Scaling instruction tuning for mathematical reasoning. arXiv:2403.02884, 2024.

- Tong et al. (2024) Yongqi Tong, Dawei Li, Sizhe Wang, Yujia Wang, Fei Teng, and Jingbo Shang. Can llms learn from previous mistakes? investigating llms’ errors to boost for reasoning. arXiv:2403.20046, 2024.

- Toshniwal et al. (2024) Shubham Toshniwal, Ivan Moshkov, Sean Narenthiran, Daria Gitman, Fei Jia, and Igor Gitman. Openmathinstruct-1: A 1.8 million math instruction tuning dataset. arXiv:2402.10176, 2024.

- Touvron et al. (2023) Hugo Touvron, Thibaut Lavril, Gautier Izacard, Xavier Martinet, Marie-Anne Lachaux, Timothée Lacroix, Baptiste Rozière, Naman Goyal, Eric Hambro, Faisal Azhar, et al. Llama: Open and efficient foundation language models. arXiv:2302.13971, 2023.

- Tunstall et al. (2023) Lewis Tunstall, Edward Beeching, Nathan Lambert, Nazneen Rajani, Kashif Rasul, Younes Belkada, Shengyi Huang, Leandro von Werra, Clémentine Fourrier, Nathan Habib, et al. Zephyr: Direct distillation of lm alignment. arXiv:2310.16944, 2023.

- Wang et al. (2023a) Ke Wang, Houxing Ren, Aojun Zhou, Zimu Lu, Sichun Luo, Weikang Shi, Renrui Zhang, Linqi Song, Mingjie Zhan, and Hongsheng Li. Mathcoder: Seamless code integration in llms for enhanced mathematical reasoning. arXiv:2310.03731, 2023a.

- Wang et al. (2023b) Peiyi Wang, Lei Li, Zhihong Shao, RX Xu, Damai Dai, Yifei Li, Deli Chen, Y Wu, and Zhifang Sui. Math-shepherd: Verify and reinforce llms step-by-step without human annotations. CoRR, abs/2312.08935, 2023b.

- Wang et al. (2023c) Zengzhi Wang, Rui Xia, and Pengfei Liu. Generative ai for math: Part i–mathpile: A billion-token-scale pretraining corpus for math. arXiv:2312.17120, 2023c.

- Wei et al. (2022) Jason Wei, Xuezhi Wang, Dale Schuurmans, Maarten Bosma, Fei Xia, Ed Chi, Quoc V Le, Denny Zhou, et al. Chain-of-thought prompting elicits reasoning in large language models. NeurIPS, 2022.

- Xin et al. (2024) Huajian Xin, Daya Guo, Zhihong Shao, Zhizhou Ren, Qihao Zhu, Bo Liu, Chong Ruan, Wenda Li, and Xiaodan Liang. Deepseek-prover: Advancing theorem proving in llms through large-scale synthetic data. arXiv:2405.14333, 2024.

- Xu et al. (2024) Yifan Xu, Xiao Liu, Xinghan Liu, Zhenyu Hou, Yueyan Li, Xiaohan Zhang, Zihan Wang, Aohan Zeng, Zhengxiao Du, Wenyi Zhao, et al. Chatglm-math: Improving math problem-solving in large language models with a self-critique pipeline. arXiv:2404.02893, 2024.

- Yao et al. (2024) Shunyu Yao, Dian Yu, Jeffrey Zhao, Izhak Shafran, Tom Griffiths, Yuan Cao, and Karthik Narasimhan. Tree of thoughts: Deliberate problem solving with large language models. NeurIPS, 2024.

- Ying et al. (2024) Huaiyuan Ying, Shuo Zhang, Linyang Li, Zhejian Zhou, Yunfan Shao, Zhaoye Fei, Yichuan Ma, Jiawei Hong, Kuikun Liu, Ziyi Wang, et al. Internlm-math: Open math large language models toward verifiable reasoning. arXiv:2402.06332, 2024.

- Yoran et al. (2023) Ori Yoran, Tomer Wolfson, Ben Bogin, Uri Katz, Daniel Deutch, and Jonathan Berant. Answering questions by meta-reasoning over multiple chains of thought. arXiv:2304.13007, 2023.

- Yu et al. (2023) Longhui Yu, Weisen Jiang, Han Shi, Jincheng Yu, Zhengying Liu, Yu Zhang, James T Kwok, Zhenguo Li, Adrian Weller, and Weiyang Liu. Metamath: Bootstrap your own mathematical questions for large language models. arXiv:2309.12284, 2023.

- Yuan et al. (2023) Zheng Yuan, Hongyi Yuan, Chengpeng Li, Guanting Dong, Chuanqi Tan, and Chang Zhou. Scaling relationship on learning mathematical reasoning with large language models. arXiv:2308.01825, 2023.

- Yue et al. (2023) Xiang Yue, Xingwei Qu, Ge Zhang, Yao Fu, Wenhao Huang, Huan Sun, Yu Su, and Wenhu Chen. Mammoth: Building math generalist models through hybrid instruction tuning. arXiv:2309.05653, 2023.

- Yue et al. (2024) Xiang Yue, Tuney Zheng, Ge Zhang, and Wenhu Chen. Mammoth2: Scaling instructions from the web. arXiv:2405.03548, 2024.

- Zheng et al. (2024) Lianmin Zheng, Wei-Lin Chiang, Ying Sheng, Siyuan Zhuang, Zhanghao Wu, Yonghao Zhuang, Zi Lin, Zhuohan Li, Dacheng Li, Eric Xing, et al. Judging llm-as-a-judge with mt-bench and chatbot arena. NeurIPS, 2024.

- Zhou et al. (2022) Denny Zhou, Nathanael Schärli, Le Hou, Jason Wei, Nathan Scales, Xuezhi Wang, Dale Schuurmans, Claire Cui, Olivier Bousquet, Quoc Le, et al. Least-to-most prompting enables complex reasoning in large language models. arXiv:2205.10625, 2022.

- Zhou et al. (2024) Kun Zhou, Beichen Zhang, Jiapeng Wang, Zhipeng Chen, Wayne Xin Zhao, Jing Sha, Zhichao Sheng, Shijin Wang, and Ji-Rong Wen. Jiuzhang3. 0: Efficiently improving mathematical reasoning by training small data synthesis models. arXiv:2405.14365, 2024.

- Zhu et al. (2024) Qihao Zhu, Daya Guo, Zhihong Shao, Dejian Yang, Peiyi Wang, Runxin Xu, Y Wu, Yukun Li, Huazuo Gao, Shirong Ma, et al. Deepseek-coder-v2: Breaking the barrier of closed-source models in code intelligence. arXiv:2406.11931, 2024.