## Hierarchical Deconstruction of LLM Reasoning: A Graph-Based Framework for Analyzing Knowledge Utilization

Miyoung Ko 1 * Sue Hyun Park 1 ∗ Joonsuk Park 2 , 3 , 4 † Minjoon Seo 1 †

1 KAIST, 2 NAVER AI Lab, 3 NAVER Cloud, 4 University of Richmond

{miyoungko, suehyunpark, minjoon}@kaist.ac.kr park@joonsuk.org

## Abstract

Despite the advances in large language models (LLMs), how they use their knowledge for reasoning is not yet well understood. In this study, we propose a method that deconstructs complex real-world questions into a graph, representing each question as a node with predecessors of background knowledge needed to solve the question. We develop the DEPTHQA dataset, deconstructing questions into three depths: (i) recalling conceptual knowledge, (ii) applying procedural knowledge, and (iii) analyzing strategic knowledge. Based on a hierarchical graph, we quantify forward discrepancy , a discrepancy in LLM performance on simpler sub-problems versus complex questions. We also measure backward discrepancy where LLMs answer complex questions but struggle with simpler ones. Our analysis shows that smaller models exhibit more discrepancies than larger models. Distinct patterns of discrepancies are observed across model capacity and possibility of training data memorization. Additionally, guiding models from simpler to complex questions through multiturn interactions improves performance across model sizes, highlighting the importance of structured intermediate steps in knowledge reasoning. This work enhances our understanding of LLM reasoning and suggests ways to improve their problem-solving abilities.

## 1 Introduction

With the rapid advancement of Large Language Models (LLMs), research interest has increasingly centered on their reasoning capabilities, particularly in solving complex questions. While many studies have assessed the general reasoning capabilities of LLMs (Wei et al., 2022a; Qin et al., 2023; Srivastava et al., 2023), the specific aspect of how these models recall and then utilize factual knowl-

* Equal contribution.

† Equal advising.

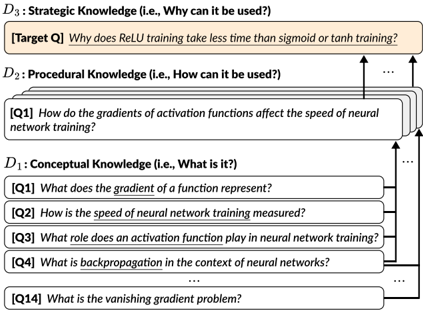

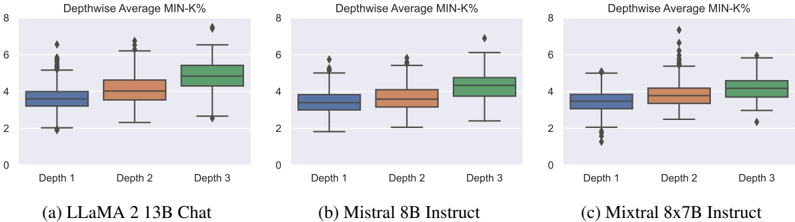

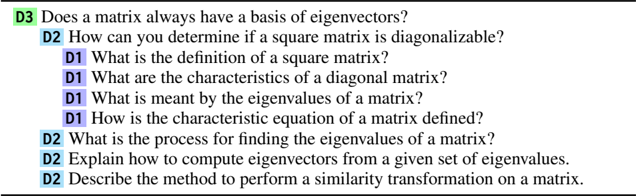

Figure 1: Example of reasoning across depths, showing a sequence of questions from D 1 (conceptual knowledge) to D 3 (strategic knowledge). Questions that ask deeper levels of knowledge require reasoning from multiple areas of shallower knowledge, which are represented as sub-questions.

<details>

<summary>Image 1 Details</summary>

### Visual Description

\n

## Diagram: Knowledge Hierarchy for ReLU Training

### Overview

The image presents a diagram illustrating a hierarchical breakdown of knowledge required to understand why ReLU (Rectified Linear Unit) training takes less time than sigmoid or tanh training. The hierarchy is structured into three levels: Strategic Knowledge (D3), Procedural Knowledge (D2), and Conceptual Knowledge (D1), each containing related questions. Arrows indicate dependencies between the levels.

### Components/Axes

The diagram is divided into three horizontal sections, labeled D1, D2, and D3. Each section represents a different level of knowledge. Within each section, there are multiple questions (Q1, Q2, etc.) listed in rectangular boxes. Arrows point upwards from questions in lower levels to questions in higher levels, indicating that answering questions in lower levels contributes to answering questions in higher levels. The top section contains a "Target Q" box.

### Detailed Analysis or Content Details

* **D3: Strategic Knowledge (i.e., Why can it be used?)**

* Contains the "Target Q": "Why does ReLU training take less time than sigmoid or tanh training?"

* **D2: Procedural Knowledge (i.e., How can it be used?)**

* Contains the question: "Q1 How do the gradients of activation functions affect the speed of neural network training?"

* An ellipsis (...) indicates that there are more questions in this level, but they are not fully displayed.

* **D1: Conceptual Knowledge (i.e., What is it?)**

* Contains the following questions:

* "Q1 What does the gradient of a function represent?"

* "Q2 How is the speed of neural network training measured?"

* "Q3 What role does an activation function play in neural network training?"

* "Q4 What is backpropagation in the context of neural networks?"

* An ellipsis (...) indicates that there are more questions in this level, but they are not fully displayed.

* "Q14 What is the vanishing gradient problem?"

Arrows connect questions across levels:

* An arrow points from "Q1" in D1 to "Q1" in D2.

* An arrow points from D2 to the "Target Q" in D3.

* An arrow points from D1 to D2.

### Key Observations

The diagram illustrates a dependency structure where understanding fundamental concepts (D1) is necessary to understand how to apply them (D2), which in turn is necessary to answer the overarching strategic question (D3). The ellipsis suggests that each level contains a more extensive set of questions than what is shown. The diagram is a visual representation of a knowledge decomposition.

### Interpretation

This diagram represents a pedagogical approach to understanding a complex topic – the efficiency of ReLU training. It breaks down the problem into manageable components, starting with foundational concepts (gradients, activation functions, backpropagation) and building towards a procedural understanding (how gradients affect training speed) and finally, a strategic understanding (why ReLU is faster). The diagram suggests that a complete understanding of the target question requires mastery of all three levels of knowledge. The use of "Target Q" emphasizes the ultimate goal of the learning process. The diagram is not presenting data, but rather a framework for organizing knowledge. It's a conceptual map, not a quantitative chart. The diagram implies that the vanishing gradient problem (Q14) is a key conceptual element in understanding why ReLU training is faster, as it is a common issue with sigmoid and tanh activation functions.

</details>

edge during reasoning has not been thoroughly explored. Some research (Dziri et al., 2023; Press et al., 2023; Wang et al., 2024) concentrate on straightforward reasoning tasks such as combining and comparing simple biographical facts to investigate the implicit reasoning skills of LLMs. However, real-world questions often demand more intricate reasoning processes that cannot be easily broken down into simple factual units. For instance, as presented in Figure 1, to answer 'Why does ReLU training take less time than sigmoid or tanh training?', one must not only recall what an activation function is but also compare the characteristics of activation functions and understand the causal relationship between gradients and training speed. This type of reasoning requires drawing conclusions beyond simply aggregating facts.

To analyze the reasoning ability of LLMs in solving real-world questions, we propose a deconstruction of complex questions into a graph structure. In this structure, each node is represented by a question that signifies a specific level of knowledge. We

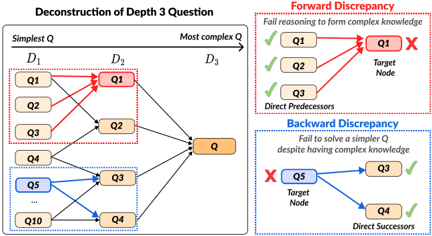

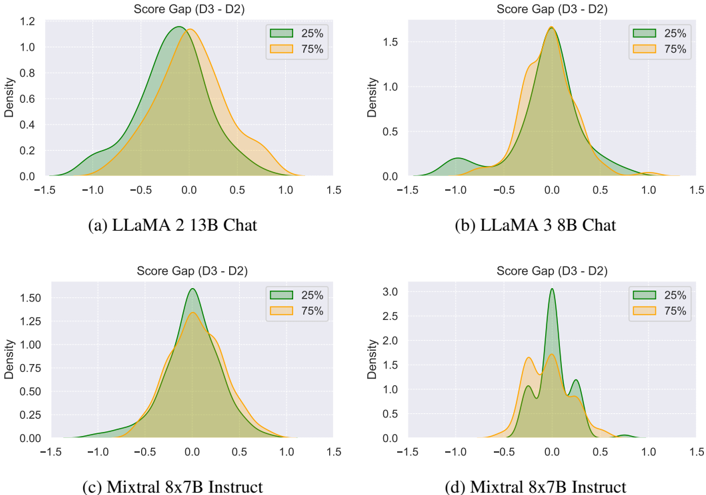

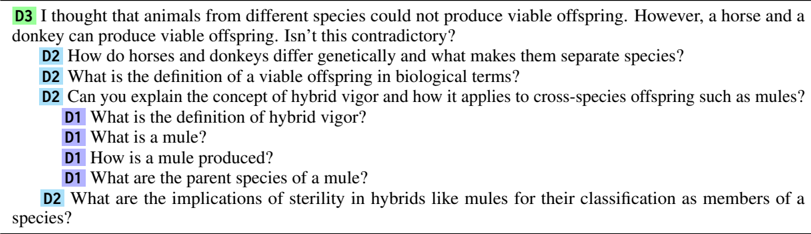

Figure 2: Hierarchical structure of a deconstructed D 3 , illustrating forward and backward discrepancies. Transition to deeper nodes requires acquiring and reasoning with knowledge from the connected shallower nodes.

<details>

<summary>Image 2 Details</summary>

### Visual Description

\n

## Diagram: Deconstruction of Depth 3 Question

### Overview

The image is a diagram illustrating the deconstruction of a Depth 3 question into simpler sub-questions, and highlighting potential discrepancies in reasoning – specifically, "Forward Discrepancy" and "Backward Discrepancy". The diagram uses a network-like structure with nodes representing questions (Q1, Q2, Q3, Q4, Q5, Q, Q10) and arrows indicating relationships between them. The diagram is divided into three main sections: a large central section showing the question decomposition, and two smaller sections on the right illustrating the discrepancies.

### Components/Axes

The diagram features the following components:

* **Title:** "Deconstruction of Depth 3 Question"

* **Horizontal Axis:** Labeled "Simplest Q" to "Most complex Q", representing the increasing complexity of questions. This axis spans the central section of the diagram.

* **Depth Labels:** D1, D2, and D3, marking stages of decomposition along the horizontal axis.

* **Nodes:** Represented by rounded rectangles, labeled Q1, Q2, Q3, Q4, Q5, Q, and Q10.

* **Arrows:** Indicate relationships between nodes. Red arrows signify failure in reasoning, while black arrows represent successful reasoning. Blue dashed arrows are also present.

* **Labels:** "Forward Discrepancy", "Fail reasoning to form complex knowledge", "Direct Predecessors", "Target Node", "Backward Discrepancy", "Fail to solve a simpler Q despite having complex knowledge", "Direct Successors".

* **Checkmarks and X Marks:** Used to indicate success or failure in reasoning.

### Detailed Analysis or Content Details

**Central Decomposition Section:**

* **D1:** Contains nodes Q1, Q2, Q3, and Q4.

* **D2:** Contains nodes Q1, Q2, Q3, and Q4.

* **D3:** Contains node Q.

* **Connections:**

* Q1 in D1 connects to Q1 in D2 via a red arrow with an 'X' mark.

* Q2 in D1 connects to Q2 in D2 via a black arrow with a checkmark.

* Q3 in D1 connects to Q3 in D2 via a black arrow with a checkmark.

* Q4 in D1 connects to Q4 in D2 via a black arrow with a checkmark.

* Q1 in D2 connects to Q in D3 via a black arrow with a checkmark.

* Q2 in D2 connects to Q in D3 via a black arrow with a checkmark.

* Q3 in D2 connects to Q in D3 via a black arrow with a checkmark.

* Q4 in D2 connects to Q in D3 via a black arrow with a checkmark.

* Q10 connects to Q4 via a blue dashed arrow.

* Q3 connects to Q4 via a blue dashed arrow.

* An ellipsis (...) indicates further connections from Q3 to Q4.

**Forward Discrepancy Section (Top-Right):**

* Nodes: Q1, Q1 (Target Node).

* Connections:

* Q1 connects to Q1 (Target Node) via a red arrow with an 'X' mark.

* Q2 connects to Q1 (Target Node) via a black arrow with a checkmark.

* Q3 connects to Q1 (Target Node) via a black arrow with a checkmark.

* Label: "Fail reasoning to form complex knowledge".

**Backward Discrepancy Section (Bottom-Right):**

* Nodes: Q5, Q3, Q4.

* Connections:

* Q5 connects to Q3 via a red arrow with an 'X' mark.

* Q5 connects to Q4 via a black arrow with a checkmark.

* Q3 connects to Q4 via a black arrow with a checkmark.

* Label: "Fail to solve a simpler Q despite having complex knowledge".

### Key Observations

* The diagram highlights two types of reasoning failures: forward and backward discrepancies.

* Forward discrepancy occurs when reasoning fails to build complex knowledge from simpler components.

* Backward discrepancy occurs when reasoning fails to solve a simpler question despite having complex knowledge.

* The use of red arrows and 'X' marks clearly indicates failures in reasoning.

* The diagram suggests a hierarchical structure where complex questions are broken down into simpler ones.

### Interpretation

The diagram illustrates a potential issue in knowledge representation and reasoning systems. It demonstrates that simply having the components of knowledge (simpler questions) doesn't guarantee the ability to synthesize them into more complex understanding (the target question). The "Forward Discrepancy" suggests a failure in the *constructive* process of reasoning – the system can't build up to the complex question. The "Backward Discrepancy" suggests a failure in the *reductive* process – the system can't break down the complex question to solve simpler ones.

The diagram is a visual representation of a potential problem in AI or cognitive architectures, where a system might possess individual pieces of information but struggle to apply them effectively in either direction – building up or breaking down knowledge. The use of the Depth (D1, D2, D3) labels suggests a layered approach to problem-solving, and the discrepancies highlight potential weaknesses in this layered structure. The diagram is not presenting data in a quantitative sense, but rather a conceptual model of reasoning failures. It's a qualitative illustration of potential pitfalls in knowledge processing.

</details>

adopt Webb's Depth of Knowledge (Webb, 1997, 1999, 2002), which assesses both the content and the depth of understanding required. Webb's Depth of Knowledge categorizes questions into three levels: mere recall of information ( D 1 ), application of knowledge ( D 2 ), and strategic thinking ( D 3 ). The transition from shallower to deeper nodes involves applying the knowledge gained from shallower nodes and performing reasoning to tackle harder problems. This approach emphasizes the gradual accumulation and integration of knowledge to address real-world problems effectively.

We introduce the resulting DEPTHQA, a collection of deconstructed questions and answers derived from human-written, scientific D 3 questions in the TutorEval dataset (Chevalier et al., 2024). The target complex questions are in D 3 , and we examine the utilization of multiple layers of knowledge and reasoning in the sequence of D 1 , D 2 , and D 3 . Figure 2 illustrates how the deconstruction process results in a hierarchical graph connecting D 1 to D 3 questions. Based on the hierarchical structure, we first measure forward reasoning gaps, denoted as forward discrepancy , which are differences in LLM performance on simpler subproblems compared to more complex questions requiring advanced reasoning. Additionally, we introduce backward discrepancy , which quantifies inconsistencies where LLMs can successfully answer complex inquiries but struggle with simpler ones. This dual assessment provides a comprehensive evaluation of the models' reasoning capabilities across different levels of complexity.

Using DEPTHQA, we investigate the knowledge reasoning ability of various instruction-tuned LLMs in the LLaMA 2 (Touvron et al., 2023), LLaMA 3 (AI@Meta, 2024), Mistral (Jiang et al.,

2023), and Mixtral (Jiang et al., 2024) family, varying in size from 7B to 70B. We compare the relationship between model capacities and depthwise discrepancies, showing that smaller models exhibit larger discrepancies in both directions. We further analyze how reliance on memorization of training data affects discrepancy, revealing that forward and backward discrepancies in large models originate from distinct types of failures. Finally, to examine the importance of structured intermediate steps in reasoning, we gradually guide models from simpler to more advanced questions through multi-turn interactions, consistently improving performance across various model sizes.

The contributions of our work are threefold:

- We propose to connect complex questions with simpler sub-questions by deconstructing questions based on depth of knowledge.

- We design the DEPTHQA dataset to evaluate LLMs' capability to form complex knowledge through reasoning. We measure forward and backward reasoning discrepancies across different levels of question complexity. 1

- We investigate the reasoning abilities of LLMs with various capacities, analyzing the impact of model size and training data memorization on discrepancies. We demonstrate the benefits of structured, multi-turn interactions to perform complex reasoning.

## 2 Related Work

Recent advancements have highlighted the impressive reasoning abilities of transformer language models across a wide range of tasks (Wei et al., 2022a; Zhao et al., 2023). Despite the success, numerous studies have found that these models often struggle with various types of reasoning, such as commonsense and logical reasoning (Qin et al., 2023; Srivastava et al., 2023). Even advanced models like GPT-4 (Achiam et al., 2023) have been noted to struggle with implicit reasoning over their internal knowledge, especially when it comes to effectively combining multiple steps to solve compositionally complex problems (Talmor et al., 2020; Rogers et al., 2020; Allen-Zhu and Li, 2023; Yang et al., 2024; Wang et al., 2024).

To address these challenges, several studies have focused on better Chain-of-Thought-style prompt-

1 We release our dataset and code at github.com/kaistAI/knowledge-reasoning .

ing or fine-tuning LLMs to verbalize the intermediate steps of knowledge and reasoning during inference (Nye et al., 2021; Wei et al., 2022b; Kojima et al., 2022; Wang et al., 2022; Sun et al., 2023; Wang et al., 2023b; Liu et al., 2023). This approach has significantly improved performance, especially in larger models with strong generalization capabilities. Theoretical and empirical studies investigate the advantages of verbalizations, highlighting their role in enhancing the reasoning capabilities of language models (Feng et al., 2023; Wang et al., 2023a; Li et al., 2024). The analysis of step-by-step reasoning abilities has matured further based on ontological (Saparov and He, 2023) and mechanistic perspectives (Hou et al., 2023a; Dutta et al., 2024).

In our proposed dataset, the most complex questions often necessitate implicit intermediate steps to reach a conclusion, which can be benefited from explicit verbalized reasoning. However, unlike previous works, our setup does not induce detailed step-by-step explanation contained in an answer to a complex question. Instead, we represent intermediate steps for a complex question in the form of sub-questions and gather answers to every subquestion, testing a model's understanding of intermediate knowledge individually. Our approach is similar to strategic question answering with intermediate answers (Geva et al., 2021; Press et al., 2023), but we further ensure a hierarchy of decompositions based on knowledge complexity. This allows examining discrepancies between questions of varying complexities, providing a distinct assessment of multi-step reasoning abilities.

Another line of work focuses on understanding transformers' knowledge and reasoning through controlled experiments (Chan et al., 2022; Akyürek et al., 2023; Dai et al., 2023; von Oswald et al., 2022; Prystawski et al., 2023; Feng and Steinhardt, 2024). Numerous studies on implicit reasoning often aim to identify latent reasoning pathways, but most have focused on simple synthetic tasks or toy models (Nanda et al., 2023; Conmy et al., 2023; Hou et al., 2023b), or evaluating through binary accuracy of short-form model predictions without considering intermediate steps (Yang et al., 2024; Wang et al., 2024). Our DEPTHQA, in contrast, challenges a model to answer complex real-world questions that require diverse reasoning types in long-form text. DEPTHQA further requires diverse types of reasoning across different depths, such as inductive and procedural reasoning, in addition to the comparative and compositional reasoning explored in prior studies (Press et al., 2023; AllenZhu and Li, 2023; Wang et al., 2024). This approach provides a more practical and nuanced assessment of the model's reasoning capabilities.

## 3 Graph-based Reasoning Framework

We develop a novel graph-based representation that delineates the dependencies between different levels of knowledge. We represent nodes as questions (Section 3.1) and edges as reasoning processes (Section 3.2). Based on the graph definition, we construct a dataset that encompasses diverse concepts and reasoning types (Section 3.3).

## 3.1 Knowledge Depth in Nodes

We represent each node as a question tied to a specific layer of knowledge. As our approach to addressing real-world problems emphasizes the gradual accumulation of knowledge similar to educational goals, we adopt the Webb's Depth of Knowledge (DOK) (Webb, 1997, 1999, 2002) widely used in education settings to categorize the level of questions. The depth of knowledge levels D k ( k ∈ { 1 , 2 , 3 } ) 2 in questions are defined as follows:

- D 1 . Factual and conceptual knowledge : The question involves the acquisition and recall of information, or following a simple formula, focusing on what the knowledge entails.

- D 2 . Procedural knowledge : The question necessitates the application of concepts through the selection of appropriate procedures and stepby-step engagement, concentrating on how the knowledge can be utilized.

- D 3 . Strategic knowledge : The question demands analysis, decision-making, or justification to address non-routine problems, emphasizing why the knowledge is applicable.

The levels can be viewed as ceilings that establish the extent or depth of an assessee's understanding (Hess, 2006), a concept recognized as a valuable assessment tool in educational contexts (Hess et al., 2009). Accordingly, we associate simpler questions with shallower depths and more complex questions with deeper depths.

2 We exclude the highest level in the original Webb's DOK, D 4 , as this level often includes interactive or creative activities and is rare or even absent in most standardized assessment (Webb, 2002; Hess, 2006).

## 3.2 Criteria for Reasoning in Edges

To conceptualize how simpler knowledge contributes to the development of complex knowledge, we define edges in our framework as transitions from a node at D k to at least one direct successor node at D k +1 3 . We perceive that advancing to deeper knowledge often requires synthesizing multiple aspects of simpler knowledge. Thus, a D k node should connect to multiple direct predecessor D k -1 nodes. This configuration establishes hierarchical dependencies among D 1 , D 2 , and D 3 questions, effectively modeling the progression needed to deepen understanding and engage with higherorder knowledge (See graph in Figure 2). Additionally, we establish three criteria to ensure that edges accurately represent the reasoning processes from shallower questions.

- C1 . Comprehensiveness: Questions at lower levels should aim to cover all foundational concepts necessary to answer a question at higher levels. This ensures that no critical knowledge gaps exist as the complexity increases.

- C2 . Implicitness: Questions at lower levels should avoid directly revealing answers or heavily hinting at solutions for higher-level questions. This encourages independent reasoning relying on the synthesis of implicit connections between nodes rather than straightforward clues.

- C3 . Non-binary questioning: Questions should elicit detailed, exploratory responses instead of simple yes/no answers. Given that LLMs may have an inherent positivity bias which leads them to prefer affirmative responses (Augustine et al., 2011; Dodds et al., 2015; Papadatos and Freedman, 2023), this helps in evaluating deep reasoning abilities beyond superficial or biased reasoning.

## 3.3 Dataset: DEPTHQA

We create DEPTHQA, a new question answering dataset for testing graph-based reasoning. The dataset is constructed in a top-down approach, deconstructing D 3 nodes into D 2 nodes, then into D 1 , creating multiple edges at each step (Table 1). We design the construction process to meticulously backtrack the knowledge necessary for complex

3 A foundational concept may apply to multiple advanced questions.

Table 1: Statistics of DEPTHQA.

| Domain | # Questions | # Questions | # Questions | # Edges between questions | # Edges between questions |

|-----------------------|---------------|---------------|---------------|-----------------------------|-----------------------------|

| | D 1 | D 2 | D 3 | D 1 → D 2 | D 2 → D 3 |

| Math | 573 | 193 | 49 | 774 | 196 |

| Computer Science | 163 | 54 | 14 | 212 | 55 |

| Environmental Science | 147 | 44 | 11 | 175 | 44 |

| Physics | 140 | 40 | 10 | 154 | 40 |

| Life Sciences | 98 | 28 | 7 | 111 | 28 |

| Math → {CS, Physics} | - | - | - | 11 | 0 |

| Total | 1,121 | 359 | 91 | 1,437 | 363 |

questions while meeting our three criteria for reasoning transition representation.

D 3 question curation We select real-world questions from the TutorEval (Chevalier et al., 2024) dataset, which contains human-crafted queries based on college-level mathematical and scientific content from textbooks 4 available on libretexts.org . Note that while these textbooks may be part of models' pre-training data due to online availability, TutorEval's human-written questions challenge models to generalize familiar concepts beyond direct training examples. We procure only complex D 3 questions from TutorEval, sorting them out using GPT-4 Turbo 5 (Achiam et al., 2023) with guidance on depth of knowledge levels. From an initial set of 834 questions, we manually refine our selection to 91 self-contained D 3 questions, ensuring clarity. We use GPT-4 Turbo to generate reference answers for each TutorEval question 6 , based on the original context and the model's self-annotated depth of knowledge. These reference answers are guided by the ground-truth key points provided by the author of each question.

Question deconstruction For each D k question, we use GPT-4 Turbo to generate up to four D k -1 questions. The prompt includes definitions for all three knowledge depths and decomposition examples to guide the deconstruction process. We provide D k with its reference answer to ensure extracted knowledge remains relevant for more challenging questions, adhering to C1 (Comprehensiveness) . We decide the optimal number of decompositions to four based on qualitative analysis, balancing comprehensiveness and implicitness: outlining every implicit reasoning step enhances comprehensiveness but may reduce implicitness,

4 Textbooks are designed with a scaffolding approach to knowledge development.

5 We use the gpt-4-0125-preview version for GPT-4 Turbo throughout this work, including data construction, verification, and experiments.

6 Chevalier et al. (2024) reports that GPT-4 excels in solving TutorEval problems with 92% correctness.

Table 2: Representative examples of required reasoning skills in D 3 and D 2 . %of instances within each depth that include the reasoning type is reported. Note that multiple reasoning types can be included in a single question.

| Depth | Reasoning type | Example question | % |

|---------|----------------------|--------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------|------|

| | Comparative | In the context of computer programming, what is the difference between for and while, are they always exchangeable? Show me some cases where one is strongly preferred to the other. | 21.1 |

| 3 | Causal | How does deflection of hair cells along the basilar membrane result in different perceived sound frequences? | 10.5 |

| | Inductive | How could a process satisfying the first law of thermodynamics still be impossible? | 8.8 |

| | Criteria Development | Explain if a matrix always have a basis of eigenvectors. | 8.8 |

| | Relational | What factors influence the time complexity of searching for an element in a data structure? | 22.6 |

| 2 | Procedural | Describe the process involved in solving cubic equations using the cubic formula. | 13.4 |

| | Application | How can sustainable agricultural practices contribute to food security and economic development in developing countries? | 7.3 |

and vice versa. Our prompt instructions carefully address this tradeoff to satisfy C2 (Implicitness) .

Deduplication and question augmentation We identified redundancies in knowledge and reasoning processes, where similar content appeared across different D 1 nodes linked to the same D 2 node, or between unconnected D 1 and D 2 nodes (example in Table 4). To address this, we utilize a Sentence Transformers embedding model 7 (Reimers and Gurevych, 2019) to detect and remove near-duplicate questions based on cosine similarity of their embeddings. We then employ GPT-4 Turbo to generate new, targeted questions and answers, filling any gaps in knowledge coverage. This approach has reduced misclassification of D 1 questions as D 2 by 88%, markedly enhancing C2 (Implicitness) . It has also decreased the total number of near-duplicates by decreased by 88%, further improving C1 (Comprehensiveness) . We subsequently update our graph data structure with these modifications.

Question debiasing Lastly, we undertake the task of manually rewriting 53 questions that originally invoke binary 'yes' or 'no' answers, ensuring C3 (Non-binary Questioning) . For example, a question that begins with 'If I understand correctly...' is transformed into 'Clarify my understanding that...', prompting the model to directly engage in analytical thinking rather than relying on simple affirmations or negations of the correctness.

Verification of hierarchy We conduct human annotation to verify the three criteria that shapes the reasoning hierarchy, reporting positive results in Appendix B. On 27.5% of DEPTHQA, an average of 83.5% of relations are fully comprehensive and 89.5% of sub-questions are fully implicit, with

7 sentence-transformers/all-mpnet-base-v2

98.7% of all questions being non-binary. Further details and examples in the construction process are in Appendix A. Prompts are in Appendix J.1.

## 3.4 Diversity of Reasoning Processes

Using a sample of 20 D 3 questions along with their interconnected 80 D 2 and 320 D 1 questions, we examine the types of reasoning needed to progress from basic to complex knowledge levels. We discover that nearly all questions necessitate the identification and extraction of several pieces of relevant information to synthesize comprehensive answers. Table 2 displays examples of questions requiring advanced reasoning skills, such as interpreting relationships between concepts, applying specific conditions, and handling assumptions, demonstrating that basic knowledge manipulation is insufficient. This diversity in reasoning types within our dataset robustly challenges LLMs to demonstrate sophisticated cognitive abilities. Detailed statistics and additional examples of reasoning types are provided in Appendix D.

## 4 Experiments

In this section, we present experiments on the depthwise reasoning ability of LLMs using DEPTHQA. We first explain the evaluation metrics and models (Section 4.1). Experimental results that follow are overall depthwise and discrepancy evaluation results (Section 4.2), the impact of memorization in knowledge reasoning (Section 4.3), and the effect of enforcing knowledge-enhanced reasoning via multi-turn inputs or prompt inputs (Section 4.5).

## 4.1 Experiment Setup

Depthwise evaluation For each question q k with depth k ( D k ), we score the factual correctness of the predicted answer on a scale from 1 to 5. We

Table 3: Depthwise reasoning performance of large language models. Bold indicates the best-performing model, and underline represents the second best performance. A darker color indicates a higher discrepancy.

| Model | Average Accuracy ↑ | Average Accuracy ↑ | Average Accuracy ↑ | Average Accuracy ↑ | Forward Discrepancy ↓ | Forward Discrepancy ↓ | Forward Discrepancy ↓ | Backward Discrepancy ↓ | Backward Discrepancy ↓ | Backward Discrepancy ↓ |

|----------------------------|----------------------|----------------------|----------------------|----------------------|-------------------------|-------------------------|-------------------------|--------------------------|--------------------------|--------------------------|

| Model | D 1 | D 2 | D 3 | Overall | D 2 → D 3 | D 1 → D 2 | Overall | D 2 → D 3 | D 1 → D 2 | Overall |

| LLaMA 2 7B Chat | 3.828 | 3.320 | 3.165 | 3.673 | 0.130 | 0.181 | 0.176 | 0.219 | 0.110 | 0.134 |

| LLaMA 2 13B Chat | 4.289 | 3.872 | 3.615 | 4.155 | 0.152 | 0.158 | 0.157 | 0.126 | 0.078 | 0.088 |

| LLaMA 2 70B Chat | 4.495 | 4.153 | 4.022 | 4.390 | 0.126 | 0.136 | 0.134 | 0.136 | 0.063 | 0.079 |

| Mistral 7B Instruct v0.2 | 4.280 | 3.897 | 4.000 | 4.176 | 0.092 | 0.157 | 0.147 | 0.144 | 0.070 | 0.088 |

| Mixtral 8x7B Instruct v0.1 | 4.599 | 4.532 | 4.429 | 4.574 | 0.087 | 0.079 | 0.081 | 0.063 | 0.063 | 0.063 |

| LLaMA 3 8B Instruct | 4.482 | 4.351 | 4.286 | 4.440 | 0.083 | 0.096 | 0.093 | 0.088 | 0.072 | 0.075 |

| LLaMA 3 70B Instruct | 4.764 | 4.749 | 4.648 | 4.754 | 0.065 | 0.050 | 0.053 | 0.043 | 0.044 | 0.044 |

| GPT-3.5 Turbo | 4.269 | 4.251 | 4.011 | 4.250 | 0.100 | 0.072 | 0.078 | 0.046 | 0.067 | 0.063 |

employ the LLM-as-a-Judge approach, which correlates highly with human judgments in scoring long-form responses (Zheng et al., 2024; Kim et al., 2024a; Lee et al., 2024; Kim et al., 2024b). Specifically, we utilize GPT-4 Turbo (Achiam et al., 2023) for absolute scoring. Following Kim et al. (2024a) and Lee et al. (2024), the model generates a score and detailed feedback for each question, reference answer, and prediction based on a defined scoring rubric. Further details on the evaluation process are provided in Appendix E. The exact input prompt for the LLM judge including the accuracy score rubric is in Appendix J.3. The reliability of the LLM evaluation results in our setting is evidenced by high annotation agreement with human evaluations, as explained in Appendix F. We report average accuracy at D k , the averaged factual correctness of questions at depth k .

Discrepancy evaluation As we deconstruct complex questions into a hierarchical graph, we can measure forward discrepancy and backward discrepancy between neighboring questions. Forward discrepancy measures the differences in performance on sub-problems compared to deeper questions requiring advanced reasoning. Given a question q k at D k ∈ { 2 , 3 } , let DP ( q k ) represents a set of direct predecessor questions at D k -1 . Then forward discrepancy for q k is defined as follows:

<!-- formula-not-decoded -->

where f is a function of a question that outputs factual correctness, as measured by an LLM evaluator. Backward discrepancy , conversely, quantifies inconsistencies where LLMs can successfully answer deeper questions but struggle with shallower ones. Given a question q k with D k ∈ { 1 , 2 } , let

DS ( q k ) represent a set of direct successor questions at D k -1 . Then backward discrepancy is defined as follows:

<!-- formula-not-decoded -->

Both forward discrepancy and backward discrepancy are normalized to the range [0, 1] by dividing by the maximum possible score gap , which is 4 at our scoring range from 1 to 5. To highlight gaps across depths, we set a strict accuracy threshold of 4 and report the average discrepancies only for examples where the mean score for DP ( q k ) and DS ( q k ) exceeds this threshold. This excludes cases where models perform poorly at both depths.

Models We mainly probe into the depthwise knowledge reasoning ability of open-source LLMs. We test representative open-source models based on the LLaMA (Touvron et al., 2023) architecture, including LLaMA 2 {7B, 13B, 70B} Chat (Touvron et al., 2023), Mistral 7B Instruct v0.2 (Jiang et al., 2023), Mixtral 8x7B Instruct v0.1 (Jiang et al., 2024), and LLaMA 3 {8B, 70B} Instruct (AI@Meta, 2024). Additionally, we include the latest GPT-3.5 Turbo 8 (OpenAI, 2022) to compare the performance of these open-source models against a proprietary model.

## 4.2 Depthwise Knowledge Reasoning Results

## Larger models exhibit smaller discrepancies.

Table 3 presents the overall depthwise reasoning performance of LLMs. As anticipated, solving questions at D 3 is the most challenging, showing the lowest average accuracy for all models. LLaMA 3 70B Instruct demonstrates the best performance across all depths, with Mixtral 8x7B Instruct achieving the second-best results. LLaMA 3

8 gpt-3.5-turbo-0125

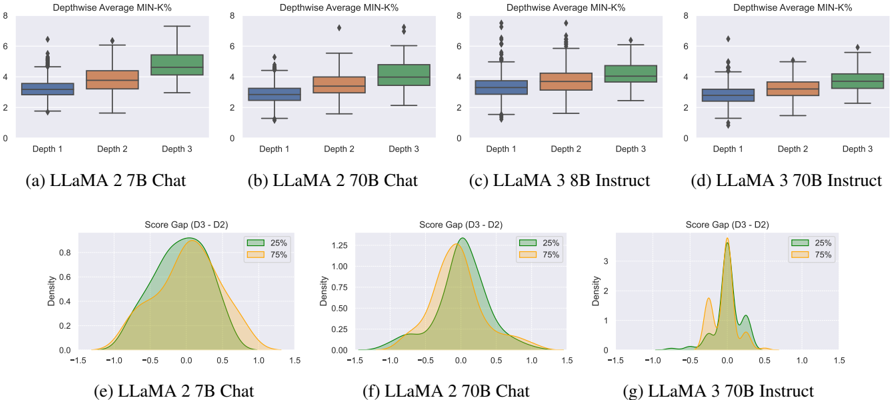

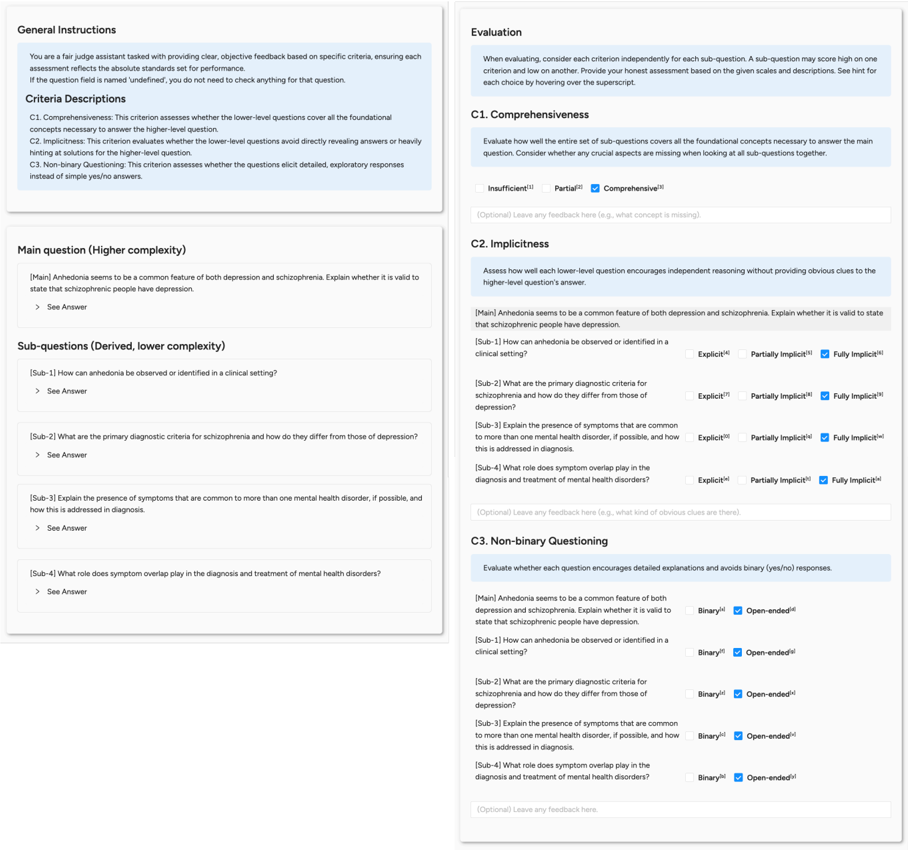

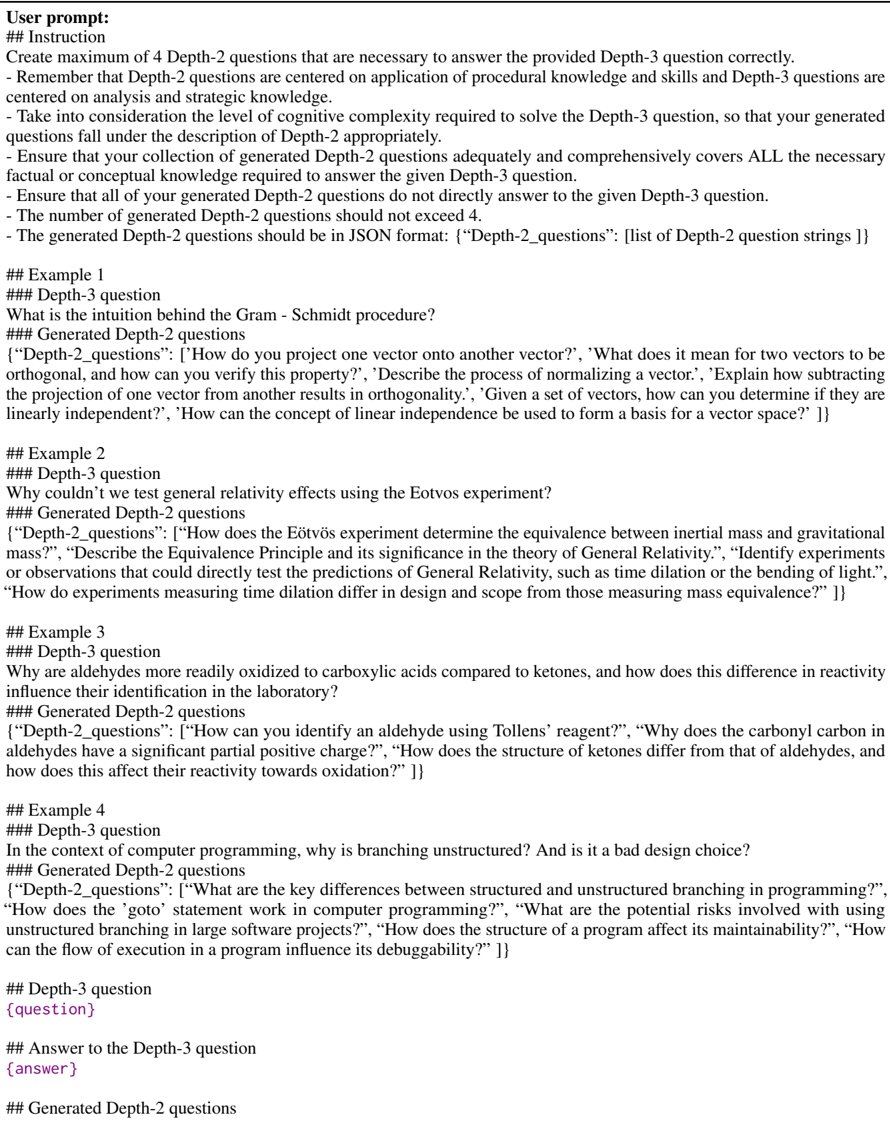

Figure 3: Memorization analysis with Min-K% probability. (a)-(d) show the distribution of average Min-K% probabilities at each depth. (e)-(g) present the distribution of score differences between neighboring questions, whose Min-K% probability is in the bottom 25% or top 75%. A positive gap indicates backward discrepancy, while a negative gap represents forward discrepancy.

<details>

<summary>Image 3 Details</summary>

### Visual Description

\n

## Box Plots & Density Plots: LLama Model Performance Comparison

### Overview

The image presents a comparison of LLama model performance across different model sizes (7B, 70B, 3B) and modes (Chat, Instruct) using box plots and density plots. The box plots show the distribution of "Depthwise Average MIN-K%" for different depths (1, 2, 3). The density plots show the distribution of "Score Gap (D3-D2)".

### Components/Axes

* **Box Plots (a-d):**

* **Y-axis:** "Depthwise Average MIN-K%" (Scale: 0 to 8, approximately).

* **X-axis:** "Depth" (Categories: 1, 2, 3).

* **Models:** LLaMA 2 7B Chat (a), LLaMA 2 70B Chat (b), LLaMA 3 8B Instruct (c), LLaMA 3 70B Instruct (d).

* **Density Plots (e-g):**

* **X-axis:** "Score Gap (D3-D2)" (Scale: -1.5 to 1.5, approximately).

* **Y-axis:** "Density" (Scale: 0 to 3, approximately).

* **Models:** LLaMA 2 7B Chat (e), LLaMA 2 70B Chat (f), LLaMA 3 70B Instruct (g).

* **Legend:**

* 25% (Green)

* 75% (Orange)

### Detailed Analysis or Content Details

**Box Plots (a-d):**

* **(a) LLaMA 2 7B Chat:** The median Depthwise Average MIN-K% increases with depth. Depth 1: ~2.5, Depth 2: ~3.5, Depth 3: ~4.5. The interquartile range (IQR) is relatively consistent across depths.

* **(b) LLaMA 2 70B Chat:** Similar trend to (a), with increasing median values with depth. Depth 1: ~2.5, Depth 2: ~3.5, Depth 3: ~5.0. IQR is also relatively consistent.

* **(c) LLaMA 3 8B Instruct:** The median Depthwise Average MIN-K% is relatively stable across depths. Depth 1: ~3.0, Depth 2: ~3.5, Depth 3: ~3.5. IQR is smaller than in (a) and (b).

* **(d) LLaMA 3 70B Instruct:** The median Depthwise Average MIN-K% increases with depth. Depth 1: ~2.5, Depth 2: ~3.5, Depth 3: ~4.5. IQR is relatively consistent.

**Density Plots (e-g):**

* **(e) LLaMA 2 7B Chat:** The distribution is roughly symmetrical around 0, with a peak around 0.0. The 25% and 75% lines indicate the central 50% of the data falls between approximately -0.2 and 0.2.

* **(f) LLaMA 2 70B Chat:** The distribution is skewed to the right, with a peak around 0.2. The 25% line is around -0.1, and the 75% line is around 0.5.

* **(g) LLaMA 3 70B Instruct:** The distribution is highly skewed to the right, with a peak around 0.6. The 25% line is around 0.2, and the 75% line is around 0.9.

### Key Observations

* The 70B models generally show higher Depthwise Average MIN-K% values than the 7B and 8B models, particularly at higher depths.

* The LLaMA 3 8B Instruct model exhibits a more stable Depthwise Average MIN-K% across depths compared to the other models.

* The Score Gap distributions shift to the right as model size increases (from 7B to 70B), indicating a larger difference in scores between depth 3 and depth 2.

* The LLaMA 3 70B Instruct model has a significantly right-skewed Score Gap distribution, suggesting a substantial improvement in performance from depth 2 to depth 3.

### Interpretation

The data suggests that increasing model size (from 7B to 70B) generally improves performance, as indicated by the higher Depthwise Average MIN-K% values and the shift in the Score Gap distributions. The LLaMA 3 8B Instruct model's stable performance across depths might indicate a different optimization strategy or a different sensitivity to depth. The right-skewed Score Gap distribution for the LLaMA 3 70B Instruct model suggests that this model benefits significantly from increasing the depth of processing.

The "Depthwise Average MIN-K%" metric likely represents some measure of model accuracy or confidence at different processing depths. The "Score Gap (D3-D2)" metric quantifies the improvement in performance when increasing the depth from 2 to 3. The combination of these metrics provides insights into how effectively each model utilizes deeper processing layers. The 25% and 75% lines on the density plots show the range of the middle 50% of the data, giving a sense of the spread and consistency of the results. The models are being compared on their ability to improve performance with increased depth.

</details>

70B Instruct also exhibits the lowest discrepancies for both forward and backward discrepancy metrics, effectively answering questions at all depths with minimal discrepancies. Conversely, the least capable model, LLaMA 2 7B Chat, shows the lowest average accuracy along with the highest forward and backward discrepancies. Note that the relatively low forward discrepancy from D 1 → D 2 for LLaMA 2 7B Chat is due to its low performance at D 2 . This observation highlights the varying capabilities of different LLMs in handling questions at different depths and the inconsistencies in reasoning across depths.

Contrasting patterns of discrepancies We observe distinct patterns when analyzing forward and backward discrepancies separately. These discrepancies can be understood as a product of intensity (the magnitude of the discrepancies) and frequency (the proportion of questions showing a positive discrepancy). Frequency indicates how often forward discrepancy or backward discrepancy occurs, while intensity reflects the strength of the discrepancy when it happens. Our analysis shows that forward discrepancy tends to occur more frequently but with lower intensity. For example, LLaMA 3 8B Instruct exhibits an intensity of 0.225 with a frequency of 41.44%. In contrast, backward discrepancy is less common but has a higher intensity when they appear. Specifically, LLaMA 3 8B Instruct shows an intensity of 0.323 with a frequency of 23.32% for backward discrepancies. The intensity and frequency for all models are provided in Appendix G.

## 4.3 Memorization in Depthwise Knowledge Reasoning

## 4.3.1 Depthwise Memorization

To determine whether solving complex questions requires reasoning rather than memorization of training data, we use a pre-training data detection method to approximate potential aspects of memorization. Following Shi et al. (2023), we compare the Min-K% probability within models. Higher values suggest a smaller possibility of predictions directly existing in the training data. To elaborate, Min-K% probability is calculated by averaging the negative log-likelihood of the K% least probable tokens in the model's predictions. In the case where a given prediction was seen during training, outlier words with low probabilities would appear less frequently, resulting in high probabilities for the K% tokens. Since Min-K% probability is the average negative log-likelihood of such tokens, the resulting value would be lower in this case. 9

Models rely less on memorization for complex questions. Figure 3 (a)-(d) presents the depthwise average of the Min-K% probability for four models. We observe that as the depth increases,

9 For our calculations, we set k to 20 and use a sequence length of 128.

the Min-K% probability also increases for all models. This indicates that answering questions based on simple conceptual knowledge corresponding to D 1 is more likely to be solved by recalling training data. While shallow questions ( D 1 ) can be addressed through memorization, solving deeper questions ( D 3 ) requires more than just recalling a single piece of memorized knowledge, indicating a need for genuine reasoning capabilities.

## 4.3.2 Memorization Gap between Depths

Further analysis of questions in the bottom 25% and top 75% quantiles of the Min-K% probability distribution provides additional insights. Note that questions in the top 75% quantile are more likely to appear in the training data, while those in the bottom 25% are less likely. Figure 3 (e)-(g) shows the score difference between neighboring questions ( D 2 → D 3 ) whose Min-K% probability is in the bottom 25% or top 75%. We calculate the memorization gap as the difference between the factual correctness of D 3 and D 2 , normalized by the maximum gap of 4. A positive value indicates higher factual accuracy for the deeper questions, signifying backward discrepancy, while a negative value indicates higher accuracy for the shallower question, representing forward discrepancy.

Variance of gaps Weobserve that the model with the smallest capacity, LLaMA 2 7B Chat, exhibits large variances in both positive and negative directions, showing significant forward and backward discrepancies. In contrast, models with larger capacities, such as LLaMA 2 70B Chat and LLaMA 3 70B Instruct, demonstrate smaller variances.

Potential causes of discrepancies Additionally, models with larger capacities tend to show relatively higher forward discrepancies-distribution concentrated on the negative side-for the top 75% examples, which rely less on memorization. On the other hand, the bottom 25% distribution is concentrated on positive values, indicating relatively more backward discrepancies. This suggests that as model capacity increases, failures in knowledge reasoning result in forward discrepancies, while failures due to reliance on memorization may lead to backward discrepancies. The depthwise MinK%probability and score difference for other models are provided in Appendix H.

## 4.4 Qualitative Analysis of Backward Discrepancy

To better understand how the more abnormal inconsistency-backward discrepancy-can emerge, we qualitatively analyze backward discrepancy cases from the weakest model in our experiments, LLaMA 2 7B chat, and the strongest model in our experiments, LLaMA 3 70B Instruct. The examples we refer to in the following paragraphs are listed in Appendix I.

We observe that backward discrepancies often stem from the models' ability to articulate highlevel concepts but struggle with translating this understanding into precise, step-by-step procedures, particularly when mathematical operations are involved. This is illustrated in Example 1, where both models explain the importance of continued fraction representation for tangle numbers well ( D 3 ) but fail to accurately describe the process of constructing a tangle for a given number ( D 2 ).

In backward discrepancy cases, answers to deeper questions are more likely to be text-based and conceptual, making them easier for models to memorize that data. In contrast, shallower questions require execution of mathematical or logical operations, where the variability in the elements makes answers harder to memorize verbatim. This elucidates memorization effects on backward discrepancy analyzed in Section 4.3.2.

Interestingly, we also observe how the degree of memorization contributing to backward discrepancy can vary with model capacity. Example 2 shows LLaMA 2 7B Chat accurately reasoning about time complexity ( D 3 ) but introducing nonstandard terminology for specific operations ( D 2 ), suggesting the model's struggle with precise recall of basic concepts. Conversely, Example 3 demonstrates LLaMA 3 70B Instruct correctly recalling a complex formula ( D 3 ) but failing to apply it practically ( D 2 ). This indicates that the model can extensively memorize information but still struggle with its flexible application. This observation exemplifies why variance of memorization gaps can differ by model capacity, as described in Section 4.3.2.

## 4.5 Effect of Explicit Reasoning Process

In this study, as presented in Figure 1 (a), D 3 questions can be solved through sequential reasoning, utilizing answers from D 1 to D 3 questions. Previous studies on implicit reasoning (Wei et al., 2022b; Press et al., 2023; Zhou et al., 2023) have shown

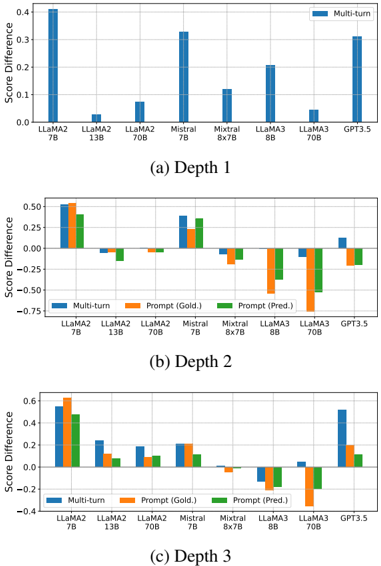

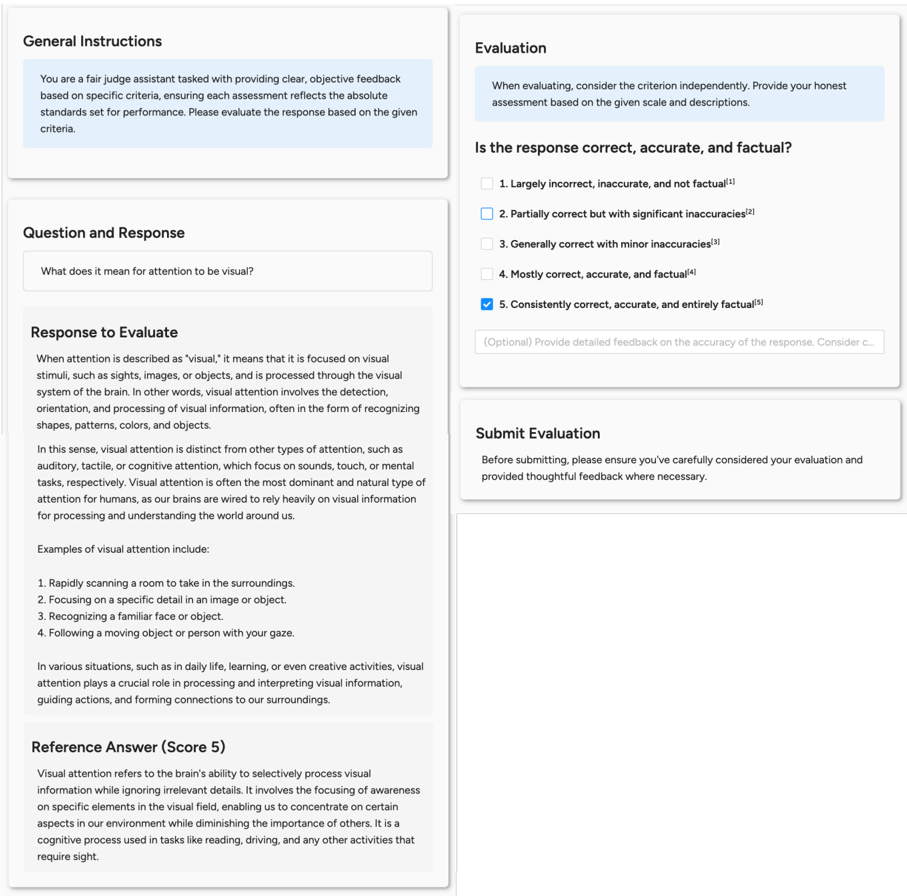

Figure 4: Performance change after providing shallower questions. Note that D 1 is not reported for prompt inputs, as D 1 does not have shallower questions.

<details>

<summary>Image 4 Details</summary>

### Visual Description

\n

## Bar Charts: Score Difference by Model and Depth

### Overview

The image presents three bar charts, labeled (a) Depth 1, (b) Depth 2, and (c) Depth 3. Each chart compares the "Score Difference" for several language models (LLaMA2 7B, LLaMA2 13B, LLaMA2 70B, Mistral 7B, Mixtral 8x7B, LLaMA3 8B, LLaMA3 70B, and GPT 3.5) across different depths of analysis. The score difference is measured on the y-axis, ranging from approximately -0.75 to 0.4. The x-axis represents the language models. Each chart uses a different color scheme to represent different evaluation methods.

### Components/Axes

* **Y-axis Title:** "Score Difference" (common to all three charts)

* **X-axis Labels:** LLaMA2 7B, LLaMA2 13B, LLaMA2 70B, Mistral 7B, Mixtral 8x7B, LLaMA3 8B, LLaMA3 70B, GPT 3.5 (common to all three charts)

* **Legend:**

* **(a) Depth 1:** Blue - "Multi-turn"

* **(b) Depth 2:** Blue - "Multi-turn", Orange - "Prompt (Gold.)", Green - "Prompt (Pred.)"

* **(c) Depth 3:** Blue - "Multi-turn", Orange - "Prompt (Gold.)", Green - "Prompt (Pred.)"

### Detailed Analysis or Content Details

**Chart (a) Depth 1:**

* The chart displays a single bar for each model, representing the "Multi-turn" score difference.

* LLaMA2 7B: Approximately 0.42

* LLaMA2 13B: Approximately 0.08

* LLaMA2 70B: Approximately 0.12

* Mistral 7B: Approximately 0.35

* Mixtral 8x7B: Approximately 0.16

* LLaMA3 8B: Approximately 0.24

* LLaMA3 70B: Approximately 0.18

* GPT 3.5: Approximately 0.38

* Trend: The score differences vary significantly across models, with LLaMA2 7B and Mistral 7B showing the highest values.

**Chart (b) Depth 2:**

* Each model has three bars: "Multi-turn" (blue), "Prompt (Gold.)" (orange), and "Prompt (Pred.)" (green).

* LLaMA2 7B: Multi-turn ≈ 0.48, Prompt (Gold.) ≈ 0.52, Prompt (Pred.) ≈ 0.45

* LLaMA2 13B: Multi-turn ≈ 0.05, Prompt (Gold.) ≈ 0.12, Prompt (Pred.) ≈ 0.02

* LLaMA2 70B: Multi-turn ≈ 0.02, Prompt (Gold.) ≈ 0.05, Prompt (Pred.) ≈ -0.05

* Mistral 7B: Multi-turn ≈ 0.45, Prompt (Gold.) ≈ 0.48, Prompt (Pred.) ≈ 0.42

* Mixtral 8x7B: Multi-turn ≈ 0.03, Prompt (Gold.) ≈ -0.02, Prompt (Pred.) ≈ -0.12

* LLaMA3 8B: Multi-turn ≈ 0.15, Prompt (Gold.) ≈ 0.18, Prompt (Pred.) ≈ 0.12

* LLaMA3 70B: Multi-turn ≈ 0.08, Prompt (Gold.) ≈ 0.05, Prompt (Pred.) ≈ -0.05

* GPT 3.5: Multi-turn ≈ 0.42, Prompt (Gold.) ≈ 0.40, Prompt (Pred.) ≈ 0.35

* Trends:

* "Multi-turn" generally shows higher positive values for LLaMA2 7B, Mistral 7B, and GPT 3.5.

* "Prompt (Gold.)" is consistently higher than "Prompt (Pred.)" for most models.

* Mixtral 8x7B shows negative values for "Prompt (Pred.)".

**Chart (c) Depth 3:**

* Similar structure to Chart (b) with three bars per model.

* LLaMA2 7B: Multi-turn ≈ 0.55, Prompt (Gold.) ≈ 0.58, Prompt (Pred.) ≈ 0.50

* LLaMA2 13B: Multi-turn ≈ 0.15, Prompt (Gold.) ≈ 0.20, Prompt (Pred.) ≈ 0.08

* LLaMA2 70B: Multi-turn ≈ 0.05, Prompt (Gold.) ≈ 0.08, Prompt (Pred.) ≈ -0.03

* Mistral 7B: Multi-turn ≈ 0.50, Prompt (Gold.) ≈ 0.53, Prompt (Pred.) ≈ 0.45

* Mixtral 8x7B: Multi-turn ≈ 0.05, Prompt (Gold.) ≈ -0.02, Prompt (Pred.) ≈ -0.15

* LLaMA3 8B: Multi-turn ≈ 0.20, Prompt (Gold.) ≈ 0.25, Prompt (Pred.) ≈ 0.15

* LLaMA3 70B: Multi-turn ≈ 0.10, Prompt (Gold.) ≈ 0.05, Prompt (Pred.) ≈ -0.10

* GPT 3.5: Multi-turn ≈ 0.55, Prompt (Gold.) ≈ 0.52, Prompt (Pred.) ≈ 0.45

* Trends:

* Similar trends to Chart (b), with LLaMA2 7B, Mistral 7B, and GPT 3.5 showing higher "Multi-turn" scores.

* "Prompt (Gold.)" remains generally higher than "Prompt (Pred.)".

* Mixtral 8x7B continues to exhibit negative "Prompt (Pred.)" values.

### Key Observations

* LLaMA2 7B and Mistral 7B consistently perform well across all depths, particularly in the "Multi-turn" evaluation.

* The difference between "Prompt (Gold.)" and "Prompt (Pred.)" suggests that the gold standard prompts yield higher scores than the predicted prompts.

* Mixtral 8x7B shows a concerning trend of negative score differences for "Prompt (Pred.)" at depths 2 and 3.

* The score differences generally appear to stabilize or slightly increase with depth for LLaMA2 7B, Mistral 7B, and GPT 3.5.

### Interpretation

These charts likely represent the performance of different language models on a task evaluated at varying levels of complexity ("Depth"). The "Multi-turn" score difference could indicate how well the model handles conversational contexts. The "Prompt (Gold.)" and "Prompt (Pred.)" scores suggest the impact of prompt quality on model performance. The gold standard prompts (presumably human-crafted) consistently lead to better results than the predicted prompts.

The consistent strong performance of LLaMA2 7B, Mistral 7B, and GPT 3.5 suggests these models are robust and capable of handling complex interactions. The negative score differences for Mixtral 8x7B's predicted prompts at higher depths raise concerns about its ability to generalize or follow instructions effectively without high-quality prompts. The increasing score differences with depth for some models could indicate that they benefit from more nuanced evaluation criteria.

The data suggests that prompt engineering is crucial for maximizing the performance of these language models, and that some models are more sensitive to prompt quality than others. Further investigation into the prompts used and the specific task being evaluated would be necessary to draw more definitive conclusions.

</details>

that enforcing LLMs to reason through intermediate steps explicitly can improve their reasoning ability. We investigate whether explicitly providing these reasoning processes to the model can aid in solving complex questions.

We encourage the model to reason by providing shallower questions in three ways: (i) Multiturn , where shallower questions are provided as user queries in a multi-turn conversation; (ii) Prompt (Gold) , where shallower questions and their gold answers are provided in prompts; (iii) Prompt (Pred.) , where shallower questions with the model's predictions are given in prompts. Note that prompt-based approaches require shallower QA pairs as inputs, which cannot be applied to D 1 questions. The prompt template for each approach is provided in Appendix J.2.

## Explicitly providing shallower solutions is beneficial for small models and complex questions.

Figure 4 illustrates the depthwise performance changes after incorporating deconstructed question information. Providing shallower questions benefits models with smaller capacities, such as LLaMA 2 7B Chat and Mistral 7B Instruct v0.2. For relatively simpler questions ( D 2 ), the benefit is less pronounced or may even decrease the per- formance of more capable models (>7B). However, intermediate questions ( D 2 ) are beneficial for complex questions ( D 3 ), except for models with large capacities ( ≥ 56B). These findings align with recent research on decomposing a complex question into simpler sub-tasks and solving sub-tasks prior to the final answer (Juneja et al., 2023; Khot et al., 2023), which have shown high performance improvements for solving complex problems across different model sizes.

Implicitly guiding reasoning via multi-turn interactions best improves performance. When comparing the two prompt-based inputs, smaller models tend to perform better with gold answers (Gold.), while more capable models favor selfprediction results (Pred.). This preference likely arises because more capable models align better with their own generated outputs, which reflect their advanced internal reasoning processes. The multi-turn approach provides the most stable results across all depths, enhancing the performance of smaller models while causing minimal performance drops for larger models. Additionally, the multi-turn approach improves D 1 performance by providing context or domain information as part of the interaction history.

## 5 Conclusion

In this study, we explore the reasoning capabilities of LLMs by deconstructing real-world questions into a graph. We introduce DEPTHQA, a set of deconstructed D 3 questions mapped into a hierarchical graph, requiring utilization of muliple layers of knowledge in the sequence of D 1 , D 2 to D 3 . This hierarchical approach provides a comprehensive assessment of LLM performance by measuring forward and backward discrepancies between simpler and complex questions. Our comparative analysis of LLMs with different capacities reveals an inverse relationship between model capacities and discrepancies. Memorization analysis suggests that the sources of forward and backward discrepancies in large models stem from different types of failures. Lastly, we demonstrate that guiding models from shallower to deeper questions through multiturn interactions stabilizes performance across the majority of models. These findings emphasize the importance of intermediate knowledge extraction in understanding LLM reasoning capabilities.

## Limitations

Small sample size Our dataset, DEPTHQA, consists of 91 complex ( D 3 ) questions from the TutorEval dataset, along with 1,480 derived shallower ( D 2 , D 1 ) questions. Despite the diversity in reasoning types explored (Section 3.4) and the hierarchical structuring of subquestions, the limited number of complex questions and the narrow content scope restrict the generalizability of our findings. The selection of TutorEval as our primary source is based on the challenge of manually developing or even sourcing intricate questions that necessitate advanced reasoning skills; such questions require (1) maintaining real-world relevance, (2) eliciting long-form answers, and (3) having minimal risk of test set contamination. Within TutorEval, complex D 3 questions represent only 33.6% of its 834 questions, which further reduces to 10.9% when excluding questions that require external knowledge retrieval. We encourage future research to build larger, more diverse datasets to more robustly assess knowledge reasoning capabilities of LLMs.

GPT-4 data generation and evaluation All questions except for D 3 and reference answers in DEPTHQA are generated by GPT-4 Turbo. To ensure the quality of these questions, we have established strict decomposition criteria (Section 3.2) and implemented rigorous procedures including detailed instructions, question augmentation, manual rewriting and verification by human annotators (Section 3.3). The reliability of the answers is supported by findings from Chevalier et al. (2024), which demonstrate GPT-4's high accuracy of 92% on TutorEval problems as assessed by human evaluators. However, there may exist inaccuracies due to unseen errors in the decomposition process or unverified knowledge produced by the model.

Furthermore, we utilize GPT-4 Turbo to assess the correctness of model predictions. Following protocols from previous studies (Kim et al., 2024a,b) which highlight GPT-4's strong correlation with human judgments on long-form content, we provide detailed instructions and specific scoring rubrics to the evaluator to ensure that the evaluation process aligns closely with our objectives. In addition, we conduct human evaluations and compare with GPT-4 Turbo evaluations, and measure sufficiently high inter-annotator agreement (Appendix F). Still, the evaluation method is subject to bias inherent in LLM judges.

## Acknowledgement

Wethank Hyeonbin Hwang, Sohee Yang, and Sungdong Kim for constructive feedback and discussions. This work was partly supported by KAISTNAVER Hypercreative AI Center and Institute for Information & communications Technology Promotion (IITP) grant funded by the Korea government (MSIT) (RS-2024-00398115, Research on the reliability and coherence of outcomes produced by Generative AI, 30%).

## References

Josh Achiam, Steven Adler, Sandhini Agarwal, Lama Ahmad, Ilge Akkaya, Florencia Leoni Aleman, Diogo Almeida, Janko Altenschmidt, Sam Altman, Shyamal Anadkat, et al. 2023. Gpt-4 technical report. arXiv .

AI@Meta. 2024. Llama 3 model card.

Ekin Akyürek, Dale Schuurmans, Jacob Andreas, Tengyu Ma, and Denny Zhou. 2023. What learning algorithm is in-context learning? investigations with linear models. In ICLR .

Zeyuan Allen-Zhu and Yuanzhi Li. 2023. Physics of language models: Part 3.2, knowledge manipulation. arXiv .

Adam A Augustine, Matthias R Mehl, and Randy J Larsen. 2011. A positivity bias in written and spoken english and its moderation by personality and gender. Social Psychological and Personality Science , 2(5):508-515.

Santiago Castro. 2017. Fast Krippendorff: Fast computation of Krippendorff's alpha agreement measure. https://github.com/pln-fing-udelar/fast-krippendorff.

Stephanie Chan, Adam Santoro, Andrew Lampinen, Jane Wang, Aaditya Singh, Pierre Richemond, James McClelland, and Felix Hill. 2022. Data distributional properties drive emergent in-context learning in transformers. In NeurIPS , pages 18878-18891.

Alexis Chevalier, Jiayi Geng, Alexander Wettig, Howard Chen, Sebastian Mizera, Toni Annala, Max Jameson Aragon, Arturo Rodríguez Fanlo, Simon Frieder, Simon Machado, et al. 2024. Language models as science tutors. arXiv .

Arthur Conmy, Augustine Mavor-Parker, Aengus Lynch, Stefan Heimersheim, and Adrià Garriga-Alonso. 2023. Towards automated circuit discovery for mechanistic interpretability. In NeurIPS . Curran Associates, Inc.

Damai Dai, Yutao Sun, Li Dong, Yaru Hao, Shuming Ma, Zhifang Sui, and Furu Wei. 2023. Why can GPT learn in-context? language models secretly perform gradient descent as meta-optimizers. In Findings of ACL .

- Peter Sheridan Dodds, Eric M Clark, Suma Desu, Morgan R Frank, Andrew J Reagan, Jake Ryland Williams, Lewis Mitchell, Kameron Decker Harris, Isabel M Kloumann, James P Bagrow, et al. 2015. Human language reveals a universal positivity bias. Proceedings of the national academy of sciences , 112(8):2389-2394.

- Subhabrata Dutta, Joykirat Singh, Soumen Chakrabarti, and Tanmoy Chakraborty. 2024. How to think stepby-step: A mechanistic understanding of chain-ofthought reasoning. TMLR .

- Nouha Dziri, Ximing Lu, Melanie Sclar, Xiang (Lorraine) Li, Liwei Jiang, Bill Yuchen Lin, Sean Welleck, Peter West, Chandra Bhagavatula, Ronan Le Bras, Jena Hwang, Soumya Sanyal, Xiang Ren, Allyson Ettinger, Zaid Harchaoui, and Yejin Choi. 2023. Faith and fate: Limits of transformers on compositionality. In NeurIPS .

- Guhao Feng, Yuntian Gu, Bohang Zhang, Haotian Ye, Di He, and Liwei Wang. 2023. Towards revealing the mystery behind chain of thought: a theoretical perspective. NeurIPS .

- Jiahai Feng and Jacob Steinhardt. 2024. How do language models bind entities in context? In ICLR .

- Mor Geva, Daniel Khashabi, Elad Segal, Tushar Khot, Dan Roth, and Jonathan Berant. 2021. Did aristotle use a laptop? a question answering benchmark with implicit reasoning strategies. TACL .

- K Hess. 2006. Applying webb's depth-of-knowledge (dok) levels in science. Accessed November , 10.

- Karin Hess, Ben Jones, Dennis Carlock, and John R Walkup. 2009. Cognitive rigor: Blending the strengths of bloom's taxonomy and webb's depth of knowledge to enhance classroom-level processes. ERIC Document (Online Database) .

- Yifan Hou, Jiaoda Li, Yu Fei, Alessandro Stolfo, Wangchunshu Zhou, Guangtao Zeng, Antoine Bosselut, and Mrinmaya Sachan. 2023a. Towards a mechanistic interpretation of multi-step reasoning capabilities of language models. In EMNLP .

- Yifan Hou, Jiaoda Li, Yu Fei, Alessandro Stolfo, Wangchunshu Zhou, Guangtao Zeng, Antoine Bosselut, and Mrinmaya Sachan. 2023b. Towards a mechanistic interpretation of multi-step reasoning capabilities of language models. In EMNLP .

- Albert Q Jiang, Alexandre Sablayrolles, Arthur Mensch, Chris Bamford, Devendra Singh Chaplot, Diego de las Casas, Florian Bressand, Gianna Lengyel, Guillaume Lample, Lucile Saulnier, et al. 2023. Mistral 7b. arXiv .

- Albert Q Jiang, Alexandre Sablayrolles, Antoine Roux, Arthur Mensch, Blanche Savary, Chris Bamford, Devendra Singh Chaplot, Diego de las Casas, Emma Bou Hanna, Florian Bressand, et al. 2024. Mixtral of experts. arXiv .

- Gurusha Juneja, Subhabrata Dutta, Soumen Chakrabarti, Sunny Manchanda, and Tanmoy Chakraborty. 2023. Small language models fine-tuned to coordinate larger language models improve complex reasoning. In EMNLP .

- Tushar Khot, Harsh Trivedi, Matthew Finlayson, Yao Fu, Kyle Richardson, Peter Clark, and Ashish Sabharwal. 2023. Decomposed prompting: A modular approach for solving complex tasks. In ICLR .

- Seungone Kim, Jamin Shin, Yejin Cho, Joel Jang, Shayne Longpre, Hwaran Lee, Sangdoo Yun, Seongjin Shin, Sungdong Kim, James Thorne, and Minjoon Seo. 2024a. Prometheus: Inducing evaluation capability in language models. In ICLR .

- Seungone Kim, Juyoung Suk, Shayne Longpre, Bill Yuchen Lin, Jamin Shin, Sean Welleck, Graham Neubig, Moontae Lee, Kyungjae Lee, and Minjoon Seo. 2024b. Prometheus 2: An open source language model specialized in evaluating other language models. arXiv .

- Takeshi Kojima, Shixiang Shane Gu, Machel Reid, Yutaka Matsuo, and Yusuke Iwasawa. 2022. Large language models are zero-shot reasoners. In NeurIPS .

- Klaus Krippendorff. 2018. Content analysis: An introduction to its methodology . Sage publications.

- Woosuk Kwon, Zhuohan Li, Siyuan Zhuang, Ying Sheng, Lianmin Zheng, Cody Hao Yu, Joseph E. Gonzalez, Hao Zhang, and Ion Stoica. 2023. Efficient memory management for large language model serving with pagedattention. In SOSP .

- Seongyun Lee, Seungone Kim, Sue Hyun Park, Geewook Kim, and Minjoon Seo. 2024. Prometheusvision: Vision-language model as a judge for finegrained evaluation. arXiv .

- Zhiyuan Li, Hong Liu, Denny Zhou, and Tengyu Ma. 2024. Chain of thought empowers transformers to solve inherently serial problems. In ICLR .

- Jiacheng Liu, Ramakanth Pasunuru, Hannaneh Hajishirzi, Yejin Choi, and Asli Celikyilmaz. 2023. Crystal: Introspective reasoners reinforced with selffeedback. In EMNLP .

- Neel Nanda, Lawrence Chan, Tom Lieberum, Jess Smith, and Jacob Steinhardt. 2023. Progress measures for grokking via mechanistic interpretability. In ICLR .

- Maxwell Nye, Anders Johan Andreassen, Guy Gur-Ari, Henryk Michalewski, Jacob Austin, David Bieber, David Dohan, Aitor Lewkowycz, Maarten Bosma, David Luan, et al. 2021. Show your work: Scratchpads for intermediate computation with language models. arXiv .

- OpenAI. 2022. Chatgpt: Optimizing language models for dialogue.

- Henry Papadatos and Rachel Freedman. 2023. Your llm judge may be biased. https://www.lesswrong.com/posts/ S4aGGF2cWi5dHtJab/your-llm-judge-may-be-biased. Accessed: 2023-06-14.

- Ofir Press, Muru Zhang, Sewon Min, Ludwig Schmidt, Noah Smith, and Mike Lewis. 2023. Measuring and narrowing the compositionality gap in language models. In Findings of EMNLP .

- Ben Prystawski, Michael Y. Li, and Noah Goodman. 2023. Why think step by step? reasoning emerges from the locality of experience. In NeurIPS .

- Chengwei Qin, Aston Zhang, Zhuosheng Zhang, Jiaao Chen, Michihiro Yasunaga, and Diyi Yang. 2023. Is ChatGPT a general-purpose natural language processing task solver? In EMNLP .

- Nils Reimers and Iryna Gurevych. 2019. SentenceBERT: Sentence embeddings using Siamese BERTnetworks. In EMNLP-IJCNLP .

- Anna Rogers, Olga Kovaleva, and Anna Rumshisky. 2020. A primer in BERTology: What we know about how BERT works. TACL .

- Abulhair Saparov and He He. 2023. Language models are greedy reasoners: A systematic formal analysis of chain-of-thought. In ICLR .

- Weijia Shi, Anirudh Ajith, Mengzhou Xia, Yangsibo Huang, Daogao Liu, Terra Blevins, Danqi Chen, and Luke Zettlemoyer. 2023. Detecting pretraining data from large language models. ArXiv .

- Aarohi Srivastava, Abhinav Rastogi, Abhishek Rao, Abu Awal Md Shoeb, Abubakar Abid, Adam Fisch, Adam R. Brown, Adam Santoro, Aditya Gupta, Adrià Garriga-Alonso, et al. 2023. Beyond the imitation game: Quantifying and extrapolating the capabilities of language models. TMLR .

- Zhiqing Sun, Xuezhi Wang, Yi Tay, Yiming Yang, and Denny Zhou. 2023. Recitation-augmented language models. In ICLR .

- Alon Talmor, Yanai Elazar, Yoav Goldberg, and Jonathan Berant. 2020. oLMpics-on what language model pre-training captures. TACL .

- Hugo Touvron, Louis Martin, Kevin Stone, Peter Albert, Amjad Almahairi, Yasmine Babaei, Nikolay Bashlykov, Soumya Batra, Prajjwal Bhargava, Shruti Bhosale, et al. 2023. Llama 2: Open foundation and fine-tuned chat models. arXiv .

- Johannes von Oswald, Eyvind Niklasson, E. Randazzo, João Sacramento, Alexander Mordvintsev, Andrey Zhmoginov, and Max Vladymyrov. 2022. Transformers learn in-context by gradient descent. In ICML .

- Boshi Wang, Xiang Deng, and Huan Sun. 2022. Iteratively prompt pre-trained language models for chain of thought. In EMNLP .

- Boshi Wang, Sewon Min, Xiang Deng, Jiaming Shen, You Wu, Luke Zettlemoyer, and Huan Sun. 2023a. Towards understanding chain-of-thought prompting: An empirical study of what matters. In ACL .

- Boshi Wang, Xiang Yue, Yu Su, and Huan Sun. 2024. Grokked transformers are implicit reasoners: A mechanistic journey to the edge of generalization. arXiv .

- Xuezhi Wang, Jason Wei, Dale Schuurmans, Quoc V Le, Ed H. Chi, Sharan Narang, Aakanksha Chowdhery, and Denny Zhou. 2023b. Self-consistency improves chain of thought reasoning in language models. In ICLR .

- Norman L Webb. 1997. Criteria for alignment of expectations and assessments in mathematics and science education. research monograph no. 6.

- Norman L Webb. 1999. Alignment of science and mathematics standards and assessments in four states. research monograph no. 18.

- Norman L Webb. 2002. Depth-of-knowledge levels for four content areas. Language Arts , 28(March):1-9.

- Jason Wei, Yi Tay, Rishi Bommasani, Colin Raffel, Barret Zoph, Sebastian Borgeaud, Dani Yogatama, Maarten Bosma, Denny Zhou, Donald Metzler, Ed H. Chi, Tatsunori Hashimoto, Oriol Vinyals, Percy Liang, Jeff Dean, and William Fedus. 2022a. Emergent abilities of large language models. TMLR .

- Jason Wei, Xuezhi Wang, Dale Schuurmans, Maarten Bosma, Ed Huai hsin Chi, F. Xia, Quoc Le, and Denny Zhou. 2022b. Chain of thought prompting elicits reasoning in large language models. NeurIPS .

- Sohee Yang, Elena Gribovskaya, Nora Kassner, Mor Geva, and Sebastian Riedel. 2024. Do large language models latently perform multi-hop reasoning? arXiv .

- Seonghyeon Ye, Doyoung Kim, Sungdong Kim, Hyeonbin Hwang, Seungone Kim, Yongrae Jo, James Thorne, Juho Kim, and Minjoon Seo. 2024. FLASK: Fine-grained language model evaluation based on alignment skill sets. In ICLR .

- Wayne Xin Zhao, Kun Zhou, Junyi Li, Tianyi Tang, Xiaolei Wang, Yupeng Hou, Yingqian Min, Beichen Zhang, Junjie Zhang, Zican Dong, Yifan Du, Chen Yang, Yushuo Chen, Z. Chen, Jinhao Jiang, Ruiyang Ren, Yifan Li, Xinyu Tang, Zikang Liu, Peiyu Liu, Jianyun Nie, and Ji rong Wen. 2023. A survey of large language models. ArXiv .

- Lianmin Zheng, Wei-Lin Chiang, Ying Sheng, Siyuan Zhuang, Zhanghao Wu, Yonghao Zhuang, Zi Lin, Zhuohan Li, Dacheng Li, Eric Xing, et al. 2024. Judging llm-as-a-judge with mt-bench and chatbot arena. NeurIPS , 36.

- Denny Zhou, Nathanael Schärli, Le Hou, Jason Wei, Nathan Scales, Xuezhi Wang, Dale Schuurmans, Claire Cui, Olivier Bousquet, Quoc V Le, and Ed H. Chi. 2023. Least-to-most prompting enables complexz reasoning in large language models. In ICLR .

## A Details in Dataset Construction

Classifying questions based on depth of knowledge To categorize questions from the TutorEval dataset (Chevalier et al., 2024), we use GPT-4 Turbo set at a temperature of 0.7, following the specific prompt detailed in Table 19. We evaluate the model's classification accuracy using a validation set of 50 questions, which we have previously annotated with their respective depth of knowledge levels. Our optimal prompting strategy involves incorporating key points from each question provided in the original dataset and instructing the model to provide a step-by-step explanation of its classification reasoning. This approach achieves a precision of 0.67 and a recall of 0.77, with a low rate of false positives. Analysis of the entire set of 834 questions reveals the distribution of depth levels: 43% at D 2 , 33.6% at D 3 , 23.3% at D 1 , and only one question at D 4 .

D 3 question filtering and disambiguation From the 280 D 3 questions initially identified, we manually exclude questions that are not selfcontained, meaning they refer to specific contexts or excerpts in textbook passages that cannot be seamlessly integrated into our input. Examples include questions like, 'I don't understand the point of Theorems 4.3.2 and 4.3.3. Why do we care about these statements?' and 'Please tell me the common conceptual points between the Weinrich and Wise 1928 study and the Roland et al. 1980 paper .' Additionally, we disambiguate questions to ensure clarity and context accuracy. For example, the question 'Why is branching unstructured? And is it a bad design choice?' was initially vague about its reference to 'branching.' Upon review, we identify the context as computer programming rather than database systems and revise the question to: 'In the context of computer programming, why is branching considered unstructured, and is it considered a poor design choice?'.

Question deduplication and augmentation As explained in Section 3.3, we leverage cosine similarity of question embeddings produced by a Sentence Transformers embedding model 10 (Reimers and Gurevych, 2019) to identify near-duplicate questions. Specifically, within the same depth 1 or 2, we apply a similarity threshold of 0.9 to identify duplicates and eliminate them. For questions across D 1 and D 2 , we remove D 2 questions with a

10 sentence-transformers/all-mpnet-base-v2

Top-1 before deduplication (similarity = 0.97)

D 2 : How do you calculate the determinant of a matrix?

D 1 : How do you find the determinant of a matrix?

Top-1 after deduplication (similarity = 0.93)

D 2 : What does it mean for two vectors to be orthogonal, and how can you verify this property?

- D 1 : What does it mean for two vectors to be orthogonal?

Table 4: Top-1 similar question pairs between D 2 and D 1 before and after the deduplication and augmentation process. While the pair above shares essentially the same depth of knowledge, the pair below substantially differ in knowledge depth due to the D 2 question asking additional procedures.

Describe how division and remainders work when considering congruence modulo a number.

1. What is the result of a division called?

2. How is a remainder defined in division?

3. What does it mean for two numbers to be congruent modulo a number?

4. What does the term 'congruence modulo a number' mean? ⇒ What is the modulo operation in mathematics?

Table 5: The original 4th shallower question (red) is asking redundant knowledge addressed in the 3rd question. We remove the duplicate question and replace it with a question asking a different concept (blue).

similarity score ≥ 0 . 9 to D 1 questions and remove D 1 questions with a similarity score between 0.8 and 0.9 to D 1 questions, under the rationale that the D 2 question is effectively at D 1 level and vice versa. The similarity threshold and the candidate to remove was determined through qualitative analysis. We analyze that the false positive rate of D 1 questions misclassified as D 2 decreases from 60% to 7% (reduction rate of 88%). Table 4 compares top-1 similar question pairs between D 2 and D 1 before and after deduplication. We further use GPT-4 Turbo with temperature 0.7 (prompt in Table 25 and 26) to augment questions filling the gap of knowledge. Table 5 illustrates how an augmented question successfully replaces a previously redundant question, enhancing comprehensiveness.

Motivation of question debiasing In our preliminary study, we found that models tend to favor 'yes' over 'no' at the beginning of the response to a question that can be answered in binary format, as exemplified in Table 6. We recognize that the inherent positivity bias in models (Augustine et al., 2011; Dodds et al., 2015; Papadatos and Freedman, 2023) has the potential to skew the model's rea-

Original question:

Being congruent modulo an ideal sounds a bit like two numbers being congruent modulo another number. Is there a connection between these two notions? ⇒ Yes

## Predicted answer:

Yes, there is a connection between the two notions. In mathematics, when we say that two integers are congruent modulo n, it means that they have the same remainder when divided by n. For example, 3 and 6 are congruent modulo 3 because when we divide 3 by 3, the remainder is 0, and when we divide 6 by 3, the remainder is also 0. [... ]

## Flipped question:

Are the concepts of being congruent modulo an ideal and two numbers being congruent modulo another number entirely distinct with no connection ? ⇒ No

## Predicted answer:

Yes, the concepts of being congruent modulo an ideal and two numbers being congruent modulo another number are distinct. When we say that two numbers a and b are congruent modulo n, we mean that their difference a - b is a multiple of n. This is written as a ≡ b (mod n). [... ]

Table 6: Example of Mistral 7B Instruct v0.2 favoring affirmative responses over negative responses when the knowledge required is consistent but only the question format is flipped.

Are there problems that one can use standard induction to prove but cannot use strong induction to prove? ⇒ What kind of problems can be proven using standard induction but not strong induction?

If I understand correctly, adding sine functions always results in a new sine function?

⇒ Clarify my understanding that adding sine functions always results in a new sine function.

Can a linear transformation map all points of a vector space to a single point, and under what conditions does this occur? ⇒ Describe the possibility of a linear transformation mapping all points of a vector space to a single point. Under what conditions does this occur?

Table 7: Example conversions of a binary question into a non-binary question.