# PUZZLES: A Benchmark for Neural Algorithmic Reasoning

**Authors**: ETH Zürich

Abstract

Algorithmic reasoning is a fundamental cognitive ability that plays a pivotal role in problem-solving and decision-making processes. Reinforcement Learning (RL) has demonstrated remarkable proficiency in tasks such as motor control, handling perceptual input, and managing stochastic environments. These advancements have been enabled in part by the availability of benchmarks. In this work we introduce PUZZLES, a benchmark based on Simon Tatham’s Portable Puzzle Collection, aimed at fostering progress in algorithmic and logical reasoning in RL. PUZZLES contains 40 diverse logic puzzles of adjustable sizes and varying levels of complexity; many puzzles also feature a diverse set of additional configuration parameters. The 40 puzzles provide detailed information on the strengths and generalization capabilities of RL agents. Furthermore, we evaluate various RL algorithms on PUZZLES, providing baseline comparisons and demonstrating the potential for future research. All the software, including the environment, is available at https://github.com/ETH-DISCO/rlp.

Human intelligence relies heavily on logical and algorithmic reasoning as integral components for solving complex tasks. While Machine Learning (ML) has achieved remarkable success in addressing many real-world challenges, logical and algorithmic reasoning remains an open research question [1, 2, 3, 4, 5, 6, 7]. This research question is supported by the availability of benchmarks, which allow for a standardized and broad evaluation framework to measure and encourage progress [8, 9, 10].

Reinforcement Learning (RL) has made remarkable progress in various domains, showcasing its capabilities in tasks such as game playing [11, 12, 13, 14, 15] , robotics [16, 17, 18, 19] and control systems [20, 21, 22]. Various benchmarks have been proposed to enable progress in these areas [23, 24, 25, 26, 27, 28, 29]. More recently, advances have also been made in the direction of logical and algorithmic reasoning within RL [30, 31, 32]. Popular examples also include the games of Chess, Shogi, and Go [33, 34]. Given the importance of logical and algorithmic reasoning, we propose a benchmark to guide future developments in RL and more broadly machine learning.

Logic puzzles have long been a playful challenge for humans, and they are an ideal testing ground for evaluating the algorithmic and logical reasoning capabilities of RL agents. A diverse range of puzzles, similar to the Atari benchmark [24], favors methods that are broadly applicable. Unlike tasks with a fixed input size, logic puzzles can be solved iteratively once an algorithmic solution is found. This allows us to measure how well a solution attempt can adapt and generalize to larger inputs. Furthermore, in contrast to games such as Chess and Go, logic puzzles have a known solution, making reward design easier and enabling tracking progress and guidance with intermediate rewards.

<details>

<summary>x1.png Details</summary>

### Visual Description

## Puzzle Grid: Assorted Puzzle Types

### Overview

The image presents a grid of 36 different puzzle types, each displayed as a small example or initial state. The puzzles vary in style and mechanics, including grid-based logic puzzles, connection puzzles, and number puzzles.

### Components/Axes

The image is organized as a 6x6 grid of puzzle examples. Each puzzle has a title above it, indicating the puzzle type.

The puzzle types are:

1. Black Box

2. Bridges

3. Cube

4. Dominosa

5. Fifteen

6. Filling

7. Flip

8. Flood

9. Galaxies

10. Guess

11. Inertia

12. Keen

13. Lightup

14. Loopy

15. Magnets

16. Map

17. Mines

18. Mosaic

19. Net

20. Netslide

21. Palisade

22. Pattern

23. Pearl

24. Pegs

25. Range

26. Rectangles

27. Same Game

28. Signpost

29. Singles

30. Sixteen

31. Slant

32. Solo

33. Tents

34. Towers

35. Tracks

36. Twiddle

37. Undead

38. Unequal

39. Unruly

40. Untangle

### Detailed Analysis or Content Details

Here's a breakdown of some of the puzzle examples:

* **Black Box:** A grid with numbers along the edges and black circles inside. The grid is 9x9. Numbers 5, 1, 5, 1, 5, 1, 5, 1, 5, H, 2 are along the top. Numbers 1, 2, 3, 4 are along the bottom. Numbers 1, 2, 3, 4 are along the left. Numbers H, 2, 3, 3 are along the right.

* **Bridges:** A network of circles with numbers inside, connected by lines.

* **Cube:** A 2D representation of a 3D cube.

* **Dominosa:** A grid of numbers, where the goal is to find pairs of numbers that form dominoes.

* **Fifteen:** A sliding tile puzzle with numbers 1 through 15.

* **Filling:** A grid with numbers indicating how many cells in a row or column should be filled.

* **Flip:** A grid with shaded and unshaded cells, where flipping a cell changes the state of adjacent cells.

* **Flood:** A grid of colored squares, where the goal is to make the entire grid one color by changing the color of the top-left square.

* **Galaxies:** A grid with circles, where the goal is to divide the grid into regions, each containing a circle.

* **Guess:** A color-based logic puzzle.

* **Inertia:** A grid with diamonds and squares, where the goal is to move a ball to a target.

* **Keen:** A grid with numbers and cages, where the numbers in each cage must satisfy a given operation.

* **Lightup:** A grid with black and white cells, where the goal is to place light bulbs to illuminate all white cells.

* **Loopy:** A grid of cells, where the goal is to draw a loop that passes through some of the cells.

* **Magnets:** A grid with plus and minus signs, where the goal is to place magnets in the cells.

* **Map:** A puzzle with different colored regions.

* **Mines:** A grid with numbers indicating the number of mines in adjacent cells.

* **Mosaic:** A grid with numbers.

* **Net:** A grid with squares and lines.

* **Netslide:** A grid with squares and arrows.

* **Palisade:** A grid with numbers.

* **Pattern:** A grid with black and white cells.

* **Pearl:** A grid with circles and lines.

* **Pegs:** A grid with blue circles.

* **Range:** A grid with numbers.

* **Rectangles:** A grid with numbers.

* **Same Game:** A grid of colored squares, where the goal is to remove groups of squares of the same color.

* **Signpost:** A grid with arrows and numbers.

* **Singles:** A grid with numbers.

* **Sixteen:** A grid with numbers.

* **Slant:** A grid with lines.

* **Solo:** A grid with numbers.

* **Tents:** A grid with trees and numbers.

* **Towers:** A grid with numbers.

* **Tracks:** A grid with tracks.

* **Twiddle:** A puzzle with numbered tiles.

* **Undead:** A grid with symbols.

* **Unequal:** A grid with numbers and inequality signs.

* **Unruly:** A grid with black and white cells.

* **Untangle:** A graph with nodes and edges.

### Key Observations

The image provides a diverse collection of puzzle types, showcasing different problem-solving skills and game mechanics. The puzzles range from simple logic puzzles to more complex spatial reasoning challenges.

### Interpretation

The image serves as a visual catalog of various puzzle types, demonstrating the breadth and variety within the puzzle genre. It highlights the different approaches to problem-solving and the diverse mechanics used to create engaging and challenging games. The collection could be used to introduce someone to different puzzle styles or to provide a visual reference for puzzle enthusiasts.

</details>

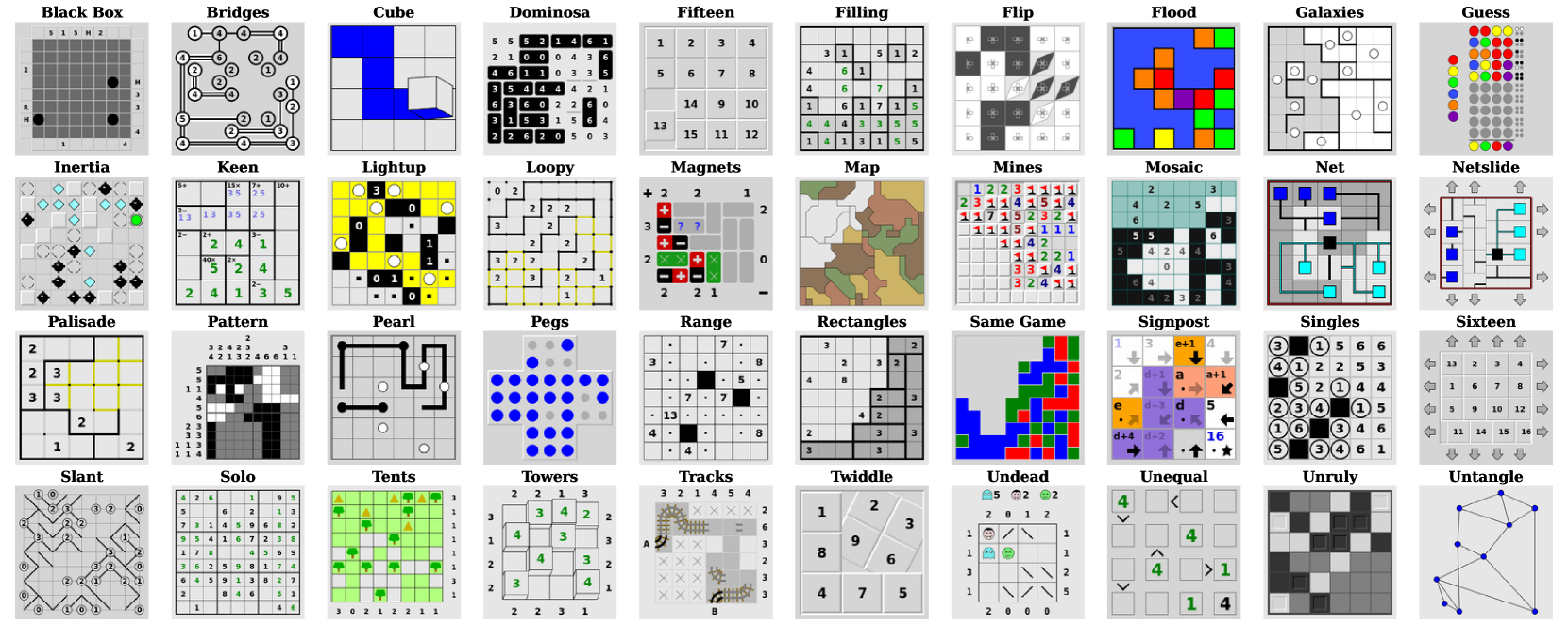

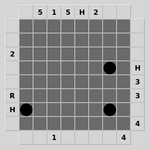

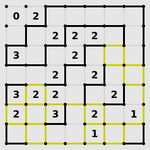

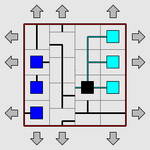

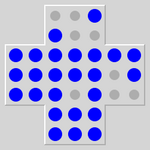

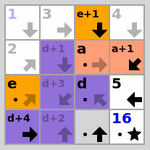

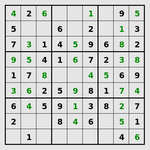

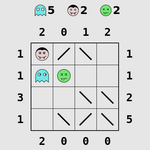

Figure 1: All puzzle classes of Simon Tatham’s Portable Puzzle Collection.

In this paper, we introduce PUZZLES, a comprehensive RL benchmark specifically designed to evaluate RL agents’ algorithmic reasoning and problem-solving abilities in the realm of logical and algorithmic reasoning. Simon Tatham’s Puzzle Collection [35], curated by the renowned computer programmer and puzzle enthusiast Simon Tatham, serves as the foundation of PUZZLES. This collection includes a set of 40 logic puzzles, shown in Figure 1, each of which presents distinct challenges with various dimensions of adjustable complexity. They range from more well-known puzzles, such as Solo or Mines (commonly known as Sudoku and Minesweeper, respectively) to lesser-known puzzles such as Cube or Slant. PUZZLES includes all 40 puzzles in a standardized environment, each playable with a visual or discrete input and a discrete action space.

Contributions.

We propose PUZZLES, an RL environment based on Simon Tatham’s Puzzle Collection, comprising a collection of 40 diverse logic puzzles. To ensure compatibility, we have extended the original C source code to adhere to the standards of the Pygame library. Subsequently, we have integrated PUZZLES into the Gymnasium framework API, providing a straightforward, standardized, and widely-used interface for RL applications. PUZZLES allows the user to arbitrarily scale the size and difficulty of logic puzzles, providing detailed information on the strengths and generalization capabilities of RL agents. Furthermore, we have evaluated various RL algorithms on PUZZLES, providing baseline comparisons and demonstrating the potential for future research.

1 Related Work

RL benchmarks.

Various benchmarks have been proposed in RL. Bellemare et al. [24] introduced the influential Atari-2600 benchmark, on which Mnih et al. [11] trained RL agents to play the games directly from pixel inputs. This benchmark demonstrated the potential of RL in complex, high-dimensional environments. PUZZLES allows the use of a similar approach where only pixel inputs are provided to the agent. Todorov et al. [23] presented MuJoCo which provides a diverse set of continuous control tasks based on a physics engine for robotic systems. Another control benchmark is the DeepMind Control Suite by Duan et al. [26], featuring continuous actions spaces and complex control problems. The work by Côté et al. [28] emphasized the importance of natural language understanding in RL and proposed a benchmark for evaluating RL methods in text-based domains. Lanctot et al. [29] introduced OpenSpiel, encompassing a wide range of games, enabling researchers to evaluate and compare RL algorithms’ performance in game-playing scenarios. These benchmarks and frameworks have contributed significantly to the development and evaluation of RL algorithms. OpenAI Gym by Brockman et al. [25], and its successor Gymnasium by the Farama Foundation [36] helped by providing a standardized interface for many benchmarks. As such, Gym and Gymnasium have played an important role in facilitating reproducibility and benchmarking in reinforcement learning research. Therefore, we provide PUZZLES as a Gymnasium environment to enable ease of use.

Logical and algorithmic reasoning within RL.

Notable research in RL on logical reasoning includes automated theorem proving using deep RL [16] or RL-based logic synthesis [37]. Dasgupta et al. [38] find that RL agents can perform a certain degree of causal reasoning in a meta-reinforcement learning setting. The work by Jiang and Luo [30] introduces Neural Logic RL, which improves interpretability and generalization of learned policies. Eppe et al. [39] provide steps to advance problem-solving as part of hierarchical RL. Fawzi et al. [31] and Mankowitz et al. [32] demonstrate that RL can be used to discover novel and more efficient algorithms for well-known problems such as matrix multiplication and sorting. Neural algorithmic reasoning has also been used as a method to improve low-data performance in classical RL control environments [40, 41]. Logical reasoning might be required to compete in certain types of games such as chess, shogi and Go [33, 34, 42, 13], Poker [43, 44, 45, 46] or board games [47, 48, 49, 50]. However, these are usually multi-agent games, with some also featuring imperfect information and stochasticity.

Reasoning benchmarks.

Various benchmarks have been introduced to assess different types of reasoning capabilities, although only in the realm of classical ML. IsarStep, proposed by Li et al. [8], specifically designed to evaluate high-level mathematical reasoning necessary for proof-writing tasks. Another significant benchmark in the field of reasoning is the CLRS Algorithmic Reasoning Benchmark, introduced by Veličković et al. [9]. This benchmark emphasizes the importance of algorithmic reasoning in machine learning research. It consists of 30 different types of algorithms sourced from the renowned textbook “Introduction to Algorithms” by Cormen et al. [51]. The CLRS benchmark serves as a means to evaluate models’ understanding and proficiency in learning various algorithms. In the domain of large language models (LLMs), BIG-bench has been introduced by Srivastava et al. [10]. BIG-bench incorporates tasks that assess the reasoning capabilities of LLMs, including logical reasoning.

Despite these valuable contributions, a suitable and unified benchmark for evaluating logical and algorithmic reasoning abilities in single-agent perfect-information RL has yet to be established. Recognizing this gap, we propose PUZZLES as a relevant and necessary benchmark with the potential to drive advancements and provide a standardized evaluation platform for RL methods that enable agents to acquire algorithmic and logical reasoning abilities.

2 The PUZZLES Environment

In the following section we give an overview of the PUZZLES environment. The puzzles are available to play online at https://www.chiark.greenend.org.uk/~sgtatham/puzzles/; excellent standalone apps for Android and iOS exist as well. The environment is written in both Python and C. For a detailed explanation of all features of the environment as well as their implementation, please see Appendices B and C.

Gymnasium RL Code

puzzle_env.py

puzzle.py

pygame.c

Puzzle C Sources

Pygame Library

puzzle Module

rlp Package Python C

Figure 2: Code and library landscape around the PUZZLES Environment, made up of the rlp Package and the puzzle Module . The figure shows how the puzzle Module presented in this paper fits within Tathams’s Puzzle Collection footnotemark: code, the Pygame package, and a user’s Gymnasium reinforcement learning code . The different parts are also categorized as Python language and C language.

2.1 Environment Overview

Within the PUZZLES environment, we encapsulate the tasks presented by each logic puzzle by defining consistent state, action, and observation spaces. It is also important to note that the large majority of the logic puzzles are designed so that they can be solved without requiring any guesswork. By default, we provide the option of two observation spaces, one is a representation of the discrete internal game state of the puzzle, the other is a visual representation of the game interface. These observation spaces can easily be wrapped in order to enable PUZZLES to be used with more advanced neural architectures such as graph neural networks (GNNs) or Transformers. All puzzles provide a discrete action space which only differs in cardinality. To accommodate the inherent difficulty and the need for proper algorithmic reasoning in solving these puzzles, the environment allows users to implement their own reward structures, facilitating the training of successful RL agents. All puzzles are played in a two-dimensional play area with deterministic state transitions, where a transition only occurs after a valid user input. Most of the puzzles in PUZZLES do not have an upper bound on the number of steps, they can only be completed by successfully solving the puzzle. An agent with a bad policy is likely never going to reach a terminal state. For this reason, we provide the option for early episode termination based on state repetitions. As we show in Section 3.4, this is an effective method to facilitate learning.

2.2 Difficulty Progression and Generalization

The PUZZLES environment places a strong emphasis on giving users control over the difficulty exhibited by the environment. For each puzzle, the problem size and difficulty can be adjusted individually. The difficulty affects the complexity of strategies that an agent needs to learn to solve a puzzle. As an example, Sudoku has tangible difficulty options: harder difficulties may require the use of new strategies such as forcing chains Forcing chains works by following linked cells to evaluate possible candidates, usually starting with a two-candidate cell. to find a solution, whereas easy difficulties only need the single position strategy. The single position strategy involves identifying cells which have only a single possible value.

The scalability of the puzzles in our environment offers a unique opportunity to design increasingly complex puzzle configurations, presenting a challenging landscape for RL agents to navigate. This dynamic nature of the benchmark serves two important purposes. Firstly, the scalability of the puzzles facilitates the evaluation of an agent’s generalization capabilities. In the PUZZLES environment, it is possible to train an agent in an easy puzzle setting and subsequently evaluate its performance in progressively harder puzzle configurations. For most puzzles, the cardinality of the action space is independent of puzzle size. It is therefore also possible to train an agent only on small instances of a puzzle and then evaluate it on larger sizes. This approach allows us to assess whether an agent has learned the correct underlying algorithm and generalizes to out-of-distribution scenarios. Secondly, it enables the benchmark to remain adaptable to the continuous advancements in RL methodologies. As RL algorithms evolve and become more capable, the puzzle configurations can be adjusted accordingly to maintain the desired level of difficulty. This ensures that the benchmark continues to effectively assess the capabilities of the latest RL methods.

3 Empirical Evaluation

We evaluate the baseline performance of numerous commonly used RL algorithms on our PUZZLES environment. Additionally, we also analyze the impact of certain design decisions of the environment and the training setup. Our metric of interest is the average number of steps required by a policy to successfully complete a puzzle, where lower is better. We refer to the term successful episode to denote the successful completion of a single puzzle instance. We also look at the success rate, i.e. what percentage of the puzzles was completed successfully.

To provide an understanding of the puzzle’s complexity and to contextualize the agents’ performance, we include an upper-bound estimate of the optimal number of steps required to solve the puzzle correctly. This estimate is a combination of both the steps required to solve the puzzle using an optimal strategy, and an upper bound on the environment steps required to achieve this solution, such as moving the cursor to the correct position. The upper bound is denoted as Optimal. Please refer to LABEL:tab:parameters for details on how this upper bound is calculated for each puzzle.

We run experiments based on all the RL algorithms presented in Table 8. We include both popular traditional algorithms such as PPO, as well as algorithms designed more specifically for the kinds of tasks presented in PUZZLES. Where possible, we used the implementations available in the RL library Stable Baselines 3 [52], using the default hyper-parameters. For MuZero and DreamerV3, we used the code available at [53] and [54], respectively. We provide a summary of all algorithms in Appendix Table 8. In total, our experiments required approximately 10’000 GPU hours.

All selected algorithms are compatible with the discrete action space required by our environment. This circumstance prohibits the use of certain other common RL algorithms such as Soft-Actor Critic (SAC) [55] or Twin Delayed Deep Deterministic Policy Gradients (TD3) [56].

3.1 Baseline Experiments

For the general baseline experiments, we trained all agents on all puzzles and evaluate their performance. Due to the challenging nature of our puzzles, we have selected an easy difficulty and small size of the puzzle where possible. Every agent was trained on the discrete internal state observation using five different random seeds. We trained all agents by providing rewards only at the end of each episode upon successful completion or failure. For computational reasons, we truncated all episodes during training and testing at 10,000 steps. For such a termination, reward was kept at 0. We evaluate the effect of this episode truncation in Section 3.4 We provide all experimental parameters, including the exact parameters supplied for each puzzle in Section E.3.

<details>

<summary>x2.png Details</summary>

### Visual Description

## Bar Chart: Average Episode Length Comparison

### Overview

The image is a bar chart comparing the average episode length of different reinforcement learning algorithms. The chart displays the average episode length on the y-axis and the algorithm names on the x-axis. Error bars are included on each bar to indicate the variability or uncertainty in the average episode length.

### Components/Axes

* **Y-axis:** "Average Episode Length", with a numerical scale from 0 to 4000, incrementing by 1000.

* **X-axis:** Categorical axis listing the reinforcement learning algorithms: A2C, DQN, DreamerV3, MuZero, PPO, QRDQN, RecurrentPPO, TRPO, and Optimal.

* **Bars:** Each bar represents the average episode length for a specific algorithm. The bars are light blue.

* **Error Bars:** Black vertical lines extending above and below each bar, indicating the standard deviation or confidence interval.

### Detailed Analysis

Here's a breakdown of the approximate average episode lengths and error bar ranges for each algorithm:

* **A2C:** Average episode length is approximately 2750. Error bar extends from approximately 1750 to 3750.

* **DQN:** Average episode length is approximately 2000. Error bar extends from approximately 1000 to 2400.

* **DreamerV3:** Average episode length is approximately 1400. Error bar extends from approximately 500 to 2300.

* **MuZero:** Average episode length is approximately 1800. Error bar extends from approximately 800 to 2800.

* **PPO:** Average episode length is approximately 1600. Error bar extends from approximately 700 to 2500.

* **QRDQN:** Average episode length is approximately 2750. Error bar extends from approximately 1750 to 4300.

* **RecurrentPPO:** Average episode length is approximately 2350. Error bar extends from approximately 1350 to 3350.

* **TRPO:** Average episode length is approximately 1750. Error bar extends from approximately 750 to 2400.

* **Optimal:** Average episode length is approximately 200. Error bar is not visible, suggesting very low variance.

### Key Observations

* QRDQN and A2C have the highest average episode lengths, both around 2750.

* Optimal has the lowest average episode length, significantly lower than all other algorithms, at approximately 200.

* QRDQN has the largest error bar, indicating high variability in episode length.

* Optimal has a very small error bar, indicating low variability in episode length.

### Interpretation

The bar chart provides a comparison of the performance of different reinforcement learning algorithms based on the average episode length. A lower average episode length generally indicates better performance, as the agent is able to achieve its goal in fewer steps. The "Optimal" algorithm has the lowest average episode length, suggesting it is the most efficient in this context. The error bars indicate the consistency of the performance of each algorithm. Algorithms with larger error bars, like QRDQN, have more variable performance, while algorithms with smaller error bars, like Optimal, have more consistent performance.

</details>

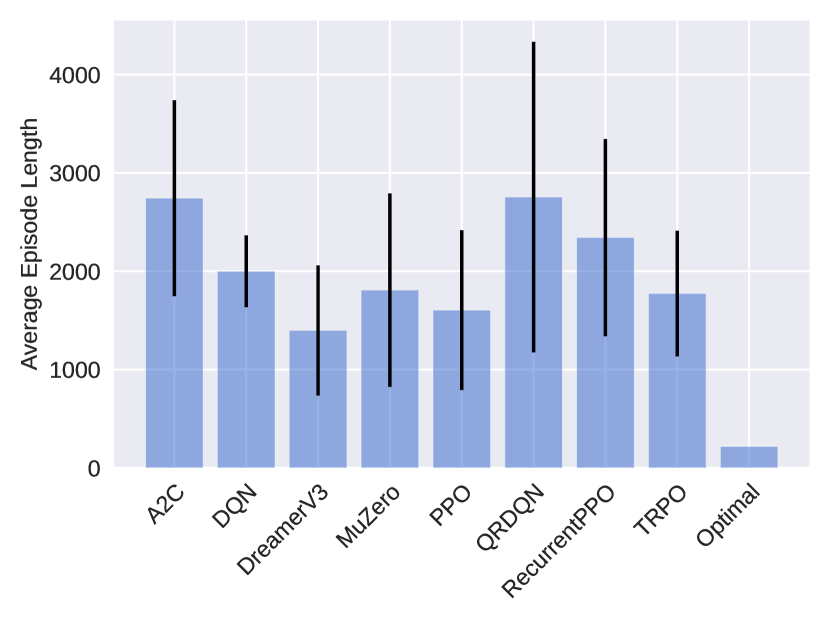

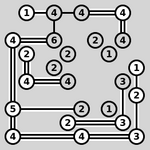

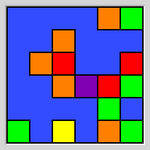

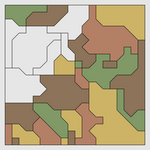

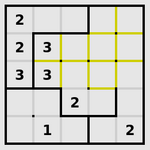

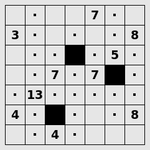

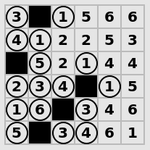

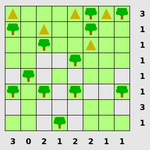

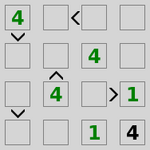

Figure 3: Average episode length of successful episodes for all evaluated algorithms on all puzzles in the easiest setting (lower is better). Some puzzles, namely Loopy, Pearl, Pegs, Solo, and Unruly, were intractable for all algorithms and were therefore excluded in this aggregation. The standard deviation is computed with respect to the performance over all evaluated instances for all trained seeds, aggregated for the total number of puzzles. Optimal refers the upper bound of the performance of an optimal policy, it therefore does not include a standard deviation. We see that DreamerV3 performs the best with an average episode length of 1334. However, this is still worse than the optimal upper bound at an average of 217 steps.

To track an agent’s progress, we use episode lengths, i.e., how many actions an agent needs to solve a puzzle. A lower number of actions indicates a stronger policy that is closer to the optimal solution. To obtain the final evaluation, we run each policy on 1000 random episodes of the respective puzzle, again with a maximum step size of 10,000 steps. All experiments were conducted on NVIDIA 3090 GPUs. The training time for a single agent with 2 million PPO steps varied depending on the puzzle and ranged from approximately 1.75 to 3 hours. The training for DreamerV3 and MuZero was more demanding and training time ranged from approximately 10 to 20 hours.

Figure 3 shows the average successful episode length for all algorithms. It can be seen that DreamerV3 performs best while PPO also achieves good performance, closely followed by TRPO and MuZero. This is especially interesting since PPO and TRPO follow much simpler training routines than DreamerV3 and MuZero. It seems that the implicit world models learned by DreamerV3 struggle to appropriately capture some puzzles. The high variance of MuZero may indicate some instability during training or the need for puzzle-specific hyperparamater tuning. Upon closer inspection of the detailed results, presented in Appendix Table 9 and 10, DreamerV3 manages to solve 62.7% of all puzzle instances. In 14 out of the 40 puzzles, it has found a policy that solves the puzzles within the Optimal upper bound. PPO and TRPO managed to solve an average of 61.6% and 70.8% of the puzzle instances, however only 8 and 11 of the puzzles have consistently solved within the Optimal upper bound. The algorithms A2C, RecurrentPPO, DQN and QRDQN perform worse than a pure random policy. Overall, it seems that some of the environments in PUZZLES are quite challenging and well suited to show the difference in performance between algorithms. It is also important to note that all the logic puzzles are designed so that they can be solved without requiring any guesswork.

3.2 Difficulty

We further evaluate the performance of a subset of the puzzles on the easiest preset difficulty level for humans. We selected all puzzles where a random policy was able to solve them with a probability of at least 10%, which are Netslide, Same Game and Untangle. By using this selection, we estimate that the reward density should be relatively high, ideally allowing the agent to learn a good policy. Again, we train all algorithms listed in Table 8. We provide results for the two strongest algorithms, PPO and DreamerV3 in Table 1, with complete results available in Appendix Table 9. Note that as part of Section 3.4, we also perform ablations using DreamerV3 on more puzzles on the easiest preset difficulty level for humans.

Table 1: Comparison of how many steps agents trained with PPO and DreamerV3 need on average to solve puzzles of two difficulty levels. In brackets, the percentage of successful episodes is reported. The difficulty levels correspond to the overall easiest and the easiest-for-humans settings. We also give the upper bound of optimal steps needed for each configuration.

| Netslide | 2x3b1 | $35.3± 0.7$ (100.0%) | $12.0± 0.4$ (100.0%) | 48 |

| --- | --- | --- | --- | --- |

| 3x3b1 | $4742.1± 2960.1$ (9.2%) | $3586.5± 676.9$ (22.4%) | 90 | |

| Same Game | 2x3c3s2 | $11.5± 0.1$ (100.0%) | $7.3± 0.2$ (100.0%) | 42 |

| 5x5c3s2 | $1009.3± 1089.4$ (30.5%) | $527.0± 162.0$ (30.2%) | 300 | |

| Untangle | 4 | $34.9± 10.8$ (100.0%) | $6.3± 0.4$ (100.0%) | 80 |

| 6 | $2294.7± 2121.2$ (96.2%) | $1683.3± 73.7$ (82.0%) | 150 | |

We can see that for both PPO and DreamerV3, the percentage of successful episodes decreases, with a large increase in steps required. DreamerV3 performs clearly stronger than PPO, requiring consistently fewer steps, but still more than the optimal policy. Our results indicate that puzzles with relatively high reward density at human difficulty levels remain challenging. We propose to use the easiest human difficulty level as a first measure to evaluate future algorithms. The details of the easiest human difficulty setting can be found in Appendix Table 7. If this level is achieved, difficulty can be further scaled up by increasing the size of the puzzles. Some puzzles also allow for an increase in difficulty with fixed size.

3.3 Effect of Action Masking and Observation Representation

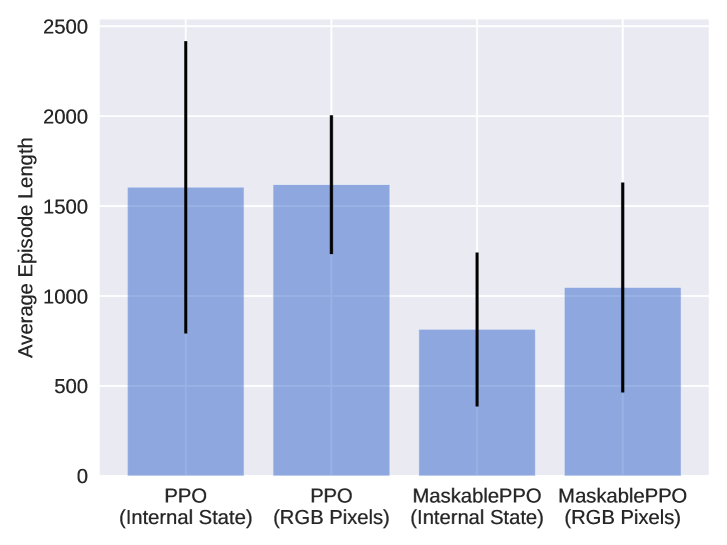

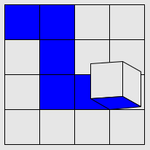

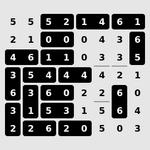

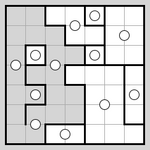

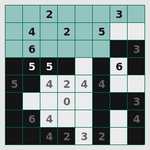

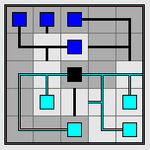

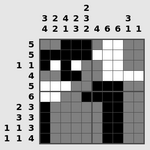

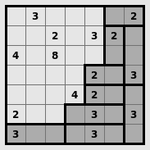

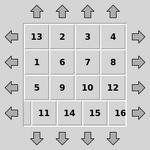

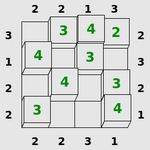

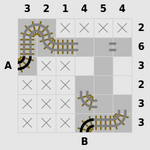

We evaluate the effect of action masking, as well as observation type, on training performance. Firstly, we analyze whether action masking, as described in paragraph “Action Masking” in Section B.4, can positively affect training performance. Secondly, we want to see if agents are still capable of solving puzzles while relying on pixel observations. Pixel observations allow for the exact same input representation to be used for all puzzles, thus achieving a setting that is very similar to the Atari benchmark. We compare MaskablePPO to the default PPO without action masking on both types of observations. We summarize the results in Figure 4. Detailed results for masked RL agents on the pixel observations are provided in Appendix Table 11.

<details>

<summary>x3.png Details</summary>

### Visual Description

## Bar Chart: Average Episode Length Comparison

### Overview

The image is a bar chart comparing the average episode length of two reinforcement learning algorithms, PPO and MaskablePPO, under two different input conditions: "Internal State" and "RGB Pixels". The chart displays the average episode length as the height of the bars, with error bars indicating the variability or standard deviation.

### Components/Axes

* **Y-axis:** "Average Episode Length", with a numerical scale from 0 to 2500 in increments of 500.

* **X-axis:** Categorical axis representing the different algorithm and input combinations:

* PPO (Internal State)

* PPO (RGB Pixels)

* MaskablePPO (Internal State)

* MaskablePPO (RGB Pixels)

* **Bars:** Light blue bars represent the average episode length for each category.

* **Error Bars:** Black vertical lines extending above and below each bar, indicating the range of variability.

### Detailed Analysis

The chart presents four distinct data points, each representing a different configuration of the reinforcement learning algorithm.

* **PPO (Internal State):** The average episode length is approximately 1600. The error bar extends from approximately 700 to 2500.

* **PPO (RGB Pixels):** The average episode length is approximately 1600. The error bar extends from approximately 1250 to 2000.

* **MaskablePPO (Internal State):** The average episode length is approximately 800. The error bar extends from approximately 300 to 1250.

* **MaskablePPO (RGB Pixels):** The average episode length is approximately 1050. The error bar extends from approximately 450 to 1650.

### Key Observations

* PPO has a higher average episode length than MaskablePPO, regardless of the input type (Internal State or RGB Pixels).

* The error bars for PPO (Internal State) and MaskablePPO (RGB Pixels) are larger, indicating greater variability in episode length.

* The error bars for PPO (RGB Pixels) and MaskablePPO (Internal State) are smaller, indicating less variability in episode length.

### Interpretation

The data suggests that the PPO algorithm generally results in longer episodes compared to MaskablePPO. This could indicate that PPO is more effective at exploring the environment or achieving a more stable policy. The use of "Internal State" versus "RGB Pixels" as input seems to have a less consistent impact, with the variability being more pronounced in some cases than others. The large error bars suggest that the performance of these algorithms can vary significantly from episode to episode, especially for PPO with internal state and MaskablePPO with RGB pixels. Further investigation would be needed to understand the factors contributing to this variability and to determine the statistical significance of the observed differences.

</details>

<details>

<summary>x4.png Details</summary>

### Visual Description

## Chart: Timesteps per Episode vs. Training Timesteps

### Overview

The image is a line graph comparing the performance of different reinforcement learning algorithms (PPO and MaskablePPO) using different observation types (RGB Pixels and Internal State). The graph plots "Timesteps per Episode" on a logarithmic scale against "Training Timesteps" on a linear scale.

### Components/Axes

* **Title:** None

* **X-axis:**

* Label: "Training Timesteps"

* Scale: Linear, from 0.00 to 2.00, with increments of 0.25. Multiplied by 10^6.

* Markers: 0.00, 0.25, 0.50, 0.75, 1.00, 1.25, 1.50, 1.75, 2.00

* **Y-axis:**

* Label: "Timesteps per Episode"

* Scale: Logarithmic (base 10), from 10^0 to 10^4

* Markers: 10^0, 10^1, 10^2, 10^3, 10^4

* **Legend:** Located at the bottom of the chart.

* **Magenta:** PPO (RGB Pixels)

* **Orange:** PPO (Internal State)

* **Blue:** MaskablePPO (RGB Pixels)

* **Green:** MaskablePPO (Internal State)

### Detailed Analysis

* **Magenta Line: PPO (RGB Pixels)**

* Trend: Initially increases rapidly, reaching approximately 10^3 timesteps per episode around 0.5 x 10^6 training timesteps. It then fluctuates significantly, with some drops and rises, before stabilizing around 10^2 timesteps per episode after 1.5 x 10^6 training timesteps.

* Data Points: Starts around 10^1, peaks around 10^3, stabilizes around 10^2.

* **Orange Line: PPO (Internal State)**

* Trend: Starts high, around 10^2 timesteps per episode, and decreases slightly before fluctuating around 10^1 to 10^2 timesteps per episode throughout the training process.

* Data Points: Starts around 10^2, fluctuates between 10^1 and 10^2.

* **Blue Line: MaskablePPO (RGB Pixels)**

* Trend: Starts around 10^2 timesteps per episode, decreases slightly, and then fluctuates around 10^1 timesteps per episode throughout the training process. There are some spikes to higher values.

* Data Points: Starts around 10^2, fluctuates around 10^1.

* **Green Line: MaskablePPO (Internal State)**

* Trend: Starts around 10^2 timesteps per episode, decreases rapidly to around 10^1 timesteps per episode, and remains relatively stable at that level throughout the training process.

* Data Points: Starts around 10^2, stabilizes around 10^1.

### Key Observations

* PPO (RGB Pixels) shows the most significant initial improvement but also the most instability.

* MaskablePPO (Internal State) converges quickly to a stable, low number of timesteps per episode.

* Using Internal State generally results in lower timesteps per episode compared to using RGB Pixels.

* MaskablePPO algorithms appear more stable than PPO algorithms.

### Interpretation

The graph illustrates the learning curves of different reinforcement learning algorithms under different observation conditions. The PPO algorithm, when using RGB pixels as input, initially learns faster but exhibits more instability during training. The MaskablePPO algorithm, especially when using the internal state, demonstrates more stable learning and converges to a lower number of timesteps per episode, suggesting more efficient learning. The choice of observation type (RGB Pixels vs. Internal State) significantly impacts the performance and stability of the algorithms. Using the internal state generally leads to more stable and efficient learning, likely because it provides a more direct and less noisy representation of the environment's state.

</details>

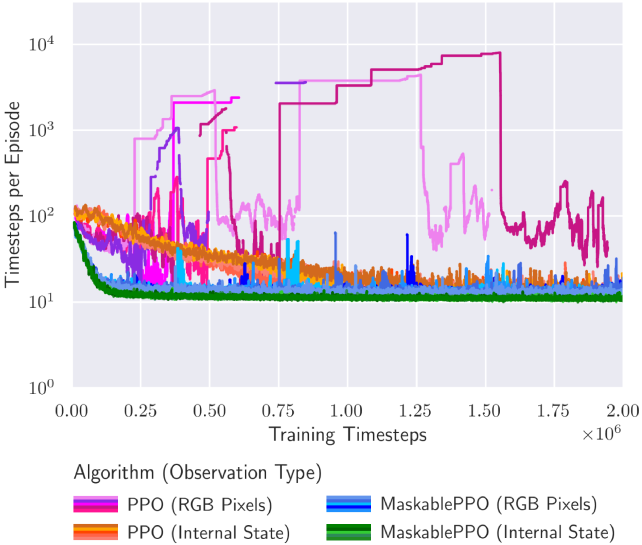

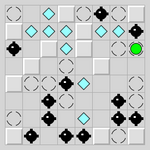

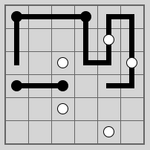

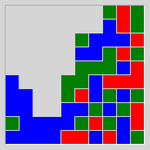

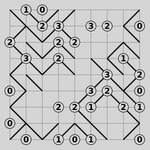

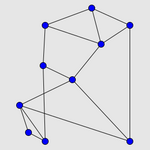

Figure 4: (left) We demonstrate the effect of action masking in both RGB observation and internal game state. By masking moves that do not change the current state, the agent requires fewer actions to explore, and therefore, on average solves a puzzle using fewer steps. (right) Moving average episode length during training for the Flood puzzle. Lower episode length is better, as the episode gets terminated as soon as the agent has solved a puzzle. Different colors describe different algorithms, where different shades of a color indicate different random seeds. Sparse dots indicate that an agent only occasionally managed to find a policy that solves a puzzle. It can be seen that both the use of discrete internal state observations and action masking have a positive effect on the training, leading to faster convergence and a stronger overall performance.

As we can observe in Figure 4, action masking has a strongly positive effect on training performance. This benefit is observed both in the discrete internal game state observations and on the pixel observations. We hypothesize that this is due to the more efficient exploration, as actions without effect are not allowed. As a result, the reward density during training is increased, and agents are able to learn a better policy. Particularly noteworthy are the outcomes related to Pegs. They show that an agent with action masking can effectively learn a successful policy, while a random policy without action masking consistently fails to solve any instance. As expected, training RL agents on pixel observations increases the difficulty of the task at hand. The agent must first understand how the pixel observation relates to the internal state of the game before it is able to solve the puzzle. Nevertheless, in combination with action masking, the agents manage to solve a large percentage of all puzzle instances, with 10 of the puzzles consistently solved within the optimal upper bound.

Furthermore, Figure 4 shows the individual training performance on the puzzle Flood. It can be seen that RL agents using action masking and the discrete internal game state observation converge significantly faster and to better policies compared to the baselines. The agents using pixel observations and no action masking struggle to converge to any reasonable policy.

3.4 Effect of Episode Length and Early Termination

We evaluate whether the cutoff episode length or early termination have an effect on training performance of the agents. For computational reasons, we perform these experiments on a selected subset of the puzzles on human level difficulty and only for DreamerV3 (see Section E.5 for details). As we can see in Table 2, increasing the maximum episode length during training from 10,000 to 100,000 does not improve performance. Only when episodes get terminated after visiting the exact same state more than 10 times, the agent is able to solve more puzzle instances on average (31.5% vs. 25.2%). Given the sparse reward structure, terminating episodes early seems to provide a better trade-off between allowing long trajectories to successfully complete and avoiding wasting resources on unsuccessful trajectories.

Table 2: Comparison of the effect of the maximum episode length (# Steps) and early termination (ET) on final performance. For each setting, we report average success episode length with standard deviation with respect to the random seed, all averaged over all selected puzzles. In brackets, the percentage of successful episodes is reported.

| $1e5$ | 10 | $2950.9± 1260.2$ (31.6%) |

| --- | --- | --- |

| - | $2975.4± 1503.5$ (25.2%) | |

| $1e4$ | 10 | $3193.9± 1044.2$ (26.1%) |

| - | $2892.4± 908.3$ (26.8%) | |

3.5 Generalization

PUZZLES is explicitly designed to facilitate the testing of generalization capabilities of agents with respect to different puzzle sizes or puzzle difficulties. For our experiments, we select puzzles with the highest reward density. We utilize a a custom observation wrapper and transformer-based encoder in order for the agent to be able to work with different input sizes, see Sections A.3 and A.4 for details. We call this approach PPO (Transformer)

Table 3: We test generalization capabilities of agents by evaluating them on puzzle sizes larger than their training environment. We report the average number of steps an agent needs to solve a puzzle, and the percentage of successful episodes in brackets. The difficulty levels correspond to the overall easiest and the easiest-for-humans settings. For PPO (Transformer), we selected the best checkpoint during training according to the performance in the training environment. For PPO (Transformer) †, we selected the best checkpoint during training according to the performance in the generalization environment.

| Netslide | 2x3b1 | ✓ | $244.1± 313.7$ (100.0%) | $242.0± 379.3$ (100.0%) |

| --- | --- | --- | --- | --- |

| 3x3b1 | ✗ | $9014.6± 2410.6$ (18.6%) | $9002.8± 2454.9$ (18.0%) | |

| Same Game | 2x3c3s2 | ✓ | $9.3± 10.9$ (99.8%) | $26.2± 52.9$ (99.7%) |

| 5x5c3s2 | ✗ | $379.0± 261.6$ (9.4%) | $880.1± 675.4$ (18.1%) | |

| Untangle | 4 | ✓ | $38.6± 58.2$ (99.8%) | $69.8± 66.4$ (100.0%) |

| 6 | ✗ | $3340.0± 3101.2$ (87.3%) | $2985.8± 2774.7$ (93.7%) | |

The results presented in Table 3 indicate that while it is possible to learn a policy that generalizes it remains a challenging problem. Furthermore, it can be observed that selecting the best model during training according to the performance on the generalization environment yields a performance benefit in that setting. This suggests that agents may learn a policy that generalizes better during the training process, but then overfit on the environment they are training on. It is also evident that generalization performance varies substantially across different random seeds. For Netslide, the best agent is capable of solving 23.3% of the puzzles in the generalization environment whereas the worst agent is only able to solve 11.2% of the puzzles, similar to a random policy. Our findings suggest that agents are generally capable of generalizing to more complex puzzles. However, further research is necessary to identify the appropriate inductive biases that allow for consistent generalization without a significant decline in performance.

4 Discussion

The experimental evaluation demonstrates varying degrees of success among different algorithms. For instance, puzzles such as Tracks, Map or Flip were not solvable by any of the evaluated RL agents, or only with performance similar to a random policy. This points towards the potential of intermediate rewards, better game rule-specific action masking, or model-based approaches. To encourage exploration in the state space, a mechanism that explicitly promotes it may be beneficial. On the other hand, the fact that some algorithms managed to solve a substantial amount of puzzles with presumably optimal performance demonstrates the advances in the field of RL. In light of the promising results of DreamerV3, the improvement of agents that have certain reasoning capabilities and an implicit world model by design stay an important direction for future research.

Experimental Results.

The experimental results presented in Section 3.1 and Section 3.3 underscore the positive impact of action masking and the correct observation type on performance. While a pixel representation would lead to a uniform observation for all puzzles, it currently increases complexity too much compared the discrete internal game state. Our findings indicate that incorporating action masking significantly improves the training efficiency of reinforcement learning algorithms. This enhancement was observed in both discrete internal game state observations and pixel observations. The mechanism for this improvement can be attributed to enhanced exploration, resulting in agents being able to learn more robust and effective policies. This was especially evident in puzzles where unmasked agents had considerable difficulty, thus showcasing the tangible advantages of implementing action masking for these puzzles.

Limitations.

While the PUZZLES framework provides the ability to gain comprehensive insights into the performance of various RL algorithms on logic puzzles, it is crucial to recognize certain limitations when interpreting results. The sparse rewards used in this baseline evaluation add to the complexity of the task. Moreover, all algorithms were evaluated with their default hyper-parameters. Additionally, the constraint of discrete action spaces excludes the application of certain RL algorithms.

In summary, the different challenges posed by the logic-requiring nature of these puzzles necessitates a good reward system, strong guidance of agents, and an agent design more focused on logical reasoning capabilities. It will be interesting to see how alternative architectures such as graph neural networks (GNNs) perform. GNNs are designed to align more closely with the algorithmic solution of many puzzles. While the notion that “reward is enough” [57, 58] might hold true, our results indicate that not just any form of correct reward will suffice, and that advanced architectures might be necessary to learn an optimal solution.

5 Conclusion

In this work, we have proposed PUZZLES, a benchmark that bridges the gap between algorithmic reasoning and RL. In addition to containing a rich diversity of logic puzzles, PUZZLES also offers an adjustable difficulty progression for each puzzle, making it a useful tool for benchmarking, evaluating and improving RL algorithms. Our empirical evaluation shows that while RL algorithms exhibit varying degrees of success, challenges persist, particularly in puzzles with higher complexity or those requiring nuanced logical reasoning. We are excited to share PUZZLES with the broader research community and hope that PUZZLES will foster further research for improving the algorithmic reasoning abilities of RL algorithms.

Broader Impact

This paper aims to contribute to the advancement of the field of Machine Learning (ML). Given the current challenges in ML related to algorithmic reasoning, we believe that our newly proposed benchmark will facilitate significant progress in this area, potentially elevating the capabilities of ML systems. Progress in algorithmic reasoning can contribute to the development of more transparent, explainable, and fair ML systems. This can further help address issues related to bias and discrimination in automated decision-making processes, promoting fairness and accountability.

References

- Serafini and Garcez [2016] Luciano Serafini and Artur d’Avila Garcez. Logic tensor networks: Deep learning and logical reasoning from data and knowledge. arXiv preprint arXiv:1606.04422, 2016.

- Dai et al. [2019] Wang-Zhou Dai, Qiuling Xu, Yang Yu, and Zhi-Hua Zhou. Bridging machine learning and logical reasoning by abductive learning. Advances in Neural Information Processing Systems, 32, 2019.

- Li et al. [2020] Yujia Li, Felix Gimeno, Pushmeet Kohli, and Oriol Vinyals. Strong generalization and efficiency in neural programs. arXiv preprint arXiv:2007.03629, 2020.

- Veličković and Blundell [2021] Petar Veličković and Charles Blundell. Neural algorithmic reasoning. Patterns, 2(7), 2021.

- Masry et al. [2022] Ahmed Masry, Do Long, Jia Qing Tan, Shafiq Joty, and Enamul Hoque. Chartqa: A benchmark for question answering about charts with visual and logical reasoning. In Findings of the Association for Computational Linguistics: ACL 2022, pages 2263–2279, 2022.

- Jiao et al. [2022] Fangkai Jiao, Yangyang Guo, Xuemeng Song, and Liqiang Nie. Merit: Meta-path guided contrastive learning for logical reasoning. In Findings of the Association for Computational Linguistics: ACL 2022, pages 3496–3509, 2022.

- Bardin et al. [2023] Sébastien Bardin, Somesh Jha, and Vijay Ganesh. Machine learning and logical reasoning: The new frontier (dagstuhl seminar 22291). In Dagstuhl Reports, volume 12. Schloss Dagstuhl-Leibniz-Zentrum für Informatik, 2023.

- Li et al. [2021] Wenda Li, Lei Yu, Yuhuai Wu, and Lawrence C Paulson. Isarstep: a benchmark for high-level mathematical reasoning. In International Conference on Learning Representations, 2021.

- Veličković et al. [2022] Petar Veličković, Adrià Puigdomènech Badia, David Budden, Razvan Pascanu, Andrea Banino, Misha Dashevskiy, Raia Hadsell, and Charles Blundell. The CLRS algorithmic reasoning benchmark. In Kamalika Chaudhuri, Stefanie Jegelka, Le Song, Csaba Szepesvari, Gang Niu, and Sivan Sabato, editors, Proceedings of the 39th International Conference on Machine Learning, volume 162 of Proceedings of Machine Learning Research, pages 22084–22102. PMLR, 17–23 Jul 2022. URL https://proceedings.mlr.press/v162/velickovic22a.html.

- Srivastava et al. [2022] Aarohi Srivastava, Abhinav Rastogi, Abhishek Rao, Abu Awal Md Shoeb, Abubakar Abid, Adam Fisch, Adam R Brown, Adam Santoro, Aditya Gupta, Adrià Garriga-Alonso, et al. Beyond the imitation game: Quantifying and extrapolating the capabilities of language models. arXiv preprint arXiv:2206.04615, 2022.

- Mnih et al. [2013] Volodymyr Mnih, Koray Kavukcuoglu, David Silver, Alex Graves, Ioannis Antonoglou, Daan Wierstra, and Martin A. Riedmiller. Playing Atari with Deep Reinforcement Learning. CoRR, abs/1312.5602, 2013. URL http://arxiv.org/abs/1312.5602.

- Tang et al. [2017] Haoran Tang, Rein Houthooft, Davis Foote, Adam Stooke, OpenAI Xi Chen, Yan Duan, John Schulman, Filip DeTurck, and Pieter Abbeel. # exploration: A study of count-based exploration for deep reinforcement learning. Advances in neural information processing systems, 30, 2017.

- Silver et al. [2018] David Silver, Thomas Hubert, Julian Schrittwieser, Ioannis Antonoglou, Matthew Lai, Arthur Guez, Marc Lanctot, Laurent Sifre, Dharshan Kumaran, Thore Graepel, et al. A general reinforcement learning algorithm that masters chess, shogi, and go through self-play. Science, 362(6419):1140–1144, 2018.

- Badia et al. [2020] Adrià Puigdomènech Badia, Bilal Piot, Steven Kapturowski, Pablo Sprechmann, Alex Vitvitskyi, Zhaohan Daniel Guo, and Charles Blundell. Agent57: Outperforming the atari human benchmark. In International conference on machine learning, pages 507–517. PMLR, 2020.

- Wurman et al. [2022] Peter R Wurman, Samuel Barrett, Kenta Kawamoto, James MacGlashan, Kaushik Subramanian, Thomas J Walsh, Roberto Capobianco, Alisa Devlic, Franziska Eckert, Florian Fuchs, et al. Outracing champion gran turismo drivers with deep reinforcement learning. Nature, 602(7896):223–228, 2022.

- Kalashnikov et al. [2018] Dmitry Kalashnikov, Alex Irpan, Peter Pastor, Julian Ibarz, Alexander Herzog, Eric Jang, Deirdre Quillen, Ethan Holly, Mrinal Kalakrishnan, Vincent Vanhoucke, et al. Scalable deep reinforcement learning for vision-based robotic manipulation. In Conference on Robot Learning, pages 651–673. PMLR, 2018.

- Kiran et al. [2021] B Ravi Kiran, Ibrahim Sobh, Victor Talpaert, Patrick Mannion, Ahmad A Al Sallab, Senthil Yogamani, and Patrick Pérez. Deep reinforcement learning for autonomous driving: A survey. IEEE Transactions on Intelligent Transportation Systems, 23(6):4909–4926, 2021.

- Rudin et al. [2022] Nikita Rudin, David Hoeller, Philipp Reist, and Marco Hutter. Learning to walk in minutes using massively parallel deep reinforcement learning. In Conference on Robot Learning, pages 91–100. PMLR, 2022.

- Rana et al. [2023] Krishan Rana, Ming Xu, Brendan Tidd, Michael Milford, and Niko Sünderhauf. Residual skill policies: Learning an adaptable skill-based action space for reinforcement learning for robotics. In Conference on Robot Learning, pages 2095–2104. PMLR, 2023.

- Wang and Hong [2020] Zhe Wang and Tianzhen Hong. Reinforcement learning for building controls: The opportunities and challenges. Applied Energy, 269:115036, 2020.

- Wu et al. [2022] Di Wu, Yin Lei, Maoen He, Chunjiong Zhang, and Li Ji. Deep reinforcement learning-based path control and optimization for unmanned ships. Wireless Communications and Mobile Computing, 2022:1–8, 2022.

- Brunke et al. [2022] Lukas Brunke, Melissa Greeff, Adam W Hall, Zhaocong Yuan, Siqi Zhou, Jacopo Panerati, and Angela P Schoellig. Safe learning in robotics: From learning-based control to safe reinforcement learning. Annual Review of Control, Robotics, and Autonomous Systems, 5:411–444, 2022.

- Todorov et al. [2012] Emanuel Todorov, Tom Erez, and Yuval Tassa. Mujoco: A physics engine for model-based control. In 2012 IEEE/RSJ international conference on intelligent robots and systems, pages 5026–5033. IEEE, 2012.

- Bellemare et al. [2013] Marc G Bellemare, Yavar Naddaf, Joel Veness, and Michael Bowling. The arcade learning environment: An evaluation platform for general agents. Journal of Artificial Intelligence Research, 47:253–279, 2013.

- Brockman et al. [2016] Greg Brockman, Vicki Cheung, Ludwig Pettersson, Jonas Schneider, John Schulman, Jie Tang, and Wojciech Zaremba. Openai gym. arXiv preprint arXiv:1606.01540, 2016.

- Duan et al. [2016] Yan Duan, Xi Chen, Rein Houthooft, John Schulman, and Pieter Abbeel. Benchmarking deep reinforcement learning for continuous control. In International conference on machine learning, pages 1329–1338. PMLR, 2016.

- Tassa et al. [2018] Yuval Tassa, Yotam Doron, Alistair Muldal, Tom Erez, Yazhe Li, Diego de Las Casas, David Budden, Abbas Abdolmaleki, Josh Merel, Andrew Lefrancq, et al. Deepmind control suite. arXiv preprint arXiv:1801.00690, 2018.

- Côté et al. [2018] Marc-Alexandre Côté, Ákos Kádár, Xingdi Yuan, Ben Kybartas, Tavian Barnes, Emery Fine, James Moore, Ruo Yu Tao, Matthew Hausknecht, Layla El Asri, Mahmoud Adada, Wendy Tay, and Adam Trischler. Textworld: A learning environment for text-based games. CoRR, abs/1806.11532, 2018.

- Lanctot et al. [2019] Marc Lanctot, Edward Lockhart, Jean-Baptiste Lespiau, Vinicius Zambaldi, Satyaki Upadhyay, Julien Pérolat, Sriram Srinivasan, Finbarr Timbers, Karl Tuyls, Shayegan Omidshafiei, Daniel Hennes, Dustin Morrill, Paul Muller, Timo Ewalds, Ryan Faulkner, János Kramár, Bart De Vylder, Brennan Saeta, James Bradbury, David Ding, Sebastian Borgeaud, Matthew Lai, Julian Schrittwieser, Thomas Anthony, Edward Hughes, Ivo Danihelka, and Jonah Ryan-Davis. OpenSpiel: A framework for reinforcement learning in games. CoRR, abs/1908.09453, 2019. URL http://arxiv.org/abs/1908.09453.

- Jiang and Luo [2019] Zhengyao Jiang and Shan Luo. Neural logic reinforcement learning. In International conference on machine learning, pages 3110–3119. PMLR, 2019.

- Fawzi et al. [2022] Alhussein Fawzi, Matej Balog, Aja Huang, Thomas Hubert, Bernardino Romera-Paredes, Mohammadamin Barekatain, Alexander Novikov, Francisco J R Ruiz, Julian Schrittwieser, Grzegorz Swirszcz, et al. Discovering faster matrix multiplication algorithms with reinforcement learning. Nature, 610(7930):47–53, 2022.

- Mankowitz et al. [2023] Daniel J Mankowitz, Andrea Michi, Anton Zhernov, Marco Gelmi, Marco Selvi, Cosmin Paduraru, Edouard Leurent, Shariq Iqbal, Jean-Baptiste Lespiau, Alex Ahern, et al. Faster sorting algorithms discovered using deep reinforcement learning. Nature, 618(7964):257–263, 2023.

- Lai [2015] Matthew Lai. Giraffe: Using deep reinforcement learning to play chess. arXiv preprint arXiv:1509.01549, 2015.

- Silver et al. [2016] David Silver, Aja Huang, Chris J. Maddison, Arthur Guez, Laurent Sifre, George van den Driessche, Julian Schrittwieser, Ioannis Antonoglou, Veda Panneershelvam, Marc Lanctot, Sander Dieleman, Dominik Grewe, John Nham, Nal Kalchbrenner, Ilya Sutskever, Timothy Lillicrap, Madeleine Leach, Koray Kavukcuoglu, Thore Graepel, and Demis Hassabis. Mastering the game of go with deep neural networks and tree search. Nature, 529:484–489, 2016. URL https://doi.org/10.1038/nature16961.

- Tatham [2004a] Simon Tatham. Simon tatham’s portable puzzle collection, 2004a. URL https://www.chiark.greenend.org.uk/~sgtatham/puzzles/. Accessed: 2023-05-16.

- Foundation [2022] Farama Foundation. Gymnasium website, 2022. URL https://gymnasium.farama.org/. Accessed: 2023-05-12.

- Wang et al. [2022] Chao Wang, Chen Chen, Dong Li, and Bin Wang. Rethinking reinforcement learning based logic synthesis. arXiv preprint arXiv:2205.07614, 2022.

- Dasgupta et al. [2019] Ishita Dasgupta, Jane Wang, Silvia Chiappa, Jovana Mitrovic, Pedro Ortega, David Raposo, Edward Hughes, Peter Battaglia, Matthew Botvinick, and Zeb Kurth-Nelson. Causal reasoning from meta-reinforcement learning. arXiv preprint arXiv:1901.08162, 2019.

- Eppe et al. [2022] Manfred Eppe, Christian Gumbsch, Matthias Kerzel, Phuong DH Nguyen, Martin V Butz, and Stefan Wermter. Intelligent problem-solving as integrated hierarchical reinforcement learning. Nature Machine Intelligence, 4(1):11–20, 2022.

- Deac et al. [2021] Andreea-Ioana Deac, Petar Veličković, Ognjen Milinkovic, Pierre-Luc Bacon, Jian Tang, and Mladen Nikolic. Neural algorithmic reasoners are implicit planners. Advances in Neural Information Processing Systems, 34:15529–15542, 2021.

- He et al. [2022] Yu He, Petar Veličković, Pietro Liò, and Andreea Deac. Continuous neural algorithmic planners. In Learning on Graphs Conference, pages 54–1. PMLR, 2022.

- Silver et al. [2017] David Silver, Thomas Hubert, Julian Schrittwieser, Ioannis Antonoglou, Matthew Lai, Arthur Guez, Marc Lanctot, Laurent Sifre, Dharshan Kumaran, Thore Graepel, et al. Mastering chess and shogi by self-play with a general reinforcement learning algorithm. arXiv preprint arXiv:1712.01815, 2017.

- Dahl [2001] Fredrik A Dahl. A reinforcement learning algorithm applied to simplified two-player texas hold’em poker. In European Conference on Machine Learning, pages 85–96. Springer, 2001.

- Heinrich and Silver [2016] Johannes Heinrich and David Silver. Deep reinforcement learning from self-play in imperfect-information games. arXiv preprint arXiv:1603.01121, 2016.

- Steinberger [2019] Eric Steinberger. Pokerrl. https://github.com/TinkeringCode/PokerRL, 2019.

- Zhao et al. [2022] Enmin Zhao, Renye Yan, Jinqiu Li, Kai Li, and Junliang Xing. Alphaholdem: High-performance artificial intelligence for heads-up no-limit poker via end-to-end reinforcement learning. In Proceedings of the AAAI Conference on Artificial Intelligence, volume 36, pages 4689–4697, 2022.

- Ghory [2004] Imran Ghory. Reinforcement learning in board games. 2004.

- Szita [2012] István Szita. Reinforcement learning in games. In Reinforcement Learning: State-of-the-art, pages 539–577. Springer, 2012.

- Xenou et al. [2019] Konstantia Xenou, Georgios Chalkiadakis, and Stergos Afantenos. Deep reinforcement learning in strategic board game environments. In Multi-Agent Systems: 16th European Conference, EUMAS 2018, Bergen, Norway, December 6–7, 2018, Revised Selected Papers 16, pages 233–248. Springer, 2019.

- Perolat et al. [2022] Julien Perolat, Bart De Vylder, Daniel Hennes, Eugene Tarassov, Florian Strub, Vincent de Boer, Paul Muller, Jerome T Connor, Neil Burch, Thomas Anthony, et al. Mastering the game of stratego with model-free multiagent reinforcement learning. Science, 378(6623):990–996, 2022.

- Cormen et al. [2022] Thomas H. Cormen, Charles Eric Leiserson, Ronald L. Rivest, and Clifford Stein. Introduction to Algorithms. The MIT Press, 4th edition, 2022.

- Raffin et al. [2021] Antonin Raffin, Ashley Hill, Adam Gleave, Anssi Kanervisto, Maximilian Ernestus, and Noah Dormann. Stable-baselines3: Reliable reinforcement learning implementations. Journal of Machine Learning Research, 22(268):1–8, 2021. URL http://jmlr.org/papers/v22/20-1364.html.

- Werner Duvaud [2019] Aurèle Hainaut Werner Duvaud. Muzero general: Open reimplementation of muzero. https://github.com/werner-duvaud/muzero-general, 2019.

- Hafner et al. [2023a] Danijar Hafner, Jurgis Pasukonis, Jimmy Ba, and Timothy Lillicrap. Mastering diverse domains through world models. https://github.com/danijar/dreamerv3, 2023a.

- Haarnoja et al. [2018] Tuomas Haarnoja, Aurick Zhou, Pieter Abbeel, and Sergey Levine. Soft actor-critic: Off-policy maximum entropy deep reinforcement learning with a stochastic actor. In International conference on machine learning, pages 1861–1870. PMLR, 2018.

- Fujimoto et al. [2018] Scott Fujimoto, Herke Hoof, and David Meger. Addressing function approximation error in actor-critic methods. In International conference on machine learning, pages 1587–1596. PMLR, 2018.

- Silver et al. [2021] David Silver, Satinder Singh, Doina Precup, and Richard S Sutton. Reward is enough. Artificial Intelligence, 299:103535, 2021.

- Vamplew et al. [2022] Peter Vamplew, Benjamin J Smith, Johan Källström, Gabriel Ramos, Roxana Rădulescu, Diederik M Roijers, Conor F Hayes, Fredrik Heintz, Patrick Mannion, Pieter JK Libin, et al. Scalar reward is not enough: A response to silver, singh, precup and sutton (2021). Autonomous Agents and Multi-Agent Systems, 36(2):41, 2022.

- Community [2000] Pygame Community. Pygame github repository, 2000. URL https://github.com/pygame/pygame/. Accessed: 2023-05-12.

- Tatham [2004b] Simon Tatham. Developer documentation for simon tatham’s puzzle collection, 2004b. URL https://www.chiark.greenend.org.uk/~sgtatham/puzzles/devel/. Accessed: 2023-05-23.

- Schulman et al. [2017] John Schulman, Filip Wolski, Prafulla Dhariwal, Alec Radford, and Oleg Klimov. Proximal policy optimization algorithms, 2017. URL http://arxiv.org/abs/1707.06347.

- Huang et al. [2022] Shengyi Huang, Rousslan Fernand Julien Dossa, Antonin Raffin, Anssi Kanervisto, and Weixun Wang. The 37 implementation details of proximal policy optimization. In ICLR Blog Track, 2022. URL https://iclr-blog-track.github.io/2022/03/25/ppo-implementation-details/. https://iclr-blog-track.github.io/2022/03/25/ppo-implementation-details/.

- Mnih et al. [2016] Volodymyr Mnih, Adrià Puigdomènech Badia, Mehdi Mirza, Alex Graves, Timothy P. Lillicrap, Tim Harley, David Silver, and Koray Kavukcuoglu. Asynchronous methods for deep reinforcement learning. CoRR, abs/1602.01783, 2016. URL http://arxiv.org/abs/1602.01783.

- Schulman et al. [2015] John Schulman, Sergey Levine, Pieter Abbeel, Michael Jordan, and Philipp Moritz. Trust region policy optimization. In Francis Bach and David Blei, editors, Proceedings of the 32nd International Conference on Machine Learning, volume 37 of Proceedings of Machine Learning Research, pages 1889–1897, Lille, France, 07–09 Jul 2015. PMLR. URL https://proceedings.mlr.press/v37/schulman15.html.

- Dabney et al. [2017] Will Dabney, Mark Rowland, Marc G. Bellemare, and Rémi Munos. Distributional reinforcement learning with quantile regression. CoRR, abs/1710.10044, 2017. URL http://arxiv.org/abs/1710.10044.

- Schrittwieser et al. [2020] Julian Schrittwieser, Ioannis Antonoglou, Thomas Hubert, Karen Simonyan, Laurent Sifre, Simon Schmitt, Arthur Guez, Edward Lockhart, Demis Hassabis, Thore Graepel, et al. Mastering atari, go, chess and shogi by planning with a learned model. Nature, 588(7839):604–609, 2020.

- Hafner et al. [2023b] Danijar Hafner, Jurgis Pasukonis, Jimmy Ba, and Timothy Lillicrap. Mastering diverse domains through world models. arXiv preprint arXiv:2301.04104, 2023b.

Appendix A PUZZLES Environment Usage Guide

A.1 General Usage

A Python code example for using the PUZZLES environment is provided in LABEL:code:init-and-play-episode. All puzzles support seeding the initialization, by adding #{seed} after the parameters, where {seed} is an int. The allowed parameters are displayed in LABEL:tab:parameters. A full custom initialization argument would be as follows: {parameters}#{seed}.

⬇

1 import gymnasium as gym

2 import rlp

3

4 # init an agent suitable for Gymnasium environments

5 agent = Agent. create ()

6

7 # init the environment

8 env = gym. make (’rlp/Puzzle-v0’, puzzle = "bridges",

9 render_mode = "rgb_array", params = "4x4#42")

10 observation, info = env. reset ()

11

12 # complete an episode

13 terminated = False

14 while not terminated:

15 action = agent. choose (env) # the agent chooses the next action

16 observation, reward, terminated, truncated, info = env. step (action)

17 env. close ()

Listing 1: Code example of how to initialize an environment and have an agent complete one episode. The PUZZLES environment is designed to be compatible with the Gymnasium API. The choice of Agent is up to the user, it can be a trained agent or random policy.

A.2 Custom Reward

A Python code example for implementing a custom reward system is provided in LABEL:code:custom-reward-wrapper. To this end, the environment’s step() function provides the puzzle’s internal state inside the info Python dict.

⬇

1 import gymnasium as gym

2 class PuzzleRewardWrapper (gym. Wrapper):

3 def step (self, action):

4 obs, reward, terminated, truncated, info = self. env. step (action)

5 # Modify the reward by using members of info["puzzle_state"]

6 return obs, reward, terminated, truncated, info

Listing 2: Code example of a custom reward implementation using Gymnasium’s Wrapper class. A user can use the game state information provided in info["puzzle_state"] to modify the rewards received by the agent after performing an action.

A.3 Custom Observation

A Python code example for implementing a custom observation structure that is compatible with an agent using a transformer encoder. Here, we provide the example for Netslide, please refer to our GitHub for more examples.

⬇

1 import gymnasium as gym

2 import numpy as np

3 class NetslideTransformerWrapper (gym. ObservationWrapper):

4 def __init__ (self, env):

5 super (NetslideTransformerWrapper, self). __init__ (env)

6 self. original_space = env. observation_space

7

8 self. max_length = 512

9 self. embedding_dim = 16 + 4

10 self. observation_space = gym. spaces. Box (

11 low =-1, high =1, shape =(self. max_length, self. embedding_dim,), dtype = np. float32

12 )

13

14 self. observation_space = gym. spaces. Dict (

15 {’obs’: self. observation_space,

16 ’len’: gym. spaces. Box (low =0, high = self. max_length, shape =(1,),

17 dtype = np. int32)}

18 )

19

20 def observation (self, obs):

21 # The original observation is an ordereddict with the keys [’barriers’, ’cursor_pos’, ’height’,

22 # ’last_move_col’, ’last_move_dir’, ’last_move_row’, ’move_count’, ’movetarget’, ’tiles’, ’width’, ’wrapping’]

23 # We are only interested in ’barriers’, ’tiles’, ’cursor_pos’, ’height’ and ’width’

24 barriers = obs [’barriers’]

25 # each element of barriers is an uint16, signifying different elements

26 barriers = np. unpackbits (barriers. view (np. uint8)). reshape (-1, 16)

27 # add some positional embedding to the barriers

28 embedded_barriers = np. concatenate (

29 [barriers, self. pos_embedding (np. arange (barriers. shape [0]), obs [’width’], obs [’height’])], axis =1)

30

31 tiles = obs [’tiles’]

32 # each element of tiles is an uint16, signifying different elements

33 tiles = np. unpackbits (tiles. view (np. uint8)). reshape (-1, 16)

34 # add some positional embedding to the tiles

35 embedded_tiles = np. concatenate (

36 [tiles, self. pos_embedding (np. arange (tiles. shape [0]), obs [’width’], obs [’height’])], axis =1)

37 cursor_pos = obs [’cursor_pos’]

38

39 embedded_cursor_pos = np. concatenate (

40 [np. ones ((1, 16)), self. pos_embedding_cursor (cursor_pos, obs [’width’], obs [’height’])], axis =1)

41

42 embedded_obs = np. concatenate ([embedded_barriers, embedded_tiles, embedded_cursor_pos], axis =0)

43

44 current_length = embedded_obs. shape [0]

45 # pad with zeros to accomodate different sizes

46 if current_length < self. max_length:

47 embedded_obs = np. concatenate (

48 [embedded_obs, np. zeros ((self. max_length - current_length, self. embedding_dim))], axis =0)

49 return {’obs’: embedded_obs, ’len’: np. array ([current_length])}

50

51 @staticmethod

52 def pos_embedding (pos, width, height):

53 # pos is an array of integers from 0 to width*height

54 # width and height are integers

55 # return a 2D array with the positional embedding, using sin and cos

56 x, y = pos % width, pos // width

57 # x and y are integers from 0 to width-1 and height-1

58 pos_embed = np. zeros ((len (pos), 4))

59 pos_embed [:, 0] = np. sin (2 * np. pi * x / width)

60 pos_embed [:, 1] = np. cos (2 * np. pi * x / width)

61 pos_embed [:, 2] = np. sin (2 * np. pi * y / height)

62 pos_embed [:, 3] = np. cos (2 * np. pi * y / height)

63 return pos_embed

64

65 @staticmethod

66 def pos_embedding_cursor (pos, width, height):

67 # cursor pos goes from -1 to width or height

68 x, y = pos

69 x += 1

70 y += 1

71 width += 1

72 height += 1

73 pos_embed = np. zeros ((1, 4))

74 pos_embed [0, 0] = np. sin (2 * np. pi * x / width)

75 pos_embed [0, 1] = np. cos (2 * np. pi * x / width)

76 pos_embed [0, 2] = np. sin (2 * np. pi * y / height)

77 pos_embed [0, 3] = np. cos (2 * np. pi * y / height)

78 return pos_embed

Listing 3: Code example of a custom observation implementation using Gymnasium’s Wrapper class. A user can use the all elements of rpovided in the obs dict to create a custom observation. In this code example, the resulting observation is suitable for a transformer-based encoder.

A.4 Generalization Example

In LABEL:code:transformer-encoder, we show how a transformer-based features extractor can be built for Stable Baseline 3’s PPO MultiInputPolicy. Together with the observations from LABEL:code:custom-observation-wrapper, this feature extractor can work with variable-length inputs. This allows for easy evaluation in environments of different sizes than the environment the agent was originally trained in.

⬇

1 import gymnasium as gym

2 import numpy as np

3 from stable_baselines3. common. torch_layers import BaseFeaturesExtractor

4 from stable_baselines3 import PPO

5 import torch

6 import torch. nn as nn

7 from torch. nn import TransformerEncoder, TransformerEncoderLayer

8

9 class TransformerFeaturesExtractor (BaseFeaturesExtractor):

10 def __init__ (self, observation_space, data_dim, embedding_dim, nhead, num_layers, dim_feedforward, dropout =0.1):

11 super (TransformerFeaturesExtractor, self). __init__ (observation_space, embedding_dim)

12 self. transformer = Transformer (embedding_dim = embedding_dim,

13 data_dim = data_dim,

14 nhead = nhead,

15 num_layers = num_layers,

16 dim_feedforward = dim_feedforward,

17 dropout = dropout)

18

19 def forward (self, observations: gym. spaces. Dict) -> torch. Tensor:

20 # Extract the ’obs’ key from the dict

21 obs = observations [’obs’]

22 length = observations [’len’]

23 # all elements of length should be the same (we can’t train on different puzzle sizes at the same time)

24 length = int (length [0])

25 obs = obs [:, : length]

26 # Return the embedding of the cursor token (which is last)

27 return self. transformer (obs)[:, -1, :]

28

29

30 class Transformer (nn. Module):

31 def __init__ (self, embedding_dim, data_dim, nhead, num_layers, dim_feedforward, dropout =0.1):

32 super (Transformer, self). __init__ ()

33 self. embedding_dim = embedding_dim

34 self. data_dim = data_dim

35

36 self. lin = nn. Linear (data_dim, embedding_dim)

37

38 encoder_layers = TransformerEncoderLayer (

39 d_model = self. embedding_dim,

40 nhead = nhead,

41 dim_feedforward = dim_feedforward,

42 dropout = dropout,

43 batch_first = True

44 )

45

46 self. transformer_encoder = TransformerEncoder (encoder_layers, num_layers)

47

48 def forward (self, x):

49 # x is of shape (batch_size, seq_length, embedding_dim)

50 x = self. lin (x)

51 transformed = self. transformer_encoder (x)

52 return transformed

53

54 if __name__ == "__main__":

55 policy_kwargs = dict (

56 features_extractor_class = TransformerFeaturesExtractor,

57 features_extractor_kwargs = dict (embedding_dim = args. transformer_embedding_dim,

58 nhead = args. transformer_nhead,

59 num_layers = args. transformer_layers,

60 dim_feedforward = args. transformer_ff_dim,

61 dropout = args. transformer_dropout,

62 data_dim = data_dims [args. puzzle])

63 )

64

65 model = PPO ("MultiInputPolicy",

66 env,

67 policy_kwargs = policy_kwargs,

68 )

Listing 4: Code example of a transformer-based feature extractor written in PyTorch, compatible with Stable Baselines 3’s PPO. This encoder design allows for variable-length inputs, enabling generalization to previously unseen puzzle sizes.

Appendix B Environment Features

B.1 Episode Definition

An episode is played with the intention of solving a given puzzle. The episode begins with a newly generated puzzle and terminates in one of two states. To achieve a reward, the puzzle is either solved completely or the agent has failed irreversibly. The latter state is unlikely to occur, as only a few games, for example pegs or minesweeper, are able to terminate in a failed state. Alternatively, the episode can be terminated early. Starting a new episode generates a new puzzle of the same kind, with the same parameters such as size or grid type. However, if the random seed is not fixed, the puzzle is likely to have a different layout from the puzzle in the previous episode.

B.2 Observation Space

There are two kinds of observations which can be used by the agent. The first observation type is a representation of the discrete internal game state of the puzzle, consisting of a combination of arrays and scalars. This observation is provided by the underlying code of Tathams’s puzzle collection. The composition and shape of the internal game state is different for each puzzle, which, in turn, requires the agent architecture to be adapted.

The second type of observation is a representation of the pixel screen, given as an integer matrix of shape (3 $×$ width $×$ height). The environment deals with different aspect ratios by adding padding. The advantage of the pixel representation is a consistent representation for all puzzles, similar to the Atari RL Benchmark [11]. It could even allow for a single agent to be trained on different puzzles. On the other hand, it forces the agent to learn to solve the puzzles only based on the visual representation of the puzzles, analogous to human players. This might increase difficulty as the agent has to learn the task representation implicitly.

B.3 Action Space

Natively, the puzzles support two types of input, mouse and keyboard. Agents in PUZZLES play the puzzles only through keyboard input. This is due to our decision to provide the discrete internal game state of the puzzle as an observation, for which mouse input would not be useful.

The action space for each puzzle is restricted to actions that can actively contribute to changing the logical state of a puzzle. This excludes “memory aides” such as markers that signify the absence of a certain connection in Bridges or adding candidate digits in cells in Sudoku. The action space also includes possibly rule-breaking actions, as long as the game can represent the effect of the action correctly.

The largest action space has a cardinality of 14, but most puzzles only have five to six valid actions which the agent can choose from. Generally, an action is in one of two categories: selector movement or game state change. Selector movement is a mechanism that allows the agent to select game objects during play. This includes for example grid cells, edges, or screen regions. The selector can be moved to the next object by four discrete directional inputs and as such represents an alternative to continuous mouse input. A game state change action ideally follows a selector movement action. The game state change action will then be applied to the selected object. The environment responds by updating the game state, for example by entering a digit or inserting a grid edge at the current selector position.

B.4 Action Masking

The fixed-size action space allows an agent to execute actions that may not result in any change in game state. For example, the action of moving the selector to the right if the selector is already placed at the right border. The PUZZLES environment provides an action mask that marks all actions that change the state of the game. Such an action mask can be used to improve performance of model-based and even some model-free RL approaches. The action masking provided by PUZZLES does not ensure adherence to game rules, rule-breaking actions can most often still be represented as a change in the game state.

B.5 Reward Structure

In the default implementation, the agent only receives a reward for completing an episode. Rewards consist of a fixed positive value for successful completion and a fixed negative value otherwise. This reward structure encourages an agent to solve a given puzzle in the least amount of steps possible. The PUZZLES environment provides the option to define intermediate rewards tailored to specific puzzles, which could help improve training progress. This could be, for example, a negative reward if the agent breaks the rules of the game, or a positive reward if the agent correctly achieves a part of the final solution.

B.6 Early Episode Termination