# LogicVista: Multimodal LLM Logical Reasoning Benchmark in Visual Contexts

Abstract

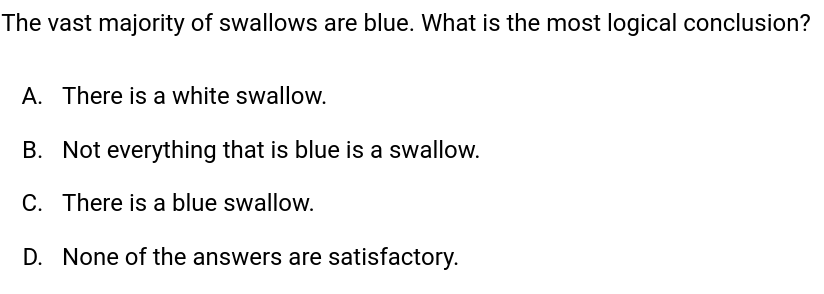

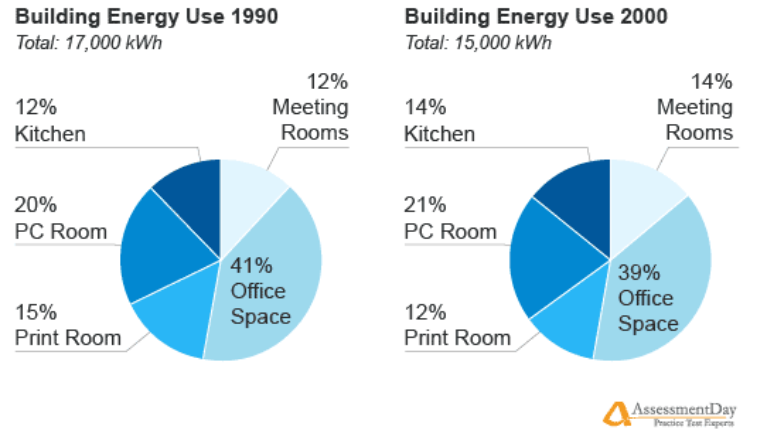

We propose LogicVista, an evaluation benchmark that assesses the integrated logical reasoning capabilities of multimodal large language models (MLLMs) in Vis ual contexts. Recent advancements in MLLMs have demonstrated various fascinating abilities, from crafting poetry based on an image to performing mathematical reasoning. However, there is still a lack of systematic evaluation of MLLMs’ proficiency in logical reasoning tasks, which are essential for activities like navigation and puzzle-solving. Thus we evaluate general logical cognition abilities across 5 logical reasoning tasks encompassing 9 different capabilities, using a sample of 448 multiple-choice questions. Each question is annotated with the correct answer and the human-written reasoning behind the selection, enabling both open-ended and multiple-choice evaluation. A total of 8 MLLMs are comprehensively evaluated using LogicVista. Code and Data Available at https://github.com/Yijia-Xiao/LogicVista. ∗ Both authors contributed equally.

1 Introduction

Recent advancements in Large Language Models (LLMs) are gradually turning the vision of a generalist AI agent into reality. These models exhibit near-human expert-level performance across a variety of tasks and have recently been augmented with visual understanding capabilities, enabling them to tackle even more complex visual challenges. This branch of work, led by proprietary projects such as GPT-4 [1] and Flamingo [2], as well as open-source efforts like LLaVA [3], Mini-GPT4 [4], enhances existing LLMs by incorporating visual comprehension. These models, known as Multimodal Large Language Models (MLLMs), use LLMs as the foundation for processing information and generating reasoned outcomes [5], thereby bridging the gap between language and vision.

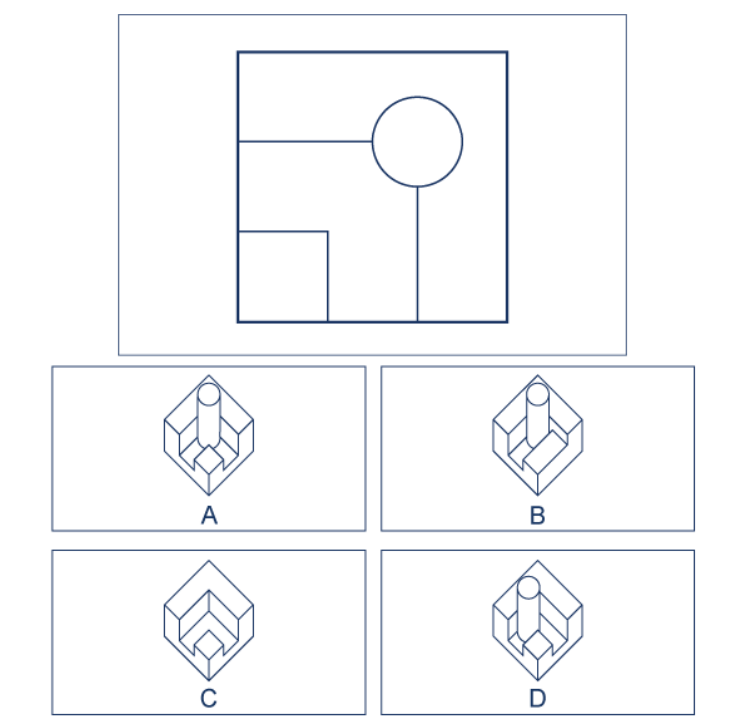

Recent MLLMs have demonstrated a range of impressive abilities, such as writing poems based on an image [6], engaging in mathematical reasoning [2], and even aiding in medical diagnosis [7]. To evaluate the performance of these models, various benchmarks have been proposed, as shown in Figure. 1 targeting the performance on common tasks such as objects recognition [8], text understanding in images [9], or mathematical problem solving [10]. However, as seen in Figure. 1, there is a notable shortage of benchmarks for MLLMs’ abilities in critical logical reasoning tasks that underlie most tasks. Perception and reasoning are two representative abilities of high-level intelligence that are used in unison during human problem-solving processes.

Many current MLLM datasets have focused solely on perception tasks, which require fact retrieval where the MLLM identifies and retrieve relevant information from a scene. However, complex multimodal reasoning, such as interpreting graphs [11], everyday reasoning, critical thinking, and problem-solving [12, 13] requires a combination of perception and logical reasoning. Proficiency in these reasoning skills is a reliable indicator of cognitive capabilities required for performing specialized or routine tasks across different domains. To our knowledge, MathVista [14] is the only benchmark that attempts to evaluate multimodal logical reasoning, but its scope is limited to mathematical-related reasoning. For a better understanding of how MLLMs perform on general reasoning tasks, there is a need for a comprehensive and general visual reasoning benchmark.

| LogicVista (Ours) | | | | | | | | | VQAv2, TextVQA and MM-vet |

| --- | --- | --- | --- | --- | --- | --- | --- | --- | --- |

|

<details>

<summary>extracted/5714025/figures/ours1.png Details</summary>

### Visual Description

## Diagram: Geometric Configurations with Diagonal and Vertical Lines

### Overview

The image contains 10 labeled diagrams (A-E, repeated twice) arranged in two rows. Each diagram features a square divided by a diagonal line and a vertical line. The diagrams vary in the position of the vertical line (left, middle, right) and the orientation of the diagonal line (top-left to bottom-right or top-right to bottom-left). Black squares are positioned in specific corners relative to the lines.

### Components/Axes

- **Diagrams**: Labeled A to E (each repeated once).

- **Square**: Uniformly sized, divided into regions by:

- **Diagonal Line**: Connects two opposite corners (either top-left to bottom-right or top-right to bottom-left).

- **Vertical Line**: Splits the square into left and right regions; position varies (left, middle, right).

- **Black Square**: Placed in one corner of the larger square, overlapping with the intersection of the diagonal and vertical lines in some cases.

### Detailed Analysis

1. **Diagram A**:

- Vertical line on the left.

- Diagonal line from top-left to bottom-right.

- Black square in the bottom-left corner.

2. **Diagram B**:

- Vertical line in the middle.

- Diagonal line from top-left to bottom-right.

- Black square in the bottom-left corner.

3. **Diagram C**:

- Vertical line on the right.

- Diagonal line from top-left to bottom-right.

- Black square in the bottom-right corner.

4. **Diagram D**:

- Vertical line in the middle.

- Diagonal line from top-right to bottom-left.

- Black square in the top-right corner.

5. **Diagram E**:

- Vertical line on the left.

- Diagonal line from top-right to bottom-left.

- Black square in the top-left corner.

### Key Observations

- **Vertical Line Position**: Alternates between left, middle, and right across diagrams.

- **Diagonal Orientation**: Alternates between two orientations (top-left to bottom-right vs. top-right to bottom-left).

- **Black Square Placement**: Positioned to align with the intersection of the diagonal and vertical lines in most cases (e.g., bottom-left in A/B, bottom-right in C, top-right in D, top-left in E).

### Interpretation

The diagrams likely illustrate scenarios where the intersection of two decision boundaries (diagonal and vertical lines) determines an outcome (represented by the black square). For example:

- **Decision Trees**: The vertical line could represent a binary choice (left/right), while the diagonal line represents a secondary condition (e.g., cost vs. benefit).

- **Resource Allocation**: The black square might symbolize an optimal resource distribution point based on two constraints.

- **Game Theory**: The configurations could model strategies where players choose between options (vertical line) and outcomes (diagonal line).

No numerical data or trends are present. The diagrams emphasize spatial relationships and positional logic rather than quantitative analysis.

</details>

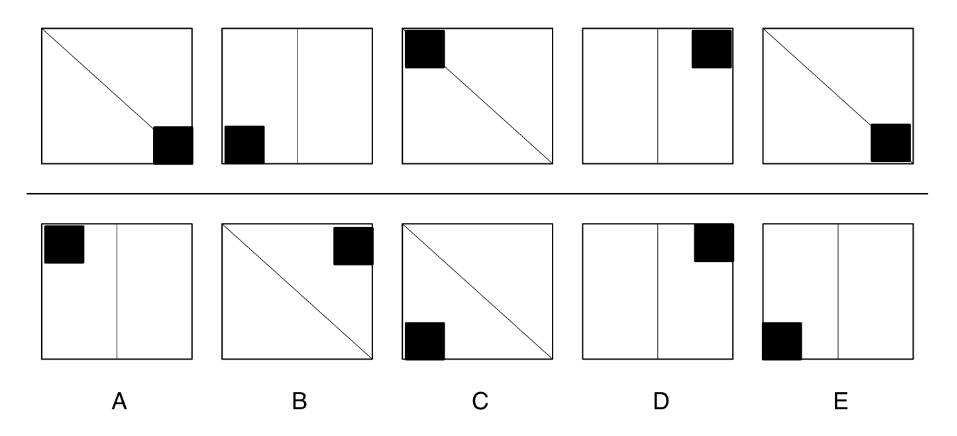

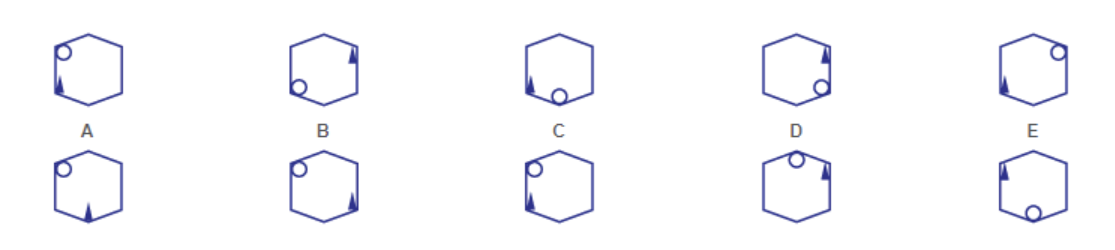

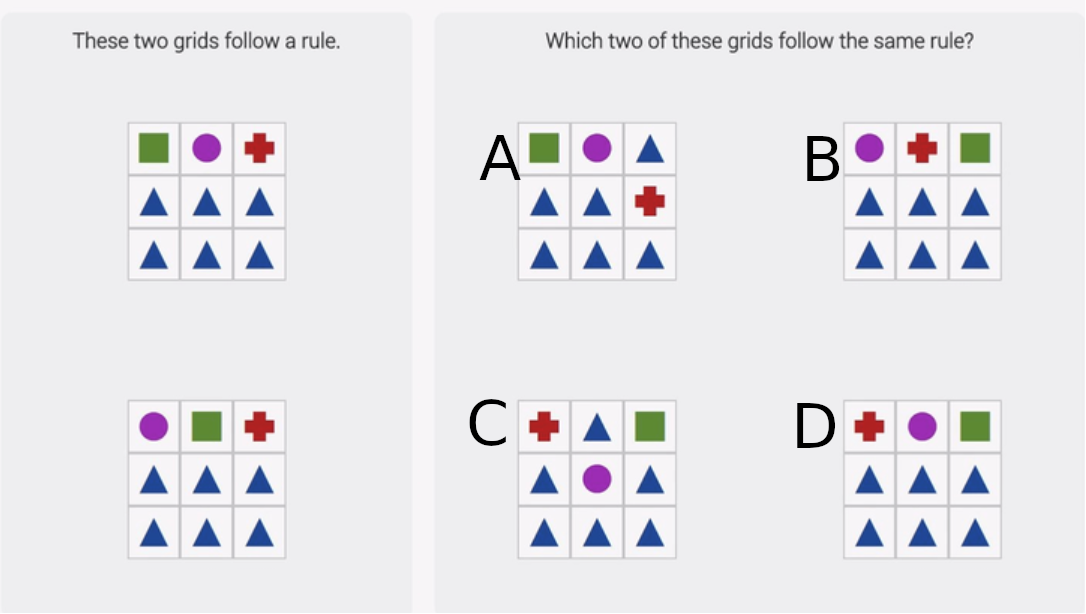

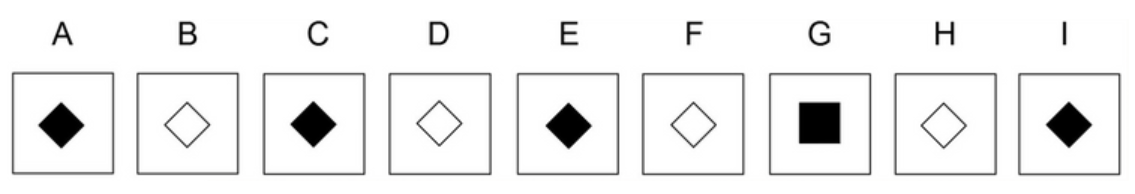

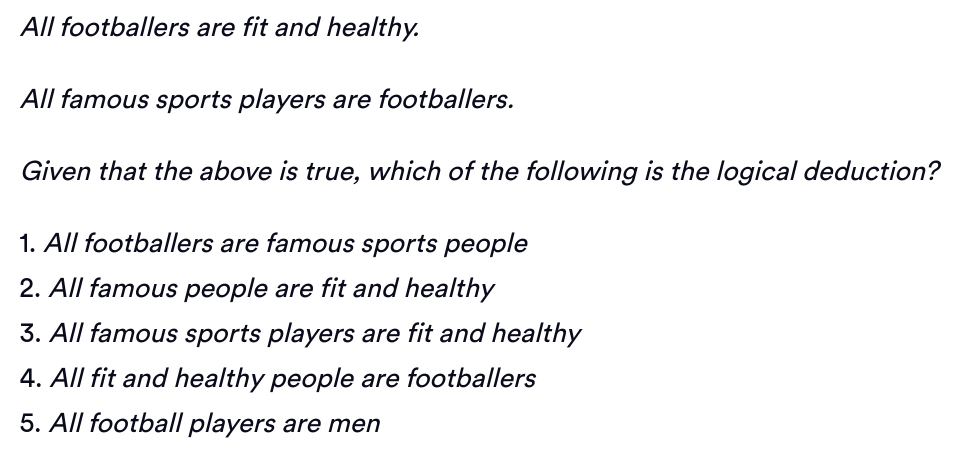

| Q: Which of the boxes comes next? A: E Reasoning Skill: Inductive Capability: Diagram |

<details>

<summary>extracted/5714025/figures/vqav2.jpg Details</summary>

### Visual Description

## Photograph: Tennis Player in Action

### Overview

The image captures a dynamic moment of a female tennis player executing a forehand stroke on a hard court. The player is mid-motion, with her body leaning forward, right arm extended to strike a yellow tennis ball, and left arm outstretched for balance. The scene is set outdoors under bright sunlight, with a chain-link fence and sparse vegetation visible in the background.

### Components/Axes

- **Subject**: Female tennis player (center-right of frame).

- **Court**: Green hard court with white boundary lines (visible at the bottom of the image).

- **Background**: Chain-link fence (occupying ~70% of the upper frame), with blurred trees and shrubs.

- **Lighting**: Bright daylight, casting sharp shadows on the court.

### Detailed Analysis

- **Player Attire**:

- Red short-sleeve shirt with a white circular logo (resembling a flame or sun) and the text "WILD" beneath it.

- White tennis skirt and white athletic shoes.

- White visor with a logo (text indiscernible).

- **Ball**: Yellow tennis ball in mid-air, positioned ~15 cm above the player’s racket.

- **Racket**: Blue and white tennis racket held in the player’s right hand, angled toward the ball.

- **Motion**: Player’s right leg is lifted mid-stride, left foot planted on the court.

### Key Observations

1. **Ball Trajectory**: The ball is slightly ahead of the racket, suggesting a forehand stroke in progress.

2. **Player Focus**: Eyes locked on the ball, indicating concentration.

3. **Environment**: No spectators or opponents visible; isolated action shot.

4. **Lighting**: High contrast between the player’s red shirt and the green court.

### Interpretation

The image emphasizes athleticism and precision, capturing the split-second coordination required in tennis. The player’s posture and ball positioning suggest a powerful forehand stroke, likely during a rally. The absence of opponents or audience implies this could be a practice session or a drill. The "WILD" logo on the shirt may indicate a team, sponsor, or brand affiliation, though no further context is provided.

No numerical data, charts, or diagrams are present. The textual elements are limited to the "WILD" logo and potential logos on the visor (unreadable).

</details>

| Q: Is the girl touching the ground? A: No Reasoning Skill: None Capability: Recognition |

| --- | --- | --- | --- |

|

<details>

<summary>extracted/5714025/figures/ours2.png Details</summary>

### Visual Description

## Diagram: 3D Cube Structure with 2D Partitioned Views

### Overview

The image depicts a 3D geometric structure resembling a cube with a smaller cube attached to its top face and a cutout section on one side. Below the 3D structure, four 2D diagrams (labeled A, B, C, D) are arranged in a 2x2 grid. Each diagram shows a square partitioned into irregular regions with varying configurations.

### Components/Axes

- **3D Structure**:

- A cube with a smaller cube stacked on its top face.

- A rectangular cutout on the front face, creating an open cavity.

- No explicit labels, axes, or legends are present in the 3D structure.

- **2D Diagrams (A, B, C, D)**:

- **Diagram A**: A square divided into three regions: a small square in the top-left, a horizontal rectangle spanning the bottom half, and a vertical rectangle on the right.

- **Diagram B**: A square partitioned into four regions: a small square in the top-left, a horizontal rectangle in the top-right, a vertical rectangle in the bottom-left, and a larger square in the bottom-right.

- **Diagram C**: A square divided into four regions: a small square in the bottom-left, a horizontal rectangle spanning the top half, a vertical rectangle on the right, and a larger square in the bottom-right.

- **Diagram D**: A square divided into four equal smaller squares (2x2 grid).

### Detailed Analysis

- **3D Structure**:

- The cube’s orientation suggests a perspective view, with the cutout revealing internal depth.

- The smaller cube on top creates a stepped effect, emphasizing vertical layering.

- **2D Diagrams**:

- **Diagram A**: Asymmetrical partitioning with uneven regions.

- **Diagram B**: Balanced but irregular partitioning, with one large square dominating the bottom-right.

- **Diagram C**: Similar to B but with the small square shifted to the bottom-left.

- **Diagram D**: Uniform partitioning into equal quadrants.

### Key Observations

1. The 3D cube’s complexity contrasts with the simplicity of the 2D diagrams.

2. Diagrams A, B, and C share a recurring pattern of a small square and a large square, while D is uniform.

3. No numerical values, legends, or axis markers are present in any diagram.

### Interpretation

The image likely illustrates a conceptual relationship between 3D spatial reasoning and 2D representation. The 3D cube’s cutout and layered structure may symbolize fragmentation or modularity, while the 2D diagrams could represent different ways to partition or analyze a system. Diagram D’s uniformity might signify standardization, whereas A, B, and C highlight variability in segmentation. The absence of labels suggests the focus is on visual patterns rather than quantitative data.

## No textual data, numerical values, or legends are present in the image. The analysis is based solely on geometric and spatial relationships.

</details>

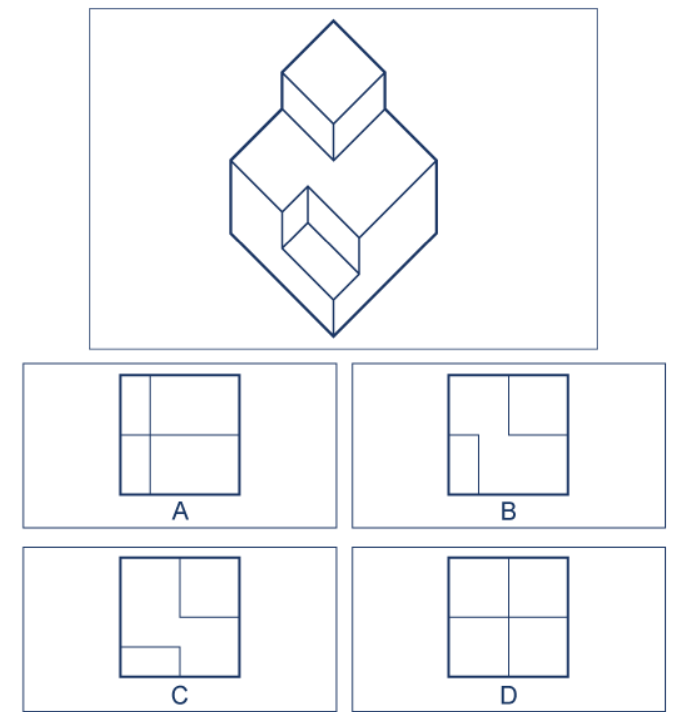

| Q: Which of these are the top view? A: B Reasoning Skill: Spatial Capability: 3D Shape |

<details>

<summary>extracted/5714025/figures/textvqa.jpg Details</summary>

### Visual Description

## Digital Transit Display: Route Information

### Overview

The image shows a digital display sign with a black background, featuring three rows of text in white and yellow. The display uses a grid-based font with green rectangular blocks separating text segments. The content indicates a transit route with origin, next stop, and destination information.

### Components/Axes

- **Text Elements**:

- **ORIGIN:** (White text, top-left)

- **NEXT STOP:** (White text, middle-left)

- **DESTINATION:** (White text, bottom-left)

- **Location Labels**:

- **WASHINGTON** (Yellow text, aligned with "ORIGIN:")

- **BWI AIRPORT** (Yellow text, aligned with "NEXT STOP:")

- **NEW YORK** (Yellow text, aligned with "DESTINATION:")

- **Design Elements**:

- Green rectangular blocks (4 per row) separating text segments

- Black background with no additional graphics

### Detailed Analysis

1. **ORIGIN:**

- Text: "WASHINGTON" (Yellow, 7-character grid font)

- Position: Top row, left-aligned

- Green blocks: 4 blocks after "WASHINGTON"

2. **NEXT STOP:**

- Text: "BWI AIRPORT" (Yellow, 11-character grid font)

- Position: Middle row, left-aligned

- Green blocks: 4 blocks after "BWI AIRPORT"

3. **DESTINATION:**

- Text: "NEW YORK" (Yellow, 8-character grid font)

- Position: Bottom row, left-aligned

- Green blocks: 4 blocks after "NEW YORK"

### Key Observations

- All text uses a consistent grid-based font with uniform spacing

- Yellow text contrasts sharply against the black background

- Green blocks serve as visual separators but lack explicit legend explanation

- No numerical data, timestamps, or additional UI elements visible

- Text alignment is strictly left-justified across all rows

### Interpretation

This display functions as a real-time transit information system, likely for trains or buses. The structure suggests:

1. **Origin**: Current location (Washington)

2. **Next Stop**: Immediate destination (BWI Airport)

3. **Destination**: Final endpoint (New York)

The use of color coding (yellow text, green blocks) enhances readability in transit environments. The absence of timestamps or additional metrics implies this is a static route display rather than a dynamic schedule. The green blocks may indicate progress markers or simply serve as visual dividers. The route appears to be a through-service connecting Washington to New York via BWI Airport, suggesting a multi-modal transit system.

</details>

| Q: What is the final destination? A: New York Reasoning Skill: None Capability: OCR |

|

<details>

<summary>extracted/5714025/figures/ours3.png Details</summary>

### Visual Description

## Diagram: Balance Scale with Torque Equilibrium

### Overview

The image depicts a horizontal balance scale with three weights positioned at varying distances from the fulcrum (center pivot point). The system is in static equilibrium, with the beam balanced horizontally.

### Components/Axes

- **Fulcrum**: Central pivot point (orange triangle) labeled with a dashed vertical line.

- **Weights**:

- Left side:

- 20 lb weight positioned 6 ft left of the fulcrum.

- 30 lb weight positioned 3 ft left of the fulcrum.

- Right side:

- Unknown weight (marked with "?") positioned 6 ft right of the fulcrum.

- **Distances**:

- Arrows indicate distances from the fulcrum: 6 ft (left and right), 3 ft (left).

### Detailed Analysis

1. **Torque Calculation**:

- Left side total torque:

$ (20 \, \text{lb} \times 6 \, \text{ft}) + (30 \, \text{lb} \times 3 \, \text{ft}) = 120 \, \text{lb-ft} + 90 \, \text{lb-ft} = 210 \, \text{lb-ft} $.

- Right side torque must equal 210 lb-ft for equilibrium.

- Unknown weight: $ \frac{210 \, \text{lb-ft}}{6 \, \text{ft}} = 35 \, \text{lb} $.

2. **Spatial Grounding**:

- All weights are aligned horizontally along the beam.

- Distances are explicitly labeled with arrows pointing to the fulcrum.

### Key Observations

- The system adheres to the principle of torque equilibrium: $ \text{Torque}_{\text{left}} = \text{Torque}_{\text{right}} $.

- The unknown weight is calculated to be **35 lb** to maintain balance.

- No outliers or anomalies; the diagram explicitly shows a balanced state.

### Interpretation

This diagram demonstrates the application of rotational equilibrium in physics. The placement and magnitude of weights are inversely proportional to their distances from the fulcrum to achieve balance. The calculation confirms that the unknown weight must be **35 lb** to counteract the combined torque of the left-side weights. The diagram serves as a visual aid for understanding lever mechanics and torque distribution.

</details>

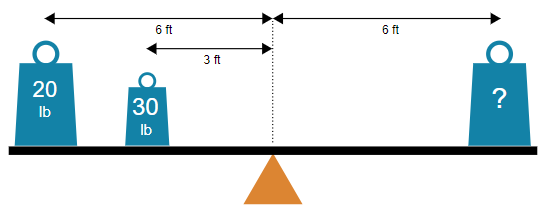

| Q: What is the weight if balanced? A: C: 35 lb Reasoning Skill: Mechanical Capability: Physics |

<details>

<summary>extracted/5714025/figures/mmvet1.png Details</summary>

### Visual Description

## Photograph: Children Solving Math Problems on a Chalkboard

### Overview

The image depicts three children (two girls and one boy) from behind, actively writing on a dark green chalkboard. Each child is solving a basic arithmetic problem using white chalk. The chalkboard contains three incomplete equations:

1. `3×3=` (left side)

2. `7×2=` (center)

3. `11-2=` (right side)

The children are wearing school uniforms: navy-blue vests with red trim over white shirts. Their hair is tied back with clips (red, yellow, and purple). The chalkboard shows faint erasure marks, suggesting prior use.

### Components/Axes

- **Chalkboard**: Dark green surface with white chalk markings.

- **Text**: Three arithmetic problems (`3×3=`, `7×2=`, `11-2=`).

- **Subjects**: Three children (two girls, one boy) positioned left-to-right.

### Detailed Analysis

1. **Left Child (Girl)**:

- Wearing a red hair clip.

- Writing `3×3=`; no answer provided.

- Uniform: Navy vest with red trim, white shirt.

2. **Center Child (Girl)**:

- Hair tied with yellow and purple clips.

- Writing `7×2=`; no answer provided.

- Uniform: Navy vest with red trim, white shirt with pink collar.

3. **Right Child (Boy)**:

- Short, dark hair.

- Writing `11-2=`; no answer provided.

- Uniform: Navy vest with red trim, white shirt.

### Key Observations

- All equations are incomplete; no solutions are written.

- Children are focused on their tasks, suggesting a learning activity.

- Uniforms indicate a formal educational setting (e.g., classroom).

- Chalkboard shows signs of prior use (faint erasure marks).

### Interpretation

The image captures a moment of active learning, emphasizing foundational math skills (multiplication and subtraction). The absence of answers suggests the children are in the process of solving problems, possibly during a lesson or exercise. The uniformity in attire and the structured activity imply a disciplined educational environment. The chalkboard’s wear indicates repeated use, reinforcing the idea of a classroom setting.

No numerical data or trends are present beyond the arithmetic problems. The image prioritizes visual storytelling over quantitative analysis.

</details>

| Q: What will girl on right write? A: 14 Reasoning Skill: Numerical Capability: OCR |

Figure 1: Capabilities and reasoning skills of various existing benchmarks. Traditional benchmarks seldom assess reasoning skills, whereas LogicVista emphasizes the fundamental capacities necessary for solving specific problems, going beyond simple recognition or math tasks.

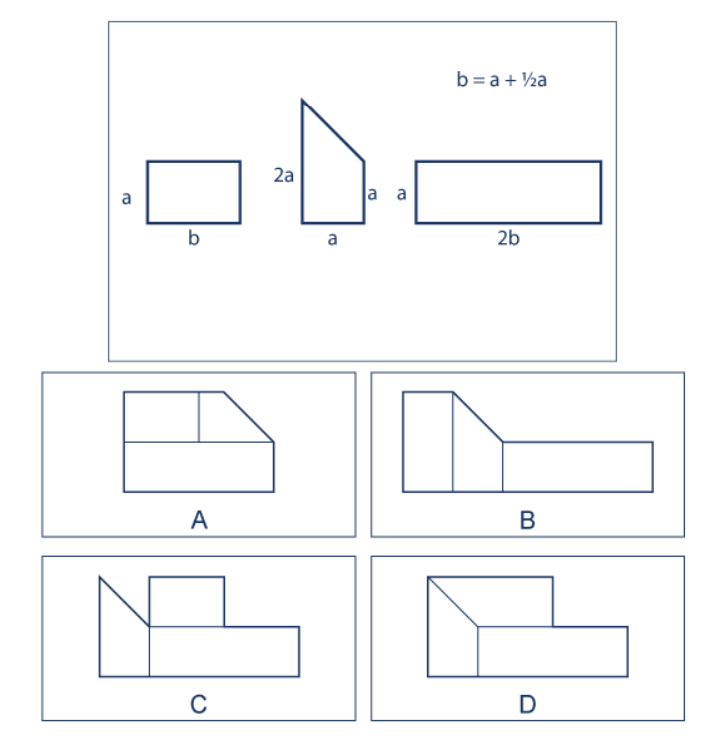

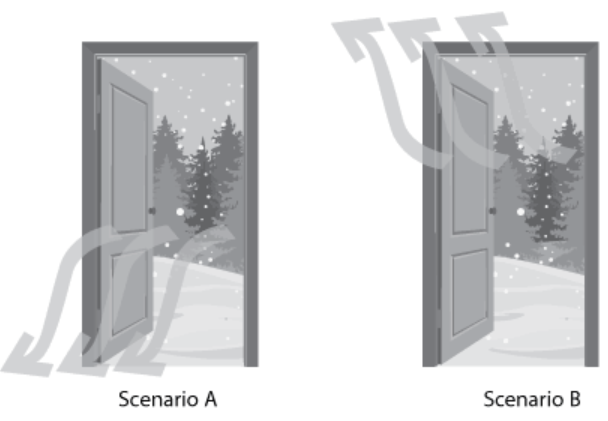

We argue that a universal comprehensive evaluation benchmark should have the following characteristics: (1) cover a wide range of logical reasoning tasks, including deductive, inductive, numeric, spatial, and mechanical reasoning; (2) present information in both graphical and Optical Character Recognition (OCR) formats to accommodate different types of data inputs; and (3) facilitate convenient quantitative analysis for rigorous assessment and comparison of model performance.

To this end, we present a comprehensive MLLM evaluation benchmark, named LogicVista, which meets all these criteria:

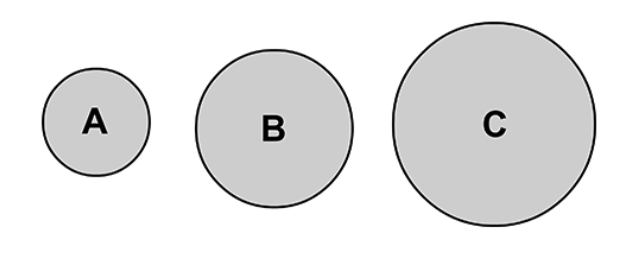

- LogicVista covers 5 representative categories of logical reasoning tasks: inductive ( $sample=107$ ), deductive ( $sample=93$ ), numerical ( $sample=95$ ), spatial ( $sample=79$ ), and mechanical ( $sample=74$ ).

- LogicVista includes a variety of capabilities, ranging from diagrams ( $sample=330$ ), OCR, ( $sample=234$ ), patterns ( $sample=105$ ), graphs ( $sample=67$ ), tables ( $sample=70$ ), 3D shapes ( $samples=45$ ), puzzles ( $samples=256$ ), sequences ( $samples=76$ ), and physics ( $samples=69$ ).

- All images, instructions, solution, and reasoning are manually annotated and validated.

- With our instruction design “please select from A, B, C, D, and E." and our LLM answer evaluator, we can assess different reasoning skills and capabilities and easily perform quantitative statistical analysis based on the natural language output of MLLMs. Additionally, We provide more in-depth human-written explanations for why each answer is correct, allowing for thorough open-ended evaluation.

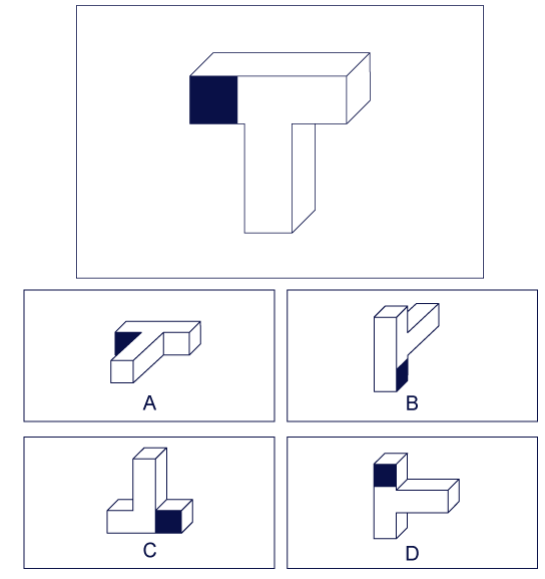

As shown in Figure. 1, LogicVista covers a wide range of reasoning capabilities and evaluates them comprehensively. For instance, answering the question “Which of these images is the top view of the given object" in Figure 1 (b) requires not only recognizing the objects’ orientation but also the ability to spatially reason over the object from a different perspective. Since these questions and diagrams are presented without context, they effectively probe the MLLM’s underlying ability rather than relying on contextual cues from the surrounding real-life environment.

Furthermore, we provide two evaluation strategies with our annotations: multiple-choice question (MCQ) evaluation and open-ended evaluation. Our annotation of MCQ choices along with our LLM evaluator allows quick evaluations of answers provided by MLLMs. Additionally, our annotation of the reasoning and thought process behind each MCQ enables open-ended evaluation, capturing the nuances of the MLLM responses and identifying which reasoning steps were correct or incorrect.

We comprehensively evaluate the performance of 8 representative open and closed source MLLMs on 448 tasks across 5 main logical reasoning categories. LogicVista’s evaluation strategy allows users to see a detailed breakdown of an MLLM’s performance on each reasoning skill and capability. This approach provides more insights than a single overall score, enabling users to better understand the specific skills in which a model excels or needs improvement.

2 Related Works

| | VQAv2 [8, 15] | COCO [16] | TextCaps [17] | Contextual [18] | MM-vet [10] | MathVista [14] | VisIT-Bench [19] | LogicVista |

| --- | --- | --- | --- | --- | --- | --- | --- | --- |

| Number of Logical Reasoning Skills Tested | 0 | 0 | 1 | 1 | 1 | 2 | 1 | 5 |

| Number of Multimodal Capabilities Tested | 1 | 1 | 2 | 2 | 6 | 12 | 2 | 9 |

| Dataset Size | 204,721 | 330,000 | 28,000 | 506 | 217 | 6,141 | 592 | 448 |

| Scene and Object Recognition | ✓ | ✓ | ✓ | ✓ | ✓ | ✓ | ✓ | ✓ |

| Inductive Reasoning | ✗ | ✗ | ✗ | ✗ | ✗ | ✓ | ✗ | ✓ |

| Deductive Reasoning | ✗ | ✗ | ✗ | ✗ | ✗ | ✗ | ✗ | ✓ |

| Numerical Reasoning | ✗ | ✗ | ✓ | ✓ | ✓ | ✓ | ✓ | ✓ |

| Spatial Reasoning | ✗ | ✗ | ✗ | ✗ | ✗ | ✗ | ✗ | ✓ |

| Mechanical Reasoning | ✗ | ✗ | ✗ | ✗ | ✗ | ✗ | ✗ | ✓ |

| Answer Choice Explanations | ✗ | ✗ | ✗ | ✗ | ✗ | ✓ | ✗ | ✓ |

| Human Annotation | ✓ | ✓ | ✓ | ✓ | ✓ | ✓ | ✓ | ✓ |

| Human Evaluation | ✗ | ✓ | ✓ | ✓ | ✗ | ✓ | ✓ | ✗ |

| Auto/GPT-4 Evaluation | ✓ | ✓ | ✓ | ✓ | ✓ | ✓ | ✓ | ✓ |

| Open-ended Evaluation | ✗ | ✓ | ✓ | ✓ | ✓ | ✓ | ✓ | ✓ |

Table 1: Comparision with related vision-language benchmarks.

Multimodal Language Models The field of vision-language models [20, 21, 22, 23, 24, 25, 26, 27, 28, 29] has made significant progress towards achieving a cohesive understanding and generation of both visual and linguistic information. This progress is largely driven by the remarkable generalization and quality capabilities of recent large language models (LLMs) [30, 1, 31, 32]. As a result, there has been a surge in the development of MLLMs that aim to integrate the diverse capabilities of vision and language for complex multimodal tasks.

Efforts to create these multimodal generalist systems include enhancing LLMs with multi-sensory processing abilities, as demonstrated by innovative projects like Frozen [33], Flamingo [2], PaLM-E [34], and GPT-4 [1]. Recent releases of open-source LLMs [35, 32, 36] have further propelled research in this field, leading to the development of OpenFlamingo [37], LLaVA [38], MiniGPT-4 [4], Otter [39], InstructBLIP [40], among others [41, 38, 42]. Additionally, multimodal agents [43, 44, 45] have been explored for their ability to link various vision tools with LLMs [30, 1], aiming to enhance integrated vision-language capabilities

Vision-Language Benchmarks Traditional vision-language benchmarks have focused on assessing specific capabilities, including visual recognition [21], generating image descriptions [20, 46], and other specialized functions such as understanding scene text [47, 17, 48], commonsense reasoning [49], mathematical reasoning [14], instruction following [19], and external knowledge incorporation [50]. While some benchmarks incorporate reasoning [18], they are often presented in real-life contexts, which may reduce the task to mere recognition based on contextual cues.

The emergence of general MLLMs has highlighted the need for updated vision-language benchmarks that encompass complex multimodal tasks requiring comprehensive vision-language skills. Our benchmark, LogicVista, aligns closely with recent evaluation studies like MM-Vet and MMBench [10, 51], which aim to provide thorough evaluations of MLLMs through well-designed evaluation samples. A key distinction of LogicVista lies in its focus on integrated vision-language capabilities, offering deeper insights beyond mere model rankings.

LLM-Based Evaluation. LogicVista adopts an open-ended LLM-based evaluation approach, which facilitates the generation and assessment of diverse answer styles and question types beyond the limitations of binary or multiple-choice responses. This innovative method leverages the capabilities of large language models (LLMs) for comprehensive model evaluation, a technique that has been effectively applied in natural language processing (NLP) tasks [52, 53, 54, 55]. Our findings indicate that this LLM-based evaluation framework is not only versatile but also robust, enabling a unified and flexible assessment across various modalities. By accommodating a wide range of answer styles and question types, this approach enhances evaluation depth and breadth, which contributes to a more thorough understanding of model performance.

3 Data annotation and organization

<details>

<summary>x1.png Details</summary>

### Visual Description

## Diagram: Closed-Source Tests System Architecture

### Overview

The diagram illustrates a closed-source testing framework with six identical clipboard icons arranged in a 3x2 grid. A central blue lock with radiating lines connects to three distinct elements via arrows: an envelope with an "@" symbol, a stack of coins and dollar bill, and two human figures with a "+" sign. All elements are rendered in black and white except the blue lock.

### Components/Axes

1. **Header**:

- Title: "Closed-Source Tests" (top-center, bold black text)

2. **Main Grid**:

- Six identical clipboard icons (3 rows × 2 columns)

- Each clipboard contains:

- Top-right: Checkmark symbol

- Bottom-right: Text block (illegible)

- Top-left: Text block (illegible)

3. **Central Element**:

- Blue lock icon with keyhole

- Radiating gray lines (8 total) connecting to three downstream elements

4. **Downstream Elements**:

- Left: Envelope with "@" symbol (email)

- Center: Stack of coins (3 stacks) + green dollar bill

- Right: Two human figures (outline) with "+" superscript

### Detailed Analysis

- **Clipboard Grid**: All six clipboards are identical in layout and positioning. No discernible text content due to low resolution.

- **Lock Mechanism**: Blue lock centrally positioned below the grid, acting as a security/access control point. Radiating lines suggest bidirectional communication or data flow.

- **Arrow Connections**:

- Lock → Envelope (left)

- Lock → Money (center)

- Lock → People (right)

- **Symbolic Elements**:

- "@" symbol on envelope implies email communication

- "$" on bill confirms financial transactions

- "+" on people suggests collaboration/team expansion

### Key Observations

1. **Security-Centric Design**: The lock's central position and radiating lines emphasize restricted access to test materials.

2. **Tripartite Output**: The system connects to three distinct domains: communication, finance, and human resources.

3. **Uniform Testing Process**: Identical clipboard icons suggest standardized testing procedures across all six instances.

### Interpretation

This diagram represents a closed-source testing ecosystem where:

1. **Test Materials** (clipboards) are secured behind access controls (lock)

2. **Outputs** flow to three critical domains:

- **Communication** (email via "@" symbol)

- **Financial Tracking** (currency symbols and stacks)

- **Team Collaboration** (human figures with "+" indicating growth)

3. The checkmarks on clipboards likely denote completed test cycles, while the illegible text blocks may represent test case details or results.

The blue lock's radiating lines suggest the system maintains strict control over test integrity while enabling monitored interactions with external stakeholders (email), financial systems (currency), and team dynamics (collaboration). The absence of numerical data implies this is a conceptual architecture rather than a quantitative analysis.

</details>

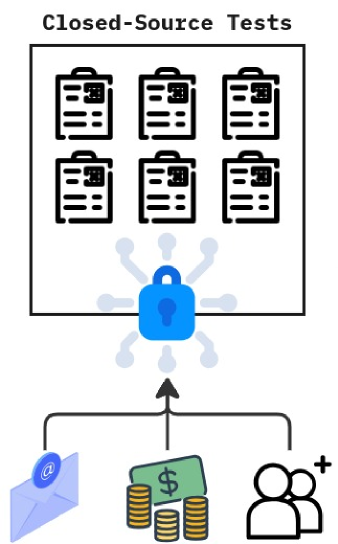

(a)

<details>

<summary>x2.png Details</summary>

### Visual Description

## Diagram: Manual Curation Workflow for Annotated Dataset

### Overview

The diagram illustrates a multi-step process for curating an annotated dataset, involving human input, data processing, and output generation. It uses visual metaphors (lock icon, document icons) to represent security, documentation, and structured data.

### Components/Axes

1. **Input Elements**:

- Three smiling human figures (left side) with dashed arrows pointing to a central box.

- Central box labeled with six document icons (representing raw data or initial inputs).

- Blue lock icon at the bottom of the central box (symbolizing security/access control).

2. **Output Elements**:

- Annotated dataset (right side) containing:

- Two image thumbnails (orange/yellow gradient backgrounds).

- JSON file icon (bottom right).

- Dashed arrows connecting the central box to the annotated dataset and JSON file.

3. **Textual Labels**:

- "annotated dataset" (top right).

- "JSON" (bottom right).

- "Manual Curation of images, answers, and reasoning" (bottom center).

### Detailed Analysis

- **Flow Direction**: Left-to-right progression from human input → central processing → structured output.

- **Key Relationships**:

- Human figures → Central box (manual curation).

- Central box → Annotated dataset (data transformation).

- Central box → JSON file (structured data export).

- **Visual Metaphors**:

- Lock icon: Implies secure handling of sensitive data.

- Document icons: Represent unstructured/raw data.

- Dashed arrows: Suggest iterative or non-linear refinement steps.

### Key Observations

1. The process emphasizes human-in-the-loop curation ("Manual Curation" text).

2. Security is explicitly highlighted via the lock icon, suggesting sensitive data handling.

3. Outputs are dual-format: visual (images) and machine-readable (JSON).

4. No numerical data or quantitative metrics are present in the diagram.

### Interpretation

This diagram represents a **data annotation pipeline** where human experts manually curate and validate raw data (images/documents) before producing a structured, annotated dataset and exportable JSON file. The lock icon implies compliance with data privacy standards (e.g., GDPR), while the dual output formats suggest the dataset is intended for both human review and algorithmic use. The absence of quantitative metrics indicates this is a conceptual workflow rather than a performance measurement tool.

</details>

(b)

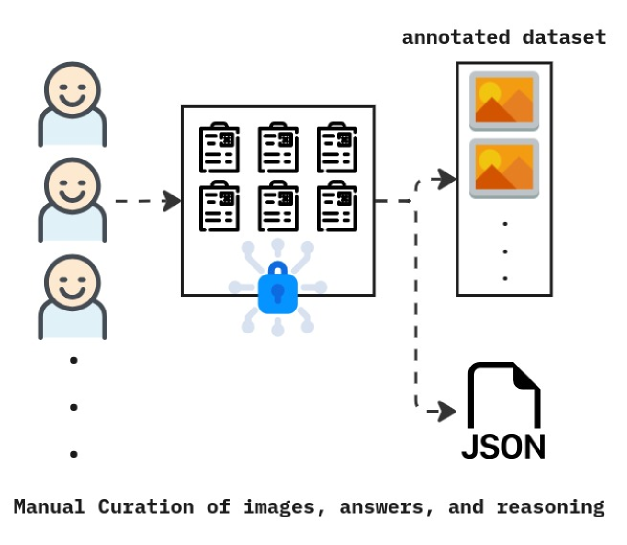

Figure 2: a) Data collected for LogicVista were gathered from closed sources to avoid data leakage. b) Manual annotators used the gathered tests, gathered the correct answers, and came up with reasonings on why the selected answers were correct. All these annotations were then stored in JSON format.

3.1 Data Sources

To ensure the integrity and quality of LogicVista’s evaluations, we have implemented a stringent data collection and curation process specifically designed to prevent data leakage detailed in Figure. 2. Our approach involves sourcing and annotating our samples from proprietary sources that require licenses, registration, payment, or a combination of these barriers to access. This methodology is critical to minimizing the risk that our benchmark data has been previously seen or utilized in the training of other multi-modal models. We prioritized sourcing data from closed sources to further reduce the potential of data leakage.

- Licensed Access: We obtain data from sources that require formal licensing, ensuring the data is used solely for research purposes and not freely available for general use or scraping on the internet.

- Registration Requirements: Some of our data sources mandate user registration and account verification, adding an additional layer of access control to ensure that the data remain restricted and not easily accessible.

- Paid Content: We utilize paid sources where content is accessible only through purchase or subscription, further restricting the data from being freely available on the internet.

Additionally, we obtained permission from the creators of IQ tests and other evaluation materials included in our dataset. This permission specifically allows the use of their content for research purposes, ensuring the data’s legitimacy and accuracy.

3.2 Annotation and Data Collection

LogicVista consists of images designed to assess the underlying reasoning capacities of MLLMs. Using real-life scenes as explicit tests of logical reasoning can be challenging, as they often contain context clues that AI agent can use to deduce answers without directly reasoning through the scene. Therefore, LogicVista presents multiple-choice questions across 9 explicit capabilities that specify the type of reasoning required, without the additional context of real-life scenes typically found in intelligence and reasoning tests. The dataset is manually collected and annotated from various licensed intelligence test sources. Over a period of 3 months, 5 annotators extracted images, correct answers, and explanations when available. The explanations detailing the reasoning behind answer choices were extensively annotated and cross-validated among annotators, ensuring data integrity through multiple rounds of quality checks. The data is structured in JSON format to facilitate easy retrieval and processing in our evaluation pipeline. For our evaluation, we focused on summarizing five reasoning skills spanning two multimodal capabilities. For detailed examples of these reasoning skills and capabilities, please refer to Appendix. A and Appendix. B.

<details>

<summary>x3.png Details</summary>

### Visual Description

## Pie Charts: Reasoning Skills and Capabilities

### Overview

The image contains two adjacent pie charts comparing distributions of reasoning skills and capabilities. Both charts use color-coded segments with percentage labels. The left chart focuses on reasoning skills, while the right chart emphasizes capabilities.

### Components/Axes

**Left Chart (Reasoning Skills):**

- **Segments:** Mechanical (17.0%), Spatial (18.0%), Numerical (21.0%), Deductive (20.0%), Inductive (24.0%)

- **Colors:** Blue, Red, Purple, Yellow, Green

- **Text:** Percentages displayed inside segments

**Right Chart (Capabilities):**

- **Segments:** Diagram (26.4%), OCR (18.7%), Puzzles (20.4%), Patterns (8.4%), Graphs (5.4%), Tables (5.6%), Physics (5.5%), Sequences (6.1%), 3D shapes (3.6%)

- **Colors:** Green, Yellow, Purple, Red, Blue, Gray, Pink, Orange, Light Blue

- **Text:** Percentages displayed inside segments

### Detailed Analysis

**Reasoning Skills Distribution:**

1. Inductive reasoning dominates at 24.0%

2. Numerical reasoning follows at 21.0%

3. Deductive reasoning at 20.0%

4. Spatial reasoning at 18.0%

5. Mechanical reasoning at 17.0%

**Capabilities Distribution:**

1. Diagram interpretation leads at 26.4%

2. Puzzles at 20.4%

3. OCR at 18.7%

4. Patterns at 8.4%

5. Graphs at 5.4%

6. Tables at 5.6%

7. Physics at 5.5%

8. Sequences at 6.1%

9. 3D shapes at 3.6%

### Key Observations

1. **Dominant Categories:**

- Inductive reasoning (24.0%) and Diagram interpretation (26.4%) are the most prominent in their respective charts

- Both charts show a "long tail" with multiple smaller segments (<10%)

2. **Distribution Patterns:**

- Reasoning skills show a more balanced distribution (range: 17-24%)

- Capabilities show greater disparity (range: 3.6-26.4%)

3. **Color Coding:**

- Left chart uses warmer colors (red/yellow) for mid-range skills

- Right chart uses cooler colors (blue/green) for higher capabilities

- 3D shapes (3.6%) uses the smallest segment with orange color

### Interpretation

The data suggests a cognitive profile emphasizing analytical skills over mechanical aptitude, with strong inductive and numerical reasoning capabilities. The capabilities chart reveals a focus on abstract pattern recognition (Diagram, Puzzles) over concrete applications (3D shapes). The significant gap between top capabilities (26.4%) and lower ones (3.6%) indicates potential areas for skill development. The near-equal distribution of reasoning skills (within 7% range) contrasts with the capabilities' wider spread, suggesting that while foundational reasoning is balanced, applied capabilities show more specialization.

</details>

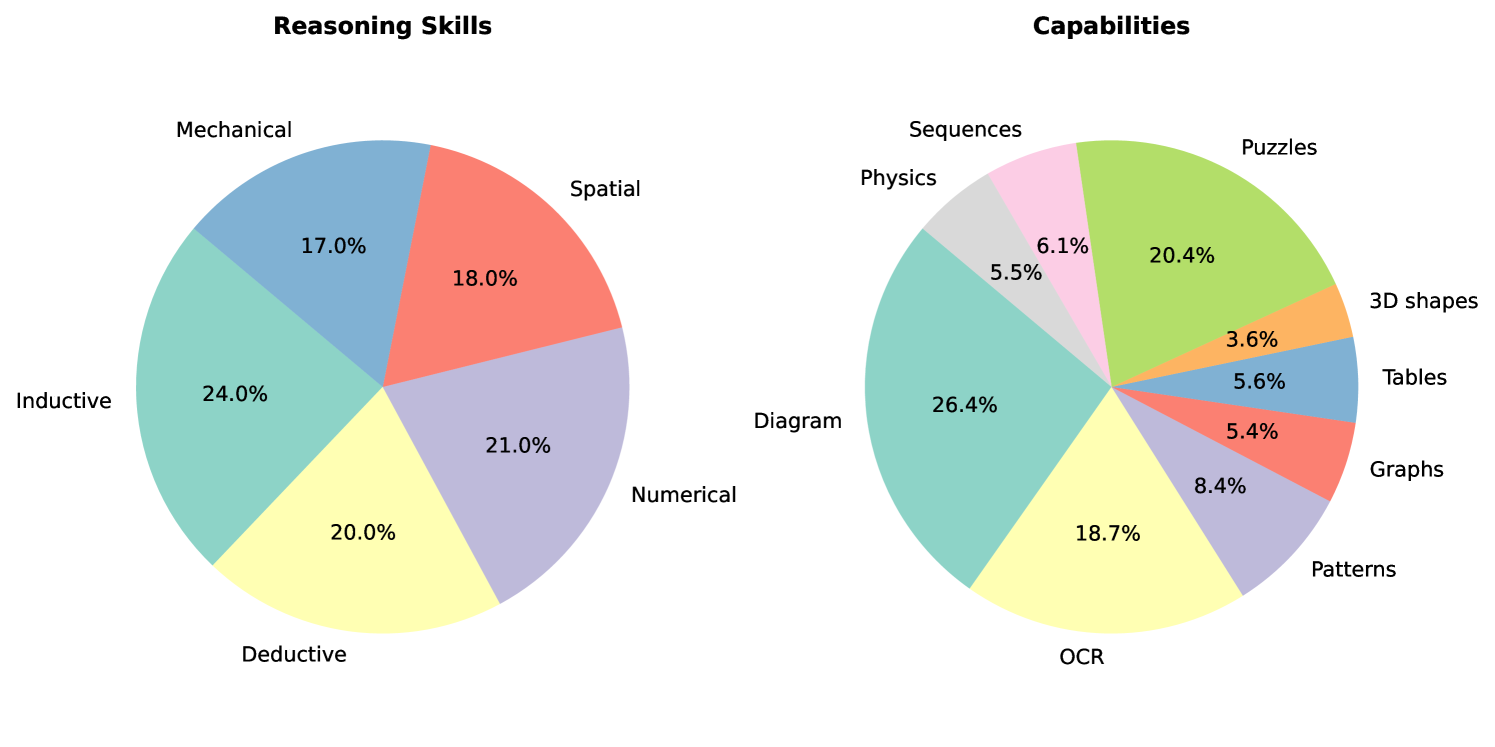

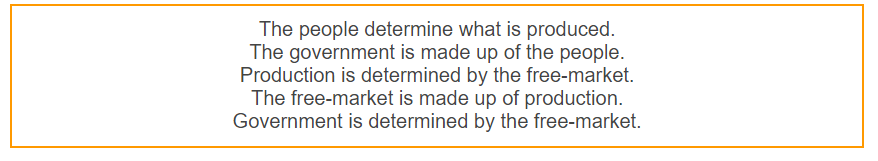

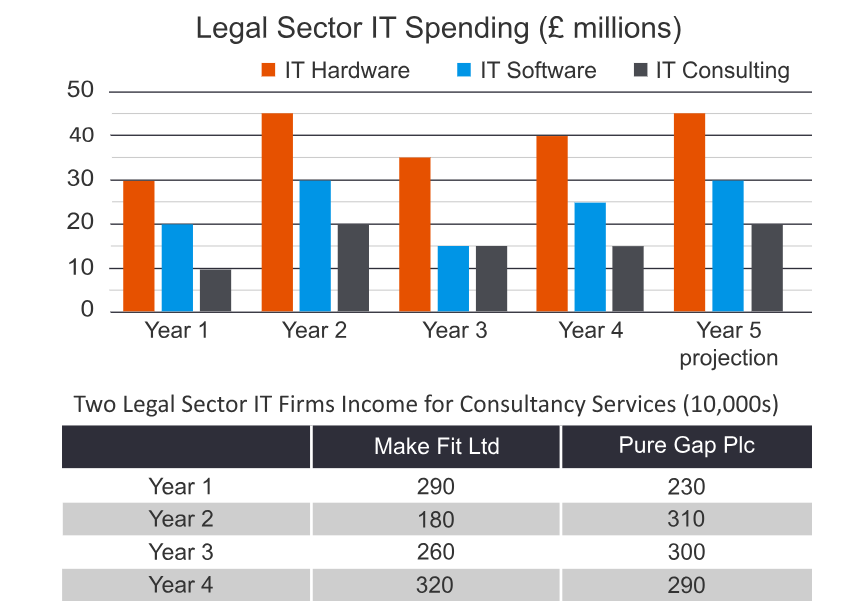

Figure 3: Proportion of reasoning skills and capabilities. On the left is the proportion of questions belonging to each reasoning skill. These proportions add up to $100\%$ as each skill is independent of another. On the right is the proportion of questions belonging to each multi-modal capability. These do not add up to $100\%$ due to the use of mixed capabilities.

3.2.1 Capabilities

We distinguish multimodal capabilities from reasoning skills, considering these capabilities fundamental to understanding a multimodal scene and extracting information. Capabilities refer to the modalities through which logical reasoning questions are delivered. To ensure comprehensive coverage in LogicVista, we have defined a diverse array of 9 capabilities for evaluation. This diversity guarantees that LogicVista thoroughly assess various logical situations that an MLLM may encounter in everyday reasoning. Figure 3 demonstrates how LogicVista contains a balanced mix of capabilities, including samples that utilize multiple capabilities to solve a problem.

- Diagrams: Simple flow diagrams and logical diagrams (e.g., Markov diagrams).

- OCR: Text embedded within an image (e.g., “gas station” in an image of a gas station).

- Patterns: Repeated sequences such as a series of diagrams, numbers, shapes, and objects (e.g., identifying patterns in how a box moves through repeated images of boxes).

- Graphs: Mathematical graphs with axes (e.g., graphs of $y=2x$ and $y=x^{2}$ ).

- Tables: Data tables (e.g., pie charts and T-tables).

- 3D Shapes: The ability to understand and differentiate 3D objects from 2D ones (e.g., recognizing a 3D shape in different rotations).

- Puzzles: Puzzles with logical implications embedded within the shapes (e.g., chess puzzles).

- Sequences: Sequences of related items or objects (e.g., predicting the next item in a sequence).

- Physics: Situations involving physics (e.g., diagrams of projectile motion).

3.2.2 Reasoning Skills

The reasoning skills of interest for this benchmark are based on common critical thinking and problem-solving skills used by humans in various contexts. For our evaluation, we summarize these into the following five skills. For our evaluation, we summarize these to include the following 5 skills. As seen in Figure 3, LogicVista encompasses a wide range of all these reasoning skills:

- Inductive Reasoning: The ability to infer the next entry in a pattern given a set of observations. This involves making generalizations based on specific observations to form an educated guess. It moves from many specific observations to a generalization. For example, observing that John gets a stomach ache when he eats dairy products leads to the inductive conclusion that he is likely lactose intolerant.

- Deductive Reasoning: The ability to conclude a specific case from a general principle or pattern. This involves moving from the general to the specific. For example, from the statement “all men are mortal,” one can deduce that “John is mortal” because John is a man.

- Numerical Reasoning: The ability to read arithmetic problems in an image and solve the math equations. For example, given the equation “10 + 10 = ?,” the answer would be “20.”

- Spatial Reasoning: The ability to understand the spatial relationships between objects and patterns and reason with those relationships. For example, seeing an unfolded box and understanding what the box would look like when folded.

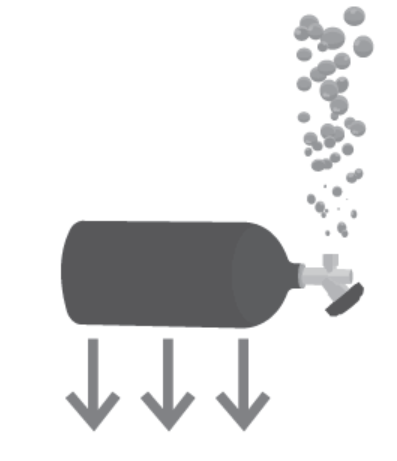

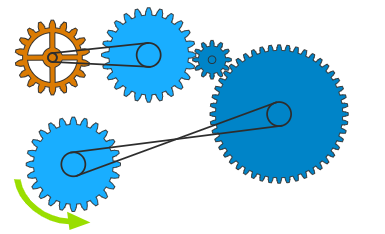

- Mechanical Reasoning: The ability to recognize a physical system and solve equations based on that system or answer questions about it. For example, seeing a set of three gears and understanding which gears will turn clockwise and which will turn counterclockwise.

3.3 LLM-based Multiple Choice Answer Extractor

<details>

<summary>x4.png Details</summary>

### Visual Description

## Flowchart: Data Annotation and Evaluation Pipeline

### Overview

This flowchart illustrates a multi-stage pipeline for processing an annotated dataset through evaluation models. It includes data annotation, raw output generation, multiple-choice question (MCQ) extraction, and performance evaluation via a bar chart. The process emphasizes automated extraction of answers from textual data and their validation through structured evaluation.

### Components/Axes

1. **Annotated Dataset**

- Contains multimodal data (images, text, symbols)

- Includes examples like:

- "The answer is 76 because..."

- "Tom would win the race..."

- "The pie chart shows..."

- "The next element in the sequence is..."

2. **Raw Open-Ended Outputs**

- Textual responses generated from the annotated dataset

- Example: "The answer is 76 because..."

3. **Extracted MCQ Answers**

- Structured options (A-E) derived from raw outputs

- Visualized as a vertical list with checkmarks (✓) and crossmarks (✗)

4. **Evaluation Models**

- Represented by a bar chart comparing performance metrics

- Categories:

- "The answer is 76 because..." (70-75%)

- "Tom would win the race..." (75-80%)

- "The pie chart shows..." (80-85%)

### Detailed Analysis

- **Flow Direction**:

Annotated dataset → Raw outputs → MCQ extraction → Evaluation models

- **Bar Chart Metrics**:

- Categories are labeled with textual examples from the dataset

- Performance values are approximate (70-85%) with no explicit numerical labels

- Bars are color-coded (pink, yellow, green) but lack a legend

### Key Observations

1. The pipeline emphasizes automated answer extraction from unstructured text.

2. Evaluation models focus on textual coherence and factual accuracy.

3. The bar chart lacks a legend, making color assignments ambiguous.

4. All textual examples follow a "The [subject] [verb]..." structure.

### Interpretation

This pipeline demonstrates a system for:

1. **Data Annotation**: Combining multimodal inputs (images, text, symbols) into structured datasets.

2. **Answer Extraction**: Using NLP to identify answers from open-ended responses.

3. **Performance Evaluation**: Quantifying model accuracy through textual examples.

The absence of a legend in the bar chart introduces uncertainty in interpreting color-coded performance metrics. The consistent structure of textual examples suggests a focus on factual QA tasks, while the evaluation models prioritize both correctness (e.g., "76" as a numerical answer) and contextual reasoning (e.g., "Tom would win the race").

</details>

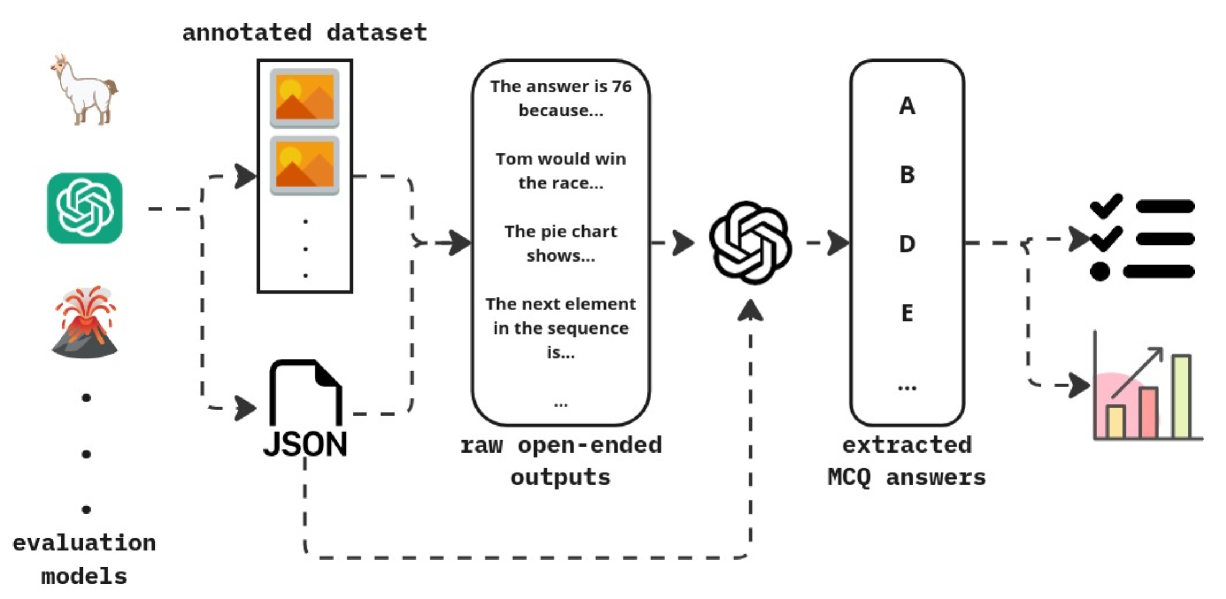

Figure 4: Pipeline of evaluating open-ended LMM outputs using MCQ answer choice extraction.

LLMs generate non-deterministic and open-ended responses [56, 57], making direct evaluation challenging. To address this, we use an LLM evaluator to compare these open-ended responses to our annotations as detailed in 4. This evaluator can assess both MCQ answer choices and the MLLM’s reasoning behind those selections, as both elements are included in our annotations. This step is achieved by feeding various contexts such as the question, and the available choices, along with the LLM-generated answers to an extraction LLM (GPT, LLaMA, etc.). Based on the provided rich context, the LLM can generate the selected letter answer choice. The final output is also repeatedly validated and if the validation fails, the extraction repeats with the provided feedback to obtain correct results.

4 Evaluation Setup

| Model | Size | Language Model | Vision Model |

| --- | --- | --- | --- |

| LLaVA-Vicuna-7B | 7B | Vicuna-7B | CLIP ViT-L/14 |

| LLaVA-Vicuna-13B | 13B | Vicuna-13B | CLIP ViT-L/336px |

| LLaVA-NeXT-Mistral-7B | 7B | Mistral-7B | CLIP ViT-L/14 |

| LLaVA-NeXT-Vicuna-7B | 7B | Vicuna-7B | CLIP ViT-L/14 |

| LLaVA-NeXT-Vicuna-13B | 13B | Vicuna-13B | CLIP ViT-L/336px |

| LLaVA-NeXT-Nous-Hermes-Yi-34B | 34B | Nous Hermes 2-Yi-34B | CLIP ViT-L/336px |

| MiniGPT-4-7B | 7B | Vicuna-7B | BLIP-2 Q-Former |

| MiniGPT-4-13B | 13B | Vicuna-13B | BLIP-2 Q-Former |

| Otter-9B | 9B | MPT-7B | CLIP ViT-L/14 |

| GPT-4 Vision | N/A N/A: Not disclosed | N/A | N/A |

| BLIP-2 | 2.7B | OPT-2.7B | EVA-ViT-G |

| Pix2Struct | 1.3B | ViT | ViT |

| InstructBLIP-Vicuna-7B | 7B | Vicuna-7B | BLIP-2 Q-Former |

| InstructBLIP-Vicuna-13B | 13B | Vicuna-13B | BLIP-2 Q-Former |

| InstructBLIP-FLAN-T5-xl | 3B | FLAN-T5 XL | BLIP-2 Q-Former |

| InstructBLIP-FLAN-T5-xxl | 11B | FLAN-T5 XXL | BLIP-2 Q-Former |

Table 2: Summary of the MLLMs used for evaluations in this study.

To evaluate the performance of MLLMs on LogicVista, we selected a range of representative models detailed in Table. 2. Specifically, we chose8 models for evaluation, including LLaVA [3, 58], MiniGPT4 [4], Otter [39], GPT-4 Vision [1], BLIP-2 [59], and InstructBLIP [40] We also included pix2struct [60] which has been fine-tuned to understand chart and diagram data.

Each model generated outputs using the LogicVista dataset. Our LLM-based multiple-choice extractor was then employed to isolate the multiple-choice selections from the MLLMs’ outputs (which often appear as full-sentence responses rather than single letters) and compare them to the ground truth answers. The overall logical reasoning score is calculated as follows:

$$

S=\frac{\sum_{n=1}^{N}s_{i}}{N}*100\% \tag{1}

$$

Here, $S$ represents the overall score, $s_{i}$ indicate whether a sample $i$ is evaluated as correct or not (regardless of category), and $N$ is the total number of samples. The score for each reasoning skill subcategory is calculated as:

$$

S_{LR}=\frac{\sum_{n=1}^{N_{LR}}s_{i}}{N_{LR}}*100\% \tag{2}

$$

where $S_{LR}$ represents the score for a specific reasoning skill category, $N_{LR}$ is the total number of samples in that reasoning skill category, and $s_{i}$ indicate whether a sample $i$ from that category was evaluated as correct. Similarly, the score for each multi-modal capability is calculated as:

$$

S_{c}=\frac{\sum_{n=1}^{N_{c}}s_{i}}{N_{c}}*100\% \tag{3}

$$

where $S_{c}$ represents the score for a specific capability, $N_{c}$ is the total number of samples in that capability, and $s_{i}$ indicates whether a sample $i$ in the capability category is evaluated correctly.

5 LogicVista Benchmarking and Performance Interpretation

5.1 Logical Reasoning Skills

We present the performance results of various multimodal LLMs on LogicVista. Table 3 outlines the outcome for these models across five logical reasoning categories. We analyzed models of different architectures and sizes, benchmarking them against a random baseline that assumes an average of five choices per question in the LogicVista dataset. Our findings indicate that many models perform below expectations, often yielding results that are worse than random guessing. This outcome is somewhat anticipated, given that most training data for multimodal LLMs and LLMs are derived from classical computer vision datasets such as COCO, which focus on recognition tasks rather than complex reasoning.

Traditional benchmarks typically emphasize recognition tasks, resulting in a lack of emphasis on reasoning tasks during both training and evaluation phases. This is evident from the observation that while many models excel on recognition-based benchmarks like COCO, TextVQA, and MM-vet, they often struggle to outperform a random baseline on logical reasoning tasks.

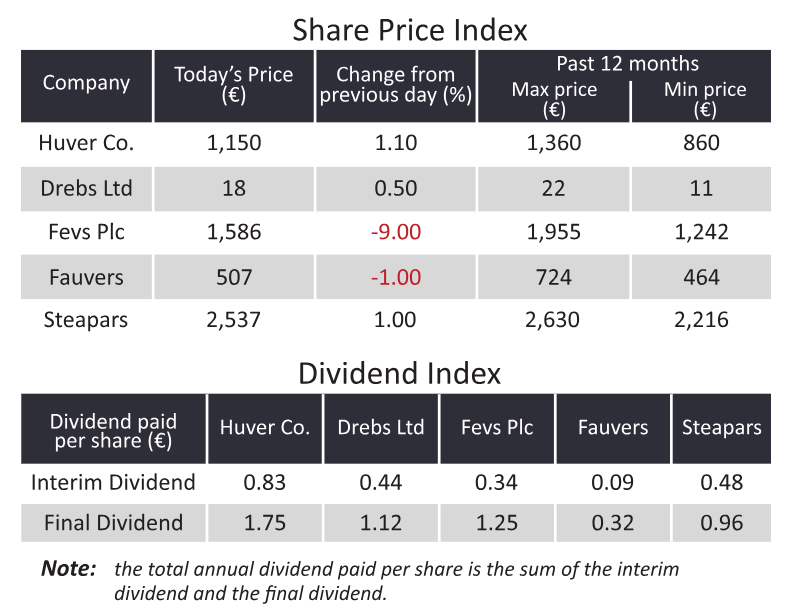

| Model | Inductive | Deductive | Numerical | Spatial | Mechanical |

| --- | --- | --- | --- | --- | --- |

| LLAVA7B | 29.91% | 29.03% | 26.32% | 25.32% | 36.49% |

| LLAVA13B | 18.69% | 31.18% | 20.00% | 27.85% | 24.32% |

| otter9B | 31.78% | 24.73% | 18.95% | 18.99% | 21.62% |

| GPT4 | 23.36% | 54.84% | 24.21% | 21.52% | 41.89% |

| BLIP2 | 17.76% | 23.66% | 23.16% | 24.05% | 18.92% |

| LLAVANEXT-7B-mistral | 16.82% | 34.41% | 23.16% | 21.52% | 22.97% |

| miniGPTvicuna7B | 10.28% | 9.68% | 7.37% | 3.80% | 27.03% |

| miniGPTvicuna13B | 13.08% | 23.66% | 10.53% | 10.13% | 17.57% |

| pix2struct | 12.15% | 6.45% | 2.11% | 7.59% | 17.57% |

| instructBLIP-vicuna-7B | 4.67% | 21.51% | 24.21% | 2.53% | 22.97% |

| instructBLIP-vicuna-13B | 3.74% | 10.75% | 18.95% | 5.06% | 17.57% |

| instructBLIP-flan-t5-xl | 23.36% | 22.58% | 22.11% | 7.59% | 33.78% |

| instructBLIP-flan-t5-xxl | 17.76% | 30.11% | 24.21% | 20.25% | 22.97% |

| LLAVANEXT-7B-vicuna | 26.17% | 21.51% | 25.26% | 27.85% | 29.73% |

| LLAVANEXT-13B-vicuna | 22.43% | 22.58% | 26.32% | 26.58% | 25.68% |

| LLAVANEXT-34B-NH | 20.56% | 52.69% | 30.53% | 24.05% | 40.54% |

Table 3: LogicVista evaluation results for various multimodal LLMs on each logical reasoning skill are presented as $\%$ , with the highest possible accuracy being $100\%$ . The highest-scoring models are highlighted in green and the lower-scoring models are highlighted in yellow.

Upon closer examination, we find that models perform best on deductive, numerical, and mechanical reasoning tasks. These types of reasoning are more prevalent in real-life scenarios, which makes models more adept at handling them. For example, deductive reasoning can be applied in predicting a character’s actions based on a scene, while numerical reasoning is crucial in solving arithmetic visual tasks. Mechanical reasoning involves understanding physical principles and interactions.

In contrast, induction and spatial reasoning are less frequently encountered in standard training data, potentially explaining the lower performance of models in these areas. These insights underscore the necessity for enhanced training and evaluation methodologies that prioritize reasoning tasks to bolster the logical reasoning capabilities of multimodal LLMs.

5.2 Visual Capabilities

In Table 4, we present the results of multimodal LLMs on logical reasoning tasks across diagrammatic and OCR mediums. Generally, we observe that OCR tasks tend to perform better than diagrammatic tasks. This difference stems from the nature of traditional computer vision tasks, which often prioritize recognizing prominent objects (“landmarks”) in a scene, such as distinct cars, planes, people, or balls. Diagrams, in contrast, lack such prominent features and mainly consist of lines and shapes, making it challenging for models to extract intricate relationships between objects.

In OCR tasks, once the text is accurately extracted from the image, the remainder of the reasoning task relies on the underlying LLM’s ability to process and interpret the content. This process typically bypasses the complexities of multimodal reasoning, leading to better performance on OCR tasks compared to diagrammatic tasks. These findings highlight the necessity for enhanced evaluation methodologies tailored to diagrammatic reasoning in multimodal LLMs, as current approaches may overlook critical details inherent in these types of tasks.

| Model | Diagram | OCR | Patterns | Graphs | Tables | 3D Shapes | Puzzles | Sequences | Physics |

| --- | --- | --- | --- | --- | --- | --- | --- | --- | --- |

| LLAVA7B | 29.70% | 28.21% | 30.47% | 25.37% | 25.71% | 22.22% | 28.52% | 25.00% | 43.48% |

| LLAVA13B | 21.52% | 22.65% | 16.19% | 16.42% | 20.00% | 31.11% | 26.17% | 15.79% | 26.09% |

| otter9B | 23.64% | 20.51% | 30.48% | 14.93% | 22.86% | 13.33% | 26.17% | 26.32% | 24.64% |

| GPT4 | 26.06% | 39.74% | 20.95% | 20.90% | 22.86% | 31.11% | 31.25% | 28.95% | 47.83% |

| BLIP2 | 20.30% | 21.79% | 20.00% | 17.91% | 24.29% | 17.78% | 22.27% | 15.79% | 20.29% |

| LLAVANEXT-7B-mistral | 20.30% | 26.92% | 21.90% | 23.88% | 22.86% | 13.33% | 22.27% | 23.68% | 30.43% |

| miniGPTvicuna7B | 10.91% | 11.54% | 12.38% | 7.46% | 8.57% | 11.11% | 9.77% | 7.89% | 23.19% |

| miniGPTvicuna13B | 12.73% | 17.52% | 12.38% | 10.45% | 11.43% | 11.11% | 14.84% | 6.58% | 20.29% |

| pix2struct | 9.39% | 8.55% | 10.48% | 0.00% | 4.29% | 11.11% | 10.55% | 11.84% | 14.49% |

| instructBLIP-vicuna-7B | 11.82% | 21.37% | 7.62% | 22.39% | 22.86% | 6.67% | 10.55% | 0.00% | 24.64% |

| instructBLIP-vicuna-13B | 10.91% | 13.68% | 5.71% | 19.40% | 15.71% | 11.11% | 6.25% | 2.63% | 18.84% |

| instructBLIP-flan-t5-xl | 20.30% | 22.22% | 20.00% | 17.91% | 22.86% | 13.33% | 18.36% | 15.79% | 33.33% |

| instructBLIP-flan-t5-xxl | 20.91% | 24.36% | 22.86% | 20.90% | 25.71% | 20.00% | 21.09% | 14.47% | 21.74% |

| LLAVANEXT-7B-vicuna | 26.67% | 23.08% | 26.67% | 20.90% | 27.14% | 33.33% | 26.56% | 19.74% | 30.43% |

| LLAVANEXT-13B-vicuna | 25.15% | 22.65% | 23.81% | 20.90% | 27.14% | 26.67% | 24.61% | 15.79% | 27.54% |

| LLAVANEXT-34B-NH | 27.58% | 39.32% | 25.71% | 28.36% | 32.86% | 26.67% | 30.86% | 21.05% | 46.37% |

Table 4: LogicVista evaluation results on various multimodal LLMs across each multi-modal capability. Accuracy results are presented as $\%$ , with a maximum possible accuracy of $100\%$ . Models achieving the highest scores are highlighted green, while lower-scoring models are highlighted yellow.

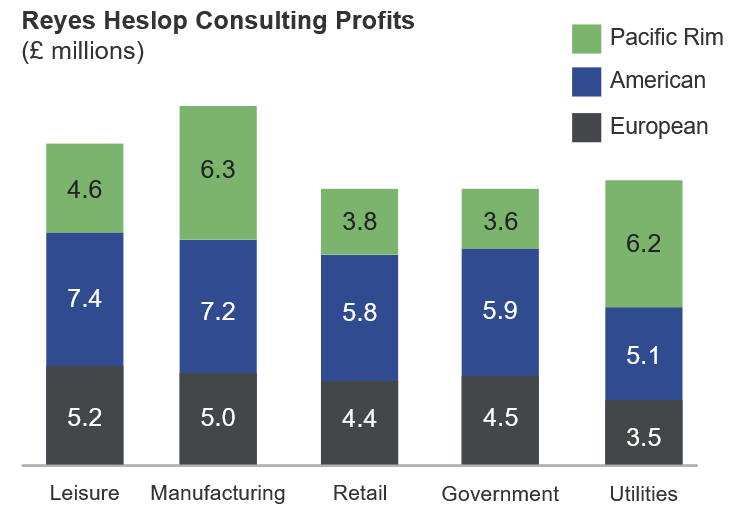

5.3 Relationship between Model Size and Performance

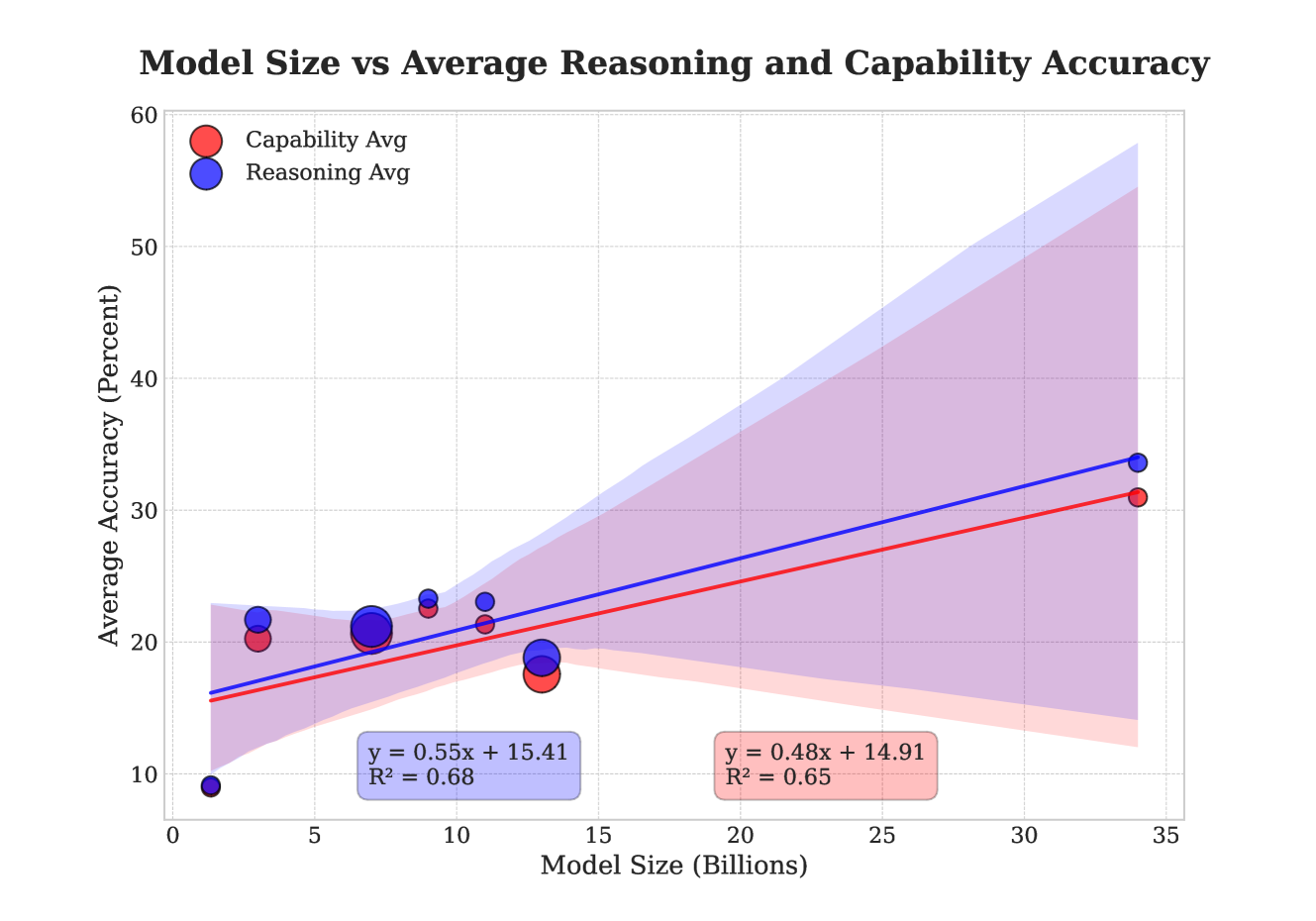

Figure 5 presents a comparative analysis of the model size and the average score achieved across all logical reasoning tasks and capabilities. Each plot includes a shaded region denoting the 95% confidence interval for the regression estimate, visually representing the uncertainty associated with the regression line. Dot sizes in the scatter plot indicate the number of models with identical parameter counts, illustrating the distribution density. This visual evidence strongly suggests a positive correlation between larger model sizes and improved performance in LogicVista. Specifically, as model size increases, performance tends to improve, indicating that larger models may have greater capacity to handle complex patterns and reasoning tasks.

6 Conclusion

Reasoning skills are critical for solving complex tasks and serve as the foundation for many challenges that humans expect AI agents to tackle. However, the exploration of reasoning abilities in multimodal LLM agents remains limited, with most benchmarks and training datasets predominantly focused on traditional computer vision tasks like recognition. For multimodal LLMs to excel in critical thinking and complex tasks, they must comprehend the underlying logical relationships inherent in these challenges.

<details>

<summary>x5.png Details</summary>

### Visual Description

## Scatter Plot: Model Size vs Average Reasoning and Capability Accuracy

### Overview

The image is a scatter plot comparing model size (in billions of parameters) to average accuracy in reasoning and capability tasks. Two data series are plotted: "Capability Avg" (red) and "Reasoning Avg" (blue), each with a trend line and shaded confidence interval. The plot includes axis labels, a legend, and numerical annotations for trend lines.

---

### Components/Axes

- **X-axis**: Model Size (Billions)

- Scale: 0 to 35 (increments of 5)

- Labels: "Model Size (Billions)"

- **Y-axis**: Average Accuracy (Percent)

- Scale: 0 to 60 (increments of 10)

- Labels: "Average Accuracy (Percent)"

- **Legend**:

- Red: "Capability Avg"

- Blue: "Reasoning Avg"

- **Trend Lines**:

- Red (Capability): `y = 0.48x + 14.91` (R² = 0.65)

- Blue (Reasoning): `y = 0.55x + 15.41` (R² = 0.68)

- **Shaded Regions**:

- Light blue (Reasoning): ±2% around the blue trend line

- Light red (Capability): ±2% around the red trend line

---

### Detailed Analysis

#### Data Points

- **Capability Avg (Red)**:

- (0, 9), (3, 20), (6, 21), (9, 22), (12, 18), (15, 17), (35, 31)

- **Reasoning Avg (Blue)**:

- (0, 9), (3, 22), (6, 22), (9, 23), (12, 22), (15, 19), (35, 33)

#### Trend Lines

- **Capability Avg**:

- Slope: 0.48 (moderate increase)

- Intercept: 14.91

- R²: 0.65 (65% variance explained)

- **Reasoning Avg**:

- Slope: 0.55 (steeper increase)

- Intercept: 15.41

- R²: 0.68 (68% variance explained)

#### Shaded Regions

- Both trend lines have ±2% confidence intervals, widening slightly at higher model sizes.

---

### Key Observations

1. **Positive Correlation**: Both capability and reasoning accuracy increase with model size.

2. **Steeper Growth for Reasoning**: The blue trend line (Reasoning) has a higher slope (0.55 vs. 0.48), indicating faster improvement.

3. **Variability**: Larger models (e.g., 35B) show wider shaded regions, suggesting greater uncertainty in accuracy measurements.

4. **R² Values**: Both trends explain ~65-68% of variance, implying model size is a strong but not sole predictor of accuracy.

---

### Interpretation

- **Model Size Impact**: Larger models improve performance in both reasoning and capability tasks, but reasoning accuracy grows more rapidly.

- **Confidence Intervals**: The shaded regions highlight that accuracy estimates for larger models are less precise, possibly due to increased complexity or measurement noise.

- **Practical Implications**: While model size is critical, other factors (e.g., architecture, training data) may also influence accuracy, as R² values are below 1.

- **Anomalies**: The red data point at (15B, 17%) deviates slightly from the trend, suggesting potential outliers or measurement errors.

This analysis underscores the trade-off between model size and performance gains, emphasizing the need for balanced optimization in AI development.

</details>

Figure 5: correlation between model size and average accuracy. The scatter plot uses varying dot sizes to represent the density of models with identical sizes.

To address this gap, we introduce LogicVista, a novel benchmark designed to evaluate multimodal LLMs through a comprehensive assessment of logical reasoning capabilities. This benchmark features a dataset of 448 samples covering five distinct reasoning skills, providing a robust platform for evaluating cutting-edge multimodal models. Our evaluation aims to shed light on the current state of logical reasoning in multimodal LLMs.

To facilitate straightforward evaluation, we employ an LLM-based multiple-choice question-answer extractor, which helps mitigate the non-deterministic nature often associated with multimodal LLM outputs. While LogicVista primarily focuses on explicit logical reasoning tasks isolated from real-life contexts, this approach represents a crucial step toward understanding fundamental reasoning skills. However, it is equally important to explore how AI agents perform tasks that blend abstract reasoning with real-world scenarios, a direction that will guide our future research endeavors.

Acknowledgements

We extend our sincere appreciation to the student researchers at the University of California, Los Angeles, for their diligent efforts in the manual annotation and validation of our dataset: Evan Li, Srinath Saikrishnan, Lawrence Li, and Oscar Cooper Stern.

References

- [1] OpenAI, Josh Achiam, Steven Adler, Sandhini Agarwal, Lama Ahmad, Ilge Akkaya, Florencia Leoni Aleman, Diogo Almeida, Janko Altenschmidt, Sam Altman, Shyamal Anadkat, Red Avila, Igor Babuschkin, Suchir Balaji, Valerie Balcom, Paul Baltescu, Haiming Bao, Mohammad Bavarian, Jeff Belgum, Irwan Bello, Jake Berdine, Gabriel Bernadett-Shapiro, Christopher Berner, Lenny Bogdonoff, Oleg Boiko, Madelaine Boyd, Anna-Luisa Brakman, Greg Brockman, Tim Brooks, Miles Brundage, Kevin Button, Trevor Cai, Rosie Campbell, Andrew Cann, Brittany Carey, Chelsea Carlson, Rory Carmichael, Brooke Chan, Che Chang, Fotis Chantzis, Derek Chen, Sully Chen, Ruby Chen, Jason Chen, Mark Chen, Ben Chess, Chester Cho, Casey Chu, Hyung Won Chung, Dave Cummings, Jeremiah Currier, Yunxing Dai, Cory Decareaux, Thomas Degry, Noah Deutsch, Damien Deville, Arka Dhar, David Dohan, Steve Dowling, Sheila Dunning, Adrien Ecoffet, Atty Eleti, Tyna Eloundou, David Farhi, Liam Fedus, Niko Felix, Simón Posada Fishman, Juston Forte, Isabella Fulford, Leo Gao, Elie Georges, Christian Gibson, Vik Goel, Tarun Gogineni, Gabriel Goh, Rapha Gontijo-Lopes, Jonathan Gordon, Morgan Grafstein, Scott Gray, Ryan Greene, Joshua Gross, Shixiang Shane Gu, Yufei Guo, Chris Hallacy, Jesse Han, Jeff Harris, Yuchen He, Mike Heaton, Johannes Heidecke, Chris Hesse, Alan Hickey, Wade Hickey, Peter Hoeschele, Brandon Houghton, Kenny Hsu, Shengli Hu, Xin Hu, Joost Huizinga, Shantanu Jain, Shawn Jain, Joanne Jang, Angela Jiang, Roger Jiang, Haozhun Jin, Denny Jin, Shino Jomoto, Billie Jonn, Heewoo Jun, Tomer Kaftan, Łukasz Kaiser, Ali Kamali, Ingmar Kanitscheider, Nitish Shirish Keskar, Tabarak Khan, Logan Kilpatrick, Jong Wook Kim, Christina Kim, Yongjik Kim, Jan Hendrik Kirchner, Jamie Kiros, Matt Knight, Daniel Kokotajlo, Łukasz Kondraciuk, Andrew Kondrich, Aris Konstantinidis, Kyle Kosic, Gretchen Krueger, Vishal Kuo, Michael Lampe, Ikai Lan, Teddy Lee, Jan Leike, Jade Leung, Daniel Levy, Chak Ming Li, Rachel Lim, Molly Lin, Stephanie Lin, Mateusz Litwin, Theresa Lopez, Ryan Lowe, Patricia Lue, Anna Makanju, Kim Malfacini, Sam Manning, Todor Markov, Yaniv Markovski, Bianca Martin, Katie Mayer, Andrew Mayne, Bob McGrew, Scott Mayer McKinney, Christine McLeavey, Paul McMillan, Jake McNeil, David Medina, Aalok Mehta, Jacob Menick, Luke Metz, Andrey Mishchenko, Pamela Mishkin, Vinnie Monaco, Evan Morikawa, Daniel Mossing, Tong Mu, Mira Murati, Oleg Murk, David Mély, Ashvin Nair, Reiichiro Nakano, Rajeev Nayak, Arvind Neelakantan, Richard Ngo, Hyeonwoo Noh, Long Ouyang, Cullen O’Keefe, Jakub Pachocki, Alex Paino, Joe Palermo, Ashley Pantuliano, Giambattista Parascandolo, Joel Parish, Emy Parparita, Alex Passos, Mikhail Pavlov, Andrew Peng, Adam Perelman, Filipe de Avila Belbute Peres, Michael Petrov, Henrique Ponde de Oliveira Pinto, Michael, Pokorny, Michelle Pokrass, Vitchyr H. Pong, Tolly Powell, Alethea Power, Boris Power, Elizabeth Proehl, Raul Puri, Alec Radford, Jack Rae, Aditya Ramesh, Cameron Raymond, Francis Real, Kendra Rimbach, Carl Ross, Bob Rotsted, Henri Roussez, Nick Ryder, Mario Saltarelli, Ted Sanders, Shibani Santurkar, Girish Sastry, Heather Schmidt, David Schnurr, John Schulman, Daniel Selsam, Kyla Sheppard, Toki Sherbakov, Jessica Shieh, Sarah Shoker, Pranav Shyam, Szymon Sidor, Eric Sigler, Maddie Simens, Jordan Sitkin, Katarina Slama, Ian Sohl, Benjamin Sokolowsky, Yang Song, Natalie Staudacher, Felipe Petroski Such, Natalie Summers, Ilya Sutskever, Jie Tang, Nikolas Tezak, Madeleine B. Thompson, Phil Tillet, Amin Tootoonchian, Elizabeth Tseng, Preston Tuggle, Nick Turley, Jerry Tworek, Juan Felipe Cerón Uribe, Andrea Vallone, Arun Vijayvergiya, Chelsea Voss, Carroll Wainwright, Justin Jay Wang, Alvin Wang, Ben Wang, Jonathan Ward, Jason Wei, CJ Weinmann, Akila Welihinda, Peter Welinder, Jiayi Weng, Lilian Weng, Matt Wiethoff, Dave Willner, Clemens Winter, Samuel Wolrich, Hannah Wong, Lauren Workman, Sherwin Wu, Jeff Wu, Michael Wu, Kai Xiao, Tao Xu, Sarah Yoo, Kevin Yu, Qiming Yuan, Wojciech Zaremba, Rowan Zellers, Chong Zhang, Marvin Zhang, Shengjia Zhao, Tianhao Zheng, Juntang Zhuang, William Zhuk, and Barret Zoph. Gpt-4 technical report, 2024.

- [2] Jean-Baptiste Alayrac, Jeff Donahue, Pauline Luc, Antoine Miech, Iain Barr, Yana Hasson, Karel Lenc, Arthur Mensch, Katie Millican, Malcolm Reynolds, Roman Ring, Eliza Rutherford, Serkan Cabi, Tengda Han, Zhitao Gong, Sina Samangooei, Marianne Monteiro, Jacob Menick, Sebastian Borgeaud, Andrew Brock, Aida Nematzadeh, Sahand Sharifzadeh, Mikolaj Binkowski, Ricardo Barreira, Oriol Vinyals, Andrew Zisserman, and Karen Simonyan. Flamingo: a visual language model for few-shot learning, 2022.

- [3] Haotian Liu, Chunyuan Li, Qingyang Wu, and Yong Jae Lee. Visual instruction tuning, 2023.

- [4] Deyao Zhu, Jun Chen, Xiaoqian Shen, Xiang Li, and Mohamed Elhoseiny. Minigpt-4: Enhancing vision-language understanding with advanced large language models, 2023.

- [5] Shukang Yin, Chaoyou Fu, Sirui Zhao, Ke Li, Xing Sun, Tong Xu, and Enhong Chen. A survey on multimodal large language models, 2023.

- [6] Chaoyou Fu, Peixian Chen, Yunhang Shen, Yulei Qin, Mengdan Zhang, Xu Lin, Jinrui Yang, Xiawu Zheng, Ke Li, Xing Sun, Yunsheng Wu, and Rongrong Ji. Mme: A comprehensive evaluation benchmark for multimodal large language models, 2023.

- [7] Xiaoman Zhang, Chaoyi Wu, Ziheng Zhao, Weixiong Lin, Ya Zhang, Yanfeng Wang, and Weidi Xie. Pmc-vqa: Visual instruction tuning for medical visual question answering, 2023.

- [8] Stanislaw Antol, Aishwarya Agrawal, Jiasen Lu, Margaret Mitchell, Dhruv Batra, C. Lawrence Zitnick, and Devi Parikh. Vqa: Visual question answering. In Proceedings of the IEEE International Conference on Computer Vision (ICCV), December 2015.

- [9] Amanpreet Singh, Vivek Natarajan, Meet Shah, Yu Jiang, Xinlei Chen, Dhruv Batra, Devi Parikh, and Marcus Rohrbach. Towards vqa models that can read, 2019.

- [10] Weihao Yu, Zhengyuan Yang, Linjie Li, Jianfeng Wang, Kevin Lin, Zicheng Liu, Xinchao Wang, and Lijuan Wang. Mm-vet: Evaluating large multimodal models for integrated capabilities, 2023.

- [11] Michael J. Wavering. Logical reasoning necessary to make line graphs. Journal of Research in Science Teaching, 26(5):373–379, May 1989.

- [12] Catherine Sophian and Susan C. Somerville. Early developments in logical reasoning: Considering alternative possibilities. Cognitive Development, 3(2):183–222, 1988.

- [13] Hugo Bronkhorst, Gerrit Roorda, Cor Suhre, and Martin Goedhart. Logical reasoning in formal and everyday reasoning tasks - international journal of science and mathematics education, Dec 2019.

- [14] Pan Lu, Hritik Bansal, Tony Xia, Jiacheng Liu, Chunyuan Li, Hannaneh Hajishirzi, Hao Cheng, Kai-Wei Chang, Michel Galley, and Jianfeng Gao. Mathvista: Evaluating mathematical reasoning of foundation models in visual contexts, 2024.

- [15] Yash Goyal, Tejas Khot, Douglas Summers-Stay, Dhruv Batra, and Devi Parikh. Making the V in VQA matter: Elevating the role of image understanding in Visual Question Answering. In Conference on Computer Vision and Pattern Recognition (CVPR), 2017.

- [16] Tsung-Yi Lin, Michael Maire, Serge Belongie, James Hays, Pietro Perona, Deva Ramanan, Piotr Dollár, and C. Lawrence Zitnick. Microsoft COCO: Common Objects in Context, page 740–755. Springer International Publishing, 2014.

- [17] Oleksii Sidorov, Ronghang Hu, Marcus Rohrbach, and Amanpreet Singh. Textcaps: a dataset for image captioning with reading comprehension, 2020.

- [18] Rohan Wadhawan, Hritik Bansal, Kai-Wei Chang, and Nanyun Peng. Contextual: Evaluating context-sensitive text-rich visual reasoning in large multimodal models, 2024.

- [19] Yonatan Bitton, Hritik Bansal, Jack Hessel, Rulin Shao, Wanrong Zhu, Anas Awadalla, Josh Gardner, Rohan Taori, and Ludwig Schmidt. Visit-bench: A benchmark for vision-language instruction following inspired by real-world use, 2023.

- [20] Xinlei Chen, Hao Fang, Tsung-Yi Lin, Ramakrishna Vedantam, Saurabh Gupta, Piotr Dollar, and C. Lawrence Zitnick. Microsoft coco captions: Data collection and evaluation server, 2015.

- [21] Yash Goyal, Tejas Khot, Douglas Summers-Stay, Dhruv Batra, and Devi Parikh. Making the v in vqa matter: Elevating the role of image understanding in visual question answering, 2017.

- [22] Jiasen Lu, Dhruv Batra, Devi Parikh, and Stefan Lee. Vilbert: Pretraining task-agnostic visiolinguistic representations for vision-and-language tasks, 2019.

- [23] Yen-Chun Chen, Linjie Li, Licheng Yu, Ahmed El Kholy, Faisal Ahmed, Zhe Gan, Yu Cheng, and Jingjing Liu. Uniter: Universal image-text representation learning, 2020.

- [24] Xiujun Li, Xi Yin, Chunyuan Li, Pengchuan Zhang, Xiaowei Hu, Lei Zhang, Lijuan Wang, Houdong Hu, Li Dong, Furu Wei, Yejin Choi, and Jianfeng Gao. Oscar: Object-semantics aligned pre-training for vision-language tasks, 2020.

- [25] Wonjae Kim, Bokyung Son, and Ildoo Kim. Vilt: Vision-and-language transformer without convolution or region supervision, 2021.

- [26] Zirui Wang, Jiahui Yu, Adams Wei Yu, Zihang Dai, Yulia Tsvetkov, and Yuan Cao. Simvlm: Simple visual language model pretraining with weak supervision, 2022.

- [27] Jianfeng Wang, Zhengyuan Yang, Xiaowei Hu, Linjie Li, Kevin Lin, Zhe Gan, Zicheng Liu, Ce Liu, and Lijuan Wang. Git: A generative image-to-text transformer for vision and language, 2022.

- [28] Zhengyuan Yang, Zhe Gan, Jianfeng Wang, Xiaowei Hu, Faisal Ahmed, Zicheng Liu, Yumao Lu, and Lijuan Wang. Unitab: Unifying text and box outputs for grounded vision-language modeling, 2022.

- [29] Zhe Gan, Linjie Li, Chunyuan Li, Lijuan Wang, Zicheng Liu, and Jianfeng Gao. Vision-language pre-training: Basics, recent advances, and future trends, 2022.

- [30] Tom B. Brown, Benjamin Mann, Nick Ryder, Melanie Subbiah, Jared Kaplan, Prafulla Dhariwal, Arvind Neelakantan, Pranav Shyam, Girish Sastry, Amanda Askell, Sandhini Agarwal, Ariel Herbert-Voss, Gretchen Krueger, Tom Henighan, Rewon Child, Aditya Ramesh, Daniel M. Ziegler, Jeffrey Wu, Clemens Winter, Christopher Hesse, Mark Chen, Eric Sigler, Mateusz Litwin, Scott Gray, Benjamin Chess, Jack Clark, Christopher Berner, Sam McCandlish, Alec Radford, Ilya Sutskever, and Dario Amodei. Language models are few-shot learners, 2020.

- [31] Aakanksha Chowdhery, Sharan Narang, Jacob Devlin, Maarten Bosma, Gaurav Mishra, Adam Roberts, Paul Barham, Hyung Won Chung, Charles Sutton, Sebastian Gehrmann, Parker Schuh, Kensen Shi, Sasha Tsvyashchenko, Joshua Maynez, Abhishek Rao, Parker Barnes, Yi Tay, Noam Shazeer, Vinodkumar Prabhakaran, Emily Reif, Nan Du, Ben Hutchinson, Reiner Pope, James Bradbury, Jacob Austin, Michael Isard, Guy Gur-Ari, Pengcheng Yin, Toju Duke, Anselm Levskaya, Sanjay Ghemawat, Sunipa Dev, Henryk Michalewski, Xavier Garcia, Vedant Misra, Kevin Robinson, Liam Fedus, Denny Zhou, Daphne Ippolito, David Luan, Hyeontaek Lim, Barret Zoph, Alexander Spiridonov, Ryan Sepassi, David Dohan, Shivani Agrawal, Mark Omernick, Andrew M. Dai, Thanumalayan Sankaranarayana Pillai, Marie Pellat, Aitor Lewkowycz, Erica Moreira, Rewon Child, Oleksandr Polozov, Katherine Lee, Zongwei Zhou, Xuezhi Wang, Brennan Saeta, Mark Diaz, Orhan Firat, Michele Catasta, Jason Wei, Kathy Meier-Hellstern, Douglas Eck, Jeff Dean, Slav Petrov, and Noah Fiedel. Palm: Scaling language modeling with pathways, 2022.

- [32] Hugo Touvron, Thibaut Lavril, Gautier Izacard, Xavier Martinet, Marie-Anne Lachaux, Timothée Lacroix, Baptiste Rozière, Naman Goyal, Eric Hambro, Faisal Azhar, Aurelien Rodriguez, Armand Joulin, Edouard Grave, and Guillaume Lample. Llama: Open and efficient foundation language models, 2023.

- [33] Maria Tsimpoukelli, Jacob Menick, Serkan Cabi, S. M. Ali Eslami, Oriol Vinyals, and Felix Hill. Multimodal few-shot learning with frozen language models, 2021.

- [34] Danny Driess, Fei Xia, Mehdi S. M. Sajjadi, Corey Lynch, Aakanksha Chowdhery, Brian Ichter, Ayzaan Wahid, Jonathan Tompson, Quan Vuong, Tianhe Yu, Wenlong Huang, Yevgen Chebotar, Pierre Sermanet, Daniel Duckworth, Sergey Levine, Vincent Vanhoucke, Karol Hausman, Marc Toussaint, Klaus Greff, Andy Zeng, Igor Mordatch, and Pete Florence. Palm-e: An embodied multimodal language model, 2023.

- [35] Susan Zhang, Stephen Roller, Naman Goyal, Mikel Artetxe, Moya Chen, Shuohui Chen, Christopher Dewan, Mona Diab, Xian Li, Xi Victoria Lin, Todor Mihaylov, Myle Ott, Sam Shleifer, Kurt Shuster, Daniel Simig, Punit Singh Koura, Anjali Sridhar, Tianlu Wang, and Luke Zettlemoyer. Opt: Open pre-trained transformer language models, 2022.

- [36] Baolin Peng, Chunyuan Li, Pengcheng He, Michel Galley, and Jianfeng Gao. Instruction tuning with gpt-4, 2023.

- [37] Anas Awadalla, Irena Gao, Josh Gardner, Jack Hessel, Yusuf Hanafy, Wanrong Zhu, Kalyani Marathe, Yonatan Bitton, Samir Gadre, Shiori Sagawa, Jenia Jitsev, Simon Kornblith, Pang Wei Koh, Gabriel Ilharco, Mitchell Wortsman, and Ludwig Schmidt. Openflamingo: An open-source framework for training large autoregressive vision-language models, 2023.

- [38] Haotian Liu, Chunyuan Li, Qingyang Wu, and Yong Jae Lee. Visual instruction tuning, 2023.

- [39] Bo Li, Yuanhan Zhang, Liangyu Chen, Jinghao Wang, Jingkang Yang, and Ziwei Liu. Otter: A multi-modal model with in-context instruction tuning, 2023.

- [40] Wenliang Dai, Junnan Li, Dongxu Li, Anthony Meng Huat Tiong, Junqi Zhao, Weisheng Wang, Boyang Li, Pascale Fung, and Steven Hoi. Instructblip: Towards general-purpose vision-language models with instruction tuning, 2023.

- [41] Tao Gong, Chengqi Lyu, Shilong Zhang, Yudong Wang, Miao Zheng, Qian Zhao, Kuikun Liu, Wenwei Zhang, Ping Luo, and Kai Chen. Multimodal-gpt: A vision and language model for dialogue with humans, 2023.

- [42] Qinghao Ye, Haiyang Xu, Guohai Xu, Jiabo Ye, Ming Yan, Yiyang Zhou, Junyang Wang, Anwen Hu, Pengcheng Shi, Yaya Shi, Chenliang Li, Yuanhong Xu, Hehong Chen, Junfeng Tian, Qian Qi, Ji Zhang, and Fei Huang. mplug-owl: Modularization empowers large language models with multimodality, 2023.

- [43] Zhengyuan Yang, Linjie Li, Jianfeng Wang, Kevin Lin, Ehsan Azarnasab, Faisal Ahmed, Zicheng Liu, Ce Liu, Michael Zeng, and Lijuan Wang. Mm-react: Prompting chatgpt for multimodal reasoning and action, 2023.

- [44] Yongliang Shen, Kaitao Song, Xu Tan, Dongsheng Li, Weiming Lu, and Yueting Zhuang. Hugginggpt: Solving ai tasks with chatgpt and its friends in hugging face, 2023.

- [45] Difei Gao, Lei Ji, Luowei Zhou, Kevin Qinghong Lin, Joya Chen, Zihan Fan, and Mike Zheng Shou. Assistgpt: A general multi-modal assistant that can plan, execute, inspect, and learn, 2023.

- [46] Harsh Agrawal, Karan Desai, Yufei Wang, Xinlei Chen, Rishabh Jain, Mark Johnson, Dhruv Batra, Devi Parikh, Stefan Lee, and Peter Anderson. nocaps: novel object captioning at scale. In 2019 IEEE/CVF International Conference on Computer Vision (ICCV). IEEE, October 2019.

- [47] Amanpreet Singh, Vivek Natarajan, Meet Shah, Yu Jiang, Xinlei Chen, Dhruv Batra, Devi Parikh, and Marcus Rohrbach. Towards vqa models that can read, 2019.

- [48] Zhengyuan Yang, Yijuan Lu, Jianfeng Wang, Xi Yin, Dinei Florencio, Lijuan Wang, Cha Zhang, Lei Zhang, and Jiebo Luo. Tap: Text-aware pre-training for text-vqa and text-caption, 2020.

- [49] Rowan Zellers, Yonatan Bisk, Ali Farhadi, and Yejin Choi. From recognition to cognition: Visual commonsense reasoning, 2019.