# Converging Paradigms: The Synergy of Symbolic and Connectionist AI in LLM-Empowered Autonomous Agents

**Authors**: Haoyi Xiong, Zhiyuan Wang, Xuhong Li, Jiang Bian, Zeke Xie, Shahid Mumtaz, Anwer Al-Dulaimi, and Laura E. Barnes

> H. Xiong and J. Bian are with Microsoft Corporation. Z. Wang and L. E. Barnes are with the University of Virginia. Xuhong Li is with Univiersité de technologie de Compiègne. Z. Xie is with Hong Kong University of Science and Technology (GZ). S. Mumtaz is with Nottingham Trent University, Nottingham, UK and Silesian University of Technology, Gliwice, Poland. Anwer Al-Dulaimi is with College of Technological Innovation, Zayed University, Abu Dhabi, UAE.

## Abstract

This article explores the convergence of connectionist and symbolic artificial intelligence (AI), from historical debates to contemporary advancements. Traditionally considered distinct paradigms, connectionist AI focuses on neural networks, while symbolic AI emphasizes symbolic representation and logic. Recent advancements in large language models (LLMs), exemplified by ChatGPT and GPT-4, highlight the potential of connectionist architectures in handling human language as a form of symbols. The study argues that LLM-empowered Autonomous Agents (LAAs) embody this paradigm convergence. By utilizing LLMs for text-based knowledge modeling and representation, LAAs integrate neuro-symbolic AI principles, showcasing enhanced reasoning and decision-making capabilities. Comparing LAAs with Knowledge Graphs within the neuro-symbolic AI theme highlights the unique strengths of LAAs in mimicking human-like reasoning processes, scaling effectively with large datasets, and leveraging in-context samples without explicit re-training. The research underscores promising avenues in neuro-vector-symbolic integration, instructional encoding, and implicit reasoning, aimed at further enhancing LAA capabilities. By exploring the progression of neuro-symbolic AI and proposing future research trajectories, this work advances the understanding and development of AI technologies.

Index Terms: Large Language Models (LLMs), LLM-Empowered Autonomous Agents (LAAs), Neuro-symbolic AI, Program-Proof-of-Thoughts (P 2 oT) prompting

## I Introduction

Artificial Intelligence (AI) has historically navigated the fascinating duality of two foundational paradigms: connectionism and symbolism. Connectionism, deeply influenced by cognitive science and computational neuroscience, delves into neural networks and machine learning algorithms that echo the deep neural architecture and functions of the human brain [1]. Imagine a sprawling network of neurons firing in electric synchrony, mirroring how advanced AI systems identify patterns and glean insights from vast datasets. Conversely, symbolism is the epitome of conceptual and logical clarity. It anchors itself in the high-level abstractions and representations of knowledge, flourishing through rule-based systems that excel in reasoning and decision-making [2]. Picture a grand library where every book is a rule, and every chapter a pathway to logical deduction–symbolic AI analogising the thought processes of human reasoning.

The dynamic interplay between these two paradigms has sculpted the continuous evolution of AI, like a grand philosophical debate, resulting in shifts in dominance and application across various research domains. Think of this dialectic as a dance through time—the elegant waltz of connectionism and symbolism, sometimes leading, sometimes following, yet always in a harmonious exchange that propels the boundaries of what AI can achieve. For instance, in the domain of image recognition, connectionist models driven by deep neural networks demonstrate their prowess by identifying subtle patterns in pixel data, akin to how our brains recognize faces in a crowd [3]. Meanwhile, in expert systems used for medical diagnostics, symbolism shines by methodically applying predefined rules to diagnose diseases, mimicking the logical flow of a doctor’s thought process [4]. This storied dance of paradigms has not just shaped, but revitalized AI, continuing to impact its trajectory as it ventures into increasingly sophisticated applications. The oscillation of dominance between these approaches resembles the ebb and flow of tides, each rise and retreat bringing new insights and innovations to the fore.

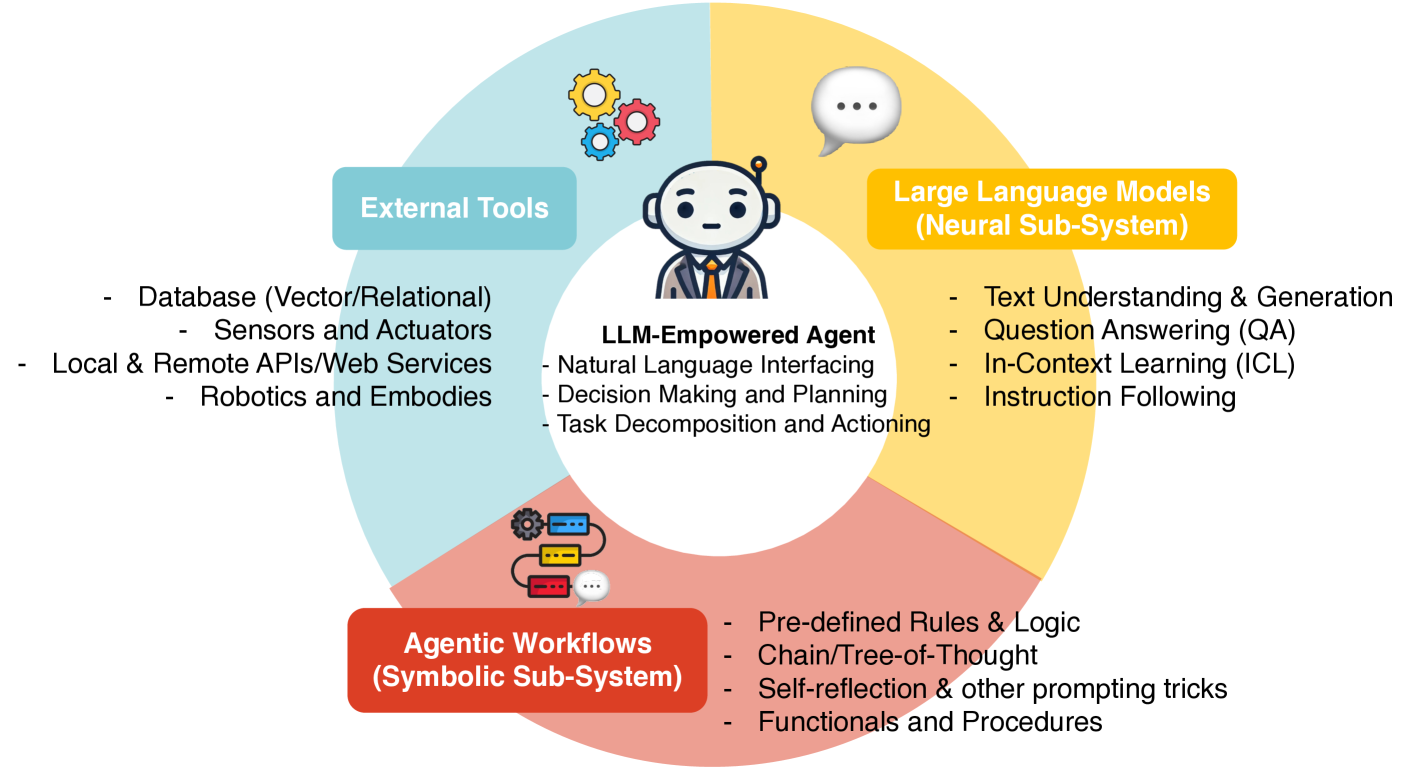

In recent years, the advancements in Large Language Models (LLMs) and foundation models have catalyzed the integration of connectionist and symbolic AI paradigms, realizing new levels of computational intelligence and versatility [5]. These models, exemplified by systems such as OpenAI’s GPT-4, have demonstrated unprecedented capabilities in natural language understanding and generation, exhibiting robust performance across a range of complex tasks [6]. LLMs themselves are a triumph of connectionism, empowered by vast amounts of data and sophisticated neural architectures to produce coherent and contextually relevant texts. Moreover, the emergence of LLM-empowered Autonomous Agents (LAAs) signifies a pivotal juncture in the development of AI, embodying the convergence of symbolic and connectionist AI. As shown in Figure 1, LAAs combine a symbolic subsystem, utilizing language-based knowledge, rules, and workflows intrinsic to symbolic AI, with the generative capabilities of LLMs [7]. This symbolic subsystem works seamlessly with the neural subsystem and incorporates external tools for perceptions and actions [8]. LAAs demonstrate advanced reasoning, planning, and decision-making abilities, marking a new era in AI. The dual subsystems align with dual-process theories of reasoning [9] and Systems I and II proposed by Yoshua Bengio [10].

<details>

<summary>x1.png Details</summary>

### Visual Description

\n

## Diagram: Architecture of an LLM-Empowered Agent

### Overview

The image is a conceptual diagram illustrating the architecture of an "LLM-Empowered Agent." It depicts the agent as a central entity integrating three distinct but interconnected subsystems: External Tools, Large Language Models (Neural Sub-System), and Agentic Workflows (Symbolic Sub-System). The diagram uses a circular layout with the agent at the core and the three subsystems as colored segments surrounding it.

### Components/Axes

The diagram is structured around a central circle containing an icon of a robot/agent and text. This central hub is surrounded by three colored segments, each representing a subsystem with its own icon and list of components.

**Central Hub:**

* **Icon:** A stylized robot head and shoulders wearing a suit and tie.

* **Title:** `LLM-Empowered Agent`

* **List of Core Functions:**

* Natural Language Interfacing

* Decision Making and Planning

* Task Decomposition and Actioning

**Surrounding Subsystems (Clockwise from Top-Left):**

1. **Blue Segment (Top-Left): External Tools**

* **Icon:** Three interlocking gears (yellow, red, blue).

* **List of Components:**

* Database (Vector/Relational)

* Sensors and Actuators

* Local & Remote APIs/Web Services

* Robotics and Embodies

2. **Yellow Segment (Top-Right): Large Language Models (Neural Sub-System)**

* **Icon:** A speech bubble with three dots inside.

* **List of Capabilities:**

* Text Understanding & Generation

* Question Answering (QA)

* In-Context Learning (ICL)

* Instruction Following

3. **Red Segment (Bottom): Agentic Workflows (Symbolic Sub-System)**

* **Icon:** A flowchart with connected boxes and a gear.

* **List of Components:**

* Pre-defined Rules & Logic

* Chain/Tree-of-Thought

* Self-reflection & other prompting tricks

* Functionals and Procedures

### Detailed Analysis

The diagram presents a modular, integrated architecture. The **LLM-Empowered Agent** is the central orchestrator. Its core functions (Natural Language Interfacing, Decision Making, Task Decomposition) suggest it acts as the "brain" that interprets goals and breaks them down.

The three surrounding subsystems provide the agent with different types of capabilities:

* **External Tools (Blue):** This segment represents the agent's interface with the physical and digital world. It includes data storage (Databases), physical interaction (Sensors/Actuators, Robotics), and software interaction (APIs/Web Services). This is the "hands and senses" of the agent.

* **Large Language Models (Yellow):** Labeled as the "Neural Sub-System," this provides the core cognitive and linguistic abilities. The listed capabilities (Text Understanding, QA, ICL, Instruction Following) are the foundational AI model skills that enable the agent to process information and communicate.

* **Agentic Workflows (Red):** Labeled as the "Symbolic Sub-System," this provides structured, rule-based, and procedural reasoning. Components like "Chain/Tree-of-Thought" and "Pre-defined Rules & Logic" suggest methods for enhancing the LLM's reasoning with more deterministic or step-by-step processes. This acts as the "structured reasoning engine."

### Key Observations

1. **Tripartite Integration:** The architecture explicitly separates capabilities into Neural (LLM), Symbolic (Workflows), and Physical/Digital (Tools) domains, with the central agent integrating them.

2. **Agent as Orchestrator:** The central agent is not just an LLM but a system that *uses* an LLM alongside other components to perform complex tasks requiring planning, tool use, and structured reasoning.

3. **Bidirectional Flow Implied:** While not shown with arrows, the layout suggests a constant flow of information: the agent receives a task, uses the LLM to understand it, employs workflows to plan, and executes actions via external tools, with feedback looping back.

4. **Complementary Subsystems:** The Neural Sub-System (LLM) handles fuzzy, language-based tasks, while the Symbolic Sub-System (Workflows) handles structured, logical tasks. They are presented as complementary, not competing.

### Interpretation

This diagram illustrates a sophisticated blueprint for building autonomous AI agents. It argues that a powerful Large Language Model alone is insufficient for complex, real-world tasks. True agency requires the integration of three pillars:

1. **Perception & Action (External Tools):** The ability to gather data from and affect the environment is fundamental for any agent that must operate beyond a text interface.

2. **Core Cognition (LLMs):** The neural network provides the flexible, human-like understanding and generation of language, which is the primary interface for defining goals and communicating results.

3. **Structured Reasoning (Agentic Workflows):** Symbolic methods and predefined workflows provide reliability, explainability, and enhanced reasoning capabilities that can compensate for the occasional unpredictability or logical shortcomings of pure neural approaches.

The architecture suggests that the future of practical AI agents lies in **hybrid systems** that combine the strengths of neural networks (flexibility, language understanding) with symbolic AI (structure, reliability) and robust tool-use frameworks. The central "LLM-Empowered Agent" is the synthesizer that makes this combination coherent and goal-directed. This model moves beyond seeing an LLM as a chatbot and re-frames it as one critical component within a larger, more capable autonomous system.

</details>

Figure 1: Elements of LLM-empowered Autonomous Agents (LAAs): Large Language Models (Neural Sub-System), Agentic Workflows (Symbolic Sub-System), and External Tools

In this work, we aim at examining the historical evolution and current state of AI by exploring the enduring debate between connectionism and symbolism and their convergence in modern technologies, particularly in the theme of neuro-symbolic approaches, including Knowledge Graphs, LLMs, and LAAs. This review aims to illustrate how the integration of these paradigms has led to groundbreaking advancements, offering new perspectives on the capabilities and future directions of AI.

- Historical Context of Technology: This article provides an in-depth examination of the historical debate between connectionism and symbolism, contextualizing modern AI developments and highlighting the strengths of each approach. We present recent advancements in LLMs with Knowledge Graphs (KGs) [11] as references, discussing these techniques from the perspectives of symbolic, connectionist, and neuro-symbolic AI. The article also showcases the transformative impact of these techniques on knowledge modeling, acquisition, representation, and reasoning.

- Convergence of Paradigms: This article highlights the convergence of symbolic and connectionist approaches in developing LAAs, emphasizing their enhanced reasoning, decision-making, and efficiency. By contrasting LAAs with Knowledge Graphs (KGs) within neuro-symbolic AI, we examine distinct patterns and functionalities. While both integrate symbolic and neural methodologies, LAAs demonstrate unique advantages over KGs: (1) analogizing human reasoning with agentic workflows and various prompting techniques [12, 13], (2) scaling effectively on large datasets, adapting to in-context samples, and leveraging the emergent abilities of LLMs. These strengths drive the surge of a new wave of neuro-symbolic AI [14].

- Future Directions: The article highlights the trend of converging paradigms and current limitations of LAAs, pointing to two promising future directions. First is the development of neuro-vector-symbolic architectures, which integrate vector manipulation to enhance the reasoning capabilities of agents. Second is the approach known as program-proof-of-thoughts (P 2 oT) prompting. This involves breaking down complex reasoning processes into verifiable propositions, utilizing program proof languages (such as Dafny) for structured verification. It aims to provide rigorous reasoning by modeling propositions, integrating with theorem provers, and focusing on applications in specific domains.

These contributions are crucial as they provide a comprehensive understanding of the evolution of AI, highlight the significance of paradigm convergence, and offer insights into future research and application potentials in the rapidly evolving field of AI.

## II Preliminaries

This section begins by summarizing the historical debate between connectionist AI and symbolic AI. We then explore knowledge graphs (KGs) as an early effort to synergize these two paradigms through neuro-symbolic AI. Lastly, we examine LLMs as the latest advancements in connectionist AI.

<details>

<summary>x2.png Details</summary>

### Visual Description

## Diagram: Timeline of AI Evolution - Symbolic, Connectionist, and Neuro-Symbolic Paradigms

### Overview

This image is a horizontal timeline diagram illustrating the historical development and convergence of two major paradigms in Artificial Intelligence: **Symbolic AI** and **Connectionist AI**. It traces key milestones from the 1950s to the present day, culminating in their fusion into **Neuro-Symbolic AI**. The timeline is presented as two parallel horizontal tracks that eventually merge into a single track, visually representing the integration of these once-separate fields.

### Components/Axes

* **Primary Structure:** Two parallel horizontal timelines (tracks) that converge into one.

* **Top Track:** Labeled **"Symbolic AI"** on the far left.

* **Bottom Track:** Labeled **"Connectionist AI"** on the far left.

* **Merged Track:** The two tracks converge into a single timeline labeled with Neuro-Symbolic fusion concepts.

* **Temporal Axis:** A horizontal timeline marked with specific years and decades: **1956, 1958, 1980s, 1990s, 2000s, 2010s, 2012, 2017-2018, 2022-Now**.

* **Event Nodes:** Each milestone is represented by a node (a small circle on the timeline) connected to a text block and an icon. The text blocks contain the event name and a brief description.

* **Icons:** Each event is accompanied by a small illustrative icon (e.g., books for Logic Theorist, a brain for Perceptron, a globe for Semantic Web).

* **Connecting Line:** A dotted, curved line connects the end of the parallel tracks to the beginning of the merged Neuro-Symbolic track, indicating continuity and evolution.

### Detailed Analysis

The timeline is segmented into two main eras before the fusion.

**1. Symbolic AI Track (Top):**

* **1956 - Logic Theorist:** Icon: Stack of books. Description: "Introduction of symbolic AI".

* **1980s - AI debates:** Icon: Speech bubble. Description: "Highlighted the dichotomy between connectionist and symbolic AI".

* **1990s - RDF:** Icon: Globe with grid. Description: "Standardization of data interchange on the web".

* **2000s - Ontologies:** Icon: Open book. Description: "Structuring and organizing knowledge".

* **Post-2000s - Semantic Web:** Icon: Globe. Description: "Enhancing data interoperability".

**2. Connectionist AI Track (Bottom):**

* **1958 - Perceptron:** Icon: Brain. Description: "Initiated connectionist AI".

* **1980s - Back propagation:** Icon: Backward arrow. Description: "Significant advancement in neural network training".

* **1990s - Machine Learning:** Icon: Network graph. Description: "SVM, Kernels, Sparse Models, Boosting etc."

**3. Neuro-Symbolic Fusion Track (Merged, from 2010s onward):**

* **2010s - Neuro-Symbolic Fusion: Knowledge Graphs:** Icon: Network graph with colored nodes. Description: "Leveraging probabilistic Markov Logic and neural networks to accelerate symbolic reasoning".

* **2012 - AlexNet:** Icon: Server racks. Description: "Revolutionize image recognition".

* **2017-2018 - Transformer, Attentions, BERT, and GPT:** Icon: Speech bubble with ellipsis. Description: "Revolutionizes NLP and language understanding".

* **2022-Now - Neuro-Symbolic Fusion: LLM-empowered Autonomous Agents:** Icon: Network graph with colored nodes. Description: "Leveraging LLMs+Agentic workflows for decision-making, task planning, and actioning".

* **2022-Now - ChatGPT and Generative AI:** Icon: ChatGPT logo. Description: "Unprecedented capabilities in natural language processing".

### Key Observations

* **Parallel Development:** For decades (1950s-2000s), Symbolic AI (focused on logic, knowledge representation, and rules) and Connectionist AI (focused on neural networks and learning from data) developed on separate, parallel tracks, with noted debates between them in the 1980s.

* **Convergence Point:** The explicit fusion begins in the **2010s** with "Neuro-Symbolic Fusion: Knowledge Graphs," indicating an early integration effort.

* **Accelerated Integration:** The period from **2012 to the present** shows rapid, sequential breakthroughs (AlexNet, Transformers, LLMs) that drive the fusion forward, culminating in the current era of "LLM-empowered Autonomous Agents."

* **Iconography:** Icons are used consistently to visually categorize the type of milestone (e.g., books for knowledge, brains for neural concepts, globes for web standards).

### Interpretation

This diagram presents a **Peircean narrative of AI's evolution as a process of abduction and synthesis**. It argues that the field's history is not a single line of progress but a dialectic between two fundamental approaches (symbolic vs. connectionist). The "AI debates" of the 1980s represent the thesis and antithesis. The timeline's structure—parallel lines merging—visually proposes that the current era (post-2010s) represents a **synthesis**, where the strengths of both paradigms are combined.

The data suggests that recent, unprecedented advances in capability (highlighted by ChatGPT and Generative AI) are not merely an extension of the connectionist path but are fundamentally enabled by this neuro-symbolic fusion. The placement of "LLM-empowered Autonomous Agents" as the latest fusion milestone implies that the future trajectory of AI lies in systems that can both reason symbolically (using knowledge graphs, logic) and learn/act connectionistically (using LLMs and agentic workflows). The diagram positions the current moment as a pivotal point where historically separate streams of research have coalesced to create a new, more powerful paradigm.

</details>

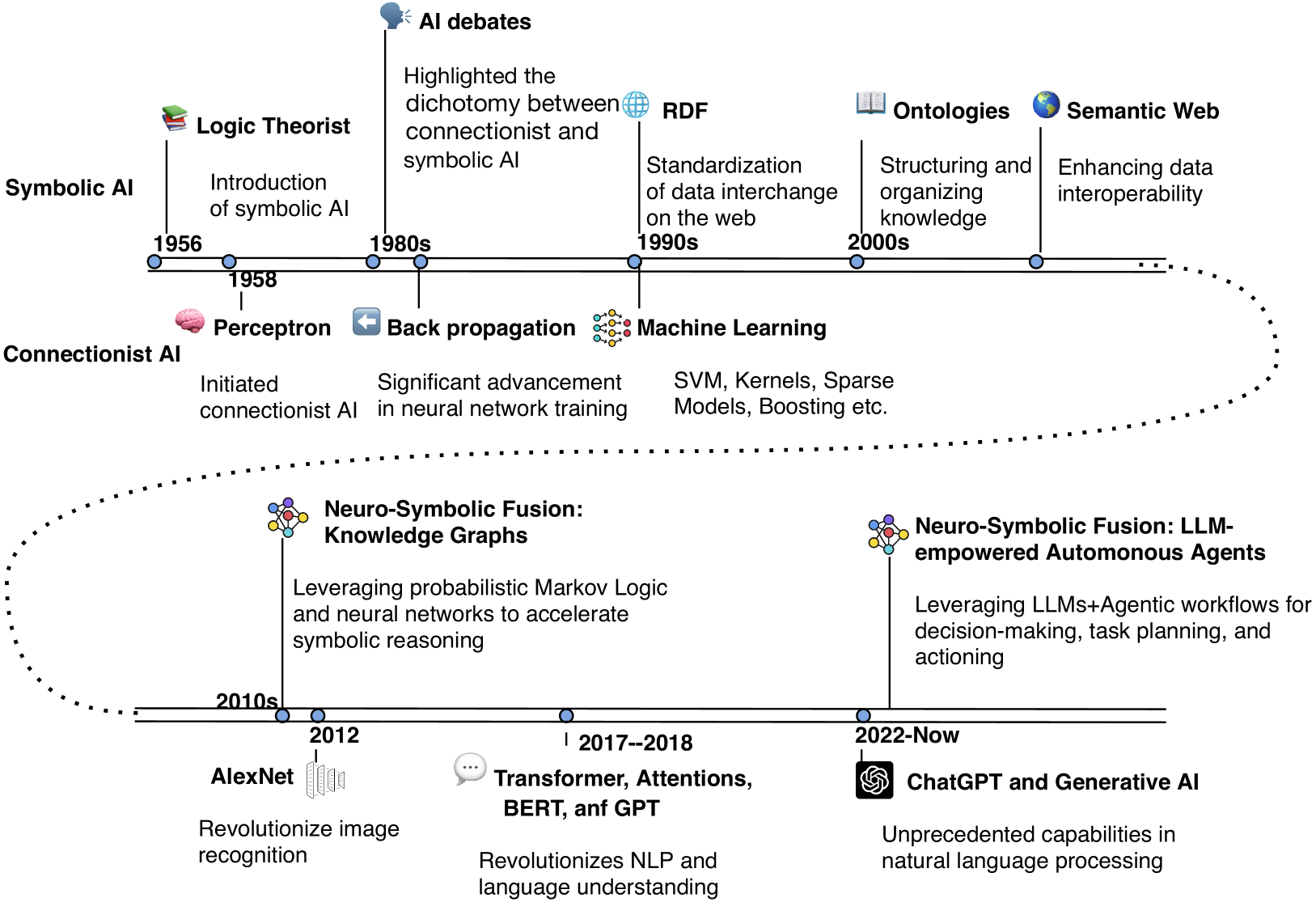

Figure 2: Exploring the Evolution of Artificial Intelligence: A Timeline of Key Innovations and Milestones. It starts from the birth of symbolic and connectionist AI in the 1950s, through key milestones like the AI debates of the 1980s and the advancement in machine learning in the 1990s. This figure highlights significant developments such as the impact of AlexNet on image recognition, the transformation in NLP by models like BERT and GPT, and the rise of generative AI, culminating in the use of LLMs and Agents for autonomous decision-making in the 2020s.

### II-A Connectionism vs. Symbolism: a Historical Debate on AI

As shown in Figure 2, the discourse of AI has long revolved around the dichotomy between connectionism and symbolism, two paradigms integral to the field. Connectionism models cognitive processes through artificial neural networks that emulate the brain’s neuron structures, emphasizing learning through algorithms and pattern recognition. This began with Frank Rosenblatt’s Perceptron in 1958 [15] and advanced significantly with the backpropagation algorithm developed by David Rumelhart, Geoffrey Hinton, and Ronald J. Williams in the 1980s [16], setting the stage for modern deep learning [1]. Conversely, symbolism focuses on high-level knowledge representations and symbolic manipulation to mimic human reasoning, gaining prominence with systems like the Logic Theorist by Allen Newell and Herbert A. Simon in 1956 [17]. Symbolic AI thrived with expert systems such as MYCIN [4] and DENDRAL [18] in the 1970s and 1980s, excelling in specific domains through predefined rules.

In the 1980s, as Ashok Goel noted, debates often involved criticisms that attacked caricatures of the opposing methods [19]. Each approach has its limitations: connectionist AI is criticized for its black-box nature and lack of interpretability [20], while symbolic AI faced challenges with the labor-intensive knowledge acquisition process [21] and its limited adaptability [22]. Historical debates between figures, such as Yann LeCun, Yoshua Bengio, and Gary Marcus, have underscored these limitations [23]. However, the integration of both paradigms has led to robust hybrid models, combining neural networks’ pattern recognition with symbolic systems’ interpretability and logical reasoning [24]. Contemporary research exemplifies this convergence, seen in neuro-symbolic AI and large-scale pre-trained models like BERT [25], GPT [5], and hybrid reinforcement learning models [26], reflecting the ongoing evolution inspired by the historical debate.

### II-B Knowledge Graphs: An Early Neuro-symbolic Attempt

Knowledge graphs have a foundation rooted in the evolution of semantic web technologies and the Resource Description Framework (RDF). Proposed by the W3C in the 1990s, RDF standardized data interchange on the web using triples (subject, predicate, object) for seamless data integration and interoperability [27]. This movement established the Semantic Web, aiming for a more intelligent and interconnected web [28]. Early adopters used RDF to build schemas and taxonomies, forming the basics of modern knowledge graphs [29].

As the field matured, the focus shifted towards capturing complex relationships and domain-specific knowledge. Ontologies, formal specifications of concepts and relationships, provided a framework for annotating and interlinking data, enabling semantic reasoning at a certain level [30]. Markov-logic networks introduced probabilistic reasoning to knowledge graphs, allowing for handling uncertainty and inconsistency in data [31]. The synergy of Ontologies and Markov-logic networks advanced the ability of symbolic AI to perform robust reasoning over large datasets [32].

In recent years, the use of graph neural networks (GNNs) has further revolutionized the landscape of knowledge graphs. GNNs adeptly leverage the graph structure for advanced pattern recognition and complex predictions. They excel in tasks such as node classification, link prediction, and the extraction of hidden patterns from graph-structured data [33]. This paradigm shift towards neural networks marks a convergence with modern machine learning techniques, enabling more nuanced and scalable interpretations of often massive and intricate datasets. The ability of GNNs to embed nodes and entire graphs numerically has significantly enhanced the computational handling of knowledge graphs [34]. In conclusion, the integration of graph neural networks with rule-based reasoning has positioned knowledge graphs at the core of the neuro-symbolic AI approach [11] prior to the surge of LLMs.

### II-C LLMs: Recent Connectionist AI Advancements

The field of connectionist AI has undergone substantial evolution, beginning with the invention of the perceptron [15], kicking off the neural network research in the late 1950s. In the following decades, the development of Multi-Layer Perceptrons (MLPs) introduced hidden layers and non-linear activation functions, enabling the modeling of more complex functions [16]. In the 1990s, Long Short-Term Memory (LSTM) networks were developed to address the limitations of traditional recurrent neural networks (RNNs) by introducing gating mechanisms to handle long-term dependencies in sequential data [35]. Self-attention mechanisms and transformer architectures proposed in the late 2010s further revolutionized sequence modeling, such as texts for natural language processing, by allowing models to focus on different parts of the input sequence when generating each part of the output sequence [36].

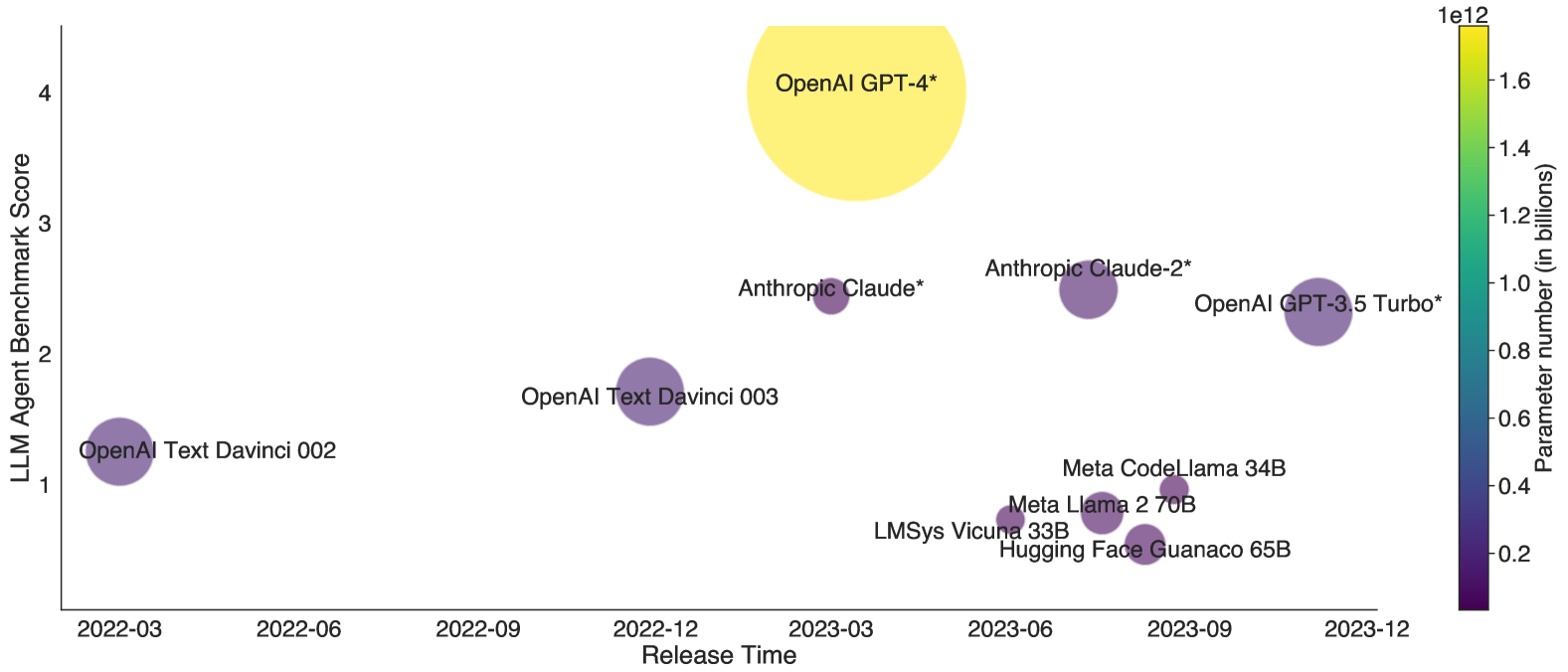

The development of transformer-based pre-trained language models has significantly advanced natural language processing (NLP). These architectures include encoder-only models, e.g., BERT [25], which excel at understanding and classifying text; decoder-only models, e.g., GPT [6], which generate coherent and contextually relevant text; and encoder-decoder models, e.g., T5 [37], which are effective in tasks requiring both comprehension and generation. Transformer-based language models, such as OpenAI’s GPT-4 [38], Google’s Gemini [39] and PaLM [40], Microsoft’s Phi-3 [41], and Meta’s LLaMA [42], are termed Large Language Models (LLMs). These models, illustrated in Figure 3, are trained on large-scale transformers comprising billions of learnable parameters to support various abilities to enable agents, including perception, reasoning, planning, and action [12]. As the central component of an agent’s neural sub-system, the larger the model, the stronger the agent’s capability.

<details>

<summary>x3.png Details</summary>

### Visual Description

## Bubble Chart: LLM Agent Benchmark Score vs. Release Time

### Overview

This is a bubble chart plotting various Large Language Models (LLMs) based on their release date and performance on an "LLM Agent Benchmark." The size and color of each bubble represent the model's parameter count, with a color scale provided. The chart visualizes the evolution of model performance and scale over time from early 2022 to late 2023.

### Components/Axes

* **Chart Type:** Bubble Chart / Scatter Plot with size and color encoding.

* **X-Axis:** "Release Time". The scale is chronological, with major tick marks labeled: `2022-03`, `2022-06`, `2022-09`, `2022-12`, `2023-03`, `2023-06`, `2023-09`, `2023-12`.

* **Y-Axis:** "LLM Agent Benchmark Score". The scale is linear, ranging from 1 to 4, with major tick marks at `1`, `2`, `3`, `4`.

* **Color/Legend (Right Side):** A vertical color bar titled "Parameter number (in billions)". The scale is logarithmic, indicated by the `1e12` (1 trillion) multiplier at the top. The gradient runs from dark purple (low) to bright yellow (high). Key labeled ticks are: `0.2`, `0.4`, `0.6`, `0.8`, `1.0`, `1.2`, `1.4`, `1.6`. The unit is billions, so `1.6` represents 1.6 trillion parameters.

* **Data Points (Bubbles):** Each bubble is labeled with the model name. The placement, size, and color of each bubble encode three dimensions of data: release time (x), benchmark score (y), and parameter count (size/color).

### Detailed Analysis

**Data Points (Listed by approximate release time, from left to right):**

1. **OpenAI Text Davinci 002**

* **Position:** X ≈ March 2022, Y ≈ 1.2.

* **Size/Color:** Medium-small bubble, dark purple. Color corresponds to the low end of the parameter scale, likely < 0.2 trillion (200 billion) parameters.

2. **OpenAI Text Davinci 003**

* **Position:** X ≈ December 2022, Y ≈ 1.8.

* **Size/Color:** Medium bubble, purple. Slightly larger and lighter than Davinci 002, indicating a higher parameter count, perhaps in the 0.2-0.4 trillion range.

3. **Anthropic Claude\***

* **Position:** X ≈ March 2023, Y ≈ 2.5.

* **Size/Color:** Medium-small bubble, purple. Similar in size/color to Davinci 003, suggesting a comparable parameter count.

4. **OpenAI GPT-4\***

* **Position:** X ≈ March 2023, Y = 4.0 (at the top of the axis).

* **Size/Color:** Very large bubble, bright yellow. This is the largest and highest-scoring model. Its color matches the top of the scale (~1.6 trillion parameters or more). It is a clear outlier in both performance and scale.

5. **Anthropic Claude-2\***

* **Position:** X ≈ July 2023, Y ≈ 2.6.

* **Size/Color:** Medium bubble, purple. Slightly larger than the original Claude, indicating an increase in parameters.

6. **OpenAI GPT-3.5 Turbo\***

* **Position:** X ≈ November 2023, Y ≈ 2.3.

* **Size/Color:** Medium-large bubble, purple. Larger than the Claude models but smaller than GPT-4. Its color suggests a parameter count in the mid-range of the scale (perhaps ~0.6-0.8 trillion).

7. **Cluster of Models (Mid-2023):** A group of four smaller, dark purple bubbles clustered between June and September 2023, all with benchmark scores below 1.0.

* **LMSys Vicuna 33B:** X ≈ June 2023, Y ≈ 0.8.

* **Meta Llama 2 70B:** X ≈ July 2023, Y ≈ 0.9.

* **Hugging Face Guanaco 65B:** X ≈ August 2023, Y ≈ 0.7.

* **Meta CodeLlama 34B:** X ≈ September 2023, Y ≈ 0.8.

* **Size/Color:** All are small bubbles, dark purple. Their color places them at the very low end of the parameter scale (< 0.2 trillion), consistent with their names (33B, 70B, 65B, 34B = 0.033 to 0.07 trillion).

### Key Observations

1. **Performance Leap:** There is a dramatic, isolated jump in benchmark score with the release of OpenAI GPT-4* in early 2023, reaching the maximum score of 4.

2. **General Upward Trend:** Excluding the outlier GPT-4, there is a general, gradual upward trend in benchmark scores for the major commercial models (Davinci series, Claude series, GPT-3.5 Turbo) from early 2022 to late 2023.

3. **Parameter Count vs. Performance:** Higher parameter count (indicated by larger, yellower bubbles) generally correlates with higher benchmark scores, but not perfectly. For example, GPT-3.5 Turbo has a high score but a smaller bubble than GPT-4. The cluster of open-source models (Vicuna, Llama 2, etc.) has significantly lower scores and much smaller parameter counts.

4. **Temporal Clustering:** Model releases are not uniform. There was a cluster of activity in mid-2023, particularly among open-source models.

5. **Asterisks:** The labels for GPT-4, Claude, Claude-2, and GPT-3.5 Turbo include an asterisk (*), which likely denotes a specific version, API access, or a note not visible in the chart itself.

### Interpretation

This chart illustrates the rapid and competitive evolution of the LLM landscape between 2022 and 2023. It suggests that while incremental improvements in performance (benchmark score) were occurring with each new model release, the field experienced a **paradigm shift** with the introduction of GPT-4. This model represents a significant outlier in both capability (score) and scale (parameters), indicating a potential architectural or training breakthrough.

The data highlights a bifurcation in the market: **high-performance, likely closed-source models** (from OpenAI, Anthropic) occupying the upper-right quadrant (later release, higher score, larger scale), and a group of **smaller, open-source models** clustered in the lower-right (mid-2023 release, lower score, much smaller scale). This visualizes the trade-off between raw performance and accessibility/scalability.

The asterisks hint at important nuances—these benchmark scores may be contingent on specific prompting, evaluation frameworks, or model versions. The chart effectively argues that in the race for LLM agent capabilities, sheer scale (parameters) has been a primary, but not sole, driver of performance, with one model achieving a disproportionate leap ahead of its contemporaries.

</details>

Figure 3: Large Language Models and Their Agentic Abilities. The X-axis shows the release dates, and the Y-axis represents the LLM Agent Benchmark Score [43]. Bubble size indicates the number of parameters (in billions). An asterisk (*) denotes estimated parameter counts when the official release is not available.

In general, every LLM undergos a two-stage training process: pre-training and fine-tuning. Pre-training involves adjusting model parameters based on the statistical properties of a large text corpus, enabling an understanding of syntax, semantics, and linguistic nuances [25]. Fine-tuning then adapts the pre-trained model to specific tasks or domains using a smaller, task-specific dataset, optimizing performance for particular applications [44]. To ensure LLMs follow human’s instructions, align with human values and exhibit desired behaviors, instruction tuning and reinforcement learning from human feedback (RLHF) have been proposed on top of fine-tuning [45].

As the size of LLMs increases, they exhibit a range of emerging capabilities, such as writing computer code, playing chess, diagnosing medical conditions, and translating languages. These capabilities often develop suddenly and dramatically at certain scales due to scaling laws, which describe how task performance can surge unexpectedly when a model reaches a particular threshold size [46]. This phenomenon is particularly observable in tasks requiring multi-step reasoning, where success probabilities compound multiplicatively, leading to rapid performance jumps [47]. However, these advancements come with “hallucination” challenges [48], such as producing false or nonsensical information that appears convincing but is inaccurate or not based on reality. These issues underline the importance of continued research and engineering to harness the benefits of LLMs while mitigating their drawbacks.

## III LLM-empowered Autonomous Agents: The Convergence of Symbolism and Connectionism

This section reviews the definition of both traditional and LLM-based agents, introduces core techniques for designing and implementing LAAs, and rethinks these innovations through the lens of symbolic AI.

### III-A Autonomous Agents: Classic and LLM-empowered

An autonomous agent is an artificially intelligent entity designed to achieve specific goals independently, acquiring contextual factors to perceive the environmental state and undertaking context-relevant actions [49]. These agents, equipped with reasoning, learning, and adaptability, thrive in dynamic and complex contexts. Unlike traditional software programs that follow predetermined rules, autonomous agents operate with self-governing attributes, allowing them to function under varying conditions [50]. Leveraging these capabilities, they facilitate automation by performing tasks that typically require human intervention, enhancing efficiency, and reducing operational costs across fields such as robotics, communication, financial trading, and healthcare [50]. For instance, in robotic applications, autonomous agents can navigate tasks with minimal supervision, continuously monitor their surroundings, and adapt to new situations, making them robust solutions for long-term automation [51, 52].

The foundational techniques of autonomous agent design originate from classic AI approaches, such as Probabilistic Graphical Models [53], Reinforcement Learning [54], and Multi-Agent Systems [55], which manage uncertainty and learn optimal behaviors in dynamic environments or enable agents to interact and share information efficiently. However, the advent of LAAs marks a significant evolution beyond traditional AI for both symbolic and neural sub-systems. These agents use extensive pre-training on vast textual corpora to acquire broad knowledge, performing human reasoning tasks by generating contextually appropriate text [56]. This capability not only simulates understanding and decision-making but also allows the generation of code and other communicative texts, enhancing their practical utility [57]. By integrating pre-trained language models with natural language understanding, LAAs adapt flexibly to diverse scenarios, expanding AI’s potential in autonomous operations.

### III-B Design and Implementation of LAAs

Central to the design of an agent is its neural sub-system–an LLM, which functions as the core controller or coordinator. The LLM orchestrates with the agent’s symbolic sub-system and external tools, including a planning and reasoning component for task decomposition and self-reflection, memory (both short-term and long-term), and a tool-use component that allows access to external information and functionalities.

- Agentic Workflow: An agentic workflow combines planning, reasoning, memory management, tool integration, and user interfaces with LLMs. Frameworks, such as LangChain [58] and LlamaIndex [59], help design these workflows.

- Planner and Reasoner: Advanced techniques such as chain-of-thought and tree-of-thought prompting [60] break down tasks into sub-tasks, with self-reflection allowing agents to critique and refine outputs [61].

- Memory Management: Incorporates short-term memory for context and long-term memory using external storage, such as vector databases, enabling efficient information retrieval and enhanced reasoning [62, 63].

- Tool-Use & Natural Language Interface (NLI) Integration: Agents can access external tools, APIs, and models, deciding when and how to utilize them based on task goals [64, 65]. In addition, An effective NLI interprets user requests and communicates actions [66]. Techniques, such as ReAct and MRKL, provide structured interaction steps (thought, action, action input, observation) [67, 68].

By integrating these components, LAAs can tackle complex tasks. However, challenges like limited context windows, long-term planning, and reliable interfaces remain, necessitating ongoing research and development.

### III-C Rethink LAAs from the Perspective of Neuro-symbolic AI

Neuro-symbolic AI combines the strengths of neural networks and symbolic reasoning, producing decision-making processes that are both explicit and interpretable. In autonomous agents enhanced by LLMs, the latest advancements in deep neural networks are harnessed, while task decomposition and planning are guided by symbolic AI principles — breaking complex tasks into discrete, logical steps that can be systematically analyzed and reasoned through [69]. This fusion of symbolic structures and deep neural networks creates a powerful synergy, significantly boosting the capabilities of these agents.

#### Symbolic Modeling and Neural Representation

Classic symbolic AI represents knowledge using abstractions and symbols, utilizing explicit symbolic modeling such as rules and relationships to perform reasoning [70]. This approach typically involves well-defined logic and structured knowledge bases, enabling systems to behave based on pre-defined rules. In contrast, LAAs, driven by language models, represent knowledge in a more distributed and implicit manner. Instead of relying on explicit symbols and rules, these agents leverage vast amounts of corpus and self-supervised pre-training on language models to infer patterns and relationships from raw text [25]. The knowledge is embedded within the weights of LLMs, allowing for more flexible and context-driven reasoning. This advantage fundamentally contrasts with the rigidity of symbolic AI, providing LAAs with the ability to handle ambiguity and generate more human-like responses [5].

#### Search-based Decision Making by Generation

Given a complex goal requiring multiple steps to achieve, existing agent technologies either harness symbolic AI to systematically explore the space of potential actions or employ reinforcement learning to optimize the trajectory of these actions, efficiently partitioning complex tasks into manageable subtasks [54]. Within a LLM-empowered agent, the Chain-of-Thought (CoT) method guides LLMs to generate texts about intermediate reasoning steps, enhancing their cognitive task performance [47]. By breaking tasks into logical sequences, CoT prompts encourage LLMs to structure their reasoning systematically. This method overcomes LLM limitations at the token level by enabling coherent, step-by-step elaboration of thought processes, improving problem-solving accuracy and reliability. More recently, Tree-of-Thought (ToT) prompting extends this approach by allowing LLMs to explore multiple reasoning paths simultaneously in a tree structure [71] and the proposal of functional search over program generation, leveraging large language models (LLMs), successfully facilitates mathematical discoveries [72]. These methods enhance LLM problem-solving abilities by promoting dynamic and reflective reasoning processes, closely mirroring symbolic reasoning techniques, on top of a neural basis.

#### Case-based Reasoning through In-context Learning

An agent must adapt to new situations, while traditional methods rely on either re-training neural networks or deducing examples of new situations into rules for better reasoning. Within a LLM-empowered agent, few-shot in-context learning (ICL) has been proposed to utilize given examples into a prompt to generate appropriate responses that solve problems without explicit re-training the LLM [73]. This approach mimics the case-based reasoning, a fundamental concept in symbolic AI, by leveraging explicit knowledge and experiences to tackle new problems. This enhances the model’s ability to generalize from specific examples, effectively creating a neuro-symbolic mapping from presented examples to desired outcomes.

#### Neuro-symbolic Integration Driven by Emergent Abilities

The emergent abilities of LLMs, such as contextual understanding, sequential reasoning, goal reformulation, and task decomposition, are surged by over-parameterized architectures and extensive pre-training corpora [46]. Combining well-designed rules with the emergent abilities of LLMs enables agents to create and follow complex workflows, known as agentic workflows. By prompting large language models with instructions like “let’s think step by step”, these models analogise human’s reasoning processes and can exhibit logical and mathematical reasoning, thereby enhancing their structured reasoning skills [12, 13]. This agentic approach allows LLMs to not only process but also proactively generate structured, logical, and adaptive reasoning pathways [56], significantly improving their problem-solving and decision-making capabilities, marking a pivotal evolution in neuro-symbolic AI technologies.

## IV Discussions and Future Directions

In this section, we discuss the LLM-empowered autonomous agent by comparing it with an alternative neuro-symbolic approach—the Knowledge Graph—and then highlight future directions for this technology.

### IV-A Comparative Analysis: LAAs versus KGs

Previous sections have presented LAAs and KGs, both of which exemplify neuro-symbolic approaches to AI. We here compare these two methodologies to highlight the superior positioning of LAAs in the current wave of AI advancements.

KGs harness the power of symbolic AI, organizing domain-specific knowledge through explicit relationships and rules. This design makes them highly effective in static environments where precision, interpretability, and predefined schemas are crucial. Their logical reasoning capabilities ensure that outputs are consistent and verifiable, which is paramount for applications needing clarity and exactitude in knowledge modeling [74]. In addition, the scalability of KGs is inherently limited by their requirements of explicit schema definitions and manual updates [75]. As the volume of data grows, the complexity of managing and querying the graph increases significantly. The maintenance of a large-scale KG demands substantial computational resources and human expertise, affecting efficiency and agility in evolving environments.

On the other hand, LAAs are designed with a more dynamic and flexible approach. By combining the language comprehension and generation abilities of neural networks with the structured reasoning of symbolic AI, these agents are equipped to tackle a wide range of complex tasks. The implicit knowledge stored in neural networks enables context-sensitive responses and seamless adaptation to changing environments [76]. Additionally, LLMs efficiently compress vast corpora into a learnable network, making these agents highly scalable. Once trained, the models can be fine-tuned with additional data at a fraction of the cost and effort required for updating knowledge graphs, and can even support in-context learning without fine-tuning. As a result, LLM-powered agents can handle larger datasets with ease and even process online data to respond to real-time changes effectively.

Furthermore, the advanced reasoning mechanisms employed by LAAs, such as CoT [47] and ToT [71], enable them to break down and solve complex problems effectively through analogising human reasoning steps [12]. These methods mitigate the limitations of token-level constraints in LLMs, fostering a more robust and contextually aware decision-making process. As a result, LAAs are poised to drive future innovations in AI, offering more versatile and intelligent solutions than their knowledge graph counterparts.

### IV-B Future Directions

Following prior discussions, we propose several future research directions aimed at enhancing the current landscape of LAAs.

<details>

<summary>x4.png Details</summary>

### Visual Description

\n

## Screenshot: Prompt-Response Interaction on Formal Verification of a Mathematical Proof

### Overview

The image displays a structured interaction, likely from an AI assistant or educational tool, where a user prompt asks for the verification of a mathematical proof using the Dafny programming language. The response provides a step-by-step decomposition of the problem and corresponding Dafny code snippets. The entire content is enclosed within a blue border.

### Components/Axes

The image is divided into two primary sections:

1. **Prompt Section (Top):** A box with a purple header labeled "Prompt:" containing the user's request.

2. **Response Section (Bottom):** A larger box with a purple header labeled "Response:" containing the assistant's structured answer.

### Detailed Analysis

#### **Prompt Section Content:**

* **Header:** "Prompt:" (in purple text).

* **Text:** "Play a role as an agent to verify the correctness of a specific mathematical proof that: **for any even integer ( n ), the sum of the first ( n ) positive integers is an even number.** Please decompose the above problem into a series of propositions. Generate dafny codes to model every proposition and perform the verfication to proof every proposition accordingly."

* *Note:* The word "verification" is misspelled as "verfication" in the original text.

#### **Response Section Content:**

* **Header:** "Response:" (in purple text).

* **Step 1: Decompose the Problem:**

* Numbered item "1. Decompose the Problem:"

* Three bullet points:

* "Proposition 1: Define what it means for a number to be even."

* "Proposition 2: State that the sum of the first ( n ) positive integers follows a specific formula."

* "Proposition 3: Prove that if ( n ) is even, then the sum of the first ( n ) positive integers is even."

* **Step 2: Formalize Using a Programming Language:**

* Numbered item "2. Formalize Using a Programming Language:"

* One bullet point: "Use a language like Dafny to encode these propositions and verify them."

* **Three Dafny Code Blocks:**

1. **First Code Block (Proposition 1):**

* Code: `method IsEven(n: int) returns (result: bool){ result := n % 2 == 0; }`

* Inline Comment (right-aligned, gray text): `//Dafny code to define an even number`

2. **Second Code Block (Proposition 2):**

* Code: `method SumOfFirstNIntegers(n: int) returns (sum: int) { sum := n * (n + 1) / 2; }`

* Inline Comment (right-aligned, gray text): `// Dafny code to define an even number` *(Note: The comment is identical to the first block's comment, which appears to be an error in the source image; the code defines the sum formula, not an even number.)*

3. **Third Code Block (Proposition 3):**

* Code:

```

method ProveSumIsEvenForEvenN(n: int)

requires IsEven(n)

ensures IsEven(SumOfFirstNIntegers(n)) {

var sum := SumOfFirstNIntegers(n);

assert IsEven(sum);

}

```

* Inline Comment (right-aligned, gray text): `// Dafny code to prove the sum is even when n is even`

* **Step 3: Integration with Theorem Provers:**

* Numbered item "3.Integration with Theorem Provers:" *(Note: No space after the period in the original.)*

* Two bullet points:

* "Use Dafny to verify the correctness of these propositions."

* "The verifier checks the logical coherence and correctness of the encoded propositions."

### Key Observations

1. **Structural Clarity:** The response follows a clear, logical decomposition from abstract propositions to concrete code implementation.

2. **Code-Comment Discrepancy:** The comment for the `SumOfFirstNIntegers` method is incorrect, stating it defines "an even number" when it actually defines the sum formula `n*(n+1)/2`.

3. **Formal Specification:** The third Dafny method (`ProveSumIsEvenForEvenN`) uses formal specification clauses (`requires`, `ensures`) to state the proof's preconditions and postconditions, which is central to Dafny's verification paradigm.

4. **Self-Contained Logic:** The proof method relies on the previously defined helper methods (`IsEven`, `SumOfFirstNIntegers`), demonstrating modular reasoning.

### Interpretation

This image documents a pedagogical or demonstrative exercise in **formal methods** and **computer-assisted theorem proving**. It illustrates the process of translating an informal mathematical statement into a form that can be mechanically verified.

* **What the data suggests:** The interaction showcases a methodology for breaking down a proof into atomic, verifiable components (propositions) and then using a verification-aware programming language (Dafny) to encode both the definitions and the logical steps. The Dafny verifier would then attempt to prove that the `assert` statement in the final method always holds true given the `requires` clause.

* **How elements relate:** The propositions provide the conceptual framework. The Dafny code provides the formal, machine-checkable implementation of that framework. The `IsEven` and `SumOfFirstNIntegers` methods establish the foundational definitions, while `ProveSumIsEvenForEvenN` encapsulates the core logical implication to be proven.

* **Notable anomalies:** The incorrect comment in the second code block is a minor but notable error in the source material. More substantively, the provided Dafny code for the third proposition is a *sketch* of a proof. The `assert IsEven(sum);` is the statement to be proven, but the code does not include the actual lemmas or arithmetic reasoning Dafny would need to automatically verify it. A complete proof would likely require additional hints or intermediate assertions about the parity of `n` and `(n+1)`. The image thus captures the setup for a proof, not necessarily a fully automated verification.

</details>

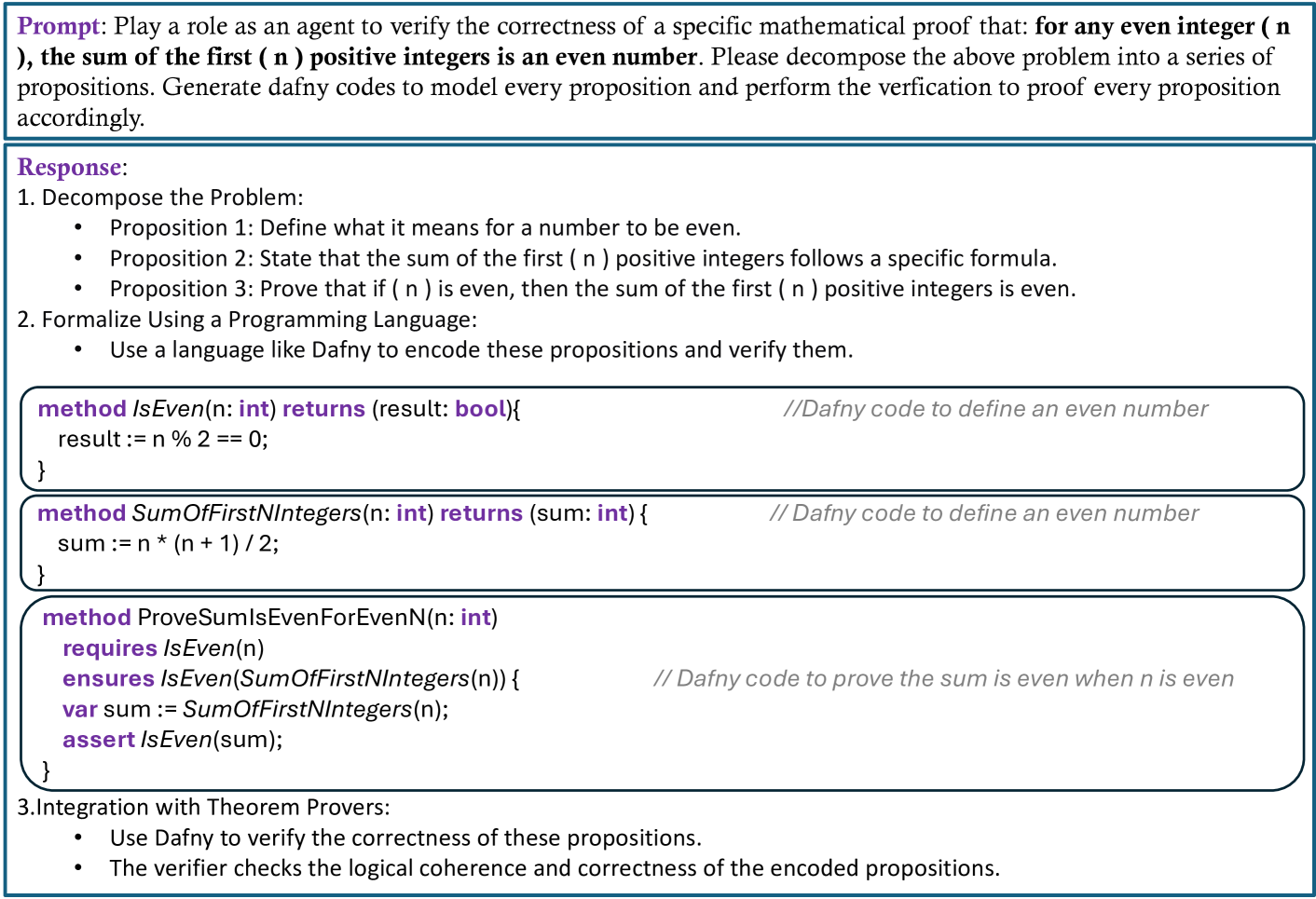

Figure 4: An Illustrative Example of Program-Proof-of-Thoughts (P 2 oT) for Mathematical Proof Verification

### IV-C Neuro-vector-symbolic Integrative Intelligence

Current agentic reasoning approaches emulate human reasoning steps explicitly [12]. For instance, when an agent receives a user’s request, it retrieves similar cases and enhances its actions through in-context learning, and for ambiguous requests, the agent prompts the LLM to clarify and rewrite the request in various forms [77]. This process involve extracting vectors for each rewritten request and performing multi-vector retrieval, improving context understanding and generative performance but increasing computational load. A vector-centric perspective, utilizing encoder-decoder architectures such as GritLM [78] that prompt generative models for instructional text encoding/vectorization, implicit neural reasoners that extend transformers with causal relation graphs for enhanced long-range reasoning [79] with latent vectors and attention matrices, and vector-symbolic architectures (VSAs) [80], could significantly address this problem. Specifically, the VSA employs high-dimensional vectors to encode and manipulate information, allowing the representation of complex structures and relationships compactly and contextually [80]. It models the cognitive and reasoning processes as algebraic operations in the vector space. Combining VSAs with LLMs could enhance cognitive capabilities, enabling precise multi-step decision-making, with applications in scientific discovery, such as solving Raven’s progressive matrices [81], thus accelerating the convergence between connectionist and symbolic paradigms through computable vectorization.

### IV-D Program-Proof-of-Thoughts Reasoning

We here illustrate the proposal of Program-Proof-of-Thoughts (P 2 oT) for agentic reasoning in a rigorous manner, building on the methodologies of CoT and ToT prompting. Specifically, P 2 oT decomposes complex reasoning processes into a series of propositions organized in linear or tree structures. It leverages the programming language for program proofs, such as Dafny [82] or Lean [83], to model and verify these propositions. Future research should focus on refining proposition modeling and verification by prompting LLMs for code generation [84], improving integration with external theorem provers and assertion verifiers (e.g., Dafny and Lean), and scaling P 2 oT to handle multi-modal data for advanced reasoning. Further, automating code generation, optimizing hybrid P 2 oT/CoT/ToT models, incorporating self-verification and self-correction, and adopting P 2 oT into domain-specific applications, including logical deduction and scientific discovery can significantly advance its capabilities [72]. Figure 4 demonstrates the use of the P 2 oT framework to verify a basic mathematical proof that for any even integer $n$ , the sum of the first $n$ positive integers is an even number. By decomposing the problem into distinct, verifiable propositions and using Dafny for formal verification, this example highlights the structured and rigorous approach of P 2 oT in logical reasoning and verification.

## V Conclusions

In conclusion, the synthesis of connectionist and symbolic paradigms, particularly through the rise of LLM-empowered Autonomous Agents (LAAs), marks a pivotal evolution in the field of AI, especially the neuro-symbolic AI. This paper has highlighted the historical context and the ongoing convergence of symbolic reasoning and neural network-based methods, underscoring how LAAs leverage the text-based knowledge representation and generative capabilities of LLMs to achieve logical reasoning and decision-making. By contrasting LAAs with Knowledge Graphs (KGs), we have demonstrated the unique advantages of LAAs in mimicking human-like reasoning processes, scaling effectively with large datasets, and leveraging in-context learning without extensive re-training. Promising directions such as neuro-vector-symbolic architectures and program-proof-of-thoughts (P 2 oT) prompting are on the horizon, potentially enhancing the agentic reasoning capabilities of AI further. These insights not only encapsulate the transformative potential of current AI technologies but also provide a clear trajectory for future research, fostering a deeper understanding and more advanced applications of neuro-symbolic AI.

## References

- [1] Yann LeCun, Yoshua Bengio, and Geoffrey Hinton. Deep learning. Nature, 521(7553):436–444, 2015.

- [2] Allen Newell and Herbert A Simon. Computer science as empirical inquiry: Symbols and search. In ACM Turing award lectures, page 1975. 2007.

- [3] Alex Krizhevsky, Ilya Sutskever, and Geoffrey E Hinton. Imagenet classification with deep convolutional neural networks. Advances in neural information processing systems, 25, 2012.

- [4] Edward Shortliffe. Computer-based medical consultations: MYCIN, volume 2. Elsevier, 2012.

- [5] Tom Brown, Benjamin Mann, Nick Ryder, Melanie Subbiah, Jared D Kaplan, Prafulla Dhariwal, Arvind Neelakantan, Pranav Shyam, Girish Sastry, Amanda Askell, et al. Language models are few-shot learners. Advances in neural information processing systems, 33:1877–1901, 2020.

- [6] Alec Radford, Jeffrey Wu, Rewon Child, David Luan, Dario Amodei, Ilya Sutskever, et al. Language models are unsupervised multitask learners. OpenAI blog, 1(8):9, 2019.

- [7] Sébastien Bubeck, Varun Chandrasekaran, Ronen Eldan, Johannes Gehrke, Eric Horvitz, Ece Kamar, Peter Lee, Yin Tat Lee, Yuanzhi Li, Scott Lundberg, et al. Sparks of artificial general intelligence: Early experiments with gpt-4. arXiv preprint arXiv:2303.12712, 2023.

- [8] Haoyi Xiong, Jiang Bian, Sijia Yang, Xiaofei Zhang, Linghe Kong, and Daqing Zhang. Natural language based context modeling and reasoning with llms: A tutorial. arXiv preprint arXiv:2309.15074, 2023.

- [9] Jonathan St BT Evans. In two minds: dual-process accounts of reasoning. Trends in cognitive sciences, 7(10):454–459, 2003.

- [10] Yoshua Bengio. Deep learning for system 2 processing. AAAI 2020, 2020.

- [11] Shaoxiong Ji, Shirui Pan, Erik Cambria, Pekka Marttinen, and S Yu Philip. A survey on knowledge graphs: Representation, acquisition, and applications. IEEE Transactions on Neural Networks and Learning Systems, 33(2):494–514, 2021.

- [12] Taylor Webb, Keith J Holyoak, and Hongjing Lu. Emergent analogical reasoning in large language models. Nature Human Behaviour, 7(9):1526–1541, 2023.

- [13] James WA Strachan, Dalila Albergo, Giulia Borghini, Oriana Pansardi, Eugenio Scaliti, Saurabh Gupta, Krati Saxena, Alessandro Rufo, Stefano Panzeri, Guido Manzi, et al. Testing theory of mind in large language models and humans. Nature Human Behaviour, pages 1–11, 2024.

- [14] Artur d’Avila Garcez and Luis C Lamb. Neurosymbolic ai: The 3 rd wave. Artificial Intelligence Review, 56(11):12387–12406, 2023.

- [15] Frank Rosenblatt. The perceptron: a probabilistic model for information storage and organization in the brain. Psychological review, 65(6):386, 1958.

- [16] David E Rumelhart, Geoffrey E Hinton, and Ronald J Williams. Learning representations by back-propagating errors. Nature, 323(6088):533–536, 1986.

- [17] Allen Newell and Herbert Simon. The logic theory machine–a complex information processing system. IRE Transactions on information theory, 2(3):61–79, 1956.

- [18] Bruce G Buchanan and Edward A Feigenbaum. Dendral and meta-dendral: Their applications dimension. Artificial intelligence, 11(1-2):5–24, 1978.

- [19] Ashok Kumar Goel. Integration of case-based reasoning and model-based reasoning for adaptive design problem-solving. The Ohio State University, 1989.

- [20] Zachary C Lipton. The mythos of model interpretability: In machine learning, the concept of interpretability is both important and slippery. Queue, 16(3):31–57, 2018.

- [21] Edward A Feigenbaum et al. The art of artificial intelligence: Themes and case studies of knowledge engineering. 1977.

- [22] Charles Elkan and Russell Greiner. Building large knowledge-based systems: Representation and inference in the cyc project: Db lenat and rv guha, 1993.

- [23] Yoshua Bengio, Yann Lecun, and Geoffrey Hinton. Deep learning for ai. Communications of the ACM, 64(7):58–65, 2021.

- [24] Artur SD’Avila Garcez, Luis C Lamb, and Dov M Gabbay. Neural-symbolic cognitive reasoning. Springer Science & Business Media, 2008.

- [25] Jacob Devlin, Ming-Wei Chang, Kenton Lee, and Kristina Toutanova. Bert: Pre-training of deep bidirectional transformers for language understanding. arXiv preprint arXiv:1810.04805, 2018.

- [26] David Silver, Julian Schrittwieser, Karen Simonyan, Ioannis Antonoglou, Aja Huang, Arthur Guez, Thomas Hubert, Lucas Baker, Matthew Lai, Adrian Bolton, et al. Mastering the game of go without human knowledge. Nature, 550(7676):354–359, 2017.

- [27] World Wide Web Consortium (W3C). Resource description framework (rdf) model and syntax specification, 1999. Retrieved from https://www.w3.org/TR/1999/REC-rdf-syntax-19990222/.

- [28] Tim Berners-Lee, James Hendler, and Ora Lassila. The semantic web: A new form of web content that is meaningful to computers will unleash a revolution of new possibilities. In Linking the World’s Information: Essays on Tim Berners-Lee’s Invention of the World Wide Web, pages 91–103. 2023.

- [29] Grigoris Antoniou and Frank Van Harmelen. A semantic web primer. MIT press, 2004.

- [30] Thomas R Gruber. A translation approach to portable ontology specifications. Knowledge Acquisition, 5(2):199–220, 1993.

- [31] Stanley Kok and Pedro Domingos. Learning the structure of markov logic networks. In Proceedings of the 22nd International Conference on Machine Learning, pages 441–448, 2005.

- [32] Maximilian Nickel, Kevin Murphy, Volker Tresp, and Evgeniy Gabrilovich. A review of relational machine learning for knowledge graphs. Proceedings of the IEEE, 104(1):11–33, 2015.

- [33] Thomas N Kipf and Max Welling. Semi-supervised classification with graph convolutional networks. In International Conference on Learning Representations, 2022.

- [34] Quan Wang, Zhendong Mao, Bin Wang, and Li Guo. Knowledge graph embedding: A survey of approaches and applications. IEEE Transactions on Knowledge and Data Engineering, 29(12):2724–2743, 2017.

- [35] Sepp Hochreiter and Jürgen Schmidhuber. Long short-term memory. Neural computation, 9(8):1735–1780, 1997.

- [36] Ashish Vaswani, Noam Shazeer, Niki Parmar, Jakob Uszkoreit, Llion Jones, Aidan N Gomez, Łukasz Kaiser, and Illia Polosukhin. Attention is all you need. Advances in neural information processing systems, 30, 2017.

- [37] Colin Raffel, Noam Shazeer, Adam Roberts, Katherine Lee, Sharan Narang, Michael Matena, Yanqi Zhou, Wei Li, and Peter J Liu. Exploring the limits of transfer learning with a unified text-to-text transformer. Journal of machine learning research, 21(140):1–67, 2020.

- [38] Josh Achiam, Steven Adler, Sandhini Agarwal, Lama Ahmad, Ilge Akkaya, Florencia Leoni Aleman, Diogo Almeida, Janko Altenschmidt, Sam Altman, Shyamal Anadkat, et al. Gpt-4 technical report. arXiv preprint arXiv:2303.08774, 2023.

- [39] Gemini Team, Rohan Anil, Sebastian Borgeaud, Yonghui Wu, Jean-Baptiste Alayrac, Jiahui Yu, Radu Soricut, Johan Schalkwyk, Andrew M Dai, Anja Hauth, et al. Gemini: a family of highly capable multimodal models. arXiv preprint arXiv:2312.11805, 2023.

- [40] Aakanksha Chowdhery, Sharan Narang, Jacob Devlin, Maarten Bosma, Gaurav Mishra, Adam Roberts, Paul Barham, Hyung Won Chung, Charles Sutton, Sebastian Gehrmann, et al. Palm: Scaling language modeling with pathways. Journal of Machine Learning Research, 24(240):1–113, 2023.

- [41] Marah Abdin, Sam Ade Jacobs, Ammar Ahmad Awan, Jyoti Aneja, Ahmed Awadallah, Hany Awadalla, Nguyen Bach, Amit Bahree, Arash Bakhtiari, Harkirat Behl, et al. Phi-3 technical report: A highly capable language model locally on your phone. arXiv preprint arXiv:2404.14219, 2024.

- [42] Hugo Touvron, Thibaut Lavril, Gautier Izacard, Xavier Martinet, Marie-Anne Lachaux, Timothée Lacroix, Baptiste Rozière, Naman Goyal, Eric Hambro, Faisal Azhar, et al. Llama: Open and efficient foundation language models. arXiv preprint arXiv:2302.13971, 2023.

- [43] Xiao Liu, Hao Yu, Hanchen Zhang, Yifan Xu, Xuanyu Lei, Hanyu Lai, Yu Gu, Hangliang Ding, Kaiwen Men, Kejuan Yang, et al. Agentbench: Evaluating llms as agents. arXiv preprint arXiv:2308.03688, 2023.

- [44] Ning Ding, Yujia Qin, Guang Yang, Fuchao Wei, Zonghan Yang, Yusheng Su, Shengding Hu, Yulin Chen, Chi-Min Chan, Weize Chen, et al. Parameter-efficient fine-tuning of large-scale pre-trained language models. Nature Machine Intelligence, 5(3):220–235, 2023.

- [45] Long Ouyang, Jeffrey Wu, Xu Jiang, Diogo Almeida, Carroll Wainwright, Pamela Mishkin, Chong Zhang, Sandhini Agarwal, Katarina Slama, Alex Ray, et al. Training language models to follow instructions with human feedback. Advances in neural information processing systems, 35:27730–27744, 2022.

- [46] Rylan Schaeffer, Brando Miranda, and Sanmi Koyejo. Are emergent abilities of large language models a mirage? Advances in Neural Information Processing Systems, 36, 2024.

- [47] Jason Wei, Xuezhi Wang, Dale Schuurmans, Maarten Bosma, Fei Xia, Ed Chi, Quoc V Le, Denny Zhou, et al. Chain-of-thought prompting elicits reasoning in large language models. Advances in neural information processing systems, 35:24824–24837, 2022.

- [48] Junyi Li, Xiaoxue Cheng, Wayne Xin Zhao, Jian-Yun Nie, and Ji-Rong Wen. Halueval: A large-scale hallucination evaluation benchmark for large language models. In Proceedings of the 2023 Conference on Empirical Methods in Natural Language Processing, pages 6449–6464, 2023.

- [49] Pattie Maes. Modeling adaptive autonomous agents. Artificial life, 1(1_2):135–162, 1993.

- [50] Stefano V Albrecht and Peter Stone. Autonomous agents modelling other agents: A comprehensive survey and open problems. Artificial Intelligence, 258:66–95, 2018.

- [51] Ronald C Arkin. Behavior-based robotics. MIT press, 1998.

- [52] Yara Rizk, Mariette Awad, and Edward W Tunstel. Cooperative heterogeneous multi-robot systems: A survey. ACM Computing Surveys (CSUR), 52(2):1–31, 2019.

- [53] Daphne Koller and Nir Friedman. Probabilistic graphical models: principles and techniques. MIT press, 2009.

- [54] Richard S Sutton and Andrew G Barto. Reinforcement learning: An introduction. MIT press, 2018.

- [55] Lei Wang, Chen Ma, Xueyang Feng, Zeyu Zhang, Hao Yang, Jingsen Zhang, Zhiyuan Chen, Jiakai Tang, Xu Chen, Yankai Lin, et al. A survey on large language model based autonomous agents. Frontiers of Computer Science, 18(6):186345, 2024.

- [56] Zhiheng Xi, Wenxiang Chen, Xin Guo, Wei He, Yiwen Ding, Boyang Hong, Ming Zhang, Junzhe Wang, Senjie Jin, Enyu Zhou, et al. The rise and potential of large language model based agents: A survey. arXiv preprint arXiv:2309.07864, 2023.

- [57] Qingyun Wu, Gagan Bansal, Jieyu Zhang, Yiran Wu, Shaokun Zhang, Erkang Zhu, Beibin Li, Li Jiang, Xiaoyun Zhang, and Chi Wang. Autogen: Enabling next-gen llm applications via multi-agent conversation framework. arXiv preprint arXiv:2308.08155, 2023.

- [58] Oguzhan Topsakal and Tahir Cetin Akinci. Creating large language model applications utilizing langchain: A primer on developing llm apps fast. In International Conference on Applied Engineering and Natural Sciences, volume 1, pages 1050–1056, 2023.

- [59] Jerry Liu. LlamaIndex, 11 2022.

- [60] Chan Hee Song, Jiaman Wu, Clayton Washington, Brian M Sadler, Wei-Lun Chao, and Yu Su. Llm-planner: Few-shot grounded planning for embodied agents with large language models. In Proceedings of the IEEE/CVF International Conference on Computer Vision, pages 2998–3009, 2023.

- [61] Bill Yuchen Lin, Yicheng Fu, Karina Yang, Faeze Brahman, Shiyu Huang, Chandra Bhagavatula, Prithviraj Ammanabrolu, Yejin Choi, and Xiang Ren. Swiftsage: A generative agent with fast and slow thinking for complex interactive tasks. Advances in Neural Information Processing Systems, 36, 2024.

- [62] Jeff Johnson, Matthijs Douze, and Hervé Jégou. Billion-scale similarity search with gpus. IEEE Transactions on Big Data, 7(3):535–547, 2019.

- [63] Kelvin Guu, Kenton Lee, Zora Tung, Panupong Pasupat, and Mingwei Chang. Retrieval augmented language model pre-training. In International conference on machine learning, pages 3929–3938. PMLR, 2020.

- [64] Scott Reed, Konrad Zolna, Emilio Parisotto, Sergio Gómez Colmenarejo, Alexander Novikov, Gabriel Barth-maron, Mai Giménez, Yury Sulsky, Jackie Kay, Jost Tobias Springenberg, et al. A generalist agent. Transactions on Machine Learning Research.

- [65] Yongliang Shen, Kaitao Song, Xu Tan, Dongsheng Li, Weiming Lu, and Yueting Zhuang. Hugginggpt: Solving ai tasks with chatgpt and its friends in hugging face. Advances in Neural Information Processing Systems, 36, 2024.

- [66] Jianfeng Gao, Michel Galley, and Lihong Li. Neural approaches to conversational ai. In The 41st international ACM SIGIR conference on research & development in information retrieval, pages 1371–1374, 2018.

- [67] Shunyu Yao, Jeffrey Zhao, Dian Yu, Nan Du, Izhak Shafran, Karthik Narasimhan, and Yuan Cao. React: Synergizing reasoning and acting in language models. arXiv preprint arXiv:2210.03629, 2022.

- [68] Ehud Karpas, Omri Abend, Yonatan Belinkov, Barak Lenz, Opher Lieber, Nir Ratner, Yoav Shoham, Hofit Bata, Yoav Levine, Kevin Leyton-Brown, et al. Mrkl systems: A modular, neuro-symbolic architecture that combines large language models, external knowledge sources and discrete reasoning. arXiv preprint arXiv:2205.00445, 2022.

- [69] Artur d’Avila Garcez, Tarek R Besold, Luc De Raedt, Peter Földiak, Pascal Hitzler, Thomas Icard, Kai-Uwe Kühnberger, Luis C Lamb, Risto Miikkulainen, and Daniel L Silver. Neural-symbolic learning and reasoning: contributions and challenges. In 2015 AAAI Spring Symposium Series, 2015.

- [70] Ronald Brachman and Hector Levesque. Knowledge representation and reasoning. Morgan Kaufmann, 2004.

- [71] Shunyu Yao, Dian Yu, Jeffrey Zhao, Izhak Shafran, Tom Griffiths, Yuan Cao, and Karthik Narasimhan. Tree of thoughts: Deliberate problem solving with large language models. Advances in Neural Information Processing Systems, 36, 2024.

- [72] Bernardino Romera-Paredes, Mohammadamin Barekatain, Alexander Novikov, Matej Balog, M Pawan Kumar, Emilien Dupont, Francisco JR Ruiz, Jordan S Ellenberg, Pengming Wang, Omar Fawzi, et al. Mathematical discoveries from program search with large language models. Nature, 625(7995):468–475, 2024.

- [73] Sewon Min, Xinxi Lyu, Ari Holtzman, Mikel Artetxe, Mike Lewis, Hannaneh Hajishirzi, and Luke Zettlemoyer. Rethinking the role of demonstrations: What makes in-context learning work? arXiv preprint arXiv:2202.12837, 2022.

- [74] Lauren Nicole DeLong, Ramon Fernández Mir, and Jacques D Fleuriot. Neurosymbolic ai for reasoning over knowledge graphs: A survey. arXiv preprint arXiv:2302.07200, 2023.

- [75] Ciyuan Peng, Feng Xia, Mehdi Naseriparsa, and Francesco Osborne. Knowledge graphs: Opportunities and challenges. Artificial Intelligence Review, 56(11):13071–13102, 2023.

- [76] Sang Michael Xie, Aditi Raghunathan, Percy Liang, and Tengyu Ma. An explanation of in-context learning as implicit bayesian inference. arXiv preprint arXiv:2111.02080, 2021.

- [77] Arian Askari, Roxana Petcu, Chuan Meng, Mohammad Aliannejadi, Amin Abolghasemi, Evangelos Kanoulas, and Suzan Verberne. Self-seeding and multi-intent self-instructing llms for generating intent-aware information-seeking dialogs. arXiv preprint arXiv:2402.11633, 2024.

- [78] Niklas Muennighoff, Hongjin Su, Liang Wang, Nan Yang, Furu Wei, Tao Yu, Amanpreet Singh, and Douwe Kiela. Generative representational instruction tuning. arXiv preprint arXiv:2402.09906, 2024.

- [79] Petar Veličković and Charles Blundell. Neural algorithmic reasoning. Patterns, 2(7), 2021.

- [80] Pentti Kanerva. Hyperdimensional computing: An introduction to computing in distributed representation with high-dimensional random vectors. Cognitive computation, 1:139–159, 2009.

- [81] Michael Hersche, Mustafa Zeqiri, Luca Benini, Abu Sebastian, and Abbas Rahimi. A neuro-vector-symbolic architecture for solving raven’s progressive matrices. Nature Machine Intelligence, 5(4):363–375, 2023.

- [82] K Rustan M Leino. Dafny: An automatic program verifier for functional correctness. In International conference on logic for programming artificial intelligence and reasoning, pages 348–370. Springer, 2010.

- [83] Leonardo de Moura and Sebastian Ullrich. The lean 4 theorem prover and programming language. In Automated Deduction–CADE 28: 28th International Conference on Automated Deduction, Virtual Event, July 12–15, 2021, Proceedings 28, pages 625–635. Springer, 2021.

- [84] Eric Mugnier, Emmanuel Anaya Gonzalez, Ranjit Jhala, Nadia Polikarpova, and Yuanyuan Zhou. Laurel: Generating dafny assertions using large language models. arXiv preprint arXiv:2405.16792, 2024.