# Towards Collaborative Intelligence: Propagating Intentions and Reasoning for Multi-Agent Coordination with Large Language Models

**Authors**: Xihe Qiu, Haoyu Wang, Xiaoyu Tan, Chao Qu, Yujie Xiong, Yuan Cheng, Yinghui Xu, Wei Chu, Yuan Qi

## Abstract

Effective collaboration in multi-agent systems requires communicating goals and intentions between agents. Current agent frameworks often suffer from dependencies on single-agent execution and lack robust inter-module communication, frequently leading to suboptimal multi-agent reinforcement learning (MARL) policies and inadequate task coordination. To address these challenges, we present a framework for training large language models (LLMs) as collaborative agents to enable coordinated behaviors in cooperative MARL. Each agent maintains a private intention consisting of its current goal and associated sub-tasks. Agents broadcast their intentions periodically, allowing other agents to infer coordination tasks. A propagation network transforms broadcast intentions into teammate-specific communication messages, sharing relevant goals with designated teammates. The architecture of our framework is structured into planning, grounding, and execution modules. During execution, multiple agents interact in a downstream environment and communicate intentions to enable coordinated behaviors. The grounding module dynamically adapts comprehension strategies based on emerging coordination patterns, while feedback from execution agents influnces the planning module, enabling the dynamic re-planning of sub-tasks. Results in collaborative environment simulation demonstrate intention propagation reduces miscoordination errors by aligning sub-task dependencies between agents. Agents learn when to communicate intentions and which teammates require task details, resulting in emergent coordinated behaviors. This demonstrates the efficacy of intention sharing for cooperative multi-agent RL based on LLMs.

Towards Collaborative Intelligence: Propagating Intentions and Reasoning for Multi-Agent Coordination with Large Language Models

## 1 Introduction

With the recent advancements of large language models (LLMs), developing intelligent agents that can perform complex reasoning and long-horizon planning has attracted increasing research attention Sharan et al. (2023); Huang et al. (2022). A variety of agent frameworks have been proposed, such as ReAct Yao et al. (2022), LUMOS Yin et al. (2023), Chameleon Lu et al. (2023) and BOLT Chiu et al. (2024). These frameworks typically consist of modules for high-level planning, grounding plans into executable actions, and interacting with environments or tools to execute actions Rana et al. (2023).

Despite their initial success, existing agent frameworks may experience some limitations. Firstly, most of them rely on a single agent for execution Song et al. (2023); Hartmann et al. (2022). However, as tasks become more complex, the action dimension can be increased exponentially, and it poses significant challenges for a single agent to handle all execution functionalities Chebotar et al. (2023); Wen et al. (2023). Secondly, existing frameworks lack inter-module communication mechanisms. Typically, the execution results are directly used as input in the planning module without further analysis or coordination Zeng et al. (2023); Wang et al. (2024b). When execution failures occur, the agent may fail to adjust its strategies accordingly Chaka (2023). Thirdly, the grounding module in existing frameworks operates statically, without interactions with downstream modules. It grounds plans independently without considering feedback or states of the execution module Xi et al. (2023). LLMs struggle to handle emergent coordination behaviors and lack common grounding on shared tasks. Moreover, existing multi-agent reinforcement learning (MARL) methods often converge on suboptimal policies that fail to exhibit a certain level of cooperation Gao et al. (2023); Yu et al. (2023).

How can the agents with LLMs effectively communicate and collaborate with each other? we propose a novel approach, Re cursive M ulti- A gent L earning with I ntention S haring (ReMALIS The code can be accessed at the following URL: https://github.com/AnonymousBoy123/ReMALIS.) to address the limitations of existing cooperative artificial intelligence (AI) multi-agent frameworks with LLMs. ReMALIS employs intention propagation between LLM agents to enable a shared understanding of goals and tasks. This common grounding allows agents to align intentions and reduce miscoordination. Additionally, we introduce bidirectional feedback loops between downstream execution agents and upstream planning and grounding modules. This enables execution coordination patterns to guide adjustments in grounding strategies and planning policies, resulting in more flexible emergent behaviors Topsakal and Akinci (2023). By integrating these mechanisms, ReMALIS significantly improves the contextual reasoning and adaptive learning capabilities of LLM agents during complex collaborative tasks. The execution module utilizes specialized agents that collaboratively execute actions, exchange information, and propagate intentions via intention networks. These propagated intentions reduce miscoordination errors and guide grounding module adjustments to enhance LLM comprehension based on coordination patterns Dong et al. (2023). Furthermore, execution agents can provide feedback to prompt collaborative re-planning in the planning module when necessary.

Compared to single-agent frameworks, the synergistic work of multiple specialized agents enhances ReMALIS ’s collective intelligence and leads to emerging team-level behaviors Wang et al. (2023). The collaborative design allows for dealing with more complex tasks that require distributed knowledge and skills. We demonstrate that:

- Intention propagation between execution agents enables emergent coordination behaviors and reduces misaligned sub-tasks.

- Grounding module strategies adjusted by intention sharing improve LLM scene comprehension.

- Planning module re-planning guided by execution feedback increases goal-oriented coordination.

Compared to various single-agent baselines and existing state-of-the-art MARL Hu and Sadigh (2023); Zou et al. (2023) methods using LLMs, our ReMALIS framework demonstrates improved performance on complex collaborative tasks, utilizing the publicly available large-scale traffic flow prediction (TFP) dataset and web-based activities dataset. This demonstrates its effectiveness in deploying LLMs as collaborative agents capable of intention communication, strategic adjustments, and collaborative re-planning Du et al. (2023).

## 2 Preliminary

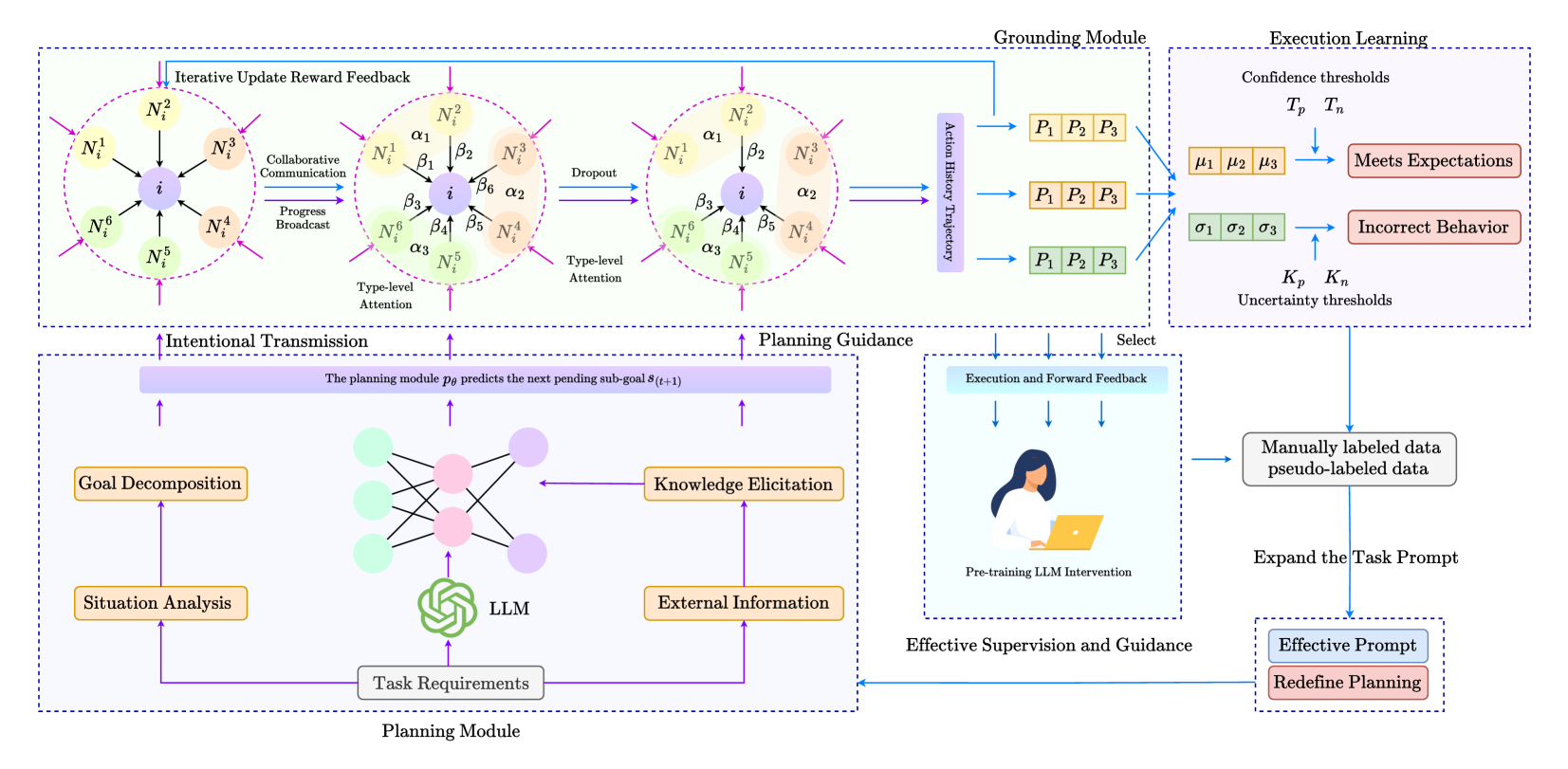

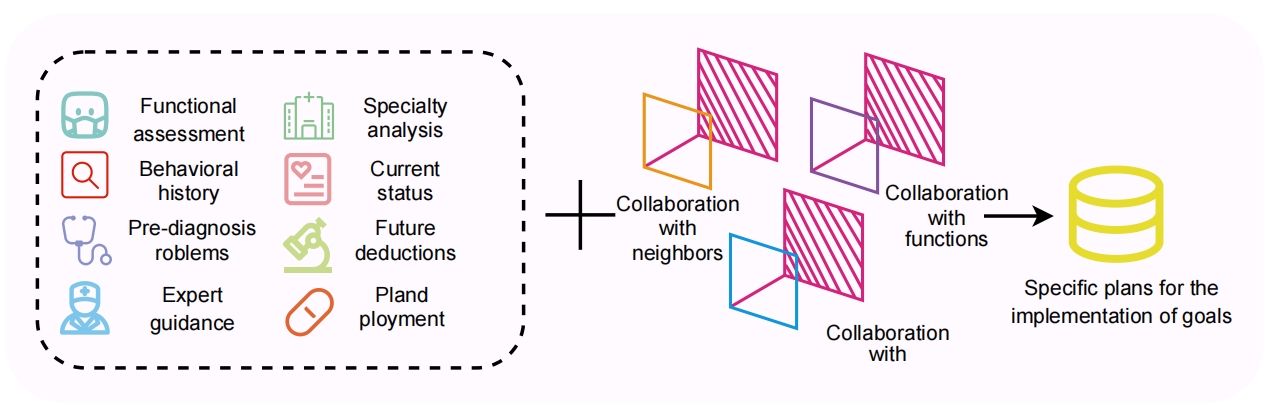

In this section, we introduce the methods of the proposed ReMALIS framework in detail. As illustrated in Figure 1, ReMALIS consists of four key components:

<details>

<summary>x1.png Details</summary>

### Visual Description

## System Architecture Diagram: Multi-Agent Planning and Execution Learning Framework

### Overview

This image is a technical system architecture diagram illustrating a multi-component framework for AI agent planning, grounding, execution learning, and human-in-the-loop supervision. The diagram is divided into four primary modules connected by data and feedback flows. The overall aesthetic uses a clean, academic style with color-coded components (orange, green, purple, blue) and directional arrows to indicate information flow.

### Components/Axes

The diagram is segmented into four major dashed-line boxes, each representing a core module:

1. **Top-Left Module: Grounding Module**

* **Sub-components:** Three circular diagrams representing iterative communication and attention among agent nodes.

* **Labels within circles:** Nodes labeled `N_i^1`, `N_i^2`, `N_i^3`, `N_i^4`, `N_i^5`, `N_i^6` surrounding a central node `i`.

* **Connection Labels:** Greek letters `α₁`, `α₂`, `α₃`, `β₁`, `β₂`, `β₃`, `β₄`, `β₅`, `β₆` on the connecting lines between nodes.

* **Process Labels:** "Iterative Update Reward Feedback", "Collaborative Communication", "Progress Broadcast", "Dropout", "Type-level Attention".

* **Output:** An "Action History Trajectory" block leading to three sets of `P₁ P₂ P₃` blocks (colored orange, yellow, green).

2. **Top-Right Module: Execution Learning**

* **Inputs:** The three `P₁ P₂ P₃` blocks from the Grounding Module.

* **Key Elements:**

* "Confidence thresholds" labeled `Tₚ`, `Tₙ`.

* "Uncertainty thresholds" labeled `Kₚ`, `Kₙ`.

* A set of orange boxes labeled `μ₁`, `μ₂`, `μ₃`.

* A set of green boxes labeled `σ₁`, `σ₂`, `σ₃`.

* **Decision Blocks:** "Meets Expectations" (orange outline) and "Incorrect Behavior" (green outline).

* **Flow:** Arrows indicate that the `P` blocks are evaluated against thresholds to produce either expectation-meeting or incorrect behavior outputs.

3. **Bottom-Left Module: Planning Module**

* **Central Element:** A green "LLM" icon (resembling the OpenAI logo) connected to a neural network diagram.

* **Input Blocks:** "Task Requirements" (grey), "Situation Analysis" (orange), "Goal Decomposition" (orange).

* **Process Blocks:** "Knowledge Elicitation" (orange), "External Information" (orange).

* **Core Function Text:** A purple bar states: "The planning module `p_θ` predicts the next pending sub-goal `s_(t+1)`".

* **Output:** Labeled "Intentional Transmission" and "Planning Guidance", with arrows pointing up to the Grounding Module.

4. **Bottom-Right Module: Effective Supervision and Guidance**

* **Central Element:** An illustration of a person at a laptop, labeled "Pre-training LLM Intervention".

* **Input:** "Execution and Forward Feedback" from the Execution Learning module.

* **Data Sources:** "Manually labeled data" and "pseudo-labeled data".

* **Process:** "Expand the Task Prompt".

* **Output Blocks:** "Effective Prompt" (blue) and "Redefine Planning" (red).

* **Feedback Loop:** A blue arrow labeled "Select" feeds back into the Execution Learning module. Another arrow feeds back into the Planning Module.

### Detailed Analysis

**Flow and Connections:**

1. The **Planning Module** receives "Task Requirements" and uses an LLM to perform situation analysis, goal decomposition, and knowledge elicitation. It predicts the next sub-goal (`s_(t+1)`) and sends "Intentional Transmission" and "Planning Guidance" to the Grounding Module.

2. The **Grounding Module** processes this guidance through a multi-agent communication protocol. Three stages are shown:

* Stage 1: Initial collaborative communication and progress broadcast among nodes (`N_i^1` to `N_i^6`).

* Stage 2: Application of "Type-level Attention" (weights `α` and `β`).

* Stage 3: A "Dropout" operation, resulting in a refined attention state.

* This process generates an "Action History Trajectory" which outputs three sequences of actions (`P₁ P₂ P₃`).

3. The **Execution Learning** module evaluates these action sequences. It uses confidence (`Tₚ`, `Tₙ`) and uncertainty (`Kₚ`, `Kₙ`) thresholds. The evaluation produces metrics (`μ` series, `σ` series) and classifies outcomes as "Meets Expectations" or "Incorrect Behavior".

4. The **Effective Supervision and Guidance** module takes the execution feedback. A human-in-the-loop ("Pre-training LLM Intervention") uses manually and pseudo-labeled data to "Expand the Task Prompt". This generates an "Effective Prompt" and helps "Redefine Planning", creating a feedback loop that refines both the Execution Learning selection criteria and the Planning Module itself.

**Spatial Grounding:**

* The **Legend/Color Code** is implicit but consistent:

* **Orange:** Associated with planning, goals, and positive outcomes ("Meets Expectations").

* **Green:** Associated with the core LLM and negative outcomes ("Incorrect Behavior").

* **Purple:** Associated with core predictive functions and attention mechanisms.

* **Blue:** Associated with feedback, supervision, and prompt engineering.

* The **Execution Learning** module is positioned in the top-right quadrant.

* The **Planning Module** is in the bottom-left quadrant.

* The **Grounding Module** spans the top-left and top-center.

* The **Supervision** module is in the bottom-right quadrant.

### Key Observations

1. **Closed-Loop System:** The diagram explicitly shows a closed-loop system where execution outcomes directly inform and refine the planning and prompting strategies.

2. **Human-in-the-Loop:** The inclusion of "Pre-training LLM Intervention" and manual data labeling indicates a hybrid system that combines automated learning with human oversight.

3. **Multi-Agent Attention:** The Grounding Module details a sophisticated attention mechanism (`α`, `β` weights) among multiple nodes (`N_i`), suggesting a distributed or ensemble approach to action selection.

4. **Threshold-Based Evaluation:** Execution success is not binary but is evaluated against continuous confidence and uncertainty thresholds (`T`, `K`), allowing for nuanced performance assessment.

5. **Prompt Engineering as a Control Lever:** The system uses "Expand the Task Prompt" and "Effective Prompt" as key mechanisms for supervision, highlighting the central role of prompt design in guiding the LLM's behavior.

### Interpretation

This diagram outlines a comprehensive framework for building more reliable and adaptable LLM-based agents. The core innovation appears to be the integration of three critical layers:

1. **Strategic Planning (Planning Module):** Where high-level goals are decomposed using an LLM.

2. **Tactical Grounding (Grounding Module):** Where plans are translated into coordinated actions through a multi-agent attention mechanism, adding robustness.

3. **Operational Learning (Execution Learning & Supervision):** Where actions are evaluated against real-world outcomes, and failures are used to systematically improve the system—either by adjusting internal thresholds or by refining the prompts that guide the core LLM.

The framework addresses key challenges in LLM deployment: the "grounding problem" (connecting plans to executable actions) and the "alignment problem" (ensuring actions meet expectations). By creating a feedback loop from execution failure back to prompt and plan redefinition, the system aims for continuous, supervised improvement. The presence of both manual and pseudo-labeled data suggests a practical approach to scaling supervision. This architecture would be relevant for complex, multi-step tasks where initial LLM plans require validation and iterative refinement in a dynamic environment.

</details>

Figure 1: This framework introduces a multi-agent learning strategy designed to enhance the capabilities of LLMs through cooperative coordination. It enables agents to collaborate and share intentions for effective coordination, and utilizes recursive reasoning to model and adapt to each other’s strategies.

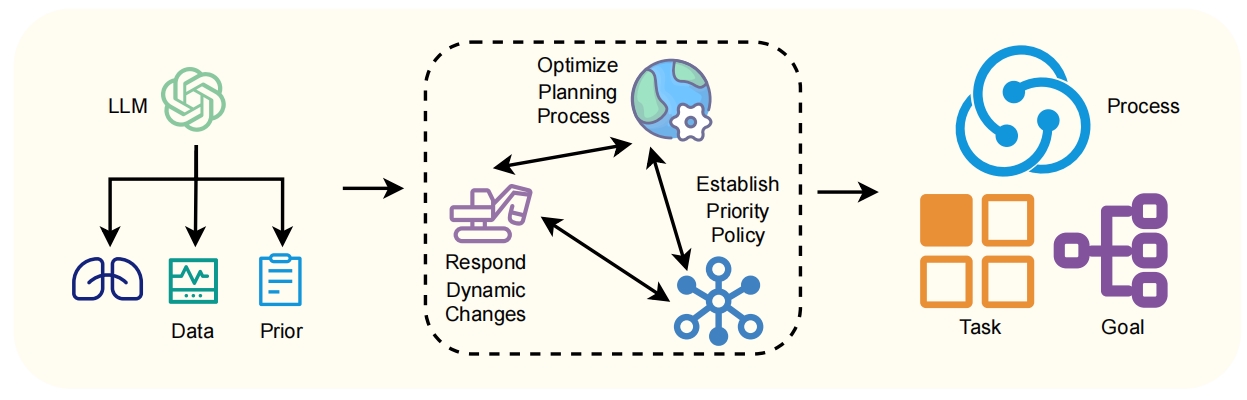

Planning Module $p_θ$ predicts the next pending sub-goal $s_t+1$ , given the current sub-goal $s_t$ and other inputs $s_t+1=p_θ(s_t,I_t,e_t,f_t),$ where $I_t$ is the current intention, $e_t$ is the grounded embedding, and $f_t$ is agent feedback. $p_θ$ first encode information through encoding layers $h_t=Encoder(s_t,I_t,e_t,f_t)$ and subsequently predict the sub-goal through $s_t+1=Softmax(T_θ(h_t))$ , where $T_θ$ utilizes the graph neural network (GNN) architecture.

The module is trained to maximize the likelihood of all sub-goals along the decision sequences given the current information on time step $t$ . This allows the dynamic re-planning of sub-task dependencies based on agent feedback.

$$

θ^*=\arg\max_θ∏_t=1^Tp_θ(s_t+1|s_t,I_t,e_t

,f_t). \tag{1}

$$

Grounding Module $g_φ$ contextualizes symbol embeddings $e_t=g_φ(s_t,I_t,f_1:t)$ , where $s_t$ , $I_t$ , and $f_1:t$ represent the states, intention, and feedback up to time step $t$ , respectively. These embeddings are processed by encoders $h_t=Encoder(s_t,I_t,f_1:t)$ and then by cross-attention layers and convolutional feature extractors: $e_t=Conv(Attn(h_t,V))+P_t$ over vocabulary $V$ . Here, $P_t$ includes agent feedback to enhance grounding accuracy based on coordination signals for more accurate contextual understanding. The module maps language symbols to physical environment representations through:

$$

g(x)=f_θ≤ft(∑_i=1^Nw_ig(x_i)\right), \tag{2}

$$

where $g(x)$ is the grounded embeddings of policy set $x$ and $g(x_i)$ represents its individual action embedding on agent $i$ , respectively, and $w_i$ are learnable weights. The grounding function $f_θ$ utilizes a GNN architecture for structural composition. Additionally, we employ an uncertainty modeling module that represents ambiguities in grounding:

$$

q_φ(z|x)=Normal\big{(}z;μ_φ(x),σ^2_φ(x)\big{)}, \tag{3}

$$

where $z$ is a latent variable modeled as a normal distribution, enabling the capture of multimodal uncertainties in grounding.

Cooperative Execution Module comprises $N$ specialized agents $\{A_1,...,A_N\}$ . This architecture avoids using a single agent to handle all tasks. Instead, each agent is dedicated to a distinct semantic domain, cultivating expertise specific to that domain. For instance, agents $A_1,A_2,$ and $A_3$ may be dedicated to query processing, information retrieval, and arithmetic operations, respectively. This specialization promotes an efficient distribution of tasks and reduces overlap in capabilities.

Decomposing skills into specialized agents risks creating isolated capabilities that lack coordination. To address this, it is essential that agents not only excel individually but also comprehend the capacities and limitations of their peers. We propose an integrated training approach where specialized agents are trained simultaneously to foster collaboration and collective intelligence. We represent the parameters of agent $A_i$ as $θ_i$ . Each agent’s policy, denoted as $y_i∼π_θ_{i}(·|s)$ , samples an output $y_i$ from a given input state $s$ . The training objective for our system is defined by the following equation:

$$

L_exe=∑_i=1^NE_(s,y^⋆)∼D{\ell(π_

θ_{i}(y_i|s),y^⋆)}, \tag{4}

$$

where $\ell(·)$ represents the task-specific loss function, comparing the agent-generated output $y_i$ with the ground-truth label $y^⋆$ . $D$ denotes the distribution of training data. By optimizing this objective collectively across all agents, each agent not only improves its own output accuracy but also enhances the overall team’s ability to produce coherent and well-coordinated results.

During training, we adjust the decomposition of grounding tasks to enhance collaboration, which is represented by the soft module weights $\{w_1,...,w_N\}$ . These weights indicate how the distribution of grounding commands can be optimized to better utilize the capabilities of different agents. The objective of this training is defined by the following loss function: $L_com=\ell(d,w^⋆)$ , where $\ell$ represents the loss function, $d$ is expressed as subgoal task instruction data, and $w^⋆$ signifies the optimal set of weights.

<details>

<summary>x2.png Details</summary>

### Visual Description

## Process Flow Diagram: Multi-Agent Collaborative Planning and Execution System

### Overview

The image is a detailed process flow diagram illustrating a four-stage, cyclical system for collaborative task planning, grounding, execution, and evaluation. The system appears designed for complex problem-solving, potentially in a medical or expert-guided domain, involving Large Language Models (LLMs), human experts, and multiple specialized agents. The flow moves from left to right through the stages, with a feedback loop at the bottom indicating iterative refinement.

### Components/Axes

The diagram is organized into four vertical, color-coded sections, each representing a major phase. The flow between phases is indicated by large, black, right-pointing chevrons (`>>`).

1. **Phase 1: Planning (Yellow Background, Leftmost)**

* **Top Cluster:** Icons and labels for "Process," "Task coding," and "Goal decomposition."

* **Middle Dashed Box:** A sub-process labeled "Optimize Planning Process" containing three interconnected elements: "Respond Dynamic Changes," "Establish Priority Policy," and an icon of a globe with a gear.

* **Bottom Cluster:** An "LLM" icon (resembling the OpenAI logo) feeds into three outputs: "Logical judgment," "Data analysis," and "Prior knowledge."

2. **Phase 2: Grounding (Light Purple Background, Center-Left)**

* **Top:** A database icon labeled "Specific plans for the implementation of goals."

* **Middle:** Three collaboration models represented by overlapping colored shapes: "Collaboration with neighbors," "Collaboration with functions," and "Collaboration with targets."

* **Bottom Dashed Box:** A list of assessment and analysis types, each with an icon: "Functional assessment," "Behavioral history," "Pre-diagnosis problems," "Expert guidance," "Specialty analysis," "Current status," "Future deductions," and "Planned deployment."

3. **Phase 3: Execution (Light Pink Background, Center-Right)**

* **Top Dashed Box:** A decision-making flowchart with icons. A green checkmark leads to a path involving vegetables, a basket, and a pot. A red "X" leads to a path involving a medicine bottle and a "no" symbol over a bottle, suggesting validation and rejection of actions.

* **Middle:** An "Instruction fine-tuning" element (blue checklist icon) receives input from the decision flowchart.

* **Bottom:** A branching structure showing "Task design" and "LLM correction" feeding into "Agents execution" and "Guided by experts."

4. **Phase 4: Evaluation & Coordination (Light Blue Background, Rightmost)**

* **Top Dashed Box:** A group of people icons surrounding various documents and charts, labeled "Communicate and Coordinate for Cooperation."

* **Middle:** An hourglass icon labeled "Collaborative evaluation."

* **Bottom:** A cycle showing "Communication between agents" (red circular arrows) and "Historical process" (monitor with gear), both informed by "Expert guidance" (graduation cap icon).

5. **Feedback Loop (Bottom of Diagram):**

* A black arrow runs from the bottom of the Execution/Evaluation phases back to the start of the Planning phase.

* Two text annotations are placed along this arrow:

* Left side: "The current error is too large. Please plan and deploy again" next to a circled "A".

* Right side: "Is there any feedback on re-planning?" next to a circled question mark.

### Detailed Analysis

* **Process Flow:** The system initiates in **Planning**, where an LLM uses prior knowledge and data to decompose goals and create tasks. This plan is then **Grounded** through collaboration models and detailed assessments. The grounded plan moves to **Execution**, where instructions are fine-tuned, tasks are designed, and agents (potentially AI or human) carry out actions, sometimes with expert guidance. Finally, the process enters **Evaluation & Coordination**, where outcomes are assessed collaboratively, communication occurs, and historical data is logged.

* **Key Relationships:**

* The LLM is a core component in the Planning phase, providing foundational judgment and analysis.

* Expert guidance is a recurring input, appearing in the Grounding (assessment list) and Execution (guided by experts) phases, and is a final output in the Evaluation phase.

* The "Instruction fine-tuning" step in Execution acts as a bridge between the high-level decision flowchart and the actual task execution.

* The feedback loop is critical, triggered by large errors or a need for re-planning, sending the process back to the initial Planning stage.

### Key Observations

* **Cyclical Nature:** The diagram is not linear but a continuous cycle, emphasizing iterative improvement based on evaluation and error feedback.

* **Hybrid Intelligence:** The system explicitly combines artificial intelligence (LLM, agents) with human intelligence (experts, collaboration, coordination).

* **Medical/Expert Context Clues:** Icons like a medicine bottle, stethoscope ("Pre-diagnosis problems"), and hospital ("Guided by experts") strongly suggest an application in healthcare or a similar expert-intensive field.

* **Structured Collaboration:** The Grounding phase breaks down collaboration into specific, structured models (with neighbors, functions, targets), indicating a sophisticated multi-agent framework.

### Interpretation

This diagram outlines a robust framework for deploying AI agents in complex, real-world tasks that require planning, validation, and expert oversight. It represents a **Peircean investigative cycle**:

1. **Abduction (Planning):** The LLM and planning process generate hypotheses (plans) based on available data and knowledge.

2. **Deduction (Grounding):** The plan is refined and tested against specific collaboration models and assessment criteria to predict outcomes.

3. **Induction (Execution):** Actions are taken in the real world (or a simulated environment), and results are observed.

4. **Evaluation & Feedback:** The outcomes are evaluated against the original goals. The explicit feedback loop ("error is too large") is the mechanism for **retroduction**—questioning the initial assumptions and plans when they fail to match reality, thus driving the next cycle of inquiry.

The system's value lies in its structured approach to mitigating the risks of autonomous AI by embedding human expertise, rigorous grounding, and continuous evaluation. It is designed not just to execute tasks, but to learn and adapt through collaboration and error correction, making it suitable for high-stakes domains where precision and accountability are paramount.

</details>

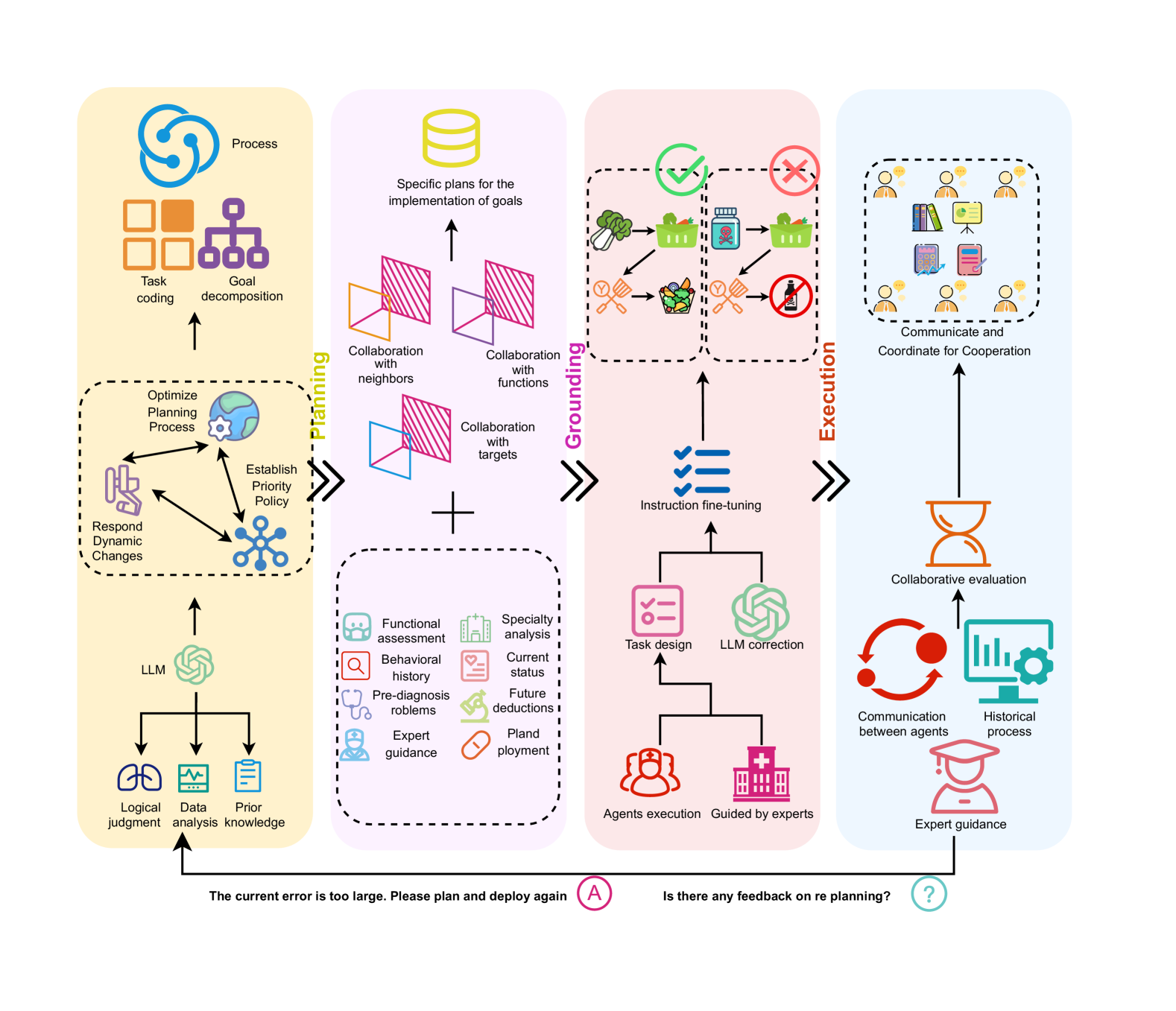

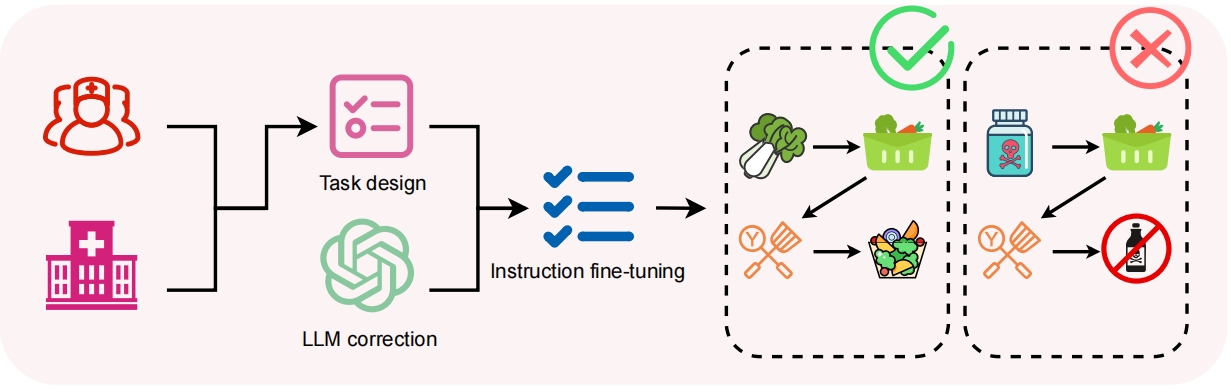

Figure 2: Overview of the proposed ReMALIS: This framework comprises a planning module, grounding module, cooperative execution module, and intention coordination channels.

## 3 Approach

The collaborative MARL of ReMALIS focuses on three key points: intention propagation for grounding, bidirectional coordination channels, and integration with recursive reasoning agents. Detailed parameter supplements and pseudocode details can be found in Appendix C and Appendix F.

### 3.1 Planning with Intention Propagation

We formulate a decentralized, partially observable Markov game for multi-agent collaboration. Each agent $i$ maintains a private intention $I_i$ encoded as a tuple $I_i=(γ_i,Σ_i,π_i,δ_i)$ , where $γ_i$ is the current goal, $Σ_i=\{σ_i1,σ_i2,…\}$ is a set of related sub-goals, $π_i(σ)$ is a probability distribution over possible next sub-goals, and $δ_i(σ)$ is the desired teammate assignment for sub-goal $σ$ .

Intentions are propagated through a communication channel $f_Λ$ parameterized by $Λ$ . For a received message $m_ij$ from agent $j$ , agent $i$ infers a belief over teammate $j$ ’s intention $b_i(I_j|m_ij)=f_Λ(m_ij)$ , where $Λ$ is a recurrent neural network. The channel $f_θ$ is trained in an end-to-end manner to maximize the coordination reward function $R_c$ . This propagates relevant sub-task dependencies to enhance common grounding on collaborative goals.

$$

Λ^*=\arg\max_ΛE_I,m∼ f_Λ[R_c

(I,m)]. \tag{5}

$$

At each time-step $t$ , the LLM witll processinputs comprising the agent’s state $s_t$ , the intention $I_t$ , and the feedback $f_1:t$ .

### 3.2 Grounding with Bidirectional Coordination Channels

The execution agent policies, denoted by $π_ξ(a_i|s_i,I_i)$ , are parameterized by $ξ$ and conditioned on the agent’s state $s_i$ and intention $I_i$ . Emergent coordination patterns are encoded in a summary statistic $c_t$ and passed to upstream modules to guide planning and grounding adjustments. For example, frequent miscoordination on sub-goal $σ$ indicates the necessity to re-plan $σ$ dependencies in $I$ .

This bidirectional feedback aligns low-level execution with high-level comprehension strategies. In addition to the downstream propagation of intents, execution layers provide bidirectional feedback signals $ψ(t)$ to upstream modules $ψ(t)=Φ(h^exec_t)$ :

$$

h^exec_t=[φ_1(o_1),…,φ_N(o_N)], \tag{6}

$$

where $Φ(·)$ aggregates agent encodings to summarize emergent coordination, and $φ_i(·)$ encodes the observation $o_i$ for agent $i$ .

Execution agents generate feedback $f_t$ to guide upstream LLM modules through: $f_t=g_θ(τ_1:t)$ , where $g_θ$ processes the action-observation history $τ_1:t$ . These signals include coordination errors $E_t$ which indicate misalignment of sub-tasks; grounding uncertainty $U_t$ , measured as entropy over grounded symbol embeddings; and re-planning triggers $R_t$ , which flag the need for sub-task reordering. These signals can reflect inconsistencies between sub-task objectives, the ambiguity of symbols in different contexts, and the need to adjust previous sub-task sequencing.

Algorithm 1 ReMALIS: Recursive Multi-Agent Learning with Intention Sharing

1: Initialize LLM parameters $θ,φ,ω$

2: Initialize agent policies $π_ξ$ , communication channel $f_θ$

3: Initialize grounding confusion matrix $C$ , memory $M$

4: for each episode do

5: for each time step $t$ do

6: Observe states $s_t$ and feedback $f_1:t$ for all agents

7: Infer intentions $I_t$ from $s_t,f_1:t$ using $LLM_θ$

8: Propagate intentions $I_t$ through channel $f_θ$

9: Compute grounded embeddings $e_t=g_φ(s_t,I_t,f_1:t)$

10: Predict sub-tasks $Σ_t+1=p_θ(I_t,e_t,f_1:t)$

11: Generate actions $a_t=a_ω(e_t,Σ_t+1,f_1:t)$

12: Execute actions $a_t$ and observe rewards $r_t$ , new states $s_t+1$

13: Encode coordination patterns $c_t=Φ(h^exec_t)$

14: Update grounding confusion $C_t,M_t$ using $c_t$

15: Update policies $π_ξ$ using $R$ and auxiliary loss $L_aux$

16: Update LLM $θ,φ,ω$ using $L_RL,L_confusion$

17: end for

18: end for

### 3.3 Execution: Integration with Reasoning Agents

#### 3.3.1 Agent Policy Generation

We parameterize agent policies $π_θ(a_t|s_t,I_t,c_1:t)$ using an LLM with weights $θ$ . At each time step, the LLM takes as input the agent’s state $s_t$ , intention $I_t$ , and coordination feedback $c_1:t$ . The output is a distribution over the next actions $a_t$ :

$$

π_θ(a_t|s_t,I_t,c_1:t)=LLM_θ(s_t,

I_t,c_1:t). \tag{7}

$$

To leverage agent feedback $f_1:t$ , we employ an auxiliary regularization model $\hat{π}_φ(a_t|s_t,f_1:t)$ :

$$

L_aux(θ;s_t,f_1:t)=MSE(π_θ(s_t),

\hat{π}_φ(s_t,f_1:t)), \tag{8}

$$

where $\hat{π}_φ$ is a feedback-conditioned policy approximation. The training loss to optimize $θ$ is:

$$

L(θ)=L_RL(θ)+λL_

aux(θ), \tag{9}

$$

where $L_RL$ is the reinforcement learning objective and $λ$ a weighting factor.

#### 3.3.2 Grounding Strategy Adjustment

We model action dependencies using a graph neural policy module $h_t^a=GNN(s_t,a)$ , where $h_t^a$ models interactions between action $a$ and the state $s_t$ . The policy is then given by $π_θ(a_t|s_t)=∏_i=1^|A|h_t^a_i$ . This captures the relational structure in the action space, enabling coordinated action generation conditioned on agent communication.

The coordination feedback $c_t$ is used to guide adjustments in the grounding module’s strategies. We define a grounding confusion matrix $C_t$ , where $C_t(i,j)$ represents grounding errors between concepts $i$ and $j$ . The confusion matrix constrains LLM grounding as:

$$

f_φ(s_t,I_t)=LLM_φ(s_t,I_t)\odot

λ C_t \tag{10}

$$

where $\odot$ is element-wise multiplication and $λ$ controls the influence of $C_t$ , reducing uncertainty on error-prone concept pairs.

We propose a modular regularization approach, with the grounding module $g_φ$ regularized by a coordination confusion estimator:

$$

L_confusion=\frac{1}{N}∑_i,jA_ψ(c_i,c_j)·

Conf(c_i,c_j) \tag{11}

$$

where $L_task$ is the task reward, $Conf(c_i,c_j)$ measures confusion between concepts $c_i$ and $c_j$ , and $A_ψ(c_i,c_j)$ are attention weights assigning importance based on grounding sensitivity.

An episodic confusion memory $M_t$ accumulates long-term grounding uncertainty statistics:

$$

M_t(i,j)=M_t-1(i,j)+I(Confuse(c_i,c_j)_t), \tag{12}

$$

where $I(·)$ are indicator functions tracking confusion events. By regularizing with a coordination-focused confusion estimator and episodic memory, the grounding module adapts to avoid miscoordination.

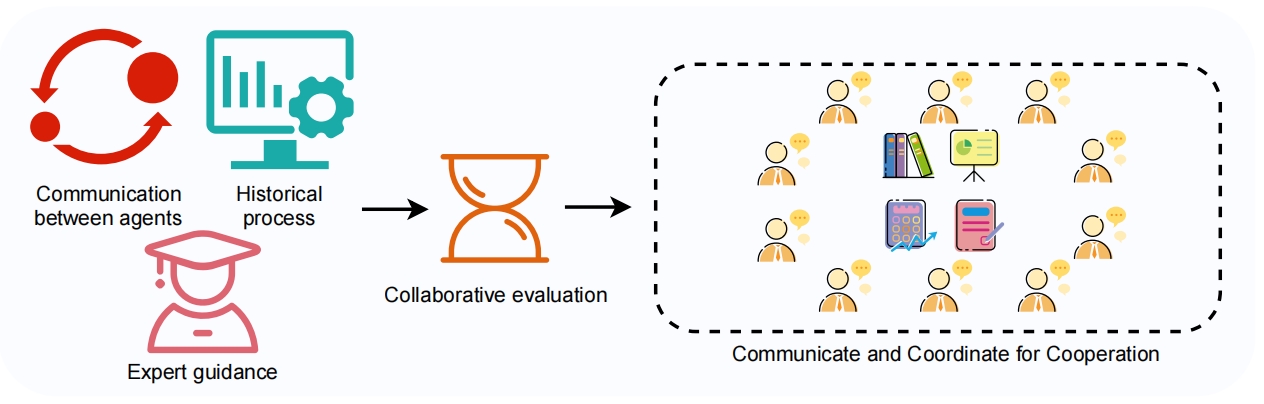

### 3.4 Collective Learning and Adaptation

The coordination feedback signals $c_t$ and interpretability signals $E_t,U_t,R_t$ play a crucial role in enabling the LLM agents to adapt and learn collectively. By incorporating these signals into the training process, the agents can adjust their strategies and policies to better align with the emerging coordination patterns and requirements of the collaborative tasks.

The collective learning process can be formalized as an optimization problem, where the goal is to minimize the following objective function $L(η,γ,ζ,ξ)=E_s_{t,I_t,f_1:t} ≤ft[αU_t+βE_t-R\right]+Ω( η,γ,ζ,ξ)$ . Here, $α$ and $β$ are weighting factors that balance the contributions of the grounding uncertainty $U_t$ and coordination errors $E_t$ , respectively. The team reward $R$ is maximized to encourage collaborative behavior. The term $Ω(η,γ,ζ,ξ)$ represents regularization terms or constraints on the model parameters to ensure stable and robust learning.

The objective function $L$ is defined over the current state $s_t$ , the interpretability signals $I_t=\{E_t,U_t,R_t\}$ , and the trajectory of feedback signals $f_1:t=\{c_1,I_1,…,c_t,I_t\}$ up to the current time step $t$ . The expectation $E_s_{t,I_t,f_1:t}[·]$ is taken over the distribution of states, interpretability signals, and feedback signal trajectories encountered during training.

| Method | Web | TFP | | | | | | |

| --- | --- | --- | --- | --- | --- | --- | --- | --- |

| Easy | Medium | Hard | All | Easy | Medium | Hard | Hell | |

| GPT-3.5-Turbo | | | | | | | | |

| CoT | 65.77 | 51.62 | 32.45 | 17.36 | 81.27 | 68.92 | 59.81 | 41.27 |

| Zero-Shot Plan | 57.61 | 52.73 | 28.92 | 14.58 | 82.29 | 63.77 | 55.39 | 42.38 |

| Llama2-7B | | | | | | | | |

| CoT | 59.83 | 54.92 | 30.38 | 15.62 | 82.73 | 65.81 | 57.19 | 44.58 |

| ReAct | 56.95 | 41.86 | 27.59 | 13.48 | 81.15 | 61.65 | 53.97 | 43.25 |

| ART | 62.51 | 52.34 | 33.81 | 18.53 | 81.98 | 63.23 | 51.78 | 46.83 |

| ReWOO | 63.92 | 53.17 | 34.95 | 19.37 | 82.12 | 71.38 | 61.23 | 47.06 |

| AgentLM | 62.14 | 46.75 | 30.84 | 15.98 | 82.96 | 66.03 | 57.16 | 43.91 |

| FireAct | 64.03 | 50.68 | 32.78 | 17.49 | 83.78 | 68.19 | 58.94 | 45.06 |

| LUMOS | 66.27 | 53.81 | 35.37 | 19.53 | 84.03 | 71.75 | 62.57 | 51.49 |

| Llama3-8B | | | | | | | | |

| Code-Llama (PoT) | 64.85 | 49.49 | 32.16 | 17.03 | 83.34 | 68.47 | 59.15 | 52.64 |

| AgentLM | 66.77 | 51.45 | 31.59 | 16.58 | 85.26 | 71.81 | 58.68 | 53.39 |

| FiReAct | 68.92 | 53.27 | 32.95 | 17.64 | 84.11 | 72.15 | 58.63 | 51.65 |

| DGN | 69.15 | 54.78 | 33.63 | 18.17 | 83.42 | 71.08 | 62.34 | 53.57 |

| LToS | 68.48 | 55.03 | 33.06 | 17.71 | 85.77 | 74.61 | 59.37 | 54.81 |

| AUTOACT | 67.62 | 56.25 | 31.84 | 16.79 | 87.89 | 76.29 | 58.94 | 52.87 |

| ReMALIS(Ours) | 73.92 | 58.64 | 38.37 | 21.42 | 89.15 | 77.62 | 64.53 | 55.37 |

Table 1: Comparative analysis of the ReMALIS framework against single-agent baselines and contemporary methods across two datasets

## 4 Experiments

### 4.1 Datasets

To assess the performance of our models, we conducted evaluations using two large-scale real-world datasets: the traffic flow prediction (TFP) dataset and the web-based activities dataset.

TFP dataset comprises 100,000 traffic scenarios, each accompanied by corresponding flow outcomes. Each example is detailed with descriptions of road conditions, vehicle count, weather, and traffic control measures, and is classified as traffic flow: smooth, congested, or jammed. The raw data was sourced from traffic cameras, incident reports, and simulations, and underwent preprocessing to normalize entities and eliminate duplicates.

Web activities dataset contains over 500,000 examples of structured web interactions such as booking flights, scheduling appointments, and making reservations. Each activity follows a template with multiple steps like searching, selecting, filling forms, and confirming. User utterances and system responses were extracted to form the input-output pairs across 150 domains, originating from real anonymized interactions with chatbots, virtual assistants, and website frontends.

### 4.2 Implementation Details

To handle the computational demands of training our framework with LLMs, we employ 8 Nvidia A800-80G GPUs Chen et al. (2024) under the DeepSpeed Aminabadi et al. (2022) training framework, which can effectively accommodate the extensive parameter spaces and activations required by our framework’s LLM components and multi-agent architecture Rasley et al. (2020).

For the TFP dataset, we classified the examples into four difficulty levels: “Easy”, “Medium”, “Hard”, and “Hell”. The “Easy” level comprises small grid networks with low, stable vehicle arrival rates. The “Medium” level includes larger grids with variable arrival rates. “Hard” tasks feature large, irregular networks with highly dynamic arrival rates and complex intersection configurations. The “Hell” level introduces challenges such as partially observable states, changing road conditions, and fully decentralized environments.

For the web activities dataset, we divided the tasks into “Easy”, “Medium”, “Hard”, and “All” levels. “Easy” tasks required basic single-click or short phrase interactions. “Medium” involved complex multi-page sequences like form submissions. “Hard” tasks demanded significant reasoning through ambiguous, dense websites. The “All” level combined tasks across the full difficulty spectrum.

The dataset was divided into 80% for training, 10% for validation, and 10% for testing, with examples shuffled. These large-scale datasets offer a challenging and naturalistic benchmark to evaluate our multi-agent framework on complex, real-world prediction and interaction tasks.

### 4.3 Results and Analysis

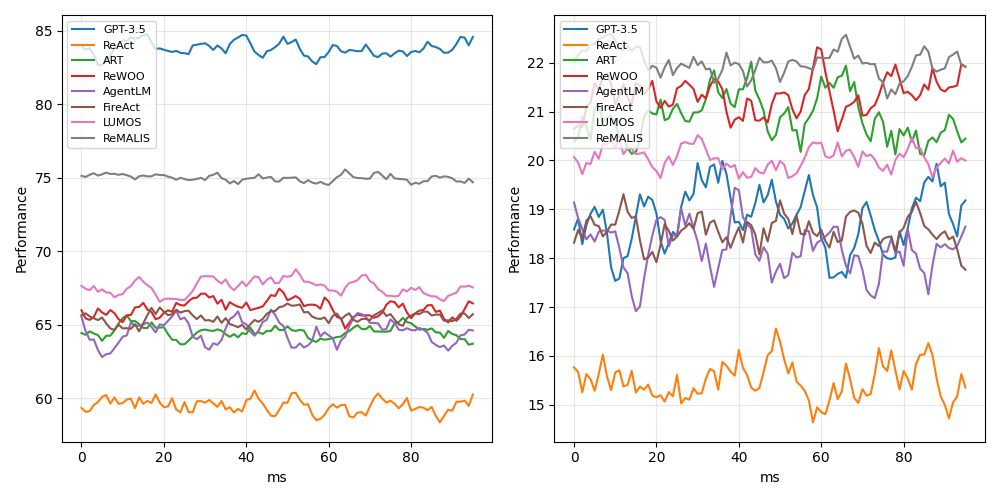

Table 1 displays the principal experimental results of our ReMALIS framework in comparison with various single-agent baselines and contemporary methods using the web activities dataset. We evaluated the models across four levels of task difficulty: “Easy”, “Medium”, “Hard”, and “All”.

The results from our comparative analysis indicate that ReMALIS (7B), equipped with a 7B parameter LLM backbone, significantly outperforms competing methods. On the comprehensive “All” difficulty level, which aggregates tasks across a range of complexities, ReMALIS achieved a notable score of 55.37%, surpassing the second-highest scoring method, LUMOS, which scored 51.49%. Additionally, ReMALIS (7B) also excelled against AUTOACT, which utilizes a larger 13B parameter model, by achieving a score that is over 3 percentage points higher at 52.87%. These findings highlight the efficacy of ReMALIS ’s parameter-efficient design and its advanced multi-agent collaborative training approach, which allow it to outperform larger single-agent LLMs significantly.

Notably, ReMALIS (7B) also exceeded the performance of GPT-3.5 (Turbo), a substantially larger foundation model, across all difficulty levels. On “Hard” tasks, ReMALIS ’s 21.42% surpassed GPT-3.5’s 17.36% by over 4 points. This indicates that ReMALIS ’s coordination mechanisms transform relatively modest LLMs into highly capable collaborative agents.

Despite their larger sizes, single-agent approaches like GPT-3.5 CoT, ReAct, and AgentLM significantly underperformed. Notably, even the advanced single-agent method LUMOS (13B) could not rival the performance of ReMALIS (7B). The superiority of ReMALIS, attributed to its specialized multi-agent design and novel features such as intention propagation, bidirectional feedback, and recursive reasoning, was particularly evident. On complex “Hard” tasks that required extensive reasoning, ReMALIS achieved a notable performance of 21.42%, surpassing LUMOS by over 2 percentage points, thus highlighting the benefits of its multi-agent architecture and collaborative learning mechanisms.

The exceptional performance of our proposed ReMALIS framework on the Traffic Flow Prediction (TFP) dataset can also be attributed to its innovative design and the effective integration of advanced techniques. On the "Easy" difficulty level, ReMALIS achieved an impressive accuracy of 89.15%, outperforming the second-best method, AUTOACT, by a substantial margin of 1.26%. In the "Medium" category, ReMALIS secured an accuracy of 77.62%, surpassing AUTOACT’s 76.29% by 1.33%. Even in the most challenging "Hard" and "Hell" levels, ReMALIS maintained its lead with accuracies of 64.53% and 55.37%, respectively, outperforming the next best methods, DGN (62.34%) and LToS (54.81%), by 2.19% and 0.56%.

### 4.4 Ablation Studies

1)The Impact on Improving Multi-Agent Coordination Accuracy We conduct ablation studies to evaluate the impact of each component within the ReMALIS framework. The observations can be found in Table 2. Excluding intention propagation results in a decrease in accuracy by over 6% across both datasets, highlighting difficulties in achieving common grounding among agents without shared local beliefs This highlights the importance of intention sharing for emergent team behaviors.

The absence of bidirectional coordination channels leads to a 4.37% decline in performance across various metrics, illustrating the importance of execution-level signals in shaping planning and grounding strategies. Without feedback coordination, agents become less responsive to new scenarios that require re-planning.

Table 2: Ablation studies on Traffic and Web datasets

| Traffic | Single Agent Baseline | 42.5% | 0.217 | 0.384 |

| --- | --- | --- | --- | --- |

| Intention Propagation | 47.3% | 0.251 | 0.425 | |

| Bidirectional Feedback | 49.8% | 0.278 | 0.461 | |

| Recursive Reasoning | 53.2% | 0.311 | 0.503 | |

| ReMALIS (Full) | 58.7% | 0.342 | 0.538 | |

| Web | Single Agent Baseline | 38.9% | 0.255 | 0.416 |

| Intention Propagation | 42.7% | 0.283 | 0.453 | |

| Bidirectional Feedback | 46.3% | 0.311 | 0.492 | |

| Recursive Reasoning | 50.6% | 0.345 | 0.531 | |

| ReMALIS (Full) | 55.4% | 0.379 | 0.567 | |

Substituting recursive reasoning with convolutional and recurrent neural networks reduces contextual inference accuracy by 5.86%. Non-recursive agents display short-sighted behavior compared to the holistic reasoning enabled by recursive transformer modeling. This emphasizes that recursive architectures are vital for complex temporal dependencies.

<details>

<summary>extracted/5737747/m1.png Details</summary>

### Visual Description

\n

## Line Charts: Model Performance Comparison (Two Metrics)

### Overview

The image displays two side-by-side line charts comparing the performance of eight different AI models or methods over time (measured in milliseconds). The charts share the same legend and x-axis but have different y-axis scales, suggesting they measure two distinct performance metrics. The data appears noisy, with frequent fluctuations for all series.

### Components/Axes

* **Chart Layout:** Two distinct line charts arranged horizontally.

* **Legend:** Located in the top-left corner of each chart. Contains eight entries with corresponding line colors:

* GPT-3.5 (Blue)

* ReAct (Orange)

* ART (Green)

* ReWOO (Red)

* AgentLM (Purple)

* FireAct (Brown)

* LLMOS (Pink)

* ReMALIS (Gray)

* **X-Axis (Both Charts):**

* **Label:** `ms` (milliseconds)

* **Scale:** Linear, from 0 to approximately 95.

* **Major Ticks:** 0, 20, 40, 60, 80.

* **Y-Axis (Left Chart):**

* **Label:** `Performance`

* **Scale:** Linear, from 60 to 85.

* **Major Ticks:** 60, 65, 70, 75, 80, 85.

* **Y-Axis (Right Chart):**

* **Label:** `Performance`

* **Scale:** Linear, from 15 to 22.

* **Major Ticks:** 15, 16, 17, 18, 19, 20, 21, 22.

### Detailed Analysis

**Left Chart (Performance Range ~60-85):**

* **Trend Verification & Data Points (Approximate Ranges):**

* **GPT-3.5 (Blue):** Consistently the highest. Trends slightly upward from ~83 to ~85. Range: ~82-85.

* **ReMALIS (Gray):** Second highest, very stable. Hovers around 75. Range: ~74-76.

* **LLMOS (Pink):** Third highest, moderate fluctuation. Range: ~67-69.

* **ReWOO (Red):** Fluctuates in the middle of the pack. Range: ~65-68.

* **FireAct (Brown):** Similar range to ReWOO, often intertwined. Range: ~64-67.

* **ART (Green):** Lower-middle range. Range: ~63-66.

* **AgentLM (Purple):** Lower-middle range, often the lowest in this cluster. Range: ~62-65.

* **ReAct (Orange):** Consistently the lowest by a significant margin. Range: ~58-61.

**Right Chart (Performance Range ~15-22):**

* **Trend Verification & Data Points (Approximate Ranges):**

* **ReMALIS (Gray):** Consistently the highest. Shows high volatility. Range: ~21-22.5.

* **ReWOO (Red):** Second highest, also volatile. Range: ~20.5-22.

* **ART (Green):** Third highest, volatile. Range: ~20-21.5.

* **LLMOS (Pink):** Middle range, less volatile than the top three. Range: ~19.5-20.5.

* **GPT-3.5 (Blue):** Middle range, highly volatile. Range: ~17.5-20.

* **FireAct (Brown):** Middle range, volatile. Range: ~18-19.5.

* **AgentLM (Purple):** Lower-middle range, volatile. Range: ~17-19.

* **ReAct (Orange):** Consistently the lowest, with notable dips. Range: ~14.5-16.5.

### Key Observations

1. **Performance Inversion:** The ranking of models is drastically different between the two metrics. GPT-3.5, which leads the left chart, is in the middle of the pack on the right chart. Conversely, ReMALIS is a top performer on both charts but leads the right chart.

2. **Volatility Contrast:** The data in the right chart (lower performance scale) exhibits significantly higher volatility (larger, more frequent swings) for all models compared to the relatively smoother lines in the left chart.

3. **Consistent Outlier:** ReAct is the lowest-performing model on both metrics by a clear margin.

4. **Clustering:** On the left chart, six of the eight models (excluding GPT-3.5 and ReMALIS) form a tight cluster between 62-69. On the right chart, the models are more spread out across the 15-22 range.

### Interpretation

The two charts likely represent two different evaluation metrics for the same set of AI agent frameworks or reasoning methods. The left chart's higher scale (60-85) suggests a metric like **accuracy, success rate, or a normalized score**, where GPT-3.5 (a base model) performs best, and specialized methods like ReMALIS are competitive. The right chart's lower, more volatile scale (15-22) could represent a metric like **efficiency, speed (inverse latency), or a cost-sensitive score**, where specialized methods (ReMALIS, ReWOO, ART) outperform the base GPT-3.5, but with less stability.

The stark difference in rankings implies a **trade-off** between the two measured qualities. A model excelling in raw performance (left chart) may not be the most efficient or cost-effective (right chart). The high volatility in the right chart suggests that the efficiency metric is more sensitive to run-to-run variation or specific task conditions. ReAct's consistently poor performance on both metrics indicates it may be a less effective approach for the tasks evaluated here. The analysis suggests that model selection should be guided by which metric (performance vs. efficiency) is prioritized for the specific application.

</details>

Figure 3: Comparative performance evaluation across varying task difficulty levels for the web activities dataset, which indicates the accuracy scores achieved by ReMALIS and several state-of-the-art baselines.

Table 3: Ablation on agent coordination capabilities

| No Communication | 31% | 23% | 17% | 592 | 873 | 1198 |

| --- | --- | --- | --- | --- | --- | --- |

| REACT | 42% | 34% | 29% | 497 | 732 | 984 |

| AgentLM | 48% | 39% | 32% | 438 | 691 | 876 |

| FiReAct | 58% | 47% | 37% | 382 | 569 | 745 |

| Basic Propagation | 68% | 53% | 41% | 314 | 512 | 691 |

| Selective Propagation | 79% | 62% | 51% | 279 | 438 | 602 |

| Full Intention Sharing | 91% | 71% | 62% | 248 | 386 | 521 |

2)The Impact on Improving Multi-Agent Coordination Capability As presented in Table 3, on aligned sub-task percentage, the proposed Basic Propagation, Selective Propagation, and Full Intention Sharing methods consistently outperform baseline models like REACT and AgentLM across varying difficulty levels (“easy”, “medium”, and “hard”). For example, Full Intention Sharing achieves alignment of 91%, 71%, and 62% across these levels, respectively. These results are substantially higher compared to scenarios with no communication (31%, 23%, and 17%).

Similarly, coordination time metrics exhibit major efficiency gains from intention propagation. On “Hard” tasks, Full Intention Sharing reduces coordination time to 521 ms, 57% faster than the 1198 ms for No Communication. As task complexity increases from easy to hard, the coordination time savings compared to baselines grows from 138 ms to 677 ms. This reveals that intention sharing mitigates growing coordination delays for difficult scenarios.

The highlighted propagation mechanisms also demonstrate clear incremental performance improvements over increasingly selective information sharing. As agents propagate more precise intentions to relevant teammates, both sub-task alignment and coordination efficiency improve. Moving from Basic to Selective to Full sharing provides gains on top of gains.

## 5 Conclusion

In this paper, we introduce a novel framework, ReMALIS, designed to enhance collaborative capabilities within multi-agent systems using LLMs. Our approach incorporates three principal innovations: intention propagation for establishing a shared understanding among agents, bidirectional coordination channels to adapt reasoning processes in response to team dynamics, and recursive reasoning architectures that provide agents with advanced contextual grounding and planning capabilities necessary for complex coordination tasks. Experimental results indicate that ReMALIS significantly outperforms several baseline methods, underscoring the efficacy of cooperative multi-agent AI systems. By developing frameworks that enable LLMs to acquire cooperative skills analogous to human team members, we advance the potential for LLM agents to manage flexible coordination in complex collaborative environments effectively.

## 6 Limitiation

While ReMALIS demonstrates promising results in collaborative multi-agent tasks, our framework relies on a centralized training paradigm, which may hinder scalability in fully decentralized environments. The current implementation does not explicitly handle dynamic agent arrival or departure during execution, which could impact coordination in real-world applications, the recursive reasoning component may struggle with long-term dependencies and planning horizons beyond a certain time frame.

## References

- Aminabadi et al. (2022) Reza Yazdani Aminabadi et al. 2022. Deepspeed-inference: Enabling efficient inference of transformer models at unprecedented scale. In SC22: International Conference for High Performance Computing, Networking, Storage and Analysis.

- Chaka (2023) Chaka Chaka. 2023. Generative ai chatbots-chatgpt versus youchat versus chatsonic: Use cases of selected areas of applied english language studies. International Journal of Learning, Teaching and Educational Research, 22(6):1–19.

- Chebotar et al. (2023) Yevgen Chebotar et al. 2023. Q-transformer: Scalable offline reinforcement learning via autoregressive q-functions. In Conference on Robot Learning. PMLR.

- Chen et al. (2023) Baian Chen et al. 2023. Fireact: Toward language agent fine-tuning. arXiv preprint arXiv:2310.05915.

- Chen et al. (2024) Yushuo Chen et al. 2024. Towards coarse-to-fine evaluation of inference efficiency for large language models. arXiv preprint arXiv:2404.11502.

- Chiu et al. (2024) Yu Ying Chiu et al. 2024. A computational framework for behavioral assessment of llm therapists. arXiv preprint arXiv:2401.00820.

- Dong et al. (2023) Yihong Dong et al. 2023. Codescore: Evaluating code generation by learning code execution. arXiv preprint arXiv:2301.09043.

- Du et al. (2023) Yali Du et al. 2023. A review of cooperation in multi-agent learning. arXiv preprint arXiv:2312.05162.

- Fan et al. (2020) Cheng Fan et al. 2020. Statistical investigations of transfer learning-based methodology for short-term building energy predictions. Applied Energy, 262:114499.

- Foerster et al. (2018) Jakob Foerster et al. 2018. Counterfactual multi-agent policy gradients. In Proceedings of the AAAI conference on artificial intelligence, volume 32.

- Gao et al. (2023) Yunfan Gao et al. 2023. Retrieval-augmented generation for large language models: A survey. arXiv preprint arXiv:2312.10997.

- Hartmann et al. (2022) Valentin N. Hartmann et al. 2022. Long-horizon multi-robot rearrangement planning for construction assembly. IEEE Transactions on Robotics, 39(1):239–252.

- He et al. (2021) Junxian He et al. 2021. Towards a unified view of parameter-efficient transfer learning. arXiv preprint arXiv:2110.04366.

- Hu and Sadigh (2023) Hengyuan Hu and Dorsa Sadigh. 2023. Language instructed reinforcement learning for human-ai coordination. arXiv preprint arXiv:2304.07297.

- Huang et al. (2022) Baichuan Huang, Abdeslam Boularias, and Jingjin Yu. 2022. Parallel monte carlo tree search with batched rigid-body simulations for speeding up long-horizon episodic robot planning. In 2022 IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS). IEEE.

- Khamparia et al. (2021) Aditya Khamparia et al. 2021. An internet of health things-driven deep learning framework for detection and classification of skin cancer using transfer learning. Transactions on Emerging Telecommunications Technologies, 32(7):e3963.

- Lee and Perret (2022) Irene Lee and Beatriz Perret. 2022. Preparing high school teachers to integrate ai methods into stem classrooms. In Proceedings of the AAAI Conference on Artificial Intelligence, volume 36.

- Li et al. (2020) Chuan Li et al. 2020. A systematic review of deep transfer learning for machinery fault diagnosis. Neurocomputing, 407:121–135.

- Li et al. (2022) Weihua Li et al. 2022. A perspective survey on deep transfer learning for fault diagnosis in industrial scenarios: Theories, applications and challenges. Mechanical Systems and Signal Processing, 167:108487.

- Loey et al. (2021) Mohamed Loey et al. 2021. A hybrid deep transfer learning model with machine learning methods for face mask detection in the era of the covid-19 pandemic. Measurement, 167:108288.

- Lotfollahi et al. (2022) Mohammad Lotfollahi et al. 2022. Mapping single-cell data to reference atlases by transfer learning. Nature biotechnology, 40(1):121–130.

- Lu et al. (2023) Pan Lu et al. 2023. Chameleon: Plug-and-play compositional reasoning with large language models. arXiv preprint arXiv:2304.09842.

- Lyu et al. (2021) Xueguang Lyu et al. 2021. Contrasting centralized and decentralized critics in multi-agent reinforcement learning. arXiv preprint arXiv:2102.04402.

- Mao et al. (2022) Weichao Mao et al. 2022. On improving model-free algorithms for decentralized multi-agent reinforcement learning. In International Conference on Machine Learning. PMLR.

- Martini et al. (2021) Franziska Martini et al. 2021. Bot, or not? comparing three methods for detecting social bots in five political discourses. Big data & society, 8(2):20539517211033566.

- Miao et al. (2023) Ning Miao, Yee Whye Teh, and Tom Rainforth. 2023. Selfcheck: Using llms to zero-shot check their own step-by-step reasoning. arXiv preprint arXiv:2308.00436.

- Qiu et al. (2024) Xihe Qiu et al. 2024. Chain-of-lora: Enhancing the instruction fine-tuning performance of low-rank adaptation on diverse instruction set. IEEE Signal Processing Letters.

- Raman et al. (2022) Shreyas Sundara Raman et al. 2022. Planning with large language models via corrective re-prompting. In NeurIPS 2022 Foundation Models for Decision Making Workshop.

- Rana et al. (2023) Krishan Rana et al. 2023. Sayplan: Grounding large language models using 3d scene graphs for scalable task planning. arXiv preprint arXiv:2307.06135.

- Rashid et al. (2020) Tabish Rashid et al. 2020. Weighted qmix: Expanding monotonic value function factorisation for deep multi-agent reinforcement learning. In Advances in neural information processing systems 33, pages 10199–10210.

- Rasley et al. (2020) Jeff Rasley et al. 2020. Deepspeed: System optimizations enable training deep learning models with over 100 billion parameters. In Proceedings of the 26th ACM SIGKDD International Conference on Knowledge Discovery & Data Mining.

- Saber et al. (2021) Abeer Saber et al. 2021. A novel deep-learning model for automatic detection and classification of breast cancer using the transfer-learning technique. IEEE Access, 9:71194–71209.

- Schroeder de Witt et al. (2019) Christian Schroeder de Witt et al. 2019. Multi-agent common knowledge reinforcement learning. In Advances in Neural Information Processing Systems 32.

- Schuchard and Crooks (2021) Ross J. Schuchard and Andrew T. Crooks. 2021. Insights into elections: An ensemble bot detection coverage framework applied to the 2018 us midterm elections. Plos one, 16(1):e0244309.

- Schumann et al. (2024) Raphael Schumann et al. 2024. Velma: Verbalization embodiment of llm agents for vision and language navigation in street view. In Proceedings of the AAAI Conference on Artificial Intelligence, volume 38.

- Shanahan et al. (2023) Murray Shanahan, Kyle McDonell, and Laria Reynolds. 2023. Role play with large language models. Nature, 623(7987):493–498.

- Sharan et al. (2023) S. P. Sharan, Francesco Pittaluga, and Manmohan Chandraker. 2023. Llm-assist: Enhancing closed-loop planning with language-based reasoning. arXiv preprint arXiv:2401.00125.

- Shen et al. (2020) Sheng Shen et al. 2020. Deep convolutional neural networks with ensemble learning and transfer learning for capacity estimation of lithium-ion batteries. Applied Energy, 260:114296.

- Singh et al. (2023) Ishika Singh et al. 2023. Progprompt: Generating situated robot task plans using large language models. In 2023 IEEE International Conference on Robotics and Automation (ICRA). IEEE.

- Song et al. (2023) Chan Hee Song et al. 2023. Llm-planner: Few-shot grounded planning for embodied agents with large language models. In Proceedings of the IEEE/CVF International Conference on Computer Vision.

- Topsakal and Akinci (2023) Oguzhan Topsakal and Tahir Cetin Akinci. 2023. Creating large language model applications utilizing langchain: A primer on developing llm apps fast. In International Conference on Applied Engineering and Natural Sciences, volume 1.

- Valmeekam et al. (2022) Karthik Valmeekam et al. 2022. Large language models still can’t plan (a benchmark for llms on planning and reasoning about change). arXiv preprint arXiv:2206.10498.

- Wang et al. (2024a) Haoyu Wang et al. 2024a. Carbon-based molecular properties efficiently predicted by deep learning-based quantum chemical simulation with large language models. Computers in Biology and Medicine, page 108531.

- Wang et al. (2024b) Haoyu Wang et al. 2024b. Subequivariant reinforcement learning framework for coordinated motion control. arXiv preprint arXiv:2403.15100.

- Wang et al. (2023) Lei Wang et al. 2023. A survey on large language model based autonomous agents. arXiv preprint arXiv:2308.11432.

- Wang et al. (2020) Tonghan Wang et al. 2020. Roma: Multi-agent reinforcement learning with emergent roles. arXiv preprint arXiv:2003.08039.

- Wen et al. (2023) Hao Wen et al. 2023. Empowering llm to use smartphone for intelligent task automation. arXiv preprint arXiv:2308.15272.

- Xi et al. (2023) Zhiheng Xi et al. 2023. The rise and potential of large language model based agents: A survey. arXiv preprint arXiv:2309.07864.

- Yao et al. (2022) Shunyu Yao et al. 2022. React: Synergizing reasoning and acting in language models. arXiv preprint arXiv:2210.03629.

- Yin et al. (2023) Da Yin et al. 2023. Lumos: Learning agents with unified data, modular design, and open-source llms. arXiv preprint arXiv:2311.05657.

- Yu et al. (2023) Shengcheng Yu et al. 2023. Llm for test script generation and migration: Challenges, capabilities, and opportunities. In 2023 IEEE 23rd International Conference on Software Quality, Reliability, and Security (QRS). IEEE.

- Zeng et al. (2023) Fanlong Zeng et al. 2023. Large language models for robotics: A survey. arXiv preprint arXiv:2311.07226.

- Zhang and Gao (2023) Xuan Zhang and Wei Gao. 2023. Towards llm-based fact verification on news claims with a hierarchical step-by-step prompting method. arXiv preprint arXiv:2310.00305.

- Zhao et al. (2024) Andrew Zhao et al. 2024. Expel: Llm agents are experiential learners. In Proceedings of the AAAI Conference on Artificial Intelligence, volume 38.

- Zhu et al. (2023) Zhuangdi Zhu et al. 2023. Transfer learning in deep reinforcement learning: A survey. IEEE Transactions on Pattern Analysis and Machine Intelligence.

- Zhuang et al. (2020) Fuzhen Zhuang et al. 2020. A comprehensive survey on transfer learning. Proceedings of the IEEE, 109(1):43–76.

- Zimmer et al. (2021a) Matthieu Zimmer et al. 2021a. Learning fair policies in decentralized cooperative multi-agent reinforcement learning. In International Conference on Machine Learning. PMLR.

- Zimmer et al. (2021b) Matthieu Zimmer et al. 2021b. Learning fair policies in decentralized cooperative multi-agent reinforcement learning. In International Conference on Machine Learning. PMLR.

- Zou et al. (2023) Hang Zou et al. 2023. Wireless multi-agent generative ai: From connected intelligence to collective intelligence. arXiv preprint arXiv:2307.02757.

## Appendix A Related Work

### A.1 Single Agent Frameworks

Early agent frameworks such as Progprompt Singh et al. (2023) directly prompt large language models (LLMs) to plan, execute actions, and process feedback in a chained manner within one model Song et al. (2023). Despite its conceptual simplicity Valmeekam et al. (2022), an integrated framework imposes a substantial burden on a single LLM, leading to challenges in managing complex tasks Raman et al. (2022); Wang et al. (2024a).

To reduce the reasoning burden, recent works explore modular designs by separating high-level planning and low-level execution into different modules. For example, LUMOS Yin et al. (2023) consists of a planning module, a grounding module, and an execution module. The planning and grounding modules break down complex tasks into interpretable sub-goals and executable actions. FiReAct Chen et al. (2023) introduces a similar hierarchical structure, with a focus on providing step-by-step explanations Zhang and Gao (2023). Although partitioning into modules specializing for different skills is reasonable, existing modular frameworks still rely on a single agent for final action execution Miao et al. (2023); Qiu et al. (2024). Our work pushes this idea further by replacing the single execution agent with a cooperative team of multiple agents.

### A.2 Multi-Agent Reinforcement Learning

Collaborative multi-agent reinforcement learning has been studied to solve complex control or game-playing tasks. Representative algorithms include COMA Foerster et al. (2018), QMIX Rashid et al. (2020) and ROMA Wang et al. (2020). These methods enable decentralized execution of different agents but allow centralized training by sharing experiences or parameters Lyu et al. (2021). Drawing on this concept, our ReMALIS framework places greater emphasis on integrating modular LLMs to address complex language tasks. In ReMALIS, each execution agent specializes in specific semantic domains such as query, computation, or retrieval, and is coordinated through a communication module Mao et al. (2022).

The concept of multi-agent RL has recently influenced the design of conversational agents Zimmer et al. (2021a); Schumann et al. (2024). EnsembleBot Schuchard and Crooks (2021) utilizes multiple bots trained on distinct topics, coordinated by a routing model. However, this approach primarily employs a divide-and-conquer strategy with independent skills Martini et al. (2021), and communication within EnsembleBot predominantly involves one-way dispatching rather than bidirectional coordination. In contrast, our work focuses on fostering a more tightly integrated collaborative system for addressing complex problems Schroeder de Witt et al. (2019); Zimmer et al. (2021b).

### A.3 Integrated & Collaborative Learning

Integrated learning techniques originate from transfer learning Zhuang et al. (2020); Zhu et al. (2023), aiming to improve a target model by incorporating additional signals from other modalities Lotfollahi et al. (2022); Shanahan et al. (2023). For multi-agent systems, Li et al. (2022); Zhao et al. (2024) find joint training of multiple agents simultaneously boosts performance over separately trained independent agents Lee and Perret (2022). Recently, integrated learning has been used in single agent frameworks like Shen et al. (2020) and Loey et al. (2021), where auxiliary losses of interpretable outputs facilitate main model training through multi-tasking Khamparia et al. (2021); Saber et al. (2021).

Our work adopts integrated learning to train specialized execution agents that are semantically consistent. At the team level, a communication module learns to attentively aggregate and propagate messages across agents, which indirectly coordinates their strategies and behaviors Fan et al. (2020). The integrated and collaborative learning synergizes individual skills and leads to emerged collective intelligence, enhancing the overall reasoning and planning capabilities when dealing with complex tasks He et al. (2021); Li et al. (2020).

## Appendix B Methodology and Contributions

Based on the motivations and inspirations above, we propose recursive multi-agent learning with intention sharing framework (ReMALIS), an innovative multi-agent framework empowered by integrated learning for communication and collaboration. The main contributions are:

1. We design a cooperative execution module with multiple agents trained by integrated learning. Different execution agents specialize in different semantic domains while understanding peer abilities, which reduces redundant capacities and improves efficient division of labor.

2. We propose an attentive communication module that propagates informative cues across specialized agents. The module coordinates agent execution strategies without explicit supervision, acting as the role of team leader.

3. The collaborative design allows ReMALIS to handle more complex tasks compared to single-agent counterparts. Specialized agents focus on their specialized domain knowledge while collaborating closely through communicative coordination, leading to strong emergent team intelligence.

4. We enable dynamic feedback loops from communication to the grounding module and re-planning of the planning module, increasing adaptability when execution difficulties arise.

We expect the idea of integrating specialized collaborative agents with dynamic coordination mechanisms to inspire more future research toward developing intelligent collaborative systems beyond conversational agents.

## Appendix C Key variables and symbols

Table 4: Key variables and symbols in the proposed recursive multi-agent learning framework.

| $p_θ$ $s_t$ $I_t$ | Planning module parameterized by $θ$ Current sub-goal at time $t$ Current intention at time $t$ |

| --- | --- |

| $e_t$ | Grounded embedding at time $t$ |

| $f_t$ | Agent feedback at time $t$ |

| $g_φ$ | Grounding module parameterized by $φ$ |

| $π_ξ_{i}$ | Execution policy of agent $i$ parameterized by $ξ_i$ |

| $f_Λ$ | Intention propagation channel parameterized by $Λ$ |

| $m_ij$ | Message sent from agent $j$ to agent $i$ |

| $b_i(I_j|m_ij)$ | Agent $i$ ’s belief over teammate $j$ ’s intention $I_j$ given message $m_ij$ |

| $R_c$ | Coordination reward |

| $π_ξ(a_i|s_i,I_i)$ | Execution agent policy conditioned on state $s_i$ and intention $I_i$ |

| $a_i$ | Action of agent $i$ |

| $s_i$ | State of agent $i$ |

| $I_i=(γ_i,Σ_i,π_i,δ_i)$ | Intention of agent $i$ |

| $γ_i$ | Current goal of agent $i$ |

| $Σ_i=\{σ_i1,σ_i2,…\}$ | Set of sub-goals for agent $i$ |

| $π_i(σ)$ | Probability distribution over possible next sub-goals for agent $i$ |

| $δ_i(σ)$ | Desired teammate assignment for sub-goal $σ$ of agent $i$ |

Table 4 summarizes the key variables and symbols used in the proposed recursive multi-agent learning framework called ReMALIS. It includes symbols representing various components like the planning module, grounding module, execution policies, intentions, goals, sub-goals, and the intention propagation channel.

Table 5: Comparison of Traffic Network Complexity Levels

| Difficulty Level | Grid Size | Intersections | Arrival Rates | Phases per Intersection |

| --- | --- | --- | --- | --- |

| Easy | 3x3 | 9 | Low and stable (0.5 vehicles/s) | Less than 10 |

| Medium | 5x5 | 25 | Fluctuating (0.5-2 vehicles/s) | 10-15 |

| Hard | 8x8 | 64 | Highly dynamic (0.1 to 3 vehicles/s) | More than 15 |

| Hell | Irregular | 100+ | Extremely dynamic with spikes | $>$ 25 |

Table 6: Training hyperparameters and configurations

| Hyperparameter/Configuration Language Model Size Optimizer | ReMALIS 7B AdamW | LUMOS 13B Adam | AgentLM 6B AdamW | GPT-3.5 175B Adam |

| --- | --- | --- | --- | --- |

| Learning Rate | 1e-4 | 2e-5 | 1e-4 | 2e-5 |

| Batch Size | 32 | 64 | 32 | 64 |

| Dropout | 0 | 0.1 | 0 | 0.1 |

| Number of Layers | 12 | 8 | 6 | 48 |

| Model Dimension | 768 | 512 | 768 | 1024 |

| Number of Heads | 12 | 8 | 12 | 16 |

| Training Epochs | 15 | 20 | 10 | 20 |

| Warmup Epochs | 1 | 2 | 1 | 2 |

| Weight Decay | 0.01 | 0.001 | 0.01 | 0.001 |

| Network Architecture | GNN | Transformer | Transformer | Transformer |

| Planning Module | GNN, 4 layers, 512 hidden size | 2-layer GNN, 1024 hidden size | - | - |

| Grounding Module | 6-layer Transformer, $d_model=768$ | 4-layer Transformer, $d_model=512$ | - | - |

| Execution Agents | 7 specialized, integrated training | Single agent | 8 agent | 4 agent |

| Intention Propagation | 4-layer GRU, 256 hidden size | - | - | - |

| Coordination Feedback | GAT, 2 heads, $α=0.2$ | - | - | - |

| Trainable Parameters | 5.37B | 6.65B | 4.61B | 17.75B |

## Appendix D Tasks Setup

### D.1 Traffic Control

We define four levels of difficulty for our traffic control tasks: Easy, Medium, Hard, and Hell in Table 5.

### D.2 Web Tasks

Similarly, we categorize the web tasks in our dataset into four levels of difficulty: Easy, Medium, Hard, and All.

Easy: The easy web tasks involve basic interactions like clicking on a single link or typing a short phrase. They require navigating simple interfaces with clear options to reach the goal.

Medium: The medium-difficulty tasks demand more complex sequences of actions across multiple pages, such as selecting filters or submitting forms. They test the agent’s ability to understand the site structure and flow.

Hard: The hard web tasks feature more open-ended exploration through dense sites with ambiguity. Significant reasoning is needed to chain obscure links and controls to achieve aims.

All: The all-level combines tasks across the spectrum of difficulty. Both simple and complex interactions are blended to assess generalized web agent skills. The performance here correlates to readiness for real-world web use cases.

## Appendix E Experimental Setups

In this study, we compare the performance of several state-of-the-art language models, including ReMALIS, LUMOS, AgentLM, and GPT-3.5. These models vary in size, architecture, and training configurations, reflecting the diversity of approaches in the field of natural language processing in Table 6.

ReMALIS is a 7 billion parameter model trained using the AdamW optimizer with a learning rate of 1e-4, a batch size of 32, and no dropout. It has 12 layers, a model dimension of 768, and 12 attention heads. The model was trained for 15 epochs with a warmup period of 1 epoch and a weight decay of 0.01. ReMALIS employs a Graph Neural Network (GNN) architecture, which is particularly suited for modeling complex relationships and structures.

LUMOS, a larger model with 13 billion parameters, was trained using the Adam optimizer with a learning rate of 2e-5, a batch size of 64, and a dropout rate of 0.1. It has 8 layers, a model dimension of 512, and 8 attention heads. The model was trained for 20 epochs with a warmup period of 2 epochs and a weight decay of 0.001. LUMOS follows a Transformer architecture, which has proven effective in capturing long-range dependencies in sequential data.

AgentLM, a 6 billion parameter model, was trained using the AdamW optimizer with a learning rate of 1e-4, a batch size of 32, and no dropout. It has 6 layers, a model dimension of 768, and 12 attention heads. The model was trained for 10 epochs with a warmup period of 1 epoch and a weight decay of 0.01. AgentLM also uses a Transformer architecture.

GPT-3.5, the largest model in this study with 175 billion parameters, was trained using the Adam optimizer with a learning rate of 2e-5, a batch size of 64, and a dropout rate of 0.1. It has 48 layers, a model dimension of 1024, and 16 attention heads. The model was trained for 20 epochs with a warmup period of 2 epochs and a weight decay of 0.001. GPT-3.5 follows the Transformer architecture, which has been widely adopted for large language models.

In addition to the base language models, the table provides details on the specialized modules and configurations employed by ReMALIS and LUMOS. ReMALIS incorporates a planning module with a 4-layer GNN and a 512 hidden size, a grounding module with a 6-layer Transformer and a model dimension of 768, 7 specialized and integrated execution agents, a 4-layer Gated Recurrent Unit (GRU) with a 256 hidden size for intention propagation, and a Graph Attention Network (GAT) with 2 heads and an alpha value of 0.2 for coordination feedback.

LUMOS, on the other hand, employs a 2-layer GNN with a 1024 hidden size for planning, a 4-layer Transformer with a model dimension of 512 for grounding, and a single integrated execution agent.

## Appendix F Pseudo-code

This algorithm 2 presents the hierarchical planning and grounding processes in the proposed recursive multi-agent learning framework. The planning module $p_θ$ takes the current sub-goal $s_t$ , intention $I_t$ , grounded embedding $e_t$ , and feedback $f_t$ as inputs, and predicts the next sub-goal $s_t+1$ . It first encodes the inputs using an encoder, and then passes the encoded representation through a graph neural network $T_θ$ parameterized by $θ$ . The output of $T_θ$ is passed through a softmax layer to obtain the probability distribution over the next sub-goal.

The grounding module $g_φ$ takes the current state $s_t$ , intention $I_t$ , and feedback trajectory $f_1:t$ as inputs, and produces the grounded embedding $e_t$ . It encodes the inputs using an encoder, and then applies cross-attention over the vocabulary $V$ , followed by a convolutional feature extractor. The output is combined with agent feedback $P_t$ to enhance the grounding accuracy. The grounding module is parameterized by $φ$ .

This algorithm 3 describes the intention propagation mechanism in the proposed recursive multi-agent learning framework. The goal is for each agent $i$ to infer a belief $b_i(I_j|m_ij)$ over the intention $I_j$ of a teammate $j$ , given a message $m_ij$ received from $j$ .

Algorithm 2 Hierarchical Planning and Grounding

1: Input: Current sub-goal $s_t$ , intention $I_t$ , grounded embedding $e_t$ , feedback $f_t$

2: Output: Next sub-goal $s_t+1$

3: $h_t=Encoder(s_t,I_t,e_t,f_t)$ {Encode inputs}

4: $s_t+1=Softmax(T_θ(h_t))$ {Predict next sub-goal}

5: $T_θ$ is a graph neural network parameterized by $θ$ {Planning module $p_θ$ }

6: Input: Current state $s_t$ , intention $I_t$ , feedback $f_1:t$

7: Output: Grounded embedding $e_t$

8: $h_t=Encoder(s_t,I_t,f_1:t)$ {Encode inputs}

9: $e_t=Conv(Attn(h_t,V))+P_t$ {Grounded embedding}

10: $Attn(·,·)$ is a cross-attention layer over vocabulary $V$

11: $Conv(·)$ is a convolutional feature extractor

12: $P_t$ includes agent feedback to enhance grounding accuracy

13: $g_φ$ is the grounding module parameterized by $φ$

It initializes an intention propagation channel $f_Λ$ , parameterized by $Λ$ , which is implemented as a recurrent neural network.

The intention inference process works as follows:

1. The received message $m_ij$ is encoded using an encoder to obtain a representation $h_ij$ .

1. The encoded message $h_ij$ is passed through the propagation channel $f_Λ$ to infer the belief $b_i(I_j|m_ij)$ over teammate $j$ ’s intention $I_j$ .

The objective is to train the parameters $Λ$ of the propagation channel $f_Λ$ to maximize the coordination reward $R_c$ over sampled intentions $I$ and messages $m$ from the distribution defined by $f_Λ$ .

Algorithm 3 Intention Propagation Mechanism

0: Current intention $I_i$ of agent $i$ , message $m_ij$ from teammate $j$