# CiteME: Can Language Models Accurately Cite Scientific Claims?

**Authors**: Ori Press, Andreas Hochlehnert, Ameya Prabhu, Vishaal Udandarao, Ofir Press, Matthias Bethge

> Tübingen AI Center, University of TübingenOpen-𝚿𝚿\mathbf{\Psi}bold_Ψ(Open-Sci) Collective

> Tübingen AI Center, University of TübingenUniversity of CambridgeOpen-𝚿𝚿\mathbf{\Psi}bold_Ψ(Open-Sci) Collective

> Princeton Language and Intelligence, Princeton University

\quotingsetup

vskip=-.0leftmargin=0.025rightmargin=0.075font=small,itshape

Abstract

Thousands of new scientific papers are published each month. Such information overload complicates researcher efforts to stay current with the state-of-the-art as well as to verify and correctly attribute claims. We pose the following research question: Given a text excerpt referencing a paper, could an LM act as a research assistant to correctly identify the referenced paper? We advance efforts to answer this question by building a benchmark that evaluates the abilities of LMs in citation attribution. Our benchmark, CiteME, consists of text excerpts from recent machine learning papers, each referencing a single other paper. CiteME use reveals a large gap between frontier LMs and human performance, with LMs achieving only 4.2-18.5% accuracy and humans 69.7%. We close this gap by introducing CiteAgent, an autonomous system built on the GPT-4o LM that can also search and read papers, which achieves an accuracy of 35.3% on CiteME. Overall, CiteME serves as a challenging testbed for open-ended claim attribution, driving the research community towards a future where any claim made by an LM can be automatically verified and discarded if found to be incorrect.

1 Introduction

<details>

<summary>extracted/5974968/figures/fig1.png Details</summary>

### Visual Description

## Diagram: Citation Resolution Workflow

### Overview

The image depicts a simplified workflow for resolving citations in text using an AI agent. It consists of three components:

1. A text box containing a sentence with an embedded citation placeholder.

2. A robot illustration interacting with a computer.

3. A text box displaying the resolved citation.

### Components/Axes

- **Left Text Box**:

- **Content**: "Find the paper cited in this text: 'ESIM is another high performing model for sentence-pair classification tasks, particularly when used with ELMo embeddings [CITATION]'"

- **Purpose**: Input text with an unresolved citation.

- **Robot Illustration**:

- **Position**: Center of the diagram.

- **Details**: A stylized robot seated at a desk with a computer, symbolizing an AI agent performing a search.

- **Right Text Box**:

- **Content**: "After searching, I think the cited paper is: 'Deep contextualized word representations'"

- **Purpose**: Output text with the resolved citation.

### Detailed Analysis

- **Textual Content**:

- The left text box includes a citation placeholder (`[CITATION]`) within a sentence discussing the ESIM model and its use with ELMo embeddings.

- The right text box resolves the citation to "Deep contextualized word representations," referencing the foundational paper by Devlin et al. (2018) on BERT.

- **Flow**:

- The workflow progresses from the left text box → robot → right text box, indicating a search process to resolve the citation.

### Key Observations

- The citation placeholder is explicitly marked as `[CITATION]`, suggesting a templated or automated system for citation resolution.

- The resolved citation refers to a well-known paper in NLP, implying the robot’s search capability is context-aware.

### Interpretation

This diagram illustrates a conceptual pipeline where an AI agent (robot) resolves citations in text by searching for relevant papers. The example uses a real-world NLP citation (ESIM + ELMo) and resolves it to the seminal BERT paper, highlighting the agent’s ability to infer contextually relevant references. The absence of numerical data or uncertainty suggests this is a schematic representation rather than an empirical analysis.

</details>

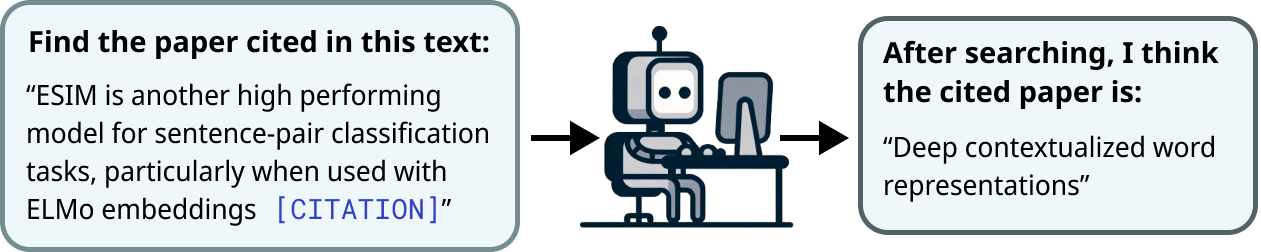

Figure 1: Example of a CiteME instance. The input (left) is an excerpt from a published paper with an anonymized citation; the target answer (right) is the title of the cited paper. */ $\ddagger$ shared first/last authorship Code: github.com/bethgelab/CiteME, Dataset: huggingface.co/datasets/bethgelab/CiteME Correspondence to {ori.press, andreas.hochlehnert}@bethgelab.org

Scientific discoveries are advancing at an ever-growing rate, with tens of thousands of new papers added just to arXiv every month [4]. This rapid progress has led to information overload within communities, making it nearly impossible for scientists to read all relevant papers. However, it remains a critical scholarship responsibility to check new claims and attribute credit to prior work accurately. Language models (LMs) have shown impressive abilities as assistants across tasks [25], which leads us to explore the following task in this paper: Can language models act as research assistants to help scientists deal with information overload?

We make progress towards answering this question by evaluating the abilities of LMs in citation attribution [27, 59]. Given a text excerpt referencing a scientific claim, citation attribution is the task in which a system is asked to fetch the title of a referenced paper, as illustrated in Figure 1.

Current benchmarks are collected automatically, which leads to the dominance of ambiguous or unattributable text excerpts that make overly broad claims or are not used as evidence for any specific claim, as shown in Table 1. Furthermore, these benchmarks typically frame citation attribution as a retrieval task from a small set of pre-selected papers where only paper titles and abstracts can be viewed, not the full paper’s content important for citation attribution [22, 50].

Table 1: Percentage of reasonable, ambiguous, unattributable, and trivial excerpts across 4 citation datasets, as labeled by human experts. For a detailed breakdown of every analyzed sample, see Appendix A.

| FullTextPeerRead [42] ACL-200 [9, 58] RefSeer [40, 58] | 24 26 24 | 26 42 28 | 34 18 32 | 16 14 16 |

| --- | --- | --- | --- | --- |

| arXiv [33] | 10 | 50 | 30 | 10 |

| Average | 21 | 36.5 | 28.5 | 14 |

To address these issues, we introduce CiteME (Citation for Model Evaluation), the first manually curated citation attribution benchmark with text excerpts that unambiguously reference a single paper. CiteMe’s use of only unambiguous text excerpts eliminates the subjectivity that characterizes other benchmarks.

To evaluate CiteMe, we conduct benchmark tests that focus on open-ended citation attribution. Human evaluators confirm the lack of ambiguity, achieving 69.7% accuracy while taking just 38.2 seconds on average to find the referenced papers. The current state-of-the-art system, SPECTER2 [77], experiences 0% accuracy on CiteME, highlighting the real-world difficulties of LM-based citation attribution. Similarly, current frontier LMs achieve performance of 4.2-18.5%, substantially beneath human performance. We conclude that current LMs cannot reliably link scientific claims to their sources.

To bridge this gap, we introduce CiteAgent, an autonomous system built on top of the GPT-4o [1] LM and the Semantic Scholar search engine [46]. CiteAgent can search for and read papers repeatedly until it finds the referenced paper, mirroring how scientists perform this scholarship task to find targeted papers. CiteAgent correctly finds the right paper 35.3% of the time when evaluated on CiteME.

In summary, our main contributions are:

- CiteME, a challenging and human-curated benchmark of recent machine learning publications that evaluates the abilities of LMs to correctly attribute scientific claims. CiteME is both natural and challenging, even for SoTA LMs.

- CiteAgent, an LM-based agent that uses the Internet to attribute scientific claims. Our agent uses an existing LM without requiring additional training. It also uses a search engine, which makes it applicable to real-world settings and differentiates it from systems that can search only within a predetermined corpus of papers.

Future work that improves the accuracy of CiteME may lead to systems that can verify all claims an LM makes, not just those in the ML research domain. This could reduce the hallucination rate [92] and increase factuality [6] of LM-generated text.

2 The CiteME Benchmark

We now present the CiteME benchmark, which we differentiate from other citation prediction benchmarks that are automatically curated, i.e., curated without human supervision or feedback in selecting text excerpts [32, 31, 9, 40, 72, 44, 42, 33]. For comparison, we study the quality of excerpts across four popular citation prediction benchmarks (FullTextPeerRead, [42], ACL-200 [9, 58], RefSeer [40, 58], and arXiv [33]). Specifically, we sample 50 excerpts from each dataset and categorize them using the following criteria:

(1) Attributable vs Unattributable. The cited paper should provide evidence for the statement in the text excerpt, i.e., be an attribution as opposed to a statement that does not clearly refer to supporting evidence. Excerpts that do not follow this criterion are termed unattributable, as in the example: {quoting} For all of our experiments, we use the hyperparameters from [CITATION]. (2) Unambiguous vs Ambiguous. The cited text excerpt should not be overly broad. The ground truth cited papers should clearly be the only possible reference for the claim in the text excerpt. Excerpts that do not follow this criterion are termed ambiguous, as in the example: {quoting} [CITATION1, CITATION2] explored paper recommendation using deep networks. (3) Non-Trivial vs Trivial. The text excerpt should not include author names or title acronyms, which simply tests LM memorization and retrieval. Excerpts that do not follow this criterion are termed trivial, as in the example: {quoting} SciBERT [CITATION] is a BERT-model pretrained on scientific texts.

(4) Reasonable vs Unreasonable. The text excerpt should be attributable, unambiguous and non-trivial. We term excerpts that do not follow this criterion unreasonable, but we categorize them according to the underlying issue (e.g., unattributable, ambiguous, or trivial). An example of a reasonable excerpt is: {quoting} We use the ICLR 2018–2022 database assembled by [CITATION], which includes 10,297 papers.

In Table 1 (left), we demonstrate that most samples from all four datasets lack sufficient information for humans to identify the cited paper and are often labeled as ambiguous or unattributable. Additionally, an average of 17.5% of the samples are tagged as trivial because they include the title of the paper or its authors directly in the excerpt. Excerpts also frequently have formatting errors, making some nearly unreadable (see examples in Appendix A). Past work also notes similar artifacts [33, 42, 58], further supporting our claims. This analysis leads us to contend that performance on existing citation benchmarks might not reflect real-world performance of LM research assistants.

In response to these deficiencies, we created CiteME, a new benchmark with human expert curation for unambiguous citation references. CiteME contains carefully selected text excerpts, each containing a single, clear citation to ensure easy and accurate evaluation.

Curation. A team of 4 machine learning graduate students, henceforth referred to as “experts”, were responsible for collecting text excerpts. The experts were instructed to find samples that (1) referenced a single paper and (2) provided sufficient context to find the cited paper with scant background knowledge. Each sample was checked for reasonableness; only those deemed reasonable by two or more experts were retained. Some excerpts were slightly modified to make them reasonable.

<details>

<summary>extracted/5974968/figures/paper_tags.png Details</summary>

### Visual Description

## Bar Chart: CiteME Paper Tags

### Overview

The chart displays the frequency distribution of paper tags in the CiteME dataset, focusing on machine learning and AI-related topics. The x-axis represents "Tag Frequency" (0–6), while the y-axis lists specific technical tags. Bars are colored light blue with black borders.

### Components/Axes

- **X-axis**: "Tag Frequency" (scale: 0–6, increments of 1)

- **Y-axis**: Technical tags (listed vertically):

1. Image Classification

2. Adversarial Machine Learning

3. Deep Learning Architectures

4. Vision-Language Models

5. Contrastive Learning

6. Multi-modal Learning

7. Representation Learning

8. Image Processing

9. Machine Learning Efficiency

10. Machine Learning Evaluation

- **Bars**: Light blue with black borders, no legend present.

### Detailed Analysis

- **Highest Frequency Tags**:

- *Image Classification*: ~6

- *Adversarial Machine Learning*: ~6

- **Mid-Frequency Tags** (~5):

- *Deep Learning Architectures*

- *Vision-Language Models*

- *Contrastive Learning*

- *Multi-modal Learning*

- *Representation Learning*

- **Lower Frequency Tags** (~4):

- *Image Processing*

- *Machine Learning Efficiency*

- *Machine Learning Evaluation*

### Key Observations

1. **Dominance of Image-Related Tags**: "Image Classification" and "Adversarial Machine Learning" are the most frequent, suggesting a strong focus on image-centric ML research.

2. **Cluster of Core ML Concepts**: Tags like "Deep Learning Architectures" and "Vision-Language Models" occupy mid-frequency positions, indicating foundational but widely studied areas.

3. **Lower Emphasis on Evaluation/Efficiency**: Tags like "Machine Learning Evaluation" and "Efficiency" show the least frequency, potentially reflecting niche or emerging research areas.

### Interpretation

The data highlights a research landscape prioritizing image processing and adversarial ML, with foundational concepts like deep learning architectures maintaining steady relevance. The lower frequency of evaluation and efficiency tags may indicate either underdeveloped research areas or a focus on applied over theoretical work. The uniformity of mid-frequency tags suggests a balanced exploration of core ML paradigms, while the absence of a legend simplifies interpretation but limits contextual nuance (e.g., distinguishing between subcategories).

</details>

<details>

<summary>extracted/5974968/figures/citeme_hist.png Details</summary>

### Visual Description

## Bar Chart: CiteME Papers by Year Published

### Overview

The chart displays the percentage of CiteME papers published annually from Pre '11 to '24. The y-axis represents the "Percent of Papers" (0–25%), and the x-axis lists years. Bars are light blue, with a legend confirming the color association.

### Components/Axes

- **X-axis (Years)**: Pre '11, '11, '12, '13, '14, '15, '16, '17, '18, '19, '20, '21, '22, '23, '24

- **Y-axis (Percent of Papers)**: 0–25% in increments of 5%

- **Legend**: Light blue color corresponds to "CiteME Papers"

### Detailed Analysis

- **Pre '11**: ~20%

- **'11**: ~1%

- **'12**: ~3%

- **'13**: ~3%

- **'14**: ~2%

- **'15**: ~5%

- **'16**: ~4%

- **'17**: ~7%

- **'18**: ~11%

- **'19**: ~11%

- **'20**: ~3%

- **'21**: ~16%

- **'22**: ~23%

- **'23**: ~24%

- **'24**: ~2%

### Key Observations

1. **Initial Decline**: A sharp drop from 20% (Pre '11) to 1% in '11, followed by gradual growth until '19.

2. **Post-2020 Surge**: A 10x increase from 3% in '20 to 24% in '23, followed by a sudden drop to 2% in '24.

3. **Stability (2015–2019)**: Fluctuations between 2% and 11%, with a peak in '18 and '19.

4. **Anomalies**: The 2024 value deviates significantly from the upward trend observed in 2021–2023.

### Interpretation

The data suggests a cyclical pattern in CiteME paper adoption. The pre-2011 baseline (~20%) may reflect early adoption, followed by a decline possibly due to methodological shifts or competing tools. The 2015–2019 stabilization could indicate market saturation or academic integration. The 2021–2023 surge aligns with potential technological advancements or renewed interest, though the 2024 drop raises questions about sustainability or external disruptions (e.g., platform changes, competition). The 2024 anomaly warrants further investigation into dataset completeness or contextual factors.

</details>

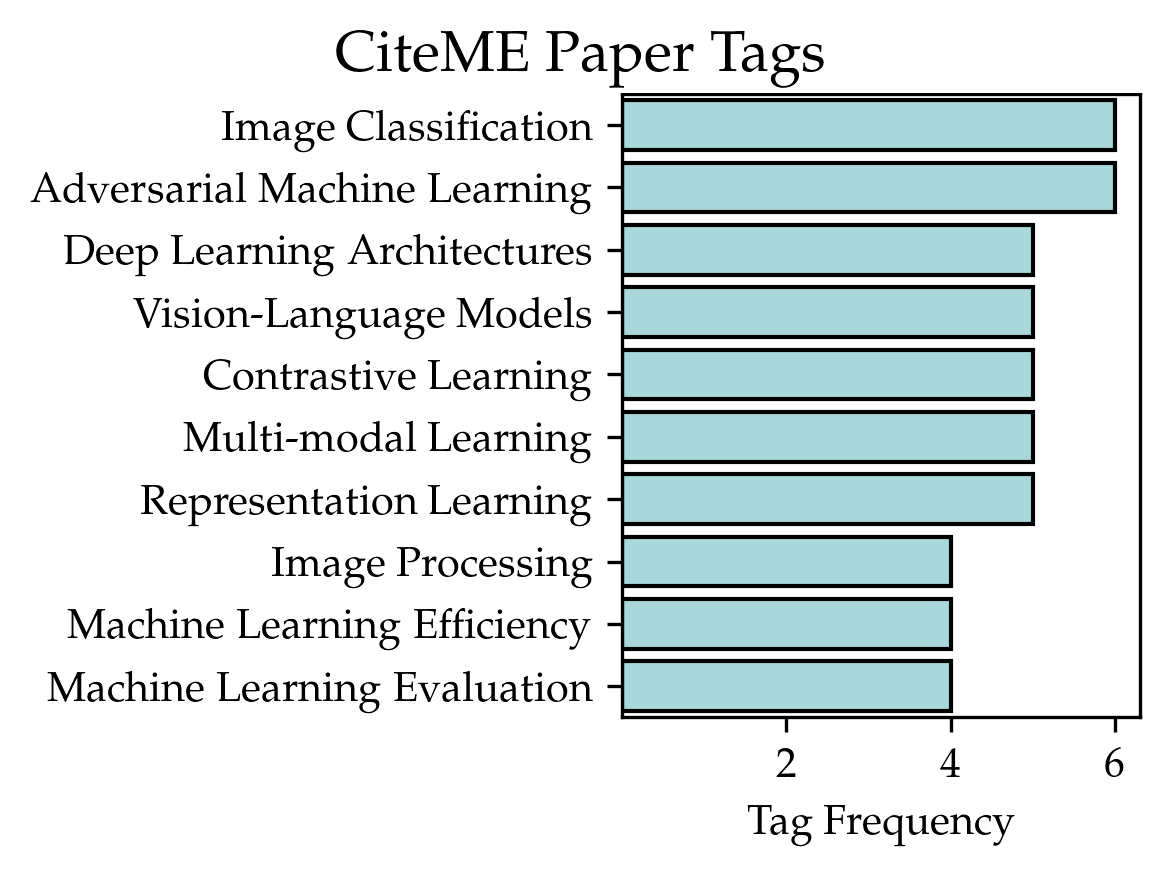

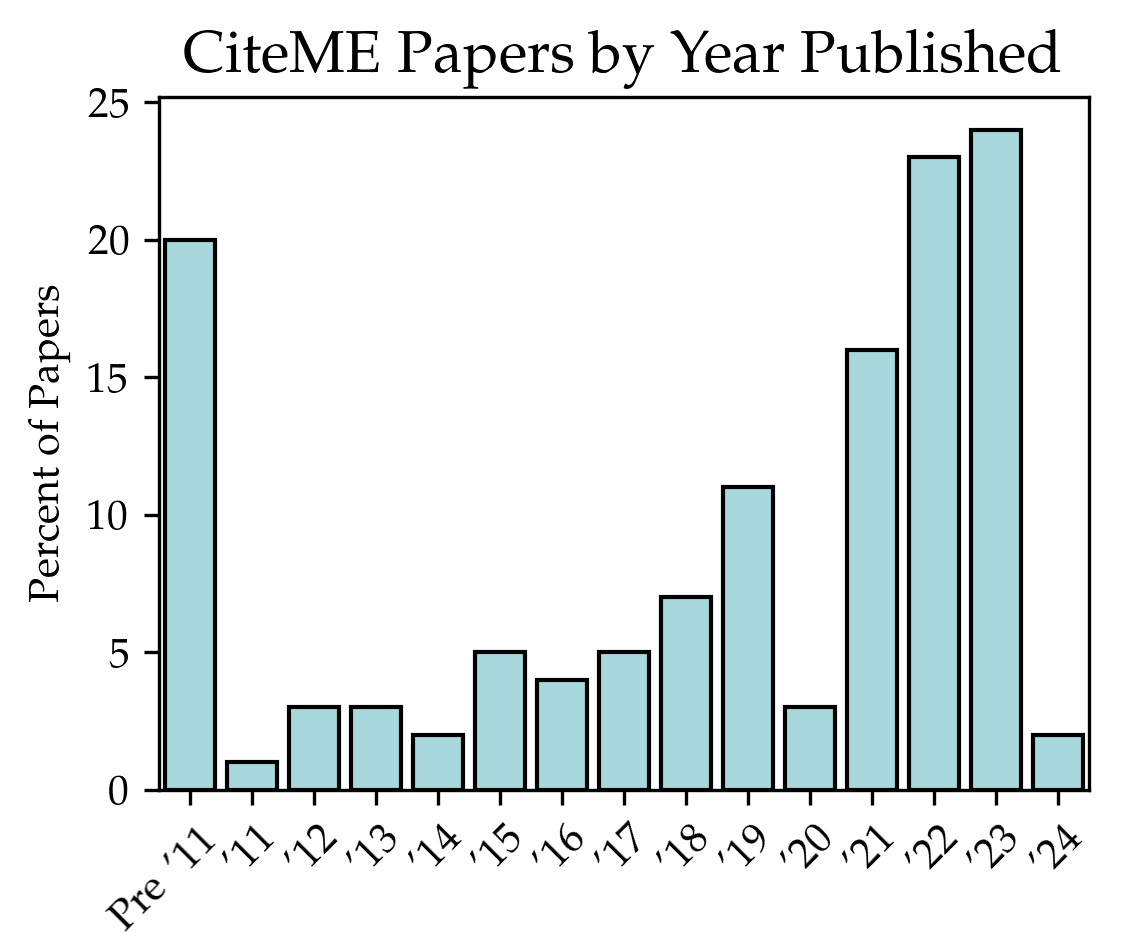

Figure 2: (Left) The top 10 most frequent labels of papers in CiteME, as identified by GPT-4. Overly broad tags like "Machine Learning" or "Deep Networks" were excluded (see Appendix D for details). (Right) Most excerpts in CiteME are from recent papers.

Filtering Out the Easy Instances. To ensure that CiteMe is a challenging and robust dataset, we remove all dataset instances that GPT-4o can correctly answer. Filtering datasets by removing the samples that a strong model can correctly answer was previously done in Bamboogle [71] and the Graduate-Level Google-Proof Q&A Benchmark [73]. In our filtering process, GPT-4o was used with no Internet access or any other external tools. Therefore, it could answer only correctly specified papers that it memorized from its training process. We ran each sample through GPT-4o five times to cover its different outcomes. In the end, we filtered out 124 samples, leaving 130 samples in total.

Human Evaluation. To ensure that our benchmark instances are not unsolvable, we evaluate human performance on them. Using a random subset of 100 samples, we asked a group of 20 experts, who were not part of benchmark construction, to perform the task of finding the referenced papers given only the excerpt, with each expert given 5 random samples from CiteME and a maximum of two minutes to solve each instance (similar to [47]). We observe that the experts found the correct citation 69.7% of the time, spending an average of only 38.2 seconds to do so. Note that this accuracy number does not represent the maximum-possible human performance since our annotators were limited to two minutes per question for budget reasons. Human accuracy may rise even higher given more time per instance. To check the experts’ consistency, five more experts were asked to solve the same instances previously answered by the original experts. In 71% of the cases, both experts agreed on the answer, and at least one expert got to the right answer in 93% of cases.

Are 130 questions sufficient to evaluate LMs? Though traditional machine learning benchmarks usually contain thousands or even millions of test samples, recent work [17, 71, 74, 86] shows that LM benchmarks can include only 100-200 samples and remain insightful. HumanEval [17], for example, which consists of 164 programming problems, is among the most influential LM datasets today, appearing in virtually every SoTA LM paper recently published [66, 1, 81, 19]. Similarly, Bamboogle [71] contains 125 questions, DrawBench [74] contains 200 instances, and Plot2Code [86] contains 132 questions. This is in line with [70, 69], who show that benchmarks with many samples can be reduced to around 100 samples without sacrificing their utility. In addition, smaller benchmarks are advantageous because they are both cheaper to evaluate and impose a less significant environmental impact [76].

3 CiteAgent

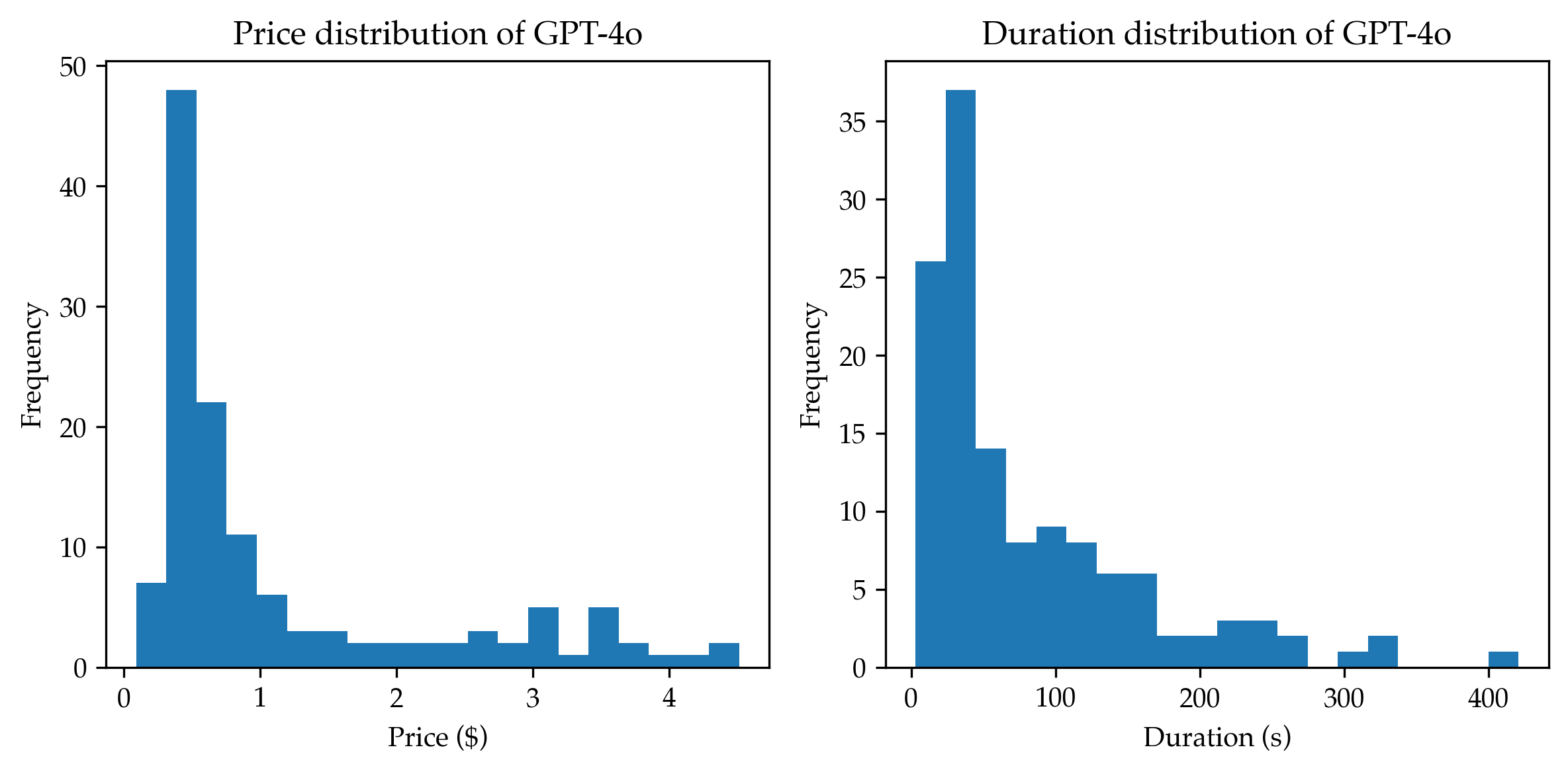

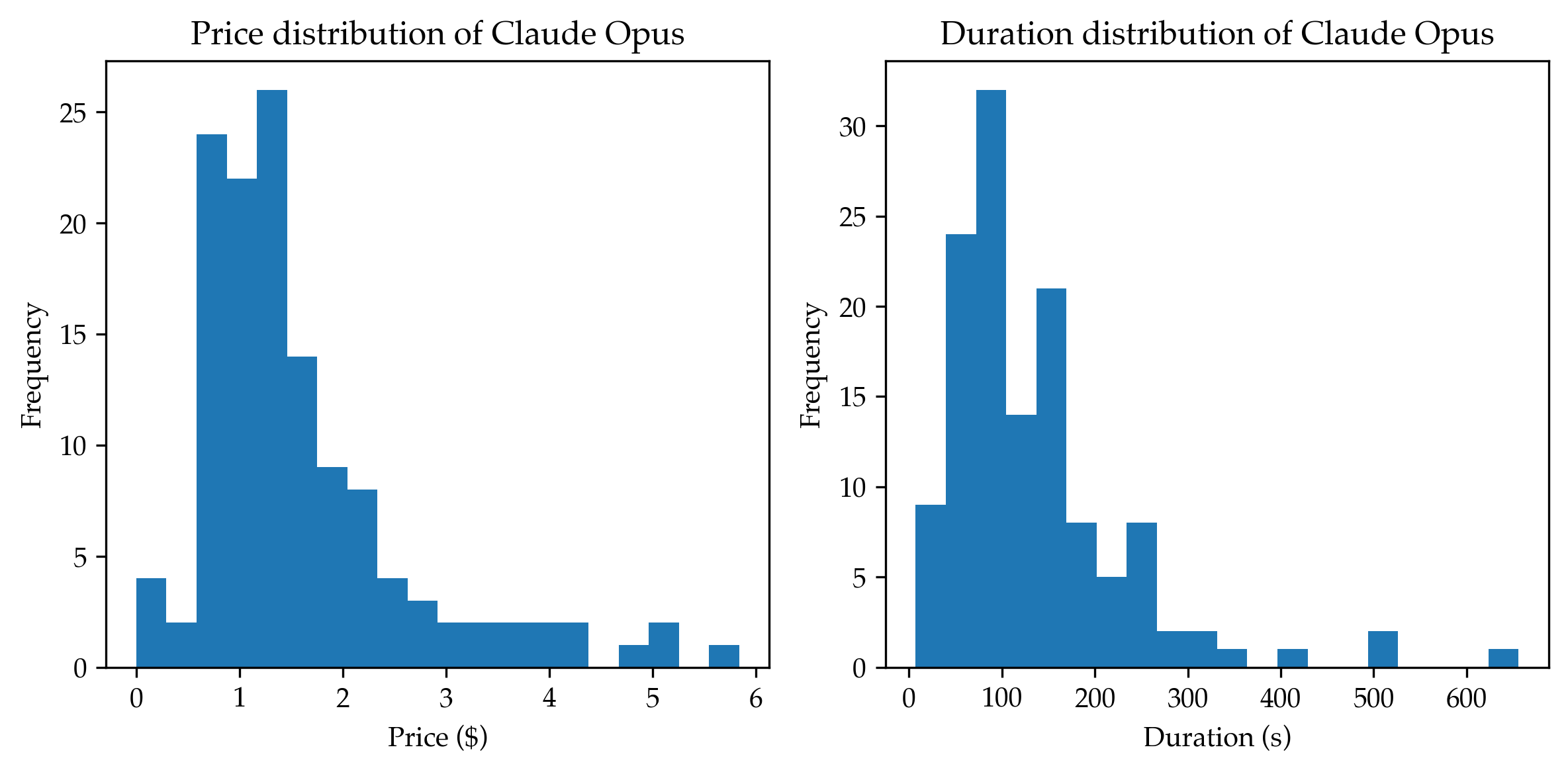

We now describe CiteAgent, an LM-based system that we built to mimic researcher performance of open-ended citation attribution. A researcher seeking the correct attribution for a claim might use a search engine, read several papers, refine the search query, and repeat until successful. To allow CiteAgent to perform these actions, we built it to use Semantic Scholar to search for and read papers. Unless specified otherwise, we refer to CiteAgent with the GPT-4o backbone simply as CiteAgent throughout this paper.

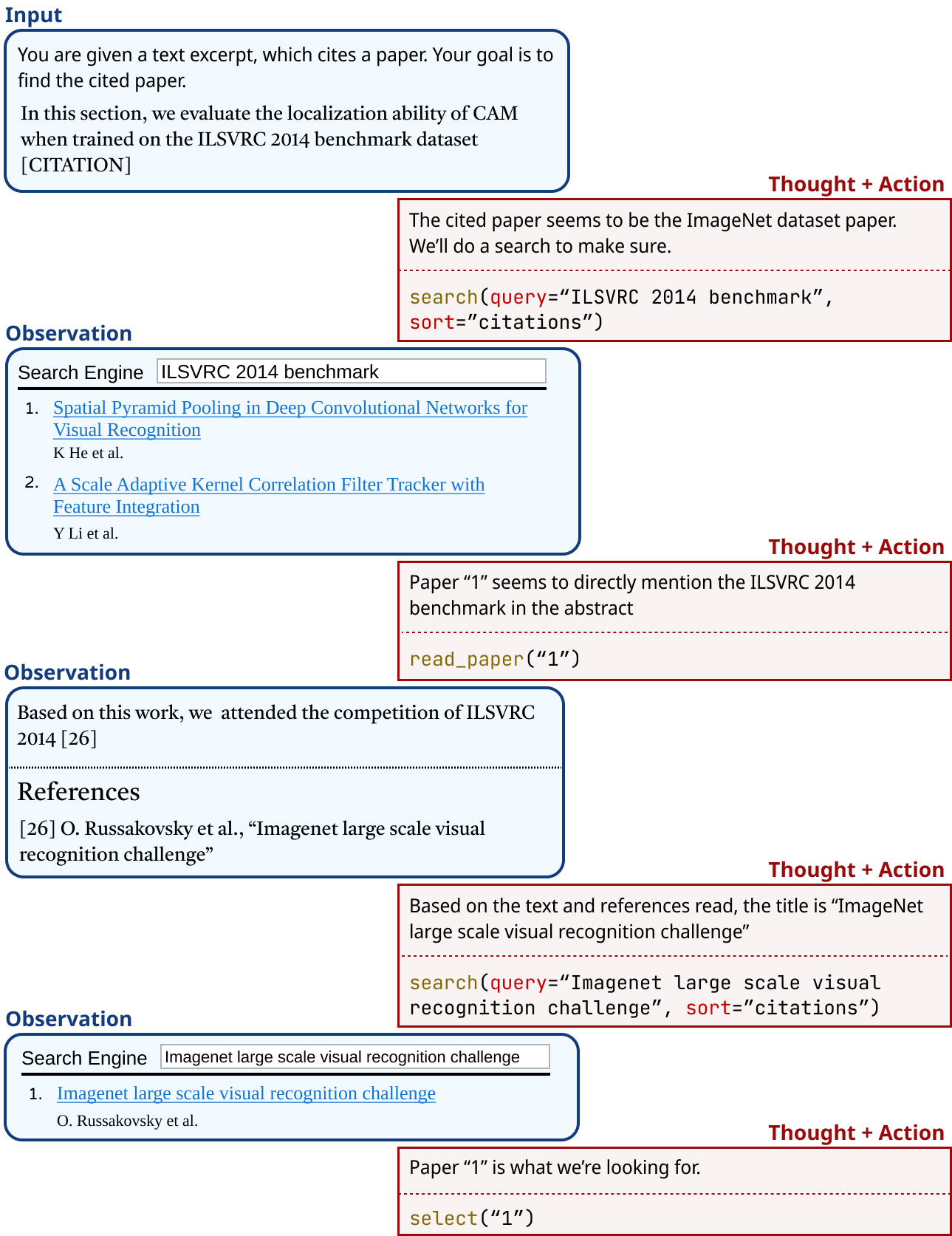

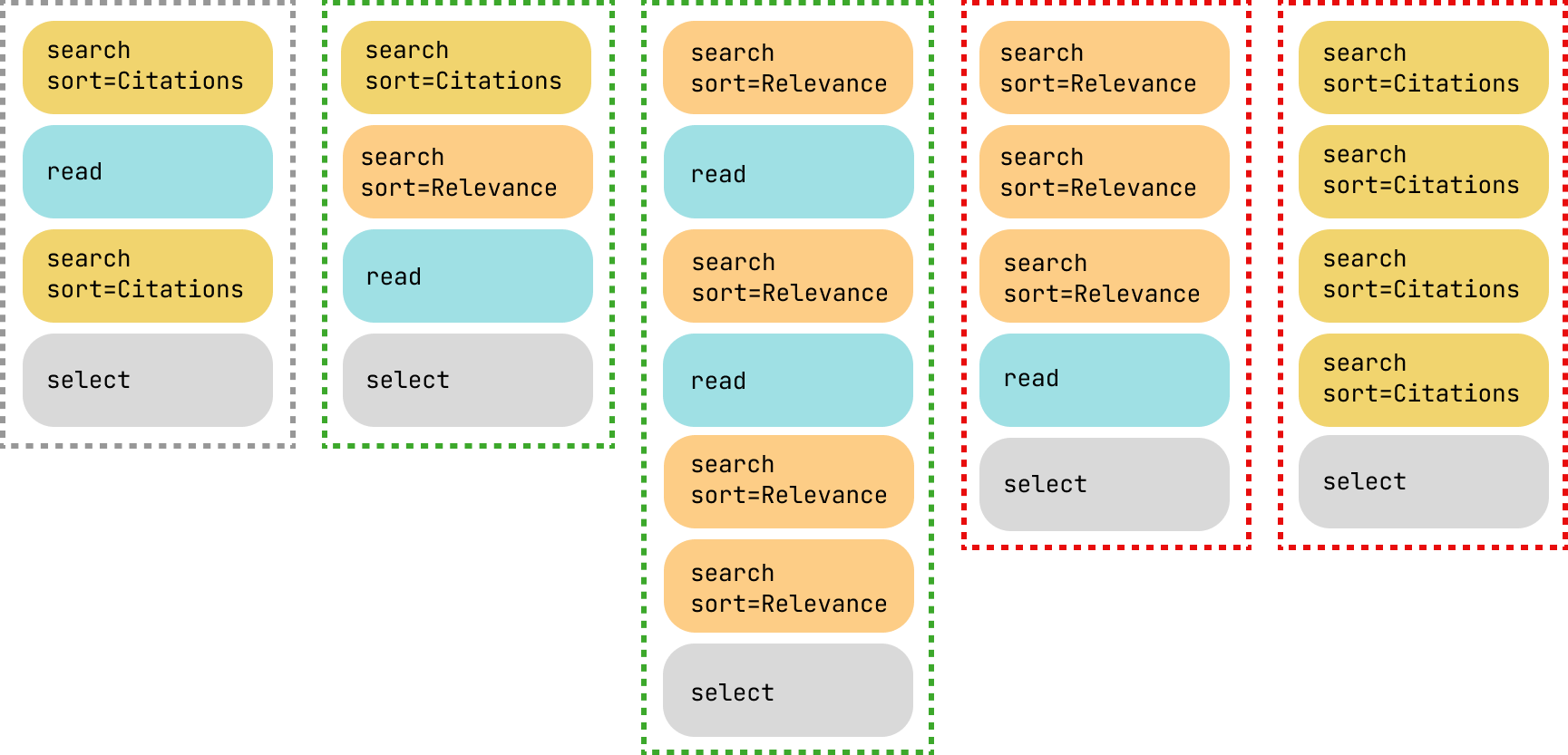

Given a text excerpt, we prompt CiteAgent to perform one of a fixed set of custom commands and provide the output that the given command generated. CiteAgent then gives its rationale before performing another action, following [90, 88]. Figure 3 shows this process. We now describe the starting prompt and custom agent commands.

Prompt. Our prompt includes the task description, descriptions of available commands, and a demonstration trajectory, i.e., the series of actions that the system executes while solving an instance [90, 88]. The trajectory includes searching, reading a paper, and searching again (see Figure 4). We model our prompt on the SWE-Agent prompt [88].

Table 2: Commands available to the model using our system.

| search(query, sort) read(ID) select(ID) | Searches for a query; sorts results by relevance or by citation count; returns a list of papers, where each item consists of the paper ID, title, number of citations, and abstract. Returns the full text of a paper, including title, author list, abstract, and the paper itself. Selects a paper from the search results as the answer. |

| --- | --- |

Agent Commands. CiteAgent can respond to three custom commands (see Table 2). It always begins by executing the search command (sorting by relevance or citation count), which searches Semantic Scholar for a query and returns top results in a sorted order. After searching, CiteAgent can either search again, read one of the listed papers, or select a paper can perform up to 15 actions for every sample. Once a select action is taken, the session ends, and the selected paper is recorded.

Search. CiteAgent initiates a search command by querying Semantic Scholar [46]. We chose the Selenium API [63] over the Semantic Scholar API due to the former’s significantly better re-ranked queries and its ability to provide a uniform interface for both our model and human trajectory annotators.

Selenium also lets us access features such as sorting search results by relevance and citation count, which our human trajectory annotators found particularly valuable.

To ensure correctness, we filter out search results published after the excerpt’s source paper, and the source paper itself. We then give CiteAgent the top 10 search results, which include paper id, title, abstract, and citation count.

<details>

<summary>extracted/5974968/figures/trajectory.png Details</summary>

### Visual Description

## Flowchart: Process for Locating Cited Papers in Technical Documents

### Overview

The image depicts a flowchart illustrating a systematic approach to identifying cited papers within technical documents. It combines textual analysis with search engine interactions, citation tracking, and reference validation to locate specific academic works.

### Components/Axes

1. **Input Section** (Blue Box):

- Text: "You are given a text excerpt, which cites a paper. Your goal is to find the cited paper."

- Subtext: "In this section, we evaluate the localization ability of CAM when trained on the ILSVRC 2014 benchmark dataset [CITATION]."

2. **Thought + Action Boxes** (Red Background):

- First Box:

- Text: "The cited paper seems to be the ImageNet dataset paper. We'll do a search to make sure."

- Code: `search(query="ILSVRC 2014 benchmark", sort="citations")`

- Second Box:

- Text: "Paper '1' seems to directly mention the ILSVRC 2014 benchmark in the abstract."

- Code: `read_paper("1")`

- Third Box:

- Text: "Based on the text and references read, the title is 'Imagenet large scale visual recognition challenge'."

- Code: `search(query="Imagenet large scale visual recognition challenge", sort="citations")`

- Fourth Box:

- Text: "Paper '1' is what we're looking for."

- Code: `select("1")`

3. **Observation Sections** (Blue Boxes):

- First Observation:

- Search Engine Query: "ILSVRC 2014 benchmark"

- Results:

1. "Spatial Pyramid Pooling in Deep Convolutional Networks for Visual Recognition" (K. He et al.)

2. "A Scale Adaptive Kernel Correlation Filter Tracker with Feature Integration" (Y. Li et al.)

- Second Observation:

- Text: "Based on this work, we attended the competition of ILSVRC 2014 [26]."

- References:

- "[26] O. Russakovsky et al., 'Imagenet large scale visual recognition challenge'"

4. **Final Observation** (Blue Box):

- Search Engine Query: "Imagenet large scale visual recognition challenge"

- Result:

- "Imagenet large scale visual recognition challenge" (O. Russakovsky et al.)

### Detailed Analysis

- **Search Logic**: The process uses citation-based sorting (`sort="citations"`) to prioritize highly referenced papers, ensuring relevance.

- **Reference Validation**: Cross-checks citations against known benchmarks (e.g., ILSVRC 2014) and competition attendance records.

- **Paper Selection**: Final selection (`select("1")`) is based on direct mention of the target benchmark in the abstract.

### Key Observations

1. **Citation-Driven Search**: Papers are prioritized by citation count to identify authoritative sources.

2. **Benchmark Alignment**: The ILSVRC 2014 benchmark is central to the workflow, linking competition participation to academic citations.

3. **Reference Chaining**: The process leverages references from initial findings to validate and refine results.

### Interpretation

This flowchart demonstrates a data-driven methodology for locating cited papers by combining:

- **Textual Analysis**: Identifying keywords like "ILSVRC 2014" and "ImageNet" in excerpts.

- **Search Engine Automation**: Using structured queries to retrieve and rank relevant papers.

- **Citation Metrics**: Prioritizing papers with high citation counts to ensure reliability.

- **Reference Validation**: Confirming results against known benchmarks and competition records.

The workflow emphasizes efficiency and accuracy, reducing manual effort through automated search and citation tracking. The final selection of Paper "1" validates the hypothesis that the cited work is the ImageNet challenge paper, aligning with both the text excerpt and reference data.

</details>

Figure 3: The demonstration trajectory we gave CiteAgent in the prompt.

Read. Read command execution causes CiteAgent to retrieve the open-access PDF corresponding to the selected paper from Semantic Scholar. Using the PyPDF2 library [29], our system extracts the text from the PDF, excluding visual figures. It then presents the text to CiteAgent, which generates a thought and a new command. If an open-access PDF link is unavailable, CiteAgent returns a message to that effect. We note that due to the limited context length of 8K tokens in the LLaMA-3 LM, we excluded the read action when using that model.

Select. Select command execution causes CiteAgent to choose a paper to attribute to the input text excerpt, which ends the run. If the number of actions reaches 14, CiteAgent is prompted to make a selection, forcefully concluding the run. This design choice ensures that all runs complete within a finite time and budget.

4 Experiment Setup

Below, we provide detailed implementation information for the baseline models and the various CiteAgent configurations we used for our evaluations.

SPECTER Models. We present the results of SPECTER [21] and SPECTER2 [77] on CiteME as our baselines. SPECTER [21] encodes robust document-level representations for scientific texts, achieving high performance on citation prediction tasks without the need for fine-tuning. We use the Semantic Scholar SPECTER API https://github.com/allenai/paper-embedding-public-apis to embed the input text excerpts and the Semantic Scholar Datasets API https://api.semanticscholar.org/api-docs/datasets to embed all papers on Semantic Scholar, using these embeddings as our retrieval set.

SPECTER2 models [77] introduce task-specific representations, each tailored to different tasks. For our experiments, we use the base customization of SPECTER2 from Hugging Face https://huggingface.co/allenai/specter2 to embed text excerpts and the Semantic Scholar Datasets API to similarly embed all papers on Semantic Scholar, forming our retrieval set. We apply an exact kNN [53] match to identify the closest embedding, computing the cosine similarity between the embeddings of text excerpt and all available papers (title and abstract). Using exact kNN matches ensures no approximations/errors are introduced while matching queries. We embed the query text excerpt as title only and both title and abstract, but that did not change the performance of the SPECTER models.

CiteAgent. We run the CiteAgent system with three SoTA LMs as backbones: GPT-4o [1], Claude 3 Opus [3], and LLaMa-3-70B [81]. We additionally ablate over three classes of commands (Table 2):

1. Search and Read. The model can perform both search and read commands.

1. Search Only. The model is not allowed to read papers but can perform searches.

1. No Commands. The model operates with no access to the interface for actions like searching and reading.

Each class of actions is evaluated with and without demonstrations trajectories in the prompt, resulting in six configurations per LM. With three LMs, two action classes, and the option to include or exclude demonstrations, we present a total of 12 CiteAgent ablations. We exclude LLaMa with both Search and Read because its context length is limited to 8k tokens. For all experiments, we use a temperature of 0.95, following Yang et al. [88], and provide our detailed prompts in Appendix E.

5 Results

Table 3: Performance of LMs (using our system) and retrieval methods on CiteME, summarized.

| | GPT-4o | LLaMA-3-70B | Claude 3 Opus | SPECTER2 | SPECTER1 |

| --- | --- | --- | --- | --- | --- |

| Accuracy [%] | 35.3 | 21.0 | 27.7 | 0 | 0 |

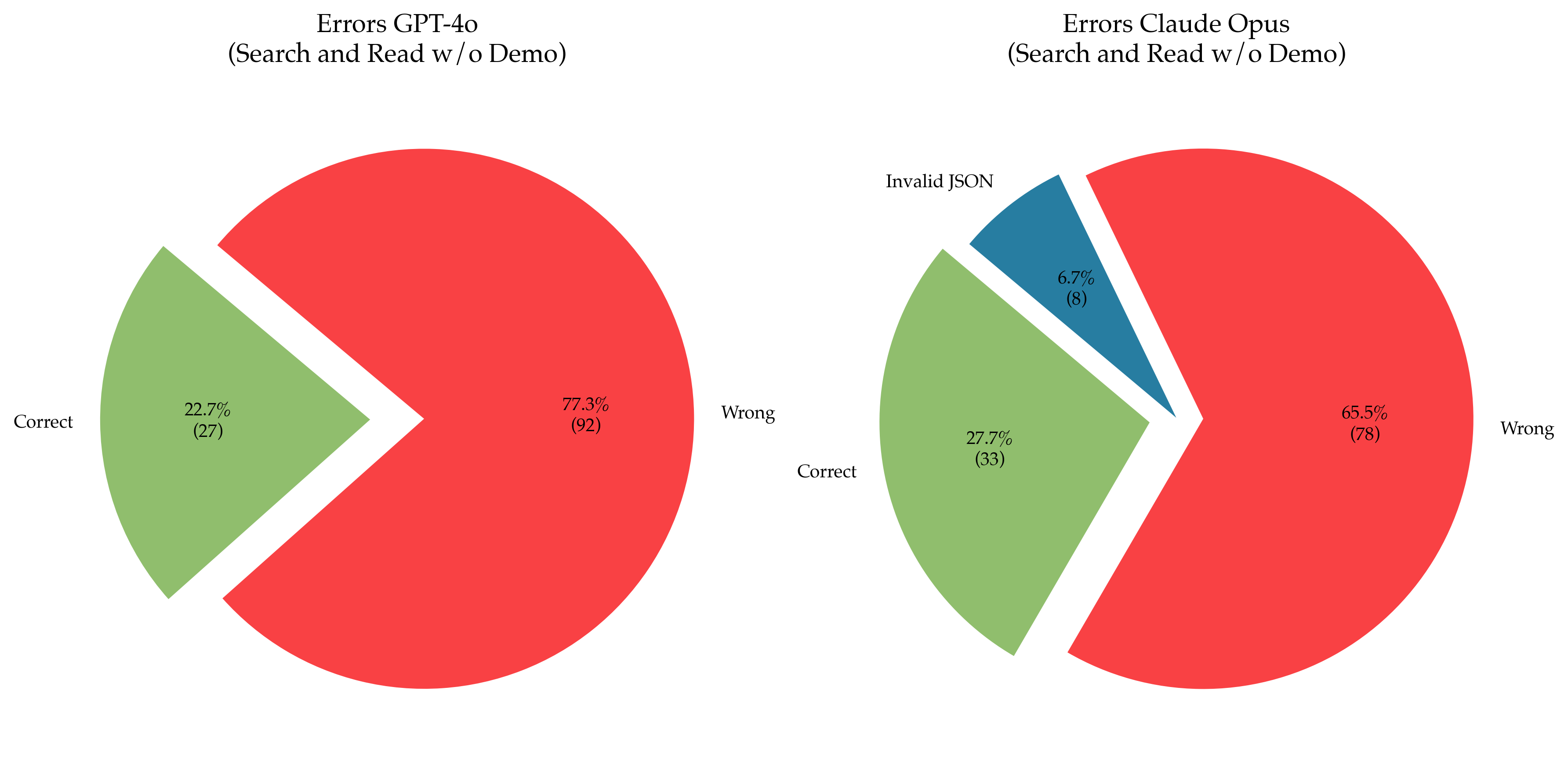

We present the evaluation results of the CiteME benchmark in Table 3. Our best model, CiteAgent (GPT-4o, search and read commands, and a demonstration in the prompt) achieves 35.3% accuracy, while the previous state-of-the-art models, SPECTER2 and SPECTER, achieve 0%. Human performance on the same task is 69.7% accuracy, with less than a minute of search time, indicating that a significant 34.4% gap remains.

Table 4: Accuracy (in %) of LMs and retrieval methods on CiteME. We test how the available commands and prompt demonstrations affect CiteME performance. LLaMA’s context window is too small and therefore incompatible with the read command.

| | Method | | | | | | |

| --- | --- | --- | --- | --- | --- | --- | --- |

| GPT-4o | LLaMA-3-70B | Claude 3 Opus | SPECTER2 | SPECTER | | | |

| Commands | No Commands | w/o Demo | 0 | 4.2 | 15.1 | 0 | 0 |

| w/ Demo | 7.6 | 5.9 | 18.5 | – | – | | |

| Search Only | w/o Demo | 26.1 | 21.0 | 26.1 | – | – | |

| w/ Demo | 29.4 | 2.5 | 27.7 | – | – | | |

| Search and Read | w/o Demo | 22.7 | N/A | 27.7 | – | – | |

| w/ Demo | 35.3 | N/A | 26.1 | – | – | | |

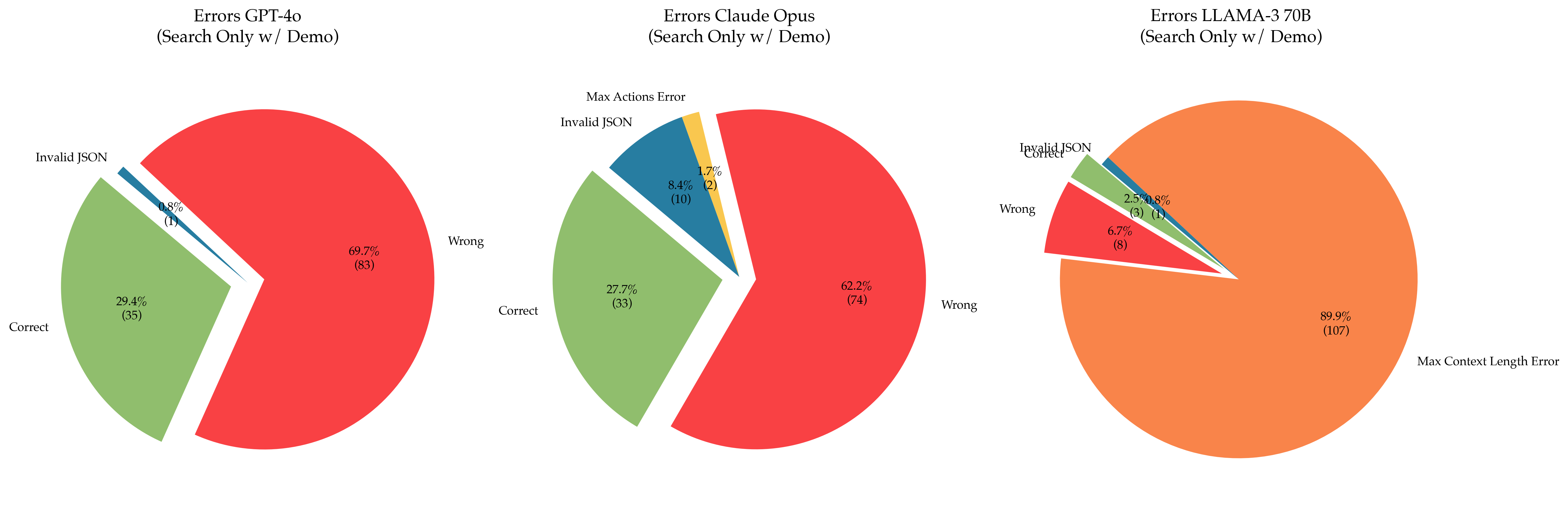

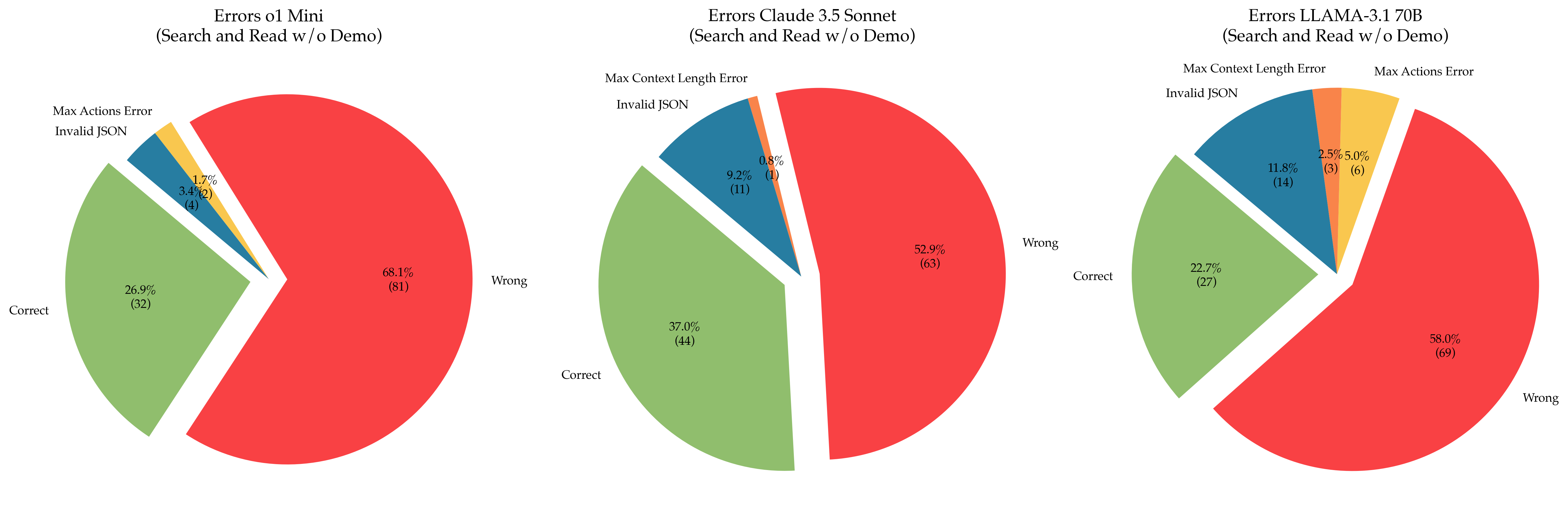

Performance across Language Models. Comparing the performance of LMs across columns in Table 4, GPT-4o demonstrates the highest accuracy when it has access to both read and search commands, outperforming other LMs by a wide margin. This finding aligns with previous research [88], which shows that GPT-4 powered agents excel in solving software issues. Notably, GPT-4o achieves high performance across settings even though CiteME consists exclusively of samples that GPT-4o cannot predict correctly without commands; its 0% performance without commands and demonstration trajectory is by design. However, LMs outperforming the SPECTER models purely by autoregressive generation provides evidence that LMs act as implicit knowledge bases with sufficient capacity [68].

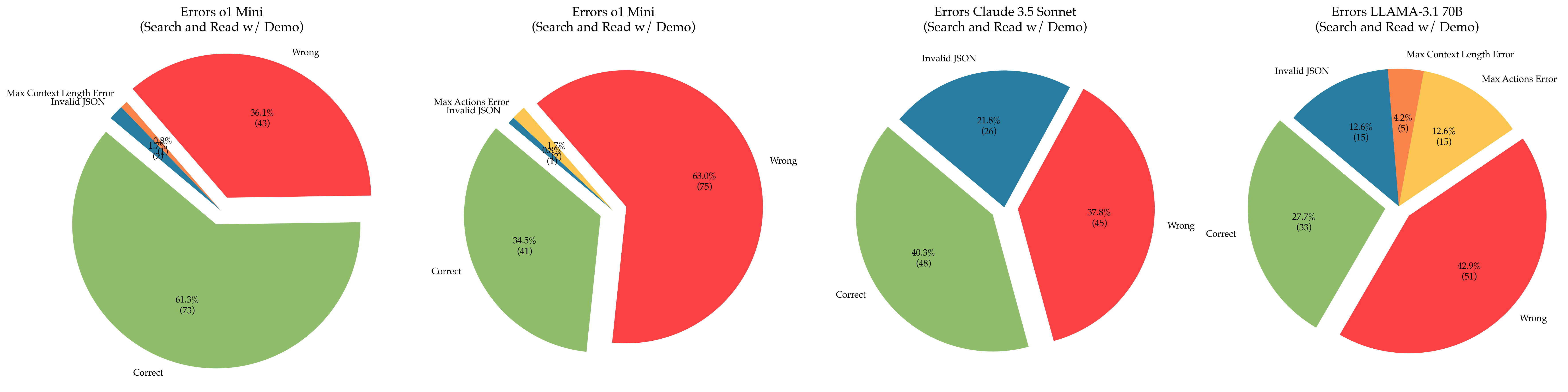

Peformance across Demonstrations. Comparing the performance between w/o Demo and w/ Demo rows in Table 4, we observe that LLaMA and Claude surprisingly perform worse when provided with a demonstration trajectory in the prompt. This may be due to the increased prompt length, which complicates the detection of important information [52]. LLaMA-3-70b incurs a performance drop to 2.5% due to combined history extending beyond its context length, resulting in errors. However, GPT-4o effectively utilizes demonstrations, which improves its accuracy.

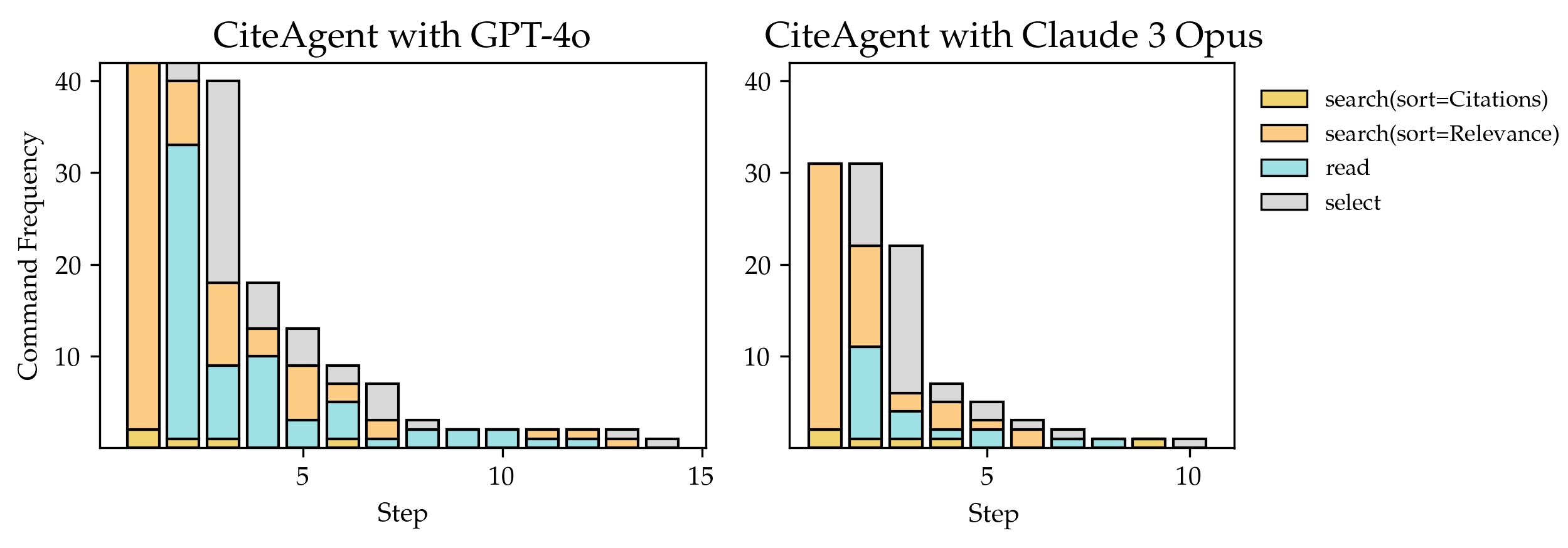

Performance across Commands. GPT-4o is the only LM whose accuracy improves with access to more commands, allowing it to read full papers. CiteAgent with GPT-4o creatively uses its commands across test samples, demonstrating command behaviors not shown in the demonstration trajectory (see Figure 4). It frequently refines its searches based on previous results and occasionally reads multiple papers before making a selection. In contrast, Claude 3 Opus is less effective in utilizing additional commands, likely due to difficulties in detecting important information [52].

<details>

<summary>extracted/5974968/figures/trajectory_analysis.png Details</summary>

### Visual Description

## Flowchart: Information Retrieval Workflow

### Overview

The image depicts a multi-stage information retrieval workflow visualized as five vertical sections, each containing stacked colored rectangles representing sequential operations. Sections are differentiated by dashed outlines (gray, green, red) and contain commands with sorting criteria ("sort=Citations" or "sort=Relevance") and actions ("read", "select").

### Components/Axes

- **Sections**: Five vertical columns labeled by dashed outlines:

1. Leftmost: Gray dashed outline

2. Second: Green dashed outline

3. Middle: No outline (longest section)

4. Fourth: Red dashed outline

5. Rightmost: Red dashed outline

- **Labels**: Textual commands in rectangles:

- Yellow: "search sort=Citations"

- Blue: "read"

- Gray: "select"

- Orange: "search sort=Relevance"

- **Flow Direction**: Top-to-bottom within each section, implying sequential execution.

### Detailed Analysis

1. **Left Section (Gray Outline)**:

- Commands:

- `search sort=Citations` (yellow)

- `read` (blue)

- `search sort=Citations` (yellow)

- `select` (gray)

- Structure: Alternating search-read pattern ending with selection.

2. **Second Section (Green Outline)**:

- Commands:

- `search sort=Citations` (yellow)

- `search sort=Relevance` (orange)

- `read` (blue)

- `select` (gray)

- Structure: Mixed sorting criteria before reading and selection.

3. **Middle Section (No Outline)**:

- Commands:

- `search sort=Relevance` (orange) ×4

- `read` (blue) ×3

- `select` (gray)

- Structure: Dominant "Relevance" sorting with repeated read operations.

4. **Fourth Section (Red Outline)**:

- Commands:

- `search sort=Relevance` (orange) ×3

- `read` (blue)

- `select` (gray)

- Structure: Balanced "Relevance" searches with single read/select.

5. **Rightmost Section (Red Outline)**:

- Commands:

- `search sort=Citations` (yellow) ×4

- `select` (gray)

- Structure: Exclusive "Citations" sorting with no read operations.

### Key Observations

- **Sorting Criteria**:

- "Citations" sorting dominates left/right sections.

- "Relevance" sorting dominates middle sections.

- **Read Operations**:

- Absent in rightmost section.

- Most frequent in middle section (3 instances).

- **Selection**:

- Universal final step in all sections.

- Gray rectangles consistently at bottom.

### Interpretation

This flowchart illustrates a comparative analysis of information retrieval strategies:

1. **Citations-First Approach** (Left/Right Sections):

- Prioritizes citation-based sorting.

- Rightmost section skips reading entirely, suggesting direct selection based on citations.

2. **Relevance-First Approach** (Middle Sections):

- Emphasizes relevance sorting with multiple read operations.

- Middle section's repetitive "search sort=Relevance" implies iterative refinement.

3. **Hybrid Strategy** (Second Section):

- Combines both sorting criteria, indicating adaptive workflows.

4. **Red-Outlined Sections** (Fourth/Fifth):

- Red outline may denote critical/alternative pathways.

- Fourth section's balanced approach contrasts with fifth's citation-exclusivity.

The workflow suggests a decision tree where sorting criteria and read operations vary based on research priorities, with selection as the universal endpoint. The absence of read operations in the rightmost section implies a confidence threshold where citations alone suffice for final selection.

</details>

Figure 4: Five CiteAgent trajectories on five different samples. CiteAgent often exhibits behavior not shown in the demonstration given in the prompt, for example: searching by citation count and then by relevance, and searching multiple times in a row. Gray dotted box: prompt demonstration; green dotted boxes: CiteAgent succeeds; red dotted boxes: CiteAgent fails.

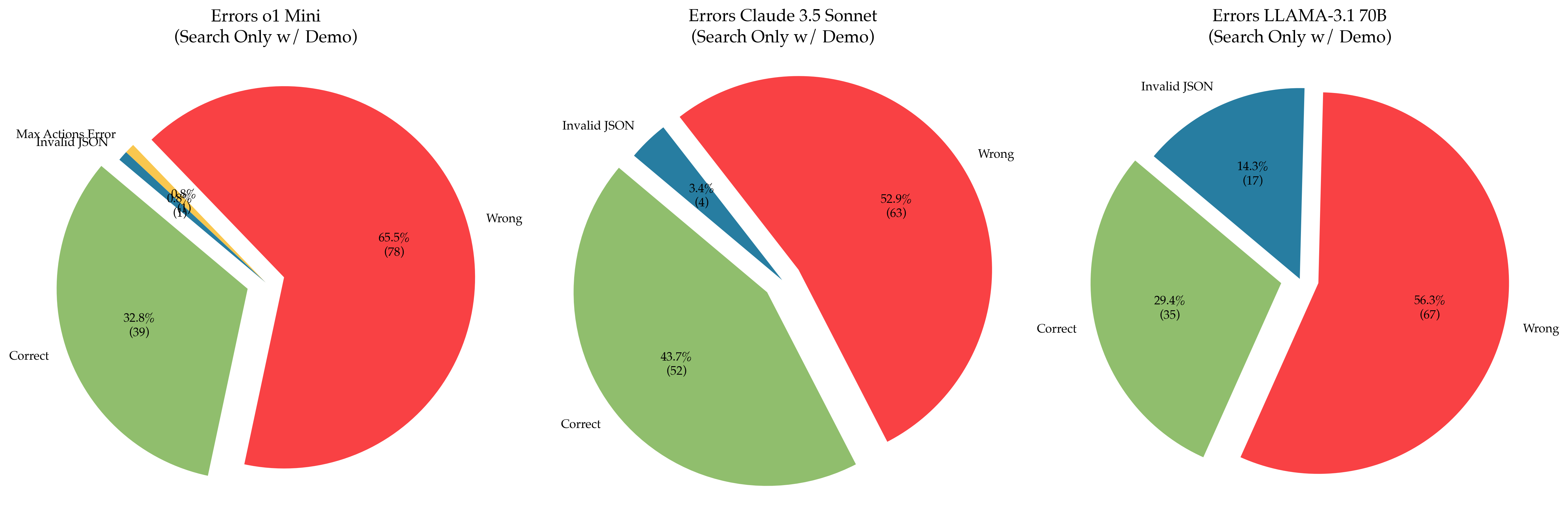

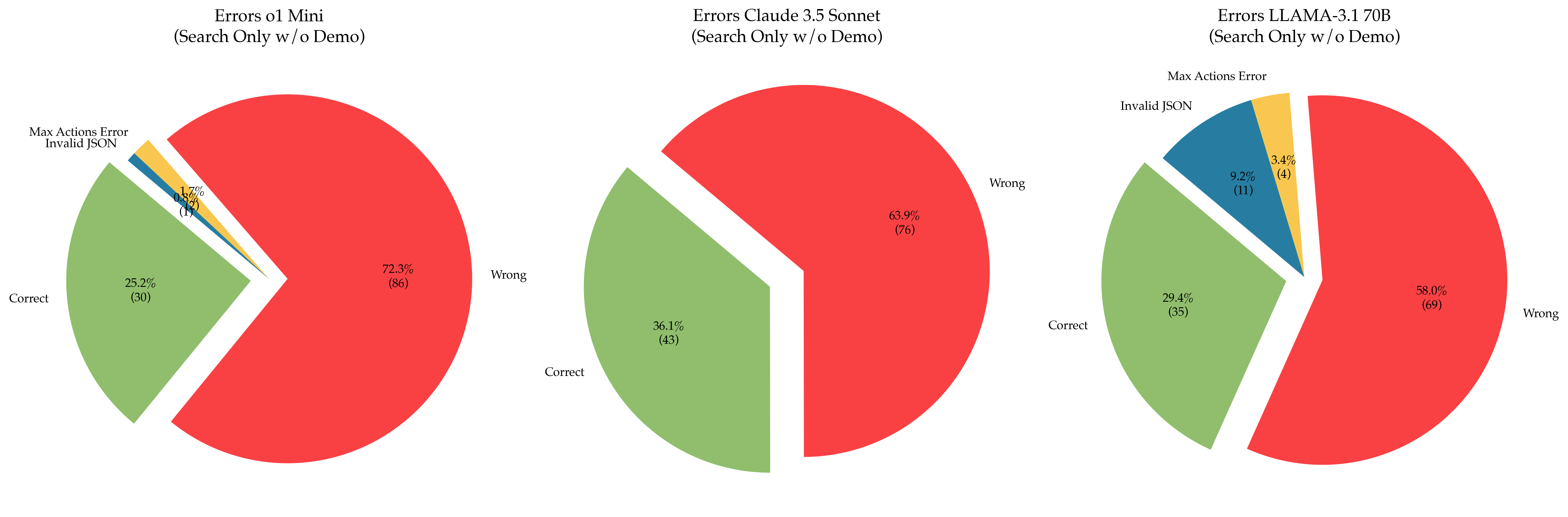

5.1 Error Analysis

To better identify CiteAgent’s shortcomings, we analyze 50 randomly chosen CiteME samples from the best performing CiteAgent (using the GPT-4o backbone, with demonstrations, Search and Read commands) failed to solve correctly. We classify each error into three types based on CiteAgent’s searches, its predicted paper and the justification provided:

Error Type 1: Misunderstands the Excerpt. This category accounts for 50% of the errors. It occurs when CiteAgent focuses on irrelevant parts of the excerpt or omits critical details. For example, in the following excerpt:

{quoting}

The pioneering work of Reed et al. [37] approached text-guided image generation by training a conditional GAN [CITATION], conditioned by text embeddings obtained from a pretrained encoder.

CiteAgent searches for "Reed text-guided image generation conditional GAN" instead of "conditional GAN". It mistakes "Reed" as relevant to the current citation although it pertains to the previous one.

Error Type 2: Understands the Excerpt but Stops Prematurely. In 32% of cases, CiteAgent searches for the correct term, but it stops at a roughly matching paper instead of the exact match. For example, in the following excerpt:

{quoting}

Using Gaussian noise and blur, [CITATION] demonstrate the superior robustness of human vision to convolutional networks, even after networks are fine-tuned on Gaussian noise or blur.

CiteAgent found a paper comparing human and machine robustness but missed that it did not cover fine-tuned networks. Notably, this paper referenced the correct target paper, meaning CiteAgent could have found the right answer with just one more step if it had properly understood the paper it was reading. Moreover, in 12.5% of such cases, the correct paper appeared in the search results but was not chosen by CiteAgent.

Error Type 3: Finds the Correct Citation but Stops Prematurely. The last 18% of errors occur when CiteAgent reads an abstract or paper and finds the correct citation; however, instead of doing another search, it selects the paper that cites the correct citation and stops searching. For example, in the following excerpt:

{quoting}

[CITATION] investigates transformers’ theoretical expressiveness, showing that transformers cannot robustly model noncounter-free regular languages even when allowing infinite precision.

CiteAgent finds a paper discussing the target paper and reports it, but it stops at the citing paper instead of searching for the correct target paper. For instance, it reports: ".. specifically mentioning Hahn’s work on transformers’ classification decisions becoming ineffective over longer input strings. This fits well with the description in the excerpt.." but it selects the citing paper instead of finding Hahn’s work, which is the correct target paper.

Technical Errors. Aside from comprehension errors that stem from a lack of understanding an excerpt, 5.8% of runs encountered technical issues. Occasionally, the LM formats responses incorrectly, making them unparseable by the system. Additionally, the Semantic Scholar API has inconsistencies, such as not providing open access PDF links when available or linking to non-existent web pages. Further details on these technical errors are provided in Appendix F.

<details>

<summary>extracted/5974968/figures/4o_claude.png Details</summary>

### Visual Description

## Bar Charts: Command Frequency Comparison for CiteAgent with GPT-4o and Claude 3 Opus

### Overview

The image contains two side-by-side bar charts comparing command frequencies for two AI agents: "CiteAgent with GPT-4o" (left) and "CiteAgent with Claude 3 Opus" (right). Each chart visualizes the distribution of four command types across 15 steps, with frequency values ranging from 0 to 40.

### Components/Axes

- **X-axis (Step)**: Labeled "Step," with markers at intervals of 5 (0, 5, 10, 15).

- **Y-axis (Command Frequency)**: Labeled "Command Frequency," scaled from 0 to 40.

- **Legend**: Positioned on the right of each chart, with four color-coded categories:

- Yellow: `search(sort=Citations)`

- Orange: `search(sort=Relevance)`

- Teal: `read`

- Gray: `select`

### Detailed Analysis

#### CiteAgent with GPT-4o (Left Chart)

- **Step 0**:

- `search(sort=Citations)`: ~40 (yellow)

- `search(sort=Relevance)`: ~35 (orange)

- `read`: ~33 (teal)

- `select`: ~2 (gray)

- **Step 5**:

- `search(sort=Citations)`: ~15 (yellow)

- `search(sort=Relevance)`: ~10 (orange)

- `read`: ~5 (teal)

- `select`: ~3 (gray)

- **Step 10**:

- All commands drop to ~1–2 (yellow/orange/teal/gray).

- **Step 15**:

- Minimal activity (~1 for gray `select`).

#### CiteAgent with Claude 3 Opus (Right Chart)

- **Step 0**:

- `search(sort=Citations)`: ~30 (yellow)

- `search(sort=Relevance)`: ~25 (orange)

- `read`: ~10 (teal)

- `select`: ~5 (gray)

- **Step 5**:

- `search(sort=Citations)`: ~5 (yellow)

- `search(sort=Relevance)`: ~3 (orange)

- `read`: ~2 (teal)

- `select`: ~4 (gray)

- **Step 10**:

- All commands drop to ~1–2 (yellow/orange/teal/gray).

- **Step 15**:

- Minimal activity (~1 for gray `select`).

### Key Observations

1. **Dominant Commands**:

- GPT-4o prioritizes `search(sort=Citations)` and `read` at Step 0, with frequencies ~40 and ~33, respectively.

- Claude 3 Opus emphasizes `search(sort=Citations)` (~30) and `search(sort=Relevance)` (~25) at Step 0.

2. **Decline Trends**:

- Both agents show steep declines in command frequency as steps increase, with near-zero activity by Step 15.

3. **Select Command**:

- GPT-4o’s `select` command remains negligible (~2 at Step 0), while Claude 3 Opus shows moderate use (~5 at Step 0).

### Interpretation

The data suggests that **GPT-4o** is optimized for citation-based searches and reading tasks, with high initial frequencies that decay rapidly. In contrast, **Claude 3 Opus** balances citation and relevance-based searches but allocates more resources to the `select` command. The sharp decline in activity across steps implies that both agents may struggle with sustained task execution or face diminishing returns over time. The disparity in `select` usage hints at differing strategies: GPT-4o focuses on information retrieval, while Claude 3 Opus integrates selection as a core workflow component.

</details>

Figure 5: CiteAgent trajectories on samples that were correctly predicted reveals differences in model behavior. GPT-4o reads more frequently than Claude 3 Opus and can correctly predict papers even after performing many actions.

5.2 Analyzing the Succesful Runs

Manually examining the instances that were correctly predicted by GPT-4o and Claude 3 Opus (Figure 5) provides insights into how the LMs use commands they were given. First, we confirm the results presented in Table 4: GPT-4o frequently reads papers before it correctly predicts a citation. Second, when both LMs correctly predict a paper, they usually take just 5 steps or fewer to do so. This could stem from LMs loss of important details when given a long context window [52].

CiteAgent’s trajectories on CiteME enable us to analyze the shortcomings of GPT-4o and other SoTA LMs. These range from understanding fine details in text (Type 1 and Type 2 Errors), to not completely understanding the task (Type 3 Errors), to being unable to use commands (Technical Errors). Correcting these errors could improve the utility of LMs on CiteME and for other related tasks.

5.3 Benchmarking Reasoning Capability Improvements with Latest Models

Table 5: Accuracy (in %) of newly released LMs on CiteME.

| Commands | Method No Commands | w/o Demo | Claude-3.5-Sonnet 8.4 | LLaMa-3.1-70B 3.4 | o1-mini 16.0 | o1-preview 38.7 |

| --- | --- | --- | --- | --- | --- | --- |

| w/ Demo | 9.2 | 8.4 | 10.9 | – | | |

| Search Only | w/o Demo | 36.1 | 29.4 | 25.2 | – | |

| w/ Demo | 43.7 | 29.4 | 32.8 | – | | |

| Search and Read | w/o Demo | 37.0 | 22.7 | 26.9 | – | |

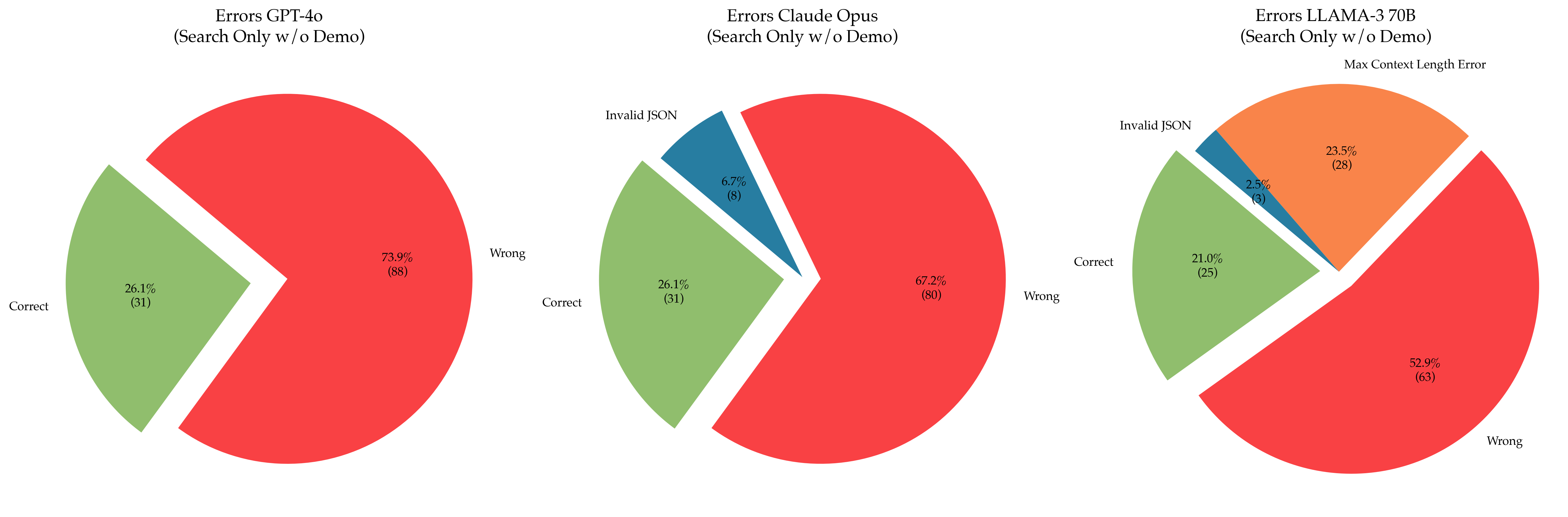

| w/ Demo | 40.3 | 27.7 | 34.5 | 61.3 | | |

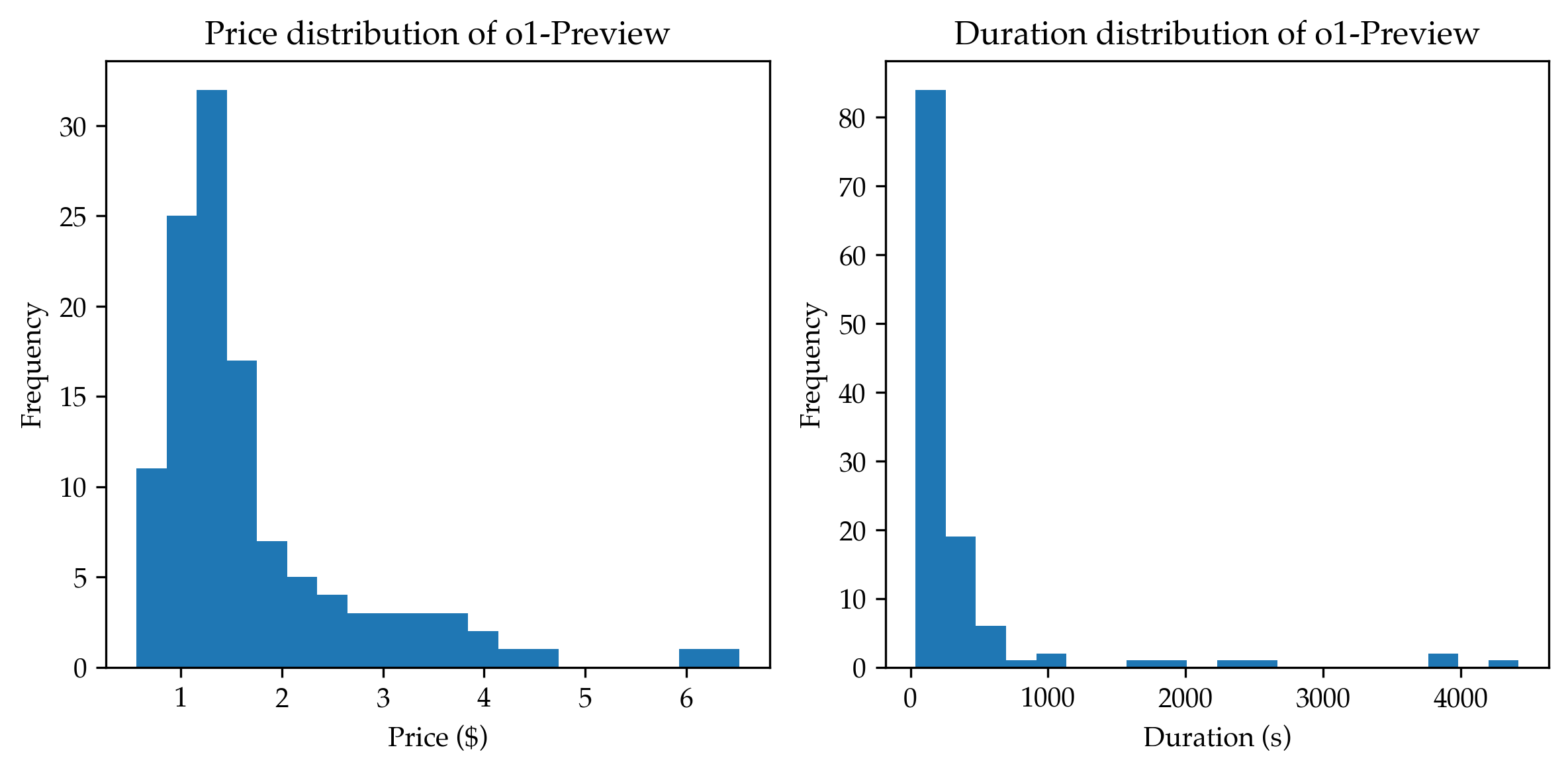

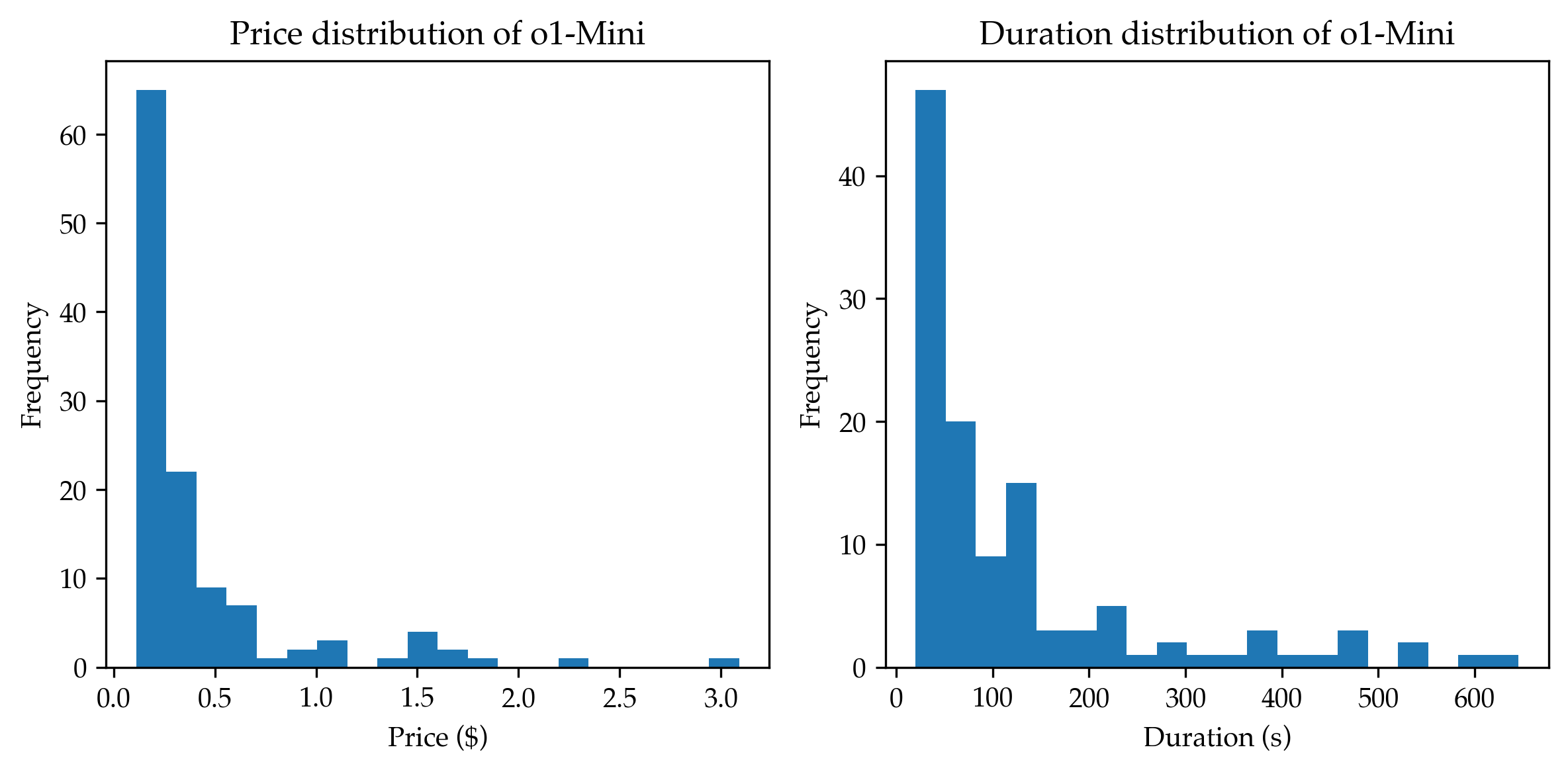

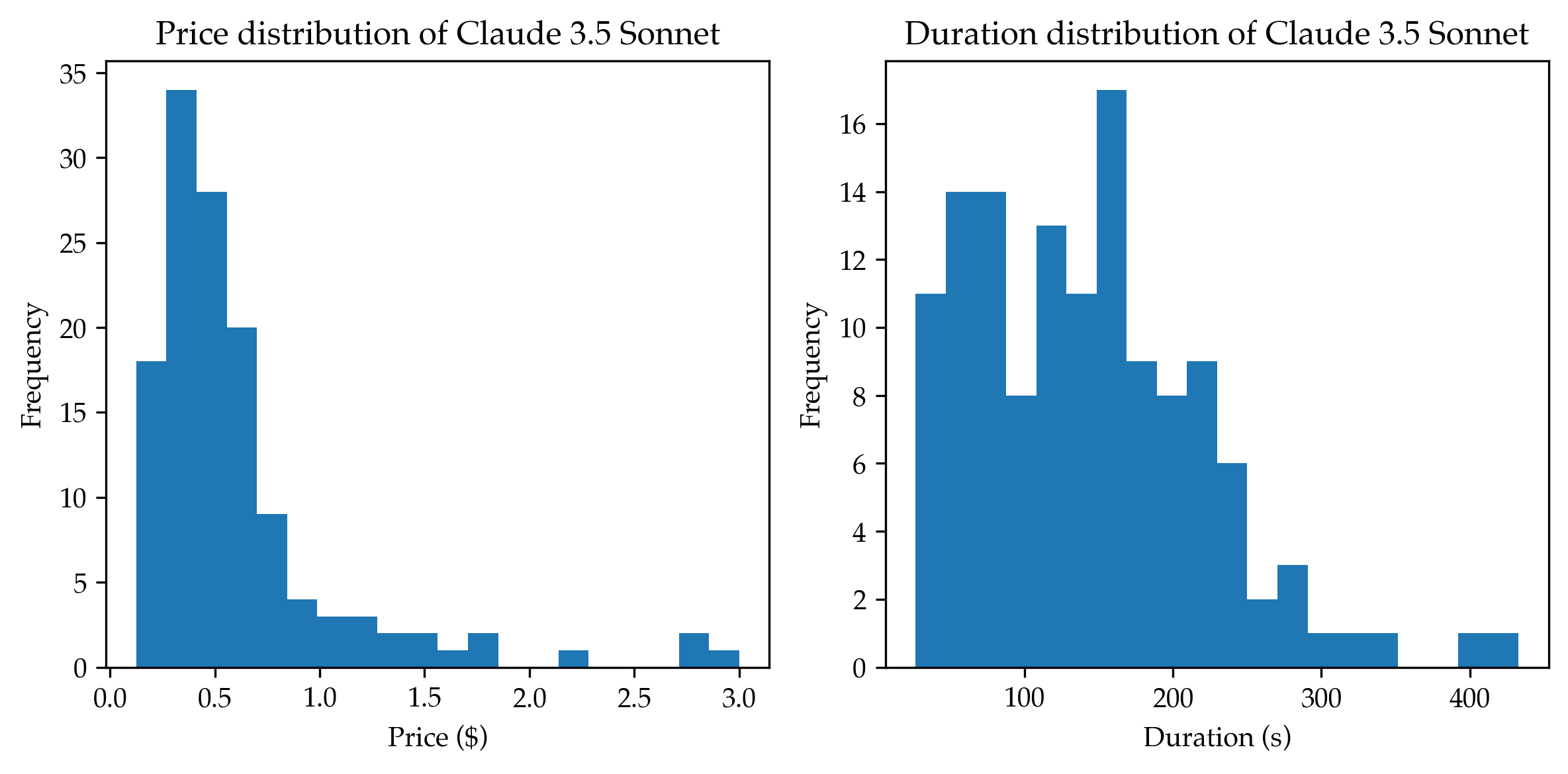

We compare the latest LLMs on the CiteME benchmark (Table 5) and find that Claude 3.5 Sonnet outperforms the previous best, Claude 3 Opus. This improvement stems from better generalization, as Sonnet achieves 9.2% without internet access, compared to Opus’ 18.5%. Similarly, LLaMa-3.1-70B shows significant gains of 8% over LLaMa-3.0-70B, highlighting enhanced reasoning capabilities. However, GPT-o1, while performing well on CiteME, appears to have memorized 38.7% of the dataset, making its 61.3% benchmark performance less clear in terms of true improvement compared to GPT-4o.

6 Related Work

Recent work has made substantial progress in developing methods and datasets to assist researchers in paper writing and literature review [8, 12, 87] or act as tutors [18]. Early work [48, 56] showed that researchers automatically retrieved topics and papers considered highly relevant to their work. Other studies included methods that assist researchers in finding new ideas [34], understanding certain topics [62], provide expert answers backed up by evidence [55] or clarifying a paper’s related work by supplementing it with more information and focus [15, 67].

Closer to our line of research, prior studies developed methods for substantiating specific claims using evidence from published papers [75, 83, 85, 84, 91, 24, 39, 45]. Retrieval-augmented LMs [49, 11, 30] are also popularly used to ground claims with real-world evidence (see [60] for a survey). Chen et al. [16] built a web-based retrieval-augmented pipeline for fact verification; this contrasts with methods that use a static dataset for claim retrieval and verification [36, 5]. Concurrent to this work, Ajith et al. [2] build a retrieval benchmark consisting of questions about discoveries shown in specific machine learning papers.

Paper discovery is a crucial component of systems that automate scientific research as shown in [10, 47, 54, 61, 78]. CiteME plays an important role in developing better tools for paper discovery, and provides a way to effectively measure their efficiency. Currently, these systems are tested as a whole, without isolating the tools responsible for scientific discovery. CiteME allows us to evaluate components within them independently – and we discover that current LM Agents are not yet ready for automated paper discovery, leading to serious gaps in end-to-end automated research pipelines.

In addition, most existing LM benchmarks are saturated, with most LMs scoring 80-95% on them [43, 38, 20]. There is a need in the AI community to show what properties LMs currently lack, to show LM developers what aspects they should work on. On CiteME, the best LMs get less than 40%, clearly indicating to developers an important task that they could improve LMs on, while also providing an indicator they can use to track progress.

Context-aware Recommendation. Relevant to our research focus, [57, 64, 37] take as input documents or parts thereof and recommend papers that are likely to be cited, often referred to as context-aware citation recommendation [51, 26, 89, 28, 42, 65, 33]. The text inputs we use in CiteME resemble those used in [42, 65, 80], which contain a few sentences with a masked out citation. However, CiteME differs because it uses excerpts containing only one unambiguous citation, making the context sufficient to identify the cited paper. Furthermore, our work explores agents with access to real-time paper information through tools like Semantic Scholar. This is crucial for real-time use since thousands of new papers are indexed by arXiv monthly (e.g., 8,895 papers in March 2024 under the cs category) [4]. Most previous approaches would be impractical due to the need for retraining with every new paper issuance.

Citation Attribution Datasets. A variety of datasets contain text excerpts from scientific papers and corresponding citations [32, 31, 9, 40, 72, 44, 42, 33]. There are many crucial distinctions between the aforementioned datasets and CiteME, with the main one being that CiteME is composed of manually selected excerpts that clearly reference a paper. To our best knowledge, CiteME is the only dataset that reports human accuracy on the benchmark.

Additionally, the excerpts in CiteME are mostly taken from papers published in the last few years (see Figure 2), whereas other datasets contain older papers. For example, the arXiv dataset [33] includes papers from 1991-2020, and FullTextPaperRead [42] contains papers from 2007-2017. This currency is particularly relevant in rapidly evolving fields like machine learning. The key distinction between the dataset and methods we present compared to previous works is their real life applicability. Our agent is based on SoTA LMs, needs no extra training, and can use a search engine, all of which make it easily applicable to real-world settings.

7 Conclusion

This work introduces a citation attribution benchmark containing manually curated text excerpts that unambiguously refer to a single other paper. We posit that methods that succeed on CiteME are likely to be highly useful in assisting researchers with real-world ML-specific attribution tasks but also generally useful in finding sources for generic claims. Further, our CiteAgent autonomous system can search the Internet for and read papers, which we show to significantly enhance the abilities of LMs on CiteME. We anticipate that this work will lead to LMs that are more accurate research assistants in the vital scholarship tasks of attribution.

Author Contributions

The project was initiated by Andreas Hochlehnert and Ori Press, with feedback from Ameya Prabhu, Ofir Press, and Matthias Bethge. The dataset was created by Ori Press and Ameya Prabhu, with help from Vishaal Udandarao and Ofir Press. Experiments were carried out by Andreas Hochlehnert, with help from Ameya Prabhu. All authors contributed to the final manuscript.

Acknowledgements

The authors thank the International Max Planck Research School for Intelligent Systems (IMPRS-IS) for supporting Ori Press, Andreas Hochlehnert, and Vishaal Udandarao. Andreas Hochlehnert is supported by the Carl Zeiss Foundation through the project “Certification and Foundations of Safe ML Systems”. Matthias Bethge acknowledges financial support via the Open Philanthropy Foundation funded by the Good Ventures Foundation. Vishaal Udandarao was supported by a Google PhD Fellowship in Machine Intelligence. Matthias Bethge is a member of the Machine Learning Cluster of Excellence, funded by the Deutsche Forschungsgemeinschaft (DFG, German Research Foundation) under Germany’s Excellence Strategy – EXC number 2064/1 – Project number 390727645 and acknowledges support by the German Research Foundation (DFG): SFB 1233, Robust Vision: Inference Principles and Neural Mechanisms, TP 4, Project No: 276693517. This work was supported by the Tübingen AI Center. The authors declare no conflicts of interests.

References

- Achiam et al. [2023] Josh Achiam, Steven Adler, Sandhini Agarwal, Lama Ahmad, Ilge Akkaya, Florencia Leoni Aleman, Diogo Almeida, Janko Altenschmidt, Sam Altman, Shyamal Anadkat, et al. Gpt-4 technical report. arXiv preprint arXiv:2303.08774, 2023.

- Ajith et al. [2024] Anirudh Ajith, Mengzhou Xia, Alexis Chevalier, Tanya Goyal, Danqi Chen, and Tianyu Gao. Litsearch: A retrieval benchmark for scientific literature search. arXiv preprint arXiv:2407.18940, 2024.

- Anthropic [2024] Anthropic. Introducing the next generation of claude, 2024. URL https://www.anthropic.com/news/claude-3-family.

- arXiv [2024] arXiv. arxiv monthly submission statistics, 2024. URL https://arxiv.org/stats/monthly_submissions. Accessed: 2024-05-27.

- Atanasova [2024] Pepa Atanasova. Generating fact checking explanations. In Accountable and Explainable Methods for Complex Reasoning over Text, pages 83–103. Springer, 2024.

- Augenstein et al. [2023] Isabelle Augenstein, Timothy Baldwin, Meeyoung Cha, Tanmoy Chakraborty, Giovanni Luca Ciampaglia, David Corney, Renee DiResta, Emilio Ferrara, Scott Hale, Alon Halevy, et al. Factuality challenges in the era of large language models. arXiv preprint arXiv:2310.05189, 2023.

- Bengio [2013] Yoshua Bengio. Deep learning of representations: Looking forward. In International conference on statistical language and speech processing, pages 1–37. Springer, 2013.

- Bhagavatula et al. [2018] Chandra Bhagavatula, Sergey Feldman, Russell Power, and Waleed Ammar. Content-based citation recommendation. arXiv preprint arXiv:1802.08301, 2018.

- Bird et al. [2008] Steven Bird, Robert Dale, Bonnie Dorr, Bryan Gibson, Mark Joseph, Min-Yen Kan, Dongwon Lee, Brett Powley, Dragomir Radev, and Yee Fan Tan. The ACL Anthology reference corpus: A reference dataset for bibliographic research in computational linguistics. In Nicoletta Calzolari, Khalid Choukri, Bente Maegaard, Joseph Mariani, Jan Odijk, Stelios Piperidis, and Daniel Tapias, editors, Proceedings of the Sixth International Conference on Language Resources and Evaluation (LREC’08), Marrakech, Morocco, May 2008. European Language Resources Association (ELRA). URL http://www.lrec-conf.org/proceedings/lrec2008/pdf/445_paper.pdf.

- Boiko et al. [2023] Daniil A Boiko, Robert MacKnight, Ben Kline, and Gabe Gomes. Autonomous chemical research with large language models. Nature, 624(7992):570–578, 2023.

- Borgeaud et al. [2022] Sebastian Borgeaud, Arthur Mensch, Jordan Hoffmann, Trevor Cai, Eliza Rutherford, Katie Millican, George Bm Van Den Driessche, Jean-Baptiste Lespiau, Bogdan Damoc, Aidan Clark, et al. Improving language models by retrieving from trillions of tokens. In International conference on machine learning, pages 2206–2240. PMLR, 2022.

- Boyko et al. [2023] James Boyko, Joseph Cohen, Nathan Fox, Maria Han Veiga, Jennifer I Li, Jing Liu, Bernardo Modenesi, Andreas H Rauch, Kenneth N Reid, Soumi Tribedi, et al. An interdisciplinary outlook on large language models for scientific research. arXiv preprint arXiv:2311.04929, 2023.

- Bui et al. [2016] Thang Bui, Daniel Hernández-Lobato, Jose Hernandez-Lobato, Yingzhen Li, and Richard Turner. Deep gaussian processes for regression using approximate expectation propagation. In International conference on machine learning, pages 1472–1481. PMLR, 2016.

- Burt et al. [2020] David R Burt, Carl Edward Rasmussen, and Mark Van Der Wilk. Convergence of sparse variational inference in gaussian processes regression. Journal of Machine Learning Research, 21(131):1–63, 2020.

- Chang et al. [2023] Joseph Chee Chang, Amy X Zhang, Jonathan Bragg, Andrew Head, Kyle Lo, Doug Downey, and Daniel S Weld. Citesee: Augmenting citations in scientific papers with persistent and personalized historical context. In Proceedings of the 2023 CHI Conference on Human Factors in Computing Systems, pages 1–15, 2023.

- Chen et al. [2023] Jifan Chen, Grace Kim, Aniruddh Sriram, Greg Durrett, and Eunsol Choi. Complex claim verification with evidence retrieved in the wild. arXiv preprint arXiv:2305.11859, 2023.

- Chen et al. [2021] Mark Chen, Jerry Tworek, Heewoo Jun, Qiming Yuan, Henrique Ponde de Oliveira Pinto, Jared Kaplan, Harri Edwards, Yuri Burda, Nicholas Joseph, Greg Brockman, et al. Evaluating large language models trained on code. arXiv preprint arXiv:2107.03374, 2021.

- Chevalier et al. [2024] Alexis Chevalier, Jiayi Geng, Alexander Wettig, Howard Chen, Sebastian Mizera, Toni Annala, Max Jameson Aragon, Arturo Rodríguez Fanlo, Simon Frieder, Simon Machado, et al. Language models as science tutors. arXiv preprint arXiv:2402.11111, 2024.

- Chowdhery et al. [2023] Aakanksha Chowdhery, Sharan Narang, Jacob Devlin, Maarten Bosma, Gaurav Mishra, Adam Roberts, Paul Barham, Hyung Won Chung, Charles Sutton, Sebastian Gehrmann, et al. Palm: Scaling language modeling with pathways. Journal of Machine Learning Research, 24(240):1–113, 2023.

- Cobbe et al. [2021] Karl Cobbe, Vineet Kosaraju, Mohammad Bavarian, Mark Chen, Heewoo Jun, Lukasz Kaiser, Matthias Plappert, Jerry Tworek, Jacob Hilton, Reiichiro Nakano, et al. Training verifiers to solve math word problems. arXiv preprint arXiv:2110.14168, 2021.

- Cohan et al. [2020] Arman Cohan, Sergey Feldman, Iz Beltagy, Doug Downey, and Daniel S Weld. Specter: Document-level representation learning using citation-informed transformers. arXiv preprint arXiv:2004.07180, 2020.

- Cohen et al. [2010] K Bretonnel Cohen, Helen L Johnson, Karin Verspoor, Christophe Roeder, and Lawrence E Hunter. The structural and content aspects of abstracts versus bodies of full text journal articles are different. BMC bioinformatics, 11:1–10, 2010.

- Cox [1958] David R Cox. The regression analysis of binary sequences. Journal of the Royal Statistical Society Series B: Statistical Methodology, 20(2):215–232, 1958.

- [24] Sam Cox, Michael Hammerling, Jakub Lála, Jon Laurent, Sam Rodriques, Matt Rubashkin, and Andrew White. Wikicrow: Automating synthesis of human scientific knowledge.

- Dakhel et al. [2023] Arghavan Moradi Dakhel, Vahid Majdinasab, Amin Nikanjam, Foutse Khomh, Michel C Desmarais, and Zhen Ming Jack Jiang. Github copilot ai pair programmer: Asset or liability? Journal of Systems and Software, 203:111734, 2023.

- Ebesu and Fang [2017] Travis Ebesu and Yi Fang. Neural citation network for context-aware citation recommendation. In Proceedings of the 40th international ACM SIGIR conference on research and development in information retrieval, pages 1093–1096, 2017.

- Färber and Jatowt [2020] Michael Färber and Adam Jatowt. Citation recommendation: approaches and datasets. International Journal on Digital Libraries, 21(4):375–405, 2020.

- Färber and Sampath [2020] Michael Färber and Ashwath Sampath. Hybridcite: A hybrid model for context-aware citation recommendation. In Proceedings of the ACM/IEEE joint conference on digital libraries in 2020, pages 117–126, 2020.

- Fenniak et al. [2024] Mathieu Fenniak, Matthew Stamy, pubpub zz, Martin Thoma, Matthew Peveler, exiledkingcc, and pypdf Contributors. The pypdf library, 2024. URL https://pypi.org/project/pypdf/. See https://pypdf.readthedocs.io/en/latest/meta/CONTRIBUTORS.html for all contributors.

- Gao et al. [2023] Tianyu Gao, Howard Yen, Jiatong Yu, and Danqi Chen. Enabling large language models to generate text with citations. arXiv preprint arXiv:2305.14627, 2023.

- Gehrke et al. [2003] Johannes Gehrke, Paul Ginsparg, and Jon Kleinberg. Overview of the 2003 kdd cup. Acm Sigkdd Explorations Newsletter, 5(2):149–151, 2003.

- Giles et al. [1998] C Lee Giles, Kurt D Bollacker, and Steve Lawrence. Citeseer: An automatic citation indexing system. In Proceedings of the third ACM conference on Digital libraries, pages 89–98, 1998.

- Gu et al. [2022] Nianlong Gu, Yingqiang Gao, and Richard HR Hahnloser. Local citation recommendation with hierarchical-attention text encoder and scibert-based reranking. In European conference on information retrieval, pages 274–288. Springer, 2022.

- Gu and Krenn [2024] Xuemei Gu and Mario Krenn. Generation and human-expert evaluation of interesting research ideas using knowledge graphs and large language models. arXiv preprint arXiv:2405.17044, 2024.

- Guu et al. [2015] Kelvin Guu, John Miller, and Percy Liang. Traversing knowledge graphs in vector space. arXiv preprint arXiv:1506.01094, 2015.

- Hanselowski et al. [2019] Andreas Hanselowski, Christian Stab, Claudia Schulz, Zile Li, and Iryna Gurevych. A richly annotated corpus for different tasks in automated fact-checking. arXiv preprint arXiv:1911.01214, 2019.

- He et al. [2010] Qi He, Jian Pei, Daniel Kifer, Prasenjit Mitra, and Lee Giles. Context-aware citation recommendation. In Proceedings of the 19th international conference on World wide web, pages 421–430, 2010.

- Hendrycks et al. [2021] Dan Hendrycks, Collin Burns, Saurav Kadavath, Akul Arora, Steven Basart, Eric Tang, Dawn Song, and Jacob Steinhardt. Measuring mathematical problem solving with the math dataset. arXiv preprint arXiv:2103.03874, 2021.

- Huang et al. [2024] Chengyu Huang, Zeqiu Wu, Yushi Hu, and Wenya Wang. Training language models to generate text with citations via fine-grained rewards. arXiv preprint arXiv:2402.04315, 2024.

- Huang et al. [2014] Wenyi Huang, Zhaohui Wu, Prasenjit Mitra, and C Lee Giles. Refseer: A citation recommendation system. In IEEE/ACM joint conference on digital libraries, pages 371–374. IEEE, 2014.

- Iyyer et al. [2014] Mohit Iyyer, Jordan Boyd-Graber, Leonardo Claudino, Richard Socher, and Hal Daumé III. A neural network for factoid question answering over paragraphs. In Proceedings of the 2014 conference on empirical methods in natural language processing (EMNLP), pages 633–644, 2014.

- Jeong et al. [2020] Chanwoo Jeong, Sion Jang, Eunjeong Park, and Sungchul Choi. A context-aware citation recommendation model with bert and graph convolutional networks. Scientometrics, 124:1907–1922, 2020.

- Joshi et al. [2017] Mandar Joshi, Eunsol Choi, Daniel S Weld, and Luke Zettlemoyer. Triviaqa: A large scale distantly supervised challenge dataset for reading comprehension. arXiv preprint arXiv:1705.03551, 2017.

- Kang et al. [2018] Dongyeop Kang, Waleed Ammar, Bhavana Dalvi, Madeleine Van Zuylen, Sebastian Kohlmeier, Eduard Hovy, and Roy Schwartz. A dataset of peer reviews (peerread): Collection, insights and nlp applications. arXiv preprint arXiv:1804.09635, 2018.

- Khalifa et al. [2024] Muhammad Khalifa, David Wadden, Emma Strubell, Honglak Lee, Lu Wang, Iz Beltagy, and Hao Peng. Source-aware training enables knowledge attribution in language models. arXiv preprint arXiv:2404.01019, 2024.

- Kinney et al. [2023] Rodney Kinney, Chloe Anastasiades, Russell Authur, Iz Beltagy, Jonathan Bragg, Alexandra Buraczynski, Isabel Cachola, Stefan Candra, Yoganand Chandrasekhar, Arman Cohan, et al. The semantic scholar open data platform. arXiv preprint arXiv:2301.10140, 2023.

- Lála et al. [2023] Jakub Lála, Odhran O’Donoghue, Aleksandar Shtedritski, Sam Cox, Samuel G Rodriques, and Andrew D White. Paperqa: Retrieval-augmented generative agent for scientific research. arXiv preprint arXiv:2312.07559, 2023.

- Learning [2011] Machine Learning. Apolo: Making sense of large network data by combining. 2011.

- Lewis et al. [2020] Patrick Lewis, Ethan Perez, Aleksandra Piktus, Fabio Petroni, Vladimir Karpukhin, Naman Goyal, Heinrich Küttler, Mike Lewis, Wen-tau Yih, Tim Rocktäschel, et al. Retrieval-augmented generation for knowledge-intensive nlp tasks. Advances in Neural Information Processing Systems, 33:9459–9474, 2020.

- Lin [2009] Jimmy Lin. Is searching full text more effective than searching abstracts? BMC bioinformatics, 10:1–15, 2009.

- Liu et al. [2015] Haifeng Liu, Xiangjie Kong, Xiaomei Bai, Wei Wang, Teshome Megersa Bekele, and Feng Xia. Context-based collaborative filtering for citation recommendation. Ieee Access, 3:1695–1703, 2015.

- Liu et al. [2024] Nelson F Liu, Kevin Lin, John Hewitt, Ashwin Paranjape, Michele Bevilacqua, Fabio Petroni, and Percy Liang. Lost in the middle: How language models use long contexts. Transactions of the Association for Computational Linguistics, 12:157–173, 2024.

- Lloyd [1982] Stuart Lloyd. Least squares quantization in pcm. IEEE transactions on information theory, 28(2):129–137, 1982.

- M. Bran et al. [2024] Andres M. Bran, Sam Cox, Oliver Schilter, Carlo Baldassari, Andrew D White, and Philippe Schwaller. Augmenting large language models with chemistry tools. Nature Machine Intelligence, pages 1–11, 2024.

- Malaviya et al. [2023] Chaitanya Malaviya, Subin Lee, Sihao Chen, Elizabeth Sieber, Mark Yatskar, and Dan Roth. Expertqa: Expert-curated questions and attributed answers. arXiv preprint arXiv:2309.07852, 2023.

- Mayr [2014] Philipp Mayr. Are topic-specific search term, journal name and author name recommendations relevant for researchers? arXiv preprint arXiv:1408.4440, 2014.

- McNee et al. [2002] Sean M McNee, Istvan Albert, Dan Cosley, Prateep Gopalkrishnan, Shyong K Lam, Al Mamunur Rashid, Joseph A Konstan, and John Riedl. On the recommending of citations for research papers. In Proceedings of the 2002 ACM conference on Computer supported cooperative work, pages 116–125, 2002.

- Medić and Šnajder [2020] Zoran Medić and Jan Šnajder. Improved local citation recommendation based on context enhanced with global information. In Proceedings of the first workshop on scholarly document processing, pages 97–103, 2020.

- Metzler et al. [2021] Donald Metzler, Yi Tay, Dara Bahri, and Marc Najork. Rethinking search: making domain experts out of dilettantes. In Acm sigir forum, volume 55, pages 1–27. ACM New York, NY, USA, 2021.

- Mialon et al. [2023] Grégoire Mialon, Roberto Dessì, Maria Lomeli, Christoforos Nalmpantis, Ram Pasunuru, Roberta Raileanu, Baptiste Rozière, Timo Schick, Jane Dwivedi-Yu, Asli Celikyilmaz, et al. Augmented language models: a survey. arXiv preprint arXiv:2302.07842, 2023.

- Miret and Krishnan [2024] Santiago Miret and NM Krishnan. Are llms ready for real-world materials discovery? arXiv preprint arXiv:2402.05200, 2024.

- Murthy et al. [2022] Sonia K Murthy, Kyle Lo, Daniel King, Chandra Bhagavatula, Bailey Kuehl, Sophie Johnson, Jonathan Borchardt, Daniel S Weld, Tom Hope, and Doug Downey. Accord: A multi-document approach to generating diverse descriptions of scientific concepts. arXiv preprint arXiv:2205.06982, 2022.

- Muthukadan [2011] Baiju Muthukadan. Selenium with python. https://selenium-python.readthedocs.io/, 2011.

- Nallapati et al. [2008] Ramesh M Nallapati, Amr Ahmed, Eric P Xing, and William W Cohen. Joint latent topic models for text and citations. In Proceedings of the 14th ACM SIGKDD international conference on Knowledge discovery and data mining, pages 542–550, 2008.

- Ohagi and Aizawa [2022] Masaya Ohagi and Akiko Aizawa. Pre-trained transformer-based citation context-aware citation network embeddings. In Proceedings of the 22nd ACM/IEEE Joint Conference on Digital Libraries, pages 1–5, 2022.

- Ouyang et al. [2022] Long Ouyang, Jeffrey Wu, Xu Jiang, Diogo Almeida, Carroll Wainwright, Pamela Mishkin, Chong Zhang, Sandhini Agarwal, Katarina Slama, Alex Ray, et al. Training language models to follow instructions with human feedback. Advances in neural information processing systems, 35:27730–27744, 2022.

- Palani et al. [2023] Srishti Palani, Aakanksha Naik, Doug Downey, Amy X Zhang, Jonathan Bragg, and Joseph Chee Chang. Relatedly: Scaffolding literature reviews with existing related work sections. In Proceedings of the 2023 CHI Conference on Human Factors in Computing Systems, pages 1–20, 2023.

- Petroni et al. [2019] Fabio Petroni, Tim Rocktäschel, Patrick Lewis, Anton Bakhtin, Yuxiang Wu, Alexander H Miller, and Sebastian Riedel. Language models as knowledge bases? arXiv preprint arXiv:1909.01066, 2019.

- Polo et al. [2024] Felipe Maia Polo, Lucas Weber, Leshem Choshen, Yuekai Sun, Gongjun Xu, and Mikhail Yurochkin. tinybenchmarks: evaluating llms with fewer examples. arXiv preprint arXiv:2402.14992, 2024.

- Prabhu et al. [2024] Ameya Prabhu, Vishaal Udandarao, Philip Torr, Matthias Bethge, Adel Bibi, and Samuel Albanie. Lifelong benchmarks: Efficient model evaluation in an era of rapid progress. arXiv preprint arXiv:2402.19472, 2024.

- Press et al. [2022] Ofir Press, Muru Zhang, Sewon Min, Ludwig Schmidt, Noah A Smith, and Mike Lewis. Measuring and narrowing the compositionality gap in language models. arXiv preprint arXiv:2210.03350, 2022.

- Radev et al. [2013] Dragomir R Radev, Pradeep Muthukrishnan, Vahed Qazvinian, and Amjad Abu-Jbara. The acl anthology network corpus. Language Resources and Evaluation, 47:919–944, 2013.

- Rein et al. [2023] David Rein, Betty Li Hou, Asa Cooper Stickland, Jackson Petty, Richard Yuanzhe Pang, Julien Dirani, Julian Michael, and Samuel R Bowman. Gpqa: A graduate-level google-proof q&a benchmark. arXiv preprint arXiv:2311.12022, 2023.

- Saharia et al. [2022] Chitwan Saharia, William Chan, Saurabh Saxena, Lala Li, Jay Whang, Emily L Denton, Kamyar Ghasemipour, Raphael Gontijo Lopes, Burcu Karagol Ayan, Tim Salimans, et al. Photorealistic text-to-image diffusion models with deep language understanding. Advances in neural information processing systems, 35:36479–36494, 2022.

- Schuster et al. [2021] Tal Schuster, Adam Fisch, and Regina Barzilay. Get your vitamin c! robust fact verification with contrastive evidence. arXiv preprint arXiv:2103.08541, 2021.

- Schwartz et al. [2020] Roy Schwartz, Jesse Dodge, Noah A Smith, and Oren Etzioni. Green ai. Communications of the ACM, 63(12):54–63, 2020.

- Singh et al. [2022] Amanpreet Singh, Mike D’Arcy, Arman Cohan, Doug Downey, and Sergey Feldman. Scirepeval: A multi-format benchmark for scientific document representations. In Conference on Empirical Methods in Natural Language Processing, 2022. URL https://api.semanticscholar.org/CorpusID:254018137.

- Skarlinski et al. [2024] Michael D Skarlinski, Sam Cox, Jon M Laurent, James D Braza, Michaela Hinks, Michael J Hammerling, Manvitha Ponnapati, Samuel G Rodriques, and Andrew D White. Language agents achieve superhuman synthesis of scientific knowledge. arXiv preprint arXiv:2409.13740, 2024.

- Tang et al. [2019] Jianheng Tang, Tiancheng Zhao, Chenyan Xiong, Xiaodan Liang, Eric P Xing, and Zhiting Hu. Target-guided open-domain conversation. arXiv preprint arXiv:1905.11553, 2019.

- Tang et al. [2023] Michael Tang, Shunyu Yao, John Yang, and Karthik Narasimhan. Referral augmentation for zero-shot information retrieval. arXiv preprint arXiv:2305.15098, 2023.

- Touvron et al. [2023] Hugo Touvron, Thibaut Lavril, Gautier Izacard, Xavier Martinet, Marie-Anne Lachaux, Timothée Lacroix, Baptiste Rozière, Naman Goyal, Eric Hambro, Faisal Azhar, et al. Llama: Open and efficient foundation language models. arXiv preprint arXiv:2302.13971, 2023.

- Vinyals and Le [2015] Oriol Vinyals and Quoc Le. A neural conversational model. arXiv preprint arXiv:1506.05869, 2015.

- Wadden et al. [2021] David Wadden, Kyle Lo, Lucy Lu Wang, Arman Cohan, Iz Beltagy, and Hannaneh Hajishirzi. Multivers: Improving scientific claim verification with weak supervision and full-document context. arXiv preprint arXiv:2112.01640, 2021.

- Wadden et al. [2022] David Wadden, Kyle Lo, Bailey Kuehl, Arman Cohan, Iz Beltagy, Lucy Lu Wang, and Hannaneh Hajishirzi. Scifact-open: Towards open-domain scientific claim verification. arXiv preprint arXiv:2210.13777, 2022.

- Wright et al. [2022] Dustin Wright, David Wadden, Kyle Lo, Bailey Kuehl, Arman Cohan, Isabelle Augenstein, and Lucy Lu Wang. Generating scientific claims for zero-shot scientific fact checking. arXiv preprint arXiv:2203.12990, 2022.

- Wu et al. [2024a] Chengyue Wu, Yixiao Ge, Qiushan Guo, Jiahao Wang, Zhixuan Liang, Zeyu Lu, Ying Shan, and Ping Luo. Plot2code: A comprehensive benchmark for evaluating multi-modal large language models in code generation from scientific plots. 2024a. URL https://api.semanticscholar.org/CorpusID:269757000.

- Wu et al. [2024b] John F. Wu, Alina Hyk, Kiera McCormick, Christine Ye, Simone Astarita, Elina Baral, Jo Ciuca, Jesse Cranney, Anjalie Field, Kartheik G. Iyer, Philipp Koehn, Jenn Kotler, Sandor J. Kruk, Michelle Ntampaka, Charlie O’Neill, Josh Peek, Sanjib Sharma, and Mikaeel Yunus. Designing an evaluation framework for large language models in astronomy research. 2024b. URL https://api.semanticscholar.org/CorpusID:270199896.

- Yang et al. [2024] John Yang, Carlos E. Jimenez, Alexander Wettig, Kilian Lieret, Shunyu Yao, Karthik Narasimhan, and Ofir Press. Swe-agent: Agent computer interfaces enable software engineering language models, 2024.

- Yang et al. [2018] Libin Yang, Yu Zheng, Xiaoyan Cai, Hang Dai, Dejun Mu, Lantian Guo, and Tao Dai. A lstm based model for personalized context-aware citation recommendation. IEEE access, 6:59618–59627, 2018.

- Yao et al. [2022] Shunyu Yao, Jeffrey Zhao, Dian Yu, Nan Du, Izhak Shafran, Karthik Narasimhan, and Yuan Cao. React: Synergizing reasoning and acting in language models. arXiv preprint arXiv:2210.03629, 2022.

- Ye et al. [2023] Xi Ye, Ruoxi Sun, Sercan Ö Arik, and Tomas Pfister. Effective large language model adaptation for improved grounding. arXiv preprint arXiv:2311.09533, 2023.

- Zhang et al. [2023] Muru Zhang, Ofir Press, William Merrill, Alisa Liu, and Noah A Smith. How language model hallucinations can snowball. arXiv preprint arXiv:2305.13534, 2023.

Appendix A Excerpts from Citation Datasets

To demonstrate the problematic nature of automatically sourced text excerpts, we randomly choose 10 excerpts from FullTextPeerRead, ACL-200, RefSeer, and arXiv. We tag each sample chosen with one of 4 tags, as summarised in Table 1 in the main paper. We show each sample as it appears verbatim, using the datasets that appear in the official repository https://github.com/nianlonggu/Local-Citation-Recommendation of Gu et al. [33].

ACL-200

[9, 58]

- m which the data was extracted (original). We used a combination of automatic (e.g. BLEU–4 (OTHERCIT), METEOR (OTHERCIT)) and human metrics (using crowdsourcing) to evaluate the output (see generally, TARGETCIT . However, in the interest of space, we will restrict the discussion to a human judgment task on output preferences. We found this evaluation task to be most informative for system improvement. The ta Unattributable

- n Section 2 that it is more difficult to extract keyphrases correctly from longer documents. Second, recent unsupervised approaches have rivaled their supervised counterparts in performance (OTHERCIT; TARGETCIT b). For example, KP-Miner (OTHERCIT), an unsupervised system, ranked third in the SemEval-2010 shared task with an F-score of 25.2, which is comparable to the best supervised system scoring 27.5. 5 An Ambiguous: The citation is ambiguous by definition, as the excerpt cites more than one paper.

- rams include unigrams for all feature definitions and bigrams for selected ones. Figure 3b shows a sample of the actual extended set. We use two datasets, one prepared for the CoNLL 2000 shared task ( TARGETCIT and another prepared for the BioNLP/NLPBA 2004 shared task (OTHERCIT). They represent two different tagging tasks, chunking and named entity recognition, respectively. The CoNLL 2000 chunking dataset Trivial

- ipts were from meetings, seminars and interviews. Some authors have also referred to this phenomenon as Ellipsis because of the elliptical form of the NSU [OTHERCIT, Fern´andez et al., 2004, OTHERCIT, TARGETCIT , OTHERCIT]. While the statistical approaches 336 have been investigated for the purpose of ellipsis detection [Fern´andez et al., 2004, OTHERCIT], it has been a common practice to use rules – syntact Ambiguous: The citation is ambiguous by definition, as the excerpt cites more than one paper.

- e source language is morphologically poor, such as English, and the target language is morphologically rich, such as Russian, i.e., language pairs with a high degree of surface realization ambiguity ( TARGETCIT . To address this problem we propose a general approach based on bilingual neural networks (BNN) exploiting source-side contextual information. This paper makes a number of contributions: Unlike previ Reasonable

- n our approach and the one described in (OTHERCIT). Such a similarity is calculated by using the WordNet::Similarity tool (OTHERCIT), and, concretely, the Wu-Palmer measure, as defined in Equation 1 ( TARGETCIT . 2N3 Sim(C1, C2) ? (1) N1 + N2 + 2N3 where C1 and C2 are the synsets whose similarity we want to calculate, C3 is their least common superconcept, N1 is the number of nodes on the path from C1 to C3, Reasonable

- ch detected image object a visual attribute and a spatial relationship to the other objects in the image. The spatial relationships are translated into selected prepositions in the resulting captions. TARGETCIT used manually segmented and labeled images and introduced visual dependency representations (VDRs) that describe spatial relationships between the image objects. The captions are generated using templ Reasonable

- ous open source machine translation systems. The widely used Moses system (OTHERCIT) implements the standard phrase-based translation model. Parsingbased translation models are implemented by Joshua ( TARGETCIT , SAMT (OTHERCIT), and cdec (OTHERCIT). Cunei (OTHERCIT) implements statistical example-based translation. OTHERCIT and OTHERCIT respectively provide additional open-source implementations of phrase-b Trivial

- and test set, we had about 1000 sentences each with 10 reference translations taken from the NIST 2002 MT evaluation. All Chinese data was re-segmented with the CRF-based Stanford Chinese segmenter ( TARGETCIT that is trained on the segmentation of the Chinese Treebank for consistency. The parser used in Section 3 was used to parse the training data so that null elements could be recovered from the trees. Trivial