# DiReCT: Diagnostic Reasoning for Clinical Notes via Large Language Models

**Authors**: {, , }, , {, , }

Abstract

Large language models (LLMs) have recently showcased remarkable capabilities, spanning a wide range of tasks and applications, including those in the medical domain. Models like GPT-4 excel in medical question answering but may face challenges in the lack of interpretability when handling complex tasks in real clinical settings. We thus introduce the diagnostic reasoning dataset for clinical notes (DiReCT), aiming at evaluating the reasoning ability and interpretability of LLMs compared to human doctors. It contains 511 clinical notes, each meticulously annotated by physicians, detailing the diagnostic reasoning process from observations in a clinical note to the final diagnosis. Additionally, a diagnostic knowledge graph is provided to offer essential knowledge for reasoning, which may not be covered in the training data of existing LLMs. Evaluations of leading LLMs on DiReCT bring out a significant gap between their reasoning ability and that of human doctors, highlighting the critical need for models that can reason effectively in real-world clinical scenarios Code are available https://github.com/wbw520/DiReCT. Data will be released through PhysioNet.. footnotetext: Corresponding author.

1 Introduction

Recent advancements of large language models (LLMs) [Zhao et al., 2023] have ushered in new possibilities and challenges for a wide range of natural language processing (NLP) tasks [Min et al., 2023]. In the medical domain, these models have demonstrated remarkable prowess [Anil et al., 2023, Han et al., 2023], particularly in medical question answering (QA) [Jin et al., 2021]. Leading-edge models, such as GPT-4 [OpenAI, 2023a], exhibit profound proficiency in understanding and generating text [Bubeck et al., 2023], even achieved high scores on the United States Medical Licensing Examination (USMLE) questions [Nori et al., 2023].

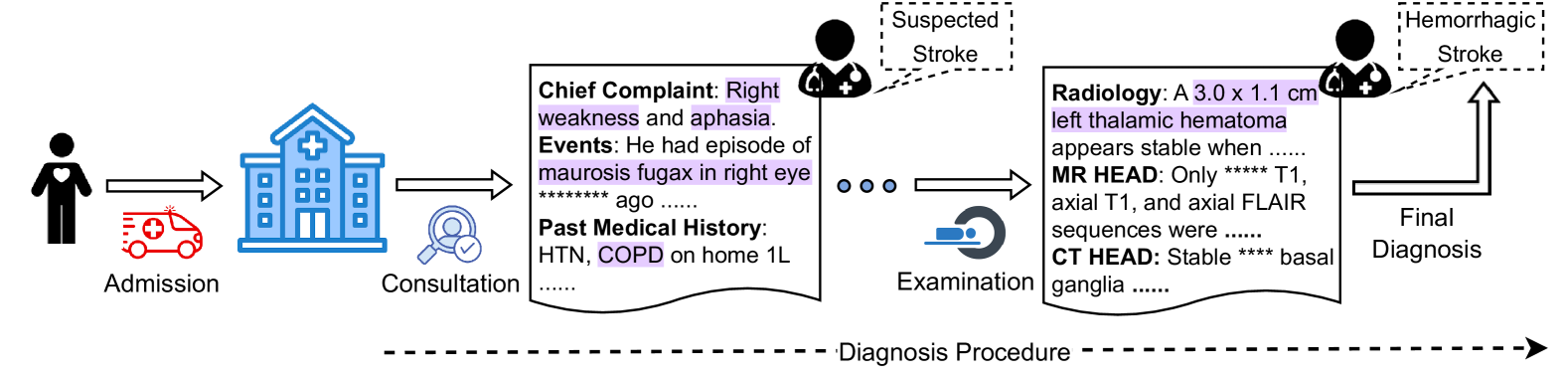

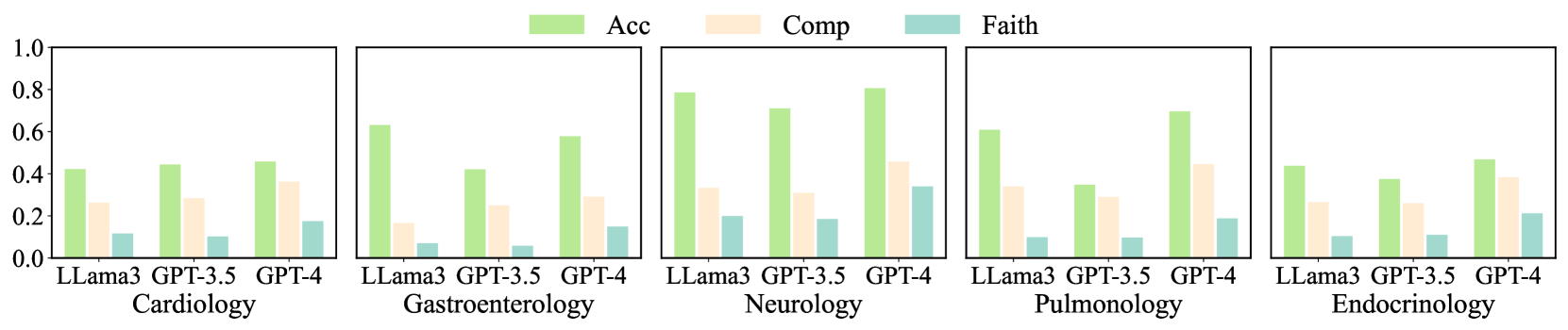

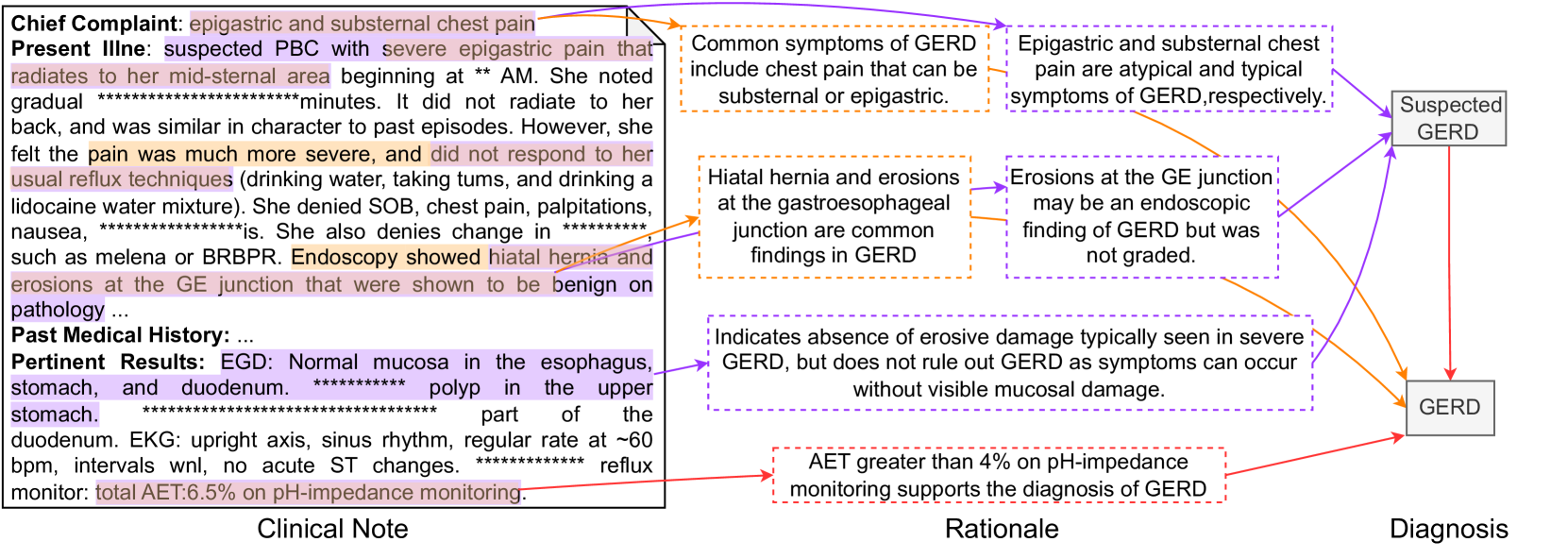

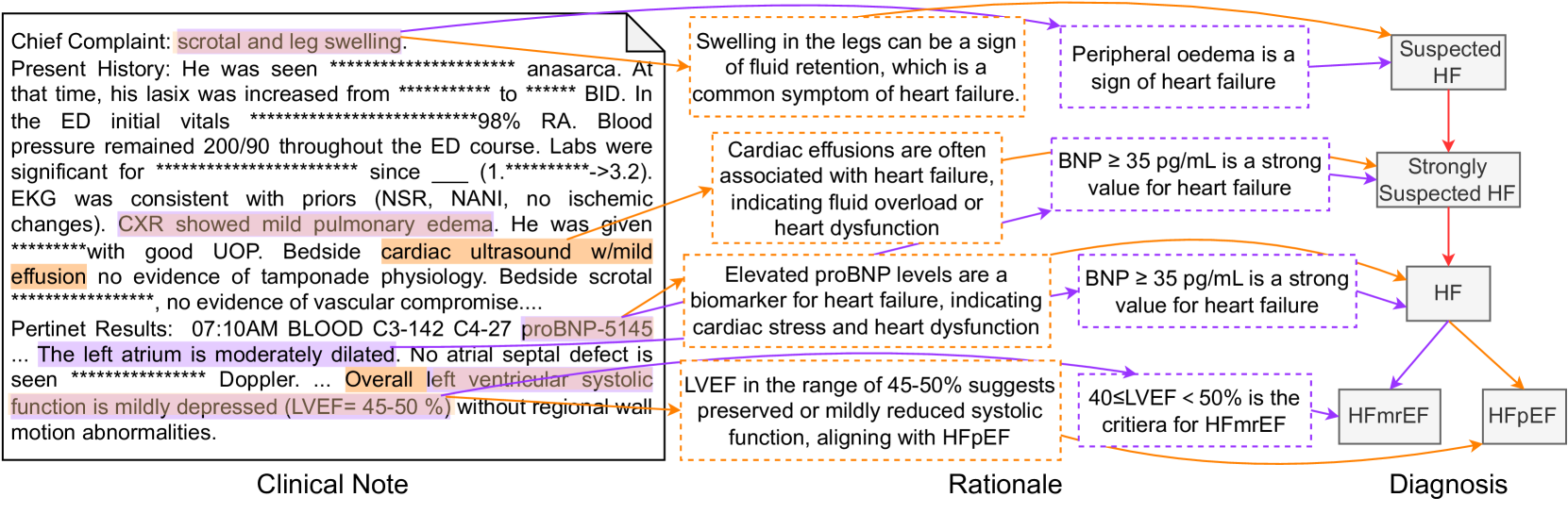

Despite the advancements, interpretability is critical, particularly in medical NLP tasks [Liévin et al., 2024]. Some studies assess this capability over medical QA [Pal et al., 2022, Li et al., 2023, Chen et al., 2024] or natural language inference (NLI) [Jullien et al., 2023]. Putting more attention on interpretability, they use relatively simple tasks as testbeds, taking short text as input. However, tasks in real clinical settings can be more complex [Gao et al., 2023a]. As shown in Figure 1, a typical diagnosis requires comprehending and combining various information, such as health records, physical examinations, and laboratory tests, for further reasoning of possible diseases in a step-by-step manner following the established guidelines. This observation suggests that both perception, or reading, (e.g., finding necessary information in medical record) and reasoning (determining the disease based on the observations) should be counted when evaluating interpretability in LLM-based medical NLP tasks.

For a more comprehensive evaluation of LLMs for supporting diagnosis in a more realistic setting, we propose a Di agnostic Re asoning dataset for C linical no T es (DiReCT). The task basically is predicting the diagnosis from a clinical note of a patient, which is a collection of various medical records, written in natural language. Our dataset contains 511 clinical notes spanning 25 disease categories, sampled from a publicly available database, MIMIC-IV [Johnson et al., 2023]. Each clinical note undergoes fine-grained annotation by professional physicians. The annotators (i.e., the physicians) are responsible for identifying the text, or the observation, in the note that leads to a certain diagnosis, as well as the explanation. The dataset also provides a diagnostic knowledge graph based on existing diagnostic guidelines to facilitate more consistent annotations and to supply a model with essential knowledge for reasoning that might not be encompassed in its training data.

To underscore the challenge offered by our dataset, we evaluate a simple AI-agent based baseline [Xi et al., 2023, Tang et al., 2023] that utilizes the knowledge graph to decompose the diagnosis into a sequence of diagnoses from a smaller number of observations. Our experimental findings indicate that current state-of-the-art LLMs still fall short of aligning well with human doctors.

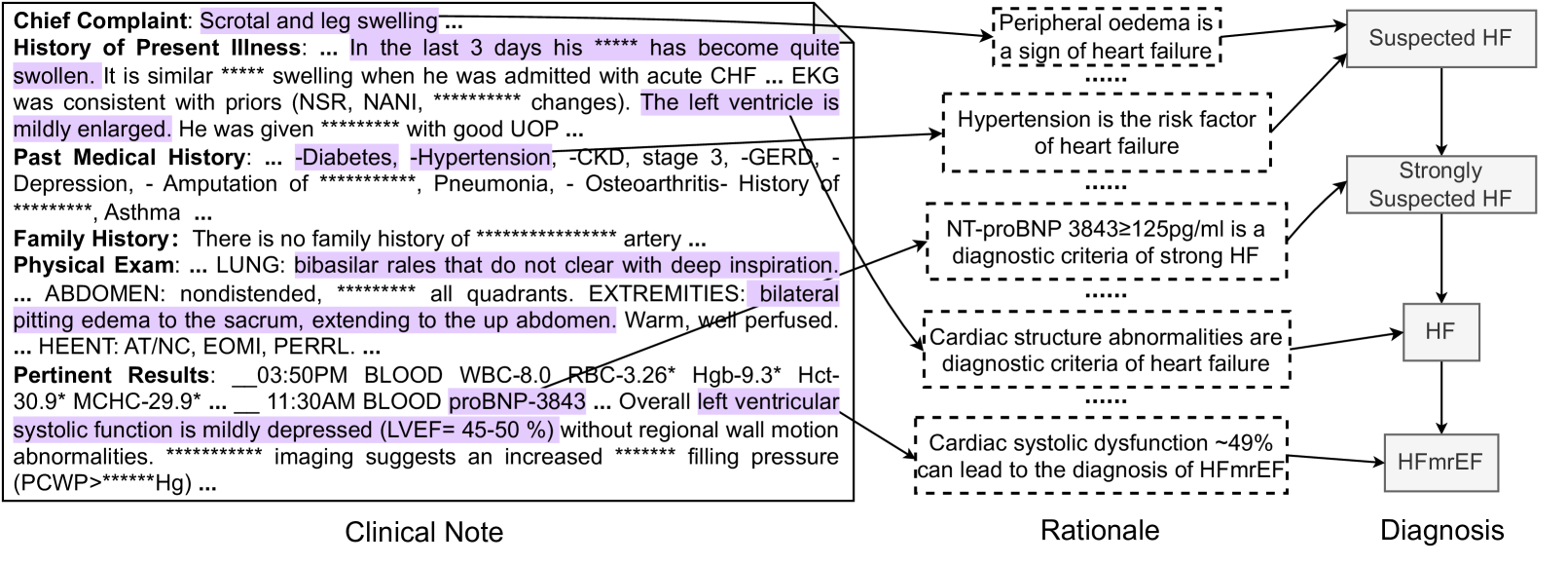

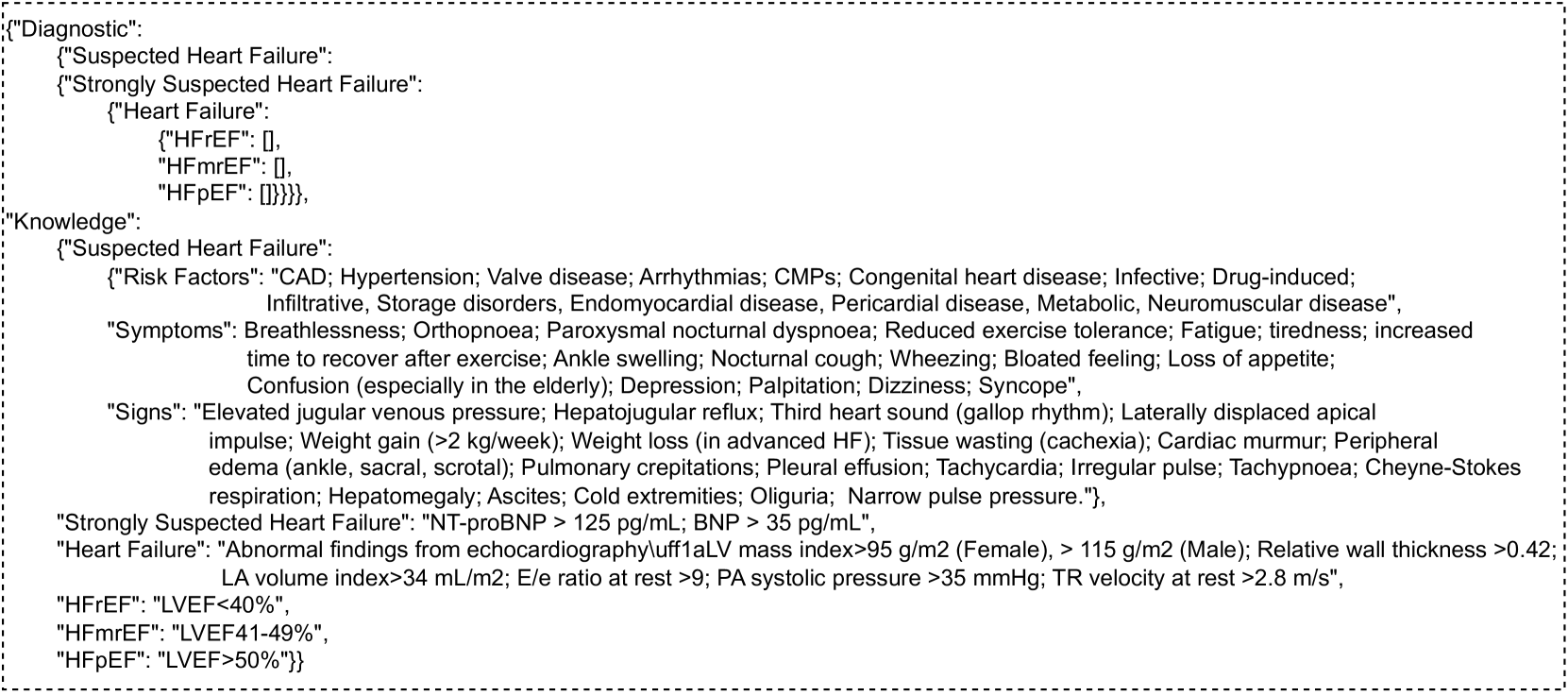

Contribution. DiReCT offers a new challenge in diagnosis from a complex clinical note with explicit knowledge of established guidelines. This challenge aligns with a realistic medical scenario that doctors are experiencing. In the application aspect, the dataset facilitates the development of a model to support doctors in diagnosis, which is error-prone [Middleton et al., 2013, Liu et al., 2022]. From the technical aspect, the dataset can benchmark models’ ability to read long text and find necessary observations for multi-evidence entailment tree reasoning. As shown in Figure 3, this is not trivial because of the variations in writing; superficial matching does not help, and medical knowledge is vital. Meanwhile, reasoning itself is facilitated by the knowledge graph. The model does not necessarily have the knowledge of diagnostic guidelines. With this choice, the knowledge graph explains the reasoning process, which is also beneficial when deploying such a diagnosis assistant system in practical uses.

<details>

<summary>x1.png Details</summary>

### Visual Description

## Diagram: Stroke Diagnosis Procedure

### Overview

The image is a diagram illustrating the typical steps in diagnosing a stroke, starting from patient admission and ending with a final diagnosis of hemorrhagic stroke. The diagram uses icons and text to represent each stage of the process.

### Components/Axes

* **Stages:** The diagram depicts the following stages in sequence:

* Admission

* Consultation

* Examination

* Final Diagnosis

* **Textual Information:** Each stage is accompanied by relevant textual information, such as chief complaints, medical history, and radiology findings.

* **Icons:** Each stage is represented by an icon.

* **Flow:** The flow of the diagnosis procedure is indicated by arrows.

* **Labels:** "Diagnosis Procedure" is written along a dashed arrow at the bottom of the diagram.

### Detailed Analysis or ### Content Details

1. **Admission:**

* Icon: A person with a heart symbol and an ambulance.

* Text: "Admission"

2. **Consultation:**

* Icon: A hospital building and a magnifying glass over a person icon.

* Text: "Consultation"

3. **Examination:**

* Icon: A doctor icon with a speech bubble labeled "Suspected Stroke".

* Text:

* "Chief Complaint: Right weakness and aphasia."

* "Events: He had episode of maurosis fugax in right eye ******** ago ....."

* "Past Medical History: HTN, COPD on home 1L ....."

4. **Examination:**

* Icon: A CT scan machine.

* Text:

* "Radiology: A 3.0 x 1.1 cm left thalamic hematoma appears stable when ....."

* "MR HEAD: Only ***** T1, axial T1, and axial FLAIR sequences were ....."

* "CT HEAD: Stable **** basal ganglia ....."

5. **Final Diagnosis:**

* Icon: A doctor icon with a speech bubble labeled "Hemorrhagic Stroke".

* Text: "Final Diagnosis"

### Key Observations

* The diagram outlines a sequential process from initial patient admission to a final diagnosis.

* The textual information provides details about the patient's symptoms, medical history, and examination findings.

* The icons visually represent each stage of the diagnosis procedure.

### Interpretation

The diagram illustrates the steps involved in diagnosing a stroke, highlighting the importance of patient history, physical examination, and imaging studies. The progression from "Suspected Stroke" to "Hemorrhagic Stroke" suggests a diagnostic pathway based on the presented information. The diagram emphasizes the role of various medical professionals and diagnostic tools in reaching a final diagnosis.

</details>

Figure 1: When a patient is admitted, an initial consultation takes place to collect subjective information. Subsequent observations may then require further examination to confirm the diagnosis.

2 Related Works

Natural language explanation. Recent advancements in NLP have led to significant achievements [Min et al., 2023]. However, existing models often lack explainability, posing potential risks [Danilevsky et al., 2020, Gurrapu et al., 2023]. Numerous efforts have been made to address this challenge. One effective approach is to provide a human-understandable plain text explanation alongside the model’s output [Camburu et al., 2018, Rajani et al., 2019]. Another strategy involves identifying evidence within the input that serves as a rationale for the model’s decisions, aligning with human reasoning [DeYoung et al., 2020]. Expanding on this concept, [Jhamtani and Clark, 2020] introduces chain-structured explanations, given that a diagnosis can demand multi-hop reasoning. This idea is further refined by ProofWriter [Tafjord et al., 2021] through a proof stage for explanations, and by [Zhao et al., 2021] through retrieval from a corpus. [Dalvi et al., 2021] proposes the entailment tree, offering more detailed explanations and facilitating inspection of the model’s reasoning. More recently, [Zhang et al., 2024] employed cumulative reasoning to tap into the potential of LLMs to provide explanation via a directed acyclic graph. Although substantial progress has been made, interpreting NLP tasks in medical domains remains an ongoing challenge [Liévin et al., 2024].

Benchmarks of interpretability in the medical domain Several datasets are designed to assess a model’s reasoning together with its interpretability in medical NLP (Table 1). MedMCQA [Pal et al., 2022] and other medical QA datasets [Li et al., 2023, Chen et al., 2024] provide plain text as explanations for QA tasks. NLI4CT [Jullien et al., 2023] uses clinical trial reports, focusing on NLI supported by multi-hop reasoning. N2N2 [Gao et al., 2022] proposes a summarization (Sum) task for a diagnosis based on multiple pieces of evidence in the input clinical note. NEJM CPC [Zack et al., 2023] interprets clinicians’ diagnostic reasoning as plain text for reasoning clinical diagnosis (CD). DR.BENCH [Gao et al., 2023b] aggregates publicly available datasets to assess the diagnostic reasoning of LLMs. Utilizing an multi-evidence entailment tree explanation, DiReCT introduces a more rigorous task to assess whether LLMs can align with doctors’ reasoning in real clinical settings.

Table 1: Comparison of existing datasets for medical reasoning tasks and ours. “t” and “w” mean tokens and words for the length of input, respectively.

| Dataset | Task | Data Source | Length | Explanation | # Cases |

| --- | --- | --- | --- | --- | --- |

| MedMCQA [Pal et al., 2022] | QA | Examination | 9.93 t | Plain Text | 194,000 |

| ExplainCPE [Li et al., 2023] | QA | Examination | 37.79 w | Plain Text | 7,000 |

| JAMA Challenge [Chen et al., 2024] | QA | Clinical Cases | 371 w | Plain Text | 1,524 |

| Medbullets [Chen et al., 2024] | QA | Online Questions | 163 w | Plain Text | 308 |

| N2N2 [Gao et al., 2022] | Sum | Clinical Notes | 785.46 t | Evidences | 768 |

| NLI4CT [Jullien et al., 2023] | NLI | Clinical Trail Reports | 10-35 t | Multi-hop | 2,400 |

| NEJM CPC [Zack et al., 2023] | CD | Clinical Cases | - | Plain Text | 2,525 |

| DiReCT (Ours) | CD | Clinical Notes | 1074.6 t | Entailment Tree | 511 |

3 A benchmark for Clinical Notes Diagnosis

This section first detail clinical notes (Section 3.1). We also describes the knowledge graph that encodes existing guidelines (Section 3.2). Our task definition, which tasks a clinical note and the knowledge graph as input is given in Section 3.4. We then present our annotation process for clinical notes (Section 3.3) and the evaluation metrics (Section 3.5).

3.1 Clinical Notes

Clinical notes used in DiReCT are stored in the SOAP format [Weed, 1970]. A clinical note comprises four components: In the subjective section, the physician records the patient’s chief complaint, the history of present illness, and other subjective experiences reported by the patient. The objective section contains structural data obtained through examinations (inspection, auscultation, etc.) and other measurable means. The assessment section involves the physician’s analysis and evaluation of the patient’s condition. This may include a summary of current status, etc. Finally, the plan section outlines the physician’s proposed treatment and management plan. This may include prescribed medications, recommended therapies, and further investigations. A clinical note also includes a primary discharge diagnosis (PDD) in the assessment section.

DiReCT’s clinical notes are sourced from the MIMIC-IV dataset [Johnson et al., 2023] (PhysioNet Credentialed Health Data License 1.5.0), which encompasses over 40,000 patients admitted to the intensive care units. Each note contains clinical data for a patient. To construct DiReCT, we curated a subset of 511 notes whose PDDs fell within one of 25 disease categories $i$ in 5 medical domains.

In our task, a note $R=\{r\}$ is an excerpt of 6 clinical data in the subjective and objective sections (i.e., $|R|=6$ ): chief complaint, history of present illness, past medical history, family history, physical exam, and pertinent results. We excluded data, such as review system and social history, because they are often missing in the original clinical notes and are less relevant to the diagnosis. We also identified the PDD $d^{\star}$ associated with $R$ . All clinical notes in DiReCT are related to only one PDD, and there is no secondary discharge diagnosis. The set of $d^{\star}$ ’s for all $R$ ’s collectively forms $\mathcal{D}^{\star}$ . We manually removed any descriptions that disclose the PDD in $R$ .

3.2 Diagnostic Knowledge Graph

Existing knowledge graphs for the medical domain, e.g., UMLS KG [Bodenreider, 2004], lack the ability to provide specific clinical decision support (e.g., diagnostic threshold, context-specific data, dosage information, etc.), which are critical for accurate diagnosis.

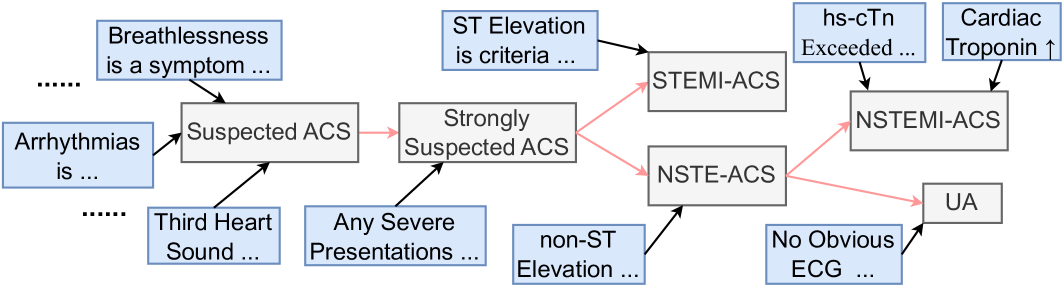

Our knowledge graphs $\mathcal{K}=\{\mathcal{K}_{i}\}_{i}$ is a collection of graph $\mathcal{K}_{i}$ for disease category $i$ . $\mathcal{K}_{i}$ is based on the diagnosis criteria in existing guidelines (refer to supplementary material for details). $\mathcal{K}_{i}$ ’s nodes are either premise $p∈\mathcal{P}_{i}$ (medical statement, e.g., Headache is a symptom of) and diagnoses $d∈\mathcal{D}_{i}$ (e.g., Suspected Stroke). $\mathcal{K}_{i}$ consists of two different types of edges. One is premise-to-diagnosis edges $\mathcal{S}_{i}=\{(p,d)\}$ , where $p∈\mathcal{P}_{i}$ and $d∈\mathcal{D}_{i}$ ; an edge is from $p$ to $d$ . This edge represents the necessary premise $p$ to make a diagnosis $d$ . We refer to them as supporting edges. The other is diagnosis-to-diagnosis edges $\mathcal{F}_{i}=\{(d,d^{\prime})\}$ , where $d,d^{\prime}∈\mathcal{D}_{i}$ and the edge is from $d$ to $d^{\prime}$ , which represents the diagnostic flow. These edges are referred to as procedural edges.

A disease category is defined according to an existing guideline, which starts from a certain diagnosis; therefore, a procedural graph $\mathcal{G}_{i}=(\mathcal{D}_{i},\mathcal{F}_{i})$ has only one root node and arbitrarily branches toward multiple leaf nodes that represent PDDs (i.e., the clinical notes in DiReCT are chosen to cover all leaf nodes of $\mathcal{G}_{i}$ ). Thus, $\mathcal{G}_{i}$ is a tree. We denote the set of the leaf nodes (or PDDs) as $\mathcal{D}^{\star}_{i}⊂\mathcal{D}_{i}$ . The knowledge graph is denoted by $\mathcal{K}_{i}=(\mathcal{D}_{i},\mathcal{P}_{i},\mathcal{S}_{i},\mathcal{F}_{%

i})$ .

<details>

<summary>x2.png Details</summary>

### Visual Description

## Flow Diagram: Acute Coronary Syndrome (ACS) Diagnosis

### Overview

The image is a flow diagram illustrating the diagnostic process for Acute Coronary Syndrome (ACS). It shows the progression from initial symptoms and findings to specific diagnoses like STEMI-ACS, NSTEMI-ACS, NSTE-ACS, and UA. The diagram uses boxes to represent conditions or diagnostic categories, and arrows to indicate the flow of the diagnostic process.

### Components/Axes

* **Boxes:** Represent different stages or diagnoses in the ACS diagnostic pathway. Boxes are either light blue or light gray.

* **Arrows:** Indicate the flow of the diagnostic process. Black arrows indicate a direct progression, while pink arrows indicate a possible progression.

* **Text Labels:** Describe the conditions, symptoms, or diagnostic criteria associated with each box.

### Detailed Analysis or ### Content Details

1. **Initial Symptoms/Findings (Top-Left):**

* "Breathlessness is a symptom..." (light blue box, top-left)

* "Arrhythmias is..." (light blue box, left)

* "Third Heart Sound..." (light blue box, bottom-left)

* "Any Severe Presentations..." (light blue box, bottom)

2. **Initial Assessment:**

* "Suspected ACS" (light gray box, center-left). This box receives arrows from "Breathlessness is a symptom...", "Arrhythmias is...", and "Third Heart Sound...".

3. **Further Assessment:**

* "Strongly Suspected ACS" (light gray box, center). This box receives an arrow from "Suspected ACS" (pink arrow) and "Any Severe Presentations...".

4. **Diagnostic Criteria (Top):**

* "ST Elevation is criteria..." (light blue box, top)

* "hs-cTn Exceeded..." (light blue box, top-right)

* "Cardiac Troponin ↑" (light blue box, top-right)

* "non-ST Elevation..." (light blue box, bottom)

* "No Obvious ECG..." (light blue box, bottom-right)

5. **Specific Diagnoses:**

* "STEMI-ACS" (light gray box, top-center). This box receives arrows from "ST Elevation is criteria..." and "Strongly Suspected ACS" (pink arrow).

* "NSTE-ACS" (light gray box, center). This box receives arrows from "non-ST Elevation..." and "Strongly Suspected ACS" (pink arrow).

* "NSTEMI-ACS" (light gray box, right). This box receives arrows from "hs-cTn Exceeded..." and "Cardiac Troponin ↑".

* "UA" (light gray box, bottom-right). This box receives arrows from "NSTE-ACS" (pink arrow) and "No Obvious ECG...".

### Key Observations

* The diagram illustrates a step-by-step approach to diagnosing ACS, starting with initial symptoms and progressing to specific diagnoses based on diagnostic criteria.

* The pink arrows indicate possible progressions or relationships between stages.

* The light blue boxes represent symptoms, findings, or criteria, while the light gray boxes represent diagnostic categories.

### Interpretation

The flow diagram provides a visual representation of the diagnostic pathway for Acute Coronary Syndrome (ACS). It highlights the importance of considering various symptoms, findings, and diagnostic criteria in order to arrive at an accurate diagnosis. The diagram suggests that the diagnostic process involves a series of assessments and evaluations, with the ultimate goal of classifying the patient's condition into one of several categories: STEMI-ACS, NSTEMI-ACS, NSTE-ACS, or UA. The pink arrows indicate that the diagnostic process is not always linear and that there may be multiple possible pathways to a final diagnosis.

</details>

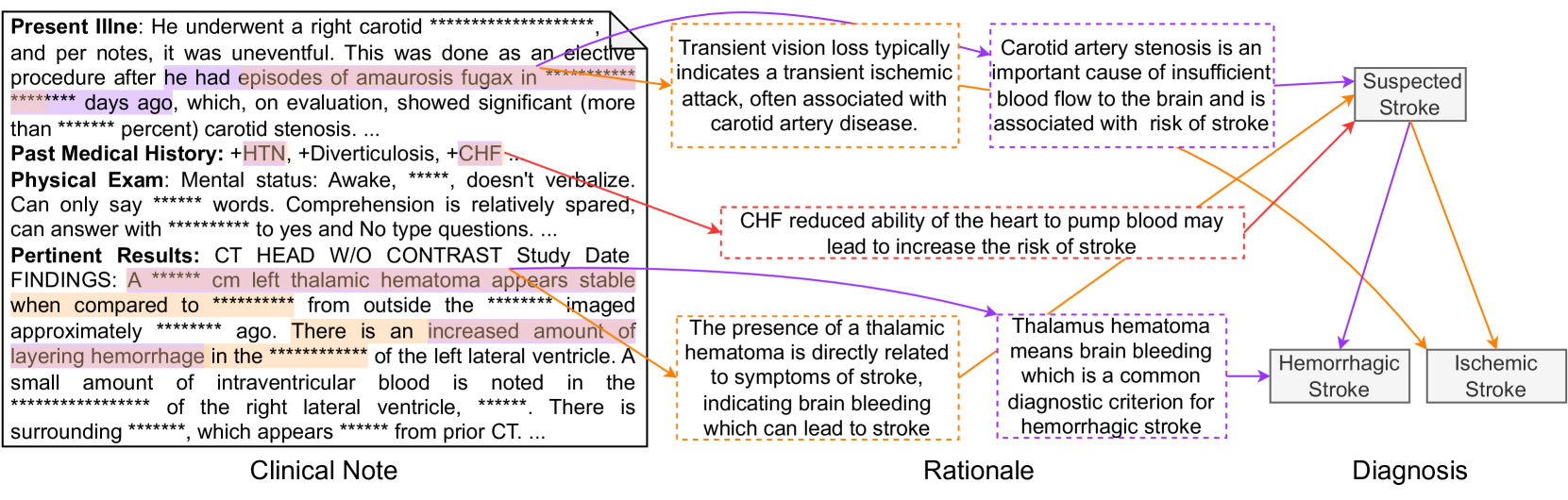

Figure 2: A part of $\mathcal{K}_{i}$ for $i$ being Acute Coronary Syndromes.

Figure 2 shows a part of $\mathcal{K}_{i}$ , where $i$ is Acute Coronary Syndromes (ACS). Premises in $\mathcal{P}_{i}$ and diagnoses in $\mathcal{D}_{i}$ are given in the blue and gray boxes, while PDDs in $\mathcal{D}^{\star}_{i}$ are ones without outgoing edges (i.e., STEMI-ACS and NSTEMI-ACS, and UA). The black and red arrows are edges in $\mathcal{S}$ and $\mathcal{F}$ , respectively, where the black arrows indicate the supporting edges.

$\mathcal{K}$ serves two essential functions: (1) They serve as the gold standard for annotation, guiding doctors in the precise and uniform interpretation of clinical notes. (2) Our task also allows a model to use them to ensure the output from an LLM can be closely aligned with the reasoning processes of medical professionals.

3.3 Data Annotation

Let $d^{\star}∈\mathcal{D}^{\star}_{i}$ denote the PDD of disease category $i$ associated with $R$ . We can find a subgraph $\mathcal{K}_{i}(d^{\star})$ of $\mathcal{K}_{i}$ that contains all ancestors of $d^{\star}$ , including premises in $\mathcal{P}_{i}$ . We also denote the set of supporting edges in $\mathcal{K}_{i}(d^{\star})$ as $\mathcal{S}_{i}(d^{\star})$ . Our annotation process is, for each supporting edge $(p,d)∈\mathcal{S}_{i}(d^{\star})$ , to extract observation $o∈\mathcal{O}$ in $R$ (highlighted text in the clinical note in Figure 3) and provide rationalization $z$ of this deduction why $o$ is a support for $d$ or corresponds to $p$ . All annotations strictly follow the procedural flow in $\mathcal{K}_{i}$ , and each observation is only related to one diagnostic node. If $R$ does not provide sufficient observations for the PDD (which may happen when a certain test is omitted), the annotators were asked to add plausible observations to $R$ . This choice compromises the fidelity of our dataset to the original clinical notes, but we chose it for the completeness of the dataset. They form the explanation $\mathcal{E}=\{(o,z,d)\}$ for $(R,d^{\star})$ . This annotation process was carried out by 9 clinical physicians and subsequently verified for accuracy and completeness by three senior medical experts.

Table 2: Statistics of DiReCT.

| Medical domain | # cat. | # samples | $|\mathcal{D}_{i}|$ | $|\mathcal{D}^{\star}_{i}|$ | $|\mathcal{O}|$ | Length |

| --- | --- | --- | --- | --- | --- | --- |

| Cardiology | 7 | 184 | 27 | 16 | 8.7 | 1156.6 t |

| Gastroenterology | 4 | 103 | 11 | 7 | 4.3 | 1026.0 t |

| Neurology | 5 | 77 | 17 | 11 | 11.9 | 1186.3 t |

| Pulmonology | 5 | 92 | 26 | 17 | 10.7 | 940.7 t |

| Endocrinology | 4 | 55 | 20 | 14 | 6.9 | 1063.5 t |

| Overall | 25 | 511 | 101 | 65 | 8.5 | 1074.6 t |

Table 2 summarizes statistics of our dataset. The second and third columns (“# cats.” and “# samples”) show the numbers of disease categories and samples in the respective medical domains. $|\mathcal{D}_{i}|$ and $|\mathcal{D}_{i}^{\star}|$ are the total numbers of diagnoses (diseases) and PDDs, summed over all diagnostic categories in the medical domain, respectively. $|\mathcal{O}|$ is the average number of annotated observations. “Length” is the average number of tokens in $R$ .

<details>

<summary>x3.png Details</summary>

### Visual Description

## Clinical Note to Diagnosis Flow Diagram

### Overview

The image presents a flow diagram that connects clinical observations from a patient's medical history and physical exam to a final diagnosis of HFmrEF (Heart Failure with mid-range Ejection Fraction). The diagram links information from a "Clinical Note" to a "Rationale" section, ultimately leading to a "Diagnosis."

### Components/Axes

* **Clinical Note:** This section contains information gathered from the patient's chief complaint, history of present illness, past medical history, family history, physical exam, and pertinent results.

* **Rationale:** This section provides the reasoning and medical knowledge that connects the clinical findings to the diagnosis.

* **Diagnosis:** This section presents the final diagnosis based on the clinical note and rationale. The diagnoses are: Suspected HF, Strongly Suspected HF, HF, and HFmrEF.

### Detailed Analysis or ### Content Details

**Clinical Note:**

* **Chief Complaint:** Scrotal and leg swelling.

* **History of Present Illness:** In the last 3 days, the patient's condition has become quite swollen. The swelling is similar to when the patient was admitted with acute CHF. EKG was consistent with priors (NSR, NANI, changes). The left ventricle is mildly enlarged. The patient was given with good UOP.

* **Past Medical History:** Diabetes, Hypertension, CKD (stage 3), GERD, Depression, Amputation, Pneumonia, Osteoarthritis, History of Asthma.

* **Family History:** There is no family history of artery.

* **Physical Exam:**

* LUNG: Bibasilar rales that do not clear with deep inspiration.

* ABDOMEN: Nondistended, all quadrants.

* EXTREMITIES: Bilateral pitting edema to the sacrum, extending to the up abdomen. Warm, well perfused.

* HEENT: AT/NC, EOMI, PERRL.

* **Pertinent Results:**

* 03:50 PM BLOOD: WBC-8.0, RBC-3.26*, Hgb-9.3*, Hct-30.9*, MCHC-29.9*.

* 11:30 AM BLOOD: proBNP-3843. Overall left ventricular systolic function is mildly depressed (LVEF = 45-50%) without regional wall motion abnormalities. Imaging suggests an increased filling pressure (PCWP > Hg).

**Rationale:**

* Peripheral oedema is a sign of heart failure.

* Hypertension is the risk factor of heart failure.

* NT-proBNP 3843 ≥ 125 pg/ml is a diagnostic criteria of strong HF.

* Cardiac structure abnormalities are diagnostic criteria of heart failure.

* Cardiac systolic dysfunction ~49% can lead to the diagnosis of HFmrEF.

**Diagnosis:**

* Suspected HF

* Strongly Suspected HF

* HF

* HFmrEF

### Key Observations

* The diagram illustrates a step-by-step process of how clinical information is used to arrive at a diagnosis of HFmrEF.

* The rationale section provides the medical justification for each diagnostic step.

* The clinical note contains a mix of subjective (patient complaints) and objective (lab results, physical exam findings) data.

* The proBNP value of 3843 is significantly higher than 125 pg/ml, supporting the diagnosis of strong HF.

* The LVEF of 45-50% falls within the range for HFmrEF.

### Interpretation

The diagram effectively demonstrates the clinical reasoning process involved in diagnosing heart failure. It highlights the importance of integrating patient history, physical exam findings, and laboratory results to arrive at an accurate diagnosis. The flow from "Clinical Note" to "Rationale" to "Diagnosis" provides a clear and logical framework for understanding the diagnostic process. The diagram emphasizes the role of specific clinical findings, such as peripheral edema, hypertension, elevated proBNP, and reduced LVEF, in supporting the diagnosis of HFmrEF. The diagram suggests a systematic approach to diagnosis, where each piece of evidence contributes to the final conclusion.

</details>

Figure 3: An annotation sample of Heart Failure (HF). The left part is the clinical note alongside extracted observations by a doctor. The middle part outlines the steps of the rationale for the premise corresponding to each diagnostic node shown in the right part.

3.4 Task Definition

We propose two tasks with different levels of supplied external knowledge. The first task is, given $R$ and $\mathcal{G}$ , to predict the associated PDD $d^{\star}$ and generate an explanation $\mathcal{E}$ that explains the model’s diagnostic procedure from $R$ to $d^{\star}$ , i.e., letting $M$ denote a model:

$$

\displaystyle\hat{d}^{\star},\hat{\mathcal{E}}=M(R,\mathcal{G}), \tag{1}

$$

where $\hat{d}^{\star}∈\cup_{i}\mathcal{D}^{\star}_{i}$ and $\hat{\mathcal{E}}$ are predictions for the PDD and explanation, respectively. With this task, the knowledge of specific diagnostic procedures in existing guidelines can be used for prediction, facilitating interpretability. The second task takes $\mathcal{K}$ as input instead of $\mathcal{G}$ , i.e.,:

$$

\displaystyle\hat{d}^{\star},\hat{\mathcal{E}}=M(R,\mathcal{K}). \tag{2}

$$

This task allows for the use of broader knowledge of premises for prediction. One may also try a task without any external knowledge.

3.5 Evaluation Metrics

We designed three metrics to quantify the predictive performance over our benchmark.

(1) Accuracy of diagnosis $\textit{Acc}^{\text{diag}}$ evaluates if a model can correctly identify the diagnosis. $\textit{Acc}^{\text{diag}}=1$ if $d^{\star}=\hat{d}$ , and $\textit{Acc}^{\text{diag}}=0$ otherwise. The average is reported.

(2) Completeness of observations $\textit{Obs}^{\text{comp}}$ evaluates whether a model extracts all and only necessary observations for the prediction. Let $\mathcal{O}$ and $\hat{\mathcal{O}}$ denote the sets of observations in $\mathcal{E}$ and $\hat{\mathcal{E}}$ , respectively. The metric is defined as $\textit{Obs}^{\text{comp}}=|\mathcal{O}\cap\hat{\mathcal{O}}|/|\mathcal{O}\cup%

\hat{\mathcal{O}}|$ , where the numerator is the number of observations that are common in both $\mathcal{O}$ and $\hat{\mathcal{O}}$ . We find the common observations with an LLM (refer to the supplementary material for more detail). This metric simultaneously evaluates the correctness of each observation and the coverage. To supplement it, we also report the precision $\textit{Obs}^{\text{pre}}$ and recall $\textit{Obs}^{\text{rec}}$ , given by $\textit{Obs}^{\text{pre}}=|\mathcal{O}\cap\hat{\mathcal{O}}|/|\hat{\mathcal{O}}|$ and $\textit{Obs}^{\text{rec}}=|\mathcal{O}\cap\hat{\mathcal{O}}|/|\mathcal{O}|$ .

(3) Faithfulness of explanations Faith evaluates if the diagnostic flow toward the PDD is fully supported by observations with faithful rationalizations. This involves establishing a one-to-one correspondence between deductions in the prediction and the ground truth. We use the correspondences established for computing $\textit{Obs}^{\text{comp}}$ . Let $o∈\mathcal{O}$ and $\hat{o}∈\hat{\mathcal{O}}$ denote corresponding observations. This correspondence is considered successful if $z$ and $\hat{z}$ as well as $d$ and $\hat{d}$ associated with $o$ and $\hat{o}$ matches. Let $m(\mathcal{E},\hat{\mathcal{E}})$ denote the number of successful matches. We use the ratio of $m(\mathcal{E},\hat{\mathcal{E}})$ to $|\mathcal{O}\cap\hat{\mathcal{O}}|$ and $|\mathcal{O}\cup\hat{\mathcal{O}}|$ as evaluation metrics $\textit{Exp}^{\text{com}}$ and $\textit{Exp}^{\text{all}}$ , respectively, to see failures come from observations or explanations and diagnosis.

4 Baseline

<details>

<summary>x4.png Details</summary>

### Visual Description

## Diagram: Clinical Reasoning Process

### Overview

The image illustrates a clinical reasoning process, starting from a clinical note and progressing through perception, observations, diagnostic knowledge graph (KG), and reasoning steps to arrive at a rationale. The process involves iterative reasoning and feedback loops.

### Components/Axes

* **Clinical Note:** Represents the initial patient information, depicted as a clipboard icon.

* **Narrowing-down:** A green rectangular box indicating the process of filtering information.

* **Perception:** A purple rectangular box representing the interpretation of clinical data.

* **Observations:** A white rectangular box listing clinical findings.

* Numbered list of observations:

1. Elevated blood pressures

2. CXR showed mild pulmonary edema

3. CHF/Cardiomyopathy

4. Severe LV diastolic dysfunction

5. BPs: 148/98, 156/93

* **Diagnostic KG:** A blue rectangular box containing a knowledge graph with nodes labeled a1, a2, a3, a4, and a5. The nodes are interconnected with black and red arrows.

* **Reasoning:** Gray rectangular boxes representing reasoning steps, each associated with a gear icon.

* **Rationale:** White rectangular boxes showing the reasoning process. Each box contains nodes labeled 1 through 5, and nodes a1, a2, and a4.

* **Input Variables (r1-r6):**

* r1: Chief Complaint

* r2: History of Present Illness

* r3: Past Medical History

* r4: Family History

* r5: Physical Examination

* r6: Pertinent Results

### Detailed Analysis or Content Details

1. **Flow from Clinical Note:** The process begins with the "Clinical Note," which feeds into both "Narrowing-down" and "Perception."

2. **Narrowing-down to Perception:** "Narrowing-down" leads to "Perception" via an arrow labeled 'i'.

3. **Perception to Observations:** "Perception" leads to "Observations."

4. **Diagnostic KG Connection:** The "Diagnostic KG" provides input to "Perception" via a teal arrow.

5. **Observations to Reasoning:** "Observations" feed into the "Reasoning" blocks.

6. **Reasoning Iteration:** There is a feedback loop (represented by a curved arrow) between the two "Reasoning" blocks.

7. **Diagnostic KG to Reasoning:** The "Diagnostic KG" also feeds into the "Reasoning" blocks via teal arrows.

8. **Reasoning to Rationale:** Each "Reasoning" block leads to a "Rationale" block.

9. **Rationale Details:**

* The first "Rationale" box shows nodes 1-5 connected to node a1 with dashed lines.

* The second "Rationale" box shows nodes 1-5 connected to node a1 with dashed lines, and a red arrow from a1 to a2.

* The third "Rationale" box shows nodes 1-5 connected to node a1 with dashed lines, a red arrow from a1 to a2, and a red arrow from a2 to a4.

10. **Diagnostic KG Details:**

* Node a1 has connections to multiple unlabeled nodes.

* Node a2 is connected to a1 (red arrow), a3 (red arrow), and a4 (red arrow).

* Node a3 is connected to a2 (red arrow) and a5 (red arrow).

* Node a4 is connected to a2 (red arrow) and other unlabeled nodes.

* Node a5 is connected to a3 (red arrow).

### Key Observations

* The diagram illustrates an iterative process of clinical reasoning.

* The "Diagnostic KG" and "Observations" serve as key inputs for the "Reasoning" process.

* The feedback loop between the "Reasoning" blocks suggests an iterative refinement of the diagnosis.

### Interpretation

The diagram represents a model for clinical decision-making. It starts with initial clinical data, which is then filtered and interpreted. Observations are made based on this perception, and a diagnostic knowledge graph is consulted. Reasoning steps are performed, potentially iteratively, to arrive at a rationale or diagnosis. The feedback loop highlights the dynamic nature of clinical reasoning, where initial assessments can be revised based on new information or insights. The connections between the Diagnostic KG and the Reasoning blocks suggest that prior knowledge and established relationships between medical concepts play a crucial role in the diagnostic process.

</details>

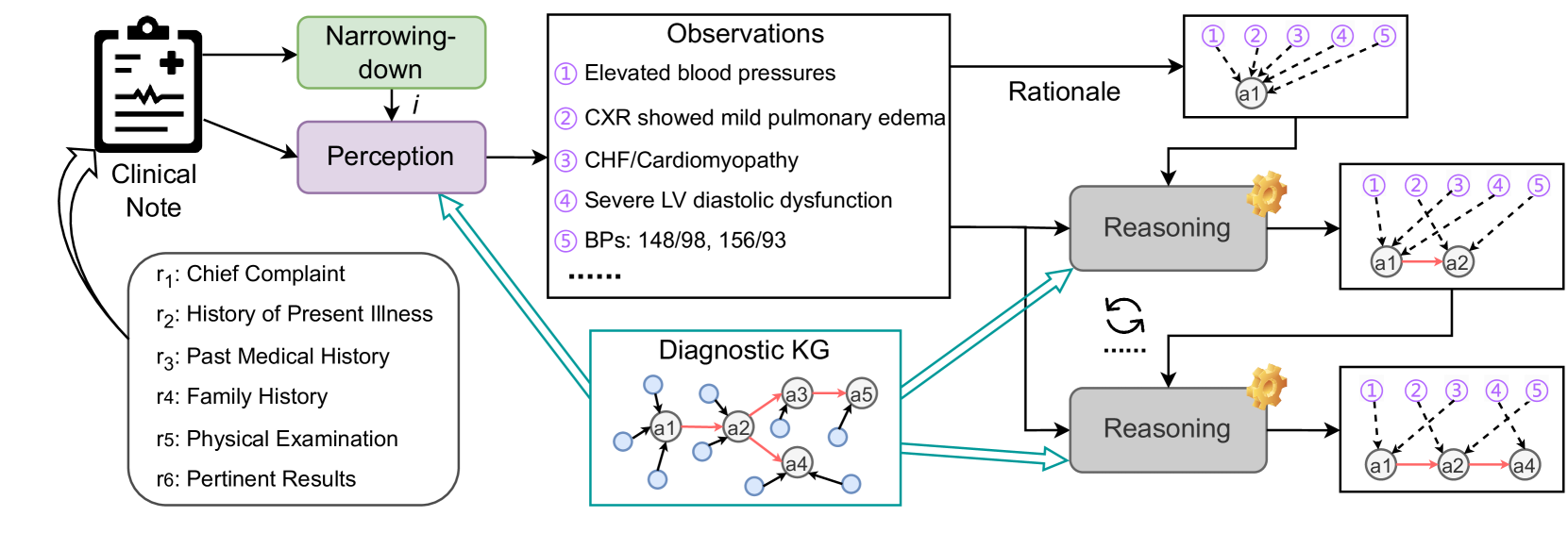

Figure 4: Pipeline of our baseline. The dotted line in the right-most boxes means deductions from an observation to a diagnosis.

Figure 4 shows an overview of our baseline with three LLM-based modules narrowing-down, perception, and reasoning (refer to the supplementary material for more details). The narrowing-down module $U$ takes $R$ as input to make a prediction $\hat{i}$ of the disease category, i.e., $\hat{i}=U(R)$ .

Let $d_{t}∈\mathcal{D}_{\hat{i}}$ be the diagnosis that has been reached with $t$ iterations over $\mathcal{K}_{\hat{i}}$ , where $t$ corresponds to the depth of node $d_{t}$ and so is less than or equal to the depth of $\mathcal{K}_{i}$ . $d_{0}$ is the root node of $\mathcal{K}_{\hat{i}}$ . For $d_{0}$ , we apply the perception module to extract all observations in $R$ and explanation $\mathcal{E}_{0}$ to support $d_{0}$ as

$$

\displaystyle\hat{\mathcal{O}},\hat{\mathcal{E}_{0}}=W(d_{0},\mathcal{K}_{\hat%

{i}}). \tag{3}

$$

$\mathcal{K}_{\hat{i}}$ is supplied to facilitate the model to extract all observations for the following reasoning process. We used only pairs of an observation and a premise. We abuse $\mathcal{K}$ to mean this for notation simplicity.

Diagnosis $d_{t}$ identifies the set $\{d_{n}\}_{n}$ of its children and so the set $\mathcal{P}_{\hat{i}}(\{d_{n}\}_{n})=\{p∈\mathcal{P}_{i}|(p,d_{n})∈%

\mathcal{S}_{i},d_{n}∈\{d_{n}\}\}$ of premises that support $d_{n}$ . Therefore, our reasoning module $V$ iteratively and greedily identifies the next step’s diagnosis (i.e., $d_{t+1}$ ) from $\{d_{n}\}_{n}$ , making a rationalization for each deduction. That is, $V$ verifies whether there exist $o$ ’s in $\hat{\mathcal{O}}$ that supports one $d_{n}$ . If $d_{n}$ is fully supported, $d_{n}$ is identified as $d_{t+1}$ for the $(t+1)$ -th iteration, i.e.,

$$

\displaystyle d_{t+1},\hat{\mathcal{E}}_{t+1}=V(\hat{\mathcal{O}},\{d_{n}\},%

\mathcal{P}_{\hat{i}}(\{d_{n}\}_{n})). \tag{4}

$$

Otherwise, the reasoning module fails. $V$ is repeated until $d_{t^{\prime}}$ in $\mathcal{D}^{\star}_{\hat{i}}$ is found or it fails. In our annotation, each observation contributes to deducing only one $d_{t}$ . Therefore, if an observation in $\hat{\mathcal{E}}_{t+1}$ is included in the preceding sets of explanations $\hat{\mathcal{E}}_{0}$ to $\hat{\mathcal{E}}_{t}$ , the corresponding explanation in the preceding sets is removed.

5 Experiments

5.1 Experimental Setup

We assess the reasoning capabilities of 7 recent LLMs from diverse families and model sizes, including 5 instruction-tuned models that are openly accessible: LLama3 8B and 70B [AI@Meta, 2024], Zephyr 7B [Tunstall et al., 2023], Mistral 7B [Jiang et al., 2023], and Mixtral 8 $×$ 7B [Jiang et al., 2023]. We have also obtained access to private versions of the GPT-3.5 turbo [OpenAI, 2023b] and GPT-4 turbo [OpenAI, 2023a] These two models are housed on a HIPPA-compliant instance within Microsoft Azure AI Studio. No data is transferred to either Microsoft or OpenAI. This secure environment enables us to safely conduct experiments with the MIMIC-IV dataset, in compliance with the Data Use Agreement., which are high-performance closed-source models. Each LLM is utilized to implement our baseline’s narrowing-down, perception, and reasoning modules. The temperature is set to 0. For computing evaluation metrics, we use LLama3 8B with few-shot prompts to make correspondences between $\mathcal{O}$ and $\hat{\mathcal{O}}$ as well as to verify a match between predicted and ground-truth explanations (refer to the supplementary material for more details).

5.2 Results

Comparison among LLMs. Table 3 shows the performance of our baseline built on top of various LLMs. We first evaluate a variant of our task that takes graph $\mathcal{G}=\{\mathcal{G}_{i}\}$ consisting of only procedural flow as external knowledge instead of $\mathcal{K}$ . Comparison between $\mathcal{G}$ and $\mathcal{K}$ demonstrates the importance of supplying premises with the model and LLMs’ capability to make use of extensive external knowledge that may be superficially different from statements in $R$ . Subsequently, some models are evaluated with our task using $\mathcal{K}$ . In addition to the metrics in Section 3.5, we also adopt the accuracy of disease category $\textit{Acc}^{\text{cat}}$ , which gives 1 when $\hat{i}=i$ , as our baseline’s performance depends on it.

Table 3: Diagnostic reasoning ability of different LLMs under the proposed baseline method.

| | | Diagnosis | Observation | Explanation | | | | |

| --- | --- | --- | --- | --- | --- | --- | --- | --- |

| Task | Models | Acc ${}^{\text{cat}}$ | Acc ${}^{\text{diag}}$ | $\textit{Obs}^{\text{pre}}$ | $\textit{Obs}^{\text{rec}}$ | $\textit{Obs}^{\text{comp}}$ | $\textit{Exp}^{\text{com}}$ | $\textit{Exp}^{\text{all}}$ |

| With $\mathcal{G}$ | Zephyr 7B | 0.274 | 0.151 | 0.123 ${}_{±\text{0.200}}$ | 0.115 ${}_{±\text{0.166}}$ | 0.092 ${}_{±\text{0.108}}$ | 0.071 ${}_{±\text{0.139}}$ | 0.014 ${}_{±\text{0.037}}$ |

| Mistral 7B | 0.507 | 0.306 | 0.211 ${}_{±\text{0.190}}$ | 0.317 ${}_{±\text{0.253}}$ | 0.173 ${}_{±\text{0.157}}$ | 0.230 ${}_{±\text{0.312}}$ | 0.062 ${}_{±\text{0.088}}$ | |

| Mixtral 8 $×$ 7B | 0.413 | 0.237 | 0.147 ${}_{±\text{0.165}}$ | 0.266 ${}_{±\text{0.261}}$ | 0.124 ${}_{±\text{0.138}}$ | 0.144 ${}_{±\text{0.268}}$ | 0.029 ${}_{±\text{0.056}}$ | |

| LLama3 8B | 0.576 | 0.321 | 0.253 ${}_{±\text{0.156}}$ | 0.437 ${}_{±\text{0.207}}$ | 0.219 ${}_{±\text{0.137}}$ | 0.232 ${}_{±\text{0.316}}$ | 0.071 ${}_{±\text{0.093}}$ | |

| LLama3 70B | 0.752 | 0.540 | 0.277 ${}_{±\text{0.146}}$ | 0.537 ${}_{±\text{0.192}}$ | 0.256 ${}_{±\text{0.142}}$ | 0.395 ${}_{±\text{0.320}}$ | 0.112 ${}_{±\text{0.110}}$ | |

| GPT-3.5 turbo | 0.679 | 0.455 | 0.389 ${}_{±\text{0.212}}$ | 0.351 ${}_{±\text{0.192}}$ | 0.275 ${}_{±\text{0.167}}$ | 0.331 ${}_{±\text{0.366}}$ | 0.103 ${}_{±\text{0.127}}$ | |

| GPT-4 turbo | 0.772 | 0.572 | 0.446 ${}_{±\text{0.207}}$ | 0.491 ${}_{±\text{0.180}}$ | 0.371 ${}_{±\text{0.186}}$ | 0.475 ${}_{±\text{0.363}}$ | 0.199 ${}_{±\text{0.181}}$ | |

| With $\mathcal{K}$ | LLama3 8B | 0.576 | 0.344 | 0.235 ${}_{±\text{0.162}}$ | 0.394 ${}_{±\text{0.227}}$ | 0.199 ${}_{±\text{0.142}}$ | 0.327 ${}_{±\text{0.375}}$ | 0.087 ${}_{±\text{0.114}}$ |

| LLama3 70B | 0.735 | 0.581 | 0.262 ${}_{±\text{0.146}}$ | 0.501 ${}_{±\text{0.208}}$ | 0.236 ${}_{±\text{0.131}}$ | 0.463 ${}_{±\text{0.374}}$ | 0.125 ${}_{±\text{0.117}}$ | |

| GPT-3.5 turbo | 0.652 | 0.413 | 0.347 ${}_{±\text{0.241}}$ | 0.279 ${}_{±\text{0.203}}$ | 0.232 ${}_{±\text{0.184}}$ | 0.374 ${}_{±\text{0.408}}$ | 0.121 ${}_{±\text{0.152}}$ | |

| GPT-4 turbo | 0.781 | 0.614 | 0.431 ${}_{±\text{0.207}}$ | 0.458 ${}_{±\text{0.187}}$ | 0.353 ${}_{±\text{0.170}}$ | 0.633 ${}_{±\text{0.338}}$ | 0.247 ${}_{±\text{0.201}}$ | |

Table 4: Evaluation of diagnostic reasoning ability of LLMs when no external knowledge is provided.

| | | | Observation | Explanation | | | |

| --- | --- | --- | --- | --- | --- | --- | --- |

| Task | Models | Acc ${}^{\text{diag}}$ | $\textit{Obs}^{\text{pre}}$ | $\textit{Obs}^{\text{rec}}$ | $\textit{Obs}^{\text{comp}}$ | $\textit{Exp}^{\text{com}}$ | $\textit{Exp}^{\text{all}}$ |

| With $\mathcal{D}^{\star}$ | LLama3 8B | 0.070 | 0.154 ${}_{±\text{0.139}}$ | 0.330 ${}_{±\text{0.244}}$ | 0.135 ${}_{±\text{0.122}}$ | 0.020 ${}_{±\text{0.100}}$ | 0.004 ${}_{±\text{0.016}}$ |

| LLama3 70B | 0.502 | 0.257 ${}_{±\text{0.150}}$ | 0.509 ${}_{±\text{0.213}}$ | 0.237 ${}_{±\text{0.145}}$ | 0.138 ${}_{±\text{0.209}}$ | 0.034 ${}_{±\text{0.054}}$ | |

| GPT-3.5 turbo | 0.223 | 0.164 ${}_{±\text{0.242}}$ | 0.149 ${}_{±\text{0.212}}$ | 0.116 ${}_{±\text{0.174}}$ | 0.091 ${}_{±\text{0.231}}$ | 0.025 ${}_{±\text{0.065}}$ | |

| GPT-4 turbo | 0.636 | 0.461 ${}_{±\text{0.206}}$ | 0.482 ${}_{±\text{0.160}}$ | 0.378 ${}_{±\text{0.174}}$ | 0.186 ${}_{±\text{0.221}}$ | 0.074 ${}_{±\text{0.090}}$ | |

| No Knowledge | LLama3 8B | 0.023 | 0.137 ${}_{±\text{0.159}}$ | 0.258 ${}_{±\text{0.274}}$ | 0.119 ${}_{±\text{0.141}}$ | 0.018 ${}_{±\text{0.083}}$ | 0.006 ${}_{±\text{0.026}}$ |

| LLama3 70B | 0.037 | 0.246 ${}_{±\text{0.148}}$ | 0.504 ${}_{±\text{0.222}}$ | 0.227 ${}_{±\text{0.148}}$ | 0.022 ${}_{±\text{0.093}}$ | 0.007 ${}_{±\text{0.030}}$ | |

| GPT-3.5 turbo | 0.059 | 0.161 ${}_{±\text{0.238}}$ | 0.148 ${}_{±\text{0.215}}$ | 0.113 ${}_{±\text{0.171}}$ | 0.036 ${}_{±\text{0.131}}$ | 0.011 ${}_{±\text{0.039}}$ | |

| GPT-4 turbo | 0.074 | 0.410 ${}_{±\text{0.208}}$ | 0.443 ${}_{±\text{0.191}}$ | 0.324 ${}_{±\text{0.182}}$ | 0.047 ${}_{±\text{0.143}}$ | 0.019 ${}_{±\text{0.058}}$ | |

With $\mathcal{G}$ , we can see that GPT-4 achieves the best performance in most metrics, especially related to observations and explanations, surpassing LLama3 70B by a large margin. In terms of accuracy (in both category and diagnosis levels), LLama3 70B is comparable to GPT-4. LLama3 70B also has a higher $\textit{Obs}^{\text{rec}}$ but low $\textit{Obs}^{\text{pre}}$ and $\textit{Obs}^{\text{comp}}$ , which means that this model tends to extract many observations. Models with high diagnostic accuracy are not necessarily excel in finding essential information in long text (i.e., observations) and generating reasons (i.e., explanations).

When $\mathcal{K}$ is given, all models show better diagnostic accuracy (except GPT-3.5) and explanations, while observations are slightly degraded. GPT-4 with $\mathcal{K}$ enhances Acc ${}^{\text{diag}}$ , $\textit{Exp}^{\text{com}}$ , and $\textit{Exp}^{\text{all}}$ scores. This suggests that premises and supporting edges are beneficial for diagnosis and explanation. Lower observational performance may indicate that the models lack the ability to associate premises and text in $R$ , which are often superficially different though semantically consistent.

LLMs may undergo inherent challenges for evaluation when no external knowledge is supplied. They may have the knowledge to diagnose but cannot make consistent observations and explanations that our task expects through $\mathcal{K}$ . To explore this, we evaluate two settings: (1) giving $D^{\star}$ and (2) no knowledge is supplied to a model (shown in Table 4). The prompts used for this setup are detailed in the supplementary material. We do not evaluate the accuracy of disease category prediction as it is basically the same as Table 3. We can clearly see that with $\mathcal{D}^{\star}$ , GPT-4’s diagnostic and observational scores are comparable to those of the task with $\mathcal{K}$ , though explanatory performance is much worse. Without any external knowledge, the diagnostic accuracy is also inferior. We understand this comparison is unfair, as the prompts differ. We intend to give a rough idea about the challenge without external knowledge. The deteriorated performance can be attributed to inconsistent wording of diagnosis names, which makes evaluation tough. High observational scores imply that observations in $R$ can be identified without relying on external knowledge. There can be some cues to spot them.

<details>

<summary>x5.png Details</summary>

### Visual Description

## Bar Chart: Model Performance by Medical Specialty

### Overview

The image presents a series of bar charts comparing the performance of three language models (LLama3, GPT-3.5, and GPT-4) across five medical specialties: Cardiology, Gastroenterology, Neurology, Pulmonology, and Endocrinology. The performance is measured by three metrics: Accuracy (Acc), Completeness (Comp), and Faithfulness (Faith). Each specialty has its own subplot, showing the performance of each model on the three metrics.

### Components/Axes

* **X-axis:** Categorical, representing the language models: LLama3, GPT-3.5, and GPT-4. Each model is evaluated within each medical specialty.

* **Y-axis:** Numerical, ranging from 0.0 to 1.0, representing the performance score.

* **Subplots:** Five subplots, each representing a different medical specialty: Cardiology, Gastroenterology, Neurology, Pulmonology, and Endocrinology.

* **Legend:** Located at the top of the image.

* Acc: Accuracy (light green)

* Comp: Completeness (light orange)

* Faith: Faithfulness (light blue)

### Detailed Analysis

**Cardiology:**

* **Acc (light green):** LLama3 ~0.45, GPT-3.5 ~0.45, GPT-4 ~0.47

* **Comp (light orange):** LLama3 ~0.27, GPT-3.5 ~0.30, GPT-4 ~0.37

* **Faith (light blue):** LLama3 ~0.12, GPT-3.5 ~0.10, GPT-4 ~0.12

**Gastroenterology:**

* **Acc (light green):** LLama3 ~0.65, GPT-3.5 ~0.43, GPT-4 ~0.78

* **Comp (light orange):** LLama3 ~0.15, GPT-3.5 ~0.25, GPT-4 ~0.30

* **Faith (light blue):** LLama3 ~0.08, GPT-3.5 ~0.08, GPT-4 ~0.15

**Neurology:**

* **Acc (light green):** LLama3 ~0.80, GPT-3.5 ~0.73, GPT-4 ~0.85

* **Comp (light orange):** LLama3 ~0.30, GPT-3.5 ~0.35, GPT-4 ~0.45

* **Faith (light blue):** LLama3 ~0.20, GPT-3.5 ~0.10, GPT-4 ~0.15

**Pulmonology:**

* **Acc (light green):** LLama3 ~0.45, GPT-3.5 ~0.35, GPT-4 ~0.70

* **Comp (light orange):** LLama3 ~0.25, GPT-3.5 ~0.35, GPT-4 ~0.30

* **Faith (light blue):** LLama3 ~0.10, GPT-3.5 ~0.10, GPT-4 ~0.20

**Endocrinology:**

* **Acc (light green):** LLama3 ~0.40, GPT-3.5 ~0.40, GPT-4 ~0.50

* **Comp (light orange):** LLama3 ~0.28, GPT-3.5 ~0.28, GPT-4 ~0.30

* **Faith (light blue):** LLama3 ~0.10, GPT-3.5 ~0.12, GPT-4 ~0.22

### Key Observations

* **Accuracy:** GPT-4 generally has the highest accuracy across all specialties, with Neurology showing the highest accuracy scores overall.

* **Completeness:** Completeness scores are generally lower than accuracy scores across all models and specialties. GPT-4 tends to have slightly higher completeness scores.

* **Faithfulness:** Faithfulness scores are the lowest among the three metrics, indicating a potential area for improvement for all models.

* **Specialty Variation:** The performance of the models varies significantly across different medical specialties, suggesting that some specialties are more challenging than others.

### Interpretation

The bar charts provide a comparative analysis of the performance of LLama3, GPT-3.5, and GPT-4 in different medical domains. GPT-4 generally outperforms the other models in terms of accuracy, completeness, and faithfulness. However, the low faithfulness scores across all models suggest that there is room for improvement in generating reliable and trustworthy information. The variation in performance across specialties highlights the importance of tailoring language models to specific domains to optimize their effectiveness. The data suggests that while GPT-4 is a strong performer, further research and development are needed to improve the faithfulness of language models in medical applications.

</details>

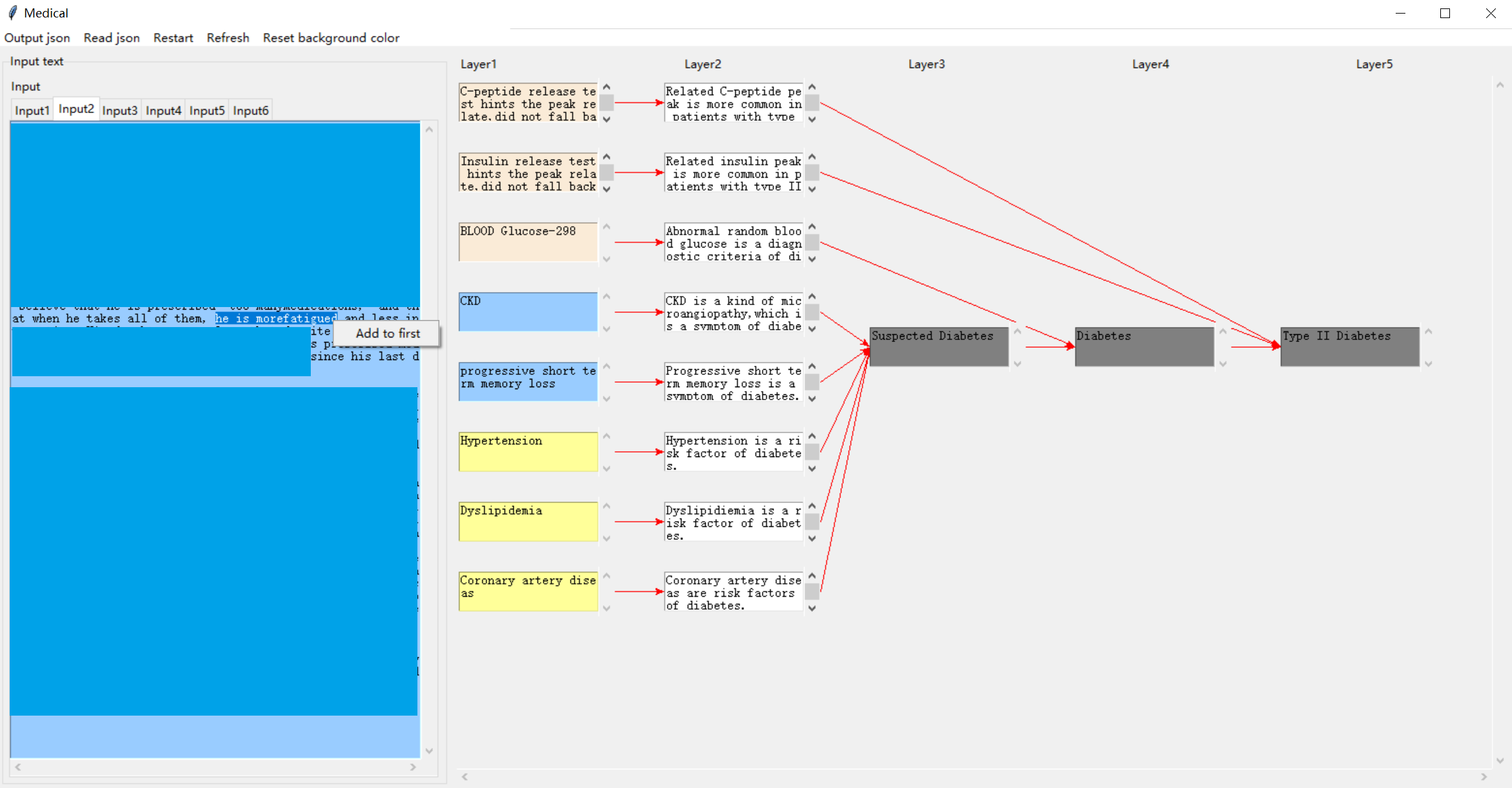

Figure 5: Performance of LLama3 70B, GPT-3.5, and GPT-4 under different medical domains. We use the task with $\mathcal{G}$ .

Performance in individual domains. Figure 5 summarizes the performance of LLama3 70B, GPT-3.5, and GPT-4 across different medical domains, evaluated using Acc ${}^{\text{cat}}$ , $\textit{Obs}^{\text{comp}}$ , and $\textit{Exp}^{\text{all}}$ . Neurology gives the best diagnostic accuracy, where GPT-4 achieved an accuracy of 0.806. LLama3 also performed well (0.786). In terms of $\textit{Obs}^{\text{comp}}$ and $\textit{Exp}^{\text{all}}$ , GPT-4’s results were 0.458 and 0.340, respectively, with the smallest difference between the two scores among all domains. This smaller gap indicates that in Neurology, the common observations in prediction and ground truth lead to the correct diagnoses with faithful rationalizations. However, GPT-4 yields a higher diagnostic accuracy score while a lower explanatory score, suggesting that the observations captured by the model or their rationalizations differ from human doctors.

For Cardiology and Endocrinology, the diagnostic accuracy of the models is relatively low (GPT-4 achieved 0.458 and 0.468, respectively). Nevertheless, $\textit{Obs}^{\text{comp}}$ and $\textit{Exp}^{\text{all}}$ are relatively high. Endocrinology results in lower diagnostic accuracy and higher explanatory performance. A smaller gap may imply that in these two domains, successful predictions are associated with observations similar to those of human doctors, and the reasoning process may be analogous. Conversely, in Gastroenterology, higher Acc ${}^{\text{cat}}$ ) is accompanied by lower $\textit{Obs}^{\text{comp}}$ and $\textit{Exp}^{\text{all}}$ (especially for LLama3), potentially indicating a significant divergence in the reasoning process from human doctors. Overall, DiReCT demonstrates that the degree of alignment between the model’s diagnostic reasoning ability and that of human doctors varies across different medical domains.

Table 5: Consistency of automated evaluation metrics with human judgments.

| Model | Observation | Rationalization |

| --- | --- | --- |

| LLama3 8B | 0.887 | 0.801 |

| GPT-4 turbo | 0.902 | 0.836 |

Reliability of automatic evaluation. We randomly pick out 100 samples from DiReCT and their prediction by GPT-4 over the task with $\mathcal{G}$ to assess the consistency of our automated metrics to evaluate the observational and explanatory performance in Section 3.3 to human judgments. Three physicians joined this experiment. For each prediction $\hat{o}∈\hat{\mathcal{O}}$ , they are asked to find a similar observation in ground truth $\mathcal{O}$ . For explanatory metrics, they verify if each prediction $\hat{z}∈\hat{\mathcal{E}}$ for $\hat{o}∈\hat{\mathcal{O}}$ align with ground-truth $z∈\mathcal{E}$ corresponding to $o$ . A prediction and a ground truth are deemed aligned for both assessments if at least two specialists agree. We compare LLama3’s and GPT-4’s judgments to explore if there is a gap between these LLMs. As summarized in Table 5, GPT-4 achieves the best results, with LLama3 8B also displaying a similar performance. From these results, we argue that our automated evaluation metrics are consistent with human judgments, and LLama3 is sufficient for this evaluation, allowing the cost-efficient option.

A prediction example. Figure 6 shows a sample generated by GPT-4. The ground-truth PDD of the input clinical note is Hemorrhagic Stroke. In this figure, purple, orange, and red indicate explanations only in the ground truth, only in prediction, and common in both, respectively; therefore, red is a successful prediction of an explanation, while purple and orange are a false negative and false positive. GPT-4 treats the observation of aurosis fugax as the criteria for diagnosing Ischemic Stroke. However, this observation only supports Suspected Stroke. Conversely, observation thalamic hematoma, which is the key indicator of Hemorrhagic Stroke, is regarded as a less important clue. Such observation-diagnosis correspondence errors lead to the model’s misdiagnosis. More samples are available in the supplementary material.

<details>

<summary>x6.png Details</summary>

### Visual Description

## Clinical Reasoning Diagram: Stroke Diagnosis

### Overview

The image presents a clinical reasoning diagram that connects a clinical note with potential diagnoses of stroke (hemorrhagic or ischemic). It links findings from the clinical note to rationales, which then lead to a suspected stroke diagnosis. The diagram uses arrows to show the relationships between the clinical observations, the rationale behind them, and the final diagnosis.

### Components/Axes

* **Clinical Note:** A block of text containing patient history, physical exam findings, and pertinent results from a CT scan.

* **Rationale:** A series of text boxes explaining the medical reasoning behind linking clinical findings to potential diagnoses.

* **Diagnosis:** Two possible diagnoses: Hemorrhagic Stroke and Ischemic Stroke.

* **Suspected Stroke:** An intermediate diagnosis node.

* **Arrows:** Purple and orange arrows indicate the flow of reasoning from clinical findings to rationales and then to diagnoses. Red arrows also connect clinical findings to rationales.

### Detailed Analysis or ### Content Details

**Clinical Note:**

* **Present Illness:** The patient underwent a right carotid procedure and per notes, it was uneventful. This was done as an elective procedure after he had episodes of amaurosis fugax.

* **Past Medical History:** +HTN (Hypertension), +Diverticulosis, +CHF (Congestive Heart Failure).

* **Physical Exam:** Mental status: Awake, doesn't verbalize. Can only say words. Comprehension is relatively spared, can answer with to yes and No type questions.

* **Pertinent Results:** CT HEAD W/O CONTRAST. FINDINGS: A cm left thalamic hematoma appears stable when compared to from outside the imaged approximately ago. There is an increased amount of layering hemorrhage in the of the left lateral ventricle. A small amount of intraventricular blood is noted in the of the right lateral ventricle. There is surrounding , which appears from prior CT.

**Rationale:**

* **Transient vision loss typically indicates a transient ischemic attack, often associated with carotid artery disease.** (Connected to "episodes of amaurosis fugax" with an orange arrow)

* **Carotid artery stenosis is an important cause of insufficient blood flow to the brain and is associated with risk of stroke.** (Connected to "carotid stenosis" with a purple arrow)

* **CHF reduced ability of the heart to pump blood may lead to increase the risk of stroke.** (Connected to "+CHF" with a red arrow)

* **The presence of a thalamic hematoma is directly related to symptoms of stroke, indicating brain bleeding which can lead to stroke.** (Connected to "thalamic hematoma" and "layering hemorrhage" with orange arrows)

* **Thalamus hematoma means brain bleeding which is a common diagnostic criterion for hemorrhagic stroke.** (Connected to "thalamic hematoma" with a purple arrow)

**Diagnosis:**

* **Suspected Stroke:** A central node that both Hemorrhagic Stroke and Ischemic Stroke connect to.

* **Hemorrhagic Stroke:** One of the two possible diagnoses.

* **Ischemic Stroke:** The other possible diagnosis.

**Connections:**

* "episodes of amaurosis fugax" (Clinical Note) -> "Transient vision loss typically indicates a transient ischemic attack, often associated with carotid artery disease." (Rationale) -> "Suspected Stroke" (Diagnosis) - Orange Arrows

* "carotid stenosis" (Clinical Note) -> "Carotid artery stenosis is an important cause of insufficient blood flow to the brain and is associated with risk of stroke." (Rationale) -> "Suspected Stroke" (Diagnosis) - Purple Arrows

* "+CHF" (Clinical Note) -> "CHF reduced ability of the heart to pump blood may lead to increase the risk of stroke." (Rationale) -> "Suspected Stroke" (Diagnosis) - Red Arrows

* "thalamic hematoma" and "layering hemorrhage" (Clinical Note) -> "The presence of a thalamic hematoma is directly related to symptoms of stroke, indicating brain bleeding which can lead to stroke." (Rationale) -> "Suspected Stroke" (Diagnosis) - Orange Arrows

* "thalamic hematoma" (Clinical Note) -> "Thalamus hematoma means brain bleeding which is a common diagnostic criterion for hemorrhagic stroke." (Rationale) -> "Hemorrhagic Stroke" (Diagnosis) - Purple Arrows

### Key Observations

* The diagram connects clinical findings to potential stroke diagnoses through a series of rationales.

* The presence of a thalamic hematoma is strongly linked to a diagnosis of hemorrhagic stroke.

* Episodes of amaurosis fugax and carotid stenosis are linked to a general "Suspected Stroke" diagnosis, which could be either hemorrhagic or ischemic.

* CHF is also linked to a general "Suspected Stroke" diagnosis.

### Interpretation

The diagram illustrates the clinical reasoning process in diagnosing a stroke. It shows how specific findings from a patient's history, physical exam, and CT scan can be used to arrive at a diagnosis. The presence of a thalamic hematoma is a key indicator of hemorrhagic stroke, while other factors like amaurosis fugax, carotid stenosis, and CHF contribute to a general suspicion of stroke, which could be either hemorrhagic or ischemic. The diagram highlights the importance of considering multiple factors and their relationships when making a clinical diagnosis. The diagram is useful for understanding the thought process behind diagnosing a stroke and how different clinical findings can point to different types of stroke.

</details>

Figure 6: An example prediction for a clinical note with PDD of Hemorrhagic Stroke by GPT-4.

6 Conclusion and Limitations

We proposed DiReCT as the first benchmark for evaluating the diagnostic reasoning ability of LLMs with interpretability by supplying external knowledge as a graph. Our evaluations reveal a notable disparity between current leading-edge LLMs and human experts, underscoring the urgent need for AI models that can perform reliable and interpretable reasoning in clinical environments. DiReCT can be easily extended to more challenging settings by removing the knowledge graph from the input, facilitating evaluations of future LLMs.

Limitations. DiReCT encompasses only a subset of disease categories and considers only one PDD, omitting the inter-diagnostic relationships due to their complexity—a significant challenge even for human doctors. Additionally, our baseline may not use optimal prompts, chain-of-thought reasoning, or address issues related to hallucinations in task responses. Our dataset is solely intended for model evaluation but not for use in clinical environments. The use of the diagnostic knowledge graph is also limited to serving merely as a part of input. Future work will focus on constructing a more comprehensive disease dataset and developing an extensive diagnostic knowledge graph.

Acknowledgments and Disclosure of Funding

This work was supported by World Premier International Research Center Initiative (WPI), MEXT, Japan. This work is also supported by JSPS KAKENHI 24K20795 and Dalian Haichuang Project for Advanced Talents.

References

- Zhao et al. [2023] Wayne Xin Zhao, Kun Zhou, Junyi Li, Tianyi Tang, Xiaolei Wang, Yupeng Hou, Yingqian Min, Beichen Zhang, Junjie Zhang, Zican Dong, Yifan Du, Chen Yang, Yushuo Chen, Zhipeng Chen, Jinhao Jiang, Ruiyang Ren, Yifan Li, Xinyu Tang, Zikang Liu, Peiyu Liu, Jian-Yun Nie, and Ji-Rong Wen. A survey of large language models. arXiv preprint arXiv:2303.18223, 2023.

- Min et al. [2023] Bonan Min, Hayley Ross, Elior Sulem, Amir Pouran Ben Veyseh, Thien Huu Nguyen, Oscar Sainz, Eneko Agirre, Ilana Heintz, and Dan Roth. Recent advances in natural language processing via large pre-trained language models: A survey. ACM Computing Surveys, 56(2):1–40, 2023.

- Anil et al. [2023] Rohan Anil, Andrew M Dai, Orhan Firat, Melvin Johnson, Dmitry Lepikhin, Alexandre Passos, Siamak Shakeri, Emanuel Taropa, Paige Bailey, Zhifeng Chen, et al. Palm 2 technical report. arXiv preprint arXiv:2305.10403, 2023.

- Han et al. [2023] Tianyu Han, Lisa C Adams, Jens-Michalis Papaioannou, Paul Grundmann, Tom Oberhauser, Alexander Löser, Daniel Truhn, and Keno K Bressem. Medalpaca–an open-source collection of medical conversational ai models and training data. arXiv preprint arXiv:2304.08247, 2023.

- Jin et al. [2021] Di Jin, Eileen Pan, Nassim Oufattole, Wei-Hung Weng, Hanyi Fang, and Peter Szolovits. What disease does this patient have? a large-scale open domain question answering dataset from medical exams. Applied Sciences, 11(14):6421, 2021.

- OpenAI [2023a] OpenAI. GPT-4 Technical Report. CoRR, abs/2303.08774, 2023a. doi: 10.48550/arXiv.2303.08774. URL https://doi.org/10.48550/arXiv.2303.08774.

- Bubeck et al. [2023] Sébastien Bubeck, Varun Chandrasekaran, Ronen Eldan, Johannes Gehrke, Eric Horvitz, Ece Kamar, Peter Lee, Yin Tat Lee, Yuanzhi Li, Scott Lundberg, et al. Sparks of artificial general intelligence: Early experiments with gpt-4. arXiv preprint arXiv:2303.12712, 2023.

- Nori et al. [2023] Harsha Nori, Nicholas King, Scott Mayer McKinney, Dean Carignan, and Eric Horvitz. Capabilities of gpt-4 on medical challenge problems. arXiv preprint arXiv:2303.13375, 2023.

- Liévin et al. [2024] Valentin Liévin, Christoffer Egeberg Hother, Andreas Geert Motzfeldt, and Ole Winther. Can large language models reason about medical questions? Patterns, 5(3), 2024.

- Pal et al. [2022] Ankit Pal, Logesh Kumar Umapathi, and Malaikannan Sankarasubbu. MedMCQA: A large-scale multi-subject multi-choice dataset for medical domain question answering. In Conference on health, inference, and learning, pages 248–260. PMLR, 2022.

- Li et al. [2023] Dongfang Li, Jindi Yu, Baotian Hu, Zhenran Xu, and Min Zhang. ExplainCPE: A free-text explanation benchmark of chinese pharmacist examination. arXiv preprint arXiv:2305.12945, 2023.

- Chen et al. [2024] Hanjie Chen, Zhouxiang Fang, Yash Singla, and Mark Dredze. Benchmarking large language models on answering and explaining challenging medical questions. arXiv preprint arXiv:2402.18060, 2024.

- Jullien et al. [2023] Mael Jullien, Marco Valentino, Hannah Frost, Paul O’Regan, Donal Landers, and André Freitas. Semeval-2023 task 7: Multi-evidence natural language inference for clinical trial data. arXiv preprint arXiv:2305.02993, 2023.

- Gao et al. [2023a] Yanjun Gao, Ruizhe Li, John Caskey, Dmitriy Dligach, Timothy Miller, Matthew M Churpek, and Majid Afshar. Leveraging a medical knowledge graph into large language models for diagnosis prediction. arXiv preprint arXiv:2308.14321, 2023a.

- Johnson et al. [2023] Alistair E. W. Johnson, Lucas Bulgarelli, Lu Shen, Alvin Gayles, Ayad Shammout, Steven Horng, Tom J. Pollard, Sicheng Hao, Benjamin Moody, Brian Gow, Li-wei H. Lehman, Leo A. Celi, and Roger G. Mark. MIMIC-IV, a freely accessible electronic health record dataset. Scientific data, 10(1):1, 2023.

- Xi et al. [2023] Zhiheng Xi, Wenxiang Chen, Xin Guo, Wei He, Yiwen Ding, Boyang Hong, Ming Zhang, Junzhe Wang, Senjie Jin, Enyu Zhou, Rui Zheng, Xiaoran Fan, Xiao Wang, Limao Xiong, Yuhao Zhou, Weiran Wang, Changhao Jiang, Yicheng Zou, Xiangyang Liu, Zhangyue Yin, Shihan Dou, Rongxiang Weng, Wensen Cheng, Qi Zhang, Wenjuan Qin, Yongyan Zheng, Xipeng Qiu, Xuanjing Huang, and Tao Gui. The rise and potential of large language model based agents: A survey, 2023.

- Tang et al. [2023] Xiangru Tang, Anni Zou, Zhuosheng Zhang, Yilun Zhao, Xingyao Zhang, Arman Cohan, and Mark Gerstein. Medagents: Large language models as collaborators for zero-shot medical reasoning. arXiv preprint arXiv:2311.10537, 2023.

- Middleton et al. [2013] Blackford Middleton, Meryl Bloomrosen, Mark A Dente, Bill Hashmat, Ross Koppel, J Marc Overhage, Thomas H Payne, S Trent Rosenbloom, Charlotte Weaver, and Jiajie Zhang. Enhancing patient safety and quality of care by improving the usability of electronic health record systems: recommendations from amia. Journal of the American Medical Informatics Association, 20(e1):e2–e8, 2013.

- Liu et al. [2022] Jinghui Liu, Daniel Capurro, Anthony Nguyen, and Karin Verspoor. “note bloat” impacts deep learning-based nlp models for clinical prediction tasks. Journal of biomedical informatics, 133:104149, 2022.

- Danilevsky et al. [2020] Marina Danilevsky, Kun Qian, Ranit Aharonov, Yannis Katsis, Ban Kawas, and Prithviraj Sen. A survey of the state of explainable AI for natural language processing. In Proceedings of the 1st Conference of the Asia-Pacific Chapter of the Association for Computational Linguistics and the 10th International Joint Conference on Natural Language Processing, pages 447–459, 2020.

- Gurrapu et al. [2023] Sai Gurrapu, Ajay Kulkarni, Lifu Huang, Ismini Lourentzou, and Feras A Batarseh. Rationalization for explainable nlp: A survey. Frontiers in Artificial Intelligence, 6, 2023.

- Camburu et al. [2018] Oana-Maria Camburu, Tim Rocktäschel, Thomas Lukasiewicz, and Phil Blunsom. e-snli: Natural language inference with natural language explanations. Advances in Neural Information Processing Systems, 31, 2018.

- Rajani et al. [2019] Nazneen Fatema Rajani, Bryan McCann, Caiming Xiong, and Richard Socher. Explain yourself! leveraging language models for commonsense reasoning. In Proceedings of the 57th Annual Meeting of the Association for Computational Linguistics, pages 4932–4942, Florence, Italy, 2019.

- DeYoung et al. [2020] Jay DeYoung, Sarthak Jain, Nazneen Fatema Rajani, Eric Lehman, Caiming Xiong, Richard Socher, and Byron C. Wallace. ERASER: A benchmark to evaluate rationalized NLP models. In Proceedings of the 58th Annual Meeting of the Association for Computational Linguistics, pages 4443–4458, 2020.

- Jhamtani and Clark [2020] Harsh Jhamtani and Peter Clark. Learning to explain: Datasets and models for identifying valid reasoning chains in multihop question-answering. In Proceedings of the 2020 Conference on Empirical Methods in Natural Language Processing, page 137–150, 2020.

- Tafjord et al. [2021] Oyvind Tafjord, Bhavana Dalvi Mishra, and Peter Clark. Proofwriter: Generating implications, proofs, and abductive statements over natural language. In Findings of the Association for Computational Linguistics: ACL-IJCNLP, page 3621–3634, 2021.

- Zhao et al. [2021] Chen Zhao, Chenyan Xiong, Jordan Boyd-Graber, and Hal Daumé III. Multi-step reasoning over unstructured text with beam dense retrieval. In Proceedings of the 2021 Conference of the North American Chapter of the Association for Computational Linguistics: Human Language Technologies, pages 4635–4641, 2021.

- Dalvi et al. [2021] Bhavana Dalvi, Peter Jansen, Oyvind Tafjord, Zhengnan Xie, Hannah Smith, Leighanna Pipatanangkura, and Peter Clark. Explaining answers with entailment trees. In Proceedings of the 2021 Conference on Empirical Methods in Natural Language Processing, pages 7358–7370, 2021.

- Zhang et al. [2024] Yifan Zhang, Jingqin Yang, Yang Yuan, and Andrew Chi-Chih Yao. Cumulative reasoning with large language models. In ICLR 2024 Workshop on Bridging the Gap Between Practice and Theory in Deep Learning, 2024. URL https://openreview.net/forum?id=XAAYyRxTlQ.

- Gao et al. [2022] Yanjun Gao, Dmitriy Dligach, Timothy Miller, Samuel Tesch, Ryan Laffin, Matthew M. Churpek, and Majid Afshar. Hierarchical annotation for building a suite of clinical natural language processing tasks: Progress note understanding. In Proceedings of the Thirteenth Language Resources and Evaluation Conference, pages 5484–5493, Marseille, France, 2022. European Language Resources Association.

- Zack et al. [2023] Travis Zack, Gurpreet Dhaliwal, Rabih Geha, Mary Margaretten, Sara Murray, and Julian C Hong. A clinical reasoning-encoded case library developed through natural language processing. Journal of General Internal Medicine, 38(1):5–11, 2023.

- Gao et al. [2023b] Yanjun Gao, Dmitriy Dligach, Timothy Miller, John Caskey, Brihat Sharma, Matthew M Churpek, and Majid Afshar. Dr. bench: Diagnostic reasoning benchmark for clinical natural language processing. Journal of Biomedical Informatics, 138:104286, 2023b.

- Weed [1970] L.L. Weed. Medical Records, Medical Education, and Patient Care: The Problem-oriented Record as a Basic Tool. Press of Case Western Reserve University, 1970. ISBN 9780815191889.

- Bodenreider [2004] Olivier Bodenreider. The unified medical language system (umls): integrating biomedical terminology. Nucleic acids research, 32(suppl_1):D267–D270, 2004.

- AI@Meta [2024] AI@Meta. Llama 3 model card. 2024. URL https://github.com/meta-llama/llama3/blob/main/MODEL_CARD.md.

- Tunstall et al. [2023] Lewis Tunstall, Edward Beeching, Nathan Lambert, Nazneen Rajani, Kashif Rasul, Younes Belkada, Shengyi Huang, Leandro von Werra, Clémentine Fourrier, Nathan Habib, et al. Zephyr: Direct distillation of lm alignment. arXiv preprint arXiv:2310.16944, 2023.

- Jiang et al. [2023] Albert Q Jiang, Alexandre Sablayrolles, Arthur Mensch, Chris Bamford, Devendra Singh Chaplot, Diego de las Casas, Florian Bressand, Gianna Lengyel, Guillaume Lample, Lucile Saulnier, et al. Mistral 7b. arXiv preprint arXiv:2310.06825, 2023.

- OpenAI [2023b] OpenAI. Introducing ChatGPT and Whisper APIs. 2023b. URL https://openai.com/blog/introducing-chatgpt-and-whisper-apis.

- Byrne et al. [2024] Robert A Byrne, Xavier Rossello, JJ Coughlan, Emanuele Barbato, Colin Berry, Alaide Chieffo, Marc J Claeys, Gheorghe-Andrei Dan, Marc R Dweck, Mary Galbraith, et al. 2023 esc guidelines for the management of acute coronary syndromes: developed by the task force on the management of acute coronary syndromes of the european society of cardiology (esc). European Heart Journal: Acute Cardiovascular Care, 13(1):55–161, 2024.

- Members et al. [2022] Writing Committee Members, Eric M Isselbacher, Ourania Preventza, James Hamilton Black III, John G Augoustides, Adam W Beck, Michael A Bolen, Alan C Braverman, Bruce E Bray, Maya M Brown-Zimmerman, et al. 2022 acc/aha guideline for the diagnosis and management of aortic disease: a report of the american heart association/american college of cardiology joint committee on clinical practice guidelines. Journal of the American College of Cardiology, 80(24):e223–e393, 2022.

- Joglar et al. [2024] José A Joglar, Mina K Chung, Anastasia L Armbruster, Emelia J Benjamin, Janice Y Chyou, Edmond M Cronin, Anita Deswal, Lee L Eckhardt, Zachary D Goldberger, Rakesh Gopinathannair, et al. 2023 acc/aha/accp/hrs guideline for the diagnosis and management of atrial fibrillation: a report of the american college of cardiology/american heart association joint committee on clinical practice guidelines. Circulation, 149(1):e1–e156, 2024.

- Ommen et al. [2020] Steve R Ommen, Seema Mital, Michael A Burke, Sharlene M Day, Anita Deswal, Perry Elliott, Lauren L Evanovich, Judy Hung, José A Joglar, Paul Kantor, et al. 2020 aha/acc guideline for the diagnosis and treatment of patients with hypertrophic cardiomyopathy: executive summary: a report of the american college of cardiology/american heart association joint committee on clinical practice guidelines. Journal of the American College of Cardiology, 76(25):3022–3055, 2020.

- Heidenreich et al. [2022] Paul A Heidenreich, Biykem Bozkurt, David Aguilar, Larry A Allen, Joni J Byun, Monica M Colvin, Anita Deswal, Mark H Drazner, Shannon M Dunlay, Linda R Evers, et al. 2022 aha/acc/hfsa guideline for the management of heart failure: a report of the american college of cardiology/american heart association joint committee on clinical practice guidelines. Journal of the American College of Cardiology, 79(17):e263–e421, 2022.

- Su et al. [2021] Lilly Su, Rea Mittal, Devyani Ramgobin, Rahul Jain, and Rohit Jain. Current management guidelines on hyperlipidemia: the silent killer. Journal of lipids, 2021(1):9883352, 2021.

- Unger et al. [2020] Thomas Unger, Claudio Borghi, Fadi Charchar, Nadia A Khan, Neil R Poulter, Dorairaj Prabhakaran, Agustin Ramirez, Markus Schlaich, George S Stergiou, Maciej Tomaszewski, et al. 2020 international society of hypertension global hypertension practice guidelines. Hypertension, 75(6):1334–1357, 2020.

- Shah et al. [2021] Shailja C Shah, M Blanca Piazuelo, Ernst J Kuipers, and Dan Li. Aga clinical practice update on the diagnosis and management of atrophic gastritis: expert review. Gastroenterology, 161(4):1325–1332, 2021.

- Gyawali et al. [2024] C Prakash Gyawali, Rena Yadlapati, Ronnie Fass, David Katzka, John Pandolfino, Edoardo Savarino, Daniel Sifrim, Stuart Spechler, Frank Zerbib, Mark R Fox, et al. Updates to the modern diagnosis of gerd: Lyon consensus 2.0. Gut, 73(2):361–371, 2024.

- Kavitt et al. [2019] Robert T Kavitt, Anna M Lipowska, Adjoa Anyane-Yeboa, and Ian M Gralnek. Diagnosis and treatment of peptic ulcer disease. The American journal of medicine, 132(4):447–456, 2019.

- Barkun et al. [2019] Alan N Barkun, Majid Almadi, Ernst J Kuipers, Loren Laine, Joseph Sung, Frances Tse, Grigorios I Leontiadis, Neena S Abraham, Xavier Calvet, Francis KL Chan, et al. Management of nonvariceal upper gastrointestinal bleeding: guideline recommendations from the international consensus group. Annals of internal medicine, 171(11):805–822, 2019.

- McKhann et al. [1984] Guy McKhann, David Drachman, Marshall Folstein, Robert Katzman, Donald Price, and Emanuel M Stadlan. Clinical diagnosis of alzheimer’s disease: Report of the nincds-adrda work group* under the auspices of department of health and human services task force on alzheimer’s disease. Neurology, 34(7):939–939, 1984.

- Igaku-Shoin-Ltd. [2018] Igaku-Shoin-Ltd. Clinical practice guidelines for epilepsy 2018. 2018.

- Lipton et al. [2001] Richard B Lipton, Seymour Diamond, Michael Reed, Merle L Diamond, and Walter F Stewart. Migraine diagnosis and treatment: results from the american migraine study ii. Headache: The Journal of Head and Face Pain, 41(7):638–645, 2001.

- Lublin [2005] Fred D Lublin. Clinical features and diagnosis of multiple sclerosis. Neurologic clinics, 23(1):1–15, 2005.

- Kleindorfer et al. [2021] Dawn O Kleindorfer, Amytis Towfighi, Seemant Chaturvedi, Kevin M Cockroft, Jose Gutierrez, Debbie Lombardi-Hill, Hooman Kamel, Walter N Kernan, Steven J Kittner, Enrique C Leira, et al. 2021 guideline for the prevention of stroke in patients with stroke and transient ischemic attack: a guideline from the american heart association/american stroke association. Stroke, 52(7):e364–e467, 2021.

- Qaseem et al. [2011] Amir Qaseem, Timothy J Wilt, Steven E Weinberger, Nicola A Hanania, Gerard Criner, Thys van der Molen, Darcy D Marciniuk, Tom Denberg, Holger Schünemann, Wisia Wedzicha, et al. Diagnosis and management of stable chronic obstructive pulmonary disease: a clinical practice guideline update from the american college of physicians, american college of chest physicians, american thoracic society, and european respiratory society. Annals of internal medicine, 155(3):179–191, 2011.

- Gupta et al. [2013] Dheeraj Gupta, Ritesh Agarwal, Ashutosh Nath Aggarwal, VN Maturu, Sahajal Dhooria, KT Prasad, Inderpaul S Sehgal, Lakshmikant B Yenge, Aditya Jindal, Navneet Singh, et al. Guidelines for diagnosis and management of chronic obstructive pulmonary disease: Joint ics/nccp (i) recommendations. Lung India, 30(3):228–267, 2013.

- Olson and Davis [2020] Gregory Olson and Andrew M Davis. Diagnosis and treatment of adults with community-acquired pneumonia. Jama, 323(9):885–886, 2020.

- Konstantinides et al. [2020] Stavros V Konstantinides, Guy Meyer, Cecilia Becattini, Héctor Bueno, Geert-Jan Geersing, Veli-Pekka Harjola, Menno V Huisman, Marc Humbert, Catriona Sian Jennings, David Jiménez, et al. 2019 esc guidelines for the diagnosis and management of acute pulmonary embolism developed in collaboration with the european respiratory society (ers) the task force for the diagnosis and management of acute pulmonary embolism of the european society of cardiology (esc). European heart journal, 41(4):543–603, 2020.

- Lewinsohn et al. [2017] David M Lewinsohn, Michael K Leonard, Philip A LoBue, David L Cohn, Charles L Daley, Ed Desmond, Joseph Keane, Deborah A Lewinsohn, Ann M Loeffler, Gerald H Mazurek, et al. Official american thoracic society/infectious diseases society of america/centers for disease control and prevention clinical practice guidelines: diagnosis of tuberculosis in adults and children. Clinical Infectious Diseases, 64(2):e1–e33, 2017.

- Charmandari et al. [2014] Evangelia Charmandari, Nicolas C Nicolaides, and George P Chrousos. Adrenal insufficiency. The Lancet, 383(9935):2152–2167, 2014.