# Scaling LLM Test-Time Compute Optimally can be More Effective than Scaling Model Parameters

**Authors**: Charlie Snell, Jaehoon Lee, Kelvin Xu, Aviral Kumar

> Work done during an internship at Google DeepMindUC Berkeley

> Google DeepMind

> Equal advisingGoogle DeepMind

\pdftrailerid

redacted Corresponding author: csnell22@berkeley.edu

Abstract

Enabling LLMs to improve their outputs by using more test-time computation is a critical step towards building generally self-improving agents that can operate on open-ended natural language. In this paper, we study the scaling of inference-time computation in LLMs, with a focus on answering the question: if an LLM is allowed to use a fixed but non-trivial amount of inference-time compute, how much can it improve its performance on a challenging prompt? Answering this question has implications not only on the achievable performance of LLMs, but also on the future of LLM pretraining and how one should tradeoff inference-time and pre-training compute. Despite its importance, little research attempted to understand the scaling behaviors of various test-time inference methods. Moreover, current work largely provides negative results for a number of these strategies. In this work, we analyze two primary mechanisms to scale test-time computation: (1) searching against dense, process-based verifier reward models; and (2) updating the model’s distribution over a response adaptively, given the prompt at test time. We find that in both cases, the effectiveness of different approaches to scaling test-time compute critically varies depending on the difficulty of the prompt. This observation motivates applying a “compute-optimal” scaling strategy, which acts to most effectively allocate test-time compute adaptively per prompt. Using this compute-optimal strategy, we can improve the efficiency of test-time compute scaling by more than 4 compared to a best-of-N baseline. Additionally, in a FLOPs-matched evaluation, we find that on problems where a smaller base model attains somewhat non-trivial success rates, test-time compute can be used to outperform a 14 larger model.

1 Introduction

Humans tend to think for longer on difficult problems to reliably improve their decisions [18, 9, 17]. Can we instill a similar capability into today’s large language models (LLMs)? More specifically, given a challenging input query, can we enable language models to most effectively make use of additional computation at test time so as to improve the accuracy of their response? In theory, by applying additional computation at test time, an LLM should be able to do better than what it was trained to do. In addition, such a capability at test-time also has the potential to unlock new avenues in agentic and reasoning tasks [34, 47, 28]. For instance, if pre-trained model size can be traded off for additional computation during inference, this would enable LLM deployment in use-cases where smaller on-device models could be used in place of datacenter scale LLMs. Automating the generation of improved model outputs by using additional inference-time computation also provides a path towards a general self-improvement algorithm that can function with reduced human supervision.

<details>

<summary>x1.png Details</summary>

### Visual Description

# Technical Document Extraction: Iteratively Revising Answers at Test-time

This document contains a detailed extraction of data and trends from a technical visualization consisting of two primary charts regarding Large Language Model (LLM) performance optimization.

---

## 1. Overall Header

**Title:** Iteratively Revising Answers at Test-time

---

## 2. Left Chart: Compute Optimal Revisions

### Component Isolation

* **Region:** Main Chart (Left)

* **Title:** Compute Optimal Revisions

* **Y-Axis Label:** MATH Accuracy (%)

* **Y-Axis Markers:** 20, 25, 30, 35, 40, 45

* **X-Axis Label:** Generation Budget

* **X-Axis Markers (Log Scale):** $2^1, 2^3, 2^5, 2^7$

* **Legend Location:** Top Left [x≈0.05, y≈0.85]

### Legend and Data Series Identification

1. **Majority (Dashed Line):** Represents the baseline performance using majority voting.

2. **Best-of-N Weighted (Solid Line):** Represents performance using a weighted selection method.

3. **Compute Optimal (Blue Points/Lines):** Data series focused on optimized compute allocation.

4. **Parallel (Red Points/Lines):** Data series focused on parallel generation.

### Trend Verification

* **General Trend:** All four data series show a logarithmic growth pattern. Accuracy increases sharply as the generation budget moves from $2^0$ to $2^4$, then begins to plateau as it approaches $2^8$.

* **Comparison:** The solid lines (Best-of-N Weighted) consistently outperform the dashed lines (Majority). Within the solid lines, the Blue (Compute Optimal) series begins to pull away from the Red (Parallel) series as the budget increases beyond $2^4$.

### Data Point Extraction (Approximate Values)

| Generation Budget ($2^x$) | Compute Optimal (Solid Blue) | Parallel (Solid Red) | Compute Optimal (Dashed Blue) | Parallel (Dashed Red) |

| :--- | :--- | :--- | :--- | :--- |

| $2^0$ (1) | ~18% | ~18% | ~18% | ~18% |

| $2^1$ (2) | ~24% | ~24% | ~19% | ~19% |

| $2^2$ (4) | ~29% | ~29% | ~24% | ~23% |

| $2^3$ (8) | ~33% | ~33% | ~28% | ~28% |

| $2^4$ (16) | ~36% | ~36% | ~32% | ~31% |

| $2^5$ (32) | ~39% | ~38% | ~34% | ~33% |

| $2^6$ (64) | ~41% | ~39% | ~36% | ~35% |

| $2^7$ (128) | ~42% | ~40% | ~37% | ~36% |

| $2^8$ (256) | ~44% | ~40% | ~38% | ~36% |

---

## 3. Right Chart: Comparing Test-time and Pretraining Compute

### Component Isolation

* **Region:** Main Chart (Right)

* **Title:** Comparing Test-time and Pretraining Compute in a FLOPs Matched Evaluation

* **Y-Axis Label:** Relative Improvement in Accuracy From Test-time Compute (%)

* **Y-Axis Markers:** -40, -30, -20, -10, 0, 10, 20, 30

* **X-Axis Label:** Ratio of Inference Tokens to Pretraining Tokens

* **X-Axis Categories:** $<<1$, $\approx 1$, $>>1$

* **Legend Location:** Bottom Left [x≈0.55, y≈0.15]

### Legend Identification

* **Green Bar:** Easy Questions

* **Blue Bar:** Medium Questions

* **Orange Bar:** Hard Questions

### Trend Verification

* **$<<1$ Ratio:** All question difficulties show positive improvement when test-time compute is much lower than pretraining compute. Medium questions benefit the most.

* **$\approx 1$ Ratio:** Easy and Medium questions still show improvement, but Hard questions show a significant performance degradation (-11.9%).

* **$>>1$ Ratio:** Only Easy questions show a slight improvement (+5.4%). Medium and Hard questions show massive performance drops, with Hard questions being the most negatively impacted (-37.2%).

### Data Table Extraction

| Ratio Category | Easy Questions (Green) | Medium Questions (Blue) | Hard Questions (Orange) |

| :--- | :--- | :--- | :--- |

| **$<<1$** | +21.6% | +27.8% | +11.8% |

| **$\approx 1$** | +16.7% | +3.5% | -11.9% |

| **$>>1$** | +5.4% | -24.3% | -37.2% |

---

## 4. Summary of Findings

The data suggests that while increasing the generation budget (test-time compute) generally improves accuracy, there is a point of diminishing returns. Furthermore, the effectiveness of test-time compute is highly dependent on the ratio of inference tokens to pretraining tokens; as this ratio increases (meaning more compute is spent at inference relative to training), the accuracy on medium and hard questions degrades significantly, while easy questions remain relatively stable.

</details>

<details>

<summary>x2.png Details</summary>

### Visual Description

# Technical Data Extraction: Test-time Search Against a PRM Verifier

This document provides a comprehensive extraction of the data and trends presented in the provided image, which contains two technical charts related to machine learning performance.

## 1. General Metadata

* **Main Title:** Test-time Search Against a PRM Verifier

* **Language:** English

* **Layout:** Two side-by-side plots (Left: Line Graph; Right: Bar Chart).

---

## 2. Left Plot: Compute Optimal Search

### Component Isolation

* **Header:** Compute Optimal Search

* **Y-Axis:** MATH Accuracy (%)

* **Range:** 10 to 45

* **Markers:** 10, 15, 20, 25, 30, 35, 40, 45

* **X-Axis:** Generation Budget

* **Scale:** Logarithmic (Base 2)

* **Markers:** $2^1, 2^3, 2^5, 2^7, 2^9$

* **Legend [Top-Left]:**

* **Red (Circle):** Majority

* **Purple (Circle):** ORM Best-of-N Weighted

* **Green (Circle):** PRM Best-of-N Weighted

* **Blue (Circle):** PRM Compute Optimal

### Trend Verification & Data Extraction

All four series show a positive correlation between Generation Budget and MATH Accuracy, following a logarithmic growth curve (diminishing returns as budget increases).

1. **PRM Compute Optimal (Blue):**

* **Trend:** Steepest initial ascent. It outperforms all other methods significantly in the mid-range budget ($2^2$ to $2^6$) before converging toward the PRM Best-of-N line at the highest budget.

* **Key Points:** Starts at ~10.5% ($2^0$). Reaches ~33% at $2^4$. Peaks at ~39% at $2^8$.

2. **PRM Best-of-N Weighted (Green):**

* **Trend:** Steady upward slope, consistently higher than ORM and Majority.

* **Key Points:** Starts at ~10.5%. Reaches ~29% at $2^4$. Ends at ~38% at $2^9$.

3. **ORM Best-of-N Weighted (Purple):**

* **Trend:** Follows a similar trajectory to PRM Best-of-N but remains consistently 2-4 percentage points lower.

* **Key Points:** Starts at ~10.5%. Reaches ~28% at $2^4$. Ends at ~34% at $2^9$.

4. **Majority (Red):**

* **Trend:** The lowest performing baseline. Shows the slowest rate of improvement.

* **Key Points:** Starts at ~10.5%. Reaches ~23% at $2^4$. Ends at ~29% at $2^9$.

---

## 3. Right Plot: Comparing Test-time and Pretraining Compute

### Component Isolation

* **Header:** Comparing Test-time and Pretraining Compute in a FLOPs Matched Evaluation

* **Y-Axis:** Relative Improvement in Accuracy From Test-time Compute (%)

* **Range:** -50 to 20

* **Markers:** -50, -40, -30, -20, -10, 0, 10, 20

* **X-Axis:** Ratio of Inference Tokens to Pretraining Tokens

* **Categories:** $<<1$, $\approx 1$, $>>1$

* **Legend [Bottom-Left]:**

* **Green:** Easy Questions

* **Blue:** Medium Questions

* **Orange:** Hard Questions

### Data Table Reconstruction

The chart measures the relative gain/loss of using test-time compute versus pretraining compute for different difficulty levels.

| Ratio of Inference to Pretraining Tokens | Easy Questions (Green) | Medium Questions (Blue) | Hard Questions (Orange) |

| :--- | :--- | :--- | :--- |

| **$<<1$** | +19.1% | -5.6% | 0.0% |

| **$\approx 1$** | +2.2% | -35.6% | -35.3% |

| **$>>1$** | +2.0% | -30.6% | -52.9% |

### Trend Analysis

* **Easy Questions:** Test-time compute is consistently beneficial, though the magnitude of improvement drops significantly as the ratio of inference tokens increases (from +19.1% to ~+2%).

* **Medium Questions:** Test-time compute is generally less efficient than pretraining compute, showing significant negative relative improvement (losses) as the ratio increases, bottoming out around -35.6%.

* **Hard Questions:** Shows the most dramatic negative trend. While neutral at low ratios, it collapses to -52.9% at high ratios, indicating that for hard questions, compute is much more effectively spent on pretraining than on test-time search.

</details>

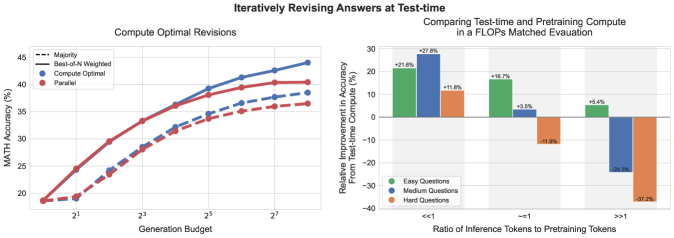

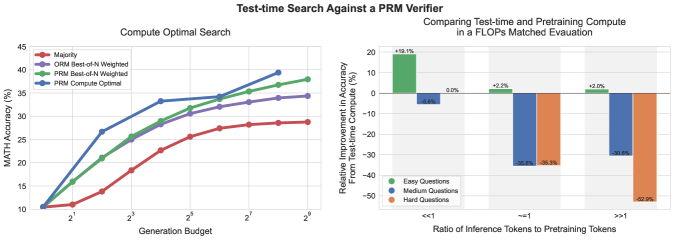

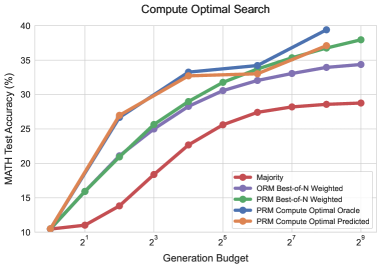

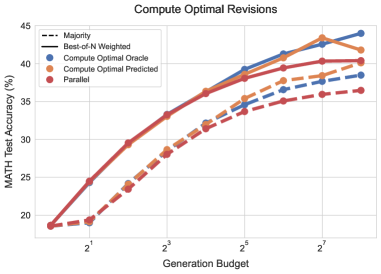

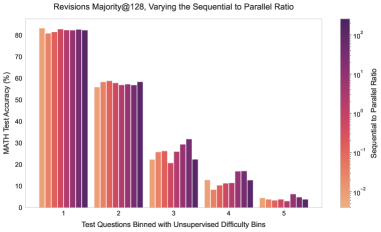

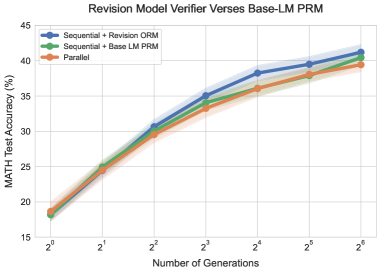

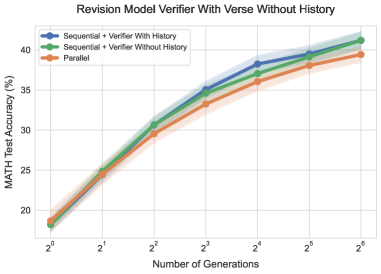

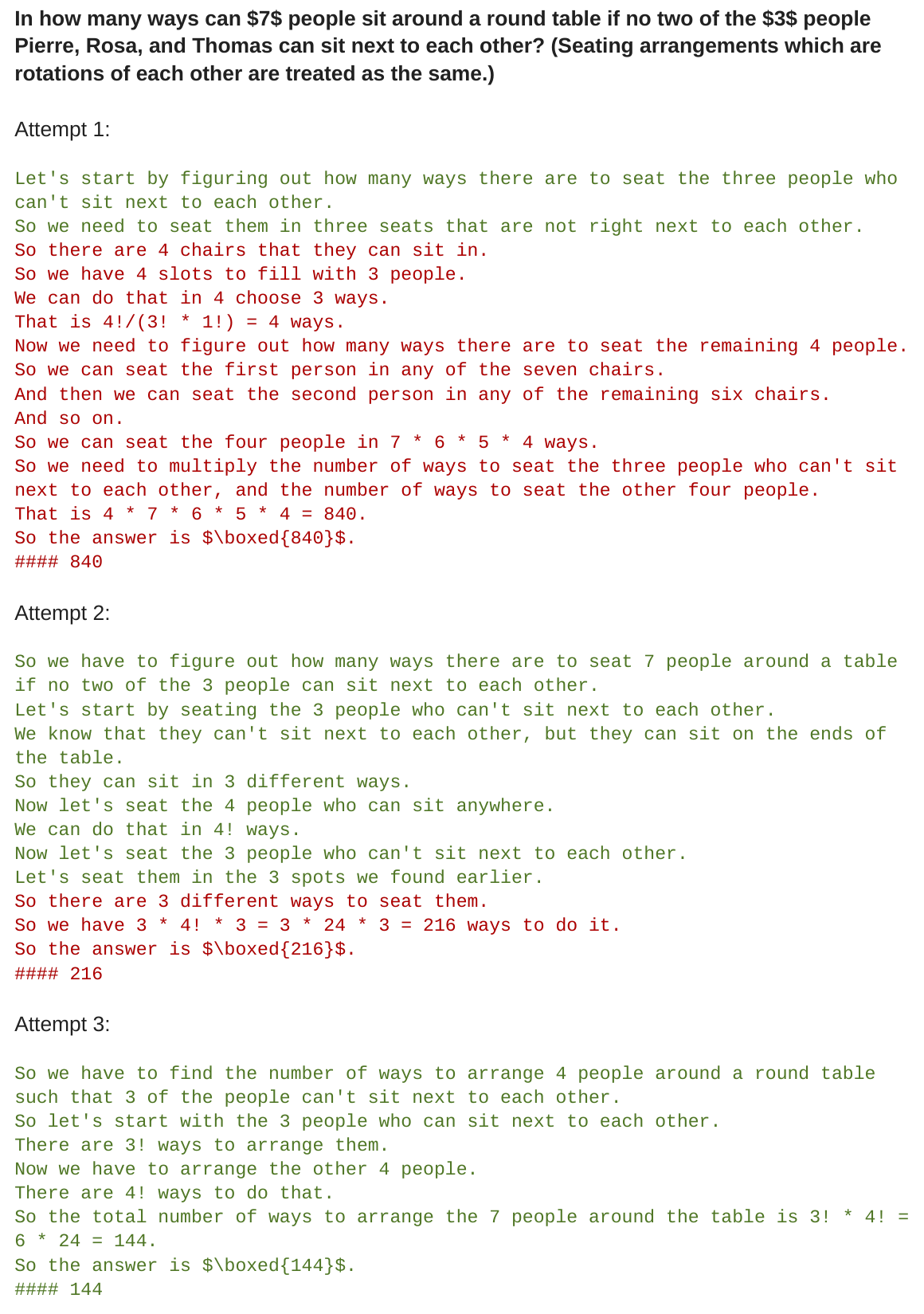

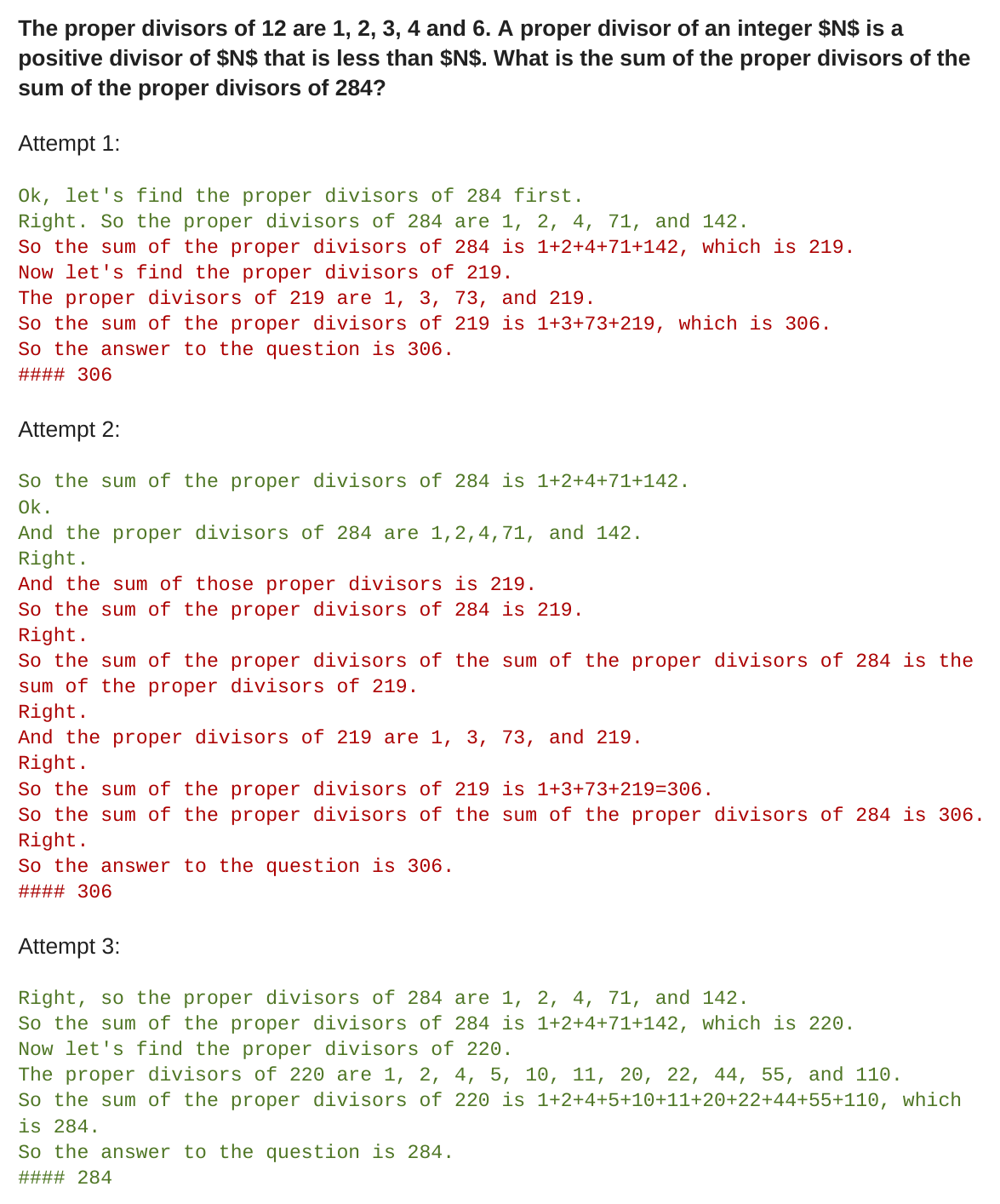

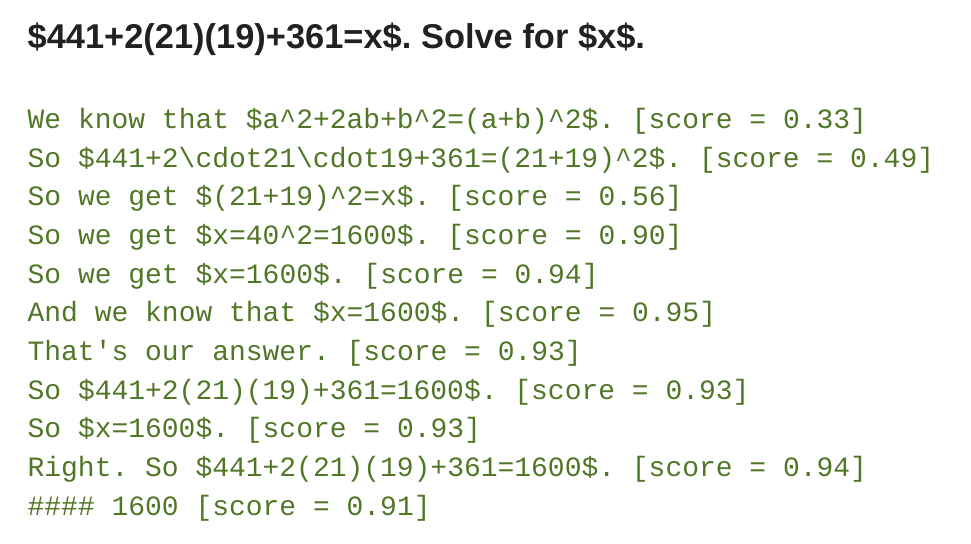

Figure 1: Summary of our main results. Left: Compute-optimal scaling for iterative self-refinement (i.e., revisions) and search. On the left, we compare the compute-optimal scaling policy for our PaLM 2-S* revision model against baselines in the revision setting (top) and the PRM search setting (bottom). We see that in the revisions case, the gap between standard best-of-N (e.g. “parallel”) and compute-optimal scaling gradually widens, enabling compute-optimal scaling to outperform best-of-N with $4×$ less test-time compute. Similarly, in the PRM search setting, we observe significant early improvements over best-of-N from compute-optimal scaling, nearly outperforming best-of-N with $4×$ less compute at points. See Sections 5 and 6 for details. Right: Comparing test-time compute and model parameter scaling. We compare the performance of compute-optimal test-time scaling with PaLM 2-S* against the performance of a $\sim 14×$ larger pretrained model without additional test-time compute (e.g. greedy sampling). We consider the setting where we expect $X$ tokens of pretraining for both models and $Y$ tokens of inference. By training a larger model, we effectively multiply the FLOPs requirement for both of these terms. If we were to apply additional test-time compute with the smaller model, so as to match this larger model’s FLOPs requirement, how would it compare in terms of accuracy? We see that for the revisions (top) when $Y<<X$ , test-time compute is often preferable to additional pretraining. However, as the inference to pretraining token ratio increases, test-time compute remains preferable on easy questions. Whereas on harder questions, pretraining is preferable in these settings. We also see a similar trend with PRM search (bottom). See Section 7 for more details.

Prior work studying inference-time computation provides mixed results. On the one hand, some works show that current LLMs can use test-time computation to improve their outputs [4, 23, 8, 30, 48], on the other hand, other work shows that the effectiveness of these methods on more complex tasks such as math reasoning remains highly limited [15, 37, 43], even though reasoning problems often require drawing inferences about existing knowledge as opposed to new knowledge. These sorts of conflicting findings motivate the need for a systematic analysis of different approaches for scaling test-time compute.

We are interested in understanding the benefits of scaling up test-time compute. Arguably the simplest and most well-studied approach for scaling test-time computation is best-of-N sampling: sampling N outputs in “parallel” from a base LLM and selecting the one that scores the highest per a learned verifier or a reward model [7, 22]. However, this approach is not the only way to use test-time compute to improve LLMs. By modifying either the proposal distribution from which responses are obtained (for instance, by asking the base model to revise its original responses “sequentially” [28]) or by altering how the verifier is used (e.g. by training a process-based dense verifier [22, 45] and searching against this verifier), the ability scale test-time compute could be greatly improved, as we show in the paper.

To understand the benefits of scaling up test-time computation, we carry out experiments on the challenging MATH [13] benchmark using PaLM-2 [3] models specifically fine-tuned Capability-specific finetuning is necessary to induce revision and verification capabilities into the base model on MATH since these capabilities are absent even in strong proprietary LLMs [15, 33]. However, we expect that future LLMs will be more effective at verification and revision due to both increased scale and the inclusion of additional data targeted specifically towards these capabilities [36, 5, 24]. Therefore in order to make progress towards understanding scaling of test-time computation, we must use models finetuned for these capabilities. That said, we expect future models to be pretrained for such capabilities directly, therefore avoiding the need for capability-specific finetuning. to either revise incorrect answers [28] (e.g. improving the proposal distribution; Section 6) or verify the correctness of individual steps in an answer using a process-based reward model (PRM) [22, 45] (Section 5). With both approaches, we find that the efficacy of a particular test-time compute strategy depends critically on both the nature of the specific problem at hand and the base LLM used. For example, on easier problems, for which the base LLM can already readily produce reasonable responses, allowing the model to iteratively refine its initial answer by predicting a sequence of N revisions (i.e., modifying the proposal distribution), may be a more effective use of test-time compute than sampling N independent responses in parallel. On the other hand, with more difficult problems that may require searching over many different high-level approaches to solving the problem, re-sampling new responses independently in parallel or deploying tree-search against a process-based reward model is likely a more effective way to use test-time computation. This finding illustrates the need to deploy an adaptive “compute-optimal“ strategy for scaling test-time compute, wherein the specific approach for utilizing test-time compute is selected depending on the prompt, so as to make the best use of additional computation. We also show that a notion of question difficulty (Section 4) from the perspective of the base LLM can be used to predict the efficacy of test-time computation, enabling us to practically instantiate this ‘compute-optimal’ strategy given a prompt. By appropriately allocating test-time compute in this way, we are able to greatly improve test-time compute scaling, surpassing the performance of a best-of-N baseline while only using about 4x less computation with both revisions and search (Sections 5 and 6).

Using our improved test-time compute scaling strategy, we then aim to understand to what extent test-time computation can effectively substitute for additional pretraining. We conduct a FLOPs-matched comparison between a smaller model with additional test-time compute and pretraining a 14x larger model. We find that on easy and intermediate questions, and even hard questions (depending on the specific conditions on the pretraining and inference workload), additional test-time compute is often preferable to scaling pretraining. This finding suggests that rather than focusing purely on scaling pretraining, in some settings it is be more effective to pretrain smaller models with less compute, and then apply test-time compute to improve model outputs. That said, with the most challenging questions, we observe very little benefits from scaling up test-time compute. Instead, we find that on these questions, it is more effective to make progress by applying additional pretraining compute, demonstrating that current approaches to scaling test-time compute may not be 1-to-1 exchangeable with scaling pretraining. Overall, this suggests that even with a fairly naïve methodology, scaling up test-time computation can already serve to be more preferable to scaling up pretraining, with only more improvements to be attained as test-time strategies mature. Longer term, this hints at a future where fewer FLOPs are spent during pretraining and more FLOPs are spent at inference.

2 A Unified Perspective on Test-Time Computation: Proposer and Verifier

We first unify approaches for using test-time computation and then analyze some representative methods. First, we view the use of additional test-time compute through the lens of modifying the model’s predicted distribution adaptively at test-time, conditioned on a given prompt. Ideally, test-time compute should modify the distribution so as to generate better outputs than naïvely sampling from the LLM itself would. In general, there are two knobs to induce modifications to an LLM’s distribution: (1) at the input level: by augmenting the given prompt with an additional set of tokens that the LLM conditions on to obtain the modified distribution, or (2) at the output level: by sampling multiple candidates from the standard LM and performing surgery on these candidates. In other words, we could either modify the proposal distribution induced by the LLM itself such that it is an improvement over naïvely conditioning on the prompt or we could use some post-hoc verifiers or scorers to perform output modifications. This process is reminiscent of Markov chain Monte Carlo (MCMC) [2] sampling from a complex target distribution but by combining a simple proposal distribution and a score function. Modifying the proposal distribution directly by altering input tokens and using a verifier form two independent axes of our study.

Modifying the proposal distribution. One way to improve the proposal distribution is to directly optimize the model for a given reasoning task via RL-inspired finetuning methods such as STaR or $\text{ReST}^{\text{EM}}$ [50, 35]. Note that these techniques do not utilize any additional input tokens but specifically finetune the model to induce an improved proposal distribution. Instead, techniques such as self-critique [4, 23, 8, 30] enable the model itself to improve its own proposal distribution at test time by instructing it to critique and revise its own outputs in an iterative fashion. Since prompting off-the-shelf models is not effective at enabling effective revisions at test time, we specifically finetune models to iteratively revise their answers in complex reasoning-based settings. To do so, we utilize the approach of finetuning on on-policy data with Best-of-N guided improvements to the model response [28].

Optimizing the verifier. In our abstraction of the proposal distribution and verifier, the verifier is used to aggregate or select the best answer from the proposal distribution. The most canonical way to use such a verifier is by applying best-of-N sampling, wherein we sample N complete solutions and then select the best one according to a verifier [7]. However, this approach can be further improved by training a process-based verifier [22], or a process reward model (PRM), which produces a prediction of the correctness of each intermediate step in an solution, rather than just the final answer. We can then utilize these per-step predictions to perform tree search over the space of solutions, enabling a potentially more efficient and effective way to search against a verifier, compared to naïve best-of-N [48, 10, 6].

3 How to Scale Test-Time Computation Optimally

Given the unification of various methods, we would now like to understand how to most effectively utilize test-time computation to improve LM performance on a given prompt. Concretely we wish to answer:

Problem setup

We are given a prompt and a test-time compute budget within which to solve the problem. Under the abstraction above, there are different ways to utilize test-time computation. Each of these methods may be more or less effective depending on the specific problem given. How can we determine the most effective way to utilize test-time compute for a given prompt? And how well would this do against simply utilizing a much bigger pretrained model?

When either refining the proposal distribution or searching against a verifier, there are several different hyper-parameters that can be adjusted to determine how a test-time compute budget should be allocated. For example, when using a model finetuned for revisions as the proposal distribution and an ORM as the verifier, we could either spend the full test-time compute budget on generating N independent samples in parallel from the model and then apply best-of-N, or we could sample N revisions in sequence using a revision model and then select the best answer in the sequence with an ORM, or strike a balance between these extremes. Intuitively, we might expect “easier” problems to benefit more from revisions, since the model’s initial samples are more likely to be on the right track but may just need further refinement. On the other hand, challenging problems may require more exploration of different high-level problem solving strategies, so sampling many times independently in parallel may be preferable in this setting.

In the case of verifiers, we also have the option to choose between different search algorithms (e.g. beam-search, lookahead-search, best-of-N), each of which may exhibit different properties depending on the quality of the verifier and proposal distribution at hand. More sophisticated search procedures might be more useful in harder problems compared to a much simpler best-of-N or majority baseline.

3.1 Test-Time Compute-Optimal Scaling Strategy

In general, we would therefore like to select the optimal allocation of our test-time compute budget for a given problem. To this end, for any given approach of utilizing test-time compute (e.g., revisions and search against a verifier in this paper, various other methods elsewhere), we define the “test-time compute-optimal scaling strategy” as the strategy that chooses hyperparameters corresponding to a given test-time strategy for maximal performance benefits on a given prompt at test time. Formally, define $\operatorname{Target}(\theta,N,q)$ as the distribution over natural language output tokens induced by the model for a given prompt $q$ , using test-time compute hyper-parameters $\theta$ , and a compute budget of $N$ . We would like to select the hyper-parameters $\theta$ which maximize the accuracy of the target distribution for a given problem. We express this formally as:

$$

\displaystyle\theta^{*}_{q,a^{*}(q)}(N)=\operatorname{argmax}_{\theta}\left(\mathbb{E}_{y\sim\operatorname{Target}(\theta,N,q)}\left[\mathbbm{1}_{y=y^{*}(q)}\right]\right), \tag{1}

$$

where $y^{*}(q)$ denotes the ground-truth correct response for $q$ , and $\theta^{*}_{q,y^{*}(q)}(N)$ represents the test-time compute-optimal scaling strategy for the problem $q$ with compute budget $N$ .

3.2 Estimating Question Difficulty for Compute-Optimal Scaling

In order to effectively analyze the test-time scaling properties of the different mechanisms discussed in Section 2 (e.g. the proposal distribution and the verifier), we will prescribe an approximation to this optimal strategy $\theta^{*}_{q,y^{*}(q)}(N)$ as a function of a statistic of a given prompt. This statistic estimates a notion of difficulty for a given prompt. The compute-optimal strategy is defined as a function of the difficulty of this prompt. Despite being only an approximate solution to the problem shown in Equation 1, we find that it can still induce substantial improvements in performance over a baseline strategy of allocating this inference-time compute in an ad-hoc or uniformly-sampled manner.

Our estimate of the question difficulty assigns a given question to one of five difficulty levels. We can then use this discrete difficulty categorization to estimate $\theta^{*}_{q,y^{*}(q)}(N)$ on a validation set for a given test-time compute budget. We then apply these compute-optimal strategies on the test-set. Concretely, we select the best performing test-time compute strategy for each difficulty bin independently. In this way, question difficulty acts as a sufficient statistic of a question when designing the compute-optimal strategy.

Defining difficulty of a problem. Following the approach of Lightman et al. [22], we define question difficulty as a function of a given base LLM. Specifically, we bin the model’s pass@1 rate – estimated from 2048 samples – on each question in the test set into five quantiles, each corresponding to increasing difficulty levels. We found this notion of model-specific difficulty bins to be more predictive of the efficacy of using test-time compute in contrast to the hand-labeled difficulty bins in the MATH dataset.

That said, we do note that assessing a question’s difficulty as described above assumes oracle access to a ground-truth correctness checking function, which is of course not available upon deployment where we are only given access to test prompts that we don’t know the answer to. In order to be feasible in practice, a compute-optimal scaling strategy conditioned on difficulty needs to first assess difficulty and then utilize the right scaling strategy to solve this problem. Therefore, we approximate the problem’s difficulty via a model-predicted notion of difficulty, which performs the same binning procedure over the the averaged final answer score from a learned verifier (and not groundtruth answer correctness checks) on the same set of 2048 samples per problem. We refer to this setting as model-predicted difficulty and the setting which relies on the ground-truth correctness as oracle difficulty.

While model-predicted difficulty removes the need for need knowing the ground truth label, estimating difficulty in this way still incurs additional computation cost during inference. That said, this one-time inference cost can be subsumed within the cost for actually running an inference-time strategy (e.g., when using a verifier, one could use the same inference computation for also running search). More generally, this is akin to exploration-exploitation tradeoff in reinforcement learning: in actual deployment conditions, we must balance the compute spent in assessing difficulty vs applying the most compute-optimal approach. This is a crucial avenue for future work (see Section 8) and our experiments do not account for this cost largely for simplicity, since our goal is to present some of the first results of what is in fact possible by effectively allocating test-time compute.

So as to avoid confounders with using the same test set for computing difficulty bins and for selecting the compute-optimal strategy, we use two-fold cross validation on each difficulty bin in the test set. We select the best-performing strategy according to performance on one fold and then measure performance using that strategy on the other fold and vice versa, averaging the results of the two test folds.

4 Experimental Setup

We first outline our experimental setup for conducting this analysis with multiple verifier design choices and proposal distributions, followed by the analysis results in the subsequent sections.

Datasets. We expect test-time compute to be most helpful when models already have all the basic “knowledge” needed to answer a question, and instead the primary challenge is about drawing (complex) inferences from this knowledge. To this end, we focus on the MATH [13] benchmark, which consists of high-school competition level math problems with a range of difficulty levels. For all experiments, we use the dataset split consisting of 12k train and 500 test questions, used in Lightman et al. [22].

Models. We conduct our analysis using the PaLM 2-S* [3] (Codey) base model. We believe this model is representative of the capabilities of many contemporary LLMs, and therefore think that our findings likely transfer to similar models. Most importantly, this model attains a non-trivial performance on MATH and yet has not saturated, so we expect this model to provide a good test-bed for us.

5 Scaling Test-Time Compute via Verifiers

In this section we analyze how test-time compute can be scaled by optimizing a verifier, as effectively as possible. To this end, we study different approaches for performing test-time search with process verifiers (PRMs) and analyze the test-time compute scaling properties of these different approaches.

5.1 Training Verifiers Amenable to Search

PRM training. Originally PRM training [42, 22] used human crowd-worker labels. While Lightman et al. [22] released their PRM training data (i.e., the PRM800k dataset), we found this data to be largely ineffective for us. We found that it was easy to exploit a PRM trained on this dataset via even naïve strategies such as best-of-N sampling. We hypothesize that this is likely a result of the distribution shift between the GPT-4 generated samples in their dataset and our PaLM 2 models. Rather than proceeding with the expensive process of collecting crowd-worker PRM labels for our PaLM 2 models, we instead apply the approach of Wang et al. [45] to supervise PRMs without human labels, using estimates of per-step correctness obtained from running Monte Carlo rollouts from each step in the solution. Our PRM’s per-step predictions therefore correspond to value estimates of reward-to-go for the base model’s sampling policy, similar to recent work [45, 31]. We also compared to an ORM baseline (Appendix F) but found that our PRM consistently outperforms the ORM. Hence, all of the search experiments in this section use a PRM model. Additional details on PRM training are shown in Appendix D.

Answer aggregation. At test time, process-based verifiers can be used to score each individual step in a set of solutions sampled from the base model. In order to select the best-of-N answers with the PRM, we need a function that can aggregate across all the per-step scores for each answer to determine the best candidate for the correct answer. To do this, we first aggregate each individual answer’s per-step scores to obtain a final score for the full answer (step-wise aggregation). We then aggregate across answers to determine the best answer (inter-answer aggregation). Concretely, we handle step-wise and inter-answer aggregation as follows:

- Step-wise aggregation. Rather than aggregating the per-step scores by taking the product or minimum [45, 22], we instead use the PRM’s prediction at the last step as the full-answer score. We found this to perform the best out of all aggregation methods we studied (see Appendix E).

- Inter-answer aggregation. We follow Li et al. [21] and apply “best-of-N weighted” selection rather than standard best-of-N. Best-of-N weighted selection marginalizes the verifier’s correctness scores across all solutions with the same final answer, selecting final answer with the greatest total sum.

5.2 Search Methods Against a PRM

<details>

<summary>x3.png Details</summary>

### Visual Description

# Technical Document: Comparison of LLM Search and Verification Strategies

This document provides a detailed technical extraction of the provided diagram, which illustrates three distinct methodologies for generating and verifying Large Language Model (LLM) outputs: **Best-of-N**, **Beam Search**, and **Lookahead Search**.

---

## 1. Global Legend (Footer)

The following key defines the visual components used across all three diagrams.

| Symbol | Description |

| :--- | :--- |

| **Purple Dashed Box** | **Apply Verifier**: Indicates a point in the process where a verification model evaluates the generated content. |

| **Solid Orange Circle** | **Full Solution**: Represents a completed response or path. |

| **Hollow Orange Circle** | **Intermediate solution step**: Represents a partial generation or a node in a search tree. |

| **Solid Green Circle** | **Selected by verifier**: A solution or step deemed high-quality by the reward model/verifier. |

| **Solid Red Circle** | **Rejected by verifier**: A solution or step deemed low-quality or incorrect by the verifier. |

---

## 2. Component Analysis

### Panel A: Best-of-N

**Header Text:** Best-of-N

**Instruction Box:** "Generate N full solutions, selecting the best one with the verifier"

* **Process Flow:**

1. Starts with a single **Question** (Blue box).

2. Four independent paths (full solutions) are generated simultaneously from the question to the final state.

3. **Visual Trend:** The paths are long, continuous lines. Three lines are colored red (rejected) and one is colored green (selected).

4. **Verification:** The verifier (purple dashed box) is applied only at the very end of the generation process for each of the N solutions.

* **Footer Caption:** "Select the best final answer using the verifier"

### Panel B: Beam Search

**Header Text:** Beam Search

**Instruction Box:** "Select the top-N samples at each step using the PRM" (Process Reward Model)

* **Process Flow:**

1. Starts with a single **Question** (Blue box).

2. The generation is broken into discrete "Intermediate solution steps" (hollow circles).

3. **Visual Trend:** A tree-like structure where branching occurs at every level. At each horizontal level, multiple nodes are evaluated.

4. **Verification:** The verifier (purple dashed box) is applied at *every* intermediate step.

5. **Selection Logic:** Only the green nodes (selected) serve as the base for the next step of generation. Red nodes (rejected) are pruned and do not continue.

* **Footer Caption:** "Select the best final answer using the verifier"

### Panel C: Lookahead Search

**Header Text:** Lookahead Search

**Instruction Box:** "Beam search, but at each step rollout k-steps in advance, using the PRM value at the end of the rollout to represent the value for the current step"

* **Process Flow:**

1. Starts with a single **Question** (Blue box).

2. **Rollout Mechanism:** From an intermediate step, the system performs a "rollout" (indicated by a dashed black curved line) several steps ahead.

3. **Annotation 1:** "Rollout k-steps"

4. **Annotation 2:** "Propagate PRM value back to step" (indicated by a solid black arrow pointing from the future state back to the current decision node).

5. **Verification:** The verifier evaluates the *future* state (the end of the rollout) to decide whether the *current* step is valid.

6. **Search Continuation:** "Continue Search from the top-N options" (indicated by solid green nodes at a lower level).

* **Visual Trend:** This is the most complex structure, showing a recursive or "look-ahead" pattern where current choices are dictated by simulated future outcomes.

---

## 3. Summary of Differences

| Feature | Best-of-N | Beam Search | Lookahead Search |

| :--- | :--- | :--- | :--- |

| **Verification Frequency** | Once (at the end) | At every intermediate step | At every step, based on future steps |

| **Granularity** | Full solution level | Step-by-step level | Step-by-step with future rollouts |

| **Computational Cost** | Low/Medium (N full paths) | High (Verification at every step) | Very High (Multiple rollouts per step) |

| **Pruning** | No pruning during generation | Immediate pruning of bad steps | Pruning based on predicted future value |

</details>

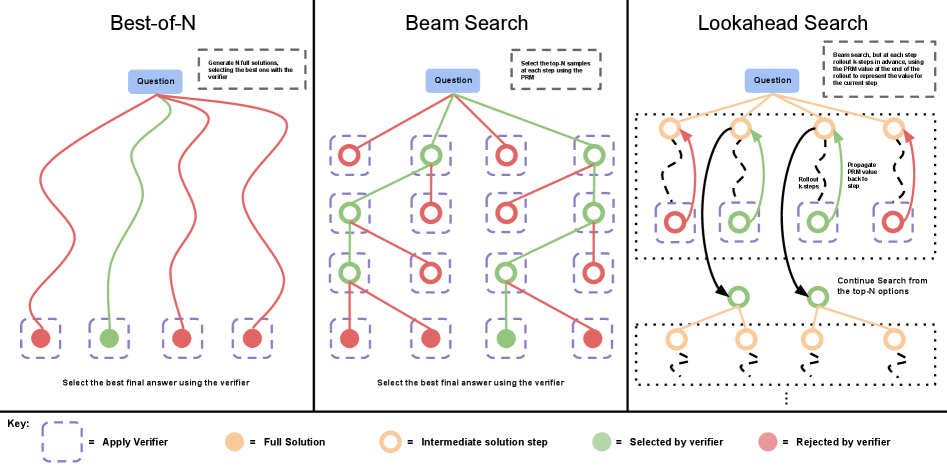

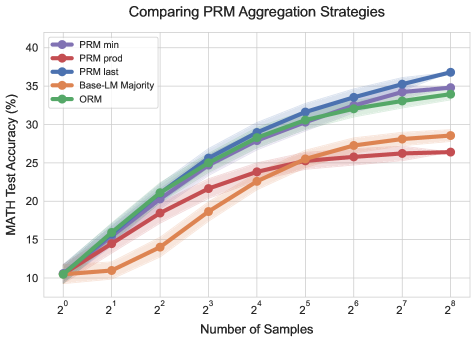

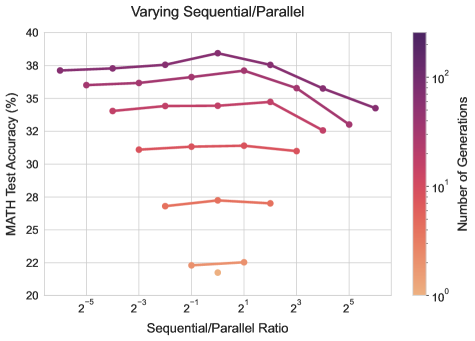

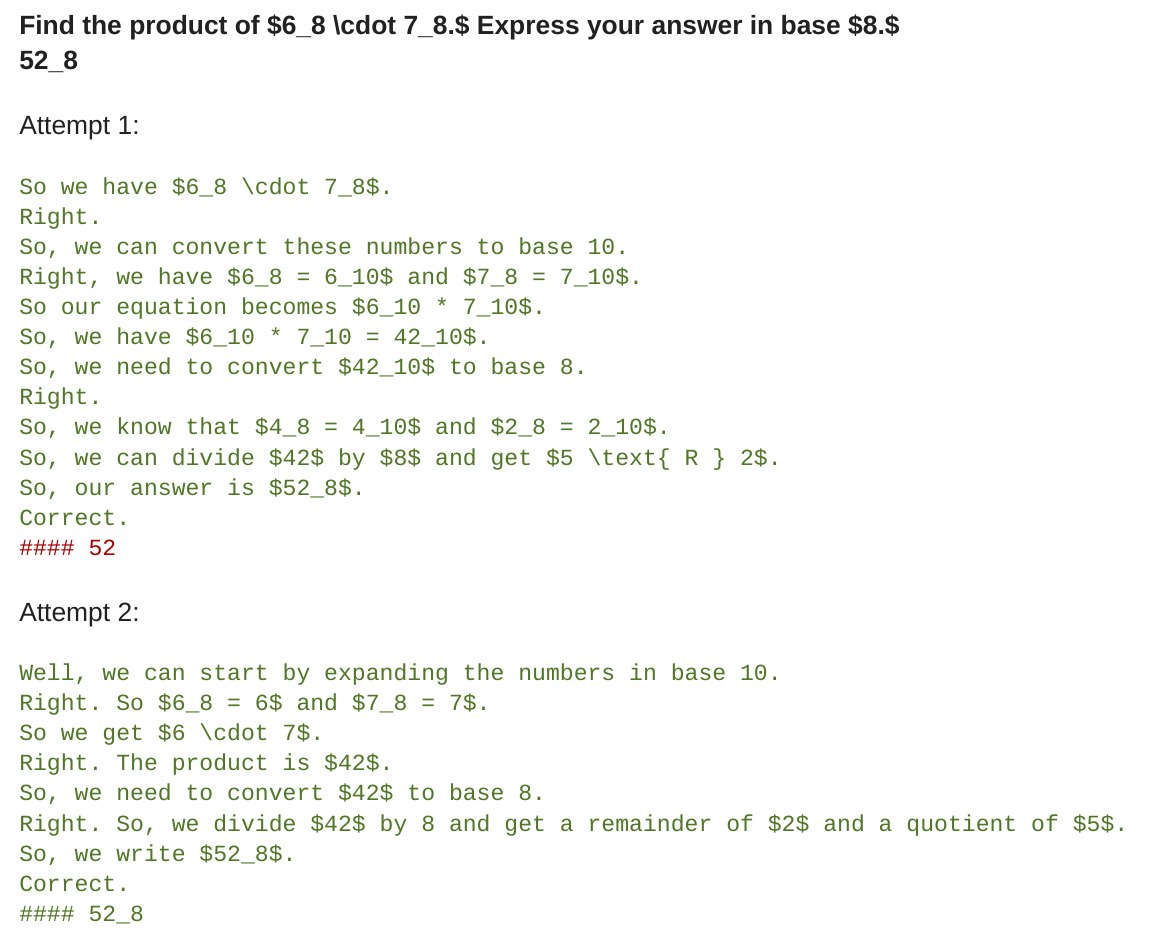

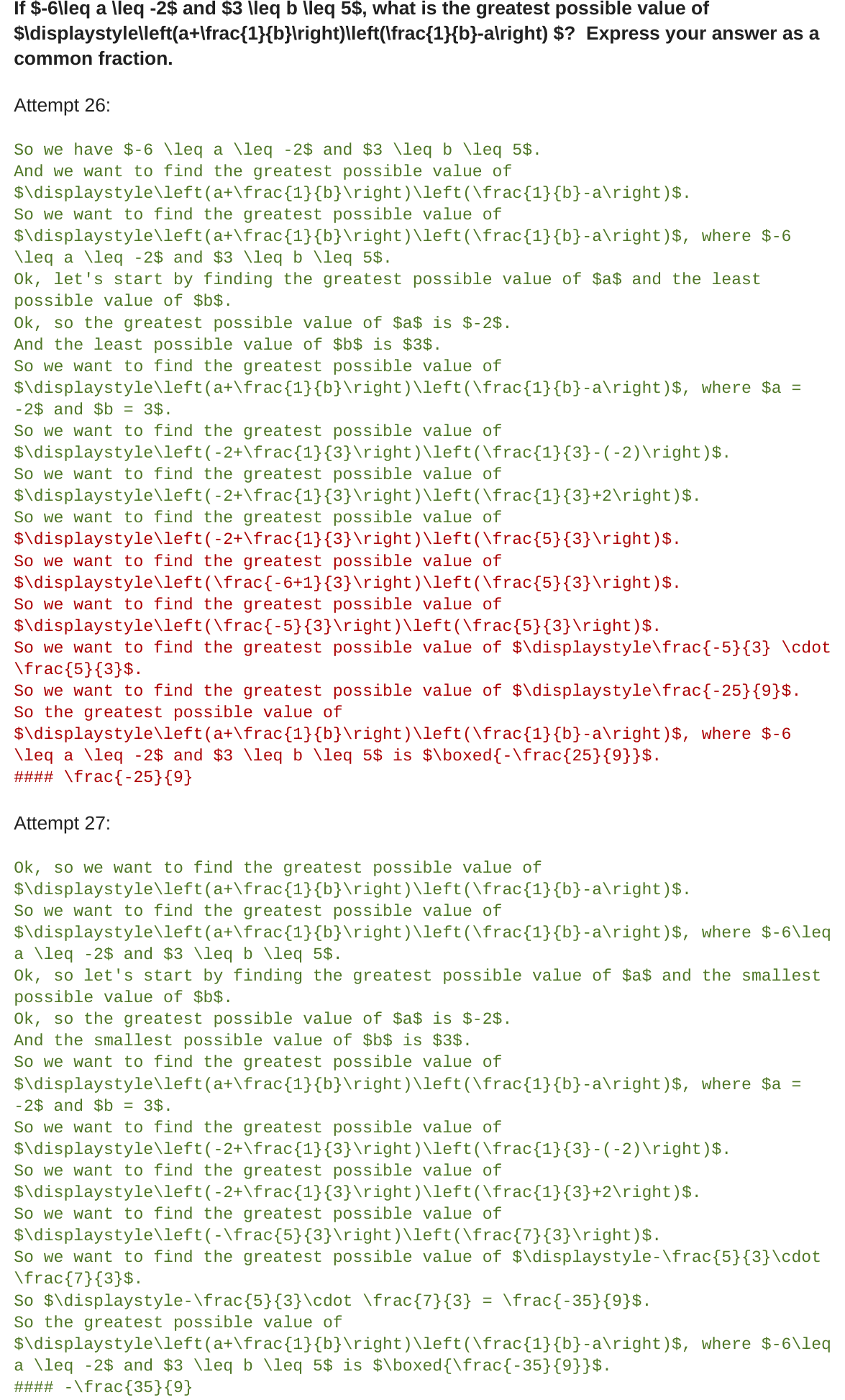

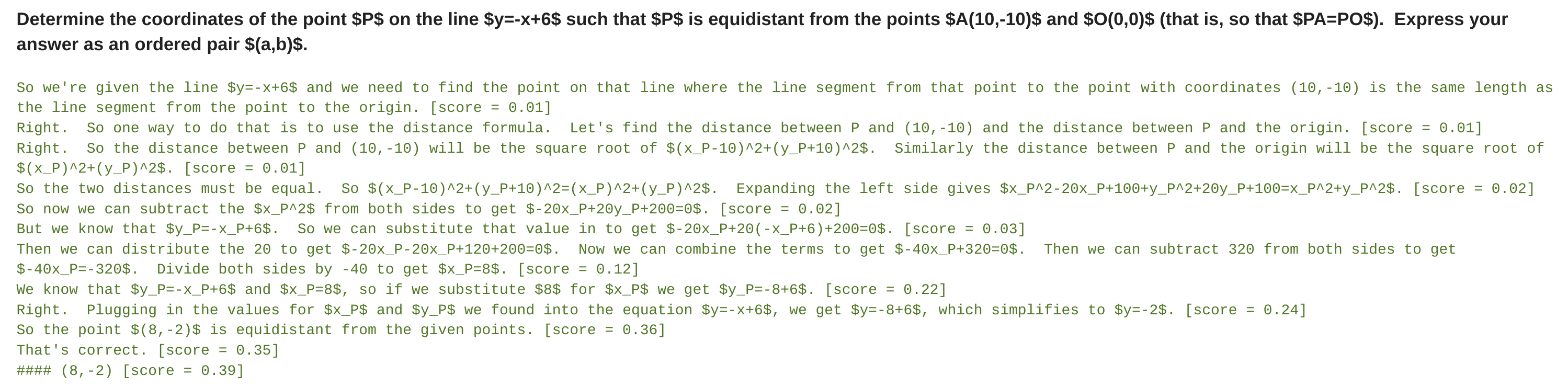

Figure 2: Comparing different PRM search methods. Left: Best-of-N samples N full answers and then selects the best answer according to the PRM final score. Center: Beam search samples N candidates at each step, and selects the top M according to the PRM to continue the search from. Right: lookahead-search extends each step in beam-search to utilize a k-step lookahead while assessing which steps to retain and continue the search from. Thus lookahead-search needs more compute.

We optimize the PRM at test time via search methods. We study three search approaches that sample outputs from a few-shot prompted base LLM (see Appendix G). An illustration is shown in Figure 2.

Best-of-N weighted. We sample N answers independently from the base LLM and then select the best answer according to the PRM’s final answer judgement.

Beam search. Beam search optimizes the PRM by searching over its per-step predictions. Our implementation is similar to BFS-V [48, 10]. Concretely, we consider a fixed number of beams $N$ and a beam width $M$ . We then run the following steps:

1. sample $N$ initial predictions for the first step in the solution

1. score the generated steps according to the PRM’s predicted step-wise reward-to-go estimate (which also corresponds to the total reward from the prefix since the reward is sparse in this setting)

1. filter for only the top $\frac{N}{M}$ highest scoring steps

1. now from each candidate, sample $M$ proposals from the next step, resulting in a total of $N/M× M$ candidate prefixes again. Then repeat steps 2-4 again.

We run this algorithm until the end of a solution or the maximum number of rounds of beam expansion are attained (40 in our case). We conclude the search with N final answer candidates, to which we apply best-of-N weighted selection described above to make our final answer prediction.

Lookahead search. Lookahead search modifies how beam search evaluates individual steps. It uses lookahead rollouts to improve the accuracy of the PRM’s value estimation in each step of the search process. Specifically, at each step in the beam search, rather than using the PRM score at the current step to select the top candidates, lookahead search performs a simulation, rolling out up to $k$ steps further while stopping early if the end of solution is reached. To minimize variance in the simulation rollout, we perform rollouts using temperature 0. The PRM’s prediction at the end of this rollout is then used to score the current step in the beam search. That is, in other words, we can view beam search as a special case of lookahead search with $k=0$ . Given an accurate PRM, increasing $k$ should improve the accuracy of the per-step value estimates at the cost of additional compute. Also note that this version of lookahead search is a special case of MCTS [38], wherein the stochastic elements of MCTS, designed to facilitate exploration, are removed since the PRM is already trained and is frozen. These stochastic elements are largely useful for learning the value function (which we’ve already learned with our PRM), but less useful at test-time when we want to exploit rather than explore. Therefore, lookahead search is largely representative of how MCTS-style methods would be applied at test-time.

<details>

<summary>x4.png Details</summary>

### Visual Description

# Technical Data Extraction: PRM Search Methods Performance Analysis

This document contains a detailed extraction of data from two side-by-side charts comparing various search methods for Process-based Reward Models (PRM) on the MATH test dataset.

---

## Chart 1: Comparing PRM Search Methods

### Metadata and Axes

* **Title:** Comparing PRM Search Methods

* **Y-Axis Label:** MATH Test Accuracy (%)

* **Range:** 10 to 40

* **Markers:** 10, 15, 20, 25, 30, 35, 40

* **X-Axis Label:** Generation Budget

* **Scale:** Logarithmic (Base 2)

* **Markers:** $2^1, 2^3, 2^5, 2^7, 2^9$

* **Legend Location:** Bottom Right [approx. x=0.7, y=0.2 relative to chart area]

### Data Series Extraction

The chart tracks 7 distinct search strategies. All series originate at approximately 10.5% accuracy at the lowest budget.

| Legend Label | Color | Visual Trend | Key Data Points (Approx.) |

| :--- | :--- | :--- | :--- |

| **Best-of-N Weighted** | Orange | Steady linear-log growth; highest final accuracy. | $2^3 \approx 26\%$, $2^9 \approx 38\%$ |

| **Majority** | Green | Slowest initial growth; consistent upward slope. | $2^3 \approx 18\%$, $2^9 \approx 29\%$ |

| **Beam; M = sqrt(N)** | Red | Rapid early growth, plateaus/dips after $2^5$. | $2^4 \approx 34\%$, $2^8 \approx 33.5\%$ |

| **Beam; M = 4** | Blue | Rapid early growth, peaks at $2^8$. | $2^4 \approx 34\%$, $2^8 \approx 37\%$ |

| **1 Step Lookahead; M = sqrt(N)** | Purple | Moderate growth, plateaus early. | $2^3 \approx 29\%$, $2^8 \approx 32\%$ |

| **3 Step Lookahead; M = sqrt(N)** | Brown | Steady growth, similar to Best-of-N but lower. | $2^5 \approx 32\%$, $2^8 \approx 31.5\%$ |

| **3 Step Lookahead; M = 4** | Pink | Late bloomer; sharp rise between $2^4$ and $2^6$. | $2^4 \approx 25\%$, $2^6 \approx 35\%$, $2^8 \approx 33\%$ |

---

## Chart 2: Comparing Beam Search and Best-of-N by Difficulty Level

### Metadata and Axes

* **Title:** Comparing Beam Search and Best-of-N by Difficulty Level

* **Y-Axis Label:** MATH Test Accuracy (%)

* **Range:** 0 to 80+

* **Markers:** 0, 20, 40, 60, 80

* **X-Axis Label:** Test Questions Binned by Increasing Difficulty Level

* **Categories:** 1, 2, 3, 4, 5 (representing difficulty levels)

* **Legend Location:** Top Right [approx. x=0.85, y=0.9]

### Component Analysis

This is a grouped bar chart where each difficulty level (1-5) contains four sub-bars representing increasing generation budgets. Each bar is stacked to show the performance of three methods.

**Legend/Color Mapping:**

* **Blue:** Beam Search (Top layer)

* **Orange/Tan:** Best-of-N Weighted (Middle layer)

* **Green:** Majority (Bottom layer)

### Data Trends by Difficulty

1. **Difficulty 1 (Easiest):** All methods perform exceptionally well, reaching >80% accuracy. The performance saturates quickly across the four budget increments.

2. **Difficulty 2:** Accuracy ranges from ~30% to ~60%. There is a clear step-up in performance as the generation budget increases.

3. **Difficulty 3:** Accuracy ranges from ~20% to ~35%. Beam Search (Blue) shows a more significant marginal gain over Majority (Green) here compared to Level 1.

4. **Difficulty 4:** Accuracy drops significantly, peaking below 20%. The "Majority" method (Green) is notably low, while Beam Search provides the bulk of the successful outcomes.

5. **Difficulty 5 (Hardest):** Accuracy is near zero for all methods, with only tiny slivers of blue (Beam Search) visible at the highest budget levels, barely exceeding 1-2%.

### Summary of Findings

* **Search Efficiency:** Beam Search (Blue) and Best-of-N Weighted (Orange) consistently outperform simple Majority voting (Green) across all difficulty levels.

* **Scaling:** Increasing the "Generation Budget" provides diminishing returns on easy problems (Level 1) but is critical for mid-to-high difficulty problems (Levels 2-4).

* **Difficulty Ceiling:** There is a sharp performance drop-off at Difficulty Level 5, where none of the tested PRM search methods achieve significant accuracy.

</details>

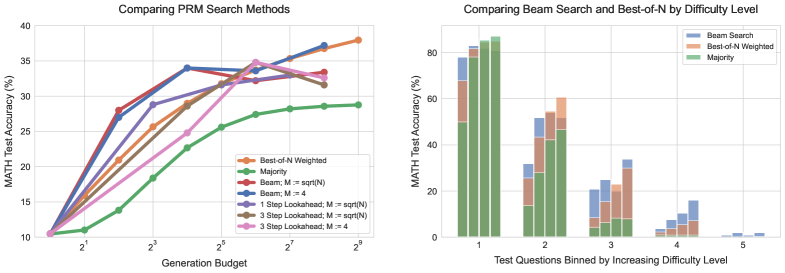

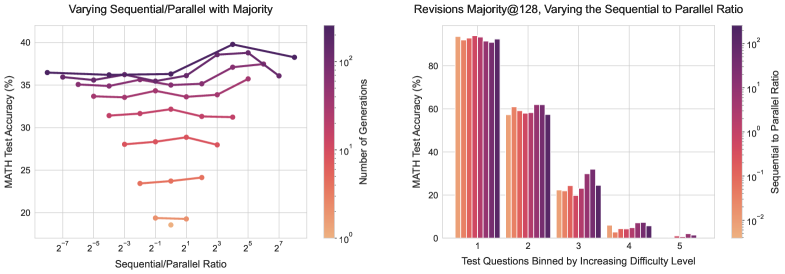

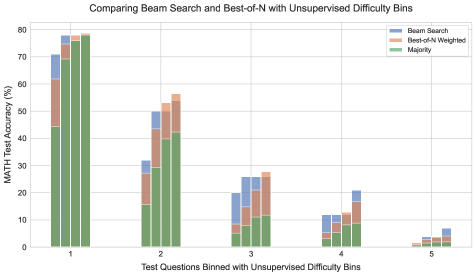

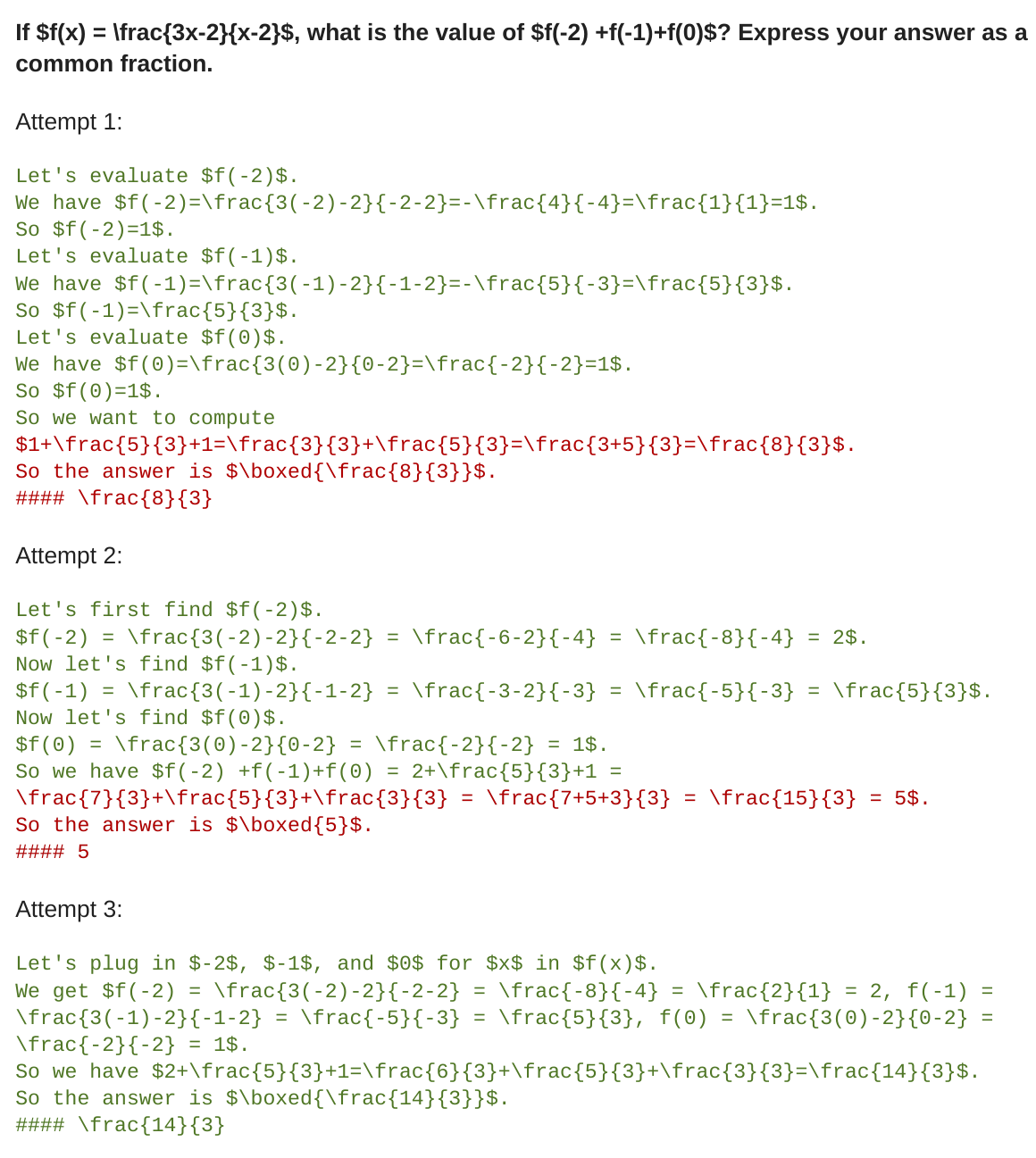

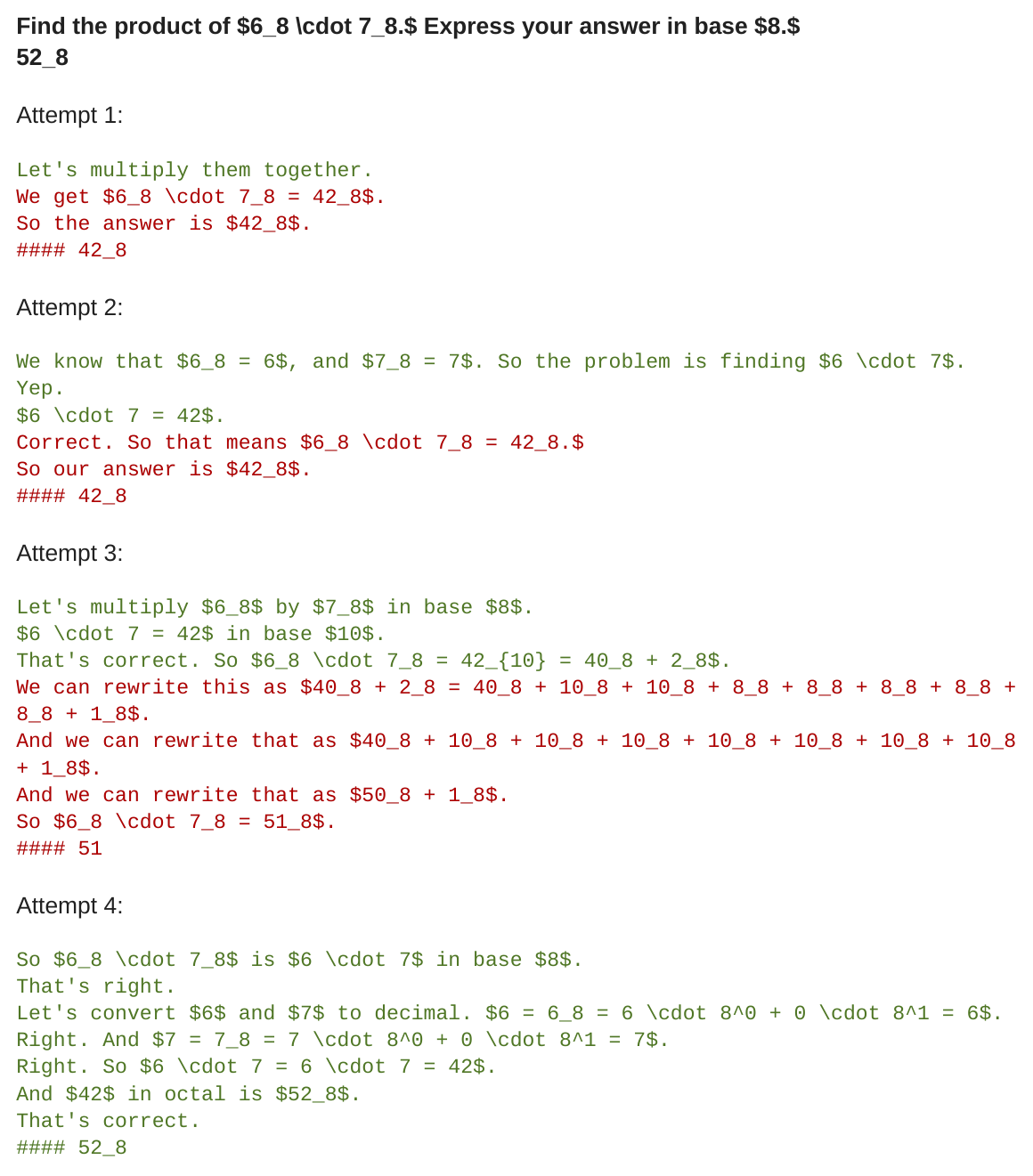

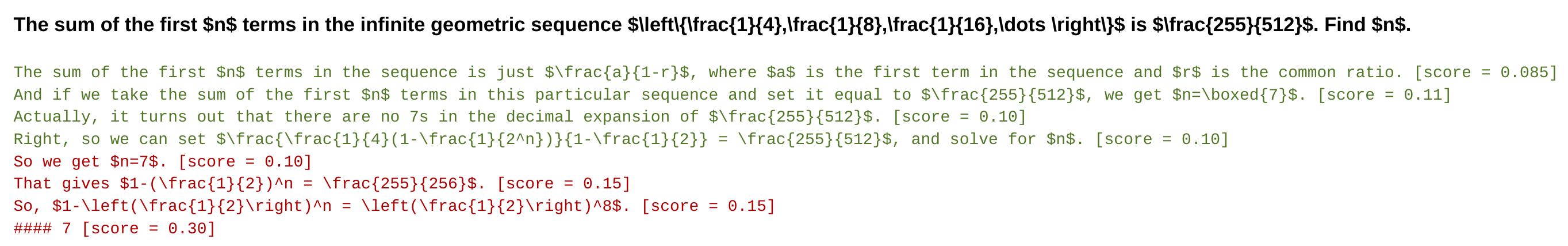

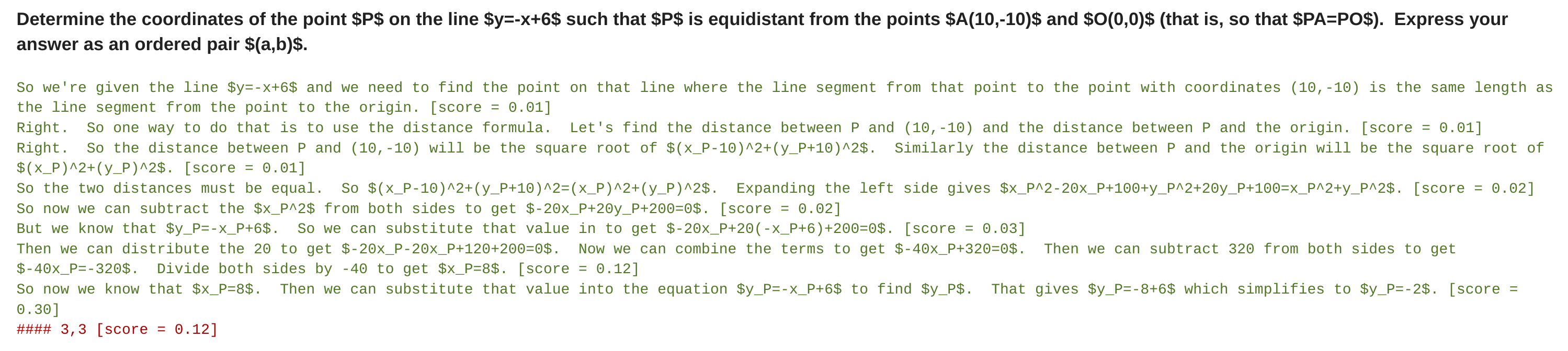

Figure 3: Left: Comparing different methods for conducting search against PRM verifiers. We see that at low generation budgets, beam search performs best, but as we scale the budget further the improvements diminish, falling below the best-of-N baseline. Lookahead-search generally underperforms other methods at the same generation budget. Right: Comparing beam search and best-of-N binned by difficulty level. The four bars in each difficulty bin correspond to increasing test-time compute budgets (4, 16, 64, and 256 generations). On the easier problems (bins 1 and 2), beam search shows signs of over-optimization with higher budgets, whereas best-of-N does not. On the medium difficulty problems (bins 3 and 4), we see beam search demonstrating consistent improvements over best-of-N.

5.3 Analysis Results: Test-Time Scaling for Search with Verifiers

We now present our results comparing various search algorithms and identify a prompt difficulty dependent compute-optimal scaling strategy for search methods.

Comparing search algorithms. We first conduct a sweep over various search settings. In addition to the standard best-of-N approach, we sweep over the two main parameters that distinguish different tree-search methods: beam-width $M$ and number of lookahead steps $k$ . While we are not able to extensively sweep every single configuration, we sweep over the following settings with a maximum budget of 256:

1. Beam search with the beam width set to $\sqrt{N}$ , where $N$ is the generation budget.

1. Beam search with a fixed beam width of 4.

1. Lookahead search with $k=3$ applied to both beam-search settings 1) and 2).

1. Lookahead search with $k=1$ applied to beam-search setting 1).

To compare search methods as a function of generation budget fairly, we build a protocol for estimating the cost of each method. We consider a generation to be a sampled answer from the base LLM. For beam search and best-of-N the generation budget corresponds to the number of beams and $N$ respectively. Lookahead search, however, utilizes additional compute: at each step of the search, we sample $k$ additional steps ahead. Therefore, we define the cost of lookahead-search to be $N×(k+1)$ samples.

Results. As shown in Figure 3 (left), with smaller generation budgets, beam search significantly outperforms best-of-N. However, as the budget is scaled up, these improvements greatly diminish, with beam search often underperforming the best-of-N baseline. We also see that, lookahead-search generally underperforms other methods at the same generation budget, likely due to the additional computation inducted by simulating the lookahead rollouts. The diminishing returns from search are likely due to exploitation of the PRM’s predictions. For example, we see some instances (such as in Figure 29), where search causes the model to generate low-information repetitive steps at the end of a solution. In other cases, we find that over-optimizing search can result in overly short solutions consisting of just 1-2 steps. This explains why the most powerful search method (i.e., lookahead search) underperforms the most. We include several of these examples found by search in Appendix M.

Which problems does search improve? To understand how to compute-optimally scale search methods, we now conduct a difficulty bin analysis. Specifically, we compare beam-search ( $M=4$ ) against best-of-N. In Figure 3 (right) we see that while in aggregate, beam search and best-of-N perform similarly with a high generation budget, evaluating their efficacy over difficulty bins reveals very different trends. On the easy questions (levels 1 and 2), the stronger optimizer of the two approaches, beam search, degrades performance as the generation budget increases, suggesting signs of exploitation of the PRM signal. In contrast, on the harder questions (levels 3 and 4), beam search consistently outperforms best-of-N. On the most difficult questions (level 5), no method makes much meaningful progress.

These findings match intuition: we might expect that on the easy questions, the verifier will make mostly correct assessments of correctness. Therefore, by applying further optimization via beam search, we only further amplify any spurious features learned by the verifier, causing performance degredation. On the more difficult questions, the base model is much less likely to sample the correct answer in the first place, so search can serve to help guide the model towards producing the correct answer more often.

<details>

<summary>x5.png Details</summary>

### Visual Description

# Technical Document Extraction: Compute Optimal Search Chart

## 1. Metadata and Header Information

* **Title:** Compute Optimal Search

* **Language:** English (100%)

* **Image Type:** Line Graph with markers

* **Primary Subject:** Performance comparison of different search/ranking methods for mathematical problem solving across varying computational budgets.

## 2. Axis and Scale Identification

* **Y-Axis (Vertical):**

* **Label:** MATH Test Accuracy (%)

* **Range:** 10 to 40

* **Markers:** 10, 15, 20, 25, 30, 35, 40

* **X-Axis (Horizontal):**

* **Label:** Generation Budget

* **Scale:** Logarithmic (Base 2)

* **Markers:** $2^1, 2^3, 2^5, 2^7, 2^9$ (with intermediate grid lines representing $2^0, 2^2, 2^4, 2^6, 2^8$)

## 3. Legend and Component Isolation

The legend is located in the bottom-right quadrant of the main chart area.

| Legend Label | Color | Marker Style |

| :--- | :--- | :--- |

| **Majority** | Red | Solid line with circle |

| **ORM Best-of-N Weighted** | Purple | Solid line with circle |

| **PRM Best-of-N Weighted** | Green | Solid line with circle |

| **PRM Compute Optimal Oracle** | Blue | Solid line with circle |

| **PRM Compute Optimal Predicted** | Orange | Solid line with circle |

## 4. Trend Verification and Data Extraction

All data series exhibit a positive correlation: as the "Generation Budget" increases, the "MATH Test Accuracy (%)" also increases, though most series show diminishing returns at higher budgets.

### Data Series Analysis

#### A. Majority (Red Line)

* **Trend:** The lowest performing baseline. It shows a steady, nearly linear increase on the log scale but remains significantly below all other methods.

* **Approximate Data Points:**

* $2^0$: ~10.5%

* $2^2$: ~14%

* $2^4$: ~23%

* $2^9$: ~29%

#### B. ORM Best-of-N Weighted (Purple Line)

* **Trend:** Slopes upward sharply until $2^4$, then decelerates, ending as the second-lowest performer at high budgets.

* **Approximate Data Points:**

* $2^0$: ~10.5%

* $2^4$: ~28%

* $2^9$: ~34.5%

#### C. PRM Best-of-N Weighted (Green Line)

* **Trend:** Consistently outperforms the ORM and Majority baselines. It maintains a steady upward trajectory throughout the budget range.

* **Approximate Data Points:**

* $2^0$: ~10.5%

* $2^4$: ~29%

* $2^9$: ~38%

#### D. PRM Compute Optimal Oracle (Blue Line)

* **Trend:** The highest performing series. It shows a very steep initial climb and reaches the highest recorded accuracy (~39.5%) at a budget of $2^8$. Note: This line ends at $2^8$.

* **Approximate Data Points:**

* $2^0$: ~10.5%

* $2^2$: ~27%

* $2^4$: ~33.5%

* $2^8$: ~39.5%

#### E. PRM Compute Optimal Predicted (Orange Line)

* **Trend:** Closely tracks the "Oracle" (Blue) line at lower budgets ($2^0$ to $2^4$). Between $2^4$ and $2^8$, it plateaus significantly compared to the Oracle, eventually converging with the PRM Best-of-N Weighted (Green) line.

* **Approximate Data Points:**

* $2^0$: ~10.5%

* $2^2$: ~27%

* $2^4$: ~33%

* $2^8$: ~37%

## 5. Summary of Findings

The chart demonstrates that Process-based Reward Models (PRM) significantly outperform Outcome-based Reward Models (ORM) and simple Majority voting. The "Compute Optimal Oracle" suggests that with perfect selection, accuracy can reach nearly 40% within a $2^8$ budget. The "Predicted" model successfully mimics the Oracle at low budgets but loses its competitive edge as the budget exceeds $2^4$, falling back toward the standard PRM weighted performance.

</details>

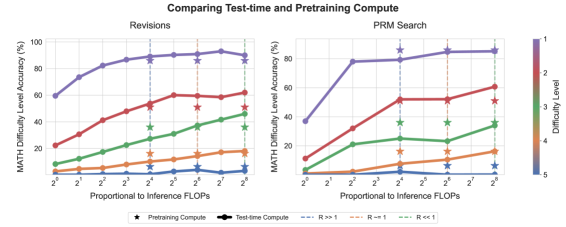

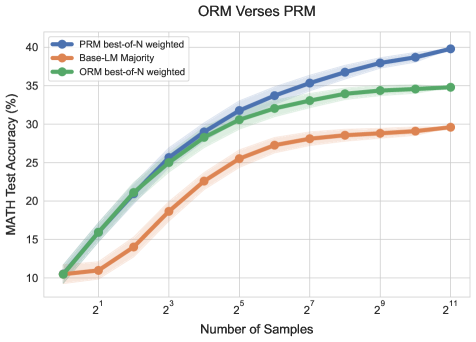

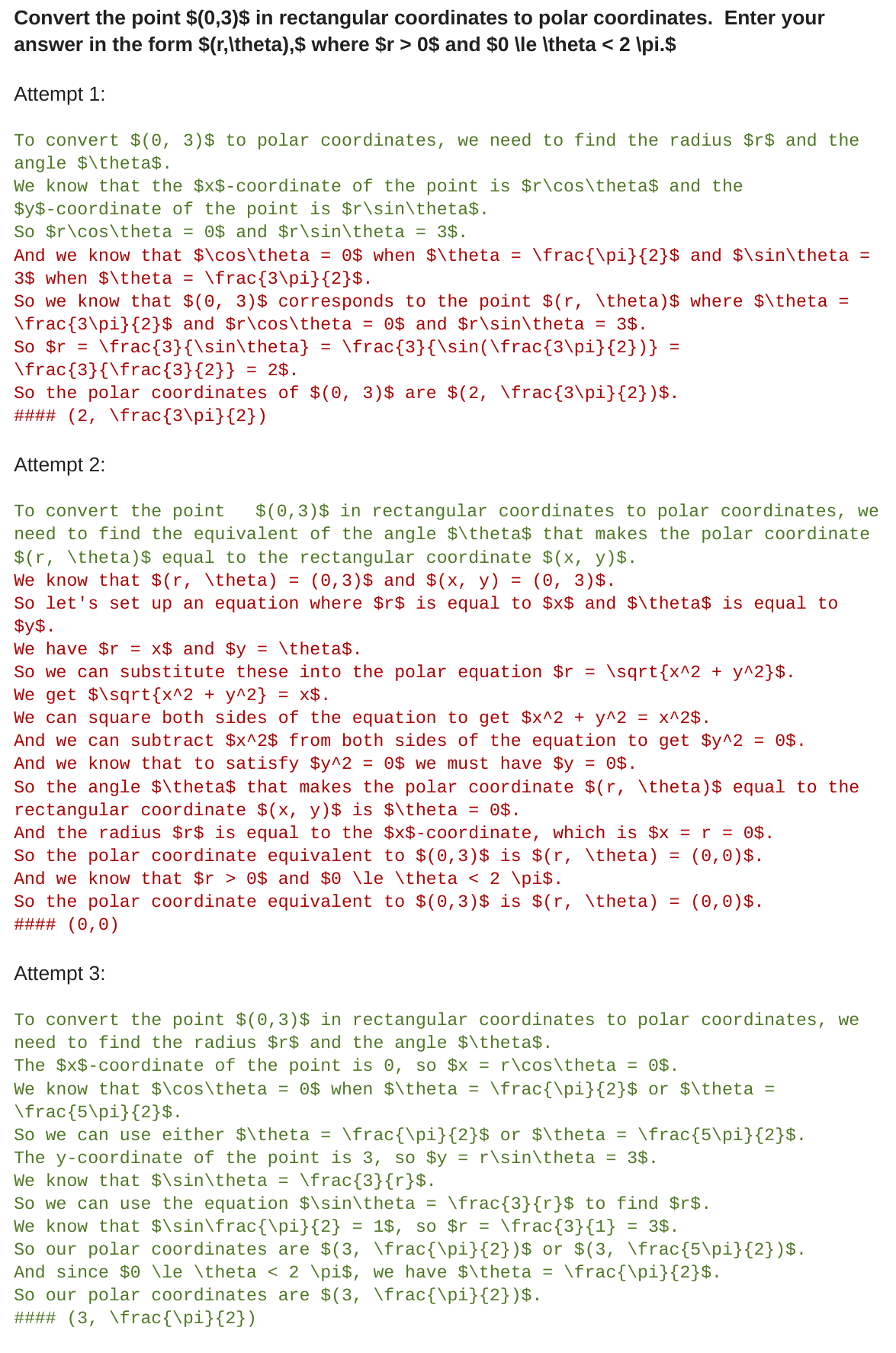

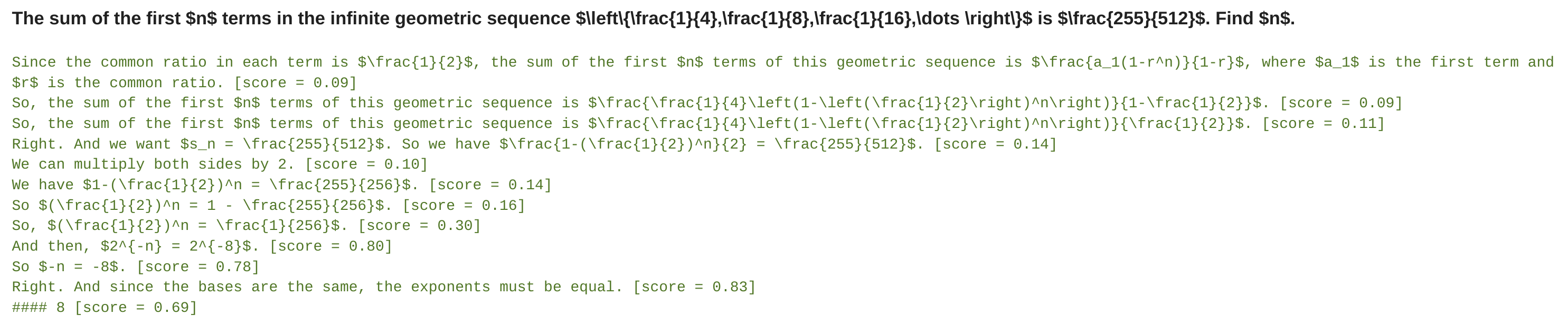

Figure 4: Comparing compute-optimal test-time compute allocation against baselines with PRM search. By scaling test time compute per the notion of question difficulty, we find that we can nearly outperform PRM best-of-N using up to 4 less test-time compute (e.g. 16 verses 64 generations). “ Compute-optimal oracle ” refers to using oracle difficulty bins derived from the groundtruth correctness information, and “ compute-optimal predicted ” refers to using the PRM’s predictions to generate difficulty bins. Observe that the curves with either type of difficulty bins largely overlap with each other.

Compute-optimal search. Given the above results, it is clear that question difficulty can be a useful statistic to predict the optimal search strategy to use at a given compute budget. Additionally, the best choice of search strategy can vary drastically as a function of this difficulty statistic. We therefore visualize the “compute-optimal” scaling trend, as represented by the best performing search strategy at each difficulty level in Figure 4. We see that in the low generation budget regime, using both the oracle and predicted difficulty, compute-optimal scaling can nearly outperform best-of-N using up to 4x less test-time compute (e.g. 16 verses 64 generations). While in the higher budget regime, some of these benefits diminish with the use of predicted difficulty, with oracle bins we still see continued improvements from optimally scaling test-time compute. This result demonstrates the performance gains that could be obtained by adaptively allocating test-time compute during search.

Takeaways for compute-optimal scaling of verifiers

We find that the efficacy of any given verifier search method depends critically on both the compute budget and the question at hand. Specifically, beam-search is more effective on harder questions and at lower compute budgets, whereas best-of-N is more effective on easier questions and at higher budgets. Moreover, by selecting the best search setting for a given question difficulty and test-time compute budget, we can nearly outperform best-of-N using up to 4x less test-time compute.

6 Refining the Proposal Distribution

So far, we studied the test-time compute scaling properties of search against verifiers. Now we turn to studying the scaling properties of modifying the proposal distribution (Section 2). Concretely, we enable the model to revise their own answers iteratively, allowing the model to dynamically improve it’s own distribution at test time. Simply prompting existing LLMs to correct their own mistakes tends to be largely ineffective for obtaining performance improvements on reasoning problems [15]. Therefore, we build on the recipe prescribed by Qu et al. [28], incorporate modifications for our setting, and finetune language models to iteratively revise their own answers. We first describe how we train and use models that refine their own proposal distribution by sequentially conditioning on their own previous attempts at the question. We then analyze the inference-time scaling properties of revision models.

<details>

<summary>x6.png Details</summary>

### Visual Description

# Technical Analysis: Language Model Sampling and Verification Strategies

This document provides a comprehensive extraction of the technical information contained in the provided diagram, which illustrates various methods for generating and verifying answers from a Language Model (LM).

## 1. Global Legend and Key

Located in the top-right header of the right-hand panel:

* **Dashed Blue Box:** "Apply Verifier"

* **Green Circle:** "Selected by verifier"

* **Red Circle:** "Rejected by verifier"

---

## 2. Left Panel: Sampling Methodologies

### Section: Parallel Sampling

* **Input (Blue Box):** "Q: If 4 daps = 7 yaps, and 5 yaps = 3 baps, how many daps equal 42 baps?"

* **Process:** The question is fed into a block labeled **"LM"**.

* **Flow:** The LM branches into three independent parallel paths.

* **Outputs:**

1. **Red Box:** "A: So 7/4 yap/dap ..."

2. **Red Box:** "A: We have 4 dap..."

3. **Green Box:** "A: If 7/4 yaps/dap ..."

* **Annotation:** "LM proposes answers independently, in parallel"

* **Visual Trend:** A one-to-many divergence where multiple complete answers are generated simultaneously.

### Section: Sequential Revisions

* **Input (Blue Box):** Same question as above regarding daps, yaps, and baps.

* **Process:** The question is fed into the **"LM"**.

* **Flow:** A linear chain of three boxes connected by arrows.

* **Outputs:**

1. **Red Box:** "A: We ..."

2. **Red Box:** "A: So ..."

3. **Green Box:** "A: If 7/4 ..."

* **Annotation:** "LM proposes a sequence of revisions, each conditioned on previous revisions"

* **Visual Trend:** A serial progression where each step modifies the previous output until a final (green) state is reached.

---

## 3. Right Panel: Using Revision Model + Verifier at Inference Time

### Section: Parallel Best-of-N

* **Structure:** A central "Question" node branches out to 7 parallel nodes.

* **Components:** Each node is enclosed in a dashed blue box ("Apply Verifier").

* **Data Points:**

* 6 nodes contain a **Red Circle** (Rejected).

* 1 node (the 3rd from the top) contains a **Green Circle** (Selected).

* **Annotation:** "Verifier selects the best answer"

* **Visual Trend:** A "shotgun" approach where many candidates are generated and a verifier picks the single successful one.

### Section: Sequential Revisions (with Verifier)

* **Structure:** A linear horizontal chain of 7 nodes following a "Question" input.

* **Components:** Each node is enclosed in a dashed blue box.

* **Data Points:**

* Nodes 1, 2, 3, 4, 6, and 7 contain **Red Circles**.

* Node 5 contains a **Green Circle**.

* **Annotation:** "Verifier selects the best answer"

* **Visual Trend:** The model iterates through steps; the verifier monitors the sequence and identifies the optimal state mid-chain or at a specific revision point.

### Section: Combining Sequential / Parallel

* **Structure:** A hybrid approach. The "Question" branches into two parallel horizontal chains. Each chain has 4 sequential nodes.

* **Internal Process:**

* **Top Chain:** Nodes 1, 2, and 4 are Red; Node 3 is Green.

* **Bottom Chain:** Nodes 1, 3, and 4 are Red; Node 2 is Green.

* **Annotation (Internal):** "Verifier selects the best answer within each chain"

* **Final Selection:** The two selected nodes (Green) from the chains are passed to a final verification stage.

* The top candidate is **Green**.

* The bottom candidate is **Red**.

* **Annotation (Final):** "Verifier selects the best answer across chains"

* **Visual Trend:** A hierarchical selection process. It uses parallel processing to explore different "paths" of sequential reasoning, then performs a final comparison to find the absolute best result.

---

## 4. Summary of Logic and Flow

The document describes a transition from simple generation (Left) to verified selection (Right).

* **Parallelism** increases the search space.

* **Sequencing** allows for iterative refinement.

* **Verification** acts as a filter to identify the correct mathematical logic (as evidenced by the "daps/yaps" word problem) among various failed attempts.

</details>

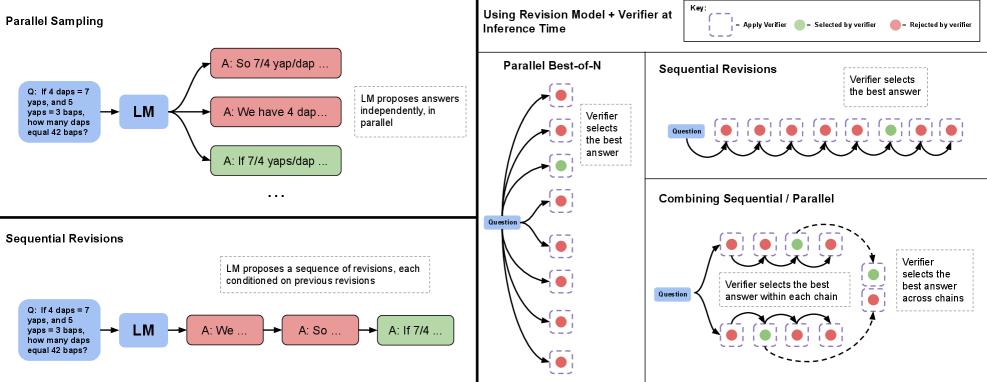

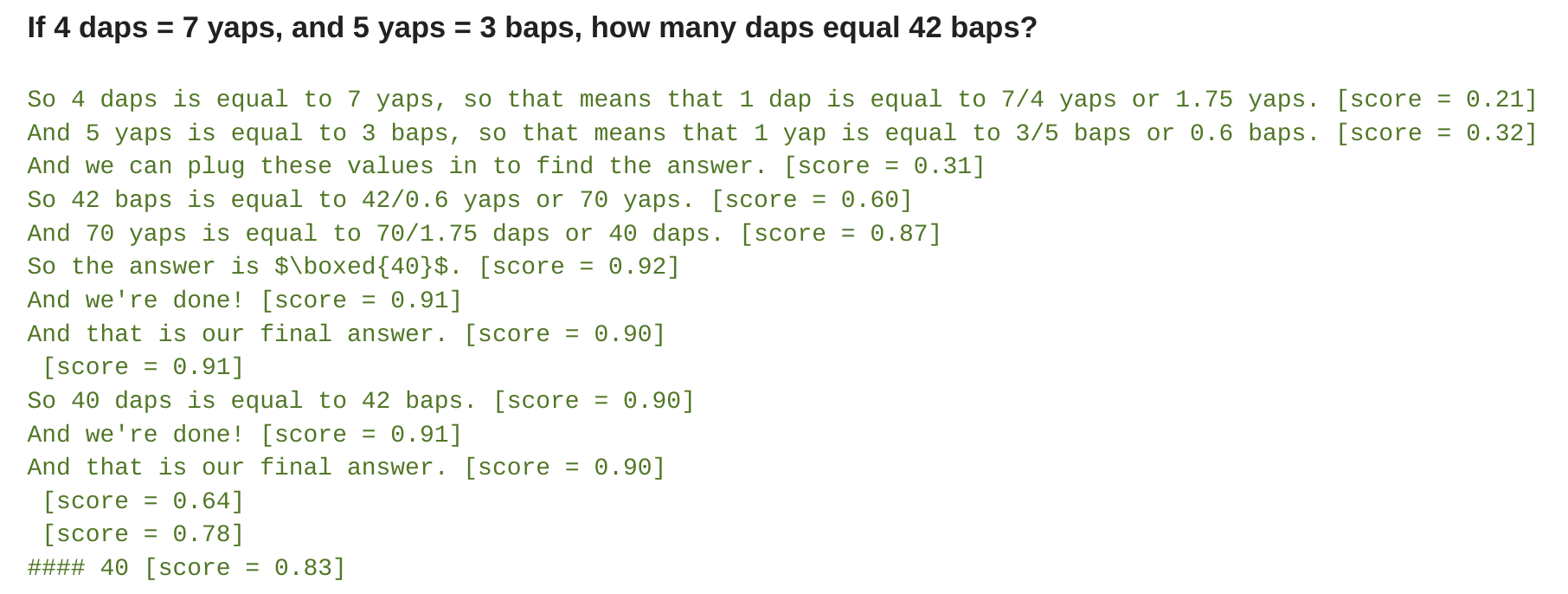

Figure 5: Parallel sampling (e.g., Best-of-N) verses sequential revisions. Left: Parallel sampling generates N answers independently in parallel, whereas sequential revisions generates each one in sequence conditioned on previous attempts. Right: In both the sequential and parallel cases, we can use the verifier to determine the best-of-N answers (e.g. by applying best-of-N weighted). We can also allocate some of our budget to parallel and some to sequential, effectively enabling a combination of the two sampling strategies. In this case, we use the verifier to first select the best answer within each sequential chain and then select the best answer accross chains.

6.1 Setup: Training and Using Revision Models

Our procedure for finetuning revision models is similar to [28], though we introduce some crucial differences. For finetuning, we need trajectories consisting of a sequence of incorrect answers followed by a correct answer, that we can then run SFT on. Ideally, we want the correct answer to be correlated with the incorrect answers provided in context, so as to effectively teach the model to implicitly identify mistakes in examples provided in-context, followed by correcting those mistakes by making edits as opposed to ignoring the in-context examples altogether, and trying again from scratch.

Generating revision data. The on-policy approach of Qu et al. [28] for obtaining several multi-turn rollouts was shown to be effective, but it was not entirely feasible in our infrastructure due to compute costs associated with running multi-turn rollouts. Therefore, we sampled 64 responses in parallel at a higher temperature and post-hoc constructed multi-turn rollouts from these independent samples. Specifically, following the recipe of [1], we pair up each correct answer with a sequence of incorrect answers from this set as context to construct multi-turn finetuning data. We include up to four incorrect answers in context, where the specific number of solutions in context is sampled randomly from a uniform distribution over categories 0 to 4. We use a character edit distance metric to prioritize selecting incorrect answers which are correlated with the final correct answer (see Appendix H). Note that token edit distance is not a perfect measure of correlation, but we found this heuristic to be sufficient to correlate incorrect in-context answers with correct target answers to facilitate training a meaningful revision model, as opposed to randomly pairing incorrect and correct responses with uncorrelated responses.

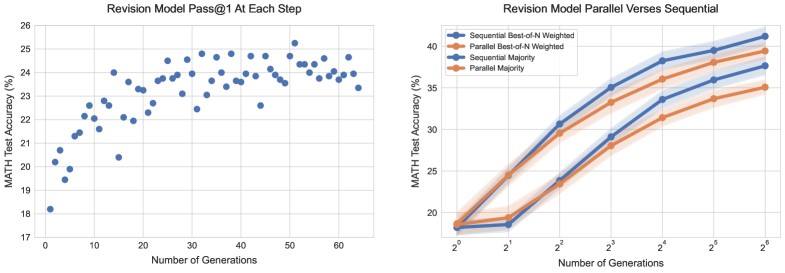

Using revisions at inference-time. Given a finetuned revision model, we can then sample a sequence of revisions from the model at test-time. While our revision model is only trained with up to four previous answers in-context, we can sample longer chains by truncating the context to the most recent four revised responses. In Figure 6 (left), we see that as we sample longer chains from the revision model, the model’s pass@1 at each step gradually improves, demonstrating that we are able to effectively teach the model to learn from mistakes made by previous answers in context.

<details>

<summary>x7.png Details</summary>

### Visual Description

# Technical Data Extraction: Revision Model Performance Analysis

This document contains a detailed extraction of data from two side-by-side technical charts evaluating the performance of a "Revision Model" on the MATH test suite.

---

## Chart 1: Revision Model Pass@1 At Each Step

### Metadata and Axis Labels

* **Title:** Revision Model Pass@1 At Each Step

* **Y-Axis Label:** MATH Test Accuracy (%)

* **Range:** 17 to 26

* **Markers:** 17, 18, 19, 20, 21, 22, 23, 24, 25, 26

* **X-Axis Label:** Number of Generations

* **Range:** 0 to 65+

* **Markers:** 0, 10, 20, 30, 40, 50, 60

### Component Analysis: Scatter Plot

* **Trend Verification:** The data points show a logarithmic-style growth pattern. There is a sharp increase in accuracy from generation 1 to approximately generation 15, followed by a plateau with high variance (noise) between 23% and 25% accuracy for the remainder of the steps.

* **Key Data Points (Approximate):**

* **Start:** ~18.2% at Generation 1.

* **Initial Growth:** Reaches ~21.5% by Generation 5; ~24% by Generation 15.

* **Outlier:** A notable dip occurs around Generation 15, dropping to ~20.4% before recovering.

* **Peak:** The highest recorded accuracy appears to be ~25.2% at approximately Generation 51.

* **End:** ~23.4% at Generation 64.

---

## Chart 2: Revision Model Parallel Verses Sequential

### Metadata and Axis Labels

* **Title:** Revision Model Parallel Verses Sequential

* **Y-Axis Label:** MATH Test Accuracy (%)

* **Range:** 20 to 40 (Actual markers: 20, 25, 30, 35, 40)

* **X-Axis Label:** Number of Generations (Logarithmic Scale)

* **Markers:** $2^0$ (1), $2^1$ (2), $2^2$ (4), $2^3$ (8), $2^4$ (16), $2^5$ (32), $2^6$ (64)

### Legend and Spatial Grounding

The legend is located in the upper-left quadrant of the chart area.

* **Blue Line with Circle Marker:** Sequential Best-of-N Weighted

* **Orange Line with Circle Marker:** Parallel Best-of-N Weighted

* **Blue Line with Diamond/Small Circle:** Sequential Majority

* **Orange Line with Diamond/Small Circle:** Parallel Majority

### Trend Verification and Data Extraction

All four series show a strong upward trend as the number of generations increases. Sequential methods consistently outperform their parallel counterparts across both voting/weighting schemes.

#### 1. Best-of-N Weighted Series (Top Two Lines)

* **Trend:** These are the highest-performing methods. The gap between Sequential and Parallel is narrowest at $2^1$ and widest at $2^6$.

* **Sequential Best-of-N Weighted (Dark Blue):**

* $2^0$: ~18.5%

* $2^6$: ~41.5% (Highest overall performance)

* **Parallel Best-of-N Weighted (Dark Orange):**

* $2^0$: ~18.5%

* $2^6$: ~39.5%

#### 2. Majority Series (Bottom Two Lines)

* **Trend:** These follow a similar trajectory but at a lower accuracy offset (approx. 4-5% lower than Best-of-N).

* **Sequential Majority (Light Blue):**

* $2^0$: ~18.2%

* $2^1$: ~18.5% (Stagnant initial growth)

* $2^6$: ~37.5%

* **Parallel Majority (Light Orange):**

* $2^0$: ~18.2%

* $2^1$: ~19.5%

* $2^6$: ~35.0%

### Summary of Findings

1. **Sequential Advantage:** In the comparison of Parallel vs. Sequential, the Sequential approach provides a consistent performance boost of roughly 2-3 percentage points at higher generation counts.

2. **Methodology Impact:** "Best-of-N Weighted" significantly outperforms "Majority" voting regardless of whether the process is parallel or sequential.

3. **Scaling:** Accuracy scales effectively with the number of generations, showing no signs of a hard plateau within the $2^6$ (64) generation limit on the logarithmic chart.

</details>

Figure 6: Left: Our revision model’s pass@1 at each revision step. Pass@1 gradually improves after each revision step, even improving beyond the 4 revision steps that it was trained for. We estimate pass@1 at each step by by averaging over the performance of 4 revision trajectories of length 64 for each question in the test-set. Right: Sequential vs parallel sampling from the revision model. Comparing performance when generating N initial answers in parallel from our revision model, verses generating N revisions sequentially, with the model. When using both the verifier and majority voting to select the answer, we see that generating answers sequentially with the revision model narrowly outperforms generating them in parallel.

That said, there is a distribution shift at inference time: the model was trained on only sequences with incorrect answers in context, but at test-time the model may sample correct answers that are included in the context. In this case, it may incidentally turn the correct answer into an incorrect answer in the next revision step. We find that indeed, similar to Qu et al. [28], around 38% of correct answers get converted back to incorrect ones with our revision model using a naïve approach. Therefore, we employ a mechanism based on sequential majority voting or verifier-based selection to select the most correct answer from the sequence of revisions made by the model (see Figure 5) to produce the best answer.

Comparisons. To test the efficacy of modifying the proposal distribution via revisions, we setup an even comparison between the performance of sampling N revisions in sequence and sampling N attempts at a question in parallel. We see in Figure 6 (right), that with both the verifier-based and majority-based selection mechanisms sampling solutions in sequence outperforms sampling them in parallel.

<details>

<summary>x8.png Details</summary>

### Visual Description

# Technical Data Extraction: MATH Test Accuracy Analysis

This document provides a comprehensive extraction of data and trends from two technical charts analyzing MATH test accuracy based on sequential/parallel processing ratios and problem difficulty.

---

## Chart 1: Varying Sequential/Parallel with Verifier

### Component Isolation

* **Header:** Title "Varying Sequential/Parallel with Verifier"

* **Main Chart:** A line graph with multiple data series plotted against a logarithmic X-axis.

* **Legend (Right Side):** A vertical color bar representing the "Number of Generations" on a log scale from $10^0$ (1) to $10^2$ (100+).

### Axis Definitions

* **Y-Axis:** "MATH Test Accuracy (%)"

* Scale: Linear, from 15 to 45 in increments of 5.

* **X-Axis:** "Sequential/Parallel Ratio"

* Scale: Logarithmic (base 2), markers at $2^{-7}, 2^{-5}, 2^{-3}, 2^{-1}, 2^1, 2^3, 2^5, 2^7$.

### Data Series and Trends

The chart contains several lines, color-coded by the number of generations. Darker purple indicates more generations; lighter orange indicates fewer.

1. **High Generation Count (Dark Purple, ~128-256 generations):**

* **Trend:** Starts at ~40% accuracy at $2^{-8}$, dips slightly, then rises to a peak of ~44% at $2^4$ before declining slightly.

* **Key Points:** Peak accuracy is achieved when the Sequential/Parallel ratio is approximately $2^4$ (16).

2. **Mid-Range Generation Count (Magenta/Pink, ~16-64 generations):**

* **Trend:** These lines are generally flatter and lower than the dark purple line. They show a slight upward trend as the ratio moves from $2^{-3}$ toward $2^3$.

* **Key Points:** Accuracy ranges between 33% and 40%.

3. **Low Generation Count (Orange/Light Peach, 1-4 generations):**

* **Trend:** These appear as short segments or single points at the bottom center of the graph.

* **Key Points:**

* Single point (1 generation) at ratio $2^0$ (1) shows ~18% accuracy.

* Short line (approx. 2-4 generations) at ratio $2^{-1}$ to $2^1$ shows ~24% accuracy.

### Summary of Findings

Accuracy increases significantly with the number of generations. For high generation counts, there is an optimal "sweet spot" for the Sequential/Parallel ratio around $2^4$, where performance is maximized.

---

## Chart 2: Revisions@128, Varying the Sequential to Parallel Ratio

### Component Isolation

* **Header:** Title "Revisions@128, Varying the Sequential to Parallel Ratio"

* **Main Chart:** A grouped bar chart showing accuracy across five difficulty levels.

* **Legend (Right Side):** A vertical color bar representing "Sequential to Parallel Ratio" on a log scale from $10^{-2}$ (0.01) to $10^2$ (100).

### Axis Definitions

* **Y-Axis:** "MATH Test Accuracy (%)"

* Scale: Linear, from 0 to 80+ in increments of 20.

* **X-Axis:** "Test Questions Binned by Increasing Difficulty Level"

* Categories: 1, 2, 3, 4, 5 (where 1 is easiest, 5 is hardest).

### Data Table Reconstruction

Each difficulty bin contains approximately 10 bars, representing different Sequential to Parallel ratios (from orange/low ratio to dark purple/high ratio).

| Difficulty Level | Trend Description | Approximate Accuracy Range |

| :--- | :--- | :--- |

| **1 (Easiest)** | Very high accuracy; relatively stable across all ratios. | ~90% - 92% |

| **2** | High accuracy; slight improvement as the ratio increases (darker bars). | ~58% - 63% |

| **3** | Moderate accuracy; clear upward trend as the ratio increases. | ~35% - 42% |

| **4** | Low accuracy; performance peaks at mid-to-high ratios then drops for the highest ratio. | ~12% - 18% |

| **5 (Hardest)** | Very low accuracy; performance is negligible for low ratios, peaking slightly for mid-high ratios. | ~1% - 4% |

### Summary of Findings

1. **Difficulty Impact:** There is a sharp, inverse correlation between question difficulty and accuracy.

2. **Ratio Impact:** Increasing the Sequential to Parallel ratio (moving toward more sequential processing) generally improves accuracy, particularly for mid-difficulty problems (Levels 2 and 3).

3. **Diminishing Returns:** For the hardest problems (Level 5), even high sequential ratios fail to bring accuracy above ~5%.

</details>

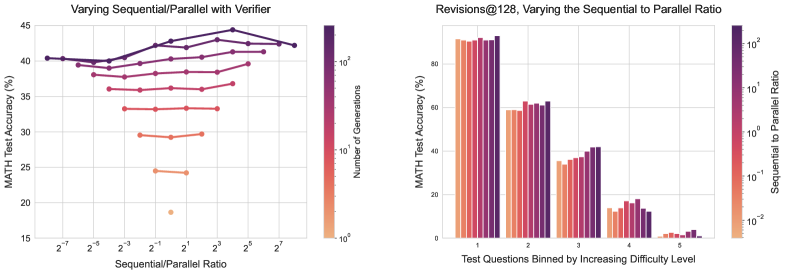

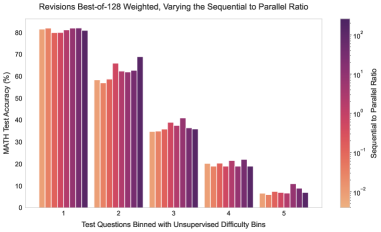

Figure 7: Left: Varying the ratio of the generation budget allocated sequential revisions to verses parallel samples. Each line represents a fixed generation budget as the ratio is changed. We use the verifier for answer selection. We see that while increased sequential revisions tends to outperform more parallel compute, at higher generation budgets there is an ideal ratio that strikes a balance between the two extremes. Right: Varying the sequential to parallel ratio for a generation budget of 128 across difficulty bins. Using verifier-based selection, we see that the easier questions attain the best performance with full sequential compute. On the harder questions, there is an ideal ratio of sequential to parallel test-time compute.

6.2 Analysis Results: Test-Time Scaling with Revisions

We saw previously that proposing answers sequentially outperforms proposing them in parallel. However, we might expect sequential and parallel sampling to have different properties. Sampling answers in parallel may act as more of a global search process, that could in principle, provide coverage over many totally different approaches for solving a problem, for instance, different candidates might utilize different high-level approaches altogether. Sequential sampling, on the other hand, may work more as a local refinement process, revising responses that are already somewhat on the right track. Due to these complementary benefits, we should strike a balance between these two extremes by allocating some of our inference-time budget to parallel sampling (e.g. $\sqrt{N}$ ) and the rest to sequential revisions (e.g. $\sqrt{N}$ ). We will now show the existence of a compute-optimal ratio between sequential and parallel sampling, and understand their relative pros and cons based on difficulty of a given prompt.

Trading off sequential and parallel test-time compute. To understand how to optimally allocate sequential and parallel compute, we perform a sweep over a number of different ratios. We see, in Figure 7 (left), that indeed, at a given generation budget, there exists an ideal sequential to parallel ratio, that achieves the maximum accuracy. We also see in Figure 7 (right) that the ideal ratio of sequential to parallel varies depending on a given question’s difficulty. In particular, easy questions benefit more from sequential revisions, whereas on difficult questions it is optimal to strike a balance between sequential and parallel computation. This finding supports the hypothesis that sequential revisions (i.e., varying the proposal distribution) and parallel sampling (i.e., search with verifiers) are two complementary axes for scaling up test-time compute, which may be more effective on a per-prompt basis. We include examples of our model’s generations in Appendix L. Additional results are shown in Appendix B.

<details>

<summary>x9.png Details</summary>

### Visual Description

# Technical Document Extraction: Compute Optimal Revisions Chart

## 1. Document Metadata

* **Title:** Compute Optimal Revisions

* **Language:** English

* **Image Type:** Line Graph with markers

* **Primary Subject:** Performance comparison of different revision strategies across varying generation budgets.

## 2. Component Isolation

### Header

* **Main Title:** Compute Optimal Revisions

### Main Chart Area

* **Y-Axis Label:** MATH Test Accuracy (%)