## Ancient Wisdom, Modern Tools: Exploring Retrieval-Augmented LLMs for Ancient Indian Philosophy

## Priyanka Mandikal

Department of Computer Science, UT Austin mandikal@utexas.edu

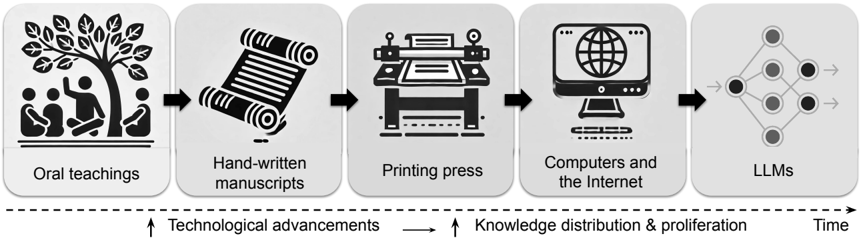

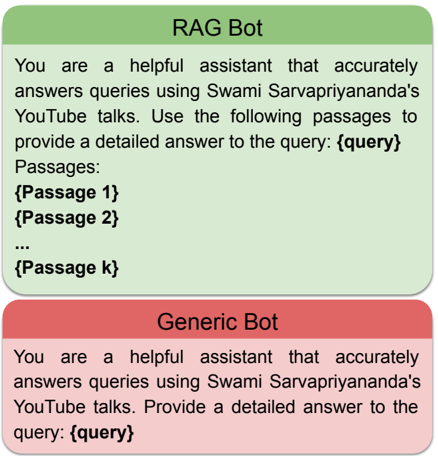

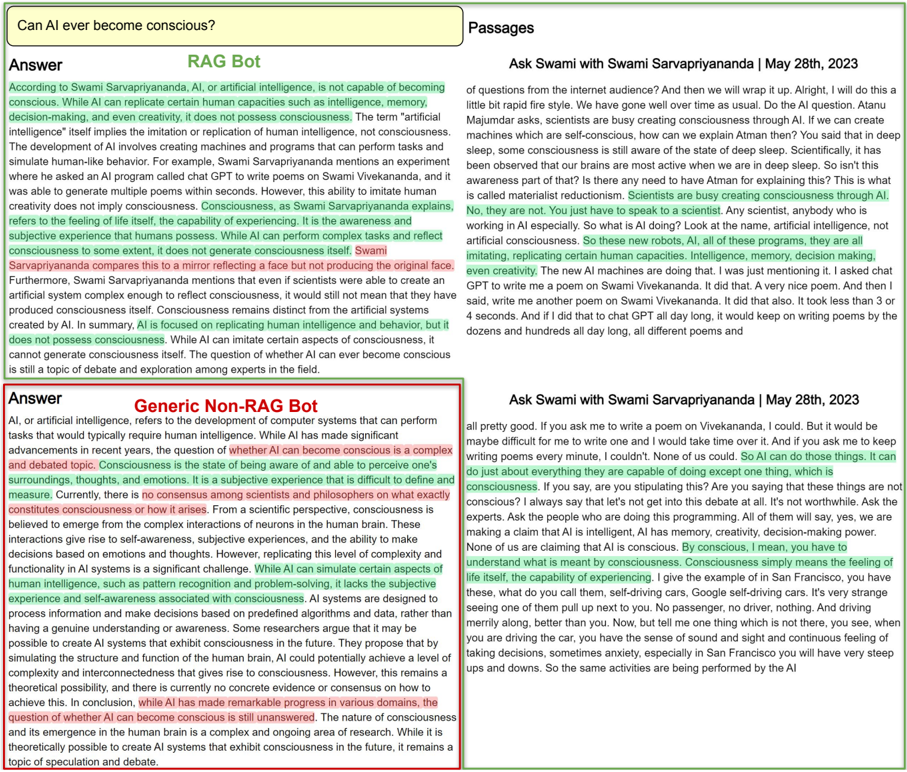

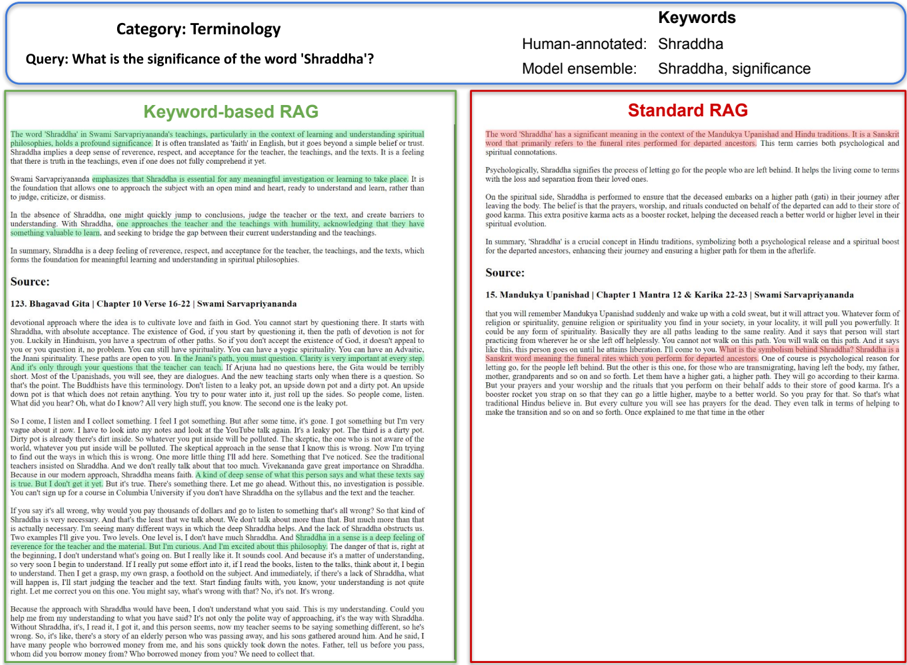

Figure 1: The dissemination of knowledge through the ages. Over time, methods of storing and transmitting knowledge have evolved from oral teachings to computers and the internet, significantly increasing the distribution and proliferation of human knowledge. The emerging LLM technology represents a new paradigm shift in this process.

<details>

<summary>Image 1 Details</summary>

### Visual Description

\n

## Diagram: Evolution of Knowledge Dissemination

### Overview

The image is a diagram illustrating the historical progression of knowledge dissemination methods, from oral teachings to Large Language Models (LLMs). It depicts five stages, each represented by an icon within a grey square, arranged horizontally and connected by arrows indicating a sequential flow over time. The diagram also includes labels indicating the relationship between technological advancements and knowledge distribution.

### Components/Axes

* **Horizontal Axis:** "Time" - indicated by an arrow pointing from left to right at the bottom of the diagram.

* **Stages (from left to right):**

1. "Oral teachings" - Icon: People gathered around a tree.

2. "Hand-written manuscripts" - Icon: A rolled scroll.

3. "Printing press" - Icon: A traditional printing press.

4. "Computers and the Internet" - Icon: A desktop computer with a globe on the screen.

5. "LLMs" - Icon: A neural network diagram.

* **Arrows:** Black arrows connecting each stage, indicating the flow of progression.

* **Labels:**

* "Technological advancements" - positioned below the first two stages, with an upward pointing arrow.

* "Knowledge distribution & proliferation" - positioned below the last two stages, with an upward pointing arrow.

### Detailed Analysis or Content Details

The diagram presents a linear progression of knowledge dissemination methods.

1. **Oral teachings:** The leftmost stage depicts a group of people gathered around a tree, symbolizing the earliest form of knowledge transfer through spoken word.

2. **Hand-written manuscripts:** The second stage shows a rolled scroll, representing the transition to recording knowledge on physical materials.

3. **Printing press:** The third stage features a printing press, signifying the mechanization of knowledge reproduction and wider distribution.

4. **Computers and the Internet:** The fourth stage illustrates a computer with a globe, representing the digital revolution and global connectivity.

5. **LLMs:** The rightmost stage displays a neural network diagram, symbolizing the latest advancement in knowledge processing and generation.

The arrows indicate a clear sequential flow from one stage to the next, demonstrating how each advancement built upon its predecessor. The labels "Technological advancements" and "Knowledge distribution & proliferation" highlight the driving forces behind this evolution.

### Key Observations

The diagram emphasizes the increasing speed and scale of knowledge dissemination over time. Each stage represents a significant leap in the ability to record, reproduce, and share information. The final stage, LLMs, suggests a shift towards automated knowledge processing and generation.

### Interpretation

The diagram illustrates a historical narrative of how humanity has progressively improved its ability to acquire, store, and share knowledge. It suggests that technological advancements are intrinsically linked to the expansion of knowledge distribution. The progression from oral traditions to LLMs demonstrates a trend towards increasing accessibility, scalability, and automation in the realm of knowledge. The diagram implies that LLMs represent a potentially transformative stage in this evolution, with the capacity to not only disseminate but also generate and synthesize knowledge. The diagram is a simplified representation of a complex process, but it effectively conveys the core idea of continuous improvement in knowledge management and dissemination.

</details>

## Abstract

LLMs have revolutionized the landscape of information retrieval and knowledge dissemination. However, their application in specialized areas is often hindered by factual inaccuracies and hallucinations, especially in long-tail knowledge distributions. We explore the potential of retrieval-augmented generation (RAG) models for long-form question answering (LFQA) in a specialized knowledge domain. We present VedantaNY-10M, a dataset curated from extensive public discourses on the ancient Indian philosophy of Advaita Vedanta. We develop and benchmark a RAG model against a standard, non-RAG LLM, focusing on transcription, retrieval, and generation performance. Human evaluations by computational linguists and domain experts show that the RAG model significantly outperforms the standard model in producing factual and comprehensive responses having fewer hallucinations. In addition, a keyword-based hybrid retriever that emphasizes unique low-frequency terms further improves results. Our study provides insights into effectively integrating modern large language models with ancient knowledge systems.

Proceedings of the 1st Machine Learning for Ancient Languages Workshop , Association for Computational Linguistics (ACL) 2024 . Dataset, code, and evaluation is available at: https://sites.google.com/view/vedantany-10m

ॐके ने�षतं पत�त प्रे�षतं मनः || 1.1 || यन्मनसा न मनुते येनाहुम�नो मतम् । तदेव ब्रह्म त्वं �व�� नेदं य�ददमुपासते || 1.6 ||

- "By whom willed and directed, does the mind alight upon its objects?"

- "What one cannot comprehend with the mind, but by which they say the mind comprehends, know that alone to be Brahman, not this which people worship here."

- Kena Upanishad, >3000 B.C.E.

## 1 Introduction

Answer-seeking has been at the heart of human civilization. Humans have climbed mountains and crossed oceans in search of answers to the greatest questions concerning their own existence. Over time, ancient wisdom has travelled from the silent solitude of mountain caves and forest hermitages into the busy cities and plains of the world. Technology has played a major role in this transmission, significantly increasing the distribution and proliferation of human knowledge. In recent times, large language models (LLMs) trained on large swathes of the internet have emerged as de-facto questionanswering machines. Recent studies on the societal impact of LLMs (Malhotra, 2021; Yiu et al., 2023) highlight their growing significance as cultural technologies. Analogous to earlier technologies like writing, print, and the internet, the power of LLMs can be harnessed meaningfully to preserve and disseminate human knowledge (Fig. 1).

Generic LLMs have proven to be highly effective for broad knowledge domains. However, they often struggle in niche and less popular areas, encountering issues such as factual inaccuracies and hallucinations in long-tail knowledge distributions (Kandpal et al., 2023; Mallen et al., 2023). Moreover, their inability to verify responses against authentic sources is particularly problematic in these domains, where LLMs can generate highly inaccurate answers with unwarranted confidence (Kandpal et al., 2023; Menick et al., 2022). In response to these limitations, there has been growing interest in retrieval-augmented generation (RAG) models (Karpukhin et al., 2020; Lewis et al., 2020b; Izacard et al., 2022; Ram et al., 2023). These models integrate external datastores to retrieve relevant knowledge and incorporate it into LLMs, demonstrating higher factual accuracy and reduced hallucinations compared to conventional LLMs (Shuster et al., 2021; Borgeaud et al., 2022). Updating these external datastores with new information is also more efficient and cost-effective than retraining LLMs. In this vein, we argue that RAG models show immense potential for enhancing study in unconventional, niche knowledge domains that are often underrepresented in pre-training data. Their ability to provide verified, authentic sources when answering questions is particularly advantageous for end-users.

In this work, we develop and evaluate a RAGbased language model specialized in the ancient Indian philosophy of Advaita Vedanta (Upanishads, >3000 B.C.E.; Bhagavad Gita, 3000 B.C.E.; Shankaracharya, 700 C.E.). To ensure that the LLMhas not been previously exposed to the source material, we construct VedantaNY-10M, a custom philosophy dataset comprising transcripts of over 750 hours of public discourses on YouTube from Vedanta Society of New York. We evaluate standard non-RAG and RAG models on this domain and find that RAG models perform significantly better. However, they still encounter issues such as irrelevant retrievals, sub-optimal retrieval passage length, and retrieval-induced hallucinations. In early attempts to mitigate some of these issues, we find that traditional sparse retrievers have a unique advantage over dense retrievers in niche domains having specific terminology-Sanskrit terms in our case. Consequently, we propose a keyword-based hybrid retriever that effectively combines sparse and dense embeddings to upsample low-frequency or domain-specific terms.

We conduct an extensive evaluation comprising both automatic metrics and human evaluation by computational linguists and domain experts. The models are evaluated along three dimensions: transcription, retrieval, and generation. Our findings are twofold. First, RAG LLMs significantly outperform standard non-RAG LLMs along all axes, offering more factual, comprehensive, and specific responses while minimizing hallucinations, with an 81% preference rate. Second, the keyword-based hybrid RAG model further outperforms the standard deep-embedding based RAG model in both automatic and human evaluations. Our study also includes detailed long-form responses from the evaluators, with domain experts specifically indicating the likelihood of using such LLMs to supplement their daily studies. Our work contributes to the broader understanding of how emerging technologies can continue the legacy of knowledge preservation and dissemination in the digital age.

## 2 Related Work

Language models for ancient texts Sommerschield et al. (2023) recently conducted a thorough survey of machine learning techniques applied to the study and restoration of ancient texts. Spanning digitization (Narang et al., 2019; Moustafa et al., 2022), restoration (Assael et al., 2022), attribution (Bogacz and Mara, 2020; Paparigopoulou et al., 2022) and representation learning (Bamman and Burns, 2020), a wide range of use cases have benefitted from the application of machine learning to study ancient texts. Recently, Lugli et al. (2022) released a digital corpus of romanized Buddhist Sanskrit texts, training and evaluating embedding models such as BERT and GPT-2. However, the use of LLMs as a question-answering tool to enhance understanding of ancient esoteric knowledge systems has not yet been systematically studied. To the best of our knowledge, ours is the first work that studies the effects of RAG models in the niche knowledge domain of ancient Indian philosophy.

Retrieval-Augmented LMs. In current LLM research, retrieval augmented generation models (RAGs) are gaining popularity (Izacard et al., 2022; Ram et al., 2023; Khandelwal et al., 2020; Borgeaud et al., 2022; Menick et al., 2022). A key area of development in RAGs has been their architecture. Early approaches involved finetuning the language model on open-domain questionanswering before deployment. MLM approaches such as REALM (Guu et al., 2020) introduced a two-stage process combining retrieval and reading, while DPR (Karpukhin et al., 2020) focused on pipeline training for question answering. RAG (Lewis et al., 2020b) used a generative approach with no explicit language modeling. Very recently, in-context RALM (Ram et al., 2023) showed that retrieved passages can be used to augment the input to the LLM in-context without any fine-tuning like prior work. In this work, we adopt the in-context retrieval augmented methodology similar to (Ram et al., 2023), where neither the retriever nor the generator is fine-tuned. This also enables us to use any combination of retrieval and generation models that best suits our application.

Applications of RAGs. The applications of RAGs are diverse and evolving. ATLAS (Izacard et al., 2022) and GopherCite (Menick et al., 2022) have shown how fine-tuning and reinforcement learning from human feedback can enhance RAGs' ability to generate verifiable answers from reliable sources. Prompting techniques have also seen innovation. kNNPrompt (Shi et al., 2022) extended kNN-LM for zero or few-shot classification tasks, and retrieval in-context approaches (Ram et al., 2023; Shi et al., 2023) have proven effective in utilizing retrieval at the input stage. Retrieval-LMs have been shown to be particularly valuable for handling longtail or less frequent entities (Kandpal et al., 2023; Mallen et al., 2023), updating knowledge (Izacard et al., 2022), improving parameter efficiency (Izacard et al., 2022; Mallen et al., 2023), and enhancing verifiability (Bohnet et al., 2022), making them increasingly relevant in a wide range of applications. In our work, we examine the application of RAGs for long-tail knowledge, conducting an extensive study on a niche knowledge domain of ancient Indian philosophy.

Evaluation of LFQA The field of long-form question answering (LFQA) is an emerging area of active research (Krishna et al., 2021; Nakano et al., 2021; Xu et al., 2023). Recently, Xu et al. (2023) conducted a thorough examination of various LFQA metrics, encompassing both human and automatic evaluation methods, and found that existing automatic metrics don't always align with human preferences. On their suggestion, we place special emphasis on conducting an extensive human evaluation utilizing the expertise of experienced computational linguists and domain experts.

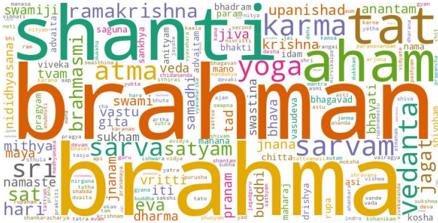

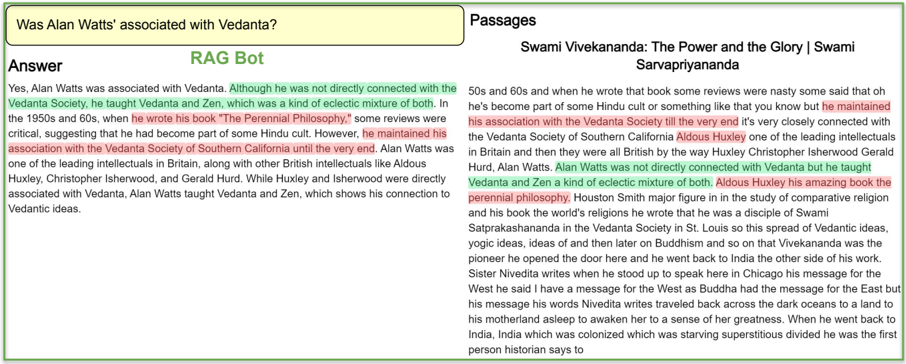

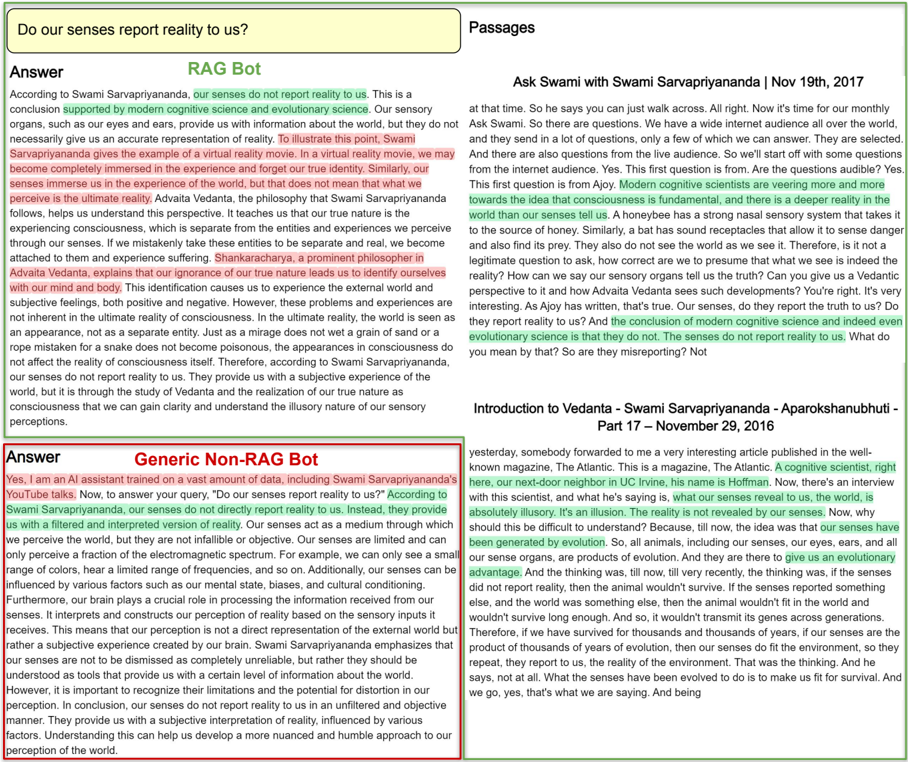

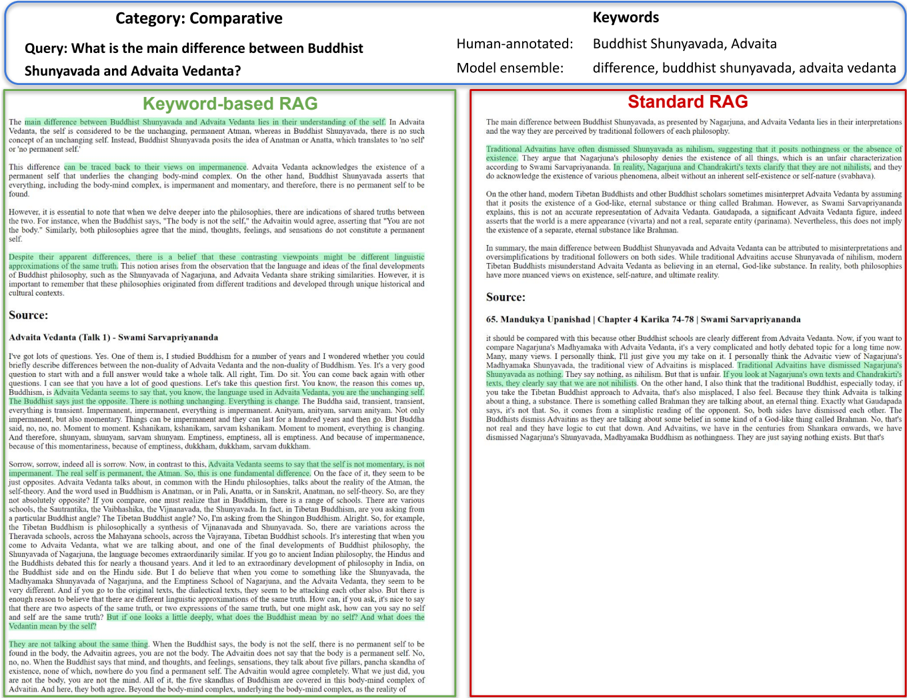

Figure 2: Sanskrit terms in VedantaNY-10M. Frequently occurring Sanskrit terms in the corpus.

<details>

<summary>Image 2 Details</summary>

### Visual Description

\n

## Word Cloud: Spiritual Concepts

### Overview

The image is a word cloud composed of terms related to Hindu philosophy and spirituality. The size of each word corresponds to its frequency within the source text used to generate the cloud. There are no axes or scales, and the arrangement appears random, though larger words are more centrally located.

### Components/Axes

There are no axes or scales present. The components are solely the words themselves, varying in size and color. The colors used are primarily shades of orange, yellow, and green.

### Detailed Analysis or Content Details

The following words are visible, with approximate size estimations based on visual prominence (larger = more frequent):

* **brahman** (very large, central, orange) - Appears multiple times, dominating the cloud.

* **shanti** (large, top-left, green)

* **atman** (large, center-left, yellow)

* **yoga** (medium-large, center, green)

* **karma** (medium, top-right, yellow)

* **vedanta** (medium, bottom-right, orange)

* **jnana** (medium, bottom-center, yellow)

* **aham** (medium, top-right, yellow)

* **sat** (medium, bottom-left, orange)

* **vasudeva** (small-medium, center-right, yellow)

* **bhagavan** (small-medium, center-right, yellow)

* **pranam** (small-medium, bottom-center, yellow)

* **dharma** (small-medium, bottom-center, orange)

* **sri** (small, bottom-left, orange)

* **mithya** (small, bottom-left, orange)

* **samadhi** (small, center-left, yellow)

* **sukham** (small, center-left, yellow)

* **vairagya** (small, center-left, yellow)

* **viveka** (small, top-left, orange)

* **tvam** (small, top-left, orange)

* **ramakrishna** (small, top-left, green)

* **upanishad** (small, top-right, green)

* **tat** (small, top-right, yellow)

* **jiva** (small, center, yellow)

* **bhakti** (small, center, yellow)

* **mano** (small, center, yellow)

* **swami** (small, center, yellow)

* **gita** (small, center, yellow)

* **vritti** (small, bottom-center, yellow)

* **iti** (small, bottom-center, yellow)

* **eva** (small, bottom-center, yellow)

* **advaita** (small, top-left, green)

* **sankhya** (small, top-left, green)

* **sarvasatyam** (small, center, yellow)

* **chitta** (small, bottom-center, yellow)

* **buddhi** (small, bottom-center, yellow)

* **maharaj** (small, bottom-right, yellow)

* **drishya** (small, bottom-right, yellow)

* **asi** (small, bottom-right, yellow)

* **vande** (small, bottom-right, orange)

* **kosha** (small, bottom-right, orange)

* **jagat** (small, bottom-right, orange)

* **swastina** (small, center-right, yellow)

* **bhadram** (small, top-right, green)

* **param** (small, top-right, green)

* **tantam** (small, top-right, yellow)

* **bhavat** (small, top-right, yellow)

There are also several smaller, less prominent words that are difficult to decipher with certainty.

### Key Observations

The most prominent words are "brahman," "shanti," "atman," "yoga," and "karma," suggesting these concepts are central to the source material. The color distribution doesn't appear to have a clear thematic organization. The repetition of "brahman" is striking.

### Interpretation

This word cloud visually represents the core vocabulary of Hindu philosophical discourse. The dominance of "brahman" indicates its fundamental importance as the ultimate reality. The presence of terms like "atman" (the self), "karma" (action and consequence), and "yoga" (union) highlights the paths and concepts used to understand and realize this ultimate reality. "Shanti" (peace) suggests a desired state of being achieved through these practices. The cloud's overall impression is one of interconnectedness and the pursuit of spiritual understanding. The use of a word cloud format itself suggests a holistic, non-linear approach to these concepts, emphasizing their interwoven nature rather than a strict hierarchical structure. The image does not provide any quantitative data, but rather a qualitative representation of thematic emphasis.

</details>

## 3 The VedantaNY-10M Dataset

We first describe our niche domain dataset creation process. The custom dataset for our study needs to satisfy the following requirements: ( 1 ) Niche: Must be a specialized niche knowledge domain within the LLM's long-tail distribution. ( 2 ) Novel : The LLM must not have previously encountered the source material. ( 3 ) Authentic: The dataset should be authentic and representative of the knowledge domain. ( 4 ) Domain experts: should be available to evaluate the model's effectiveness and utility.

Knowledge domain. To satisfy the first requirement, we choose our domain to be the niche knowledge system of Advaita Vedanta, a 1300-year-old Indian school of philosophy (Shankaracharya, 700 C.E.) based on the Upanishads (>3000 B.C.E.), Bhagavad Gita (3000 B.C.E.) and Brahmasutras (3000 B.C.E.) 1 . It is a contemplative knowledge tradition that employs a host of diverse tools and techniques including analytical reasoning, logic, linguistic paradoxes, metaphors and analogies to enable the seeker to enquire into their real nature. Although a niche domain, this knowledge system has been continuously studied and rigorously developed over millenia, offering a rich and structured niche for the purposes of our study. Being a living tradition, it offers the additional advantage of providing experienced domain experts to evaluate the language models in this work.

Composition of the dataset. Considering the outlined criteria, we introduce VedantaNY-10M, a curated philosophy dataset of public discourses.

1 Currently there exists no consensus on accurately dating these ancient scriptures. The Upanishads (which are a part of the Vedas) have been passed on orally for millennia and are traditionally not given a historic date. However, they seem to have been compiled and systematically organized sometime around 3000 B.C.E. by Vyasa. Likewise, the time period of Adi Shankaracharya also varies and he is usually placed between 450 B.C.E to 700 C.E.

To maintain authenticity while ensuring that the LLM hasn't previously been exposed to the source material, we curate our dataset from a collection of YouTube videos on Advaita Vedanta, sourced from the Vedanta Society of New York. It contains 10M tokens and encompasses over 750 hours of philosophical discourses by Swami Sarvapriyananda, a learned monk of the Ramakrishna Order. These discourses provide a rich and comprehensive exposition of the principles of Advaita Vedanta, making them an invaluable resource for our research.

Languages and scripts. The dataset primarily features content in English, accounting for approximately 97% of the total material. Sanskrit, the classical language of Indian philosophical literature, constitutes around 3% of the dataset. The Sanskrit terms are transliterated into the Roman script. To accommodate the linguistic diversity and the specific needs of the study, the dataset includes words in both English and Sanskrit, without substituting the Sanskrit terms with any English translations. Translating ancient Sanskrit technical terms having considerably nuanced definitions into English is a non-trivial problem (Malhotra and Babaji, 2020). Hence, our dual-language approach ensures that the Sanskrit terms and concepts are accurately represented and accessible, thereby enhancing the authenticity of our research material. Frequently occurring Sanskrit terms in the corpus are shown in Fig. 2. For excerpts from passages, please refer to Appendix Table 2.

## 4 In-context RAG for niche domains

We now discuss the methodology adopted to build an in-context retrieval augmented chatbot from the custom dataset described above.

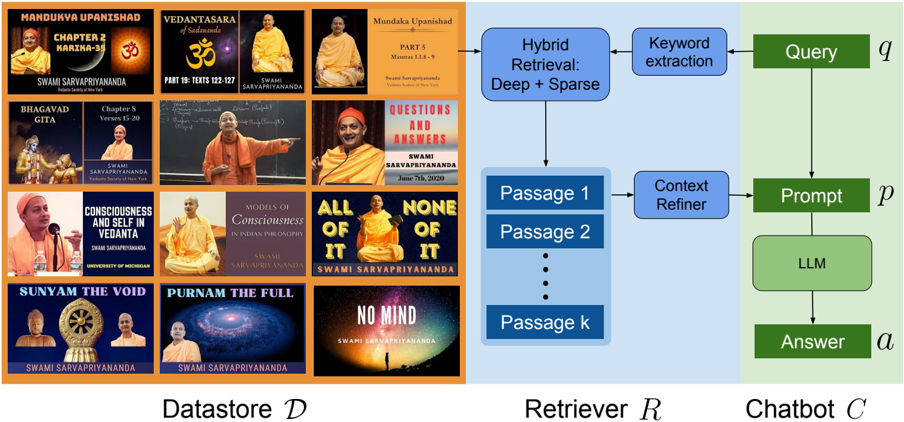

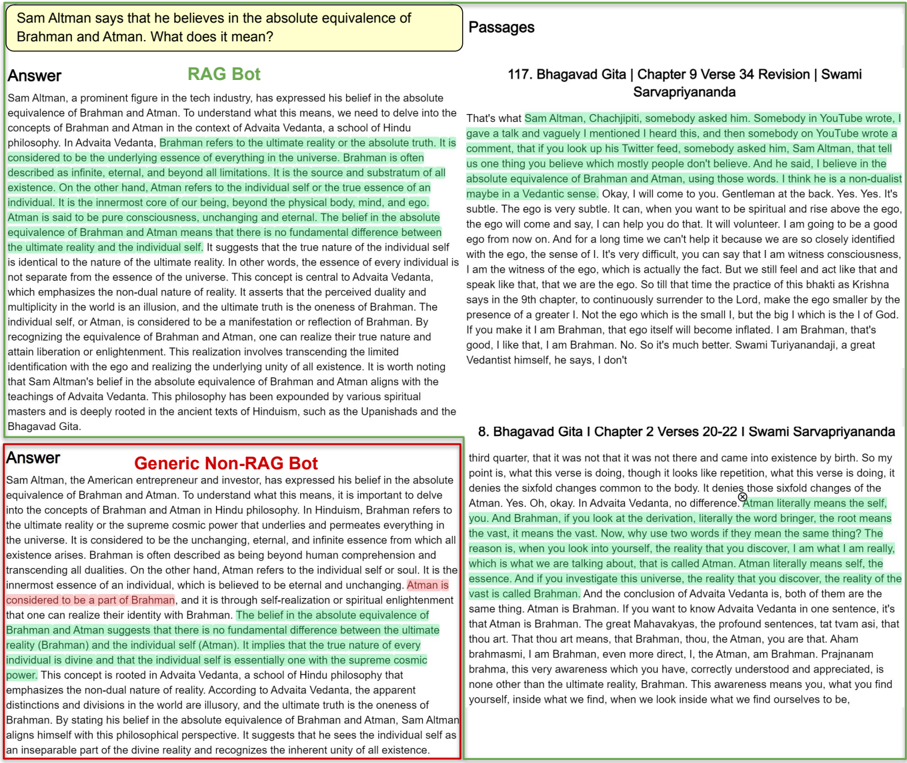

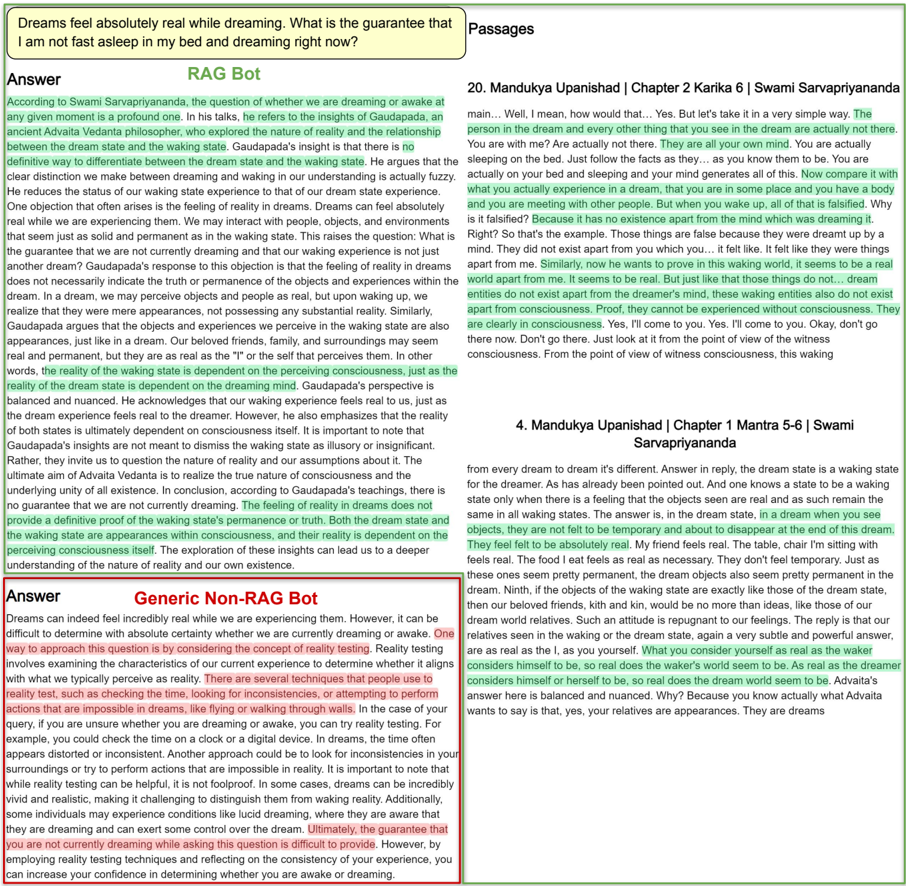

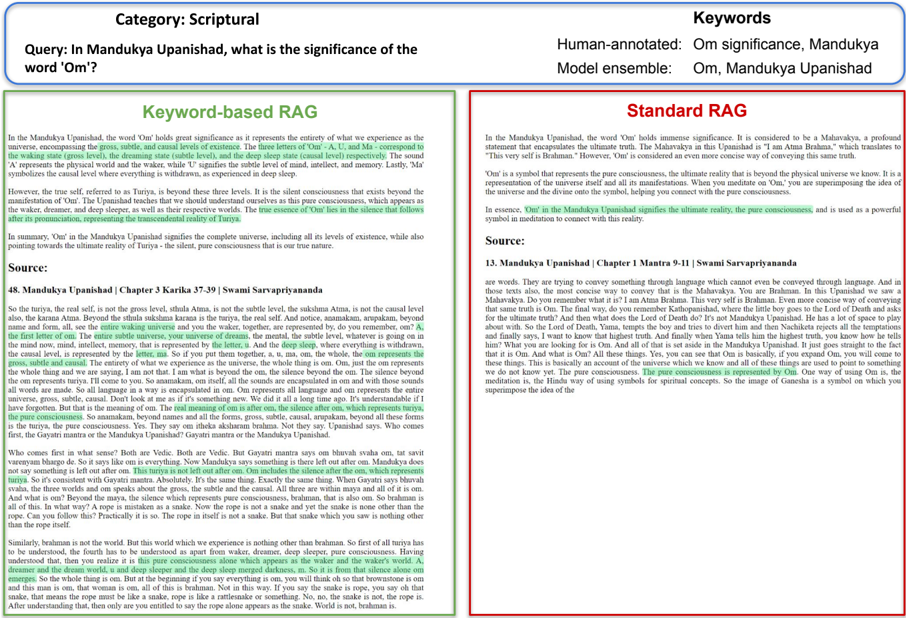

We first define a generic chatbot C g that does not use retrieval as follows: C g : q → a g where q is the user query and a g is the answer generated by the chatbot. Now, let D t represent the textual data corpus from our knowledge domain and R be the retriever. Our goal is to build a retrieval-augmented generation chatbot C r : q × R ( D t , q ) → a r that will generate answer a r for the query by retrieving relevant context from D t using R . An overview of our approach is illustrated in Fig. 3. We first build D t from 765 hours of public discourses on Advaita Vedanta introduced in Sec. 3. When deployed, the system processes q by first using retriever R to identify the top-k most relevant passages P from D t using a similarity metric. Subsequently, a large language model (LLM) is prompted with both the query and the retrieved passages in-context, following Ram et al. (2023), to generate a contextually relevant response.

We now describe each of the components in detail. We follow a four-stage process as follows:

Transcription. We first need to create a dense textual corpus targeted at our niche domain. Since our dataset consists of YouTube videos, we first employ a transcription model to transcribe the audio into text. Our video corpus D v consists of 612 videos totaling 765 hours of content, with an average length of 1.25 hours per video. We extract audio content from D v and transcribe it using OpenAI's Whisper large-v2 model (Radford et al., 2023). This step converts the spoken discourses into a transcribed textual corpus D t consisting of 10M tokens in total. Since Whisper is a multi-lingual model, it has the capacity to support the dual-language nature of our dataset. We evaluate the transcription quality of Whisper in Sec. C.1.

Datastore creation. The transcribed text in D t is then segmented into shorter chunks called passages P , consisting of 1500 characters each. These chunks are then processed by a deep embedder to produce deep embedding vectors z dense . These embedded chunks are stored in a vector database D z . Ultimately, we store approximately 25,000 passage embeddings z ∈ D z , each representing a discrete chunk of the philosophical discourse in D t .

Retrieval. To perform retrieval-augmented generation, we first need to build a retrieval system R : D z × q → P that retrieves contextually relevant textual passages P from D t given D z and q . The retriever performs the following operation: P = D t [ argTop -k z ∈ D z sim ( q, z )] , where we use cosine similarity as the similarity metric. Standard RAG models employ state-of-theart deep embedders to encode documents and retrieve them during inference. However, these semantic embeddings can struggle to disambiguate between specific niche terminology in custom domains (Mandikal and Mooney, 2024). This can be particularly problematic in datasets having long-tail distributions such as ours. In addition, retrieved fixed-length passages are sub-optimal. Short incomplete contexts can be particularly damaging for LFQA, while longer contexts can contain unnecessary information that can confuse the generation model. To mitigate these two issues, we experiment with two key changes: (1) a keyword-based

Figure 3: Overview of the RAG model. We present VedantaNY-10M, a dataset derived from over 750 hours of public discourses on the ancient Indian philosophy of Advaita Vedanta, and build a retrieval-augmented generation (RAG) chatbot for this knowledge domain. At deployment, given a query q , the retriever R first retrieves the top-k most relevant passages P from the datastore using a hybrid keyword-based retriever. It then refines this retrieved context using a keyword-based context reshaper to adjust the passage length. Finally, an LLM is prompted with the query and the refined passages in-context. We conduct an extensive evaluation with computational linguists and domain experts to assess the model's real-world utility and identify challenges.

<details>

<summary>Image 3 Details</summary>

### Visual Description

\n

## Diagram: Retrieval Augmented Generation (RAG) System Flow

### Overview

The image depicts a diagram illustrating the flow of information in a Retrieval Augmented Generation (RAG) system. The system consists of three main components: a Datastore (D), a Retriever (R), and a Chatbot. The Datastore contains various images related to Swami Sarvapriyananda's teachings. The Retriever extracts relevant passages from the Datastore based on a user query. The Chatbot then uses these passages to generate an answer.

### Components/Axes

The diagram is divided into three vertical sections labeled:

* **Datastore D**: Located on the left, containing images of book covers and portraits.

* **Retriever R**: Located in the center, showing the process of passage retrieval and context refinement.

* **Chatbot**: Located on the right, illustrating the Large Language Model (LLM) and answer generation.

Key labels and components within each section:

* **Datastore D**: Contains images with titles like "Mandukya Upanishad Chapter 2", "Vedantasara", "Bhagavad Gita", "Consciousness and Self in Vedanta", "Sunyam The Void", "Purnam The Full", "Models of Consciousness in Indian Philosophy", "All of None of It", "Questions and Answers". Each image also includes "Swami Sarvapriyananda" and the University of Michigan logo.

* **Retriever R**: Includes components labeled "Hybrid Retrieval: Deep + Sparse", "Keyword extraction", "Context Refiner", and a list of "Passage 1" through "Passage k".

* **Chatbot**: Includes components labeled "LLM" and "Answer".

* **Flow Arrows**: Arrows indicate the direction of information flow between components.

* **Variables**: "q" represents the Query, "p" represents the Prompt, and "a" represents the Answer.

### Detailed Analysis or Content Details

The diagram illustrates the following flow:

1. A **Query (q)** is input into the system.

2. **Keyword extraction** is performed on the query.

3. The **Hybrid Retrieval** component (Deep + Sparse) searches the **Datastore (D)** based on the extracted keywords.

4. The Retriever returns **Passage 1** through **Passage k** (a variable number of passages).

5. The **Context Refiner** processes these passages.

6. A **Prompt (p)** is generated using the refined passages.

7. The **LLM** (Large Language Model) receives the prompt.

8. The LLM generates an **Answer (a)**.

The Datastore contains images of the following:

* **Mandukya Upanishad Chapter 2 Karika-38**

* **Vedantasara** Part 3, Mantra 1.14-9

* **Bhagavad Gita** Verses 16-20

* **Consciousness and Self in Vedanta**

* **Sunyam The Void**

* **Purnam The Full**

* **Models of Consciousness in Indian Philosophy**

* **All of None of It**

* **Questions and Answers** June 7th, 2020

Each image in the Datastore is attributed to "Swami Sarvapriyananda" and the University of Michigan.

### Key Observations

The diagram highlights the key steps involved in a RAG system. The use of "Hybrid Retrieval" suggests a combination of semantic and keyword-based search methods. The "Context Refiner" component is crucial for ensuring the LLM receives relevant and concise information. The Datastore is specifically populated with content related to the teachings of Swami Sarvapriyananda.

### Interpretation

This diagram demonstrates a RAG architecture designed to answer questions based on a specific knowledge base – in this case, the teachings of Swami Sarvapriyananda. The system aims to improve the accuracy and relevance of LLM-generated responses by grounding them in factual information retrieved from the Datastore. The hybrid retrieval approach suggests an attempt to balance precision (keyword-based) and recall (semantic-based) in the search process. The Context Refiner is likely used to filter out irrelevant information and format the retrieved passages for optimal LLM consumption. The overall system is designed to provide informed and contextually relevant answers to user queries related to the subject matter contained within the Datastore. The use of a specific teacher's work suggests a focused application of RAG for a specialized domain.

</details>

hybrid retriever to focus on unique low-frequency words, and (2) a context-refiner to meaningfully shorten or expand retrieved context.

1. Keyword-based retrieval. To emphasize the importance of key terminology, we first employ keyword extraction and named-entity recognition techniques on the query q to extract important keywords κ . During retrieval, we advocate for a hybrid model combining both deep embeddings as well as sparse vector space embeddings. We encode the full query in the deep embedder and assign a higher importance to keyphrases in the sparse embedder. The idea is to have the sparse model retrieve domainspecific specialized terms that might otherwise be missed by the deep model. Our hybrid model uses a simple weighted combination of the query-document similarities in the sparse and dense embedding spaces. Specifically, we score a document D for query q and keywords κ using the ranking function:

<!-- formula-not-decoded -->

where z d and z s denotes the dense and sparse embedding functions and Sim is cosine simi- larity measuring the angle between such vector embedddings. In our experiments, we set λ = 0 . 2 . Amongst the top-n retrieved passages, we choose k passages containing the maximum number of unique keywords.

2. Keyword-based context refinement. Furthermore, we refine our retrieved passages by leveraging the extracted keywords using a heuristic-based refinement operation to produce P ′ = Ref ( P, κ ) . For extension, we expand the selected passage to include one preceding and one succeeding passage, and find the first and last occurrence of the extracted keywords. Next, we trim the expanded context from the first occurrence to the last. This can either expand or shorten the original passage depending on the placement of keywords. This ensures that retrieved context contains relevant information for the generation model.

Generation. For answer generation, we construct prompt p from the query q and the retrieved passages ( P ′ 1 , P ′ 2 , ..., P ′ k ) ∈ P in context. Finally, we invoke the chatbot C r to synthesize an answer a r from the constructed prompt. For an example of the constructed RAG bot prompt, please refer to Fig. 5. This four-stage process produces a retrieval- augmented chatbot that can generate contextually relevant responses for queries in our niche domain.

Implementation Details. For embedding and generation, we experiment with both closed and open source language models. For RAG vs non-RAG comparison, we use OpenAI's text-embeddingada-002 model (Brown et al.) as the embedder and GPT-4-turbo (OpenAI, 2023) as the LLM for both C r and C g . For comparing RAG model variants, we use the open source nomic-embed-textv1 (Nussbaum et al., 2024) as our deep embedder and Mixtral-8x7B-Instruct-v0.1 (Jiang et al., 2024) as our generation model. For keyword extraction, we use an ensemble of different models including OpenKP (Xiong et al., 2019), KeyBERT (Grootendorst, 2020) and SpanMarker (Aarsen, 2020). We experimented with using language models such as ChatGPT for keyword extraction, but the results were very poor as also corroborated in Song et al. (2024). For further implementation details of the eval metrics, see Appendix Sec. A. The VedantaNY-10M dataset, code and evaluation is publicly available at https://github.com/ priyankamandikal/vedantany-10m .

## 5 Evaluation

We now evaluate the model along two axes: automatic evaluation metrics and a human evaluation survey. To ensure a broad and comprehensive evaluation, we categorize the questions into five distinct types, each designed to test different aspects of the model's capabilities:

1. Anecdotal: Generate responses based on stories and anecdotes narrated by the speaker in the discourses.

2. Comparative : Analyze and compare different concepts, philosophies, or texts. This category tests the model's analytical skills and its ability to draw parallels and distinctions.

3. Reasoning Require logical reasoning, critical thinking, and the application of principles to new scenarios.

4. Scriptural : Test the model's ability to reference, interpret, and explain passages from religious or philosophical texts.

5. Terminology : Probe the model's understanding of specific technical terms and concepts.

For a sample set of questions across the above five categories, please refer to Appendix Table 4.

## 5.1 Automatic Evaluation

Inspired by Xu et al. (2023), we conduct an extensive automatic evaluation of the two RAG models on our evaluation set. We describe each metric type below and provide implementation details in Appendix Sec. A. Due to the lack of gold answers, we are unable to report reference-based metrics.

Answer-only metrics: We assess features like fluency and coherence by analyzing responses with specific metrics: (1) Self-BLEU (Zhu et al., 2018) for text diversity, where higher scores suggest less diversity, applied in open-ended text generation; (2) GPT-2 perplexity for textual fluency, used in prior studies on constrained generation. We also consider (3) Word and (4) Sentence counts as length-based metrics, owing to their significant influence on human preferences (Sun et al., 2019; Liu et al., 2022; Xu et al., 2023).

(Question, answer) metric: To ensure answers are relevant to the posed questions, we model p ( q | a ) for ranking responses with RankGen (Krishna et al., 2022). Leveraging the T5-XXL architecture, this encoder is specially trained via contrastive learning to evaluate model generations based on their relevance to a given prefix, in this context, the question. A higher RankGen score indicates a stronger alignment between the question and the answer, serving as a measure of relevance.

(Answer, evidence) metric: A key challenge in LFQA is assessing answer correctness without dedicated factuality metrics, akin to summarization's faithfulness. We apply QAFactEval (Fabbri et al., 2022), originally for summarization, to LFQA by considering the answer as a summary and evidence documents as the source. Answers deviating from source content, through hallucinations or external knowledge, will score lower on this metric.

## 5.2 Human Evaluation

We have three experienced domain experts evaluate the models across the five categories. Each of these experts is closely associated with Vedanta Society of New York, and has extensively studied the philosophy in question for up to a decade on average, being well-versed with domain-specific terminology and conceptual analysis. We conduct the human survey along two dimensions: retrieval and generation. For retrieval, we evaluate relevance and completeness, and for generation we evaluate factual correctness and completeness. In addition, we ask the reviewers to provide free-form justifi-

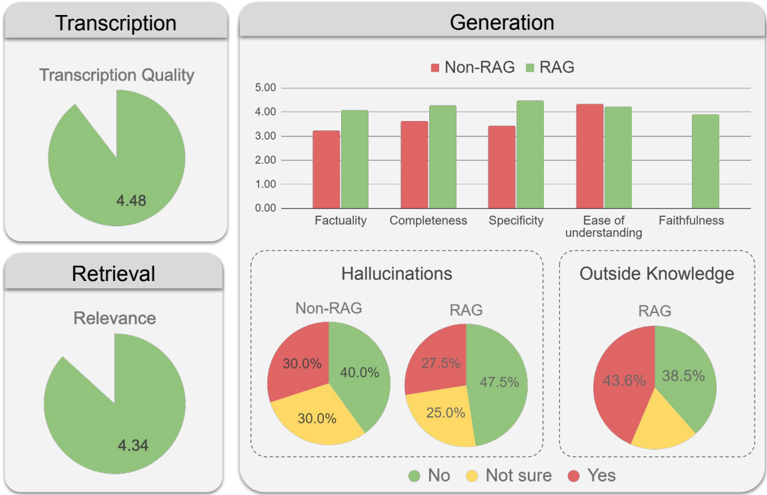

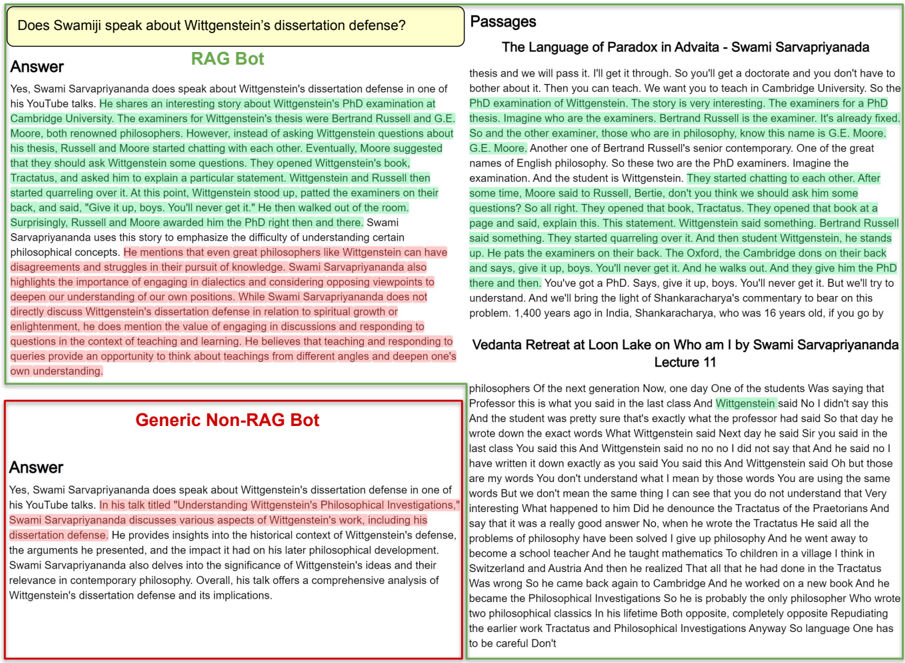

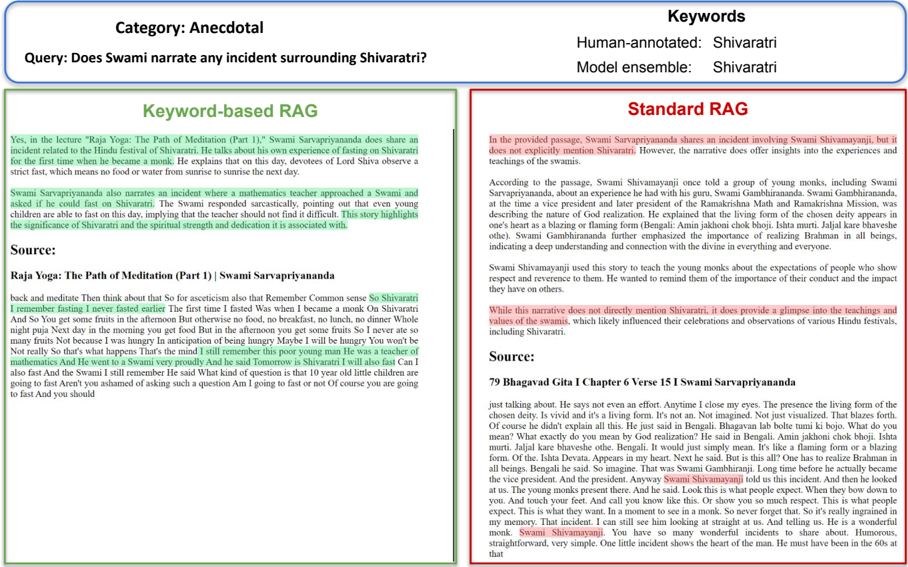

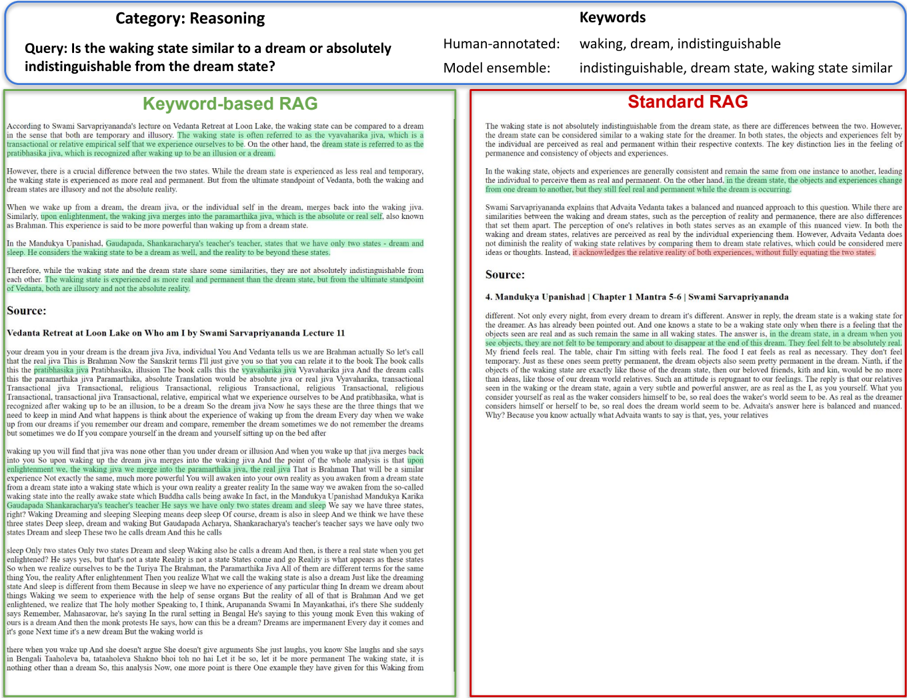

Figure 4: Human evaluation: RAG vs non-RAG. Both transcription and retrieval performance receive high scores from the evaluators. For generation, the RAG model outperforms the generic model across various metrics, particularly in factuality, completeness and specificity, while being marginally lower in ease of understanding.

<details>

<summary>Image 4 Details</summary>

### Visual Description

## Chart Compilation: Evaluation of RAG vs. Non-RAG Systems

### Overview

This image presents a compilation of charts evaluating the performance of systems with and without Retrieval-Augmented Generation (RAG) across several metrics: Transcription Quality, Generation (Factuality, Completeness, Specificity, Ease of Understanding, Faithfulness), Retrieval Relevance, Hallucinations, and Outside Knowledge. The charts primarily use bar graphs and pie charts to visualize the data.

### Components/Axes

The image is divided into four main sections: Transcription, Generation, Retrieval, and Hallucinations/Outside Knowledge.

* **Transcription Quality:** Pie chart with a single value.

* **Generation:** Bar graph with five metrics on the x-axis (Factuality, Completeness, Specificity, Ease of Understanding, Faithfulness) and a y-axis ranging from 0.00 to 5.00. Two data series are presented: Non-RAG (red) and RAG (green).

* **Retrieval Relevance:** Pie chart with a single value.

* **Hallucinations:** Two pie charts, one for Non-RAG and one for RAG, showing the distribution of responses categorized as "No", "Not Sure", and "Yes".

* **Outside Knowledge:** Pie chart for RAG, showing the distribution of responses categorized as "No", "Not Sure", and "Yes".

* **Legend:** Located at the bottom-center of the image, defining the colors for "No" (green), "Not Sure" (yellow), and "Yes" (red). The Generation chart legend is at the top-right, defining "Non-RAG" (red) and "RAG" (green).

### Detailed Analysis or Content Details

**1. Transcription Quality:**

* The pie chart shows a Transcription Quality score of approximately 4.48.

**2. Generation:**

* **Factuality:** Non-RAG is approximately 2.7, RAG is approximately 4.0.

* **Completeness:** Non-RAG is approximately 2.8, RAG is approximately 4.0.

* **Specificity:** Non-RAG is approximately 2.5, RAG is approximately 3.5.

* **Ease of Understanding:** Non-RAG is approximately 3.0, RAG is approximately 4.0.

* **Faithfulness:** Non-RAG is approximately 3.0, RAG is approximately 4.0.

* *Trend:* For all five metrics, the RAG data series consistently outperforms the Non-RAG data series, with RAG bars being significantly higher.

**3. Retrieval Relevance:**

* The pie chart shows a Retrieval Relevance score of approximately 4.34.

**4. Hallucinations (Non-RAG):**

* No: 30.0%

* Not Sure: 30.0%

* Yes: 40.0%

**5. Hallucinations (RAG):**

* No: 47.5%

* Not Sure: 25.0%

* Yes: 27.5%

**6. Outside Knowledge (RAG):**

* No: 43.36%

* Not Sure: 38.5%

* Yes: 18.14%

### Key Observations

* RAG consistently outperforms Non-RAG across all Generation metrics.

* RAG significantly reduces the occurrence of hallucinations compared to Non-RAG.

* The majority of responses from RAG indicate "No" outside knowledge, with a substantial portion being "Not Sure".

* Transcription and Retrieval Relevance both have high scores, around 4.3-4.5.

### Interpretation

The data strongly suggests that incorporating Retrieval-Augmented Generation (RAG) significantly improves the quality of generated text. RAG leads to higher factuality, completeness, specificity, ease of understanding, and faithfulness compared to systems without RAG. Furthermore, RAG demonstrably reduces the frequency of hallucinations.

The "Outside Knowledge" pie chart for RAG indicates that the system primarily relies on retrieved information, as the majority of responses indicate "No" outside knowledge. The substantial "Not Sure" category suggests that the system is cautious about asserting information not directly supported by the retrieved context.

The high scores for Transcription Quality and Retrieval Relevance suggest that the underlying retrieval and transcription components are performing well, providing a solid foundation for the generation process. The consistent improvement across all metrics when RAG is employed highlights its effectiveness in enhancing the overall performance of the system. The data suggests that RAG is a valuable technique for building more reliable and trustworthy language models.

</details>

cation for their choices, which proves to be very useful in analyzing the two models.

Relevance: Defined as the relevance of the retrieved passages to the user query, this metric is scored on a scale from 1 to 5 (1 = Not at all relevant, 5 = Extremely relevant).

Correctness: Factual accuracy of the generated answer (1 = Inaccurate, 5 = No inaccuracies)

Completeness: Measures if the retrieved passage and generated answer comprehensively cover all parts of the query (1 = Not at all comprehensive misses crucial points, 5 = Very comprehensive).

## 5.3 Results: RAG vs Non-RAG

We first conduct a human evaluation survey with 5 computational linguists and 3 domain experts on RAG vs non-RAG models. In the evaluation of the generation capabilities of our models, we consider five metrics: factuality, completeness, specificity, ease of understanding, and faithfulness. The performance of the RAG model is compared against a baseline non-RAG model across these dimensions in Fig. 4. The RAG model substantially outperforms the non-RAG model across various metrics, particularly in factuality, completeness and specificity, while being marginally lower in ease of understanding. Sample responses in Figs. 7-11.

## 5.4 Results: Standard RAG vs Keyword-based RAG

We report results in Table 1. The keyword based RAG model shows strong improvement across all automatic metrics while significantly outperforming the standard model in the human evaluation. Amongst the answer-only metrics, the model tends to produce longer, more comprehensive answers (indicated by longer length), which are more coherent (lower perplexity). The question-answer RankGen metric (Krishna et al., 2022) evaluates the probability of the answer given the question. A higher score for the model suggests more relevant answers to the question. Most notably, the keyword model does very well on QAFactEval (Fabbri et al., 2022), which evaluates faithfulness by comparing answers from the summary (in our case, the answer) and the evidence (retrievals). A higher score indicates greater faithfulness of the answer to retrieved passages, indicating fewer hallucinations and reliance on outside knowledge.

For the human evaluation in Table 1, we report scores normalized between 0 to 1. A relevance rating of 0.82 for keyword-based RAG vs 0.59 for standard RAG indicates a strong alignment between the retrieved content and the users' queries for our model, demonstrating the efficacy of the retrieval process. Conversely, the standard model

Table 1: Automatic and human evaluation: standard RAG (M1) vs keyword-based RAG (M2). We report both automatic and human evaluation metrics calculated on 25 triplets of {question, answer, retrievals} across 5 different question categories. The key-word based RAG model shows strong improvement across all automatic metrics while significantly outperforming the standard model in the human evaluation.

| Category RAG Model | Mean M1/M2 | Anecdotal M1/M2 | Comparative M1/M2 | Reasoning M1/M2 | Scriptural M1/M2 | Terminology M1/M2 |

|----------------------|----------------------|-------------------|---------------------|-------------------|--------------------|---------------------|

| Automatic metrics | Automatic metrics | Automatic metrics | Automatic metrics | Automatic metrics | Automatic metrics | Automatic metrics |

| Answer-only | | | | | | |

| GPT2-PPL ↓ | 16.6/ 15.3 | 16.6 / 16.6 | 16.9/ 15.7 | 13.9/ 11.9 | 14.2 /14.7 | 21.5/ 17.7 |

| Self-bleu ↓ | 0.12 /0.13 | 0.11/ 0.05 | 0.10/ 0.06 | 0.15 /0.27 | 0.13 /0.16 | 0.09 /0.14 |

| # Words ↑ | 196/ 227 | 189 / 189 | 174/ 206 | 218/ 282 | 225/ 243 | 216/ 261 |

| # Sentences ↑ | 9.0/ 10.1 | 8.2 /7.6 | 7.8/ 9.4 | 9.6/ 11.8 | 10.0/ 10.6 | 9.4/ 11.0 |

| (Question, answer) | (Question, answer) | | | | | |

| RankGen ↑ | 0.46/ 0.48 | 0.42/ 0.52 | 0.44/ 0.47 | 0.41/ 0.43 | 0.51/ 0.52 | 0.52 /0.46 |

| (Answer, retrievals) | (Answer, retrievals) | | | | | |

| QAFactEval ↑ | 1.36/ 1.60 | 1.01/ 1.14 | 1.53/ 1.94 | 1.18/ 1.61 | 1.52 /1.36 | 1.56/ 1.95 |

| Human evaluation | Human evaluation | Human evaluation | Human evaluation | Human evaluation | Human evaluation | Human evaluation |

| Retrieval | | | | | | |

| Relevance ↑ | 0.59/ 0.82 | 0.41/ 0.88 | 0.79/ 0.85 | 0.73/ 0.83 | 0.48/ 0.73 | 0.55/ 0.81 |

| Completeness ↑ | 0.52/ 0.79 | 0.41/ 0.86 | 0.72/ 0.79 | 0.57/ 0.83 | 0.37/ 0.68 | 0.52/ 0.79 |

| Answer | | | | | | |

| Correctness ↑ | 0.61/ 0.86 | 0.40/ 0.89 | 0.81/ 0.88 | 0.71/ 0.85 | 0.52/ 0.81 | 0.63/ 0.89 |

| Completeness ↑ | 0.58/ 0.85 | 0.42/ 0.92 | 0.80/ 0.85 | 0.72/ 0.81 | 0.49/ 0.77 | 0.63/ 0.91 |

sometimes fails to disambiguate unique terminology and retrieves incorrect passages (see Fig. 12). In assessing the accuracy of the generated answer, the keyword-based RAG model significantly outperforms the standard model, indicating better alignment with verifiable facts. Fig. 13 shows a factually inaccurate response from the generic model. The keyword model achieves higher completeness scores for both the retrieval and generation. Sample responses are shown in Figs. 12-16.

## 6 Challenges

The evaluation in Sec. 5 shows that the RAG model provides responses that are not only more aligned with the source material but are also more comprehensive, specific, and user-friendly compared to the responses generated by the generic language model. In this section, we discuss the challenges we encountered while building the retrieval-augmented chatbot for the niche knowledge domain of ancient Indian philosophy introduced in this work.

Transcription. Our requirement of using a niche data domain having long-tail knowledge precludes the use of source material that the LLM has previously been exposed to. To ensure this, we construct a textual corpus that is derived from automated transcripts of YouTube discourses. These transcripts can sometimes contain errors such as missing punc- tuations, incorrect transcriptions, and transliterations of Sanskrit terms. A sample of such errors is shown in Appendix Table 3. A proofreading mechanism and/or improved transcription models can help alleviate these issues to a large extent.

Spoken vs written language. Unlike traditional textual corpora that are compiled from written sources, our dataset is derived from spoken discourses. Spoken language is often more verbose and less structured than written text, with the speaker frequently jumping between concepts mid-sentence. This unstructured nature of the text can be unfamiliar for a language model trained extensively on written text. A peculiar failure case arising from this issue is shown in Appendix Fig. 6. This can be addressed by converting the spoken text into a more structured prose format with the help of well-crafted prompts to LLMs, followed by human proofreading.

Context length. The passages retrieved in the standard model are of a fixed length and can sometimes be too short for many queries, especially for long-form answering. For instance, the retrieved passage may include a snippet from the middle of the full context, leading to incomplete or incoherent chatbot responses (Fig. 11). This prompted us to employ a keyword-based context-expansion mechanism to provide a more complete context. While this results in much better answer generation, the retrieved passage may contain too much information, making it difficult for the generator to reason effectively. Moreover, the increase in the number of tokens increases processing time. Future work can explore more advanced retrieval models capable of handling longer contexts and summarizing them effectively before input to the LLM.

Retrieval-induced hallucinations. There are scenarios when the RAG model latches onto a particular word or phrase in the retrieved passage and hallucinates a response that is not only irrelevant but also factually incorrect. A sample of such a hallucination is in Fig. 10. This is a more challenging problem to address. However, retrieval models that can extract the full context, summarize it and remove irrelevant information should be capable of mitigating this issue to a reasonable extent.

## 7 Conclusion

In this work, we integrate modern retrievalaugmented large language models with the ancient Indian philosophy of Advaita Vedanta. Toward this end, we present VedantaNY-10M, a large dataset curated from automatic transcriptions of extensive philosophical discourses on YouTube. Validating these models along various axes using both automatic and human evaluation provides two key insights. First, RAG models significantly outperform non-RAG models, with domain experts expressing a strong preference for using such RAG models to supplement their daily studies. Second, the keyword-based hybrid RAG model underscores the merits of integrating classical and contemporary deep learning techniques for retrieval in niche and specialized domains. While there is much work to be done, our study underscores the potential of integrating modern machine learning techniques to unravel ancient knowledge systems.

## Limitations and Future Work

While our study demonstrates the utility of integrating retrieval-augmented LLMs with ancient knowledge systems, there are limitations and scope for future work. First, this study focuses on a single niche domain of Advaita Vedanta as taught by one teacher. Expanding this study to include other ancient philosophical systems, such as the Vedantic schools of Vishishtadvaita and Dvaita, as well as various Buddhist and Jain traditions, would be a valuable extension. Second, incorporating primary scriptural sources, in addition to spoken discourses, would enhance the authenticity of the RAG model's outputs. Third, while we only experiment with RAG models in this study, finetuning the language models themselves on philosophy datasets is an interesting future direction. Fourth, the context refiner is currently heuristic-based and may not generalize well to all scenarios. Replacing it with a trained refiner using abstractive or extractive summarization techniques would considerably improve its utility and efficiency. Fifth, expanding the evaluation set and involving more subjects for evaluation and will considerably strengthen the study's robustness. Finally, while the language models in this work are primarily in English and Latin script, building native LLMs capable of functioning in the original Sanskrit language of the scriptures using Devanagari script is essential future work.

## Acknowledgments

The author would like to thank Prof. Kyle Mahowald for his insightful course on form and functionality in LLMs, which guided the evaluation of the language models presented in this paper. Fangyuan Xu provided valuable information on automatic metrics for LFQA evaluation. The author extends their gratitude to all the human evaluators who took the survey and provided valuable feedback, with special thanks to Dr. Anandhi who coordinated the effort among domain experts. Finally, the author expresses deep gratitude to the Vedanta Society of New York and Swami Sarvapriyananda for the 750+ hours of public lectures that served as the dataset for this project.

## Ethics Statement

All data used in this project has been acquired from public lectures on YouTube delivered by Swami Sarvapriyananda of the Vedanta Society of New York. While our study explores integrating ancient knowledge systems with modern machine learning techniques, we recognize their inherent limitations. These knowledge traditions have always emphasized the importance of the teacher in transmitting knowledge. We do not see LLMs as replacements for monks and teachers of these ancient traditions, but only as tools to supplement analysis and study. Moreover, users of these tools need to be made well aware that these models can and do make errors, and should therefore seek guidance from qualified teachers to carefully progress on the path.

## References

- Tom Aarsen. 2020. SpanMarker for Named Entity Recognition.

- Yannis Assael, Thea Sommerschield, Brendan Shillingford, Mahyar Bordbar, John Pavlopoulos, Marita Chatzipanagiotou, Ion Androutsopoulos, Jonathan Prag, and Nando de Freitas. 2022. Restoring and attributing ancient texts using deep neural networks. Nature , 603.

- David Bamman and Patrick J Burns. 2020. Latin bert: A contextual language model for classical philology. arXiv preprint arXiv:2009.10053 .

- Bhagavad Gita. 3000 B.C.E. The Bhagavad Gita.

- Bartosz Bogacz and Hubert Mara. 2020. Period classification of 3d cuneiform tablets with geometric neural networks. In ICFHR .

- Bernd Bohnet, Vinh Q Tran, Pat Verga, Roee Aharoni, Daniel Andor, Livio Baldini Soares, Jacob Eisenstein, Kuzman Ganchev, Jonathan Herzig, Kai Hui, et al. 2022. Attributed question answering: Evaluation and modeling for attributed large language models. arXiv preprint arXiv:2212.08037 .

- Sebastian Borgeaud, Arthur Mensch, Jordan Hoffmann, Trevor Cai, Eliza Rutherford, Katie Millican, George Bm Van Den Driessche, Jean-Baptiste Lespiau, Bogdan Damoc, Aidan Clark, et al. 2022. Improving language models by retrieving from trillions of tokens. In ICML .

- The Brahmasutras. 3000 B.C.E. The Brahmasutras.

- Tom Brown, Benjamin Mann, Nick Ryder, Melanie Subbiah, Jared D Kaplan, Prafulla Dhariwal, Arvind Neelakantan, Pranav Shyam, Girish Sastry, Amanda Askell, et al. Language models are few-shot learners.

- Anthony Chen, Gabriel Stanovsky, Sameer Singh, and Matt Gardner. 2020. MOCHA: A dataset for training and evaluating generative reading comprehension metrics. In EMNLP .

- Kevin Clark, Minh-Thang Luong, Quoc V. Le, and Christopher D. Manning. 2020. ELECTRA: Pretraining text encoders as discriminators rather than generators. In ICLR .

- Alexander Fabbri, Chien-Sheng Wu, Wenhao Liu, and Caiming Xiong. 2022. QAFactEval: Improved QAbased factual consistency evaluation for summarization. In NAACL .

- Maarten Grootendorst. 2020. Keybert: Minimal keyword extraction with bert.

- Kelvin Guu, Kenton Lee, Zora Tung, Panupong Pasupat, and Mingwei Chang. 2020. Realm: Retrieval augmented language model pre-training. In ICML .

- Matthew Honnibal, Ines Montani, Sofie Van Landeghem, and Adriane Boyd. 2020. spaCy: Industrialstrength Natural Language Processing in Python.

- Gautier Izacard, Patrick Lewis, Maria Lomeli, Lucas Hosseini, Fabio Petroni, Timo Schick, Jane DwivediYu, Armand Joulin, Sebastian Riedel, and Edouard Grave. 2022. Atlas: Few-shot learning with retrieval augmented language models. JMLR .

- Albert Q. Jiang, Alexandre Sablayrolles, Antoine Roux, Arthur Mensch, Blanche Savary, Chris Bamford, Devendra Singh Chaplot, Diego de las Casas, Emma Bou Hanna, Florian Bressand, Gianna Lengyel, Guillaume Bour, Guillaume Lample, Lélio Renard Lavaud, Lucile Saulnier, MarieAnne Lachaux, Pierre Stock, Sandeep Subramanian, Sophia Yang, Szymon Antoniak, Teven Le Scao, Théophile Gervet, Thibaut Lavril, Thomas Wang, Timothée Lacroix, and William El Sayed. 2024. Mixtral of experts. Preprint , arXiv:2401.04088.

- Nikhil Kandpal, Haikang Deng, Adam Roberts, Eric Wallace, and Colin Raffel. 2023. Large language models struggle to learn long-tail knowledge. In ICML .

- Vladimir Karpukhin, Barlas O˘ guz, Sewon Min, Patrick Lewis, Ledell Wu, Sergey Edunov, Danqi Chen, and Wen-tau Yih. 2020. Dense passage retrieval for opendomain question answering. EMNLP .

- Urvashi Khandelwal, Omer Levy, Dan Jurafsky, Luke Zettlemoyer, and Mike Lewis. 2020. Generalization through memorization: Nearest neighbor language models. ICLR .

- Kalpesh Krishna, Yapei Chang, John Wieting, and Mohit Iyyer. 2022. Rankgen: Improving text generation with large ranking models. arXiv:2205.09726 .

- Kalpesh Krishna, Aurko Roy, and Mohit Iyyer. 2021. Hurdles to progress in long-form question answering. In NAACL .

- Philippe Laban, Tobias Schnabel, Paul N. Bennett, and Marti A. Hearst. 2022. Summac: Re-visiting nlibased models for inconsistency detection in summarization. TACL .

- Mike Lewis, Yinhan Liu, Naman Goyal, Marjan Ghazvininejad, Abdelrahman Mohamed, Omer Levy, Veselin Stoyanov, and Luke Zettlemoyer. 2020a. BART: Denoising sequence-to-sequence pre-training for natural language generation, translation, and comprehension. In ACL .

- Patrick Lewis, Ethan Perez, Aleksandra Piktus, Fabio Petroni, Vladimir Karpukhin, Naman Goyal, Heinrich Küttler, Mike Lewis, Wen-tau Yih, Tim Rocktäschel, et al. 2020b. Retrieval-augmented generation for knowledge-intensive nlp tasks. NeurIPS .

- Yixin Liu, Alexander R. Fabbri, Pengfei Liu, Yilun Zhao, Linyong Nan, Ruilin Han, Simeng Han, Shafiq R. Joty, Chien-Sheng Wu, Caiming Xiong, and Dragomir R. Radev. 2022. Revisiting the gold standard: Grounding summarization evaluation with robust human evaluation. ArXiv , abs/2212.07981.

- Ligeia Lugli, Matej Martinc, Andraž Pelicon, and Senja Pollak. 2022. Embeddings models for buddhist Sanskrit. In LREC .

- Rajiv Malhotra. 2021. Artificial Intelligence and the Future of Power: 5 Battlegrounds . Rupa Publications.

- Rajiv Malhotra and Satyanarayana Dasa Babaji. 2020. Sanskrit Non-Translatables: The Importance of Sanskritizing English . Amaryllis.

- Alex Mallen, Akari Asai, Victor Zhong, Rajarshi Das, Hannaneh Hajishirzi, and Daniel Khashabi. 2023. When not to trust language models: Investigating effectiveness and limitations of parametric and nonparametric memories. ACL .

- Priyanka Mandikal and Raymond Mooney. 2024. Sparse meets dense: A hybrid approach to enhance scientific document retrieval. In The 4th CEUR Workshop on Scientific Document Understanding, AAAI .

- Joshua Maynez, Shashi Narayan, Bernd Bohnet, and Ryan McDonald. 2020. On faithfulness and factuality in abstractive summarization. In ACL .

- Jacob Menick, Maja Trebacz, Vladimir Mikulik, John Aslanides, Francis Song, Martin Chadwick, Mia Glaese, Susannah Young, Lucy CampbellGillingham, Geoffrey Irving, et al. 2022. Teaching language models to support answers with verified quotes. arXiv preprint arXiv:2203.11147 .

- Ragaa Moustafa, Farida Hesham, Samiha Hussein, Badr Amr, Samira Refaat, Nada Shorim, and Taraggy M Ghanim. 2022. Hieroglyphs language translator using deep learning techniques (scriba). In International Mobile, Intelligent, and Ubiquitous Computing Conference (MIUCC) .

- Reiichiro Nakano, Jacob Hilton, Suchir Balaji, Jeff Wu, Long Ouyang, Christina Kim, Christopher Hesse, Shantanu Jain, Vineet Kosaraju, William Saunders, et al. 2021. Webgpt: Browser-assisted questionanswering with human feedback. arXiv preprint arXiv:2112.09332 .

- Sonika Narang, MK Jindal, and Munish Kumar. 2019. Devanagari ancient documents recognition using statistical feature extraction techniques. S¯ adhan¯ a , 44.

- Zach Nussbaum, John X. Morris, Brandon Duderstadt, and Andriy Mulyar. 2024. Nomic embed: Training a reproducible long context text embedder. Preprint , arXiv:2402.01613.

OpenAI. 2023. Gpt-4 technical report.

- Asimina Paparigopoulou, John Pavlopoulos, and Maria Konstantinidou. 2022. Dating greek papyri images with machine learning. In ICDAR Workshop on Computational Paleography, https://doi. org/10.21203/rs .

- Alec Radford, Jong Wook Kim, Tao Xu, Greg Brockman, Christine McLeavey, and Ilya Sutskever. 2023. Robust speech recognition via large-scale weak supervision. In ICML .

- Ori Ram, Yoav Levine, Itay Dalmedigos, Dor Muhlgay, Amnon Shashua, Kevin Leyton-Brown, and Yoav Shoham. 2023. In-context retrieval-augmented language models. arXiv preprint arXiv:2302.00083 .

Ramakrishna Order. Belur math.

- Adi Shankaracharya. 700 C.E. Commentary on the Upanishads.

- Weijia Shi, Julian Michael, Suchin Gururangan, and Luke Zettlemoyer. 2022. Nearest neighbor zero-shot inference. In Proceedings of the 2022 Conference on Empirical Methods in Natural Language Processing .

- Weijia Shi, Sewon Min, Michihiro Yasunaga, Minjoon Seo, Rich James, Mike Lewis, Luke Zettlemoyer, and Wen-tau Yih. 2023. Replug: Retrievalaugmented black-box language models. arXiv preprint arXiv:2301.12652 .

- Kurt Shuster, Spencer Poff, Moya Chen, Douwe Kiela, and Jason Weston. 2021. Retrieval augmentation reduces hallucination in conversation. ACL .

- Thea Sommerschield, Yannis Assael, John Pavlopoulos, Vanessa Stefanak, Andrew Senior, Chris Dyer, John Bodel, Jonathan Prag, Ion Androutsopoulos, and Nando de Freitas. 2023. Machine Learning for Ancient Languages: A Survey. Computational Linguistics .

- Mingyang Song, Xuelian Geng, Songfang Yao, Shilong Lu, Yi Feng, and Liping Jing. 2024. Large language models as zero-shot keyphrase extractors: A preliminary empirical study. Preprint , arXiv:2312.15156.

- Simeng Sun, Ori Shapira, Ido Dagan, and Ani Nenkova. 2019. How to compare summarizers without target length? pitfalls, solutions and re-examination of the neural summarization literature. Workshop on Methods for Optimizing and Evaluating Neural Language Generation .

Upanishads. >3000 B.C.E. The Upanishads.

- Vedanta Society of New York. Swami Sarvapriyananda's Vedanta discourses.

- Lee Xiong, Chuan Hu, Chenyan Xiong, Daniel Campos, and Arnold Overwijk. 2019. Open domain web keyphrase extraction beyond language modeling. arXiv preprint arXiv:1911.02671 .

- Fangyuan Xu, Yixiao Song, Mohit Iyyer, and Eunsol Choi. 2023. A critical evaluation of evaluations for long-form question answering. In ACL .

- Eunice Yiu, Eliza Kosoy, and Alison Gopnik. 2023. Imitation versus innovation: What children can do that large language and language-and-vision models cannot (yet)? ArXiv , abs/2305.07666.

- Yaoming Zhu, Sidi Lu, Lei Zheng, Jiaxian Guo, Weinan Zhang, Jun Wang, and Yong Yu. 2018. Texygen: A benchmarking platform for text generation models. In The 41st International ACM SIGIR Conference on Research & Development in Information Retrieval .

## A Implementation Details

## A.1 Automatic Metrics

Following Xu et al. (2023), we implement a number of automatic evaluation metrics for LFQA as described below.

Length We use the Spacy package (Honnibal et al., 2020) for word tokenization.

Self-BLEU We calculate Self-BLEU by regarding one sentence as hypothesis and all others in the same answer paragraph as reference. We report self-BLEU-5 as a measure of coherence.

RankGen For a given question q and a modelgenerated answer a , we first transform them into fixed-size vectors ( q , a ) using the RankGen encoder (Krishna et al., 2022). To assess their relevance, we compute the dot product q · a . We utilize the T5-XXL (11B) encoder, which has been trained using both in-book negative instances and generative negatives.

QAFactEval QAFactEval is a QA-based metric recently introduced by Fabbri et al. (2022). It has demonstrated exceptional performance across multiple factuality benchmarks for summarization (Laban et al., 2022; Maynez et al., 2020). The pipeline includes four key components: (1) Noun Phrase (NP) extraction from sentence S represented as Ans ( S ) , (2) BART-large (Lewis et al., 2020a) for question generation denoted as Q G , (3) Electralarge (Clark et al., 2020) for question answering labeled as Q A , and (4) learned metrics LERC (Chen et al., 2020), to measure similarity as Sim ( p i , s i ) . An additional answerability classification module is incorporated to assess whether a question can be answered with the information provided in document D . Following Xu et al. (2023), we report LERC, which uses the learned metrics to compare Ans S and Ans D (a).

## A.2 Chat Prompt

For an example of the constructed RAG bot prompt, please refer to Fig. 5. In this scenario, the RAG bot C r is presented with the top-k retrieved passages alongside the query for generating a response, whereas a generic bot C g would only receive the query without additional context.

## B Sample Sanskrit terms

Table 2 contains excerpts from passages containing Sanskrit terms. The Sanskrit terms are italicized

<details>

<summary>Image 5 Details</summary>

### Visual Description

\n

## Diagram: RAG Bot vs. Generic Bot Prompt Comparison

### Overview

The image presents a comparison between the prompts used for a Retrieval-Augmented Generation (RAG) Bot and a Generic Bot. The comparison is visually represented as two rectangular blocks, one green for the RAG Bot and one red for the Generic Bot, stacked vertically. Each block contains the text of the prompt used for the respective bot.

### Components/Axes

The diagram consists of two main components:

* **RAG Bot Block:** A green rectangle at the top.

* **Generic Bot Block:** A red rectangle at the bottom.

Each block contains text defining the bot's role and instructions. The variable `{query}` appears in both prompts.

### Content Details

**RAG Bot Prompt (Green Block):**

"You are a helpful assistant that accurately answers queries using Swami Sarvapriyananda's YouTube talks. Use the following passages to provide a detailed answer to the query: {query}

Passages:

{Passage 1}

{Passage 2}

...

{Passage k}"

**Generic Bot Prompt (Red Block):**

"You are a helpful assistant that accurately answers queries using Swami Sarvapriyananda's YouTube talks. Provide a detailed answer to the query: {query}"

### Key Observations

The key difference between the two prompts is the inclusion of "Passages:" and the placeholder for multiple passages (`{Passage 1}`, `{Passage 2}`, ..., `{Passage k}`) in the RAG Bot prompt. This indicates that the RAG Bot is designed to utilize retrieved information (passages) to formulate its responses, while the Generic Bot does not explicitly have access to such passages within its prompt.

### Interpretation

The diagram illustrates the core distinction between a standard Large Language Model (LLM) and a RAG-enhanced LLM. The Generic Bot relies solely on its pre-trained knowledge to answer queries. The RAG Bot, however, is augmented with external knowledge retrieved from a source (in this case, Swami Sarvapriyananda's YouTube talks) and provided as context within the prompt. This allows the RAG Bot to provide more accurate and contextually relevant answers, especially for queries requiring information not readily available in the LLM's pre-trained data. The use of `{query}` in both prompts suggests that both bots are designed to respond to user input, but the RAG Bot's response will be informed by the provided passages. The "..." in the RAG Bot prompt indicates that the number of passages can vary. This is a conceptual diagram demonstrating the difference in prompt structure, not a presentation of data.

</details>

Figure 5: Prompts for the RAG and generic chatbots. RAG Bot receives the top-k retrieved relevant passages in the prompt along with the query, while the generic bot only receives the query.

and underlined. Notice that the passages contain detailed English explanations of these terms. To retain linguistic diversity, authenticity and comprehensiveness of the source material, we retain these Sanskrit terms as is in our passages as described in Sec. 3. Note that these are direct Whisper (Radford et al., 2023) transcriptions with no further postprocessing or proofreading. Transcriptions may not always be accurate.

## C Transcription

We asses the transcript quality and list out some common errors.

## C.1 Transcript Evaluation

Transcription quality is scored on a scale from 1 to 5 (where 1 = Poor, 5 = Perfect). On 10 randomly sampled transcripts, evaluators assign a high average score of 4.48 suggesting that the transcription of YouTube audio into text is highly accurate and clear, indicating that our constructed custom dataset D t is of high quality.

## C.2 Transcript Errors

Table 3 contains a few sample transcription errors. The transcriptions are largely good for English words and sentences. However, errors often arise from incorrectly transcribing Sanskrit terms and verses. Other less common errors include missing

## Sl. No. Excerpts from passages

1. Om Bhadram Karne Bhishrinu Yamadevaha Bhadram Pashyam Akshabhirya Jatraaha Sthirai Rangai Stushta Vagam Sasthanubhi Vyase Madevahitaiyadayoh Swasthina Indro Vriddha Shravaha Swasthina Phusa Vishwa Vedaaha Swasthina Starksho Arishta Nemi Swasthino Brihas Patir Dadhatu Om Shanti Shanti Shanti .

2. Samsara is our present situation, the trouble that we are caught in, the mess that we are caught in. Samsara is this. In Sanskrit, normally when you use the word samsara , it really means this world of our life, you know, being born and struggling in life and afflicted by suffering and death and hopelessness and meaninglessness.

3. The problem being ignorance, solution is knowledge and the method is Jnana Yoga , the path of knowledge. So what is mentioned here, Shravana Manana Nididhyasana , hearing, reflection, meditation, that is Jnana Yoga . So that's at the highest level of practice, way of knowledge.

4. In Sanskrit, ajnana and adhyasa , ignorance and superimposition. Now if you compare the four aspects of the self, the three appearances and the one reality, three appearances, waker, dreamer, deep sleeper, the one reality, turiyam , if you compare them with respect to ignorance and error, you will find the waker, that's us right now. We have both ignorance and error.

5. Mandukya investigates this and points out there is an underlying reality, the Atman , pure consciousness, which has certain characteristics. This is causality, it is beyond causality. It is neither a cause nor an effect. The Atman is not produced like this, nor is it a producer of this. It is beyond change. No change is there in the Atman , nirvikara . And third, it is not dual, it is non-dual, advaitam . This is kadyakarana in Sanskrit, this is kadyakarana vilakshana Atma . In Sanskrit this is savikara , this is nirvikara Atma . This is dvaita , this is advaita Atma . So this is samsara and this is moksha , freedom.

## Notes

This is a Sanskrit chant which is directly Romanized and processed. The automatic transcriptions often contain errors in word segmentation for Sanskrit verses.

Samsara is a Sanskrit term. The excerpt contains an explanation of the concept in English.

The excerpt contains an explanation of Jnana Yoga , the path of knowledge.

Ajnana , adhyasa and turiyam are Sanskrit terms. Notice that the passage implicitly contains rough English translations of these terms in the context of the overall discourse. For instance, ajnana is translated as ignorance and adhyasa is translated as superimposition.

The excerpt contains an explanation of different Sanskrit technical terms.

Table 2: Excerpts from passages containing Sanskrit terms. These excerpts contain detailed English descriptions of technical terms in Sanskrit (italicized and underlined). To retain authenticity to the source material, we retain these Sanskrit terms as is in our passages. Note that these are direct Whisper (Radford et al., 2023) transcriptions with no further post-processing or proofreading, so transcriptions may not always be accurate. For more details, refer to Sec. B.

Figure 6: Subject confusion: Aldous Huxley vs Alan Watts. In the retrieved passage, the speaker jumps between speaking about Aldous Huxley and Alan Watts. Moreover, the transcription has poor punctuation. As a result, the RAG bot incorrectly attributes information about Huxley to Watts in the response. Due to the unstructured nature of the spoken text, such errors can occur. This can be addressed by converting the spoken text into a more structured prose format with the help of well-crafted prompts to LLMs, followed by human proofreading (see Sec. D).

<details>

<summary>Image 6 Details</summary>

### Visual Description

\n

## Screenshot: Question & Answer with Passages

### Overview

This screenshot displays a question-and-answer interface, likely from a Retrieval-Augmented Generation (RAG) bot. The question asks about Alan Watts' association with Vedanta. The interface presents an answer generated by the "RAG Bot" and supporting passages from a text titled "Swami Vivekananda: The Power and the Glory" by Sarvapriyananda.

### Components/Axes

The screenshot is divided into three main sections:

1. **Question:** Located at the top-left, posing the query "Was Alan Watts" associated with Vedanta?".

2. **Answer:** Positioned below the question, labeled "Answer" and attributed to "RAG Bot".

3. **Passages:** Occupying the right side of the screenshot, labeled "Passages" and attributed to "Swami Vivekananda: The Power and the Glory | Swami Sarvapriyananda".

### Content Details

**Question:** "Was Alan Watts" associated with Vedanta?"

**Answer (RAG Bot):** "Yes, Alan Watts was associated with Vedanta. Although he was not directly connected with the Vedanta Society, he taught Vedanta and Zen, which was a kind of eclectic mixture of both. In the 1950s and 60s, when he wrote his book “The Perennial Philosophy,” some reviews were critical, suggesting that he had become part of some Hindu cult. However, he maintained his association with the Vedanta Society of Southern California until the very end. Alan Watts was one of the leading intellectuals in Britain, along with other British intellectuals like Aldous Huxley, Christopher Isherwood, and Gerald Hurd. While Huxley and Isherwood were directly associated with Vedanta, Alan Watts taught Vedanta and Zen, which shows his connection to Vedantic ideas."

**Passages (Swami Vivekananda: The Power and the Glory | Swami Sarvapriyananda):**

"50s and 60s and when he wrote that book some reviews were nasty some said that oh he’s become part of some Hindu cult or something like that you know but he maintained his association with the Vedanta Society till the very end it’s very closely connected with the Vedanta Society of Southern California Aldous Huxley one of the leading intellectuals in Britain and then they were all British by the way Huxley Christopher Isherwood Gerald Hurd, Alan Watts. Alan Watts was not directly connected with Vedanta but he taught Vedanta and Zen a kind of eclectic mixture of both. Aldous Huxley his amazing book the perennial philosophy. Houston Smith major figure in the study of comparative religion and his book the world’s religions he wrote that he was a disciple of Swami Satprakashananda in the Vedanta Society in St Louis so this spread of Vedantic ideas, yogic ideas of and then later on Buddhism and so on that Vivekananda was the pioneer he opened the door here and he went back to India the other side of his work. Sister Nivedita writes when he stood up to speak here in Chicago his message for the West he said I have a message for the West as Buddha had the message for the East but his message his words Nivedita writes traveled back across the dark oceans to a land to his motherland asleep to awaken her to a sense of her greatness. When he went back to India, India which was colonized which was starving superstitious divided he was the first person historian says to"

### Key Observations

* The answer provided by the RAG Bot directly addresses the question, confirming Alan Watts' association with Vedanta, albeit not a direct connection to the Vedanta Society.

* The passages provided support the answer, mentioning Alan Watts alongside other intellectuals involved with Vedanta and related philosophies.

* The passages are somewhat fragmented and appear to be transcriptions of spoken words, indicated by phrases like "you know" and incomplete sentences.

* There is repetition of Alan Watts' name within both the answer and the passages.

### Interpretation

The screenshot demonstrates a RAG system in action. The system successfully retrieves relevant passages from a source text ("Swami Vivekananda: The Power and the Glory") to support its answer to a user's question. The passages provide context and evidence for the claim that Alan Watts was associated with Vedanta, even though he wasn't a direct member of the Vedanta Society. The fragmented nature of the passages suggests the source material might be a transcript of a lecture or discussion. The system's ability to connect Watts to figures like Huxley and Isherwood highlights the broader intellectual context of Vedanta's influence in the mid-20th century. The system is functioning as expected, providing a relevant answer backed by supporting evidence.

</details>

or incorrect punctuation. Human proofreading will remove these errors to a large extent.

## D Spoken vs written language