# FLoD: Integrating Flexible Level of Detail into 3D Gaussian Splatting for Customizable Rendering

**Authors**: Yunji Seo, Young Sun Choi, HyunSeung Son, Youngjung Uh

> 0009-0004-9941-3610Yonsei UniversitySouth Koreaoungji@yonsei.ac.kr

> 0009-0001-9836-4245Yonsei UniversitySouth Koreayoungsun.choi@yonsei.ac.kr

> 0009-0009-1239-0492Yonsei UniversitySouth Koreaghfod0917@yonsei.ac.kr

> 0000-0001-8173-3334Yonsei UniversitySouth Koreayj.uh@yonsei.ac.kr

\setcctype

by-nc-nd

<details>

<summary>x1.png Details</summary>

### Visual Description

## Diagram: Comparison of Rendering Methods and Hardware

### Overview

This diagram compares the rendering quality and memory usage of 3D Gaussian Splatting and FLoD-3DGS (Frame-Level Detail 3D Gaussian Splatting) on two different GPUs: an RTX A5000 (24GB VRAM) and a GeForce MX250 (2GB VRAM). It demonstrates the ability of FLoD-3DGS to render the same scene on limited hardware by utilizing different levels of detail. The diagram also shows the corresponding single-level renderings for each FLoD-3DGS level.

### Components/Axes

The diagram is structured into four main sections:

1. **Hardware Specifications:** Two computer icons representing the RTX A5000 and GeForce MX250, with their respective VRAM amounts.

2. **Rendering Results:** Four images showing the rendered scene using different methods and hardware.

3. **FLoD-3DGS Levels:** A vertical list of numbers 1 through 5, representing the different levels of detail in FLoD-3DGS.

4. **Single Level Renderings:** A vertical list of five images, each corresponding to a single-level rendering of the scene.

The Y-axis of the left two images is labeled "3D Gaussian Splatting" and "FLoD-3DGS". The X-axis is not explicitly labeled but represents the different hardware configurations.

### Detailed Analysis or Content Details

**Hardware & Rendering Results:**

* **RTX A5000 (24GB VRAM):** The 3D Gaussian Splatting rendering shows a high-quality image with a PSNR (Peak Signal-to-Noise Ratio) of 27.1. The FLoD-3DGS rendering also shows a high-quality image with a PSNR of 27.6.

* **GeForce MX250 (2GB VRAM):** 3D Gaussian Splatting results in a "CUDA out of memory" error. FLoD-3DGS successfully renders the scene with a PSNR of 27.3. The rendering is highlighted with a green box labeled "selective rendering" and a smaller box labeled "single level rendering".

**FLoD-3DGS Levels & Single Level Renderings:**

* **Level 1:** The corresponding single-level rendering is a low-resolution, blurry image.

* **Level 2:** The corresponding single-level rendering shows more detail than Level 1, but is still relatively low resolution.

* **Level 3:** The corresponding single-level rendering shows a moderate level of detail. The point cloud is colored with a gradient from purple to pink.

* **Level 4:** The corresponding single-level rendering shows a higher level of detail than Level 3. The point cloud is colored with a gradient from orange to yellow.

* **Level 5:** The corresponding single-level rendering shows the highest level of detail. The point cloud is colored green.

The FLoD-3DGS levels are visually represented by point clouds of varying density and color. The point clouds are contained within red boxes, numbered 1-5 from top to bottom.

### Key Observations

* 3D Gaussian Splatting requires significant VRAM (as demonstrated by the "CUDA out of memory" error on the GeForce MX250).

* FLoD-3DGS allows rendering of complex scenes on hardware with limited VRAM.

* The PSNR values are relatively consistent across the different rendering methods and hardware, suggesting that FLoD-3DGS maintains a comparable level of quality while reducing memory usage.

* The single-level renderings demonstrate the trade-off between detail and memory usage in FLoD-3DGS. Lower levels have less detail but require less memory.

### Interpretation

This diagram demonstrates the effectiveness of FLoD-3DGS as a technique for rendering 3D Gaussian Splatting scenes on resource-constrained hardware. By selectively rendering different levels of detail, FLoD-3DGS can overcome the memory limitations of GPUs like the GeForce MX250, while still achieving a reasonable level of rendering quality (as indicated by the PSNR values). The diagram highlights the importance of adaptive rendering techniques for making advanced rendering methods accessible to a wider range of devices. The point cloud visualizations of the FLoD-3DGS levels provide a clear illustration of how the level of detail affects the visual appearance of the scene. The consistent PSNR values suggest that the quality loss associated with using lower levels of detail is minimal. The diagram effectively communicates the benefits of FLoD-3DGS in a visually compelling manner.

</details>

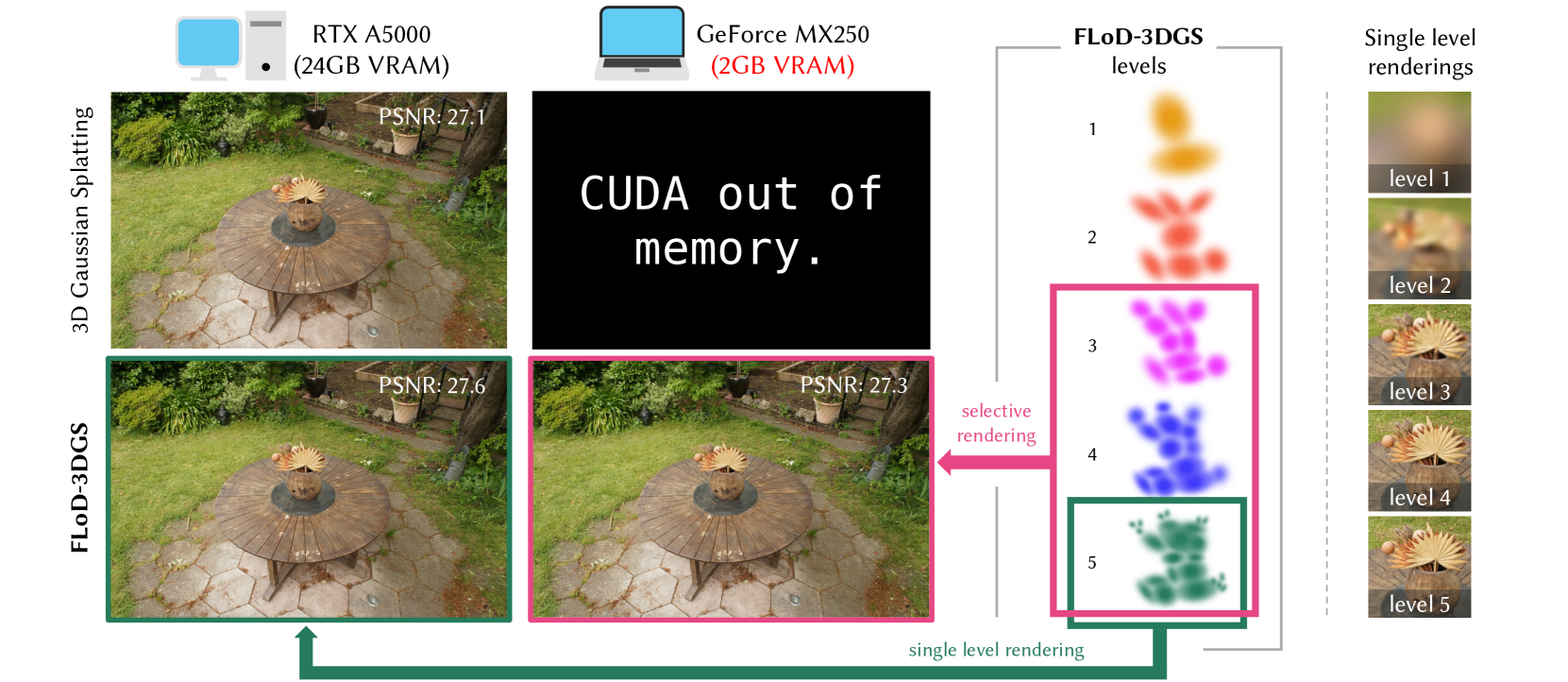

Figure 1. We introduce Level of Detail (LoD) mechanism in 3D Gaussian Splatting (3DGS) through multi-level representations. These representations enable flexible rendering by selecting individual levels or subsets of levels. The green box illustrates max-level rendering on a high-end server, while the pink box shows subset-level rendering for a low-cost laptop, where traditional 3DGS fails to render. Thus, FLoD-3DGS can flexibly adapt to diverse hardware settings.

\Description

Abstract.

3D Gaussian Splatting (3DGS) has significantly advanced computer graphics by enabling high-quality 3D reconstruction and fast rendering speeds, inspiring numerous follow-up studies. However, 3DGS and its subsequent works are restricted to specific hardware setups, either on only low-cost or on only high-end configurations. Approaches aimed at reducing 3DGS memory usage enable rendering on low-cost GPU but compromise rendering quality, which fails to leverage the hardware capabilities in the case of higher-end GPU. Conversely, methods that enhance rendering quality require high-end GPU with large VRAM, making such methods impractical for lower-end devices with limited memory capacity. Consequently, 3DGS-based works generally assume a single hardware setup and lack the flexibility to adapt to varying hardware constraints.

To overcome this limitation, we propose Flexible Level of Detail (FLoD) for 3DGS. FLoD constructs a multi-level 3DGS representation through level-specific 3D scale constraints, where each level independently reconstructs the entire scene with varying detail and GPU memory usage. A level-by-level training strategy is introduced to ensure structural consistency across levels. Furthermore, the multi-level structure of FLoD allows selective rendering of image regions at different detail levels, providing additional memory-efficient rendering options. To our knowledge, among prior works which incorporate the concept of Level of Detail (LoD) with 3DGS, FLoD is the first to follow the core principle of LoD by offering adjustable options for a broad range of GPU settings.

Experiments demonstrate that FLoD provides various rendering options with trade-offs between quality and memory usage, enabling real-time rendering under diverse memory constraints. Furthermore, we show that FLoD generalizes to different 3DGS frameworks, indicating its potential for integration into future state-of-the-art developments.

3D Gaussian Splatting, Level-of-Detail, Novel View Synthesis submissionid: 1344 journal: TOG journalyear: 2025 journalvolume: 44 journalnumber: 4 publicationmonth: 8 copyright: cc price: doi: 10.1145/3731430 ccs: Computing methodologies Reconstruction ccs: Computing methodologies Point-based models ccs: Computing methodologies Rasterization

1. Introduction

Recent advances in 3D reconstruction have led to significant improvements in the fidelity and rendering speed of novel view synthesis. In particular, 3D Gaussian Splatting (3DGS) (Kerbl et al., 2023) has demonstrated photo-realistic quality at exceptionally fast rendering rates. However, its reliance on numerous Gaussian primitives makes it impractical for rendering on devices with limited GPU memory. Similarly, methods such as AbsGS (Ye et al., 2024), FreGS (Zhang et al., 2024), and Mip-Splatting (Yu et al., 2024), which further enhance rendering quality, remain constrained to higher-end devices due to their dependence on a comparable or even greater number of Gaussians for scene reconstruction. Conversely, LightGaussian (Fan et al., 2023) and CompactGS (Lee et al., 2024) address memory limitations by removing redundant Gaussians, which helps reduce rendering memory demands as well as reducing storage size. However, the reduction in memory usage comes at the expense of rendering quality. Consequently, existing approaches are developed based on either high-end or low-cost devices. As a result, they lack the flexibility to adapt and produce optimal renderings across various GPU memory capacities.

Motivated by the need for greater flexibility, we integrate the concept of Level of Detail (LoD) within the 3DGS framework. LoD is a concept in graphics and 3D modeling that provides different levels of detail, allowing model complexity to be adjusted for optimal performance on varying devices. At lower levels, models possess reduced geometric and textural detail, which decreases memory and computational demands. Conversely, at higher levels, models have increased detail, leading to higher memory and computational demands. This approach enables graphical applications to operate effectively on systems with varying GPU settings, avoiding processing delays for low-end devices while maximizing visual quality for high-end setups. Additionally, it enables the selective application of different levels, using higher levels where necessary and lower levels in less critical regions, to enhance resource efficiency while maintaining a high perceptual image.

Recent methods that integrate LoD with 3DGS (Ren et al., 2024; Kerbl et al., 2024; Liu et al., 2024) develop multi-level representations to achieve consistent and high-quality renderings, rather than the adaptability to diverse GPU memory settings. While these methods excel at creating detailed high-level representations, rendering with only lower-level representations to accommodate middle or low-cost GPU settings causes significant scene content loss and distortions. This highlights the lack of flexibility in existing methods to adapt and optimize rendering quality across different hardware setups.

<details>

<summary>x2.png Details</summary>

### Visual Description

\n

## Diagram: FloD-3DGS Pipeline

### Overview

This diagram illustrates the pipeline for FloD-3DGS (likely a 3D reconstruction or rendering technique). It depicts a multi-level approach starting from SfM (Structure from Motion) points, applying scale constraints, level training, and ultimately rendering at different levels of detail. The diagram is structured as a flow chart with several sub-diagrams explaining specific steps.

### Components/Axes

The diagram consists of the following main components:

* **Initialization:** Starting point with SfM points.

* **3D Scale Constraint:** Applying a 3D scale constraint to the points.

* **Level Training:** Iterative training process with large overlap.

* **FloD-3DGS Levels:** Multiple levels of detail (Level 1 to Level Lmax).

* **Level Up:** Condition for increasing the level (l < Lmax).

* **Rendering:** Single-level and selective rendering options.

* **Sub-diagrams (a), (b), (c), (d):** Detailed explanations of specific steps.

### Detailed Analysis or Content Details

The diagram shows a flow from left to right.

1. **Initialization:** A collection of SfM points (represented as black dots) is the starting point.

2. **3D Scale Constraint:** The points are transformed into a 3D point cloud. A red dashed box indicates the application of a 3D scale constraint.

3. **Level Training:** The point cloud undergoes level training, indicated by a larger red dashed box. The point cloud appears to become denser during this stage.

4. **FloD-3DGS Levels:** The trained point cloud is saved and organized into multiple levels (Level 1, Level 2, ... Level Lmax). Each level is represented by a differently colored point cloud (orange, red, and lighter shades). The levels are enclosed in a curly brace.

5. **Level Up:** A feedback loop indicates that the level is incremented (l ← l + 1) if the current level (l) is less than the maximum level (Lmax).

6. **Rendering:** Two rendering options are presented: single-level rendering and selective rendering.

**Sub-diagram (a) - 3D Scale Constraint:**

* Shows three circles representing Level l, Level l+1, and Level Lmax.

* Level l has a "minimum size" constraint.

* Level l+1 has a "minimum size" constraint.

* Level Lmax has "no upper size limit".

* Text: "No upper size limit"

**Sub-diagram (b) - Overlap Pruning:**

* Shows a point cloud within a dashed circle representing "Large overlap".

* An arrow indicates the pruning of points, resulting in a sparser point cloud.

* Text: "Large overlap"

**Sub-diagram (c) - Single Level Rendering:**

* Shows a green cone-shaped rendering of points from Level Lmax.

* Text: "Single level rendering"

* Text: "Level Lmax"

**Sub-diagram (d) - Selective Rendering:**

* Shows a rendering with points from Level 1 (blue) and Level Lmax (red).

* Text: "Selective rendering"

* Text: "Level 1"

* Text: "Level Lmax"

### Key Observations

* The process is iterative, with level training and level up steps.

* The diagram emphasizes the creation of multiple levels of detail for efficient rendering.

* Overlap pruning is used to optimize the point cloud.

* The rendering options allow for both simple and complex visualizations.

* The scale constraint is applied at the beginning of the process.

### Interpretation

The diagram describes a hierarchical 3D reconstruction and rendering pipeline. The FloD-3DGS technique appears to leverage multiple levels of detail to balance rendering speed and quality. The initial SfM points are refined through scale constraints and level training, resulting in a multi-resolution representation. The overlap pruning step suggests an optimization strategy to reduce computational cost. The rendering options provide flexibility in visualizing the reconstructed scene, allowing for either a simplified single-level view or a more detailed selective rendering. The sub-diagrams provide insights into the specific mechanisms used in each step, such as the scale constraint and overlap pruning. The diagram suggests a robust and efficient approach to 3D reconstruction and rendering, particularly suitable for large-scale scenes. The use of "l" and "Lmax" suggests a mathematical formulation underlying the level selection process. The diagram does not provide numerical data, but rather a conceptual overview of the pipeline.

</details>

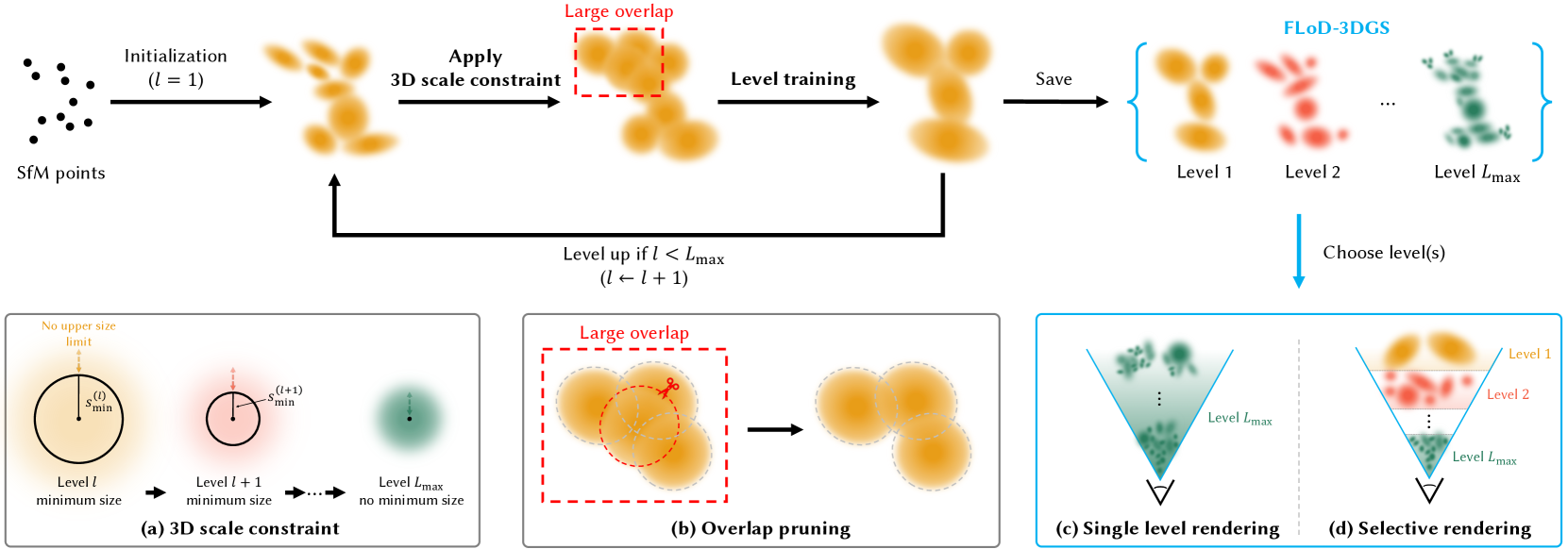

Figure 2. Method overview. Training begins at level 1, initialized from SfM points. During the training of each level, (a) a level-specific 3D scale constraint $s_{\text{min}}^{(l)}$ is imposed on the Gaussians as a lower bound, and (b) overlap pruning is performed to mitigate Gaussian overlap. At the end of each level’s training, the Gaussians are cloned and saved as the final representation for level $l$ . This level-by-level training continues until the max level ( $L_{\text{max}}$ ), resulting in a multi-level 3D Gaussian representation referred to as FLoD-3DGS. FLoD-3DGS supports (c) single-level rendering and (d) selective rendering using multiple levels.

\Description

To address the hardware adaptability challenges, we propose Flexible Level of Detail (FLoD). FLoD constructs a multi-level 3D Gaussian Splatting (3DGS) representation that provides varying levels of detail and memory requirements, with each level independently capable of reconstructing the full scene. Our method applies a level-specific 3D scale constraint, which increases each successive level, to limit the amount of detail reconstructed and the rendering memory demand. Furthermore, we introduce a level-by-level training method to maintain a consistent 3D structure across all levels. Our trained FLoD representation provides the flexibility to choose any single level based on the available GPU memory or desired rendering rates. Furthermore, the independent and multi-level structure of our method allows different parts of an image to be rendered with different levels of detail, which we refer to as selective rendering. Depending on the scene type or the object of interest, higher-level Gaussians can be used to rasterize important regions, while lower levels can be assigned to less critical areas, resulting in more efficient rendering. As a result, FLoD provides the versatility of adapting to diverse GPU settings and rendering contexts.

We empirically validate the effectiveness of FLoD in offering flexible rendering options, tested on both a high-end server and a low-cost laptop. We conduct experiments not only on the Tanks and Temples (Knapitsch et al., 2017) and Mip-Nerf360 (Barron et al., 2022) datasets, which are commonly used in 3DGS and its variants but also on the DL3DV-10K (Ling et al., 2023) dataset, which contains distant background elements that can be effectively represented through LoD. Furthermore, we demonstrate that FLoD can be easily integrated into existing 3DGS variants, while also enhancing the rendering quality.

2. Related Work

2.1. 3D Gaussian Splatting

3D Gaussian Splatting (3DGS) (Kerbl et al., 2023) has attained popularity for its fast rendering speed in comparison to other novel view synthesis literature such as NeRF (Mildenhall et al., 2020). Subsequent works, such as FreGS (Zhang et al., 2024) and AbsGS (Ye et al., 2024), improve rendering quality by modifying the loss function and the Gaussian density control strategy, respectively. However, these methods, including 3DGS, demand high rendering memory because they rely on a large number of Gaussians, making them unsuitable for low-cost devices with limited GPU memory.

To address these memory challenges, various works have proposed compression methods for 3DGS. LightGaussian (Fan et al., 2023) and Compact3D (Lee et al., 2024) use pruning techniques, while EAGLES (Girish et al., 2024) employs quantized embeddings. However, their rendering quality falls short compared to 3DGS. RadSplat (Niemeyer et al., 2024) and Scaffold-GS (Lu et al., 2024) maintain rendering quality while reducing memory usage with neural radiance field prior and neural Gaussians. Despite these advancements, existing 3DGS methods lack the flexibility to provide multiple rendering options for optimizing performance across various GPU settings.

In contrast, we propose a multi-level 3DGS that increases rendering flexibility by enabling rendering across various GPU settings, ranging from server GPUs with 24GB VRAM to laptop GPUs with 2GB VRAM.

2.2. Multi-Scale Representation

There have been various attempts to improve the rendering quality of novel view synthesis through multi-scale representations. In the field of Neural Radiance Fields (NeRF), approaches such as Mip-NeRF (Barron et al., 2021) and Zip-NeRF (Barron et al., 2023) adopt multi-scale representations to improve rendering fidelity. Similarly, in 3D Gaussian Splatting (3DGS), Mip-Splatting (Yu et al., 2024) uses a multi-scale filtering mechanism, and MS-GS (Yan et al., 2024) applies a multi-scale aggregation strategy. However, these methods primarily focus on addressing the aliasing problem and do not consider the flexibility to adapt to different GPU settings.

In contrast, our proposed method generates a multi-level representation that not only provides flexible rendering across various GPU settings but also enhances reconstruction accuracy.

2.3. Level of Detail

Level of Detail (LoD) in computer graphics traditionally uses multiple representations of varying complexity, allowing the selection of detail levels according to computational resources. In NeRF literature, NGLOD (Takikawa et al., 2021) and Variable Bitrate Neural Fields (Takikawa et al., 2022) create LoD structures based on grid-based NeRFs.

In 3D Gaussian Splatting (3DGS), methods such as Octree-GS (Ren et al., 2024) and Hierarchical-3DGS (Kerbl et al., 2024) integrate the concept of LoD and create multi-level 3DGS representation for efficient and high-detail rendering. However, these methods primarily target efficient rendering on high-end GPUs, such as A6000 or A100 GPUs with 48GB or 80GB VRAM. Moreover, these methods render using Gaussians from the entire range of levels, not solely from individual levels. Rendering with individual levels, particularly the lower ones, leads to a loss of image quality. Therefore, theses methods cannot provide rendering options with lower memory demands. While CityGaussian (Liu et al., 2024) can render individual levels using its multi-level representations created with various compression rates, it also does not address the challenges of rendering on lower-cost GPU.

In contrast, our method allows for rendering using either individual or multiple levels, as all levels independently reconstruct the scene. Additionally, as each level has an appropriate degree of detail and corresponding rendering computational demand, our method offers rendering options that can be optimized for diverse GPU setups.

3. Preliminary

3D Gaussian Splatting (3DGS) (Kerbl et al., 2023) introduces a method to represent a 3D scene using a set of 3D Gaussian primitives. Each 3D Gaussian is characterized by attributes: position $\boldsymbol{\mu}$ , opacity $o$ , covariance matrix $\boldsymbol{\Sigma}$ , and spherical harmonic coefficients. The covariance matrix $\mathbf{\Sigma}$ is factorized into a scaling matrix $\mathbf{S}$ and a rotation matrix $\mathbf{R}$ :

$$

\boldsymbol{\Sigma}=\mathbf{R}\mathbf{S}\mathbf{S}^{\top}\mathbf{R}^{\top}. \tag{1}

$$

To facilitate the independent optimization of both components, the scaling matrix $\mathbf{S}$ is optimized through the vector $\mathbf{s}_{\text{opt}}$ , and the rotation matrix $\mathbf{R}$ is optimized via the quaternion $\mathbf{q}$ . These 3D Gaussians are projected to 2D screenspace and the opacity contribution of a Gaussian at a pixel $(x,y)$ is computed as follows:

$$

\alpha(x,y)=o\cdot e^{-\frac{1}{2}\left(([x,y]^{T}-\boldsymbol{\mu}^{\prime})^%

{T}\boldsymbol{\Sigma}^{\prime-1}([x,y]^{T}-\boldsymbol{\mu}^{\prime})\right)}, \tag{2}

$$

where $\boldsymbol{\mu}^{\prime}$ and $\boldsymbol{\Sigma}^{\prime}$ are the 2D projected mean and covariance matrix of the 3D Gaussians. The image is rendered by alpha blending the projected Gaussians in depth order.

4. Method: Flexible Level of Detail

Our method reconstructs a scene as a $L_{\text{max}}$ -level 3D Gaussian representation, using 3D Gaussians of varying sizes from level 1 to $L_{\text{max}}$ (Section 4.1). Through our level-by-level training process (Section 4.2), each level independently captures the overall scene structure while optimizing for render quality appropriate to its respective level. This process results yields a novel LoD structure of 3D Gaussians, which we refer to as FLoD-3DGS. The lower levels in FLoD-3DGS reconstruct the coarse structures of the scene using fewer and larger Gaussians, while higher levels capture fine details using more and smaller Gaussians. Additionally, we introduce overlap pruning to eliminate artifacts caused by excessive Gaussian overlap (Section 4.3) and demonstrate our method’s easy integration with different 3DGS-based method (Section 4.4).

4.1. 3D Scale Constraint

For each level $l$ where $l∈[1,L_{\text{max}}]$ , we impose a 3D scale constraint $s_{\text{min}}^{(l)}$ as the lower bound on 3D Gaussians. The 3D scale constraint $s_{\text{min}}^{(l)}$ is defined as follows:

$$

s_{\text{min}}^{(l)}=\begin{cases}\lambda\times\rho^{1-l}&\text{for }1\leq l<L%

_{\text{max}}\\

0&\text{for }l=L_{\text{max}}.\end{cases} \tag{3}

$$

$\lambda$ is the initial 3D scale constraint, and $\rho$ is the scale factor by which the 3D scale constraint is reduced for each subsequent level. The 3D scale constraint is 0 at $L_{\text{max}}$ to allow reconstruction of the finest details without constraints at this stage. Then, we define 3D Gaussians’ scale at level $l$ as follows:

$$

\mathbf{s}^{(l)}=e^{\mathbf{s_{\text{opt}}}}+s_{\text{min}}^{(l)}. \tag{4}

$$

where $\mathbf{s_{\text{opt}}}$ is the learnable parameter for scale, while the 3D scale constraint $s_{\text{min}}^{(l)}$ is fixed. We note that $\mathbf{s}^{(l)}>=s_{\text{min}}^{(l)}$ because $e^{\mathbf{s_{\text{opt}}}}>0$ .

On the other hand, there is no upper bound on Gaussian size at any level. This allows for flexible modeling, where scene contents with simple shapes and appearances can be modeled with fewer and larger Gaussians, avoiding the redundancy of using many small Gaussians at high levels.

4.2. Level-by-level Training

We design a coarse-to-fine training process, where the next-level Gaussians are initialized by the fully-trained previous-level Gaussians. Similar to 3DGS, the 3D Gaussians at level 1 are initialized from SFM points. Then, the training process begins. Note that training of subsequent levels are nearly identical.

The training process consists of periodic densification and pruning of Gaussians over a set number of iterations. This is then followed by the optimization of Gaussian attributes without any further densification or pruning for an additional set of iterations. Throughout the entire training process for level $l$ , the 3D scale of the Gaussian is constrained to be larger or equal to $s_{\text{min}}^{(l)}$ by definition.

After completing training at level $l$ , this stage is saved as a checkpoint. At this point, the Gaussians are cloned and saved as the final Gaussians for level $l$ . Then, the checkpoint Gaussians are used to initialize Gaussians of the next level $l+1$ . For initialized Gaussians at the next level $l+1$ , we set

$$

\mathbf{s}_{\text{opt}}=\textnormal{log}(\mathbf{s}^{(l)}-s_{\text{min}}^{(l+1%

)}), \tag{5}

$$

such that $\mathbf{s}^{(l+1)}=\mathbf{s}^{(l)}$ . It prevents abrupt initial loss by eliminating the gap $\mathbf{s}^{(l+1)}-\mathbf{s}^{(l)}=\cancel{e^{\mathbf{s_{\text{opt}}^{\text{%

prev}}}}}+s_{\text{min}}^{(l+1)}-(\cancel{e^{\mathbf{s_{\text{opt}}^{\text{%

prev}}}}}+s_{\text{min}}^{(l)})$ . Note that $\mathbf{s_{\text{opt}}^{\text{prev}}}$ represents the learnable parameter for scale at level $l$ .

4.3. Overlap Pruning

To prevent rendering artifacts, we remove Gaussians with large overlaps. Specifically, Gaussians whose average distance of its three nearest neighbors falls below a pre-defined distance threshold $d_{\text{OP}}^{(l)}$ are eliminated. Equation for $d_{\text{avg}}^{(l)}$ is given as:

$$

d_{\text{avg}}^{(i)}=\frac{1}{3}\sum_{j=1}^{3}d_{ij} \tag{6}

$$

$d_{\text{OP}}^{(l)}$ is set as half of the 3D scale constraint $s_{\text{min}}^{(l)}$ for training level $l$ . This method also reduces the overall memory footprint.

4.4. Compatibility to Different Backbone

The simplicity of our method, stemming from the straightforward design of the 3D scale constraints and the level-by-level training pipeline, makes it easy to integrate with other 3DGS-based techniques. We integrate our approach into Scaffold-GS (Lu et al., 2024), a variant of 3DGS that leverages anchor-based neural Gaussians. We generate a multi-level set of Scaffold-GS by applying progressively decreasing 3D scale constraints on the neural Gaussians, optimized through our level-by-level training method.

5. Rendering Methods

FLoD’s $L_{\text{max}}$ -level 3D Gaussian representation provides a broad range of rendering options. Users can select a single level to render the scene (Section 5.1), or multiple levels to increase rendering efficiency through selective rendering (Section 5.2). Levels and rendering methods can be adjusted to achieve the desired rendering rates or to fit within available GPU memory limits.

5.1. Single-level Rendering

From our multi-level set of 3D Gaussians $\{\mathbf{G}^{(l)}\mid l=1,...,L_{\text{max}}\}$ , users can choose any single level for rendering to match their GPU memory capabilities. This approach is similar to how games or streaming services let users adjust quality settings to optimize performance for their devices. Rendering any single level independently is possible because each level is designed to fully reconstruct the scene.

High-end hardware can handle the smaller and more numerous Gaussians of level $L_{\text{max}}$ , achieving high-quality rendering. However, rendering a large number of Gaussians may exceed the memory limits of commodity devices. In such cases, lower levels can be chosen to match the memory constraints.

5.2. Selective Rendering

<details>

<summary>x3.png Details</summary>

### Visual Description

\n

## Diagram: Gaussian Pyramid Level Representation

### Overview

This diagram illustrates the levels of a Gaussian pyramid, likely used in image processing or computer vision. It depicts the projection of the image plane through different levels of the pyramid, showing how the image size and projection distance change at each level. The diagram uses a ray-tracing-like representation to visualize the process.

### Components/Axes

* **Horizontal Axis:** Represents the distance along the optical axis. Marked with `-f`, `0`, `dproj(L=4)`, and `dproj(Lstart=3)`. The scale is not explicitly defined, but it appears linear.

* **Vertical Axis:** Represents the image plane or screen size. Labeled as "screensize (γ = 1)".

* **Image Plane:** A vertical line on the left, labeled "image plane".

* **Levels:** Five levels are depicted, labeled "Level 3 Lstart", "Level 4", "Level 5 Lend (Gaussians region)".

* **Projection Lines:** Colored lines representing the projection of the image through each level.

* **Markers:** Red dots mark key points on the optical axis.

* **Labels:** `Smin(L=4)`, `Smin(Lstart=3)` indicate the minimum size at each level.

### Detailed Analysis

The diagram shows a series of projections from the image plane to different levels of the Gaussian pyramid.

* **Level 3 Lstart (Magenta):** Starts at a point beyond the image plane (negative distance) and projects to `dproj(Lstart=3)` on the horizontal axis. `Smin(Lstart=3)` is indicated at this level. The line slopes downward.

* **Level 4 (Light Blue):** Starts at the image plane (0) and projects to `dproj(L=4)` on the horizontal axis. `Smin(L=4)` is indicated at this level. The line slopes downward.

* **Level 5 Lend (Light Green):** Starts at the image plane (0) and projects to `dproj(L=4)` on the horizontal axis. This level is labeled as the "Gaussians region". The line slopes downward.

* **f:** The image plane is located at -f.

* The distance `dproj(L=4)` and `dproj(Lstart=3)` are indicated on the horizontal axis.

The diagram does not provide specific numerical values for the distances or sizes, only relative positions and labels.

### Key Observations

* The projection distance increases as the level number increases.

* The lines representing the projections converge towards the right side of the diagram.

* The "Gaussians region" (Level 5) is positioned between Level 4 and the image plane.

* The `Smin` values appear to be related to the level number.

### Interpretation

This diagram illustrates the concept of a Gaussian pyramid, a multi-scale representation of an image. Each level of the pyramid represents a downsampled version of the original image, with increasing levels corresponding to smaller image sizes and larger projection distances. The "Gaussians region" (Level 5) likely refers to the application of Gaussian blurring at that level, which is a common step in creating a Gaussian pyramid.

The diagram suggests that the projection process is used to map features from the image plane to different scales within the pyramid. The `Smin` values likely represent the minimum size of features that can be reliably detected at each level. The diagram is a conceptual illustration and does not provide quantitative data, but it effectively conveys the relationships between the different levels of the Gaussian pyramid and the projection process. The use of different colors for each level helps to visually distinguish the projections and understand the flow of information.

</details>

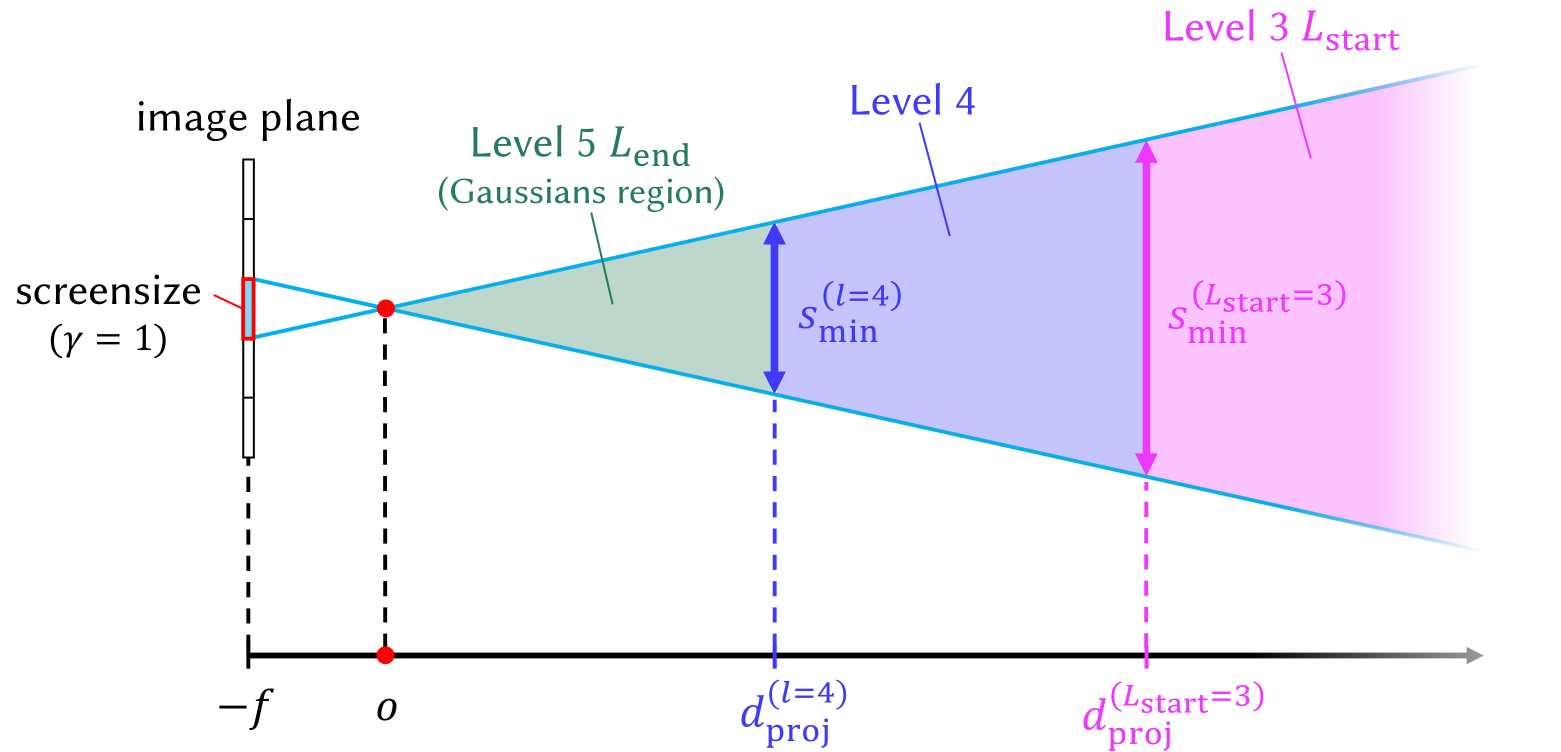

Figure 3. Visualization of the selective rendering process that shows how $d_{\text{proj}}^{(l)}$ determines the appropriate Gaussian level for specific regions. This example visualizes the case where level 3 is used as $L_{\text{start}}$ and level 5 as $L_{\text{end}}$ .

\Description

Although a single level can be simply selected to match GPU memory capabilities, utilizing multiple levels can further enhance visual quality while keeping memory demands manageable. Distant objects or background regions do not need to be rendered with high-level Gaussians, which capture small and intricate details. This is because the perceptual difference between high-level and low-level Gaussian reconstructions becomes less noticeable as the distance from the viewpoint increases. In such scenarios, lower levels can be employed for distant regions while higher levels are used for closer areas. This arrangement of multiple level Gaussians can achieve perceptual quality comparable to using only high-level Gaussians but at a reduced memory cost.

Therefore, we propose a faster and more memory-efficient rendering method by leveraging our multi-level set of 3D Gaussians $\{\mathbf{G}^{(l)}\mid l=1,...,L_{\text{max}}\}$ . We create the set of Gaussians $\mathbf{G}_{\text{sel}}$ for selective rendering by sampling Gaussians from a desired level range, $L_{\text{start}}$ to $L_{\text{end}}$ :

$$

\mathbf{G}_{\text{sel}}=\bigcup_{l=L_{\text{start}}}^{L_{\text{end}}}\left\{G^%

{(l)}\in\mathbf{G}^{(l)}\mid d_{\text{proj}}^{(l-1)}>d_{G^{(l)}}\geq d_{\text{%

proj}}^{(l)}\right\}, \tag{7}

$$

where $d_{\text{proj}}^{(l)}$ decides the inclusion of a Gaussian $G^{(l)}$ whose distance from the camera is $d_{G^{(l)}}$ . We define $d_{\text{proj}}^{(l)}$ as:

$$

d_{\text{proj}}^{(l)}=\frac{s_{\text{min}}^{(l)}}{\gamma}\times{f}, \tag{8}

$$

by solving a proportional equation $s_{\text{min}}^{(l)}:\gamma=d_{\text{proj}}^{(l)}:f$ . Hence, the distance $d_{\text{proj}}^{(l)}$ is where the level-specific Gaussian 3D scale constraint $s_{\text{min}}^{(l)}$ becomes equal to the screen size threshold $\gamma$ on the image plane. $f$ is the focal length of the camera. We set $d_{\text{proj}}^{(L_{\text{end}})}=0$ and $d_{\text{proj}}^{(L_{\text{start}}-1)}=∞$ to ensure that the scene is fully covered with Gaussians from the level range $L_{\text{start}}$ to $L_{\text{end}}$ .

The Gaussian set $\mathbf{G}_{\text{sel}}$ is created using the 3D scale constraint $s_{\text{min}}^{(l)}$ because $s_{\text{min}}^{(l)}$ represents the smallest 3D dimension that Gaussians at level $l$ can be trained to represent. Therefore, the distance $d_{\text{proj}}^{(l)}$ can be used to determine which level of Gaussians should be selected for different regions, as demonstrated in Figure 3. Since $s_{\text{min}}^{(l)}$ is fixed for each level, $d_{\text{proj}}^{(l)}$ is also fixed. Thus, constructing the Gaussian set $\mathbf{G}_{\text{sel}}$ only requires calculating the distance of each Gaussian from the camera, $d_{G^{(l)}}$ . This method is computationally more efficient than the alternative, which requires calculating each Gaussian’s 2D projection and comparing it with the screen size threshold $\gamma$ at every level.

The threshold $\gamma$ and the level range [ $L_{\text{start}}$ , $L_{\text{end}}$ ] can be adjusted to accommodate specific memory limitations or desired rendering rates. A smaller threshold and a high-level range prioritize fine details over memory and speed, while a larger threshold and a low-level range reduce memory use and speed up rendering at the cost of fine details.

Predetermined Gaussian Set

<details>

<summary>x4.png Details</summary>

### Visual Description

\n

## Diagram: Level of Detail (LOD) Comparison

### Overview

The image presents a comparative diagram illustrating two approaches to Level of Detail (LOD) management: "(a) predetermined" and "(b) per-view". Both approaches utilize concentric, shaded circles to represent LOD levels, and rays emanating from camera positions to demonstrate the visibility of different detail levels. The diagram focuses on the spatial relationship between camera views, LOD levels, and the "view frustum".

### Components/Axes

The diagram consists of two main sections, labeled "(a) predetermined" and "(b) per-view", separated by a dashed vertical line. Each section contains:

* **Concentric Circles:** Representing LOD levels. The levels are labeled "Level 3 Lstart (Gaussians region)", "Level 4", and "Level 5 Lend".

* **Rays:** Emanating from camera positions (represented by inverted triangles) indicating the field of view. These rays intersect the concentric circles.

* **Shading:** The circles are shaded with varying intensities of purple, indicating the LOD level.

* **View Frustum:** A teal-colored wedge shape in section (b), representing the visible area from a camera's perspective.

* **Labels:** Text annotations describing the LOD levels and the overall approach.

### Detailed Analysis or Content Details

**Section (a) Predetermined:**

* Three concentric circles are present, shaded in purple.

* The innermost circle is labeled "Level 3 Lstart (Gaussians region)".

* The middle circle is labeled "Level 4".

* The outermost circle is labeled "Level 5 Lend".

* Three cameras (inverted triangles) are positioned within the innermost circle (Level 3).

* Rays emanate from each camera, extending outwards and intersecting all three LOD levels. The rays are colored pink.

* The rays are evenly distributed, suggesting a uniform LOD selection based on distance.

**Section (b) Per-view:**

* Three concentric circles are present, shaded in purple. The levels are the same as in section (a).

* Three cameras (inverted triangles) are positioned at varying distances from the center.

* Rays emanate from each camera, but their lengths vary.

* The rays from the closest camera intersect only Level 3 and Level 4.

* The rays from the middle camera intersect Level 4 and Level 5.

* The rays from the furthest camera intersect only Level 5.

* A teal wedge labeled "view frustum" is overlaid on the rays, indicating the visible area.

* The rays are colored pink and green.

### Key Observations

* The "predetermined" approach (a) applies the same LOD to all cameras, regardless of their distance.

* The "per-view" approach (b) dynamically adjusts the LOD based on the camera's distance and view frustum.

* The "Gaussians region" label suggests that Level 3 might be calculated using a Gaussian distribution.

* The LOD levels increase numerically (3 to 5) as the detail level decreases.

* The dashed circles surrounding both sections indicate a common spatial boundary.

### Interpretation

This diagram illustrates the difference between a static, predetermined LOD system and a dynamic, per-view LOD system. The predetermined approach is simpler to implement but can lead to overdraw (rendering unnecessary detail for distant objects) or underdraw (rendering insufficient detail for close objects). The per-view approach is more complex but optimizes rendering performance by only rendering the appropriate level of detail for each camera's view. The "Gaussians region" label suggests a potential method for calculating LOD transitions, possibly smoothing the visual changes between levels. The diagram highlights the trade-offs between simplicity and efficiency in LOD management, and demonstrates how a per-view approach can improve rendering performance by adapting to the specific needs of each camera. The use of the "view frustum" emphasizes the importance of only rendering objects within the visible area.

</details>

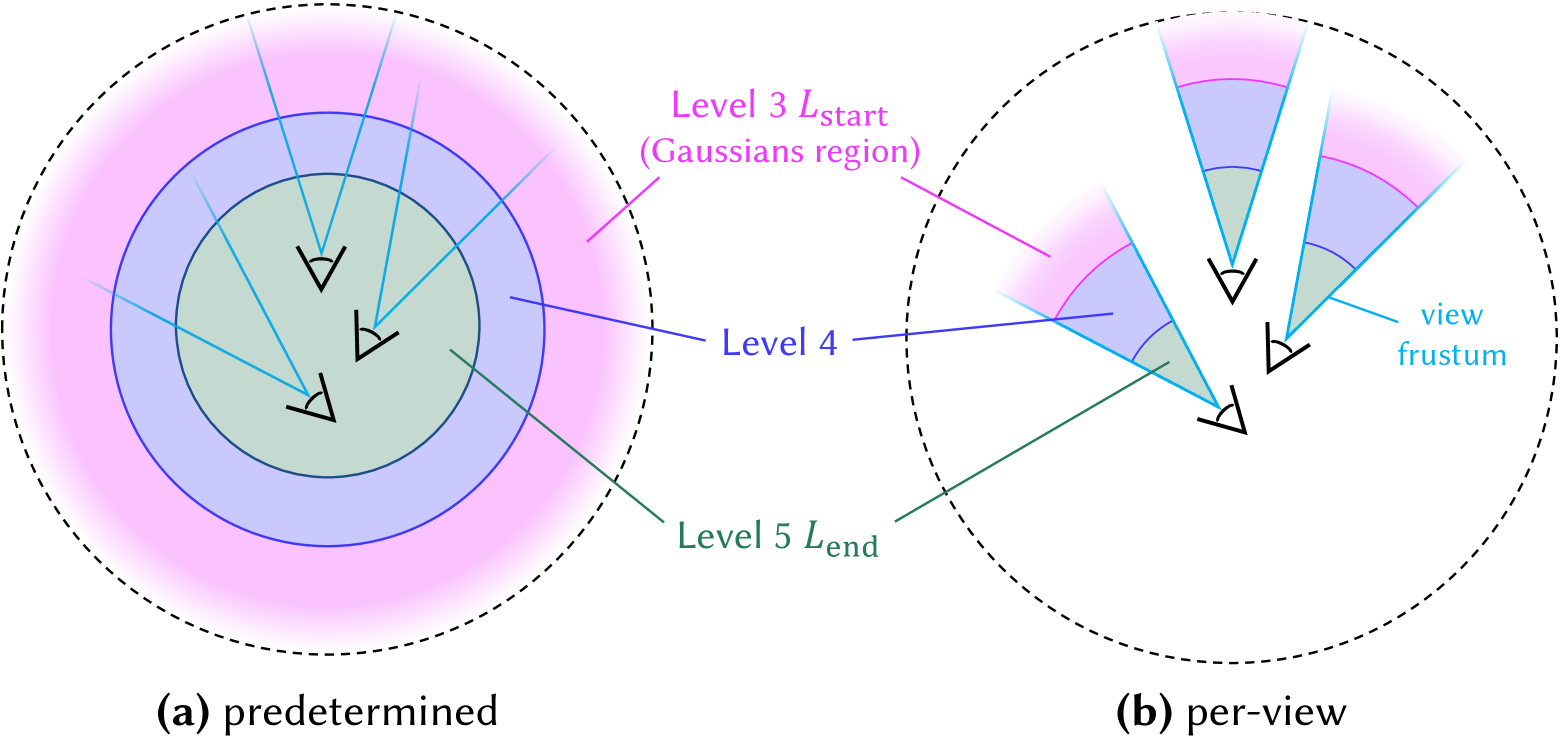

Figure 4. Comparison of predetermined Gaussian set $\mathbf{G}_{\text{sel}}$ and per-view Gaussian set $\mathbf{G}_{\text{sel}}$ creation methods. In the predetermined version, the Gaussian set is fixed, whereas the per-view version updates the Gaussian set dynamically whenever the camera position changes. This example illustrates the case where level 3 is used as $L_{\text{start}}$ and level 5 as $L_{\text{end}}$ .

For scenes where important objects are centrally located or the camera trajectory is confined to a small region, higher-level Gaussians can be assigned in the central areas, while lower-level Gaussians are allocated to the background. This strategy enables high-quality rendering while reducing rendering memory and storage overhead.

To achieve this, we calculate the Gaussian distance $d_{G^{(l)}}$ from the average position of all training view cameras before rendering and use it to predetermine the Gaussian subset $\mathbf{G}_{\text{sel}}$ , as illustrated in Figure 4 (a). Since $\mathbf{G}_{\text{sel}}$ is predetermined, it remains fixed during the rendering, eliminating the need to recalculate $d_{G^{(l)}}$ whenever the camera view changes. This predetermined approach allows for non-sampled Gaussians to be excluded, significantly reducing memory consumption during rendering. Furthermore, The sampled $\mathbf{G}_{\text{sel}}$ can be stored for future use, requiring less storage compared to maintaining all level Gaussians. As a result, this method is especially beneficial for low-cost devices with limited GPU memory and storage capacity.

<details>

<summary>x5.png Details</summary>

### Visual Description

\n

## Image: Level of Detail (LoD) Comparison

### Overview

The image presents a visual comparison of two rendering techniques, "FLoD-3DGS" and "FLoD-Scaffold", across five levels of detail, ranging from "level 1" (lowest detail) to "level 5 (Max)" (highest detail). Each level displays a scene with a bush and a trailer, with associated memory usage indicated below each image.

### Components/Axes

The image is organized into two rows, representing the two rendering techniques. Each row contains five columns, representing the five levels of detail. Labels are present above each column indicating the level number. Labels are present to the left of each row indicating the rendering technique. Below each image is a "memory" label with a corresponding value in GB.

### Detailed Analysis or Content Details

**FLoD-3DGS Row:**

* **Level 1:** The image is heavily blurred, with only the general shape of the bush and background visible. Memory usage: 0.25GB.

* **Level 2:** The image is slightly less blurred than level 1, with some more detail becoming visible in the bush. Memory usage: 0.31GB.

* **Level 3:** The bush is more defined, with individual leaves becoming discernible. Memory usage: 0.75GB.

* **Level 4:** The bush is significantly more detailed, with clear leaf structure and texture. Memory usage: 1.27GB.

* **Level 5 (Max):** The image is the sharpest, with the highest level of detail in the bush and background. Memory usage: 2.06GB.

**FLoD-Scaffold Row:**

* **Level 1:** The trailer is heavily blurred, with only the general shape visible. Memory usage: 0.24GB.

* **Level 2:** The trailer is slightly less blurred than level 1, with some more detail becoming visible. Memory usage: 0.42GB.

* **Level 3:** The trailer is more defined, with individual components becoming discernible. Memory usage: 0.43GB.

* **Level 4:** The trailer is significantly more detailed, with clear structure and texture. Memory usage: 0.68GB.

* **Level 5 (Max):** The image is the sharpest, with the highest level of detail in the trailer and background. Memory usage: 0.98GB.

### Key Observations

* Memory usage increases consistently with each level of detail for both rendering techniques.

* FLoD-3DGS consistently requires more memory than FLoD-Scaffold for the same level of detail.

* The visual difference between levels 1-3 is more pronounced than between levels 4-5, suggesting diminishing returns in visual quality per unit of memory used at higher levels.

* The FLoD-Scaffold technique appears to achieve a reasonable level of detail with lower memory consumption.

### Interpretation

The image demonstrates the trade-off between visual fidelity and memory usage in rendering. The Level of Detail (LoD) techniques, FLoD-3DGS and FLoD-Scaffold, allow for dynamic adjustment of rendering complexity based on factors like distance from the viewer or available hardware resources.

The data suggests that FLoD-Scaffold is a more memory-efficient approach, potentially making it suitable for resource-constrained environments. The increasing memory usage with each level indicates that higher detail requires significantly more computational resources. The diminishing returns in visual quality at higher levels suggest that there is an optimal point where increasing detail no longer justifies the increased memory cost.

The comparison highlights the importance of choosing an appropriate LoD strategy based on the specific application requirements and hardware limitations. The image serves as a visual representation of the performance characteristics of these two rendering techniques, allowing for informed decision-making in the design of 3D graphics systems.

</details>

Figure 5. Renderings of each level in FLoD-3DGS and FLoD-Scaffold. FLoD can be integrated with both 3DGS and Scaffold-GS, with each level offering varying levels of detail and memory usage.

Per-view Gaussian Set

In large-scale scenes with camera trajectories that span broad regions, resampling the Gaussian set $\mathbf{G}_{\text{sel}}$ based on the camera’s new position is necessary. This is because the camera may move and enter regions where lower level Gaussians have been assigned, leading to a noticeable decline in rendering quality.

Therefore, in such cases, we define the Gaussian distance $d_{G^{(l)}}$ as the distance between a Gaussian $G^{(l)}$ and the current camera position. Consequently, whenever the camera position changes, $d_{G^{(l)}}$ is recalculated to resample the Gaussian set $\mathbf{G}_{\text{sel}}$ as illustrated in Figure 4 (b). To maintain fast rendering rates, all Gaussians within the level range [ $L_{\text{start}}$ , $L_{\text{end}}$ ] are kept in GPU memory. Therefore, with the cost of increased rendering memory, selective rendering with per-view $\mathbf{G}_{\text{sel}}$ effectively maintains consistent rendering quality over long camera trajectories.

6. Experiment

6.1. Experiment Settings

6.1.1. Datasets

We conduct our experiments on a total of 15 real-world scenes. Two scenes are from Tanks&Temples (Knapitsch et al., 2017) and seven scenes are from Mip-NeRF360 (Barron et al., 2022), encompassing both bounded and unbounded environments. These datasets are commonly used in existing 3DGS research. In addition, we incorporate six unbounded scenes from DL3DV-10K (Ling et al., 2023), which include various urban and natural landscapes. We choose to include DL3DV-10K because it contains more objects located in distant backgrounds, providing a better demonstration of the diversity in real-world scenes. Further details on the datasets can be found in Appendix A.

6.1.2. Evaluation Metrics

We measure PSNR, structural similarity SSIM (Wang et al., 2004), and perceptual similarity LPIPS (Zhang et al., 2018) for a comprehensive evaluation. Additionally, we assess the number of Gaussians used for rendering the scenes, the GPU memory usage, and the rendering rates (FPS) to evaluate resource efficiency.

6.1.3. Baselines

We compare FLoD-3DGS against several models, including 3DGS (Kerbl et al., 2023), Scaffold-GS (Lu et al., 2024), Mip-Splatting (Yu et al., 2024), Octree-GS (Ren et al., 2024) and Hierarchical-3DGS (Kerbl et al., 2024). Among these, the main competitors are Octree-GS and Hierarchical-3DGS, as they share the LoD concept with FLoD. However, these two competitors define individual level representation differently from ours.

In FLoD, each level representation independently reconstructs the scene. In contrast, Octree-GS defines levels by aggregating the representations from the first level up to the specified level, meaning that individual levels do not exist independently. On the other hand, Hierarchical-3DGS does not have the concept of rendering using a specific level’s representation, unlike FLoD and Octree-GS. Instead, it employs a hierarchical structure with multiple levels, where Gaussians from different levels are selected based on the target granularity $\tau$ setting for each camera view during rendering.

Additionally, like FLoD, Octree-GS is adaptable to both 3DGS and Scaffold-GS. We will refer to the 3DGS based Octree-GS as Octree-3DGS and the Scaffold-GS based Octree-GS as Octree-Scaffold.

<details>

<summary>x6.png Details</summary>

### Visual Description

## Image: 3D Model Rendering Quality Comparison

### Overview

The image presents a comparison of rendering quality for a 3D model of a traditional Chinese pavilion, using two different techniques: Octree-3DGS and FLOD-3DGS. The rendering quality is shown at five different levels, ranging from Level 1 (lowest quality) to Level 5 (highest quality). Each rendering is accompanied by metrics: the number of graphics (G) used in kilobytes (K) and the Structural Similarity Index Measure (SSIM).

### Components/Axes

The image is organized into a 2x5 grid.

* **Rows:** Represent the rendering technique used: Octree-3DGS (top row) and FLOD-3DGS (bottom row).

* **Columns:** Represent the rendering level, labeled "level 1" through "level 5 (Max)".

* **Labels:** Each image has two labels below it: "#G s: [value]([percentage]) SSIM: [value]". "#G s:" indicates the number of graphics used in kilobytes, followed by the percentage of total graphics used. "SSIM:" indicates the Structural Similarity Index Measure, a metric for image quality.

### Detailed Analysis or Content Details

**Octree-3DGS:**

* **Level 1:** #G s: 25K(9%) SSIM: 0.40

* **Level 2:** #G s: 119K(17%) SSIM: 0.56

* **Level 3:** #G s: 276K(39%) SSIM: 0.68

* **Level 4:** #G s: 560K(78%) SSIM: 0.83

* **Level 5 (Max):** #G s: 713K(100%) SSIM: 0.92

**FLOD-3DGS:**

* **Level 1:** #G s: 7K(0.7%) SSIM: 0.56

* **Level 2:** #G s: 18K(2%) SSIM: 0.70

* **Level 3:** #G s: 223K(22%) SSIM: 0.88

* **Level 4:** #G s: 475K(47%) SSIM: 0.93

* **Level 5 (Max):** #G s: 1015K(100%) SSIM: 0.96

**Trends:**

* **Octree-3DGS:** As the level increases from 1 to 5, the number of graphics used (#G s) increases monotonically from 25K to 713K. The SSIM score also increases monotonically from 0.40 to 0.92, indicating improving image quality.

* **FLOD-3DGS:** Similar to Octree-3DGS, the number of graphics used (#G s) increases monotonically from 7K to 1015K as the level increases from 1 to 5. The SSIM score also increases monotonically from 0.56 to 0.96.

### Key Observations

* **Graphics Usage:** FLOD-3DGS consistently uses fewer graphics than Octree-3DGS at lower levels (1-3). However, at levels 4 and 5, the graphics usage for FLOD-3DGS approaches and exceeds that of Octree-3DGS.

* **SSIM Scores:** FLOD-3DGS achieves a higher SSIM score at all levels, suggesting better image quality for a given number of graphics, especially at lower levels.

* **Percentage of Graphics:** The percentage of graphics used increases with level for both methods, reaching 100% at level 5.

### Interpretation

The image demonstrates a trade-off between rendering quality (as measured by SSIM) and the number of graphics used. Both Octree-3DGS and FLOD-3DGS improve image quality as more graphics are utilized. However, FLOD-3DGS appears to be more efficient at lower levels, achieving comparable or better image quality with significantly fewer graphics. This suggests that FLOD-3DGS might be preferable for applications where computational resources are limited, or where a balance between quality and performance is desired. The increasing graphics usage at higher levels for both methods indicates diminishing returns in terms of SSIM improvement per additional graphic. The SSIM values suggest that the visual difference between levels 4 and 5 is less pronounced than between levels 1 and 2. The data suggests that FLOD-3DGS is a more efficient rendering technique, particularly at lower levels of detail, but requires more resources at the highest quality setting.

</details>

Figure 6. Comparison of the renderings at each level between FLoD-3DGS and Octree-3DGS on the DL3DV-10K dataset. ”#G’s” refers to the number of Gaussians, and the percentages (%) next to these values indicate the proportion of Gaussians used relative to the max level (level 5).

<details>

<summary>x7.png Details</summary>

### Visual Description

## Image Analysis: 3DGS Rendering Comparison

### Overview

The image presents a comparative analysis of two 3D Graphics Systems (3DGS): Hierarchical-3DGS and FLOD-3DGS. Each system is evaluated across four different time steps (τ) – 120, 30, 15, and 0 (Max) – displaying rendered images of a garden scene with a gazebo as the central element. For each rendering, the image reports memory usage (as a percentage of total memory) and Peak Signal-to-Noise Ratio (PSNR). The bottom of each image also indicates the level of detail used.

### Components/Axes

The image is organized as a 2x4 grid.

* **Rows:** Represent the two 3DGS systems: Hierarchical-3DGS (top row) and FLOD-3DGS (bottom row).

* **Columns:** Represent different time steps (τ): 120, 30, 15, and 0 (Max).

* **Labels:** Each image includes labels for the 3DGS system name (left side), time step (top center), memory usage (bottom left), and PSNR (bottom right).

* **Levels:** Each image also indicates the level of detail used (bottom center).

### Detailed Analysis / Content Details

**Hierarchical-3DGS**

* **τ = 120:** Memory: 3.53GB (79%), PSNR: 20.98, Level: [3,2,1]

* **τ = 30:** Memory: 3.72GB (83%), PSNR: 23.47, Level: [3,2,1]

* **τ = 15:** Memory: 4.19GB (93%), PSNR: 24.71, Level: [3,4,3]

* **τ = 0 (Max):** Memory: 4.46GB (100%), PSNR: 26.03, Level: [Max]

**FLOD-3DGS**

* **τ = 120:** Memory: 0.73GB (29%), PSNR: 24.02, Level: [3,2,1]

* **τ = 30:** Memory: 1.29GB (52%), PSNR: 26.23, Level: [3,2,1]

* **τ = 15:** Memory: 1.40GB (57%), PSNR: 26.71, Level: [3,4,3]

* **τ = 0 (Max):** Memory: 2.45GB (100%), PSNR: 27.64, Level: [Max]

### Key Observations

* **Memory Usage:** FLOD-3DGS consistently uses significantly less memory than Hierarchical-3DGS across all time steps. At τ=120, FLOD-3DGS uses approximately 29% of the memory used by Hierarchical-3DGS (0.73GB vs 3.53GB).

* **PSNR:** FLOD-3DGS generally achieves higher PSNR values than Hierarchical-3DGS, indicating better image quality. The difference in PSNR is more pronounced at lower time steps.

* **Memory vs. PSNR:** As time steps decrease (τ approaches 0), both systems increase memory usage, and PSNR improves.

* **Level of Detail:** The level of detail appears to change between time steps, with [3,4,3] being used at τ=15 for both systems, and [Max] at τ=0.

### Interpretation

The data suggests that FLOD-3DGS is a more efficient rendering system than Hierarchical-3DGS, requiring less memory while achieving higher image quality (as measured by PSNR). The increasing memory usage and PSNR as time steps decrease indicate that more computational resources are allocated to rendering as the simulation progresses, resulting in a more detailed and accurate image. The level of detail parameter likely controls the complexity of the rendered scene, and its adjustment contributes to the trade-off between memory usage and image quality.

The consistent difference in memory usage between the two systems suggests a fundamental difference in their underlying algorithms or data structures. FLOD-3DGS may employ more aggressive level-of-detail scaling or more efficient memory management techniques. The higher PSNR values for FLOD-3DGS suggest that these optimizations do not come at the cost of image fidelity.

The use of "Max" for the level of detail at τ=0 indicates that the system is rendering the scene with the highest possible level of detail when given unlimited time. This provides a benchmark for the maximum achievable image quality.

</details>

Figure 7. Comparison of the trade-off between visual quality and memory usage for FLoD-3DGS and Hierarchical-3DGS. The percentages (%) shown next to the memory values indicate how much memory each rendering setting consumes relative to the memory required by the ”Max” setting for maximum rendering quality.

6.1.4. Implementation

FLoD-3DGS is implemented on the 3DGS framework. Experiments are mainly conducted on a single NVIDIA RTX A5000 24GB GPU. Following the common practice for LoD in graphics applications, we train our FLoD representation up to level $L_{\text{max}}=5$ . Note that $L_{\text{max}}$ is adjustable for specific objectives and settings with minimal impact on render quality. For FLoD-3DGS training with $L_{\text{max}}=5$ levels, we set the training iterations for levels 1, 2, 3, 4, and 5 to 10,000, 15,000, 20,000, 25,000, and 30,000, respectively. The number of training iterations for the max level matches that of the backbone, while the lower levels have fewer iterations due to their faster convergence.

Gaussian density control techniques (densification, pruning, overlap pruning, opacity reset) are applied during the initial 5,000, 6,000, 8,000, 10,000, and 15,000 iterations for levels 1, 2, 3, 4, and 5, respectively. The Gaussian density control techniques run for the same duration as the backbone at the max level, but for shorter durations at the lower levels, as fewer Gaussians need to be optimized. Additionally, the intervals for densification are set to 2,000, 1,000, 500, 500, and 200 iterations for levels 1, 2, 3, 4, and 5, respectively. We use longer intervals compared to the backbone, which sets the interval to 100, as to allow more time for Gaussians to be optimized before new Gaussians are added or existing Gaussians are removed. These settings were selected based on empirical observations. Overlap pruning runs every 1000 iterations at all levels except the max level, where it is not applied.

We set the initial 3D scale constraint $\lambda$ to 0.2 and the scale factor $\rho$ to 4. This configuration effectively distinguishes the level of detail across $L_{\text{max}}$ levels in most of the scenes we handle, enabling LoD representations that adapt to various memory capacities. For smaller scenes or when higher detail is required at lower levels, the initial 3D scale constraint $\lambda$ can be further reduced.

Unlike the original 3DGS approach, we do not periodically remove large Gaussians or those with large projected sizes during training as we do not impose an upper bound on the Gaussian scale. All other training settings not mentioned follow those of the backbone model. For loss, we adopt L1 and SSIM losses across all levels, consistent with the backbone model.

For selective rendering, we default to using the predetermined Gaussian set unless stated otherwise. The screen size threshold $\gamma$ is set as 1.0. This selects Gaussians of level $l$ from distances where the image projection of the level-specific 3D scale constraint $s_{\text{min}}^{(l)}$ becomes equal or smaller than 1.0 pixel length.

6.2. Flexible Rendering

In this section, we show that each level representation from FLoD can be used independently. Based on this, we demonstrate the extensive range of rendering options that FLoD offers, through both single and selective rendering.

<details>

<summary>x8.png Details</summary>

### Visual Description

\n

## Image Series: Image Quality vs. Processing Level

### Overview

The image presents a series of six progressively processed images of a tree against a blurred background. Each image is labeled with a "level" indicating the processing stage, and accompanied by performance metrics: PSNR (Peak Signal-to-Noise Ratio), memory usage, and Frames Per Second (FPS) for different processing configurations (A5000 and MX250). The images demonstrate a trade-off between image quality (as indicated by PSNR) and computational resources (memory and FPS).

### Components/Axes

The image consists of six horizontally arranged panels. Each panel contains:

* **Image:** A visual representation of the processed image.

* **Level Label:** A text label indicating the processing level (e.g., "level (3,2,1)", "level 3", etc.).

* **Performance Metrics:** Three lines of text displaying PSNR, memory usage, and FPS.

The performance metrics are presented as follows:

* **PSNR:** A numerical value representing the image quality.

* **Memory:** The amount of memory used during processing, in GB.

* **FPS:** Frames Per Second, reported for both A5000 and MX250 GPUs.

### Detailed Analysis

Here's a breakdown of the data for each level:

* **Level (3,2,1):**

* PSNR: 22.9

* Memory: 0.61GB

* FPS (A5000): 304, (MX250): 28.7

* **Level 3:**

* PSNR: 23.0

* Memory: 0.76GB

* FPS (A5000): 274, (MX250): 17.9

* **Level (4,3,2):**

* PSNR: 25.5

* Memory: 0.81GB

* FPS (A5000): 218, (MX250): 13.2

* **Level 4:**

* PSNR: 25.8

* Memory: 1.27GB

* FPS (A5000): 178, (MX250): 10.6

* **Level (5,4,3):**

* PSNR: 26.4

* Memory: 1.21GB

* FPS (A5000): 150, (MX250): 8.4

* **Level 5:**

* PSNR: 26.9

* Memory: 2.06GB

* FPS (A5000): 113, (MX250): OOM (Out of Memory)

**Trends:**

* **PSNR:** The PSNR value generally increases with the processing level, indicating improved image quality. The increase is more pronounced between levels (3,2,1) and (4,3,2) and then plateaus.

* **Memory:** Memory usage consistently increases with the processing level.

* **FPS (A5000):** FPS decreases as the processing level increases, indicating a higher computational cost.

* **FPS (MX250):** FPS also decreases with the processing level. At level 5, the MX250 runs out of memory (OOM).

### Key Observations

* The MX250 GPU is unable to handle the processing at level 5 due to memory limitations.

* The A5000 GPU experiences a significant drop in FPS as the processing level increases, but remains functional at all levels.

* The largest jump in PSNR occurs between levels (3,2,1) and (4,3,2).

* There is a diminishing return in PSNR improvement for higher processing levels (4 and 5).

### Interpretation

The data suggests a trade-off between image quality, computational resources, and processing speed. Higher processing levels result in better image quality (higher PSNR) but require more memory and lead to lower FPS. The MX250 GPU demonstrates limited capacity for resource-intensive processing, while the A5000 GPU offers greater flexibility but still experiences performance degradation at higher levels.

The levels likely represent different stages of a processing pipeline, potentially involving more complex algorithms or higher resolution outputs. The diminishing returns in PSNR improvement at higher levels suggest that further processing may not significantly enhance the visual quality and could be inefficient. The "OOM" error on the MX250 at level 5 highlights the importance of considering hardware limitations when selecting processing parameters.

The image demonstrates a typical optimization problem: finding the optimal balance between quality and performance based on available resources. The choice of processing level would depend on the specific application requirements and the capabilities of the hardware.

</details>

Figure 8. Various rendering options of FLoD-3DGS are evaluated on a server with an A5000 GPU and a laptop equipped with a 2GB VRAM MX250 GPU. The flexibility of FLoD-3DGS provides rendering options that prevent out-of-memory (OOM) errors and allow near real-time rendering on the laptop setting.

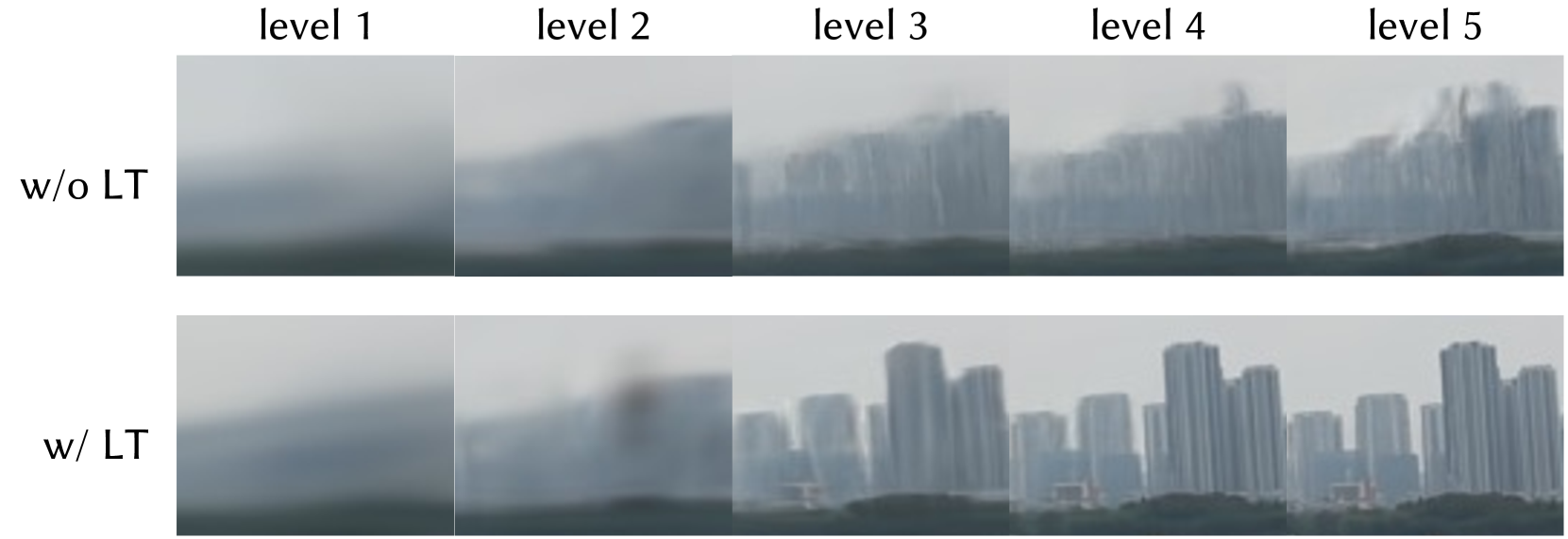

6.2.1. LoD Representation

As shown in Figure 5, FLoD follows the LoD concept by offering independent representations at each level. Each level captures the scene with varying levels of detail and corresponding memory requirements. This enables users to select an appropriate level for rendering based on the desired visual quality and available memory. A key observation is that even at lower levels (e.g., levels 1, 2, and 3), FLoD-3DGS achieves high perceptual visual quality for the background. This is because, even with the large size of Gaussians at lower levels, the perceived detail in distant regions is similar to that achieved using the smaller Gaussians at higher levels.

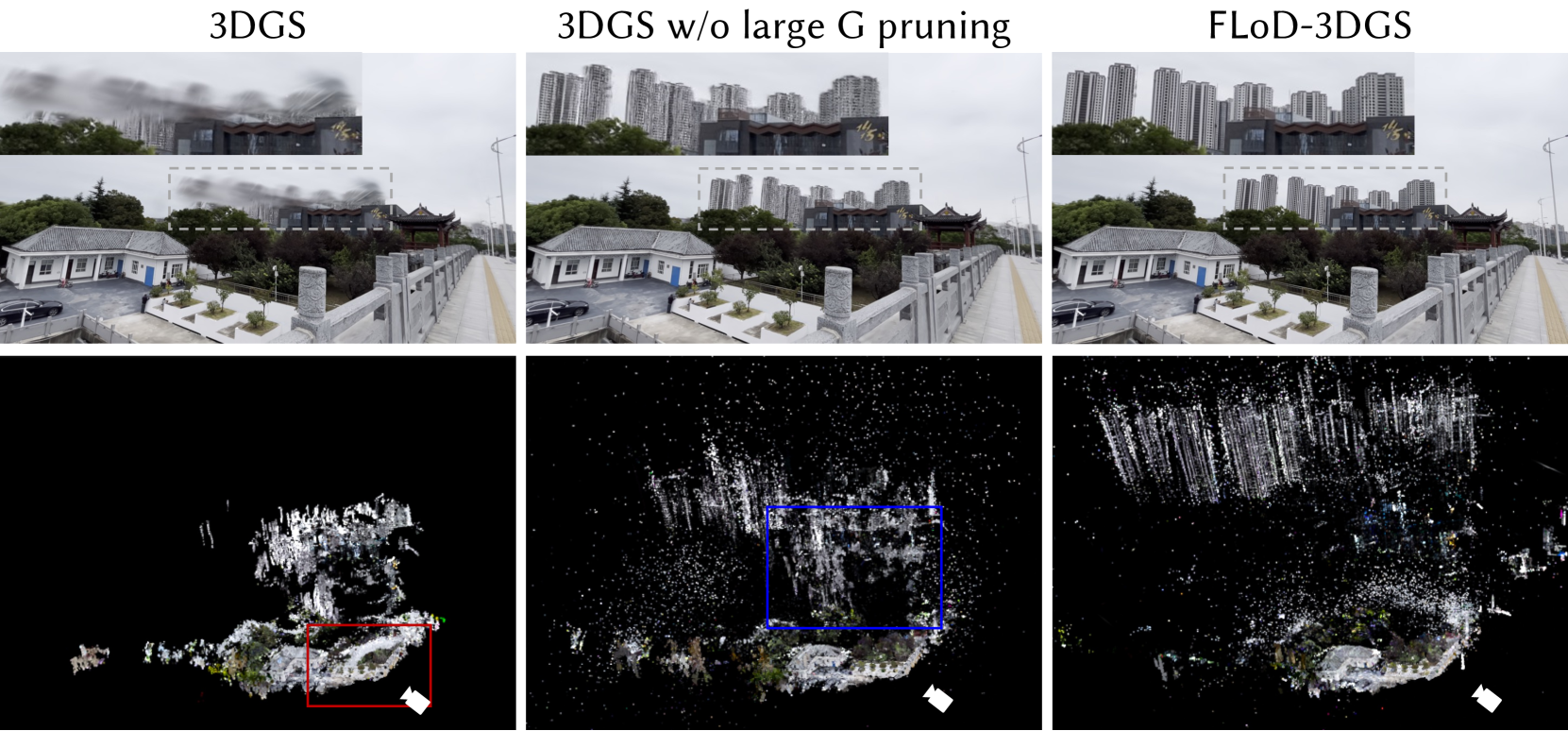

To further demonstrate the effectiveness of FLoD’s level representations, we compare renderings of each level from FLoD-3DGS with those from Octree-3DGS, as shown in Figure 6. At lower levels (e.g., levels 1, 2, and 3), Octree-3DGS shows broken structures, such as a pavilion, and the sharp artifacts created by very thin and elongated Gaussians. In contrast, FLoD-3DGS preserves the overall structure with appropriate detail for each level. Notably, it achieves this while using fewer Gaussians than Octree-3DGS, showing our method’s superiority in efficiently creating lower-level representations that better capture the scene structure. At higher levels (e.g., level 5), FLoD-3DGS uses more Gaussians to achieve higher visual quality and accurately reconstruct complex scene structures. This shows that our method can handle detailed scenes effectively through the higher level representations.

In summary, the level representations of FLoD-3DGS outperform those of Octree-3DGS in reconstructing scene structures, as evidenced by its higher SSIM values across all levels. Furthermore, FLoD-3DGS uses significantly fewer Gaussians at lower levels, requiring only 0.7%, 2%, and 22% of the Gaussians of the max level for levels 1, 2, and 3, respectively. These results demonstrate that FLoD-3DGS can create level representations with a wide range of memory requirements.

Note that we exclude Hierarchical-3DGS from this comparison because it was not designed for rendering with specific levels. For render results of Hierarchical-3DGS and Octree-3DGS that use Gaussians from single levels individually, please refer to Appendix C.

<details>

<summary>x9.png Details</summary>

### Visual Description

\n

## Charts: Performance Comparison of Hierarchical-3DGS and FLOD-3DGS

### Overview

The image presents two line charts comparing the performance of "Hierarchical-3DGS" and "FLOD-3DGS" methods. The left chart shows the relationship between PSNR (Peak Signal-to-Noise Ratio) and Memory usage (in GB), while the right chart shows the relationship between PSNR and FPS (Frames Per Second). Both charts use the same x-axis (PSNR) and display data for both methods as distinct lines with corresponding markers.

### Components/Axes

* **X-axis (Both Charts):** PSNR (Peak Signal-to-Noise Ratio), ranging from approximately 21 to 28.

* **Left Chart Y-axis:** Memory (GB), ranging from approximately 0.8 to 4.5.

* **Right Chart Y-axis:** FPS (Frames Per Second), ranging from approximately 20 to 200.

* **Legend (Top-Left of each chart):**

* Blue Line/Markers: Hierarchical-3DGS

* Red Line/Markers: FLOD-3DGS

### Detailed Analysis

**Left Chart: Memory vs. PSNR**

* **Hierarchical-3DGS (Blue Line):** The line slopes generally upward, indicating that as PSNR increases, memory usage also increases.

* PSNR = 21, Memory ≈ 3.6 GB

* PSNR = 22, Memory ≈ 3.7 GB

* PSNR = 23, Memory ≈ 3.8 GB

* PSNR = 24, Memory ≈ 3.9 GB

* PSNR = 25, Memory ≈ 4.0 GB

* PSNR = 26, Memory ≈ 4.15 GB

* PSNR = 27, Memory ≈ 4.25 GB

* PSNR = 28, Memory ≈ 4.3 GB

* **FLOD-3DGS (Red Line):** The line shows a steep upward trend, especially at higher PSNR values.

* PSNR = 21, Memory ≈ 0.8 GB

* PSNR = 22, Memory ≈ 0.85 GB

* PSNR = 23, Memory ≈ 0.9 GB

* PSNR = 24, Memory ≈ 0.95 GB

* PSNR = 25, Memory ≈ 1.0 GB

* PSNR = 26, Memory ≈ 1.1 GB

* PSNR = 27, Memory ≈ 1.8 GB

* PSNR = 28, Memory ≈ 2.0 GB

**Right Chart: FPS vs. PSNR**

* **Hierarchical-3DGS (Blue Line):** The line slopes downward, indicating that as PSNR increases, FPS decreases.

* PSNR = 21, FPS ≈ 90

* PSNR = 22, FPS ≈ 80

* PSNR = 23, FPS ≈ 70

* PSNR = 24, FPS ≈ 60

* PSNR = 25, FPS ≈ 50

* PSNR = 26, FPS ≈ 40

* PSNR = 27, FPS ≈ 30

* PSNR = 28, FPS ≈ 25

* **FLOD-3DGS (Red Line):** The line also slopes downward, but the decrease in FPS is more pronounced, especially at higher PSNR values.

* PSNR = 21, FPS ≈ 200

* PSNR = 22, FPS ≈ 180

* PSNR = 23, FPS ≈ 160

* PSNR = 24, FPS ≈ 140

* PSNR = 25, FPS ≈ 120

* PSNR = 26, FPS ≈ 100

* PSNR = 27, FPS ≈ 110

* PSNR = 28, FPS ≈ 105

### Key Observations

* Hierarchical-3DGS consistently uses significantly more memory than FLOD-3DGS across all PSNR values.

* FLOD-3DGS achieves much higher FPS values than Hierarchical-3DGS, especially at lower PSNR values.

* Both methods exhibit a trade-off between memory usage/FPS and PSNR. Increasing PSNR generally leads to higher memory usage and lower FPS.

* The FPS drop for FLOD-3DGS is more dramatic at higher PSNR values than for Hierarchical-3DGS.

### Interpretation

The data suggests that FLOD-3DGS is more efficient in terms of memory usage and achieves higher frame rates, particularly at lower PSNR values. This indicates that FLOD-3DGS might be preferable for applications where speed and memory are critical, even if it means sacrificing some image quality (lower PSNR). Hierarchical-3DGS, while using more resources, maintains a more stable FPS performance as PSNR increases.

The trade-off between PSNR, memory, and FPS highlights the need to carefully consider the application's requirements when choosing between these two methods. The steeper decline in FPS for FLOD-3DGS at higher PSNR suggests a potential bottleneck or limitation in its implementation that becomes more apparent as image quality demands increase. The consistent memory usage of Hierarchical-3DGS suggests a more predictable resource allocation strategy. The data implies that the optimal choice depends on the specific balance between image quality, processing speed, and available memory.

</details>

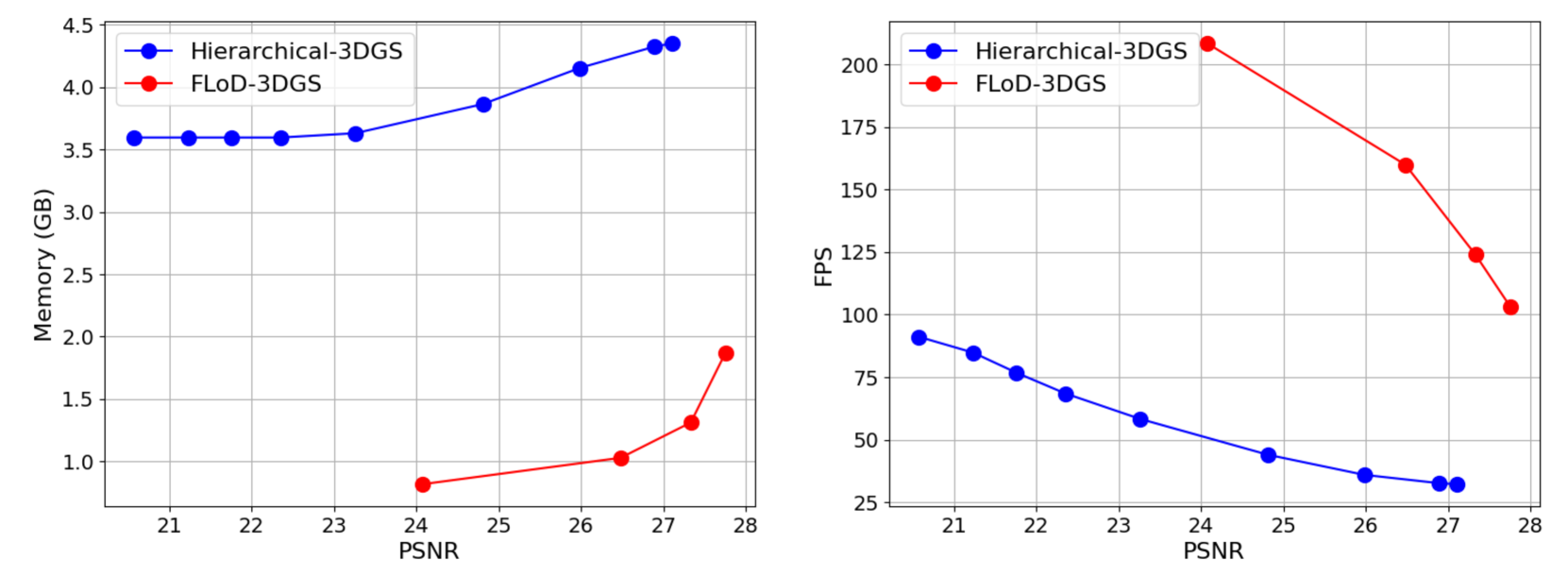

Figure 9. Comparison of the trade-offs in selective rendering for FLoD-3DGS and Hierarchical-3DGS on Mip-NeRF360 scenes: visual quality(PSNR) versus memory usage, and visual quality versus rendering speed(FPS).

6.2.2. Selective Rendering

FLoD provides not only single-level rendering but also selective rendering. Selective rendering enables more efficient rendering by selectively using Gaussians from multiple levels.

To evaluate the efficiency of FLoD’s selective rendering, we compare rendering quality and memory usage for different selective rendering configurations against Hierarchical-3DGS. We compare with Hierarchical-3DGS because its rendering method, involving the selection of Gaussians from its hierarchy based on target granularity $\tau$ , is similar to our selective rendering which selects Gaussians across level ranges based on the screen size threshold $\gamma$ .

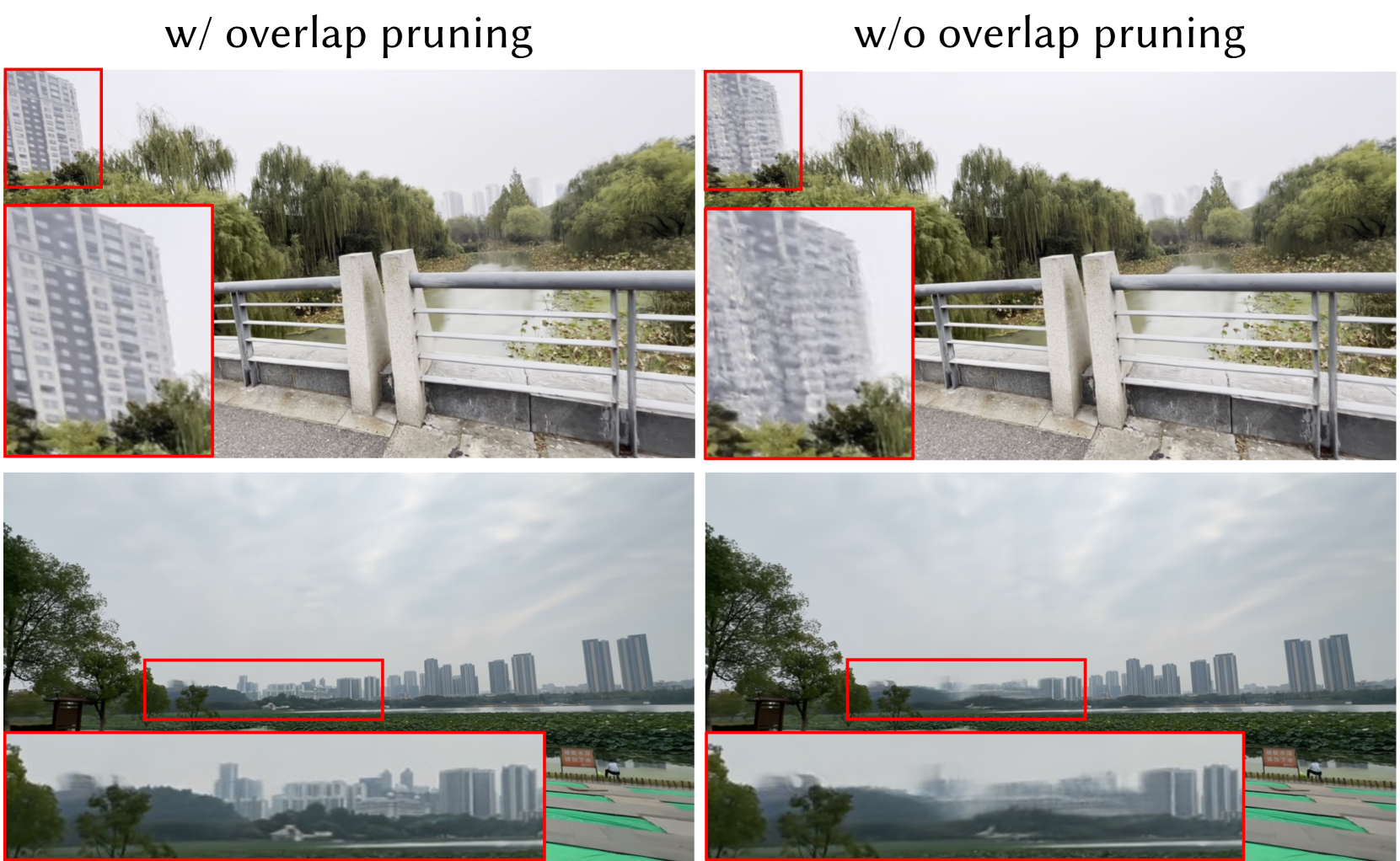

As shown in Figure 7, FLoD-3DGS effectively reduces memory usage through selective rendering. For example, selectively using levels 5, 4, and 3 reduces memory usage by about half compared to using only level 5, while the PSNR decreases by less than 1. Similarly, selective rendering with levels 3, 2, and 1 reduce memory usage to approximately 30%, with PSNR drop of about 3.6.

In contrast, Hierarchical-3DGS does not reduce memory usage as effectively as FLoD-3DGS and also suffers from a greater decrease in rendering quality. Even when the target granularity $\tau$ is set to 120, occupied GPU memory remains high, consuming approximately 79% of the memory used for the maximum rendering quality setting ( $\tau=0$ ). Moreover, for this rendering setting, the PSNR drops significantly by more than 5. These results demonstrate that FLoD-3DGS’s selective rendering provides a wider range of rendering options, achieving a better balance between visual quality and memory usage compared to Hierarchical-3DGS.

We further compare the memory usage to PSNR curve, and FPS to PSNR curve on the Mip-NeRF360 scenes in Figure 9. For FLoD-3DGS, we evaluate rendering performance using only level 5, as well as selectively using levels 5, 4, 3; levels 4, 3, 2; and levels 3, 2, 1. For Hierarchical-3DGS, we measure rendering performance with target granularity $\tau$ set to 0, 6, 15, 30, 60, 90, 120, 160, and 200. The results show that FLoD-3DGS consistently uses less memory and achieves higher fps than Hierarchical-3DGS when compared at the same PSNR levels. Notably, as PSNR decreases, FLoD-3DGS shows a sharper reduction in memory usage, and a greater increase in fps.

Note that for a fair comparison, we train Hierarchical-3DGS with a maximum $\tau$ of 200 during the hierarchy optimization stage to enhance its rendering quality for larger $\tau$ beyond its default settings. For renderings of Hierarchicial-3DGS using its default training settings, please refer to Appendix D.

Table 1. Quantitative comparison of FLoD-3DGS to baselines across three real-world datasets (Mip-NeRF360, DL3DV-10K, Tanks&Temples). For FLoD-3DGS and Hierarchical-3DGS, we use the rendering setting that produces the best image quality. The best results are highlighted in bold.

| 3DGS Mip-Splatting Octree-3DGS | 27.36 27.59 27.29 | 0.812 0.831 0.815 | 0.217 0.181 0.214 | 28.00 28.64 29.14 | 0.908 0.917 0.915 | 0.142 0.125 0.128 | 23.58 23.62 24.19 | 0.848 0.855 0.865 | 0.177 0.157 0.154 |

| --- | --- | --- | --- | --- | --- | --- | --- | --- | --- |

| Hierarchical-3DGS | 27.10 | 0.797 | 0.219 | 30.45 | 0.922 | 0.115 | 24.03 | 0.861 | 0.152 |

| FLoD-3DGS | 27.75 | 0.815 | 0.224 | 31.99 | 0.937 | 0.107 | 24.41 | 0.850 | 0.186 |

Table 2. Trade-offs between visual quality, rendering speed, and the number of Gaussians achieved in FLoD-3DGS through single-level and selective rendering in the Mip-NeRF360 dataset.

| ✓ | 27.75 | 0.815 | 0.224 | 103 | 2189K |

| --- | --- | --- | --- | --- | --- |

| ✓- ✓- ✓ | 27.33 | 0.801 | 0.245 | 124 | 1210K |

| ✓ $-\checkmark$ | 26.67 | 0.764 | 0.292 | 150 | 1049K |

| ✓- ✓- ✓ | 26.48 | 0.759 | 0.298 | 160 | 856K |

| ✓ | 24.11 | 0.634 | 0.440 | 202 | 443K |

| ✓- ✓- ✓ | 24.07 | 0.632 | 0.442 | 208 | 414K |

6.2.3. Various Rendering Options

FLoD supports both single-level rendering and selective rendering, offering a wide range of rendering options with varying visual quality and memory requirements. As shown in Table 2, FLoD enables flexible adjustment of the number of Gaussians. Reducing the number of Gaussians increases rendering speed while also reducing memory usage, allowing FLoD to adapt efficiently to hardware environments with varying memory constraints.

To evaluate the flexibility of FLoD, we conduct experiments on a server with an A5000 GPU and a low-cost laptop equipped with a 2GB VRAM MX250 GPU. As shown in Figure 8, rendering with only level 4 or selective rendering using levels 5, 4, and 3 achieves visual quality comparable to rendering with only level 5, while reducing memory usage by approximately 40%. This reduction prevents out-of-memory (OOM) errors that occur on low-cost GPUs, such as the MX250, when rendering with only level 5. Furthermore, using lower levels for single-level rendering or selective rendering increases fps, enabling near real-time rendering even on low-cost devices.

Hence, FLoD offers considerable flexibility by providing various rendering options through single and selective rendering, ensuring effective performance across devices with different memory capacities. For additional evaluations of rendering flexibility on the MX250 GPU in Mip-NeRF360 scenes, please refer to the Appendix G.

6.3. Max Level Rendering

We have demonstrated that FLoD provides various rendering options following the LoD concept. However, in this section, we show that using only the max level for single-level rendering provides rendering quality comparable to those of existing models. Moreover, FLoD provides rendering quality comparable to those of existing models when using the maximum level for single-level rendering. Table 1 compares FLoD-3DGS with baselines across three real-world datasets. Table 1 compares max-level (level 5) of FLoD-3DGS with baselines across three real-world datasets.

FLoD-3DGS performs competitively on the Mip-NeRF360 and Tanks&Temples datasets, which are commonly used in baseline evaluations, and outperforms all baselines across all reconstruction metrics on the DL3DV-10K dataset. This demonstrates that FLoD achieves high-quality rendering, which users can select from among the various rendering options FLoD provides. For qualitative comparisons, please refer to Appendix F.

<details>

<summary>x10.png Details</summary>

### Visual Description

\n

## Image Analysis: 3D Reconstruction Comparison

### Overview

The image presents a comparative visual analysis of three different 3D reconstruction methods: 3DGS, 3DGS without large G pruning, and FLOD-3DGS. Each method is demonstrated through two views: a photograph-like rendering of the scene and a point cloud representation of the reconstructed 3D model. The point cloud visualizations include a red bounding box in the first two images, highlighting a specific region of interest.

### Components/Axes