# Focus Agent: LLM-Powered Virtual Focus Group

**Authors**: Taiyu Zhang, Xuesong Zhang, Robbe Cools, Adalberto L. Simeone

> 1234-5678-9012 KU Leuven Naamsestraat 22 Leuven Belgium 3001

> KU Leuven Naamsestraat 22 Leuven Belgium 3001

> KU Leuven Leuven Belgium

(2024)

## Abstract

In the domain of Human-Computer Interaction, focus groups represent a widely utilised yet resource-intensive methodology, often demanding the expertise of skilled moderators and meticulous preparatory efforts. This study introduces the “Focus Agent,” a Large Language Model (LLM) powered framework that simulates both the focus group (for data collection) and acts as a moderator in a focus group setting with human participants. To assess the data quality derived from the Focus Agent, we ran five focus group sessions with a total of 23 human participants as well as deploying the Focus Agent to simulate these discussions with AI participants. Quantitative analysis indicates that Focus Agent can generate opinions similar to those of human participants. Furthermore, the research exposes some improvements associated with LLMs acting as moderators in focus group discussions that include human participants.

Human-computer Interaction, Intelligent Virtual Agent, Virtual Focus Group, Multi Agent Simulation journalyear: 2024 copyright: acmlicensed conference: ACM International Conference on Intelligent Virtual Agents; September 16–19, 2024; GLASGOW, United Kingdom booktitle: ACM International Conference on Intelligent Virtual Agents (IVA ’24), September 16–19, 2024, GLASGOW, United Kingdom doi: 10.1145/3652988.3673918 isbn: 979-8-4007-0625-7/24/09 ccs: Computing methodologies Multi-agent planning ccs: Human-centered computing User studies ccs: Human-centered computing Virtual reality

## 1. Introduction

In the domain of qualitative research, focus groups have emerged as a widely adopted methodology and are extensively employed in both industrial and academic contexts (Kitzinger, 1994, 1995; Mazza, 2006), thanks to its structured group discussions aimed at gaining in-depth insights into specific issues. Within Human-Computer Interaction (HCI), researchers routinely employ focus groups as a vital tool in project planning, evaluation, and data collection endeavours (Mazza, 2006; Troshani et al., 2021; Selter et al., 2023; Stalmeijer et al., 2014). Particularly noteworthy is the growing prominence of virtual focus groups, especially in the post-COVID-19 era (Keen et al., 2022). This transition towards virtual focus groups can be attributed to their blending a methodologically sound approach with the potential of engaging with geographically dispersed and otherwise challenging to access populations (Turney and Pocknee, 2005).

Organising a focus group presents two primary challenges: first, gathering so many people at the same time is not an easy task, especially when researchers are interested in exploring the lived experiences of diverse or hard to reach groups (Brüggen and Willems, 2009; Gratton and O’Donnell, 2011; Wirtz et al., 2019); second, the success of a focus group relies on an experienced moderator with domain-specific expertise. A moderator lacking experience can disrupt the discussion flow or gather unproductive data (Nagle and Williams, 2013). These issues have sometimes hindered the adoption of focus groups into certain HCI research efforts (Rosenbaum et al., 2002).

<details>

<summary>extracted/5830562/FocusGroupSimulation.png Details</summary>

### Visual Description

## Process Diagram: Collaborative AI Moderation Cycle

### Overview

The image is a conceptual process diagram illustrating a cyclical, four-stage collaborative workflow involving an AI "Moderator" and a group of AI agents. The diagram uses cartoon-style robots, directional arrows, and text labels to depict a continuous loop of planning, questioning, discussion, and reflection.

### Components/Axes

The diagram is composed of two primary visual entities and four labeled process stages connected by arrows.

1. **Central Moderator (Top-Center):**

* A single, friendly-looking robot with a blue and white color scheme.

* It holds a pencil in its right hand and a clipboard with a checklist in its left hand.

* The label **"Moderator"** is placed to its right in blue text.

2. **Discussion Group (Bottom-Center):**

* A group of five distinct, colorful robots (green, orange, blue, teal, and red) seated around a brown table.

* Above the group are five speech bubbles in corresponding colors (yellow, orange, teal, blue, pink), each containing an ellipsis ("..."), indicating ongoing conversation.

* This group is encircled by a solid blue arrow labeled **"Discussion"** at the bottom.

3. **Process Flow Arrows & Labels:**

* **Planning:** A red, dashed, circular arrow originates from and returns to the Moderator, forming a loop above it. The label **"Planning"** is inside this loop in red text.

* **Questions:** A solid blue arrow curves downward from the Moderator to the Discussion Group. The label **"Questions"** is placed along the right side of this arrow in blue text.

* **Discussion:** A solid blue circular arrow encircles the Discussion Group from below. The label **"Discussion"** is inside this loop in blue text.

* **Reflection:** A red, dashed arrow curves upward from the Discussion Group back to the Moderator. The label **"Reflection"** is placed along the left side of this arrow in red text.

### Detailed Analysis

The diagram outlines a clear, sequential, and cyclical workflow:

1. **Stage 1 - Planning (Moderator-Centric):** The process begins with the Moderator in a self-contained planning phase, represented by the red dashed loop. This suggests internal preparation or strategy formulation.

2. **Stage 2 - Questions (Moderator to Group):** The Moderator initiates interaction with the group by sending "Questions," visualized by the solid blue arrow flowing downward.

3. **Stage 3 - Discussion (Group-Centric):** The group engages in a collaborative "Discussion," represented by the solid blue loop around them and the speech bubbles. This is the core interactive phase.

4. **Stage 4 - Reflection (Group to Moderator):** Insights or outcomes from the discussion are fed back to the Moderator via the "Reflection" stage, shown by the red dashed arrow curving upward.

5. **Cycle Repeats:** The Reflection arrow leads back to the Moderator, implying that the insights inform the next "Planning" phase, thus closing the loop and starting the cycle anew.

**Visual Coding:**

* **Color & Line Style:** Red dashed lines/arrows are associated with the Moderator's internal processes (Planning) and the feedback to it (Reflection). Solid blue lines/arrows are associated with external, interactive processes between the Moderator and the group (Questions, Discussion).

* **Spatial Layout:** The Moderator is positioned authoritatively at the top, initiating and receiving information. The Discussion Group is at the bottom, as the active, collaborative unit.

### Key Observations

* The process is explicitly **cyclical and iterative**, not linear.

* There is a clear **division of labor**: the Moderator plans, questions, and reflects, while the Group discusses.

* The **speech bubbles with ellipses** effectively convey ongoing, multi-party communication without specifying content.

* The **different robot designs** in the group may symbolize diversity of perspective or specialization among the collaborating agents.

### Interpretation

This diagram models a structured framework for AI-assisted or AI-driven collaborative problem-solving, deliberation, or learning. It suggests a system where a central, possibly more advanced or specialized, AI (the Moderator) orchestrates a process involving a panel of other AIs.

The data demonstrates a **Peircean investigative cycle**:

1. **Planning (Hypothesis Formation):** The Moderator formulates a plan or initial query.

2. **Questions (Abduction):** It poses targeted questions to elicit information.

3. **Discussion (Induction/Deduction):** The group collaboratively processes the questions, likely generating and testing ideas.

4. **Reflection (Evaluation & Learning):** The outcomes are analyzed, and lessons are fed back to refine the Moderator's understanding and future plans.

The separation of "Questions" (from Moderator) and "Discussion" (within Group) is notable. It implies the Moderator sets the agenda or scope, but the detailed exploration is decentralized among the group. The use of dashed red lines for Planning and Reflection may indicate these are internal, cognitive, or less "visible" processes compared to the solid blue, interactive exchanges.

This model could be applied to scenarios like multi-agent AI systems for research, complex decision-making support, or automated tutoring systems where a tutor AI guides a group of student AIs. The cyclical nature emphasizes continuous improvement and adaptation based on the outcomes of each collaborative round.

</details>

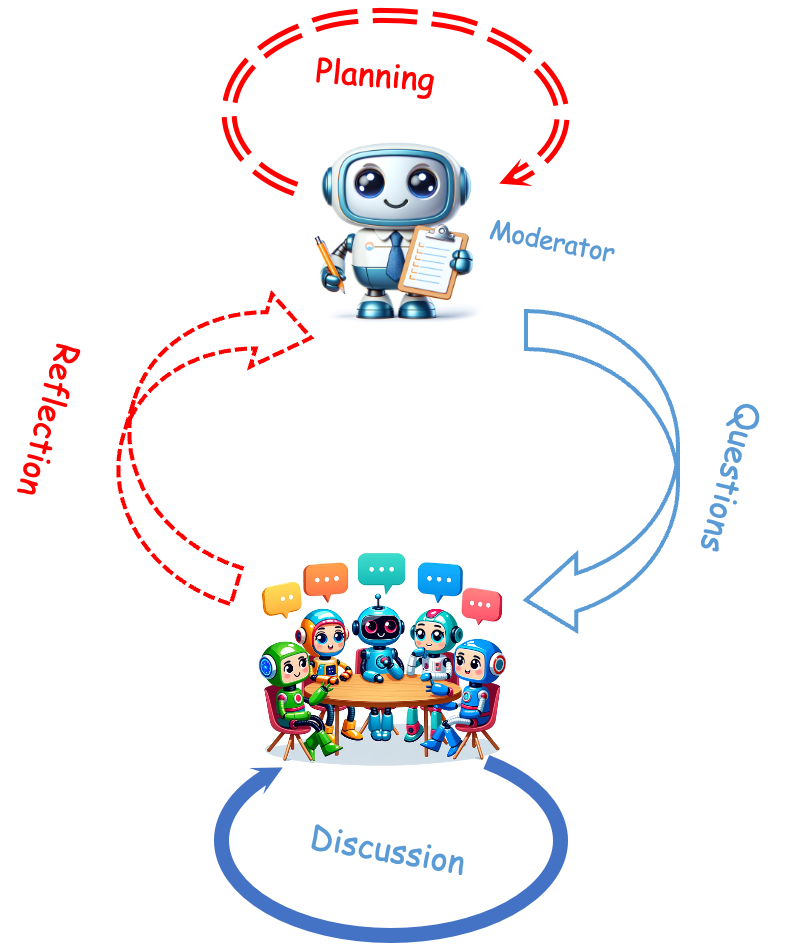

Figure 1. The AI moderator generates questions according to the discussion content and plan, while AI Participants discuss the prompt from the moderator.

The advent of Large Language Models (LLMs), such as ChatGPT, offers a potential solution. These models can frequently communicate in text, generate diverse content from various perspectives based on the large scale of text information on the internet (Reynolds and McDonell, 2021; Brown et al., 2020), and demonstrate expertise across several fields, including social sciences, healthcare, and education (Koubaa, 2023; Sallam, 2023). Their capabilities extend to assisting with paper writing (Katar et al., 2023; Ciaccio, 2023), providing legal advice (Katz et al., 2024; Nay et al., 2024), and supporting medical inquiries (Haupt and Marks, 2023). Given these advancements, focus groups, a classic qualitative data collection method, should benefit from LLMs. Despite their potential, these models are prone to certain limitations such as misunderstanding human instructions, generating potentially biased content, or factually incorrect (hallucinated) information (Wang et al., 2023b). Additional framework design is still necessary for multi-agent tasks, such as societal simulations (Park et al., 2023) or role-playing game simulations (Xu et al., 2023).

This work introduces the “Focus Agent”, an LLM-based moderator for focus groups that has two functions: 1) simulating discussions without human participants and collecting AI-generated opinions, and 2) guiding focus groups as a moderator as shown in Figure 1, with human participants as well. To address prevalent issues in multi-agent simulations, including repetitive opinions and the generation of irrelevant content, the “Focus Agent” employs a scheduled discussion format that divides the focus group into distinct stages, each corresponding to a specific topic. This method mirrors the strategies employed by experienced human moderators. Additionally, the framework incorporates reflection periods during discussion to counteract memory loss during the simulation, ensuring a coherent and productive discussion flow. When moderating focus groups with human participants, a multi-person Speech-to-Text (S2T) and Text-to-Speech (T2S) integration enables the “Focus Agent” to interact with multiple users simultaneously.

Our work primarily explores the application of LLMs in simulating focus group discussions. Two main Research Questions (RQs) are as follows:

RQ 1: To what extent do the opinions generated by a LLM align with those of human participants in focus group?

RQ 2: To what extent is a LLM effective in performing the duties of a moderator in focus group discussions?

To answer these RQs, we conducted a user study with 23 participants across five discussion groups. Participants engaged in a one-hour AI-moderated focus group discussion on the topic of “digital well-being”, followed by a 30-minute session led by a researcher to share their experiences, evaluate the AI moderator’s performance and collect feedback, which was referred as a meta focus group in our work. Meanwhile, the Focus Agent simulated the focus group discussions on the same topic with AI participants. Qualitative analysis including thematic analysis and content analysis of the transcriptions reveals that the AI simulation outputs the majority of opinions expressed by human participants. Additionally, we assessed the performance of the Focus Agent functioning as a moderator, both in the focus group simulation with AI participants as well as with focus groups involving human participants. Based on our findings, the Focus Agent meets the essential criteria required of a focus group moderator. This includes progressively guiding discussions from general to more specific topics and maintaining an actively engaged atmosphere, drawing on the fundamental literacy expected of a focus group moderator (Stewart and Shamdasani, 2014). However, when tasked with moderating discussions involving human participants, the agent’s ability to interact with humans seems constrained, and it has not demonstrated sufficient understanding of human conversation. We identified several limitations of current LLMs in managing multi-person discussions and offer suggestions for integrating AI agents into focus group more effectively. To promote further research, the code has been open-sourced https://github.com/AriaXR/FocusAgent.

## 2. Related Work

This section discusses previous research directly related to our study. We divided it into three subsections: Focus Group Development, Multi-Agent Simulation and Multi-speaker speech recognition for Voice-based Conversational Agents.

### 2.1. Focus Group Development

The utilisation of focus groups, or group depth interviews, is a cornerstone method within the realms of advertising, marketing, and HCI research due to its effectiveness in gathering qualitative insights (Stewart and Shamdasani, 2014). The earliest focus groups were conducted through face-to-face conversations, which make the organisation complex and time-consuming, even with a lot of fees for participant reimbursement (Rosenbaum et al., 2002). The popularity of online focus groups has augmented their appeal, offering advantages such as the convenience of participation from any location at any time, and anonymity, which reduces participants’ apprehension of judgement (Daniels et al., 2019; Wilkerson et al., 2014; Stewart and Shamdasani, 2017). Researchers inviting many people to participate in online meetings at the same time often encounter difficulties, such as inconsistent time schedules, time differences, and poor communication caused by network delays. To further facilitate users’ participation in focus groups, some social media platforms provide asynchronous text-based focus groups (Gordon et al., 2021; Biedermann, 2018; Richard et al., 2021; Wenzek et al., 2019). However, as participants do not contribute simultaneously, it brings some difficulties relating to such a reduced ‘spontaneity’ (Brüggen and Willems, 2009; Nicholas et al., 2010) including: shorter answers with fewer word counts (Chen and Neo, 2019); uneven flow during the interactions due to their lag (Veloso, 2020); and more unfocused exchanges that do not always address the relevant research question (Brüggen and Willems, 2009).

The recent advancements in LLMs, which are trained on extensive internet text data, offer novel opportunities for conducting focus groups. As an innovative retrieval model, LLMs have the potential to streamline the data collection process (Zhu et al., 2023). Utilising LLMs to simulate focus groups presents a simpler and potentially more efficient alternative to engaging human participants, thereby opening new avenues for qualitative research.

### 2.2. Multi-Agent Simulation

Despite the capability of LLMs to process one-on-one question-answer formats, their deployment in long term dialogues and opinion generation, such as focus group discussions, reveals some limitations. These challenges include difficulties in understanding complex instructions, hallucination of agents, a limited token memory leading to loss of continuity, repetitive dialogues, and the generation of meaningless conversation in long-term interactions (OpenAI, 2023; Xu et al., 2024).

To help solve these issues, recent research has come up with new ways to organise how these AI agents think and respond, tailored to specific kinds of tasks (Talebirad and Nadiri, 2023; Park et al., 2023). The Chain-of-Thought (CoT) principle is pivotal, serving as the foundational idea behind them (Wang et al., 2023a). By dissecting complex issues into simpler elements, it facilitates a collaborative approach among multiple agents to tackle each component, leading to a comprehensive solution. By decomposing complex problems into many simple parts, the solution is achieved through the combined efforts of multiple small agents. Additionally, the reflection mechanism plays a crucial role in addressing memory limitations and enhancing the authenticity of the generated content (Yan et al., 2024). This process involves storing detailed historical data as structured information, which can be referenced for more informed decision-making in future interactions. Moreover, to improve the consistency of agent performance across various contexts, some works have investigated the exploration of diverse prompting techniques tailored to the specific roles (Shanahan et al., 2023).

In our work, we have built upon insights from previous research to address potential challenges that could arise during focus group discussions. Furthermore, we have developed a novel framework for conducting focus groups, primarily guided by an AI moderator. The AI moderator facilitates simulated focus group discussions and aids in coordinating focus groups that include human participants. To bridge the interaction gap with human participants, we incorporate a voice-based conversational agent to the moderator.

### 2.3. Multi-speaker speech recognition for Voice-based Conversational Agents

Unlike text-based chatbots, Voice-based Conversational Agents (VCAs) necessitate an extra technological layer for operation: they use a speech-to-text (S2T) process to interpret spoken inputs and a text-to-speech (T2S) system for generating spoken responses (Jokinen and McTear, 2022; Rough and Cowan, 2020). This integration allows VCAs to facilitate interactions in a more natural, conversational manner, bridging the gap between human users and digital assistants.

However, current S2T technologies, such as Google’s API or OpenAI’s Whisper, encounter difficulties in long-term group discussions such as focus groups (Radford et al., 2022). One challenge with using S2T technologies like Whisper for multi-participant discussions is the duration limit on voice recording inputs, which is considerably less than the typical length of conversations. A potential solution involves segmenting longer discussions into shorter fragments using Voice Activity Detection (VAD), which helps manage recordings more effectively (Bain et al., 2023). Another limitation is lack of speaker differentiation, a critical feature for understanding who is speaking in group discussions. Some research has attempted to identify individual speakers by analysing the unique timbre of their voices (Medennikov et al., 2020; Horiguchi et al., 2021, 2020). However, these methods often fall short in accuracy due to the absence of prior information about the speakers. A more effective approach involves using a pre-recorded sample from each speaker, enabling a retrieval-based method to significantly improve performance by accurately distinguishing between speakers (Desplanques et al., 2020).

In our work, we improved Whisper, an open-source S2T model, with a retrieval-based technique, optimising it for multi-participant discussions such as focus groups.

## 3. Focus Agent Implementation

Our Focus Agent was designed to simulate focus group discussions and facilitate running sessions involving human participants. For the focus group simulation, we devised a multi-agent framework, complemented by a moderator to oversee the entire focus group process. This ensures that the contributions from AI participants are both relevant and valuable. Regarding interactions with actual human participants, we incorporated S2T and T2S systems into the AI moderator, enabling voice-based communication.

### 3.1. Focus Group Simulation

<details>

<summary>extracted/5830562/AISimulation.png Details</summary>

### Visual Description

## Diagram and Transcript: Focus Group Meeting Simulation

### Overview

The image displays a simulation interface for a focus group discussion. It consists of two main sections: a top section with a title, topic, timing information, and a circular diagram representing the "Meeting Room" with participants, and a bottom section containing a scrollable transcript of the conversation.

### Components/Axes

**Top Section:**

* **Main Title:** "Meeting Room" (centered at the top).

* **Focus Group Topic:** "Digital Wellbeing: Balancing Screen Time and Mental Health" (left-aligned, with the topic text in green).

* **Timing Information:**

* "Scheduled Time: 60 minutes"

* "Simulation Time: 11 minutes"

* **Meeting Room Diagram:** A large light-gray circle representing the room. Inside are smaller circles representing participants:

* **Center (Red Circle):** "Able (Moderator)"

* **Top (White Circle with black border):** "Noah"

* **Top-Right (Green Circle):** "Caleb"

* **Right (Green Circle):** "David"

* **Bottom (Green Circle):** "William"

* **Left (Green Circle):** "Amelia"

**Bottom Section (Transcript):**

* A scrollable text box containing a chronological transcript of the discussion.

* Each line begins with a speaker's name in bold, followed by their dialogue.

### Detailed Analysis / Content Details

**Transcript Content (Verbatim Extraction):**

* **Noah:** On average, I spend about 4-5 hours on screens each day. My online activities vary, but I'm particularly drawn to exploring alternative viewpoints and engaging in intellectual discussions on various platforms. While this has broadened my horizons and allowed me to connect with like-minded individuals, I've also noticed the potential for information overload and the negative impact it can have on my mental clarity. It's a constant reminder to balance my online pursuits with offline experiences that rejuvenate my mind and soul.

* **Able:** Now that we've discussed our overall perspectives on digital wellbeing, let's dive into our daily digital habits. How much time do you typically spend on screens each day, including smartphones, computers, tablets, and TVs? And what are the activities you engage in most frequently online, such as social media, gaming, or streaming? Please share your experiences and any positive or negative impacts you've noticed from your digital habits.

* **Noah:** Personally, I've found that incorporating regular digital detoxes into my routine has been incredibly beneficial. One weekend a month, I completely disconnect from all screens and immerse myself in nature, engaging in activities that ground me and allow me to reconnect with myself. This intentional break from technology helps me recharge and gain a fresh perspective, reminding me of the importance of being present in the physical world. It's a liberating experience that I would highly recommend to anyone seeking to strike a healthy balance between the digital realm and their mental wellbeing.

* **Able:** It's fascinating to hear about your experiences and perspectives on digital wellbeing. Building on our discussion so far, I'm curious to know if any of you have tried any specific techniques or tools to limit screen time and improve your mental health?

* **William:** Hello everyone, my name is William. Coming from Malaysia, I work as an Event Planner. Technology has revolutionized the event planning industry, making tasks more efficient and communication easier. However, I also believe in maintaining a healthy balance. What I find important is adapting and being flexible to embrace new technologies, while also being mindful of the impact it can have on our mental wellbeing. Let's explore strategies that allow us to harness the benefits of technology without compromising our overall well-being.

* **Able:** It's great to have such diverse perspectives in our group. Now, let's hear from William. Would you like to introduce yourself and share your relationship with technology?

* **Noah:** As a free-spirited and independent thinker, I've always valued personal freedom and individuality. When it comes to screen time and mental wellbeing, I believe it's essential to not only set boundaries but also question the societal norms around technology. Sometimes, going against the norm and following our own path can lead to a more fulfilling and balanced life. So, let's explore unconventional strategies and challenge the status quo in our pursuit of digital wellbeing.

* **Caleb:** I completely agree with David. In my experience, I've also found that setting specific time limits for certain activities can be helpful. For example, I allocate a fixed amount of time for professional work, social media, and leisure activities. This way, I can maintain a sense of control over my screen time and ensure that I'm not neglecting other important aspects of my life. It's all about finding a balance that works for us individually.

### Key Observations

1. **Participant Roles:** The diagram clearly distinguishes the moderator (Able, in red) from the participants (all others in green, except Noah who is in white with a black border).

2. **Discussion Flow:** The transcript shows a moderated discussion. Able (Moderator) poses guiding questions to steer the conversation from general perspectives to daily habits and specific techniques.

3. **Emerging Themes:** Participants discuss:

* Quantifying screen time (Noah: 4-5 hours/day).

* Positive aspects: intellectual growth, connection, efficiency (William).

* Negative aspects: information overload, impact on mental clarity (Noah).

* Strategies for balance: digital detoxes (Noah), setting time limits (Caleb), questioning norms (Noah), and mindful adaptation (William).

4. **Simulation State:** The simulation is 11 minutes into a 60-minute scheduled session.

### Interpretation

This image captures a snapshot of a qualitative research method—a simulated focus group—exploring the nuanced relationship between technology use and mental health. The data presented is not numerical but discursive.

* **What the data suggests:** The conversation reveals a shared concern about the mental health impacts of screen time among a diverse group (varying professions and nationalities, e.g., William from Malaysia). There is a consensus that passive consumption is problematic, while intentional, bounded use is beneficial. The strategies discussed are personal and behavioral (detoxes, time limits) rather than technological.

* **How elements relate:** The diagram visually reinforces the structure of the discussion: a central moderator facilitating dialogue among geographically or virtually dispersed participants. The transcript is the direct output of this structured interaction. The timing information contextualizes the depth of the discussion achieved so far.

* **Notable patterns/anomalies:** A notable pattern is the shift from Noah's initial abstract discussion of "alternative viewpoints" to very concrete, personal strategies like monthly nature retreats. An interesting anomaly in the diagram is Noah's uniquely styled circle (white with black border), which may indicate a special status (e.g., a key informant, a remote participant with a different connection type, or simply a visual emphasis) not explicitly explained in the visible text. The transcript also contains a potential continuity error where Able says "let's hear from William" after William has already spoken, which could be a simulation glitch or an editing artifact.

</details>

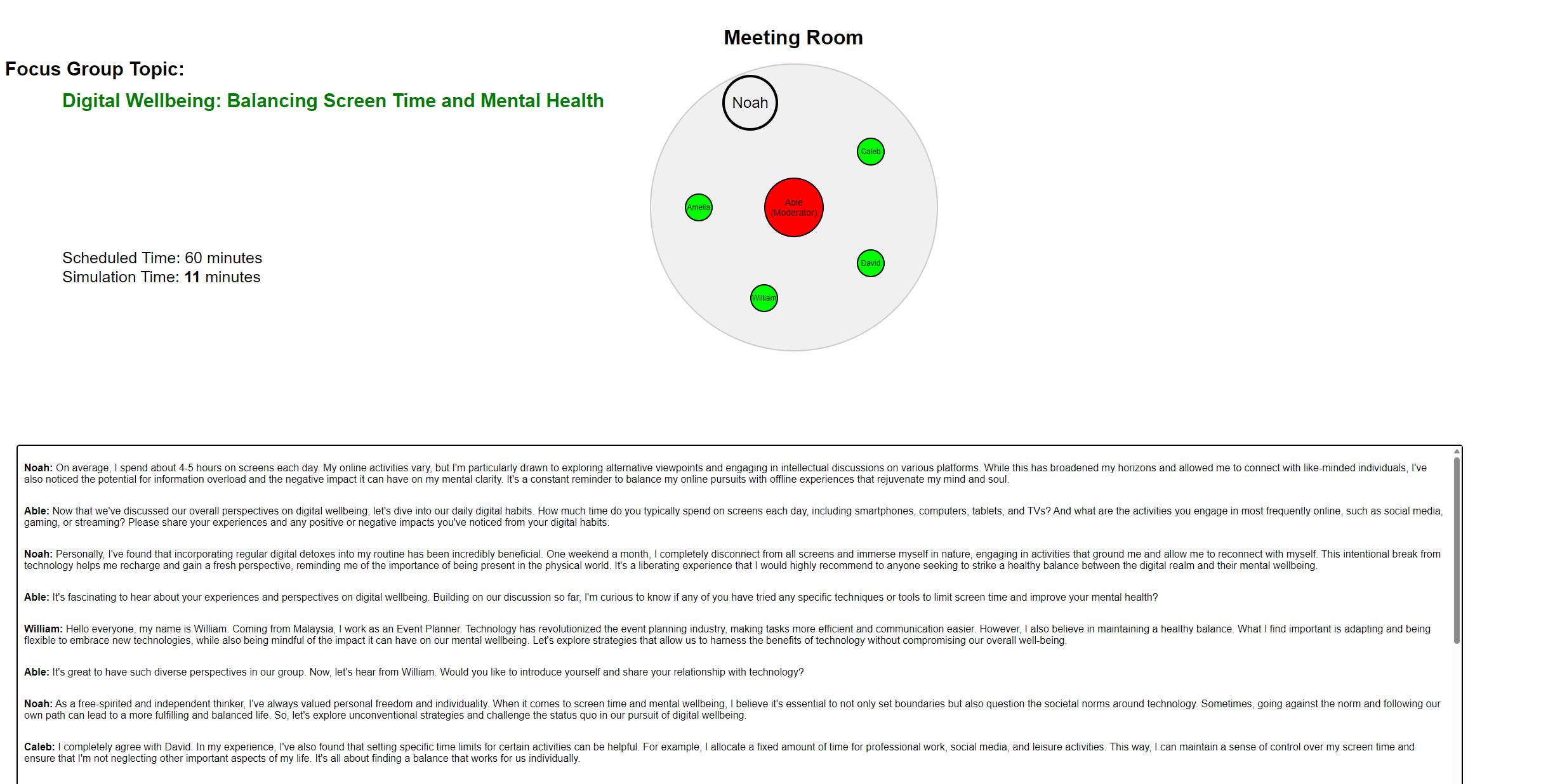

Figure 2. A web demo of the Focus Group simulation system.

In accordance with the benchmark study conducted by OpenCompass (Contributors, 2023), the two most advanced Large Language Models (LLMs) available in the field at the time of writing are ChatGPT and GPT4. Pilot testing revealed that ChatGPT resulted in similar opinions compared with GPT4, after which we decided not to use the superior GPT4 due to its 20-fold increase in cost. Compared to direct prompts, our algorithmic framework improves the realism and comprehensiveness of the AI simulation, as corroborated in Figure 2.

Initially, we attempted to employ a singular prompt to simulate focus group discussions. However, concerning both content and length, the generated outcomes significantly deviated from our expectations. In response to these challenges, we introduce the framework of our Focus Agent, featuring an AI moderator to guide the discussion process. As shown in Figure 1, this AI moderator generates some plans to divide the whole discussion into multiple stages, aligning with the distinct topic and aims of the focus group. Based on these guidelines, the AI moderator then facilitates a simulated focus group discussion with other AI entities as participants. Throughout the conversation, the moderator actively engages in reflection, responding to the dialogue of the participants by timely introducing pertinent questions to foster further discussion. We explained this process in detail in the online appendix.

Within the simulated focus group, each participant represents an artificial intelligence entity. Experimenters are responsible for defining key parameters such as the topic, goals, overall duration, and specific characteristics of the participants, which include names, ages, occupations, nationalities, and personalities. In this setting, LLMs are tasked with understanding the context through assigned roles, typically categorised as system, user, and assistant. The system role involves attributing virtual personas to the LLMs, while the user and assistant roles are designed to aid in interpreting the context either from the viewpoint of the designated character or from that of others. To achieve this, we have developed a sequence of prompt designs, the details of which are provided in the online appendix.

To simulate the focus group discussion as realistically as possible, we designed the algorithm of both moderator and participants. The role of the moderator within the focus group simulation system encompasses the critical responsibilities of guiding and orchestrating the discussion, which includes managing time allocation and steering the discourse topics. These responsibilities are reflected in the moderator’s thought chain, elucidated in Algorithm 1. We added a reflection mechanism at the end of every stage to compress the context of previous discussion to avoid memory lost. Time allocation is managed based on text lengths, with a convention of one hundred words equating to approximately one minute within the simulation.

Algorithm 1 Moderator

0: $List:[Stages],List:[TimeArrangements]$

0: $Str:Response$

for all $stage,time_{stage}\leftarrow Stages,TimeArrangements$ do

$Response\leftarrow LLM(NewStagePrompt)$

$time_{cur}\leftarrow Estimate(Response)$

while $time_{cur}<time_{stage}$ do

if Response from participants then

$Response\leftarrow ParticipantResponse$

else if any participant is inactivate then

$Response\leftarrow LLM(InactivateParticipantPrompt)$

else

$Response\leftarrow LLM(InsightsPrompt)$

end if

Update $time_{cur}$ according to $Estimate(Response)$

end while

end for

Algorithm 2 outlines the systematic approach adopted by each AI participant throughout the discussion, with their level of engagement assessed by the LLM. The LLM dynamically evaluates the ongoing conversation and the contributions of other AI participants to gauge engagement levels. AI participants are provided the latitude to contribute to the discussion uninterrupted unless they surpass the stipulated time allocation. In instances where participants opt to disengage or exhibit novel ideas, signalling a lull in the discourse, the moderator intervenes by posing new questions, drawing inspiration from the preceding discussions. In parallel, the moderator actively encourages less active participants to actively partake in the discourse. Participant activity is monitored through the detection of speaking times within the ongoing stage. Participants who exhibit negligible speaking activity or speaking three times less than those of the most speaking participants are categorised as inactive.

Algorithm 2 Participants

0: $List:[Participants]$

0: $Str:response$

repeat

$engagements\leftarrow[]$

for all $participant$ in $Participants$ do

$engagements$ add $LLM(EngagementPrompt)$

end for

if $Max(engagements)\geq Threshold$ then

$speaker\leftarrow Participants[Index(Max(engagements))]$

$Response\leftarrow LLM(PartResponsePrompt(speaker))$

end if

until Finished

### 3.2. Voice-based Focus Agent with human participants

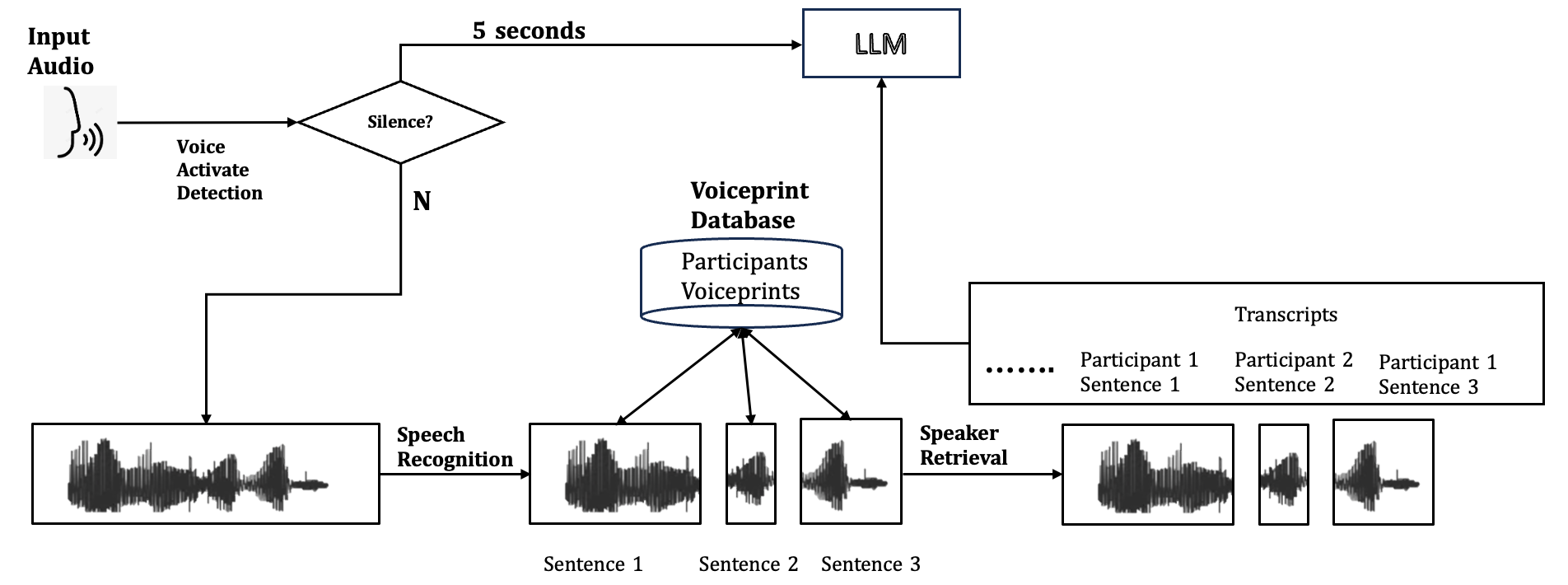

To make sure the AI moderator can communicate with human participants efficiently, S2T and T2S are necessary. APIs provided by various companies are often suitable for many scenarios. However, they fall short of our specific needs for facilitating multi-participant discussions in focus groups due to limitations related to the length of input recordings and the absence of speaker differentiation. To address these challenges, we have developed our own S2T system, as depicted in Figure 3. This system processes long discussion audio by segmenting it into shorter sentence-length audio pieces, leveraging VAD for segmentation. Subsequently, it identifies the most similar participant from a database of participant voices and transcribes the audio using the open-source S2T model Whisper by OpenAI (Radford et al., 2022). To ensure participants have ample opportunity to express their views without undue interruption, the AI moderator is programmed to intervene only after a silence of 5 seconds, thus differing from approaches that might actively disrupt the conversation flow.

<details>

<summary>extracted/5830562/S2T.png Details</summary>

### Visual Description

## [Diagram]: Multi-Speaker Speech Processing Pipeline Flowchart

### Overview

The image is a technical flowchart diagram illustrating a multi-stage pipeline for processing audio input from multiple speakers. The system performs voice activity detection, speech recognition, speaker identification using voiceprints, and transcript generation, ultimately feeding the processed information into a Large Language Model (LLM). The flow is primarily left-to-right with a central database component.

### Components/Axes

The diagram consists of several interconnected components, represented by boxes, a diamond, a cylinder, and waveform icons, linked by directional arrows. All text is in English.

**Key Components & Labels:**

1. **Input Audio:** Top-left. Represented by an icon of a head with sound waves.

2. **Voice Activate Detection:** Text label below the "Input Audio" icon.

3. **Decision Diamond:** Labeled "Silence?". It has two output paths:

* **"5 seconds"**: An arrow leading to the "LLM" box.

* **"N"** (for "No"): An arrow leading down to the main processing pipeline.

4. **Waveform Box (Initial):** A rectangular box containing a single, complex audio waveform.

5. **Speech Recognition:** Text label on an arrow leading from the initial waveform box to a set of segmented waveforms.

6. **Segmented Waveforms:** Three separate boxes, each containing a simpler waveform. They are labeled below as:

* "Sentence 1"

* "Sentence 2"

* "Sentence 3"

7. **Voiceprint Database:** A cylindrical database icon located centrally above the segmented waveforms. It is labeled:

* **Title:** "Voiceprint Database"

* **Contents:** "Participants Voiceprints"

8. **Speaker Retrieval:** Text label on an arrow leading from the segmented waveforms to a final set of waveforms.

9. **Final Waveforms:** Three boxes containing waveforms, visually similar to the segmented ones but now associated with speaker identity.

10. **Transcripts:** A large rectangular box on the right. It contains:

* **Title:** "Transcripts"

* **Content:** A list format showing:

* "......"

* "Participant 1 Sentence 1"

* "Participant 2 Sentence 2"

* "Participant 1 Sentence 3"

11. **LLM:** A rectangular box at the top-right. It receives two inputs: the "5 seconds" path from the "Silence?" check and an arrow from the "Transcripts" box.

### Detailed Analysis

**Process Flow:**

1. **Start:** The process begins with "Input Audio".

2. **Voice Activation Detection:** The audio is checked for voice activity.

3. **Silence Check:** A decision point ("Silence?") determines the next step.

* **If Silence is detected (Yes path, implied):** The system waits for "5 seconds" and then sends a signal to the "LLM". The "N" (No) path is taken if speech is present.

* **If Speech is present (N path):** The audio waveform is passed to the next stage.

4. **Speech Recognition:** The continuous audio stream is processed and segmented into individual sentences ("Sentence 1", "Sentence 2", "Sentence 3").

5. **Speaker Identification:** The segmented sentences are processed alongside the "Voiceprint Database" which stores "Participants Voiceprints". The "Speaker Retrieval" step uses this database to associate each sentence with a specific speaker.

6. **Transcript Generation:** The output is a structured transcript listing each sentence with its identified speaker (e.g., "Participant 1 Sentence 1").

7. **LLM Integration:** The final "Transcripts" are sent to the "LLM". The LLM also receives a direct signal from the initial silence detection path, suggesting it may be triggered or informed by periods of inactivity.

### Key Observations

* The diagram clearly separates the tasks of **what was said** (Speech Recognition) and **who said it** (Speaker Retrieval via Voiceprint Database).

* The "Silence?" check acts as a gatekeeper. It appears to have a dual function: triggering the LLM after a timeout (5 seconds of silence) and allowing active speech to enter the processing pipeline.

* The transcript example shows that the system can handle interleaved speech from multiple participants (Participant 1, then 2, then 1 again).

* The flowchart uses standard symbols: rectangles for processes/data, a diamond for a decision, a cylinder for a database, and arrows for flow direction.

### Interpretation

This diagram outlines an **end-to-end multi-speaker speech processing system** designed for conversational analysis. Its primary purpose is to convert raw, multi-speaker audio into a structured, speaker-attributed text transcript suitable for consumption by a Large Language Model.

The architecture suggests a focus on **meeting or conversation transcription**. The "Voiceprint Database" implies a pre-enrolled set of users, making this suitable for known-participant scenarios like team meetings, interviews, or call center analytics rather than anonymous crowd audio.

The direct link from the "Silence?" check to the LLM is a notable design choice. It could indicate that the LLM is used for **summarization or action generation** triggered by natural pauses in conversation, or that silence periods are themselves a meaningful signal for the LLM to process (e.g., to identify turn-taking or thinking time).

The system's value lies in its ability to **disentangle and structure conversational data**. By providing the LLM with not just the text but also the speaker identity for each utterance, it enables more sophisticated analysis, such as tracking individual contributions, analyzing dialogue dynamics, or generating speaker-specific summaries. The absence of numerical data or trends confirms this is a conceptual process flow diagram, not a data visualization.

</details>

Figure 3. Speech to Text system. We divided long audio recording into short pieces with voice activity detection. Then we transcribed the short audio pieces and recognised the speaker according to the voiceprints collected in advance from the participants.

In order to incorporate T2S functionality into our system, we leveraged the Google TTS API https://console.cloud.google.com/speech/text-to-speech. To allow some participants who are interested in discussion in an immersive environment, we established the focus group environment within Mozilla Hubs https://hubs.mozilla.com, a Virtual Reality (VR) platform.

## 4. Pilot Study

To enhance user experience, we conducted a pilot study with four volunteers before the main user study to assess the system’s stability and the AI moderator’s effectiveness. The pilot included a 50-minute focus group discussion and a 30-minute feedback session on the AI agent’s performance.

Feedback from the pilot highlighted areas for improvement, which were addressed to optimise the user study:

1. Human participants may not always have insights for every query, unlike AI participants who consistently generate new content. Observations showed the AI moderator might repeat questions if there were no responses, leading to stagnation. We adjusted the AI moderator’s protocol to move on if no further responses were forthcoming.

1. Anonymity in Summaries: Volunteers were uncomfortable with being mentioned by name in summaries. We revised the process to ensure participant anonymity, enhancing comfort levels.

1. Conciseness of Questions: The long content generated by LLMs are not ideal for verbal interactions. We refined prompts to yield shorter responses.

Additionally, we assessed the S2T system’s accuracy to ensure comprehensive transcription and understanding by the agent. The Word Error Rate (WER) WER is a metric for gauging speech-to-text conversion accuracy, calculated as $WER=(S+D+I)/N$ , where $S$ denotes substitutions, $D$ deletions, $I$ insertions, and $N$ the total number of words in the reference text. served as the evaluation metric. Professional human transcribers typically achieve a WER of 11.3% in open conversational settings (Xiong et al., 2016). Using this as a benchmark, we found our S2T system achieved a WER of 4.6%, demonstrating commendable accuracy. For speaker identification, our system achieved a micro F1 Score of 0.81 using the EN2001 audio segment from the AMI Corpus (Carletta, 2006), highlighting its capability in recognising speakers. The pilot study indicated the agent exhibited no significant misunderstandings of the conversations.

## 5. User Study

To investigate our research questions, we designed a user study that involved human participants engaging in focus group discussions on the theme of “digital well-being,” alongside simulations of focus groups centred around the same topic. The objective of these sessions was to study individual practices in managing screen time and their perceptions of its impact on mental health. The choice of “digital well-being” as the focal topic was strategic, given its universal relevance, which facilitated participant recruitment. Participants had the option to join the focus groups either via a VR headset or through their personal computers, aiming for device consistency within groups to streamline the discussion dynamics, as shown in Figure 4.

Demographics. Our recruitment efforts yielded 23 participants, where we assigned 11 to join with VR headset and 12 to join with their own personal computer. The participant pool had an average age of 30 years ( $min=18$ , $max=60$ , $SD=10$ ), distributed across five groups–three with VR headset and two with desktop. Each group comprised 3 to 6 individuals, ensuring a diverse range of perspectives and experiences. The selection of the total number of groups is based on previous work (Guest et al., 2017), which has demonstrated that five groups are optimal for focus group studies.

Procedure. The user study included three distinct components: a primary focus group involving human participants (hereafter referred to as “ focus group ”), a meta focus group where human participants convened to reflect on their experiences within the focus group (hereafter referred to as “ meta focus group ”), and a simulated focus group with AI entities as participants (hereafter referred to as “ focus group simulation ”).

First, participants submitted a one-minute self-introduction audio recording before the focus group. This recording collected demographic information (age, prior focus group experience, and daily screen usage) and provided a unique voice print for each participant. This data initialised the AI participants in the simulation. We assessed English proficiency based on the accuracy of the S2T results from their recordings. Then participants accessed the designated meeting rooms in Mozilla Hubs. For VR groups, our team provided VR headsets (Quest series or Vive Pro), while the desktop group used their own PCs. Once all participants were ready, the researcher started the system, and the AI moderator began moderating the focus group. An author observed and recorded essential information throughout the sessions. The sessions were scheduled for 60 minutes, with an actual average duration of 51 minutes ( $SD=13minutes$ ).

Following the conclusion of each focus group discussion, a meta focus group was conducted. This session spanned approximately 20 minutes and was facilitated by one of the authors. The topic of the meta focus group mainly focuses on two points: the experience of focus group discussion and the attitude to the AI moderator.

At the end, each participant received a 10€ gift card as compensation. This study was reviewed and approved by the university’s ethics review board.

<details>

<summary>extracted/5830562/FocusAgent.png Details</summary>

### Visual Description

## 3D Virtual Meeting Room Scene: First-Person Perspective

### Overview

This is a 3D rendered virtual environment depicting a modern conference room, viewed from a first-person perspective (the viewer is seated at the conference table). The scene includes stylized avatars, office furniture, and architectural details, with bright directional light casting distinct shadows across the carpeted floor, indicating a structured virtual collaboration space.

### Components/Elements

1. **Foreground & Seating**:

- A black office chair (back facing the viewer) with an avatar (curly reddish-brown hair, partial view of the back of their head/neck) seated at a long, light-brown wooden conference table with a darker wooden base.

- Additional black office chairs are positioned along the length of the conference table, extending into the midground.

2. **Midground Avatars & Objects**:

- A cartoon-style tiger avatar (orange with black stripes, white muzzle/paws) standing on the grey carpet, facing the viewer.

- A purple-and-white robot avatar positioned near a grey pillar on the left side of the room. A black text label with white text reading "Moderator" is displayed directly above this robot avatar.

- A small grey trash can is placed on the floor next to the robot avatar.

3. **Background & Architecture**:

- A brick wall with three large, bright (overexposed, no visible exterior view) rectangular windows. A low wooden cabinet runs along the base of the wall under the windows.

- Left side: An open doorway leading to another room, with a green illuminated "EXIT" sign (white text on green background) mounted above the door frame.

- Ceiling: Features recessed spotlights and a long, linear white light fixture running horizontally across the upper portion of the scene.

- Floor: Grey carpeted surface, with sharp, dark shadows cast by the windows, furniture, and avatars, indicating bright external light (likely sunlight) coming from the windows.

### Content Details

- **Text Elements**:

- "EXIT": Green illuminated sign with white text, located above the left doorway (top-left area of the image).

- "Moderator": Black rectangular label with white text, positioned directly above the purple robot avatar (left-midground area).

- **Spatial Layout**:

- The conference table occupies the right and central foreground/midground.

- The "Moderator" robot and EXIT door are in the left portion of the scene.

- The tiger avatar is centered in the midground, between the robot and the conference table.

- Windows and brick wall form the rear boundary of the room.

### Key Observations

- The scene blends realistic office furniture with whimsical, cartoonish avatars, indicating a customizable virtual meeting space.

- The "Moderator" label explicitly assigns a role to the robot avatar, suggesting a managed, structured virtual meeting environment.

- High-contrast shadows from the windows create a sense of depth and realism in the 3D rendered space.

### Interpretation

This image represents a virtual conference room, likely part of a metaverse or remote collaboration platform. The labeled "Moderator" avatar implies a formal meeting structure, where roles are designated to guide or manage the session. The mix of professional office design and playful avatars balances functionality with personalized virtual presence, making the space suitable for both work-focused and casual virtual gatherings. The bright, open layout and clear role labeling suggest an environment designed for organized, interactive remote collaboration.

</details>

Figure 4. Users participant focus group using Focus Agent in VR environment.

## 6. Result Analysis

Following the methodological framework proposed by Gerling et al. (Gerling et al., 2020), we employed both thematic and content analyses to scrutinise the transcripts derived from the focus group and focus group simulation sessions. Additionally, thematic analysis was specifically applied to the meta focus group discussions to collect participant feedback. For the transcription of data from the user study, we utilised the outputs from our S2T system, subsequently refining these transcripts against the recorded audio by two researchers. The final evaluation of our S2T system showcased a WER of 2.5% and an F1 score of 0.9, indicating a level of performance sufficiently reliable for the purposes of our study. Due to recording issues, the data from the third focus group session was incomplete. The transcription for this group was reconstructed based on recollections and notes taken by an observer, and consequently, this data was not included in the accuracy assessment of the S2T system. The initial analysis was conducted by the lead author, with the findings subsequently reviewed and validated by the co-authors.

### 6.1. Focus Group

In the thematic analysis conducted on the transcriptions from both the human focus group and the focus group simulations, we elicited distinct themes related to our study topic. From the transcriptions, we identified four central themes. In contrast, the focus group simulations revealed five themes, incorporating an additional theme focused on the challenges associated with controlling screen time. This discrepancy mainly came from the differences in moderation performance between the two groups. In the focus group simulations, the AI moderator tends to guide AI participants to engage more deeply with the topics. While human participants in the focus group did broach additional topics, these were less related to the central theme of discussion, highlighting a contrast in how thematic expansion was handled across the two settings.

<details>

<summary>extracted/5830562/Content_Analysis.png Details</summary>

### Visual Description

## [Venn Diagram Set]: Screen Usage, Impact, Strategies, and Effects

### Overview

The image contains four Venn diagrams illustrating aspects of screen usage, its impact, balancing strategies, and post-balancing effects. Each diagram uses three color-coded circles: **green (AI)**, **red (Human)**, and **brown (Both)** (legend in the top-right corner).

### 1. Daily Screen Usage (Top-Left)

- **Title**: *Daily Screen Usage*

- **Components**: Venn diagram with three circles (AI: green, Human: red, Both: brown).

- **Human-only (red circle)**: Navigate, Take Photos, Shop (3 items).

- **AI-only (green circle)**: Volunteer (1 item).

- **Both (brown circle)**: Study, Read E-book, Work, Watch Videos, Keep Relationships, Play Games, Update News, Social Media (8 items).

- **Numbers**: Red (3), Green (1), Brown (8).

### 2. Impact of Long Screen Time (Top-Right)

- **Title**: *Impact of Long Screen Time*

- **Components**: Venn diagram with three circles (AI: green, Human: red, Both: brown).

- **Human-only (red circle)**: Addicted, Stressed, Overwhelmed, anxiety or depression due to negative content from social media, Increased Sense of Belonging, Insomnia (6 items; number = 1, note discrepancy).

- **AI-only (green circle)**: Insecured, Impaired focus, Reduced ability for face-to-face interaction, Limited creativity (4 items; number = 4).

- **Both (brown circle)**: FOMO, Eye Strain, Comparison, headache, isolated, Self-doubt, relaxed, Guilty (8 items; number = 13, note discrepancy).

- **Numbers**: Red (1), Green (4), Brown (13).

### 3. Strategies to Balance (Bottom-Left)

- **Title**: *Strategies to Balance*

- **Components**: Venn diagram with three circles (AI: green, Human: red, Both: brown).

- **Human-only (red circle)**: 0 items (red circle is empty; number = 0).

- **AI-only (green circle)**: Set ‘Screen-Free’ days, Practicing Mindfulness, Digital Minimalism, Technology-Free Zones, Create a support system with friends (5 items; number = 5).

- **Both (brown circle)**: Go For a Walk, Setting Boundaries, Digital Declutter, Engage in Offline Hobbies or Activities, Regular Exercise, Reminders to Monitor Screen Time, Setting Goals for Online Activities, Schedule a Fulfilling Life, Screen Blocking, Self-Reflection, Limit Screen Time with Apps (11 items; number = 11).

### 4. Effect After Balance (Bottom-Right)

- **Title**: *Effect After Balance*

- **Components**: Venn diagram with three circles (AI: green, Human: red, Both: brown).

- **Human-only (red circle)**: stressful (1 item; number = 1).

- **AI-only (green circle)**: presence, fulfilled, relaxed (3 items; number = 3).

- **Both (brown circle)**: anxiety, isolation (2 items; number = 2).

### Key Observations

- **Color Coding**: Green = AI, Red = Human, Brown = Both (consistent across all diagrams).

- **Overlap Trends**:

- *Daily Screen Usage*: More shared activities (8) than human-only (3) or AI-only (1).

- *Impact of Long Screen Time*: Most shared impacts (13) and AI-specific impacts (4), with few human-only (1).

- *Strategies to Balance*: No human-only strategies; more shared (11) than AI-only (5).

- *Effect After Balance*: Balanced effects (human: 1, AI: 3, shared: 2).

### Interpretation

- **Screen Usage**: Daily activities involve a mix of human and AI tasks, with significant overlap (e.g., social media, work).

- **Impact**: Long screen time has widespread shared effects (e.g., FOMO, eye strain) and AI-specific harms (e.g., impaired focus), suggesting systemic risks.

- **Balancing Strategies**: Most strategies are shared or AI-driven, indicating a need for collaborative (human + AI) approaches to reduce screen time.

- **Post-Balancing Effects**: Balanced outcomes (e.g., reduced anxiety, increased presence) suggest that intentional balancing mitigates negative impacts.

(Note: Discrepancies between item counts and numbers in some diagrams are noted, but all text is transcribed as visible.)

</details>

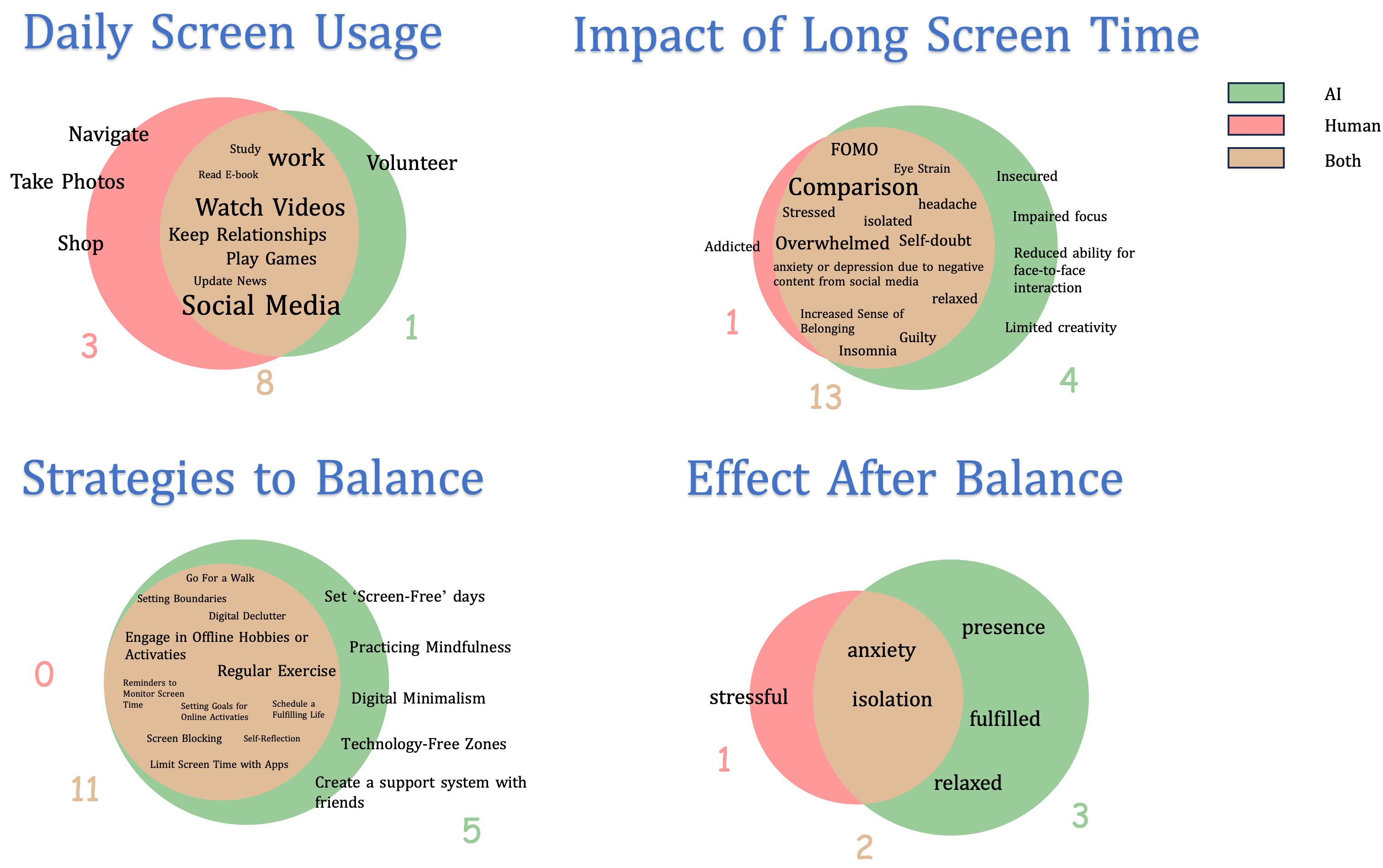

Figure 5. Content analysis according to the themes from both focus group and focus group simulation, font size indicates the frequency of the codes

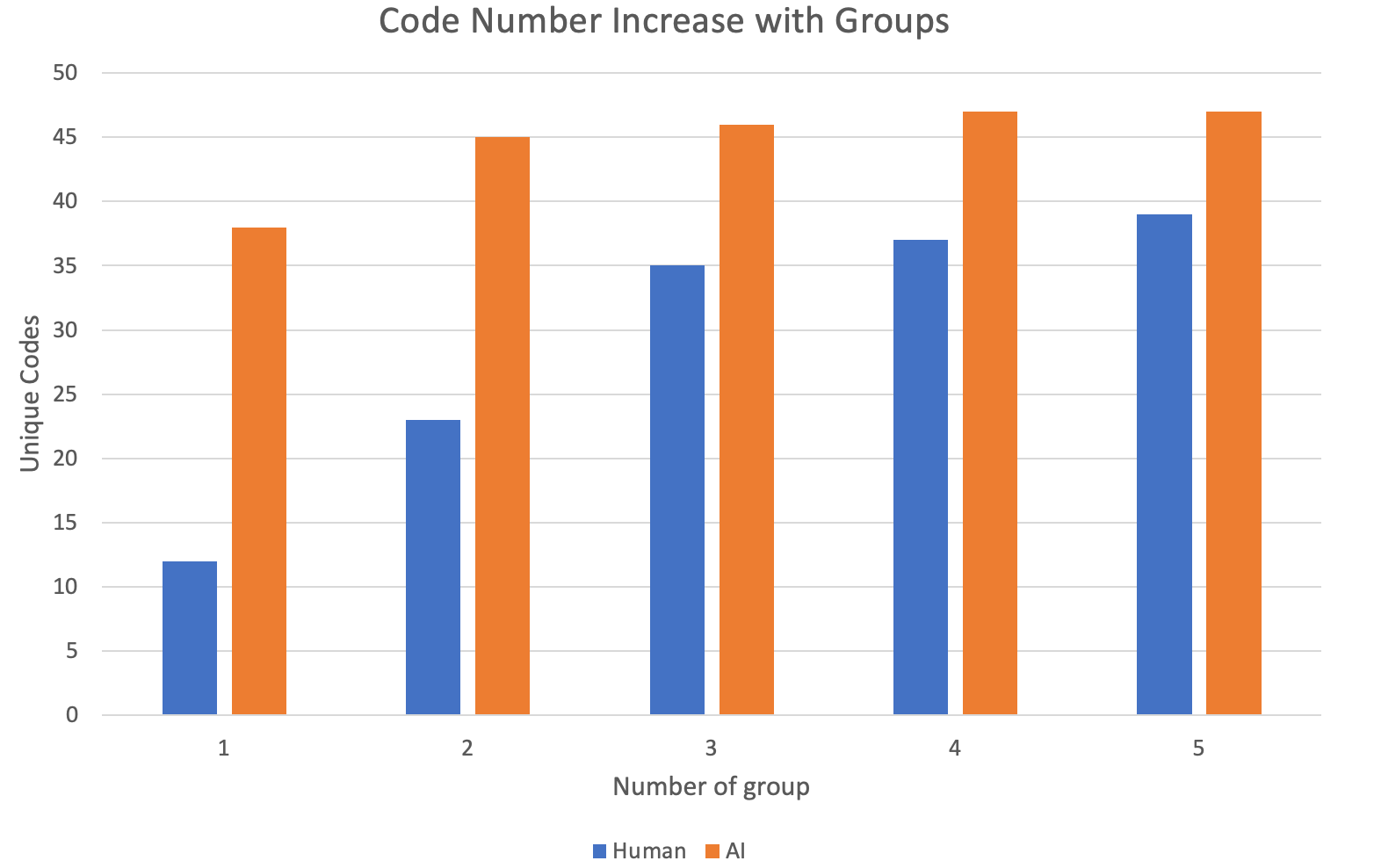

In our content analysis, we derived 39 unique codes from the focus group transcriptions and 47 from the focus group simulations, each reflecting various facets of the discussion topic. To compare the perspectives of AI and human participants, we illustrated the overlap and divergence of these codes through a Venn diagram, as showcased in Figure 5. The analysis revealed that the majority of opinions expressed by human participants were also covered by AI participants. Interestingly, AI participants introduced several viewpoints not raised by their human counterparts, such as volunteering online during daily screen usage, adding additional dimensions to the discussion. Another observation from this analysis is the tendency of AI participants to express similar opinions more than human participants across different focus group sessions. The data referenced in Figure 6 reveal a discrepancy in code generation between simulation and focus groups. Each iteration of the focus group can collect similar number of codes. The result indicates that simulations of focus groups tend to generate higher repetition of identical codes. Following several iterations, the aggregate of unique codes converges, suggesting that the most common opinions have been collected. At this point, AI participants can not generate new codes, whereas human participants continue to demonstrate potential for such creativity. This observation underscores the tendency of AI to produce more common opinions, while human participants display greater variance and individuality in their perspectives.

<details>

<summary>extracted/5830562/CodeNumberChangeWithGroup.png Details</summary>

### Visual Description

## Bar Chart: Code Number Increase with Groups

### Overview

This is a vertical grouped bar chart titled "Code Number Increase with Groups." It compares the number of "Unique Codes" generated by two entities, "Human" and "AI," across five different group sizes (labeled 1 through 5). The chart demonstrates that the AI consistently generates a higher number of unique codes than the Human across all group sizes, with both showing an increasing trend as the group number increases.

### Components/Axes

* **Chart Title:** "Code Number Increase with Groups" (centered at the top).

* **Y-Axis (Vertical):**

* **Label:** "Unique Codes" (rotated 90 degrees).

* **Scale:** Linear scale from 0 to 50, with major gridlines and labels at intervals of 5 (0, 5, 10, 15, 20, 25, 30, 35, 40, 45, 50).

* **X-Axis (Horizontal):**

* **Label:** "Number of group".

* **Categories:** Five discrete categories labeled "1", "2", "3", "4", and "5".

* **Legend:** Located at the bottom center of the chart.

* **Blue Square:** Labeled "Human".

* **Orange Square:** Labeled "AI".

* **Data Series:** Two series of bars, grouped by the x-axis category.

* **Series 1 (Blue):** Represents "Human" data.

* **Series 2 (Orange):** Represents "AI" data.

### Detailed Analysis

The chart presents the following approximate data points for each group. Values are estimated based on the y-axis gridlines.

**Trend Verification:**

* **Human (Blue Bars):** The series shows a consistent, positive linear trend. The height of the blue bars increases steadily from Group 1 to Group 5.

* **AI (Orange Bars):** The series shows a strong positive trend that is steepest between Groups 1 and 2, then continues to increase at a slower rate, appearing to plateau between Groups 4 and 5.

**Data Points (Approximate Values):**

| Number of Group | Human (Unique Codes) | AI (Unique Codes) |

| :--- | :--- | :--- |

| **1** | ~12 | ~38 |

| **2** | ~23 | 45 |

| **3** | 35 | ~46 |

| **4** | ~37 | ~47 |

| **5** | ~39 | ~47 |

**Spatial Grounding & Component Isolation:**

* For each x-axis category (1-5), the blue "Human" bar is positioned to the left, and the orange "AI" bar is positioned to the right.

* The legend is clearly separated from the plot area at the bottom, ensuring no overlap with data.

* The y-axis gridlines extend horizontally across the plot area, aiding in value estimation.

### Key Observations

1. **Consistent AI Superiority:** The AI bar is taller than the Human bar in every single group, indicating a higher output of unique codes regardless of group size.

2. **Diverging Growth Rates:** While both series increase, the initial jump for AI from Group 1 (~38) to Group 2 (45) is significant (+7). The Human series shows a more uniform rate of increase across all groups.

3. **Plateau Effect:** The AI's output appears to reach a near-maximum or plateau around 47 unique codes by Group 4, with negligible increase to Group 5. The Human output, while still increasing, shows no sign of plateauing within the given range.

4. **Narrowing Gap (Proportionally):** The absolute difference between AI and Human output is largest at Group 1 (~26 codes) and smallest at Group 5 (~8 codes). However, the *ratio* of AI to Human output decreases from approximately 3.2:1 at Group 1 to about 1.2:1 at Group 5, suggesting the Human's output is catching up in relative terms.

### Interpretation

This chart likely illustrates the results of an experiment or analysis comparing the generative capacity of humans versus an AI system in a coding or categorization task. The "Number of group" could represent increasing task complexity, team size, or iterative sessions.

The data suggests that the AI system has a significantly higher baseline capacity for generating unique codes (or identifiers, categories, etc.), especially in simpler or initial scenarios (Group 1). Its rapid early increase implies it can scale its output quickly with modest increases in group/complexity. The plateau at higher groups might indicate a system limit, a saturation of the problem space, or that the AI reaches optimal performance quickly.

The Human performance, while lower, shows steady, scalable improvement. The narrowing proportional gap suggests that humans may require more groups (or iterations) to approach their peak performance, but they demonstrate consistent learning or adaptation. The chart does not measure the *quality* or *correctness* of the unique codes, only their quantity. Therefore, while the AI is more prolific, the value of its output versus the human's would require additional qualitative analysis.

</details>

Figure 6. Unique code number increased according to the round of focus group and focus group simulation.

### 6.2. Meta Focus Group

According to the transcriptions of the meta focus group, we coded 51 data points and identified three main themes.

We derived three themes from the data: 1) User Experiences of the Virtual Focus Group; 2) User Attitudes towards the Focus Agent, which is further divided into two sub-themes: a) Positive Attitudes, and b) Negative Attitudes; and 3) Feedback on the Virtual Focus Group System.

#### Theme 1: User Experiences of Focus Group.

A majority of participants conveyed satisfaction with the focus group discussions, highlighting several reasons. For many, the topics discussed were directly relevant to their daily lives, adding value to their participation. As one participant explained, “ I think it’s great to discuss these topics because that’s what we deal with every day. ” (G5, P4). Furthermore, participants appreciated the diversity of perspectives present, valuing the opportunity to exchange experiences. An exemplifying statement reads, “ I think you bring up so many great points. It’s very enriching to hear different perspectives. ” (G5, P3). At the end of the discussions, the moderator inquired whether participants had any additional opinions on the topic that they had not had the opportunity to express during the session. All participants confirmed that they had no further insights to share, indicating that the discussions had comprehensively covered the topic from their perspectives.

#### Theme 2: Attitude to the Focus Agent.

The second theme encapsulates the users’ feedback and experiences with Focus Agent. This theme is divided into two categories: positive and negative, to provide a clearer understanding of the users’ attitudes towards Focus Agent.

SubTheme 1: positive attitude. A prevalent sentiment among participants was their appreciation for the guidance offered by the Focus Agent, acknowledging its efficacy in steering the discussions. As an example, one participant remarked, “ The moderator kind of did a good job by posing questions that allowed us to express our thoughts and encouraged other participants to share their sentiments on the topic. ” (G4, P1). Furthermore, three participants specifically commended the Focus Agent’s clear articulation in English, while an additional participant admired the agent’s friendly demeanour.

SubTheme 2: Negative attitude. The prevailing sentiment among participants leaned towards dissatisfaction with the Focus Agent’s performance. A recurring concern revolved around the repetition of questions, as one participant articulated, “ I found it somewhat confusing at times since the moderator repeated the questions several times, which we had already discussed ” (G1, P2). Another noteworthy issue was the perceived lack of intellectual acumen exhibited by the Focus Agent during discussions. For example, one participant expressed, “ I don’t believe it possesses true intelligence, nor does it seem capable of comprehending all the information we’ve conveyed, let alone guiding us into more profound and coherent discussions ” (G5, P2). At last, some biases were identified in the discussion, notably in steering participants towards articulating the adverse effects associated with prolonged screen use, “ When discussing the impact of long screen using time, I felt that the AI moderator tried to demonise the technology. (G1, P1)”.

#### Theme 3: Feedback on virtual focus group system.

The third theme encapsulates certain system issues encountered during the use of Focus Agent. A concern raised by some participants was the insufficient time allocated for responding to questions, resulting in interruptions by the agent. As articulated by one participant, “ There were instances where we were attempting to respond to a question or had just commenced our response when the moderator interrupted us and swiftly moved on to the next question ” (G3, P3). Furthermore, two participants recommended the incorporation of subtitles to augment their understanding of the questions posed.

## 7. Discussion

In this discussion, we address the RQs through our findings and expand on the underlying reasons informed by our analysis.

### 7.1. RQ1: To what extent do the opinions generated by a LLM align with those of human participants in focus group?

The content analysis of the focus group discussions revealed that opinions generated by AI tend to encompass a wide array of human perspectives within the designated topic. Nevertheless, these AI-generated opinions often reflected more common viewpoints, demonstrating a lack of the uniqueness commonly found in human responses. A possible explanation is that, unlike human participants, who dynamically build upon previous contributions and enrich discussions with personal experiences, AI responses largely appeared as potentially plausible experiences that might happen to people.

This observation suggests that LLMs could serve as a tool for researchers aiming to streamline the focus group process with human participants. By deploying a Focus Agent, researchers could initially gather a broad spectrum of common opinions on a specific topic, thereby setting a foundational understanding of the expected participant responses. This could further assist in refining the focus group’s questions and topics, making the discussion more targeted and efficient. Therefore, fewer human focus group sessions may be required to confirm the AI-generated content and identify novel insights from participants, optimising the research process while still uncovering the unique, creative perspectives that only human participants can provide. However, human participants are still necessary for current focus groups to make sure the data is reliable.

### 7.2. RQ2: To what extent is a LLM effective in performing the duties of a moderator in focus group discussions?

During the focus group simulations, LLMs demonstrated sufficient knowledge to facilitate the group and engage with AI participants effectively. Feedback from the meta focus group indicated that human participants acknowledged the AI moderator’s capability to support the discussion, albeit perceiving it more as a tool rather than a sentient interlocutor. This perception was attributed to the AI moderator’s lack of apparent intelligence in interactions, such as overlooking participant requests, posing repetitive questions, or failing to grasp the hints behind conversations.

The primary challenges are rooted in the inherent limitations of LLMs in navigating multi-person dialogues. For LLMs to respond appropriately, they must comprehend inputs from human participants, reason through the conversation’s context, and formulate accurate responses. While their reasoning capacity seemed adequate during simulations, issues predominantly arose in understanding and response generation phases. Existing research has begun to address LLMs’ comprehension issues when assisting humans, yet their effectiveness in multi-participant discussions remains constrained (Dong et al., 2023). From an understanding standpoint, discussions among human participants often involve colloquial language and incomplete sentences, differing markedly from the more structured exchanges with AI, leading to the AI moderator’s difficulties in recognising whether questions had been answered. Consequently, the AI moderator might repetitively address the same points rather than progressing the discussion. Additionally, challenges in generating aligned and unbiased content persist within LLM outputs (Wang et al., 2023b; Taubenfeld et al., 2024). Although not the primary focus of our study, participants noted issues such as deviation from guidelines or biases in the discussion (see subsection 6.2), underscoring the LLMs’ limitations in mimicking human conversational norms accurately.

Given these observations, we advise against deploying the Focus Agent as the sole moderator in focus group discussions due to the current inadequacies in human-AI communication. Instead, the AI-generated summaries and questions could be utilised by human moderators to streamline the discussion flow and address specific topics. For more in-depth discussions, the presence of a human moderator is essential to ensure a positive user experience and foster the generation of innovative insights, highlighting the complementary roles of AI and human moderators in enhancing the efficacy of focus groups.

### 7.3. Improvement of Focus Agent

Based on the process of the user study and the insights gathered during the meta focus group, several areas for enhancing the structure and functionality of the Focus Agent have been identified:

1. Design of thought chain: Although the thought chain in our work shows enough ability to facilitate the focus group discussion, to be able to facilitate a deeper topic during discussion a more complex design is required, for example one such as the tree of thoughts (Yao et al., 2023).

2. Subtitles for the Focus Agent’s speech: Participants suggested that the speech of the Focus Agent might be too long for them to be able to comprehend a question in its entirety. In this case, subtitles would be a useful help.

3. Time schedule of Focus Agent: the Time allocation was a pre-determined time duration. However, the time should be allocated according to the participants’ engagement in the current discussion. In this case, the Focus Agent should make dynamic time allocations based on the flow of the discussion.

## 8. Limitation and Future Work

Our investigation underscores several limitations that pave the way for future research directions.

First, the current iteration of the Focus Agent is limited to text-based interactions, differing significantly from the multi-modal nature of human moderation. Human moderators use non-verbal cues and physical context to tailor their approach, which text-only agents cannot replicate. This limitation is particularly challenging in settings involving tactile or visual elements. However, advancements in sophisticated LLMs like GPT-4, which understand multi-modal data (OpenAI, 2023), could evolve the Focus Agent into a more versatile, multi-modal platform that closely simulates human discussions.

Second, our study centres on LLM application within focus groups, overlooking broader quantitative and qualitative research methodologies. Prior studies have used LLMs to generate reviews or comments (Liang et al., 2023; Chuang et al., 2023), noting that LLM-generated opinions may lack human creativity (Bender et al., 2021). Ensuring the validity of these insights requires extensive empirical validation.

Lastly, our analysis highlights the difficulties LLMs face in multi-participant discussions. While there is some research on one-on-one dialogues and all-AI discussions (Abbasiantaeb et al., 2024), studies on mixed human-AI communication in group settings are scarce. The ability of LLMs to engage in multi-human conversations is crucial for advancing human-AI interaction. Future research should explore how human participants adjust their communication strategies in the presence of AI, aiming to optimise these interactions for better collaborative outcomes.

## 9. Conclusion

Our research introduced the Focus Agent, a novel AI simulation system developed to simulate focus group discussions through the dialogue of AI agents. This system aims to gather insights akin to those derived from traditional focus groups, leveraging the capabilities of AI participants to generate discussions on designated topics. To assess the degree of alignment between the viewpoints expressed by AI and human participants, we ran a user study that employed an AI moderator to facilitate discussions among human participants. Our analysis uncovered that the Focus Agent includes opinions that similar to those of human participants. Additionally, we studied human participants’ perceptions of the AI moderator and found that while the AI could fulfil the functional role of a moderator, there remained some differences in the interaction experience compared to engagement with human moderators. We examined the underlying reasons and identified specific areas within the large language model’s capabilities that require further enhancement.

## References

- (1)

- Abbasiantaeb et al. (2024) Zahra Abbasiantaeb, Yifei Yuan, Evangelos Kanoulas, and Mohammad Aliannejadi. 2024. Let the llms talk: Simulating human-to-human conversational qa via zero-shot llm-to-llm interactions. In Proceedings of the 17th ACM International Conference on Web Search and Data Mining. 8–17.

- Bain et al. (2023) Max Bain, Jaesung Huh, Tengda Han, and Andrew Zisserman. 2023. WhisperX: Time-Accurate Speech Transcription of Long-Form Audio. INTERSPEECH 2023 (2023).

- Bender et al. (2021) Emily M Bender, Timnit Gebru, Angelina McMillan-Major, and Shmargaret Shmitchell. 2021. On the dangers of stochastic parrots: Can language models be too big?. In Proceedings of the 2021 ACM conference on fairness, accountability, and transparency. 610–623.

- Biedermann (2018) Narelle Biedermann. 2018. The use of Facebook for virtual asynchronous focus groups in qualitative research. Contemporary nurse 54, 1 (2018), 26–34.

- Brown et al. (2020) Tom Brown, Benjamin Mann, Nick Ryder, Melanie Subbiah, Jared D Kaplan, Prafulla Dhariwal, Arvind Neelakantan, Pranav Shyam, Girish Sastry, Amanda Askell, et al. 2020. Language models are few-shot learners. Advances in neural information processing systems 33 (2020), 1877–1901.

- Brüggen and Willems (2009) Elisabeth Brüggen and Pieter Willems. 2009. A critical comparison of offline focus groups, online focus groups and e-Delphi. International Journal of Market Research 51, 3 (2009), 1–15.

- Carletta (2006) Jean Carletta. 2006. Announcing the AMI meeting corpus. The ELRA Newsletter 11, 1 (2006), 3–5.

- Chen and Neo (2019) Julienne Chen and Pearlyn Neo. 2019. Texting the waters: An assessment of focus groups conducted via the WhatsApp smartphone messaging application. Methodological Innovations 12, 3 (2019), 2059799119884276.

- Chuang et al. (2023) Yun-Shiuan Chuang, Agam Goyal, Nikunj Harlalka, Siddharth Suresh, Robert Hawkins, Sijia Yang, Dhavan Shah, Junjie Hu, and Timothy T Rogers. 2023. Simulating Opinion Dynamics with Networks of LLM-based Agents. arXiv preprint arXiv:2311.09618 (2023).

- Ciaccio (2023) Edward J Ciaccio. 2023. Use of artificial intelligence in scientific paper writing. , 101253 pages.

- Contributors (2023) OpenCompass Contributors. 2023. OpenCompass: A Universal Evaluation Platform for Foundation Models. https://github.com/InternLM/OpenCompass.

- Daniels et al. (2019) Nicola Daniels, Patricia Gillen, Karen Casson, and Iseult Wilson. 2019. STEER: Factors to consider when designing online focus groups using audiovisual technology in health research. International Journal of Qualitative Methods 18 (2019), 1609406919885786.

- Desplanques et al. (2020) Brecht Desplanques, Jenthe Thienpondt, and Kris Demuynck. 2020. Ecapa-tdnn: Emphasized channel attention, propagation and aggregation in tdnn based speaker verification. arXiv preprint arXiv:2005.07143 (2020).

- Dong et al. (2023) Xin Luna Dong, Seungwhan Moon, Yifan Ethan Xu, Kshitiz Malik, and Zhou Yu. 2023. Towards next-generation intelligent assistants leveraging llm techniques. In Proceedings of the 29th ACM SIGKDD Conference on Knowledge Discovery and Data Mining. 5792–5793.

- Gerling et al. (2020) Kathrin Gerling, Patrick Dickinson, Kieran Hicks, Liam Mason, Adalberto L Simeone, and Katta Spiel. 2020. Virtual reality games for people using wheelchairs. In Proceedings of the 2020 CHI Conference on Human Factors in Computing Systems. 1–11.