# Proof of Thought : Neurosymbolic Program Synthesis allows Robust and Interpretable Reasoning

**Authors**:

- Debargha Ganguly (Case Western Reserve University)

- &Srinivasan Iyengar (Microsoft Corporation)

- Vipin Chaudhary (Case Western Reserve University)

- &Shivkumar Kalyanaraman (Microsoft Corporation)

> Most work conducted at Microsoft Research, with partial support from NSF Awards 2117439 and 2112606.

## Abstract

Large Language Models (LLMs) have revolutionized natural language processing, yet they struggle with inconsistent reasoning, particularly in novel domains and complex logical sequences. This research introduces Proof of Thought, a framework that enhances the reliability and transparency of LLM outputs. Our approach bridges LLM-generated ideas with formal logic verification, employing a custom interpreter to convert LLM outputs into First Order Logic constructs for theorem prover scrutiny. Central to our method is an intermediary JSON-based Domain-Specific Language, which by design balances precise logical structures with intuitive human concepts. This hybrid representation enables both rigorous validation and accessible human comprehension of LLM reasoning processes. Key contributions include a robust type system with sort management for enhanced logical integrity, explicit representation of rules for clear distinction between factual and inferential knowledge, and a flexible architecture that allows for easy extension to various domain-specific applications. We demonstrate Proof of Thought ’s effectiveness through benchmarking on StrategyQA and a novel multimodal reasoning task, showing improved performance in open-ended scenarios. By providing verifiable and interpretable results, our technique addresses critical needs for AI system accountability and sets a foundation for human-in-the-loop oversight in high-stakes domains.

## 1 Introduction

Large language models (LLMs) have revolutionized the field of AI and enabled a wide range of applications. However, as these models are increasingly deployed to process unstructured data and perform complex tasks autonomously, their inconsistent reasoning capabilities remain a critical limitation (Marcus, 2020). This inconsistency manifests in variable performance across out-of-domain reasoning, negation understanding, and extended logical chains, suggesting a reliance on superficial heuristics (Bender et al., 2021). The implications are far-reaching, particularly in high-stakes domains where reliable and transparent decision-making is crucial (Rudin, 2019). Errors or biases in these contexts could have severe consequences, underscoring the urgent need for more dependable and interpretable AI systems.

Recent advances in prompt engineering have shown promise in addressing these challenges. Techniques such as Chain-of-Thought (CoT) (Wei et al., 2022), Self-Consistency with CoT (CoT-SC) (Wang et al., 2022), Tree of Thoughts (ToT) (Yao et al., 2024), and Graph of Thoughts (GoT) (Besta et al., 2024) have improved problem-solving capabilities. In the multimodal domain, techniques like Set-of-Marks (SoM) prompting have emerged (Yang et al., 2023). Despite advancements in performance figures, the mechanisms behind these improvements remain opaque, causing blind spots in real-world usage as failure modes are not well understood. The fundamental issue lies in the lack of interpretability and guaranteed verifiability in LLM reasoning processes (Danilevsky et al., 2020). This opacity hinders our ability to trust and validate LLM outputs, a critical concern in scenarios requiring explainable AI or human-in-the-loop oversight.

Real-world applications, especially in health and safety domains, face additional challenges in training and deploying models due to the scarcity of high-quality annotated data. This is particularly evident in sectors like energy, healthcare, and manufacturing, where ML has significant potential for operational efficiency (Jordan and Mitchell, 2015). Complex tasks, such as identifying OSHA violations in cluttered visual data, illustrate the difficulties in long-tail, low-data scenarios. These scenarios are characterized by diverse and unpredictable phenomena, where specialized model training is impractical (Zhang et al., 2021). With robust reasoning, LLMs’ wide knowledge and commonsense abilities can potentially operate in these low-data paradigms, opening up more applications.

Contributions: To address these challenges, we :

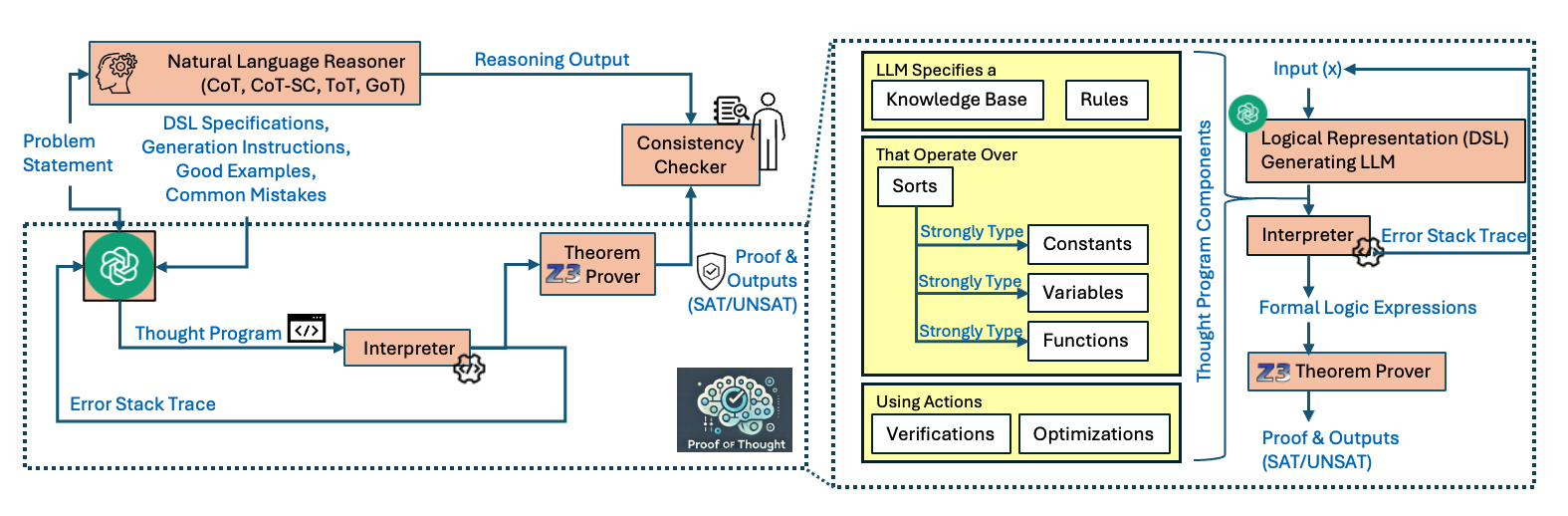

1. Propose Proof of Thought (PoT), a novel approach that leverages the in-context learning and code generation capabilities of LLMs while incorporating their inherent knowledge and spatial understanding. Our system employs a custom interpreter that parses "LLM-Thoughts" (represented as DSL code snippets) to generate First Order Logic programs, which are then verified by a Z3 theorem prover.

1. Introduce an intermediate JSON-based DSL (neurosymbolic representation) and the associated interpreter that operates on human-understandable abstract concepts using intuitive, near-English language constructs (see Fig 1). This strikes a balance between the precision required for logical definitions with formal first-order logic proofs and accessibility for non-expert users.

1. Benchmark performance over StrategyQA (a boolean multi-hop implicit NLP reasoning benchmark) and a novel multimodal real-world long-tail reasoning problem (Reddit-OSHA Benchmark). This shows that PoT works on complex, and a wide variety of tasks.

Proof of Thought enhances the capabilities of LLMs in complex, open-ended scenarios by providing reasoning guarantees, conditioned on correctness of knowledge base and rule specifications, therefore furnishing a framework for human-in-the-loop oversight and verification.

## 2 Related Work

Early Integration Attempts laid the groundwork for neuro-symbolic AI. Works like EBL-ANN (Towell and Shavlik, 1994), KBANN (Towell et al., 1990), and C-ILP (d’Avila Garcez et al., 2009) incorporated propositional formulae into neural networks. While pioneering, these approaches struggled with scalability and expressiveness. Knowledge Graph integration advanced the field further. Methods proposed by Chen et al. (2020a) and Kampffmeyer et al. (2019) showed promise in leveraging structured knowledge for improved reasoning. However, maintaining interpretability and explicit rule incorporation remained challenging. Differentiable Logic Programming frameworks like DeepProbLog (Manhaeve et al., 2018) and Scallop (Li et al., 2023) demonstrated the potential of integrating probabilistic logic programming with neural networks. These approaches enable end-to-end training of neuro-symbolic systems but face limitations in handling complex reasoning tasks and diverse logical formalisms. Gupta and Kembhavi (2023) showed how compositional visual reasoning can be done by program generation without training.

Large Language Models have opened new avenues for neuro-symbolic reasoning. Techniques such as Chain-of-Thought (Wei et al., 2022), Tree-of-Thoughts (Yao et al., 2024), and Graph-of-Thoughts (Besta et al., 2024) have shown impressive results in complex reasoning tasks. However, these methods often produce inconsistent intermediate steps and struggle with out-of-domain reasoning. Interpretable Concept-based Models, as explored by Kim et al. (2018) and Chen et al. (2020b), aim to increase trust in deep learning models. However, state-of-the-art approaches often rely on high-dimensional concept embeddings that lack clear semantic meaning, limiting their interpretability.

Advanced Neuro-symbolic Frameworks like the Deep Concept Reasoner (DCR) (Barbiero et al., 2023) and GENOME (Chen et al., 2023) have made progress in combining neural and symbolic components. DCR constructs syntactic rule structures from concept embeddings, while GENOME introduces a modular approach for visual reasoning tasks. Despite these advancements, challenges in scalability and generalization to diverse task domains persist. A critical issue is reasoning shortcuts, where models use unintended concept semantics. To address this, Marconato et al. (2024) introduced BEARS, improving model calibration and informative annotation acquisition. These developments showcase the potential for imparting robustness and reliability via neuro-symbolic AI systems.

LLM Code Generation and Low-Resource Translation demonstrate the remarkable adaptability of large language models. In code generation, LLMs like Codex (Chen et al., 2021) and AlphaCode (Li et al., 2022) show proficiency across multiple programming languages, often outperforming specialized models. These systems excel at understanding context, generating syntactically correct code, and even solving complex algorithmic problems. Paralleling this, research in low-resource language translation (Zoph et al., 2016; Tanzer et al., 2023) reveals LLMs’ ability to rapidly adapt to new languages with minimal examples. Techniques like few-shot learning and cross-lingual transfer enable models to leverage knowledge from high-resource languages to improve performance on low-resource ones. Both domains highlight LLMs’ capacity for quick adaptation and generalization, suggesting potential for enhanced neuro-symbolic systems that can efficiently learn and apply formal reasoning across diverse domains with limited training data.

## 3 Proof of Thought : A Neurosymbolic Reasoning Framework

In this section, we introduce Proof of Thought (PoT), a novel framework that bridges NLP with formal logical reasoning to enhance the interpretability and verifiability of LLM outputs. We first outline the foundational concepts and notations that underpin our approach.

<details>

<summary>PoT_Framework.png Details</summary>

### Visual Description

\n

## Diagram: Reasoning Process with LLM and Theorem Prover

### Overview

The image depicts a diagram illustrating a reasoning process involving a Natural Language Reasoner (LLM) and a Theorem Prover. The diagram is split into two main sections, visually separated by a dashed vertical line. The left side shows the LLM's reasoning loop, while the right side details the Thought Program Components and their interaction with the Theorem Prover. The overall process appears to be a system for generating, verifying, and optimizing logical statements using a combination of natural language processing and formal reasoning.

### Components/Axes

The diagram consists of several key components:

* **Natural Language Reasoner:** Includes labels for (CoT, CoT-SC, ToT, GoT) and input of "Problem Statement" and output of "Reasoning Output".

* **Thought Program:** A central component within the LLM loop, with an associated "Error Stack Trace".

* **Interpreter:** Connects the Thought Program to the Theorem Prover, also generating an "Error Stack Trace".

* **Consistency Checker:** Receives Reasoning Output and feeds into the Theorem Prover.

* **Theorem Prover:** Outputs "Proof & Outputs (SAT/UNSAT)".

* **LLM Specifies a Knowledge Base:** A box containing "Rules" and "Sorts".

* **Sorts:** Contains "Constants", "Variables", and "Functions", all labeled as "Strongly Type".

* **Using Actions:** Contains "Verifications" and "Optimizations".

* **Input (x):** Input to the Generating LLM.

* **Generating LLM:** Converts Input (x) to "Logical Representation (DSL)".

* **Formal Logic Expressions:** Output of the Interpreter.

The diagram uses arrows to indicate the flow of information between these components. There are no explicit axes or scales.

### Detailed Analysis or Content Details

The diagram illustrates a cyclical process.

1. **Problem Statement to Reasoning Output:** A "Problem Statement" is fed into the "Natural Language Reasoner" (which utilizes methods like CoT, CoT-SC, ToT, and GoT), resulting in "Reasoning Output".

2. **Reasoning Output to Theorem Prover:** The "Reasoning Output" is passed to a "Consistency Checker" and then to the "Theorem Prover".

3. **Theorem Prover Output:** The "Theorem Prover" generates "Proof & Outputs (SAT/UNSAT)".

4. **Thought Program Loop:** The "Thought Program" receives input and generates an "Error Stack Trace". The "Interpreter" processes the "Thought Program" and outputs "Formal Logic Expressions", also generating an "Error Stack Trace".

5. **LLM Knowledge Base:** The LLM specifies a "Knowledge Base" that operates over "Sorts" (Constants, Variables, Functions – all "Strongly Type").

6. **Thought Program Components:** The right side of the diagram details the "Thought Program Components" starting with an "Input (x)" to a "Generating LLM" which creates a "Logical Representation (DSL)". This is then interpreted and sent to the "Theorem Prover".

7. **Actions:** The "Theorem Prover" is used for "Verifications" and "Optimizations".

### Key Observations

* The diagram emphasizes the interplay between natural language reasoning and formal logical verification.

* The presence of "Error Stack Trace" outputs from both the "Interpreter" and the "Thought Program" suggests a debugging or iterative refinement process.

* The "SAT/UNSAT" output from the "Theorem Prover" indicates a boolean satisfiability check, a common technique in formal verification.

* The "Strongly Type" label on the "Sorts" suggests a focus on type safety within the knowledge base.

* The diagram is highly conceptual and doesn't contain specific numerical data.

### Interpretation

This diagram represents a system designed to enhance the reliability and correctness of reasoning performed by Large Language Models (LLMs). The LLM generates reasoning steps (the "Thought Program"), which are then translated into formal logic and verified by a "Theorem Prover". This verification step provides a means to detect inconsistencies or errors in the LLM's reasoning. The iterative loop, indicated by the "Error Stack Trace", suggests a process of refinement where the LLM adjusts its reasoning based on the feedback from the Theorem Prover.

The use of a "Consistency Checker" before the Theorem Prover suggests a preliminary check for logical coherence. The "Knowledge Base" specified by the LLM provides the foundational rules and types for the formal reasoning process. The inclusion of "Verifications" and "Optimizations" indicates that the system is not only concerned with correctness but also with efficiency and performance.

The diagram highlights a trend towards integrating formal methods with LLMs to overcome the limitations of purely statistical or heuristic approaches to reasoning. The system aims to combine the flexibility and expressiveness of natural language with the rigor and reliability of formal logic. The diagram does not provide any quantitative data on the performance or effectiveness of this system, but it clearly outlines the key components and their interactions.

</details>

Figure 1: Architecture of the Proof of Thought (PoT) framework, illustrating the integration of natural language reasoning with formal logical verification.

### 3.1 Background Concepts & Notation from LLM Reasoning Literature

Let $p_{\theta}$ denote a pre-trained language model (LM) with parameters $\theta$ . In a conversation, user messages (prompts) and LM replies (thoughts) are exchanged. We use lowercase letters $x,y,z,...$ to indicate LM thoughts, where the definition of a "thought" is use-case specific (e.g., a paragraph, document, or code block). The simplest Prompting Approach approach is Input-Output (IO) where an LM directly transforms an input sequence $x$ into output $y$ without intermediate steps. Chain-of-Thought (CoT) introduces intermediate thoughts $a_{1},a_{2},...$ between $x$ and $y$ , enhancing performance on tasks like mathematical reasoning.

Multiple CoTs generalizes CoT by generating $k$ independent chains and selecting the best output based on a prescribed scoring metric. This approach, introduced as Self-Consistency with CoT (CoT-SC), allows exploration of different reasoning paths. Tree of Thoughts (ToT) further enhances CoT-SC by modeling reasoning as a tree of thoughts. Each node represents a partial solution. For a given node, $k$ new nodes are generated, then evaluated using an LM or human scores. The tree expansion is guided by search algorithms like BFS or DFS. Finally, Graph of Thoughts (GoT) extends ToT by allowing more complex connections between thoughts, forming a directed graph structure. In GoT, thoughts can have multiple predecessors and successors, enabling more flexible reasoning paths and the combination of ideas from different branches. This approach allows for cyclic reasoning patterns and can capture more intricate problem-solving strategies.

### 3.2 Framework Overview

"All our knowledge begins with the senses, proceeds then to the understanding, and ends with reason. There is nothing higher than reason."

- Immanuel Kant, in Critique of Pure Reason

Proof of Thought models the LLM’s reasoning process as a structured transformation from natural language input to formal logical expressions that can be verified using theorem proving techniques. The framework consists of three primary components :

- Logical Representation Generator $\mathcal{G}$ : Maps input $x$ to a logical representation $\mathcal{L}$ using $p_{\theta}$ .

- Interpreter $\mathcal{I}$ : Parses $\mathcal{L}$ and constructs formal logical expressions $\phi$ in first-order logic (FOL).

- Theorem Prover $\mathcal{T}$ : Verifies the validity of $\phi$ and provides proofs or counterexamples.

The PoT reasoning process can thus be formalized as:

$$

\mathcal{L}=\mathcal{G}(x;p_{\theta});\phi=\mathcal{I}(\mathcal{L});\text{Verification Result}=\mathcal{T}(\phi)

$$

Guarantees: By using theorem proving, we rely on the principle of logical consequence, where conclusions are guaranteed to be true if the premises and inference rules, depicted in the logical representations (both of which are human readable, allowing interpretability and verifiability). These logical representations are the ultimate arbiter of truth and validity. The guarantees are what distinguishes PoT from other forms of reasoning. Inductive reasoning (drawing general conclusions from specific observations) can be useful, but doesn’t offer the same level of certainty. In contrast, guarantees with theorem proving allow us to establish precise arguments with mathematical truths with absolute confidence, elevating the reasoning process beyond mere conjecture or intuition.

Our Proof of Thought framework architecture (shown in Fig 1) introduces a JSON-based Domain Specific Language (DSL) along with the associated interpreter. Next, we discuss the design choices for these in detail.

### 3.3 Design of the JSON-Based Domain-Specific Language (DSL)

The core of our logical reasoning system is built upon a carefully designed JSON-based Domain-Specific Language (DSL) that serves as an intermediate representation for translating reasoning tasks into formal logic. This DSL was created with the primary challenge of being general-purpose enough to accommodate a wide range of reasoning problems. The choice of JSON as the underlying format was deliberate, leveraging its widespread use, human readability, and ease of parsing. This design decision ensures that the logical representations are both machine-parseable and accessible to users who may not have expertise in formal logic or programming. Moreover, JSON’s compatibility with structured outputs from AI service providers like OpenAI and Google, which offer guaranteed outputs matching particular schemas, makes it an ideal choice for our system that doesn’t rely on retraining models. The key components of the DSL are:

<details>

<summary>examplecomponents1.png Details</summary>

### Visual Description

## JSON Data Structure: Task Assignment Configuration

### Overview

The image presents a structured JSON (JavaScript Object Notation) data configuration, likely for a task assignment or scheduling problem. The data is organized into several key sections: `sorts`, `variables`, `functions`, `knowledge_base`, `constants`, `rules`, and `optimization`. Each section defines specific elements used in a constraint satisfaction or optimization process. The layout is a grid of colored blocks, each representing a JSON object or array.

### Components/Axes

The image doesn't have traditional axes like a chart. Instead, it's a visual representation of a JSON structure. The main components are:

* **`sorts`**: Defines the types of objects involved (Person, Equipment, Task, Location, Time).

* **`variables`**: Defines the variables used in the problem, their types, and their associated sorts.

* **`functions`**: Defines functions that map between sorts.

* **`knowledge_base`**: Contains initial facts or assertions about the problem.

* **`constants`**: Defines specific instances of sorts (e.g., specific people, equipment, locations).

* **`rules`**: Defines logical rules that govern the assignment process.

* **`optimization`**: Defines the objective function to be optimized.

* **`verifications`**: Defines constraints that must be satisfied.

The blocks are arranged in a 3x3 grid.

### Detailed Analysis or Content Details

Here's a detailed transcription of the JSON data, section by section:

**1. `sorts` (Top-Left, Green)**

```json

[

{"name": "Person", "type": "DeclareSort"},

{"name": "Equipment", "type": "DeclareSort"},

{"name": "Task", "type": "DeclareSort"},

{"name": "Location", "type": "DeclareSort"},

{"name": "Time", "type": "RealSort"}

]

```

**2. `variables` (Top-Center, Yellow)**

```json

[

{"name": "p", "sort": "Person"},

{"name": "e", "sort": "Equipment"},

{"name": "t", "sort": "Task"},

{"name": "l", "sort": "Location"},

{"name": "time", "sort": "Time"}

]

```

**3. `verifications` (Top-Right, Light Blue)**

```json

{

"name": "All Tasks Assigned",

"forall": [

{"name": "t", "sort": "Task"}

],

"constraint": "Exists([{\"name\": \"p\", \"sort\": \"Person\"}], assigned_to(t, p))"

},

{

"name": "No Overqualified Assignments",

"forall": [

{"name": "p", "sort": "Person"},

{"name": "t", "sort": "Task"}

],

"implies": {

"antecedent": "assigned_to(t, p)",

"consequent": "skill_level(p) <= 7"

}

}

```

**4. `functions` (Middle-Left, Green)**

```json

[

{"name": "assigned_to", "domain": ["Task", "Person"], "range": "BoolSort"},

{"name": "location_of", "domain": ["Person"], "range": "Location"},

{"name": "start_time", "domain": ["Task"], "range": "Time"},

{"name": "duration", "domain": ["Task"], "range": "Time"},

{"name": "skill_level", "domain": ["Person"], "range": "IntSort"}

]

```

**5. `knowledge_base` (Middle-Center, Yellow)**

```json

{

"assigned_to(task1, alice)",

"location_of(alice) == warehouse_A",

"start_time(task1) == 9.0",

"duration(task1) == 2.0",

"skill_level(alice, task1) == 5"

}

```

**6. `optimization` (Middle-Right, Light Blue)**

```json

{

"variables": [

{"name": "y", "sort": "Person"},

{"name": "x", "sort": "Task"}

],

"constraints": [

"ForAll(x, y), implies(assigned_to(x, y), skill_level(y, x) <= 3)"

],

"cost": "skill_level(y, x)"

}

```

**7. `constants` (Bottom-Left, Green)**

```json

{

"persons": {

"sort": "Person",

"members": ["alice", "bob", "charlie"]

},

"equipment": {

"sort": "Equipment",

"members": ["forklift", "crane", "truck"]

},

"locations": {

"sort": "Location",

"members": ["warehouse_A", "warehouse_B", "yard"]

},

"tasks": {

"sort": "Task",

"members": ["task1", "task2", "task3"]

}

}

```

**8. `rules` (Bottom-Center, Yellow)**

```json

{

"name": "Task Assignment Rule",

"forall": [

{"name": "p", "sort": "Person"},

{"name": "t", "sort": "Task"}

],

"implies": {

"antecedent": "skill_level(p, t) >= 5",

"consequent": "assigned_to(t, p)"

}

}

```

**9. `verifications` (Bottom-Right, Light Blue)**

```json

[

{"name": "Time Constraint", "constraint": "time >= 0"}

]

```

### Key Observations

* The data defines a task assignment problem with constraints on skill levels and task assignments.

* The `knowledge_base` provides an initial assignment (task1 to alice) and associated data.

* The `optimization` section suggests an attempt to minimize the skill level required for a task.

* The `rules` section defines a simple rule: if a person has a skill level of 5 or greater for a task, they are assigned to it.

* The `verifications` section ensures all tasks are assigned and that no one is overqualified.

### Interpretation

This JSON configuration represents a simplified task assignment problem. The goal is to assign tasks to people based on their skill levels, while adhering to certain constraints. The `optimization` section suggests a desire to assign tasks to individuals with the *lowest* necessary skill level, potentially to maximize resource utilization or minimize training costs. The `rules` and `verifications` sections define the logic and constraints that govern the assignment process. The `knowledge_base` provides a starting point for the assignment, and the overall structure suggests a system designed to find an optimal or feasible solution to the task assignment problem. The use of `DeclareSort` indicates a formal specification language is being used, likely for automated reasoning or constraint solving. The data is well-structured and appears to be designed for programmatic processing.

</details>

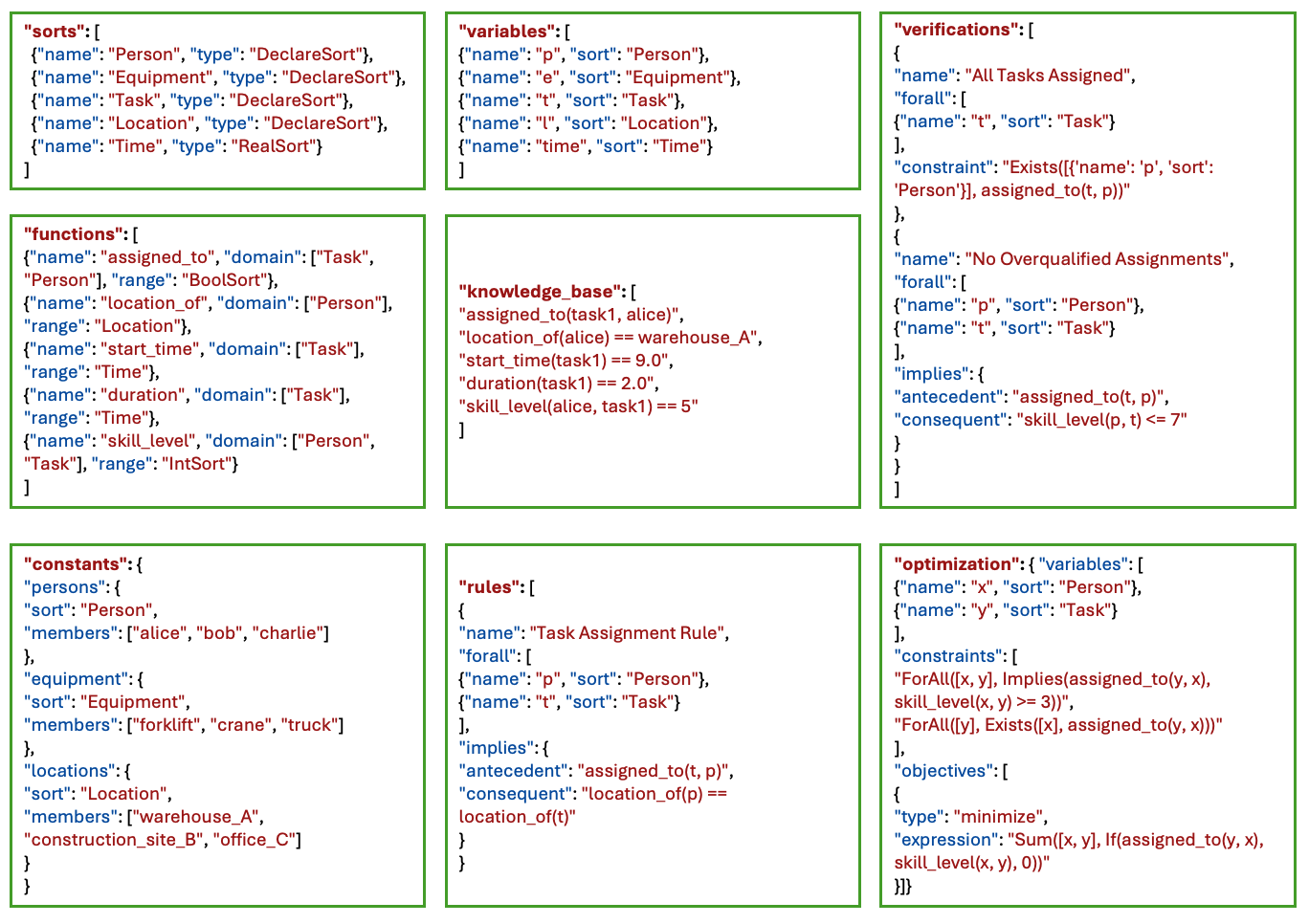

Figure 2: Example DSL program components of the Proof of Thought (PoT) framework for a dummy task assignment verification and optimization problem. The figure displays the JSON-based Domain-Specific Language (DSL) structure, including sort definitions, variables, functions, constants, knowledge base, rules, verifications, and optimization constraints for a workforce management scenario.

1. Sorts ( $\mathcal{S}$ ) define the domains or types used in the logic. Let $\mathcal{S}=\{S_{1},S_{2},\ldots,S_{n}\}$ be the set of sorts, where each $S_{i}$ represents a specific domain. The inclusion of sorts in our system is a key differentiator. It allows for reasoning over high-level, human-understandable concepts, which is crucial for bridging the gap between natural language problem descriptions and formal logical representations. The sort definition in Fig 2 allows for more intuitive problem representation. For instance, instead of reasoning about abstract entities, we can now reason about Persons, Equipment, Tasks, Locations, and Time. This makes it easier to translate natural language problems into formal logic. The type-safe reasoning catches semantic errors early. For example, if we tried to apply a function meant for Equipment to a Person, the system would catch this error before any reasoning takes place. Moreover, this structure allows for domain-specific reasoning strategies. For instance, we could implement specialized reasoning algorithms for time-based logic using the Time sort.

1. Functions ( $\mathcal{F}$ ) definitions in our system go beyond simple predicates, allowing for rich, typed relationships between sorts. Let $f:S_{1}\times S_{2}\times\ldots\times S_{k}\rightarrow S_{r}$ represent a function, where $S_{1},S_{2},\ldots,S_{k},S_{r}\in\mathcal{S}$ . For predicates, $S_{r}=\text{Bool}$ .

For example, in Fig 2, the function definition allows for complex domain modeling. For instance, ‘assigned_to‘ represents a relationship between Tasks and Persons, ‘location_of‘ transforms a Person into a Location, and ‘skill_level‘ represents a property of a Person in relation to a specific Task. The type-checking ensures that functions are only applied to arguments of the correct sort, reducing logical errors. For example, trying to find the ‘skill_level‘ of an Equipment item for a Task would be caught as a type error. In future work, we hope that these function definitions also open up the possibility of incorporating external algorithms. For instance, the ‘duration’ function could be linked to an external scheduling algorithm that calculates task durations based on various factors.

1. Constants ( $\mathcal{C}$ ) with associated sorts provide grounding for abstract reasoning in concrete entities. Let $c_{i}:S_{j}$ denote that constant $c_{i}$ is of sort $S_{j}$ . In Fig 2 constant declaration grounds the abstract sorts in concrete entities. It allows for easy integration of domain-specific knowledge - for instance, we know that "alice", "bob", and "charlie" are Persons in our system. In future work, we believe this structure has the potential for linking with external databases or knowledge graphs. For example, the "equipment" constants could be linked to an external database containing detailed specifications for each piece of equipment.

1. Variables ( $\mathcal{V}$ ) enable clear scoping rules for quantifiers and type-safe substitutions in logical formulas. Let $x_{i}:S_{j}$ denote that variable $x_{i}$ ranges over sort $S_{j}$ (see Fig 2).

1. Knowledge Base ( $\mathcal{KB}$ ) contains axioms or facts assumed to be true within the logical system. Let $\mathcal{KB}=\{\varphi_{1},\varphi_{2},\ldots,\varphi_{m}\}$ where each $\varphi_{i}$ is a well-formed formula in first-order logic. The structured knowledge base allows for separation of axioms from rules and queries, and supports incremental knowledge addition. In Fig 2, the knowledge base contains factual information about the problem domain. It’s separate from the rules and verifications, allowing for easy updates and additions without changing the core reasoning system. This structure also allows for potential consistency checking. For instance, we could verify that no person is assigned to two tasks at the same time, or that no task has a negative duration.

1. Rules ( $\mathcal{R}$ ) specify logical constructs, often involving quantifiers and implications. Let $\mathcal{R}=\{r_{1},r_{2},\ldots,r_{l}\}$ where each $r_{i}$ is a well-formed formula representing a rule. The explicit representation of rules enables a clear distinction between factual knowledge and inferential knowledge. These rules represent inferential knowledge - knowledge that can be derived from the facts in the knowledge base. They allow for easy addition of domain-specific reasoning patterns. This explicit representation of rules also supports explainable AI. When the system makes an inference, it can point to the specific rule(s) used, making its reasoning process transparent and understandable to users. For example, some rules are depicted in Fig 2 and here are a few more:

- $\forall x:\text{Person},\text{Worker}(x)\rightarrow\exists y:\text{Equipment},\text{Wearing}(x,y)$

- $\forall x:\text{Person},\forall y:\text{Equipment},\text{Wearing}(x,y)\land\text{SafetyGear}(y)\rightarrow\text{Safe}(x)$

1. Verifications ( $\mathcal{V}$ ) state properties or conditions to be verified by the theorem prover. Let $\mathcal{V}=\{v_{1},v_{2},\ldots,v_{p}\}$ where each $v_{i}$ is a well-formed formula to be verified. Separating verifications from the knowledge base and rules allows for clear goal-directed reasoning. These verifications (Fig 2) represent specific properties we want to check in our system. They allow for easy testing and validation of the knowledge base and rules. When a verification fails, the system can potentially generate counter-examples, providing valuable insights into why the desired property doesn’t hold. For example:

- $\forall x:\text{Person},\text{Worker}(x)\rightarrow\text{Safe}(x)$

- $\exists x:\text{Person},\text{Worker}(x)\land\lnot\text{Safe}(x)$

1. Optimization (optional) ( $\mathcal{O}$ ) sections define problems with objectives and constraints. Let $\mathcal{O}=(f_{\text{obj}},\mathcal{C})$ where $f_{\text{obj}}$ is the objective function and $\mathcal{C}$ is a set of constraints. The optimization problem in Fig 2 seeks to minimize the total skill level of assigned persons while ensuring that all tasks are assigned and that each assigned person has at least the minimum required skill level. This ability to combine logical constraints with numerical optimization allows for the representation of complex real-world problems that go beyond pure logical satisfiability. We intend to benchmark this in future work. Here is an example:

- $\text{minimize }f_{\text{obj}}(x)=\sum_{i=1}^{n}\text{cost}(x_{i})$

- subject to: $\forall i,1\leq i\leq n,\text{Safe}(x_{i})$

1. Actions ( $\mathcal{A}$ ) list what the interpreter has to perform. The only two possible actions are ‘verify’ and ‘optimize’. This simple declaration tells the system what to do with the problem representation we’ve built up. It provides flexibility in choosing different reasoning or optimization approaches for the same problem representation. For extensibility, this structure also opens up possibilities for meta-reasoning about which actions to take. For instance, the system could analyze the problem structure to decide whether to attempt verification first or go straight to optimization.

### 3.4 Design of the Interpreter’s Facilities and Capabilities

This section provides an in-depth description of the interpreter’s facilities, detailing how it constructs and manipulates logical expressions.

Type System, Sort Management: The interpreter implements a robust type system, managing sorts and ensuring type safety across all expressions. It supports a variety of Z3 compatible sorts, including primitive sorts like Bool, Int, and Real, which form the foundation of the type system. User-defined sorts, known as declared sorts, allow for the representation of specific domains such as Person or Equipment. For situations requiring a finite set of elements, enumerated sorts are available. The type system also accommodates composite sorts, constructed using type constructors, which enable the creation of function sorts or tuple sorts. Throughout its operations, the interpreter rigorously enforces type consistency, ensuring that functions and predicates are applied only to arguments of the correct sorts.

Symbol Table, Scope Management: Central to the interpreter’s functionality is a symbol table that maintains mappings from identifiers to their definitions, including variables, constants, and functions. This table is crucial for scope management, particularly when dealing with quantified variables in logical expressions. The parsing process is another key component, where the interpreter builds abstract syntax trees (ASTs) that represent the structure of expressions. This process handles a wide range of logical constructs, from atomic formulas (basic predicates applied to terms) to complex formulas constructed using logical connectives and quantifiers. The interpreter pays special attention to quantifiers, carefully managing bound variables to ensure correct scoping. Additionally, it supports substitution of terms for variables, an essential operation in applying inference rules.

Pre-processing: While the bulk of reasoning is handled by the theorem prover, the interpreter applies basic inference and simplification rules to optimize expressions before passing them on. This includes simplification processes that reduce expressions using logical identities, such as eliminating double negations. Normalization is another crucial step, converting expressions into a standard form (like prenex normal form) to facilitate theorem proving. The interpreter also performs early error detection, identifying contradictory statements or type mismatches before they can cause issues in later stages of processing.

Feedback Loop: Adequate error handling and diagnostics are paramount in the interpreter’s design. It provides detailed error messages to assist the LLM in identifying and correcting issues with its programs. These diagnostics cover a range of potential problems, including type errors that indicate inconsistencies or mismatches in the type system, alerts for undefined symbols when functions, predicates, or constants are used without proper definition, and syntax errors that highlight issues in the structure of logical expressions.

Future Proofing: The interpreter’s architecture emphasizes extensibility and customization. Users have the flexibility to extend its capabilities in several ways. They can add new sorts to define additional domains of discourse, expanding the system’s ability to represent complex scenarios. The logical language can be enhanced by defining new functions and predicates, allowing users to capture more intricate relationships within their domain of interest. Furthermore, the modular design of the interpreter facilitates integration with different theorem provers or logic systems, enhancing its versatility and applicability to various problem domains.

## 4 Results

### 4.1 StrategyQA - Complex Natural Language Reasoning

Task Setup: StrategyQA presents a significant challenge in natural language processing, testing a model’s ability to perform multi-hop, implicit reasoning across diverse scenarios. This boolean question answering benchmark requires models to infer unstated reasoning steps, mirroring complex human cognitive processes. For example, "Did Aristotle use a laptop?" requires the implicit chain: "When did Aristotle live? When was the laptop invented? Do these time periods overlap?" This level of abstraction surpasses simple fact retrieval or explicit reasoning tasks in other benchmarks like BoolQ or Twenty Questions (20QA). While state-of-the-art language models have shown impressive performance on StrategyQA (e.g., PaLM-2 achieving 90.20% accuracy with few-shot Chain of Thought and Self Consistency (Anil et al., 2023)), they lack transparency and verifiability in their reasoning process. Our Proof of Thought (PoT) framework addresses this limitation by providing complete, explicit, and verifiable reasoning chains. PoT breaks down implicit reasoning steps into explicit logical representations, defines the knowledge base used, and ensures each inference is provable through a theorem prover.

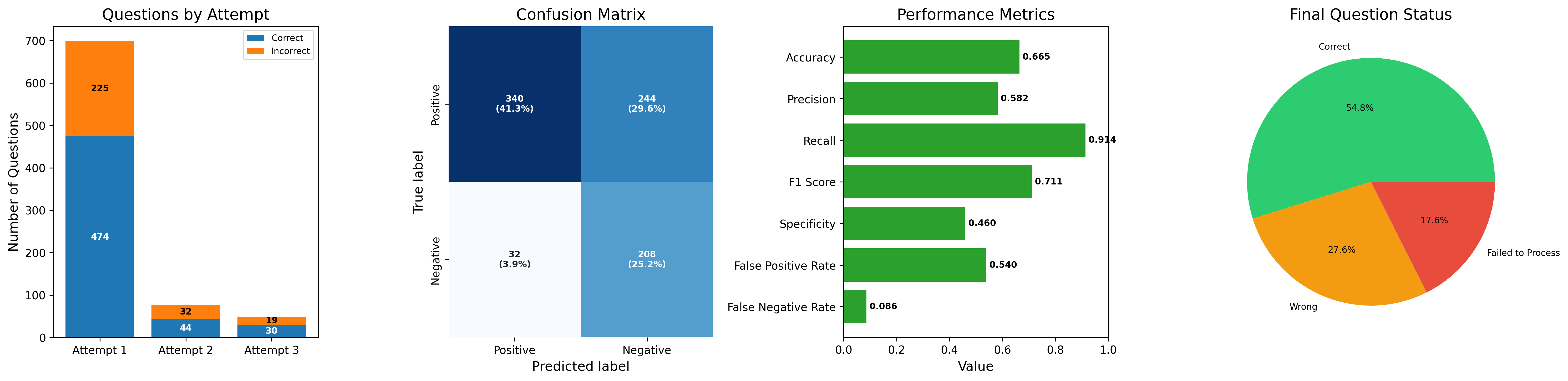

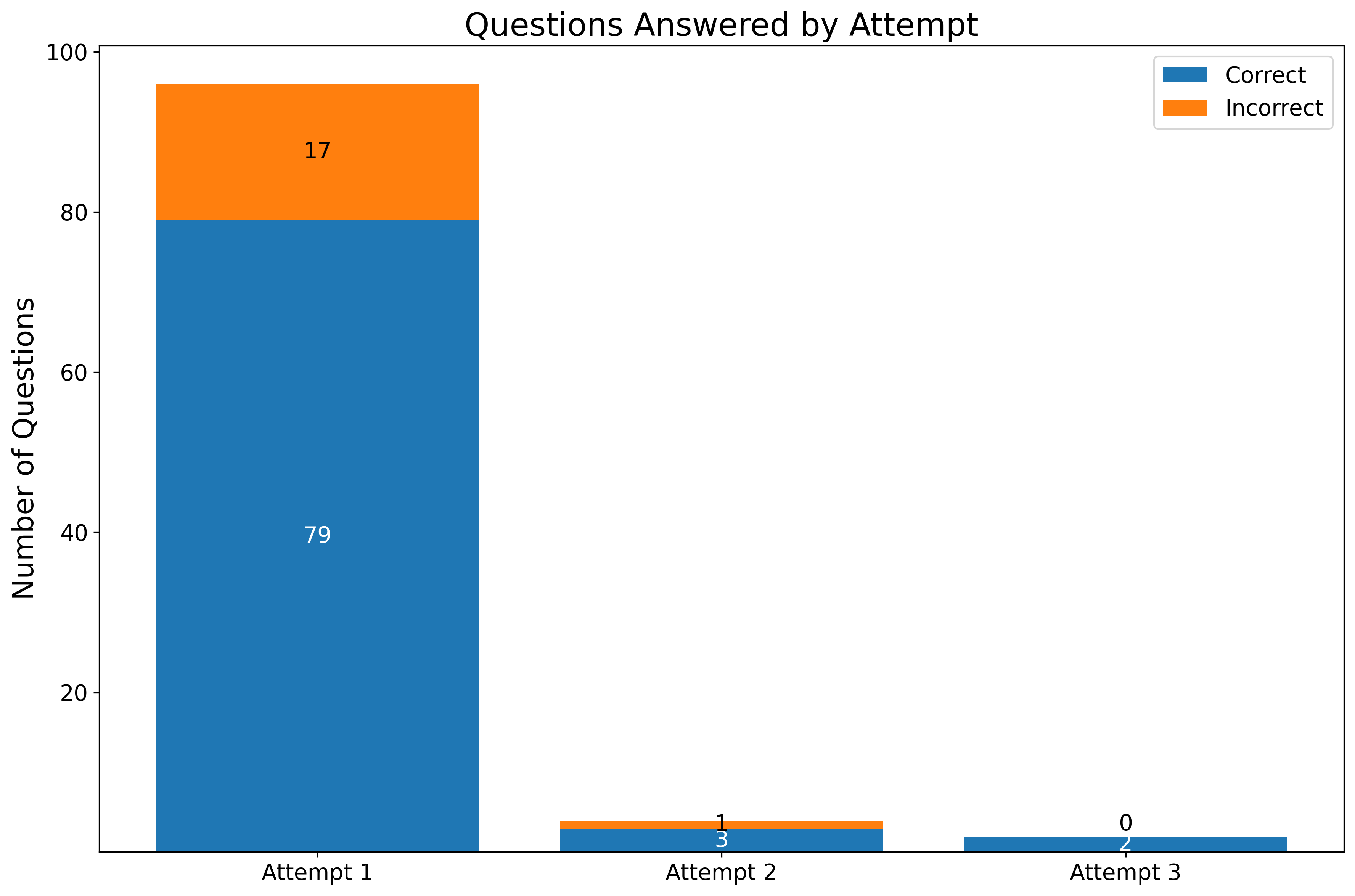

PoT Results: We evaluated PoT on a sample of 1000 questions from the StrategyQA dataset, focusing on the framework’s ability to generate syntactically correct programs, produce provable reasoning chains, and match outputs with correct answers. The system, with the inclusion of a 3 step feedback loops (i.e., initial prompt, +2 attempts at resolving), successfully compiled and executed 82.4% of the 1000 processed questions, marking a significant improvement from runs with lower feedback loops. This increase in compilation success rate underscores the effectiveness of our feedback mechanism in addressing and resolving issues in generated logical representations. The system demonstrated strong recall at 91.40%, indicating its proficiency in identifying true positive cases. The F1 score of 71.13% suggests a good balance between precision and recall, though precision (58.22%) presents an area for potential enhancement. The high recall, coupled with a false positive rate of 53.98%, indicates a tendency for the system to overpredict positive cases. This observation points to a need for future refinements in discriminating between positive and negative instances more accurately. While the compilation success rate is encouraging, the 17.6% of questions that failed to compile highlight an area for further improvement. Enhancing the robustness of the code generation process through improved prompting techniques, fine-tuning, and expansion of the feedback loop mechanism could potentially reduce this failure rate in future iterations.

### 4.2 Multimodal Reddit-OSHA Benchmark

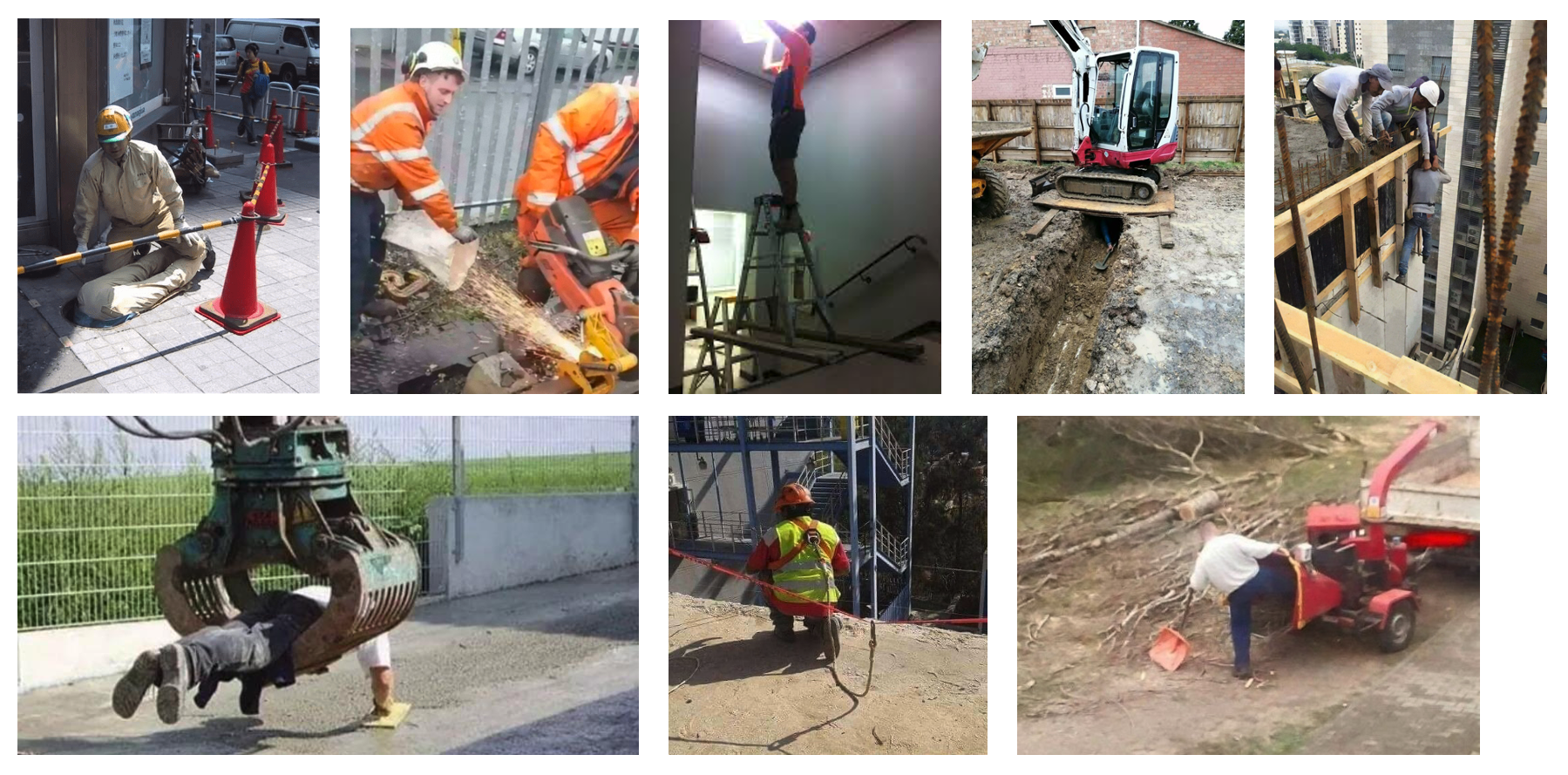

Task Setup: We curated 103 samples from the r/OSHA subreddit, featuring individuals in extremely hazardous situations. This dataset represents long-tail, low-probability scenarios, mirroring challenging real-world deployment conditions for health and safety applications. The images encompass a wide range of problems with varied lighting, scene setups, visual clutter, and resolutions.

Baselines: We implemented four reasoning strategies using GPT-4 as the underlying language model: Chain of Thought (CoT), Chain of Thought with Self-Consistency (CoT-SC), Tree of Thought (ToT), and Graph of Thought (GoT). Each baseline processes base64-encoded images and uses a consistent system prompt instructing the model to act as a safety inspector. CoT encourages step-by-step reasoning, concluding with a binary hazard decision. CoT-SC extends this by generating 5 independent reasoning paths per image, with the final decision determined by majority voting. ToT explores multiple reasoning paths in a tree-like structure with a maximum depth of 3 and a breadth of 2 at each node. GoT implements an iterative reasoning process with 3 iterations per image, building upon previous analyses. Evaluation metrics include win rate (proportion of correctly identified hazards) and reasoning richness (number of sentences in model responses).

Baseline Results: All reasoning strategies demonstrated high performance, with CoT achieving a 99.03% win rate and CoT-SC, ToT, and GoT all achieving perfect 100% win rates. This suggests that advanced reasoning strategies can correct errors made by simpler approaches. Reasoning richness varied significantly, with ToT producing the most detailed responses (often over 1000 sentences per image) and CoT the most concise (25-30 sentences).

PoT Results: Our Proof of Thought framework showed remarkable improvements on this dataset with the inclusion of a 3 step feedback loop (i.e., initial prompt, +2 attempts at resolving). Notably, we reduced compilation errors from 14.6% to 0%, demonstrating the effectiveness of our feedback and error correction mechanisms. The win rate on compiled programs increased from 72% to 81.55%, indicating both more reliable code generation and more accurate logical reasoning.

## 5 Discussion and Future work

Future research directions include expanding PoT to handle more complex logical structures, that extend past boolean SAT & UNSAT, using one versus all setups, and developing more sophisticated feedback mechanisms to further reduce compilation errors. We intend to explore JSON-like representations for non-boolean responses. Additionally, exploring ways to make the logical representations more accessible to non-expert users and investigating the scalability of PoT to larger, more diverse datasets will be important next steps. Further, integrating PoT with other techniques such as model updates using reinforcement learning, or supervised fine-tuned models on synthetically generated syntactically correct PoT programs might unlock at-scale “System 2” thinking.

## 6 Conclusion

Proof of Thought bridges the gap between language models’ flexibility and formal logic’s rigor, offering a promising solution for trustworthy reasoning in vision-language models. By enhancing interpretability and providing reasoning guarantees, PoT addresses critical challenges in AI system accountability and reliability. Our results demonstrate its potential in both natural language and multimodal reasoning tasks, paving the way for more transparent, verifiable AI systems capable of complex reasoning in high-stakes domains.

## References

- Anil et al. [2023] Rohan Anil, Andrew M Dai, Orhan Firat, Melvin Johnson, Dmitry Lepikhin, Alexandre Passos, Siamak Shakeri, Emanuel Taropa, Paige Bailey, Zhifeng Chen, et al. Palm 2 technical report. arXiv preprint arXiv:2305.10403, 2023.

- Barbiero et al. [2023] Pietro Barbiero, Gabriele Ciravegna, Francesco Giannini, Mateo Espinosa Zarlenga, Lucie Charlotte Magister, Alberto Tonda, Pietro Lió, Frederic Precioso, Mateja Jamnik, and Giuseppe Marra. Interpretable neural-symbolic concept reasoning. In International Conference on Machine Learning, pages 1801–1825. PMLR, 2023.

- Bender et al. [2021] Emily M Bender, Timnit Gebru, Angelina McMillan-Major, and Shmargaret Shmitchell. On the dangers of stochastic parrots: Can language models be too big? In Proceedings of the 2021 ACM conference on fairness, accountability, and transparency, pages 610–623, 2021.

- Besta et al. [2024] Maciej Besta, Nils Blach, Ales Kubicek, Robert Gerstenberger, Michal Podstawski, Lukas Gianinazzi, Joanna Gajda, Tomasz Lehmann, Hubert Niewiadomski, Piotr Nyczyk, et al. Graph of thoughts: Solving elaborate problems with large language models. In Proceedings of the AAAI Conference on Artificial Intelligence, volume 38, pages 17682–17690, 2024.

- Chen et al. [2021] Mark Chen, Jerry Tworek, Heewoo Jun, Qiming Yuan, Henrique Ponde De Oliveira Pinto, Jared Kaplan, Harri Edwards, Yuri Burda, Nicholas Joseph, Greg Brockman, et al. Evaluating large language models trained on code. arXiv preprint arXiv:2107.03374, 2021.

- Chen et al. [2020a] Xiaojun Chen, Shengbin Jia, and Yang Xiang. A review: Knowledge reasoning over knowledge graph. Expert systems with applications, 141:112948, 2020a.

- Chen et al. [2023] Zhenfang Chen, Rui Sun, Wenjun Liu, Yining Hong, and Chuang Gan. Genome: generative neuro-symbolic visual reasoning by growing and reusing modules. arXiv preprint arXiv:2311.04901, 2023.

- Chen et al. [2020b] Zhi Chen, Yijie Bei, and Cynthia Rudin. Concept whitening for interpretable image recognition. Nature Machine Intelligence, 2(12):772–782, 2020b.

- Danilevsky et al. [2020] Marina Danilevsky, Kun Qian, Ranit Aharonov, Yannis Katsis, Ban Kawas, and Prithviraj Sen. A survey of the state of explainable ai for natural language processing. arXiv preprint arXiv:2010.00711, 2020.

- d’Avila Garcez et al. [2009] Artur S d’Avila Garcez, Luís C Lamb, and Dov M Gabbay. Neural-symbolic learning systems. Springer, 2009.

- Gupta and Kembhavi [2023] Tanmay Gupta and Aniruddha Kembhavi. Visual programming: Compositional visual reasoning without training. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, pages 14953–14962, 2023.

- Jordan and Mitchell [2015] Michael I Jordan and Tom M Mitchell. Machine learning: Trends, perspectives, and prospects. Science, 349(6245):255–260, 2015.

- Kampffmeyer et al. [2019] Michael Kampffmeyer, Yinbo Chen, Xiaodan Liang, Hao Wang, Yujia Zhang, and Eric P Xing. Rethinking knowledge graph propagation for zero-shot learning. In Proceedings of the IEEE/CVF conference on computer vision and pattern recognition, pages 11487–11496, 2019.

- Kim et al. [2018] Been Kim, Martin Wattenberg, Justin Gilmer, Carrie Cai, James Wexler, Fernanda Viegas, et al. Interpretability beyond feature attribution: Quantitative testing with concept activation vectors (tcav). In International conference on machine learning, pages 2668–2677. PMLR, 2018.

- Li et al. [2022] Yujia Li, David Choi, Junyoung Chung, Nate Kushman, Julian Schrittwieser, Rémi Leblond, Tom Eccles, James Keeling, Felix Gimeno, Agustin Dal Lago, et al. Competition-level code generation with alphacode. Science, 378(6624):1092–1097, 2022.

- Li et al. [2023] Ziyang Li, Jiani Huang, and Mayur Naik. Scallop: A language for neurosymbolic programming. Proceedings of the ACM on Programming Languages, 7(PLDI):1463–1487, 2023.

- Manhaeve et al. [2018] Robin Manhaeve, Sebastijan Dumancic, Angelika Kimmig, Thomas Demeester, and Luc De Raedt. Deepproblog: Neural probabilistic logic programming. Advances in neural information processing systems, 31, 2018.

- Marconato et al. [2024] Emanuele Marconato, Samuele Bortolotti, Emile van Krieken, Antonio Vergari, Andrea Passerini, and Stefano Teso. Bears make neuro-symbolic models aware of their reasoning shortcuts. arXiv preprint arXiv:2402.12240, 2024.

- Marcus [2020] Gary Marcus. The next decade in ai: four steps towards robust artificial intelligence. arXiv preprint arXiv:2002.06177, 2020.

- Rudin [2019] Cynthia Rudin. Stop explaining black box machine learning models for high stakes decisions and use interpretable models instead. Nature machine intelligence, 1(5):206–215, 2019.

- Tanzer et al. [2023] Garrett Tanzer, Mirac Suzgun, Eline Visser, Dan Jurafsky, and Luke Melas-Kyriazi. A benchmark for learning to translate a new language from one grammar book. arXiv preprint arXiv:2309.16575, 2023.

- Towell and Shavlik [1994] Geoffrey G Towell and Jude W Shavlik. Knowledge-based artificial neural networks. Artificial intelligence, 70(1-2):119–165, 1994.

- Towell et al. [1990] Geoffrey G Towell, Jude W Shavlik, and Michiel O Noordewier. Refinement of approximate domain theories by knowledge-based neural networks. In Proceedings of the eighth National conference on Artificial intelligence-Volume 2, pages 861–866, 1990.

- Wang et al. [2022] Xuezhi Wang, Jason Wei, Dale Schuurmans, Quoc Le, Ed Chi, Sharan Narang, Aakanksha Chowdhery, and Denny Zhou. Self-consistency improves chain of thought reasoning in language models. arXiv preprint arXiv:2203.11171, 2022.

- Wei et al. [2022] Jason Wei, Xuezhi Wang, Dale Schuurmans, Maarten Bosma, Fei Xia, Ed Chi, Quoc V Le, Denny Zhou, et al. Chain-of-thought prompting elicits reasoning in large language models. Advances in neural information processing systems, 35:24824–24837, 2022.

- Yang et al. [2023] Jianwei Yang, Hao Zhang, Feng Li, Xueyan Zou, Chunyuan Li, and Jianfeng Gao. Set-of-mark prompting unleashes extraordinary visual grounding in gpt-4v. arXiv preprint arXiv:2310.11441, 2023.

- Yao et al. [2024] Shunyu Yao, Dian Yu, Jeffrey Zhao, Izhak Shafran, Tom Griffiths, Yuan Cao, and Karthik Narasimhan. Tree of thoughts: Deliberate problem solving with large language models. Advances in Neural Information Processing Systems, 36, 2024.

- Zhang et al. [2021] Chiyuan Zhang, Samy Bengio, Moritz Hardt, Benjamin Recht, and Oriol Vinyals. Understanding deep learning (still) requires rethinking generalization. Communications of the ACM, 64(3):107–115, 2021.

- Zoph et al. [2016] Barret Zoph, Deniz Yuret, Jonathan May, and Kevin Knight. Transfer learning for low-resource neural machine translation. arXiv preprint arXiv:1604.02201, 2016.

## 7 Appendix

### 7.1 StrategyQA Results

<details>

<summary>strategyqaresults.png Details</summary>

### Visual Description

\n

## Charts: Performance Analysis of Question Attempts

### Overview

The image presents a series of four charts visualizing the performance of question attempts. The charts display the number of correct/incorrect answers per attempt, a confusion matrix, performance metrics (accuracy, precision, recall, F1 score, specificity, false positive rate, false negative rate), and the final status of questions (correct, wrong, failed to process).

### Components/Axes

* **Chart 1: Questions by Attempt**

* X-axis: Attempt (1, 2, 3)

* Y-axis: Number of Questions (Scale: 0 to 700, increments of 100)

* Legend:

* Blue: Correct

* Orange: Incorrect

* **Chart 2: Confusion Matrix**

* X-axis: Predicted label (Positive, Negative)

* Y-axis: True label (Positive, Negative)

* Cells contain counts and percentages.

* **Chart 3: Performance Metrics**

* X-axis: Value (Scale: 0.0 to 1.0, increments of 0.2)

* Y-axis: Metric Name (Accuracy, Precision, Recall, F1 Score, Specificity, False Positive Rate, False Negative Rate)

* Horizontal bars represent metric values.

* **Chart 4: Final Question Status**

* Pie chart showing the proportion of questions in each status.

* Legend:

* Green: Correct

* Red: Wrong

* Yellow: Failed to Process

### Detailed Analysis or Content Details

* **Chart 1: Questions by Attempt**

* Attempt 1: Approximately 474 correct, approximately 32 incorrect.

* Attempt 2: Approximately 225 correct, approximately 44 incorrect.

* Attempt 3: Approximately 19 correct, approximately 30 incorrect.

* The number of correct answers decreases significantly with each attempt, while the number of incorrect answers increases.

* **Chart 2: Confusion Matrix**

* Positive/Positive: 340 (41.3%)

* Positive/Negative: 244 (29.6%)

* Negative/Positive: 32 (3.9%)

* Negative/Negative: 208 (25.2%)

* **Chart 3: Performance Metrics**

* Accuracy: Approximately 0.665

* Precision: Approximately 0.582

* Recall: Approximately 0.914

* F1 Score: Approximately 0.711

* Specificity: Approximately 0.460

* False Positive Rate: Approximately 0.540

* False Negative Rate: Approximately 0.086

* **Chart 4: Final Question Status**

* Correct: 54.8%

* Wrong: 27.6%

* Failed to Process: 17.6%

### Key Observations

* The number of correct answers drops dramatically with each attempt.

* The confusion matrix shows a high number of true positives (340) but also a significant number of false negatives (244).

* Recall is high (0.914), indicating the model correctly identifies most positive cases.

* Precision is relatively low (0.582), suggesting a significant number of false positives.

* The "Failed to Process" category represents a notable portion (17.6%) of the questions.

### Interpretation

The data suggests a model that performs well at identifying positive cases (high recall) but struggles with precision, leading to a substantial number of false positives. The decreasing number of correct answers with each attempt is concerning and could indicate issues with the question set, the model's learning process, or the data distribution across attempts. The significant "Failed to Process" rate suggests potential problems with data input, processing, or model compatibility. The confusion matrix highlights a bias towards predicting positive outcomes, which explains the high recall and low precision. Further investigation is needed to understand the reasons behind the declining performance across attempts and the high failure rate. The model appears to be overly sensitive, flagging many instances as positive when they are actually negative.

</details>

Figure 3: Performance analysis of the Proof of Thought (PoT) framework on the StrategyQA dataset. The includes four visualizations: (1) a stacked bar chart showing questions answered by attempt, (2) a pie chart displaying the final question status, (3) a confusion matrix for predicted vs. true labels, and (4) a bar chart of various performance metrics including accuracy, precision, recall, F1-score, specificity, and false positive rate.

### 7.2 Multimodal Reddit-OSHA Benchmark

<details>

<summary>HSE_Image_Samples.png Details</summary>

### Visual Description

\n

## Photographs: Construction Site Activities

### Overview

The image is a composite of nine photographs depicting various activities on a construction site. The photos showcase different stages of work, including utility installation, excavation, structural work, and landscaping. There is no quantitative data or charts present; the information is purely visual and descriptive.

### Components/Axes

There are no axes, legends, or scales present in this image. It is a collection of photographs.

### Detailed Analysis or Content Details

Here's a description of each photograph, moving from left to right, top row then bottom row:

1. **Top-Left:** A worker in a white protective suit and hard hat is connecting a yellow hose to an orange traffic cone. A dark-colored vehicle is partially visible in the background.

2. **Top-Second:** A worker wearing a high-visibility vest and hard hat is operating a jackhammer to break up pavement. The ground is disturbed, with broken concrete and soil visible.

3. **Top-Third:** A worker is ascending a metal ladder inside a brightly lit, pink-colored room. The room appears to be part of a building under construction.

4. **Top-Fourth:** A small excavator is digging in a dirt area. A residential building is visible in the background.

5. **Top-Fifth:** Workers are constructing a concrete wall with steel reinforcement bars (rebar). Wooden forms are in place to hold the concrete.

6. **Bottom-Left:** A large mechanical claw or grapple is holding a bundle of cables or pipes. The background is a paved area.

7. **Bottom-Second:** Two workers, one in a high-visibility vest and the other in a red jacket, are working with underground cables or pipes. A manhole cover is visible.

8. **Bottom-Third:** A worker in a white shirt is standing near a red wood chipper. Branches and wood debris are scattered around.

### Key Observations

The photographs collectively illustrate a range of construction tasks. The presence of protective gear (suits, vests, hard hats) indicates a focus on safety. The variety of equipment (jackhammer, excavator, wood chipper) suggests a complex project involving multiple trades.

### Interpretation

The images depict a typical construction site, likely for a residential or commercial project. The activities shown suggest a project that involves underground utility work, foundation construction, and landscaping. The photographs do not provide any specific details about the project's timeline, budget, or scope. They are purely illustrative of the types of work performed on a construction site. The images suggest a project in progress, with various stages of completion visible across the different photographs. There are no anomalies or outliers apparent from the visual information provided. The images are a snapshot of a dynamic and complex process.

</details>

Figure 4: Sample Images from the Multimodal Reddit-OSHA Benchmark

<details>

<summary>hsestackplot.png Details</summary>

### Visual Description

\n

## Stacked Bar Chart: Questions Answered by Attempt

### Overview

The image presents a stacked bar chart visualizing the number of questions answered correctly and incorrectly across three attempts. The chart displays the distribution of correct and incorrect answers for each attempt, allowing for a comparison of performance over time.

### Components/Axes

* **Title:** "Questions Answered by Attempt" (centered at the top)

* **X-axis:** "Attempt" with markers for Attempt 1, Attempt 2, and Attempt 3.

* **Y-axis:** "Number of Questions" with a scale ranging from 0 to 100, incrementing by 20.

* **Legend:** Located in the top-right corner, distinguishing between "Correct" (blue) and "Incorrect" (orange).

### Detailed Analysis

The chart consists of three stacked bars, one for each attempt.

* **Attempt 1:**

* Correct answers: Approximately 79 questions (represented by a blue segment).

* Incorrect answers: Approximately 17 questions (represented by an orange segment).

* Total questions attempted: 79 + 17 = 96 questions.

* **Attempt 2:**

* Correct answers: Approximately 1 question (represented by a blue segment).

* Incorrect answers: Approximately 3 questions (represented by an orange segment).

* Total questions attempted: 1 + 3 = 4 questions.

* **Attempt 3:**

* Correct answers: Approximately 0 questions (represented by a blue segment).

* Incorrect answers: Approximately 2 questions (represented by an orange segment).

* Total questions attempted: 0 + 2 = 2 questions.

### Key Observations

* The majority of questions were attempted on Attempt 1, with a high number of correct answers.

* The number of questions attempted significantly decreased with each subsequent attempt.

* The proportion of incorrect answers appears to increase relative to correct answers in later attempts, although the small sample sizes in Attempts 2 and 3 make this observation less reliable.

* Attempt 3 had the lowest number of questions attempted, with no correct answers.

### Interpretation

The data suggests a decline in engagement or a successful completion of the task after the first attempt. The initial attempt shows a strong performance, with a large number of questions answered correctly. The subsequent attempts indicate a diminishing need for further attempts, potentially because the user achieved their desired outcome or lost interest. The low number of attempts in the later stages makes it difficult to draw definitive conclusions about performance trends. The chart highlights a significant drop in activity after the first attempt, which could be due to various factors, such as the task being completed, the user finding an alternative solution, or a lack of motivation to continue. The data could be used to assess the effectiveness of the task or identify areas where users may be struggling.

</details>

Figure 5: Performance analysis of the Proof of Thought (PoT) framework on the Multimodal Reddit-OSHA Benchmark dataset with a stacked bar chart showing questions answered by attempt.

### 7.3 Real Reasoning Traces using PoT : StrategyQA Dataset

1. Question: Could Javier Sotomayor jump over the head of the average giraffe? Answer: False Predicted Answer: UNSAT (False).

⬇

{

"sorts": [

{"name": "Person", "type": "DeclareSort"},

{"name": "Animal", "type": "DeclareSort"},

{"name": "Real", "type": "RealSort"}

],

"functions": [

{"name": "jump_height", "domain": ["Person"], "range": "Real"},

{"name": "height", "domain": ["Animal"], "range": "Real"}

],

"constants": {

"persons": {"sort": "Person", "members": ["javier_sotomayor"]},

"animals": {"sort": "Animal", "members": ["average_giraffe"]}

},

"variables": [

{"name": "p", "sort": "Person"},

{"name": "a", "sort": "Animal"}

],

"knowledge_base": [

{"assertion": "jump_height(javier_sotomayor) == 2.45"},

{"assertion": "height(average_giraffe) == 5.5"}

],

"verifications": [

{"name": "Sotomayor Jump Over Giraffe", "constraint": "jump_height(javier_sotomayor) >= height(average_giraffe)"

}

],

"actions": ["verify_conditions"]

}

1. Question: Did the Cherokee people send a delegation to oppose allotment? Answer: True Predicted Answer: SAT (True)

⬇

{

"sorts": [

{"name": "Group", "type": "DeclareSort"},

{"name": "Action", "type": "DeclareSort"},

{"name": "Bool", "type": "BoolSort"}

],

"functions": [

{"name": "send_delegation", "domain": ["Group"], "range": "Bool"},

{"name": "oppose_allotment", "domain": ["Group"], "range": "Bool"}

],

"constants": {

"groups": {"sort": "Group", "members": ["cherokee_people"]},

"actions": {"sort": "Action", "members": ["allotment"]}

},

"variables": [

{"name": "g", "sort": "Group"}

],

"knowledge_base": [

{"assertion": "send_delegation(cherokee_people)"},

{"assertion": "ForAll([g], Implies(send_delegation(g), oppose_allotment(g)))",

"variables": [{"name": "g", "sort": "Group"}]

}

],

"verifications": [

{"name": "Cherokee Oppose Allotment", "constraint": "oppose_allotment(cherokee_people)"}

],

"actions": ["verify_conditions"]

}

### 7.4 Real Reasoning Traces using PoT : Multimodal Reddit-OSHA Dataset

<details>

<summary>88-hse.png Details</summary>

### Visual Description

\n

## Photograph: Building Exterior with Worker

### Overview

The image depicts the exterior of a two-story building with a worker on a ladder accessing a section above the entrance. The building is identified as the "American Literary Historical Society". A car is parked in front of the building. The sky is blue with scattered clouds.

### Components/Axes

There are no axes or charts in this image. The key elements are:

* **Building:** A two-story structure with a gray facade and a yellow entrance area.

* **Ladder:** An aluminum extension ladder leaning against the building.

* **Worker:** A person wearing a green shirt and dark pants, ascending the ladder.

* **Car:** A silver hatchback parked on the street.

* **Signage:** Text reading "AMERICAN LITERARY HISTORICAL SOCIETY" is visible on the building.

* **Address:** The number "10045" is displayed above the entrance door.

* **Sky:** A blue sky with white clouds.

### Detailed Analysis or Content Details

* **Building Address:** 10045.

* **Building Name:** AMERICAN LITERARY HISTORICAL SOCIETY. The text is vertically aligned and centered on the gray facade.

* **Entrance:** The entrance is painted yellow and has two doors.

* **Ladder Position:** The ladder is positioned centrally above the entrance. The worker is approximately two-thirds of the way up the ladder.

* **Car Make/Model:** The car appears to be a silver Honda Fit (approximate).

* **Surrounding Environment:** The building is located on a street with a sidewalk. There are other buildings and utility poles visible in the background.

* **Weather:** The weather appears to be sunny and clear.

### Key Observations

* The worker is performing maintenance or repairs on the building.

* The building appears to be a commercial or institutional structure.

* The scene is a typical urban or suburban environment.

* The building is well-maintained, but requires some external work.

### Interpretation

The photograph documents a routine maintenance activity at the American Literary Historical Society building. The presence of the worker on the ladder suggests ongoing upkeep of the property. The image provides a snapshot of the building's exterior and its surrounding environment. The building's name suggests it is an organization dedicated to the study and preservation of American literature and history. The scene is unremarkable, but provides a visual record of the building and its activities. There are no apparent anomalies or unusual features. The image is a straightforward depiction of a building and a worker performing a task. It doesn't suggest any deeper narrative or hidden meaning.

</details>

<details>

<summary>84-HSE.png Details</summary>

### Visual Description

\n

## Photograph: Forklift Operation

### Overview

The image depicts a person operating a forklift to lift a stack of wooden planks towards an open garage door. The scene appears to be an industrial or warehouse setting. The image does not contain charts, graphs, or diagrams with quantifiable data. It is a visual record of an activity.

### Components/Axes

There are no axes or legends present in the image. The key components are:

* **Forklift:** A black forklift is visible on the left side of the image. The forks are extended and supporting the wooden planks.

* **Wooden Planks:** A stack of approximately 8-10 wooden planks is being lifted. They appear to be of varying widths and thicknesses.

* **Person:** A person is operating the forklift, positioned within the operator's cabin.

* **Garage Door:** A partially open garage door is in the background, revealing a bright outdoor area.

* **Ceiling:** The ceiling is visible, with overhead lighting and structural elements.

* **Wall:** A wall is visible on the left side of the image, with some markings or labels.

* **Control Panel:** A small control panel is visible on the lower left corner of the image.

### Detailed Analysis or Content Details

The image shows a forklift lifting a stack of wooden planks. The planks are positioned horizontally on the forks. The person operating the forklift is facing towards the garage door. The garage door is approximately halfway open. The lighting in the room is bright, with overhead fluorescent lights. The wall on the left has some markings, including a red and white sticker with the letters "ABC" and a logo. There is also a white rectangular sign with some text and images, but the details are too blurry to decipher.

### Key Observations

* The forklift appears to be in good working condition.

* The planks are not uniformly stacked, suggesting a potentially unstable load.

* The operator appears to be carefully maneuvering the load.

* The garage door is open, indicating that the planks are being moved either into or out of the building.

* The presence of the control panel suggests that the forklift is equipped with standard safety features.

### Interpretation

The image likely depicts a routine material handling operation in a warehouse or industrial setting. The forklift is being used to transport wooden planks, which could be used for a variety of purposes, such as construction, packaging, or storage. The open garage door suggests that the planks are being moved to a different location. The image highlights the importance of safe forklift operation and proper load handling. The slightly uneven stack of planks suggests a potential safety concern, as an unstable load could lead to an accident. The presence of the control panel and the operator's careful maneuvering indicate that safety procedures are being followed. The image provides a snapshot of a common industrial activity and underscores the need for vigilance and adherence to safety protocols. The markings on the wall may indicate specific safety instructions or operational guidelines for the area. Without further context, it is difficult to determine the exact purpose of the planks or the overall workflow of the operation.

</details>

<details>

<summary>hse-10.png Details</summary>

### Visual Description

\n

## Photograph: Person on Ladder

### Overview

The image depicts a person standing on a ladder, reaching towards a ceiling light fixture. The setting appears to be an indoor hallway or corridor. The image does not contain charts, diagrams, or data tables. It is a snapshot of a real-world scene.

### Components/Axes

There are no axes or components in the traditional sense of a chart or diagram. The key elements are:

* **Person:** Wearing an orange shirt and dark shorts.

* **Ladder:** A standard A-frame ladder.

* **Ceiling Light:** A fluorescent or similar type of ceiling fixture.

* **Wall:** A plain, light-colored wall.

* **Handrail:** A metal handrail is visible on the right side of the image.

* **Chair/Stool:** A chair or stool is partially visible on the left side.

* **Wood Planks:** Several wood planks are on the floor.

### Detailed Analysis or Content Details

The person is positioned on the top step of the ladder, extending their arm upwards towards the light fixture. The ladder is placed on a slightly elevated surface, possibly a step or platform. The lighting in the image is somewhat dim, creating shadows. The person's back is to the camera, obscuring their facial features. The wood planks on the floor suggest ongoing work or maintenance in the area.

### Key Observations

The scene suggests someone is performing maintenance or repair work on the ceiling light. The use of a ladder indicates the light fixture is out of easy reach. The presence of wood planks suggests a possible renovation or construction project. The scene appears to be a workplace or commercial building.

### Interpretation

The image likely represents a common workplace scenario involving maintenance or repair tasks. The person on the ladder is actively engaged in addressing an issue with the ceiling light. The overall impression is one of practical work being carried out in a functional environment. The image highlights the importance of safety precautions when working at heights, as the person is positioned on the top step of the ladder. The scene could be interpreted as a demonstration of a potential safety hazard if proper procedures are not followed. The presence of the wood planks suggests a larger project is underway, potentially involving renovations or upgrades to the building's infrastructure.

</details>

Figure 6: Example 1-3 from the HSE Reddit OSHA Dataset.

1. HSE Example 1

⬇

{

"sorts": [

{"name": "Person", "type": "DeclareSort"},

{"name": "Equipment", "type": "DeclareSort"},

{"name": "SafetyGear", "type": "DeclareSort"}

],

"functions": [

{"name": "Using", "domain": ["Person", "Equipment"], "range": "BoolSort"

},

{"name": "Wearing", "domain": ["Person", "SafetyGear"], "range": "BoolSort"}

],

"constants": {

"persons": {"sort": "Person", "members": ["worker"]},

"equipments": {"sort": "Equipment", "members": ["ladder"]},

"safetyGears": {"sort": "SafetyGear", "members": ["hardHat", "harness"]}

},

"knowledge_base": [

{"assertion": "Using(worker, ladder)", "value": true},

{"assertion": "Wearing(worker, hardHat)", "value": false},

{"assertion": "Wearing(worker, harness)", "value": false}

],

"rules": [

{"name": "Hard Hat Rule", "forall": [{"name": "p", "sort": "Person"},

{"name": "e", "sort": "Equipment"}], "implies": {"antecedent": "Using(p, e)", "consequent": "Wearing(p, hardHat)"}},

{"name": "Harness Rule", "forall": [{"name": "p", "sort": "Person"}, {"name": "e", "sort": "Equipment"}],

"implies": {"antecedent": "Using(p, e)", "consequent": "Wearing(p, harness)"}}

],

"verifications": [

{"name": "Verify Hard Hat Compliance", "constraint": "Wearing(worker, hardHat)"},

{"name": "Verify Harness Compliance", "constraint": "Wearing(worker, harness)"}

],

"actions": ["verify_conditions"]

}

1. HSE Example 2

⬇

{

"sorts": [{"name": "Person", "type": "DeclareSort"},

{"name": "Equipment", "type": "DeclareSort"},

{"name": "SafetyEquipment", "type": "DeclareSort"}

],

"functions": [

{"name": "StandingOn", "domain": ["Person", "Equipment"], "range": "BoolSort"},

{"name": "UsingSafetyEquipment", "domain": ["Person", "SafetyEquipment"], "range": "BoolSort"},

{"name": "IsSafe", "domain": ["Person"], "range": "BoolSort"}

],

"constants": {

"persons": {"sort": "Person", "members": ["worker"]},

"equipments": {"sort": "Equipment", "members": ["forklift", "pallet"]},

"safetyEquipments": {"sort": "SafetyEquipment", "members": ["harness"]}

},

"knowledgebase": [

{"assertion": "StandingOn(worker, pallet)", "value": true},

{"assertion": "UsingSafetyEquipment(worker, harness)", "value": false}

],

"rules": [

{"name": "Safety Rule", "forall": [{"name": "p", "sort": "Person"}],

"implies": {"antecedent": "And(StandingOn(p, pallet), Not(UsingSafetyEquipment(p, harness)))", "consequent": "Not(IsSafe(p))"}}

],

"verifications": [

{"name": "Verify Safety", "constraint": "IsSafe(worker)"}

],

"actions": ["verify_conditions"]

}

1. HSE Example 3

⬇

{

"sorts": [

{"name": "Person", "type": "DeclareSort"},

{"name": "Equipment", "type": "DeclareSort"},

{"name": "Location", "type": "DeclareSort"}

],

"functions": [

{"name": "Worker", "domain": ["Person"], "range": "BoolSort"},

{"name": "Using", "domain": ["Person", "Equipment"], "range": "BoolSort"},

{"name": "AtHeight", "domain": ["Person"], "range": "BoolSort"},

{"name": "HasFallProtection", "domain": ["Person"], "range": "BoolSort"},

{"name": "Stable", "domain": ["Equipment"], "range": "BoolSort"}

],

"constants": {

"persons": {"sort": "Person", "members": ["worker"]},

"equipments": {"sort": "Equipment", "members": ["ladder", "scaffold"]},

"locations": {"sort": "Location", "members": ["worksite"]}

},

"knowledge_base": [

"Worker(worker)", "Using(worker, ladder)", "Using(worker, scaffold)", "AtHeight(worker)",

{"assertion": "Stable(ladder)", "value": false},

{"assertion": "Stable(scaffold)", "value": false},

{"assertion": "HasFallProtection(worker)", "value": false}

],

"rules": [

{

"name": "Safety Rule", "forall": [{"name": "p", "sort": "Person"}],

"implies": {"antecedent": "And(Worker(p), AtHeight(p))", "consequent": "HasFallProtection(p)"}

},

{

"name": "Stability Rule", "forall": [{"name": "e", "sort": "Equipment"}],

"implies": {"antecedent": "Using(worker, e)", "consequent": "Stable(e)"}

}],

"verifications": [

{"name": "Verify Safety", "constraint": "And(HasFallProtection(worker), Stable(ladder), Stable(scaffold))"}

],

"actions": ["verify_conditions"]

}

### 7.5 A Qualitative Analysis of Generated DSL Programs and Reasoning Patterns

The generated DSL programs across both the StrategyQA dataset and the OSHA dataset illustrate how formal logical representations can be used to model complex reasoning tasks. In both cases, the structured use of sorts, functions, rules, and verifications ensures that the questions posed are systematically decomposed into logical assertions that can be verified by a theorem prover such as Z3. Here, we analyze key aspects of these programs and how they contribute to effective reasoning.

#### 7.5.1 Sorts and Function Definitions as the Backbone of Logical Modeling

The use of DeclareSort, BoolSort, RealSort, and other basic sorts in the DSL programs serves as the foundation for defining the domains of discourse. For example, in the StrategyQA question involving Javier Sotomayor and a giraffe, the Person and Animal sorts allow the definition of relationships between humans and animals in terms of measurable attributes (e.g., jump height and height). Similarly, in the OSHA-related examples, Person, Equipment, and SafetyGear sorts model the entities relevant to workplace safety.

By defining functions like jump_height, height, Wearing, and Using, we map the relationships between entities and their properties. These functions serve as predicates that are later used in verifications or rule implications. In these cases, the functions provide critical context for understanding the state of the world and the conditions under which certain outcomes (e.g., compliance with safety regulations or reaching a height) hold true.

#### 7.5.2 Knowledge Base and Its Role in Establishing Ground Truth