# Interpretable Contrastive Monte Carlo Tree Search Reasoning

\intervalconfig

soft open fences

Abstract

We propose (S) peculative (C) ontrastive MCTS ∗: a novel Monte Carlo Tree Search (MCTS) reasoning algorithm for Large Language Models (LLMs) which significantly improves both reasoning accuracy and speed. Our motivation comes from: 1. Previous MCTS LLM reasoning works often overlooked its biggest drawback—slower speed compared to CoT; 2. Previous research mainly used MCTS as a tool for LLM reasoning on various tasks with limited quantitative analysis or ablation studies of its components from reasoning interpretability perspective. 3. The reward model is the most crucial component in MCTS, however previous work has rarely conducted in-depth study or improvement of MCTS’s reward models. Thus, we conducted extensive ablation studies and quantitative analysis on components of MCTS, revealing the impact of each component on the MCTS reasoning performance of LLMs. Building on this, (i) we designed a highly interpretable reward model based on the principle of contrastive decoding and (ii) achieved an average speed improvement of 51.9% per node using speculative decoding. Additionally, (iii) we improved UCT node selection strategy and backpropagation used in previous works, resulting in significant performance improvement. We outperformed o1-mini by an average of 17.4% on the Blocksworld multi-step reasoning dataset using Llama-3.1-70B with SC-MCTS ∗. Our code is available at https://github.com/zitian-gao/SC-MCTS.

1 Introduction

With the remarkable development of Large Language Models (LLMs), models such as o1 (OpenAI, 2024a) have now gained a strong ability for multi-step reasoning across complex tasks and can solve problems that are more difficult than previous scientific, code, and mathematical problems. The reasoning task has long been considered challenging for LLMs. These tasks require converting a problem into a series of reasoning steps and then executing those steps to arrive at the correct answer. Recently, LLMs have shown great potential in addressing such problems. A key approach is using Chain of Thought (CoT) (Wei et al., 2024), where LLMs break down the solution into a series of reasoning steps before arriving at the final answer. Despite the impressive capabilities of CoT-based LLMs, they still face challenges when solving problems with an increasing number of reasoning steps due to the curse of autoregressive decoding (Sprague et al., 2024). Previous work has explored reasoning through the use of heuristic reasoning algorithms. For example, Yao et al. (2024) applied heuristic-based search, such as Depth-First Search (DFS) to derive better reasoning paths. Similarly, Hao et al. (2023) employed MCTS to iteratively enhance reasoning step by step toward the goal.

The tremendous success of AlphaGo (Silver et al., 2016) has demonstrated the effectiveness of the heuristic MCTS algorithm, showcasing its exceptional performance across various domains (Jumper et al., 2021; Silver et al., 2017). Building on this, MCTS has also made notable progress in the field of LLMs through multi-step heuristic reasoning. Previous work has highlighted the potential of heuristic MCTS to significantly enhance LLM reasoning capabilities. Despite these advancements, substantial challenges remain in fully realizing the benefits of heuristic MCTS in LLM reasoning.

<details>

<summary>extracted/6087579/fig/Fig1.png Details</summary>

### Visual Description

## Decision Tree with Logit Bar Charts

### Overview

The image presents a decision tree illustrating a blocksworld task, where the goal is to have the red block on top of the yellow block. The tree shows possible actions and their outcomes, accompanied by bar charts representing the logits (log-odds) of different actions chosen by an "expert" and an "amateur" at different states. The "CD Logits" show the chosen action.

### Components/Axes

* **Title:** Blocksworld task goal: The red block is on top of the yellow block (contained in a brown box at the top)

* **States:** The diagram shows three states: S0, S1, and S2, arranged vertically on the left.

* **Actors:** For states S1 and S2, there are two actors: Expert Logits (SE) and Amateur Logits (SA).

* **Actions:** The decision tree shows two actions: a0 (unstaking the red block) and a1 (stacking on the yellow block).

* **CD Logits:** CD Logits (SCD1) and CD Logits (SCD2) are shown for states S1 and S2 respectively.

* **Bar Charts:** Horizontal bar charts represent the logits for each action.

* The x-axis represents the logit value.

* The y-axis represents the different actions.

* **Decision Tree:** A tree diagram shows the possible actions and their outcomes.

* Nodes represent states of the block configuration.

* Edges represent actions taken to transition between states.

* **Block Configurations:** Each node in the decision tree shows a stack of blocks (red, green, blue, yellow) on a brown base.

### Detailed Analysis or ### Content Details

**State S0:**

* The initial state is described as the goal state: "The red block is on top of the yellow block."

**State S1:**

* **Expert Logits (SE1):**

* Unstack red: 8

* Pick-up blue: 1

* Pick-up yellow: 1

* **Amateur Logits (SA1):**

* Unstack red: 6

* Pick-up blue: 2

* Pick-up yellow: 2

* **CD Logits (SCD1):**

* Unstack red: Selected (indicated by a green checkmark)

* Pick up blue

* Pick up yellow

**State S2:**

* **Expert Logits (SE2):**

* Stack on yellow: 7

* Stack on blue: 1

* Stack on green: 1

* Put-down red: 1

* **Amateur Logits (SA2):**

* Stack on yellow: 3

* Stack on blue: 2

* Stack on green: 2

* Put-down red: 3

* **CD Logits (SCD2):**

* Stack on yellow: Selected (indicated by a green checkmark)

* Stack on blue

* Stack on green

* Put-down red

**Decision Tree:**

* **Root Node (a0):** Represents the initial state where the red block is on top of the yellow block.

* **Action a0 (Unstack the red):** From the root node, the action "Unstack the red" leads to three possible states.

* **Action a1 (Stack on The yellow):** From one of the states resulting from action a0, the action "Stack on The yellow" leads to several possible states.

* The tree shows the progression of actions and their resulting block configurations.

### Key Observations

* In state S1, both the expert and amateur logits favor "Unstack red," with the expert having a higher logit value.

* In state S2, both the expert and amateur logits favor "Stack on yellow," with the expert having a higher logit value.

* The CD Logits reflect the actions with the highest logits.

* The decision tree visually represents the possible sequences of actions and their outcomes.

### Interpretation

The diagram illustrates a decision-making process in a blocksworld environment. The logits represent the confidence or preference for different actions by an "expert" and an "amateur." The CD Logits indicate the chosen action based on these logits. The decision tree shows how these actions lead to different states, ultimately aiming to achieve the goal of having the red block on top of the yellow block. The expert consistently shows a stronger preference for the optimal actions (unstaking red when necessary and stacking on yellow when possible), suggesting a more efficient problem-solving strategy compared to the amateur.

</details>

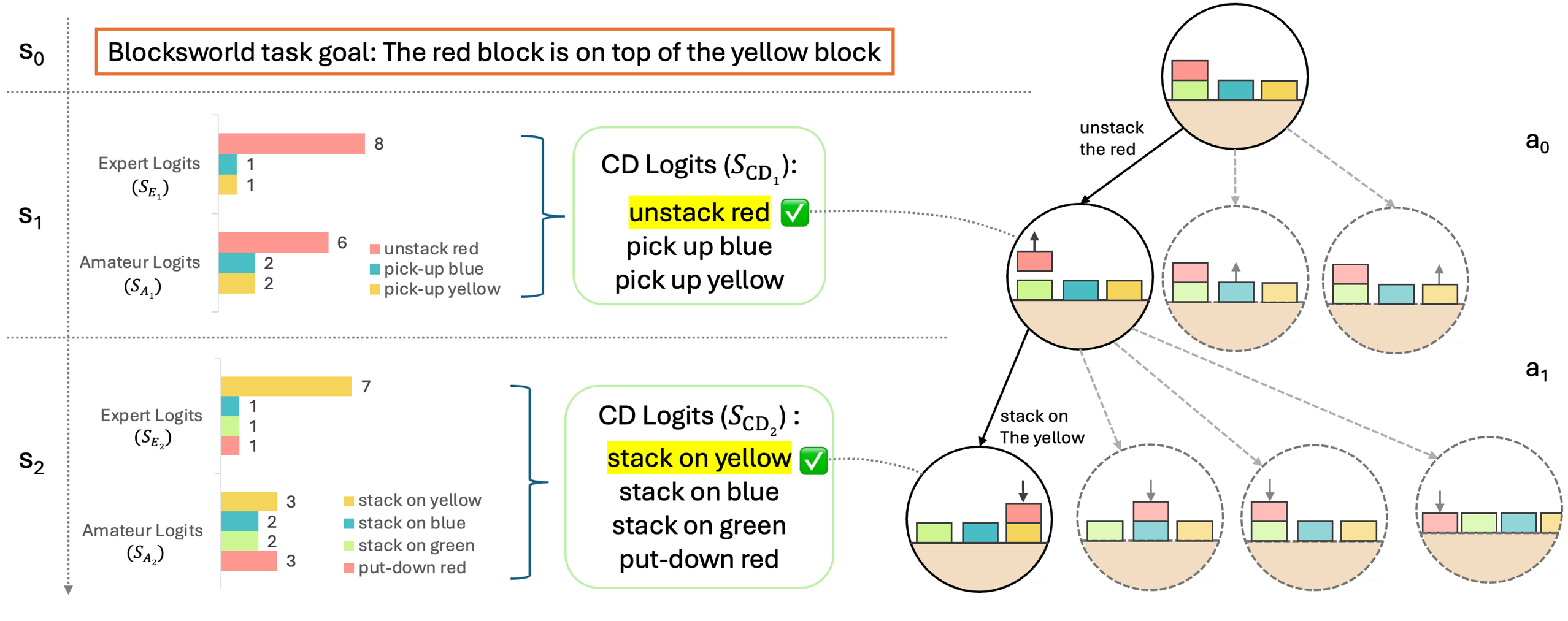

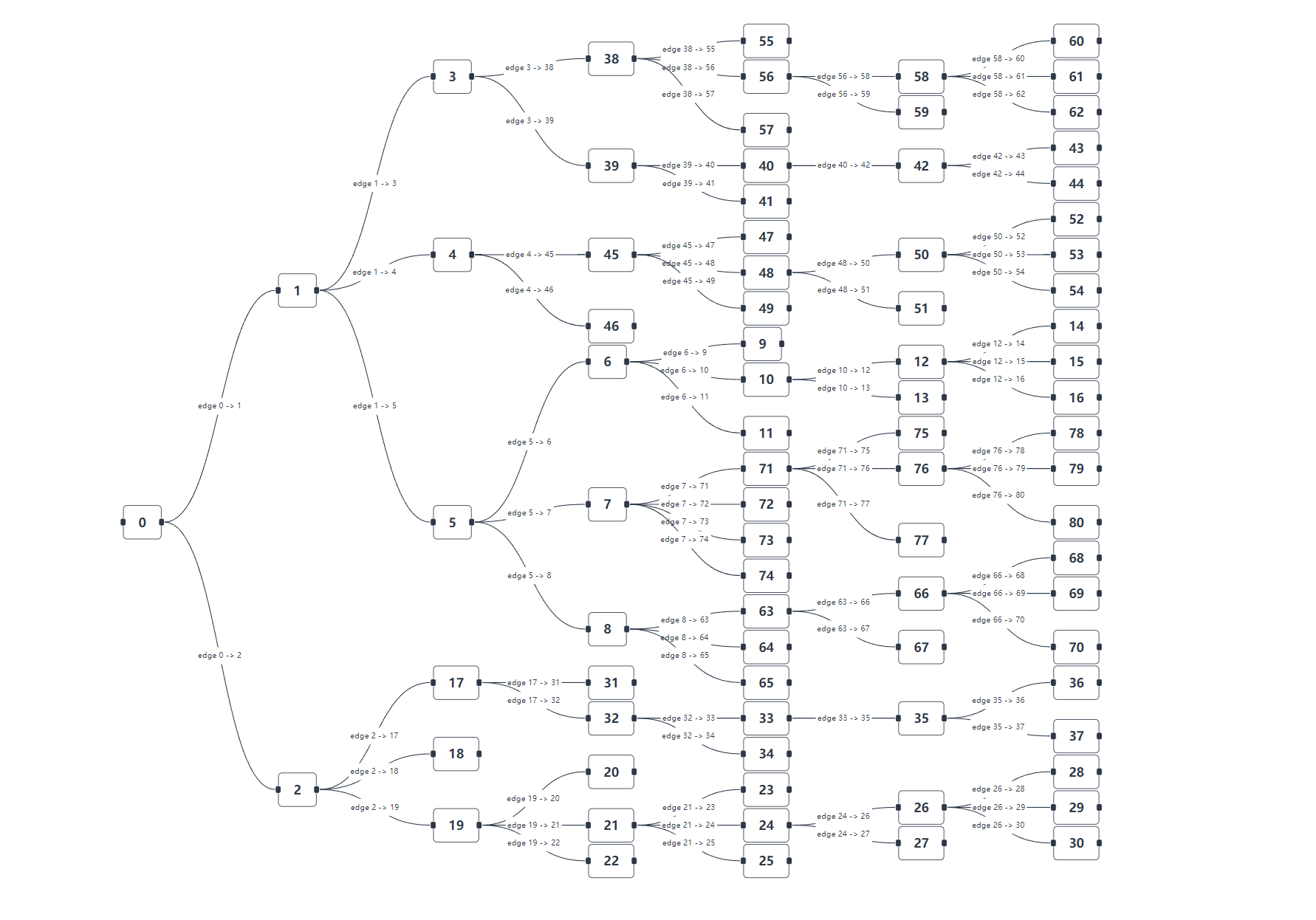

Figure 1: An overview of SC-MCTS ∗. We employ a novel reward model based on the principle of contrastive decoding to guide MCTS Reasoning on Blocksworld multi-step reasoning dataset.

The first key challenge is that MCTS’s general reasoning ability is almost entirely dependent on the reward model’s performance (as demonstrated by our ablation experiments in Section 5.5), making it highly challenging to design dense, general yet efficient rewards to guide MCTS reasoning. Previous works either require two or more LLMs (Tian et al., 2024) or training epochs (Zhang et al., 2024a), escalating the VRAM and computational demand, or they rely on domain-specific tools (Xin et al., 2024a; b) or datasets (Qi et al., 2024), making it difficult to generalize to other tasks or datasets.

The second key challenge is that MCTS is significantly slower than Chain of Thoughts (CoT). CoT only requires designing a prompt of multi-turn chats (Wei et al., 2024). In contrast, MCTS builds a reasoning tree with 2–10 layers depending on the difficulty of the task, where each node in the tree represents a chat round with LLM which may need to be visited one or multiple times. Moreover, to obtain better performance, we typically perform 2–10 MCTS iterations, which greatly increases the number of nodes, leading to much higher computational costs and slower reasoning speed.

To address the these challenges, we went beyond prior works that treated MCTS as a tool and focused on analyzing and improving its components especially reward model. Using contrastive decoding, we redesigned reward model by integrating interpretable reward signals, clustering their prior distributions, and normalizing the rewards using our proposed prior statistical method. To prevent distribution shift, we also incorporated an online incremental update algorithm. We found that the commonly used Upper Confidence Bound on Trees (UCT) strategy often underperformed due to sensitivity to the exploration constant, so we refined it and improved backpropagation to favor steadily improving paths. To address speed issues, we integrated speculative decoding as a "free lunch." All experiments were conducted using the Blocksworld dataset detailed in Section 5.1.

Our goal is to: (i) design novel and high-performance reward models and maximize the performance of reward model combinations, (ii) analyze and optimize the performance of various MCTS components, (iii) enhance the interpretability of MCTS reasoning, (iv) and accelerate MCTS reasoning. Our contributions are summarized as follows:

1. We went beyond previous works who primarily treated MCTS as an tool rather than analyzing and improving its components. Specifically, we found the UCT strategy in most previous works may failed to function from our experiment. We also refined the backpropagation of MCTS to prefer more steadily improving paths, boosting performance.

1. To fully study the interpretability of MCTS multi-step reasoning, we conducted extensive quantitative analysis and ablation studies on every component. We carried out numerous experiments from both the numerical and distributional perspectives of the reward models, as well as its own interpretability, providing better interpretability for MCTS multi-step reasoning.

1. We designed a novel, general action-level reward model based on the principle of contrastive decoding, which requires no external tools, training, or datasets. Additionally, we found that previous works often failed to effectively harness multiple reward models, thus we proposed a statistical linear combination method. At the same time, we introduced speculative decoding to speed up MCTS reasoning by an average of 52% as a "free lunch."

We demonstrated the effectiveness of our approach by outperforming OpenAI’s flagship o1-mini model by an average of 17.4% using Llama-3.1-70B on the Blocksworld multi-step reasoning dataset.

2 Related Work

Large Language Models Multi-Step Reasoning

One of the key focus areas for LLMs is understanding and enhancing their reasoning capabilities. Recent advancements in this area focused on developing methods that improve LLMs’ ability to handle complex tasks in domains like code generation and mathematical problem-solving. Chain-of-Thought (CoT) (Wei et al., 2024) reasoning has been instrumental in helping LLMs break down intricate problems into a sequence of manageable steps, making them more adept at handling tasks that require logical reasoning. Building upon this, Tree-of-Thought (ToT) (Yao et al., 2024) reasoning extends CoT by allowing models to explore multiple reasoning paths concurrently, thereby enhancing their ability to evaluate different solutions simultaneously. Complementing these approaches, Monte Carlo Tree Search (MCTS) has emerged as a powerful reasoning method for decision-making in LLMs. Originally successful in AlphaGo’s victory (Silver et al., 2016), MCTS has been adapted to guide model-based planning by balancing exploration and exploitation through tree-based search and random sampling, and later to large language model reasoning (Hao et al., 2023), showing great results. This adaptation has proven particularly effective in areas requiring strategic planning. Notable implementations like ReST-MCTS ∗ (Zhang et al., 2024a), rStar (Qi et al., 2024), MCTSr (Zhang et al., 2024b) and Xie et al. (2024) have shown that integrating MCTS with reinforced self-training, self-play mutual reasoning or Direct Preference Optimization (Rafailov et al., 2023) can significantly improve reasoning capabilities in LLMs. Furthermore, recent advancements such as Deepseek Prover (Xin et al., 2024a; b) demonstrates the potential of these models to understand complex instructions such as formal mathematical proof.

Decoding Strategies

Contrastive decoding and speculative decoding both require Smaller Language Models (SLMs), yet few have realized that these two clever decoding methods can be seamlessly combined without any additional cost. The only work that noticed this was Yuan et al. (2024a), but their proposed speculative contrastive decoding focused on token-level decoding. In contrast, we designed a new action-level contrastive decoding to guide MCTS reasoning, the distinction will be discussed further in Section 4.1. For more detailed related work please refer to Appendix B.

3 Preliminaries

3.1 Multi-Step Reasoning

A multi-step reasoning problem can be modeled as a Markov Decision Process (Bellman, 1957) $\mathcal{M}=(S,A,P,r,\gamma)$ . $S$ is the state space containing all possible states, $A$ the action space, $P(s^{\prime}|s,a)$ the state transition function, $r(s,a)$ the reward function, and $\gamma$ the discount factor. The goal is to learn and to use a policy $\pi$ to maximize the discounted cumulative reward $\mathbb{E}_{\tau\sim\pi}\left[\sum_{t=0}^{T}\gamma^{t}r_{t}\right]$ . For reasoning with LLMs, we are more focused on using an existing LLM to achieve the best reasoning.

3.2 Monte Carlo Tree Search

Monte Carlo Tree Search (MCTS) is a decision-making algorithm involving a search tree to simulate and evaluate actions. The algorithm operates in the following four phases:

Node Selection: The selection process begins at the root, selecting nodes hierarchically using strategies like UCT as the criterion to favor a child node based on its quality and novelty.

Expansion: New child nodes are added to the selected leaf node by sampling $d$ possible actions, predicting the next state. If the leaf node is fully explored or terminal, expansion is skipped.

Simulation: During simulation or “rollout”, the algorithm plays out the “game” randomly from that node to a terminal state using a default policy.

Backpropagation: Once a terminal state is reached, the reward is propagated up the tree, and each node visited during the selection phase updates its value based on the simulation result.

Through iterative application of its four phases, MCTS efficiently improves reasoning through trials and heuristics, converging on the optimal solution.

3.3 Contrastive Decoding

We discuss vanilla Contrastive Decoding (CD) from Li et al. (2023), which improves text generation in LLMs by reducing errors like repetition and self-contradiction. CD uses the differences between an expert model and an amateur model, enhancing the expert’s strengths and suppressing the amateur’s weaknesses. Consider a prompt of length $n$ , the CD objective is defined as:

$$

{\mathcal{L}}_{\text{CD}}(x_{\text{cont}},x_{\text{pre}})=\log p_{\text{EXP}}(%

x_{\text{cont}}|x_{\text{pre}})-\log p_{\text{AMA}}(x_{\text{cont}}|x_{\text{%

pre}})

$$

where $x_{\text{pre}}$ is the sequence of tokens $x_{1},...,x_{n}$ , the model generates continuations of length $m$ , $x_{\text{cont}}$ is the sequence of tokens $x_{n+1},...,x_{n+m}$ , and $p_{\text{EXP}}$ and $p_{\text{AMA}}$ are the expert and amateur probability distributions. To avoid penalizing correct behavior of the amateur or promoting implausible tokens, CD applies an adaptive plausibility constraint using an $\alpha$ -mask, which filters tokens by their logits against a threshold, the filtered vocabulary $V_{\text{valid}}$ is defined as:

$$

V_{\text{valid}}=\{i\mid s^{(i)}_{\text{EXP}}\geq\log\alpha+\max_{k}s^{(k)}_{%

\text{EXP}}\}

$$

where $s^{(i)}_{\text{EXP}}$ and $s^{(i)}_{\text{AMA}}$ are unnormalized logits assigned to token i by the expert and amateur models. Final logits are adjusted with a coefficient $(1+\beta)$ , modifying the contrastive effect on output scores (Liu et al., 2021):

$$

s^{(i)}_{\text{CD}}=(1+\beta)s^{(i)}_{\text{EXP}}-s^{(i)}_{\text{AMA}}

$$

However, our proposed CD is at action level, averaging over the whole action, instead of token level in vanilla CD. Our novel action-level CD reward more robustly captures the differences in confidence between the expert and amateur models in the generated answers compared to vanilla CD. The distinction will be illustrated in Section 4.1 and explained further in Appendix A.

3.4 Speculative Decoding as "free lunch"

Based on Speculative Decoding (Leviathan et al., 2023), the process can be summarized as follows: Let $M_{p}$ be the target model with the conditional distribution $p(x_{t}|x_{<t})$ , and $M_{q}$ be a smaller approximation model with $q(x_{t}|x_{<t})$ . The key idea is to generate $\gamma$ tokens using $M_{q}$ and filter them against $M_{p}$ ’s distribution, accepting tokens consistent with $M_{p}$ . Speculative decoding samples $\gamma$ tokens autoregressively from $M_{q}$ , keeping those where $q(x)≤ p(x)$ . If $q(x)>p(x)$ , the sample is rejected with probability $1-\frac{p(x)}{q(x)}$ , and a new sample is drawn from the adjusted distribution:

$$

p^{\prime}(x)=\text{norm}(\max(0,p(x)-q(x))).

$$

Since both contrastive and speculative decoding rely on the same smaller models, we can achieve the acceleration effect of speculative decoding as a "free lunch" (Yuan et al., 2024a).

4 Method

4.1 Multi-Reward Design

Our primary goal is to design novel and and high-performance reward models for MCTS reasoning and to maximize the performance of reward model combinations, as our ablation experiments in Section 5.5 demonstrate that MCTS performance is almost entirely determined by the reward model.

SC-MCTS ∗ is guided by three highly interpretable reward models: contrastive JS divergence, loglikelihood and self evaluation. Previous work such as (Hao et al., 2023) often directly adds reward functions with mismatched numerical magnitudes without any prior statistical analysis or linear combination. As a result, their combined reward models may fail to demonstrate full performance. Moreover, combining multiple rewards online presents numerous challenges such as distributional shifts in the values. Thus, we propose a statistically-informed reward combination method: Multi-RM method. Each reward model is normalized contextually by the fine-grained prior statistics of its empirical distribution. The pseudocode for reward model construction is shown in Algorithm 1. Please refer to Appendix D for a complete version of SC-MCTS ∗ that includes other improvements such as dealing with distribution shift when combining reward functions online.

Algorithm 1 SC-MCTS ∗, reward model construction

1: Expert LLM $\pi_{e}$ , Amateur SLM $\pi_{a}$ , Problem set $D$ ; $M$ selected problems for prior statistics, $N$ pre-generated solutions per problem, $K$ clusters

2: $\tilde{A}←\text{Sample-solutions}(\pi_{e},D,M,N)$ $\triangleright$ Pre-generate $M× N$ solutions

3: $p_{e},p_{a}←\text{Evaluate}(\pi_{e},\pi_{a},\tilde{A})$ $\triangleright$ Get policy distributions

4: for $r∈\{\text{JSD},\text{LL},\text{SE}\}$ do

5: $\bm{\mu}_{r},\bm{\sigma}_{r},\bm{b}_{r}←\text{Cluster-stats}(r(\tilde%

{A}),K)$ $\triangleright$ Prior statistics (Equation 1)

6: $R_{r}← x\mapsto(r(x)-\mu_{r}^{k^{*}})/\sigma_{r}^{k^{*}}$ $\triangleright$ Reward normalization (Equation 2)

7: end for

8: $R←\sum_{r∈\{\text{JSD},\text{LL},\text{SE}\}}w_{r}R_{r}$ $\triangleright$ Composite reward

9: $A_{D}←\text{MCTS-Reasoning}(\pi_{e},R,D,\pi_{a})$ $\triangleright$ Search solutions guided by $R$

10: $A_{D}$

Jensen-Shannon Divergence

The Jensen-Shannon divergence (JSD) is a symmetric and bounded measure of similarity between two probability distributions $P$ and $Q$ . It is defined as:

$$

\mathrm{JSD}(P\,\|\,Q)=\frac{1}{2}\mathrm{KL}(P\,\|\,M)+\frac{1}{2}\mathrm{KL}%

(Q\,\|\,M),\quad M=\frac{1}{2}(P+Q),

$$

where $\mathrm{KL}(P\,\|\,Q)$ is the Kullback-Leibler Divergence (KLD), and $M$ represents the midpoint distribution. The JSD is bounded between 0 and 1 for discrete distributions, making it better than KLD for online normalization of reward modeling.

Inspired by contrastive decoding, we propose our novel reward model: JSD between the expert model’s logits and the amateur model’s logits. Unlike vanilla token-level contrastive decoding (Li et al., 2023), our reward is computed at action-level, treating a sequence of action tokens as a whole:

$$

R_{\text{JSD}}=\frac{1}{n}\sum_{i=T_{\text{prefix}}+1}^{n}\left[\mathrm{JSD}(p%

_{\text{e}}(x_{i}|x_{<i})\,\|\,p_{\text{a}}(x_{i}|x_{<i})\right]

$$

where $n$ is the length of tokens, $T_{\text{prefix}}$ is the index of the last prefix token, $p_{\text{e}}$ and $p_{\text{a}}$ represent the softmax probabilities of the expert and amateur models, respectively. This approach ensures that the reward captures model behavior at the action level as the entire sequence of tokens is taken into account at once. This contrasts with vanilla token-level methods where each token is treated serially.

Loglikelihood

Inspired by Hao et al. (2023), we use a loglikelihood reward model to evaluate the quality of generated answers based on a given question prefix. The model computes logits for the full sequence (prefix + answer) and accumulates the log-probabilities over the answer part tokens.

Let the full sequence $x=(x_{1},x_{2},...,x_{T_{\text{total}}})$ consist of a prefix and a generated answer. The loglikelihood reward $R_{\text{LL}}$ is calculated over the answer portion:

$$

R_{\text{LL}}=\sum_{i=T_{\text{prefix}}+1}^{T_{\text{total}}}\log\left(\frac{%

\exp(z_{\theta}(x_{i}))}{\sum_{x^{\prime}\in V}\exp(z_{\theta}(x^{\prime}))}\right)

$$

where $z_{\theta}(x_{i})$ represents the unnormalized logit for token $x_{i}$ . After calculating logits for the entire sequence, we discard the prefix and focus on the answer tokens to form the loglikelihood reward.

Self Evaluation

Large language models’ token-level self evaluation can effectively quantify the model’s uncertainty, thereby improving the quality of selective generation (Ren et al., 2023). We instruct the LLM to perform self evaluation on its answers, using a action level evaluation method, including a self evaluation prompt to explicitly indicate the model’s uncertainty.

After generating the answer, we prompt the model to self-evaluate its response by asking "Is this answer correct/good?" This serves to capture the model’s confidence in its own output leading to more informed decision-making. The self evaluation prompt’s logits are then used to calculate a reward function. Similar to the loglikelihood reward model, we calculate the self evaluation reward $R_{\text{SE}}$ by summing the log-probabilities over the self-evaluation tokens.

Harnessing Multiple Reward Models

We collected prior distributions for the reward models and found some of them span multiple regions. Therefore, we compute the fine-grained prior statistics as mean and standard deviation of modes of the prior distribution ${\mathcal{R}}∈\{{\mathcal{R}}_{\text{JSD}},{\mathcal{R}}_{\text{LL}},{%

\mathcal{R}}_{\text{SE}}\}$ :

$$

\mu^{(k)}=\frac{1}{c_{k}}\sum_{R_{i}\in\rinterval{b_{1}}{b_{k+1}}}R_{i}\quad%

\text{and}\quad\sigma^{(k)}=\sqrt{\frac{1}{c_{k}}\sum_{R_{i}\in\rinterval{b_{1%

}}{b_{k+1}}}(R_{i}-\mu^{(k)})^{2}} \tag{1}

$$

where $b_{1}<b_{2}<...<b_{K+1}$ are the region boundaries in ${\mathcal{R}}$ , $R_{i}∈{\mathcal{R}}$ , and $c_{k}$ is the number of $R_{i}$ in $\rinterval{b_{1}}{b_{k+1}}$ . The region boundaries were defined during the prior statistical data collection phase 1.

After we computed the fine-grained prior statistics, the reward factors are normalized separately for each region (which degenerates to standard normalization if only a single region is found):

$$

R_{\text{norm}}(x)=(R(x)-\mu^{(k^{*})})/\sigma^{(k^{*})},~{}\text{where}~{}k^{%

*}=\operatorname*{arg\,max}\{k:b_{k}\leq R(x)\} \tag{2}

$$

This reward design, which we call Multi-RM method, has some caveats: first, to prevent distribution shift during reasoning, we update the mean and standard deviation of the reward functions online for each mode (see Appendix D for pseudocode); second, we focus only on cases with clearly distinct reward modes, leaving general cases for future work. For the correlation heatmap, see Appendix C.

4.2 Node Selection Strategy

Upper Confidence Bound applied on Trees Algorithm (UCT) (Coquelin & Munos, 2007) is crucial for the selection phase, balancing exploration and exploitation by choosing actions that maximize:

$$

UCT_{j}=\bar{X}_{j}+C\sqrt{\frac{\ln N}{N_{j}}}

$$

where $\bar{X}_{j}$ is the average reward of taking action $j$ , $N$ is the number of times the parent has been visited, and $N_{j}$ is the number of times node $j$ has been visited for simulation, $C$ is a constant to balance exploitation and exploration.

However, $C$ is a crucial part of UCT. Previous work (Hao et al., 2023; Zhang et al., 2024b) had limited thoroughly investigating its components, leading to potential failures of the UCT strategy. This is because they often used the default value of 1 from the original proposed UCT (Coquelin & Munos, 2007) without conducting sufficient quantitative experiments to find the optimal $C$ . This will be discussed in detail in Section 5.4.

4.3 Backpropagation

After each MCTS iteration, multiple paths from the root to terminal nodes are generated. By backpropagating along these paths, we update the value of each state-action pair. Previous MCTS approaches often use simple averaging during backpropagation, but this can overlook paths where the goal achieved metric $G(p)$ progresses smoothly (e.g., $G(p_{1})=0→ 0.25→ 0.5→ 0.75$ ). These paths just few step away from the final goal $G(p)=1$ , are often more valuable than less stable ones.

To improve value propagation, we propose an algorithm that better captures value progression along a path. Given a path $\mathbf{P}=\{p_{1},p_{2},...,p_{n}\}$ with $n$ nodes, where each $p_{i}$ represents the value at node $i$ , the total value is calculated by summing the increments between consecutive nodes with a length penalty. The increment between nodes $p_{i}$ and $p_{i-1}$ is $\Delta_{i}=p_{i}-p_{i-1}$ . Negative increments are clipped at $-0.1$ and downweighted by 0.5. The final path value $V_{\text{final}}$ is:

$$

V_{\text{final}}=\sum_{i=2}^{n}\left\{\begin{array}[]{ll}\Delta_{i},&\text{if %

}\Delta_{i}\geq 0\\

0.5\times\max(\Delta_{i},-0.1),&\text{if }\Delta_{i}<0\end{array}\right\}-%

\lambda\times n \tag{3}

$$

where $n$ is the number of nodes in the path and $\lambda=0.1$ is the penalty factor to discourage long paths.

5 Experiments

5.1 Dataset

Blocksworld (Valmeekam et al., 2024; 2023) is a classic domain in AI research for reasoning and planning, where the goal is to rearrange blocks into a specified configuration using actions like ’pick-up,’ ’put-down,’ ’stack,’ and ’unstack. Blocks can be moved only if no block on top, and only one block at a time. The reasoning process in Blocksworld is a MDP. At time step $t$ , the LLM agent selects an action $a_{t}\sim p(a\mid s_{t},c)$ , where $s_{t}$ is the current block configuration, $c$ is the prompt template. The state transition $s_{t+1}=P(s_{t},a_{t})$ is deterministic and is computed by rules. This forms a trajectory of interleaved states and actions $(s_{0},a_{0},s_{1},a_{1},...,s_{T})$ towards the goal state.

One key feature of Blocksworld is its built-in verifier, which tracks progress toward the goal at each step. This makes Blocksworld ideal for studying heuristic LLM multi-step reasoning. However, we deliberately avoid using the verifier as part of the reward model as it is task-specific. More details of Blocksworld can be found in Appendix F.

5.2 Main Results

To evaluate the SC-MCTS ∗ algorithm in LLM multi-step reasoning, we implemented CoT, RAP-MCTS, and SC-MCTS ∗ using Llama-3-70B and Llama-3.1-70B. For comparison, we used Llama-3.1-405B and GPT-4o for CoT, and applied 0 and 4 shot single turn for o1-mini, as OpenAI (2024b) suggests avoiding CoT prompting. The experiment was conducted on Blocksworld dataset across all steps and difficulties. For LLM settings, GPU and OpenAI API usage data, see Appendix E and H.

| Mode | Models | Method | Steps | | | | | | |

| --- | --- | --- | --- | --- | --- | --- | --- | --- | --- |

| Step 2 | Step 4 | Step 6 | Step 8 | Step 10 | Step 12 | Avg. | | | |

| Easy | Llama-3-70B ~Llama-3.2-1B | 4-shot CoT | 0.2973 | 0.4405 | 0.3882 | 0.2517 | 0.1696 | 0.1087 | 0.2929 |

| RAP-MCTS | 0.9459 | 0.9474 | 0.8138 | 0.4196 | 0.2136 | 0.1389 | 0.5778 | | |

| SC-MCTS* (Ours) | 0.9730 | 0.9737 | 0.8224 | 0.4336 | 0.2136 | 0.2222 | 0.5949 | | |

| Llama-3.1-70B ~Llama-3.2-1B | 4-shot CoT | 0.5405 | 0.4868 | 0.4069 | 0.2238 | 0.2913 | 0.2174 | 0.3441 | |

| RAP-MCTS | 1.0000 | 0.9605 | 0.8000 | 0.4336 | 0.2039 | 0.1111 | 0.5796 | | |

| SC-MCTS* (Ours) | 1.0000 | 0.9737 | 0.7724 | 0.4503 | 0.3010 | 0.1944 | 0.6026 | | |

| Llama-3.1-405B | 0-shot CoT | 0.8108 | 0.6579 | 0.5931 | 0.5105 | 0.4272 | 0.3611 | 0.5482 | |

| 4-shot CoT | 0.7838 | 0.8553 | 0.6483 | 0.4266 | 0.5049 | 0.4167 | 0.5852 | | |

| o1-mini | 0-shot | 0.9730 | 0.7368 | 0.5103 | 0.3846 | 0.3883 | 0.1944 | 0.4463 | |

| 4-shot | 0.9459 | 0.8026 | 0.6276 | 0.3497 | 0.3301 | 0.2222 | 0.5167 | | |

| GPT-4o | 0-shot CoT | 0.5405 | 0.4868 | 0.3241 | 0.1818 | 0.1165 | 0.0556 | 0.2666 | |

| 4-shot CoT | 0.5135 | 0.6579 | 0.6000 | 0.2797 | 0.3010 | 0.3611 | 0.4444 | | |

| Hard | Llama-3-70B ~Llama-3.2-1B | 4-shot CoT | 0.5556 | 0.4405 | 0.3882 | 0.2517 | 0.1696 | 0.1087 | 0.3102 |

| RAP-MCTS | 1.0000 | 0.8929 | 0.7368 | 0.4503 | 0.1696 | 0.1087 | 0.5491 | | |

| SC-MCTS* (Ours) | 0.9778 | 0.8929 | 0.7566 | 0.5298 | 0.2232 | 0.1304 | 0.5848 | | |

| Llama-3.1-70B ~Llama-3.2-1B | 4-shot CoT | 0.6222 | 0.2857 | 0.3421 | 0.1722 | 0.1875 | 0.2174 | 0.2729 | |

| RAP-MCTS | 0.9778 | 0.9048 | 0.7829 | 0.4702 | 0.1875 | 0.1087 | 0.5695 | | |

| SC-MCTS* (Ours) | 0.9778 | 0.9405 | 0.8092 | 0.4702 | 0.1696 | 0.2174 | 0.5864 | | |

| Llama-3.1-405B | 0-shot CoT | 0.7838 | 0.6667 | 0.6053 | 0.3684 | 0.2679 | 0.2609 | 0.4761 | |

| 4-shot CoT | 0.8889 | 0.6667 | 0.6579 | 0.4238 | 0.5804 | 0.5217 | 0.5915 | | |

| o1-mini | 0-shot | 0.6889 | 0.4286 | 0.1776 | 0.0993 | 0.0982 | 0.0000 | 0.2034 | |

| 4-shot | 0.9556 | 0.8452 | 0.5263 | 0.3907 | 0.2857 | 0.1739 | 0.4966 | | |

| GPT-4o | 0-shot CoT | 0.6222 | 0.3929 | 0.3026 | 0.1523 | 0.0714 | 0.0000 | 0.2339 | |

| 4-shot CoT | 0.6222 | 0.4167 | 0.5197 | 0.3642 | 0.3304 | 0.1739 | 0.4102 | | |

Table 1: Accuracy of various reasoning methods and models across steps and difficulty modes on the Blocksworld multi-step reasoning dataset.

From Table 1, it can be observed that SC-MCTS ∗ significantly outperforms RAP-MCTS and 4-shot CoT across both easy and hard modes, and in easy mode, Llama-3.1-70B model using SC-MCTS ∗ outperforms the 4-shot CoT Llama-3.1-405B model.

<details>

<summary>extracted/6087579/fig/acc.png Details</summary>

### Visual Description

## Line Chart: Model Accuracy vs. Step

### Overview

The image contains two line charts comparing the accuracy of different language models across several steps. The charts share the same legend and axes, allowing for a direct comparison of model performance. The x-axis represents the "Step" number, while the y-axis represents "Accuracy."

### Components/Axes

* **X-axis:** "Step" with markers at 2, 4, 6, 8, 10, and 12.

* **Y-axis:** "Accuracy" ranging from 0.2 to 1.0, with markers at 0.2, 0.4, 0.6, 0.8, and 1.0.

* **Legend (Top-Right):**

* Yellow dashed line: "Llama-3.1-70B: 4-shot CoT"

* Orange dashed line: "Llama-3.1-70B: RAP-MCTS"

* Red solid line: "Llama-3.1-70B: SC-MCTS* (Ours)"

* Pink dashed line: "o1-mini: 4-shot"

* Blue dashed line: "Llama-3.1-405B: 4-shot CoT"

### Detailed Analysis

**Left Chart:**

* **Llama-3.1-70B: 4-shot CoT (Yellow Dashed):** Starts at approximately 0.62 at Step 2, decreases to about 0.32 at Step 4, then to 0.34 at Step 6, drops to 0.18 at Step 8, remains at 0.18 at Step 10, and ends at approximately 0.22 at Step 12.

* **Llama-3.1-70B: RAP-MCTS (Orange Dashed):** Starts at approximately 0.98 at Step 2, decreases to about 0.88 at Step 4, then to 0.78 at Step 6, drops to 0.42 at Step 8, remains at 0.18 at Step 10, and ends at approximately 0.14 at Step 12.

* **Llama-3.1-70B: SC-MCTS* (Ours) (Red Solid):** Starts at approximately 0.98 at Step 2, decreases to about 0.92 at Step 4, then to 0.88 at Step 6, drops to 0.42 at Step 8, remains at 0.18 at Step 10, and ends at approximately 0.12 at Step 12.

* **o1-mini: 4-shot (Pink Dashed):** Starts at approximately 0.94 at Step 2, decreases to about 0.84 at Step 4, then to 0.74 at Step 6, drops to 0.44 at Step 8, remains at 0.20 at Step 10, and ends at approximately 0.14 at Step 12.

* **Llama-3.1-405B: 4-shot CoT (Blue Dashed):** Starts at approximately 0.92 at Step 2, decreases to about 0.68 at Step 4, then to 0.66 at Step 6, drops to 0.44 at Step 8, increases to 0.62 at Step 10, and ends at approximately 0.52 at Step 12.

**Right Chart:**

* **Llama-3-70B: 4-shot CoT (Yellow Dashed):** Starts at approximately 0.56 at Step 2, decreases to about 0.46 at Step 4, then to 0.34 at Step 6, drops to 0.24 at Step 8, remains at 0.18 at Step 10, and ends at approximately 0.12 at Step 12.

* **Llama-3-70B: RAP-MCTS (Orange Dashed):** Starts at approximately 1.00 at Step 2, decreases to about 0.92 at Step 4, then to 0.78 at Step 6, drops to 0.44 at Step 8, remains at 0.26 at Step 10, and ends at approximately 0.14 at Step 12.

* **Llama-3-70B: SC-MCTS* (Ours) (Red Solid):** Starts at approximately 0.98 at Step 2, decreases to about 0.90 at Step 4, then to 0.80 at Step 6, drops to 0.36 at Step 8, remains at 0.26 at Step 10, and ends at approximately 0.12 at Step 12.

* **o1-mini: 4-shot (Pink Dashed):** Starts at approximately 0.96 at Step 2, decreases to about 0.86 at Step 4, then to 0.76 at Step 6, drops to 0.38 at Step 8, remains at 0.24 at Step 10, and ends at approximately 0.12 at Step 12.

* **Llama-3.1-405B: 4-shot CoT (Blue Dashed):** Starts at approximately 0.90 at Step 2, decreases to about 0.68 at Step 4, then to 0.66 at Step 6, drops to 0.44 at Step 8, increases to 0.58 at Step 10, and ends at approximately 0.52 at Step 12.

### Key Observations

* The "Llama-3.1-70B: SC-MCTS* (Ours)" model (red line) generally starts with high accuracy but experiences a significant drop after Step 6.

* The "Llama-3.1-405B: 4-shot CoT" model (blue line) shows a different trend, with accuracy decreasing initially but then increasing slightly at Step 10 before decreasing again at Step 12.

* The "Llama-3.1-70B: 4-shot CoT" model (yellow line) consistently performs the worst across all steps in the left chart.

* The "Llama-3-70B: 4-shot CoT" model (yellow line) consistently performs the worst across all steps in the right chart.

* The other models ("Llama-3.1-70B: RAP-MCTS", "o1-mini: 4-shot") show a decreasing trend in accuracy as the step increases.

### Interpretation

The charts illustrate the performance of different language models over a series of steps, likely representing iterations or stages in a task. The decreasing accuracy of most models suggests a potential degradation in performance as the process continues. The "Llama-3.1-405B: 4-shot CoT" model's slight recovery at Step 10 could indicate a specific adaptation or adjustment within that model. The "SC-MCTS*" model, despite starting strong, appears to be susceptible to performance decline over time. The comparison between the left and right charts is not immediately clear without additional context, but the models in the right chart generally start with higher accuracy. The consistent underperformance of the "4-shot CoT" models suggests that the "Chain of Thought" method may not be as effective as other approaches for these specific models and tasks.

</details>

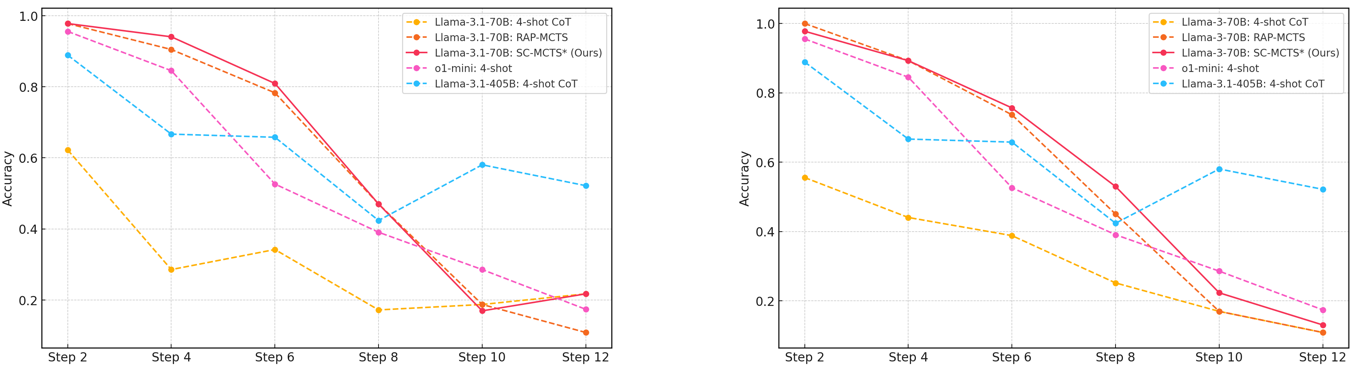

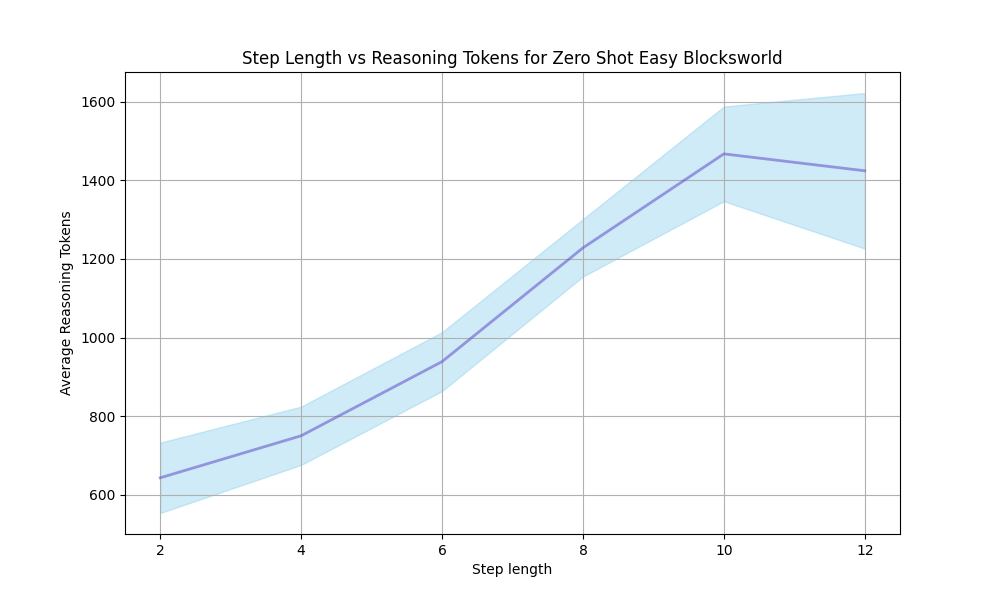

Figure 2: Accuracy comparison of various models and reasoning methods on the Blocksworld multi-step reasoning dataset across increasing reasoning steps.

From Figure 2, we observe that as the reasoning path lengthens, the performance advantage of two MCTS reasoning algorithms over themselves, GPT-4o, and Llama-3.1-405B’s CoT explicit multi-turn chats and o1-mini implicit multi-turn chats (OpenAI, 2024b) in terms of accuracy diminishes, becoming particularly evident after Step 6. The accuracy decline for CoT is more gradual as the reasoning path extends, whereas models employing MCTS reasoning exhibits a steeper decline. This trend could be due to the fixed iteration limit of 10 across different reasoning path lengths, which might be unfair to longer paths. Future work could explore dynamically adjusting the iteration limit based on reasoning path length. It may also be attributed to our use of a custom EOS token to ensure output format stability in the MCTS reasoning process, which operates in completion mode. As the number of steps and prompt prefix lengths increases, the limitations of completion mode may become more pronounced compared to the chat mode used in multi-turn chats. Additionally, we observe that Llama-3.1-405B benefits significantly from its huge parameter size, although underperforming at fewer steps, experiences the slowest accuracy decline as the reasoning path grows longer.

5.3 Reasoning Speed

<details>

<summary>extracted/6087579/fig/speed.png Details</summary>

### Visual Description

## Bar Chart: Token Generation Speed Comparison

### Overview

The image presents two bar charts comparing the token generation speed (tokens/s) of different model configurations: "Vanilla", "SD-Llama-3.1-8B", and "SD-Llama-3.2-1B". The left chart compares these configurations for "Llama3.1-70B", while the right chart compares them for "Llama-3.1-405B". The charts also display the relative speed compared to the "Vanilla" configuration, indicated by values like "1.00x", "1.15x", "1.52x", "2.00x", and "0.55x".

### Components/Axes

**Left Chart:**

* **X-axis:** "Llama3.1-70B"

* **Y-axis:** "Token/s", ranging from 0 to 100, with tick marks at intervals of 20.

* **Legend (Top-Right):**

* Vanilla (light red)

* SD-Llama-3.1-8B (light yellow)

* SD-Llama-3.2-1B (light blue)

**Right Chart:**

* **X-axis:** "Llama-3.1-405B"

* **Y-axis:** "Token/s", ranging from 0 to 14, with tick marks at intervals of 2.

* **Legend (Top-Right):**

* Vanilla (light red)

* SD-Llama-3.1-8B (light yellow)

* SD-Llama-3.2-1B (light blue)

**Shared Elements:**

* A horizontal dashed line is present on both charts, representing the "Vanilla" model's token/s value.

* The relative speed compared to the "Vanilla" configuration is displayed above each bar.

### Detailed Analysis

**Left Chart (Llama3.1-70B):**

* **Vanilla (light red):** The bar reaches approximately 60 tokens/s. The relative speed is labeled as "1.00x".

* **SD-Llama-3.1-8B (light yellow):** The bar reaches approximately 69 tokens/s. The relative speed is labeled as "1.15x".

* **SD-Llama-3.2-1B (light blue):** The bar reaches approximately 91 tokens/s. The relative speed is labeled as "1.52x".

**Right Chart (Llama-3.1-405B):**

* **Vanilla (light red):** The bar reaches approximately 6 tokens/s. The relative speed is labeled as "1.00x".

* **SD-Llama-3.1-8B (light yellow):** The bar reaches approximately 12 tokens/s. The relative speed is labeled as "2.00x".

* **SD-Llama-3.2-1B (light blue):** The bar reaches approximately 3.3 tokens/s. The relative speed is labeled as "0.55x".

### Key Observations

* For the Llama3.1-70B model, both SD-Llama configurations outperform the Vanilla model in terms of token generation speed. SD-Llama-3.2-1B shows the most significant improvement.

* For the Llama-3.1-405B model, SD-Llama-3.1-8B significantly outperforms the Vanilla model, while SD-Llama-3.2-1B performs worse.

### Interpretation

The charts demonstrate the impact of different model configurations on token generation speed. The results vary depending on the base model (Llama3.1-70B vs. Llama-3.1-405B). For Llama3.1-70B, both SD-Llama configurations improve performance. However, for Llama-3.1-405B, SD-Llama-3.1-8B provides a substantial performance boost, while SD-Llama-3.2-1B reduces performance. This suggests that the effectiveness of these configurations is model-dependent, and careful consideration is needed when choosing a configuration for a specific model. The "Vanilla" model serves as a baseline for comparison, allowing for easy assessment of the relative performance gains or losses associated with the SD-Llama configurations.

</details>

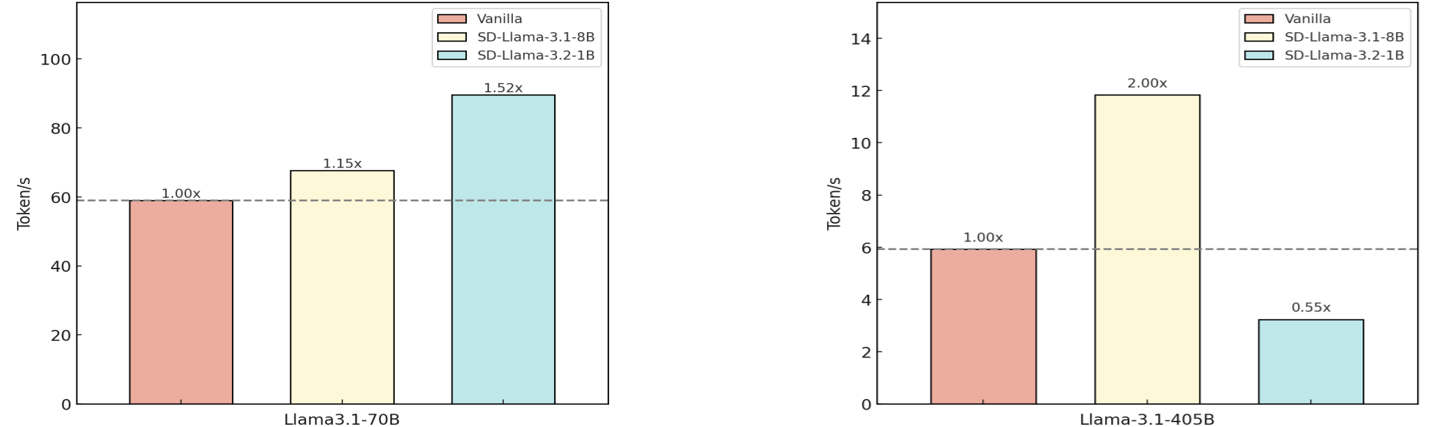

Figure 3: Speedup comparison of different model combinations. For speculative decoding, we use Llama-3.2-1B and Llama-3.1.8B as amateur models with Llama-3.1-70B and Llama-3.1-405B as expert models, based on average node-level reasoning speed in MCTS for Blocksworld multi-step reasoning dataset.

As shown in Figure 3, we can observe that the combination of Llama-3.1-405B with Llama-3.1-8B achieves the highest speedup, improving inference speed by approximately 100% compared to vanilla decoding. Similarly, pairing Llama-3.1-70B with Llama-3.2-1B results in a 51.9% increase in reasoning speed. These two combinations provide the most significant gains, demonstrating that speculative decoding with SLMs can substantially enhance node level reasoning speed. However, we can also observe from the combination of Llama-3.1-405B with Llama-3.2-1B that the parameters of SLMs in speculative decoding should not be too small, since the threshold for accepting draft tokens during the decoding process remains fixed to prevent speculative decoding from affecting performance (Leviathan et al., 2023), as overly small parameters may have a negative impact on decoding speed, which is consistent with the findings in Zhao et al. (2024); Chen et al. (2023).

5.4 Parameters

<details>

<summary>extracted/6087579/fig/uct.png Details</summary>

### Visual Description

## Line Chart: Accuracy vs. C

### Overview

The image is a line chart that plots the accuracy of different algorithms against the parameter 'C'. The chart compares the performance of RAP-MCTS, SC-MCTS* (Ours), and a Negative Control (c=0). The x-axis represents the parameter 'C', and the y-axis represents the accuracy.

### Components/Axes

* **Title:** Implicit, but the chart shows "Accuracy vs. C"

* **X-axis:**

* Label: C

* Scale: 0 to 400, with major ticks at 0, 50, 100, 150, 200, 250, 300, 350, and 400.

* **Y-axis:**

* Label: Accuracy

* Scale: 0.54 to 0.62, with major ticks at 0.54, 0.56, 0.58, 0.60, and 0.62.

* **Legend:** Located in the top-right corner.

* RAP-MCTS: Represented by a black triangle.

* SC-MCTS* (Ours): Represented by a black star.

* Negative Control (c=0): Represented by a black circle.

* **Data Series:** The chart contains one primary data series represented by a teal line with circular markers.

### Detailed Analysis

* **RAP-MCTS:** Represented by a black triangle at approximately (2, 0.55).

* **SC-MCTS* (Ours):** Represented by a black star at approximately (100, 0.63).

* **Negative Control (c=0):** Represented by a black circle at approximately (2, 0.55).

* **Teal Line (Unspecified Algorithm):**

* Trend: The teal line initially increases sharply from C=0 to approximately C=50. It then plateaus around C=50 to C=150, followed by a gradual decrease until C=250. From C=250 to C=400, the line remains relatively constant.

* Data Points:

* C=0, Accuracy=0.54

* C=5, Accuracy=0.55

* C=10, Accuracy=0.57

* C=15, Accuracy=0.58

* C=20, Accuracy=0.60

* C=25, Accuracy=0.60

* C=30, Accuracy=0.61

* C=35, Accuracy=0.61

* C=40, Accuracy=0.63

* C=50, Accuracy=0.63

* C=75, Accuracy=0.63

* C=100, Accuracy=0.63

* C=125, Accuracy=0.63

* C=150, Accuracy=0.63

* C=200, Accuracy=0.63

* C=250, Accuracy=0.61

* C=300, Accuracy=0.61

* C=350, Accuracy=0.61

* C=400, Accuracy=0.61

### Key Observations

* The teal line shows a significant increase in accuracy as 'C' increases from 0 to approximately 50.

* The accuracy plateaus between C=50 and C=150.

* Beyond C=150, the accuracy decreases slightly before stabilizing.

* The RAP-MCTS and Negative Control have similar accuracy values at C=0.

* The SC-MCTS* (Ours) has a higher accuracy than RAP-MCTS and Negative Control.

### Interpretation

The chart suggests that the parameter 'C' has a significant impact on the accuracy of the algorithm represented by the teal line. Increasing 'C' initially leads to a substantial improvement in accuracy, but there are diminishing returns beyond a certain point. The plateau indicates that further increases in 'C' do not significantly improve accuracy, and may even lead to a slight decrease. The SC-MCTS* (Ours) algorithm appears to outperform the RAP-MCTS and Negative Control algorithms. The negative control accuracy is the baseline performance when C=0. The optimal value of C for the teal line algorithm appears to be around 50-150.

</details>

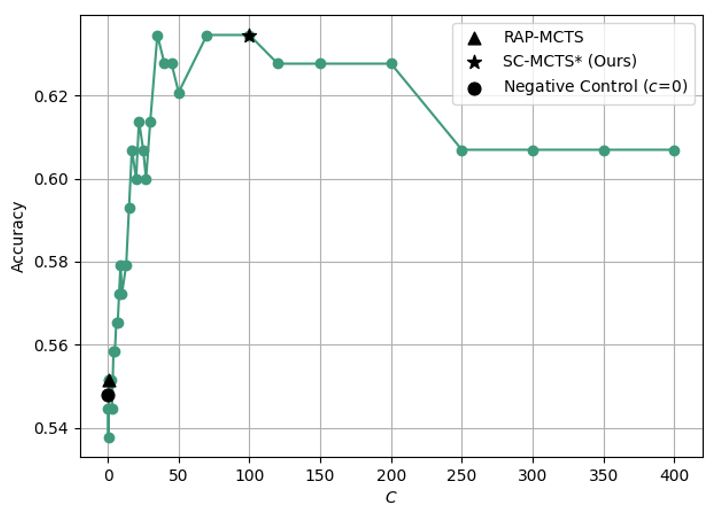

Figure 4: Accuracy comparison of different constant $C$ of UCT on Blocksworld multi-step reasoning dataset.

<details>

<summary>extracted/6087579/fig/iter.png Details</summary>

### Visual Description

## Line Chart: Accuracy vs. Iteration for Easy and Hard Modes

### Overview

The image is a line chart comparing the accuracy of a model in "Easy Mode" and "Hard Mode" over 10 iterations. The x-axis represents the iteration number, and the y-axis represents the accuracy. The chart displays how the accuracy changes for each mode as the number of iterations increases.

### Components/Axes

* **X-axis:** Iteration, labeled from 1 to 10 in increments of 1.

* **Y-axis:** Accuracy, labeled from 0.35 to 0.60 in increments of 0.05.

* **Legend:** Located in the bottom-right corner.

* Blue line with circular markers: "Easy Mode"

* Red line with circular markers: "Hard Mode"

### Detailed Analysis

* **Easy Mode (Blue):**

* Trend: Generally increasing accuracy over iterations.

* Iteration 1: Approximately 0.415

* Iteration 2: Approximately 0.42

* Iteration 3: Approximately 0.47

* Iteration 4: Approximately 0.50

* Iteration 5: Approximately 0.57

* Iteration 6: Approximately 0.60

* Iteration 7: Approximately 0.61

* Iteration 8: Approximately 0.62

* Iteration 9: Approximately 0.625

* Iteration 10: Approximately 0.625

* **Hard Mode (Red):**

* Trend: Generally increasing accuracy over iterations, but starts lower than Easy Mode.

* Iteration 1: Approximately 0.345

* Iteration 2: Approximately 0.345

* Iteration 3: Approximately 0.435

* Iteration 4: Approximately 0.48

* Iteration 5: Approximately 0.53

* Iteration 6: Approximately 0.57

* Iteration 7: Approximately 0.59

* Iteration 8: Approximately 0.60

* Iteration 9: Approximately 0.605

* Iteration 10: Approximately 0.605

### Key Observations

* Easy Mode consistently outperforms Hard Mode in terms of accuracy across all iterations.

* Both modes show significant improvement in accuracy from iteration 1 to iteration 10.

* The accuracy of Hard Mode increases more rapidly than Easy Mode between iterations 2 and 5.

* Both modes appear to plateau in accuracy after iteration 8.

### Interpretation

The data suggests that the model performs better on the "Easy Mode" compared to the "Hard Mode," indicating that the "Hard Mode" presents more challenging scenarios for the model to learn. The increasing accuracy for both modes demonstrates the model's ability to learn and improve its performance over successive iterations. The plateauing of accuracy after iteration 8 suggests that the model may be approaching its maximum performance level for both modes, and further training may yield diminishing returns. The more rapid increase in accuracy for Hard Mode between iterations 2 and 5 suggests that the model is learning to overcome the initial challenges posed by the harder scenarios.

</details>

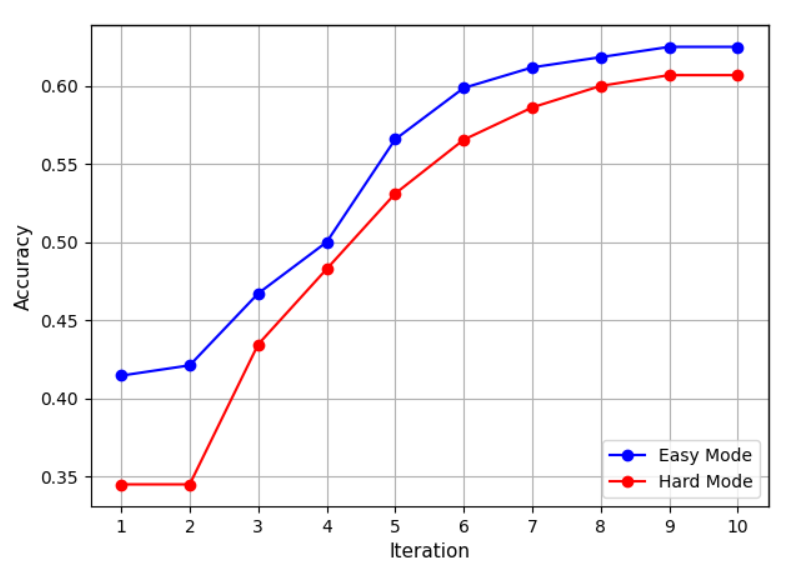

Figure 5: Accuracy comparison of different numbers of iteration on Blocksworld multi-step reasoning dataset.

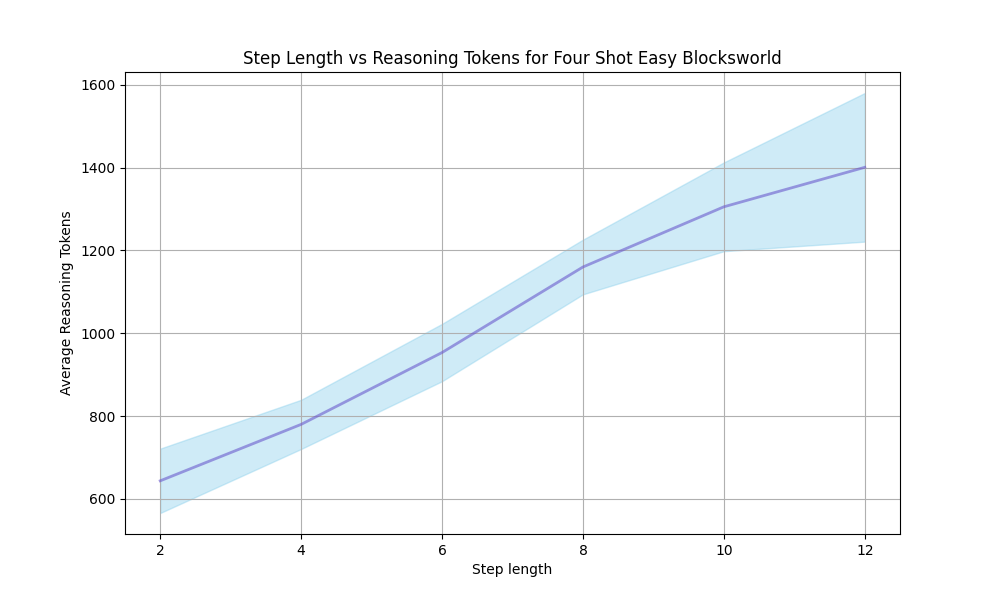

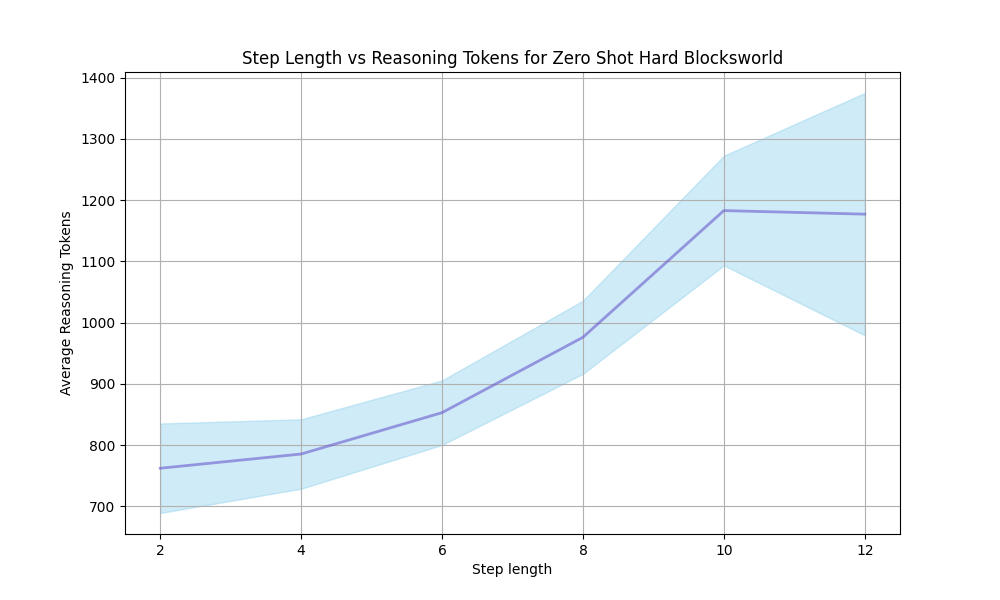

As discussed in Section 4.2, the constant $C$ is a crucial part of UCT strategy, which completely determines whether the exploration term takes effect. Therefore, we conducted quantitative experiments on the constant $C$ , to eliminate interference from other factors, we only use MCTS base with the common reward model $R_{\text{LL}}$ for both RAP-MCTS and SC-MCTS ∗. From Figure 5 we can observe that the constant $C$ of RAP-MCTS is too small to function effectively, while the constant $C$ of SC-MCTS ∗ is the value most suited to the values of reward model derived from extensive experimental data. After introducing new datasets, this hyperparameter may need to be re-tuned.

From Figure 5, it can be observed that the accuracy of SC-MCTS ∗ on multi-step reasoning increases steadily with the number of iterations. During the first 1-7 iterations, the accuracy rises consistently. After the 7th iteration, the improvement in accuracy becomes relatively smaller, indicating that under the experimental setting with depth limitations, the exponentially growing exploration nodes in later iterations bring diminishing returns in accuracy.

5.5 Ablation Study

| Parts of SC-MCTS ∗ | Accuracy (%) | Improvement (%) |

| --- | --- | --- |

| MCTS base | 55.92 | — |

| + $R_{\text{JSD}}$ | 62.50 | +6.58 |

| + $R_{\text{LL}}$ | 67.76 | +5.26 |

| + $R_{\text{SE}}$ | 70.39 | +2.63 |

| + Multi-RM Method | 73.68 | +3.29 |

| + Improved $C$ of UCT | 78.95 | +5.27 |

| + BP Refinement | 80.92 | +1.97 |

| SC-MCTS ∗ | 80.92 | Overall +25.00 |

Table 2: Ablation Study on the Blocksworld dataset at Step 6 under difficult mode. For a more thorough ablation study, the reward model for the MCTS base was set to pseudo-random numbers.

As shown in Table 2, the results of the ablation study demonstrate that each component of SC-MCTS ∗ contributes significantly to performance improvements. Starting from a base MCTS accuracy of 55.92%, adding $R_{\text{JSD}}$ , $R_{\text{LL}}$ , and $R_{\text{SE}}$ yields a combined improvement of 14.47%. Multi-RM method further boosts performance by 3.29%, while optimizing the $C$ parameter in UCT adds 5.27%, and the backpropagation refinement increases accuracy by 1.97%. Overall, SC-MCTS ∗ achieves an accuracy of 80.92%, a 25% improvement over the base, demonstrating the effectiveness of these enhancements for complex reasoning tasks.

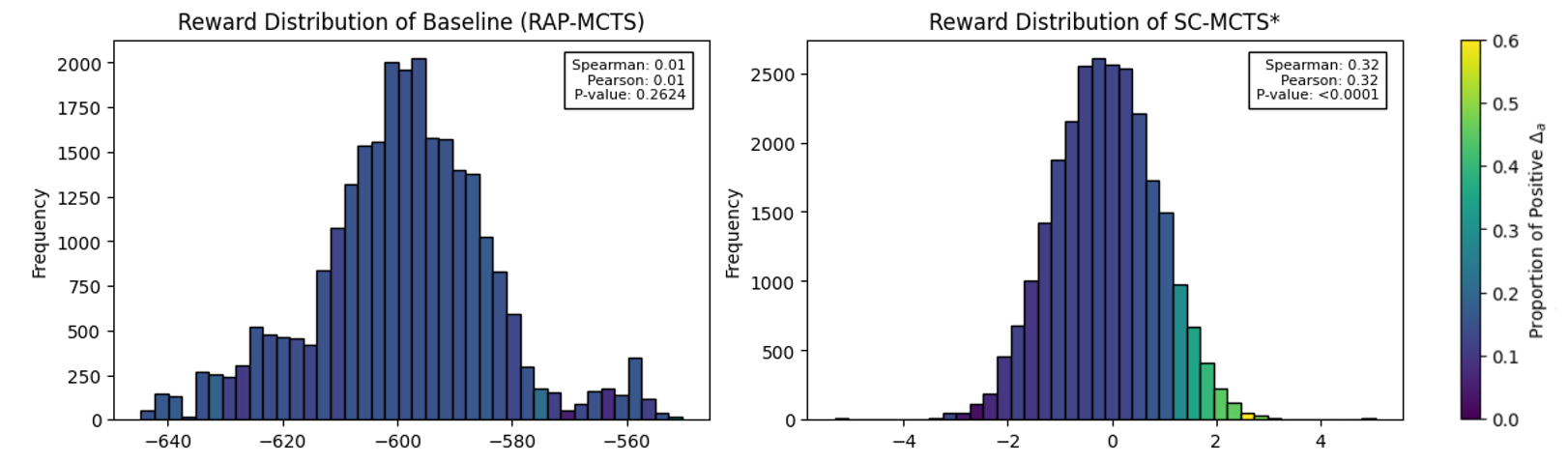

5.6 Interpretability Study

In the Blocksworld multi-step reasoning dataset, we utilize a built-in ground truth verifier to measure the percentage of progress toward achieving the goal at a given step, denoted as $P$ . The value of $P$ ranges between $[0,1]$ . For any arbitrary non-root node $N_{i}$ , the progress is defined as:

$$

P(N_{i})=\text{Verifier}(N_{i}).

$$

For instance, in a 10-step Blocksworld reasoning task, the initial node $A$ has $P(A)=0$ . After executing one correct action and transitioning to the next node $B$ , the progress becomes $P(B)=0.1$ .

Given a non-root node $N_{i}$ , transitioning to its parent node $\text{Parent}(N_{i})$ through a specific action $a$ , the contribution of $a$ toward the final goal state is defined as:

$$

\Delta_{a}=P(\text{Parent}(N_{i}))-P(N_{i}).

$$

Next, by analyzing the relationship between $\Delta_{a}$ and the reward value $R_{a}$ assigned by the reward model for action $a$ , we aim to reveal how our designed reward model provides highly interpretable reward signals for the selection of each node in MCTS. We also compare the performance of our reward model against a baseline reward model. Specifically, the alignment between $\Delta_{a}$ and $R_{a}$ demonstrates the interpretability of the reward model in guiding the reasoning process toward the goal state. Since Section 5.5 has already demonstrated that the reasoning performance of MCTS reasoning is almost entirely determined by the reward model, using interpretable reward models greatly enhances the interpretability of our algorithm SC-MCTS ∗.

<details>

<summary>extracted/6087579/fig/reward.png Details</summary>

### Visual Description

## Histogram: Reward Distribution Comparison

### Overview

The image presents two histograms side-by-side, comparing the reward distributions of two different algorithms: Baseline (RAP-MCTS) and SC-MCTS*. The histograms are color-coded to represent the proportion of positive Δa.

### Components/Axes

**Left Histogram (Baseline RAP-MCTS):**

* **Title:** Reward Distribution of Baseline (RAP-MCTS)

* **X-axis:** Reward (values ranging from approximately -640 to -560)

* **Y-axis:** Frequency (values ranging from 0 to 2000)

* **Statistical Information:**

* Spearman: 0.01

* Pearson: 0.01

* P-value: 0.2624

**Right Histogram (SC-MCTS*):**

* **Title:** Reward Distribution of SC-MCTS*

* **X-axis:** Reward (values ranging from approximately -4 to 4)

* **Y-axis:** Frequency (values ranging from 0 to 2500)

* **Statistical Information:**

* Spearman: 0.32

* Pearson: 0.32

* P-value: <0.0001

**Color Bar (Proportion of Positive Δa):**

* **Label:** Proportion of Positive Δa

* **Scale:** Ranges from 0.0 to 0.6, with color gradient from dark blue to yellow.

### Detailed Analysis

**Left Histogram (Baseline RAP-MCTS):**

* The distribution is centered around -595, with a primary peak at approximately 1900 frequency.

* The distribution has a long tail towards lower reward values (left side).

* There's a secondary, smaller peak around -560 with a frequency of approximately 300.

* The bars are colored according to the proportion of positive Δa, but the color variation is minimal, mostly dark blue.

**Right Histogram (SC-MCTS*):**

* The distribution is centered around 0, with a peak at approximately 2500 frequency.

* The distribution is more symmetrical compared to the Baseline.

* The bars show a color gradient, with blue bars around the center and green/yellow bars towards the right (positive reward values).

* At reward value of 2, the frequency is approximately 250, and the color is green, corresponding to a proportion of positive Δa of approximately 0.4.

### Key Observations

* The SC-MCTS* algorithm has a reward distribution that is centered around 0, indicating better performance compared to the Baseline.

* The Baseline algorithm's reward distribution is centered around -595, indicating lower performance.

* The p-value for SC-MCTS* is <0.0001, indicating a statistically significant result.

* The p-value for Baseline is 0.2624, indicating a non-significant result.

* The color gradient in the SC-MCTS* histogram shows that higher reward values are associated with a higher proportion of positive Δa.

### Interpretation

The histograms compare the reward distributions of two algorithms, Baseline (RAP-MCTS) and SC-MCTS*. The SC-MCTS* algorithm demonstrates a significantly better reward distribution, centered around 0, with a statistically significant p-value. This suggests that SC-MCTS* is a more effective algorithm compared to the Baseline. The color gradient in the SC-MCTS* histogram further indicates that higher reward values are associated with a higher proportion of positive Δa, reinforcing the algorithm's superior performance. The Baseline algorithm, on the other hand, has a reward distribution centered around -595 and a non-significant p-value, indicating lower performance.

</details>

Figure 6: Reward distribution and interpretability analysis. The left histogram shows the baseline reward model (RAP-MCTS), while the right represents SC-MCTS ∗. Bin colors indicate the proportion of positive $\Delta_{a}$ (lighter colors means higher proportions). Spearman and Pearson correlations along with p-values are shown in the top right of each histogram.

From Figure 6, shows that SC-MCTS* reward values correlate significantly with $\Delta_{a}$ , as indicated by the high Spearman and Pearson coefficients. Additionally, the mapping between the reward value bins and the proportion of positive $\Delta_{a}$ (indicated by the color gradient from light to dark) is highly consistent and intuitive. This strong alignment suggests that our reward model effectively captures the progress toward the goal state, providing interpretable signals for action selection during reasoning.

These results highlight the exceptional interpretability of our designed reward model, which ensures that SC-MCTS* not only achieves superior reasoning performance but is also highly interpretable. This interpretability is crucial for understanding and improving the decision-making process in multi-step reasoning tasks, further validating transparency of our proposed algorithm.

6 Conclusion

In this paper, we present SC-MCTS ∗, a novel and effective algorithm to enhancing the reasoning capabilities of LLMs. With extensive improvements in reward modeling, node selection strategy and backpropagation, SC-MCTS ∗ boosts both accuracy and speed, outperforming OpenAI’s o1-mini model by 17.4% on average using Llama-3.1-70B on the Blocksworld dataset. Experiments demonstrate its strong performance, making it a promising approach for multi-step reasoning tasks. For future work please refer to Appendix J. The synthesis of interpretability, efficiency and generalizability positions SC-MCTS ∗ as a valuable contribution to advancing LLMs multi-step reasoning.

References

- Bellman (1957) Richard Bellman. A markovian decision process. Journal of Mathematics and Mechanics, 6(5):679–684, 1957. ISSN 00959057, 19435274. URL http://www.jstor.org/stable/24900506.

- Chen et al. (2023) Charlie Chen, Sebastian Borgeaud, Geoffrey Irving, Jean-Baptiste Lespiau, Laurent Sifre, and John Jumper. Accelerating large language model decoding with speculative sampling, 2023. URL https://arxiv.org/abs/2302.01318.

- Chen et al. (2024) Qiguang Chen, Libo Qin, Jiaqi Wang, Jinxuan Zhou, and Wanxiang Che. Unlocking the boundaries of thought: A reasoning granularity framework to quantify and optimize chain-of-thought, 2024. URL https://arxiv.org/abs/2410.05695.

- Coquelin & Munos (2007) Pierre-Arnaud Coquelin and Rémi Munos. Bandit algorithms for tree search. In Proceedings of the Twenty-Third Conference on Uncertainty in Artificial Intelligence, UAI’07, pp. 67–74, Arlington, Virginia, USA, 2007. AUAI Press. ISBN 0974903930.

- Frantar et al. (2022) Elias Frantar, Saleh Ashkboos, Torsten Hoefler, and Dan Alistarh. Gptq: Accurate post-training quantization for generative pre-trained transformers, 2022.

- Hao et al. (2023) Shibo Hao, Yi Gu, Haodi Ma, Joshua Hong, Zhen Wang, Daisy Wang, and Zhiting Hu. Reasoning with language model is planning with world model. In Houda Bouamor, Juan Pino, and Kalika Bali (eds.), Proceedings of the 2023 Conference on Empirical Methods in Natural Language Processing, pp. 8154–8173, Singapore, December 2023. Association for Computational Linguistics. doi: 10.18653/v1/2023.emnlp-main.507. URL https://aclanthology.org/2023.emnlp-main.507.

- Hao et al. (2024) Shibo Hao, Yi Gu, Haotian Luo, Tianyang Liu, Xiyan Shao, Xinyuan Wang, Shuhua Xie, Haodi Ma, Adithya Samavedhi, Qiyue Gao, Zhen Wang, and Zhiting Hu. LLM reasoners: New evaluation, library, and analysis of step-by-step reasoning with large language models. In ICLR 2024 Workshop on Large Language Model (LLM) Agents, 2024. URL https://openreview.net/forum?id=h1mvwbQiXR.

- Jumper et al. (2021) John Jumper, Richard Evans, Alexander Pritzel, Tim Green, Michael Figurnov, Olaf Ronneberger, Kathryn Tunyasuvunakool, Russ Bates, Augustin Žídek, Anna Potapenko, Alex Bridgland, Clemens Meyer, Simon A. A. Kohl, Andrew J. Ballard, Andrew Cowie, Bernardino Romera-Paredes, Stanislav Nikolov, Rishub Jain, Jonas Adler, and Trevor Back. Highly accurate protein structure prediction with alphafold. Nature, 596(7873):583–589, Jul 2021. doi: https://doi.org/10.1038/s41586-021-03819-2. URL https://www.nature.com/articles/s41586-021-03819-2.

- Leviathan et al. (2023) Yaniv Leviathan, Matan Kalman, and Yossi Matias. Fast inference from transformers via speculative decoding. In Proceedings of the 40th International Conference on Machine Learning, ICML’23. JMLR.org, 2023.

- Li et al. (2023) Xiang Lisa Li, Ari Holtzman, Daniel Fried, Percy Liang, Jason Eisner, Tatsunori Hashimoto, Luke Zettlemoyer, and Mike Lewis. Contrastive decoding: Open-ended text generation as optimization. In Anna Rogers, Jordan Boyd-Graber, and Naoaki Okazaki (eds.), Proceedings of the 61st Annual Meeting of the Association for Computational Linguistics (Volume 1: Long Papers), pp. 12286–12312, Toronto, Canada, July 2023. Association for Computational Linguistics. doi: 10.18653/v1/2023.acl-long.687. URL https://aclanthology.org/2023.acl-long.687.

- Liu et al. (2021) Alisa Liu, Maarten Sap, Ximing Lu, Swabha Swayamdipta, Chandra Bhagavatula, Noah A. Smith, and Yejin Choi. DExperts: Decoding-time controlled text generation with experts and anti-experts. In Proceedings of the 59th Annual Meeting of the Association for Computational Linguistics and the 11th International Joint Conference on Natural Language Processing (Volume 1: Long Papers), pp. 6691–6706, Online, August 2021. Association for Computational Linguistics. doi: 10.18653/v1/2021.acl-long.522. URL https://aclanthology.org/2021.acl-long.522.

- McAleese et al. (2024) Nat McAleese, Rai Michael Pokorny, Juan Felipe Ceron Uribe, Evgenia Nitishinskaya, Maja Trebacz, and Jan Leike. Llm critics help catch llm bugs, 2024.

- O’Brien & Lewis (2023) Sean O’Brien and Mike Lewis. Contrastive decoding improves reasoning in large language models, 2023. URL https://arxiv.org/abs/2309.09117.

- OpenAI (2024a) OpenAI. Introducing openai o1. https://openai.com/o1/, 2024a. Accessed: 2024-10-02.

- OpenAI (2024b) OpenAI. How reasoning works. https://platform.openai.com/docs/guides/reasoning/how-reasoning-works, 2024b. Accessed: 2024-10-02.

- Qi et al. (2024) Zhenting Qi, Mingyuan Ma, Jiahang Xu, Li Lyna Zhang, Fan Yang, and Mao Yang. Mutual reasoning makes smaller llms stronger problem-solvers, 2024. URL https://arxiv.org/abs/2408.06195.

- Rafailov et al. (2023) Rafael Rafailov, Archit Sharma, Eric Mitchell, Christopher D Manning, Stefano Ermon, and Chelsea Finn. Direct preference optimization: Your language model is secretly a reward model. In A. Oh, T. Naumann, A. Globerson, K. Saenko, M. Hardt, and S. Levine (eds.), Advances in Neural Information Processing Systems, volume 36, pp. 53728–53741. Curran Associates, Inc., 2023. URL https://proceedings.neurips.cc/paper_files/paper/2023/file/a85b405ed65c6477a4fe8302b5e06ce7-Paper-Conference.pdf.

- Ren et al. (2023) Jie Ren, Yao Zhao, Tu Vu, Peter J. Liu, and Balaji Lakshminarayanan. Self-evaluation improves selective generation in large language models. In Javier Antorán, Arno Blaas, Kelly Buchanan, Fan Feng, Vincent Fortuin, Sahra Ghalebikesabi, Andreas Kriegler, Ian Mason, David Rohde, Francisco J. R. Ruiz, Tobias Uelwer, Yubin Xie, and Rui Yang (eds.), Proceedings on "I Can’t Believe It’s Not Better: Failure Modes in the Age of Foundation Models" at NeurIPS 2023 Workshops, volume 239 of Proceedings of Machine Learning Research, pp. 49–64. PMLR, 16 Dec 2023. URL https://proceedings.mlr.press/v239/ren23a.html.

- Silver et al. (2016) David Silver, Aja Huang, Chris J. Maddison, Arthur Guez, Laurent Sifre, George van den Driessche, Julian Schrittwieser, Ioannis Antonoglou, Veda Panneershelvam, Marc Lanctot, Sander Dieleman, Dominik Grewe, John Nham, Nal Kalchbrenner, Ilya Sutskever, Timothy Lillicrap, Madeleine Leach, Koray Kavukcuoglu, Thore Graepel, and Demis Hassabis. Mastering the game of go with deep neural networks and tree search. Nature, 529(7587):484–489, Jan 2016. doi: https://doi.org/10.1038/nature16961.

- Silver et al. (2017) David Silver, Thomas Hubert, Julian Schrittwieser, Ioannis Antonoglou, Matthew Lai, Arthur Guez, Marc Lanctot, Laurent Sifre, Dharshan Kumaran, Thore Graepel, Timothy Lillicrap, Karen Simonyan, and Demis Hassabis. Mastering chess and shogi by self-play with a general reinforcement learning algorithm, 2017. URL https://arxiv.org/abs/1712.01815.

- Sprague et al. (2024) Zayne Sprague, Fangcong Yin, Juan Diego Rodriguez, Dongwei Jiang, Manya Wadhwa, Prasann Singhal, Xinyu Zhao, Xi Ye, Kyle Mahowald, and Greg Durrett. To cot or not to cot? chain-of-thought helps mainly on math and symbolic reasoning, 2024. URL https://arxiv.org/abs/2409.12183.

- Tian et al. (2024) Ye Tian, Baolin Peng, Linfeng Song, Lifeng Jin, Dian Yu, Haitao Mi, and Dong Yu. Toward self-improvement of llms via imagination, searching, and criticizing. ArXiv, abs/2404.12253, 2024. URL https://api.semanticscholar.org/CorpusID:269214525.

- Valmeekam et al. (2023) Karthik Valmeekam, Matthew Marquez, Sarath Sreedharan, and Subbarao Kambhampati. On the planning abilities of large language models - a critical investigation. In Thirty-seventh Conference on Neural Information Processing Systems, 2023. URL https://openreview.net/forum?id=X6dEqXIsEW.

- Valmeekam et al. (2024) Karthik Valmeekam, Matthew Marquez, Alberto Olmo, Sarath Sreedharan, and Subbarao Kambhampati. Planbench: an extensible benchmark for evaluating large language models on planning and reasoning about change. In Proceedings of the 37th International Conference on Neural Information Processing Systems, NIPS ’23, Red Hook, NY, USA, 2024. Curran Associates Inc.

- Wei et al. (2024) Jason Wei, Xuezhi Wang, Dale Schuurmans, Maarten Bosma, Brian Ichter, Fei Xia, Ed H. Chi, Quoc V. Le, and Denny Zhou. Chain-of-thought prompting elicits reasoning in large language models. In Proceedings of the 36th International Conference on Neural Information Processing Systems, NIPS ’22, Red Hook, NY, USA, 2024. Curran Associates Inc. ISBN 9781713871088.

- Xie et al. (2024) Yuxi Xie, Anirudh Goyal, Wenyue Zheng, Min-Yen Kan, Timothy P. Lillicrap, Kenji Kawaguchi, and Michael Shieh. Monte carlo tree search boosts reasoning via iterative preference learning, 2024. URL https://arxiv.org/abs/2405.00451.

- Xin et al. (2024a) Huajian Xin, Daya Guo, Zhihong Shao, Zhizhou Ren, Qihao Zhu, Bo Liu (Benjamin Liu), Chong Ruan, Wenda Li, and Xiaodan Liang. Deepseek-prover: Advancing theorem proving in llms through large-scale synthetic data. ArXiv, abs/2405.14333, 2024a. URL https://api.semanticscholar.org/CorpusID:269983755.

- Xin et al. (2024b) Huajian Xin, Z. Z. Ren, Junxiao Song, Zhihong Shao, Wanjia Zhao, Haocheng Wang, Bo Liu, Liyue Zhang, Xuan Lu, Qiushi Du, Wenjun Gao, Qihao Zhu, Dejian Yang, Zhibin Gou, Z. F. Wu, Fuli Luo, and Chong Ruan. Deepseek-prover-v1.5: Harnessing proof assistant feedback for reinforcement learning and monte-carlo tree search, 2024b. URL https://arxiv.org/abs/2408.08152.

- Xu (2023) Haotian Xu. No train still gain. unleash mathematical reasoning of large language models with monte carlo tree search guided by energy function, 2023. URL https://arxiv.org/abs/2309.03224.

- Yao et al. (2024) Shunyu Yao, Dian Yu, Jeffrey Zhao, Izhak Shafran, Thomas L. Griffiths, Yuan Cao, and Karthik Narasimhan. Tree of thoughts: deliberate problem solving with large language models. In Proceedings of the 37th International Conference on Neural Information Processing Systems, NIPS ’23, Red Hook, NY, USA, 2024. Curran Associates Inc.

- Yuan et al. (2024a) Hongyi Yuan, Keming Lu, Fei Huang, Zheng Yuan, and Chang Zhou. Speculative contrastive decoding. In Lun-Wei Ku, Andre Martins, and Vivek Srikumar (eds.), Proceedings of the 62nd Annual Meeting of the Association for Computational Linguistics (Volume 2: Short Papers), pp. 56–64, Bangkok, Thailand, August 2024a. Association for Computational Linguistics. URL https://aclanthology.org/2024.acl-short.5.

- Yuan et al. (2024b) Lifan Yuan, Ganqu Cui, Hanbin Wang, Ning Ding, Xingyao Wang, Jia Deng, Boji Shan, Huimin Chen, Ruobing Xie, Yankai Lin, Zhenghao Liu, Bowen Zhou, Hao Peng, Zhiyuan Liu, and Maosong Sun. Advancing llm reasoning generalists with preference trees, 2024b.

- Zhang et al. (2024a) Dan Zhang, Sining Zhoubian, Ziniu Hu, Yisong Yue, Yuxiao Dong, and Jie Tang. Rest-mcts*: Llm self-training via process reward guided tree search, 2024a. URL https://arxiv.org/abs/2406.03816.

- Zhang et al. (2024b) Di Zhang, Xiaoshui Huang, Dongzhan Zhou, Yuqiang Li, and Wanli Ouyang. Accessing gpt-4 level mathematical olympiad solutions via monte carlo tree self-refine with llama-3 8b, 2024b. URL https://arxiv.org/abs/2406.07394.

- Zhao et al. (2024) Weilin Zhao, Yuxiang Huang, Xu Han, Wang Xu, Chaojun Xiao, Xinrong Zhang, Yewei Fang, Kaihuo Zhang, Zhiyuan Liu, and Maosong Sun. Ouroboros: Generating longer drafts phrase by phrase for faster speculative decoding, 2024. URL https://arxiv.org/abs/2402.13720.

Appendix A Action-Level Contrastive Reward

We made the distinction between action-level variables and token-level variables: action-level (or step-level) variables are those that aggregate over all tokens in a reasoning step, and is typically utilized by the reasoning algorithm directly; token-level variables, by contrast, operates in a more microscopic and low-level environment, such as speculative decoding.

We found that the traditional contrastive decoding using the difference in logits, when aggregated over the sequence gives a unstable reward signal compared to JS divergence. We suspected this is due to the unbounded nature of logit difference, and the potential failure modes associated with it that needs extra care and more hyperparameter tuning.

Appendix B More Related Work

Large Language Models Multi-Step Reasoning

Deepseek Prover (Xin et al., 2024a; b) relied on Lean4 as an external verification tool to provide dense reward signals in the RL stage. ReST-MCTS ∗ (Zhang et al., 2024a) employed self-training to collect high-quality reasoning trajectories for iteratively improving the value model. AlphaLLM (Tian et al., 2024) used critic models initialized from the policy model as the MCTS reward model. rStar (Qi et al., 2024) utilized mutual consistency of SLMs and an additional math-specific action space. Xu (2023) proposed reconstructing fine-tuned LLMs into residual-based energy models to guide MCTS.

Speculative Decoding

Speculative decoding was first introduced in Leviathan et al. (2023), as a method to accelerate sampling from large autoregressive models by computing multiple tokens in parallel without retraining or changing the model structure. It enhances computational efficiency, especially in large-scale generation tasks, by recognizing that hard language-modeling tasks often include easier subtasks that can be approximated well by more efficient models. Similarly, DeepMind introduced speculative sampling (Chen et al., 2023), which expands on this idea by generating a short draft sequence using a faster draft model and then scoring this draft with a larger target model.

Contrastive Decoding

Contrastive decoding, as proposed by Li et al. (2023), is a simple, computationally light, and training-free method for text generation that can enhancethe quality and quantity by identifying strings that highlight potential differences between strong models and weak models. In this context, the weak models typically employ conventional greedy decoding techniques such as basic sampling methods, while the strong models are often well-trained large language models. This approach has demonstrated notable performance improvements in various inference tasks, including arithmetic reasoning and multiple-choice ranking tasks, thereby increasing the accuracy of language models. According to experiments conducted by O’Brien & Lewis (2023), applying contrastive decoding across various tasks has proven effective in enhancing the reasoning capabilities of LLMs.

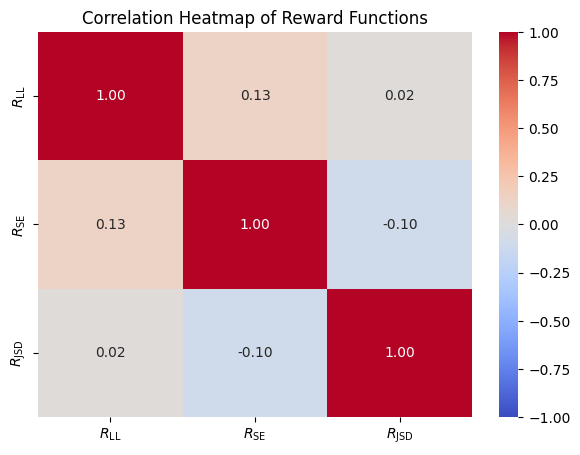

Appendix C Reward Functions Correlation

<details>

<summary>extracted/6087579/fig/heatmap.png Details</summary>

### Visual Description

## Chart Type: Correlation Heatmap

### Overview

The image is a correlation heatmap visualizing the relationships between three reward functions: R_LL, R_SE, and R_JSD. The heatmap uses a color gradient from blue (-1.00) to red (1.00) to represent the correlation coefficients. The values are also explicitly stated within each cell of the heatmap.

### Components/Axes

* **Title:** Correlation Heatmap of Reward Functions

* **X-axis:** R_LL, R_SE, R_JSD

* **Y-axis:** R_LL, R_SE, R_JSD

* **Colorbar:** Ranges from -1.00 (blue) to 1.00 (red), with intermediate values indicated (e.g., -0.75, -0.50, -0.25, 0.00, 0.25, 0.50, 0.75).

### Detailed Analysis

The heatmap displays the correlation coefficients between the reward functions.

* **R_LL vs. R_LL:** 1.00 (red)

* **R_LL vs. R_SE:** 0.13 (light orange)

* **R_LL vs. R_JSD:** 0.02 (light gray)

* **R_SE vs. R_LL:** 0.13 (light orange)

* **R_SE vs. R_SE:** 1.00 (red)

* **R_SE vs. R_JSD:** -0.10 (light blue)

* **R_JSD vs. R_LL:** 0.02 (light gray)

* **R_JSD vs. R_SE:** -0.10 (light blue)

* **R_JSD vs. R_JSD:** 1.00 (red)

### Key Observations

* The diagonal elements (R_LL vs. R_LL, R_SE vs. R_SE, R_JSD vs. R_JSD) are all 1.00, indicating perfect positive correlation (as expected).

* R_LL and R_SE have a weak positive correlation of 0.13.

* R_LL and R_JSD have a very weak positive correlation of 0.02.

* R_SE and R_JSD have a weak negative correlation of -0.10.

### Interpretation

The heatmap reveals the relationships between the three reward functions. R_LL and R_SE are weakly positively correlated, while R_SE and R_JSD are weakly negatively correlated. R_LL and R_JSD show almost no correlation. This suggests that R_SE might capture different aspects of the reward compared to R_LL and R_JSD, and that R_LL and R_JSD are relatively independent. The heatmap provides a visual and quantitative summary of the interdependencies between these reward functions, which could be useful in designing or selecting appropriate reward functions for a given task.

</details>

Figure 7: Reward Functions Correlation Heatmap.

It can be seen from Figure 7 that the correlations between the three reward functions are relatively low, absolute values all below 0.15. These low correlations of reward functions make them ideal for Multi-RM method.

Appendix D Algorithm Details of SC-MCTS ∗

The pseudocode inside MCTS reasoning of SC-MCTS ∗ is shown in Algorithm 2, based on Zhang et al. (2024a). The complete version of SC-MCTS ∗ is: first sample a subset of problems to obtain the prior data for reward values (Algorithm 1), then use it and two SLMs, one for providing contrastive reward signals, another for speculative decoding speedup, to perform MCTS reasoning. The changes of SC-MCTS ∗ compared to previous works are highlighted in teal.

Algorithm 2 SC-MCTS ∗, reasoning

1: expert LLM $\pi_{\text{e}}$ , amatuer SLM $\pi_{\text{a}}$ , speculative SLM $\pi_{\text{s}}$ , problem $q$ , reward model $R$ , reward factor statistics ${\mathcal{S}}$ , max iterations $T$ , threshold $l$ , branch $b$ , rollout steps $m$ , roll branch $d$ , weight parameter $\alpha$ , exploration constant $C$

2: $T_{q}←$ Initialize-tree $(q)$

3: for $i=1... T$ do

4: $n←$ Root $(T_{q})$

5: while $n$ is not leaf node do $\triangleright$ Node selection

6: $n←$ $\operatorname*{arg\,max}_{n^{\prime}∈\text{children}(n)}(v_{n^{\prime}}+C%

\sqrt{\frac{\ln{N_{n}}}{N_{n^{\prime}}}})$ $\triangleright$ Select child node based on UCT

7: end while

8: if $v_{n}≥ l$ then break $\triangleright$ Output solution

9: end if

10: if $n$ is not End of Inference then

11: for $j=1... b$ do $\triangleright$ Thought expansion

12: $n_{j}←$ Get-new-child $(A_{n},q,\pi_{\text{e}})$ $\triangleright$ Expand based on previous steps

13: $v_{n_{j}},{\mathcal{S}}←$ $R(A_{n_{j}},q,\pi_{\text{e}},\pi_{\text{a}},{\mathcal{S}})$ $\triangleright$ Evaluate contrastive reward and update reward factor statistics

14: end for

15: $n^{\prime}←$ $\operatorname*{arg\,max}_{n^{\prime}∈\text{children}(n)}(v_{n^{\prime}})$

16: $v_{\max}←$ 0

17: for $k=1... m$ do $\triangleright$ Greedy MC rollout

18: $A,v_{\max}←$ Get-next-step-with-best-value $(A,q,\pi_{\text{e}},\pi_{\text{s}},d)$ $\triangleright$ Sample new children using speculative decoding and record the best observed value

19: end for