# LLMs Know More Than They Show: On the Intrinsic Representation of LLM Hallucinations

> Corresponding author; Work partially done during internship at Apple.

Abstract

Large language models (LLMs) often produce errors, including factual inaccuracies, biases, and reasoning failures, collectively referred to as “hallucinations”. Recent studies have demonstrated that LLMs’ internal states encode information regarding the truthfulness of their outputs, and that this information can be utilized to detect errors. In this work, we show that the internal representations of LLMs encode much more information about truthfulness than previously recognized. We first discover that the truthfulness information is concentrated in specific tokens, and leveraging this property significantly enhances error detection performance. Yet, we show that such error detectors fail to generalize across datasets, implying that—contrary to prior claims—truthfulness encoding is not universal but rather multifaceted. Next, we show that internal representations can also be used for predicting the types of errors the model is likely to make, facilitating the development of tailored mitigation strategies. Lastly, we reveal a discrepancy between LLMs’ internal encoding and external behavior: they may encode the correct answer, yet consistently generate an incorrect one. Taken together, these insights deepen our understanding of LLM errors from the model’s internal perspective, which can guide future research on enhancing error analysis and mitigation. Our code is available in https://github.com/technion-cs-nlp/LLMsKnow.

1 Introduction

The ever-growing popularity of large language models (LLM) across many domains has brought a significant limitation to center stage: their tendency to “hallucinate” – which is often used to describe the generation of inaccurate information. But what are hallucinations, and what causes them? A considerable body of research has sought to define, taxonomize, and understand hallucinations through extrinsic, behavioral analysis, primarily examining how users perceive such errors (Bang et al., 2023; Ji et al., 2023; Huang et al., 2023a; Rawte et al., 2023). However, this approach does not adequately address how these errors are encoded within the LLMs. Alternatively, another line of work has explored the internal representations of LLMs, suggesting that LLMs encode signals of truthfulness (Kadavath et al., 2022; Li et al., 2024; Chen et al., 2024, inter alia). However, these analyses were typically restricted to detecting errors—determining whether a generated output contains inaccuracies—without delving deeper into how such signals are represented and could be leveraged to understand or mitigate hallucinations.

In this work, we reveal that the internal representations of LLMs encode much more information about truthfulness than previously recognized. Through a series of experiments, we train classifiers on these internal representations to predict various features related to the truthfulness of generated outputs. Our findings reveal the patterns and types of information encoded in model representations, linking this intrinsic data to extrinsic LLM behavior. This enhances our ability to detect errors (while understanding the limitations of error detection), and may guide the development of more nuanced strategies based on error types and mitigation methods that make use of the model’s internal knowledge. Our experiments are designed to be general, covering a broad array of LLM limitations. While the term “hallucinations” is widely used, it lacks a universally accepted definition (Venkit et al., 2024). Our framework adopts a broad interpretation, considering hallucinations to encompass all errors produced by an LLM, including factual inaccuracies, biases, common-sense reasoning failures, and other real-world errors. This approach enables us to draw general conclusions about model errors from a broad perspective.

Our first step is identifying where truthfulness signals are encoded in LLMs. Previous studies have suggested methods for detecting errors in LLM outputs using intermediate representations, logits, or probabilities, implying that LLMs may encode signals of truthfulness (Kadavath et al., 2022; Li et al., 2024; Chen et al., 2024). Focusing on long-form generations, which reflect real-world usage of LLMs, our analysis uncovers a key oversight: the choice of token used to extract these signals (Section 3). We find that truthfulness information is concentrated in the exact answer tokens – e.g., “Hartford” in “The capital of Connecticut is Hartford, an iconic city…”. Recognizing this nuance significantly improves error detection strategies across the board, revealing that truthfulness encoding is stronger than previously observed.

From this point forward, we concentrate on our most effective strategy: a classifier trained on intermediate LLM representations within the exact answer tokens, referred to as ‘probing classifiers’ (Belinkov, 2021). This approach helps us explore what these representations reveal about LLMs. Our demonstration that a trained probing classifier can predict errors suggests that LLMs encode information related to their own truthfulness. However, we find that probing classifiers do not generalize across different tasks (Section 4). Generalization occurs only within tasks requiring similar skills (e.g., factual retrieval), indicating the truthfulness information is “skill-specific” and varies across different tasks. For tasks involving different skills, e.g., sentiment analysis, these classifiers are no better–or worse–than logit-based uncertainty predictors, challenging the idea of a “universal truthfulness” encoding proposed in previous work (Marks & Tegmark, 2023; Slobodkin et al., 2023). Instead, our results indicate that LLMs encode multiple, distinct notions of truth. Thus, deploying trainable error detectors in practical applications should be undertaken with caution.

We next find evidence that LLMs encode not only error detection signals but also more nuanced information about error types. Delving deeper into errors within a single task, we taxonomize its errors based on responses across repeated samples (Section 5). For example, the same error being consistently generated is different from an error that is generated occasionally among many other distinct errors. Using a different set of probing classifiers, we find that error types are predictable from the LLM representations, drawing a connection between the models’s internal representations and its external behavior. This classification offers a more nuanced understanding of errors, enabling developers to predict error patterns and implement more targeted mitigation strategies.

Finally, we find that the truthfulness signals encoded in LLMs can also differentiate between correct and incorrect answers for the same question (Section 6). Results highlight a significant misalignment between LLM’s internal representations and its external behavior in some cases. The model’s internal encoding may identify the correct answer–yet it frequently generates an incorrect response. This discrepancy reveals that the LLM’s external behavior may misrepresent its abilities, potentially pointing to new strategies for reducing errors by utilizing its existing strengths. Overall, our model-centric framework provides a deeper understanding of LLM errors, suggesting potential directions for improvements in error analysis and mitigation.

2 Background

Defining and characterizing LLM errors.

The term “hallucinations” is widely used across various subfields such as conversational AI (Liu et al., 2022), abstractive summarization (Zhang et al., 2019), and machine translation (Wang & Sennrich, 2020), each interpreting the term differently. Yet, no consensus exists on defining hallucinations: Venkit et al. (2024) identified 31 distinct frameworks for conceptualizing hallucinations, revealing the diversity of perspectives. Research efforts aim to define and taxonomize hallucinations, distinguishing them from other error types (Liu et al., 2022; Ji et al., 2023; Huang et al., 2023a; Rawte et al., 2023). On the other hand, recent scholarly conversations introduce terms like “confabulations” (Millidge, 2023) and “fabrications” (McGowan et al., 2023), attributing a possible “intention” to LLMs, although the notions of LLM “intention” and other human-like traits are still debated (Salles et al., 2020; Serapio-García et al., 2023; Harnad, 2024). These categorizations, however, adopt a human-centric view by focusing on the subjective interpretations of LLM hallucinations, which does not necessarily reflect how these errors are encoded within the models themselves. This gap limits our ability to address the root causes of hallucinations, or to reason about their nature. For example, it is unclear whether conclusions about hallucinations defined in one framework can be applied to another framework. Liang et al. (2024) defined hallucinations as inconsistencies with the training data. While this approach engage with the possible root causes of hallucinations, our study focuses on insights from the model itself, without requiring training data access. Instead, we adopt a broad interpretation of hallucinations. Here, we define hallucinations as any type of error generated by an LLM, including factual inaccuracies, biases, failures in common-sense reasoning, and others.

Another line of research suggests that LLMs either encode information about their own errors (Kadavath et al., 2022; Azaria & Mitchell, 2023) or exhibit discrepancies between their outputs and internal representations (Liu et al., 2023; Gottesman & Geva, 2024), indicating the presence of underlying mechanisms not reflected in their final outputs. Moreover, Yona et al. (2024) found that current LLMs fail to effectively convey their uncertainty through their generated outputs. Hence, we propose shifting the focus from human-centric interpretations of hallucinations to a model-centric perspective, examining the model’s intermediate activations.

Error detection in LLMs.

Error detection is a longstanding task in NLP, crucial for maintaining high standards in various practical applications and for constructing more reliable systems that ensure user trust (Bommasani et al., 2021). Over the years, many studies have proposed task-specific solutions (see Section A.1). However, the recent shift towards general-purpose LLMs necessitates a holistic approach capable of addressing any error type, rather than focusing on specific ones, making it suitable for the diverse errors generated by these models.

A line of work has addressed this challenge by leveraging external knowledge sources (Lewis et al., 2020; Gao et al., 2023) or an external LLM judge (Lin et al., 2021; Rawte et al., 2023) to identify erroneous outputs. On the other hand, our work focuses on detection methods that rely solely on the computations of the LLM—specifically, output logits, probabilities after softmax, and hidden states.

Error detection in LLMs is also closely linked to uncertainty estimation, where low certainty signals potential inaccuracies and possible errors. Popular methods to derive calibrated confidence include inspecting the model logit output values (Varshney et al., 2023; Taubenfeld et al., 2025), agreement across multiple sampled answers (Kuhn et al., 2023; Manakul et al., 2023; Tian et al., 2023a), verbalized probability (Tian et al., 2023b), and direct prompting (Kadavath et al., 2022).

Another line of work trains probing classifiers to discover and utilize truthfulness features. This approach has shown some success by probing the final token of an answer–either generated (Kadavath et al., 2022; Snyder et al., 2023; Yuksekgonul et al., 2023; Zou et al., 2023; Yin et al., 2024; Chen et al., 2024; Simhi et al., 2024; Gekhman et al., 2025) or not (Li et al., 2024; Marks & Tegmark, 2023; Burns et al., 2022; Azaria & Mitchell, 2023; Rateike et al., 2023). Others probe the final token of the prompt before the response is generated (Slobodkin et al., 2023; Snyder et al., 2023; Simhi et al., 2024; Gottesman & Geva, 2024). Many previous studies simplify the analysis by generating answers in a few-shot setting or limiting generation to a single token. In contrast, we simulate real-world usage of LLMs by allowing unrestricted answer generation. By probing exact answer tokens, we achieve significant improvements in error detection.

3 Better Error Detection

This section presents our experiments on detecting LLM errors through their own computations, focusing on token selection’s impact and introducing a method that outperforms other approaches.

3.1 Task Definition

Given an LLM $M$ , an input prompt $p$ and the LLM-generated response $\hat{y}$ , the task is to predict whether $\hat{y}$ is correct or wrong. We assume that there is access to the LLM’s internal states (i.e., white-box setting), but no access to any external resources (e.g., search engine or additional LLMs).

We use a dataset $D=\{(q_{i},y_{i})\}_{i=1}^{N}$ , consisting of $N$ question-label pairs, where $\{q_{i}\}_{i=1}^{N}$ represents a series of questions (e.g., “What is the capital of Connecticut?”) and $\{y_{i}\}_{i=1}^{N}$ the corresponding ground-truth answers (“Hartford”). For each question $q_{i}$ , we prompt the model $M$ to generate a response $y_{i}$ , resulting in the set of predicted answers $\{\hat{y}_{i}\}_{i=1}^{N}$ (“The capital of Connecticut is Hartford…”). Next, to build our error-detection dataset, we evaluate the correctness of each generated response $\hat{y}_{i}$ by comparing it to the ground-truth label $y_{i}$ . This comparison yields a correctness label $z_{i}∈\{0,1\}$ ( $1$ correct, $0 0$ wrong). The comparison can be done either via automatic heuristics or with the assistance of an instruct-LLM. For most datasets, we use heuristics to predict correctness, except for one case. See Appendix A.2. Our error detection dataset is: $\{(q_{i},\hat{y}_{i},z_{i})\}_{i=1}^{N}$ . Note that this dataset is defined based on the analyzed LLM and its generated answers. Any instances where the LLM refuses to answer are excluded, as these can easily be classified as incorrect.

3.2 Experimental Setup

Datasets and models.

We perform all experiments on four LLMs: Mistral-7b (Jiang et al., 2023), Mistral-7b-instruct-v0.2 (denoted Mistral-7b-instruct), Llama3-8b (Touvron et al., 2023), and Llama3-8b-instruct. We consider 10 different datasets spanning various domains and tasks: TriviaQA (Joshi et al., 2017), HotpotQA with/without context (Yang et al., 2018), Natural Questions (Kwiatkowski et al., 2019), Winobias (Zhao et al., 2018), Winogrande (Sakaguchi et al., 2021), MNLI (Williams et al., 2018), Math (Sun et al., 2024), IMDB review sentiment analysis (Maas et al., 2011), and a dataset of movie roles (movies) that we curate. We allow unrestricted response generation to mimic real-world LLM usage, with answers decoded greedily. For more details on the datasets and the prompts used to generate answers, refer to Appendix A.3.

Performance metric.

We measure the area under the ROC curve to evaluate error detectors, providing a single metric that reflects their ability to distinguish between positive and negative cases across many thresholds, balancing sensitivity (true positive rate) and specificity (false positive rate).

Error detection methods. We compare methods from both uncertainty and hallucinations literature.

- Aggregated probabilities / logits: Previous studies (Guerreiro et al., 2023; Kadavath et al., 2022; Varshney et al., 2023; Huang et al., 2023b) aggregate output token probabilities or logits to score LLM confidence for error detection. We implement several methods from the literature, calculating the minimum, maximum, or mean of these values. The main paper reports results for the most common approach, Logits-mean, and the best-performing one, Logits-min, with additional baselines in Appendix B.

- P(True): Kadavath et al. (2022) showed that LLMs are relatively calibrated when asked to evaluate the correctness of their generation via prompting. We implement this evaluation using the same prompt.

- Probing: Probing classifiers involve training a small classifier on a model’s intermediate activations to predict features of processed text (Belinkov, 2021). Recent studies show their effectiveness for error detection in generated text (Kadavath et al., 2022, inter alia). An intermediate activation is a vector $h_{l,t}$ from a specific LLM layer $l$ and (either read or generated) token $t$ . Thus, each LLM generation produces multiple such activations. Following prior work, we use a linear probing classifier for error detection (Li et al., 2024, inter alia) on static tokens: the last generated token ( $h_{l,-1}$ ), the one before it ( $h_{l,-2}$ ), and the final prompt token ( $h_{l,k}$ ). The layer $l$ is selected per token based on validation set performance.

For further details on the implementation of each method, refer to Appendix A.4.

<details>

<summary>x1.png Details</summary>

### Visual Description

## Diagram: Question Answering Example

### Overview

The image illustrates an example of a question-answering interaction, likely demonstrating how a model like Mistral processes a prompt and generates a response. It highlights the prompt, the model's answer, and the tokenization of the answer.

### Components/Axes

* **Prompt:** The input question provided to the model.

* **Mistral:** The model generating the answer.

* **first_exact_answer_token:** Indicates the start of the correct answer within the model's response.

* **last_exact_answer_token:** Indicates the end of the correct answer within the model's response.

* **last_q_token:** Indicates the last token of the question.

* **Tags:** "\<s>", "[INST]", "[/INST]", "\</s>" are special tokens used for formatting and instruction.

* **Numerical Indices:** "-2", "-1" are numerical indices, likely representing token positions relative to the end of the sequence.

### Detailed Analysis or ### Content Details

* **Prompt (Top, Green Box):**

* Text: "\<s> [INST] What is the capital of the U.S. state of Connecticut? [/INST]"

* **Mistral Response (Bottom, Blue Box):**

* Text: "The capital city of the U.S. state of Connecticut is Hartford. It's one of the oldest cities in the United States and was founded in 1635. Hartford is located in the central part of the state and is home to several cultural institutions, universities, and businesses.\</s>"

* **Token Annotations:**

* "first_exact_answer_token" (Purple Line): Points to the word "Hartford".

* "last_exact_answer_token" (Teal Line): Points to the word "Hartford".

* "last_q_token" (Green Line): Points to the end of the prompt.

* **Numerical Indices:**

* "-2" (Orange Box): Located near the end of the Mistral response.

* "-1" (Orange Box): Located near the end of the Mistral response.

### Key Observations

* The model correctly identifies "Hartford" as the capital of Connecticut.

* The "first_exact_answer_token" and "last_exact_answer_token" both point to "Hartford", indicating that the model's answer is precise.

* The model provides additional context about Hartford beyond just stating its name.

* The tags \<s>, [INST], [/INST], and \</s> are used to structure the prompt and response.

### Interpretation

The diagram demonstrates a successful question-answering interaction. The model (Mistral) receives a prompt, processes it, and generates a relevant and accurate response. The annotations highlight the specific tokens that constitute the correct answer, suggesting a mechanism for evaluating the model's performance. The additional context provided in the response indicates a degree of understanding beyond simple keyword matching. The numerical indices at the end of the response likely represent token positions, potentially used for further analysis or processing of the output.

</details>

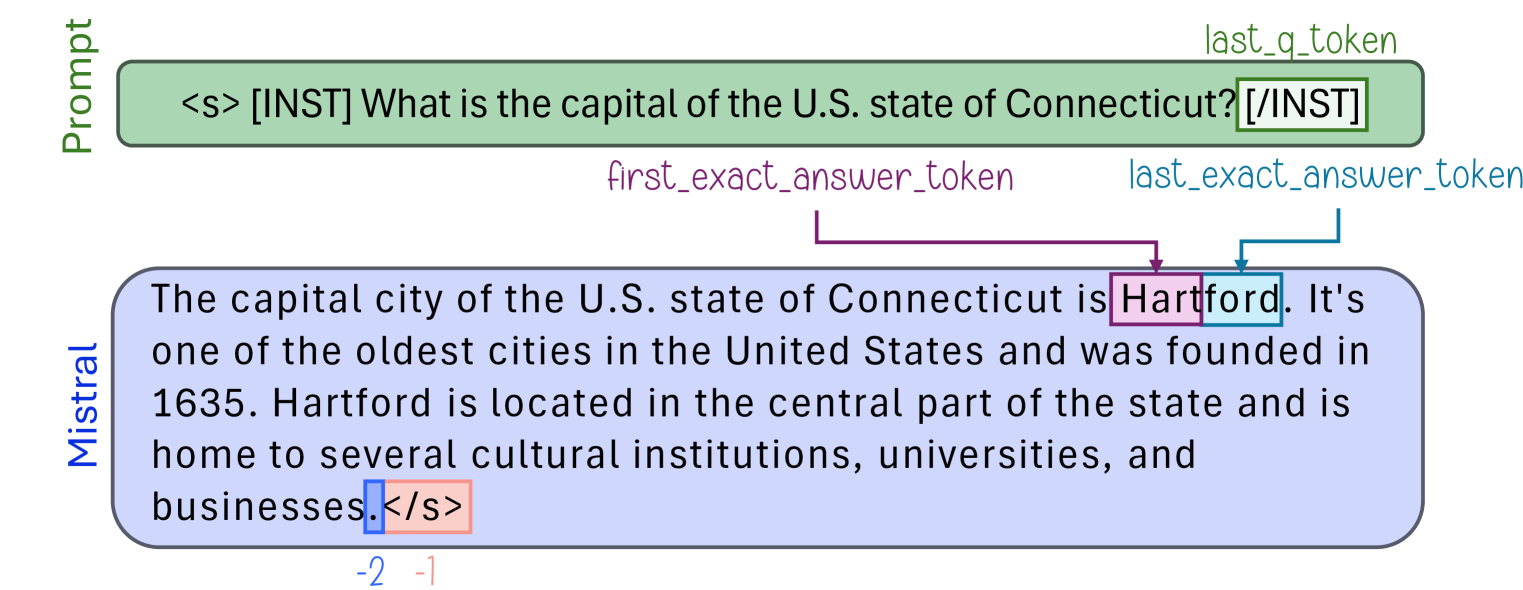

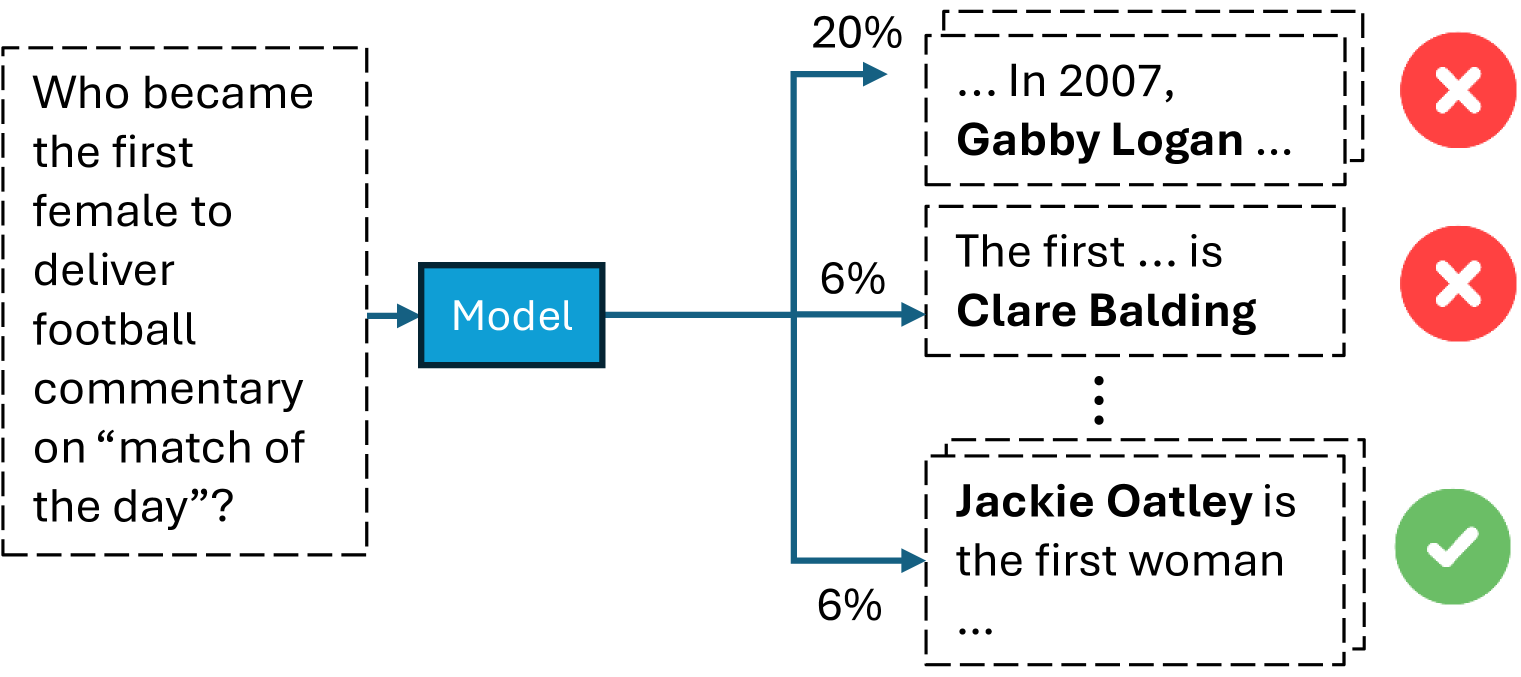

Figure 1: Example for the input and LLM output from the TriviaQA dataset, and the names of the tokens that can be probed.

Exact Answer Tokens.

Existing methods often overlook a critical nuance: the token selection for error detection, typically focusing on the last generated token or taking a mean. However, since LLMs typically generate long-form responses, this practice may miss crucial details (Brunner et al., 2020). Other approaches use the last token of the prompt (Slobodkin et al., 2023, inter alia), but this is inherently inaccurate due to LLMs’ unidirectional nature, failing to account for the generated response and missing cases where different sampled answers from the same model vary in correctness. We investigate a previously unexamined token location: the exact answer tokens, which represent the most meaningful parts of the generated response. We define exact answer tokens as those whose modification alters the answer’s correctness, disregarding subsequent generated content. In practice, we do not use this definition for extracting the exact answer, but rather an instruct model in a few-shot setting. Still, the definition is useful to manually verify that automatic extractions work as expected. Figure 1 illustrates the different token locations. In the following experiments, we implement each error detection method with an “exact answer” version, demonstrating that it often improves performance, especially in probing. Implementation details for detecting the exact answer token are given in Appendix A.2.

3.3 Results

<details>

<summary>extracted/6450693/figures/probing_heatmaps/mistral-7b-instruct/triviaqa_auc.png Details</summary>

### Visual Description

## Heatmap: Layer vs. Token

### Overview

The image is a heatmap visualizing the relationship between "Layer" and "Token". The color intensity represents a value, with darker blue indicating higher values and lighter blue indicating lower values. The heatmap spans layers 0 to 30 and various tokens, including "last_q", "first_answer", "second_answer", "exact_answer_before_first", "exact_answer_first", "exact_answer_last", "exact_answer_after_last", and numerical tokens from -8 to -1.

### Components/Axes

* **X-axis (Token):** Categorical, listing tokens: "last\_q", "first\_answer", "second\_answer", "exact\_answer\_before\_first", "exact\_answer\_first", "exact\_answer\_last", "exact\_answer\_after\_last", "-8", "-7", "-6", "-5", "-4", "-3", "-2", "-1".

* **Y-axis (Layer):** Numerical, ranging from 0 to 30 in increments of 2.

* **Color Scale:** A gradient from light blue (approximately 0.5) to dark blue (1.0), indicating the value associated with each cell in the heatmap.

### Detailed Analysis

The heatmap displays varying intensities of blue, indicating different values for each layer-token combination.

* **"last\_q", "first\_answer", "second\_answer":** These tokens show relatively high values (darker blue) across most layers, especially from layer 0 to approximately layer 20. The values seem to decrease slightly in the higher layers (20-30).

* **"exact\_answer\_before\_first", "exact\_answer\_first", "exact\_answer\_last", "exact\_answer\_after\_last":** These tokens exhibit a band of high values (darker blue) concentrated between approximately layers 8 and 18. Outside this band, the values are generally lower (lighter blue).

* **Numerical Tokens (-8 to -1):** These tokens generally show lower values (lighter blue) across all layers compared to the other tokens. There are some localized areas of slightly higher values, but overall, the intensity is less.

### Key Observations

* The tokens related to "exact\_answer" exhibit a distinct band of high values in the middle layers (8-18).

* The initial tokens ("last\_q", "first\_answer", "second\_answer") have higher values in the lower layers, gradually decreasing as the layer number increases.

* The numerical tokens (-8 to -1) generally have the lowest values across all layers.

### Interpretation

The heatmap likely represents the activation or importance of different tokens across various layers of a neural network model, possibly a transformer model used for question answering.

* The high values for "last\_q", "first\_answer", and "second\_answer" in the lower layers suggest that these tokens are important for initial processing and understanding of the question.

* The concentration of high values for "exact\_answer" tokens in the middle layers indicates that these layers are crucial for identifying and processing the exact answer within the context.

* The lower values for numerical tokens might indicate that these tokens are less relevant for the specific task or model being analyzed.

The distinct patterns observed in the heatmap suggest that different layers of the model specialize in processing different types of tokens, contributing to the overall question-answering process. The black box highlights the "exact_answer" tokens, emphasizing their importance in the middle layers.

</details>

(a) TriviaQA

<details>

<summary>extracted/6450693/figures/probing_heatmaps/mistral-7b-instruct/winobias_auc.png Details</summary>

### Visual Description

## Heatmap: Token vs Layer

### Overview

The image is a heatmap visualizing the relationship between "Token" and "Layer". The color intensity represents a value ranging from 0.5 to 1.0, with darker blue shades indicating higher values. The x-axis represents different tokens, and the y-axis represents different layers. A black rectangle highlights a specific region of the heatmap.

### Components/Axes

* **X-axis (Token):**

* Categories: last\_q, first\_answer, second\_answer, exact\_answer\_before\_first, exact\_answer\_first, exact\_answer\_last, exact\_answer\_after\_last, -8, -7, -6, -5, -4, -3, -2, -1

* **Y-axis (Layer):**

* Scale: 0, 2, 4, 6, 8, 10, 12, 14, 16, 18, 20, 22, 24, 26, 28, 30

* **Color Scale (Legend):** Located on the right side of the heatmap.

* 1. 0: Darkest Blue

* 2. 9:

* 3. 8:

* 4. 7:

* 5. 6:

* 6. 5: Lightest Blue

### Detailed Analysis

* **last\_q:** The color intensity generally increases from layer 0 to layer 30, starting from a light blue (around 0.5-0.6) and reaching a darker blue (around 0.7-0.8).

* **first\_answer:** Similar to "last\_q", the color intensity increases from layer 0 to layer 30, starting from a light blue (around 0.5-0.6) and reaching a darker blue (around 0.7-0.8).

* **second\_answer:** The color intensity increases from layer 0 to layer 30, starting from a light blue (around 0.5-0.6) and reaching a darker blue (around 0.7-0.8).

* **exact\_answer\_before\_first, exact\_answer\_first, exact\_answer\_last, exact\_answer\_after\_last:** These tokens show a higher color intensity (darker blue, around 0.8-0.9) in the upper layers (0-10) compared to the other tokens. The intensity decreases slightly towards layer 30.

* **-8 to -1:** The color intensity is generally lower (lighter blue, around 0.5-0.7) compared to the other tokens, especially in the upper layers (0-10). The intensity seems to increase slightly towards layer 30.

### Key Observations

* The tokens "exact\_answer\_before\_first", "exact\_answer\_first", "exact\_answer\_last", and "exact\_answer\_after\_last" have a higher value in the initial layers (0-10).

* The tokens "-8" to "-1" have a lower value across all layers compared to the other tokens.

* The color intensity generally increases from layer 0 to layer 30 for "last\_q", "first\_answer", and "second\_answer".

### Interpretation

The heatmap visualizes the relationship between different tokens and layers, with color intensity representing a certain value. The "exact\_answer" related tokens seem to be more relevant or have a higher impact in the initial layers, while the numerical tokens (-8 to -1) have a lower impact across all layers. The increasing color intensity for "last\_q", "first\_answer", and "second\_answer" suggests that these tokens become more relevant or important as the layer number increases. The black rectangle highlights a specific region of interest, possibly indicating a particular area of focus for analysis.

</details>

(b) Winobias

<details>

<summary>extracted/6450693/figures/probing_heatmaps/mistral-7b-instruct/answerable_math_auc.png Details</summary>

### Visual Description

## Heatmap: Layer vs Token

### Overview

The image is a heatmap visualizing the relationship between "Layer" and "Token". The heatmap uses a blue color gradient, where darker shades of blue indicate higher values and lighter shades indicate lower values. The vertical axis represents "Layer" with numerical values from 0 to 30. The horizontal axis represents "Token" with categorical values including "last_q", "first_answer", "second_answer", "exact_answer_before_first", "exact_answer_first", "exact_answer_last", "exact_answer_after_last", and numerical values from -8 to -1.

### Components/Axes

* **X-axis (Token):**

* Categories: last\_q, first\_answer, second\_answer, exact\_answer\_before\_first, exact\_answer\_first, exact\_answer\_last, exact\_answer\_after\_last

* Numerical: -8, -7, -6, -5, -4, -3, -2, -1

* **Y-axis (Layer):** Numerical values from 0 to 30, incrementing by 2.

* **Color Scale:** A blue gradient ranging from 0.5 (lightest blue) to 1.0 (darkest blue).

### Detailed Analysis

The heatmap shows distinct patterns based on the token type and layer.

* **Tokens "exact\_answer\_before\_first", "exact\_answer\_first", "exact\_answer\_last", and "exact\_answer\_after\_last":** These tokens exhibit the highest values (darkest blue) across all layers. The values are approximately 0.9 to 1.0.

* **Token "last\_q":** This token shows relatively lower values (lighter blue) across all layers, generally ranging from 0.5 to 0.7.

* **Tokens "first\_answer" and "second\_answer":** These tokens have intermediate values, generally between 0.6 and 0.8.

* **Numerical Tokens (-8 to -1):** These tokens show a mix of values, with some layers having higher values than others. The values range from 0.5 to 0.8.

**Specific Data Points (Approximate):**

* Layer 0, Token last\_q: Approximately 0.6

* Layer 0, Token exact\_answer\_first: Approximately 0.9

* Layer 30, Token last\_q: Approximately 0.5

* Layer 30, Token exact\_answer\_first: Approximately 0.9

### Key Observations

* The tokens related to "exact\_answer" consistently show high values across all layers.

* The token "last\_q" consistently shows low values across all layers.

* There is some variation in values for the numerical tokens (-8 to -1) depending on the layer.

### Interpretation

The heatmap suggests that the "exact\_answer" tokens are highly relevant or important across all layers, as indicated by their high values. The "last\_q" token, on the other hand, appears to be less relevant or important. The varying values for the numerical tokens (-8 to -1) indicate that their relevance or importance may depend on the specific layer. The data demonstrates a clear distinction in the importance or relevance of different token types across the layers.

</details>

(c) Math

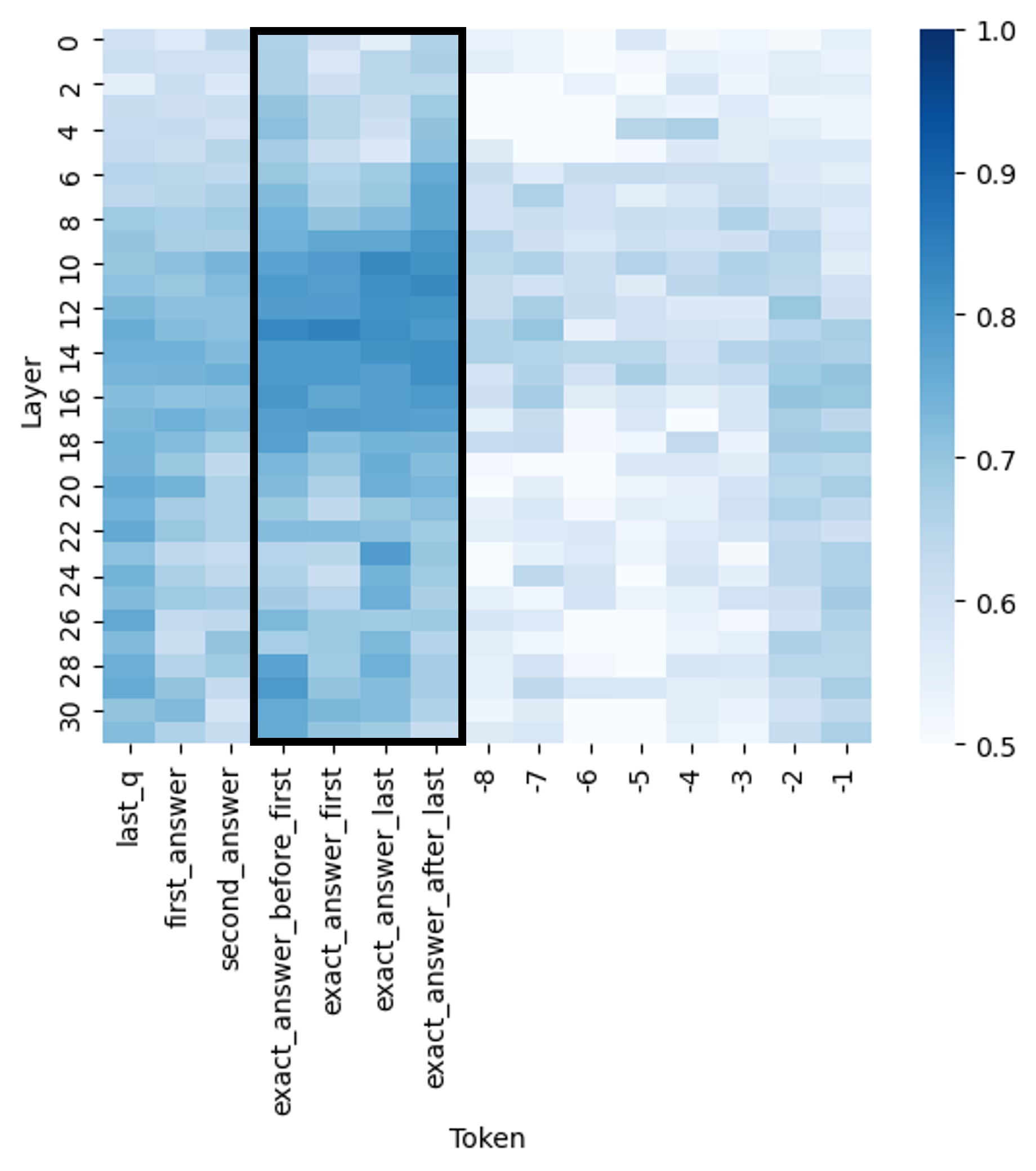

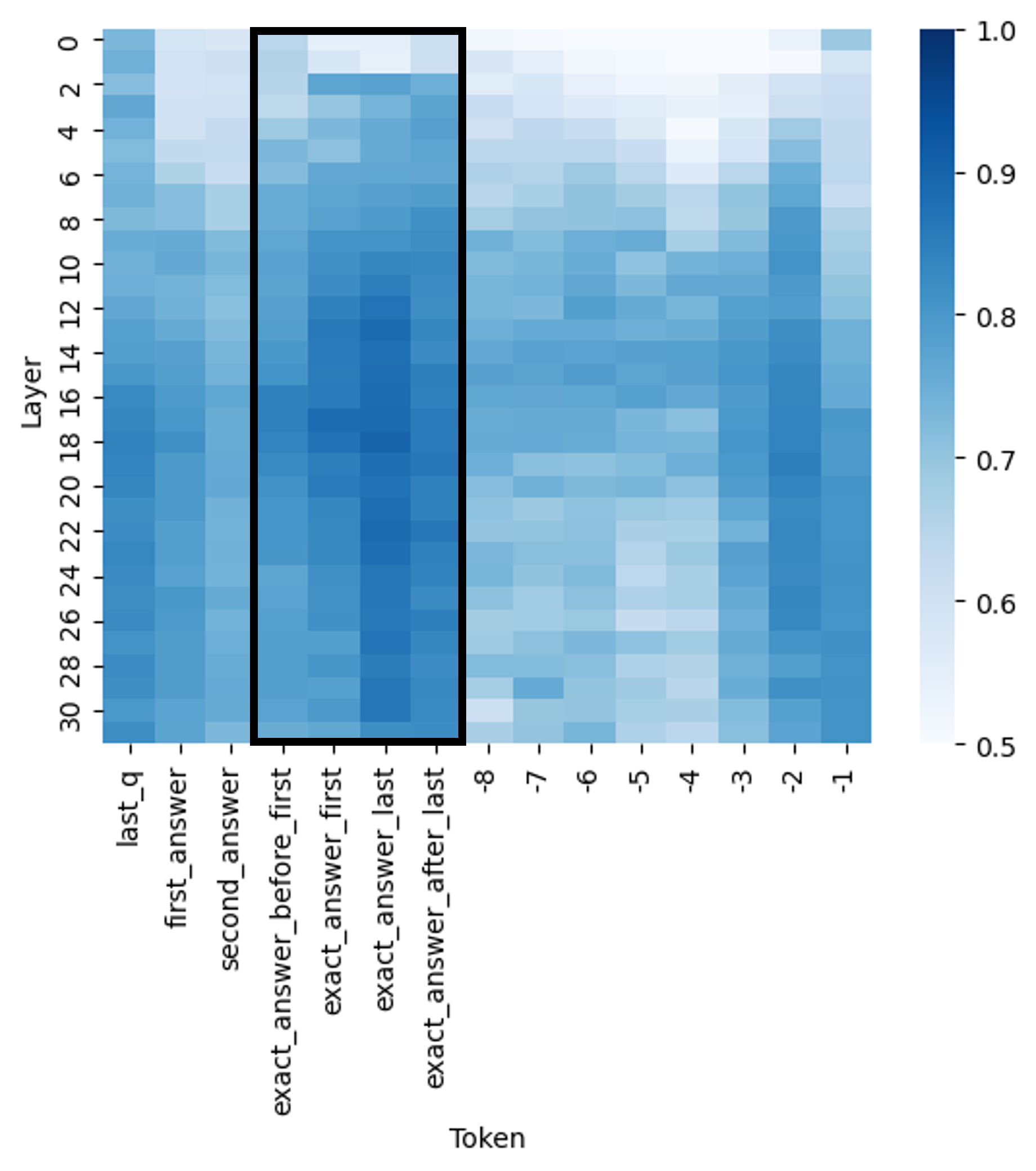

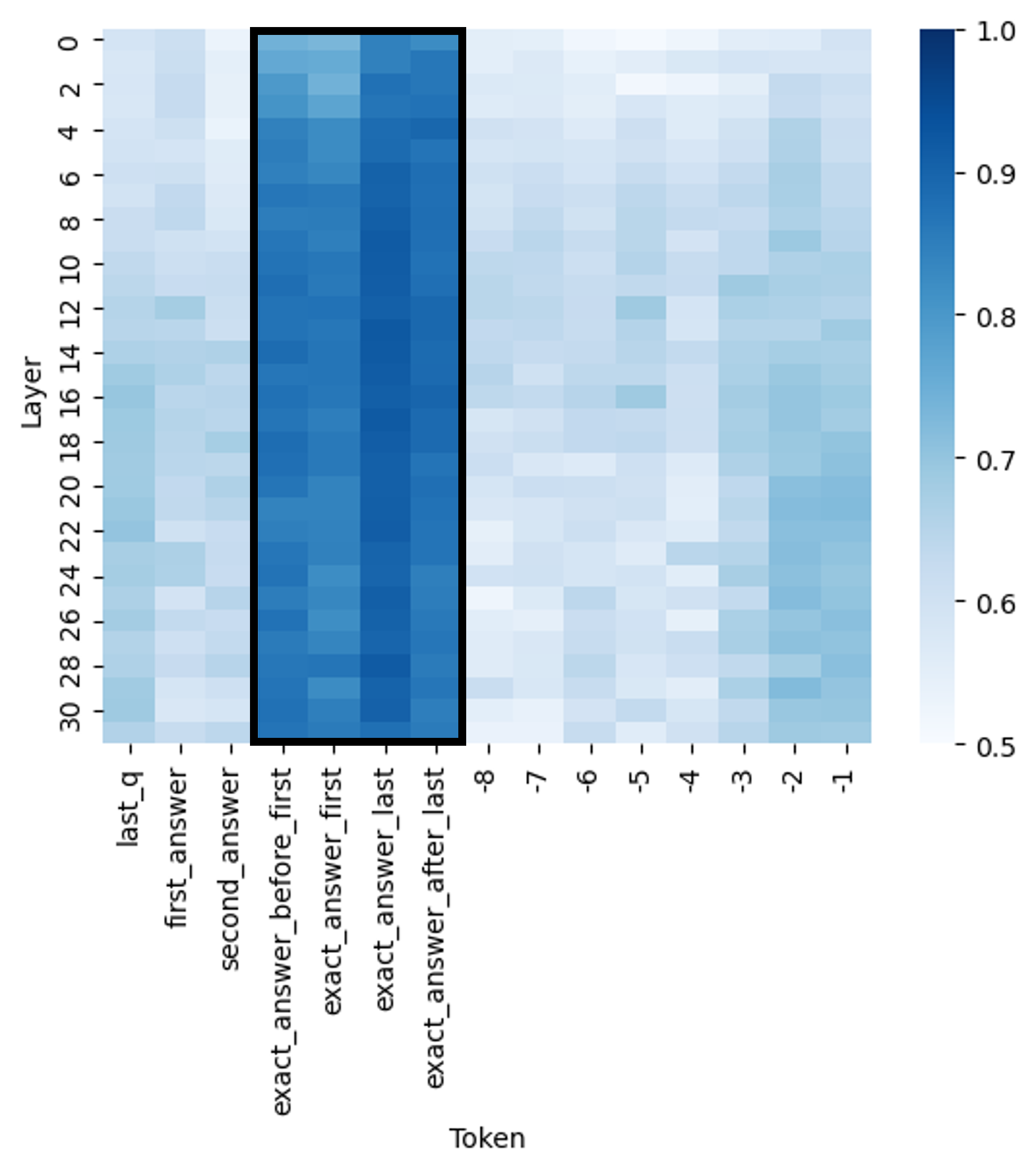

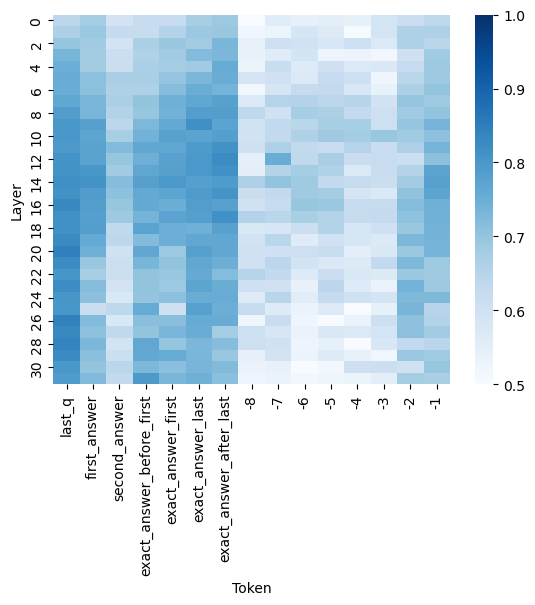

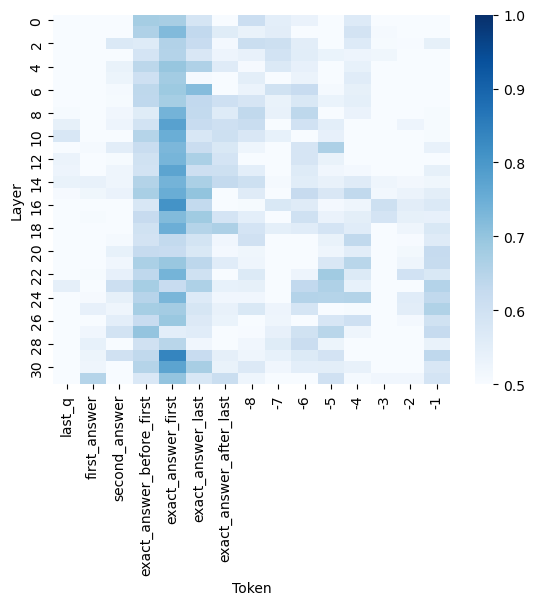

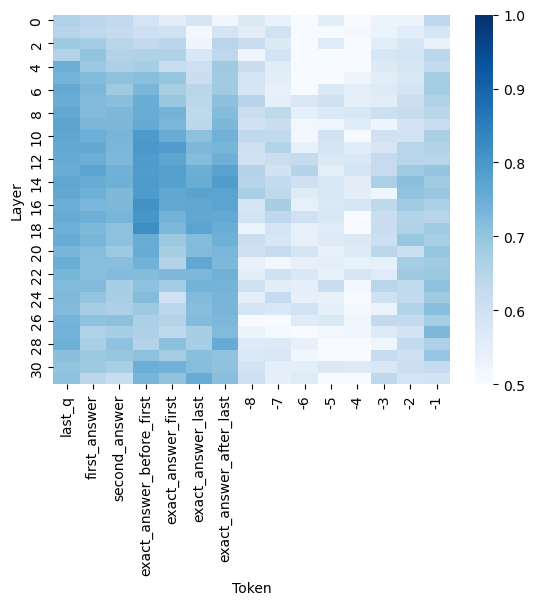

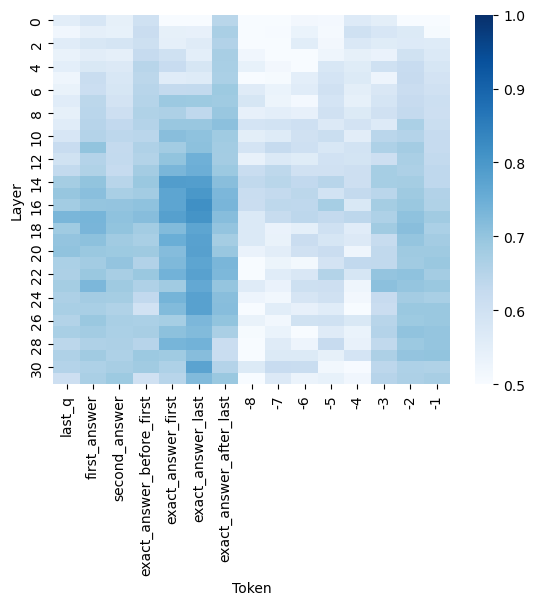

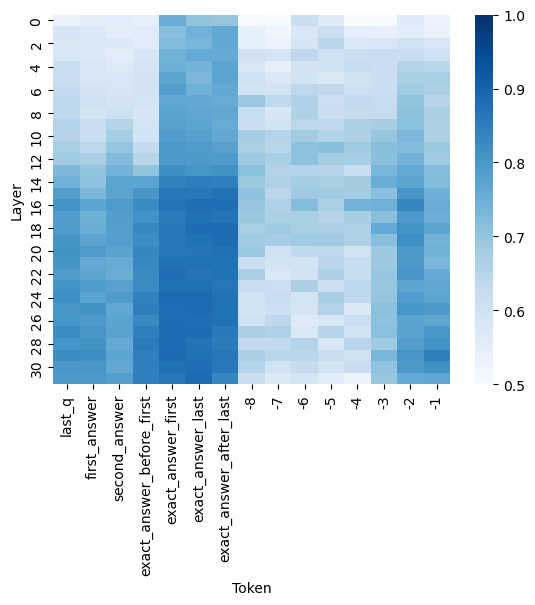

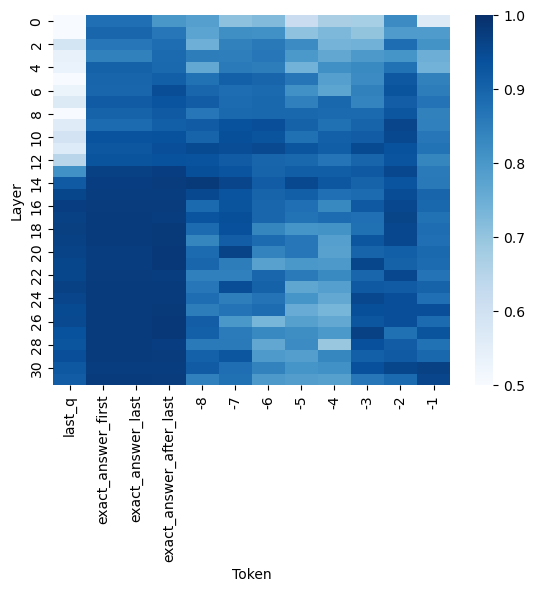

Figure 2: AUC values of a probe error detector across layers and tokens, Mistral-7b-instruct. Generation proceeds from left to right, with detection performance peaking at the exact answer tokens.

Patterns of truthfulness encoding.

We first focus on probing classifiers to gain insights into the internal representations of LLMs. Specifically, we analyze the effects of layer and token selection on the error detection performance of these probing classifiers. By systematically probing all model layers, starting from the last question token to the final generated token, we observe consistent truthfulness encoding patterns. Figure 2 shows AUC metrics of probes across Mistral-7b-Instruct layers and tokens. Middle to later layers often yield the most effective probing results (see Appendix B for more datasets and models), aligning with previous studies on truthfulness encoding (Burns et al., 2022; CH-Wang et al., 2023) and transformer representations (nostalgebraist, 2020; Meng et al., 2022; Geva et al., 2023). Regarding tokens, a strong truthfulness signal appears immediately after the prompt, suggesting that this representation encodes information on the model’s general ability to answer the question correctly. This signal weakens as text generation progresses but peaks again at the exact answer tokens. Towards the end of the generation process, signal strength rises again, though it remains weaker than at the exact answer tokens. These patterns are consistent across nearly all datasets and models (see Appendix B), suggesting a general mechanism by which LLMs encode and process truthfulness during text generation.

Error Detection Results.

Table 1: Comparison of error detection techniques using AUC metric, across different models and datasets. The best-performing method is bolded. Using exact answer tokens is useful for many cases, especially probing.

| | Mistral-7b-Instruct | Llama 3-8b-Instruct | | | | |

| --- | --- | --- | --- | --- | --- | --- |

| TriviaQA | Winobias | Math | TriviaQA | Winobias | Math | |

| Logits-mean | $0.60$ $± 0.009$ | $0.56$ $± 0.017$ | $0.55$ $± 0.029$ | $0.66$ $± 0.005$ | $0.60$ $± 0.026$ | $0.75$ $± 0.018$ |

| Logits-mean-exact | $0.68$ $± 0.007$ | $0.54$ $± 0.012$ | $0.51$ $± 0.005$ | $0.71$ $± 0.006$ | $0.55$ $± 0.019$ | $0.80$ $± 0.021$ |

| Logits-min | $0.63$ $± 0.008$ | $0.59$ $± 0.012$ | $0.51$ $± 0.017$ | $0.74$ $± 0.007$ | $0.61$ $± 0.024$ | $0.75$ $± 0.016$ |

| Logits-min-exact | $0.75$ $± 0.006$ | $0.53$ $± 0.013$ | $0.71$ $± 0.009$ | $0.79$ $± 0.006$ | $0.61$ $± 0.019$ | $0.89$ $± 0.018$ |

| p(True) | $0.66$ $± 0.006$ | $0.45$ $± 0.021$ | $0.48$ $± 0.022$ | $0.73$ $± 0.008$ | $0.59$ $± 0.020$ | $0.62$ $± 0.017$ |

| p(True)-exact | $0.74$ $± 0.003$ | $0.40$ $± 0.021$ | $0.60$ $± 0.025$ | $0.73$ $± 0.005$ | $0.63$ $± 0.014$ | $0.59$ $± 0.018$ |

| Probe @ token | | | | | | |

| Last generated [-1] | $0.71$ $± 0.006$ | $0.82$ $± 0.004$ | $0.74$ $± 0.008$ | $0.81$ $± 0.005$ | $0.86$ $± 0.007$ | $0.82$ $± 0.016$ |

| Before last generated [-2] | $0.73$ $± 0.004$ | $0.85$ $± 0.004$ | $0.74$ $± 0.007$ | $0.75$ $± 0.005$ | $0.88$ $± 0.005$ | $0.79$ $± 0.020$ |

| End of question | $0.76$ $± 0.008$ | $0.82$ $± 0.011$ | $0.72$ $± 0.007$ | $0.77$ $± 0.007$ | $0.80$ $± 0.018$ | $0.72$ $± 0.023$ |

| Exact | 0.85 $± 0.004$ | 0.92 $± 0.005$ | 0.92 $± 0.008$ | 0.83 $± 0.002$ | 0.93 $± 0.004$ | 0.95 $± 0.027$ |

Next, we evaluate various error detection methods by comparing their performance with and without the use of exact answer tokens. Table 1 compares the AUC across three representative datasets (additional datasets and models in Appendix B, showing consistent patterns). Here we present results for the last exact answer token, which outperformed both the first exact answer token and the one preceding it, while the token following the last performed similarly. Incorporating the exact answer token improves the different error detection methods in almost all datasets. Notably, our probing technique (bottom line) consistently outperforms all other baselines across the board. While we did not compare all existing error detection methods, the primary conclusion is that information about truthfulness is highly localized in specific generated tokens, and that focusing on exact answer tokens leads to significant improvements in error detection.

4 Generalization Between Tasks

The effectiveness of a probing classifier in detecting errors suggests that LLMs encode information about the truthfulness of their outputs. This supports using probing classifiers for error detection in production, but their generalizability across tasks remains unclear. While some studies argue for a universal mechanism of truthfulness encoding in LLMs (Marks & Tegmark, 2023; Slobodkin et al., 2023), results on probe generalization across datasets are mixed (Kadavath et al., 2022; Marks & Tegmark, 2023; CH-Wang et al., 2023; Slobodkin et al., 2023; Levinstein & Herrmann, 2024) –observing a decline in performance, yet it remains significantly above random chance. Understanding this is essential for real-world applications, where the error detector may encounter examples that significantly differ from those it was trained on. Therefore, we explore whether a probe trained on one dataset can detect errors in others.

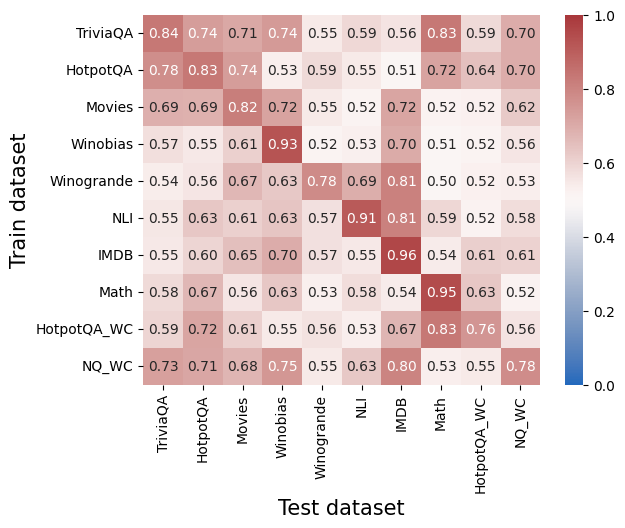

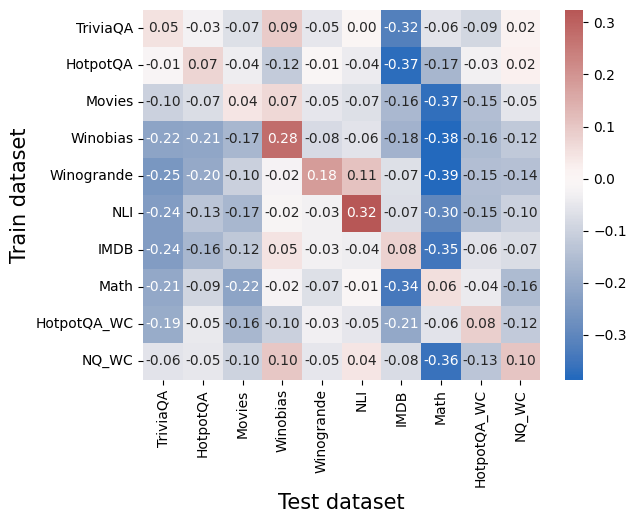

Our generalization experiments are conducted between all of the ten datasets discussed in Section 3, covering a broader range of reaslistic task settings than previous work. This breadth of experiments has not been previously explored, and is crucial considering the mixed findings in previous work. We select the optimal token and layer combination for each dataset, train all probes using this combination on other datasets, and then test them on the original dataset. We evaluate generalization performance using the absolute AUC score, defined as $\max(\text{auc},1-\text{auc})$ , to also account for cases where the learned signal in one dataset is reversed in another.

Results.

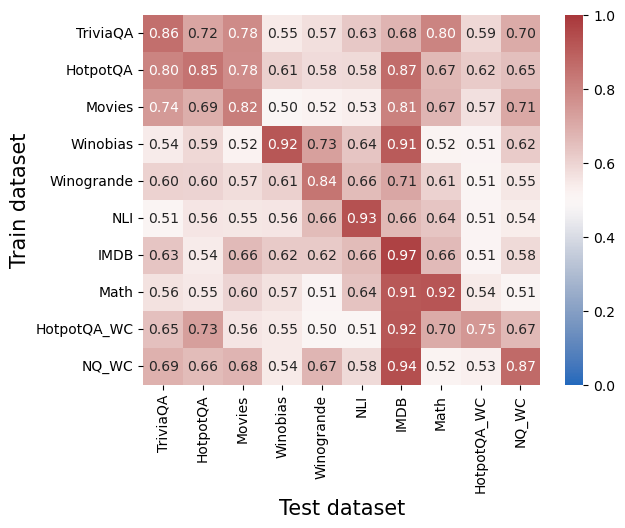

<details>

<summary>extracted/6450693/figures/generalization/mistral_instruct.png Details</summary>

### Visual Description

## Heatmap: Dataset Performance Comparison

### Overview

The image is a heatmap visualizing the performance of different models trained on various datasets and tested on the same or different datasets. The color intensity represents the performance score, ranging from blue (low) to red (high), with corresponding numerical values displayed in each cell. The rows represent the training datasets, and the columns represent the testing datasets.

### Components/Axes

* **Title:** None explicitly present in the image.

* **X-axis (Test dataset):**

* Categories: TriviaQA, HotpotQA, Movies, Winobias, Winogrande, NLI, IMDB, Math, HotpotQA\_WC, NQ\_WC

* **Y-axis (Train dataset):**

* Categories: TriviaQA, HotpotQA, Movies, Winobias, Winogrande, NLI, IMDB, Math, HotpotQA\_WC, NQ\_WC

* **Colorbar (Right side):**

* Scale: Ranges from 0.0 (blue) to 1.0 (red) in increments of 0.2.

### Detailed Analysis

The heatmap displays performance scores for each combination of training and testing datasets. The scores range from approximately 0.50 to 0.97.

Here's a breakdown of the values:

* **TriviaQA:**

* Trained on TriviaQA: 0.86

* Tested on:

* TriviaQA: 0.86

* HotpotQA: 0.72

* Movies: 0.78

* Winobias: 0.55

* Winogrande: 0.57

* NLI: 0.63

* IMDB: 0.68

* Math: 0.80

* HotpotQA\_WC: 0.59

* NQ\_WC: 0.70

* **HotpotQA:**

* Trained on HotpotQA: 0.80

* Tested on:

* TriviaQA: 0.80

* HotpotQA: 0.85

* Movies: 0.78

* Winobias: 0.61

* Winogrande: 0.58

* NLI: 0.58

* IMDB: 0.87

* Math: 0.67

* HotpotQA\_WC: 0.62

* NQ\_WC: 0.65

* **Movies:**

* Trained on Movies: 0.74

* Tested on:

* TriviaQA: 0.74

* HotpotQA: 0.69

* Movies: 0.82

* Winobias: 0.50

* Winogrande: 0.52

* NLI: 0.53

* IMDB: 0.81

* Math: 0.67

* HotpotQA\_WC: 0.57

* NQ\_WC: 0.71

* **Winobias:**

* Trained on Winobias: 0.54

* Tested on:

* TriviaQA: 0.54

* HotpotQA: 0.59

* Movies: 0.52

* Winobias: 0.92

* Winogrande: 0.73

* NLI: 0.64

* IMDB: 0.91

* Math: 0.52

* HotpotQA\_WC: 0.51

* NQ\_WC: 0.62

* **Winogrande:**

* Trained on Winogrande: 0.60

* Tested on:

* TriviaQA: 0.60

* HotpotQA: 0.60

* Movies: 0.57

* Winobias: 0.61

* Winogrande: 0.84

* NLI: 0.66

* IMDB: 0.71

* Math: 0.61

* HotpotQA\_WC: 0.51

* NQ\_WC: 0.55

* **NLI:**

* Trained on NLI: 0.51

* Tested on:

* TriviaQA: 0.51

* HotpotQA: 0.56

* Movies: 0.55

* Winobias: 0.56

* Winogrande: 0.66

* NLI: 0.93

* IMDB: 0.66

* Math: 0.64

* HotpotQA\_WC: 0.51

* NQ\_WC: 0.54

* **IMDB:**

* Trained on IMDB: 0.63

* Tested on:

* TriviaQA: 0.63

* HotpotQA: 0.54

* Movies: 0.66

* Winobias: 0.62

* Winogrande: 0.62

* NLI: 0.66

* IMDB: 0.97

* Math: 0.66

* HotpotQA\_WC: 0.51

* NQ\_WC: 0.58

* **Math:**

* Trained on Math: 0.56

* Tested on:

* TriviaQA: 0.56

* HotpotQA: 0.55

* Movies: 0.60

* Winobias: 0.57

* Winogrande: 0.51

* NLI: 0.64

* IMDB: 0.91

* Math: 0.92

* HotpotQA\_WC: 0.54

* NQ\_WC: 0.51

* **HotpotQA\_WC:**

* Trained on HotpotQA\_WC: 0.65

* Tested on:

* TriviaQA: 0.65

* HotpotQA: 0.73

* Movies: 0.56

* Winobias: 0.55

* Winogrande: 0.50

* NLI: 0.51

* IMDB: 0.92

* Math: 0.70

* HotpotQA\_WC: 0.75

* NQ\_WC: 0.67

* **NQ\_WC:**

* Trained on NQ\_WC: 0.69

* Tested on:

* TriviaQA: 0.69

* HotpotQA: 0.66

* Movies: 0.68

* Winobias: 0.54

* Winogrande: 0.67

* NLI: 0.58

* IMDB: 0.94

* Math: 0.52

* HotpotQA\_WC: 0.53

* NQ\_WC: 0.87

### Key Observations

* The diagonal elements (training and testing on the same dataset) generally have higher scores, indicating better performance when the model is tested on data similar to what it was trained on.

* The IMDB dataset shows high performance when used as the test set, regardless of the training dataset.

* Winobias, NLI, IMDB, and Math datasets show high performance when trained and tested on themselves.

### Interpretation

The heatmap illustrates the transferability of models across different datasets. Training and testing on the same dataset generally yields the best performance, suggesting that models are highly specialized to the characteristics of their training data. However, some datasets, like IMDB, appear to be more generalizable, as models trained on other datasets perform relatively well when tested on IMDB. The lower off-diagonal scores indicate that models trained on one dataset may not perform as well on datasets with different characteristics or distributions. The high self-performance of Winobias, NLI, IMDB, and Math suggests these datasets have unique features that allow models to learn effectively when trained and tested on them.

</details>

(a) Raw AUC values. Values above $0.5$ indicate some generalization.

<details>

<summary>extracted/6450693/figures/generalization/mistral_instruct_reduced.png Details</summary>

### Visual Description

## Heatmap: Dataset Transfer Performance

### Overview

The image is a heatmap visualizing the transfer performance between different datasets. The rows represent the training dataset, and the columns represent the test dataset. The color intensity indicates the performance, with redder colors indicating positive transfer and bluer colors indicating negative transfer. Numerical values are overlaid on each cell, providing precise performance metrics.

### Components/Axes

* **X-axis (Test dataset):** TriviaQA, HotpotQA, Movies, Winobias, Winogrande, NLI, IMDB, Math, HotpotQA\_WC, NQ\_WC

* **Y-axis (Train dataset):** TriviaQA, HotpotQA, Movies, Winobias, Winogrande, NLI, IMDB, Math, HotpotQA\_WC, NQ\_WC

* **Colorbar (right side):** Ranges from -0.2 (blue) to 0.3 (red), with intermediate values of -0.1, 0.0, 0.1, 0.2. This represents the transfer performance score.

### Detailed Analysis or Content Details

The heatmap displays a matrix of transfer performance values. Each cell (i, j) represents the performance of a model trained on dataset i (row) and tested on dataset j (column).

Here's a breakdown of the values:

* **TriviaQA:**

* Trained on TriviaQA: 0.11

* Trained on HotpotQA: -0.05

* Trained on Movies: 0.04

* Trained on Winobias: -0.04

* Trained on Winogrande: -0.04

* Trained on NLI: 0.01

* Trained on IMDB: -0.19

* Trained on Math: 0.10

* Trained on HotpotQA\_WC: -0.08

* Trained on NQ\_WC: 0.02

* **HotpotQA:**

* Trained on TriviaQA: -0.05

* Trained on HotpotQA: 0.08

* Trained on Movies: 0.04

* Trained on Winobias: 0.04

* Trained on Winogrande: 0.02

* Trained on NLI: -0.03

* Trained on IMDB: -0.03

* Trained on Math: -0.01

* Trained on HotpotQA\_WC: -0.04

* Trained on NQ\_WC: -0.05

* **Movies:**

* Trained on TriviaQA: -0.01

* Trained on HotpotQA: -0.08

* Trained on Movies: 0.08

* Trained on Winobias: -0.08

* Trained on Winogrande: -0.09

* Trained on NLI: -0.08

* Trained on IMDB: -0.06

* Trained on Math: -0.03

* Trained on HotpotQA\_WC: -0.10

* Trained on NQ\_WC: 0.02

* **Winobias:**

* Trained on TriviaQA: -0.21

* Trained on HotpotQA: -0.18

* Trained on Movies: -0.22

* Trained on Winobias: 0.33

* Trained on Winogrande: 0.12

* Trained on NLI: 0.02

* Trained on IMDB: 0.04

* Trained on Math: -0.19

* Trained on HotpotQA\_WC: -0.16

* Trained on NQ\_WC: -0.07

* **Winogrande:**

* Trained on TriviaQA: -0.15

* Trained on HotpotQA: -0.17

* Trained on Movies: -0.17

* Trained on Winobias: 0.02

* Trained on Winogrande: 0.23

* Trained on NLI: 0.04

* Trained on IMDB: -0.16

* Trained on Math: -0.10

* Trained on HotpotQA\_WC: -0.16

* Trained on NQ\_WC: -0.13

* **NLI:**

* Trained on TriviaQA: -0.24

* Trained on HotpotQA: -0.21

* Trained on Movies: -0.19

* Trained on Winobias: -0.03

* Trained on Winogrande: 0.05

* Trained on NLI: 0.32

* Trained on IMDB: -0.21

* Trained on Math: -0.07

* Trained on HotpotQA\_WC: -0.16

* Trained on NQ\_WC: -0.15

* **IMDB:**

* Trained on TriviaQA: -0.12

* Trained on HotpotQA: -0.23

* Trained on Movies: -0.08

* Trained on Winobias: 0.04

* Trained on Winogrande: 0.01

* Trained on NLI: 0.04

* Trained on IMDB: 0.10

* Trained on Math: -0.04

* Trained on HotpotQA\_WC: -0.16

* Trained on NQ\_WC: -0.10

* **Math:**

* Trained on TriviaQA: -0.19

* Trained on HotpotQA: -0.22

* Trained on Movies: -0.14

* Trained on Winobias: -0.02

* Trained on Winogrande: -0.10

* Trained on NLI: 0.02

* Trained on IMDB: 0.04

* Trained on Math: 0.22

* Trained on HotpotQA\_WC: -0.13

* Trained on NQ\_WC: -0.18

* **HotpotQA\_WC:**

* Trained on TriviaQA: -0.10

* Trained on HotpotQA: -0.03

* Trained on Movies: -0.19

* Trained on Winobias: -0.04

* Trained on Winogrande: -0.11

* Trained on NLI: -0.11

* Trained on IMDB: 0.05

* Trained on Math: -0.00

* Trained on HotpotQA\_WC: 0.08

* Trained on NQ\_WC: -0.02

* **NQ\_WC:**

* Trained on TriviaQA: -0.07

* Trained on HotpotQA: -0.11

* Trained on Movies: -0.07

* Trained on Winobias: -0.04

* Trained on Winogrande: 0.06

* Trained on NLI: -0.03

* Trained on IMDB: 0.07

* Trained on Math: -0.19

* Trained on HotpotQA\_WC: -0.14

* Trained on NQ\_WC: 0.18

### Key Observations

* The diagonal elements (training and testing on the same dataset) generally show positive transfer, as indicated by the redder colors and positive values.

* Negative transfer (blue colors and negative values) is observed in many off-diagonal elements, suggesting that training on one dataset can sometimes hurt performance on another.

* Winobias and NLI datasets show strong positive transfer when trained and tested on themselves (0.33 and 0.32 respectively).

* Training on TriviaQA often results in negative transfer to other datasets, as seen by the blueish colors in the first row.

### Interpretation

The heatmap provides insights into the transferability of knowledge between different datasets. The positive diagonal elements indicate that models generally perform best when trained and tested on the same dataset. The off-diagonal elements reveal how well a model trained on one dataset generalizes to another. Negative transfer highlights potential domain differences or biases that prevent effective generalization. The data suggests that some datasets are more similar and benefit from transfer learning, while others are distinct and require specific training. For example, training on Winobias or NLI seems to provide a strong positive transfer when tested on the same dataset, suggesting these datasets have unique characteristics that are well-learned by the models. Conversely, training on TriviaQA often leads to negative transfer, indicating that the knowledge gained from TriviaQA may not be directly applicable or may even be detrimental to performance on other datasets.

</details>

(b) Performance (AUC) difference of the probe and the logit-based method. Values above $0 0$ indicate generalization beyond the logit-based method.

Figure 3: Generalization between datasets, Mistral-7b-instruct. After subtracting the logit-based method’s performance, we observe that most datasets show limited or no meaningful generalization.

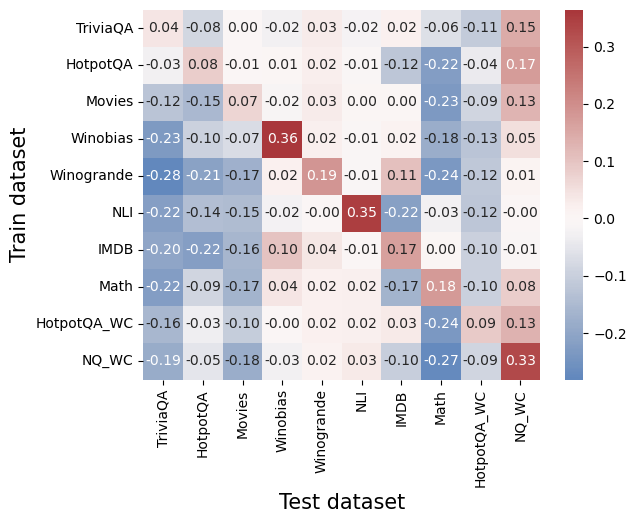

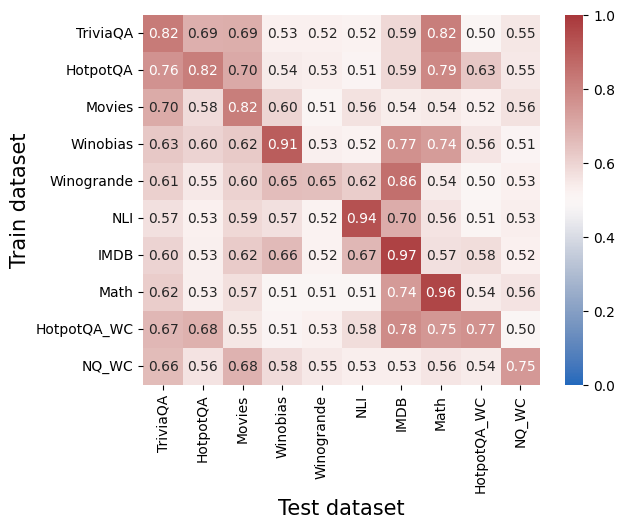

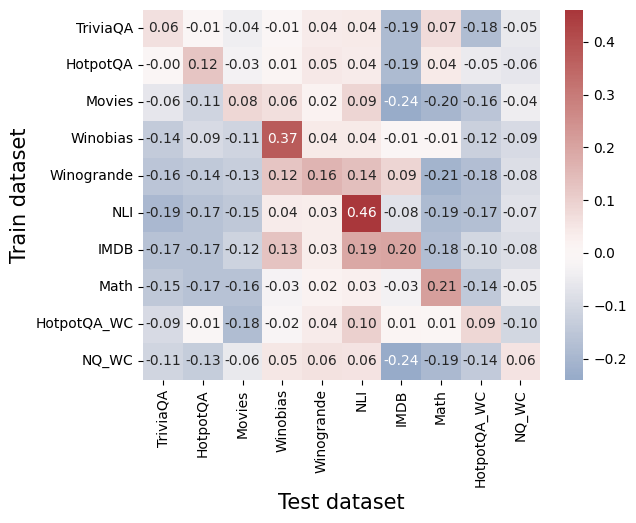

Figure 3(a) shows the generalization results for Mistral-7b-instruct, with similar patterns observed for other LLMs in Appendix C. In this context, values above $0.5$ indicate successful generalization. At first glance, the results appear consistent with previous research: most heatmap values exceed $0.5$ , implying some degree of generalization across tasks. This observation supports the existence of a universal mechanism for decoding truthfulness, since the same linear directions—captured by the probe—encode truthfulness information across many datasets. However, upon closer inspection, it turns out that most of this performance can be achieved by logit-based truthfulness detection, which only observes the output logits. Figure 3(b) presents the same heatmap after subtracting results from our strongest logit-based baseline (Logit-min-exact). This adjusted heatmap reveals the probe’s generalization rarely exceeds what can be achieved by examining logits alone. This suggests that the observed generalization is not due to a universal internal encoding of truthfulness. Instead, it likely arises from information already available through external features, such as logits. Past evidence for generalization may therefore have been overstated.

Nonetheless, we do observe some successful generalization in tasks requiring similar skills, such as parametric factual retrieval (TriviaQA, HotpotQA, Movies) and common-sense reasoning (Winobias, Wingrande, NLI). This suggests that, although the overall pattern of truthfulness signals across tokens appeared consistent across tasks (as observed in Section 3.3), LLMs have many “skill-specific” truthfulness mechanisms rather than universal ones. However, some patterns remain unexplained, such as the asymmetric generalization from TriviaQA to Math tasks. Overall, our findings indicate that models have a multifaceted representation of truthfulness. The internal mechanisms responsible for solving distinct problem are implemented as different mechanisms (e.g., circuits) within models (Elhage et al., 2021; Olah et al., 2023). Similarly, LLMs do not encode truthfulness through a single unified mechanism but rather through multiple mechanisms, each corresponding to different notions of truth. Further investigation is required to disentangle these mechanisms.

5 Investigating Error Types

Having established the limitations of error detection, we now shift to error analysis. Previously, we explored types of LLM limitations across different tasks, noting both commonalities and distinctions in their error representations. In this section, we focus on the types of errors LLMs make in a specific task—TriviaQA—which represents factual errors, a commonly studied issue in LLMs (Kadavath et al., 2022; Snyder et al., 2023; Li et al., 2024; Chen et al., 2024; Simhi et al., 2024).

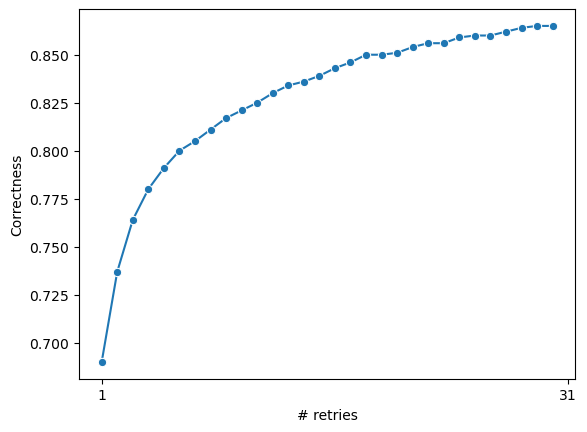

5.1 Taxonomy of Errors

Intuitively, not all mistakes are identical. In one case, an LLM may consistently generate an incorrect answer, considering it correct, while in another case, it could issue a best guess. To analyze errors from the LLM’s perspective, we sample $K=30$ responses at a temperature setting of $T=1$ We chose $K=30$ as the overall correctness seemed to plateau around this point; see Appendix D. We found that lower temperatures generally produced less truthful answers across repeated trials. for each example in the dataset and then analyze the resulting distribution of answers.

<details>

<summary>x2.png Details</summary>

### Visual Description

## Diagram: Model Output Analysis

### Overview

The image depicts a diagram illustrating the output of a model in response to the question "Otis Barton was a pioneer in exploring where?". The model generates multiple possible answers, each associated with a confidence score (percentage). The diagram also indicates whether each answer is considered correct (green checkmark) or incorrect (red X).

### Components/Axes

* **Input Question (Left):** "Otis Barton was a pioneer in exploring where?" - Presented in a dashed-line box.

* **Model (Center):** A blue rectangle labeled "Model".

* **Output Answers (Right):** Three possible answers generated by the model, each in a dashed-line box, along with their confidence scores and correctness indicators.

* **Answer 1:** "Otis Barton was a pioneer in exploring the underwater world..." - Confidence: 93%, Correctness: Green checkmark. There are multiple boxes stacked behind this one, with the top one reading "Otis co-invented..."

* **Answer 2:** "... best known for his excavations in the Maya region of Central America" - Confidence: 3%, Correctness: Red X.

* **Answer 3:** "... Exploring the underground rivers to Tennessee ..." - Confidence: 3%, Correctness: Red X.

* **Arrows:** Blue arrows connect the input question to the "Model" and the "Model" to each of the output answers, indicating the flow of information.

### Detailed Analysis or ### Content Details

* **Input:** The question "Otis Barton was a pioneer in exploring where?" is presented as input to the model.

* **Model Processing:** The "Model" block represents the processing stage where the input question is analyzed.

* **Output Generation:** The model generates three possible answers with associated confidence scores:

* "Otis Barton was a pioneer in exploring the underwater world..." is assigned a 93% confidence score and is marked as correct.

* "... best known for his excavations in the Maya region of Central America" is assigned a 3% confidence score and is marked as incorrect.

* "... Exploring the underground rivers to Tennessee ..." is assigned a 3% confidence score and is marked as incorrect.

### Key Observations

* The model demonstrates a high degree of confidence (93%) in the correct answer ("Otis Barton was a pioneer in exploring the underwater world...").

* The model assigns low confidence scores (3% each) to the incorrect answers.

* The diagram clearly distinguishes between correct and incorrect answers using green checkmarks and red X's, respectively.

### Interpretation

The diagram illustrates the performance of a model in answering a specific question. The high confidence score assigned to the correct answer suggests that the model is effective in identifying the correct response. The low confidence scores assigned to the incorrect answers indicate that the model is also capable of distinguishing between relevant and irrelevant information. The diagram provides a clear and concise overview of the model's output and its accuracy in answering the given question.

</details>

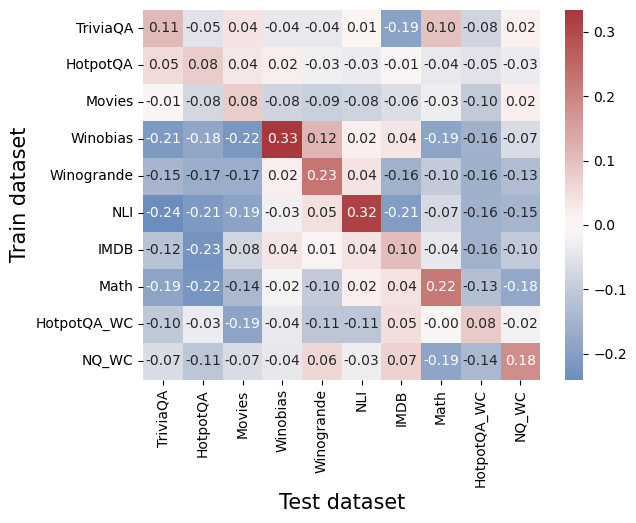

(a) The LLM mostly answers correctly, but sometimes hallucinates.

<details>

<summary>x3.png Details</summary>

### Visual Description

## Diagram: Model Accuracy on State Border Question

### Overview

The image is a diagram illustrating the accuracy of a model in answering the question: "Which American state borders on only one other state?". The diagram shows the model's output, the percentage of times each output was given, and whether the output was correct or incorrect.

### Components/Axes

* **Input Question:** "Which American state borders on only one other state?" (contained in a dashed-line box on the left)

* **Model:** A blue rectangle labeled "Model" in the center.

* **Output 1:** "Missouri is the. The only state to border ... is Missouri ..." (contained in a dashed-line box on the right, top). This output is marked with a red "X", indicating it is incorrect.

* **Output 2:** "Maine is the. The US state that ... is Maine, which ..." (contained in a dashed-line box on the right, bottom). This output is marked with a green checkmark, indicating it is correct.

* **Percentages:**

* 87% associated with the "Missouri" output.

* 13% associated with the "Maine" output.

### Detailed Analysis

* The model incorrectly identifies Missouri as the state bordering only one other state 87% of the time.

* The model correctly identifies Maine as the state bordering only one other state 13% of the time.

* The diagram uses a blue arrow to indicate the flow from the input question to the model and from the model to the outputs.

### Key Observations

* The model is significantly more likely to provide the incorrect answer (Missouri) than the correct answer (Maine).

* The model's accuracy is low, with only 13% of responses being correct.

### Interpretation

The diagram demonstrates that the model performs poorly on the given question. The high percentage of incorrect answers suggests that the model may be biased towards Missouri or lacks the necessary information to accurately answer the question. The diagram highlights the need for improvement in the model's ability to identify states based on their bordering states.

</details>

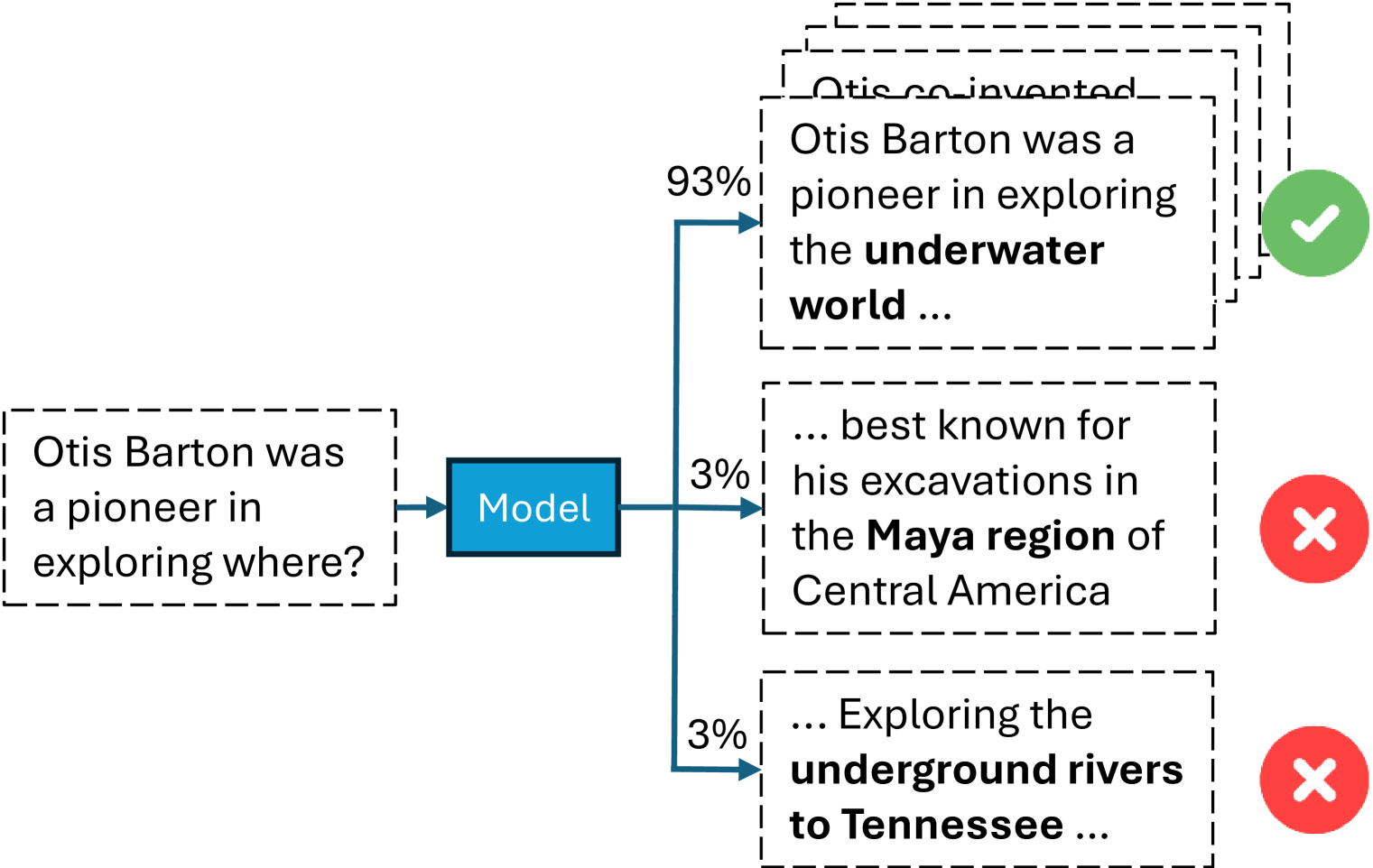

(b) The LLM mostly answers incorrectly, but seems to have some knowledge on the correct answer.

<details>

<summary>x4.png Details</summary>

### Visual Description

## Diagram: Model Output for Football Commentary Question

### Overview

The image is a diagram illustrating the output of a model in response to the question: "Who became the first female to deliver football commentary on 'match of the day'?" The model's output is represented by three different text snippets, each associated with a percentage and a visual indicator (a red "X" or a green checkmark).

### Components/Axes

* **Input Question (Left):** "Who became the first female to deliver football commentary on 'match of the day'?" This is enclosed in a dashed-line rectangle.

* **Model (Center):** A blue rectangle labeled "Model".

* **Output Branches (Right):** Three dashed-line rectangles, each containing a potential answer. Each branch is connected to the "Model" box by a blue arrow.

* **Percentages:** Each output branch is associated with a percentage (20% and 6%).

* **Validation Indicators:** Each output branch has either a red "X" or a green checkmark.

### Detailed Analysis

* **Input Question:** The question is clearly stated and forms the basis for the model's task.

* **Model:** The "Model" box represents the system processing the input question and generating potential answers.

* **Output Branch 1 (Top):**

* Text: "... In 2007, Gabby Logan ..."

* Percentage: 20%

* Validation: Red "X" (Incorrect)

* **Output Branch 2 (Middle):**

* Text: "The first ... is Clare Balding"

* Percentage: 6%

* Validation: Red "X" (Incorrect)

* **Output Branch 3 (Bottom):**

* Text: "Jackie Oatley is the first woman ..."

* Percentage: 6%

* Validation: Green Checkmark (Correct)

### Key Observations

* The model assigns the highest probability (20%) to an incorrect answer (Gabby Logan).

* The correct answer (Jackie Oatley) is assigned a lower probability (6%).

* The model provides partial answers, indicated by the ellipses ("...").

### Interpretation

The diagram illustrates a scenario where a model tasked with answering a specific question about football commentary provides multiple potential answers with associated confidence levels. The model incorrectly assigns a higher probability to a wrong answer, highlighting a potential weakness in its reasoning or training data. The use of validation indicators (red "X" and green checkmark) clearly distinguishes between correct and incorrect answers, providing a visual assessment of the model's performance. The diagram suggests that while the model can generate relevant answers, its accuracy and confidence calibration need improvement.

</details>

(c) The LLM generates many different answers, one of them is the correct one which is generated a small fraction of the resamples.

Figure 4: Different error types in free-form generation, exposed when resampled many times.

Figure 4 illustrates three representative error types. In one (Figure 4(a)), the model usually gives the correct answer but occasionally make an error, implying correct information is present but sampling may lead to mistakes. In another (Figure 4(b), the model often responds incorrectly, though it is capable of providing the right answer, indicating some retained knowledge despite consistently making the same error. In a third type (Figure 4(c)), the model generates a wide array of mostly incorrect answers, reflecting low confidence in any generated answer.

More generally, we categorize the errors by logging three specific features for each example: (a) the number of different answers generated; (b) the frequency of the correct answer; and (c) the frequency of the most common incorrect answer. These features reveal the following error patterns:

- (A) Refuses to answer: The model responds that it cannot answer the question in at least half the cases.

- (B) Consistently correct: Answers correctly in at least half of the cases. This category is divided into: (B1) always correct; and (B2) mostly correct with occasional errors.

- (C) Consistently incorrect: Consistently generates the same incorrect response in at least half of the cases. Similarly to type B, we subdivide this type into (C1) correct answer is never produced; and (C2) correct answer appears at least once.

- (D) Two competing: Generates both correct and incorrect responses at similar rates–difference in rates is 5 or less, and each response is generated at least 5 times.

- (E) Many answers: Generates over 10 distinct answers. Like types C and D, Subtypes include (E1) correct answer is never generated; and (E2) correct answer is generated at least once.

This taxonomy covers 96% of the errors in TriviaQA for Mistral-7b-instruct. For more qualitative examples of each type of error, see Appendix D.3. Although some overlap exists between types, our goal is to identify general patterns and explore their connection to the models’s internal representations. For a discussion on the design choices of this taxonomy, refer to Appendix D.1. This taxonomy classifies LLM errors based on an extrinsic, behavior-based analysis. Similarly, previous work analyzed repeated samples to assess an LLM’s knowledge of the correct answer (Simhi et al., 2024; Gekhman et al., 2024). Our approach is distinct because it also examines the nature of errors that the LLM makes. Furthermore, as we discuss next, we analyze the connection between these behavioral patterns and the model’s internal encoding.

5.2 Predicting Error Types

Our taxonomy offers an external, behavioral analysis of LLMs, which we complement by an intrinsic evaluation. We explore whether LLMs encode information on potential error types within their intermediate activations, offering a deeper insight into the underlying mechanisms. To investigate this, we train a probe in a one-to-many setting, where a single probe identifies a specific error type from all others. We use representations extracted from the answers produced via greedy decoding.

Table 2 presents the results. Our findings show that error types can be predicted from the intermediate representations of the greedy decoding generations, suggesting that they may capture not only output correctness but also fine-grained information about potential errors. While detection performance varies between types, the predictability of each type is valuable on its own, as it opens the possibility of tailoring targeted interventions for specific error types. Additionally, although performance on error types C and D is lower, it remains well above random, providing meaningful insights. These results suggest that internal representations encode more than just binary correctness, revealing a nuanced taxonomy of error types and offering deeper insights into how these models process and encode knowledge.

Table 2: AUC scores for error type classification (TriviaQA). Error types are predictable from the inner model representations, indicating the encoding of fine-grained information on errors.

| Error type | Mistral-7b | Mistral-Instr-7b | Llama3-8b | Llama3-Instr-8b |

| --- | --- | --- | --- | --- |

| (A) Refuses to answer | $0.86\scriptscriptstyle{± 0.002}$ | $0.85\scriptscriptstyle{± 0.011}$ | $0.87\scriptscriptstyle{± 0.002}$ | $0.88\scriptscriptstyle{± 0.014}$ |

| (B) Consistently correct | $0.88\scriptscriptstyle{± 0.001}$ | $0.82\scriptscriptstyle{± 0.008}$ | $0.86\scriptscriptstyle{± 0.001}$ | $0.81\scriptscriptstyle{± 0.002}$ |

| (C) Consistently incorrect | $0.59\scriptscriptstyle{± 0.002}$ | $0.67\scriptscriptstyle{± 0.002}$ | $0.59\scriptscriptstyle{± 0.002}$ | $0.64\scriptscriptstyle{± 0.003}$ |

| (D) Two competing | $0.63\scriptscriptstyle{± 0.002}$ | $0.68\scriptscriptstyle{± 0.006}$ | $0.61\scriptscriptstyle{± 0.001}$ | $0.65\scriptscriptstyle{± 0.004}$ |

| (E) Many answers | $0.90\scriptscriptstyle{± 0.001}$ | $0.84\scriptscriptstyle{± 0.003}$ | $0.89\scriptscriptstyle{± 0.001}$ | $0.89\scriptscriptstyle{± 0.001}$ |

6 Detecting the Correct Answer

After identifying that models encode diverse truthfulness-related information, we examine how this internal truthfulness aligns with their external behavior during response generation. To this end, we use our probe, We choose the best-performing probe for each task, which is trained on the last exact answer token. trained on error detection, to select an answer from a pool of 30 generated responses to the same question. We then measure the model’s accuracy based on the selected answers. A case where this accuracy does not significantly differ from traditional decoding methods (such as greedy decoding), suggests that the LLM’s internal representation of truthfulness is consistent with its external behavior. In simpler terms, that the model is generating answers that it also internally considers as correct. Conversely, a case where using the probe alters performance either way, would suggest a misalignment between the LLM’s internal representations and its actual behavior.

Experimental Setup

The experiments were conducted on TriviaQA, Winobias, and Math. We resample each model answer in the same strategy described in Section 5.1. The final chosen answer is the one with the highest correctness probability, as assessed by the probe. We compare to three baselines: (1) greedy decoding, (2) random selection from the $K=30$ answer candidates; and (3) majority vote wherein the most frequently generated answer is chosen.

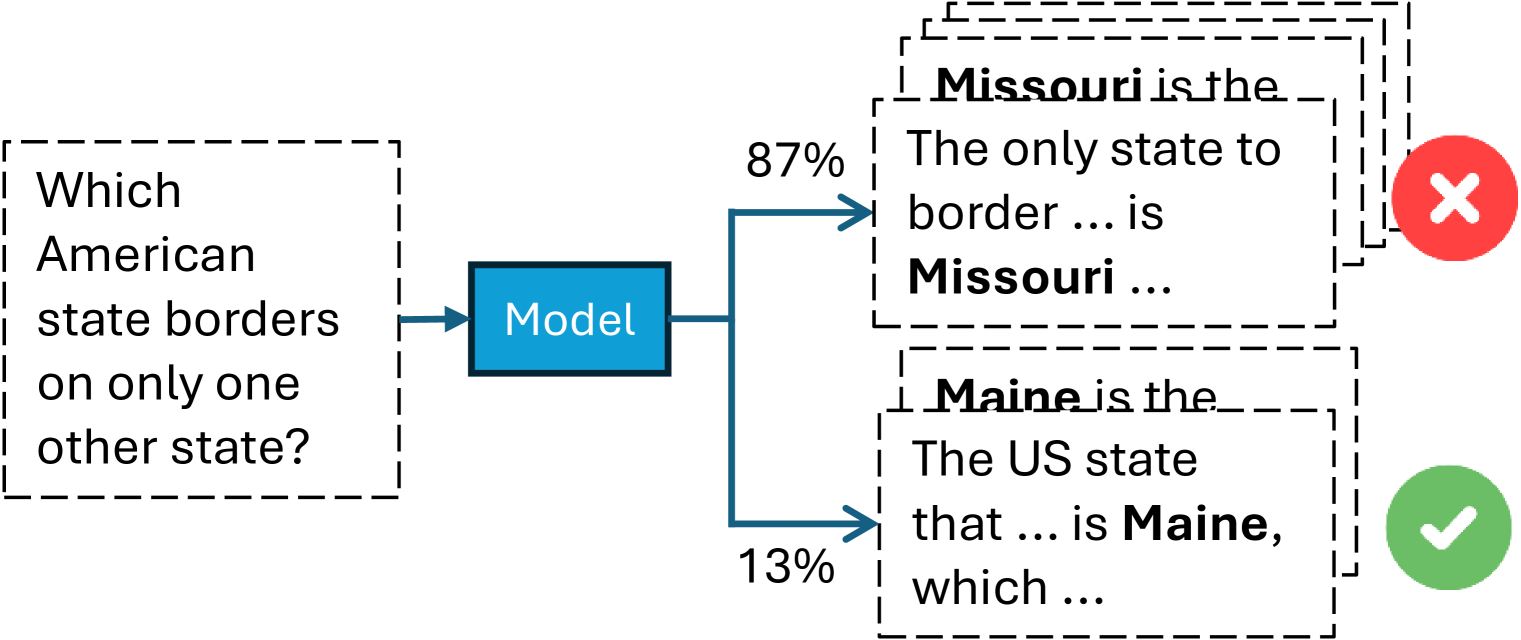

Results

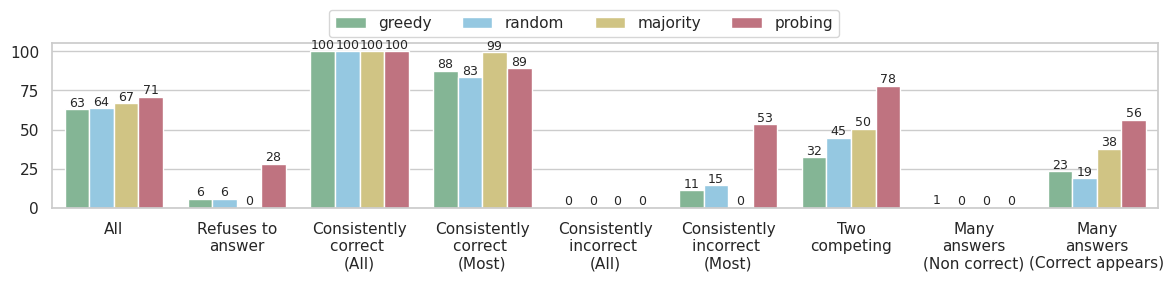

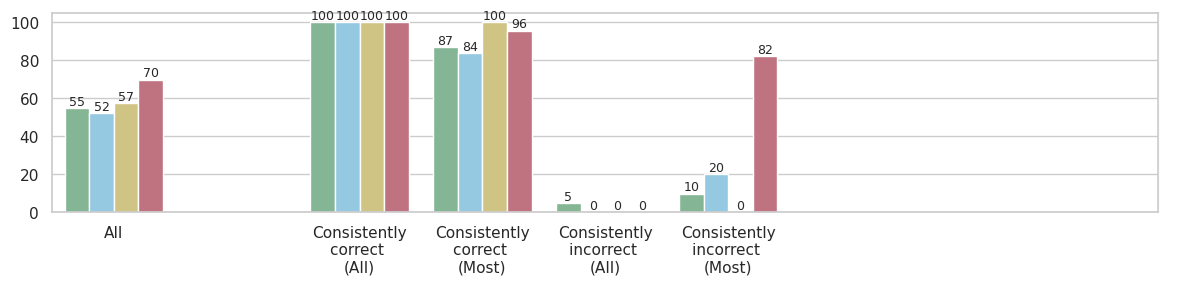

The results for Mistral-7b-instruct are summarized in Figure 5, with additional results for other LLMs and datasets as well as qualitative examples provided in Appendix E. We only present results on error types that appear 30 times or more in our test dataset. Overall, using the probe to select answers enhances the LLMs accuracy across all examined tasks. However, the extent of improvement varies by error type. For instance, in the TriviaQA dataset, there is minimal gain in the “mostly correct” category (B2). In contrast, substantial gains—ranging from 30 to 40 points in some cases—are observed in the “mostly incorrect” (C2), “two competing answers” (D), and “many answers” (E1) categories. Interestingly, and perhaps surprisingly, the probe is most effective in cases where the LLM lacks any (external) preference for the correct answer during generation. The fact that the probe can effectively identify the correct answer in these scenarios, points at a significant disconnect between the LLM’s internal encoding and its external behavior. These results suggest that even when the model encodes information of which answer is correct, it can still generate an incorrect answer in practice.

While using the probe to select the answer proves effective, it is not proposed here as an error mitigation strategy but rather as a diagnostic tool. However, these findings indicate that further research in this area could leverage the existing knowledge within LLMs to significantly reduce errors. We recommend exploring this direction in future investigations.

<details>

<summary>extracted/6450693/figures/choose_answer/probe_choose_answer_triviaqa_mistral_instruct.png Details</summary>

### Visual Description

## Bar Chart: Algorithm Performance on Question Answering

### Overview

The image is a bar chart comparing the performance of four different algorithms ("greedy", "random", "majority", and "probing") on a question-answering task. The y-axis represents the percentage of correct answers, ranging from 0 to 100. The x-axis represents different categories of question answering scenarios, such as "All", "Refuses to answer", "Consistently correct", "Consistently incorrect", "Two competing answers", and "Many answers".

### Components/Axes

* **Title:** (Implicit) Algorithm Performance on Question Answering

* **Y-axis:** Percentage of correct answers (0 to 100, with increments of 25)

* **X-axis:** Categories of question answering scenarios:

* All

* Refuses to answer

* Consistently correct (All)

* Consistently correct (Most)

* Consistently incorrect (All)

* Consistently incorrect (Most)

* Two competing

* Many answers (Non correct)

* Many answers (Correct appears)

* **Legend:** Located at the top of the chart.

* Green: greedy

* Light Blue: random

* Tan: majority

* Rose: probing

### Detailed Analysis or ### Content Details

**1. All:**

* greedy: 63%

* random: 64%

* majority: 67%

* probing: 71%

**2. Refuses to answer:**

* greedy: 6%

* random: 6%

* majority: 0%

* probing: 28%

**3. Consistently correct (All):**

* greedy: 100%

* random: 100%

* majority: 100%

* probing: 100%

**4. Consistently correct (Most):**

* greedy: 88%

* random: 83%

* majority: 99%

* probing: 89%

**5. Consistently incorrect (All):**

* greedy: 0%

* random: 0%

* majority: 0%

* probing: 0%

**6. Consistently incorrect (Most):**

* greedy: 11%

* random: 15%

* majority: 0%

* probing: 53%

**7. Two competing:**

* greedy: 32%

* random: 45%

* majority: 50%

* probing: 78%

**8. Many answers (Non correct):**

* greedy: 1%

* random: 0%

* majority: 0%

* probing: 0%

**9. Many answers (Correct appears):**

* greedy: 23%

* random: 19%

* majority: 38%

* probing: 56%

### Key Observations

* All algorithms perform perfectly when the answer is consistently correct.

* The "probing" algorithm has the highest percentage when refusing to answer, and when there are two competing answers.

* The "probing" algorithm performs best when there are many answers and the correct answer appears.

* All algorithms perform poorly when consistently incorrect.

### Interpretation

The bar chart provides a comparative analysis of four question-answering algorithms across various scenarios. The "probing" algorithm appears to be more aggressive in providing answers, as it has a higher percentage of correct answers in complex scenarios like "Two competing" and "Many answers (Correct appears)", but also refuses to answer more often. The "greedy", "random", and "majority" algorithms show more consistent performance across different scenarios, but generally lower accuracy in complex situations. The fact that all algorithms score 0% when consistently incorrect (All) suggests that these scenarios are fundamentally difficult for all approaches.

</details>

(a) TriviaQA

<details>

<summary>extracted/6450693/figures/choose_answer/probe_choose_answer_math_mistral_instruct.png Details</summary>

### Visual Description

## Bar Chart: Accuracy Analysis

### Overview

The image is a bar chart comparing the accuracy of a system under different conditions. The x-axis represents different categories of consistency (All, Consistently correct (All), Consistently correct (Most), Consistently incorrect (All), Consistently incorrect (Most)), and the y-axis represents the percentage of accuracy, ranging from 0 to 100. There are four data series represented by different colored bars: green, light blue, yellow, and red.

### Components/Axes

* **X-axis:**

* All

* Consistently correct (All)

* Consistently correct (Most)

* Consistently incorrect (All)

* Consistently incorrect (Most)

* **Y-axis:** Percentage scale from 0 to 100, with increments of 20.

* **Data Series (Bar Colors):**

* Green

* Light Blue

* Yellow

* Red

### Detailed Analysis

Here's a breakdown of the data for each category:

* **All:**

* Green: 55%

* Light Blue: 52%

* Yellow: 57%

* Red: 70%

* **Consistently correct (All):**

* Green: 100%

* Light Blue: 100%

* Yellow: 100%

* Red: 100%

* **Consistently correct (Most):**

* Green: 87%

* Light Blue: 84%

* Yellow: 100%

* Red: 96%

* **Consistently incorrect (All):**

* Green: 5%

* Light Blue: 0%

* Yellow: 0%

* Red: 0%

* **Consistently incorrect (Most):**

* Green: 10%

* Light Blue: 20%

* Yellow: 0%

* Red: 82%

### Key Observations

* The "Consistently correct (All)" category shows perfect accuracy (100%) across all data series.

* The "Consistently incorrect (All)" category shows very low accuracy (0-5%) across all data series.

* The "Consistently incorrect (Most)" category shows a high degree of variance, with the red series showing a high percentage (82%) while the yellow series shows 0%.

* The "All" category shows the lowest accuracy across all categories, with values ranging from 52% to 70%.

### Interpretation

The chart indicates that the system performs exceptionally well when it is consistently correct, achieving 100% accuracy. Conversely, when the system is consistently incorrect, the accuracy drops significantly, approaching 0% for some data series. The "Consistently incorrect (Most)" category reveals a potential issue, as the red series shows a high percentage, suggesting that under certain conditions, the system might be consistently incorrect in a specific way. The "All" category represents the overall accuracy, which is lower than the "Consistently correct" categories, indicating that there are instances where the system struggles. The differences between the data series (green, light blue, yellow, red) suggest that different factors or configurations influence the system's accuracy. Further investigation is needed to understand the specific conditions that lead to consistent incorrectness, especially for the red series in the "Consistently incorrect (Most)" category.

</details>

(b) Math

Figure 5: Different answer choice strategies, Mistral-7B-Instruct. A notable improvement in accuracy by using the error-detection probe is observed for error types where the LLM shows no preference for the correct answer across repeated generations.

7 Discussion and Conclusions

In this study, we analyzed LLM errors through their internal representations. Our approach depends on access to internal representations, restricting its use to open-source models. We focus on QA tasks with clear gold labels, which are key for benchmarking truthfulness detection and valued by the community. To ensure robustness, we tested 10 datasets across 4 model architectures. Open-ended tasks are left for future research, with our work laying the groundwork for broader applications. For instance, we found that truthfulness-related information is localized in specific tokens within long-responses, enabling practical improvements in error detection for production models. This insight could extend to tasks like summarization, by probing the most meaningful entities in an answer.

Truthfulness features showed poor generalization across tasks and datasets, highlighting the need for caution when applying trained error detectors in varied settings. Some unexplained patterns suggest hidden links between unrelated tasks that warrant further research. Improving generalization could involve exploring the effects of layer-token combinations and training on diverse datasets, as demonstrated by Bürger et al. (2024). Deciphering task-specific truthfulness features and their overlaps across tasks might also enhance classifier design. Still, task-specific probes could be highly valuable in critical fields like medicine and law, where reliability matters. These probes can detect errors, predict error types, and guide response selection from resampled outputs, offering significant practical benefits. Guidelines for applying these probes are provided in Appendix F.