# LeanAgent: Lifelong Learning for Formal Theorem Proving

**Authors**:

- Chaowei Xiao, Anima Anandkumar (California Institute of Technology, Stanford University, University of Wisconsin, Madison)

> Equal contribution.

Abstract

Large Language Models (LLMs) have been successful in mathematical reasoning tasks such as formal theorem proving when integrated with interactive proof assistants like Lean. Existing approaches involve training or fine-tuning an LLM on a specific dataset to perform well on particular domains, such as undergraduate-level mathematics. These methods struggle with generalizability to advanced mathematics. A fundamental limitation is that these approaches operate on static domains, failing to capture how mathematicians often work across multiple domains and projects simultaneously or cyclically. We present LeanAgent, a novel lifelong learning framework for formal theorem proving that continuously generalizes to and improves on ever-expanding mathematical knowledge without forgetting previously learned knowledge. LeanAgent introduces several key innovations, including a curriculum learning strategy that optimizes the learning trajectory in terms of mathematical difficulty, a dynamic database for efficient management of evolving mathematical knowledge, and progressive training to balance stability and plasticity. LeanAgent successfully generates formal proofs for 155 theorems across 23 diverse Lean repositories where formal proofs were previously missing, many from advanced mathematics. It performs significantly better than the static LLM baseline, proving challenging theorems in domains like abstract algebra and algebraic topology while showcasing a clear progression of learning from basic concepts to advanced topics. In addition, we analyze LeanAgent’s superior performance on key lifelong learning metrics. LeanAgent achieves exceptional scores in stability and backward transfer, where learning new tasks improves performance on previously learned tasks. This emphasizes LeanAgent’s continuous generalizability and improvement, explaining its superior theorem-proving performance.

1 Introduction

Mathematics can be expressed in informal and formal languages. Informal mathematics utilizes natural language and intuitive reasoning, whereas formal mathematics employs symbolic logic to construct machine-verifiable proofs (Kevin Buzzard, 2019). State-of-the-art large language models (LLMs), such as o1 (OpenAI, 2024) and Claude (Claude Team, 2024), produce incorrect informal proofs (Zhou et al., 2024). This highlights the importance of formal mathematics in ensuring proof correctness and reliability. Interactive theorem provers (ITPs), such as Lean (De Moura et al., 2015), have emerged as tools for formalizing and verifying mathematical proofs. However, constructing formal proofs using ITPs is complex and time-consuming; it requires extremely detailed proof steps and involves working with extensive mathematical libraries.

Recent research has explored using LLMs to generate proof steps or complete proofs. For example, LeanDojo (Yang et al., 2023) introduced the first open-source framework to spur such research. Existing approaches typically involve training or fine-tuning LLMs on a specific dataset (Jiang et al., 2022). However, data scarcity in formal theorem proving (Polu et al., 2022) hinders the generalizability of these approaches (Liu et al., 2023). For example, ReProver, the retrieval-augmented LLM from the LeanDojo family, uses a retriever fine-tuned on Lean’s math library, mathlib4 (mathlib4 Community, 2024). Although mathlib4 contains over 100,000 formalized mathematical theorems and definitions, it covers primarily up to undergraduate mathematics. Consequently, ReProver performs poorly on more challenging mathematics, such as Terence Tao’s formalization of the Polynomial Freiman-Ruzsa (PFR) Conjecture (Tao et al., 2024).

<details>

<summary>extracted/6256159/Final2.png Details</summary>

### Visual Description

## Diagram: Automated Theorem Proving System Architecture

### Overview

The diagram illustrates a multi-stage automated theorem proving system with iterative learning and curriculum adaptation. It shows data flow from GitHub repositories through a LeanAgent, curriculum learning framework, progressive training mechanisms, and specialized handling of "SORRY Theorems" (theorems that initially fail to prove).

### Components/Axes

**Key Components:**

1. **Input Layer**

- GitHub Repositories (source of theorems/proofs)

- Extract Data Per Repository → Premise Corpus + Theorems + Proofs

2. **Core Processing**

- LeanAgent (theorem proving engine)

- Curriculum Learning (complexity-based adaptation)

- Progressive Training (retriever evolution)

3. **Output Layer**

- Dynamic Database (knowledge repository)

- SORRY Theorem Proving (specialized handling)

**Visual Elements:**

- Color-coded complexity levels (green=low, yellow=medium, red=high)

- Feedback loops between components

- Iterative refinement arrows

### Detailed Analysis

**1. Data Ingestion Pipeline**

- GitHub repositories → Extract Data Per Repository

- Creates: Premise Corpus + Theorems + Proofs

**2. Curriculum Learning System**

- Complexity → Theorem Complexities (e.g., 2.7, 7.4)

- Complexity graph shows:

- # Proof Steps vs Complexity

- Distribution: Easy (green) → Medium (yellow) → Hard (red)

- Descending # Easy Theorems over time

**3. Progressive Training Mechanism**

- Latest Retriever → Limited Exposure (1 epoch)

- Balanced Training (Stability vs Plasticity)

- New Retriever generation

**4. SORRY Theorem Proving**

- Input: SORRY Theorems

- Process:

- Retrieved Premises (updated retriever)

- Generated Tactics

- Best-First Tree Search

- Output: New Proofs

### Key Observations

1. **Iterative Learning**: Feedback loops between components suggest continuous improvement

2. **Complexity Adaptation**: Curriculum learning adjusts difficulty based on proof complexity metrics

3. **Retriever Evolution**: Progressive training creates increasingly sophisticated theorem retrieval systems

4. **Specialized Handling**: SORRY Theorems use distinct processing pipeline with retrieval-augmented generation

### Interpretation

This architecture demonstrates a sophisticated approach to automated theorem proving that:

1. **Adapts Difficulty**: Uses complexity metrics to create personalized learning paths

2. **Balances Stability/Plasticity**: Progressive training maintains core knowledge while enabling adaptation

3. **Handles Edge Cases**: Specialized SORRY Theorem Proving addresses previously unsolved problems

4. **Leverages Community Knowledge**: GitHub repositories serve as initial knowledge base

The system appears designed to create self-improving theorem provers that can handle increasingly complex mathematical problems through iterative learning and curriculum adaptation. The "SORRY Theorem" handling suggests a focus on solving previously intractable problems through advanced retrieval and search strategies.

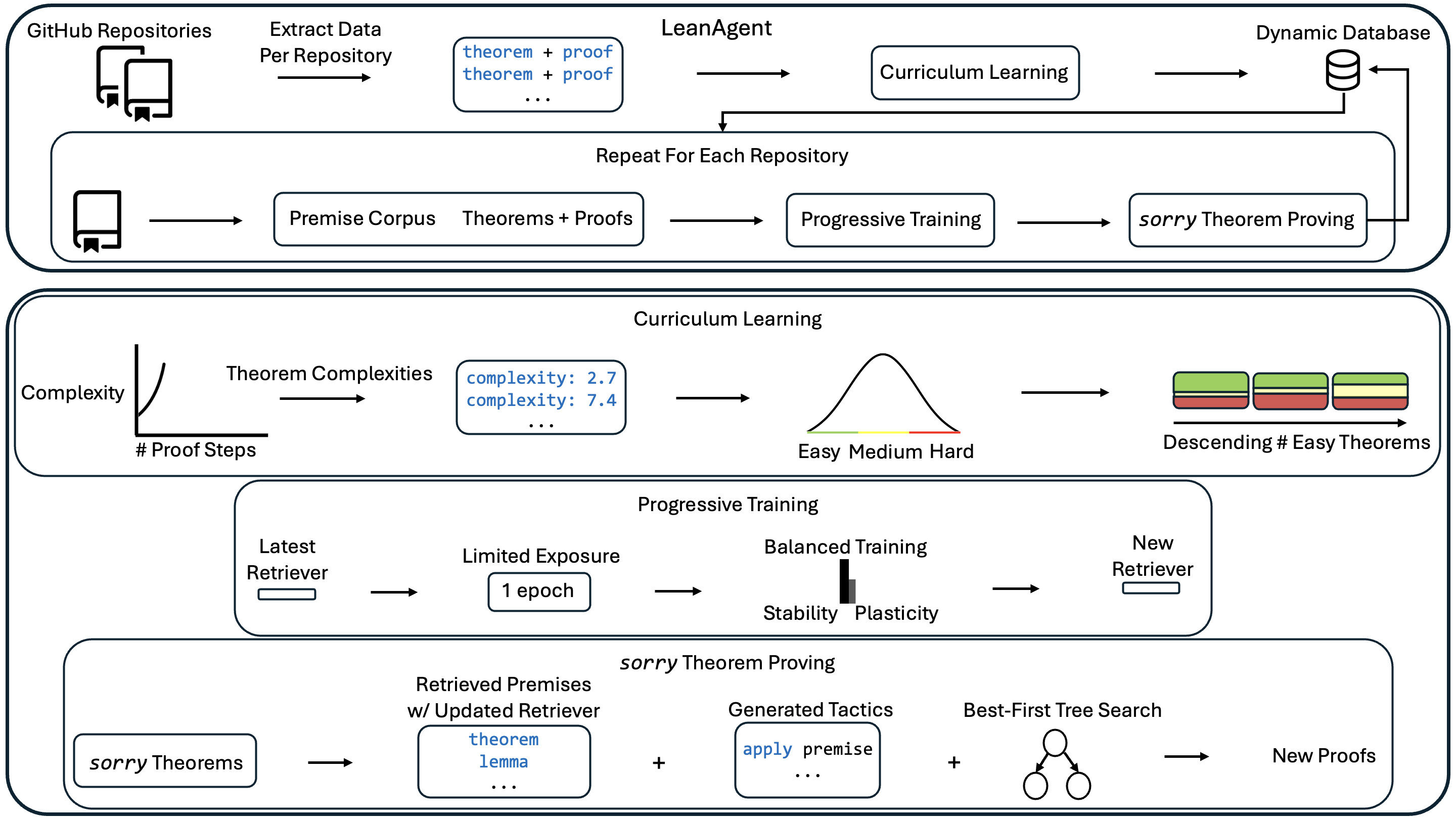

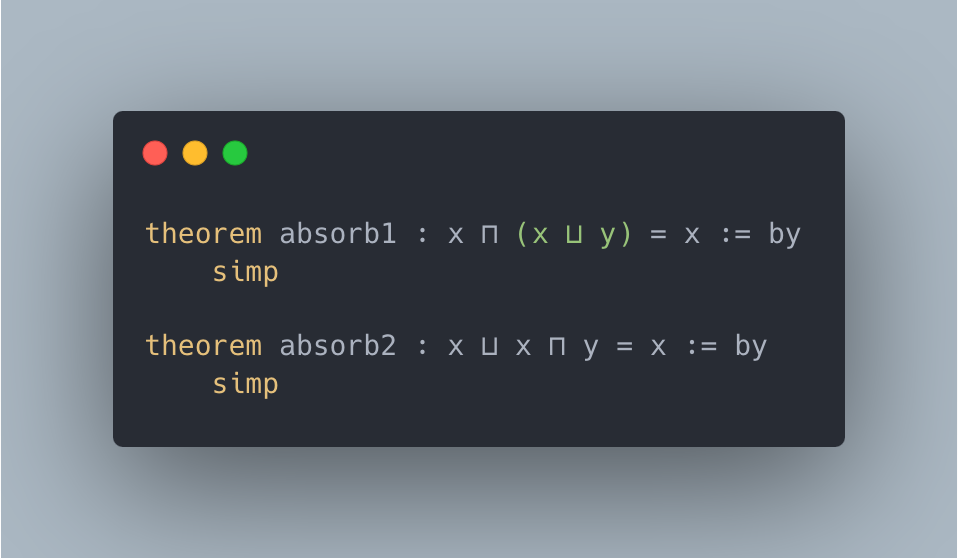

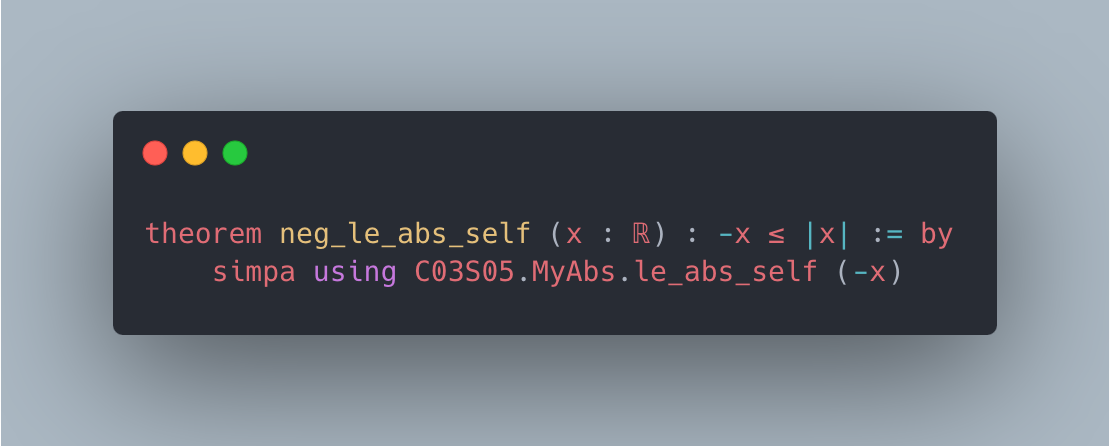

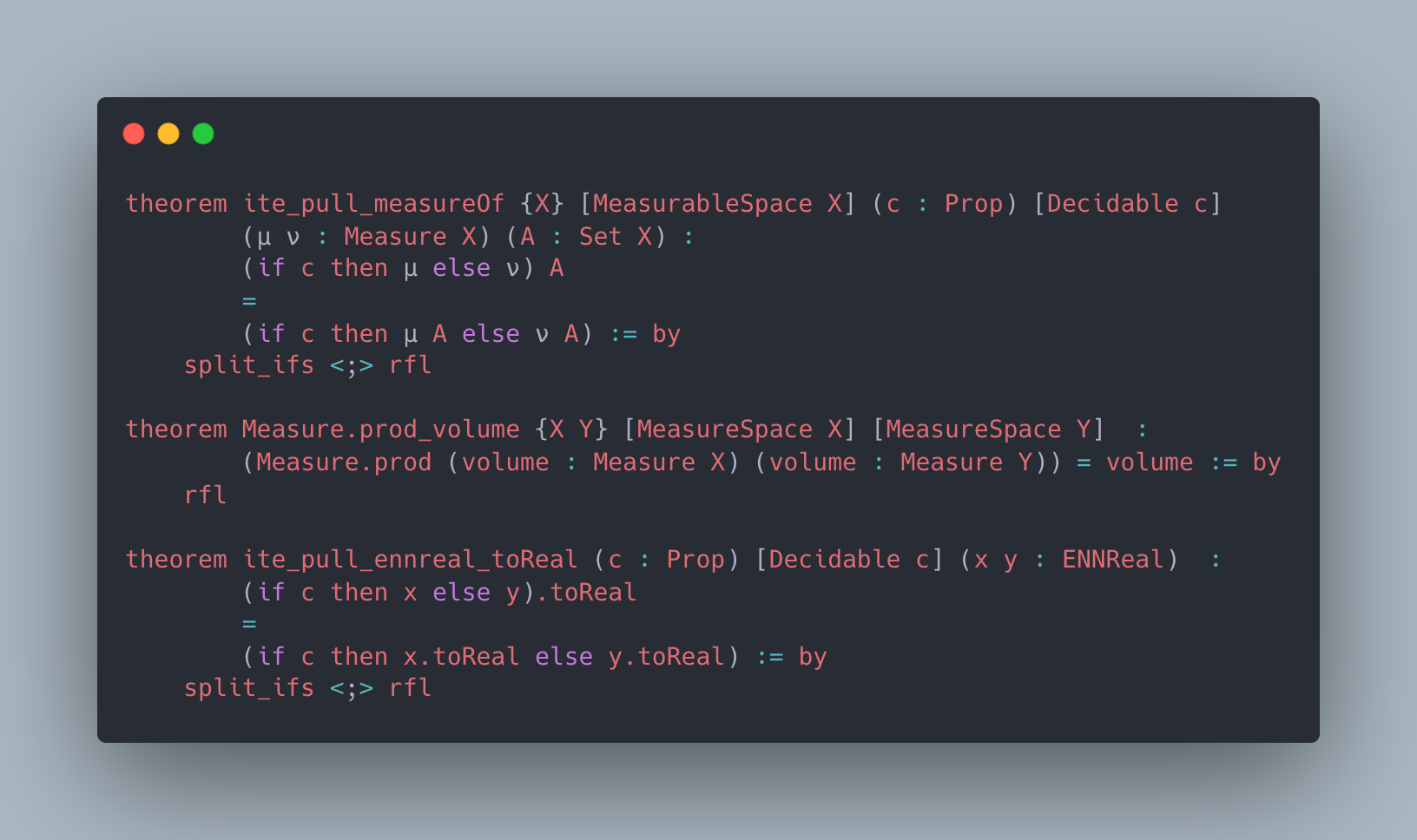

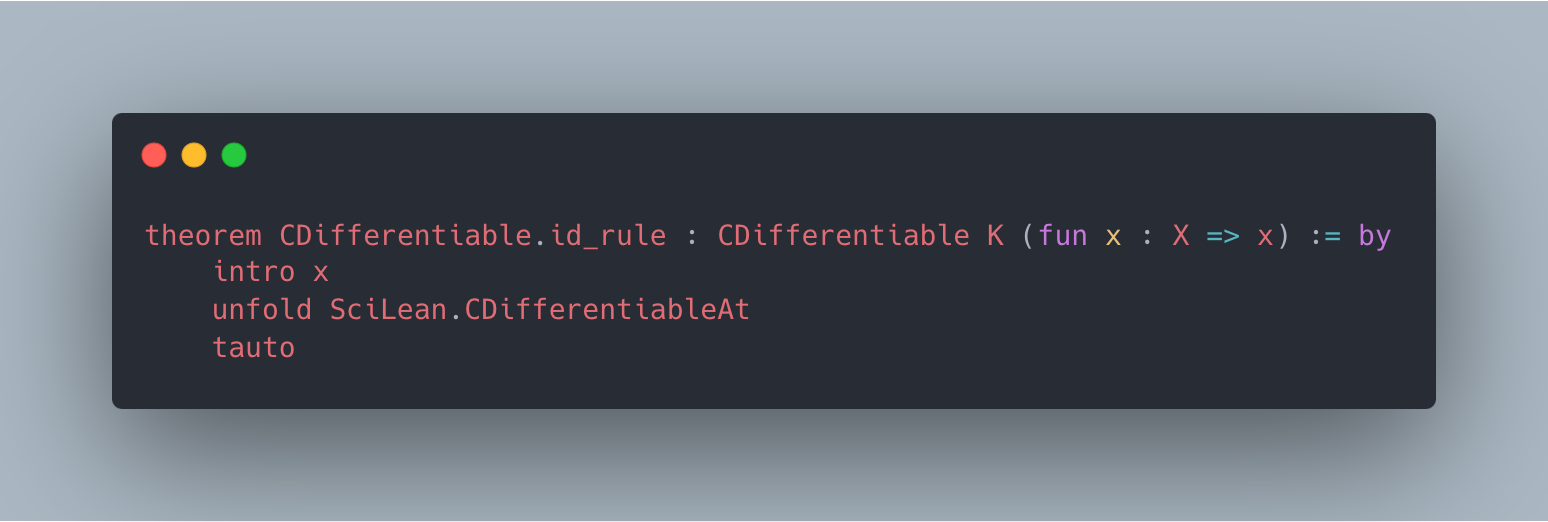

</details>

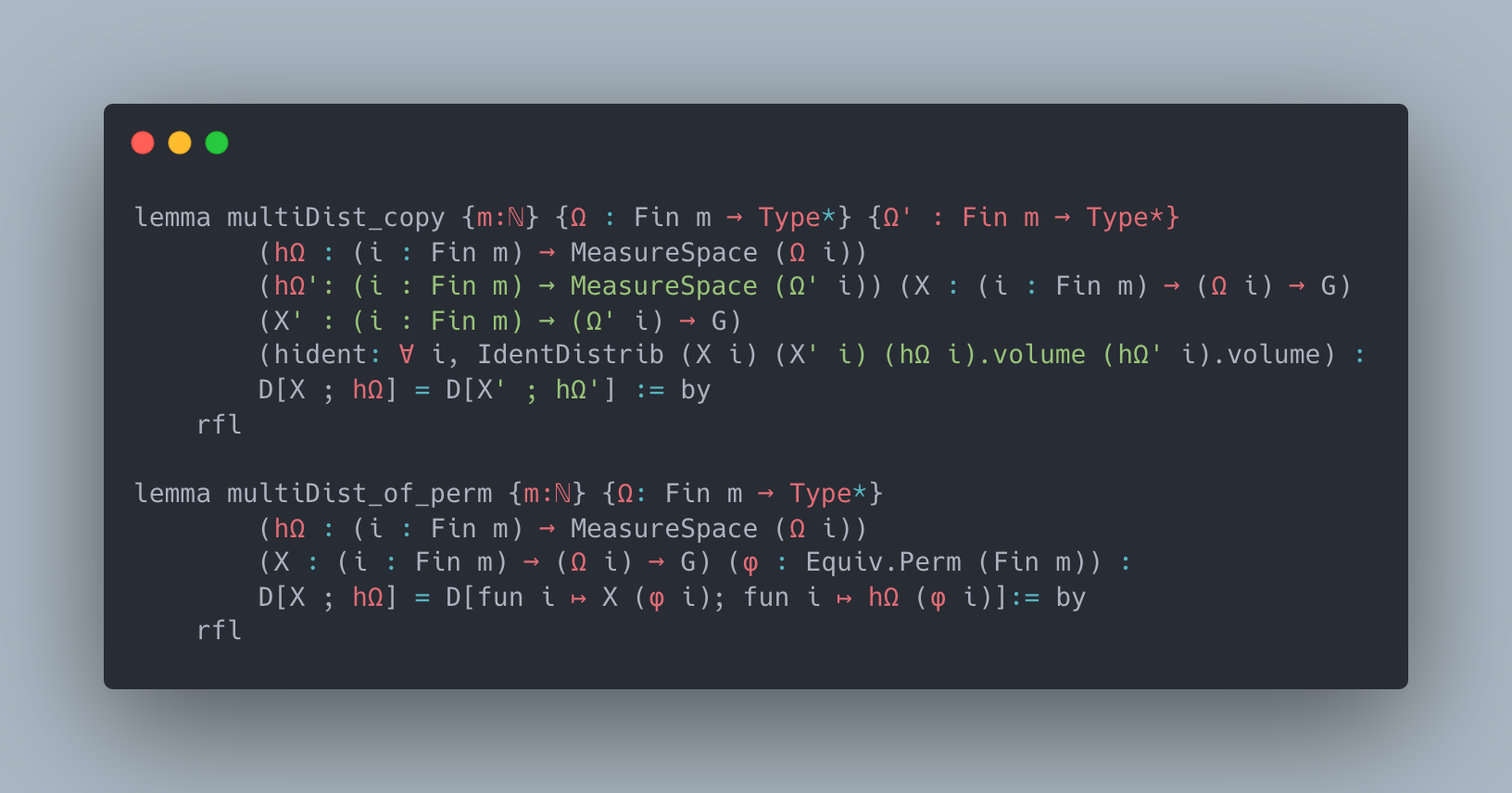

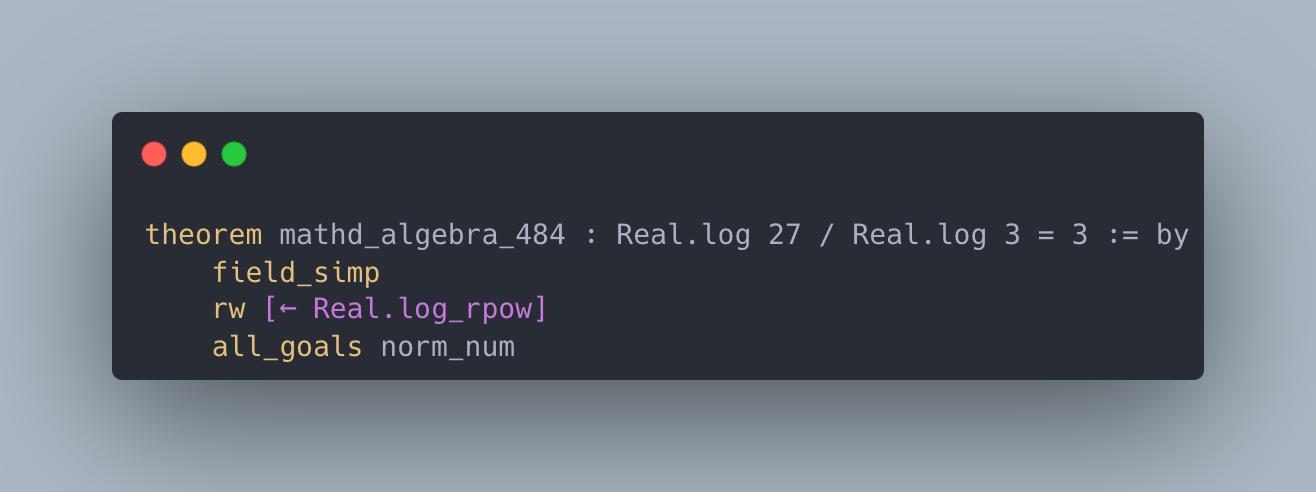

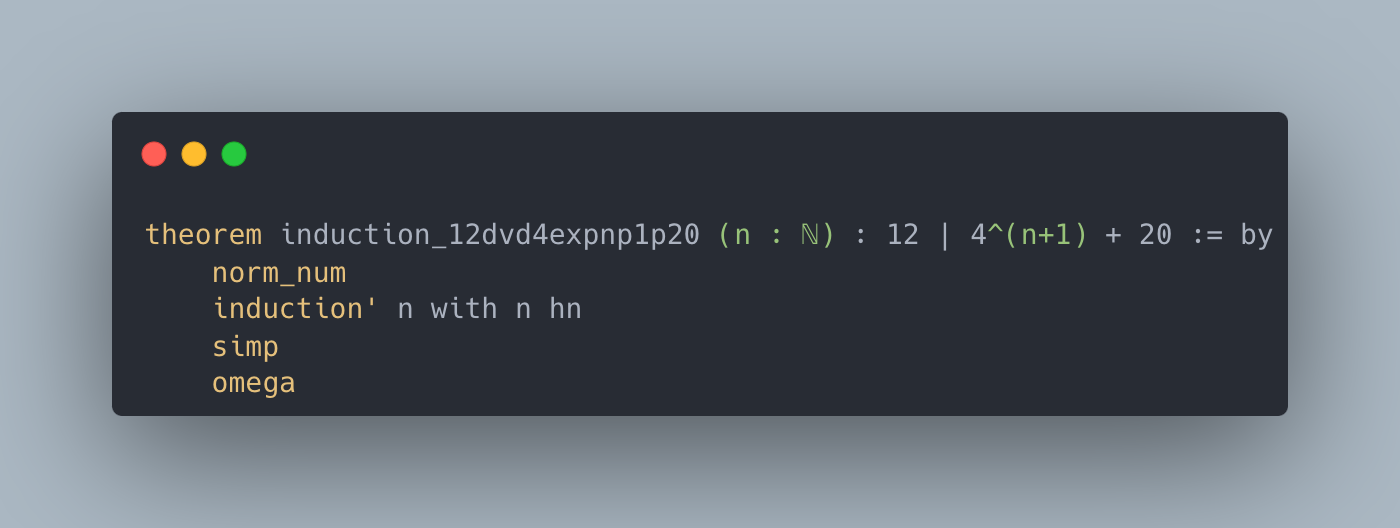

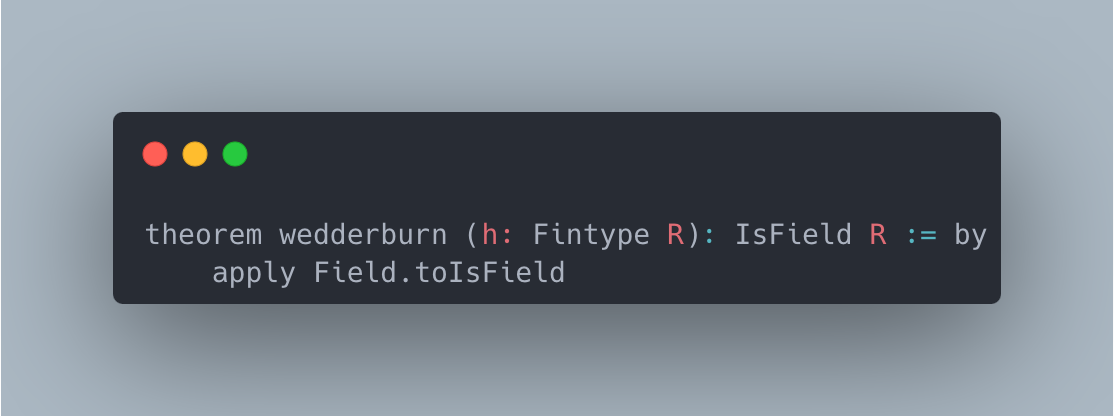

Figure 1: LeanAgent overview. LeanAgent searches for Lean repositories and uses LeanDojo to extract theorems and proofs. It uses curriculum learning, computing theorem complexity as $e^{S}$ ( $S$ = proof steps) and calculating the 33rd and 67th complexity percentiles across all theorems to sort repositories by easy theorem count. LeanAgent adds the curriculum to its dynamic database. As a retrieval-based framework, LeanAgent generates a dataset (premise corpus and a collection of theorems and proofs) for each repository in the curriculum and progressively trains its retriever. Progressive training happens over one epoch to prevent forgetting old knowledge. Then, LeanAgent uses the updated retriever in a search-based method to generate formal proofs for theorems where formal proofs were previously missing, known as sorry theorems. It adds new proofs to the database.

The dynamic nature of mathematical research exacerbates this generalizability issue. Mathematicians often formalize across multiple domains and projects simultaneously or cyclically. For example, Terence Tao has worked on various projects in parallel, including formalizations of the PFR Conjecture, symmetric mean of real numbers, classical Newton inequality, and asymptotic analysis (Tao, ; Tao, 2024a; Tao et al., 2024; Tao, 2024b). Patrick Massot has been formalizing Scholze’s condensed mathematics and the Perfectoid Spaces project (Community, 2024a; b). These examples highlight a critical gap in current theorem-proving AI approaches: the lack of a system that can adapt and improve across multiple, diverse mathematical domains over time, given limited Lean data availability.

Connection to Lifelong Learning. Crucially, how mathematicians formalize is relevant to lifelong learning, i.e. learning multiple tasks without forgetting (Wang et al., 2024b). A significant challenge is catastrophic forgetting: when adaptation to new distributions leads to a loss of understanding of old ones (Jiang et al., 2024). The core challenge is balancing plasticity (the ability to learn and adapt) with stability (the ability to retain existing knowledge) (Wang et al., 2024b). Increasing plasticity to learn new tasks efficiently can lead to overwriting previously learned information. However, enhancing stability to preserve old knowledge may impair the model’s ability to acquire new skills (van de Ven et al., 2024). Achieving the right balance is key to continuous generalizability in theorem proving.

LeanAgent. We present LeanAgent, a novel lifelong learning framework for theorem proving. As shown in Figure 1, LeanAgent’s workflow consists of (1) deriving complexity measures of theorems to compute a curriculum for learning, (2) progressive training to learn while balancing stability and plasticity, and (3) searching for proofs of sorry theorems by leveraging a best-first tree search, all while using a dynamic database to manage its evolving mathematical knowledge. LeanAgent works with any LLM; we implement it with retrieval for improved generalizability (Yang et al., 2023).

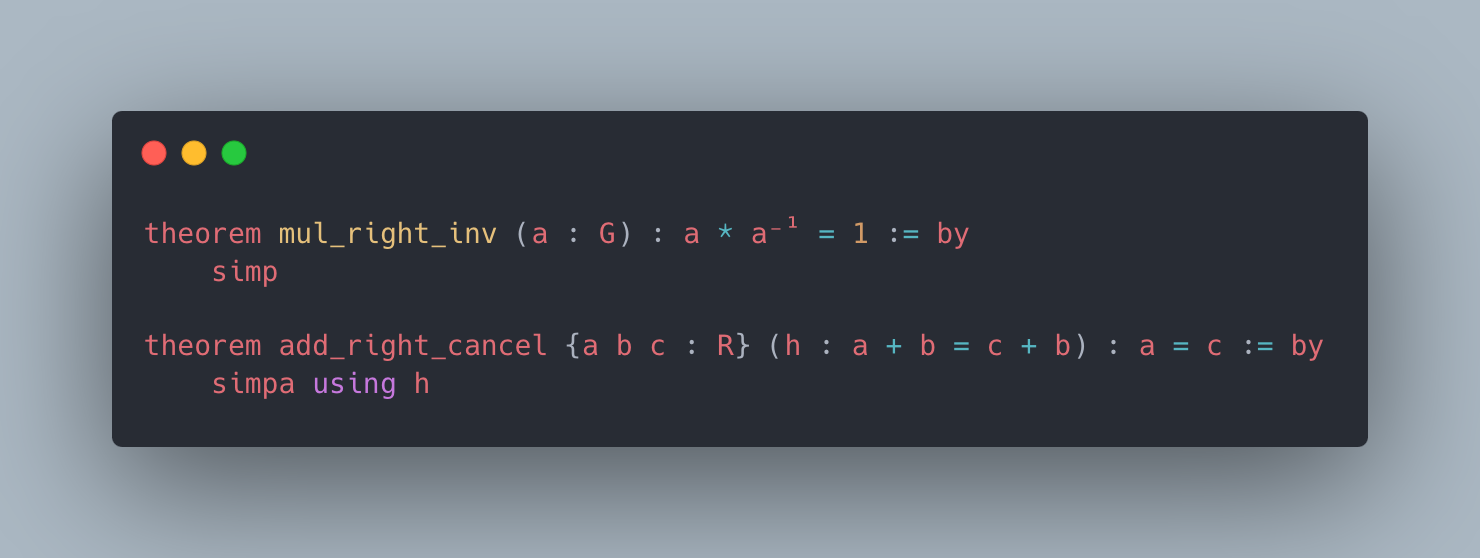

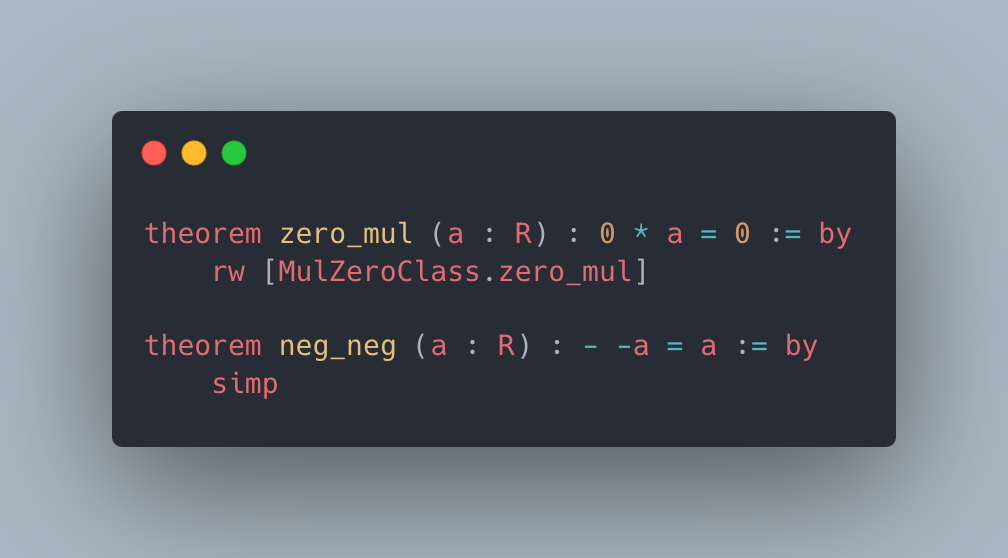

We employ a simple progressive training method to avoid catastrophic forgetting. Progressive training allows LeanAgent to continuously adapt to new mathematical knowledge while preserving previously learned information. This process involves incrementally training the retriever on newly generated datasets from each repository in the curriculum. Starting with a pre-trained retriever (e.g., ReProver’s retriever based on ByT5 (Xue et al., 2022)), LeanAgent trains on each new dataset for one additional epoch. Restricting progressive training to one epoch helps balance stability and plasticity. Crucially, this training is repeated for each dataset generated from the database, gradually expanding LeanAgent’s knowledge base. This approach increases the space of possible proof states (where a state consists of a theorem’s hypotheses and current proof progress) while adding new premises to the premise embeddings. More sophisticated lifelong learning methods like Elastic Weight Consolidation (EWC) (Kirkpatrick et al., 2017), which uses the Fisher Information Matrix to constrain important weights for previous tasks, result in excessive plasticity. The uncontrolled plasticity is due to the inability of these methods to adapt parameter importance as theorem complexity increases. This forces rapid changes in parameters crucial for learning advanced concepts. Such methods fail to adapt to the evolving complexity of mathematical theorems, making them unsuitable for lifelong learning in theorem proving.

Extensive experiments across 23 diverse Lean repositories demonstrate LeanAgent’s advancements in lifelong learning for theorem proving. LeanAgent successfully generates formal proofs for 155 theorems across these 23 repositories where formal proofs were previously missing, known as sorry theorems, many from advanced mathematics. For example, it proves challenging sorry theorems in abstract algebra and algebraic topology related to Coxeter systems and the Hairy Ball Theorem (Coxeter, 2024; Hairy Ball Theorem, 2024). LeanAgent also proves 7 theorems using exploits found within Lean’s type system. We find that LeanAgent demonstrates progressive learning in theorem proving, initially proving basic sorry theorems and significantly advancing to more complex ones. It significantly outperforms the static ReProver baseline in terms of proving new sorry theorems. We have issued pull requests to the respective repositories with the newly proven sorry theorems. Some of these proofs utilized unintended constructs within the repositories’ implementations, which are currently being addressed through appropriate fixes.

In theorem proving, we find that stability, without losing too much plasticity, is crucial for continuous generalizability to new repositories. Backward transfer (BWT), where learning new tasks improves performance on previously learned tasks, is essential in theorem proving (Wang et al., 2024b). Mathematicians require a lifelong learning framework for theorem proving that is both continuously generalizable and continuously improving. We conduct an extensive ablation study using six lifelong metrics carefully proposed or selected from the literature. LeanAgent’s simple components of curriculum learning and progressive training improve stability and BWT scores substantially, emphasizing its continuous generalizability and improvement and explaining its superior sorry theorem proving performance.

2 Preliminaries

Neural Theorem Proving. The current state-of-the-art of learning-based provers employs Transformer-based (Vaswani et al., 2017) LLMs that process expressions as plain text strings (Azerbayev et al., 2024; Xin et al., 2024b; Shao et al., 2024). In addition, researchers have explored complementary aspects like proof search algorithms (Lample et al., ; Wang et al., 2023). Moreover, other works break the theorem-proving process into smaller proving tasks (Song et al., 2024; Wang et al., 2024a; Lin et al., 2024).

Premise Selection. A critical challenge in theorem proving is the effective selection of relevant premises (Irving et al., 2016; Tworkowski et al., ). However, many existing approaches treat premise selection as an isolated problem (Wang & Deng, 2020; Piotrowski et al., 2023) or use selected premises only as input to symbolic provers (Alama et al., 2014; Mikuła et al., 2024).

Retrieval-Augmented LLMs. While retrieval-augmented language models have been extensively studied in areas like code generation (Lu et al., 2022; Zhou et al., 2023), their application to formal theorem proving is relatively new. However, relevant architectures have been researched in natural language processing (NLP) (Lu et al., 2024; Borgeaud et al., 2022; Thakur et al., 2024).

Lifelong Learning. Lifelong learning addresses catastrophic forgetting in sequential task learning (Chen et al., 2024). Approaches include regularization methods (Kirkpatrick et al., 2017), memory-based techniques (Lopez-Paz & Ranzato, 2017; Chaudhry et al., 2019; Shin et al., 2017), and knowledge distillation (Li & Hoiem, 2017; Kim et al., 2023). Other strategies involve dynamic architecture adjustment (Mendez & Eaton, 2021) and recent work on gradient manipulation and selective re-initialization (Chen et al., 2024; Dohare et al., 2024). We justify not using these strategies in Appendix A.6.

Curriculum Learning in Theorem Proving. Prior work created a synthetic inequality generator to produce a curriculum of statements of increasing difficulty (Polu et al., 2022). For reinforcement learning, an existing work used the length of proofs to help determine rewards (Zombori et al., 2019).

3 Methodology

A useful lifelong learning strategy for theorem proving requires (a) a repository order strategy and (b) a learning strategy. We solve (a) with curriculum learning to utilize the structure of Lean proofs and (b) with progressive training to balance stability and plasticity. LeanAgent consists of four main components: curriculum learning, dynamic database management, progressive training of the retriever, and sorry theorem proving. Further methodology details are in Appendix A.1 and a discussion of why curriculum learning works in theorem proving is available in Appendix A.6.

3.1 Curriculum Learning

LeanAgent uses curriculum learning to learn on increasingly complex mathematical repositories. This process optimizes LeanAgent’s learning trajectory, allowing it to build upon foundational knowledge before tackling more advanced concepts.

First, we automatically search for and clone Lean repositories from GitHub. We use LeanDojo for each repository to extract fine-grained information about their theorems, proofs, and dependencies. Then, we calculate the complexity of each theorem using $e^{S}$ , where $S$ represents the number of proof steps. However, sorry theorems, which have no proofs, are assigned infinite complexity. We use an exponential scaling to address the combinatorial explosion of possible proof paths as the length of the proof increases. Further justification for considering this complexity metric is in Appendix A.6.

We compute the 33rd and 67th percentiles of complexity across all theorems in all repositories. Using these percentiles, we categorize non- sorry theorems into three groups: easy (theorems with complexity below the 33rd percentile), medium (theorems with complexity between the 33rd and 67th percentiles), and hard (theorems with complexity above the 67th percentile). We then sort repositories by the number of easy theorems they contain. This sorting forms the basis of our curriculum, with LeanAgent starting on repositories with the highest number of easy theorems.

3.2 Dynamic Database Management

Then, we add the sorted repositories to LeanAgent’s custom dynamic database using the data LeanAgent extracted. This way, we can keep track of and interact with the knowledge that LeanAgent is aware of and the proofs it has produced. We also include the complexity of each theorem computed in the previous step into the dynamic database, allowing for efficient reuse of repositories in a future curriculum. Details of the database’s contents and features can be found in Appendix A.1.

For each repository in the curriculum, LeanAgent uses the dynamic database to generate a dataset by following the same procedure used to make LeanDojo Benchmark 4 (details in Appendix A.1). This dataset includes a collection of theorems and their proofs. Each step of these proofs contains detailed annotations, such as how the step changes the state of the proof. A state consists of a theorem’s hypotheses and the current progress in proving the theorem. As such, this pairing of theorems and proofs demonstrates how to use specific tactics (functions) and premises in sequence to prove a theorem. In addition, the dataset includes a premise corpus, serving as a library of facts and definitions.

3.3 Progressive Training of the Retriever

LeanAgent then progressively trains its retriever on the newly generated dataset. This strategy allows LeanAgent to continuously adapt to new mathematical knowledge from the premises in new datasets while preserving previously learned information, crucial for lifelong learning in theorem proving. Progressive training achieves this by incrementally incorporating new knowledge from each repository.

Although LeanAgent works with any LLM, we provide a specific implementation here. We start with ReProver’s retriever, a fine-tuned version of the ByT5 encoder (Xue et al., 2022), leveraging its general pre-trained knowledge from mathlib4. We train LeanAgent on the new dataset for an additional epoch. This limited exposure helps prevent overfitting to the new data while allowing LeanAgent to learn essential new information. LeanAgent’s retriever, and therefore the embeddings it generates, are continuously updated during progressive training. Thus, at the end of the current progressive training run, we precompute embeddings for all premises in the corpus generated by LeanAgent’s current state to ensure that we properly evaluate LeanAgent’s validation performance. To understand how LeanAgent balances stability and plasticity, we save the model iteration with the highest validation recall for the top ten retrieved premises (R@10). This is a raw plasticity value: it can be used to compute other metrics that describe LeanAgent’s ability to adapt to and handle new types of mathematics in the latest repository (details in Sec. 4). Then, we compute the average test R@10 over all previous datasets the model has progressively trained on, a raw stability value.

As mentioned previously, we repeat this procedure for each dataset we generate from the database, hence the progressive nature of this training. Progressive training adds new premises to the premise embeddings and increases the space of possible proof states. This allows LeanAgent to explore more diverse paths to prove theorems, discovering new proofs that it couldn’t produce with its original knowledge base.

3.4 sorry Theorem Proving

For each sorry theorem, LeanAgent generates a proof with a best-first tree search by generating tactic candidates at each step, in line with prior work (Yang et al., 2023). Using the embeddings from the entire corpus of premises we previously collected, LeanAgent retrieves relevant premises from the premise corpus based on their similarity to the current proof state, represented as a context embedding. Then, it filters the results using a corpus dependency graph to ensure that we only consider premises accessible from the current file. We add these retrieved premises to the current state and generate tactic candidates using beam search. Then, we run each tactic candidate through Lean to obtain potential next states. Each successful tactic application adds a new edge to the proof search tree. We choose the tactic with the maximum cumulative log probability of the tactics leading to it. If the search reaches a dead-end, we backtrack and explore alternative paths. We repeat the above steps until the search finds a proof, exhausts all possibilities, or reaches the time limit of 10 minutes.

If LeanAgent finds a proof, it adds it to the dynamic database. The newly added premises from this proof will be included in a future premise corpus involving the current repository. Moreover, LeanAgent can learn from the new proof during progressive training in the future, aiding further improvements.

4 Experiments

4.1 Experimental Setup

Table 1: Selected repository descriptions

| PFR Hairy Ball Theorem Coxeter | Polynomial Freiman-Ruzsa Conjecture Algebraic topology result Coxeter groups |

| --- | --- |

| Mathematics in Lean Source | Lean files for the textbook |

| Formal Book | Proofs from THE BOOK |

| MiniF2F | Math olympiad-style problem solving |

| SciLean | Scientific computing |

| Carleson | Carleson’s Theorem |

| Lean4 PDL | Propositional Dynamic Logic |

We devote this section to describing two types of experiments: (1) sorry Theorem Proving: We compare sorry theorem proving performance between LeanAgent and ReProver. We also examine the progression of LeanAgent’s proven sorry theorems during lifelong learning to the end of lifelong learning. This shows LeanAgent’s continuous generalizability and improvement. (2) Lifelong Learning Analysis: We conduct an ablation study with six lifelong learning metrics to explain LeanAgent’s superiority in sorry theorem proving. Moreover, these results explain LeanAgent’s superior handling of the stability-plasticity tradeoff. Please see Appendix A.2 for experiment implementation details. We release LeanAgent at https://github.com/lean-dojo/LeanAgent.

Repositories. We evaluate our approach on a diverse set of 23 Lean repositories to assess its generalizability across different mathematical domains (Skřivan, 2024; Kontorovich, 2024; Avigad, 2024; Tao et al., 2024; Renshaw, 2024; Fermat’s Last Theorem, 2024; DeepMind, 2024; Carneiro, 2024; Wieser, 2024; Mizuno, 2024; Murphy, 2024; Formal Logic, 2024; Con-nf, 2024; Gadgil, 2024; Yang, 2024; Zeta 3 Irrational, 2024; Firsching, Moritz, 2024; Monnerjahn, 2024; van Doorn, 2024; Dillies, 2024; Hairy Ball Theorem, 2024; Coxeter, 2024; Gattinger, 2024). Details of key repositories are in Table 1. Further details of these repositories, including commits and how we chose the initial curriculum and the sub-curriculum (described in Sec. 4.2), are in Appendix A.3.

4.2 sorry Theorem Proving

We compare the number of sorry theorems LeanAgent can prove, both during and after lifelong learning, to the ReProver baseline. We use ReProver as the baseline because we use its retriever as LeanAgent’s initial retriever in our experiments.

<details>

<summary>extracted/6256159/newproof4.png Details</summary>

### Visual Description

## Code Snippet Analysis: Theorem Prover Syntax Comparison

### Overview

The image contains code snippets from multiple formal theorem provers (PFR, SciLean, Coqeter, MiniF2F, Formal Book) demonstrating mathematical proofs. Each section includes syntax-highlighted code with specific terms emphasized in green.

### Components/Axes

- **Structure**: Five distinct code blocks labeled by prover name

- **Syntax Highlighting**:

- Blue: Keywords (theorem, lemma, simp, apply, etc.)

- Brown: Theorem/lemma names

- Green: Highlighted terms (key components)

- Black: Mathematical symbols and variables

### Detailed Analysis

#### PFR Section

```text

lemma condRho_of_translate {Ω S : Type*} [MeasureSpace Ω] (X : Ω → G) (Y : Ω → S) (A : Finset G) (s:G) :

condRho (fun ω ↦ X ω + s) Y A = condRho X Y A := by

simp only [condRho, rho_of_translate]

```

- **Highlighted Terms**: `condRho`, `rho_of_translate`

#### SciLean Section

```text

theorem re_float (a : Float) : RCLike.re a = a := by

exact RCLike.re_eq_self_of_le le_rfl

```

- **Highlighted Terms**: `RCLike.re_eq_self_of_le`, `le_rfl`

#### Coqeter Section

```text

lemma invmaps_of_eq {S:Set G} [CoxeterSystem G S] {s :S} :

invmaps S s = s := by

simp [CoxeterSystem.Presentation.invmaps]

unfold CoxeterSystem.toMatrix

apply CoxeterSystem.monoidLift.mapLift.of

```

- **Highlighted Terms**: `CoxeterSystem.Presentation.invmaps`, `CoxeterSystem.toMatrix`, `CoxeterSystem.monoidLift.mapLift.of`

#### MiniF2F Section

```text

theorem induction_12dvd4expnp1p20 (n : N) :

12 | 4^(n+1) + 20 := by

norm_num

induction' n with n hn

simp

omega

theorem amc12a_2002_p6 (n : N) (h₀ : 0 < n) :

∃ m, (m > n ∧ ∃ p, m * p ≤ m + p) := by

lift n to N+ using h₀

cases' n with n

exact (_, lt_add_of_pos_right _ _ _ zero_lt_one 1, by simp)

```

- **Highlighted Terms**: `lt_add_of_pos_right`, `zero_lt_one`

#### Formal Book Section

```text

theorem wedderburn (h: Fintype R) : IsField R := by

apply Field.toIsField

```

- **Highlighted Terms**: `Field.toIsField`

### Key Observations

1. **Syntax Consistency**: All provers use similar proof structures (`by`, `simp`, `apply`)

2. **Highlighted Patterns**:

- Mathematical operations (`condRho`, `re_float`)

- Type constraints (`Finset G`, `Fintype R`)

- Core proof tactics (`simp`, `apply`, `exact`)

3. **Mathematical Focus**: All proofs involve algebraic structures (groups, fields, Coxeter systems)

### Interpretation

The image demonstrates comparative analysis of formal proof structures across different theorem provers. The highlighted terms represent:

- Core mathematical concepts (`condRho`, `re_float`)

- Type system constraints (`Finset`, `Fintype`)

- Fundamental proof strategies (`simp`, `apply`)

- Algebraic structure properties (`CoxeterSystem`, `Field`)

The consistent use of `simp` and `apply` across provers suggests shared foundational proof techniques, while the specific highlighted terms reveal domain-specific implementations. The MiniF2F section's number theory focus contrasts with the algebraic emphasis in other sections, showing the versatility of these provers in handling different mathematical domains.

</details>

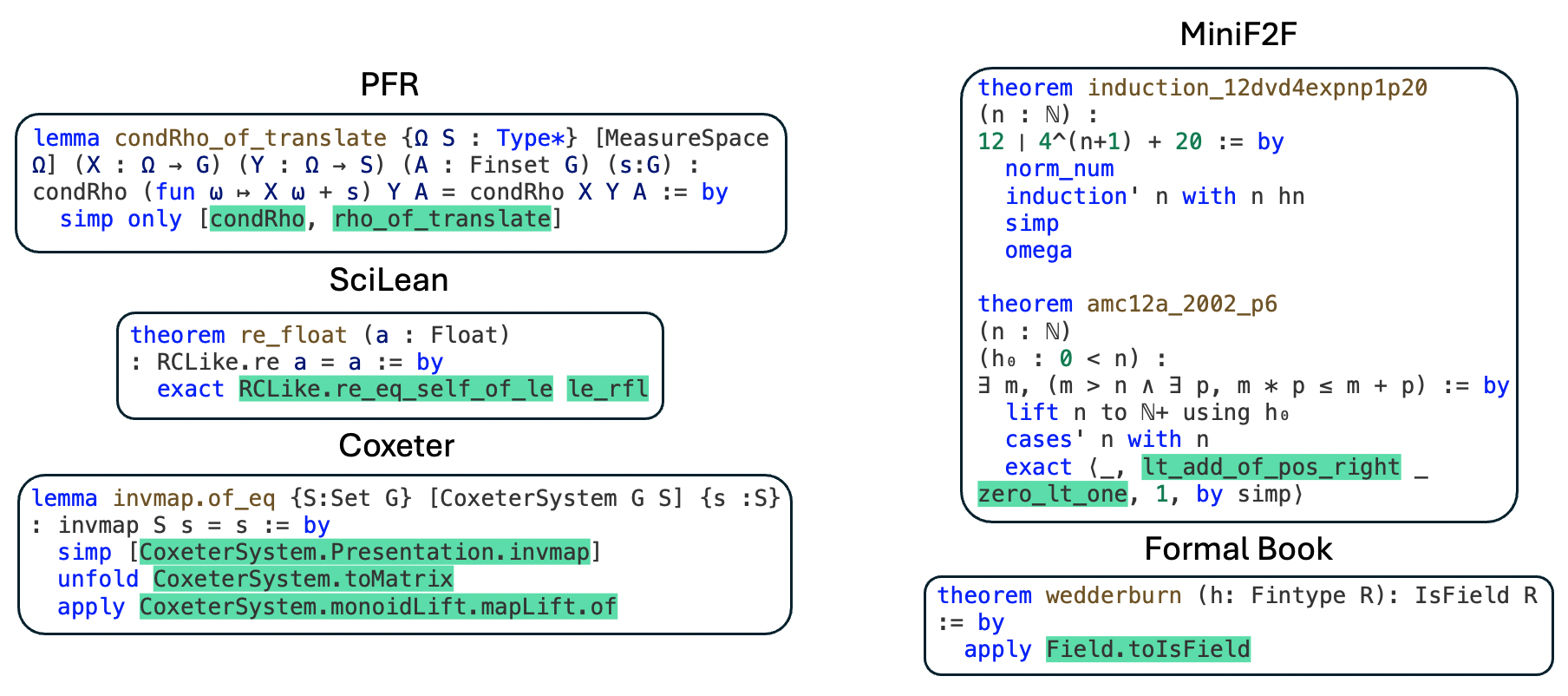

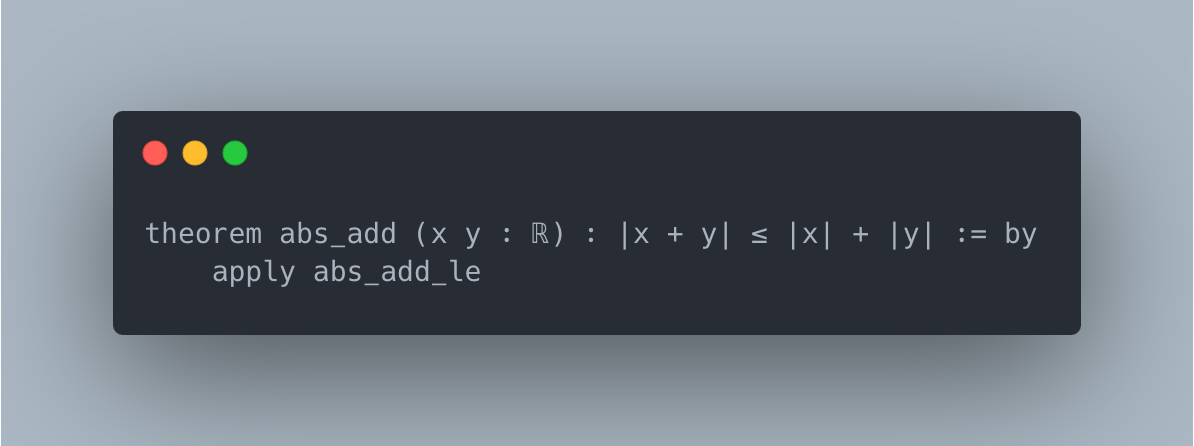

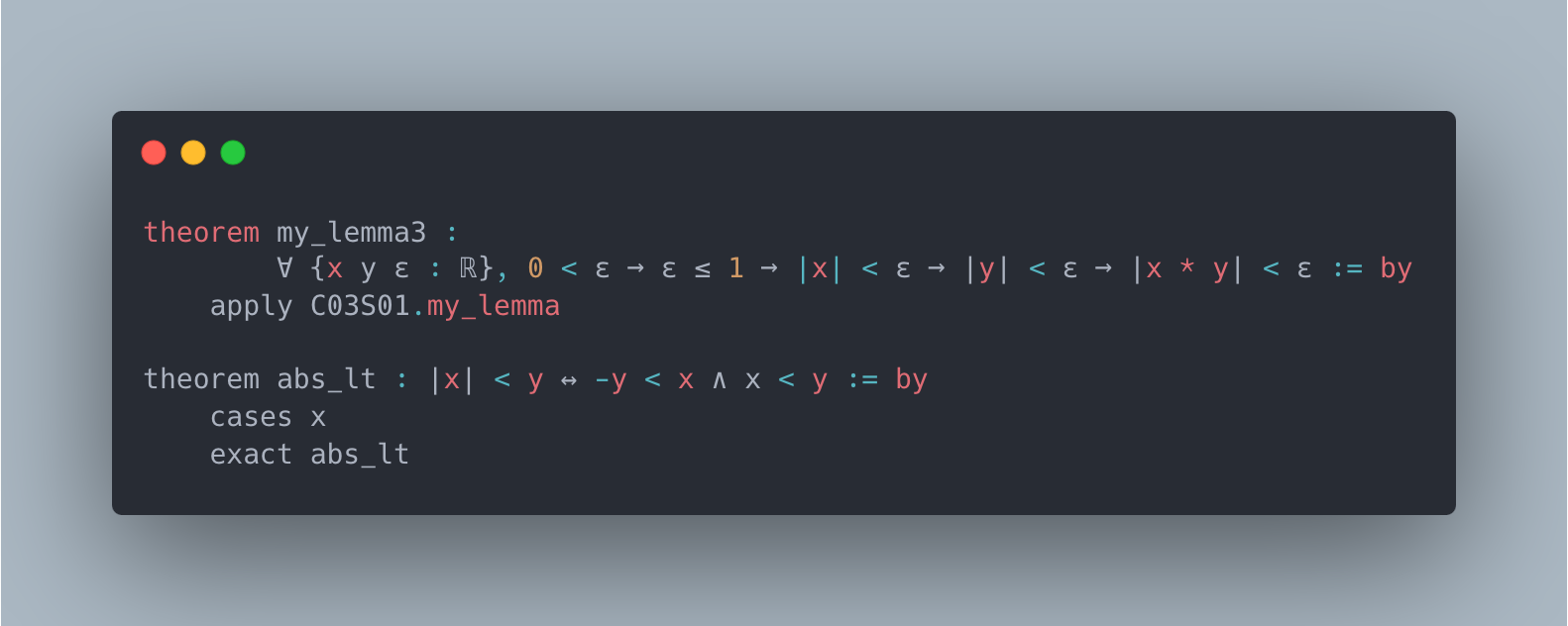

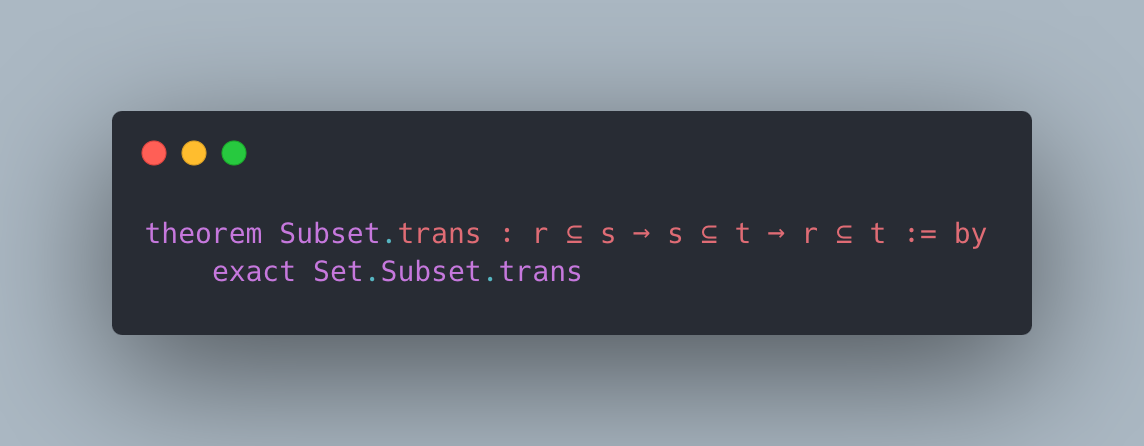

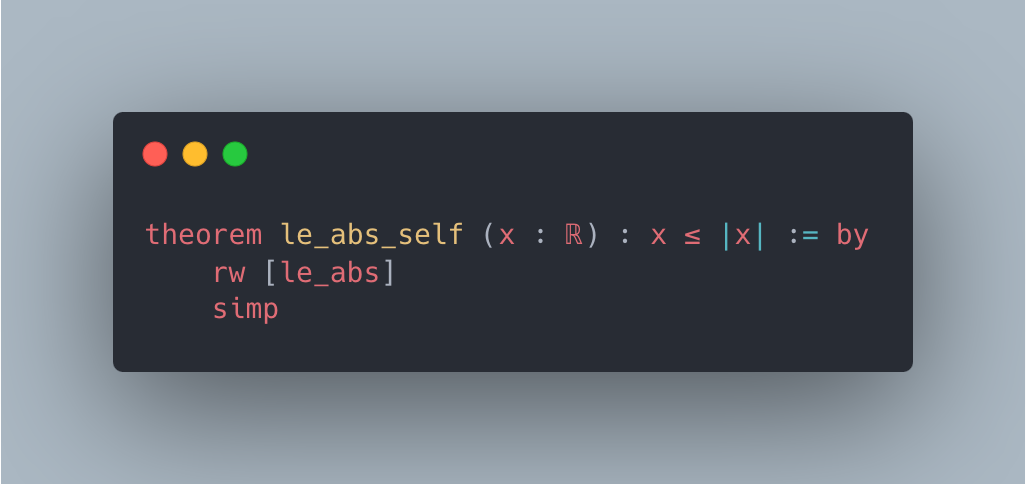

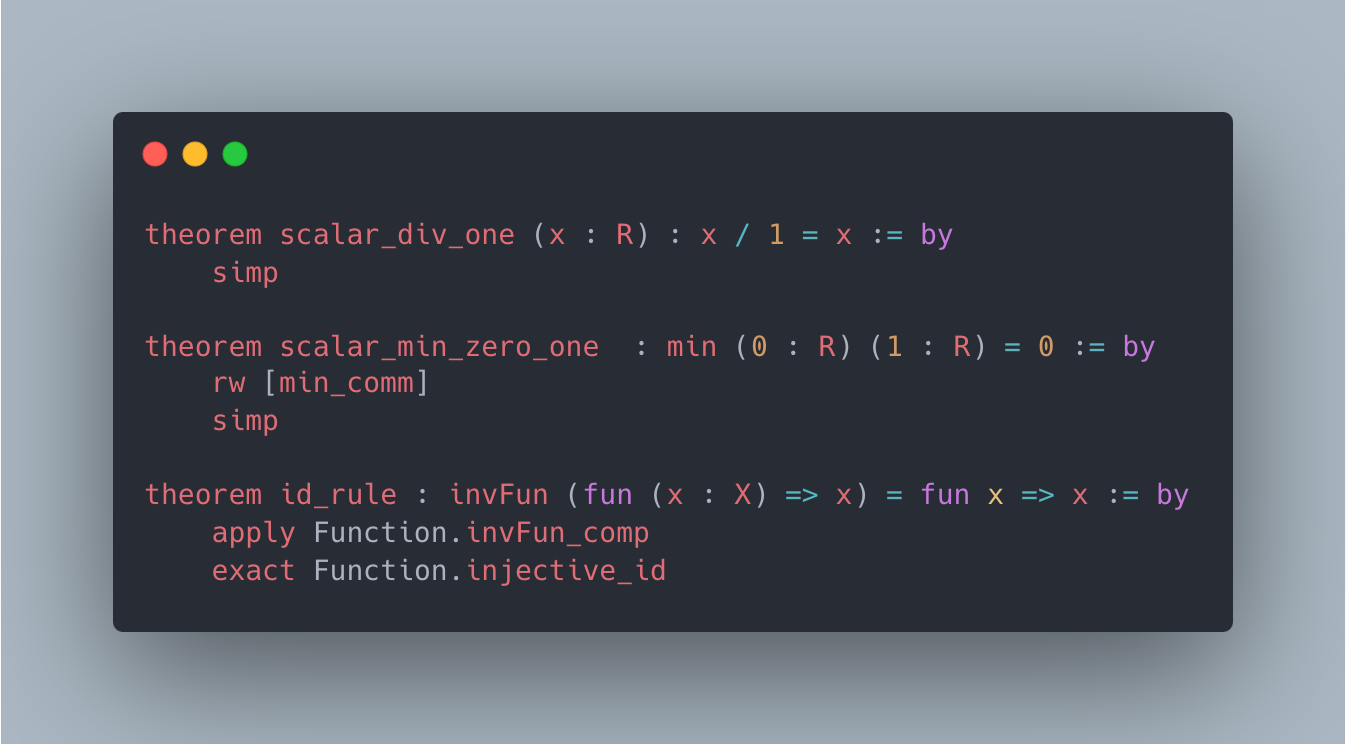

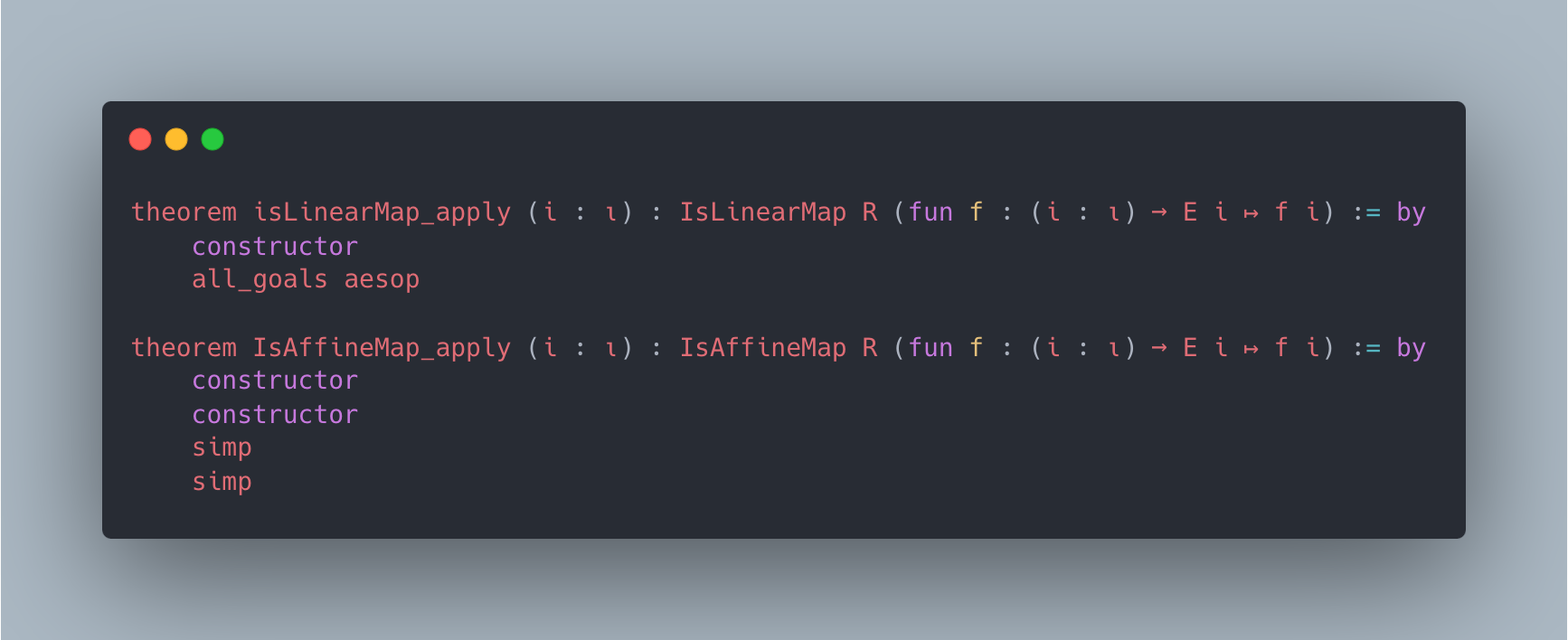

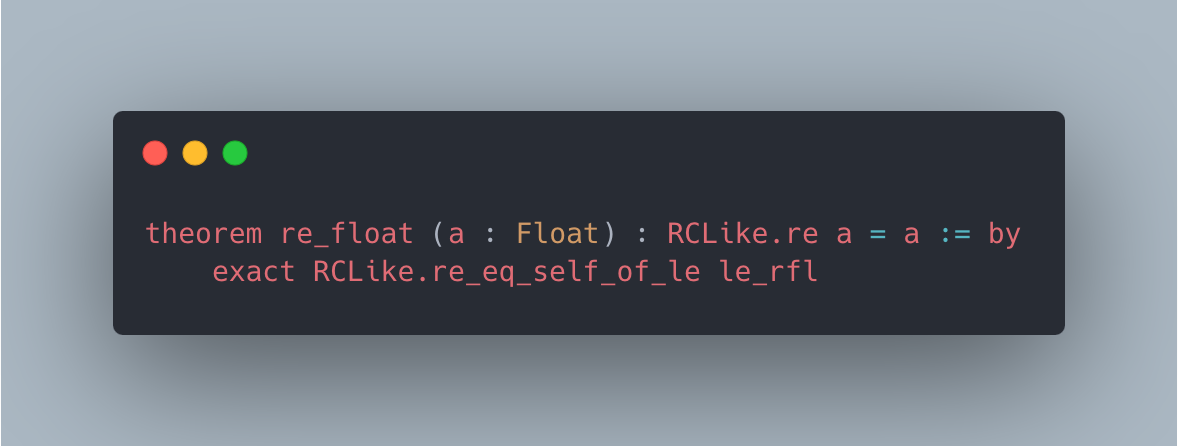

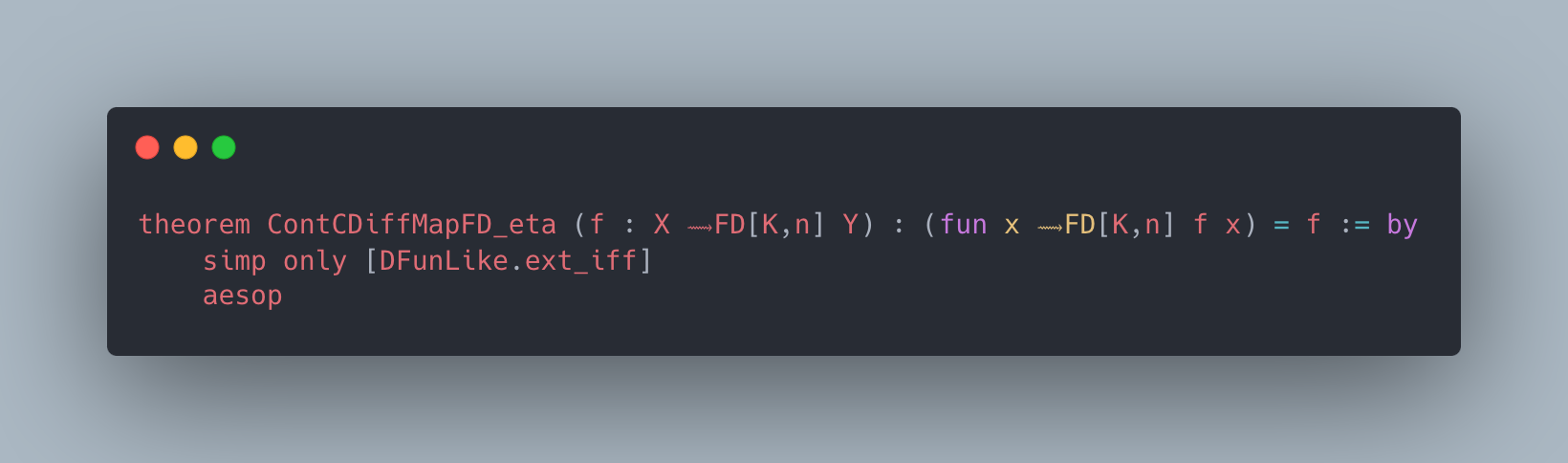

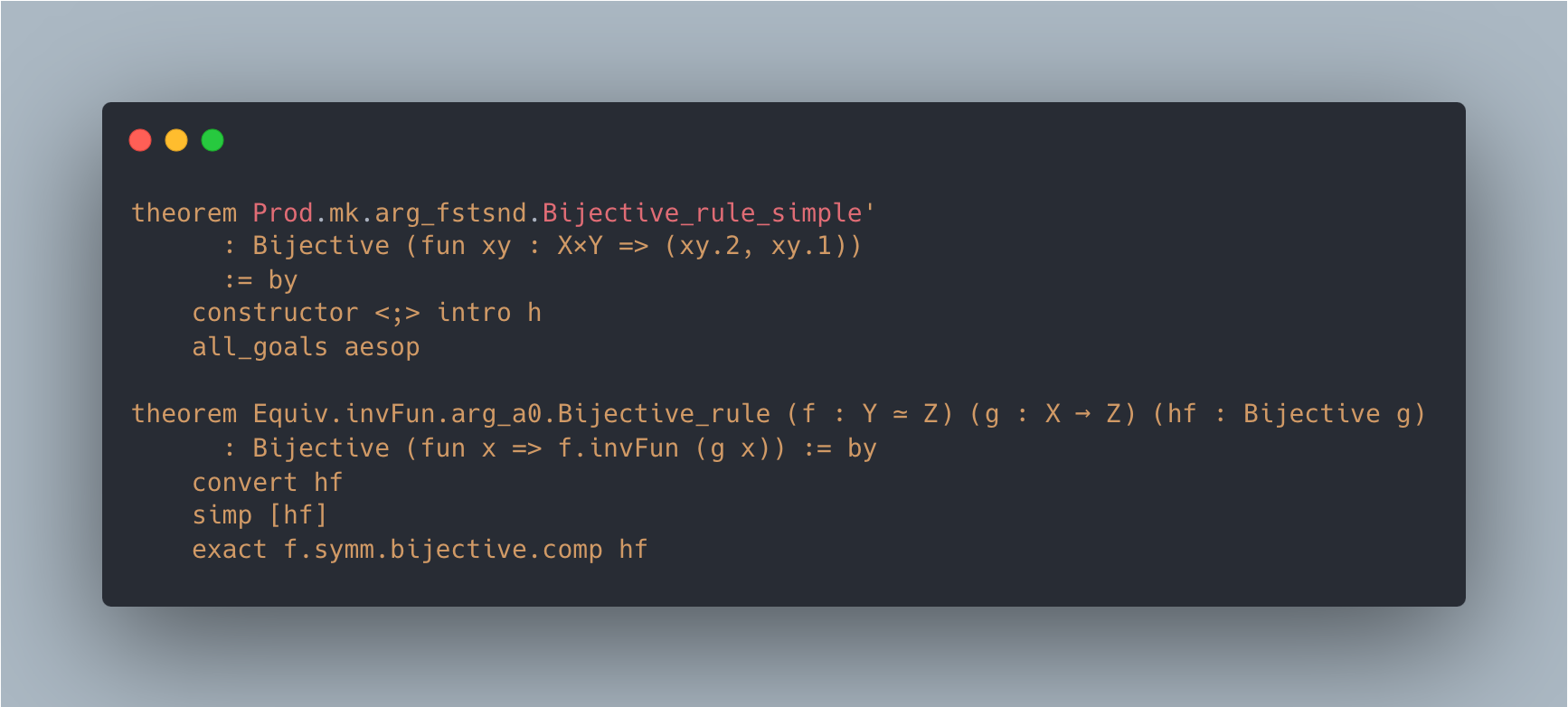

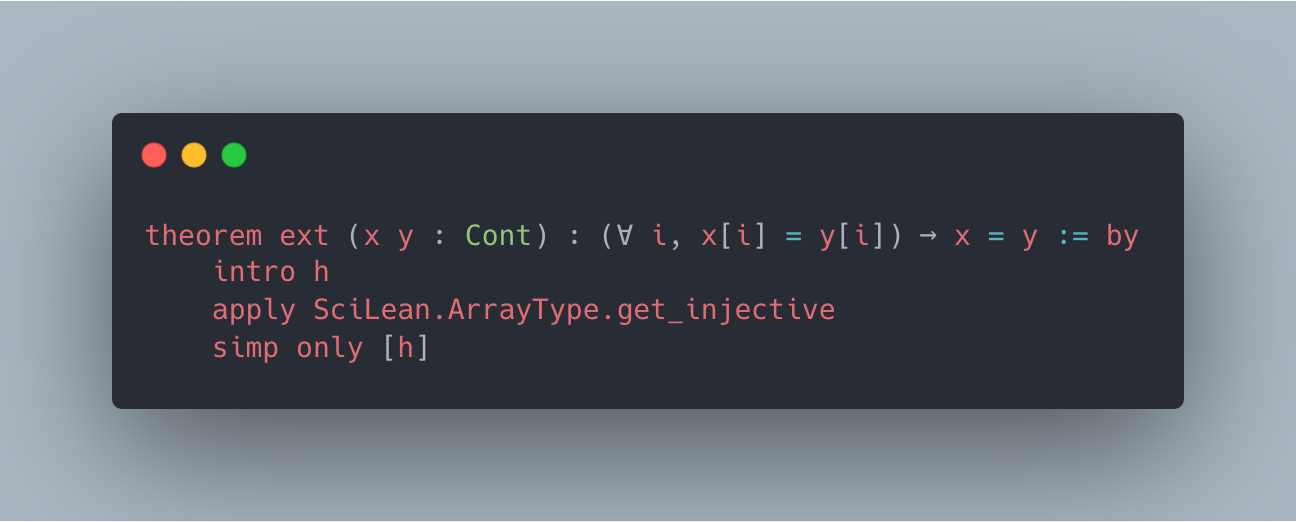

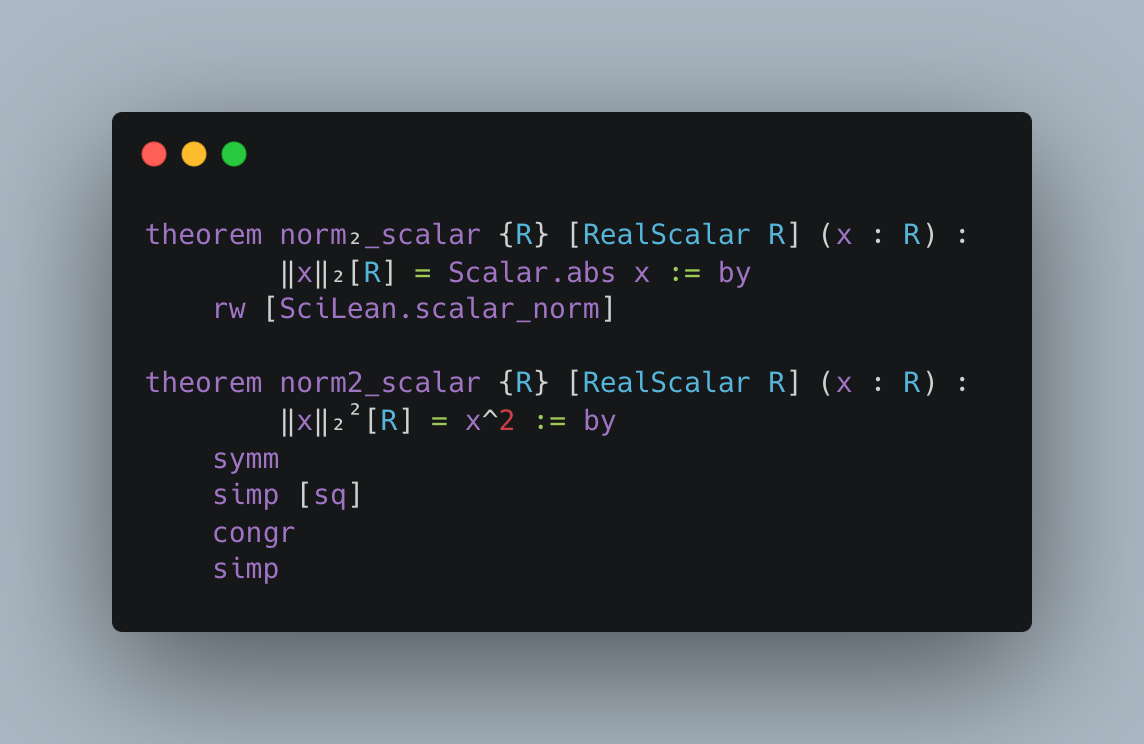

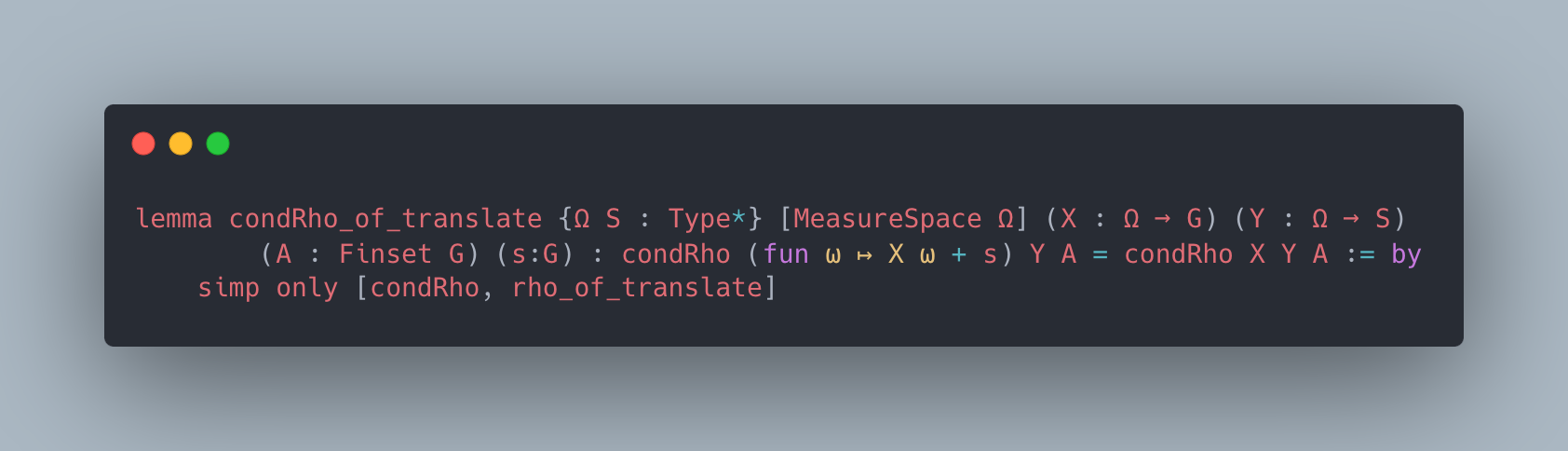

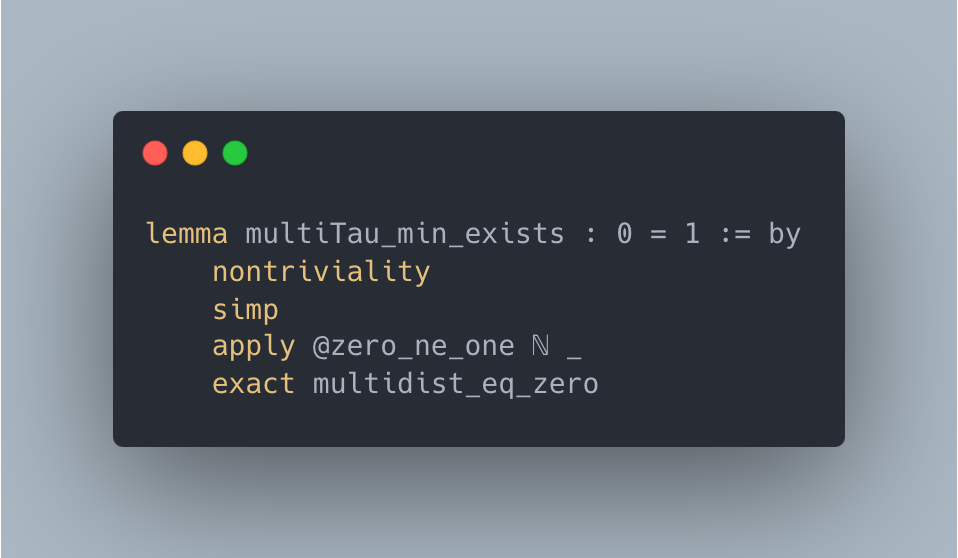

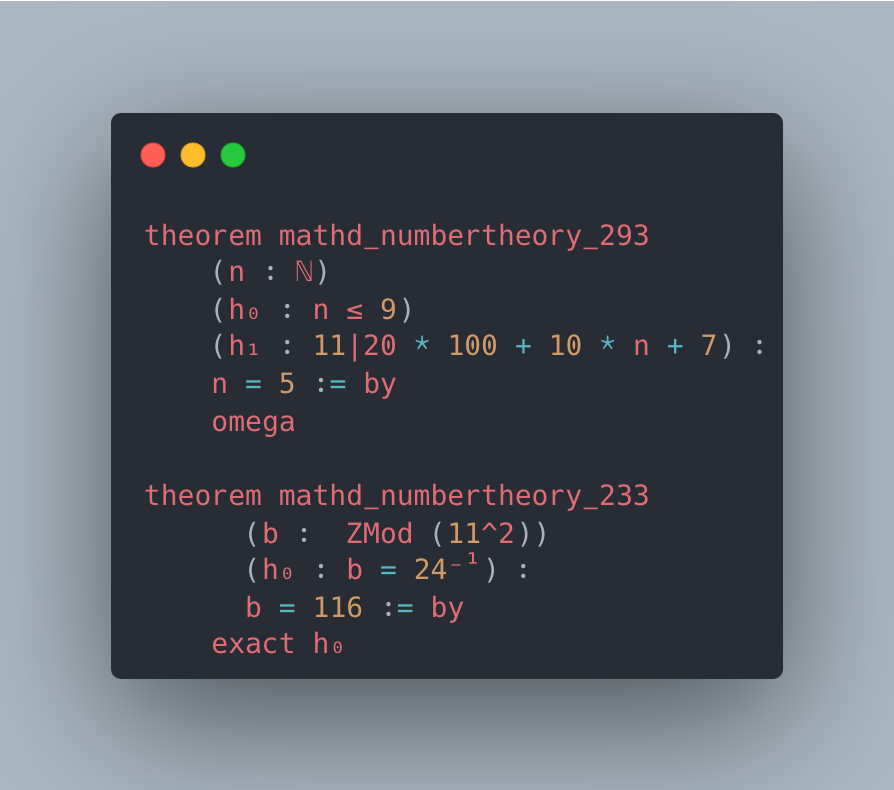

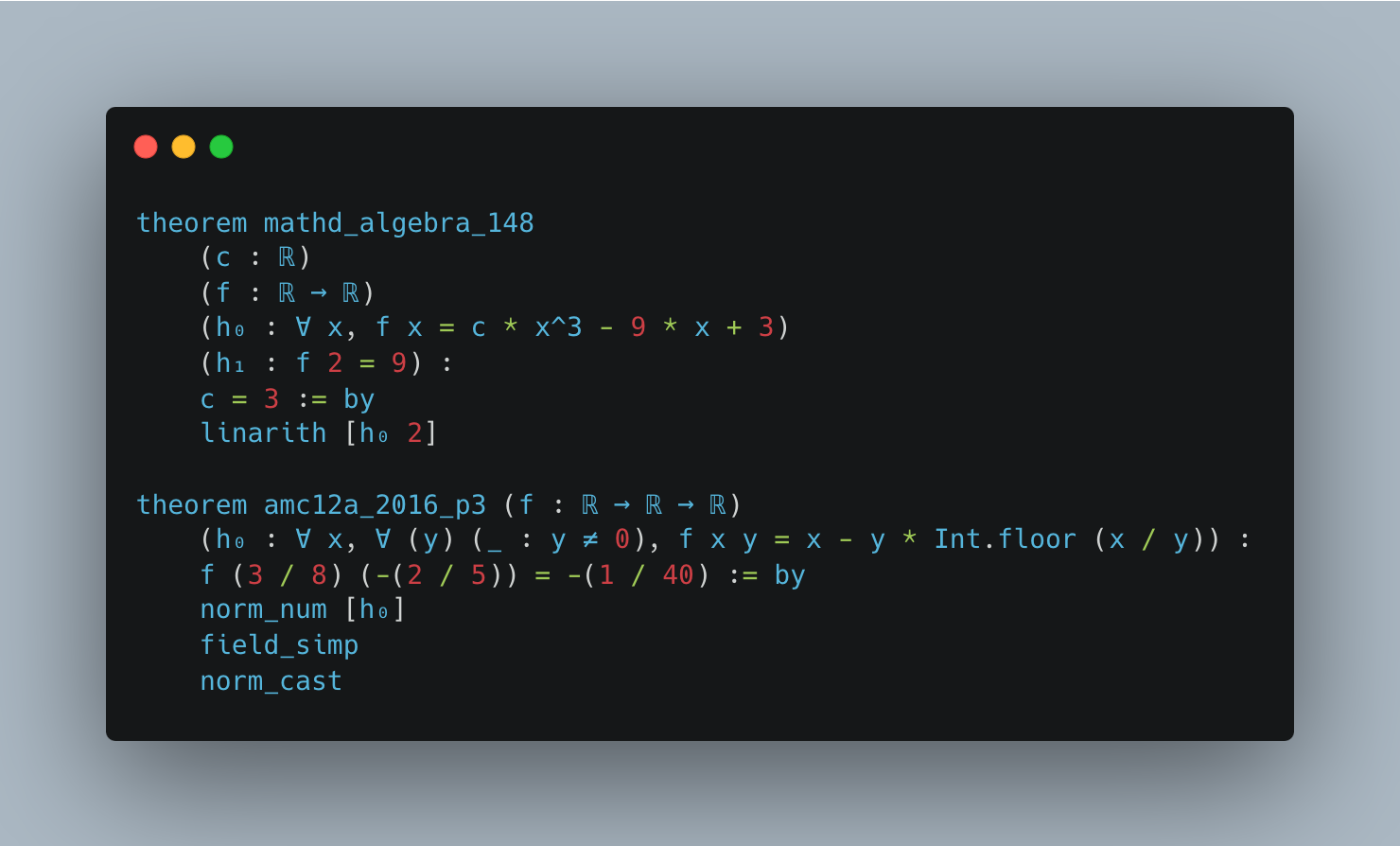

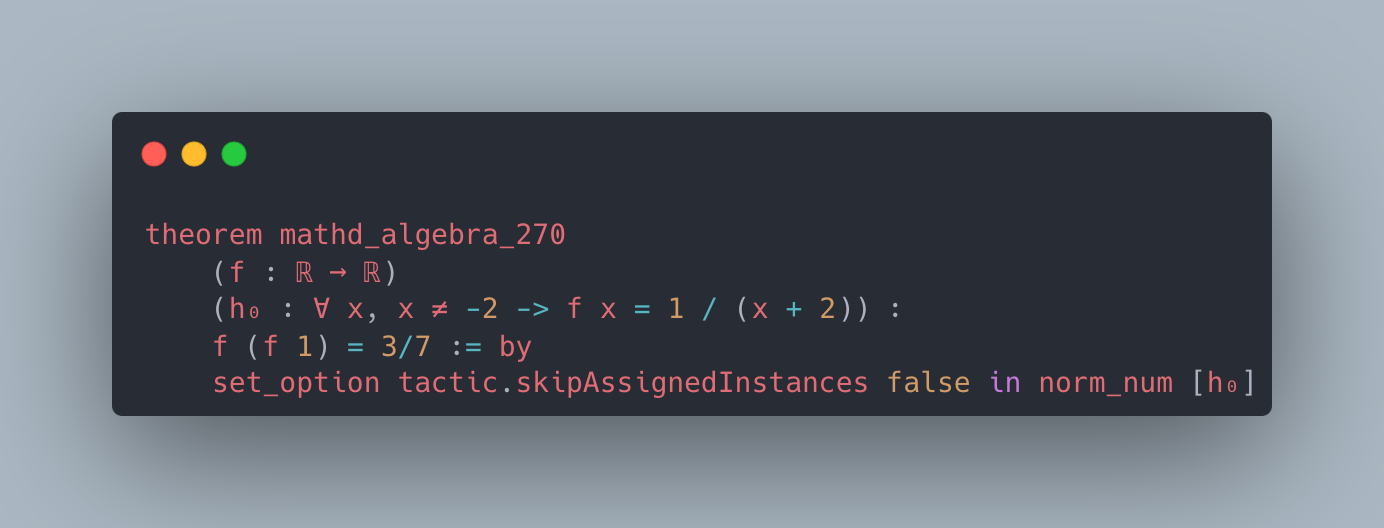

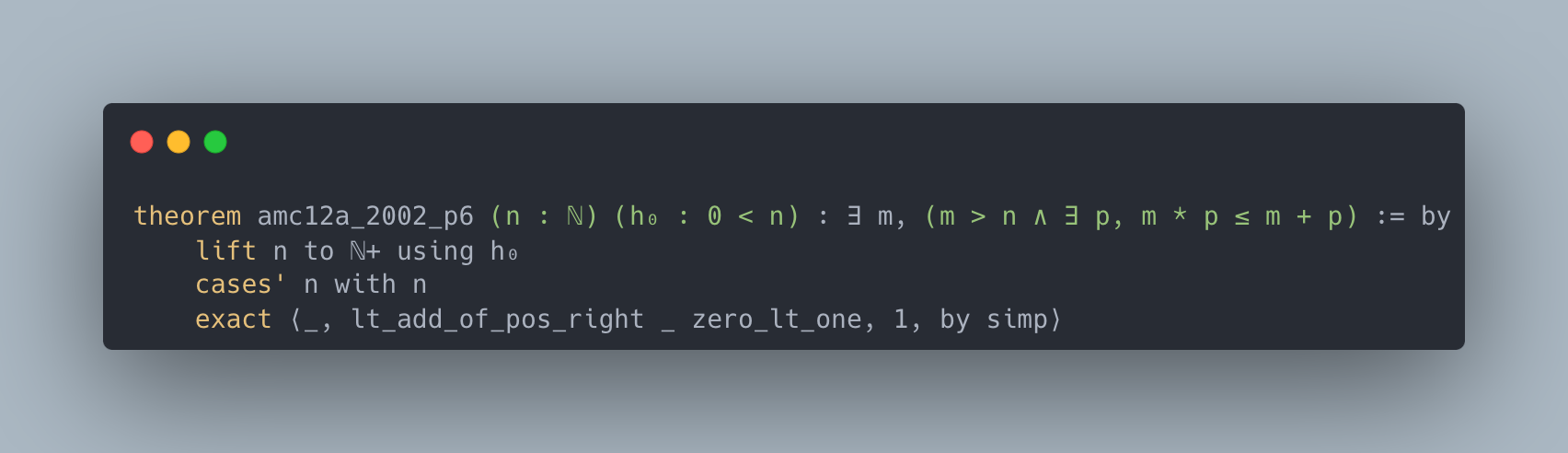

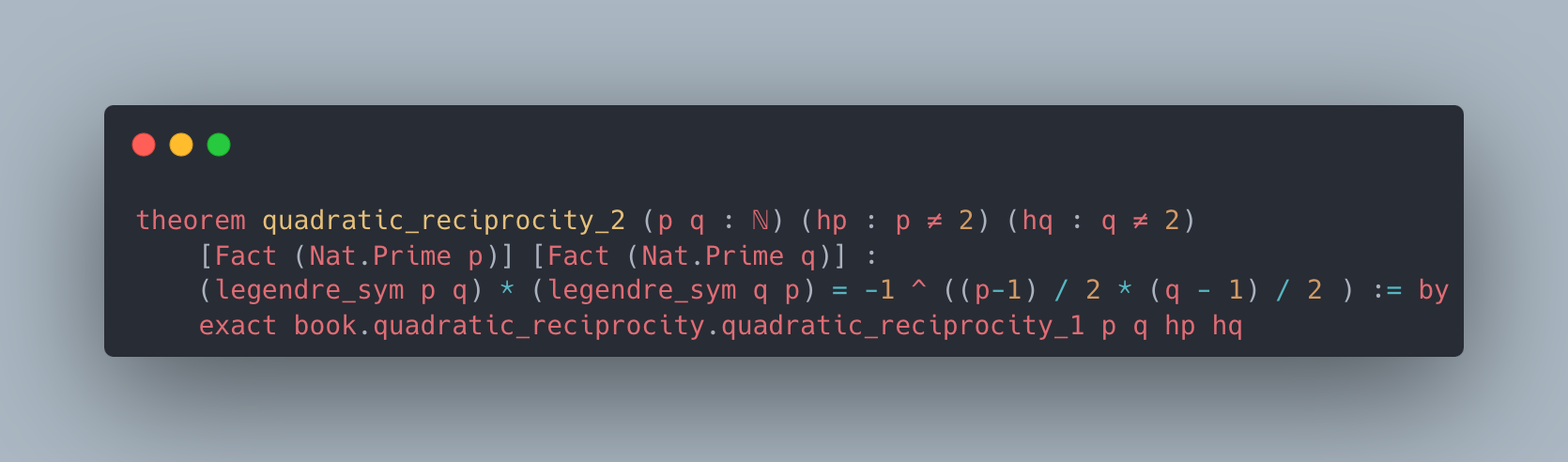

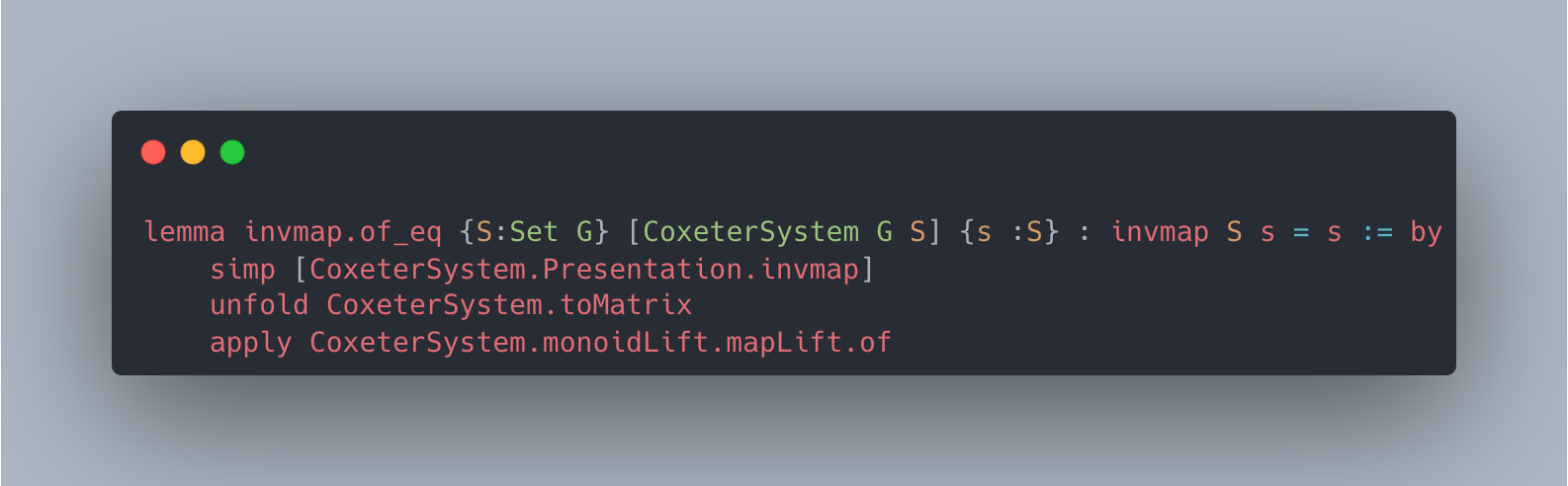

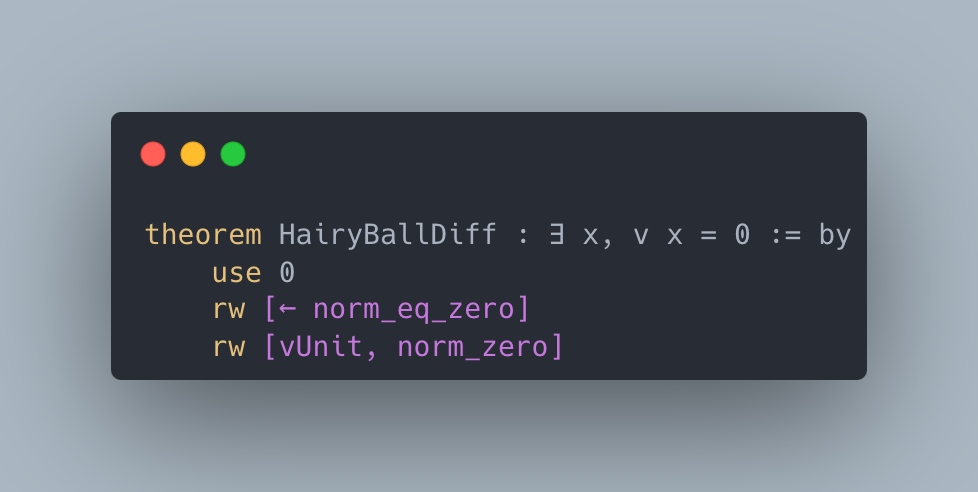

Figure 2: Case studies of LeanAgent’s new proofs. LeanAgent shows an ability to work with these repositories, often able to retrieve the necessary premises (highlighted). For example, LeanAgent proves a sorry theorem from PFR, condRho_of_translate, by simply expanding definitions, showing its proving ability on the PFR repository. In addition, LeanAgent could prove re_float from SciLean during lifelong learning, while ReProver could not. Moreover, its MiniF2F proofs demonstrate its ability to generate relatively longer and more complex proofs for complex mathematics. LeanAgent’s proof of invmap.of_eq and wedderburn represents its theorem proving capabilities with abstract algebra premises.

Table 2: Accuracy in proving sorry theorems across repositories. Accuracy is calculated as (proven theorems / total sorry theorems). “LA” denotes LeanAgent and “ReProver+” denotes the setting where we update ReProver on all 23 repositories at once. “During” shows accuracy during lifelong learning, “Add. After” shows additional accuracy after lifelong learning, and “Total” shows the combined accuracy. “MIL” stands for Mathematics in Lean Source and “Hairy Ball” refers to the Hairy Ball Theorem repository. Repositories with no sorry theorems or no proven ones are not shown. The best accuracy for each repository is in bold. As noted previously, we progressively train on MiniF2F after the initial curriculum to demonstrate the use case of formalizing in a new repository after learning a curriculum. As such, we don’t evaluate LeanAgent after lifelong learning on MiniF2F.

| MIL MiniF2F Formal Book | 29 406 29 | 72.4 24.4 10.3 | 48.3 24.4 6.9 | 24.1 - 3.4 | 48.3 20.9 6.9 | 55.2 20.9 10.3 |

| --- | --- | --- | --- | --- | --- | --- |

| SciLean | 294 | 9.2 | 7.5 | 1.7 | 8.2 | 8.5 |

| Hairy Ball | 14 | 7.1 | 0.0 | 7.1 | 0.0 | 7.1 |

| Coxeter | 15 | 6.7 | 6.7 | 0.0 | 0.0 | 6.7 |

| Carleson | 24 | 4.2 | 4.2 | 0.0 | 4.2 | 4.2 |

| Lean4 PDL | 30 | 3.3 | 3.3 | 0.0 | 3.3 | 3.3 |

| PFR | 37 | 2.7 | 2.7 | 0.0 | 0.0 | 0.0 |

In addition, a use case of LeanAgent is proving in a new repository after learning a curriculum; we progressively train on MiniF2F to demonstrate this. Note that we choose the Lean4 version of the MiniF2F repository (Yang, 2024) and disregard its separation into validation and test splits (reasoning in Appendix A.5). LeanAgent’s success rate on the Lean4 version of the MiniF2F test set is also in Appendix A.5. Moreover, a mathematician could use LeanAgent for (1) an initial curriculum $A$ , and later (2) a sub-curriculum $B$ . LeanAgent can then help the mathematician prove in the repositories in curriculum $A+B$ . To demonstrate this scenario, we continue LeanAgent on a sub-curriculum $B$ of 8 repositories.

Results are in Table 2, with case studies in Figure 2. Appendix A.5 provides a more thorough discussion, including an ablation study, and contains the complete theorems and proofs relevant to this section.

LeanAgent demonstrates continuous generalizability and improvement in theorem-proving capabilities across multiple repositories. LeanAgent’s proofs are a superset of the sorry theorems proved by ReProver in most cases. Moreover, to isolate the effect of curriculum learning, we compare LeanAgent against ReProver+, the ReProver model updated on all 23 repositories at once, and notice that LeanAgent outperforms it on several repositories, emphasizing the importance of curriculum learning. Overall, LeanAgent progresses from basic concepts (arithmetic, simple algebra) to advanced topics (abstract algebra, topology).

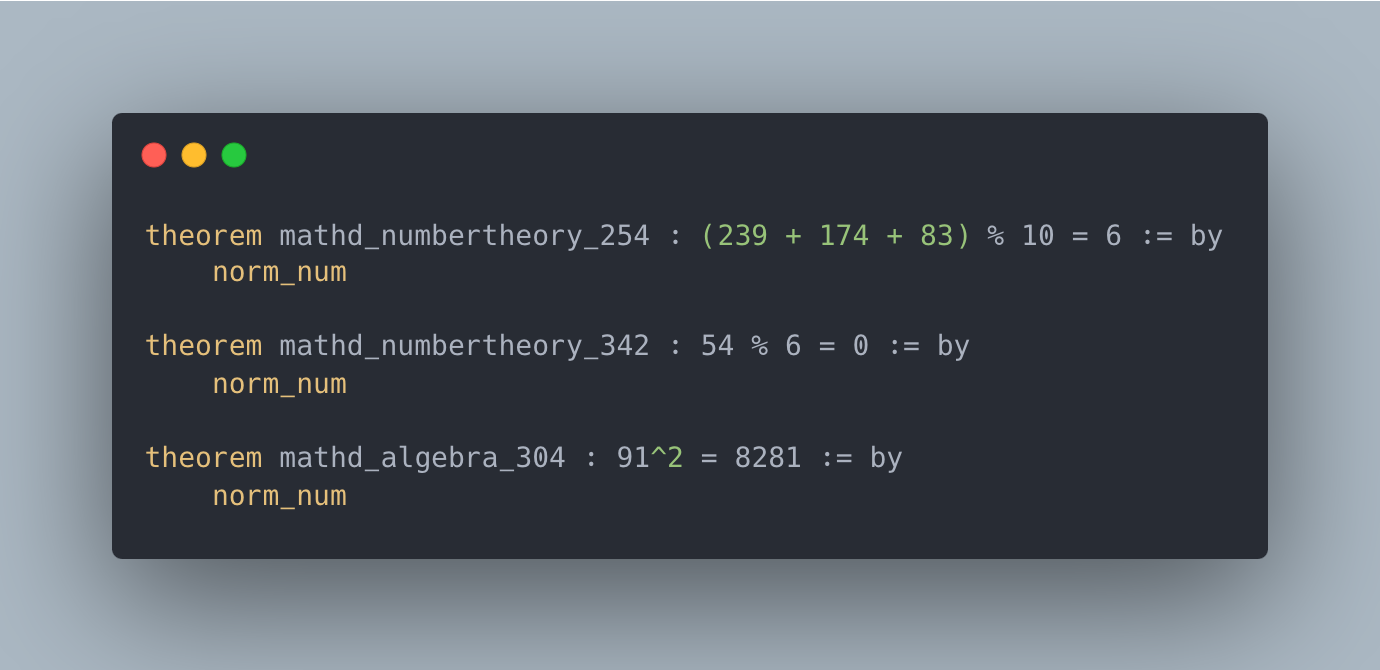

PFR. LeanAgent can prove a sorry theorem from this repository, while ReProver cannot. It also generalizes to a different commit (not included in progressive training), uncovering 7 system exploits. LeanAgent proves two theorems with just the rfl tactic, one of which ReProver cannot, and proves 5 sorry theorems with a $0=1$ placeholder theorem statement.

SciLean. During lifelong learning, LeanAgent proves theorems related to fundamental algebraic structures, linear and affine maps, and measure theory basics. By the end of lifelong learning, it proves concepts in advanced function spaces, sophisticated bijections, and abstract algebraic structures.

Mathematics in Lean Source. During lifelong learning, LeanAgent proves theorems about basic algebraic structures and fundamental arithmetic properties. By the end of lifelong learning, it proves more complex theorems involving quantifier manipulation, set theory, and relations.

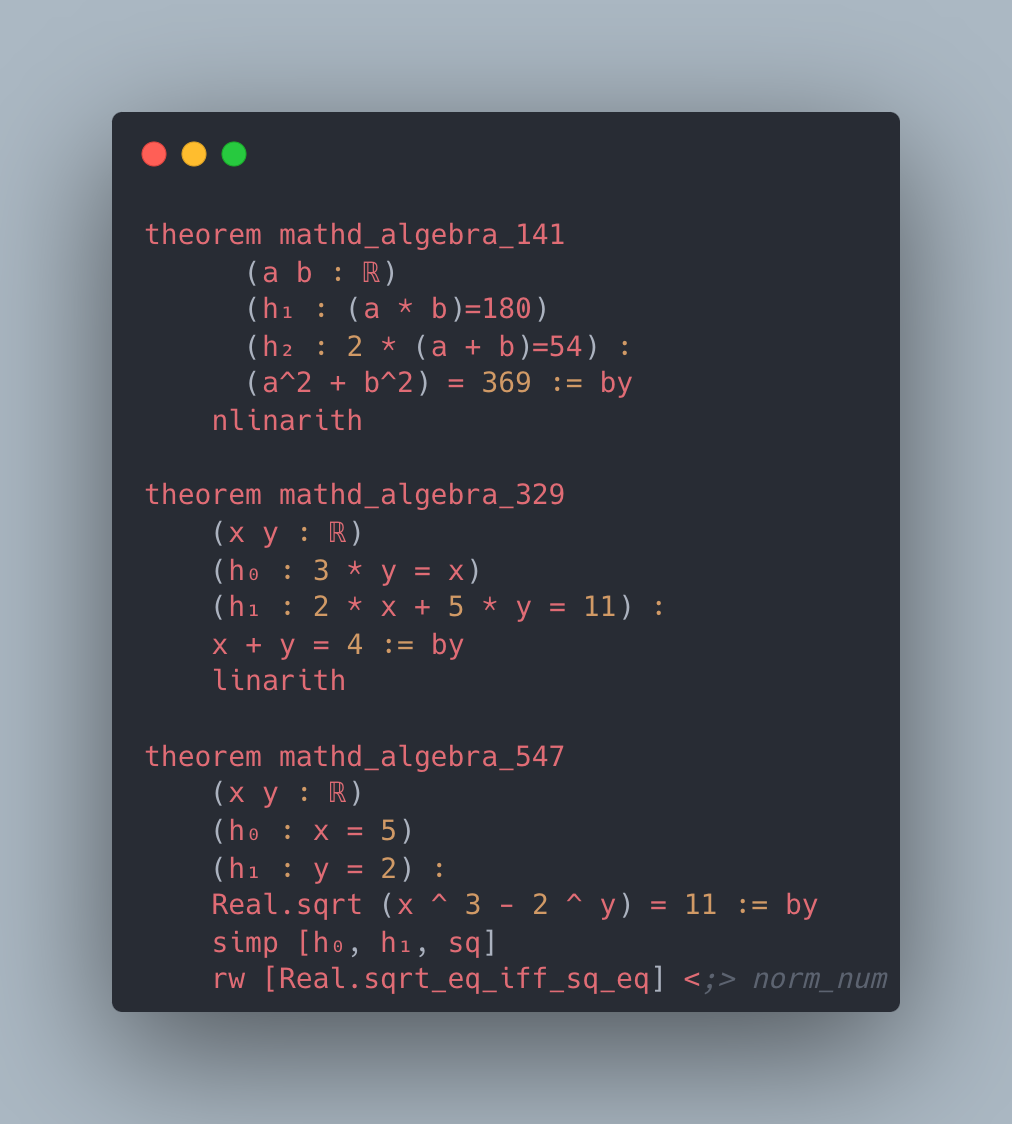

MiniF2F. ReProver demonstrates proficiency in basic arithmetic, elementary algebra, and simple calculus. However, by the end of lifelong learning, LeanAgent handles theorems with advanced number theory, sophisticated algebra, complex calculus and analysis, abstract algebra, and complex induction.

Sub-curriculum. In the Formal Book repository, LeanAgent progresses from proving basic real analysis and number theory theorems to more advanced abstract algebra, exemplified by its proof of Wedderburn’s Little Theorem. For the Coxeter repository, LeanAgent proves a complex lemma about Coxeter systems, showcasing its increased understanding of group theory. In the Hairy Ball Theorem repository, LeanAgent proves a key step of the theorem, demonstrating improved performance in algebraic topology. Only LeanAgent can prove these theorems, demonstrating that it has much more advanced theorem-proving capabilities than ReProver.

4.3 Lifelong Learning Analysis

To our knowledge, no other lifelong learning frameworks for theorem proving exist in the literature. As such, we conduct an ablation study with six lifelong learning metrics to showcase LeanAgent’s superior handling of the stability-plasticity tradeoff. These results help explain LeanAgent’s superiority in sorry theorem proving performance. We compute these metrics for the original curriculum of 14 repositories.

Specifically, the ablation study consists of seven additional setups constructed from a combination of learning and dataset options. Options for learning setups are progressive training with or without EWC. Dataset setups involve a dataset order and construction. Options for dataset orders involve Single Repository or Merge All, where each dataset consists of all previous repositories and the new one. Given the most popular repositories on GitHub by star count, options for dataset construction include popularity order or curriculum order. Appendix A.3 shows these orders and additional repository details.

Metrics. We use six lifelong learning metrics: Windowed-Forgetting 5 (WF5), Forgetting Measure (FM), Catastrophic Forgetting Resilience (CFR), Expanded Backward Transfer (EBWT), Windowed-Plasticity 5 (WP5), and Incremental Plasticity (IP). A description of these metrics is in Table 3 (De Lange et al., 2023; Wang et al., 2024b; Díaz-Rodríguez et al., 2018). Our reasoning for considering these metrics is detailed in Appendix A.4.

Table 3: Description of lifelong learning metrics.

| WF5 | Measures forgetting over a 5-task window | Lower | Existing |

| --- | --- | --- | --- |

| FM | Average performance drop on old tasks | Lower | Existing |

| CFR | Ratio of min to max average test R@10 | Higher | Proposed |

| EBWT | Average improvement on old tasks after learning new ones | Higher | Existing |

| WP5 | Max average test R@10 increase over a 5-task window | Higher | Existing |

| IP | Rate of validation R@10 change per task | Higher | Proposed |

We describe why we introduce two new metrics to address specific aspects of lifelong learning in theorem proving:

- Catastrophic Forgetting Resilience (CFR). This metric captures LeanAgent’s ability to maintain performance on its weakest task relative to its best performance, crucial in the presence of diverse mathematical domains.

- Incremental Plasticity (IP). IP provides a more granular view of plasticity than aggregate measures and is sensitive to the order of tasks, particularly relevant in lifelong learning for theorem proving.

In addition, these metrics in the Merge All strategy measure cumulative knowledge refinement rather than isolated task performance (details in Appendix A.4). Due to these interpretational differences, we analyze Single Repository and Merge All setups separately. We consider an improvement of at least 3% to be significant.

Table 4: Comparison of lifelong learning metrics across setups. The best scores for each metric are in bold.

| WF5 ( $\downarrow$ ) FM ( $\downarrow$ ) CFR ( $\uparrow$ ) | 0.18 0.85 0.88 | 7.60 6.53 0.87 | 7.17 4.04 0.88 | 0.73 2.11 0.85 | 15.83 10.50 0.76 | 2.23 4.06 0.94 | 13.34 11.44 0.75 | 5.82 3.80 0.90 |

| --- | --- | --- | --- | --- | --- | --- | --- | --- |

| EBWT ( $\uparrow$ ) | 1.21 | 0.51 | 1.04 | 0.76 | -0.20 | 0.73 | -1.34 | -0.39 |

| WP5 ( $\uparrow$ ) | 2.47 | 0.89 | 1.47 | 3.42 | 0.00 | 0.09 | 0.00 | 0.11 |

| IP ( $\uparrow$ ) | 1.02 | 0.36 | 0.26 | 1.06 | -1.50 | -0.64 | -1.71 | -0.89 |

| Single Repository: | Merge All: |

| --- | --- |

| Setup 1: No EWC, Popularity Order | Setup 4: No EWC, Popularity Order |

| Setup 2: EWC, Popularity Order | Setup 5: No EWC, Curriculum Learning |

| Setup 3: EWC, Curriculum Learning | Setup 6: EWC, Popularity Order |

| Setup 7: EWC, Curriculum Learning | |

Single Repository Analysis. We first analyze the Single Repository results from Table 4. LeanAgent demonstrates superior stability across multiple metrics. The WF5 metric is 75.34% lower for LeanAgent than the next best setup, suggesting it maintains performance over a window more effectively. Its FM score is 59.97% lower than Setup 3’s, showcasing its resilience against catastrophic forgetting. Furthermore, LeanAgent, Setup 1, and Setup 2 demonstrate high and consistent resilience against catastrophic forgetting, with CFR values above 0.87 and minimal (±0.01) differences. This underscores LeanAgent’s ability to continuously generalize over time. In addition, LeanAgent has a 16.25% higher EBWT, indicating its ability to continuously improve over time.

In contrast, Setup 3 exhibits characteristics of higher plasticity. It shows a 38.26% higher WP5 over LeanAgent, indicating a greater ability to rapidly adapt to new tasks in a window. This is complemented by its 3.98% higher IP over LeanAgent, suggesting a more pronounced improvement on new tasks over time. However, these plasticity gains come at a significant cost: Setup 3 suffers from more severe catastrophic forgetting, as evidenced by its significantly worse stability metrics compared to LeanAgent. This excessive plasticity in Setup 3 stems from EWC’s inability to adapt parameter importance as theorem complexity increases. EWC preserves parameters important for simpler theorems, which may not be crucial for more complex ones. Consequently, these preserved parameters resist change while other parameters change rapidly for complex theorems. This forces the model to become more plastic overall, relying heavily on non-preserved parameters for new, complex theorems.

LeanAgent’s favorable stability and EBWT scores make it the most suitable for lifelong learning in the Single Repository setting.

Merge All Analysis. Next, we analyze the Merge All setups from Table 4. Setup 5’s WF5 metric is 61.68% lower than the next best setup (Setup 7), suggesting Setup 5 balances and retains knowledge across an expanding dataset most effectively. Furthermore, Setup 5’s CFR score is 3.77% higher than that of Setup 7, again demonstrating high and consistent resilience in the face of an expanding, potentially more complex dataset. However, Setup 7 has a 6.44% lower FM score than Setup 5’s, showcasing its ability to maintain performance on earlier data points. Moreover, Setup 5 is the only setup with a positive EBWT, indicating that learning new tasks improves performance on the entire historical dataset. The other setups have a negative EBWT, indicating performance degradation on earlier tasks after learning new ones.

Only Setups 5 and 7 have a non-zero WP5, suggesting the ability to adapt to the growing complexity of the combined dataset. The zero values for Setups 4 and 6 indicate that popularity order struggles to show improvement when dealing with merged data. However, although Setup 5 has the highest IP score with a 27.75% improvement over Setup 7, all 4 setups have negative IP values. This indicates a decrease in validation R@10 over time, suggesting that the Merge All strategy struggles to maintain performance.

Experiment 5’s favorable stability and EBWT scores suggest it is the best at balancing the retention of earlier knowledge with the adaptation to new data in a combined dataset. However, its negative IP value indicates a fundamental issue with its approach.

Comparative Analysis and Insights. Although the metrics have different interpretations in the Single Repository and Merge All settings, we can still draw some meaningful comparisons by focusing on overall trends and relative performance. We must consider that the negative IP values in Merge All setups indicate a significant issue. This drawback outweighs the potential benefits seen in other metrics like WP5, as it indicates a fundamental inability to maintain and improve performance in a continuously growing dataset. In contrast, LeanAgent demonstrates a positive IP, indicating its ability to incorporate new knowledge. This, combined with its superior stability and EBWT metrics relative to other Single Repository methods, suggests that LeanAgent is better suited than Setup 5 for continuous generalizability and improvement.

Consistency with sorry Theorem Proving Performance. This lifelong learning analysis is consistent with LeanAgent’s sorry theorem proving performance. LeanAgent’s superior stability metrics (WF5, FM, and CFR) explain its ability to maintain performance across diverse mathematical domains, as evidenced by its success in proving theorems from various repositories like SciLean, Mathematics in Lean Source, and PFR. Its high EBWT score aligns with its progression from basic concepts to advanced topics in theorem proving. While LeanAgent shows slightly lower plasticity (WP5 and IP) compared to some setups, this trade-off results in better overall performance, as reflected in its ability to prove a superset of sorry theorems compared to ReProver in most cases. This analysis demonstrates LeanAgent’s overall superiority in lifelong learning for theorem proving.

5 Conclusion

We have presented LeanAgent, a lifelong learning framework for theorem proving that achieves continuous generalizability and improvement across diverse mathematical domains. Key components include a curriculum learning strategy, progressive training approach, and custom dynamic database infrastructure. LeanAgent successfully generates formal proofs for 155 theorems where formal proofs were previously missing and uncovers 7 exploits across 23 Lean repositories, including from challenging mathematics. This highlights its potential to assist in formalizing complex proofs across multiple domains and identifying system exploits. For example, LeanAgent successfully proves challenging theorems in abstract algebra and algebraic topology. It outperforms the ReProver baseline in proving new sorry theorems, progressively learning from basic to complex mathematical concepts. Moreover, LeanAgent shows significant performance in forgetting measures and backward transfer, explaining its continuous generalizability and continuous improvement.

Future work could explore integration with Lean Copilot, providing real-time assistance with a mathematician’s repositories. In addition, a limitation of LeanAgent is its inability to prove certain theorems due to a lack of data on specific topics, such as odeSolve.arg_x 0.semiAdjoint_rule in SciLean about ODEs. To solve this problem, future work could use reinforcement learning for synthetic data generation during curriculum construction. Moreover, future work could use LeanAgent with additional math LLMs and search strategies.

Acknowledgments

Adarsh Kumarappan is supported by the Summer Undergraduate Research Fellowships (SURF) program at Caltech. Anima Anandkumar is supported by the Bren named chair professorship, Schmidt AI 2050 senior fellowship, and ONR (MURI grant N00014-18-12624). We thank Terence Tao for detailed discussions and feedback that significantly improved this paper. We thank Zulip chat members for engaging in clarifying conversations that were incorporated into the paper.

References

- Alama et al. (2014) Jesse Alama, Tom Heskes, Daniel Kühlwein, Evgeni Tsivtsivadze, and Josef Urban. Premise Selection for Mathematics by Corpus Analysis and Kernel Methods. Journal of Automated Reasoning, 52(2):191–213, February 2014. ISSN 0168-7433, 1573-0670. doi: 10.1007/s10817-013-9286-5.

- Arana & Stafford (2023) Andrew Arana and Will Stafford. On the difficulty of discovering mathematical proofs. Synthese, 202(2):1–29, 2023. doi: 10.1007/s11229-023-04184-5.

- Avigad (2024) Jeremy Avigad. Avigad/mathematics_in_lean_source, August 2024.

- Azerbayev et al. (2024) Zhangir Azerbayev, Hailey Schoelkopf, Keiran Paster, Marco Dos Santos, Stephen McAleer, Albert Q. Jiang, Jia Deng, Stella Biderman, and Sean Welleck. Llemma: An Open Language Model For Mathematics, March 2024.

- Bengio et al. (2009) Yoshua Bengio, Jérôme Louradour, Ronan Collobert, and Jason Weston. Curriculum learning. In Proceedings of the 26th Annual International Conference on Machine Learning, pp. 41–48, Montreal Quebec Canada, June 2009. ACM. ISBN 978-1-60558-516-1. doi: 10.1145/1553374.1553380.

- Borgeaud et al. (2022) Sebastian Borgeaud, Arthur Mensch, Jordan Hoffmann, Trevor Cai, Eliza Rutherford, Katie Millican, George van den Driessche, Jean-Baptiste Lespiau, Bogdan Damoc, Aidan Clark, Diego de Las Casas, Aurelia Guy, Jacob Menick, Roman Ring, Tom Hennigan, Saffron Huang, Loren Maggiore, Chris Jones, Albin Cassirer, Andy Brock, Michela Paganini, Geoffrey Irving, Oriol Vinyals, Simon Osindero, Karen Simonyan, Jack W. Rae, Erich Elsen, and Laurent Sifre. Improving language models by retrieving from trillions of tokens, February 2022.

- Carneiro (2024) Mario Carneiro. Digama0/lean4lean, September 2024.

- Chang et al. (2021) Ernie Chang, Hui-Syuan Yeh, and Vera Demberg. Does the Order of Training Samples Matter? Improving Neural Data-to-Text Generation with Curriculum Learning. In Paola Merlo, Jorg Tiedemann, and Reut Tsarfaty (eds.), Proceedings of the 16th Conference of the European Chapter of the Association for Computational Linguistics: Main Volume, pp. 727–733, Online, April 2021. Association for Computational Linguistics. doi: 10.18653/v1/2021.eacl-main.61.

- Chaudhry et al. (2019) Arslan Chaudhry, Marc’Aurelio Ranzato, Marcus Rohrbach, and Mohamed Elhoseiny. Efficient Lifelong Learning with A-GEM, January 2019.

- Chen et al. (2023) Jiefeng Chen, Timothy Nguyen, Dilan Gorur, and Arslan Chaudhry. Is forgetting less a good inductive bias for forward transfer?, March 2023.

- Chen et al. (2024) Yupeng Chen, Senmiao Wang, Zhihang Lin, Zeyu Qin, Yushun Zhang, Tian Ding, and Ruoyu Sun. MoFO: Momentum-Filtered Optimizer for Mitigating Forgetting in LLM Fine-Tuning. 2024. doi: 10.48550/ARXIV.2407.20999.

- Cirik et al. (2016) Volkan Cirik, Eduard Hovy, and Louis-Philippe Morency. Visualizing and Understanding Curriculum Learning for Long Short-Term Memory Networks, November 2016.

- Claude Team (2024) Claude Team. Introducing claude 3.5 sonnet, June 2024. URL https://www.anthropic.com/news/claude-3-5-sonnet.

- Community (2024a) Lean Community. Leanprover-community/lean-liquid. leanprover-community, September 2024a.

- Community (2024b) Lean Community. Leanprover-community/lean-perfectoid-spaces. leanprover-community, August 2024b.

- Con-nf (2024) Con-nf. Leanprover-community/con-nf: A formal consistency proof of Quine’s set theory New Foundations. https://github.com/leanprover-community/con-nf/tree/main, 2024.

- Coxeter (2024) Coxeter. NUS-Math-Formalization/coxeter at 96af8aee7943ca8685ed1b00cc83a559ea389a97. https://github.com/NUS-Math-Formalization/coxeter/tree/96af8aee7943ca8685ed1b00cc83a559ea389a97, 2024.

- De Lange et al. (2023) Matthias De Lange, Gido van de Ven, and Tinne Tuytelaars. Continual evaluation for lifelong learning: Identifying the stability gap, March 2023.

- De Moura et al. (2015) Leonardo De Moura, Soonho Kong, Jeremy Avigad, Floris Van Doorn, and Jakob Von Raumer. The Lean Theorem Prover (System Description). In Amy P. Felty and Aart Middeldorp (eds.), Automated Deduction - CADE-25, volume 9195, pp. 378–388, Cham, 2015. Springer International Publishing. ISBN 978-3-319-21400-9 978-3-319-21401-6. doi: 10.1007/978-3-319-21401-6˙26.

- DeepMind (2024) Google DeepMind. Google-deepmind/debate. Google DeepMind, August 2024.

- Díaz-Rodríguez et al. (2018) Natalia Díaz-Rodríguez, Vincenzo Lomonaco, David Filliat, and Davide Maltoni. Don’t forget, there is more than forgetting: New metrics for Continual Learning, October 2018.

- Dillies (2024) Yaël Dillies. YaelDillies/LeanAPAP, September 2024.

- Dohare et al. (2024) Shibhansh Dohare, J. Fernando Hernandez-Garcia, Qingfeng Lan, Parash Rahman, A. Rupam Mahmood, and Richard S. Sutton. Loss of plasticity in deep continual learning. Nature, 632(8026):768–774, August 2024. ISSN 0028-0836, 1476-4687. doi: 10.1038/s41586-024-07711-7.

- Fermat’s Last Theorem (2024) Fermat’s Last Theorem. ImperialCollegeLondon/FLT. Imperial College London, September 2024.

- Firsching, Moritz (2024) Firsching, Moritz. Mo271/FormalBook: Formalizing ”Proofs from THE BOOK”, 2024.

- Formal Logic (2024) Formalized Formal Logic. FormalizedFormalLogic/Foundation. FormalizedFormalLogic, September 2024.

- Gadgil (2024) Siddhartha Gadgil. Siddhartha-gadgil/Saturn: Experiments with SAT solvers with proofs in Lean 4. https://github.com/siddhartha-gadgil/Saturn, 2024.

- Gattinger (2024) Malvin Gattinger. M4lvin/lean4-pdl, September 2024.

- Hairy Ball Theorem (2024) Hairy Ball Theorem. Corent1234/hairy-ball-theorem-lean at a778826d19c8a7ddf1d26beeea628c45450612e6. https://github.com/corent1234/hairy-ball-theorem-lean/tree/a778826d19c8a7ddf1d26beeea628c45450612e6, 2024.

- Irving et al. (2016) Geoffrey Irving, Christian Szegedy, Alexander A Alemi, Niklas Een, Francois Chollet, and Josef Urban. DeepMath - Deep Sequence Models for Premise Selection. In Advances in Neural Information Processing Systems, volume 29. Curran Associates, Inc., 2016.

- Jiang et al. (2022) Albert Q. Jiang, Wenda Li, Szymon Tworkowski, Konrad Czechowski, Tomasz Odrzygóźdź, Piotr Miłoś, Yuhuai Wu, and Mateja Jamnik. Thor: Wielding Hammers to Integrate Language Models and Automated Theorem Provers. 2022. doi: 10.48550/ARXIV.2205.10893.

- Jiang et al. (2024) Gangwei Jiang, Caigao Jiang, Zhaoyi Li, Siqiao Xue, Jun Zhou, Linqi Song, Defu Lian, and Ying Wei. Interpretable Catastrophic Forgetting of Large Language Model Fine-tuning via Instruction Vector. 2024. doi: 10.48550/ARXIV.2406.12227.

- Kevin Buzzard (2019) Kevin Buzzard. The Future of Mathematics? Professor Kevin Buzzard - 30 May 2019, June 2019.

- Kim et al. (2023) Seungyeon Kim, Ankit Singh Rawat, Manzil Zaheer, Sadeep Jayasumana, Veeranjaneyulu Sadhanala, Wittawat Jitkrittum, Aditya Krishna Menon, Rob Fergus, and Sanjiv Kumar. EmbedDistill: A Geometric Knowledge Distillation for Information Retrieval. 2023. doi: 10.48550/ARXIV.2301.12005.

- Kirkpatrick et al. (2017) James Kirkpatrick, Razvan Pascanu, Neil Rabinowitz, Joel Veness, Guillaume Desjardins, Andrei A. Rusu, Kieran Milan, John Quan, Tiago Ramalho, Agnieszka Grabska-Barwinska, Demis Hassabis, Claudia Clopath, Dharshan Kumaran, and Raia Hadsell. Overcoming catastrophic forgetting in neural networks. Proceedings of the National Academy of Sciences, 114(13):3521–3526, March 2017. ISSN 0027-8424, 1091-6490. doi: 10.1073/pnas.1611835114.

- Kontorovich (2024) Alex Kontorovich. AlexKontorovich/PrimeNumberTheoremAnd, August 2024.

- (37) Guillaume Lample, Marie-Anne Lachaux, Thibaut Lavril, Xavier Martinet, Amaury Hayat, Gabriel Ebner, Aurélien Rodriguez, and Timothée Lacroix. HyperTree Proof Search for Neural Theorem Proving.

- Li et al. (2024) Conglong Li, Zhewei Yao, Xiaoxia Wu, Minjia Zhang, Connor Holmes, Cheng Li, and Yuxiong He. DeepSpeed Data Efficiency: Improving Deep Learning Model Quality and Training Efficiency via Efficient Data Sampling and Routing. Proceedings of the AAAI Conference on Artificial Intelligence, 38(16):18490–18498, March 2024. ISSN 2374-3468, 2159-5399. doi: 10.1609/aaai.v38i16.29810.

- Li & Hoiem (2017) Zhizhong Li and Derek Hoiem. Learning without Forgetting, February 2017.

- Lin et al. (2024) Haohan Lin, Zhiqing Sun, Yiming Yang, and Sean Welleck. Lean-STaR: Learning to Interleave Thinking and Proving, August 2024.

- Liu et al. (2023) Chengwu Liu, Jianhao Shen, Huajian Xin, Zhengying Liu, Ye Yuan, Haiming Wang, Wei Ju, Chuanyang Zheng, Yichun Yin, Lin Li, Ming Zhang, and Qun Liu. FIMO: A Challenge Formal Dataset for Automated Theorem Proving, December 2023.

- Lopez-Paz & Ranzato (2017) David Lopez-Paz and Marc’ Aurelio Ranzato. Gradient Episodic Memory for Continual Learning. In Advances in Neural Information Processing Systems, volume 30. Curran Associates, Inc., 2017.

- Loshchilov & Hutter (2017) I. Loshchilov and F. Hutter. Decoupled Weight Decay Regularization. In International Conference on Learning Representations, November 2017.

- Lu et al. (2024) Minghai Lu, Benjamin Delaware, and Tianyi Zhang. Proof Automation with Large Language Models, September 2024.

- Lu et al. (2022) Shuai Lu, Nan Duan, Hojae Han, Daya Guo, Seung-won Hwang, and Alexey Svyatkovskiy. ReACC: A Retrieval-Augmented Code Completion Framework. In Smaranda Muresan, Preslav Nakov, and Aline Villavicencio (eds.), Proceedings of the 60th Annual Meeting of the Association for Computational Linguistics (Volume 1: Long Papers), pp. 6227–6240, Dublin, Ireland, May 2022. Association for Computational Linguistics. doi: 10.18653/v1/2022.acl-long.431.

- mathlib4 Community (2024) The mathlib4 Community. Leanprover-community/mathlib4. leanprover-community, September 2024.

- Mendez & Eaton (2021) Jorge A Mendez and Eric Eaton. Lifelong Learning of Compositional Structures. 2021.

- Mikuła et al. (2024) Maciej Mikuła, Szymon Tworkowski, Szymon Antoniak, Bartosz Piotrowski, Albert Qiaochu Jiang, Jin Peng Zhou, Christian Szegedy, Łukasz Kuciński, Piotr Miłoś, and Yuhuai Wu. Magnushammer: A Transformer-Based Approach to Premise Selection, March 2024.

- Mizuno (2024) Yuma Mizuno. Yuma-mizuno/lean-math-workshop. https://github.com/yuma-mizuno/lean-math-workshop, 2024.

- Monnerjahn (2024) Ludwig Monnerjahn. Louis-Le-Grand/Formalisation-of-constructable-numbers, September 2024.

- Murphy (2024) Logan Murphy. Loganrjmurphy/LeanEuclid, September 2024.

- OpenAI (2024) OpenAI. OpenAI o1 System Card, September 2024.

- Piotrowski et al. (2023) Bartosz Piotrowski, Ramon Fernández Mir, and Edward Ayers. Machine-Learned Premise Selection for Lean. In Automated Reasoning with Analytic Tableaux and Related Methods: 32nd International Conference, TABLEAUX 2023, Prague, Czech Republic, September 18–21, 2023, Proceedings, pp. 175–186, Berlin, Heidelberg, September 2023. Springer-Verlag. ISBN 978-3-031-43512-6. doi: 10.1007/978-3-031-43513-3˙10.

- Polu et al. (2022) Stanislas Polu, Jesse Michael Han, Kunhao Zheng, Mantas Baksys, Igor Babuschkin, and I. Sutskever. Formal Mathematics Statement Curriculum Learning. ArXiv, February 2022.

- Renshaw (2024) David Renshaw. Dwrensha/compfiles: Catalog Of Math Problems Formalized In Lean. https://github.com/dwrensha/compfiles, September 2024.

- Shao et al. (2024) Zhihong Shao, Peiyi Wang, Qihao Zhu, Runxin Xu, Junxiao Song, Xiao Bi, Haowei Zhang, Mingchuan Zhang, Y. K. Li, Y. Wu, and Daya Guo. DeepSeekMath: Pushing the Limits of Mathematical Reasoning in Open Language Models, April 2024.

- Shin et al. (2017) Hanul Shin, Jung Kwon Lee, Jaehong Kim, and Jiwon Kim. Continual Learning with Deep Generative Replay, December 2017.

- Skřivan (2024) Tomáš Skřivan. Lecopivo/SciLean: Scientific computing in Lean 4. https://github.com/lecopivo/SciLean/tree/master, September 2024.

- Song et al. (2024) Peiyang Song, Kaiyu Yang, and Anima Anandkumar. Towards large language models as copilots for theorem proving in lean, 2024. URL https://arxiv.org/abs/2404.12534.

- (60) Valentin I Spitkovsky, Hiyan Alshawi, and Daniel Jurafsky. Baby Steps: How “Less is More” in Unsupervised Dependency Parsing.

- Subramanian et al. (2017) Sandeep Subramanian, Sai Rajeswar, Francis Dutil, Chris Pal, and Aaron Courville. Adversarial Generation of Natural Language. In Phil Blunsom, Antoine Bordes, Kyunghyun Cho, Shay Cohen, Chris Dyer, Edward Grefenstette, Karl Moritz Hermann, Laura Rimell, Jason Weston, and Scott Yih (eds.), Proceedings of the 2nd Workshop on Representation Learning for NLP, pp. 241–251, Vancouver, Canada, August 2017. Association for Computational Linguistics. doi: 10.18653/v1/W17-2629.

- (62) Terence Tao. Teorth/asymptotic.

- Tao (2024a) Terence Tao. Teorth/newton, June 2024a.

- Tao (2024b) Terence Tao. Teorth/symmetric_project, July 2024b.

- Tao et al. (2024) Terence Tao, Pietro Monticone, Lorenzo Luccioli, and Rémy Degenne. Teorth/pfr, August 2024.

- Thakur et al. (2024) Amitayush Thakur, George Tsoukalas, Yeming Wen, Jimmy Xin, and Swarat Chaudhuri. An In-Context Learning Agent for Formal Theorem-Proving, August 2024.

- (67) Szymon Tworkowski, Maciej Mikula, Tomasz Odrzygozdz, Konrad Czechowski, Szymon Antoniak, Albert Q Jiang, Christian Szegedy, Lukasz Kucinski, Piotr Milos, and Yuhuai Wu. Formal Premise Selection With Language Models.

- van de Ven et al. (2024) Gido M. van de Ven, Nicholas Soures, and Dhireesha Kudithipudi. Continual Learning and Catastrophic Forgetting, March 2024.

- van Doorn (2024) Floris van Doorn. Fpvandoorn/carleson, September 2024.

- Vaswani et al. (2017) Ashish Vaswani, Noam Shazeer, Niki Parmar, Jakob Uszkoreit, Llion Jones, Aidan N Gomez, Ł ukasz Kaiser, and Illia Polosukhin. Attention is All you Need. In Advances in Neural Information Processing Systems, volume 30. Curran Associates, Inc., 2017.

- Wang et al. (2023) Haiming Wang, Ye Yuan, Zhengying Liu, Jianhao Shen, Yichun Yin, Jing Xiong, Enze Xie, Han Shi, Yujun Li, Lin Li, Jian Yin, Zhenguo Li, and Xiaodan Liang. DT-Solver: Automated Theorem Proving with Dynamic-Tree Sampling Guided by Proof-level Value Function. In Anna Rogers, Jordan Boyd-Graber, and Naoaki Okazaki (eds.), Proceedings of the 61st Annual Meeting of the Association for Computational Linguistics (Volume 1: Long Papers), pp. 12632–12646, Toronto, Canada, July 2023. Association for Computational Linguistics. doi: 10.18653/v1/2023.acl-long.706.

- Wang et al. (2024a) Haiming Wang, Huajian Xin, Zhengying Liu, Wenda Li, Yinya Huang, Jianqiao Lu, Zhicheng Yang, Jing Tang, Jian Yin, Zhenguo Li, and Xiaodan Liang. Proving Theorems Recursively, May 2024a.

- Wang et al. (2024b) Liyuan Wang, Xingxing Zhang, Hang Su, and Jun Zhu. A Comprehensive Survey of Continual Learning: Theory, Method and Application. IEEE Transactions on Pattern Analysis and Machine Intelligence, 46(8):5362–5383, August 2024b. ISSN 0162-8828, 2160-9292, 1939-3539. doi: 10.1109/TPAMI.2024.3367329.

- Wang & Deng (2020) Mingzhe Wang and Jia Deng. Learning to Prove Theorems by Learning to Generate Theorems. ArXiv, February 2020.

- Wang et al. (2024c) Ruida Wang, Jipeng Zhang, Yizhen Jia, Rui Pan, Shizhe Diao, Renjie Pi, and Tong Zhang. TheoremLlama: Transforming General-Purpose LLMs into Lean4 Experts, October 2024c.

- Wieser (2024) Eric Wieser. Eric-wieser/lean-matrix-cookbook: The matrix cookbook, proved in the Lean theorem prover. https://github.com/eric-wieser/lean-matrix-cookbook, 2024.

- Xin et al. (2024a) Huajian Xin, Daya Guo, Zhihong Shao, Zhizhou Ren, Qihao Zhu, Bo Liu, Chong Ruan, Wenda Li, and Xiaodan Liang. DeepSeek-Prover: Advancing Theorem Proving in LLMs through Large-Scale Synthetic Data, May 2024a.

- Xin et al. (2024b) Huajian Xin, Z. Z. Ren, Junxiao Song, Zhihong Shao, Wanjia Zhao, Haocheng Wang, Bo Liu, Liyue Zhang, Xuan Lu, Qiushi Du, Wenjun Gao, Qihao Zhu, Dejian Yang, Zhibin Gou, Z. F. Wu, Fuli Luo, and Chong Ruan. DeepSeek-Prover-V1.5: Harnessing Proof Assistant Feedback for Reinforcement Learning and Monte-Carlo Tree Search, August 2024b.

- Xue et al. (2022) Linting Xue, Aditya Barua, Noah Constant, Rami Al-Rfou, Sharan Narang, Mihir Kale, Adam Roberts, and Colin Raffel. ByT5: Towards a Token-Free Future with Pre-trained Byte-to-Byte Models. Transactions of the Association for Computational Linguistics, 10:291–306, 2022. doi: 10.1162/tacl˙a˙00461.

- Yang (2024) Kaiyu Yang. Yangky11/miniF2F-lean4. https://github.com/yangky11/miniF2F-lean4/tree/main, 2024.

- Yang et al. (2023) Kaiyu Yang, Aidan M. Swope, Alex Gu, Rahul Chalamala, Peiyang Song, Shixing Yu, Saad Godil, Ryan Prenger, and Anima Anandkumar. LeanDojo: Theorem Proving with Retrieval-Augmented Language Models. 2023. doi: 10.48550/ARXIV.2306.15626.

- Zaremba & Sutskever (2015) Wojciech Zaremba and Ilya Sutskever. Learning to Execute, February 2015.

- Zeta 3 Irrational (2024) Zeta 3 Irrational. Ahhwuhu/zeta_3_irrational at 3d68ddd90434a398c9a72f30d50c57f15a0118c7. https://github.com/ahhwuhu/zeta_3_irrational/tree/3d68ddd90434a398c9a72f30d50c57f15a0118c7, 2024.

- (84) Jiawei Zhao, Zhenyu Zhang, Beidi Chen, Zhangyang Wang, Anima Anandkumar, and Yuandong Tian. GaLore: Memory-Efficient LLM Training by Gradient Low-Rank Projection. URL http://arxiv.org/abs/2403.03507.

- Zhou et al. (2024) Jin Peng Zhou, Charles Staats, Wenda Li, Christian Szegedy, Kilian Q. Weinberger, and Yuhuai Wu. Don’t Trust: Verify – Grounding LLM Quantitative Reasoning with Autoformalization, March 2024.

- Zhou et al. (2023) Shuyan Zhou, Uri Alon, Frank F. Xu, Zhiruo Wang, Zhengbao Jiang, and Graham Neubig. DocPrompting: Generating Code by Retrieving the Docs, February 2023.

- Zombori et al. (2019) Zsolt Zombori, Adrian Csiszarik, Henryk Michalewski, Cezary Kaliszyk, and Josef Urban. Curriculum Learning and Theorem Proving. 2019.

Appendix A Appendix

A.1 Further Methodology Details

Repository Scanning and Data Extraction. We use the GitHub API to query for Lean repositories based on sorting parameters (e.g., by repository stars or most recently updated repositories). We maintain a list of known repositories to avoid; the list can be updated to allow LeanAgent to re-analyze the same repository on a new commit or Lean version.

We clone each identified repository locally using the Git version control system. To ensure compatibility with our theorem-proving pipeline, we check the Lean version required by each repository and compare it with the supported versions of our system. If the required version is incompatible, we skip the repository and move on to the next one. Otherwise, LeanAgent switches its Lean version to match the repository’s version. This version checking is performed by parsing the repository’s configuration files and extracting the specified Lean version.

Dynamic Database Management. This database contains many key features that are useful in our setting. For example, it can add new repositories, update existing ones, and generate merged datasets from multiple repositories with customizable splitting strategies. In addition, it can query specific theorems or premises across repositories, track the progress of proof attempts (including the proof status of sorry theorems), and analyze the structure and content of Lean proofs, including tactic sequences and proof states.

The database keeps track of various details: Repository metadata; theorems categorized as already proven, sorry theorems that are proven, or sorry theorems that are unproven; premise files with their imports and individual premises; traced files for tracking which files have been processed; detailed theorem information, including file path, start/end positions, and full statements; and traced tactics with annotated versions, including the proof state before and after application.

If we encounter duplicate theorems between repositories while merging repositories, we use the theorem from the repository most recently added to the database. We deduplicate premise files and traced files by choosing the first one encountered while merging the repositories. We also generate metadata containing details of all the repositories used to generate the dataset and statistics regarding the theorems, premise files, and traced files in the dataset, such as the total number of theorems.

We provide the user with many options to generate a dataset. To generate the set of theorems and proofs, the default option is to simply use the theorems, proofs, premise files, and traced files from the current curriculum repository in the database. Specifically, we use the random split from LeanDojo to create training, validation, and testing sets. We refrain from using the novel split from LeanDojo, as we would like LeanAgent to learn as much as possible from a repository to perform well on its hardest theorems. The data in the splits include details about the proofs of theorems, including the URL and commit of the source repository, the file path of the theorem, the full name of the theorem, the theorem statement, the start and end positions in the source file, and a list of traced tactics with annotations. The validation and test split each contain 2% of the total theorem and proofs, following the methodology from LeanDojo. Moreover, the database uses a topological sort over the traced files in the repository to generate the premise corpus. This corpus is a JSON Lines file, where each line is a JSON object consisting of a path to a Lean source file, the file’s imports, and the file’s premise statements and definitions.

Progressive Training of the Retriever. We describe some additional steps for progressive training. To precompute the embeddings, we use a single forward pass with batch processing to serialize and tokenize premises from the entire corpus. Then, we use the retriever’s encoder to process the batches and generate embeddings.

sorry Theorem Proving. We start by processing the premise corpus to use it more efficiently during premise retrieval. This involves initializing a directed dependency graph to represent each file path in the corpus, adding files as nodes and imports as edges, and creating a transitive closure of this graph. We also track all premises encountered during this process, building a comprehensive knowledge base.

Crucially, we limit retrieval to a subset of all available premises to aid the effectiveness of the results. Specifically, we choose the top 25% of accessible and relevant premises, following ReProver’s method.

Proof Integration and Pull Request Generation. We integrate the generated proofs into the original Lean files and create pull requests to propose the changes to the repository owners. This aids the development of these repositories and functions as more training data for future research.

To achieve this, in a temporary Git branch, we iterate over the Lean files and locate the sorry keywords corresponding to the generated proofs. We then replace these sorry keywords with the actual proof text, working from the bottom of each file upward to preserve the position of theorems. After integrating the proofs, we commit our changes, push them, and create a pull request for the repository on GitHub.

A.2 Experiment Implementation Details

We use ReProver’s retriever trained on the random split from LeanDojo. We use four NVIDIA A100 GPUs with 80GB of memory each for progressive training. LeanAgent uses a distributed architecture leveraging PyTorch Lightning and Ray for parallel processing. We use bfloat16 mixed precision and optimize with AdamW (Loshchilov & Hutter, 2017) with an effective batch size of 16 (achieved through a batch size of 4 with gradient accumulation over 4 steps). In the first 1,000 steps, the learning rate warms up linearly from 0 to the maximum value of $10^{-3}$ . Then it decays to 0 using a cosine schedule. In addition, we apply gradient clipping with a value of 1.0. Just as ReProver does during training, we sample 3 negative premises per example, including 1 in-file negative premise. The maximum sequence length for the retriever is set to 1024 tokens. The maximum sequence length for the generator is set to 512 tokens for input and 128 tokens for output.

The prover uses a best-first search strategy with no limit on the maximum number of expansions of the search tree. It generates 64 tactic candidates and retrieves 100 premises for each proof state. LeanAgent uses ReProver’s tactic generator for the experiments. We generate tactics with a beam search of size 5. We used 4 CPU workers, 1 per GPU. Due to the wide variety of repositories and experimental setups that we tested, the time for each experiment widely varied. For example, the experiments in Table 10 took from 4 to 9 days to complete.

Furthermore, we do not compare LeanAgent with any existing LLM-based prover besides ReProver because LeanAgent is a framework, not a model. As mentioned previously, it can be used with any LLM. As such, a comparison would be impractical for reasons including differences in data, pre-training, and fine-tuning. We only compare with ReProver because we use ReProver’s retriever as the starting one in LeanAgent, allowing for a more faithful comparison.

Moreover, we do not compare with Aesop because it is not an ML model. We aim to improve upon ML research for theorem proving, such as ReProver. Moreover, Aesop is not a framework, but ReProver was included as the starting point of the LeanAgent framework, which is why we compare LeanAgent to ReProver. Rather than comparing against existing tools, we aim to understand how lifelong learning can work in theorem proving.

Furthermore, although LeanAgent can work with other LLMs such as Llemma (Azerbayev et al., 2024) and DeepSeek-Prover (Xin et al., 2024a), using these LLMs in our work would require architectural modifications that go beyond the scope of our current work. For example, the 7B model DeepSeek-Prover as well as the 7B and 34B Llemma models are not retrieval-based. As such, rather than progressively training a retriever, we would progressively train the entire model. This may be feasible with methods such as Gradient Low-Rank Projection (Zhao et al., ), but this would lead to fundamentally different usage than we currently demonstrate. Specifically, rather than using a best-first tree search approach as we do with ReProver’s retriever and tactic generator, we may instead need to generate the entire proof at once. This setup is quite dissimilar from our current evaluation, and so these results may be too dissimilar from our current evaluation framework.

In addition, we would like to note that because LeanAgent does not claim to contribute a new search algorithm, it can be used with other search strategies such as Hypertree Proof Search (Lample et al., ). However, the source code for Hypertree Proof Search was only recently released on GitHub, explaining why we did not use it thus far.

Moreover, the objective function for Elastic Weight Consolidation (EWC) is given by:

$$

L(\theta)=L_{B}(\theta)+\frac{\lambda}{2}\sum_{i}F_{i}(\theta_{i}-\theta_{A,i}%

)^{2}

$$

where $L_{B}(\theta)$ is the loss for the current task B, $i$ is the label for each parameter, $\theta_{A,i}$ are the parameters from the previous task A, $F_{i}$ is the Fisher information matrix, and $\lambda$ is a hyperparameter that controls the strength of the EWC penalty. For the setups that use EWC, we performed a grid search over $\lambda$ values in {0.01, 0.1, 1, 10, 100}. For each value, we ran Setup 2 on separate testing repositories. We found 0.1 to yield the best overall stability and plasticity scores.

A.3 Repository Details

Table 5: Additional repository descriptions

Table 6: Repository commits. Formalization of Const. Numbers denotes Formalization of Constructable Numbers.

| PFR Hairy Ball Theorem Coxeter | fa398a5b853c7e94e3294c45e50c6aee013a2687 a778826d19c8a7ddf1d26beeea628c45450612e6 96af8aee7943ca8685ed1b00cc83a559ea389a97 |

| --- | --- |

| Mathematics in Lean Source | 5297e0fb051367c48c0a084411853a576389ecf5 |

| Formal Book | 6fbe8c2985008c0bfb30050750a71b90388ad3a3 |

| MiniF2F | 9e445f5435407f014b88b44a98436d50dd7abd00 |

| SciLean | 22d53b2f4e3db2a172e71da6eb9c916e62655744 |

| Carleson | bec7808b907190882fa1fa54ce749af297c6cf37 |

| Lean4 PDL | c7f649fe3c4891cf1a01c120e82ebc5f6199856e |

| Prime Number Theorem And | 29baddd685660b5fedd7bd67f9916ae24253d566 |

| Compfiles | f99bf6f2928d47dd1a445b414b3a723c2665f091 |

| FLT | b208a302cdcbfadce33d8165f0b054bfa17e2147 |

| Debate | 7fb39251b705797ee54e08c96177fabd29a5b5a3 |

| Lean4Lean | 05b1f4a68c5facea96a5ee51c6a56fef21276e0f |

| Matrix Cookbook | f15a149d321ac99ff9b9c024b58e7882f564669f |

| Math Workshop | 5acd4b933d47fd6c1032798a6046c1baf261445d |

| LeanEuclid | f1912c3090eb82820575758efc31e40b9db86bb8 |

| Foundation | d5fe5d057a90a0703a745cdc318a1b6621490c21 |

| Con-nf | 00bdc85ba7d486a9e544a0806a1018dd06fa3856 |

| Saturn | 3811a9dd46cdfd5fa0c0c1896720c28d2ec4a42a |

| Zeta 3 Irrational | 914712200e463cfc97fe37e929d518dd58806a38 |

| Formalization of Const. Numbers | 01ef1f22a04f2ba8081c5fb29413f515a0e52878 |

| LeanAPAP | 951c660a8d7ba8e39f906fdf657674a984effa8b |

Table 7: Repository orders (initial curriculum). Note that Popularity Order is by star count on August 20, 2024.

| 1 2 3 | Compfiles Mathematics in Lean Source Prime Number Theorem And | SciLean FLT PFR |

| --- | --- | --- |

| 4 | Math Workshop | Prime Number Theorem And |

| 5 | FLT | Compfiles |

| 6 | PFR | Debate |

| 7 | SciLean | Mathematics in Lean Source |

| 8 | Debate | Lean4Lean |

| 9 | Matrix Cookbook | Matrix Cookbook |

| 10 | Con-nf | Math Workshop |

| 11 | Foundation | LeanEuclid |

| 12 | Saturn | Foundation |

| 13 | LeanEuclid | Con-nf |

| 14 | Lean4Lean | Saturn |

Table 8: Curriculum order (sub-curriculum)

| 1 2 3 | Zeta 3 Irrational Formal Book Formalization of Constructable Numbers |

| --- | --- |

| 4 | Carleson |

| 5 | LeanAPAP |

| 6 | Hairy Ball Theorem |

| 7 | Coxeter |

| 8 | Lean4 PDL |

Table 9: Repository theorem and premise counts

| PFR Hairy Ball Theorem Coxeter | 74306 73026 71273 | 109855 131217 127608 |

| --- | --- | --- |

| Mathematics in Lean Source | 78886 | 117699 |

| Formal Book | 74654 | 112458 |

| MiniF2F | 71313 | 127202 |

| SciLean | 72244 | 129711 |

| Carleson | 73851 | 109334 |

| Lean4 PDL | 20599 | 46400 |

| Prime Number Theorem And | 79147 | 115751 |

| Compfiles | 121391 | 178108 |

| FLT | 75082 | 114830 |

| Debate | 68853 | 103684 |

| Lean4Lean | 2559 | 22689 |

| Matrix Cookbook | 67585 | 102294 |

| Math Workshop | 76942 | 115458 |

| LeanEuclid | 15423 | 40555 |

| Foundation | 25047 | 57964 |

| Con-nf | 29489 | 64177 |

| Saturn | 10982 | 34497 |

| Zeta 3 Irrational | 120174 | 176332 |

| Formalization of Const. Numbers | 74050 | 109645 |

| LeanAPAP | 71090 | 109477 |

Repository Statistics and Information. Additional repository descriptions are in Table 5. The commits we used for experiments are in Table 6. Moreover, the repository orders are detailed in Table 7 and Table 8. Furthermore, the total number of theorems and premises per repository (including dependencies) are in Table 9.

Repository Selection Process. Many repositories have issues such as incompatibilities with LeanDojo, unsupported Lean versions, and build failures. As such, our process for choosing the 14 repositories in the first curriculum was simply using LeanAgent to extract information from the most popular repositories on GitHub. We disregard incompatible and inapplicable ones, such as those with no theorems. We performed this process on August 20, 2024. We performed a similar process for the eight repositories in the second curriculum with two differences: (1) We checked that the number of sorry theorems visible from GitHub was at least 10. This narrowed down the available repositories significantly. (2) We included some more recently updated repositories to provide some variety in the age of the repositories in our curriculum. We performed this process on September 14, 2024. However, many of the repositories that passed this test had fewer than 10 sorry theorems when processed by LeanDojo; this is mainly due to the functionalities of LeanDojo.

A.4 Lifelong Learning Metric Details