## DOES SPATIAL COGNITION EMERGE IN FRONTIER MODELS?

Santhosh Kumar Ramakrishnan ∗ Erik Wijmans Philipp Kr¨ ahenb¨ uhl Vladlen Koltun

Apple

## ABSTRACT

Not yet. We present SPACE, a benchmark that systematically evaluates spatial cognition in frontier models. Our benchmark builds on decades of research in cognitive science. It evaluates large-scale mapping abilities that are brought to bear when an organism traverses physical environments, smaller-scale reasoning about object shapes and layouts, and cognitive infrastructure such as spatial attention and memory. For many tasks, we instantiate parallel presentations via text and images, allowing us to benchmark both large language models and large multimodal models. Results suggest that contemporary frontier models fall short of the spatial intelligence of animals, performing near chance level on a number of classic tests of animal cognition. Code and data are available: https://github.com/apple/ml-space-benchmark

## 1 INTRODUCTION

Frontier models have achieved impressive performance in mathematics, coding, general knowledge, and commonsense reasoning (Hendrycks et al., 2021a;b; Chen et al., 2021; Sakaguchi et al., 2021; Yue et al., 2024). This remarkable progress has inspired characterizations of frontier models as possessing the intelligence of a smart high schooler and predictions of the imminent arrival of superintelligence (Aschenbrenner, 2024). These characterizations are often underpinned by the premise that competence (or even mastery) in some aspects of cognition is symptomatic of broad cognitive competence. This is not self-evident. To quote Brooks's first law of artificial intelligence, 'When an AI system performs a task, human observers immediately estimate its general competence in areas that seem related. Usually that estimate is wildly overinflated.' (Brooks, 2024).

Our work focuses on spatial cognition, a foundational form of intelligence that is present in a broad spectrum of animals including humans (Marshall & Fink, 2001; Waller & Nadel, 2013; Mallot, 2024). Spatial cognition refers to the ability of animals to perceive and interact with their surroundings, build mental representations of objects and environments, and draw upon these representations to support navigation and manipulation. Decades of research in animal cognition have characterized the spatial cognition of mice, rats, bats, pigeons, corvids, dogs, wolves, elephants, marmosets, tamarins, howler monkeys, baboons, chimpanzees, and humans (Tolman, 1948; Menzel, 1973; Peters, 1974; Gillner & Mallot, 1998; Marshall & Fink, 2001; Noser & Byrne, 2007; Tommasi et al., 2012; Porter & Garber, 2013; Blaser et al., 2013; Geva-Sagiv et al., 2015; Presotto et al., 2019; de Guinea et al., 2021; Payne et al., 2021; Xu et al., 2024; Xavier et al., 2024; Welklin et al., 2024). Human infants already possess rudimentary spatial cognition, which subsequently improves along developmental schedules that have been characterized (Blades & Spencer, 1994; Newcombe, 2000; Vasilyeva & Lourenco, 2012). Spatial cognition is known to underpin more advanced cognitive abilities (Kozhevnikov et al., 2007; Newcombe, 2010; Young et al., 2018).

The emergence of spatial cognition has been linked to embodiment (Smith & Gasser, 2005; Jansen & Heil, 2010; Frick & M¨ ohring, 2016), without which the development of spatial cognition may be impaired (Foreman et al., 1990; Anderson et al., 2013). However, frontier models are typically trained in a disembodied manner on corpora of text, images, and video. Does spatial cognition emerge in disembodied frontier models? To study this question systematically, we develop SPACE,

∗ Corresponding author: s ramakrishnan@apple.com

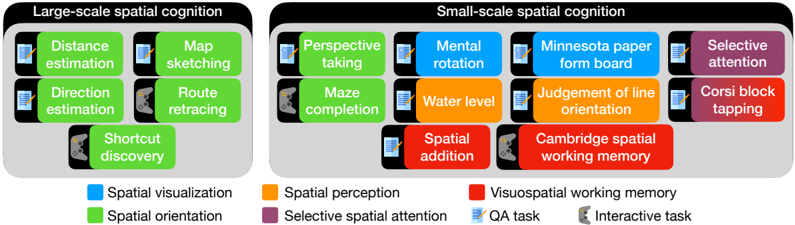

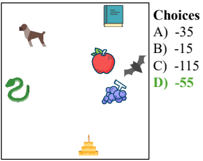

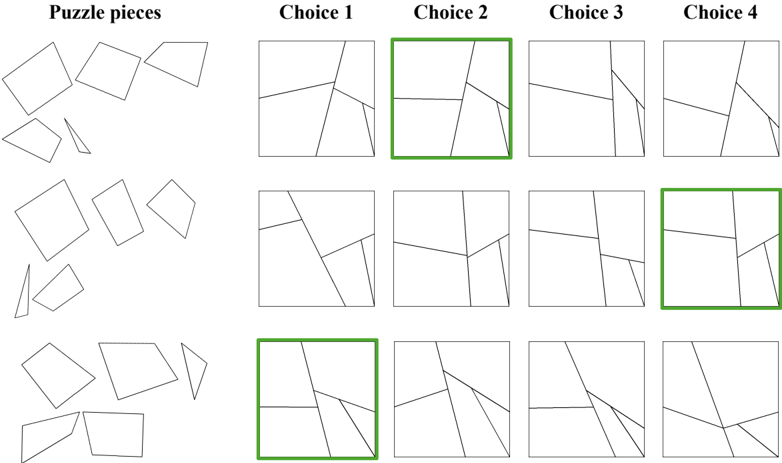

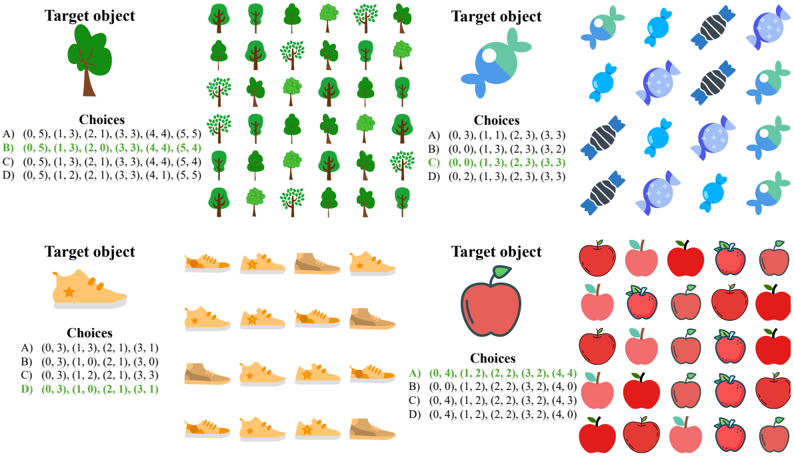

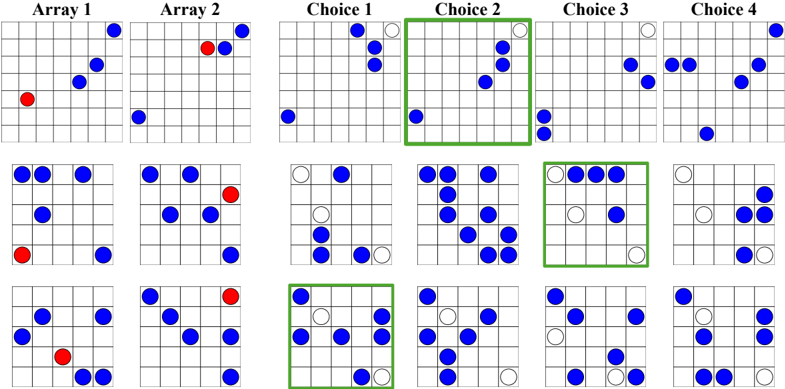

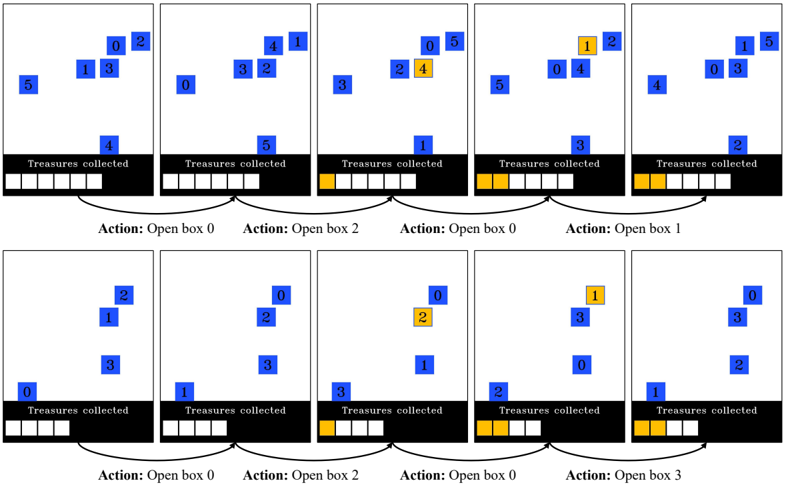

Figure 1: SPACE: Spatial Perception And Cognition Evaluation. We design a suite of spatial cognition tasks based on the cognitive science literature. These are broadly classified into large-scale and small-scale spatial cognition. Large-scale tasks require understanding space at the level of environments and evaluate spatial orientation and cognitive mapping abilities. Small-scale tasks require understanding space at the level of objects or object arrangements and evaluate skills such as spatial visualization, spatial orientation, spatial perception, selective spatial attention and visuospatial working memory. These tasks can be multiple-choice question answering, or interactive games. We develop multimodal as well as purely textual presentations, which support evaluation of both large language models (LLMs) and vision-language models (VLMs).

<details>

<summary>Image 1 Details</summary>

### Visual Description

\n

## Diagram: Spatial Cognition Tasks

### Overview

The image presents a diagram categorizing spatial cognition tasks into two main groups: Large-scale spatial cognition and Small-scale spatial cognition. Each category contains several specific tasks, visually represented as rounded rectangles with icons and labels. A legend at the bottom of the diagram explains the color-coding used to indicate the cognitive process involved in each task.

### Components/Axes

The diagram is divided into two main sections, labeled "Large-scale spatial cognition" (left) and "Small-scale spatial cognition" (right). Each section contains a grid of task labels within colored rectangles. The legend at the bottom provides a key for the colors, associating them with different cognitive processes:

* **Blue:** Spatial visualization

* **Green:** Spatial orientation

* **Orange:** Spatial perception

* **Red:** Visuospatial working memory

* **Light Blue:** Selective spatial attention

* **Black Icon:** QA task

* **Grey Icon:** Interactive task

### Detailed Analysis or Content Details

**Large-Scale Spatial Cognition (Left Side):**

* **Distance estimation:** Blue rectangle with a grey icon.

* **Map sketching:** Green rectangle with a grey icon.

* **Direction estimation:** Blue rectangle with a grey icon.

* **Route retracing:** Green rectangle with a grey icon.

* **Shortcut discovery:** Green rectangle with a grey icon.

**Small-Scale Spatial Cognition (Right Side):**

* **Perspective taking:** Blue rectangle with a grey icon.

* **Mental rotation:** Orange rectangle with a grey icon.

* **Minnesota paper form board:** Blue rectangle with a grey icon.

* **Selective attention:** Light Blue rectangle with a grey icon.

* **Maze completion:** Orange rectangle with a grey icon.

* **Water level:** Orange rectangle with a grey icon.

* **Judgement of line orientation:** Orange rectangle with a grey icon.

* **Corsi block tapping:** Red rectangle with a grey icon.

* **Spatial addition:** Orange rectangle with a grey icon.

* **Cambridge spatial working memory:** Red rectangle with a grey icon.

### Key Observations

The diagram visually separates spatial cognition into large-scale and small-scale processes. The color-coding highlights the different cognitive abilities involved in each task. Tasks involving spatial visualization and orientation (blue and green) are prominent in large-scale cognition, while spatial perception and visuospatial working memory (orange and red) are more prevalent in small-scale cognition. The presence of both QA and interactive task icons suggests a distinction in the method of assessment for these tasks.

### Interpretation

This diagram illustrates a conceptual framework for understanding the diverse range of cognitive processes involved in spatial cognition. The distinction between large-scale and small-scale cognition likely reflects differences in the spatial extent and complexity of the tasks. Large-scale tasks involve navigating and understanding environments, while small-scale tasks focus on manipulating and reasoning about objects within a limited space. The color-coding provides a valuable way to categorize tasks based on the underlying cognitive abilities they engage. The inclusion of task type icons (QA vs. Interactive) suggests that the assessment methods used for these tasks may differ, potentially influencing the cognitive processes emphasized. This diagram could be used as a basis for designing experiments or interventions aimed at improving specific aspects of spatial cognition.

</details>

a benchmark that builds on decades of research in cognitive science. Our benchmark comprises two broad classes of tasks, covering large-scale and small-scale spatial cognition (Hegarty et al., 2006; Meneghetti et al., 2022; Newcombe, 2024). See Figure 1 for an overview.

Large-scale spatial cognition has to do with a model's ability to understand its surroundings. In large-scale tasks, the model is familiarized with an environment and is then asked to estimate distances and directions to landmarks, sketch a map of the environment, retrace a known route, or discover a shortcut to a goal. Small-scale spatial cognition has to do with a model's ability to perceive, imagine, and mentally transform objects in two or three dimensions. Together, large-scale and small-scale tasks evaluate core cognitive abilities such as spatial perception, visualization, orientation, selective attention, and visuospatial memory (Lacroix et al., 2021; Meneghetti et al., 2022).

We design text-based and image-based presentations to evaluate both large language-only and vision-language models (LLMs and VLMs, respectively). Our results indicate that contemporary frontier models have not yet reached competency - let alone mastery - in spatial cognition. On key large-scale spatial cognition tasks, frontier multimodal models perform near chance level, even when presented with an allocentric (map) view of the environment. The strongest models exhibit much better performance on some small-scale tasks that evaluate selective spatial attention and visuospatial working memory, especially with purely textual presentations via character arrays, but perform near chance on other tasks such as mental rotation (Vandenberg & Kuse, 1978), perspective taking (Kozhevnikov & Hegarty, 2001), maze completion (Lacroix et al., 2021), or the classic Minnesota Paper Form Board test (Likert & Quasha, 1941; 1969).

## 2 RELATED WORK

Spatial cognition. Spatial cognition is a branch of cognitive science that seeks to understand how humans and animals perceive, interpret, represent, and interact with objects and environments (Marshall & Fink, 2001; Landau, 2002; Waller & Nadel, 2013; Mallot, 2024; Newcombe, 2024). This involves the perception of object sizes, shapes, and scales, as well as the relationships between objects and landmarks in the environment (including location, distance, direction, and orientation). Spatial cognition is broadly divided into two categories: large-scale and small-scale (Hegarty et al., 2006; Jansen, 2009; Meneghetti et al., 2022; Newcombe, 2024). Large-scale spatial cognition refers to the ability to build spatial representations of environments and use them effectively for navigation and spatial reasoning. Large-scale spatial cognition tasks typically involve egocentric spatial transformations, where the viewer's perspective changes with respect to the environment while the spatial relationships between parts of the environment remain constant (Wang et al., 2014). Smallscale spatial cognition refers to the ability to perceive, imagine, and mentally transform objects or shapes in 2D or 3D. This is typically evaluated using paper and pencil tasks that require allocentric spatial transformations of objects and shapes (Wang et al., 2014). While large-scale spatial cognition has been demonstrated in a wide range of animals (Tolman, 1948; Menzel, 1973; Peters, 1974;

O'Keefe & Nadel, 1978; Gillner & Mallot, 1998; Richardson et al., 1999; Geva-Sagiv et al., 2015; Toledo et al., 2020), the study of small-scale spatial cognition is specific to humans.

Emergent spatial representations. Several works have shown that spatial representations, a phenomenon similar to spatial cognition, can emerge in neural networks (Banino et al., 2018; Cueva & Wei, 2018; Wijmans et al., 2023; Sorscher et al., 2023). These works train a neural network from scratch for path integration or navigation tasks and analyze the model weights to identify spatial representations.

Spatial reasoning in large language models. PlanBench (Valmeekam et al., 2024) and CogEval (Momennejad et al., 2023) evaluate LLMs on text-based planning tasks such as navigation, delivery logistics and block stacking to evaluate cognitive mapping and planning. Yamada et al. (2024) evaluate spatial reasoning in LLMs by performing map traversals on different types of graphs and evaluate the model's self-localization ability. EWOK (Ivanova et al., 2024) studies spatial plausibility reasoning in LLMs. In comparison to these benchmarks, SPACE evaluates a broader array of cognitive abilities and implements multimodal presentations of classic animal cognition experiments.

Benchmarks for large multimodal models. The recent successes of multimodal models (OpenAI, 2024; Li et al., 2024a; Reid et al., 2024) have been facilitated by large-scale training on text and multimodal corpora (Rana, 2010; Together Computer, 2023; Chen et al., 2023; Laurenc ¸on et al., 2023; Gadre et al., 2023), followed by tuning on human preferences (Liu et al., 2023a; Awadalla et al., 2024; Ouyang et al., 2022; Rafailov et al., 2023). The remarkable advances in the capabilities of these models inspired a variety of benchmarks that evaluate their performance. Early multimodal benchmarks consisted of single-task datasets such as visual question answering (Antol et al., 2015; Goyal et al., 2019; Marino et al., 2019) and image captioning (Chen et al., 2015). However, due to the limited scope of early datasets and concerns regarding potential test-data leakage, newer benchmarks use diverse collections of tasks (Fu et al., 2023; Yu et al., 2024; Liu et al., 2023b; Yue et al., 2024; Lu et al., 2024; Ying et al., 2024). While these datasets primarily focus on image understanding, newer datasets that emphasize spatiotemporal reasoning have been proposed for video (Li et al., 2024b; Fu et al., 2024a; Majumdar et al., 2024).

Recent studies highlight a number of shortcomings of frontier multimodal models (Moskvichev et al., 2023; Tong et al., 2024; Chen et al., 2024a; Fu et al., 2024b). One such shortcoming is that models may not perceive the image in detail, often missing fine-grained details or ignoring the image entirely (Chen et al., 2024b; Guan et al., 2024; Tong et al., 2024). HallusionBench proposes a new dataset of image pairs, where tiny edits are made from one image to another that change the answer to the question (Guan et al., 2024). MMVP identifies issues with CLIP-based pretraining of visual encoders, which make current models blind to certain visual patterns, and proposes a benchmark of CLIP-blind image pairs where the same question has opposite answers (Tong et al., 2024). MMStar shows that many questions in multimodal benchmarks can be answered correctly without the image and proposes a new split of existing benchmarks that addresses this issue (Chen et al., 2024b).

Another shortcoming of existing models is their lack of spatial perception and reasoning (Chen et al., 2024a; Cheng et al., 2024). SpatialVLM proposes a VQA dataset that requires answering questions about relative spatial arrangements and metric relationships (Chen et al., 2024a). SpatialRGPT further includes region-level understanding (Cheng et al., 2024). MOCHI evaluates the ability of vision models to identify rotated versions of procedurally-generated objects (Bonnen et al., 2025). 'Perception test' aims to overcome shortcomings of standard video datasets by creating a diagnostic dataset where participants record videos while following complex scripts depicting interesting events (Patraucean et al., 2023). It evaluates fundamental perceptual skills (memory, abstraction, intuitive physics, and semantics) and various types of reasoning.

Another line of work considers skill acquisition (the ability to learn a skill and apply it to new scenarios). Prior work has studied this using visual analogical reasoning (Chollet, 2019; Moskvichev et al., 2023; Yiu et al., 2024). The ARC dataset contains samples consisting of a few examples of abstract grids and their transformations and one or more test inputs (Chollet, 2019). The objective is to understand the transformation performed using the examples and apply it to test inputs. The transformations have been further organized into specific concepts with varying degrees of difficulty in the ConceptARC dataset (Moskvichev et al., 2023). Inspired by ARC and developmental psy-

chology, the KiVA dataset studies visual analogies in the context of visually realistic 3D shapes with concepts like transformations in color, size, rotations, reflections, and counting (Yiu et al., 2024).

## 3 SPACE: A BENCHMARK FOR S PATIAL P ERCEPTION A ND C OGNITION E VALUATION

We develop a benchmark for evaluating the spatial cognition of frontier models. The benchmark comprises large-scale and small-scale tasks and is designed for compatibility with both text-only and multimodal models.

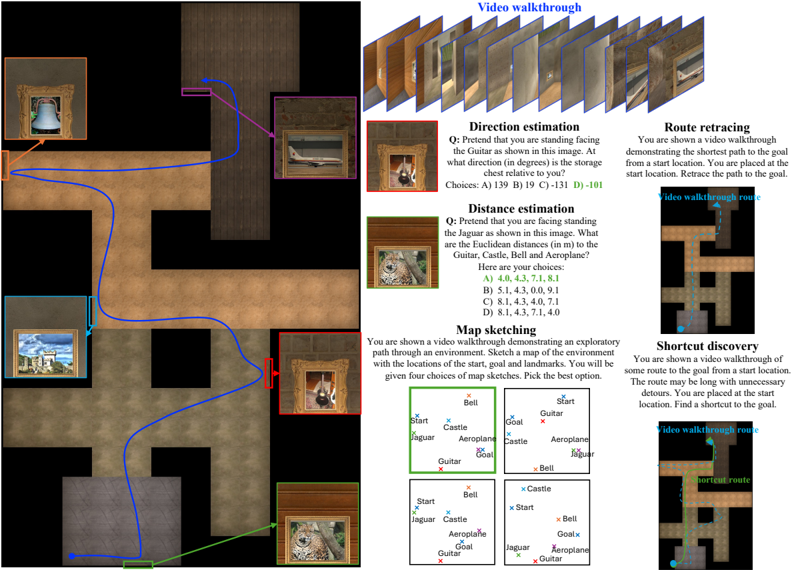

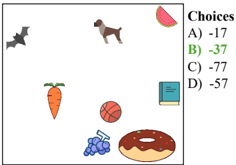

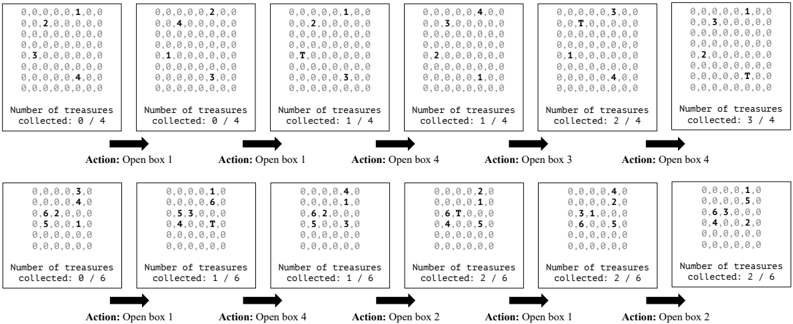

## 3.1 LARGE-SCALE SPATIAL COGNITION

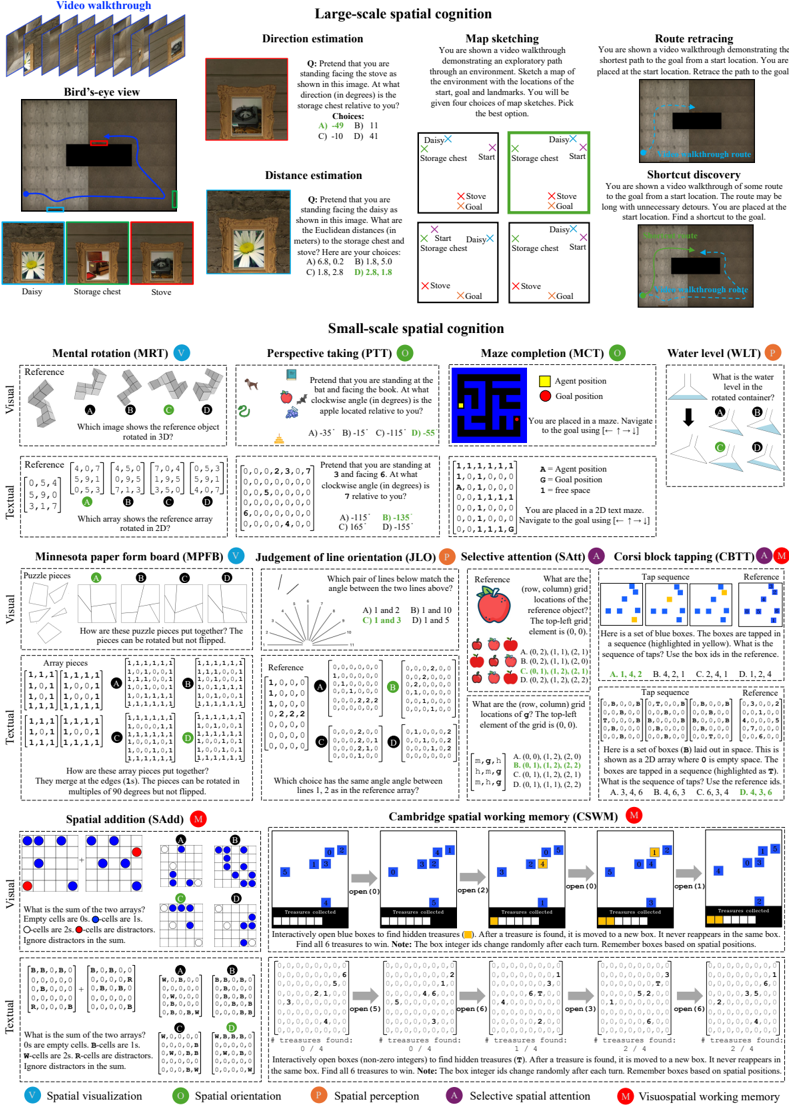

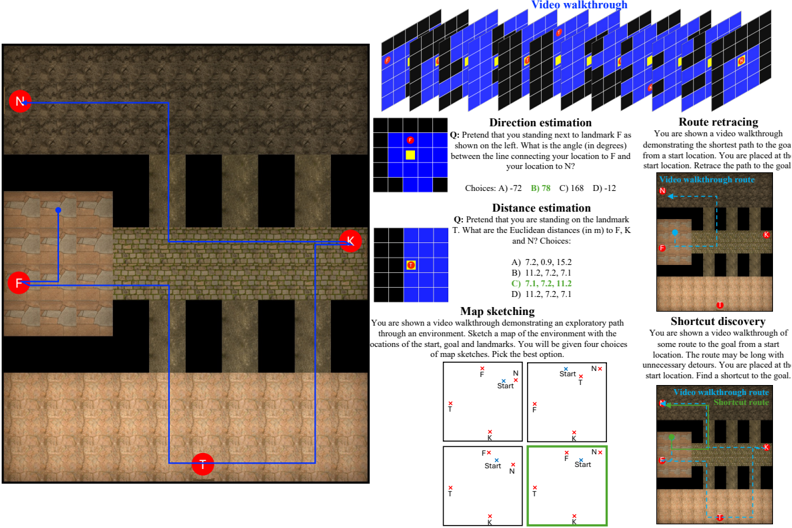

In large-scale spatial cognition tasks, we evaluate the ability of models to build spatial representations of their surrounding environment, and whether they can use these representations to reason about and navigate in the environment. There are two stages to these tasks. First, we familiarize the model with an environment by showing a video walkthrough. 1 The model must build a mental representation of the environment that captures the locations of start, goal and landmark locations, and their spatial relationships. After the model is familiarized with the environment, we evaluate the model's spatial representation using five tasks derived from the cognitive science literature (Meneghetti et al., 2022). See Figure 2(top) and Figure 3 for an overview.

- 1 . Direction estimation. The goal is to determine the directions to other landmarks from a given landmark. The participant is asked to pretend that they are facing a landmark A, and then asked to estimate the direction (in degrees) to another landmark B. This is known as a pointing trial in the cognitive science literature (Allen et al., 1996; Hegarty et al., 2006; Pazzaglia & Taylor, 2007; Weisberg et al., 2014; Meneghetti et al., 2016). We formulate this as a multiple-choice QA task with four options for the direction (only one correct option).

- 2 . Distance estimation. The goal is to determine the straight-line distances from one landmark to all other landmarks (Allen et al., 1996; Hegarty et al., 2006). The participant is asked to pretend that they are facing a landmark A, and then asked to estimate the Euclidean distance to all the other landmarks. We pose this as a multiple-choice QA with four options for the list of distances to each landmark. Since current models are not good at estimating metric measurements (Chen et al., 2024a; Cheng et al., 2024), we generate incorrect options such that the ratios of distances between landmarks are not preserved, making it easier to identify the correct option.

- 3 . Map sketching. The goal is to draw a map of the environment that contains the start, goal and landmark positions (Allen et al., 1996; Hegarty et al., 2006; Pazzaglia & Taylor, 2007; Weisberg et al., 2014; Meneghetti et al., 2016; 2021). We formulate this as multiple-choice QA with four options for the map sketches. The correct option preserves the true spatial relationships between the different map elements, while the incorrect options skew the spatial relationships randomly.

- 4 . Route retracing. The goal is to retrace the route shown in the video from the start to the goal (Allen et al., 1996; Pazzaglia & Taylor, 2007; Meneghetti et al., 2016; 2021). This task evaluates the model's ability to remember landmarks seen in the route and the actions required along the route to reach the goal. We formulate this as an interactive task where the model receives the current observation, decides which action to take, and receives updated observations based on the actions taken. We measure performance using the SPL metric (success weighted by path length), which penalizes the model for taking unnecessary detours (Anderson et al., 2018). (The demonstrated route, which the model must retrace, is always the shortest path to the goal.)

- 5 . Shortcut discovery. The goal is to discover a shortcut (i.e., a route never observed before) from the start to the goal after observing a video walkthrough that takes detours to reach the goal (Tolman, 1948; Allen et al., 1996; Pazzaglia & Taylor, 2007; Meneghetti et al., 2016; 2021). The ability to discover shortcuts in familiar environments is a key indicator of cognitive mapping ability (Tolman, 1948). When designing environments and walkthrough paths, we ensured that a novel shortcut exists that the model can exploit. Similar to route retracing, we treat this as an interactive navigation task and measure performance using the SPL metric.

1 For text-only models, the 'video walkthrough' is a sequence of discrete map observations presented as arrays of characters, see Figure 3 for examples.

֯

֯

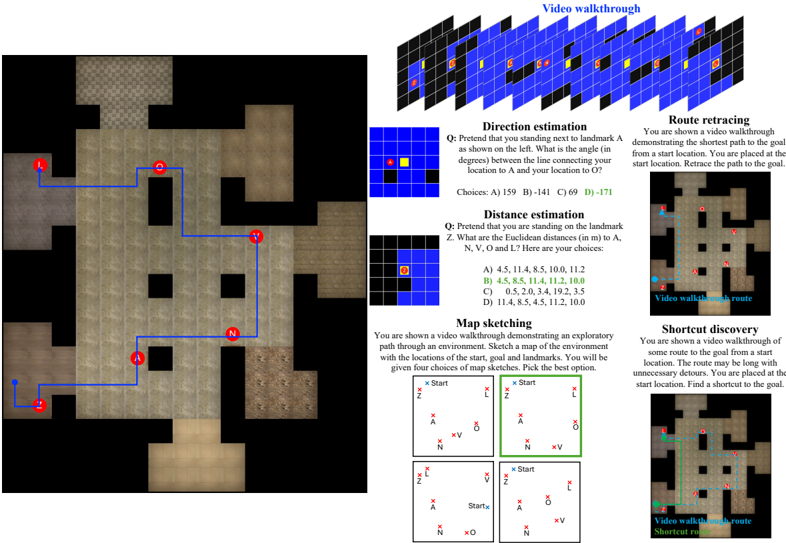

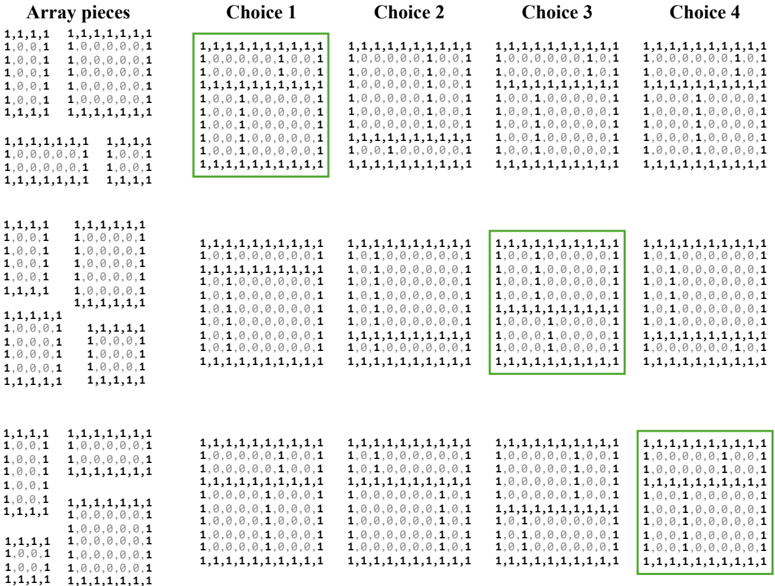

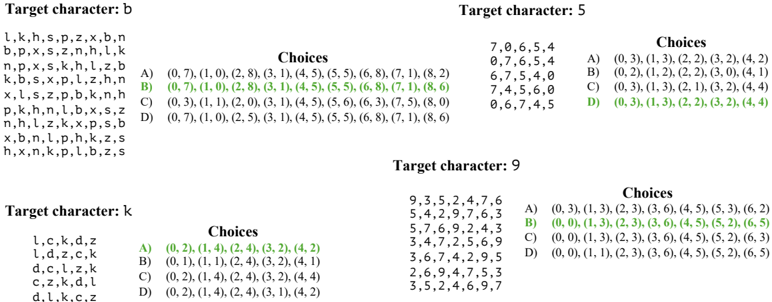

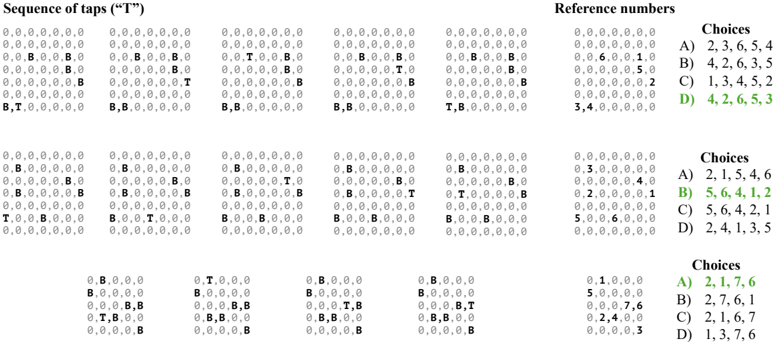

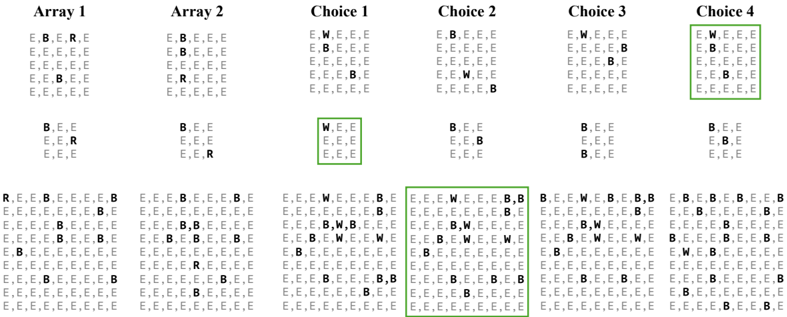

Figure 2: The tasks in SPACE. For all tasks (other than the water level test), we include multimodal as well as purely textual presentations, to support evaluating both large language models (LLMs) and vision-language models (VLMs). For large-scale tasks, we visualize examples from the egocentric image presentation here and visualize alternate presentations in Figure 3. For small-scale tasks, we visualize both visual and textual presentations here. Bolding of characters in the arrays is for illustration purposes only.

<details>

<summary>Image 2 Details</summary>

### Visual Description

## Diagram: Spatial Cognition Tasks & fMRI Activation

### Overview

This diagram presents a series of spatial cognition tasks used in an fMRI study, along with corresponding brain activation maps. The tasks are categorized into Large-scale and Small-scale spatial cognition, and further subdivided into specific tests. Each task description is accompanied by a representative brain activation map showing areas of significant activity (red/yellow). The bottom section details the fMRI parameters used in the study.

### Components/Axes

The diagram is structured into several sections:

* **Header:** "Spatial Cognition Tasks & fMRI Activation"

* **Large-scale spatial cognition:** Includes Bird's-eye view, Direction estimation, Distance estimation, Map sketching, and Route retracing.

* **Small-scale spatial cognition:** Includes Mental rotation (MRT), Perspective taking (PTD), Maze completion (MCT), and Water level (WLT).

* **fMRI Parameters:** Details about the scanner, slice thickness, TR, TE, and number of volumes.

* **Brain Activation Maps:** Each task has a corresponding brain activation map displayed in a standardized brain template.

* **Activation Scale:** A color scale indicating the level of activation (from low to high).

### Detailed Analysis or Content Details

**Large-scale spatial cognition:**

* **Bird's-eye view:** Image showing Daisy, Storage chest, and Stove.

* **Direction estimation:** Question: "Pretend you are standing facing the stove as shown in this image. At what direction (in degrees) is the storage chest relative to you?" Choices: A) -10° B) 11° C) 31° D) 41°.

* **Distance estimation:** Question: "Pretend you are standing facing the stove as shown in this image. What is the approximate distance (in meters) to the storage chest and stove?" Choices: A) 1.5, 2.5 B) 1.5, 3.0 C) 1.8, 2.5 D) 1.8, 3.0.

* **Map sketching:** "You are shown a video walkthrough demonstrating the layout of the environment with the locations of the stove, storage chest, and goal. Pick the best option." Four map sketches are shown.

* **Route retracing:** "You are shown videos retracing demonstrating the shortest path to the goal from the start location. Retrace the path to the goal." Image of a walkthrough route.

**Small-scale spatial cognition:**

* **Mental rotation (MRT):** Image showing a reference object and four rotated versions. Question: "Which image shows the reference object rotated in 31°?"

* **Perspective taking (PTD):** Question: "Pretend that you are standing at the bottom of the structure as shown in this image. At what elevation angle (in degrees) do you see the stove relative to you?" Choices: A) 15° B) 30° C) 45° D) 60°.

* **Maze completion (MCT):** "You are placed in a maze. Navigate to the goal position." Image of a maze with "Agent position" and "Goal position" marked.

* **Water level (WLT):** Question: "What is the water level in the pool compared to the roof?" Choices: A) Lower B) Same C) Higher. Image of a building with a pool.

**fMRI Parameters:**

* **Scanner:** 3T Siemens Prisma

* **Slice thickness:** 3 mm

* **TR:** 2 s

* **TE:** 30 ms

* **Volumes:** 350

**Brain Activation Maps:**

Each task has a corresponding brain activation map. The maps show areas of significant activation in red and yellow, with the intensity of the color representing the level of activation. The brain template is in a standardized orientation (left is left). The activation scale is shown at the bottom right, ranging from approximately 0 to 4.5.

### Key Observations

* The activation maps vary significantly across tasks, suggesting that different spatial cognition processes engage distinct brain regions.

* Commonly activated areas include the parietal lobe, frontal lobe, and temporal lobe.

* The fMRI parameters are standard for a 3T scanner.

* The tasks are designed to isolate different aspects of spatial cognition, ranging from large-scale navigation to small-scale object manipulation.

* The questions in the tasks are multiple choice, suggesting a controlled experimental setup.

### Interpretation

This diagram illustrates an fMRI study investigating the neural basis of spatial cognition. The researchers used a series of carefully designed tasks to probe different aspects of spatial processing, and then used fMRI to identify the brain regions involved in each task. The varying activation patterns suggest that spatial cognition is not a single process, but rather a collection of distinct cognitive operations. The study provides insights into how the brain represents and processes spatial information, which is crucial for everyday activities such as navigation, object recognition, and manipulation. The use of both large-scale and small-scale tasks allows for a comprehensive understanding of the neural mechanisms underlying spatial cognition. The fMRI parameters are standard, ensuring the reliability and validity of the results. The multiple-choice format of the tasks likely helps to control for individual differences in strategy and performance. The diagram is a clear and concise representation of the study's design and findings.

</details>

֯

֯

֯

֯

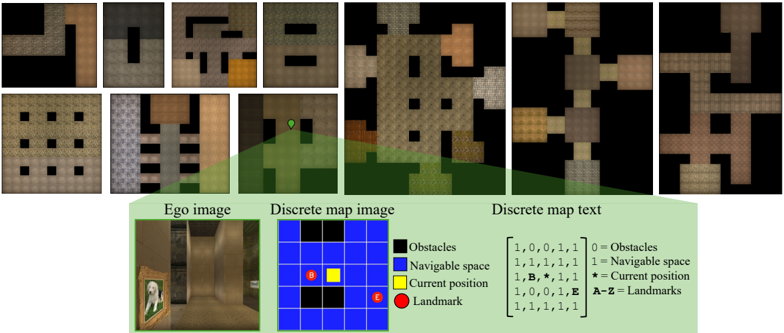

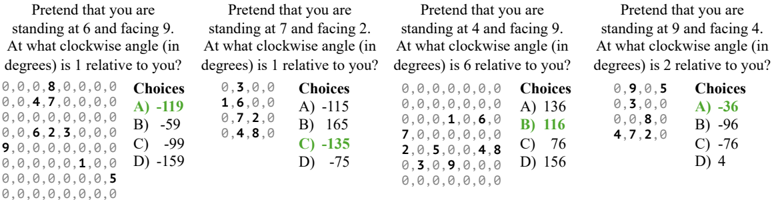

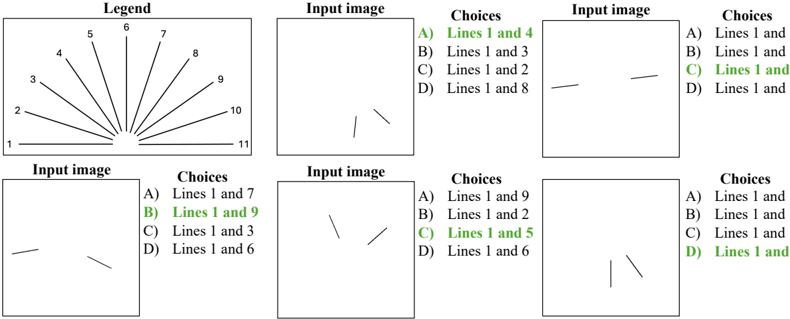

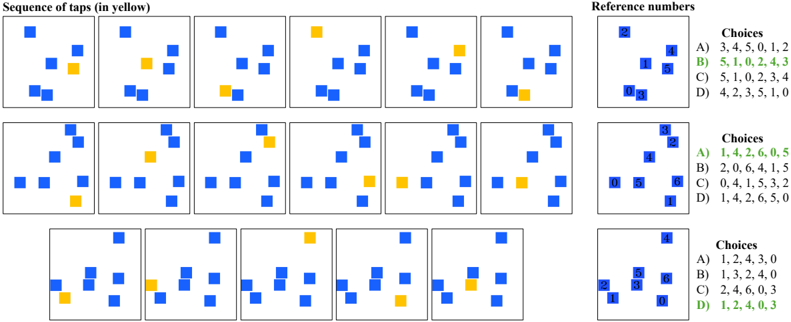

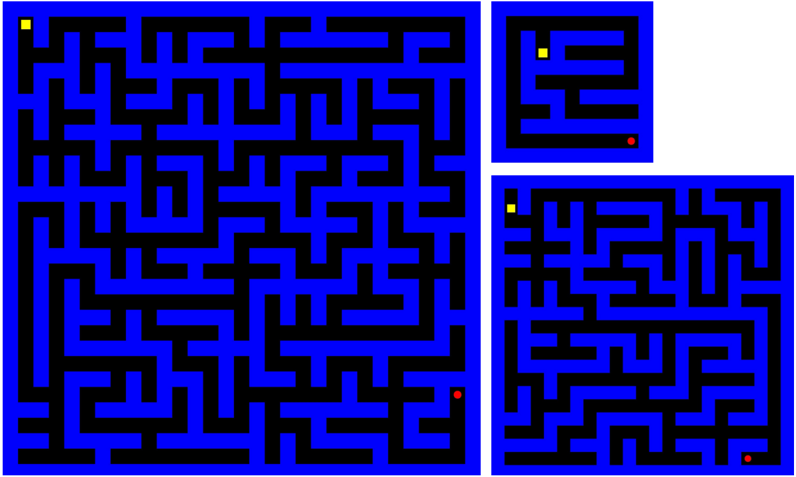

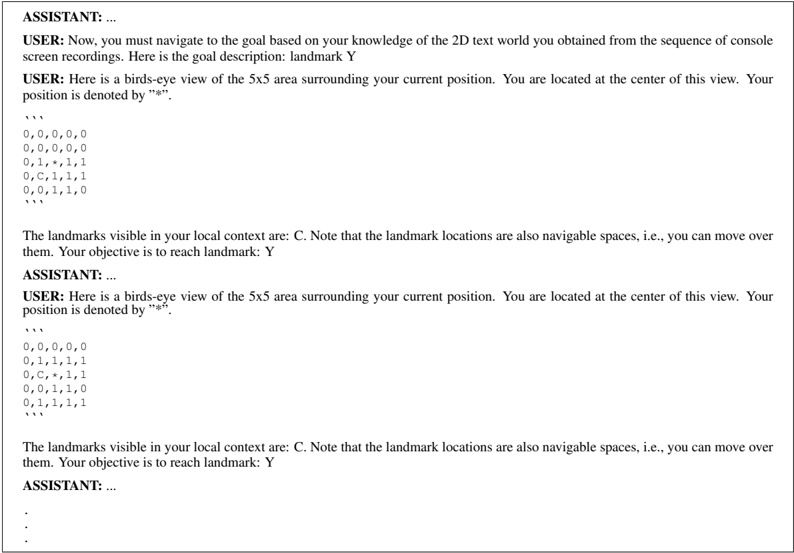

Figure 3: Large-scale spatial cognition. We design ten environment layouts based on experimental protocols in cognitive science. The top row shows bird's-eye view renderings of these environments. To evaluate largescale spatial cognition in frontier models, we implement three observation spaces: egocentric image, discrete map (DM) image, and discrete map (DM) text (see bottom row). Ego image shows a first-person view within the environment. DMimage shows a quantized, allocentric bird's-eye view of the 2 . 5m × 2 . 5m region centered on the current position. Unlike ego image, DM image enables performing the large-scale tasks in a simplified setting without requiring perspective geometry. DM text depicts the DM image using text characters. We evaluate multimodal models using ego image and DM image, and large language models using DM text.

<details>

<summary>Image 3 Details</summary>

### Visual Description

\n

## Diagram: Environment Representation

### Overview

The image presents a comparison of different representations of an indoor environment, likely for a robotic navigation or AI agent. It shows a series of "first-person" views (Ego image), discretized map representations (Discrete map image), and a textual representation of the map (Discrete map text). The goal appears to be illustrating how a continuous visual environment can be abstracted into discrete, symbolic representations.

### Components/Axes

The image is divided into three main sections:

1. **Ego Image:** A 2x6 grid of first-person perspective images of the environment. These images show what an agent "sees" from its current location.

2. **Discrete Map Image:** A 2x2 grid showing a top-down, discretized representation of the environment. This uses a grid-based map where each cell represents a specific state (obstacle, navigable space, agent position, landmark).

3. **Discrete Map Text:** A section containing a sample "Ego image", a 4x4 grid representing the discretized map, and a legend explaining the color coding.

The legend defines the following:

* Blue: Obstacles (value 0)

* White: Navigable space (value 1)

* Yellow: Current position (value B, asterisk *)

* Red: Landmark (value E, A-Z)

### Detailed Analysis or Content Details

**Ego Image:**

The Ego images show a corridor-like environment with textured walls and floors. The perspective changes across the images, suggesting the agent is moving through the environment. The lighting and textures vary, indicating different areas within the space. It's difficult to extract precise details from these images without further processing.

**Discrete Map Image:**

The Discrete Map Image shows a simplified, top-down view of the environment. The grid cells are colored according to the legend. The images show varying arrangements of obstacles and navigable space.

**Discrete Map Text:**

The Discrete Map Text section provides a concrete example of the discretized map. The 4x4 grid has the following configuration (reading row by row, left to right):

```

[1, 0, 0, 1]

[1, 1, 1, 1]

[1, B, *, 1]

[1, 1, 1, 1]

```

Where:

* 1 represents Navigable space

* 0 represents Obstacles

* B represents Current position

* \* represents Current position (duplicate of B)

* E represents Landmarks (not present in this example)

The legend also provides a matrix representation of the color coding:

```

[ 1, 0, 0, 1 ] = Obstacles

[ 1, 1, 1, 1 ] = Navigable space

[ 1, B, *, 1 ] = Current position

[ 1, 1, 1, 1 ] = Landmarks

```

### Key Observations

* The discretization process simplifies the environment, losing fine-grained details present in the Ego images.

* The current position is represented by both 'B' and '*' in the example map, which is redundant.

* The Discrete Map Image and Discrete Map Text sections provide consistent representations of the environment.

* The Ego images provide a visual context for understanding the abstracted representations.

### Interpretation

This diagram illustrates a common approach in robotics and AI: representing a continuous environment in a discrete form for planning and decision-making. The Ego images represent the raw sensory input, while the Discrete Map Image and Text represent the processed, symbolic representation. This abstraction allows algorithms to reason about the environment without dealing with the complexity of raw pixel data. The discretization process involves identifying obstacles, navigable spaces, and key locations (landmarks). The choice of discretization resolution (grid size) impacts the accuracy and computational cost of planning algorithms. The redundancy in representing the current position ('B' and '\*') suggests a potential area for optimization in the representation. The diagram highlights the trade-off between realism (Ego images) and computational efficiency (Discrete maps).

</details>

## 3.1.1 IMPLEMENTATION

3D environment generation. We create ten environment layouts based on prior work in cognitive science and artificial intelligence (Tolman, 1948; Gillner & Mallot, 1998; Richardson et al., 1999; Banino et al., 2018; Bouchekioua et al., 2021). Figure 3 shows bird's-eye view images of each layout. See the appendix for more details about the environment generation process.

Observation spaces. We create multiple observation spaces to support evaluating both text-only and vision+text models. These are egocentric images, discrete map (DM) images, and discrete map (DM) text presentations.

- Ego image. The environment is captured using a forward-facing perspective camera placed at the model's location in the environment. This is similar to the setup of an animal navigating through an immersive environment and requires understanding perspective geometry.

- DMimage. This is a quantized bird's-eye view image of a 2 . 5m × 2 . 5m area in the environment surrounding the model's location. This is akin to a human using a map to navigate. The current location is always at the center of the DM image. We use a Pacman-like coloring scheme highlighting the obstacles, navigable space, current postion, and landmarks. DM image simplifies the mapping process by removing the need for perspective geometry understanding.

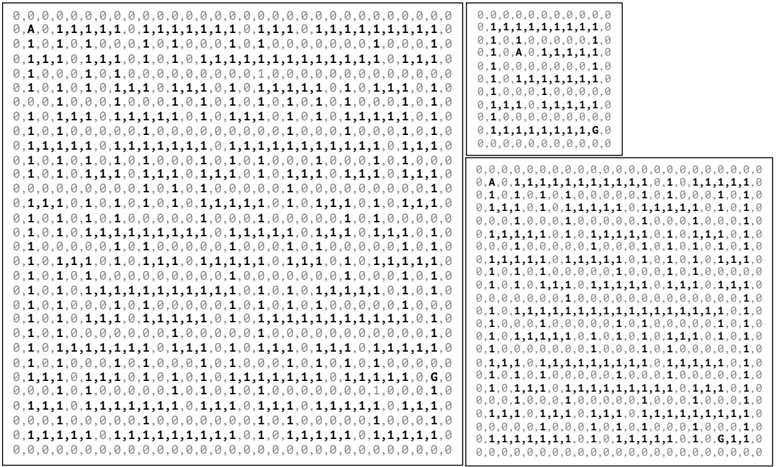

- DMtext. This is a translation of DM image to an text array. We carefully select the text encoding to ensure compatibility with text tokenizers of popular models and ensure that each element of the array is encoded by the tokenizers of all evaluated models as a distinct token.

See Figure 3 (bottom) for examples of these presentations. The first two observation spaces are used for models that support visual inputs, while the last observation space is used for text-only models. See the appendix for additional illustrations of these tasks and dataset statistics.

## 3.2 SMALL-SCALE SPATIAL COGNITION

In small-scale spatial cognition tasks, we evaluate the models' ability to perceive, imagine, and mentally transform objects or shapes in two and three dimensions. We build on the body of work on visuospatial abilities, which are evaluated in humans via paper-and-pencil tasks (Allen et al., 1996; Weisberg et al., 2014; Meneghetti et al., 2022). These abilities may be used to explain individual differences between participants in large-scale spatial cognition (Meneghetti et al., 2022). We define ten small-scale tasks to evaluate abilities such as spatial perception, spatial visualization, spatial orientation, selective attention, and visuospatial working memory. See Figure 2(bottom) for illustrations of each task. We summarize each task below and provide additional details in the appendix.

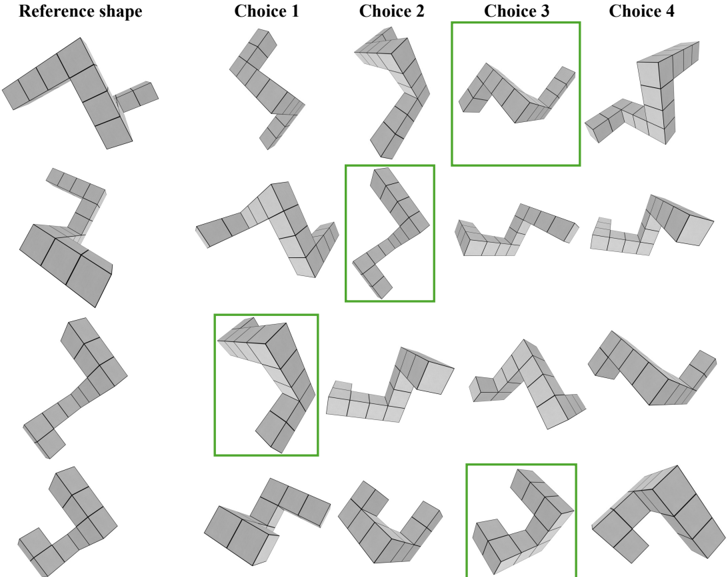

- 6 . Mental rotation test (MRT). This is a test of spatial visualization, i.e., the ability to mentally manipulate 2D or 3D stimuli (Vandenberg & Kuse, 1978). In the visual presentation, a reference 3D shape from Shepard & Metzler (1971) is provided along with four choices. The correct choice is a rotated version of the reference, and the remaining choices are rotated versions of an alternate shape. The goal is to identify the correct choice from the distractors. The text-only version of this task uses 2D character arrays, akin to the card rotations test from French et al. (1963).

- 7 . Perspective taking test (PTT). This is a test of spatial orientation, i.e., the ability to imagine being in a different position in space and seeing the surroundings from a new perspective (Kozhevnikov & Hegarty, 2001). We place N randomly-sampled objects (like apples, bats, dogs, books, grapes, etc.) at random locations in an image (with no overlap between objects). The objective is to take the perspective of standing next to an object (say, a bat) facing another object (say, a book), and determine the relative orientation of a third object (say, an apple). This is a multiple-choice QA with four options (only one correct option).

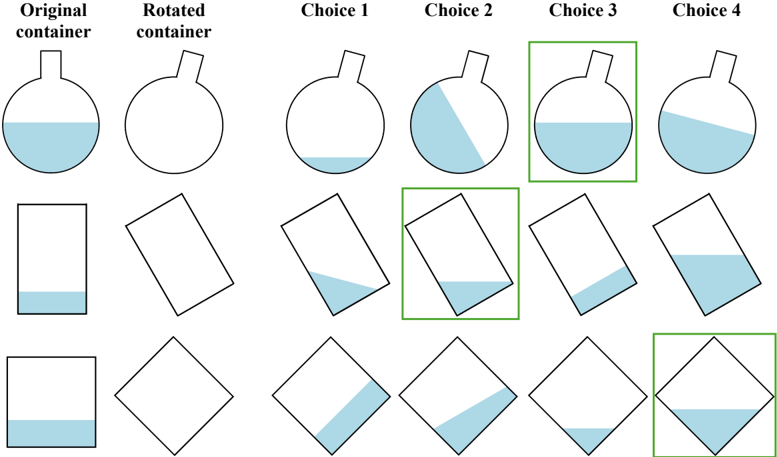

- 8 . Water level test (WLT). This is a test of spatial perception (Piaget et al., 1957). Originally, it was designed to evaluate children's knowledge about the horizontal nature of the surface of water in a sealed bottle regardless of its orientation. Performance on the water-level test was found to be related to performance on spatial ability tests (Foltz, 1978; Wittig & Allen, 1984). We present the model with an image of a water container partially filled with water and ask it to imagine the position of the water if the container were tilted. We implement this as a four-way multiplechoice QA, where each choice is an image showing the tilted container with varying water levels. The objective is to select the one choice that shows the correct water level.

- 9 . Minnesota Paper Form Board test (MPFB). This is a test of spatial visualization, where the model must perform multi-step manipulations of complex spatial information (Meneghetti et al., 2022). Specifically, we provide the model with pieces of a figure and ask it to identify how the pieces fit together (Likert & Quasha, 1941; 1969). We programmatically segment a square into five pieces, and rotate the pieces randomly to generate the final segments. We generate alternate segmentations of a square as negative choices for a multiple-choice QA presentation.

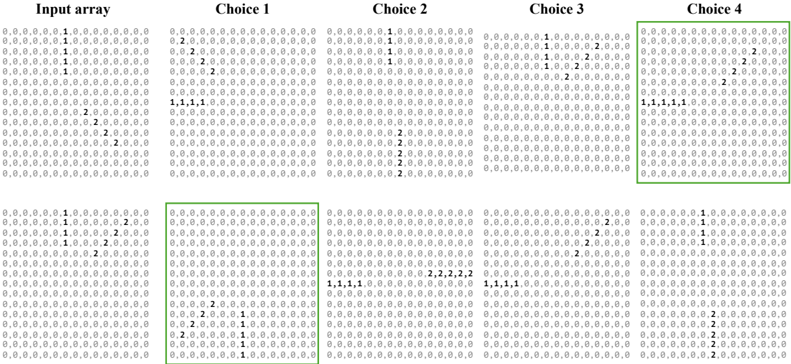

- 10 . Judgement of Line Orientation test (JLO). This is a test of spatial perception (Benton, 1994), where a model must determine the angle between two lines in an image. Our visual presentation shows two lines in an image along with a set of 11 reference lines. The objective is to determine the pair of reference lines that have the same angles between them as the lines in the image. This is presented as a multiple-choice QA with four choices (only one of them correct). Our text-only presentation implements the tasks via lines embedded in 2D integer arrays.

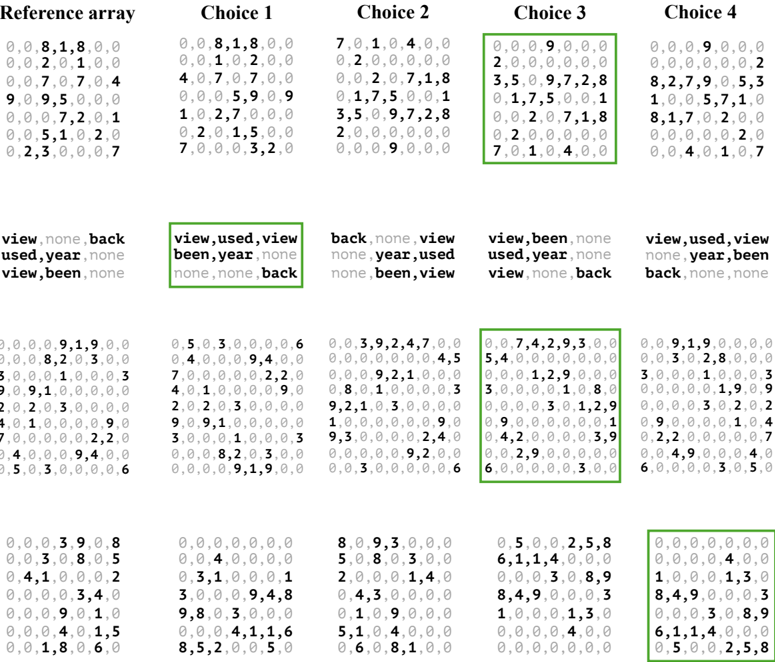

- 11 . Selective attention task (SAtt). This is a test of selective spatial attention, i.e., the ability to selectively attend to a particular region of space while ignoring others (Serences & Kastner, 2014; Pahor et al., 2022). In particular, we use the widely used cancellation task, where the goal is to search for and mark out target stimuli embedded amidst distractors (Della Sala et al., 1992; Brickenkamp & Zillmer, 1998; Dalmaijer et al., 2015; Lacroix et al., 2021; Pahor et al., 2022; Kalina & Walgrave, 2004). We design the task as multiple-choice QA with objects as the stimuli for visual evaluation and characters as stimuli for text-only evaluation. The target stimuli and distractors are arranged on a grid. The answer must be selected from one out of four options. The correct option lists the (row, column) pairs that localize the target stimuli in the grid.

- 12 . Maze completion task (MCT). This is an interactive game to evaluate spatial orientation, planning, and executive functioning (Lacroix et al., 2021). We programmatically create mazes using Mazelib (Stilley, 2014) and render them using a Pacman-like color scheme for the visual presentation and a character array for the text-only presentation (similar to DM image and DM text in Figure 3). Using the maze rendering, a model must sequentially select an up/down/left/right action to reach the goal and execute a stop action to successfully complete the task. If the model does not reach the goal within 250 actions, it is considered to have failed. We measure the success rate, i.e., the percentage of mazes where the model reaches the goal within the allotted time.

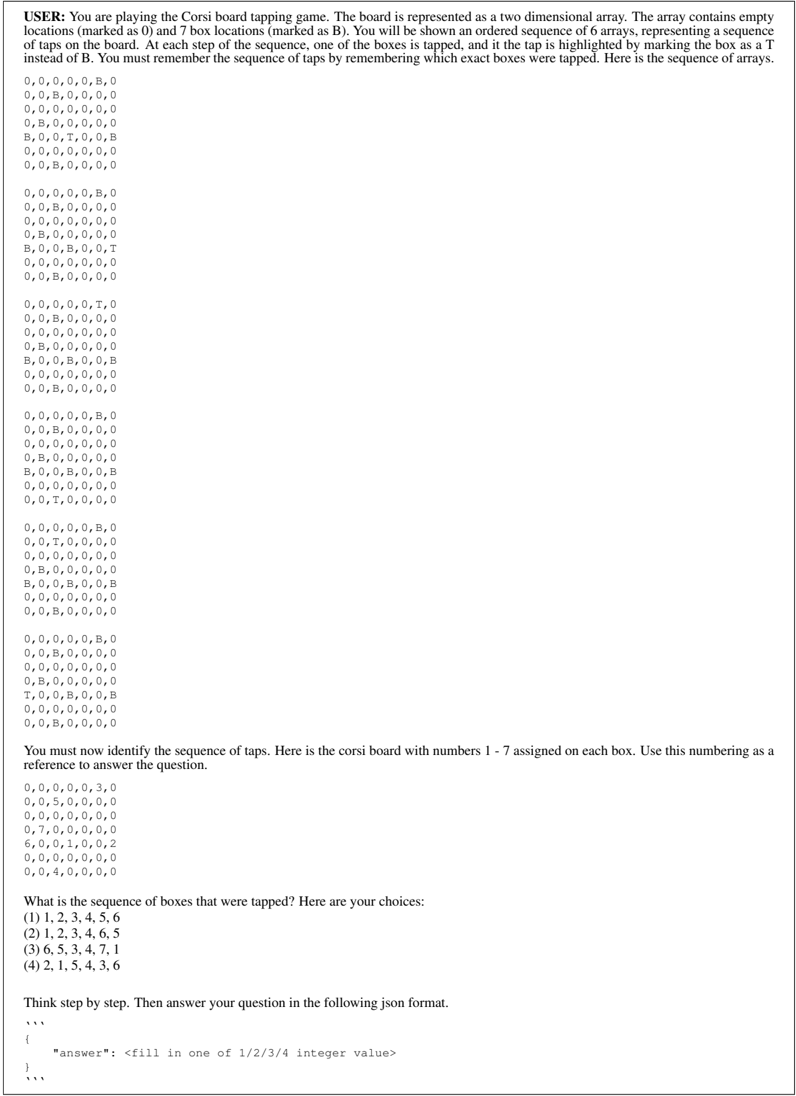

- 13 . Corsi block-tapping task (CBTT). This is a test of visuospatial working memory and attention (Corsi, 1972; Claessen et al., 2015). We create a digital Corsi board with N blue-colored blocks that are randomly placed on the board with no overlap ( N ∈ [5 , 8]) . We randomly sample a sequence of K taps, where each block is tapped at most once ( K ∈ [4 , N ]) . The taps are digitally rendered on the blocks by highlighting them in yellow when tapped, yielding an sequence of K images. After presenting the K images, we provide a rendering of the board with integer IDs

assigned to each block and ask the model to reproduce the sequence of taps using these IDs. We treat this as multiple-choice QA with four choices of tap sequences, only one of which is correct.

- 14 . Spatial addition task (SAdd). This is a test of visuospatial working memory, i.e., the ability to store and manipulate spatial information in memory (Wechsler, 2009). The model is presented with two 2D grids, where each grid location can be empty or contain a blue or red dot. The objective is to add the two grids together by following certain rules. If a grid location has a blue dot in exactly one of grids, the result should be a blue dot. If a grid location has blue dots on both grids, the result should be a white dot. Red dots are distractors and must be ignored. We programmatically generate grid pairs with sizes sampled from { 3 , 5 , 7 , 9 } and pseudo-randomly populate them with blue and red dots. We formulate the task as multiple-choice QA, presenting four grids as possible answers, exactly one of which is correct.

- 15 . Cambridge spatial working memory test (CSWM). This is an interactive game that evaluates visuospatial working memory (Sahakian et al., 1988). The model is presented with an image containing N blue colored boxes ( N ∈ [3 , 7]) . A yellow 'treasure' is initially hidden in one of the boxes. The model must sequentially select boxes one at a time to find the hidden treasure. Once the treasure is found, another treasure is placed in one of the remaining boxes. The objective is to locate all the yellow treasures via a process of elimination. We programmatically generate instances of this task by randomly sampling blue boxes, placing them at random locations (without overlap), and placing the treasures in each box in random order. At each step, we assign random integer IDs to each box as a reference for selecting a box. The boxes' integer IDs are randomized in each step, forcing the model to remember boxes based on their spatial positions. When the model finds a treasure, the box containing the treasure becomes yellow. The model must find all the treasures before a time limit T (determined based on N ) to succeed.

As with large-scale spatial cognition, we also implement purely textual presentations of these tasks to support evaluation of large language models (LLMs). Figure 2 illustrates both the multimodal and the purely textual presentations. The key idea in instantiating the textual presentations is to encode all spatial information via 2D character arrays. We did not identify a natural such encoding for the water level test (WLT) and did not include a text-only presentation for it for this reason. See the appendix for additional illustrations of these tasks. In some tasks, such as MRT, MPFB, and JLO, the text presentations are substantially easier than the corresponding visual presentations. However, the visual and textual presentations match closely for the remaining tasks, enabling us to identify modality-specific limitations of multimodal models by evaluating them on the two presentations.

## 4 EXPERIMENTS

Baselines. We evaluate a number of LLMs and VLMs. Using text-only presentations, we evaluate GPT-4v and GPT-4o (OpenAI, 2023; 2024), Claude 3.5 Sonnet (Anthropic, 2024), the Llama3 family (Dubey et al., 2024), Mistral models such as Mixtral 8x7B, Mixtral 8x22B, and Mistral 123B (Jiang et al., 2024; Mistral AI team, 2024a), and two Yi 1.5 models (Young et al., 2024). Using multimodal presentations, we evaluate GPT-4v and GPT-4o (OpenAI, 2023; 2024), Claude 3.5 Sonnet (Anthropic, 2024), LlaVA-NeXT-Interleave (Li et al., 2024a), Pixtral 12B (Mistral AI team, 2024b), and Phi-3.5-vision (Abdin et al., 2024). We use the vLLM inference engine for evaluating the open-source models (Kwon et al., 2023). For each task, we implement a prompt that provides a detailed description of the task and the expected response format (see the appendix). We also list the results of a chance baseline that selects an answer at random. For multiple-choice QA tasks, chance is at 25% . For interactive tasks, the chance baseline samples an action at random in each step. We further include human performance for reference for the multiple-choice QA tasks. See the appendix for additional implementation details.

Large-scale spatial cognition results. The results are shown in Table 1, grouped by presentation modality (ego image, DM image, DM text). For image-based presentations, we evaluate Claude 3.5 Sonnet, GPT-4v and GPT-4o because they support video understanding (via a succession of images). For DM text, we evaluate both open and closed LLMs. We also list the performance of the chance baseline for calibration, as well as human performance (see the appendix for details). In the text-only modality, Claude 3.5 Sonnet attains the highest average performance. Mistral 123B is the highest-performing open model. All evaluated models struggle with large-scale spatial cognition, falling significantly below human performance on direction estimation, distance estimation,

## Observation space: Ego image

Table 1: Large-scale spatial cognition results. The three tables show results for different observation spaces. Results below 50% of human performance are gray. Methods are sorted based on their overall performance.

| Method | Direction estimation | Distance estimation | Map sketching | Route retracing | Shortcut discovery | Average |

|-------------------|----------------------------|----------------------------|----------------------------|----------------------------|----------------------------|----------------------------|

| Human | 82.8 | 83.2 | 96.6 | - | - | - |

| GPT-4o | 32 . 0 ± 4 . 1 | 36 . 5 ± 5 . 0 | 33 . 3 ± 4 . 1 | 6 . 6 ± 3 . 6 | 6 . 4 ± 1 . 0 | 23 . 0 |

| Claude 3.5 Sonnet | 29 . 0 ± 2 . 9 | 34 . 4 ± 2 . 9 | 27 . 5 ± 8 . 3 | 7 . 4 ± 2 . 8 | 0 . 0 ± 0 . 0 | 19 . 6 |

| GPT-4v | 29 . 7 ± 0 . 3 | 31 . 9 ± 2 . 7 | 20 . 0 ± 11 . 8 | 1 . 6 ± 1 . 2 | 3 . 9 ± 0 . 9 | 17 . 4 |

| Chance | 25.0 | 25.0 | 25.0 | 0.0 | 0.0 | 15.0 |

| | Observation space: DMimage | Observation space: DMimage | Observation space: DMimage | Observation space: DMimage | Observation space: DMimage | Observation space: DMimage |

| Method | Direction estimation | Distance estimation | Map sketching | Route retracing | Shortcut discovery | Average |

| Human | 82.9 | 82.5 | 100.0 | - | - | - |

| GPT-4o | 29 . 5 ± 5 . 5 | 31 . 9 ± 1 . 0 | 33 . 3 ± 3 . 3 | 23 . 6 ± 3 . 1 | 25 . 9 ± 2 . 0 | 28 . 8 |

| Claude 3.5 Sonnet | 32 . 5 ± 2 . 3 | 40 . 0 ± 2 . 6 | 30 . 0 ± 4 . 1 | 15 . 4 ± 4 . 3 | 13 . 7 ± 6 . 4 | 26 . 3 |

| GPT-4v | 26 . 3 ± 3 . 0 | 29 . 3 ± 4 . 1 | 45 . 0 ± 5 . 0 | 13 . 7 ± 5 . 2 | 15 . 3 ± 3 . 0 | 25 . 9 |

| Chance | 25.0 | 25.0 | 25.0 | 0.0 | 0.0 | 15.0 |

| | Observation space: DMtext | Observation space: DMtext | Observation space: DMtext | Observation space: DMtext | Observation space: DMtext | Observation space: DMtext |

| Method | Direction estimation | Distance estimation | Map sketching | Route retracing | Shortcut discovery | Average |

| Human | 66.7 | 76.5 | 66.7 | - | - | - |

| Claude 3.5 Sonnet | 29 . 2 ± 4 . 4 | 40 . 2 ± 3 . 1 | 51 . 7 ± 5 . 5 | 26 . 5 ± 2 . 9 | 20 . 0 ± 3 . 0 | 33 . 5 |

| GPT-4o | 28 . 7 ± 4 . 1 | 33 . 3 ± 1 . 7 | 46 . 7 ± 4 . 1 | 27 . 5 ± 3 . 2 | 26 . 6 ± 0 . 1 | 32 . 6 |

| Mistral 123B | 30 . 5 ± 5 . 1 | 28 . 9 ± 5 . 7 | 38 . 3 ± 5 . 5 | 20 . 3 ± 2 . 8 | 19 . 9 ± 3 . 0 | 27 . 6 |

| GPT-4v | 30 . 7 ± 4 . 1 | 26 . 5 ± 2 . 7 | 40 . 8 ± 6 . 0 | 20 . 6 ± 5 . 8 | 15 . 4 ± 2 . 0 | 26 . 8 |

| Llama 3 70B | 27 . 0 ± 2 . 2 | 30 . 4 ± 1 . 9 | 35 . 0 ± 8 . 3 | 13 . 2 ± 9 . 2 | 5 . 3 ± 4 . 1 | 22 . 2 |

| Yi 1.5 34B | 26 . 2 ± 4 . 7 | 35 . 7 ± 1 . 4 | 35 . 0 ± 10 . 7 | 3 . 2 ± 0 . 2 | 1 . 1 ± 1 . 6 | 20 . 2 |

| Mixtral 8x22B | 21 . 3 ± 1 . 9 | 19 . 4 ± 1 . 4 | 39 . 2 ± 12 . 6 | 1 . 5 ± 1 . 4 | 3 . 9 ± 1 . 7 | 17 . 0 |

| Yi 1.5 9B | 10 . 8 ± 1 . 0 | 20 . 0 ± 3 . 7 | 35 . 0 ± 5 . 0 | 5 . 0 ± 2 . 2 | 1 . 3 ± 1 . 5 | 14 . 4 |

| Llama 3 8B | 22 . 5 ± 2 . 9 | 24 . 6 ± 2 . 1 | 23 . 3 ± 7 . 1 | 0 . 0 ± 0 . 0 | 1 . 1 ± 1 . 6 | 14 . 3 |

| Mixtral 8x7B | 15 . 8 ± 2 . 0 | 16 . 1 ± 1 . 4 | 30 . 0 ± 8 . 2 | 1 . 1 ± 1 . 6 | 1 . 1 ± 1 . 6 | 12 . 8 |

| Chance | 25.0 | 25.0 | 25.0 | 0.0 | 0.0 | 15.0 |

and map sketching, and less than 30% SPL on route retracing and shortcut discovery, even with allocentric presentation. With egocentric multimodal presentation (the closest counterpart to classic experimental protocols in animal cognition), the models are near chance level on all tasks.

Human performance ranges from 80% to 100% accuracy on image-based presentations of the multiple-choice QA tasks. Since perceiving large sequences of text arrays is non-trivial for humans, the performance drops to 65% -80% for the text presentations.

Small-scale spatial cognition results. The results are shown in Table 2. With multimodal presentations, we benchmark GPT-4o, GPT-4v, Claude 3.5 Sonnet, and a number of open multimodal models. With purely textual presentations, we benchmark both open and closed models. We also list the performance of the chance baseline for calibration, as well as human performance (see the appendix for details).

Performance of some model classes (e.g., GPT-4o, GPT-4v, Claude 3.5 Sonnet) on purely textual presentations is considerably higher than on multimodal presentations. The best-performing models, Claude 3.5 Sonnet and GPT-4o, achieve 43.8% and 40.1% average accuracies in the multimodal regime and 64.5% and 65.2% average accuracies with purely textual presentations. (Chance is < 25% .) We attribute this in part to the simplified nature of the text-only implementations of tasks like MRT, MPFB, and JLO (e.g., the text-only presentation of mental rotation uses only 2D shapes and constrained 2D rotations) and in part to the relative developmental maturity of large language models (LLMs) versus multimodal models on the remaining tasks.

On tasks that evaluate visuospatial working memory (specifically SAtt, CBTT, SAdd, and CSWM), the strongest LLMs perform well. On selective attention (SAtt), GPT-4o, Claude 3.5 Sonnet, Mistral 123B, and GPT-4v all achieve over 95% accuracy, matching or outperforming the human performance on this task. On the other hand, all models perform poorly on maze completion (MCT), in both presentation modalities. (Note that the models operate with full visibility, as illustrated in Figure 2.) With multimodal presentation, all evaluated models are near chance on perspective taking (PTT) and the Minnesota Paper Form Board test (MPFB). On mental rotation (MRT), the best models are near chance with multimodal presentation, which uses 3D shapes, and only marginally better with purely textual presentation, which uses 2D arrays and constrained rotations.

Multimodal

Table 2: Small-scale spatial cognition results. The two tables show results for multimodal and text-only presentations, respectively. Results below 50% of human performance are gray, results above 90% of human performance are bold . Methods are sorted based on their average performance. ( ∗ Some multimodal models ran out of memory on MCT and CSWM tasks; their accuracy is taken to be 0 for calculating the average.)

| Method | MRT | PTT | WLT | MPFB | JLO | SAtt | MCT | CBTT | SAdd | CSWM | Average |

|---------------------|---------------------|---------------------|---------------------|-------------------|-----------------|-----------------|----------------|----------------|---------------------|-------------------------------|-----------|

| Human | 78.5 | 80.0 | 94.0 | 84.0 | 82.0 | 95.0 | - | 100.0 | 98.0 | - | - |

| Claude 3.5 Sonnet | 29 . 9 ± 3 . 8 | 21 . 8 ± 2 . 9 | 37 . 0 ± 4 . 6 | 35 . 5 ± 7 . 0 | 40 . 5 ± 3 . 8 | 90 . 5 ± 3 . 5 | 2 . 2 ± 1 . 8 | 56 . 5 ± 3 . 8 | 48 . 0 ± 6 . 2 | 76 . 7 ± 2 . 5 | 43.8 |

| GPT-4o | 33 . 3 ± 1 . 9 | 26 . 5 ± 3 . 6 | 59 . 0 ± 10 . 8 | 27 . 0 ± 2 . 2 | 26 . 5 ± 5 . 9 | 70 . 2 ± 1 . 8 | 10 . 4 ± 1 . 0 | 68 . 0 ± 2 . 0 | 40 . 5 ± 7 . 1 | 40 . 0 ± 0 . 0 | 40.1 |

| GPT-4v | 32 . 3 ± 0 . 3 | 28 . 0 ± 2 . 0 | 35 . 0 ± 7 . 7 | 22 . 5 ± 4 . 1 | 26 . 5 ± 6 . 8 | 59 . 8 ± 4 . 4 | 0 . 7 ± 1 . 0 | 44 . 5 ± 3 . 0 | 32 . 0 ± 4 . 5 | 26 . 7 ± 3 . 4 | 30 . 8 |

| Pixtral 12B | 28 . 3 ± 3 . 1 | 23 . 2 ± 4 . 9 | 43 . 0 ± 7 . 0 | 30 . 5 ± 7 . 9 | 24 . 5 ± 7 . 3 | 36 . 0 ± 3 . 9 | OOM | 39 . 5 ± 3 . 0 | 28 . 5 ± 6 . 1 | OOM | 25 . 4 ∗ |

| Phi-3.5-vision | 24 . 1 ± 1 . 0 | 27 . 0 ± 3 . 2 | 22 . 5 ± 7 . 9 | 26 . 0 ± 0 . 0 | 21 . 0 ± 4 . 1 | 44 . 0 ± 4 . 6 | OOM | 33 . 0 ± 4 . 6 | 22 . 0 ± 6 . 8 | OOM | 22 . 0 ∗ |

| Llava interleave 7B | 25 . 1 ± 3 . 2 | 25 . 8 ± 5 . 8 | 25 . 0 ± 8 . 5 | 25 . 0 ± 3 . 3 | 24 . 0 ± 5 . 7 | 32 . 0 ± 4 . 9 | OOM | 25 . 5 ± 5 . 7 | 27 . 0 ± 4 . 1 | OOM | 20 . 9 ∗ |

| Chance | 25.0 | 25.0 | 25.0 | 25.0 | 25.0 | 25.0 | 0.0 | 25.0 | 25.0 | 33 . 8 ± 5 . 4 | 23.4 |

| | Text-only | Text-only | Text-only | Text-only | Text-only | Text-only | Text-only | Text-only | Text-only | Text-only | Text-only |

| Method | MRT | PTT | MPFB | JLO | SAtt | MCT | | CBTT | SAdd | CSWM | Average |

| Human | 90.0 | 75.0 | 92.0 | 98.0 | 96.0 | | - | 100.0 | 98.0 | - | - |

| GPT-4o | 41 . 9 ± 6 . 2 | 55 . 5 ± 3 . 9 | 50 . 5 ± 9 . 6 | 66 . 5 ± 4 . 8 | 98 . 8 ± | 0 . 4 21 . 5 | ± 3 . 8 | 82 . 5 ± 1 . 7 | 93 . 5 ± 3 . 6 | 76 . 7 ± 2 . 5 | 65.2 |

| Claude 3.5 Sonnet | 37 . 5 ± 1 . 8 | 50 . 0 ± 7 . 5 | 45 . 0 ± 6 . 7 | 70 . 5 ± 4 . 3 | 97 . 0 ± 1 | . 0 10 . 0 | ± 1 . 1 | 97 . 5 ± 0 . 9 | 91 . 5 ± 4 . 3 | 82 . 0 ± 3 . 3 | 64.5 |

| Mistral 123B | 39 . 4 ± 6 . 2 | 44 . 8 ± 4 . 0 | 48 . 5 ± 5 . 2 | 57 . 0 ± 5 . 4 | 97 . 5 ± | 0 . 5 14 . 8 | ± 2 . 8 | 88 . 5 ± 0 . 9 | 92 . 5 ± 0 . 9 | 62 . 0 ± 2 . 8 | 60.5 |

| GPT-4v | 41 . 2 ± 7 . 2 | 67 . 5 ± 6 . 5 | 34 . 0 ± 6 . 0 | 62 . 0 ± 4 . 0 | 95 . 8 ± | 1 . 3 3 . 7 | ± 1 . 0 | 87 . 5 ± 3 . 6 | 79 . 0 ± 2 . 2 | 45 . 3 ± 2 . 5 | 57.3 |

| Llama 3 70B | 28 . 1 ± 9 . 2 | 29 . 2 ± 2 . 4 | 38 . 5 ± 3 . 8 | 42 . 5 ± 0 . 9 | 71 . 8 ± | 3 . 8 1 . 5 | ± 1 . 0 | 52 . 5 ± 5 . 7 | 62 . 5 ± 5 . 4 | 34 . 0 ± 5 . 9 | 40.0 |

| Mixtral 8x22B | 26 . 9 ± 3 . 2 | 24 . 5 ± 5 . 2 | 31 . 0 ± 5 . 9 | 36 . 0 ± 5 . 1 | 73 . 5 ± | 3 . 6 1 . 5 | ± 2 . 1 | 55 . 0 ± 3 . 3 | 68 . 0 ± 6 . 8 | 17 . 3 ± 2 . 5 | 37 . 0 |

| Yi 1.5 34B | 20 . 6 ± 6 . 0 | 28 . 0 ± 2 . 1 | 34 . 5 ± 4 . 6 | 33 . 5 ± 3 . 6 | 58 . 2 ± | 4 . 3 0 . 7 | ± 1 . 0 | 35 . 5 ± 8 . 4 | 41 . 5 ± 0 . 9 | 24 . 0 ± 0 . 0 | 30 . 7 |

| Yi 1.5 9B | 21 . 2 ± 1 . 2 | 23 . 8 ± 2 . 7 | 30 . 0 ± 3 . | ± 5 . | 48 . 2 ± | 4 . 0 0 . 7 | ± 1 . 0 | 36 . 5 ± 4 . 6 | 51 . 5 ± 8 . 9 | 24 . 7 ± 8 . 4 | 29 . 0 |

| | 14 . 4 ± 1 . | ± 5 . 1 | 2 | 24 . 5 5 | | . 7 0 . 0 | ± 0 . 0 | 27 . 5 ± 7 . 1 | | 26 . 0 ± 6 . 5 | 24 . 7 |

| Llama 3 8B | 1 | 25 . 8 | 26 . 0 ± 4 . 2 | 27 . 0 ± 1 . 7 | 46 . 0 ± | 3 | | 22 . 5 | 30 . 0 ± 7 . 3 | | 23 . 8 |

| Mixtral 8x7B Chance | 19 . 4 ± 4 . 5 25.0 | 10 . 5 ± 0 . 9 25.0 | 29 . 5 ± 5 . 7 25.0 | 27 . 5 ± 7 . 25.0 | 5 39 . 0 ± 25.0 | 5 . 4 0 . 0 0.0 | ± 0 . 0 | ± 3 . 8 25.0 | 43 . 5 ± 3 . 3 25.0 | 22 . 7 ± 4 . 1 33 . 0 ± 5 . 3 | 23.1 |

Humans perform well, achieving over 80% accuracy on the majority of the multiple-choice QA tasks with both text-only and multimodal presentations. Humans perform better on the textual presentations of tasks like MRT, MPFB and JLO than their vision counterparts due to the simplified nature of the text-only implementations.

Ecological compatibility of SPACE with frontier models. Our results indicate that current frontier models lack spatial cognition. Alternatively, these results could be the result of models not understanding the inputs presented to them (i.e., the inputs are not ecological compatibility). We study this in Appendix A.1 and demonstrate that this is not the case. Models can understand the inputs correctly and perform non-spatial cognition tasks well, yet fail to demonstrate spatial cognition.

## 5 DISCUSSION

We presented SPACE, a benchmark for spatial cognition in frontier models. Our evaluation of contemporary models brings up intriguing questions and opportunities for further investigation. First, our results underscore that frontier models exhibit a fundamentally different form of intelligence from what has been observed (and studied) in humans and animals. No biological intelligence we have encountered has exhibited such advanced skill in some aspects of higher cognition (Trinh et al., 2024) while failing so profoundly in basic spatial cognition. This is particularly intriguing because in biological intelligence, spatial cognition is considered a prerequisite for higher cognition, and breakdowns in spatial cognition are diagnostic of higher-level disorders (Cappa, 2008; Possin, 2010; Verghese et al., 2017; Cammisuli et al., 2024). From a scientific standpoint, the constellation of traits exhibited by frontier models is fascinating and may inspire a new cognitive science (Simon, 2019). As a precautionary stance, we can refrain from drawing analogies based on experience with biological cognition. (E.g., 'a model won the Mathematics Olympiad therefore it possesses a comparable cognitive repertoire to a human Olympiad winner and could be expected to have comparable skill in other domains'.)

Could deficiencies in spatial cognition be causally linked to some of the puzzling breakdowns exhibited by contemporary frontier models in higher-level tasks? What is the roadmap for bringing spatial cognition in frontier models up to the level of animal cognition (and perhaps beyond)? Is this a prerequisite for attaining some of the more far-reaching aspirations of contemporary artificial intelligence research? Does embodiment play a role, as it has in prior forms of intelligence (Smith & Gasser, 2005; Savva et al., 2019)? Or will artificial cognition continue to develop along a fundamentally different ontogenetic path? We expect further advances to increase the robustness and generality of frontier models, and to continue to broaden our understanding of the nature of intelligence.

## REFERENCES

- Marah I Abdin, Sam Ade Jacobs, Ammar Ahmad Awan, Jyoti Aneja, Ahmed Awadallah, Hany Awadalla, Nguyen Bach, Amit Bahree, Arash Bakhtiari, Harkirat S. Behl, et al. Phi-3 technical report: A highly capable language model locally on your phone. arXiv:2404.14219 , 2024.

- Gary L Allen, Kathleen C Kirasic, Shannon H Dobson, Richard G Long, and Sharon Beck. Predicting environmental learning from spatial abilities: An indirect route. Intelligence , 22(3), 1996.

- David I Anderson, Joseph J Campos, David C Witherington, Audun Dahl, Monica Rivera, Minxuan He, Ichiro Uchiyama, and Marianne Barbu-Roth. The role of locomotion in psychological development. Frontiers in Psychology , 4, 2013.

- Peter Anderson, Angel X. Chang, Devendra Singh Chaplot, Alexey Dosovitskiy, Saurabh Gupta, Vladlen Koltun, Jana Kosecka, Jitendra Malik, Roozbeh Mottaghi, Manolis Savva, and Amir R. Zamir. On evaluation of embodied navigation agents. arXiv:1807.06757 , 2018.

- Anthropic. Introducing Claude 3.5 Sonnet, 2024. https://www.anthropic.com/news/ claude-3-5-sonnet .

- Stanislaw Antol, Aishwarya Agrawal, Jiasen Lu, Margaret Mitchell, Dhruv Batra, C. Lawrence Zitnick, and Devi Parikh. VQA: Visual question answering. In ICCV , 2015.

- Leopold Aschenbrenner. Situational awareness: The decade ahead, 2024. https: //situational-awareness.ai/wp-content/uploads/2024/06/ situationalawareness.pdf .

- Anas Awadalla, Le Xue, Oscar Lo, Manli Shu, Hannah Lee, Etash Kumar Guha, Matt Jordan, Sheng Shen, Mohamed Awadalla, Silvio Savarese, Caiming Xiong, Ran Xu, Yejin Choi, and Ludwig Schmidt. MINT-1T: Scaling open-source multimodal data by 10x: A multimodal dataset with one trillion tokens. arXiv:2406.11271 , 2024.

- Andrea Banino, Caswell Barry, Benigno Uria, Charles Blundell, Timothy Lillicrap, Piotr Mirowski, Alexander Pritzel, Martin J Chadwick, Thomas Degris, Joseph Modayil, et al. Vector-based navigation using grid-like representations in artificial agents. Nature , 557(7705), 2018.

- Arthur Lester Benton. Contributions to neuropsychological assessment: A clinical manual . Oxford University Press, USA, 1994.

- Mark Blades and Christopher Spencer. The development of children's ability to use spatial representations. Advances in child development and behavior , 25, 1994.

- Nicole Blaser, G Dell'Omo, G Dell'Ariccia, David Paul Wolfer, and H-P Lipp. Testing cognitive navigation in unknown territories: homing pigeons choose different targets. Journal of Experimental Biology , 216(16), 2013.

- Tyler Bonnen, Stephanie Fu, Yutong Bai, Thomas O'Connell, Yoni Friedman, Nancy Kanwisher, Josh Tenenbaum, and Alexei Efros. Evaluating multiview object consistency in humans and image models. In NeurIPS , 2025.

- Youcef Bouchekioua, Aaron P Blaisdell, Yutaka Kosaki, Iku Tsutsui-Kimura, Paul Craddock, Masaru Mimura, and Shigeru Watanabe. Spatial inference without a cognitive map: the role of higher-order path integration. Biological Reviews , 96(1), 2021.

- R Brickenkamp and E Zillmer. Test d2: concentration-endurance test. Gottingen Ger. CJ Hogrefe , 1998.

- Rodney Brooks. Rodney brooks' three laws of artificial intelligence, 2024. https://rodneybrooks.com/ rodney-brooks-three-laws-of-artificial-intelligence/ .

- Davide Maria Cammisuli, Gloria Marchesi, Virginia Bellocchio, Edoardo Nicol` o Aiello, Barbara Poletti, Federico Verde, Vincenzo Silani, Nicola Ticozzi, Stefano Zago, Teresa Difonzo, et al. Behavioral disorders of spatial cognition in patients with mild cognitive impairment due to alzheimer's disease (the bdsc-mci project): Ecological validity of the corsi learning suvra-span test. Journal of Personalized Medicine , 14(5), 2024.

- SF Cappa. Cognitive neurology: a clinical textbook . Oxford University Press, 2008.

- Boyuan Chen, Zhuo Xu, Sean Kirmani, Brian Ichter, Danny Driess, Pete Florence, Dorsa Sadigh, Leonidas J. Guibas, and Fei Xia. Spatialvlm: Endowing vision-language models with spatial reasoning capabilities. In CVPR , 2024a.

- Lin Chen, Jinsong Li, Xiaoyi Dong, Pan Zhang, Conghui He, Jiaqi Wang, Feng Zhao, and Dahua Lin. Sharegpt4v: Improving large multi-modal models with better captions. arXiv:2311.12793 , 2023.

- Lin Chen, Jinsong Li, Xiaoyi Dong, Pan Zhang, Yuhang Zang, Zehui Chen, Haodong Duan, Jiaqi Wang, Yu Qiao, Dahua Lin, and Feng Zhao. Are we on the right way for evaluating large visionlanguage models? arXiv:2403.20330 , 2024b.

- Mark Chen, Jerry Tworek, Heewoo Jun, Qiming Yuan, Henrique Pond´ e de Oliveira Pinto, Jared Kaplan, Harri Edwards, Yuri Burda, Nicholas Joseph, Greg Brockman, et al. Evaluating large language models trained on code. arXiv:2107.03374 , 2021.

- Xinlei Chen, Hao Fang, Tsung-Yi Lin, Ramakrishna Vedantam, Saurabh Gupta, Piotr Doll´ ar, and C. Lawrence Zitnick. Microsoft COCO captions: Data collection and evaluation server. arXiv:1504.00325 , 2015.

- An-Chieh Cheng, Hongxu Yin, Yang Fu, Qiushan Guo, Ruihan Yang, Jan Kautz, Xiaolong Wang, and Sifei Liu. Spatialrgpt: Grounded spatial reasoning in vision language model. arXiv:2406.01584 , 2024.

- Franc ¸ois Chollet. On the measure of intelligence. arXiv:1911.01547 , 2019.

- Michiel HG Claessen, Ineke JM Van Der Ham, and Martine JE Van Zandvoort. Computerization of the standard corsi block-tapping task affects its underlying cognitive concepts: a pilot study. Applied Neuropsychology: Adult , 22(3), 2015.

- Philip Michael Corsi. Human memory and the medial temporal region of the brain. Phd thesis, McGill University, 1972. https://escholarship.mcgill.ca/concern/theses/ 05741s554 .

- Christopher J. Cueva and Xue-Xin Wei. Emergence of grid-like representations by training recurrent neural networks to perform spatial localization. In ICLR , 2018.

- Edwin S Dalmaijer, Stefan Van der Stigchel, Tanja CW Nijboer, Tim HW Cornelissen, and Masud Husain. Cancellationtools: All-in-one software for administration and analysis of cancellation tasks. Behavior Research Methods , 47, 2015.

- Dawson-Haggerty et al. trimesh, 2019. https://trimesh.org/ .

- Miguel de Guinea, Alejandro Estrada, K Anne-Isola Nekaris, and Sarie Van Belle. Cognitive maps in the wild: revealing the use of metric information in black howler monkey route navigation. Journal of Experimental Biology , 224(15), 2021.

- Sergio Della Sala, Marcella Laiacona, Hans Spinnler, and Chiara Ubezio. A cancellation test: its reliability in assessing attentional deficits in Alzheimer's disease. Psychological Medicine , 22(4), 1992.

- Jia Deng, Wei Dong, Richard Socher, Li-Jia Li, Kai Li, and Li Fei-Fei. Imagenet: A large-scale hierarchical image database. In CVPR , 2009.

- Abhimanyu Dubey, Abhinav Jauhri, Abhinav Pandey, Abhishek Kadian, Ahmad Al-Dahle, Aiesha Letman, Akhil Mathur, Alan Schelten, Amy Yang, Angela Fan, et al. The llama 3 herd of models. arXiv:2407.21783 , 2024.

- Pul Ashby Foltz. Adult performance on piaget's water level task and its relation of spatial orientation and visualization. Master's thesis, University of Richmond, 1978. https://scholarship.richmond.edu/cgi/viewcontent.cgi? article=1424&context=masters-theses .

- Nigel Foreman, Denny Foreman, Alison Cummings, and Sandra Owens. Locomotion, active choice, and spatial memory in children. The Journal of General Psychology , 117(2), 1990.

- John W French, Ruth B Ekstrom, and Leighton A Price. Manual for kit of reference tests for cognitive factors. 1963.

- Andrea Frick and Wenke M¨ ohring. A matter of balance: Motor control is related to children's spatial and proportional reasoning skills. Frontiers in Psychology , 6, 2016.

- Chaoyou Fu, Peixian Chen, Yunhang Shen, Yulei Qin, Mengdan Zhang, Xu Lin, Zhenyu Qiu, Wei Lin, Jinrui Yang, Xiawu Zheng, Ke Li, Xing Sun, and Rongrong Ji. MME: A comprehensive evaluation benchmark for multimodal large language models. arXiv:2306.13394 , 2023.

- Chaoyou Fu, Yuhan Dai, Yondong Luo, Lei Li, Shuhuai Ren, Renrui Zhang, Zihan Wang, Chenyu Zhou, Yunhang Shen, Mengdan Zhang, et al. Video-mme: The first-ever comprehensive evaluation benchmark of multi-modal llms in video analysis. arXiv:2405.21075 , 2024a.

- Xingyu Fu, Yushi Hu, Bangzheng Li, Yu Feng, Haoyu Wang, Xudong Lin, Dan Roth, Noah A. Smith, Wei-Chiu Ma, and Ranjay Krishna. BLINK: Multimodal large language models can see but not perceive. arXiv:2404.12390 , 2024b.

- Samir Yitzhak Gadre, Gabriel Ilharco, Alex Fang, Jonathan Hayase, Georgios Smyrnis, Thao Nguyen, Ryan Marten, Mitchell Wortsman, Dhruba Ghosh, Jieyu Zhang, et al. Datacomp: In search of the next generation of multimodal datasets. In NeurIPS , 2023.

- Maya Geva-Sagiv, Liora Las, Yossi Yovel, and Nachum Ulanovsky. Spatial cognition in bats and rats: from sensory acquisition to multiscale maps and navigation. Nature Reviews Neuroscience , 16(2), 2015.

- Sabine Gillner and Hanspeter A Mallot. Navigation and acquisition of spatial knowledge in a virtual maze. Journal of Cognitive Neuroscience , 10(4), 1998.

- Yash Goyal, Tejas Khot, Aishwarya Agrawal, Douglas Summers-Stay, Dhruv Batra, and Devi Parikh. Making the V in VQA matter: Elevating the role of image understanding in visual question answering. International Journal of Computer Vision , 127(4), 2019.

- Tianrui Guan, Fuxiao Liu, Xiyang Wu, Ruiqi Xian, Zongxia Li, Xiaoyu Liu, Xijun Wang, Lichang Chen, Furong Huang, Yaser Yacoob, et al. Hallusionbench: an advanced diagnostic suite for entangled language hallucination and visual illusion in large vision-language models. In CVPR , 2024.

- Mary Hegarty and David Waller. A dissociation between mental rotation and perspective-taking spatial abilities. Intelligence , 32(2), 2004.

- Mary Hegarty, Daniel R Montello, Anthony E Richardson, Toru Ishikawa, and Kristin Lovelace. Spatial abilities at different scales: Individual differences in aptitude-test performance and spatiallayout learning. Intelligence , 34(2), 2006.

- Dan Hendrycks, Collin Burns, Steven Basart, Andy Zou, Mantas Mazeika, Dawn Song, and Jacob Steinhardt. Measuring massive multitask language understanding. In ICLR , 2021a.

- Dan Hendrycks, Collin Burns, Saurav Kadavath, Akul Arora, Steven Basart, Eric Tang, Dawn Song, and Jacob Steinhardt. Measuring mathematical problem solving with the MATH dataset. In NeurIPS Datasets and Benchmarks , 2021b.

- Anna A Ivanova, Aalok Sathe, Benjamin Lipkin, Unnathi Kumar, Setayesh Radkani, Thomas H Clark, Carina Kauf, Jennifer Hu, RT Pramod, Gabriel Grand, et al. Elements of world knowledge (ewok): A cognition-inspired framework for evaluating basic world knowledge in language models. arXiv:2405.09605 , 2024.

- Petra Jansen. The dissociation of small-and large-scale spatial abilities in school-age children. Perceptual and Motor Skills , 109(2), 2009.

- Petra Jansen and Martin Heil. The relation between motor development and mental rotation ability in 5-to 6-year-old children. International Journal of Developmental Science , 4(1), 2010.

- Albert Q. Jiang, Alexandre Sablayrolles, Antoine Roux, Arthur Mensch, Blanche Savary, Chris Bamford, Devendra Singh Chaplot, Diego de Las Casas, Emma Bou Hanna, Florian Bressand, et al. Mixtral of experts. arXiv:2401.04088 , 2024.

- Ashley N Kalina and Suzie A Walgrave. Normative evaluation of a letter cancellation instrument for the assessment of sustained attention: A construct validation study. The Journal of Undergraduate Research , 2(1), 2004.

- Maria Kozhevnikov and Mary Hegarty. A dissociation between object manipulation spatial ability and spatial orientation ability. Memory & Cognition , 29, 2001.

- Maria Kozhevnikov, Michael A Motes, and Mary Hegarty. Spatial visualization in physics problem solving. Cognitive Science , 31(4), 2007.

- Woosuk Kwon, Zhuohan Li, Siyuan Zhuang, Ying Sheng, Lianmin Zheng, Cody Hao Yu, Joseph Gonzalez, Hao Zhang, and Ion Stoica. Efficient memory management for large language model serving with PagedAttention. In SOSP , 2023.

- Emilie Lacroix, St´ ephanie Cornet, Naima Deggouj, and Martin Gareth Edwards. The visuo-spatial abilities diagnosis (vsad) test: Evaluating the potential cognitive difficulties of children with vestibular impairment through a new tablet-based computerized test battery. Behavior Research Methods , 53, 2021.

- Barbara Landau. Spatial cognition. In Encyclopedia of the Human Brain . Elsevier, 2002.

- Hugo Laurenc ¸on, Lucile Saulnier, L´ eo Tronchon, Stas Bekman, Amanpreet Singh, Anton Lozhkov, Thomas Wang, Siddharth Karamcheti, Alexander M. Rush, Douwe Kiela, Matthieu Cord, and Victor Sanh. OBELICS: An open web-scale filtered dataset of interleaved image-text documents. In NeurIPS , 2023.

- Feng Li, Renrui Zhang, Hao Zhang, Yuanhan Zhang, Bo Li, Wei Li, Zejun Ma, and Chunyuan Li. Llava-next-interleave: Tackling multi-image, video, and 3d in large multimodal models. arXiv:2407.07895 , 2024a.

- Kunchang Li, Yali Wang, Yinan He, Yizhuo Li, Yi Wang, Yi Liu, Zun Wang, Jilan Xu, Guo Chen, Ping Luo, et al. Mvbench: A comprehensive multi-modal video understanding benchmark. In CVPR , 2024b.

- Rensis Likert and WH Quasha. Minnesota Paper Form Board Test . Psychological Corporation, 1941.

- Rensis Likert and William H Quasha. Revised Minnesota paper form board test . Psychological Corporation, 1969.

- Haotian Liu, Chunyuan Li, Qingyang Wu, and Yong Jae Lee. Visual instruction tuning. In NeurIPS , 2023a.

- Yuan Liu, Haodong Duan, Yuanhan Zhang, Bo Li, Songyang Zhang, Wangbo Zhao, Yike Yuan, Jiaqi Wang, Conghui He, Ziwei Liu, Kai Chen, and Dahua Lin. Mmbench: Is your multi-modal model an all-around player? arXiv:2307.06281 , 2023b.

- Pan Lu, Hritik Bansal, Tony Xia, Jiacheng Liu, Chunyuan Li, Hannaneh Hajishirzi, Hao Cheng, KaiWei Chang, Michel Galley, and Jianfeng Gao. Mathvista: Evaluating mathematical reasoning of foundation models in visual contexts. In ICLR , 2024.

- Arjun Majumdar, Anurag Ajay, Xiaohan Zhang, Pranav Putta, Sriram Yenamandra, Mikael Henaff, Sneha Silwal, Paul Mcvay, Oleksandr Maksymets, Sergio Arnaud, et al. Openeqa: Embodied question answering in the era of foundation models. In CVPR , 2024.

- Hanspeter A Mallot. From Geometry to Behavior: An Introduction to Spatial Cognition . MIT Press, 2024.

- Kenneth Marino, Mohammad Rastegari, Ali Farhadi, and Roozbeh Mottaghi. OK-VQA: A visual question answering benchmark requiring external knowledge. In CVPR , 2019.

- J´ er ˆ ome Marquet-Dol´ eac, R´ egis Soppelsa, and Jean-Michel Albaret. Laby 5-12: Test des labyrinthes . Hogrefe, 2010.

- John C Marshall and Gereon R Fink. Spatial cognition: Where we were and where we are. Neuroimage , 14(1), 2001.

- Chiara Meneghetti, Clara Zancada-Men´ endez, Patricia Sampedro-Piquero, Laudino Lopez, Massimiliano Martinelli, Lucia Ronconi, and Barbara Rossi. Mental representations derived from navigation: The role of visuo-spatial abilities and working memory. Learning and Individual Differences , 49, 2016.

- Chiara Meneghetti, Laura Miola, Enrico Toffalini, Massimiliano Pastore, and Francesca Pazzaglia. Learning from navigation, and tasks assessing its accuracy: The role of visuospatial abilities and wayfinding inclinations. Journal of Environmental Psychology , 75, 2021.

- Chiara Meneghetti, Laura Miola, Tommaso Feraco, and Veronica Muffato. Individual differences in navigation: An introductory overview. In Prime Archives in Psychology . Vide Leaf, 2nd edition, 2022.

- Emil W Menzel. Chimpanzee spatial memory organization. Science , 182(4115), 1973.

- Mistral AI team. Large enough, 2024a. https://mistral.ai/news/ mistral-large-2407/ .

- Mistral AI team. Announcing pixtral 12b, 2024b. https://mistral.ai/news/ pixtral-12b/ .

- Ida Momennejad, Hosein Hasanbeig, Felipe Vieira Frujeri, Hiteshi Sharma, Robert Osazuwa Ness, Nebojsa Jojic, Hamid Palangi, and Jonathan Larson. Evaluating cognitive maps in large language models with cogeval: No emergent planning. In NeurIPS , 2023.

- Arsenii Moskvichev, Victor Vikram Odouard, and Melanie Mitchell. The conceptarc benchmark: Evaluating understanding and generalization in the ARC domain. Transactions on Machine Learning Research , 2023.

- Nora S. Newcombe. Making space: The development of spatial representation and reasoning . MIT Press, 2000.

- Nora S. Newcombe. Picture this: Increasing math and science learning by improving spatial thinking. American Educator , 34(2), 2010.

- Nora S. Newcombe. Spatial Cognition. In Open Encyclopedia of Cognitive Science . MIT Press, 2024.

- Rahel Noser and Richard W Byrne. Mental maps in chacma baboons (papio ursinus): using intergroup encounters as a natural experiment. Animal Cognition , 10, 2007.

- John O'Keefe and Lynn Nadel. The hippocampus as a cognitive map . Oxford University Press, 1978.

- OpenAI. GPT-4 technical report. arXiv:2303.08774 , 2023.

- OpenAI. Hello gpt-4o, 2024. https://openai.com/index/hello-gpt-4o/ .

- Long Ouyang, Jeffrey Wu, Xu Jiang, Diogo Almeida, Carroll L. Wainwright, Pamela Mishkin, Chong Zhang, Sandhini Agarwal, Katarina Slama, Alex Ray, John Schulman, Jacob Hilton, Fraser Kelton, Luke Miller, Maddie Simens, Amanda Askell, Peter Welinder, Paul F. Christiano, Jan Leike, and Ryan Lowe. Training language models to follow instructions with human feedback. In NeurIPS , 2022.

- Anja Pahor, Randy E Mester, Audrey A Carrillo, Eunice Ghil, Jason F Reimer, Susanne M Jaeggi, and Aaron R Seitz. Ucancellation: A new mobile measure of selective attention and concentration. Behavior Research Methods , 54(5), 2022.

- Viorica Patraucean, Lucas Smaira, Ankush Gupta, Adri` a Recasens, Larisa Markeeva, Dylan Banarse, Skanda Koppula, Joseph Heyward, Mateusz Malinowski, Yi Yang, et al. Perception test: A diagnostic benchmark for multimodal video models. In NeurIPS , 2023.

- HL Payne, GF Lynch, and Dmitriy Aronov. Neural representations of space in the hippocampus of a food-caching bird. Science , 373(6552), 2021.

- Francesca Pazzaglia and Holly A Taylor. Perspective, instruction, and cognitive style in spatial representation of a virtual environment. Spatial Cognition and Computation , 7(4), 2007.

- Michael Peters, Bruno Laeng, Kerry Latham, Marla Jackson, Raghad Zaiyouna, and Chris Richardson. A redrawn vandenberg and kuse mental rotations test-different versions and factors that affect performance. Brain and Cognition , 28(1), 1995.

- Roger Paul Peters. Wolf-sign: Scents And Space In A Wide-ranging Predator. University of Michigan, 1974.

- Jean Piaget, Baerbel Inhelder, F. J. Langdon, and J. L. Lunzer. The child's conception of space. British Journal of Educational Studies , 5(2), 1957.

- Leila M Porter and Paul A Garber. Foraging and spatial memory in wild weddell's saddleback tamarins (saguinus fuscicollis weddelli) when moving between distant and out-of-sight goals. International Journal of Primatology , 34, 2013.

- Katherine L Possin. Visual spatial cognition in neurodegenerative disease. Neurocase , 16(6), 2010.

- Andrea Presotto, Richard Fayrer-Hosken, Caitlin Curry, and Marguerite Madden. Spatial mapping shows that some african elephants use cognitive maps to navigate the core but not the periphery of their home ranges. Animal Cognition , 22, 2019.

- Rafael Rafailov, Archit Sharma, Eric Mitchell, Christopher D. Manning, Stefano Ermon, and Chelsea Finn. Direct preference optimization: Your language model is secretly a reward model. In NeurIPS , 2023.

- Ahad Rana. Common crawl - building an open web-scale crawl using hadoop, 2010. https: //www.slideshare.net/hadoopusergroup/common-crawlpresentation .

- Machel Reid, Nikolay Savinov, Denis Teplyashin, Dmitry Lepikhin, Timothy P. Lillicrap, JeanBaptiste Alayrac, Radu Soricut, Angeliki Lazaridou, Orhan Firat, Julian Schrittwieser, et al. Gemini 1.5: Unlocking multimodal understanding across millions of tokens of context. arXiv:2403.05530 , 2024.

- Anthony E Richardson, Daniel R Montello, and Mary Hegarty. Spatial knowledge acquisition from maps and from navigation in real and virtual environments. Memory & Cognition , 27(4), 1999.

- Barbara J Sahakian, Robin G Morris, John L Evenden, Andrew Heald, Raymond Levy, Michael Philpot, and Trevor W Robbins. A comparative study of visuospatial memory and learning in alzheimer-type dementia and parkinson's disease. Brain , 111(3), 1988.