# * : On-the-Fly Self-Speculative Decoding for LLM Inference Acceleration

> Corresponding Author

## Abstract

Speculative decoding (SD) has emerged as a widely used paradigm to accelerate LLM inference without compromising quality. It works by first employing a compact model to draft multiple tokens efficiently and then using the target LLM to verify them in parallel. While this technique has achieved notable speedups, most existing approaches necessitate either additional parameters or extensive training to construct effective draft models, thereby restricting their applicability across different LLMs and tasks. To address this limitation, we explore a novel plug-and-play SD solution with layer-skipping, which skips intermediate layers of the target LLM as the compact draft model. Our analysis reveals that LLMs exhibit great potential for self-acceleration through layer sparsity and the task-specific nature of this sparsity. Building on these insights, we introduce , an on-the-fly self-speculative decoding algorithm that adaptively selects intermediate layers of LLMs to skip during inference. does not require auxiliary models or additional training, making it a plug-and-play solution for accelerating LLM inference across diverse input data streams. Our extensive experiments across a wide range of models and downstream tasks demonstrate that can achieve over a $1.3\times$ $\sim$ $1.6\times$ speedup while preserving the original distribution of the generated text. We release our code in https://github.com/hemingkx/SWIFT.

## 1 Introduction

Large Language Models (LLMs) have exhibited outstanding capabilities in handling various downstream tasks (OpenAI, 2023; Touvron et al., 2023a; b; Dubey et al., 2024). However, their token-by-token generation necessitated by autoregressive decoding poses efficiency challenges, particularly as model sizes increase. To address this, speculative decoding (SD) has been proposed as a promising solution for lossless LLM inference acceleration (Xia et al., 2023; Leviathan et al., 2023; Chen et al., 2023). At each decoding step, SD first employs a compact draft model to efficiently predict multiple tokens as speculations for future decoding steps of the target LLM. These tokens are then validated by the target LLM in parallel, ensuring that the original output distribution remains unchanged.

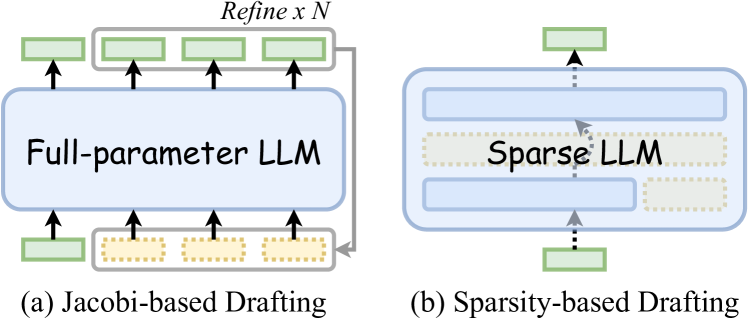

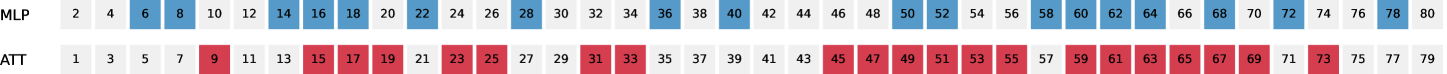

Recent advancements in SD have pushed the boundaries of the latency-accuracy trade-off by exploring various strategies (Xia et al., 2024), including incorporating lightweight draft modules into LLMs (Cai et al., 2024; Ankner et al., 2024; Li et al., 2024a; b), employing fine-tuning strategies to facilitate efficient LLM drafting (Kou et al., 2024; Yi et al., 2024; Elhoushi et al., 2024), and aligning draft models with the target LLM (Liu et al., 2023a; Zhou et al., 2024; Miao et al., 2024). Despite their promising efficacy, these approaches require additional modules or extensive training, which limits their broad applicability across different model types and causes significant inconvenience in practice. To tackle this issue, another line of research has proposed the Jacobi-based drafting (Santilli et al., 2023; Fu et al., 2024) to facilitate plug-and-play SD. As illustrated in Figure 1 (a), these methods append pseudo tokens to the input prompt, enabling the target LLM to generate multiple tokens as drafts in a single decoding step. However, the Jacobi-decoding paradigm misaligns with the autoregressive pretraining objective of LLMs, resulting in suboptimal acceleration effects.

<details>

<summary>x1.png Details</summary>

### Visual Description

\n

## Diagram: LLM Drafting Methods

### Overview

The image presents a comparative diagram illustrating two different drafting methods for Large Language Models (LLMs): Jacobi-based Drafting and Sparsity-based Drafting. Each method is visually represented with a block diagram showing the flow of information and the components involved.

### Components/Axes

The diagram consists of two main sections, labeled (a) and (b), representing the two drafting methods. Each section includes:

* **LLM Block:** A large rectangular block representing the LLM itself. In (a) it is labeled "Full-parameter LLM", and in (b) it is labeled "Sparse LLM".

* **Input/Output Blocks:** Smaller rectangular blocks, colored light green, representing input or output data.

* **Intermediate Blocks:** Smaller rectangular blocks, colored yellow with a dotted pattern, representing intermediate data or processing steps.

* **Arrows:** Arrows indicating the direction of information flow.

* **Labels:** Text labels identifying the components and the overall method.

* **Refine x N:** A label at the top of the (a) section indicating a refinement process repeated N times.

### Detailed Analysis or Content Details

**(a) Jacobi-based Drafting:**

* **Full-parameter LLM:** A large blue rectangle dominates the center.

* **Input:** Four light green rectangles are positioned below the LLM block, each connected to the LLM via an arrow.

* **Intermediate:** Four yellow, dotted rectangles are positioned between the input and the LLM, each connected to both.

* **Output:** Four light green rectangles are positioned above the LLM block, each connected to the LLM via an arrow.

* **Refine x N:** Located at the top center, indicating a refinement process repeated N times. The arrows from the LLM to the top green blocks suggest this refinement.

**(b) Sparsity-based Drafting:**

* **Sparse LLM:** A large blue rectangle dominates the center. This LLM block is composed of multiple smaller blue rectangles stacked vertically.

* **Intermediate:** Two yellow, dotted rectangles are positioned within the Sparse LLM block, suggesting internal processing.

* **Input:** Two light green rectangles are positioned below the Sparse LLM block, connected via dotted arrows.

* **Output:** One light green rectangle is positioned above the Sparse LLM block, connected via a dotted arrow.

* The dotted arrows indicate a more selective or sparse connection between the input/output and the LLM.

### Key Observations

* Jacobi-based Drafting (a) appears to involve a full parameter LLM with a direct connection between input, intermediate processing, and output. The "Refine x N" label suggests an iterative refinement process.

* Sparsity-based Drafting (b) utilizes a Sparse LLM, implying a more efficient or selective use of parameters. The dotted arrows suggest a less dense connection between input/output and the LLM.

* The number of input/output blocks differs between the two methods, suggesting different data handling approaches.

### Interpretation

The diagram illustrates two distinct approaches to drafting LLMs. Jacobi-based Drafting seems to employ a full-parameter model with iterative refinement, potentially requiring more computational resources. Sparsity-based Drafting, on the other hand, leverages a sparse model, potentially offering improved efficiency and scalability. The use of dotted arrows in the sparsity-based method suggests a selective activation or connection of parameters, which is characteristic of sparse models. The diagram highlights a trade-off between model complexity (full vs. sparse) and data flow (direct vs. selective). The "Refine x N" label in (a) suggests an iterative process to improve the model's performance, while (b) appears to focus on efficient parameter utilization. The diagram does not provide quantitative data, but it visually conveys the conceptual differences between the two drafting methods.

</details>

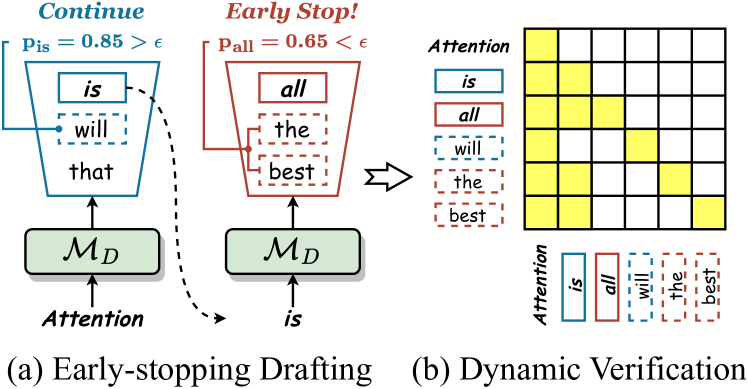

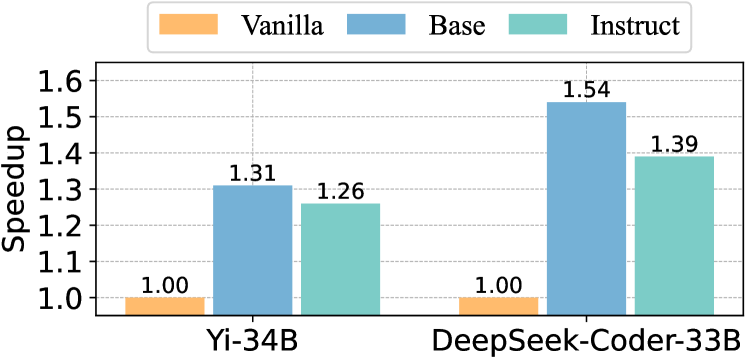

Figure 1: Illustration of prior solution and ours for plug-and-play SD. (a) Jacobi-based drafting appends multiple pseudo tokens to the input prompt, enabling the target LLM to generate multiple tokens as drafts in a single step. (b) adopts sparsity-based drafting, which exploits the inherent sparsity in LLMs to facilitate efficient drafting. This work is the first exploration of plug-and-play SD using sparsity-based drafting.

In this work, we introduce a novel research direction for plug-and-play SD: sparsity-based drafting, which leverages the inherent sparsity in LLMs to enable efficient drafting (see Figure 1 (b)). Specifically, we exploit a straightforward yet practical form of LLM sparsity – layer sparsity – to accelerate inference. Our approach is based on two key observations: 1) LLMs possess great potential for self-acceleration through layer sparsity. Contrary to the conventional belief that layer selection must be carefully optimized (Zhang et al., 2024), we surprisingly found that uniformly skipping layers to draft can still achieve a notable $1.2\times$ speedup, providing a strong foundation for plug-and-play SD. 2) Layer sparsity is task-specific. We observed that each task requires its own optimal set of skipped layers, and applying the same layer configuration across different tasks would cause substantial performance degradation. For example, the speedup drops from $1.47\times$ to $1.01\times$ when transferring the configuration optimized for a storytelling task to a reasoning task.

Building on these observations, we introduce , the first on-the-fly self-speculative decoding algorithm that adaptively optimizes the set of skipped layers in the target LLM during inference, facilitating the lossless acceleration of LLMs across diverse input data streams. integrates two key innovations: (1) a context-based layer set optimization mechanism that leverages LLM-generated context to efficiently identify the optimal set of skipped layers corresponding to the current input stream, and (2) a confidence-aware inference acceleration strategy that maximizes the use of draft tokens, improving both speculation accuracy and verification efficiency. These innovations allow to strike an expected balance between the latency-accuracy trade-off in SD, providing a new plug-and-play solution for lossless LLM inference acceleration without the need for auxiliary models or additional training, as demonstrated in Table 1.

We conduct experiments using LLaMA-2 and CodeLLaMA models across multiple tasks, including summarization, code generation, mathematical reasoning, etc. achieves a $1.3\times$ $\sim$ $1.6\times$ wall-clock time speedup compared to conventional autoregressive decoding. Notably, in the greedy setting, consistently maintains a $98\$ $\sim$ $100\$ token acceptance rate across the LLaMA2 series, indicating the high alignment potential of this paradigm. Further analysis validated the effectiveness of across diverse data streams and its compatibility with various LLM backbones.

Our key contributions are:

1. We performed an empirical analysis of LLM acceleration on layer sparsity, revealing both the potential for LLM self-acceleration via layer sparsity and its task-specific nature, underscoring the necessity for adaptive self-speculative decoding during inference.

1. Building on these insights, we introduce , the first plug-and-play self-speculative decoding algorithm that optimizes the set of skipped layers in the target LLM on the fly, enabling lossless acceleration of LLM inference across diverse input data streams.

1. We conducted extensive experiments across various models and tasks, demonstrating that consistently achieves a $1.3\times$ $\sim$ $1.6\times$ speedup without any auxiliary model or training, while theoretically guaranteeing the preservation of the generated text’s distribution.

## 2 Related Work

Speculative Decoding (SD)

Due to the sequential nature of autoregressive decoding, LLM inference is constrained by memory-bound computations (Patterson, 2004; Shazeer, 2019), with the primary latency bottleneck arising not from arithmetic computations but from memory reads/writes of LLM parameters (Pope et al., 2023). To mitigate this issue, speculative decoding (SD) introduces utilizing a compact draft model to predict multiple decoding steps, with the target LLM then validating them in parallel (Xia et al., 2023; Leviathan et al., 2023; Chen et al., 2023). Recent SD variants have sought to enhance efficiency by incorporating additional modules (Kim et al., 2023; Sun et al., 2023; Du et al., 2024; Li et al., 2024a; b) or introducing new training objectives (Liu et al., 2023a; Kou et al., 2024; Zhou et al., 2024; Gloeckle et al., 2024). However, these approaches necessitate extra parameters or extensive training, limiting their applicability across different models. Another line of research has explored plug-and-play SD methods with Jacobi decoding (Santilli et al., 2023; Fu et al., 2024), which predict multiple steps in parallel by appending pseudo tokens to the input and refining them iteratively. As shown in Table 1, our work complements these efforts by investigating a novel plug-and-play SD method with layer-skipping, which exploits the inherent sparsity of LLM layers to accelerate inference. The most related approaches to ours include Self-SD (Zhang et al., 2024) and LayerSkip (Elhoushi et al., 2024), which also skip intermediate layers of LLMs to form the draft model. However, both methods require a time-consuming offline training process, making them neither plug-and-play nor easily generalizable across different models and tasks.

| Eagle (Li et al., 2024a; b) | Draft Heads | Yes | ✗ | ✓ | ✓ | ✓ | - |

| --- | --- | --- | --- | --- | --- | --- | --- |

| Rest (He et al., 2024) | Context Retrieval | Yes | ✗ | ✓ | ✓ | ✓ | - |

| Self-SD (Zhang et al., 2024) | Layer Skipping | No | ✗ | ✓ | ✓ | ✗ | - |

| Parallel (Santilli et al., 2023) | Jacobi Decoding | No | ✓ | ✓ | ✗ | ✗ | $0.9\times$ $\sim$ $1.0\times$ |

| Lookahead (Fu et al., 2024) | Jacobi Decoding | No | ✓ | ✓ | ✓ | ✓ | $1.2\times$ $\sim$ $1.4\times$ |

| (Ours) | Layer Skipping | No | ✓ | ✓ | ✓ | ✓ | $1.3\times$ $\sim$ $1.6\times$ |

Table 1: Comparison of with existing SD methods. “ AM ” denotes whether the method requires auxiliary modules such as additional parameters or data stores. “ Greedy ”, “ Sampling ”, and “ Token Tree ” denote whether the method supports greedy decoding, multinomial sampling, and token tree verification, respectively. is the first plug-and-play layer-skipping SD method, which is orthogonal to those Jacobi-based methods such as Lookahead (Fu et al., 2024).

Efficient LLMs Utilizing Sparsity

LLMs are powerful but often over-parameterized (Hu et al., 2022). To address this issue, various methods have been proposed to accelerate inference by leveraging different forms of LLM sparsity. One promising research direction is model compression, which includes approaches such as quantization (Dettmers et al., 2022; Frantar et al., 2023; Ma et al., 2024), parameter pruning (Liu et al., 2019; Hoefler et al., 2021; Liu et al., 2023b), and knowledge distillation (Touvron et al., 2021; Hsieh et al., 2023; Gu et al., 2024). These approaches aim to reduce model sparsity by compressing LLMs into more compact forms, thereby decreasing memory usage and computational overhead during inference. Our proposed method, , focuses specifically on sparsity within LLM layer computations, providing a more streamlined approach to efficient LLM inference that builds upon recent advances in layer skipping (Corro et al., 2023; Zhu et al., 2024; Jaiswal et al., 2024; Liu et al., 2024). Unlike these existing layer-skipping methods that may lead to information loss and performance degradation, investigates the utilization of layer sparsity to enable lossless acceleration of LLM inference.

## 3 Preliminaries

### 3.1 Self-Speculative Decoding

Unlike most SD methods that require additional parameters, self-speculative decoding (Self-SD) first proposed utilizing parts of an LLM as a compact draft model (Zhang et al., 2024). In each decoding step, this approach skips intermediate layers of the LLM to efficiently generate draft tokens; these tokens are then validated in parallel by the full-parameter LLM to ensure that the output distribution of the target LLM remains unchanged. The primary challenge of Self-SD lies in determining which layers, and how many, should be skipped – referred to as the skipped layer set – during the drafting stage, which is formulated as an optimization problem. Formally, given the input data $\mathcal{X}$ and the target LLM $\mathscr{M}_{T}$ with $L$ layers (including both attention and MLP layers), Self-SD aims to identify the optimal skipped layer set $\bm{z}$ that minimizes the average inference time per token:

$$

\bm{z}^{*}=\underset{\bm{z}}{\arg\min}\frac{\sum_{\bm{x}\in\mathcal{X}}f\left(

\bm{x}\mid\bm{z};\bm{\theta}_{\mathscr{M}_{T}}\right)}{\sum_{\bm{x}\in\mathcal

{X}}|\bm{x}|},\quad\text{ s.t. }\bm{z}\in\{0,1\}^{L}, \tag{1}

$$

where $f(\cdot)$ is a black-box function that returns the inference latency of sample $\bm{x}$ , $\bm{z}_{i}\in\{0,1\}$ denotes whether layer $i$ of the target LLM is skipped when drafting, and $|\bm{x}|$ represents the sample length. Self-SD addresses this problem through a Bayesian optimization process (Jones et al., 1998). Before inference, this process iteratively selects new inputs $\bm{z}$ based on a Gaussian process (Rasmussen & Williams, 2006) and evaluates Eq (1) on the training set of $\mathcal{X}$ . After a specified number of iterations, the best $\bm{z}$ is considered an approximation of $\bm{z}^{*}$ and is held fixed for inference.

While Self-SD has proven effective, its reliance on a time-intensive Bayesian optimization process poses certain limitations. For each task, Self-SD must sequentially evaluate all selected training samples during every iteration to optimize Eq (1); Moreover, the computational burden of Bayesian optimization escalates substantially with the number of iterations. As a result, processing just eight CNN/Daily Mail (Nallapati et al., 2016) samples for 1000 Bayesian iterations requires nearly 7.5 hours for LLaMA-2-13B and 20 hours for LLaMA-2-70B on an NVIDIA A6000 server. These computational demands restrict the generalizability of Self-SD across different models and tasks.

### 3.2 Experimental Observations

This subsection delves into Self-SD, exploring the plug-and-play potential of this layer-skipping SD paradigm for lossless LLM inference acceleration. Our key findings are detailed below.

<details>

<summary>x2.png Details</summary>

### Visual Description

## Chart: Speedups with Skipping and Domain Shift

### Overview

The image presents two charts. The first (a) shows the relationship between the number of sub-layers skipped and the token acceptance rate for two different candidate selection methods (Top-k and Top-1). The second chart (b) displays speedup variations across different evaluation tasks (Summarization, Reasoning, Storytelling, and Translation) under domain shift conditions.

### Components/Axes

**Chart (a): Speedups with a Unified Skipping Pattern**

* **X-axis:** Number of Sub-layers to Skip (ranging from approximately 25 to 45, with markers at 25, 30, 35, 40, 45).

* **Y-axis (left):** Token Acceptance Rate (ranging from approximately 0.2 to 1.0).

* **Y-axis (right):** Speedup (ranging from approximately 0.8 to 1.2).

* **Legend:**

* Top-k candidates (represented by a dark blue line with triangle markers)

* Top-1 candidates (represented by a light blue line with triangle markers)

**Chart (b): Speedup Variations under Domain Shift**

* **X-axis:** Evaluation Tasks (Summarization, Reasoning, Storytelling, Translation).

* **Y-axis:** Speedup (ranging from approximately 0.8 to 1.5).

* **Legend:**

* Sum. LS (represented by an orange bar)

* Rea. LS (represented by a light green bar)

* Story. LS (represented by a teal bar)

* Trans. LS (represented by a red bar)

### Detailed Analysis or Content Details

**Chart (a):**

* **Top-k candidates:** The line starts at approximately 0.95 at 25 sub-layers skipped, decreases to approximately 0.75 at 35 sub-layers, then increases to approximately 0.9 at 45 sub-layers.

* **Top-1 candidates:** The line starts at approximately 0.85 at 25 sub-layers skipped, decreases sharply to approximately 0.25 at 35 sub-layers, and then increases to approximately 0.4 at 45 sub-layers.

* The speedup axis is not directly tied to the lines, but appears to be a secondary indicator.

**Chart (b):**

* **Summarization:** Sum. LS = 0.99

* **Reasoning:** Rea. LS = 1.17, and a second value of 1.12 is present.

* **Storytelling:** Story. LS = 1.34

* **Translation:** Trans. LS = 1.24

* The bars represent the speedup for each task.

### Key Observations

* In Chart (a), increasing the number of skipped sub-layers initially decreases the token acceptance rate for both Top-k and Top-1 candidates, but the rate recovers somewhat at higher skip numbers. Top-1 candidates experience a more dramatic initial drop in acceptance rate.

* In Chart (b), Storytelling shows the highest speedup (1.34), while Summarization has the lowest (0.99). Reasoning has two values, suggesting potential variance or multiple measurements.

### Interpretation

The data suggests that skipping sub-layers can be a viable strategy for accelerating model performance, but it comes with a trade-off in token acceptance rate. The optimal number of sub-layers to skip appears to depend on the candidate selection method used. Top-1 candidates are more sensitive to skipping than Top-k candidates.

Chart (b) indicates that the effectiveness of this acceleration strategy varies across different NLP tasks. Storytelling benefits the most from the domain shift, while Summarization sees the least improvement. The presence of two values for Reasoning suggests that the speedup may be less consistent for this task.

The "LS" in the legend for Chart (b) likely refers to a specific domain shift or evaluation setting (e.g., Low-resource setting). The charts demonstrate the impact of this domain shift on the speedup achieved for each task. The data suggests that the benefits of skipping sub-layers are not uniform across all tasks and are influenced by the evaluation context.

</details>

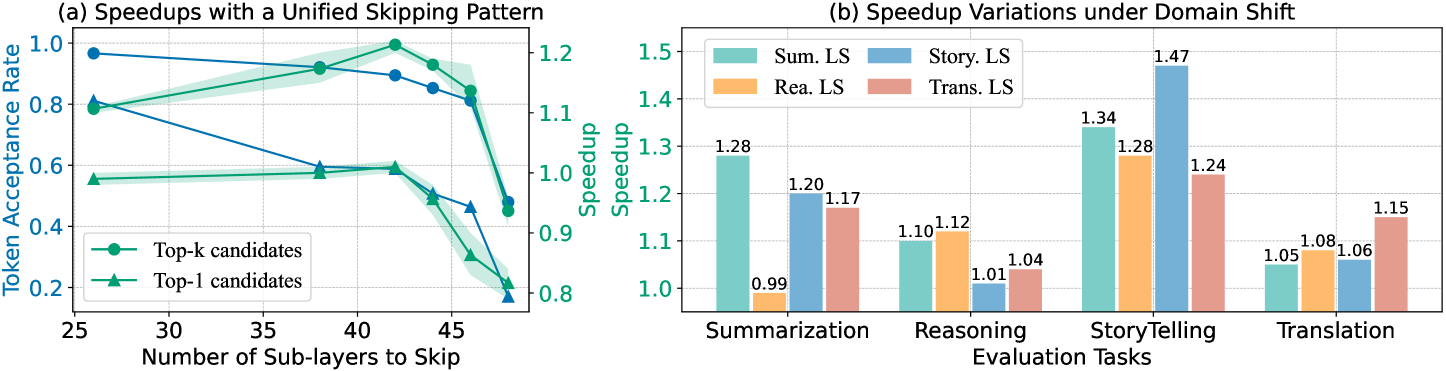

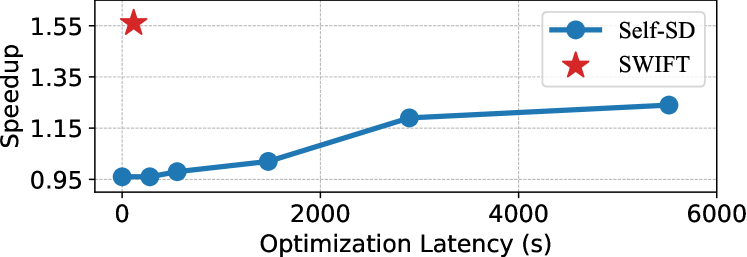

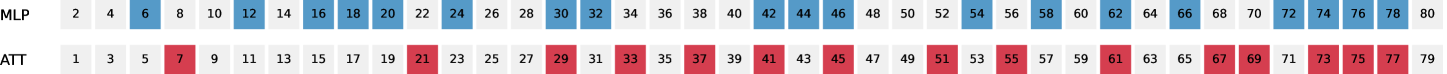

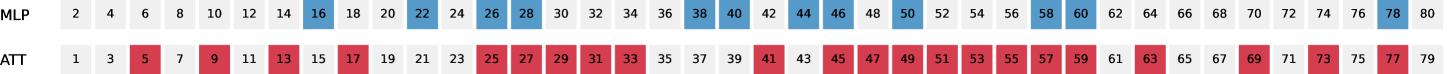

Figure 2: (a) LLMs possess self-acceleration potential via layer sparsity. By utilizing drafts from the top- $k$ candidates, we found that uniformly skipping half of the layers during drafting yields a notable $1.2\times$ speedup. (b) Layer sparsity is task-specific. Each task requires its own optimal set of skipped layers, and applying the skipped layer configuration from one task to another can lead to substantial performance degradation. “ X LS ” represents the skipped layer set optimized for task X.

#### 3.2.1 LLMs Possess Self-Acceleration Potential via Layer Sparsity

We begin by investigating the potential of behavior alignment between the target LLM and its layer-skipping variant. Unlike previous work (Zhang et al., 2024) that focused solely on greedy draft predictions, we leverage potential draft candidates from top- $k$ predictions, as detailed in Section 4.2. We conducted experiments using LLaMA-2-13B across the CNN/Daily Mail (Nallapati et al., 2016), GSM8K (Cobbe et al., 2021), and TinyStories (Eldan & Li, 2023) datasets. We applied a uniform layer-skipping pattern with $k$ set to 10. The experimental results, illustrated in Figure 2 (a), demonstrate a $30\$ average improvement in the token acceptance rate by leveraging top- $k$ predictions, with over $90\$ of draft tokens accepted by the target LLM. Consequently, compared to Self-SD, which achieved a maximum speedup of $1.01\times$ in this experimental setting, we revealed that the layer-skipping SD paradigm could yield an average wall-clock speedup of $1.22\times$ over conventional autoregressive decoding with a uniform layer-skipping pattern. This finding challenges the prevailing belief that the selection of skipped layers must be meticulously curated, suggesting instead that LLMs possess greater potential for self-acceleration through inherent layer sparsity.

#### 3.2.2 Layer Sparsity is Task-specific

We further explore the following research question: Is the skipped layer set optimized for one specific task applicable to other tasks? To address this, we conducted domain shift experiments using LLaMA-2-13B on the CNN/Daily Mail, GSM8K, TinyStories, and WMT16 DE-EN datasets. The experimental results, depicted in Figure 2 (b), reveal two critical findings: 1) Each task requires its own optimal skipped layer set. As illustrated in Figure 2 (b), the highest speedup performance is consistently achieved by the skipped layer configuration specifically optimized for each task. The detailed configuration of these layers is presented in Appendix A, demonstrating that the optimal configurations differ across tasks. 2) Applying the static skipped layer configuration across different tasks can lead to substantial efficiency degradation. For example, the speedup decreases from $1.47\times$ to $1.01\times$ when the optimized skipped layer set from a storytelling task is applied to a mathematical reasoning task, indicating that the optimized skipped layer set for one specific task does not generalize effectively to others.

These findings lay the groundwork for our plug-and-play solution within layer-skipping SD. Section 3.2.1 provides a strong foundation for real-time skipped layer selection, suggesting that additional optimization using training data may be unnecessary; Section 3.2.2 highlights the limitations of static layer-skipping patterns for dynamic input data streams across various tasks, underscoring the necessity for adaptive layer optimization during inference. Building on these insights, we present our on-the-fly self-speculative decoding method for efficient and adaptive layer set optimization.

## 4 SWIFT: On-the-Fly Self-Speculative Decoding

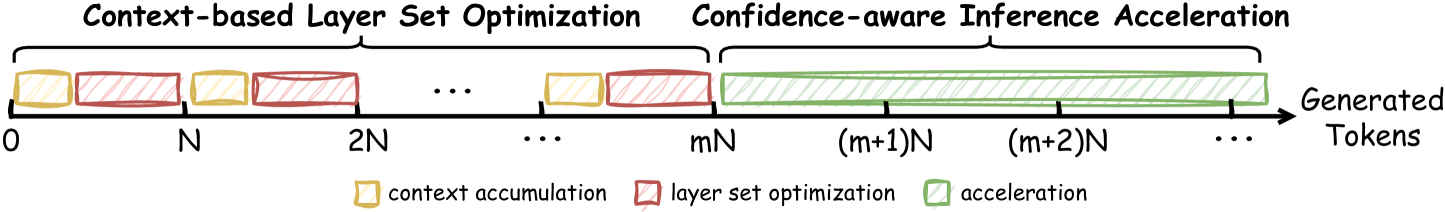

We introduce , the first plug-and-play self-speculative decoding approach that optimizes the skipped layer set of the target LLM on the fly, facilitating lossless LLM acceleration across diverse input data streams. As shown in Figure 3, divides LLM inference into two distinct phases: (1) context-based layer set optimization (§ 4.1), which aims to identify the optimal skipped layer set given the input stream, and (2) confidence-aware inference acceleration (§ 4.2), which employs the determined configuration to accelerate LLM inference.

<details>

<summary>x3.png Details</summary>

### Visual Description

\n

## Diagram: Context-based Layer Set Optimization and Confidence-aware Inference Acceleration

### Overview

The image is a diagram illustrating a process divided into two main phases: "Context-based Layer Set Optimization" and "Confidence-aware Inference Acceleration". The diagram depicts these phases occurring sequentially along a timeline representing "Generated Tokens". The timeline is marked with numerical values representing multiples of 'N', and the diagram uses colored blocks to represent different stages within the process.

### Components/Axes

* **X-axis:** Represents "Generated Tokens", with markers at 0, N, 2N, mN, (m+1)N, (m+2)N, and continuing with "...".

* **Phases:** Two main phases are labeled:

* "Context-based Layer Set Optimization" (spanning from 0 to mN)

* "Confidence-aware Inference Acceleration" (spanning from mN onwards)

* **Legend:** Located at the bottom-right, the legend defines the color-coding:

* Yellow: "context accumulation"

* Red: "layer set optimization"

* Green: "acceleration"

### Detailed Analysis

The diagram shows a repeating pattern within the "Context-based Layer Set Optimization" phase. This pattern consists of a yellow block ("context accumulation") followed by a red block ("layer set optimization"). This pattern repeats multiple times, indicated by the "...".

The "Confidence-aware Inference Acceleration" phase is represented by a long series of green blocks ("acceleration"). The green blocks become increasingly dense towards the right, suggesting an increasing rate of acceleration.

The x-axis is divided into segments marked by multiples of N. The transition from the optimization phase to the acceleration phase occurs at mN. The diagram does not provide a specific value for 'm'.

### Key Observations

* The process begins with context accumulation and layer set optimization, which are repeated multiple times.

* After a certain point (mN), the process transitions to a phase of acceleration.

* The acceleration phase appears to be continuous and increasing in intensity.

* The diagram does not provide quantitative data about the duration or intensity of each phase.

### Interpretation

The diagram illustrates a two-stage process for generating tokens. The initial "Context-based Layer Set Optimization" phase focuses on building context and refining the model's parameters. This phase involves iterative accumulation of context and optimization of layer sets. Once sufficient context is established (at mN), the process transitions to the "Confidence-aware Inference Acceleration" phase, where the model leverages its learned knowledge to generate tokens more efficiently. The increasing density of green blocks suggests that the acceleration becomes more pronounced as the process continues, potentially due to increased confidence in the model's predictions.

The diagram suggests a strategy for balancing exploration (optimization) and exploitation (acceleration) in a token generation process. The initial optimization phase ensures that the model has a strong foundation of knowledge, while the subsequent acceleration phase allows it to generate tokens quickly and efficiently. The value of 'm' likely represents a threshold or trigger point for switching between these two phases, and its optimal value would depend on the specific application and model architecture.

</details>

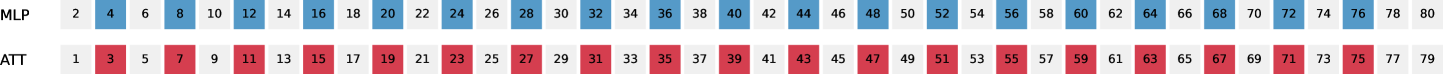

Figure 3: Timeline of inference. N denotes the maximum generation length per instance.

### 4.1 Context-based Layer Set Optimization

Layer set optimization is a critical challenge in self-speculative decoding, as it determines which layers of the target LLM should be skipped to form the draft model (see Section 3.1). Unlike prior methods that rely on time-intensive offline optimization, our work emphasizes on-the-fly layer set optimization, which poses a greater challenge to the latency-accuracy trade-off: the optimization must be efficient enough to avoid delays during inference while ensuring accurate drafting of subsequent decoding steps. To address this, we propose an adaptive optimization mechanism that balances efficiency with drafting accuracy. Our method minimizes overhead by performing only a single forward pass of the draft model per step to validate potential skipped layer set candidates. The core innovation is the use of LLM-generated tokens (i.e., prior context) as ground truth, allowing for simultaneous validation of the draft model’s accuracy in predicting future decoding steps.

In the following subsections, we illustrate the detailed process of this optimization phase for each input instance, which includes context accumulation (§ 4.1.1) and layer set optimization (§ 4.1.2).

#### 4.1.1 Context Accumulation

Given an input instance in the optimization phase, the draft model is initialized by uniformly skipping layers in the target LLM. This initial layer-skipping pattern is maintained to accelerate inference until a specified number of LLM-generated tokens, referred to as the context window, has been accumulated. Upon reaching this window length, the inference transitions to layer set optimization.

<details>

<summary>x4.png Details</summary>

### Visual Description

## Diagram: Efficient Layer Set Suggestion & Parallel Candidate Evaluation

### Overview

The image presents a diagram illustrating two processes: (a) Efficient Layer Set Suggestion and (b) Parallel Candidate Evaluation, both related to Large Language Model (LLM) optimization. The diagram depicts the flow of tokens through these processes, highlighting the interaction between random search, Bayesian optimization, and LLM verification, as well as the parallel evaluation of candidate models.

### Components/Axes

The diagram is divided into two main sections, labeled (a) and (b). Each section contains several components represented by boxes and arrows. A legend at the bottom of the image defines the color coding for different types of tokens:

* Yellow: input tokens

* Light Green: LLM-generated tokens

* Light Blue: draft tokens

* Light Yellow: accepted tokens

Section (a) includes:

* LLM Inputs: A block of yellow tokens.

* Random Search: A box with a dice icon and the text "np.random.choice()".

* Bayes Optimization: A box with a curve icon.

* MLP/Attention Layer Sets: A table listing layer configurations with associated binary values (0 or 1).

* Target LLM Verification: A box with a target icon.

* Accepted Tokens: A block of light yellow tokens.

Section (b) includes:

* Original Outputs: A block of light green tokens.

* Parallel Draft Tokens: A block of light blue tokens.

* Calculate Matchness: A box.

* Gaussian Update: A box.

* Alter Skipped Layer Set: A box with an edit icon.

* Compact Model: A box with a model icon.

* Draft multiple steps: A box.

Arrows indicate the flow of tokens and data between these components. Dashed arrows represent update signals.

### Detailed Analysis or Content Details

**Section (a): Efficient Layer Set Suggestion**

* **LLM Inputs:** The process begins with a series of yellow "input tokens".

* **Random Search & Bayes Optimization:** These two methods operate in parallel, receiving input tokens and generating layer set suggestions.

* **Layer Sets:** The table within this section lists the following layer configurations and their binary values:

* MLP: 0

* Attention: 1

* MLP: 0

* Attention: 0

* MLP: 1

* Attention: 0

* **Target LLM Verification:** The generated layer sets are then sent to "Target LLM Verification".

* **Accepted Tokens:** Verified layer sets result in "accepted tokens" (light yellow), which are fed back into the "LLM Inputs" via a dashed "update" arrow.

**Section (b): Parallel Candidate Evaluation**

* **Original Outputs:** The process starts with "original outputs" (light green tokens).

* **Parallel Draft Tokens:** These outputs are used to generate "parallel draft tokens" (light blue).

* **Calculate Matchness:** The "matchness" between the original and draft tokens is calculated.

* **Gaussian Update:** The results of the matchness calculation are used to perform a "Gaussian Update".

* **Alter Skipped Layer Set:** Based on the update, the "skipped layer set" is altered.

* **Compact Model:** The altered layer set leads to a "compact model".

* **Draft multiple steps:** The process can be repeated in multiple steps.

* **Conditional Flow:** A "if best" arrow indicates that the process continues if the current candidate is the best.

### Key Observations

* The diagram highlights a closed-loop optimization process where layer sets are suggested, verified, and updated based on performance.

* The parallel evaluation of candidate models allows for efficient exploration of different layer configurations.

* The use of "draft tokens" suggests an iterative refinement process.

* The "Gaussian Update" implies a probabilistic approach to optimization.

* The diagram does not provide specific numerical data or performance metrics.

### Interpretation

The diagram illustrates a method for efficiently optimizing LLMs by suggesting and evaluating different layer configurations. The combination of random search and Bayesian optimization allows for a balance between exploration and exploitation of the layer space. The parallel evaluation of candidate models accelerates the optimization process. The feedback loop, driven by the "Target LLM Verification" and "Gaussian Update", ensures that the model converges towards an optimal configuration. The use of different token colors clearly visualizes the flow of information and the transformation of data throughout the process. The diagram suggests a focus on model compression or efficiency, as indicated by the "Compact Model" component. The overall goal appears to be to find a layer set that maintains performance while reducing computational cost.

</details>

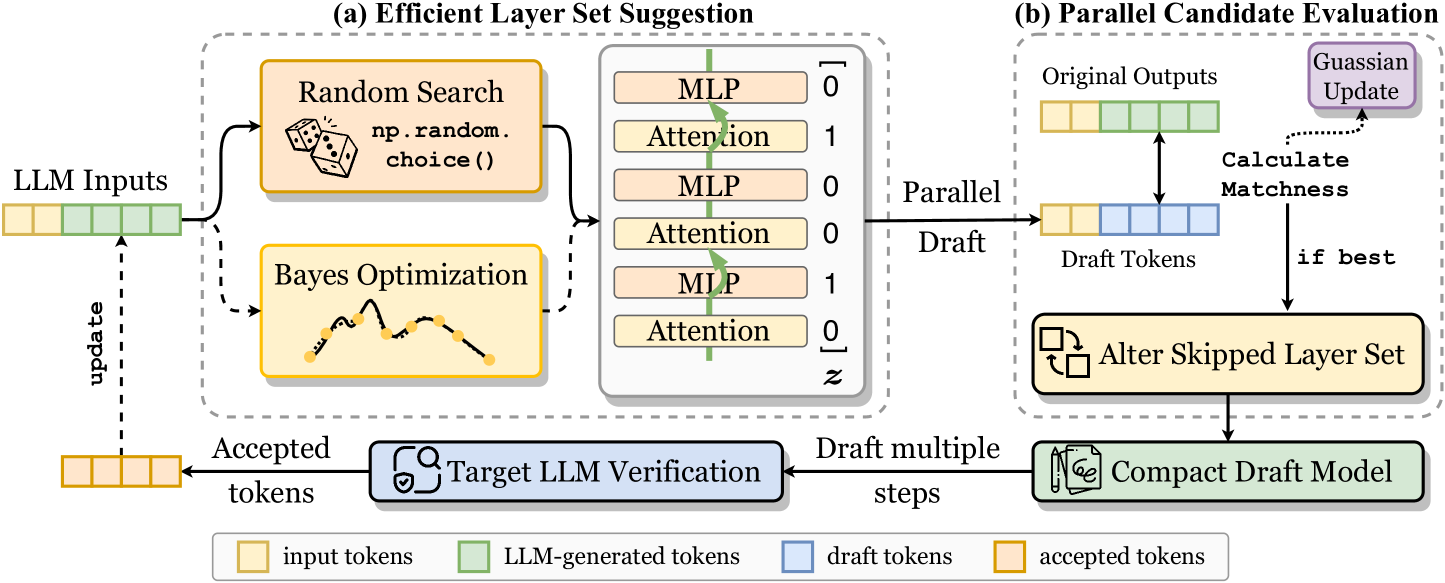

Figure 4: Layer set optimization process in . During the optimization stage, performs an optimization step prior to each LLM decoding step to adjust the skipped layer set, which involves: (a) Efficient layer set optimization. integrates random search with interval Bayesian optimization to propose layer set candidates; (b) Parallel candidate evaluation. uses LLM-generated tokens (i.e., prior context) as ground truth, enabling simultaneous validation of the proposed candidates. The best-performing layer set is selected to accelerate the current decoding step.

#### 4.1.2 Layer Set Optimization

During this stage, as illustrated in Figure 4, we integrate an optimization step before each LLM decoding step to refine the skipped layer set, which comprises two substeps:

Efficient Layer Set Suggestion

This substep aims to suggest a potential layer set candidate. Formally, given a target LLM $\mathscr{M}_{T}$ with $L$ layers, our goal is to identify an optimal skipped layer set $\bm{z}\in\{0,1\}^{L}$ to form the compact draft model. Unlike Zhang et al. (2024), which relies entirely on a time-consuming Bayesian optimization process, we introduce an efficient strategy that combines random search with Bayesian optimization. In this approach, random sampling efficiently handles most of the exploration. Specifically, given a fixed skipping ratio $r$ , applies Bayesian optimization at regular intervals of $\beta$ optimization steps (e.g., $\beta=25$ ) to suggest the next layer set candidate, while random search is employed during other optimization steps.

$$

\bm{z}=\left\{\begin{array}[]{ll}\operatorname{Bayesian\_Optimization}(\bm{l})

&\text{ if }o\text{ \

\operatorname{Random\_Search}(\bm{l})&\text{ otherwise }\end{array},\right. \tag{2}

$$

where $1\leq o\leq S$ is the current optimization step; $S$ denotes the maximum number of optimization steps; $\bm{l}=\binom{L}{rL}$ denotes the input space, i.e., all possible combinations of layers that can be skipped.

Parallel Candidate Evaluation

leverages LLM-generated context to simultaneously validate the candidate draft model’s performance in predicting future decoding steps. Formally, given an input sequence $\bm{x}$ and the previously generated tokens within the context window, denoted as $\bm{y}=\{y_{1},\dots,y_{\gamma}\}$ , the draft model $\mathscr{M}_{D}$ , which skips the designated layers $\bm{z}$ of the target LLM, is employed to predict these context tokens in parallel:

$$

y^{\prime}_{i}=\arg\max_{y}\log P\left(y\mid\bm{x},\bm{y}_{<i};\bm{\theta}_{

\mathscr{M}_{D}}\right),1\leq i\leq\gamma, \tag{3}

$$

where $\gamma$ represents the context window. The cached key-value pairs in the target LLM $\mathscr{M}_{T}$ are reused by $\mathscr{M}_{D}$ , presumably aligning $\mathscr{M}_{D}$ ’s distribution with $\mathscr{M}_{T}$ and reducing the redundant computation. The matchness score is defined as the exact match ratio between $\bm{y}$ and $\bm{y}^{\prime}$ :

$$

\texttt{matchness}=\frac{\sum_{i}\mathbb{I}\left(y_{i}=y^{\prime}_{i}\right)}{

\gamma},1\leq i\leq\gamma, \tag{4}

$$

where $\mathbb{I}(\cdot)$ denotes the indicator function. This score serves as the optimization objective during optimization, reflecting $\mathscr{M}_{D}$ ’s accuracy in predicting future decoding steps. As shown in Figure 4, the matchness score at each step is integrated into the Gaussian process model to guide Bayesian optimization, with the highest-scoring layer set candidate being retained to form the draft model.

As illustrated in Figure 3, the process of context accumulation and layer set optimization alternates for each instance until a termination condition is met – either the maximum number of optimization steps is reached or the best candidate remains unchanged over multiple iterations. Once the optimization phase concludes, the inference process transitions to the confidence-aware inference acceleration phase, where the optimized draft model is employed to speed up LLM inference.

### 4.2 Confidence-aware Inference Acceleration

<details>

<summary>x5.png Details</summary>

### Visual Description

\n

## Diagram: Early-stopping Drafting and Dynamic Verification

### Overview

The image presents a diagram illustrating two stages of a process: "Early-stopping Drafting" (a) and "Dynamic Verification" (b). The diagram depicts a sequence of operations involving text processing, attention mechanisms, and decision-making based on probability thresholds. It appears to be a visual representation of a method for generating text, potentially within a machine learning context.

### Components/Axes

The diagram consists of two main sections, labeled (a) and (b). Each section contains visual elements representing text blocks, attention mechanisms, and a decision-making process.

* **Section (a): Early-stopping Drafting**

* Text Blocks: "is", "will", "that" (orange boxes), "all", "the", "best" (red boxes).

* Model: `M_D` (green rectangle)

* Attention: Indicated by arrows and the label "Attention".

* Probability Thresholds: `P_is = 0.85 > ε` (blue), `P_all = 0.65 < ε` (red). `ε` is a threshold value.

* Arrows: Representing flow and connections between components. A dashed arrow indicates a "Continue" path, while a solid arrow indicates an "Early Stop!" path.

* **Section (b): Dynamic Verification**

* Text Blocks: "is", "all", "will", "the", "best" (blue boxes with dashed outlines).

* Model: `M_D` (green rectangle)

* Attention: Indicated by arrows and the label "Attention".

* Attention Matrix: A grid of yellow squares representing an attention matrix.

### Detailed Analysis or Content Details

**Section (a): Early-stopping Drafting**

* The process begins with the model `M_D` receiving "Attention" input.

* The model generates the sequence "is", "will", "that".

* A probability `P_is` is calculated for "is", with a value of 0.85. This value is compared to a threshold `ε`. Since 0.85 > `ε`, the process continues.

* The model then generates the sequence "all", "the", "best".

* A probability `P_all` is calculated for "all", with a value of 0.65. This value is compared to the same threshold `ε`. Since 0.65 < `ε`, the process stops ("Early Stop!").

* The dashed arrow indicates the continuation path, while the solid arrow indicates the early stopping path.

**Section (b): Dynamic Verification**

* The model `M_D` receives "Attention" input and generates the sequence "is", "all", "will", "the", "best".

* The attention matrix is a 6x6 grid of yellow squares. The rows correspond to the words "is", "all", "will", "the", "best", and the columns likely represent the same words, indicating the attention weights between them.

* The text blocks are enclosed in dashed blue boxes.

### Key Observations

* The diagram illustrates a dynamic process where text generation can be stopped early based on a probability threshold.

* The attention mechanism plays a crucial role in both stages of the process.

* The attention matrix in Section (b) provides a visual representation of the relationships between different words in the generated sequence.

* The threshold `ε` is a key parameter that controls the trade-off between generation length and quality.

### Interpretation

The diagram depicts a method for efficient text generation that combines drafting and verification stages. The "Early-stopping Drafting" stage aims to quickly generate a draft sequence, while the "Dynamic Verification" stage refines the sequence and ensures its quality. The probability thresholds and attention mechanisms are used to make informed decisions about when to continue or stop the generation process.

The use of different colors (orange, red, blue) to highlight the text blocks likely indicates different stages or roles in the generation process. The attention matrix in Section (b) suggests that the model is able to focus on the most relevant parts of the generated sequence.

The diagram suggests a system that balances exploration (generating new text) with exploitation (verifying and refining existing text). The threshold `ε` allows for tuning the system's behavior based on the desired trade-off between these two objectives. The overall goal appears to be to generate high-quality text efficiently by stopping the generation process when the probability of generating meaningful content falls below a certain level.

</details>

Figure 5: Confidence-aware inference process of . (a) The drafting terminates early if the confidence score drops below threshold $\epsilon$ . (b) Draft candidates are dynamically selected based on confidence and then verified in parallel by the target LLM.

During the acceleration phase, the optimization step is removed. applies the best-performed layer set to form the compact draft model and decodes following the draft-then-verify paradigm. Specifically, at each decoding step, given the input $\bm{x}$ and previous LLM outputs $\bm{y}$ , the draft model $\mathscr{M}_{D}$ predicts future LLM decoding steps in an autoregressive manner:

$$

y^{\prime}_{j}=\arg\max_{y}\log P\left(y\mid\bm{x},\bm{y},\bm{y}^{\prime}_{<j}

;\bm{\theta}_{\mathscr{M}_{D}}\right), \tag{5}

$$

where $1\leq j\leq N_{D}$ is the current draft step, $N_{D}$ denotes the maximum draft length, $\bm{y}^{\prime}_{<j}$ represents previous draft tokens, and $P(\cdot)$ denotes the probability distribution of the next draft token. The KV cache of the target LLM $\mathscr{M}_{T}$ and preceding draft tokens $\bm{y}^{\prime}_{<j}$ is reused to reduce the computational cost.

Let $p_{j}=\max P(\cdot)$ denote the probability of the top-1 draft prediction $y^{\prime}_{j}$ , which can be regarded as a confidence score. Recent research (Li et al., 2024b; Du et al., 2024) shows that this score is highly correlated with the likelihood that the draft token $y^{\prime}_{j}$ will pass verification – higher confidence scores indicate a greater chance of acceptance. Therefore, following previous studies (Zhang et al., 2024; Du et al., 2024), we leverage the confidence score to prune unnecessary draft steps and select valuable draft candidates, improving both speculation accuracy and verification efficiency.

As shown in Figure 5, we integrate with two confidence-aware inference strategies These confidence-aware inference strategies are also applied during the optimization phase, where the current optimal layer set is used to form the draft model and accelerate the corresponding LLM decoding step.: 1) Early-stopping Drafting. The autoregressive drafting process halts if the confidence $p_{j}$ falls below a specified threshold $\epsilon$ , avoiding any waste of subsequant drafting computation. 2) Dynamic Verification. Each $y^{\prime}_{j}$ is dynamically extended with its top- $k$ draft predictions for parallel verification to enhance speculation accuracy, with $k$ determined by the confidence score $p_{j}$ . Concretely, $k$ is set to 10, 5, 3, and 1 for $p$ in the ranges of $(0,0.5]$ , $(0.5,0.8]$ , $(0.8,0.95]$ , and $(0.95,1]$ , respectively. All draft candidates are linearized into a single sequence and verified in parallel by the target LLM using a special causal attention mask (see Figure 5 (b)).

## 5 Experiments

### 5.1 Experimental Setup

Implementation Details

We mainly evaluate on LLaMA-2 (Touvron et al., 2023b) and CodeLLaMA series (Rozière et al., 2023) across various tasks, including summarization, mathematical reasoning, storytelling, and code generation. The evaluation datasets include CNN/Daily Mail (CNN/DM) (Nallapati et al., 2016), GSM8K (Cobbe et al., 2021), TinyStories (Eldan & Li, 2023), and HumanEval (Chen et al., 2021). The maximum generation lengths on CNN/DM, GSM8K, and TinyStories are set to 64, 64, and 128, respectively. We conduct 1-shot evaluation for CNN/DM and TinyStories, and 5-shot evaluation for GSM8K. We compare pass@1 and pass@10 for HumanEval. We randomly sample 1000 instances from the test set for each dataset except HumanEval. The maximum generation lengths for HumanEval and all analyses are set to 512. During optimization, we employ both random search and Bayesian optimization https://github.com/bayesian-optimization/BayesianOptimization to suggest skipped layer set candidates. Following prior work, we adopt speculative sampling (Leviathan et al., 2023) as our acceptance strategy with a batch size of 1. Detailed setups are provided in Appendix B.1 and B.2.

Baselines

In our main experiments, we compare to two existing plug-and-play methods: Parallel Decoding (Santilli et al., 2023) and Lookahead Decoding (Fu et al., 2024), both of which employ Jacobi decoding for efficient LLM drafting. It is important to note that , as a layer-skipping SD method, is orthogonal to these Jacobi-based SD methods, and integrating with them could further boost inference efficiency. We exclude other SD methods from our comparison as they necessitate additional modules or extensive training, which limits their generalizability.

Evaluation Metrics

We report two widely-used metrics for evaluation: mean generated length $M$ (Stern et al., 2018) and token acceptance rate $\alpha$ (Leviathan et al., 2023). Detailed descriptions of these metrics can be found in Appendix B.3. In addition to these metrics, we report the actual decoding speed (tokens/s) and wall-time speedup ratio compared with vanilla autoregressive decoding. The acceleration of theoretically guarantees the preservation of the target LLMs’ output distribution, making it unnecessary to evaluate the generation quality. However, to provide a point of reference, we present the evaluation scores for code generation tasks.

| LLaMA-2-13B Parallel Lookahead | Vanilla 1.04 1.38 | 1.00 0.95 $\times$ 1.16 $\times$ | 1.00 $\times$ 1.11 1.50 | 1.00 0.99 $\times$ 1.29 $\times$ | 1.00 $\times$ 1.06 1.62 | 1.00 0.97 $\times$ 1.37 $\times$ | 1.00 $\times$ 19.49 25.46 | 20.10 0.97 $\times$ 1.27 $\times$ | 1.00 $\times$ |

| --- | --- | --- | --- | --- | --- | --- | --- | --- | --- |

| 4.34 | 1.37 $\times$ † | 3.13 | 1.31 $\times$ † | 8.21 | 1.53 $\times$ † | 28.26 | 1.41 $\times$ | | |

| LLaMA-2-13B -Chat | Vanilla | 1.00 | 1.00 $\times$ | 1.00 | 1.00 $\times$ | 1.00 | 1.00 $\times$ | 19.96 | 1.00 $\times$ |

| Parallel | 1.06 | 0.96 $\times$ | 1.08 | 0.97 $\times$ | 1.10 | 0.98 $\times$ | 19.26 | 0.97 $\times$ | |

| Lookahead | 1.35 | 1.15 $\times$ | 1.57 | 1.31 $\times$ | 1.66 | 1.40 $\times$ | 25.69 | 1.29 $\times$ | |

| 3.54 | 1.28 $\times$ | 2.95 | 1.25 $\times$ | 7.42 | 1.50 $\times$ † | 26.80 | 1.34 $\times$ | | |

| LLaMA-2-70B | Vanilla | 1.00 | 1.00 $\times$ | 1.00 | 1.00 $\times$ | 1.00 | 1.00 $\times$ | 4.32 | 1.00 $\times$ |

| Parallel | 1.05 | 0.95 $\times$ | 1.07 | 0.97 $\times$ | 1.05 | 0.96 $\times$ | 4.14 | 0.96 $\times$ | |

| Lookahead | 1.36 | 1.15 $\times$ | 1.54 | 1.30 $\times$ | 1.59 | 1.35 $\times$ | 5.45 | 1.26 $\times$ | |

| 3.85 | 1.43 $\times$ † | 2.99 | 1.39 $\times$ † | 6.17 | 1.62 $\times$ † | 6.41 | 1.48 $\times$ | | |

Table 2: Comparison between and prior plug-and-play methods. We report the mean generated length M, speedup ratio, and average decoding speed (tokens/s) under greedy decoding. † indicates results with a token acceptance rate $\alpha$ above 0.98. More details are provided in Appendix C.1.

| HumanEval (pass@1) HumanEval (pass@10) | Vanilla 4.75 Vanilla | 1.00 0.98 1.00 | - 0.311 - | 0.311 1.40 $\times$ 0.628 | 1.00 $\times$ 3.79 1.00 $\times$ | 1.00 0.88 1.00 | - 0.372 - | 0.372 1.46 $\times$ 0.677 | 1.00 $\times$ 1.00 $\times$ |

| --- | --- | --- | --- | --- | --- | --- | --- | --- | --- |

| 3.55 | 0.93 | 0.628 | 1.29 $\times$ | 2.79 | 0.90 | 0.683 | 1.30 $\times$ | | |

Table 3: Experimental results of on code generation tasks. We report the mean generated length M, acceptance rate $\alpha$ , accuracy (Acc.), and speedup ratio for comparison. We use greedy decoding for pass@1 and random sampling with a temperature of 0.6 for pass@10.

### 5.2 Main Results

Table 2 presents the comparison between and previous plug-and-play methods on text generation tasks. The experimental results demonstrate the following findings: (1) shows superior efficiency over prior methods, achieving consistent speedups of $1.3\times$ $\sim$ $1.6\times$ over vanilla autoregressive decoding across various models and tasks. (2) The efficiency of is driven by the high behavior consistency between the target LLM and its layer-skipping draft variant. As shown in Table 2, produces a mean generated length M of 5.01, with a high token acceptance rate $\alpha$ ranging from $90\$ to $100\$ . Notably, for the LLaMA-2 series, this acceptance rate remains stable at $98\$ $\sim$ $100\$ , indicating that nearly all draft tokens are accepted by the target LLM. (3) Compared with 13B models, LLaMA-2-70B achieves higher speedups with a larger layer skip ratio ( $0.45$ $\rightarrow$ $0.5$ ), suggesting that larger-scale LLMs exhibit greater layer sparsity. This underscores ’s potential to deliver even greater speedups as LLM scales continue to grow. A detailed analysis of this finding is presented in Section 5.3, while additional experimental results for LLaMA-70B models, including LLaMA-3-70B, are presented in Appendix C.2.

Table 3 shows the evaluation results of on code generation tasks. achieves speedups of $1.3\times$ $\sim$ $1.5\times$ over vanilla autoregressive decoding, demonstrating its effectiveness across both greedy decoding and random sampling settings. Additionally, speculative sampling theoretically guarantees that maintains the original output distribution of the target LLM. This is empirically validated by the task performance metrics in Table 3. Despite a slight variation in the pass@10 metric for CodeLLaMA-34B, achieves identical performance to autoregressive decoding.

### 5.3 In-depth Analysis

<details>

<summary>x6.png Details</summary>

### Visual Description

## Chart: Speedup vs. Number of Instances

### Overview

This chart illustrates the relationship between the number of instances and the resulting speedup and matchness, along with a latency breakdown per token. Two speedup curves are presented: an overall speedup and an instance speedup. The chart also shows the matchness as a function of the number of instances. A vertical dashed red line indicates an "Optimization Stop!" point.

### Components/Axes

* **X-axis:** "# of Instances" - Ranging from 0 to 100, with markers at intervals of 10.

* **Y-axis (left):** "Matchness" - Ranging from 0.0 to 1.0, with markers at intervals of 0.2.

* **Y-axis (right):** "Speedup" - Ranging from 1.2 to 1.6, with markers at intervals of 0.1.

* **Legend:** Located in the center-right of the chart.

* "Overall Speedup" - Represented by green circles connected by a solid line.

* "Instance Speedup" - Represented by gray circles connected by a dashed line.

* **Annotation:** "Optimization Stop!" - A vertical dashed red line at approximately instance 15.

* **Annotation:** "Average" - A label placed near the end of the Instance Speedup line.

* **Table:** "Latency Breakdown per Token" - Located in the bottom-right corner. Columns are "Modules", "Latency (ms)", and "Ratio (%)".

### Detailed Analysis or Content Details

**Matchness:**

The Matchness starts at approximately 0.0 at 0 instances and increases rapidly, approaching 0.85-0.90 by 20 instances. The rate of increase slows down as the number of instances increases, leveling off around 0.95-1.0 after 60 instances.

**Overall Speedup (Green Line):**

The Overall Speedup line starts at approximately 1.3 at 0 instances. It exhibits a steep upward slope initially, reaching around 1.52 at 10 instances. The slope gradually decreases, and the line plateaus around 1.55-1.57 after 50 instances, with a slight fluctuation towards the end.

**Instance Speedup (Gray Line):**

The Instance Speedup line begins at approximately 1.58 at 0 instances. It shows a slight downward trend initially, decreasing to around 1.52 at 10 instances. The line remains relatively stable between 1.50 and 1.55 for the remainder of the instances, with some minor fluctuations. The "Average" label is placed near the end of the line, indicating an average value of approximately 1.56.

**Latency Breakdown per Token (Table):**

| Modules | Latency (ms) | Ratio (%) |

|---|---|---|

| Optimize | 0.24 ± 0.02 | 0.8 |

| Draft | 19.93 ± 1.36 | 64.4 |

| Verify | 8.80 ± 2.21 | 28.4 |

| Others | 1.98 ± 0.13 | 6.4 |

| Total | 30.95 ± 2.84 | 100.0 |

### Key Observations

* The "Optimization Stop!" point at approximately 15 instances suggests that adding more instances beyond this point yields diminishing returns in terms of speedup.

* The Instance Speedup remains relatively constant after the initial decrease, while the Overall Speedup continues to increase, albeit at a decreasing rate.

* The Draft module contributes the largest portion (64.4%) to the total latency.

* The Matchness approaches 1.0 with a relatively small number of instances (around 60).

### Interpretation

The chart demonstrates the benefits of increasing the number of instances for processing, as evidenced by the initial increase in Overall Speedup and Matchness. However, it also highlights the point of diminishing returns, indicated by the "Optimization Stop!" annotation. The relatively stable Instance Speedup suggests that the performance gain from adding more instances is limited after a certain point. The latency breakdown reveals that the "Draft" module is the primary bottleneck in the process, suggesting that optimizing this module could lead to significant performance improvements. The high Matchness achieved with a moderate number of instances indicates that the system is able to maintain accuracy even as the number of instances increases. The difference between the Overall and Instance Speedup suggests that there are overheads associated with coordinating multiple instances.

</details>

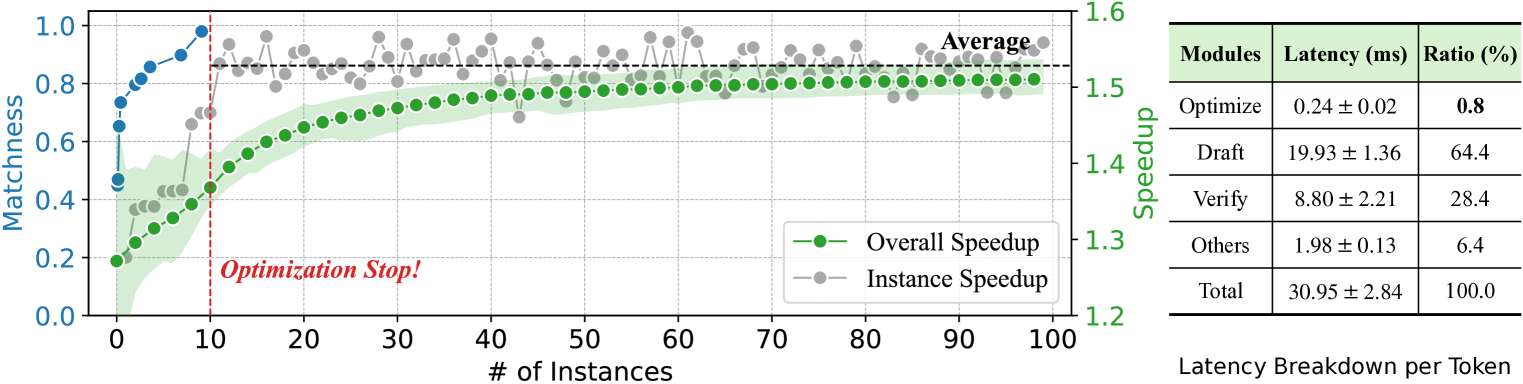

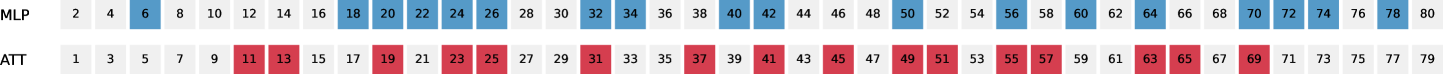

Figure 6: Illustration and latency breakdown of inference. As the left figure shows, after the context-based layer set optimization phase, the overall speedup of steadily increases, reaching the average instance speedup during the acceleration phase. The additional optimization steps account for only $\bf{0.8\$ of the total inference latency, as illustrated in the right figure.

Illustration of Inference

As described in Section 4, divides the LLM inference process into two distinct phases: optimization and acceleration. Figure 6 (left) illustrates the detailed acceleration effect of during LLM inference. Specifically, the optimization phase begins at the start of inference, where an optimization step is performed before each decoding step to adjust the skipped layer set forming the draft model. As shown in Figure 6, in this phase, the matchness score of the draft model rises sharply from 0.45 to 0.73 during the inference of the first instance. This score then gradually increases to 0.98, which triggers the termination of the optimization process. Subsequently, the inference transitions to the acceleration phase, during which the optimization step is removed, and the draft model remains fixed to accelerate LLM inference. As illustrated, the instance speedup increases with the matchness score, reaching an average of $1.53\times$ in the acceleration phase. The overall speedup gradually rises as more tokens are generated, eventually approaching the average instance speedup. This dynamic reflects a key feature of : the efficiency of improves with increasing input length and the number of instances.

Breakdown of Computation

Figure 6 (right) presents the computation breakdown of different modules in with 1000 CNN/DM samples using LLaMA-2-13B. The results demonstrate that the optimization step only takes $\bf{0.8\$ of the overall inference process, indicating the efficiency of our strategy. Compared with Self-SD (Zhang et al., 2024) that requires a time-consuming optimization process (e.g., 7.5 hours for LLaMA-2-13B on CNN/DM), achieves a nearly 180 $\times$ optimization time reduction, facilitating on-the-fly inference acceleration. Besides, the results show that the drafting stage of consumes the majority of inference latency. This is consistent with our results of mean generated length in Table 2 and 3, which shows that nearly $80\$ output tokens are generated by the efficient draft model, demonstrating the effectiveness of our framework.

<details>

<summary>x7.png Details</summary>

### Visual Description

\n

## Bar Chart: Performance Comparison of Language Models

### Overview

This bar chart compares the performance of three language models – Vanilla, Self-SD, and SWIFT – across five different tasks: Summarization, Reasoning, Instruction, Translation, and QA (Question Answering). The performance is measured using two metrics: Speedup (left y-axis) and Token Acceptance (right y-axis). The chart uses bar graphs to represent Speedup and line graphs to represent Token Acceptance.

### Components/Axes

* **X-axis:** Task type (Summarization, Reasoning, Instruction, Translation, QA)

* **Left Y-axis:** Speedup (Scale: 0.8 to 1.6)

* **Right Y-axis:** Token Acceptance (Scale: 0.5 to 1.0)

* **Legend:**

* Vanilla (Yellow) - Represented by yellow bars.

* Self-SD (Light Blue) - Represented by light blue bars.

* SWIFT (Dark Blue) - Represented by dark blue bars.

* SWIFT (Green Line with filled circles) - Represents Token Acceptance for SWIFT.

* Self-SD (Light Blue Line with empty circles) - Represents Token Acceptance for Self-SD.

### Detailed Analysis

The chart presents data for each task, showing the Speedup and Token Acceptance for each model.

**Summarization:**

* Vanilla Speedup: Approximately 1.00x

* Self-SD Speedup: Approximately 1.28x

* SWIFT Speedup: Approximately 1.56x

* SWIFT Token Acceptance: Starts at approximately 0.95 and decreases to approximately 0.92.

* Self-SD Token Acceptance: Starts at approximately 0.85 and increases to approximately 0.88.

**Reasoning:**

* Vanilla Speedup: Approximately 1.00x

* Self-SD Speedup: Approximately 1.10x

* SWIFT Speedup: Approximately 1.45x

* SWIFT Token Acceptance: Relatively flat, around 0.94-0.95.

* Self-SD Token Acceptance: Starts at approximately 0.75 and increases to approximately 0.82.

**Instruction:**

* Vanilla Speedup: Approximately 1.00x

* Self-SD Speedup: Approximately 1.08x

* SWIFT Speedup: Approximately 1.47x

* SWIFT Token Acceptance: Starts at approximately 0.95 and decreases to approximately 0.93.

* Self-SD Token Acceptance: Relatively flat, around 0.70-0.72.

**Translation:**

* Vanilla Speedup: Approximately 1.05x

* Self-SD Speedup: Approximately 1.27x

* SWIFT Speedup: Approximately 1.00x

* SWIFT Token Acceptance: Starts at approximately 0.93 and decreases to approximately 0.88.

* Self-SD Token Acceptance: Relatively flat, around 0.68-0.70.

**QA:**

* Vanilla Speedup: Approximately 1.02x

* Self-SD Speedup: Approximately 1.35x

* SWIFT Speedup: Approximately 1.00x

* SWIFT Token Acceptance: Relatively flat, around 0.93-0.94.

* Self-SD Token Acceptance: Starts at approximately 0.65 and increases to approximately 0.68.

### Key Observations

* SWIFT consistently demonstrates the highest Speedup across most tasks (Summarization, Reasoning, Instruction).

* Self-SD generally shows a moderate improvement in Speedup compared to Vanilla.

* Token Acceptance for SWIFT is generally high and relatively stable.

* Token Acceptance for Self-SD is lower than SWIFT but shows a slight increasing trend across tasks.

* Vanilla consistently has a Speedup of approximately 1.00x, indicating no performance improvement.

* The Translation task shows a relatively low Speedup for SWIFT compared to other tasks.

### Interpretation

The data suggests that the SWIFT model significantly outperforms Vanilla and Self-SD in terms of Speedup for most of the evaluated tasks. This indicates that SWIFT is more efficient at processing these tasks. The higher Token Acceptance for SWIFT suggests that it is also more reliable in generating appropriate tokens. Self-SD provides a moderate improvement over Vanilla, but it does not reach the performance level of SWIFT. The relatively low Speedup for SWIFT in the Translation task could indicate that this task presents unique challenges for the model, or that the benefits of SWIFT are less pronounced in this specific domain. The increasing trend in Token Acceptance for Self-SD might suggest that the model is learning and improving its token generation capabilities as it processes more complex tasks. The combination of Speedup and Token Acceptance provides a comprehensive view of the model's performance, highlighting its efficiency and reliability.

</details>

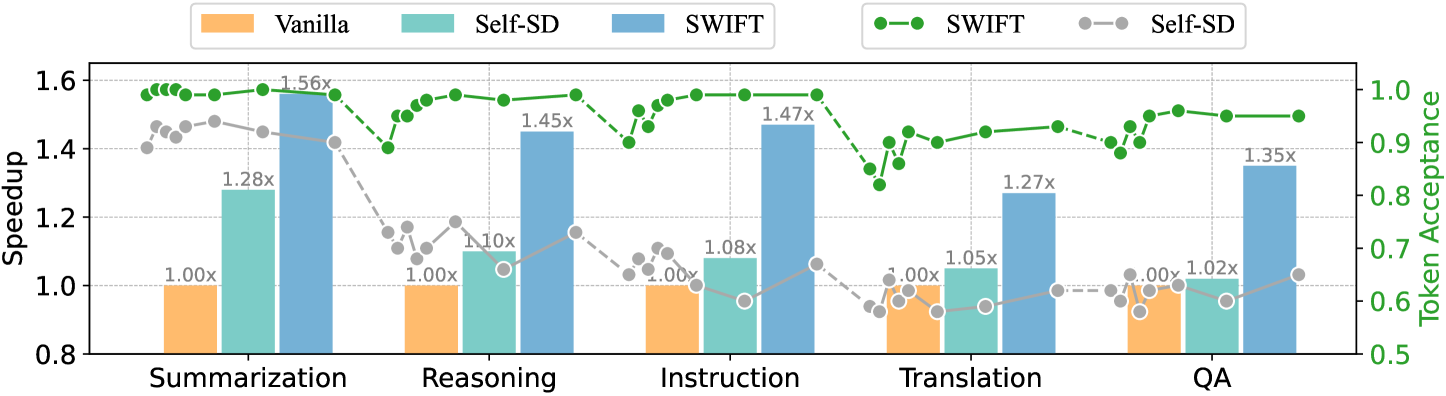

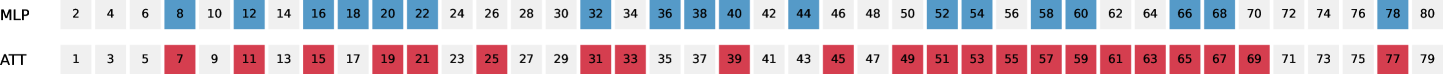

Figure 7: Comparison between and Self-SD in handling dynamic data input streams. Unlike Self-SD, which suffers from efficiency reduction during distribution shift, maintains stable acceleration performance with an acceptance rate exceeding 0.9.

Dynamic Input Data Streams

We further validate the effectiveness of in handling dynamic input data streams. We selected CNN/DM, GSM8K, Alpaca (Taori et al., 2023), WMT14 DE-EN, and Nature Questions (Kwiatkowski et al., 2019) for the evaluation on summarization, reasoning, instruction following, translation, and question answering tasks, respectively. For each task, we randomly sample 500 instances from the test set and concatenate them task-by-task to form the input stream. The experimental results are presented in Figure 7. As demonstrated, Self-SD is sensitive to domain shifts, with the average token acceptance rate dropping from $92\$ to $68\$ . Consequently, it suffers from severe speedup reduction from $1.33\times$ to an average of $1.05\times$ under domain shifts. In contrast, exhibits promising adaptation capability to different domains with an average token acceptance rate of $96\$ , leading to a consistent $1.3\times$ $\sim$ $1.6\times$ speedup.

<details>

<summary>x8.png Details</summary>

### Visual Description

\n

## Charts: Speedup vs. Instances/Layer Skip Ratio

### Overview

The image contains two charts, labeled (a) and (b). Chart (a) depicts the relationship between speedup and the number of instances for different optimization strategies. Chart (b) shows the speedup as a function of the layer skip ratio for different model sizes. Both charts aim to demonstrate performance scaling characteristics.

### Components/Axes

**Chart (a): Flexible Optimization Strategy**

* **X-axis:** "# of Instances" ranging from 0 to 50.

* **Y-axis:** "Speedup" ranging from 1.25 to 1.50.

* **Legend:**

* Blue Line: "S=1000, β=25"

* Orange Line: "S=500, β=25"

* Green Line: "S=1000, β=50"

**Chart (b): Scaling Law of SWIFT**

* **X-axis:** "Layer Skip Ratio r" ranging from 0.30 to 0.60.

* **Y-axis:** "Speedup" ranging from 1.2 to 1.6.

* **Legend:**

* Blue Line: "7B"

* Orange Line: "13B"

* Green Line: "70B"

### Detailed Analysis or Content Details

**Chart (a): Flexible Optimization Strategy**

* **Blue Line (S=1000, β=25):** The line slopes upward, showing increasing speedup with the number of instances.

* At 0 instances, speedup is approximately 1.28.

* At 5 instances, speedup is approximately 1.34.

* At 10 instances, speedup is approximately 1.38.

* At 15 instances, speedup is approximately 1.41.

* At 20 instances, speedup is approximately 1.43.

* At 25 instances, speedup is approximately 1.45.

* At 30 instances, speedup is approximately 1.46.

* At 35 instances, speedup is approximately 1.47.

* At 40 instances, speedup is approximately 1.48.

* At 45 instances, speedup is approximately 1.49.

* At 50 instances, speedup is approximately 1.50.

* **Orange Line (S=500, β=25):** The line also slopes upward, but at a slower rate than the blue line.

* At 0 instances, speedup is approximately 1.27.

* At 5 instances, speedup is approximately 1.32.

* At 10 instances, speedup is approximately 1.36.

* At 15 instances, speedup is approximately 1.39.

* At 20 instances, speedup is approximately 1.41.

* At 25 instances, speedup is approximately 1.43.

* At 30 instances, speedup is approximately 1.44.

* At 35 instances, speedup is approximately 1.45.

* At 40 instances, speedup is approximately 1.46.

* At 45 instances, speedup is approximately 1.47.

* At 50 instances, speedup is approximately 1.48.

* **Green Line (S=1000, β=50):** The line slopes upward, similar to the orange line, but generally higher than the orange line.

* At 0 instances, speedup is approximately 1.30.

* At 5 instances, speedup is approximately 1.36.

* At 10 instances, speedup is approximately 1.40.

* At 15 instances, speedup is approximately 1.43.

* At 20 instances, speedup is approximately 1.45.

* At 25 instances, speedup is approximately 1.46.

* At 30 instances, speedup is approximately 1.47.

* At 35 instances, speedup is approximately 1.48.

* At 40 instances, speedup is approximately 1.49.

* At 45 instances, speedup is approximately 1.50.

* At 50 instances, speedup is approximately 1.50.

**Chart (b): Scaling Law of SWIFT**

* **Blue Line (7B):** The line initially decreases, then plateaus.

* At 0.30, speedup is approximately 1.42.

* At 0.40, speedup is approximately 1.38.

* At 0.50, speedup is approximately 1.32.

* At 0.60, speedup is approximately 1.28.

* **Orange Line (13B):** The line increases initially, reaches a peak, and then decreases.

* At 0.30, speedup is approximately 1.40.

* At 0.40, speedup is approximately 1.46.

* At 0.50, speedup is approximately 1.52.

* At 0.60, speedup is approximately 1.44.

* **Green Line (70B):** The line increases steadily and then decreases.

* At 0.30, speedup is approximately 1.45.

* At 0.40, speedup is approximately 1.50.

* At 0.50, speedup is approximately 1.56.

* At 0.60, speedup is approximately 1.50.

### Key Observations

* In Chart (a), increasing the number of instances consistently improves speedup for all configurations. Higher values of S (1000) generally yield higher speedup than lower values (500). Higher values of β (50) also tend to improve speedup.

* In Chart (b), the 7B model shows a decreasing speedup with increasing layer skip ratio. The 13B model exhibits an optimal layer skip ratio around 0.50, while the 70B model shows a peak speedup around 0.50, followed by a decrease.

### Interpretation

Chart (a) demonstrates the benefits of distributed computing. Increasing the number of instances leads to a near-linear speedup, suggesting efficient parallelization. The parameters S and β likely control aspects of the optimization strategy, with higher S and β values leading to better performance.

Chart (b) illustrates the trade-offs involved in layer skipping. For smaller models (7B), skipping layers degrades performance. However, for larger models (13B and 70B), a moderate amount of layer skipping can improve speedup, likely by reducing computational cost. The optimal layer skip ratio depends on the model size, suggesting that larger models can benefit more from skipping layers. The decrease in speedup at higher layer skip ratios indicates that excessive skipping can lead to information loss and reduced accuracy. These charts provide insights into optimizing model training and inference by tuning the number of instances and layer skip ratio.

</details>

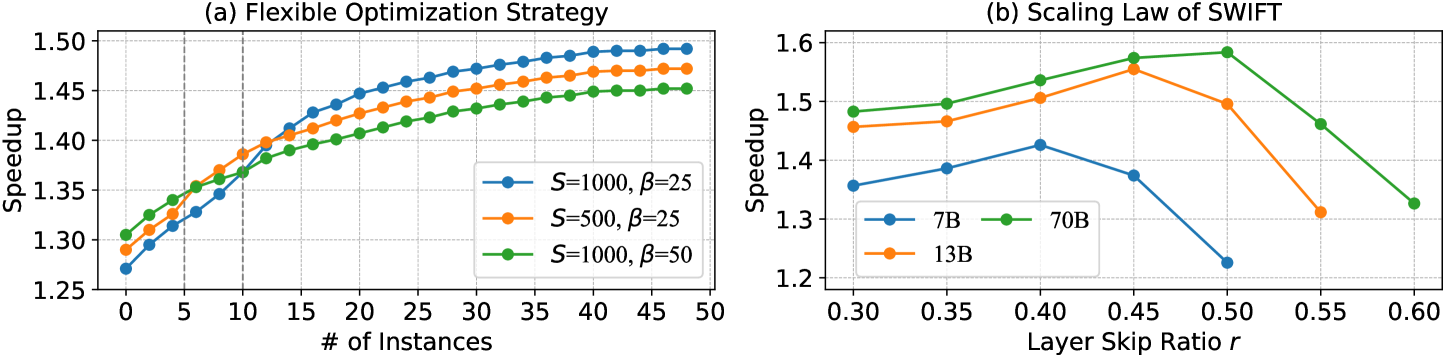

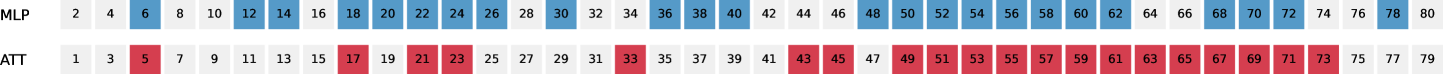

Figure 8: In-depth analysis of , which includes: (a) Flexible optimization strategy. The maximum optimization iteration $S$ and Bayesian interval $\beta$ can be flexibly adjusted to accommodate different input data types. (b) Scaling law. The speedup and optimal layer skip ratio of increase with larger model sizes, indicating that larger LLMs exhibit greater layer sparsity.

Flexible Optimization & Scaling Law

Figure 8 (a) presents the flexibility of in handling various input types by adjusting the maximum optimization step $S$ and Bayesian interval $\beta$ . For input with fewer instances, reducing $S$ enables an earlier transition to the acceleration phase while increasing $\beta$ reduces the overhead during the optimization phase, enhancing speedups during the initial stages of inference. In cases with sufficient input data, enables exploring more optimization paths, thereby enhancing the overall speedup. Figure 8 (b) illustrates the scaling law of : as the model size increases, both the optimal layer-skip ratio and overall speedup improve, indicating that larger LLMs exhibit more layer sparsity. This finding highlights the potential of for accelerating LLMs of larger sizes (e.g., 175B), which we leave for future investigation.

<details>

<summary>x9.png Details</summary>

### Visual Description

\n

## Bar Chart: Speedup Comparison of Language Models

### Overview

This bar chart compares the speedup achieved by three different training configurations – Vanilla, Base, and Instruct – for two language models: Yi-34B and DeepSeek-Coder-33B. The speedup is measured on the y-axis, while the x-axis represents the language model being evaluated.

### Components/Axes

* **X-axis:** Language Model (Yi-34B, DeepSeek-Coder-33B)

* **Y-axis:** Speedup (Scale ranges from 1.0 to 1.6, with increments of 0.1)

* **Legend:**

* Vanilla (Color: Orange)

* Base (Color: Blue)

* Instruct (Color: Teal)

### Detailed Analysis

The chart consists of six bars, two for each language model, representing the speedup for each training configuration.

**Yi-34B:**

* **Vanilla:** The bar is positioned at approximately 1.00 on the y-axis.

* **Base:** The bar reaches approximately 1.31 on the y-axis. The line slopes upward from the Vanilla bar.

* **Instruct:** The bar reaches approximately 1.26 on the y-axis. The line slopes downward from the Base bar.

**DeepSeek-Coder-33B:**

* **Vanilla:** The bar is positioned at approximately 1.00 on the y-axis.

* **Base:** The bar reaches approximately 1.54 on the y-axis. The line slopes upward from the Vanilla bar.

* **Instruct:** The bar reaches approximately 1.39 on the y-axis. The line slopes downward from the Base bar.

### Key Observations

* The "Base" configuration consistently provides the highest speedup for both language models.

* The "Instruct" configuration provides a speedup that is lower than the "Base" configuration but higher than the "Vanilla" configuration.

* DeepSeek-Coder-33B shows a greater speedup overall compared to Yi-34B, particularly in the "Base" configuration.

* The "Vanilla" configuration has a speedup of 1.00 for both models, indicating no speedup relative to a baseline.

### Interpretation

The data suggests that the "Base" training configuration is the most effective for accelerating both Yi-34B and DeepSeek-Coder-33B. The "Instruct" configuration offers a moderate speedup, while the "Vanilla" configuration provides no speedup. The larger speedup observed with DeepSeek-Coder-33B suggests that this model benefits more from the "Base" training approach than Yi-34B. This could be due to differences in model architecture, training data, or other factors. The consistent pattern of "Base" > "Instruct" > "Vanilla" indicates a clear hierarchy in the effectiveness of these training configurations. The speedup values provide quantitative evidence of the performance gains achieved by each configuration.

</details>

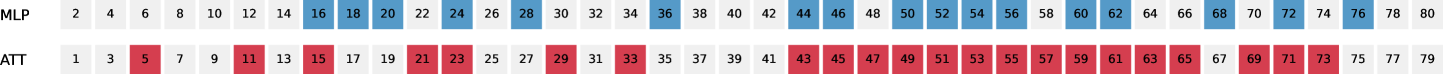

Figure 9: Speedups of on LLM backbones and their instruction-tuned variants.

Other LLM Backbones

Beyond LLaMA, we assess the effectiveness of on additional LLM backbones. Specifically, we include Yi-34B (Young et al., 2024) and DeepSeek-Coder-33B (Guo et al., 2024) along with their instruction-tuned variants for text and code generation tasks, respectively. The speedup results of are illustrated in Figure 9, demonstrating that achieves efficiency improvements ranging from $26\$ to $54\$ on these LLM backbones. Further experimental details are provided in Appendix C.3.

## 6 Conclusion

In this work, we introduce , an on-the-fly self-speculative decoding algorithm that adaptively selects certain intermediate layers of LLMs to skip during inference. The proposed method does not require additional training or auxiliary models, making it a plug-and-play solution for accelerating LLM inference across diverse input data streams. Extensive experiments conducted across various LLMs and tasks demonstrate that achieves over a $1.3\times$ $\sim$ $1.6\times$ speedup while preserving the distribution of the generated text. Furthermore, our in-depth analysis highlights the effectiveness of in handling dynamic input data streams and its seamless integration with various LLM backbones, showcasing the great potential of this paradigm for practical LLM inference acceleration.

## Ethics Statement

The datasets used in our experiments are publicly released and labeled through interaction with humans in English. In this process, user privacy is protected, and no personal information is contained in the dataset. The scientific artifacts that we used are available for research with permissive licenses. The use of these artifacts in this paper is consistent with their intended purpose.

## Acknowledgements