<details>

<summary>Image 1 Details</summary>

### Visual Description

Icon/Small Image (92x33)

</details>

## Rewarding Progress: Scaling Automated Process Verifiers for LLM Reasoning

1,3,* 1,* 2 2 2 2

Amrith Setlur , Chirag Nagpal , Adam Fisch , Xinyang Geng , Jacob Eisenstein , Rishabh Agarwal , Alekh Agarwal 1 , Jonathan Berant † ,2 and Aviral Kumar † ,2,3 1 Google Research, 2 Google DeepMind, 3 Carnegie Mellon University, * Equal contribution, † Equal advising

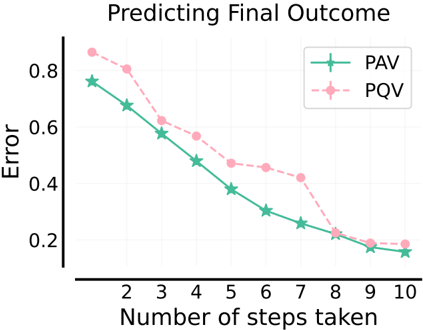

A promising approach for improving reasoning in large language models is to use process reward models (PRMs). PRMs provide feedback at each step of a multi-step reasoning trace, potentially improving credit assignment over outcome reward models (ORMs) that only provide feedback at the final step. However, collecting dense, per-step human labels is not scalable, and training PRMs from automatically-labeled data has thus far led to limited gains. To improve a base policy by running search against a PRM or using it as dense rewards for reinforcement learning (RL), we ask: 'How should we design process rewards?'. Our key insight is that, to be effective, the process reward for a step should measure progress : a change in the likelihood of producing a correct response in the future, before and after taking the step, corresponding to the notion of step-level advantages in RL. Crucially, this progress should be measured under a prover policy distinct from the base policy. We theoretically characterize the set of good provers and our results show that optimizing process rewards from such provers improves exploration during test-time search and online RL. In fact, our characterization shows that weak prover policies can substantially improve a stronger base policy, which we also observe empirically. We validate our claims by training process advantage verifiers (PAVs) to predict progress under such provers, and show that compared to ORMs, test-time search against PAVs is > 8% more accurate, and 1 . 5 -5 × more compute-efficient. Online RL with dense rewards from PAVs enables one of the first results with 5 -6 × gain in sample efficiency, and > 6% gain in accuracy, over ORMs.

## 1. Introduction

Trained reward models or verifiers are often used to improve math reasoning in large language models, either by re-ranking solutions at test-time (Collins, 2000) or via reinforcement learning (RL) (Uesato et al., 2022). Typically, verifiers are trained to predict the outcome of an entire reasoning trace, often referred to as outcome reward models (ORM) (Cobbe et al., 2021b; Hosseini et al., 2024). However, ORMs only provide a sparse signal of correctness, which can be hard to learn from and inefficient to search against. This challenge is alleviated by fine-grained supervision, in theory. For reasoning, prior works train process reward models (PRMs) that assign intermediate rewards after each step of search (Snell et al., 2024) or during RL. While Lightman et al. (2023) obtains PRM annotations from human raters, this approach is not scalable. More recent works (Luo et al., 2024; Wang et al., 2024) train PRMs to predict automatically-generated annotations that estimate future success of solving the problem, akin to value functions in RL. So far, automated PRMs, especially as dense rewards in RL, only improve by 1-2% over ORMs (Shao et al., 2024), raising serious doubts over their utility.

To resolve these uncertainties, in this paper, we train PRMs with automated annotations, such that optimizing the dense rewards from trained PRMs can improve a base policy compute- and sampleefficiently , during test-time search and online RL. For this, we first ask: (i) what should the per-step process rewards measure, and (ii) what kind of automated data collection strategy should we use to train PRMs that predict this measure. For (i) , conventional belief (Lightman et al., 2023; Uesato et al., 2022)

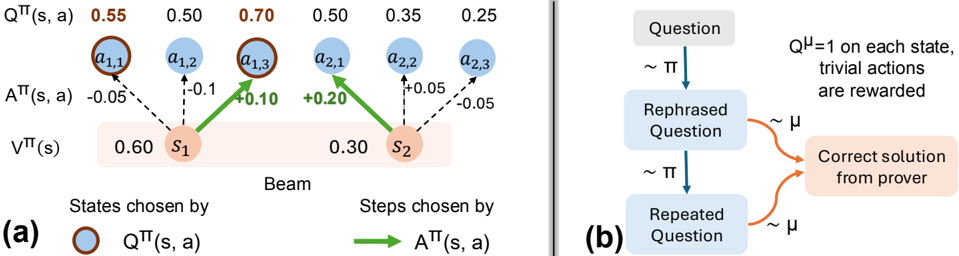

Figure 1 | Process advantage verifiers (PAV): Process reward for a step is defined as progress (advantage) under the prover policy, i.e. , change in prover policy's success rate before and after the step. (a): The base policy samples both correct 1 and incorrect 2 steps but struggles to succeed from either. A strong prover policy completes the solution from both steps, and is unable to adequately reflect progress made by 1 and 2 (both scored 0.0). Conversely, a complementary prover policy distinguishes 1 , 2 more prominently (only succeeds from 1 ). (b,c): Compared to ORMs, PAVs are 5x more compute efficient, 10% more accurate in test-time search, and 6x more sample efficient, 7% more accurate for online reinforcement learning (RL).

<details>

<summary>Image 2 Details</summary>

### Visual Description

\n

## Diagram: Prover Policy and Performance Analysis

### Overview

The image presents a diagram illustrating a system for solving equations, comparing a "Base Policy" to a "Very capable prover policy" and evaluating their performance using Proof-Aware Values (PAVs). The diagram shows a sequence of steps in solving an equation, with feedback on the correctness of each step. Below this, two charts (b and c) compare the performance of different policies using metrics like accuracy and efficiency.

### Components/Axes

The diagram consists of three main sections: (a) a flow diagram of the equation-solving process, (b) a chart comparing "Search with PAVs" performance, and (c) a chart comparing "RL with PAVs" performance.

**Section (a):**

* **Start:** Indicates the beginning of the problem.

* **Question:** "Let 4x+3y=25, 7x+6y=49. Solve for x, y."

* **Step 1:** "We eliminate y from system of equations." (Associated with a blue curved line)

* **Step 2:** "The equations imply: 10x + 9y = 25" (Associated with an orange curved line)

* **Feedback:** Green checkmarks indicate correct steps, red crosses indicate incorrect steps.

* **Final Answer:** Displayed alongside each step, showing the system's solution attempt.

* **Policy Blocks:** "Base Policy" (grey), "Very capable prover policy" (light blue), "Good prover policy: complementary to base" (dark blue).

**Section (b): Search with PAVs**

* **X-axis:** "# samples from Base Policy" (Scale: 2<sup>1</sup> to 2<sup>7</sup>)

* **Y-axis:** "Accuracy" (Scale: 0.10 to 0.25)

* **Legend:**

* "ORM" (Solid Red Line)

* "PRM Q-value" (Dashed Orange Line)

* "PAV" (Dashed Teal Line)

* **Annotations:** "5x Compute Efficient", "10% Accuracy"

**Section (c): RL with PAVs**

* **X-axis:** "Training Iterations (x10<sup>3</sup>)" (Scale: 0 to 10)

* **Y-axis:** "Accuracy" (Scale: 0.10 to 0.25)

* **Legend:**

* "ORM-RL" (Solid Red Line)

* "PAV-RL" (Dashed Teal Line)

* **Annotations:** "6x Sample Efficient", "7% Accuracy"

### Detailed Analysis or Content Details

**Section (a):**

The flow diagram shows the system attempting to solve the given equations.

* Step 1: The system attempts to eliminate 'y', resulting in a final answer of x=1, y=7 (Correct).

* Step 2: The system attempts to derive the next equation, resulting in a final answer of x=1, y=7 (Correct).

* A third attempt (not numbered) leads to an incorrect implication and a final answer of x=-1, y=3 (Incorrect).

* A fourth attempt (not numbered) leads to a final answer of x=1, y=7 (Correct).

**Section (b): Search with PAVs**

* **ORM:** Starts at approximately 0.12 accuracy at 2<sup>1</sup> samples, rises to approximately 0.22 accuracy at 2<sup>7</sup> samples. The line slopes upward, with increasing steepness.

* **PRM Q-value:** Starts at approximately 0.11 accuracy at 2<sup>1</sup> samples, rises to approximately 0.18 accuracy at 2<sup>7</sup> samples. The line slopes upward, but less steeply than ORM.

* **PAV:** Starts at approximately 0.10 accuracy at 2<sup>1</sup> samples, rises to approximately 0.20 accuracy at 2<sup>7</sup> samples. The line slopes upward, with a moderate steepness.

**Section (c): RL with PAVs**

* **ORM-RL:** Starts at approximately 0.12 accuracy at 0 training iterations, rises to approximately 0.23 accuracy at 9x10<sup>3</sup> training iterations. The line initially rises steeply, then plateaus.

* **PAV-RL:** Starts at approximately 0.11 accuracy at 0 training iterations, rises to approximately 0.24 accuracy at 9x10<sup>3</sup> training iterations. The line rises more consistently than ORM-RL, with a slight peak around 8x10<sup>3</sup> iterations.

### Key Observations

* In Section (b), PAV consistently outperforms PRM Q-value, and achieves comparable accuracy to ORM with fewer samples.

* In Section (c), PAV-RL consistently outperforms ORM-RL in terms of accuracy.

* The annotations highlight that using PAVs results in 5x compute efficiency and 10% accuracy improvement in the search process (Section b), and 6x sample efficiency and 7% accuracy improvement in the RL process (Section c).

* The system demonstrates an ability to recover from incorrect implications (as seen in the red 'X' step in Section a).

### Interpretation

The diagram demonstrates the effectiveness of using Proof-Aware Values (PAVs) to improve the performance of both search and reinforcement learning algorithms in the context of equation solving. PAVs enhance both accuracy and efficiency. The flow diagram in Section (a) illustrates the iterative nature of the problem-solving process and the system's ability to learn from its mistakes. The comparison between the "Base Policy" and the "Very capable prover policy" suggests that incorporating proof-awareness leads to a more robust and accurate solver. The annotations quantify these improvements, providing concrete evidence of the benefits of using PAVs. The consistent outperformance of PAV-based methods in both charts suggests a generalizable advantage, applicable to a range of problem-solving scenarios. The slight peak in the PAV-RL curve around 8x10<sup>3</sup> iterations might indicate an optimal training point beyond which further iterations yield diminishing returns.

</details>

has been to measure mathematical correctness or relevance of steps. But, it is unclear if this supervision yields the most improvement in the base policy ( e.g. , a policy may need to generate simpler, repetitive, and even incorrect steps to explore and discover the final answer during test-time search and RL). Our key insight is that per-step, process rewards that measure a notion of progress : change in the likelihood of arriving at a correct final answer before and after taking the step, are effective, for both test-time beam search and online RL. Reinforcing steps that make progress regardless of whether they appear in a correct or incorrect trace diversifies the exploration of possible answers at initial steps, which is crucial when the approach to solve a problem is not clear. Formally, such rewards correspond to per-step advantages of steps from the RL literature (Sutton and Barto, 2018). We empirically show that using advantages in addition to ORM rewards outperforms the typical use of future probabilities of success or 𝑄 -values (Wang et al., 2024) for both search and RL. This is because, when given a combinatorial space of responses, under bounded computational and sampling constraints, 𝑄 -values mainly 'exploit' states whereas advantages also 'explore' steps that make the most progress towards the final answer (Fig. 2).

To answer (ii) , we first note that advantages under a poor base policy are ≈ 0 on most steps, and thus will not be informative for search or RL. In addition, regardless of the strength of the base policy, using its own per-step advantages as process rewards in RL will result in base policy updates equivalent to only

using outcome rewards for RL (since a standard policy gradient algorithm already computes advantages). Hence, we propose to use advantages estimated via rollouts under a different prover policy as process rewards (Fig. 1(a)). How should we choose this prover policy? A natural guess would be to use a very capable prover. However, we show advantages under an overly capable prover policy, that can succeed from any step, fail to distinguish good and bad steps. A similar argument holds for very weak provers.

In theory, we formalize this intuition to define good provers as policies that are complementary to the base policy ( i.e. , policies with advantages that can contrast steps produced by the base policy sufficiently), while still producing step-level advantages correlated with those of the base policy. For e.g. , for Bestof𝐾 policies (Nakano et al., 2021) corresponding to a base policy, we empirically find that provers corresponding to 𝐾 > 1 (but not too large) are more capable at improving the base policy. Contrary to intuition, the set of complementary provers also contains policies that are worse than the base policy. To predict the advantages of such provers we train dense verifiers, called process advantage verifiers (PAVs) , that accelerate sample and compute efficiency of RL and search.

With the conceptual design of PAVs in place, we prescribe practical workflows for training PAVs and demonstrate their efficacy on a series of 2B, 9B, and 27B Gemma2 models (Gemma Team et al., 2024). PAV training data is gathered by sampling 'seed' solution traces from the prover and partial rollouts from the same to estimate the 𝑄 -value at each prefix of the seed trace. Our workflow prescribes favorable ratios for seed and partial rollouts. Our first set of empirical results show that for an equal budget on test-time compute, beam search against trained PAVs is >8% better in accuracy, and 1 . 5 -5 × more compute efficient compared to re-ranking complete traces against an ORM (Fig. 1(b)). Dense rewards from PAVs improve the efficiency of step-level exploration during search by pruning the combinatorial space of solutions aggressively and honing in on a diverse set of possible sequences. Finally, we demonstrate for the first time , that using PAVs as dense rewards in RL scales up data efficiency by 6 × compared to only using outcome rewards (Fig. 1(c)). Moreover, base policies trained with PAVs also achieve 8 × better Pass @ 𝑁 performance (probability of sampling the correct solution in 𝑁 attempts), and consequently afford a higher ceiling on the performance of any test-time re-ranker. Finally, running RL with PAVs discovers solutions to hard problems that sampling from the SFT policy with a very large budget can't solve.

## 2. Preliminaries, Definitions, and Notation

Following protocols from Lightman et al. (2023); Uesato et al. (2022), a reasoning trace from an LLM consists of multiple logical steps separated by a demarcation token. An outcome reward model (ORM) is a trained verifier that assigns a numerical score after the last step of the trace, and a process reward model (PRM) is a trained verifier that scores each step of the trace individually.

Problem setup and notation. Given a math problem 𝒙 ∈ X , our goal is to improve a base policy 𝜋 that samples a response 𝒚 ∼ 𝜋 (· | 𝒙 ) in the set Y . A response 𝒚 consists of multiple reasoning steps (maximum 𝐻 ), separated by a delimiter ('next line' in our case), i.e. , 𝒚 = ( 𝑎 1 , 𝑎 2 , . . . , 𝑎𝐻 ) . Since sampling is auto-regressive, we can view each step as an action taken by the agent 𝜋 in a Markov decision process (MDP) with deterministic dynamics. Specifically, we treat the prefix ( 𝒙 , 𝑎 1 , . . . , 𝑎 ℎ -1 ) as the current state 𝒔 ℎ and next step 𝑎ℎ ∼ 𝜋 (· | 𝒙 ) as the action taken by 𝜋 at 𝒔 ℎ , resulting in the next state 𝒔 ℎ + 1 . For problem 𝒙 , with ground-truth response 𝒚 ★ 𝒙 , we can evaluate the accuracy of 𝜋 by running a regular expression match on the final answer (Hendrycks et al., 2021): Rex ( 𝒚 , 𝒚 ★ 𝒙 ) ↦→ { 0 , 1 } , i.e. , accuracy is given by 𝔼 𝒚 ∼ 𝜋 (·| 𝒙 ) Rex ( 𝒚 , 𝒚 ★ 𝒙 ) . Now, given a dataset D = {( 𝒙 𝑖 , 𝒚 ★ 𝒙 𝑖 )} 𝑖 of problem-solution pairs, the main goal is to learn a good base policy by optimizing this outcome reward on D . Next, we see how we can leverage the final answer verifier Rex available on D to train ORMs and PRMs.

Outcome reward model (ORM). Given a response 𝒚 , an ORM estimates the ground-truth correctness Rex ( 𝒚 , 𝒚 ★ 𝒙 ) . To train such a model we first take problems in D , and collect training data of the form {( 𝒙 , 𝒚 ∼ 𝜋 (· | 𝒙 ) , Rex ( 𝒚 , 𝒚 ★ 𝒙 ))} . Then we train an ORM that takes as input a problem-response pair ( 𝒙 , 𝒚 ) and predicts Rex ( 𝒚 , 𝒚 ★ 𝒙 ) . At test time, when 𝒚 ★ 𝒙 is unknown, the ORM is used to score candidate solutions revealed by test-time search. Given a base policy 𝜋 , a Best-of𝐾 policy: BoK ( 𝜋 ) , is a policy that samples 𝐾 responses from 𝜋 , scores them against an ORM, and returns the one with the highest score. Whenever the ORM matches Rex, the performance of BoK ( 𝜋 ) is referred to as Pass @ 𝐾 . Furthermore, when the likelihood of 𝜋 solving problem 𝒙 is 𝑝 𝒙 , then for BoK ( 𝜋 ) this likelihood is given by the expression: 1 - ( 1 -𝑝 𝒙 ) 𝐾 . In general, this is larger than 𝑝 𝒙 , making BoK ( 𝜋 ) stronger than 𝜋 for 𝐾 > 1.

Standard process reward models (PRMs). A PRM scores every step 𝑎ℎ in a multi-step response 𝒚 ∼ 𝜋 ( e.g. , in Lightman et al. (2023) PRMs are trained to score correct steps over incorrect and irrelevant ones). But, unlike ORMs, which only require Rex for data collection, PRM training data requires expensive step-level human annotations. Prior works (Luo et al., 2024; Wang et al., 2024) attempted to scale process rewards automatically by sampling from the model to provide a heuristic understanding of when a step is actually correct. In particular, they evaluate a prefix by computing the expected future accuracy of multiple completions sampled from 𝜋 , after conditioning on the prefix, i.e. , value function 𝑄 𝜋 (Eq. 1) from RL. Similarly, we define 𝑉 𝜋 ( 𝒔 ℎ ) B 𝔼 𝑎 ℎ ∼ 𝜋 (·| 𝒔 ℎ ) 𝑄 𝜋 ( 𝒔 ℎ , 𝑎 ℎ ) as value of state 𝒔 ℎ . These works use 𝑄 𝜋 as the PRM that assigns a score of 𝑄 𝜋 ( 𝒔 ℎ , 𝑎 ℎ ) to the action 𝑎ℎ , at state 𝒔 ℎ .

$$Q ^ { \pi } ( \underbrace { ( x , a _ { 1 } , \dots , a _ { h - 1 } ) } _ { s t a t e \, s _ { h } } , \underbrace { a _ { h } } _ { a c t i o n \, a _ { h } } ) = \underbrace { \mathbb { E } _ { a _ { h + 1 } , \dots , a _ { H } \sim \pi ( \cdot | s _ { h } , a _ { h } ) } \left [ R e x \left ( ( a _ { 1 } , \dots , a _ { H } ) , y _ { x } ^ { ^ { * } } \right ) \right ] } _ { l i k e l i d o f f u t r e s c e s s } ,$$

Using PRMs for beam search at test-time. Given a PRM, a natural way to spend test-time compute is to use it as a step-level re-ranker within a beam search procedure (Snell et al., 2024). For each problem, at step 0, a beam of maximum width 𝐵 , is initialized with a single state consisting of just the problem. At step ℎ , a beam contains partial responses unrolled till a set of states or prefixes { 𝒔 𝑖 } 𝐵 𝑖 = 1 . From each state 𝒔 𝑖 in this set, 𝐶 independent actions or steps { 𝑎𝑖,𝑗 } 𝐶 𝑗 = 1 are sampled from 𝜋 (· | 𝒔 𝑖 ) , each of which leads to a new state. Process rewards from PRMs assign a score to every new state ( 𝒔 𝑖 , 𝑎 𝑖, 𝑗 ) , and only the states corresponding to the top 𝐵 values are retained in the beam for the next step.

## 3. How Should we Define Process Rewards and Why?

Ultimately, we are interested in test-time search and RL methods that can most efficiently and reliably discover solution traces with the correct final answer, thus maximizing Rex. To this end, process rewards should serve as step-level supervision to indirectly maximize outcome-level Rex. Our position contrasts with conventional belief that process rewards should mainly evaluate mathematical correctness or relevance of individual steps (Lightman et al., 2023; Uesato et al., 2022), since LLMs might need to generate trivial or repetitive intermediate steps in order to discover a trace with the correct final answer. With this insight, in this section we approach the design of dense automated step rewards as a form of supervision to be used in conjunction with sparse outcome rewards to improve the base policy.

In an MDP, a starting point to design step-level dense feedback that is eventually meant to optimize a sparse outcome reward Rex is to consider the notion of a potential function (Ng et al., 1999): in our case, this is a function that summarizes the difference between some statistic of the policy at the future state and the same statistic computed at the current state. By appealing to this framework, in Sec. 3.1, we

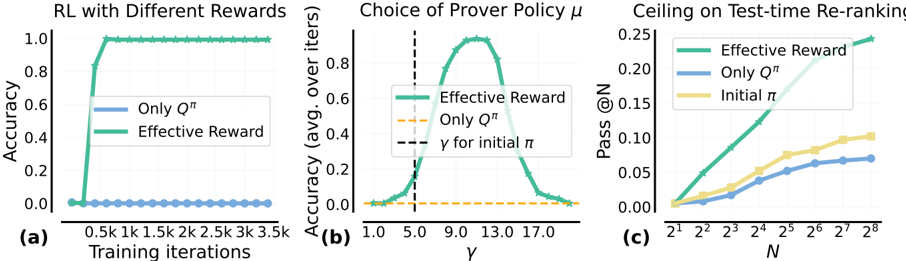

Figure 2 | Issues with using 𝑄 -values as process rewards : (a): Unlike 𝐴 𝜋 , 𝑄 𝜋 mixes action evaluation with the 𝑄 -value of the previous state. Beam search with 𝑄 𝜋 exploits high-likelihood states, while adding 𝐴 𝜋 ( e.g. , 𝑄 𝜋 + 𝛼𝐴 𝜋 in Eq. 5) aids in exploring states reached by making actions that induce progress, i.e. , increase likelihood of success. (b): 𝑄 𝜇 from a strong prover 𝜇 can assign unmerited bonuses to trivial actions.

<details>

<summary>Image 3 Details</summary>

### Visual Description

## Diagram: Reinforcement Learning Process & Question Rephrasing

### Overview

The image presents two diagrams, labeled (a) and (b), illustrating components of a reinforcement learning process and a question rephrasing mechanism. Diagram (a) depicts a state transition diagram with Q-values and policy values, while diagram (b) shows a flow chart for rephrasing questions and obtaining solutions.

### Components/Axes

**Diagram (a):**

* **States:** s1, s2

* **Actions:** a1,1, a1,2, a1,3, a2,1, a2,2, a2,3

* **Q-values:** Q<sup>π</sup>(s, a) – values ranging from 0.25 to 0.70.

* **Policy Values:** V<sup>π</sup>(s) – 0.60 for s1 and 0.30 for s2.

* **Policy Transition Weights:** A<sup>π</sup>(s, a) – values ranging from -0.10 to +0.20.

* **Legend:**

* Blue circles: Q<sup>π</sup>(s, a)

* Green arrows: A<sup>π</sup>(s, a)

* **Label:** "Beam" – positioned below states s1 and s2.

**Diagram (b):**

* **Input:** "Question"

* **Process:** "Rephrased Question", "Repeated Question"

* **Output:** "Correct solution from prover"

* **Distribution:** ~π (sampling from a policy) and ~μ (sampling from a distribution)

* **Reward:** Q<sup>μ</sup> = 1 on each state, trivial actions are rewarded.

* **Arrows:** Indicate flow of information.

### Detailed Analysis or Content Details

**Diagram (a):**

* **State s1:**

* Q<sup>π</sup>(s1, a1,1) = 0.55

* Q<sup>π</sup>(s1, a1,2) = 0.50

* Q<sup>π</sup>(s1, a1,3) = 0.70

* V<sup>π</sup>(s1) = 0.60

* A<sup>π</sup>(s1, a1,1) = -0.05

* A<sup>π</sup>(s1, a1,2) = -0.10

* A<sup>π</sup>(s1, a1,3) = +0.20

* **State s2:**

* Q<sup>π</sup>(s2, a2,1) = 0.50

* Q<sup>π</sup>(s2, a2,2) = 0.35

* Q<sup>π</sup>(s2, a2,3) = 0.25

* V<sup>π</sup>(s2) = 0.30

* A<sup>π</sup>(s2, a2,1) = +0.05

* A<sup>π</sup>(s2, a2,2) = -0.05

**Diagram (b):**

* A "Question" is fed into a process that generates a "Rephrased Question" sampled from a policy π.

* The "Rephrased Question" leads to a "Correct solution from prover".

* The process is repeated with a "Repeated Question" also sampled from policy π.

* The "Repeated Question" is sampled from a distribution μ.

* The reward function Q<sup>μ</sup> assigns a value of 1 to each state, rewarding trivial actions.

### Key Observations

* In diagram (a), the Q-values for state s1 are generally higher than those for state s2, suggesting s1 is a more desirable state.

* The policy transition weights (A<sup>π</sup>(s, a)) indicate the probability of taking specific actions from each state. Positive values suggest a higher probability, while negative values suggest a lower probability.

* Diagram (b) illustrates an iterative process of question rephrasing and solution seeking, potentially aiming to improve the quality or accuracy of the solutions obtained.

### Interpretation

Diagram (a) represents a simplified reinforcement learning environment. The Q-values represent the expected cumulative reward for taking a specific action in a given state, following a particular policy (π). The policy values (V<sup>π</sup>(s)) represent the expected cumulative reward for being in a given state, following the same policy. The policy transition weights (A<sup>π</sup>(s, a)) show the probability of taking each action from each state. The "Beam" label suggests a beam search algorithm might be used to explore the state space.

Diagram (b) depicts a mechanism for refining questions to obtain better solutions. The rephrasing process, guided by a policy π, aims to generate questions that are more likely to elicit correct answers. The iterative nature of the process, with repeated questioning and sampling from distribution μ, suggests a search for optimal question formulations. The reward function Q<sup>μ</sup> incentivizes trivial actions, potentially indicating a need to balance exploration and exploitation in the question-answering process.

The two diagrams together suggest a system where reinforcement learning is used to guide the rephrasing of questions, ultimately leading to improved solution quality. The system appears to be designed to explore different question formulations and learn which ones are most effective in eliciting correct answers.

</details>

show that advantages - not value functions (Luo et al., 2024; Wang et al., 2024) - that measure a notion of 'progress' at each new step are more appropriate for use as dense rewards in search and RL (primarily for exploration). Then in Secs. 3.3 and 3.4, we show that this progress or advantage vakue is measured best under a policy 𝜇 , different from the base policy 𝜋 . We call this policy 𝜇 , the prover policy .

## 3.1. Process Rewards Should be Advantages, Not Value Functions

To understand the relationship to potential functions, we first study test-time beam search, and present some challenges with the reward design of Snell et al. (2024), that uses value function 𝑄 𝜋 ( 𝒔 , 𝑎 ) of the base policy 𝜋 to reward action 𝑎 at state 𝒔 . Consider the example in Fig. 2(a), where from the 2 states in the beam, we sample 3 actions. If we pick next states purely based on highest values of 𝑄 𝜋 , we would be comparing steps sampled from different states ( e.g. , 𝑎 1 , 1 vs. 𝑎 2 , 1 ) against each other. Clearly, a reduction in expected final outcome, i.e. , 𝑄 𝜋 ( 𝒔 1 , 𝑎 1 , 1 ) -𝑉 𝜋 ( 𝒔 1 ) , means that 𝑎 1 , 1 by itself has a negative effect of -0 . 05 on the probability of success from 𝒔 1 , whereas 𝑎 2 , 1 has a positive effect of + 0 . 20 from 𝒔 2 . However, expanding the beam based on absolute values of 𝑄 𝜋 retains the action that makes negative progress, and removes state 𝒔 2 from the beam (as beam size is 2). In other words, 𝑄 𝜋 fails to decouple the 'evaluation' of an action (step), from the 'promise' shown by the previous state. This will not be an issue for every problem, and particularly not when the beam capacity is unbounded, but under finite computational and sampling constraints, using 𝑄 𝜋 might retain states with potentially unfavorable steps that hurt the overall likelihood of success. If we could also also utilize the progress made by the previous step along with the likelihood of success 𝑄 𝜋 when deciding what to retain in the beam, then we can address this tradeoff.

How can we measure the 'progress' made by a step? One approach is to consider the relative increase/decrease in the likelihood of success, before and after the step. This notion is formalized by the advantage (Eq. 2) of a step under policy 𝜋 . Furthermore, since advantages can attach either positive or negative values to a step, training the base policy against advantages supervises the base policy when it generates a step that makes progress (where 𝐴 𝜋 > 0), and also when it fails to produce one, employing a 'negative gradient' that speeds up RL training (Tajwar et al., 2024).

$$A ^ { \pi } ( { \mathbf s _ { h } } , a _ { h } ) \coloneqq Q ^ { \pi } ( { \mathbf s _ { h } } , a _ { h } ) - V ^ { \pi } ( { \mathbf s _ { h } } ) = Q ^ { \pi } ( { \mathbf s _ { h } } , a _ { h } ) - Q ^ { \pi } ( { \mathbf s _ { h - 1 } } , a _ { h - 1 } ) .$$

Recall that since we view process rewards as potential functions in the MDP, they can be computed under

any policy 𝜇 , which can be the base policy. However, in the above example, reasons for which 𝑄 𝜋 is a seemingly unfit choice for process rewards also apply to 𝑄 𝜇 . Nevertheless, we can possibly use advantage under 𝜇 : 𝐴 𝜇 , which measures the progress made by a step to improve the likelihood of success under 𝜇 . In that case, how should we choose this policy 𝜇 , that we call the prover policy, and should it be necessarily different from base policy 𝜋 ? Before diving into the choice of 𝜇 , we discuss a more pertinent question: how should we use 𝐴 𝜇 in conjunction with outcome rewards for improving the base policy 𝜋 ? We will then formally reason about the choice of 𝜇 in Secs. 3.3 and 3.4.

## 3.2. Our Approach: Process Advantage Verifiers (PAV)

For building an approach that uses process rewards 𝐴 𝜇 together with the outcome reward Rex to improve the base policy 𝜋 , we situate ourselves in the context of improving 𝜋 with online RL. If all we had was access to Rex on D , the standard RL objective is given by:

$$\ell _ { \text {ORM-RL} } ( \pi ) \coloneqq \mathbb { E } _ { \mathbf x \sim \mathcal { D } , ( a _ { 1 } , \dots , a _ { H } ) \sim \pi ( \cdot | \mathbf x ) } \left [ R e x \left ( ( \mathbf x , a _ { 1 } , \dots , a _ { H } ) , y _ { \mathbf x } ^ { ^ { * } } \right ) \right ] .$$

Inspired by how reward bonuses (and potential functions) are additive (Bellemare et al., 2016; Ng et al., 1999), one way to use process rewards 𝐴 𝜇 is to combine it with the standard RL objective as:

$$\ell _ { \text {PAV-RL} } ^ { \pi ^ { \prime } } ( \pi ) \colon = \ell _ { \text {ORM-RL} } ( \pi ) + \alpha \cdot \sum _ { h = 1 } ^ { H } \mathbb { E } _ { s _ { h } \sim d _ { h } ^ { \prime } } \, \mathbb { E } _ { a _ { h } \sim \pi ( \cdot | s _ { h } ) } \left [ A ^ { \mu } ( s _ { h } , a _ { h } ) \right ]$$

The term in red is the difference in likelihoods of success of the prover 𝜇 , summed over consecutive steps (a notion of progress ). Here, 𝑑 𝜋 ′ ℎ denotes the distribution over states at step ℎ , visited by the old policy 𝜋 ′ (policy at previous iterate). Following policy gradient derivations (Williams, 1992):

$$\boxed { \nabla _ { \pi } \ell ^ { \pi ^ { \prime } } _ { \text {PAV-RL} } ( \pi ) \Big | _ { \pi ^ { \prime } = \pi } = \sum _ { h = 1 } ^ { H } \, \nabla _ { \pi } \log \pi ( a _ { h } \, | \, s _ { h } ) \cdot \underbrace { ( Q ^ { \pi } ( s _ { h } , a _ { h } ) + \alpha \cdot A ^ { \mu } ( s _ { h } , a _ { h } ) ) } _ { \text {effective reward} } }$$

At a glance, we can view 𝑄 𝜋 ( 𝒔 ℎ , 𝑎 ℎ ) + 𝛼𝐴 𝜇 ( 𝒔 ℎ , 𝑎 ℎ ) as the effective reward for step 𝑎ℎ when scored against a combination of the outcome evaluation Rex, i.e., 𝑄 𝜋 , and process rewards 𝐴 𝜇 . Thus, we can optimize Eq. 4 indirectly via (a) running beam-search against the effective reward; or (b) online RL where the policy gradients are given by Eq. 5. For either of these, we need access to verifiers that are trained to predict the advantage 𝐴 𝜇 ( 𝒔 ℎ , 𝑎 ℎ ) under the prover. We refer to these verifiers as process advantage verifiers (PAVs) . In Sec. 4.2 we describe how to train PAVs, but now we use the above formulation to reason about how to choose prover 𝜇 that is most effective at improving base 𝜋 .

We also remark that the term in red resembles prior work on imitation learning via policy optimization (Ross and Bagnell, 2014; Sun et al., 2017), where the main aim is to learn a policy 𝜋 that imitates the prover 𝜇 , or to improve upon it to some extent. Of course, this is limiting since our goal is to not just take actions that perform at a similar level as 𝜇 , but to improve the base policy even further, and using a combination of 𝑄 𝜋 and 𝐴 𝜇 is critical towards this goal.

How should we choose the prover 𝜇 ? Perhaps a natural starting point is to set the prover to be identical to the base policy, i.e. , 𝜇 = 𝜋 , which produces process rewards that prior works have considered Shao et al. (2024). However, setting 𝐴 𝜋 = 𝐴 𝜇 in Eq. 5 results in exactly the same policy gradient update as only optimizing outcome evaluation Rex. Moreover, for a poor base policy 𝜋 , where 𝑄 𝜋 ≈ 0 on most states,

the term 𝐴 𝜋 would also be ≈ 0, and hence running beam search with the effective rewards would not be informative at all. Hence, a better approach is to use a different prover policy , but a very weak prover 𝜇 will likely run into similar issues as a poor base policy. We could instead use a very capable prover 𝜇 , but unfortunately even this may not be any better than optimizing only the outcome reward either. To see why, consider a scenario where 𝜋 's response contains an intermediate step that does not help make progress towards the solution ( e.g. , 𝜋 simply restates the question, see Fig. 2(b)). Here, 𝑄 𝜇 for a capable prover before and after this irrelevant step will be identical since 𝜇 can succeed from either step. This means that 𝜇 fails to distinguish steps, resulting in 𝐴 𝜇 ≈ 0 in most cases. Training with this process reward during RL will then lead to gradients that are equivalent to those observed when purely optimizing ℓ ORM -RL . In fact, empirically, we observe that online RL with 𝑄 𝜇 from strong provers leads to polices that only produce re-phrasings of the question (App. G) and do not succeed at solving the question. Clearly, any policy different from the base policy cannot serve as a prover. So, how do we identify a set of good provers? Can they indeed be weaker than the base policy? We answer next.

## Takeaway: What should process rewards measure during test-time search and online RL?

- Process rewards should correspond to progress, or advantage , as opposed to absolute 𝑄 -values, for a better explore-exploit tradeoff during beam search and online RL.

- Advantages should be computed using a prover policy, different from the base policy.

## 3.3. Analysis in a Didactic Setting: Learning a Planted Sub-sequence

In this section, we aim to characterize prover policies that are effective in improving the base policy. To do so, we first introduce a didactic example, representative of real reasoning scenarios to illustrate the main intuition. Then, we will formalize these intuitions in the form of theoretical results.

Didactic example setup. Given an unknown sub-sequence 𝒚 ★ consisting of tokens from vocabulary V B { 1 , 2 , . . . , 15 } , we train a policy 𝜋 to produce a response which contains this sub-sequence. The task completion reward is terminal and sparse, i.e. , 𝑟 ( 𝒚 , 𝒚 ★ ) = 1 for a 𝒚 if and only if 𝒚 ★ appears in 𝒚 . By design, the reward 𝑟 ( 𝒚 , 𝒚 ★ ) resembles outcome reward Rex ( 𝒚 , 𝒚 ★ 𝒙 ) in Sec. 2. The prover policy 𝜇 is a procedural policy, parameterized by a scalar 𝛾 > 0 (details in App. B). As 𝛾 increases, the performance of 𝜇 improves and → 1 as 𝛾 →∞ . For simplicity, we assume oracle access to ground-truth 𝐴 𝜇 and 𝑄 𝜋 , and alleviate errors from learned verifiers approximating these values.

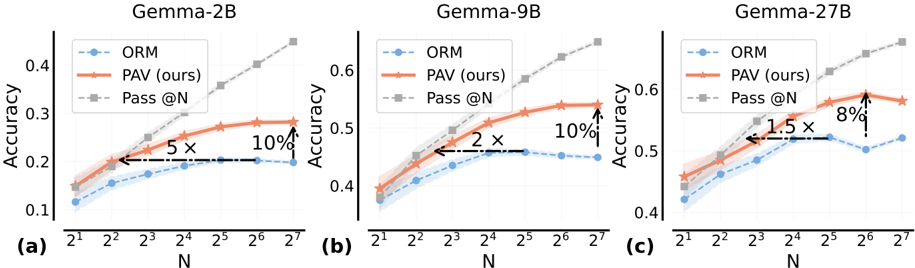

(1) RL with effective reward 𝑄 𝜋 + 𝛼𝐴 𝜇 is 10 × more sample-efficient than only outcome reward. In Fig. 3(a), we first note that training 𝜋 with this effective reward under a prover 𝜇 with strength 𝛾 = 10, produces optimal performance (100% accuracy) in 350 iterations, despite starting from a mediocre initialization for 𝜋 ( 𝛾 = 5 . 0 ) . Training with only outcome reward is ineffective. More importantly, in Fig. 3(b), we note that effective rewards only help for a set of provers, in 𝛾 ∈ [ 8 . 0 , 15 . 0 ] . Outside this range, we observed advantages 𝐴 𝜇 were close to 0 on most states, either because 𝜇 was poor (small 𝛾 ) and was unable to generate 𝒚 ★ even when 𝜋 got the sequence partially correct, or because 𝜇 was strong (large 𝛾 ) that it generated 𝒚 ∗ with almost equal likelihood from all prefixes.

(2) Effective reward improves Pass @N by 5 × over only outcome reward. We report the 'Pass @N' performance in Fig. 3(c), which measures the maximum reward 𝑟 across 𝑁 traces sampled i.i.d. from 𝜋 and hence, represents the ceiling on the performance of any test-time search method that picks a single response from multiple draws ( e.g. , as in Best-of-N). For a policy trained with the effective reward for 100 iterations, the Pass @N performance grows 5 × faster with 𝑁 , compared to the policy trained with only

Figure 3 | Results for our didactic analysis: (a): We train base policy via RL with either effective reward 𝑄 𝜋 + 𝛼𝐴 𝜇 , or the typical 𝑄 𝜋 (computed via Monte-Carlo sampling). (b): We vary the strength 𝛾 of the prover 𝜇 used to compute advantages 𝐴 𝜇 in the effective reward, and plot the base policy accuracy averaged over the RL run. (c): We plot the max score out of 𝑁 responses (Pass @N) sampled i.i.d. from an undertrained base policy (iter 100) .

<details>

<summary>Image 4 Details</summary>

### Visual Description

\n

## Charts: Reinforcement Learning Performance Analysis

### Overview

The image presents three charts (a, b, and c) analyzing the performance of Reinforcement Learning (RL) algorithms under different reward schemes and parameter settings. Chart (a) compares accuracy with different rewards over training iterations. Chart (b) examines the impact of the prover policy parameter γ on accuracy. Chart (c) investigates the effect of the test-time re-ranking parameter N on the pass rate @ N.

### Components/Axes

**Chart (a): RL with Different Rewards**

* **X-axis:** Training iterations (0.5k, 1k, 1.5k, 2k, 2.5k, 3k, 3.5k)

* **Y-axis:** Accuracy (0.0 to 1.0)

* **Legend:**

* "Only Qπ" (Blue)

* "Effective Reward" (Green)

**Chart (b): Choice of Prover Policy μ**

* **X-axis:** γ (1.0 to 17.0)

* **Y-axis:** Accuracy (avg. over iters) (0.0 to 1.0)

* **Legend:**

* "Effective Reward" (Green)

* "Only Qπ" (Orange)

* "γ for initial π" (Dashed Orange)

**Chart (c): Ceiling on Test-time Re-ranking**

* **X-axis:** N (2<sup>1</sup>, 2<sup>2</sup>, 2<sup>3</sup>, 2<sup>4</sup>, 2<sup>5</sup>, 2<sup>6</sup>, 2<sup>7</sup>, 2<sup>8</sup>) which is equivalent to (2, 4, 8, 16, 32, 64, 128, 256)

* **Y-axis:** Pass @ N (0.0 to 0.25)

* **Legend:**

* "Effective Reward" (Green)

* "Only Qπ" (Blue)

* "Initial π" (Yellow)

### Detailed Analysis or Content Details

**Chart (a): RL with Different Rewards**

* **"Only Qπ" (Blue):** The line remains relatively flat around 0.0 accuracy throughout all training iterations. Accuracy is approximately 0.02 at all points.

* **"Effective Reward" (Green):** The line shows a steep increase in accuracy from 0.0 to approximately 1.0 between 0.5k and 1.5k training iterations. After 1.5k iterations, the accuracy stabilizes around 0.98-1.0.

**Chart (b): Choice of Prover Policy μ**

* **"Effective Reward" (Green):** The line forms a bell-shaped curve, peaking at approximately γ = 9.0 with an accuracy of around 0.95. The accuracy decreases as γ moves away from 9.0 in either direction. Accuracy is approximately 0.2 at γ = 1.0 and γ = 17.0.

* **"Only Qπ" (Orange):** The line is relatively flat, with an accuracy around 0.2 across all values of γ.

* **"γ for initial π" (Dashed Orange):** A horizontal dashed line at approximately 0.2 accuracy, spanning the entire range of γ.

**Chart (c): Ceiling on Test-time Re-ranking**

* **"Effective Reward" (Green):** The line shows a generally increasing trend, starting from approximately 0.02 at N=2 and reaching approximately 0.12 at N=256. There is a slight plateau between N=64 and N=128.

* **"Only Qπ" (Blue):** The line also shows an increasing trend, but starts at a lower value (approximately 0.01 at N=2) and reaches approximately 0.10 at N=256.

* **"Initial π" (Yellow):** The line starts at approximately 0.03 at N=2 and increases to approximately 0.08 at N=256, exhibiting a slower growth rate compared to the other two lines.

### Key Observations

* In Chart (a), the "Effective Reward" scheme significantly outperforms "Only Qπ" in terms of accuracy.

* In Chart (b), the "Effective Reward" scheme is highly sensitive to the choice of γ, with optimal performance around γ = 9.0.

* In Chart (c), increasing the value of N generally improves the pass rate for all three schemes, but the "Effective Reward" scheme consistently achieves the highest pass rate.

* The "Only Qπ" scheme consistently performs worse than the "Effective Reward" scheme across all three charts.

### Interpretation

These charts demonstrate the effectiveness of the "Effective Reward" scheme in Reinforcement Learning tasks. The scheme leads to faster learning (Chart a), optimal performance with a specific parameter setting (Chart b), and higher success rates in test-time re-ranking (Chart c). The sensitivity of the "Effective Reward" scheme to the parameter γ suggests that careful tuning of this parameter is crucial for achieving optimal performance. The consistently lower performance of the "Only Qπ" scheme indicates that the additional components of the "Effective Reward" scheme provide significant benefits. The trends in Chart (c) suggest diminishing returns as N increases, indicating a potential trade-off between computational cost and performance. The dashed line in Chart (b) provides a baseline for comparison, showing the performance of the initial policy without any learning. Overall, the data suggests that the "Effective Reward" scheme is a promising approach for improving the performance of Reinforcement Learning algorithms.

</details>

the outcome reward. Due to only sparse feedback, the latter policy does not learn to sample partially correct 𝒚 ★ , whereas a policy trained with the effective reward produces partially correct 𝒚 ★ , and is able to sample the complete 𝒚 ★ with higher likelihood during Pass @N.

## Takeaway: Online RL with process rewards from different prover policies.

Effective rewards 𝑄 𝜋 + 𝛼𝐴 𝜇 from prover 𝜇 : (i) improve sample efficiency of online RL, and (ii) yield policies with better Pass @N performance, over using only outcome rewards. But, advantages of very capable or poor 𝜇 do not improve base policy beyond outcome rewards.

## 3.4. Theory: Provers Complementary to the Base Policy Boost Improvement

From our didactic analysis, it is clear that process rewards 𝐴 𝜇 under different provers 𝜇 disparately affect the base policy that optimizes 𝑄 𝜋 + 𝛼𝐴 𝜇 via online RL. We now present a formal analysis of why this happens and characterize a class of provers that can guarantee non-trivial improvements to the base policy. For simplicity, we assume oracle access to 𝑄 𝜋 , 𝐴 𝜇 at every state-action pair ( 𝒔 ℎ , 𝑎 ℎ ) and prove our result in the tabular RL setting, where the policy class is parameterized using the softmax parameterization in Agarwal et al. (2021). Proofs for this section are in App. F.

Main intuitions. We expect a prover 𝜇 to improve a base policy 𝜋 only when 𝝁 is able to distinguish different actions taken by 𝜋 , by attaining sufficiently varying advantage values 𝐴 𝜇 ( 𝒔 ℎ , 𝑎 ) for actions 𝑎 at state 𝒔 ℎ . This can be formalized under the notion of sufficiently large variance across actions, 𝕍 𝑎 ∼ 𝜋 [ 𝐴 𝜇 ( 𝒔 ℎ , 𝑎 )] . In that case, can we simply use a policy with large advantage variance under any measure? No, because when the prover 𝜇 ranks actions at a given state very differently compared to the base policy 𝜋 (e.g., if 𝐴 𝜇 and 𝐴 𝜋 are opposite), then effective rewards 𝑄 𝜇 + 𝛼𝐴 𝜋 will be less reliable due to conflicting learning signals. Thus, we want 𝔼 𝜋 [⟨ 𝐴 𝜇 , 𝐴 𝜋 ⟩] to not be too negative, so that 𝝁 and 𝝅 are reasonably aligned on their assessment of steps from 𝜋 .

In Theorem 3.1, we present our result on policy improvement where the base policy is updated with natural policy gradient (Kakade, 2001a): 𝜋𝑡 + 1 ( 𝑎 | 𝒔 ℎ ) ∝ exp ( 𝛾 · ( 𝑄 𝜋 ( 𝒔 ℎ , 𝑎 ) + 𝐴 𝜇 ( 𝒔 ℎ , 𝑎 ))) . We note that in this idealized update rule, swapping 𝑄 values (of 𝜇 or 𝜋 ) with advantages does not affect the update since we assume access to all possible actions when running the update. Nonetheless, despite this simplifying assumption, the analysis is able to uncover good choices for the prover policy 𝜇 for computing process

reward 𝐴 𝜇 , and is orthogonal to the design consideration of advantages or 𝑄 -values as process rewards that we have discussed so far in this paper. Theorem 3.1 formalizes our intuition by showing that policy improvement at iteration 𝑡 , grows as the variance in 𝐴 𝜇 values increases (higher distinguishability) and reduces when 𝐴 𝜇 and 𝐴 𝜋 become extremely misaligned. This will then allow us to discuss a special case for the case of Best-of-K policies as provers as an immediate corollary.

Theorem 3.1 (Lower bound on policy improvement; informal) . For base policy iterate 𝜋𝑡 , after one step of policy update, with learning rate 𝛾 ≪ 1 , the improvement over a distribution of states 𝜌 :

$$\mathbb { E } _ { s \sim \rho } \left [ V ^ { \pi _ { t + 1 } } ( s ) - V ^ { \pi _ { t } } ( s ) \right ] & \gtrsim \gamma \cdot \mathbb { E } _ { s \sim \rho } \mathbb { V } _ { a \sim \pi _ { t } } [ A ^ { \mu } ( s , a ) ] + \gamma \cdot \mathbb { E } _ { s \sim \rho } \mathbb { E } _ { a \sim \pi _ { t } } \left [ A ^ { \mu } ( s , a ) A ^ { \pi _ { t } } ( s , a ) \right ] \\ & \underbrace { d i s t i n g u i s h a b i l i t y f r o m \, u } _ { d i s t i n g u i s h a b i l i t y f r o m \, u } \left [ A ^ { \mu } ( s , a ) \right ] + \gamma \cdot \mathbb { E } _ { s \sim \rho } \mathbb { E } _ { a \sim \pi _ { t } } \left [ A ^ { \mu } ( s , a ) A ^ { \pi _ { t } } ( s , a ) \right ] \\$$

It may seem that the base policy 𝜋 can only learn from an improved prover 𝜇 , but our result shows that a weak prover can also amplify a stronger base policy , since a weak prover 𝜇 may have a lower average of 𝑄 𝜇 under its own measure, but still have higher variance across 𝑄 𝜇 (compared to 𝑄 𝜋 ) when evaluated under 𝜋 (see Proposition F.1 in App. F.5 for formal discussion). This tells us that rewarding progress under a prover is different from typical knowledge distillation or imitation learning algorithms (Hinton, 2015; Rusu et al., 2015) that in most cases remain upper bounded by the performance of the stronger teacher. So provers cannot be characterized purely by strength, what is a class of provers that is a reasonable starting point if we were to improve any base policy 𝜋 ?

The policy class of 'Best-of-K' (computed over base policies) contain complementary provers. A good starting point to identify good provers for a base policy 𝜋 , is the class of Best-of-K policies or BoK ( 𝜋 ) . Recall from Sec. 2 that the performance of BoK ( 𝜋 ) increases monotonically with 𝐾 . Applying Theorem 3.1 to this class, we arrive at Remark 3.1 that recommends using BoK ( 𝜋 ) with 𝐾 > 1 as a prover policy for a poor base policy 𝜋 . However, 𝐾 cannot be too large always since when 𝑄 𝜋 ( 𝒔 , 𝑎 ) ≈ 1 , increasing 𝐾 too much can hurt distinguishability of different steps at that state. In the next section, we empirically note that the policies in the class of BoK ( 𝜋 ) indeed induce different performance gains when used as prover policies, and we find Bo4 to be a good choice for test-time search over most base policies.

Remark 3.1. When 𝑄 𝜋 ( 𝒔 , 𝑎 ) = 𝑂 ( 1 / 𝐾 ) , ∀ 𝒔 , 𝑎 , using BoK ( 𝜋 ) as a prover for base 𝜋 improves distinguishability (and improvement) by Ω ( 𝐾 2 ) , and make alignment worse at most by 𝑂 ( 𝐾 ) .

## Takeaway: Formal characterization of good prover policies that improve the base policy.

Provers with advantages that can distinguish actions taken by the base policy (more strongly than the base policy itself) but are not too misaligned from the base, boost improvements on each update of the base policy. We call such policies complementary provers . BoK ( 𝜋 ) for any base policy 𝜋 for 𝐾 > 1 can provide a good starting choice of prover policies.

## 4. Results: Scaling Test-Time Compute with PAVs

Now, we study how process verifiers can scale up test-time compute. While our derivations from Sec. 3.2 were with RL, we can also use the effective reward 𝑄 𝜋 ( 𝒔 ℎ , 𝑎 ℎ ) + 𝛼 · 𝐴 𝜇 ( 𝒔 ℎ , 𝑎 ℎ ) for running beam search over intermediate steps sampled from base policy 𝜋 . To do so, we train a process advantage verifier to predict 𝐴 𝜇 , along with a process reward model 𝑄 𝜋 . PAV training is done using procedures discussed in Sec. 4.2. While the candidates of the beam are selected using a combination of both the PAV and the PRM 𝑄 𝜋 ,

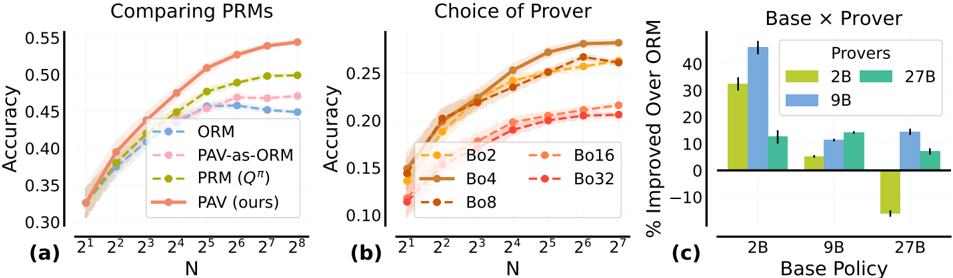

Figure 4 | For test-time search, PAVs are 8 -10% more accurate and 1 . 5 -5 × more compute efficient over ORMs: On samples from (a) Gemma-2B , (b) 9B , and (c) 27B SFT policies, we run test-time beam search with the estimate of effective reward 𝑄 𝜋 + 𝛼𝐴 𝜇 (PAV), where 𝜇 is the Bo4 ( 𝜋 ) policy. We compare beam search performance with best-of-N, re-ranking with a trained outcome verifier (ORM), or the oracle Rex (Pass @N).

<details>

<summary>Image 5 Details</summary>

### Visual Description

\n

## Chart: Accuracy vs. N for Different Models

### Overview

This image presents three line charts, each comparing the accuracy of three methods – ORM, PAV (ours), and Pass@N – across varying values of N. Each chart corresponds to a different model: Gemma-2B, Gemma-9B, and Gemma-27B. The charts visualize how accuracy changes as N (likely representing the number of samples or attempts) increases. Shaded areas around each line represent confidence intervals.

### Components/Axes

* **X-axis:** Labeled "N", with tick marks at 2<sup>1</sup>, 2<sup>2</sup>, 2<sup>3</sup>, 2<sup>4</sup>, 2<sup>5</sup>, 2<sup>6</sup>, and 2<sup>7</sup>.

* **Y-axis:** Labeled "Accuracy", with a scale ranging from approximately 0.1 to 0.65.

* **Legend:** Located at the top-left of each chart, containing the labels:

* ORM (Blue line with circle markers)

* PAV (ours) (Orange line with circle markers)

* Pass@N (Gray dashed line with diamond markers)

* **Chart Titles:**

* (a) Gemma-2B

* (b) Gemma-9B

* (c) Gemma-27B

* **Annotations:** Each chart includes arrows indicating percentage improvements of PAV (ours) over ORM.

### Detailed Analysis or Content Details

**Gemma-2B (Chart a):**

* **ORM (Blue):** Starts at approximately 0.17 accuracy at N=2<sup>1</sup>, rises to around 0.24 at N=2<sup>3</sup>, plateaus around 0.25-0.27 for N=2<sup>4</sup> through N=2<sup>7</sup>.

* **PAV (Orange):** Starts at approximately 0.21 accuracy at N=2<sup>1</sup>, steadily increases to around 0.33 at N=2<sup>7</sup>.

* **Pass@N (Gray):** Starts at approximately 0.23 accuracy at N=2<sup>1</sup>, and increases steadily to approximately 0.41 at N=2<sup>7</sup>.

* **Annotation:** An arrow indicates a 5x improvement of PAV over ORM at N=2<sup>3</sup>, and a 10% improvement at N=2<sup>7</sup>.

**Gemma-9B (Chart b):**

* **ORM (Blue):** Starts at approximately 0.32 accuracy at N=2<sup>1</sup>, rises to around 0.38 at N=2<sup>3</sup>, plateaus around 0.38-0.42 for N=2<sup>4</sup> through N=2<sup>7</sup>.

* **PAV (Orange):** Starts at approximately 0.40 accuracy at N=2<sup>1</sup>, steadily increases to around 0.56 at N=2<sup>7</sup>.

* **Pass@N (Gray):** Starts at approximately 0.42 accuracy at N=2<sup>1</sup>, and increases steadily to approximately 0.62 at N=2<sup>7</sup>.

* **Annotation:** An arrow indicates a 2x improvement of PAV over ORM at N=2<sup>3</sup>, and a 10% improvement at N=2<sup>7</sup>.

**Gemma-27B (Chart c):**

* **ORM (Blue):** Starts at approximately 0.38 accuracy at N=2<sup>1</sup>, rises to around 0.45 at N=2<sup>3</sup>, then decreases slightly to around 0.43 at N=2<sup>7</sup>.

* **PAV (Orange):** Starts at approximately 0.48 accuracy at N=2<sup>1</sup>, steadily increases to around 0.61 at N=2<sup>7</sup>.

* **Pass@N (Gray):** Starts at approximately 0.50 accuracy at N=2<sup>1</sup>, and increases steadily to approximately 0.64 at N=2<sup>7</sup>.

* **Annotation:** An arrow indicates a 1.5x improvement of PAV over ORM at N=2<sup>3</sup>, and an 8% improvement at N=2<sup>7</sup>.

### Key Observations

* **PAV consistently outperforms ORM** across all models and values of N.

* **Pass@N generally achieves the highest accuracy** across all models and values of N.

* The improvement of PAV over ORM appears to diminish as N increases, particularly for Gemma-27B.

* The accuracy of ORM for Gemma-27B decreases slightly after N=2<sup>3</sup>, while PAV and Pass@N continue to improve.

### Interpretation

The charts demonstrate the effectiveness of the "PAV (ours)" method in improving accuracy compared to "ORM" across different model sizes (Gemma-2B, Gemma-9B, and Gemma-27B). The "Pass@N" method consistently achieves the highest accuracy, suggesting it is the most robust approach. The annotations highlight the percentage improvements of PAV over ORM, providing a quantifiable measure of its benefit.

The diminishing improvement of PAV over ORM as N increases suggests that the benefits of PAV are more pronounced at lower values of N. The slight decrease in ORM accuracy for Gemma-27B at higher N values could indicate overfitting or a limitation of the ORM method with larger models.

The consistent upward trend of Pass@N suggests that increasing the number of samples (N) generally leads to higher accuracy, regardless of the model size. This is expected, as more samples provide more information for the model to learn from. The differences in accuracy between the models suggest that model size plays a significant role in performance. Gemma-27B generally exhibits higher accuracy than Gemma-9B and Gemma-2B, indicating that larger models have a greater capacity to learn and generalize.

</details>

the final candidate is selected using the outcome reward prediction from 𝑄 𝜋 itself (i.e., we repurpose the PRM representing 𝑄 𝜋 as an ORM). For clarity, we abuse notation and refer to the estimated effective reward (ORM + 𝛼 PAV) as PAV directly.

Setup . We finetune Gemma 2B, 9B, and 27B (Gemma Team et al., 2024) on MATH (Hendrycks et al., 2021) via supervised fine-tuning (SFT) to get three base policies. The set of provers consists of the three base SFT policies themselves as well as their best-of-K policies for different values of 𝐾 ∈ { 2 0 , . . . , 2 5 } . Additional details for the experiments in this section are in App. C.

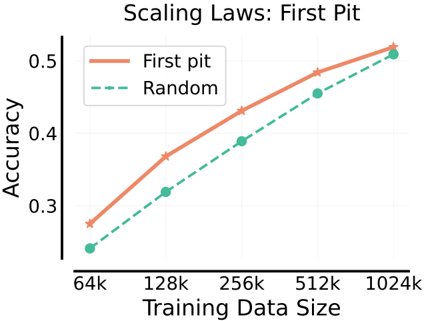

## 4.1. PAVs Scale Test-Time Compute by 5 -10 × Over ORMs

Result 1: PAVs are more compute efficient than ORMs. In Fig. 4, we plot the performance of beam search with PAVs for different sizes of the beam 𝑁 , and compare it with best-of𝑁 using ORMs, i.e. , sampling 𝑁 complete solutions from the base policy and returning the one with the highest ORM score. To compare PAVs and ORMs, we evaluate the compute efficiency of PAVs over ORMs, given by the ratio of total compute needed by PAVs to obtain the same performance as running best-of-128 with ORM. Even when accounting for the fact that running beam search with PAVs does require additional compute per solution trace (since each element in the beam samples 𝐶 = 3 next steps, before scoring and pruning the beam), PAVs are able to scale the compute efficiency by 10 × over ORMs for Gemma-2B, 9B base models, and by 5 × for Gemma-27B model. We use BoK ( 𝜋 ) with 𝐾 = 4 as the prover policy for all base policies 𝜋 .

We also compare performance with beam search using process verifiers that only predict 𝑄 𝜋 , and best-of-N where the ORM is replaced with PAV (PAV-as-ORM). At 𝑁 = 128, similar to Luo et al. (2024), we note a similar gain of 4% for 'PAV-as-ORM' Fig. 5(a) over only ORMs, for base Gemma-9B 𝜋 . When comparing beam search with 𝑄 𝜋 (Snell et al., 2024), we find that PAVs scale compute efficiency by 8 × . Evidently, advantages from the prover in the effective reward positively impact the beam search. Why does 𝐴 𝜇 help, and for what choice of the prover 𝜇 ?

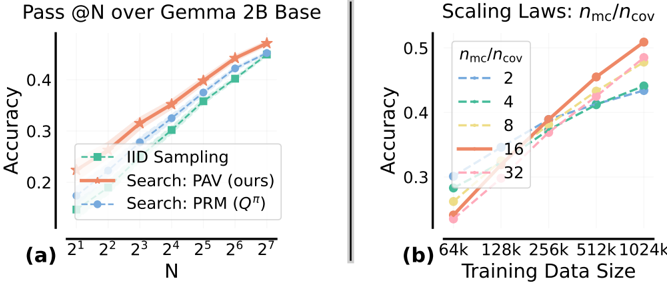

Result 2: Beam search with too weak/strong provers is sub-optimal. In Fig. 5(b), for the setting when the base policy 𝜋 is a Gemma-2B SFT model, we compare beam search with PAVs where the provers are given by BoK ( 𝜋 ) , for different values of 𝐾 . Recall that as 𝐾 increases, BoK ( 𝜋 ) becomes stronger. Corroborating our analysis in Sec. 3.4, our results show that neither too weak (Bo2) or too strong (Bo32) provers perform best. Instead, across all values of 𝑁 , we find Bo4 to be dominant. The advantage values

Figure 5 | Comparing PAVs with search baselines and ablating over the prover policy: (a): We compare beam search over Gemma 9B SFT, using either effective reward (PAV), or 𝑄 𝜋 (Snell et al., 2024), and report best-of-N performance where the re-ranker is either the ORM or PAV-as-ORM. (b): For the base Gemma 2B SFT policy, we run beam search with the effective reward where the prover is BoK ( 𝜋 ) for different values of 𝐾 . In both ( 𝑎 ) , ( 𝑏 ) the x-axis scales the size of the beam or 𝑁 for best-of-N. (c): For each base policy in the set: Gemma 2B, 9B, 27B policies, we run beam search with PAVs (beam size of 16) where the prover is another policy from the same set.

<details>

<summary>Image 6 Details</summary>

### Visual Description

\n

## Charts: Performance Comparison of Proof Reasoning Methods (PRMs)

</details>

𝐴 𝜇 ≈ 0 on all steps for very large 𝐾 , since 𝑄 𝜇 ( 𝒔 ℎ , 𝑎 ℎ ) = 1 - ( 1 -𝑄 𝜋 ( 𝒔 ℎ , 𝑎 ℎ )) 𝐾 → 1 on all steps, as we increase 𝐾 . Hence, in order to succeed we need an intermediate-level prover policy .

We make similar observations in Figure 5(c) where we use the three base policies (Gemma 2B/9B/27B) as provers for training PAVs. In this scenario, we evaluate beam search with PAVs at 𝑁 = 16 on top of different base policies. We find that for the 2B and 9B base models, the 9B and 27B provers are most effective respectively , whereas for the 27B model, surprisingly a weaker 9B policy is more effective than the stronger 27B model. The weaker model presumably offers a complementary signal that distinguishes between different actions taken by 27B, aligning with our theoretical observations in Sec. 3.4.

Result 3: Advantages from the prover policy enable exploration. As discussed in Sec. 3.1, advantage 𝐴 𝜇 measures the progress made by an action agnostic of the value of the previous state, where as 𝑄 𝜋 measures the promise of a particular state. Given a finite capacity beam, our effective reward (Eq. 5), which linearly combines 𝑄 𝜋 and 𝐴 𝜇 induces a better tradeoff between exploring new prefixes (states) from where progress can be made and exploiting currently known prefixes with high Q-values. Exploration at

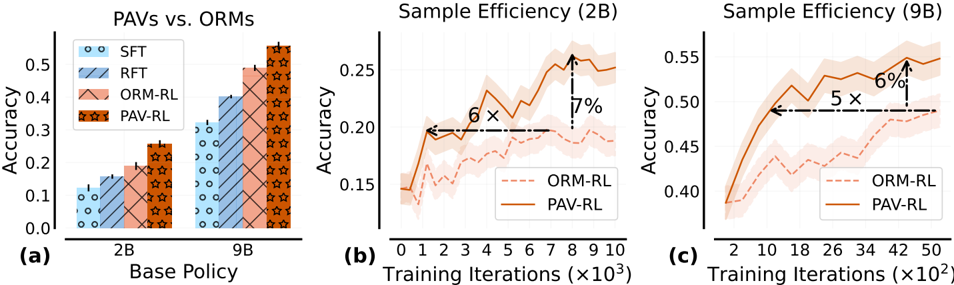

Figure 6 | (a): Beam search with PAVs improves exploration efficiency (higher Pass@N), over typical PRMs. (b): Performance of beam search over Gemma 9B SFT for PAVs trained on datasets with different 𝑛 mc / 𝑛 cov .

<details>

<summary>Image 7 Details</summary>

### Visual Description

\n

## Charts: Pass@N over Gemma 2B Base & Scaling Laws

### Overview

The image presents two charts comparing the performance of different sampling methods (IID, PAV, PRM) on the Gemma 2B Base model, measured by Pass@N accuracy. The first chart (a) shows accuracy versus the number of samples (N). The second chart (b) illustrates the impact of training data size on accuracy, with varying ratios of model capacity to coverage (ηmc/ηcov).

### Components/Axes

**Chart (a):**

* **Title:** Pass@N over Gemma 2B Base

* **X-axis:** N (labeled as 2<sup>1</sup>, 2<sup>2</sup>, 2<sup>3</sup>, 2<sup>4</sup>, 2<sup>5</sup>, 2<sup>6</sup>, 2<sup>7</sup>)

* **Y-axis:** Accuracy (ranging from approximately 0.15 to 0.5)

* **Legend:**

* IID Sampling (Green dashed line)

* Search: PAV (ours) (Orange solid line)

* Search: PRM (Q<sup>π</sup>) (Blue dashed line)

**Chart (b):**

* **Title:** Scaling Laws: ηmc/ηcov

* **X-axis:** Training Data Size (labeled as 64k, 128k, 256k, 512k, 1024k)

* **Y-axis:** Accuracy (ranging from approximately 0.3 to 0.5)

* **Legend:** (Color-coded lines representing different ηmc/ηcov ratios)

* 2 (Light Blue dashed line)

* 4 (Pale Green dashed line)

* 8 (Pink solid line)

* 16 (Orange solid line)

* 32 (Red solid line)

### Detailed Analysis or Content Details

**Chart (a):**

* **IID Sampling (Green):** Starts at approximately 0.17 accuracy at N=2<sup>1</sup> and increases steadily to approximately 0.47 accuracy at N=2<sup>7</sup>. The line is relatively straight, indicating a consistent increase in accuracy with increasing N.

* **Search: PAV (Orange):** Begins at approximately 0.18 accuracy at N=2<sup>1</sup> and rises more steeply than IID, reaching approximately 0.52 accuracy at N=2<sup>7</sup>.

* **Search: PRM (Blue):** Starts at approximately 0.19 accuracy at N=2<sup>1</sup> and increases at a rate between IID and PAV, reaching approximately 0.49 accuracy at N=2<sup>7</sup>.

**Chart (b):**

* **ηmc/ηcov = 2 (Light Blue):** Starts at approximately 0.32 accuracy at 64k and increases to approximately 0.42 accuracy at 1024k.

* **ηmc/ηcov = 4 (Pale Green):** Starts at approximately 0.34 accuracy at 64k and increases to approximately 0.45 accuracy at 1024k.

* **ηmc/ηcov = 8 (Pink):** Starts at approximately 0.36 accuracy at 64k and increases to approximately 0.47 accuracy at 1024k.

* **ηmc/ηcov = 16 (Orange):** Starts at approximately 0.38 accuracy at 64k and increases to approximately 0.49 accuracy at 1024k.

* **ηmc/ηcov = 32 (Red):** Starts at approximately 0.40 accuracy at 64k and increases to approximately 0.51 accuracy at 1024k.

### Key Observations

* In Chart (a), PAV consistently outperforms both IID and PRM across all values of N. PRM performs better than IID.

* In Chart (b), accuracy generally increases with training data size for all ηmc/ηcov ratios. Higher ηmc/ηcov ratios lead to higher accuracy, with the most significant gains observed at lower training data sizes.

* The performance gap between different ηmc/ηcov ratios appears to narrow as the training data size increases.

### Interpretation

The data suggests that the PAV search method is the most effective for improving Pass@N accuracy on the Gemma 2B Base model, particularly as the number of samples (N) increases. The scaling laws (Chart b) demonstrate the importance of balancing model capacity (ηmc) with coverage (ηcov) during training. Increasing the training data size consistently improves accuracy, but the optimal ratio between model capacity and coverage depends on the available data. The diminishing returns observed at larger training data sizes suggest that there may be a point of saturation where further increases in data yield only marginal improvements. The consistent outperformance of PAV indicates that its search strategy is more efficient at leveraging the available data to improve model performance. The charts provide empirical evidence for the benefits of both improved sampling techniques and careful consideration of scaling laws in language model training.

</details>

initial steps is critical to ensure that the beam at later steps covers diverse partial rollouts each with a high likelihood of producing the correct answer. Thus over-committing to the beam with actions from the same state, regardless of the progress made by each can prove to be sub-optimal over a selection strategy that balances rewarding previous actions 𝐴 𝜇 and current states 𝑄 𝜋 . Indeed, we observe in Fig. 6(a), beam search with PAV enhances pass@N performance vs. beam search with 𝑄 𝜋 and i.i.d. sampling.

## Takeaways: Scaling test-time compute with process advantage verifiers.

- Beam search with PAVs boosts accuracy by >8% & compute efficiency by 1.5-5x over ORMs.

- Utilizing Best-of-K policies (corresponding to the base policy) as provers induce better exploration to maximize outcome reward. Optimal provers for a base policy appear at 𝐾 > 1.

## 4.2. How to Collect Data to Train PAVs?: PAV Training Data Scaling Laws

We now describe the procedure for training outcome verifiers and PAVs. We can learn to predict 𝑄 𝜋 for a policy 𝜋 (similar for 𝑄 𝜇 ) by finetuning LLMs with a cross-entropy loss on the following data with triplets ( 𝒔 , 𝑎, 𝑄 𝜋 mc ( 𝒔 , 𝑎 )) . To collect this data, we first sample 𝑛 cov 'seed' rollouts from the base or prover policy respectively for ORM and PAVs, to promote coverage over prefixes and steps. Then we sample 𝑛 mc additional rollouts, conditioned on each prefix in the seed rollout to compute the Monte-Carlo estimate of 𝑄 𝜋 at each prefix. In Fig. 6(b) we plot the beam search performance of PAVs trained with different ratios of 𝑛 mc / 𝑛 cov , as we scale the total dataset size. Here, the beam size is fixed to 128 and the base policy is the Gemma 9B SFT policy and prover is 𝐵𝑜 4 policy. We find that under low sampling budgets, optimizing for coverage ( 𝑛 cov > 𝑛 mc ) is better for performance, and when budget is higher, reducing label noise in 𝑄 𝜋 mc by setting 𝑛 mc > 𝑛 cov gets us more improvements. In addition, we also spend some initial sampling budget is spent to identify 'high value' states where 𝑄 𝜋 is larger than a threshold, and identify the first step with low 𝑄 𝜋 on an incorrect partial rollout from this state. We found this strategy to scale better with dataset size, as we discuss in App. D.

## 5. Results: Scaling Dense-Reward RL with PAVs

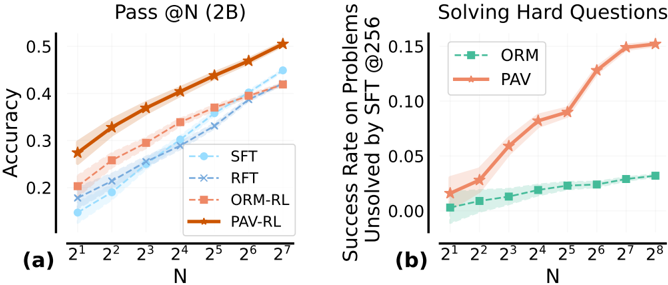

We can also use PAVs to train policies via online reinforcement learning (RL), by using the effective reward 𝑄 𝜋 + 𝛼𝐴 𝜇 as dense, per-step rewards. We compare the sample efficiency of PAV-RL (i.e., ℓ PAV -RL in Eq. 4) with standard ORM-RL (i.e., ℓ ORM -RL in Eq. 3) on Gemma 2B and Gemma 9B SFT models, which are further optimized via rejection finetuning (RFT) (Yuan et al., 2023), before using them to initialize RL. To our knowledge, no prior work has successfully demonstrated the use of dense per-step feedback with a process reward model for RL, and we present the first significant set of results establishing the efficacy of this approach. We show that PAV-RL is much more sample-efficient, and enjoys a higher ceiling on the performance of any test-time re-ranker. Additional details for the experiments are in App. E.

Result 1: PAV-RL is > 7% better than ORM-RL in test accuracy, and 6 × sample efficient. In Fig. 7(a), we report the test accuracies of Gemma 2B and 9B models trained with SFT, RFT, ORM-RL and PAV-RL. PAV-RL improves the RFT policy by 11% for 2B, and 15% for 9B, with > 7% gain over ORM-RL in both cases. Not only do the effective rewards from PAV improve the raw accuracy after RL, this higher accuracy is attained 6 × faster (see Fig. 7(b)) for the 2B run and similarly for the 9B RL run (Fig. 7(c)). For both 2B and 9B, RL runs, we experiment with two options for the prover policy: (i) 2B SFT policy; and (ii) 9B SFT policy. While both of these provers rapidly become weaker than the base policy within a few gradient steps of RL, a fixed PAV trained with each of these provers is able to still sustain performance gains in RL. More interestingly, we find that the 2B SFT policy serves as the best choice of the prover for both 2B and

Figure 7 | PAVs as dense rewards in RL improve sample efficiency compared to ORMs, along with gains on raw accuracy: (a) We report the performance of a base policy trained using RL with effective rewards (PAV-RL), or only outcome rewards (ORM-RL), and baselines SFT, RFT. (b,c): Across training iterations, we report the test performance of policies trained with PAV-RL and ORM-RL, on Gemma 2B and 9B SFT base policies.

<details>

<summary>Image 8 Details</summary>

### Visual Description

## Charts: PAVs vs. ORMs & Sample Efficiency

### Overview

The image presents three charts comparing the performance of different reinforcement learning (RL) policies. Chart (a) compares the final accuracy of several policies (SFT, RFT, ORM-RL, and PAV-RL) across two base policy sizes (2B and 9B). Charts (b) and (c) show the sample efficiency, i.e., accuracy as a function of training iterations, for ORM-RL and PAV-RL with 2B and 9B base policies, respectively. The charts aim to demonstrate the benefits of PAV-RL in terms of both final accuracy and sample efficiency.

### Components/Axes

* **Chart (a): PAVs vs. ORMs**

* X-axis: Base Policy (2B, 9B)

* Y-axis: Accuracy (0.0 to 0.45)

* Legend:

* SFT (Supervised Fine-Tuning) - Light Blue circles

* RFT (Reinforcement Fine-Tuning) - Light Blue diagonal stripes

* ORM-RL (Offline RL) - Orange

* PAV-RL (Policy Alignment via Value) - Red stars

* **Chart (b): Sample Efficiency (2B)**

* X-axis: Training Iterations (x10^3) (0 to 9)

* Y-axis: Accuracy (0.15 to 0.3)

* Legend:

* ORM-RL - Dashed Orange line

* PAV-RL - Solid Orange line

* **Chart (c): Sample Efficiency (9B)**

* X-axis: Training Iterations (x10^2) (0 to 50)

* Y-axis: Accuracy (0.4 to 0.55)

* Legend:

* ORM-RL - Dashed Orange line

* PAV-RL - Solid Orange line

### Detailed Analysis or Content Details

* **Chart (a): PAVs vs. ORMs**

* SFT (2B): Accuracy ≈ 0.08

* SFT (9B): Accuracy ≈ 0.15

* RFT (2B): Accuracy ≈ 0.15

* RFT (9B): Accuracy ≈ 0.25

* ORM-RL (2B): Accuracy ≈ 0.25

* ORM-RL (9B): Accuracy ≈ 0.40

* PAV-RL (2B): Accuracy ≈ 0.30

* PAV-RL (9B): Accuracy ≈ 0.45

* **Chart (b): Sample Efficiency (2B)**

* ORM-RL: Starts at ≈ 0.17, increases to ≈ 0.21 by 4x10^3 iterations, then fluctuates around ≈ 0.20.

* PAV-RL: Starts at ≈ 0.16, increases rapidly to ≈ 0.26 by 8x10^3 iterations, then decreases slightly to ≈ 0.24 by 9x10^3 iterations.

* The chart indicates that PAV-RL achieves a higher accuracy than ORM-RL with 2B base policy. The text "6 x" and "7%" are placed on the chart, indicating that PAV-RL achieves 6x the accuracy and 7% improvement over ORM-RL.

* **Chart (c): Sample Efficiency (9B)**

* ORM-RL: Starts at ≈ 0.42, increases to ≈ 0.48 by 20x10^2 iterations, then fluctuates around ≈ 0.50.

* PAV-RL: Starts at ≈ 0.40, increases rapidly to ≈ 0.52 by 30x10^2 iterations, then decreases slightly to ≈ 0.48 by 50x10^2 iterations.

* The chart indicates that PAV-RL achieves a higher accuracy than ORM-RL with 9B base policy. The text "5 x" and "6%" are placed on the chart, indicating that PAV-RL achieves 5x the accuracy and 6% improvement over ORM-RL.

### Key Observations

* PAV-RL consistently outperforms ORM-RL in both final accuracy (Chart a) and sample efficiency (Charts b and c).

* Increasing the base policy size from 2B to 9B significantly improves the performance of all policies, but the relative advantage of PAV-RL remains.

* PAV-RL exhibits faster initial learning compared to ORM-RL, as evidenced by the steeper slope of the PAV-RL curves in Charts b and c.

* The accuracy of both policies fluctuates after reaching a peak, suggesting potential instability or overfitting.

### Interpretation

The data strongly suggests that PAV-RL is a more effective reinforcement learning policy than ORM-RL, particularly when using larger base policies. The higher final accuracy and faster sample efficiency of PAV-RL indicate that it can learn more effectively from limited data. The annotations "6x" and "7%" (for 2B) and "5x" and "6%" (for 9B) quantify the improvement of PAV-RL over ORM-RL, highlighting its practical significance. The fluctuations in accuracy after the initial learning phase suggest that further research is needed to improve the stability and generalization ability of both policies. The comparison of 2B and 9B base policies demonstrates the importance of model size in reinforcement learning, with larger models generally achieving better performance. The charts provide compelling evidence for the benefits of policy alignment via value (PAV-RL) as a method for improving reinforcement learning performance.

</details>

9B policies. This observation that a weak prover can still improve the base policy corroborates our results in the didactic setup and our analysis in Sec. 3.4. While we were not able to run experiments where the prover policy is dynamically updated on the fly, we believe that updating the prover through the process of RL training should only amplify these benefits.

Result 2: PAV-RL achieves higher performance ceiling on test-time re-ranking. In Fig. 8(a), for Gemma 2B, we plot the Pass @N performance for each method, and find (i) Pass @N is higher ( > 7%) for PAV-RL, compared to ORM-RL, for any 𝑁 ≤ 128; and (ii) the rate at which Pass @N improves for PAV-RL is higher than ORM-RL. Both trends are consistent with our observations on the didactic example in Sec. 3.3. Notably, for 𝑁 ≥ 64, ORM-RL is worse than the SFT policy, perhaps due to lower entropy over the distribution at the next step resulting in non-diverse candidates. Why does PAV-RL produce diverse candidates, and does not suffer from the low diversity problem in ORM-RL? We answer this with a key insight on how the primary benefit of PAVs is to promote efficient exploration.

Figure 8 | (a): For the policies trained in (a) we report the best-of-N performance where the oracle reward Rex is used to rank 𝑁 candidates sampled from the base policy (Pass @N). (b): Amongst hard problems that remain unsolved by Best-of-256 over the base SFT policy, we check how many are solved by Best-of-N over PAV-RL or ORM-RL. PAV-RL is able to solve a substantially more problems than what ORM-RL was able to solve.

<details>

<summary>Image 9 Details</summary>

### Visual Description

## Charts: Model Performance Comparison

### Overview

The image presents two line charts comparing the performance of different models (SFT, RFT, ORM-RL, PAV-RL, ORM, PAV) across varying values of 'N'. The left chart (a) displays "Pass @N (2B)" which appears to be an accuracy metric, while the right chart (b) shows "Success Rate on Problems Unsolved by SFT @256". Both charts use a logarithmic scale for the x-axis (N).

### Components/Axes

**Chart (a): Pass @N (2B)**

* **X-axis:** N, ranging from 2<sup>1</sup> to 2<sup>7</sup> (approximately 2 to 128).

* **Y-axis:** Accuracy, ranging from 0.15 to 0.5.

* **Data Series:**

* SFT (Light Blue)

* RFT (Pale Blue)

* ORM-RL (Light Orange)

* PAV-RL (Dark Orange)

* **Legend:** Located in the top-left corner.

**Chart (b): Solving Hard Questions**

* **X-axis:** N, ranging from 2<sup>1</sup> to 2<sup>8</sup> (approximately 2 to 256).

* **Y-axis:** Success Rate on Problems Unsolved by SFT @256, ranging from 0 to 0.15.

* **Data Series:**

* ORM (Light Green)

* PAV (Dark Orange)

* **Legend:** Located in the top-left corner.

### Detailed Analysis or Content Details

**Chart (a): Pass @N (2B)**

* **SFT (Light Blue):** The line slopes upward, starting at approximately 0.17 at N=2<sup>1</sup> and reaching approximately 0.35 at N=2<sup>7</sup>.

* **RFT (Pale Blue):** The line also slopes upward, starting at approximately 0.18 at N=2<sup>1</sup> and reaching approximately 0.42 at N=2<sup>7</sup>.

* **ORM-RL (Light Orange):** The line slopes upward more steeply than SFT and RFT, starting at approximately 0.22 at N=2<sup>1</sup> and reaching approximately 0.45 at N=2<sup>7</sup>.

* **PAV-RL (Dark Orange):** The line has the steepest upward slope, starting at approximately 0.25 at N=2<sup>1</sup> and reaching approximately 0.5 at N=2<sup>7</sup>.

**Chart (b): Solving Hard Questions**

* **ORM (Light Green):** The line is relatively flat, starting at approximately 0.02 at N=2<sup>1</sup> and reaching approximately 0.03 at N=2<sup>8</sup>.

* **PAV (Dark Orange):** The line slopes upward, starting at approximately 0.02 at N=2<sup>1</sup> and reaching approximately 0.15 at N=2<sup>8</sup>. The increase is more pronounced from N=2<sup>5</sup> onwards.

### Key Observations

* In Chart (a), PAV-RL consistently outperforms all other models across all values of N. ORM-RL performs better than RFT and SFT.

* In Chart (b), PAV demonstrates a significant increase in success rate as N increases, while ORM remains relatively stable.

* The shaded areas around the lines in both charts represent confidence intervals or standard deviations, indicating the variability in the results.

### Interpretation

The data suggests that models utilizing Reinforcement Learning (RL) – specifically ORM-RL and PAV-RL – demonstrate superior performance in the "Pass @N (2B)" task (Chart a) compared to models trained with Supervised Fine-Tuning (SFT) and Reinforcement Learning from Human Feedback (RFT). PAV-RL consistently achieves the highest accuracy.

Chart (b) highlights the ability of the PAV model to solve problems that are initially unsolved by the SFT model, and this ability increases with larger values of N. This suggests that PAV benefits from increased computational resources or a larger search space. The relatively flat performance of ORM indicates it does not scale as effectively as PAV in solving these "hard" problems.

The logarithmic scale of the x-axis (N) is crucial. It indicates that the performance gains are more significant at lower values of N, and the rate of improvement diminishes as N increases. This could be due to diminishing returns or the inherent difficulty of the task. The confidence intervals suggest that the observed differences in performance are statistically significant, but there is still some degree of uncertainty. The two charts together suggest a trade-off between overall accuracy (Chart a) and the ability to solve particularly challenging problems (Chart b).

</details>