# Articulated Animal AI: An Environment for Animal-like Cognition in a Limbed Agent

**Authors**:

- Jeremy Lucas (McGill University)

- Isabeau Prémont-Schwarz

Abstract

This paper presents the Articulated Animal AI Environment for Animal Cognition, an enhanced version of the previous AnimalAI Environment. Key improvements include the addition of agent limbs, enabling more complex behaviors and interactions with the environment that closely resemble real animal movements. The testbench features an integrated curriculum training sequence and evaluation tools, eliminating the need for users to develop their own training programs. Additionally, the tests and training procedures are randomized, which will improve the agent’s generalization capabilities. These advancements significantly expand upon the original AnimalAI framework and will be used to evaluate agents on various aspects of animal cognition.

1 Introduction

The field of artificial intelligence has seen the development of numerous frameworks designed to test and evaluate models. Each new framework or dataset has often spurred on signmificant progress as different research teams compete to achieve the state the of the art on that specific benchmark. This paper specifically addresses AI frameworks that focus on tasks modeled after animal cognition. Despite significant advancements, many animals still outperform even the most advanced AI systems in various cognitive tasks. A notable framework within this domain is the AnimalAI Environment, which features a simplistic setup with a single spherical agent capable of only basic movements, such as moving forward or backward, and rotating left or right. This environment offers a set of simple building blocks—such as blocks, half-cylinders, spherical pieces of food, ramps, and walls—within a built-in arena, allowing researchers to create their own cognitive tasks for the agent.

However, the AnimalAI Environment presents several limitations. The broad scope of the environment adds complexity to the task of training AI models, as researchers must first design effective tests. Additionally, the environment lacks generalization capabilities; object positions and rotations must be manually configured, leading to the potential for overfitting due to the absence of randomization in tests. Moreover, the agent itself is rudimentary and unrealistic, restricting the exploration of cognitive abilities related to movement. The Articulated Animal AI Environment has been developed to address these shortcomings, offering a more sophisticated and versatile platform for evaluating AI in the context of animal cognition tasks.

2 The Influence from Animal AI

The AnimalAI test environment serves as a versatile platform that enables scientists and researchers to design cognitive assessments for a simple agent using modular building blocks. Upon the publication of their paper, the AnimalAI team introduced a competition aimed at developing a highly generalizable agent capable of performing across a diverse array of unknown tests. This competition presented researchers with the challenge of generating a sufficient number of tests to train such an agent. The tests devised for the competition by the AnimalAI team were grounded in ten distinct categories of animal cognition. The results of the competition were disappointing, with even the best AI models performing significantly worse than a human child on every task apart from food retrieval, internal modeling, and numerosity. The meaning and definitions of these categories are described in the next section. Although the competition outcomes were underwhelming, the challenge itself was noteworthy. [1] [2]

3 Animal AI’s Cognition Benchmarks

We utilized the cognitive categories employed in the AnimalAI competition to develop our benchmark platform. These ten categories are as follows:

1. Basic food retrieval: This category tests the agent’s ability to reliably retrieve food in the presence of only food items. It is necessary to make sure agents have the same motivation as animals for subsequent tests.

1. Preferences: This category tests an agent’s ability to choose the most rewarding course of action. Tests are designed to be unambiguous as to the correct course of action based on the rewards in our environment.

1. Obstacles: This category contains objects that might impede the agent’s navigation. To succeed, the agent will have to explore its environment, a key component of animal behavior.

1. Avoidance: This category identifies an agent’s ability to detect and avoid negative stimuli, which is critical for biological organisms and important for subsequent tests.

1. Spatial Reasoning: This category tests an agent’s ability to understand the spatial affordances of its environment, including knowledge of simple physics and memory of previously visited locations.

1. Robustness: This category includes variations of the environment that look superficially different, but for which affordances and solutions to problems remain the same.

1. Internal Models: In these tests, the lights may turn off, and the agent must remember the layout of the environment to navigate in the dark.

1. Object Permanence and Working Memory: This category checks whether the agent understands that objects persist even when they are out of sight, as they do in the real world and in our environment.

1. Numerosity: This category tests the agent’s to judge which area has the most food, as it only gets to choose one area.

1. Causal Reasoning and Object Affordances: This category tests the agent’s ability to use objects to reach it’s goal.

4 Additional Categories

In addition to the 10 categories listed above, we introduced two additional categories, L0 - Initial Food Contact and L11 - Body Awareness, to address specific challenges posed by the multi-limbed agent within our environment. L0 was created to provide a simpler framework for the agent to learn to move toward food, recognizing the necessity for a foundational category focused on basic movement. L11 was developed in response to the absence of limb-related cognitive tests in the original AnimalAI competition, which featured a spherical, limbless agent. This new category was essential for evaluating the agent’s understanding and coordination of its limbs.

5 Environment Design

5.1 The Agent

<details>

<summary>extracted/5920856/Research/agent.png Details</summary>

### Visual Description

## Diagram: 3D Cross

### Overview

The image is a 3D rendering of a cross shape. It consists of a red sphere at the center and two white cylinders intersecting at the sphere's center, forming a cross. The background is a dark blue grid.

### Components

- **Red Sphere:** Located at the center of the image, where the two cylinders intersect.

- **White Cylinders:** Two cylinders intersecting at the center, forming a cross shape. They appear to have rounded ends.

- **Background:** A dark blue grid pattern.

### Detailed Analysis

The red sphere is positioned at the exact center of the image. The two white cylinders are oriented diagonally, creating a symmetrical cross. The cylinders appear to have a slight glow or soft shading, giving them a rounded appearance. The grid background provides a sense of depth and spatial orientation.

### Key Observations

- The image is symmetrical.

- The color contrast between the red sphere and the white cylinders is significant.

- The grid background provides a sense of perspective.

### Interpretation

The image is a simple 3D representation of a cross. The use of basic geometric shapes (sphere and cylinders) and contrasting colors makes it visually clear and easy to understand. The grid background suggests a 3D space or environment. The image could be used as a symbol or icon, or as a visual element in a larger design. There is no data or information being presented, it is purely a visual representation.

</details>

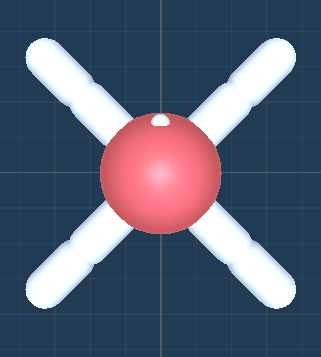

Figure 1: The Top Down view of the agent.

Description:

The agent is a soft body constructed using configurable joints. It has four thighs and four legs, totaling eight joints. Each joint can rotate in the x direction (up and down) between -90 and 90 degrees and in the z direction (sideways) between -45 and 45 degrees. The head has a mass of 0.5, while each thigh and leg have a mass of 1. The lighter weight of the head helps the agent walk more effectively, preventing it from dragging its head on the ground.

5.2 Observational Parameters

The vision space for the agent offers a choice between customizing a camera or using raycasts. By default, the agent’s joint rotations are normalized within the range of [-1, 1] and used as observations.

5.2.1 Camera

The camera extends from the agent’s eye (indicated by a white dot) and can be set to grayscale. It supports resolutions ranging from 8x8 to 512x512 pixels.

5.2.2 Raycast

Raycasts also emanate from the agent’s eye. The viewing angle and number of rays can be customized. The viewing angle ranges from 5 to 180 degrees, encompassing both the left and right sides of the agent. A viewing angle of 180 degrees covers the full 360-degree surroundings of the agent, while a 90-degree viewing angle covers the front half. The number of rays, ranging from 1 to 20, determines the number of rays emitted in each direction. There is always a ray pointing directly forward.

5.3 Action Parameters

5.3.1 Joint Rotation

Selecting joint rotation enables the agent to move its joints by setting a target rotation property. The joints are propelled to reach the target rotation through motor control.

5.3.2 Joint Velocity

Selecting joint velocity allows the agent to move its joints by setting a target angular velocity property. The joints are propelled by adding the specified angular velocity.

5.4 Rewards

There are four distinct types of rewards in these environments:

- Food Pieces: Green food pieces provide rewards equal to their scale or size. Yellow food pieces provide rewards equal to half of their scale or size.

- Wall Collisions: Agents receive a -1 reward when they collide with walls.

- Training Facilitation: Agents receive two rewards each timestep, both equal to -(0.5)/maxsteps. The first reward is received only if the head is on the ground, encouraging the agent to walk rather than drag its head. The second reward is given every timestep, incentivizing the agent to explore and make decisions.

5.5 Other Parameters

Maxsteps: This parameter defines the number of steps the agent has per episode. It is recommended to set this value between 1000 and 5000 steps.

5.6 The Environment

5.6.1 Parameters

Difficulty: The difficulty parameter ranges from 0 to 10, increasing the challenge of the current level when generated. The specific aspects that become more difficult vary for each level and are described in the test bench.

Seed: The seed parameter allows for the saving of certain configurations for comparative testing. Setting a unique seed ensures that the level will generate and behave consistently every time.

5.7 The Test Bench

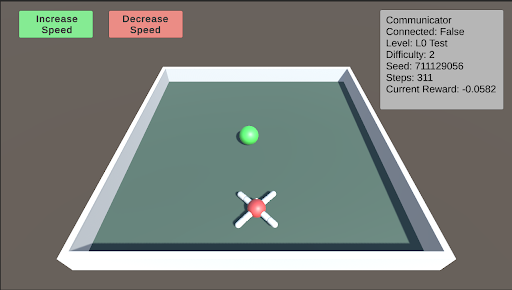

L0: L0 is the initial test designed to help the agent begin learning to approach and consume food. In this stage, the food is placed directly in front of the agent. As the difficulty level increases, the food is positioned farther away, becomes smaller, and varies in its horizontal placement relative to the agent.

<details>

<summary>extracted/5920856/Research/L0.png Details</summary>

### Visual Description

## Game Simulation: Reinforcement Learning Environment

### Overview

The image depicts a simulation environment, likely for reinforcement learning. It shows a rectangular arena with a green sphere and a red "X" shaped object inside. The simulation includes controls to adjust speed and displays various parameters such as connection status, level, difficulty, seed, steps, and current reward.

### Components/Axes

* **Arena:** A rectangular area enclosed by white walls, with a light green floor.

* **Green Sphere:** Located near the top-center of the arena.

* **Red "X" Object:** Located near the bottom-center of the arena, with white cylindrical extensions.

* **Increase Speed Button:** A green button located at the top-left corner.

* **Decrease Speed Button:** A red button located at the top-center.

* **Communicator Panel:** A grey panel located at the top-right corner, displaying the following information:

* **Communicator**

* **Connected:** False

* **Level:** L0 Test

* **Difficulty:** 2

* **Seed:** 711129056

* **Steps:** 311

* **Current Reward:** -0.0582

### Detailed Analysis or Content Details

The simulation environment appears to be a simple game or task where the green sphere and the red "X" object interact. The "Increase Speed" and "Decrease Speed" buttons suggest that the simulation's speed can be adjusted. The "Communicator" panel provides information about the simulation's state, including whether it's connected, the current level, difficulty, seed, number of steps taken, and the current reward.

* **Increase Speed Button:** Green background with white text "Increase Speed".

* **Decrease Speed Button:** Red background with white text "Decrease Speed".

* **Communicator Panel:**

* **Connected:** False (Indicates the simulation is not connected to an external communicator.)

* **Level:** L0 Test (Indicates the simulation is at level L0 Test.)

* **Difficulty:** 2 (Indicates the difficulty level is set to 2.)

* **Seed:** 711129056 (A numerical seed for the random number generator, ensuring reproducibility.)

* **Steps:** 311 (The number of steps taken in the simulation.)

* **Current Reward:** -0.0582 (The current reward value, which is negative.)

### Key Observations

* The simulation is not connected to an external communicator.

* The current reward is negative, suggesting that the agent is not performing optimally or is being penalized.

* The seed value is a large number, indicating a specific configuration of the simulation.

### Interpretation

The simulation environment is likely designed for training a reinforcement learning agent. The agent's goal might be to control the red "X" object to interact with the green sphere in a way that maximizes the reward. The negative reward suggests that the agent has not yet learned the optimal policy. The "Increase Speed" and "Decrease Speed" buttons could be used to adjust the simulation's pace for training purposes. The seed value ensures that the simulation can be reset to the same initial state for consistent training. The number of steps indicates the progress of the simulation.

</details>

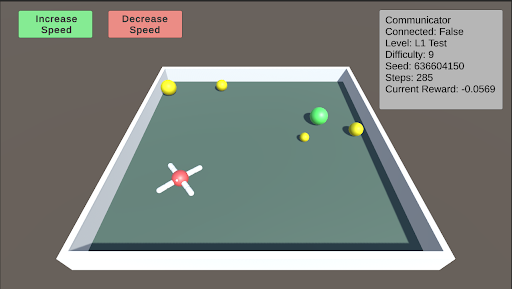

L1: Basic Food Retrieval L1 serves as a benchmark to test the agent’s understanding of the food. The agent must recognize that yellow food pieces do not end the episode, while green food pieces do. As the difficulty increases, more pieces of food are distributed throughout the arena, ranging from 1 to 5 pieces. At lower difficulty levels, the food is mostly stationary, but it begins to move as the difficulty increases.

<details>

<summary>extracted/5920856/Research/L1.png Details</summary>

### Visual Description

## Game Simulation: Environment and Agent

### Overview

The image depicts a simulated environment, likely a game or reinforcement learning scenario. It shows a rectangular arena with an agent (a red and white object) and several colored spheres. The simulation's status is displayed in a text box in the top-right corner, including connection status, level, difficulty, seed, steps, and current reward. There are also "Increase Speed" and "Decrease Speed" buttons at the top-left.

### Components/Axes

* **Environment:** A rectangular arena with a light gray floor and white walls.

* **Agent:** A red and white object with four extensions, positioned near the bottom-left corner of the arena.

* **Spheres:** Several spheres of varying sizes and colors (yellow and green) are scattered within the arena.

* **UI Elements:**

* "Increase Speed" button (green background) at the top-left.

* "Decrease Speed" button (red background) at the top-left.

* Status box (top-right) containing:

* "Communicator"

* "Connected: False"

* "Level: L1 Test"

* "Difficulty: 9"

* "Seed: 636604150"

* "Steps: 285"

* "Current Reward: -0.0569"

### Detailed Analysis

* **Agent:** The agent appears to be a central red sphere with four white extensions radiating outwards.

* **Spheres:** There are at least five spheres visible:

* Three yellow spheres of varying sizes.

* Two green spheres of varying sizes.

* **Status Box:** The status box provides information about the simulation's current state. The communicator is not connected ("Connected: False"). The simulation is at level "L1 Test" with a difficulty of 9. The random seed is 636604150. The simulation has run for 285 steps, and the current reward is -0.0569.

* **Buttons:** The "Increase Speed" and "Decrease Speed" buttons suggest that the simulation speed can be adjusted.

### Key Observations

* The agent is positioned near the bottom-left corner of the arena.

* The spheres are scattered throughout the arena, with the green spheres clustered towards the top-right.

* The current reward is negative, suggesting that the agent is not performing optimally.

### Interpretation

The image represents a reinforcement learning environment where an agent interacts with its surroundings to achieve a goal. The agent's objective is likely to collect or interact with the spheres in some way, with the reward signal indicating the agent's success. The negative reward suggests that the agent is either not collecting the spheres efficiently or is incurring penalties for certain actions. The "Increase Speed" and "Decrease Speed" buttons suggest that the simulation can be sped up or slowed down for training or observation purposes. The "Seed" value indicates that the simulation is reproducible, allowing for consistent experimentation. The "Difficulty" level suggests that the environment can be made more or less challenging.

</details>

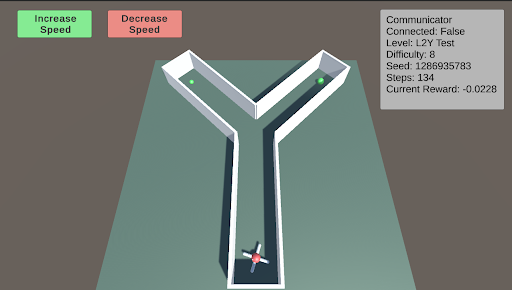

L2: Preferences - Y-Maze L2 tests the agent’s ability to make choices to obtain better or more food. The Y-maze consists of two paths. When the difficulty is below 5, there will be only one piece of green food at one of the two paths. When the difficulty is above 5, there is an additional smaller piece of green food in the other path. The agent must learn to choose the optimal path.

<details>

<summary>extracted/5920856/Research/L2.png Details</summary>

### Visual Description

## Screenshot: L2Y Test Environment

### Overview

The image is a screenshot of a simulated environment, likely for reinforcement learning or robotics testing. It depicts a Y-shaped maze with a small robot at the bottom and two green spheres at the ends of the maze branches. The environment includes UI elements for controlling speed and displaying simulation parameters.

### Components/Axes

* **Environment:** A Y-shaped maze with white walls on a light green floor.

* **Robot:** A small, red and white robot located at the base of the Y.

* **Goals:** Two green spheres, one at the end of each branch of the Y.

* **UI Elements:**

* "Increase Speed" button (green) at the top-left.

* "Decrease Speed" button (red) at the top-center.

* "Communicator" information box at the top-right.

### Detailed Analysis

**Communicator Information Box (Top-Right):**

* **Communicator:** (Title)

* **Connected:** False

* **Level:** L2Y Test

* **Difficulty:** 8

* **Seed:** 1286935783

* **Steps:** 134

* **Current Reward:** -0.0228

**Buttons (Top-Left):**

* **Increase Speed:** Green button with the text "Increase Speed".

* **Decrease Speed:** Red button with the text "Decrease Speed".

**Maze:**

* The maze is Y-shaped with white walls.

* The floor is light green.

* Two green spheres are located at the end of each branch.

* The robot is at the base of the Y.

### Key Observations

* The simulation is not connected ("Connected: False").

* The robot has taken 134 steps.

* The current reward is negative (-0.0228), suggesting the robot has not yet reached a goal or is being penalized for actions.

### Interpretation

The image shows a reinforcement learning environment where a robot is navigating a Y-shaped maze. The goal is likely for the robot to reach one of the green spheres. The "Communicator" information box provides details about the simulation, including the level, difficulty, seed, steps taken, and current reward. The negative reward suggests the robot is still learning and has not yet optimized its path to the goal. The "Increase Speed" and "Decrease Speed" buttons allow the user to control the simulation speed, potentially for debugging or observation purposes.

</details>

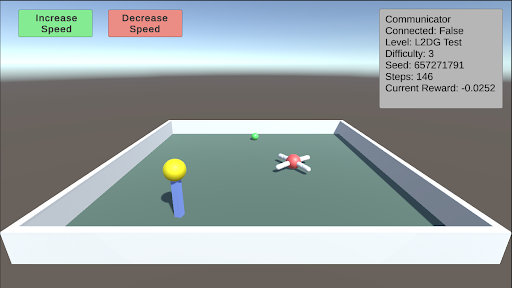

L2: Preferences - Delayed Gratification This test consists of a small piece of green food in front of the agent and a larger piece of yellow food on top of a pillar that is slowly descending. The agent must learn to wait for the yellow food to come down before consuming the green food. As the difficulty increases, the time it takes for the pillar to descend also increases.

<details>

<summary>extracted/5920856/Research/L2DG.png Details</summary>

### Visual Description

## Screenshot: Unity Game Environment

### Overview

The image is a screenshot of a Unity game environment. It shows a simple game scene with interactive elements and a communicator window displaying game-related information. The scene includes a rectangular arena, a yellow ball on a blue stand, a green ball, and a red-centered object with white extensions. There are also UI elements for controlling speed.

### Components/Axes

* **UI Elements (Top-Left):**

* "Increase Speed" button (green)

* "Decrease Speed" button (red)

* **Communicator Window (Top-Right):**

* Communicator

* Connected: False

* Level: L2DG Test

* Difficulty: 3

* Seed: 657271791

* Steps: 146

* Current Reward: -0.0252

* **Game Environment:**

* Rectangular arena with light gray walls and a light green floor.

* Yellow ball on a blue stand (bottom-left).

* Green ball (center-top).

* Red-centered object with white extensions (center-right).

### Detailed Analysis or ### Content Details

* **Increase Speed Button:** Located at the top-left, colored green, and labeled "Increase Speed".

* **Decrease Speed Button:** Located at the top-center, colored red, and labeled "Decrease Speed".

* **Communicator Window:** Located at the top-right, displays the following information:

* **Connected:** False (Indicates the game is not connected to a network or server)

* **Level:** L2DG Test (Specifies the current level of the game)

* **Difficulty:** 3 (Sets the difficulty level of the game)

* **Seed:** 657271791 (A numerical seed used for random number generation, ensuring consistent game behavior)

* **Steps:** 146 (The number of steps or actions taken in the game)

* **Current Reward:** -0.0252 (The current reward value, indicating a slight penalty or negative score)

* **Game Arena:**

* The arena is a rectangular enclosure with light gray walls and a light green floor.

* The yellow ball on a blue stand is positioned in the bottom-left corner of the arena.

* The green ball is located near the center-top of the arena.

* The red-centered object with white extensions is positioned in the center-right of the arena.

### Key Observations

* The game is currently not connected.

* The level is "L2DG Test" with a difficulty of 3.

* The game has taken 146 steps.

* The current reward is slightly negative (-0.0252).

### Interpretation

The screenshot depicts a simple game environment likely used for testing or development purposes. The "Communicator" window provides real-time feedback on the game's state, including connection status, level, difficulty, and reward. The presence of "Increase Speed" and "Decrease Speed" buttons suggests that the game involves controlling the speed of an object or agent within the arena. The negative reward indicates that the agent may be penalized for certain actions or behaviors. The seed value ensures that the game can be reproduced with the same initial conditions.

</details>

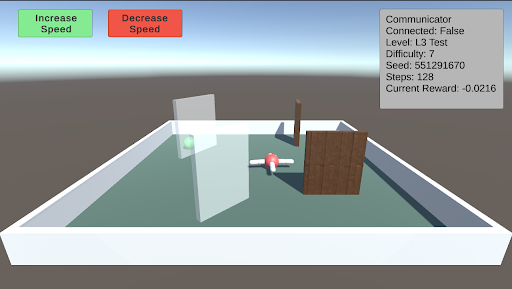

L3: Obstacles This test involves a single green piece of food placed in a random position and covered by a randomly sized transparent wall. Additionally, other walls will spawn in random orientations as obstacles. As the difficulty increases, both the size of the walls and the number of additional walls increase.

<details>

<summary>extracted/5920856/Research/L3.png Details</summary>

### Visual Description

## Game Environment Screenshot: Reinforcement Learning Task

### Overview

The image is a screenshot of a 3D game environment, likely used for reinforcement learning. It shows a simple scene with an agent (a red and white object resembling a small plane) navigating a small arena with obstacles. The top-right corner displays a "Communicator" box with information about the current state of the environment and agent. There are also "Increase Speed" and "Decrease Speed" buttons at the top-left.

### Components/Axes

* **Environment:** A square arena with light blue floor and white walls.

* **Agent:** A red and white object resembling a small plane.

* **Obstacles:**

* A transparent wall.

* A thin vertical brown wall.

* A larger brown wall with a wooden texture.

* A green sphere.

* **UI Elements:**

* "Increase Speed" button (green).

* "Decrease Speed" button (red).

* "Communicator" box (top-right).

### Detailed Analysis or ### Content Details

**1. "Communicator" Box (Top-Right):**

* **Communicator:** (Title)

* **Connected:** False

* **Level:** L3 Test

* **Difficulty:** 7

* **Seed:** 551291670

* **Steps:** 128

* **Current Reward:** -0.0216

**2. Buttons (Top-Left):**

* **Increase Speed:** Green button with white text.

* **Decrease Speed:** Red button with white text.

**3. Environment Details:**

* The agent is positioned near the center of the arena.

* The green sphere is located near the transparent wall.

* The walls are arranged to create a simple navigation challenge.

### Key Observations

* The agent is not connected to a network ("Connected: False").

* The agent is in the "L3 Test" level.

* The agent has taken 128 steps.

* The agent's current reward is negative (-0.0216), indicating a penalty or unsuccessful action.

### Interpretation

The screenshot depicts a reinforcement learning environment where an agent is being trained to navigate a simple arena. The "Communicator" box provides key information about the agent's progress and the environment's state. The negative reward suggests that the agent may be struggling to complete the task or is being penalized for certain actions. The "Increase Speed" and "Decrease Speed" buttons likely allow manual control of the agent's movement for testing or debugging purposes. The seed value is used for reproducibility of the environment.

</details>

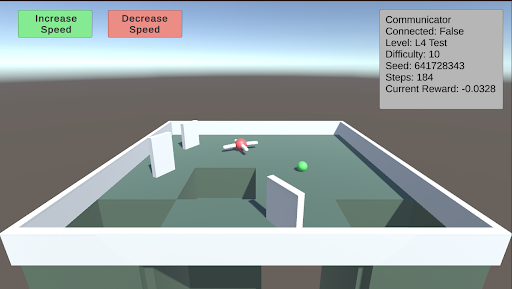

L4: Avoidance This test involves creating holes in the ground to serve as a negative stimulus. While the previous AnimalAI paper used negative reward floor pads, holes provide a more realistic challenge. As the difficulty increases, the holes become larger. The test starts with two holes, and when the difficulty exceeds 5, a third hole is introduced.

<details>

<summary>extracted/5920856/Research/L4.png Details</summary>

### Visual Description

## Screenshot: Unity Environment

### Overview

The image is a screenshot of a Unity environment, likely a simulation or game. It shows a simple maze-like structure with a red and white agent and a green sphere. UI elements indicate control buttons and game state information.

### Components/Axes

* **UI Elements (Top):**

* "Increase Speed" button (Green)

* "Decrease Speed" button (Red)

* **Communicator Box (Top-Right):**

* Connected: False

* Level: L4 Test

* Difficulty: 10

* Seed: 641728343

* Steps: 184

* Current Reward: -0.0328

* **Environment:**

* Maze-like structure with white walls and a light green floor.

* Red and white agent (likely the controlled entity).

* Green sphere (likely the target or goal).

### Detailed Analysis or Content Details

* **Increase Speed Button:** Located at the top-left, colored green.

* **Decrease Speed Button:** Located at the top, to the right of the "Increase Speed" button, colored red.

* **Communicator Box:** Located at the top-right.

* "Connected: False" indicates the simulation is not connected to an external source.

* "Level: L4 Test" indicates the current level is "L4 Test".

* "Difficulty: 10" indicates the difficulty level.

* "Seed: 641728343" indicates the random seed used for the simulation.

* "Steps: 184" indicates the number of steps taken in the simulation.

* "Current Reward: -0.0328" indicates the current reward value.

* **Environment:**

* The maze consists of white walls forming corridors.

* The floor is light green.

* The red and white agent is positioned within the maze.

* The green sphere is also positioned within the maze, likely as a target.

### Key Observations

* The simulation is running, as indicated by the "Steps" counter.

* The agent is navigating a maze-like environment.

* The current reward is negative, suggesting the agent has not yet reached the goal or is being penalized.

### Interpretation

The screenshot depicts a reinforcement learning environment within Unity. The agent (red and white) is likely being trained to navigate the maze and reach the green sphere. The "Communicator" box provides real-time information about the simulation's state, including the reward, steps taken, and difficulty level. The negative reward suggests the agent is still learning and has not yet optimized its path to the goal. The "Increase Speed" and "Decrease Speed" buttons likely control the simulation speed for training or observation purposes.

</details>

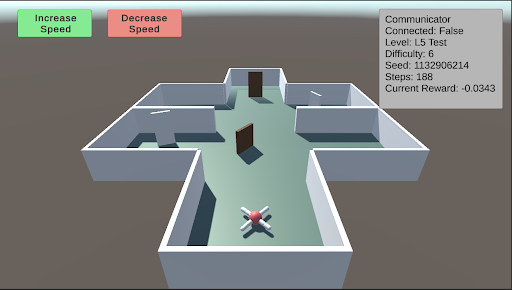

L5: Spatial Reasoning This test evaluates the agent’s ability to navigate a maze to find a piece of food hidden behind a wall in a random sector. Additional walls are placed to obstruct the agent’s path. As the difficulty increases, the number and size of the walls increase, and the maze itself becomes larger and more complex.

<details>

<summary>extracted/5920856/Research/L5.png Details</summary>

### Visual Description

## Screenshot: AI Agent in Simulated Environment

### Overview

The image is a screenshot of a simulated environment, likely a test environment for an AI agent. The environment is a simple, maze-like structure with an agent navigating it. The screenshot also includes UI elements indicating the agent's status and control options.

### Components/Axes

* **Environment:** A 3D rendered maze-like structure with gray walls and a light green floor. There are several doorways and a few white rectangular objects on the walls.

* **Agent:** A red and white object with four legs, positioned in the lower center of the environment.

* **UI Elements (Top):**

* "Increase Speed" button (green background)

* "Decrease Speed" button (red background)

* **UI Elements (Top-Right):** A gray box containing the following information:

* "Communicator"

* "Connected: False"

* "Level: L5 Test"

* "Difficulty: 6"

* "Seed: 1132906214"

* "Steps: 188"

* "Current Reward: -0.0343"

### Detailed Analysis

* **Environment Details:** The maze consists of several interconnected rooms and corridors. There are three visible doors. The white rectangular objects on the walls are likely visual cues or markers.

* **Agent Details:** The agent appears to be a simple, four-legged robot. Its position suggests it is navigating the maze.

* **UI Element Values:**

* The agent is not connected to a communicator ("Connected: False").

* The agent is in level "L5 Test".

* The difficulty is set to 6.

* The random seed is 1132906214.

* The agent has taken 188 steps.

* The current reward is -0.0343.

### Key Observations

* The agent is operating in a simulated environment.

* The agent's performance is being tracked (steps, reward).

* The agent's speed can be controlled via the "Increase Speed" and "Decrease Speed" buttons.

* The negative reward suggests the agent may not be performing optimally or is being penalized for certain actions.

### Interpretation

The screenshot depicts a typical setup for training and testing AI agents in a simulated environment. The agent is likely learning to navigate the maze through trial and error, with the reward signal guiding its behavior. The negative reward suggests that the agent may be exploring or making mistakes, which is a normal part of the learning process. The various parameters (level, difficulty, seed) allow for controlled experimentation and reproducibility. The "Communicator" status suggests the agent may have the capability to communicate with an external system, but it is currently disabled.

</details>

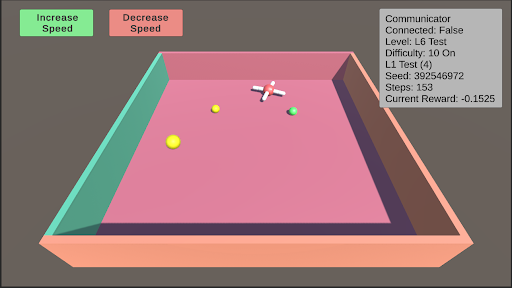

L6: Robustness Robustness is inherently present in all these tests due to their randomized nature. However, enabling robustness specifically will result in a random test from L0 to L11 being selected, and the colors of the test objects changing to one of five randomly generated colors.

<details>

<summary>extracted/5920856/Research/L6.png Details</summary>

### Visual Description

## Simulation Environment: Agent Navigation

### Overview

The image depicts a simulation environment, likely for training an AI agent. The environment consists of a rectangular arena with colored walls, several spherical objects, and an agent represented by a white cross-shaped figure. The image also includes UI elements for controlling the simulation and displaying relevant information.

### Components/Axes

* **Arena:** A rectangular box with walls of different colors (teal, pink, and peach).

* **Agent:** A white cross-shaped object with red accents, positioned within the arena.

* **Spherical Objects:** Three spheres of different colors (yellow, yellow, and green) are scattered within the arena.

* **UI Elements:**

* "Increase Speed" button (green).

* "Decrease Speed" button (red).

* Information box (top-right corner) displaying:

* "Communicator"

* "Connected: False"

* "Level: L6 Test"

* "Difficulty: 10 On"

* "L1 Test (4)"

* "Seed: 392546972"

* "Steps: 153"

* "Current Reward: -0.1525"

### Detailed Analysis or ### Content Details

* **Arena:** The arena's walls are colored teal (left), pink (back), and peach (bottom).

* **Agent:** The agent is positioned near the center of the arena.

* **Spherical Objects:**

* Two yellow spheres are located on the left side of the arena.

* One green sphere is located on the right side of the arena.

* **UI Elements:**

* The "Increase Speed" button is located at the top-left corner and is colored green.

* The "Decrease Speed" button is located at the top-center and is colored red.

* The information box displays the current state of the simulation, including whether the communicator is connected (False), the current level (L6 Test), difficulty (10 On), L1 Test (4), the random seed (392546972), the number of steps taken (153), and the current reward (-0.1525).

### Key Observations

* The agent is in a simulated environment with simple objects.

* The simulation is not connected to a communicator.

* The agent is at level L6 of the test.

* The agent has taken 153 steps and has a negative reward.

### Interpretation

The image shows a snapshot of an AI agent being trained in a simulated environment. The agent's objective is likely to interact with the spherical objects in the arena, and the reward function is designed to encourage certain behaviors. The negative reward suggests that the agent is not yet performing optimally. The UI elements provide information about the simulation's state and allow for control over the agent's speed. The "Connected: False" status indicates that the simulation is running locally and not communicating with an external server.

</details>

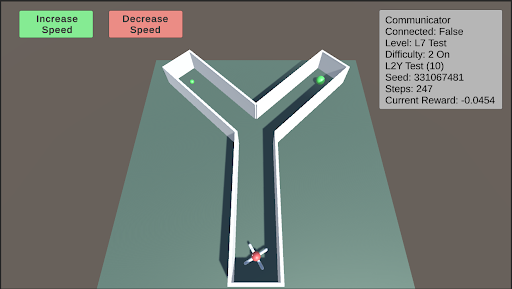

L7: Internal Models This test assesses the agent’s internal modeling capabilities using its camera. Blackouts will occur, with the frequency and duration of these blackouts increasing as the difficulty rises. During these blackouts, the agent’s camera will render a dark screen. Enabling internal models will select a random test from L0 to L11 and apply the blackouts described above.

<details>

<summary>extracted/5920856/Research/L7.png Details</summary>

### Visual Description

## Screenshot: Y-Maze Environment

### Overview

The image is a screenshot of a simulated Y-shaped maze environment. An agent, represented by a small vehicle with a propeller, is positioned at the bottom of the maze. Two green spheres are located at the ends of the two branches of the "Y". The screenshot also includes UI elements for controlling the simulation (Increase/Decrease Speed) and displaying simulation parameters (Communicator status, Level, Difficulty, Seed, Steps, Current Reward).

### Components/Axes

* **Environment:** A Y-shaped maze with white walls and a light green floor.

* **Agent:** A small vehicle with a propeller, located at the bottom of the maze.

* **Goals:** Two green spheres, one at the end of each branch of the "Y".

* **UI Elements:**

* "Increase Speed" button (green) - top-left

* "Decrease Speed" button (red) - top-center

* Communicator information box (top-right):

* Communicator

* Connected: False

* Level: L7 Test

* Difficulty: 2 On

* L2Y Test (10)

* Seed: 331067481

* Steps: 247

* Current Reward: -0.0454

### Detailed Analysis or ### Content Details

* **Maze:** The maze consists of a straight path leading to a fork, creating the "Y" shape. The walls are white, and the floor is light green.

* **Agent:** The agent appears to be a small vehicle with a propeller, suggesting it can move within the environment. It is positioned at the start of the maze.

* **Goals:** The green spheres likely represent the goals the agent needs to reach.

* **Simulation Parameters:**

* **Connected:** False - Indicates the simulation is not connected to an external communicator.

* **Level:** L7 Test - Indicates the current level of the simulation.

* **Difficulty:** 2 On - Indicates the difficulty level is set to 2 and is enabled ("On").

* **L2Y Test (10):** Indicates the type of test being run, likely related to the Y-maze. The number 10 is in parenthesis.

* **Seed:** 331067481 - A random seed used for the simulation.

* **Steps:** 247 - The number of steps taken in the simulation.

* **Current Reward:** -0.0454 - The current reward value for the agent.

### Key Observations

* The agent is at the starting point of the maze.

* The simulation is not connected to an external communicator.

* The current reward is negative, suggesting the agent has not yet reached a goal or is being penalized for actions.

### Interpretation

The screenshot depicts a reinforcement learning environment where an agent is trained to navigate a Y-shaped maze. The agent's objective is likely to reach one of the green spheres (goals) at the end of the maze branches. The "Current Reward" value indicates the agent's performance, which is currently negative. The simulation parameters provide information about the current state of the training process, including the level, difficulty, and number of steps taken. The "Increase Speed" and "Decrease Speed" buttons suggest the user can control the simulation speed. The seed value ensures reproducibility of the experiment.

</details>

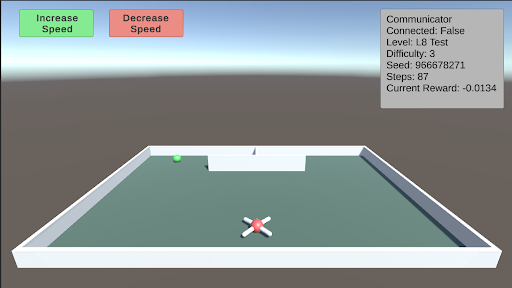

L8: Object Permanence This test involves a green piece of food that spawns on either side of a wall facing the agent. The food then moves behind the wall. There is a barrier separating the two sides behind the wall, with one side containing the food and the other side containing a hole. The agent must remember that the food still exists behind the wall. As difficulty increases, the speed at which the food moves behind the wall also increases.

<details>

<summary>extracted/5920856/Research/L8.png Details</summary>

### Visual Description

## Screenshot: Unity Environment

### Overview

The image is a screenshot of a Unity environment, likely a simulation or game. It shows a simple scene with a green sphere, a white and red cross-shaped object, and a walled enclosure. UI elements include "Increase Speed" and "Decrease Speed" buttons, and a "Communicator" box displaying various parameters.

### Components/Axes

* **Buttons (Top-Left):**

* "Increase Speed" (Green)

* "Decrease Speed" (Red)

* **Communicator Box (Top-Right):**

* Title: "Communicator"

* Connected: False

* Level: L8 Test

* Difficulty: 3

* Seed: 966678271

* Steps: 87

* Current Reward: -0.0134

* **Environment:**

* Enclosure: White walls, light green floor

* Green Sphere: Located in the top-left quadrant of the enclosure.

* Cross-Shaped Object: Located in the bottom-center of the enclosure, colored white and red.

### Detailed Analysis or Content Details

* **Communicator Box Details:**

* The "Connected" status is "False," indicating that the environment is not currently connected to an external system.

* The "Level" is "L8 Test," suggesting this is a test level.

* The "Difficulty" is set to "3."

* The "Seed" is "966678271," likely used for random number generation within the simulation.

* The number of "Steps" taken is "87."

* The "Current Reward" is "-0.0134," indicating a negative reward value.

### Key Observations

* The environment appears to be a simple test setup, possibly for reinforcement learning or agent training.

* The negative reward suggests the agent may not be performing optimally or is being penalized for certain actions.

* The "Increase Speed" and "Decrease Speed" buttons imply the user can control the simulation speed.

### Interpretation

The screenshot depicts a basic Unity environment designed for testing or training an agent. The "Communicator" box provides real-time feedback on the agent's performance, including the number of steps taken and the current reward. The negative reward value suggests that the agent is either not achieving its goal or is incurring penalties. The "Increase Speed" and "Decrease Speed" buttons allow for adjusting the simulation speed, which could be useful for debugging or accelerating the training process. The level being "L8 Test" indicates this is likely a development or testing environment.

</details>

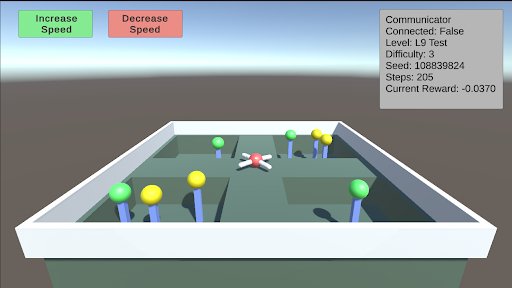

L9: Numerosity This test requires the agent to choose between different sectors based on the quantity of food present. There are four sectors, each containing a random number of food pieces. The agent must enter a sector and collect all the food within it. Once the agent enters a sector, the opening will close. The agent must correctly choose the sector with the most food. As the difficulty increases, the number of food pieces in each sector increases, making the decision more challenging.

<details>

<summary>extracted/5920856/Research/L9.png Details</summary>

### Visual Description

## Game Environment Screenshot: Agent Navigation Task

### Overview

The image is a screenshot of a game environment, likely a simulation or training environment. It depicts an agent navigating a maze-like structure with colored targets. The top of the screen contains UI elements for controlling the agent's speed and displaying game-related information.

### Components/Axes

* **Environment:** A 3D maze-like structure with gray walls and floor.

* **Agent:** A red and white, four-legged agent located in the center of the maze.

* **Targets:** Green and yellow spherical targets placed throughout the maze, each on top of a blue post.

* **UI Elements (Top-Left):**

* "Increase Speed" button (green background)

* "Decrease Speed" button (red background)

* **UI Elements (Top-Right):** A gray box displaying the following information:

* "Communicator"

* "Connected: False"

* "Level: L9 Test"

* "Difficulty: 3"

* "Seed: 108839824"

* "Steps: 205"

* "Current Reward: -0.0370"

### Detailed Analysis or Content Details

* **Agent Position:** The agent is positioned near the center of the maze, seemingly at the intersection of several pathways.

* **Target Distribution:** The green and yellow targets are distributed throughout the maze, with varying distances from the agent. There are approximately 4 green targets and 3 yellow targets visible.

* **UI Information:**

* The "Communicator" is not connected ("Connected: False").

* The current level is "L9 Test".

* The difficulty level is 3.

* The random seed is 108839824.

* The agent has taken 205 steps.

* The current reward is -0.0370.

* **Button Functionality:** The "Increase Speed" and "Decrease Speed" buttons likely control the agent's movement speed within the simulation.

### Key Observations

* The agent appears to be in a navigation task, likely aiming to reach the targets.

* The negative reward suggests that the agent might be penalized for taking steps or for not reaching the targets efficiently.

* The "Connected: False" status might indicate that the agent is not communicating with an external system or server.

### Interpretation

The screenshot depicts a reinforcement learning environment where an agent is being trained to navigate a maze and reach targets. The reward system encourages the agent to find efficient paths. The UI elements provide information about the current state of the simulation, including the agent's progress and the difficulty level. The seed value ensures reproducibility of the environment. The negative reward suggests the agent is being penalized for something, possibly time or collisions. The goal of the training is likely to optimize the agent's navigation strategy to maximize its reward.

</details>

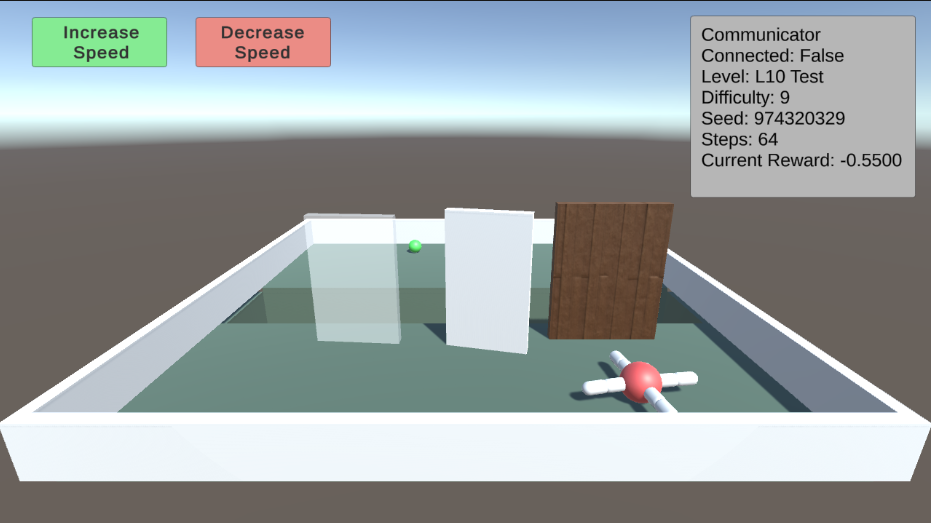

L10: Causal Reasoning This test evaluates the agent’s cognitive ability to understand the environment around it. By pushing over a plank, the agent can walk over a trench to reach a piece of food. However, as the difficulty increases, transparent and opaque walls will appear alongside the plank. The agent must comprehend the cause and effect of its actions, and pick the plank to push over. Additionally, the trench will gradually become wider.

<details>

<summary>extracted/5920856/Research/L10.png Details</summary>

### Visual Description

## Game Environment Screenshot: Reinforcement Learning Experiment

### Overview

The image is a screenshot of a 3D game environment, likely used for reinforcement learning experiments. It shows a simple maze-like setup with a green sphere (presumably the agent), obstacles, and a target. The top-right corner displays game-related information, and there are buttons to control the agent's speed.

### Components/Axes

* **Environment:** A rectangular enclosure with light blue walls and a light blue floor.

* **Agent:** A green sphere located in the left side of the environment.

* **Obstacles:** Three vertical barriers: a transparent barrier on the left, a white barrier in the middle, and a brown wooden barrier on the right.

* **Target:** A red sphere with four white cylinders extending from it, located in the bottom-right corner of the environment.

* **UI Elements (Top):**

* "Increase Speed" button (green background) on the top-left.

* "Decrease Speed" button (red background) next to the "Increase Speed" button.

* Text box (top-right) displaying game information.

### Detailed Analysis or ### Content Details

**UI Text Box (Top-Right):**

* **Communicator:**

* **Connected:** False

* **Level:** L10 Test

* **Difficulty:** 9

* **Seed:** 974320329

* **Steps:** 64

* **Current Reward:** -0.5500

**Buttons (Top-Left):**

* **Increase Speed:** Green button with the text "Increase Speed".

* **Decrease Speed:** Red button with the text "Decrease Speed".

### Key Observations

* The agent (green sphere) is positioned at the start of the maze.

* The goal (red sphere with cylinders) is at the end of the maze.

* The agent needs to navigate around the obstacles to reach the goal.

* The "Connected" status is "False," suggesting the agent might not be connected to a central server or network.

* The "Current Reward" is negative, indicating a penalty or that the agent has not yet reached the goal.

### Interpretation

The screenshot depicts a reinforcement learning environment where an agent (the green sphere) is trained to navigate a maze and reach a target. The agent's performance is evaluated based on the "Current Reward," which is likely updated as the agent interacts with the environment. The "Steps" counter indicates the number of actions the agent has taken. The negative reward suggests the agent may be penalized for taking steps or colliding with obstacles. The "Difficulty" and "Level" parameters indicate the complexity of the maze. The "Seed" value is used for random number generation, ensuring reproducibility of the experiment. The "Increase Speed" and "Decrease Speed" buttons allow for manual adjustment of the agent's movement speed, potentially for debugging or demonstration purposes. The fact that the communicator is not connected could mean that the agent is running locally and not communicating with a remote server.

</details>

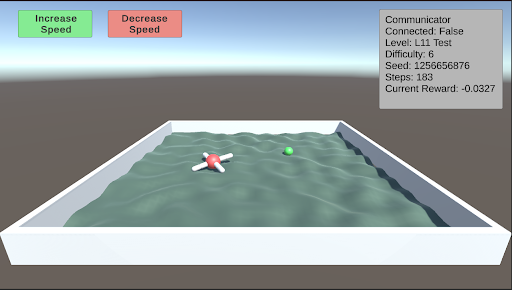

L11: Body Awareness This test assesses the agent’s understanding of its own body. After navigating through the corridors of the Y-maze, Thorndike hut, and a normal maze, the agent has developed a basic understanding of its body. This test further evaluates the agent’s ability to function on rough terrain. A single piece of food is placed away from the agent on uneven terrain. As the difficulty increases, the terrain becomes progressively rougher.

<details>

<summary>extracted/5920856/Research/L11.png Details</summary>

### Visual Description

## Screenshot: Simulated Environment

### Overview

The image is a screenshot of a simulated environment, likely a game or training simulation. It features a 3D scene with a water-filled container, a red and white object resembling a robot or agent, a green sphere, and UI elements indicating simulation parameters and controls.

### Components/Axes

* **UI Elements (Top):**

* "Increase Speed" button (green) - Located top-left.

* "Decrease Speed" button (red) - Located top-center.

* "Communicator" box (top-right) - Displays simulation status and parameters.

* Connected: False

* Level: L11 Test

* Difficulty: 6

* Seed: 1256656876

* Steps: 183

* Current Reward: -0.0327

* **Environment:**

* White rectangular container filled with water (or a water-like substance).

* Red and white object (agent/robot) with four white appendages.

* Green sphere (target/goal).

* Background: Gray gradient, suggesting a sky or distant environment.

### Detailed Analysis or Content Details

* **Agent/Robot:** The red and white object is positioned in the water. It appears to be a central red sphere with four white appendages extending outwards.

* **Target/Goal:** The green sphere is also located in the water, at a distance from the agent.

* **Water:** The water surface is textured to simulate waves or ripples.

* **Simulation Parameters:** The "Communicator" box provides the following information:

* The simulation is not connected.

* The current level is "L11 Test".

* The difficulty is set to 6.

* The random seed is 1256656876.

* The simulation has run for 183 steps.

* The current reward is -0.0327.

### Key Observations

* The simulation appears to involve an agent (the red and white object) navigating a water environment towards a target (the green sphere).

* The negative "Current Reward" suggests that the agent has not yet reached the goal or is being penalized for some action.

* The "Increase Speed" and "Decrease Speed" buttons indicate that the user can control the agent's speed.

### Interpretation

The image depicts a reinforcement learning or robotics simulation environment. The agent is likely being trained to navigate the water and reach the green sphere. The reward system provides feedback to the agent, guiding its learning process. The simulation parameters (level, difficulty, seed) allow for controlled experimentation and reproducibility. The "Connected: False" status might indicate that the simulation is running locally or is not connected to a remote server.

</details>

6 Curriculum Training

We provide a curriculum training inspired by [3] where the agent focuses on tasks were the learning progress (or regression) is fastest. The system operates by organizing the levels and difficulties into a 2D matrix, where each cell represents a specific level at a given difficulty. Upon the completion of an episode, the agent’s reward is recorded in the corresponding cell of the matrix. A rolling moving average and variance are calculated for each cell over a period of 10 episodes, and the z-value for each cell is determined using the following equation.

$$

z_{i}:=\frac{\sigma_{i}}{|\mu_{i}|+10^{-7}} \tag{1}

$$

These z-values are then used to create a probability distribution that guides the selection of the next training level and difficulty, prioritizing levels with higher variance. Thus the agent will focus more on tasks where it’s performance is highly variable. The probability of each cell being selected next is determined according to the equation below.

$$

p_{i}=\frac{z_{i}+c}{\sum_{j=1}^{n}(z_{j}+c)} \tag{2}

$$

This curriculum training procedure effectively promotes learning and facilitates training. As the agent begins to learn and increases its end reward for a task, the rolling variance rises, which in turn increases the z-value and the likelihood of the same level and difficulty being presented again. This creates a feedback loop until the agent either stops learning or completes the current level and difficulty. As the variance decreases, a new level and difficulty pair is presented to the agent for further learning. Additionally, this training combats catastrophic forgetting. If the agent revisits a previously mastered level and difficulty pair but is currently failing, the increased variance will cause this pair to reappear more frequently until the task is relearned. Overall, this curriculum training procedure provides an effective method for training an agent across a diverse range of tasks.

7 Future Work

In the initial release of the Articulated Animal AI Environment, our goal is to enable researchers to familiarize themselves with the platform and test their cognition-based models using the provided test bench. A designated set of testing seeds has been reserved within the environment, which will be used for future evaluations of selected candidates or potential competition scenarios.

8 Conclusion

The Articulated Animal AI Environment is a robust framework designed to assess the capabilities of a multi-limbed agent in performing animal cognition tasks. Building upon the foundational AnimalAI Environment, the Articulated Animal AI Environment enhances the training process by offering pre-randomized and generalized tests, coupled with a structured curriculum training program and comprehensive evaluation tools. This setup allows researchers to concentrate on refining their models rather than on the time-intensive task of developing effective tests.

Acknowledgments

This work was supported in part by McGill University.

References

- [1] Matthew Crosby, Benjamin Beyret, Murray Shanahan, José Hernández-Orallo, Lucy Cheke, and Marta Halina. Animal-ai: A testbed for experiments on autonomous agents. arXiv preprint arXiv:1909.07483, 2019.

- [2] Konstantinos Voudouris, Ibrahim Alhas, Wout Schellaert, Matthew Crosby, Joel Holmes, John Burden, Niharika Chaubey, Niall Donnelly, Matishalin Patel, Marta Halina, José Hernández-Orallo, and Lucy G. Cheke. Animal-ai 3: What’s new & why you should care. arXiv preprint arXiv:2312.11414, 2023.

- [3] Jacqueline Gottlieb, Pierre-Yves Oudeyer, Manuel Lopes, and Adrien Baranes. Information seeking, curiosity and attention: computational and neural mechanisms. Trends in Cognitive Sciences, 17(11):585–593, 2013.

Appendix A Appendix

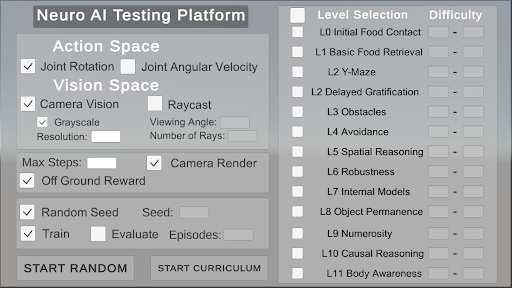

This appendix contains additional information about how to use the interface. All source code is available online.

Environment source code: https://github.com/jeremy-lucas-mcgill/Articulated-Animal-AI-Environment

The Interface

The interface provides users with the capability to customize their agents by selecting specific actions, vision settings, and general parameters. Users can then choose the levels and difficulty range for training. The interface offers two primary options for initiating the training process: "Start Random," which randomly selects a level and difficulty for each episode, or "Start Curriculum," which begins the curriculum-based training program.

<details>

<summary>extracted/5920856/Research/Interface.png Details</summary>

### Visual Description

## GUI: Neuro AI Testing Platform

### Overview

The image is a screenshot of a GUI for a "Neuro AI Testing Platform". It features sections for configuring the action space, vision space, reward system, training parameters, and level selection. The interface allows users to define various aspects of the AI's environment and training regime.

### Components/Axes

* **Title:** Neuro AI Testing Platform

* **Action Space:**

* Joint Rotation (Checkbox: Selected)

* Joint Angular Velocity (Checkbox: Unselected)

* **Vision Space:**

* Camera Vision (Checkbox: Selected)

* Grayscale (Checkbox: Selected)

* Resolution: (Text Input Field)

* Raycast (Checkbox: Unselected)

* Viewing Angle: (Text Input Field)

* Number of Rays: (Text Input Field)

* **Reward:**

* Max Steps: (Text Input Field)

* Camera Render (Checkbox: Selected)

* Off Ground Reward (Checkbox: Selected)

* **Training:**

* Random Seed (Checkbox: Selected)

* Seed: (Text Input Field)

* Train (Checkbox: Selected)

* Evaluate (Checkbox: Unselected)

* Episodes: (Text Input Field)

* **Level Selection:**

* Level Selection (Header)

* Difficulty (Header)

* L0 Initial Food Contact (Checkbox: Unselected, Difficulty: Text Input Field with "-")

* L1 Basic Food Retrieval (Checkbox: Unselected, Difficulty: Text Input Field with "-")

* L2 Y-Maze (Checkbox: Unselected, Difficulty: Text Input Field with "-")

* L2 Delayed Gratification (Checkbox: Unselected, Difficulty: Text Input Field with "-")

* L3 Obstacles (Checkbox: Unselected, Difficulty: Text Input Field with "-")

* L4 Avoidance (Checkbox: Unselected, Difficulty: Text Input Field with "-")

* L5 Spatial Reasoning (Checkbox: Unselected, Difficulty: Text Input Field with "-")

* L6 Robustness (Checkbox: Unselected, Difficulty: Text Input Field with "-")

* L7 Internal Models (Checkbox: Unselected, Difficulty: Text Input Field with "-")

* L8 Object Permanence (Checkbox: Unselected, Difficulty: Text Input Field with "-")

* L9 Numerosity (Checkbox: Unselected, Difficulty: Text Input Field with "-")

* L10 Causal Reasoning (Checkbox: Unselected, Difficulty: Text Input Field with "-")

* L11 Body Awareness (Checkbox: Unselected, Difficulty: Text Input Field with "-")

* **Buttons:**

* START RANDOM

* START CURRICULUM

### Detailed Analysis or ### Content Details

The GUI is structured into distinct sections, each controlling a specific aspect of the AI testing environment.

* **Action Space:** The AI can control its "Joint Rotation". "Joint Angular Velocity" is an available option but is currently unselected.

* **Vision Space:** The AI uses "Camera Vision" and processes it in "Grayscale". Parameters like "Resolution", "Viewing Angle", and "Number of Rays" can be configured, but the values are not specified in the provided image.

* **Reward:** The AI receives a reward for being "Off Ground". The "Camera Render" option is enabled. The "Max Steps" parameter is configurable.

* **Training:** The training process uses a "Random Seed". The "Train" option is enabled, while "Evaluate" is disabled. The number of "Episodes" can be specified.

* **Level Selection:** A series of levels (L0 to L11) are listed, each with a checkbox for selection and a field to specify the difficulty. None of the levels are currently selected. The difficulty for each level is set to "-".

### Key Observations

* The GUI provides a comprehensive set of options for configuring an AI testing environment.

* The user has selected "Joint Rotation" for the action space and "Camera Vision" with "Grayscale" for the vision space.

* The AI receives a reward for being "Off Ground".

* The training process is configured to use a random seed and is currently set to train.

* No specific levels are selected for training.

### Interpretation

The "Neuro AI Testing Platform" GUI allows researchers and developers to set up and run experiments for training AI agents. The configuration options cover a range of aspects, from the agent's action and perception capabilities to the reward structure and training regime. The level selection feature suggests a curriculum learning approach, where the AI is gradually exposed to more complex tasks. The current configuration indicates a setup focused on joint rotation control, grayscale camera vision, and a reward for being off the ground, with the training process enabled but no specific levels selected.

</details>