## A CASE FOR AI CONSCIOUSNESS: LANGUAGE AGENTS AND GLOBAL WORKSPACE THEORY

## Simon Goldstein

The University of Hong Kong simon.d.goldstein@gmail.com

## Cameron Domenico Kirk-Giannini

Rutgers University-Newark camerondomenico.kirkgiannini@gmail.com

## ABSTRACT

It is generally assumed that existing artificial systems are not phenomenally conscious, and that the construction of phenomenally conscious artificial systems would require significant technological progress if it is possible at all. We challenge this assumption by arguing that if Global Workspace Theory (GWT) -aleading scientific theory of phenomenal consciousness - is correct, then instances of one widely implemented AI architecture, the artificial language agent , might easily be made phenomenally conscious if they are not already. Along the way, we articulate an explicit methodology for thinking about how to apply scientific theories of consciousness to artificial systems and employ this methodology to arrive at a set of necessary and sufficient conditions for phenomenal consciousness according to GWT.

K eywords Large Language Models (LLMs) · Artificial Intelligence · Consciousness · Global Workspace Theory · Functionalism

The advent of generative text and image models has led to a resurgence of academic and popular interest in the question of what it would take for an AI to be conscious. While it is difficult to draw general conclusions on this topic because of the great diversity of philosophical and scientific theories of consciousness, a number of authors have recently suggested that it may be possible to construct conscious AIs in the near future (Butlin et al. (2023); Chalmers (2023)). Recent work has focused on supporting this claim by surveying the commitments of a wide range of philosophical and scientific theories of consciousness. While this approach has the advantage of appealing to diverse theoretical perspectives, however, it is constrained in its level of detail. In what follows, we take a different approach. We focus on one leading scientific theory of consciousness, global workspace theory (GWT), and one widely implemented AI architecture, the artificial language agent (or language agent for short), arguing that if GWT is correct, then language agents might easily be made conscious if they are not conscious already. Our project makes three main contributions to the literature on consciousness in artificial systems. First, it provides an explicit methodology for thinking about how to apply scientific theories of consciousness to artificial systems. Second, it focuses scholarly attention on language agents, which we take to be an especially interesting case study in artificial consciousness. Third, it

presents a case for consciousness in AI systems that is better equipped to respond to certain important objections than earlier proposals. 1

To investigate whether AI systems can be conscious, we adopt a broadly computational and functionalist perspective according to which consciousness is a special kind of information processing. AI systems are conscious if they process information in this special way. Within this framework, the most important open problem is to specify carefully which functional roles are associated with consciousness. Below, we describe how existing work on GWT has vacillated between a series of different functional conditions associated with consciousness. These conditions differ in how demanding they are and in their level of abstraction. We show that these differences matter to predictions about what it would take for AI systems to be conscious. We also develop two methodological tools to adjudicate between these various functional conditions. In particular, we reflect on the usefulness of consciousness and explore thought experiments that probe how our judgments about consciousness vary with slight changes to functional role. In each case, we argue that our methodological tools tend to favor more permissive functional roles, which make it easier for a wide range of systems to count as conscious.

Here is the plan. In section 1, we distinguish between access consciousness and phenomenal consciousness and discuss the place of each in the science of consciousness and normative theory. In section 2, we introduce GWT and describe some of the evidence for it. Sections 3, 4, and 5 distill from GWT a functional characterization of consciousness in artificial systems. Section 6 turns to contemporary machine learning, introducing the architecture of language agents. In section 7, we argue that existing language agents come close to satisfying the functional criteria introduced in section 5, and that they could be made to do so fully with simple architectural modifications. In section 8, we consider a number of further modifications to our proposed architecture that could address a range of different kinds of skepticism about its plausibility as a conscious system. Section 9 considers two objections to our arguments. Section 10 concludes.

## 1 Consciousness

The ordinary concept of a conscious state or being is imprecise, failing to differentiate among a diverse set of properties. For this reason, it is customary to distinguish between two important senses in which a state or being can be conscious: access consciousness and phenomenal consciousness. A state is access conscious to the extent that it is available for use in reasoning and guiding speech and action, while it is phenomenally conscious just in case it has experiential properties (Block 2002). Correspondingly, a being is conscious in the access sense to the extent that it tokens access-conscious states and conscious in the phenomenal sense just in case it tokens phenomenally conscious states.

While there are interesting scientific questions to ask about the kinds of biological and artificial functional architectures which give rise to access-conscious states, it is phenomenal consciousness which is generally regarded as the greater puzzle both scientifically and philosophically. Substantial literatures exist in philosophy, psychology, and cognitive neuroscience on the nature

1 Since the 1990s, there have been a number of attempts to implement a global workspace in an AI system. Notable examples include CMattie (Franklin and Graesser 1999), a simple system designed to schedule seminars at a university by sending and reading messages in natural language, its successor system IDA (Franklin 2003), and the robotic system designed by Shanahan (2006). Though we think this existing work is interesting, its significance is limited by two factors. First, authors in this tradition do not generally develop and defend an explicit account of the necessary and sufficient conditions for consciousness according to GWT. This makes it difficult to assess whether the systems claimed to implement a global workspace in fact do. Second, the systems described in this tradition are generally very simple and have a limited range of capabilities which could not be extended without significant architectural modifications, leaving them more vulnerable than language agent architectures to the 'small model objection' (see section 9 for discussion).

of phenomenal consciousness and its relation to physical systems. 2 In what follows, our primary concern will be with phenomenal consciousness. We will discuss one leading scientific theory of phenomenal consciousness in biological systems - Global Workspace Theory - and distill from it an account of the conditions under which artificial systems might also be phenomenally conscious. We take it to be uncontroversial that any artificial system meeting these conditions would be access conscious, and we will not argue at length for this claim.

The question of consciousness in artificial systems is especially urgent because many philosophers tie questions of consciousness to the normative domain. First, it is commonly held that certain phenomenally conscious states contribute positively or negatively to an individual's wellbeing in virtue of their phenomenal properties. For example, the pleasantness of pleasures and the unpleasantness of pains are held to improve and reduce an individual's wellbeing, respectively. 3 This connection pertains to phenomenal consciousness rather than access consciousness. Second, it is often held that a being must be conscious in order for it to be the right kind of thing to have wellbeing in the morally significant sense. 4 According to this line of thought, while it might be said of simple systems like protists and fungi that things could go well or poorly for them, it does not follow that we have a moral reason to avoid harming them because they are not conscious.

## 2 Global Workspace Theory

Global Workspace Theory originated with the work of Bernard Baars (Baars 1988, 1997b) and has since grown to be one of the leading theories of consciousness in biological systems. GWT posits the existence of a global workspace : a component of cognitive architecture that receives information from a range of cognitive modules, processes this information, and then broadcasts the processed information back to the cognitive modules to which it is connected. According to GWT, a representation is conscious when it is present in the global workspace. 5 Though proponents of GWT are not always clear on the sort of consciousness they have in mind, we interpret GWT as endorsing the claim that information in the global workspace is conscious in both the access and phenomenal senses, and that being in the global workspace is what renders a piece of information phenomenally conscious.

It is helpful to distinguish the various claims associated with GWT into structural claims about cognitive architecture and functional claims about how the global workspace receives information, processes it, and transmits it to other parts of the cognitive system. The core structural claim of GWTis that human cognitive architecture consists of a number of relatively autonomous modules which process information specific to particular tasks (e.g. particular sense modalities, motor control, and so on) together with a single global workspace with which all of these modules interface. 6 The cognitive architecture posited by GWT thus contrasts with a pairwise architecture, where modules communicate with one another directly rather than pooling their information into a shared space (Goyal et al. 2021).

2 For an introduction with suggestions for further reading, see Van Gulick (2022).

3 See, for example, Kagan (1992) and Bramble (2016).

4 See Lin (2021) and Goldstein and Kirk-Giannini (forthcoming) for recent discussion.

5 This is a slight simplification. Baars (1988) (363), for example, suggests that representations may need to be present in the global workspace for some minimum amount of time before they are consciously experienced, and Baars (1997a) (44) suggests that representations in the global workspace must be in 'the spotlight of attention' to be consciously experienced. We set these complications aside in what follows since we do not take them to bear significantly on our arguments.

6 For example, Dehaene et al. (1998) mention five main modules connected to the global workspace: perceptual systems, long-term memory, evaluative systems, attentional systems, and motor systems (see also Mesulam (1998)).

When it comes to functional claims, GWT posits three important aspects of global information processing. First, there is uptake , the process that determines which information coming from the modules will enter the global workspace. Second, there is broadcast , the process whereby information in the global workspace is sent to a wide range of modules for use in further parallel processing. Finally, there is processing , wherein the global workspace integrates and manipulates information it has taken up to shape the trajectory of conscious experience.

GWTdistinguishes between two types of processing: parallel processing in individual modules and serial processing in the global workspace. Because it is parallel, processing in individual modules can handle effectively unlimited amounts of information. The global workspace, on the other hand, can process a limited amount of information at any given time. The limited storage capacity of the global workspace creates a bottleneck for information processing which drives the need for selective attention during the uptake phase.

Though it is beyond the scope of our discussion here to present GWT in complete detail, we pause to expand briefly on each of the components of the function of the global workspace and describe some of the psychological phenomena GWT is particularly well suited to explain.

## 2.1 Uptake

At any given time, parallel processing in the modules is generating a large amount of information which could potentially enter the global workspace. Baars (1997b) emphasizes three types of information: outer senses, inner senses (including visual imagery, inner speech, and dreams), and ideas. Because the global workspace has limited capacity, however, only some of this information can be taken up from the modules at any given time. There is therefore a kind of competition for information to achieve uptake into the global workspace and to remain there once it does.

One important example of competition for uptake is binocular rivalry, one instance of a more general class of 'two-channel experiments'. In binocular rivalry, a subject is shown a different image in each of their eyes. The subject's conscious experience flips back and forth between the two images (Moreno-Bote et al. 2011). Crucially, the subject cannot have simultaneous conscious experiences of inconsistent visual information. This suggests that there is a bottleneck on conscious experience. Consciousness selects among competing visual inputs to generate a coherent narrative about the world.

Baars (1988) suggests a number of ways in which this competition might be implemented in the mind. First, informational signals coming from different modules might have different levels of activation, and activation might be increased when different modules work together to boost a particular signal (Baars (1988); p. 95). However, Baars holds that activation alone is not sufficient to get information stably into the global workspace: in addition to high levels of activation, information in the global workspace must receive positive feedback from the modules to which it is broadcast (Baars (1988); p. 205). 7

Ultimately, conscious uptake is controlled by attention (Posner 1994). This includes both conscious and unconscious attentional processes. Attention boosts information into the global workspace and helps to keep it there (Baars (1988); 308). For example, unconscious attentional processes explain why, even if we are closely attending to one thing, other information can 'break through' into consciousness if it is sufficiently important: 'Absorption does not protect us from high-priority signals, even those that begin unconsciously.' ( Baars (1997b); 107).

7 This aspect of Baars's theory is motivated by the existence of redundancy effects, wherein information which is at first consciously accessible fades from consciousness as a subject becomes habituated to it (Baars (1997b); 93). Positive feedback from receiving modules is supposed to capture the idea that a given piece of information in the global workspace contains new information useful to the cognitive system.

The role of attention in uptake is illustrated by other 'two-channel' variants of binocular rivalry: listening to two conversations at once, reading alternating words of text, or seeing two sports games superimposed on the same screen (Baars (1997b); 23-24). In these cases, unconscious information from the second channel will break through if it is sufficiently important, emotional, or relevant. Information in the unconscious channel can also unconsciously influence interpretation of ambiguous words (like bank ) in the conscious channel (Baars (1997b); 27).

The attentional blink is another empirical finding that showcases the way in which attention shapes conscious experience. In the attentional blink, a pair of stimuli are shown in rapid succession. If the first stimulus is attended to, and if the second stimulus is shown very quickly after the initial one, the second stimulus is not consciously experienced. But if the subject was not directing their attention at the first stimulus, the second stimulus is experienced consciously (Raymond et al. 1992; Dehaene et al. 2017).

Since Baars, neuroscientists have studied in detail the mechanisms by which humans implement the global workspace architecture. A key concept in this area is ignition , a specific hypothesis about how uptake is achieved. According to global neuronal workspace theory, the main development of GWT in neuroscience, 'ignition is characterized by the sudden, coherent, and exclusive activation of a subset of workspace neurons coding for the current conscious content, with the remainder of the workspace neurons being inhibited' (Mashour et al. (2020); 777). In other words, ignition is a non-linear process whereby a single neural representation suddenly comes to dominate the neuronal workspace, suppressing all competing patterns of activation.

## 2.2 Broadcast

The most important function of the global workspace is to serve as a central repository of information available to the cognitive architecture's various parallel processors. In addition to taking up information from these processors, then, the global workspace must also transmit information back to them. We refer to this function of the global workspace as broadcast .

As we have seen, Baars (1988) holds that there is an intimate connection between uptake and broadcast: it is only through broadcasting to a coalition of processors and receiving positive activation signals from them that a representation can remain stably in the global workspace.

In addition to broadcast from the global workspace to processors that handle functions like perception and memory, broadcast from the global workspace also drives intentional action. Building on James (1890)'s ideomotor theory of action, Baars (1997b) (p. 138) suggests that when a goal is conscious, it will automatically begin to recruit unconscious 'effectors' to promote the goal and cause action, unless inhibited by the conscious representation of a conflicting goal. The process of broadcast from the global workspace is thus the means by which serial processing of perceptual and other inputs leads to coordinated agency.

## 2.3 Processing

According to GWT, the global workspace is not simply a passive repository of information available to various cognitive modules; it plays an active role in processing information. Processing in the global workspace is notable for its generality. As Dehaene et al. put it, 'once we are conscious of an item, we can readily perform a large variety of operations on it, including evaluation, memorization, action guidance, and verbal report' (Dehaene et al. (1998); 14529). Indeed, there are some kinds of processing that can only occur in the global workspace. For example, Baars (1997a) (p. 17) suggests that conscious processing in the global workspace is required to construct concepts out of two-word compounds like potato soup . This is illustrated experimentally by priming effects. Unconsciously seeing the word dog makes it easier to identify

the word puppy consciously seconds later. But priming effects are not observed with compound words, like potato soup or honey cake : the global workspace, and consciousness, is required to integrate the meaning of the two words. Moreover, unconscious priming effects do not influence actions: again, the global workspace is required.

Amore general aspect of information processing in the global workspace concerns the demand for consistency. The global workspace seeks to organize disparate information into a single coherent model of the world. One illustration of this demand is visual illusions like the impossible trident and Escher's infinite staircases. When we visually attend to these images, the global workspace tries to create a single coherent image, even though there isn't one available. In the impossible trident, tracing the trident across the scene will result in alternating visual images, because there is no way to combine the information into one coherent object (Baars (1997b); 88).

Information processing in the global workspace is closely connected to some of the core operations of working memory, including the visuospatial sketchpad and phonological loop. The visuospatial sketchpad allows for storage and manipulation of mental images; the phonological loop briefly stores information related to speech, and also allows for its rehearsal (Hitch and Baddeley 1976). These processing activities can only trigger when the item of information has been accessed in the global workspace using attention: 'attended memory items can activate subsequent memory states in order to retrieve an association or as part of a cognitive routine when, for example, a mental image is transformed during mental rotation. . . whereas activity-silent states merely store previously computed states.' (Mashour et al. (2020); 785).

A final aspect of processing in the global workspace concerns the idea of effort . Dehaene et al. (1998) suggest that mental effort is related to processing in the global workspace. Many modular perceptual and motor tasks do not involve a phenomenology of mental effort, even when they are complex tasks. By contrast, even simple conscious mental tasks like subtraction do involve effort.

## 3 What is Essential to Consciousness? Existing Work

So far, we've surveyed a range of claims that GWT makes about consciousness in humans. But we haven't said precisely which properties of conscious human systems are necessary and sufficient for consciousness. This is a difficult question. Several authors have noted that it is tricky to say exactly how similar a system needs to be to a human global workspace in order to count as conscious (Carruthers 2019; Birch 2022; Butlin et al. 2023). More permissive conceptions of consciousness, according to which conscious systems might be less like the human brain, will make it easier for AI systems to be conscious, while less permissive conceptions, according to which conscious systems must be more like the human brain, will make it harder.

As we noted above, we think about these questions through a computational and functionalist perspective on consciousness. The idea here is that there is some functional role associated with consciousness, so that all and only systems instantiating this functional role are conscious. More carefully, we can also think about a necessary and sufficient functional role for a particular mental state to be conscious, and then say that a system is conscious when one of its mental states is conscious.

It is consistent with this methodology that the relevant functional role is not metaphysically necessary and sufficient for consciousness. Rather, for us what is relevant is that the functional role in question be nomically necessary and sufficient for consciousness. This distinction allows us to sidestep tricky questions about phenomenal zombies - hypothetical beings that are physically like conscious systems but lack conscious experience. Perhaps phenomenal zombies are metaphysically possible, and so for any functional role, it is metaphysically possible to satisfy it without being phenomenally conscious. Nonetheless, there could still be functional roles that

perfectly co-vary with consciousness as a matter of physical law. Another way of thinking about this is that the scientific laws of our world may imply that access consciousness (understood in terms of the global workspace) is sufficient for phenomenal consciousness. If so, AI systems with access consciousness would also be phenomenally conscious.

In this section, we present a number of existing characterizations of the conditions an artificial system would need to satisfy to have a global workspace according to GWT. Though they are on the right track, we believe that none of these characterizations get things quite right. We argue for our own set of conditions in section 5.

Butlin et al. (2023) is the most influential recent treatment of the question of what conditions are necessary and sufficient for a system to be phenomenally conscious according to GWT. Butlin et al. propose a set of four conditions:

- (B1) Multiple specialized systems capable of operating in parallel (modules).

- (B2) Limited capacity workspace, entailing a bottleneck in information flow and a selective attention mechanism.

- (B3) Global broadcast: availability of information in the workspace to all modules.

- (B4) State-dependent attention, giving rise to the capacity to use the workspace to query modules in succession to perform complex tasks.

In connection with condition (B2), Butlin et al. write that,

'...the capacity of the workspace must be smaller than the collective capacity of the modules which feed into it. Having a limited capacity workspace enables modules to share information efficiently, in contrast to schemes involving pairwise interactions such as Transformers, which become expensive with scale. . . The bottleneck also forces the system to learn useful, low-dimensional, multimodal representations. . . With the bottleneck comes a requirement for an attention mechanism that selects information from the modules for representation in the workspace.' (2023: 26-27)

In addition to Butin et al., there are also several recent papers which provide functional characterizations of GWT without explicitly addressing issues of phenomenal consciousness. Since we are understanding GWT as a theory of the conditions which must be satisfied for a system to be phenomenally conscious, however, these characterizations are relevant to our project.

VanRullen and Kanai (2021) (p. 3) propose 'a step-by-step attempt at defining necessary and sufficient components for an implementation of the global workspace in an AI system,' according to which a system must meet the following conditions:

- (VK1) A number of independent specialized modules, each with its own high-level latent space.

- (VK2) An independent and intermediate shared latent space, trained to perform unsupervised neural translation between the latent spaces from the specialized modules.

- (VK3) The system prioritizes competing inputs to the shared space with attention.

- (VK4) When a specific module is connected to the workspace as a result of attentional selection, its latent space activation vector is copied into the [global latent workspace] and then immediately broadcast into the latent space of all other modules.

VanRullen and Kanai explicate the notion of a 'high-level latent space' as follows: 'a latent space is a representation layer trained to encode the key elements of an input domain. This information

corresponds to high-level conceptual representations such as visual object features, word meaning, chunks of action sequences, etc.' (2021: 3).

According to Juliani et al. (2022), on the other hand, a system implements a global workspace just in case it contains a central workspace module that:

- (J1) Has the ability to interact with a dynamic set of modules.

- (J2) Has the capacity for selective attention over the modules.

- (J3) Has the capacity to maintain information over time.

- (J4) Has the capacity to manipulate information over time.

Juliani et al. understand (J1) as requiring that the central workspace receive inputs from a set of modules that potentially changes over time, remarking that 'while the neural anatomy of the brain is largely fixed, what counts as a 'module' within the GWT is dynamically determined based on population activity across multiple brain regions at a given time' (957). Their (J2) is intended to capture both attentional processes affecting which representations make their way into the central workspace (ignition) and attentional processes affecting which information in the workspace makes its way back to the modules (broadcast).

While a number of themes emerge from these proposed sets of necessary and sufficient conditions, it is clear that they are not equivalent. (J1), for example, demands that the set of modules connected to the global workspace be dynamic, whereas this requirement is not to be found in the proposals of Butlin et al. or VanRullen and Kanai. And (B2) specifies that the global workspace must have a limited capacity, which is a constraint not imposed by VanRullen and Kanai or Juliani et al. A key philosophical problem in approaching the literature on GWT and artificial systems, then, is to assess the plausibility of each condition proposed as a gloss on GWT.

## 4 What is Essential to Consciousness? Theoretical Choice Points

The existing proposals described in the previous section raise a number of broader questions about how to think about consciousness in AI systems in the context of GWT. We highlight some of these questions in this section before arguing for our considered view in the next.

A first question is how to functionally capture GWT's notion of attention. Butlin et al. (2023) suggest that consciousness requires 'top-down' attention, where the information in the workspace can control what further information enters the workspace, in addition to 'bottom-up' attention, where the strength of the signals from various modules determines which information enters the workspace. In contrast, though VanRullen and Kanai mention this distinction between top-down and bottom-up attention, they do not build it into their conditions on phenomenal consciousness.

A second question concerns the strength of the broadcast condition. Whereas (J1) simply requires that the workspace interacts with some modules, (B3) and (VK4) require that information in the global workspace is broadcast to every single module. Here, one especially relevant sub-question is whether information in the workspace must be sent back to the perception modules, which would enable top-down perceptual processing.

Athird question concerns the status of information processing in the global workspace. VanRullen and Kanai do not require the global workspace to engage in processing at all, whereas Juliani et al. do but remain unspecific about the nature of this processing. Butlin et al. do not clarify how much processing is required for state-dependent attention, so it is not clear where they come down on this issue. One might require that the workspace engage in very specific types of processing familiar from human working memory; for example, the presence of a visuospatial sketchpad and

phonological loop. Alternatively, one could follow Juliani et al. (2022) in being unspecific about the nature of the required processing, or not require processing to take place within the global workspace at all.

A final question, and one which in our opinion has been insufficiently discussed in the existing literature on GWT and artificial systems, is whether the representations in the global workspace must have specific structural features. Here one especially important idea is that phenomenal consciousness requires that perceptual representations are rich in some way. For example, Rosenthal (2005) suggests that phenomenal experiences are representations that occupy a similarity space, and Tye (1995)'s PANIC theory requires specific types of non-conceptual contents. Similarly, Carruthers (2020) argues that 'phenomenal consciousness is access-conscious nonconceptual content,' because it involves especially fine-grained content. Baars glosses richness in terms of relative detail:

'Try raising your eyebrows, for example. Did you know which muscles to contract? Now compare this knowledge to seeing yourself raising your eyebrows in a mirror. Which task provides more detailed information, doing it or seeing yourself doing it ? It is commonly reported that we have little or no conscious access to the details in action control, while perception is full of rich detail'(1997b; 64).

This all raises the possibility that artificial systems could lack phenomenal consciousness if their perceptual systems were insufficiently rich.

The debates just discussed are related to a bigger-picture question about the appropriate level of functional analysis for theorizing about consciousness. Requiring only high-level functional similarity to humans results in a more permissive account of phenomenal consciousness, whereas requiring lower-level functional similarity results in a more restrictive account. We ourselves tend to think about the functional role of consciousness in terms of high-level concepts related to manipulating information. But a competing approach might focus more on the implementational level (compare Cao (2022)). According to this alternative picture, consciousness functionally requires a neural net that implements higher-level functional ideas in a similar way to humans. For example, uptake might require a neural net that displays similar nonlinear ignition patterns to humans. It might also require an unconscious attention system that is trained in a similar way as the human attention system. By contrast, more abstract levels of analysis might allow an uptake system that implements an informational bottleneck in a different way.

Even in the case of humans, a higher level functional perspective may be more appropriate for thinking about the global workspace. Baars et al. argue that there is no single brain region that implements the global workspace: 'a brain-based GW capacity cannot be limited to only one anatomical hub. Rather, it should be sought in a dynamic and coherent binding capacity - a functional hub - for neural signaling over multiple networks' (2013; 20). Ultimately, they suggest that the global workspace recruits a wide range of brain regions and can in principle engage with arbitrary neurons in the brain. If there is no particular brain region associated with the global workspace, this may make it more likely that the essential functions of the global workspace could be implemented in a wide range of systems, including artificial ones.

## 5 What is Essential to Consciousness? A Theory

How do we assess the competing proposals of section 3 and answer the thorny questions of section 4? In this section, we consider two strategies: first, appealing to the ways in which consciousness is advantageous to systems that possess it; second, employing thought experiments about which

changes to conscious systems would turn them into systems that are not conscious. We suggest that each strategy tends to favor more permissive, higher-level functional roles.

## 5.1 The Value of Consciousness

A first way of approaching the problem of distilling a set of necessary and sufficient conditions for consciousness from GWT is to consider what the theory says about the usefulness of consciousness. What purpose does consciousness have in systems that possess it? We might find that consciousness is steered towards solving particular kinds of problems, but that only some and not all of the candidate functional roles sketched in the previous two sections are necessary in order to solve those problems. In general, this approach assumes that phenomenal consciousness is causally efficacious, connecting in lawlike ways to the cognitive and even physical behavior of the organisms that possess it. The methodology is to hold that a property is part of the functional role of consciousness according to GWT just in case that property plays a suitably central role in explaining how having a global workspace helps systems solve certain canonical problems.

What problems does consciousness help conscious systems solve? Baars (1997b) describes a number of information processing tasks that consciousness facilitates on the GWT picture; here, we distill them into four. First, consciousness helps to classify inputs to central cognition by prioritizing more important information over less important information. Second, consciousness enforces the coherence of the information being processed in central cognition. Third, consciousness helps to coordinate cognition by recruiting a range of unconscious tools to solve a single problem. Fourth, consciousness allows for correction of errors in reasoning. We suggest that when we think about consciousness in terms of serving these purposes, we should favor more permissive approaches to its functional role.

1. Classification. Any cognitively sophisticated agent faces the problem of deciding which information is important and relevant to its current situation and which is not. Solving this problem is crucial for reducing the computational complexity of reasoning about how to act. As Dehaene et al. put it:

'The organization of the brain into computationally specialized subsystems is efficient, but this architecture also raises a specific computational problem: the organism as a whole cannot stick to a diversity of probabilistic interpretations; it must act and therefore cut through the multiple possibilities and decide in favor of a single course of action. Integrating all of the available evidence to converge toward a single decision is a computational requirement that, we contend, must be faced by any animal or autonomous AI system' (2021; 3).

By its nature, the information bottleneck induced by the global workspace requires a conscious system to classify some information as more important than other information -it is only the information that makes it from the modules into the global workspace that is broadcast to the entire system and available for further processing.

2. Coherence. A related function of the global workspace is to create a coherent narrative about the world on the basis of potentially inconsistent representations. In the process of selecting information for uptake using attention, the global workspace works to produce consistent interpretations. We saw this demand for coherence in cases of binocular rivalry and other two-channel experiences, as well as in the conscious experience of perceptual illusions like the impossible trident. On the present picture, coherent conscious experience is a tool for facilitating effective agency. If our action-guiding representations of the world change dramatically from moment to moment, we will struggle to form and execute effective plans over time.

3. Coordination. In a cognitive architecture containing parallel processing modules, solving problems may often require coordinating the activity of multiple processors. The global workspace solves this problem by making the outputs of one processor available to the others and by guiding the activity of the processors over time through top-down attention.

4. Correction. Top-down attention also facilitates error detection and correction. Baars (1997b) argues that one function of consciousness is to monitor for errors: 'if we have no conscious access to our own performance, and if some reliable source of information tells us that we are doing quite badly, we tend to accept misleading feedback because we cannot check our own performance directly' (136). This effect is illustrated by an experiment due to Langer and Imber (1979), where a complex coding task was performed by two otherwise identical groups, who were given the labels 'Bosses' and 'Assistants'. Each group performed the same task, but the 'Assistants' group was told they were bad at the task. When doing the task consciously, the two groups performed the same: their conscious access enabled error detection. But once the task was automatic, the 'Bosses' outperformed the 'Assistants,' because misleading feedback about 'Assistant' abilities could not be corrected by conscious error detection.

The ability to monitor for errors is connected again to the nature of agency, because it is also connected to the concept of voluntary control: 'with direct conscious access to our own performance we are much less influenced by misleading labels. These results suggest that three things go together: consciousness of action details, voluntary control over those details, and the ability to monitor and edit the details.' (Baars (1997b); 136)

In general, we believe that focusing on the practical advantages conferred by consciousness favors more permissive accounts of the conditions which must be satisfied for consciousness. For example, it is difficult to see why the usefulness of consciousness would require representations in the global workspace to be broadcast back to every input module. Or consider the process of ignition as it is understood by the global neuronal workspace hypothesis. During ignition, information enters the global workspace through a specific non-linear process of neuronal activation. We don't think that nonlinear neuronal activation of this specific kind is plausibly required for coherent action or efficient information-processing.

In contrast, attention to the practical advantages of having a global workspace does suggest that certain core features of the GWT framework should be in place for conscious experience. For example, it seems to us that classification requires bottom-up attention, while coherence, coordination, and correction require a combination of top-down attention and processing in the workspace. It is worth noting in this connection that, while Butlin et al.'s (B1)-(B4) probably come closest to capturing the capacities identified above, none of the existing proposals we have discussed simultaneously emphasize the need for bottom-up attention, top-down attention, and information processing within the workspace itself.

## 5.2 Thought Experiments

A second way of approaching the question of what it would take for an artificial system to be conscious according to GWT is to consider thought experiments where a candidate condition is removed from a conscious system and we judge whether that system is still conscious.

First, consider the fact that working memory in human beings is limited to just a few items (seven or less). It would be easy to design AI systems with much larger working memories. But the very small size of human working memory is not plausibly essential to consciousness. Imagine that a person's working memory expanded from seven to ten thousand items. Intuitively, they would not thereby go from being conscious to being unconscious. Instead, they would be superconscious , conscious of vastly more.

## A CASE FOR AI CONSCIOUSNESS

This thought experiment suggests to us that consciousness does not require that the global workspace have bounded size. In other words, it suggests to us that the restriction to systems with a limited capacity workspace in Butlin et al.'s (B2) is unwarranted. In this connection, it is worth pointing out that efficient information sharing, useful multimodal representations, and attention could all be implemented in a system with an arbitrarily large global workspace.

Second, consider whether having a dynamic set of modules is plausibly a necessary condition on phenomenal consciousness according to GWT. Start with a conscious system with a global workspace dynamically connected at different times to different subsets of a set S of modules. Now imagine that we change the system so that it is always connected to each module in S, but some of the connections at any given time are not active. Could simply creating the extra connections turn a conscious system into one that lacks consciousness? Would the light of conscious inner life suddenly extinguish when the last connection is installed? We think not. This thought experiment suggests to us that consciousness does not require a dynamic set of modules, as in Juliani et al.'s (J1). 8

Third, consider whether consciousness requires that information represented in the global workspace must be broadcast recurrently back to every module connected to it. Start with a conscious system with a global workspace which both receives information from and broadcasts information to each of its input modules. Now imagine that we add a module to the system which has a one-way connection to the global workspace: it can send information to the global workspace, but not receive information back. Would the addition of this module turn a conscious system into an unconscious one? Intuitively, we think the answer to this question is no. This thought experiment suggests that conditions like Butlin et al.'s (B3) and VanRullen and Kanai's (VK4) are unnecessarily strong.

Fourth, consider the richness of perceptual experience. Baars et al. (2013) observes that not only visual but also conceptual representations can be conscious. He describes these conceptual conscious experiences as 'fringe' or 'feeling of knowing' consciousness: 'Feelings of knowing are not imprecise in their underlying contents. They are simply subjectively vaguer than the sight of a coffee cup. . . lacking clear figure-ground contrast, differentiated details and sharp temporal boundaries. However, concepts, judgments, and semantic knowledge can be complex, precise, and accurate.' (Baars et al. (2013); 15). Now imagine a human being who lost their visual consciousness, but retained their fringe representations. Would this person no longer be conscious? We think they would still be conscious (compare Chalmers (2023)). This suggests that rich perceptual inputs should not be construed as necessary for phenomenal consciousness according to GWT.

Finally, consider the plausibility of conditions tied to specific deep-learning architectural assumptions, like VanRullen and Kanai's (VK2) and (VK4). (VK2) requires that the global workspace have been trained in a specific way, while (VK4) requires that information stored in the modules and global workspace be representable as an activation vector, and also that movement of information from a module to the workspace be interpretable as a copy function on activation vectors. We think it is easy to imagine a conscious system which does not meet these conditions. Consider, for example, a normal adult human. It is not clear to us that the global workspace of a human brain has been trained to perform unsupervised neural translation between different latent spaces

8 It might be protested that Juliani et al.'s conception of a dynamic set of modules is one on which the modules that exist at one time might not exist at another later time, not one on which the same modules always exist but might or might not be connected to the global workspace at any given time. But similar considerations suggest that consciousness does not require the set of modules to be dynamic in this second sense, either. Take a conscious system with a global workspace connected at time t to a set S of modules. If we constrain the system so that no modules can be added or removed from S, will it cease to be conscious? Intuitively, no - at most, constraining the system in this way would limit the range of conscious experiences it could have.

because it is not clear to us that the global workspace of a human brain has been trained at all. 9 Similarly, to copy the activation vector from one space to another in a literal sense, there must be a neuron in the latter space corresponding functionally to each neuron in the former space. If the global neuronal workspace hypothesis is true and the human global workspace is realized by a specific network of long-range neurons, there is little reason to believe that this network is isomorphic in the required way to the brain regions from which it receives inputs. To put it another way, it seems to us more plausible that when information moves from brain a module into the global neuronal workspace, what happens is that a pattern of activation with content C in the module causally produces a distinct pattern of activation with content C in the global neuronal workspace, where this shared content need not be explained by positing structurally identical patterns of neural activity. This suggests that VanRullen and Kanai's (VK2) and (VK4) are too specific to be plausible. 10

We have rejected a number of candidate conditions on consciousness on the basis of thought experiments. It is worth noting at this point that we do not think the same method can be used to challenge other more foundational aspects of the GWT picture. For example, if we imagine a conscious system consisting of a set of parallel processing modules and a global workspace and then remove the parallel processing modules, we have no strong intuition that the resulting system will be conscious. 11

Combining considerations from the usefulness of consciousness with the results of our thought experiments, here is our considered view of the necessary and sufficient conditions for conscious experience according to GWT. A system is phenomenally conscious just in case:

- (1) It contains a set of parallel processing modules.

- (2) These modules generate representations that compete for entry through an information bottleneck into a workspace module, where the outcome of this competition is influenced both by the activity of the parallel processing modules (bottom-up attention) and by the state of the workspace module (top-down attention).

- (3) The workspace maintains and manipulates these representations, including in ways that improve synchronic and diachronic coherence.

- (4) The workspace broadcasts the resulting representations back to sufficiently many of the system's modules. 12

9 If you think that the plasticity involved in normal neurological development might count as a kind of training in the relevant sense, consider an intrinsic duplicate of yourself which is produced instantaneously out of inanimate components by a sophisticated machine. The brain of such a duplicate would have never undergone training of any kind, but it would be conscious.

10 Since VanRullen and Kanai's explicit goal is to articulate a set of necessary and sufficient conditions for implementing a global workspace in artificial systems , the fact that their account makes implausible predictions about biological systems might be thought not to constitute a serious objection. Here we would like to make two points. First, given that one of the main motivations for GWT is that it understands consciousness in terms of the high-level functional organization of information processing, it would be odd for the conditions for implementing a global workspace to differ between biological and nonbiological systems. Second, we see no reason why all artificial systems implementing a global workspace would need to share the deep-learning architectural assumptions built into (VK1)-(VK4). Consider, for example, a neuron-by-neuron simulation of a human brain. If biological brains constitute a problem for (VK1)-(VK4), then simulated brains do, as well.

11 We have weaker intuitions about whether starting with a conscious system and then removing its capacity for top-down attention or its workspace's ability to promote coherent representations would result in a system that is not conscious. There might be room for reasonable disagreement on these points, and for this reason some might hesitate to follow us in building these features into the conditions for conscious experience. This issue is not central for our dialectical purposes, however, since our claim is that it is possible to imagine a simple language agent architecture that meets even our more demanding conditions.

12 Correspondingly, according to our view a representation is phenomenally conscious just in case it is being manipulated by the workspace of a phenomenally conscious system.

## 6 Language Agents

In the rest of the paper, we'll apply (1)-(4) to a particular type of AI system: the language agent. Language agents are created by embedding an LLM into a functional architecture with the structure of an agent that acts predictably according to the laws of folk psychology. The LLM performs all of the relevant information processing of the system, while the functional architecture ensures that this information processing produces coherent agentic behavior. Below, we'll focus on the language agents developed by Park et al. (2023) as a case study. But there are numerous other examples of language agents, including AutoGPT 13 , BabyAGI 14 , Voyager 15 , SPRING 16 , and others 17 .

Language agents record and store their beliefs, desires, and plans, as well as their perceptual observations, in natural language. The functional architecture with which a language agent is programmed specifies how these sentences recording beliefs, desires, plans, and observations are fed into the LLM as it considers how the agent will act. Indeed, it is the roles assigned to different stored sentences by the architecture of the language agent which make it the case that they count as the agent's beliefs, desires, and so forth. In this respect, the structure of a language agent mirrors the structure of the human mind according to cognitive theories which posit a distinction between the content of a representation and its functional role. What makes a representation with the content I am eating a desire rather than a belief in the human mind, according to such theories, is the way in which it enters into an agent's broader cognitive economy, and especially action planning. 18

Cognition requires not only moving and storing information, but also processing it. The information-processing role in a language agent is played by its LLM. Different cognitive tasks within the language agent may rely on the LLM in different ways. For example, the route from perception to memory might require the LLM to summarize a text description of the agent's observations to highlight those aspects worth recording, while planning action might require the LLM to reason about what course of action would be rational given the agent's beliefs and desires. While the language agents in which we are most interested rely more or less exclusively on a single LLM for cognitive processing, for our purposes it would not matter if they relied on some combination of different LLMs, or even if some of their cognitive capacities were realized by hand-coded algorithms.

For concreteness, let us return to the language agents developed by Park et al. (2023). Park et al.'s agents live in a text-based simulation called 'Smallville'. They observe and interact with their simulated environment and each other via text descriptions of what they see and how they choose to act. Each agent's perceptual observations are stored in a text file called the memory

13 Project available at https://github.com/Significant-Gravitas/Auto-GPT .

14 Project available at https://github.com/yoheinakajima/babyagi .

15 See Wang et al. (2023).

16 See Wu et al. (2023).

17 Perhaps the most successful recent agentic application of language models is Devin, billed as the 'first AI software engineer' ( https://www.cognition-labs.com/introducing-devin ). Another recent example of a step towards language agents is Ghost in the Minecraft, where LLMs learn to navigate the game Minecraft (Zhu et al. 2023). Mind2Web is a framework for building web agents (Deng et al. 2024). A longer list of existing LLM agents can be found here: https://github.com/e2b-dev/awesome-ai-agents . ChatDev ( https://github. com/OpenBMB/ChatDev ) is another multi-agent environment with some similar features to the Park et al. Generative Agents framework. For further scaffolding techniques that increase the agency of LLMs, see: Tree of Thoughts (Yao et al. 2024), LLM+P (Liu et al. 2023), GPT-engineer, and RecurrentGPT. In a similar vein, Zhang et al. (2024) develop AgentOptimizer, a framework for training language agents without modifying the weights of their underlying language models. For benchmarks measuring the agency of LLMs, with discussion of applications for language agents, see AgentBench (Liu et al. 2023) and API-bank (Li et al. 2023).

18 See for example Fodor (1987).

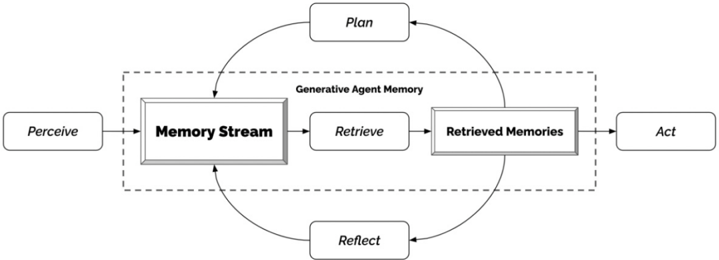

Figure 1: The architecture of Park et al.'s language agents. Reproduced from Park et al. (2023).

<details>

<summary>Image 1 Details</summary>

### Visual Description

## Diagram: Generative Agent Memory Flow

### Overview

The image is a diagram illustrating the flow of information and processes within a generative agent's memory system. It depicts a cyclical process involving perception, memory storage and retrieval, planning, action, and reflection.

### Components/Axes

* **Nodes:** The diagram consists of rectangular nodes representing different stages or components of the agent's cognitive process. These nodes are:

* Perceive

* Memory Stream

* Retrieve

* Retrieved Memories

* Act

* Plan

* Reflect

* **Flow:** Arrows indicate the direction of information flow between these components.

* **Generative Agent Memory:** A dashed rectangle encloses the "Memory Stream," "Retrieve," and "Retrieved Memories" nodes, labeled as "Generative Agent Memory."

### Detailed Analysis

1. **Perceive:** The process begins with the agent perceiving information from the environment. An arrow leads from "Perceive" to "Memory Stream."

2. **Memory Stream:** The perceived information is stored in the "Memory Stream."

3. **Retrieve:** From the "Memory Stream," information is retrieved via the "Retrieve" process.

4. **Retrieved Memories:** The retrieved information is stored in "Retrieved Memories."

5. **Act:** The agent then acts based on the retrieved memories. An arrow leads from "Retrieved Memories" to "Act."

6. **Plan:** The agent also plans based on the retrieved memories. An arrow leads from "Retrieved Memories" to "Plan." An arrow leads from "Plan" back to "Memory Stream," forming a loop.

7. **Reflect:** The agent reflects on its experiences. An arrow leads from "Memory Stream" to "Reflect." An arrow leads from "Reflect" back to "Memory Stream," forming another loop.

### Key Observations

* The "Generative Agent Memory" component is central to the agent's cognitive process.

* The diagram highlights the cyclical nature of the agent's learning and adaptation, with feedback loops between memory, planning, and reflection.

### Interpretation

The diagram illustrates a simplified model of how a generative agent processes information, learns from its experiences, and plans its actions. The "Generative Agent Memory" acts as the core component, storing and retrieving information that drives the agent's behavior. The loops involving "Plan" and "Reflect" suggest a continuous process of learning and adaptation, where the agent refines its understanding of the world based on its experiences. The model emphasizes the importance of memory in shaping an agent's behavior and decision-making.

</details>

stream along with other beliefs, including those comprising their text backstory, which specifies their long-term goals and relationships with other agents. At the end of each day, every agent in Smallville calls on the LLM (in this case, gpt3.5-turbo) to generate a plan for the next day in light of the contents of their memory stream . These plans flexibly shape how an agent acts the following day.

For our purposes in what follows, it will be important to discuss three aspects of Park et al.'s agents in more detail. First, there is the memory stream. In Park et al.'s agents, the memory stream is implicated in all important cognitive processes. It is where perceptions are recorded, as well as where non-perceptual beliefs, desires, and plans are stored. Each entry in the memory stream comes along with a timestamp, and each is assigned an importance score by the LLM as it is recorded. These importance scores play a key role in determining how each entry shapes the future behavior of the agent: entries with higher importance scores are more likely to influence an agent's other beliefs and plans.

Second, there is the retrieval function . Since an agent's memory stream is unmanageably long, in planning action is it necessary to select an action-relevant subset of entries. This is the role of the retrieval function. Given an input circumstance, the retrieval function produces a list of entries from the memory stream ordered by taking a weighted sum of their importance (as described above), their recency, and their relevance to the input circumstance (this is again calculated by the LLM). In deciding how to act in a given circumstance, each language agent considers only those entries from its memory stream which rank highly enough according to the retrieval function.

Third, there is the process of reflection . If the entries in the memory stream were limited to perceptual beliefs and plans, language agents would struggle to function intelligently in light of new information. To solve this problem, Park et al. introduce a function that enables agents to freely draw inferences from their existing beliefs. During reflection, agents draw on the LLM to first pose and then answer a series of questions about how to generalize from their recent experiences. For example, in reflection an agent might pose and then answer questions about their values or the significance of their relationships. The results of reflection are then stored in the memory stream, where they are accessible to the retrieval function.

From the perspective of GWT, Park et al.'s agents have a number of suggestive architectural features. For example, the memory stream maintains representations and interfaces with both the perception and action systems. It also manipulates those representations through reflection, planning, and revising plans in light of new information. There is even an information bottleneck

of sorts in the form of the retrieval function imposing constraints on how much of the information in the memory stream can be fed to the underlying LLM.

At the same time, Park et al.'s language agents lack some of the features which we have identified as necessary for consciousness according to GWT. For example, since perception, belief, desire, and planning are all handled by the memory stream, it is not clear that the agents contain a series of parallel processing modules. All information processed by a language agent makes its way into the memory stream, so there is no information bottleneck leading into it. From the perspective of GWT, then, it seems to us that Park et al.'s language agents are not plausibly conscious. This conclusion is in keeping with the general climate of skepticism surrounding claims of consciousness in artificial systems.

At the same time, however, we wish to challenge this climate of skepticism by suggesting that Park et al.'s language agents can serve as the basis for language agents which would be phenomenally conscious according to GWT - and indeed that the changes necessary to create such agents are technically trivial in the sense that they could be implemented straightforwardly given existing technology.

## 7 Language Agents Could Easily Have Conscious Experiences

We have seen that, while Park et al.'s language agents contain a centralized cognitive module which stores and manipulates information in the manner of a global workspace, it is less clear that they contain a series of input modules to this workspace whose representations must compete for entry into it. In this section, we describe how the architecture of Park et al.'s language agents could be changed to more closely mirror the structure of a conscious system according to GWT. None of the changes we consider will affect how the LLM underlying a language agent is trained or structured. Rather, all of the changes pertain to how that LLM is scaffolded to produce an agent. In this way, once we have an LLM that can process information, consciousness will depend on how that information processing is exploited in systematic, lawlike ways by hard-coded rules.

To begin, note that Park et al.'s language agents in fact have representations of several kinds (beliefs, desires, plans) and engage in a range of forms of cognitive processing including assigning importance scores to memory items, reflection, assigning relevance scores to memory items during retrieval, plan formation and revision, and practical reasoning leading to action. Park et al. make the architectural choice to store all these kinds of representations in the same cognitive workspace, the memory stream, and to have all cognitive processing take as input a series of items from the memory stream. But language agents could also be built with many of the same features arranged into a different architecture.

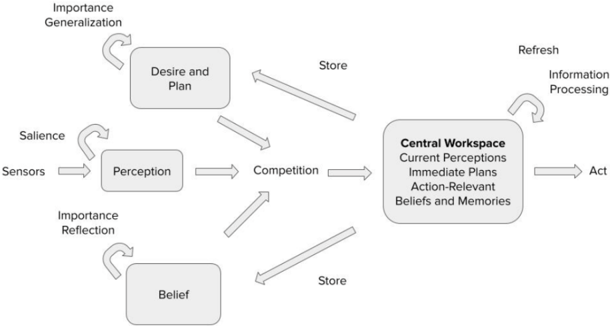

Imagine, for example, that we modify Park et al.'s architecture in the following ways. First, instead of a single memory stream containing perceptual inputs, other beliefs, desires, and plans, we have a central workspace connected to three further cognitive input modules: a perception module, a belief module, and a desire-and-plan module. Each of these three modules stores representations of the appropriate type. The central workspace can store representations of any type.

Second, imagine that the three input modules perform the following information processing tasks in parallel. The perception module receives observations from the environment and assigns them salience ratings corresponding to how relevant it judges them to be to the agent's likely future cognition. The belief module assigns each belief an importance score and engages in reflectiondriven inference in the same way as Park et al.'s agents. The desire-and-plan module assigns each desire an importance score and engages in a desire-based analog of reflection: generalizing new desires from the agent's existing list of desires.

Third, imagine that the central workspace plays a number of important information processing roles. It receives perceptual inputs that have been flagged as high salience and decides whether to send them to the belief module for storage. It recursively forms and adds detail to plans on the basis of the agent's beliefs and desires, working to ensure that the results are coherent. And it chooses how the agent should act on the basis of its beliefs, desires, and plans.

Fourth, imagine that information in the architecture flows along the following paths: in belief formation, from the perception module to the central workspace and then to the belief module; in planning, from the belief and desire-and-plan modules to the central workspace and then back to the desire-and-plan module; in action, from the belief and desire-and-plan modules to the central workspace and then back to the belief and desire-and-plan modules (because actions must be recorded in memory and plans must be revised in light of actions).

The architecture just described would be no more technically challenging to implement than Park et al.'s architecture. But it would be much closer to the architecture of a phenomenally conscious system according to GWT. In particular, it would contain a series of parallel processing modules and a central workspace that maintains and manipulates representations, including in ways that promote coherence, and broadcasts them back to these modules. The only condition arguably not satisfied would be the idea that representations from the input modules compete through a bottleneck for entry into the central workspace in a way sensitive to both bottom-up and top-down attention.

It is this idea of competition for entry into the global workspace which requires us to depart most significantly from Park et al.'s architecture. But even here, we can take inspiration from Park et al.'s definition of the retrieval function. Recall that the retrieval function generates an ordered list of items from the memory stream which are important, recent, and relevant to an agent's current situation. We can turn the idea of an ordered list of this kind into something which looks more like competition for entry into the global workspace as follows. Imagine that we define a competition function taking as input an ordered triple consisting of the contents of the perception module, the contents of the belief module, and the contents of the desire-and-plan module and yielding as output a set of representations of limited size (for concreteness, suppose we choose 50). Imagine that this function's output always includes the most salient items from the perception module (for concreteness, suppose there are ten of these), and that the remaining 40 spots are filled by ranking the contents of the belief and desire-and-plan modules according to a weighted combination of their importance, relevance to the agent's current situation, and recency, as in Park et al.'s retrieval function. Finally, suppose that this competition function is called periodically, and only the representations which it selects have a chance to enter the central workspace.

Implementing a competition function of this kind brings our architecture closer to a canonical GWT system in two ways. First, it introduces an information bottleneck at the point at which the parallel processing modules feed into the global workspace. Second, it gives functional roles to the GWT ideas of bottom-up attention (in the form of the importance or salience the module assigns to a given piece of information) and of top-down attention (in the form of the relevance assigned to different pieces of information given the agent's current situation).

The language agent architecture we have just described, with its central workspace, three input modules, and competition function, satisfies Section 4's four conditions for being a conscious system. It contains a series of parallel processing modules: the perception, belief, and desireand-plan modules. These modules generate representations that compete for entry through an information bottleneck (the competition function) into a central workspace module via a process influenced by both bottom-up and top-down attention. The central workspace module maintains and manipulates the representations in it in ways that promote coherence and broadcasts them back to the belief and desire-and-plan modules. Accordingly, we believe the language agent

Figure 2: The architecture of a conscious language agent.

<details>

<summary>Image 2 Details</summary>

### Visual Description

## Diagram: Cognitive Architecture

### Overview

The image is a diagram illustrating a cognitive architecture. It depicts the flow of information and processes between different cognitive components such as perception, belief, desire and plan, and a central workspace. The diagram uses arrows to show the direction of information flow and feedback loops to represent iterative processes.

### Components/Axes

* **Nodes:** The diagram contains four primary nodes, represented as rounded rectangles:

* Desire and Plan (top)

* Perception (middle)

* Belief (bottom)

* Central Workspace (right)

* **Inputs:**

* Sensors (input to Perception)

* **Outputs:**

* Act (output from Central Workspace)

* **Processes/Connections:**

* Salience (feedback loop from Perception)

* Importance Generalization (feedback loop from Desire and Plan)

* Importance Reflection (feedback loop from Belief)

* Competition (connection from Perception and Belief to Central Workspace)

* Store (connection from Desire and Plan and Belief to Central Workspace)

* Refresh (feedback loop from Central Workspace)

* Information Processing (feedback loop from Central Workspace)

* **Central Workspace Contents:**

* Current Perceptions

* Immediate Plans

* Action-Relevant

* Beliefs and Memories

### Detailed Analysis or ### Content Details

The diagram illustrates the flow of information and processes within a cognitive system.

1. **Sensors** provide input to **Perception**.

2. **Perception** processes sensory information and has a feedback loop labeled **Salience**.

3. **Belief** represents the system's beliefs and has a feedback loop labeled **Importance Reflection**.

4. **Desire and Plan** represents the system's goals and plans and has a feedback loop labeled **Importance Generalization**.

5. **Perception** and **Belief** both send information to the **Central Workspace** through a process labeled **Competition**.

6. **Desire and Plan** and **Belief** both send information to the **Central Workspace** through a process labeled **Store**.

7. The **Central Workspace** contains:

* Current Perceptions

* Immediate Plans

* Action-Relevant information

* Beliefs and Memories

8. The **Central Workspace** has a feedback loop labeled **Refresh** and **Information Processing**.

9. The **Central Workspace** outputs an **Act**.

### Key Observations

* The diagram emphasizes the interaction and competition between different cognitive components.

* The Central Workspace acts as a central hub for integrating information and generating actions.

* Feedback loops play a crucial role in refining and updating the information within each component.

### Interpretation

The diagram represents a simplified model of cognitive architecture, illustrating how different cognitive processes interact to produce behavior. The flow of information from sensors to perception, belief, and desire, and their integration in the central workspace, highlights the complex interplay between sensory input, prior knowledge, and goals. The feedback loops suggest that these processes are iterative and adaptive, allowing the system to learn and refine its understanding of the world. The "Competition" process suggests that different perceptions and beliefs compete for attention and influence within the central workspace. The "Store" process suggests that desires, plans, and beliefs are stored in the central workspace. The model suggests that the system's actions are based on the integration of current perceptions, immediate plans, action-relevant information, and beliefs and memories.

</details>

architecture we have just described is the architecture of a phenomenally conscious artificial system if GWT is correct.

## 8 Potential Modifications

So far, we have argued for a particular view of the necessary and sufficient conditions GWT imposes on phenomenally conscious cognitive systems and described the architecture of a type of language agent which satisfies these conditions. This completes our core case for the near-term possibility of phenomenally conscious artificial systems if GWT is true.

We are aware, however, that not everyone will agree with us on our characterization of the conditions GWT imposes on phenomenally conscious artificial systems. In this section, we introduce and respond to some further conditions which might be thought relevant in this context.

First, according to both GWT and the global neuronal workspace hypothesis, information in the global workspace does not stay there indefinitely. Instead, it must compete with incoming information from the modules, and, if it loses this competition, it is replaced by that incoming information. To put it slightly differently, one might worry that information in the global workspace needs to be actively maintained there. This feature of GWT is not represented in the architecture we described in the previous section. 19

It is not difficult to modify the architecture described above to address this concern. Imagine that each time the competition function is called, the set of representations it generates serves as input to a second function, which we might call the refresh function. The refresh function takes as input the current contents of the central workspace and the output of the competition function. It works by assigning each of the current contents of the central workspace a score based on its importance, relevance to the agent's current situation, and recency (that is, a score of the same type as those assigned by the competition function) and then discarding any current workspace contents whose score falls below some threshold (for example, items which score lower than the median of the outputs of the competition function).

19 Thanks to Raphaël Millière for discussion on this point.

Second, Butlin et al. (2023) require that the global workspace have a limited capacity. We have argued above that thought experiments suggest that this condition is not necessary for a system to be conscious, but it would be straightforward to implement it in our imagined architecture. We could simply hold that the refresh function ranks the current contents of the workspace and the outputs of the competition function and selects a specific number of top-scoring pieces of information to form the new contents of the central workspace. For example, we could hold that the 50 items provided by the competition function are ranked alongside the current contents of the central workspace, and the top 50 items on this new ranking become the new contents of the central workspace. Setting things up in this way guarantees that the central workspace never contains more than 50 pieces of information.

Third, some may worry that the fact that Park et al.'s language agents function entirely in natural language, with no recognizable perceptual apparatus, prevents them from being phenomenally conscious. Here it is worth emphasizing that while existing language agents are reliant on textbased observation spaces, the technology already exists to implement language agents with richer perceptual modalities. The rise of multimodal language models like GPT-4, which can interpret image as well as text inputs, means that it would not require significant further technological innovation to create language agents with visual perceptual modalities. We can imagine this working in at least two different ways. First, images as perceptual inputs could be translated into text by the perception module using existing captioning technology, and the rest of the agent's cognition could proceed in natural language. Second, the multimodality of the underlying language model could be used more pervasively throughout the agent's cognition, so that images could enter the global workspace and be stored in and retrieved from the belief module.