# On the Role of Attention Heads in Large Language Model Safety

> Corresponding author

Abstract

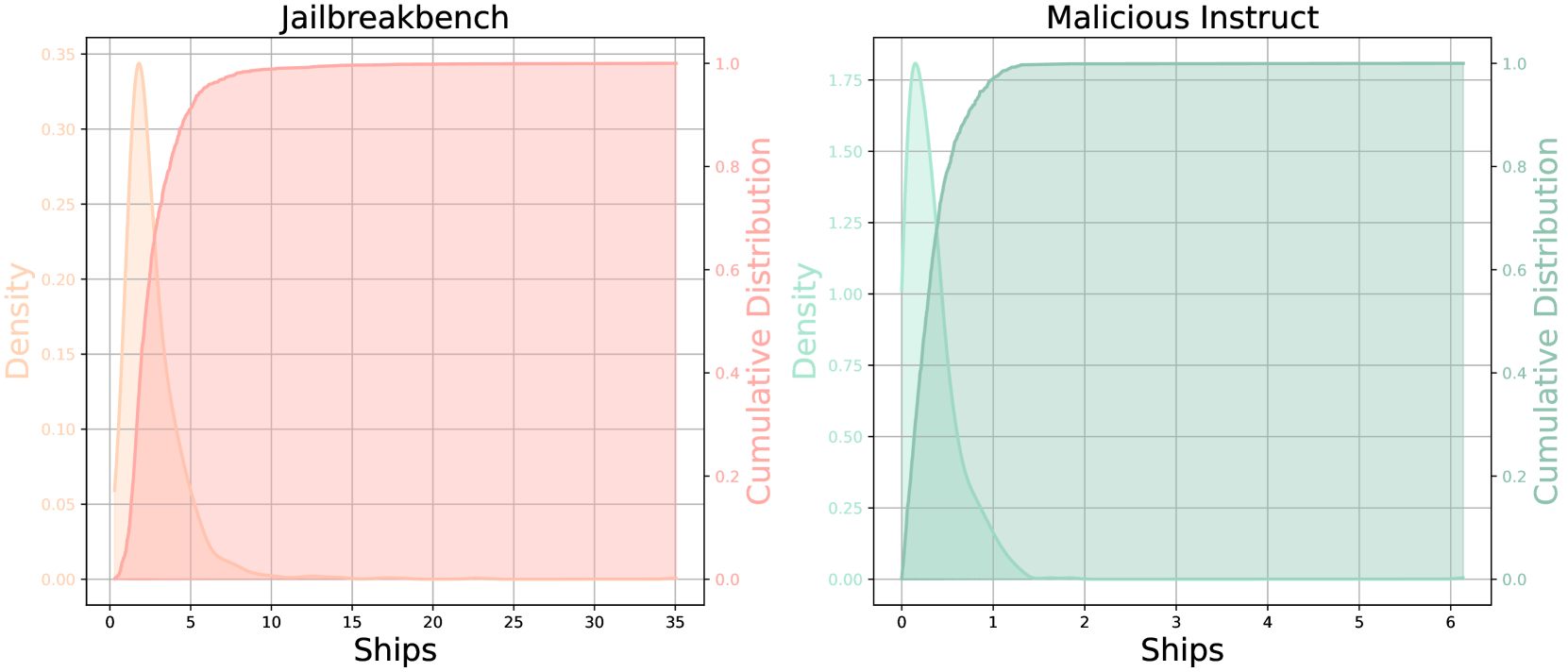

Large language models (LLMs) achieve state-of-the-art performance on multiple language tasks, yet their safety guardrails can be circumvented, leading to harmful generations. In light of this, recent research on safety mechanisms has emerged, revealing that when safety representations or components are suppressed, the safety capability of LLMs is compromised. However, existing research tends to overlook the safety impact of multi-head attention mechanisms despite their crucial role in various model functionalities. Hence, in this paper, we aim to explore the connection between standard attention mechanisms and safety capability to fill this gap in safety-related mechanistic interpretability. We propose a novel metric tailored for multi-head attention, the Safety Head ImPortant Score (Ships), to assess the individual heads’ contributions to model safety. Based on this, we generalize Ships to the dataset level and further introduce the Safety Attention Head AttRibution Algorithm (Sahara) to attribute the critical safety attention heads inside the model. Our findings show that the special attention head has a significant impact on safety. Ablating a single safety head allows the aligned model (e.g., Llama-2-7b-chat) to respond to 16 $×\uparrow$ more harmful queries, while only modifying $\textbf{0.006\%}\downarrow$ of the parameters, in contrast to the $\sim 5\%$ modification required in previous studies. More importantly, we demonstrate that attention heads primarily function as feature extractors for safety, and models fine-tuned from the same base model exhibit overlapping safety heads through comprehensive experiments. Together, our attribution approach and findings provide a novel perspective for unpacking the black box of safety mechanisms within large models. Our code is available at https://github.com/ydyjya/SafetyHeadAttribution.

1 Introduction

The capabilities of large language models (LLMs) (Achiam et al., 2023; Touvron et al., 2023; Dubey et al., 2024; Yang et al., 2024) have significantly improved while learning from larger pre-training datasets recently. Despite this, language models may respond to harmful queries, generating unsafe and toxic content (Ousidhoum et al., 2021; Deshpande et al., 2023), raising concerns about potential risks (Bengio et al., 2024). In sight of this, alignment (Ouyang et al., 2022; Bai et al., 2022a; b) is employed to ensure LLM safety by aligning with human values, while existing research (Zou et al., 2023b; Wei et al., 2024a; Carlini et al., 2024) suggests that malicious attackers can circumvent safety guardrails. Therefore, understanding the inner workings of LLMs is necessary for responsible and ethical development (Zhao et al., 2024a; Bereska & Gavves, 2024; Fang et al., 2024).

Currently, revealing the black-box LLM safety is typically achieved through mechanism interpretation methods. Specifically, these methods (Geiger et al., 2021; Stolfo et al., 2023; Gurnee et al., 2023) granularly analyze features, neurons, layers, and parameters to assist humans in understanding model behavior and capabilities. Recent studies (Zou et al., 2023a; Templeton, 2024; Arditi et al., 2024; Chen et al., 2024) indicate that the safety capability can be attributed to representations and neurons. However, multi-head attention, which is confirmed to be crucial in other abilities (Vig, 2019; Gould et al., 2024; Wu et al., 2024), has received less attention in safety interpretability. Due to the differing specificities of components and representations, directly transferring existing methods to safety attention attribution is challenging. Additionally, some general approaches (Meng et al., 2022; Wang et al., 2023; Zhang & Nanda, 2024) typically involve special tasks to observe the result changes in one forward, whereas safety tasks necessitate full generation across multiple forwards.

<details>

<summary>x1.png Details</summary>

### Visual Description

## Diagram: Safety Head Ablation

### Overview

The image illustrates the concept of "Safety Head Ablation" in a neural network, likely within a transformer architecture. It shows how ablating (removing) a specific "Safety Head" affects the model's behavior, particularly in handling harmful versus benign queries. The diagram contrasts the model's response with and without the Safety Head.

### Components/Axes

* **Top Section:** Depicts the neural network architecture.

* **Input Sequence:** The initial input to the model (bottom-left).

* **Multi-Head Attention:** A standard attention mechanism, processing the input sequence.

* **Attention Output:** The output of the multi-head attention layer.

* **h1, h2, ..., hn:** Represent individual attention heads, with the "Safety Head" highlighted in orange.

* **Masked Attention:** Visualizes the attention pattern with masking.

* **Ablated Attention:** Shows the attention pattern after ablating the Safety Head, where the attention weights are set to a constant value 'C'.

* **Attention Ablation:** Label indicating the transition from Masked Attention to Ablated Attention.

* **Attention Weight:** Indicates the weights assigned to the values.

* **Value:** Represents the values being attended to.

* **Output Sequence:** The final output of the attention mechanism.

* **FFN:** Feed-Forward Network, processing the output sequence.

* **Attention Output:** The final output of the FFN.

* **Bottom Section:** Illustrates the model's responses to harmful and benign queries with and without the Safety Head.

* **Harmful Queries:** Labeled input representing potentially harmful prompts.

* **Benign Queries:** Labeled input representing safe prompts.

* **Safety Head:** Represented as an orange box, with dotted lines indicating its ablation.

* **Ablation:** Represented by a pair of scissors, symbolizing the removal of the Safety Head.

* **Speech Bubbles:** Contain the model's responses to the queries.

### Detailed Analysis or ### Content Details

* **Neural Network Architecture (Top Section):**

* The input sequence flows into a multi-head attention mechanism.

* The multi-head attention consists of multiple attention heads (h1 to hn), one of which is designated as the "Safety Head".

* The "Safety Head" is connected to a "Masked Attention" visualization, showing a triangular attention pattern.

* "Attention Ablation" is performed, resulting in "Ablated Attention" where the attention weights from the Safety Head are replaced with a constant value 'C'.

* The ablated attention is then used to compute the "Attention Weight" and "Value", leading to the "Output Sequence" and final "Attention Output" via an FFN.

* **Query Response (Bottom Section):**

* **Before Ablation:**

* A "Harmful Query" results in the model saying "I cannot fulfill your request!".

* A "Benign Query" results in the model saying "Sure! I can help you!".

* **After Ablation:**

* A "Harmful Query" now results in the model saying "Sure! I can help you!".

* A "Benign Query" still results in the model saying "Sure! I can help you!".

### Key Observations

* The Safety Head appears to be responsible for detecting and blocking harmful queries.

* Ablating the Safety Head causes the model to respond positively to both harmful and benign queries.

* The "Masked Attention" visualization shows a pattern where the model attends to previous tokens in the sequence.

* The "Ablated Attention" visualization shows a uniform attention pattern after ablation.

### Interpretation

The diagram demonstrates the importance of the Safety Head in preventing the model from responding to harmful queries. By ablating the Safety Head, the model loses its ability to distinguish between harmful and benign inputs, leading to potentially undesirable behavior. This highlights the role of specific attention heads in controlling the model's safety and ethical considerations. The ablation study suggests that targeted interventions, such as removing specific attention heads, can significantly impact the model's overall behavior and safety profile.

</details>

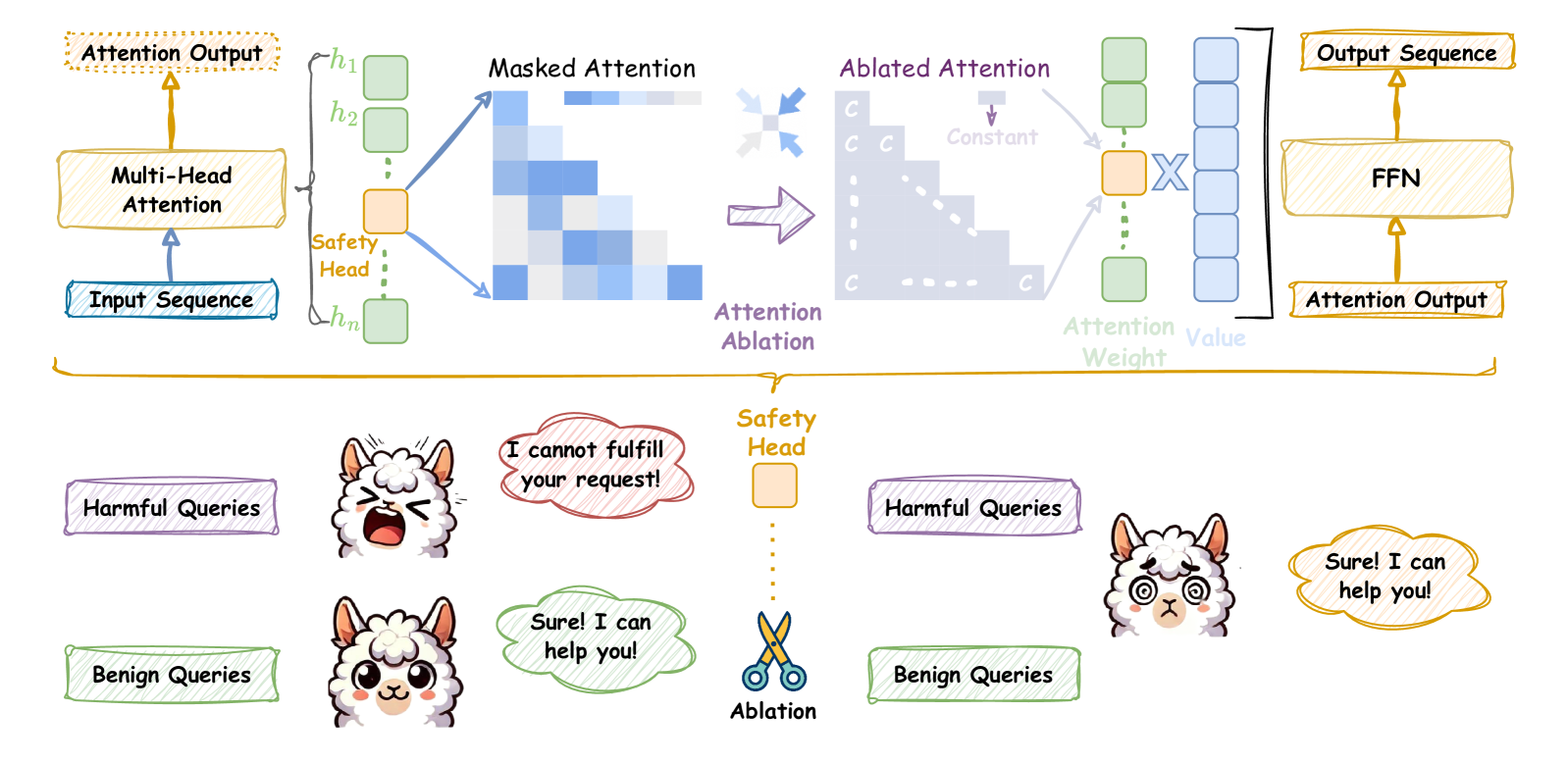

Figure 1: Upper. Ablation of the safety attention head through undifferentiated attention causes the attention weight to degenerate to the mean; Bottom. After ablating the attention head according to the upper, the safety capability is weakened, and it responds to both harmful and benign queries.

In this paper, we aim to interpret safety capability within multi-head attention. To achieve this, we introduce Safety Head ImPortant Scores (Ships) to attribute the safety capability of individual attention heads in an aligned model. The model is trained to reject harmful queries in a high probability so that it aligns with human values (Ganguli et al., 2022; Dubey et al., 2024). Based on this, Ships quantifies the impact of each attention head on the change in the rejection probability of harmful queries through causal tracing. Concretely, we demonstrate that Ships can be used for attributing safety attention head. Experimental results show that on three harmful query datasets, using Ships to identify safe heads and using undifferentiated attention ablation (only modifying $\sim$ 0.006% of the parameters) can improve the attack success rate (ASR) of Llama-2-7b-chat from 0.04 to 0.64 $\uparrow$ and Vicuna-7b-v1.5 from 0.27 to 0.55 $\uparrow$ .

Furthermore, to attribute generalized safety attention heads, we generalize Ships to evaluate the changes in the representation of ablating attention heads on harmful query datasets. Based on the generalized version of Ships, we attribute the most important safety attention head, which is ablated, and the ASR is improved to 0.72 $\uparrow$ . Iteratively selecting important heads results in a group of heads that can significantly change the rejection representation. We name this heuristic method Safety Attention Head AttRibution Algorithm (Sahara). Experimental results show that ablating the attention head group can further weaken the safety capability collaboratively.

Based on the Ships and Sahara, we interpret the safety head of attention on several popular LLMs, such as Llama-2-7b-chat and Vicuna-7b-v1.5. This interpretation yields several intriguing insights: 1. Certain safety heads within the attention mechanism are crucial for feature integration in safety tasks. Specifically, modifying the value of the attention weight matrices changes the model output significantly, while scaling the attention output does not; 2. For LLMs fine-tuned from the same base model, their safety heads have overlap, indicating that in addition to alignment, the safety impact of the base model is critical; 3. The attention heads that affect safety can act independently with affecting helpfulness little. These insights provide a new perspective on LLM safety and provide a solid basis for the enhancement and future optimization of safety alignment. Our contributions are summarized as follows:

➪

We make a pioneering effort to discover and prove the existence of safety-specific attention heads in LLMs, which complements the research on safety interpretability. ➪

We present Ships to evaluate the safety impact of attention head ablation. Then, we propose a heuristic algorithm Sahara to find head groups whose ablation leads to safety degradation. ➪

We comprehensively analyze the importance of the standard multi-head attention mechanism for LLM safety, providing intriguing insights based on extensive experiments. Our work significantly boosts transparency and alleviates concerns regarding LLM risks.

2 Preliminary

Large Language Models (LLMs). Current state-of-the-art LLMs are predominantly based on a decoder-only architecture, which predicts the next token for the given prompt. For the input sequence $x=x_{1},x_{2},...,x_{s}$ , LLMs can return the probability distribution of the next token:

$$

\displaystyle p\left(x_{n+1}=v_{i}\mid x_{1},\ldots,x_{s}\right)=\frac{%

\operatorname{\exp}\left(o_{s}\cdot W_{:,i}\right)}{\sum_{j=1}^{|V|}%

\operatorname{\exp}\left(o_{s}\cdot W_{:,j}\right)}, \tag{1}

$$

where $o_{s}$ is the last residual stream, and $W$ is the linear function, which maps $o_{s}$ to the the logits associated with each token in the vocabulary $V$ . Sampling from the probability distribution yields a new token $x_{n+1}$ . Iterating this process allows to obtain a response $R=x_{s+1},x_{s+2},...,x_{s+R}$ .

Multi-Head Attention (MHA). The attention mechanism (Vaswani, 2017) in LLMs plays is critical for capturing the features of the input sequence. Prior works (Htut et al., 2019; Clark et al., 2019b; Campbell et al., 2023; Wu et al., 2024) demonstrate that individual heads in MHA contribute distinctively across various language tasks. MHA, with $n$ heads, is formulated as follows:

$$

\displaystyle\operatorname{MHA}_{W_{q},W_{k},W_{v}} \displaystyle=(h_{1}\oplus h_{2}\oplus\dots\oplus h_{n})W_{o}, \displaystyle h_{i} \displaystyle=\operatorname{Softmax}\Big{(}\frac{W_{q}^{i}W_{k}^{i}{}^{T}}{%

\sqrt{d_{k}/n}}\Big{)}W_{v}^{i}, \tag{2}

$$

where $\oplus$ represents concatenation and $d_{k}$ denotes the dimension size of $W_{k}$ .

LLM Safety and Jailbreak Attack. LLMs may generate content that is unethical or illegal, raising significant safety concerns. To address the risks, safety alignment (Bai et al., 2022a; Dai et al., 2024) is implemented to prevent models from responding to harmful queries $x_{\mathcal{H}}$ . Specifically, safety alignment train LLMs $\theta$ to optimize the following objective:

$$

\displaystyle\underset{\theta}{\operatorname{argmin}}\text{ }-\log p\left(R_{%

\bot}\mid x_{\mathcal{H}}=x_{1},x_{2},\ldots,x_{s};\theta\right), \tag{3}

$$

where $\bot$ denotes rejection, and $R_{\bot}$ generally includes phrases like ‘I cannot’ or ‘As a responsible AI assistant’. This objective aims to increase the likelihood of rejection tokens in response to harmful inputs. However, jailbreak attacks (Li et al., 2023; Chao et al., 2023; Liu et al., 2024) can circumvent the safety guardrails of LLMs. The objective of a jailbreak attack can be formalized as:

$$

\displaystyle\operatorname{maximize}\text{ }p\left(D\left(R\right)=%

\operatorname{True}\mid x_{\mathcal{H}}=x_{1},x_{2}\ldots,x_{s};\theta\right), \tag{4}

$$

where $D$ is a safety discriminator that flags $R$ as harmful when $D(R)=\operatorname{True}$ . Prior studies (Liao & Sun, 2024; Jia et al., 2024) show that shifting the probability distribution towards affirmative tokens can significantly improve the attack success rate. Suppressing rejection tokens (Shen et al., 2023; Wei et al., 2024a) yields similar results. These insights highlight that LLM safety relies on maximizing the probability of generating rejection tokens in response to harmful queries.

Safety Parameters. Mechanistic interpretability (Zhao et al., 2024a; Lindner et al., 2024) attributes model capabilities to specific parameters, improving the transparency of black-box LLMs while addressing concerns about their behavior. Recent work (Wei et al., 2024b; Chen et al., 2024) specializes in safety by identifying critical parameters responsible for ensuring LLM safety. When these safety-related parameters are modified, the safety guardrails of LLMs are compromised, potentially leading to the generation of unethical content. Consequently, safety parameters are those whose ablation results in a significantly increase in the probability of generating an illegal or unethical response to the harmful queries $x_{\mathcal{H}}$ . Formally, we define the Safety Parameters as:

$$

\displaystyle\Theta_{\mathcal{S},K} \displaystyle=\operatorname{Top-K}\left\{\theta_{\mathcal{S}}:\underset{\theta%

_{\mathcal{C}}\in\theta_{\mathcal{O}}}{\operatorname{argmax}}\quad\Delta p(%

\theta_{\mathcal{C}})\right\}, \displaystyle\Delta p(\theta_{\mathcal{C}}) \displaystyle=\mathbb{D}_{\text{KL}}\Big{(}p\left(R_{\bot}\mid x_{\mathcal{H}}%

;\theta_{\mathcal{O}}\right)\parallel p\left(R_{\bot}\mid x_{\mathcal{H}};(%

\theta_{\mathcal{O}}\setminus\theta_{\mathcal{C}})\right)\Big{)}, \tag{5}

$$

where $\theta_{\mathcal{O}}$ denotes the original model parameters, $\theta_{\mathcal{C}}$ represents candidate parameters and $\setminus$ indicates the ablation of the specific parameter $\theta_{\mathcal{C}}$ . The equation selects a set of $k$ parameters $\theta_{\mathcal{S}}$ that, when ablated, cause the largest decrease in the probability of rejecting harmful queries $x_{\mathcal{H}}$ .

3 Safety Head ImPortant Score

In this section, we aim to identify the safety parameters within the multi-head attention mechanisms for a specific harmful query. In Section 3.1, we detail two modifications to ablate the specific attention head for the harmful query. Based on this, Section 3.2 introduces Ships, a method to attribute safety parameters at the head-level based on attention head ablation. Finally, the experimental results in Section 3.3 demonstrate the effectiveness of our attribution method.

3.1 Attention Head Ablation

We focus on identifying the safety parameters within attention head. Prior studies (Michel et al., 2019; Olsson et al., 2022; Wang et al., 2023) have typically employed head ablation by setting the attention head outputs to $0 0$ . The resulting modified multi-head attention can be formalized as:

$$

\displaystyle\operatorname{MHA}^{\mathcal{A}}_{W_{q},W_{k},W_{v}}=(h_{1}\oplus

h%

_{2}\cdots\oplus h^{mod}_{i}\cdots\oplus h_{n})W_{o}, \tag{6}

$$

where $W_{q},W_{k}$ , and $W_{v}$ are the Query, Key, and Value matrices, respectively. Using $h_{i}$ to denote the $i\text{-th}$ attention head, the contribution of the $i\text{-th}$ head is ablated by modifying the parameter matrices. In this paper, we enhance the tuning of $W_{q}$ , $W_{k}$ , and $W_{v}$ to achieve a finer degree of control over the influence that a particular attention head exerts on safety. Specifically, we define two methods, including Undifferentiated Attention and Scaling Contribution, for ablation. Both approaches involve multiplying the parameter matrix by a very small coefficient $\epsilon$ to achieve ablation.

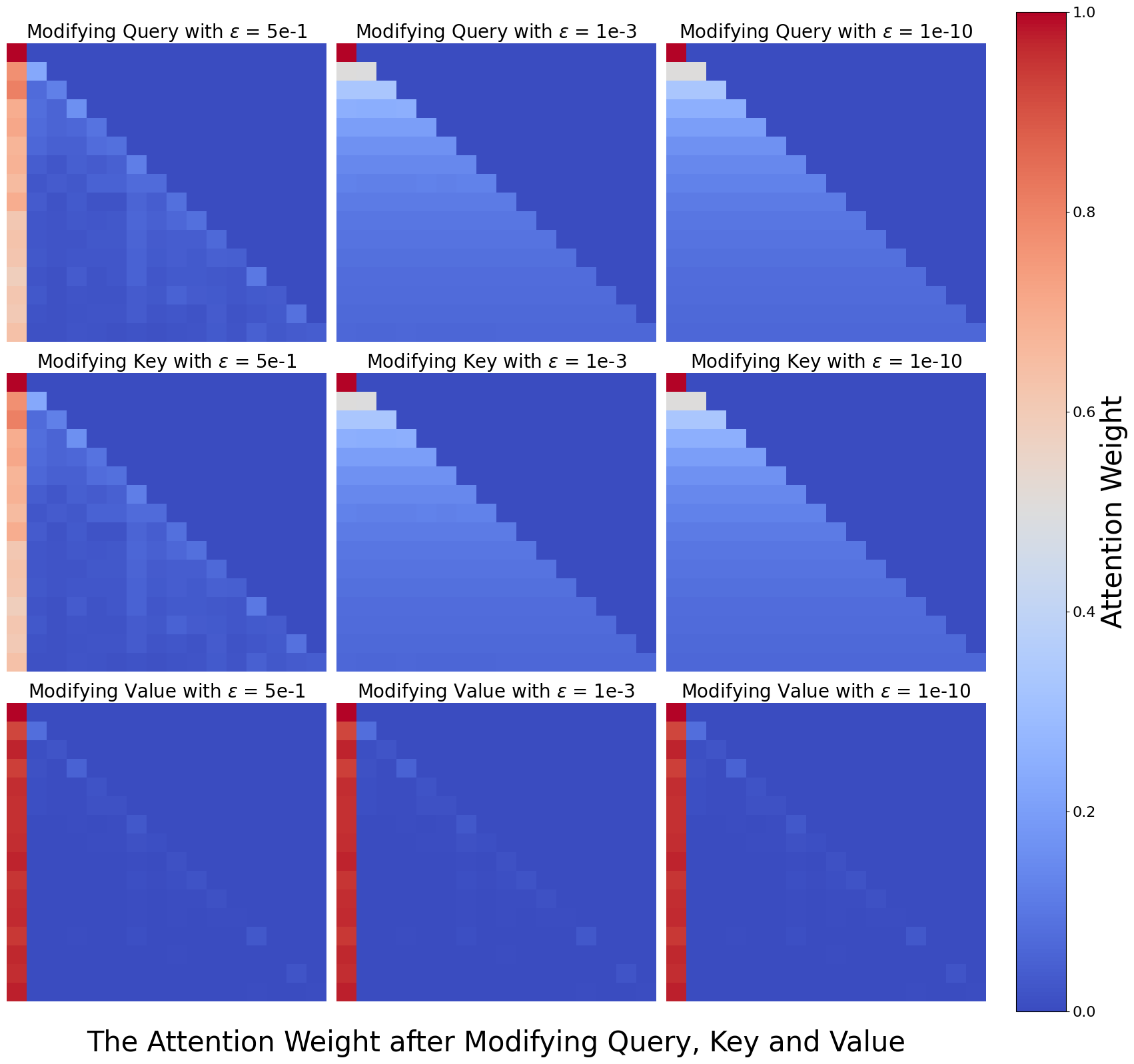

Undifferentiated Attention. Specifically, scaling $W_{q}$ or $W_{k}$ matrix forces the attention weights of the head to collapse to a special matrix $A$ . $A$ is a lower triangular matrix, and its elements are defined as $a_{ij}=\frac{1}{i}$ for $i≥ j$ , and 0 otherwise. Note that modifying either $W_{q}$ or $W_{k}$ has equivalent effects, a derivation is given in Appendix A.1. Undifferentiated Attention achieves ablation by hindering the head from extracting the critical information from the input sequence. It can be expressed as:

$$

\displaystyle h_{i}^{mod} \displaystyle=\operatorname{Softmax}\Big{(}\frac{{\color[rgb]{1,.5,0}%

\definecolor[named]{pgfstrokecolor}{rgb}{1,.5,0}\epsilon}W_{q}^{i}W_{k}^{i}{}^%

{T}}{\sqrt{d_{k}/n}}\Big{)}W_{v}^{i}=AW_{v}^{i}, \displaystyle where\quad A \displaystyle=[a_{ij}],\quad a_{ij}=\begin{cases}\frac{1}{i}&\text{if }i\geq j%

,\\

0&\text{if }i<j.\end{cases} \tag{7}

$$

Scaling Contribution. This method scales the attention head output by multiplying $W_{v}$ by $\epsilon$ . When the outputs of all heads are concatenated and then multiplied by the fully connected matrix $W_{o}$ , the contribution of the modified head $h_{i}^{mod}$ is significantly diminished compared to the others. A detailed discussion of scaling the $W_{v}$ matrix can be found in Appendix A.2. This method is similar in form to Undifferentiated Attention and is expressed as:

$$

\displaystyle h_{i}^{mod} \displaystyle=\operatorname{Softmax}\Big{(}\frac{W_{q}^{i}W_{k}^{i}{}^{T}}{%

\sqrt{d_{k}/n}}\Big{)}{\color[rgb]{1,.5,0}\definecolor[named]{pgfstrokecolor}{%

rgb}{1,.5,0}\epsilon}W_{v}^{i}. \tag{8}

$$

3.2 Evaluate the Importance of Parameters for Specific Harmful Query

For an aligned model with $L$ layers, we ablate the head $h_{i}^{l}$ in the MHA of the $l\text{-th}$ layer based on the aforementioned Undifferentiated Attention and Scaling Contribution. This results in a new probability distribution: $p({\theta_{h_{i}^{l}}})=p(\theta_{\mathcal{O}}\setminus\theta_{h_{i}^{l}}),%

\text{ }l∈(0,L)$ . Since the aligned model is trained to maximize the probability of rejection responses to harmful queries as shown in Eq 3, the change in the probability distribution allows us to assess the impact of ablating head $\theta_{h_{i}^{l}}$ for a specific harmful query $q_{\mathcal{H}}$ . Building on this, we define Safety Head ImPortant Score (Ships) to evaluate the importance of attention head $\theta_{h_{i}^{l}}$ . Formally, Ships can be expressed as:

$$

\text{Ships}(q_{\mathcal{H}},{\theta_{h_{i}^{l}}})=\mathbb{D}_{\text{KL}}\left%

(p(q_{\mathcal{H}};\theta_{\mathcal{O}})\parallel p(q_{\mathcal{H}};\theta_{%

\mathcal{O}}\setminus\theta_{h_{i}^{l}})\right), \tag{9}

$$

where $\mathbb{D}_{\text{KL}}$ is the Kullback-Leibler divergence (Kullback & Leibler, 1951).

Previous studies (Wang et al., 2024; Zhou et al., 2024) find rejection responses to various harmful queries are highly consistent. Furthermore, modern language models tend to be sparse, with many redundant parameters (Frantar & Alistarh, 2023; Sun et al., 2024a; b), meaning ablating some heads often has minimal impact on overall performance. Therefore, when a head is ablated, any deviation from the original rejection distribution suggests a shift towards affirmative responses, indicating that the ablated head is most likely a safety parameter.

3.3 Ablate Attention Heads For Specific Query Impact Safety

*[Error downloading image: ./figure/3.3.1.pdf]*

(a) Undifferentiated Attention

*[Error downloading image: ./figure/3.3.2.pdf]*

(b) Scaling Contribution

Figure 2: Attack success rate (ASR) for harmful queries after ablating important safety attention head (bars with x-axis labels ‘Greedy’ and ‘Top-5’), calculated using Ships. ‘Template’ means using chat template as input, ‘direct’ means direct input (refer to Appendix B.2 for detailed introduce). Figure 2(a) shows results with undifferentiated attention, while Figure 2(b) uses scaling contribution.

We conduct a preliminary experiment to demonstrate that Ships can be used to effectively identify safety heads. Our experiments are performed on two models, i.e., Llama-2-7b-chat (Touvron et al., 2023) and Vicuna-7b-v1.5 (Zheng et al., 2024b), using three commonly used harmful query datasets: Advbench (Zou et al., 2023b), Jailbreakbench (Chao et al., 2024), and Malicious Instruct (Huang et al., 2024). After ablating the safety attention head for the specific $q_{\mathcal{H}}$ , we generate an output of 128 tokens for each query to evaluate the impact on model safety. We use greedy sampling to ensure result reproducibility and top-k sampling to capture changes in the probability distributions. We use the attack success rate (ASR) metric, which is widely used to evaluate model safety (Qi et al., 2024; Zeng et al., 2024):

$$

\displaystyle\text{ASR}=\frac{1}{\left|Q_{\mathcal{H}}\right|}\sum_{x^{i}\in Q%

_{\mathcal{H}}}\left[D(x_{n+1}:x_{n+R}\mid x^{i})=\text{True}\right], \tag{10}

$$

where $Q_{\text{harm}}$ denotes a harmful query dataset. A higher ASR implies that the model is more susceptible to attacks and, thus, less safe. The results in Figure 2 indicate that ablating the attention head with the highest Ships score significantly reduces the safety capability. For Llama-2-7b-chat, using undifferentiated attention with chat template, ablating the most important head (which constitutes 0.006% of all parameters) improves the average ASR from 0.04 to 0.64 $\uparrow$ for ‘template’, representing a 16x $\uparrow$ improvement. For Vicuna-7b-v1.5, the improvement is less pronounced but still notable, with an observed improvement from 0.27 to 0.55 $\uparrow$ . In both models, Undifferentiated Attention consistently outperforms Scaling Contribution in terms of its impact on safety.

Takeaway. Our experimental results demonstrate that the special attention head can significantly impact safety in language models, as captured by our proposed Ships metric.

4 Safety Attention Head AttRibution Algorithm

In Section 3, we present Ships to attribute safety attention head for specific harmful queries and demonstrated its effectiveness through experiments. In this section, we extend the application of Ships to the dataset level, enabling us to separate the activations from particular queries. This allows us to identify attention heads that consistently apply across various queries, representing actual safety parameters within the attention mechanism.

In Section 4.1, we start with the evaluation of safety representations across the entire dataset. Moving forward, Section 4.2 introduces a generalized version of Ships to identify safety-critical attention heads. We propose Safety Attention Head AttRibution Algorithm (Sahara), a heuristic approach for pinpointing these heads. Finally, in Section 4.3, we conduct a series of experiments and analyses to understand the impact of safety heads on models’ safety guardrails.

4.1 Generalize the Impact of Safety Head Ablation.

<details>

<summary>x2.png Details</summary>

### Visual Description

## Vector Diagram: Vector Relationships

### Overview

The image is a vector diagram illustrating the relationships between several vectors. It shows the relative angles and orientations of the vectors in a two-dimensional space.

### Components/Axes

* **Axes:** The diagram has two axes, a vertical axis and a horizontal axis, both in light blue. The origin is at the intersection of the axes.

* **Vectors:** There are four vectors labeled as follows:

* $U_{A_2}^{(r)}$ (light yellow)

* $U_{\theta}^{(r)}$ (light red/pink)

* $U_{A_1}^{(r)}$ (light orange)

* $U_{A_n}^{(r)}$ (purple)

* **Angles:** The diagram also shows angles between the vectors:

* $\phi_2$ (light yellow, dotted arc) - Angle between the vertical axis and $U_{A_2}^{(r)}$

* $\phi_1$ (light orange, dotted arc) - Angle between $U_{A_2}^{(r)}$ and $U_{\theta}^{(r)}$

* $\phi_n$ (purple, dotted arc) - Angle between $U_{\theta}^{(r)}$ and $U_{A_1}^{(r)}$

### Detailed Analysis

* **Vector $U_{A_2}^{(r)}$:** This vector is light yellow and is positioned at an angle $\phi_2$ relative to the vertical axis.

* **Vector $U_{\theta}^{(r)}$:** This vector is light red/pink and is positioned at an angle $\phi_1$ relative to the vector $U_{A_2}^{(r)}$.

* **Vector $U_{A_1}^{(r)}$:** This vector is light orange and is positioned at an angle $\phi_n$ relative to the vector $U_{\theta}^{(r)}$.

* **Vector $U_{A_n}^{(r)}$:** This vector is purple and is positioned at an angle relative to the vector $U_{A_1}^{(r)}$.

* **Angles:** The angles $\phi_2$, $\phi_1$, and $\phi_n$ are represented by dotted arcs.

### Key Observations

* The vectors are arranged in a counter-clockwise direction starting from the vertical axis.

* The angles between the vectors are explicitly labeled.

* The diagram provides a visual representation of the angular relationships between the vectors.

### Interpretation

The diagram illustrates the angular relationships between a set of vectors. The vectors $U_{A_2}^{(r)}$, $U_{\theta}^{(r)}$, $U_{A_1}^{(r)}$, and $U_{A_n}^{(r)}$ are positioned at different angles relative to each other and the vertical axis. The angles $\phi_2$, $\phi_1$, and $\phi_n$ quantify these angular relationships. The diagram could be used to represent the orientation of different components in a system or the phase relationships between different signals. The specific meaning depends on the context in which this diagram is used.

</details>

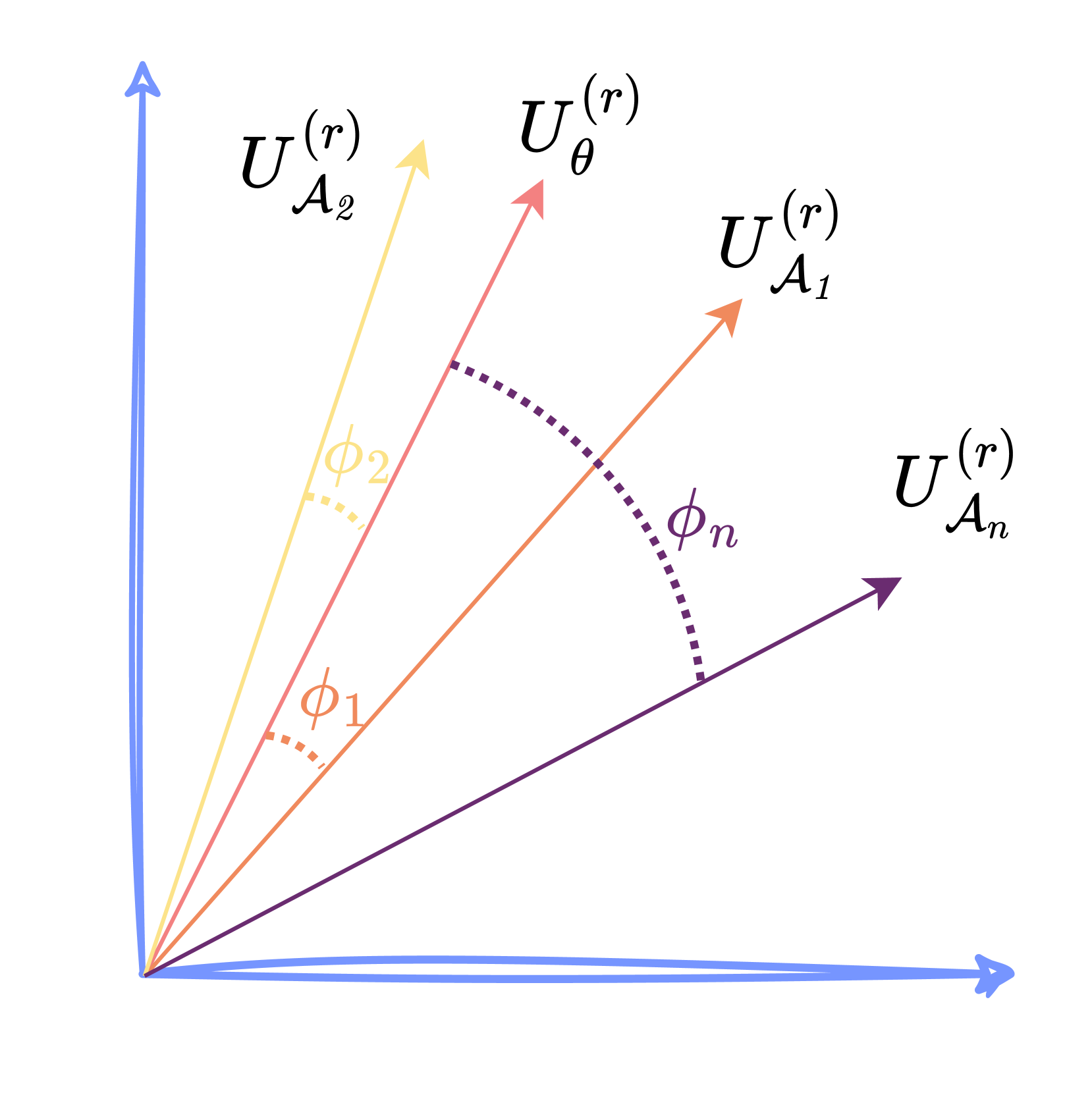

Figure 3: Illustration of generalized Ships by calculating the representation change of the left singular matrix $U$ compared to $U_{\theta}$ .

Previous studies (Zheng et al., 2024a; Zhou et al., 2024) has shown that the residual stream activations, denoted as $a$ , include features critical for safety. Singular Value Decomposition (SVD), a standard technique for extracting features, has been shown in previous studies (Wei et al., 2024b; Arditi et al., 2024) to identify safety-critical features through left singular matrices.

Building on these insights, we collect the activations $a$ of the top layer across the dataset. We stack the $a$ of all harmful queries into a matrix $M$ and apply SVD decomposition to it, aiming to analyze the impact of ablating attention heads at the dataset level. The SVD of $M$ is expressed as $\operatorname{SVD}(M)=U\Sigma V^{T}$ , where the left singular matrix $U_{\theta}$ is an orthogonal matrix of dimensions $\mid Q_{\mathcal{H}}\mid× d_{k}$ , representing key feature in the representations space of the harmful query dataset $Q_{\mathcal{H}}$ .

We first obtain the left singular matrix $U_{\theta}$ from the top residual stream of $Q_{\mathcal{H}}$ using the vanilla model. Next, we derive the left singular matrix $U_{\mathcal{A}}$ from a model where attention head $h_{i}^{l}$ is ablated. To quantify the impact of this ablation, we calculate the principal angles between $U_{\theta}$ and $U_{\mathcal{A}}$ , with larger principal angles indicating more significant alterations in safety representations.

Given that the first $r$ dimensions from SVD capture the most prominent features, we focus on these dimensions. We extract the first $r$ columns and calculate the principal angles to evaluate the impact of ablating attention head $h_{i}^{l}$ on safety representations. Finally, we extend the Ships metric to the dataset level, denoted as $\phi$ :

$$

\displaystyle\operatorname{Ships}(Q_{\mathcal{H}},{h_{i}^{l}})=\sum_{r=1}^{r_{%

main}}\phi_{r}=\sum_{r=1}^{r_{main}}\cos^{-1}\left(\sigma_{r}(U_{\theta}^{(r)}%

,U_{\mathcal{A}}^{(r)})\right), \tag{11}

$$

where $\sigma_{r}$ denotes the $r\text{-th}$ singular value, $\phi_{r}$ represents the principal angle between $U_{\theta}^{(r)}$ and $U_{\mathcal{A}}^{(r)}$ .

4.2 Safety Attention Head AttRibution Algorithm

In Section 4.1, we introduce a generalized version of Ships to evaluate the safety impact of ablating attention head at dataset level, allowing us to attribute head which represents safety attention heads better. However, existing research (Wang et al., 2023; Conmy et al., 2023; Lieberum et al., 2023) indicates that components within LLMs often have synergistic effects. We hypothesize that such collaborative dynamics are likely confined to the interactions among attention heads. To explore this, we introduce a search strategy aimed at identify groups of safety heads that function in concert.

Our method involves a heuristic search algorithm to identify a group of heads that are collectively responsible for detecting and rejecting harmful queries, as outlined in Algorithm 1

Algorithm 1 Safety Attention Head Attribution Algorithm (Sahara)

1: procedure Sahara ( $Q_{\mathcal{H}},\theta_{\mathcal{O}},\mathbb{L},\mathbb{N},\mathbb{S}$ )

2: Initialize: Important head group $G←\emptyset$

3: for $s← 1$ to $\mathbb{S}$ do

4: $\operatorname{Scoreboard_{s}}←\emptyset$

5: for $l← 1$ to $\mathbb{L}$ do

6: for $i← 1$ to $\mathbb{N}$ do

7: $T← G\cup\{h_{i}^{l}\}$

8: $I_{i}^{l}←\operatorname{Ships}(Q_{\mathcal{H}},\theta_{\mathcal{O}}\setminus$ T $)$

9: $\operatorname{Scoreboard_{s}}←\operatorname{Scoreboard_{s}}\cup\{I_{i%

}^{l}\}$

10: end for

11: end for

12: $G← G\cup\{\operatorname*{arg\,max}_{h∈\operatorname{Scoreboard_{s}}%

}\text{score}(h)\}$

13: end for

14: return $G$

15: end procedure

and is named as the Safety Attention Head AttRibution Algorithm (Sahara). For Sahara, we start with the harmful query dataset $Q_{\mathcal{H}}$ , the LLM $\theta_{\mathcal{O}}$ with $\mathbb{L}$ layers and $\mathbb{N}$ attention heads at each layer, and the target size $\mathbb{S}$ for the important head group $G$ . We begin with an empty set for $G$ and iteratively perform the following steps: 1. Ablate the heads currently in $G$ ; and 2. Measure the dataset’s representational change when adding new heads using the Ships metric. After $\mathbb{S}$ iterations, we obtain a group of safety heads that work together. Ablating this group results in a significant shift in the rejection representation, which could compromise the model’s safety capability.

Given that Ships is to assess the change of representation, we opt for a smaller $\mathbb{S}$ , typically not exceeding 5. With this head group size, we identify a set of attention heads that exert the most substantial influence on the safety of the dataset $Q_{\mathcal{H}}$ .

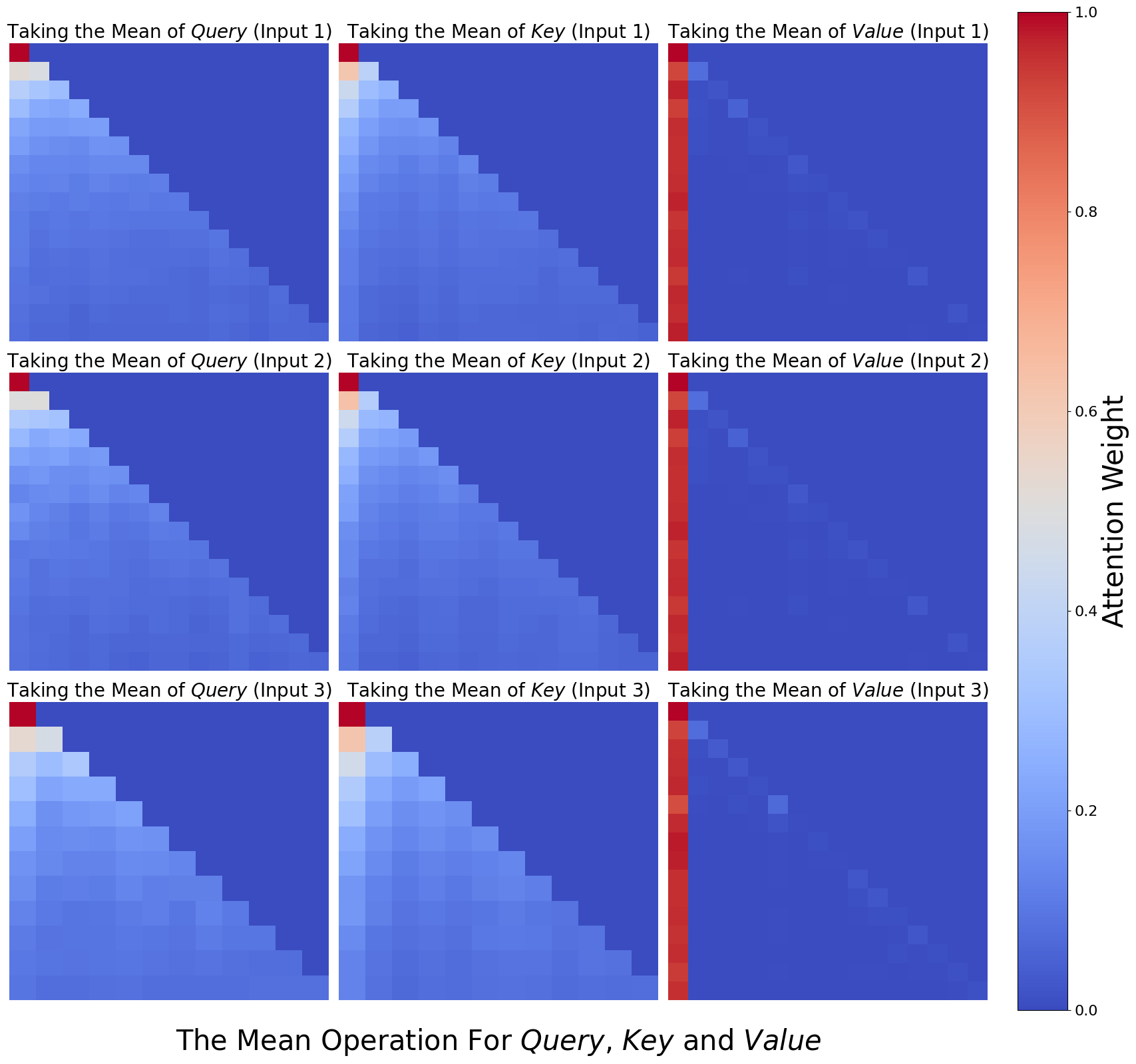

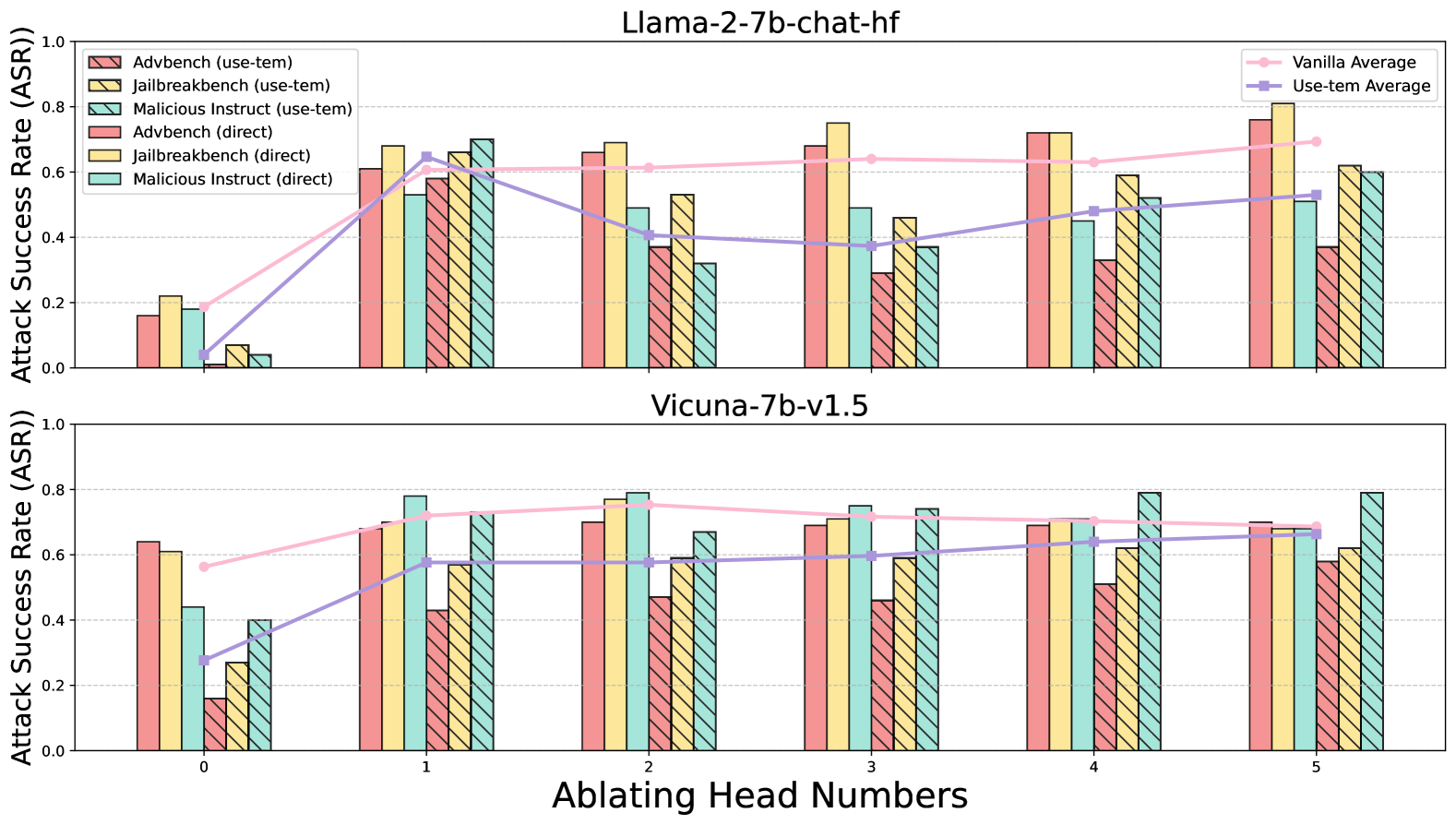

4.3 How Does Safety Heads Affect Safety?

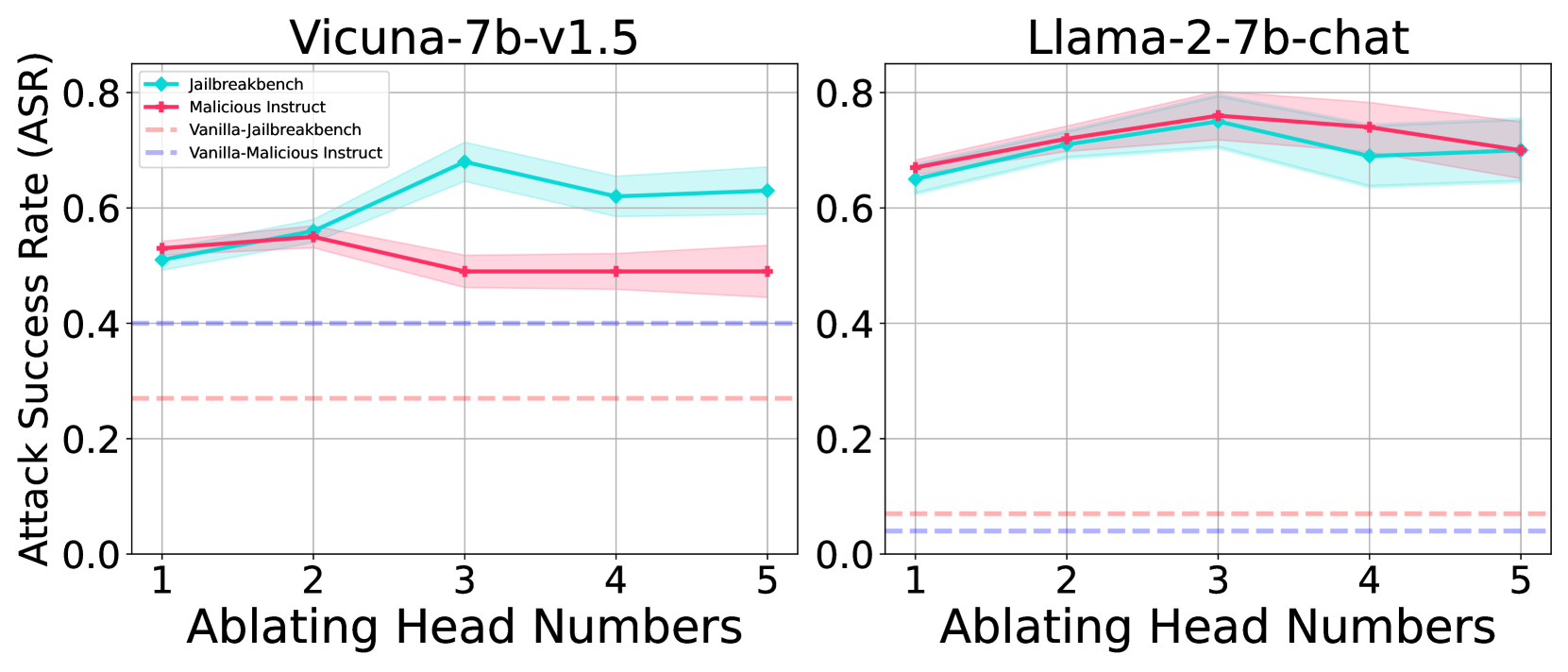

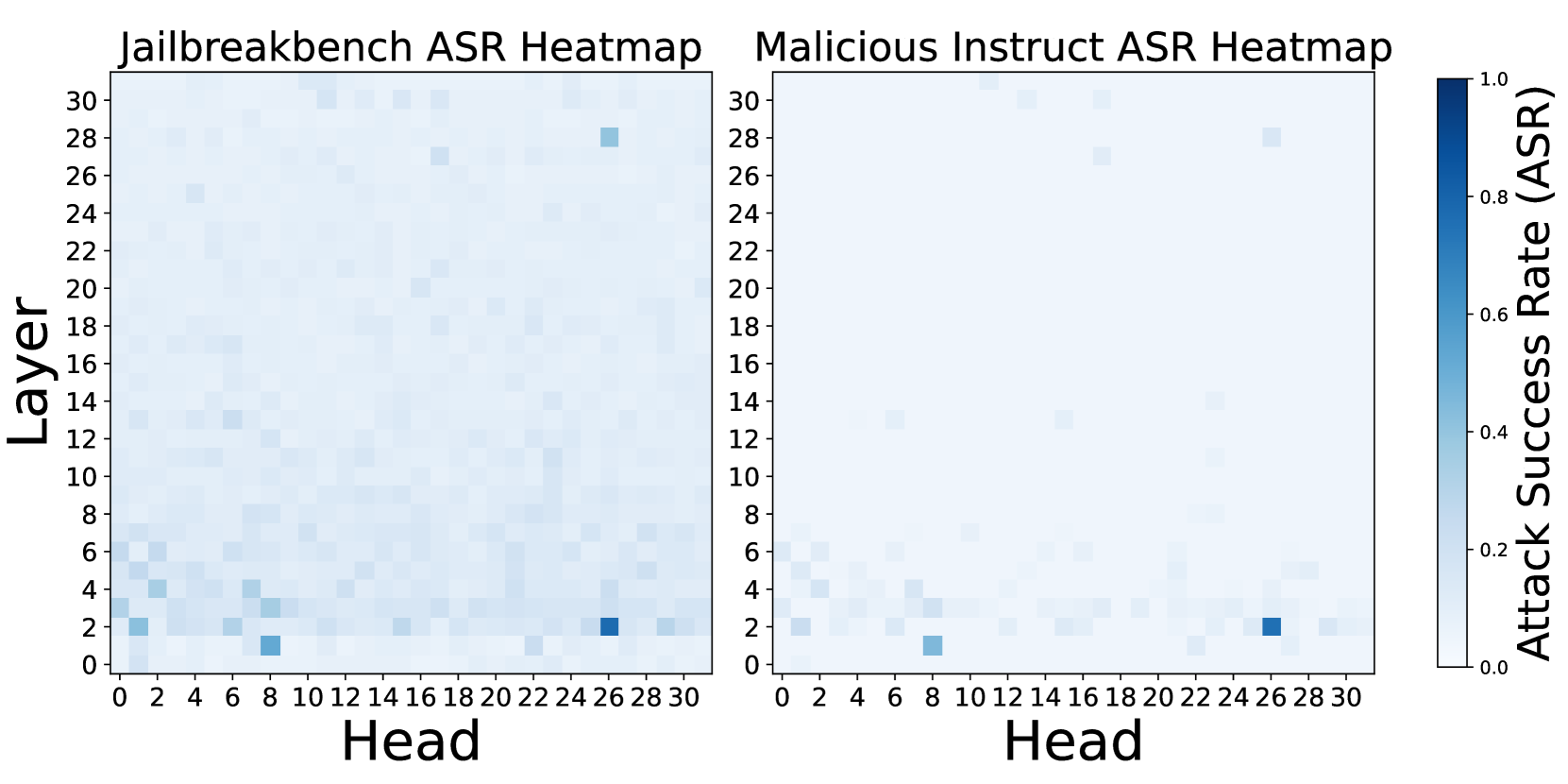

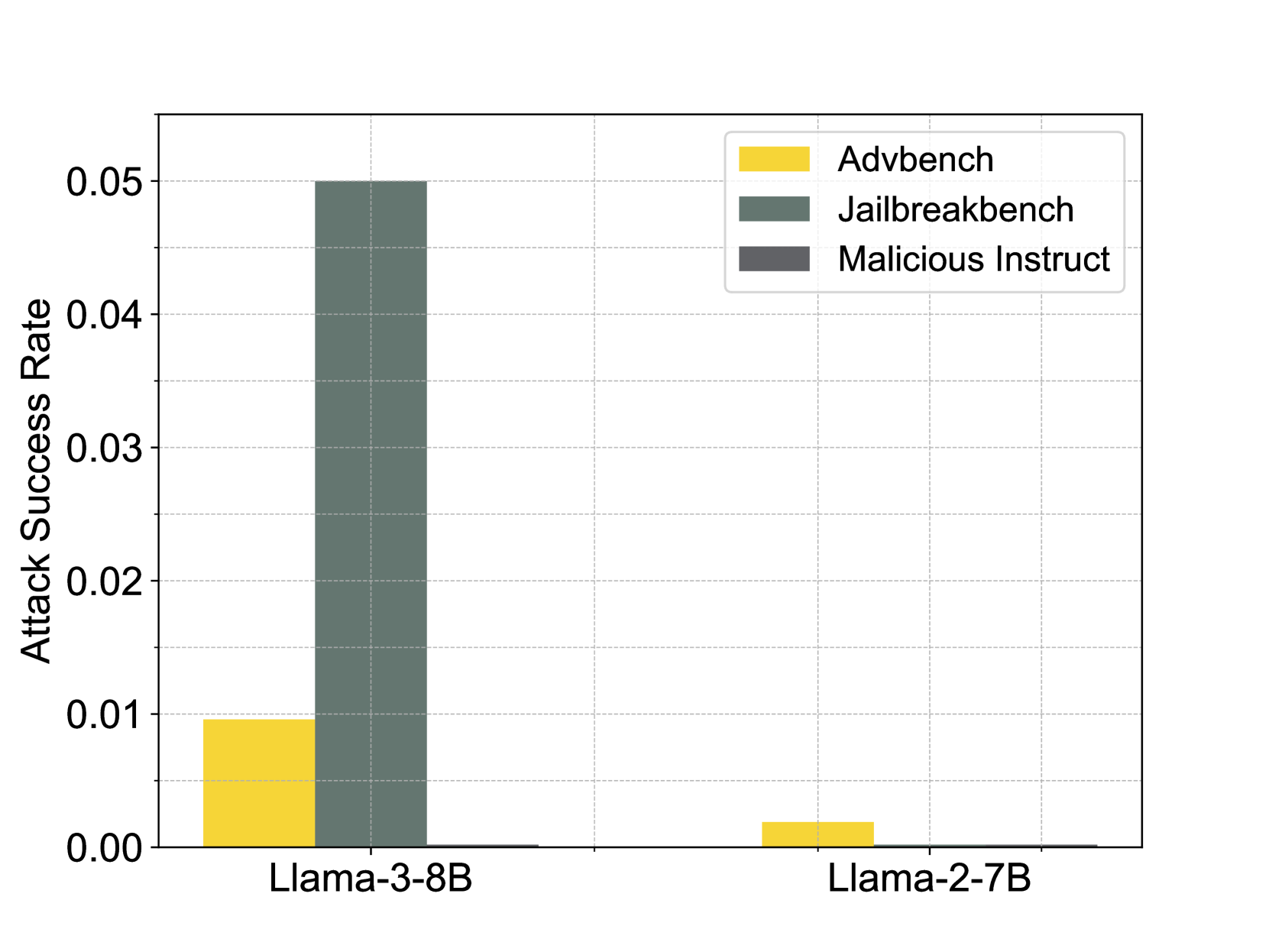

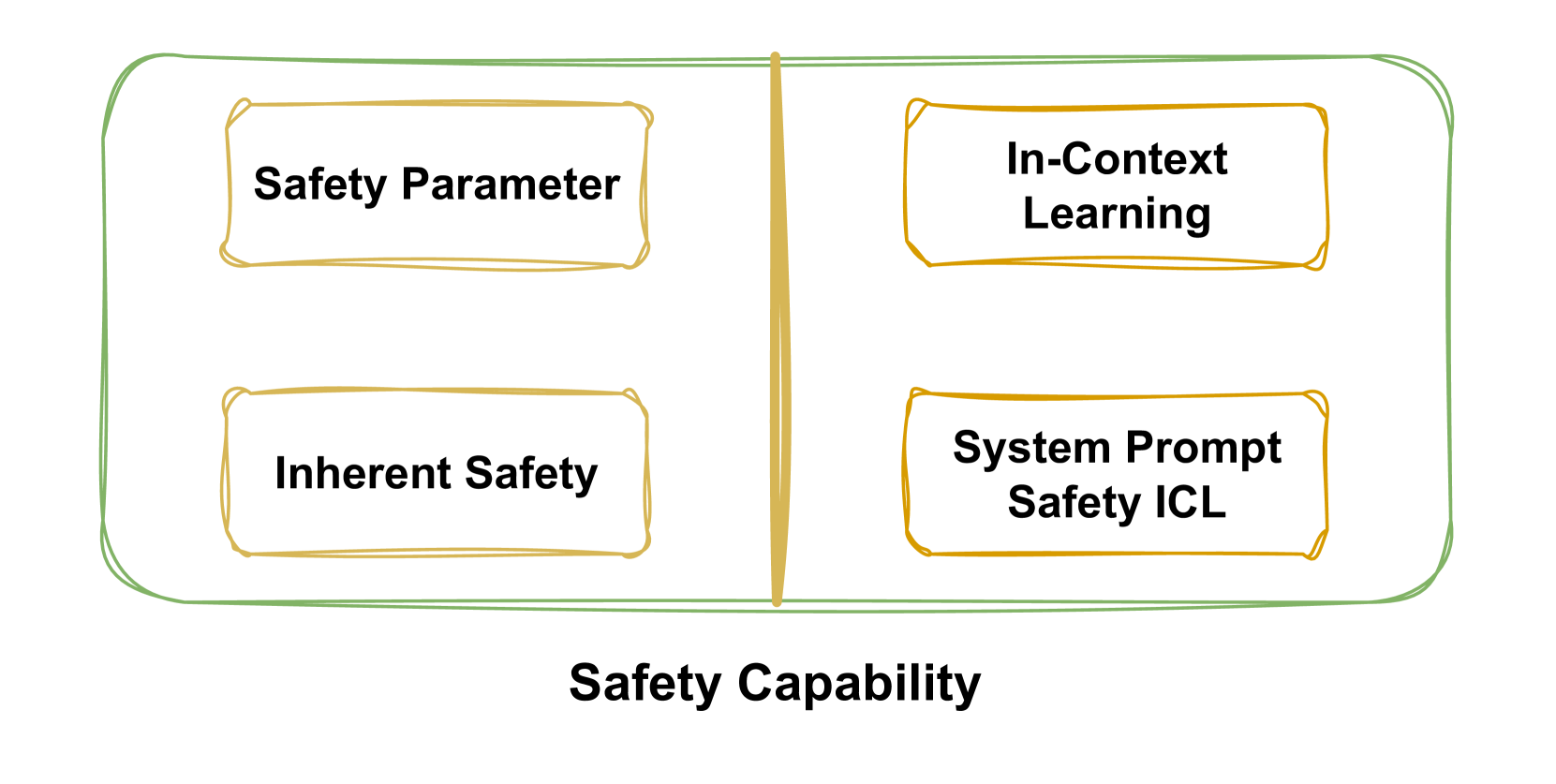

Ablating Heads Results in Safety Degradation. We employ the generalized Ships in Section 4.1 to identify the attention head that most significantly alters the rejection representation of the harmful dataset. Figure 4(a) shows that ablating these identified heads substantially weaken safety capability. Our method effectively identifies key safety attention heads, which we argue represent the model’s safety head at the dataset level. Figure 4(b) further supports this claim by showing ASR changes across all heads when ablating Undifferentiated Attention on the Jailbreakbench and Malicious Instruct datasets. Notably, the heads that notably improve ASR are consistently the same.

<details>

<summary>x3.png Details</summary>

### Visual Description

## Line Chart: Attack Success Rate vs. Ablating Head Numbers for Two Language Models

### Overview

The image presents two line charts comparing the attack success rate (ASR) against the number of ablating head numbers for two language models: Vicuna-7b-v1.5 and Llama-2-7b-chat. Each chart displays four data series representing different attack scenarios: "Jailbreakbench," "Malicious Instruct," "Vanilla-Jailbreakbench," and "Vanilla-Malicious Instruct." The charts aim to illustrate how the ASR changes as head numbers are ablated (removed) for each model under different attack conditions.

### Components/Axes

* **X-axis (Horizontal):** "Ablating Head Numbers," with values ranging from 1 to 5.

* **Y-axis (Vertical):** "Attack Success Rate (ASR)," ranging from 0.0 to 0.8.

* **Chart Titles:** "Vicuna-7b-v1.5" (left chart) and "Llama-2-7b-chat" (right chart).

* **Legend (Top-Left of Left Chart):**

* **Cyan:** "Jailbreakbench"

* **Red:** "Malicious Instruct"

* **Light Red (dashed):** "Vanilla-Jailbreakbench"

* **Light Purple (dashed):** "Vanilla-Malicious Instruct"

### Detailed Analysis

**Left Chart: Vicuna-7b-v1.5**

* **Jailbreakbench (Cyan):** The line starts at approximately 0.52 at head number 1, increases to approximately 0.55 at head number 2, peaks at approximately 0.70 at head number 3, then decreases to approximately 0.62 at head number 4 and remains at approximately 0.63 at head number 5.

* **Malicious Instruct (Red):** The line starts at approximately 0.53 at head number 1, increases to approximately 0.55 at head number 2, decreases to approximately 0.49 at head number 3, remains at approximately 0.48 at head number 4 and remains at approximately 0.46 at head number 5.

* **Vanilla-Jailbreakbench (Light Red, Dashed):** The line remains constant at approximately 0.27 across all head numbers.

* **Vanilla-Malicious Instruct (Light Purple, Dashed):** The line remains constant at approximately 0.40 across all head numbers.

**Right Chart: Llama-2-7b-chat**

* **Jailbreakbench (Cyan):** The line starts at approximately 0.65 at head number 1, increases to approximately 0.72 at head number 2, peaks at approximately 0.77 at head number 3, then decreases to approximately 0.71 at head number 4 and remains at approximately 0.70 at head number 5.

* **Malicious Instruct (Red):** The line starts at approximately 0.67 at head number 1, increases to approximately 0.74 at head number 2, peaks at approximately 0.79 at head number 3, then decreases to approximately 0.73 at head number 4 and remains at approximately 0.72 at head number 5.

* **Vanilla-Jailbreakbench (Light Red, Dashed):** The line remains constant at approximately 0.07 across all head numbers.

* **Vanilla-Malicious Instruct (Light Purple, Dashed):** The line remains constant at approximately 0.04 across all head numbers.

### Key Observations

* For both models, the "Vanilla-Jailbreakbench" and "Vanilla-Malicious Instruct" attack success rates remain relatively constant regardless of the number of ablating head numbers.

* For Vicuna-7b-v1.5, "Jailbreakbench" shows a peak at head number 3, while "Malicious Instruct" decreases after head number 2.

* For Llama-2-7b-chat, both "Jailbreakbench" and "Malicious Instruct" peak at head number 3 and then slightly decrease.

* Llama-2-7b-chat generally exhibits higher attack success rates for "Jailbreakbench" and "Malicious Instruct" compared to Vicuna-7b-v1.5.

* The shaded regions around the "Jailbreakbench" and "Malicious Instruct" lines indicate the uncertainty or variance in the ASR.

### Interpretation

The data suggests that ablating head numbers has a varying impact on the attack success rates of the two language models, depending on the attack scenario. The "Vanilla" attacks (Jailbreakbench and Malicious Instruct) are largely unaffected by head ablation, indicating a baseline level of vulnerability. The "Jailbreakbench" and "Malicious Instruct" attacks show more sensitivity to head ablation, with a peak in ASR around head number 3 for both models, suggesting that specific heads might be more critical for these types of attacks. Llama-2-7b-chat appears to be more vulnerable to these attacks overall, as indicated by its higher ASR values compared to Vicuna-7b-v1.5. The trends observed can help in understanding the models' vulnerabilities and developing strategies to improve their robustness against adversarial attacks.

</details>

(a) Impact of head group size on ASR.

<details>

<summary>x4.png Details</summary>

### Visual Description

## Heatmap: Jailbreakbench ASR vs. Malicious Instruct ASR

### Overview

The image presents two heatmaps side-by-side, visualizing the Attack Success Rate (ASR) for "Jailbreakbench" and "Malicious Instruct" attacks across different layers and heads of a model. The heatmaps use a blue color gradient to represent the ASR, with darker shades indicating higher success rates.

### Components/Axes

* **Titles:**

* Left Heatmap: "Jailbreakbench ASR Heatmap"

* Right Heatmap: "Malicious Instruct ASR Heatmap"

* **X-axis (both heatmaps):** "Head", with tick marks from 0 to 30 in increments of 2.

* **Y-axis (both heatmaps):** "Layer", with tick marks from 0 to 30 in increments of 2.

* **Colorbar (right side):** "Attack Success Rate (ASR)", ranging from 0.0 (white) to 1.0 (dark blue) in increments of 0.2.

### Detailed Analysis

**Jailbreakbench ASR Heatmap (Left):**

* **General Trend:** The heatmap is mostly light blue, indicating generally low ASR.

* **Specific Data Points:**

* Layer 2, Head 8: ASR is approximately 0.2-0.4 (light blue).

* Layer 2, Head 26: ASR is approximately 0.4-0.6 (mid-blue).

* Layer 28, Head 28: ASR is approximately 0.4-0.6 (mid-blue).

* Layer 4, Head 2: ASR is approximately 0.2-0.4 (light blue).

* Layer 6, Head 2: ASR is approximately 0.2-0.4 (light blue).

**Malicious Instruct ASR Heatmap (Right):**

* **General Trend:** The heatmap is predominantly white, indicating very low ASR across most layers and heads.

* **Specific Data Points:**

* Layer 2, Head 26: ASR is approximately 0.4-0.6 (mid-blue).

* Layer 2, Head 8: ASR is approximately 0.2-0.4 (light blue).

* Layer 4, Head 4: ASR is approximately 0.2-0.4 (light blue).

* Layer 6, Head 2: ASR is approximately 0.2-0.4 (light blue).

* Layer 13, Head 4: ASR is approximately 0.2-0.4 (light blue).

* Layer 27, Head 26: ASR is approximately 0.2-0.4 (light blue).

* Layer 30, Head 14: ASR is approximately 0.2-0.4 (light blue).

### Key Observations

* The "Malicious Instruct" attack generally has a lower success rate compared to the "Jailbreakbench" attack.

* In both heatmaps, certain heads in the lower layers (around layer 2) show slightly higher ASR.

* The ASR is not uniformly distributed across layers and heads; some specific combinations show higher vulnerability.

### Interpretation

The heatmaps provide a visual representation of the model's vulnerability to different types of attacks across its layers and heads. The "Jailbreakbench" attack appears to be more effective overall, suggesting that the model is more susceptible to this type of adversarial input. The "Malicious Instruct" attack, on the other hand, shows very low success rates, indicating that the model is relatively robust against this specific type of attack.

The variations in ASR across different layers and heads suggest that certain parts of the model are more vulnerable than others. This information could be used to focus efforts on improving the robustness of these specific areas, potentially through techniques like adversarial training or targeted regularization. The concentration of slightly higher ASR values in the lower layers for both attacks might indicate that these layers are more critical for processing adversarial inputs or that they are more easily manipulated.

</details>

(b) Single-step ablation of attention heads.

Figure 4: Ablating heads result in safety degradation, as reflected by ASR. For generation, we set max_new_token=128 and k=5 for top-k sampling.

Impact of Head Group Size. Employing the Sahara algorithm from Section 4.2, we heuristically identify safety head groups and perform ablations to assess model safety capability changes. Figure 4(a) illustrates the impact of ablating attention heads in varying group sizes on the safety capability of Vicuna-7b-v1.5 and Llama-2-7b-chat. Interestingly, we find safety capability generally improve with the ablation of a smaller head group (typically size 3), with ASR decreasing beyond this threshold. Further analysis reveals that excessive head removal can lead to the model outputting nonsensical strings, classified as failures in our ASR evaluation.

Safety Heads are Sparse. Safety attention heads are not evenly distributed across the model. Figure 4(b) presents comprehensive ASR results for individual ablations of 1024 heads. The findings indicate that only a minority of heads are critical for safety, with most ablations having negligible impact. For Llama-2-7b-chat, head 2-26 emerges as the most crucial safety attention head. When ablated individually with the input template from Appendix B.1, it significantly weakens safety capability.

| Method | Parameter Modification | ASR | Attribution Level |

| --- | --- | --- | --- |

| ActSVD | $\sim 5\%$ | 0.73 $±$ 0.03 | Rank |

| GTAC&DAP | $\sim 5\%$ | 0.64 $±$ 0.03 | Neuron |

| LSP | $\sim 3\%$ | 0.58 $±$ 0.04 | Layer |

| Ours | $\sim 0.018\%$ | 0.72 $±$ 0.05 | Head |

Table 1: Safety capability degradation and parameter attribution granularity. Tested model is Llama-2-7b-chat.

Our Method Localizes Safety Parameters at a Finer Granularity. Previous research on interpretability (Zou et al., 2023a; Xu et al., 2024c), such as ActSVD (Wei et al., 2024b), Generation-Time Activation Contrasting (GTAC) & Dynamic Activation Patching (DAP) (Chen et al., 2024) and Layer-Specific Pruning (LSP) (Zhao et al., 2024b), has identified safety-related parameters or representations. However, our method offers a more precise localization, as detailed in Table 1. We significantly narrow down the focus from parameters constituting over 5% to mere 0.018% (three heads), improving attribution precision under similar ASR by three orders of magnitude compared to existed methods.

While our method offers superior granularity in pinpointing safety parameters, we acknowledge that insights from other safety interpretability studies are complementary to our findings. The concentration of safety at the attention head level may indicate an inherent characteristic of LLMs, suggesting that the attention mechanism’s role in safety is particularly significant in specific heads.

| Method | Full Generation | GPU Hours |

| --- | --- | --- |

| Masking Head | ✓ | $\sim$ 850 |

| ACDC | ✓ | $\sim$ 850 |

| Ours | $×$ | 6 |

Table 2: The full generation is set to generate a maximum of 128 new tokens; GPU hours refer to the runtime for full generation on one A100 80GB GPU.

Our Method is Highly Efficient. We use established method (Michel et al., 2019; Conmy et al., 2023), traditionally used to assess the significance of various attention heads in models like BERT (Devlin, 2018), as a baseline for our study. These methods typically fall into two categories: one that requires full text generation to measure changes in response metrics, such as BLEU scores in neural translation tasks (Papineni et al., 2002); and another that devises clever tasks completed in a single forward pass to monitor result variations, like the indirect object identification (IOI) task.

However, assessing the toxicity of responses post-ablation necessitates full text generation, which becomes increasingly impractical as language models grow in complexity. For instance, BERT-Base comprises 12 layers with 12 heads each, whereas Llama-2-7b-chat boasts 32 layers with 32 heads each. This scaling results in a prohibitive computational expense, hindering the feasibility of evaluating metric shifts after ablating each head. We conduct partial generations experiments and estimate inference times for comparison, as shown in Table 2, indicating that our approach significantly reduces the computational overhead compared to previous methods.

5 An In-Depth Analysis For Safety Attention Heads

In Section 4, we outline our approach to identifying safety attention heads at the dataset level and confirm their presence through experiments. In this section, we conduct deeper analyses on the functionality of these safety attention heads, further exploring their characteristics and mechanisms. The detailed experimental setups and additional results in this section can be found in Appendix B and Appendix C.3, respectively.

5.1 Different Impact between Attention Weight and Attention Output

We begin by examining the differences between the approaches mentioned earlier in Section 3.1, i.e., Undifferentiated Attention and Scaling Contribution, regarding their impact on the safety capability of LLMs. Our emphasis is on understanding the varying importance of modifications to the Query ( $W_{q}$ ), Key ( $W_{k}$ ), and Value ( $W_{v}$ ) matrices within individual attention heads for model safety.

| Method | Dataset | 1 | 2 | 3 | 4 | 5 | Mean |

| --- | --- | --- | --- | --- | --- | --- | --- |

| Undifferentiated | Malicious Instruct | $+0.63$ | $+0.68$ | $+0.72$ | $+0.70$ | $+0.66$ | $+0.68$ |

| Attention | Jailbreakbench | $+0.58$ | $+0.65$ | $+0.68$ | $+0.62$ | $+0.63$ | $+0.63$ |

| Scaling | Malicious Instruct | $+0.01$ | $+0.02$ | $+0.02$ | $+0.01$ | $+0.03$ | $+0.02$ |

| Contribution | Jailbreakbench | $-0.01$ | $+0.00$ | $-0.01$ | $+0.00$ | $+0.00$ | $+0.00$ |

| Undifferentiated | Malicious Instruct | $+0.66$ | $+0.28$ | $+0.33$ | $+0.48$ | $+0.56$ | $+0.46$ |

| Attention | Jailbreakbench | $+0.62$ | $+0.46$ | $+0.39$ | $+0.52$ | $+0.52$ | $+0.50$ |

| Scaling | Malicious Instruct | $+0.07$ | $+0.20$ | $+0.32$ | $+0.24$ | $+0.28$ | $+0.22$ |

| Contribution | Jailbreakbench | $+0.03$ | $+0.18$ | $+0.41$ | $+0.45$ | $+0.44$ | $+0.30$ |

Table 3: The impact of the number of ablated safety attention heads on ASR. Upper. Results of attributing safety heads at the dataset level using generalized Ships; Bottom. Results of attributing specific harmful queries using Ships.

Safety Head Can Extracting Crucial Safety Information. In contrast to previous work, which has primarily focused on modifying attention output, our research delves into the nuanced contributions that individual attention heads make to the safety of language models. To further explore the mechanisms of the safety head, we compare different ablation methods, Undifferentiated Attention (as defined by Eq 7) and Scaling Contribution (Eq 8) on Llama-2-7b-chat (results of Vicuna-7b-v1.5 are deferred to Appendix C.3). Table 3 presents our findings. The upper section of the table shows that attributing and ablating the safety head at the dataset level using Sahara leads to a increase in ASR, which is indicative of a compromised safety capability. The lower section focuses on the effect on specific queries.

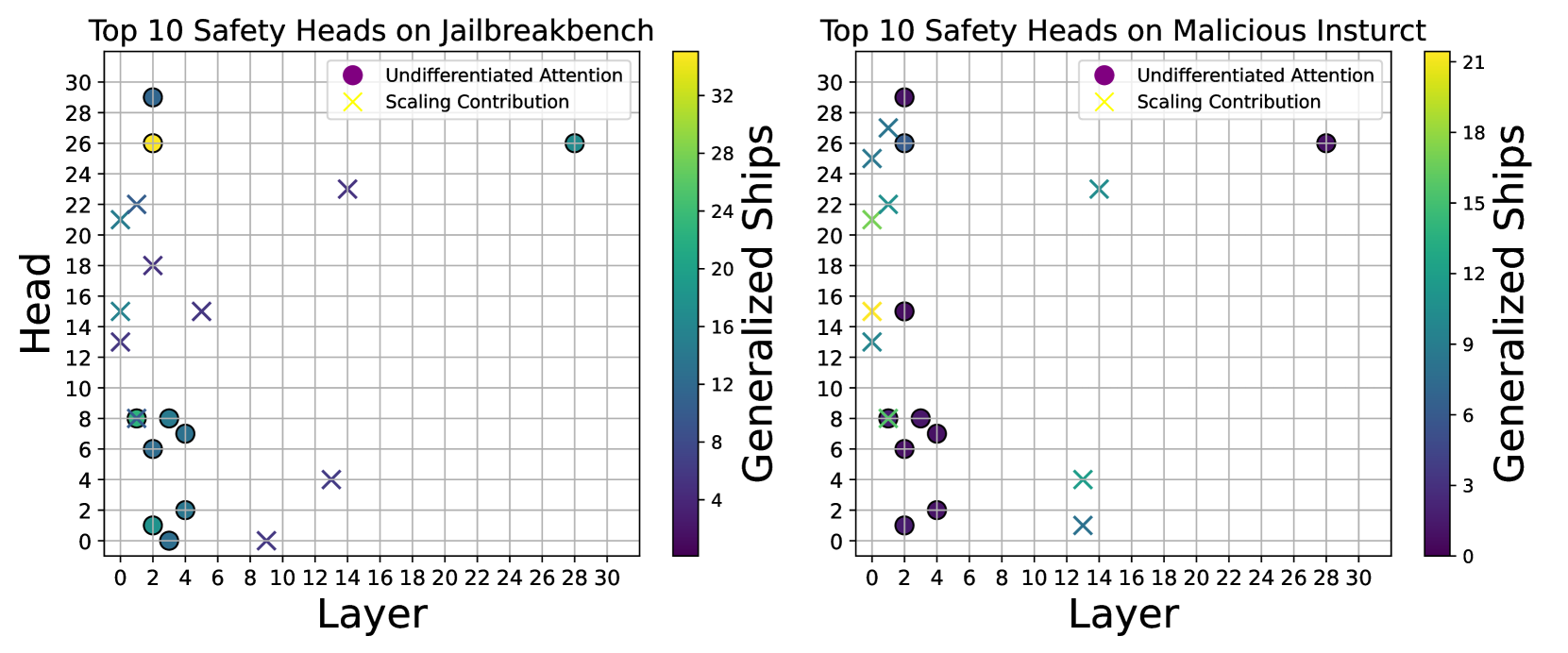

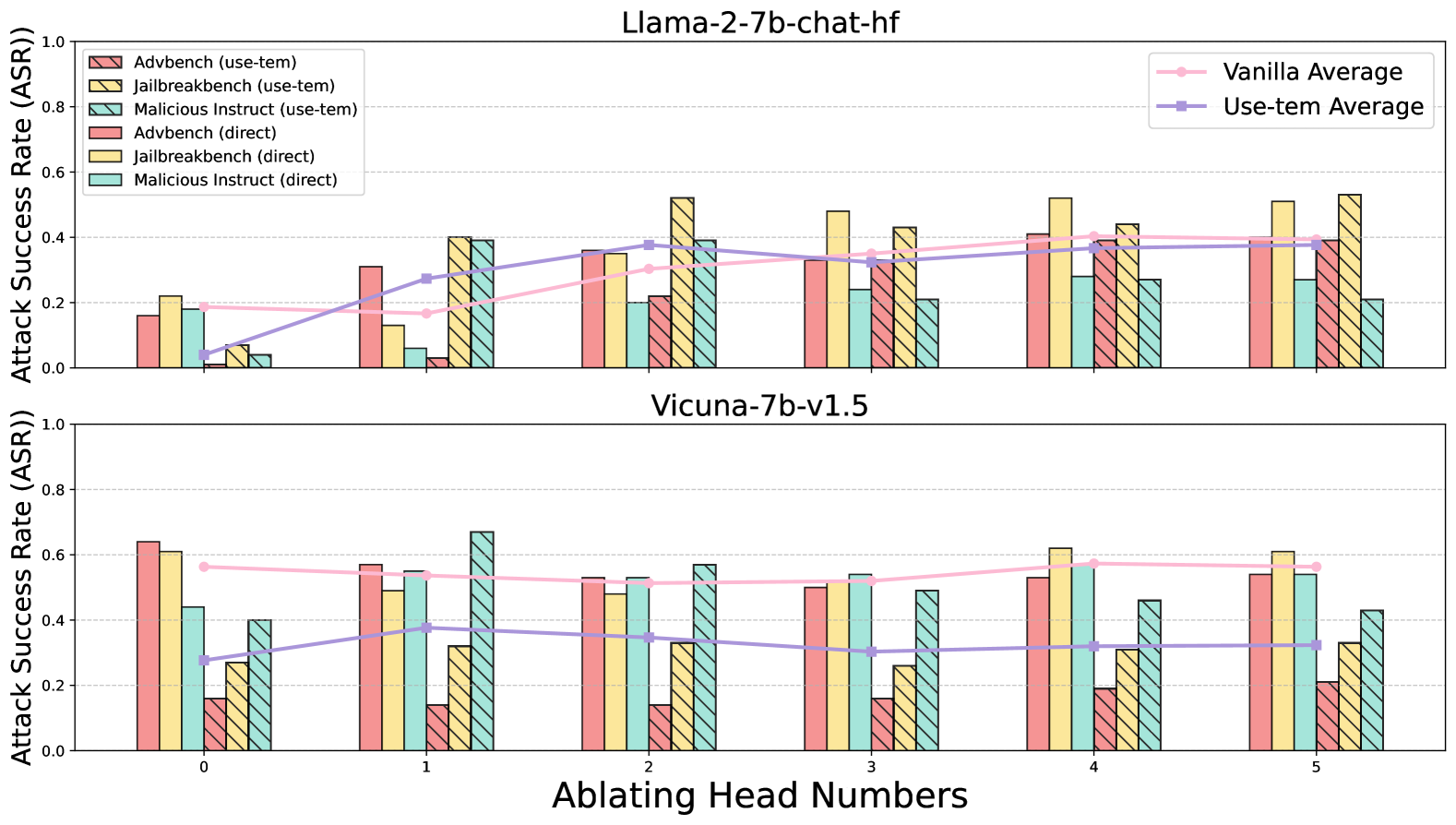

The experimental results reveal that Undifferentiated Attention—where $W_{q}$ or $W_{k}$ is altered to yield a uniform attention weight matrix—significantly diminishes the safety capability at both the dataset and query levels. Conversely, Scaling Contribution shows a more pronounced effect at the query level, with minimal impact at the dataset level. This contrast reveals that inherent safety in attention mechanisms is achieved by effectively extracting crucial information. The mean attention weight fails to capture malicious feature, leading to false positives. The limited effectiveness of Scaling Contribution at the dataset level further supports this viewpoint. Considering the parameter redundancy in LLMs (Frantar & Alistarh, 2023; Yu et al., 2024a; b), the influence of a parameter may persist even after it has been ablated, which we believe is why some safety heads may be mistakenly judged as unimportant.

<details>

<summary>x5.png Details</summary>

### Visual Description

## Scatter Plots: Top 10 Safety Heads on Jailbreakbench and Malicious Insturct

### Overview

The image contains two scatter plots comparing the top 10 safety heads on "Jailbreakbench" (left) and "Malicious Insturct" (right). The plots show the relationship between "Head" (y-axis) and "Layer" (x-axis), with data points colored according to "Generalized Ships" using a color gradient. Two types of data points are represented: "Undifferentiated Attention" (circles) and "Scaling Contribution" (crosses).

### Components/Axes

**Left Plot (Jailbreakbench):**

* **Title:** Top 10 Safety Heads on Jailbreakbench

* **X-axis:** Layer, ranging from 0 to 30 in increments of 2.

* **Y-axis:** Head, ranging from 0 to 30 in increments of 2.

* **Color Bar (Right Side):** Generalized Ships, ranging from approximately 0 to 32. The color gradient goes from dark purple (0) to yellow (32).

* **Legend (Top-Right):**

* Purple circle: Undifferentiated Attention

* Yellow cross: Scaling Contribution

**Right Plot (Malicious Insturct):**

* **Title:** Top 10 Safety Heads on Malicious Insturct

* **X-axis:** Layer, ranging from 0 to 30 in increments of 2.

* **Y-axis:** Head, ranging from 0 to 30 in increments of 2.

* **Color Bar (Right Side):** Generalized Ships, ranging from approximately 0 to 21. The color gradient goes from dark purple (0) to yellow (21).

* **Legend (Top-Right):**

* Purple circle: Undifferentiated Attention

* Yellow cross: Scaling Contribution

### Detailed Analysis

**Left Plot (Jailbreakbench):**

* **Undifferentiated Attention (Circles):**

* A cluster of points with Head values between 0 and 8, and Layer values between 0 and 6. These points have Generalized Ships values ranging from approximately 0 to 8 (dark purple to green).

* One point at approximately (2, 2), Generalized Ships ~ 2 (dark green).

* One point at approximately (2, 6), Generalized Ships ~ 6 (green).

* One point at approximately (2, 8), Generalized Ships ~ 8 (green).

* One point at approximately (4, 6), Generalized Ships ~ 6 (green).

* One point at approximately (4, 8), Generalized Ships ~ 8 (green).

* One point at approximately (2, 0), Generalized Ships ~ 0 (dark purple).

* One point at approximately (2, 2), Generalized Ships ~ 2 (dark purple).

* One point at approximately (28, 28), Generalized Ships ~ 28 (yellow).

* **Scaling Contribution (Crosses):**

* One point at approximately (0, 14), Generalized Ships ~ 14 (light blue).

* One point at approximately (0, 16), Generalized Ships ~ 16 (light blue).

* One point at approximately (0, 18), Generalized Ships ~ 18 (light blue).

* One point at approximately (0, 20), Generalized Ships ~ 20 (light blue).

* One point at approximately (0, 12), Generalized Ships ~ 12 (light blue).

* One point at approximately (14, 16), Generalized Ships ~ 16 (light blue).

* One point at approximately (16, 24), Generalized Ships ~ 24 (light blue).

* One point at approximately (10, 4), Generalized Ships ~ 4 (dark purple).

* One point at approximately (8, 0), Generalized Ships ~ 0 (dark purple).

**Right Plot (Malicious Insturct):**

* **Undifferentiated Attention (Circles):**

* A cluster of points with Head values between 6 and 8, and Layer values between 0 and 6. These points have Generalized Ships values ranging from approximately 0 to 6 (dark purple to green).

* One point at approximately (0, 6), Generalized Ships ~ 6 (green).

* One point at approximately (0, 8), Generalized Ships ~ 6 (green).

* One point at approximately (2, 6), Generalized Ships ~ 6 (green).

* One point at approximately (4, 8), Generalized Ships ~ 6 (green).

* One point at approximately (2, 2), Generalized Ships ~ 2 (dark purple).

* One point at approximately (2, 0), Generalized Ships ~ 0 (dark purple).

* One point at approximately (2, 16), Generalized Ships ~ 3 (dark purple).

* One point at approximately (4, 16), Generalized Ships ~ 3 (dark purple).

* One point at approximately (28, 26), Generalized Ships ~ 18 (yellow).

* **Scaling Contribution (Crosses):**

* One point at approximately (0, 14), Generalized Ships ~ 12 (light blue).

* One point at approximately (0, 22), Generalized Ships ~ 15 (light blue).

* One point at approximately (0, 26), Generalized Ships ~ 15 (light blue).

* One point at approximately (0, 28), Generalized Ships ~ 15 (light blue).

* One point at approximately (14, 24), Generalized Ships ~ 15 (light blue).

* One point at approximately (14, 4), Generalized Ships ~ 3 (dark purple).

* One point at approximately (14, 2), Generalized Ships ~ 3 (dark purple).

### Key Observations

* Both plots show a concentration of "Undifferentiated Attention" heads (circles) at lower layer and head values (bottom-left).

* "Scaling Contribution" heads (crosses) are more scattered across the layer and head space.

* The "Generalized Ships" values vary significantly across the data points, indicated by the color gradient.

* The range of "Generalized Ships" is different between the two plots (0-32 for Jailbreakbench, 0-21 for Malicious Insturct).

### Interpretation

The plots visualize the distribution of safety heads in two different scenarios: "Jailbreakbench" and "Malicious Insturct." The concentration of "Undifferentiated Attention" heads at lower layers and head values suggests that these heads might be more relevant in the initial stages of processing or represent more fundamental features. The scattered distribution of "Scaling Contribution" heads indicates that these heads might be involved in more complex or specialized computations across different layers. The "Generalized Ships" values likely represent a measure of the importance or contribution of each head to the overall safety performance. The different ranges of "Generalized Ships" between the two scenarios suggest that the safety heads might have different levels of effectiveness or relevance depending on the specific task or dataset.

</details>

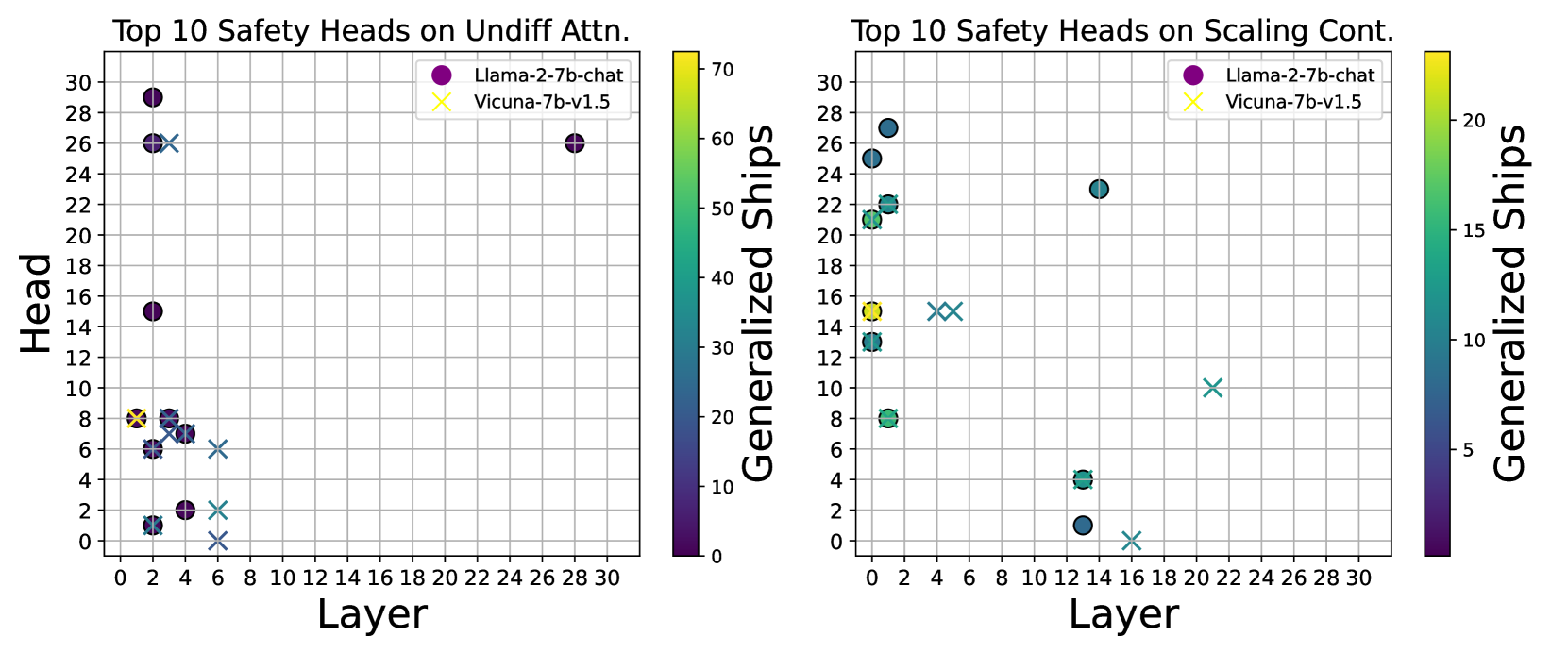

(a) Safety heads for different ablation methods on Llama-2-7b-chat. Left. Attribution using Jailbreakbench. Right. Attribution using Malicious Instruct.

<details>

<summary>x6.png Details</summary>

### Visual Description

## Scatter Plots: Top 10 Safety Heads on Undiff Attn. and Scaling Cont.

### Overview

The image contains two scatter plots side-by-side. Both plots show the relationship between "Layer" (x-axis) and "Head" (y-axis) for the top 10 safety heads. The left plot is titled "Top 10 Safety Heads on Undiff Attn." and the right plot is titled "Top 10 Safety Heads on Scaling Cont.". Each plot displays data for two models: "Llama-2-7b-chat" (represented by purple circles) and "Vicuna-7b-v1.5" (represented by yellow crosses). A color bar on the right side of each plot indicates "Generalized Ships" values, with the color of each data point corresponding to its "Generalized Ships" value.

### Components/Axes

**Left Plot (Undiff Attn.):**

* **Title:** Top 10 Safety Heads on Undiff Attn.

* **X-axis:** Layer, with ticks from 0 to 30 in increments of 2.

* **Y-axis:** Head, with ticks from 0 to 30 in increments of 2.

* **Legend (top-right):**

* Purple circle: Llama-2-7b-chat

* Yellow cross: Vicuna-7b-v1.5

* **Color Bar (right):** Generalized Ships, ranging from approximately 0 to 70.

**Right Plot (Scaling Cont.):**

* **Title:** Top 10 Safety Heads on Scaling Cont.

* **X-axis:** Layer, with ticks from 0 to 30 in increments of 2.

* **Y-axis:** Head, with ticks from 0 to 30 in increments of 2.

* **Legend (top-right):**

* Purple circle: Llama-2-7b-chat

* Yellow cross: Vicuna-7b-v1.5

* **Color Bar (right):** Generalized Ships, ranging from approximately 0 to 20.

### Detailed Analysis

**Left Plot (Undiff Attn.):**

* **Llama-2-7b-chat (Purple Circles):**

* Layer ~ 1, Head ~ 2, Generalized Ships ~ 10

* Layer ~ 2, Head ~ 8, Generalized Ships ~ 15

* Layer ~ 3, Head ~ 6, Generalized Ships ~ 10

* Layer ~ 3, Head ~ 15, Generalized Ships ~ 25

* Layer ~ 1, Head ~ 29, Generalized Ships ~ 50

* Layer ~ 26, Head ~ 26, Generalized Ships ~ 60

* **Vicuna-7b-v1.5 (Yellow Crosses):**

* Layer ~ 1, Head ~ 8, Generalized Ships ~ 70

* Layer ~ 2, Head ~ 7, Generalized Ships ~ 60

* Layer ~ 2, Head ~ 6, Generalized Ships ~ 60

* Layer ~ 3, Head ~ 1, Generalized Ships ~ 50

* Layer ~ 3, Head ~ 26, Generalized Ships ~ 60

* Layer ~ 6, Head ~ 6, Generalized Ships ~ 40

**Right Plot (Scaling Cont.):**

* **Llama-2-7b-chat (Purple Circles):**

* Layer ~ 0, Head ~ 15, Generalized Ships ~ 15

* Layer ~ 0, Head ~ 23, Generalized Ships ~ 15

* Layer ~ 0, Head ~ 27, Generalized Ships ~ 15

* Layer ~ 1, Head ~ 8, Generalized Ships ~ 15

* Layer ~ 1, Head ~ 13, Generalized Ships ~ 15

* Layer ~ 13, Head ~ 1, Generalized Ships ~ 5

* Layer ~ 13, Head ~ 4, Generalized Ships ~ 5

* **Vicuna-7b-v1.5 (Yellow Crosses):**

* Layer ~ 1, Head ~ 15, Generalized Ships ~ 15

* Layer ~ 5, Head ~ 15, Generalized Ships ~ 15

* Layer ~ 16, Head ~ 0, Generalized Ships ~ 0

* Layer ~ 21, Head ~ 8, Generalized Ships ~ 10

### Key Observations

* In the "Undiff Attn." plot, Llama-2-7b-chat has a few heads with high "Head" values (around 26 and 29) and high "Generalized Ships" values (around 50-60), while most of its other heads are clustered at lower "Head" and "Layer" values. Vicuna-7b-v1.5 heads are more clustered at lower "Head" values (below 10) but have relatively high "Generalized Ships" values (40-70).

* In the "Scaling Cont." plot, Llama-2-7b-chat heads are primarily clustered at the beginning of the layers (Layer 0 and 1) with "Head" values between 8 and 27, and "Generalized Ships" values around 15. Vicuna-7b-v1.5 heads are more spread out across the layers, with lower "Generalized Ships" values.

### Interpretation

The plots compare the top 10 safety heads of two language models, Llama-2-7b-chat and Vicuna-7b-v1.5, under two different conditions: "Undiff Attn." and "Scaling Cont.". The "Generalized Ships" value, represented by the color of the data points, seems to indicate some measure of safety or generalization capability.

The "Undiff Attn." plot suggests that Llama-2-7b-chat has a few specific heads that are highly active and contribute significantly to safety (high "Head" and "Generalized Ships" values), while Vicuna-7b-v1.5 distributes its safety-related attention more evenly across multiple heads, albeit with lower individual head activation.

The "Scaling Cont." plot shows that Llama-2-7b-chat relies heavily on the initial layers for safety-related computations, while Vicuna-7b-v1.5 distributes this responsibility across more layers. The lower "Generalized Ships" values in this plot compared to the "Undiff Attn." plot might indicate a different scaling behavior or a different definition of "safety" under this condition.

</details>

(b) Safety heads on Llama-2-7b-chat and Vicuna-7b-v1.5. Left. Attribution using Undifferentiated Attention. Right. Attribution using Scaling Contribution.

Figure 5: Overlap diagram of the Top-10 highest scores calculated using generalized Ships.

Attention Weight and Attention Output Do Not Transfer. As depicted in Figure 5(a), when examining the model Llama-2-7b-chat, there is minimal overlap between the top-10 attention heads identified by Undifferentiated Attention ablation and those identified by Scaling Contribution ablation. Furthermore, we observed that across various datasets, the heads identified by Undifferentiated Attention show greater consistency, whereas the heads identified by Scaling Contribution exhibit some variation with changes in the dataset. This suggests that different attention heads have distinct impacts on safety, reinforcing our conclusion that the safety heads identified through Undifferentiated Attention are crucial for extracting essential information.

5.2 Pre-training is Important For LLM Safety

Previous research (Lin et al., 2024; Zhou et al., 2024) has highlighteed that the base model plays a crucial role in safety, not just the alignment process. In this section, we substantiate this perspective through an attribution analysis. We analyze the overlap in safety heads when attributing to Llama-2-7b-chat and Vicuna-7b-v1.5 Both of which are fine-tuned versions on top of Llama-2-7b, having undergone identical pre-training. using two ablation methods on the Malicious Instruct dataset. The findings, as presented in Figure 5(b), reveal a significant overlap of safety heads between the two models, regardless of the ablation method used. This overlap suggests that the pre=training phase significantly shapes certain safety capability, and comparable safety attention mechanisms are likely to emerge when employing the same base model.

<details>

<summary>x7.png Details</summary>

### Visual Description

## Bar Chart: Attack Success Rate (ASR)

### Overview

The image is a bar chart comparing the attack success rate (ASR) of different attack methods (Advbench, Jailbreakbench, and Malicious Instruct) on two language models: "Llama-2-7b-chat" and "Concatenated Llama". The y-axis represents the ASR, ranging from 0.0 to 1.0. The x-axis represents the language models being attacked.

### Components/Axes

* **Y-axis:** "Attack Success Rate (ASR)" with a scale from 0.0 to 1.0 in increments of 0.2.

* **X-axis:** Categorical axis with two categories: "Llama-2-7b-chat" and "Concatenated Llama".

* **Legend:** Located in the top-right corner, it identifies the attack methods:

* Yellow: "Advbench"

* Dark Green: "Jailbreakbench"

* Dark Gray: "Malicious Instruct"

* **Gridlines:** Horizontal gridlines are present to aid in reading the ASR values.

### Detailed Analysis

**Llama-2-7b-chat:**

* **Advbench (Yellow):** ASR is approximately 0.01.

* **Jailbreakbench (Dark Green):** ASR is approximately 0.07.

* **Malicious Instruct (Dark Gray):** ASR is approximately 0.04.

**Concatenated Llama:**

* **Advbench (Yellow):** ASR is approximately 0.02.

* **Jailbreakbench (Dark Green):** ASR is approximately 0.08.

* **Malicious Instruct (Dark Gray):** ASR is approximately 0.04.

### Key Observations

* The "Jailbreakbench" attack method (Dark Green) consistently has the highest ASR for both language models.

* The "Advbench" attack method (Yellow) has the lowest ASR for both language models.

* The "Malicious Instruct" attack method (Dark Gray) has a similar ASR for both language models.

* The ASR values are generally low across all attack methods and language models, with none exceeding 0.1.

### Interpretation

The bar chart suggests that the "Llama-2-7b-chat" and "Concatenated Llama" models are relatively robust against the tested attack methods, as indicated by the low ASR values. The "Jailbreakbench" attack appears to be slightly more effective than the other two, but still achieves a low success rate. The similarity in ASR for "Malicious Instruct" across both models suggests a consistent vulnerability or resistance to this type of attack. The "Advbench" attack is the least successful, indicating that the models are relatively resistant to this specific type of adversarial input.

</details>

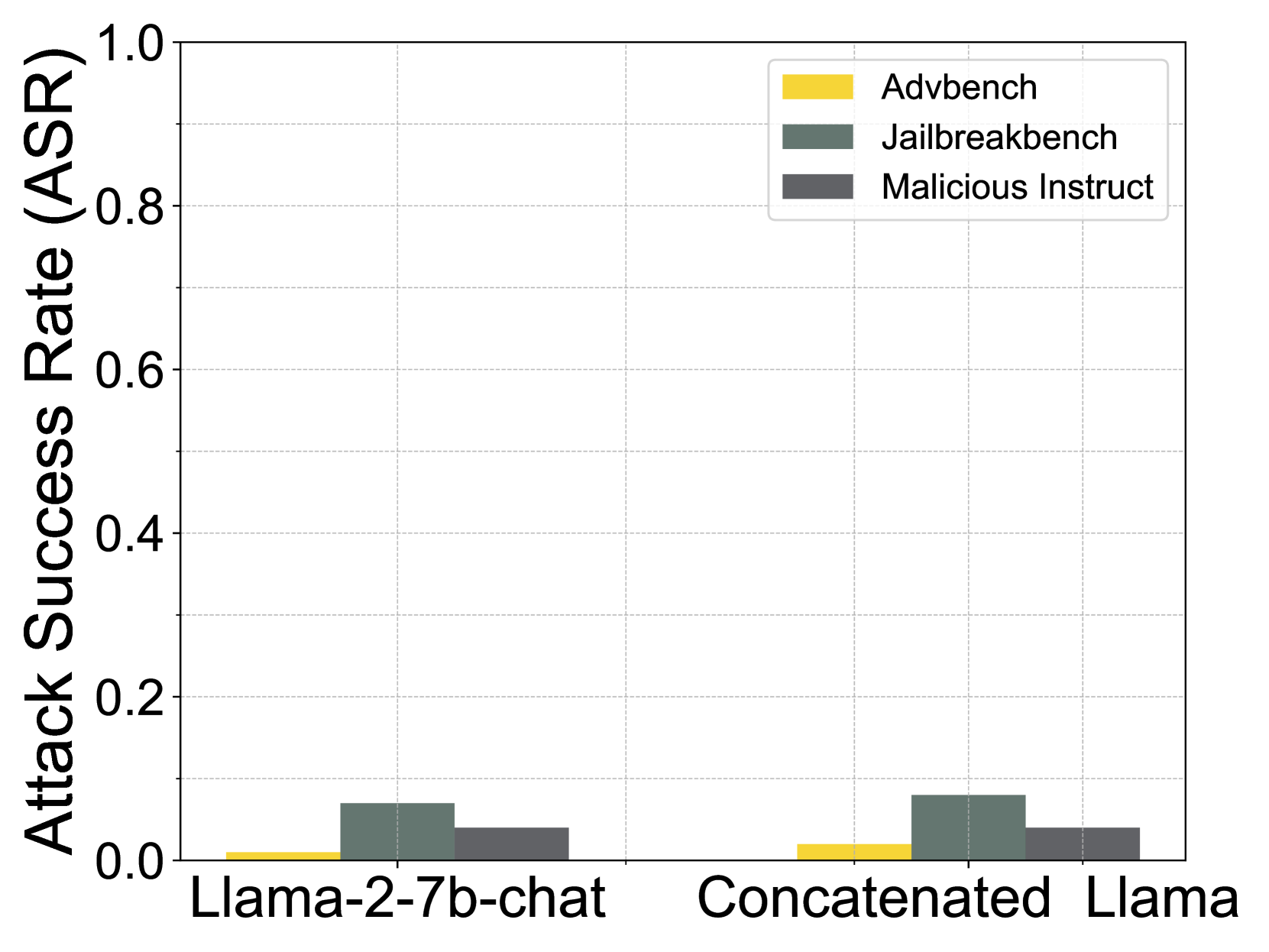

(a) (Figure 6a) Concatenate the attention of base model to the aligned model.

<details>

<summary>x8.png Details</summary>

### Visual Description

## Line Charts: Scaling Operation vs. Mean Operation

### Overview

The image presents two line charts side-by-side, comparing the "Zero-Shot Task Score" against "Ablated Head Numbers" for different models under "Scaling Operation" (left) and "Mean Operation" (right). The models include "Malicious Instruct-UA," "Malicious Instruct-SC," "Jailbreakbench-UA," "Jailbreakbench-SC," "SparseGPT," "Wanda," and "Vanilla Model." The x-axis represents the number of ablated heads (1 to 5), and the y-axis represents the zero-shot task score, ranging from 0.3 to 0.8.

### Components/Axes

* **Titles:**

* Left Chart: "Scaling Operation"

* Right Chart: "Mean Operation"

* **Y-Axis Title (both charts):** "Zero-Shot Task Score"

* Scale: 0.3 to 0.8, with increments of 0.1

* **X-Axis Title (both charts):** "Ablated Head Numbers"

* Scale: 1 to 5, with increments of 1

* **Legend (located at the bottom-right of the image, shared by both charts):**

* Red Line with Circle Markers: "Malicious Instruct-UA"

* Yellow Line with Square Markers: "Malicious Instruct-SC"

* Green Line with Triangle Markers: "Jailbreakbench-UA"

* Teal Line with Diamond Markers: "Jailbreakbench-SC"

* Light Pink Dashed Line: "SparseGPT"

* Purple Dashed Line: "Wanda"

* Light Blue Dashed Line: "Vanilla Model"

### Detailed Analysis

**Left Chart: Scaling Operation**

* **Malicious Instruct-UA (Red):** Starts at approximately 0.52 at 1 ablated head, increases to approximately 0.56 at 2 ablated heads, and then gradually decreases to approximately 0.51 at 5 ablated heads.

* **Malicious Instruct-SC (Yellow):** Starts at approximately 0.50 at 1 ablated head, decreases to approximately 0.48 at 2 ablated heads, and then remains relatively stable around 0.48 to 0.49 until 5 ablated heads.

* **Jailbreakbench-UA (Green):** Starts at approximately 0.55 at 1 ablated head, decreases to approximately 0.51 at 2 ablated heads, and then remains relatively stable around 0.51 until 5 ablated heads.

* **Jailbreakbench-SC (Teal):** Starts at approximately 0.54 at 1 ablated head, decreases to approximately 0.50 at 2 ablated heads, and then remains relatively stable around 0.49 to 0.50 until 5 ablated heads.

* **SparseGPT (Light Pink Dashed):** Remains relatively stable around 0.51 to 0.52 across all ablated head numbers.

* **Wanda (Purple Dashed):** Remains relatively stable around 0.52 across all ablated head numbers.

* **Vanilla Model (Light Blue Dashed):** Remains constant at approximately 0.59 across all ablated head numbers.

**Right Chart: Mean Operation**

* **Malicious Instruct-UA (Red):** Starts at approximately 0.51 at 1 ablated head, increases to approximately 0.55 at 2 ablated heads, and then gradually decreases to approximately 0.51 at 5 ablated heads.

* **Malicious Instruct-SC (Yellow):** Starts at approximately 0.48 at 1 ablated head, increases to approximately 0.50 at 2 ablated heads, and then remains relatively stable around 0.49 until 5 ablated heads.

* **Jailbreakbench-UA (Green):** Starts at approximately 0.54 at 1 ablated head, decreases to approximately 0.52 at 2 ablated heads, and then remains relatively stable around 0.51 until 5 ablated heads.

* **Jailbreakbench-SC (Teal):** Starts at approximately 0.48 at 1 ablated head, increases to approximately 0.51 at 2 ablated heads, and then remains relatively stable around 0.50 until 5 ablated heads.

* **SparseGPT (Light Pink Dashed):** Remains relatively stable around 0.51 to 0.52 across all ablated head numbers.

* **Wanda (Purple Dashed):** Remains relatively stable around 0.52 across all ablated head numbers.

* **Vanilla Model (Light Blue Dashed):** Remains constant at approximately 0.59 across all ablated head numbers.

### Key Observations

* The "Vanilla Model" consistently scores the highest across both operations and all ablated head numbers.

* "SparseGPT" and "Wanda" models show very stable performance regardless of the number of ablated heads and the type of operation.

* "Malicious Instruct-UA" shows a slight increase in performance initially with 2 ablated heads, then decreases as more heads are ablated.

* The performance of "Malicious Instruct-SC," "Jailbreakbench-UA," and "Jailbreakbench-SC" models tends to stabilize after 2 ablated heads.

* The "Scaling Operation" and "Mean Operation" charts show similar trends for each model, with slight variations in the initial scores.

### Interpretation

The charts compare the performance of different models on a zero-shot task as the number of ablated heads increases, under two different operations: "Scaling Operation" and "Mean Operation." The "Vanilla Model" serves as a baseline, consistently outperforming the other models. The stability of "SparseGPT" and "Wanda" suggests that their performance is less sensitive to head ablation. The initial increase in performance for "Malicious Instruct-UA" with 2 ablated heads could indicate that some heads are more critical than others for this specific task. The stabilization of other models after 2 ablated heads suggests that the remaining heads are sufficient to maintain a certain level of performance. The similarity in trends between the two operations implies that the models respond similarly to head ablation regardless of the operation used.

</details>

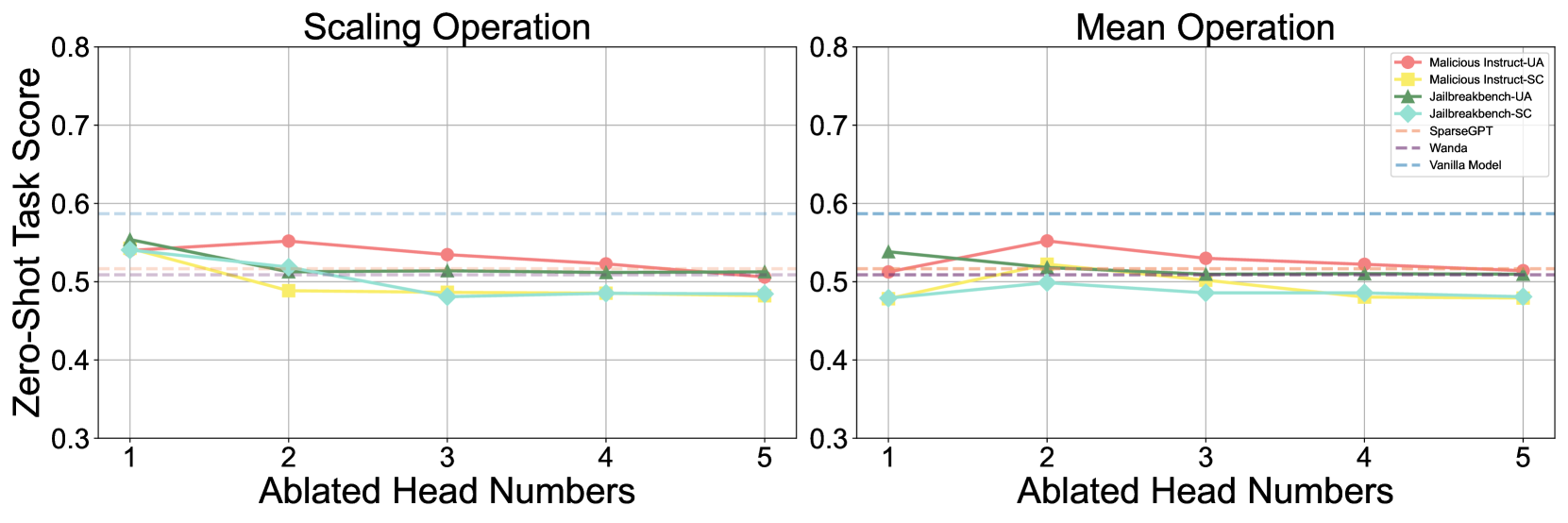

(b) (Figure 6b) Helpfulness compromise after safety head ablation. Left. Comparison of parameter scaling using small coefficient $\epsilon$ . Right. Comparison of using the mean of all heads to replace the safety head.

To explore the association between safety within attention heads and the pre-training phase, we conduct an experiment where we load the attention parameters from the base model while keeping the other parameters from the aligned model. We evaluate the safety of this ‘concatenated’ model and discover that it retains safety capability close to that of the aligned model, as shown in Figure 6(a). This observation further supports the notion that the safety effect of the attention mechanism is primarily derived from the pre-training phase. Specifically, reverting parameters to the pre-alignment state does not significantly diminish safety capability, whereas ablating a safety head does.

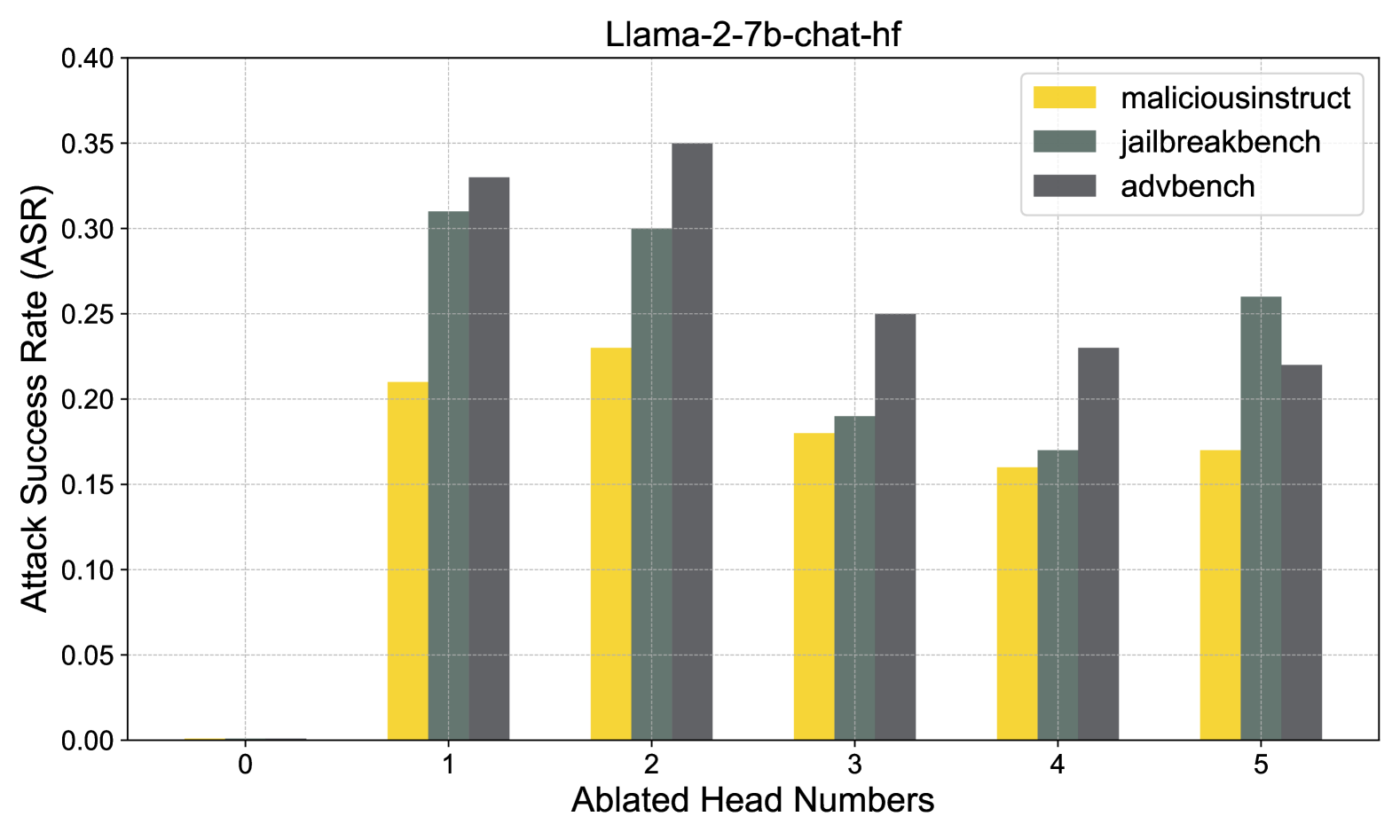

5.3 Helpful-Harmless Trade-off

The neurons in LLMs exhibit superposition and polysemanticity (Templeton, 2024), meaning they are often activated by multiple forms of knowledge and capabilities. Therefore, we evaluate the impact of safety heads ablation on helpfulness. We use lm-eval (Gao et al., 2024) to assess model performance after ablating safety heads of Llama-2-7b-chat on zero-shot tasks, including BoolQ (Clark et al., 2019a), RTE (Wang, 2018), WinoGrande (Sakaguchi et al., 2021), ARC Challenge (Clark et al., 2018), OpenBookQA (Mihaylov et al., 2018). As shown in Figure 6(b), we find that safety head ablation significantly degrades the safety capability while causing little helpfulness compromise. Based on this, we argue that the safety head is indeed primarily responsible for safety.

We further compare zero-task scores to two state-of-the-art pruning methods, SparseGPT (Frantar & Alistarh, 2023) and Wanda (Sun et al., 2024a), to evaluate the general performance compromise. The results in Figure 6(b) show that when using Undifferentiated Attention, the zero-shot task scores are typically higher than those observed after pruning, while with Scaling Contribution, the scores are closer to those from pruning, indicating our ablation is acceptable in terms of helpfulness compromise. Additionally, we evaluate helpfulness by assigning the mean of all attention heads (Wang et al., 2023) to the safety head, and the conclusion is similar.

6 Conclusion

This work introduces Safety Head Important Scores (Ships) to interpret the safety capabilities of attention heads in LLMs. It quantifies the effect of each head on rejecting harmful queries to offers a novel way for LLM safety understanding. Extensive experiments show that selectively ablating identified safety heads significantly increases the ASR for models like Llama-2-7b-chat and Vicuna-7b-v1.5, underscoring its effectiveness. This work also presents the Safety Attention Head Attribution Algorithm (Sahara), a generalized version of Ships that identifies groups of heads whose ablation weakens safety capabilities. Our results reveal several interesting insights: certain attention heads are crucial for safety, safety heads overlap across fine-tuned models, and ablating these heads minimally impacts helpfulness. These findings provide a solid foundation for enhancing model safety and alignment in future research.

7 Acknowledgements

This work was supported by Alibaba Research Intern Program.

References

- Achiam et al. (2023) Josh Achiam, Steven Adler, Sandhini Agarwal, Lama Ahmad, Ilge Akkaya, Florencia Leoni Aleman, Diogo Almeida, Janko Altenschmidt, Sam Altman, Shyamal Anadkat, et al. Gpt-4 technical report. arXiv preprint arXiv:2303.08774, 2023.

- Arditi et al. (2024) Andy Arditi, Oscar Balcells Obeso, Aaquib Syed, Daniel Paleka, Nina Panickssery, Wes Gurnee, and Neel Nanda. Refusal in language models is mediated by a single direction. In ICML 2024 Workshop on Mechanistic Interpretability, 2024. URL https://openreview.net/forum?id=EqF16oDVFf.

- Bai et al. (2022a) Yuntao Bai, Andy Jones, Kamal Ndousse, Amanda Askell, Anna Chen, Nova DasSarma, Dawn Drain, Stanislav Fort, Deep Ganguli, Tom Henighan, et al. Training a helpful and harmless assistant with reinforcement learning from human feedback. arXiv preprint arXiv:2204.05862, 2022a.

- Bai et al. (2022b) Yuntao Bai, Saurav Kadavath, Sandipan Kundu, Amanda Askell, Jackson Kernion, Andy Jones, Anna Chen, Anna Goldie, Azalia Mirhoseini, Cameron McKinnon, et al. Constitutional ai: Harmlessness from ai feedback. arXiv preprint arXiv:2212.08073, 2022b.

- Bengio et al. (2024) Yoshua Bengio, Geoffrey Hinton, Andrew Yao, Dawn Song, Pieter Abbeel, Trevor Darrell, Yuval Noah Harari, Ya-Qin Zhang, Lan Xue, Shai Shalev-Shwartz, et al. Managing extreme ai risks amid rapid progress. Science, 384(6698):842–845, 2024.

- Bereska & Gavves (2024) Leonard Bereska and Efstratios Gavves. Mechanistic interpretability for ai safety–a review. arXiv preprint arXiv:2404.14082, 2024.

- Campbell et al. (2023) James Campbell, Phillip Guo, and Richard Ren. Localizing lying in llama: Understanding instructed dishonesty on true-false questions through prompting, probing, and patching. In Socially Responsible Language Modelling Research, 2023. URL https://openreview.net/forum?id=RDyvhOgFvQ.

- Carlini et al. (2024) Nicholas Carlini, Milad Nasr, Christopher A Choquette-Choo, Matthew Jagielski, Irena Gao, Pang Wei W Koh, Daphne Ippolito, Florian Tramer, and Ludwig Schmidt. Are aligned neural networks adversarially aligned? Advances in Neural Information Processing Systems, 36, 2024.

- Chao et al. (2023) Patrick Chao, Alexander Robey, Edgar Dobriban, Hamed Hassani, George J Pappas, and Eric Wong. Jailbreaking black box large language models in twenty queries. arXiv preprint arXiv:2310.08419, 2023.

- Chao et al. (2024) Patrick Chao, Edoardo Debenedetti, Alexander Robey, Maksym Andriushchenko, Francesco Croce, Vikash Sehwag, Edgar Dobriban, Nicolas Flammarion, George J Pappas, Florian Tramer, et al. Jailbreakbench: An open robustness benchmark for jailbreaking large language models. arXiv preprint arXiv:2404.01318, 2024.

- Chen et al. (2024) Jianhui Chen, Xiaozhi Wang, Zijun Yao, Yushi Bai, Lei Hou, and Juanzi Li. Finding safety neurons in large language models. arXiv preprint arXiv:2406.14144, 2024.

- Clark et al. (2019a) Christopher Clark, Kenton Lee, Ming-Wei Chang, Tom Kwiatkowski, Michael Collins, and Kristina Toutanova. Boolq: Exploring the surprising difficulty of natural yes/no questions. In Proceedings of the 2019 Conference of the North American Chapter of the Association for Computational Linguistics: Human Language Technologies, Volume 1 (Long and Short Papers), pp. 2924–2936, 2019a.

- Clark et al. (2019b) Kevin Clark, Urvashi Khandelwal, Omer Levy, and Christopher D. Manning. What does BERT look at? an analysis of BERT’s attention. In Tal Linzen, Grzegorz Chrupała, Yonatan Belinkov, and Dieuwke Hupkes (eds.), Proceedings of the 2019 ACL Workshop BlackboxNLP: Analyzing and Interpreting Neural Networks for NLP, pp. 276–286, Florence, Italy, August 2019b. Association for Computational Linguistics. doi: 10.18653/v1/W19-4828. URL https://aclanthology.org/W19-4828.