# Paths-over-Graph: Knowledge Graph Empowered Large Language Model Reasoning

**Authors**: Xingyu Tan, Xiaoyang Wang, Qing Liu, Xiwei Xu, Xin Yuan, Wenjie Zhang

> 0009-0000-7232-7051 University of New South Wales Data61, CSIRO Sydney Australia

> 0000-0003-3554-3219 University of New South Wales Sydney Australia

> 0000-0001-7895-9551 Data61, CSIRO Sydney Australia

> 0000-0002-2273-1862 Data61, CSIRO Sydney Australia

> 0000-0002-9167-1613 Data61, CSIRO Sydney Australia

> 0000-0001-6572-2600 University of New South Wales Sydney Australia

(2025)

## Abstract

Large Language Models (LLMs) have achieved impressive results in various tasks but struggle with hallucination problems and lack of relevant knowledge, especially in deep complex reasoning and knowledge-intensive tasks. Knowledge Graphs (KGs), which capture vast amounts of facts in a structured format, offer a reliable source of knowledge for reasoning. However, existing KG-based LLM reasoning methods face challenges like handling multi-hop reasoning, multi-entity questions, and effectively utilizing graph structures. To address these issues, we propose Paths-over-Graph (PoG), a novel method that enhances LLM reasoning by integrating knowledge reasoning paths from KGs, improving the interpretability and faithfulness of LLM outputs. PoG tackles multi-hop and multi-entity questions through a three-phase dynamic multi-hop path exploration, which combines the inherent knowledge of LLMs with factual knowledge from KGs. In order to improve the efficiency, PoG prunes irrelevant information from the graph exploration first and introduces efficient three-step pruning techniques that incorporate graph structures, LLM prompting, and a pre-trained language model (e.g., SBERT) to effectively narrow down the explored candidate paths. This ensures all reasoning paths contain highly relevant information captured from KGs, making the reasoning faithful and interpretable in problem-solving. PoG innovatively utilizes graph structure to prune the irrelevant noise and represents the first method to implement multi-entity deep path detection on KGs for LLM reasoning tasks. Comprehensive experiments on five benchmark KGQA datasets demonstrate PoG outperforms the state-of-the-art method ToG across GPT-3.5-Turbo and GPT-4, achieving an average accuracy improvement of 18.9%. Notably, PoG with GPT-3.5-Turbo surpasses ToG with GPT-4 by up to 23.9%.

Large Language Models; Knowledge Graph; Knowledge Graph Question Answering; Retrieval-Augmented Generation journalyear: 2025 copyright: acmlicensed conference: Proceedings of the ACM Web Conference 2025; April 28-May 2, 2025; Sydney, NSW, Australia booktitle: Proceedings of the ACM Web Conference 2025 (WWW ’25), April 28-May 2, 2025, Sydney, NSW, Australia doi: 10.1145/3696410.3714892 isbn: 979-8-4007-1274-6/25/04 ccs: Information systems Question answering

## 1. Introduction

<details>

<summary>x1.png Details</summary>

### Visual Description

## Diagram: Comparison of AI Reasoning Methods for a Multi-Hop Question

### Overview

The image is a technical diagram comparing four different artificial intelligence approaches to answering a complex, multi-hop reasoning question. The central question posed is: **"What country bordering France contains an airport that serves Nijmegen?"** The diagram is divided into four horizontal panels, labeled (a) through (d), each illustrating a different method's process and final answer. Three methods fail (marked with a red "X"), while one succeeds (marked with a green checkmark).

### Components/Axes

The diagram is structured as a vertical sequence of four panels. Each panel contains:

1. **A method label** (e.g., "GPT-3.5/GPT-4 LLM only").

2. **A flowchart** using icons (question mark, brain/LLM icon, network/KG icon, document icon) and arrows to represent the process flow.

3. **A text box** explaining the method's reasoning or output.

4. **A final answer box** (colored red for incorrect, green for correct) and a status icon (red "X" or green checkmark).

### Detailed Analysis

#### **Panel (a): GPT-3.5/GPT-4 LLM only**

* **Process Flow:** A question mark icon points directly to an LLM (brain) icon, which outputs the answer "Belgium".

* **Reasoning Text (Chain of Thoughts prompt):** "Let's go step by step. Response: Nijmegen is served by airports in the neighboring countries, and one of the closest major ones is **Brussels Airport (BRU)** in **Belgium**, which is relatively near Nijmegen compared to other major airports. The answer is **Belgium**."

* **Final Answer:** "Belgium" (in a red box).

* **Status:** Incorrect (Red "X").

#### **Panel (b): LLM empowered KG exploration search**

* **Process Flow:** The entities "France" and "Nijmegen" are input. They are processed by a combination of a Knowledge Graph (KG) icon and an LLM icon, generating "KG Triples". This leads to the answer "Netherlands".

* **Reasoning Text (TextGG (:****** (** (** triples triples triples triples: location location[,, France,, location.location.containedby, Europe], [France, location.location.containedby, Western Europe], [France, location.location.geolocation, Unnamed Entity], [Nijmegen, second_level_division, Netherland] Answering: First, Nijmegen is a city in the Netherlands. Second, the Netherlands is a country bordering France. The answer is **{Netherlands}**."

* **Final Answer:** "Netherlands" (in a red box).

* **Status:** Incorrect (Red "X").

#### **Panel (c): LLM empowered KG subgraph answering**

* **Process Flow:** A question mark and a KG icon are input to an LLM. The output is a document icon combined with a KG icon, leading to the result "Refuse to answering".

* **Reasoning Text (MindMap):** "MindMap cannot prompt LLM to construct a graph and generate the graph descript document since the retrieved subgraph is extremely large and dense."

* **Final Answer:** "Refuse to answering" (in a red box).

* **Status:** Incorrect (Red "X").

#### **Panel (d): PoG (Path of Graph)**

* **Process Flow:** This is the most complex flowchart.

1. **Subgraph Detection:** Multiple question marks and magnifying glasses point to multiple KG icons, which are combined into a single, denser KG icon.

2. **Question Analysis:** A separate question mark goes through an LLM icon to a "Question Analysis" box (a question mark inside a square).

3. The outputs of Subgraph Detection and Question Analysis are combined.

4. **Reasoning Path Exploration:** The combined data is processed, represented by a series of horizontal black bars (suggesting multiple paths).

5. **Reasoning Path Pruning:** An LLM icon acts on the paths, reducing them to a shorter set of bars.

6. A final LLM icon processes the pruned paths to output the answer "Germany".

* **Reasoning Text (PoG Reasoning paths):**

* **Path 1:** `Nijmegen --(nearby)--> Weeze Airport --(contain by)--> Germany --(continent)--> Europ, Western Europen --(contain)--> France`

* **Path 2:** `Nijmegen --(nearby)--> Weeze Airport --(contain by)--> Germany --(adjoins)--> Unnamed Entity --(adjoins)--> France`

* **Response:** "From the provided knowledge graph path, the entity **{Germany}** is the country that contains an airport serving Nijmegen and is also the country bordering France. Therefore, the answer to the main question 'What country bordering France contains an airport that serves Nijmegen?' is **{Germany}**."

* **Final Answer:** "Germany" (in a green box).

* **Status:** Correct (Green checkmark).

### Key Observations

1. **Question Complexity:** The question requires connecting three entities (Nijmegen, an airport, a country) and verifying a geographical relationship (bordering France). This makes it a challenging multi-hop reasoning task.

2. **Method Failure Modes:**

* **LLM-only (a):** Fails due to a plausible but incorrect assumption (that Brussels is the closest major airport). It lacks factual grounding.

* **KG Exploration (b):** Fails because it retrieves correct but incomplete triples. It correctly identifies Nijmegen as being in the Netherlands but incorrectly concludes the Netherlands borders France.

* **KG Subgraph (c):** Fails due to computational or representational overload; the retrieved knowledge subgraph is too large and dense for the method to process effectively.

3. **PoG Success (d):** The PoG method succeeds by explicitly modeling and pruning reasoning paths. It finds the correct chain: Nijmegen -> Weeze Airport (in Germany) -> Germany (borders France). The "Pruning" step is critical for filtering out irrelevant or incorrect paths.

### Interpretation

This diagram serves as a comparative analysis of AI reasoning architectures, highlighting the limitations of pure LLMs and basic knowledge graph integration for complex, multi-constraint questions. It argues for the superiority of a structured, path-based approach (PoG) that combines subgraph detection, explicit question analysis, and iterative reasoning path exploration and pruning.

The core insight is that answering such questions isn't just about retrieving facts (like "Nijmegen is in the Netherlands") but about constructing and validating a *chain of relationships* that satisfies all constraints of the query. The PoG method's success demonstrates the importance of:

1. **Structured Exploration:** Systematically generating potential reasoning paths from a knowledge graph.

2. **Constraint Verification:** Explicitly checking each path against all conditions in the question (e.g., "contains an airport that serves Nijmegen" AND "borders France").

3. **Pruning:** Eliminating paths that are incomplete, irrelevant, or lead to contradictions.

The incorrect answer "Netherlands" in panel (b) is particularly instructive; it shows how a system can be partially correct yet ultimately wrong by missing a single relational link (the border with France). The diagram implicitly critiques methods that perform shallow retrieval or lack mechanisms for holistic path validation.

</details>

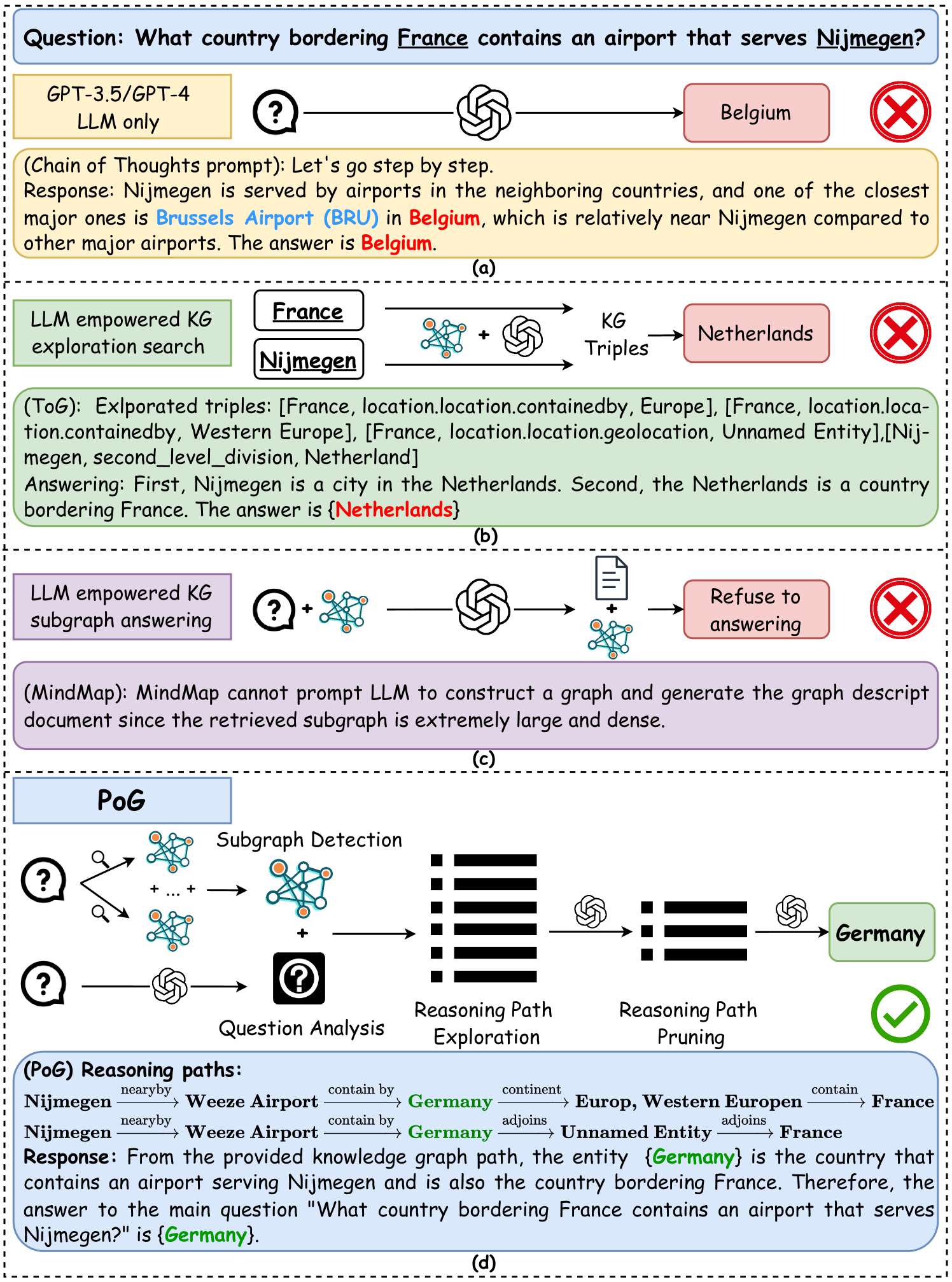

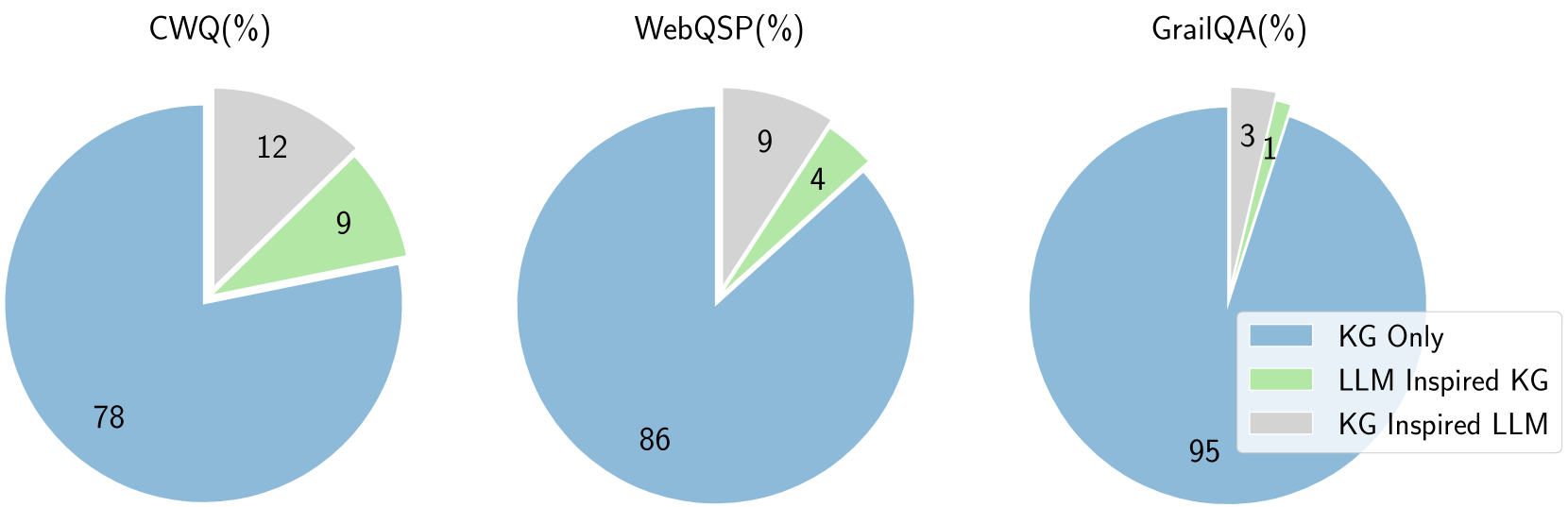

Figure 1. Representative workflow of four LLM reasoning paradigms.

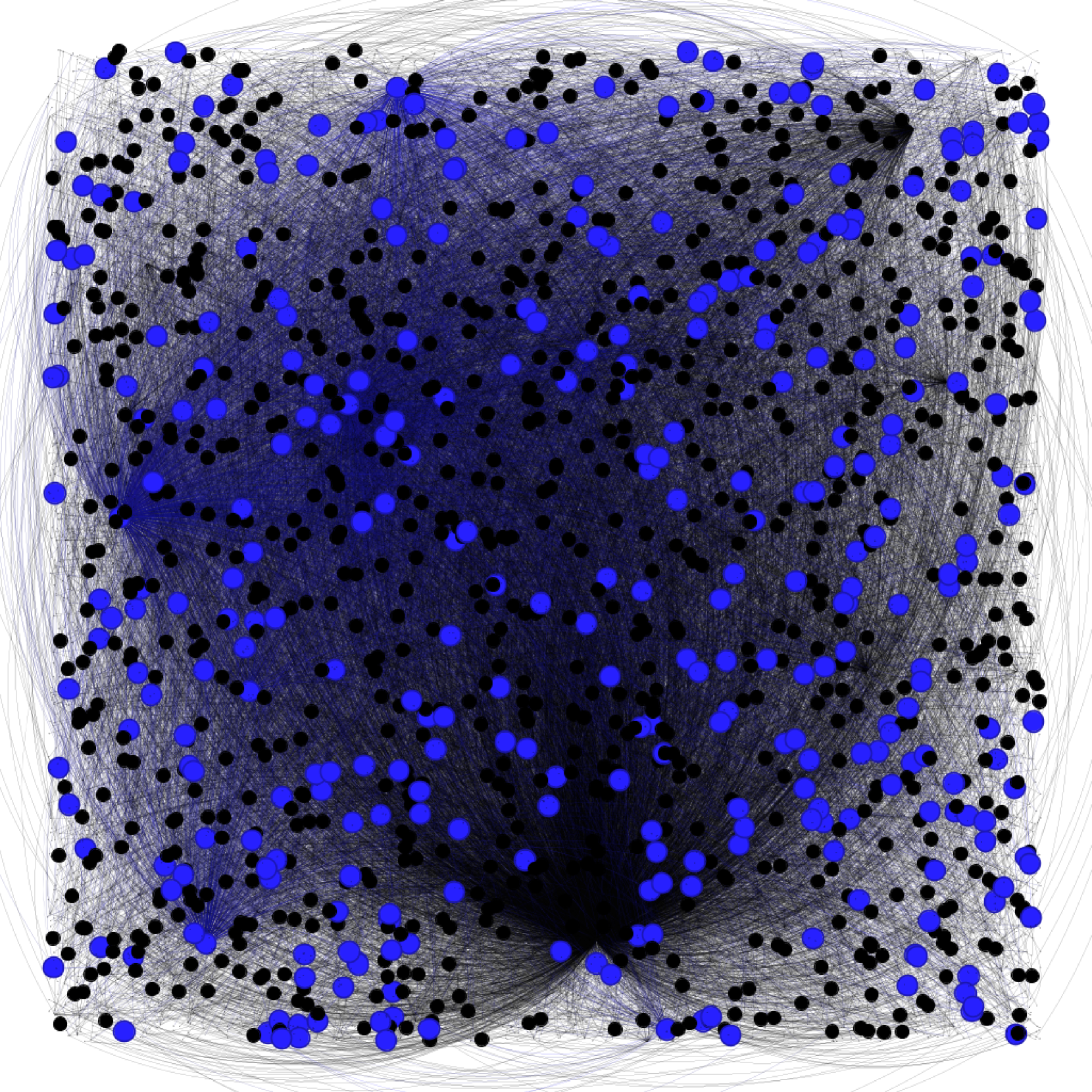

Large Language Models (LLMs) have demonstrated remarkable performance in various tasks (Brown, 2020; Chowdhery et al., 2023; Touvron et al., 2023; Besta et al., 2024; Huang et al., 2025). These models leverage pre-training techniques by scaling to billions of parameters and training on extensive, diverse, and unlabelled data (Touvron et al., 2023; Rawte et al., 2023). Despite these impressive capabilities, LLMs face two well-known challenges. First, they struggle with deep and responsible reasoning when tackling complex tasks (Petroni et al., 2020; Talmor et al., 2018; Khot et al., 2022). Second, the substantial cost of training makes it difficult to keep models updated with the latest knowledge (Sun et al., 2024; Wen et al., 2024), leading to errors when answering questions that require specialized information not included in their training data. For example, in Figure 1 (a), though models like GPT can generate reasonable answers for knowledge-specific questions, these answers may be incorrect due to outdated information or hallucination of reasoning on LLM inherent Knowledge Base (KB).

To deal with the problems of error reasoning and knowledge gaps, the plan-retrieval-answering method has been proposed (Luo et al., 2024; Zhao et al., 2023; Li et al., 2023b). In this approach, LLMs are prompted to decompose complex reasoning tasks into a series of sub-tasks, forming a plan. Simultaneously, external KBs are retrieved to answer each step of the plan. However, this method still has the issue of heavily relying on the reasoning abilities of LLMs rather than the faithfulness of the retrieved knowledge. The generated reasoning steps guide information selection, but answers are chosen based on the LLM’s interpretation of the retrieved knowledge rather than on whether the selection leads to a correct and faithful answer.

To address these challenges, incorporating external knowledge sources like Knowledge Graphs (KGs) is a promising solution to enhance LLM reasoning (Sun et al., 2024; Luo et al., 2024; Pan et al., 2024; Luo et al., 2023). KGs offer abundant factual knowledge in a structured format, serving as a reliable source to improve LLM capabilities. Knowledge Graph Question Answering (KGQA) serves as an approach for evaluating the integration of KGs with LLMs, which requires machines to answer natural language questions by retrieving relevant facts from KGs. These approaches typically involve: (1) identifying the initial entities from the question, and (2) iteratively retrieving and refining inference paths until sufficient evidence has been obtained. Despite their success, they still face challenges such as handling multi-hop reasoning problems, addressing questions with multiple topic entities, and effectively utilizing the structural information of graphs.

Challenge 1: Multi-hop reasoning problem. Current methods (Guo et al., 2024; Ye et al., 2021; Sun et al., 2024; Ma et al., 2024), such as the ToG model presented in Figure 1 (b), begin by exploring from each topic entity, with LLMs selecting connected knowledge triples like (France, contained_by, Europe). This process relies on the LLM’s inherent understanding of these triples. However, focusing on one-hop neighbors can result in plausible but incorrect answers and prematurely exclude correct ones, especially when multi-hop reasoning is required. Additionally, multi-hop reasoning introduces significant computational overhead, making efficient pruning essential, especially in dense and large KGs.

Challenge 2: Multi-entity question. As shown in Figure 1 (b), existing work (Guo et al., 2024; Ye et al., 2021; Sun et al., 2024; Ma et al., 2024) typically explores KG for each topic entity independently. When a question involves multiple entities, these entities are examined in separate steps without considering their interconnections. This approach can result in a large amount of irrelevant information in the candidate set that does not connect to the other entities in the question, leading to suboptimal results.

Challenge 3: Utilizing graph structure. Existing methods (Wen et al., 2024; Guo et al., 2023; Chen et al., 2024) often overlook the inherent graph structures when processing retrieved subgraphs. For example, the MindMap model in Figure 1 (c) utilizes LLMs to generate text-formatted subgraphs from KG triples, converting them into graph descriptions that are fed back into the LLM to produce answers. This textual approach overlooks the inherent structural information of graphs and can overwhelm the LLM when dealing with large graphs. Additionally, during KG information selection, most methods use in-context learning by feeding triples into the LLM, ignoring the overall graph structure.

Contributions. In this paper, we introduce a novel method, P aths- o ver- G raph (PoG). Unlike previous studies that utilize knowledge triples for retrieval (Sun et al., 2024; Ma et al., 2024), PoG employs knowledge reasoning paths, that contain all the topic entities in a long reasoning length, as a retrieval-augmented input for LLMs. The paths in KGs serve as logical reasoning chains, providing KG-supported, interpretable reasoning logic that addresses issues related to the lack of specific knowledge background and unfaithful reasoning paths.

To address multi-hop reasoning problem, as shown in Figure 1 (d), PoG first performs question analysis, to extract topic entities from questions. Utilizing these topic entities, it decomposes the complex question into sub-questions and generates an LLM thinking indicator termed "Planning". This planning not only serves as an answering strategy but also predicts the implied relationship depths between the answer and each topic entity. The multi-hop paths are then explored starting from a predicted depth, enabling a dynamic search process. Previous approaches using planning usually retrieve information from scratch, which often confuses LLMs with source neighborhood-based semantic information. In contrast, our method ensures that LLMs follow accurate reasoning paths that directly lead to the answer.

To address multi-entity questions, PoG employs a three-phase exploration process to traverse reasoning paths from the retrieved question subgraph. All paths must contain all topic entities in the same order as they occur in the LLM thinking indicator. In terms of reasoning paths in KGs, all paths are inherently logical and faithful. Each path potentially contains one possible answer and serves as the interpretable reasoning logic. The exploration leverages the inherent knowledge of both LLM and KG.

To effectively utilize graph structure, PoG captures the question subgraph by expanding topic entities to their maximal depth neighbors, applying graph clustering and reduction to reduce graph search costs. In the path pruning phase, we select possible correct answers from numerous candidates. All explored paths undergo a three-step beam search pruning, integrating graph structures, LLM prompting, and a pre-trained language understanding model (e.g., BERT) to ensure effectiveness and efficiency. Additionally, inspired by the Graph of Thought (GoT) (Besta et al., 2024), to reduce LLM hallucination, PoG prompts LLMs to summarize the obtained Top- $W_{\max}$ paths before evaluating the answer, where $W_{\max}$ is a user-defined maximum width in the path pruning phase. In summary, the advantage of PoG can be abbreviated as:

- Dynamic deep search: Guided by LLMs, PoG dynamically extracts multi-hop reasoning paths from KGs, enhancing LLM capabilities in complex knowledge-intensive tasks.

- Interpretable and faithful reasoning: By utilizing highly question-relevant knowledge paths, PoG improves the interpretability of LLM reasoning, enhancing the faithfulness and question-relatedness of LLM-generated content.

- Efficient pruning with graph structure integration: PoG incorporates efficient pruning techniques in both the KG and reasoning paths to reduce computational costs, mitigate LLM hallucinations caused by irrelevant noise, and effectively narrow down candidate answers.

- Flexibility and effectiveness: a) PoG is a plug-and-play framework that can be seamlessly applied to various LLMs and KGs. b) PoG allows frequent knowledge updates via the KG, avoiding the expensive and slow updates required for LLMs. c) PoG reduces the LLMs token usage by over 50% with only a ±2% difference in accuracy compared to the best-performing strategy. d) PoG achieves state-of-the-art results on all the tested KGQA datasets, outperforming the strong baseline ToG by an average of 18.9% accuracy using both GPT-3.5 and GPT-4. Notably, PoG with GPT-3.5 can outperform ToG with GPT-4 by up to 23.9%.

## 2. Related Work

KG-based LLM reasoning. KGs provide structured knowledge valuable for integration with LLMs (Pan et al., 2024). Early studies (Peters et al., 2019; Luo et al., 2024; Zhang et al., 2021; Li et al., 2023b) embed KG knowledge into neural networks during pre-training or fine-tuning, but this can reduce explainability and hinder efficient knowledge updating (Pan et al., 2024). Recent methods combine KGs with LLMs by converting relevant knowledge into textual prompts, often ignoring structural information (Pan et al., 2024; Wen et al., 2024). Advanced works (Sun et al., 2024; Jiang et al., 2023; Ma et al., 2024) involve LLMs directly exploring KGs, starting from an initial entity and iteratively retrieving and refining reasoning paths until the LLM decides the augmented knowledge is sufficient. However, by starting from a single vertex and ignoring the question’s position within the KG’s structure, these methods overlook multiple topic entities and the explainability provided by multi-entity paths.

Reasoning with LLM prompting. LLMs have shown significant potential in solving complex tasks through effective prompting strategies. Chain of Thought (CoT) prompting (Wei et al., 2022) enhances reasoning by following logical steps in few-shot learning. Extensions like Auto-CoT (Zhang et al., 2023), Complex-CoT (Fu et al., 2022), CoT-SC (Wang et al., 2022), Zero-Shot CoT (Kojima et al., 2022), ToT (Yao et al., 2024), and GoT (Besta et al., 2024) build upon this approach. However, these methods often rely solely on knowledge present in training data, limiting their ability to handle knowledge-intensive or deep reasoning tasks. To solve this, some studies integrate external KBs using plan-and-retrieval methods such as CoK (Li et al., 2023b), RoG (Luo et al., 2024), and ReAct (Yao et al., 2022), decomposing complex questions into subtasks to reduce hallucinations. However, they may focus on the initial steps of sub-problems and overlook further steps of final answers, leading to locally optimal solutions instead of globally optimal ones. To address these deep reasoning challenges, we introduce dynamic multi-hop question reasoning. By adaptively determining reasoning depths for different questions, we enable the model to handle varying complexities effectively.

KG information pruning. Graphs are widely used to model complex relationships among different entities (Tan et al., 2023; Sima et al., 2024; Li et al., 2024b, a). KGs contain vast amounts of facts (Guo et al., 2019; Wu et al., 2024; Wang et al., 2024a), making it impractical to involve all relevant triples in the context of the LLM due to high costs and potential noise (Wang et al., 2024b). Existing methods (Sun et al., 2024; Jiang et al., 2023; Ma et al., 2024) typically identify initial entities and iteratively retrieve reasoning paths until an answer is reached, often treating the LLM as a function executor and relying on in-context learning or fine-tuning, which is expensive. Some works attempt to reduce pruning costs. KAPING (Baek et al., 2023a) projects questions and triples into the same semantic space to retrieve relevant knowledge via similarity measures. KG-GPT (Kim et al., 2023) decomposes complex questions, matches, and selects the relevant relations with sub-questions to form evidence triples. However, these methods often overlook the overall graph structure and the interrelations among multiple topic entities, leading to suboptimal pruning and reasoning performance.

<details>

<summary>x2.png Details</summary>

### Visual Description

## Technical Diagram: Knowledge Graph-Based Question Answering System

### Overview

This image is a detailed technical diagram illustrating a multi-stage system for answering complex natural language questions by exploring and reasoning over a knowledge graph. The system uses a combination of graph traversal, Large Language Model (LLM) assistance, and path selection/pruning techniques. The diagram is divided into four main dashed-line boxes representing sequential phases: **Initialization**, **Exploration**, **Path Pruning**, and **Question Answering**. A large, central knowledge graph sub-diagram is the focal point of the Exploration phase.

### Components/Axes (System Phases & Modules)

**1. Initialization (Top-Right Box)**

* **Question:** "What country bordering **France** contains an airport that serves **Nijmegen**?" (The words "France" and "Nijmegen" are highlighted in yellow and red, respectively).

* **Components:**

* **Topic Entity Recognition:** A module icon (brain with nodes) processes the question.

* **Question Subgraph Detection:** A module icon (network graph) follows.

* **Split Questions, LLM indicator, Ordered Entities:** A green box outputs processed question components.

* **Flow:** Arrows indicate the question feeds into Topic Entity Recognition, then to Question Subgraph Detection, and finally to the Split Questions module.

**2. Exploration (Top-Center Box)**

* **Components (Three Exploration Strategies):**

* **Topic Entity Path Exploration:** A small diagram showing a path from a red node to a yellow node through white nodes.

* **LLM Supplement Path Exploration:** A small diagram showing multiple paths (red, green, yellow nodes) with an LLM icon (brain) connected.

* **Node Expand Exploration:** A small diagram showing a central node expanding to many surrounding nodes.

* **Flow:** These three exploration modules feed into the large central knowledge graph diagram.

**3. Central Knowledge Graph (Main Diagram within Exploration)**

This is a directed graph with labeled edges. Nodes are colored boxes, and edges are arrows with relation labels.

* **Key Nodes (Entities):**

* **Nijmegen** (Red box, left side)

* **Weeze Airport** (Blue box)

* **Germany** (Pink box)

* **France** (Yellow box, right side)

* **Kingdom of the Netherlands** (Grey box)

* **Europe, Western Europe** (Blue box)

* **Central European Time Zone** (Grey box)

* **Lyon-Saint Exupéry Airport** (Blue box)

* **Public airport** (Grey box)

* **Ryanair** (Grey box)

* **Wired** (Grey box)

* **2000, 2002, 1924 Olympics** (Grey box)

* **Unnamed Entity, ...** (Grey box, appears twice)

* **Veghel, Strijen, Rhenen, Oostzaan** (Grey box)

* **Key Edges (Relations):**

* `nearby_airports` (Nijmegen -> Weeze Airport)

* `airport_type` (Weeze Airport -> Public airport)

* `containedby` (Weeze Airport -> Germany)

* `adjoin_s` (Germany -> UnnamedEntity; UnnamedEntity -> France)

* `continent` (Germany -> Europe, Western Europe)

* `containedby` (Kingdom of the Netherlands -> Europe, Western Europe)

* `country` (Kingdom of the Netherlands -> Veghel, Strijen, Rhenen, Oostzaan)

* `time_zones` (Veghel... -> Central European Time Zone)

* `in_this_time_zone` (France -> Central European Time Zone)

* `airports_of_this_type` (Public airport -> Lyon-Saint Exupéry Airport)

* `containedby` (Lyon-Saint Exupéry Airport -> France)

* `user_topics` (Wired -> Ryanair; Wired -> France)

* `olympic_athletes` (Nijmegen -> Unnamed Entity, ...)

* `athlete_affiliation` (Unnamed Entity... -> 2000, 2002, 1924 Olympics)

* `participating_countries` (2000... Olympics -> France)

* `second_level_division` (Nijmegen -> Netherlands)

* `location.administrative_division, containedby` (Nijmegen -> Kingdom of the Netherlands)

**4. Path Pruning (Bottom-Center Box)**

* **Components (Three Selection/Pruning Stages):**

* **Fuzzy Selection:** Takes `Indicator` (H_I) and `Paths_Set` (H_Path) as input, outputs a simplified graph.

* **Precise Path Selection:** Shows a more complex graph with colored nodes (red, blue, yellow) and an LLM icon.

* **Branch Reduced Selection:** Shows a pruned graph with dashed lines indicating removed branches, colored nodes (green, purple, red, yellow), and an LLM icon.

* **Flow:** The output of the central knowledge graph feeds into Fuzzy Selection, then to Precise Path Selection, then to Branch Reduced Selection.

**5. Question Answering (Bottom-Right Box)**

* **Components:**

* **Path Summarizing:** A beige box with an LLM icon.

* **Decision Diamond:** A diamond shape with "Yes!" and "No" outputs.

* **Answer:** A green box.

* **Flow:** The pruned paths from the previous stage go to Path Summarizing. The output goes to a decision point (likely checking if a valid answer path is found). If "Yes!", it proceeds to the final **Answer**. If "No", an arrow loops back to the **Split Questions, LLM indicator, Ordered Entities** module in the Initialization phase, suggesting an iterative refinement process.

### Detailed Analysis

The diagram meticulously maps the process of answering the sample question: "What country bordering France contains an airport that serves Nijmegen?"

1. **Initialization:** The system identifies key entities ("France", "Nijmegen") and decomposes the question.

2. **Exploration:** It explores the knowledge graph starting from these entities. The central graph shows potential paths:

* From **Nijmegen** to **Weeze Airport** (`nearby_airports`).

* From **Weeze Airport** to **Germany** (`containedby`).

* From **Germany** to **France** via an `adjoin_s` (adjoins) relation through an `UnnamedEntity`.

* This path (Nijmegen -> Weeze Airport -> Germany -> France) appears to satisfy the question's conditions: Germany borders France and contains an airport (Weeze) that serves Nijmegen.

3. **Path Pruning:** The system evaluates and selects the most relevant paths from the explored graph using fuzzy, precise, and branch-reduction techniques, likely leveraging LLMs for semantic understanding.

4. **Question Answering:** The selected path is summarized to generate the final answer ("Germany").

### Key Observations

* **Color Coding:** Entities are color-coded: Red (Nijmegen - source), Yellow (France - target), Blue (Airports/Regions), Pink (Germany - candidate answer), Grey (other entities).

* **Iterative Loop:** The "No" path from the answer decision back to initialization indicates the system can retry with refined parameters if an answer isn't found.

* **LLM Integration:** LLM icons are present in Exploration (supplement paths), Path Pruning (Precise and Branch Reduced Selection), and Path Summarizing, indicating they are used for reasoning, path evaluation, and answer generation.

* **Graph Complexity:** The central knowledge graph contains multiple, potentially distracting paths (e.g., connections to Olympics, Ryanair, time zones) that the pruning stages must filter out.

### Interpretation

This diagram represents a sophisticated **neuro-symbolic AI system** for question answering. It combines the structured reasoning of knowledge graphs (symbolic) with the flexible language understanding of Large Language Models (neuro).

* **What it demonstrates:** The system can break down a complex, multi-hop natural language question into a graph traversal problem. It explores a wide neighborhood of relevant entities, then uses learned heuristics (via LLMs and selection algorithms) to prune irrelevant information and converge on the most plausible answer path.

* **How elements relate:** The phases form a pipeline: **Parse -> Explore -> Prune -> Answer**. The central knowledge graph is the shared data structure manipulated by each phase. The LLMs act as reasoning engines within the symbolic framework, guiding exploration and selection.

* **Notable aspects:** The inclusion of "Fuzzy Selection" suggests handling of uncertainty or partial matches in the graph. The iterative loop highlights the system's robustness, allowing for re-attempts. The presence of extraneous nodes (like "Wired" or "Olympics") in the exploration graph shows the system's challenge: distinguishing relevant from irrelevant connections in a densely connected knowledge base. The final answer ("Germany") is derived not from a direct "serves" relation but from a chain of inferences (`nearby_airports` -> `containedby` -> `adjoin_s`), showcasing the system's ability to perform compositional reasoning.

</details>

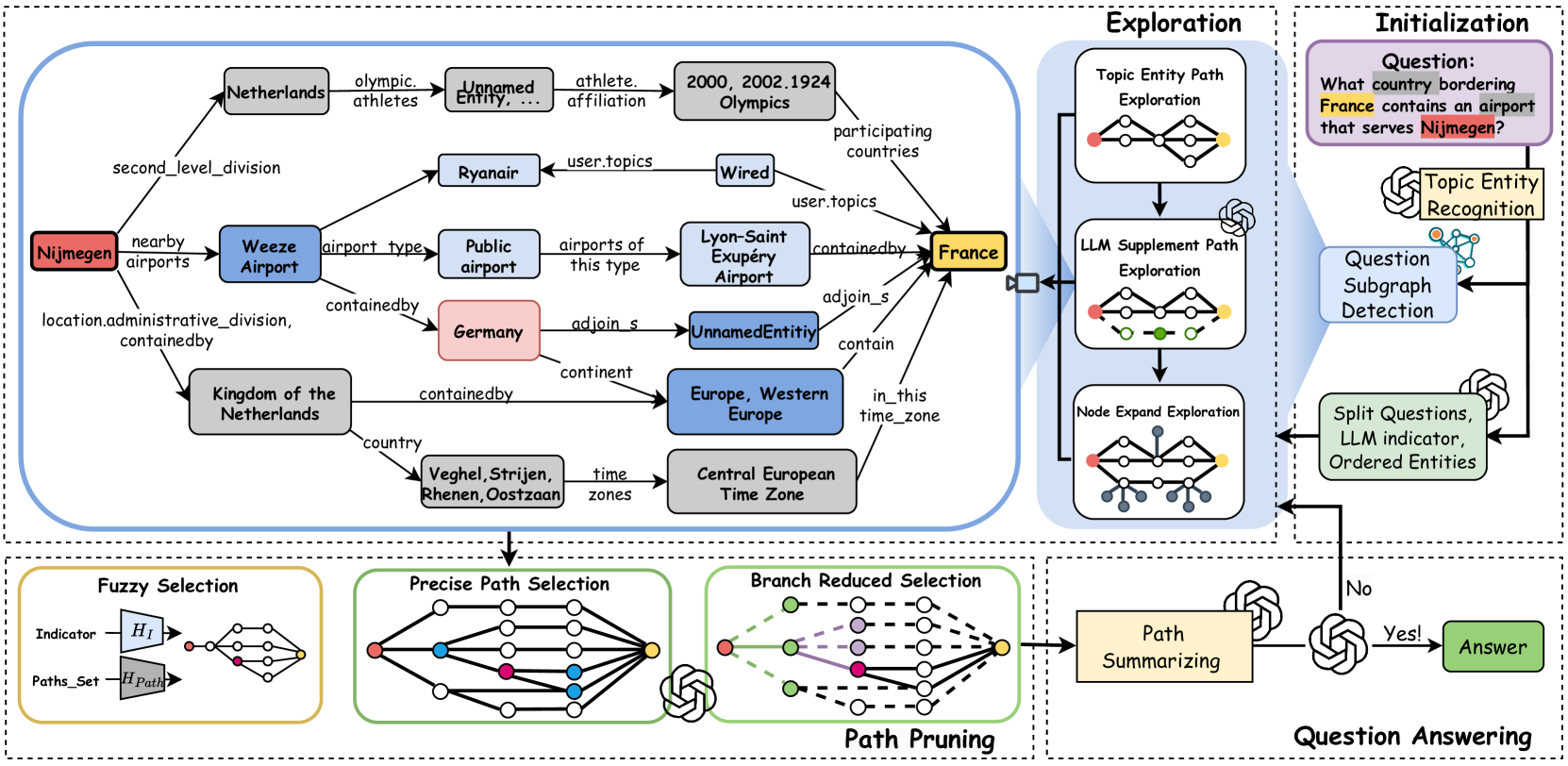

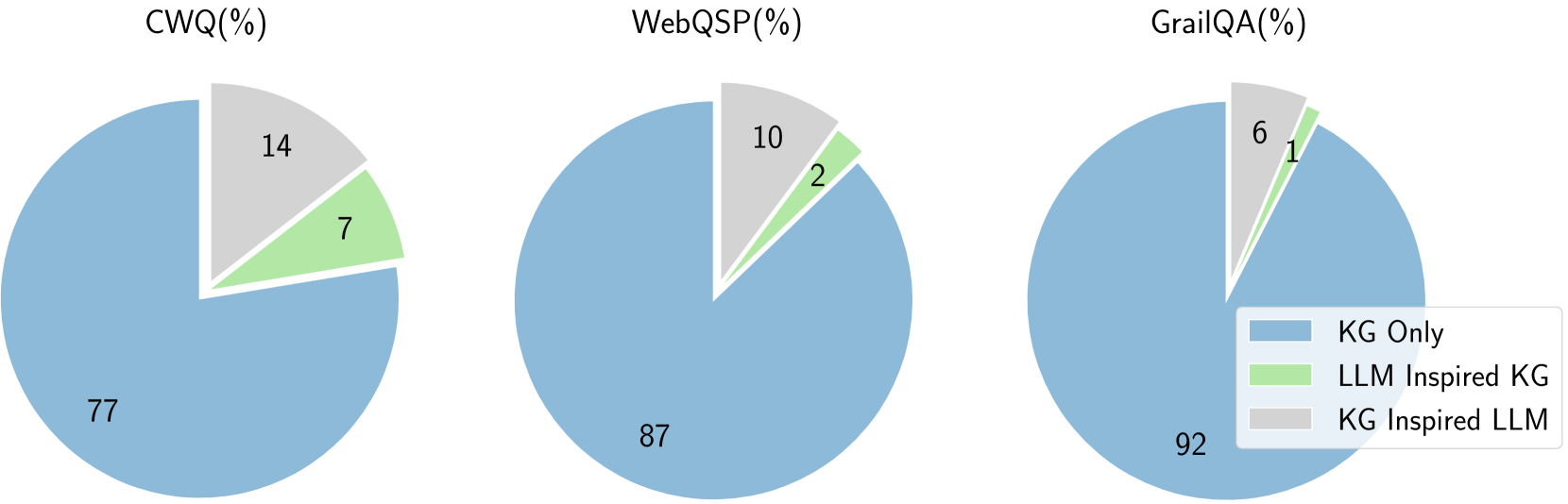

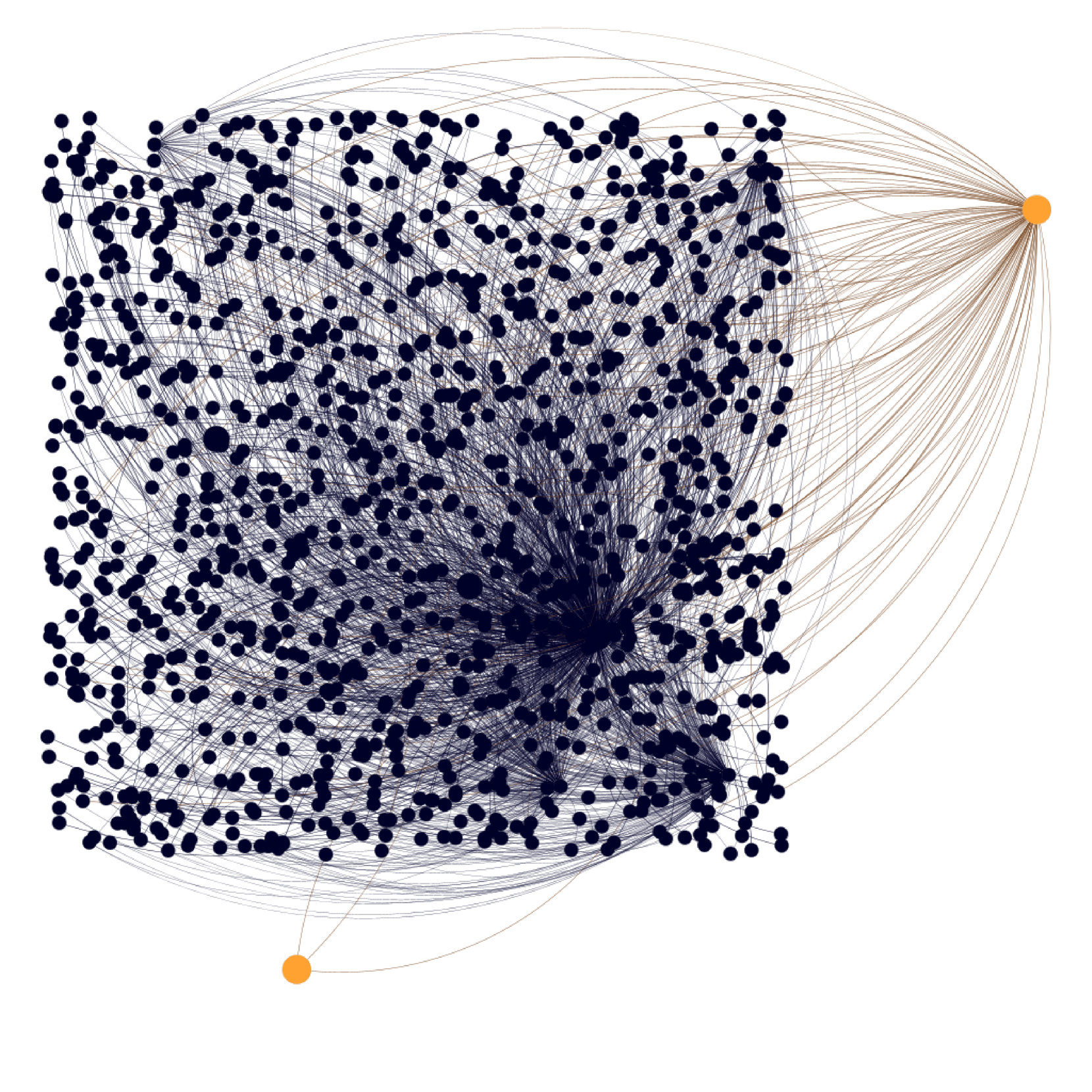

Figure 2. Overview of the PoG architecture. Exploration: After initialization (detailed in Figure 3), the model retrieves entity paths from $\mathcal{G}_{q}$ through three exploration phases. Path Pruning: PoG applies a three-step beam search to prune paths after each exploration phase. Question Answering: The pruned paths are then evaluated for question answering. If these paths do not fully answer the question, the model explores deeper paths until $D_{max}$ is reached or moves on to the next exploration phase.

## 3. Preliminary

Consider a Knowledge Graph (KG) $\mathcal{G(E,R,T)}$ , where $\mathcal{E}$ , $\mathcal{R}$ and $\mathcal{T}$ represent the set of entities, relations, and knowledge triples, respectively. Each knowledge triple $T\in\mathcal{T}$ encapsulates the factual knowledge in $\mathcal{G}$ , and is represented as $T=(e_{h},r,e_{t})$ , where $e_{h},e_{t}\in\mathcal{E}$ and $r\in\mathcal{R}$ . Given an entity set $\mathcal{E_{S}\subseteq E}$ , the induced subgraph of $\mathcal{E_{S}}$ is denoted as $\mathcal{S=(E_{S},R_{S},T_{S})}$ , where $\mathcal{T}_{S}=\{(e,r,e^{\prime})\in\mathcal{T}\mid e,e^{\prime}\in\mathcal{E }_{S}\}$ , and $\mathcal{R}_{S}=\{r\in\mathcal{R}\mid(e,r,e^{\prime})\in\mathcal{T}_{S}\}.$ Furthermore, we denote $\mathcal{D}(e)$ and $\mathcal{D}(r)$ as the sets of short textual descriptions for each entity $e\in\mathcal{E}$ and each relation $r\in\mathcal{R}$ , respectively. For example, the text description of the entity “m.0f8l9c” is $\mathcal{D}$ (“m.0f8l9c”)= “France”. For simplicity, in this paper, all entities and relations are referenced through their $\mathcal{D}$ representations and transformed into natural language.

** Definition 0 (Reasoning Path)**

*Given a KG $\mathcal{G}$ , a reasoning path within $\mathcal{G}$ is defined as a connected sequence of knowledge triples, represented as: $path_{\mathcal{G}}(e_{1},e_{l+1})=\{T_{1},T_{2},...,T_{l}\}=\{(e_{1},r_{1},e_{ 2}),(e_{2},r_{2},e_{3})$ $,...,(e_{l},r_{l},e_{l+1})\}$ , where $T_{i}\in\mathcal{T}$ denotes the $i$ -th triple in the path and $l$ denotes the length of the path, i.e., $length(path_{\mathcal{G}}(e_{1},e_{l+1}))=l$ .*

** Example 0**

*Consider a reasoning path between ”University” and ”Student” in KG: $path_{\mathcal{G}}(\text{University}$ , $\text{Student})$ $=\{(\text{University}$ , employs, $\text{Professor})$ , $(\text{Professor}$ , teaches, $\text{Course})$ , $(\text{Course}$ , enrolled_in, $\text{Student})\}$ , and can be visualized as:

$$

\text{University}\xrightarrow{\text{employs}}\text{Professor}\xrightarrow{

\text{teaches}}\text{Course}\xrightarrow{\text{enrolled\_in}}\text{Student}.

$$

It indicates that a “University” employs a “Professor,” who teaches a “Course,” in which a ”Student” is enrolled. The length of the path is 3.*

For any entity $s$ and $t$ in $\mathcal{G}$ , if there exists a reasoning path between $s$ and $t$ , we say $s$ and $t$ can reach each other, denoted as $s\leftrightarrow t$ . The distance between $s$ and $t$ in $\mathcal{G}$ , denoted as $dist_{\mathcal{G}}(s,t)$ , is the shortest reasoning path distance between $s$ and $t$ . For the non-reachable vertices, their distance is infinite. Given a positive integer $h$ , the $h$ -hop neighbors of an entity $s$ in $\mathcal{G}$ is defined as $N_{\mathcal{G}}(s,h)=\{t\in\mathcal{E}|dist_{\mathcal{G}}(s,t)\leq h\}$ .

** Definition 0 (Entity Path)**

*Given a KG $\mathcal{G}$ and a list of entities $list_{e}$ = [ $e_{1},e_{2},e_{3},\ldots,e_{l}$ ], the entity path of $list_{e}$ is defined as a connected sequence of reasoning paths, which is denoted as $path_{\mathcal{G}}(list_{e})$ $=\{path_{\mathcal{G}}(e_{1},e_{2}),$ $path_{\mathcal{G}}(e_{2},e_{3}),\ldots,path_{\mathcal{G}}(e_{l-1},e_{l})\}=\{( e_{s},r,e_{t})$ $|(e_{s},r,e_{t})\in path_{\mathcal{G}}(e_{i},e_{i+1})\land 1\leq i<l\}$ .*

Knowledge Graph Question Answering (KGQA) is a fundamental reasoning task based on KGs. Given a natural language question $q$ and a KG $\mathcal{G}$ , the objective is to devise a function $f$ that predicts answers $a\in Answer(q)$ utilizing knowledge encapsulated in $\mathcal{G}$ , i.e., $a=f(q,\mathcal{G})$ . Consistent with previous research (Sun et al., 2019; Luo et al., 2024; Sun et al., 2024; Ma et al., 2024), we assume the topic entities $Topic(q)$ mentioned in $q$ and answer entities $Answer(q)$ in ground truth are linked to the corresponding entities in $\mathcal{G}$ , i.e., $Topic(q)\subseteq\mathcal{E}\text{ and }Answer(q)\subseteq\mathcal{E}$ .

## 4. Method

PoG implements the “KG-based LLM Reasoning” by first exploring all possible faithful reasoning paths and then collaborating with LLM to perform a 3-step beam search selection on the retrieved paths. Compared to previous approaches (Sun et al., 2024; Ma et al., 2024), our model focuses on providing more accurate and question-relevant retrieval-argument graph information. The framework of PoG is outlined in Figure 2, comprising four main components.

- Initialization. The process begins by identifying the set of topic entities from the question input, and then queries the source KG $\mathcal{G}$ by exploring up to $D_{\max}$ -hop from each topic entity to construct the evidence sub-graph $\mathcal{G}_{q}$ , where $D_{\max}$ is the user-defined maximum exploration depth. Subsequently, we prompt the LLM to analyze the question and generate an indicator that serves as a strategy for the answer formulation process and predicting the exploration depth $D_{\text{predict}}$ .

- Exploration. After initialization, the model retrieves topic entity paths from $\mathcal{G}_{q}$ through three exploration phases: topic entity path exploration, LLM supplement path exploration, and node expand exploration. All reasoning paths are constrained within the depth range $D\in[D_{\text{predict}},D_{\max}]$ .

- Path Pruning. Following each exploration phase, PoG employs a pre-trained LM, LLM prompting, and graph structural analysis to perform a three-step beam search. The pruned paths are then evaluated in the question answering.

- Question Answering. Finally, LLM is prompted to assess if the pruned reasoning paths sufficiently answer the question. If not, continue exploration with deeper paths incrementally until the $D_{\max}$ is exceeded or proceed to the next exploration phase.

<details>

<summary>x3.png Details</summary>

### Visual Description

## Diagram: Knowledge Graph Question Answering System Architecture

### Overview

This image is a technical system architecture diagram illustrating a multi-stage process for answering complex natural language questions using a Knowledge Graph (KG). The system combines Large Language Model (LLM) capabilities for question decomposition with graph-based operations for information retrieval and reasoning. The flow proceeds from an input question and knowledge graph to two primary outputs: a decomposed question set and a reduced, relevant subgraph.

### Components/Axes

The diagram is organized into several interconnected blocks, flowing generally from top-left to bottom-right, with feedback loops.

**1. Input Section (Top-Left):**

* **Input Label:** `Input: G, q, D_max`

* `G`: Represents the Knowledge Graph.

* `q`: Represents the input question.

* `D_max`: Likely a maximum distance or depth parameter for graph traversal.

* **Question Box:** Contains the example question: `Question: What country bordering France contains an airport that serves Nijmegen?`

* **Entity Extraction:** An arrow points from the question to a list of extracted entities and their types:

* `Country` (Grey background)

* `France` (Yellow background)

* `Airport` (Grey background)

* `Nijmegen` (Red background)

* **Topic Entity Identification:** Arrows labeled `H_G` and `H_T` point to a box labeled `Topic Entity:`, which contains:

* `France` (Yellow background)

* `Nijmegen` (Red background)

**2. LLM Indictor / Question Analysis (Center-Left, Green Dashed Box):**

* **Label:** `LLM Indictor:`

* **Question Analysis Diagram:** Shows a semantic parse of the question:

* `Nijmegen ←serves airport ←own answer (country) →borders France`

* This indicates the relationships: an airport *serves* Nijmegen, the answer (a country) *owns* that airport, and that country *borders* France.

* **Split Questions:** The LLM decomposes the original question into two simpler sub-questions:

* `Split_question1: What country contains an airport that serves Nijmegen?`

* `Split_question2: What country borders France?`

* **Output 1 Arrow:** Points left, labeled `Output1: I_LLM, q_split`.

**3. Knowledge Graph (G) Processing (Top-Right, Blue Box):**

* **Label:** `Knowledge Graph (G)` with a small network icon.

* **Visual Representation:** Two overlapping circular diagrams representing graph neighborhoods.

* **Left Circle (Yellow Spotlight):** Centered on `France` (yellow node). Concentric dashed circles represent increasing distance (`D_max`). Arrows show paths radiating outward from France.

* **Right Circle (Red Spotlight):** Centered on `Nijmegen` (red node). Similar concentric circles and radiating paths.

* **Operation:** A `+` symbol between the circles indicates the combination or union of these two neighborhoods.

* **Flow:** An arrow points downward from this combined graph to the "Graph Detection" stage.

**4. Graph Operations Pipeline (Bottom, Three Blue Dashed Boxes):**

This pipeline processes the combined graph from the previous stage. The flow is right-to-left (Graph Detection → Node and Relation Clustering → Graph Reduction).

* **Graph Detection (Rightmost Box):**

* **Label:** `Graph Detection`

* **Visual:** A network graph with white nodes and multi-colored edges (blue, red, green, purple, orange, black). Key nodes are colored: one yellow (France), one red (Nijmegen), and one orange (likely representing the answer country or an airport).

* **Node and Relation Clustering (Center Box):**

* **Label:** `Node and Relation Clustering`

* **Visual:** The same graph structure, but edges are now uniformly black. The colored nodes (yellow, red, orange) remain, suggesting the process identifies and clusters relevant nodes and their connecting relations.

* **Graph Reduction (Leftmost Box):**

* **Label:** `Graph Reduction`

* **Visual:** A simplified graph. Many nodes and edges from the previous stage have been removed, leaving a sparse subgraph that connects the key entities (yellow, red, orange nodes) via specific paths.

* **Output 2 Arrow:** Points left from the Graph Reduction box, labeled `Output2: G_q`.

### Detailed Analysis

* **Textual Content Transcription:**

* All text is in English.

* Input Question: "What country bordering France contains an airport that serves Nijmegen?"

* LLM Split Questions: "What country contains an airport that serves Nijmegen?" and "What country borders France?"

* System Labels: Input, Output1, Output2, LLM Indictor, Question Analysis, Topic Entity, Knowledge Graph (G), Graph Detection, Node and Relation Clustering, Graph Reduction.

* Mathematical/Notational Symbols: `G`, `q`, `D_max`, `H_G`, `H_T`, `I_LLM`, `q_split`, `G_q`.

* **Spatial Grounding:**

* The **Legend/Entity Key** is implicitly defined by the colored boxes in the "Topic Entity" section (top-center): Yellow = France, Red = Nijmegen. This color coding is consistently applied to the nodes in the Knowledge Graph visualization and the subsequent graph operation diagrams.

* The **Knowledge Graph visualization** is in the top-right quadrant.

* The **LLM processing** is in the center-left.

* The **graph operation pipeline** runs along the bottom third of the image.

* **Component Flow & Relationships:**

1. The system starts with a complex question (`q`) and a large knowledge graph (`G`).

2. An LLM analyzes the question, extracts entities (France, Nijmegen), and decomposes it into two simpler, answerable sub-questions (`q_split`). This is `Output1`.

3. Simultaneously, the system identifies topic entities and uses them to extract relevant neighborhoods from the main KG (`G`), centered on France and Nijmegen, within a distance `D_max`.

4. These neighborhoods are combined and passed through a three-step graph processing pipeline:

* **Detection:** Identifies relevant nodes and relations in the combined neighborhood.

* **Clustering:** Groups the detected nodes and relations.

* **Reduction:** Prunes the graph to retain only the most salient subgraph (`G_q`) that likely contains the answer path. This is `Output2`.

5. The final answer would presumably be derived by reasoning over the reduced graph `G_q` and/or answering the split questions `q_split`.

### Key Observations

* **Hybrid Architecture:** The system explicitly combines neural (LLM) and symbolic (Knowledge Graph) AI components. The LLM handles natural language understanding and decomposition, while the KG provides structured reasoning.

* **Two-Pronged Output:** The system produces both a reformulated question set (`Output1`) and a focused data subgraph (`Output2`), which could be used independently or together for final answer generation.

* **Visual Consistency:** The color-coding of key entities (France=yellow, Nijmegen=red) is maintained across different diagram sections, aiding in tracking these elements through the process.

* **Graph Pipeline Logic:** The sequence from Detection → Clustering → Reduction suggests a funnel-like process of first gathering all potentially relevant information, then organizing it, and finally distilling it to its essence.

### Interpretation

This diagram outlines a sophisticated approach to **Complex Question Answering (CQA)** over knowledge graphs. The core innovation appears to be the tight coupling of an LLM's reasoning and decomposition skills with targeted graph operations.

* **Problem Solved:** It addresses the challenge of answering multi-hop questions (like the example, which requires finding a country that satisfies two conditions: bordering France and owning an airport serving Nijmegen) by breaking them down and constraining the search space within a vast knowledge graph.

* **Mechanism:** The LLM acts as a "question analyst," translating a complex query into a logical form and simpler sub-questions. The graph operations then execute a focused search, starting from the mentioned entities (France, Nijmegen) and exploring their local neighborhoods to find connecting paths that satisfy the sub-question conditions.

* **Significance:** The `D_max` parameter is crucial, as it limits the computational cost of graph traversal. The "Graph Reduction" step is the key efficiency gain, transforming a large, noisy subgraph into a minimal, answer-bearing one (`G_q`).

* **Potential Applications:** This architecture is suited for search engines, question-answering systems, and analytical tools that need to perform reasoning over structured data (e.g., corporate knowledge bases, biomedical databases, encyclopedic graphs like Wikidata).

* **Underlying Assumption:** The system assumes that the answer to the complex question can be found by exploring a bounded neighborhood around the explicitly mentioned entities and that the LLM can correctly decompose the question into logically equivalent sub-questions. The success hinges on the quality of the LLM's decomposition and the completeness of the underlying knowledge graph.

</details>

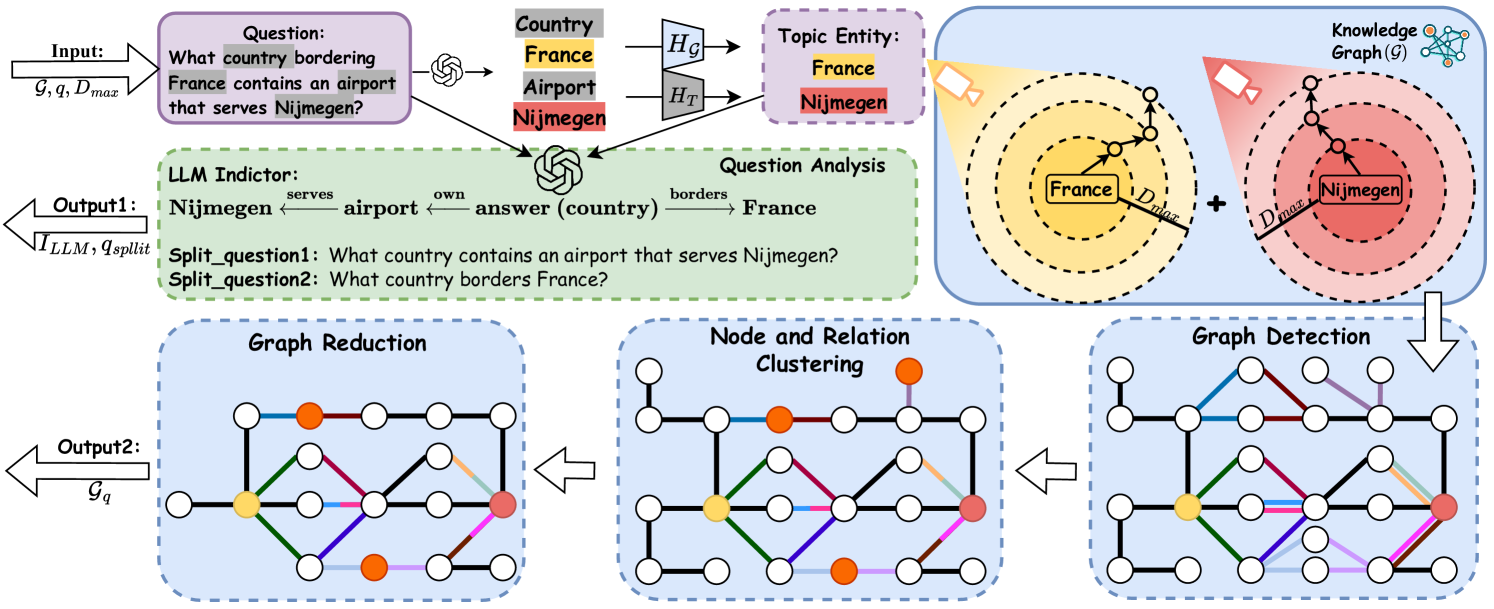

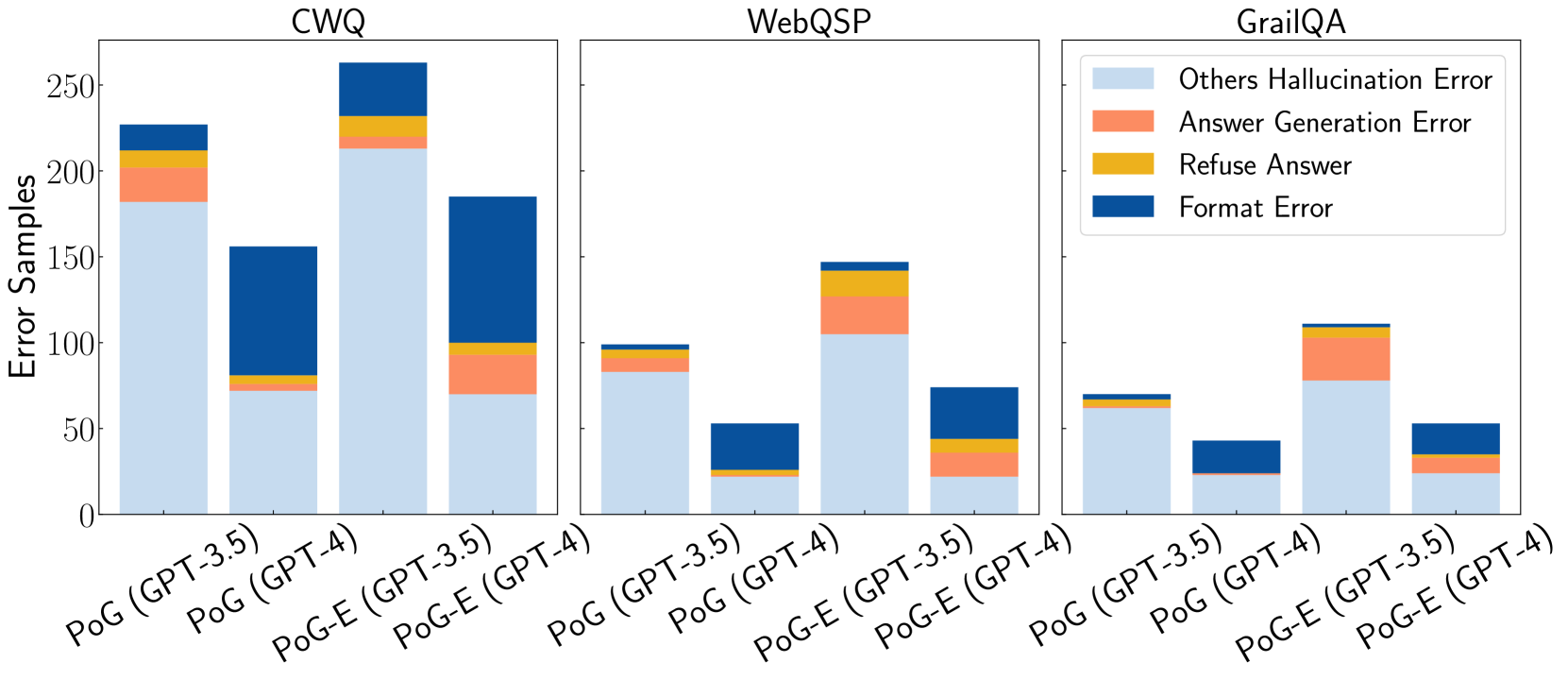

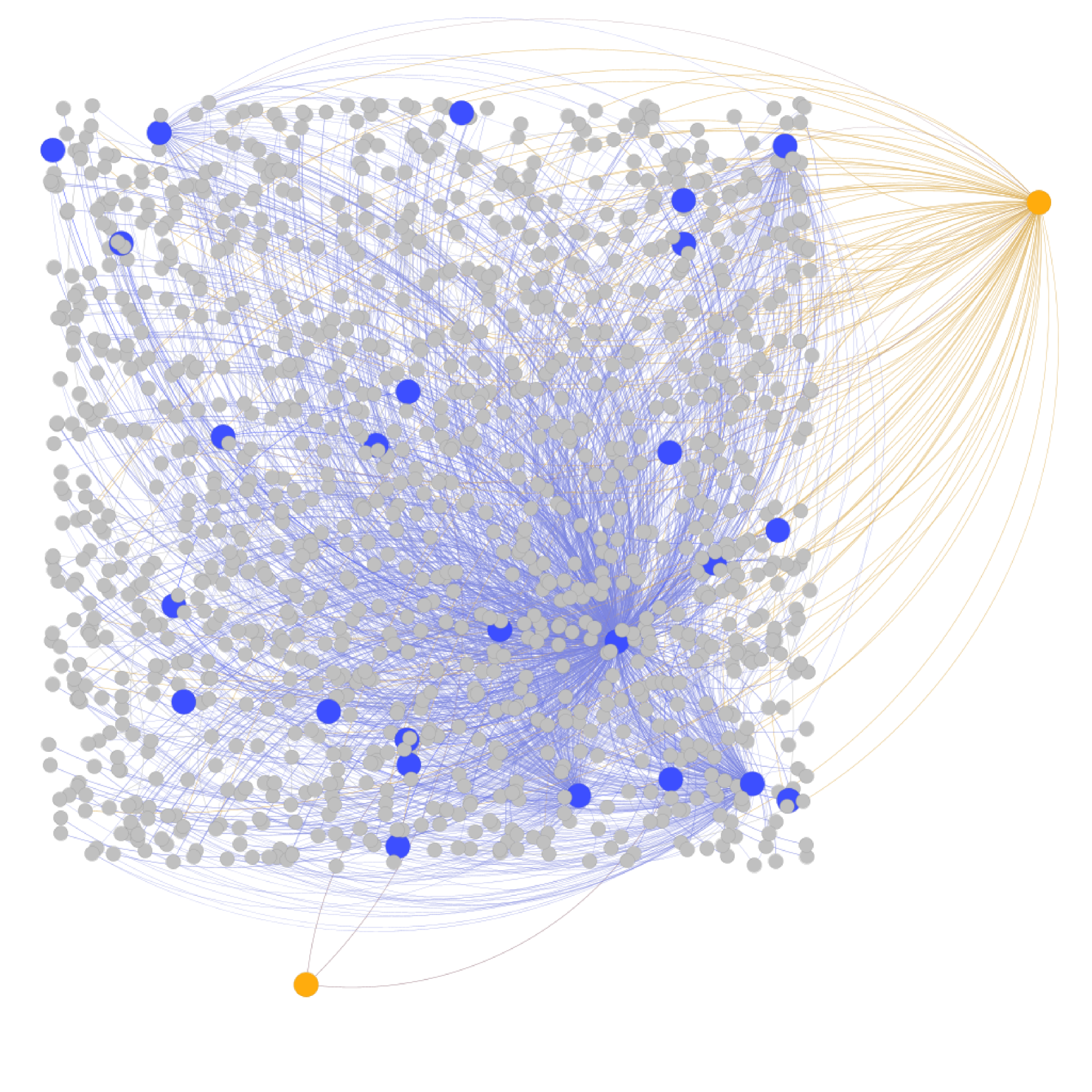

Figure 3. Overview of the initialization phase. Output 1: from the input question, the model identifies topic entities and prompts the LLM to decompose questions into split questions $q_{split}$ and generate an indicator $I_{LLM}$ . The indicator outlines a strategy for formulating the answer and predicts the exploration depth $D_{predict}$ . Output 2: the model queries the source KG up to $D_{max}$ -hop from identified topic entities, constructing and pruning the evidence subgraph $\mathcal{G}_{q}$ .

### 4.1. Initialization

The initialization has two main stages, i.e., question subgraph detection and question analysis. The framework is shown in Figure 3.

Question subgraph detection. Given a question $q$ , PoG initially identifies the question subgraph, which includes all the topic entities of $q$ and their $D_{\max}$ -hop neighbors.

Topic entity recognition. To identify the relevant subgraph, PoG first employs LLMs to extract the potential topic entities from the question. Following the identification, the process applies BERT-based similarity matching to align these potential entities with entities from KG. Specifically, as shown in Figure 3, we encode both the keywords and all entities from KG into dense vector embeddings as $H_{T}$ and $H_{\mathcal{G}}$ . We then compute a cosine similarity matrix between these embeddings to determine the matches. For each keyword, the entities with the highest similarity scores are selected to form the set $Topic(q)$ . This set serves as the foundation for constructing the question subgraph in subsequent steps.

Subgraph detection. Upon identifying the topic entities, PoG captures the induced subgraph $\mathcal{G}_{q}\subseteq\mathcal{G}$ by expanding around each entity $e$ in $Topic(q)$ . For each entity, we retrieve knowledge triples associated with its $D_{\max}$ -hop neighbors, thereby incorporating query-relevant and faithful KG information into $\mathcal{G}_{q}$ . Through this process, we update $\mathcal{E}_{q}$ with newly added intermediate nodes that serve as bridging pathways between the topic entities. The result subgraph, $\mathcal{G}_{q}$ is defined as $(\mathcal{E}_{q},\mathcal{R}_{q},\mathcal{T}_{q})$ , where $\mathcal{E}_{q}$ encompasses $Topic(q)$ together with the set $\{N_{\mathcal{G}}(e,D_{\max})\mid e\in Topic(q)\}$ , effectively linking all relevant entities and their connective paths within the defined hop distance. To interact with KG, we utilize the pre-defined SPARQL queries as detailed in Appendix D.

Graph pruning. To efficiently manage information overhead and reduce computational cost, we implement graph pruning on the question subgraph $\mathcal{G}_{q}$ using node and relation clustering alongside graph reduction techniques. As illustrated in Figure 3, node and relation clustering is achieved by compressing multiple nodes and their relations into supernodes, which aggregate information from the original entities and connections. For graph reduction, we employ bidirectional BFS to identify all paths connecting the topic entities. Based on these paths, we regenerate induced subgraphs that involve only the relevant connections, effectively excluding nodes and relations that lack strong relevance to the topic entities.

Question analysis. To reduce hallucinations in LLMs, the question analysis phase is divided into two parts and executed within a single LLM call using an example-based prompt (shown in Appendix E). First, the complex question $q$ is decomposed into simpler questions based on the identified topic entities, each addressing their relationship to the potential answer. Addressing these simpler questions collectively guides the LLM to better answer the original query, thereby reducing hallucinations. Second, a LLM indicator is generated, encapsulating all topic entities and predicting the answer position within a single chain of thought derived from the original question. This indicator highlights the relationships and sequence among the entities and answer. Based on this, a predicted depth $D_{\text{predict}}$ is calculated, defined as the maximum distance between the predicted answer and each topic entity. An example of question analysis is shown in Figure 3 with predicted depth 2.

### 4.2. Exploration

As discussed in Section 1, identifying reasoning paths that encompass all topic entities is essential to derive accurate answers. These paths serve as interpretable chains of thought, providing both the answer and the inference steps leading to it, a feature we refer as interpretability. To optimize the discovery of such paths efficiently and accurately, the exploration process is divided into three phases: topic entity path exploration, LLM supplement path exploration, and node expand exploration. After each phase, we perform path pruning and question answering. If a sufficient path is found, the process terminates; otherwise, it advances to the next phase to explore additional paths. Due to the space limitation, the pseudo-code of exploration section is shown in Appendix A.1.

Topic entity path exploration. To reduce LLM usage and search space, PoG begins exploration from a predicted depth $D_{\text{predict}}$ rather than the maximum depth. Using the question subgraph $\mathcal{G}_{q}$ , topic entities $Topic(q)$ , LLM indicator $I_{\text{LLM}}$ , and $D_{\text{predict}}$ , PoG identifies reasoning paths containing all topic entities by iteratively adjusting the exploration depth $D$ . Entities in $Topic(q)$ are ordered according to $I_{\text{LLM}}$ to facilitate reasoning effectively. Starting from the predicted depth $D=min(D_{\text{predict}},D_{\text{max}})$ , we employ a bidirectional BFS to derive all potential entity paths, which is defined as:

$$

Paths_{t}=\{p\mid|Topic(q)|\times(D-1)<length(p)\leq|Topic(q)|\times D\},

$$

where $p=Path_{\mathcal{G}_{q}}(Topic(q))$ . To reduce the complexity, a pruning strategy is employed and selects the top- $W_{\max}$ paths based on $Paths_{t}$ , $I_{\text{LLM}}$ , and split questions from Section 4.1. These paths are evaluated for sufficiency verification. If inadequate, $D$ is incremented until $D_{\max}$ is reached. Then the next phase commences.

LLM supplement path exploration. Traditional KG-based LLM reasoning often rephrases KG facts without utilizing the LLM’s inherent knowledge. To overcome this, PoG prompts LLMs to generate predictions based on path understanding and its implicit knowledge, providing additional relevant insights. It involves generating new LLM thinking indicators $I_{\text{Sup}}$ for predicted entities $e\in Predict(q)$ , and then using text similarity to verify and align them with $\mathcal{E}_{q}\in\mathcal{G}_{q}$ . The supplementary entity list $List_{S}(e)=Topic(q)+e$ is built and ranked by $I_{\text{Sup}}$ to facilitate reasoning effectively. Next, supplementary paths $Paths_{s}$ are derived from $List_{S}(e)$ in the evidence KG $\mathcal{G}_{q}$ with a fixed depth $D_{\max}$ :

$$

Paths_{s}=\{p\mid\text{length}(p)\leq|Topic(q)|\times D_{\max}\},

$$

where $p=Path_{\mathcal{G}_{q}}(List_{S}(e))$ . These paths with new indicators are evaluated similarly to the topic entity path exploration phase. The prompting temple is shown in Appendix E.

Node expand exploration. If previous phases cannot yield sufficient paths, PoG proceeds to node expansion. Unlike previous methods (Sun et al., 2024; Ma et al., 2024) that separately explore relations and entities, PoG explores both simultaneously, leveraging clearer semantic information for easier integration with existing paths. During the exploration, PoG expands unvisited entities by 1-hop neighbors in $\mathcal{G}$ . New triples are merged into existing paths to form the new paths, followed by pruning and evaluation.

### 4.3. Path Pruning

As introduced in Section 2, KGs contain vast amounts of facts, making it impractical to involve all relevant triples in the LLM’s context due to high costs. To address this complexity and reduce LLM overhead, we utilize a three-step beam search for path pruning. The corresponding pseudo-code can be found in Appendix A.2.

Fuzzy selection. Considering that only a small subset of the generated paths is relevant, the initial step of our beam search involves fuzzy selection by integrating a pre-trained language model (e.g. SentenceBERT (Reimers and Gurevych, 2019)), to filter the irrelevant paths quickly. As shown in Figure 2, we encode the LLM indicator $I_{\text{LLM}}$ (or $I_{\text{Sup}}$ ) and all reasoning paths into vector embeddings, denoted as $H_{I}$ and $H_{Paths}$ , and calculate cosine similarities between them. The top- $W_{1}$ paths with the highest similarity scores are selected for further evaluation.

Precise path selection. Following the initial fuzzy selection, the number of candidate paths is reduced to $W_{1}$ . At this stage, we prompt the LLM to select the top- $W_{\max}$ reasoning paths most likely to contain the correct answer. The specific prompt used to guide LLM in selection phase can be found in Appendix E.

Branch reduced selection. Considering that paths are often represented in natural language and can be extensive, leading to high processing costs for LLMs, we implement a branch reduced selection method integrated with the graph structure. This method effectively balances efficiency and accuracy by further refining path selection. Starting with $D=1$ , for each entity $e$ in the entity list, we extract the initial $D$ -step paths from every path in the candidate set $Paths_{c}$ into a new set $Paths_{e}$ . If the number of $Paths_{e}$ exceeds the maximum designated width $W_{\max}$ , these paths are pruned using precise path selection. The process iterates until the number of paths in $Paths_{c}$ reaches $D_{\max}$ . For example, as illustrated in Figure 2, with $W_{\max}=1$ , only the initial step paths (depicted in green) are extracted for further examination, while paths represented by dashed lines are pruned. This selection method enables efficient iterative selection by limiting the number of tokens and ensuring the relevance and conciseness of the reasoning paths.

Beam search strategy. Based on the three path pruning methods above, PoG can support various beam search strategies, ranging from non-reliant to fully reliant on LLMs. These strategies are selectable in a user-friendly manner, allowing flexibility based on the specific requirements of the task. We have defined four such strategies in Algorithm 2 of Appendix A.2.

### 4.4. Question Answering

Based on the pruned paths in Section 4.3, we introduce a two-step question-answering method.

Path Summarizing. To address hallucinations caused by paths with excessive or incorrect text, we develop a summarization strategy by prompting LLM to review and extract relevant triples from provided paths, creating a concise and focused path. Details of the prompts used are in Appendix E.

Question answering. Based on the current reasoning path derived from path pruning and summarizing, we prompt the LLM to first evaluate whether the paths are sufficient for answering the split question and then the main question. If the evaluation is positive, LLM is prompted to generate the answer using these paths, along with the question and question analysis results as inputs, as shown in Figures 2. The prompts for evaluation and generation are detailed in Appendix E. If the evaluation is negative, the exploration process is repeated until completion. If node expand exploration reaches its depth limit without yielding a satisfactory answer, LLM will leverage both provided and inherent knowledge to formulate a response. Additional details on the prompts can be found in Appendix E.

## 5. Experiments

Table 1. Results of PoG across various datasets, compared with the state-of-the-art (SOTA) in Supervised Learning (SL) and In-Context Learning (ICL) methods. The highest scores for ICL methods are highlighted in bold, while the second-best results are underlined. The Prior FT (Fine-tuned) SOTA includes the best-known results achieved through supervised learning.

| Method | Class | LLM | Multi-Hop KGQA | Single-Hop KGQA | Open-Domain QA | | |

| --- | --- | --- | --- | --- | --- | --- | --- |

| CWQ | WebQSP | GrailQA | Simple Questions | WebQuestions | | | |

| Without external knowledge | | | | | | | |

| IO prompt (Sun et al., 2024) | - | GPT-3.5-Turbo | 37.6 | 63.3 | 29.4 | 20.0 | 48.7 |

| CoT (Sun et al., 2024) | - | GPT-3.5-Turbo | 38.8 | 62.2 | 28.1 | 20.3 | 48.5 |

| SC (Sun et al., 2024) | - | GPT-3.5-Turbo | 45.4 | 61.1 | 29.6 | 18.9 | 50.3 |

| With external knowledge | | | | | | | |

| Prior FT SOTA | SL | - | 70.4 (Das et al., 2021) | 85.7 (Luo et al., 2024) | 75.4 (Gu et al., 2023) | 85.8 (Baek et al., 2023b) | 56.3 (Kedia et al., 2022) |

| KB-BINDER (Li et al., 2023a) | ICL | Codex | - | 74.4 | 58.5 | - | - |

| ToG/ToG-R (Sun et al., 2024) | ICL | GPT-3.5-Turbo | 58.9 | 76.2 | 68.7 | 53.6 | 54.5 |

| ToG-2.0 (Ma et al., 2024) | ICL | GPT-3.5-Turbo | - | 81.1 | - | - | - |

| ToG/ToG-R (Sun et al., 2024) | ICL | GPT-4 | 69.5 | 82.6 | 81.4 | 66.7 | 57.9 |

| PoG-E | ICL | GPT-3.5-Turbo | 71.9 | 90.9 | 87.6 | 78.3 | 76.9 |

| PoG | ICL | GPT-3.5-Turbo | 74.7 | 93.9 | 91.6 | 80.8 | 81.8 |

| PoG-E | ICL | GPT-4 | 78.5 | 95.4 | 91.4 | 81.2 | 82.0 |

| PoG | ICL | GPT-4 | 81.4 | 96.7 | 94.4 | 84.0 | 84.6 |

Experimental settings. We evaluate PoG on five KGQA datasets, i.e., CWQ (Talmor and Berant, 2018), WebQSP (Yih et al., 2016), GrailQA (Gu et al., 2021), SimpleQuestions (Petrochuk and Zettlemoyer, 2018), and WebQuestions (Berant et al., 2013). PoG is tested against methods without external knowledge (IO, CoT (Wei et al., 2022), SC (Wang et al., 2022)) and the state-of-the-art (SOTA) approaches with external knowledge, including prompting-based and fine-tuning-based methods. Freebase (Bollacker et al., 2008) serves as the background knowledge graph for all datasets. Experiments are conducted using two LLMs, i.e., GPT-3.5 (GPT-3.5-Turbo) and GPT-4. Following prior studies, we use exact match accuracy (Hits@1) as the evaluation metric. Due to the space limitation, detailed experimental settings, including dataset statistics, baselines, and implementation details, are provided in Appendix C.

PoG setting. We adopt the Fuzzy + Precise Path Selection strategy in Algorithm 2 of Appendix A.2 for PoG, with $W_{1}=80$ for fuzzy selection. Additionally, we introduce PoG-E, which randomly selects one relation from each edge in the clustered question subgraph to evaluate the impact of graph structure on KG-based LLM reasoning. $W_{\max}$ and $D_{\max}$ are 3 by default for beam search.

### 5.1. Main Results

Since PoG leverages external knowledge to enhance LLM reasoning, we first compare it with other methods that utilize external knowledge. Although PoG is a training-free, prompting-based method and has natural disadvantages compared to fine-tuned methods trained on evaluation data. As shown in Table 1, PoG with GPT-3.5-Turbo still achieves new SOTA performance across most datasets. Additionally, PoG with GPT-4 surpasses fine-tuned SOTA across all the multi-hop and open-domain datasets by an average of 17.3% and up to 28.3% on the WebQuestions dataset. Comparing all the in-context learning (ICL) methods, PoG with GPT-3.5-Turbo surpasses all the previous SOTA methods. When comparing PoG with GPT-3.5-Turbo against SOTA using GPT-4, PoG outperforms the SOTA by an average of 12.9% and up to 23.9%. When using the same LLM, PoG demonstrates substantial improvements: with GPT-3.5-Turbo, it outperforms SOTA by an average of 21.2% and up to 27.3% on the WebQuestions dataset; with GPT-4, it outperforms SOTA by 16.6% on average and up to 26.7% on the WebQuestions dataset. Additionally, PoG with GPT-3.5-Turbo outperforms methods without external knowledge (e.g., IO, CoT, SC prompting) by 62% on GrailQA and 60.5% on Simple Questions. These results show that incorporating external knowledge graphs significantly enhances reasoning tasks. PoG-E also achieves excellent results. Under GPT-4, PoG-E surpasses all SOTA in ICL by 14.1% on average and up to 24.1% on the WebQuestions dataset. These findings demonstrate that the graph structure is crucial for reasoning tasks, particularly for complex logical reasoning. By integrating the structural information of the question within the graph, PoG enhances the deep reasoning capabilities of LLMs, leading to superior performance.

### 5.2. Ablation Study

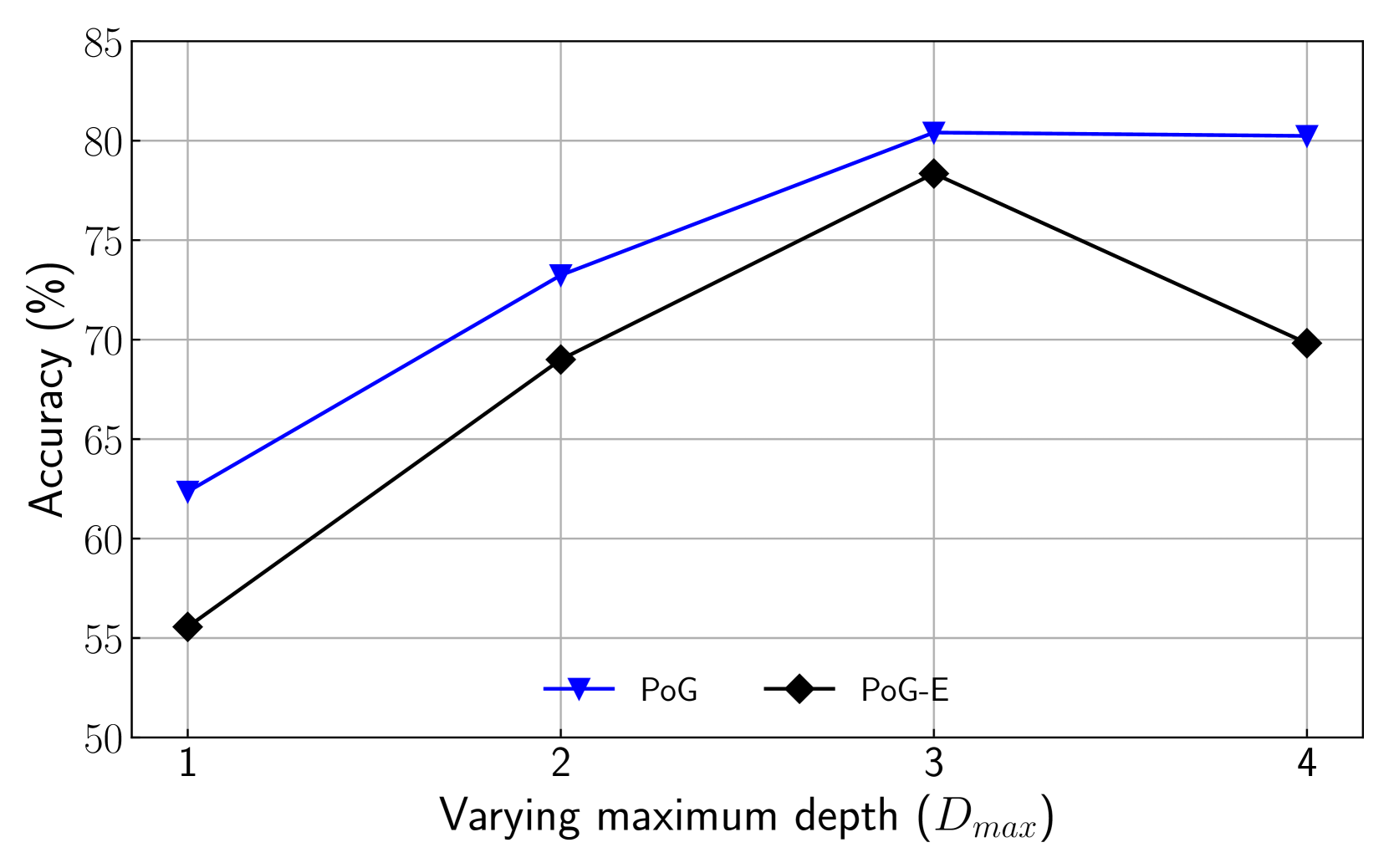

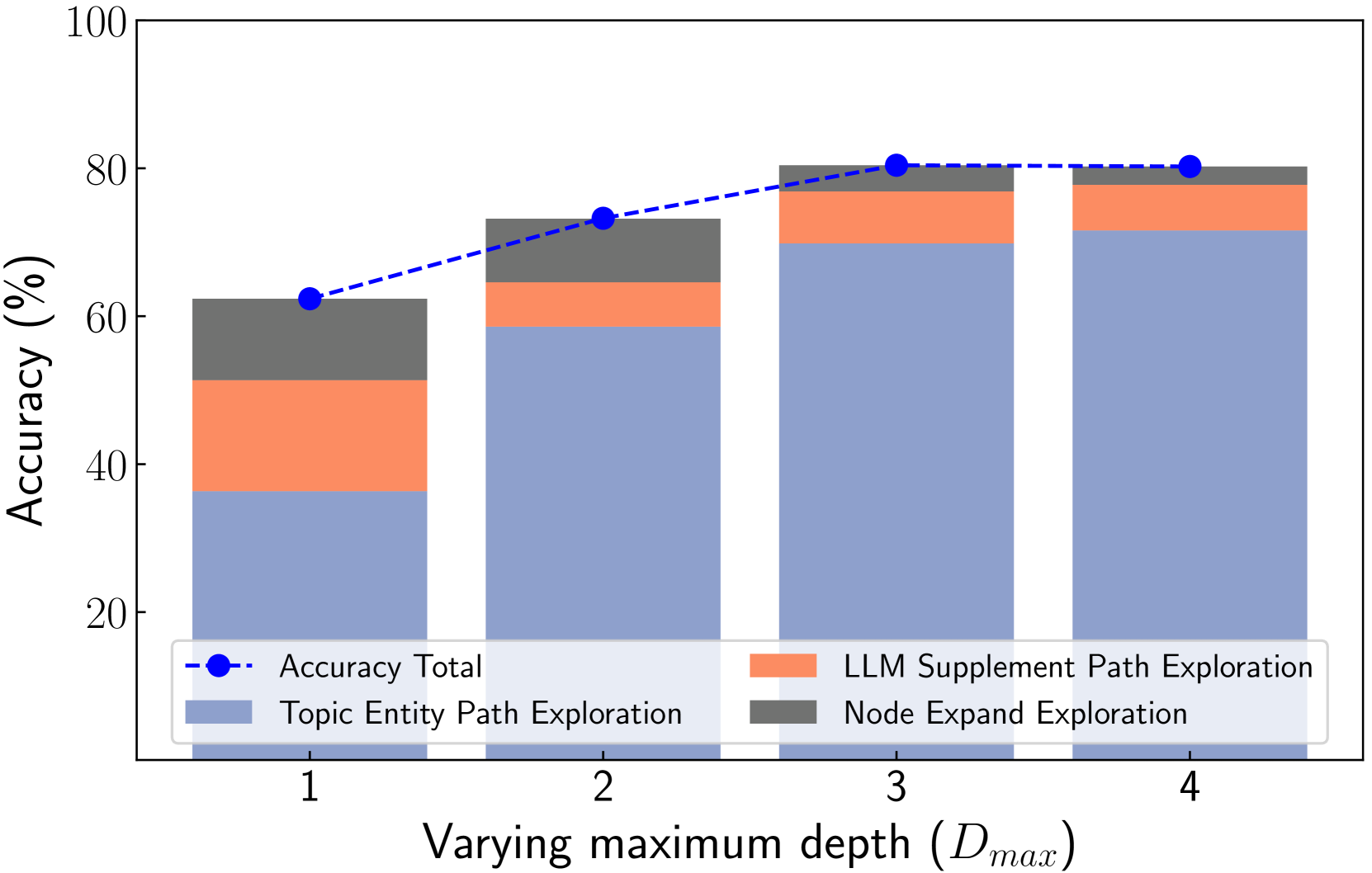

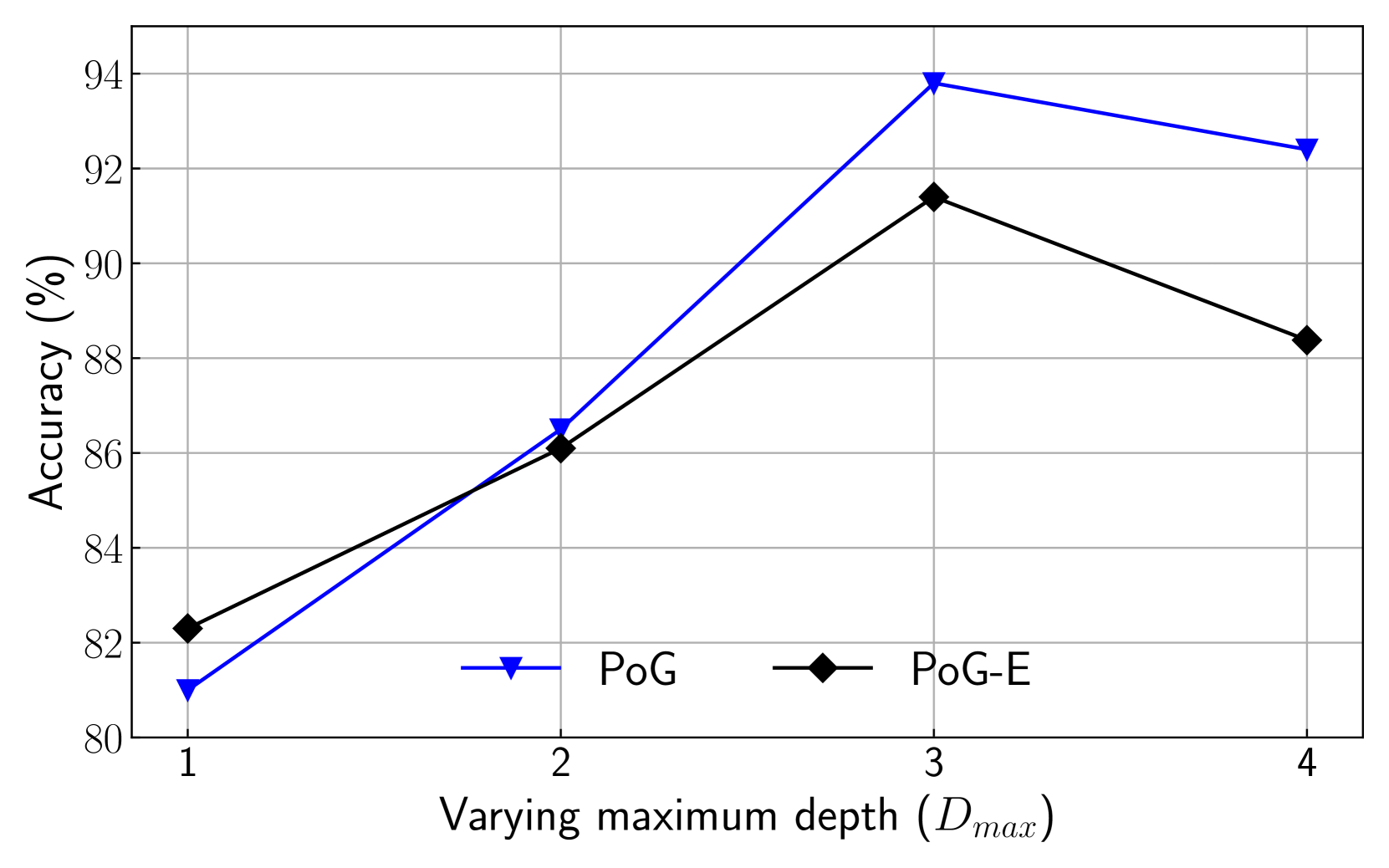

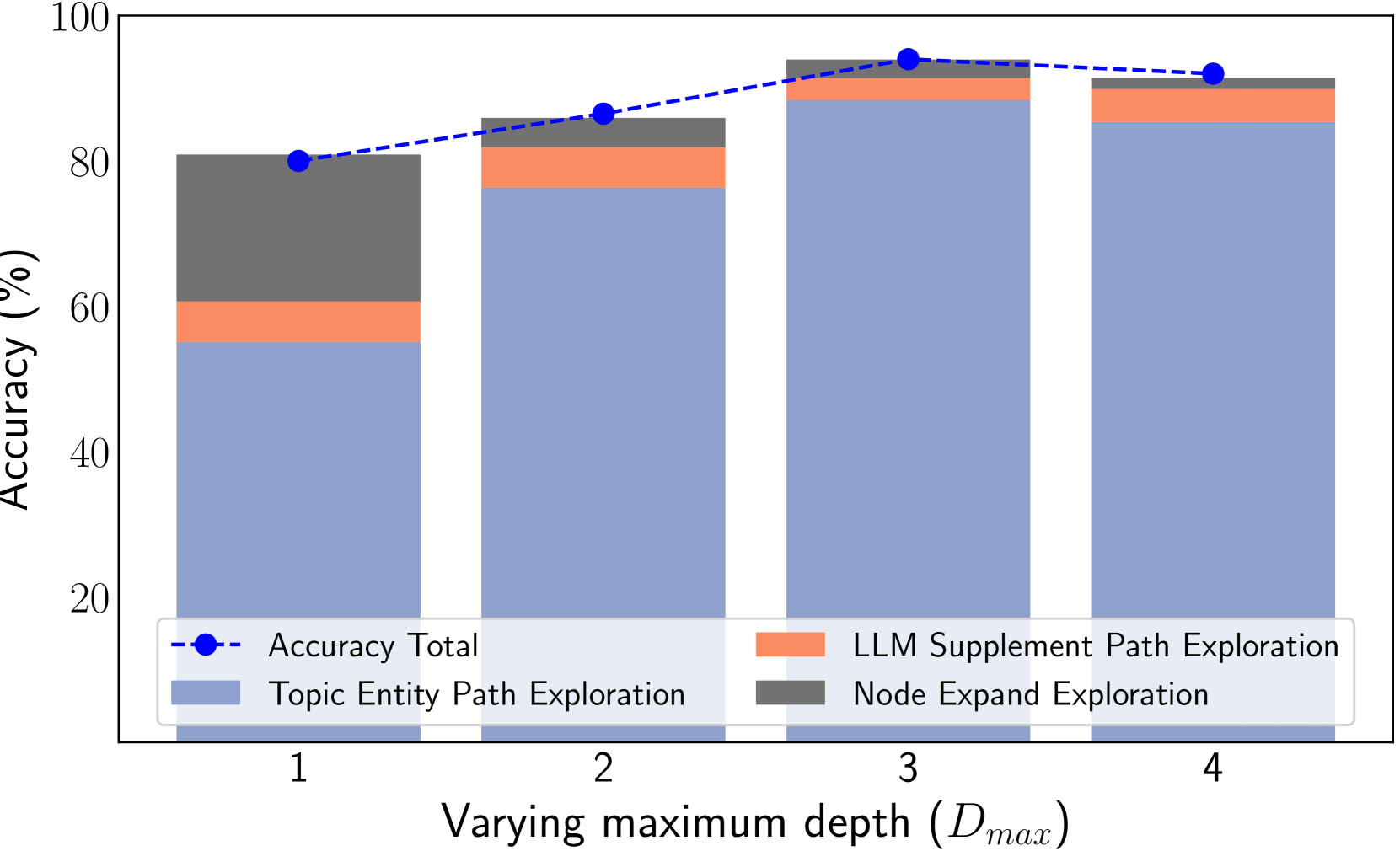

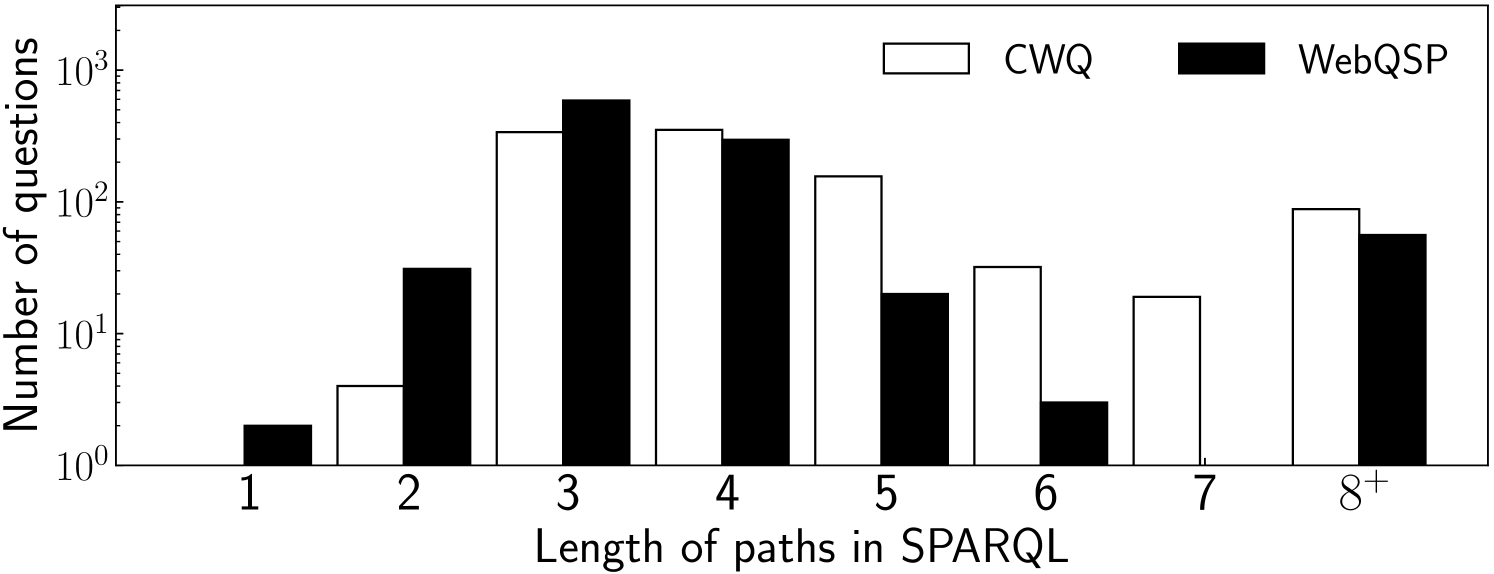

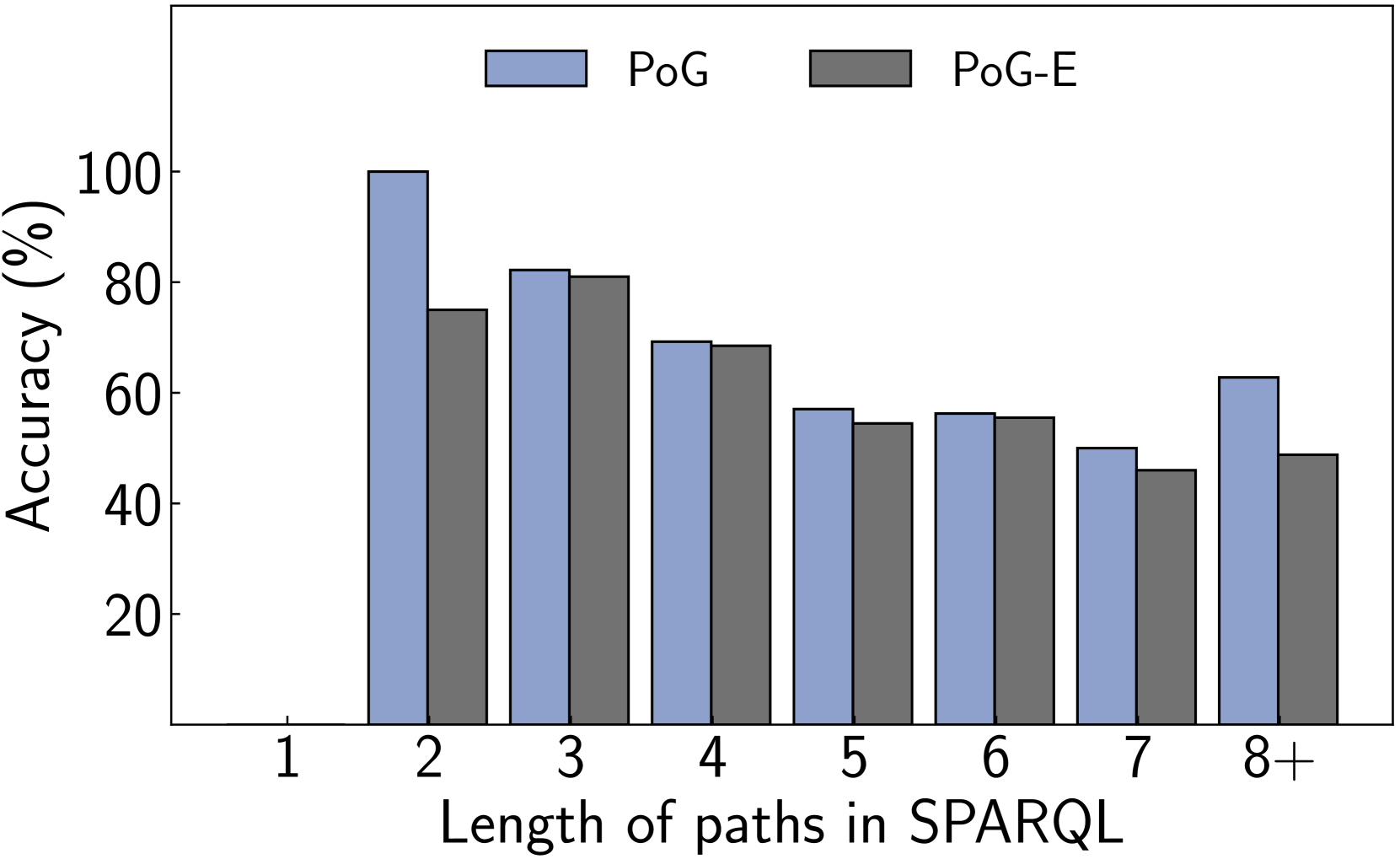

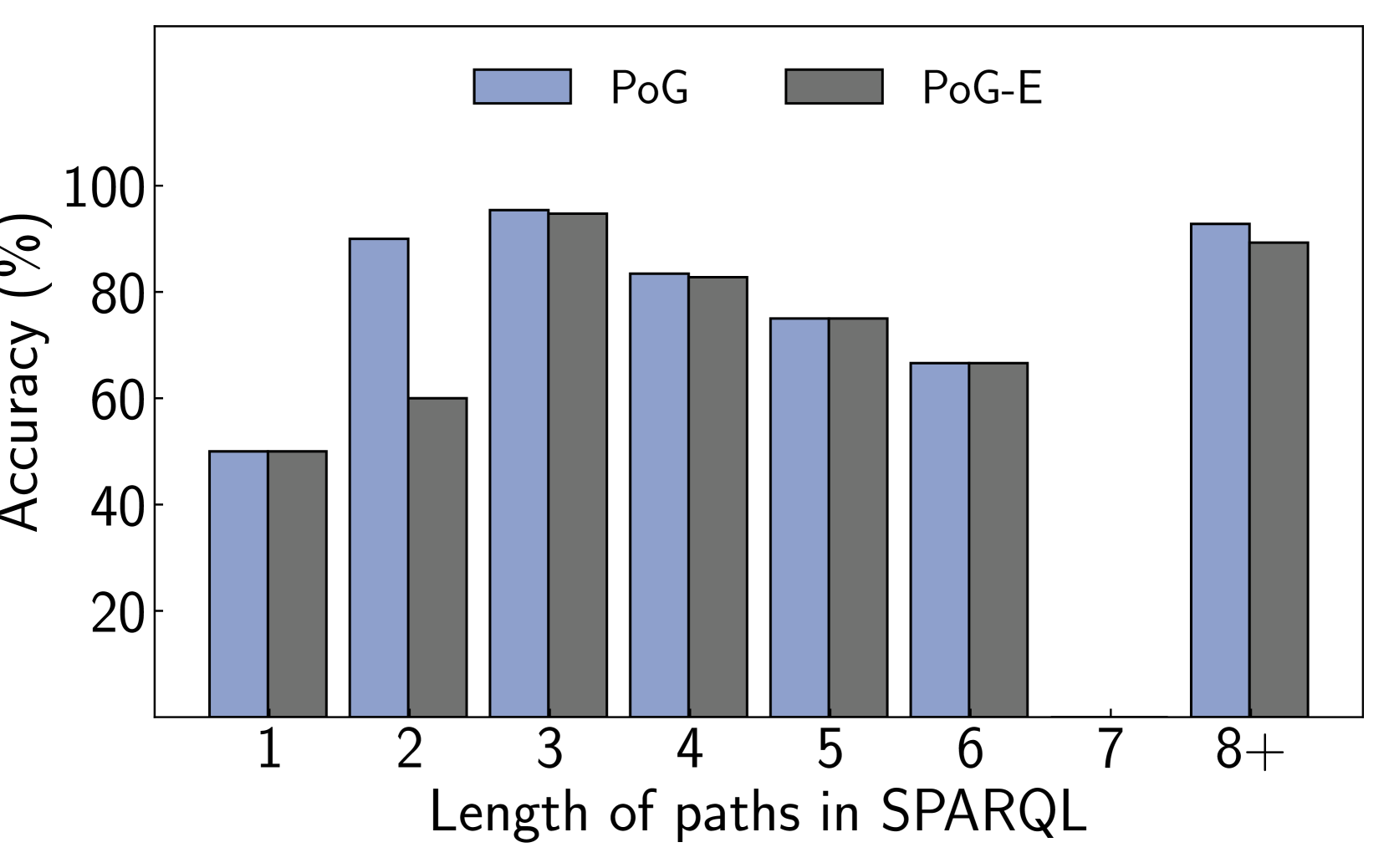

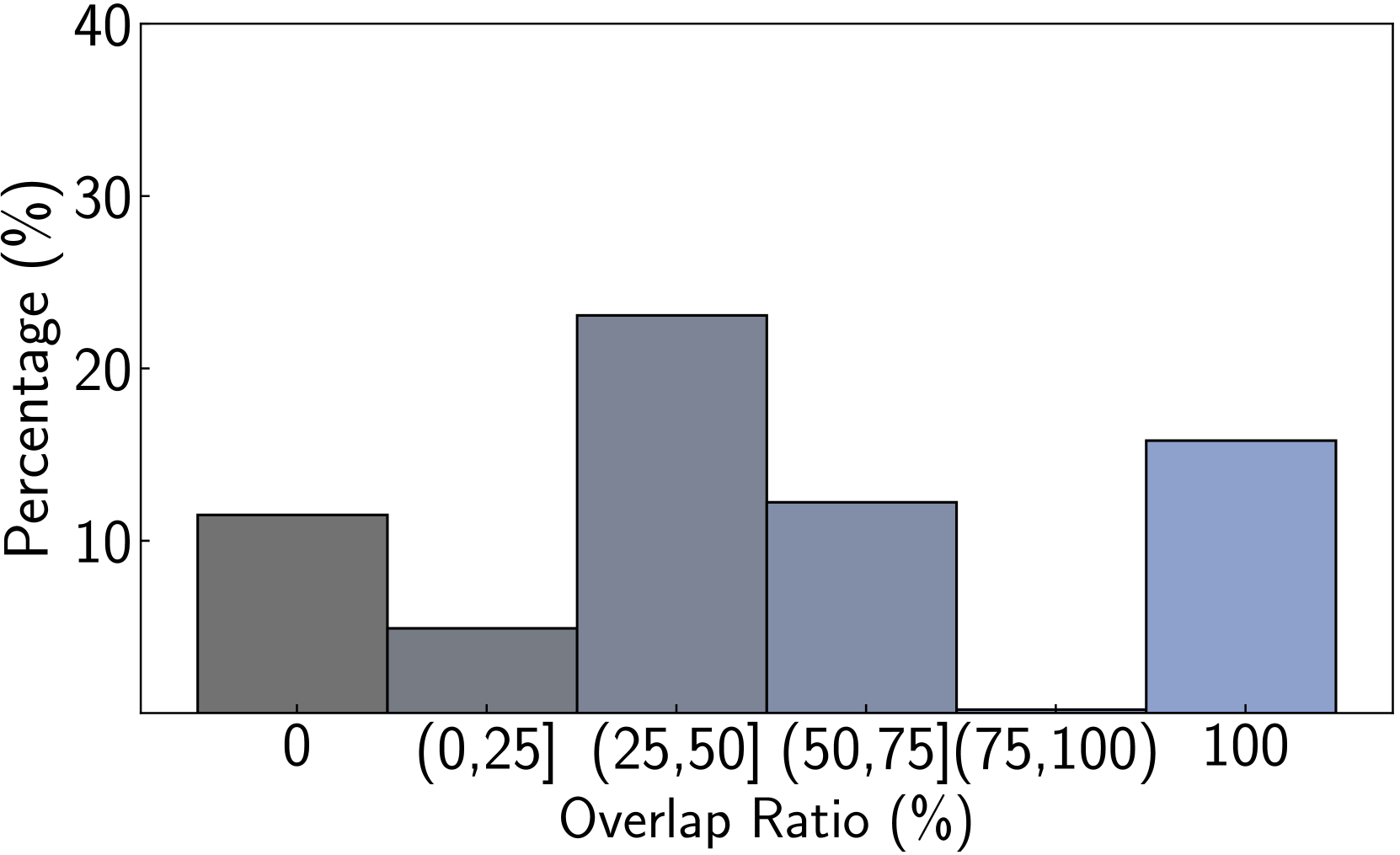

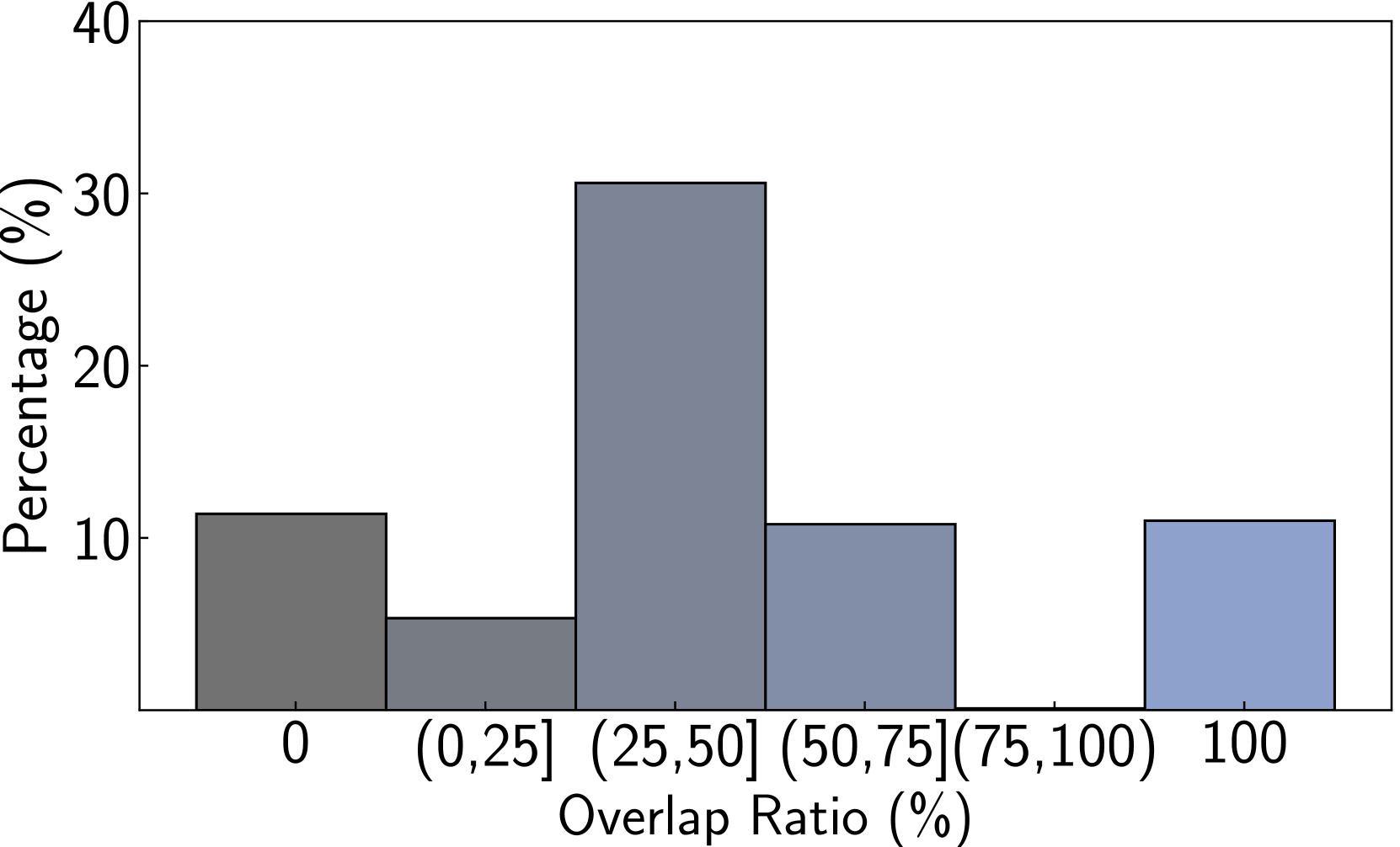

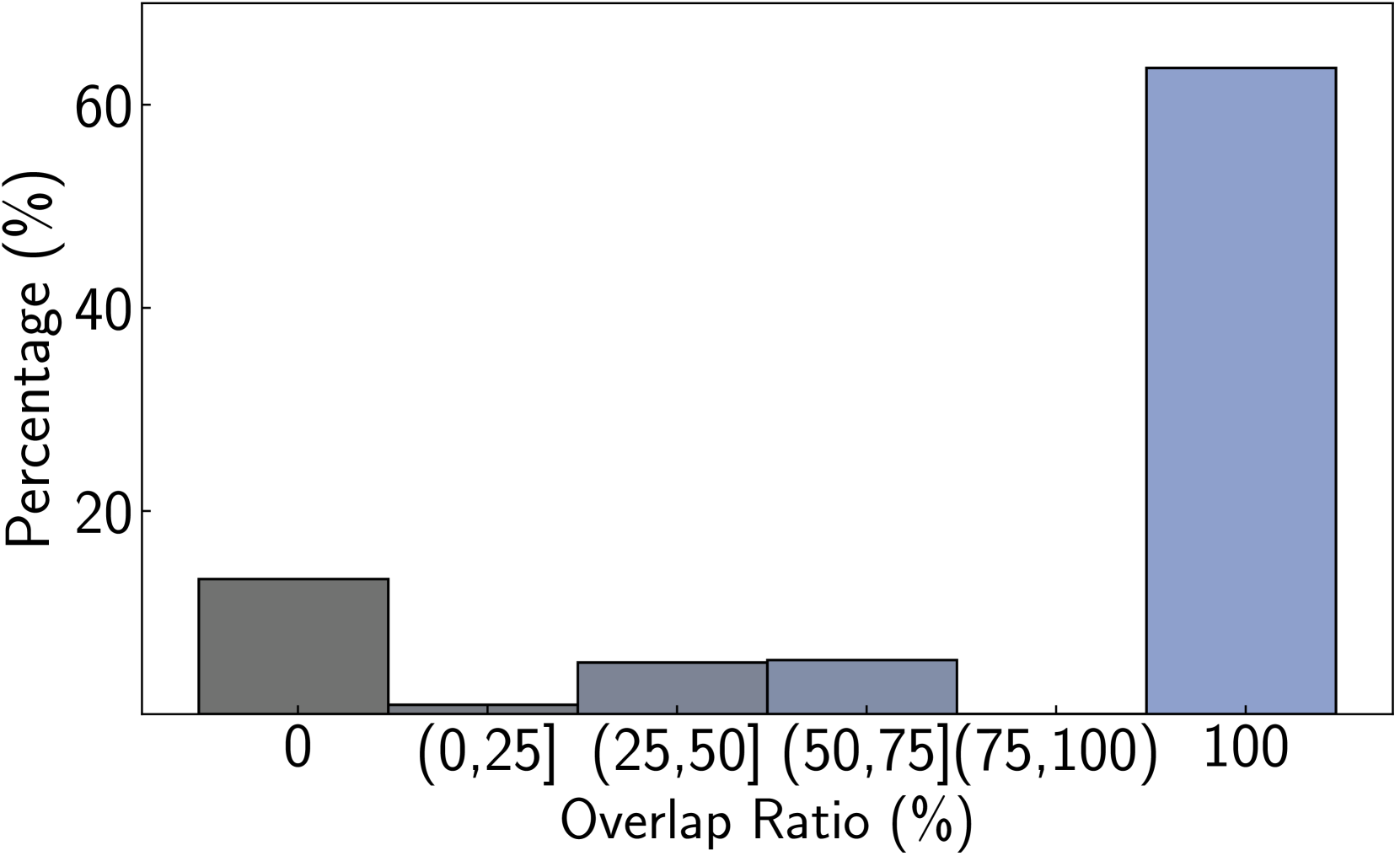

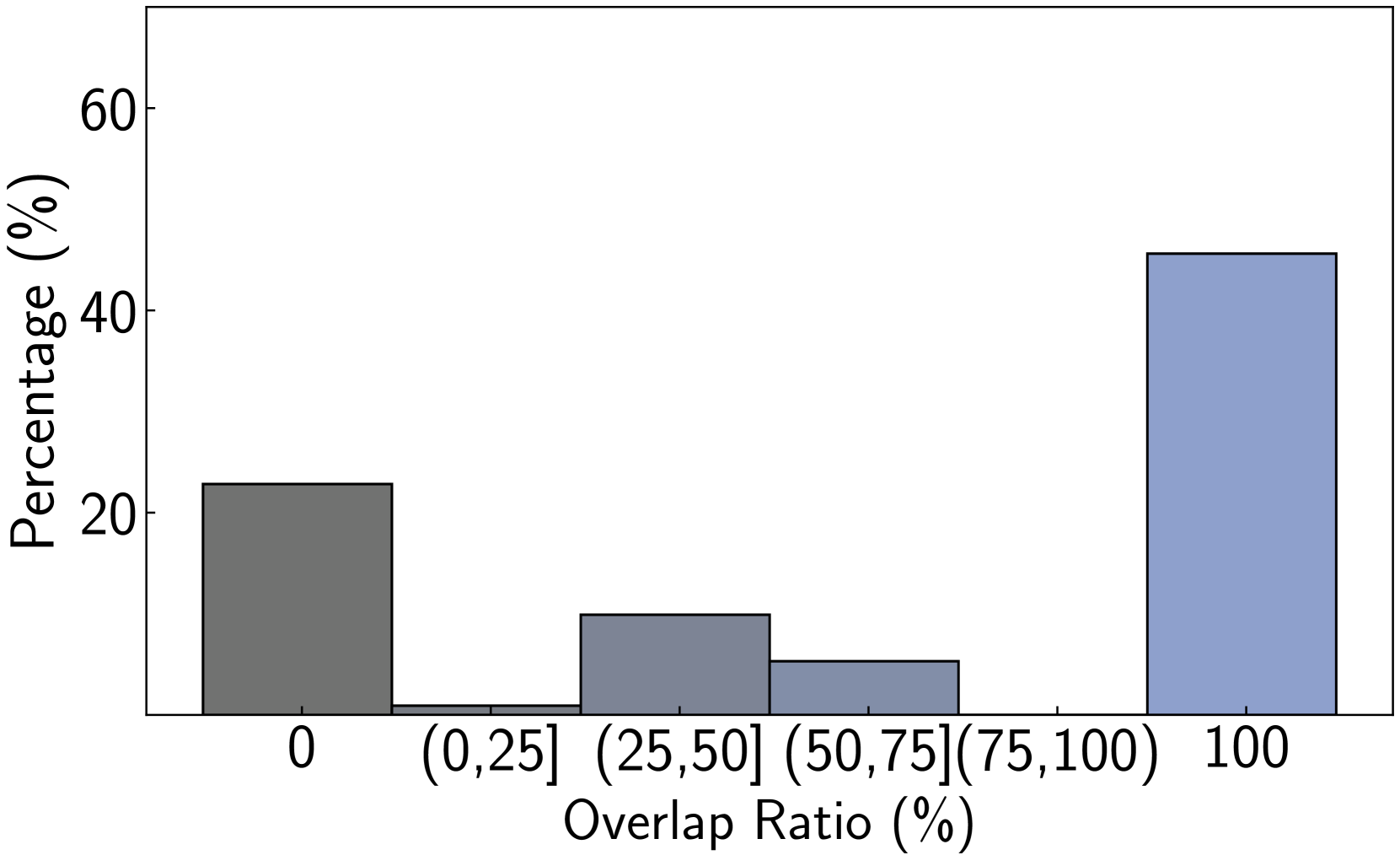

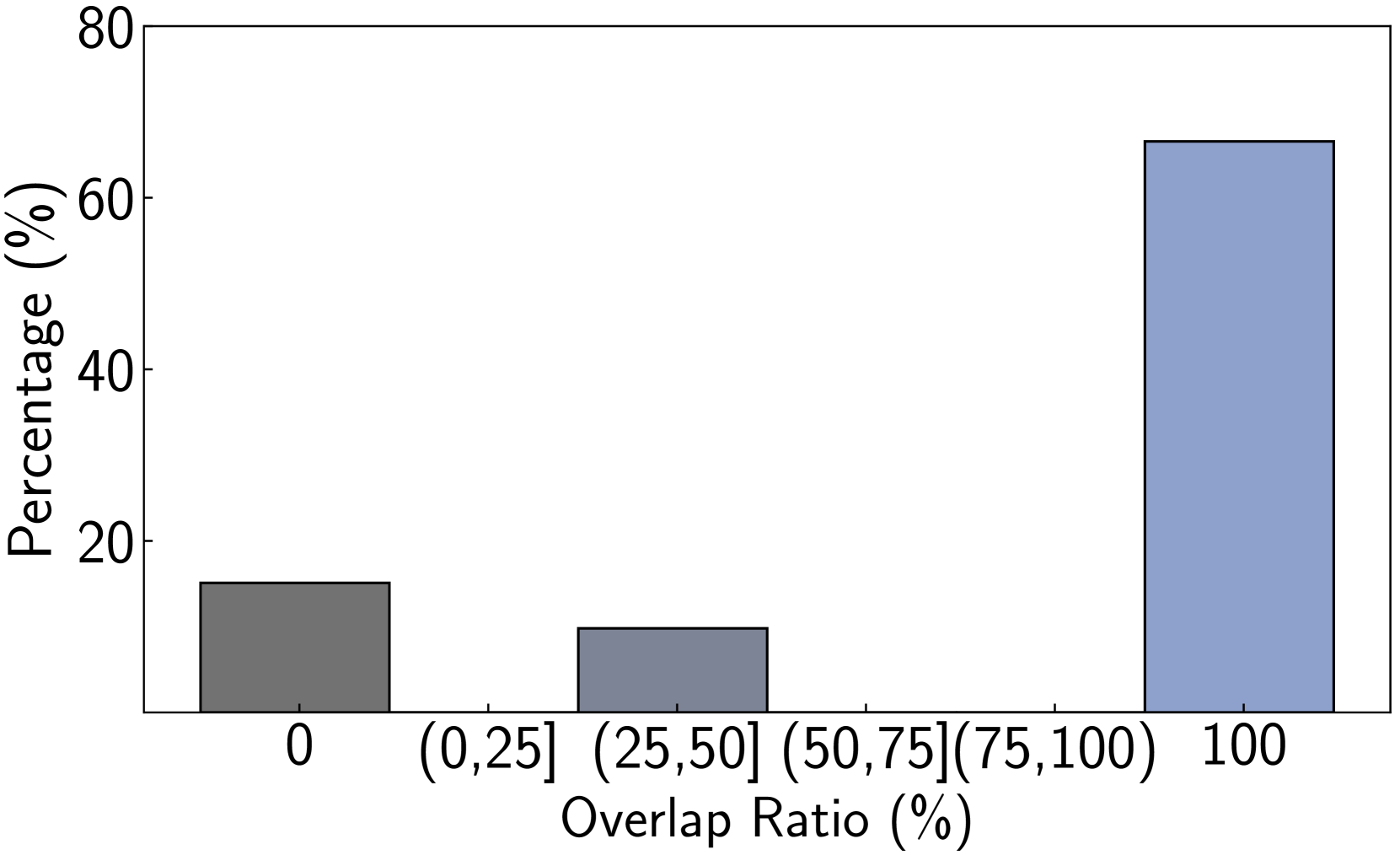

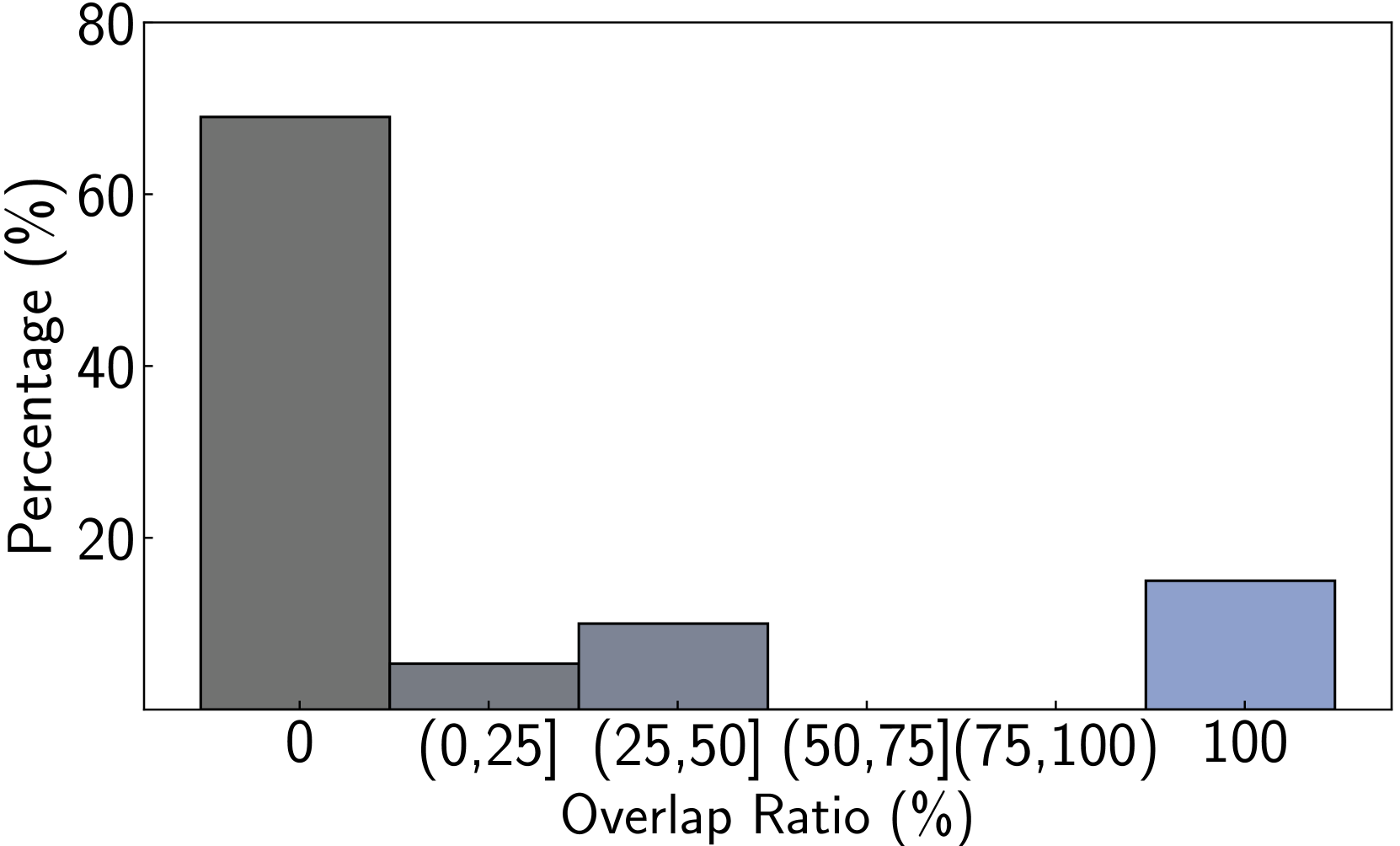

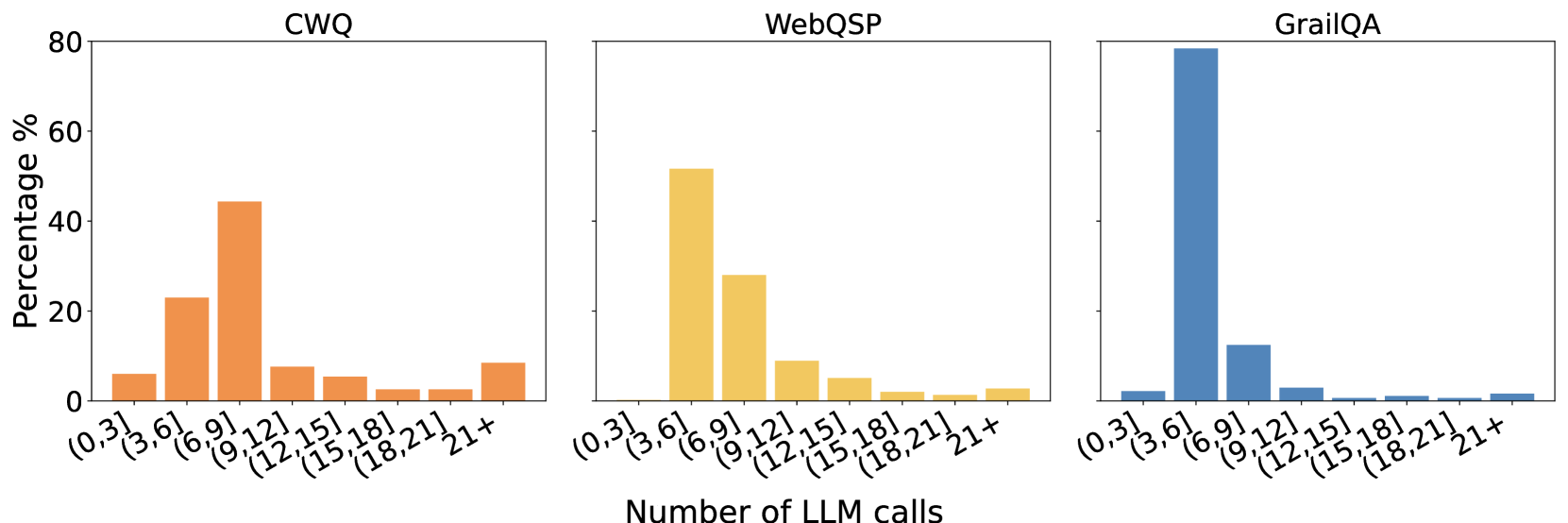

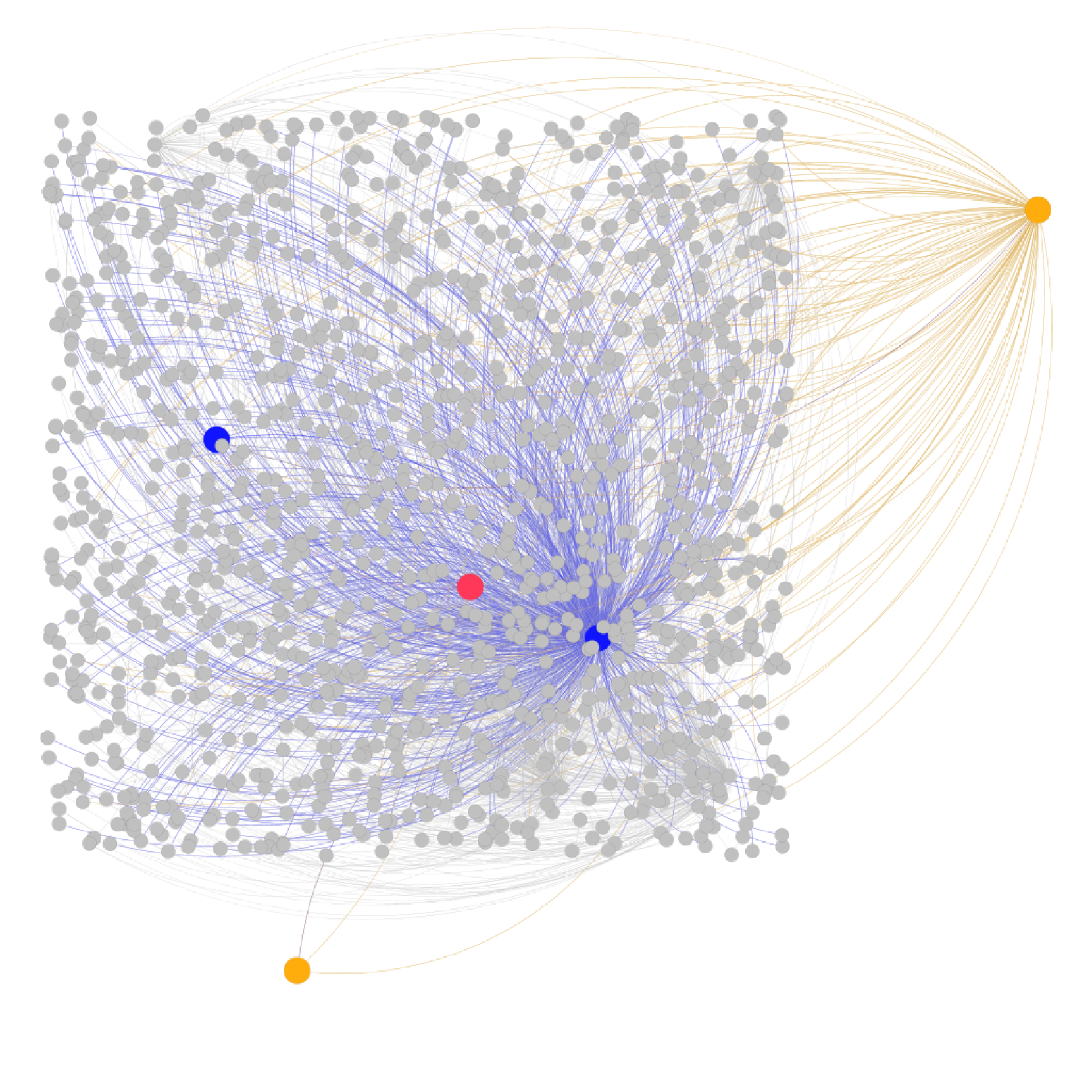

We perform various ablation studies to understand the importance of different factors in PoG. These ablation studies are performed with GPT-3.5-Turbo on two subsets of the CWQ and WebQSP test sets, each containing 500 randomly sampled questions. Does search depth matter? As described, PoG’s dynamic deep search is limited by $D_{max}$ . To assess the impact of $D_{\max}$ on performance, we conduct experiments with depth from 1 to 4. The results, shown in Figures 4 (a) and (c), indicate that performance improves with increased depth, but the benefits diminish beyond a depth of 3. Figures 4 (b) and (d), showing which exploration phase the answer is generated from, reveal that higher depths reduce the effectiveness of both LLM-based path supplementation and node exploration. Excessive depth leads to LLM hallucinations and difficulties in managing long reasoning paths. Therefore, we set the maximum depth to 3 for experiments to balance performance and computational efficiency. Additionally, even at lower depths, PoG maintains strong performance by effectively combining the LLM’s inherent knowledge with the structured information from the KG.

<details>

<summary>x4.png Details</summary>

### Visual Description

## Line Chart: Accuracy vs. Maximum Depth for PoG and PoG-E Methods

### Overview

This is a line chart comparing the performance, measured in accuracy percentage, of two methods—PoG and PoG-E—as a function of a parameter called "Varying maximum depth (D_max)". The chart plots accuracy on the vertical axis against discrete depth values on the horizontal axis.

### Components/Axes

* **Chart Type:** Line chart with markers.

* **X-Axis (Horizontal):**

* **Label:** "Varying maximum depth (D_max)"

* **Scale:** Discrete integer values: 1, 2, 3, 4.

* **Y-Axis (Vertical):**

* **Label:** "Accuracy (%)"

* **Scale:** Linear scale from 50 to 85, with major gridlines every 5 units (50, 55, 60, ..., 85).

* **Legend:**

* **Position:** Centered at the bottom of the chart area.

* **Series 1:** "PoG" - Represented by a solid blue line with downward-pointing triangle markers (▼).

* **Series 2:** "PoG-E" - Represented by a solid black line with diamond markers (◆).

* **Grid:** A light gray grid is present, with both horizontal and vertical lines aligned with the axis ticks.

### Detailed Analysis

**Data Series: PoG (Blue line, ▼ markers)**

* **Trend:** The line shows a consistent upward trend that plateaus at the highest depth values.

* **Data Points (Approximate):**

* At D_max = 1: Accuracy ≈ 62.5%

* At D_max = 2: Accuracy ≈ 73.5%

* At D_max = 3: Accuracy ≈ 80.5%

* At D_max = 4: Accuracy ≈ 80.5%

**Data Series: PoG-E (Black line, ◆ markers)**

* **Trend:** The line shows an initial upward trend, peaks at D_max=3, and then declines.

* **Data Points (Approximate):**

* At D_max = 1: Accuracy ≈ 55.5%

* At D_max = 2: Accuracy ≈ 69.0%

* At D_max = 3: Accuracy ≈ 78.5%

* At D_max = 4: Accuracy ≈ 70.0%

### Key Observations

1. **Performance Gap:** The PoG method consistently achieves higher accuracy than the PoG-E method at every measured depth value.

2. **Peak Performance:** Both methods reach their peak accuracy at D_max = 3. PoG's peak is ~80.5%, while PoG-E's peak is ~78.5%.

3. **Divergent Behavior at High Depth:** At the maximum depth of 4, the two methods diverge significantly. PoG maintains its peak accuracy (plateaus), while PoG-E's accuracy drops sharply by approximately 8.5 percentage points from its peak.

4. **Rate of Improvement:** The most significant gains in accuracy for both methods occur when increasing D_max from 1 to 2.

### Interpretation

The chart demonstrates the relationship between model complexity (controlled by maximum depth, D_max) and predictive accuracy for two related algorithms. The data suggests:

* **Benefit of Increased Depth:** For both methods, increasing the maximum depth from 1 to 3 leads to substantial improvements in accuracy, indicating that allowing the model to consider deeper hierarchical structures or longer sequences is beneficial up to a point.

* **Robustness vs. Overfitting:** The PoG method appears more robust to increases in model complexity. Its performance plateaus at D_max=3 and 4, suggesting it has reached its capacity or that additional depth provides no further benefit. In contrast, the PoG-E method's performance degrades at D_max=4, which is a classic sign of overfitting—the model may be becoming too complex and fitting noise in the training data, harming its generalization performance.

* **Method Superiority:** Based on this evaluation, PoG is the superior method across the tested range of depths, offering both higher peak accuracy and more stable performance as complexity increases. The "E" variant (PoG-E) may incorporate a modification that introduces instability or sensitivity at higher depths.

**Language Note:** All text in the image is in English. No other languages are present.

</details>

(a) CWQ (Vary $D_{\max}$ )

<details>

<summary>x5.png Details</summary>

### Visual Description

## Stacked Bar Chart with Line Overlay: Accuracy vs. Maximum Depth

### Overview

This image displays a stacked bar chart with an overlaid line graph. It illustrates how the total accuracy of a system and the contribution of its three constituent exploration methods change as a key parameter, the maximum depth (`D_max`), is increased from 1 to 4.

### Components/Axes

* **X-Axis:** Labeled "Varying maximum depth (`D_max`)". It has four discrete, evenly spaced categories marked with the integers `1`, `2`, `3`, and `4`.

* **Y-Axis:** Labeled "Accuracy (%)". It is a linear scale ranging from 0 to 100, with major tick marks at intervals of 20 (0, 20, 40, 60, 80, 100).

* **Legend:** Positioned at the bottom of the chart area, spanning its width. It contains four entries:

1. **Accuracy Total:** Represented by a blue dashed line with circular markers.

2. **Topic Entity Path Exploration:** Represented by a light blue (periwinkle) solid bar segment.

3. **LLM Supplement Path Exploration:** Represented by an orange solid bar segment.

4. **Node Expand Exploration:** Represented by a dark gray solid bar segment.

### Detailed Analysis

**Data Series and Values (Approximate):**

The chart presents data for four values of `D_max`. For each, the total accuracy (line) and the stacked contributions (bars) are as follows:

* **D_max = 1:**

* **Accuracy Total (Line):** ~62%

* **Bar Composition (Bottom to Top):**

* Topic Entity Path Exploration (Light Blue): ~36%

* LLM Supplement Path Exploration (Orange): ~15% (stacked from ~36% to ~51%)

* Node Expand Exploration (Dark Gray): ~11% (stacked from ~51% to ~62%)

* **Trend Check:** The line starts at its lowest point. The bar is the shortest, with the "Topic Entity" segment being the largest component.

* **D_max = 2:**

* **Accuracy Total (Line):** ~73%

* **Bar Composition (Bottom to Top):**

* Topic Entity Path Exploration (Light Blue): ~59%

* LLM Supplement Path Exploration (Orange): ~5% (stacked from ~59% to ~64%)

* Node Expand Exploration (Dark Gray): ~9% (stacked from ~64% to ~73%)

* **Trend Check:** The line shows a significant upward slope. The "Topic Entity" segment grows substantially, while the "LLM Supplement" segment shrinks noticeably.

* **D_max = 3:**

* **Accuracy Total (Line):** ~80%

* **Bar Composition (Bottom to Top):**

* Topic Entity Path Exploration (Light Blue): ~70%

* LLM Supplement Path Exploration (Orange): ~7% (stacked from ~70% to ~77%)

* Node Expand Exploration (Dark Gray): ~3% (stacked from ~77% to ~80%)

* **Trend Check:** The line continues to rise, but the slope is less steep than the previous step. The "Topic Entity" segment continues to dominate and grow. The "Node Expand" segment is now very small.

* **D_max = 4:**

* **Accuracy Total (Line):** ~80%

* **Bar Composition (Bottom to Top):**

* Topic Entity Path Exploration (Light Blue): ~72%

* LLM Supplement Path Exploration (Orange): ~6% (stacked from ~72% to ~78%)

* Node Expand Exploration (Dark Gray): ~2% (stacked from ~78% to ~80%)

* **Trend Check:** The line is flat, indicating a plateau. The bar composition is nearly identical to `D_max=3`, with a very slight increase in the "Topic Entity" segment and a negligible decrease in the "Node Expand" segment.

### Key Observations

1. **Plateau Effect:** The total accuracy (blue dashed line) increases from `D_max=1` to `D_max=3` but then plateaus, showing no improvement between `D_max=3` and `D_max=4`.

2. **Dominant Component:** The "Topic Entity Path Exploration" (light blue) is the largest contributor to accuracy at every depth, and its contribution grows steadily as `D_max` increases.

3. **Diminishing Returns of Other Methods:** The contributions from "LLM Supplement Path Exploration" (orange) and especially "Node Expand Exploration" (dark gray) become proportionally smaller as `D_max` increases. The "Node Expand" method's contribution is minimal at depths 3 and 4.

4. **Component Shift:** There is a clear shift in the system's behavior. At low depth (`D_max=1`), all three methods contribute meaningfully. At higher depths (`D_max=3,4`), the system relies almost entirely on "Topic Entity Path Exploration."

### Interpretation

This chart demonstrates the performance characteristics of a multi-method exploration system, likely for knowledge graph traversal, question answering, or a similar AI task. The data suggests:

* **Optimal Depth:** The system reaches its peak effective performance at a maximum depth (`D_max`) of 3. Increasing the depth further to 4 does not yield accuracy gains, indicating a point of diminishing returns or a fundamental limit of the approach.

* **Method Efficacy:** The "Topic Entity Path Exploration" method is the most effective and scalable component. Its increasing contribution with depth implies it benefits from exploring deeper, more complex paths in the data structure.

* **Role of Supplementary Methods:** The "LLM Supplement" and "Node Expand" methods appear to be most valuable in compensating for the limitations of the primary method at shallow depths. As the primary method is allowed to explore deeper (higher `D_max`), these supplementary methods become less critical, suggesting they may be addressing surface-level or immediate neighbor information that the deeper primary search eventually captures more effectively.

* **System Design Insight:** The plateau suggests that for this specific task and configuration, allocating computational resources to increase depth beyond 3 is inefficient. Resources might be better spent improving the core "Topic Entity" exploration algorithm or investigating why deeper exploration fails to improve accuracy further.

</details>

(b) CWQ(PoG)

<details>

<summary>x6.png Details</summary>

### Visual Description

## Line Chart: Accuracy vs. Maximum Depth for PoG and PoG-E Methods

### Overview

The image is a line chart comparing the performance (accuracy) of two methods, labeled "PoG" and "PoG-E," as a function of a parameter called "Varying maximum depth (D_max)." The chart shows that both methods improve in accuracy as depth increases from 1 to 3, but then experience a decline at depth 4. The "PoG" method consistently achieves higher accuracy than "PoG-E" at depths 2, 3, and 4.

### Components/Axes

* **Chart Type:** Line chart with markers.

* **X-Axis:**

* **Label:** "Varying maximum depth (D_max)"

* **Scale:** Discrete integer values: 1, 2, 3, 4.

* **Y-Axis:**

* **Label:** "Accuracy (%)"

* **Scale:** Linear scale from 80 to 94, with major gridlines at intervals of 2 (80, 82, 84, 86, 88, 90, 92, 94).

* **Legend:**

* **Position:** Bottom center of the chart area.

* **Series 1:** "PoG" - Represented by a blue line with downward-pointing triangle markers (▼).

* **Series 2:** "PoG-E" - Represented by a black line with diamond markers (◆).

* **Grid:** A light gray grid is present for both major x and y ticks.

### Detailed Analysis

**Data Series: PoG (Blue line, ▼ markers)**

* **Trend:** The line slopes steeply upward from depth 1 to 3, then slopes slightly downward to depth 4.

* **Data Points (Approximate):**

* D_max = 1: Accuracy ≈ 81.0%

* D_max = 2: Accuracy ≈ 86.5%

* D_max = 3: Accuracy ≈ 93.8% (Peak)

* D_max = 4: Accuracy ≈ 92.4%

**Data Series: PoG-E (Black line, ◆ markers)**

* **Trend:** The line slopes upward from depth 1 to 3, then slopes downward to depth 4. The slope is less steep than the PoG line between depths 1 and 3.

* **Data Points (Approximate):**

* D_max = 1: Accuracy ≈ 82.3%

* D_max = 2: Accuracy ≈ 86.1%

* D_max = 3: Accuracy ≈ 91.4% (Peak)

* D_max = 4: Accuracy ≈ 88.4%

### Key Observations

1. **Performance Peak:** Both methods achieve their highest accuracy at a maximum depth (D_max) of 3.

2. **Relative Performance:** PoG-E starts with a slightly higher accuracy than PoG at D_max=1 (≈82.3% vs. ≈81.0%). However, PoG surpasses PoG-E at D_max=2 and maintains a significant lead at D_max=3 and D_max=4.

3. **Performance Drop-off:** Both methods show a decrease in accuracy when moving from D_max=3 to D_max=4. The drop is more pronounced for PoG-E (≈3.0 percentage points) than for PoG (≈1.4 percentage points).

4. **Greatest Divergence:** The largest performance gap between the two methods occurs at D_max=3, where PoG outperforms PoG-E by approximately 2.4 percentage points.

### Interpretation

The chart demonstrates the relationship between model complexity (controlled by maximum depth, D_max) and predictive accuracy for two related algorithms, PoG and PoG-E. The data suggests an optimal complexity point at D_max=3 for both methods, where accuracy is maximized. Increasing depth beyond this point (to 4) leads to a decline in performance, which could indicate the onset of overfitting, where the model becomes too specialized to the training data and loses generalization ability.

The "PoG" variant appears to be the more robust and effective method overall, as it not only achieves a higher peak accuracy but also experiences a less severe performance degradation at the highest tested depth. The initial advantage of PoG-E at the simplest configuration (D_max=1) is quickly overcome, suggesting that PoG scales better with increased model complexity within the tested range. This analysis would be crucial for a practitioner deciding which method to use and what depth parameter to select for their specific application, balancing accuracy against potential computational costs associated with greater depth.

</details>

(c) WebQSP (Vary $D_{\max}$ )

<details>

<summary>x7.png Details</summary>

### Visual Description

## Stacked Bar Chart with Line Overlay: Accuracy vs. Maximum Depth

### Overview