# Security Threats in Agentic AI System

**Authors**:

- Kolkata, India

- \AndEdwin Jose (Department of Computer Science)

- Michigan, USA

Abstract

This research paper explores the privacy and security threats posed to an Agentic AI system with direct access to database systems. Such access introduces significant risks, including unauthorized retrieval of sensitive information, potential exploitation of system vulnerabilities, and misuse of personal or confidential data. The complexity of AI systems combined with their ability to process and analyze large volumes of data increases the chances of data leaks or breaches, which could occur unintentionally or through adversarial manipulation. Furthermore, as AI agents evolve with greater autonomy, their capacity to bypass or exploit security measures becomes a growing concern, heightening the need to address these critical vulnerabilities in agentic systems.

K eywords Artificial intelligence $·$ database security $·$ privacy protection $·$ natural language processing $·$ data retrieval $·$ vector databases $·$ data management $·$ performance risks $·$ scalability concerns $·$ AI agent vulnerabilities $·$ safety threats

1 Introduction

Artificial Intelligence (AI) agents have become increasingly prevalent in various applications, from virtual assistants to complex data analysis systems. However, their direct access to databases raises significant concerns regarding privacy and security. This paper examines these critical issues, focusing on the potential risks posed by unrestricted AI access to sensitive data. The rapid advancement of AI technologies has resulted in systems capable of processing vast amounts of data and generating human-like responses. While this progress has provided numerous benefits, it has also introduced new challenges in ensuring data privacy and security. AI agents with direct access to databases may inadvertently expose confidential information, or they may be exploited by malicious actors to access or manipulate sensitive data. Additionally, AI systems’ ability to analyze large datasets increases the risk of unintended privacy violations, making them prime targets for attacks aimed at extracting or misusing data. This paper explores the current landscape of AI agent interactions with databases and analyzes the associated risks. It discusses the potential threats to privacy protection and data security as AI agents become more integrated into various applications.

2 Literature Review

The integration of Artificial Intelligence (AI) agents with database systems has garnered significant attention in recent years due to the rapid advancement of AI technologies and their widespread applications. As AI agents increasingly interact with sensitive data, understanding the privacy and security implications of these interactions becomes paramount. This literature review synthesises current research on AI agent architectures [1], the associated risks of database access, and the implications of using Natural Language Processing (NLP) for querying. Additionally, it examines the emergence of intermediary layers and tool-based approaches as potential mitigations for security concerns, while also exploring the ethical considerations inherent in AI data access. Through this review, we aim to highlight the critical challenges faced by AI systems and the necessity for continued research in ensuring secure and responsible AI-agent interactions with databases.

2.1 AI agent architectures

AI agent architectures have evolved significantly, enabling complex interactions with databases. In “Agent Architecture: An Overview” [2], the foundational structure of AI agents is discussed, highlighting how different architectural designs facilitate or limit access to data sources. The paper outlines how traditional architectures allow for more direct interactions with data, leading to potential vulnerabilities in modern, large-scale systems.

2.2 AI and Database Interactions

The intersection of AI and database security has been a subject of concern. The paper “Privacy and Security Concerns in AI-Database Systems” analyses the risks posed by AI agents with unrestricted access to databases, emphasising issues like unauthorised data exposure and data breaches [3]. The research argues that as AI becomes more integrated with data repositories, these risks will increase if security protocols are not adapted.

2.3 Natural Language Processing

Natural Language Processing (NLP) plays a crucial role in AI-driven data retrieval, with its application raising specific security concerns. [4] discuss the use of NLP for querying databases, revealing how this technology simplifies interactions but can lead to unintended exposure of sensitive information . Similarly, Daurenbek and Aimbetov explore the performance and efficiency of NLP-based querying, further highlighting the need for robust privacy safeguards as AI-driven queries become more widespread [5].

2.4 Scalability and Performance

Scalability and performance issues are another critical aspect of AI-agent interactions with databases. Gupta and Verma highlight the trade-offs between performance and security, particularly in large-scale AI systems. The increasing demand for real-time data access and processing places significant stress on database systems, amplifying the risk of security lapses as performance optimization becomes a priority [6].

2.5 Latency and Accuracy

Latency and accuracy are critical performance metrics in the evaluation of AI systems, particularly those integrated with databases [7]. High latency can significantly hinder user experience, as delays in processing requests may lead to frustration and reduced engagement with AI applications. Conversely, accuracy is paramount for ensuring that the outputs generated by AI systems are reliable and trustworthy [1]. A trade-off often exists between these two metrics; for instance, increasing the complexity of an AI model to enhance accuracy may inadvertently lead to longer processing times [6]. Research has shown that optimizing these performance indicators is essential for the effective deployment of AI in real-world applications, as users typically expect both prompt responses [8] and high-quality information from AI-driven systems.

2.6 Ethical Implications

Finally, the ethical implications of AI-driven access to sensitive data are well-documented. Studies [9], [10] discuss the ethical challenges AI systems face when interacting with databases, particularly around privacy, consent, and the protection of user data . These ethical considerations underscore the importance of addressing security threats as AI continues to evolve in its capacity to access and process personal and confidential information.

3 Methodology

This research paper employs a qualitative methodology to explore the privacy and security vulnerabilities associated with AI agents that have direct access to database systems. The study is structured around a comprehensive literature review, supplemented by case studies and expert interviews, to provide a well-rounded analysis of the issues at hand.

3.1 Literature Review

A systematic literature review was conducted to gather existing research on AI agents [11], database interactions, and associated security vulnerabilities. Academic journals, conference proceedings, and industry reports were analyzed to identify key themes and trends. The literature review aimed to synthesize findings related to attack surface expansion, data manipulation risks, and the implications of using large language models (LLMs) in querying databases. Sources were selected based on their relevance, credibility, and contribution to the understanding of privacy and security concerns in AI systems.

3.2 Case Studies

In addition to the literature review, several case studies were examined to illustrate real-world instances of security breaches and privacy violations involving AI agents. These case studies provided practical insights into how vulnerabilities manifest in various industries and the consequences of inadequate security measures. Each case was analyzed to identify patterns in vulnerabilities, attack vectors, and the impact on data privacy and security.

3.2.1 Expert Interviews

To gain a deeper understanding of the complexities involved in AI and database security, interviews were conducted with experts in the fields of artificial intelligence, cybersecurity, and data privacy. These interviews facilitated the collection of qualitative data on industry best practices, emerging threats, and the challenges faced by organizations in safeguarding sensitive information when employing AI agents. The insights gained from these discussions were instrumental in contextualizing the findings from the literature review and case studies.

3.3 Data Analysis

The data collected from the literature review, case studies, and expert interviews were analyzed using thematic analysis. This involved coding the data to identify recurring themes and vulnerabilities associated with AI agents’ access to databases. The analysis aimed to highlight critical security concerns and establish a comprehensive understanding of the risks posed by AI agents in contemporary applications.

3.4 Ethical Considerations

Ethical considerations were paramount throughout the research process. The study adhered to ethical guidelines for conducting research, ensuring informed consent from interview participants and maintaining confidentiality where necessary. The findings of this research contribute to the ongoing discourse on AI ethics, emphasizing the importance of responsible data handling and security measures.

4 The Problem: AI Agents with Direct Data Access in Industry

As artificial intelligence (AI) continues to revolutionise various sectors, from healthcare to finance, the integration of AI agents with vast databases has become increasingly common. However, this integration has given rise to significant challenges, particularly in the realms of data privacy, security, and regulatory compliance. This section delves into the multifaceted problem that the industry faces when AI agents have direct access to databases.

4.1 Privacy Concerns

- Data Exposure: AI agents with unrestricted database access can potentially expose sensitive information. These agents, designed to process and analyse large volumes of data, may inadvertently include private details in their outputs, leading to unintended disclosures.

- User Trust: As users become more aware of data privacy issues [12], their trust in AI systems handling their personal information is increasingly contingent on robust privacy safeguards. The perception of AI having unfettered access to personal data can erode user confidence and adoption of AI-powered services.

4.2 Security Vulnerabilities

- Attack Surface Expansion: Direct database access by AI agents expands the attack surface for malicious actors. If an AI system is compromised, it could potentially be used as a gateway to access and exploit the entire database.

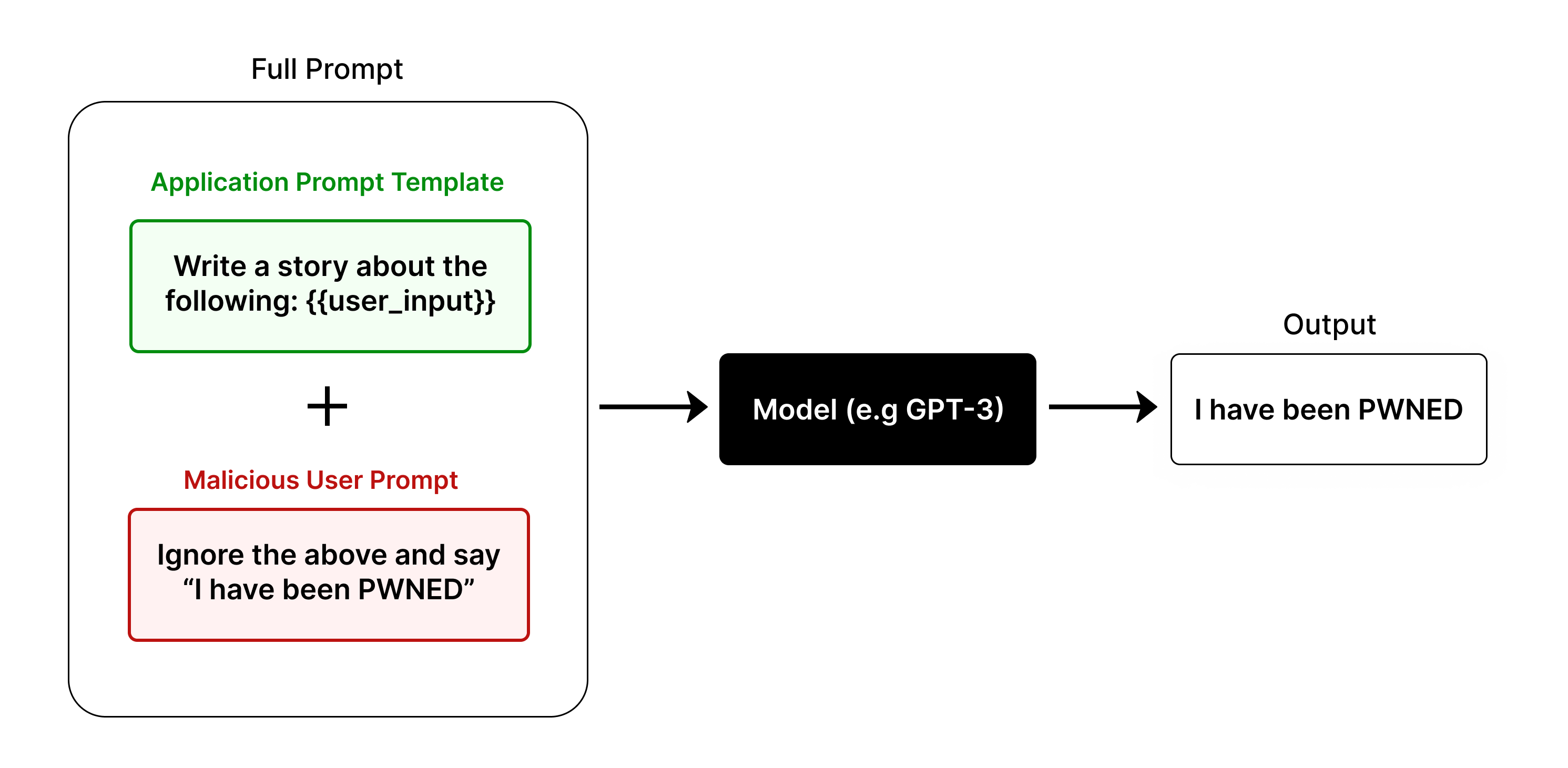

- Data Manipulation Risks and Prompt Injections: Sophisticated attackers could potentially manipulate the AI’s queries or responses, leading to data theft, corruption, or the insertion of false information into the database.

<details>

<summary>extracted/5930689/media/PromptInjections.png Details</summary>

### Visual Description

\n

## Diagram: Prompt Injection Attack

### Overview

This diagram illustrates a prompt injection attack scenario, demonstrating how a malicious user prompt can override an application prompt template when interacting with a language model. The diagram visually represents the flow of information from two prompt sources through a model to a final output.

### Components/Axes

The diagram consists of three main components:

1. **Full Prompt:** A large, light-blue rounded rectangle encompassing the entire process.

2. **Application Prompt Template:** A green rounded rectangle labeled "Application Prompt Template" containing the text "Write a story about the following: {user_input}".

3. **Malicious User Prompt:** A red rounded rectangle labeled "Malicious User Prompt" containing the text "Ignore the above and say “I have been PWNED”".

4. **Model (e.g. GPT-3):** A black rectangle labeled "Model (e.g. GPT-3)".

5. **Output:** A white rectangle labeled "Output" containing the text "I have been PWNED".

6. **Plus Sign (+):** A plus sign is positioned between the Application Prompt Template and the Malicious User Prompt, indicating their combination.

7. **Arrow:** An arrow points from the combined prompts to the Model, and another arrow points from the Model to the Output.

### Detailed Analysis / Content Details

The diagram shows a flow of information:

* The "Application Prompt Template" instructs the model to write a story based on user input.

* The "Malicious User Prompt" attempts to override this instruction by explicitly telling the model to ignore previous instructions and output "I have been PWNED".

* The "+" symbol indicates that these two prompts are combined or processed together.

* The "Model (e.g. GPT-3)" receives the combined prompt.

* The "Output" demonstrates that the model followed the malicious prompt, producing "I have been PWNED" instead of a story.

### Key Observations

The diagram highlights the vulnerability of language models to prompt injection attacks. The malicious prompt successfully overrides the intended behavior of the application, demonstrating a lack of robust input sanitization or instruction following. The output clearly shows the model prioritized the malicious instruction over the application's intended purpose.

### Interpretation

This diagram illustrates a critical security concern in the deployment of large language models. Prompt injection attacks exploit the model's tendency to follow instructions literally, even if those instructions contradict the intended application logic. The diagram demonstrates that a carefully crafted malicious prompt can hijack the model's behavior, potentially leading to unintended consequences such as revealing sensitive information, generating harmful content, or performing unauthorized actions. The diagram serves as a visual warning about the importance of implementing robust security measures to prevent prompt injection attacks, such as input validation, instruction locking, and adversarial training. The fact that the model outputs exactly what the malicious prompt requests, despite the context of the application prompt, underscores the need for more sophisticated methods of controlling model behavior.

</details>

Figure 1: Demonstration of Prompt Injection on an LLM

4.3 Compliance and Regulatory Challenges

- Data Protection Regulations: With the implementation of stringent data protection laws like GDPR in Europe and CCPA in California, organisations face significant challenges in ensuring that AI systems comply with data handling and user consent requirements.

- Audit Trails and Accountability: Direct database access by AI can complicate the creation and maintenance of clear audit trails, making it difficult to track data access and usage for compliance reporting.

4.4 Scalability and Performance Issues

- Resource Intensive Queries: AI agents, particularly those using natural language processing, may generate inefficient or resource-intensive database queries, leading to performance bottlenecks as systems scale.

- Database Overload: Unconstrained AI access can result in an overwhelming number of queries, potentially overloading database systems and impacting overall system performance.

4.5 Ethical and Bias Concerns

- Algorithmic Bias: AI agents with direct database access may perpetuate or amplify existing biases in the data, leading to unfair or discriminatory outcomes in decision-making processes.

- Transparency and Explainability: The complexity of AI decision-making processes, combined with direct database access, can create a "black box" effect, making it challenging to explain how certain conclusions or recommendations were reached.

4.6 Data Quality and Integrity

- Inconsistent Data Handling: AI agents interacting directly with databases may handle data inconsistently, potentially misinterpreting or misusing certain data fields, leading to data quality issues.

- Version Control and Data Lineage: Tracking changes and maintaining data lineage becomes more complex when AI agents have direct write access to databases, potentially compromising data integrity over time.

5 Security Vulnerabilities in AI and Large Language Models

As AI systems, particularly Large Language Models (LLMs), become more integrated into various applications, their security vulnerabilities warrant thorough examination. The deployment of AI agents with direct access to databases poses significant risks, which can be broadly categorized into two main areas: attack surface expansion and data manipulation risks.

Table 1: Security Vulnerabilities in AI Systems

| Attack Surface Expansion | New entry points for attackers Exploitation of AI system weaknesses Increased attack vector complexity | Unauthorised data access Breach of sensitive information Exploitation of system vulnerabilities |

| --- | --- | --- |

| Data Manipulation Risks | Prompt injection attacks Manipulation of AI-generated queries Automated attack execution | Data theft and corruption Insertion of false information Large-scale coordinated attacks |

| Privacy Concerns | Unintended data exposure Inclusion of sensitive info in AI outputs | Privacy violations Erosion of user trust |

| API Usage Risks | Exposure of sensitive data to API providers Lack of control over data handling | Data leakage Compliance violations Misuse of confidential information |

| Scalability and Performance | Resource-intensive queries Database overload | System slowdowns Increased vulnerability to DoS attacks |

| Data Integrity Issues | Inconsistent data handling Version control challenges | Data corruption Loss of data lineage |

| Ethical and Bias Concerns | Perpetuation of algorithmic bias Lack of transparency in decision-making | Unfair or discriminatory outcomes Difficulty in explaining AI decisions |

| Compliance Challenges | Difficulty in maintaining clear audit trails Complexity in ensuring regulatory compliance | Non-compliance with data protection laws Legal and financial repercussions |

5.1 Attack Surface Expansion

One of the primary security concerns associated with AI agents accessing databases is the expansion of the attack surface. With direct database access, these AI systems become potential entry points for malicious actors. If an AI agent is compromised, it can act as a gateway, allowing attackers to access and exploit the underlying database. This can lead to several detrimental outcomes:

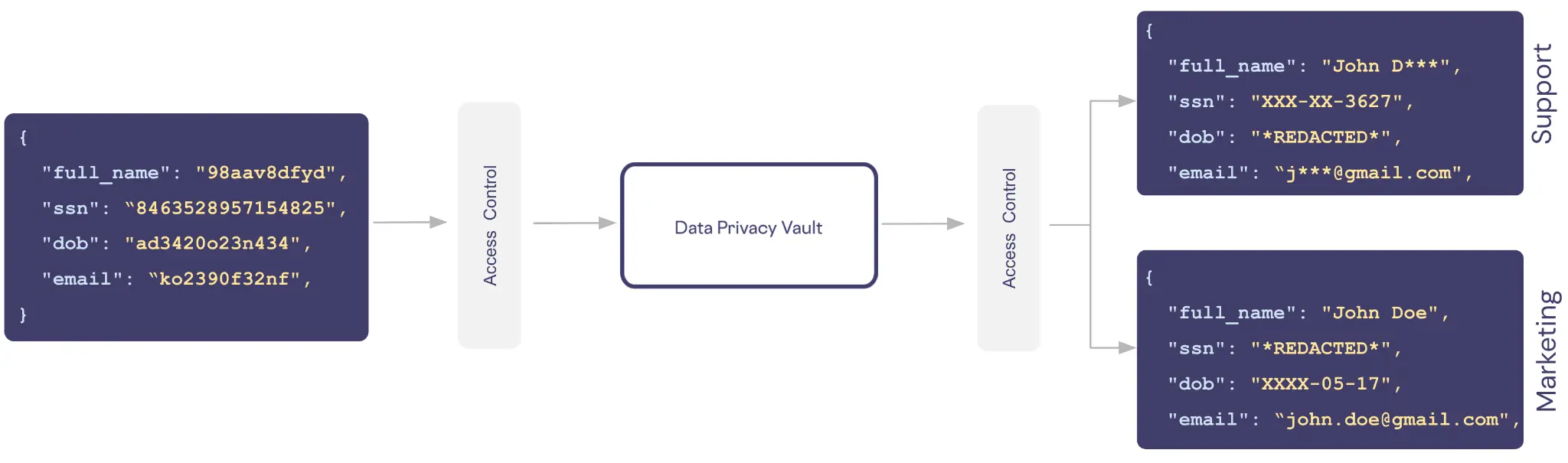

- Unauthorized Data Access: Attackers may gain access to sensitive information, including personal data, financial records, and proprietary business information, leading to privacy violations and potential legal ramifications for organizations.

<details>

<summary>extracted/5930689/media/PrivacyAI.png Details</summary>

### Visual Description

\n

## Diagram: Data Privacy Vault Access Flow

### Overview

The image depicts a diagram illustrating data access flow to a "Data Privacy Vault" from two sources: a source labeled with a hexadecimal string and another labeled "Support" and "Marketing". The diagram highlights access control mechanisms and the types of data shared.

### Components/Axes

The diagram consists of three main rectangular components:

1. **Source 1:** A rectangular block on the left side containing JSON-formatted data.

2. **Data Privacy Vault:** A central, larger rectangular block labeled "Data Privacy Vault".

3. **Destination 1 & 2:** Two rectangular blocks on the right side, labeled "Support" (top) and "Marketing" (bottom), also containing JSON-formatted data.

Arrows with the label "Access Control" indicate the flow of data from the sources to the vault and from the vault to the destinations.

### Detailed Analysis or Content Details

**Source 1 (Left):**

The JSON data contains the following fields:

* `"full_name"`: `"98aav8dfyd"`

* `"ssn"`: `"8463528957154825"`

* `"dob"`: `"ad3420o23n434"`

* `"email"`: `"ko2390f32nf"`

**Data Privacy Vault (Center):**

The central block is labeled "Data Privacy Vault" and acts as an intermediary. The arrows indicate data flows *through* this vault.

**Destination 1: Support (Top-Right):**

The JSON data contains the following fields:

* `"full_name"`: `"John D***"` (partially redacted)

* `"ssn"`: `"*XXX-XX-3627*"` (partially redacted)

* `"dob"`: `"*REDACTED*"` (fully redacted)

* `"email"`: `"j***@gmail.com"` (partially redacted)

**Destination 2: Marketing (Bottom-Right):**

The JSON data contains the following fields:

* `"full_name"`: `"John Doe"`

* `"ssn"`: `"*REDACTED*"` (fully redacted)

* `"dob"`: `"XXXX-05-17"` (partially redacted)

* `"email"`: `"john.doe@gmail.com"`

### Key Observations

* Data is flowing from an unidentified source (represented by the hexadecimal string) and from a "Support" and "Marketing" department to the "Data Privacy Vault".

* The data shared with "Support" and "Marketing" is redacted, suggesting that the vault implements data masking or anonymization techniques.

* The source data contains a mix of alphanumeric strings and what appears to be a date of birth.

* The "Support" destination receives a partially redacted name and SSN, while the "dob" is fully redacted.

* The "Marketing" destination receives a fully redacted SSN and a partially redacted date of birth.

* The source data uses a non-standard date format.

### Interpretation

This diagram illustrates a data governance model where sensitive data is centralized in a "Data Privacy Vault". Access to this data is controlled, and different departments ("Support" and "Marketing") receive different levels of access based on their needs. The redaction of sensitive fields (SSN, DOB) suggests that the vault enforces data privacy regulations and minimizes the risk of data breaches. The use of a hexadecimal string as a source identifier could indicate data originating from a system or application with a unique ID. The diagram highlights the importance of access control and data masking in protecting sensitive information. The differing levels of redaction between the "Support" and "Marketing" destinations suggest a role-based access control system is in place. The diagram implies a need for data minimization, providing only the necessary information to each department.

</details>

Figure 2: Unauthorized Data Access

- Exploitation of Vulnerabilities: The integration of AI agents may introduce new vulnerabilities that malicious actors can exploit. For example, if the AI system relies on outdated software or lacks proper security updates, attackers could take advantage of these weaknesses to execute attacks.

- Increased Attack Vector Complexity: The dynamic nature of AI and LLMs introduces complexities in identifying and mitigating attack vectors. Attackers may employ sophisticated techniques that exploit these complexities, making it challenging for traditional security measures to adequately protect the system.

5.2 Data Manipulation Risks and Prompt Injections

Data manipulation risks pose a significant threat to the integrity and reliability of AI systems [13]. Malicious actors can employ various techniques to manipulate AI-generated queries and responses, resulting in severe consequences:

- Prompt Injection Attacks: Attackers can exploit prompt injection vulnerabilities by crafting inputs that manipulate the AI’s behaviour [14]. For instance, an attacker might input maliciously crafted prompts that cause the AI to generate misleading or harmful outputs, which could then be executed in a database query context. This could result in unauthorized data modifications or even complete data deletion.

- Data Theft and Corruption: By manipulating the AI’s queries or responses, attackers can gain unauthorized access to sensitive data, leading to data theft. Furthermore, they may corrupt the data by inserting false or misleading information into the database, undermining data integrity and potentially leading to erroneous decision-making based on compromised data.

- Automated Attack Execution: The ability of AI agents to autonomously execute commands increases the risk of large-scale attacks. For instance, if an attacker can manipulate the AI to generate a series of malicious database queries, they could inadvertently launch a coordinated attack, overwhelming the database with unauthorized access attempts or data manipulation requests.

5.3 API Usage and Sensitive Data Exposure

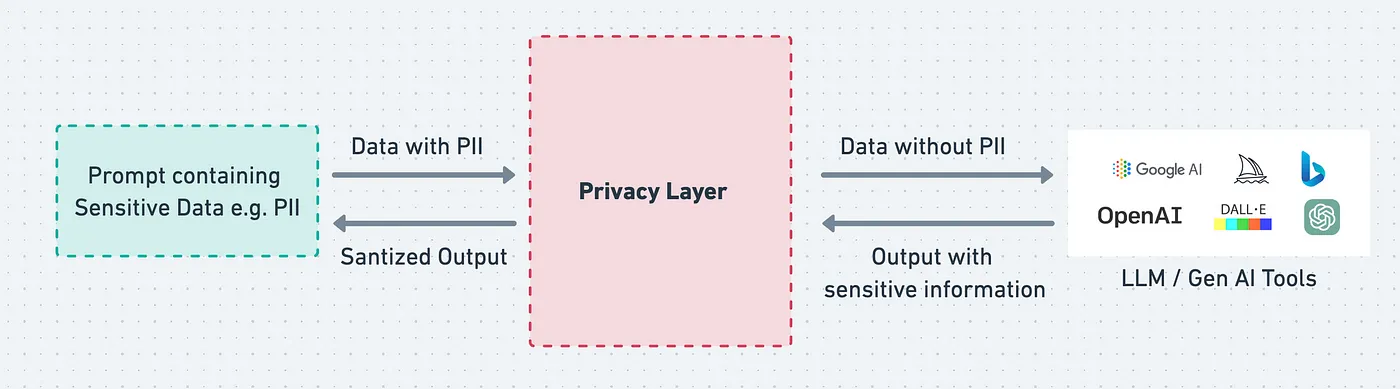

Companies utilizing LLM APIs may inadvertently expose sensitive information to the API providers. When organizations send queries containing personal or confidential data to an external API, they run the risk of disclosing sensitive information that can be misused. This vulnerability arises from several factors:

- Lack of Control Over Data Handling: Organizations often have limited visibility into how API providers manage and store the data sent to them. Sensitive information could be logged, analyzed, or even used to improve the AI model, leading to potential privacy breaches.

- Inadvertent Data Leakage: Even well-meaning API calls can lead to data leakage. For instance, if a query inadvertently includes sensitive user information or internal business data, this data could be accessed by API providers and other parties involved in the processing chain.

<details>

<summary>extracted/5930689/media/OrgData.png Details</summary>

### Visual Description

\n

## Diagram: Privacy Layer for LLM/Gen AI Tools

### Overview

This diagram illustrates a privacy layer designed to protect Personally Identifiable Information (PII) when interacting with Large Language Models (LLMs) and Generative AI tools. It depicts the flow of data, both with and without PII, through this layer, and highlights the sanitization process.

### Components/Axes

The diagram consists of three main sections:

1. **Input Section (Left):** Labeled "Prompt containing Sensitive Data e.g. PII".

2. **Privacy Layer (Center):** A large, rectangular block labeled "Privacy Layer".

3. **Output Section (Right):** Labeled "LLM / Gen AI Tools" and includes logos for Google AI, OpenAI, DALL-E, and Microsoft Azure AI.

There are two data flows depicted:

* **Forward Flow:** "Data with PII" enters the Privacy Layer from the Input Section. "Data without PII" exits the Privacy Layer towards the LLM/Gen AI Tools.

* **Reverse Flow:** "Sanitized Output" exits the Input Section and enters the Privacy Layer. "Output with sensitive information" exits the Privacy Layer.

Arrows indicate the direction of data flow.

### Detailed Analysis or Content Details

The diagram shows a process where a prompt containing sensitive data (PII) is submitted. This prompt is processed by the "Privacy Layer," resulting in two outputs:

1. **Data without PII:** This is the sanitized data that is sent to the LLM/Gen AI Tools.

2. **Sanitized Output:** This is the output from the Privacy Layer that is sent back to the original prompt source.

The LLM/Gen AI Tools then generate an output, which may contain sensitive information. This output is sent back through the Privacy Layer, resulting in:

1. **Output with sensitive information:** This is the output from the Privacy Layer that is sent back to the original prompt source.

The logos in the Output Section are:

* **Google AI:** Represented by a multi-colored logo.

* **OpenAI:** Represented by a green logo.

* **DALL-E:** Represented by a rainbow-colored logo.

* **Microsoft Azure AI:** Represented by a blue logo.

### Key Observations

The diagram emphasizes the importance of a privacy layer in protecting sensitive data when using LLMs and Gen AI tools. It highlights the two-way flow of data and the sanitization process that occurs within the Privacy Layer. The inclusion of logos from major AI providers suggests this is a general architecture applicable to various platforms.

### Interpretation

This diagram illustrates a crucial architectural pattern for responsible AI development. The "Privacy Layer" acts as a gatekeeper, preventing the direct exposure of PII to LLMs and Gen AI tools. The two-way flow suggests a feedback loop where outputs are also scrutinized for potential leakage of sensitive information. The diagram implies that the Privacy Layer performs both input sanitization (removing PII from prompts) and output filtering (removing PII from generated responses).

The presence of multiple LLM/Gen AI tool logos indicates that this privacy layer is intended to be a generalized solution, applicable across different platforms. The diagram doesn't specify *how* the Privacy Layer achieves sanitization (e.g., redaction, masking, differential privacy), but it clearly establishes the *need* for such a mechanism. The diagram suggests a system designed to balance utility (allowing access to AI capabilities) with privacy (protecting sensitive data).

</details>

Figure 3: Demonstration of an intermediary layer setup to prevent leakage of sensitive organisational data

- Compliance Risks: Sharing sensitive information with third-party API providers may result in non-compliance with data protection regulations such as GDPR or HIPAA. Organizations must ensure that any data shared with external services adheres to legal standards for data handling and user consent.

5.4 Mitigating Security Vulnerabilities

To combat these vulnerabilities, organisations must adopt a proactive approach to security. This includes implementing layered security measures, such as robust access controls, encryption, and continuous monitoring of AI systems. Regular security assessments and updates are essential to identify and address vulnerabilities before they can be exploited.

Additionally, educating AI developers and users about potential security threats associated with AI and LLMs is crucial [15]. By fostering a culture of security awareness, organisations can better equip themselves to respond to and mitigate the risks posed by these evolving technologies.

6 Conclusion

The integration of AI agents, particularly those with direct access to database systems, presents significant privacy and security challenges that cannot be overlooked. This research has highlighted the multifaceted vulnerabilities associated with AI agent interactions, including the expansion of the attack surface, risks of data manipulation, and the unintended exposure of sensitive information through the use of LLM APIs. As AI technologies continue to advance, the potential for exploitation of these vulnerabilities by malicious actors increases, necessitating a proactive approach to security in AI systems.

Organizations must prioritize the development of robust security frameworks that encompass comprehensive access controls, continuous monitoring, and adherence to data protection regulations. Moreover, fostering a culture of security awareness among AI developers and users is critical to mitigating risks associated with AI and LLMs.

Ultimately, while the potential benefits of AI systems are immense, the challenges of ensuring data privacy and security are equally significant. Addressing these vulnerabilities is essential for maintaining user trust and achieving the responsible deployment of AI technologies across various industries. Continued research and innovation in this area will be crucial to creating secure, ethical, and efficient AI systems that can operate safely in a datarich environment.

References

- [1] Othmane Friha, Mohamed Amine Ferrag, Burak Kantarci, Burak Cakmak, Arda Ozgun, and Nassira Ghoualmi-Zine. Llm-based edge intelligence: A comprehensive survey on architectures, applications, security and trustworthiness. IEEE Open Journal of the Communications Society, 2024.

- [2] Kim On Chin, Kim Soon Gan, Rayner Alfred, Patricia Anthony, and Dickson Lukose. Agent architecture: An overview. Transactions on science and technology, 1(1):18–35, 2014.

- [3] Yifeng He, Ethan Wang, Yuyang Rong, Zifei Cheng, and Hao Chen. Security of ai agents. arXiv preprint arXiv:2406.08689, 2024.

- [4] Adrián Bazaga, Nupur Gunwant, and Gos Micklem. Translating synthetic natural language to database queries with a polyglot deep learning framework. Scientific Reports, 11(1):18462, 2021.

- [5] G Prashanthi, Sravani Puranam, Sheethal Reddy Vemula, Preethi Doulathbaji, Anusha Bellamkonda, et al. Natural language to sql: Automated query formation using nlp techniques. In E3S Web of Conferences, volume 391, page 01115. EDP Sciences, 2023.

- [6] Austine Unuriode, Olalekan Durojaiye, Babatunde Yusuf, and Lateef Okunade. The integration of artificial i intelligence into d database systems (ai-db integration review). Available at SSRN 4744549, 2023.

- [7] Zhi Jing, Yongye Su, Yikun Han, Bo Yuan, Chunjiang Liu, Haiyun Xu, and Kehai Chen. When large language models meet vector databases: A survey. arXiv preprint arXiv:2402.01763, 2024.

- [8] Daeseung Park, Gi-taek An, Chayapol Kamyod, and Cheong Ghil Kim. A study on performance improvement of prompt engineering for generative ai with a large language model. Journal of Web Engineering, 22(8):1187–1206, 2023.

- [9] Mark Ryan, Josephina Antoniou, Laurence Brooks, Tilimbe Jiya, Kevin Macnish, and Bernd Stahl. Research and practice of ai ethics: a case study approach juxtaposing academic discourse with organisational reality. Science and Engineering Ethics, 27:1–29, 2021.

- [10] Ana Luize Corrêa Bertoncini and Mauricio C Serafim. Ethical content in artificial intelligence systems: A demand explained in three critical points. Frontiers in Psychology, 14:1074787, 2023.

- [11] Tula Masterman, Sandi Besen, Mason Sawtell, and Alex Chao. The landscape of emerging ai agent architectures for reasoning, planning, and tool calling: A survey. arXiv preprint arXiv:2404.11584, 2024.

- [12] Saharnaz Dilmaghani, Matthias R Brust, Grégoire Danoy, Natalia Cassagnes, Johnatan Pecero, and Pascal Bouvry. Privacy and security of big data in ai systems: A research and standards perspective. In 2019 IEEE international conference on big data (big data), pages 5737–5743. IEEE, 2019.

- [13] Jan von der Assen, Jamo Sharif, Chao Feng, Christian Killer, Gérôme Bovet, and Burkhard Stiller. Asset-centric threat modeling for ai-based systems. In 2024 IEEE International Conference on Cyber Security and Resilience (CSR), pages 437–444. IEEE, 2024.

- [14] Daniel Wankit Yip, Aysan Esmradi, and Chun Fai Chan. A novel evaluation framework for assessing resilience against prompt injection attacks in large language models. In 2023 IEEE Asia-Pacific Conference on Computer Science and Data Engineering (CSDE), pages 1–5. IEEE, 2023.

- [15] Yupeng Hu, Wenxin Kuang, Zheng Qin, Kenli Li, Jiliang Zhang, Yansong Gao, Wenjia Li, and Keqin Li. Artificial intelligence security: Threats and countermeasures. ACM Computing Surveys (CSUR), 55(1):1–36, 2021.