# Trustworthy XAI and Its Applications

**Authors**: \fnmA.S.M Anas\surFerdous, \fnmAbdur\surRashid, \fnmFatema Tuj Johura\surSoshi, \fnmParag\surBiswas, \fnmAngona\surBiswas, \fnmKishor\surDatta Gupta

> nasim.abdullah@ieee.org

> anasferdous001@gmail.com

> rabdurrashid091@gmail.com

> fatemasoshi@gmail.com

> text2parag@gmail.com

> angonabiswas28@gmail.com

> kgupta@cau.edu[[[[[

[1] \fnm MD Abdullah Al \sur Nasim

1,6] \orgdiv Research and Development Department, \orgname Pioneer Alpha, \orgaddress \city Dhaka, \country Bangladesh

2] \orgdiv Department of Biomedical Engineering, \orgname Bangladesh University of Engineering and Technology, \orgaddress \city Dhaka, \country Bangladesh

4] \orgdiv Msc in Data Science and Analytics, \orgname University of Hertfordshire, \orgaddress \city Hatfield, \country UK

3, 5] \orgdiv MSEM Department, \orgname Westcliff university, \orgaddress \city California, \country United States

7] \orgdiv Department of Computer and Information Science, \orgname Clark Atlanta University, \city Georgia, \country USA

Abstract

Artificial Intelligence (AI) is an important part of our everyday lives. We use it in self-driving cars and smartphone assistants. People often call it a ”black box” because its complex systems, especially deep neural networks, are hard to understand. This complexity raises concerns about accountability, bias, and fairness, even though AI can be quite accurate. Explainable Artificial Intelligence (XAI) is important for building trust. It helps ensure that AI systems work reliably and ethically. This article looks at XAI and its three main parts: transparency, explainability, and trustworthiness. We will discuss why these components matter in real-life situations. We will also review recent studies that show how XAI is used in different fields. Ultimately, gaining trust in AI systems is crucial for their successful use in society.

keywords: Artificial Intelligence(AI), XAI, Explainable Artificial Intelligence (XAI), Healthcare, Autonomous Vehicles

1 Introduction

The foundations of modern artificial intelligence were laid by philosophers who attempted to define human thought as the mechanical manipulation of symbols, which led to the development of the programmable digital computer [1] in the 1940s. Alan Turing may have written the first article on the topic of AI in 1941, though it is now lost, suggesting that he was at least considering the idea at that time.In his groundbreaking essay ”Computing Machinery and Intelligence” from 1950, Turing first presented the idea of the Turing test to the general public [2]. Turing questioned the feasibility of creating thinking robots in it. John McCarthy first used the term artificial intelligence (AI) in 1956 at the Dartmouth Conference [3], but the first models’ numerous flaws have prevented AI from being widely adopted and used in healthcare.

Many of these limitations were removed with the advent of deep learning in the early 2000s, and we are now entering a new era of technology where AI can be used in clinical practice through risk assessment models that increase diagnostic accuracy and workflow efficiency. Performance of AI systems has improved significantly in recent years, and these new models expand on their capabilities to include text-image synthesis based on almost any prompt, whereas previous systems primarily focused on generating facial images.

<details>

<summary>extracted/6367585/image/a1.png Details</summary>

### Visual Description

## Circular Diagram: Application of AI

### Overview

The image is a circular diagram illustrating the application of Artificial Intelligence (AI) across various industries. The diagram is structured with "Application of AI" at the center, surrounded by industry sectors and specific AI applications within those sectors.

### Components/Axes

* **Center:** "Application of AI"

* **Industries (Inner Ring):** Financial industry, Manufacturing industry, Other industries, Logistics industry, Home furnishing industrial, Retail industry, Security industry, Health care, Electronic commerce.

* **AI Applications (Outer Ring):** Specific applications of AI within each industry, listed as bullet points.

* **Color Coding:** Each industry sector has a distinct color, which is consistent with the corresponding AI applications listed in the outer ring.

### Detailed Analysis

**Financial Industry (Blue):**

* Big data analysis of stock securities

* Industry trend analysis

* Investment risk forecast

**Manufacturing Industry (Tan/Light Brown):**

* Equipment fault prediction

* Product defect detection

* Machine vision positioning

* Man-machine cooperation

**Other Industries (Gray):**

* Education: Unmanned examination and marking

* Agricultural: Real-time monitoring of crop status

* Environmental protection: Intelligent energy consumption monitoring and analysis

* Urban management: Traffic route optimization

**Logistics Industry (Green):**

* UAV delivery

* Automatic pilot

* Automatic sorting

* Intelligent logistics planning

* Intelligent scheduling algorithm

**Home Furnishing Industrial (Yellow):**

* Intelligent speakers

* Remote control

* Environmental monitoring

* Anti-theft alarm

* Programmable control

**Retail Industry (Tan/Light Brown):**

* Unmanned convenience store

* Unmanned warehouse

* Intelligent supply chain

* Intelligent customer flow statistics and analysis

**Security Industry (Blue):**

* Human body analysis

* Vehicle analysis

* Behavior analysis

* Image analysis

**Health Care (Green):**

* Medical robot

* Intelligent drug research and development

* Intelligent diagnosis and treatment

* Intelligent image recognition

* Intelligent health management

**Electronic Commerce (Yellow):**

* Intelligent customer service robot

* Recommendation engine

* Image search

* Sales and inventory forecasts

* Commodity pricing

### Key Observations

* The diagram provides a broad overview of AI applications across diverse industries.

* Each industry has multiple specific AI applications, indicating the widespread adoption of AI technologies.

* The "Other Industries" category includes applications in education, agriculture, environmental protection, and urban management, highlighting the versatility of AI.

### Interpretation

The diagram illustrates the pervasive nature of AI and its potential to transform various sectors. The applications range from automation and optimization (e.g., logistics, manufacturing) to advanced analytics and decision-making (e.g., finance, security). The inclusion of "Other Industries" suggests that AI is not limited to traditional sectors but is also being applied to address societal challenges and improve quality of life. The diagram emphasizes the importance of AI as a key enabler of innovation and progress across multiple domains.

</details>

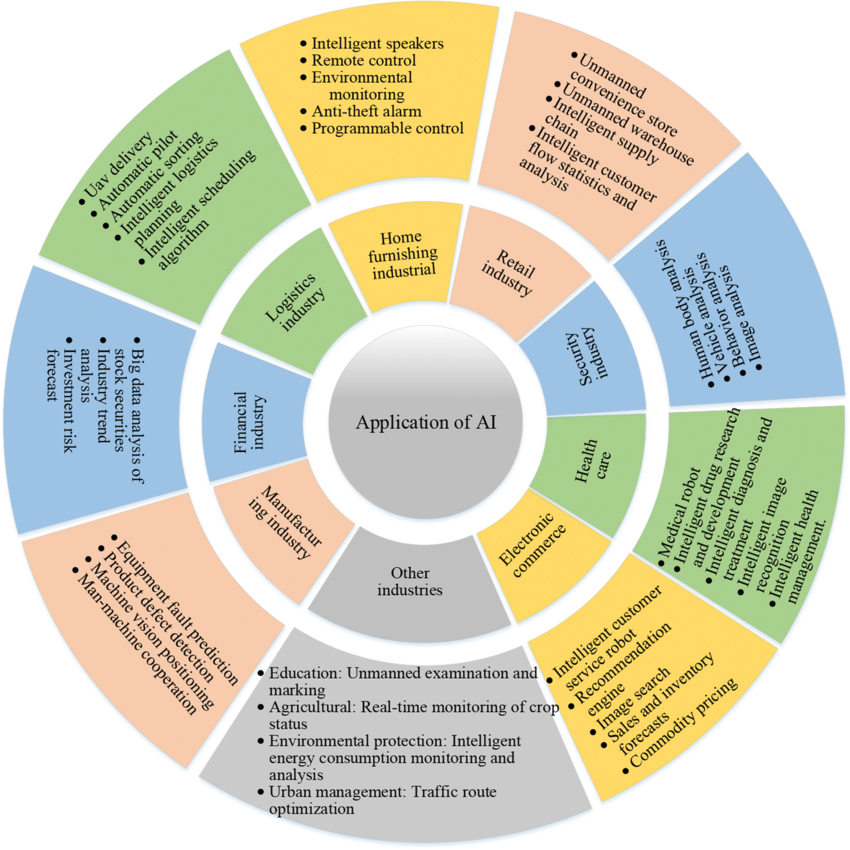

Figure 1: Applications of AI across various domains [4]

The diverse range of fields in which AI is being used already demonstrates its applicability and promise to revolutionize business: AI facilitates activities like sentiment analysis, machine translation, and spam filtering by making it easier for computers to comprehend and produce human language in the discipline of natural language processing (NLP) [5]. Additionally, computer vision [6] makes it possible for computers to understand visual data, which advances areas like facial recognition, object identification, and self-driving cars. Machine learning (ML), which has uses in fraud detection, recommendation systems, predictive analytics, and other domains, has made it possible for computers to learn from data. The design, development, and application of machines are the focus of the AI field of robotics [7].

Many industries, including manufacturing, healthcare, and space exploration, use various machines [8] [9]. Combining artificial intelligence with business intelligence (BI) [10] improves how businesses collect, process, and visualize data. This leads to better decision-making and increased productivity. In healthcare, AI helps diagnose diseases, develop treatments, and provide personalized care, which improves patient outcomes [11], [12], [13]. AI also plays a significant role in education by engaging students, customizing lessons, and automating administrative tasks, resulting in more personalized learning experiences. AI in agriculture increases agricultural output, reduces costs, and ensures environmental sustainability through data-driven strategies. In a similar vein, AI in manufacturing boosts output, efficiency, and quality through work automation and process optimization. AI is changing operations, enhancing services, and changing global industry landscapes in a number of sectors, including banking, retail, energy, transportation [14], handwriting detection [15], and government. AI is widely used in a wide number of industries, as seen in Figure 1. Retail, security, healthcare, e-commerce, manufacturing, banking, logistics and transportation, and home furnishings are some of these sectors. These applications rely on moderately advanced AI technology, such as computer vision, natural language processing, and machine learning.

The contributions of this research can be stated below:

1. Providing an overview of XAI that understands the significance of black box models. For fair and ethical purposes, XAI fosters trust among humans and AI.

1. Discussing Deep Learning based systems that will be consistent and trustworthy. Moreover, recent studies from the literature have been reviewed properly.

1. Providing guidelines regarding XAI that will be helpful for detecting problems from numerous domains.

1. The paper identifies and analyzes three key components of XAI: transparency, explainability, and trustworthiness. It details how these elements are essential for understanding and improving AI systems.

1.1 Third Wave of Artificial Intelligence (3AI)

Most current commercial AI technology is called ”narrow AI.” This means these systems are highly specialized and can only perform a few specific tasks. For example, even the best self-driving cars rely on limited AI systems. Another drawback of today’s AI is its reliance on large training data sets. A typical machine learning program needs tens of thousands of cat photos to recognize cats accurately, while a three-year-old child can do this with just a few examples. The idea of ”Third Wave AI” comes from the need for AI to become more humanlike in various ways to overcome these limitations and achieve its full potential.

<details>

<summary>x1.jpg Details</summary>

### Visual Description

## Diagram: Waves of AI Development

### Overview

The image is a diagram illustrating the four waves of AI development, presented chronologically from left to right. Each wave is associated with a specific time period and key characteristics, represented as bullet points. The diagram uses rounded rectangles to enclose the descriptions of each wave, and curved arrows to suggest a progression from one wave to the next.

### Components/Axes

* **Wave Titles:** First Wave, Second Wave, Third Wave, Fourth Wave. Each title is displayed in a colored rectangle (grey, blue, orange, green respectively) above the description of the wave.

* **Time Periods:** Pre 2010, 2010-2020, 2020-2030, 2030-. These indicate the approximate time frame associated with each wave.

* **Wave Descriptions:** Each wave has a list of bullet points describing its characteristics.

* **Flow Arrows:** Curved arrows connect the waves, indicating the progression of AI development.

### Detailed Analysis or ### Content Details

**First Wave (Pre 2010):**

* Handcrafted/Human programmed

* Traditional Programming

* No learning capability

* Poor handling of uncertainty

**Second Wave (2010-2020):**

* Statistical Models trained on BIG Data

* Neural Networks - Deep Learning

* Individually unreliable

**Third Wave (2020-2030):**

* Models to drive decisions

* Models to explain decisions

**Fourth Wave (2030-):**

* More human-like learning

* Learn from descriptive, contextual models instead of enormous sets of labeled training data

* Learn interactively

### Key Observations

* The diagram presents a chronological progression of AI development, with each wave building upon the previous one.

* The descriptions of each wave highlight the key advancements and challenges associated with that period.

* The diagram suggests a shift from rule-based systems (First Wave) to data-driven models (Second Wave) to more explainable and interactive AI (Third and Fourth Waves).

* The time periods are approximate and serve as a general guideline for the evolution of AI.

### Interpretation

The diagram provides a high-level overview of the evolution of AI, highlighting the key trends and advancements in the field. It suggests a move towards more sophisticated and human-like AI systems that can learn from descriptive data, explain their decisions, and interact with users in a more natural way. The diagram emphasizes the importance of addressing the limitations of earlier AI systems, such as their lack of learning capability, poor handling of uncertainty, and individual unreliability. The progression suggests a future where AI is more integrated into human decision-making processes and capable of learning and adapting in real-time.

</details>

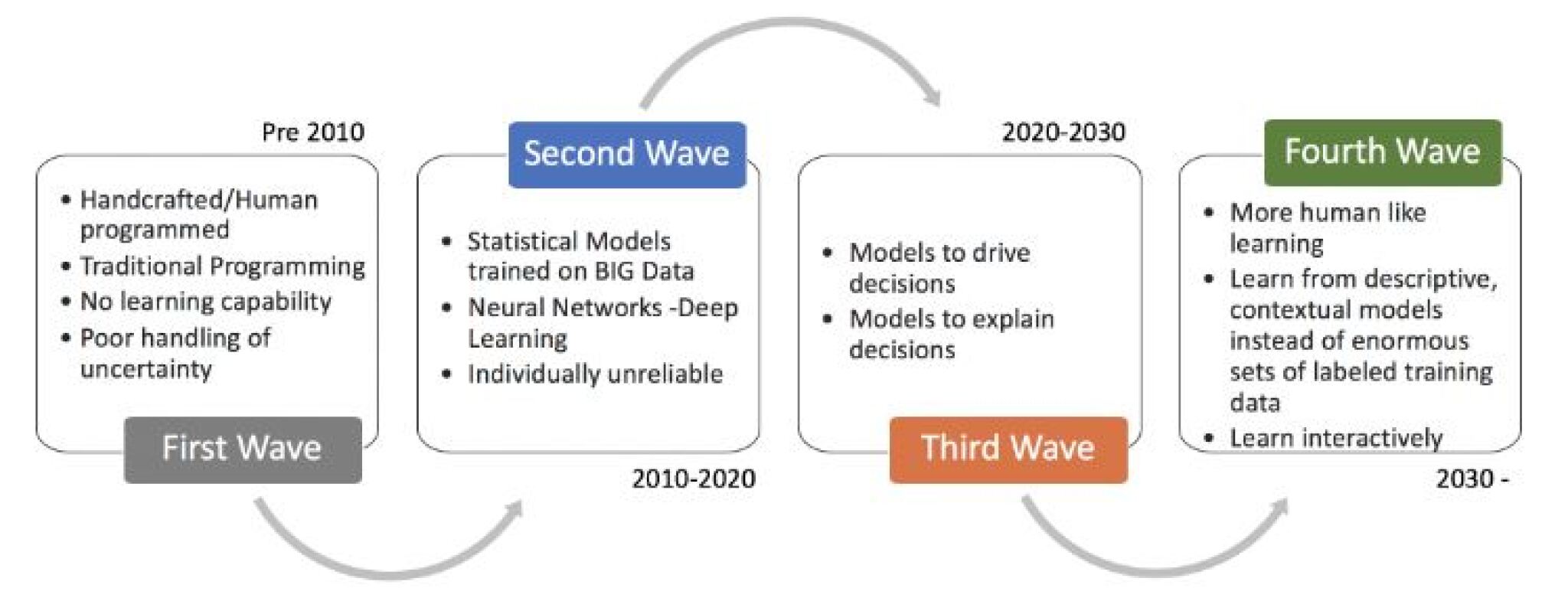

Figure 2: Past, Present, and Future of AI waves. [16]

According to the Defense Advanced Research Projects Agency (DARPA) [17], third-wave AI systems will understand the context of situations, use common sense, and adapt to changes. This will create more natural and intuitive connections between AI systems and people [17]. One of DARPA’s active projects, called XAI, aims to develop these third-wave AI systems. These computers will learn about their environments and the contexts in which they work. They will also build explanations needed to clarify real-world events.

- First Wave AI focused on rules, logic, and built knowledge.

- Second Wave AI introduced big data, statistical learning, and probabilistic techniques.

- The goal of third-wave AI is to develop common sense and the ability to adapt to different contexts.

Tractica [16] predicts that the global market for AI software will grow from about 9.5 billion US dollars in 2018 to 118.6 billion by 2025. This data aims to develop AI systems that can perform tasks accurately while providing explanations that people can understand.

The term ”third wave” refers to the advancement of AI technologies beyond traditional machine learning. This new phase focuses on creating more advanced systems that can understand context, reason, and think similarly to humans. It draws inspiration from cognitive science and neuroscience, aiming to build AI that can engage with the world in more complex and detailed ways.

XAI, or Explainable Artificial Intelligence, focuses on making AI systems, especially machine learning models, easier to understand. The goal is to help people trust the decisions these systems make. XAI techniques work to explain how AI makes predictions and why it behaves the way it does. This helps users, such as developers, regulators, and everyday users, grasp the key factors that affect AI results.

Developing AI systems that can make accurate predictions or decisions and explain why they did so is a key goal of both the third wave of AI and explainable AI. By using XAI methods in their design, developers can ensure that these advanced AI systems are not only effective but also easy to understand. This will help build user trust and acceptance.

1.2 Concept of Explainable AI

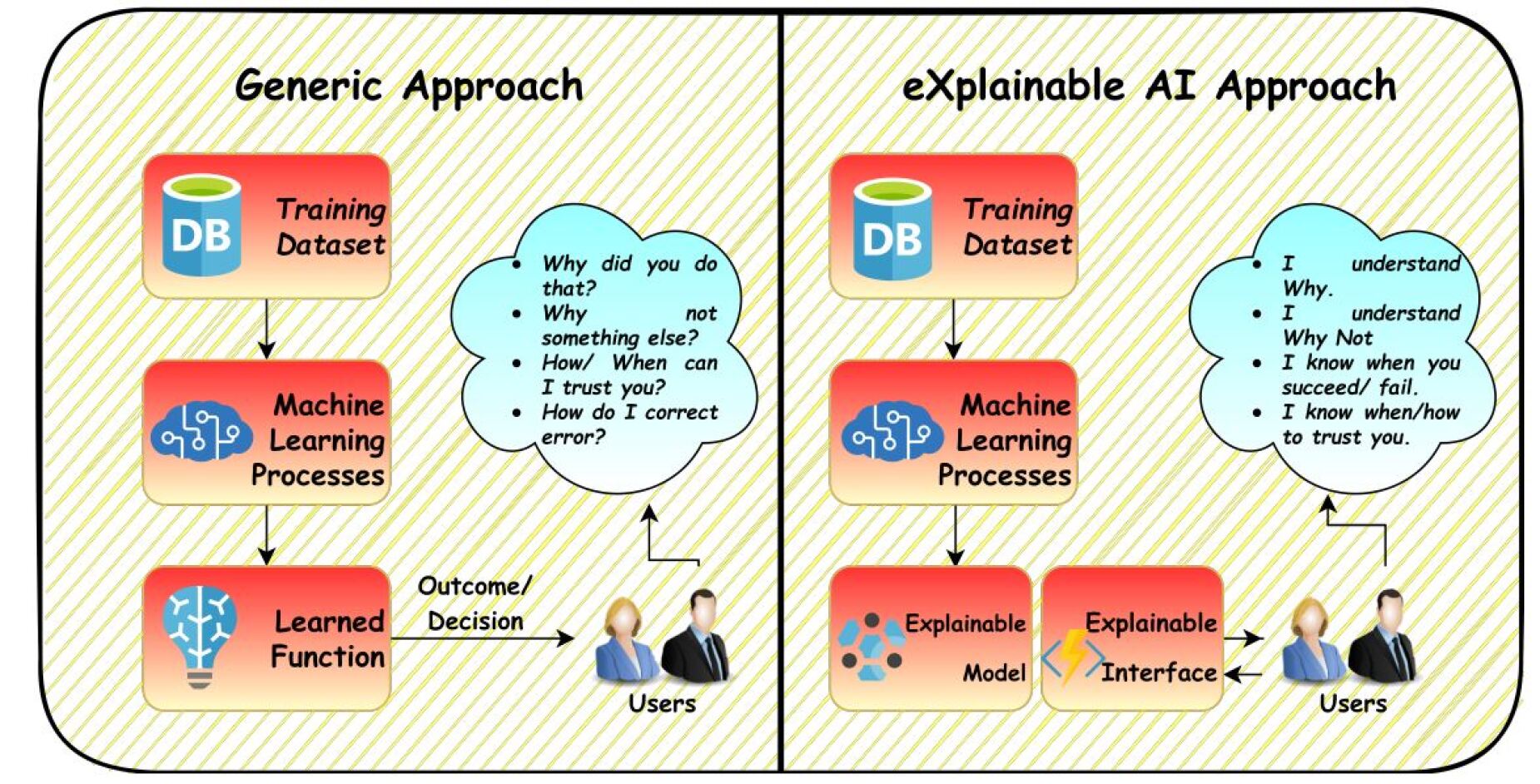

AI often struggles with what is called the ”black box” problem because users do not understand how it works. This can lead to issues like lack of trust, confusion, unfair treatment, and violations of privacy. AI systems can also have hidden biases. Explainable AI (XAI) aims to make AI systems easier to understand, helping users know how they make decisions. The goal of XAI is to make AI safer and more user-friendly. Therefore, we need to look at each part of AI individually and discuss its different aspects [18].

There are two main types of machine learning (ML) techniques: white box and black box models [19]. Experts can easily understand the results of a white box model. However, even specialists may find it hard to grasp the results of a black box model [20]. The XAI algorithm [21] follows three key principles: interpretability, explainability, and transparency. A model is transparent when it clearly explains how it gets its results from training data and how it generates labels from test data. Interpretability means being able to explain findings in a way that others understand [22]. While there’s no single accepted definition of explainability, its value is recognized. One definition describes it as a set of clear features that help make decisions, like classifying or predicting outcomes for specific cases. An algorithm that meets these standards helps document and verify decisions and improves itself based on new data.

<details>

<summary>x2.jpg Details</summary>

### Visual Description

## Diagram: Generic vs. Explainable AI Approach

### Overview

The image presents a comparative diagram illustrating the workflow of a generic machine learning approach versus an explainable AI (XAI) approach. The diagram highlights the key differences in how the models are used and how users interact with them.

### Components/Axes

**Left Side: Generic Approach**

* **Title:** Generic Approach

* **Top Block:** "DB" icon with the label "Training Dataset"

* **Middle Block:** "Machine Learning Processes" with a blue brain icon.

* **Bottom Block:** "Learned Function" with a lightbulb icon.

* **Cloud:** A thought bubble containing questions:

* "Why did you do that?"

* "Why not something else?"

* "How/When can I trust you?"

* "How do I correct error?"

* **Users:** A graphic of two people labeled "Users".

* **Arrows:** Arrows indicate the flow of information from the "Training Dataset" to "Machine Learning Processes" to "Learned Function". An arrow also connects the "Learned Function" to the "Users" with the label "Outcome/Decision". An arrow also connects the "Users" to the thought bubble.

**Right Side: eXplainable AI Approach**

* **Title:** eXplainable AI Approach

* **Top Block:** "DB" icon with the label "Training Dataset"

* **Middle Block:** "Machine Learning Processes" with a blue brain icon.

* **Bottom Block (Left):** "Explainable Model" with a blue icon.

* **Bottom Block (Right):** "Explainable Interface" with a yellow lightning bolt icon.

* **Cloud:** A thought bubble containing statements:

* "I understand Why."

* "I understand Why Not."

* "I know when you succeed/fail."

* "I know when/how to trust you."

* **Users:** A graphic of two people labeled "Users".

* **Arrows:** Arrows indicate the flow of information from the "Training Dataset" to "Machine Learning Processes" to "Explainable Model" and "Explainable Interface". An arrow connects the "Explainable Interface" to the "Users". An arrow also connects the "Users" to the thought bubble.

### Detailed Analysis

**Generic Approach Flow:**

1. A "Training Dataset" (represented by a database icon labeled "DB") feeds into "Machine Learning Processes".

2. The "Machine Learning Processes" block leads to a "Learned Function" (represented by a lightbulb icon).

3. The "Learned Function" provides an "Outcome/Decision" to the "Users".

4. The "Users" have questions about the decision, represented by a thought bubble.

**eXplainable AI Approach Flow:**

1. A "Training Dataset" (represented by a database icon labeled "DB") feeds into "Machine Learning Processes".

2. The "Machine Learning Processes" block leads to an "Explainable Model" and an "Explainable Interface".

3. The "Explainable Interface" provides information to the "Users".

4. The "Users" have a better understanding of the model's decisions, represented by a thought bubble.

### Key Observations

* The primary difference between the two approaches is the inclusion of an "Explainable Model" and "Explainable Interface" in the XAI approach.

* The generic approach leads to user questions and potential distrust, while the XAI approach aims to provide understanding and trust.

* Both approaches start with a "Training Dataset" and "Machine Learning Processes".

### Interpretation

The diagram illustrates the shift from traditional "black box" machine learning models to more transparent and understandable XAI models. The generic approach, while functional, leaves users questioning the model's decisions and potentially distrustful. The XAI approach addresses this by providing an "Explainable Model" and "Explainable Interface" that allow users to understand the reasoning behind the model's outputs, fostering trust and enabling better decision-making. The diagram highlights the importance of explainability in AI, particularly in applications where transparency and user understanding are critical.

</details>

Figure 3: Explainable Artificial Intelligence (XAI): A look at AI now and tomorrow. [18]

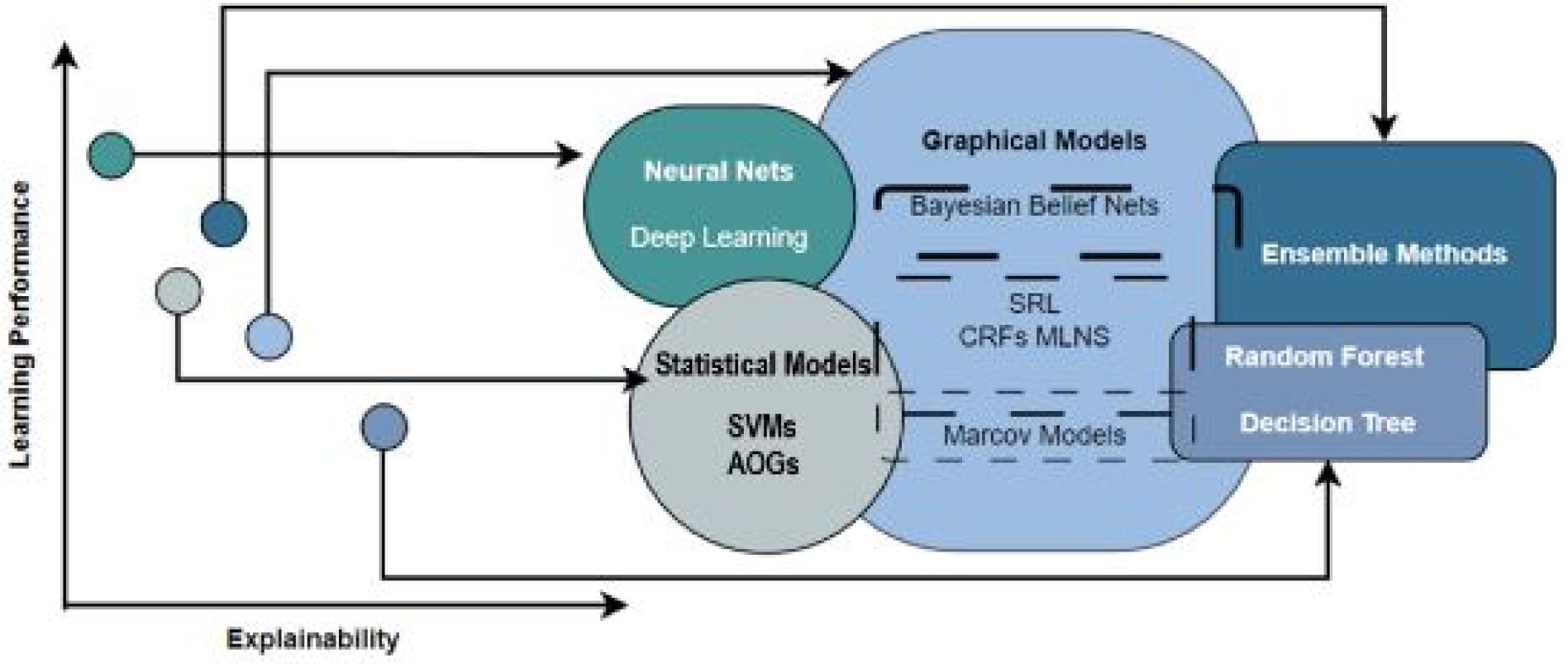

Researchers are studying intelligent systems to understand them better. This is an important topic. Sometimes, a system needs to understand its own workings to comply with rules. Many complex algorithms, shown in Figure 4, balance achieving high accuracy with being explainable.

<details>

<summary>x3.jpg Details</summary>

### Visual Description

## Diagram: Machine Learning Model Comparison

### Overview

The image is a diagram comparing different machine learning models based on their learning performance and explainability. The diagram uses a 2D space with "Learning Performance" on the y-axis and "Explainability" on the x-axis. Different types of models are grouped into clusters, and their relative positions indicate their performance and explainability trade-offs.

### Components/Axes

* **X-axis:** Explainability (horizontal axis)

* **Y-axis:** Learning Performance (vertical axis)

* **Clusters:**

* Neural Nets (includes Deep Learning) - Teal color

* Statistical Models (includes SVMs, AOGs) - Light gray color

* Graphical Models (includes Bayesian Belief Nets, SRL, CRFs MLNS, Markov Models) - Light blue color

* Ensemble Methods (includes Random Forest, Decision Tree) - Dark blue color

### Detailed Analysis

The diagram plots machine learning models in a 2D space defined by "Learning Performance" and "Explainability".

* **Neural Nets (Teal):** Positioned towards the top-left, indicating high learning performance but relatively low explainability. Includes "Deep Learning".

* **Statistical Models (Light Gray):** Positioned towards the bottom-left, indicating lower learning performance but relatively low explainability. Includes "SVMs" and "AOGs".

* **Graphical Models (Light Blue):** Positioned towards the center, suggesting a balance between learning performance and explainability. Includes "Bayesian Belief Nets", "SRL", "CRFs MLNS", and "Markov Models".

* **Ensemble Methods (Dark Blue):** Positioned towards the top-right, indicating high learning performance and relatively high explainability. Includes "Random Forest" and "Decision Tree".

The diagram also includes several data points (circles) connected to the clusters with lines. The positions of these data points on the axes are as follows:

* **Teal Data Point:** High Learning Performance, Low Explainability (connected to Neural Nets)

* **Dark Blue Data Point:** Medium Learning Performance, High Explainability (connected to Ensemble Methods)

* **Light Gray Data Point:** Low Learning Performance, Low Explainability (connected to Statistical Models)

* **Light Blue Data Point:** Medium Learning Performance, Medium Explainability (connected to Graphical Models)

* **Darker Blue Data Point:** High Learning Performance, Low Explainability

### Key Observations

* There is a trade-off between learning performance and explainability.

* Neural Nets offer high learning performance but low explainability.

* Ensemble Methods offer a good balance of both.

* Statistical Models have lower performance and explainability.

* Graphical Models offer a middle ground.

### Interpretation

The diagram illustrates the trade-offs between learning performance and explainability for different machine learning models. It suggests that models like Neural Nets are suitable for tasks where performance is paramount, even at the cost of interpretability. Ensemble Methods offer a good compromise, while Statistical Models might be preferred when explainability is more important than performance. Graphical Models provide a balance between the two. The diagram is useful for selecting the appropriate model based on the specific requirements of a given task.

</details>

Figure 4: Trade-off between AI model accuracy and explainability, highlighting the challenge of balancing performance with interpretability. [23]

1.3 Classification Tree of XAI

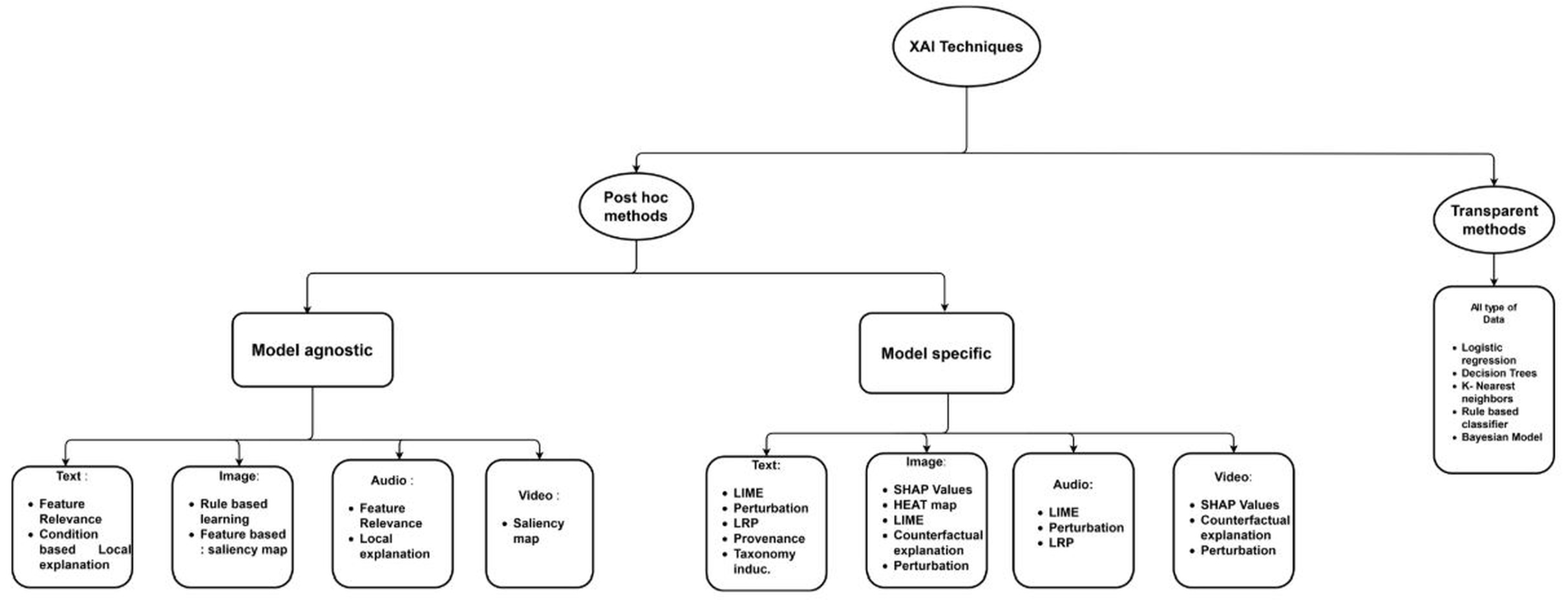

XAI techniques are divided into two categories: transparent and post-hoc methods. A transparent approach is one that represents the model’s capabilities and decision-making process in an easy-to-understand way [24]. Transparent models include Bayesian approaches, decision trees, linear regression, and fuzzy inference systems. Transparent approaches can be useful when the internal feature correlations are highly complex or linear. A comprehensive classification of different XAI methods and approaches related to different types of data is shown in Figure 5.

<details>

<summary>extracted/6367585/image/classification.jpg Details</summary>

### Visual Description

## Diagram: XAI Techniques Classification

### Overview

The image is a flowchart illustrating a classification of Explainable Artificial Intelligence (XAI) techniques. It categorizes XAI techniques into "Post hoc methods" and "Transparent methods." "Post hoc methods" are further divided into "Model agnostic" and "Model specific" approaches. Each of these categories is then broken down by data type: Text, Image, Audio, and Video, with specific techniques listed for each.

### Components/Axes

* **Main Title:** XAI Techniques (located at the top-center of the diagram)

* **First Level Categories:**

* Post hoc methods (located in the center of the diagram)

* Transparent methods (located on the right side of the diagram)

* **Second Level Categories (under Post hoc methods):**

* Model agnostic (located on the left side of the diagram)

* Model specific (located on the right side of the diagram)

* **Data Types:** Text, Image, Audio, Video (listed under both "Model agnostic" and "Model specific")

* **Techniques:** Lists of specific XAI techniques under each data type.

### Detailed Analysis or ### Content Details

**1. XAI Techniques (Top-Level Category):**

* This is the root of the diagram, representing the overall subject.

**2. Post hoc methods:**

* **Definition:** Methods applied after the model has been trained.

* **Sub-categories:**

* **Model agnostic:** Techniques that can be applied to any machine learning model.

* **Text:**

* Feature Relevance

* Condition based Local explanation

* **Image:**

* Rule based learning

* Feature based: saliency map

* **Audio:**

* Feature Relevance

* Local explanation

* **Video:**

* Saliency map

* **Model specific:** Techniques tailored to specific types of models.

* **Text:**

* LIME

* Perturbation

* LRP

* Provenance

* Taxonomy induc.

* **Image:**

* SHAP Values

* HEAT map

* LIME

* Counterfactual explanation

* Perturbation

* **Audio:**

* LIME

* Perturbation

* LRP

* **Video:**

* SHAP Values

* Counterfactual explanation

* Perturbation

**3. Transparent methods:**

* **Definition:** Models that are inherently interpretable.

* **Data Type:** All type of Data

* **Techniques:**

* Logistic regression

* Decision Trees

* K-Nearest neighbors

* Rule based classifier

* Bayesian Model

### Key Observations

* The diagram provides a structured overview of XAI techniques, categorizing them based on their applicability (Post hoc vs. Transparent) and model dependence (Model agnostic vs. Model specific).

* The "Post hoc methods" category is further divided by data type, indicating which techniques are suitable for different types of data.

* "Transparent methods" are presented as inherently interpretable models, applicable to all data types.

### Interpretation

The diagram serves as a taxonomy of XAI techniques, offering a framework for understanding the different approaches to model interpretability. It highlights the distinction between methods that are applied after model training (Post hoc) and models that are inherently interpretable (Transparent). The categorization by data type within "Post hoc methods" suggests that the choice of XAI technique may depend on the nature of the data being analyzed. The diagram is useful for researchers and practitioners in the field of AI to navigate the landscape of XAI techniques and select the most appropriate methods for their specific needs.

</details>

Figure 5: Categorization of Explainable AI (XAI) techniques based on data type, illustrating differences between transparent and posthoc approaches [24]

Posterior approaches are useful for interpreting the complexity of a model, especially when there are nonlinear relationships or high data complexity. When a model does not follow a direct relationship between data and features, posterior techniques can be an effective tool to explain what the model has learned [24]. Inference using local feature weights is provided by transparent methods such as Bayesian classifiers, support vector machines, logistic regression, and K-nearest neighbors. This model category meets three properties: simulability, decomposability, and algorithmic transparency [24].

1.4 Definition of Transparency in Artificial Intelligence

Transparency in XAI is the capacity of an AI system to make its decisions and actions understandable through explanations [25]. Transparency is one of the most important aspects of XAI, rendering AI decisions interpretable and justified. Transparency allows decision-making processes to be closely examined by stakeholders, mitigating risks in applications with societal impact, such as healthcare and finance [26].

The explainability provided by XAI techniques also increases the overall transparency of AI systems. The users are able to examine the decision-making procedure, identify any bias, and analyze the reliability and fairness of the output of the model [27]. Transparent solutions are necessary in areas like the medical field, banking, and autonomous vehicles, where AI-decisions can have significant effects.

By providing meaningful information about the internal processes of AI models, XAI methods assist users in recognizing patterns, comprehending relationships, and revealing biases or errors [27]. Due to the heightened transparency, stakeholders can more effectively develop opinions, ensure the predictions made by the model are accurate, and take the necessary action.

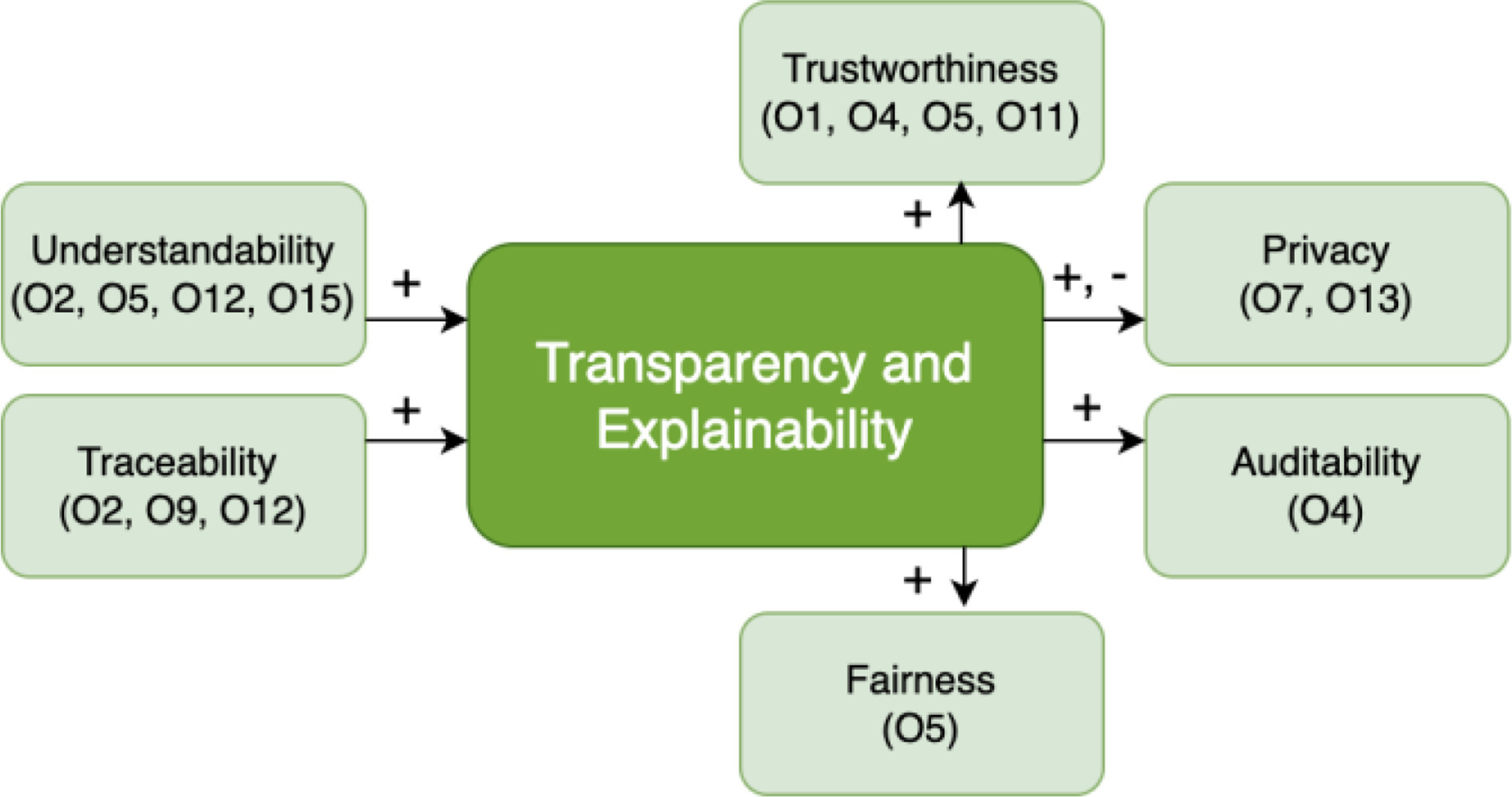

The examination of ethical criteria showed a link between explainability, transparency, and other quality needs. Figure 6 displays nine quality standards related to explainability and openness. The key standards for AI transparency are marked with “O.” For example, O2 focuses on how to interpret models, O15 highlights the importance of traceability, and O5 and O12 ensure that users understand the information. By following these standards, AI models can provide clear and convincing explanations for their outcomes. Keeping these standards improves accountability and helps reduce bias in AI systems.

<details>

<summary>extracted/6367585/image/eai3.jpg Details</summary>

### Visual Description

## Diagram: Transparency and Explainability Relationships

### Overview

The image is a diagram illustrating the relationships between "Transparency and Explainability" and other related concepts like Understandability, Traceability, Trustworthiness, Privacy, Fairness, and Auditability. The diagram uses rounded rectangles to represent each concept, with arrows and "+" or "+,-" symbols indicating the type of relationship.

### Components/Axes

* **Central Concept:** "Transparency and Explainability" (represented by a green rounded rectangle in the center).

* **Related Concepts:**

* Understandability (O2, O5, O12, O15) - top-left, light green rounded rectangle

* Traceability (O2, O9, O12) - bottom-left, light green rounded rectangle

* Trustworthiness (O1, O4, O5, O11) - top-center, light green rounded rectangle

* Privacy (O7, O13) - top-right, light green rounded rectangle

* Fairness (O5) - bottom-center, light green rounded rectangle

* Auditability (O4) - bottom-right, light green rounded rectangle

* **Relationships:** Arrows with "+" or "+,-" symbols indicate the influence of one concept on another.

### Detailed Analysis or ### Content Details

* **Understandability** has a positive (+) influence on Transparency and Explainability.

* **Traceability** has a positive (+) influence on Transparency and Explainability.

* **Transparency and Explainability** has a positive (+) influence on Trustworthiness.

* **Transparency and Explainability** has a positive and negative (+,-) influence on Privacy.

* **Transparency and Explainability** has a positive (+) influence on Auditability.

* **Transparency and Explainability** has a positive (+) influence on Fairness.

### Key Observations

* Transparency and Explainability are central to the diagram, acting as both a recipient of influence (from Understandability and Traceability) and a source of influence (on Trustworthiness, Privacy, Auditability, and Fairness).

* The diagram suggests that Transparency and Explainability can have both positive and negative effects on Privacy.

### Interpretation

The diagram illustrates a network of relationships centered around Transparency and Explainability. It suggests that Understandability and Traceability are key enablers of Transparency and Explainability. In turn, Transparency and Explainability influence Trustworthiness, Privacy, Auditability, and Fairness. The dual influence on Privacy indicates a complex relationship where increased transparency can both enhance and potentially compromise privacy depending on the context. The diagram highlights the interconnectedness of these concepts in a system or process.

</details>

Figure 6: Key qualitative standards (O1-O15) related to explainability and transparency in AI systems, addressing user comprehension, interpretability, and traceability. [28]

The growth of AI systems’ explainability and transparency is facilitated by their understandability. When discussing the significance of understandability, the transparency guidelines addressed three points: 1) ensuring that people comprehend the AI system’s behavior and the methods for using it (O5, O12); 2) communicating in an intelligible manner the locations, purposes, and methods of AI use (O15); and 3) making sure people comprehend the distinction between real AI decisions and those that AI merely assists in making (O2) [28]. Thus, by guaranteeing that people are informed about the use of AI in a straightforward and comprehensive manner, understandability promotes explainability and transparency. The necessity of tracking the decisions made by AI systems is highlighted by traceability in transparency requirements (O2, O12) [28]. In order to ensure openness, Organization O12 also noted how crucial it is to track the data utilized in AI decision-making.

1.5 Transparency Vs Explainability in AI

Explainability and transparency are similar concepts [29]. According to McLarney et al. [30], a transparent AI necessitates that ”Basic elements of data and decisions must be available for inspection during and after AI use.” Transparency is achieved when users have access to their data or can understand how decisions are made. On the other hand, explainability seeks to reveal the reasons for AI’s successes or failures and demonstrate how it utilizes the knowledge and judgment of those it affects. It provides a rational justification for the actions of the AI. Users must clearly know what data is collected, how the AI interprets this data, and how it produces reliable outcomes for each affected individual. This straightforward explanation overlooks the challenges we face when trying to clarify ”black box” algorithms, the context that may be omitted, and the accuracy needed when offering understandable explanations to customers. Therefore, the question arises: is having minimal explainability preferable to having none at all? [30]. Additionally, the belief that explanations can adequately address the dynamic nature of the rich information ecosystem and the appropriateness of managing anomalies are also vital factors to consider.

<details>

<summary>x4.jpg Details</summary>

### Visual Description

## Text Message: Apology and Explanation for Abrupt Conversation End

### Overview

The image is a screenshot of a text message or chat window. The message expresses an apology for an abrupt end to a conversation and provides possible reasons for the interruption, including technical issues and perceived disinterest from the user.

### Components/Axes

The message is contained within a dark gray box, likely representing a chat bubble. The text is white. There are no axes or scales. The message consists of a paragraph of explanation followed by a bulleted list of potential indicators of user disinterest.

### Detailed Analysis or ### Content Details

The text of the message is as follows:

"I'm sorry to hear that our conversation ended abruptly. That was not my intention. Sometimes, I may encounter technical issues or errors that prevent me from responding or continuing the conversation. Other times, I may detect some signals or cues that indicate that the user is not interested or satisfied with the conversation, such as:

* The user does not reply for a long time or leaves the chat box.

* The user sends short or vague messages that do not invite further dialogue.

* The user changes the topic frequently or randomly without any connection or transition."

### Key Observations

The message attempts to provide a rationale for the abrupt end of a conversation, attributing it either to technical difficulties or a perceived lack of engagement from the other party. The bulleted list provides specific examples of behaviors that might be interpreted as disinterest.

### Interpretation

The message is likely an automated response or a pre-written explanation used by a chatbot or customer service representative. It aims to preemptively address user frustration by offering potential reasons for the interruption and suggesting that the user's behavior might have contributed to the issue. The message attempts to shift some of the responsibility for the abrupt ending onto the user by implying that their actions might have signaled a lack of interest.

</details>

Figure 7: Output from the Bing search engine’s conversation feature explaining a failure. a partial screenshot taken using an Android smartphone on March 2, 2023. [17]

It’s interesting to note that although certain AI algorithms evaluate data automatically, more and more AI systems are made to explain how their algorithms operate and the logic behind specific choices [17]. For instance, the conversation mode of the Bing search engine provides succinct explanations of its operation (Fig. 7). Sometimes, end users might find these explanations sufficient, but other times, they would be perplexed as to how an AI came to a particular conclusion or acted in a particular manner. When individuals are more confused by the explanation given, it is unrealistic to expect them to become more computer-literate [17]. Instead, we must improve the justification of the AI system.

1.6 Definition of Trustworthiness in Artificial Intelligence

Creating trustworthy AI systems requires a careful strategy that looks at organizational, ethical, and technical factors. The first step is to set clear standards for trustworthiness. These standards should include accountability, security, privacy, transparency, fairness, and ethical behavior. Using high-quality, unbiased data and clear algorithms that explain AI decisions is essential. Strong security measures and privacy practices protect sensitive information from cyberattacks.

It’s important to create accountability frameworks and follow ethical guidelines to ensure responsible AI use. By focusing on user needs and constantly monitoring and updating the systems, AI can stay reliable over time. Applying these principles across all stages of the AI process allows organizations to develop systems that are explainable, equitable, ethical, and robust, which fosters stakeholder and user trust.

<details>

<summary>extracted/6367585/image/eai4.png Details</summary>

### Visual Description

## Diagram: Trustworthy AI Components

### Overview

The image is a diagram illustrating the components of Trustworthy AI. It presents three main categories: Ethics of Algorithms, Ethics of Data, and Ethics of Practice, each with sub-categories listed below them. The diagram uses a top-down approach, starting with "Trustworthy AI" at the top and branching out to the three ethical categories.

### Components/Axes

* **Main Node:** "Trustworthy AI" (located at the top-center, in a green circle)

* **Category 1:** "Ethics of Algorithms" (located on the left)

* Sub-category 1: "Respect for Human Autonomy"

* Sub-category 2: "Prevention of Harm"

* Sub-category 3: "Fairness"

* Sub-category 4: "Explicability"

* **Category 2:** "Ethics of Data" (located in the center)

* Sub-category 1: "Human-centred"

* Sub-category 2: "Individual Data Control"

* Sub-category 3: "Transparency"

* Sub-category 4: "Accountability"

* Sub-category 5: "Equality"

* **Category 3:** "Ethics of Practice" (located on the right)

* Sub-category 1: "Responsibility"

* Sub-category 2: "Liability"

* Sub-category 3: "Codes and Regulations"

All categories and sub-categories are contained within rectangular boxes with rounded corners, filled with a light yellow color.

### Detailed Analysis or ### Content Details

The diagram visually represents a hierarchical structure. "Trustworthy AI" is the overarching concept, which is then broken down into three key ethical areas. Each ethical area is further detailed with specific principles or considerations.

* **Ethics of Algorithms:** Focuses on ensuring algorithms are respectful, safe, fair, and understandable.

* **Ethics of Data:** Emphasizes the importance of human-centered data practices, individual control over data, transparency, accountability, and equality.

* **Ethics of Practice:** Highlights the need for responsibility, liability, and adherence to codes and regulations in the practice of AI.

### Key Observations

* The diagram provides a structured overview of the ethical considerations for AI.

* The sub-categories under each ethical area offer specific guidance for developing and deploying AI systems responsibly.

* The diagram suggests that Trustworthy AI is a multi-faceted concept, encompassing algorithms, data, and practical implementation.

### Interpretation

The diagram illustrates a framework for achieving Trustworthy AI by addressing ethical concerns related to algorithms, data handling, and practical application. It emphasizes the importance of human-centered design, fairness, transparency, and accountability in AI development and deployment. The diagram serves as a visual aid for understanding the key components of ethical AI and can be used as a guide for developing and implementing AI systems responsibly.

</details>

Figure 8: The three key components of XAI: Algorithmic Ethics, Data Ethics, and Practice Ethics. [31]

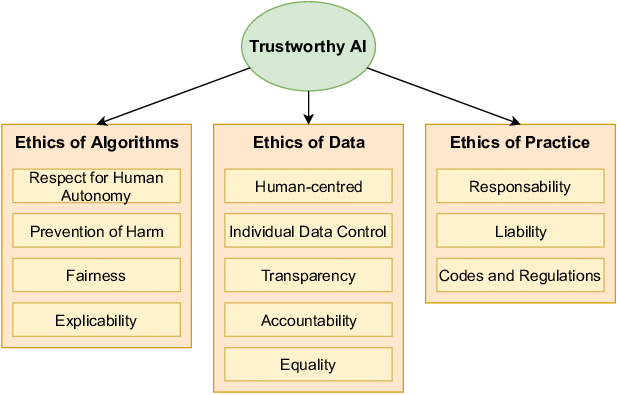

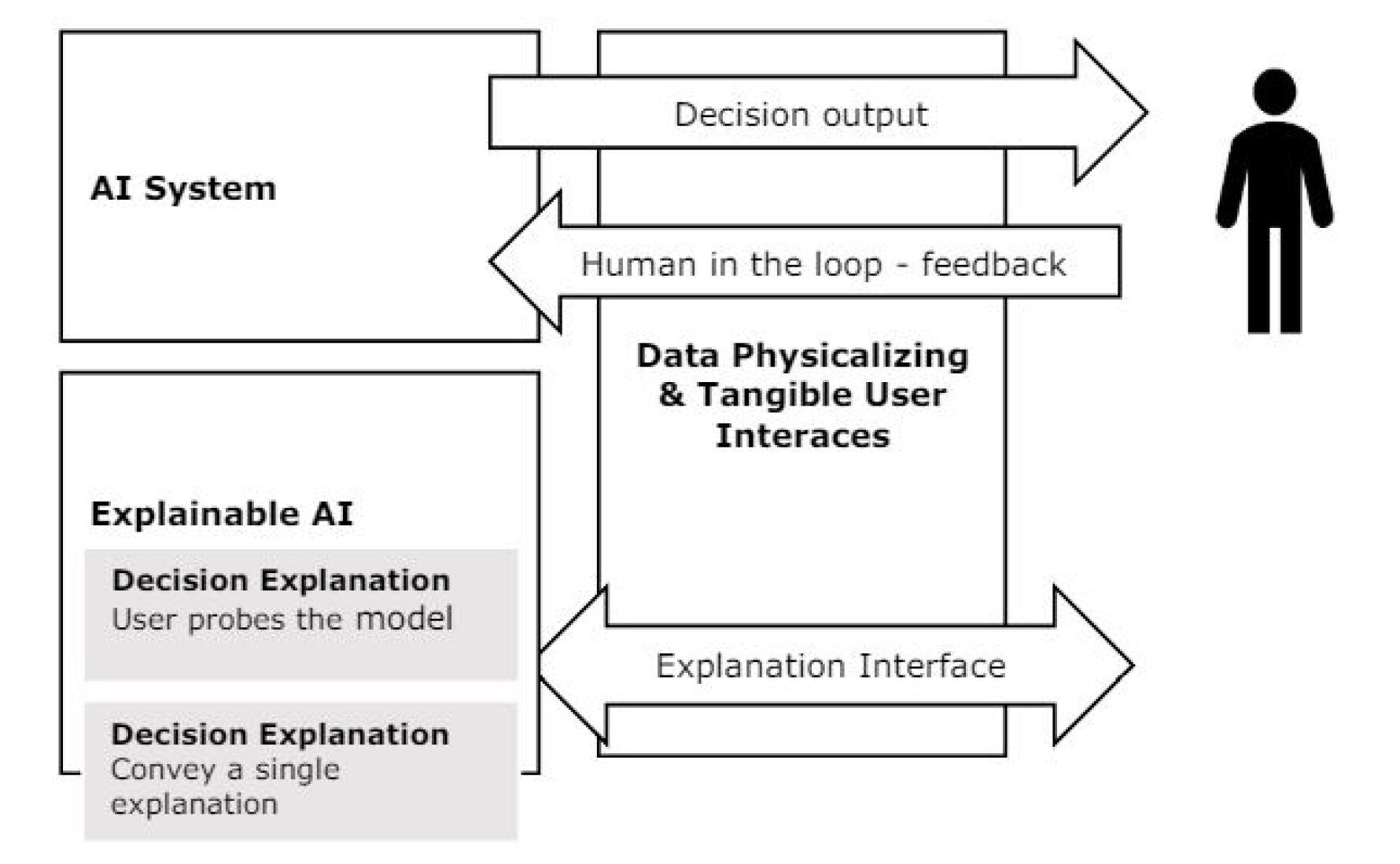

The three elements illustrated in Figure 8 —algorithmic ethics, data ethics, and practice ethics—intersect to create responsible AI. These elements define a data-centered way to handle ethical issues [31]. However, several open challenges still remain with respect to dealing with ethical issues in AI systems. In the work [31], authors give the vision of Trustworthy AI, which mentions that:

1. Human agency and oversight: AI systems have to enable human freedom. They need to facilitate user choice, safeguard fundamental rights, and enable human control. This will assist in developing an equitable and just society.

1. Security and technical robustness: Security and technical proficiency are crucial to prevent damage. In order to enable an AI system to operate efficiently and reduce risks, its creators ought to consider potential risks when designing it. They range from environmental alterations where the system will operate to attacks by malicious individuals.

1. Data protection and data governance: Privacy is a fundamental right that has been highly compromised with the vast amounts of data that artificial intelligence systems gather. It is necessary to protect individual privacy to prevent potential harm. For this purpose, robust data governance must be in place. This includes making sure that the information being utilized is precise and applicable. Furthermore, there is a necessity to establish definite rules for data access and how data must be treated while maintaining the integrity of privacy.

1. Transparency: Explainability and transparency are pretty much dependent on each other. The key objective is to make data, technology, and business models clear. In today’s age, which is the age of pervasive technology, transparency has become a must. It aids customers in comprehending the huge volumes of data collected and the ensuing benefits.

1. Fairness, diversity, and nondiscrimination: Including several voices in AI systems is vital to achieve XAI. We need to involve all individuals who may be affected to ensure equal treatment and access. Fairness and this requirement come hand in hand.

1. Social and environmental welfare: We have to think about the environment and community in seeking justice and doing no harm. We should finance research on AI solutions to global issues. This will make AI systems environmentally friendly and sustainable. AI is supposed to be for the good of all people, including future generations..

1. Accountability: Accountability and fairness are essential in the context of AI. We need to have systems of holding AI systems accountable for their actions and generated results. Accountability needs to be a constituent part of AI development, deployment, and use, both during and following the activities.

1.7 Impact of XAI on Zero Trust Architecture (ZTA)

Zero Trust Architecture (ZTA) is a security system that always checks every request, no matter where it comes from, and does not assume trust automatically. Explainable AI (XAI) helps ZTA by ensuring that decisions made by AI in security are clear and reasonable.

XAI is especially useful in identity verification, access control, and spotting unusual behavior. AI models analyze how users behave and identify any suspicious activities. By adding explainability, security analysts can better understand and confirm AI-driven security rules. This reduces false alarms and speeds up response times.

For example, AI-driven network monitoring systems that use ZTA principles can explain why a specific access attempt looks suspicious. This explanation builds trust in automated cybersecurity decisions [32].

1.8 An Overview of Necessities for Reliable AI

Despite heated societal discussions, the requirements for trustworthy AI remain ambiguous and are handled inconsistently by numerous organizations and organizations. Globally, accountability, explainability, verifiability, and fairness are all part of the Fairness, Responsibility, Accuracy, Verifiability, and Accountability in Machine Learning (FAT-ML) principles [33]. Explainability, fairness, privacy, and robustness are just a few of the many needs that will be examined in this study (Table 1).

Table 1: Conditions necessary for trustworthy artificial intelligence (AI)

| Explainability | To help consumers comprehend, the method by which the AI model generates its output might be demonstrated. |

| --- | --- |

| Fairness | Regardless of certain protected variables, the AI model’s output can be shown. |

| Privacy | It is feasible to prevent issues with personal data that might arise while the AI is being developed. |

| Robustness | The AI model can fend against outside threats while continuing to operate correctly. |

2 XAI Vs AI

XAI improves AI systems by focusing on transparency, clear explanations, and accountability. It offers understandable reasons for decisions, which helps users trust the system and makes it easier to assess fairness compared to traditional ”black box” methods.

The main difference between reliable XAI and traditional AI is how they make decisions. While AI can give accurate forecasts or suggestions, reliable XAI emphasizes the need to explain the steps that lead to these results. Clear explanations from XAI systems allow users to judge the fairness and reliability of AI-generated outcomes.

To improve security and maintain transparency in AI-driven cybersecurity, we need to integrate XAI into Zero Trust Architecture (ZTA). When explainability methods clarify why certain decisions are made, people can better understand and trust the AI-driven access control and behavioral analytics in ZTA. As we face compliance and operational challenges, future cybersecurity frameworks will rely more on AI automation. It will be essential to ensure that these AI systems can be easily explained [34].

XAI focuses on more than just providing explanations; it also considers ethical issues. AI development processes that follow the principles of Fairness, Accountability, and Transparency (FAT) help ensure that AI systems meet ethical and legal standards. By prioritizing ethical standards, XAI aims to reduce biases, discrimination, and other harmful effects of AI technology. Trustworthy AI is an approach that emphasizes user safety and transparency. Responsible AI developers clearly explain to clients and the public how the technology works, what it is meant for, and its limitations, since no model is perfect.

Table 2: Seven Requirements to Meet in Order to Develop Reliable AI

| Human Authority and Supervision | Artificial intelligence technology ought to uphold human agency and basic rights, instead of limiting or impeding human autonomy. | The right to get human assistance | Recital 71, Art 22 |

| --- | --- | --- | --- |

| Robustness and Safety | Systems must be dependable, safe, robust enough to tolerate mistakes or inconsistencies, and capable of deviating from a totally automated decision | Art 22 | |

| Data Governance and Privacy | Individuals should be in total control of the information that is about them, and information about them should not be used against them | Notification and information access rights regarding the logic used in automated processes | Art 13, 14, and 15 |

| Transparency | Systems using AI ought to be transparent and traceable | The right to get clarification | Recital 71 |

| Diversity and Fairness | AI systems have to provide accessibility and take into account the whole spectrum of human capacities, requirements, and standards | Right to not have decisions made only by machines | Art 22 |

| Environmental and Social Well-Being | AI should be utilized to promote social change, accountability, and environmental sustainability | Accurate knowledge regarding the importance and possible consequences of making decisions exclusively through automation | Art 13, 14, and 15 |

| Accountability | Establishing procedures to guarantee that AI systems and their outcomes are held accountable is essential | Right to be informed when decisions are made only by machines | Art 13, 14 |

3 Applications of XAI

Authentic XAI has numerous uses in sectors where accountability, interpretability, and transparency are essential. XAI can provide an explanation for a diagnosis or therapy recommendation in medical diagnosis and recommendation systems. Financial institutions can employ XAI for risk assessment, fraud detection, and credit scoring. XAI can help attorneys with contract analysis, lawsuit prediction, and legal research. In autonomous vehicles, XAI plays a significant role in providing context for the decisions made by the AI systems, particularly in high-stakes scenarios such as accidents or unanticipated roadside incidents. XAI can be applied to process optimization, predictive maintenance, and quality control in manufacturing settings. By offering justifications for automated responses or suggestions in chatbots and virtual assistants, XAI can improve customer service. By providing an explanation for the recommendations and assessments made by adaptive learning systems, XAI can help with individualized learning. By providing an explanation for the recommendations and assessments made by adaptive learning systems, XAI can help with individualized learning. We shall concentrate on a few particular applications in this section and go into detail about them.

3.1 Application of XAI in Medical Science

The field of artificial intelligence (AI) is rapidly growing on a global scale, particularly in healthcare, which is a hot topic for research [35]. There are numerous opportunities to utilize AI technology in the healthcare sector, where the well-being of individuals is at stake, due to its significant relevance and the vast amounts of digital medical data that have been collected [36]. AI has enabled us to perform tasks quickly that were previously unfeasible with traditional technologies.

The trustworthiness and openness of AI systems are becoming increasingly important, especially in areas like healthcare. As AI is used more in medical decision-making, people are worried about how reliable and understandable its results are. These worries highlight the need to evaluate AI models carefully to make sure their predictions are based on important and verifiable factors. In critical situations like medicine, proving that AI systems are credible is vital for their safe and effective use.

In the medical field, clinical decision support systems (CDSS) utilize AI technology to assist healthcare professionals with critical tasks such as diagnosis and treatment planning [37]. While these systems aim to support healthcare practitioners, misuse can have severe consequences in situations where lives are at risk. For example, false alarms, which are common in scenarios involving urgent patients, can lead to exhaustion among medical personnel.

The study [38] significantly contributes to medical skin lesion diagnostics in several ways. First, it modifies an existing explainable AI (XAI) technique to boost user confidence and trust in AI systems. This change involves developing an AI model that can distinguish between different types of skin lesions. The study uses synthetic examples and counter-examples to create explanations that highlight the key features influencing classification decisions. The research [38] trains a deep learning classifier with the ISIC 2019 dataset using the ResNet architecture. This allows professionals to use the explanations to reason effectively. Overall, the study’s main contributions lie in its refinement and evaluation of the XAI technique in a real-world medical setting, its analysis of the latent space, and its thorough user study to assess how effective the explanations are, particularly among experts in the field.

This research paper [39] discusses how to recognize brain tumors in MRI images using two effective algorithms: fuzzy C-means (FCM) and Artificial Neural Network (ANN). The authors aim to make the tumor segmentation process more understandable and improve accuracy in identifying tumors. Their main goal is to enhance tools that help doctors diagnose brain tumors more accurately.

This research offers two key benefits. First, it helps identify brain cancers in medical images more precisely, which is crucial for early diagnosis and treatment. Second, by incorporating XAI principles into the segmentation process, the researchers make their models’ decisions clearer and easier to understand for patients and medical experts. In summary, this increased clarity boosts the overall trust and acceptance of AI-driven systems in medical image analysis within clinical settings.

This study [40] discusses how AI and machine learning can help diagnose whole slide images (WSIs) in pathology. While AI can improve accuracy and efficiency, concerns exist about its reliability because it can be hard to understand. To address these issues, the article suggests using explainable AI methods, which help clarify how AI makes decisions. By adding XAI, pathology systems become more transparent and trustworthy, especially for critical tasks like diagnosing diseases. The study also introduces HistoMapr-Breast, a software tool that uses XAI to assist with breast core biopsies.

A recent study examines the importance of making sure AI systems in healthcare are accurate and strong, especially regarding how easy they are to understand and how well they can resist attacks [41]. As AI becomes more common in medical settings, it’s crucial to verify that the predictions these systems make rely on trustworthy features. To tackle this challenge, researchers have proposed various methods to improve model interpretability and explainability. The study shows that adversarial attacks can affect a model’s explainability, even when the model has strong training. Additionally, the authors introduce two types of attack classifiers: one that tells apart harmless and harmful inputs, and another that determines the nature of the attack.

This research paper [42] looks at explainable machine learning in cardiology. It discusses the challenges of understanding complex prediction models and how these models affect important healthcare decisions. The study explains the main ideas and methods of explainable machine learning, helping cardiologists understand the benefits and limitations of this approach. The goal is to improve decision-making in clinical settings by offering guidance on when to use easy-to-understand models versus complex ones. This can help improve patient outcomes while ensuring accountability and transparency in predictions.

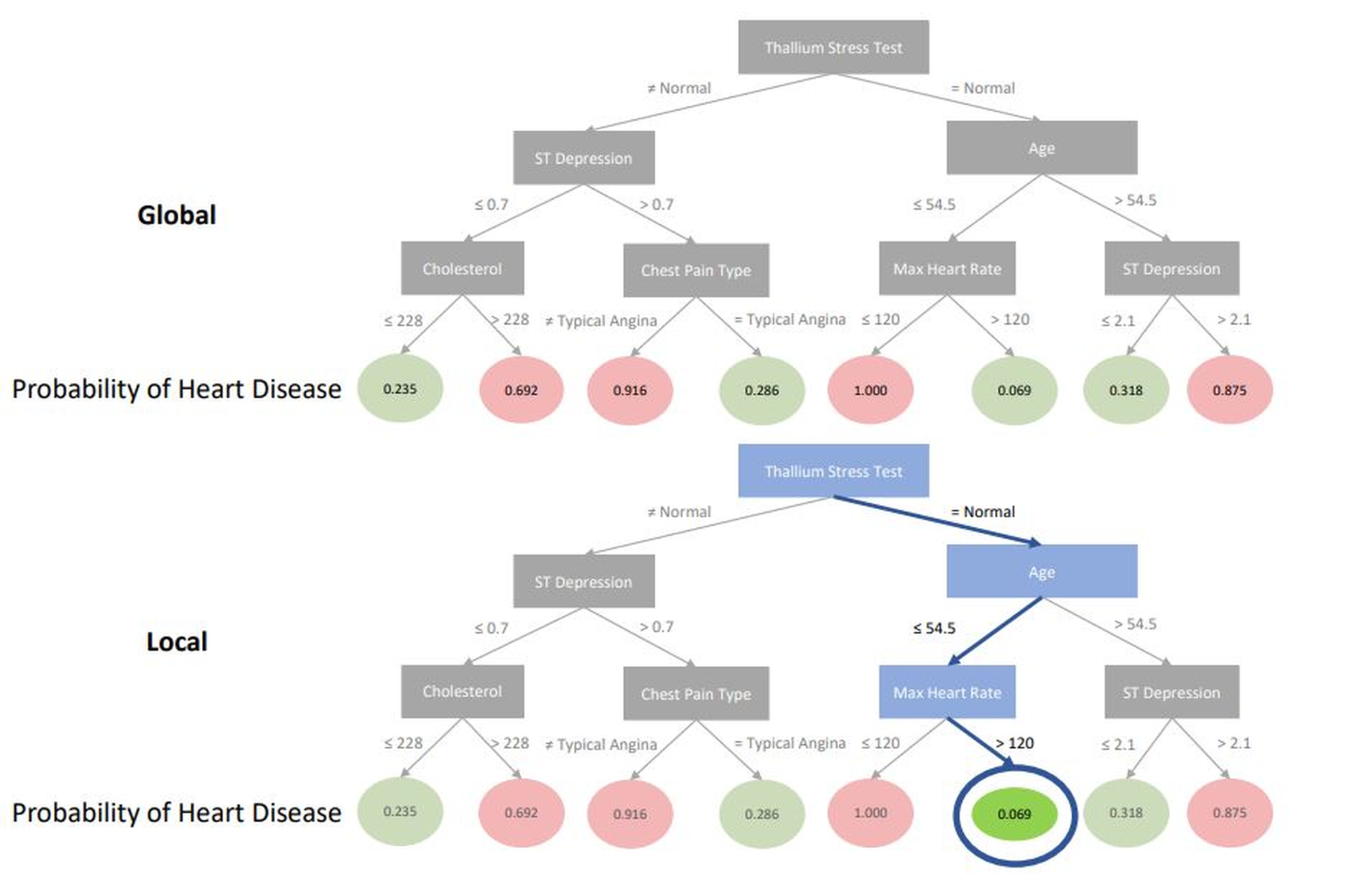

Figure 9 shows a decision tree created from the predictions of a random forest model. This global tree diagram illustrates how the random forest works overall. By following a patient’s path through the tree, individual predictions can be examined. This type of explanation is beneficial because it clarifies both the general functioning of the model and the reasoning behind specific predictions. Decision trees are suitable for fields like cardiology because they use rule-based reasoning similar to clinical decision guidelines.

<details>

<summary>extracted/6367585/image/eai5.png Details</summary>

### Visual Description

## Decision Tree: Probability of Heart Disease - Global vs. Local

### Overview

The image presents two decision trees, labeled "Global" and "Local," illustrating the probability of heart disease based on various factors. The trees use a series of binary splits based on medical test results and patient characteristics to arrive at a probability score. The nodes are colored green for lower probability and red for higher probability of heart disease. The "Local" tree highlights a specific path with a blue line and a blue-bordered node.

### Components/Axes

* **Nodes:** Rectangular nodes represent decision points based on features like "Thallium Stress Test," "Age," "ST Depression," "Cholesterol," "Chest Pain Type," and "Max Heart Rate."

* **Branches:** Lines connecting the nodes represent the outcomes of the decisions (e.g., "≠ Normal" or "= Normal").

* **Leaf Nodes:** Circular nodes at the bottom represent the final probability of heart disease, with values ranging from 0.069 to 1.000.

* **Probability of Heart Disease:** Label indicating the values in the leaf nodes.

* **Global:** Label indicating the first decision tree.

* **Local:** Label indicating the second decision tree.

### Detailed Analysis

**Global Decision Tree:**

* **Root Node:** "Thallium Stress Test" splits into "≠ Normal" and "= Normal."

* **If Thallium Stress Test ≠ Normal:**

* "ST Depression" splits at ≤0.7 and >0.7.

* If ST Depression ≤0.7: "Cholesterol" splits at ≤228 (probability 0.235, green) and >228 (probability 0.692, red).

* If ST Depression >0.7: "Chest Pain Type" splits at ≠ Typical Angina (probability 0.916, red) and = Typical Angina (probability 0.286, green).

* **If Thallium Stress Test = Normal:**

* "Age" splits at ≤54.5 and >54.5.

* If Age ≤54.5: "Max Heart Rate" splits at ≤120 (probability 1.000, red) and >120 (probability 0.069, green).

* If Age >54.5: "ST Depression" splits at ≤2.1 (probability 0.318, green) and >2.1 (probability 0.875, red).

**Local Decision Tree:**

* The "Local" tree is identical in structure to the "Global" tree, but a specific path is highlighted in blue.

* **Highlighted Path:**

* "Thallium Stress Test" = Normal (blue line)

* "Age" ≤54.5 (blue line)

* "Max Heart Rate" >120 (blue line)

* Resulting Probability: 0.069 (green node with blue border)

### Key Observations

* The decision trees use a combination of test results (Thallium Stress Test, ST Depression) and patient characteristics (Age, Cholesterol, Chest Pain Type, Max Heart Rate) to estimate the probability of heart disease.

* Higher cholesterol levels and ST depression generally correlate with a higher probability of heart disease.

* The "Local" tree highlights a scenario where a normal Thallium Stress Test, younger age (≤54.5), and higher maximum heart rate (>120) result in a low probability of heart disease (0.069).

### Interpretation

The decision trees provide a visual representation of how different factors contribute to the probability of heart disease. The "Global" tree shows the overall decision-making process, while the "Local" tree emphasizes a specific scenario and its outcome. The highlighted path in the "Local" tree suggests a case where, despite other potential risk factors, the combination of a normal Thallium Stress Test, younger age, and higher maximum heart rate significantly reduces the estimated probability of heart disease. The trees could be used to help doctors assess patient risk and make informed treatment decisions.

</details>

Figure 9: A model using random forests predicts heart disease by analyzing both local and global decision trees. The global diagram starts by examining whether a patient’s thallium stress test results are normal. If the test shows a problem, the model looks at the patient’s ST depression next. The local graphic shows the specific pathway a patient took in the model, explaining the reasons for their individual prediction. For example, a patient under 54.5 years old, with a maximum heart rate that is high and normal thallium stress test results, has a very low chance of having heart disease [42].

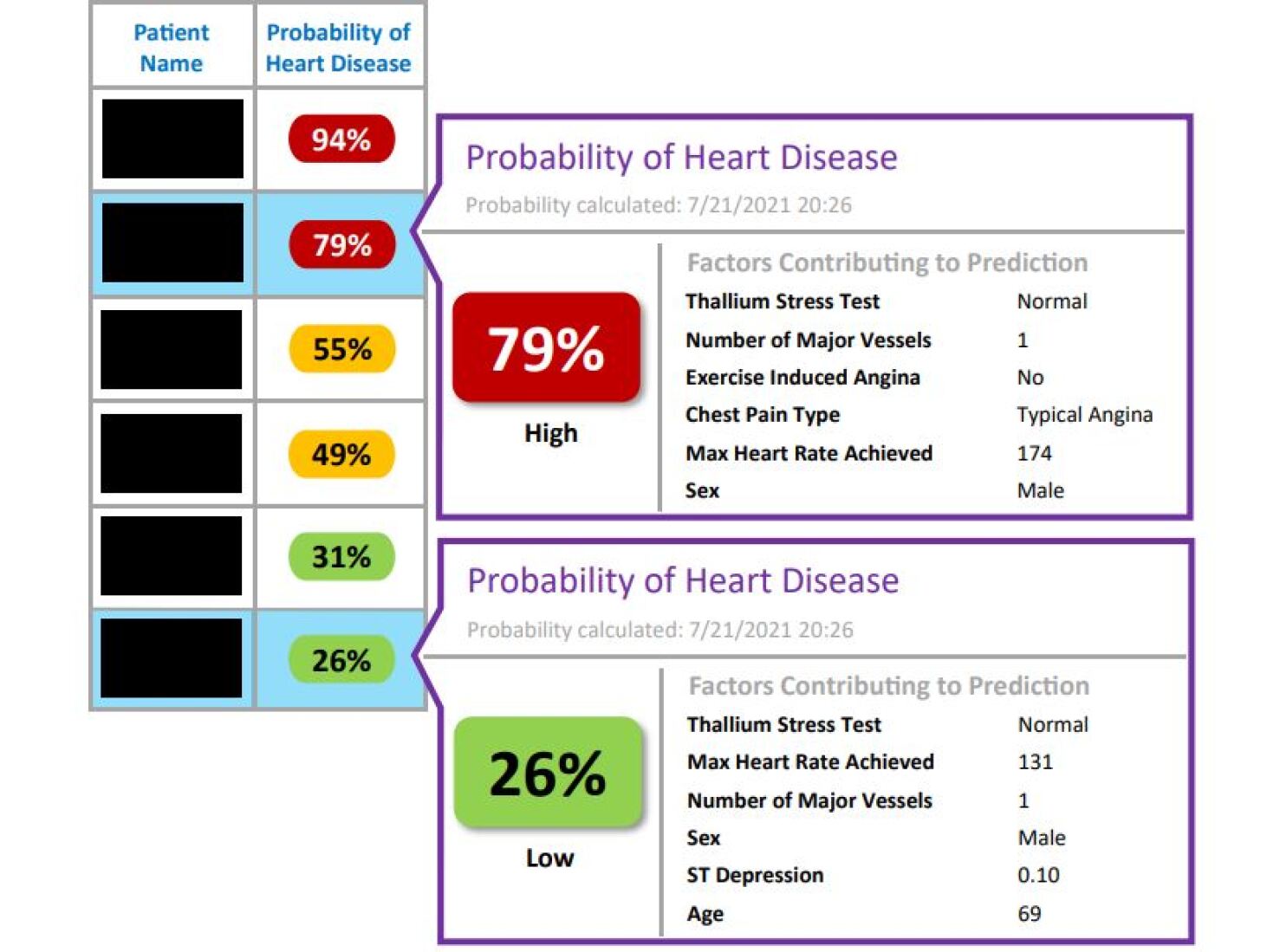

Figure 10 shows the LIME explanations for our heart failure model’s two local predictions. The authors explain how these predictions serve as a clinical decision support tool in Epic, which is an electronic health record designed for doctors (Epic Systems Corporation, Verona, Wisconsin, USA). This kind of explanation helps to clarify the clinical factors that affect each prediction. Importantly, this type of explanation can be added to an EHR, which may improve the practical use of a complex model by making forecasts and clear explanations easy to integrate into clinical work.

<details>

<summary>x5.jpg Details</summary>

### Visual Description

## Patient Heart Disease Probability Report

### Overview

The image presents a patient heart disease probability report, displaying the calculated probability of heart disease for multiple patients along with factors contributing to the prediction for two specific patients. The report includes patient names (blacked out for privacy), their corresponding heart disease probability percentages, and associated risk levels (High, Low).

### Components/Axes

* **Columns:** The report is structured with two primary columns: "Patient Name" and "Probability of Heart Disease".

* **Probability of Heart Disease:** This column displays the calculated probability as a percentage, with associated color-coding and risk level labels.

* **Risk Levels:** The risk levels are categorized as "High" and "Low".

* **Factors Contributing to Prediction:** This section lists specific factors used in the probability calculation, such as Thallium Stress Test results, Number of Major Vessels, Exercise Induced Angina, Chest Pain Type, Max Heart Rate Achieved, Sex, ST Depression, and Age.

* **Calculation Date:** The probability calculation date is 7/21/2021 20:26.

### Detailed Analysis or ### Content Details

**Patient Data:**

* **Patient 1:** Probability of Heart Disease: 94% (Color: Red)

* **Patient 2:** Probability of Heart Disease: 79% (Color: Red)

* **Patient 3:** Probability of Heart Disease: 55% (Color: Yellow)

* **Patient 4:** Probability of Heart Disease: 49% (Color: Yellow)

* **Patient 5:** Probability of Heart Disease: 31% (Color: Green)

* **Patient 6:** Probability of Heart Disease: 26% (Color: Green)

**Detailed Patient Information (Patient with 79% probability):**

* Probability of Heart Disease: 79% (Color: Red)

* Risk Level: High

* Factors Contributing to Prediction:

* Thallium Stress Test: Normal

* Number of Major Vessels: 1

* Exercise Induced Angina: No

* Chest Pain Type: Typical Angina

* Max Heart Rate Achieved: 174

* Sex: Male

**Detailed Patient Information (Patient with 26% probability):**

* Probability of Heart Disease: 26% (Color: Green)

* Risk Level: Low

* Factors Contributing to Prediction:

* Thallium Stress Test: Normal

* Max Heart Rate Achieved: 131

* Number of Major Vessels: 1

* Sex: Male

* ST Depression: 0.10

* Age: 69

### Key Observations

* The probability of heart disease varies significantly among the patients, ranging from 26% to 94%.

* Higher probability percentages are associated with a "High" risk level, while lower percentages are associated with a "Low" risk level.

* The factors contributing to the prediction differ between the two detailed patient examples, highlighting the individualized nature of the risk assessment.

* The patient with a 79% probability has a higher Max Heart Rate Achieved (174) compared to the patient with a 26% probability (131).

* The patient with a 26% probability has an ST Depression value of 0.10 and an age of 69, factors not listed for the patient with 79% probability.

### Interpretation

The report suggests a personalized approach to assessing heart disease risk, where individual factors are considered to calculate the probability of heart disease. The color-coding and risk level labels provide a quick visual assessment of each patient's risk. The detailed patient information sections offer insights into the specific factors that contribute to the calculated probability, allowing for a more comprehensive understanding of the risk assessment. The differences in contributing factors between the two detailed patients highlight the importance of considering individual patient characteristics when evaluating heart disease risk.

</details>

Figure 10: Heart disease prediction explanation produced using Local Interpretable Model-Agnostic Explanations (LIME). This illustration shows how clinical decision assistance can be integrated into an Epic electronic health record by means of a local explanation utilizing the LIME algorithm. To help clinicians identify patients who are likely to be at a high risk of heart disease, probabilities are color-coded. To improve the predictability and actionability of the results for doctors, the clinical factors that are most significant to the prediction are shown on the right [42].

For medical AI to work reliably and be widely used, we need to do a lot of research and reach an agreement on important features like explainability, fairness, privacy, and reliability [33]. We must meet clear requirements and standards in any healthcare setting that uses AI, and we need to update these regularly. Additionally, we should establish laws that clarify who is responsible if something goes wrong with a medical AI whether that’s the designers, researchers, healthcare workers, or patients [43].

3.2 Explainability and Interpretability of Autonomous Systems

Explainability and interpretability are crucial concepts in the context of autonomous systems, referring to the ability to understand the decisions and behaviors of these systems. Explainability involves an autonomous system’s capacity to provide clear justifications for its actions and choices [44]. This clarity is essential for fostering acceptance and confidence in AI systems, especially in critical fields such as banking, healthcare, and autonomous vehicles.

While explainability and interpretability are closely related, interpretability focuses more on understanding the internal mechanisms and processes of the autonomous system [45]. An interpretable system offers users insight into the factors and criteria that influence its decision-making, enabling them to grasp how the system arrived at its conclusions.

The research paper The research article [18] focuses on trust and dependability in autonomous systems. Autonomous systems have the potential for system operation, rapid information dissemination, massive data processing, working in hazardous environments, operating with greater resilience and tenacity than humans, and even astronomical examination [46], [47]. Following years of research and development, today’s automated technologies represent the peak of progress in computer recognition, responsive systems, user-friendly interface design, and sensing automation.

According to [44], the global market for automotive intelligent hardware, operations, and innovation is projected to grow significantly, increasing from $1.25billionin2017to$ 28.5 billion by 2025. Intel’s research on the expected benefits of autonomous vehicles indicates that implementing these technologies on public roads could reduce annual commute times by 250 million hours and save over 500,000 lives in the United States between 2035 and 2045 [44]. Modern cars utilize artificial intelligence for various functions, including intelligent cruise control, automatic driving and parking, and blind-spot detection (Figure 11).

Authors [18] describe the challenges of autonomous systems, like, people sometimes tend to be overly excited about the potential of new ideas and ignore, or at least appear to be unaware of, the potential drawbacks of cutting-edge developments. Even in the early stages of robotics and autonomous system implementation, humanity preferred to put up with faulty goods and services, but they have gradually come to understand the importance of trustworthy and dependable autonomous systems. Numerous examples have demonstrated how operators’ use of automation is greatly impacted by trustworthiness.

<details>

<summary>x6.jpg Details</summary>

### Visual Description

## Autonomous Vehicle Scene Analysis

### Overview

The image depicts a street scene as viewed from inside an autonomous vehicle. The vehicle's dashboard is visible, displaying information about the current driving situation. The scene includes pedestrians, cyclists, parked cars, and street infrastructure, all marked with bounding boxes and directional indicators.

### Components/Axes

* **Bounding Boxes:** Red boxes highlight pedestrians and cyclists. Blue boxes highlight vehicles and infrastructure.

* **Directional Indicators:** Blue arrows indicate the direction of travel or intended path of the autonomous vehicle.

* **Dashboard Display:** Shows vehicle speed, driving mode, alerts, and energy consumption.

* **Text Labels (German):**

* "Bremsvorgang aktiv!" (Braking process active!)

* "Achtung!" (Attention!)

* "Stadtverkehr" (City traffic)

* "Ankunft: 15:34 Uhr" (Arrival: 15:34)

* "Energieleistung" (Energy performance)

* **Speed Display:** Shows two values: 30 (left) and 32 (right).

### Detailed Analysis or ### Content Details

* **Pedestrians:** One pedestrian is located on the left side of the street, enclosed in a red bounding box. Another pedestrian is on a bicycle on the right side of the street, also enclosed in a red bounding box.

* **Vehicles:** Several vehicles are parked along the street, enclosed in blue bounding boxes.

* **Street Infrastructure:** Streetlights are marked with blue lines.

* **Dashboard:**

* "Bremsvorgang aktiv! Achtung!" is displayed in red, indicating an active braking process and a warning.

* "Stadtverkehr" is displayed, indicating the current driving mode.

* The speed display shows "30" on the left and "32" on the right, likely representing speed limits or current speed.

* "Ankunft: 15:34 Uhr" indicates the estimated time of arrival.

* "Energieleistung" is displayed above a graph, likely showing energy consumption.

### Key Observations

* The autonomous vehicle is actively monitoring pedestrians and vehicles in its surroundings.

* The braking system is active, and a warning is displayed, suggesting a potential hazard.

* The vehicle is operating in city traffic mode.

* The dashboard provides real-time information about the driving situation, including speed, arrival time, and energy consumption.

### Interpretation

The image demonstrates the capabilities of an autonomous vehicle in a complex urban environment. The vehicle is able to detect and track pedestrians and vehicles, assess potential hazards, and provide relevant information to the driver (or, in a fully autonomous mode, to the vehicle's control system). The active braking system and warning message suggest that the vehicle has detected a potential collision and is taking action to avoid it. The dashboard display provides a comprehensive overview of the driving situation, allowing the driver to monitor the vehicle's performance and make informed decisions.

</details>

Figure 11: An automated vehicle that provides a clear and understandable rationale for its decisions at that moment serves as a prime example of explainable AI in automated driving [18].

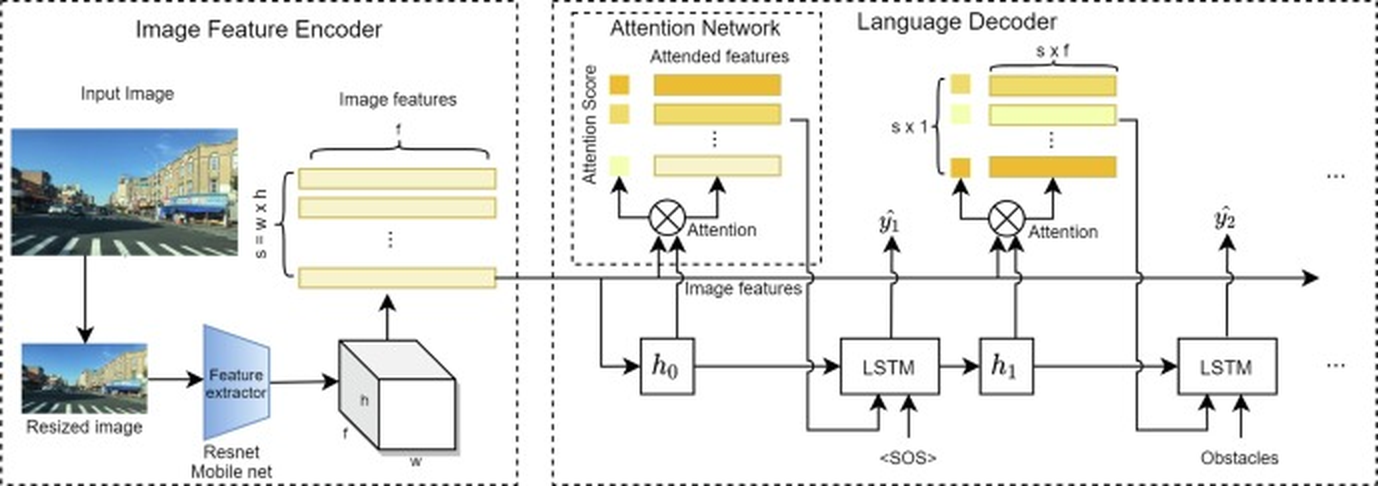

When AI has become prevalent in autonomous vehicle (AV) operations, user trust has been identified as a major issue that is essential to the success of these operations. XAI, which calls for the AI system to give the user explanations for every decision it makes, is a viable approach to fostering user trust for such integrated AI-based driving systems [48]. This work develops explainable Deep Learning (DL) models to improve trustworthiness in autonomous driving systems, driven by the need to improve user trust and the potential of innovative XAI technology in addressing such requirements. The main concept of this [48] research is to frame the decision-making process of autonomous vehicles (AVs) as an image-captioning task, generating textual descriptions of driving scenarios to serve as understandable explanations for humans. The proposed multi-modal deep learning architecture, shown in Figure 12, utilizes Transformers to model the relationship between images and language, generating meaningful descriptions and driving actions. Key contributions include improving the AV decision-making process for better explainability, developing a fully Transformer-based model, and outperforming baseline models. This results in enhanced user trust, valuable insights for AV developers, and improved interpretability through attention mechanisms and goal induction.

<details>

<summary>extracted/6367585/image/eai8.png Details</summary>

### Visual Description

## Diagram: Image Captioning Architecture

### Overview

The image presents a diagram of an image captioning architecture, which consists of three main components: an Image Feature Encoder, an Attention Network, and a Language Decoder. The diagram illustrates the flow of information between these components, starting from an input image and ending with a generated sequence of words (caption).

### Components/Axes

* **Image Feature Encoder:** This module processes the input image to extract relevant features. It includes:

* **Input Image:** A photograph of a street scene.

* **Resized Image:** A smaller version of the input image.

* **Feature extractor:** A Resnet Mobile net, which extracts features from the resized image.

* **Image features:** A set of feature maps with dimensions s = w x h, where s is the number of features, w is the width, and h is the height.

* **Attention Network:** This module focuses on the most relevant image features for generating the caption. It includes: