# FrontierMath: A Benchmark for Evaluating Advanced Mathematical Reasoning in AI

Abstract

We introduce FrontierMath, a benchmark of hundreds of original, exceptionally challenging mathematics problems crafted and vetted by expert mathematicians. The questions cover most major branches of modern mathematics—from computationally intensive problems in number theory and real analysis to abstract questions in algebraic geometry and category theory. Solving a typical problem requires multiple hours of effort from a researcher in the relevant branch of mathematics, and for the upper end questions, multiple days. FrontierMath uses new, unpublished problems and automated verification to reliably evaluate models while minimizing risk of data contamination. Current state-of-the-art AI models solve under 2% of problems, revealing a vast gap between AI capabilities and the prowess of the mathematical community. As AI systems advance toward expert-level mathematical abilities, FrontierMath offers a rigorous testbed that quantifies their progress. Teams interested in evaluating models on the benchmark should reach out to math_evals@epochai.org We thank Terence Tao, Timothy Gowers, and Richard Borcherds for their insightful interviews and thank Terence Tao for contributing several problems to this benchmark. We also thank Nat Friedman, Fedor Petrov, Feiyang Lin, Eli Lifland, Marc Pickett, and the Constellation team. We gratefully acknowledge OpenAI for their support in creating the benchmark.

1 Introduction

Recent AI systems have demonstrated remarkable proficiency in tackling challenging mathematical tasks, from achieving olympiad-level performance in geometry [trinh2024solving] to improving upon existing research results in combinatorics [romera2024mathematical]. However, existing benchmarks face some limitations:

Saturation of existing benchmarks

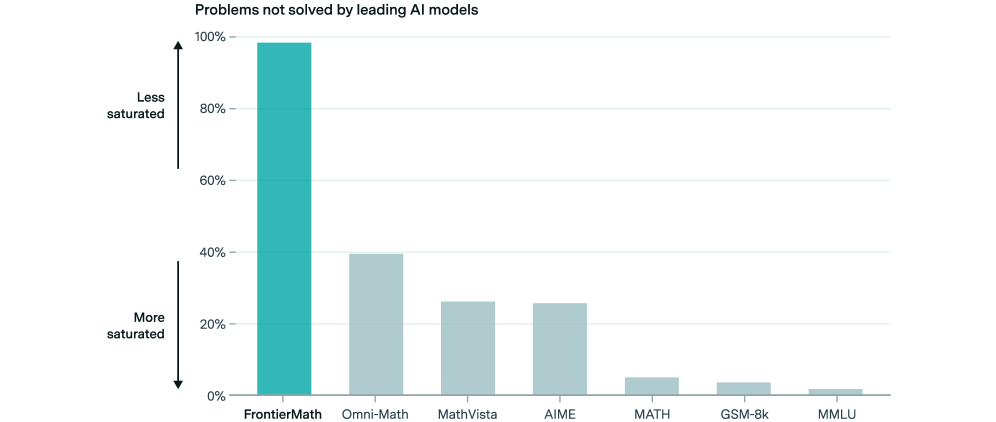

Current standard mathematics benchmarks such as the MATH dataset [hendrycks2021measuring] and GSM8K [cobbe2021training] primarily assess competency at the high-school and early undergraduate level. As state-of-the-art models achieve near-perfect performance on these benchmarks, we lack rigorous ways to evaluate their capabilities in advanced mathematical domains that require deeper theoretical understanding, creative insight, and specialized expertise. Furthermore, to assess AI’s potential contributions to mathematics research, we require benchmarks that better reflect the challenges faced by working mathematicians.

Benchmark contamination in training data

A significant challenge in evaluating large language models (LLMs) is data contamination—the inadvertent inclusion of benchmark problems in training data. This issue leads to artificially inflated performance metrics that mask models’ true reasoning capabilities [deng2023investigating, xu2024benchmark]. While competitions like the International Mathematics Olympiad (IMO) or American Invitational Mathematics Examination (AIME) offer fresh test problems created after model training, making them immune to contamination, these sources provide only a small number of problems and often require substantial manual effort to grade.

Sample problem 1: Testing Artin’s primitive root conjecture

Definitions. For a positive integer $n$ , let $v_{p}(n)$ denote the largest integer $v$ such that $p^{v}\mid n$ . For $p$ a prime and $a\not\equiv 0±od{p}$ , we let $\textup{ord}_{p}(a)$ denote the smallest positive integer $o$ such that $a^{o}\equiv 1±od{p}$ . For $x>0$ , we let $\textup{ord}_{p,x}(a)=\prod_{\begin{subarray}{c}q≤ x\\

q\text{ prime}\end{subarray}}q^{v_{q}(\textup{ord}_{p}(a))}\prod_{\begin{subarray}{c}q>x\\

q\text{ prime}\end{subarray}}q^{v_{q}(p-1)}.$ Problem. Let $S_{x}$ denote the set of primes $p$ for which $\textup{ord}_{p,x}(2)>\textup{ord}_{p,x}(3),$ and let $d_{x}$ denote the density $d_{x}=\frac{|S_{x}|}{|\{p≤ x:p\text{ is prime}\}|}$ of $S_{x}$ in the primes. Let $d_{∞}=\lim_{x→∞}d_{x}.$ Compute $\lfloor 10^{6}d_{∞}\rfloor.$ Answer: 367707 MSC classification: 11 Number theory

Sample problem 2: Find the degree 19 polynomial

Construct a degree 19 polynomial $p(x)∈\mathbb{C}[x]$ such that $X:=\{p(x)=p(y)\}⊂\mathbb{P}^{1}×\mathbb{P}^{1}$ has at least 3 (but not all linear) irreducible components over $\mathbb{C}$ . Choose $p(x)$ to be odd, monic, have real coefficients and linear coefficient -19 and calculate $p(19)$ . Answer: 1876572071974094803391179 MSC classification: 14 Algebraic geometry; 20 Group theory and generalizations; 11 Number theory generalizations

Sample problem 3: Prime field continuous extensions

Let $a_{n}$ for $n∈\mathbb{Z}$ be the sequence of integers satisfying the recurrence formula See Appendix A.3 for precise coefficients. $\displaystyle a_{n}=(981× 0^{11})a_{n-1}+(549× 0^{11})a_{n-2}$ $\displaystyle\hskip 11.38092pt-(277× 0^{11})a_{n-3}+(706× 0^{8})a_{n-4}$ with initial conditions $a_{i}=i$ for $0≤ i≤ 3$ . Find the smallest prime $p\equiv 4\bmod 7$ for which the function $\mathbb{Z}→\mathbb{Z}$ given by $n\mapsto a_{n}$ can be extended to a continuous function on $\mathbb{Z}_{p}$ . Answer: 9811 MSC classification: 11 Number theory

Figure 1: FrontierMath features hundreds of advanced mathematics problems that require hours for expert mathematicians to solve. We release representative samples from the benchmark. Statements and solutions for released problems are provided in Appendix ˜ A.

To address these limitations, we introduce FrontierMath, a benchmark of original, exceptionally challenging mathematical problems created in collaboration with over 60 mathematicians from leading institutions. FrontierMath addresses data contamination concerns by featuring exclusively new, previously unpublished problems. It enables scalable evaluation through automated verification that provides rapid, reproducible assessment. The benchmark spans the full spectrum of modern mathematics, from challenging competition-style problems to problems drawn directly from contemporary research, covering most branches of mathematics in the 2020 Mathematics Subject Classification (MSC2020). In particular, FrontierMath contains problems across 70% of the top-level subjects in the MSC2020 classification excluding “00 General and overarching topics; collections”, “01 History and biography”, and “97 Mathematics education”.

<details>

<summary>x1.png Details</summary>

### Visual Description

## Bar Chart: Problems not solved by leading AI models

### Overview

The image is a bar chart comparing the percentage of problems not solved by leading AI models across different problem types. The y-axis represents the percentage of unsolved problems, ranging from 0% to 100%. The x-axis lists the problem types: FrontierMath, Omni-Math, MathVista, AIME, MATH, GSM-8k, and MMLU. The chart uses a color gradient to indicate saturation, with higher bars representing less saturated problem areas and lower bars representing more saturated ones.

### Components/Axes

* **Title:** Problems not solved by leading AI models

* **Y-axis:** Percentage of problems not solved, ranging from 0% to 100% in increments of 20%. The axis is labeled with "Less saturated" at the top and "More saturated" at the bottom, with an arrow pointing upwards for "Less saturated" and downwards for "More saturated".

* **X-axis:** Problem types: FrontierMath, Omni-Math, MathVista, AIME, MATH, GSM-8k, MMLU.

### Detailed Analysis

The chart displays the following approximate percentages of problems not solved for each category:

* **FrontierMath:** Approximately 98%, colored in teal.

* **Omni-Math:** Approximately 40%, colored in light teal.

* **MathVista:** Approximately 26%, colored in light teal.

* **AIME:** Approximately 26%, colored in light teal.

* **MATH:** Approximately 5%, colored in light teal.

* **GSM-8k:** Approximately 3%, colored in light teal.

* **MMLU:** Approximately 1%, colored in light teal.

### Key Observations

* FrontierMath has a significantly higher percentage of unsolved problems compared to the other problem types.

* The percentage of unsolved problems decreases substantially from Omni-Math to MMLU.

* MATH, GSM-8k, and MMLU have very low percentages of unsolved problems.

### Interpretation

The chart indicates that leading AI models struggle the most with FrontierMath problems, with nearly all problems remaining unsolved. Omni-Math and MathVista also have a relatively high percentage of unsolved problems. In contrast, the AI models perform much better on MATH, GSM-8k, and MMLU problems, suggesting these areas are more "saturated" or well-addressed by current AI capabilities. The data suggests that research and development efforts should focus on improving AI performance in areas like FrontierMath, Omni-Math, and MathVista to address the current gaps in problem-solving capabilities.

</details>

Figure 2: Comparison of unsolved problems across five mathematics benchmarks. While existing benchmarks are approaching saturation (see Appendix B.3), FrontierMath maintains a >98% unsolved rate.

The problems in FrontierMath demand deep theoretical understanding, creative insight, and specialized expertise, often requiring multiple hours of effort from expert mathematicians to solve. We provide a set of representative example problems and their solutions (see Appendix B.3 and Figure ˜ 1). Notably, current state-of-the-art AI models are unable to solve more than 2% of the problems in FrontierMath, even with multiple attempts, highlighting a significant gap between human and AI capabilities in advanced mathematics (see Figure 2).

To understand expert perspectives on FrontierMath’s difficulty and relevance, we interviewed several prominent mathematicians, including Fields Medalists Terence Tao (2006), Timothy Gowers (1998), and Richard Borcherds (1998), and IMO coach Evan Chen. They unanimously characterized the problems as exceptionally challenging, requiring deep domain expertise and significant time investment to solve. While they noted that the requirement of numerical answers differs from typical mathematical research practice, these problems nonetheless demand substantial expertise and time even from experienced mathematicians.

2 Data collection

2.1 The collection pipeline

We developed the FrontierMath benchmark through collaboration with over 60 mathematicians from universities across more than a dozen countries. Our contributors formed a diverse group spanning academic ranks from graduate students to faculty members. Many are distinguished participants in prestigious mathematical competitions, collectively holding 14 IMO gold medals. One contributing mathematician also holds a Fields Medal.

Their areas of expertise collectively span a vast expanse of modern mathematics—including but not limited to algebraic geometry, number theory, point set and algebraic topology, combinatorics, category theory, representation theory, partial differential equations, probability theory, differential geometry, logic, and theoretical computer science. Since many of our contributors are active researchers, the problems naturally incorporate sophisticated techniques and insights found in contemporary mathematical research.

Each mathematician created new problems following guidelines designed to ensure clarity, verifiability, and definitive answers across various difficulty levels and mathematical domains. Four requirements guided problem creation:

- Originality: While problems could build on existing mathematical ideas, they needed to do so in novel and non-obvious ways—either through clever adaptations that substantially transform the original concepts, or through innovative combinations of multiple ideas that obscure their origins. This ensures that solving them requires genuine mathematical insight rather than pattern matching against known problems.

- Automated verifiability: Problems had to possess definitive, computable answers that could be automatically verified. To facilitate automated verification, we often structured problems to have integer solutions, which are straightforward to check programmatically. We also included solutions that could be represented as arbitrary SymPy objects—including symbolic expressions, matrices, and more. By utilizing SymPy, we enabled a wide range of mathematical outputs to be represented and verified programmatically, ensuring that even complex symbolic solutions could be efficiently and accurately checked. This approach allowed us to support diverse answer types while maintaining verification standards.

- Guessproofness: Problems were designed to avoid being susceptible to guessing; solving the problem had to be necessary to find the correct answer. As can be seen from Figure ˜ 1, problems often have numerical answers that are large and nonobvious, which reduces the vulnerability to guesswork. As a rule of thumb, we require that there should not be a greater than 1% chance of guessing the correct answer without doing most of the work that one would need to do to “correctly" find the solution. This is important to ensure that the challenges assessed genuine mathematical understanding and reasoning.

- Computational tractability: The solution of a computationally intensive problem must include scripts demonstrating how to find the answer, starting only from standard knowledge of the field. For example, if a problem requires factoring a large integer, this must be doable from a standard algorithm. These scripts must cumulatively run less than a minute on standard hardware. This requirement ensures efficient and manageable evaluation times.

For each contributed problem, mathematicians provided a detailed solution and multiple forms of metadata. Each submission included both a verification script for automated answer checking (see Section ˜ 2.2) and comprehensive metadata tags. These tags included subject and technique classifications, indicating both the mathematical domains and specific methodologies required for solution. Each submission then underwent peer review by at least one mathematician with relevant domain expertise, who evaluated all components: the problem statement, solution, verification code, difficulty ratings, and classification tags (see Section ˜ 2.3). Additionally, the problems were securely produced and handled to prevent data contamination, and reviewed for originality (see Section ˜ 2.4). Finally, the submissions included structured difficulty ratings that assessed three key dimensions: the required level of background knowledge, the estimated time needed to identify key insights, and the time required to work through technical details (see Section ˜ 2.5).

2.2 Automated verification

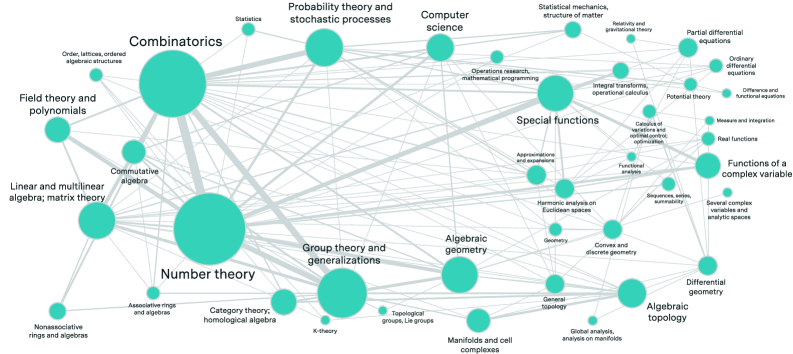

FrontierMath focuses exclusively on problems with automatically verifiable solutions—primarily numerical answers or mathematical objects that can be expressed as SymPy objects (including symbolic expressions, matrices, sets, and other mathematical structures). For each problem, the evaluated model submits code that computes and saves its answer as a Python object. A script then automatically verifies this answer. If the problem has a unique integer solution, it checks for an exact match. If the problem has a unique symbolic real answer, it uses SymPy evaluation to check that the difference between the submitted answer and the actual answer simplifies to 0. In all other cases, a custom verification script is necessary to check validity of a submitted answer (see Figure 3).

<details>

<summary>x2.png Details</summary>

### Visual Description

## Diagram: LLM Code Submission and Verification

### Overview

The diagram illustrates the process of an LLM (Language Learning Model) submitting code, computing and saving an answer, and a verification script loading and marking the result as correct or incorrect. It shows two different code submission scenarios and how they are evaluated.

### Components/Axes

* **Title:** LLM submits code that computes and saves the answer.

* **Code Submission Blocks:** Two instances of "submitted\_code.py" are shown, each containing Python code.

* **Verification Block:** One instance of "verification\_script.py" containing Python code for verifying the answer.

* **Flow Arrows:** Solid and dashed arrows indicating the flow of data/execution between the code submission blocks and the verification script block.

* **Verification Results:** A green checkmark (☑) indicating a correct result and a red cross (X) indicating an incorrect result.

### Detailed Analysis

**1. First Code Submission Scenario:**

* **Code Block Title:** submitted\_code.py

* **Code:**

```python

import pickle

# This is the final answer

final_answer = (8, 3)

# Pickle the final result to file

with open("final_answer.p", "wb") as f:

pickle.dump(final_answer, f)

```

This code defines a tuple `final_answer` as (8, 3), and then serializes this tuple to a file named "final\_answer.p" using the `pickle` library in write-binary ("wb") mode.

**2. Second Code Submission Scenario:**

* **Code Block Title:** submitted\_code.py

* **Code:**

```python

import pickle

# This is the final answer

final_answer = (2, 2)

# Pickle the final result to file

with open("final_answer.p", "wb") as f:

pickle.dump(final_answer, f)

```

This code defines a tuple `final_answer` as (2, 2), and then serializes this tuple to a file named "final\_answer.p" using the `pickle` library in write-binary ("wb") mode.

**3. Verification Script:**

* **Code Block Title:** verification\_script.py

* **Code:**

```python

import pickle

def verify(answer):

return answer[0]**2 - 7*answer[1]**2 == 1

answer = pickle.load(open("final_answer.p", "rb"))

verify(answer)

```

This script first imports the `pickle` library. It defines a function `verify(answer)` which takes a tuple as input and returns `True` if `answer[0]**2 - 7*answer[1]**2` equals 1, and `False` otherwise. The script then loads the pickled `final_answer` from the "final\_answer.p" file in read-binary ("rb") mode and calls the `verify` function with the loaded answer.

**4. Flow of Execution and Verification Results:**

* The output of the first `submitted_code.py` (final\_answer = (8, 3)) is passed to the verification script via a solid arrow. The verification script evaluates 8\*\*2 - 7\*3\*\*2 = 64 - 63 = 1, which equals 1. Therefore, the result is marked as correct (green checkmark).

* The output of the second `submitted_code.py` (final\_answer = (2, 2)) is passed to the verification script via a dashed arrow. The verification script evaluates 2\*\*2 - 7\*2\*\*2 = 4 - 28 = -24, which does not equal 1. Therefore, the result is marked as incorrect (red cross).

### Key Observations

* Two distinct code submission scenarios produce different answers.

* The verification script evaluates the submitted answers based on a specific mathematical formula.

* The first submission yields a correct result, while the second is incorrect.

### Interpretation

The diagram illustrates a simple testing framework where an LLM generates code that produces an answer, and a verification script evaluates the correctness of the answer. The example shows that the LLM can sometimes produce correct answers (as demonstrated by the first scenario), but it's also capable of producing incorrect answers (as demonstrated by the second scenario). The use of pickling allows for easy transfer of the computed result between the submitted code and the verification script. The solid and dashed lines serve to differentiate the flows of the different submission scenarios.

</details>

Figure 3: Example verification script for an instance of Pell’s equation: Find a tuple of integers $(x,y)$ such that $x^{2}-7y^{2}=1$ . The left boxes show the code that produces and saves answers, the right box shows the verification script that evaluates them. A custom verification script is necessary here since the problem has infinitely many solutions.

This design choice enables rapid, objective evaluation of AI models without requiring them to output solutions in formal proof languages like Lean or Coq. The automated verification framework substantially reduces the cost and complexity of evaluation, as verification can be performed programmatically without human intervention. Furthermore, it eliminates potential human bias and inconsistency in the evaluation process, as the verification criteria are explicitly defined and uniformly applied.

While this approach enables efficient and scalable evaluation, it does impose certain limitations. Most notably, we cannot include problems that require mathematical proofs or formal reasoning steps, as these would demand human evaluation to assess correctness and clarity. In theory, we could have accepted formalized proofs in Lean or other formal languages as a problem solution format. However, this presents two challenges. Firstly, current AI models are often not trained to write formalized proofs in specialized languages. We wanted to ensure that the problems were measuring genuine reasoning skills, rather than skill at writing formalized proofs. Secondly, Lean’s mathematical library, mathlib, doesn’t fully cover the undergraduate math curriculum [mathlib], let alone the scope of research math, which limits the fields such a benchmark could measure. However, even without formal proofs, these problems span a substantial breadth of modern mathematics and include problems that eminent mathematicians have characterized as exceptionally difficult (see Section ˜ 6).

2.3 Validation and quality assurance

To maintain high quality standards and minimize errors in the benchmark, we established a rigorous multi-stage review process. Each submitted problem underwent blind peer review by at least one expert mathematician from our team, who evaluated the following criteria:

- Verifying the correctness of the problem statement and the provided solution.

- Checking for ambiguities or potential misunderstandings in the problem wording.

- Assessing the problem for guessproofness, ensuring that solutions cannot be easily obtained without proper mathematical reasoning.

- Confirming the appropriateness of the assigned difficulty ratings and metadata.

Verifying correctness is the most important of these tasks. For problems with unique solutions—the majority of our benchmark—verifying correctness requires checking the mathematical argumentation, computations, and, if applicable, programming scripts the writer provided to justify their answer. For problems with non-unique solutions, e.g. those which ask for a solution to some system of Diophantine equations or for a Hamiltonian path in a given graph, the reviewer only checks that the provided verification script matches the problem requirements and test it on the answer.

All problems in the benchmark have undergone at least one blind peer review. Through this review process, we identify problems with incorrect answers as well as those that fail to meet our guessproofness criterion. Such problems are only included in the benchmark after authors successfully address these issues through revision.

During first reviews, our reviewers commonly identify several types of issues with submitted problems. These include answers that aren’t easily verifiable, problems where guessing is easier than proving, and cases where simple brute-force methods circumvent the intended difficulty. Such issues occur predominantly with authors who are new to submitting questions to the benchmark and have not yet fully internalized the guidelines. Beyond these concerns, straightforward errors in question statements or computational mistakes in solutions leading to incorrect answers are also not uncommon.

We commissioned blind reviews from second reviewers on 30 randomly chosen questions so far to get some idea about the noise threshold we might expect due to errors, and we also randomly selected 5 questions to be removed from the dataset and serve as a public sample of problems (see Appendix ˜ A). In total, 35 of the accepted problems have received scrutiny beyond the first round of peer review.

Two out of 35 questions had an incorrect answer provided by the author, undetected in the first review. Assuming that a question being incorrect is a Bernoulli trial with probability $p$ and that the second reviewers catch all errors, using a Jeffreys prior on $p$ yields a posterior error rate of $\frac{2+0.5}{35+1}≈ 6.9\%$ for this benchmark. On one hand, the Jeffreys prior is conservative since our prior belief about $p$ is likely lower due to the careful construction of the benchmark. On the other hand, we must account for potential undetected errors that even the second review might have missed. Therefore, estimating a critical error rate of approximately $10\%$ is reasonable. This estimate aligns with error rates typical in machine learning benchmarks; for instance, [northcutt2021pervasivelabelerrorstest] estimate a $>6\%$ label error rate in the ImageNet validation set, while [gema2024we] find that over $9\%$ of questions in the MMLU benchmark contain errors based on expert review of 3,000 randomly sampled questions.

The second reviews also identified less critical errors. Six out of 35 questions had missing hypotheses in their statements which technically made them not fully rigorous. While domain experts could reasonably impute this missing context, it might still pose difficulties for models attempting to solve these problems. For two of the 35 questions, reviewers proposed strategies for guessing the solution with substantially less effort or computation than was necessary for a rigorous mathematical justification of the answer, violating the guessproofness criterion. In all cases, they proposed adjustments to the problem which corrected the error while preserving the original mathematical intent of the writer.

Although the error rate remains low, the mistakes we have spotted highlight the issues of a passive review system, in which the reviewer sees the full solution document and simply has to state their approval in order for a problem to be accepted into the benchmark. Going forward, we are adopting a more active review process, and will henceforth require concrete confirmation of a problem’s soundness before it is accepted. For problems which seek a solution with some easily verified characteristic, such as a tuple of integers satisfying a Diophantine equation, it is enough to test that criterion directly. If a problem seeks a symbolic real number or some efficiently computed value, then it would be strong evidence if a heuristically determined guess approximates the given solution. For more abstract problems, it may be necessary to have a reviewer solve the problem themselves, given only the key steps and ideas of the solution rather than the full write-up.

Additionally, we observed inconsistent difficulty ratings between first and second reviewers; due to the subjective nature of this task, ratings rarely matched and often showed substantial differences. See Section 2.5 for discussion on our ongoing effort to normalize these ratings. In several cases, second reviewers found no fundamental problems with the questions but suggested ways to increase their difficulty, indicating potential room to improve the difficulty of the remaining questions if reviewers were to spend more time on refining them.

2.4 Originality and data contamination prevention

Contributors were explicitly instructed to develop novel mathematical problems. While they could build upon existing mathematical ideas, they were required to do so in non-obvious ways—either through clever adaptations that substantially transformed the original concepts, or through novel and innovative combinations of multiple ideas. This approach ensured that solving the problems required genuine mathematical insight rather than pattern matching against known problems.

To minimize the risk of problems and solutions being disseminated online, we encouraged all submissions to be conducted through secure, encrypted channels. We employed encrypted communication platforms to coordinate with contributors and requested that any written materials stored online be encrypted—for instance, by placing documents in password-protected archives shared only via secure methods. While we endeavored to adhere strictly to these protocols, we acknowledge that, in some cases, standard email clients were used when communicating about a subset of the problems outside our immediate team.

Our primary method for validating originality relied on expert review by our core team of mathematicians. Their extensive familiarity with competition and research-level problems enabled them to identify potential similarities with existing problems that automated systems might miss. The team conducted thorough manual checks against popular mathematics websites, online repositories, and academic publications. This expert review process was supplemented by verification using plagiarism detection software.

To provide further confidence in our problems’ originality, we ran them through the plagiarism detection tools Quetext and Copyscape. Across the entire dataset, the verification process revealed no significant matches to existing content, with minimal flags attributed only to standard mathematical terminology or software oversensitivity. We limited our plagiarism checking to problem statements only, excluding solutions to minimize online exposure. We specifically chose Quetext and Copyscape over more widely-used tools like Turnitin because they posed the lowest risk of our problems being stored in databases that might later be used to train AI models.

2.5 Problem difficulty

Estimates of mathematical problem difficulty are useful for FrontierMath’s goal of evaluating AI mathematical capabilities, as such estimates would provide more fine-grained information about the performance of AI models. To assess problem difficulty, each problem author provided ratings along three key dimensions:

1. Background: This rating ranges from 1 to 5 and indicates the level of mathematical background required to approach the problem. A rating of 1 corresponds to high school level, 2 to early undergraduate level, 3 to late undergraduate level, 4 to graduate level, and 5 to research level.

1. Creativity: Estimated as the number of hours an expert would need to identify the key ideas for solving the problem. This measure has no upper limit.

1. Execution: Similarly estimated as the number of hours required to compute the final answer once the key ideas are found, including time writing a script if applicable. This measure also has no upper limit.

To verify and refine the initial difficulty assessments, each difficulty assessment underwent a peer-review process. Reviewers assessed the accuracy of the initial difficulty ratings. Any discrepancies between the authors’ ratings and the reviewers’ assessments were discussed, and adjustments were made as needed to ensure that the difficulty ratings accurately reflected the problem’s demands in terms of background, creativity, and execution.

Accurately assessing the difficulty of mathematics problems presents significant challenges [Chen2024, Chen2024Mistake]. Problems that seem impossible may become trivial after exposure to certain techniques, and multiple solution paths of varying complexity often exist. Moreover, while we designed our problems to require substantial mathematical work rather than allow solutions through guessing or pattern matching, the possibility of models finding unexpected shortcuts could undermine such difficulty estimates.

Given these challenges, we view our difficulty ratings as providing rough guidance. More rigorous validation, such as through systematic data collection on human solution times, would be needed to make stronger claims about these difficulty assessments. In unpublished preliminary work, we found that problems rated as more difficult correlate with lower solution rates by GPT-4o, providing some support for our difficulty assessment system. However, more systematic validation would be needed to make strong claims about the reliability of these ratings.

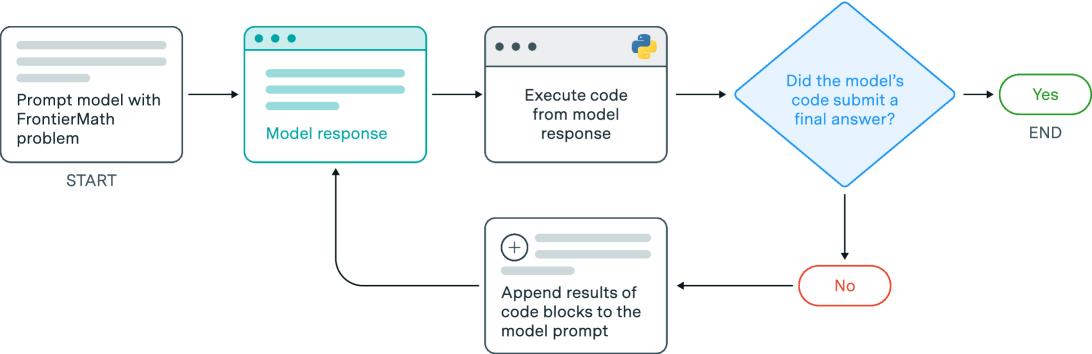

3 Dataset composition

The FrontierMath benchmark covers a broad spectrum of contemporary mathematics, spanning both foundational areas and specialized research domains. This comprehensive coverage is crucial for effectively evaluating AI systems’ mathematical capabilities across the landscape of modern mathematics. Working with over 60 mathematicians across different specializations, we captured most top-level MSC 2020 classification codes, reflecting the breadth of mathematics from foundational fields to emerging research areas.

| MSC Classification | Percentage | MSC Classification | Percentage |

| --- | --- | --- | --- |

| 11 Number theory | 17.8% | 57 Manifolds and cell complexes | 2.1% |

| 05 Combinatorics | 15.8% | 13 Commutative algebra | 2.1% |

| 20 Group theory and generalizations | 8.9% | 54 General topology | 1.4% |

| 60 Probability theory and stochastic processes | 5.1% | 35 Partial differential equations | 1.4% |

| 15 Linear and multilinear algebra; matrix theory | 4.8% | 53 Differential geometry | 1.4% |

| 14 Algebraic geometry | 4.8% | 42 Harmonic analysis on Euclidean spaces | 1.4% |

| 33 Special functions | 4.8% | 41 Approximations and expansions | 1.4% |

| 55 Algebraic topology | 3.1% | 52 Convex and discrete geometry | 1.4% |

| 12 Field theory and polynomials | 2.4% | 82 Statistical mechanics, structure of matter | 1.0% |

| 30 Functions of a complex variable | 2.4% | 44 Integral transforms, operational calculus | 1.0% |

| 68 Computer science | 2.4% | 17 Nonassociative rings and algebras | 1.0% |

| 18 Category theory; homological algebra | 2.4% | Other (< 3 problems each) | 9.9% |

Table 1: Percentage distribution of Mathematics Subject Classification (MSC) 2020 codes in the FrontierMath dataset, representing the proportion of each classification relative to the total MSC assignments.

Number theory and combinatorics are most prominently represented, collectively accounting for approximately 34% of all MSC2020 tags. This prominence reflects both our contributing mathematicians’ expertise and these domains’ natural amenability to problems with numerical solutions that can be automatically verified. The next most represented fields are algebraic geometry and group theory (together about 14% of MSC tags), followed by algebraic topology (approximately 3%), linear algebra (5%), and special functions (5%). Problems involving probability theory and stochastic processes constitute about 5% of the MSC tags, with additional significant representation in complex analysis, category theory, and partial differential equations, each comprising between 1-3% of the MSC tags.

<details>

<summary>x3.png Details</summary>

### Visual Description

## Network Diagram: Mathematical Fields and Their Relationships

### Overview

The image is a network diagram illustrating the relationships between various fields of mathematics. The nodes represent mathematical areas, and the edges (lines) connecting them indicate connections or dependencies between these fields. The size of each node appears to correspond to the relative importance or centrality of that field within the network.

### Components/Axes

* **Nodes:** Represented by teal circles, each labeled with a mathematical field or subfield. The size of the circle varies, suggesting a weighting or importance factor.

* **Edges:** Represented by gray lines, connecting the nodes. The thickness of the lines may indicate the strength or frequency of the relationship between the connected fields.

* **Labels:** Text labels are associated with each node, identifying the mathematical field.

### Detailed Analysis or ### Content Details

**Node Labels and Associated Subfields:**

* **Combinatorics:** Located in the top-left quadrant. Subfield: "Order, lattices, ordered algebraic structures"

* **Statistics:** Located in the top-center.

* **Probability theory and stochastic processes:** Located in the top-center.

* **Computer science:** Located in the top-center. Subfields: "Operations research, mathematical programming"

* **Special functions:** Located in the top-right quadrant. Subfields: "Integral transforms, operational calculus", "Approximations and expansions", "Harmonic analysis on Euclidean spaces", "Calculus of variations and optimal control, optimization", "Functional analysis", "Sequences, series, summability"

* **Statistical mechanics, structure of matter:** Located in the top-right quadrant.

* **Relativity and gravitational theory:** Located in the top-right quadrant.

* **Partial differential equations:** Located in the top-right quadrant.

* **Ordinary differential equations:** Located in the right quadrant.

* **Difference and functional equations:** Located in the right quadrant.

* **Functions of a complex variable:** Located in the right quadrant. Subfields: "Measure and integration", "Real functions", "Several complex variables and analytic spaces"

* **Field theory and polynomials:** Located in the left quadrant.

* **Linear and multilinear algebra; matrix theory:** Located in the left quadrant.

* **Commutative algebra:** Located in the left quadrant.

* **Number theory:** Located in the bottom-left quadrant. Subfields: "Associative rings and algebras", "Category theory, homological algebra", "Nonassociative rings and algebras"

* **Group theory and generalizations:** Located in the bottom-center. Subfields: "Topological groups, Lie groups", "K-theory"

* **Algebraic geometry:** Located in the bottom-center. Subfields: "Geometry", "Convex and discrete geometry", "General topology", "Global analysis, analysis on manifolds"

* **Manifolds and cell complexes:** Located in the bottom-center.

* **Algebraic topology:** Located in the bottom-right quadrant.

* **Differential geometry:** Located in the right quadrant.

**Node Sizes:**

* The largest nodes appear to be "Number theory", "Special functions", and "Combinatorics".

* "Group theory and generalizations" is also a relatively large node.

* Other nodes are significantly smaller, suggesting a lesser degree of connectivity or importance within the network.

**Edge Connections:**

* "Combinatorics" has connections to "Statistics", "Probability theory and stochastic processes", "Computer science", "Field theory and polynomials", "Linear and multilinear algebra; matrix theory", "Commutative algebra", and "Number theory".

* "Number theory" has connections to "Combinatorics", "Group theory and generalizations", "Algebraic geometry", "Linear and multilinear algebra; matrix theory", and "Commutative algebra".

* "Special functions" has connections to "Computer science", "Statistical mechanics, structure of matter", "Relativity and gravitational theory", "Partial differential equations", "Ordinary differential equations", "Difference and functional equations", "Functions of a complex variable", "Algebraic geometry", and "Algebraic topology".

### Key Observations

* The diagram highlights the interconnectedness of various mathematical fields.

* "Number theory", "Special functions", and "Combinatorics" appear to be central hubs in this network.

* The density of connections varies across the diagram, indicating stronger relationships between some fields than others.

### Interpretation

This network diagram provides a visual representation of the relationships between different areas of mathematics. The size of the nodes and the thickness of the edges suggest the relative importance and strength of these relationships. The diagram could be used to understand the dependencies between different mathematical fields, identify areas of potential cross-disciplinary research, or visualize the structure of mathematical knowledge. The central role of "Number theory", "Special functions", and "Combinatorics" suggests that these fields have broad applications and connections to many other areas of mathematics. The diagram also reveals clusters of related fields, such as the cluster around "Special functions" which includes "Partial differential equations", "Ordinary differential equations", and "Functions of a complex variable". This suggests that these fields share common concepts and techniques.

</details>

Figure 4: Mathematical subject interconnections in FrontierMath. Node sizes indicate the frequency of each subject’s appearance in problems, while connections indicate when multiple mathematical subjects are combined within single problems, demonstrating the benchmark’s integration of many mathematical domains.

The network visualization (Figure 4) reveals how mathematical subjects combine within individual problems. Number theory and combinatorics appear together most frequently, with 13% of problems requiring both subjects, followed by combinatorics-group theory (9%) and number theory-group theory (8%). These three fields—number theory (44% of all problems), combinatorics (39%), and group theory (22%)—form the core of the benchmark, each combining with more than a dozen other mathematical domains in novel ways.

There is a wide range of techniques required to solve the problems in our dataset. In particular, there are over 200 different techniques listed as being involved in the solutions of our problems. Although generating functions, recurrence relations, and special functions emerge as common techniques, they each appear in less than 5% of problems, underscoring the benchmark’s methodological diversity. Even the most frequently co-occurring techniques appear together in at most 3 problems, highlighting how problems typically require unique combinations of mathematical approaches.

4 Evaluation

4.1 Experiment-enabled evaluation framework

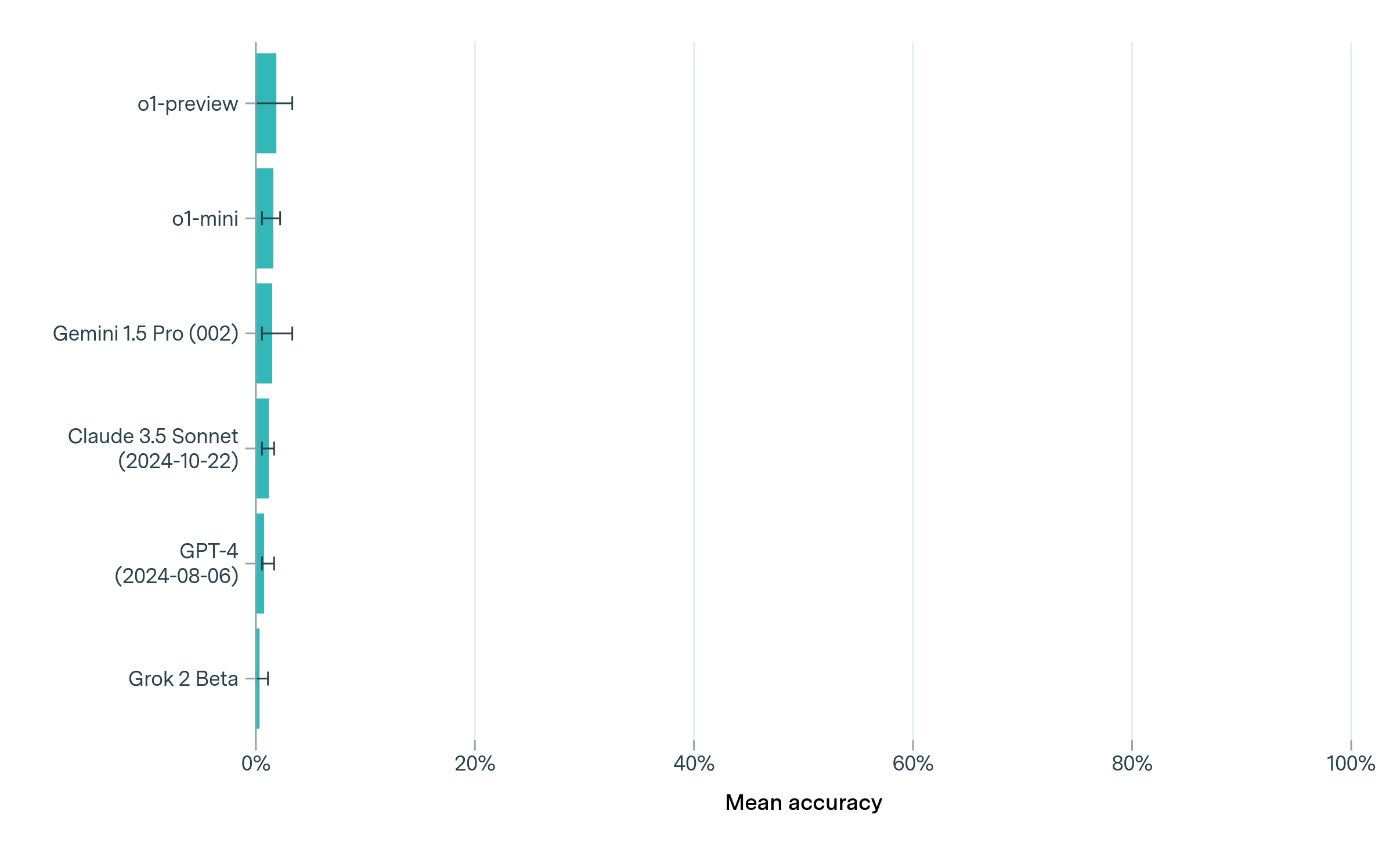

To evaluate AI models on FrontierMath problems, we developed a framework that allows models to explore and verify potential solution approaches through code execution, mirroring how mathematicians might experiment with ideas when tackling challenging problems. This framework enables models to test hypotheses, receive feedback, and refine their approach based on experimental results, as illustrated in Figure 5.

The evaluation process follows an iterative cycle where the model analyzes the mathematical problem, proposes solution strategies, implements these strategies as executable Python code, receives feedback from code execution results, and refines its approach based on the experimental outcomes. For each problem, the model interacts with a Python environment where it can write code blocks that are automatically executed, with outputs and any error messages being fed back into the conversation. This allows the model to verify intermediate results, test conjectures, and catch potential errors in its reasoning.

<details>

<summary>x4.png Details</summary>

### Visual Description

## Flowchart: Model Solving FrontierMath Problems

### Overview

The image is a flowchart illustrating the process of a model solving FrontierMath problems. It shows the steps involved, from prompting the model to determining if the model's code submitted a final answer. The flowchart includes decision points and loops, indicating an iterative process.

### Components/Axes

The flowchart consists of the following components:

* **Start:** Labeled "START", indicates the beginning of the process.

* **Prompt model with FrontierMath problem:** A rectangular box representing the initial step of providing the model with a problem.

* **Model response:** A rectangular box representing the model's response to the prompt.

* **Execute code from model response:** A rectangular box representing the execution of code generated by the model. A Python logo is present in the top right corner of this box.

* **Did the model's code submit a final answer?:** A diamond shape representing a decision point.

* **Yes:** A rounded rectangle indicating the successful completion of the process, labeled "END".

* **No:** A rounded rectangle indicating that the model's code did not submit a final answer.

* **Append results of code blocks to the model prompt:** A rectangular box representing the step of appending the results of code blocks to the model prompt.

* **Arrows:** Arrows indicate the flow of the process.

### Detailed Analysis or ### Content Details

1. **START:** The process begins with "Prompt model with FrontierMath problem".

2. The model generates a "Model response".

3. The code from the model's response is executed ("Execute code from model response").

4. A decision is made: "Did the model's code submit a final answer?".

* If "Yes", the process ends ("END").

* If "No", the results of the code blocks are appended to the model prompt ("Append results of code blocks to the model prompt"), and the process loops back to the "Model response" step.

### Key Observations

* The flowchart illustrates an iterative process where the model refines its response based on the results of executed code.

* The Python logo suggests that the code being executed is likely Python code.

* The "No" path creates a feedback loop, allowing the model to improve its answer.

### Interpretation

The flowchart describes a system where a model attempts to solve FrontierMath problems by generating and executing code. If the initial code execution does not produce a final answer, the results are fed back into the model to refine its approach. This iterative process continues until the model successfully submits a final answer. The use of Python suggests a specific implementation environment for the code execution. The diagram highlights the importance of feedback loops in complex problem-solving scenarios.

</details>

Figure 5: Experimental framework for mathematical problem solving. The model can either provide a final answer directly or experiment with code execution before reaching a solution.

When submitting a final answer, models must follow a standardized format by including a specific marker comment (# This is the final answer), saving the result using Python’s pickle module, and ensuring the submission code is self-contained and independent of previous computations. The interaction continues until either the model submits a correctly formatted final answer or reaches the token limit, which we set to 10,000 tokens in our experiments. If a model reaches this token limit without having submitted a final answer, it receives a final prompt requesting an immediate final answer submission. If the model still fails to provide a properly formatted final answer after this prompt, the attempt is marked as incorrect.

4.2 Results

4.2.1 Accuracy on FrontierMath

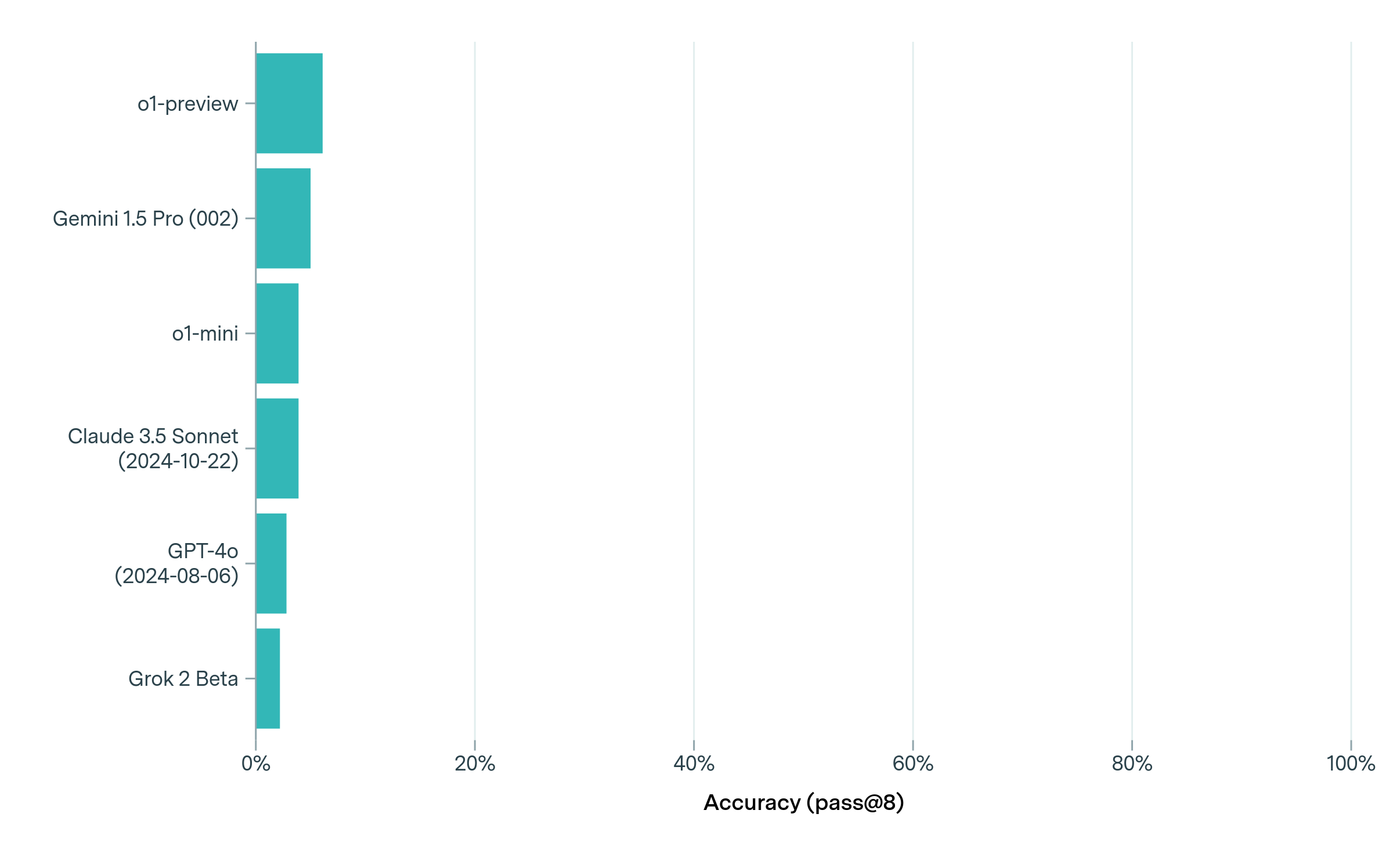

We evaluated six leading language models on our existing subset of FrontierMath problems: o1-preview ([o1-preview]), o1-mini ([o1-mini]), and GPT-4o (2024-08-06 version) ([GPT-4o]), Claude 3.5 Sonnet (2024-10-22 version) ([sonnet3-5]), Grok 2 Beta ([grokbeta]), and Google DeepMind’s Gemini 1.5 Pro 002 ([gemini15pro002]). All models had a very low performance on FrontierMath problems, with no model achieving even a 2% success rate on the full benchmark (see Figure 6). This stands in stark contrast to other mathematics evaluations such as GSM8K ([cobbe2021training]), MATH ([hendrycks2021measuring]), AIME 2024 ([o1-learningtoreason]), or Omni-MATH ([gao2024omni]), which are closer to saturation (see Figure 2).

<details>

<summary>visuals/model_comparison_mean_accuracy.png Details</summary>

### Visual Description

## Bar Chart: Mean Accuracy of Language Models

### Overview

The image is a horizontal bar chart comparing the mean accuracy of several language models. The models are listed on the vertical axis, and the mean accuracy is displayed on the horizontal axis, ranging from 0% to 100%. Each bar represents the mean accuracy of a specific model, with error bars indicating the range of accuracy.

### Components/Axes

* **Vertical Axis (Language Models):**

* o1-preview

* o1-mini

* Gemini 1.5 Pro (002)

* Claude 3.5 Sonnet (2024-10-22)

* GPT-4 (2024-08-06)

* Grok 2 Beta

* **Horizontal Axis (Mean Accuracy):**

* Scale: 0% to 100%

* Markers: 0%, 20%, 40%, 60%, 80%, 100%

* **Bars:** Teal bars representing the mean accuracy for each language model.

* **Error Bars:** Black horizontal lines extending from each bar, indicating the range of accuracy.

### Detailed Analysis

Here's a breakdown of the mean accuracy for each language model, based on the bar chart:

* **o1-preview:** Mean accuracy is approximately 10%, with an error range of approximately +/- 1%.

* **o1-mini:** Mean accuracy is approximately 8%, with an error range of approximately +/- 1%.

* **Gemini 1.5 Pro (002):** Mean accuracy is approximately 7%, with an error range of approximately +/- 1%.

* **Claude 3.5 Sonnet (2024-10-22):** Mean accuracy is approximately 5%, with an error range of approximately +/- 1%.

* **GPT-4 (2024-08-06):** Mean accuracy is approximately 5%, with an error range of approximately +/- 1%.

* **Grok 2 Beta:** Mean accuracy is approximately 3%, with an error range of approximately +/- 1%.

### Key Observations

* The "o1-preview" model has the highest mean accuracy among the listed models, at approximately 10%.

* "Grok 2 Beta" has the lowest mean accuracy, at approximately 3%.

* The error ranges for all models appear to be relatively small, suggesting consistent performance.

* The models "Claude 3.5 Sonnet" and "GPT-4" have similar mean accuracy values.

### Interpretation

The bar chart provides a comparison of the mean accuracy of different language models. The data suggests that the "o1-preview" model performs better than the other models in terms of mean accuracy. The relatively small error ranges indicate that the performance of each model is consistent. The chart allows for a quick visual comparison of the models' performance, highlighting the strengths and weaknesses of each. The dates associated with some models (Claude 3.5 Sonnet and GPT-4) might indicate the version or release date of those models, which could be relevant for understanding their performance in relation to newer or older models.

</details>

Figure 6: Performance of leading language models on FrontierMath based on mean accuracy across 8 runs. All models show consistently poor performance, with even the best models solving less than 2% of problems in each run on average. When re-evaluating problems that were solved at least once by any model, o1-preview demonstrated the strongest performance across repeated trials (see Section B.2).

Based on a single evaluation of the full benchmark, we found that models solved very few problems (less than 2% success rate). Given this low success rate and the fact that we ran only one evaluation, the precise ordering of model performance should be interpreted with significant caution, as individual successes can have an outsized impact on rankings. To better understand model behavior on solved problems, we identified all problems that any model solved at least once—a total of four problems—and conducted repeated trials with five runs per model per problem (see Appendix B.2). We observed high variability across runs: only in one case did a model solve a question on all five runs (o1-preview for question 2). When re-evaluating these problems that were solved at least once, o1-preview demonstrated the strongest performance across repeated trials (see Section B.2).

Moreover, even when a model obtained the correct answer, this does not mean that its reasoning was correct. For instance, on one of these problems running a few simple simulations was sufficient to make accurate guesses without any deeper mathematical understanding. However, models’ low overall accuracy shows that such guessing strategies do not work on the overwhelming majority of FrontierMath problems. We also ran each model five times per problem on our five public sample problems (see Appendix A), and made the transcripts publicly available. The transcripts can be downloaded at https://epochai.org/files/sample_question_transcripts.zip

4.2.2 Number of responses and token usage

Our evaluation framework allows models to run experiments and reflect on their results multiple times before submitting a final answer. We found significant differences in how models use this opportunity. For example, o1-preview averages just 1.29 responses per problem, whereas Grok 2 Beta averages 3.81 responses. o1-preview and Gemini 1.5 Pro 002 typically submit a final answer before seeing any experimental results, despite explicit instructions that they can take time to experiment and wait until they are confident to make a final guess. In contrast, the other models show more extended interactions.

This pattern is also reflected in token usage. Gemini 1.5 Pro 002 reaches the 10,000 token limit in only 16.8% of questions, while Claude 3.5 Sonnet, GPT-4o and Grok 2 Beta exceed this limit in more than 45% of their attempts. On average, Gemini 1.5 Pro uses the fewest tokens per question (around 6,000), while other models use between 12,000 and 17,000 tokens. These averages can exceed the 10,000 token conversation limit because models that reach the limit still get to see the results of their final experiment and complete their final answer.

5 Related work

The evaluation of mathematical reasoning in AI systems has progressed through various benchmarks and competitions, each testing different aspects of mathematical capability. Here we review the major existing benchmarks and competitions, and discuss how FrontierMath advances this landscape.

Several established mathematics benchmarks focus on evaluating basic mathematical reasoning capabilities in AI systems. Datasets like GSM8K [cobbe2021training] and MATH [hendrycks2021measuring] provide structured collections of elementary to undergraduate-level problems. While these benchmarks provided useful measures of capabilities in mathematical reasoning, state-of-the-art models now achieve near-perfect performance on many of them (see Figure ˜ 2), limiting their utility for discriminating between model capabilities.

Recent work has developed more challenging benchmarks for evaluating mathematical reasoning. The Advanced Reasoning Benchmark (ARB) [sawada2023arb] combines university-level mathematics with contest problems, spanning undergraduate to early graduate-level topics. The GHOSTS dataset [frieder2024mathematical] provides a comprehensive evaluation of language models’ mathematical capabilities through 709 problems spanning graduate-level topics, with a focus on natural language interactions and human-expert evaluation. Several benchmarks have focused specifically on olympiad-level mathematics: OlympiadBench [he2024olympiadbench] compiled 8,476 problems from mathematics and physics olympiads, PutnamBench [tsoukalas2024putnambench] draws from the Putnam Competition, and OmniMATH [gao2024omni] provides 4,428 problems sourced from international competitions (including IMO), Art of Problem Solving forums, and verified competition archives, with detailed domain categorization and difficulty scaling. While these benchmarks present more challenging problems than earlier datasets, their reliance on historical competition materials creates significant data contamination risks, as models may have been trained on the source materials or highly similar problems.

The Putnam-AXIOM benchmark [gulati2024putnamaxiom] partially circumvents these contamination risks by generating problem variants. Specifically, the authors programmatically generate new problems of equivalent difficulty by renaming variables and changing constants in existing Putnam problems. This results in significant drops in performance - for example, o1-preview’s accuracy drops from 50% to 34% using generated instead of unmodified problems. However, this technique only provides adds a limited degree of novelty to generated problems, and does not aid much in designing problems of much higher difficulty.

The AI Mathematical Olympiad (AIMO) [xtx2024aimo], an initiative led by XTX Markets, aims to develop AI systems capable of achieving International Mathematical Olympiad gold medal performance. While AIMO creates novel national olympiad-level problems specifically designed to test advanced mathematical reasoning while avoiding data contamination risks, its requirement that runtime models be open-weight means that frontier AI models cannot be officially evaluated on the benchmark.

A parallel line of work explores formal mathematics benchmarks. The MiniF2F benchmark [zheng2021minif2f] provides 488 formally verified problems from mathematical olympiads and undergraduate courses, manually formalized across multiple theorem proving systems including Metamath, Lean, and Isabelle. While MiniF2F enables rigorous evaluation of neural theorem provers through machine-verified proofs, it faces similar data contamination concerns and focuses on competition-style problems rather than research mathematics.

FrontierMath addresses these fundamental limitations in three key ways. First, it eliminates data contamination concerns by featuring exclusively new, previously unpublished problems, ensuring models cannot leverage training on highly similar materials. Second, it raises the difficulty level beyond existing benchmarks by incorporating research-level mathematics across most branches of modern mathematics, moving beyond beyond both elementary problems and competition-style mathematics. Third, its automated verification system enables efficient, reproducible evaluation of any model—including closed source frontier AI systems—while maintaining high difficulty standards. This positions FrontierMath as a unique tool for evaluating progress toward research-level mathematical capabilities.

6 Interviews with mathematicians

We conducted interviews with four prominent mathematicians to gather expert perspectives on FrontierMath’s difficulty, significance, and prospects: Terence Tao (2006 Fields Medalist), Timothy Gowers (1998 Fields Medalist), Richard Borcherds (1998 Fields Medalist), and Evan Chen, a renowned mathematics educator and IMO coach. Note that Evan Chen is also a co-author of this paper, and Terence Tao contributed several problems to the benchmark.

Assessment of FrontierMath difficulty

All four mathematicians characterized the research problems in the FrontierMath benchmark as exceptionally challenging, noting that the most difficult questions require deep domain expertise and significant time investment. For example, referring to a selection of several questions from the dataset, Tao remarked, "These are extremely challenging. I think that in the near term basically the only way to solve them, short of having a real domain expert in the area, is by a combination of a semi-expert like a graduate student in a related field, maybe paired with some combination of a modern AI and lots of other algebra packages…” However, some mathematicians pointed out that the numerical format of the questions feels somewhat contrived. Borcherds, in particular, mentioned that the benchmark problems “aren’t quite the same as coming up with original proofs.”

Expected timeline for FrontierMath progress

The mathematicians expressed significant uncertainty about the timeline for AI progress on FrontierMath-level problems, while generally agreeing these problems were well beyond current AI capabilities. Tao anticipated that the benchmark would "resist AIs for several years at least," noting that the problems require substantial domain expertise and that we currently lack sufficient relevant training data.

The interviewees anticipated human-AI collaboration would precede full automation. Chen and Tao both suggested that human experts working with AI systems could potentially tackle FrontierMath problems within around three years, much sooner than fully autonomous AI solutions. As Tao explained, on advanced graduate level questions, guiding current AI systems to correct solutions takes "about five times as much effort" as solving them directly—but he expected this ratio to improve below 1 on certain problems within a few years with sufficient tooling and capability improvements.

Key barriers to FrontierMath progress

A major challenge the mathematicians identified was the extremely limited training data available for FrontierMath’s specialized research problems. As Tao noted, for many FrontierMath problems, the relevant training data is "almost nonexistent…you’re talking like a dozen papers with relevant things." Gowers emphasized that solving these problems requires familiarity with "the tricks of the trade of some particular branch of maths"—domain knowledge that is difficult to acquire without substantial training data. The mathematicians discussed potential approaches to address data limitations, including synthetic data generation and formal verification methods to validate solutions.

Potential uses of FrontierMath-capable systems

The mathematicians interviewed identified several potential contributions from AI systems capable of solving FrontierMath-level problems. As Gowers noted, such systems could serve as powerful assistants for "slightly boring bits of doing research where you, for example, make some conjecture that would be useful, but you’re not quite sure if it’s true… it could be a very, very nice time saving device." Tao similarly emphasized their value for routine but technically demanding calculations, suggesting that a system performing at FrontierMath’s level could help mathematicians tackle problems that currently "take weeks to figure out." However, several interviewees cautioned that the practical impact would depend heavily on computational costs. As Tao observed regarding current systems like AlphaProof, "if your amazing tool takes three days of compute off of all of Google to solve each problem…then that’s less of a useful tool." The mathematicians generally agreed that AI systems at FrontierMath’s level would be most valuable as supplements to human mathematicians rather than replacements, helping to verify calculations, test conjectures, and handle routine technical work while leaving broader research direction and insight generation to humans.

7 Discussion

In this paper, we introduced FrontierMath, a new benchmark composed of a challenging set of mathematical problems spanning most branches of modern mathematics. FrontierMath focuses on highly challenging math problems, with an emphasis on research-level problems, whose solutions require multiple hours of concentrated effort by expert mathematicians. We found that leading AI models cannot solve over 2% of the problems in the benchmark. We have further qualitatively validated the difficulty of the problems by sharing a sample of ten problems to four expert mathematicians, including three Fields medal winners, who uniformly assessed the problems as exceptionally difficult.

FrontierMath addresses two key limitations of previous math benchmarks such as the MATH dataset [hendrycks2021measuring] and GSM8K [cobbe2021training]. First, it focuses on exceptionally challenging problems that require deep reasoning and creativity, including research-level mathematics, preventing saturation with current leading AI systems. Second, by using exclusively novel, unreleased problems, it helps prevent data contamination, reducing the risk that models succeed through pattern matching against training data.

Despite these strengths, FrontierMath has several important limitations. First, the practical focus on automatically verifiable and numerical answers excludes proof-writing and open-ended exploration, which are significant parts of modern math research. Second, while our problems are substantially more challenging than existing benchmarks, requiring hours of focused work from expert mathematicians, they still fall short of typical mathematical research, which often spans weeks, months or even years of sustained investigation. By limiting problems to hours instead of months, we make the benchmark practical but miss testing crucial long-term research skills.

Another limitation is that current AI models cannot solve even a small fraction of the problems in our benchmark. While this demonstrates the high difficulty level of our problems, it temporarily limits FrontierMath’s usefulness in evaluating relative performance of models. However, we expect this limitation to resolve as AI systems improve.

Evaluating AI systems’ mathematical capabilities will provide valuable insights into their potential to assist with complex mathematical research. Beyond mathematics, the precise chains of reasoning and creative synthesis required in these problems serve as an important proxy for broader scientific thinking. Through its high difficulty standards and rigorous verification framework, FrontierMath offers a systematic way to measure progress in advanced reasoning capabilities as AI systems evolve.

8 Future work

We will further develop the FrontierMath benchmark by introducing new, rigorously vetted problems. These new problems will follow our established data collection and review process, with enhanced quality assurance procedures to reduce answer errors and improve the accuracy of difficulty ratings. We will also release another set of representative sample problems to showcase the benchmark’s depth and variety.

We will further experiment with different evaluation methodologies to better assess the mathematical proficiency of today’s leading models. For example, we will test the effects of increasing the token limit, allowing models to reason for longer and run more experiments per problem. We also plan to conduct multiple runs for each model-problem pair, enabling us to report statistics and confidence intervals across attempts. These evaluations will help us better understand how different evaluation parameters affect model performance on advanced mathematical reasoning tasks.

Through FrontierMath, we are setting a new standard of difficulty for evaluating complex reasoning capabilities in leading AI systems. At Epoch AI, we are committed to maintaining FrontierMath as a public resource and regularly conducting comprehensive evaluations of leading models. We will continue working with mathematicians to produce new problems, perform quality assurance, validate difficulty scores, and provide transparent and authoritative assessments of AI systems’ mathematical reasoning abilities.

Appendix A Sample problems and solutions

This section presents five problems from the FrontierMath benchmark, with one chosen at random from each quintile of difficulty. The problems are arranged in decreasing order of difficulty, from research-level number theory to undergraduate algebraic geometry. Background levels indicate the prerequisite knowledge required, while creativity and execution ratings reflect hours of innovative thinking and technical rigor needed respectively.

| Problem | Author | Background | Creativity | Execution | MSC | Key Techniques |

| --- | --- | --- | --- | --- | --- | --- |

| 1 (A.1) | O. Järviniemi | Research | 4 | 15 | 11 | Frobenius elts., Artin symbols |

| 2 (A.2) | A. Kite | Research | 3 | 4 | 14, 20, 11 | Monodromy, branch loci |

| 3 (A.3) | D. Chicharro | Graduate | 3 | 3 | 11 | p-adic analysis, recurrences |

| 4 (A.4) | P. Enugandla | Graduate | 2 | 3 | 20 | Coxeter groups, characters |

| 5 (A.5) | A. Gunning | HS/Undergr. | 2 | 2 | 14, 11 | Hasse-Weil Bound |

Figure 7: MSC2020 codes: 11 (Number Theory), 14 (Algebraic Geometry), 20 (Group Theory and Generalizations) We thank Piotr Śniady for pointing out a typo in the problem statement of Sample 4, and Will McLafferty, Timothy Smits, and Frederick Vu for identifying errors in the originally given solution for Sample 5 in an earlier draft of the paper.

A.1 Sample problem 1 — high difficulty

A.1.1 Problem

Definitions. For a positive integer $n$ , let $v_{p}(n)$ denote the largest integer $v$ such that $p^{v}\mid n$ .

For $p$ a prime and $a\not\equiv 0±od{p}$ , we let $\textup{ord}_{p}(a)$ denote the smallest positive integer $o$ such that $a^{o}\equiv 1±od{p}$ . For $x>0$ , we let

$$

\textup{ord}_{p,x}(a)=\prod_{\begin{subarray}{c}q\leq x\\

q\text{ prime}\end{subarray}}q^{v_{q}(\textup{ord}_{p}(a))}\prod_{{q>x\\

q\text{ prime}}}q^{v_{q}(p-1)}.

$$

Problem. Let $S_{x}$ denote the set of primes $p$ for which

$$

\textup{ord}_{p,x}(2)>\textup{ord}_{p,x}(3), \tag{2}

$$

and let $d_{x}$ denote the density

$$

d_{x}=\frac{|S_{x}|}{|\{p\leq x:p\text{ is prime}\}|}

$$

of $S_{x}$ in the primes. Let

$$

d_{\infty}=\lim_{x\to\infty}d_{x}.

$$

Compute We have lowered the precision of this and several other problems which were written before the one-minute computation rule was finalized. $\lfloor 10^{6}d_{∞}\rfloor.$

Answer:

367707

A.1.2 Solution

We will break this into multiple sections.

Background

First of all, the presence of the $x$ -parameter is a technicality; informally, we are just asking for the density of primes $p$ such that

$$

\textup{ord}_{p}(2)>\textup{ord}_{p}(3). \tag{2}

$$

The motivation of this problem comes from Artin’s primitive conjecture which asks: for which integers $a$ are there infinitely many primes $p$ such that $a$ is a primitive root modulo $p$ ? This is equivalent to requesting $\textup{ord}_{p}(a)=p-1$ , i.e. $\textup{ind}_{p}(a)=1$ .

Artin’s conjecture is open. For our purposes, the relevant fact is that the conjecture has been solved on the assumption of a generalization of the Riemann hypothesis (for zeta functions of number fields). This was done by C. Hooley in 1967.

The key idea is that $a$ is a primitive root mod $p$ if and only if there is no $n>1$ such that $a$ is an $n$ th power modulo $p$ for some $n\mid p-1$ . One can try to compute the number of such primes $p$ not exceeding $x$ with an inclusion-exclusion argument. The Chebotarev density theorem gives us asymptotics for

$$

\displaystyle|\{p\leq x:p\equiv 1\pmod{n},a\text{ is a perfect }n\text{th power modulo }p\}| \tag{1}

$$

for any fixed $n.$

Bounding the error terms is what we need the generalization of Riemann’s hypothesis for (c.f.: the standard RH bounds the error term in the prime number theorem); we don’t know how to do it without.

For concreteness, if $a=2$ , then $a$ is a primitive root modulo $p$ for roughly $37\%$ of primes. See https://mathworld.wolfram.com/ArtinsConstant.html. In general, the density is strictly positive as long as $a$ is not a perfect square nor $-1$ (in which case there are no such primes $p>3$ ). The density is not quite $37\%$ in all cases – see below for more on this.

This argument is very general: it can be adapted to look at primes $p$ such that $\textup{ord}_{p}(2)=\textup{ord}_{p}(3)=p-1$ , or $\textup{ord}_{p}(2)=(p-1)/a,\textup{ord}_{p}(3)=(p-1)/b$ for fixed $a,b∈\mathbb{Z}_{+}$ , or $\textup{ord}_{p}(2)=\textup{ord}_{p}(3)$ , or – you guessed it – $\textup{ord}_{p}(2)>\textup{ord}_{p}(3)$ . The "bounding the error terms" part is no more difficult in these cases, and no non-trivial complications arise. But we of course still can’t do it without GRH.

We replaced $\textup{ord}_{p}(a)$ with $\textup{ord}_{p,x}(2)$ for the reason of deleting the error terms, and for this reason only. As a result, when one does the inclusion-exclusion argument, there are only a finite number of terms needed, and thus we only need asymptotic formulas for (1), rather than any sharp bounds for the error term.

So the question then is about computing the main terms. To do so, one needs to be able to compute asymptotics for (1). In the case $a=2$ , it turns out that (1) is asymptotically $1/\phi(n)n$ for any square-free $n$ . In some cases, like for $a=4$ and $a=5$ , this is not the case: For $a=4$ , take $n=2$ . For $a=5$ , this fails for $n=10$ , since $p\equiv 1±od{5}$ implies that $5$ is a square modulo $p$ – this is related to the fact that $\sqrt{5}∈\mathbb{Q}(\zeta_{5})$ . But it turns out that there is always only a small, finite number of revisions one needs to make to the argument, allowing for the computation of the main terms.

There are a few papers considering generalizations of Artin’s conjecture. It is known that the density of primes $p$ for which $\textup{ord}_{p}(2)>\textup{ord}_{p}(3)$ is positive (as a special case of a more general result), this again under GRH, but the task of computing that density has not been carried out in the literature, justifying the originality of this problem. There is a fair amount of legwork and understanding needed to do this.

Solution outline

Here are the key steps for verifying the correctness of the answer:

1. We have explicit (but cumbersome) formulas for the density $d(a,b)=d(a,b,x)$ of primes $p$ such that

$$

\displaystyle\textup{ord}_{p,x}(2)=(p-1)/a,\textup{ord}_{p,x}(3)=(p-1)/b \tag{2}

$$

1. These formulas are " quasi-multiplicative ": they can be written in the form

$$

d(a,b)=f(v_{2}(a),v_{3}(a),v_{2}(b),v_{3}(b))\prod_{5\leq q\leq x}g_{q}(v_{q}(a),v_{q}(b)).

$$

1. $f$ and $g_{q}$ are explicit functions that can be evaluated in $O(1)$ time.

1. For any fixed $a$ , the sum

$$

\sum_{a<b}d(a,b,x)

$$

can be evaluated in $O(a)$ time.

- Idea: We can write $\sum_{a<b}d(a,b,x)=\sum_{b=1}^{∞}d(a,b,x)-\sum_{b=1}^{a}d(a,b,x)$ . The first sum is just the density of $p:\textup{ord}_{p,x}(2)=(p-1)/a$ , which can be evaluated similarly to point 1 above. The second sum can be evaluated with brute force.

1. We have, for any $T≥ 100$ , roughly This omits a lower-order term.

$$

\sum_{T<a<b}d(a,b,x)<114T^{-2\cdot 46/47},

$$

(think of the exponent as $-2+\epsilon$ ), and thus we have

$$

|\sum_{a<b}d(a,b,x)-\sum_{a<b,a\leq T}d(a,b,x)|<114T^{-2\cdot 46/47}.

$$

1. We have, for any $x_{∞}>x≥ 10^{8}$ ,

$$

\sum_{a<b,a\leq T}|d(a,b,x_{\infty})-d(a,b,x)|\leq\frac{1}{9x}.

$$

Then, taking $T=10^{5}$ and $x=10^{8}$ , we get an answer that has error at most $2.5· 10^{-8}$ . This then gives $7$ correct decimal places.

Step 1

In this part, we fix $a,b∈\mathbb{Z}_{+}$ , and we’ll compute the density of primes $p$ satisfying (2).

For (2) to hold, we need $2$ to be a perfect $a$ th power (and $p\equiv 1±od{a}$ ), but "no more": $2$ should not be an $aq$ th power for any prime $q$ (such that $p\equiv 1±od{aq}$ ). Similarly for $b$ . It’s convenient to frame these propositions in terms of algebraic number theory.

Let $K=\mathbb{Q}(\zeta_{\textup{lcm}(a,b)},2^{1/a},3^{1/b})$ , and for each prime $q$ let $K_{1,q}=\mathbb{Q}(\zeta_{aq},2^{1/aq})$ and $K_{2,q}=\mathbb{Q}(\zeta_{bq},3^{1/bq})$ . We want to compute the density of primes $p$ such that $p$ splits in $K$ , but doesn’t split in $K_{i,q}$ for any $i∈\{1,2\},2≤ q≤ x$ .

There is an "obvious guess" for what that density should be, by naively assuming that different values of $q$ are independent (e.g. $2$ being a fifth power shouldn’t provide evidence on whether $2$ is a seventh power), and that there are no "spurious correlations" (e.g. $2$ being a fifth power shouldn’t provide evidence on whether $3$ is a fifth power or not). This intuition is almost correct: it makes a small, finite number of mistakes, namely at the primes $q=2$ and $q=3$ . There are two reasons for this:

- $\zeta_{3}∈\mathbb{Q}(\zeta_{4},\sqrt{3})$ , since $\zeta_{3}=(1+\sqrt{-3})/2$ .

- $\sqrt{2}∈\mathbb{Q}(\zeta_{8})$ , since we have $(1+i)^{2}=2i$ , and thus $1+i=\zeta_{8}·\sqrt{2}$ .

Crucially, the only relations between $\zeta_{N},2^{1/N}$ and $3^{1/N}$ are these two. The following two well-known lemmas formalize this:

Lemma 1. Denote $K_{n}=\mathbb{Q}(\zeta_{n},2^{1/n},3^{1/n})$ . Let $N$ be an arbitrary positive integer. Picture $N$ as large, e.g. $N=100$ . Let $q_{1},q_{2},...,q_{k}$ be pairwise distinct primes greater than three. The fields

$$

K_{6^{N}},K_{q_{1}^{N}},\ldots,K_{q_{k}^{N}}

$$

are linearly disjoint This is equivalent to the Galois group of the compositum (in this case, $K_{(6q_{1}·s q_{k})^{N}}$ ) factorizing as the product of Galois groups of the individual fields: $\textup{Gal}(K_{(6q_{1}·s q_{k})^{N}}/\mathbb{Q})\cong\textup{Gal}(K_{6^{N}}/\mathbb{Q})×\textup{Gal}(K_{q_{1}^{N}}/\mathbb{Q})×·s×\textup{Gal}(K_{q_{k}^{N}}/\mathbb{Q})$ . (Another way of saying this is that the degree of the compositum is the product of the degrees of the individual fields.) For the non-expert, this is just a way of saying ”these things are independent from each other”.. Furthermore, the degrees $[K_{q_{i}^{N}}:\mathbb{Q}]$ are equal to $\phi(q_{i}^{N})q_{i}^{2N}$ ("the maximum possible").

Proof. We apply several results of [olli]. Recall that $\mathbb{Q}(\zeta_{q_{1}^{N}}),...,\mathbb{Q}(\zeta_{q_{K}^{N}})$ are linearly disjoint. We can append $K_{6^{N}}$ to the set while maintaining disjointness, since the largest cyclotomic subfield of $K_{6^{N}}$ lies in $\mathbb{Q}(\zeta_{2· 6^{N}},\sqrt{2},\sqrt{3})⊂\mathbb{Q}(\zeta_{2· 6^{N}},\zeta_{8},\zeta_{12})$ (see Proposition 3.14 of the paper). Then, throwing in elements of the form $2^{1/q_{i}^{N}}$ and $3^{1/q_{i}^{N}}$ maintains linear disjointness, because the degrees of the resulting field extensions are powers of $q_{i}$ , and those are coprime. For the claim about maximality of degrees, apply Proposition 3.9 with $n=q_{i}^{N},K=\mathbb{Q}(\zeta_{n}),k=n^{2}$ , with $a_{i}$ the numbers $2^{q_{i}^{x}}3^{q_{i}^{y}}$ for $0≤ x,y<N$ ; the quotients of such numbers are not $n$ th powers in $K$ by Corollary 3.6. QED.

Of course, the claim about linear disjointness and the degrees being the maximum possible now holds for subfields of $K_{6^{N}},K_{q_{1}^{N}},...,K_{q_{k}^{N}}$ , too; we’ll be using this fact throughout.

At the primes $q=2,q=3$ we lose a factor of 4, as promised earlier:

Lemma 2. For $N≥ 3$ we have

$$

[K_{6^{N}}:\mathbb{Q}]=\frac{\phi(6^{N})6^{2N}}{4}.

$$