# Competence-Aware AI Agents with Metacognition for Unknown Situations and Environments (MUSE)

**Authors**: Rodolfo Valiente, Praveen K. Pilly

Abstract

Metacognition, defined as the awareness and regulation of one’s cognitive processes, is central to human adaptability in unknown situations. In contrast, current autonomous agents often struggle in novel environments due to their limited capacity for adaptation. We hypothesize that metacognition is a critical missing ingredient in autonomous agents for the cognitive flexibility needed to tackle unfamiliar challenges. Given the broad scope of metacognitive abilities, we focus on competence awareness and strategy selection. To this end, we propose the Metacognition for Unknown Situations and Environments (MUSE) framework to integrate metacognitive processes of self-assessment and self-regulation into autonomous agents. We present two implementations of MUSE: one based on world modeling and another leveraging large language models (LLMs). Our system continually learns to assess its competence on a given task and uses this self-assessment to guide iterative cycles of strategy selection. MUSE agents demonstrate high competence awareness and significant improvements in self-regulation for solving novel, out-of-distribution tasks more effectively compared to model-based reinforcement learning and purely prompt-based LLM agent approaches. This work highlights the promise of approaches inspired by cognitive and neural systems in enabling autonomous agents to adapt to new environments while mitigating the heavy reliance on extensive training data and large models for the current models.

keywords: Agentic AI , Large Language Model , Metacognition , Reinforcement Learning , Self-Assessment , Self-Regulation , World Model

\NewColumnType

L[1]Q[l,#1] \NewColumnType C[1]Q[c,#1] \NewColumnType R[1]Q[r,#1]

Intelligent Systems Center

1 Introduction

The pursuit of fully autonomous agents in artificial intelligence (AI) remains a significant challenge. Current autonomous agents are primarily designed for operating environments, conditions, and uses that are known a priori. They rely on either scripted behaviors or pre-trained policies, both of which struggle to handle unknown situations effectively. As a result, when faced with novelty, they are prone to fail with suboptimal or even catastrophic outcomes (e.g., robotic manipulation errors in unstructured settings). This limitation severely restricts their deployment in safety-critical unknown environments, especially for long-duration missions or applications with little to no human oversight. Therefore, there is a practical urgency to reduce the failure rate, time to completion, and cost of autonomous missions by enabling resilient handling of unknowns during deployment.

In mainstream AI, large-scale multi-task pre-training has emerged as the leading approach for enhancing adaptability in autonomous agents (Team et al., 2021). For example, Adaptive Agent (AdA) (Team et al., 2023) was trained with billions of frames and tasks to enable rapid adaptation to unseen, open-ended tasks. Similarly, the RT-2 (Brohan et al., 2023) and RT-X (Collaboration et al., 2023) models leverage large-scale robotic trajectory datasets to train agents capable of solving novel manipulation tasks and generalizing to new robots and environments. However, internet-scale pre-training to be able to handle every potential change and combination of changes in real-world applications is impractical, prohibitively resource-intensive, and expensive. Even with significantly limited data, AI agents must intelligently interpolate and extrapolate beyond their pre-trained scenarios while continually learning and adapting to novelty (Kudithipudi et al., 2023). In other words, when faced with novel scenarios, pre-trained knowledge must be continually updated to strike a dynamic balance between stability and plasticity (Grossberg, 1980).

Similar to humans, AI agents can leverage pre-deployment training to acquire a wide range of skills across diverse, known scenarios. Importantly, they can also be equipped with the ability to engage in online learning for continual improvement when encountering novel situations. For example, a teenager attending driving school follows a structured curriculum that teaches foundational vehicle control skills, which are then progressively built upon to master more complex tasks, such as merging onto highways or navigating construction zones. This learning process is cumulative in the sense that mastery of foundational skills simplifies the acquisition of more advanced ones. Moreover, the key principles of driving are consolidated in the student’s brain, protecting them from catastrophic forgetting. Ultimately, the end of driving school marks the beginning of a lifelong learning process, where the student draws on prior experiences to navigate novel driving challenges independently without an instructor.

Metacognition, defined as the awareness and regulation of one’s cognitive processes, is a key human trait that has been extensively studied in cognitive psychology (Flavell, 1979; Nelson and Narens, 1990; Metcalfe et al., 1993; Koriat, 1997; Dunlosky and Metcalfe, 2008). This metacognitive flexibility enables humans to learn online efficiently and solve problems iteratively, especially in relation to new tasks. For instance, students can leverage metacognition to more accurately assess their knowledge and adjust their study habits accordingly (Cohen, 2012; Chen et al., 2017). The use of metacognition among college students has indeed been shown to correlate significantly with various measures of academic success (Young and Fry, 2008; Isaacson and Fujita, 2006). Students who perform poorly often overestimate their abilities, leading to under-preparation for exams. This is a common issue in education, where overconfident students allocate insufficient time to study, believing they have already mastered the material. Conversely, students who underestimate their knowledge may spend excessive time reviewing topics they already understand, hindering their progress. Even children as young as three years old can become effective learners and thinkers from activities designed to develop metacognitive skills (Chatzipanteli et al., 2014). It is generally agreed that self-assessment of competence, which can range from over-confidence in novices to slight under-confidence in experts (Kruger and Dunning, 1999; Dunning, 2011), is a capability that is teachable and improves naturally as one becomes more skilled or knowledgeable (Kramarski and Mevarech, 2003; Schraw et al., 2006). Neuroscience further reveals that the subregions of the prefrontal cortex responsible for metacognitive judgments are distinct from those involved in cognitive functions like visual memory recognition, such that the metacognitive function can be selectively deactivated without affecting the cognitive function (e.g., Middlebrooks et al. (2012); Miyamoto et al. (2017)).

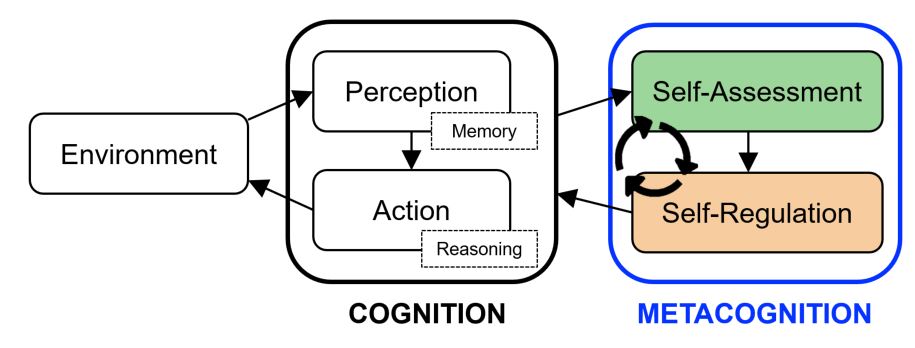

While metacognition spans a wide range of capabilities and higher-order cognitive processes (e.g., Feeling of Knowing, Judgment of Learning, Source Monitoring), it can be conceptualized as an internal perception-action loop of self-assessment and self-regulation (Nelson and Narens, 1990; Dunlosky and Bjork, 2013). Self-assessment in this context refers to an individual’s ability to assess their competence regarding a specific task. Self-regulation refers to the ability to strategically select and control one’s actions based on this self-assessment. In this article, we introduce the Metacognition for Unknown Situations and Environments (MUSE) framework to computationally instantiate and train the metacognitive capabilities of self-assessment and self-regulation for AI agents, so that they can also achieve more efficient learning and improved generalization to unknown scenarios (Figure 1). Specifically, the self-assessment mechanism is designed to predict the agent’s likelihood of successfully completing a given task for proposed action plans, with a learnable internal model informed by past experiences. And the self-regulation mechanism leverages this self-assessment to enable iterative cycles of competence-aware strategy selection for problem-solving. We present two implementations of the MUSE agent: one based on world modeling and the other utilizing large language models (LLMs). Our experiments in two distinct environments (namely, Meta-World and ALFWorld) demonstrate that MUSE agents achieve substantial improvements in handling novel scenarios compared to baseline/non-metacognitive approaches. Further, we show that metacognition makes a particularly big impact on smaller or less-capable LLM agents, making them amenable to edge deployment as well as less reliant on big data for online adaptation.

<details>

<summary>figures/metacognitive_cycle.png Details</summary>

### Visual Description

## Cognitive Process Diagram: Cognition and Metacognition

### Overview

The image is a diagram illustrating the relationship between cognition and metacognition. It depicts a flow of information and processes, starting from the environment, moving through perception and action within cognition, and then into self-assessment and self-regulation within metacognition.

### Components/Axes

* **Environment:** A rounded rectangle on the left, representing the external environment.

* **Cognition:** A larger rounded rectangle encompassing "Perception" and "Action".

* **Perception:** A rounded rectangle inside the Cognition box.

* **Memory:** A dashed rectangle connected to Perception.

* **Action:** A rounded rectangle inside the Cognition box, below Perception.

* **Reasoning:** A dashed rectangle connected to Action.

* **Metacognition:** A rounded rectangle on the right, encompassing "Self-Assessment" and "Self-Regulation".

* **Self-Assessment:** A green rounded rectangle inside the Metacognition box.

* **Self-Regulation:** An orange rounded rectangle inside the Metacognition box, below Self-Assessment.

* **Arrows:** Arrows indicate the flow of information and processes.

### Detailed Analysis or ### Content Details

1. **Environment** to **Cognition**: An arrow points from the "Environment" box to both "Perception" and "Action" within the "Cognition" box.

2. **Perception** to **Action**: An arrow points from "Perception" to "Action".

3. **Cognition** to **Metacognition**: An arrow points from "Action" to both "Self-Assessment" and "Self-Regulation" within the "Metacognition" box.

4. **Self-Assessment** to **Self-Regulation**: An arrow points from "Self-Assessment" to "Self-Regulation".

5. **Self-Regulation** to **Self-Assessment**: A curved arrow points from "Self-Regulation" back to "Self-Assessment", indicating a feedback loop.

### Key Observations

* The diagram highlights a hierarchical structure, with the environment influencing cognition, and cognition influencing metacognition.

* Metacognition involves a feedback loop between self-assessment and self-regulation.

* Memory is associated with perception, and reasoning is associated with action.

### Interpretation

The diagram illustrates a model of cognitive processing where the environment provides input that is processed through perception and action. This cognitive processing then informs metacognitive processes of self-assessment and self-regulation. The feedback loop between self-assessment and self-regulation suggests a continuous process of monitoring and adjusting one's own cognitive processes. The association of memory with perception and reasoning with action suggests that these cognitive functions play a crucial role in these processes.

```

</details>

Figure 1: The metacognitive cycle of self-assessment and self-regulation operates on the traditional perception-action loop of existing AI agents to boost their ability for iterative problem-solving in unknown situations and environments.

In contrast to current reinforcement learning (RL) approaches (e.g., Silver et al. (2016, 2017, 2018); Ha and Schmidhuber (2018); Hafner et al. (2023)), which focus on maximizing expected cumulative reward, our MUSE framework prioritizes competence as the primary evaluation metric to enhance the agent’s adaptability to unknown situations. We hypothesize that focusing solely on maximizing return may cause an agent to become stuck in unfamiliar situations, particularly under sparse reward regimes. By contrast, maximizing competence continually for strategy selection encourages more effective exploration in such novel environments. Through metacognition, the agent can evaluate its capabilities and attempt new strategies within its perceived competence, enabling safer and more effective online adaptation. In other words, by maximizing competence, the agent not only improves its ability to tackle immediate challenges more effectively but also fosters iterative problem-solving in complex environments.

The contributions of our article are threefold:

- We introduce the Metacognition for Unknown Situations and Environments (MUSE) framework, which integrates metacognitive functions of self-assessment and self-regulation into sequential decision-making agents.

- We propose an implementation of a competence awareness model that continually learns to assess the agent’s competence on a given task and serves as an evaluation function for planning (Self-Assessment).

- We propose an implementation of a policy modulation model that leverages self-assessment to iteratively drive strategy selection by identifying action plans that maximize the likelihood of task success (Self-Regulation).

2 Related Work

2.1 Self-Assessment

Self-assessment broadly refers to an agent’s ability to monitor its internal states and assess its capabilities and performance in relation to tasks and goals. It enables humans to reflect and make adjustments for improving outcomes (Flavell, 1979; Nelson and Narens, 1990; Schraw, 1998).

World Models: These are generative models of environmental dynamics (Ha and Schmidhuber, 2018; Robine et al., 2023; Micheli et al., 2023; Hansen et al., 2022, 2023), which can be used to estimate the expected cumulative reward of agents. These models often employ sequence-based architectures, such as recurrent neural networks, to predict the next state, reward, and terminal signals. Decoder-based World Models (Hafner et al., 2023; Robine et al., 2023; Micheli et al., 2023) can additionally generate the input state corresponding to the predicted next state. In contrast, decoder-free World Models focus on predicting the outcomes of actions in the latent space, bypassing the need to decode input states (Hansen et al., 2022, 2023). World Models act as proxy simulators during training, enabling agents to learn more efficiently by reducing the reliance on real-environment interactions (e.g., Ha and Schmidhuber (2018); Koul et al. (2020); Hafner et al. (2023)). In this work, we extend the capabilities of decoder-based World Models by training them to predict not only environmental dynamics but also the agent’s competence to solve a given task.

LLM Critics: These models are designed to evaluate the performance of LLMs. The LLM itself can be prompted to provide feedback on its outputs (Madaan et al., 2023), intermediate reasoning steps (Paul et al., 2023), or even the prompt itself (Hu et al., 2023). Some approaches enhance the correctness and quality of LLM outputs by using stochastic beam search guided by self-evaluation (Xie et al., 2023). Recognizing that LLMs currently are limited in identifying their own errors, reasoning missteps, or biases (Huang et al., 2023), researchers have augmented LLM critics with external tools, such as search engines and calculators, to improve reliability (Gou et al., 2023). Retrieval Augmented Generation (RAG) approaches have also been proposed to strengthen self-evaluation by providing relevant external knowledge bases (Asai et al., 2023). In contrast, MUSE does not rely solely on pre-trained knowledge or external tools. Instead, it continually learns and grounds itself to evaluate its competence on given tasks and uses this kind of self-assessment to modulate policy decisions.

Confidence Networks: Recent work in cognitive computational neuroscience has developed quantitative frameworks for assessing metacognitive judgments related to self-assessment across a range of domains, task difficulties, and time scales with and without external feedback (Fleming, 2024; Lu et al., 2025). Measures that have been proposed and utilized to assess metacognition include the statistical correlation between self-reported confidence ratings and actual performance across trials as well as the more reliable meta- d’ metric, which measures the ability of self-assessment to discriminate between high-performance (correct) and low-performance (incorrect) trials without being affected by response bias in metacognitive judgments (Maniscalco and Lau, 2012; Fleming and Lau, 2014).

Consistent with these measures of metacognition, there is prior work in machine learning aimed at self-assessment of deep neural networks that perform perception tasks. For classification, Corbiere et al. (2019) trained a separate neural network (called ConfidNet) that operates on high-level features extracted by the classifier to predict the true class probability (TCP), which is the softmax probability of the correct class irrespective of whether it was chosen or not. Further, Webb et al. (2023) trained confidence networks for a variety of perception tasks to instead predict the probability of the decision being correct, i.e., a value of 1 if correct and 0 otherwise. They also trained a RL agent that chooses among perception labels as well as an opt-out action that earns a low-risk low-reward in situations when the agent is least certain about its decision. These self-assessment metrics themselves are task-agnostic (Fleming, 2024) but require a training scheme that is adapted to the specifics of the task. In this regard, one of our contributions is to implement a global self-assessment metric for artificial agents that predicts the probability of task success in each episode over time.

2.2 Self-Regulation

Self-regulation is the process by which an agent dynamically adjusts its behavior based on self-assessment to achieve specific goals (Flavell, 1979; Nelson and Narens, 1990). This ability is essential for humans to exhibit robust decision-making and function autonomously in unfamiliar environments.

Model-based Reinforcement Learning (MBRL) Agents: These agents utilize World Models to simulate future scenarios, enabling them to train with minimal real interactions with the environment (Moerland et al., 2023). Dyna (Sutton, 1991) is a foundational architecture that integrates learning and planning within a single agent. Its core idea is to use the agent’s real experiences to update not only its policy but also its internal model of the environment, and thereby leverage the updated internal model to further improve the policy offline using simulated experiences. Among recent leading ones, AlphaGo (Silver et al., 2016), AlphaZero (Silver et al., 2017), and MuZero (Silver et al., 2018) are all MBRL systems that employ internal simulations/models and Monte Carlo Tree Search (MCTS) to explore potential action paths, based on variations of the Upper Confidence Bound (UCB) score, and evaluate them for action selection. In contrast to MBRL agents, which prioritize maximizing expected cumulative reward, the self-regulation mechanism of MUSE leverages competence as the primary evaluation metric to enhance the agent’s ability to navigate and adapt effectively in unknown situations.

Prompt-based LLM Agents: The capabilities of LLMs extend beyond language generation, making them increasingly popular for reasoning tasks to potentially deal with novelty. Chain-of-Thought (CoT) prompting (Wei et al., 2022), for example, decomposes a complex problem into intermediate steps to arrive at a final answer. However, CoT reasoning struggles to yield accurate results due to error propagation as the number of steps increases (Chen et al., 2022). Techniques such as self-consistency (Wang et al., 2022), least-to-most prompting (Zhou et al., 2022), and Tree-of-Thought (ToT) prompting (Yao et al., 2024) aim to mitigate this issue by improving sampling strategies and leveraging search algorithms. Nevertheless, these methods rely solely on the LLM’s pre-trained knowledge, which limits their ability to adapt to external feedback.

Beyond reasoning tasks, LLMs are also being increasingly applied to operate in an agentic loop of perception and action, which unlocks the benefits of large-scale pre-training for multi-step interactive tasks without relying on RL. ReAct (Yao et al., 2022) was among the first purely prompt-based LLM agents that integrated both reasoning and action planning to perform text-based problem-solving. However, ReAct is inefficient and limited in its ability to transfer performance improvements to subsequent episodes. To address this issue, Reflexion (Shinn et al., 2023) built on ReAct by adding an LLM critic that reflects on failures and provides persistent verbal feedback to the agent for improved performance in subsequent episodes. The performance gains from these LLM agent methods are solely dependent on enhanced prompt-based in-context learning, which limits their ability for longer-term true learning from new varied experiences. While MUSE also makes use of prompting for both reasoning and planning, it can also continually learn from its experiences to facilitate more effective problem-solving in unknown situations and environments.

3 Decoder-based World Model implementation

In this section, we describe our implementation of the MUSE framework using a decoder-based World Model to equip MBRL agents with metacognitive abilities of self-assessment and self-regulation.

3.1 Methods

3.1.1 Self-Assessment through World Modeling

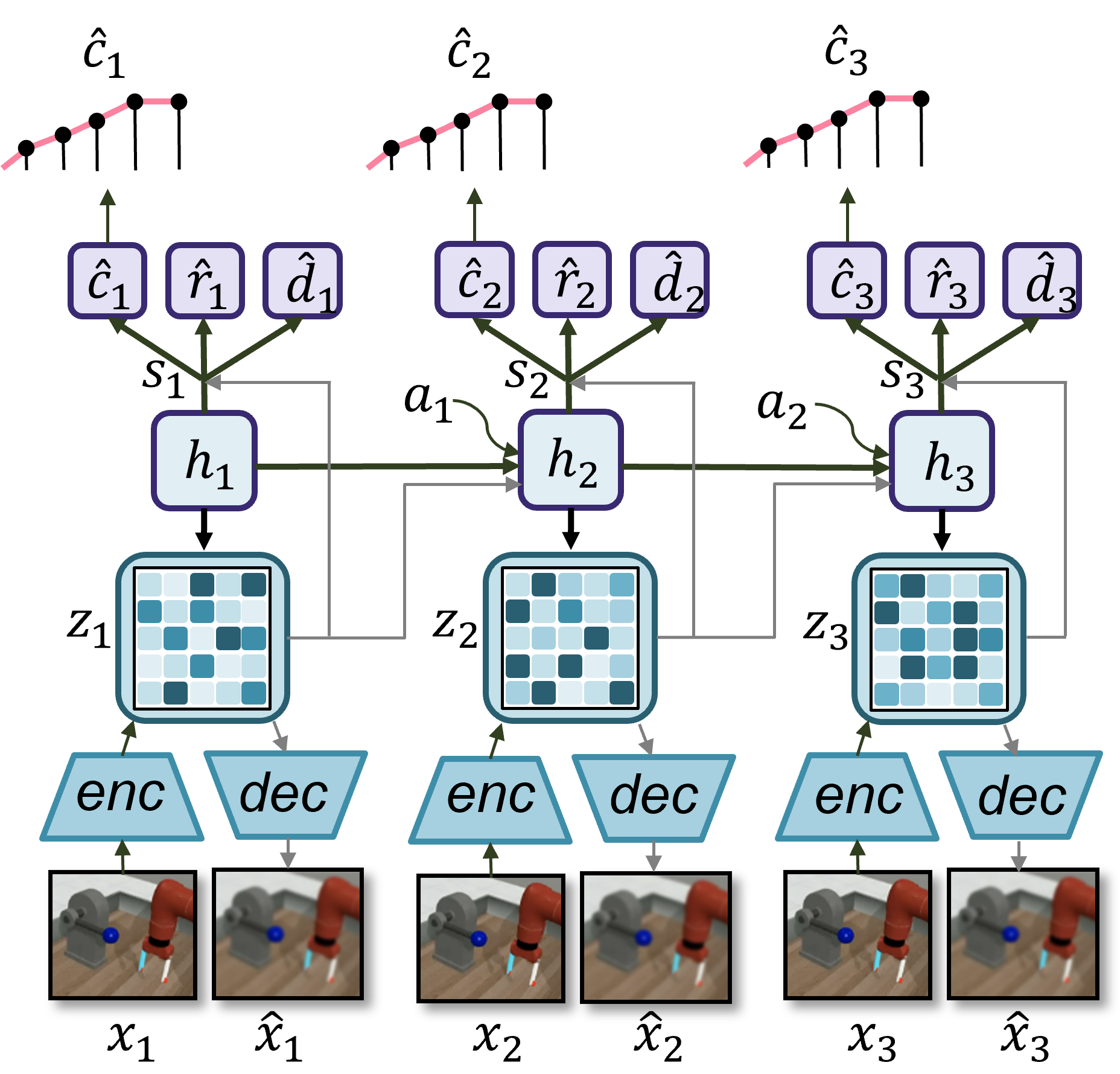

We leverage the decoder-based World Model from Dreamer-v3 (Hafner et al., 2023) to implement self-assessment for agents, but we note that our approach can be extended to decoder-free World Models as well. Dreamer-v3 uses a Recurrent State-Space Model (RSSM) to model the environment dynamics. See Equations 1 - 3 for the formulation from Hafner et al. (2023). The RSSM maps the input state $x_{t}$ and recurrent state $h_{t}$ to a latent embedding $z_{t}$ and uses the concatenation of $h_{t}$ and $z_{t}$ , called the RSSM state, as input to parameterize various distributions over the reward $\hat{r}_{t}$ , terminal signal $\hat{d}_{t}$ , and decoded state $\hat{x}_{t}$ .

$$

\displaystyle\begin{aligned} \begin{aligned} \raisebox{8.39578pt}{\hbox to0.0pt{\hss\vbox to0.0pt{\hbox{$\text{RSSM}\hskip 4.30554pt\begin{cases}\hphantom{A}\\

\hphantom{A}\\

\hphantom{A}\end{cases}\hskip-10.33327pt$}\vss}}}&\text{Sequence model:}\hskip 35.00005pt&&h_{t}&\ =&\ f_{\phi}(h_{t-1},z_{t-1},a_{t-1})\\

&\text{Encoder:}\hskip 35.00005pt&&z_{t}&\ \sim&\ q_{\phi}(z_{t}\;|\;h_{t},x_{t})\\

&\text{Dynamics predictor:}\hskip 35.00005pt&&\hat{z}_{t}&\ \sim&\ p_{\phi}(\hat{z}_{t}\;|\;h_{t})\\

&\text{Reward predictor:}\hskip 35.00005pt&&\hat{r}_{t}&\ \sim&\ p_{\phi}(\hat{r}_{t}\;|\;h_{t},z_{t})\\

&\text{Terminal signal predictor:}\hskip 35.00005pt&&\hat{d}_{t}&\ \sim&\ p_{\phi}(\hat{d}_{t}\;|\;h_{t},z_{t})\\

&\text{Decoder:}\hskip 35.00005pt&&\hat{x}_{t}&\ \sim&\ p_{\phi}(\hat{x}_{t}\;|\;h_{t},z_{t})\end{aligned}\end{aligned} \tag{1}

$$

ifnextchar

gobble

Following Hafner et al. (2023), given a sequential batch of inputs $x_{1:T}$ , actions $a_{1:T}$ , rewards $r_{1:T}$ , and terminal signals $d_{1:T}$ , the World Model parameters $\phi$ are optimized to minimize the prediction loss $\mathcal{L}_{\mathrm{pred}}$ , the dynamics loss $\mathcal{L}_{\mathrm{dyn}}$ , and the representation loss $\mathcal{L}_{\mathrm{rep}}$ . The prediction loss $\mathcal{L}_{\mathrm{pred}}$ is the joint negative log-likelihood of the multiple probabilistic predictors (Equation 3). Real-valued quantities like the reward and decoded state are trained with a symlog squared loss, whereas the terminal signal, which is a binary-valued quantity, is trained with logistic regression. The dynamics loss $\mathcal{L}_{\mathrm{dyn}}$ and the representation loss $\mathcal{L}_{\mathrm{rep}}$ are designed to effectively learn the dynamics of the latent embeddings for generating realistic rollout trajectories.

$$

\displaystyle\begin{aligned} \mathcal{L}(\phi)\doteq\operatorname{E}_{q_{\phi}}\Bigg[\displaystyle\sum_{t=1}^{T}(\mathcal{L}_{\mathrm{pred}}(\phi)+\mathcal{L}_{\mathrm{dyn}}(\phi)+0.1\mathcal{L}_{\mathrm{rep}}(\phi))\Bigg]\end{aligned} \tag{2}

$$

ifnextchar

gobble

$$

\displaystyle\begin{aligned} \mathcal{L}_{pred}\doteq-\ln p_{\phi}(r_{t}\;|\;h_{t},z_{t})-\ln p_{\phi}(d_{t}\;|\;h_{t},z_{t})-\ln p_{\phi}(x_{t}\;|\;h_{t},z_{t})\end{aligned} \tag{3}

$$

ifnextchar

gobble

We augment Dreamer-v3’s World Model with an additional head for predicting task success. In this implementation, the Self-Assessment Model is an MLP with $N$ outputs that map the RSSM state to the probability of task success within the $N$ quantiles of the maximum episode duration. Specifically, the MLP outputs parameterize $N=5$ Bernoulli distributions $\{\psi_{1},·s\psi_{N}\}$ for the five quantiles. A self-assessment prediction involves sampling from each of these distributions, $\hat{c}^{i}_{t}\sim\psi_{i}(h_{t},z_{t})$ , and combining these samples into a prediction vector. For example, a prediction of success in the first quantile would yield the vector $[1,1,1,1,1]$ , whereas a prediction of failure even by the last quantile would yield the vector $[0,0,0,0,0]$ . This process is visualized in Figure 2. Note that each component of the self-assessment head is trained separately using binary cross-entropy loss, and the individual losses are then added to the total prediction loss $\mathcal{L}_{\mathrm{pred}}$ (Equation 4).

$$

\displaystyle\begin{aligned} \mathcal{L}_{\mathrm{SA}}\doteq-\sum_{i=1}^{N}\ln\psi_{i}(h_{t},z_{t}),\hskip 35.00005pt\mathcal{L}_{pred}\leftarrow\mathcal{L}_{pred}+\mathcal{L}_{\mathrm{SA}}\end{aligned} \tag{4}

$$

ifnextchar

gobble

<details>

<summary>figures/rssm_sa_combined.png Details</summary>

### Visual Description

## Diagram: Recurrent Neural Network for Robotic Control

### Overview

The image depicts a recurrent neural network (RNN) architecture, likely used for controlling a robotic arm. The diagram illustrates the flow of information through the network over three time steps. It includes input images, encoding and decoding layers, hidden states, and predicted control parameters.

### Components/Axes

* **Time Steps:** The diagram shows three time steps, indexed by 1, 2, and 3.

* **Input Images (x):** At the bottom, there are pairs of images at each time step, labeled as x1, x̂1, x2, x̂2, x3, x̂3. The 'x' likely represents the input image, and 'x̂' represents the reconstructed or predicted image.

* **Encoder (enc):** A light blue trapezoid labeled "enc" represents the encoder network. It takes the input image (x) and transforms it into a latent representation (z).

* **Decoder (dec):** A light blue trapezoid labeled "dec" represents the decoder network. It takes the latent representation (z) and reconstructs the image (x̂).

* **Latent Representation (z):** The square boxes labeled z1, z2, and z3 represent the latent representations at each time step. These boxes contain a grid of smaller squares, each with varying shades of blue, suggesting a matrix or tensor representation.

* **Hidden State (h):** The rounded rectangles labeled h1, h2, and h3 represent the hidden states of the RNN at each time step.

* **Control Parameters (ĉ, r̂, d̂):** At the top, there are three rounded rectangles at each time step, labeled ĉ1, r̂1, d̂1, ĉ2, r̂2, d̂2, ĉ3, r̂3, d̂3. These likely represent the predicted control parameters for the robotic arm, such as position (ĉ), rotation (r̂), and depth (d̂).

* **Control Parameter Visualization:** Above each set of control parameters (ĉ1, ĉ2, ĉ3), there is a small graph. The x-axis represents time, and the y-axis represents the value of the control parameter. A pink line connects the data points, showing the trend of the control parameter over time.

* **Arrows:** Arrows indicate the flow of information through the network. Dark green arrows represent the primary flow, while gray arrows represent recurrent connections.

* **Recurrent Connections (a):** The gray arrows labeled a1 and a2 represent the recurrent connections, feeding the hidden state from the previous time step into the current time step.

* **State Connections (s):** The dark green arrows labeled s1, s2, and s3 connect the hidden state to the control parameters.

### Detailed Analysis

* **Input Images:** The images at the bottom show a robotic arm interacting with an object (possibly a blue sphere). The "x" images are likely the real images, while the "x̂" images are the reconstructions generated by the decoder. The reconstructed images appear slightly blurred.

* **Encoder-Decoder:** The encoder-decoder structure suggests that the network is learning a compressed representation of the input images. This representation is then used to reconstruct the images and predict the control parameters.

* **Hidden State:** The hidden state acts as a memory, storing information from previous time steps. This allows the network to make decisions based on the history of the robot's actions and observations.

* **Control Parameters:** The control parameters are the output of the network, and they determine the actions of the robotic arm. The graphs above the control parameters provide a visualization of how these parameters change over time.

* **Recurrent Connections:** The recurrent connections allow the network to maintain a state over time, enabling it to learn complex sequences of actions.

* **Control Parameter Visualization Details:**

* **ĉ1:** The pink line starts low and increases steadily. The black dots are at approximately y=0.2, 0.4, 0.6, 0.8.

* **ĉ2:** The pink line starts low and increases steadily. The black dots are at approximately y=0.2, 0.4, 0.6, 0.8.

* **ĉ3:** The pink line starts low and increases steadily. The black dots are at approximately y=0.2, 0.4, 0.6, 0.8.

### Key Observations

* The network appears to be processing sequential data, as evidenced by the time steps and recurrent connections.

* The encoder-decoder structure suggests that the network is learning a compressed representation of the input images.

* The hidden state plays a crucial role in maintaining a memory of past events.

* The control parameters are the output of the network and determine the actions of the robotic arm.

### Interpretation

The diagram illustrates a recurrent neural network designed for controlling a robotic arm. The network takes input images, encodes them into a latent representation, and uses this representation to predict control parameters. The recurrent connections allow the network to maintain a state over time, enabling it to learn complex sequences of actions. The encoder-decoder structure suggests that the network is learning a compressed representation of the input images, which is then used to reconstruct the images and predict the control parameters. This architecture is well-suited for tasks that require sequential decision-making, such as robotic control. The network learns to map visual inputs to appropriate motor commands, enabling the robot to perform complex tasks.

</details>

Figure 2: Schematic of the implementation of self-assessment in the context of the Dreamer-v3 World Model (adapted from Hafner et al. (2023)). The input state $x$ to the RSSM is encoded into latent embedding $z$ . The model recurrently predicts self-assessment $\hat{c}$ , reward $\hat{r}$ , and terminal signal $\hat{d}$ , while also decoding the input state $\hat{x}$ .

3.1.2 Self-Regulation

Even with pre-deployment training on multiple tasks, including parametric variations, MBRL agents exhibit limited generalization to novel tasks that require either new, orchestrated combinations of those skills or entirely new skills (Ketz and Pilly, 2022). While Dreamer-v3 can handle novel parametric variations for a known task, it struggles to make progress when faced with an unknown reward function that differs semantically from those of the training tasks. Central to our MUSE framework is the self-regulation algorithm, which performs competence-aware actions to solve novel tasks. Specifically, the decision-making process selects actions that maximize the likelihood of task success. Self-assessed competence can be used to guide planning in three primary ways:

1. Simulate multiple future scenarios (rollout trajectories) based on the current state and potential actions, then greedily select the path that maximizes the self-assessment criterion

1. Perform MCTS over actions using the self-assessment criterion in place of variations of the UCB score

1. Optimize the RSSM state to directly maximize the self-assessment criterion to effectively self-regulate the policy

For this implementation of MUSE, we used the third option. This self-regulation method, which is detailed in Algorithm 1, leverages the differentiability of the World Model with the self-assessment head. MUSE performs a World Model rollout where the agent’s actions are regulated to increase the likelihood of reaching a success state. Specifically, MUSE directly optimizes the RSSM state, $s$ ( $\doteq\{h,z\}$ ), input to the agent for selecting actions that maximize the self-assessment criterion. The intuition is that when the Self-Assessment Model predicts failure in a novel environment, it is no longer useful to just rely on the default policy. Instead, we seek competence-aware actions that guide the agent to a success state by augmenting the RSSM state in a direction that increases the probability of task success. At each time step $t$ , MUSE performs a short World Model rollout of horizon $H$ that optimizes over the RSSM state $s$ as follows:

$$

\displaystyle\begin{aligned} s\leftarrow s+\beta\nabla_{s}\left(\sum_{i=1}^{N}\psi_{i}(s)\right).\end{aligned} \tag{5}

$$

ifnextchar

gobble It then applies the self-regulated action $a_{t}\sim\pi(a_{t}|s)$ , observes the resulting recurrent state $h_{t+1}$ , and begins a new iteration.

Algorithm 1 Self-regulation leverages the World Model and its constituent Self-Assessment Model to select competence-aware actions

1: $h_{t}$

2: $H$ = 10

3: $\beta=0.02$

4: $z_{t}\sim p_{\phi}(z_{t}|h_{t})$

5: $h← h_{t}$

6: $z← z_{t}$

7: $s\doteq\{h,z\}$

8: for $step← 1$ to $H$ do

9: $a\sim\pi(a|s)$

10: $h← f_{\phi}(s,a)$

11: $\displaystyle s← s+\beta∇_{s}\left(\sum_{i=1}^{N}\psi_{i}(s)\right)$

12: end for

13: $a_{t}\sim\pi(a_{t}|s)$

14: $h_{t+1}← f_{\phi}(s,a_{t})$

15: return $z_{t}$ , $a_{t}$ , $h_{t+1}$

3.2 Meta-World Experiments

For the World-Model-based implementation, we evaluated our approach within the Meta-World robotic manipulation simulator (Yu et al., 2020) and compared it against Dreamer-v3 (Hafner et al., 2023) as the MBRL baseline. Meta-World provides a suitable testbed for learning a shared perceptual and dynamics model across multiple tasks using a 6 degrees-of-freedom (DOF) robotic arm.

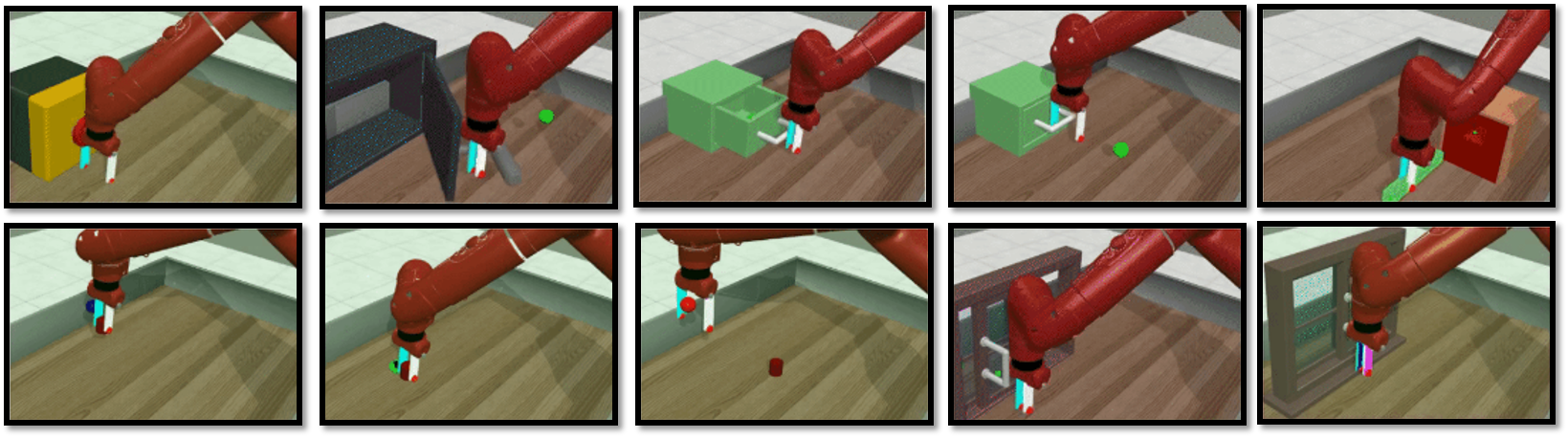

To ensure consistency, we used Dreamer-v3’s network architectures, hyperparameters, and learning procedures across all shared components between the two agents (e.g., an imagination horizon of 15 time steps for actor and critic learning). Both methods were implemented in the same PyTorch codebase, with the self-assessment and self-regulation modules omitted for Dreamer-v3. The agents received a $64× 64$ RGB observation alongside a 40-dimensional proprioceptive state. Additionally, we included a task embedding that was represented as a single integer-valued channel appended to the visual state. MUSE leveraged the built-in success signal returned by the Meta-World environment to train its Self-Assessment Model. For the experiments, we employed a two-stage protocol comprising pre-deployment training on known tasks followed by deployment adaptation to unknown tasks. Each episode in these experiments had a maximum time limit of 500 steps.

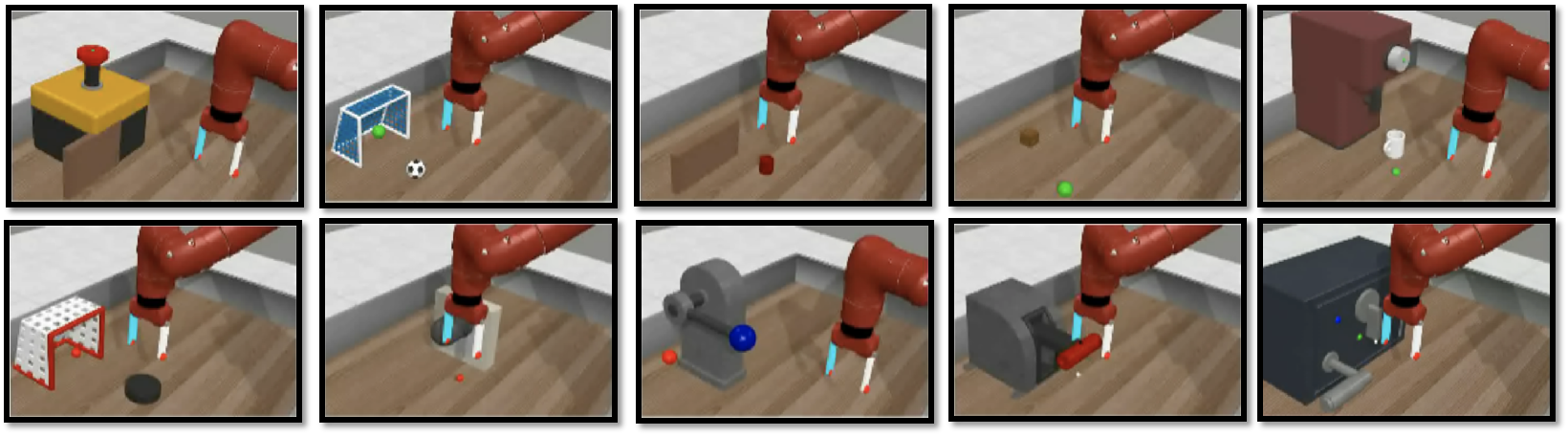

For pre-deployment training, we utilized Meta-World’s MT10 suite of 10 different manipulation tasks (Figure 3). In particular, we adopted a multi-task learning paradigm (Mandi et al., 2023), encompassing all 10 training tasks over 2M total environment steps. This paradigm was chosen over meta-RL approaches to reduce computational costs and training time (Wang et al., 2021). By default, object and goal positions were randomly sampled to enable domain randomization. For deployment adaptation, we evaluated the agents on a set of 10 novel tasks from Meta-World’s MT50 suite with distinct reward functions, which were semantically different from those in the pre-deployment training set (Figure 4). The agents were exposed to one novel task at a time, starting with pre-deployment trained weights, for 20 adaptation episodes per task. They were assessed for performance on novel tasks during these adaptation episodes. The task embedding channel was set to zero for novel tasks. During training as well as adaptation, both agents continually updated their World Models with real data and their actor and critic neural networks with imagined data. The replay buffer from pre-deployment training was retained for deployment adaptation to prevent catastrophic forgetting of previously trained performance.

3.2.1 Metrics

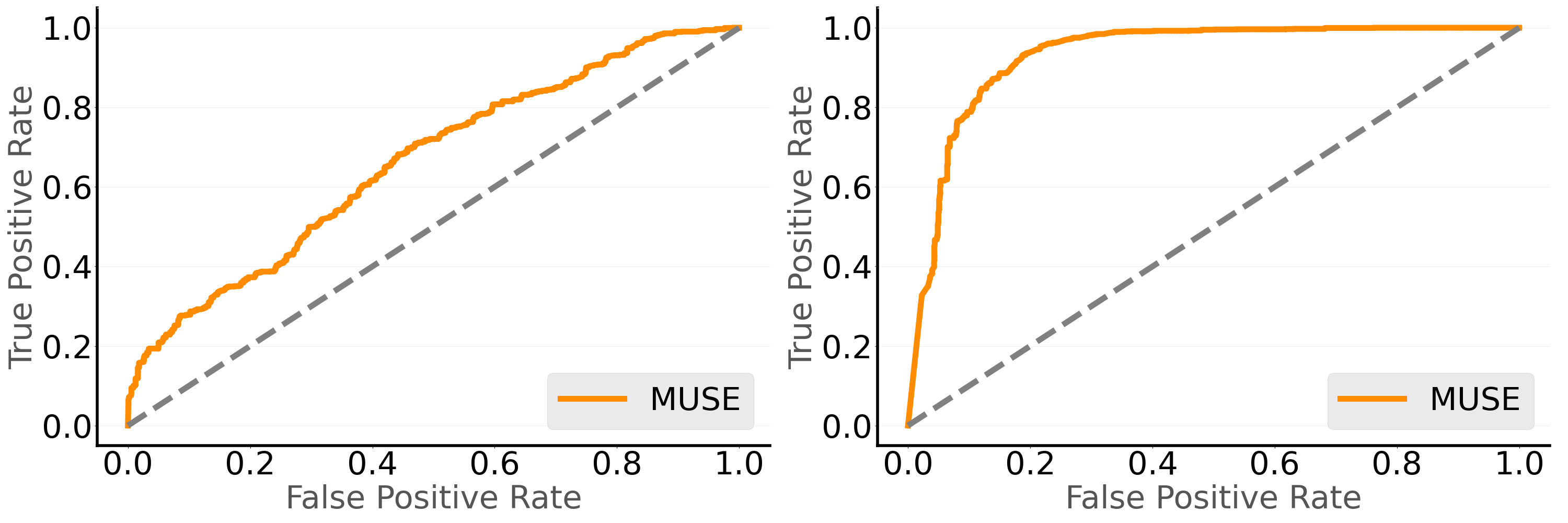

Self-Assessment We used metacognitive accuracy and the Area under the Type 2 Receiver Operating Characteristic Curve (AUROC2) to evaluate how well MUSE predicts its success on novel tasks (Fleming and Lau, 2014), which in turn indicates how effectively the self-assessment signal can support online adaptation. MUSE was evaluated for each novel task separately over 20 adaptation episodes. During these episodes, we collected MUSE’s step-wise self-assessment predictions for evaluation. The predictions at all time steps across tasks were compared with the true labels to compute the metacognitive accuracy and AUROC2 metrics. The Type 2 ROC curve for MUSE was computed by treating the sum of the self-assessment components $\left(\sum_{i=1}^{N}\psi_{i}(s)\right)$ as the signal. Note that, as Dreamer-v3 lacks a self-assessment module to predict episode outcomes, we cannot make a meaningful comparison between Dreamer-v3 and MUSE for self-assessment.

Self-Regulation We evaluated how well the agents generalize to unknown situations using two metrics: the percentage of novel tasks solved and the average time to task completion. Each agent was assessed for each novel task separately over the 20 adaptation episodes. The percentage of episodes where the agent completed the task within the maximum time limit was averaged across all novel tasks to calculate the success rate. Similarly, the number of time steps required to achieve success in each episode was averaged across all episodes for each novel task to compute the respective time-to-completion metrics.

<details>

<summary>figures/train_mt10_tasks.png Details</summary>

### Visual Description

## Image Montage: Robotic Arm Interactions

### Overview

The image is a montage of ten separate scenes, each depicting a red robotic arm interacting with different objects in a simulated environment. The scenes show the arm manipulating objects of various shapes and colors, suggesting a sequence of tasks or a demonstration of the arm's capabilities.

### Components/Axes

* **Robotic Arm:** A red, articulated robotic arm is the central element in each scene. It has a gripper at the end, which appears to be equipped with sensors or markers of blue, white, and red.

* **Objects:** The objects being manipulated vary in shape, size, and color. They include:

* A yellow rectangular prism

* A dark gray cabinet with an open door

* A green cube

* A red cylinder

* A wooden frame

* A window

* **Environment:** The environment appears to be a simulated room with a light-colored floor and walls. Some scenes include a green circular marker on the floor.

### Detailed Analysis or ### Content Details

The montage presents a sequence of interactions. Here's a breakdown of each scene:

1. **Scene 1:** The robotic arm is positioned near a yellow rectangular prism. The gripper is close to the object, suggesting an attempt to grasp or manipulate it.

2. **Scene 2:** The robotic arm is interacting with a dark gray cabinet. The cabinet door is open, and the arm's gripper is inside, possibly retrieving or placing an object. A green circular marker is on the floor.

3. **Scene 3:** The robotic arm is positioned above a green cube. The gripper is open, suggesting it is about to pick up the cube. A green circular marker is on the floor.

4. **Scene 4:** The robotic arm is holding a green cube. The gripper is closed around the cube, and the arm is likely moving it to a new location.

5. **Scene 5:** The robotic arm is positioned near a red rectangular prism. The gripper is close to the object, suggesting an attempt to grasp or manipulate it. A green circular marker is on the floor.

6. **Scene 6:** The robotic arm is positioned near the floor. The gripper is open, suggesting it is about to pick up something.

7. **Scene 7:** The robotic arm is positioned above a red cylinder. The gripper is open, suggesting it is about to pick up the cylinder.

8. **Scene 8:** The robotic arm is positioned near a wooden frame. The gripper is close to the object, suggesting an attempt to grasp or manipulate it.

9. **Scene 9:** The robotic arm is positioned near a window. The gripper is close to the object, suggesting an attempt to grasp or manipulate it.

10. **Scene 10:** The robotic arm is positioned near a window. The gripper is close to the object, suggesting an attempt to grasp or manipulate it.

### Key Observations

* The robotic arm appears to be performing a variety of tasks, including picking up, placing, and manipulating objects.

* The green circular markers may indicate target locations or points of interest for the robotic arm.

* The objects being manipulated vary in shape, size, and color, suggesting the arm is capable of handling a variety of items.

### Interpretation

The montage likely demonstrates the capabilities of the robotic arm in a simulated environment. The different scenes showcase the arm's ability to interact with various objects and perform tasks such as picking, placing, and manipulating. The presence of green markers suggests a guided or programmed sequence of actions. The variety of objects indicates the arm's versatility in handling different shapes and sizes. Overall, the montage presents a visual representation of the robotic arm's functionality and potential applications.

</details>

Figure 3: Meta-World pre-deployment training set [button-press, door-open, drawer-close, drawer-open, peg-insert-side, pick-place, push, reach, window-close, window-open], which comprises the 10 tasks from the MT10 suite.

<details>

<summary>figures/test_evaluation_tasks.png Details</summary>

### Visual Description

## Image Set: Robotic Arm Tasks

### Overview

The image shows a series of ten simulated environments where a robotic arm is performing different tasks. Each environment features a different object or set of objects that the arm interacts with. The arm itself is a reddish-brown color with a white and light blue gripper. The environments are simple, with a light-colored floor and walls.

### Components/Axes

Each of the ten images contains the following key components:

* **Robotic Arm:** A reddish-brown robotic arm with a white and light blue gripper.

* **Environment:** A simulated environment with a light-colored floor and walls.

* **Objects:** Various objects that the arm interacts with, such as a button, soccer ball, blocks, and tools.

### Detailed Analysis or ### Content Details

Here's a breakdown of each of the ten environments:

1. **Top-Left:** A yellow box with a red button on top, a brown barrier, and the robotic arm approaching.

2. **Top-Center-Left:** A blue and white soccer goal with a green ball inside and a black and white soccer ball outside. The robotic arm is positioned to interact with the balls.

3. **Top-Center:** A small red rectangular prism and a larger brown rectangular prism. The robotic arm is positioned to interact with the red prism.

4. **Top-Center-Right:** A brown cylinder and a green ball. The robotic arm is positioned to interact with the ball.

5. **Top-Right:** A brown box with a white knob and a white mug. A green ball is on the floor. The robotic arm is positioned to interact with the mug.

6. **Bottom-Left:** A white and red goal with a red ball inside and a black puck outside. The robotic arm is positioned to interact with the puck.

7. **Bottom-Center-Left:** A clear rectangular prism and two red balls. The robotic arm is positioned to interact with the balls.

8. **Bottom-Center:** A gray vise with a blue ball in the vise and a red ball on the floor. The robotic arm is positioned to interact with the vise.

9. **Bottom-Center-Right:** A gray box with a red cylinder on top. The robotic arm is positioned to interact with the cylinder.

10. **Bottom-Right:** A dark gray safe with a silver handle, a blue button, and a green light. The robotic arm is positioned to interact with the safe.

### Key Observations

* The robotic arm is consistent across all environments.

* The objects and tasks vary significantly, suggesting a range of capabilities being tested.

* The environments are simple and uncluttered, focusing attention on the task at hand.

### Interpretation

The image set likely represents a series of tests or demonstrations of a robotic arm's ability to interact with different objects and perform various tasks. The variety of objects and tasks suggests that the arm is designed to be versatile and adaptable. The simple environments allow for a clear focus on the arm's performance without distractions. The tasks range from simple manipulation (picking up a ball) to more complex interactions (operating a vise or safe), indicating a range of potential applications.

</details>

Figure 4: Meta-World evaluation set [button-press-topdown-wall, soccer, push-wall, push-block, coffee-button, plate-slide, peg-unplug-side, lever-pull, handle-press, door-unlock], which comprises 10 novel tasks from the MT50 suite with distinct reward functions, which differ semantically from those in the pre-deployment training set.

Table 1: Self-assessment performance of the MUSE agent on novel tasks in the Meta-World environment.

| Method | Metric | Value |

| --- | --- | --- |

| MUSE | Metacognitive Accuracy | 92% |

| MUSE | AUROC2 | 0.95 |

3.2.2 Results

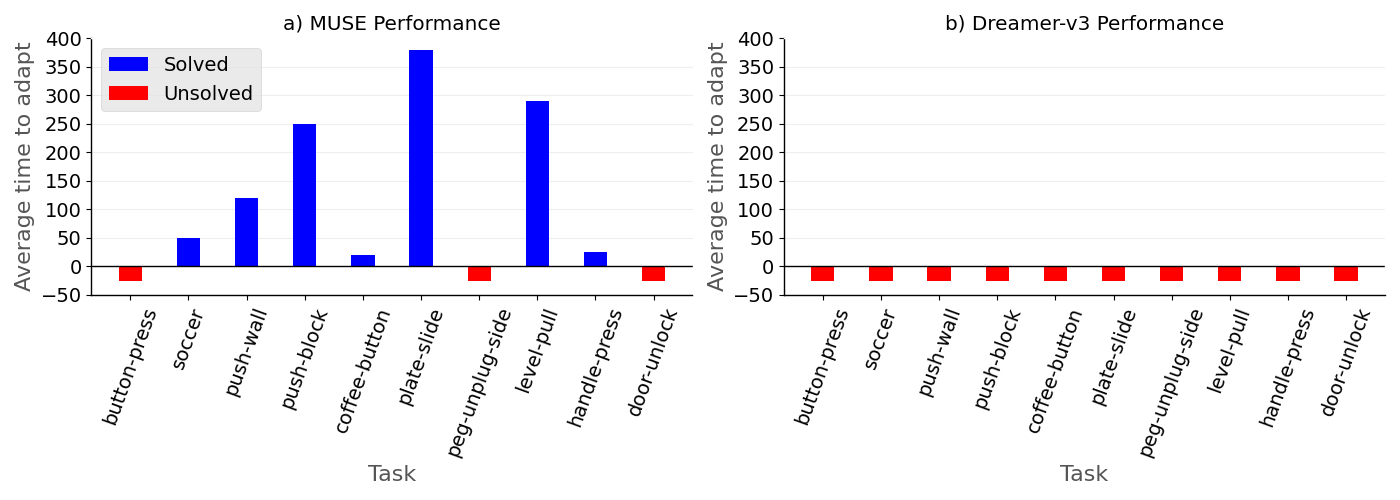

The MUSE agent achieved a metacognitive accuracy of 92% and an AUROC2 of 0.95 on novel tasks over the adaptation episodes, demonstrating that the Self-Assessment Model is highly predictive of competence for novel tasks (Table 1). Further, MUSE successfully solved 7 of the 10 novel tasks (70%) by leveraging competence-aware actions. The 7 solved tasks required different time steps per episode depending on the relative difficulty and complexity of the task (Figure 5 a). For instance, plate-slide required nearly the maximum time budget, while coffee-button was solved quickly. But in sharp contrast, Dreamer-v3 failed to solve any of the novel tasks within the allotted adaptation episodes (Figure 5 b).

<details>

<summary>figures/adaptation_steps.png Details</summary>

### Visual Description

## Bar Charts: MUSE vs. Dreamer-v3 Performance

### Overview

The image contains two bar charts comparing the performance of two systems, MUSE and Dreamer-v3, on a set of tasks. The charts display the average time to adapt for each task, with blue bars indicating tasks that were solved and red bars indicating tasks that were not solved.

### Components/Axes

**Chart a) MUSE Performance:**

* **Title:** a) MUSE Performance

* **Y-axis:** Average time to adapt

* Scale: 0 to 400, with increments of 50.

* **X-axis:** Task

* Categories: button-press, soccer, push-wall, push-block, coffee-button, plate-slide, peg-unplug-side, level-pull, handle-press, door-unlock

* **Legend:** Located in the top-left corner.

* Blue: Solved

* Red: Unsolved

**Chart b) Dreamer-v3 Performance:**

* **Title:** b) Dreamer-v3 Performance

* **Y-axis:** Average time to adapt

* Scale: 0 to 400, with increments of 50.

* **X-axis:** Task

* Categories: button-press, soccer, push-wall, push-block, coffee-button, plate-slide, peg-unplug-side, level-pull, handle-press, door-unlock

* **Legend:** (Implicitly the same as MUSE, as no legend is present)

* Blue: Solved

* Red: Unsolved

### Detailed Analysis

**Chart a) MUSE Performance:**

* **button-press:** Unsolved (Red bar) at approximately -25.

* **soccer:** Solved (Blue bar) at approximately 50.

* **push-wall:** Solved (Blue bar) at approximately 125.

* **push-block:** Solved (Blue bar) at approximately 250.

* **coffee-button:** Solved (Blue bar) at approximately 25.

* **plate-slide:** Solved (Blue bar) at approximately 380.

* **peg-unplug-side:** Unsolved (Red bar) at approximately -25.

* **level-pull:** Solved (Blue bar) at approximately 290.

* **handle-press:** Solved (Blue bar) at approximately 25.

* **door-unlock:** Unsolved (Red bar) at approximately -25.

**Chart b) Dreamer-v3 Performance:**

* **button-press:** Unsolved (Red bar) at approximately -25.

* **soccer:** Unsolved (Red bar) at approximately -25.

* **push-wall:** Unsolved (Red bar) at approximately -25.

* **push-block:** Unsolved (Red bar) at approximately -25.

* **coffee-button:** Unsolved (Red bar) at approximately -25.

* **plate-slide:** Unsolved (Red bar) at approximately -25.

* **peg-unplug-side:** Unsolved (Red bar) at approximately -25.

* **level-pull:** Unsolved (Red bar) at approximately -25.

* **handle-press:** Unsolved (Red bar) at approximately -25.

* **door-unlock:** Unsolved (Red bar) at approximately -25.

### Key Observations

* MUSE solves most tasks, with 'plate-slide' requiring the highest average time to adapt.

* Dreamer-v3 fails to solve any of the tasks, with all tasks showing a negative average time to adapt (represented by red bars at approximately -25).

### Interpretation

The data suggests that MUSE significantly outperforms Dreamer-v3 on the given set of tasks. MUSE is able to solve most of the tasks, while Dreamer-v3 fails to solve any of them. The negative average time to adapt for Dreamer-v3 across all tasks is unusual and may indicate a specific issue with the system's adaptation process or how the metric is being calculated. The 'plate-slide' task appears to be the most challenging for MUSE, requiring a substantially longer adaptation time compared to other solved tasks.

</details>

Figure 5: Average number of time steps per episode required to solve each of the 10 novel tasks in the Meta-World environment. MUSE (a) successfully solved 7 out of 10 tasks, whereas Dreamer-v3 (b) failed to solve any of them. Note that to facilitate illustration, unsolved tasks are assigned a nominal value of -25 and depicted by a red bar.

3.3 Discussion

The pre-deployment training tasks were selected to encompass a broad range of skills, so the agents could develop a better understanding of various manipulation strategies. Following this training, the agents were exposed to out-of-distribution tasks that were selected to evaluate their ability to generalize learned skills to novel challenges. This two-stage approach of training on familiar tasks and then adapting to novel ones was used to rigorously assess the effectiveness of MUSE and Dreamer-v3 in unknown situations. Overall, the World-Model-based experiments showed that MUSE significantly outperforms Dreamer-v3 in adapting to novel tasks by effectively leveraging competence awareness for strategy selection.

4 LLM-based implementation

In this section, we describe our implementation of the MUSE framework to equip LLM agents with metacognitive abilities of self-assessment and self-regulation.

4.1 Methods

4.1.1 ReAct

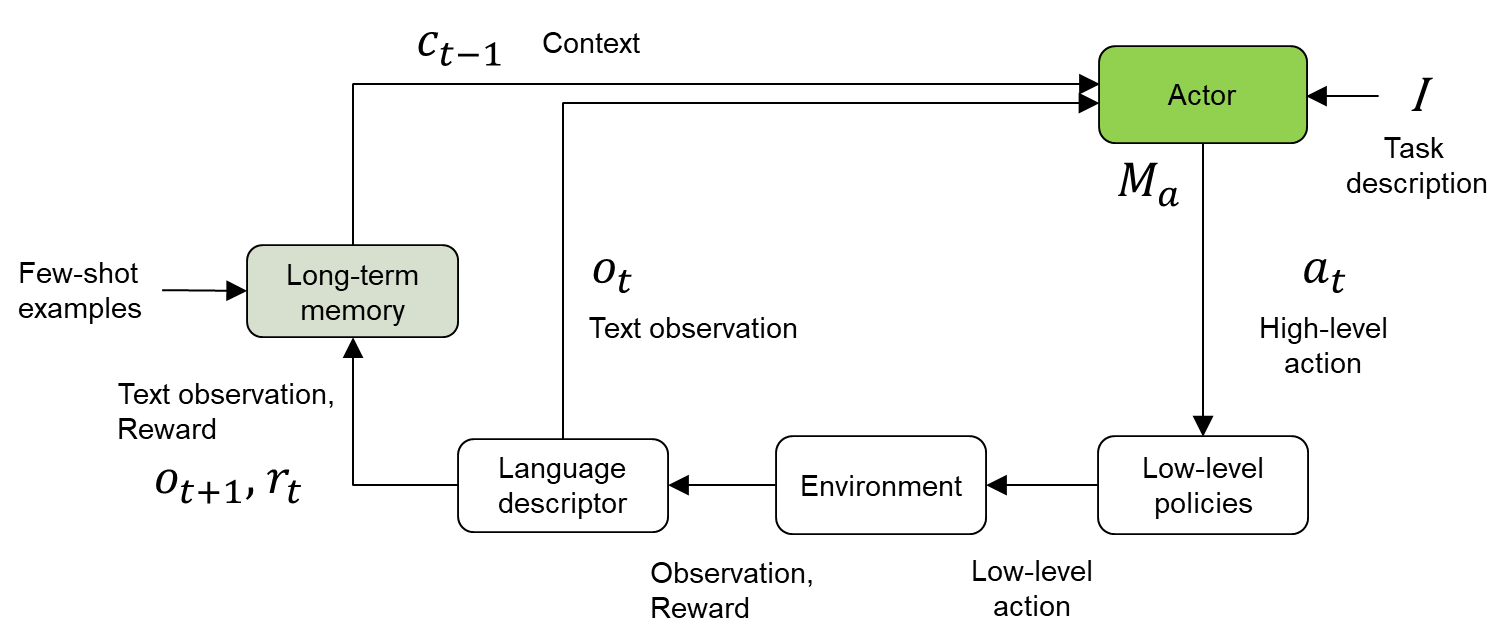

Yao et al. (2022) was among the first to introduce an LLM agent that interacts with its environment to accomplish tasks by being prompted to reason and act (Figure 6). At time step $t$ , the ReAct agent ( $M_{a}$ ) perceives an observation $o_{t}∈\mathcal{O}$ , executes an action $a_{t}∈\mathcal{A}$ based on the policy $\pi(a_{t}|I,c_{t-1},o_{t})$ , and receives a reward $r_{t}$ . Here, $I$ is the natural language description of the task, and $c_{t}=\{o_{1},a_{1},r_{1}...,o_{t},a_{t},r_{t}\}$ is the running context of the trajectory within the episode. The context is reset at the end of each episode, which occurs when either the task is solved within the time budget or the episode terminates due to running out of time. The ReAct agent leverages language-based CoT reasoning (Wei et al., 2022) for improved sequential action selection for multi-step tasks. Note that actions $a_{t}$ stored in $c_{t}$ include both standard actions and CoT reasoning steps. Two-shot domain-specific trajectory examples with step-by-step reasoning are included in the prompt for CoT reasoning. See Supplementary Section 8.1 for illustrative examples.

<details>

<summary>figures/ReAct.png Details</summary>

### Visual Description

## Diagram: Actor-Environment Interaction Loop

### Overview

The image is a diagram illustrating an actor-environment interaction loop, incorporating elements of long-term memory, language descriptors, and low-level policies. The diagram depicts the flow of information and actions between the actor and the environment, with context and task descriptions influencing the actor's behavior.

### Components/Axes

* **Actor:** A green rounded rectangle labeled "Actor" at the top-right. It receives input from "Context" (c<sub>t-1</sub>), "Task description" (I), and "Long-term memory". It outputs "High-level action" (a<sub>t</sub>) and "M<sub>a</sub>".

* **Long-term memory:** A green rounded rectangle on the left, receiving "Few-shot examples" as input and providing context (c<sub>t-1</sub>) to the "Actor". It also receives "Text observation, Reward" (o<sub>t+1</sub>, r<sub>t</sub>).

* **Language descriptor:** A white rounded rectangle at the bottom-left, receiving "Text observation" (o<sub>t</sub>) and outputting to the "Environment".

* **Environment:** A white rounded rectangle at the bottom-center, receiving input from the "Language descriptor" ("Low-level action") and outputting "Observation, Reward" to the "Language descriptor".

* **Low-level policies:** A white rounded rectangle at the bottom-right, receiving "High-level action" (a<sub>t</sub>) from the "Actor" and outputting "Low-level action" to the "Environment".

* **Arrows:** Arrows indicate the flow of information and actions between the components.

### Detailed Analysis

* **Actor:** The "Actor" receives three inputs:

* "Context" (c<sub>t-1</sub>) from the "Long-term memory".

* "Task description" (I).

* "M<sub>a</sub>" from the "Long-term memory".

The "Actor" outputs "High-level action" (a<sub>t</sub>) to the "Low-level policies".

* **Long-term memory:** The "Long-term memory" receives "Few-shot examples" and "Text observation, Reward" (o<sub>t+1</sub>, r<sub>t</sub>). It outputs "Context" (c<sub>t-1</sub>) to the "Actor".

* **Language descriptor:** The "Language descriptor" receives "Text observation" (o<sub>t</sub>) and outputs to the "Environment".

* **Environment:** The "Environment" receives input from the "Language descriptor" ("Low-level action") and outputs "Observation, Reward" to the "Language descriptor".

* **Low-level policies:** The "Low-level policies" receives "High-level action" (a<sub>t</sub>) from the "Actor" and outputs "Low-level action" to the "Environment".

### Key Observations

* The diagram illustrates a closed-loop system where the "Actor" interacts with the "Environment" through "Low-level policies" and "Language descriptor".

* The "Long-term memory" provides context to the "Actor" based on "Few-shot examples" and past experiences ("Text observation, Reward").

* The "Language descriptor" translates "Text observation" into a format understandable by the "Environment".

### Interpretation

The diagram represents a reinforcement learning framework where an "Actor" learns to perform tasks in an "Environment". The "Actor" uses "Long-term memory" to store and retrieve relevant information, allowing it to adapt to new situations based on "Few-shot examples". The "Language descriptor" enables the system to process textual observations from the "Environment". The "Low-level policies" translate high-level actions from the "Actor" into concrete actions that can be executed in the "Environment". The loop represents the continuous interaction between the "Actor" and the "Environment", where the "Actor" learns from its experiences and improves its performance over time.

</details>

Figure 6: Our illustration of the ReAct architecture (Yao et al., 2022).

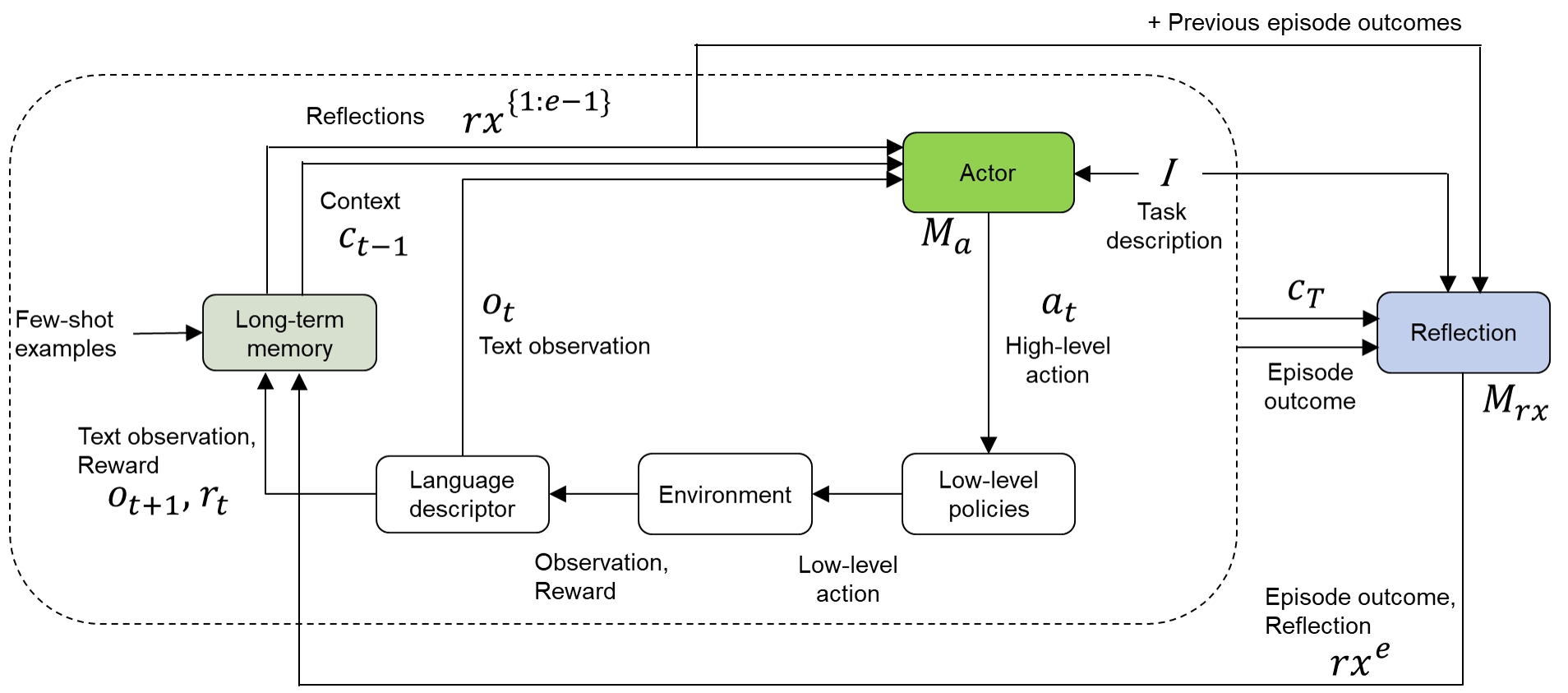

4.1.2 Reflexion

Reflexion (Shinn et al., 2023) enhances in-context prompting for LLM agents by generating internal feedback in language, referred to as “reflection,” to transfer lessons learned across episodes for a given task (Figure 7). At the end of each episode $e$ , Reflexion prompts an LLM to reason and generate verbal feedback $rx^{e}$ about the agent’s performance by analyzing the entire trajectory $c_{T}$ from the episode start ( $t=1$ ) to finish ( $t=T$ ) and the episode outcome (success or failure). This Reflection LLM ( $M_{rx}$ ) also receives the reflections and outcomes from previous episodes ( $1:e-1$ ). The Reflexion agent ( $M_{a}$ ) follows the policy $\pi(a_{t}|I,c_{t-1},o_{t},rx^{\{1:e-1\}})$ , which incorporates cumulative reflections from earlier episodes. In contrast to ReAct, which relies solely on short-term memory comprising the agent’s running trajectory within the current episode, Reflexion leverages both short- and long-term memory for improved strategic adaptation to novel situations. This iterative internal feedback mechanism allows the agent to refine its strategies progressively by integrating lessons learned from failures in previous episodes. Reflexion addresses the credit assignment problem through implicit reasoning about specific actions within the trajectory that led to failures, proposing alternative strategies for future episodes. Through this process, the agent develops an enhanced understanding of effective plans to iteratively improve its adaptability to novel tasks.

While the original Reflexion study (Shinn et al., 2023) utilized a single LLM for both action generation and reflection, our implementation uses distinct LLMs that are fine-tuned for each respective task. Additionally, we extend the reflection process to include successful outcomes, which enables the model to reinforce effective strategies alongside learning from failures.

<details>

<summary>figures/Reflex.png Details</summary>

### Visual Description

## System Diagram: Actor-Reflection Interaction

### Overview

The image is a system diagram illustrating the interaction between an "Actor" and a "Reflection" module within an environment. It depicts the flow of information, actions, and feedback loops between these components, including the environment, long-term memory, and low-level policies.

### Components/Axes

* **Nodes:**

* Actor (Green rounded rectangle)

* Reflection (Blue rounded rectangle)

* Long-term memory (Green rounded rectangle)

* Language descriptor (White rounded rectangle)

* Environment (White rounded rectangle)

* Low-level policies (White rounded rectangle)

* **Labels:**

* Reflections: rx^{1:e-1}

* Context: c_{t-1}

* Few-shot examples

* Text observation: o_t

* Text observation, Reward: o_{t+1}, r_t

* Observation, Reward

* Low-level action

* Task description: I

* High-level action: a_t

* Episode outcome: c_T

* Episode outcome, Reflection: rx^e

* M_a (below Actor)

* M_rx (below Reflection)

* + Previous episode outcomes (top-right)

### Detailed Analysis

* **Actor:** The Actor receives input from "Reflections rx^{1:e-1}", "Context c_{t-1}", and "Task description I". It outputs a "High-level action a_t" to "Low-level policies". The Actor has an associated memory M_a.

* **Reflection:** The Reflection module receives input from "Task description I", "Episode outcome c_T", and "+ Previous episode outcomes". It outputs to "Reflections rx^{1:e-1}", and "Long-term memory". The Reflection module has an associated memory M_rx, which also receives "Episode outcome, Reflection rx^e".

* **Long-term memory:** Receives "Few-shot examples" and "Text observation, Reward o_{t+1}, r_t". It outputs "Context c_{t-1}" and "Text observation o_t".

* **Language descriptor:** Receives "Text observation o_t" and outputs "Observation, Reward" to the "Environment".

* **Environment:** Receives "Observation, Reward" from the "Language descriptor" and "Low-level action" from "Low-level policies". It outputs "Text observation, Reward o_{t+1}, r_t" to "Long-term memory".

* **Low-level policies:** Receives "High-level action a_t" from the "Actor" and outputs "Low-level action" to the "Environment".

### Key Observations

* The diagram illustrates a closed-loop system where the Actor interacts with the Environment through high-level actions, which are then translated into low-level actions.

* The Reflection module plays a crucial role in learning and adaptation by processing episode outcomes and updating the long-term memory.

* The system incorporates both few-shot examples and continuous feedback (reward) to guide learning.

### Interpretation

The diagram represents a reinforcement learning architecture where an agent (Actor) learns to interact with an environment. The Reflection module allows the agent to learn from past experiences and improve its performance over time. The long-term memory stores relevant information that can be used to guide future actions. The inclusion of few-shot examples suggests a meta-learning approach, where the agent can quickly adapt to new tasks based on limited data. The overall architecture emphasizes the importance of both exploration (through interaction with the environment) and exploitation (through leveraging past experiences) in achieving optimal performance.

</details>

Figure 7: Our illustration of the Reflexion architecture (Shinn et al., 2023), which leverages verbal RL to enable agents to learn from past episodes.

4.1.3 MUSE

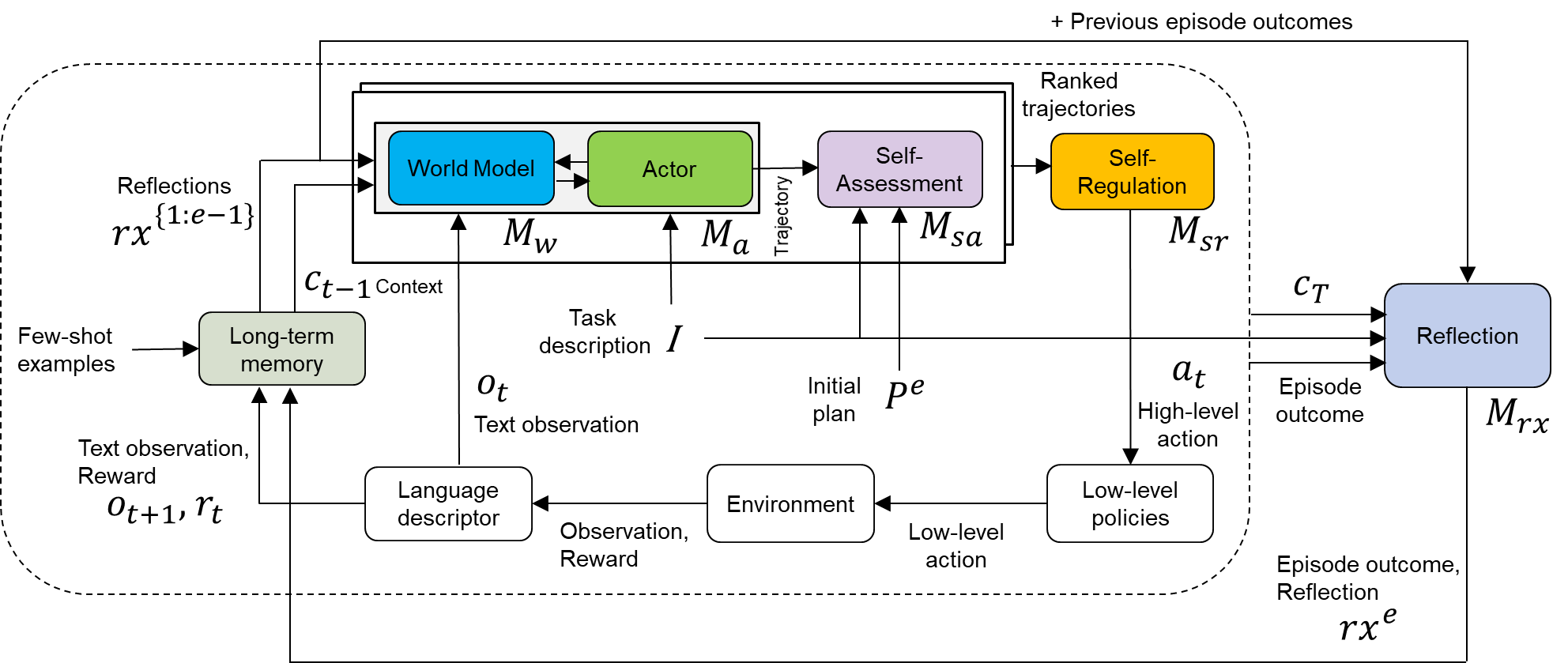

The MUSE framework, illustrated in Figure 8, builds on mechanisms from ReAct and Reflexion by incorporating additional modules for self-assessment and self-regulation:

- World Model ( $M_{w}$ ): An LLM that can be prompted to predict the next observation, reward, and terminal signal given the current observation and action. This LLM works in conjunction with the Actor ( $M_{a}$ ) to generate potential future states and actions (rollout trajectories), enabling look-ahead planning. In text-based domains, such as the experiments presented here, $M_{w}$ can use the same LLM as $M_{a}$ . In fact, the same LLM can be directly prompted to generate diverse trajectories without requiring explicit interaction between the two.

- Self-Assessment Model ( $M_{sa}$ ): A language-conditioned neural network that evaluates and scores trajectories generated by the World Model/Actor to assess their alignment and effectiveness for the agent’s goals. Specifically, it predicts task competence, or the probability of task success, for each trajectory.

- Self-Regulation ( $M_{sr}$ ): A module that decides a competence-aware course of action based on one of the options outlined in Subsection 3.1.2. For this implementation of MUSE, $M_{sr}$ chooses the first action $a_{t}$ from the rollout trajectory that is most likely to achieve task success, as determined by competence evaluations from $M_{sa}$ .

<details>

<summary>figures/MUSE.png Details</summary>

### Visual Description

## Diagram: High-Level System Architecture

### Overview

The image presents a high-level system architecture diagram, likely for a reinforcement learning or AI system. It illustrates the flow of information and interactions between various components, including a world model, actor, self-assessment, self-regulation, long-term memory, language descriptor, environment, and reflection mechanism. The diagram emphasizes the iterative nature of the process, with feedback loops and context-dependent decision-making.

### Components/Axes

* **Nodes (Rounded Rectangles):** Represent distinct modules or components within the system.

* World Model (Blue)

* Actor (Green)

* Self-Assessment (Purple)

* Self-Regulation (Yellow)

* Long-term memory (Light Green)

* Language descriptor (White)

* Environment (White)

* Low-level policies (White)

* Reflection (Light Blue)

* **Arrows:** Indicate the flow of information or control between components.

* **Labels:** Describe the data or signals being passed between components.

* Reflections rx{1:e-1}

* Ct-1 Context

* Task description I

* Ot Text observation

* Initial plan Pe

* At High-level action

* Episode outcome

* Text observation, Reward Ot+1, rt

* Observation, Reward

* Low-level action

* Episode outcome, Reflection rxe

* Ranked trajectories

* \+ Previous episode outcomes

* **Variables:**

* Mw

* Ma

* Msa

* Msr

* Mrx

### Detailed Analysis or ### Content Details

1. **Central Processing Unit:**

* A dashed rounded rectangle encloses the "World Model," "Actor," "Self-Assessment," and "Self-Regulation" modules. This grouping suggests a central processing unit or core decision-making component.

* The "World Model" (blue) receives input from "Reflections rx{1:e-1}" and "Ct-1 Context". It outputs to the "Actor".

* The "Actor" (green) receives input from the "World Model" and "Task description I". It outputs a "Trajectory" to the "Self-Assessment" module.

* The "Self-Assessment" (purple) receives the "Trajectory" and "Initial plan Pe". It outputs to the "Self-Regulation" module.

* The "Self-Regulation" (yellow) receives input from "Self-Assessment" and outputs "At High-level action" and "Ranked trajectories".

2. **Memory and Context:**

* "Long-term memory" (light green) receives "Few-shot examples" and "Text observation, Reward Ot+1, rt". It outputs "Ct-1 Context" to the "World Model".

3. **Environment Interaction:**

* "Language descriptor" (white) receives "Observation, Reward" from the "Environment" (white) and outputs "Text observation, Reward Ot+1, rt" to the "Long-term memory". It also outputs "Ot Text observation" to the "World Model".

* The "Environment" receives "Low-level action" from "Low-level policies" (white) and outputs "Observation, Reward" to the "Language descriptor".

* "Low-level policies" receives "At High-level action" from "Self-Regulation" and outputs "Low-level action" to the "Environment".

4. **Reflection Mechanism:**

* The "Reflection" module (light blue) receives "Ranked trajectories" (indirectly from "Self-Regulation"), "Ct", and "Episode outcome". It outputs "Reflections rx{1:e-1}" to the "World Model" and "Episode outcome, Reflection rxe" to the "Long-term memory".

* The "Reflection" module also receives "+ Previous episode outcomes" as input.

### Key Observations

* The system incorporates a hierarchical structure, with high-level planning and decision-making ("World Model," "Actor," "Self-Assessment," "Self-Regulation") interacting with lower-level policies and the environment.

* Feedback loops are prevalent, allowing the system to learn from its experiences and adapt its behavior.

* The "Reflection" module plays a crucial role in learning and knowledge accumulation.

* The system integrates both textual and reward-based information.

### Interpretation

The diagram illustrates a sophisticated AI system designed for complex tasks. The system leverages a world model to reason about the environment, an actor to take actions, and self-assessment and self-regulation mechanisms to improve performance. The inclusion of long-term memory and a reflection module suggests a system capable of continuous learning and adaptation. The system appears to be designed to learn from both successful and unsuccessful episodes, using the reflection mechanism to extract valuable insights. The integration of language and reward signals indicates a system that can understand and respond to both human instructions and environmental feedback. The overall architecture suggests a system capable of handling complex, dynamic environments and learning to achieve long-term goals.

</details>

Figure 8: Illustration of the MUSE architecture for LLM agents, which implements the metacognitive cycle to iteratively solve unknown tasks.

World Model/Actor During deployment, the World Model/Actor generates several potential future state-action sequences with a horizon $H$ at each time step $t$ . The diversity of these rollout trajectories, denoted by $\tau_{t}=\{a_{t},r_{t},o_{t+1},...,a_{t+H-1},r_{t+H-1},o_{t+H}\}$ , is controlled by the temperature setting of the LLM. These trajectories represent hypothetical paths extending from the current observation $o_{t}$ and context $c_{t-1}$ , guided by the task description $I$ and task-specific reflections $rx^{\{1:e-1\}}$ stored in memory. For this implementation, we explicitly did not specify a horizon $H$ ; instead, the LLM was allowed to generate trajectories up to the maximum length permitted by its context window. The temperature of the LLM was set to 0.5, and five rollout trajectories were generated at each time step.

Self-Assessment The Self-Assessment Model ( $M_{sa}$ ) utilizes a transformer encoder $\mathcal{M}$ (SentenceTransformers, 2024) and an MLP $g_{\eta}$ to predict the probability of task success (Equation 6) for rollout trajectories generated by the World Model/Actor before their actual execution in the environment. Specifically, $M_{sa}$ evaluates the alignment and effectiveness of potential trajectories for the task at hand. The algorithm for training $M_{sa}$ and using it for evaluation is detailed in Algorithm 2.

$$

\displaystyle\begin{aligned} y_{\text{pred}}=M_{sa}(I,P^{e},\tau)\end{aligned} \tag{6}

$$

ifnextchar

gobble Here, $\tau$ represents a trajectory and $P^{e}$ denotes the initial language-based plan generated by the World Model/Actor LLM at the start of episode $e$ based on task description $I$ . See Supplementary Material for several illustrative examples of the initial plan $P^{e}$ . The output layer of $M_{sa}$ employs a sigmoid activation function to yield a probability of task success. A threshold of 0.5 is used to convert these probabilities into binary outcomes (success or failure). During pre-deployment training and deployment adaptation, $M_{sa}$ is trained to minimize the binary cross-entropy loss between the predicted task success ( $y_{\text{pred}}$ ) and the actual binary outcome ( $y$ ) as follows:

$$

\displaystyle\begin{aligned} \mathcal{L}(y,y_{\text{pred}})\doteq-\left[y\log(y_{\text{pred}})+(1-y)\log(1-y_{\text{pred}})\right]\end{aligned} \tag{7}

$$

ifnextchar

gobble To increase the number of training samples and mitigate overfitting, the training trajectories are segmented into non-overlapping chunks, each containing four action-observation pairs. For example, the initial chunk within an episode includes pairs ( $a_{1}$ , $o_{1}$ ) through ( $a_{4}$ , $o_{4}$ ), while the next spans ( $a_{5}$ , $o_{5}$ ) through ( $a_{8}$ , $o_{8}$ ), and so on.

Algorithm 2 Training and evaluation of Self-Assessment Model $M_{sa}$

1: Input: Dataset $\mathcal{D}=\{(I,P^{e},\tau,y)\}$ , transformer encoder $\mathcal{M}$ , MLP $g_{\eta}$

2: Output: Trained evaluator $g_{\eta}$

3: function TrainEvaluator ( $\mathcal{D}$ , $\mathcal{M}$ , $g_{\eta}$ )

4: for each $(I,P^{e},\tau,y)∈\mathcal{D}$ do

5: $Z←\mathcal{M}(I)$ $\triangleright$ Generate task embedding

6: $S←\mathcal{M}(\tau,P^{e})$ $\triangleright$ Generate trajectory and plan embedding

7: $X←\text{concat}(S,Z)$

8: $y_{\text{pred}}← g_{\eta}(X)$ $\triangleright$ Predict task success probability

9: Update $g_{\eta}$ parameters to minimize $\mathcal{L}(y,y_{\text{pred}})$

10: end for

11: return Trained MLP $g_{\eta}$

12: end function

13: function EvaluateTrajectory ( $I$ , $P^{e}$ , $\tau$ , $\mathcal{M}$ , $g_{\eta}$ )

14: $Z←\mathcal{M}(I)$

15: $S←\mathcal{M}(\tau,P^{e})$

16: $X←\text{concat}(S,Z)$

17: $y_{\text{pred}}← g_{\eta}(X)$

18: return $y_{\text{pred}}$

19: end function

MUSE Framework The pre-deployment training and deployment adaptation procedures for the MUSE agent are detailed in Algorithms 3 and 4, respectively. For pre-deployment training, we first train the Reflexion agent (Shinn et al., 2023) on each of the training tasks separately to collect data. The constituent models of the MUSE agent are then trained with multi-task supervised learning to benefit from the diversity of experiences. Specifically, the Reflection LLM ( $M_{rx}$ ) in MUSE is trained using Direct Preference Optimization (DPO) with a preference dataset (Rafailov et al., 2024) that is created by comparing reflections of success and failure across the training tasks. A success (positive) reflection occurs when the agent fails in episode $e_{i}$ but succeeds in episode $e_{i+1}$ following the reflection. Conversely, a failure (negative) reflection happens when the agent succeeds in episode $e_{i}$ but fails in episode $e_{i+1}$ despite the reflection. DPO directly optimizes $M_{rx}$ to generate generalizable reflections that maximize the likelihood of satisfying these preferences (i.e., increase the likelihood of success and decrease the likelihood of failure). This enables MUSE to iteratively improve its ability to recover from failures and adapt to novel tasks through a more effective reflection process.

The Actor LLM ( $M_{a}$ ) is trained using Supervised Fine-Tuning (SFT) with only successful episodes, for simplicity, across the training tasks. By imitating the behavior demonstrated in these episodes, $M_{a}$ learns to align its policy with actions seen during successful episodes. This joint multi-task fine-tuning process equips MUSE with generalizable success-driven strategies. Note that we use Low-Rank Adaptation (LoRA) (Hu et al., 2021) for parameter-efficient fine-tuning of both the $M_{a}$ and $M_{rx}$ LLMs. Additionally, the Self-Assessment Model ( $M_{sa}$ ) undergoes supervised learning with pertinent multi-task data, which maps trajectory chunks to corresponding episode outcomes.

During deployment, when the agents encounter a novel task, they engage in sequential episodes until success is achieved. MUSE employs competence-aware planning, as described above, to choose its actions. Furthermore, MUSE can perform online updates to each of its constituent models as new data becomes available from the novel task. Note that the baseline agents, ReAct and Reflexion, can also respond adaptively to new experiences but rely only on in-context prompting and/or reflection. However, these methods do not involve updating model parameters, which leads to knowledge being stored only for the short term. This constraint hinders the transfer of knowledge across episodes and tasks. MUSE overcomes these limitations by continually updating the weights of its models, enabling more effective knowledge transfer.

Algorithm 3 Procedure for pre-deployment training of the MUSE agent

Training dataset ${D_{in}}\sim$ in-distribution tasks Specify max. training episodes, max. steps

1: function PreTrainModels ( $D_{in}$ , $M_{a}$ , $M_{rx}$ , $M_{sa}$ , $M_{w}$ )

2: Initialize $M_{sa}$ , $M_{w}$ , memory buffer

3: for each task $\sim{D_{in}}$ do

4: Initialize $M_{a}$ , $M_{rx}$ for the Reflexion agent

5: Add $I$ to memory buffer

6: Set $t=0$ , $e=0$

7: while $e<$ max. training episodes do

8: while $t<$ max. steps & task-not-solved do

9: Generate $a_{t}$ using $M_{a}$ and submit to simulator

10: Obtain $o_{t+1}$ and $r_{t}$ , append $o_{t}$ , $a_{t}$ , $r_{t}$ to $c_{t}$

11: Increment $t$

12: end while

13: Generate $rx^{e}$ using $M_{rx}$

14: Append episode outcome, $c_{t}$ , $rx^{e}$ to memory buffer

15: Increment $e$

16: end while

17: end for

18: Use all data in memory buffer to train $M_{a}$ , $M_{rx}$ , $M_{sa}$ , $M_{w}$ for MUSE

19: return Trained $M_{a}$ , $M_{rx}$ , $M_{sa}$ , $M_{w}$

20: end function

Algorithm 4 Procedure for deployment adaptation of the MUSE agent

Test dataset ${D_{out}}\sim$ out-of-distribution tasks Specify max. adaptation episodes

1: function AdaptModels ( $D_{out}$ , $M_{a}$ , $M_{rx}$ , $M_{sa}$ , $M_{w}$ )

2: Initialize memory buffer

3: for each task $\sim{D_{out}}$ do

4: Set $M_{a}$ , $M_{rx}$ , $M_{sa}$ , $M_{w}$ to pre-deployment trained weights

5: Add $I$ to memory buffer

6: Set $t=0$ , $e=0$

7: while $e<$ max. adaptation episodes do

8: while $t<$ max. steps & task-not-solved do

9: Generate rollout trajectories using $M_{w}$ and $M_{a}$

10: Evaluate each trajectory using $M_{sa}$

11: Select the best action $a_{t}$ and submit to simulator