# A Survey on Uncertainty Quantification of Large Language Models: Taxonomy, Open Research Challenges, and Future Directions

**Authors**: Ola Shorinwa, Zhiting Mei, Justin Lidard, Allen Z. Ren, AnirudhaMajumdar

> shoa@princeton.edu

> maymei@princeton.edu

> jlidard@princeton.edu

> allen.ren@princeton.edu

> ani.majumdar@princeton.eduPrincetonUniversityPrincetonNJUSA

Abstract.

The remarkable performance of large language models (LLMs) in content generation, coding, and common-sense reasoning has spurred widespread integration into many facets of society. However, integration of LLMs raises valid questions on their reliability and trustworthiness, given their propensity to generate hallucinations: plausible, factually-incorrect responses, which are expressed with striking confidence. Previous work has shown that hallucinations and other non-factual responses generated by LLMs can be detected by examining the uncertainty of the LLM in its response to the pertinent prompt, driving significant research efforts devoted to quantifying the uncertainty of LLMs. This survey seeks to provide an extensive review of existing uncertainty quantification methods for LLMs, identifying their salient features, along with their strengths and weaknesses. We present existing methods within a relevant taxonomy, unifying ostensibly disparate methods to aid understanding of the state of the art. Furthermore, we highlight applications of uncertainty quantification methods for LLMs, spanning chatbot and textual applications to embodied artificial intelligence applications in robotics. We conclude with open research challenges in uncertainty quantification of LLMs, seeking to motivate future research.

Uncertainty Quantification; Large Language Models (LLMs); Confidence Estimation. doi: 1111111.1111111 ccs: Computing methodologies ccs: Computing methodologies Artificial intelligence ccs: Computing methodologies Natural language processing ccs: Computing methodologies Natural language generation

1. Introduction

Large language models have demonstrated remarkable language generation capabilities, surpassing average human performance on many benchmarks including math, reasoning, and coding (Achiam et al., 2023; Anthropic, 2024; Brown, 2020; Touvron et al., 2023; Dubey et al., 2024; Chiang et al., 2023). For example, recent (multi-modal) large language models were shown to achieve impressive scores, e.g., in the $90\%$ percentile, on simulated Law School Admission Test (LSAT) exams, the American Mathematics Competition (AMC) contests, the Multistate Bar Exam, and the Graduate Record Exam (GRE) General Test, outperforming a majority of test takers (Katz et al., 2024; Achiam et al., 2023; Anthropic, 2024). Likewise, LLMs have advanced the state of the art in machine translation, text summarization, and question-and-answer tasks. However, LLMs also tend to produce plausible, factually-incorrect responses to their input prompts, termed hallucinations (Lee et al., 2018). In some scenarios, the hallucinated response is overtly incorrect; however, in many cases, the factuality of the LLM response is harder to discern, posing significant risk as a user might falsely assume factuality of the response, which can result in devastating consequences, especially when safety is of paramount importance. As a result, hallucinations pose a notable danger to the safe, widespread adoption of LLMs.

<details>

<summary>extracted/6588127/images/teaser/uq_for_llms.png Details</summary>

### Visual Description

\n

## Screenshot: Chatbot Interaction - Fact Check Failure

### Overview

This image is a screenshot of a chatbot conversation. A user asks a question about the lowest temperature recorded in Antarctica. The chatbot provides an answer with high confidence, but a subsequent "Fact-Check" indicates the answer is false.

### Components/Axes

The screenshot displays a chat interface with message bubbles. The elements are:

* **User Message 1:** "What is the lowest-ever temperature recorded in Antarctica?" (Top-left, blue bubble)

* **Chatbot Response 1:** "The lowest-ever temperature recorded in Antarctica was a staggering -128.6°F (-89.2°C)." (Center-left, green bubble with a checkmark icon)

* **User Message 2:** "How confident are you?" (Bottom-left, blue bubble)

* **Chatbot Response 2:** "I am 100% confident." (Bottom-center-left, green bubble with a checkmark icon)

* **Fact-Check Indicator:** A large red "X" with the text "Fact-Check: False" (Bottom-center, red)

### Detailed Analysis or Content Details

The chatbot initially states the lowest temperature in Antarctica is -128.6°F, which is equivalent to -89.2°C. The chatbot expresses 100% confidence in this answer. However, the "Fact-Check: False" indicator reveals the provided information is inaccurate.

### Key Observations

The key observation is the discrepancy between the chatbot's confident assertion and the fact-check result. This highlights a potential issue with the chatbot's knowledge base or reasoning capabilities. The chatbot confidently provides incorrect information.

### Interpretation

This screenshot demonstrates a failure of a chatbot's fact-checking mechanism. The chatbot confidently delivers an incorrect answer, indicating a flaw in its data sources or its ability to verify information. The "Fact-Check: False" label suggests an external system or process identified the error. This example underscores the importance of verifying information provided by AI models, even when presented with high confidence. The actual lowest temperature recorded in Antarctica is -89.2°C (-128.6°F), which the chatbot stated, but the context implies it is incorrect. This is a paradox. The chatbot is correct in its numerical answer, but incorrect in its overall statement. This suggests the chatbot may be misinterpreting the question or providing a temperature from a different location.

</details>

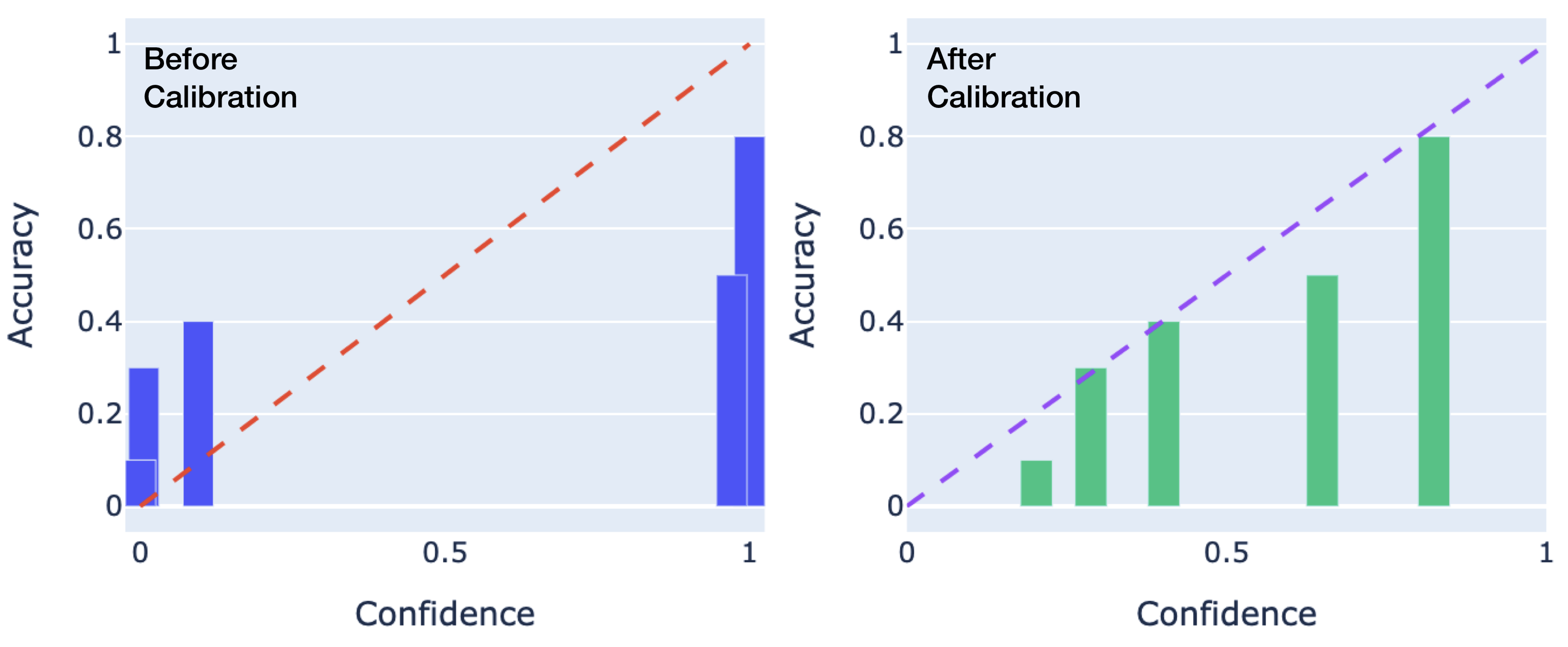

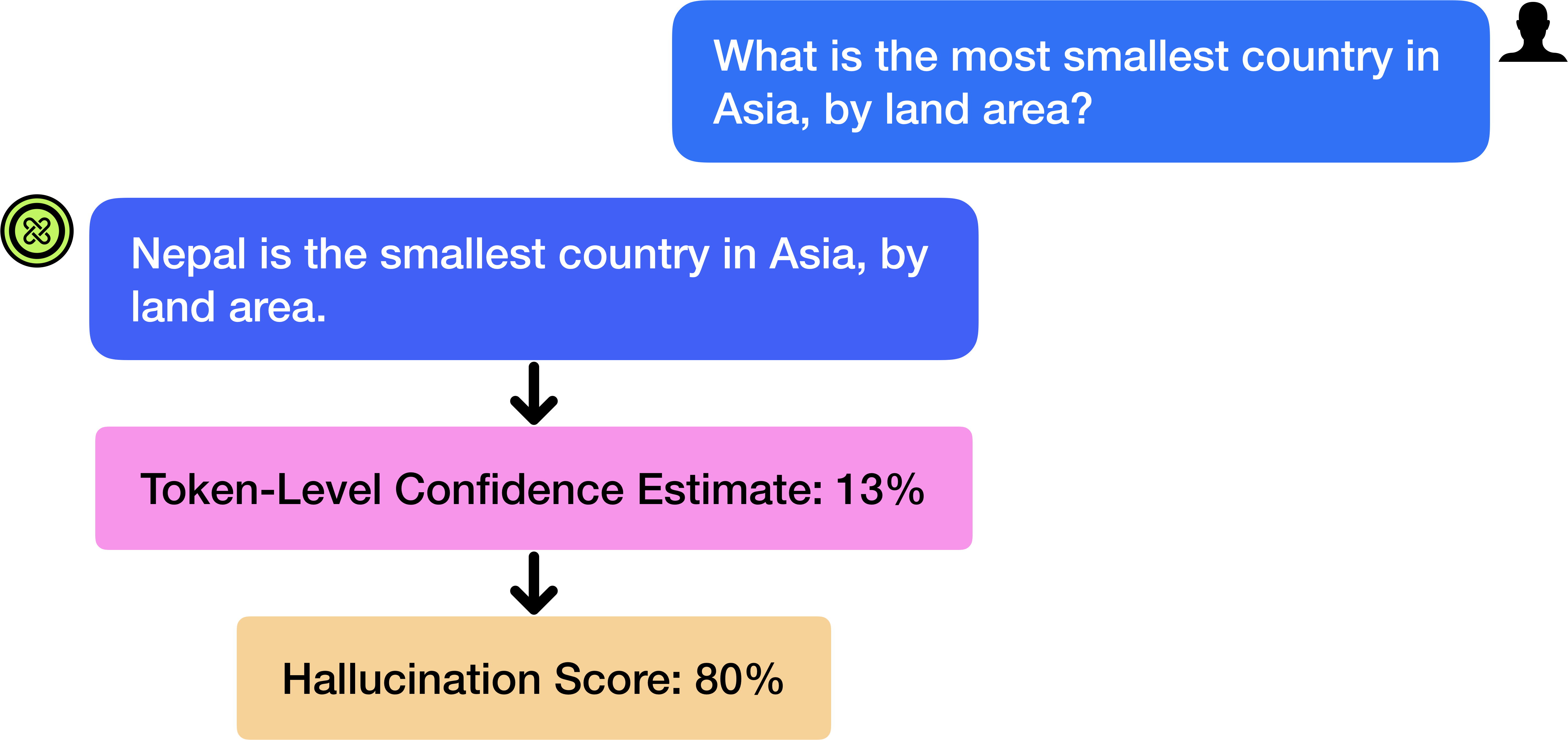

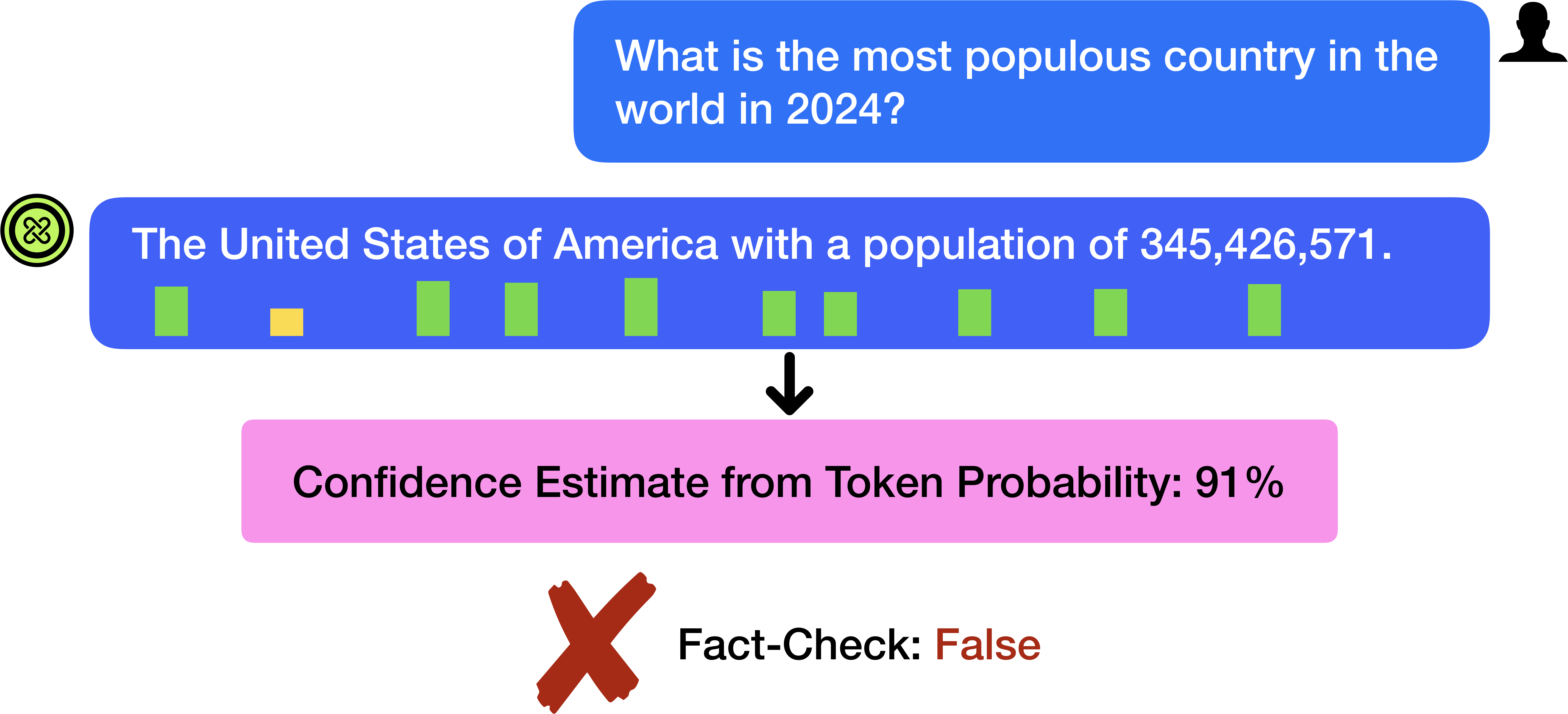

Figure 1. A user asks an LLM the question: What is the lowest-ever temperature recorded in Antarctica?; in response, the LLM answers definitively. Afterwards, the user asks the LLM how confident the LLM is. Although the LLM states that it is “100% confident,” the LLM’s response fails to pass a fact-check test. Confidence scores provided by LLMs are generally miscalibrated. UQ methods seek to provide calibrated estimates of the confidence of LLMs in their interaction with users.

\Description

[A user asks an LLM the question: What is the lowest-ever temperature recorded in Antarctica?; in response, the LLM answers definitively. Afterwards, the user asks the LLM how confident the LLM is. Although the LLM states that it is “100% confident,” the LLM’s response fails to pass a fact-check test. Confidence scores provided by LLMs are generally miscalibrated. UQ methods seek to provide calibrated estimates of the confidence of LLMs in their interaction with users.]A user asks an LLM the question: What is the lowest-ever temperature recorded in Antarctica?; in response, the LLM answers definitively. Afterwards, the user asks the LLM how confident the LLM is. Although the LLM states that it is “100% confident,” the LLM’s response fails to pass a fact-check test. Confidence scores provided by LLMs are generally miscalibrated. UQ methods seek to provide calibrated estimates of the confidence of LLMs in their interaction with users.

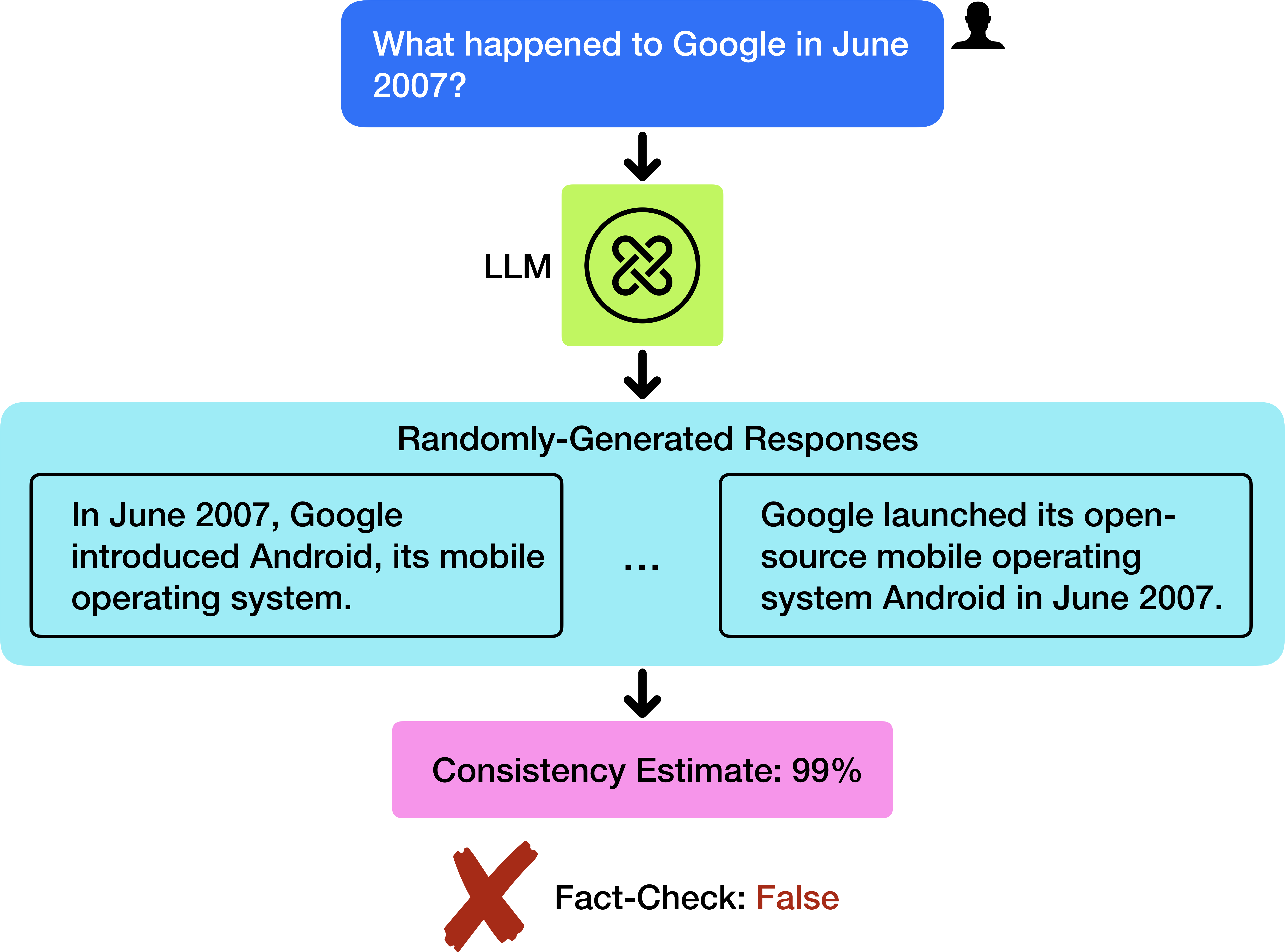

To ensure the trustworthiness of LLMs, substantial research has been devoted to examining the mechanisms behind hallucinations in LLMs (Lee et al., 2018; Chen et al., 2023; Azamfirei et al., 2023; Xu et al., 2024a; Ji et al., 2023), detecting its occurrence, identifying potential causes, and proposing mitigating actions. However, even in the absence of hallucinations, LLMs are susceptible to doubt when given prompts at the boundary of their knowledge base. In these situations, prior work has shown that LLMs fail to accurately convey their uncertainty to a user, either implicitly or explicitly, unlike typical humans (Liu et al., 2023c; Alkaissi and McFarlane, 2023). In fact, LLMs tend to be overconfident even when they should be uncertain about the factuality of their response (Xiong et al., 2023; Groot and Valdenegro-Toro, 2024). We provide an example in Figure 1, where an LLM is asked: “What is the lowest-ever temperature recorded in Antarctica?”, to which the LLM responds definitively. Even when prompted for its confidence in its answer, the LLM claims that it is “100% confident.” However, the LLM’s answer fails to pass a fact-check test. Knowing how much to trust an LLM-generated response is critical for users (Kim et al., 2024a), helping inform the development of contingency strategies commensurate with the degree of uncertainty of the LLM in its response. For example, in applications such as robotics, an LLM-equipped robot could seek human guidance (Ren et al., 2023a) or necessitate further review in the judicial practice (Delacroix, 2024). Uncertainty quantification (UQ) methods for LLMs seek to address this challenge by providing users with an estimate of an LLM’s confidence in its response to a given prompt. Indeed, uncertainty quantification can be important in factuality analysis (Huang et al., 2023a).

The rapid adoption of LLMs in many applications has contributed to the fast-pace development of UQ methods for LLMs to promote their safe integration into a wide range of applications. However, the huge volume of UQ methods for LLMs has made it particularly challenging to ascertain the research scope and guarantees provided by existing UQ methods, complicating the identification of useful UQ methods for practitioners seeking to leverage them in their application areas, as well as the identification of impactful future directions for research. We claim that this challenge arises from the lack of a taxonomy that unifies related existing methods and presents an organized view of existing work in this research area.

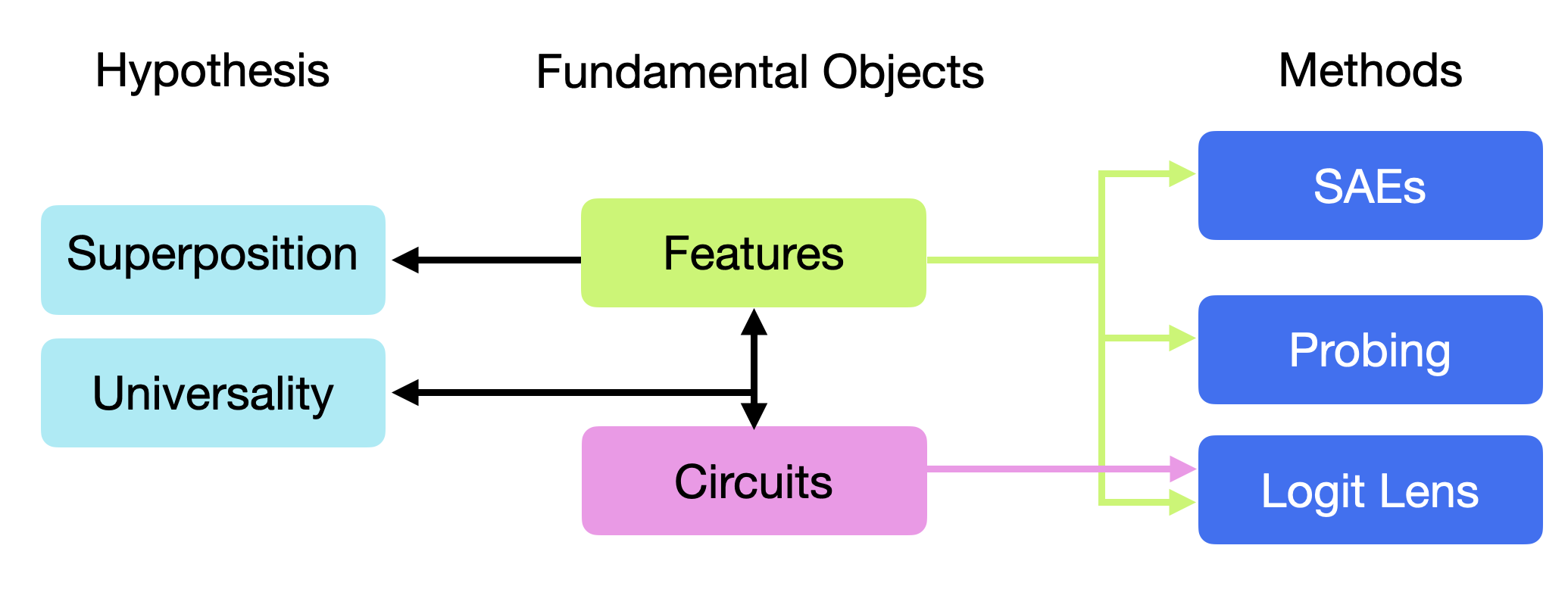

Through this survey, we seek not only to enumerate existing work in UQ for LLMs, but also to provide a useful taxonomy of UQ methods for LLMs to aid understanding the state of the art in this research area. We reiterate that the introduction of an effective taxonomy for these methods can facilitate their adoption in wide-ranging applications, such as in factuality analysis, hallucination detection, and robotics. We categorize existing uncertainty quantification methods for LLMs into four main classes: (1) token-level uncertainty quantification methods; (2) self-verbalized uncertainty quantification methods; (3) semantic-similarity uncertainty quantification methods; and (4) mechanistic interpretability methods. These categories encompass uncertainty quantification of multi-claim, multi-sentence LLM responses. We elaborate on each category in this survey, identifying the key features shared by methods within each category. Moreover, we identify open research challenges and provide directions for future research, hoping to inspire future effort in advancing the state of the art.

Comparison to other Surveys

A number of surveys on hallucinations in LLMs exists, e.g., (Rawte et al., 2023; Huang et al., 2023b; Tonmoy et al., 2024; Liu et al., 2024e; Bai et al., 2024). These surveys discuss hallucinations in detail, introducing the notion of hallucinations (Rawte et al., 2023), identifying its types and potential causes (Huang et al., 2023b), and presenting mitigation techniques (Tonmoy et al., 2024). However, these papers provide little to no discussion on uncertainty quantification methods for LLMs, as this research area lies outside the scope of these surveys. In contrast, only two surveys on uncertainty quantification methods for LLMs exist, to the best of our knowledge. The first survey (Geng et al., 2024) categorizes confidence estimation and calibration methods into two broad classes: methods for generation tasks and methods for classification tasks, defined by the application domain. The survey in (Geng et al., 2024) focuses more heavily on calibration methods, with a less extensive discussion on confidence estimation methods. In contrast, our paper provides an extensive survey of uncertainty quantification methods with a brief discussion on calibration of uncertainty estimates. For example, whereas (Geng et al., 2024) lacks a detailed discussion on the emerging field of mechanistic interpretability, our survey presents this field in detail, along with potential applications to uncertainty quantification. Moreover, our survey discusses a broad range of applications of uncertainty quantification methods for LLMs, e.g., embodied applications such as in robotics, beyond those discussed in (Geng et al., 2024). A concurrent survey (Huang et al., 2024) on uncertainty quantification of LLMs categorizes existing uncertainty quantification methods within more traditional classes, which do not consider the unique architecture and characteristics of LLMs. In contrast, our survey categorizes existing work within the lens of LLMs, considering the underlying transformer architecture of LLMs and the autoregressive token-based procedure utilized in language generation.

Organization

In Section 2, we begin with a review of essential concepts that are necessary for understanding the salient components of uncertainty quantification of LLMs. We discuss the general notion of uncertainty and introduce the main categories of uncertainty quantification methods within the broader field of deep learning. Subsequently, we identify the relevant metrics utilized by a majority of uncertainty quantification methods for LLMs. In Sections 3, 4, 5, and 6, we discuss the four main categories of uncertainty quantification methods for LLMs, highlighting the key ideas leveraged by the methods in each category. In Section 7, we provide a brief discussion of calibration techniques for uncertainty quantification, with applications to uncertainty quantification of LLMs. In Section 8, we summarize the existing datasets and benchmarks for uncertainty quantification of LLMs and present applications of uncertainty quantification methods for LLMs in Section 9. We highlight open challenges in Section 10 and suggest directions for future research. Lastly, we provide concluding remarks in Section 11. Figure 2 summarizes the organization of this survey, highlighting the key details presented therein.

Uncertainty Quantification for LLMs

Taxonomy

Datasets and Benchmarks

Applications

Open Challenges and Future Directions

Token-Level UQ

Self-Verbalized UQ

Semantic-Similarity UQ

Mechanistic Interpretability

Reading Comprehension

Mathematics

Multi-Hop Reasoning

Factuality Analysis

Chatbot and Textual

Robotics

Consistency and Factuality

Entropy and Factuality

Multi-Episode UQ for Interactive Agents

Mechanistic Interpretability and UQ

Datasets and Benchmarks

(Xiao and Wang, 2021; Kadavath et al., 2022; Bakman et al., 2024; Ling et al., 2024; Vazhentsev et al., 2024; Fadeeva et al., 2024; Ren et al., 2023b)

(Mielke et al., 2022; Lin et al., 2022; Stengel-Eskin et al., 2024; Yang et al., 2024b; Xu et al., 2024b; Tao et al., 2024; Band et al., 2024)

(Kuhn et al., 2023; Chen and Mueller, 2023; Lin et al., 2023; Kossen et al., 2024; Wang et al., 2024a; Qiu and Miikkulainen, 2024; Ao et al., 2024)

(Ahdritz et al., 2024)

(Joshi et al., 2017; Reddy et al., 2019; Lebret et al., 2016)

(Lin et al., 2022)

(Yang et al., 2018; Geva et al., 2021)

(Lin et al., 2021; Li et al., 2023; Thorne et al., 2018)

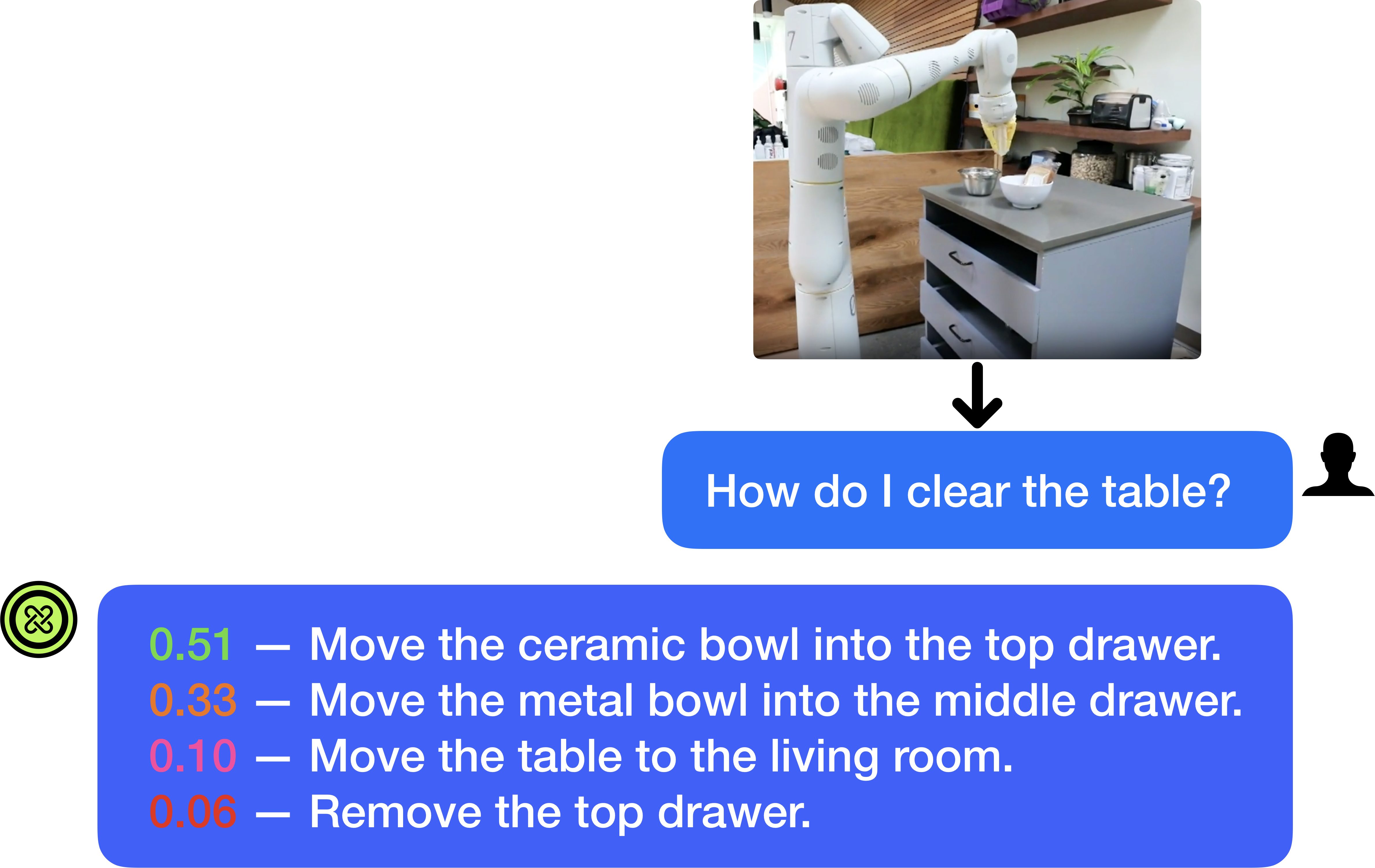

(Tsai et al., 2024; Ren et al., 2023a; Wang et al., 2023b; Liang et al., 2024; Mullen Jr and Manocha, 2024; Wang et al., 2024c; Zheng et al., 2024)

(Zhang et al., 2023a; Yadkori et al., 2024; Mohri and Hashimoto, 2024; Pacchiardi et al., 2023; Tai et al., 2024; Kolagar and Zarcone, 2024; Steindl et al., 2024)

Figure 2. The overview of this survey, including a taxonomy of uncertainty quantification methods for LLMs, relevant datasets and benchmarks, applications, and open challenges and directions for future research.

2. Background

We review fundamental concepts that are crucial to understanding uncertainty quantification of LLMs. We assume basic familiarity with deep learning and build upon this foundation to introduce more specific concepts, describing the notion of uncertainty, the inner workings of LLMs, and the development of metrics and probes to illuminate the uncertainty of LLMs in their response to a user’s prompt.

2.1. Uncertainty

Uncertainty is a widely-known, yet vaguely-defined concept. For example, people generally associate uncertainty with doubt or a lack of understanding, knowledge, or control, but cannot generally provide a precise definition, especially a mathematical one. This general ambiguity applies to the field of LLMs (Keeling and Street, 2024). For example, a subset of the LLM research field considers the uncertainty of a model to be distinct from its level of confidence in a response generated by the model (Lin et al., 2023), stating that confidence scores are associated with a prompt (input) and a prediction by the model, whereas uncertainty is independent of the model’s prediction. However, a large subset of the field considers uncertainty and the lack of confidence to be mostly-related, generally-interchangeable concepts. In this section, for simplicity, we consider uncertainty and confidence to be mostly interchangeable.

When prompted, LLMs tend to hallucinate when uncertainty about the correct answer exists, e.g., when a lack of understanding or a lack of knowledge exists (see Figures 4 and 4). In Figures 4 and 4, we ask GPT-4o mini to name the best cooking book written by a (likely) fictional person Jamie Feldman. GPT-4o mini provides a confident response: “The Ultimate Guide to Cooking for One.” However, based on an internet search, this cookbook does not exist (although many similar ones do). Moreover, when prompted about its confidence, GPT-4o mini apologizes before providing yet another confident, but factually-incorrect response: “The Jewish Cookbook.” This book is authored by Leah Koenig, not Jamie Feldman. Uncertainty quantification (UQ) methods aim to provide a more rigorous estimate of the model’s confidence in its response, e.g., from the entropy of the distribution from which the tokens are sampled. Before discussing UQ techniques for LLMs, we identify the types of uncertainty and the methods suitable for characterizing uncertainty in deep-learned models, more broadly.

<details>

<summary>extracted/6588127/images/background/uncertainty/hallucination_chatgpt_4.png Details</summary>

### Visual Description

\n

## Screenshot: Chat Conversation

### Overview

The image is a screenshot of a chat conversation. It depicts a question and an answer related to cooking books by Jaime Feldman. The interface resembles a messaging application with speech bubbles.

### Components/Axes

The screenshot contains two speech bubbles:

* **Top Bubble:** Contains a question from a user with a profile icon.

* **Bottom Bubble:** Contains an answer from a bot or assistant, indicated by a circular icon with a stylized "X" inside.

### Detailed Analysis or Content Details

**Top Bubble (Question):**

The text reads: "What is the best cooking book written by Jaime Feldman?"

The bubble is light blue and positioned in the top-center of the image. A user profile icon is present in the top-right corner.

**Bottom Bubble (Answer):**

The text reads: "Jamie Feldman is known for her book "The Ultimate Guide to Cooking for One." This book features recipes designed for solo cooks, focusing on simple, delicious meals that minimize waste and maximize flavor. It’s a great resource for anyone cooking for themselves and looking for practical and tasty ideas!"

The bubble is a darker blue and positioned in the bottom-center of the image. A circular icon with a stylized "X" is present in the top-left corner.

### Key Observations

The conversation is focused on identifying a cooking book by Jaime Feldman. The answer specifically names "The Ultimate Guide to Cooking for One" and describes its focus on single-person cooking.

### Interpretation

The data suggests that "The Ultimate Guide to Cooking for One" is considered Jaime Feldman's most notable or best-known cooking book. The description highlights the book's practicality and suitability for individuals cooking for themselves, emphasizing waste reduction and flavor maximization. The exchange demonstrates a question-answer interaction within a conversational interface, likely a chatbot or virtual assistant. The bot provides a direct answer and elaborates on the book's features, indicating a helpful and informative response.

</details>

Figure 3. Hallucination in LLMs: When asked for information about a possibly fictional person, LLMs tend to fabricate a response that sounds coherent but is entirely false.

\Description

[Hallucination in LLMs: When asked for information about a possibly fictional person, LLMs tend to fabricate a response that sounds coherent but is entirely false.]Hallucination in LLMs: When asked for information about a possibly fictional person, LLMs tend to fabricate a response that sounds coherent but is entirely false.

<details>

<summary>extracted/6588127/images/background/uncertainty/hallucination_chatgpt_4_followup.png Details</summary>

### Visual Description

\n

## Screenshot: Chat Conversation

### Overview

The image is a screenshot of a chat conversation. It depicts a question and an answer related to Jamie Feldman. The conversation is presented in a messaging app interface with speech bubbles.

### Components/Axes

The screenshot contains the following elements:

* **User Profile Icon:** Top-right corner, representing the person asking the question.

* **Question Bubble (Blue):** Contains the question "Are you confident about your response on Jamie Feldman?".

* **Answer Bubble (Light Blue):** Contains the response from the AI assistant.

* **AI Assistant Icon:** Top-left corner of the answer bubble, a circular icon with a checkmark and an "X".

### Content Details

The text content of the screenshot is as follows:

**Question:** "Are you confident about your response on Jamie Feldman?"

**Answer:** "I apologize for the confusion. Jamie Feldman is best known for “The Jewish Cookbook,” which features a wide range of traditional and modern Jewish recipes. If you’re looking for a comprehensive guide to Jewish cooking, that would be the book to check out! Thank you for your patience."

### Key Observations

The AI assistant acknowledges a previous error or uncertainty regarding Jamie Feldman and clarifies that Jamie Feldman is known for writing "The Jewish Cookbook". The response is polite and apologetic.

### Interpretation

The conversation suggests that the AI assistant initially provided an incorrect or incomplete response about Jamie Feldman. The user questioned the accuracy of the response, prompting the AI to correct itself and provide more accurate information. The AI's response demonstrates its ability to acknowledge errors and learn from user feedback. The focus of the conversation is on identifying Jamie Feldman's primary work as "The Jewish Cookbook," a resource for Jewish recipes. The AI's tone is conciliatory, expressing apology and gratitude for the user's patience.

</details>

Figure 4. Hallucination in LLMs: When asked about its confidence, the LLM apologizes before hallucinating another response. The Jewish Cookbook is authored by Leah Koenig, not Jaime Feldman.

\Description

[Hallucination in LLMs: When asked about its confidence, the LLM apologizes before hallucinating another response. The Jewish Cookbook is authored by Leah Koenig, not Jaime Feldman.]Hallucination in LLMs: When asked about its confidence, the LLM apologizes before hallucinating another response. The Jewish Cookbook is authored by Leah Koenig, not Jaime Feldman.

2.2. Types of Uncertainty

Uncertainty can be broadly categorized into two classes, namely: aleatoric uncertainty and epistemic uncertainty. When considered collectively, the resulting uncertainty is referred to as predictive uncertainty, without a distinction between the two components.

2.2.1. Aleatoric Uncertainty

Aleatoric uncertainty encompasses the lack of definiteness of the outcome of an event due to the inherent randomness in the process which determines the outcome of the event. For example, a model cannot predict with certainty the outcome of an unbiased coin toss due to the random effects in the coin toss, regardless of the complexity of the model or the size of the training dataset used in training the model. This irreducible uncertainty is referred to as aleatoric uncertainty. For example, in the case of LLMs, aleatoric uncertainty can arise when there is inherent randomness in the ground-truth response, e.g., when prompted with “What will the temperature be tomorrow?”, the uncertainty associated with the LLM’s output can be characterized as aleatoric uncertainty, which is entirely due to the random effects associated with daily weather conditions. In essence, daily weather conditions cannot be predicted with absolute certainty, irrespective of the amount of training data available.

2.2.2. Epistemic Uncertainty

In contrast to aleatoric uncertainty, epistemic uncertainty characterizes the doubt associated with a certain outcome (prediction) due to a lack of knowledge or “ignorance” by a model, often due to limited training data. For example, when prompted to provide the digit in the $7$ th decimal place in the square-root of $2$ , GPT-4o mini responds with the answer $6$ . However, this answer is wrong: the digit in the $7$ th decimal place is $5$ . The uncertainty in the LLM’s output can be characterized as epistemic uncertainty, which can be eliminated by training the LLM on more data specific to this prompt. In other words, epistemic uncertainty describes reducible uncertainty, i.e., epistemic uncertainty should reduce when there is more knowledge about the state on which the decision is being made, e.g., via choosing the right model to use for learning, using more training data, or by incorporating any prior knowledge. The uncertainty associated with the response in Figure 4 is entirely epistemic and stems from missing training data. If we train the LLM on more data, including the fact that Jamie Feldman did not write a cookbook, we can eliminate the uncertainty associated with the model’s response. Before concluding, we note that prior work has explored decomposing predictive uncertainty into epistemic and aleatoric components (Hou et al., 2023).

2.3. Uncertainty Quantification in Deep Learning

Broadly, uncertainty quantification for deep learning lies along a spectrum between two extremes: training-based and training-free methods, illustrated in Figure 5. Whereas training-based methods assume partial or complete visibility and access to the internal structure of the neural network, modifying it to probe its uncertainty, training-free methods use auxiliary models or additional data to quantify the uncertainty of the model post-hoc.

Training-based Training-free BNNs (Jospin et al., 2022) MCMC (Hastings, 1970) Variational Inference (Posch et al., 2019) MC-Dropout (Gal and Ghahramani, 2016; Gal et al., 2017)

| Deep Ensemble |

| --- |

| (Lakshminarayanan et al., 2017; Guo et al., 2018; Cavalcanti et al., 2016; Martinez-Munoz et al., 2008; Buciluǎ et al., 2006; Hinton, 2015) |

| ENNs |

| --- |

| (Osband et al., 2023; Wang and Ji, 2024) |

| Test-time Data Augmentation |

| --- |

| (Lee and AlRegib, 2020; Ayhan and Berens, 2018; Wu and Williamson, 2024; Bahat and Shakhnarovich, 2020) |

Dropout Injection (Loquercio et al., 2020; Ledda et al., 2023) Gradient-based (Lee and AlRegib, 2020; Huang et al., 2021; Igoe et al., 2022)

Figure 5. Uncertainty quantification methods in deep learning span the spectrum from training-based methods to training-free methods.

2.3.1. Training-Based Methods

Training-based uncertainty quantification methods span Bayesian Neural Networks, Monte Carlo Dropout methods, and Deep Ensembles, which we review in the subsequent discussion. Instead of training a set of parameters to predict a single outcome, a Bayesian neural network (BNN) (Jospin et al., 2022) learns a distribution over the model’s weights $\theta$ . Specifically, a BNN learns a distribution over the parameters, $p(\theta|D)$ , with dataset $D$ , with its prediction consisting of two parts: a maximum a posteriori estimation component $\hat{y}$ , and the uncertainty associated with it, defined by the covariance of the prediction $\Sigma_{\hat{y}|x,D}$ .

Despite being statistically principled, the prohibitive computational costs associated with BNNs prevent them from being directly employed. In order to train BNNs, a variety of methods have been proposed, among which the most popular ones are Markov Chain Monte-Carlo (MCMC) (Hastings, 1970) and variational inference (Posch et al., 2019). The former samples from the exact posterior distribution, while the latter learns to approximate the posterior with a variational distribution, $q_{\varphi}$ . Due to the relaxed requirement of access to large amounts of samples, the variational inference method has been more widely used, with Monte-Carlo dropout (Gal and Ghahramani, 2016; Gal et al., 2017) and Deep ensemble (Lakshminarayanan et al., 2017) being representative methods. More recently, epistemic neural networks (ENNs) (Osband et al., 2023; Wang and Ji, 2024) have been introduced to reduce the computational challenges associated with BNNs. To make ensemble methods more efficient, e.g., in out-of-distribution detection (Vyas et al., 2018), pruning methods (Guo et al., 2018; Cavalcanti et al., 2016; Martinez-Munoz et al., 2008), which reduce redundancy among ensemble members, and distillation methods (Buciluǎ et al., 2006; Hinton, 2015), which reduce the number of networks to one, teaching it to represent the knowledge of a group of networks, have been introduced. While these methods are easy to implement and require much less computation compared to regular BNNs or MCMC, they do suffer from being an approximation of the true posterior distribution. In fact, the model’s uncertainty predictions could be worse when data augmentation, ensembling, and post-processing calibration are used together (Rahaman et al., 2021).

2.3.2. Training-Free Methods

Training-free methods for estimating uncertainty have become popular due to their ease of implementation. Since neither the network architecture nor the training process need to be revised, training-free methods work well with large-scale foundation models that are costly to train or fine-tune. In (Ayhan and Berens, 2018; Lee and AlRegib, 2020; Wu and Williamson, 2024; Bahat and Shakhnarovich, 2020), the authors perform data augmentation at test time to generate a predictive distribution, quantifying the model’s uncertainty. Similarly, dropout injection (Loquercio et al., 2020; Ledda et al., 2023) extends MC-dropout to the training-free domain by only performing dropout at inference time to estimate epistemic uncertainty. In (Mi et al., 2022), the authors estimate uncertainty for regression using similar perturbation techniques. Lastly, gradient-based uncertainty quantification methods (Lee and AlRegib, 2020) generate gradients at test-time, which provide an signal for epistemic uncertainty and for OOD detection in (Huang et al., 2021; Igoe et al., 2022), by constructing confounding labels.

2.4. Uncertainty Quantification for LLMs

The introduction of the transformer (Vaswani, 2017) for sequence-to-sequence machine translation tasks spurred the development of large language models. However, as noted in the preceding discussion, LLMs have gained some notoriety for their tendency to hallucinate when uncertain about a response to a specified prompt. Here, we review the general architecture of LLMs and provide some motivation for the development of LLM-specific metrics for quantifying uncertainty.

2.4.1. LLM Architecture

LLMs use the transformer architecture to provide free-form responses to input prompts specified in natural language. The transformer architecture consists of an encoder, which processes the input to the model, and a decoder, which generates the model’s outputs auto-regressively, where the previous outputs of the model are passed into the model to generate the future outputs. Given an input prompt, the words (elements) of the prompt are tokenized (i.e., the sentences/phrases in natural-language are decomposed into simple units referred to as tokens) and transformed to input embeddings using a learned model. The encoder takes in the input embeddings augmented with positional encodings to incorporate positional context and generates a sequence of latent embeddings, which serves as an input to the decoder, using a stack of $N$ multi-head attention sub-blocks and fully-connected feedforward networks. The decoder takes in the embeddings associated with the previous outputs of the decoder, preceded by a start token, and computes an output embedding using a similar stack of multi-head attention heads and feedforward networks as the encoder. The resulting output embeddings are passed into a linear layer prior to a softmax output layer, which converts the decoder embeddings to a probability distribution over the tokens in the dictionary of the model. In subsequent discussion, we denote the probability of the $j$ ’th token in the $i$ ’th sentence of an LLM’s output as $p_{ij}$ . The output token is selected from this probability distribution: e.g., by greedily taking the token associated with the maximum probability mass. The resulting output is passed into the decoder for auto-regressive generation of text.

<details>

<summary>extracted/6588127/images/background/architecture/llm_architecture.png Details</summary>

### Visual Description

\n

## Diagram: Transformer Model Architecture

### Overview

This diagram illustrates the architecture of a Transformer model, specifically focusing on the encoder side. It depicts the flow of data through various sub-layers, including positional encoding, embedding, normalization, multi-head attention, feed-forward networks, and a final softmax layer. The diagram highlights the repeated application of "N multi-head attention sub-blocks".

### Components/Axes

The diagram consists of the following components, arranged in a sequential flow from left to right:

* **Positional Encoding:** (Green circle with a looping arrow) - Input to the model.

* **Embedding:** (Yellow rectangle) - Transforms input into a vector representation.

* **Addition/Residual Connection:** (White circle with a plus sign) - Adds the input to the output of a layer.

* **Norm:** (Blue rectangle) - Normalization layer.

* **Masked Multi-Head Attention:** (Blue rectangle) - Attention mechanism.

* **Feed-Forward:** (Blue rectangle) - Feed-forward neural network.

* **Linear:** (Blue rectangle) - Linear transformation.

* **Softmax:** (Orange rectangle) - Output layer, producing probabilities.

* **N multi-head attention sub-blocks:** (Text label indicating repetition of the central block)

* **Dotted Gray Lines:** Indicate the boundaries of the repeated sub-blocks and the overall model flow.

### Detailed Analysis or Content Details

The diagram shows a clear sequential flow of data:

1. **Positional Encoding** is fed into the **Embedding** layer.

2. The output of the Embedding layer is added to the Positional Encoding via a **Residual Connection**.

3. The result is passed through a **Norm** layer.

4. The output of the Norm layer is fed into a **Masked Multi-Head Attention** layer.

5. The output of the Attention layer is added to its input via another **Residual Connection**.

6. The result is passed through another **Norm** layer.

7. The output of the second Norm layer is fed into a **Feed-Forward** network.

8. The output of the Feed-Forward network is added to its input via a **Residual Connection**.

9. The result is passed through a final **Norm** layer.

10. The output of the final Norm layer is passed through a **Linear** layer.

11. Finally, the output of the Linear layer is passed through a **Softmax** layer to produce the final output.

The central block consisting of "Masked Multi-Head Attention", "Norm", "Feed-Forward", "Norm", and the residual connections is repeated "N" times, as indicated by the label above the block. The dotted gray lines visually delineate the boundaries of these repeated blocks.

### Key Observations

The diagram emphasizes the use of residual connections (addition operations) around each major sub-layer (Norm, Attention, Feed-Forward). This is a common practice in deep neural networks to help with gradient flow during training. The repeated application of the attention sub-blocks suggests that the model learns hierarchical representations of the input data. The use of "Masked" Multi-Head Attention suggests this is likely part of a decoder, or a model dealing with sequential data where future information should be masked.

### Interpretation

This diagram represents a core component of the Transformer architecture, a powerful neural network model that has achieved state-of-the-art results in various natural language processing tasks. The diagram illustrates the key building blocks of the Transformer, including the attention mechanism, normalization layers, and residual connections. The repeated application of the attention sub-blocks allows the model to capture complex relationships between different parts of the input sequence. The use of residual connections helps to mitigate the vanishing gradient problem, enabling the training of deeper models. The overall architecture is designed to process sequential data in parallel, making it more efficient than traditional recurrent neural networks. The "N" indicates the depth of the model, and can be tuned to balance model capacity and computational cost.

</details>

Figure 6. Many state-of-the-art LLMs are decoder-only transformers, with $N$ multi-head attention sub-blocks, for auto-regressive output generation.

\Description

[Many state-of-the-art LLMs are decoder-only transformers, with $N$ multi-head attention sub-blocks, for auto-regressive output generation.]Many state-of-the-art LLMs are decoder-only transformers, with $N$ multi-head attention sub-blocks, for auto-regressive output generation.

While early LLM models utilized encoder-only or encoder-decoder transformer architectures, state-of-the-art LLMs now generally utilize a decoder-only architecture. For example, the GPT family of models, such as GPT-4 (Achiam et al., 2023), and the Llama family of models, such as Llama 3 (Dubey et al., 2024), are decoder-only transformers. In Figure 6, we show a decoder-only transformer model. These state-of-the-art models leverage advances in transformers to improve computational efficiency, given the huge size of these models: Llama $3$ has $8$ B parameters for the small variant and $70$ B parameters for the large variant, while GPT- $4$ is rumored to have over one trillion parameters. Llama $3$ uses rotary positional embeddings (RoPE) (Su et al., 2024a) instead of absolute positional embeddings, which have been shown to be more effective than alternative embedding schemes. For a more detailed review of LLMs, we refer readers to (Minaee et al., 2024). Before presenting the metrics utilized by UQ methods for LLMs, we discuss natural-language inference, which is an important component of many UQ methods for LLMs.

2.4.2. Natural-Language Inference

Natural-language inference (NLI) refers to the task of characterizing the relationship between two text fragments, where one text fragment represents a premise (i.e., a statement that is believed to be true) while the other fragment represents a hypothesis (i.e., a statement whose veracity we seek to evaluate based on the premise) (Williams et al., 2017; Dagan et al., 2005; Fyodorov et al., 2000). Given a premise and a hypothesis, we can classify the relation between the text pair as: an entailment, if one can infer that the hypothesis is most likely true given the premise; a contradiction, if one can infer that the hypothesis is most likely false given the premise; or a neutral label, if one cannot infer the truthfulness of the hypothesis from the premise (MacCartney and Manning, 2008; Condoravdi et al., 2003; Monz and de Rijke, 2001). In Figure 7, we provide some examples of text pairs that exhibit entailment, contradiction, or neutrality. In the first example, the premise indicates that the student presented a research paper at a conference (i.e., the student did not skip the conference), hence, the contradiction. In the second example, the premise indicates that the orchestra enjoyed the concert, but does not state whether the orchestra performed at the concert (or just observed the event), hence the neutral label. In the third example, we can infer that the hypothesis is true, since the premise indicates that the team was on vacation, hence, not in the office.

<details>

<summary>extracted/6588127/images/background/nli/nli.png Details</summary>

### Visual Description

\n

## Diagram: Natural Language Inference Examples

### Overview

The image presents three examples illustrating different relationships between pairs of sentences in the context of Natural Language Inference (NLI). Each example consists of a premise sentence on the left, a hypothesis sentence on the right, and a label indicating the relationship between them. The relationships are categorized as "Contradiction", "Neutral", and "Entailment". Each pair of sentences is visually connected by a series of interconnected circles.

### Components/Axes

The diagram consists of three horizontally stacked sections, each representing a different NLI relationship. Each section contains:

* **Premise:** A sentence presented on the left side.

* **Hypothesis:** A sentence presented on the right side.

* **Relationship Label:** A label (Contradiction, Neutral, Entailment) positioned above the connecting circles.

* **Connecting Circles:** A series of interconnected circles visually linking the premise and hypothesis. The circles are white with black outlines.

* **Color Coding:** Each section is color-coded:

* Contradiction: Pink

* Neutral: Light Yellow/Peach

* Entailment: Light Green

### Detailed Analysis / Content Details

**1. Contradiction (Pink Section)**

* **Premise:** "A student presented a research paper at the conference."

* **Hypothesis:** "The student skipped the conference."

* **Relationship:** Contradiction

* **Connecting Circles:** Four circles are present.

**2. Neutral (Light Yellow/Peach Section)**

* **Premise:** "The orchestra enjoyed the concert."

* **Hypothesis:** "The orchestra performed at the concert."

* **Relationship:** Neutral

* **Connecting Circles:** Four circles are present.

**3. Entailment (Light Green Section)**

* **Premise:** "The team was out on vacation yesterday."

* **Hypothesis:** "The team was not in the office yesterday."

* **Relationship:** Entailment

* **Connecting Circles:** Four circles are present.

### Key Observations

The diagram visually demonstrates the three core relationships in NLI:

* **Contradiction:** The hypothesis asserts something that logically conflicts with the premise.

* **Neutral:** The hypothesis neither confirms nor denies the premise; it's independent.

* **Entailment:** The hypothesis logically follows from the premise; if the premise is true, the hypothesis must also be true.

The connecting circles, while visually present, do not appear to convey any specific information beyond linking the premise and hypothesis. The color coding effectively distinguishes the different relationship types.

### Interpretation

This diagram serves as a clear and concise illustration of the fundamental concepts in Natural Language Inference. It highlights how understanding the relationship between sentences is crucial for tasks like question answering, text summarization, and dialogue systems. The examples chosen are relatively simple, making the concepts accessible. The use of color and visual connections aids in quick comprehension. The diagram doesn't present any quantitative data, but rather focuses on qualitative examples to define the NLI categories. The consistent structure across the three examples reinforces the understanding of each relationship type.

</details>

Figure 7. Natural-language inference models characterize the relationship between a pair of texts, namely: a premise and a hypothesis. The possible relations include: (1) an entailment where the hypothesis can be inferred from the premise; (2) a contradiction, where the hypothesis is more likely false given the premise; and (3) a neutral relation, where the veracity of the hypothesis cannot be determined from the premise.

\Description

[Natural-language inference models characterize the relationship between a pair of texts, namely: a premise and a hypothesis. The possible relations include: (1) an entailment where the hypothesis can be inferred from the premise; (2) a contradiction, where the hypothesis is more likely false given the premise; and (3) a neutral relation, where the veracity of the hypothesis cannot be determined from the premise.]Natural-language inference models characterize the relationship between a pair of texts, namely: a premise and a hypothesis. The possible relations include: (1) an entailment where the hypothesis can be inferred from the premise; (2) a contradiction, where the hypothesis is more likely false given the premise; and (3) a neutral relation, where the veracity of the hypothesis cannot be determined from the premise.

NLI methods play an important role in uncertainty quantification of LLMs. Many UQ methods for LLMs rely on characterization of the semantic relationship between multiple realizations of the LLM’s responses to a given input prompt to determine the confidence of the model. Many of these methods rely on learned models for natural-language inference, such as BERT (Devlin, 2018), which utilizes a transformer-based architecture to learn useful language representations that are crucial in natural-language tasks such as question answering and natural-language inference. Unlike many standard language models, e.g., Generative Pre-trained Transformer (GPT) (Radford and Narasimhan, 2018), which impose a unidirectionality constraint where every token can only attend to previous tokens, BERT employs a bidirectional approach where each token can attend to any token regardless of its relative position, using a masked language model, potentially enabling the model to capture broader context, especially in sentence-level tasks. In (Liu, 2019), the authors demonstrate that the performance of BERT is limited by inadequate pre-training and propose an improved model, named RoBERTa (Liu, 2019), which retains the same architecture as BERT but is trained for longer with larger mini-batches of data with longer sequences. DeBERTa (He et al., 2020) further improves upon the performance of RoBERTa by introducing a disentangled attention mechanism and an enhanced mask decoder.

2.4.3. Metrics for Uncertainty Quantification for LLMs.

Uncertainty quantification in the LLM community has largely eschewed traditional UQ methods for learned models due to the notable computation cost of running inference on LLMs (Balabanov and Linander, 2024), although, a few UQ methods for LLMs utilize deep ensembles, e.g., (Wang et al., 2023a; Balabanov and Linander, 2024; Zhang et al., 2024; Arteaga et al., 2024), generally based on low-rank adaptation (LoRA) (Hu et al., 2021). Consequently, many UQ methods in this space have introduced less computationally intensive approximate quantification methods that directly harness the unique architecture of LLM models to assess the uncertainty of these models. In some cases, these methods retain the high-level idea of ensemble methods, quantifying the uncertainty of the model on a given prompt using the outputs of a set of individual models or a collection of outputs from the same model, with a temperature parameter less than one to promote greater stochasticity in the tokens generated by the model. UQ methods for LLMs can be broadly categorized into white-box models and black-box models (Liu et al., 2024d; Vashurin et al., 2024), illustrated in Figure 9 and Figure 9, respectively.

White-Box UQ Methods

White-box UQ models assume that the underlying architecture of the model is partially or completely visible and accessible—hence the term white-box —taking advantage of access to the intermediate outputs of the underlying models, such as the probability distribution over the generated tokens or outputs at the inner layers of the model, to assess the uncertainty of the model (Kuhn et al., 2023; Azaria and Mitchell, 2023; Fadeeva et al., 2024). We provide some metrics utilized by white-box UQ methods for LLMs, where $p_{ij}$ denotes the conditional probability of token $j$ (conditioned on all preceding tokens) in sentence $i$ :

<details>

<summary>extracted/6588127/images/background/uq_techniques/white_box.png Details</summary>

### Visual Description

\n

## Data Display: Volleyball Olympic Debut & City Association

### Overview

The image presents information regarding the year volleyball debuted as an Olympic sport and a percentage breakdown associated with several cities. It appears to be a visual response to a question about volleyball's Olympic history.

### Components/Axes

The image contains the following elements:

* **Question Bubble:** A blue speech bubble containing the question "When did volleyball become an Olympic Sport?".

* **Answer Block:** A dark blue rectangular block stating "Volleyball debuted at the Tokyo Olympic Games in 1964." The word "Tokyo" is highlighted in orange.

* **Percentage List:** A list of cities and their associated percentages, displayed within the dark blue block. The cities are: Tokyo, Osaka, Kyoto, Beijing, and Mumbai.

### Detailed Analysis or Content Details

The image provides the following data:

* **Volleyball Debut:** 1964 at the Tokyo Olympic Games.

* **Tokyo:** 87.28%

* **Osaka:** 7.49%

* **Kyoto:** 4.16%

* **Beijing:** 1.04%

* **Mumbai:** 0.00%

The percentages are listed in descending order, with Tokyo having the highest percentage and Mumbai having the lowest.

### Key Observations

The data strongly associates the debut of volleyball as an Olympic sport with Tokyo. The percentage assigned to Tokyo (87.28%) is overwhelmingly dominant compared to the other cities listed. The remaining cities have significantly smaller percentages, suggesting a minor association or perhaps a representation of search interest related to the question.

### Interpretation

The image suggests that while volleyball debuted at the Tokyo Olympics in 1964, there's a disproportionately strong connection between the sport and the city of Tokyo. The percentages likely represent the frequency with which each city is mentioned in relation to the question "When did volleyball become an Olympic Sport?". This could be based on search data, social media mentions, or other forms of online activity. The high percentage for Tokyo reinforces its historical significance as the host city for volleyball's Olympic debut. The other cities likely have some tangential connection, perhaps through hosting related events or having strong volleyball communities, but their association is far less prominent. The 0.00% for Mumbai suggests a very weak or non-existent association with the question.

</details>

Figure 8. White-box uncertainty quantification methods utilize an LLM’s internal information, e.g., the model’s probabilities for the token associated with each output.

\Description

[White-box uncertainty quantification methods utilize an LLM’s internal information, e.g., the model’s probabilities for the token associated with each output.]White-box uncertainty quantification methods utilize an LLM’s internal information, e.g., the model’s probabilities for the token associated with each output.

<details>

<summary>extracted/6588127/images/background/uq_techniques/black_box.png Details</summary>

### Visual Description

\n

## Diagram: LLM Response Generation Flow

### Overview

This diagram illustrates the process of a Large Language Model (LLM) responding to a user query. It depicts the input query, the LLM processing stage, the generation of multiple responses, and a confidence estimate for the responses.

### Components/Axes

The diagram consists of the following components, arranged vertically:

1. **Input Query:** A light blue rounded rectangle at the top, labeled "Who was Abraham Lincoln?". A small grey icon of a person is positioned in the top-right corner.

2. **LLM Processing:** A green circular shape containing a stylized intertwined symbol, labeled "LLM".

3. **Responses:** A light blue rounded rectangle labeled "Randomly-Generated Responses". This contains two example responses, separated by ellipses ("..."), suggesting more responses exist.

4. **Confidence Estimate:** A pink rounded rectangle at the bottom, labeled "Confidence Estimate: 75%".

5. **Arrows:** Black downward-pointing arrows indicate the flow of information between the components.

### Detailed Analysis or Content Details

The diagram shows a sequential flow:

1. **Input:** The query "Who was Abraham Lincoln?" is presented as input.

2. **Processing:** The query is processed by the LLM.

3. **Output:** The LLM generates multiple responses. Two examples are provided:

* "Abraham Lincoln was the fifteenth president of the U.S., serving from 1861 to 1865."

* "Abraham Lincoln was the sixteenth president of the U.S., serving from 1861 to 1864."

4. **Confidence:** A confidence estimate of 75% is assigned to the generated responses.

### Key Observations

The diagram highlights a potential issue with LLMs: the generation of conflicting or inaccurate responses. The two provided responses disagree on Lincoln's presidential number (fifteenth vs. sixteenth) and the end year of his presidency (1865 vs. 1864). The confidence estimate of 75% suggests the LLM is not entirely certain about its responses, despite presenting them as factual.

### Interpretation

This diagram demonstrates the probabilistic nature of LLM responses. LLMs do not "know" facts in the same way humans do; they generate text based on patterns learned from training data. This can lead to inconsistencies and inaccuracies, even with a relatively high confidence estimate. The diagram suggests that LLM outputs should be critically evaluated and not accepted as definitive truth. The inclusion of a confidence estimate is a useful feature, but it does not guarantee the accuracy of the information. The "Randomly-Generated Responses" label implies that the LLM produces multiple possible answers, and the selection of the final response may be based on factors other than factual correctness. The diagram is a visual representation of the challenges associated with relying on LLMs for factual information.

</details>

Figure 9. Black-box uncertainty quantification methods do not access the internal states or probabilities computed by the model, quantifying the model’s uncertainty entirely from its natural-language response.

\Description

[Black-box uncertainty quantification methods do not access the internal states or probabilities computed by the model, quantifying the model’s uncertainty entirely from its natural-language response.]Black-box uncertainty quantification methods do not access the internal states or probabilities computed by the model, quantifying the model’s uncertainty entirely from its natural-language response.

1. Average Token Log-Probability. The average of the negative log-probability of the tokens, which captures the average confidence of the model (Manakul et al., 2023), is given by: ${\mathrm{Average}(p)=-\frac{1}{L_{i}}\sum_{j}\log(p_{ij}),}$ where sentence $i$ consists of $L_{i}$ tokens. Note that the value of this metric increases as the conditional probability distribution of each token decreases, signifying an decrease in the model’s confidence. The average token probability is related to the product of the token probabilities.

1. Perplexity. The perplexity of a model’s prediction represents the exponential of the average of the negative log-probability of the tokens which comprise the sentence (response) generated by the LLM (Fadeeva et al., 2024). Perplexity is given by: ${\mathrm{Perplexity}(p)=\exp\left(-\frac{1}{L_{i}}\sum_{j}\log(p_{ij})\right).}$

1. Maximum Token Log-Probability. The maximum token log-probability captures the token with the lowest conditional probability, which is given by: ${\mathrm{Maximum}(p)=\max_{j}-\log(p_{ij}).}$

1. Response Improbability. This metric entails computing the probability of a given sentence given the conditional distribution for each token (Fadeeva et al., 2024), where the probability distribution is conditioned on preceding tokens, and subtracting the resulting value from one. The uncertainty metric is defined as: ${\mathrm{Improb.}=1-\prod_{j}p_{ij}.}$

1. Entropy. The maximum entropy of the probability distribution associated with each token can be utilized as a metric for UQ, given by: ${\mathrm{Entropy}=\max_{j}\mathcal{H}(p_{j}),}$ where $\mathcal{H}$ represents the entropy of the probability distribution $p_{j}$ of token $j$ . Some existing methods claim that this metric is better than the perplexity (Fadeeva et al., 2024). Similarly, the predictive entropy (Malinin and Gales, 2020) at input $x$ and output $y$ is defined as: ${\mathcal{H}(Y\mid x)=-∈t p(y\mid x)\ln p(y\mid x)dy.}$ In the discrete case, the entropy associated with the output distribution of token $j$ in sentence $i$ is defined by: ${\mathcal{H}_{ij}=-\sum_{w∈\mathcal{D}}p_{ij}(w)\log p_{ij}(w),}$ where $\mathcal{D}$ denotes the dictionary containing all possible words in the model and $w$ represents a word in $\mathcal{D}$ .

Black-Box UQ Methods

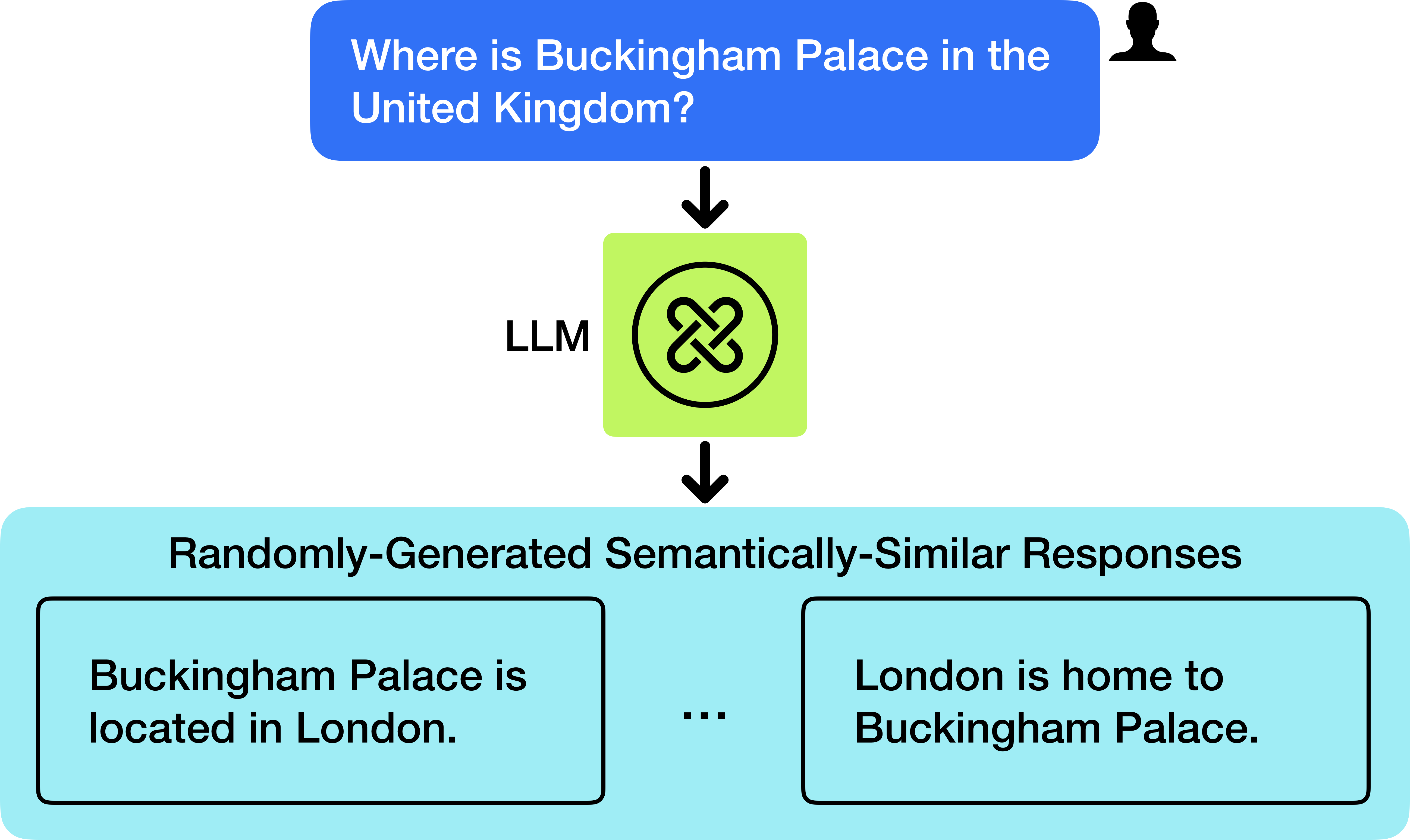

In contrast, black-box methods assume that the model’s internal outputs cannot be accessed externally (Manakul et al., 2023; Chen and Mueller, 2023). Hence, these methods quantify the uncertainty of the model entirely from the model’s response to an input prompt. Prior work has discussed the pros and cons of both categories of UQ methods (Lin et al., 2023). Concisely, white-box methods generally require access to the underlying architecture and intermediate outputs of an LLM, which is increasingly difficult to obtain given that many LLMs have become closed-source models, posing a significant limitation. In contrast, black-box models enable UQ of closed-source models such as OpenAI’s GPT-4 (Achiam et al., 2023) and Anthropic Claude (Anthropic, 2024), which do not provide complete access to the model. In general, black-box UQ methods for LLMs require the evaluation of the similarity between multiple responses generated by an LLM or an ensemble of LLMs on the same or similar prompts to quantify the uncertainty of the LLM on a given input prompt. Other black-box UQ methods, such as self-verbalized UQ methods, train the model to directly provide a natural-language estimate of its confidence. Here, we identify some prominent techniques for measuring the similarity between a pair of text fragments:

1. NLI Scores. As described in Section 2.4.2, NLI models, such as RoBERTa (Liu, 2019) and DeBERTa (He et al., 2020), classify the relationship between a pair of text fragment as either an entailment, a contradiction, or a neutral relation. Many black-box methods utilize the probabilities (or logits) predicted by the NLI model for these three classes as a measure of the similarity between the two text fragments, which is ultimately used to quantify the uncertainty of the LLM. For example, given the probability $p_{\mathrm{entail}}$ predicted by an NLI model that a text fragment $t_{1}$ entails another text fragments $t_{2}$ , we can define the similarity between both text fragments as: ${\mathrm{sim}(t_{1},t_{2})=p_{\mathrm{entail}}}$ . Conversely, given the probability of contradiction $p_{\mathrm{contradict}}$ , we can define the similarity between $t_{1}$ and $t_{2}$ as: ${\mathrm{sim}(t_{1},t_{2})=1-p_{\mathrm{contradict}}}$ .

1. Jaccard Index. The Jaccard index, also referred to as Intersection-over-Union measures the similarity between two sets by computing the ratio of the intersection of both sets and the union of both sets. Hence, the Jaccard index $J$ between two sets $\mathcal{T}_{1}$ and $\mathcal{T}_{2}$ , where each set consists of the words that make up its associated text fragment, is given by: ${J(\mathcal{T}_{1},\mathcal{T}_{2})=\frac{|\mathcal{T}_{1}\cap\mathcal{T}_{2}|%

}{|\mathcal{T}_{1}\cup\mathcal{T}_{2}|}.}$ Although the Jaccard index always lies between $0 0$ and $1$ , making it a suitable metric (Pilehvar et al., 2013; Cronin et al., 2017; Qurashi et al., 2020), the Jaccard index does not consider the context of the text fragments, which is important in evaluating the similarity between both text fragments.

1. Sentence-Embedding-Based Similarity. The similarity between two text fragments can also be determined by computing the cosine-similarity between the sentence embeddings associated with each text fragment. Sentence-embedding models transform natural-language inputs (or tokens) into a vector space, enabling direct computation of the similarity between two sentences (phrases). For example, Sentence-BERT (SBERT) (Reimers, 2019) builds upon the pretrained BERT architecture to train a model that computes semantically-relevant sentence embeddings. Other similar models include LaBSE (Feng et al., 2020) and SONAR (Duquenne et al., 2023). Since the sentence embeddings capture the context of the text fragment, this approach is less susceptible to the challenges faced by the Jaccard index, such as those that arise with negated words.

1. BERTScore. The BERTScore (Zhang et al., 2019) measures the similarity between two sentences by computing the cosine-similarity between the contextual embedding of each token (word) in the reference sentence $t_{r}$ and the contextual embedding of the associated token in the candidate sentence $t_{c}$ . The token embeddings are generated from NLI models to capture the context of the sentence. As a result, a given word might have different embeddings, depending on the context of the sentence in which it is used, addressing the challenges faced by the Jaccard similarity metric and word-embedding-based metrics. The BERTScore is composed of a precision $P_{\mathrm{BS}}$ , recall $R_{\mathrm{BS}}$ , and F1 $F_{\mathrm{BS}}$ score, given by:

$$

P_{\mathrm{BS}}=\frac{1}{|t_{c}|}\sum_{\hat{w}_{j}\in t_{c}}\max_{w_{i}\in t_{%

r}}w_{i}^{\top}\hat{w}_{j},\enspace R_{\mathrm{BS}}=\frac{1}{|t_{r}|}\sum_{w_{%

i}\in t_{r}}\max_{\hat{w}_{j}\in t_{c}}w_{i}^{\top}\hat{w}_{j},\enspace F_{%

\mathrm{BS}}=2\frac{P_{\mathrm{BS}}\cdot R_{\mathrm{BS}}}{P_{\mathrm{BS}}+R_{%

\mathrm{BS}}}, \tag{1}

$$

where each token in the candidate sentence is matched to its most similar token in the reference sentence. The BERTScore is obtained by computing the cosine-similarity between matched pairs. Since each token embedding is normalized, the cosine-similarity between a pair of embeddings simplifies to the inner-product. The recall score is related to the ROUGE metric (Lin, 2004) used in evaluating text summaries and more broadly to the BARTScore (Yuan et al., 2021). However, the ROUGE metric utilizes human-provided summaries as the reference.

In the following sections, we describe the main categories of UQ methods for LLMs in detail, namely: (1) Token-Level UQ Methods; (2) Self-Verbalized UQ Methods; (3) Semantic-Similarity UQ Methods; and (4) Mechanistic Interpretability, outlined in Figure 2. Although mechanistic interpretability has not been widely applied to uncertainty quantification, we believe that insights from mechanistic interpretability can be more extensively applied to the uncertainty quantification of LLMs; hence, we include these methods within our taxonomy.

3. Token-Level UQ

We recall that the outputs of an LLM are generated by sampling from a probability distribution over the tokens that make up the outputs, conditioned on the preceding tokens in the outputs (see LABEL:{sec:background_uq_llm}). Token-level UQ methods leverage the probability distribution over each token to estimate the probability of generating a given response from an LLM. Although a high predicted probability in a particular generation may not be indicative of its correctness over another, direct quantification of the model’s generating distribution may lead to better understanding of the stochasticity of generations. Token-level UQ methods utilize the white-box UQ metrics discussed in Section 2.4.3 to estimate the randomness in the probability distribution associated with an LLM’s response. For example, some token-level UQ methods compute the entropy of the underlying probability distribution over the tokens (Xiao and Wang, 2021; Ling et al., 2024) or semantic clusters (Kuhn et al., 2023) (referred to as semantic entropy) to estimate the LLM’s confidence. Likewise, a variant of SelfCheckGPT (Manakul et al., 2023) trains an $n$ -gram model using multiple samples of the response of an LLM to a given query including its main response. Subsequently, SelfCheckGPT estimates the LLM’s uncertainty by computing the average of the log-probabilities of the tokens generated by the $n$ -gram model, given the original response of the LLM. Moreover, SelfCheckGPT proposes using the maximum of the negative log-probability to estimate the LLM’s uncertainty.

Token-based UQ methods generally perform poorly with long-form responses, because the product of the token probabilities decrease with longer responses, even when the responses are semantically equivalent to a shorter response. To address this limitation, token-based UQ methods employ a length-normalized scoring function (Thomas and Joy, 2006; Malinin and Gales, 2020), to reduce the dependence of the UQ metrics on the length of the sequence, given by: ${\mathrm{Product(p)}=\prod_{j=1}^{L_{i}}p_{ij}^{\frac{1}{L_{i}}}}$ , where $L_{i}$ denotes the length of sentence $i$ , and $p_{ij}$ is the conditional probability of token $j$ , given all preceding tokens, in sentence $i$ . The work in (Bakman et al., 2024) introduces Meaning-Aware Response Scoring (MARS) as an alternative to length-normalized scoring. MARS utilizes an importance function to assign weights to each token based on its contribution to the meaning of the sentence. The contribution of each token to the meaning of the sentence is determined using BEM (Bulian et al., 2022), a question-answer evaluation model. Taking a different approach, Claim-Conditioned Probability (CCP) (Fadeeva et al., 2024) decomposes the output of an LLM into a set of claims and computes the token-level uncertainty of each claim from its constituent tokens. CCP utilizes the OpenAI Chat API (Brown, 2020; Achiam et al., 2023) to identify the main claims in a given response. By examining the component claims, CCP provides finer-grained uncertainty quantification compared to other UQ methods for LLMs.

As described, token-level UQ methods estimate the uncertainty of an LLM based on the conditional distribution associated with each token. Although this approach is effective in general, the conditional distribution of the tokens can be misleading in certain scenarios, especially when an initial token is incorrect but all the succeeding tokens are highly probable given the initial token. Trainable attention-based dependency (TAD) (Vazhentsev et al., 2024) trains a regression model on the conditional dependence between the tokens and applies the predicted factors to improve the estimated uncertainty of the LLM. Lastly, we present token-level UQ methods that use specific prompting strategies to estimate the model’s confidence. The work in (Kadavath et al., 2022) shows that token-based UQ methods can be particularly effective in estimating the confidence of LLMs when the model is prompted to select an option when given a multiple-choice question. Specifically, the authors show that the model’s probability distribution over the options in the prompt is well-calibrated, when presented with multiple-choice problems or problems with a True/False answer. Further, the authors fine-tune an LLM with a value head to predict the probability that the model knows the answer to a given question for each token. The probability associated with the LLM’s final token is defined as the confidence of the LLM in its response for the given prompt. The results demonstrate that the LLM predictions of these probability values are well-calibrated, with an improvement in the calibration performance with larger models. Other follow-on work leveraging multiple-choice problems to estimate the uncertainty of LLMs includes (Ren et al., 2023b).

4. Self-Verbalized UQ

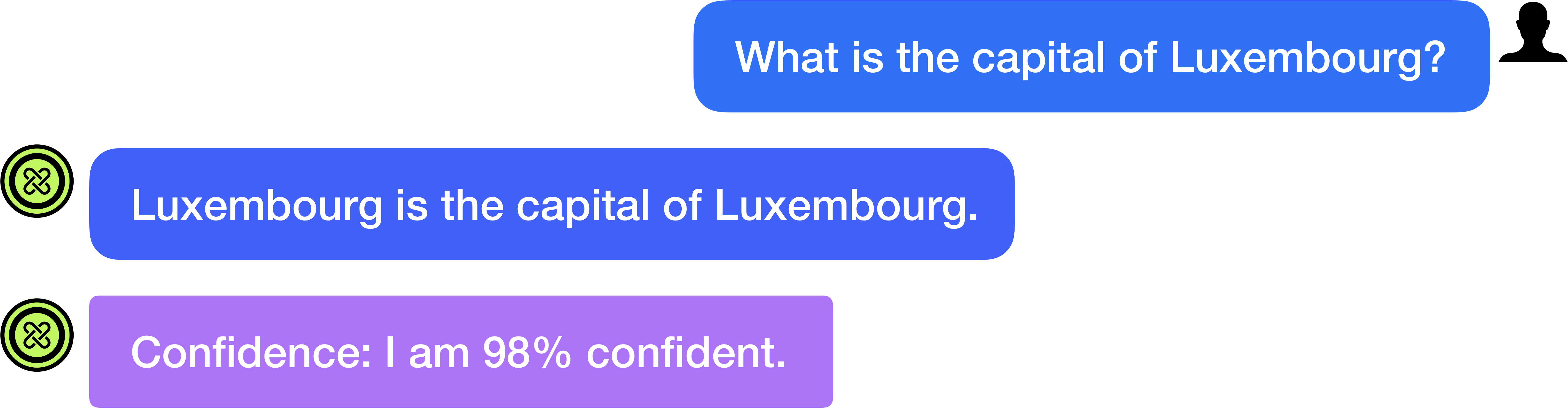

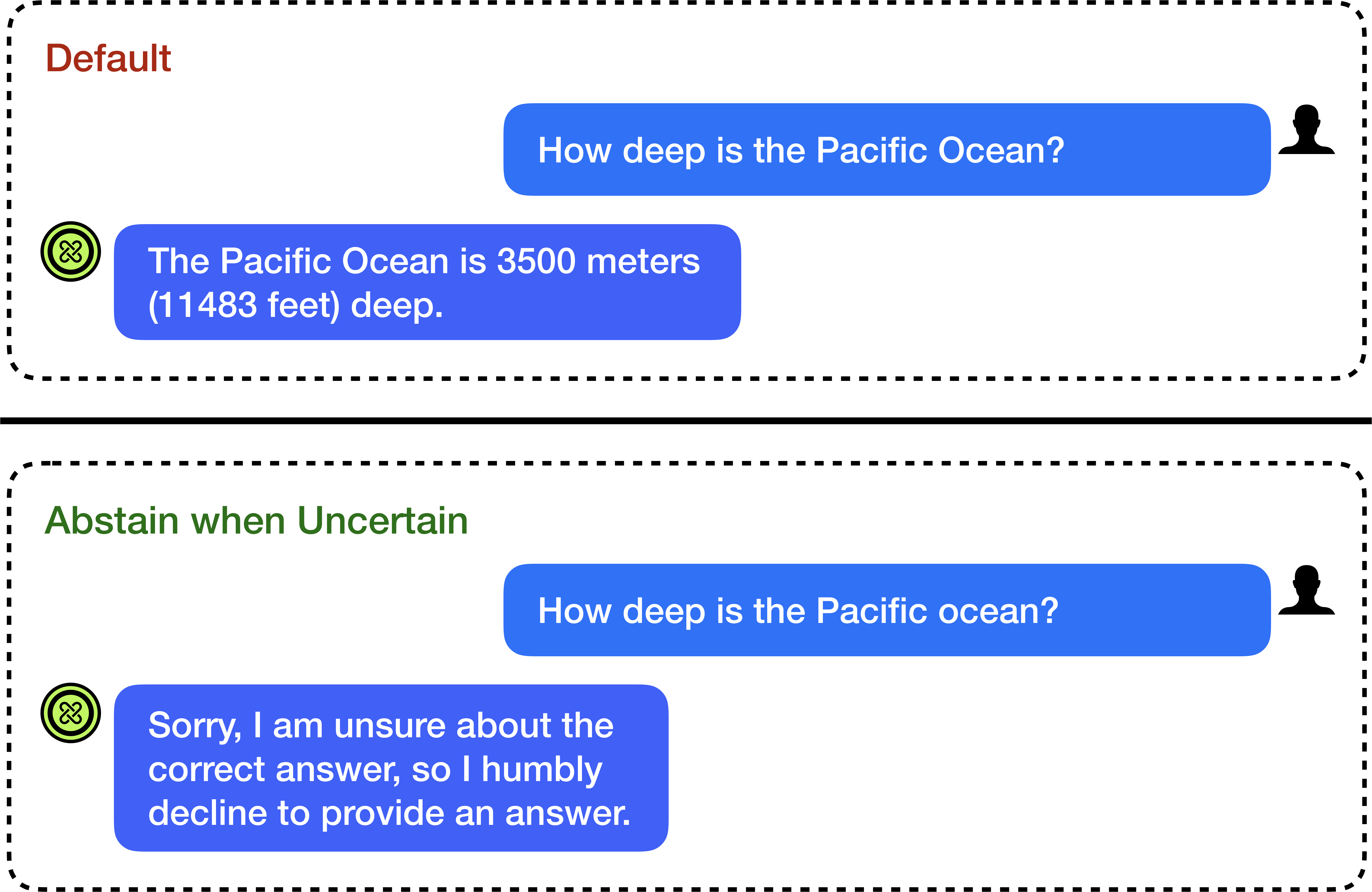

Self-verbalized uncertainty quantification methods seek to harness the impressive learning and reasoning capabilities of LLMs to enable an LLM to express its confidence in a given response through natural-language. Self-verbalized uncertainty estimates (e.g., expressed as probabilities) are more easily interpretable to humans, especially when the estimates are provided using widely-used epistemic uncertainty markers (Tang et al., 2024; Yona et al., 2024), e.g., words like I am not sure… or This response might be… Figure 11 illustrates the use of epistemic markers by an LLM to convey its uncertainty, when asked of the team that won the 2022 NBA Finals. The response of the LLM is actually incorrect; however, by expressing its uncertainty, a user may be more inclined to verify the factuality of the LLM’s response. Prior work has shown that LLMs typically fail to accurately express their confidence in a given response, often using decisive words that suggest confidence, while at the same time being unsure of the accuracy of their response. Empirical studies (Krause et al., 2023) have shown that poor calibration of LLM’s self-verbalized confidence estimates is more pronounced in low-data language settings, e.g., Hindi and Amharic.

<details>

<summary>extracted/6588127/images/self_verbalized_uq/epistemic_uncertainty_markers.png Details</summary>

### Visual Description

\n

## Diagram: LLM Response with and without Epistemic Markers

### Overview

This diagram illustrates the difference in responses from a Large Language Model (LLM) when prompted with a question, specifically "Which team won the 2022 NBA Finals?", with and without the inclusion of epistemic markers (expressions of uncertainty). The diagram shows a flow from the question, through the LLM, to two different outputs.

### Components/Axes

The diagram consists of the following components:

* **Input Question:** "Which team won the 2022 NBA Finals?" - positioned at the top of the diagram.

* **LLM Representation:** A circular icon labeled "LLM" with a stylized infinity symbol inside, representing the Large Language Model. This is positioned centrally.

* **Output 1: With Epistemic Markers:** A rounded rectangle labeled "With Epistemic Markers" containing the text: "I think the Milwaukee Bucks won the 2022 NBA Finals, but I am not sure." - positioned on the left side, below the LLM.

* **Output 2: Without Epistemic Markers:** A rounded rectangle labeled "Without Epistemic Markers" containing the text: "The Milwaukee Bucks won the 2022 NBA Finals." - positioned on the right side, below the LLM.

* **Arrows:** Downward arrows indicating the flow of information from the question to the LLM, and from the LLM to the outputs.

* **User Icon:** A small grey icon of a person's head and shoulders, positioned to the right of the input question.

### Detailed Analysis or Content Details

The diagram presents a comparative analysis of LLM responses.

* **Input:** The question is a straightforward factual query about the winner of the 2022 NBA Finals.

* **LLM Processing:** The LLM processes the question.

* **Output with Epistemic Markers:** The LLM's response includes phrases expressing uncertainty ("I think," "but I am not sure"). The response states the Milwaukee Bucks as the winner, but qualifies it with doubt.

* **Output without Epistemic Markers:** The LLM's response is a direct statement of fact, asserting that the Milwaukee Bucks won the 2022 NBA Finals without any qualifiers.

### Key Observations

The key observation is the difference in the LLM's output based on whether or not it incorporates epistemic markers. The inclusion of these markers demonstrates a level of awareness of uncertainty, while their absence presents the information as a definitive fact. The diagram highlights the importance of how LLMs frame their responses, particularly when dealing with potentially ambiguous or uncertain information.

### Interpretation

The diagram demonstrates a crucial aspect of LLM behavior: the ability to express (or suppress) uncertainty. The presence of epistemic markers in the first output suggests a more cautious and nuanced response, acknowledging the possibility of error. The second output, lacking these markers, presents the information as a confident assertion. This difference is significant because it impacts how users perceive the reliability of the information provided by the LLM.

The diagram implicitly raises questions about the underlying mechanisms that control the inclusion or exclusion of epistemic markers in LLM responses. It suggests that these markers are not inherent to the LLM's knowledge but are rather a function of its programming or the specific prompt it receives. This has implications for the development of more trustworthy and transparent LLMs, as it highlights the need for mechanisms that accurately reflect the LLM's level of confidence in its responses.

The diagram also subtly points to the potential for LLMs to be manipulated into presenting uncertain information as fact, simply by omitting epistemic markers. This underscores the importance of critical thinking and source evaluation when interacting with LLMs.

</details>

Figure 10. The LLM provides an incorrect response, but communicates its uncertainty using epistemic markers, e.g., “I think.”

\Description

[The LLM provides an incorrect response, but communicates its uncertainty using epistemic markers, e.g., “I think.”]The LLM provides an incorrect response, but communicates its uncertainty using epistemic markers, e.g., “I think.”

<details>

<summary>extracted/6588127/images/self_verbalized_uq/self_verbalized_uncertainty.png Details</summary>

### Visual Description

\n

## Screenshot: Chatbot Conversation

### Overview

The image is a screenshot of a chatbot conversation. It depicts a question and two responses from the chatbot, including a confidence level. The conversation is presented as a series of speech bubbles.

### Components/Axes

There are no axes or traditional chart components. The elements are:

* **Question Bubble:** Top-center, blue background, white text.

* **Response 1 Bubble:** Center, blue background, white text. Includes a circular icon on the left.

* **Response 2 Bubble:** Bottom-center, purple background, white text. Includes a circular icon on the left.

* **User Icon:** Top-right corner of the question bubble.

### Content Details

The text content is as follows:

1. **Question:** "What is the capital of Luxembourg?"

2. **Response 1:** "Luxembourg is the capital of Luxembourg."

3. **Response 2:** "Confidence: I am 98% confident."

The circular icons in the response bubbles appear to be identical.

### Key Observations

The chatbot correctly identifies Luxembourg as the capital of Luxembourg. The chatbot expresses a high degree of confidence (98%) in its answer.

### Interpretation

This screenshot demonstrates a simple question-answering interaction with a chatbot. The chatbot successfully provides a factual answer and quantifies its certainty. The high confidence level suggests the chatbot is well-trained on this particular piece of information. The visual presentation (speech bubbles) is typical of conversational interfaces. The chatbot's response is a tautology, stating that Luxembourg is the capital of Luxembourg, which is factually correct but not particularly informative.

</details>

Figure 11. LLMs can be trained or fine-tuned to provide numeric estimates of their confidence in the factuality of their response.

\Description

[LLMs can be trained or fine-tuned to provide numeric estimates of their confidence in the factuality of their response.]LLMs can be trained or fine-tuned to provide numeric estimates of their confidence in the factuality of their response.