# Training Large Language Models to Reason in a Continuous Latent Space

**Authors**: Shibo Hao, Sainbayar Sukhbaatar, DiJia Su, Xian Li, Zhiting Hu, Jason Weston, Yuandong Tian

1]FAIR at Meta 2]UC San Diego \contribution [*]Work done at Meta

(December 23, 2025)

Abstract

Large language models (LLMs) are restricted to reason in the “language space”, where they typically express the reasoning process with a chain-of-thought (CoT) to solve a complex reasoning problem. However, we argue that language space may not always be optimal for reasoning. For example, most word tokens are primarily for textual coherence and not essential for reasoning, while some critical tokens require complex planning and pose huge challenges to LLMs. To explore the potential of LLM reasoning in an unrestricted latent space instead of using natural language, we introduce a new paradigm Coconut (C hain o f Con tin u ous T hought). We utilize the last hidden state of the LLM as a representation of the reasoning state (termed “continuous thought”). Rather than decoding this into a word token, we feed it back to the LLM as the subsequent input embedding directly in the continuous space. Experiments show that Coconut can effectively augment the LLM on several reasoning tasks. This novel latent reasoning paradigm leads to emergent advanced reasoning patterns: the continuous thought can encode multiple alternative next reasoning steps, allowing the model to perform a breadth-first search (BFS) to solve the problem, rather than prematurely committing to a single deterministic path like CoT. Coconut outperforms CoT in certain logical reasoning tasks that require substantial backtracking during planning, with fewer thinking tokens during inference. These findings demonstrate the promise of latent reasoning and offer valuable insights for future research.

1 Introduction

Large language models (LLMs) have demonstrated remarkable reasoning abilities, emerging from extensive pretraining on human languages (Dubey et al., 2024; Achiam et al., 2023). While next token prediction is an effective training objective, it imposes a fundamental constraint on the LLM as a reasoning machine: the explicit reasoning process of LLMs must be generated in word tokens. For example, a prevalent approach, known as chain-of-thought (CoT) reasoning (Wei et al., 2022), involves prompting or training LLMs to generate solutions step-by-step using natural language. However, this is in stark contrast to certain human cognition results. Neuroimaging studies have consistently shown that the language network – a set of brain regions responsible for language comprehension and production – remains largely inactive during various reasoning tasks (Amalric and Dehaene, 2019; Monti et al., 2012, 2007, 2009; Fedorenko et al., 2011). Further evidence indicates that human language is optimized for communication rather than reasoning (Fedorenko et al., 2024).

A significant issue arises when LLMs use language for reasoning: the amount of reasoning required for each particular reasoning token varies greatly, yet current LLM architectures allocate nearly the same computing budget for predicting every token. Most tokens in a reasoning chain are generated solely for fluency, contributing little to the actual reasoning process. On the contrary, some critical tokens require complex planning and pose huge challenges to LLMs. While previous work has attempted to fix these problems by prompting LLMs to generate succinct reasoning chains (Madaan and Yazdanbakhsh, 2022), or performing additional reasoning before generating some critical tokens (Zelikman et al., 2024), these solutions remain constrained within the language space and do not solve the fundamental problems. On the contrary, it would be ideal for LLMs to have the freedom to reason without any language constraints, and then translate their findings into language only when necessary.

<details>

<summary>figures/figure_1_meta_3.png Details</summary>

### Visual Description

## Diagram: Chain-of-Thought (CoT) vs. Chain of Continuous Thought (COCONUT)

### Overview

The image presents two diagrams illustrating the difference between Chain-of-Thought (CoT) and Chain of Continuous Thought (COCONUT) approaches in language models. Both diagrams depict the flow of information through a Large Language Model, highlighting the input and output tokens, hidden states, and embeddings.

### Components/Axes

**Left Diagram: Chain-of-Thought (CoT)**

* **Title:** Chain-of-Thought (CoT)

* **Components:**

* Input Token: \[Question], x<sub>i</sub>, x<sub>i+1</sub>, x<sub>i+2</sub>, x<sub>i+j</sub>

* Input Embedding: Represented by yellow rounded rectangles corresponding to each input token.

* Large Language Model: A central gray rounded rectangle.

* Last Hidden State: Represented by purple rounded rectangles above the Large Language Model, corresponding to each input token.

* Output Token (sampling): \[Answer]

* **Flow:** The input tokens are fed into the Large Language Model, which generates hidden states. These hidden states are then used to sample output tokens.

**Right Diagram: Chain of Continuous Thought (COCONUT)**

* **Title:** Chain of Continuous Thought (COCONUT)

* **Text Description:** "Last hidden states are used as input embeddings"

* **Components:**

* Input Token: \[Question], <bot>, <eot>

* Input Embedding: Represented by gradient (yellow to purple) rounded rectangles corresponding to each input token.

* Large Language Model: A central gray rounded rectangle.

* Last Hidden State: Represented by gradient (yellow to purple) rounded rectangles above the Large Language Model, corresponding to each input token.

* Output Token (sampling): \[Answer]

* **Flow:** The input tokens are fed into the Large Language Model, which generates hidden states. These hidden states are then used as input embeddings for the next step.

### Detailed Analysis or Content Details

**Chain-of-Thought (CoT):**

* The input tokens \[Question], x<sub>i</sub>, x<sub>i+1</sub>, x<sub>i+2</sub>, and x<sub>i+j</sub> are converted into input embeddings (yellow rounded rectangles).

* These embeddings are fed into the Large Language Model (gray rounded rectangle).

* The Large Language Model generates last hidden states (purple rounded rectangles) for each input token.

* The last hidden states are used to sample the output token \[Answer].

**Chain of Continuous Thought (COCONUT):**

* The input tokens \[Question], <bot>, and <eot> are initially converted into input embeddings (yellow rounded rectangles).

* These embeddings are fed into the Large Language Model (gray rounded rectangle).

* The Large Language Model generates last hidden states (gradient rounded rectangles) for each input token.

* The last hidden states are then used as input embeddings for the next step, creating a continuous chain of thought.

* The final output token is \[Answer].

### Key Observations

* **CoT:** Uses the last hidden states to sample output tokens directly.

* **COCONUT:** Uses the last hidden states as input embeddings for the next step, creating a continuous chain.

* The COCONUT diagram explicitly states that "Last hidden states are used as input embeddings."

* The input embeddings in COCONUT are represented with a gradient, visually indicating the transformation of hidden states into embeddings.

### Interpretation

The diagrams illustrate a key difference between Chain-of-Thought (CoT) and Chain of Continuous Thought (COCONUT) approaches. CoT treats each step independently, using the hidden states to directly sample output tokens. In contrast, COCONUT leverages the hidden states as input embeddings for subsequent steps, creating a continuous flow of information and potentially allowing the model to maintain context and build upon previous reasoning steps. The use of gradient colors in the COCONUT diagram visually emphasizes the transformation of hidden states into input embeddings, highlighting the continuous nature of the process. The <bot> and <eot> tokens in COCONUT likely represent the beginning and end of the thought process, respectively.

</details>

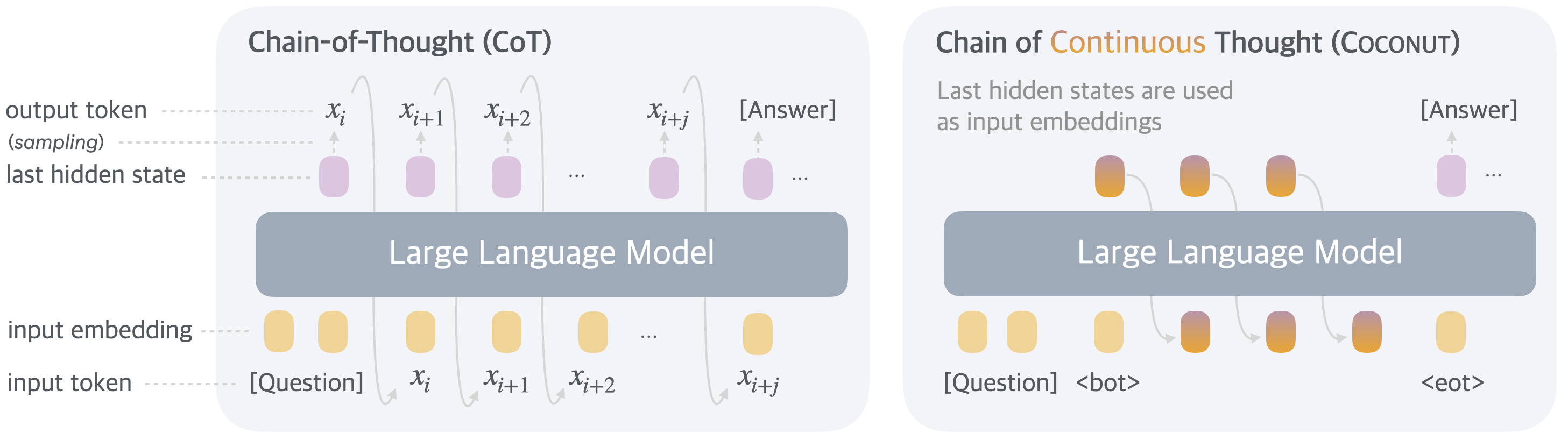

Figure 1: A comparison of Chain of Continuous Thought (Coconut) with Chain-of-Thought (CoT). In CoT, the model generates the reasoning process as a word token sequence (e.g., $[x_{i},x_{i+1},...,x_{i+j}]$ in the figure). Coconut regards the last hidden state as a representation of the reasoning state (termed “continuous thought”), and directly uses it as the next input embedding. This allows the LLM to reason in an unrestricted latent space instead of a language space.

In this work we instead explore LLM reasoning in a latent space by introducing a novel paradigm, Coconut (Chain of Continuous Thought). It involves a simple modification to the traditional CoT process: instead of mapping between hidden states and language tokens using the language model head and embedding layer, Coconut directly feeds the last hidden state (a continuous thought) as the input embedding for the next token (Figure 1). This modification frees the reasoning from being within the language space, and the system can be optimized end-to-end by gradient descent, as continuous thoughts are fully differentiable. To enhance the training of latent reasoning, we employ a multi-stage training strategy inspired by Deng et al. (2024), which effectively utilizes language reasoning chains to guide the training process.

Interestingly, our proposed paradigm leads to an efficient reasoning pattern. Unlike language-based reasoning, continuous thoughts in Coconut can encode multiple potential next steps simultaneously, allowing for a reasoning process akin to breadth-first search (BFS). While the model may not initially make the correct decision, it can maintain many possible options within the continuous thoughts and progressively eliminate incorrect paths through reasoning, guided by some implicit value functions. This advanced reasoning mechanism surpasses traditional CoT, even though the model is not explicitly trained or instructed to operate in this manner, as seen in previous works (Yao et al., 2023; Hao et al., 2023).

Experimentally, Coconut successfully enhances the reasoning capabilities of LLMs. For math reasoning (GSM8k, Cobbe et al., 2021), using continuous thoughts is shown to be beneficial to reasoning accuracy, mirroring the effects of language reasoning chains. This indicates the potential to scale and solve increasingly challenging problems by chaining more continuous thoughts. On logical reasoning including ProntoQA (Saparov and He, 2022), and our newly proposed ProsQA (Section 4.1) which requires stronger planning ability, Coconut and some of its variants even surpasses language-based CoT methods, while generating significantly fewer tokens during inference. We believe that these findings underscore the potential of latent reasoning and could provide valuable insights for future research.

2 Related Work

Chain-of-thought (CoT) reasoning. We use the term chain-of-thought broadly to refer to methods that generate an intermediate reasoning process in language before outputting the final answer. This includes prompting LLMs (Wei et al., 2022; Khot et al., 2022; Zhou et al., 2022), or training LLMs to generate reasoning chains, either with supervised finetuning (Yue et al., 2023; Yu et al., 2023) or reinforcement learning (Wang et al., 2024; Havrilla et al., 2024; Shao et al., 2024; Yu et al., 2024a). Madaan and Yazdanbakhsh (2022) classified the tokens in CoT into symbols, patterns, and text, and proposed to guide the LLM to generate concise CoT based on analysis of their roles. Recent theoretical analyses have demonstrated the usefulness of CoT from the perspective of model expressivity (Feng et al., 2023; Merrill and Sabharwal, 2023; Li et al., 2024). By employing CoT, the effective depth of the transformer increases because the generated outputs are looped back to the input (Feng et al., 2023). These analyses, combined with the established effectiveness of CoT, motivated our design that feeds the continuous thoughts back to the LLM as the next input embedding. While CoT has proven effective for certain tasks, its autoregressive generation nature makes it challenging to mimic human reasoning on more complex problems (LeCun, 2022; Hao et al., 2023), which typically require planning and search. There are works that equip LLMs with explicit tree search algorithms (Xie et al., 2023; Yao et al., 2023; Hao et al., 2024), or train the LLM on search dynamics and trajectories (Lehnert et al., 2024; Gandhi et al., 2024; Su et al., 2024). In our analysis, we find that after removing the constraint of a language space, a new reasoning pattern similar to BFS emerges, even though the model is not explicitly trained in this way.

Latent reasoning in LLMs. Previous works mostly define latent reasoning in LLMs as the hidden computation in transformers (Yang et al., 2024; Biran et al., 2024). Yang et al. (2024) constructed a dataset of two-hop reasoning problems and discovered that it is possible to recover the intermediate variable from the hidden representations. Biran et al. (2024) further proposed to intervene the latent reasoning by “back-patching” the hidden representation. Shalev et al. (2024) discovered parallel latent reasoning paths in LLMs. Another line of work has discovered that, even if the model generates a CoT to reason, the model may actually utilize a different latent reasoning process. This phenomenon is known as the unfaithfulness of CoT reasoning (Wang et al., 2022; Turpin et al., 2024). To enhance the latent reasoning of LLM, previous research proposed to augment it with additional tokens. Goyal et al. (2023) pretrained the model by randomly inserting a learnable <pause> tokens to the training corpus. This improves LLM’s performance on a variety of tasks, especially when followed by supervised finetuning with <pause> tokens. On the other hand, Pfau et al. (2024) further explored the usage of filler tokens, e.g., “... ”, and concluded that they work well for highly parallelizable problems. However, Pfau et al. (2024) mentioned these methods do not extend the expressivity of the LLM like CoT; hence, they may not scale to more general and complex reasoning problems. Wang et al. (2023) proposed to predict a planning token as a discrete latent variable before generating the next reasoning step. Recently, it has also been found that one can “internalize” the CoT reasoning into latent reasoning in the transformer with knowledge distillation (Deng et al., 2023) or a special training curriculum which gradually shortens CoT (Deng et al., 2024). Yu et al. (2024b) also proposed to distill a model that can reason latently from data generated with complex reasoning algorithms. These training methods can be combined to our framework, and specifically, we find that breaking down the learning of continuous thoughts into multiple stages, inspired by iCoT (Deng et al., 2024), is very beneficial for the training. Recently, looped transformers (Giannou et al., 2023; Fan et al., 2024) have been proposed to solve algorithmic tasks, which have some similarities to the computing process of continuous thoughts, but we focus on common reasoning tasks and aim at investigating latent reasoning in comparison to language space.

3 Coconut: Chain of Continuous Thought

In this section, we introduce our new paradigm Coconut (Chain of Continuous Thought) for reasoning in an unconstrained latent space. We begin by introducing the background and notation we use for language models. For an input sequence $x=(x_{1},...,x_{T})$ , the standard large language model $\mathcal{M}$ can be described as:

$$

H_{t}=\text{Transformer}(E_{t}+P_{t})

$$

$$

\mathcal{M}(x_{t+1}\mid x_{\leq t})=\text{softmax}(Wh_{t})

$$

where $E_{t}=[e(x_{1}),e(x_{2}),...,e(x_{t})]$ is the sequence of token embeddings up to position $t$ ; $P_{t}=[p(1),p(2),...,p(t)]$ is the sequence of positional embeddings up to position $t$ ; $H_{t}∈\mathbb{R}^{t× d}$ is the matrix of the last hidden states for all tokens up to position $t$ ; $h_{t}$ is the last hidden state of position $t$ , i.e., $h_{t}=H_{t}[t,:]$ ; $e(·)$ is the token embedding function; $p(·)$ is the positional embedding function; $W$ is the parameter of the language model head.

Method Overview. In the proposed Coconut method, the LLM switches between the “language mode” and “latent mode” (Figure 1). In language mode, the model operates as a standard language model, autoregressively generating the next token. In latent mode, it directly utilizes the last hidden state as the next input embedding. This last hidden state represents the current reasoning state, termed as a “continuous thought”.

Special tokens <bot> and <eot> are employed to mark the beginning and end of the latent thought mode, respectively. As an example, we assume latent reasoning occurs between positions $i$ and $j$ , i.e., $x_{i}=$ <bot> and $x_{j}=$ <eot>. When the model is in the latent mode ( $i<t<j$ ), we use the last hidden state from the previous token to replace the input embedding, i.e., $E_{t}=[e(x_{1}),e(x_{2}),...,e(x_{i}),h_{i},h_{i+1},...,h_{t-1}]$ . After the latent mode finishes ( $t≥ j$ ), the input reverts to using the token embedding, i.e., $E_{t}=[e(x_{1}),e(x_{2}),...,e(x_{i}),h_{i},h_{i+1},...,h_{j-1},e(x_{j}),...,e(x_{t})]$ . It is noteworthy that $\mathcal{M}(x_{t+1}\mid x_{≤ t})$ is not defined when $i<t<j$ , since the latent thought is not intended to be mapped back to language space. However, $\mathrm{softmax}(Wh_{t})$ can still be calculated for probing purposes (see Section 4).

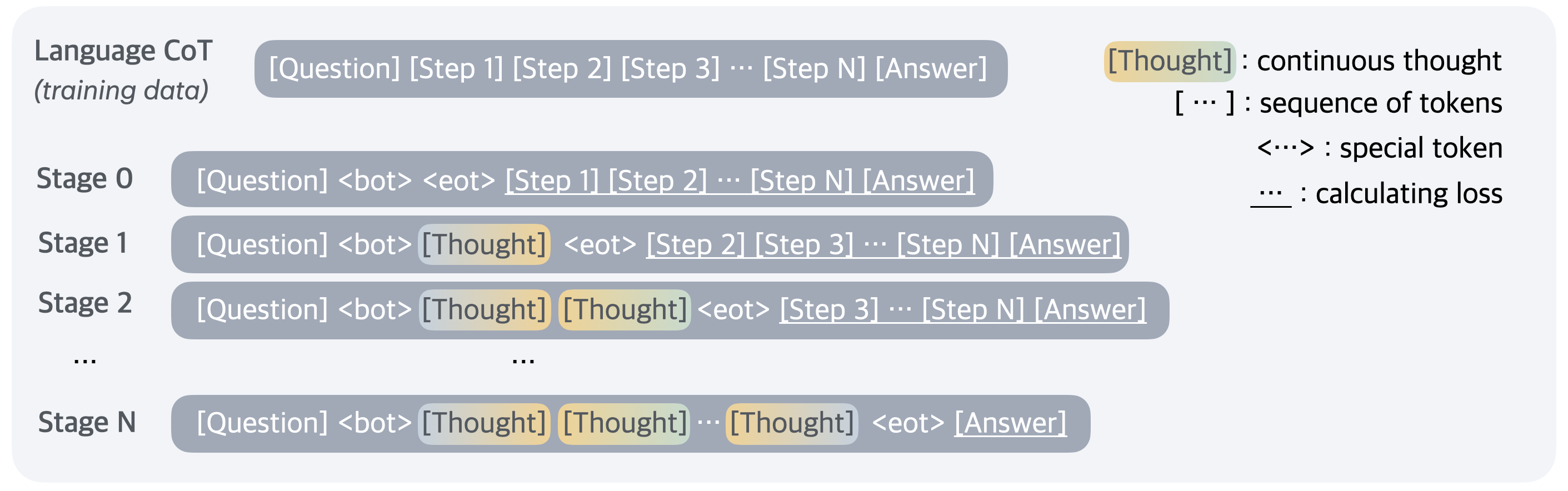

Training Procedure. In this work, we focus on a problem-solving setting where the model receives a question as input and is expected to generate an answer through a reasoning process. We leverage language CoT data to supervise continuous thought by implementing a multi-stage training curriculum inspired by Deng et al. (2024). As shown in Figure 2, in the initial stage, the model is trained on regular CoT instances. In the subsequent stages, at the $k$ -th stage, the first $k$ reasoning steps in the CoT are replaced with $k× c$ continuous thoughts If a language reasoning chain is shorter than $k$ steps, then all the language thoughts will be removed., where $c$ is a hyperparameter controlling the number of latent thoughts replacing a single language reasoning step. Following Deng et al. (2024), we also reset the optimizer state when training stages switch. We insert <bot> and <eot> tokens to encapsulate the continuous thoughts.

<details>

<summary>figures/figure_2_meta_5.png Details</summary>

### Visual Description

## Diagram: Language CoT Training Data Stages

### Overview

The image illustrates the process of Language Chain-of-Thought (CoT) training data across multiple stages. It shows how the model incorporates "Thought" tokens into the training sequence, progressing from no explicit thought to multiple thought tokens before the final answer.

### Components/Axes

* **Left Side**: Lists the stages of training, from Stage 0 to Stage N.

* **Center**: Shows the structure of the training data at each stage, including tokens like [Question], [Step 1], [Step 2], [Step N], [Answer], <bot>, <eot>, and [Thought].

* **Right Side**: Provides a legend explaining the meaning of specific tokens:

* `[Thought]`: continuous thought

* `[...]`: sequence of tokens

* `<...>`: special token

* `...`: calculating loss

### Detailed Analysis or Content Details

* **Language CoT (training data)**: `[Question] [Step 1] [Step 2] [Step 3] ... [Step N] [Answer]`

* This represents the initial training data format, consisting of a question, a series of steps, and the final answer.

* **Stage 0**: `[Question] <bot> <eot> [Step 1] [Step 2] ... [Step N] [Answer]`

* At Stage 0, the model receives the question, a beginning-of-turn token `<bot>`, an end-of-turn token `<eot>`, followed by the steps and the answer. No explicit "Thought" tokens are present.

* **Stage 1**: `[Question] <bot> [Thought] <eot> [Step 2] [Step 3] ... [Step N] [Answer]`

* In Stage 1, a single "Thought" token is inserted after the `<bot>` token and before the `<eot>` token, and before the remaining steps.

* **Stage 2**: `[Question] <bot> [Thought] [Thought] <eot> [Step 3] ... [Step N] [Answer]`

* In Stage 2, two "Thought" tokens are inserted after the `<bot>` token and before the `<eot>` token, and before the remaining steps.

* **Stage N**: `[Question] <bot> [Thought] [Thought] ... [Thought] <eot> [Answer]`

* In Stage N, multiple "Thought" tokens are inserted after the `<bot>` token and before the `<eot>` token, and before the answer. The number of "Thought" tokens increases as the stage progresses.

### Key Observations

* The diagram illustrates an iterative process where the model is progressively trained to incorporate its "Thoughts" into the reasoning process.

* The number of "Thought" tokens increases with each stage, suggesting a deepening or elaboration of the model's reasoning.

* The `<bot>` and `<eot>` tokens likely signify the beginning and end of a turn or a specific segment of the interaction.

### Interpretation

The diagram demonstrates a training methodology for Language CoT models, where the model is gradually exposed to its own reasoning process ("Thoughts") during training. This approach likely aims to improve the model's ability to generate coherent and well-reasoned responses by explicitly incorporating intermediate thought steps. The progression from Stage 0 to Stage N indicates an increasing emphasis on the model's internal reasoning, potentially leading to more complex and nuanced answers. The use of special tokens like `<bot>` and `<eot>` suggests a structured approach to managing the flow of information during training.

</details>

Figure 2: Training procedure of Chain of Continuous Thought (Coconut). Given training data with language reasoning steps, at each training stage we integrate $c$ additional continuous thoughts ( $c=1$ in this example), and remove one language reasoning step. The cross-entropy loss is then used on the remaining tokens after continuous thoughts.

During the training process, we mask the loss on questions and latent thoughts. It is important to note that the objective does not encourage the continuous thought to compress the removed language thought, but rather to facilitate the prediction of future reasoning. Therefore, it’s possible for the LLM to learn more effective representations of reasoning steps compared to human language.

Training Details. Our proposed continuous thoughts are fully differentiable and allow for back-propagation. We perform $n+1$ forward passes when $n$ latent thoughts are scheduled in the current training stage, computing a new latent thought with each pass and finally conducting an additional forward pass to obtain a loss on the remaining text sequence. While we can save any repetitive computing by using a KV cache, the sequential nature of the multiple forward passes poses challenges for parallelism. Further optimizing the training efficiency of Coconut remains an important direction for future research.

Inference Process. The inference process for Coconut is analogous to standard language model decoding, except that in latent mode, we directly feed the last hidden state as the next input embedding. A challenge lies in determining when to switch between latent and language modes. As we focus on the problem-solving setting, we insert a <bot> token immediately following the question tokens. For <eot>, we consider two potential strategies: a) train a binary classifier on latent thoughts to enable the model to autonomously decide when to terminate the latent reasoning, or b) always pad the latent thoughts to a constant length. We found that both approaches work comparably well. Therefore, we use the second option in our experiment for simplicity, unless specified otherwise.

4 Experiments

We validate the feasibility of LLM reasoning in a continuous latent space through experiments on three datasets. We mainly evaluate the accuracy by comparing the model-generated answers with the ground truth. The number of newly generated tokens per question is also analyzed, as a measure of reasoning efficiency. We report the clock-time comparison in Appendix 8.

4.1 Reasoning Tasks

Math Reasoning. We use GSM8k (Cobbe et al., 2021) as the dataset for math reasoning. It consists of grade school-level math problems. Compared to the other datasets in our experiments, the problems are more diverse and open-domain, closely resembling real-world use cases. Through this task, we explore the potential of latent reasoning in practical applications. To train the model, we use a synthetic dataset generated by Deng et al. (2023).

Logical Reasoning. Logical reasoning involves the proper application of known conditions to prove or disprove a conclusion using logical rules. This requires the model to choose from multiple possible reasoning paths, where the correct decision often relies on exploration and planning ahead. We use 5-hop ProntoQA (Saparov and He, 2022) questions, with fictional concept names. For each problem, a tree-structured ontology is randomly generated and described in natural language as a set of known conditions. The model is asked to judge whether a given statement is correct based on these conditions. This serves as a simplified simulation of more advanced reasoning tasks, such as automated theorem proving (Chen et al., 2023; DeepMind, 2024).

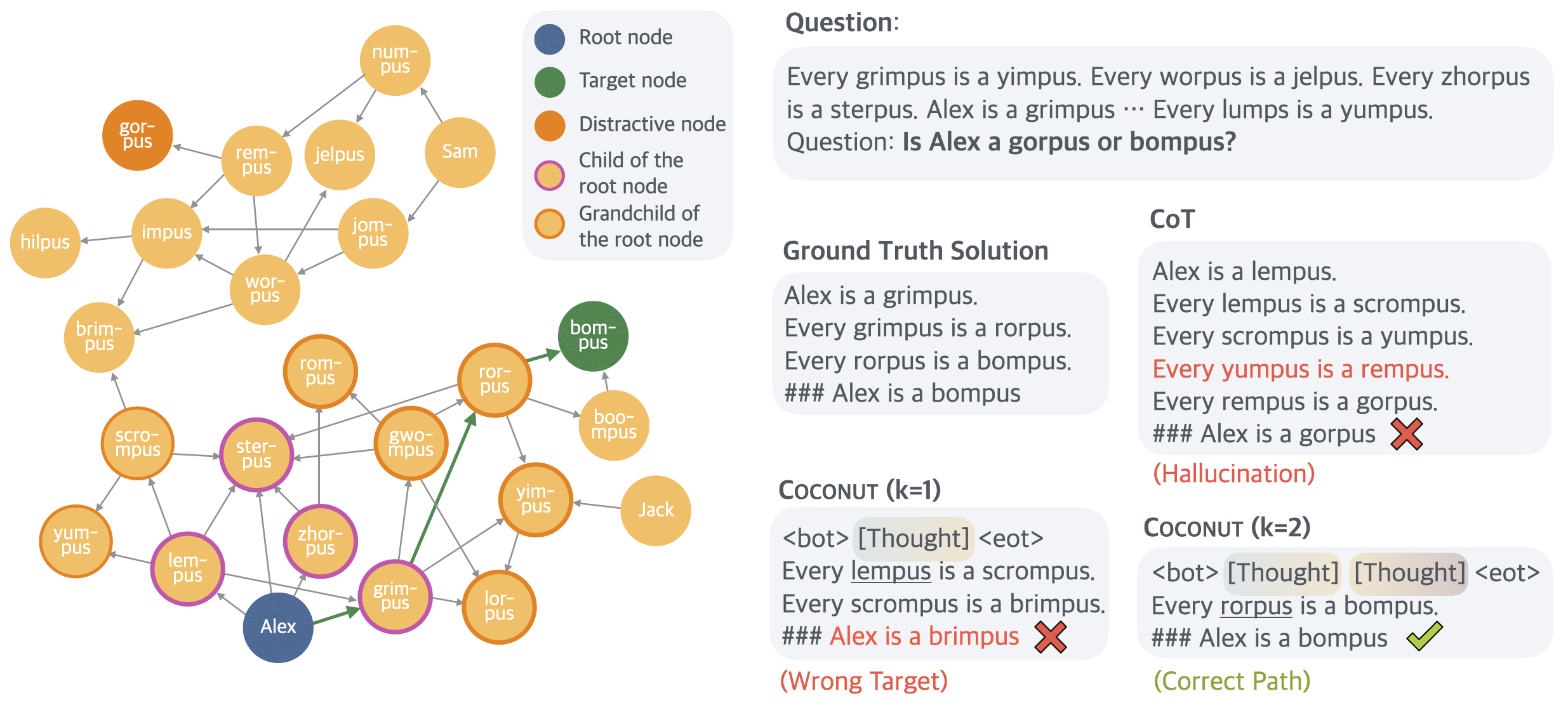

We found that the generation process of ProntoQA could be more challenging, especially since the size of distracting branches in the ontology is always small, reducing the need for complex planning. To fix that, we apply a new dataset construction pipeline using randomly generated DAGs to structure the known conditions. The resulting dataset requires the model to perform substantial planning and searching over the graph to find the correct reasoning chain. We refer to this new dataset as ProsQA (Pro of with S earch Q uestion- A nswering). A visualized example is shown in Figure 7. More details of datasets can be found in Appendix 7.

4.2 Experimental Setup

We use a pre-trained GPT-2 (Radford et al., 2019) as the base model for all experiments. The learning rate is set to $1× 10^{-4}$ while the effective batch size is 128. Following Deng et al. (2024), we also reset the optimizer when the training stages switch.

Math Reasoning. By default, we use 2 latent thoughts (i.e., $c=2$ ) for each reasoning step. We analyze the correlation between performance and $c$ in Section 1. The model goes through 3 stages besides the initial stage. Then, we have an additional stage, where we still use $3× c$ continuous thoughts as in the penultimate stage, but remove all the remaining language reasoning chain. This handles the long-tail distribution of reasoning chains longer than 3 steps. We train the model for 6 epochs in the initial stage, and 3 epochs in each remaining stage.

Logical Reasoning. We use one continuous thought for every reasoning step (i.e., $c=1$ ). The model goes through 6 training stages in addition to the initial stage, because the maximum number of reasoning steps is 6 in these two datasets. The model then fully reasons with continuous thoughts to solve the problems in the last stage. We train the model for 5 epochs per stage.

For all datasets, after the standard schedule, the model stays in the final training stage, until the 50th epoch. We select the checkpoint based on the accuracy on the validation set. For inference, we manually set the number of continuous thoughts to be consistent with their final training stage. We use greedy decoding for all experiments.

4.3 Baselines and Variants of Coconut

We consider the following baselines: (1) CoT: We use the complete reasoning chains to train the language model with supervised finetuning, and during inference, the model generates a reasoning chain before outputting an answer. (2) No-CoT: The LLM is trained to directly generate the answer without using a reasoning chain. (3) iCoT (Deng et al., 2024): The model is trained with language reasoning chains and follows a carefully designed schedule that “internalizes” CoT. As the training goes on, tokens at the beginning of the reasoning chain are gradually removed until only the answer remains. During inference, the model directly predicts the answer. (4) Pause token (Goyal et al., 2023): The model is trained using only the question and answer, without a reasoning chain. However, different from No-CoT, special <pause> tokens are inserted between the question and answer, which are believed to provide the model with additional computational capacity to derive the answer. For a fair comparison, the number of <pause> tokens is set the same as continuous thoughts in Coconut.

We also evaluate some variants of our method: (1) w/o curriculum: Instead of the multi-stage training, we directly use the data from the last stage which only includes questions and answers to train Coconut. The model uses continuous thoughts to solve the whole problem. (2) w/o thought: We keep the multi-stage training which removes language reasoning steps gradually, but don’t use any continuous latent thoughts. While this is similar to iCoT in the high-level idea, the exact training schedule is set to be consistent with Coconut, instead of iCoT. This ensures a more strict comparison. (3) Pause as thought: We use special <pause> tokens to replace the continuous thoughts, and apply the same multi-stage training curriculum as Coconut.

4.4 Results and Discussion

| Method | GSM8k | ProntoQA | ProsQA | | | |

| --- | --- | --- | --- | --- | --- | --- |

| Acc. (%) | # Tokens | Acc. (%) | # Tokens | Acc. (%) | # Tokens | |

| CoT | 42.9 $\ ±$ 0.2 | 25.0 | 98.8 $\ ±$ 0.8 | 92.5 | 77.5 $\ ±$ 1.9 | 49.4 |

| No-CoT | 16.5 $\ ±$ 0.5 | 2.2 | 93.8 $\ ±$ 0.7 | 3.0 | 76.7 $\ ±$ 1.0 | 8.2 |

| iCoT | 30.0 ∗ | 2.2 | 99.8 $\ ±$ 0.3 | 3.0 | 98.2 $\ ±$ 0.3 | 8.2 |

| Pause Token | 16.4 $\ ±$ 1.8 | 2.2 | 77.7 $\ ±$ 21.0 | 3.0 | 75.9 $\ ±$ 0.7 | 8.2 |

| Coconut (Ours) | 34.1 $\ ±$ 1.5 | 8.2 | 99.8 $\ ±$ 0.2 | 9.0 | 97.0 $\ ±$ 0.3 | 14.2 |

| - w/o curriculum | 14.4 $\ ±$ 0.8 | 8.2 | 52.4 $\ ±$ 0.4 | 9.0 | 76.1 $\ ±$ 0.2 | 14.2 |

| - w/o thought | 21.6 $\ ±$ 0.5 | 2.3 | 99.9 $\ ±$ 0.1 | 3.0 | 95.5 $\ ±$ 1.1 | 8.2 |

| - pause as thought | 24.1 $\ ±$ 0.7 | 2.2 | 100.0 $\ ±$ 0.1 | 3.0 | 96.6 $\ ±$ 0.8 | 8.2 |

Table 1: Results on three datasets: GSM8l, ProntoQA and ProsQA. Higher accuracy indicates stronger reasoning ability, while generating fewer tokens indicates better efficiency. ∗ The result is from Deng et al. (2024).

<details>

<summary>x1.png Details</summary>

### Visual Description

## Line Chart: Accuracy vs. Thoughts per Step

### Overview

The image is a line chart showing the relationship between "Accuracy (%)" and "# Thoughts per step". The chart displays a generally increasing trend in accuracy as the number of thoughts per step increases. A shaded region around the line indicates variability or uncertainty.

### Components/Axes

* **X-axis:** "# Thoughts per step" with markers at 0, 1, and 2.

* **Y-axis:** "Accuracy (%)" with markers at 26, 28, 30, 32, 34, and 36.

* **Data Series:** A single blue line representing the accuracy at different numbers of thoughts per step. A shaded blue region surrounds the line, indicating a confidence interval or standard deviation.

### Detailed Analysis

* **Trend:** The blue line shows an upward trend, indicating that accuracy increases as the number of thoughts per step increases.

* **Data Points:**

* At 0 thoughts per step, the accuracy is approximately 25.4%.

* At 1 thought per step, the accuracy is approximately 31.2%.

* At 2 thoughts per step, the accuracy is approximately 34.1%.

* **Uncertainty:** The shaded region around the line widens as the number of thoughts per step increases, suggesting greater variability or uncertainty in accuracy at higher numbers of thoughts.

### Key Observations

* The accuracy increases noticeably from 0 to 1 thought per step, and then increases at a slower rate from 1 to 2 thoughts per step.

* The uncertainty in accuracy is relatively small at 0 thoughts per step but increases significantly at 1 and 2 thoughts per step.

### Interpretation

The chart suggests that increasing the number of thoughts per step generally improves accuracy. However, the diminishing returns and increasing uncertainty at higher numbers of thoughts per step indicate that there may be a point beyond which further increases in thoughts per step do not significantly improve accuracy and may even introduce more variability. The shaded region likely represents the standard deviation or confidence interval, showing the range of possible accuracy values for each number of thoughts per step. This could be due to the complexity of the task, the variability in the quality of thoughts, or other factors.

</details>

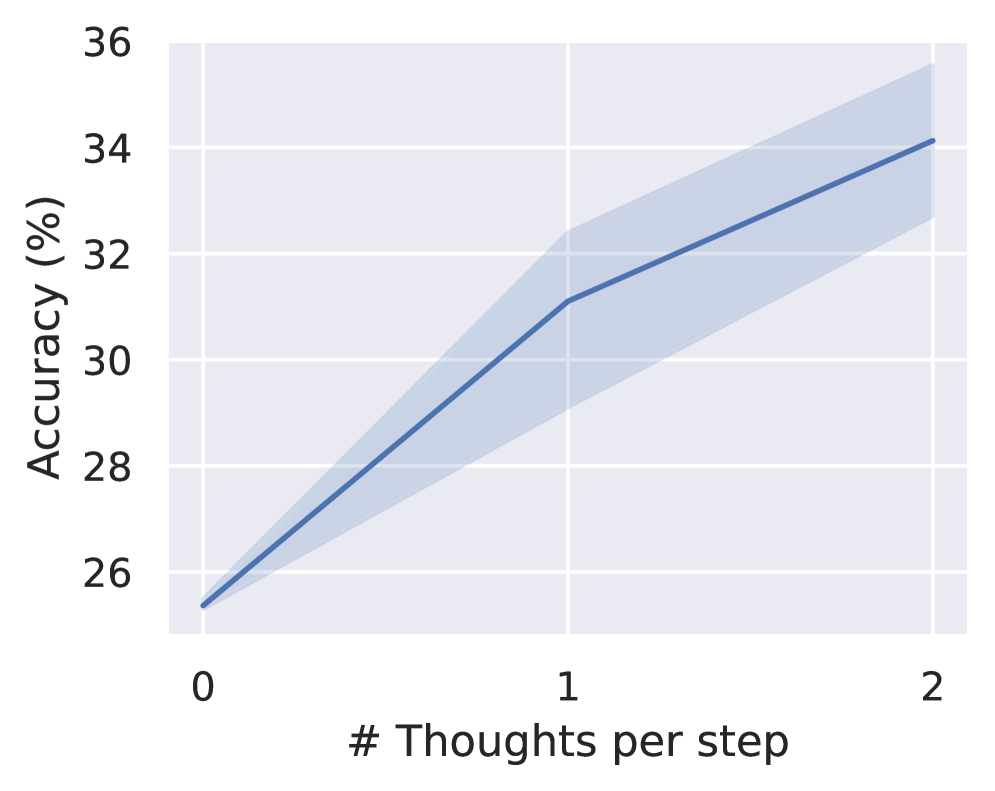

Figure 3: Accuracy on GSM8k with different number of continuous thoughts.

We show the overall results on all datasets in Table 1. Continuous thoughts effectively enhance LLM reasoning, as shown by the consistent improvement over no-CoT. It even shows better performance than CoT on ProntoQA and ProsQA. We describe several key conclusions from the experiments as follows.

“Chaining” continuous thoughts enhances reasoning. In conventional CoT, the output token serves as the next input, which proves to increase the effective depth of LLMs and enhance their expressiveness (Feng et al., 2023). We explore whether latent space reasoning retains this property, as it would suggest that this method could scale to solve increasingly complex problems by chaining multiple latent thoughts.

In our experiments with GSM8k, we found that Coconut outperformed other architectures trained with similar strategies, particularly surpassing the latest baseline, iCoT (Deng et al., 2024). The performance is significantly better than Coconut (pause as thought) which also enables more computation in the LLMs. While Pfau et al. (2024) empirically shows that filler tokens, such as the special <pause> tokens, can benefit highly parallelizable problems, our results show that Coconut architecture is more effective for general problems, e.g., math word problems, where a reasoning step often heavily depends on previous steps. Additionally, we experimented with adjusting the hyperparameter $c$ , which controls the number of latent thoughts corresponding to one language reasoning step (Figure 3). As we increased $c$ from 0 to 1 to 2, the model’s performance steadily improved. We discuss the case of larger $c$ in Appendix 9. These results suggest that a chaining effect similar to CoT can be observed in the latent space.

In two other synthetic tasks, we found that the variants of Coconut (w/o thoughts or pause as thought), and the iCoT baseline also achieve impressive accuracy. This indicates that the model’s computational capacity may not be the bottleneck in these tasks. In contrast, GSM8k, being an open-domain question-answering task, likely involves more complex contextual understanding and modeling, placing higher demands on computational capability.

Latent reasoning outperforms language reasoning in planning-intensive tasks. Complex reasoning often requires the model to “look ahead” and evaluate the appropriateness of each step. Among our datasets, GSM8k and ProntoQA are relatively straightforward for next-step prediction, due to intuitive problem structures and limited branching. In contrast, ProsQA’s randomly generated DAG structure significantly challenges the model’s planning capabilities. As shown in Table 1, CoT does not offer notable improvement over No-CoT. However, Coconut, its variants, and iCoT substantially enhance reasoning on ProsQA, indicating that latent space reasoning provides a clear advantage in tasks demanding extensive planning. An in-depth analysis of this process is provided in Section 5.

The LLM still needs guidance to learn latent reasoning. In the ideal case, the model should learn the most effective continuous thoughts automatically through gradient descent on questions and answers (i.e., Coconut w/o curriculum). However, from the experimental results, we found the models trained this way do not perform any better than no-CoT.

<details>

<summary>figures/figure_4_meta.png Details</summary>

### Visual Description

## Diagram: Large Language Model with LM Head

### Overview

The image depicts a diagram of a large language model (LLM) with an LM head, showing the flow of information and some associated numerical values. The diagram includes input tokens, the LLM itself, the LM head, and output probabilities. It also includes a word problem.

### Components/Axes

* **LM head:** A rectangular box at the top-left, filled with an orange color.

* **Large Language Model:** A large, rounded rectangular box in the center, filled with a grey color.

* **Input Tokens:** Represented by rounded rectangles at the bottom of the LLM box. Some are labeled with `<bot>` and `<eot>`. The colors of the input tokens vary from light yellow to a gradient of yellow to purple.

* **Output Tokens:** Represented by rounded rectangles at the top of the LLM box. Some are labeled with `<bot>` and `<eot>`. The colors of the output tokens vary from light purple to a gradient of yellow to purple.

* **Probabilities:** A bar chart on the top-right, showing probabilities associated with the LM head's output. The bars are orange.

* **Text:** A word problem at the bottom of the diagram.

### Detailed Analysis

* **Input Tokens (Bottom):**

* The first token is represented by three dots.

* The second token is light yellow.

* The third token is light yellow and labeled `<bot>`.

* The fourth token is a gradient of yellow to purple.

* The fifth token is a gradient of yellow to purple.

* The sixth token is a gradient of yellow to purple.

* The seventh token is light yellow.

* The eighth token is labeled `<eot>`.

* **Output Tokens (Top):**

* The first token is light purple and labeled `<bot>`.

* The second token is a gradient of yellow to purple.

* The third token is a gradient of yellow to purple.

* The fourth token is a gradient of yellow to purple.

* The fifth token is light purple and labeled `<eot>`.

* The sixth token is light purple.

* The seventh token is represented by three dots.

* **Probabilities (Top-Right):**

* "180": 0.22

* "180": 0.20

* "9": 0.13

* **Text (Bottom):**

* "James decides to run 3 sprints 3 times a week. He runs 60 meters each sprint. How many total meters does he run a week?"

### Key Observations

* The diagram illustrates the flow of information through a large language model, from input tokens to the LM head, which then generates output probabilities.

* The input tokens are fed into the Large Language Model.

* The LM head predicts the next token based on the input.

* The probabilities associated with the LM head's output are shown in the bar chart.

* The word problem at the bottom provides a context for the model's task.

### Interpretation

The diagram provides a simplified view of how a large language model works. The input tokens represent the initial information provided to the model, which then processes this information and generates output tokens. The LM head is responsible for predicting the next token in the sequence, and the probabilities associated with its output reflect the model's confidence in its predictions. The word problem at the bottom suggests that the model is being used to solve a simple arithmetic problem. The model is likely trained to predict the answer to the question, given the context provided in the problem. The "180" and "9" are likely intermediate calculations or the final answer. The model predicts "180" with a probability of 0.22 and 0.20, and "9" with a probability of 0.13. The correct answer to the word problem is 540, which is also present in the diagram.

</details>

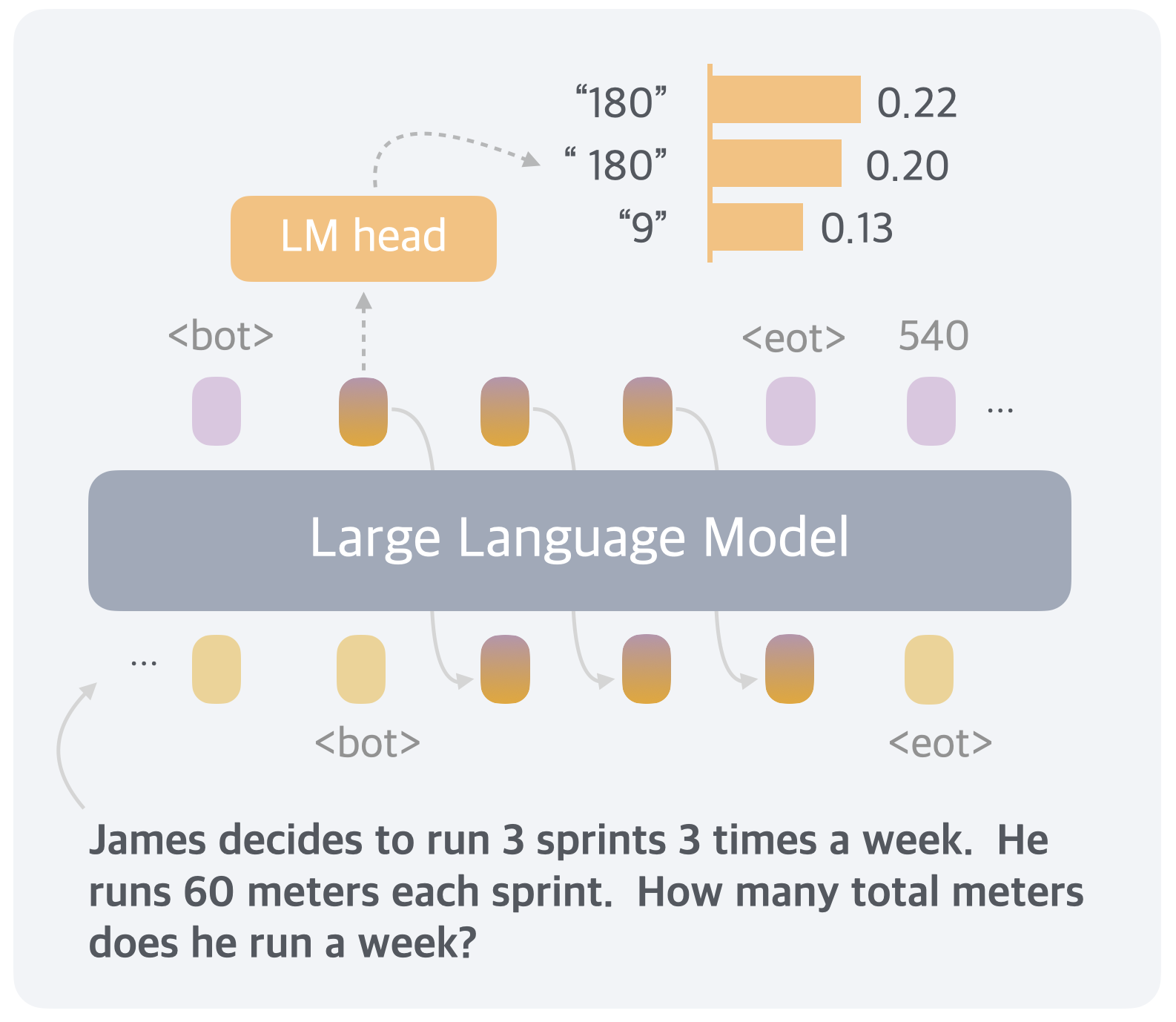

Figure 4: A case study where we decode the continuous thought into language tokens.

With the multi-stage curriculum which decomposes the training into easier objectives, Coconut is able to achieve top performance across various tasks. The multi-stage training also integrates well with pause tokens (Coconut - pause as thought). Despite using the same architecture and similar multi-stage training objectives, we observed a small gap between the performance of iCoT and Coconut (w/o thoughts). The finer-grained removal schedule (token by token) and a few other tricks in iCoT may ease the training process. We leave combining iCoT and Coconut as future work. While the multi-stage training used for Coconut has proven effective, further research is definitely needed to develop better and more general strategies for learning reasoning in latent space, especially without the supervision from language reasoning chains.

Continuous thoughts are efficient representations of reasoning. Though the continuous thoughts are not intended to be decoded to language tokens, we can still use it as an intuitive interpretation of the continuous thought. We show a case study in Figure 4 of a math word problem solved by Coconut ( $c=1$ ). The first continuous thought can be decoded into tokens like “180”, “ 180” (with a space), and “9”. Note that, the reasoning trace for this problem should be $3× 3× 60=9× 60=540$ , or $3× 3× 60=3× 180=540$ . The interpretations of the first thought happen to be the first intermediate variables in the calculation. Moreover, it encodes a distribution of different traces into the continuous thoughts. As shown in Section 5.3, this feature enables a more advanced reasoning pattern for planning-intense reasoning tasks.

5 Understanding the Latent Reasoning in Coconut

In this section, we present an analysis of the latent reasoning process with a variant of Coconut. By leveraging its ability to switch between language and latent space reasoning, we are able to control the model to interpolate between fully latent reasoning and fully language reasoning and test their performance (Section 5.2). This also enables us to interpret the the latent reasoning process as tree search (Section 5.3). Based on this perspective, we explain why latent reasoning can make the decision easier for LLMs (Section 5.4).

5.1 Experimental Setup

Methods. The design of Coconut allows us to control the number of latent thoughts by manually setting the position of the <eot> token during inference. When we enforce Coconut to use $k$ continuous thoughts, the model is expected to output the remaining reasoning chain in language, starting from the $k+1$ step. In our experiments, we test variants of Coconut on ProsQA with $k∈\{0,1,2,3,4,5,6\}$ . Note that all these variants only differ in inference time while sharing the same model weights. Besides, we report the performance of CoT and no-CoT as references.

To address the issue of forgetting earlier training stages, we modify the original multi-stage training curriculum by always mixing data from other stages with a certain probability ( $p=0.3$ ). This updated training curriculum yields similar performance and enables effective control over the switch between latent and language reasoning.

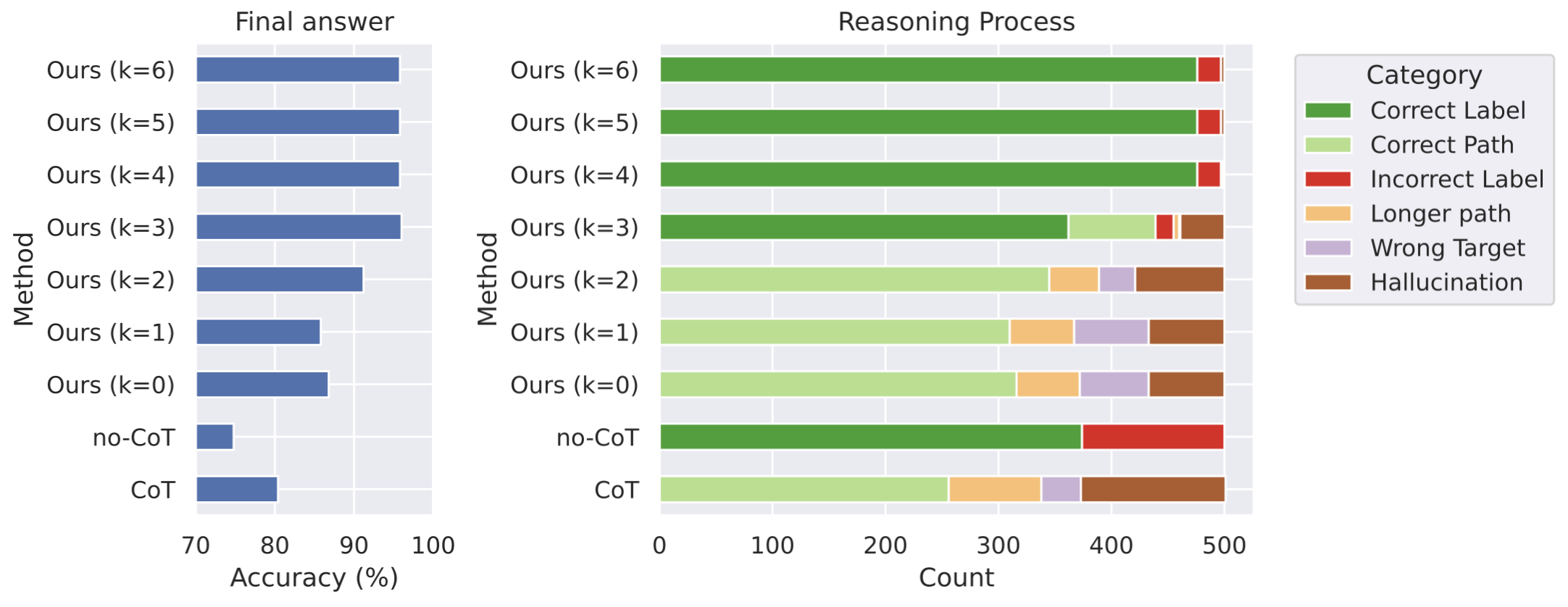

Metrics. We apply two sets of evaluation metrics. One of them is based on the correctness of the final answer, regardless of the reasoning process. It is the metric used in the main experimental results above (Section 1). To enable fine-grained analysis, we define another metric on the reasoning process. Assuming we have a complete language reasoning chain which specifies a path in the graph, we can classify it into (1) Correct Path: The output is one of the shortest paths to the correct answer. (2) Longer Path: A valid path that correctly answers the question but is longer than the shortest path. (3) Hallucination: The path includes nonexistent edges or is disconnected. (4) Wrong Target: A valid path in the graph, but the destination node is not the one being asked. These four categories naturally apply to the output from Coconut ( $k=0$ ) and CoT, which generate the full path. For Coconut with $k>0$ that outputs only partial paths in language (with the initial steps in continuous reasoning), we classify the reasoning as a Correct Path if a valid explanation can complete it. Also, we define Longer Path and Wrong Target for partial paths similarly. If no valid explanation completes the path, it’s classified as hallucination. In no-CoT and Coconut with larger $k$ , the model may only output the final answer without any partial path, and it falls into (5) Correct Label or (6) Incorrect Label. These six categories cover all cases without overlap.

<details>

<summary>figures/figure_5_revised_1111.png Details</summary>

### Visual Description

## Bar Charts: Final Answer Accuracy vs. Reasoning Process Analysis

### Overview

The image presents two horizontal bar charts comparing different methods for a task. The left chart, titled "Final answer," displays the accuracy (%) of each method. The right chart, titled "Reasoning Process," breaks down the counts of different categories (Correct Label, Correct Path, Incorrect Label, Longer Path, Wrong Target, Hallucination) for each method. The methods include "CoT," "no-CoT," and "Ours" with varying parameters (k=0 to k=6).

### Components/Axes

**Left Chart (Final answer):**

* **Title:** Final answer

* **X-axis:** Accuracy (%)

* Scale: 70 to 100, with tick marks at 70, 80, 90, and 100.

* **Y-axis:** Method

* Categories: CoT, no-CoT, Ours (k=0), Ours (k=1), Ours (k=2), Ours (k=3), Ours (k=4), Ours (k=5), Ours (k=6)

**Right Chart (Reasoning Process):**

* **Title:** Reasoning Process

* **X-axis:** Count

* Scale: 0 to 500, with tick marks at 0, 100, 200, 300, 400, and 500.

* **Y-axis:** Method

* Categories: CoT, no-CoT, Ours (k=0), Ours (k=1), Ours (k=2), Ours (k=3), Ours (k=4), Ours (k=5), Ours (k=6)

**Legend (Located on the top-right):**

* **Category:**

* Correct Label (Green)

* Correct Path (Light Green)

* Incorrect Label (Red)

* Longer Path (Orange)

* Wrong Target (Light Purple)

* Hallucination (Brown)

### Detailed Analysis

**Left Chart (Final answer):**

* **CoT:** Accuracy approximately 75%.

* **no-CoT:** Accuracy approximately 72%.

* **Ours (k=0):** Accuracy approximately 82%.

* **Ours (k=1):** Accuracy approximately 85%.

* **Ours (k=2):** Accuracy approximately 90%.

* **Ours (k=3):** Accuracy approximately 95%.

* **Ours (k=4):** Accuracy approximately 97%.

* **Ours (k=5):** Accuracy approximately 98%.

* **Ours (k=6):** Accuracy approximately 99%.

**Trend:** The accuracy generally increases as the value of 'k' increases in the "Ours" method.

**Right Chart (Reasoning Process):**

* **CoT:** Dominated by "Correct Path" (light green), with significant "Hallucination" (brown) and a small amount of "Longer Path" (orange).

* **no-CoT:** Dominated by "Correct Label" (green), with a significant portion of "Incorrect Label" (red).

* **Ours (k=0):** Mostly "Correct Path" (light green), with some "Longer Path" (orange) and "Wrong Target" (light purple), and "Hallucination" (brown).

* **Ours (k=1):** Mostly "Correct Path" (light green), with some "Longer Path" (orange) and "Wrong Target" (light purple), and "Hallucination" (brown).

* **Ours (k=2):** Mostly "Correct Path" (light green), with some "Longer Path" (orange) and "Wrong Target" (light purple), and "Hallucination" (brown).

* **Ours (k=3):** Mostly "Correct Label" (green), with a small amount of "Correct Path" (light green), "Longer Path" (orange), and "Hallucination" (brown).

* **Ours (k=4):** Almost entirely "Correct Label" (green), with a very small amount of "Incorrect Label" (red).

* **Ours (k=5):** Almost entirely "Correct Label" (green), with a very small amount of "Incorrect Label" (red).

* **Ours (k=6):** Almost entirely "Correct Label" (green), with a very small amount of "Incorrect Label" (red).

**Trends:**

* As 'k' increases in the "Ours" method, the "Correct Label" (green) count increases significantly, while "Correct Path" (light green), "Longer Path" (orange), "Wrong Target" (light purple), and "Hallucination" (brown) counts decrease.

* "no-CoT" has a high count of "Incorrect Label" (red).

### Key Observations

* The "Ours" method with higher 'k' values (k=4, k=5, k=6) achieves significantly higher accuracy than "CoT" and "no-CoT."

* The "Reasoning Process" chart reveals that the improved accuracy of "Ours" with higher 'k' values is associated with a higher count of "Correct Label" and a lower count of other categories like "Longer Path," "Wrong Target," and "Hallucination."

* "no-CoT" has the lowest accuracy and a high count of "Incorrect Label," suggesting it struggles with providing correct labels.

* "CoT" relies more on "Correct Path" but suffers from a significant amount of "Hallucination."

### Interpretation

The data suggests that the "Ours" method, particularly with higher 'k' values, is more effective in achieving accurate final answers. The "Reasoning Process" chart indicates that this is because the method is better at identifying the "Correct Label" and avoiding issues like "Longer Path," "Wrong Target," and "Hallucination." The "no-CoT" method's low accuracy and high "Incorrect Label" count suggest it lacks a robust mechanism for label correction. The "CoT" method, while utilizing "Correct Path," is prone to "Hallucination," which negatively impacts its overall accuracy. The parameter 'k' in the "Ours" method seems to control the balance between exploring different reasoning paths and converging on the correct label, with higher values leading to better accuracy.

</details>

Figure 5: The accuracy of final answer (left) and reasoning process (right) of multiple variants of Coconut and baselines on ProsQA.

<details>

<summary>figures/figure_6_meta_3.png Details</summary>

### Visual Description

## Diagram: Reasoning Path Visualization

### Overview

The image presents a diagram illustrating a reasoning path, alongside examples of question answering and solutions. The diagram visualizes relationships between different entities, while the text provides a question, a ground truth solution, and two attempts by a system called "COCONUT" to answer the question using different reasoning depths (k=1 and k=2).

### Components/Axes

* **Diagram Legend (Top-Left)**:

* Blue node: Root node

* Green node: Target node

* Orange node: Distractive node

* Purple outline node: Child of the root node

* Orange outline node: Grandchild of the root node

* **Node Network (Left)**: A network of interconnected nodes, each labeled with a name ending in "-pus". Nodes are connected by gray lines, with green arrows indicating the correct reasoning path.

* **Question (Top-Right)**: A logical reasoning question.

* **Ground Truth Solution (Middle-Right)**: The correct answer and reasoning steps.

* **COCONUT (k=1) (Bottom-Left)**: The system's attempt to answer the question with a reasoning depth of 1.

* **COCONUT (k=2) (Bottom-Right)**: The system's attempt to answer the question with a reasoning depth of 2.

* **Nodes in the Network**:

* Root Node: Alex (Blue)

* Target Node: bom-pus (Green)

* Distractive Nodes: gor-pus, rem-pus, jelpus, Sam, impus, jom-pus, wor-pus, hilpus, boo-mpus, Jack, lor-pus, gwo-mpus, yum-pus, scro-mpus

* Child of the root node: ster-pus, zhor-pus, lem-pus, grim-pus, rom-pus

* Grandchild of the root node: num-pus, brim-pus, ror-pus, yim-pus

### Detailed Analysis

* **Node Network**:

* The network consists of nodes labeled with names like "Alex", "bom-pus", "gor-pus", etc.

* Alex (root node) is connected to ster-pus, zhor-pus, lem-pus, and grim-pus.

* The correct path from Alex to bom-pus is highlighted with green arrows: Alex -> grim-pus -> ror-pus -> bom-pus.

* **Question**:

* The question is: "Every grimpus is a yimpus. Every worpus is a jelpus. Every zhorpus is a sterpus. Alex is a grimpus ... Every lumps is a yumpus. Question: Is Alex a gorpus or bompus?"

* **Ground Truth Solution**:

* "Alex is a grimpus. Every grimpus is a rorpus. Every rorpus is a bompus. ### Alex is a bompus"

* **COCONUT (k=1)**:

* "\<bot> [Thought] Every lempus is a scrompus. Every scrompus is a brimpus. ### Alex is a brimpus X (Wrong Target)"

* The system incorrectly concludes that Alex is a brimpus.

* **COCONUT (k=2)**:

* "\<bot> [Thought] [Thought] Every rorpus is a bompus. ### Alex is a bompus ✓ (Correct Path)"

* The system correctly concludes that Alex is a bompus.

* **COT (Chain of Thought)**:

* "Alex is a lempus. Every lempus is a scrompus. Every scrompus is a yumpus. Every yumpus is a rempus. Every rempus is a gorpus. ### Alex is a gorpus X (Hallucination)"

* The system incorrectly concludes that Alex is a gorpus.

### Key Observations

* The diagram visualizes the relationships between different entities and the reasoning path required to answer the question.

* The COCONUT system with a reasoning depth of 2 (k=2) correctly answers the question, while the system with a reasoning depth of 1 (k=1) fails.

* The COT (Chain of Thought) reasoning also fails to answer the question correctly.

### Interpretation

The image demonstrates the importance of reasoning depth in question answering. The COCONUT system, when allowed to explore a deeper reasoning path (k=2), is able to correctly answer the question. This suggests that a more thorough exploration of the relationships between entities can lead to more accurate conclusions. The failure of the COT reasoning highlights the potential for "hallucinations" or incorrect inferences when relying on a single chain of thought. The diagram provides a visual representation of the reasoning process, making it easier to understand the relationships between entities and the steps required to arrive at the correct answer.

</details>

Figure 6: A case study of ProsQA. The model trained with CoT hallucinates an edge (Every yumpus is a rempus) after getting stuck in a dead end. Coconut (k=1) outputs a path that ends with an irrelevant node. Coconut (k=2) solves the problem correctly.

<details>

<summary>figures/figure_7_meta_4.png Details</summary>

### Visual Description

## Network Diagram: COCONUT Model with k=1 and k=2

### Overview

The image presents two network diagrams illustrating the COCONUT model with different values of 'k' (k=1 and k=2). Each diagram shows a network of nodes representing word fragments or units, connected by edges. The nodes are labeled with word fragments, and the edges are annotated with probabilities and 'h' values. The diagrams demonstrate how the model generates sequences of word fragments.

### Components/Axes

**General Components:**

* **Nodes:** Represent word fragments (e.g., "ster-pus", "lemp-us", "Alex").

* **Edges:** Connect nodes, indicating transitions between word fragments. Edges are annotated with probabilities.

* **Node Color:** The node "Alex" is blue, while all other word fragment nodes are orange/yellow.

* **Edge Color:** Most edges are gray, but some are green, indicating a specific path.

* **'h' Value:** Indicates the hidden state or context.

* **COCONUT (k=1/k=2):** Titles indicating the model configuration.

* **<bot> [Thought] <eot>:** Represents the beginning-of-text, the thought, and the end-of-text markers.

* **Every lempus/rorpus...:** Indicates the generated sequence.

* **p("lempus")/p("rorpus"):** Probability calculations for the generated sequences.

**Left Diagram (k=1):**

* **Nodes:** Alex, ster-pus, lemp-us, zhor-pus, grim-pus.

* **Probabilities:**

* ster-pus: 0.01 (h=0), 0.33 (h=2)

* grim-pus: 0.16 (h=1), 0.32 (h=2)

* **Edge Color:** The edge from Alex to grim-pus is green.

* **Probability Calculation:**

* p("lempus") = p("le")p("mp")p("us") = 0.33

**Right Diagram (k=2):**

* **Nodes:** Alex, yum-pus, ster-pus, lemp-us, scro-mpus, rom-pus, zhor-pus, lorp-us, grim-pus, yimp-us, gwo-mpus, rorp-us.

* **Probabilities:**

* yum-pus: 7e-4 (h=0)

* scro-mpus: 2e-3 (h=1)

* rom-pus: 3e-3 (h=0)

* lorp-us: 2e-4 (h=0)

* yimp-us: 5e-5 (h=1)

* gwo-mpus: 0.12 (h=1)

* rorp-us: 0.87 (h=1)

* **Edge Color:** The edge from Alex to grim-pus and from grim-pus to rorp-us are green.

* **Probability Calculation:**

* p("rorpus") = p("ro")p("rp")p("us") = 0.87

### Detailed Analysis or Content Details

**Left Diagram (k=1):**

* The network starts from the "Alex" node.

* "Alex" connects to "ster-pus", "lemp-us", "zhor-pus", and "grim-pus".

* The path from "Alex" to "grim-pus" is highlighted in green.

* The probability of generating "lempus" is calculated as 0.33.

**Right Diagram (k=2):**

* The network starts from the "Alex" node.

* "Alex" connects to "yum-pus", "ster-pus", "lemp-us", "zhor-pus", "yimp-us", and "grim-pus".

* The path from "Alex" to "grim-pus" to "rorp-us" is highlighted in green.

* The probability of generating "rorpus" is calculated as 0.87.

### Key Observations

* The value of 'k' influences the complexity of the network and the generated sequences.

* Higher 'k' (k=2) results in a more complex network with more nodes and connections.

* The green edges highlight specific paths through the network, likely representing the most probable or relevant sequences.

* The probability calculations show how the model computes the likelihood of generating specific word fragments.

### Interpretation

The diagrams illustrate a probabilistic model (COCONUT) for generating word fragments or sequences. The 'k' parameter controls the order of the model, with higher values allowing for longer-range dependencies. The network structure represents the possible transitions between word fragments, and the edge probabilities quantify the likelihood of these transitions. The green paths highlight the most probable sequences, and the probability calculations demonstrate how the model computes the overall likelihood of generating specific words or phrases. The model seems to be learning the structure of words and their probabilities based on the training data. The difference in the generated sequences ("lempus" vs. "rorpus") and their probabilities suggests that the model adapts its output based on the 'k' parameter, effectively capturing different levels of linguistic complexity.

</details>

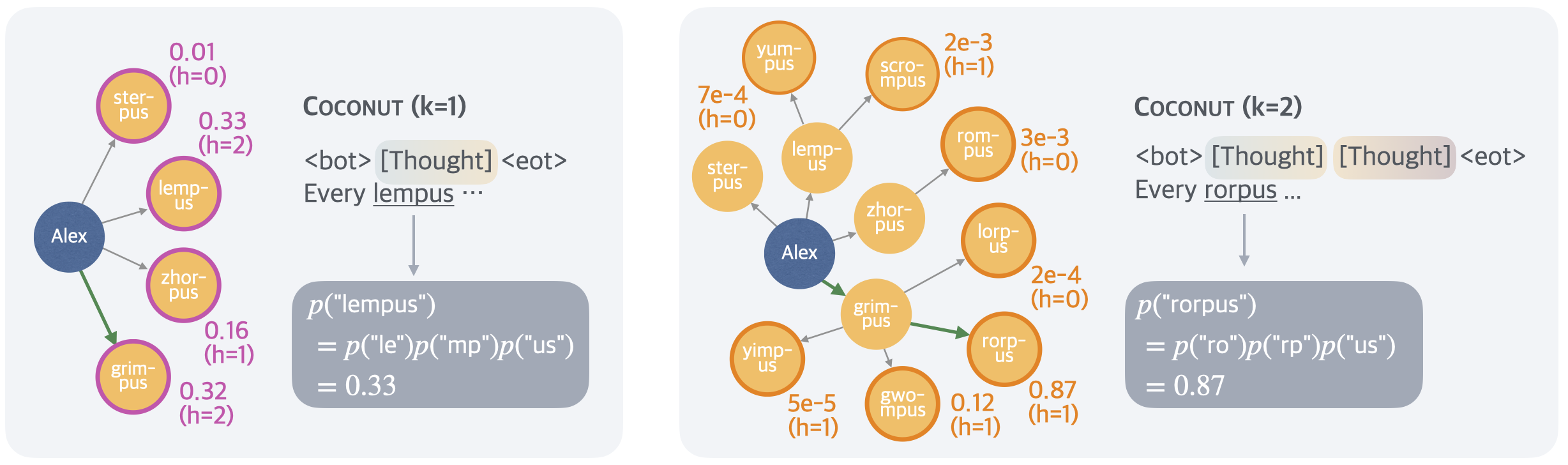

Figure 7: An illustration of the latent search trees. The example is the same test case as in Figure 7. The height of a node (denoted as $h$ in the figure) is defined as the longest distance to any leaf nodes in the graph. We show the probability of the first concept predicted by the model following latent thoughts (e.g., “lempus” in the left figure). It is calculated as the multiplication of the probability of all tokens within the concept conditioned on previous context (omitted in the figure for brevity). This metric can be interpreted as an implicit value function estimated by the model, assessing the potential of each node leading to the correct answer.

5.2 Interpolating between Latent and Language Reasoning

Figure 5 shows a comparative analysis of different reasoning methods on ProsQA. As more reasoning is done with continuous thoughts (increasing $k$ ), both final answer accuracy (Figure 5, left) and the rate of correct reasoning processes (“Correct Label” and “Correct Path” in Figure 5, right) improve. Additionally, the rate of “Hallucination” and “Wrong Target” decrease, which typically occur when the model makes a wrong move earlier. This also indicates the better planning ability when more reasoning happens in the latent space.

A case study is shown in Figure 7, where CoT hallucinates an nonexistent edge, Coconut ( $k=1$ ) leads to a wrong target, but Coconut ( $k=2$ ) successfully solves the problem. In this example, the model cannot accurately determine which edge to choose at the earlier step. However, as latent reasoning can avoid making a hard choice upfront, the model can progressively eliminate incorrect options in subsequent steps and achieves higher accuracy at the end of reasoning. We show more evidence and details of this reasoning process in Section 5.3.

The comparison between CoT and Coconut ( $k=0$ ) reveals another interesting observation: even when Coconut is forced to generate a complete reasoning chain, the accuracy of the answers is still higher than CoT. The generated reasoning paths are also more accurate with less hallucination. From this, we can infer that the training method of mixing different stages improves the model’s ability to plan ahead. The training objective of CoT always concentrates on the generation of the immediate next step, making the model “shortsighted”. In later stages of Coconut training, the first few steps are hidden, allowing the model to focus more on future steps. This is related to the findings of Gloeckle et al. (2024), where they propose multi-token prediction as a new pretraining objective to improve the LLM’s ability to plan ahead.

5.3 Interpreting the Latent Search Tree

Given the intuition that continuous thoughts can encode multiple potential next steps, the latent reasoning can be interpreted as a search tree, rather than merely a reasoning “chain”. Taking the case of Figure 7 as a concrete example, the first step could be selecting one of the children of Alex, i.e., {lempus, sterpus, zhorpus, grimpus}. We depict all possible branches in the left part of Figure 7. Similarly, in the second step, the frontier nodes will be the grandchildren of Alex (Figure 7, right).

Unlike a standard breadth-first search (BFS), which explores all frontier nodes uniformly, the model demonstrates the ability to prioritize promising nodes while pruning less relevant ones. To uncover the model’s preferences, we analyze its subsequent outputs in language space. For instance, if the model is forced to switch back to language space after a single latent thought ( $k=1$ ), it predicts the next step in a structured format, such as “every [Concept A] is a [Concept B].” By examining the probability distribution over potential fillers for [Concept A], we can derive numeric values for the children of the root node Alex (Figure 7, left). Similarly, when $k=2$ , the prediction probabilities for all frontier nodes—the grandchildren of Alex —are obtained (Figure 7, right).

<details>

<summary>figures/percentile.png Details</summary>

### Visual Description

## Cumulative Distribution Chart: First Thoughts vs. Second Thoughts

### Overview

The image presents two cumulative distribution charts, titled "First thoughts" and "Second thoughts," comparing the distribution of values for "Top 1," "Top 2," and "Top 3" categories. The charts display the percentile on the x-axis and the value on the y-axis, showing how the cumulative distribution changes between the first and second thoughts.

### Components/Axes

**Chart Titles:**

* Left Chart: "First thoughts"

* Right Chart: "Second thoughts"

**Axes:**

* X-axis (both charts): "Percentile," ranging from 0.0 to 1.0 in increments of 0.2.

* Y-axis (both charts): "Value," ranging from 0.0 to 1.0 in increments of 0.2.

**Legend (both charts, located in the bottom-right):**

* Top 3: Light cyan color

* Top 2: Medium cyan color

* Top 1: Dark cyan color

### Detailed Analysis

**Left Chart: First Thoughts**

* **Top 1 (Dark cyan):** The curve starts at approximately (0, 0.35) and rises steadily, reaching a value of 0.6 at a percentile of 0.2, and approaches 1.0 at a percentile of 1.0.

* **Top 2 (Medium cyan):** The curve starts at approximately (0, 0.45) and rises steadily, reaching a value of 0.8 at a percentile of 0.2, and approaches 1.0 at a percentile of 1.0.

* **Top 3 (Light cyan):** The curve starts at approximately (0, 0.5) and rises steadily, reaching a value of 0.9 at a percentile of 0.2, and approaches 1.0 at a percentile of 1.0.

**Right Chart: Second Thoughts**

* **Top 1 (Dark cyan):** The curve starts at approximately (0, 0.25) and rises steadily, reaching a value of 0.6 at a percentile of 0.2, and approaches 1.0 at a percentile of 1.0.

* **Top 2 (Medium cyan):** The curve starts at approximately (0, 0.35) and rises steadily, reaching a value of 0.75 at a percentile of 0.2, and approaches 1.0 at a percentile of 1.0.

* **Top 3 (Light cyan):** The curve starts at approximately (0, 0.4) and rises steadily, reaching a value of 0.85 at a percentile of 0.2, and approaches 1.0 at a percentile of 1.0.

### Key Observations

* In both charts, the "Top 3" category consistently has the highest values for any given percentile, followed by "Top 2" and then "Top 1."

* The "Second thoughts" chart shows a lower starting value for all three categories compared to the "First thoughts" chart.

* All curves approach a value of 1.0 as the percentile approaches 1.0.

### Interpretation

The charts illustrate the cumulative distribution of values for the top categories, showing how the distribution shifts from "First thoughts" to "Second thoughts." The lower starting values in the "Second thoughts" chart suggest that, overall, the values in each category are lower after reconsideration. The consistent ordering of "Top 3," "Top 2," and "Top 1" indicates a hierarchical structure where higher-ranked categories generally have higher values. The fact that all curves approach 1.0 suggests that, eventually, all values in each category are accounted for as the percentile increases.

</details>

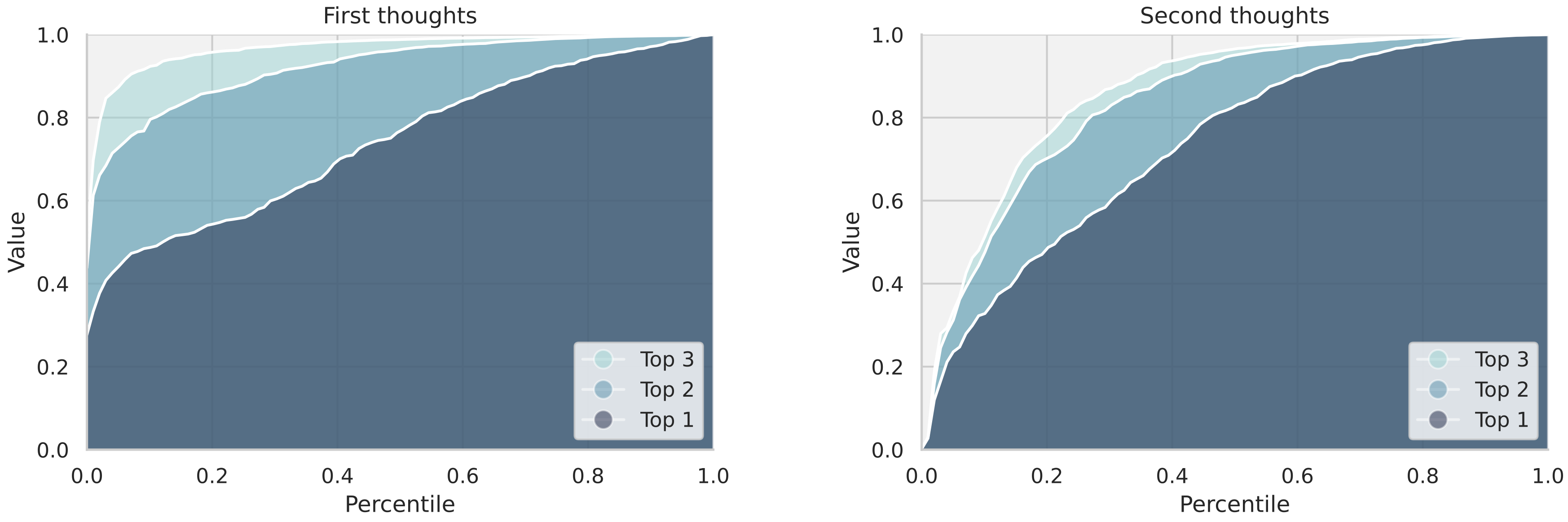

Figure 8: Analysis of parallelism in latent tree search. The left plot depicts the cumulative value of the top-1, top-2, and top-3 candidate nodes for the first thoughts, calculated across test cases and ranked by percentile. The significant gaps between the lines reflect the model’s ability to explore alternative latent thoughts in parallel. The right plot shows the corresponding analysis for the second thoughts, where the gaps between lines are narrower, indicating reduced parallelism and increased certainty in reasoning as the search tree develops. This shift highlights the model’s transition toward more focused exploration in later stages.

The probability distribution can be viewed as the model’s implicit value function, estimating each node’s potential to reach the target. As shown in the figure, “lempus”, “zhorpus”, “grimpus”, and “sterpus” have a value of 0.33, 0.16, 0.32, and 0.01, respectively. This indicates that in the first continuous thought, the model has mostly ruled out “sterpus” as an option but remains uncertain about the correct choice among the other three. In the second thought, however, the model has mostly ruled out other options but focused on “rorpus”.

Figure 8 presents an analysis of the parallelism in the model’s latent reasoning across the first and second thoughts. For the first thoughts (left panel), the cumulative values of the top-1, top-2, and top-3 candidate nodes are computed and plotted against their respective percentiles across the test set. The noticeable gaps between the three lines indicate that the model maintains significant diversity in its reasoning paths at this stage, suggesting a broad exploration of alternative possibilities. In contrast, the second thoughts (right panel) show a narrowing of these gaps. This trend suggests that the model transitions from parallel exploration to more focused reasoning in the second latent reasoning step, likely as it gains more certainty about the most promising paths.

5.4 Why is a Latent Space Better for Planning?

<details>

<summary>figures/value_stats_meta_2.png Details</summary>

### Visual Description

## Line Chart: First Thoughts vs. Second Thoughts

### Overview

The image contains two line charts, one titled "First thoughts" and the other "Second thoughts." Each chart plots the "Value" (y-axis) against "Height" (x-axis) for two categories: "Correct" and "Incorrect." The charts show how the value changes with height for both correct and incorrect responses, with shaded regions indicating uncertainty or variance.

### Components/Axes

**First Thoughts Chart:**

* **Title:** First thoughts

* **X-axis:** Height, with markers at 0, 1, 2, 3, 4, 5, and 6.

* **Y-axis:** Value, with markers at 0.0, 0.2, 0.4, and 0.6.

* **Legend:** Located in the top-left corner.

* **Correct:** Represented by a blue line.

* **Incorrect:** Represented by an orange line.

**Second Thoughts Chart:**

* **Title:** Second thoughts

* **X-axis:** Height, with markers at 0, 1, 2, 3, 4, and 5.

* **Y-axis:** Value, with markers at 0.0, 0.1, 0.2, 0.3, 0.4, 0.5, and 0.6.

* **Legend:** (Same as above, inferred)

* **Correct:** Represented by a blue line.

* **Incorrect:** Represented by an orange line.

### Detailed Analysis

**First Thoughts Chart:**

* **Correct (Blue Line):** The line starts at approximately 0.52 at Height 2, increases to approximately 0.57 at Height 3, remains relatively stable at approximately 0.57 at Height 4 and 5, and then decreases to approximately 0.52 at Height 6.

* **Incorrect (Orange Line):** The line starts at approximately 0.01 at Height 0, increases to approximately 0.17 at Height 1, increases to approximately 0.25 at Height 2, increases to approximately 0.27 at Height 3, increases to approximately 0.31 at Height 4, increases to approximately 0.34 at Height 5, and then decreases to approximately 0.29 at Height 6.

**Second Thoughts Chart:**

* **Correct (Blue Line):** The line starts at approximately 0.50 at Height 1, increases to approximately 0.53 at Height 2, decreases to approximately 0.50 at Height 3, decreases to approximately 0.45 at Height 4, and then decreases to approximately 0.24 at Height 5.

* **Incorrect (Orange Line):** The line starts at approximately 0.01 at Height 0, increases to approximately 0.09 at Height 1, increases to approximately 0.11 at Height 2, increases to approximately 0.15 at Height 3, increases to approximately 0.17 at Height 4, and then decreases to approximately 0.12 at Height 5.

### Key Observations

* In the "First thoughts" chart, the "Correct" value is consistently higher than the "Incorrect" value across all heights.

* In the "Second thoughts" chart, the "Correct" value starts higher than the "Incorrect" value, but the "Correct" value decreases significantly at higher heights, while the "Incorrect" value remains relatively low.

* The shaded regions around the lines indicate variability or uncertainty in the data.

### Interpretation

The charts compare the "Value" of "Correct" and "Incorrect" responses at different "Heights" for "First thoughts" and "Second thoughts." The "First thoughts" chart suggests that initial responses are more likely to be correct, and this advantage persists across different heights. However, the "Second thoughts" chart reveals a different pattern. While initial "Second thoughts" are also more likely to be correct, the "Correct" value decreases significantly as height increases, suggesting that overthinking or reconsidering can lead to more incorrect responses at higher heights. The "Incorrect" value remains relatively low, indicating that second-guessing doesn't necessarily improve accuracy at higher heights. The shaded regions highlight the variability in the data, suggesting that these trends are not absolute but rather represent general tendencies.

</details>

Figure 9: The correlation between prediction probability of concepts and their heights.

In this section, we explore why latent reasoning is advantageous for planning, drawing on the search tree perspective and the value function defined earlier. Referring to our illustrative example, a key distinction between “sterpus” and the other three options lies in the structure of the search tree: “sterpus” is a leaf node (Figure 7). This makes it immediately identifiable as an incorrect choice, as it cannot lead to the target node “bompus”. In contrast, the other nodes have more descendants to explore, making their evaluation more challenging.

To quantify a node’s exploratory potential, we measure its height in the tree, defined as the shortest distance to any leaf node. Based on this notion, we hypothesize that nodes with lower heights are easier to evaluate accurately, as their exploratory potential is limited. Consistent with this hypothesis, in our example, the model exhibits greater uncertainty between “grimpus” and “lempus”, both of which have a height of 2—higher than the other candidates.

To test this hypothesis more rigorously, we analyze the correlation between the model’s prediction probabilities and node heights during the first and second latent reasoning steps across the test set. Figure 9 reveals a clear pattern: the model successfully assigns lower values to incorrect nodes and higher values to correct nodes when their heights are low. However, as node heights increase, this distinction becomes less pronounced, indicating greater difficulty in accurate evaluation.

In conclusion, these findings highlight the benefits of leveraging latent space for planning. By delaying definite decisions and expanding the latent reasoning process, the model pushes its exploration closer to the search tree’s terminal states, making it easier to distinguish correct nodes from incorrect ones.

6 Conclusion

In this paper, we presented Coconut, a novel paradigm for reasoning in continuous latent space. Through extensive experiments, we demonstrated that Coconut significantly enhances LLM reasoning capabilities. Notably, our detailed analysis highlighted how an unconstrained latent space allows the model to develop an effective reasoning pattern similar to BFS. Future work is needed to further refine and scale latent reasoning methods. One promising direction is pretraining LLMs with continuous thoughts, which may enable models to generalize more effectively across a wider range of reasoning scenarios. We anticipate that our findings will inspire further research into latent reasoning methods, ultimately contributing to the development of more advanced machine reasoning systems.

Acknowledgement

The authors express their sincere gratitude to Jihoon Tack for his valuable discussions throughout the course of this work.

References

- Achiam et al. (2023) Josh Achiam, Steven Adler, Sandhini Agarwal, Lama Ahmad, Ilge Akkaya, Florencia Leoni Aleman, Diogo Almeida, Janko Altenschmidt, Sam Altman, Shyamal Anadkat, et al. Gpt-4 technical report. arXiv preprint arXiv:2303.08774, 2023.

- Amalric and Dehaene (2019) Marie Amalric and Stanislas Dehaene. A distinct cortical network for mathematical knowledge in the human brain. NeuroImage, 189:19–31, 2019.

- Biran et al. (2024) Eden Biran, Daniela Gottesman, Sohee Yang, Mor Geva, and Amir Globerson. Hopping too late: Exploring the limitations of large language models on multi-hop queries. arXiv preprint arXiv:2406.12775, 2024.

- Chen et al. (2023) Wenhu Chen, Ming Yin, Max Ku, Pan Lu, Yixin Wan, Xueguang Ma, Jianyu Xu, Xinyi Wang, and Tony Xia. Theoremqa: A theorem-driven question answering dataset. In Proceedings of the 2023 Conference on Empirical Methods in Natural Language Processing, pages 7889–7901, 2023.

- Cobbe et al. (2021) Karl Cobbe, Vineet Kosaraju, Mohammad Bavarian, Mark Chen, Heewoo Jun, Lukasz Kaiser, Matthias Plappert, Jerry Tworek, Jacob Hilton, Reiichiro Nakano, et al. Training verifiers to solve math word problems. arXiv preprint arXiv:2110.14168, 2021.

- DeepMind (2024) Google DeepMind. Ai achieves silver-medal standard solving international mathematical olympiad problems, 2024. https://deepmind.google/discover/blog/ai-solves-imo-problems-at-silver-medal-level/.

- Deng et al. (2023) Yuntian Deng, Kiran Prasad, Roland Fernandez, Paul Smolensky, Vishrav Chaudhary, and Stuart Shieber. Implicit chain of thought reasoning via knowledge distillation. arXiv preprint arXiv:2311.01460, 2023.

- Deng et al. (2024) Yuntian Deng, Yejin Choi, and Stuart Shieber. From explicit cot to implicit cot: Learning to internalize cot step by step. arXiv preprint arXiv:2405.14838, 2024.

- Dubey et al. (2024) Abhimanyu Dubey, Abhinav Jauhri, Abhinav Pandey, Abhishek Kadian, Ahmad Al-Dahle, Aiesha Letman, Akhil Mathur, Alan Schelten, Amy Yang, Angela Fan, et al. The llama 3 herd of models. arXiv preprint arXiv:2407.21783, 2024.

- Fan et al. (2024) Ying Fan, Yilun Du, Kannan Ramchandran, and Kangwook Lee. Looped transformers for length generalization. arXiv preprint arXiv:2409.15647, 2024.

- Fedorenko et al. (2011) Evelina Fedorenko, Michael K Behr, and Nancy Kanwisher. Functional specificity for high-level linguistic processing in the human brain. Proceedings of the National Academy of Sciences, 108(39):16428–16433, 2011.

- Fedorenko et al. (2024) Evelina Fedorenko, Steven T Piantadosi, and Edward AF Gibson. Language is primarily a tool for communication rather than thought. Nature, 630(8017):575–586, 2024.

- Feng et al. (2023) Guhao Feng, Bohang Zhang, Yuntian Gu, Haotian Ye, Di He, and Liwei Wang. Towards revealing the mystery behind chain of thought: a theoretical perspective. Advances in Neural Information Processing Systems, 36, 2023.

- Gandhi et al. (2024) Kanishk Gandhi, Denise Lee, Gabriel Grand, Muxin Liu, Winson Cheng, Archit Sharma, and Noah D Goodman. Stream of search (sos): Learning to search in language. arXiv preprint arXiv:2404.03683, 2024.