# Efficient Rectification of Neuro-Symbolic Reasoning Inconsistencies by Abductive Reflection

**Authors**: Wen-Chao Hu, Wang-Zhou Dai, Yuan Jiang, Zhi-Hua Zhou

## Abstract

Neuro-Symbolic (NeSy) AI could be regarded as an analogy to human dual-process cognition, modeling the intuitive System 1 with neural networks and the algorithmic System 2 with symbolic reasoning. However, for complex learning targets, NeSy systems often generate outputs inconsistent with domain knowledge and it is challenging to rectify them. Inspired by the human Cognitive Reflection, which promptly detects errors in our intuitive response and revises them by invoking the System 2 reasoning, we propose to improve NeSy systems by introducing Abductive Reflection (ABL-Refl) based on the Abductive Learning (ABL) framework. ABL-Refl leverages domain knowledge to abduce a reflection vector during training, which can then flag potential errors in the neural network outputs and invoke abduction to rectify them and generate consistent outputs during inference. ABL-Refl is highly efficient in contrast to previous ABL implementations. Experiments show that ABL-Refl outperforms state-of-the-art NeSy methods, achieving excellent accuracy with fewer training resources and enhanced efficiency.

## 1 Introduction

Human decision-making is generally recognized as an interaction between two systems: System 1 quickly generates an intuitive response, and System 2 engages in further algorithmic and slow reasoning (Frederick 2005; Kahneman 2011). In Neuro-Symbolic (NeSy) Artificial Intelligence (AI), neural networks often resemble System 1 for rapid pattern recognition, and symbolic reasoning mirrors System 2 to leverage domain knowledge and handle complex problems thoughtfully, yet in a slower and more controlled way (Bengio 2019). Like human System 1 reasoning, when facing complicated tasks, neural networks often produce unreliable outputs which cause inconsistencies with domain knowledge. These inconsistencies can then be reconciled with the help of the symbolic reasoning counterpart (Hitzler 2022).

To achieve the above process, some methods relax symbolic domain knowledge as neural network constraints (Xu et al. 2018; Yang, Lee, and Park 2022), some attempt to approximate logical calculus using distributed representations within neural networks (Wang et al. 2019). However, a loss of full symbolic reasoning ability often occurs during these relaxation or approximation, hampering the ability of generating reliable output.

Abductive Learning (ABL) (Zhou 2019; Zhou and Huang 2022) is a framework for bridging machine learning and logical reasoning while preserving full expressive power in each side. In ABL, the machine learning component first converts raw data into primitive symbolic outputs. These outputs can be utilized by the symbolic reasoning component, which leverages domain knowledge and performs abduction to generate a revised, more reliable output. However, previous implementations of ABL require a highly discrete combinatorial consistency optimization before applying abduction, and this optimization has high complexity which encumbers, thereby severely limiting the efficiency and applicability to large-scale scenarios.

Human reasoning naturally exploits both sides efficiently, a hypothetical model for this process is called Cognitive Reflection, where the fast System 1 thinking is called to quickly generate an approximate over-all solution, and then seamlessly hands the complicated parts to System 2 (Frederick 2005). The key to this process is the reflection mechanism, which promptly detects which part in the intuitive response may contain inconsistencies with domain knowledge and invokes System 2 to rectify them. This reflection typically positively associates with System 2 capabilities, as both are closely linked to an individual’s mastery of domain knowledge (Sinayev and Peters 2015). Following the reflection, the process of the step-by-step formal reasoning becomes less complex: With a largely reduced search space, deriving the correct solution for System 2 becomes straightforward.

Inspired by this phenomenon, we propose a general enhancement, Abductive Reflection (ABL-Refl). Based on ABL framework, ABL-Refl preserves full expressive power of neural networks and symbolic reasoning, while replacing the time-consuming consistency optimization with the reflection mechanism, thereby significantly improves efficiency and applicability. Specifically, in ABL-Refl, a reflection vector is concurrently generated with the neural network intuitive output, which flags potential errors in the output and invokes symbolic reasoning to perform abduction, thereby rectifying these errors and generating a new output that is more consistent with domain knowledge. During model training, the training information for the reflection derives from domain knowledge. In essence, the reflection vector is abduced from domain knowledge and serves as an attention mechanism for narrowing the problem space of symbolic reasoning. The reflection can be trained unsupervisedly, requiring only the same amount of domain knowledge as state-of-the-art NeSy systems without generating extra training data.

We validate the effectiveness of ABL-Refl in solving Sudoku NeSy benchmarks in both symbolic and visual forms. Compared to previous NeSy methods, ABL-Refl performs significantly better, achieving higher reasoning accuracy efficiently with fewer training resources. We also compare our method to symbolic solvers, and show that the reduced search space in ABL-Refl improves the reasoning efficiency. Further experiments on solving combinatorial optimization on graphs validate that ABL-Refl can handle diverse types of data in varied dimensions, and exploit knowledge base in different forms.

## 2 Related Work

Recently, there has been notable progress in enhancing neural networks with reliable symbolic reasoning. Some methods use differentiable fuzzy logic (Serafini and Garcez 2016; Marra et al. 2020) or relax symbolic domain knowledge as constraints for neural network training (Xu et al. 2018; Yang, Lee, and Park 2022; Hoernle et al. 2022; Ahmed et al. 2022), while others learn constraints within neural networks by approximating logic reasoning with distributed representations (Amos and Kolter 2017; Selsam et al. 2018; Wang et al. 2019). These models tend to soften the requirements in symbolic reasoning, impacting the reliability of output generation. Models like DeepProbLog (Manhaeve et al. 2018) and NeurASP (Yang, Ishay, and Lee 2020) interpret the neural network output as a distribution over symbols and then apply a symbolic solver, incurring substantial computational costs. Abductive Learning (ABL) (Zhou 2019; Zhou and Huang 2022) attempts to integrate machine learning and logical reasoning in a balanced and mutually supporting way. It features an easy-to-use open-source toolkit (Huang et al. 2024) with many practical applications (Huang et al. 2020; Cai et al. 2021; Wang et al. 2021; Gao et al. 2024). However, the consistency optimization is with high complexity.

Another category of work related to our study also follows a similar process of prediction, error identification, and reasoning (Nair et al. 2020; Nye et al. 2021; Han et al. 2023). These methods are usually constrained in a narrow scope of domain knowledge, confined to specific mathematical problems or are bounded within a minimal world model.

Cornelio el al. (2023) generates a selection module to identify errors requiring symbolic reasoning rectification. In constrast to their approach which requires the preparation of a large synthetic dataset in advance, our approach automatically abduces the reflection vector during model training.

## 3 Abductive Reflection

This section presents problem setting and the Abductive Reflection (ABL-Refl) method.

### 3.1 Problem Setting

The main task of this paper is as follows: The input is raw data $\boldsymbol{x}$ , which can be in either symbolic or sub-symbolic form, and the target output is $\boldsymbol{y}=\left[y_{1},y_{2},\dots,y_{n}\right]$ , with each $y_{i}$ being a symbol from a set $\mathcal{Y}$ that contains all possible output symbols. We assume two key components at our disposal: neural network $f$ and domain knowledge base $\mathcal{KB}$ . $f$ can directly map $\boldsymbol{x}$ to $\boldsymbol{y}$ , and $\mathcal{KB}$ holds constraints between the symbols in $\boldsymbol{y}$ . $\mathcal{KB}$ can assume various forms, including propositional logic, first-order logic, mathematical or physical equations, etc., and can perform symbolic reasoning operations by exploiting the corresponding symbolic solver. The output $\boldsymbol{y}$ should adhere to the constraints in $\mathcal{KB}$ , otherwise it will inevitably contain errors that lead to inconsistencies with the domain knowledge and incorrect reasoning results.

This problem type has broad applications. For example, it can be used to solve Sudoku puzzles, where the output $\boldsymbol{{y}}$ consists of $n=81$ symbols from the set $\mathcal{Y}=\{1,2,\dots,9\}$ , and the constraints in $\mathcal{KB}$ are the rules of Sudoku. It can also be applied in deploying generative models for text generation, gene prediction, mathematical problem-solving, etc., producing outputs that adhere to intricate commonsense, biological, or mathematical logics in $\mathcal{KB}$ .

### 3.2 Brief Introduction to Abductive Learning

<details>

<summary>extracted/6187776/figs/ABL.jpg Details</summary>

### Visual Description

\n

## Diagram: Neural Network with Consistency Optimization and Abduction

### Overview

This image is a technical diagram illustrating an enhanced neural network architecture that incorporates two additional post-processing stages: **Consistency Optimization** and **Abduction**. The system takes an input `x`, processes it through a neural network to produce an initial "Intuitive Output" vector `ŷ`, refines this output using a knowledge base (`KB`) for consistency, and finally applies an abduction function `δ` to generate a "Final Output" vector `ȳ`. The diagram uses color-coding (green for neural network components, red for consistency optimization, blue for abduction) and directional arrows to show data flow and relationships.

### Components/Flow

The diagram is organized into three main regions from left to right:

1. **Left Region: Neural Network `f`**

* **Input:** Labeled `Input x` with a black arrow pointing into the network.

* **Neural Network Block:** A large, light-gray rounded rectangle labeled `Neural Network f`. Inside it are two sub-blocks:

* A white rounded rectangle labeled `Body Block f₁`.

* A light-green rounded rectangle labeled `Output Layer f₂`.

* **Output:** A green arrow points from the `Output Layer f₂` to a vertical vector.

2. **Center Region: Intuitive Output & Consistency Optimization**

* **Intuitive Output Vector:** A vertical column vector labeled `Intuitive Output ŷ` (in green text). It contains elements: `ŷ₁`, `ŷ₂`, `ŷ₃`, `ŷ₄`, `...`, `ŷₙ`.

* **Consistency Optimization Module:** A large, light-red circle labeled `Consistency Optimization` (in red text). Inside it are three vertical vectors, each containing a subset of the `ŷ` elements (e.g., `ŷ₁`, `ŷ₃`, `ŷ₄`, `...`, `ŷₙ` in the first). Some elements are highlighted with pink rectangles. Red dashed lines labeled `(query)` connect the `Intuitive Output ŷ` vector to a database icon below.

* **Knowledge Base (`KB`):** A blue cylinder icon labeled `KB` in blue text. It receives the red dashed "query" lines and has a solid blue arrow pointing to the right.

3. **Right Region: Abduction & Final Output**

* **Intermediate Vector:** A vertical vector (output of Consistency Optimization) containing elements `ŷ₂`, `ŷ₃`, `...`, `ŷₙ`, with some pink highlights.

* **Abduction Process:** The blue arrow from the `KB` points to a process labeled `Abduction δ` (in blue text). This process acts on the intermediate vector.

* **Final Output Vector:** A vertical column vector labeled `Final Output ȳ` (in blue text). It contains transformed elements: `δ(ŷ₁)`, `ŷ₂`, `ŷ₃`, `δ(ŷ₄)`, `...`, `ŷₙ`. A blue arrow connects the `Abduction δ` label to this final vector.

### Detailed Analysis

* **Data Flow:** The primary flow is linear: `Input x` → `Neural Network f` → `Intuitive Output ŷ` → `Consistency Optimization` (informed by `KB`) → `Abduction δ` (informed by `KB`) → `Final Output ȳ`.

* **Vector Structure:** All output vectors (`ŷ`, the intermediate vector, and `ȳ`) are depicted as column vectors with `n` elements, indexed from 1 to `n`. The ellipsis (`...`) indicates a continuation of elements between `ŷ₄` and `ŷₙ`.

* **Role of the Knowledge Base (`KB`):** The `KB` is central to the post-processing. It is queried (red dashed lines) during the Consistency Optimization phase and provides input (solid blue arrow) to the Abduction phase. This suggests the `KB` contains logical rules or constraints used to refine the neural network's raw output.

* **Transformation Notation:** The final output `ȳ` shows that some elements are transformed by the function `δ` (e.g., `δ(ŷ₁)`, `δ(ŷ₄)`), while others remain unchanged (e.g., `ŷ₂`, `ŷ₃`). This implies the abduction process selectively modifies the output based on knowledge base rules.

### Key Observations

1. **Selective Modification:** The abduction function `δ` does not alter all elements of the intermediate vector. The diagram explicitly shows `ŷ₂` and `ŷ₃` passing through unchanged to the final output `ȳ`, while `ŷ₁` and `ŷ₄` are transformed to `δ(ŷ₁)` and `δ(ŷ₄)`.

2. **Query Mechanism:** The "Intuitive Output" vector `ŷ` is used to query the `KB` (red dashed lines) *before* the consistency optimization is fully applied. This indicates an interactive or iterative refinement process.

3. **Visual Emphasis:** Pink highlighting within the vectors inside the Consistency Optimization circle and the intermediate vector draws attention to specific elements being processed or flagged during that stage.

4. **Color-Coded Semantics:** The diagram uses color consistently to separate concerns: Green for the base neural network, Red for the consistency-checking phase, and Blue for the knowledge-based abduction and final output.

### Interpretation

This diagram represents a **neuro-symbolic AI architecture**. It demonstrates a method to combine the pattern recognition capabilities of a neural network (`f`) with the reasoning power of a symbolic knowledge base (`KB`).

* **Purpose:** The system aims to produce a final output (`ȳ`) that is not only statistically predictive (from the neural network) but also logically consistent with a set of predefined rules or facts stored in the `KB`. The "Intuitive Output" `ŷ` is the raw, potentially inconsistent prediction.

* **Process Flow:** The two-stage post-processing is key. First, **Consistency Optimization** likely checks the neural network's output against the `KB` to identify conflicts or improbable states (highlighted in pink). Second, **Abduction** (`δ`) is a form of logical inference that finds the best explanation or modification to the output to make it consistent with the knowledge base, resulting in the "Final Output" `ȳ`.

* **Significance:** This approach addresses a common weakness of pure neural networks: their outputs can be statistically accurate but logically incoherent or violate known constraints. By integrating a `KB`, the system can enforce domain rules, correct obvious errors, and produce more reliable and interpretable results. The diagram highlights the flow of information and the critical role of the knowledge base in guiding the refinement of the neural network's initial guess.

</details>

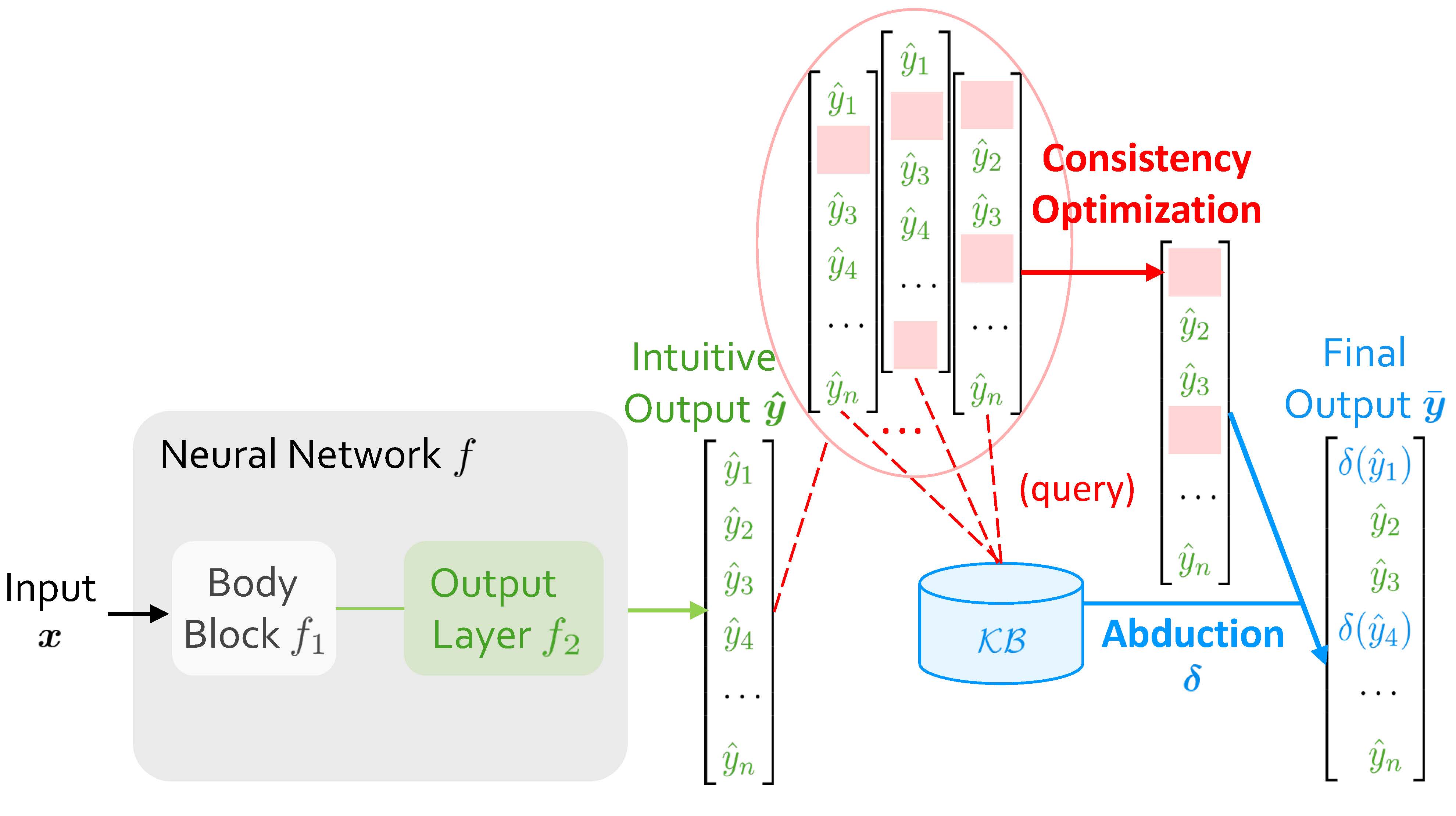

Figure 1: Abductive Learning (ABL) framework.

When Abductive Learning (ABL) receives an input $\boldsymbol{x}$ , it initially employs $f$ to map $\boldsymbol{x}$ into an intuitive output $\boldsymbol{\hat{y}}=\left[\hat{y}_{1},\hat{y}_{2},\dots,\hat{y}_{n}\right]$ . When $f$ is under-trained, $\boldsymbol{\hat{y}}$ might contain errors leading to inconsistencies with $\mathcal{KB}$ . ABL then tries to rectify them, and obtains a revised $\boldsymbol{\bar{y}}$ . As shown in Figure 1, the final output, $\boldsymbol{\bar{y}}$ , consists of two parts: the green part retains the results from neural network, and the blue part is the modified result obtained by abduction, a basic form of symbolic reasoning that seeks plausible explanations for observations based on $\mathcal{KB}$ .

Specifically, the process of obtaining $\boldsymbol{\bar{y}}$ can be divided into two sequential steps. The first step, consistency optimization, determines which positions in $\boldsymbol{\hat{y}}$ include elements that contain errors causing inconsistencies, so that performing abduction at these positions will yield a $\boldsymbol{\bar{y}}$ consistent with $\mathcal{KB}$ . Essentially, this process is pinpointing propositions (or ground atoms, etc.) which have incorrect truth assignments, and most neuro-symbolic tasks can be formalized into this form. Once these positions are determined, the second step is rectifying by abduction, which then becomes easy for $\mathcal{KB}$ and its corresponding symbolic solver.

#### Challenge.

In previous ABL, consistency optimization has always been a computational bottleneck. It operates as an external module using zeroth-order optimization methods, independent from both $f$ and $\mathcal{KB}$ (Dai et al. 2019; Zhou and Huang 2022). For each time of inference, it involves repetitively selecting various possible positions and querying the $\mathcal{KB}$ to see if a consistent result can be inferred. Each query involves an invocation of $\mathcal{KB}$ for slow symbolic reasoning. Also, since it is a complex combinatorial problem with a highly discrete nature, the number of such queries required escalates exponentially as data scale increases. This large number leads to a marked increase in time consumption, hence confines the applicability of ABL to only small datasets, usually those with output dimension $n$ less than 10.

### 3.3 Architecture

To address the challenges above, we propose Abductive Reflection (ABL-Refl). In this section, we will provide a detailed description of its architecture.

Let’s first revisit the role of the neural network $f$ when we map the input to symbols from the set $\mathcal{Y}$ . Typically, the raw data is first passed through the body block of the network, denoted by $f_{1}$ , resulting in a high-dimensional embedding which encapsulates a wealth of feature information of the raw data. The form of $f_{1}$ varies, including structures like recurrent layers, graph convolution layers, or Transformers, etc. The result of $f_{1}$ is subsequently passed into several layers, usually linear layers, denoted by $f_{2}$ , to obtain the intuitive output: $\boldsymbol{\hat{y}}=\text{argmax}(f_{2}(f_{1}(\boldsymbol{x})))\in\mathcal{Y} ^{n}$ .

<details>

<summary>extracted/6187776/figs/ABL-Refl.jpg Details</summary>

### Visual Description

## Diagram: Neural Network Architecture with Reflection and Abduction

### Overview

The image is a technical diagram illustrating a neural network architecture that incorporates a reflection mechanism and an abduction process for error correction. The system processes an input `x` through a neural network `f`, producing an initial intuitive output, which is then refined using a reflection vector and external knowledge to generate a final, corrected output.

### Components/Axes

The diagram is organized into several key components, flowing from left to right:

1. **Input**: Labeled "Input `x`" on the far left.

2. **Neural Network `f`**: A large, light-gray rounded rectangle containing:

* **Body Block `f₁`**: The initial processing unit.

* **Output Layer `f₂`**: A green box that receives data from the Body Block.

* **Reflection Layer `R`**: A blue box that also receives data from the Body Block.

3. **Intuitive Output `ŷ`**: A vertical vector (list) in green, positioned to the right of the Neural Network. It contains elements: `ŷ₁`, `ŷ₂`, `ŷ₃`, `ŷ₄`, `...`, `ŷₙ`.

4. **Reflection `r`**: A vertical binary vector in blue, positioned below the Intuitive Output. It contains the values: `1`, `0`, `0`, `1`, `...`, `0`.

5. **Error-Removed `ŷ'`**: A vertical vector in blue, positioned to the right of the Intuitive Output. It shows a modified version of `ŷ`, where some elements (like `ŷ₁` and `ŷ₄`) are replaced with horizontal lines (`—`), indicating removal or masking.

6. **Knowledge Base `KB`**: A blue cylinder icon, representing an external database or knowledge store.

7. **Abduction `δ`**: A process label in blue, associated with an arrow pointing from the Error-Removed vector to the Final Output.

8. **Final Output `ȳ`**: A vertical vector in blue on the far right. It contains elements: `δ(ŷ₁)`, `ŷ₂`, `ŷ₃`, `δ(ŷ₄)`, `...`, `ŷₙ`.

### Detailed Analysis

**Data Flow and Component Relationships:**

1. **Initial Processing**: The input `x` enters the **Body Block `f₁`**. The output of `f₁` splits into two parallel paths within the Neural Network `f`.

2. **Dual Output Generation**:

* Path 1 (Green): Goes to the **Output Layer `f₂`**, which generates the **Intuitive Output `ŷ`**. This is the network's initial prediction vector.

* Path 2 (Blue): Goes to the **Reflection Layer `R`**, which generates the **Reflection `r`**. This is a binary vector (containing 1s and 0s) that acts as a selector or mask.

3. **Error Removal / Masking**: The **Intuitive Output `ŷ`** and the **Reflection `r`** are combined. The diagram shows the **Error-Removed `ŷ'`** vector. The visual logic suggests that where `r` has a `0`, the corresponding element in `ŷ` is retained (e.g., `ŷ₂`, `ŷ₃`). Where `r` has a `1`, the corresponding element in `ŷ` is marked for correction (replaced with `—` in `ŷ'`, e.g., positions 1 and 4).

4. **Knowledge-Based Correction**: The **Error-Removed `ŷ'`** and the **Knowledge Base `KB`** feed into the **Abduction `δ`** process. Abduction is a form of logical inference that seeks the most likely explanation.

5. **Final Output Generation**: The **Abduction `δ`** process uses the knowledge base to correct the masked elements. The result is the **Final Output `ȳ`**. In this vector:

* Elements that were retained (`ŷ₂`, `ŷ₃`, ..., `ŷₙ`) remain unchanged.

* Elements that were masked (`ŷ₁`, `ŷ₄`) are replaced by the function `δ` applied to them: `δ(ŷ₁)` and `δ(ŷ₄)`. This implies `δ` uses context from `KB` and the other elements to infer a corrected value.

### Key Observations

* **Dual-Path Architecture**: The core neural network `f` has a parallel structure, producing both a prediction (`ŷ`) and a self-assessment or confidence mask (`r`).

* **Selective Correction**: The system does not correct all outputs. The Reflection `r` vector (`1,0,0,1,...`) specifically targets the 1st and 4th elements for correction, while leaving the 2nd, 3rd, and nth elements untouched.

* **Role of Abduction**: The correction mechanism (`δ`) is explicitly labeled as "Abduction," indicating it performs inference to the best explanation, rather than simple interpolation or averaging. It relies on an external **Knowledge Base (`KB`)**.

* **Notation Consistency**: The diagram uses consistent color coding (green for intuitive/initial, blue for reflective/corrected) and mathematical notation (`ŷ` for estimate, `ȳ` for final, `δ` for function).

### Interpretation

This diagram depicts a **self-correcting neural network system**. Its purpose is to improve the reliability of a model's output by identifying and correcting potential errors.

* **How it works**: The system first makes a prediction (`ŷ`). Simultaneously, a reflection layer assesses this prediction, generating a binary vector (`r`) that flags specific outputs as potentially erroneous (where `r=1`). These flagged outputs are then "removed" or masked. An abduction process (`δ`), powered by an external knowledge base (`KB`), infers the most plausible correct values for only those flagged positions, producing the final output (`ȳ`).

* **What the data suggests**: The architecture suggests that the model is designed for tasks where:

1. Outputs are structured (e.g., a sequence, a set of class probabilities).

2. Errors are sparse (only a few elements need correction, as shown by the few `1`s in `r`).

3. External, structured knowledge (`KB`) is available to inform corrections.

* **Notable Implications**: This is not a standard feedforward network. It introduces a form of **internal critique** (the Reflection Layer) and **external validation** (the Knowledge Base via Abduction). The system explicitly separates the generation of a hypothesis from its verification and correction, mimicking a scientific or diagnostic reasoning process. The use of "abduction" is significant, as it implies the correction is based on finding the most plausible explanation given the knowledge, not just optimizing a loss function.

</details>

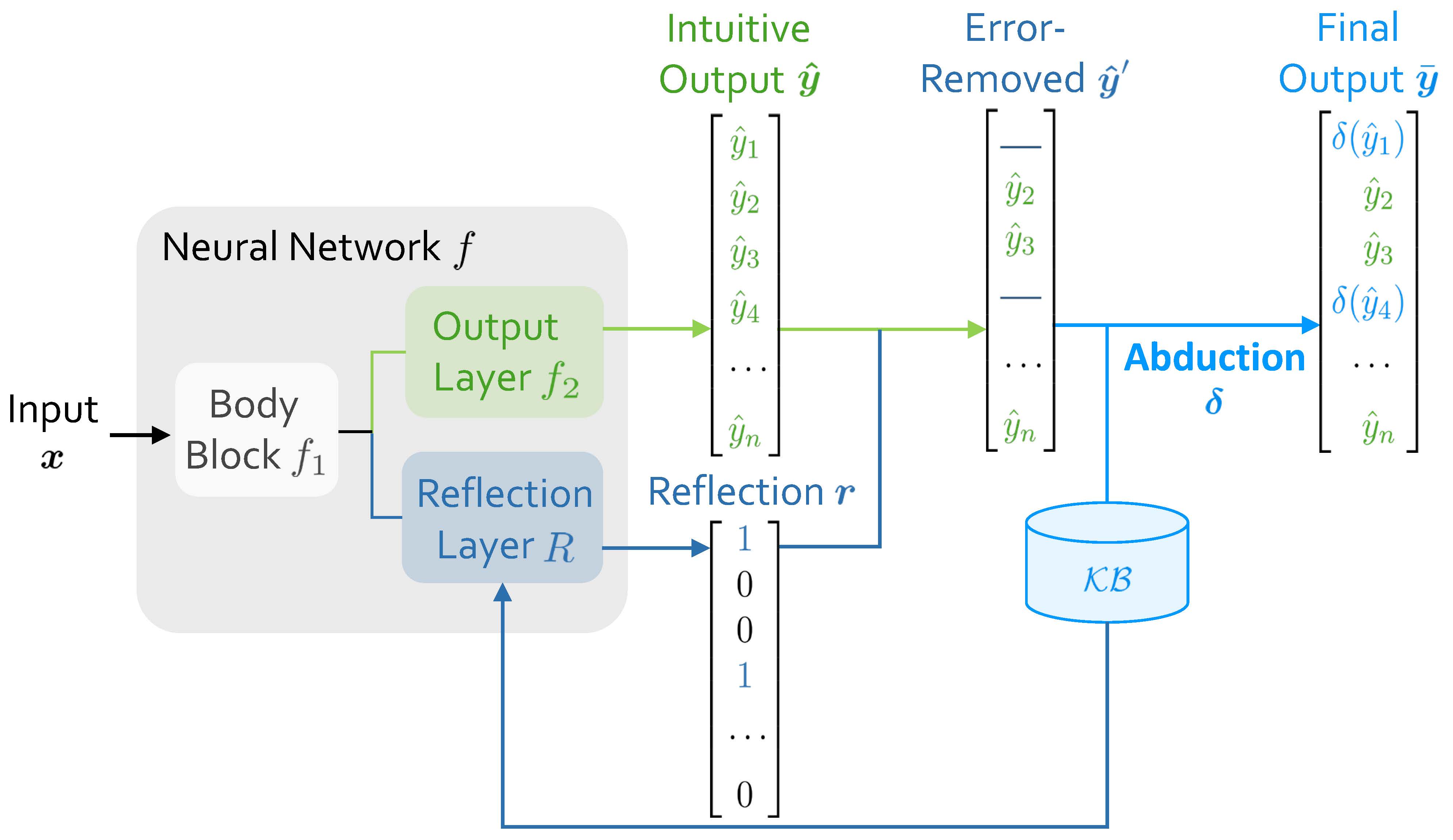

Figure 2: Architecture of Abductive Reflection (ABL-Refl). It replaces the external consistency optimization module with an efficient reflection mechanism, which is abduced directly from $\mathcal{KB}$ .

Besides the structure described above, as shown in Figure 2, our architecture further incorporates a reflection layer $R$ after the body block $f_{1}$ , generating a reflection vector: $\boldsymbol{r}=\text{argmax}(R(f_{1}(\boldsymbol{x})))\in\{0,1\}^{n}$ . The reflection layer $R$ and reflection vector $\boldsymbol{r}$ together constitute the reflection mechanism. This vector $\boldsymbol{r}$ has the same dimensionality $n$ as the intuitive output $\boldsymbol{\hat{y}}$ , and each element, $r_{i}$ , acts as a binary classifier to indicate whether the corresponding element $\hat{y}_{i}$ is an error leading to inconsistencies with $\mathcal{KB}$ (flagged as 1 for an error, and 0 otherwise). The reflection vector $\boldsymbol{r}$ is generated concurrently with the intuitive response during inference, resonating with human cognition where cognitive reflection typically forms right upon generation of an intuitive response (Frederick 2005).

With the initial intuitive output $\boldsymbol{\hat{y}}$ and the corresponding reflection vector $\boldsymbol{r}$ , we seamlessly obtain the error-removed output $\hat{\boldsymbol{y}}^{\prime}$ : In $\hat{\boldsymbol{y}}^{\prime}$ , elements flagged as error by $\boldsymbol{r}$ are removed and left as blanks, while the rest are retained. Subsequently, $\mathcal{KB}$ applies abduction to fill in these blanks, thereby generating an output $\boldsymbol{\bar{y}}$ that is consistent with $\mathcal{KB}$ . That is:

$$

\bar{y}_{i}=\begin{cases}\quad\!\!\;\hat{y}_{i},&r_{i}=0\\

\delta(\hat{y}_{i}),&r_{i}=1\end{cases}\quad i=1,2,\dots,n

$$

where $\delta$ denotes abduction. We treat $\boldsymbol{\bar{y}}=\left[\bar{y}_{1},\bar{y}_{2},\dots,\bar{y}_{n}\right]$ as the final output.

During model training, the reflection is abduced from $\mathcal{KB}$ by directly leveraging information from domain knowledge (discussed later in Section 3.4). It can be seen as an attention mechanism generated from neural networks, which can help quickly focus symbolic reasoning specifically on areas it identifies as errors, hence largely narrowing the problem space of deliberate symbolic reasoning (Zhang et al. 2020).

#### Benefits.

Compared to previous ABL implementations, ABL-Refl replaces the zeroth-order consistency optimization module with the reflection mechanism to address the computational bottleneck. In this way, the need for a substantial number of querying $\mathcal{KB}$ is mitigated: After promptly pinpointing inconsistencies in System 1 output, regardless of the data scale, only a single invocation of $\mathcal{KB}$ is required to obtain a rectified and more consistent output.

Another thing worth noticing is that, in the architecture, the reflection layer directly connects to the body block, which helps leveraging information from the embeddings and linking more closely with the raw data. Therefore, the reflection vector $\boldsymbol{r}$ establishes a more direct and tighter bridge between raw data and domain knowledge.

### 3.4 Training Paradigm

In this section, we will discuss how to train the ABL-Refl method, especially the reflection in it.

<details>

<summary>extracted/6187776/figs/Opt.jpg Details</summary>

### Visual Description

## Diagram: Error Correction via Reflection and Knowledge Base Consistency Check

### Overview

The image is a technical flowchart illustrating a conceptual process for refining an initial output vector (`ŷ`) by removing errors using a reflection vector (`r`), resulting in a corrected output (`ŷ'`). Both the original and corrected outputs are then checked for consistency against a knowledge base (`KB`). The diagram uses color-coding (green for intuitive output, blue for reflection and error-removed components) and directional arrows to show data flow and operations.

### Components/Axes

The diagram is composed of the following labeled components, arranged spatially:

1. **Intuitive Output `ŷ` (Top-Left, Green):**

* A vertical vector containing elements: `ŷ₁`, `ŷ₂`, `ŷ₃`, `ŷ₄`, `...`, `ŷₙ`.

* This represents the initial, potentially erroneous output sequence.

2. **Reflection `r` (Bottom-Left, Blue):**

* A vertical binary vector containing elements: `1`, `0`, `0`, `1`, `...`, `0`.

* This vector appears to act as a mask or selector, where a `1` likely indicates an element in `ŷ` that is flagged for correction or removal.

3. **Error-Removed `ŷ'` (Center, Blue):**

* A vertical vector containing elements: `—`, `ŷ₂`, `ŷ₃`, `—`, `...`, `ŷₙ`.

* The dashes (`—`) in the first and fourth positions correspond to the `1`s in the `Reflection r` vector, indicating those elements (`ŷ₁` and `ŷ₄`) have been removed or nullified.

4. **Knowledge Base `KB` (Center-Right, Blue Cylinder):**

* Represented by a standard database cylinder icon labeled `KB`.

* This symbolizes a repository of established facts or rules used for validation.

5. **Consistency Check Functions (Right Side, Black Text):**

* `Con(ŷ, KB)`: An arrow points from the `Intuitive Output ŷ` to this function label.

* `Con(ŷ', KB)`: An arrow points from the `Error-Removed ŷ'` to this function label.

* These represent operations that evaluate the consistency of an output vector with the knowledge base.

### Detailed Analysis

**Data Flow and Relationships:**

1. A green arrow originates from the `Intuitive Output ŷ` vector. It splits into two paths:

* **Path 1 (Top):** Continues directly to the right, feeding into the `Con(ŷ, KB)` consistency check.

* **Path 2 (Bottom):** Turns downward and then right, entering the `Error-Removed ŷ'` vector. This path is combined with a blue arrow coming from the `Reflection r` vector.

2. A blue arrow originates from the `Reflection r` vector and merges with the green path from `ŷ` to form the input for the `Error-Removed ŷ'` vector. This visually represents the application of the reflection mask to the intuitive output.

3. A green arrow exits the `Error-Removed ŷ'` vector and points right to the `Con(ŷ', KB)` consistency check.

4. Blue lines connect the `KB` database cylinder to both consistency check functions (`Con(ŷ, KB)` and `Con(ŷ', KB)`), indicating that the knowledge base is queried or used by both operations.

**Component Isolation:**

* **Header/Left Region:** Contains the input data structures (`ŷ` and `r`).

* **Main/Center Region:** Contains the processing step (creation of `ŷ'`) and the core knowledge resource (`KB`).

* **Footer/Right Region:** Contains the output operations (the two consistency checks).

### Key Observations

* The process is **parallel**: The original output `ŷ` and the error-corrected output `ŷ'` are evaluated for consistency against the same `KB` simultaneously or in equivalent steps.

* The **Reflection `r` vector is a precise mask**. Its binary values directly map to the elements removed in `ŷ'`. In this specific example, `r = [1, 0, 0, 1, ..., 0]` leads to `ŷ' = [—, ŷ₂, ŷ₃, —, ..., ŷₙ]`.

* The diagram implies a **comparative analysis**. The system likely compares the results of `Con(ŷ, KB)` and `Con(ŷ', KB)` to determine if removing the reflected elements improved consistency with the knowledge base.

### Interpretation

This diagram models a **knowledge-grounded refinement or verification loop**, common in AI systems, particularly those involving reasoning or fact-checking.

* **What it demonstrates:** The process suggests that an initial AI-generated output (`ŷ`) may contain errors or inconsistencies. A separate "reflection" mechanism (`r`) identifies specific problematic components. These are filtered out to produce a candidate correction (`ŷ'`). The core validation step is checking both versions against a trusted `KB`. The system's goal is likely to produce an output that is more consistent with established knowledge.

* **Relationships:** The `Reflection r` is the control mechanism that acts upon the data (`ŷ`). The `KB` is the authoritative reference. The `Con` functions are the evaluators. The entire flow is a pipeline from generation (`ŷ`) to correction (`ŷ'`) to validation (both `Con` checks).

* **Notable Implications:** The architecture implies that error correction is not a black box but a targeted process based on identifiable flags (`r`). The parallel consistency checks allow for a direct A/B comparison: "Was the original output consistent? Is the modified version more consistent?" This is a foundational pattern for iterative improvement and self-correction in AI models. The use of vectors suggests the outputs are structured sequences (e.g., text tokens, data points).

</details>

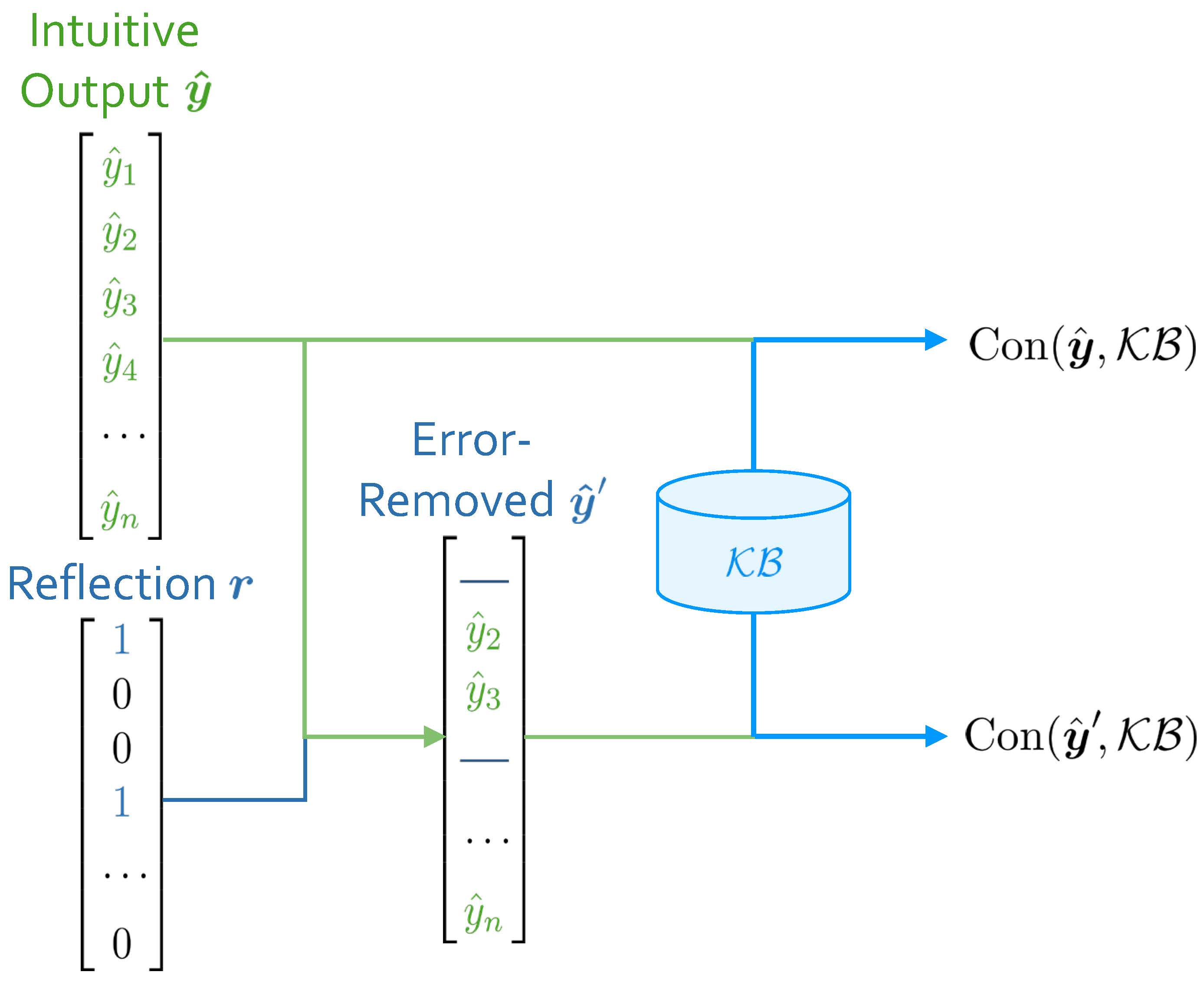

Figure 3: Consistency measurements.

In ABL-Refl, when each input $\boldsymbol{x}$ is processed by the neural network, we obtain the intuitive output $\boldsymbol{\hat{y}}$ and the reflection vector $\boldsymbol{r}$ , and subsequently obtain the error-removed (by $\boldsymbol{r}$ ) output $\boldsymbol{\hat{y}}^{\prime}$ . With $\boldsymbol{\hat{y}}$ and $\boldsymbol{\hat{y}}^{\prime}$ , we can measure their consistency with $\mathcal{KB}$ , respectively. We denote these consistency measurements as $\text{Con}(\boldsymbol{\hat{y}},\mathcal{KB})$ and $\text{Con}(\boldsymbol{\hat{y}}^{\prime},\mathcal{KB})$ , as shown in Figure 3. For a simplest example, if all elements in $\boldsymbol{\hat{y}}$ (or $\boldsymbol{\hat{y}}^{\prime}$ ) adhere to constraints in $\mathcal{KB}$ , the consistency measurement is 1; otherwise, it is 0.

Consequently, the improvement in consistency measurement after reflection, as denoted by

$$

\Delta\text{Con}_{\boldsymbol{r}}(\boldsymbol{\hat{y}})=\text{Con}(\boldsymbol

{\hat{y}}^{\prime},\mathcal{KB})-\text{Con}(\boldsymbol{\hat{y}},\mathcal{KB})

$$

naturally indicates the effectiveness of the reflection vector: A higher value of it signifies that reflection $\boldsymbol{r}$ can more effectively detect inconsistencies within $\boldsymbol{\hat{y}}$ . Our training goal is to guide the neural network’s parameters towards generating reflections that can maximize this value. Given that $\Delta\text{Con}_{\boldsymbol{r}}(\boldsymbol{\hat{y}})$ is usually a discrete value, we employ the REINFORCE algorithm to achieve this goal (Williams 1992), which optimizes the policy (implicitly defined by neural network $f$ ) through maximizing a specified reward — in this case, $\Delta\text{Con}_{\boldsymbol{r}}(\boldsymbol{\hat{y}})$ . This process leads to the following consistency loss:

$$

L_{con}(\boldsymbol{x})=-\Delta\text{Con}_{\boldsymbol{r}}(\boldsymbol{\hat{y}

})\cdot\nabla_{\theta}\log f_{\theta}\left(\boldsymbol{\hat{y}},\boldsymbol{r}

\mid\boldsymbol{x}\right) \tag{1}

$$

where $\theta$ are parameters of neural network $f$ .

Additionally, given that the time abduction required often escalates with problem size, we want to invoke it judiciously during inference, applying it only when it is truly necessary. Therefore, we aim to avoid the reflection vector from flagging too many elements in $\boldsymbol{\hat{y}}$ as error. To achieve this, we then introduce a reflection size loss:

$$

L_{size}(\boldsymbol{x})=\Phi\!\left(C-\frac{1}{n}\sum_{i=1}^{n}\left(1-R\left

(f_{1}(\boldsymbol{x})\right)_{i}\right)\right) \tag{2}

$$

where $\Phi(a)\triangleq\max(0,a)^{2}$ and $C$ is a hyperparameter ranging between 0 and 1. When $C$ is set at a higher value, the reflection vector tends to retain a greater number of intuitive output elements instead of flagging them as error and delegating to abduction.

In addition to the above-mentioned training methods, using labeled data, we employ data-driven supervised training methods similar to common neural network training paradigm. The loss function in this process, e.g., cross-entropy loss, is denoted by $L_{labeled}(\boldsymbol{x},\boldsymbol{y})$ .

Therefore, combining all the training loss, the total loss for ABL-Refl is represented as follows:

$$

\displaystyle\mathcal{L} \displaystyle=\frac{1}{|D_{l}|}\sum_{(\boldsymbol{x},\boldsymbol{y})\in D_{l}}

L_{labeled}(\boldsymbol{x},\boldsymbol{y}) \displaystyle+\frac{1}{|D_{l}\cup D_{u}|}\sum_{\boldsymbol{x}\in D_{l}\cup D_{

u}}(\alpha L_{con}(\boldsymbol{x})+\beta L_{size}(\boldsymbol{x})) \tag{3}

$$

where $\alpha$ and $\beta$ are hyperparameters, $D_{l}=\{(\boldsymbol{x}_{1},\boldsymbol{y}_{1}),(\boldsymbol{x}_{2}, \boldsymbol{y}_{2}),\dots\}$ are the labeled datasets and $D_{u}=\{\boldsymbol{x}_{1},\boldsymbol{x}_{2},\dots\}$ are the unlabeled datasets.

Note that neither $L_{con}$ nor $L_{size}$ , which are loss functions specifically related to the reflection, incorporate information from the data label. Instead, we leverage training information directly from $\mathcal{KB}$ to train the reflection. Also, despite sharing the prior feature layers, the output layer $f_{2}$ and reflection layer $R$ utilize different training information, thereby decoupling the objectives of intuitive problem-solving and inconsistency reflection.

## 4 Experiments

In this section, we will conduct several experiments. First, we will test our method on the NeSy benchmark task of solving Sudoku to comprehensively verify its effectiveness. Next, we will change the Sudoku input from symbols to images, which requires integrating and simultaneous reasoning with both sub-symbolic and symbolic elements, representing one of the most challenging tasks in this field. Finally, we will tackle NP-hard combinatorial optimization problems on graphs, using a knowledge base of only mathematical definitions, to demonstrate our method’s versatility. Through these experiments, we aim to answer the following questions:

- Compared to existing neuro-symbolic learning methods, can ABL-Refl achieve better performance in tasks requiring complex reasoning?

- Can ABL-Refl reduce the training resources required?

- Can ABL-Refl narrow the problem space for symbolic reasoning to achieve acceleration?

- Does ABL-Refl possess the capability for broad application, such as handling diverse data scenarios or various forms of domain knowledge?

All experiments are performed on a server with Intel Xeon Gold 6226R CPU and Tesla A100 GPU. In our experiments, we simply set hyperparameters $\alpha$ and $\beta$ in Eq. (3) to 1, since adjusting them does not have a noticeable impact on the results. For the hyperparameter $C$ in (2), we set it to 0.8, and have provided discussions in Appendix C, demonstrating that setting it to a value within a broad moderate range (e.g., 0.6-0.9) would always be a recommended choice. All experiments are repeated 5 times.

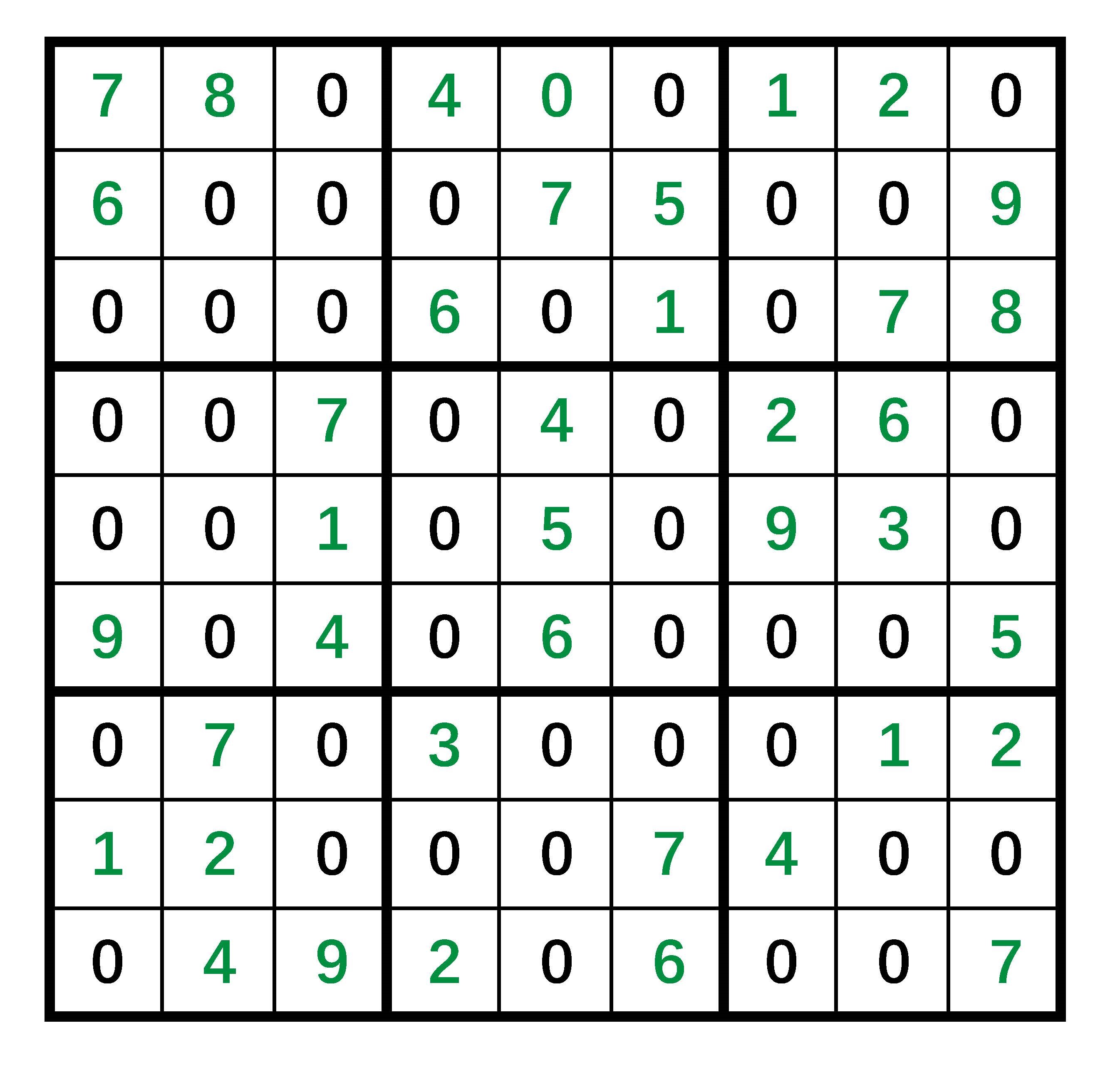

### 4.1 Solving Sudoku

#### Dataset and Setting.

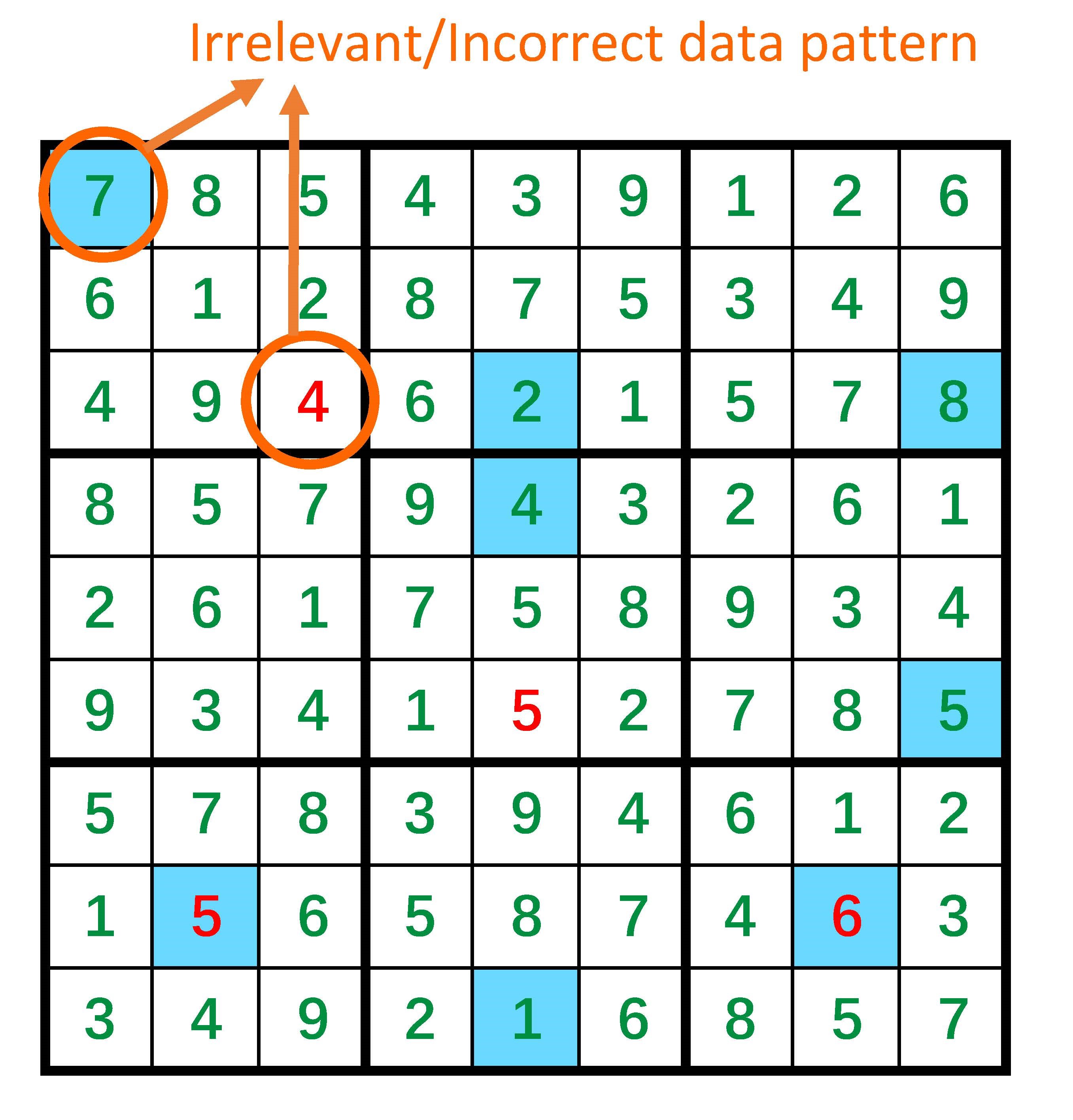

This task aims to solve a 9 $\times$ 9 Sudoku: Given 81 digits of 0-9 (where 0 represents a blank space) in a 9 $\times$ 9 board, we aim to find a solution $\boldsymbol{y}\in\{1,2,\dots,9\}^{81}$ that adhere to the Sudoku rules: no duplicate numbers are allowed in any row, column, or 3 $\times$ 3 subgrid. In this section, we first consider inputs in symbolic form, $\boldsymbol{x}\in\{0,1,\dots,9\}^{81}$ , and use datasets from a publicly available Kaggle site (Vopani 2019).

For the neural network $f$ , we use a simple graph neural network (GNN): the body block $f_{1}$ consists of one embedding layer and eight iterations of message-passing layers, resulting in a 128-dimensional embedding for each number, and then connects to both a linear output layer $f_{2}$ to obtain the intuitive output $\hat{\boldsymbol{y}}$ and a linear reflection layer $R$ to obtain the reflection vector ${\boldsymbol{r}}$ . We use the cross-entropy loss as $L_{labeled}$ . For the domain knowledge base $\mathcal{KB}$ , it contains the Sudoku rules mentioned above. We express $\mathcal{KB}$ in the form of propositional logic and utilize the MiniSAT solver (Sörensson 2010), an open-source SAT solver, as the symbolic solver to leverage $\mathcal{KB}$ and perform abduction.

For the consistency measurement, we define it as follows: one point is awarded for each row, each column and each 3 $\times$ 3 subgrid with no duplicate numbers, additionally, ten points are awarded if the entire board has no inconsistencies with $\mathcal{KB}$ . In this way, it is entirely based on $\mathcal{KB}$ . Notice that we deviated from the 1 or 0 measurement example setup mentioned in Section 3.4 to avoid a predominance of zero values in $\Delta\text{Con}_{\boldsymbol{r}}(\boldsymbol{\hat{y}})$ of Eq. (1), facilitating effective training with the REINFORCE algorithm. Similar considerations are applied in subsequent experiments.

#### Compared Methods and Results.

We compare ABL-Refl with the following baseline methods: 1) Recurrent Relational Network (RRN) (Palm, Paquet, and Winther 2018), a pure neural network method, 2) CL-STE (Yang, Lee, and Park 2022), a representative method of logic-based regularized loss, and 3) SATNet (Wang et al. 2019). A detailed description of these methods is provided in Appendix A. We also report the result for Simple GNN, which is the very same neural network used in our setting, yet directly treats the intuitive output $\hat{\boldsymbol{y}}$ as the final output.

| RRN CL-STE SATNet | 114.8 $\pm$ 7.8 173.6 $\pm$ 9.9 140.3 $\pm$ 6.8 | 0.19 $\pm$ 0.01 0.19 $\pm$ 0.02 0.11 $\pm$ 0.01 | 73.1 $\pm$ 1.2 76.5 $\pm$ 1.8 74.1 $\pm$ 0.4 |

| --- | --- | --- | --- |

| ABL-Refl | 109.8 $\pm$ 10.8 | 0.22 $\pm$ 0.02 | 97.4 $\pm$ 0.3 |

| Simple GNN | 29.7 $\pm$ 2.6 | 0.02 $\pm$ 0.00 | 55.6 $\pm$ 0.3 |

Table 1: Training time (for a total of 100 epochs using 20K training data), inference time and accuracy (on 1K test data) on solving Sudoku.

We report the training time (for a total of 100 epochs using 20K training data), inference time (on 1K test data) and accuracy (the percentage of completely accurate Sudoku solution boards on test data) in Table 1. We may see that our method outperforms the baselines significantly, improving by over 20% while maintaining a comparable inference time. This suggests an answer to Q1: ABL-Refl can achieve better reasoning performance. This improvement is primarily due to the use of abduction to rectify the neural network’s output during inference.

Furthermore, our method reaches high accuracy in only a few epochs (training curve is shown in Appendix B), significantly reducing training time. Even considering under identical training epochs, our total training time is less than baseline methods, despite involving a time-consuming symbolic solver. This partly stems from the neural network in our approach being less complex than those in baseline methods while achieving high accuracy. Overall, this suggests an answer to Q2: ABL-Refl can reduce the training time required.

We also attempt to reduce the amount of labeled data, removing labels from 50%, 75%, and 90% of the training data. We record the inference accuracy in Table 2. It can be observed that even with only 2K labeled training data, our method still achieves far better accuracy than the baseline methods with 20K labeled training data. This suggests an answer to Q2 from another aspect: ABL-Refl can reduce the labeled training data required.

| 20K | 0 | 97.4 $\pm$ 0.3 |

| --- | --- | --- |

| 10K | 10K | 96.3 $\pm$ 0.3 |

| 5K | 15K | 95.8 $\pm$ 0.6 |

| 2K | 18K | 94.7 $\pm$ 0.8 |

Table 2: Inference accuracy on solving Sudoku after reducing the amount of labeled data.

#### Comparing to Symbolic Solvers.

| Propositional logic ABL-Refl First-order logic | MiniSAT 97.4 $\pm$ 0.3 Prolog with CLP(FD) | Solver only 0.021 $\pm$ 0.004 Solver only | 100 $\pm$ 0 0.196 $\pm$ 0.015 100 $\pm$ 0 | - 0.217 $\pm$ 0.019 - | 0.227 $\pm$ 0.024 105.81 $\pm$ 5.62 | 0.227 $\pm$ 0.024 105.81 $\pm$ 5.62 |

| --- | --- | --- | --- | --- | --- | --- |

| ABL-Refl | 97.4 $\pm$ 0.3 | 0.021 $\pm$ 0.004 | 31.86 $\pm$ 1.88 | 31.88 $\pm$ 1.89 | | |

Table 3: Inference accuracy and time (on 1K test data) on solving Sudoku. For $\mathcal{KB}$ expressed in two different forms, ABL-Refl shows notable acceleration compared to symbolic solvers in both cases.

We next compare our method with merely employing symbolic solvers from scratch, to demonstrate its capability in accelerating symbolic reasoning. We perform inference on 1K test data and record the accuracy and time in Table 3. The inference time for our method includes the combined duration for data processing through both the neural network (NN time) and symbolic reasoning (abduction time).

As observed in the former two lines, our method achieves a notable acceleration in the abduction process, consequently decreasing the overall inference time, with only a minor compromise in accuracy. This efficiency gain is due to the fact that in ABL-Refl, after quickly generating an intuition through the neural network, abduction only needs to focus on areas identified as necessary by the reflection vector, whereas using only symbolic solvers requires abduction to reason through all blanks in a Sudoku puzzle. Overall, this suggests an answer to Q3: ABL-Refl can quickly generate the reflection, thereby reducing the symbolic reasoning search space and enhancing reasoning efficiency.

We also compared with Prolog with CLP(FD) (Triska 2012) solver, by expressing the same $\mathcal{KB}$ with a first-order constraint logic program. As shown in the table, we observe a significant reduction in abduction time and overall inference time, which puts another evidence to our previous answer to Q3, and also suggests an answer to Q4: ABL-Refl can effectively utilize the two most commonly used forms in symbolic knowledge representation, propositional logic and first-order logic.

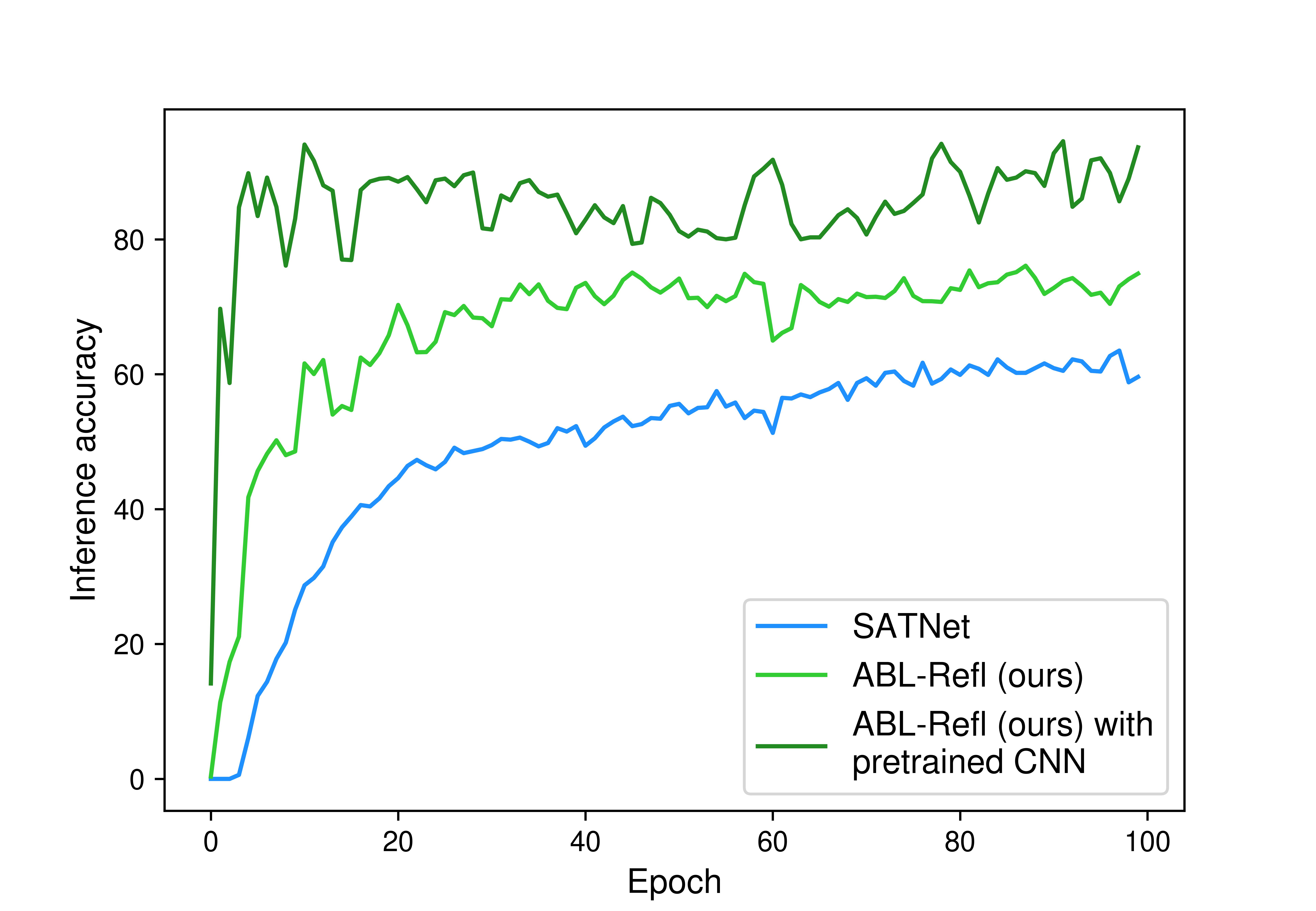

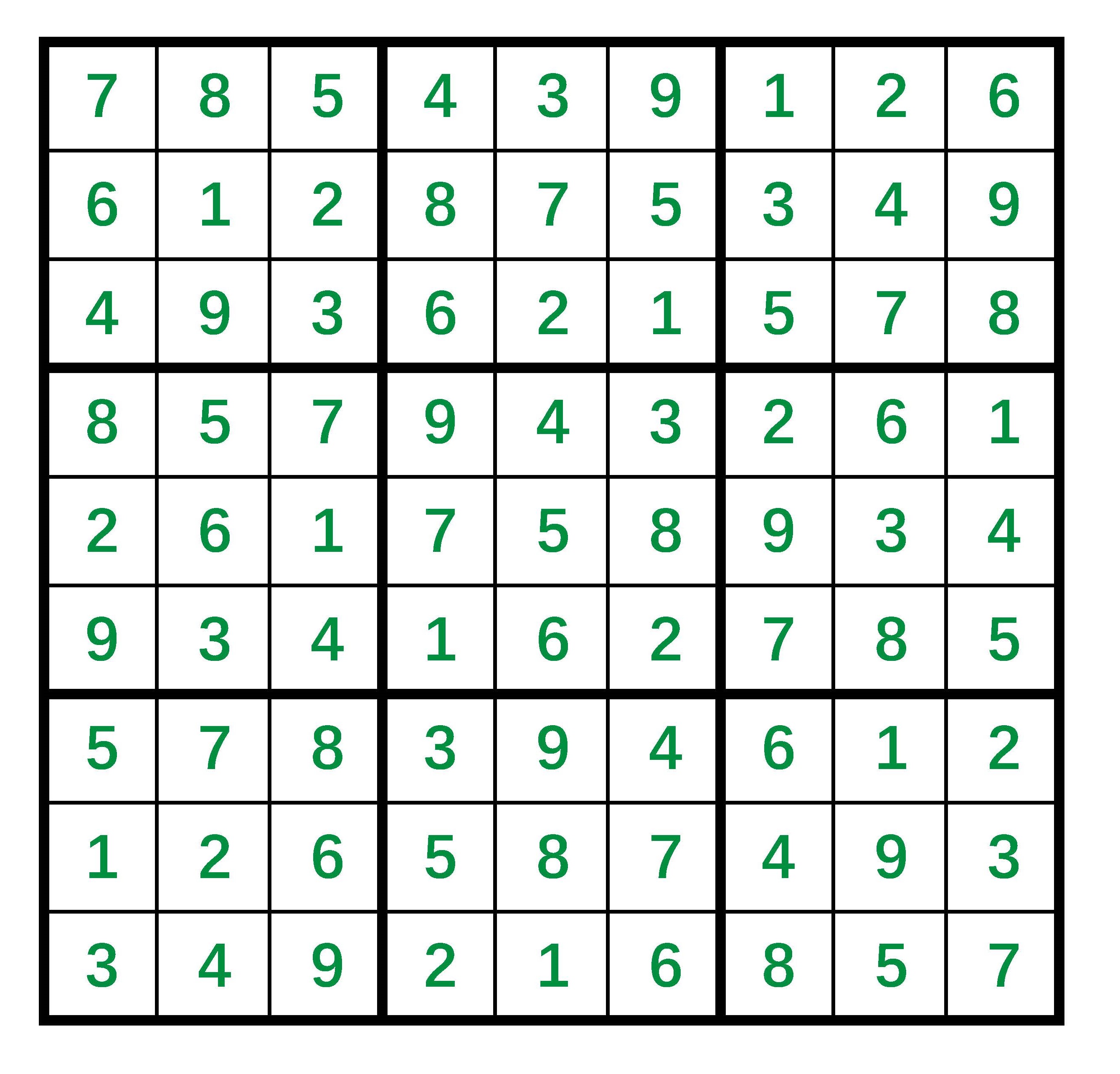

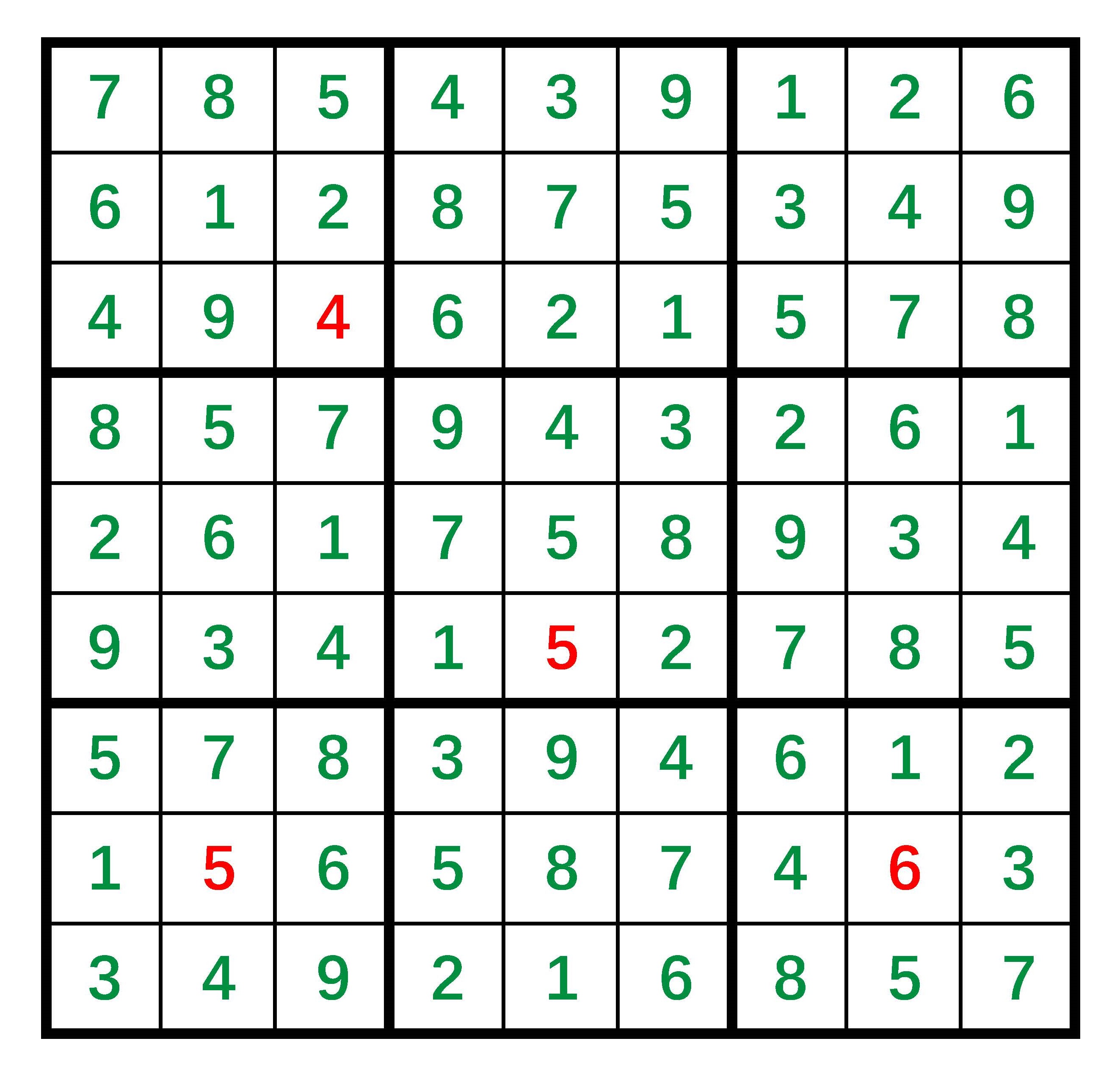

### 4.2 Solving Visual Sudoku

#### Dataset and Setting.

In this section, we modify the input from 81 symbolic digits to 81 MNIST images (handwritten digits of 0-9). We use the dataset provided in SATNet (Wang et al. 2019) and use 9K Sudoku boards for training and 1K for testing.

In order to process image data, we first pass each image through a LeNet convolutional neural network (CNN) (LeCun et al. 1998) to obtain the probability of each digit. The rest of our setting follows from that described in Section 4.1.

#### Compared Methods and Results.

We compare ABL-Refl with SATNet, as both methods allow for end-to-end training from visual inputs. We report the results in Table 4 and the training curve in Appendix B. Compared to SATNet, ABL-Refl shows notable improvement in reasoning accuracy within only a few training epochs. We then consider pretraining the CNN in advance using self-supervised learning methods (Chen et al. 2020) and find that this can further improve accuracy. Overall, the results further suggest positive answers to Q1 and Q2.

We also compare with CNN+Solver: each image is first mapped to symbolic form by a fully trained CNN (with 99.6% accuracy on the MNIST dataset) and then directly fed into the symbolic solver to fill in the blanks and derive the final output. In such scenarios, the problem space for the symbolic solver includes all the Sudoku blanks, and additionally, since the symbolic solver cannot revise errors from CNN, any inaccuracies in CNN’s output could lead the symbolic solver to crash (i.e., output no solution). Consequently, inference accuracy and time are adversely affected. This confirms the positive answer to Q3.

Finally, an overview of Sections 4.1 and 4.2 also suggests an answer to Q4: ABL-Refl is capable of handling both symbolic and sub-symbolic forms of input data.

| SATNet CNN+Solver ABL-Refl | 0.12 $\pm$ 0.01 0.23 $\pm$ 0.02 0.22 $\pm$ 0.02 | 63.5 $\pm$ 2.2 67.8 $\pm$ 4.2 77.8 $\pm$ 5.8 |

| --- | --- | --- |

| ABL-Refl (with pretrained CNN) | 0.22 $\pm$ 0.02 | 93.5 $\pm$ 3.2 |

Table 4: Inference time (on 1K test data) and accuracy on solving visual Sudoku.

### 4.3 Solving Combinatorial Optimization Problems on Graphs

In this section, we will further expand the application domain of our method. We apply ABL-Refl to solving combinatorial optimization problems on graphs. We conduct the experiment on finding the maximum clique in this section, and provide an additional experiment in Appendix E.

#### Dataset and Setting.

In this task, we are given a graph $G=(V,E)$ with $|V|=n$ nodes, and aim to output $\boldsymbol{y}\in\{0,1\}^{n}$ , where each index corresponds to a node, and the set of indices assigned the value of 1 collectively constitute the maximum clique. Note that this problem is a challenging NP-hard problem with extensive applications in real-life scenarios, and is generally considered challenging for neural networks (Zhang et al. 2023).

We use several datasets from the TUDatasets (Morris et al. 2020), with their basic information shown in Table 5. We use 80% of the data for training and 20% for testing.

In our method, the body layer $f_{1}$ consists of a single GAT layer (Veličković et al. 2017) and 16 gated graph convolution layers (Li et al. 2015), and the output layer $f_{2}$ and reflection layer $R$ are both linear layers. We use binary cross-entropy loss as $L_{labeled}$ . The domain knowledge base $\mathcal{KB}$ expresses the mathematical definition of maximum clique, i.e., every pair of vertices in the output set should be connected by an edge. We use Gurobi solver, an efficient mixed-integer program solver, to perform abduction. We define the consistency measurement as follows: one point is awarded for each pair of vertices if they are not connected by an edge; additionally, the size of the output set multiplied by 10 is added if the output set is indeed a clique.

| Method | Dataset (Graph nums./Avg. nodes per graph/Avg. edges per graph) | | | |

| --- | --- | --- | --- | --- |

| ENZYMES (600/33/62) | PROTEINS (1113/39/73) | IMDB-Binary (1000/19/97) | COLLAB (5000/74/2457) | |

| Erdos | 0.883 $\pm$ 0.156 | 0.905 $\pm$ 0.133 | 0.936 $\pm$ 0.175 | 0.852 $\pm$ 0.212 |

| Neural SFE | 0.933 $\pm$ 0.148 | 0.926 $\pm$ 0.165 | 0.961 $\pm$ 0.143 | 0.781 $\pm$ 0.316 |

| ABL-Refl | 0.991 $\pm$ 0.017 | 0.985 $\pm$ 0.020 | 0.979 $\pm$ 0.029 | 0.982 $\pm$ 0.015 |

Table 5: Approximation ratios on finding maximum clique on different datasets.

#### Compared Methods and Results.

We compare our methods with the following baselines: 1) Erdos (Karalias and Loukas 2020), 2) Neural SFE (Karalias et al. 2022), both leading methods for solving graph combinatorial problems. Their detailed descriptions are provided in Appendix A.

We report the approximation ratios in Table 5. The approximation ratio, indicating the result set size relative to the actual maximum set size, is better when closer to 1. We may observe that our method outperforms the baseline methods, achieving near-perfect results on all datasets. This confirms the positive answer to Q1. Also, as the scale of the data increases, our method maintains a high level of accuracy, showing a more pronounced improvement compared to baseline methods. This suggests an answer to Q4: ABL-Refl is capable of handling scalable data scenarios, even in high-dimensional settings that are challenging for previous methods. Finally, an overview of this section provides another aspect to Q4: ABL-Refl can utilize a wide range of $\mathcal{KB}$ , not limited to logical expressions but can also operate effectively with just the basic mathematical formulations.

## 5 Effects of Reflection Mechanism

This section provides a further analysis on the reflection mechanism. In ABL-Refl, the reflection is abduced from domain knowledge, and acts as an efficient attention mechanism to direct the focus for symbolic search. This reflection is the key in our method to accomplish the NeSy reasoning rectification pipeline, i.e., a pipeline that detects errors in neural networks and then invokes symbolic reasoning to rectify these positions. To corroborate the effectiveness of the reflection, we conduct direct comparison with other methods that achieve the same pipeline:

1. ABL, minimizing the inconsistency of intuitive output and knowledge base with an external zeroth-order consistency optimization module, as detailed in Section 3.2;

1. NN Confidence, retaining intuitive output with the top 80% confidence from the neural network result (other retain thresholds are explored in Appendix D) and passing the remaining into symbolic reasoning;

1. NASR (Cornelio et al. 2023), using a Transformer-based external selection module to detect error, and the module is trained on a large synthetic dataset in advance.

We compare them on the solving visual Sudoku task in Section 4.2. For a fair comparison, all methods employ the same neural network, $\mathcal{KB}$ and MiniSAT solver setup. We report the recall (the percentage of errors from neural networks that can be identified), inference time and accuracy (on 1K test data) in Table 6. Note that “recall” directly evaluates the effectiveness of the detection module itself. The following analysis examines the results:

- The consistency optimization in ABL faces significant efficiency challenges due to the large data scale (output dimension $n=81$ ). In such scenarios, the potential rectifications can reach up to $2^{81}$ , resulting in an overwhelmingly large search space for consistency optimization. Also, as an external module, its only way of interacting with $\mathcal{KB}$ is to treat it as a black box and repetitively submit queries for consistency evaluation. As a result, it may require more than $10^{9}$ queries to identify errors for each Sudoku example, resulting in several hours to complete inference on 1K test data.

- NN Confidence performs poorly in identifying outputs with errors. Since the pure data-driven neural network training does not explicitly incorporate $\mathcal{KB}$ information, a low confidence from it does not necessarily indicate an inconsistency with the domain knowledge. This subsequently results in the frequent crashing in symbolic solver, therefore hampering the overall inference time and accuracy. This result parallels human cognitive reflection abilities, which do not show much positive correlation with System 1 intuition (Pennycook et al. 2016). To further illustrate this point, we provide additional analysis, including a case study, in Appendix D.

- Our method also outperforms NASR, and notably, without the need of a synthetic dataset. This could be due to the fact that NASR’s error-selection module is trained independently from other components, and operates sequentially and separately during inference. Therefore, it can only rely on information from the output label, in contrast to our method, which can leverage information directly from the body block of neural network, establishing a deeper connection with the raw data. Additionally, in NASR, traversing the separate selection module takes additional time, whereas in ABL-Refl, the reflection is generated concurrently with the neural network output, avoiding efficiency loss.

| NN Confidence NASR ABL-Refl | 82.64 $\pm$ 2.78 95.86 $\pm$ 0.96 99.04 $\pm$ 0.85 | 0.24 $\pm$ 0.03 0.26 $\pm$ 0.02 0.22 $\pm$ 0.02 | 64.3 $\pm$ 6.2 82.7 $\pm$ 4.4 93.5 $\pm$ 3.2 |

| --- | --- | --- | --- |

Table 6: Recall, inference time and accuracy. ”Timeout” indicates that inference takes more than 1 hour.

## 6 Conclusion

In this paper, we present Abductive Reflection (ABL-Refl). It leverages domain knowledge to abduce a reflection vector, which flags potential errors in neural network outputs and then invokes abduction, serving as an attention mechanism for symbolic reasoning to focus on a much smaller problem space. Experiments show that ABL-Refl significantly outperforms other NeSy methods, achieving excellent reasoning accuracy with fewer training resources, and has successfully enhanced reasoning efficiency.

ABL-Refl preserves the integrity of both machine learning and logical reasoning with superior inference speed and high versatility. Therefore, it has the potential for broad application. In the future, it can be applied to large language models (Mialon et al. 2023) to help identify errors within their outputs, and subsequently exploit symbolic reasoning to enhance their trustworthiness and reliability.

## Acknowledgments

This research was supported by the NSFC (62176117, 62206124) and Jiangsu Science Foundation Leading-edge Technology Program (BK20232003).

## References

- Ahmed et al. (2022) Ahmed, K.; Wang, E.; Chang, K.-W.; and Van den Broeck, G. 2022. Neuro-symbolic entropy regularization. In Uncertainty in Artificial Intelligence, 43–53. PMLR.

- Amos and Kolter (2017) Amos, B.; and Kolter, J. Z. 2017. Optnet: Differentiable optimization as a layer in neural networks. In International Conference on Machine Learning, 136–145. PMLR.

- Bengio (2019) Bengio, Y. 2019. From system 1 deep learning to system 2 deep learning. In Neural Information Processing Systems.

- Cai et al. (2021) Cai, L.-W.; Dai, W.-Z.; Huang, Y.-X.; Li, Y.-F.; Muggleton, S. H.; and Jiang, Y. 2021. Abductive Learning with Ground Knowledge Base. In Proceedings of the 30th International Joint Conference on Artificial Intelligence (IJCAI’21), 1815–1821.

- Chen et al. (2020) Chen, T.; Kornblith, S.; Norouzi, M.; and Hinton, G. 2020. A simple framework for contrastive learning of visual representations. In International conference on machine learning, 1597–1607. PMLR.

- Cornelio et al. (2023) Cornelio, C.; Stuehmer, J.; Hu, S. X.; and Hospedales, T. 2023. Learning where and when to reason in neuro-symbolic inference. In The Eleventh International Conference on Learning Representations.

- Dai et al. (2019) Dai, W.-Z.; Xu, Q.; Yu, Y.; and Zhou, Z.-H. 2019. Bridging machine learning and logical reasoning by abductive learning. Advances in Neural Information Processing Systems, 32.

- Frederick (2005) Frederick, S. 2005. Cognitive reflection and decision making. Journal of Economic perspectives, 19(4): 25–42.

- Gao et al. (2024) Gao, E.-H.; Huang, Y.-X.; Hu, W.-C.; Zhu, X.-H.; and Dai, W.-Z. 2024. Knowledge-Enhanced Historical Document Segmentation and Recognition. In Proceedings of the 38th AAAI Conference on Artificial Intelligence (AAAI’24), 8409–8416.

- Han et al. (2023) Han, Q.; Yang, L.; Chen, Q.; Zhou, X.; Zhang, D.; Wang, A.; Sun, R.; and Luo, X. 2023. A GNN-Guided Predict-and-Search Framework for Mixed-Integer Linear Programming. arXiv preprint arXiv:2302.05636.

- Hitzler (2022) Hitzler, P. 2022. Neuro-symbolic artificial intelligence: The state of the art. IOS Press.

- Hoernle et al. (2022) Hoernle, N.; Karampatsis, R. M.; Belle, V.; and Gal, K. 2022. Multiplexnet: Towards fully satisfied logical constraints in neural networks. In Proceedings of the AAAI Conference on Artificial Intelligence, volume 36, 5700–5709.

- Huang et al. (2020) Huang, Y.-X.; Dai, W.-Z.; Yang, J.; Cai, L.-W.; Cheng, S.; Huang, R.; Li, Y.-F.; and Zhou, Z.-H. 2020. Semi-Supervised Abductive Learning and Its Application to Theft Judicial Sentencing. In Proceedings of the 20th IEEE International Conference on Data Mining (ICDM’20), 1070–1075.

- Huang et al. (2024) Huang, Y.-X.; Hu, W.-C.; Gao, E.-H.; and Jiang, Y. 2024. ABLkit: A Python Toolkit for Abductive Learning. Frontiers of Computer Science, pp. to appear.

- Kahneman (2011) Kahneman, D. 2011. Thinking, fast and slow. macmillan.

- Karalias and Loukas (2020) Karalias, N.; and Loukas, A. 2020. Erdos goes neural: an unsupervised learning framework for combinatorial optimization on graphs. Advances in Neural Information Processing Systems, 33: 6659–6672.

- Karalias et al. (2022) Karalias, N.; Robinson, J.; Loukas, A.; and Jegelka, S. 2022. Neural Set Function Extensions: Learning with Discrete Functions in High Dimensions. arXiv preprint arXiv:2208.04055.

- LeCun et al. (1998) LeCun, Y.; Bottou, L.; Bengio, Y.; and Haffner, P. 1998. Gradient-based learning applied to document recognition. Proceedings of the IEEE, 86(11): 2278–2324.

- Li et al. (2015) Li, Y.; Tarlow, D.; Brockschmidt, M.; and Zemel, R. 2015. Gated graph sequence neural networks. arXiv preprint arXiv:1511.05493.

- Manhaeve et al. (2018) Manhaeve, R.; Dumancic, S.; Kimmig, A.; Demeester, T.; and De Raedt, L. 2018. Deepproblog: Neural probabilistic logic programming. advances in neural information processing systems, 31.

- Marra et al. (2020) Marra, G.; Giannini, F.; Diligenti, M.; and Gori, M. 2020. Integrating learning and reasoning with deep logic models. In Machine Learning and Knowledge Discovery in Databases, 517–532. Springer.

- Mialon et al. (2023) Mialon, G.; Fourrier, C.; Swift, C.; Wolf, T.; LeCun, Y.; and Scialom, T. 2023. GAIA: a benchmark for General AI Assistants. arXiv preprint arXiv:2311.12983.

- Morris et al. (2020) Morris, C.; Kriege, N. M.; Bause, F.; Kersting, K.; Mutzel, P.; and Neumann, M. 2020. Tudataset: A collection of benchmark datasets for learning with graphs. arXiv preprint arXiv:2007.08663.

- Nair et al. (2020) Nair, V.; Bartunov, S.; Gimeno, F.; Von Glehn, I.; Lichocki, P.; Lobov, I.; O’Donoghue, B.; Sonnerat, N.; Tjandraatmadja, C.; Wang, P.; et al. 2020. Solving mixed integer programs using neural networks. arXiv preprint arXiv:2012.13349.

- Nye et al. (2021) Nye, M.; Tessler, M.; Tenenbaum, J.; and Lake, B. M. 2021. Improving coherence and consistency in neural sequence models with dual-system, neuro-symbolic reasoning. Advances in Neural Information Processing Systems, 34: 25192–25204.

- Palm, Paquet, and Winther (2018) Palm, R.; Paquet, U.; and Winther, O. 2018. Recurrent relational networks. Advances in neural information processing systems, 31.

- Pennycook et al. (2016) Pennycook, G.; Cheyne, J. A.; Koehler, D. J.; and Fugelsang, J. A. 2016. Is the cognitive reflection test a measure of both reflection and intuition? Behavior research methods, 48: 341–348.

- Selsam et al. (2018) Selsam, D.; Lamm, M.; Bünz, B.; Liang, P.; de Moura, L.; and Dill, D. L. 2018. Learning a SAT solver from single-bit supervision. arXiv preprint arXiv:1802.03685.

- Serafini and Garcez (2016) Serafini, L.; and Garcez, A. d. 2016. Logic tensor networks: Deep learning and logical reasoning from data and knowledge. arXiv preprint arXiv:1606.04422.

- Sinayev and Peters (2015) Sinayev, A.; and Peters, E. 2015. Cognitive reflection vs. calculation in decision making. Frontiers in psychology, 6: 532.

- Sörensson (2010) Sörensson, N. 2010. Minisat 2.2 and minisat++ 1.1. A short description in SAT Race.

- Triska (2012) Triska, M. 2012. The finite domain constraint solver of SWI-Prolog. In Functional and Logic Programming: 11th International Symposium, 307–316. Springer.

- Veličković et al. (2017) Veličković, P.; Cucurull, G.; Casanova, A.; Romero, A.; Lio, P.; and Bengio, Y. 2017. Graph attention networks. arXiv preprint arXiv:1710.10903.

- Vopani (2019) Vopani. 2019. 9 Million Sudoku Puzzles and Solutions. https://www.kaggle.com/datasets/rohanrao/sudoku. Accessed: 2024-08-01.

- Wang et al. (2021) Wang, J.; Deng, D.; Xie, X.; Shu, X.; Huang, Y.-X.; Cai, L.-W.; Zhang, H.; Zhang, M.-L.; Zhou, Z.-H.; and Wu, Y. 2021. Tac-Valuer: Knowledge-based Stroke Evaluation in Table Tennis. In Proceedings of the 27th ACM SIGKDD Conference on Knowledge Discovery and Data Mining (KDD’21), 3688–3696.

- Wang et al. (2019) Wang, P.-W.; Donti, P.; Wilder, B.; and Kolter, Z. 2019. Satnet: Bridging deep learning and logical reasoning using a differentiable satisfiability solver. In International Conference on Machine Learning, 6545–6554. PMLR.

- Williams (1992) Williams, R. J. 1992. Simple statistical gradient-following algorithms for connectionist reinforcement learning. Reinforcement learning, 5–32.

- Xu et al. (2018) Xu, J.; Zhang, Z.; Friedman, T.; Liang, Y.; and Broeck, G. 2018. A semantic loss function for deep learning with symbolic knowledge. In International conference on machine learning, 5502–5511. PMLR.

- Yang, Ishay, and Lee (2020) Yang, Z.; Ishay, A.; and Lee, J. 2020. Neurasp: Embracing neural networks into answer set programming. In 29th International Joint Conference on Artificial Intelligence.

- Yang, Lee, and Park (2022) Yang, Z.; Lee, J.; and Park, C. 2022. Injecting logical constraints into neural networks via straight-through estimators. In International Conference on Machine Learning, 25096–25122. PMLR.

- Zhang et al. (2023) Zhang, B.; Luo, S.; Wang, L.; and He, D. 2023. Rethinking the expressive power of gnns via graph biconnectivity. arXiv preprint arXiv:2301.09505.

- Zhang et al. (2020) Zhang, W.; Sun, Z.; Zhu, Q.; Li, G.; Cai, S.; Xiong, Y.; and Zhang, L. 2020. NLocalSAT: Boosting local search with solution prediction. arXiv preprint arXiv:2001.09398.

- Zhou (2019) Zhou, Z.-H. 2019. Abductive learning: towards bridging machine learning and logical reasoning. Science China Information Sciences, 62: 1–3.

- Zhou and Huang (2022) Zhou, Z.-H.; and Huang, Y.-X. 2022. Abductive Learning. In Hitzler, P.; and Sarker, M. K., eds., Neuro-Symbolic Artificial Intelligence: The State of the Art, 353–369. Amsterdam: IOS Press.

## Appendix A Comparison Methods

In this section, we will provide a brief supplementary introduction to the compared baseline methods used in experiments.

### A.1 Solving Sudoku

In the solving Sudoku experiment (Section 4.1 and 4.2), we have compared our method with the following baselines:

1. Recurrent Relational Network (RRN) (Palm, Paquet, and Winther 2018), a state-of-the-art pure neural network method tailored for this problem;

1. CL-STE (Yang, Lee, and Park 2022), injecting logical knowledge (defined in the same way as our $\mathcal{KB}$ ) as neural network constraints during the training of RRN;

1. SATNet (Wang et al. 2019), incorporating a differentiable MaxSAT solver into the neural network to perform reasoning.

Note that CL-STE is a representative method of logic-based regularized loss, relaxing symbolic logic as neural network loss. Additionally, among these methods, CL-STE stands out in both accuracy and efficiency (partly because it prevents constructing complex SDDs, unlike other methods including semantic loss (Xu et al. 2018)).

Other lines of methods generally underperform above baselines in scenarios where $n$ (the scale of $\boldsymbol{y}$ ) is high. For instance, ABL faces the challenge where consistency optimization needs to choose among exponential query candidates, resulting in runtimes thousands of times longer than other methods, as seen in Section 5. Take two other representative NeSy methods as examples: DeepProbLog (Manhaeve et al. 2018) involves substantial computational costs, taking days to complete solving Sudoku; NeurASP (Yang, Ishay, and Lee 2020) also performs slow and lags in accuracy, as shown in Yang et al. (2022).

### A.2 Solving Combinatorial Optimization on Graphs

In the solving combinatorial optimization on graphs experiment (Section 4.3 and Appendix E), we have compared our method with the following baselines:

1. Erdos (Karalias and Loukas 2020), optimizing set functions using a neural network parametrizing a distribution over sets;

1. Neural SFE (Karalias et al. 2022), optimizing set functions by extending them onto high-dimensional continuous domains.

In this experiment, the above methods use the same body block graph neural network as our method.

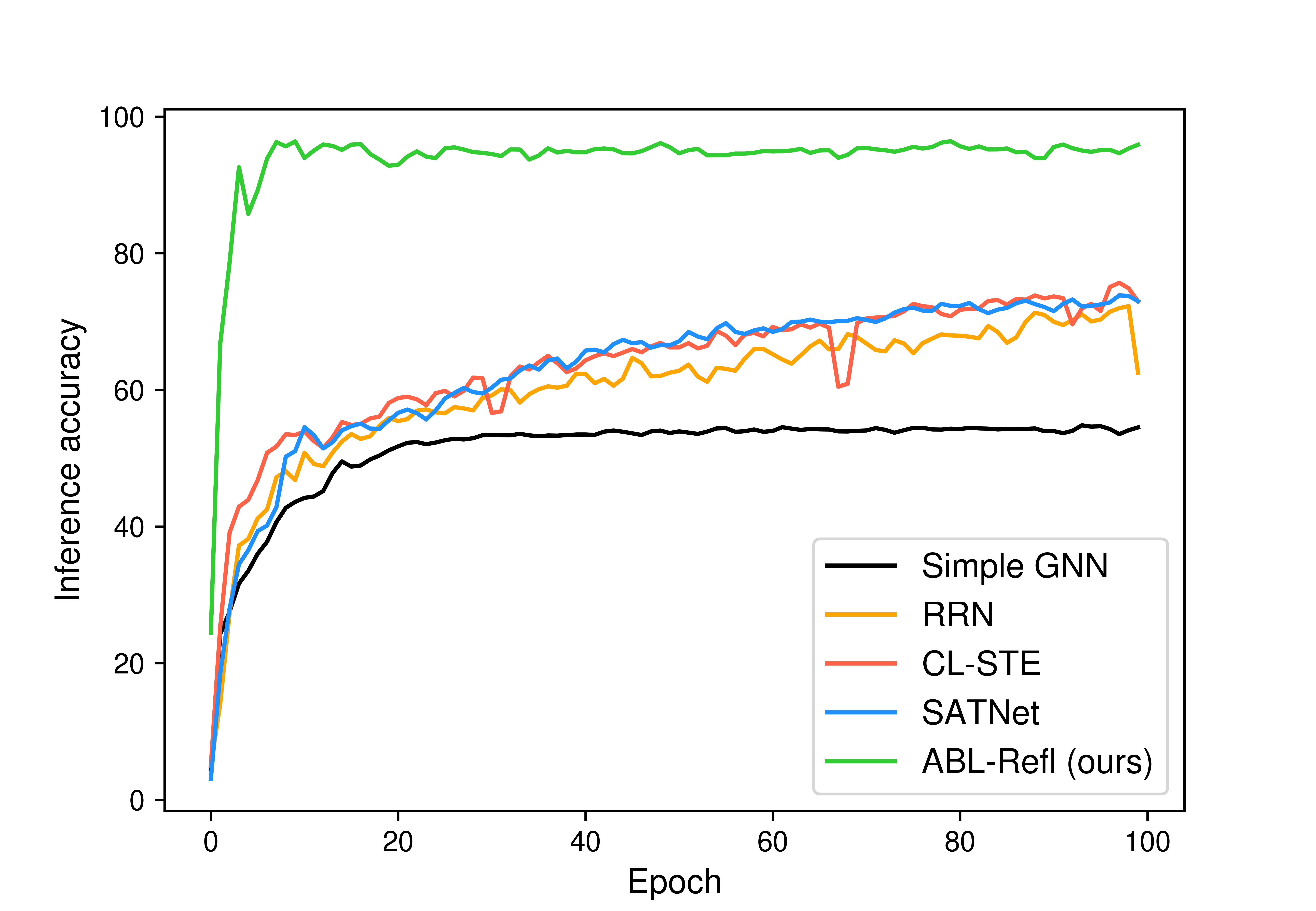

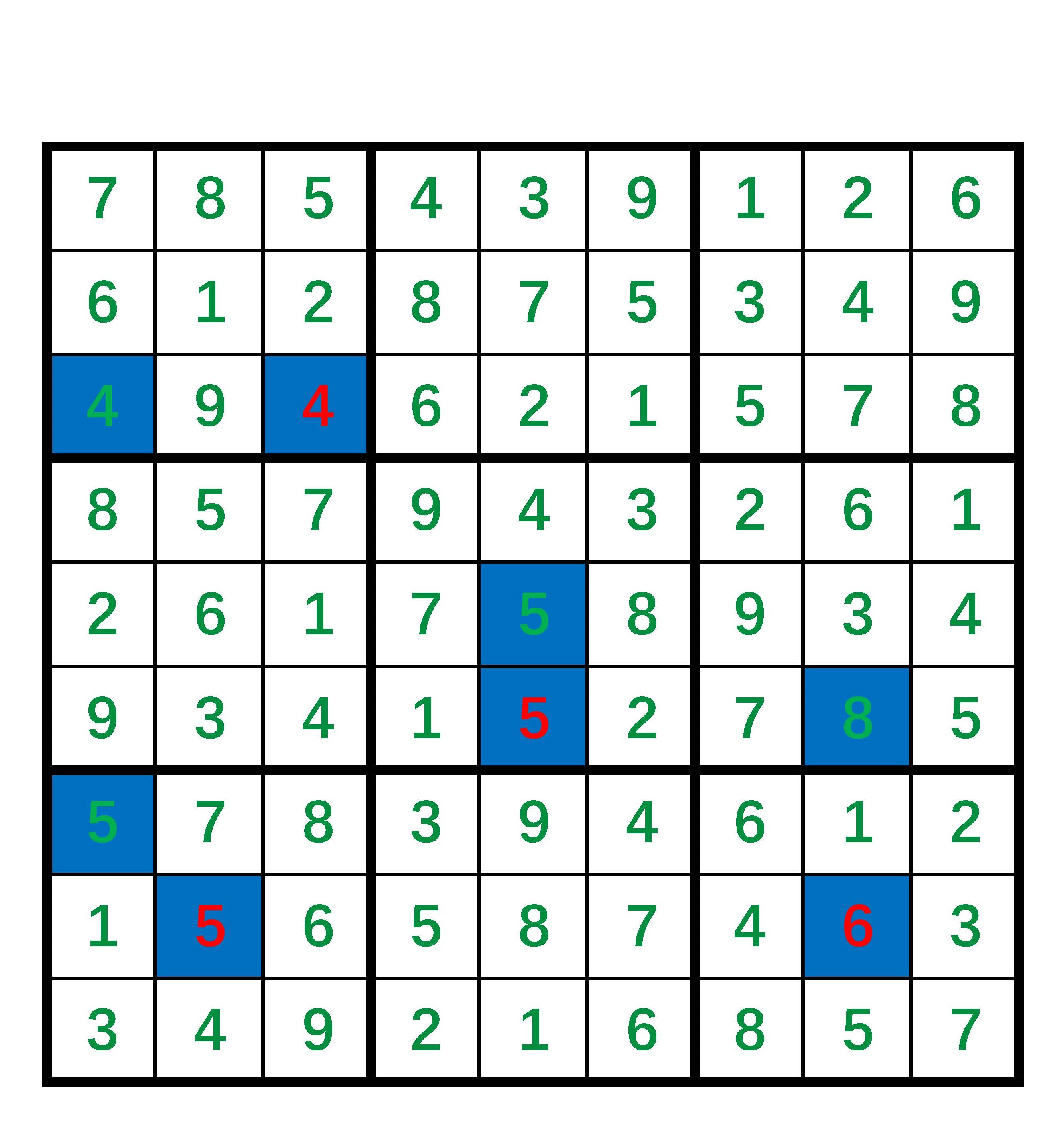

## Appendix B Training Curve

In this section, we will report the training curve in the experiments on solving Sudoku (Section 4.1) and visual Sudoku (Section 4.2). The respective training curves for each scenario are shown in Figures 4(a) and 4(b), with the horizontal axis representing training epochs and the vertical axis representing inference accuracy. We may see that our method achieves high accuracy within just a few epochs, significantly reducing training time compared to other baseline methods.

<details>

<summary>extracted/6187776/figs/sudoku.jpg Details</summary>

### Visual Description

## Line Chart: Inference Accuracy Comparison Over Training Epochs

### Overview

The image is a line chart comparing the inference accuracy of five different machine learning models over the course of 100 training epochs. The chart demonstrates the learning curves and final performance of each model.

### Components/Axes

* **X-Axis (Horizontal):** Labeled "Epoch". It represents the training iterations, with major tick marks at 0, 20, 40, 60, 80, and 100.

* **Y-Axis (Vertical):** Labeled "Inference accuracy". It represents the model's accuracy percentage, with major tick marks at 0, 20, 40, 60, 80, and 100.

* **Legend:** Located in the bottom-right quadrant of the chart area. It maps line colors to model names:

* Black line: `Simple GNN`

* Orange line: `RRN`

* Red line: `CL-STL`

* Blue line: `SATNet`

* Green line: `ABL-Refl (ours)`

### Detailed Analysis

The chart plots five distinct data series, each showing a different trajectory of accuracy improvement over time.

1. **ABL-Refl (ours) - Green Line:**

* **Trend:** Exhibits an extremely rapid initial ascent, reaching near-peak performance within the first ~10 epochs. After this initial spike, it maintains a very high and relatively stable accuracy with minor fluctuations for the remainder of the training.

* **Data Points (Approximate):** Starts near 0% at epoch 0. Surges to approximately 90-95% by epoch 5. From epoch 10 to 100, it fluctuates in a high band, consistently between ~94% and ~97%.

2. **SATNet - Blue Line:**

* **Trend:** Shows a steady, logarithmic-style growth curve. It improves rapidly in the early epochs and then continues to gain accuracy at a decreasing rate, with some volatility.

* **Data Points (Approximate):** Starts near 0%. Reaches ~50% by epoch 10, ~65% by epoch 40, and ~70% by epoch 70. It ends near ~73% at epoch 100.

3. **CL-STL - Red Line:**

* **Trend:** Follows a growth pattern very similar to SATNet (blue), often intertwining with it. It shows a general upward trend with noticeable volatility, including a significant dip.

* **Data Points (Approximate):** Starts near 0%. Tracks closely with the blue line, reaching ~70% by epoch 70. It experiences a sharp drop to approximately 60% around epoch 65 before recovering. It ends near ~74% at epoch 100, slightly above the blue line.

4. **RRN - Orange Line:**

* **Trend:** Also follows a logarithmic growth curve but consistently performs slightly below the blue (SATNet) and red (CL-STL) lines for most of the training. It shows moderate volatility.

* **Data Points (Approximate):** Starts near 0%. Reaches ~45% by epoch 10, ~60% by epoch 40, and ~68% by epoch 80. It ends near ~72% at epoch 100.

5. **Simple GNN - Black Line:**

* **Trend:** Shows the slowest initial growth and plateaus at the lowest final accuracy. Its curve is smoother with less volatility compared to the other non-green lines.

* **Data Points (Approximate):** Starts near 0%. Reaches ~40% by epoch 10, ~52% by epoch 20, and then plateaus. It maintains an accuracy between ~53% and ~55% from epoch 30 to 100.

### Key Observations

* **Performance Hierarchy:** There is a clear and substantial performance gap. The `ABL-Refl (ours)` model (green) dramatically outperforms all other models from the very beginning and maintains a lead of approximately 20-25 percentage points in accuracy throughout.

* **Convergence Speed:** `ABL-Refl` converges to its peak performance almost immediately (within ~5-10 epochs), while the other four models require the full 100 epochs to approach their final, lower accuracy levels.

* **Model Grouping:** The `SATNet` (blue), `CL-STL` (red), and `RRN` (orange) models form a middle-performance cluster with similar learning dynamics, all ending in the low-to-mid 70% range. `Simple GNN` (black) is the lowest-performing model, plateauing in the mid-50% range.

* **Volatility:** The middle cluster (blue, red, orange lines) exhibits more volatility (jagged lines) during training compared to the relatively smooth plateau of the black line and the high, stable performance of the green line. The red line (`CL-STL`) shows the most pronounced single drop in performance.

### Interpretation

This chart is likely from a research paper introducing the `ABL-Refl` model. The data strongly suggests that the proposed `ABL-Refl` method is significantly more effective and efficient for the given inference task than the baseline methods (`Simple GNN`, `RRN`, `CL-STL`, `SATNet`).

* **What the data demonstrates:** The `ABL-Refl` model learns the task with far fewer training iterations (epochs) and achieves a much higher final accuracy. Its stability after the initial learning phase suggests robust generalization.

* **Relationship between elements:** The chart is designed to highlight the superiority of the authors' method. The choice of a bright green color for `ABL-Refl` makes it visually dominant. The clustering of the other four models emphasizes that they represent a class of existing solutions that the new method surpasses.

* **Notable anomalies:** The sharp dip in the `CL-STL` (red) line around epoch 65 is an outlier in its otherwise steady climb, potentially indicating a temporary instability in its training process. The near-identical performance trajectories of `SATNet` and `CL-STL` for much of the chart suggest these models may share similar underlying mechanisms or limitations for this task.

* **Underlying message:** The primary takeaway is not just that `ABL-Refl` is better, but that it represents a qualitative leap in performance—changing the problem from one where accuracy slowly climbs to the 70s to one where near-perfect accuracy (~95%+) is rapidly and reliably achieved.

</details>

(a) Sudoku.

<details>

<summary>extracted/6187776/figs/visual_sudoku.jpg Details</summary>

### Visual Description

\n

## Line Chart: Inference Accuracy vs. Training Epochs

### Overview

This image is a line chart comparing the training performance of three different machine learning models over 100 epochs. The chart plots "Inference accuracy" on the vertical axis against "Epoch" on the horizontal axis. The primary purpose is to demonstrate the learning curves and final performance of a proposed method ("ABL-Refl") against a baseline ("SATNet") and an enhanced version of the proposed method.

### Components/Axes

* **Chart Type:** Line chart with three data series.

* **X-Axis (Horizontal):**

* **Label:** "Epoch"

* **Scale:** Linear, from 0 to 100.

* **Major Tick Marks:** At intervals of 20 (0, 20, 40, 60, 80, 100).

* **Y-Axis (Vertical):**

* **Label:** "Inference accuracy"

* **Scale:** Linear, from 0 to approximately 95 (inferred from data peaks).

* **Major Tick Marks:** At intervals of 20 (0, 20, 40, 60, 80).

* **Legend:**

* **Position:** Bottom-right corner of the plot area.

* **Entries (from top to bottom):**

1. **SATNet:** Represented by a blue line.

2. **ABL-Refl (ours):** Represented by a light green line.

3. **ABL-Refl (ours) with pretrained CNN:** Represented by a dark green line.

### Detailed Analysis

The chart displays three distinct learning curves, each showing a general upward trend with varying degrees of volatility and final accuracy.

1. **SATNet (Blue Line):**

* **Trend:** Starts near 0% accuracy at epoch 0. Shows a steady, relatively smooth logarithmic growth curve. The rate of improvement slows after approximately epoch 40.

* **Approximate Data Points:**

* Epoch 0: ~0%

* Epoch 20: ~40%

* Epoch 40: ~50%

* Epoch 60: ~55%

* Epoch 80: ~60%

* Epoch 100: ~60% (ends slightly below its peak).

2. **ABL-Refl (ours) (Light Green Line):**

* **Trend:** Starts near 0% at epoch 0. Exhibits a rapid initial increase, followed by a more gradual climb with moderate fluctuations. It consistently outperforms SATNet after the first few epochs.

* **Approximate Data Points:**

* Epoch 0: ~0%

* Epoch 20: ~65%

* Epoch 40: ~70%

* Epoch 60: ~70% (with a notable dip around epoch 60).

* Epoch 80: ~72%

* Epoch 100: ~75%.

3. **ABL-Refl (ours) with pretrained CNN (Dark Green Line):**

* **Trend:** Starts at a significantly higher accuracy (~15%) at epoch 0. Shows an extremely rapid initial jump to over 80% within the first 5-10 epochs. After this initial surge, it enters a volatile plateau phase, oscillating between approximately 80% and 95% for the remainder of training. It is the top-performing model throughout.

* **Approximate Data Points:**

* Epoch 0: ~15%

* Epoch 10: Peaks near ~95%.

* Epoch 20: ~85%

* Epoch 40: ~82%

* Epoch 60: ~90% (after a local peak).

* Epoch 80: ~85%

* Epoch 100: ~92%.

### Key Observations

* **Performance Hierarchy:** A clear and consistent performance order is established early and maintained: "ABL-Refl with pretrained CNN" > "ABL-Refl" > "SATNet".

* **Impact of Pretraining:** The addition of a pretrained CNN provides a massive initial boost (starting at ~15% vs. 0%) and leads to a much higher final accuracy ceiling (~90%+ vs. ~75%).

* **Convergence & Stability:**