# Inferring Functionality of Attention Heads from their Parameters

**Authors**:

- Amit Elhelo Mor Geva (Blavatnik School of Computer Science, Tel Aviv University)

Abstract

Attention heads are one of the building blocks of large language models (LLMs). Prior work on investigating their operation mostly focused on analyzing their behavior during inference for specific circuits or tasks. In this work, we seek a comprehensive mapping of the operations they implement in a model. We propose MAPS (Mapping Attention head ParameterS), an efficient framework that infers the functionality of attention heads from their parameters, without any model training or inference. We showcase the utility of MAPS for answering two types of questions: (a) given a predefined operation, mapping how strongly heads across the model implement it, and (b) given an attention head, inferring its salient functionality. Evaluating MAPS on 20 operations across 6 popular LLMs shows its estimations correlate with the head’s outputs during inference and are causally linked to the model’s predictions. Moreover, its mappings reveal attention heads of certain operations that were overlooked in previous studies, and valuable insights on function universality and architecture biases in LLMs. Next, we present an automatic pipeline and analysis that leverage MAPS to characterize the salient operations of a given head. Our pipeline produces plausible operation descriptions for most heads, as assessed by human judgment, while revealing diverse operations. We release our code and mappings at https://github.com/amitelhelo/MAPS.

Inferring Functionality of Attention Heads from their Parameters

Amit Elhelo Mor Geva Blavatnik School of Computer Science, Tel Aviv University {amitelhelw@mail,morgeva@tauex}.tau.ac.il

1 Introduction

<details>

<summary>x1.png Details</summary>

### Visual Description

\n

## Diagram: Multi-head Attention Layer and Token Mapping Analysis

### Overview

The image is a diagram illustrating the process of inferring functionality within a multi-head attention layer by analyzing mappings between tokens. It depicts a multi-head attention layer, parameter projection to a vocabulary, and two methods for evaluating the layer's operation: mapping countries to capitals and name variations. The diagram uses a grid-based visualization to represent these mappings, with color intensity indicating the strength of the association.

### Components/Axes

The diagram consists of the following components:

* **Multi-head attention layer:** Represented as a rectangular block with input and output arrows. Inside the block are labeled matrices: W<sub>VQ</sub><sup>1</sup>, W<sub>QK</sub><sup>1</sup>, W<sub>VQ</sub><sup>n</sup>, W<sub>QK</sub><sup>n</sup>. A magnifying glass highlights a portion of the layer.

* **Projecting parameters to the vocabulary:** A grid of cells representing the vocabulary, with a highlighted cell labeled "M". The grid is labeled "|V|" on both axes.

* **Inferring functionality by analyzing mappings between tokens:** A descriptive label for the lower portion of the diagram.

* **A: Evaluating the head's implementation of a predefined operation:** A label for the "Country to capital" mapping grid.

* **B: Inspecting the head's salient operations:** A label for the "Name variations" mapping grid.

* **Country to capital grid:** A 3x3 grid with rows labeled "France", "Germany", and "Egypt", and columns labeled "Cairo", "Paris", and "Berlin".

* **Name variations grid:** A 2x3 grid with rows labeled "Tomas" and "Donna", and columns labeled "tommi", "Don", and "Tom".

* **Association Strength Indicators:** Color intensity within the grids represents the strength of the association between tokens.

* **Association Scores:** "0.7" is displayed below the "Country to capital" grid, and "0.9" is displayed below the "Name variations" grid.

### Detailed Analysis or Content Details

**Multi-head Attention Layer:**

* The layer contains matrices labeled W<sub>VQ</sub><sup>1</sup>, W<sub>QK</sub><sup>1</sup>, W<sub>VQ</sub><sup>n</sup>, and W<sub>QK</sub><sup>n</sup>. These likely represent weight matrices for query, key, and value transformations within the attention mechanism. The superscript 'n' suggests multiple heads.

* The magnifying glass focuses on a portion of the layer, implying detailed inspection of specific weights.

**Projecting Parameters to the Vocabulary:**

* The grid represents the vocabulary space, with dimensions labeled "|V|". The size of the grid is approximately 8x8.

* The cell labeled "M" is highlighted, potentially indicating a specific token or parameter of interest.

**Country to Capital Mapping (A):**

* The grid shows associations between countries and their capitals.

* France - Paris: Strong association (dark yellow).

* France - Cairo: Weak association (light yellow).

* France - Berlin: Weak association (light yellow).

* Germany - Cairo: Weak association (light yellow).

* Germany - Paris: Weak association (light yellow).

* Germany - Berlin: Strong association (dark yellow).

* Egypt - Cairo: Strong association (dark yellow).

* Egypt - Paris: Weak association (light yellow).

* Egypt - Berlin: Weak association (light yellow).

* The overall association score is 0.7.

**Name Variations Mapping (B):**

* The grid shows associations between name variations.

* Tomas - tommi: Strong association (dark yellow).

* Tomas - Don: Weak association (light yellow).

* Tomas - Tom: Weak association (light yellow).

* Donna - tommi: Weak association (light yellow).

* Donna - Don: Strong association (dark yellow).

* Donna - Tom: Weak association (light yellow).

* The overall association score is 0.9.

### Key Observations

* The "Name variations" mapping (0.9) has a higher association score than the "Country to capital" mapping (0.7), suggesting the attention head is better at capturing relationships between name variations.

* The grids use a color gradient to represent the strength of the association, with darker yellow indicating a stronger relationship.

* The multi-head attention layer is depicted as a complex component with multiple weight matrices.

### Interpretation

The diagram illustrates a method for understanding the internal workings of a multi-head attention layer. By analyzing how the layer maps tokens (countries to capitals, name variations), researchers can infer the functionality and salient operations of the layer. The higher association score for name variations suggests that the attention head may be particularly sensitive to subtle differences in names. The use of grid-based visualizations allows for a clear and intuitive representation of these mappings. The diagram highlights the importance of examining the internal representations learned by attention mechanisms to gain insights into their behavior. The "M" in the vocabulary projection could represent a key parameter or token that the attention head focuses on. The overall goal is to move beyond treating attention layers as "black boxes" and to develop a deeper understanding of their internal logic.

</details>

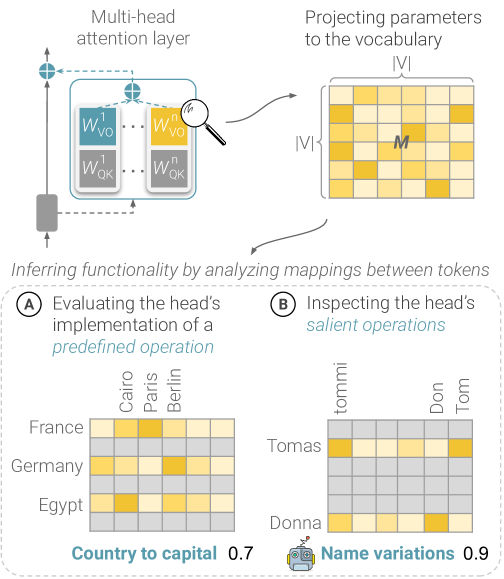

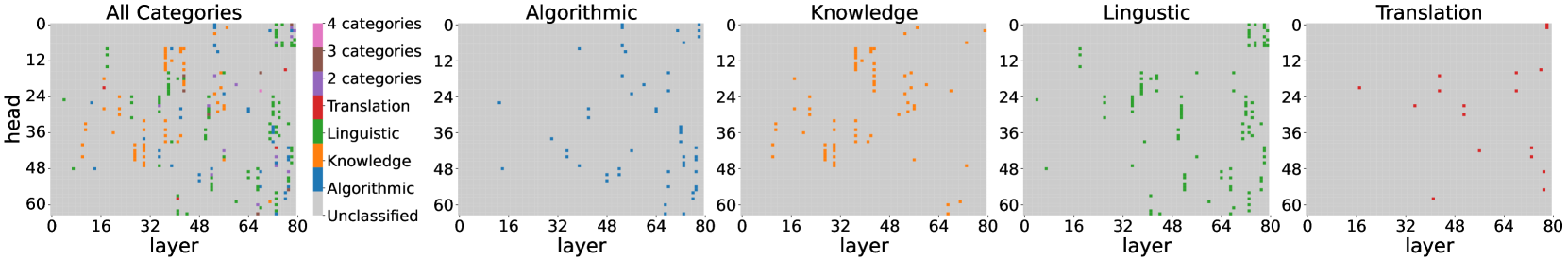

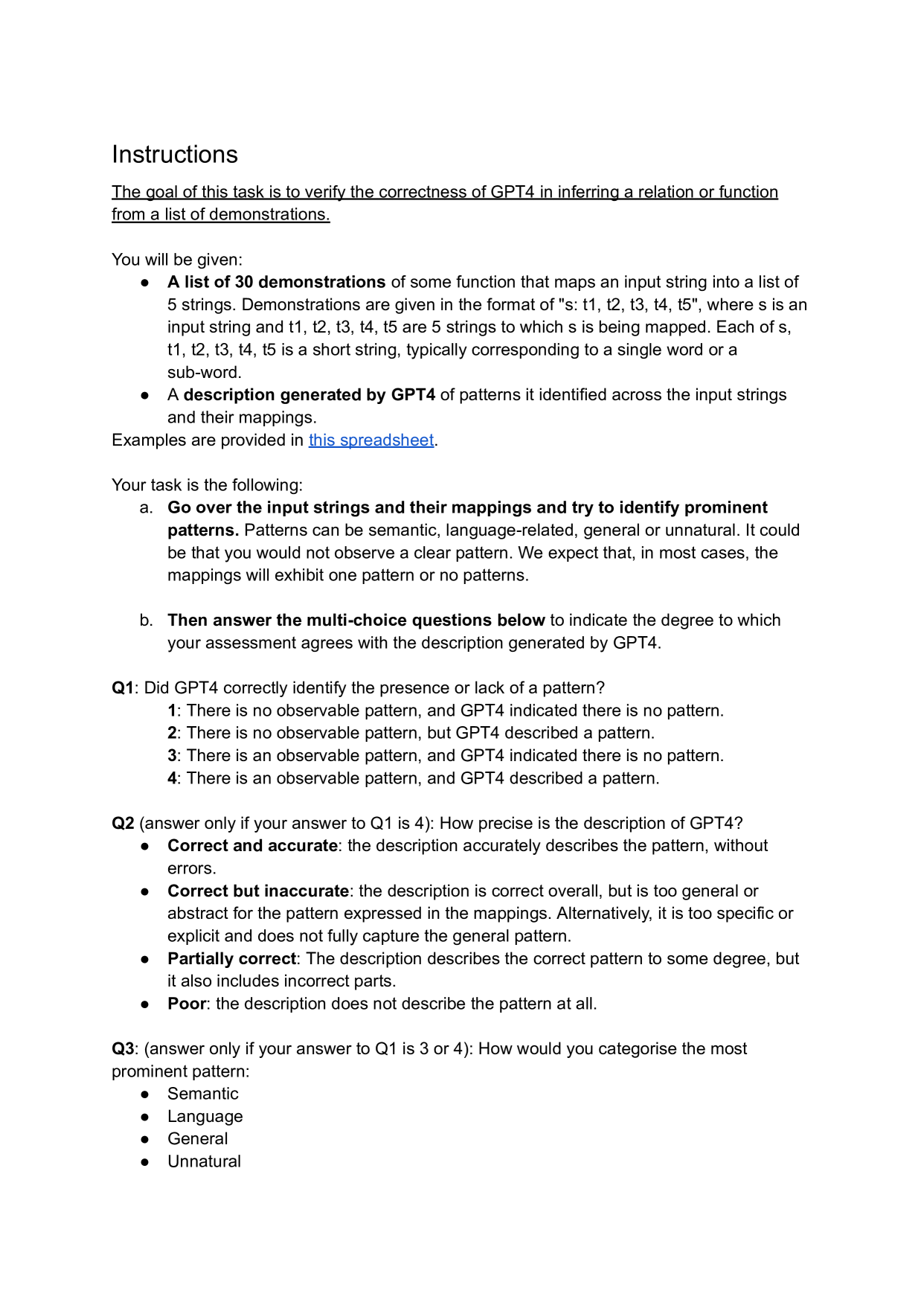

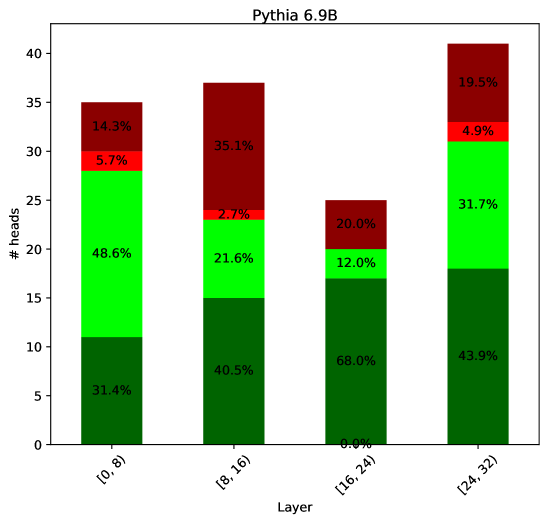

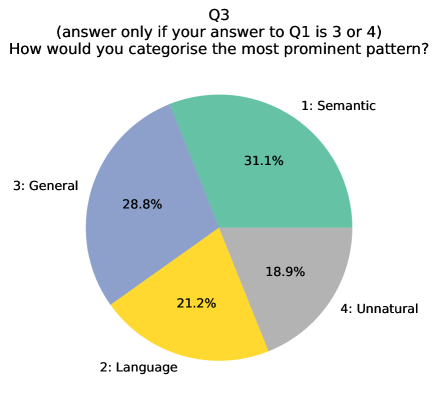

Figure 1: Illustration of MAPS, a framework for inferring the functionality of attention heads in LLMs from their parameters. MAPS casts the head as a matrix $M$ which assigns a score for every pair of tokens in the model’s vocabulary. Then, it considers groups of token pairs (sub-matrices in $M$ ) to measure how strongly the head implements a given operation (A) and to inspect the head’s salient operations (B).

Attention heads play a key role in modern large language models (LLMs) (Vaswani et al., 2017; Zhou et al., 2024; Olsson et al., 2022). Numerous studies (Zheng et al., 2024; Ferrando et al., 2024) have explored their functionality, typically by analyzing their attention patterns or outputs during inference for certain inputs or tasks.

However, relying on the model’s behavior for certain inputs has drawbacks. First, this approach may overlook some of the functions implemented by the head, as heads can exhibit different behaviors for different inputs (Gould et al., 2024; Merullo et al., 2024a; Olsson et al., 2022; Kissane et al., 2024). Second, a comprehensive analysis of the head’s operation would require executing the model over numerous inputs, potentially the whole training corpus, which involves a high computational cost and could be impossible when the data is unavailable. Last, analyzing the examples that activate the head is often non-trivial and could be misleading (Bolukbasi et al., 2021; Gao et al., 2024; Kissane et al., 2024).

In this work, we consider a different approach to this problem, where our goal is to infer the functionality of attention heads directly from their parameters and without executing the model. To this end, we leverage the approach of interpreting model parameters in the vocabulary space (Geva et al., 2021, 2022; Katz et al., 2024). Specifically, we build on the formulation by Elhage et al. (2021); Dar et al. (2023), who cast the attention head as a matrix $M$ , where each entry is a mapping score between two tokens. While this approach has been shown effective in identifying heads with certain operations, so far its usage has been limited to studying specific heads in detected circuits Wang et al. (2023); McDougall et al. (2024) or a single operation Gould et al. (2024).

Here, we scale this interpretation approach into a general framework, called MAPS (Mapping Attention heads ParameterS), which enables answering two types of basic questions: (a) given a predefined operation, mapping how strongly different heads across the model implement it, and (b) given an attention head, inferring its prominent operations. This is done by considering patterns across groups of mappings in $M$ , as illustrated in Figure 1. Predefined relations signify groups of mappings expressing a certain relation (e.g. city of a country or pronoun resolving). Salient operations consist of subsets of mappings for which the head induces the most prominent effect. In addition, analyzing simple statistics of these mappings provides insights into how global or specific its operation is.

We evaluate our framework on 6 popular LLMs and 20 predefined relations of 4 categories – knowledge, language, algorithmic, and translation. Experiments show that estimations by MAPS strongly correlate with the head outputs during inference. Moreover, causally removing all the heads implementing a certain operation substantially impairs the model’s ability to answer queries requiring this operation, compared to removing other heads.

Analysis of the obtained mappings shows that, across all models, MAPS detects relation heads mostly in the middle and upper layers, while revealing universality patterns for several relations. Moreover, it demonstrates how the model’s architecture introduces biases in function encoding. Smaller models tend to encode higher numbers of relations on a single head, and in Llama-3.1 models, which use grouped-query attention, grouped attention heads often implement the same or similar relations. Notably, MAPS successfully detected previously identified heads of specific operations, while discovering additional heads of similar operations not reported before.

Next, we demonstrate the utility of MAPS for inferring the prominent operations of a given head. We consider the head’s salient mappings in $M$ and use GPT-4o Hurst et al. (2024) to automatically describe the functionality they exhibit. Applying this procedure to GPT-2 xl and Pythia 6.9B, we map the prominent operations of 62% of their heads and 60%-96% of those in the middle and upper layers. Qualitative analysis shows semantic, linguistic, and algorithmic operations and reveals novel operations, such as the extension of time periods (day->month;month->year). A human study shows that our automated pipeline performs reasonably well, and GPT-4o reliably detects observable operations.

To conclude, we introduce MAPS, an efficient framework for inferring attention heads’ functionality from their parameters. We showcase the utility of MAPS in systematically mapping a certain functionality across the model and automatically characterizing the salient operations of a given head. Estimations by MAPS correlate with the head’s outputs and are faithful to the model’s behavior, and provide valuable insights on architecture biases and universality of head operations in LLMs.

2 Preliminaries and Notation

We assume a transformer-based LM with a hidden dimension $d$ , $L$ layers, $H$ attention heads per layer, a vocabulary $\mathcal{V}$ , an embedding matrix $E∈\mathbb{R}^{|\mathcal{V}|× d}$ , and an unembedding matrix $U∈\mathbb{R}^{d×|\mathcal{V}|}$ .

Attention heads as interaction matrices

We use the formulation by Elhage et al. (2021) and view an attention head as two “interaction” matrices $W_{QK},W_{VO}∈\mathbb{R}^{d× d}$ . Given a sequence of $n$ hidden states $X∈\mathbb{R}^{n× d}$ , the matrix $W_{QK}$ computes the query-key scores to produce an attention weights matrix $A∈\mathbb{R}^{n× n}$ :

$$

A=\text{softmax}\Bigg{(}\frac{X(W_{QK})X^{T}}{\sqrt{d/H}}\Bigg{)}

$$

The matrix $W_{VO}$ operates on the contextualized hidden states according to $A$ , namely $\tilde{X}=AX$ , and produces the head’s output $Y∈\mathbb{R}^{n× d}$ :

$$

Y=\tilde{X}W_{VO} \tag{1}

$$

The matrix $W_{QK}$ can be viewed as “reading” from the residual stream, and $W_{VO}$ can be viewed as the “writing” component. Notably, this formulation omits the bias terms of the head.

Interpreting attention heads in embedding space

Recent works have analyzed the operation of different components in transformers through projection to the model’s vocabulary space (nostalgebraist, 2020; Geva et al., 2021, 2022; Dar et al., 2023; Katz et al., 2024). Specifically, Elhage et al. (2021); Dar et al. (2023) interpret each of the attention head matrices – $W_{QK}$ and $W_{VO}$ – as a matrix that maps between pairs of tokens from the vocabulary. Considering $W_{VO}$ , it is interpreted via multiplication from both sides with the model’s embedding matrix: ${\tilde{M}=E(W_{VO})E^{T}∈\mathbb{R}^{|\mathcal{V}|×|\mathcal{V}|}}$ . Each entry in $\tilde{M}$ is viewed as a mapping score between source and target tokens ${s,t∈\mathcal{V}}$ based on $W_{VO}$ , which signifies how strongly the head promotes it in its outputs. Elhage et al. (2021) suggested that when the weights of $E$ and $U$ are not tied, a more faithful interpretation can be obtained by:

$$

M=E(W_{VO})U

$$

Other notable variations include applying the model’s first MLP layer to the embedding matrix $E$ (Gould et al., 2024) and the final layer norm on rows of $E(W_{VO})$ (Wang et al., 2023).

3 MAPS

Based on the above view, we propose a general framework, called MAPS, for inferring the functionality of attention heads in LLMs directly from their parameters. We focus on analyzing the $W_{VO}$ component of the head, which produces the head’s output to the residual stream, and make the following observations. First, the $i$ -th row of $M$ provides the scores for mappings from the $i$ -th token to any token in $\mathcal{V}$ . Similarly, the $j$ -th column of $M$ provides scores for mappings from any token in $\mathcal{V}$ to the $j$ -th token. Therefore, considering the scores of certain submatrices of $M$ may reveal how the attention head operates on different sets of inputs. For example, analyzing the rows corresponding to tokens representing countries may reveal general knowledge-related operations implemented by the head, and attention heads that copy certain tokens should have diagonal-like submatrices in $M$ .

An important question that arises is which parts of $M$ to consider in order to identify the head’s functionality. In principle, there are $2^{|\mathcal{V}|}$ different subsets of rows that can be considered, which would be infeasible to traverse with $|\mathcal{V}|=\mathcal{O}(10K)$ in typical LLMs. Here, we propose two complementary ways to approach this, described next.

3.1 Predefined Relations

One intuitive approach is to define a set of possible operations that can be realized through pairs of tokens, and then measure the extent to which the head implements each operation. For example, the operation of mapping a country to its capital can be realized through a set of token pairs expressing that relation, e.g. (France, Paris) or (Egypt, Cairo). Similarly, mapping between synonyms can be realized via pairs such as (talk, speak) and (fast, quick). Such operations can be viewed as an implementation of relations between tokens.

Let $R$ be a predefined relation and $\mathcal{D}_{R}$ a dataset of token pairs expressing $R$ . Also, denote by $\mathbf{m}_{i}∈\mathbb{R}^{|\mathcal{V}|}$ the $i$ -th row of $M$ (corresponding to the mapping scores of the $i$ -th token), and by $\texttt{topk}(\mathbf{m}_{i})$ the $k$ tokens with the highest scores in $\mathbf{m}_{i}$ . The extent to which an attention head, interpreted as the matrix $M$ , implements $R$ can be measured as the portion of pairs $(s,t)∈\mathcal{D}_{R}$ where $t$ is in the top-scoring tokens in $\mathbf{m}_{s}$ :

$$

\phi_{R}(M):=\frac{1}{|\mathcal{D}_{R}|}\sum_{(s,t)\in\mathcal{D}_{R}}\mathds{%

1}[t\in\texttt{topk}(\mathbf{m}_{s})] \tag{2}

$$

For instance, the score for $R=$ ‘‘country to capital’’ reflects how often the head promotes the capital city of a country in its output when operating on an input representation of that country.

Notably, our formulation also supports suppression operations observed in previous work (Wang et al., 2023; Gould et al., 2024; McDougall et al., 2024), where certain attention heads suppress certain concepts or outputs during inference. Representing a suppressive relation is done by defining the pairs $(s,t)$ as before and considering the top-scoring tokens in $-\mathbf{m}_{s}$ instead of $\mathbf{m}_{s}$ .

3.2 Salient Operations

The main limitation of the above approach is that it could miss certain relations that heads implement. A complementary approach would be to characterize the head’s functionality from prominent mappings appearing in $M$ . Dar et al. (2023) tackled this by considering the top-scoring mappings in $M$ . However, we recognize two drawbacks in this method: (a) the scores in $M$ are influenced by the token embedding norms, which could bias the top scores towards mappings of tokens with high embedding norms, and (b) the top entries in $M$ may cover mapping from a small number of tokens (e.g., from a single row), thus describing the head’s functionality for only a few tokens.

Here, we propose a more holistic approach to identify salient mappings in $M$ , by first identifying the tokens on which the head’s operation is most prominent, and then considering the top-scoring mappings for these tokens. We measure how prominent the head’s operation on a token $t∈\mathcal{V}$ via the ratio of the token’s embedding norm after multiplying by $W_{VO}$ to the norm before this transformation:

$$

\sigma_{t}(W_{VO}):=\frac{||\mathbf{e}_{t}W_{VO}||}{||\mathbf{e}_{t}||} \tag{3}

$$

Comparing the sets of top versus salient mappings indeed shows substantial differences. The average Jaccard similarity of the sets obtained for heads in GPT-2 xl is 0.01. In the next sections, we experiment with both approaches, showing their effectiveness in inferring attention head functionality in multiple LLMs.

4 Mapping Predefined Relations

In this section, we utilize MAPS to map how strongly attention heads implement various operations in multiple LLMs (§ 4.1). We assess the correctness and generalization of these estimations via correlative and causal experiments (§ 4.2, § 4.3) and analyze prominent trends (§ 4.4).

4.1 Experimental Setup

Datasets

We construct datasets for 20 relations of four categories: algorithmic (e.g., word to first letter), knowledge (e.g., country to capital), linguistic (e.g., adjective to comparative), and translation (English to French/Spanish), and 3 vocabularies of widely-used model families. For every relation, we collect pairs of strings expressing it. For instance, possible pairs for the relation word-to-compound are (hot, hotdog) and (wall, wallpaper). Data is obtained from previously published datasets and online sources and further augmented by querying ChatGPT to generate example pairs, which we (authors) manually validated. Then, we tokenize the pairs with each of the tokenizers of Llama-3.1 Dubey et al. (2024), Pythia Biderman et al. (2023) GPT Radford et al. (2019) and Phi-2 Javaheripi and Bubeck (2023), keeping only cases where the resulting mapping is between single tokens. Experimenting with different tokenizers is important as MAPS leverages the model’s vocabulary. Llama-3.1’s vocabulary has $\sim$ 130k tokens compared to $\sim$ 50k tokens for GPT-2, Phi-2, and Pythia. For more details on the collection, dataset statistics, and examples, see § A.

Models

We analyze models of various sizes from different families: Llama-3.1 8B and 70B Dubey et al. (2024), Pythia 6.9B and 12B Biderman et al. (2023), Phi-2 Javaheripi and Bubeck (2023), and GPT-2 xl Radford et al. (2019). These models have varying numbers of layers and attention heads, from 32 layers and 32 heads in Pythia 6.9B to 80 layers and 64 heads in Llama-3.1 70B. Additionally, Llama-3.1 uses grouped-query attention Ainslie et al. (2023), versus the other models which use multi-head attention Vaswani et al. (2017).

Measuring predefined relations

For every attention head and relation $R$ , we derive the matrix $M$ and calculate the relation score $\phi_{R}(M)$ (Eq. 2). We also compute the score for the suppressive variant $\bar{R}$ of every relation $R$ . For example, the suppressive variant of $R=\texttt{country to capital}$ corresponds to the operation of suppressing the capital of a given country.

We follow previous works (Dar et al., 2023; Geva et al., 2021, 2022) and set low $k$ values to reflect strong prioritization of the target token in the head’s output. For Pythia, Phi-2 and GPT-2, we use $k=1$ for the copying and name-copying relations and $k=10$ for other relations. For the Llama-3.1 models, we set $k=3$ for copying and name-copying and $k=25$ for other relations. The bigger values for Llama-3.1 are due to their large vocabulary, which allows expressing a concept with more tokens. The smaller values for the copying relations are for measuring them more strictly. For further discussion on this selection, see § A.

To classify whether a head “implements” a relation $R$ , we apply a threshold $\tau$ to $\phi_{R}(M)$ . Namely, if $t$ appears in the top- $k$ mappings of $s$ for $\tau$ percent of the pairs $(s,t)∈\mathcal{D}_{R}$ , then we consider the head as implementing $R$ . We choose a threshold of $\tau=15\%$ after experimenting with different thresholds and comparing against randomly initialized heads (see § A for details).

4.2 Evaluation of Functionality Estimation

We evaluate whether the functionality estimations by MAPS faithfully describe the operations of the heads during inference. Our experiments show that the estimated operation of a head strongly correlates with its outputs and demonstrates the expected causal effect on the model’s generation.

Experiment 1: Correlation with head outputs

For every relation $R$ and source-target pair $(s,t)∈\mathcal{D}_{R}$ , we evaluate the model using four prompt templates (provided in § B.1). One representative template is: We do not simply feed in $s$ as input to avoid potential biases from the attention sink phenomenon Xiao et al. (2024).

$$

\mathcal{P}_{s}:=\texttt{``This is a document about $\langle$s$\rangle$''}

$$

Where $\langle\texttt{s}\rangle$ is the string of the source token $s$ . For example, for the pair (England, London), we will have ‘‘This is a document about England’’. Next, we obtain the output $\mathbf{y}_{s}∈\mathbb{R}^{d}$ of every attention head at the last position (corresponding to $s$ ), Here the head outputs include the bias term of $W_{V}$ , see § B.1. and project it to the model’s vocabulary space, i.e. $\mathbf{y}_{s}U∈\mathbb{R}^{|\mathcal{V}|}$ . The top-scoring tokens in the resulting vector are those promoted by the head given the prompt $\mathcal{P}_{s}$ Geva et al. (2022). To check whether the head implements the relation $R$ , namely promote $t$ when given $s$ in the input, we test for every pair $(s,t)$ whether $t$ appears in the top $k$ tokens in $\mathbf{y}_{s}U$ . We use the same $k$ values specified in § 4.1. Concretely, for every head $h$ we compute the following score, which represents how strongly the head implements $R$ during inference:

$$

\phi^{*}_{R}(h):=\frac{1}{|\mathcal{D}_{R}|}\sum_{(s,t)\in\mathcal{D}_{R}}%

\mathds{1}[t\in\texttt{topk}(\mathbf{y}_{s}U)] \tag{4}

$$

We check the correlation between the static score $\phi_{R}(h)$ inferred by our method and the dynamic score $\phi^{*}_{R}(h)$ computed separately for each of the four templates. As a baseline, we compute $\phi^{*}_{R}(h)$ while restricting the attention in $h$ from $s$ to be only to itself. This emulates an operation of the head as if it fully attends to the representation of $s$ .

Results

Table 1 shows the results for Llama-3.1 8B. For the vast majority of relations, we observe a strong to very strong correlation of 0.71-0.95 Schober et al. (2018) when the query’s subject is not contextualized. This high correlation often remains or even increases when considering the head’s outputs for contextualized inputs. This shows that MAPS well-estimates the head’s behavior for task-related inputs. Still, for some relations (e.g. word to compound and word to last letter) correlation is lower for contextualized inputs, demonstrating that in some cases, the head may switch its operation depending on the context. This agrees with the observation that heads often implement multiple operations (§ 4.4). Results for other models are in § B.1, generally exhibiting similar trends, though with occasional larger drops in the contextualized setting for Pythia and GPT-2 xl.

| Category | Relation | Correlation w/o context. | Correlation w/ context. |

| --- | --- | --- | --- |

| Algorithmic | Copying | 0.76 | 0.73 |

| Name copying | 0.95 | 0.95 | |

| Word to first letter | 0.90 | 0.78 | |

| Word to last letter | 0.67 | 0.36 | |

| Knowledge | Country to capital | 0.85 | 0.85 |

| Country to language | 0.76 | 0.62 | |

| Object to superclass | 0.74 | 0.73 | |

| Product by company | 0.46 | 0.49 | |

| Work to location | 0.44 | 0.45 | |

| Linguistic | Word to antonym | 0.90 | 0.86 |

| Adj to comparative | 0.85 | 0.86 | |

| Adj to superlative | 0.87 | 0.89 | |

| Noun to pronoun | 0.89 | 0.79 | |

| Verb to past tense | 0.91 | 0.86 | |

| Word to compound | 0.78 | 0.62 | |

| Word to homophone | 0.85 | 0.75 | |

| Word to synonym | 0.79 | 0.69 | |

| Translation | English to French | 0.71 | 0.68 |

| English to Spanish | 0.82 | 0.81 | |

Table 1: Correlation between the relation score of a head and the head’s outputs in Llama-3.1 8B, with and without head contextualization. Results are statistically significant with p-values $≤$ 3.9e-128 (see § B.1).

| Relation | TR Tasks | CTR Tasks | | | |

| --- | --- | --- | --- | --- | --- |

| Base | - TR | - RND | Base | - TR | |

| Adj to comparative | 0.91 | 0.20 | 0.82 | 0.92 | 0.63 |

| Copying | 1.00 | 0.68 | 1.00 | 0.95 | 0.88 |

| Country to capital | 0.97 | 0.00 | 0.95 | 0.89 | 0.90 |

| Country to language | 1.00 | 0.08 | 0.96 | 0.89 | 0.89 |

| Name copying | 1.00 | 0.24 | 1.00 | 0.90 | 0.92 |

| Noun to pronoun | 0.88 | 0.46 | 0.86 | 0.90 | 0.88 |

| Object to superclass | 0.78 | 0.39 | 0.68 | 0.90 | 0.87 |

| Verb to past tense | 0.22 | 0.04 | 0.26 | 0.03 | 0.02 |

| Word to first letter | 0.91 | 0.34 | 0.87 | 0.91 | 0.74 |

| Year to following | 0.92 | 0.00 | 0.87 | 0.83 | 0.79 |

Table 2: Accuracy of Pythia 12B on tasks for a target relation (TR) versus on control (CTR) tasks, when removing heads implementing the relation compared to when removing random heads (RND). Results for RND heads are averaged over 5 experiments. We omit standard deviation for brevity and report it in § B.2.

Experiment 2: Causal effect on model outputs

For a given relation $R$ , we evaluate the model’s performance on queries that require applying $R$ , when removing the heads classified by MAPS as implementing $R$ versus when removing random heads from the model. We choose a diverse set of 13 relations and construct a test set $\tilde{\mathcal{D}}_{R}$ for every relation $R$ as follows. First, we craft a task prompt that requires the model to apply $R$ . For example, a prompt for the country to capital relation could be ‘‘The capital of $\langle s\rangle$ is’’, with $\langle s\rangle$ being a placeholder for a country. Then, for each pair $(s,t)∈\mathcal{D}_{R}$ we instantiate the prompt with $s$ to create an input $\tilde{\mathcal{P}}_{s}$ and a test example $(\tilde{\mathcal{P}}_{s},t)∈\tilde{\mathcal{D}}_{R}$ .

Let $\mathcal{H}_{R}^{i}$ be the subset of $i$ attention heads with the highest scores for $\phi_{R}(M)$ . We evaluate the models on $\tilde{\mathcal{D}}_{R}$ while running each input $n$ times, each time canceling (by setting to zero) the outputs of the attention heads $\mathcal{H}_{R}^{i}$ and obtaining the model’s prediction with greedy decoding. We set $n$ as the minimum between the number of heads in the model with $\phi_{R}(M)>0$ and a fixed boundary: 150 for GPT-2 xl, Pythia 6.9B, Pythia 12B, and Llama-3.1 8B and 250 for Llama-3.1 70B. In cases when the accuracy drops to 0 after ablating $i<n$ heads, we report results obtained up to $i$ .

We compare the above intervention against a baseline where $i$ randomly sampled heads that are not in $\mathcal{H}_{R}^{i}$ are ablated, repeating this experiment 5 times and reporting the average accuracy. Additionally, to establish that relation heads are important specifically for tasks involving $R$ , we remove the relation heads as above and measure the model’s performance on up to five control tasks for other relations. We choose the relations such that $<$ 15% of the target relation heads are also control relation heads, and the absolute difference between the baseline accuracy on the control task and the target task is $≤$ 20%.

Results

Results for Pythia 12B are presented in Table 2, excluding relations where the base accuracy was $<$ 0.1. For all relations, removing the relation heads identified by MAPS causes a major accuracy drop of $≥$ 32% compared to $≤$ 13% when removing random heads. Moreover, while the accuracy drop for the control tasks is considerable in some cases (at most 33%), it is significantly smaller than the relative drop on the target relation task. Results for the other models are generally similar (see § B.2). Notable differences are that the accuracy drops in Llama-3.1 are often smaller, but in 9 out of 11 relations they are larger than those obtained for the random and control baselines.

4.3 Generalization to Multi-Token Entities

A natural question that arises is how well the estimations by MAPS generalize to contextualized inputs representing multiple tokens. Namely, if we infer the head’s ability to perform country-to-capital mappings from country names tokenized as a single token, will we observe the same behavior for countries tokenized as multiple tokens?

To test this, we apply the data collection process from § 4.1 to create new datasets for 11 relations of source-target pairs $(s,t)$ where $s$ has multiple tokens. Then, we repeat the correlative experiment in § 4.2 for GPT-2 xl, Pythia 6.9B and Pythia 12B using this data and the prompt template ‘‘This is a document about $\langle$ s $\rangle$ ’’.

We observe that the estimated operations generalize to multi-token representations. For 53 out of the 64 model-relation combinations (with and without contextualization), the correlation between the relation score and the head’s output in the multi-token setting is similar ( $≤$ 0.05 difference) or higher than the single-token setting. In the remaining cases, there is a slightly bigger drop ( $≤$ 0.13), but the correlations remain $≥$ 0.63. The full results are provided in § C.

4.4 Analysis

Function distribution

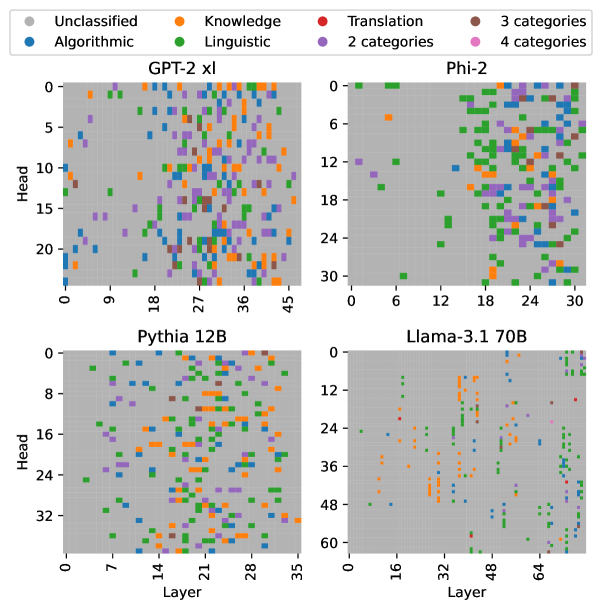

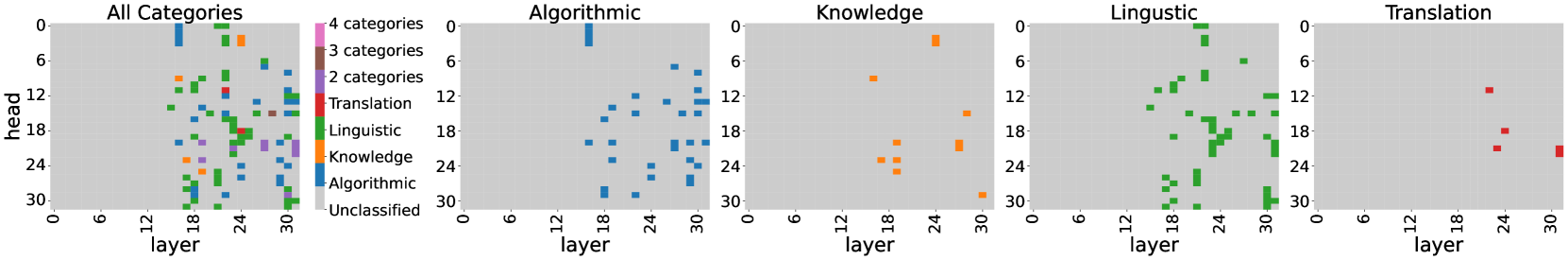

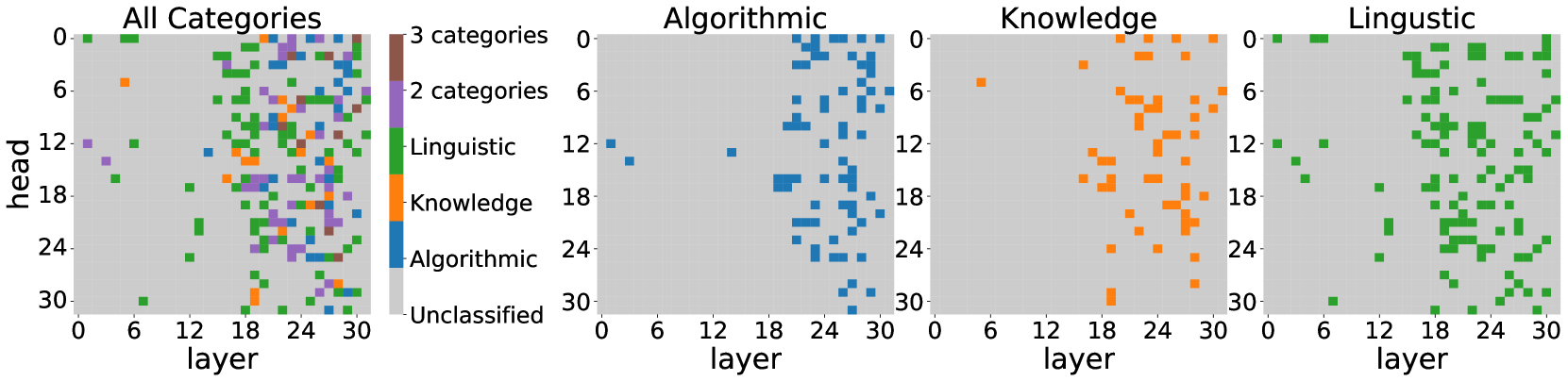

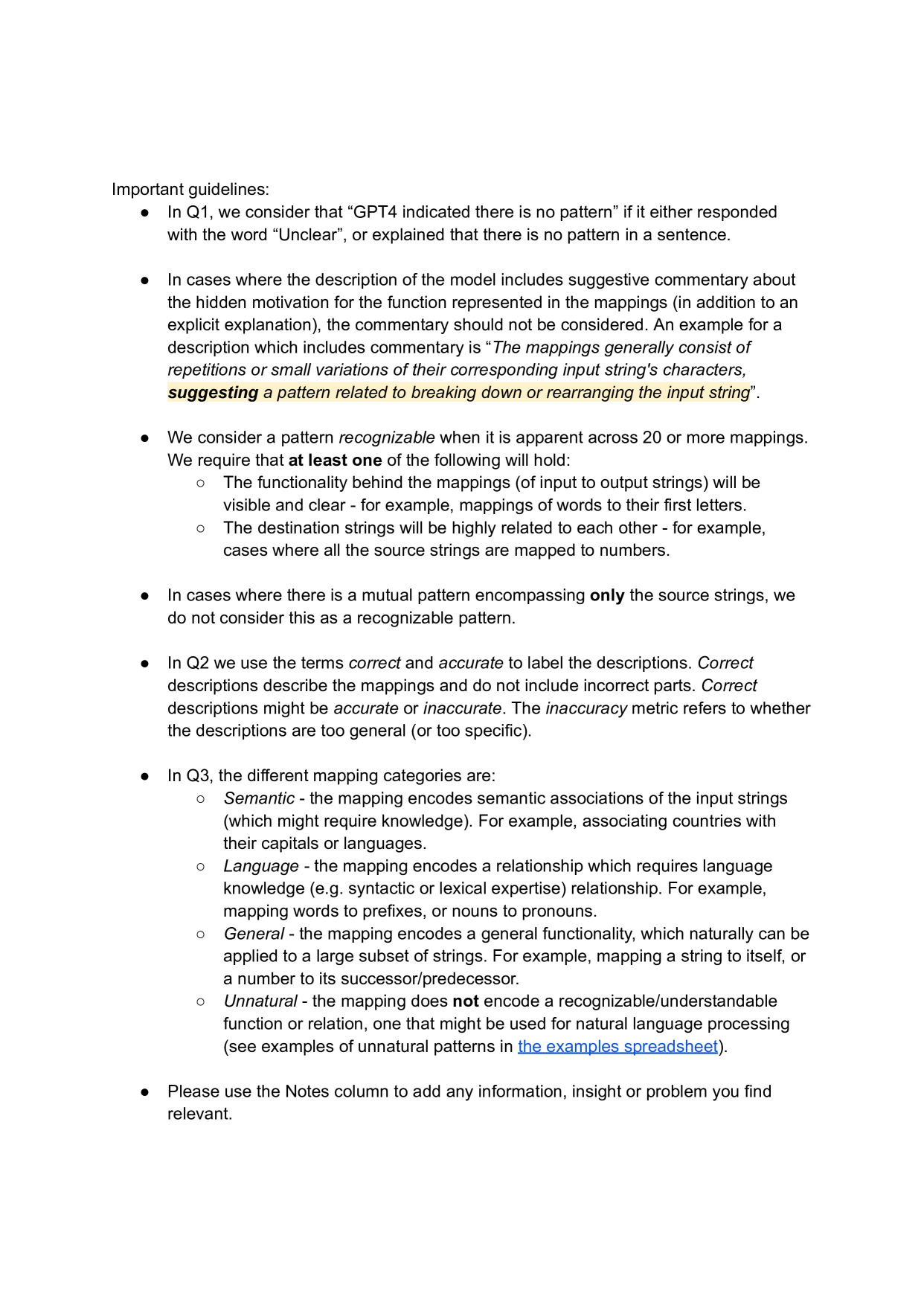

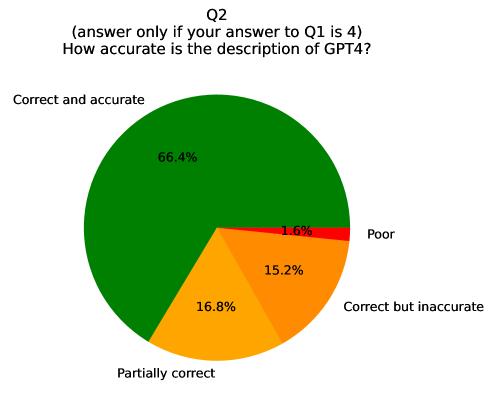

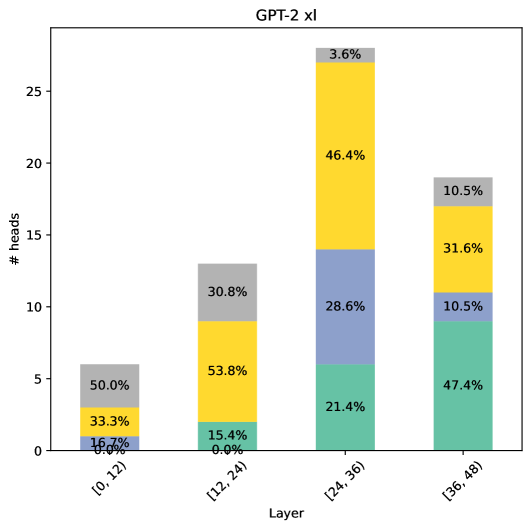

Figure 2 shows category-level classification results of all heads in GPT-2 xl, Phi-2, Pythia 12B, and Llama-3.1 70B. A head is assigned to a certain category if it implements at least one relation from it or its suppressive variant. Considering prominent trends across all models, we first observe that MAPS identified relations from all categories, with classified heads mostly being located in the middle and upper layers. This may suggest that early layers perform operations that cannot be represented in the model’s output vocabulary space. Interestingly, we observe a “side effect” of the grouped attention structure in Llama-3.1 models, where grouped heads often implement the same relations or their suppressive variants.

In addition, heads often implement multiple relations from the same or different categories. The portion of multi-category heads (out of all classified heads) generally decreases in model size: 38% in GPT-2 xl, 29% in Phi-2, 20% in Pythia 6.9B, Pythia 12B and 11% in Llama-3.1 70B. An exception to this trend is Llama-3.1 8B with 11% of multi-category heads, which may be caused by its grouped query attention structure. Also, 20%-36% of the classified heads implement at least one suppression relation.

<details>

<summary>x2.png Details</summary>

### Visual Description

\n

## Scatter Plots: Category Distribution Across Model Layers and Heads

### Overview

The image presents four scatter plots, each representing a different large language model (GPT-2 XL, Phi-2, Pythia 12B, and Llama-3.1 70B). Each plot visualizes the distribution of different categories of information processed by the model's layers and heads. The x-axis represents the layer number, and the y-axis represents the head number. Each point on the plot is colored according to the category of information it represents.

### Components/Axes

* **X-axis (Layer):** Represents the layer number within the neural network. Scales vary for each model:

* GPT-2 XL: 0 to 45

* Phi-2: 0 to 30

* Pythia 12B: 0 to 35

* Llama-3.1 70B: 0 to 64

* **Y-axis (Head):** Represents the head number within the neural network. Scales vary for each model:

* GPT-2 XL: 0 to 20

* Phi-2: 0 to 30

* Pythia 12B: 0 to 32

* Llama-3.1 70B: 0 to 60

* **Legend:** Located at the top-left of the image, defining the color-coding for each category:

* Grey: Unclassified

* Blue: Algorithmic

* Orange: Knowledge

* Green: Linguistic

* Red: Translation

* Purple: 3 categories

* Light Purple: 2 categories

* Dark Purple: 4 categories

### Detailed Analysis or Content Details

**GPT-2 XL (Top-Left):**

* The plot shows a relatively even distribution of all categories across layers and heads.

* Algorithmic (blue) and Linguistic (green) categories appear to be the most prevalent, with a slight concentration in the lower layer numbers (0-20) and across most head numbers.

* Knowledge (orange) is scattered throughout, with a higher density in the middle layers (18-36).

* Unclassified (grey) points are present but less frequent.

* Translation (red) and the category counts (purple shades) are sparsely distributed.

**Phi-2 (Top-Right):**

* A significant concentration of Linguistic (green) points is observed in the upper layers (18-30) and across most head numbers.

* Algorithmic (blue) points are more concentrated in the lower layers (0-12) and lower head numbers.

* Knowledge (orange) is scattered, with a slight concentration in the middle layers (12-24).

* Translation (red) and the category counts (purple shades) are sparsely distributed.

**Pythia 12B (Bottom-Left):**

* Linguistic (green) points dominate the lower layers (0-14) and are distributed across most head numbers.

* Knowledge (orange) points are concentrated in the middle layers (14-28).

* Algorithmic (blue) points are scattered throughout, with a higher density in the lower layers.

* Unclassified (grey) points are present but less frequent.

* Translation (red) and the category counts (purple shades) are sparsely distributed.

**Llama-3.1 70B (Bottom-Right):**

* Linguistic (green) points are heavily concentrated in the upper layers (32-64) and across most head numbers.

* Knowledge (orange) points are concentrated in the middle layers (16-48).

* Algorithmic (blue) points are scattered throughout, with a higher density in the lower layers.

* Unclassified (grey) points are present but less frequent.

* Translation (red) and the category counts (purple shades) are sparsely distributed.

### Key Observations

* The distribution of categories varies significantly across different models.

* Linguistic information appears to be predominantly processed in the upper layers of Phi-2 and Llama-3.1 70B.

* Knowledge information is often concentrated in the middle layers across all models.

* Algorithmic information is more evenly distributed, but tends to be more prevalent in the lower layers.

* The "Unclassified" category suggests that some information processed by the models does not fall neatly into the defined categories.

* The category counts (2, 3, and 4 categories) are very sparsely distributed across all models.

### Interpretation

These scatter plots provide insights into how different large language models process information at various layers and heads. The varying distributions suggest that each model has a unique architecture and learning strategy. The concentration of Linguistic information in the upper layers of Phi-2 and Llama-3.1 70B might indicate that these models prioritize language understanding and generation in their later stages of processing. The concentration of Knowledge in the middle layers suggests that these layers are crucial for integrating and reasoning about factual information. The presence of the "Unclassified" category highlights the complexity of natural language and the limitations of current categorization schemes. The sparse distribution of the category counts suggests that these are less common or more nuanced types of information processing.

The plots demonstrate a clear relationship between model architecture, layer depth, and the types of information processed. By visualizing this relationship, we can gain a better understanding of the inner workings of these powerful language models and potentially improve their design and performance. The differences between the models suggest that there isn't a single "best" way to process language, and that different architectures may be better suited for different tasks.

</details>

Figure 2: Functionality mapping by MAPS for 20 relations of 4 categories — algorithmic, knowledge, linguistic, translation — across all attention heads in GPT-2 xl, Phi-2, Pythia 12B, Llama-3.1 70B. A head is marked as a specific category if it implements at least one relation from this category.

Function universality

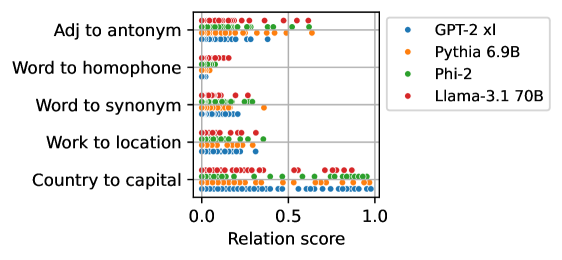

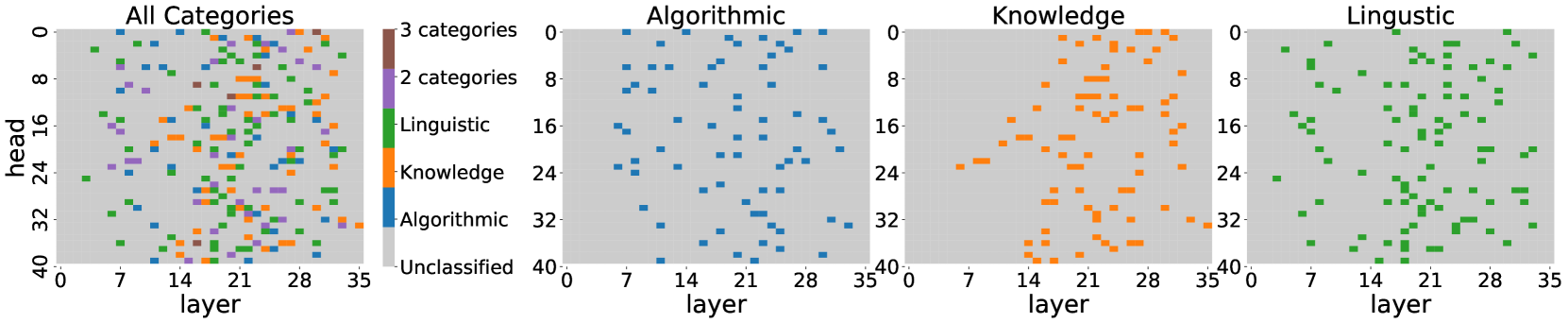

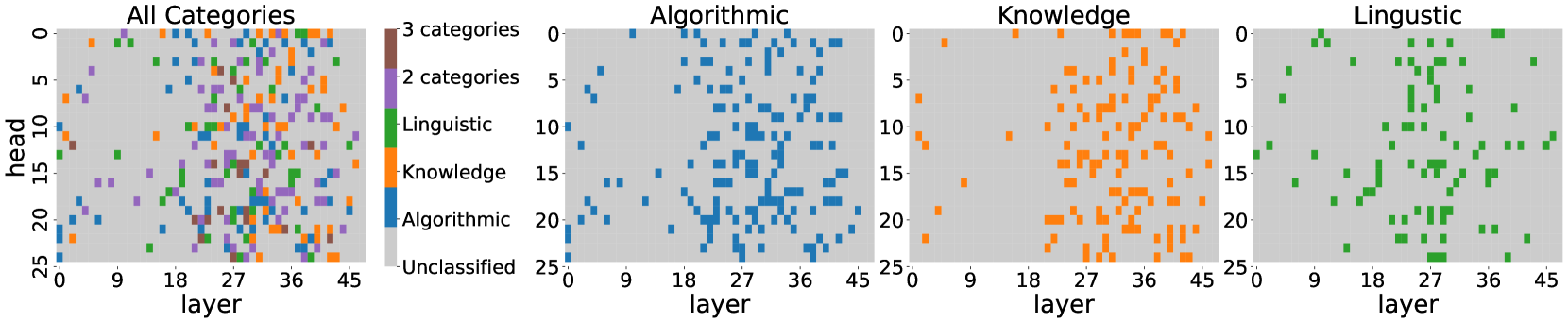

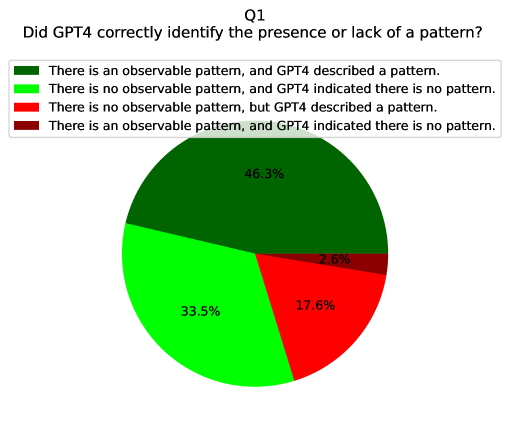

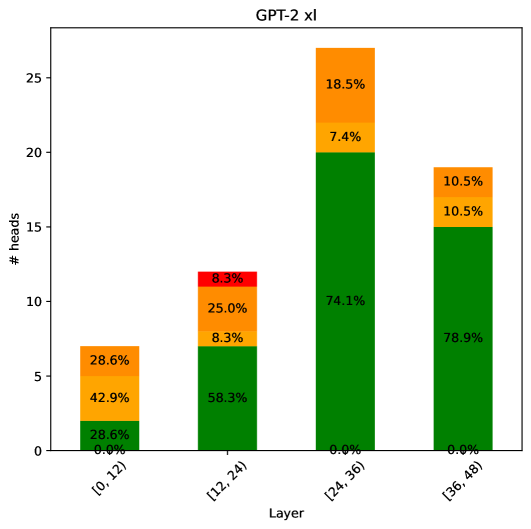

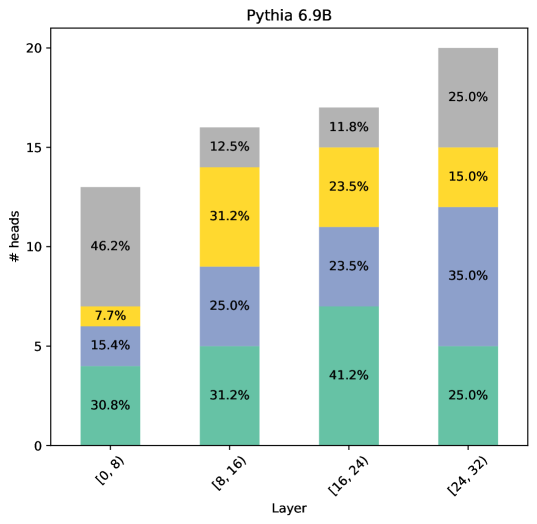

Figure 3 presents the distributions of relation scores for several representative relations in multiple models showing two interesting trends. First, despite architecture and training data differences, models encode relations in their heads to similar degrees, as observed by the similar highest scores per relation. This observation supports the “universality hypothesis” Li et al. (2015) that different networks learn similar features and circuits and expands recent similar findings about universality in LLMs Gould et al. (2024); Arditi et al. (2024); Tigges et al. (2024). Second, the scores for a given relation are diverse, with different heads implementing the relation at varying degrees, as opposed to having a small set of heads with high relation scores. This has implications for research concerning localization and editing; certain concepts or associations are encoded in a large number of model components at varying degrees.

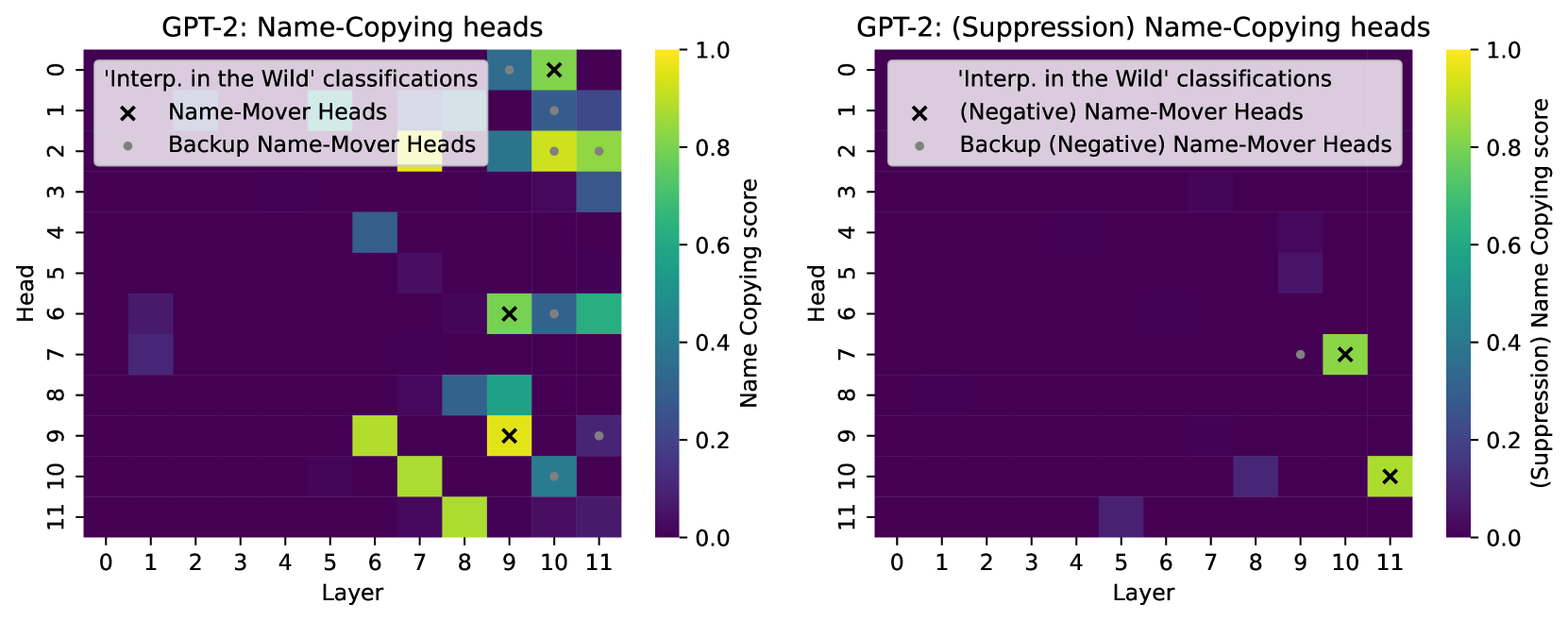

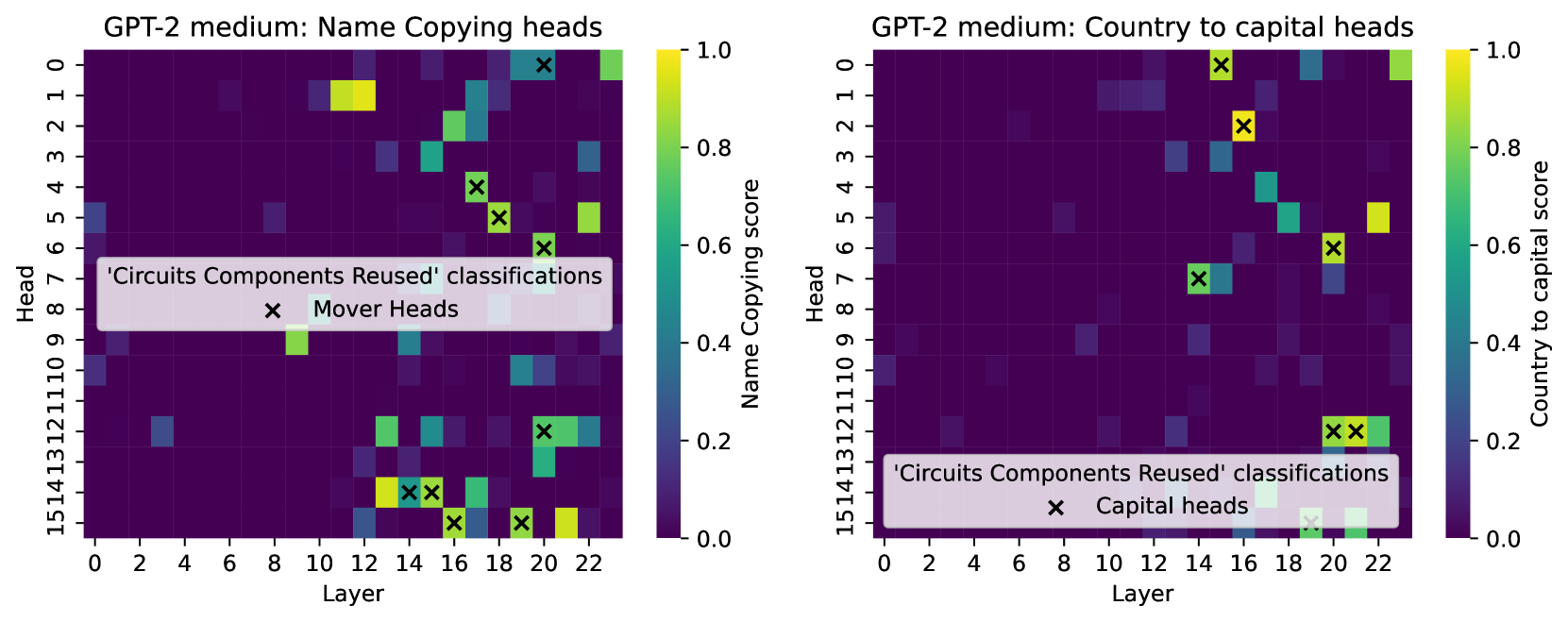

Comparison with known head functionalities

Wang et al. (2023) identified “Name Mover” and “Anti Name Mover” heads in a circuit for indirect object identification in GPT-2 small, which copy or suppress copying specific names in the context, and Merullo et al. (2024a) identified “Mover” and “Capital” heads in GPT-2 medium. MAPS successfully identified all these heads as name copiers or country-to-capital mappers (which agrees with a similar analysis conducted by Wang et al., 2023). In addition, it discovered 25 heads in GPT-2 small and 46 in GPT-2 medium that implement similar operations but were not recognized in prior analyses. While the additional heads may not participate in the specific circuits discovered, they may be triggered for circuits of similar or related tasks that were overlooked in previous analyses.

Notably, for all the heads identified in previous works, MAPS reveals various additional functionalities. These observations extend the findings by Merullo et al. (2024a) of heads that implement multiple functionalities.

Taken together, these results demonstrate the effectiveness of MAPS in comprehensively mapping the implementation of a certain operation by attention heads across the model. A more detailed comparison is in § D.

<details>

<summary>x3.png Details</summary>

### Visual Description

\n

## Scatter Plot: Relation Scores for Different Language Models

### Overview

The image presents a scatter plot comparing the performance of four language models (GPT-2 xl, Pythia 6.9B, Phi-2, and Llama-3.1 70B) across five different relation types: "Adj to antonym", "Word to homophone", "Word to synonym", "Work to location", and "Country to capital". The x-axis represents the "Relation score", ranging from 0.0 to 1.0. Each point on the plot represents the score achieved by a specific language model for a specific relation type.

### Components/Axes

* **X-axis:** "Relation score" (Scale: 0.0 to 1.0, with markers at 0.0, 0.5, and 1.0)

* **Y-axis:** Relation types, listed vertically:

* Adj to antonym

* Word to homophone

* Word to synonym

* Work to location

* Country to capital

* **Legend:** Located in the top-right corner, mapping colors to language models:

* Blue: GPT-2 xl

* Orange: Pythia 6.9B

* Green: Phi-2

* Red: Llama-3.1 70B

### Detailed Analysis

Let's analyze each relation type and the performance of each model:

* **Adj to antonym:** All models cluster between approximately 0.6 and 1.0. GPT-2 xl (blue) appears to have a slight concentration around 0.7-0.8. Pythia 6.9B (orange) is spread between 0.6 and 0.9. Phi-2 (green) is concentrated around 0.8-0.9. Llama-3.1 70B (red) is mostly between 0.7 and 1.0.

* **Word to homophone:** Similar to "Adj to antonym", all models score between approximately 0.6 and 1.0. GPT-2 xl (blue) is concentrated around 0.7-0.8. Pythia 6.9B (orange) is spread between 0.6 and 0.9. Phi-2 (green) is concentrated around 0.8-0.9. Llama-3.1 70B (red) is mostly between 0.7 and 1.0.

* **Word to synonym:** All models score between approximately 0.4 and 1.0. GPT-2 xl (blue) is concentrated around 0.5-0.7. Pythia 6.9B (orange) is spread between 0.6 and 0.9. Phi-2 (green) is concentrated around 0.7-0.9. Llama-3.1 70B (red) is mostly between 0.7 and 1.0.

* **Work to location:** All models score between approximately 0.2 and 0.8. GPT-2 xl (blue) is concentrated around 0.3-0.5. Pythia 6.9B (orange) is spread between 0.4 and 0.7. Phi-2 (green) is concentrated around 0.5-0.7. Llama-3.1 70B (red) is mostly between 0.5 and 0.8.

* **Country to capital:** All models score between approximately 0.6 and 1.0. GPT-2 xl (blue) is concentrated around 0.7-0.9. Pythia 6.9B (orange) is spread between 0.7 and 0.9. Phi-2 (green) is concentrated around 0.8-1.0. Llama-3.1 70B (red) is mostly between 0.7 and 1.0.

### Key Observations

* The "Work to location" relation consistently yields the lowest relation scores across all models.

* Llama-3.1 70B (red) generally achieves higher scores than the other models, particularly in "Word to synonym" and "Country to capital".

* GPT-2 xl (blue) tends to have the lowest scores, especially in "Work to location".

* The scores are generally clustered, with less variance within each relation type.

### Interpretation

The scatter plot demonstrates the ability of different language models to understand and quantify relationships between concepts. The varying scores across relation types suggest that some relationships are inherently easier for these models to grasp than others. The consistently low scores for "Work to location" might indicate that this relationship requires more complex reasoning or world knowledge that these models currently lack.

The superior performance of Llama-3.1 70B suggests that larger models with more parameters are better equipped to capture these relationships. The clustering of scores within each relation type indicates a degree of consistency in how these models perceive these relationships, but the variations between models highlight the differences in their underlying knowledge and reasoning capabilities. The plot provides a comparative assessment of these models' relational understanding, which is crucial for tasks like question answering, knowledge graph completion, and semantic reasoning.

</details>

Figure 3: Relation scores for all heads of Llama-3.1 70B, Pythia 6.9B, Phi-2, GPT-2 xl for several relations. We observe that heads from all models implement these relations to similar degrees.

5 Inspecting Salient Operations

We saw that given an operation realized as a relation between pairs of tokens, we can map how strongly it is implemented by attention heads across the model. Here, we use MAPS to tackle a complementary problem of inferring the prominent operations of a given attention head. We introduce an automatic pipeline for interpreting salient mappings in attention heads (§ 5.1) and use it to broadly infer the functionalities in Pythia 6.9B and GPT-2 xl (§ 5.2). In § F, we extend our analysis to show that the skewness of saliency scores can indicate how global or specific the head’s functionality is.

5.1 Automatic Functionality Inference

We propose the following steps for inferring the functionality of an attention head:

1. Using the saliency score (Eq. 3) to identify the top $k$ tokens for which the head’s transformation is most prominent.

1. For each salient token $s$ , collecting the top $n$ tokens it is mapped to according to $M$ , namely, the tokens corresponding to the top entries in $\mathbf{m}_{s}$ . This could be extended to suppression for better coverage.

1. Inferring the head’s salient operations by querying an LLM about prominent patterns in the list of salient tokens and their top mappings. Notably, we ask the model to indicate there is no pattern when no clear pattern is observed across the mappings. For the exact prompt used, see § E.

We run this pipeline on a total of 2,224 attention heads in GPT-2 xl and Pythia 6.9B, while setting $k=30$ (step 1) and $n=5$ (step 2) and using GPT-4o (Hurst et al., 2024) (step 3). We analyze how often GPT-4o was able to recognize a prominent functionality and measure the quality of its descriptions compared to human judgment.

5.2 Results

<details>

<summary>x4.png Details</summary>

### Visual Description

\n

## Line Chart: Pattern Detection in Neural Network Layers

### Overview

The image presents two line charts comparing pattern detection rates across layers in two different neural network models: Pythia 6.9b and GPT2 xl. The charts display the percentage of heads where a pattern was detected as a function of the layer number.

### Components/Axes

* **X-axis (Both Charts):** "Layer" - ranging from 0 to 30 for Pythia 6.9b and 0 to 40 for GPT2 xl. The scale is linear.

* **Y-axis (Both Charts):** "% of heads where a pattern was detected" - ranging from 0 to 100. The scale is linear.

* **Chart Titles:**

* Left Chart: "Pythia 6.9b"

* Right Chart: "GPT2 xl"

* **Data Series:** A single blue line for each chart representing the pattern detection rate.

* **Gridlines:** Both charts have a light gray grid to aid in reading values.

### Detailed Analysis

**Pythia 6.9b (Left Chart):**

The blue line starts at approximately 55% at layer 0, dips to around 40% at layer 1, then rises steadily to a peak of approximately 90% around layer 15. After layer 15, the line fluctuates between 70% and 90% until layer 25, after which it declines sharply to approximately 40% at layer 30.

* Layer 0: ~55%

* Layer 1: ~40%

* Layer 5: ~65%

* Layer 10: ~80%

* Layer 15: ~90%

* Layer 20: ~80%

* Layer 25: ~70%

* Layer 30: ~40%

**GPT2 xl (Right Chart):**

The blue line begins at approximately 65% at layer 0, dips to around 40% at layer 2, then rises to a peak of approximately 95% around layer 30. After layer 30, the line declines to approximately 60% at layer 40.

* Layer 0: ~65%

* Layer 2: ~40%

* Layer 5: ~60%

* Layer 10: ~70%

* Layer 20: ~80%

* Layer 30: ~95%

* Layer 35: ~85%

* Layer 40: ~60%

### Key Observations

* Both models show an initial dip in pattern detection rate followed by a rise.

* GPT2 xl exhibits a higher peak detection rate (around 95%) compared to Pythia 6.9b (around 90%).

* Pythia 6.9b shows a more pronounced decline in pattern detection rate towards the end of its layers.

* GPT2 xl maintains a relatively higher detection rate across a wider range of layers.

### Interpretation

The charts suggest that pattern detection capabilities develop in the initial layers of both neural networks, reaching a peak performance at a certain layer depth. The subsequent decline in detection rate in Pythia 6.9b could indicate overfitting or a loss of generalization ability in the later layers. The more gradual decline in GPT2 xl suggests better robustness or a different learning dynamic. The higher peak detection rate in GPT2 xl may indicate a greater capacity for identifying complex patterns. The differences in the curves could be attributed to variations in model architecture, training data, or training procedures. The data suggests that the optimal layer depth for pattern detection varies between the two models. The initial dip in both charts could represent a period of adjustment or feature extraction before meaningful patterns emerge.

</details>

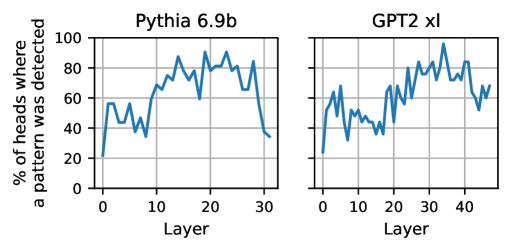

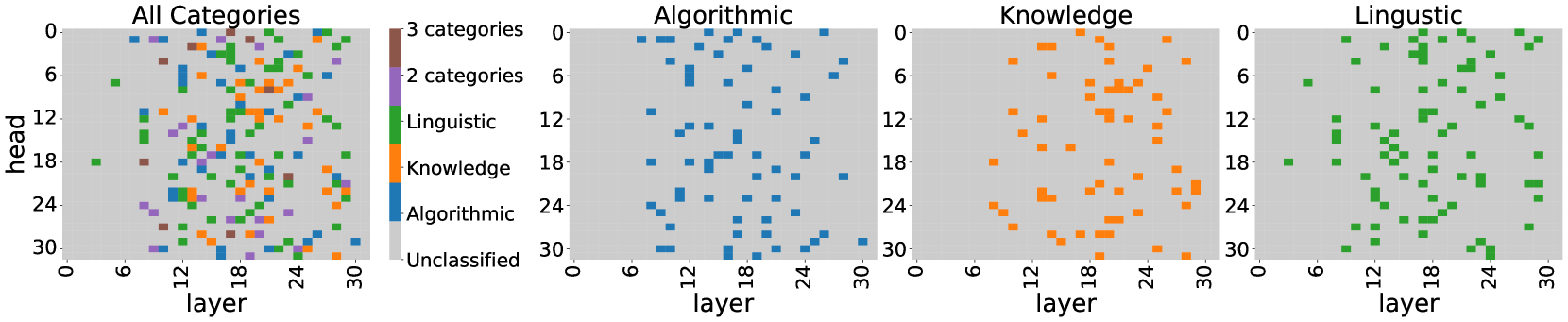

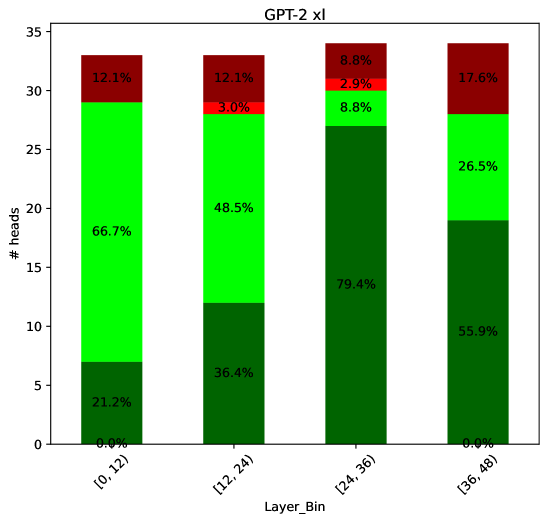

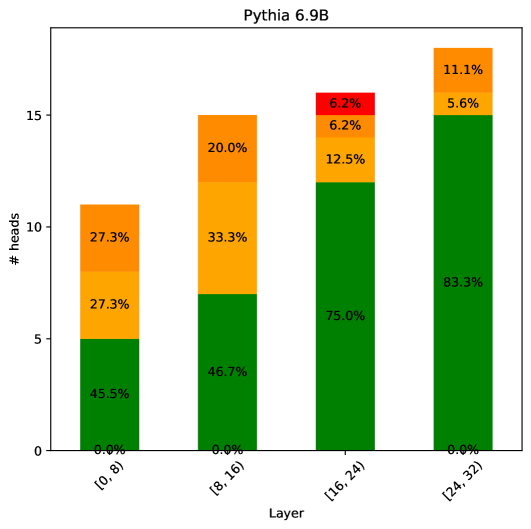

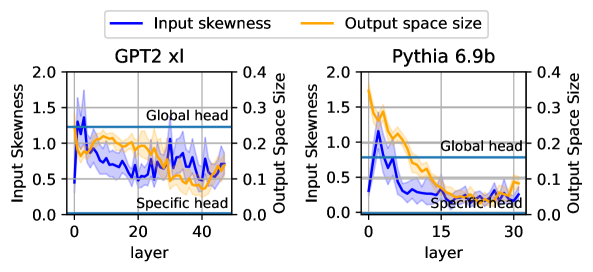

Figure 4: Portion of heads where GPT-4o identified a prominent pattern across the head’s salient mappings.

Figure 4 shows the percentage of heads per layer in GPT-2 xl and Pythia 6.9B where GPT-4o described a pattern. In both models, we observe a high rate of 60%-96% interpretable heads in the middle and upper layers, compared to a lower rate of 20%-60% in the early and last layers. These trends are consistent with those observed for predefined relations (§ 4), suggesting that early-layer heads are less interpretable in the vocabulary space. Qualitative analysis of 107 heads with identified patterns shows diverse operations: 38% semantic (e.g., extension of time-periods, day->month; month->year; year->decade), 36% algorithmic (e.g., capitalization, water->Water), and 26% linguistic (e.g., completion of sub-words (inhib->inhibition; resil->resilience). Examples of salient mappings and their interpretations are provided in § E.

Interpretation quality

We conduct a human study to assess the plausibility of the generated descriptions, finding that GPT-4o correctly identifies the presence or absence of a pattern in 80% of the cases and reliably detects observable patterns. This shows that our automatic pipeline is reasonable and demonstrates promising trends in automatically interpreting attention heads with MAPS. For more details on this study and its results, see § E.

6 Related Work

Prior studies of attention heads in LLMs mostly focused on analyzing their attention patterns Voita et al. (2019); Clark et al. (2019); Vig and Belinkov (2019), training probes and sparse auto-encoders Kissane et al. (2024), studying head outputs, and performing causal interventions (see survey by Zheng et al., 2024). Unlike these methods, MAPS infers the functionality of attention heads from their parameters, without any training or inference.

Vocabulary projections of attention head parameters have been used for analyzing certain attention head operations in LLMs Wang et al. (2023); McDougall et al. (2024); Kim et al. (2024); García-Carrasco et al. (2024); Elhage et al. (2021). However, they have been used mostly as a validation tool for operations inferred by other methods and were applied to specific relations and heads, typically in the scope of specific circuits. Gould et al. (2024) studied a single relation across all heads of multiple LLMs. Our work proposes a general framework that uses vocabulary projections as its primary tool for inferring attention head functionality.

Millidge and Black (2022) utilized an LLM to interpret the vocabulary projections of singular vectors of attention heads and MLP matrices, but their approach does not consider input-output mappings which are essential for estimating head functionality. More recently, Merullo et al. (2024b) used parameter similarities of heads at different layers to study their “communication channels”. Lastly, Hernandez et al. (2024) showed that relation operations of attention heads can be well-approximated by linear functions. Our work further shows that some of these relations are implemented by mappings encoded in head parameters.

7 Conclusion

We present MAPS, an efficient framework for analyzing the functionality of attention heads from their parameters. MAPS utility is two-fold: it allows mapping how strongly a given operation is implemented across the heads of a model and inferring the salient operations of a given head. Experiments show that estimations by MAPS correlate with the head outputs during inference and causally relate to the model’s behavior. Moreover, strong LLMs can interpret them automatically, often aligning with human judgment. Our analysis provides insights into architecture biases on function encoding and function universality in LLMs.

Limitations

MAPS primarily focuses on analyzing the part of the head’s computation that writes the output to the residual stream, i.e., the matrix $W_{VO}$ . In other words, we use single-token mappings to analyze the operation of the output part of the head on contextualized representations $\tilde{X}$ . While our experiments in § 4.3 show that these estimations generalize to multi-token inputs, it is still valuable to examine the head’s computation responsible for contextualization and for creating $\tilde{X}$ , i.e., the matrix $W_{QK}$ .

Another limitation of MAPS is that its expressivity is bounded by the model’s vocabulary. Namely, it can only map operations that can be expressed via pairs of tokens. While this formulation can effectively describe and capture various features, as demonstrated by our experiments in § 4 and § 5, there are likely to be operations that this framework would overlook, such as idioms and positional features. A related challenge is the lower coverage of MAPS in early layers, where the model may not yet operate in the output vocabulary space, but instead computes general-purpose features to be used by later layers. Extending MAPS to support other types of representations is a promising direction to overcome these limitations, as well as exploring methods such as linear mappings Yom Din et al. (2024) and patching Ghandeharioun et al. (2024) to improve the performance on early layers.

Lastly, MAPS relies on the formulation of attention heads as interaction matrices (§ 2), which ignores the bias terms of $W_{V},W_{O}$ . While our experiments show there is a strong correlation between the estimations by MAPS and head outputs, these terms may influence them. Incorporating these bias terms into the analysis is an interesting direction, which we leave for future works to explore.

Acknowledgments

We thank Guy Dar, Daniela Gottesman, Ohav Barbi, Ori Yoran, Yoav Gur-Arieh and Samuel Amouyal who helped with analysis and provided useful feedback. This research was supported in part by The Israel Science Foundation grant 1083/24.

References

- Ainslie et al. (2023) Joshua Ainslie, James Lee-Thorp, Michiel de Jong, Yury Zemlyanskiy, Federico Lebron, and Sumit Sanghai. 2023. GQA: Training generalized multi-query transformer models from multi-head checkpoints. In Proceedings of the 2023 Conference on Empirical Methods in Natural Language Processing, pages 4895–4901, Singapore. Association for Computational Linguistics.

- Arditi et al. (2024) Andy Arditi, Oscar Obeso, Aaquib Syed, Daniel Paleka, Nina Panickssery, Wes Gurnee, and Neel Nanda. 2024. Refusal in language models is mediated by a single direction. arXiv preprint arXiv:2406.11717.

- Azaria and Mitchell (2023) Amos Azaria and Tom Mitchell. 2023. The internal state of an LLM knows when it‘s lying. In Findings of the Association for Computational Linguistics: EMNLP 2023, pages 967–976, Singapore. Association for Computational Linguistics.

- Biderman et al. (2023) Stella Biderman, Hailey Schoelkopf, Quentin Gregory Anthony, Herbie Bradley, Kyle O’Brien, Eric Hallahan, Mohammad Aflah Khan, Shivanshu Purohit, USVSN Sai Prashanth, Edward Raff, Aviya Skowron, Lintang Sutawika, and Oskar van der Wal. 2023. Pythia: A suite for analyzing large language models across training and scaling. In International Conference on Machine Learning, ICML 2023, 23-29 July 2023, Honolulu, Hawaii, USA, volume 202 of Proceedings of Machine Learning Research, pages 2397–2430. PMLR.

- Bohnet et al. (2022) Bernd Bohnet, Vinh Q Tran, Pat Verga, Roee Aharoni, Daniel Andor, Livio Baldini Soares, Massimiliano Ciaramita, Jacob Eisenstein, Kuzman Ganchev, Jonathan Herzig, et al. 2022. Attributed question answering: Evaluation and modeling for attributed large language models. arXiv preprint arXiv:2212.08037.

- Bolukbasi et al. (2021) Tolga Bolukbasi, Adam Pearce, Ann Yuan, Andy Coenen, Emily Reif, Fernanda Viégas, and Martin Wattenberg. 2021. An interpretability illusion for bert. ArXiv preprint, abs/2104.07143.

- Clark et al. (2019) Kevin Clark, Urvashi Khandelwal, Omer Levy, and Christopher D. Manning. 2019. What does BERT look at? an analysis of BERT’s attention. In Proceedings of the 2019 ACL Workshop BlackboxNLP: Analyzing and Interpreting Neural Networks for NLP, pages 276–286, Florence, Italy. Association for Computational Linguistics.

- Dar et al. (2023) Guy Dar, Mor Geva, Ankit Gupta, and Jonathan Berant. 2023. Analyzing transformers in embedding space. In Proceedings of the 61st Annual Meeting of the Association for Computational Linguistics (Volume 1: Long Papers), pages 16124–16170, Toronto, Canada. Association for Computational Linguistics.

- Dubey et al. (2024) Abhimanyu Dubey, Abhinav Jauhri, Abhinav Pandey, Abhishek Kadian, Ahmad Al-Dahle, Aiesha Letman, Akhil Mathur, Alan Schelten, Amy Yang, Angela Fan, et al. 2024. The llama 3 herd of models. ArXiv preprint, abs/2407.21783.

- Elhage et al. (2021) Nelson Elhage, Neel Nanda, Catherine Olsson, Tom Henighan, Nicholas Joseph, Ben Mann, Amanda Askell, Yuntao Bai, Anna Chen, Tom Conerly, et al. 2021. A mathematical framework for transformer circuits. Transformer Circuits Thread, 1(1):12.

- Ferrando et al. (2024) Javier Ferrando, Gabriele Sarti, Arianna Bisazza, and Marta R Costa-jussà. 2024. A primer on the inner workings of transformer-based language models. ArXiv preprint, abs/2405.00208.

- Gao et al. (2024) Leo Gao, Tom Dupré la Tour, Henk Tillman, Gabriel Goh, Rajan Troll, Alec Radford, Ilya Sutskever, Jan Leike, and Jeffrey Wu. 2024. Scaling and evaluating sparse autoencoders. ArXiv preprint, abs/2406.04093.

- García-Carrasco et al. (2024) Jorge García-Carrasco, Alejandro Maté, and Juan C. Trujillo. 2024. How does GPT-2 predict acronyms? extracting and understanding a circuit via mechanistic interpretability. In International Conference on Artificial Intelligence and Statistics, 2-4 May 2024, Palau de Congressos, Valencia, Spain, volume 238 of Proceedings of Machine Learning Research, pages 3322–3330. PMLR.

- Geva et al. (2022) Mor Geva, Avi Caciularu, Kevin Wang, and Yoav Goldberg. 2022. Transformer feed-forward layers build predictions by promoting concepts in the vocabulary space. In Proceedings of the 2022 Conference on Empirical Methods in Natural Language Processing, pages 30–45, Abu Dhabi, United Arab Emirates. Association for Computational Linguistics.

- Geva et al. (2021) Mor Geva, Roei Schuster, Jonathan Berant, and Omer Levy. 2021. Transformer feed-forward layers are key-value memories. In Proceedings of the 2021 Conference on Empirical Methods in Natural Language Processing, pages 5484–5495, Online and Punta Cana, Dominican Republic. Association for Computational Linguistics.

- Ghandeharioun et al. (2024) Asma Ghandeharioun, Avi Caciularu, Adam Pearce, Lucas Dixon, and Mor Geva. 2024. Patchscopes: A unifying framework for inspecting hidden representations of language models. In Forty-first International Conference on Machine Learning.

- Gould et al. (2024) Rhys Gould, Euan Ong, George Ogden, and Arthur Conmy. 2024. Successor heads: Recurring, interpretable attention heads in the wild. In The Twelfth International Conference on Learning Representations.

- Gur-Arieh et al. (2025) Yoav Gur-Arieh, Roy Mayan, Chen Agassy, Atticus Geiger, and Mor Geva. 2025. Enhancing automated interpretability with output-centric feature descriptions. arXiv preprint arXiv:2501.08319.

- Hernandez et al. (2024) Evan Hernandez, Arnab Sen Sharma, Tal Haklay, Kevin Meng, Martin Wattenberg, Jacob Andreas, Yonatan Belinkov, and David Bau. 2024. Linearity of relation decoding in transformer language models. In The Twelfth International Conference on Learning Representations.

- Hurst et al. (2024) Aaron Hurst, Adam Lerer, Adam P Goucher, Adam Perelman, Aditya Ramesh, Aidan Clark, AJ Ostrow, Akila Welihinda, Alan Hayes, Alec Radford, et al. 2024. Gpt-4o system card. ArXiv preprint, abs/2410.21276.

- Javaheripi and Bubeck (2023) Mojan Javaheripi and Sébastien Bubeck. 2023. Phi-2: The surprising power of small language models.

- Katz et al. (2024) Shahar Katz, Yonatan Belinkov, Mor Geva, and Lior Wolf. 2024. Backward lens: Projecting language model gradients into the vocabulary space. In Proceedings of the 2024 Conference on Empirical Methods in Natural Language Processing, pages 2390–2422, Miami, Florida, USA. Association for Computational Linguistics.

- Kim et al. (2024) Geonhee Kim, Marco Valentino, and André Freitas. 2024. A mechanistic interpretation of syllogistic reasoning in auto-regressive language models. ArXiv preprint, abs/2408.08590.

- Kissane et al. (2024) Connor Kissane, Robert Krzyzanowski, Joseph Isaac Bloom, Arthur Conmy, and Neel Nanda. 2024. Interpreting attention layer outputs with sparse autoencoders. In ICML 2024 Workshop on Mechanistic Interpretability.

- Kuhn et al. (2023) Lorenz Kuhn, Yarin Gal, and Sebastian Farquhar. 2023. Semantic uncertainty: Linguistic invariances for uncertainty estimation in natural language generation. In The Eleventh International Conference on Learning Representations.

- Li et al. (2015) Yixuan Li, Jason Yosinski, Jeff Clune, Hod Lipson, and John Hopcroft. 2015. Convergent learning: Do different neural networks learn the same representations? In Proceedings of the 1st International Workshop on Feature Extraction: Modern Questions and Challenges at NIPS 2015, volume 44 of Proceedings of Machine Learning Research, pages 196–212, Montreal, Canada. PMLR.

- Loper and Bird (2002) Edward Loper and Steven Bird. 2002. NLTK: The natural language toolkit. In Proceedings of the ACL-02 Workshop on Effective Tools and Methodologies for Teaching Natural Language Processing and Computational Linguistics, pages 63–70, Philadelphia, Pennsylvania, USA. Association for Computational Linguistics.

- McDougall et al. (2024) Callum Stuart McDougall, Arthur Conmy, Cody Rushing, Thomas McGrath, and Neel Nanda. 2024. Copy suppression: Comprehensively understanding a motif in language model attention heads. In Proceedings of the 7th BlackboxNLP Workshop: Analyzing and Interpreting Neural Networks for NLP, pages 337–363, Miami, Florida, US. Association for Computational Linguistics.

- Meng et al. (2022) Kevin Meng, David Bau, Alex Andonian, and Yonatan Belinkov. 2022. Locating and editing factual associations in GPT. In Advances in Neural Information Processing Systems 35: Annual Conference on Neural Information Processing Systems 2022, NeurIPS 2022, New Orleans, LA, USA, November 28 - December 9, 2022.

- Merullo et al. (2024a) Jack Merullo, Carsten Eickhoff, and Ellie Pavlick. 2024a. Circuit component reuse across tasks in transformer language models. In The Twelfth International Conference on Learning Representations.

- Merullo et al. (2024b) Jack Merullo, Carsten Eickhoff, and Ellie Pavlick. 2024b. Talking heads: Understanding inter-layer communication in transformer language models. In The Thirty-eighth Annual Conference on Neural Information Processing Systems.

- Millidge and Black (2022) Beren Millidge and Sid Black. 2022. The singular value decompositions of transformer weight matrices are highly interpretable.

- Nanda and Bloom (2022) Neel Nanda and Joseph Bloom. 2022. Transformerlens. https://github.com/TransformerLensOrg/TransformerLens.

- nostalgebraist (2020) nostalgebraist. 2020. Interpreting gpt: the logit lens.

- Olsson et al. (2022) Catherine Olsson, Nelson Elhage, Neel Nanda, Nicholas Joseph, Nova DasSarma, Tom Henighan, Ben Mann, Amanda Askell, Yuntao Bai, Anna Chen, et al. 2022. In-context learning and induction heads. ArXiv preprint, abs/2209.11895.

- Radford et al. (2019) Alec Radford, Jeffrey Wu, Rewon Child, David Luan, Dario Amodei, Ilya Sutskever, et al. 2019. Language models are unsupervised multitask learners. OpenAI blog, 1(8):9.

- Schober et al. (2018) Patrick Schober, Christa Boer, and Lothar A. Schwarte. 2018. Correlation coefficients: Appropriate use and interpretation. Anesthesia & Analgesia, 126:1763–1768.

- Tigges et al. (2024) Curt Tigges, Michael Hanna, Qinan Yu, and Stella Biderman. 2024. LLM circuit analyses are consistent across training and scale. In The Thirty-eighth Annual Conference on Neural Information Processing Systems.

- Vaswani et al. (2017) Ashish Vaswani, Noam Shazeer, Niki Parmar, Jakob Uszkoreit, Llion Jones, Aidan N. Gomez, Lukasz Kaiser, and Illia Polosukhin. 2017. Attention is all you need. In Advances in Neural Information Processing Systems 30: Annual Conference on Neural Information Processing Systems 2017, December 4-9, 2017, Long Beach, CA, USA, pages 5998–6008.

- Vig and Belinkov (2019) Jesse Vig and Yonatan Belinkov. 2019. Analyzing the structure of attention in a transformer language model. In Proceedings of the 2019 ACL Workshop BlackboxNLP: Analyzing and Interpreting Neural Networks for NLP, pages 63–76, Florence, Italy. Association for Computational Linguistics.

- Voita et al. (2019) Elena Voita, David Talbot, Fedor Moiseev, Rico Sennrich, and Ivan Titov. 2019. Analyzing multi-head self-attention: Specialized heads do the heavy lifting, the rest can be pruned. In Proceedings of the 57th Annual Meeting of the Association for Computational Linguistics, pages 5797–5808, Florence, Italy. Association for Computational Linguistics.

- Vrandečić and Krötzsch (2014) Denny Vrandečić and Markus Krötzsch. 2014. Wikidata: a free collaborative knowledgebase. Commun. ACM, 57(10):78–85.

- Wang et al. (2023) Kevin Ro Wang, Alexandre Variengien, Arthur Conmy, Buck Shlegeris, and Jacob Steinhardt. 2023. Interpretability in the wild: a circuit for indirect object identification in GPT-2 small. In The Eleventh International Conference on Learning Representations, ICLR 2023, Kigali, Rwanda, May 1-5, 2023. OpenReview.net.

- Wolf et al. (2020) Thomas Wolf, Lysandre Debut, Victor Sanh, Julien Chaumond, Clement Delangue, Anthony Moi, Pierric Cistac, Tim Rault, Remi Louf, Morgan Funtowicz, Joe Davison, Sam Shleifer, Patrick von Platen, Clara Ma, Yacine Jernite, Julien Plu, Canwen Xu, Teven Le Scao, Sylvain Gugger, Mariama Drame, Quentin Lhoest, and Alexander Rush. 2020. Transformers: State-of-the-art natural language processing. In Proceedings of the 2020 Conference on Empirical Methods in Natural Language Processing: System Demonstrations, pages 38–45, Online. Association for Computational Linguistics.

- Xiao et al. (2024) Guangxuan Xiao, Yuandong Tian, Beidi Chen, Song Han, and Mike Lewis. 2024. Efficient streaming language models with attention sinks. In The Twelfth International Conference on Learning Representations.

- Yom Din et al. (2024) Alexander Yom Din, Taelin Karidi, Leshem Choshen, and Mor Geva. 2024. Jump to conclusions: Short-cutting transformers with linear transformations. In Proceedings of the 2024 Joint International Conference on Computational Linguistics, Language Resources and Evaluation (LREC-COLING 2024), pages 9615–9625, Torino, Italia. ELRA and ICCL.

- Yu et al. (2024) Lei Yu, Meng Cao, Jackie CK Cheung, and Yue Dong. 2024. Mechanistic understanding and mitigation of language model non-factual hallucinations. In Findings of the Association for Computational Linguistics: EMNLP 2024, pages 7943–7956, Miami, Florida, USA. Association for Computational Linguistics.

- Zheng et al. (2024) Zifan Zheng, Yezhaohui Wang, Yuxin Huang, Shichao Song, Bo Tang, Feiyu Xiong, and Zhiyu Li. 2024. Attention heads of large language models: A survey. ArXiv preprint, abs/2409.03752.

- Zhou et al. (2024) Zhenhong Zhou, Haiyang Yu, Xinghua Zhang, Rongwu Xu, Fei Huang, Kun Wang, Yang Liu, Junfeng Fang, and Yongbin Li. 2024. On the role of attention heads in large language model safety. ArXiv preprint, abs/2410.13708.

Appendix A Mapping Predefined Relations – Additional Details and Results

In § 4, we showed how MAPS can be utilized to map all heads that implement a predefined relation across a language model. Here we offer further details on the datasets and implementation, as well as supplementary results.

A.1 Datasets

| Category | Relation | Example mappings | Dataset size per tokenizer | | |

| --- | --- | --- | --- | --- | --- |

| Llama-3.1 | Pythia | GPT-2 / Phi-2 | | | |

| Algorithmic | Copying | (ottawa, ottawa),(say,say) | 450 | 432 | 436 |

| Name copying | (Mallory, Mallory),(Walt, Walt) | 134 | 113 | 132 | |

| Word to first letter | (bend, b),(past, p) | 238 | 237 | 238 | |

| Word to last letter | (bend, d),(past, t) | 238 | 237 | 238 | |

| Year to following | (1728, 1729),(1958, 1959) | | 147 | 133 | |

| Knowledge | Country to capital | (Bulgaria, Sofia),(Chile, Santiago) | 45 | 32 | 43 |

| Country to language | (Laos, Lao),(Denmark, Danish) | 51 | 37 | 48 | |

| Object to superclass | (tiger, animal),(carp, fish) | 62 | 46 | 65 | |

| Product by company | (Xbox, Microsoft),(Bravia, Sony) | 39 | | 40 | |

| Work to location | (farmer, farm),(chef, kitchen) | 48 | 34 | 45 | |

| Linguistic | Adj to comparative | (big, bigger),(high, higher) | 47 | 44 | 48 |

| Adj to superlative | (angry, angriest),(high, highest) | 39 | | 41 | |

| Noun to pronoun | (viewers, they),(Anna, she) | 257 | 238 | 253 | |

| Verb to past tense | (ask, asked),(eat, ate) | 110 | 112 | 112 | |

| Word to antonym | (love, hate),(right, wrong) | 91 | 88 | 92 | |

| Word to compound | (hot, hotdog),(wall, wallpaper) | 38 | | 36 | |

| Word to homophone | (steal, steel),(sea, see) | 103 | 88 | 91 | |

| Word to synonym | (vague, obscure),(ill, sick) | 154 | 142 | 154 | |

| Translation | English to French | (cat, chat),(love, amour) | 32 | | |

| English to Spanish | (cat, gato),(love, amor) | 34 | | | |

Table 3: Datasets used for inspecting predefined operations in models with different tokenizers. Every model column describes the datasets’ sizes for this model. Different tokenizers lead to differences between datasets. We discard datasets that were left with $≤$ 30 single-token mappings after tokenization.

Table 4: Sources for constructing per-relation datasets used in § 4.

We display the list of categories and relations used to map predefined relations (§ 4), alongside the sizes of the different datasets and examples for relations pairs in Table 3.

Data collection

We obtained the relation pairs from the sources: WikiData Vrandečić and Krötzsch (2014); “English Word Frequency List” Kaggle dataset, https://www.kaggle.com/datasets/wheelercode/english-word-frequency-list which is based on Google Books Ngram Viewer Exports, version 3, exported on Feb 17, 2020, https://storage.googleapis.com/books/ngrams/books/datasetsv3.html the datasets used by Hernandez et al. (2024), which are based on CounterFact Meng et al. (2022) and WikiData Vrandečić and Krötzsch (2014), and ChatGPT. https://chatgpt.com/ We also used the nltk package Loper and Bird (2002) to validate several relation datasets. Except for the Translation and year to following datasets, all datasets are in English. The details on which source was used to compose which relation are presented in Table 4.

In the datasets for the relations work to location, verb to past tense, product by company, object to superclass, adj to superlative, adj to comparative, word to antonym, we filter out pairs where the source token appeared as a source token in other pairs. Relation pairs were filtered out from different datasets to assert their correctness.

Data processing

For every model, we tokenized the various datasets using the model’s tokenizer. To maximize the number of words mapped to single tokens, we added a leading space before every word. For example, if the relation source word was "Don", we tokenized the string " Don" instead. Finally, we filtered out relation pairs where at least one of the words was mapped to more than one token.

A.2 Implementation Details

Applying the first MLP

For every model except Llama-3.1 70B, and similarly to Wang et al. (2023); Gould et al. (2024), we first applied the model’s first MLP to the tokens embeddings. Notably, we did not apply the first MLP when we analyzed heads from the models’ first layers (layer 0), since the first attention layer precedes the first MLP in the computation. To adjust the embeddings to the first MLP’s input distribution, we also applied the layer norm that precedes it. Regarding Llama-3.1 70B, we observed better results when not applying the first MLP.

Selection of $k$

To calculate a head’s relation score $\phi_{R}(M)$ , we obtain the top- $k$ tokens in $\mathbf{m}_{s}$ for every source token $s$ . For Pythia, GPT-2 and Phi-2 we set $k=1$ for copying and name-copying relations and $k=10$ for other relations. For the Llama-3.1 models we set $k=3$ for copying and name-copying and $k=25$ for other relations. Table 5 – which presents the tokenization applied to several base words by the tokenizers of Llama-3.1, GPT-2 and Pythia – demonstrates the need to set larger $k$ values for Llama-3.1. The larger vocabulary size allows Llama-3.1’s tokenizer to express the same concept with more tokens.

| Word | Llama-3.1 | Pythia | GPT-2 |

| --- | --- | --- | --- |

| Hello | >Hello, Hello, _hello, Ġhello, hello, ĠHello, Hallo, Bonjour, Hola | Hello, Ġhello, hello, ĠHello | hello, ĠHello, Ġhello, Hello |

| Please | Please, Ġplease, please, ĠPLEASE, ĠPlease, .Please, PLEASE, >Please, Bitte, ĠBITTE, ĠBitte, Ġbitte | Please, please, Ġplease, ĠPlease | Please, Ġplease, ĠPlease, ĠPLEASE, please |

| Love | ĠLOVE, love, loven, Ġlove, Love, ĠLove, ĠLiebe, Ġliebe, Ġamour, Ġamore, Ġamor | love, ĠLOVE, Love, Ġlove, ĠLove | Ġlove, love, ĠLove, Love, ĠLOVE |

| Water | -water, _WATER, ĠWater, _water, water, Ġwater, Water, ĠWATER, .water, ĠWasser, ’eau, agua, Ġagua | Water, Ġwater, water, ĠWater, agua | Water, water, Ġwater, ewater, ĠWater |

| School | ĠSCHOOL, -school, schools, Ġschool, _school, school, ĠSchool, .school, School | School, Ġschool, school, ĠSchool | ĠSchool, Ġschool, school, ĠSCHOOL, School |

Table 5: Different tokenizations for base words by the tokenizers of Llama-3.1, Pythia and GPT-2. The “Ġ” symbol represents a leading space. We observe that Llama-3.1’s larger vocabulary allows expressing every base word with more tokens.

A.3 Random Baselines

A concern that may arise from choosing a relatively small relation score threshold, is that the results obtained by MAPS may capture the similarity of tokens embeddings, rather than a functionality implemented by attention head’s weights. To study this, we applied MAPS to randomly initialized matrices from the empirical distribution of the model. Concretely, for every layer in the original model, we sampled $H$ random matrices (with the same shape as $W_{VO}$ ) from a normal distribution, for which the mean and standard deviation are the average and the standard deviation of the $W_{VO}$ matrices in the original layer. We applied our predefined relation analysis (described in § 4.1) to those matrices and measured the amounts of “functional attention heads” classified among them.

For models Phi-2, Pythia 6.9B, Pythia 12B, Llama-3.1 8B and Llama-3.1 70B no random matrices were classified as relation heads. For GPT-2 xl, 5 matrices were classified as such, compared to 250 relation heads in the trained model, and out of 1200 heads in the model. This demonstrates that the choice of $\tau=15\%$ is meaningful for separating between functionalities of trained attention heads and random ones. While smaller thresholds could have also been justified by this experiment, we chose $\tau=15\%$ to assert that the heads encode a substantial fraction of the relation pairs.

A.4 Additional Results