# Aristotle: Mastering Logical Reasoning with A Logic-Complete Decompose-Search-Resolve Framework

> Corresponding author: Hao Fei

## Abstract

In the context of large language models (LLMs), current advanced reasoning methods have made impressive strides in various reasoning tasks. However, when it comes to logical reasoning tasks, major challenges remain in both efficacy and efficiency. This is rooted in the fact that these systems fail to fully leverage the inherent structure of logical tasks throughout the reasoning processes such as decomposition, search, and resolution. To address this, we propose a logic-complete reasoning framework, Aristotle, with three key components: Logical Decomposer, Logical Search Router, and Logical Resolver. In our framework, symbolic expressions and logical rules are comprehensively integrated into the entire reasoning process, significantly alleviating the bottlenecks of logical reasoning, i.e., reducing sub-task complexity, minimizing search errors, and resolving logical contradictions. The experimental results on several datasets demonstrate that Aristotle consistently outperforms state-of-the-art reasoning frameworks in both accuracy and efficiency, particularly excelling in complex logical reasoning scenarios. We will open-source all our code at http://llm-symbol.github.io/Aristotle.

## 1 Introduction

LLMs Patel et al. (2023); Chowdhery et al. (2023) have unlocked unprecedented potential in semantic understanding Zhao et al. (2023), sparking immense hope for realizing AGI. A fundamental requirement for true intelligence is the ability to perform human-level reasoning, such as commonsense reasoning Wang et al. (2024c), mathematical problem-solving Wang et al. (2024a), and geometric reasoning Eisner et al. (2024). To achieve this, researchers have drawn inspiration from human reasoning processes, proposing various methods and strategies for LLM-based reasoning. One of the most groundbreaking works is the Chain-of-Thought (CoT) Wei et al. (2022), which breaks down complex problems into smaller sub-problems, solving them step by step. The birth of CoT has elevated the reasoning capabilities of LLMs to new heights. Further research has built on this foundation by closely emulating human cognitive patterns, introducing more advanced approaches, such as Least-to-Most Zhou et al. (2023), Tree-of-Thought (ToT) Yao et al. (2023), Graph-of-Thought (GoT) Besta et al. (2024), and Plan-and-Solve Wang et al. (2023a), which have achieved progressively better results on reasoning benchmarks. In summary, successful LLM-based reasoning methods generally involve three key modules Huang and Chang (2023); Li et al. (2024): problem decomposition, path searching, and problem resolution.

<details>

<summary>x1.png Details</summary>

### Visual Description

## Bar Chart Comparison: Error Rate and Reasoning Steps

### Overview

The image displays a two-panel bar chart comparing the performance of two methods, labeled "ToT" (blue bars) and "Ours" (red bars). The left panel compares "Error Rate (%)" across two categories, "SE" and "RE." The right panel compares the number of "Reasoning Steps" for a single, unspecified category. The chart visually emphasizes the reduction in both error rate and reasoning steps achieved by the "Ours" method relative to "ToT."

### Components/Axes

* **Legend:** Positioned at the top center. Contains two entries:

* A blue rectangle labeled "ToT".

* A red rectangle labeled "Ours".

* **Left Chart (Error Rate):**

* **Y-axis:** Labeled "Error Rate (%)". Scale markers at 0, 10, 20, and 30.

* **X-axis:** Two categorical labels: "SE" and "RE".

* **Right Chart (Reasoning Steps):**

* **Y-axis:** Labeled "Reasoning Steps". Scale markers at 0, 10, 20, and 30.

* **X-axis:** No explicit label. Contains a single pair of bars.

* **Data Annotations:** Red downward-pointing arrows with numerical labels indicate the absolute reduction from the "ToT" value to the "Ours" value.

### Detailed Analysis

**Left Panel: Error Rate (%)**

* **Category SE:**

* **ToT (Blue Bar):** Value is explicitly labeled as **15.0**.

* **Ours (Red Bar):** The bar is significantly shorter. A red arrow points from the top of the ToT bar down to the top of the Ours bar, labeled **-11.1**. This indicates the "Ours" error rate is approximately 15.0 - 11.1 = **3.9%**.

* **Category RE:**

* **ToT (Blue Bar):** Value is explicitly labeled as **28.4**.

* **Ours (Red Bar):** The bar is very short, near the baseline. A red arrow points from the top of the ToT bar down to the top of the Ours bar, labeled **-28.0**. This indicates the "Ours" error rate is approximately 28.4 - 28.0 = **0.4%**.

**Right Panel: Reasoning Steps**

* **ToT (Blue Bar):** Value is explicitly labeled as **24.6**.

* **Ours (Red Bar):** The bar is shorter. A red arrow points from the top of the ToT bar down to the top of the Ours bar, labeled **-12.9**. This indicates the "Ours" method uses approximately 24.6 - 12.9 = **11.7** reasoning steps.

### Key Observations

1. **Consistent Superiority:** The "Ours" method outperforms "ToT" on all presented metrics, showing both lower error rates and fewer reasoning steps.

2. **Magnitude of Improvement:** The reduction is most dramatic in the "RE" error rate, where the error drops by 28.0 percentage points (a ~98.6% relative reduction). The reduction in reasoning steps is also substantial, cutting the count by more than half.

3. **Visual Design:** The use of red downward arrows directly on the chart provides an immediate, quantitative understanding of the improvement without requiring the viewer to calculate differences from the axis.

### Interpretation

This chart is designed to convincingly demonstrate the effectiveness of a proposed method ("Ours") against a baseline or alternative method ("ToT"). The data suggests that "Ours" is not only more accurate (lower error rates) but also more efficient (requires fewer reasoning steps). The near-elimination of error in the "RE" category is a particularly strong result.

The pairing of error rate and reasoning steps implies a relationship between efficiency and accuracy. The chart argues that the "Ours" method achieves better outcomes *while* using a simpler or more direct reasoning process, which is a highly desirable property in computational or AI systems. The clear, annotated presentation is typical of a research paper or technical report aiming to highlight a significant methodological advancement.

</details>

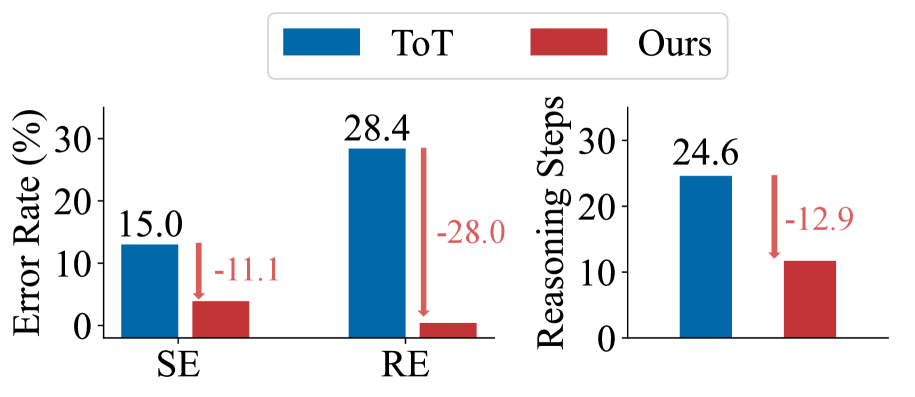

Figure 1: Our reasoning framework vs. the SoTA ToT: comparison in terms of Search Error (SE) and single-step Reasoning Error (RE), as well as in terms of average number of reasoning steps.

<details>

<summary>x2.png Details</summary>

### Visual Description

\n

## Logical Reasoning Flowchart: Automated Theorem Proving Process

### Overview

This image is a detailed flowchart illustrating a multi-step automated logical reasoning system designed to evaluate a given premise and question. The system decomposes natural language into formal logic, searches for contradictions through iterative resolution, and concludes whether a statement is true or false. The process is divided into three main phases: Search Initialization, Search and Resolve, and Conclude Answer.

### Components/Axes

The diagram is organized into three vertical columns corresponding to the three main steps. Key components include:

- **Raw Input**: Contains the initial natural language premise and question.

- **Translated and Decomposed**: Shows the logical formalization of the input.

- **Initialization**: Defines the starting logical clauses for the reasoning engine.

- **Search and Resolve**: The core processing loop containing a **Resolver**, **Search Router**, and mechanisms for checking **Current Clauses** against **Complementary Clauses**.

- **Reasoning Complete?**: A decision diamond that checks for contradictions.

- **Proof**: A box showing the logical proof structure.

- **Reasoning Trajectories**: A detailed side panel showing the step-by-step evolution of clauses across three reasoning rounds.

- **Final answer**: The concluding output.

**Visual Elements & Spatial Grounding:**

- **Colors**: Blue boxes represent resolved or current clauses. Pink boxes represent complementary clauses or contradiction states. Yellow boxes represent inputs, outputs, or key decisions. Green and purple boxes represent processing modules (Translator, Decomposer).

- **Arrows**: Gray arrows indicate the primary flow of data and control between steps. Dashed arrows indicate feedback loops or secondary data flow.

- **Layout**: The main process flows from top-left (Raw Input) down through the center column. The "Reasoning Trajectories" panel is positioned to the right of the main flow, providing a detailed audit trail.

### Detailed Analysis

#### Step 1: Search Initialization

* **Raw Input (Top-Left)**:

* **Premise (P):** "If people are nice, then they are nice. If people are smart, then they are patient. Dave is Smart."

* **Question (S):** "Dave is nice."

* **(True/False/Unknown/Self-Contradiction)**

* **Translated and Decomposed (Below Raw Input)**:

* The premise and question are processed by a **Translator** and **Decomposer**.

* **Premises (Pn):**

1. `Patient(x, False) ∨ Nice(x, True)`

2. `Patient(x, False) ∨ Patient(x, True)`

3. `Smart(x, False) ∨ Patient(x, True)`

* **Question (Sn):** `Nice(Dave, True)`

* **Initialization (Bottom-Left)**:

* **Sn:** `Nice(Dave, True)`

* **¬Sn:** `Nice(Dave, False)` (The negation of the question).

* The system initializes with the question and its negation, along with the list of premises (Pn) ①, ②, ③.

#### Step 2: Search and Resolve (Center Column)

This is an iterative loop.

1. **Search Router** selects a **Current Clause** (starting with `¬Sn: Nice(Dave, False)`) and the set of **Premises (Pn)**.

2. The **Resolver** attempts to find a **Complementary Clause** from the premises that can be resolved with the current clause.

3. The system checks for contradictions.

* **First Pass:** With `Current Clause: ¬Sn: Nice(Dave, False)`, it finds **Complementary Clause ①:** `Patient(x, False) ∨ Nice(x, True)`. The result is `Resolved Clause: Patient(Dave, False)` with the note "No Contradiction".

* The process loops back ("Reasoning Complete? -> No") with the new resolved clause as the current clause.

#### Step 3: Conclude Answer & Reasoning Trajectories (Right Panel)

The right panel details the three rounds of reasoning that occur within the "Search and Resolve" loop.

* **Start 2nd Round:**

* **Current Clause:** `¬Sn: Nice(Dave, False)` (This appears to be a reset or carry-over; the main flow had `Patient(Dave, False)`).

* **Complementary Clause ①:** `Patient(x, False) ∨ Nice(x, True)`

* **Resolved Clause:** `Patient(Dave, False)` (No contradiction).

* **Start 3rd Round:**

* **Current Clause:** `Patient(Dave, False)`

* **Complementary Clause ②:** `Smart(x, False) ∨ Patient(x, True)`

* **Resolved Clause:** `Smart(Dave, False)` (No contradiction).

* **Final Round (Implied):**

* **Current Clause:** `Smart(Dave, False)`

* **Complementary Clause ③:** `Smart(x, False) ∨ Patient(x, True)`

* **Resolved Clause:** `Contradiction!` (Resolving `Smart(Dave, False)` with `Smart(x, False) ∨ Patient(x, True)` yields `Patient(Dave, True)`, which contradicts the earlier derived `Patient(Dave, False)`).

* **Proof:** `D_Sn = Pn ⊢ Sn` and `D_¬Sn = Pn ⊢ ¬Sn`. The system has derived both the question (`Sn`) and its negation (`¬Sn`) from the premises, indicating a self-contradiction in the original premise set.

**Final Answer (Bottom Center):** "The answer is **True**." This is derived from the proof box `D_Sn = Pn ⊢ Sn`, meaning the premises logically entail the question `Sn: Nice(Dave, True)`.

### Key Observations

1. **Self-Contradictory Premises:** The logical decomposition reveals that the original natural language premise "If people are nice, then they are nice" is a tautology (always true) and adds no constraint. The core issue arises from the interaction of the other premises, which ultimately lead to a contradiction (`Patient(Dave, False)` and `Patient(Dave, True)`).

2. **Reasoning Path:** The system does not directly prove `Nice(Dave, True)`. Instead, it attempts to prove the negation (`Nice(Dave, False)`), fails by deriving a contradiction, and therefore concludes the original statement must be true (a form of proof by contradiction or reductio ad absurdum).

3. **Visual Audit Trail:** The "Reasoning Trajectories" panel is crucial for debugging and understanding the step-by-step logical inferences, showing exactly which clauses were combined at each stage.

### Interpretation

This flowchart demonstrates a **resolution-based theorem prover** applied to a logical puzzle. It showcases the core challenges in automated reasoning: translating ambiguous natural language into precise formal logic and navigating complex inference chains.

The process highlights that the initial premise set is **inconsistent**. The statement "Dave is nice" is deemed **True** not because it is directly supported, but because assuming its opposite (`Dave is not nice`) leads to a logical impossibility given the other premises. This is a classic example of how formal systems handle contradictions—they expose them rather than ignore them.

The diagram serves as a technical specification for such a system, detailing the data structures (clauses), control flow (search and resolve loop), and proof logging (trajectories). It emphasizes the importance of **clause management** and **contradiction detection** as the engine of logical deduction. The final answer "True" is a consequence of the system's design to return the original statement if its negation is unsatisfiable, even if the underlying premise set is flawed.

</details>

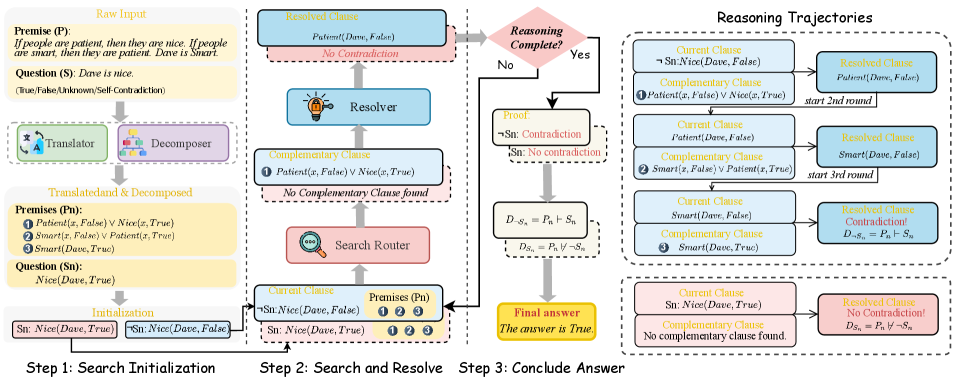

Figure 2: Our Aristotle logical reasoning framework (best viewed via zooming in). In step 1, the

<details>

<summary>figure/translation.png Details</summary>

### Visual Description

## Diagram: Language Translation/Exchange Icon

### Overview

The image is a stylized, flat-design icon or diagram representing a bidirectional language translation or communication process. It features two primary symbolic elements connected by curved arrows, suggesting a continuous cycle or exchange.

### Components/Axes

The diagram is composed of four main visual components arranged on a light gray background:

1. **Top-Left Element:** A light purple, rounded speech bubble shape. Inside it is a large, dark gray Chinese character: **文**.

2. **Bottom-Right Element:** A bright blue, rounded shape resembling a document or speech bubble. Inside it is a large, dark gray capital letter: **A**.

3. **Top-Right Arrow:** A dark gray, curved arrow originating from the blue "A" shape and pointing towards the purple "文" bubble.

4. **Bottom-Left Arrow:** A dark gray, curved arrow originating from the purple "文" bubble and pointing towards the blue "A" shape.

### Detailed Analysis

* **Textual Content:**

* **Chinese Character:** **文** (Pronounced: *wén*). This character means "text," "language," "writing," or "culture." It is rendered in a bold, sans-serif style.

* **Latin Letter:** **A**. This is a standard, bold, sans-serif capital "A." In this context, it most likely represents the Latin alphabet or the English language, potentially as an abbreviation for "Alpha" or simply as a symbol for Western languages.

* **Spatial Relationships & Flow:**

* The two main elements are placed diagonally opposite each other (top-left and bottom-right).

* The arrows create a clear, clockwise circular flow between the two elements. The top arrow indicates a process from "A" to "文," and the bottom arrow indicates a process from "文" to "A."

* The design uses a limited color palette: light purple (#E6E6FA approx.), bright blue (#00BFFF approx.), dark gray (#333333 approx.), and a light gray background (#F0F0F0 approx.).

### Key Observations

* The diagram is abstract and symbolic rather than literal. It does not depict actual translation software or a specific user interface.

* The use of a single Chinese character and a single Latin letter is a minimalist representation of two distinct language systems.

* The curved arrows are of equal weight and style, implying a balanced, reciprocal relationship between the two language systems.

* The shapes (speech bubble/document) are generic and could represent dialogue, text input, or documents.

### Interpretation

This diagram is a conceptual representation of **bidirectional translation or linguistic exchange**.

* **What it suggests:** The core idea is the conversion or communication between two different language domains, symbolized by Chinese (文) and a Latin-based language (A). The cyclic arrows emphasize that this is not a one-way translation but an ongoing, interactive process—like a conversation, real-time translation, or the work of a bilingual system.

* **How elements relate:** The "文" and "A" are the core subjects. The speech bubble shapes frame them as units of communication. The arrows define the relationship between them as dynamic and reciprocal.

* **Notable Anomalies/Inferences:** The choice of "A" is interesting. It could be a placeholder for any Latin-alphabet language, or it might specifically reference "Alpha," hinting at a project name or a first version (e.g., "Healer Alpha" from the system prompt). The diagram's simplicity makes it versatile for representing concepts like machine translation, cross-cultural communication, language learning, or API integration between systems using different languages. The lack of additional text or labels makes its meaning broad and open to interpretation within the context of language technology.

</details>

Translator and

<details>

<summary>figure/decomposer.png Details</summary>

### Visual Description

\n

## Diagram: Hierarchical Tree Structure

### Overview

The image displays a simple, three-level hierarchical tree diagram or organizational chart. It consists of colored, rounded rectangular boxes connected by solid blue lines, indicating a parent-child or branching relationship. The diagram is presented on a plain, light gray background. There is **no textual information** (labels, titles, or data) present within any of the boxes or elsewhere in the image.

### Components/Axes

* **Nodes:** Six rounded rectangular boxes, each with a distinct color and a subtle 3D effect created by a darker shade on the left and top edges.

* **Connections:** Solid, medium-blue lines that connect the boxes, forming the hierarchical structure. The lines branch at right angles.

* **Layout:** The diagram is structured in three distinct horizontal levels.

* **Level 1 (Top):** A single yellow box, centered horizontally.

* **Level 2 (Middle):** Two boxes. A red box is positioned to the left of the center line, and a green box is positioned to the right.

* **Level 3 (Bottom):** Three boxes. From left to right: a green box, a yellow box, and a blue box. They are evenly spaced across the width of the diagram.

### Detailed Analysis

* **Spatial Grounding & Structure:**

* The top (yellow) box is the root node. A single blue line descends from its bottom center.

* This line connects to a horizontal blue bar that spans the width between the two middle-level boxes.

* From this horizontal bar, two vertical lines descend to connect to the top centers of the red (left) and green (right) boxes.

* From the bottom center of the red box, a blue line descends to a second, wider horizontal bar.

* From the bottom center of the green box, a blue line also descends to connect to the same horizontal bar.

* From this second horizontal bar, three vertical lines descend to connect to the top centers of the three bottom-level boxes (green, yellow, blue).

* **Color Palette:**

* **Yellow:** Used for the top-level node and the center node of the bottom level.

* **Red:** Used for the left node of the middle level.

* **Green:** Used for the right node of the middle level and the left node of the bottom level.

* **Blue:** Used for the right node of the bottom level and for all connecting lines.

* **Trend Verification:** Not applicable, as this is a structural diagram, not a data chart. The "flow" is strictly top-down and branching.

### Key Observations

1. **Absence of Text:** The most significant observation is the complete lack of any textual labels, identifiers, or data within the diagram. Its meaning is entirely abstract.

2. **Color Repetition:** The colors yellow and green are each used twice, while red and blue are used once for a node. The blue color is exclusively used for the connecting lines and one final node.

3. **Symmetry and Asymmetry:** The structure is symmetrical at the first branching (one node to two), but becomes asymmetrical at the second branching, as the left parent (red) and right parent (green) both connect to all three child nodes, rather than each having their own distinct set.

4. **Visual Style:** The design is flat with minimal depth cues (the 3D shading on the boxes). It uses a simple, clean, and modern aesthetic suitable for presentations or conceptual illustrations.

### Interpretation

This diagram is a **template or abstract representation** of a hierarchical system. Its meaning is not defined by the image itself but would be provided by context or accompanying text.

* **What it demonstrates:** It visually models a one-to-many, multi-level relationship. The top node is the primary category or origin. It splits into two secondary categories. Both of these secondary categories then share or contribute to three tertiary items or outcomes at the bottom level.

* **How elements relate:** The blue lines explicitly define the relationships and flow of authority, dependency, or categorization. The shared connection from both middle nodes to all bottom nodes suggests a **matrix or combined influence** structure, rather than a strict, siloed tree. For example, the bottom three items could be projects that receive input from both departments (red and green).

* **Notable anomalies:** The key anomaly is the **lack of semantic content**. Without labels, the diagram is purely syntactic. The color choices appear arbitrary for meaning-coding but may be for visual distinction. The structure implies that the two middle-level entities have equal standing and identical relationships to the bottom level, which is a specific and notable organizational pattern.

**In summary, this is a blank structural template for a two-tiered hierarchy where two parent nodes jointly oversee three child nodes. To extract factual information, the boxes would need to be populated with text.**

</details>

Decomposer together transform $P$ and $S$ into $P_n$ and $S_n$ . Then, we initialize the $C_current$ using the decomposed $S_n$ and $¬ S_n$ . In step 2, the

<details>

<summary>x5.png Details</summary>

### Visual Description

Icon/Small Image (29x29)

</details>

Search Router uses the $C_current$ and $P_n$ to search for $C_complement$ . The

<details>

<summary>x6.png Details</summary>

### Visual Description

Icon/Small Image (29x29)

</details>

Resolver then resolves $C_current$ with $C_complement$ to produce $C_resolved$ . The reasoning complete if: (1) the $C_resolved$ determines whether a contradiction exists; (2) reach the maximum number of iterations $I_max$ . In step 3, Aristotle then concludes the Proof $D_S_{n}$ and $D_¬ S_{n}$ based on the Proof Determination. Using these proofs, Aristotle determines the final answer based on Eq. (1). Note that two distinct reasoning paths will be implemented: a solid box representing the path starting from $¬ S_n$ , and a dotted box representing the path starting from $S_n$ . The complete reasoning process for both two paths, including all iterations are shown in the right part “ Reasoning Trajectories ”.

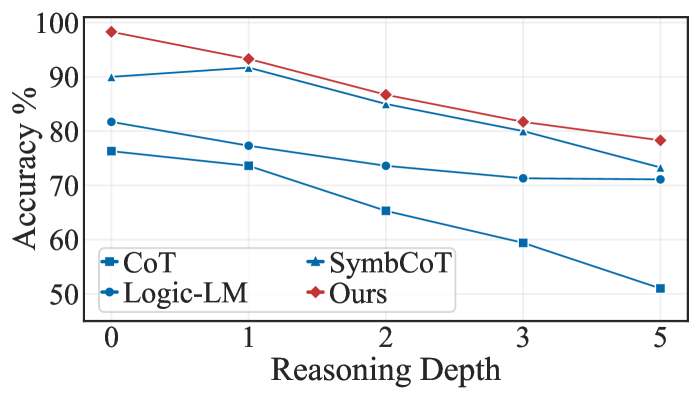

Compared to other forms of general reasoning, logical reasoning Huang and Chang (2023) stands out as one of the most challenging tasks, as it demands the strictest evidence, arguments, and logical rigor to arrive at sound conclusions or judgments. Logical reasoning more closely mirrors human-level cognitive processes, making it crucial in high-stakes domains such as mathematical proof generation, legal analysis, and scientific discovery Cummins et al. (1991); Markovits and Vachon (1989). In recent years, numerous studies have investigated how to integrate LLMs into logical reasoning. For example, some methods Pan et al. (2023); Gao et al. (2023) use LLMs to translate textual problems into symbolic expressions, which are then addressed by external logic solvers. Subsequent work, such as SymbCoT Xu et al. (2024), suggests that LLMs themselves can handle both symbolic translation and logic resolution, thus avoiding potential information loss caused when using external solvers. While SymbCoT has achieved state-of-the-art (SoTA) performance, the inherent simplicity of CoT’s linear reasoning process leaves considerable room for further improvement in LLM-based logical reasoning.

In response, certain research Yao et al. (2023); Besta et al. (2024); Zhang et al. (2023) has applied sophisticated general-purpose reasoning methods (e.g., ToT, GoT) directly to logical reasoning tasks. Unfortunately, these approaches largely overlook the inherent structure of logical tasks and fail to effectively integrate logical rules into the decompose-search-resolve framework, leaving key issues unresolved in both reasoning efficacy and efficiency:

$\blacktriangleright$ From an efficacy perspective, first, when LLMs decompose logical problems, they often rely on the linguistic token relations rather than the underlying logical structure, leading to disconnected sub-problems and faulty reasoning. Specifically, when reasoning hinges on specific logical relationships, neglecting them can result in disjointed sub-problems, breaking the logical chain and ultimately leading to incorrect conclusions. Furthermore, during the search stage, current path search methods rely heavily on evaluators that may be unreliable, selecting nodes based on possibly flawed logic, causing error propagation through subsequent reasoning steps Chen et al. (2024); Wang et al. (2024b). For the resolving step, these methods guide LLMs to solve sub-questions with simple text prompts, which frequently contain logical errors, resulting in numerous faulty nodes in the search space Xu et al. (2024). These errors propagate through subsequent reasoning steps, causing entire paths to fail and leading to reasoning failure. Our preliminary experiments reveal that directly applying SoTA general-purpose reasoning methods with a search mechanism to logical tasks results in significant errors, with 28.4% for reasoning and 15.0% for search, as shown in Fig. 1.

$\blacktriangleright$ From an efficiency perspective, these approaches also lead to significant shortcomings. For example, generating large numbers of incorrect nodes wastes computational resources Ning et al. (2024). Moreover, relying on unreliable evaluators introduces bias into the search process, leading to unnecessary node and path explorations, ultimately reducing efficiency Chen et al. (2024). We note that inefficient logical reasoning systems can significantly undermine their value in practical application scenarios.

To address these challenges, we propose a novel reasoning framework, Aristotle, which effectively tackles the performance and the efficiency bottlenecks in existing logical reasoning tasks by completely integrating symbolic expressions and rules into each stage of the decomposition, search, and resolution. Fig. 2 illustrates the overall framework. Specifically, we first introduce a Logical Decomposer that breaks down the original problem into smaller and simpler components based on its logical structure, reducing the complexity of logical tasks. We then devise a Logical Search Router, which leverages proof by contradiction to directly search for logical inconsistencies, thereby reducing search errors from unreliable evaluators and minimizing the number of steps required by existing methods. Finally, we develop a Logical Resolver, which rigorously resolves logical contradictions at each reasoning step, guided by the Logical Search Router. Overall, Aristotle thoroughly considers the inherent logical structure of tasks, fully incorporating logical symbols into the entire decompose-search-resolve framework. This ensures a more logically coherent reasoning process, leading to more reliable final results.

We conducted experiments across multiple logical reasoning benchmarks, where our method surpasses the current SoTA baselines by 4.5% with GPT-4 and 5.4% with GPT-4o. Further analysis revealed that the decomposer, search router, and resolver modules each contributed to: (i) reducing task complexity during problem decomposition, leading to improved accuracy in subsequent search and reasoning phases; (ii) focusing search efforts on the most direct and relevant paths, which reduced errors and enhanced efficiency; (iii) achieving near-perfect logical reasoning accuracy. Moreover, we observe that Aristotle delivers even greater performance improvements in complex scenarios, such as those with more intricate logical structures or longer reasoning chains. Overall, this work marks the first successful complete integration of symbolic logic expressions into every stage of an LLM-based reasoning framework (decomposition, search, and resolution), demonstrating that LLMs can perform complete logical reasoning over symbolic structures.

## 2

<details>

<summary>figure/aristotle_icon.png Details</summary>

### Visual Description

## Icon: Stylized Male Figure

### Overview

The image is a simple, flat-design icon depicting the head and shoulders of a stylized male figure. It uses a limited color palette with bold black outlines, characteristic of icon sets used in user interfaces or digital illustrations. There is no embedded text, data, or complex diagrammatic flow.

### Components/Design Elements

The icon is composed of several distinct visual components:

1. **Head & Hair:** A rounded head shape with a gray area representing hair on top and a full gray beard and mustache. The hairline is defined by a curved black line.

2. **Face:** A peach-colored facial area containing:

* **Eyes:** Two simple, solid black oval dots.

* **Eyebrows:** Two short, curved black lines above the eyes.

* **Nose:** A small, curved black line.

* **Mouth:** A simple, horizontal black line within the beard area.

3. **Ears:** Two peach-colored, semi-circular shapes on either side of the head.

4. **Clothing/Shoulders:** A light blue-gray area below the head, suggesting a shirt or tunic. A single black diagonal line runs from the lower left towards the upper right, indicating a fold or seam in the fabric.

5. **Outline:** All elements are defined by consistent, medium-weight black outlines.

### Detailed Analysis

* **Color Palette:**

* **Primary Fill Colors:** Gray (hair/beard), Peach (skin), Light Blue-Gray (clothing).

* **Outline/Detail Color:** Black.

* **Background:** The icon is presented on a plain, light gray background.

* **Style:** The design is minimalist and symbolic, not realistic. It uses geometric shapes and clean lines. The figure has a neutral, perhaps slightly stern or thoughtful expression.

* **Spatial Composition:** The figure is centered within the image frame. The head occupies the upper two-thirds, with the shoulders forming the base. The elements are symmetrically arranged around the vertical axis of the face.

### Key Observations

* The icon contains **no textual information, labels, axes, legends, or numerical data**.

* It is a representational symbol, not a chart, graph, or technical diagram.

* The design is highly simplified, conveying the concept of "a man," possibly with connotations of age, wisdom, or a classical philosopher/sage due to the beard and simple attire.

### Interpretation

This image is a graphical asset, not a document containing factual data or information to be extracted. Its purpose is symbolic or representational.

* **What it represents:** The icon is a visual shorthand for concepts such as "male user," "person," "philosopher," "sage," "teacher," or "historical figure." The beard and simple clothing are key signifiers that steer the interpretation away from a generic person and towards an archetype associated with wisdom or antiquity.

* **How elements relate:** The components work together to create a recognizable human silhouette. The black outlines provide clear separation between the different color fields (hair, skin, clothing), ensuring the icon remains legible at small sizes.

* **Notable features:** The lack of detailed facial features (like pupils or a detailed mouth) is intentional, keeping the icon generic and universally understandable. The diagonal line on the clothing is the only element that breaks the perfect symmetry, adding a slight touch of detail or character.

**Conclusion for Technical Documentation:** This image contains no extractable textual or numerical data. It is a design element. A technical description for documentation purposes would focus on its visual attributes (colors, shapes, style) and its intended symbolic meaning within a user interface or document.

</details>

Aristotle Architecture

We first formally define the logical reasoning task. Given a set of premises $P=\{p_1,p_2,…,p_n\}$ , where each $p_i$ represents a logical statement, a reasoner should derive an answer $A$ regarding a given statement $S$ . The possible answer is true ( $T$ ), false ( $F$ ), unknown ( $U$ ), or self-contradictory ( $SD$ ). Self-contradictory means a statement can be proved true and false simultaneously. The formal definition of each answer can be found in Eq. (1).

As illustrated in Fig. 2, Aristotle has an architecture with four modules: Translator, Decomposer, Search Router, and Resolver.

####

<details>

<summary>figure/translation.png Details</summary>

### Visual Description

## Diagram: Language Translation/Exchange Icon

### Overview

The image is a stylized, flat-design icon or diagram representing a bidirectional language translation or communication process. It features two primary symbolic elements connected by curved arrows, suggesting a continuous cycle or exchange.

### Components/Axes

The diagram is composed of four main visual components arranged on a light gray background:

1. **Top-Left Element:** A light purple, rounded speech bubble shape. Inside it is a large, dark gray Chinese character: **文**.

2. **Bottom-Right Element:** A bright blue, rounded shape resembling a document or speech bubble. Inside it is a large, dark gray capital letter: **A**.

3. **Top-Right Arrow:** A dark gray, curved arrow originating from the blue "A" shape and pointing towards the purple "文" bubble.

4. **Bottom-Left Arrow:** A dark gray, curved arrow originating from the purple "文" bubble and pointing towards the blue "A" shape.

### Detailed Analysis

* **Textual Content:**

* **Chinese Character:** **文** (Pronounced: *wén*). This character means "text," "language," "writing," or "culture." It is rendered in a bold, sans-serif style.

* **Latin Letter:** **A**. This is a standard, bold, sans-serif capital "A." In this context, it most likely represents the Latin alphabet or the English language, potentially as an abbreviation for "Alpha" or simply as a symbol for Western languages.

* **Spatial Relationships & Flow:**

* The two main elements are placed diagonally opposite each other (top-left and bottom-right).

* The arrows create a clear, clockwise circular flow between the two elements. The top arrow indicates a process from "A" to "文," and the bottom arrow indicates a process from "文" to "A."

* The design uses a limited color palette: light purple (#E6E6FA approx.), bright blue (#00BFFF approx.), dark gray (#333333 approx.), and a light gray background (#F0F0F0 approx.).

### Key Observations

* The diagram is abstract and symbolic rather than literal. It does not depict actual translation software or a specific user interface.

* The use of a single Chinese character and a single Latin letter is a minimalist representation of two distinct language systems.

* The curved arrows are of equal weight and style, implying a balanced, reciprocal relationship between the two language systems.

* The shapes (speech bubble/document) are generic and could represent dialogue, text input, or documents.

### Interpretation

This diagram is a conceptual representation of **bidirectional translation or linguistic exchange**.

* **What it suggests:** The core idea is the conversion or communication between two different language domains, symbolized by Chinese (文) and a Latin-based language (A). The cyclic arrows emphasize that this is not a one-way translation but an ongoing, interactive process—like a conversation, real-time translation, or the work of a bilingual system.

* **How elements relate:** The "文" and "A" are the core subjects. The speech bubble shapes frame them as units of communication. The arrows define the relationship between them as dynamic and reciprocal.

* **Notable Anomalies/Inferences:** The choice of "A" is interesting. It could be a placeholder for any Latin-alphabet language, or it might specifically reference "Alpha," hinting at a project name or a first version (e.g., "Healer Alpha" from the system prompt). The diagram's simplicity makes it versatile for representing concepts like machine translation, cross-cultural communication, language learning, or API integration between systems using different languages. The lack of additional text or labels makes its meaning broad and open to interpretation within the context of language technology.

</details>

Translator.

We use the LLM itself to parse the given premises $P$ and question statement $S$ into a symbolic format, which aims to eliminate ambiguity and ensure precision in the logical statement. We specifically use Logic Programming (LP) language, adopting Prolog’s grammar Clocksin and Mellish (2003) to represent the problem as facts, rules, and queries. Facts and rules Baader et al. (2003) are derived from $P$ , while queries are formulated based on the $S$ . We denote the translated premises (facts and rules) as $P_t$ , and queries as $S_t$ . The details of the grammar can be found at A

####

<details>

<summary>figure/decomposer.png Details</summary>

### Visual Description

\n

## Diagram: Hierarchical Tree Structure

### Overview

The image displays a simple, three-level hierarchical tree diagram or organizational chart. It consists of colored, rounded rectangular boxes connected by solid blue lines, indicating a parent-child or branching relationship. The diagram is presented on a plain, light gray background. There is **no textual information** (labels, titles, or data) present within any of the boxes or elsewhere in the image.

### Components/Axes

* **Nodes:** Six rounded rectangular boxes, each with a distinct color and a subtle 3D effect created by a darker shade on the left and top edges.

* **Connections:** Solid, medium-blue lines that connect the boxes, forming the hierarchical structure. The lines branch at right angles.

* **Layout:** The diagram is structured in three distinct horizontal levels.

* **Level 1 (Top):** A single yellow box, centered horizontally.

* **Level 2 (Middle):** Two boxes. A red box is positioned to the left of the center line, and a green box is positioned to the right.

* **Level 3 (Bottom):** Three boxes. From left to right: a green box, a yellow box, and a blue box. They are evenly spaced across the width of the diagram.

### Detailed Analysis

* **Spatial Grounding & Structure:**

* The top (yellow) box is the root node. A single blue line descends from its bottom center.

* This line connects to a horizontal blue bar that spans the width between the two middle-level boxes.

* From this horizontal bar, two vertical lines descend to connect to the top centers of the red (left) and green (right) boxes.

* From the bottom center of the red box, a blue line descends to a second, wider horizontal bar.

* From the bottom center of the green box, a blue line also descends to connect to the same horizontal bar.

* From this second horizontal bar, three vertical lines descend to connect to the top centers of the three bottom-level boxes (green, yellow, blue).

* **Color Palette:**

* **Yellow:** Used for the top-level node and the center node of the bottom level.

* **Red:** Used for the left node of the middle level.

* **Green:** Used for the right node of the middle level and the left node of the bottom level.

* **Blue:** Used for the right node of the bottom level and for all connecting lines.

* **Trend Verification:** Not applicable, as this is a structural diagram, not a data chart. The "flow" is strictly top-down and branching.

### Key Observations

1. **Absence of Text:** The most significant observation is the complete lack of any textual labels, identifiers, or data within the diagram. Its meaning is entirely abstract.

2. **Color Repetition:** The colors yellow and green are each used twice, while red and blue are used once for a node. The blue color is exclusively used for the connecting lines and one final node.

3. **Symmetry and Asymmetry:** The structure is symmetrical at the first branching (one node to two), but becomes asymmetrical at the second branching, as the left parent (red) and right parent (green) both connect to all three child nodes, rather than each having their own distinct set.

4. **Visual Style:** The design is flat with minimal depth cues (the 3D shading on the boxes). It uses a simple, clean, and modern aesthetic suitable for presentations or conceptual illustrations.

### Interpretation

This diagram is a **template or abstract representation** of a hierarchical system. Its meaning is not defined by the image itself but would be provided by context or accompanying text.

* **What it demonstrates:** It visually models a one-to-many, multi-level relationship. The top node is the primary category or origin. It splits into two secondary categories. Both of these secondary categories then share or contribute to three tertiary items or outcomes at the bottom level.

* **How elements relate:** The blue lines explicitly define the relationships and flow of authority, dependency, or categorization. The shared connection from both middle nodes to all bottom nodes suggests a **matrix or combined influence** structure, rather than a strict, siloed tree. For example, the bottom three items could be projects that receive input from both departments (red and green).

* **Notable anomalies:** The key anomaly is the **lack of semantic content**. Without labels, the diagram is purely syntactic. The color choices appear arbitrary for meaning-coding but may be for visual distinction. The structure implies that the two middle-level entities have equal standing and identical relationships to the bottom level, which is a specific and notable organizational pattern.

**In summary, this is a blank structural template for a two-tiered hierarchy where two parent nodes jointly oversee three child nodes. To extract factual information, the boxes would need to be populated with text.**

</details>

Decomposer.

By breaking down the logical statement into a standardized logical form, we can simplify the reasoning process, making it easier to apply formal rules and perform efficient logical calculations. To achieve this, we use an LLM to transform the parsed premises $P_t$ , and queries $S_t$ into a standardized logical form through Normalization Davis and Putnam (1960) and Skolemization Nonnengart (1996), converting them into Conjunctive Normal Form (CNF) and eliminates quantifiers, denoted as $P_n$ and $S_n$ . For example, the logical rule $∀ x (P(x)→ Q(x))$ will be decomposed into $¬ P(x)∨ Q(x)$ .

####

<details>

<summary>x7.png Details</summary>

### Visual Description

Icon/Small Image (29x29)

</details>

Search Router.

We adopt the proof-by-contradiction Bishop (1967) approach because it allows us to straightforwardly search for complementary clauses. This method reduces search errors and directly targets logical conflicts, making the reasoning process faster and more efficient. We design a rule-based module to search for the clauses $C_complement∈ P_n$ such that $C_current$ and $C_complement$ contain complementary terms. Terms are complementary when they share the same predicate and argument, but have opposite polarity. For example, if the $C_current$ is $P(x,True)$ , clauses in the premises that contains $P(x,False)$ will be found by the Search Router as $C_complement$ , since they are complementary (same predicate $P$ and argument $x$ but opposite polarity (True vs. False)). We will explain how we define the $C_current$ in Section 3. We include more details about the search strategy in Appendix B and H.

####

<details>

<summary>x8.png Details</summary>

### Visual Description

Icon/Small Image (29x29)

</details>

Resolver.

To conduct effective step-wise reasoning during proof by contradiction, we adhere to the resolution principle Robinson (1965) as it provides clear and concise instructions to resolve logical conflicts, minimizing the likelihood of reasoning errors. Specifically, it works by canceling out the complementary terms identified by the Search Router and connecting the remaining terms, a process that will be implemented using an LLM. Specifically, given two clauses $C_current$ and $C_complement$ , where:

$$

C_current=P(x,True)∨ A

$$

$$

C_complement=P(x,False)∨ B

$$

Here, $P(x,True)$ and $P(x,False)$ are complementary terms. The Resolver cancels out them and connects the remaining terms. The resolved clause becomes:

$$

C_resolved=Resolve(C_current,C_complement)=A∨ B

$$

If the remaining clause is empty or contradiction ( $⊥$ ) E.g. Resolve $C_current=(A)$ and $C_complement=(¬ A)$ will get an empty clause $C_resolved=⊥$ . An empty clause is equivalent to a contradiction. , we can conclude the proof and determine the answer, which will be explained in detail in Section 3 at Step 2.

## 3 Logic-Complete Reasoning Processing

With Aristotle, we now demonstrate how each module comes into play to form the integrated dual-path reasoning process.

#### Step 1: Search Initialization.

As shown in the step 1 of Fig. 2, given the original premises $P$ and the question statement $S$ , we first translate them into symbolic format $P_t$ and $S_t$ , and then decompose them into $P_n$ and $S_n$ , respectively.

Translate and Decompose $\blacktriangleright$ Input: $P$ , $S$ $\blacktriangleright$ Output: $P_n$ , $S_n$ , $C_current$ = { $S_n$ , $¬ S_n$ }

To implement proof by contradiction, we initialize the current clause $C_current$ with both $S_n$ and its negation $¬ S_n$ , denoted as $C_current=S_n$ and $C_current=¬ S_n$ . Considering both $S_n$ and $¬ S_n$ is necessary because we need both proofs to scrupulously conclude an answer, which is marked in Eq. (1) and will be explained in detail later in Step 3.

#### Step 2: Search and Resolve.

At this stage, two reasoning paths are initiated: one from $C_current=S_n$ and the other from $C_current=¬ S_n$ , initialized in Step 1. We aim to reach a final answer using proof by contradiction for both paths, iteratively search for complementary clauses and resolve conflicts. This helps us systematically reach an accurate final answer more quickly. Specifically for each reasoning path, presented in the Step 2 of Fig. 2, the Search Router selects clauses $C_complement∈ P_n$ that are complementary to $C_current$ .

Search $\blacktriangleright$ Input: $P_n$ , $C_current$ $\blacktriangleright$ Output: $C_complement$

The Resolver module then applies the resolution rule Resolve( $C_current$ , $C_complement$ ) to produce a new clause $C_resolved$ .

Resolve $\blacktriangleright$ Input: $C_current$ , $C_complement$ $\blacktriangleright$ Output: $C_resolved$

If the $C_resolved$ indicates a contradiction or confirms the absence of a contradiction, we then terminate the reasoning process. If not, we then update $C_current$ = $C_resolved$ and repeat the Search and Resolve process. If the process reaches the maximum number of iterations $I_max$ and still does not find a contradiction, we conclude that there is no contradiction and terminate the reasoning process. Given the determination of whether contradiction exists, we then use the formula presented below to formally establish the proof $D_S_{n}$ (started from $C_current$ = $S_n$ ) and $D_¬ S_{n}$ (started from $C_current$ = $¬ S_n$ ) to determine whether $P_n$ entails either $S_n$ or $¬ S_n$ .

Proof Determination $D_S_{n}=\begin{cases}P_n\vdash¬ S_n&($C_\text{resolved$ = Contradiction)}\ P_n\not\vdash¬ S_n&($C_\text{resolved$ = No Contradiction)}\end{cases}$ $D_¬ S_{n}=\begin{cases}P_n\vdash S_n&($C_\text{resolved$ = Contradiction)}\ P_n\not\vdash S_n&($C_\text{resolved$ = No Contradiction)}\end{cases}$

#### Step 3: Conclude Answer.

This proof $D_S_{n}$ and $D_¬ S_{n}$ can then be used to conclude the truth value $A$ of $S$ based on Eq. (1). For example, consider a statement $S$ . If we get $D_S_{n}$ = $P\vdash¬ S$ and $D_¬ S_{n}$ = $P\not\vdash S$ , the combination of $P\vdash¬ S$ and $P\not\vdash S$ leads to the conclusion $A$ that $S$ is false according to Eq. (1).

Final Answer $\blacktriangleright$ Input: $D_S_{n}∈≤ft\{P_n\vdash¬ S_n,P_n\not\vdash¬ S_n\right\}$ $D_¬ S_{n}∈≤ft\{P_n\vdash S_n,P_n\not\vdash S_n\right\}$ $\blacktriangleright$ Output: $A∈\{True, False, Unknown, Self-Contradictory\}$

$$

A=≤ft\{\begin{array}[]{ll}True,&P_n\vdash S_n∧ P_n\not\vdash¬ S_n\\

False,&P_n\not\vdash S_n∧ P_n\vdash¬ S_n\\

Unknown,&P_n\not\vdash S_n∧ P_n\not\vdash¬ S_n\\

Self-Contradictory,&P_n\vdash S_n∧ P_n\vdash¬ S_n\\

\end{array}\right. \tag{1}

$$

The full algorithm and an example case can be found in Appendix H and I, respectively.

| | Method | GPT-4 | GPT-4o | | | | | | |

| --- | --- | --- | --- | --- | --- | --- | --- | --- | --- |

| ProntoQA | ProofWriter | LogicNLI | Avg | ProntoQA | ProofWriter | LogicNLI | Avg | | |

| LR | Naive | 77.4 | 53.1 | 49.0 | 59.8 | 89.6 | 48.7 | 53.0 | 63.8 |

| CoT | 98.9 | 68.1 | 51.0 | 72.6 | 98.0 | 77.2 | 61.0 | 78.7 | |

| AR | CoT-SC | 93.4 | 69.3 | 57.3 | 73.3 | 99.6 | 78.3 | 64.3 | 80.7 |

| CR | 98.2 | 71.7 | 62.0 | 77.3 | 99.6 | 82.2 | 61.0 | 80.9 | |

| DetermLR | 98.6 | 79.2 | 57.0 | 78.3 | 93.4 | 69.8 | 58.0 | 75.7 | |

| ToT | 97.6 | 70.3 | 52.7 | 73.5 | 98.6 | 69.0 | 56.7 | 74.8 | |

| SR | SymbCoT | 99.6 | 82.5 | 59.0 | 80.4 | 99.4 | 82.3 | 58.7 | 80.1 |

| Logic-LM | 83.2 | 79.7 | - | - | 83.2 | 72.0 | - | - | |

| Ours | 99.6 | 86.8 | 68.3 | 84.9 | 99.6 | 88.5 | 70.7 | 86.3 | |

| (+0.0) | (+4.3) | (+6.3) | (+4.5) | (+0.0) | (+6.2) | (+6.4) | (+5.4) | | |

Table 1: Performance on GPT-4 and GPT-4o. The second best score is underlined and bold one is the best. In the brackets are the corresponding improvements in between.

## 4 Experiments

We present the experiment settings, baselines and results in this Section.

| $\bullet$ Claude-3.5-Sonnet CoT-SC | ProntoQA 98.0 | ProofWriter 78.5 | LogicNLI 54.3 | Avg 77.0 |

| --- | --- | --- | --- | --- |

| CR | 88.8 | 57.8 | 57.7 | 68.1 |

| ToT | 92.0 | 69.5 | 46.7 | 69.4 |

| Ours | 99.0 | 86.5 | 61.3 | 82.3 |

| (+1.0) | (+8.0) | (+3.6) | (+5.3) | |

| $\bullet$ Llama-3.1-405b | | | | |

| CoT-SC | 84.0 | 69.5 | 60.3 | 71.3 |

| CR | 96.0 | 56.3 | 50.7 | 67.7 |

| ToT | 98.4 | 65.5 | 56.7 | 73.5 |

| Ours | 98.4 | 89.5 | 69.0 | 85.6 |

| (+0.0) | (+20.0) | (+8.7) | (+12.1) | |

Table 2: Performance by using Claude-3.5-Sonnet and Llama-3.1-405B LLMs.

### 4.1 Settings

#### LLMs.

We assess the baselines and our method using GPT-4 and GPT-4o. We also include Claude and LLaMA to verify whether our method can generalize to different LLMs other than GPT series.

#### Dataset.

We evaluated both the baselines and our method on three carefully selected logical reasoning datasets: ProntoQA Saparov and He (2023), ProofWriter Tafjord et al. (2021) and LogicNLI Tian et al. (2021). These datasets were chosen to reflect increasing levels of difficulty, with ProntoQA being the easiest, ProofWriter moderately complex, and LogicNLI the most challenging due to their intricate logical structures. ProntoQA focuses on basic deductive logical relationships, ProofWriter introduces more complex structures such as “and/or,” and LogicNLI presents the most intricate reasoning with constructs such as “either/or” and “if and only if”. This progression enables us to comprehensively evaluate the effectiveness of our method across varying levels of complexity in logical structure. The details of each dataset can be found in appendix D.

#### Baselines.

We compare with a wide range of established baselines. Those baselines can be classified into three main categories. (1) Linear Reasoning (LR) refers to approaches where the model arrives at an answer through a single-step process, using a straightforward response based on the initial prompt including: Naive Prompting and CoT Wei et al. (2022); (2) Aggregative Reasoning (AR) refers to methods where the model performs reasoning multiple times or aggregates the results to reach a final answer. This includes: CoT-SC Wang et al. (2023b); Cumulative Reasoning (CR; Zhang et al., 2023); DetermLR Sun et al. (2024); ToT Yao et al. (2023); (3) Symbolic Reasoning (SR), which engages symbolic expressions and rules in the reasoning framework including: SymbCoT Xu et al. (2024) and Logic-LM Pan et al. (2023). More details can be found in Appendix G.

### 4.2 Main Result

The main results are presented in Table 1, from which we can learn the following observations:

#### Our method consistently outperforms all baselines across the three datasets.

Specifically, we achieve average improvements over CoT-SC, ToT, CR, and SymbCoT of 11.6%, 11.4%, 7.6%, and 4.5% on GPT-4, and 5.6%, 11.5%, 5.4%, and 6.2% on GPT-4o, respectively. These results demonstrate the general advantage of our method over the existing baselines across different datasets.

#### Our method performs even more effectively in complex logical scenarios.

We notice in Table 1 that our approach does not yield an improvement on the ProntoQA dataset. This can be attributed to the relative simplicity of the dataset, where most baselines already achieve high accuracy, leaving limited room for further enhancement. However, our improvements are more pronounced on the challenging datasets. Specifically, we achieve a 4.3% and 6.2% improvement over the second-best baseline on ProofWriter with GPT-4 and GPT-4o, respectively. On the most challenging dataset, LogicNLI, we observe even greater improvements of 6.3% for GPT-4 and 6.4% for GPT-4o. These results highlight the advantages of our method in scenarios involving complex logical structures and increased difficulty.

#### Our method is generalizable across different models.

In Table 2, we present the results for two models (Claude and Llama) outside the GPT series. We compare our method with strong baselines that aggregate multiple reasoning paths. Our method demonstrates similar improvements over the selected strong baseline, highlighting its generalizability across different models.

<details>

<summary>x9.png Details</summary>

### Visual Description

\n

## Grouped Bar Chart: Model Ablation Study on ProofWriter and LogicNLI Datasets

### Overview

The image displays a grouped bar chart comparing the accuracy (in percentage) of a proposed model ("Ours") against three ablated versions on two distinct datasets: ProofWriter and LogicNLI. The chart visually demonstrates the performance impact of removing specific model components.

### Components/Axes

* **Chart Type:** Grouped bar chart.

* **Y-Axis:** Labeled "Accuracy (%)". The scale runs from 30 to 90, with major tick marks at 30, 50, 70, and 90.

* **X-Axis:** Contains two primary categorical groups: "ProofWriter" (left group) and "LogicNLI" (right group).

* **Legend:** Positioned at the bottom center of the chart. It defines four model variants by color:

* **Dark Red:** "Ours"

* **Medium Red:** "w/o Decomposer"

* **Light Red:** "w/o Search Router"

* **Very Light Pink:** "w/o Resolver"

* **Data Labels:** Each bar has its exact accuracy percentage value displayed directly above it.

### Detailed Analysis

**ProofWriter Dataset (Left Group):**

1. **Ours (Dark Red, leftmost bar):** 88.5% accuracy. This is the tallest bar in the entire chart.

2. **w/o Decomposer (Medium Red, second bar):** 62.0% accuracy.

3. **w/o Search Router (Light Red, third bar):** 37.7% accuracy. This is the shortest bar in the ProofWriter group.

4. **w/o Resolver (Very Light Pink, rightmost bar):** 47.1% accuracy.

**LogicNLI Dataset (Right Group):**

1. **Ours (Dark Red, leftmost bar):** 70.7% accuracy. This is the tallest bar in the LogicNLI group.

2. **w/o Decomposer (Medium Red, second bar):** 42.8% accuracy. This is the shortest bar in the LogicNLI group.

3. **w/o Search Router (Light Red, third bar):** 39.1% accuracy.

4. **w/o Resolver (Very Light Pink, rightmost bar):** 57.5% accuracy.

**Trend Verification:**

* In both datasets, the "Ours" model (dark red) shows a clear upward trend compared to all ablated versions, achieving the highest accuracy.

* The removal of any component leads to a significant performance drop. The magnitude of the drop varies by dataset and component.

* For ProofWriter, the performance order from highest to lowest is: Ours > w/o Decomposer > w/o Resolver > w/o Search Router.

* For LogicNLI, the performance order from highest to lowest is: Ours > w/o Resolver > w/o Decomposer > w/o Search Router.

### Key Observations

1. **Consistent Superiority:** The full model ("Ours") significantly outperforms all ablated variants on both tasks, indicating the combined value of all components.

2. **Component Criticality Varies by Task:**

* The **Search Router** appears to be the most critical component for the **ProofWriter** task, as its removal causes the largest accuracy drop (from 88.5% to 37.7%, a 50.8 percentage point decrease).

* The **Decomposer** appears to be the most critical component for the **LogicNLI** task, as its removal causes the largest drop (from 70.7% to 42.8%, a 27.9 percentage point decrease).

3. **Resolver's Role:** Removing the **Resolver** has the least severe impact among the ablations in both tasks, though the drop is still substantial. Interestingly, the model without the Resolver performs better on LogicNLI (57.5%) than the model without the Decomposer (42.8%), which is the opposite of the trend seen in ProofWriter.

4. **Overall Performance Gap:** The absolute performance gap between the full model and the best ablated version is larger for ProofWriter (88.5% vs. 62.0%, a 26.5-point gap) than for LogicNLI (70.7% vs. 57.5%, a 13.2-point gap).

### Interpretation

This ablation study provides strong evidence for the efficacy of the proposed model's architecture. The data suggests that the **Decomposer**, **Search Router**, and **Resolver** are not redundant; each contributes meaningfully to the model's overall reasoning capability, as measured by accuracy on these logical inference tasks.

The varying impact of component removal across datasets implies that the tasks (ProofWriter and LogicNLI) likely rely on different reasoning sub-skills. ProofWriter, which involves generating natural language proofs, seems heavily dependent on the **Search Router**—perhaps for navigating a knowledge base or proof space. LogicNLI, which involves classifying natural language inferences, seems more dependent on the **Decomposer**—likely for breaking down complex sentences into logical forms.

The fact that the full model achieves the highest score on both tasks demonstrates the robustness and generalizability of the integrated approach. The chart effectively argues that the proposed model's strength lies in the synergistic combination of its specialized components, and removing any one of them creates a significant bottleneck in performance.

</details>

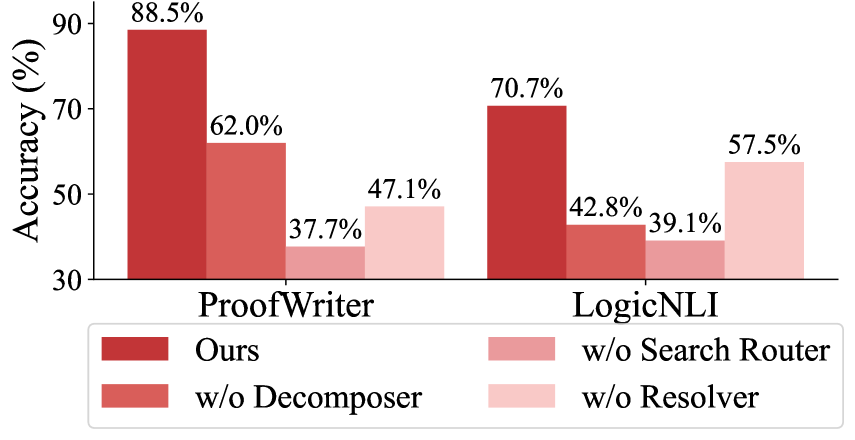

Figure 3: Ablation results (w/ GPT-4o).

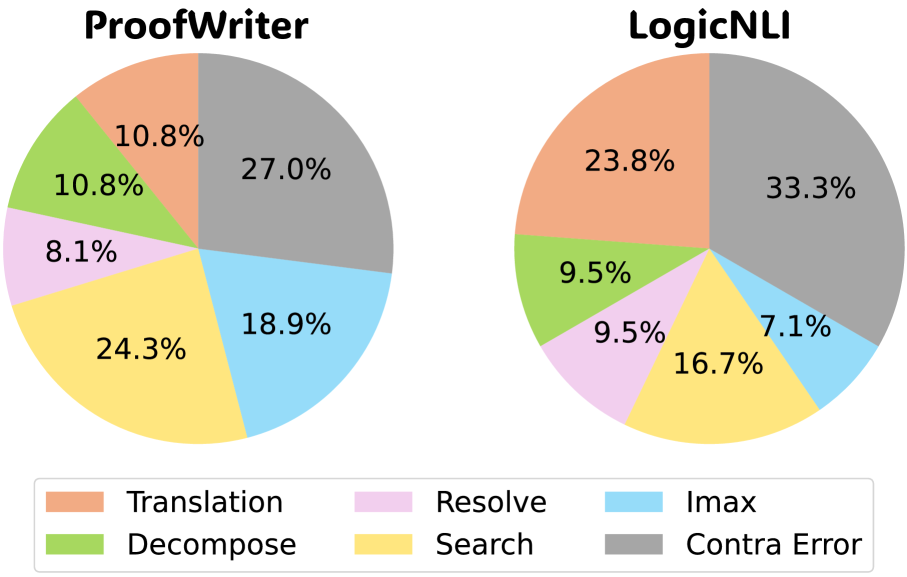

### 4.3 Model Ablation

To evaluate the contribution of each module in our framework, we conducted an ablation study by replacing each module individually with simpler alternatives. Specifically, we substitute (1) the Decomposer by prompting the LLM for simple decomposition, (2) the Resolver by prompting the LLM to infer using the given premises, and (3) the Search Router by prompting the LLM to search for relevant premises.

The results, shown in Fig. 3, demonstrate that removing any module leads to a significant performance drop, highlighting the importance of each component. Notably, replacing the Search Router results in the largest performance decline (50.8% and 31.6% for ProofWriter and LogicNLI, respectively), emphasizing the benefits of searching complementary premises under the proof-by-contradiction strategy. Besides, the Decomposer has a greater impact than the Resolver on LogicNLI, whereas in ProofWriter, the Resolver plays a more significant role than the Decomposer. This is because LogicNLI includes more complex logical structures, such as “either…or…”, “vice versa”, and “if and only if”, while ProofWriter primarily involves simpler conjunctions such as “and”, “or”. As a result, LogicNLI relies more heavily on the Decomposer to break down complex logical statements into simpler forms for optimal performance.

## 5 Analysis and Discussion

We now take one step further, delving into the underlying working mechanisms of our system.

<details>

<summary>x10.png Details</summary>

### Visual Description

## Scatter Plot: Method Performance Comparison (Accuracy vs. Computational Steps)

### Overview

The image is a 2D scatter plot comparing the performance of five different methods or algorithms. The plot visualizes the trade-off between computational cost (measured in "Number of Steps") and performance (measured as "Accuracy (%)"). One method, labeled "Ours," is highlighted with a distinct red star, suggesting it is the proposed or primary method of the study from which this figure originates.

### Components/Axes

* **Chart Type:** Scatter Plot

* **X-Axis:**

* **Label:** "Number of Steps"

* **Scale:** Linear, ranging from approximately 11 to 25.

* **Major Ticks:** 12, 16, 20, 24.

* **Y-Axis:**

* **Label:** "Accuracy (%)"

* **Scale:** Linear, ranging from 65 to 95.

* **Major Ticks:** 65, 75, 85, 95.

* **Data Series & Legend:** The legend is embedded directly in the plot area, with labels placed adjacent to their corresponding data points.

* **"Ours":** Represented by a **red star (★)**. Positioned in the top-left quadrant.

* **"DetermLR":** Represented by a **blue circle (●)**. Positioned in the upper-middle area.

* **"CR":** Represented by a **blue circle (●)**. Positioned near the center, slightly below "DetermLR".

* **"CoT-SC":** Represented by a **blue circle (●)**. Positioned just below and slightly left of "CR".

* **"ToT":** Represented by a **blue circle (●)**. Positioned in the bottom-right quadrant.

### Detailed Analysis

The plot contains five distinct data points. Below is an analysis of each, including approximate coordinate extraction and trend description.

1. **"Ours" (Red Star)**

* **Spatial Position:** Top-left corner of the data cluster.

* **Approximate Coordinates:** (X ≈ 12, Y ≈ 88).

* **Trend Description:** This point represents the highest accuracy and the lowest number of steps among all plotted methods.

2. **"DetermLR" (Blue Circle)**

* **Spatial Position:** Upper-middle region, to the right and below "Ours".

* **Approximate Coordinates:** (X ≈ 15, Y ≈ 79).

* **Trend Description:** Shows a significant drop in accuracy (~9 percentage points) compared to "Ours" for a moderate increase in steps (~3 more steps).

3. **"CR" (Blue Circle)**

* **Spatial Position:** Near the center of the plot.

* **Approximate Coordinates:** (X ≈ 17, Y ≈ 72).

* **Trend Description:** Positioned below "DetermLR", indicating lower accuracy for a slightly higher step count.

4. **"CoT-SC" (Blue Circle)**

* **Spatial Position:** Just below and slightly left of "CR".

* **Approximate Coordinates:** (X ≈ 16, Y ≈ 69).

* **Trend Description:** Has the second-lowest accuracy and a step count similar to "CR".

5. **"ToT" (Blue Circle)**

* **Spatial Position:** Far right, bottom quadrant.

* **Approximate Coordinates:** (X ≈ 24.5, Y ≈ 70).

* **Trend Description:** This method requires the highest number of steps by a large margin but achieves an accuracy only marginally better than "CoT-SC" and worse than "CR".

### Key Observations

* **Dominant Performance:** The method labeled "Ours" is a clear outlier, achieving the highest accuracy (~88%) with the fewest computational steps (~12). This represents a Pareto-optimal point on the chart.

* **Inverse General Trend:** Among the four blue-circle methods, there is no clear positive correlation between steps and accuracy. In fact, the method with the most steps ("ToT") has one of the lowest accuracies.

* **Clustering:** "CR" and "CoT-SC" form a cluster with similar step counts (16-17) and relatively low accuracy (69-72%).

* **Efficiency Gap:** There is a substantial performance gap between the proposed method ("Ours") and all other compared methods in this visualization.

### Interpretation

This chart is designed to demonstrate the superior efficiency and effectiveness of the "Ours" method. The data suggests that "Ours" achieves a breakthrough in the accuracy-efficiency trade-off, delivering top-tier performance while being significantly less computationally expensive (requiring fewer steps) than alternatives like "DetermLR", "CR", "CoT-SC", and especially "ToT".

The poor performance of "ToT" (high steps, low accuracy) is particularly notable, indicating it may be an inefficient approach for the task measured here. The clustering of "CR" and "CoT-SC" suggests these methods may share similar underlying mechanisms or limitations.

The visual rhetoric—using a distinct red star for "Ours" placed in the coveted "high accuracy, low cost" top-left corner—is a powerful and intentional choice to highlight the proposed method's advantage. The chart effectively argues that "Ours" is not just incrementally better but represents a different class of performance compared to the other plotted techniques.

</details>

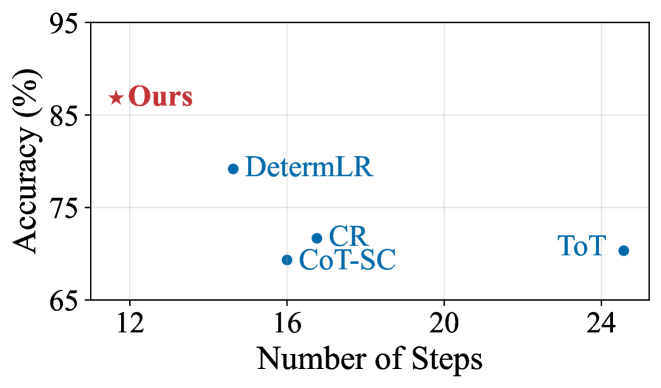

Figure 4: Accuracy vs. Efficiency on ProofWriter using GPT-4. Efficiency is measured as the average number of visited nodes/steps required to solve the problem. The upper-left corner is the optimal point, representing the best performance with the fewest visited nodes.

### 5.1 Accuracy vs. Efficiency

#### Our method achieves better reasoning accuracy with higher efficiency.

Here, we measure the average number of steps or nodes for solving problems in the ProofWriter dataset. As shown in Fig. 4, our method not only achieves the highest accuracy across all baselines but does so with the least number of visited nodes indicating both superior efficacy and efficiency. Specifically, our method achieves the highest accuracy among all baselines, while visiting only 11.65 nodes on average, reducing the number of nodes visited by 52.6%, 30.5%, and 20.4% compared to ToT, CR, and DetermLR, respectively. This demonstrates that our approach effectively balances accuracy and computational efficiency. By directly targeting contradictions, it significantly streamlines the reasoning process, making it both precise and efficient.

### 5.2 Step-wise Reasoning Accuracy

#### The

<details>

<summary>x11.png Details</summary>

### Visual Description

Icon/Small Image (29x29)

</details>

Resolver can achieve near-perfect accuracy in one-step logical inference.

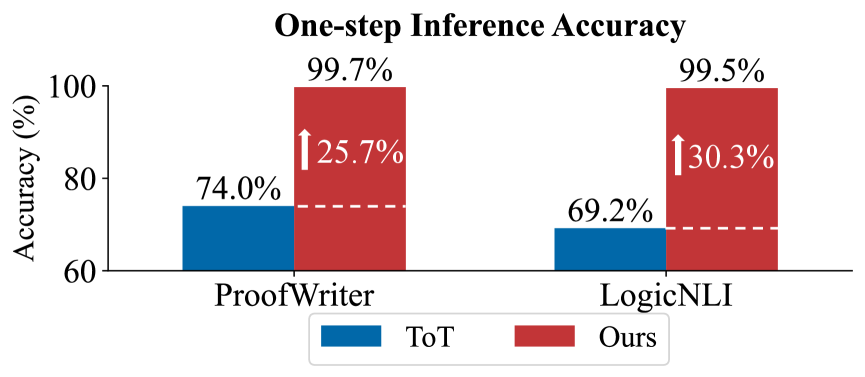

To understand why our framework is effective, we must also examine its one-step logical reasoning accuracy. Since the final answer is derived from these individual inferences (i.e., nodes in ToT and steps in Ours), their accuracy directly impacts the overall performance. We compare the one-step reasoning accuracy of our method with that of ToT shown in Fig. 5. We randomly sample 100 cases with manual evaluation. ToT demonstrated around 70% accuracy, which is consistent with prior research showing that LLMs can sometimes introduce logical errors. In contrast, our Aristotle achieved near-perfect accuracy in one-step inference, underscoring the effectiveness of the Resolver module’s use of the resolution principle. This is because the resolution principle provides a systematic and logically rigorous way to resolve contradictions, simplifying the reasoning process compared to methods that rely on LLMs to reason from previous steps and multiple premises.

<details>

<summary>x12.png Details</summary>

### Visual Description

## Bar Chart: One-step Inference Accuracy

### Overview

The image is a bar chart comparing the accuracy of two methods, labeled "ToT" and "Ours," on two different datasets or tasks: "ProofWriter" and "LogicNLI." The chart demonstrates a significant performance improvement of the "Ours" method over the "ToT" method for both tasks.

### Components/Axes

* **Chart Title:** "One-step Inference Accuracy" (centered at the top).

* **Y-axis:** Labeled "Accuracy (%)". The scale runs from 60 to 100, with major tick marks at 60, 80, and 100.

* **X-axis:** Contains two categorical labels: "ProofWriter" (left) and "LogicNLI" (right).

* **Legend:** Located at the bottom center of the chart.

* A blue rectangle is labeled "ToT".

* A red rectangle is labeled "Ours".

* **Data Series:** Two sets of paired bars, one for each x-axis category.

* **ProofWriter Set:** A blue bar (ToT) on the left, a red bar (Ours) on the right.

* **LogicNLI Set:** A blue bar (ToT) on the left, a red bar (Ours) on the right.

* **Annotations:**

* White text labels above each bar show the exact accuracy percentage.

* White upward-pointing arrows are placed between the paired bars, with text indicating the percentage point improvement of "Ours" over "ToT".

* A horizontal dashed white line extends from the top of each blue bar to the corresponding red bar, visually connecting the baseline to the improved result.

### Detailed Analysis

**1. ProofWriter Task:**

* **ToT (Blue Bar):** Accuracy is **74.0%**.

* **Ours (Red Bar):** Accuracy is **99.7%**.

* **Improvement:** An arrow indicates an increase of **25.7%** (percentage points) from the ToT baseline.

**2. LogicNLI Task:**

* **ToT (Blue Bar):** Accuracy is **69.2%**.

* **Ours (Red Bar):** Accuracy is **99.5%**.

* **Improvement:** An arrow indicates an increase of **30.3%** (percentage points) from the ToT baseline.

**Trend Verification:**

* For both tasks, the red bar ("Ours") is substantially taller than the blue bar ("ToT"), confirming a visual trend of superior performance.

* The improvement margin is larger for LogicNLI (30.3%) than for ProofWriter (25.7%).

### Key Observations

* **Near-Perfect Performance:** The "Ours" method achieves near-perfect accuracy (99.5% and 99.7%) on both tasks.

* **Significant Gains:** The improvements are very large, exceeding 25 percentage points in both cases.

* **Consistent Pattern:** The relationship between the methods is consistent across both tasks: "Ours" dramatically outperforms "ToT".

* **Baseline Performance:** The "ToT" method performs moderately on ProofWriter (74.0%) and slightly worse on LogicNLI (69.2%).

### Interpretation

The data presents a clear and compelling case for the superiority of the "Ours" method over the "ToT" method for one-step inference on the tested tasks. The near-ceiling performance of "Ours" suggests it has effectively solved these specific inference challenges under the given conditions. The larger improvement on LogicNLI might indicate that the "Ours" method is particularly adept at handling the type of reasoning required by that dataset compared to ProofWriter. The chart is designed to highlight this dramatic performance gap, using color contrast, direct numerical labels, and explicit improvement annotations to make the conclusion unambiguous for a technical audience. The primary message is one of substantial methodological advancement.

</details>

Figure 5: One-step reasoning accuracy using GPT-4o.

### 5.3 Search Error

#### Our method effectively reduces errors from the search strategy.

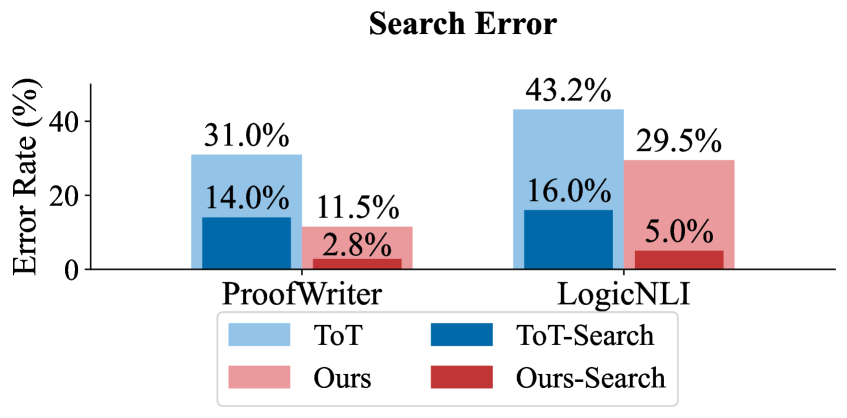

Apart from one-step logical inference, the search router also plays a crucial role. Previous research has shown that methods involving an evaluator to guide the search tend to underperform as the evaluator can be unreliable and may mislead the reasoning process, resulting in incorrect answers. We assess the search error In ToT, search errors occur when the evaluator selects a logically flawed node for expansion. In contrast, in our method, search errors arise when either the complementary clause is not found or a non-complementary clause is selected. The evaluation process is conducted manually., as shown in Fig. 6. Our search strategy significantly reduces errors, lowering them by 11.2% in ProofWriter and 9.0% in LogicNLI. This demonstrates that our logic-based search approach outperforms LLM self-evaluation, effectively addressing the limitations posed by unreliable evaluators in logical reasoning. An explanation is that our method simplifies the search process by focusing on identifying complementary clauses, a task with clear definitions and rules that an LLM can easily follow with a few examples. In contrast, having an LLM evaluate logical inferences, such as in ToT, requires complex judgments, making it more prone to errors Chen et al. (2024); Wang et al. (2024b).

<details>

<summary>x13.png Details</summary>

### Visual Description

## Bar Chart: Search Error Rate Comparison

### Overview

The image is a grouped bar chart titled "Search Error" that compares the error rates (in percentage) of four different methods across two datasets: "ProofWriter" and "LogicNLI". The chart visually demonstrates the performance impact of adding a search component to two base methods ("ToT" and "Ours").

### Components/Axes

* **Chart Title:** "Search Error" (centered at the top).

* **Y-Axis:** Labeled "Error Rate (%)". The axis has numerical markers at 0, 20, and 40.

* **X-Axis:** Contains two categorical labels: "ProofWriter" (left group) and "LogicNLI" (right group).

* **Legend:** Positioned at the bottom center of the chart. It defines four data series by color:

* Light Blue: "ToT"

* Dark Blue: "ToT-Search"

* Light Red/Pink: "Ours"

* Dark Red: "Ours-Search"

### Detailed Analysis

The chart presents data for two distinct datasets, each with four associated methods.

**1. ProofWriter Dataset (Left Group):**

* **ToT (Light Blue Bar):** The tallest bar in this group, with a labeled value of **31.0%**.

* **ToT-Search (Dark Blue Bar):** A significantly shorter bar, with a labeled value of **14.0%**.

* **Ours (Light Red Bar):** Slightly shorter than the ToT-Search bar, with a labeled value of **11.5%**.

* **Ours-Search (Dark Red Bar):** The shortest bar in the entire chart, with a labeled value of **2.8%**.

**2. LogicNLI Dataset (Right Group):**

* **ToT (Light Blue Bar):** The tallest bar in the entire chart, with a labeled value of **43.2%**.

* **ToT-Search (Dark Blue Bar):** A much shorter bar, with a labeled value of **16.0%**.

* **Ours (Light Red Bar):** The second-tallest bar in this group, with a labeled value of **29.5%**.

* **Ours-Search (Dark Red Bar):** The shortest bar in this group, with a labeled value of **5.0%**.

### Key Observations

* **Consistent Trend of Improvement with Search:** For both base methods ("ToT" and "Ours") and on both datasets, the addition of a search component ("-Search") results in a substantial reduction in error rate.

* **"Ours-Search" is the Top Performer:** The dark red "Ours-Search" method achieves the lowest error rate in both datasets (2.8% on ProofWriter, 5.0% on LogicNLI).

* **"ToT" Has the Highest Error Rates:** The light blue "ToT" method has the highest error rate in both datasets (31.0% and 43.2%).

* **Relative Performance:** The "Ours" method (light red) consistently outperforms the "ToT" method (light blue) without search. With search, "Ours-Search" (dark red) consistently outperforms "ToT-Search" (dark blue).

* **Dataset Difficulty:** Error rates are universally higher on the LogicNLI dataset compared to ProofWriter for all corresponding methods, suggesting LogicNLI may be a more challenging task.

### Interpretation

This chart provides strong empirical evidence for two key conclusions within the context of the research it represents: