# DeepSeek-V3 Technical Report

**Report Number**: 001

Abstract

We present DeepSeek-V3, a strong Mixture-of-Experts (MoE) language model with 671B total parameters with 37B activated for each token. To achieve efficient inference and cost-effective training, DeepSeek-V3 adopts Multi-head Latent Attention (MLA) and DeepSeekMoE architectures, which were thoroughly validated in DeepSeek-V2. Furthermore, DeepSeek-V3 pioneers an auxiliary-loss-free strategy for load balancing and sets a multi-token prediction training objective for stronger performance. We pre-train DeepSeek-V3 on 14.8 trillion diverse and high-quality tokens, followed by Supervised Fine-Tuning and Reinforcement Learning stages to fully harness its capabilities. Comprehensive evaluations reveal that DeepSeek-V3 outperforms other open-source models and achieves performance comparable to leading closed-source models. Despite its excellent performance, DeepSeek-V3 requires only 2.788M H800 GPU hours for its full training. In addition, its training process is remarkably stable. Throughout the entire training process, we did not experience any irrecoverable loss spikes or perform any rollbacks. The model checkpoints are available at https://github.com/deepseek-ai/DeepSeek-V3.

{CJK*}

UTF8gbsn

<details>

<summary>x1.png Details</summary>

### Visual Description

# Technical Document Extraction: LLM Benchmark Performance Comparison

## 1. Component Isolation

* **Header/Legend:** Located at the top of the image, spanning the width. It contains six color-coded labels for the models being compared.

* **Main Chart:** A grouped bar chart showing performance across six different benchmarks.

* **Y-Axis:** Located on the left, representing "Accuracy / Percentile (%)".

* **X-Axis:** Located at the bottom, representing six specific benchmark categories with their respective metrics in parentheses.

---

## 2. Legend and Model Identification

The legend is positioned at the top of the chart. Each model is represented by a specific color and pattern:

| Model Name | Color/Pattern Description |

| :--- | :--- |

| **DeepSeek-V3** | Royal Blue with white diagonal stripes (hatching) |

| **DeepSeek-V2.5** | Light Blue (solid) |

| **Qwen2.5-72B-Inst** | Medium Grey (solid) |

| **Llama-3.1-405B-Inst** | Light Grey (solid) |

| **GPT-4o-0513** | Tan/Beige (solid) |

| **Claude-3.5-Sonnet-1022** | Cream/Off-white (solid) |

---

## 3. Data Table Reconstruction

The following table extracts the numerical data points displayed above each bar in the chart.

| Benchmark (Metric) | DeepSeek-V3 | DeepSeek-V2.5 | Qwen2.5-72B-Inst | Llama-3.1-405B-Inst | GPT-4o-0513 | Claude-3.5-Sonnet-1022 |

| :--- | :---: | :---: | :---: | :---: | :---: | :---: |

| **MMLU-Pro** *(EM)* | **75.9** | 66.2 | 71.6 | 73.3 | 72.6 | 78.0 |

| **GPQA-Diamond** *(Pass@1)* | **59.1** | 41.3 | 49.0 | 51.1 | 49.9 | 65.0 |

| **MATH 500** *(EM)* | **90.2** | 74.7 | 80.0 | 73.8 | 74.6 | 78.3 |

| **AIME 2024** *(Pass@1)* | **39.2** | 16.7 | 23.3 | 23.3 | 9.3 | 16.0 |

| **Codeforces** *(Percentile)* | **51.6** | 35.6 | 24.8 | 25.3 | 23.6 | 20.3 |

| **SWE-bench Verified** *(Resolved)* | **42.0** | 22.6 | 23.8 | 24.5 | 38.8 | 50.8 |

---

## 4. Trend Analysis and Key Observations

### General Trends

* **DeepSeek-V3 Performance:** In every category, the DeepSeek-V3 value is highlighted in a **bold font**, indicating it is the primary subject of the chart. It outperforms its predecessor (DeepSeek-V2.5) significantly across all benchmarks.

* **Competitive Standing:** DeepSeek-V3 holds the highest score in 4 out of the 6 benchmarks shown (MMLU-Pro and SWE-bench Verified being the exceptions where Claude-3.5-Sonnet-1022 leads).

### Category Specifics

1. **MMLU-Pro (EM):** Most models cluster between 71% and 78%. Claude-3.5-Sonnet-1022 leads this category at 78.0%, followed by DeepSeek-V3 at 75.9%.

2. **GPQA-Diamond (Pass@1):** Claude-3.5-Sonnet-1022 leads (65.0%), with DeepSeek-V3 following (59.1%). There is a significant drop-off to the other models which are near or below 50%.

3. **MATH 500 (EM):** DeepSeek-V3 shows a dominant lead at 90.2%, which is 10.2 percentage points higher than the next closest model (Qwen2.5-72B-Inst at 80.0%).

4. **AIME 2024 (Pass@1):** DeepSeek-V3 shows a very strong lead at 39.2%, more than double the performance of most other models in the set (e.g., DeepSeek-V2.5 at 16.7% and Claude at 16.0%).

5. **Codeforces (Percentile):** DeepSeek-V3 leads significantly at 51.6%. Interestingly, the "frontier" Western models (GPT-4o and Claude-3.5) perform the worst in this specific coding percentile metric (23.6% and 20.3% respectively).

6. **SWE-bench Verified (Resolved):** Claude-3.5-Sonnet-1022 leads at 50.8%. DeepSeek-V3 follows at 42.0%, showing a substantial improvement over DeepSeek-V2.5 (22.6%).

---

## 5. Axis and Scale Details

* **Y-Axis:** Ranges from 0 to 100 with major increments every 20 units (0, 20, 40, 60, 80, 100). Horizontal dashed grid lines are present at each 20-unit interval to facilitate visual alignment.

* **X-Axis:** Categorical, representing six distinct benchmarks. Each category contains a cluster of six bars corresponding to the models in the legend.

</details>

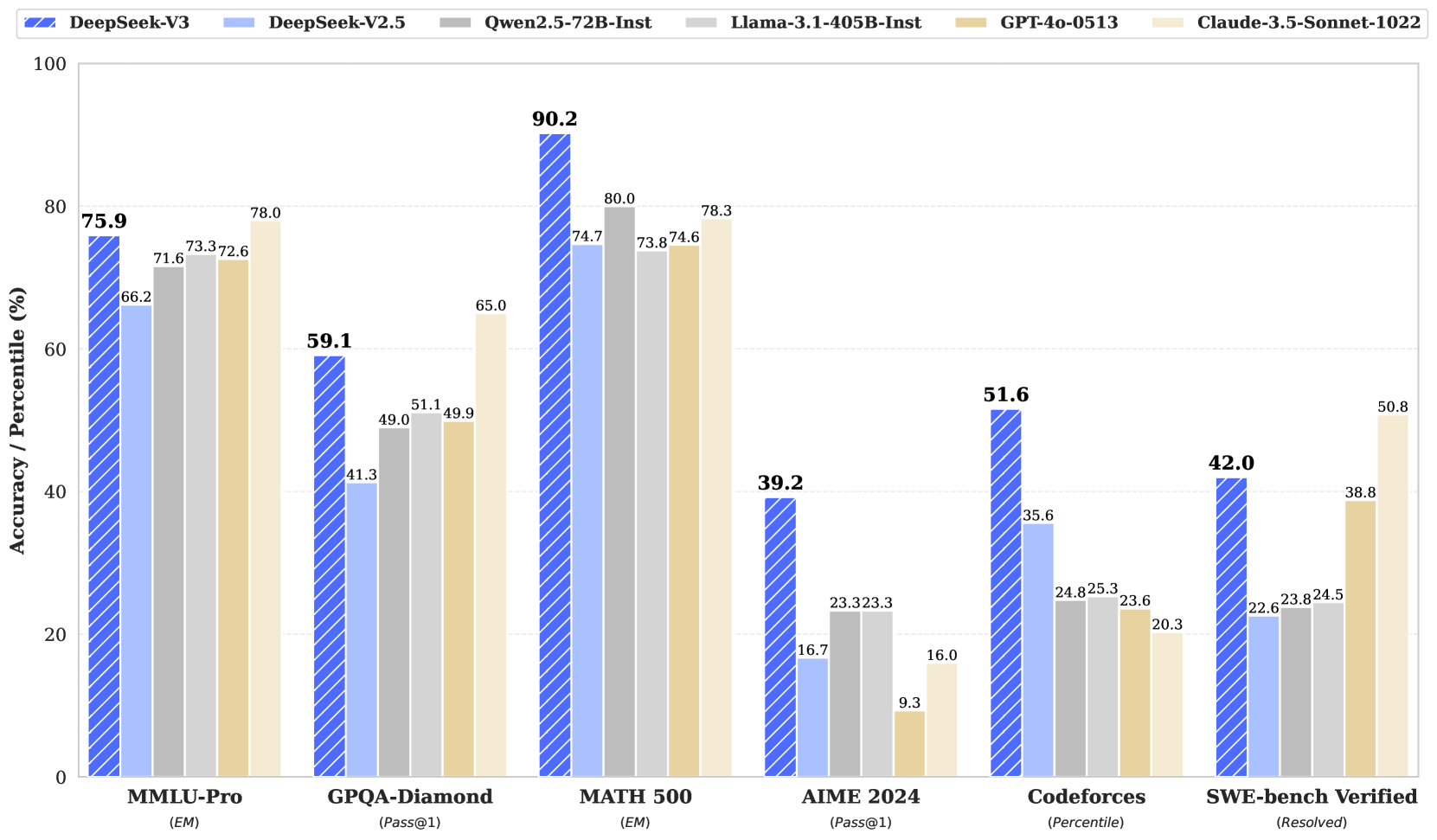

Figure 1: Benchmark performance of DeepSeek-V3 and its counterparts. Contents

1. 1 Introduction

1. 2 Architecture

1. 2.1 Basic Architecture

1. 2.1.1 Multi-Head Latent Attention

1. 2.1.2 DeepSeekMoE with Auxiliary-Loss-Free Load Balancing

1. 2.2 Multi-Token Prediction

1. 3 Infrastructures

1. 3.1 Compute Clusters

1. 3.2 Training Framework

1. 3.2.1 DualPipe and Computation-Communication Overlap

1. 3.2.2 Efficient Implementation of Cross-Node All-to-All Communication

1. 3.2.3 Extremely Memory Saving with Minimal Overhead

1. 3.3 FP8 Training

1. 3.3.1 Mixed Precision Framework

1. 3.3.2 Improved Precision from Quantization and Multiplication

1. 3.3.3 Low-Precision Storage and Communication

1. 3.4 Inference and Deployment

1. 3.4.1 Prefilling

1. 3.4.2 Decoding

1. 3.5 Suggestions on Hardware Design

1. 3.5.1 Communication Hardware

1. 3.5.2 Compute Hardware

1. 4 Pre-Training

1. 4.1 Data Construction

1. 4.2 Hyper-Parameters

1. 4.3 Long Context Extension

1. 4.4 Evaluations

1. 4.4.1 Evaluation Benchmarks

1. 4.4.2 Evaluation Results

1. 4.5 Discussion

1. 4.5.1 Ablation Studies for Multi-Token Prediction

1. 4.5.2 Ablation Studies for the Auxiliary-Loss-Free Balancing Strategy

1. 4.5.3 Batch-Wise Load Balance VS. Sequence-Wise Load Balance

1. 5 Post-Training

1. 5.1 Supervised Fine-Tuning

1. 5.2 Reinforcement Learning

1. 5.2.1 Reward Model

1. 5.2.2 Group Relative Policy Optimization

1. 5.3 Evaluations

1. 5.3.1 Evaluation Settings

1. 5.3.2 Standard Evaluation

1. 5.3.3 Open-Ended Evaluation

1. 5.3.4 DeepSeek-V3 as a Generative Reward Model

1. 5.4 Discussion

1. 5.4.1 Distillation from DeepSeek-R1

1. 5.4.2 Self-Rewarding

1. 5.4.3 Multi-Token Prediction Evaluation

1. 6 Conclusion, Limitations, and Future Directions

1. A Contributions and Acknowledgments

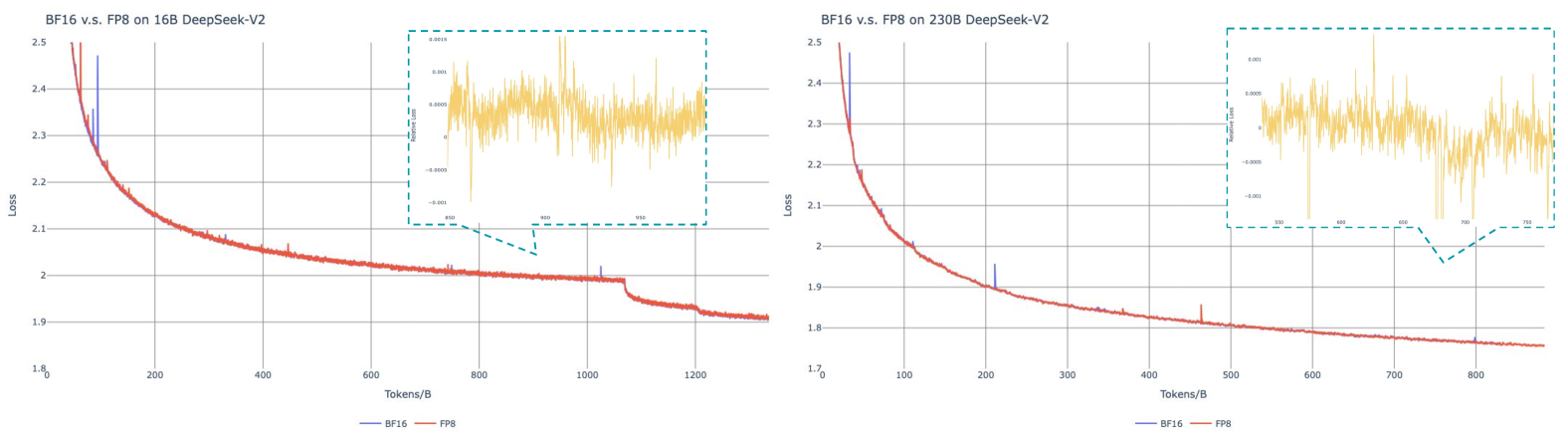

1. B Ablation Studies for Low-Precision Training

1. B.1 FP8 v.s. BF16 Training

1. B.2 Discussion About Block-Wise Quantization

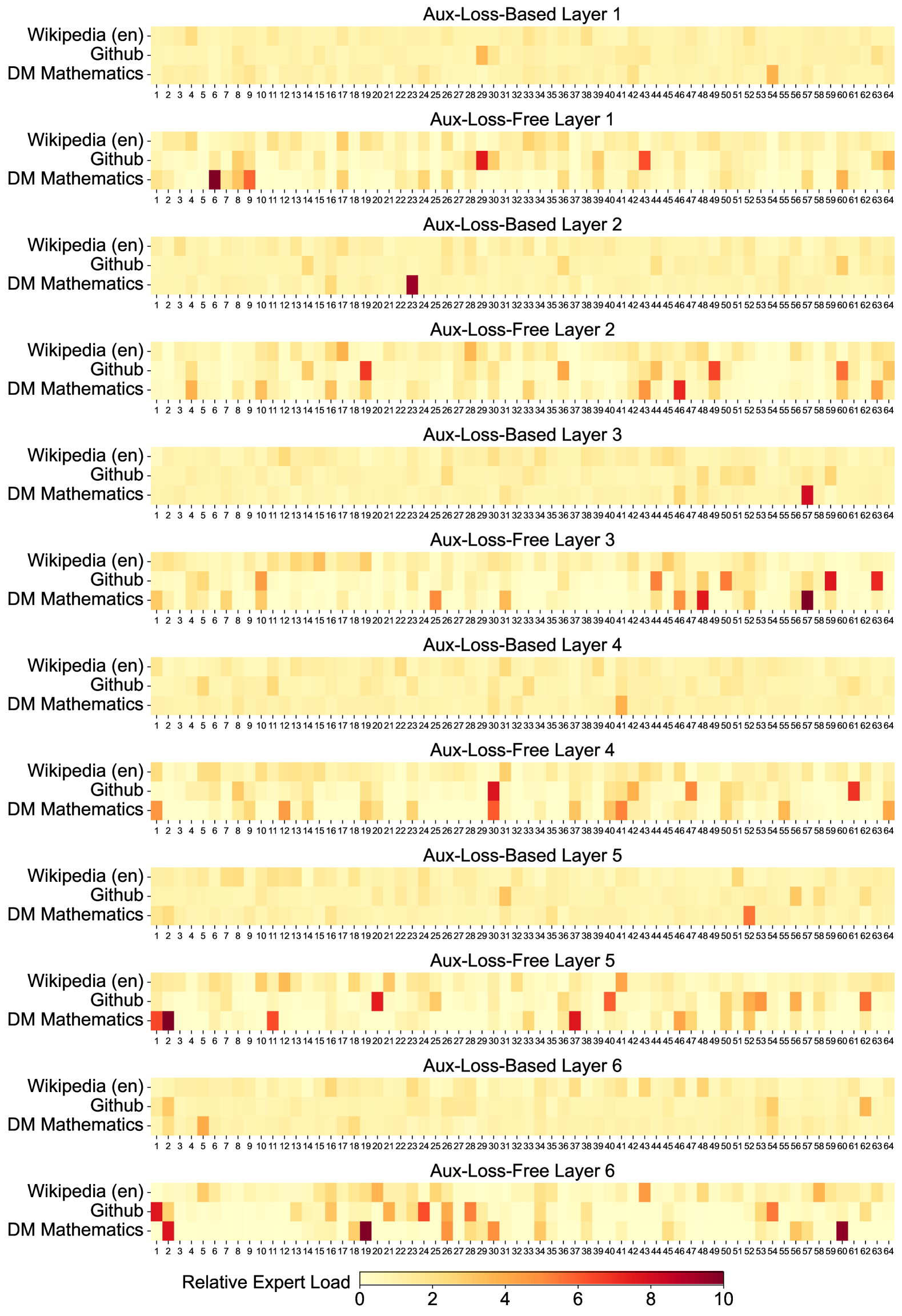

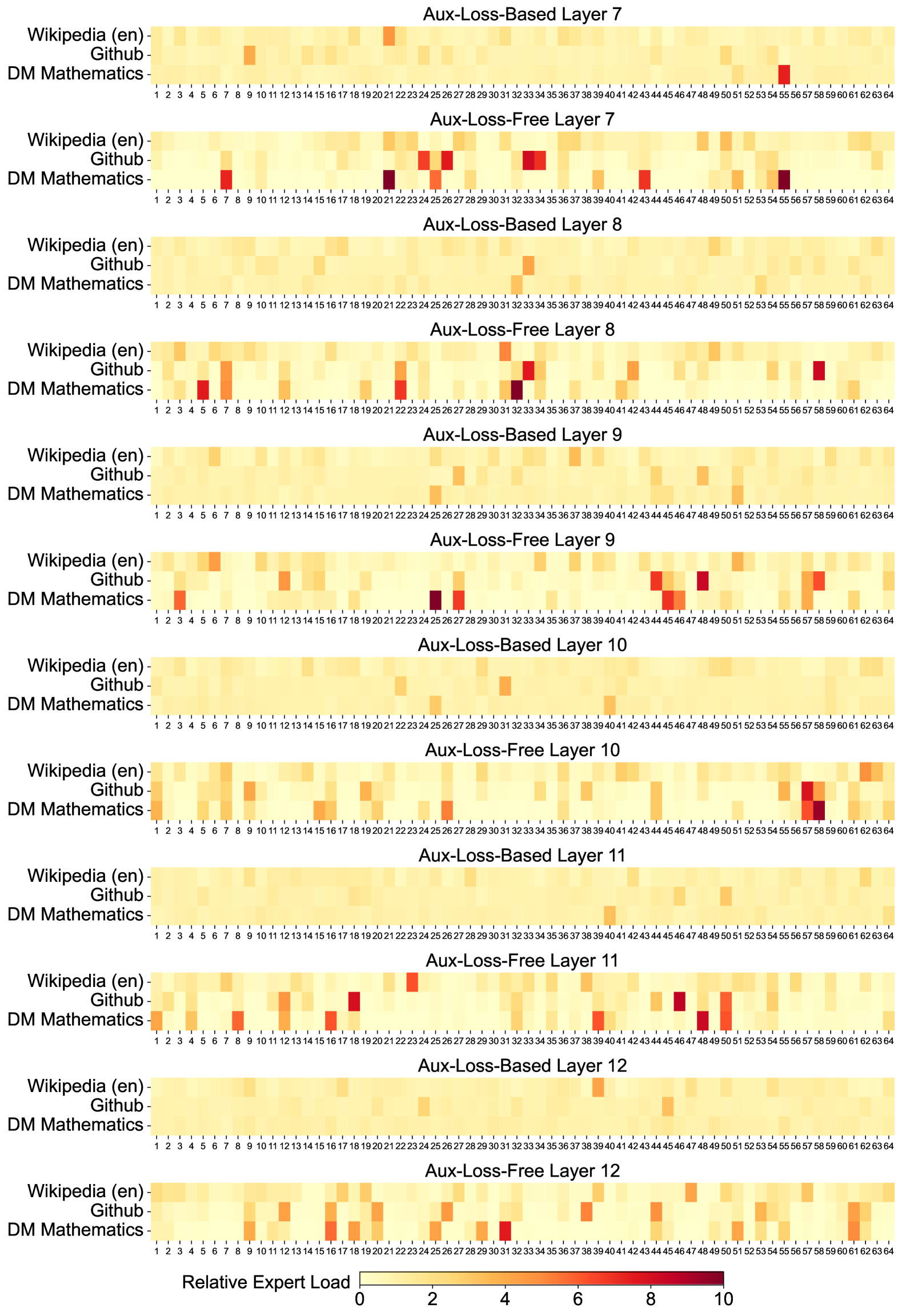

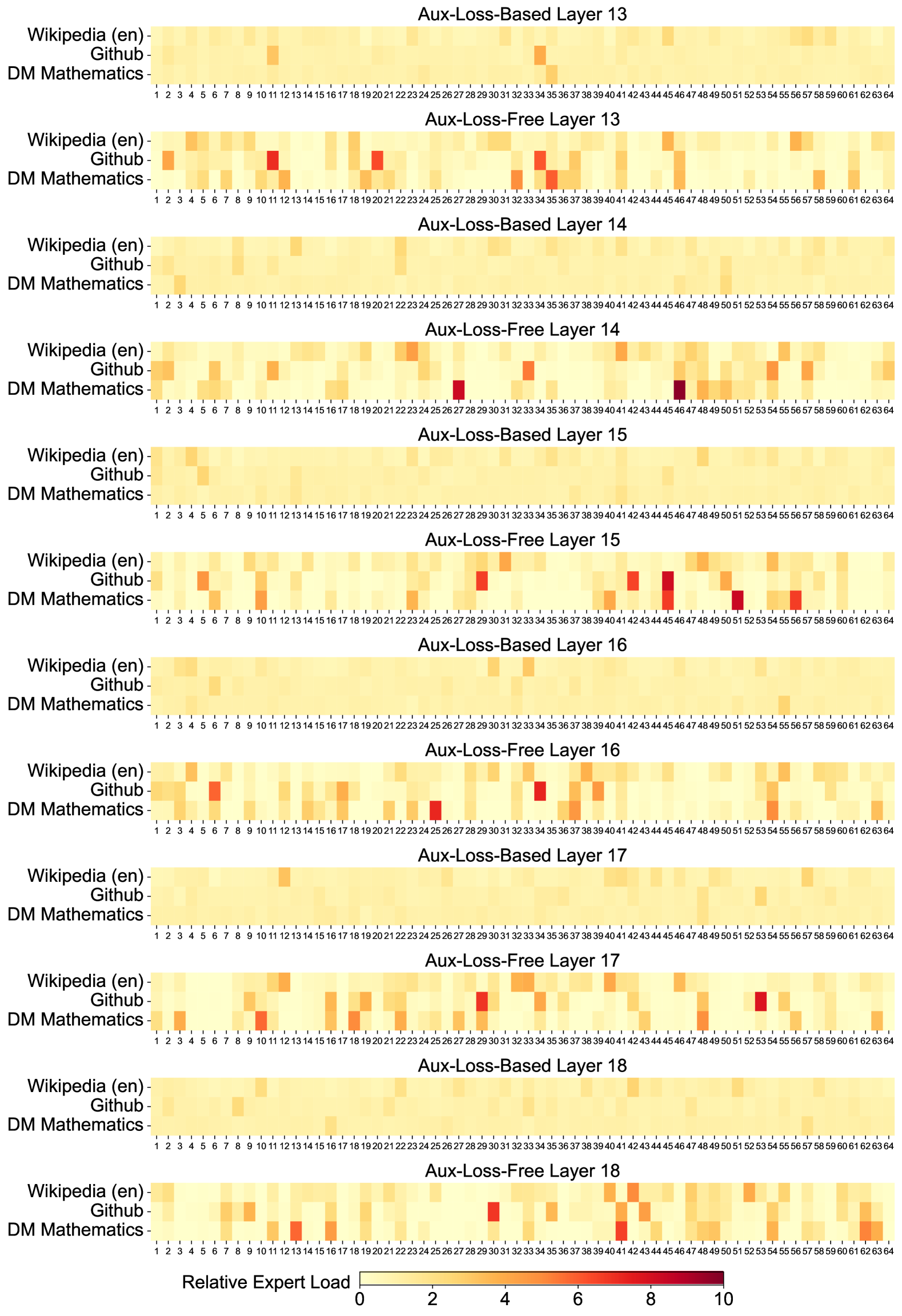

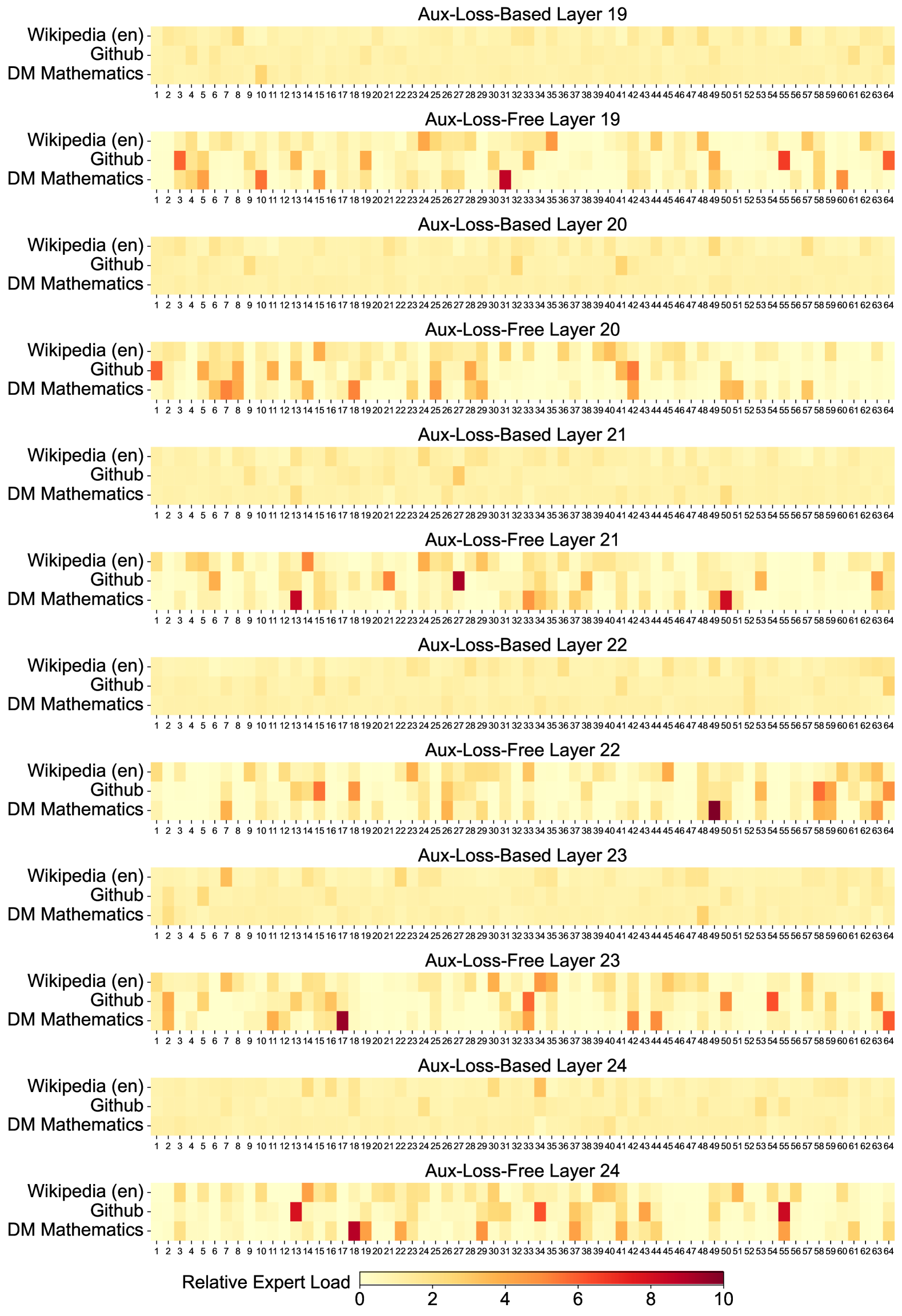

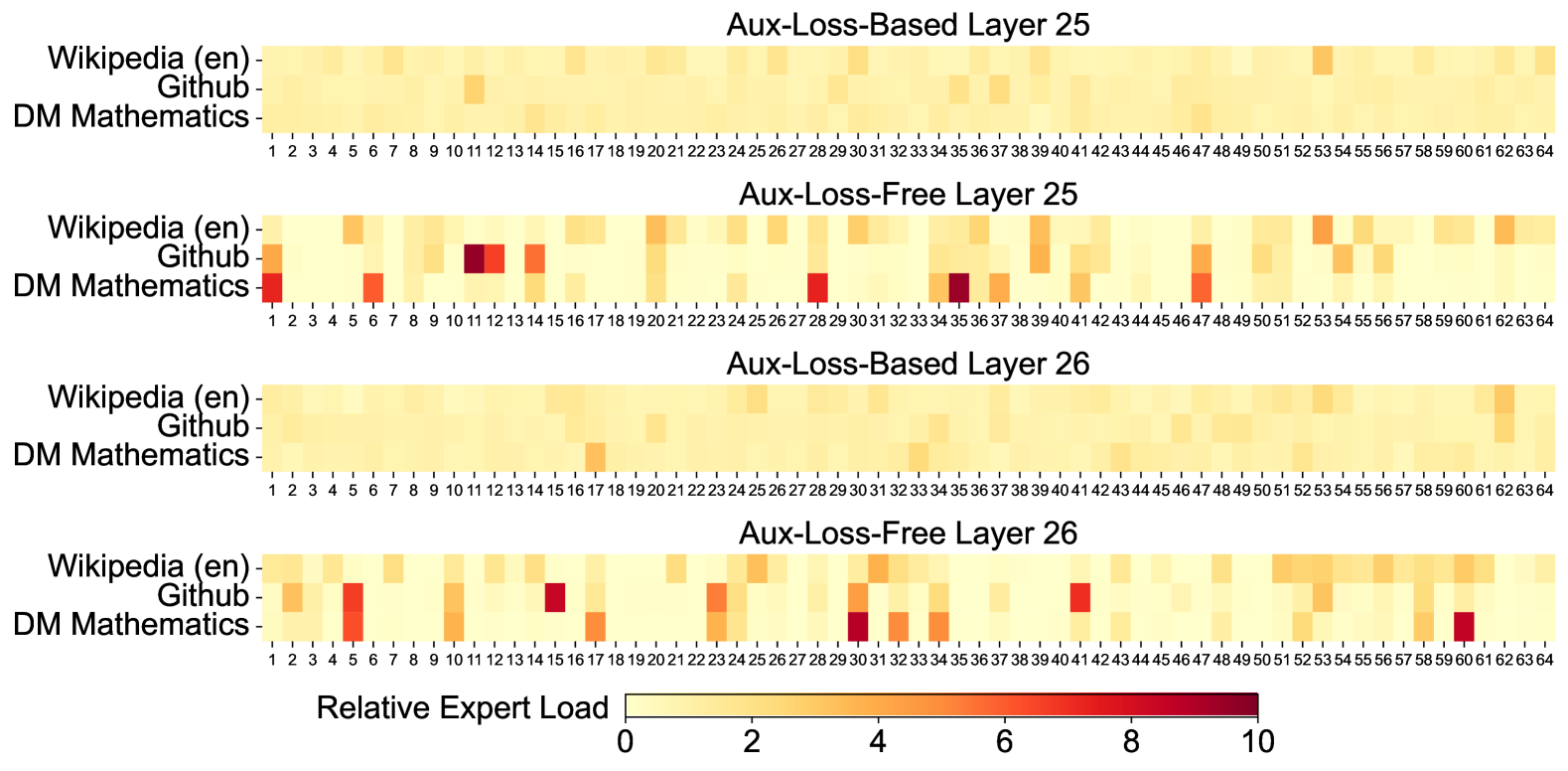

1. C Expert Specialization Patterns of the 16B Aux-Loss-Based and Aux-Loss-Free Models

1 Introduction

In recent years, Large Language Models (LLMs) have been undergoing rapid iteration and evolution (OpenAI, 2024a; Anthropic, 2024; Google, 2024), progressively diminishing the gap towards Artificial General Intelligence (AGI). Beyond closed-source models, open-source models, including DeepSeek series (DeepSeek-AI, 2024b, c; Guo et al., 2024; DeepSeek-AI, 2024a), LLaMA series (Touvron et al., 2023a, b; AI@Meta, 2024a, b), Qwen series (Qwen, 2023, 2024a, 2024b), and Mistral series (Jiang et al., 2023; Mistral, 2024), are also making significant strides, endeavoring to close the gap with their closed-source counterparts. To further push the boundaries of open-source model capabilities, we scale up our models and introduce DeepSeek-V3, a large Mixture-of-Experts (MoE) model with 671B parameters, of which 37B are activated for each token.

With a forward-looking perspective, we consistently strive for strong model performance and economical costs. Therefore, in terms of architecture, DeepSeek-V3 still adopts Multi-head Latent Attention (MLA) (DeepSeek-AI, 2024c) for efficient inference and DeepSeekMoE (Dai et al., 2024) for cost-effective training. These two architectures have been validated in DeepSeek-V2 (DeepSeek-AI, 2024c), demonstrating their capability to maintain robust model performance while achieving efficient training and inference. Beyond the basic architecture, we implement two additional strategies to further enhance the model capabilities. Firstly, DeepSeek-V3 pioneers an auxiliary-loss-free strategy (Wang et al., 2024a) for load balancing, with the aim of minimizing the adverse impact on model performance that arises from the effort to encourage load balancing. Secondly, DeepSeek-V3 employs a multi-token prediction training objective, which we have observed to enhance the overall performance on evaluation benchmarks.

In order to achieve efficient training, we support the FP8 mixed precision training and implement comprehensive optimizations for the training framework. Low-precision training has emerged as a promising solution for efficient training (Kalamkar et al., 2019; Narang et al., 2017; Peng et al., 2023b; Dettmers et al., 2022), its evolution being closely tied to advancements in hardware capabilities (Micikevicius et al., 2022; Luo et al., 2024; Rouhani et al., 2023a). In this work, we introduce an FP8 mixed precision training framework and, for the first time, validate its effectiveness on an extremely large-scale model. Through the support for FP8 computation and storage, we achieve both accelerated training and reduced GPU memory usage. As for the training framework, we design the DualPipe algorithm for efficient pipeline parallelism, which has fewer pipeline bubbles and hides most of the communication during training through computation-communication overlap. This overlap ensures that, as the model further scales up, as long as we maintain a constant computation-to-communication ratio, we can still employ fine-grained experts across nodes while achieving a near-zero all-to-all communication overhead. In addition, we also develop efficient cross-node all-to-all communication kernels to fully utilize InfiniBand (IB) and NVLink bandwidths. Furthermore, we meticulously optimize the memory footprint, making it possible to train DeepSeek-V3 without using costly tensor parallelism. Combining these efforts, we achieve high training efficiency.

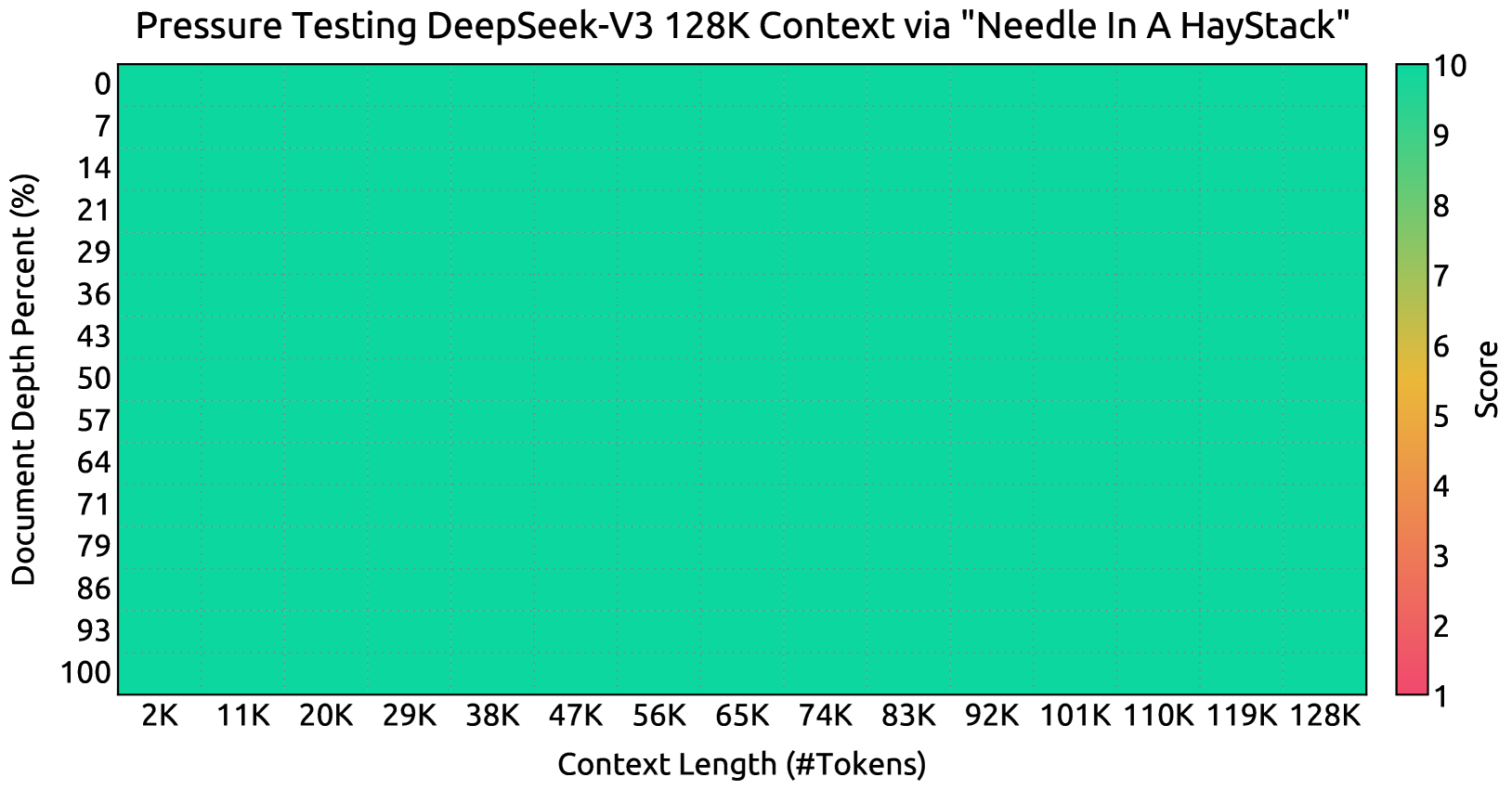

During pre-training, we train DeepSeek-V3 on 14.8T high-quality and diverse tokens. The pre-training process is remarkably stable. Throughout the entire training process, we did not encounter any irrecoverable loss spikes or have to roll back. Next, we conduct a two-stage context length extension for DeepSeek-V3. In the first stage, the maximum context length is extended to 32K, and in the second stage, it is further extended to 128K. Following this, we conduct post-training, including Supervised Fine-Tuning (SFT) and Reinforcement Learning (RL) on the base model of DeepSeek-V3, to align it with human preferences and further unlock its potential. During the post-training stage, we distill the reasoning capability from the DeepSeek-R1 series of models, and meanwhile carefully maintain the balance between model accuracy and generation length.

We evaluate DeepSeek-V3 on a comprehensive array of benchmarks. Despite its economical training costs, comprehensive evaluations reveal that DeepSeek-V3-Base has emerged as the strongest open-source base model currently available, especially in code and math. Its chat version also outperforms other open-source models and achieves performance comparable to leading closed-source models, including GPT-4o and Claude-3.5-Sonnet, on a series of standard and open-ended benchmarks.

| in H800 GPU Hours-in USD | 2664K $5.328M | 119K $0.238M | 5K $0.01M | 2788K $5.576M |

| --- | --- | --- | --- | --- |

Table 1: Training costs of DeepSeek-V3, assuming the rental price of H800 is $2 per GPU hour.

Lastly, we emphasize again the economical training costs of DeepSeek-V3, summarized in Table 1, achieved through our optimized co-design of algorithms, frameworks, and hardware. During the pre-training stage, training DeepSeek-V3 on each trillion tokens requires only 180K H800 GPU hours, i.e., 3.7 days on our cluster with 2048 H800 GPUs. Consequently, our pre-training stage is completed in less than two months and costs 2664K GPU hours. Combined with 119K GPU hours for the context length extension and 5K GPU hours for post-training, DeepSeek-V3 costs only 2.788M GPU hours for its full training. Assuming the rental price of the H800 GPU is $2 per GPU hour, our total training costs amount to only $5.576M. Note that the aforementioned costs include only the official training of DeepSeek-V3, excluding the costs associated with prior research and ablation experiments on architectures, algorithms, or data.

Our main contribution includes:

Architecture: Innovative Load Balancing Strategy and Training Objective

- On top of the efficient architecture of DeepSeek-V2, we pioneer an auxiliary-loss-free strategy for load balancing, which minimizes the performance degradation that arises from encouraging load balancing.

- We investigate a Multi-Token Prediction (MTP) objective and prove it beneficial to model performance. It can also be used for speculative decoding for inference acceleration.

Pre-Training: Towards Ultimate Training Efficiency

- We design an FP8 mixed precision training framework and, for the first time, validate the feasibility and effectiveness of FP8 training on an extremely large-scale model.

- Through the co-design of algorithms, frameworks, and hardware, we overcome the communication bottleneck in cross-node MoE training, achieving near-full computation-communication overlap. This significantly enhances our training efficiency and reduces the training costs, enabling us to further scale up the model size without additional overhead.

- At an economical cost of only 2.664M H800 GPU hours, we complete the pre-training of DeepSeek-V3 on 14.8T tokens, producing the currently strongest open-source base model. The subsequent training stages after pre-training require only 0.1M GPU hours.

Post-Training: Knowledge Distillation from DeepSeek-R1

- We introduce an innovative methodology to distill reasoning capabilities from the long-Chain-of-Thought (CoT) model, specifically from one of the DeepSeek R1 series models, into standard LLMs, particularly DeepSeek-V3. Our pipeline elegantly incorporates the verification and reflection patterns of R1 into DeepSeek-V3 and notably improves its reasoning performance. Meanwhile, we also maintain control over the output style and length of DeepSeek-V3.

Summary of Core Evaluation Results

- Knowledge: (1) On educational benchmarks such as MMLU, MMLU-Pro, and GPQA, DeepSeek-V3 outperforms all other open-source models, achieving 88.5 on MMLU, 75.9 on MMLU-Pro, and 59.1 on GPQA. Its performance is comparable to leading closed-source models like GPT-4o and Claude-Sonnet-3.5, narrowing the gap between open-source and closed-source models in this domain. (2) For factuality benchmarks, DeepSeek-V3 demonstrates superior performance among open-source models on both SimpleQA and Chinese SimpleQA. While it trails behind GPT-4o and Claude-Sonnet-3.5 in English factual knowledge (SimpleQA), it surpasses these models in Chinese factual knowledge (Chinese SimpleQA), highlighting its strength in Chinese factual knowledge.

- Code, Math, and Reasoning: (1) DeepSeek-V3 achieves state-of-the-art performance on math-related benchmarks among all non-long-CoT open-source and closed-source models. Notably, it even outperforms o1-preview on specific benchmarks, such as MATH-500, demonstrating its robust mathematical reasoning capabilities. (2) On coding-related tasks, DeepSeek-V3 emerges as the top-performing model for coding competition benchmarks, such as LiveCodeBench, solidifying its position as the leading model in this domain. For engineering-related tasks, while DeepSeek-V3 performs slightly below Claude-Sonnet-3.5, it still outpaces all other models by a significant margin, demonstrating its competitiveness across diverse technical benchmarks.

In the remainder of this paper, we first present a detailed exposition of our DeepSeek-V3 model architecture (Section 2). Subsequently, we introduce our infrastructures, encompassing our compute clusters, the training framework, the support for FP8 training, the inference deployment strategy, and our suggestions on future hardware design. Next, we describe our pre-training process, including the construction of training data, hyper-parameter settings, long-context extension techniques, the associated evaluations, as well as some discussions (Section 4). Thereafter, we discuss our efforts on post-training, which include Supervised Fine-Tuning (SFT), Reinforcement Learning (RL), the corresponding evaluations, and discussions (Section 5). Lastly, we conclude this work, discuss existing limitations of DeepSeek-V3, and propose potential directions for future research (Section 6).

2 Architecture

We first introduce the basic architecture of DeepSeek-V3, featured by Multi-head Latent Attention (MLA) (DeepSeek-AI, 2024c) for efficient inference and DeepSeekMoE (Dai et al., 2024) for economical training. Then, we present a Multi-Token Prediction (MTP) training objective, which we have observed to enhance the overall performance on evaluation benchmarks. For other minor details not explicitly mentioned, DeepSeek-V3 adheres to the settings of DeepSeek-V2 (DeepSeek-AI, 2024c).

<details>

<summary>x2.png Details</summary>

### Visual Description

# Technical Document Extraction: DeepSeek Architecture Overview

This document provides a comprehensive technical breakdown of the provided architectural diagram, which illustrates the components of a Transformer Block, specifically detailing the **DeepSeekMoE** and **Multi-Head Latent Attention (MLA)** mechanisms.

---

## 1. High-Level Structure: Transformer Block $\times L$

The left side of the image depicts the standard macro-architecture of a Transformer layer, which is repeated $L$ times.

### Components and Flow:

* **Input Path:** Data enters from the bottom.

* **Sub-layer 1 (Attention):**

* **RMSNorm:** The input first passes through Root Mean Square Normalization.

* **Attention:** The normalized signal enters the Attention block (detailed in the MLA section).

* **Residual Connection:** The original input is added to the output of the Attention block via an element-wise addition operator ($\oplus$).

* **Sub-layer 2 (Feed-Forward):**

* **RMSNorm:** The output of the first residual addition passes through a second normalization layer.

* **Feed-Forward Network:** The signal enters the FFN block (detailed in the DeepSeekMoE section).

* **Residual Connection:** The input to this sub-layer is added to the FFN output via an element-wise addition operator ($\oplus$).

* **Output:** The final processed signal exits at the top.

---

## 2. Component Detail: DeepSeekMoE

This section expands on the "Feed-Forward Network" block. It describes a Mixture-of-Experts (MoE) architecture.

### Legend [Top Right]:

* **Light Blue Box:** Routed Expert

* **Light Green Box:** Shared Expert

### Data Flow and Components:

1. **Input Hidden $\mathbf{u}_t$:** Represented as a vector of neurons.

2. **Routing Mechanism:**

* The input is sent to a **Router**.

* The Router generates a distribution, and a **Top-$K_r$** selection is made (visualized by a bar chart).

* Dashed yellow lines indicate the selection of specific "Routed Experts."

3. **Expert Processing:**

* **Shared Experts (Green):** Labeled $1$ through $N_s$. The input $\mathbf{u}_t$ is sent to all shared experts.

* **Routed Experts (Blue):** Labeled $1, 2, 3, 4, \dots, N_r-1, N_r$. Only the experts selected by the Top-$K_r$ gating receive the input.

4. **Aggregation:**

* Outputs from all active experts (Shared and Routed) are multiplied by their respective gating weights ($\otimes$).

* The weighted outputs are summed ($\oplus$).

5. **Output Hidden $\mathbf{h}'_t$:** The final aggregated vector.

---

## 3. Component Detail: Multi-Head Latent Attention (MLA)

This section expands on the "Attention" block, focusing on low-rank latent projections to reduce KV cache overhead.

### Legend [Top Right of MLA Box]:

* **Diagonal Striped Pattern:** Cached During Inference

### Data Flow and Components:

1. **Input Hidden $\mathbf{h}_t$:** The base input vector.

2. **Latent Projections:**

* **Query Path:** $\mathbf{h}_t$ is projected into a **Latent $\mathbf{c}_t^Q$**.

* **Key-Value Path:** $\mathbf{h}_t$ is projected into a **Latent $\mathbf{c}_t^{KV}$**. Note that $\mathbf{c}_t^{KV}$ is marked as **Cached During Inference**.

3. **Feature Extraction:**

* **From $\mathbf{c}_t^Q$:** Produces $\{\mathbf{q}_{t,i}^C\}$ (content query) and $\{\mathbf{q}_{t,i}^R\}$ (positional query via *apply RoPE*). These are concatenated to form $\{[\mathbf{q}_{t,i}^C; \mathbf{q}_{t,i}^R]\}$.

* **From $\mathbf{c}_t^{KV}$:** Produces $\{\mathbf{k}_{t,i}^C\}$ (content key) and $\{\mathbf{v}_{t,i}^C\}$ (content value).

* **Positional Key:** A separate path from $\mathbf{h}_t$ applies RoPE to generate $\mathbf{k}_t^R$ (marked as **Cached During Inference**).

* **Concatenation:** $\{\mathbf{k}_{t,i}^C\}$ and $\mathbf{k}_t^R$ are concatenated to form $\{[\mathbf{k}_{t,i}^C; \mathbf{k}_t^R]\}$.

4. **Attention Mechanism:**

* The processed Query, Key, and Value sets are fed into the **Multi-Head Attention** block.

5. **Output Hidden $\mathbf{u}_t$:** The final output vector of the attention mechanism.

---

## 4. Textual Transcriptions

### Labels and Variables:

* **Transformer Block $\times L$**

* **RMSNorm**

* **Attention**

* **Feed-Forward Network**

* **DeepSeekMoE**

* **Output Hidden $\mathbf{h}'_t$**

* **Shared Expert ($N_s$)**

* **Routed Expert ($N_r$)**

* **Router**

* **Top-$K_r$**

* **Input Hidden $\mathbf{u}_t$**

* **Multi-Head Latent Attention (MLA)**

* **Cached During Inference**

* **Multi-Head Attention**

* **Latent $\mathbf{c}_t^Q$**

* **Latent $\mathbf{c}_t^{KV}$**

* **apply RoPE**

* **concatenate**

* **Input Hidden $\mathbf{h}_t$**

### Mathematical Notations:

* $\{\mathbf{q}_{t,i}^C\}$ : Content Query

* $\{\mathbf{q}_{t,i}^R\}$ : RoPE Query

* $\{[\mathbf{q}_{t,i}^C; \mathbf{q}_{t,i}^R]\}$ : Concatenated Query

* $\mathbf{k}_t^R$ : RoPE Key

* $\{\mathbf{k}_{t,i}^C\}$ : Content Key

* $\{[\mathbf{k}_{t,i}^C; \mathbf{k}_t^R]\}$ : Concatenated Key

* $\{\mathbf{v}_{t,i}^C\}$ : Content Value

</details>

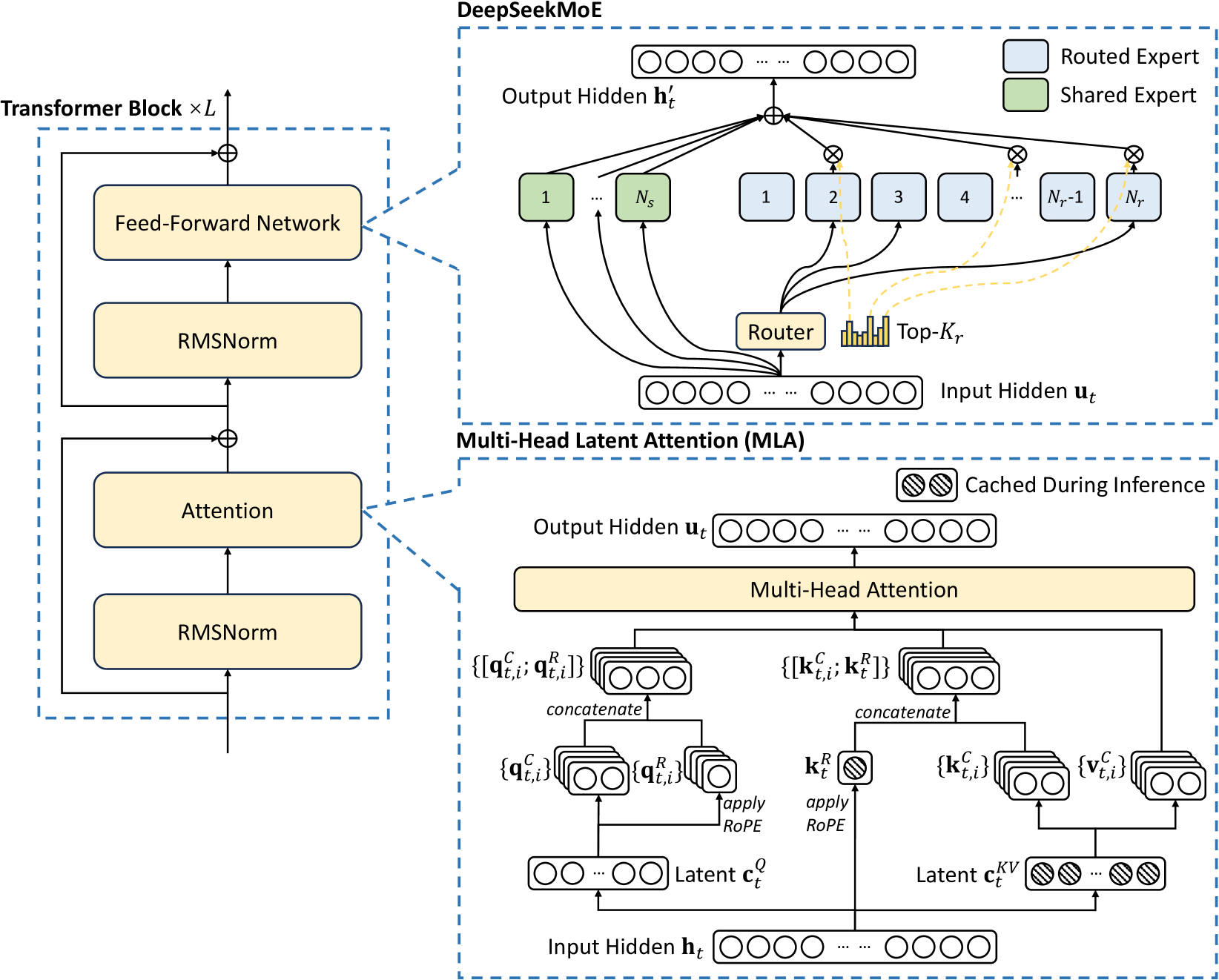

Figure 2: Illustration of the basic architecture of DeepSeek-V3. Following DeepSeek-V2, we adopt MLA and DeepSeekMoE for efficient inference and economical training.

2.1 Basic Architecture

The basic architecture of DeepSeek-V3 is still within the Transformer (Vaswani et al., 2017) framework. For efficient inference and economical training, DeepSeek-V3 also adopts MLA and DeepSeekMoE, which have been thoroughly validated by DeepSeek-V2. Compared with DeepSeek-V2, an exception is that we additionally introduce an auxiliary-loss-free load balancing strategy (Wang et al., 2024a) for DeepSeekMoE to mitigate the performance degradation induced by the effort to ensure load balance. Figure 2 illustrates the basic architecture of DeepSeek-V3, and we will briefly review the details of MLA and DeepSeekMoE in this section.

2.1.1 Multi-Head Latent Attention

For attention, DeepSeek-V3 adopts the MLA architecture. Let $d$ denote the embedding dimension, $n_{h}$ denote the number of attention heads, $d_{h}$ denote the dimension per head, and $\mathbf{h}_{t}∈\mathbb{R}^{d}$ denote the attention input for the $t$ -th token at a given attention layer. The core of MLA is the low-rank joint compression for attention keys and values to reduce Key-Value (KV) cache during inference:

$$

\displaystyle\boxed{\color[rgb]{0,0,1}\definecolor[named]{pgfstrokecolor}{rgb}%

{0,0,1}\mathbf{c}_{t}^{KV}} \displaystyle=W^{DKV}\mathbf{h}_{t}, \displaystyle[\mathbf{k}_{t,1}^{C};\mathbf{k}_{t,2}^{C};...;\mathbf{k}_{t,n_{h%

}}^{C}]=\mathbf{k}_{t}^{C} \displaystyle=W^{UK}\mathbf{c}_{t}^{KV}, \displaystyle\boxed{\color[rgb]{0,0,1}\definecolor[named]{pgfstrokecolor}{rgb}%

{0,0,1}\mathbf{k}_{t}^{R}} \displaystyle=\operatorname{RoPE}({W^{KR}}\mathbf{h}_{t}), \displaystyle\mathbf{k}_{t,i} \displaystyle=[\mathbf{k}_{t,i}^{C};\mathbf{k}_{t}^{R}], \displaystyle[\mathbf{v}_{t,1}^{C};\mathbf{v}_{t,2}^{C};...;\mathbf{v}_{t,n_{h%

}}^{C}]=\mathbf{v}_{t}^{C} \displaystyle=W^{UV}\mathbf{c}_{t}^{KV}, \tag{1}

$$

where $\mathbf{c}_{t}^{KV}∈\mathbb{R}^{d_{c}}$ is the compressed latent vector for keys and values; $d_{c}(\ll d_{h}n_{h})$ indicates the KV compression dimension; $W^{DKV}∈\mathbb{R}^{d_{c}× d}$ denotes the down-projection matrix; $W^{UK},W^{UV}∈\mathbb{R}^{d_{h}n_{h}× d_{c}}$ are the up-projection matrices for keys and values, respectively; $W^{KR}∈\mathbb{R}^{d_{h}^{R}× d}$ is the matrix used to produce the decoupled key that carries Rotary Positional Embedding (RoPE) (Su et al., 2024); $\operatorname{RoPE}(·)$ denotes the operation that applies RoPE matrices; and $[·;·]$ denotes concatenation. Note that for MLA, only the blue-boxed vectors (i.e., $\color[rgb]{0,0,1}\definecolor[named]{pgfstrokecolor}{rgb}{0,0,1}\mathbf{c}_{t%

}^{KV}$ and $\color[rgb]{0,0,1}\definecolor[named]{pgfstrokecolor}{rgb}{0,0,1}\mathbf{k}_{t%

}^{R}$ ) need to be cached during generation, which results in significantly reduced KV cache while maintaining performance comparable to standard Multi-Head Attention (MHA) (Vaswani et al., 2017).

For the attention queries, we also perform a low-rank compression, which can reduce the activation memory during training:

$$

\displaystyle\mathbf{c}_{t}^{Q} \displaystyle=W^{DQ}\mathbf{h}_{t}, \displaystyle[\mathbf{q}_{t,1}^{C};\mathbf{q}_{t,2}^{C};...;\mathbf{q}_{t,n_{h%

}}^{C}]=\mathbf{q}_{t}^{C} \displaystyle=W^{UQ}\mathbf{c}_{t}^{Q}, \displaystyle[\mathbf{q}_{t,1}^{R};\mathbf{q}_{t,2}^{R};...;\mathbf{q}_{t,n_{h%

}}^{R}]=\mathbf{q}_{t}^{R} \displaystyle=\operatorname{RoPE}({W^{QR}}\mathbf{c}_{t}^{Q}), \displaystyle\mathbf{q}_{t,i} \displaystyle=[\mathbf{q}_{t,i}^{C};\mathbf{q}_{t,i}^{R}], \tag{6}

$$

where $\mathbf{c}_{t}^{Q}∈\mathbb{R}^{d_{c}^{\prime}}$ is the compressed latent vector for queries; $d_{c}^{\prime}(\ll d_{h}n_{h})$ denotes the query compression dimension; $W^{DQ}∈\mathbb{R}^{d_{c}^{\prime}× d},W^{UQ}∈\mathbb{R}^{d_{h}n_{h}%

× d_{c}^{\prime}}$ are the down-projection and up-projection matrices for queries, respectively; and $W^{QR}∈\mathbb{R}^{d_{h}^{R}n_{h}× d_{c}^{\prime}}$ is the matrix to produce the decoupled queries that carry RoPE.

Ultimately, the attention queries ( $\mathbf{q}_{t,i}$ ), keys ( $\mathbf{k}_{j,i}$ ), and values ( $\mathbf{v}_{j,i}^{C}$ ) are combined to yield the final attention output $\mathbf{u}_{t}$ :

$$

\displaystyle\mathbf{o}_{t,i} \displaystyle=\sum_{j=1}^{t}\operatorname{Softmax}_{j}(\frac{\mathbf{q}_{t,i}^%

{T}\mathbf{k}_{j,i}}{\sqrt{d_{h}+d_{h}^{R}}})\mathbf{v}_{j,i}^{C}, \displaystyle\mathbf{u}_{t} \displaystyle=W^{O}[\mathbf{o}_{t,1};\mathbf{o}_{t,2};...;\mathbf{o}_{t,n_{h}}], \tag{10}

$$

where $W^{O}∈\mathbb{R}^{d× d_{h}n_{h}}$ denotes the output projection matrix.

2.1.2 DeepSeekMoE with Auxiliary-Loss-Free Load Balancing

Basic Architecture of DeepSeekMoE.

For Feed-Forward Networks (FFNs), DeepSeek-V3 employs the DeepSeekMoE architecture (Dai et al., 2024). Compared with traditional MoE architectures like GShard (Lepikhin et al., 2021), DeepSeekMoE uses finer-grained experts and isolates some experts as shared ones. Let $\mathbf{u}_{t}$ denote the FFN input of the $t$ -th token, we compute the FFN output $\mathbf{h}_{t}^{\prime}$ as follows:

$$

\displaystyle\mathbf{h}_{t}^{\prime} \displaystyle=\mathbf{u}_{t}+\sum_{i=1}^{N_{s}}{\operatorname{FFN}^{(s)}_{i}%

\left(\mathbf{u}_{t}\right)}+\sum_{i=1}^{N_{r}}{g_{i,t}\operatorname{FFN}^{(r)%

}_{i}\left(\mathbf{u}_{t}\right)}, \displaystyle g_{i,t} \displaystyle=\frac{g^{\prime}_{i,t}}{\sum_{j=1}^{N_{r}}g^{\prime}_{j,t}}, \displaystyle g^{\prime}_{i,t} \displaystyle=\begin{cases}s_{i,t},&s_{i,t}\in\operatorname{Topk}(\{s_{j,t}|1%

\leqslant j\leqslant N_{r}\},K_{r}),\\

0,&\text{otherwise},\end{cases} \displaystyle s_{i,t} \displaystyle=\operatorname{Sigmoid}\left({\mathbf{u}_{t}}^{T}\mathbf{e}_{i}%

\right), \tag{12}

$$

where $N_{s}$ and $N_{r}$ denote the numbers of shared experts and routed experts, respectively; $\operatorname{FFN}^{(s)}_{i}(·)$ and $\operatorname{FFN}^{(r)}_{i}(·)$ denote the $i$ -th shared expert and the $i$ -th routed expert, respectively; $K_{r}$ denotes the number of activated routed experts; $g_{i,t}$ is the gating value for the $i$ -th expert; $s_{i,t}$ is the token-to-expert affinity; $\mathbf{e}_{i}$ is the centroid vector of the $i$ -th routed expert; and $\operatorname{Topk}(·,K)$ denotes the set comprising $K$ highest scores among the affinity scores calculated for the $t$ -th token and all routed experts. Slightly different from DeepSeek-V2, DeepSeek-V3 uses the sigmoid function to compute the affinity scores, and applies a normalization among all selected affinity scores to produce the gating values.

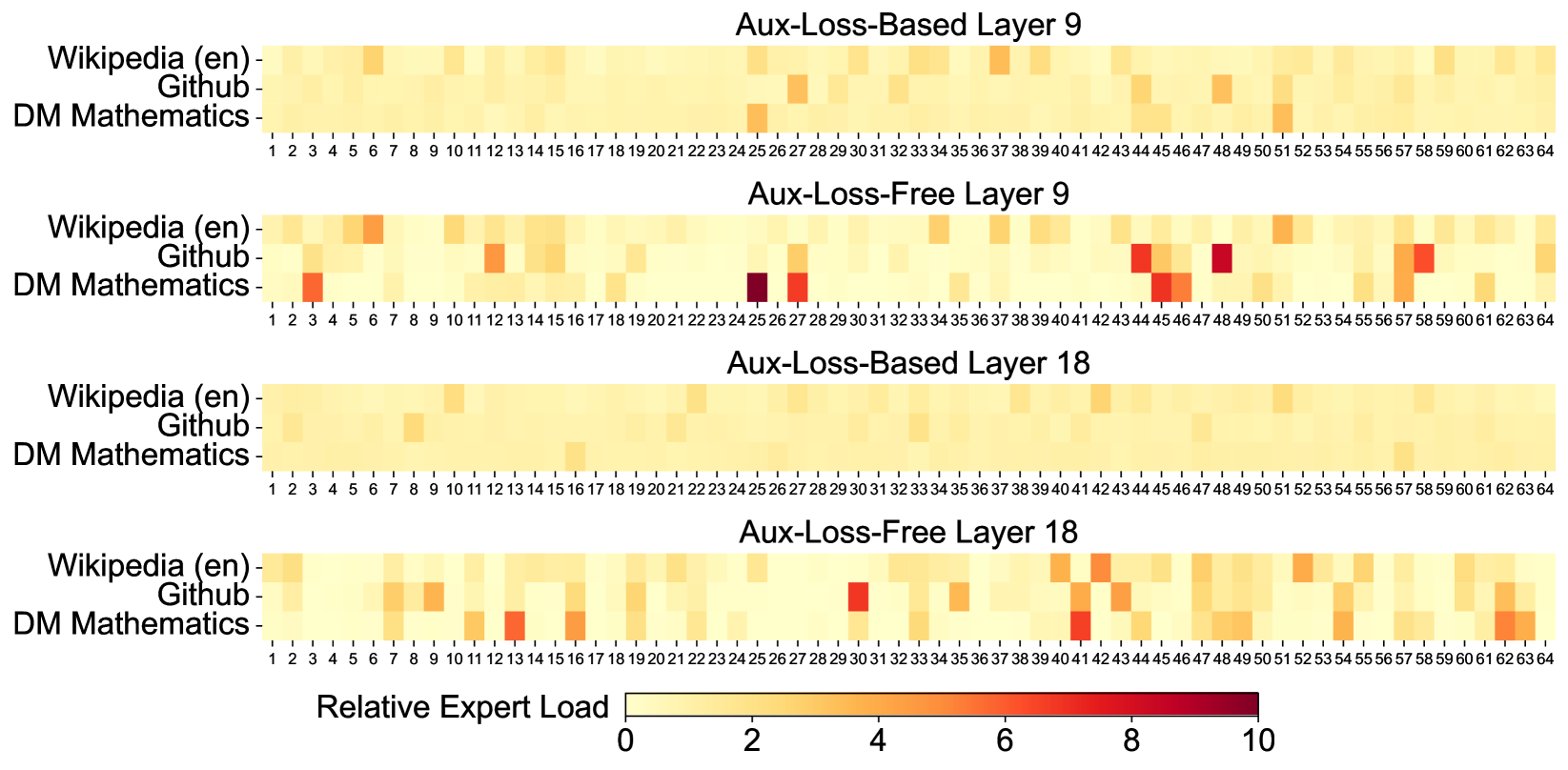

Auxiliary-Loss-Free Load Balancing.

For MoE models, an unbalanced expert load will lead to routing collapse (Shazeer et al., 2017) and diminish computational efficiency in scenarios with expert parallelism. Conventional solutions usually rely on the auxiliary loss (Fedus et al., 2021; Lepikhin et al., 2021) to avoid unbalanced load. However, too large an auxiliary loss will impair the model performance (Wang et al., 2024a). To achieve a better trade-off between load balance and model performance, we pioneer an auxiliary-loss-free load balancing strategy (Wang et al., 2024a) to ensure load balance. To be specific, we introduce a bias term $b_{i}$ for each expert and add it to the corresponding affinity scores $s_{i,t}$ to determine the top-K routing:

$$

\displaystyle g^{\prime}_{i,t} \displaystyle=\begin{cases}s_{i,t},&s_{i,t}+b_{i}\in\operatorname{Topk}(\{s_{j%

,t}+b_{j}|1\leqslant j\leqslant N_{r}\},K_{r}),\\

0,&\text{otherwise}.\end{cases} \tag{16}

$$

Note that the bias term is only used for routing. The gating value, which will be multiplied with the FFN output, is still derived from the original affinity score $s_{i,t}$ . During training, we keep monitoring the expert load on the whole batch of each training step. At the end of each step, we will decrease the bias term by $\gamma$ if its corresponding expert is overloaded, and increase it by $\gamma$ if its corresponding expert is underloaded, where $\gamma$ is a hyper-parameter called bias update speed. Through the dynamic adjustment, DeepSeek-V3 keeps balanced expert load during training, and achieves better performance than models that encourage load balance through pure auxiliary losses.

Complementary Sequence-Wise Auxiliary Loss.

Although DeepSeek-V3 mainly relies on the auxiliary-loss-free strategy for load balance, to prevent extreme imbalance within any single sequence, we also employ a complementary sequence-wise balance loss:

$$

\displaystyle\mathcal{L}_{\mathrm{Bal}} \displaystyle=\alpha\sum_{i=1}^{N_{r}}{f_{i}P_{i}}, \displaystyle f_{i}=\frac{N_{r}}{K_{r}T}\sum_{t=1}^{T}\mathds{1} \displaystyle\left(s_{i,t}\in\operatorname{Topk}(\{s_{j,t}|1\leqslant j%

\leqslant N_{r}\},K_{r})\right), \displaystyle s^{\prime}_{i,t} \displaystyle=\frac{s_{i,t}}{\sum_{j=1}^{N_{r}}s_{j,t}}, \displaystyle P_{i} \displaystyle=\frac{1}{T}\sum_{t=1}^{T}{s^{\prime}_{i,t}}, \tag{17}

$$

where the balance factor $\alpha$ is a hyper-parameter, which will be assigned an extremely small value for DeepSeek-V3; $\mathds{1}(·)$ denotes the indicator function; and $T$ denotes the number of tokens in a sequence. The sequence-wise balance loss encourages the expert load on each sequence to be balanced.

Node-Limited Routing.

Like the device-limited routing used by DeepSeek-V2, DeepSeek-V3 also uses a restricted routing mechanism to limit communication costs during training. In short, we ensure that each token will be sent to at most $M$ nodes, which are selected according to the sum of the highest $\frac{K_{r}}{M}$ affinity scores of the experts distributed on each node. Under this constraint, our MoE training framework can nearly achieve full computation-communication overlap.

No Token-Dropping.

Due to the effective load balancing strategy, DeepSeek-V3 keeps a good load balance during its full training. Therefore, DeepSeek-V3 does not drop any tokens during training. In addition, we also implement specific deployment strategies to ensure inference load balance, so DeepSeek-V3 also does not drop tokens during inference.

<details>

<summary>x3.png Details</summary>

### Visual Description

# Technical Diagram Analysis: Multi-Token Prediction (MTP) Architecture

This document provides a detailed technical extraction of the provided architectural diagram, which illustrates a machine learning model designed for Multi-Token Prediction (MTP).

## 1. High-Level Overview

The diagram depicts a sequential architecture consisting of a **Main Model** followed by multiple **MTP Modules** (MTP Module 1 and MTP Module 2 are shown, with an ellipsis indicating further modules). The system is designed to predict multiple future tokens simultaneously by shifting the input window and sharing weights across specific layers.

---

## 2. Component Breakdown

### A. Input and Target Tokens (Data Flow)

The diagram tracks the flow of tokens through three distinct stages.

| Stage | Input Tokens | Target Tokens | Prediction Goal |

| :--- | :--- | :--- | :--- |

| **Main Model** | $t_1, t_2, t_3, t_4$ | $t_2, t_3, t_4, t_5$ | Next Token Prediction |

| **MTP Module 1** | $t_2, t_3, t_4, t_5$ | $t_3, t_4, t_5, t_6$ | $Next^2$ Token Prediction |

| **MTP Module 2** | $t_3, t_4, t_5, t_6$ | $t_4, t_5, t_6, t_7$ | $Next^3$ Token Prediction |

### B. Main Model Structure

Contained within a blue dashed boundary.

- **Input:** $t_1$ through $t_4$.

- **Embedding Layer:** (Green) Shared across all modules.

- **Transformer Block $\times L$:** (Yellow) A stack of $L$ transformer blocks processing the embeddings.

- **Output Head:** (Green) Shared across all modules. Produces the final hidden state.

- **Loss Function:** Cross-Entropy Loss comparing output to targets $t_2 \dots t_5$.

- **Loss Label:** $\mathcal{L}_{Main}$

### C. MTP Module 1 Structure

Contained within a blue dashed boundary.

- **Input:** $t_2$ through $t_5$.

- **Embedding Layer:** (Green) Shared with Main Model.

- **RMSNorm Layers:** Two parallel RMSNorm blocks.

- One receives input from the Shared Embedding Layer.

- The other receives the hidden state output from the **Main Model's** Transformer stack.

- **Concatenation:** The outputs of the two RMSNorm layers are merged.

- **Linear Projection:** (Yellow) Processes the concatenated representation.

- **Transformer Block:** (Yellow) A single transformer block.

- **Output Head:** (Green) Shared with Main Model.

- **Loss Function:** Cross-Entropy Loss comparing output to targets $t_3 \dots t_6$.

- **Loss Label:** $\mathcal{L}_{MTP}^1$

### D. MTP Module 2 Structure

Contained within a blue dashed boundary.

- **Input:** $t_3$ through $t_6$.

- **Embedding Layer:** (Green) Shared with previous modules.

- **RMSNorm Layers:** Two parallel RMSNorm blocks.

- One receives input from the Shared Embedding Layer.

- The other receives the hidden state output from **MTP Module 1's** Transformer Block.

- **Concatenation:** Merges the two RMSNorm outputs.

- **Linear Projection:** (Yellow) Processes the concatenated representation.

- **Transformer Block:** (Yellow) A single transformer block.

- **Output Head:** (Green) Shared with previous modules.

- **Loss Function:** Cross-Entropy Loss comparing output to targets $t_4 \dots t_7$.

- **Loss Label:** $\mathcal{L}_{MTP}^2$

---

## 3. Shared Components and Connections

The diagram uses specific visual cues to denote shared parameters and data flow:

1. **Shared Layers (Green Blocks):**

* **Embedding Layer:** Connected by a green dotted line labeled "Shared" across all three modules.

* **Output Head:** Connected by a green dotted line labeled "Shared" across all three modules.

2. **Hidden State Propagation:**

* A solid black line carries the output from the Main Model's Transformer stack into the RMSNorm of MTP Module 1.

* A solid black line carries the output from MTP Module 1's Transformer Block into the RMSNorm of MTP Module 2.

3. **Loss Aggregation:**

* Each module contributes a specific loss component ($\mathcal{L}_{Main}$, $\mathcal{L}_{MTP}^1$, $\mathcal{L}_{MTP}^2$), suggesting a multi-task objective function.

---

## 4. Textual Transcriptions

### Labels and Headers

* **Top Row:** Target Tokens

* **Bottom Row:** Input Tokens

* **Main Model Header:** Main Model (*Next Token Prediction*)

* **MTP Module 1 Header:** MTP Module 1 (*Next$^2$ Token Prediction*)

* **MTP Module 2 Header:** MTP Module 2 (*Next$^3$ Token Prediction*)

### Internal Block Text

* `Cross-Entropy Loss`

* `Output Head`

* `Transformer Block × L`

* `Transformer Block`

* `Linear Projection`

* `RMSNorm`

* `Embedding Layer`

* `concatenation`

* `Shared` (associated with green dotted lines)

### Mathematical Notations

* **Tokens:** $t_1, t_2, t_3, t_4, t_5, t_6, t_7$

* **Losses:** $\mathcal{L}_{Main}$, $\mathcal{L}_{MTP}^1$, $\mathcal{L}_{MTP}^2$

* **Variables:** $L$ (number of blocks in Main Model)

</details>

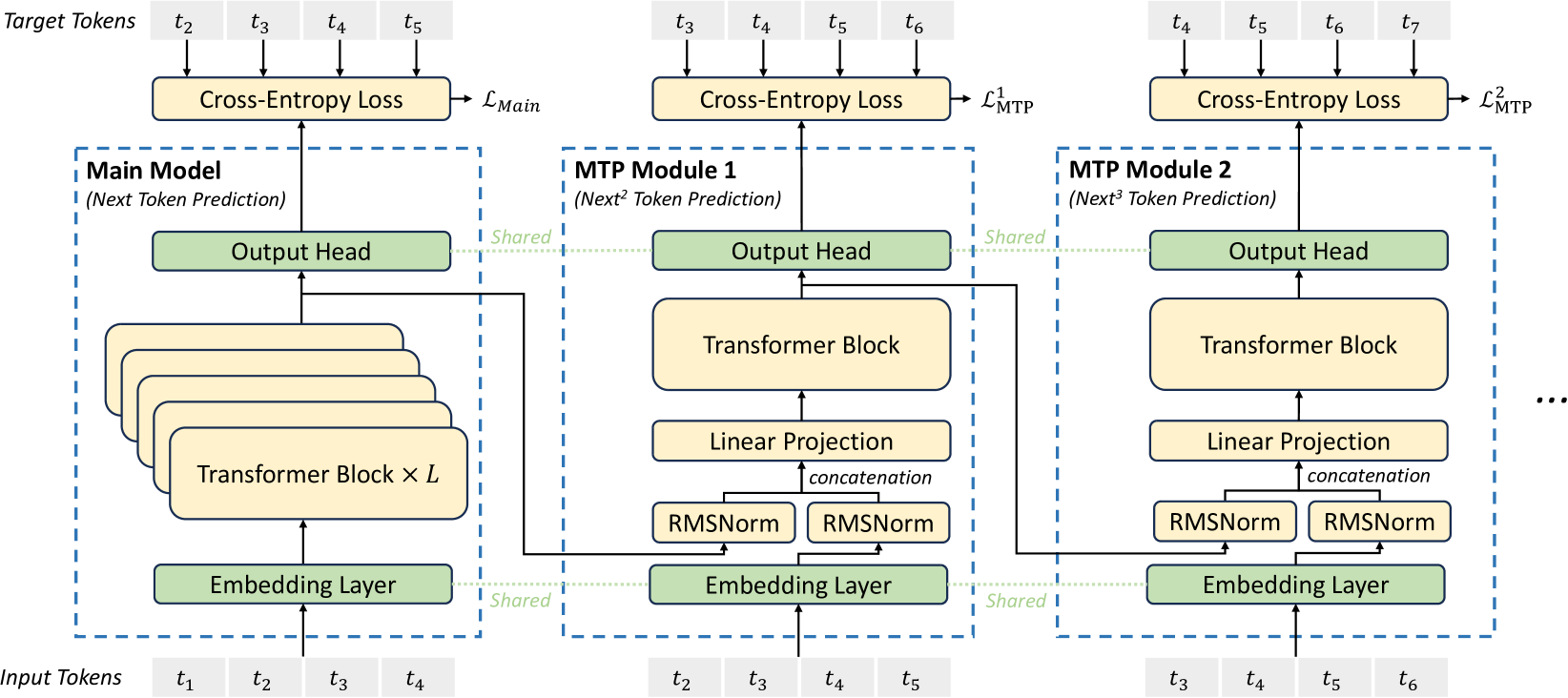

Figure 3: Illustration of our Multi-Token Prediction (MTP) implementation. We keep the complete causal chain for the prediction of each token at each depth.

2.2 Multi-Token Prediction

Inspired by Gloeckle et al. (2024), we investigate and set a Multi-Token Prediction (MTP) objective for DeepSeek-V3, which extends the prediction scope to multiple future tokens at each position. On the one hand, an MTP objective densifies the training signals and may improve data efficiency. On the other hand, MTP may enable the model to pre-plan its representations for better prediction of future tokens. Figure 3 illustrates our implementation of MTP. Different from Gloeckle et al. (2024), which parallelly predicts $D$ additional tokens using independent output heads, we sequentially predict additional tokens and keep the complete causal chain at each prediction depth. We introduce the details of our MTP implementation in this section.

MTP Modules.

To be specific, our MTP implementation uses $D$ sequential modules to predict $D$ additional tokens. The $k$ -th MTP module consists of a shared embedding layer $\operatorname{Emb}(·)$ , a shared output head $\operatorname{OutHead}(·)$ , a Transformer block $\operatorname{TRM}_{k}(·)$ , and a projection matrix $M_{k}∈\mathbb{R}^{d× 2d}$ . For the $i$ -th input token $t_{i}$ , at the $k$ -th prediction depth, we first combine the representation of the $i$ -th token at the $(k-1)$ -th depth $\mathbf{h}_{i}^{k-1}∈\mathbb{R}^{d}$ and the embedding of the $(i+k)$ -th token $Emb(t_{i+k})∈\mathbb{R}^{d}$ with the linear projection:

$$

\mathbf{h}_{i}^{\prime k}=M_{k}[\operatorname{RMSNorm}(\mathbf{h}_{i}^{k-1});%

\operatorname{RMSNorm}(\operatorname{Emb}(t_{i+k}))], \tag{21}

$$

where $[·;·]$ denotes concatenation. Especially, when $k=1$ , $\mathbf{h}_{i}^{k-1}$ refers to the representation given by the main model. Note that for each MTP module, its embedding layer is shared with the main model. The combined $\mathbf{h}_{i}^{\prime k}$ serves as the input of the Transformer block at the $k$ -th depth to produce the output representation at the current depth $\mathbf{h}_{i}^{k}$ :

$$

\mathbf{h}_{1:T-k}^{k}=\operatorname{TRM}_{k}(\mathbf{h}_{1:T-k}^{\prime k}), \tag{22}

$$

where $T$ represents the input sequence length and i:j denotes the slicing operation (inclusive of both the left and right boundaries). Finally, taking $\mathbf{h}_{i}^{k}$ as the input, the shared output head will compute the probability distribution for the $k$ -th additional prediction token $P_{i+1+k}^{k}∈\mathbb{R}^{V}$ , where $V$ is the vocabulary size:

$$

P_{i+k+1}^{k}=\operatorname{OutHead}(\mathbf{h}_{i}^{k}). \tag{23}

$$

The output head $\operatorname{OutHead}(·)$ linearly maps the representation to logits and subsequently applies the $\operatorname{Softmax}(·)$ function to compute the prediction probabilities of the $k$ -th additional token. Also, for each MTP module, its output head is shared with the main model. Our principle of maintaining the causal chain of predictions is similar to that of EAGLE (Li et al., 2024b), but its primary objective is speculative decoding (Xia et al., 2023; Leviathan et al., 2023), whereas we utilize MTP to improve training.

MTP Training Objective.

For each prediction depth, we compute a cross-entropy loss $\mathcal{L}_{\text{MTP}}^{k}$ :

$$

\mathcal{L}_{\text{MTP}}^{k}=\operatorname{CrossEntropy}(P_{2+k:T+1}^{k},t_{2+%

k:T+1})=-\frac{1}{T}\sum_{i=2+k}^{T+1}\log P_{i}^{k}[t_{i}], \tag{24}

$$

where $T$ denotes the input sequence length, $t_{i}$ denotes the ground-truth token at the $i$ -th position, and $P_{i}^{k}[t_{i}]$ denotes the corresponding prediction probability of $t_{i}$ , given by the $k$ -th MTP module. Finally, we compute the average of the MTP losses across all depths and multiply it by a weighting factor $\lambda$ to obtain the overall MTP loss $\mathcal{L}_{\text{MTP}}$ , which serves as an additional training objective for DeepSeek-V3:

$$

\mathcal{L}_{\text{MTP}}=\frac{\lambda}{D}\sum_{k=1}^{D}\mathcal{L}_{\text{MTP%

}}^{k}. \tag{25}

$$

MTP in Inference.

Our MTP strategy mainly aims to improve the performance of the main model, so during inference, we can directly discard the MTP modules and the main model can function independently and normally. Additionally, we can also repurpose these MTP modules for speculative decoding to further improve the generation latency.

3 Infrastructures

3.1 Compute Clusters

DeepSeek-V3 is trained on a cluster equipped with 2048 NVIDIA H800 GPUs. Each node in the H800 cluster contains 8 GPUs connected by NVLink and NVSwitch within nodes. Across different nodes, InfiniBand (IB) interconnects are utilized to facilitate communications.

3.2 Training Framework

The training of DeepSeek-V3 is supported by the HAI-LLM framework, an efficient and lightweight training framework crafted by our engineers from the ground up. On the whole, DeepSeek-V3 applies 16-way Pipeline Parallelism (PP) (Qi et al., 2023a), 64-way Expert Parallelism (EP) (Lepikhin et al., 2021) spanning 8 nodes, and ZeRO-1 Data Parallelism (DP) (Rajbhandari et al., 2020).

In order to facilitate efficient training of DeepSeek-V3, we implement meticulous engineering optimizations. Firstly, we design the DualPipe algorithm for efficient pipeline parallelism. Compared with existing PP methods, DualPipe has fewer pipeline bubbles. More importantly, it overlaps the computation and communication phases across forward and backward processes, thereby addressing the challenge of heavy communication overhead introduced by cross-node expert parallelism. Secondly, we develop efficient cross-node all-to-all communication kernels to fully utilize IB and NVLink bandwidths and conserve Streaming Multiprocessors (SMs) dedicated to communication. Finally, we meticulously optimize the memory footprint during training, thereby enabling us to train DeepSeek-V3 without using costly Tensor Parallelism (TP).

3.2.1 DualPipe and Computation-Communication Overlap

<details>

<summary>x4.png Details</summary>

### Visual Description

# Technical Document Extraction: Computation and Communication Timeline

## 1. Document Overview

This image is a horizontal timeline diagram illustrating the interleaving of **Computation** and **Communication** tasks over **Time**. It specifically details the execution flow of a neural network layer (likely a Transformer block, given the MLP and ATTN labels) during distributed training.

## 2. Legend and Symbols

The diagram uses specific symbols to denote the phase of the data chunk:

* **$\triangle$ (White Triangle):** Forward chunk

* **$\blacktriangle$ (Black Triangle):** Backward chunk

## 3. Component Isolation

### A. Y-Axis Labels (Left Side)

* **Computation:** The top row of the timeline, representing processing tasks.

* **Communication:** The bottom row of the timeline, representing data transfer tasks.

### B. X-Axis (Bottom)

* **Time:** Indicated by a label and a right-pointing arrow ($\rightarrow$), showing that the sequence progresses from left to right.

### C. Color Coding

* **Lime Green:** Backward-related computation/communication.

* **Light Blue:** Weight-related computation.

* **Orange/Yellow:** Forward-related computation/communication.

* **Purple:** Pipeline Parallelism (PP) overhead.

* **Red (Vertical Bars):** Synchronization points or barriers.

---

## 4. Sequence of Operations (Timeline Flow)

The timeline is divided into two main phases: the **MLP (Multi-Layer Perceptron)** phase and the **ATTN (Attention)** phase, separated by a red synchronization bar.

### Phase 1: MLP Operations

| Row | Task Label | Symbol | Color | Description |

| :--- | :--- | :--- | :--- | :--- |

| **Computation** | MLP(B) | $\blacktriangle$ | Lime Green | Backward pass computation for MLP. |

| **Computation** | MLP(W) | $\blacktriangle$ | Light Blue | Weight gradient computation for MLP. |

| **Computation** | MLP(F) | $\triangle$ | Orange | Forward pass computation for MLP. |

| **Communication** | DISPATCH(F) | $\triangle$ | Orange | Forward dispatch communication (overlaps with MLP(B) and MLP(W)). |

| **Communication** | DISPATCH(B) | $\blacktriangle$ | Lime Green | Backward dispatch communication (overlaps with MLP(F)). |

### Phase 2: ATTN Operations

| Row | Task Label | Symbol | Color | Description |

| :--- | :--- | :--- | :--- | :--- |

| **Computation** | ATTN(B) | $\blacktriangle$ | Lime Green | Backward pass computation for Attention. |

| **Computation** | ATTN(W) | $\blacktriangle$ | Light Blue | Weight gradient computation for Attention. |

| **Computation** | ATTN(F) | $\triangle$ | Orange | Forward pass computation for Attention. |

| **Communication** | COMBINE(F) | $\triangle$ | Orange | Forward combine communication (overlaps with ATTN(B)). |

| **Communication** | PP | N/A | Purple | Pipeline Parallelism communication/stall. |

| **Communication** | COMBINE(B) | $\blacktriangle$ | Lime Green | Backward combine communication (overlaps with ATTN(W) and ATTN(F)). |

---

## 5. Key Trends and Technical Observations

* **Concurrency/Overlapping:** The diagram demonstrates a high degree of overlap between computation and communication. For example, while the system is performing `MLP(F)` (Forward computation), it is simultaneously performing `DISPATCH(B)` (Backward communication).

* **Symmetry:** The structure of the MLP phase and the ATTN phase are similar, both involving a sequence of Backward (B), Weight (W), and Forward (F) computations.

* **Synchronization:** Red vertical bars indicate strict boundaries where computation and communication must synchronize before proceeding to the next major block (e.g., between MLP and ATTN).

* **Pipeline Overhead:** The `PP` (Purple) block in the communication row represents a specific interval where pipeline-related communication occurs, notably occurring between the Forward and Backward combine steps in the Attention phase.

</details>

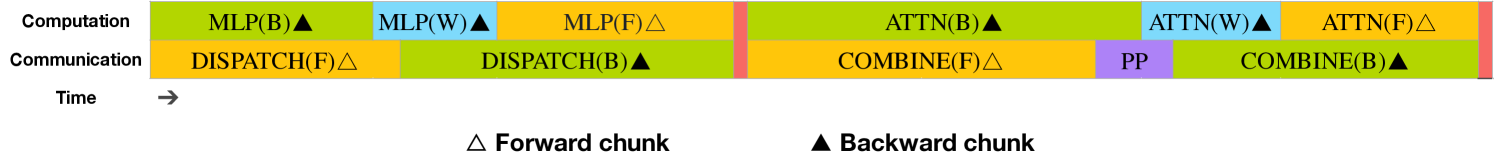

Figure 4: Overlapping strategy for a pair of individual forward and backward chunks (the boundaries of the transformer blocks are not aligned). Orange denotes forward, green denotes "backward for input", blue denotes "backward for weights", purple denotes PP communication, and red denotes barriers. Both all-to-all and PP communication can be fully hidden.

For DeepSeek-V3, the communication overhead introduced by cross-node expert parallelism results in an inefficient computation-to-communication ratio of approximately 1:1. To tackle this challenge, we design an innovative pipeline parallelism algorithm called DualPipe, which not only accelerates model training by effectively overlapping forward and backward computation-communication phases, but also reduces the pipeline bubbles.

The key idea of DualPipe is to overlap the computation and communication within a pair of individual forward and backward chunks. To be specific, we divide each chunk into four components: attention, all-to-all dispatch, MLP, and all-to-all combine. Specially, for a backward chunk, both attention and MLP are further split into two parts, backward for input and backward for weights, like in ZeroBubble (Qi et al., 2023b). In addition, we have a PP communication component. As illustrated in Figure 4, for a pair of forward and backward chunks, we rearrange these components and manually adjust the ratio of GPU SMs dedicated to communication versus computation. In this overlapping strategy, we can ensure that both all-to-all and PP communication can be fully hidden during execution. Given the efficient overlapping strategy, the full DualPipe scheduling is illustrated in Figure 5. It employs a bidirectional pipeline scheduling, which feeds micro-batches from both ends of the pipeline simultaneously and a significant portion of communications can be fully overlapped. This overlap also ensures that, as the model further scales up, as long as we maintain a constant computation-to-communication ratio, we can still employ fine-grained experts across nodes while achieving a near-zero all-to-all communication overhead.

<details>

<summary>x5.png Details</summary>

### Visual Description

# Technical Document Extraction: Pipeline Parallelism Schedule

## 1. Overview

This image is a technical timing diagram (Gantt-style chart) illustrating a pipeline parallelism execution schedule across multiple computational devices. It visualizes the interleaving of forward and backward passes for various micro-batches over a timeline.

## 2. Component Isolation

### Header / Y-Axis (Left)

The vertical axis lists the computational units involved in the pipeline:

* **Device 0** (Top)

* **Device 1**

* **Device 2**

* **Device 3**

* **Device 4**

* **Device 5**

* **Device 6**

* **Device 7** (Bottom)

### Footer / X-Axis (Bottom)

* **Label:** "Time" with a right-pointing arrow ($\rightarrow$), indicating that the horizontal axis represents chronological progression.

### Legend (Bottom)

The legend defines the operations represented by colored blocks and numerical labels:

* **Orange Block:** Forward

* **Dark Green Block:** Backward

* **Light Green Block:** Backward for input

* **Blue Block:** Backward for weights

* **Split Orange/Green Block:** Overlapped forward & Backward

---

## 3. Data Extraction and Flow Analysis

### Execution Pattern

The diagram shows a "1F1B" (One Forward, One Backward) or similar pipelining strategy with 8 devices and 10 micro-batches (numbered 0 through 9).

#### The Forward Pass (Orange)

* **Trend:** The forward pass initiates at Device 0 and propagates down to Device 7. There is a staggered start (pipeline bubble) where Device $n$ starts its forward pass for micro-batch 0 exactly one time step after Device $n-1$.

* **Micro-batches:** 0, 1, 2, 3, 4, 5, 6, 7, 8, 9.

* **Observation:** On Device 0, forward passes for micro-batches 0-6 occur consecutively before the first backward pass begins.

#### The Backward Pass (Green/Blue)

* **Trend:** Backward passes generally move from Device 7 up to Device 0.

* **Components:**

* **Backward for input (Light Green):** Usually follows the forward pass of the same micro-batch on the same device.

* **Backward for weights (Blue):** Often clustered toward the end of the timeline, particularly visible on Devices 0, 6, and 7.

* **Overlapped (Split Orange/Green):** Occurs mid-pipeline (e.g., Device 0, micro-batch 7) where a forward pass and a backward pass are executed concurrently.

### Device-Specific Activity (Sample Rows)

| Device | Initial Forward Sequence | Mid-Pipeline Behavior | End-of-Pipe Behavior |

| :--- | :--- | :--- | :--- |

| **Device 0** | 0, 1, 2, 3, 4, 5, 6 | Overlaps Forward 7 with Backward; then alternating. | Ends with weight updates (Blue 6, 7, 8, 9). |

| **Device 4** | Starts at $T=4$ with micro-batch 0. | Alternates Forward (Orange) and Backward (Green). | Ends with Backward 9 and Weight 8, 9. |

| **Device 7** | Starts at $T=7$ with micro-batch 0. | Immediate transition to Backward 0, 1, 2, 3. | Ends with a dense block of Weight updates (Blue). |

---

## 4. Key Technical Observations

1. **Pipeline Depth:** There are 8 stages (Devices 0-7).

2. **Startup Latency:** There is a clear "ramp-up" period where devices are idle (white space) waiting for the first micro-batch to reach them. Device 7 does not begin work until 7 time units have passed.

3. **Steady State:** The middle section of the diagram shows the "steady state" where devices are fully utilized, performing interleaved forward and backward tasks.

4. **Communication/Dependency:** The diagonal shift of the numbers (e.g., Forward 0 moving from Device 0 at $T=0$ to Device 7 at $T=7$) confirms a sequential dependency between devices for the forward pass, and a reverse dependency for the backward pass.

5. **Resource Optimization:** The "Overlapped forward & Backward" blocks indicate specific points where the hardware is capable of bi-directional communication or concurrent computation to reduce total execution time (Makespan).

</details>

Figure 5: Example DualPipe scheduling for 8 PP ranks and 20 micro-batches in two directions. The micro-batches in the reverse direction are symmetric to those in the forward direction, so we omit their batch ID for illustration simplicity. Two cells enclosed by a shared black border have mutually overlapped computation and communication.

In addition, even in more general scenarios without a heavy communication burden, DualPipe still exhibits efficiency advantages. In Table 2, we summarize the pipeline bubbles and memory usage across different PP methods. As shown in the table, compared with ZB1P (Qi et al., 2023b) and 1F1B (Harlap et al., 2018), DualPipe significantly reduces the pipeline bubbles while only increasing the peak activation memory by $\frac{1}{PP}$ times. Although DualPipe requires keeping two copies of the model parameters, this does not significantly increase the memory consumption since we use a large EP size during training. Compared with Chimera (Li and Hoefler, 2021), DualPipe only requires that the pipeline stages and micro-batches be divisible by 2, without requiring micro-batches to be divisible by pipeline stages. In addition, for DualPipe, neither the bubbles nor activation memory will increase as the number of micro-batches grows.

| 1F1B ZB1P DualPipe (Ours) | $(PP-1)(F+B)$ $(PP-1)(F+B-2W)$ $(\frac{PP}{2}-1)(F\&B+B-3W)$ | $1×$ $1×$ $2×$ | $PP$ $PP$ $PP+1$ |

| --- | --- | --- | --- |

Table 2: Comparison of pipeline bubbles and memory usage across different pipeline parallel methods. $F$ denotes the execution time of a forward chunk, $B$ denotes the execution time of a full backward chunk, $W$ denotes the execution time of a "backward for weights" chunk, and $F\&B$ denotes the execution time of two mutually overlapped forward and backward chunks.

3.2.2 Efficient Implementation of Cross-Node All-to-All Communication

In order to ensure sufficient computational performance for DualPipe, we customize efficient cross-node all-to-all communication kernels (including dispatching and combining) to conserve the number of SMs dedicated to communication. The implementation of the kernels is co-designed with the MoE gating algorithm and the network topology of our cluster. To be specific, in our cluster, cross-node GPUs are fully interconnected with IB, and intra-node communications are handled via NVLink. NVLink offers a bandwidth of 160 GB/s, roughly 3.2 times that of IB (50 GB/s). To effectively leverage the different bandwidths of IB and NVLink, we limit each token to be dispatched to at most 4 nodes, thereby reducing IB traffic. For each token, when its routing decision is made, it will first be transmitted via IB to the GPUs with the same in-node index on its target nodes. Once it reaches the target nodes, we will endeavor to ensure that it is instantaneously forwarded via NVLink to specific GPUs that host their target experts, without being blocked by subsequently arriving tokens. In this way, communications via IB and NVLink are fully overlapped, and each token can efficiently select an average of 3.2 experts per node without incurring additional overhead from NVLink. This implies that, although DeepSeek-V3 selects only 8 routed experts in practice, it can scale up this number to a maximum of 13 experts (4 nodes $×$ 3.2 experts/node) while preserving the same communication cost. Overall, under such a communication strategy, only 20 SMs are sufficient to fully utilize the bandwidths of IB and NVLink.

In detail, we employ the warp specialization technique (Bauer et al., 2014) and partition 20 SMs into 10 communication channels. During the dispatching process, (1) IB sending, (2) IB-to-NVLink forwarding, and (3) NVLink receiving are handled by respective warps. The number of warps allocated to each communication task is dynamically adjusted according to the actual workload across all SMs. Similarly, during the combining process, (1) NVLink sending, (2) NVLink-to-IB forwarding and accumulation, and (3) IB receiving and accumulation are also handled by dynamically adjusted warps. In addition, both dispatching and combining kernels overlap with the computation stream, so we also consider their impact on other SM computation kernels. Specifically, we employ customized PTX (Parallel Thread Execution) instructions and auto-tune the communication chunk size, which significantly reduces the use of the L2 cache and the interference to other SMs.

3.2.3 Extremely Memory Saving with Minimal Overhead

In order to reduce the memory footprint during training, we employ the following techniques.

Recomputation of RMSNorm and MLA Up-Projection.

We recompute all RMSNorm operations and MLA up-projections during back-propagation, thereby eliminating the need to persistently store their output activations. With a minor overhead, this strategy significantly reduces memory requirements for storing activations.

Exponential Moving Average in CPU.

During training, we preserve the Exponential Moving Average (EMA) of the model parameters for early estimation of the model performance after learning rate decay. The EMA parameters are stored in CPU memory and are updated asynchronously after each training step. This method allows us to maintain EMA parameters without incurring additional memory or time overhead.

Shared Embedding and Output Head for Multi-Token Prediction.

With the DualPipe strategy, we deploy the shallowest layers (including the embedding layer) and deepest layers (including the output head) of the model on the same PP rank. This arrangement enables the physical sharing of parameters and gradients, of the shared embedding and output head, between the MTP module and the main model. This physical sharing mechanism further enhances our memory efficiency.

3.3 FP8 Training

<details>

<summary>x6.png Details</summary>

### Visual Description

# Technical Document Extraction: Mixed-Precision Training Flow Diagram

## 1. Document Overview

This image is a technical architectural diagram illustrating the data flow and precision casting (quantization) logic for a deep learning training iteration. It specifically details the interactions between Forward Propagation (**Fprop**), Data Gradient calculation (**Dgrad**), and Weight Gradient calculation (**Wgrad**) using mixed-precision formats (BF16, FP32, and FP8).

---

## 2. Component Isolation

### A. Data Nodes (Grey Rectangles)

These represent the tensors stored in memory at various stages of the training loop.

* **Input**: Labeled as **BF16**.

* **Weight**: Central node used by Fprop and Dgrad.

* **Output**: Result of the forward pass.

* **Output Gradient**: Labeled as **BF16**; the starting point for the backward pass.

* **Input Gradient**: The final result of the Dgrad process.

* **Weight Gradient**: Labeled as **FP32**; the result of the Wgrad process.

* **Optimizer States**: Receives data from Weight Gradient.

* **Master Weight**: High-precision weight storage used to update the model.

### B. Computational Blocks (Yellow Rounded Rectangles)

These represent the arithmetic operations (Matrix Multiplication and Accumulation). Each contains a multiplication symbol ($\otimes$) and a summation symbol ($\sum$).

* **Fprop (Forward Propagation)**: Accumulation occurs in **FP32**.

* **Dgrad (Data Gradient)**: Accumulation occurs in **FP32**.

* **Wgrad (Weight Gradient)**: Accumulation occurs in **FP32**.

---

## 3. Process Flow and Precision Transitions

The diagram tracks how data is cast between formats (e.g., "To FP8") as it moves between nodes and computational blocks.

### Forward Propagation (Fprop)

1. **Input (BF16)** is cast **To FP8** and enters the Fprop block.

2. **Weight** enters the Fprop block (implied FP8 conversion based on the multiplication operation).

3. Inside **Fprop**, multiplication is performed, and results are accumulated in **FP32**.

4. The result is cast **To BF16** to become the **Output**.

### Data Gradient (Dgrad) - Backward Pass

1. **Output Gradient (BF16)** is cast **To FP8** and enters the Dgrad block.

2. **Weight** enters the Dgrad block.

3. Inside **Dgrad**, multiplication is performed, and results are accumulated in **FP32**.

4. The result is cast **To BF16** to become the **Input Gradient**.

### Weight Gradient (Wgrad) - Backward Pass

1. **Input (BF16)** is routed from the start, cast **To FP8**, and enters the Wgrad block.

2. **Output Gradient (BF16)** is cast **To FP8** and enters the Wgrad block.

3. Inside **Wgrad**, multiplication is performed, and results are accumulated in **FP32**.

4. The result is output as the **Weight Gradient (FP32)**.

### Optimizer and Weight Update

1. **Weight Gradient (FP32)** is cast **To BF16** and enters **Optimizer States**.

2. **Optimizer States** feeds into the **Master Weight** (cast **To FP32**).

3. The **Master Weight** is cast **To FP8** to update the active **Weight** used in the next Fprop/Dgrad cycle.

---

## 4. Summary of Precision Formats

| Component / Path | Precision Format |

| :--- | :--- |

| Primary Storage (Input, Output, Gradients) | BF16 |

| Internal Accumulation (Fprop, Dgrad, Wgrad) | FP32 |

| Master Weight / Weight Gradient | FP32 |

| Computational Inputs (Casting) | FP8 |

## 5. Spatial Grounding and Logic Check

* **Header/Top Row**: Shows the forward path (Input $\rightarrow$ Fprop $\rightarrow$ Output) and the start of the weight gradient path.

* **Middle Row**: Shows the Weight management (Weight $\rightarrow$ Master Weight $\rightarrow$ Optimizer States).

* **Bottom Row**: Shows the backward path (Input Gradient $\leftarrow$ Dgrad $\leftarrow$ Output Gradient).

* **Trend/Logic**: The diagram consistently shows that while storage and accumulation happen in higher precision (BF16/FP32), the operands for the heavy matrix multiplications are down-cast to **FP8** to optimize computational throughput.

</details>

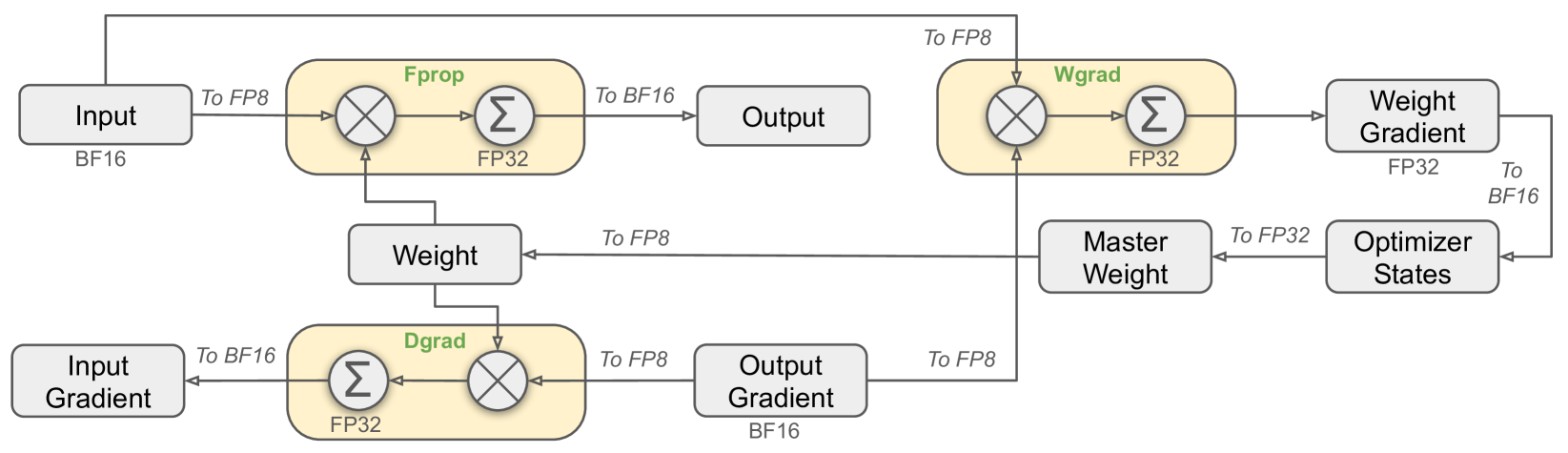

Figure 6: The overall mixed precision framework with FP8 data format. For clarification, only the Linear operator is illustrated.

Inspired by recent advances in low-precision training (Peng et al., 2023b; Dettmers et al., 2022; Noune et al., 2022), we propose a fine-grained mixed precision framework utilizing the FP8 data format for training DeepSeek-V3. While low-precision training holds great promise, it is often limited by the presence of outliers in activations, weights, and gradients (Sun et al., 2024; He et al., ; Fishman et al., 2024). Although significant progress has been made in inference quantization (Xiao et al., 2023; Frantar et al., 2022), there are relatively few studies demonstrating successful application of low-precision techniques in large-scale language model pre-training (Fishman et al., 2024). To address this challenge and effectively extend the dynamic range of the FP8 format, we introduce a fine-grained quantization strategy: tile-wise grouping with $1× N_{c}$ elements or block-wise grouping with $N_{c}× N_{c}$ elements. The associated dequantization overhead is largely mitigated under our increased-precision accumulation process, a critical aspect for achieving accurate FP8 General Matrix Multiplication (GEMM). Moreover, to further reduce memory and communication overhead in MoE training, we cache and dispatch activations in FP8, while storing low-precision optimizer states in BF16. We validate the proposed FP8 mixed precision framework on two model scales similar to DeepSeek-V2-Lite and DeepSeek-V2, training for approximately 1 trillion tokens (see more details in Appendix B.1). Notably, compared with the BF16 baseline, the relative loss error of our FP8-training model remains consistently below 0.25%, a level well within the acceptable range of training randomness.

3.3.1 Mixed Precision Framework

Building upon widely adopted techniques in low-precision training (Kalamkar et al., 2019; Narang et al., 2017), we propose a mixed precision framework for FP8 training. In this framework, most compute-density operations are conducted in FP8, while a few key operations are strategically maintained in their original data formats to balance training efficiency and numerical stability. The overall framework is illustrated in Figure 6.

Firstly, in order to accelerate model training, the majority of core computation kernels, i.e., GEMM operations, are implemented in FP8 precision. These GEMM operations accept FP8 tensors as inputs and produce outputs in BF16 or FP32. As depicted in Figure 6, all three GEMMs associated with the Linear operator, namely Fprop (forward pass), Dgrad (activation backward pass), and Wgrad (weight backward pass), are executed in FP8. This design theoretically doubles the computational speed compared with the original BF16 method. Additionally, the FP8 Wgrad GEMM allows activations to be stored in FP8 for use in the backward pass. This significantly reduces memory consumption.

Despite the efficiency advantage of the FP8 format, certain operators still require a higher precision due to their sensitivity to low-precision computations. Besides, some low-cost operators can also utilize a higher precision with a negligible overhead to the overall training cost. For this reason, after careful investigations, we maintain the original precision (e.g., BF16 or FP32) for the following components: the embedding module, the output head, MoE gating modules, normalization operators, and attention operators. These targeted retentions of high precision ensure stable training dynamics for DeepSeek-V3. To further guarantee numerical stability, we store the master weights, weight gradients, and optimizer states in higher precision. While these high-precision components incur some memory overheads, their impact can be minimized through efficient sharding across multiple DP ranks in our distributed training system.

<details>

<summary>x7.png Details</summary>

### Visual Description

# Technical Document Extraction: Quantization and Accumulation Precision Diagrams

This document provides a detailed technical extraction of the provided image, which illustrates two methods for optimizing neural network computations: (a) Fine-grained quantization and (b) Increasing accumulation precision.

---

## General Information

- **Language:** English

- **Primary Components:** Two main sub-figures labeled (a) and (b).

- **Context:** High-performance computing, specifically focusing on Tensor Core and CUDA Core operations for General Matrix Multiplication (GEMM).

---

## (a) Fine-grained Quantization

This section describes the data structure and processing flow for quantized matrix multiplication.

### 1. Input Component (Top Left)

* **Structure:** A large horizontal matrix labeled with a height of **1** and a width segment labeled **$N_C$**.

* **Scaling Factor:** Above the main matrix is a smaller vector representing "Scaling Factor." It contains teal-colored blocks.

* **Relationship:** The diagram shows a zoomed-in view where a specific segment of the input matrix (length $N_C$) corresponds to a specific teal scaling factor block.

### 2. Weight Component (Center)

* **Structure:** A large vertical matrix. A vertical segment is labeled with height **$N_C$** and width **$N_C$**.

* **Scaling Factor:** Above the weight matrix is a vertical vector of "Scaling Factor" blocks (light pink/purple).

* **Relationship:** A specific $N_C \times N_C$ block in the weight matrix (highlighted in yellow) corresponds to a specific scaling factor in the vector above.

### 3. Tensor Core Operation (Middle Left)

* **Equation:** [Pink Rectangle] = [Green Rectangle] $\times$ [Yellow Square]

* **Label:** "Tensor Core"

* **Description:** Represents the low-precision matrix multiplication performed by the Tensor Core using the quantized inputs and weights.

### 4. Output Component (Bottom Left)

* **Equation:** [Pink Rectangle] $*$ [Teal Square] $*$ [Light Purple Square] = [Resultant Pink/Purple Rectangle]

* **Label:** "CUDA Core"

* **Description:** This represents the de-quantization step. The low-precision result from the Tensor Core is multiplied by the Input Scaling Factor (Teal) and the Weight Scaling Factor (Light Purple) to produce the final output in a larger matrix.

---

## (b) Increasing Accumulation Precision

This section describes the evolution of Warpgroup Matrix Multiply-Accumulate (WGMMA) operations to improve precision.

### 1. WGMMA Comparison (Top Right)

* **WGMMA 1:** Shows a Green GEMM input and an Orange GEMM input feeding into a single Pink "Low Prec Acc" (Low Precision Accumulator) block.

* **WGMMA 4:** Shows the same inputs, but the output Pink block is part of a larger flow. An arrow indicates that the result of the accumulation is passed down to the next stage.

* **Legend (Spatial Grounding: Bottom right of this sub-section):**

* **Pink Square:** Low Prec Acc (Low Precision Accumulator)

* **Yellow / Green Squares:** GEMM Input

* **Label:** "Tensor Core"

### 2. Output and Precision Refinement (Bottom Right)

* **Components:**

* A horizontal rectangle labeled **$N_C$ Interval**.

* Inside the rectangle is a Pink block (Low Prec Acc).

* A Teal block labeled **Scaling Factor** is on the left.

* A Pink block labeled **FP32 Register** is on the right.

* **Flow:** The output from "WGMMA 4" (Tensor Core) feeds into the $N_C$ Interval block.

* **Label:** "CUDA Core"

* **Legend (Spatial Grounding: Bottom right of this sub-section):**

* **Teal Square:** Scaling Factor

* **Pink Square:** FP32 Register

---

## Summary of Key Data and Labels

| Category | Labels / Components |

| :--- | :--- |

| **Mathematical Variables** | $N_C$, 1 |

| **Hardware Units** | Tensor Core, CUDA Core |

| **Data Types/Roles** | Scaling Factor, GEMM Input, Low Prec Acc, FP32 Register, $N_C$ Interval |

| **Operations** | WGMMA 1, WGMMA 4, Multiplication ($\times$), Scalar Multiplication ($*$) |

### Visual Trend Verification

* **Quantization Flow:** The diagram moves from high-level matrix structures (Input/Weight) to specific hardware operations (Tensor Core) and finally to de-quantization (CUDA Core).

* **Precision Flow:** The diagram shows a transition from a simple accumulation (WGMMA 1) to a more complex, higher-precision accumulation (WGMMA 4) that integrates with FP32 registers for better numerical stability over an $N_C$ interval.

</details>

Figure 7: (a) We propose a fine-grained quantization method to mitigate quantization errors caused by feature outliers; for illustration simplicity, only Fprop is illustrated. (b) In conjunction with our quantization strategy, we improve the FP8 GEMM precision by promoting to CUDA Cores at an interval of $N_{C}=128$ elements MMA for the high-precision accumulation.

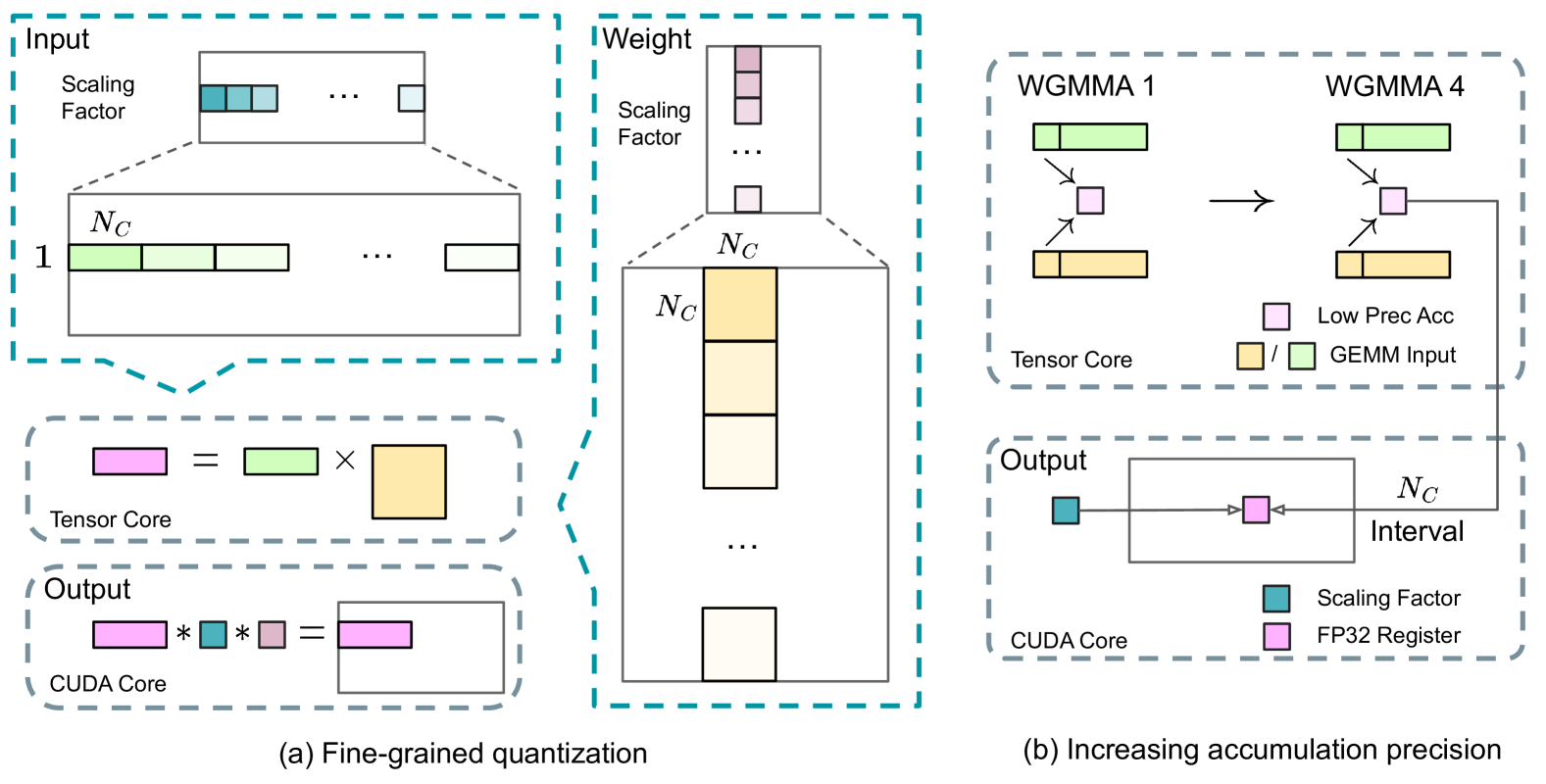

3.3.2 Improved Precision from Quantization and Multiplication

Based on our mixed precision FP8 framework, we introduce several strategies to enhance low-precision training accuracy, focusing on both the quantization method and the multiplication process.

Fine-Grained Quantization.

In low-precision training frameworks, overflows and underflows are common challenges due to the limited dynamic range of the FP8 format, which is constrained by its reduced exponent bits. As a standard practice, the input distribution is aligned to the representable range of the FP8 format by scaling the maximum absolute value of the input tensor to the maximum representable value of FP8 (Narang et al., 2017). This method makes low-precision training highly sensitive to activation outliers, which can heavily degrade quantization accuracy. To solve this, we propose a fine-grained quantization method that applies scaling at a more granular level. As illustrated in Figure 7 (a), (1) for activations, we group and scale elements on a 1x128 tile basis (i.e., per token per 128 channels); and (2) for weights, we group and scale elements on a 128x128 block basis (i.e., per 128 input channels per 128 output channels). This approach ensures that the quantization process can better accommodate outliers by adapting the scale according to smaller groups of elements. In Appendix B.2, we further discuss the training instability when we group and scale activations on a block basis in the same way as weights quantization.

One key modification in our method is the introduction of per-group scaling factors along the inner dimension of GEMM operations. This functionality is not directly supported in the standard FP8 GEMM. However, combined with our precise FP32 accumulation strategy, it can be efficiently implemented.

Notably, our fine-grained quantization strategy is highly consistent with the idea of microscaling formats (Rouhani et al., 2023b), while the Tensor Cores of NVIDIA next-generation GPUs (Blackwell series) have announced the support for microscaling formats with smaller quantization granularity (NVIDIA, 2024a). We hope our design can serve as a reference for future work to keep pace with the latest GPU architectures.

Increasing Accumulation Precision.

Low-precision GEMM operations often suffer from underflow issues, and their accuracy largely depends on high-precision accumulation, which is commonly performed in an FP32 precision (Kalamkar et al., 2019; Narang et al., 2017). However, we observe that the accumulation precision of FP8 GEMM on NVIDIA H800 GPUs is limited to retaining around 14 bits, which is significantly lower than FP32 accumulation precision. This problem will become more pronounced when the inner dimension K is large (Wortsman et al., 2023), a typical scenario in large-scale model training where the batch size and model width are increased. Taking GEMM operations of two random matrices with K = 4096 for example, in our preliminary test, the limited accumulation precision in Tensor Cores results in a maximum relative error of nearly 2%. Despite these problems, the limited accumulation precision is still the default option in a few FP8 frameworks (NVIDIA, 2024b), severely constraining the training accuracy.

In order to address this issue, we adopt the strategy of promotion to CUDA Cores for higher precision (Thakkar et al., 2023). The process is illustrated in Figure 7 (b). To be specific, during MMA (Matrix Multiply-Accumulate) execution on Tensor Cores, intermediate results are accumulated using the limited bit width. Once an interval of $N_{C}$ is reached, these partial results will be copied to FP32 registers on CUDA Cores, where full-precision FP32 accumulation is performed. As mentioned before, our fine-grained quantization applies per-group scaling factors along the inner dimension K. These scaling factors can be efficiently multiplied on the CUDA Cores as the dequantization process with minimal additional computational cost.