## Analog Alchemy: Neural Computation with In-Memory Inference, Learning and Routing

Dissertation zur

Erlangung der naturwissenschaftlichen Doktorwürde

(Dr. sc. UZH ETH Zürich) vorgelegt der Mathematisch-naturwissenschaftlichen Fakultät der Universität Zürich und der Eidgenössischen Technischen Hochschule Zürich von

Yiğit Demirağ

aus der

Türkei

Promotionskommission

Prof. Dr. Giacomo Indiveri (Vorsitz und Leitung)

Prof. Dr. Melika Payvand Prof. Dr. Benjamin Grewe

Zürich, 2024

## yigit demirag

## ANALOG ALCHEMY: NEURAL COMPUTATION WITH IN-MEMORY I N F E R E N C E , LEARNING AND ROUTING

To the engineers and scientists who will one day build superintelligence; from whatever materials and circuits, in whatever form.

## ABSTRACT

As neural computation is revolutionizing the field of Artificial Intelligence (AI), rethinking the ideal neural hardware is becoming the next frontier. Fast and reliable von Neumann architecture has been the hosting platform for neural computation. Although capable, its separation of memory and computation creates the bottleneck for the energy efficiency of neural computation, contrasting the biological brain. The question remains: how can we efficiently combine memory and computation, while exploiting the physics of the substrate, to build intelligent systems? In this thesis, I explore an alternative way with memristive devices for neural computation, where the unique physical dynamics of the devices are used for inference, learning and routing. Guided by the principles of gradient-based learning, we selected functions that need to be materialized, and analyzed connectomics principles for efficient wiring. Despite non-idealities and noise inherent in analog physics, I will provide hardware evidence of adaptability of local learning to memristive substrates, new material stacks and circuit blocks that aid in solving the credit assignment problem and efficient routing between analog crossbars for scalable architectures. First, I address limited bit precision problem in binary Resistive Random Access Memory (RRAM) devices for stable training. By introducing a new device programning technique that precisely controls the filament growth process, we enhance the effective bit precision of these devices. Later, we prove the versatility of this technique by applying it to novel perovskite memristors. Second, I focus on the hard problem of online credit assignment in recurrent Spiking Neural Networks (SNNs) in the presence of memristor non-idealities. I present a simulation framework based on a comprehensive statistical model of Phase Change Material (PCM) crossbar array, capturing all major device non-idealities. Building upon the recently developed e-prop local learning rule, we demonstrate that gradient accumulation is crucial for reliably implementing the learning rule with memristive devices. Moreover, I introduce PCM-trace, a scalable implementation of synaptic eligibility traces, a functional block demanded by many learning rules, using volatile characteristics by specifically fabricated PCM devices. Third, I present our discovery of a novel memristor material capable of switching between volatile and non-volatile modes. This reconfigurable memristor, based on halide perovskite nanocrystals, offers a significant advancement in emerging memory technologies, enabling the implementation of both static and dynamic neural variables with the same material and fabrication technique, while holding the world record in endurance. Finally, I introduce Mosaic, a memristive systolic architecture for in memory computing and routing. Mosaic, trained with our novel layout-aware training methods, efficiently implements small-world graph connectivity and demonstrates superior energy efficiency in spike routing compared to other hardware platforms.

## ACKNOWLEDGEMENTS

This thesis wouldn't have been possible without many people: scientists, friends, and family. I'm honored to have shared this journey with such curious and driven individuals. Among the many who contributed, there are a few exceptional individuals who were absolutely core to making this happen:

First I'd like to thank my supervisor, Giacomo Indiveri, who is a rare scientist truly channeling his work towards a dream. Over these 5 years, he gave me complete freedom to explore what I believe are the most exciting problems, while providing me with high-bandwidth feedback on demand, with more than 500 emails and many thousands of DMs. He taught me the importance of pushing unusual ideas to the limit. Whenever I came up with an ambitious project goal, he always reminded me to deeply consider the efficiency on the silicon, first. I'm grateful for having been his student.

I've been very fortunate to coincide with Melika Payvand, my co-supervisor, in this particular academic space and time. She is the most curious mind craving to understand the emergence of intelligence from the physics of computation, and her passion is infectious. Together, we traversed the probability trees for nearly every projects in my PhD, and executed against the entropy. Her close friendship is the cherry on the cake; I enjoyed and valued every second of it.

Then there are people I am very lucky to collaborate with and learn from. Rohit A. John, an extraordinary person who taught me the importance of grinding with massive focus while solving hard problems. And Elisa Vianello, who always provided her seamless support and insights that have made hard projects a joyful exploration. And Emre Neftci, whose disruptive scientific ideas deeply resonated with me, and with whom I always enjoyed discussing ideas.

I have to thank Alpha, Anqchi, Arianna, Chiara, Dmitrii, Farah, Filippo, Jimmy, Karthik, Manu, Maryada, Nicoletta, Tristan, and many others, who I hope will forgive me for not being mentioned individually or for resorting to alphabetical order when I did. Thank you for inspiring conversations in INI hallways, night walks in Zurich, and giving me the privilege of calling you, my friends.

During my PhD, I completed two internships at Google Zurich and one research visit at MILA. All of these were fantastic learning experiences, where I got a chance to reshape my research scope. From these experiences, I would especially like to thank Jyrki Alakuijala, Johannes von Oswald, Eyvind Niklasson, Ettore Randazzo, Alexander Mordvintsev, Esteban Real, Arna Ghosh, Jonathan Cornford, Joao Sacramento, Blake Richards, Guillaume Lajoie, and Blaise Aguera y Arcas.

I would also like to extend my thanks to my professors from my Master's degree, particularly Ekmel Ozbay, Bayram Butun and Yusuf Leblebici, for their invaluable support and inspiration.

And to Gizay, for sharing most of the journey and everything we created together.

But most of all, I want to express my deepest gratitude to my mom and dad, who inspired me to be curious, to take the world as a playground, and provided me with a loving home. And to my little brother, Efe, who is the best teammate in every game we play and in life's adventures.

## CONTENTS

| 1 Introduction | 1 Introduction | 1 Introduction | 1 Introduction | 1 | 1 | 1 | 1 | 1 |

|------------------|-----------------------------------------------------------------------------------|----------------------------------------------------------------------------------|-----------------------------------------------------------------------------------|-----------------------------------------------------------------------------------|-----------------------------------------------------------------------------------|-----------------------------------------------------------------------------------|-----|-----|

| 2 | Enhancing Bit Precision of Binary Memristors for Robust On-chip Learning | Enhancing Bit Precision of Binary Memristors for Robust On-chip Learning | Enhancing Bit Precision of Binary Memristors for Robust On-chip Learning | Enhancing Bit Precision of Binary Memristors for Robust On-chip Learning | Enhancing Bit Precision of Binary Memristors for Robust On-chip Learning | 9 | 9 | |

| | 2 . 1 | Introduction 9 | Introduction 9 | Introduction 9 | Introduction 9 | Introduction 9 | | |

| | 2 . 2 | ReRAM Device Modeling 10 | ReRAM Device Modeling 10 | ReRAM Device Modeling 10 | ReRAM Device Modeling 10 | ReRAM Device Modeling 10 | | |

| | 2 . 3 | Bit-Precision Enhancing Weight Update Rule 10 | Bit-Precision Enhancing Weight Update Rule 10 | Bit-Precision Enhancing Weight Update Rule 10 | Bit-Precision Enhancing Weight Update Rule 10 | Bit-Precision Enhancing Weight Update Rule 10 | | |

| | 2 . 4 | Learning Circuits and Architecture 12 | Learning Circuits and Architecture 12 | Learning Circuits and Architecture 12 | Learning Circuits and Architecture 12 | Learning Circuits and Architecture 12 | | |

| | 2 . 5 | System-level Simulations 13 | System-level Simulations 13 | System-level Simulations 13 | System-level Simulations 13 | System-level Simulations 13 | | |

| | 2 . 6 | Discussion 14 | Discussion 14 | Discussion 14 | Discussion 14 | Discussion 14 | | |

| 3 | Online Temporal Credit Assignment with Non-volatile and Volatile Memristors | Online Temporal Credit Assignment with Non-volatile and Volatile Memristors | Online Temporal Credit Assignment with Non-volatile and Volatile Memristors | Online Temporal Credit Assignment with Non-volatile and Volatile Memristors | Online Temporal Credit Assignment with Non-volatile and Volatile Memristors | Online Temporal Credit Assignment with Non-volatile and Volatile Memristors | 15 | |

| | 3 . 1 | Framework for Online Training of RSNNs with Non-volatile Memristors | Framework for Online Training of RSNNs with Non-volatile Memristors | Framework for Online Training of RSNNs with Non-volatile Memristors | Framework for Online Training of RSNNs with Non-volatile Memristors | 15 | 15 | |

| | | 3 . 1 . 2 | Introduction 15 Building blocks for training on in-memory processing cores | Introduction 15 Building blocks for training on in-memory processing cores | 16 | | | |

| | | 3 . 1 . 3 | PCM device modeling and integration into neural networks | PCM device modeling and integration into neural networks | 16 | | | |

| | | 3 . 1 . 4 | Discussion 23 | Discussion 23 | Discussion 23 | | | |

| | 3 . 2 | Implementing Online Training of RSNNs on a Neuromorphic Hardware | Implementing Online Training of RSNNs on a Neuromorphic Hardware | Implementing Online Training of RSNNs on a Neuromorphic Hardware | Implementing Online Training of RSNNs on a Neuromorphic Hardware | 25 | 25 | |

| | | 3 . 2 . 1 | 3 . 2 . 1 | 3 . 2 . 1 | 3 . 2 . 1 | 3 . 2 . 1 | | |

| | | 3 2 2 | From the simulation to an analog chip 25 | From the simulation to an analog chip 25 | From the simulation to an analog chip 25 | From the simulation to an analog chip 25 | | |

| | | . . | Discussion 26 | Discussion 26 | Discussion 26 | Discussion 26 | | |

| | 3 . 3 Scalable Synaptic Eligibility Traces with Volatile Memristive Devices 28 | 3 . 3 Scalable Synaptic Eligibility Traces with Volatile Memristive Devices 28 | 3 . 3 Scalable Synaptic Eligibility Traces with Volatile Memristive Devices 28 | 3 . 3 Scalable Synaptic Eligibility Traces with Volatile Memristive Devices 28 | 3 . 3 Scalable Synaptic Eligibility Traces with Volatile Memristive Devices 28 | 3 . 3 Scalable Synaptic Eligibility Traces with Volatile Memristive Devices 28 | | |

| | | 3 . 3 . 1 | Introduction 28 | Introduction 28 | Introduction 28 | Introduction 28 | | |

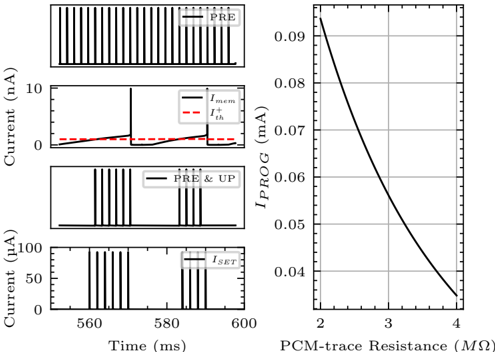

| | | 3 . 3 . 2 | PCM-trace: Implementing eligibility traces with PCM drift 30 | PCM-trace: Implementing eligibility traces with PCM drift 30 | PCM-trace: Implementing eligibility traces with PCM drift 30 | PCM-trace: Implementing eligibility traces with PCM drift 30 | | |

| | | 3 . 3 . 3 | Multi PCM-trace: Increasing the dynamic range of traces 31 | Multi PCM-trace: Increasing the dynamic range of traces 31 | Multi PCM-trace: Increasing the dynamic range of traces 31 | Multi PCM-trace: Increasing the dynamic range of traces 31 | | |

| | | 3 . 3 . 4 | Circuits and Architecture 32 | Circuits and Architecture 32 | Circuits and Architecture 32 | Circuits and Architecture 32 | | |

| | | 3 . 3 . 5 | Discussion 34 | Discussion 34 | Discussion 34 | Discussion 34 | | |

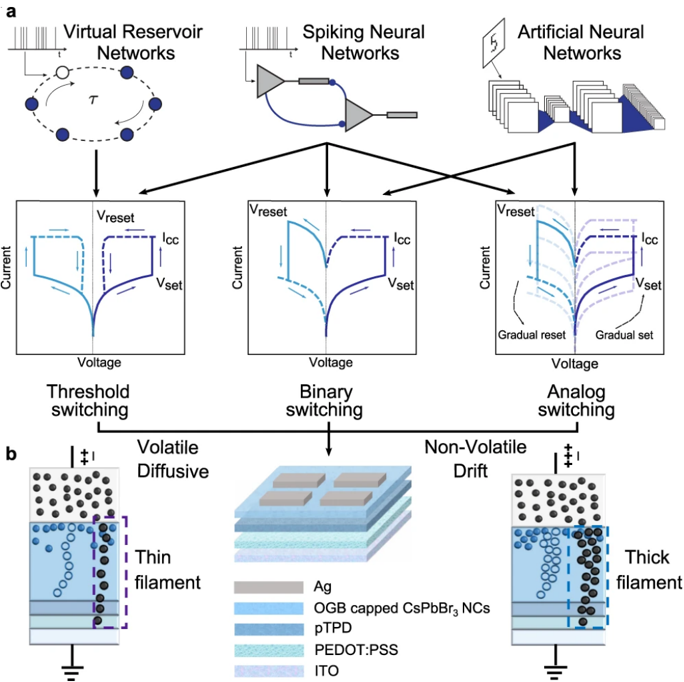

| 4 | Discovering Single Material that Switches Between Volatile or Non-Volatile Modes | Discovering Single Material that Switches Between Volatile or Non-Volatile Modes | Discovering Single Material that Switches Between Volatile or Non-Volatile Modes | Discovering Single Material that Switches Between Volatile or Non-Volatile Modes | Discovering Single Material that Switches Between Volatile or Non-Volatile Modes | Discovering Single Material that Switches Between Volatile or Non-Volatile Modes | 37 | |

| | 4 . 1 | Introduction 37 | Introduction 37 | Introduction 37 | Introduction 37 | Introduction 37 | | |

| | 4 . 2 | Diffusive Mode of the Perovskite Reconfigurable Memristor | Diffusive Mode of the Perovskite Reconfigurable Memristor | Diffusive Mode of the Perovskite Reconfigurable Memristor | 40 | | | |

| | 4 . 3 | Drift Mode of the Perovskite Reconfigurable Memristor 40 | Drift Mode of the Perovskite Reconfigurable Memristor 40 | Drift Mode of the Perovskite Reconfigurable Memristor 40 | Drift Mode of the Perovskite Reconfigurable Memristor 40 | | | |

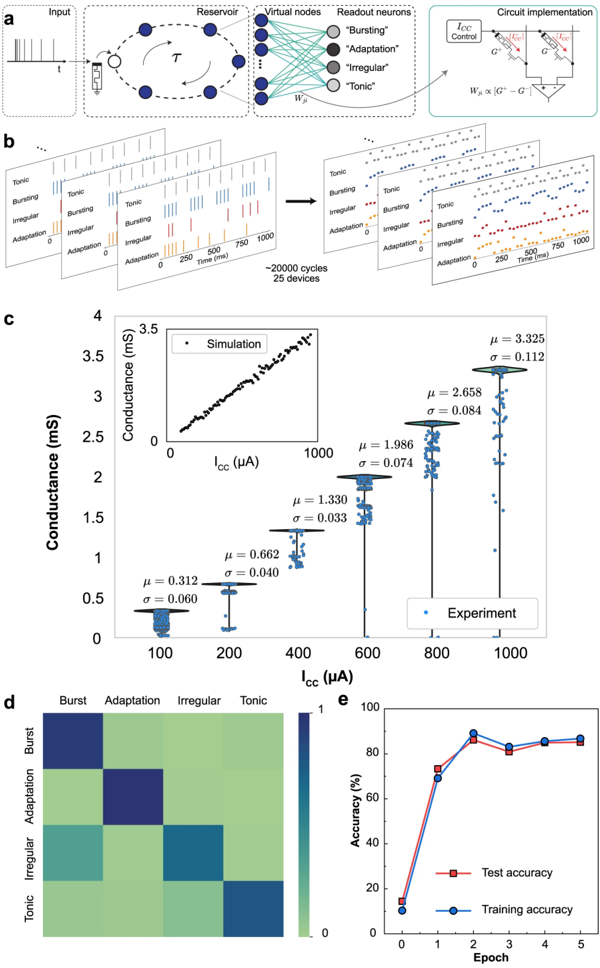

| | 4 . 4 | Reservoir Computing with Perovskite Memristors 42 | Reservoir Computing with Perovskite Memristors 42 | Reservoir Computing with Perovskite Memristors 42 | Reservoir Computing with Perovskite Memristors 42 | | | |

| | 4 . 5 | Diffusive Perovskite Memristors as Reservoir Elements 43 | Diffusive Perovskite Memristors as Reservoir Elements 43 | Diffusive Perovskite Memristors as Reservoir Elements 43 | Diffusive Perovskite Memristors as Reservoir Elements 43 | | | |

| | 4 . 6 | Drift Perovskite Memristors as Readout Elements 43 | Drift Perovskite Memristors as Readout Elements 43 | Drift Perovskite Memristors as Readout Elements 43 | Drift Perovskite Memristors as Readout Elements 43 | | | |

| | 4 . 7 | Classification of Neural Firing Patterns 44 | Classification of Neural Firing Patterns 44 | Classification of Neural Firing Patterns 44 | Classification of Neural Firing Patterns 44 | | | |

| | 4 | | | | | | | |

| | . 8 | Discussion 44 | Discussion 44 | Discussion 44 | Discussion 44 | | | |

| 5 | 4 . 9 Mosaic: An Analog Systolic Architecture for In-Memory Computing and Routing | Methods | 4 . 9 Mosaic: An Analog Systolic Architecture for In-Memory Computing and Routing | 4 . 9 Mosaic: An Analog Systolic Architecture for In-Memory Computing and Routing | 4 . 9 Mosaic: An Analog Systolic Architecture for In-Memory Computing and Routing | 4 . 9 Mosaic: An Analog Systolic Architecture for In-Memory Computing and Routing | 49 | |

| | 5 . 1 | Introduction 49 | Introduction 49 | Introduction 49 | Introduction 49 | Introduction 49 | | |

| | 5 . 2 | Mosaic Hardware Computing and Routing Measurements | Mosaic Hardware Computing and Routing Measurements | Mosaic Hardware Computing and Routing Measurements | 51 | | | |

| | 5 . 3 | Analog Hardware-aware Simulations 55 | Analog Hardware-aware Simulations 55 | Analog Hardware-aware Simulations 55 | Analog Hardware-aware Simulations 55 | Analog Hardware-aware Simulations 55 | | |

| | 5 . 4 | Benchmarking Routing Energy in Neuromorphic Platforms | Benchmarking Routing Energy in Neuromorphic Platforms | Benchmarking Routing Energy in Neuromorphic Platforms | 56 | | | |

| | 5 . 5 | Discussion 58 Methods 60 | Discussion 58 Methods 60 | Discussion 58 Methods 60 | Discussion 58 Methods 60 | Discussion 58 Methods 60 | | |

| | 5 . 6 | Conclusions 65 | Conclusions 65 | Conclusions 65 | Conclusions 65 | Conclusions 65 | | |

| | | | 69 | 69 | 69 | 69 | | |

| a | Appendix | Appendix | | | | | | |

| | Bibliography | Bibliography | 93 | 93 | 93 | 93 | | |

| | Contributions Publications | Contributions Publications | 113 | 113 | 113 | 113 | | |

You've got to listen to the silicon, because it's always trying to tell you what it can do.

Carver Mead

How do we imbue the spark of intelligence into lifeless computational physical substrates? This question has been my quest, inspired by the early pioneers such as McCulloch and Pitts [ 1 ], Alan Turing [ 2 ] and von Neumann [ 3 ], who laid the foundation of modern neural computing. As the field of AI progressively gains superiority in numerous benchmarks, the quest to understand intelligence and to rethink its ideal implementation on a physical substrate has never been more pressing.

While intelligence remains elusive with numerous definitions, learning 1 seems to me to be a cornerstone of intelligence. Whether it is natural or artificial , the intelligent agent must adapt to survive and replicate. Bacteria can learn to swim away from environments that lower the probability of successful replication [ 4 ]. And Artificial Neural Network (ANN) architectures absorbing the datasets better are preferred by AI researchers and industry [ 5 ]. This is a common theme; intelligent systems should learn well to last. And any physical implementation of an intelligent system, likewise, needs to implement learning dynamics. However, the computational demands of learning place an enormous burden on existing hardware.

For over 75 years, computing hardware has relied on the von Neumann architecture: synchronous, deterministic, binary logic driving a processing unit that interfaces with a separate memory subsystem. This design excels at executing arbitrary sequential instructions, but it necessitates constant shuttling of data between memory and compute. 2 Memory hierarchies, with their layers of progressively larger and slower storage, have been the stopgap solution, but fundamentally the bottleneck remains. This non-local memory access is a leading factor in the latency and energy consumption of modern AI systems. In stark contrast, neural computation in biology is inherently intertwined with memory, operating asynchronously, sparsely, and stochastically. This calls for a fundamental rethinking of computing, where neural models and hardware are co-designed with locality and physics-awareness as first principles.

## An Alternative Path

This thesis departs from the well-established path of digital accelerator design. The inherent noise tolerance of neural networks presents an opportunity to relax strict precision and determinism requirements in both compute and memory subsystems of digital electronics. In turn, this relaxation unlocks some exotic modes of computation, where subthreshold regime of transistors and raw physics of novel materials can be exploited for neural computation and storage. Historically, this is the essence of neuromorphic engineering, where a deliberate trade-off can be made, favoring low-power and scalability offered by statistical physical processes over theoretical precision of boolean algebra.

This thesis optimizes neural computation across Marr's computational, algorithmic, and implementational levels [ 6 ], advocating for the co-design of neural models and hardware with locality and physics-awareness as guiding principles. By pushing critical neural operations to the fundamental level of material physics, engaging electrical, chemical, or even mechanical properties, we explore a new frontier in low-power neural computing.

Specifically, we investigate the following key strategies, which are detailed in the subsequent sections:

1 dynamic process of adapting to the environmental pressure to improve the probability of survival or replication.

2 partially due to the rapid time-multiplexing of resources.

- Analog In-Memory Computing: We exploit the physics of volatile (temporary) or nonvolatile (permanent) materials to perform critical neural network operations directly within the memory units. This non-von Neumann architecture fundamentally eliminates the need for data movement between memory and compute units, which are traditionally decoupled systems.

- Local Learning: We depart from the computationally intensive Back-Propagation Through Time (BPTT) for training, opting instead for local and online gradient-based learning rules. These rules inherently exhibit varying degrees of variance and bias in their estimates of the gradient of an objective function [ 7 ]. However, they offer significant advantages in hardware in terms of power efficiency and simplified implementation due to local availability of weight update signals and the elimination of the need for buffering intermediate values.

- Analog In-Memory Routing: We bring out locally dense and globally sparse connectivity on neural networks. This connectivity prior allows high utilization of routers with non-volatile materials to efficiently transmit neural activations within cores of analog systolic arrays.

- Physics-aware Training: We utilize data-driven optimization to counteract non-idealities inherent to analog technologies. This involves collecting extensive component measurements to model their collective behavior, tailoring learning algorithms and circuits accordingly. Additionally, we employ gradient-based architectural adaptations and weight re-parameterizations for robust on-chip inference and training. Our methods are validated on small-scale fabrications to assess their on-device performance and scalability potential.

In the following section, I will explain memristors, the prima materia of our endeavor, that enables and unifies these strategies explored in this thesis.

## Memristors for Analog in Memory Neural Operations

Neural network computation, biological or artificial, is fundamentally memory-centric. The human brain operates on O ( 10 15 ) synapses [ 8 ], while Large Language Models (LLMs) like GPT4 perform non-linear operations on O ( 10 12 ) parameters [ 9 ]. Scaling laws reveal a direct link between parameter count and performance [ 10 ], suggesting increasing network size is a reliable path to improved performance in future neural networks.

Given that memory requirements scale quadratically with the number of layers, memory becomes the primary design constraint in neural computing hardware, impacting scalability, throughput, and power efficiency. 3

An ideal memory system for neural computing should be high density, low-energy, quick access, and on-chip. However, an ideal memory does not yet exist, as these requirements are often conflicting. High density memories (i.e., DRAM, High Bandwidth Memory (HBM), 3 D NAND flash) are off-chip with long time to access, faster memories that don't use capacitors (i.e. SRAM) are larger in area due to transistor count, and high-bandwith memories (i.e., HBM) are expensive as they require additional banks, ports and channels. That's why memory hierarchy exists, to address this trade-off by using multiple levels of progressively larger and slower storage. Yet, the von Neumann bottleneck persists, with memory access to each layer in the hierarchy

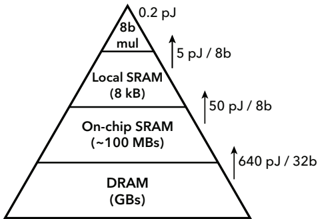

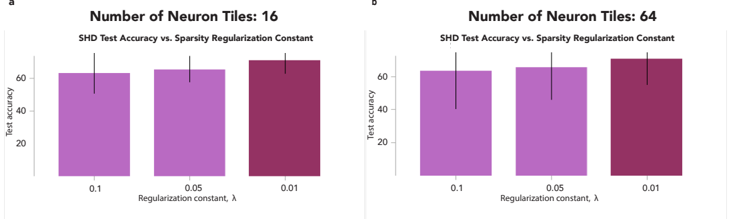

F igure 1 . 1 : Importance of data locality is shown in the memory hierarchy as the computation ( 8 b multiplication) costs only the fraction of the energy of memory access, in 45 nm CMOS [ 11 ].

<details>

<summary>Image 1 Details</summary>

### Visual Description

\n

## Diagram: Memory Hierarchy Energy Consumption

### Overview

The image is a triangular diagram representing a memory hierarchy, illustrating the trade-off between memory size/distance from the processor and energy consumption per bit. The diagram shows four levels of memory: 8b multiplier, Local SRAM, On-chip SRAM, and DRAM, arranged from top to bottom, representing increasing size and distance from the processor. Energy consumption per bit increases as you move down the hierarchy.

### Components/Axes

The diagram consists of four horizontal layers, each representing a different memory type. Arrows indicate the energy consumption per bit for each level. The diagram does not have traditional axes, but the vertical position represents the distance from the processor (closer at the top, further at the bottom) and implicitly, the speed of access.

* **Top Layer:** 8b multiplier, labeled with "0.2 pJ"

* **Second Layer:** Local SRAM (8 kB), labeled with "5 pJ / 8b"

* **Third Layer:** On-chip SRAM (~100 MBs), labeled with "50 pJ / 8b"

* **Bottom Layer:** DRAM (GBs), labeled with "640 pJ / 32b"

### Detailed Analysis or Content Details

The diagram illustrates the energy cost associated with accessing different types of memory.

* **8b multiplier:** Consumes 0.2 picojoules (pJ).

* **Local SRAM (8 kB):** Consumes 5 pJ per 8 bits.

* **On-chip SRAM (~100 MBs):** Consumes 50 pJ per 8 bits.

* **DRAM (GBs):** Consumes 640 pJ per 32 bits.

The energy consumption increases significantly as you move down the memory hierarchy. The DRAM level has the highest energy consumption, while the 8b multiplier has the lowest. The units are not consistent across all levels (pJ vs. pJ/bit).

### Key Observations

* There is a clear trend of increasing energy consumption as memory size increases and distance from the processor grows.

* The energy consumption per bit is significantly higher for DRAM compared to the other memory levels.

* The diagram highlights the importance of using a memory hierarchy to optimize energy efficiency.

* The units are inconsistent, making direct comparison difficult. DRAM is measured in pJ/32b while the others are pJ/8b.

### Interpretation

This diagram demonstrates the fundamental principle behind memory hierarchies in computer architecture. Smaller, faster, and closer memory (like the 8b multiplier and Local SRAM) is more energy-efficient but also more expensive and limited in capacity. Larger, slower, and more distant memory (like DRAM) is cheaper and has higher capacity but consumes significantly more energy per access.

The diagram suggests that a well-designed memory hierarchy aims to keep frequently accessed data in the faster, more energy-efficient levels, minimizing the need to access the slower, more energy-intensive levels. The inconsistent units suggest a focus on illustrating the *relative* energy costs rather than precise absolute values. The use of approximate values (~100 MBs) for On-chip SRAM further reinforces this point.

The diagram is a simplified representation, but it effectively conveys the trade-offs involved in memory system design. It implies that optimizing energy consumption requires careful consideration of memory size, speed, and distance from the processor.

</details>

(i.e., DRAM → on-chip SRAM → local SRAM), incurring roughly an order of magnitude more energy and latency penalty. Even in the ideal case of local SRAM, the cost of memory access exceeds that of computation by an order of magnitude. In-memory computing offers a compelling

3 On edge Tensor Processing Unit (TPU) for instance, memory access can consume over 90% of the total energy, throttle throughput to below 10% peak capacity, and in general dominate the majority of chip area [ 12 ].

alternative to data movement by processing data where it is stored, and one of the most promising technologies for in-memory computing is memristors.

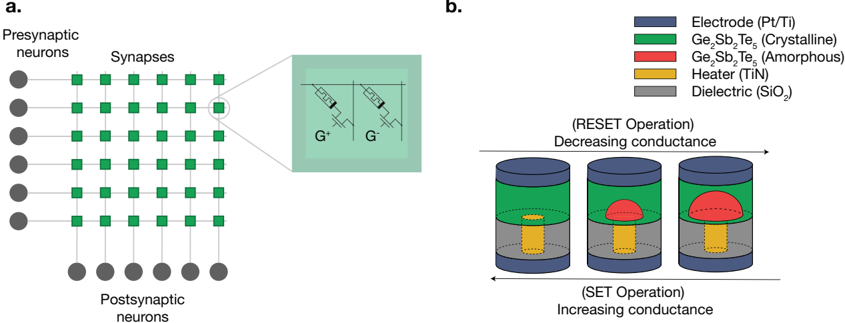

Memristors are two-terminal analog resistive memory devices capable of both computation and memory [ 13 ]. They have a unique ability to encode and store information in their electrical conductance, which can be altered based on the history of applied electrical pulses [ 14 ]. Because a single memristor's conductance can transition between multiple levels between Low Conductive State (LCS) and High Conductance State (HCS), it can store more than one bit of information (typically between to 3 and 5 bits) improving the memory density. 4 Various memristor types exist, each with distinct operating principles and advantages. For example, PCM relies on the contrasting electrical resistance of amorphous and crystalline phases in chalcogenide materials [ 16 , 17 ], RRAM operates by altering the resistance of dialectric material through the drift of oxygen vacancies or the formation of conductive filaments [ 18 ], and Ferroelectric Random Access Memory (FeRAM) uses the polarization of ferroelectric materials to store and change information [ 19 ]. Although the electrical interface to these types does not differ significantly (applying electrical pulses to read or program the conductance), these underlying mechanisms determine the device's switching speed, footprint, stochasticity, endurance, and energy efficiency.

When implementing functions with memristor forms, it is helpful to categorize by volatility. Non-volatile memristors retain conductance after programming, ideal for weight storage in neural networks. This property extends to in-memory computing to perform dot-product operations [ 20 -22 ]. When an array of N memristive devices store vector elements Gi in their conductance states, applying voltages Vi to the devices and measuring the resulting currents I i , the dot product ∑ N i = 1 GiVi can be computed with O ( 1 ) complexity by Ohm's Law and Kirchoff's current law. This principle extends to matrix-vector multiplication, enabling neural network inference directly through the analog substrate's physics. In-memory neural inference has been demonstrated in large prototypes like the PCM-based HERMES chip [ 23 ] and RRAM-based NeuRRAM chip [ 18 ].

Volatile memristors, however, provide functionality beyond mere storage, and are often less explored. Their conductance decay within 10 ms to 100 ms allows for continuous-time, stochastic accumulate-and-decay functions, approximating low-pass filtering, signal averaging, or time tracking. In neural computations, volatile memristors have been used to implement short-term synaptic [ 24 , 25 ] and neuronal dynamics [ 26 , 27 ].

why spikes ? In this thesis, I focus on SNNs, where neural activations are represented as discrete pulses called spikes. This choice is primarily motivated by hardware considerations. Spikes enable extreme spatiotemporal sparsity, aligning well with asynchronous computation where energy consumption directly correlates with spiking events that trigger localized circuits [ 28 ]. Mixed-signal spiking neuron circuits inherently act as sigma-delta Analog-to-Digital Converters (ADCs), converting analog neural computation into digital spikes [ 29 ] for noise-robust transmission over long distances without signal degradation [ 30 ]. Additionally, spiking neurons seamlessly interface with reading and programming of memristive devices and also with event-based sensors [ 31 , 32 ] through Address-Event Representation (AER) [ 33 ] protocol. Spiking communication has even been proposed to mitigate severe heating issues in 3 D fabrication using n-ary coding schemes [ 34 ].

From a computational perspective, a common argument for spikes is efficient information encoding through precisely utilizing the temporal dimension (e.g., time-to-first-spike [ 35 ], phasecoding [ 36 ], or inter-spike-interval [ 37 ]). Furthermore, the spiking framework allows to formulate hypotheses about biophysical spike-based learning mechanisms in the brain [ 38 -40 ] and explore the computational capabilities of spiking neural networks [ 41 ], as demonstrated in our recent work. While a comprehensive exploration of the advantages of spikes is beyond this thesis's scope, the primary focus here is their potential for energy-efficient communication on analog substrates, as their unique computational benefits remain to be conclusively demonstrated.

4 These attributes are interestingly similar to biological synapses, where the synaptic efficacy is modulated by the history of pre- and post-synaptic activity, and is suggested to take 26 distinguishable strengths (correlated with spine head volume) [ 15 ].

## State of the Art

Despite their potential, memristors are not without their challenges for neural computing. In this section, I will outline some of these challanges in inference, learning and routing, and state-of-the-art attempts to address them.

Limited bit precision. Programming nanoscale memristive devices, modifies their atomic arrangement, inherently stochastic, non-linear and of limited granularity [ 42 -45 ]. This analog non-idealities are known to cause significant performance drops in training networks compared to software simulations [ 46 ]. Controlled experiments by Sidler et al. [ 22 ] have demonstrated that the poor training performance is primarily due to insufficient number of pulses switching the device between High Resistive State (HRS) and Low Resistive State (LRS).

Following this, various attempts have been made to improve the bit precision of memristive devices. Optimizing RRAM materials has increased the number of bits per device, but often at the cost of lower ON/OFF ratios (i.e., the ratio of the LRS and HRS), making it harder to distinguish states with small footprint circuits [ 47 -50 ]. Furthermore, architectural optimizations have been explored, including using multiple binary devices per synapse [ 51 ], assigning a number system to multiple devices [ 52 ], leveraging stochastic switching [ 53 , 54 ], and complementing binary memristive devices with capacitors [ 55 ]. However, these methods still require complex and large synaptic architectures, limiting scalability.

In Chapter 2 , we propose a novel approach to program intrinsically 1 -bit RRAM devices to increase their effective bit resolution by precise control of the filament formation.

The credit assignment problem. Learning in any systems is fundamentally about adjusting its parameters to improve its performance. In neural networks, learning involves adjusting weights, represented by the vector W , to optimize the performance, measured by an objective function, F ( W ) . The credit assignment problem refers to the challenge of determining the precise weight adjustments needed for improvement, especially in deep networks where the relationship between individual neurons and overall performance is less clear [ 56 ].

Traditionally, Hebbian mechanisms [ 57 ] leveraging the timing of pre- and post-synaptic spikes, have been the go-to solution for on-chip learning in the neuromorphic field. This is because Hebbian rules explain a numerous neuroscientific observations [ 58 , 59 ], posses interesting variance maximization properties [ 60 ] but more practically, utilize local signals to the synapse in a simple way, making them easily adaptable to silicon circuits [ 61 -64 ]. However, Hebbian rules alone had limited success while scaling to large networks, and require heavily crafted architectural biases to achieve hiererchical, disentangled representations. 5

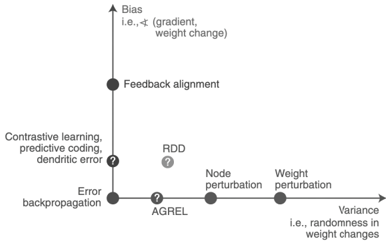

Backpropagation [ 68 ], on the other hand, remains the state-of-the-art algorithm for training modern ANNs, and is one of the pillars of the deep learning revolution. To give an intuition why it works, following the insight from Richards et al. [ 7 ], let's consider weight changes, ∆ W , to be small and objective function to be maximized, F , to be locally smooth, then resulting ∆ F can be approximated as ∆ F ≈ ∆ W T · ∇ WF ( W ) , where ∇ WF ( W ) is the gradient of the objective function with respect to the weights. This means that to guarantee improving learning performance ( ∆ F ≥ 0), a principled approach to weight adjustment is to take a small step in the direction of the steepest performance improvement, guided by the gradient ( ∆ F ≈ η ∇ WF ( W ) T · ∇ WF ( W ) ≥ 0). Backpropagation explicitly calculating gradients, while powerful, is unsuitable for online learning on analog substrates due to its need for symmetric feedback weights and distinct forward/backward phases [ 56 ]. And for temporal credit assignment, BPTT unrolls the network in reverse time to backpropagate the error gradients, resulting in memory complexity scaling with O ( kT ) , where k is the number of time steps and T is the number of neurons. This temporal nonlocality, necessitates the usage of memory hierarchy to save silicon space, resulting in sacrificing energy efficiency and latency. For this reason, various alternatives have been proposed to estimate gradients while offering better locality, such as feedback alignment [ 69 ], Q-AGREL [ 70 ], difference

5 While this is true, it is possible that end-to-end credit assignment might not be needed [ 65 ]. Recent works successfully replacing global backpropagation with truncated layer-wise backpropagation supports this view [ 66 , 67 ]. Nevertheless, designing the right architecture and local loss functions to guide the truncated credit assignment is still an open question.

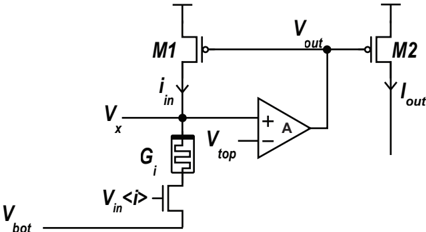

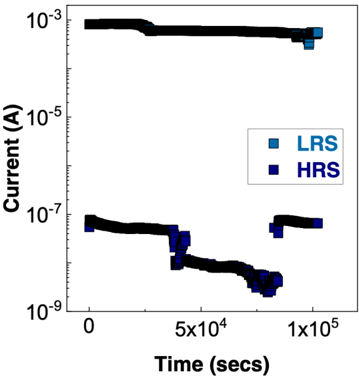

target propagation [ 71 ], predictive coding [ 72 ] and weight perturbation-based methods [ 73 ]. These methods exhibit varying degrees of variance (resulting in slower convergence) and bias (leading to poor generalization [ 74 ]) in their estimations [ 7 ].

However, as long as variance and bias are within reasonable bounds, the learning rule can still be effective, potentially offering a favorable sweet spot between locality and performance. The challenge of local and effective spatio-temporal credit assignment is still a critical frontier in neural processing in analog substrates, demanding new and creative approaches.

In Chapter 3 , we address this challenge for the first time, designing material and circuits evolved around gradient calculations, and implementing e-prop [ 75 ] local learning rule for Recurrent Spiking Neural Networks (RSNNs) on a memristive chip.

## Programming memristors with non-idealities

for online learning. On-chip learning requires the ability to program the network weights in accordance with the demands of the learning rule. While digital systems often rely on quantization to optimize memory access [ 76 ] and associated mitigation techniques such as stochastic rounding [ 77 ], gradient scaling [ 78 ], quantization range learning [ 79 ] and optimizing the weight representation [ 80 ], analog memristive systems present unique challenges due to nonidealities such as conductance-dependent, non-linear, stochastic, and time-varying programming responses [ 81 ]. Then, it is crucial to identify which digital methods can be effectively transferred to analog systems while promising small footprint and energy overhead.

In Section 3 . 1 and 3 . 2 , we analyze several practical weight update schemes implementing an online learning rule for mixed-signal systems on a custom simulator, and later validate on a real neuromorphic chip.

Scalable synaptic eligibility traces for local learning. Many high-performing local rules rely on eligibility traces [ 39 , 82 -86 ], slow synaptic memory mechanisms that carry information forward in time. These traces bridge the temporal gap between synaptic activity occuring on millisecond timescale with network errors arising seconds later, helping to solve the distal reward problem [ 87 , 88 ]. While several neuromorphic platforms [ 89 -91 ] have incorporated synaptic eligibility traces for learning, this mechanism is one of the most costly building blocks in neural computation, due to quadratic scaling of the number of synapses. Digital implementations suffer from the memory-intensive nature of numerical trace calculations, leading to a von Neumann bottleneck [ 92 , 93 ]. Even in mixed-signal designs, the slow dynamics of eligibility traces require large capacitors leading to sacrifice of the scalability [ 94 ].

In Section 3 . 3 , we propose a novel and scalable implementation of synaptic eligibility traces using volatile memristors.

Unifiying volatile and non-volatile materials. Different neural building blocks require different volatility, bit precision, and endurance characteristics from the memristive devices, which are then tailored to meet these demands. For example, ANN inference workloads require linear non-volatile conductance response over a wide dynamic range for optimal weight update and minimum noise for gradient calculation [ 21 , 22 , 95 ]. Whereas SNNs often demand richer and multiple synaptic dynamics simultaneously e.g., short term conductance decay (to implement Short-Term Plasticity (STP) and eligibility traces [ 82 ]), non-volatile device states (to represent synaptic efficacy) and a probabilistic nature (to mimic synaptic vesicle releases [ 24 ]). However, optimizing different active memristive material for each of these features limits their suitability to a wide range of computational frameworks and ultimately increases the system complexity for most demanding applications. Moreover, these diverse specifications cannot always be implemented by combining

F igure 1 . 2 : Bias and variance in learning rules which estimate the gradients, even if they are not explicitly compute gradients. Figure taken from [ 7 ].

<details>

<summary>Image 2 Details</summary>

### Visual Description

\n

## Scatter Plot: Learning Algorithms and Their Characteristics

### Overview

The image presents a scatter plot illustrating the relationship between "Bias" (vertical axis) and "Variance" (horizontal axis) for various learning algorithms. Each algorithm is represented by a point on the plot, and question marks indicate uncertainty or areas requiring further investigation.

### Components/Axes

* **X-axis:** Labeled "Variance", with the clarifying text "i.e., randomness in weight changes". The scale is not explicitly marked, but appears to range from low variance on the left to high variance on the right.

* **Y-axis:** Labeled "Bias", with the clarifying text "i.e., ∇ (gradient, weight change)". The scale is not explicitly marked, but appears to range from low bias at the bottom to high bias at the top.

* **Data Points/Algorithms:**

* Feedback alignment

* Contrastive learning, predictive coding, dendritic error

* RDD (with a question mark)

* AGREL (with a question mark)

* Node perturbation

* Weight perturbation

* Error backpropagation

* **Question Marks:** Placed near the data points for "RDD" and "AGREL", and also near "Contrastive learning, predictive coding, dendritic error", indicating uncertainty in their precise positioning or characteristics.

### Detailed Analysis

The plot shows a general trend of increasing variance as bias decreases.

* **Feedback alignment:** Located in the top-left quadrant, indicating high bias and low variance. Approximate coordinates: (1, 9).

* **Contrastive learning, predictive coding, dendritic error:** Located in the upper-middle quadrant, indicating high bias and moderate variance. Approximate coordinates: (3, 7).

* **Error backpropagation:** Located in the lower-middle quadrant, indicating low bias and moderate variance. Approximate coordinates: (3, 2).

* **AGREL:** Located near the x-axis, indicating low bias and low variance. Approximate coordinates: (4, 1).

* **RDD:** Located between AGREL and Contrastive learning, predictive coding, dendritic error, indicating low bias and moderate variance. Approximate coordinates: (5, 4).

* **Node perturbation:** Located on the x-axis, indicating low bias and high variance. Approximate coordinates: (6, 1).

* **Weight perturbation:** Located on the x-axis, indicating low bias and very high variance. Approximate coordinates: (7, 1).

The positioning of the algorithms suggests a trade-off between bias and variance. Algorithms with high bias tend to have low variance, and vice versa.

### Key Observations

* The algorithms cluster along a diagonal, suggesting a negative correlation between bias and variance.

* The question marks highlight areas where the positioning of the algorithms is uncertain or requires further investigation.

* Error backpropagation, AGREL, Node perturbation, and Weight perturbation are positioned relatively close to the x-axis, indicating low bias.

* Feedback alignment and Contrastive learning, predictive coding, dendritic error are positioned relatively high on the y-axis, indicating high bias.

### Interpretation

This plot illustrates a fundamental concept in machine learning: the bias-variance trade-off. Algorithms with high bias make strong assumptions about the data, leading to low variance but potentially high errors if the assumptions are incorrect. Algorithms with low bias make fewer assumptions, leading to high variance and potentially overfitting the data.

The positioning of the algorithms on the plot suggests their relative strengths and weaknesses. For example, Feedback alignment is a high-bias, low-variance algorithm, which might be suitable for simple problems where strong assumptions are valid. Weight perturbation is a low-bias, high-variance algorithm, which might be suitable for complex problems where flexibility is important.

The question marks indicate that the positioning of RDD, AGREL, and Contrastive learning, predictive coding, dendritic error is uncertain, suggesting that further research is needed to understand their characteristics. The plot provides a useful visual representation of the trade-off between bias and variance, and can help guide the selection of appropriate learning algorithms for different problems.

</details>

different types of memristors on a monolithic circuit e.g., volatile and non-volatile, binary and analog, due to the incompatibility of the fabrication processes. Although some prototype materials have been proposed to exhibit dual-functional memory [ 96 , 97 ], the dominance of one of the mechanisms often results in poor switching performance. Therefore, the lack of universal memristors capable of realizing diverse computational primitives has been a challenge.

In Chapter 4 , we present our discovery of a novel memristor type that can be used for both volatile and non-volatile operations based on a simple programming scheme, while achieving a world-record in endurance.

Routing of multi-crossbar arrays for scaling. Scaling neural networks, by increasing layer width or depth, has proven to be a powerful technique for improving performance [ 98 ]. However, scaling memristive crossbar array dimensions is hindered by analog non-idealities such as current sneak-paths, parasitic resistance, capacitance of metal lines, and yield limitations [ 99 -101 ]. For this reason, large-scale systems need to adapt multiple crossbars of managable dimensions [ 102 ], but this introduces the overhead of routing activities, especially with long wires along the source, router and destination. To reduce wiring length, three-dimensional ( 3 D) technology to vertically stack logic, crossbar arrays, and routers has been proposed [ 34 , 102 ], but the fabrication complexity and cost of 3 D integration are currently prohibitive. Today's most advanced multi-crossbar neuromorphic chips, e.g., HERMES [ 23 ] and NeuRRAM [ 18 ], still rely on off-chip communication for routing, strongly diminishing communication energy efficiency. This necessitates the development of efficient on-chip routing mechanisms to achieve energy-efficient communication. When the routing is optimized for communicating events through the on-chip AER protocol, the designer faces a trade-off between source-based and destination-based routing. Source-based routing offers the flexibility of per-neuron Content Addressable Memory (CAM) memory as used by DYNAP-SE [ 103 ], but this comes at the cost of increased chip area and slower memory access. Destination-based routing, while more area-efficient, sacrifices some degree of network configurability.

In Chapter 5 , we propose and fabricate a novel memristive in-memory routing core that can be reconfigured to route signals between crossbars, enabling dense local, sparse global connectivity with orders of magnitude more routing efficiency compared to other SNN hardware platforms.

## Thesis Overview

This thesis explores the path towards intelligent and low-power analog computing substrates, embracing the Bitter Lesson [ 104 ] and addressing some of the challenges in learning and scale. It consists of six selected publications, which I have coauthored with amazing electrical engineers, computer scientists, material designers and neuroscientists. My individual contributions to the work presented in each chapter are clarified and outlined in Appendix 4 .

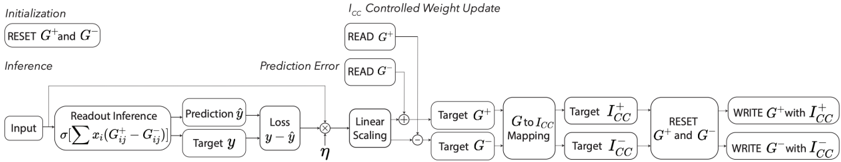

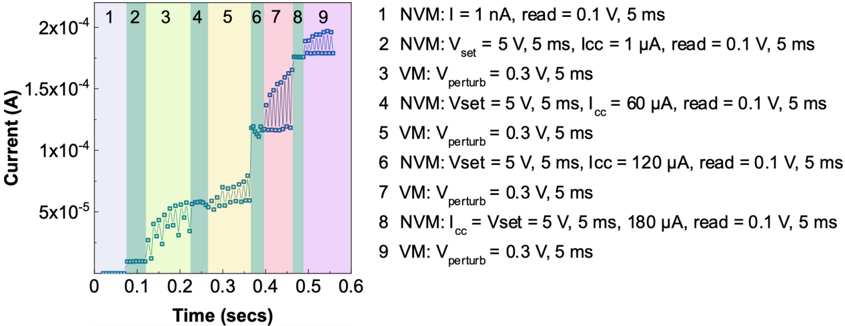

In the first part, we focus on developing mixed-signal learning circuits targeting memristive weights in single-layer feedforward SNN architectures. We address the limited resolution and device-to-device and cycle-to-cycle variability of binary RRAM weights, aiming to enable on-chip learning. Building upon the observation of Ielmini [ 105 ] that filament size in RRAM can be precisely controlled by compliance current, we introduce a programming circuitry that modulates synaptic weights based on the estimated gradients using a modified Delta Rule [ 106 ]. This approach achieves multi-level weight resolution within the conductance of intrinsically binary switching RRAM devices. We model variability of device responses to our new compliance current programming scheme, I CC to G LRS, from experimental measurements on a 4 kb HfO 2 -based RRAM array, and adjust our implementation accordingly. We validate our approach and circuits through simulations for standard Complementary Metal-Oxide-Semiconductor (CMOS) 180 nm processes and system simulations on the MNIST dataset. This codesign of algorithm, material, and circuit properties establishes a significant building block for single-layer on-chip learning with memristive devices. Furthermore, in Chapter 4 , we demonstrate that our programming

scheme can also be applied, with even more linear response, to novel perovskite memristors.

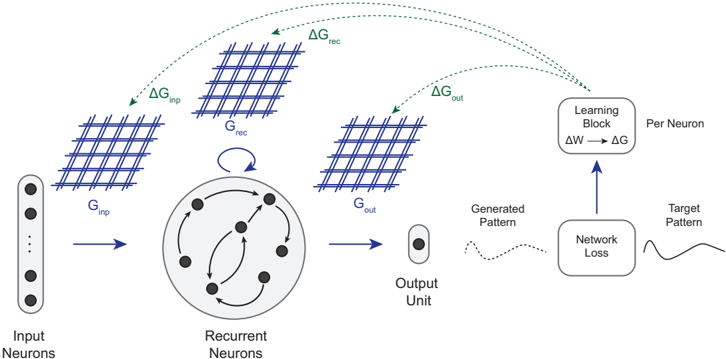

In Chapter 3 , we extend our focus to more challenging task of training RSNNs on-chip, addressing the complexities of online temporal credit assignment with memristive devices for in-memory computing. Our work encompasses three complementary efforts: 1 ) given a recent local learning rule development for RSNNs [ 75 ], investigating how to reliably program analog devices based on a realistic PCM model on simulations, 2 ) validating these results on a PCM-based neuromorphic chip, and 3 ) proposing a scalable implementation of synaptic eligibility traces, a crucial component of many local learning rules, using volatile memristors.

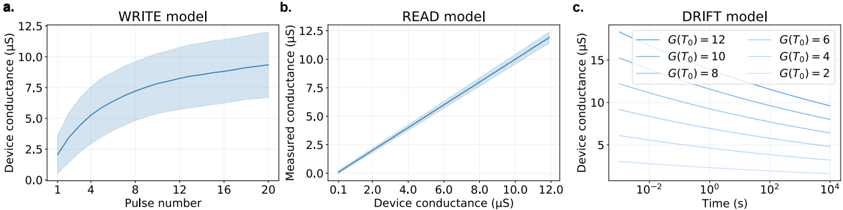

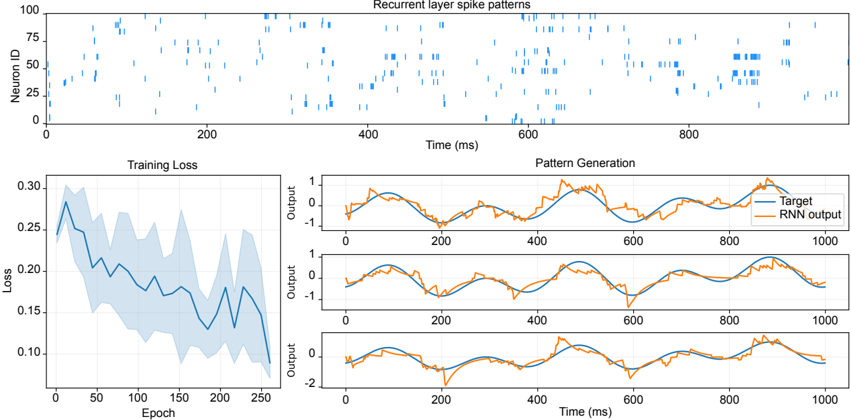

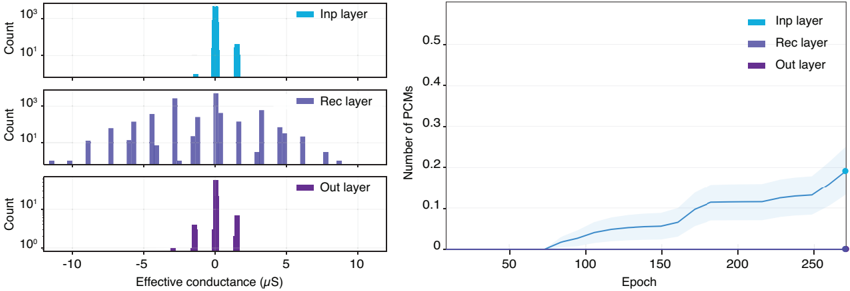

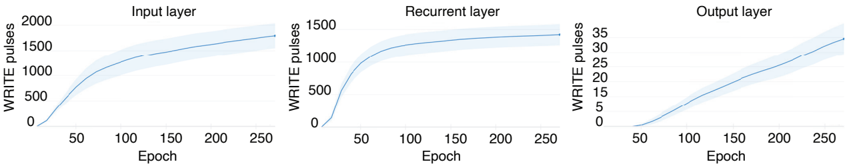

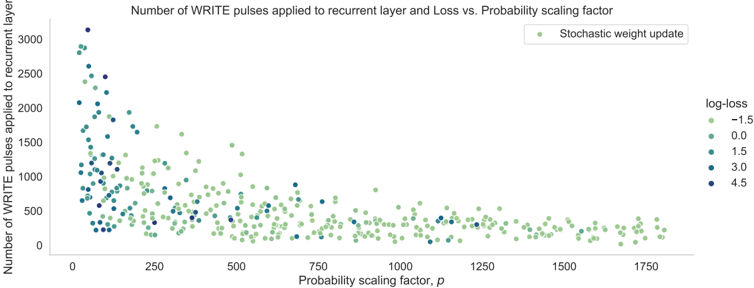

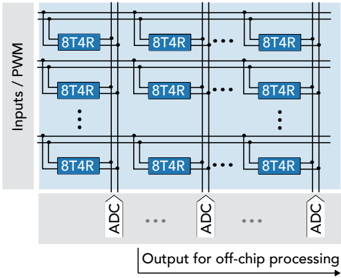

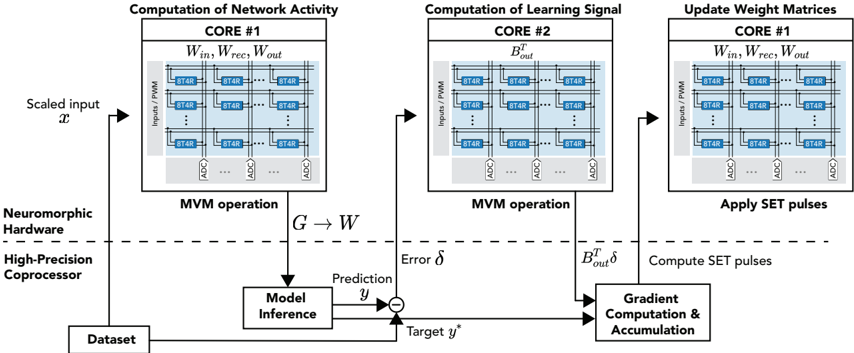

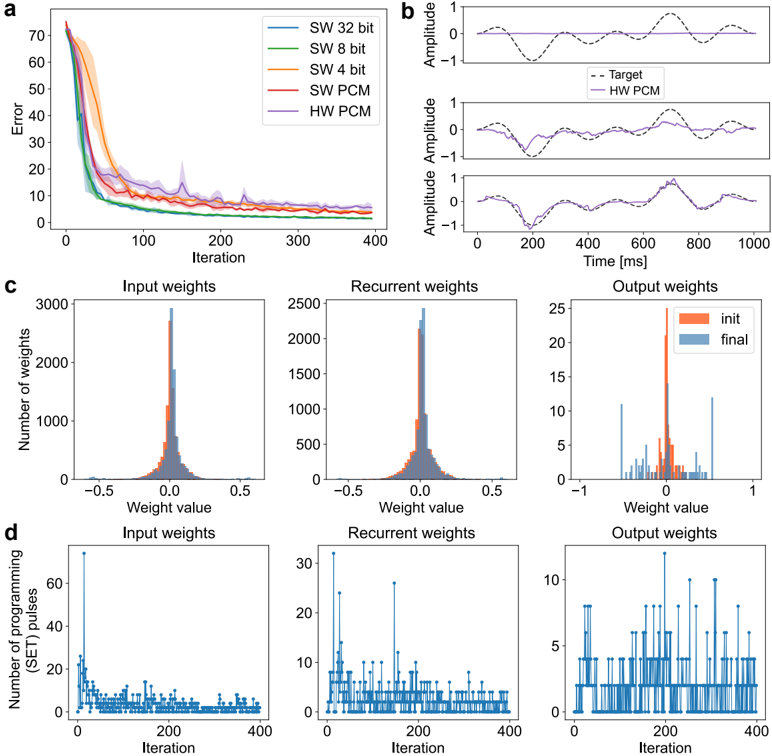

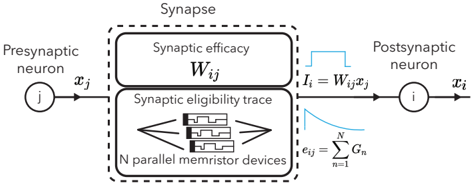

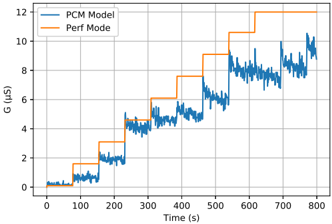

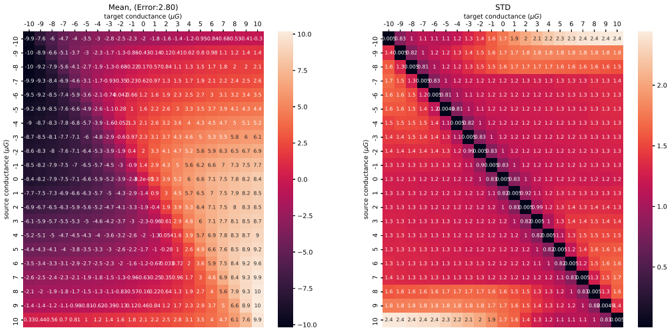

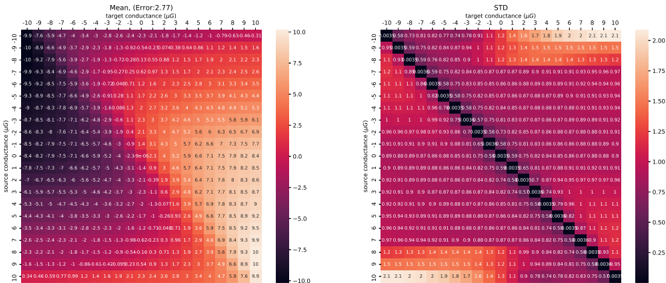

To achieve this, we start with developing a PyTorch [ 107 ]-based simulation framework based on a comprehensive statistical model of a PCM crossbar array, capturing the major device nonidealities: programming noise, read noise, and temporal drift [ 81 ]. Our selected learning rule, e-prop, estimates the gradient with nice locality constraints, but it is not known how to reflect the gradient signal reliably while programming memristors with non-idealities. This framework enables benchmarking four commonly practiced memristor-aware weight update mechanisms (Sign-Gradient Descent [ 108 ], stochastic updates [ 81 ], multi-memristor updates [ 109 ] and mixedprecision [ 110 ]), to reliably program memristors conductances based on estimated gradients on generic regression tasks. We show that mixed-precision update scheme is superior by accumulating gradients on a high-precision memory (similar to quantization-aware-training methods [ 111 ]), allowing for a lower learning rate and improved alignment of weight update magnitudes with the PCM's minimum programmable conductance change. It also reduces the total number of write pulses by 2 -3 orders of magnitude, reducing energy spent on costly memristive programming and mitigating potential endurance issues. Furthermore, we verified previous digital implementations [ 77 ] of the stochastic update working well down to 8 -bit precision digital simulations.

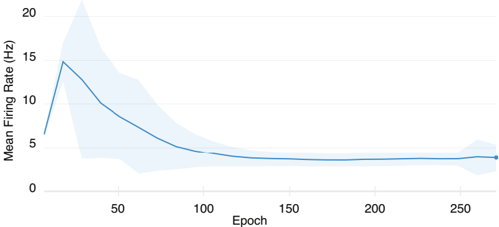

Following simulations, the next step is to validate it on a physical neuromorphic chip. We implemented the e-prop learning rule on the HERMES chip [ 23 ], fabricated in 14 nm CMOS, with four 256 x 256 PCM crossbar arrays in a differential parallel setup. We programmed all weights of a RSNN onto physical PCM devices across HERMES cores for in-memory inference. On-chip training was controlled by a digital coprocessor implementing a mixed-precision algorithm to accumulate gradients on a high-precision memory unit. Our mixed-precision training on HERMES achieved performance competitive with conventional software simulations, maintained a regularized firing rate, and significantly reduced the number of PCM programming pulses, enhancing energy efficiency. Our results demonstrates the first successful implementation of a gradient-based, powerful online learning rule for RSNNs on an analog substrate. While the mixed-precision technique requires an additional high-precision memory unit, we demonstrate that inference can be in-memory, and off-chip guided learning can be activated as needed with minimal analog device programming.

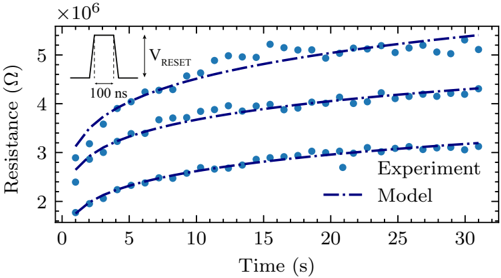

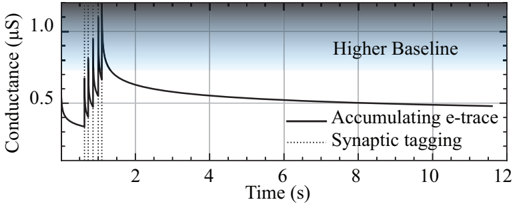

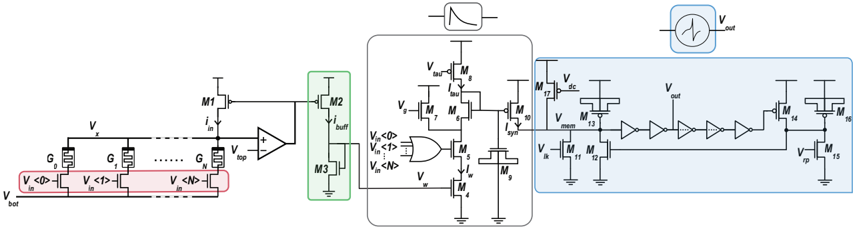

Local learning rules, including e-prop, often require synaptic eligibility traces [ 39 , 82 -86 ], posing a scaling challenge for analog hardware due to their O ( N 2 ) area scaling, where N is the number of neurons. This challenge is exacerbated by increased time-constant requirements, as larger capacitors are needed for implementation. To address this, we introduce PCM-trace, a novel small-footprint circuitry leveraging the inherent conductance drift of PCM to emulate eligibility traces for local learning rules. We exploit the material bug - the structural relaxation and temporal conductance drift in PCM's amorphous regime [ 112 ], described by R ( t ) = R ( t 0 )( t t 0 ) ν - turning it into a feature. Our optimized material choice allows gradual SET pulses to accumulate the trace, while conductance naturally decays over seconds. We also introduce a multi-PCM-trace configuration, distributing synaptic traces across multiple PCM devices to significantly improve dynamic range. Experimental results on 130 nm CMOS technology confirm that PCM-trace can maintain eligibility traces for over 10 seconds while offering more than 11 x area savings compared to conventional capacitor-based trace implementations [ 94 ].

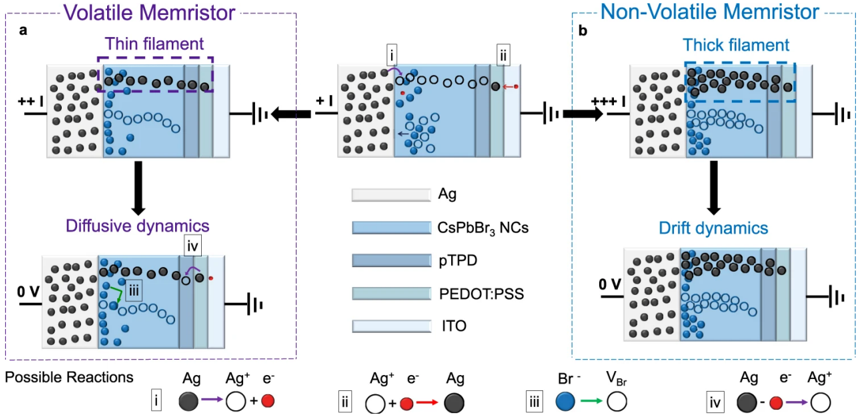

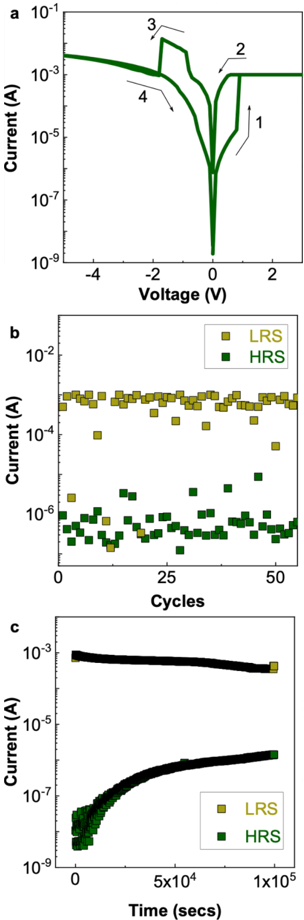

In Chapter 4 , we introduce a state-of-the-art memristive material based on halide perovskite nanocrystals that can be dynamically reconfigured to exhibit volatile or non-volatile behavior. This is motivated by the fact that many in-memory neural computing systems demand devices with specific switching characteristics, and existing memristive devices cannot be reconfigured to meet these diverse volatile and non-volatile switching requirements. To achieve this, we develop a cesium lead bromide nanocrystals capped with organic ligands as the active switching matrix and silver as the active electrode. This design leverages the low activation energy of ion migration in halide perovskites to achieve both diffusive (volatile) and drift (non-volatile) switching. By actively controlling the compliance current ( Icc ) following our prior work at Chapter 2 , the magnitude of ion flux is adjusted, enabling on-demand switching between the two modes. This control mechanism allows for the selection of diffusive dynamics with low Icc (1 µ A) for volatile behavior and drift kinetics with higher Icc ( 1 mA) for non-volatile memory operation. Moreover, our measurements demonstrated that memristors using perovskite nanocrystals capped with OGB, achieve a record endurance in both volatile and non-volatile modes. We attribute this superior performance to the larger OGB ligands, which better insulate the nanocrystals and regulate the electrochemical reactions responsible for switching behavior.

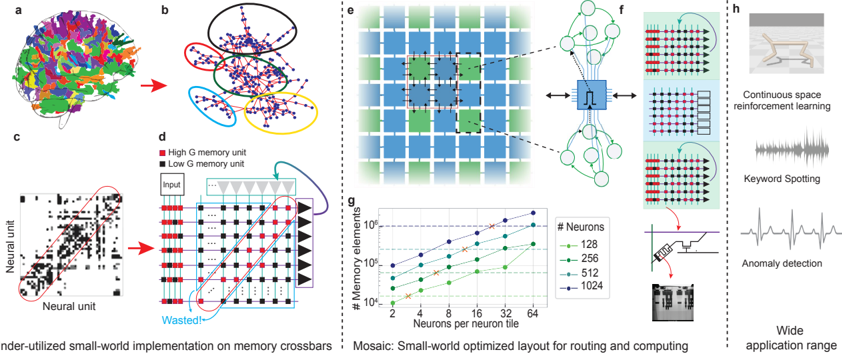

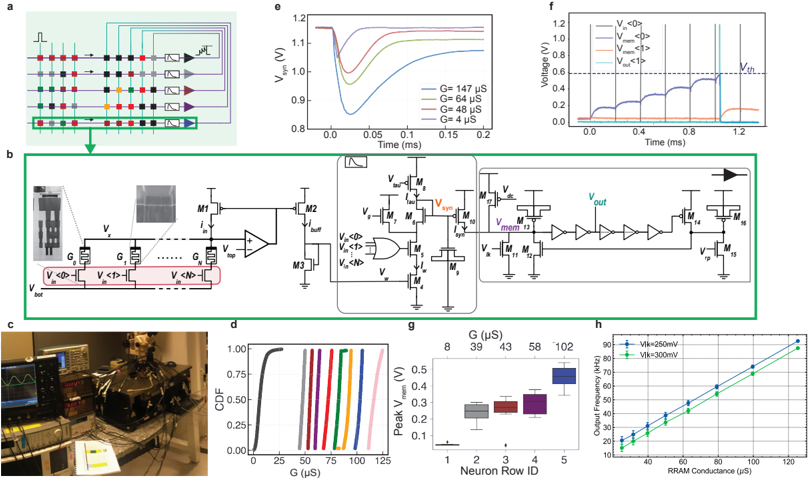

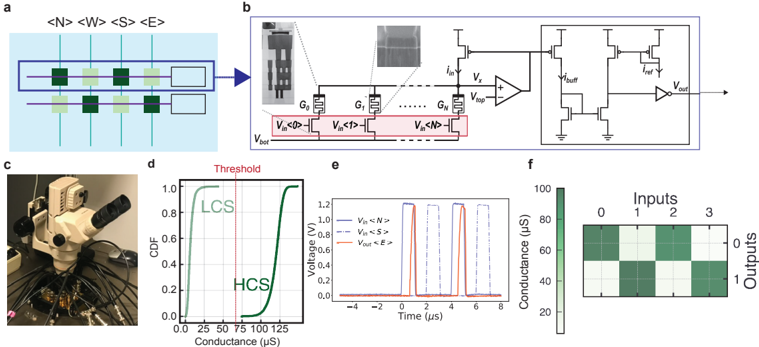

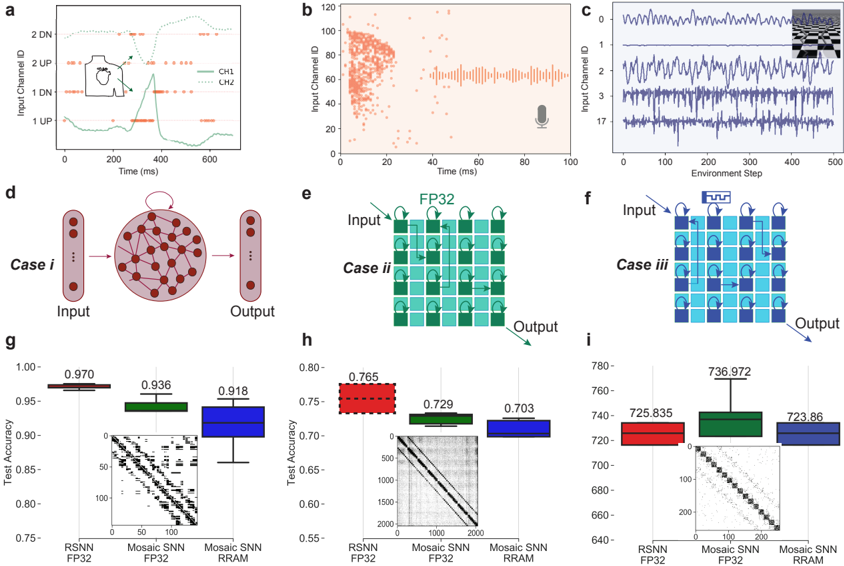

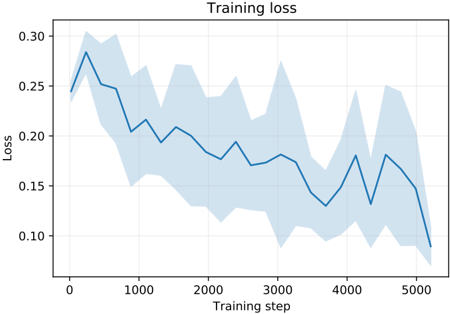

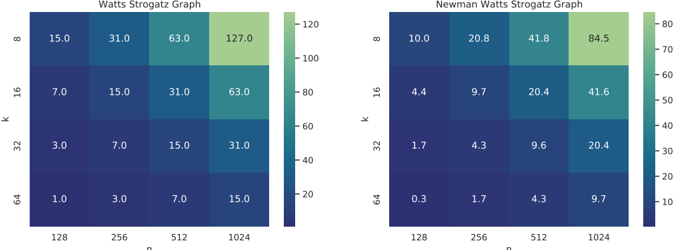

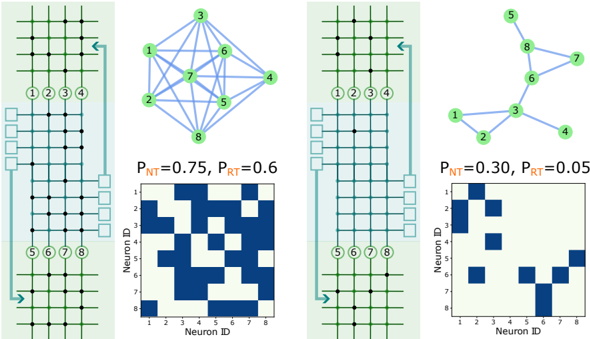

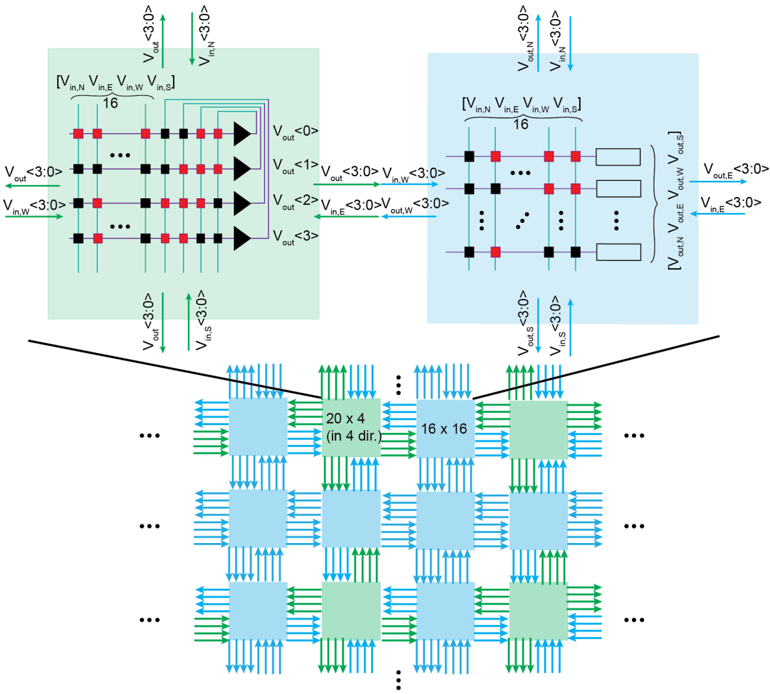

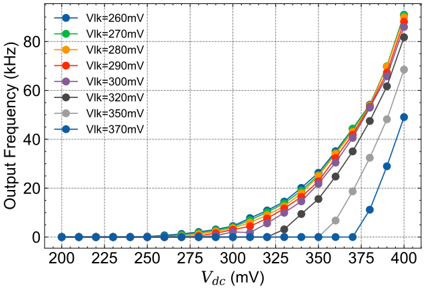

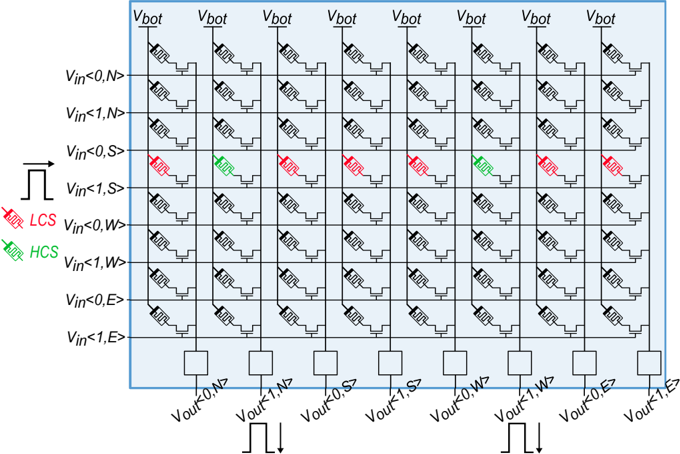

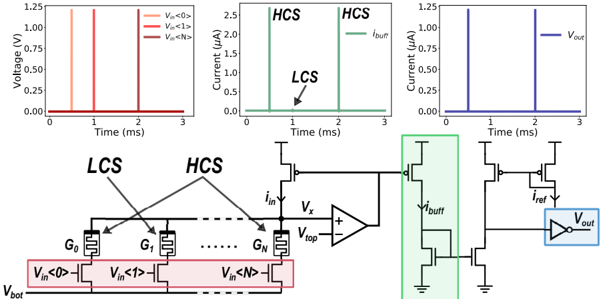

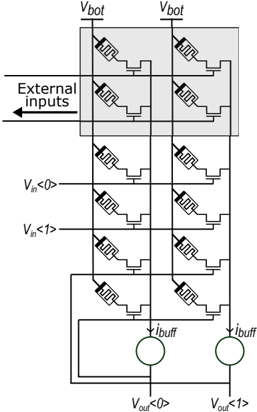

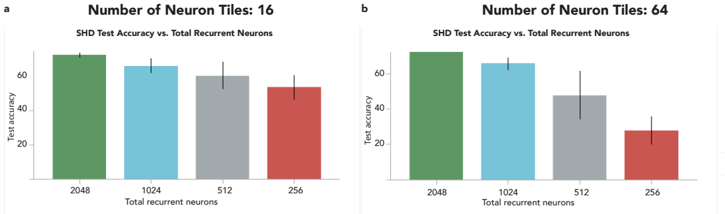

In Chapter 5 , we switch gears and focus on the scalability of memristive architectures in neuromorphic systems, a challenge hindered by analog non-idealities of crossbar arrays. We introduce Mosaic, a novel memristive systolic array architecture consisting of interconnected Neuron Tiles and Routing Tiles, both implemented with RRAM integrated 130 nm CMOS technology.

Each Neuron Tile, per usual, is a crossbar array of memristors storing network weights for a RSNN layer with Leaky Integrate-and-Fire (LIF) neurons. These neurons emit spikes based on integrated synaptic inputs, transmitting them to neighbouring tiles through Routing Tiles. The Routing Tiles, also based on memristor arrays, define the connectivity patterns between Neuron Tiles. The resulting structure then becomes a small-world graph with dense local and sparse long-range connections, similar to the connectivity found in biological brains 6 .

Our in-memory routing approach necessitates careful optimization of connectivity during offline training to prune RSNNs into a Mosaic-compatible, small-world graph. We introduce a novel hardware layout-aware training method that considers the physical layout of the chip and optimizes neural network weights using either gradient-based or evolutionary algorithms [ 113 ].

In-memory routing and optimization for sparse long-range and locally dense communication in Mosaic result in significant energy efficiency in spike routing, surpassing other SNN hardware platforms by at least one order of magnitude, as demonstrated by hardware measurements and system-level simulations. Notably, due to its layout-aware structured sparsity, Mosaic achieves competitive accuracy in edge computing tasks like biosignal anomaly detection, keyword spotting, and motor control.

6 It may not be a coincidence that some of the solutions proposed in this thesis can also be found in the biological brain, whispering of a deep connection between highly optimized silicon substrates and the evolved neural tissue.

<details>

<summary>Image 3 Details</summary>

### Visual Description

Icon/Small Image (61x83)

</details>

## ENHANCING BIT PRECISION OF BINARY MEMRISTORS FOR ROBUST ON-CHIP LEARNING

This chapter's content was published in IEEE International Symposium on Circuits and Systems (ISCAS). The original publication is authored by Melika Payvand, Yigit Demirag, Thomas Dalgaty, Elisa Vianello, Giacomo Indiveri.

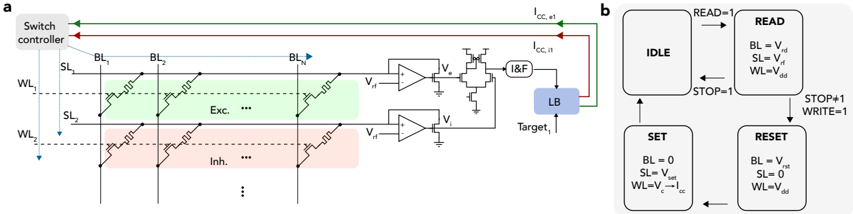

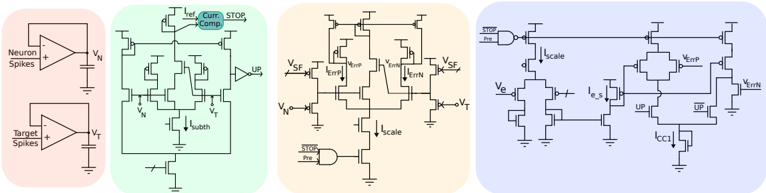

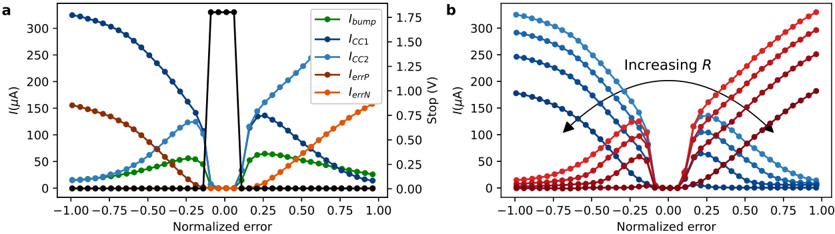

Analog neuromorphic circuits with memristive synapses offer the potential for power-efficient neural network inference, but limited bit precision of memristors poses challenges for gradientbased training. In this chapter, we introduce a programming technique of the weights to enhance the effective bit precision of initially binary memristive devices, enabling more robust and performant on-chip training. To overcome the problems of variability and limited resolution of ReRAM memristive devices used to store synaptic weights, we propose to use only their HCS and control their desired conductance by modulating their programming compliance current, ICC . We introduce spike-based CMOS circuits for training the network weights; and demonstrate the relationship between the weight, the device conductance, and the ICC used to set the weight, supported with experimental measurements from a 4 kb array of HfO 2 -based devices. To validate the approach and the circuits presented, we present circuit simulation results for a standard CMOS 180 nm process and system-level simulations for classifying hand-written digits from the MNIST dataset.

## 2 . 1 introduction

The neural networks deployed on the resource-constrained devices can benefit greatly from online training to adapt to shifting data distributions, sensory noise, device degradation, or new tasks that are not seen in the pretraining. While CMOS architectures with integrated memristive devices offer ultra-low power inference, their use for online learning has been limited [ 114 , 115 ].

In this chapter, we propose novel learning circuits for SNN architectures implemented by 1 T 1 R arrays. These circuits enable analog weight updates on binary ReRAM devices by controlling their SET operation ICC . In addition to increasing the bit precision of network weights, the proposed strategy allows compact, fast, and scalable event-based learning scheme compatible with the AER interface [ 116 ].

Previously significant efforts have aimed to increase the bit precision of memristive devices for online learning through material and architectural optimizations.

material optimization Several groups reported TiO 2 -based [ 47 -49 ] and HfO 2 -based [ 50 ] ReRAM devices with up to 8 bits of precision. However, in all these works, the analog behavior is traded off with the lower available ON/OFF ratio. While the analog behavior is an important concern for training neural networks, cycle-to-cycle and device-to-device variability harms the effective number of bits further when ON/OFF ratio is small. Also, tuning the precise memory state is not always easily achievable in a real-time manner, requiring recursively tuning with an active feedback scheme [ 50 , 117 ]. Furthermore, some efforts have been focused on carefully designing a barrier level using exhaustive experimental search over a range of materials [ 47 , 48 ] which makes it difficult to fabricate.

architecture optimization Increasing the effective bit resolution has also been demonstrated with architectural advancements. Strategies such as using multiple binary switches to emulate n-bit synapses [ 51 ] or exploiting stochastic switching properties for analog-like adaptation [ 53 , 54 ] have been explored. Alternatively, IBM's approach of using a capacitor alongside two PCM devices to act as an analog volatile memory, increases combined precision but incurs significant area overhead [ 55 ]. Recently, a mixed-precision approach has been employed to train

the networks using a digital coprocessor for weight update accumulation [ 118 ], but requires digital buffering of weights, gradients and suffers of domain-conversion costs.

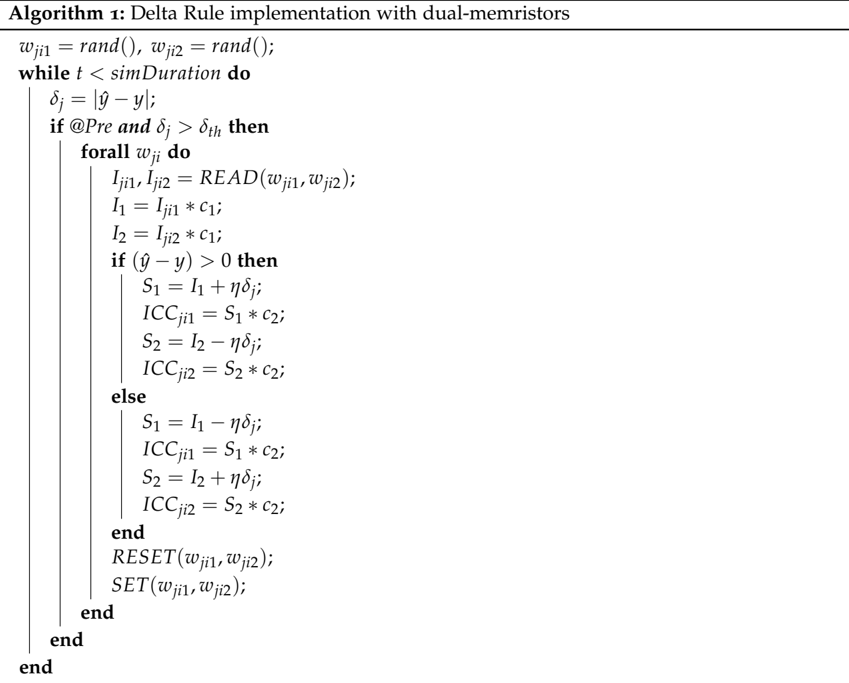

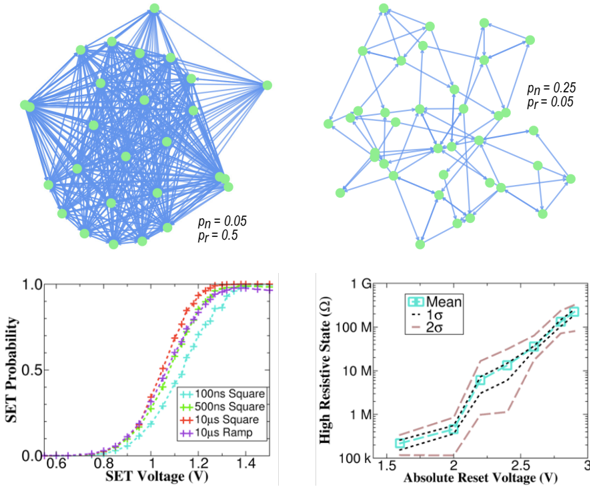

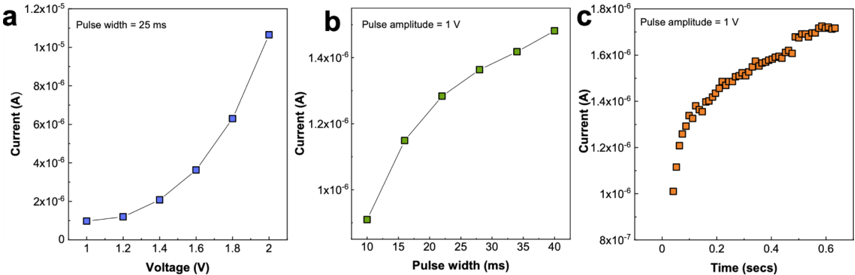

Thus, neither device nor architecture optimizations have fully resolved the challenges of low bit precision in memristors for online learning. Prior work by Ielmini [ 105 ] observed that the electrical resistance of memristors after a SET operation follows a linear relationship with ICC in a log-log scale, by control over the size of the filament. This critical observation underpins our approach, where we exploit this relationship to directly control device conductance.

To minimize the effect of variability, we adopt an algorithm-device codesign approach. We restrict devices to stay only in their HCS and modulate their conductance by adjusting the programming ICC . Specifically, we derive a technologically feasible online learning algorithm based on the Delta rule [ 106 ], mapping weight updates onto the ICC used for setting the device. This co-design offers several advantages: (i) relaxed fabrication constraints compared to multi-bit devices, and (ii) increased state stability due to the use of only two levels per device.

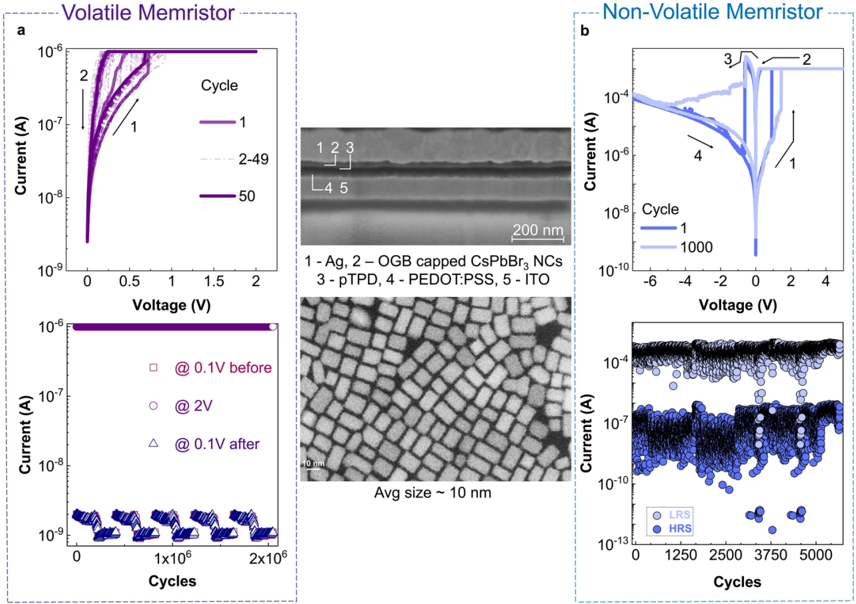

## 2 . 2 reram device modeling

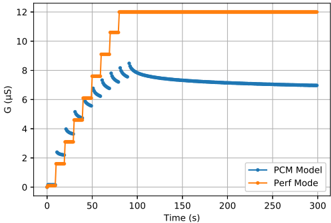

To find the average relationship between the mean of the cycle-to-cycle distribution of the HCS and the SET programming ICC , we performed measurements on a 16 × 256 ( 4 kb) array of HfO 2 -based ReRAM devices integrated onto a 130 nm CMOS process between metal layers 4 and 5 [ 119 ]. Each device is connected in series to the drain of an n-type selector transistor which allows the SET programming ICC to be controlled based on the voltage applied to its gate. The 1 T 1 R structure allows a single device to be selected for reading or programming by applying appropriate voltages to a pair of Source/Bit Lines (SL/BL) and a single Word Line (WL).

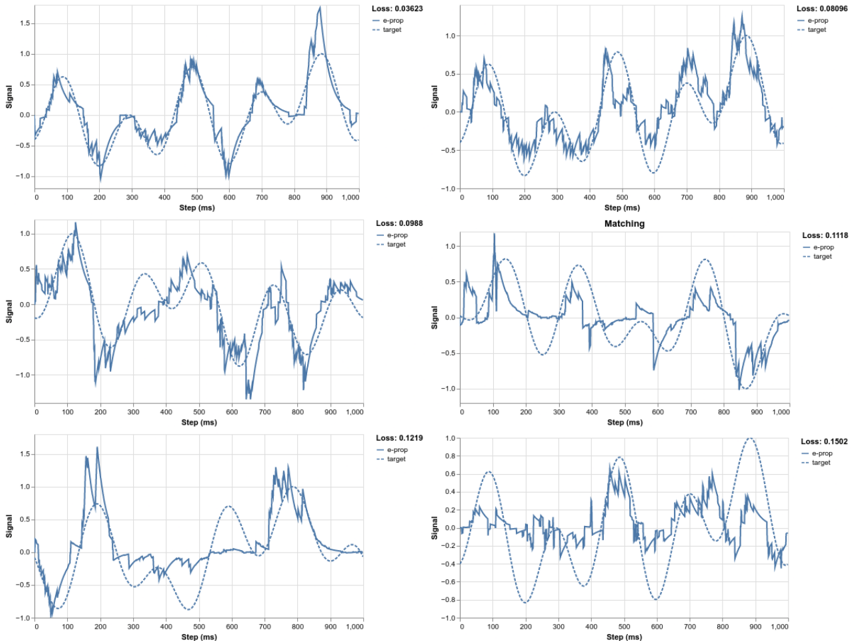

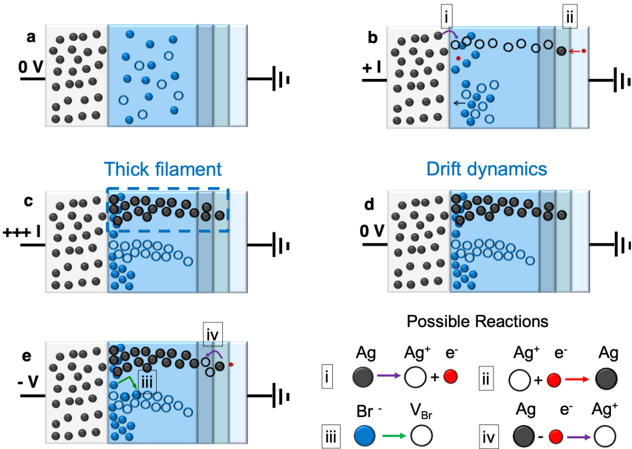

All 4 kb devices were initially formed in a raster scan fashion by applying a large voltage ( 4 V typical) between the SL and BL to induce a soft breakdown in the oxide layer and introduce conductive oxygen vacancies. After forming, each device was subject to sets of 100 RESET/SET cycles over a range of SET ICC s between 10 µ A and 400 µ A, where the resistance of each device was recorded after each SET operation. The mean of all devices' median resistances over the 100 cycles, at a single ICC , gives the average relationship between HCS median and SET ICC as in Fig. 2 . 1 . The relationship is seen to follow a line in the log-log plot (power law) and over this ICC range, it allows precise control of the conductance median of the cycle-to-cycle distribution between 50 k Ω and 2 k Ω .

## 2 . 3 bit -precision enhancing weight update rule

The learning algorithm is based on the Delta rule, the simplest form of the gradient descent for single-layer networks. In our implementation, the objective function is defined as the difference between the desired target output signal y and the network prediction signal ˆ y , for a given set of input patterns signals x , weighted by the synaptic weight parameters w . Then the Delta rule can be used to calculate the change of the weights connecting a neuron i in the input layer and a neuron j at the output layer as follows:

$$\Delta w _ { j i } = \eta ( y _ { j } - \hat { y } _ { j } ) x _ { i } = \eta \delta _ { j } \, x _ { i } , & & ( 2 . 1 )$$

F igure 2 . 1 : Mean and standard deviation of the device conductance as a function of the ICC s. The inset shows the samples from the fitted mean and standard deviation used for the simulations.

<details>

<summary>Image 4 Details</summary>

### Visual Description

\n

## Chart: Conductance vs. Current

### Overview

The image presents a graph illustrating the relationship between conductance (G<sub>LRS</sub>) and current (I<sub>CC</sub>). The main plot shows a logarithmic scale for both axes, displaying a clear upward trend. An inset plot provides a more detailed view of the data at higher current values, also on a logarithmic scale. Error bars are present on the main plot, indicating the uncertainty in the conductance measurements.

### Components/Axes

* **X-axis:** I<sub>CC</sub> (A) - Current, labeled in Amperes. The scale is logarithmic, ranging from approximately 10<sup>-5</sup> A to 10<sup>-4</sup> A.

* **Y-axis:** G<sub>LRS</sub> (S) - Conductance, labeled in Siemens. The scale is logarithmic, ranging from approximately 10<sup>-5</sup> S to 10<sup>-4</sup> S.

* **Data Series:** A single blue line with circular data points represents the conductance as a function of current.

* **Error Bars:** Vertical error bars are associated with each data point on the main plot, indicating the uncertainty in the conductance measurements.

* **Inset Plot:** A smaller plot in the top-right corner, also showing G<sub>LRS</sub> (S) on the Y-axis and I<sub>CC</sub> (A) on the X-axis, but with a different scale. The inset plot appears to show a scatter plot of many data points.

### Detailed Analysis

The main plot shows a strong positive correlation between I<sub>CC</sub> and G<sub>LRS</sub>. The line slopes sharply upwards initially, then gradually flattens out as I<sub>CC</sub> increases.

Here's an approximate extraction of data points from the main plot, noting the logarithmic scales and error bar uncertainties:

* I<sub>CC</sub> = 10<sup>-5</sup> A, G<sub>LRS</sub> ≈ 3 x 10<sup>-5</sup> S (with an error bar extending to approximately 5 x 10<sup>-5</sup> S)

* I<sub>CC</sub> = 3 x 10<sup>-5</sup> A, G<sub>LRS</sub> ≈ 1 x 10<sup>-4</sup> S (with an error bar extending to approximately 2 x 10<sup>-4</sup> S)

* I<sub>CC</sub> = 6 x 10<sup>-5</sup> A, G<sub>LRS</sub> ≈ 2 x 10<sup>-4</sup> S (with an error bar extending to approximately 3 x 10<sup>-4</sup> S)

* I<sub>CC</sub> = 1 x 10<sup>-4</sup> A, G<sub>LRS</sub> ≈ 3.5 x 10<sup>-4</sup> S (with an error bar extending to approximately 4.5 x 10<sup>-4</sup> S)

* I<sub>CC</sub> = 2 x 10<sup>-4</sup> A, G<sub>LRS</sub> ≈ 5 x 10<sup>-4</sup> S (with an error bar extending to approximately 6 x 10<sup>-4</sup> S)

* I<sub>CC</sub> = 3 x 10<sup>-4</sup> A, G<sub>LRS</sub> ≈ 6 x 10<sup>-4</sup> S (with an error bar extending to approximately 7 x 10<sup>-4</sup> S)

* I<sub>CC</sub> = 4 x 10<sup>-4</sup> A, G<sub>LRS</sub> ≈ 6.5 x 10<sup>-4</sup> S (with an error bar extending to approximately 7.5 x 10<sup>-4</sup> S)

The inset plot shows a dense scatter of points, also exhibiting a positive correlation, but with more scatter than the main plot. The data points in the inset appear to cluster around a similar trend as the main plot, but with a higher density of data.

### Key Observations

* The conductance increases significantly with increasing current, especially at lower current values.

* The rate of increase in conductance slows down at higher current values, suggesting a saturation effect.

* The error bars indicate a considerable degree of uncertainty in the conductance measurements, particularly at lower current values.

* The inset plot provides a more detailed view of the data, confirming the overall trend observed in the main plot.

### Interpretation

The data suggests a non-linear relationship between conductance and current. This could be indicative of a switching behavior in the material or device under investigation. The initial steep increase in conductance with current might represent a transition from a high-resistance state to a low-resistance state. The subsequent flattening of the curve suggests that the material reaches a saturation point where further increases in current do not significantly increase the conductance.

The error bars highlight the variability in the measurements, which could be due to factors such as noise, device-to-device variations, or measurement limitations. The inset plot provides a more granular view of the data, potentially revealing finer details about the conductance-current relationship. The consistent trend observed in both the main plot and the inset plot strengthens the conclusion that there is a clear correlation between conductance and current in this system. The logarithmic scales suggest that the relationship is exponential or power-law-like. This type of behavior is often observed in memristive devices or other systems exhibiting non-linear electrical characteristics.

</details>

<details>

<summary>Image 5 Details</summary>

### Visual Description

\n

## Algorithm: Delta Rule Implementation with Dual-Memristors

### Overview

The image presents a pseudocode algorithm titled "Delta Rule Implementation with Dual-Memristors". It outlines a learning rule, likely for a neural network, utilizing dual-memristor devices. The algorithm iteratively adjusts weights based on the difference between a target output and an actual output.

### Components/Axes

The content is structured as a pseudocode block with the following key elements:

* **Initialization:** `wji1 = rand(); wji2 = rand();` - Initializes weights `wji1` and `wji2` with random values.

* **Loop Condition:** `while t < simDuration do` - The algorithm iterates as long as the simulation time `t` is less than `simDuration`.

* **Error Calculation:** `δj = |ŷ - y|` - Calculates the error `δj` as the absolute difference between the target output `ŷ` and the actual output `y`.

* **Conditional Statement:** `if @Pre and δj > δth then` - Executes the following block only if the condition `@Pre` is true and the error `δj` is greater than a threshold `δth`.

* **Inner Loop:** `forall wji do` - Iterates through all weights `wji`.

* **Read Operation:** `Iji1, Iji2 = READ(wji1, wji2);` - Reads current values from memristors `wji1` and `wji2`, assigning them to `Iji1` and `Iji2`.

* **Intermediate Calculations:** `I1 = Iji1 * c1; I2 = Iji2 * c1;` - Calculates intermediate values `I1` and `I2` by multiplying the read currents with a constant `c1`.

* **Conditional Update (Positive Error):** `if (ŷ - y) > 0 then` - Executes the following block if the difference between the target and actual output is positive.

* `S1 = I1 + ηδj; ICCji1 = S1 * c2;` - Updates `S1` and calculates `ICCji1`.

* `S2 = I2 - ηδj; ICCji2 = S2 * c2;` - Updates `S2` and calculates `ICCji2`.

* **Conditional Update (Negative Error):** `else` - Executes the following block if the difference between the target and actual output is not positive (i.e., negative or zero).

* `S1 = I1 - ηδj; ICCji1 = S1 * c2;` - Updates `S1` and calculates `ICCji1`.

* `S2 = I2 + ηδj; ICCji2 = S2 * c2;` - Updates `S2` and calculates `ICCji2`.

* **Reset Operation:** `RESET(wji1, wji2);` - Resets the memristors `wji1` and `wji2`.

* **Set Operation:** `SET(wji1, wji2);` - Sets the memristors `wji1` and `wji2`.

* **End Statements:** `end`, `end` - Terminates the inner and outer loops.

### Detailed Analysis or Content Details

The algorithm describes a learning process where weights are adjusted based on the error signal. The use of `READ` and `RESET/SET` operations suggests the algorithm is designed for memristor-based hardware implementation. The constants `c1` and `c2` likely represent device characteristics. The parameter `η` (eta) appears to be a learning rate. The `@Pre` condition is not defined, suggesting it might be a hardware-specific pre-condition.

### Key Observations

The algorithm implements a delta rule, a classic supervised learning algorithm. The use of memristors introduces non-linearity and potentially energy efficiency. The conditional updates based on the sign of the error indicate a gradient-based learning approach. The `READ` operation suggests the memristor's state is read before weight updates.

### Interpretation

This algorithm demonstrates a potential implementation of a neural network learning rule using memristor devices. The algorithm leverages the unique properties of memristors – their ability to retain state and perform analog computation – to implement weight storage and updates. The `READ`, `RESET`, and `SET` operations are crucial for interacting with the memristor hardware. The algorithm's performance would depend on the specific characteristics of the memristors (represented by `c1` and `c2`) and the learning rate `η`. The `@Pre` condition suggests a hardware-level requirement for the learning process to proceed. The use of absolute value in the error calculation `δj = |ŷ - y|` suggests the algorithm is not sensitive to the sign of the error during the initial error calculation, but the sign is used to determine the direction of weight updates. This is a standard approach in delta rule implementations. The algorithm is a simplified model and may require further refinement for practical applications, such as handling multiple layers or more complex activation functions.

</details>

F igure 2 . 2 : Event-based neuromorphic architecture using online learning in a 1 T 1 R array (a), and the asynchronous state machine used as the switch controller applying the appropriate voltages on the BL, SL and WL of the array for online learning.

<details>

<summary>Image 6 Details</summary>

### Visual Description

\n

## Diagram: Memory Cell Circuit and State Diagram

### Overview

The image presents a schematic diagram of a memory cell circuit (labeled 'a') alongside a state diagram illustrating its operational modes (labeled 'b'). The circuit appears to be a non-volatile memory cell, likely a resistive RAM (ReRAM) or similar technology, with excitation (Exc.) and inhibition (Inh.) phases. The state diagram shows the transitions between IDLE, READ, SET, and RESET states.

### Components/Axes

**Part a (Circuit Diagram):**

* **Switch Controller:** Green arrow indicating control signals.

* **BL:** Bit Line (two instances, labeled BL and BL̅)

* **SL:** Select Line (two instances, labeled SL and SL̅)

* **WL:** Word Line (two instances, labeled WL and WL̅)

* **V<sub>T</sub>:** Threshold Voltage (two instances)

* **cc, v1:** Positive supply voltage.

* **cc, n:** Negative supply voltage.

* **LB:** Load Balance (blue oval)

* **Target:** Output signal.

* **&F:** AND/Flip-Flop gate.

* **Exc.:** Excitation region (highlighted in green).

* **Inh.:** Inhibition region (highlighted in red).

* **Dotted Lines:** Represent continuation of the memory array.

**Part b (State Diagram):**

* **IDLE:** Initial state.

* **READ:** State for reading the memory cell value.

* **SET:** State for setting the memory cell to a high resistance state.

* **RESET:** State for resetting the memory cell to a low resistance state.

* **READ = 1:** Transition from IDLE to READ.

* **STOP = 1:** Transition from IDLE to SET.

* **STOP + 1 WRITE = 1:** Transition from IDLE to RESET.

* **BL = V<sub>T</sub>, SL = V<sub>T</sub>, WL = V<sub>W</sub>:** Conditions for READ state.

* **BL = 0, SL = V<sub>T</sub>, WL = V<sub>SET</sub>:** Conditions for SET state.

* **BL = V<sub>T</sub>, SL = 0, WL = V<sub>RESET</sub>:** Conditions for RESET state.

### Detailed Analysis or Content Details

**Part a (Circuit Diagram):**

The circuit consists of two parallel memory cells, each with a bit line (BL/BL̅), select line (SL/SL̅), and word line (WL/WL̅). The excitation region (Exc.) shows a series of pulses applied to the word lines, while the inhibition region (Inh.) shows a similar pattern. The bit lines are connected to a differential amplifier and an AND/Flip-Flop gate. The Load Balance (LB) signal is connected to the output. The circuit utilizes complementary signals (BL/BL̅, SL/SL̅, WL/WL̅) for operation.

**Part b (State Diagram):**

The state diagram shows a four-state machine.

* From IDLE, the system transitions to READ upon receiving a READ signal (READ = 1).

* From IDLE, the system transitions to SET upon receiving a STOP signal (STOP = 1).

* From IDLE, the system transitions to RESET upon receiving a STOP + 1 WRITE signal.

* The READ state is defined by BL = V<sub>T</sub>, SL = V<sub>T</sub>, and WL = V<sub>W</sub>.

* The SET state is defined by BL = 0, SL = V<sub>T</sub>, and WL = V<sub>SET</sub>.

* The RESET state is defined by BL = V<sub>T</sub>, SL = 0, and WL = V<sub>RESET</sub>.

### Key Observations

* The circuit utilizes a differential sensing scheme with the differential amplifier.

* The excitation and inhibition regions suggest a pulse-based operation for programming the memory cell.

* The state diagram clearly defines the conditions for each operation (READ, SET, RESET).

* The use of complementary signals (BL/BL̅, SL/SL̅, WL/WL̅) indicates a balanced circuit design.

* The Load Balance (LB) signal suggests a mechanism for stabilizing the memory cell operation.

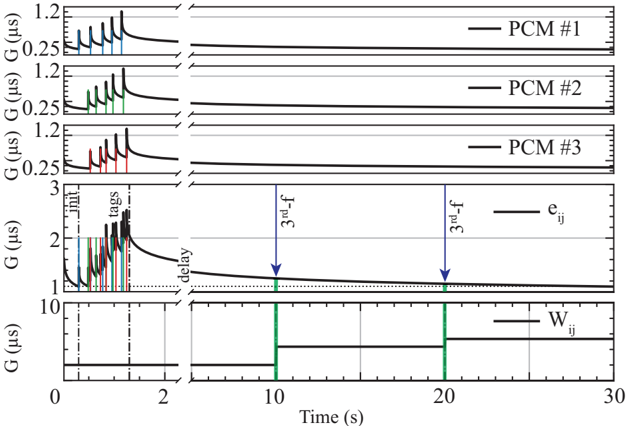

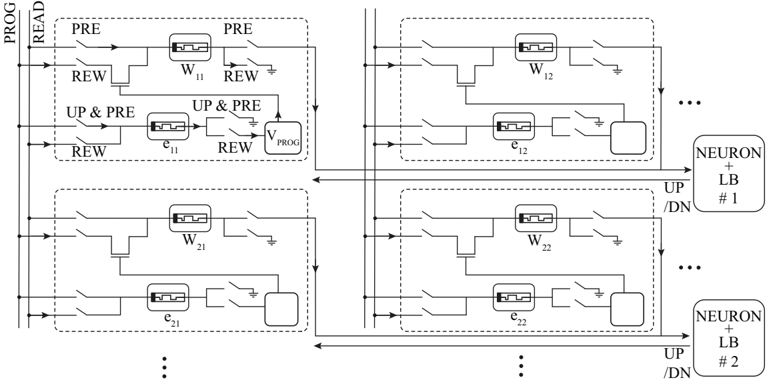

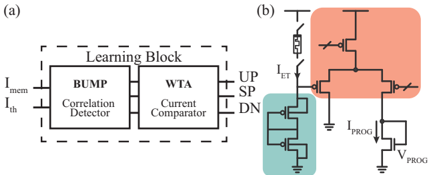

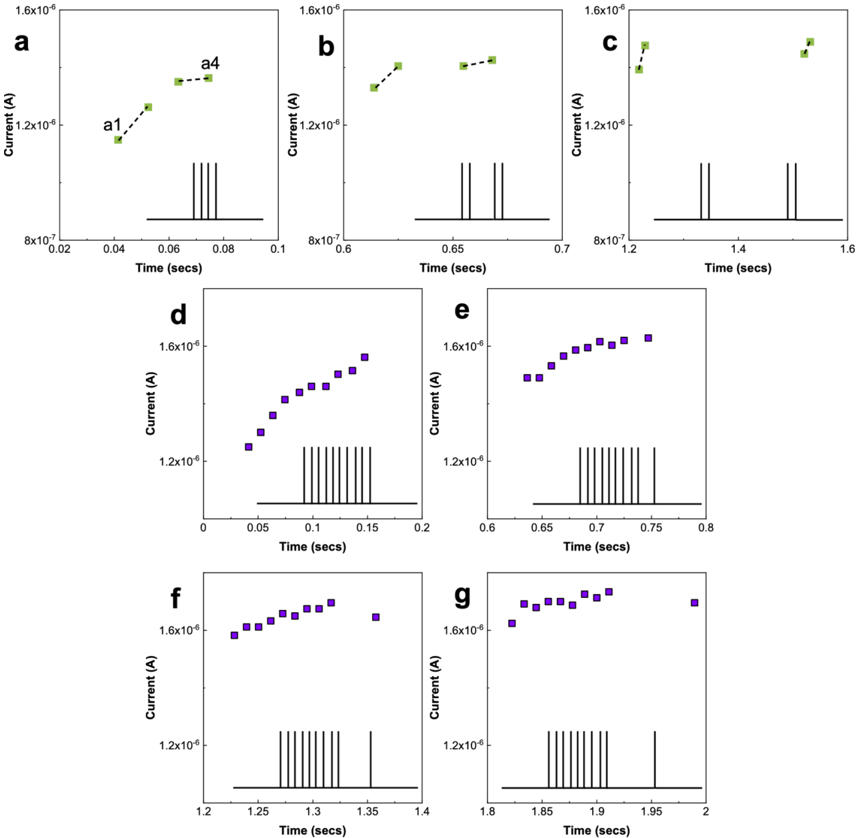

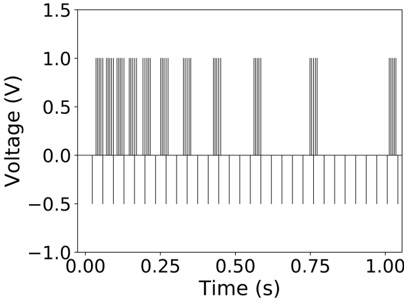

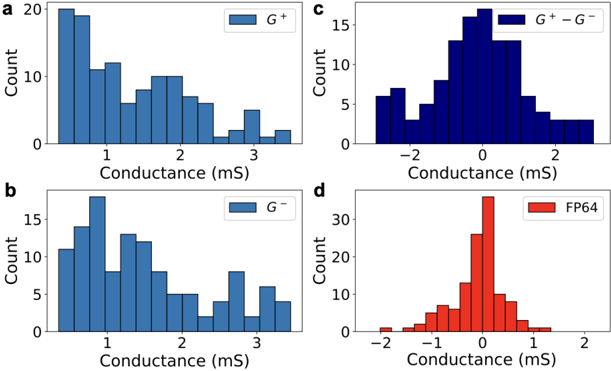

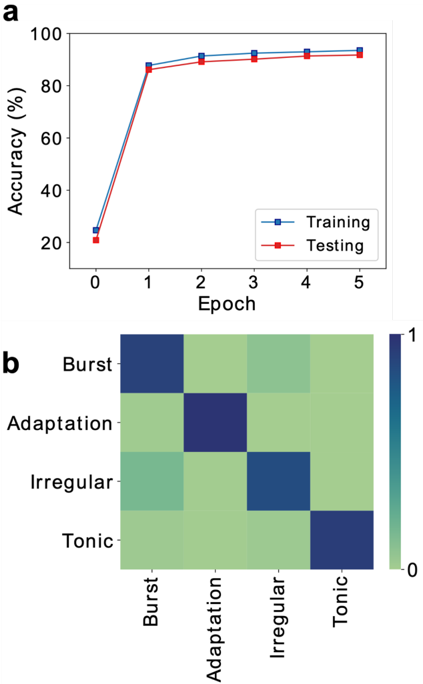

### Interpretation