## Towards high resolution, validated and open global wind power assessments

E. U. Peña-Sánchez 1,2,† , P. Dunkel 1,2, †,* , C. Winkler 1,2,† , H. Heinrichs 1 , F. Prinz 1 , J.M. Weinand 1 , R. Maier 1,2 , S. Dickler 1 , S. Chen 1,3 , K.Gruber 4 , T.Klütz 1 , J. Linßen 1 , and D. Stolten 1,2

1 Forschungszentrum Jülich GmbH, Institute of Climate and Energy Systems, Jülich Systems Analysis, 52425 Jülich, Germany

2 RWTH Aachen University, Chair for Fuel Cells, Faculty of Mechanical Engineering, 52062 Aachen, Germany

3 Forschungszentrum Jülich GmbH, Institute of Bio- and Geosciences - Agrosphere (IBG-3), 52425 Jülich, Germany

4 Institute for Sustainable Economic Development, University of Natural Resources and Life Sciences, Vienna, Austria

† Equally contributed

*corresponding author: p.dunkel@fz-juelich.de

## Abstract

Wind power is expected to play a crucial role in future net-zero energy systems, but wind power simulations to support deployment strategies vary drastically in their results, hindering reliable design decisions. Therefore, we present a transparent, open source, validated and evaluated, global wind power simulation tool called ETHOS.RESKitWind with high spatial resolution and customizable designs for both onshore and offshore wind turbines. The tool provides a comprehensive validation and calibration procedure using over 16 million global measurements from metrerological masts and wind turbine sites. We achieve a global average capacity factor mean error of 0.006 and Pearson correlation of 0.865. In addition, we evaluate its performance against several aggregated and statistical sources of wind power generation. The release of ETHOS.RESKitWind is a step towards a fully open source and open data approach to accurate wind power modeling by incorporating the most comprehensive simulation advances in one model.

Keywords: wind speeds, cross-validation, open-source, wind energy potential, wind energy simulation, wind power generation model

## Introduction

Wind power is placed as one of the largest renewable sources for the upcoming decades [14]. Thus, evaluating wind power resources is essential to develop strategies for the energy systems transformation, for instance in capacity planning, designing adequate market frameworks, or for increasing the speed of planning and permitting [2,4-6]. Being able to accurately assess wind resources ultimately leads to more reliable future energy transformation strategies.

Wind power resources depend on the location (spatial dependency), on the conditions at a particular time (temporal dependency), and on the wind turbine performance (technology dependency) to translate wind speed's kinetic energy into electricity output. Incorporating these three aspects in one wind energy assessment tool is essential to enhance the robustness and reliability of results. In addition, the validation of results is necessary to evaluate the performance of the model. There have been continuous efforts within the renewable energy simulation community to capture these dependencies using time-resolved and geospatiallyconstrained wind power simulation models [7].

Widely used, state-of-the-art, open-source models that account for the three wind power dependencies are Renewables.ninja [8], RESKit [9], pyGRETA [10] and Atlite [11] (see Table 1). The first three models use weather data based on MERRA-2 [12], and RESKit and pyGRETA take advantage of the higher-resolved Global Wind Atlas (GWA) [13] to increase the spatial resolution to 1 km 2 . Atlite employs ERA5 [14] data, which compared to MERRA-2 has a higher spatial resolution of 0.28° [~ 31 km 2 ] and offers wind speeds at 100 m height instead of 50 m as in the case of MERRA-2.

Table 1: Comparison of common global open-source wind energy models

| Model | Author(s), year | Data source | Resolution [time, lat/lon] | Turbine modeling characteristics | Validation of results |

|-------------------|---------------------------------|---------------|-----------------------------------------|------------------------------------------------------------------------------------------------------------------------------------------|---------------------------------------------------------------------------------------------------------------------------------|

| Renewables .ninja | Staffell & Pfenninger[8] , 2016 | MERRA- 2 | 1 hour, 0.5°/0.625° | 141 existing turbine models | Time-resolved country- level aggregated data in eight European countries |

| RESKit | Ryberg et al.[9] , , 2017 | MERRA- 2 | 1 hour, 0.5°/0.625° (scaled to 1 km 2 ) | 123 existing turbine models and user- defined configurations on hub height, rotor diameter and capacity to derive synthetic power curves | Two hourly-resolved wind park generation data: one in France and one in the Netherlands and monthly power generation in Denmark |

| pyGRETA | Siala & Houmy[10], 2020 | MERRA- 2 | 1 hour, 0.5°/0.625° (scaled to 1 km 2 ) | Allows user-defined changes in cut-in and cut-out wind speeds and the full-load stage in the power curves | Not provided |

| Atlite | Hofmann et al.[11], 2021 | ERA5 | 1 hour, 0.28125° | 27 existing turbine models | Not provided |

All four models face two primary limitations: First, the absence or restricted availability of validation procedures and, second, the unaddressed inherited mean errors from their weather data source as shown by various studies [15-22]. The most overlooked aspect is the validation

of model outcomes despite its crucial relevance to narrow uncertainties and enhancing the robustness of assessments as emphasized by other authors [7,8]. Only Renewables.ninja and RESKit provide a validation procedure at all, but exclusively for European wind production. To the best of the authors' knowledge, no supplementary validations of these models have been conducted in other regions. Consequently, an evaluation of the models' reliability and performance on a global scale remains an open question. Renewables.ninja validated their model results against monthly-aggregated country wind power generation data from ENTSOE as well as nationally aggregated wind power generation data with at least hourly resolution from eight power system operators for eight European countries. The authors [8] found a systematic mean error in wind speeds in MERRA-2 across Europe. Based on this finding, they calibrated the results of their model by incorporating 'national correction factors'. The RESKit model [9] has been validated against hourly power generation data from two wind parks, resulting in a high Pearson correlation between 0.80-0.88, with total power generation underestimations between 5 and 37%. In addition, the model performance was compared with monthly power generation data from 86 turbines in Denmark, where the majority of deviations in power generation range from -20% to 30%.

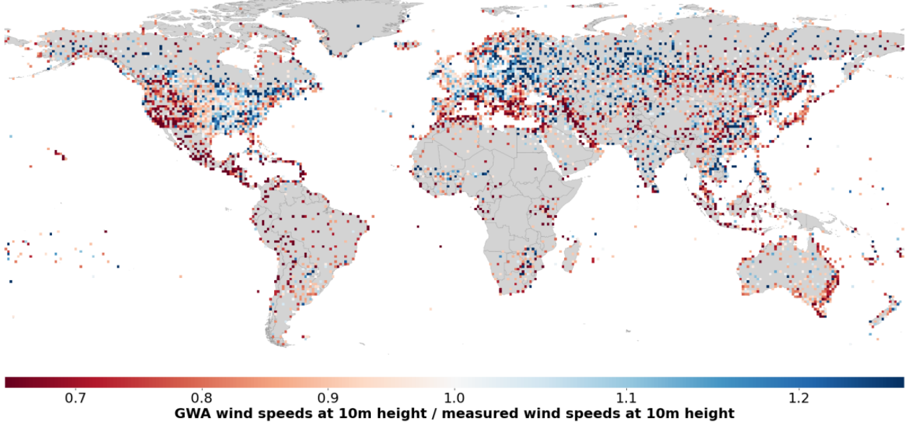

The second limitation is defined by the absence or insufficient measures taken to rectify mean errors present in the input data. Previous studies have documented that reanalysis data inherently contain certain deviations and mean errors. For instance, seasonal and diurnal mean errors in MERRA-2 and ERA5 wind speed data have been found previously [21], as well as terrain-related deviations when comparing wind speeds from reanalysis data with wind speed measurements [20,18] .Furthermore, statistical comparisons of these two datasets have been conducted [16] [19], ultimately concluding that ERA5 exhibited superior performance in comparison to MERRA-2. In addition, global mean errors in wind speeds at 10m were also found in the GWA (see Supplementary material 1.12) in particular an overestimation in wind speeds in intertropical regions, Mediterranean Europe, the western half of the USA and the southern hemisphere, and an underestimation in the northern hemisphere of the Eurasian plate and the eastern half of the USA and Canada (see Supplementary material Figure 1). Therefore, the evaluation and subsequent correction of wind speeds derived from reanalysis data can contribute significantly to the accuracy of wind energy assessments. Notably, although Renewable.ninja and RESKit acknowledge such effects and indirectly address them via their validation procedure, none of the listed models has utilized wind speed correction measures to address inherent mean errors in reanalysis data.

To cover the existing bandwidth of wind turbine characteristics, simulating as many commercially available wind turbines as possible can support achieving more realistic power generation estimations. As presented in Table 1, most models offer to simulate the performance of such turbines although the available number varies from 27 to 141. The most flexible approach when it comes to user-defined turbines is provided by RESKit [9] because it is the only model that allows the user to define a synthetic wind power curve, in addition to the ones declared by the manufacturers, based on three wind turbine parameters: hub height, rotor diameter and capacity. This is especially useful for simulating prospective wind turbines, which is often necessary when evaluating future scenarios. In summary, the identified constraints in the reviewed literature comprise the lack of thorough validation encompassing regions beyond Europe, the absence of mean error corrections in the input weather data source, and the lack of incorporating and evaluating the performance of contemporary and prospective wind turbine models.

In this article, we address the above-mentioned limitations of wind power models to enhance their reliability and applicability. Thus, this study introduces ETHOS.RESKitWind , a novel wind power model based on RESKit . Our model addresses the identified limitations through extensive validation, global applicability, and the incorporation of more than 800 wind turbine models. To enhance precision, we implement a comprehensive calibration of wind speed data gathered from 213 global weather mast locations in 25 different countries globally, spanning over 8 million hours of observation after filtration. Furthermore, we validate the simulated wind power output by comparing it with the actual hourly output from 152 turbines and wind farm sites. Finally, we further validate our model by comparing the outcomes with publicly available country-level hourly wind power generation data, as well as with yearly wind power generation estimates derived from statistical analysis. In response to this analysis, we introduce a methodology and provide global correction factors as open data to enhance alignment with widely available country-specific wind power generation data. Through these rigorous measures, our work significantly contributes to the reliability of future wind power simulations. This contribution is of utmost relevance for the ongoing energy transformation, providing a robust foundation for accurate, open and globally applicable wind energy assessments.

## Results

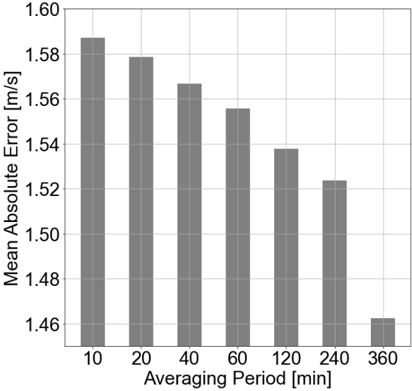

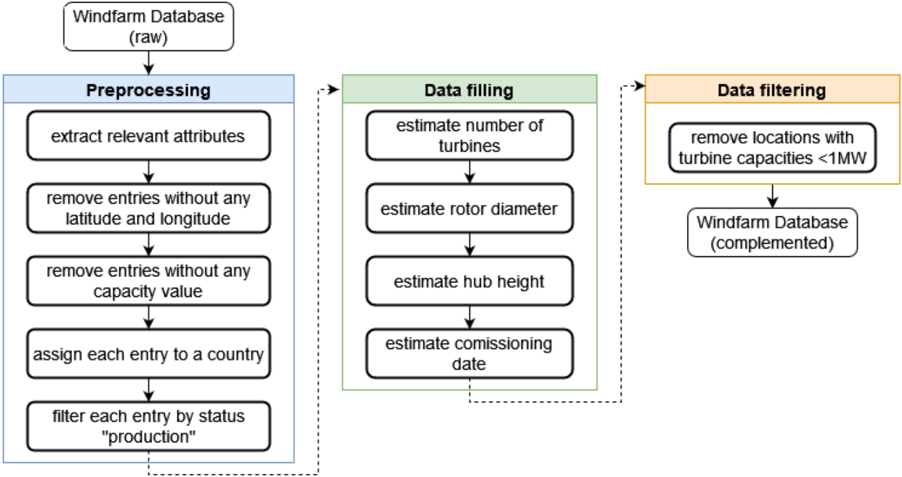

## Wind speed calibration impact and improvements

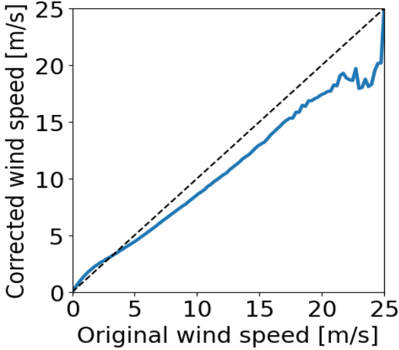

The calibration of input wind speeds yielded enhanced performance across all wind dependencies. Figure 1 illustrates the impact of wind speed calibration, showing changes when applying the value-based wind speed adjustment. Wind speeds below 3.2 m/s are adjusted upwards, while wind speeds above 3.2 m/s are adjusted downwards by around 13% on average with the largest relative correction at 13 m/s. The calibration effectively reverses an observed overestimation of wind speed in the range relevant for wind turbine power generation (3-25 m/s). This dependency shows a differential correction across wind speed values, highlighting the adequacy of a value-based wind-speed calibration approach. It is important to note that there is a steep reduction in the number of available observations at wind speeds ~20 m/s or higher, which contributes to the fluctuations seen in Figure 1. Additionally, the measured wind speeds exhibit a skewed normal distribution centered around 6 m/s, which is a relatively low average wind speed for wind energy installations.

Figure 1: Effect of the value-based wind speed calibration for different wind speeds

<details>

<summary>Image 1 Details</summary>

### Visual Description

## Line Graph: Corrected vs Original Wind Speed

### Overview

The graph compares corrected wind speed (y-axis) to original wind speed (x-axis) across a range of 0–25 m/s. Two lines are plotted: a solid blue line representing corrected wind speed and a dashed black line representing original wind speed. The corrected wind speed generally follows the original trend but with deviations, particularly at higher speeds.

### Components/Axes

- **X-axis**: "Original wind speed [m/s]" (0–25 m/s, linear scale).

- **Y-axis**: "Corrected wind speed [m/s]" (0–25 m/s, linear scale).

- **Legend**: Located in the top-right corner.

- Solid blue line: "Corrected wind speed".

- Dashed black line: "Original wind speed".

### Detailed Analysis

- **Solid Blue Line (Corrected Wind Speed)**:

- Starts at (0, 0).

- Follows a roughly linear trend with minor fluctuations.

- Key points:

- (5, 5)

- (10, 10)

- (15, 15)

- (20, 18) [~18 m/s]

- (25, 20) [~20 m/s]

- Deviations: Between x=20–25 m/s, the line dips slightly below the dashed line before rising again.

- **Dashed Black Line (Original Wind Speed)**:

- Straight diagonal line from (0, 0) to (25, 25).

- Represents a 1:1 relationship between original and corrected speeds.

### Key Observations

1. **Correction Bias**: The corrected wind speed is consistently lower than the original at lower speeds (e.g., 18 m/s vs. 20 m/s at x=20).

2. **Asymptotic Behavior**: At higher speeds (x=25), corrected speed approaches original speed (20 m/s vs. 25 m/s), suggesting diminishing correction errors.

3. **Fluctuations**: Minor oscillations in the blue line (e.g., ~16–18 m/s between x=15–20) indicate measurement noise or calibration variability.

### Interpretation

The graph demonstrates that wind speed correction introduces a systematic underestimation at lower speeds, likely due to sensor calibration or environmental factors. The convergence of the two lines at higher speeds implies improved accuracy or reduced correction needs in turbulent conditions. The deviations in the corrected line suggest potential instability in the correction algorithm or external variables (e.g., gusts, measurement lag). This could impact applications like wind energy modeling, where precise speed data is critical for turbine efficiency calculations.

</details>

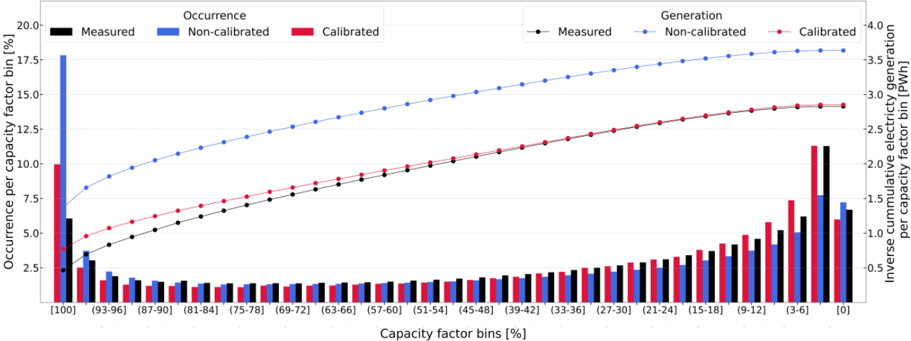

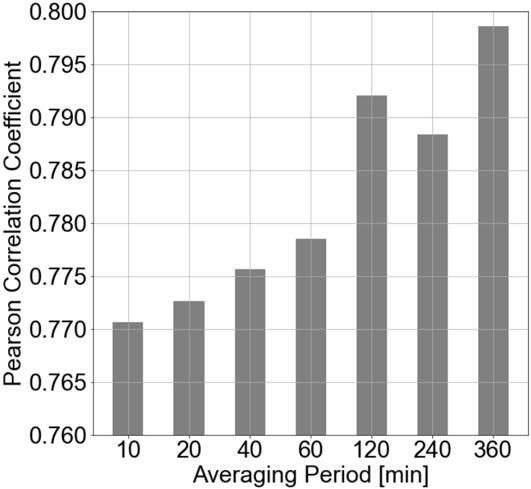

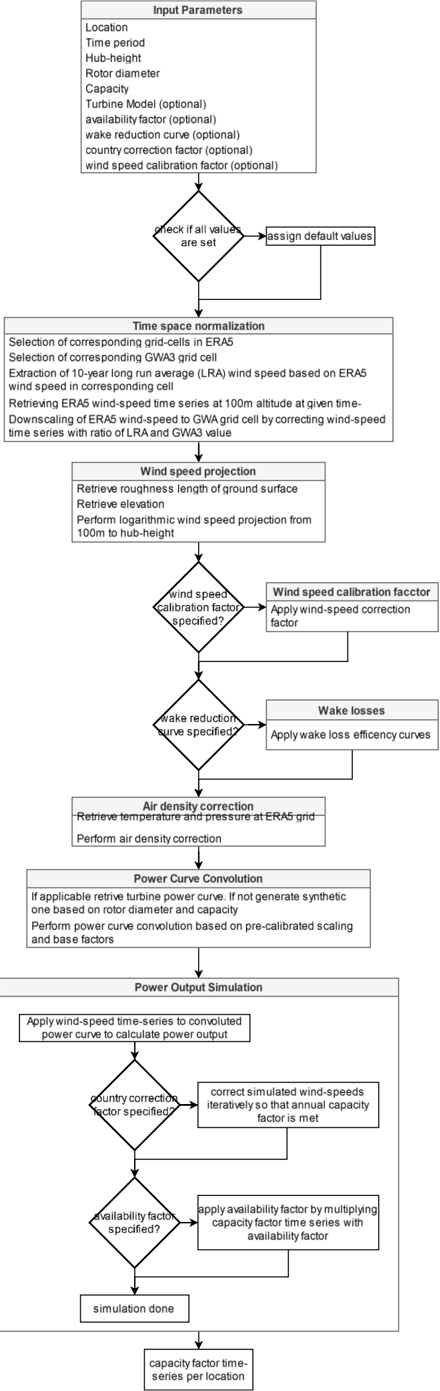

Temporal dimension. The calibration offsets over-representation of high-capacity factors in uncalibrated workflows by addressing wind speed corrections, reducing statistical errors, and

ensuring closer alignment with measurements (see Table 2 and Figure 2). The calibration procedure reduces the capacity factor mean error by 11.2% and improves temporal correlation metrics such as root mean square error, Pearson correlation, detrended cross-correlation coefficient, and Perkins' skill score (see Table 2). Despite larger deviations for higher capacity factors, the calibrated workflow ETHOS.RESKitWind achieves near-parity in total cumulative electricity generation.

Table 2: Comparison of capacity factors key statistical indicators from two workflow configurations: calibrated and non-calibrated

| Indicator [unitless] | Calibrated | Non- calibrated | Delta (absolute) [%] | Significance for wind energy assessments |

|----------------------------------------------------------|--------------|-------------------|------------------------|-----------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------|

| Measured mean | 0.363 | 0.363 | - | - |

| Mean | 0.368 | 0.48 | | Closer approximation to the total |

| Mean error | 0.006 | 0.118 | -11.2 | power generation by the turbines allowing for more precise economic estimations such as levelized cost of electricity, return of investment, value of loss load, etc. |

| Perkins skill score | 0.87 | 0.82 | +5.0 | Closer approximation to the power generation stochastic variability allowing for more precise technical considerations design to reduce this type of variability in energy systems such as infrastructure capabilities in storage, transmission, etc. |

| Root-mean square error | 0.175 | 0.229 | -5.4 | Closer approximation to the power generation natural variability allowing for more precise technical design considerations to optimize power dispatch in energy systems such as infrastructure capabilities in power generation, demand control, system synergies, sector coupling etc. |

| Pearson correlation | 0.865 | 0.85 | +1.5 | Closer approximation to the power generation natural variability allowing for more precise technical design considerations to optimize power dispatch in energy systems such as infrastructure capabilities in power generation, demand control, system synergies, sector coupling etc. |

| Detrended cross- correlation analysis (DCCA) coefficient | 0.819 | 0.798 | +2.1 | Closer approximation to the power generation natural variability allowing for more precise technical design considerations to optimize power dispatch in energy systems such as infrastructure capabilities in power generation, demand control, system synergies, sector coupling etc. |

| Count [Million h] | 7.7 | 7.7 | - | - |

Zero capacity factors occur at a similar rate (~6-7%) in both measured and simulated workflows, as wind speeds frequently fall below or exceed turbine operational thresholds (see Figure 2). The calibration procedure has a negligible effect on these occurrences. The calibrated ETHOS.RESKitWind aligns closely with measured values in the (0-3] capacity factor bin, the most frequent category. In contrast, the uncalibrated workflow underestimates occurrences by about one-third, indicating weaker temporal correlation and probability density alignment. In mid-range capacity factor bins (3-48%), the calibrated workflow aligns better with measured trends despite initially overestimating values and then declining more sharply. Conversely, in high-capacity factor bins (51-99%), the uncalibrated workflow tracks measurements more closely, though these bins contribute less to total electricity generation due to lower cumulative occurrences. At full turbine power (100% capacity factor), both workflows significantly overestimate measurements. However, the uncalibrated workflow overshoots by 2.8 times compared to 0.4 times for the calibrated workflow, significantly impacting total generation and statistical indicators.

Figure 2: Power generation and capacity factor bin comparison between over 7.7 million hourly measurements, and calibrated and non-calibrated results. The plot illustrates capacity factor percentage bins on the x-axis, with the occurrence of capacity factors as percentages shown by bars on the left y-axis and the inverse cumulative electricity generation depicted by lines on the right y-axis.

<details>

<summary>Image 2 Details</summary>

### Visual Description

## Chart: Dual-Axis Capacity Factor Analysis

### Overview

The image is a dual-axis chart comparing three data series (Measured, Non-calibrated, Calibrated) across capacity factor bins. The left y-axis represents "Occurrence per capacity factor bin [%]" (bars), while the right y-axis shows "Inverse cumulative capacity factor per bin [PWh]" (lines). The x-axis categorizes data into capacity factor bins (e.g., [100], [93-96], ..., [0]).

### Components/Axes

- **X-axis**: "Capacity factor bins [%]" with discrete bins (e.g., [100], [93-96], [87-90], ..., [0]).

- **Left Y-axis**: "Occurrence per capacity factor bin [%]" (bars).

- **Right Y-axis**: "Inverse cumulative capacity factor per bin [PWh]" (lines).

- **Legend**:

- **Black**: Measured

- **Blue**: Non-calibrated

- **Red**: Calibrated

- **Data Series**:

- **Bars** (left y-axis): Three categories (Measured, Non-calibrated, Calibrated).

- **Lines** (right y-axis): Three categories (Measured, Non-calibrated, Calibrated).

### Detailed Analysis

#### Left Y-Axis (Occurrence)

- **Measured (Black)**:

- Highest occurrence in [100] bin (~10%).

- Decreases sharply to ~2.5% in [93-96] bin.

- Remains low (~1-2%) in lower bins.

- **Non-calibrated (Blue)**:

- Lower than Measured in [100] (~7.5%).

- Slightly higher than Measured in [93-96] (~5%).

- Decreases to ~1-2% in lower bins.

- **Calibrated (Red)**:

- Lowest in [100] (~5%).

- Increases gradually to ~2.5% in [93-96].

- Remains low (~1-2%) in lower bins.

#### Right Y-Axis (Inverse Cumulative Generation)

- **Measured (Black Line)**:

- Starts at ~2.5 PWh in [100] bin.

- Increases gradually to ~3.5 PWh in [0] bin.

- **Non-calibrated (Blue Line)**:

- Starts at ~3.5 PWh in [100] bin.

- Increases steadily to ~4.0 PWh in [0] bin.

- **Calibrated (Red Line)**:

- Starts at ~2.5 PWh in [100] bin.

- Increases to ~3.0 PWh in [0] bin.

### Key Observations

1. **Occurrence Trends**:

- Measured data dominates the highest capacity factor bin ([100]).

- Non-calibrated and Calibrated show lower occurrence in high bins but similar trends in lower bins.

2. **Inverse Cumulative Generation**:

- Non-calibrated has the highest inverse cumulative generation across all bins.

- Measured and Calibrated show similar trends, with Calibrated slightly outperforming Measured in lower bins.

3. **Divergence**:

- Measured has the highest occurrence in [100] but the lowest inverse cumulative generation.

- Non-calibrated has the lowest occurrence in [100] but the highest inverse cumulative generation.

### Interpretation

The data suggests that **Non-calibrated models** prioritize higher capacity factors (e.g., [100] bin) for generation efficiency, as evidenced by their dominance in inverse cumulative generation. However, their occurrence in high-capacity bins is lower than Measured data, indicating potential trade-offs between occurrence and generation efficiency. **Calibrated models** balance these factors, showing moderate occurrence and generation performance. The **Measured data** reflects real-world distribution, with high occurrence in the [100] bin but lower cumulative generation, possibly due to operational constraints or measurement limitations. This analysis highlights the importance of calibration in optimizing capacity factor utilization for energy systems.

</details>

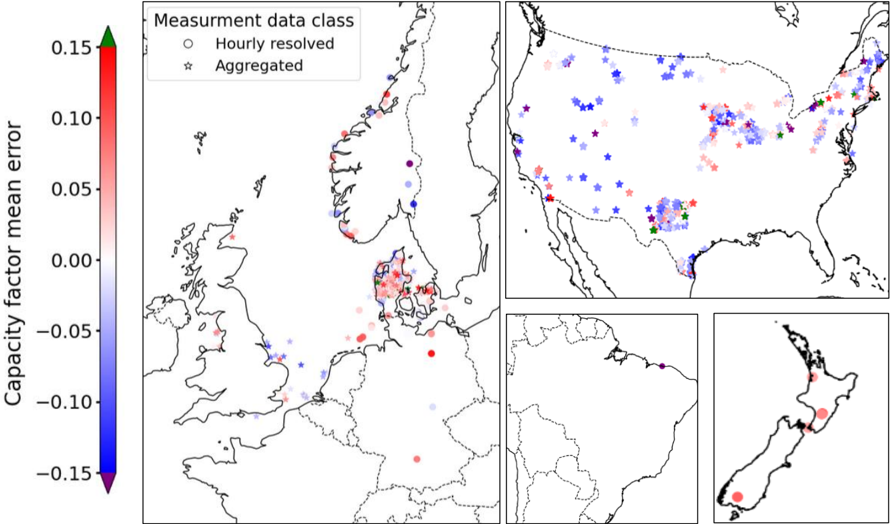

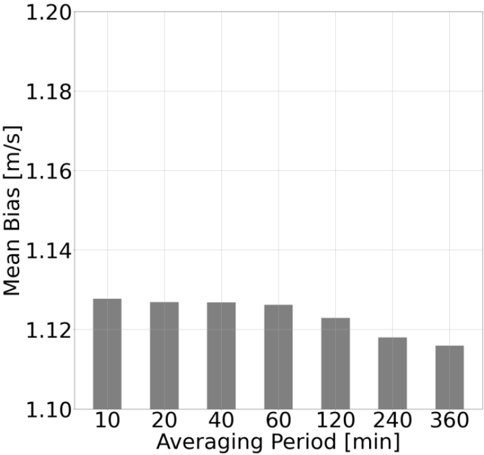

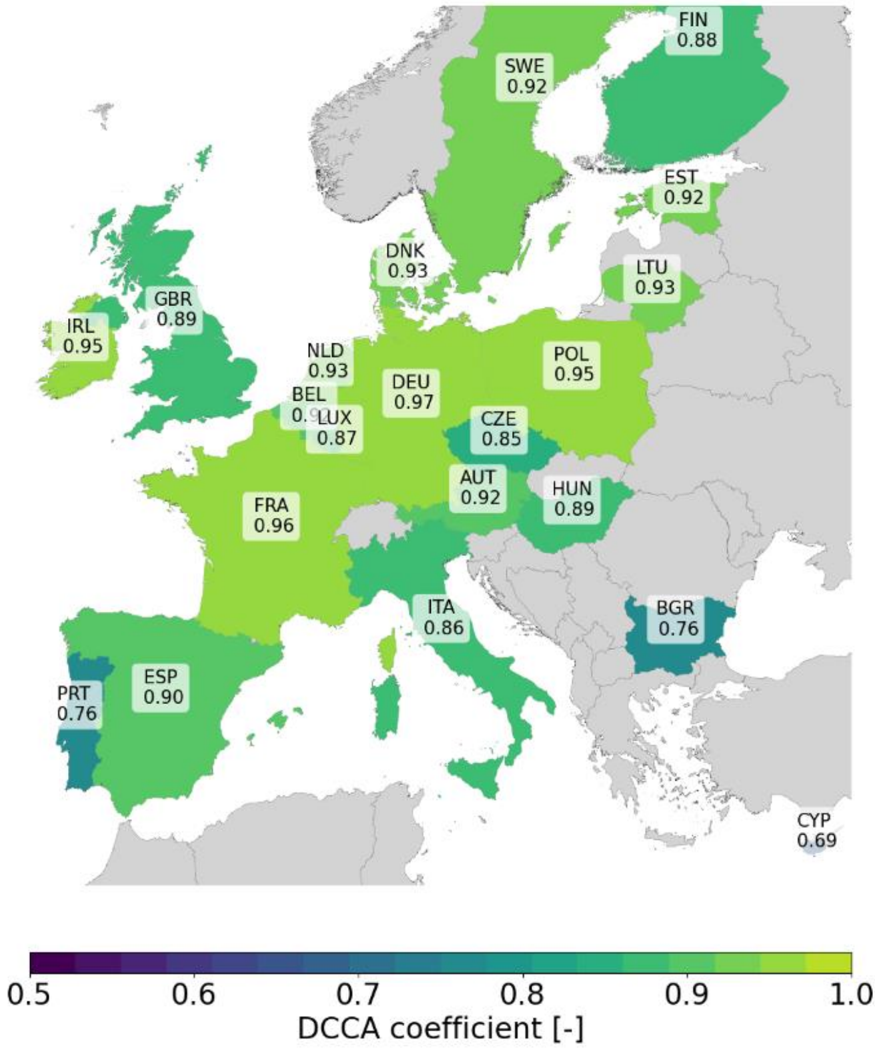

Spatial dimension. The calibrated ETHOS.RESKitWind model demonstrates no significant location bias across turbine types (i.e., on- or offshore) or locations when compared to both aggregated and hourly-resolved data, reinforcing its robustness in the spatial dimension. For hourly-resolved data, most locations show a mean capacity factor error within ±10%, with a predominantly positive deviation (see Figure 3). This margin is considered acceptable for generation models. However, isolated locations in Norway and Brazil show larger mean errors (~-17%), possibly due to discrepancies between simulated turbine characteristics and measurements.

Figure 3: ETHOS.RESKitWind results capacity factor mean error comparison using two classes of obtained measurement data: hourly-resolved and aggregated.

<details>

<summary>Image 3 Details</summary>

### Visual Description

## Composite Map: Capacity Factor Mean Error by Region

### Overview

The image presents a composite map visualization of capacity factor mean error across four regions: Europe, the United States, South America, and New Zealand. Data points are color-coded using a gradient scale from -0.15 (blue) to +0.15 (red), with green at the top. Two data classes are distinguished: "Hourly resolved" (circles) and "Aggregated" (stars). The visualization emphasizes spatial distribution and measurement accuracy variations.

### Components/Axes

1. **Primary Map (Europe)**:

- **Legend**: Located in the top-left corner, with:

- **Color Scale**: Vertical gradient from blue (-0.15) to red (+0.15), labeled "Capacity factor mean error."

- **Data Class Symbols**:

- Circles (blue to red gradient) for "Hourly resolved" data.

- Stars (blue to red gradient) for "Aggregated" data.

- **Markers**:

- Red, blue, and green circles/stars distributed across Europe, with higher density in Northern and Central Europe.

- Notable clusters in Scandinavia (red), UK (blue), and Central Europe (mixed).

2. **Sub-Maps**:

- **USA**:

- Mixed markers (red, blue, green) with higher density in the Midwest and Northeast.

- Aggregated data (stars) dominate in Texas and California.

- **South America**:

- Sparse markers, primarily blue (negative error) in Brazil and Argentina.

- **New Zealand**:

- Two red circles (positive error) in the North Island and one blue circle in the South Island.

3. **Color Scale**:

- Positioned left of the Europe map, with a green arrowhead at the top (0.15) and a purple arrowhead at the bottom (-0.15).

### Detailed Analysis

- **Europe**:

- **Hourly Resolved Data**:

- Red circles (positive error) concentrated in Scandinavia and the UK.

- Blue circles (negative error) in Southern Europe (e.g., Spain, Italy).

- **Aggregated Data**:

- Red stars in Germany and France; blue stars in Eastern Europe.

- **Color Scale Alignment**: Red markers align with the upper end of the scale (+0.10 to +0.15), while blue markers align with the lower end (-0.10 to -0.05).

- **USA**:

- **Hourly Resolved Data**:

- Red circles in the Midwest (e.g., Illinois, Ohio) and blue circles in the Southwest (e.g., Arizona).

- **Aggregated Data**:

- Red stars in Texas and California; blue stars in the Midwest.

- **Notable**: Mixed data types in the Northeast (e.g., New York, Pennsylvania).

- **South America**:

- **Hourly Resolved Data**:

- Blue circles in Brazil and Argentina, indicating negative errors (-0.05 to -0.10).

- **Aggregated Data**:

- No stars present; all markers are circles.

- **New Zealand**:

- **Hourly Resolved Data**:

- Red circles in the North Island (positive error, ~+0.05) and a blue circle in the South Island (-0.05).

### Key Observations

1. **Data Density**: Europe has the highest density of markers, suggesting more granular measurement data.

2. **Error Distribution**:

- Positive errors (+0.05 to +0.15) dominate in Northern Europe and the US Midwest.

- Negative errors (-0.10 to -0.05) are prevalent in Southern Europe, South America, and New Zealand.

3. **Data Class Distribution**:

- Aggregated data (stars) are more common in the US and Europe, while South America relies solely on hourly data.

4. **Outliers**:

- A single red circle in South America (Brazil) deviates from the general negative error trend.

### Interpretation

The visualization highlights regional disparities in capacity factor measurement accuracy:

- **Europe**: High-resolution data (hourly) shows mixed errors, with Northern regions experiencing overestimation (red) and Southern regions underestimation (blue). Aggregated data aligns with these trends but with broader spatial coverage.

- **USA**: Mixed data types suggest varied measurement approaches. The Midwest’s positive errors may reflect grid instability, while the Southwest’s negative errors could indicate underreporting.

- **South America**: Sparse data and consistent negative errors may indicate limited measurement infrastructure or systemic underestimation.

- **New Zealand**: Isolated positive errors in the North Island suggest localized measurement challenges.

The capacity factor mean error range (-0.15 to +0.15) underscores significant variability in renewable energy measurement accuracy, with implications for grid management and policy. The dominance of aggregated data in the US and Europe highlights a preference for coarser resolution, potentially sacrificing granular insights for broader trends.

</details>

Using aggregated generation data, which provides broader spatial coverage but lacks temporal detail, reveals a mix of trends (see Figure 3). Wind parks in the USA and offshore locations west of the UK show mostly negative mean errors, while those in Denmark and the UK's east coast exhibit positive deviations. While useful for expanding location coverage, this approach introduces greater uncertainty due to its lack of temporal granularity.

Most mean errors by region and turbine type fall within ±0.02, with the largest positive errors seen in New Zealand (+0.078) and Germany (+0.0526) (see Table 4 in the Supplementary material). The largest negative error occurs in Brazil (-0.175). Denmark demonstrates the most accurate results (-0.0003), followed by Norway (-0.004). No consistent discrepancies are linked to turbine types. Hourly-resolved data proves more reliable for precise analysis, enabling the identification of phenomena like induced stalling, restricted operation, and the exact onset of power generation. This enhances the model's ability to address spatial and operational dynamics effectively.

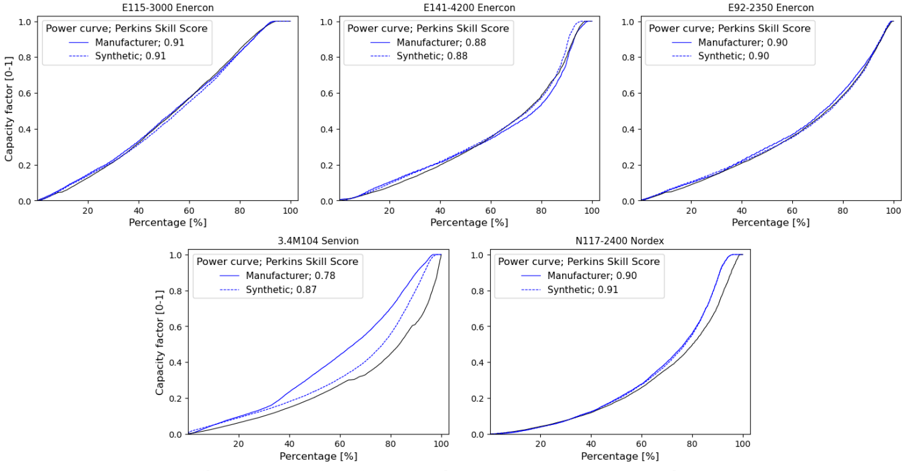

Technological dimension. In order to assess the efficacy of our model in replicating wind power generation, we conducted an experiment wherein we subjected the model to measured wind speeds at hub height. This enables the identification of potential input wind speed biases in temporal and location dependencies. However, reliable hub-height wind speed data is scarce. Only Denker and Wulf AG provided the requisite time-resolved wind speeds at hub height in conjunction with power generation from five distinct turbine models. Figure 4 compares the measurements and the simulation results obtained using the manufacturer's power curve included in the windpower.net [23] database and the synthetic power curve generator algorithm in ETHOS.RESKitWind .

Figure 4: Real and synthetic power curves comparison

<details>

<summary>Image 4 Details</summary>

### Visual Description

## Power Curve Comparison: Manufacturer vs Synthetic Models

### Overview

The image contains five power curve graphs comparing Manufacturer and Synthetic models across different wind turbine types (Enercon and Nordex). Each graph shows capacity factor (0-1) against percentage (%) from 0 to 100. Solid lines represent Manufacturer data, dashed lines represent Synthetic data, with Perkins Skill Scores provided in legends.

### Components/Axes

- **X-axis**: Percentage (%) from 0 to 100 (all graphs)

- **Y-axis**: Capacity Factor (0-1) (all graphs)

- **Legends**:

- Manufacturer (solid line) with Perkins Skill Score

- Synthetic (dashed line) with Perkins Skill Score

- **Graph Titles**:

1. E115-3000 Enercon

2. E141-4200 Enercon

3. E92-2350 Enercon

4. 3.4M104 Servion

5. N117-2400 Nordex

### Detailed Analysis

1. **E115-3000 Enercon**

- Manufacturer: 0.91 Perkins Skill Score (solid line)

- Synthetic: 0.91 Perkins Skill Score (dashed line)

- Both lines show identical upward trends, reaching ~0.8 capacity factor at 100%.

2. **E141-4200 Enercon**

- Manufacturer: 0.88 Perkins Skill Score (solid line)

- Synthetic: 0.88 Perkins Skill Score (dashed line)

- Similar trend to E115-3000, with slight divergence at 80% (Manufacturer: ~0.75, Synthetic: ~0.72).

3. **E92-2350 Enercon**

- Manufacturer: 0.90 Perkins Skill Score (solid line)

- Synthetic: 0.90 Perkins Skill Score (dashed line)

- Manufacturer line consistently above Synthetic, reaching ~0.85 capacity factor at 100%.

4. **3.4M104 Servion**

- Manufacturer: 0.78 Perkins Skill Score (solid line)

- Synthetic: 0.87 Perkins Skill Score (dashed line)

- Synthetic line surpasses Manufacturer at ~60%, reaching ~0.8 capacity factor at 100%.

5. **N117-2400 Nordex**

- Manufacturer: 0.90 Perkins Skill Score (solid line)

- Synthetic: 0.91 Perkins Skill Score (dashed line)

- Synthetic line slightly outperforms Manufacturer, with both reaching ~0.85 capacity factor at 100%.

### Key Observations

- **Consistency**: Manufacturer lines generally maintain higher capacity factors than Synthetic lines, except in 3.4M104 Servion.

- **Skill Score Correlation**: Higher Perkins Skill Scores (0.90-0.91) correlate with better performance in most cases.

- **Anomaly**: 3.4M104 Servion shows Synthetic outperforming Manufacturer despite lower Manufacturer Skill Score (0.78 vs 0.87).

### Interpretation

The data suggests Manufacturer models typically demonstrate superior capacity factors, but Synthetic models can outperform in specific configurations (e.g., 3.4M104 Servion). The Perkins Skill Scores appear to reflect model accuracy, with higher scores aligning with better performance in most cases. The exception in 3.4M104 Servion indicates potential model-specific factors influencing outcomes, warranting further investigation into synthetic data generation methods for that configuration.

</details>

A comparison of the manufacturers and synthetic power curves of Enercon and N117-2400 turbines reveals a striking similarity in shape. This is corroborated by a Perkins Skill score that is highly similar in numerical terms. This indicates that the simulated power curves are highly analogous and closely aligned with the capacity factor measurements. The line plot for the 3.4M104 Senvion shows that the simulated power curves produce significantly different sorted

capacity factors compared to the manufacturer's and to the actual measurements. Possible causes of the latter might come from data handling and processing of measurements or the relative developing year of the turbine (2008). A newly introduced synthetic power curve score (SPPC), see Methods section, overcomes possible data errors as well as the lack of power generation data for all turbines by comparing directy manufacturer's and syntetic power curves directy, bypassing the need to have time-resolved wind speeds at hub height. Table 3 presents the average SPCS for the turbines manufactured by the six leading producers, as reported in the Windpower.net [23] database. The data in this table demonstrate that, irrespective of the wind speed input, the synthetic power curve algorithm developed [9] and included in ETHOS.RESKitWind achieves a mean power curve score of 0.96 or higher for the majority of global installed capacity. This is especially beneficial in the case where the actual power curve is unknown.

The results obtained from all three dimensions demonstrate that the ETHOS.RESKitWind power generation model, when used in conjunction with the calibration procedure, offers a reliable assessment tool across the different measured data classes obtained. It should be noted, however, that the availability of such data on a global scale is limited, which presents a challenge to the global validation of power simulation models.

| Table 3: Average six manufacturers Manufacturer | synthetic power according to the Global installed capacity [GW] | curve score in installed capacity Percentage of global capacity | ETHOS.RESKit Wind for the reported according Turbines installed [thousand] | turbines of the top windpower.net [23] Synthetic power curve score 1 |

|---------------------------------------------------|-------------------------------------------------------------------|-------------------------------------------------------------------|------------------------------------------------------------------------------|------------------------------------------------------------------------|

| Vestas | 110.6 | 29.68 | 49.7 | 0.988 |

| Enercon | 45.4 | 12.19 | 24.2 | 0.965 |

| GE Energy | 43 | 11.54 | 25 | 0.984 |

| Siemens | 38.9 | 10.43 | 14.2 | 0.985 |

| Gamesa | 36 | 9.67 | 24.5 | 0.994 |

| Nordex | 21.7 | 5.81 | 8.7 | 0.992 |

| Total | 295.6 | 79.32 | 146.3 | 0.984 |

1 the synthetic power curve score is the cumulative minimum sum of capacity factors distribution of two power curves: manufacturer and synthetic, taking as reference the manufacturer one.

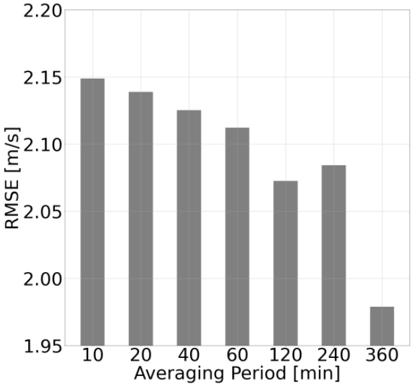

## Evaluation against global wind power generation estimates

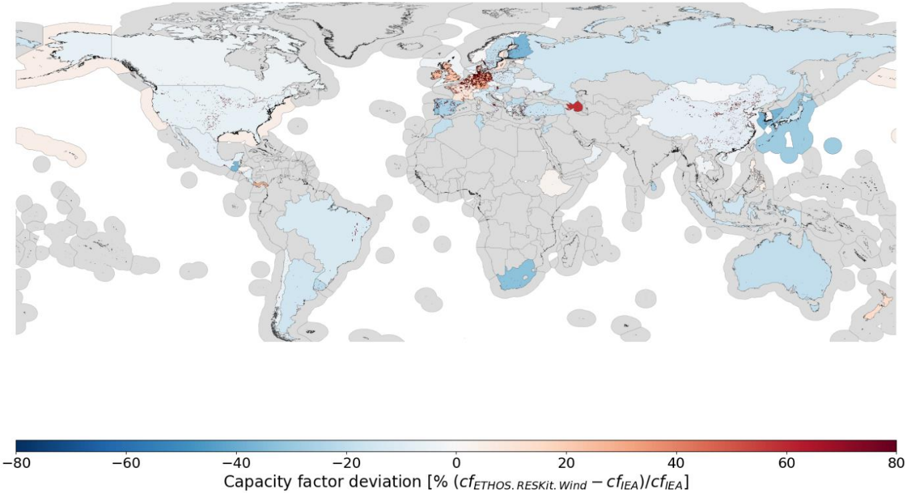

To address the limitation of global measurement data availability and evaluate the model's performance against global power estimates, ETHOS.RESKitWind was compared with publicly available power estimates at the country level for several years by the International Energy Agency (IEA) (see Figure 5). After minimizing the effects of technology differences, temporal uncertainties, and locational variations, the model showed a slight tendency to underestimate capacity factors across most countries, with discrepancies of approximately -10% or less. The IEA reports a global average capacity factor of 0.306 across 71 countries and offshore regions, while the model yielded an average of 0.278, a relative deviation of 9.1%. In comparison, the non-calibrated workflow demonstrated a significant overestimation, with an average capacity factor of 0.372 and a relative deviation of 21.3%.

Regional trends are evident. Most countries in the Americas, Oceania, East Asia, and South Africa follow the global pattern of underestimation, with exceptions like Panama, New Zealand, and Azerbaijan, which exhibit higher capacity factors. Europe presents a more varied picture. Countries around the North Sea and Sweden's offshore region show slight overestimations, which may stem from the higher density of weather masts used for wind speed calibration in these areas.

These deviations arise from several factors. The IEA dataset lacks detailed turbine technology characteristics, necessitating assumptions and external data sources to define turbine properties. Further uncertainties stem from the annual averaging of generation data, which obscures temporal dynamics, and from challenges in precisely locating turbines or identifying their commissioning dates. Additionally, external influences such as grid congestion, curtailment, import/export dynamics, and discrepancies in reporting conditions contribute to differences between simulated and actual results.

Figure 5: Capacity factor deviation map between ETHOS.RESKitWind and IEA data [24] based on the average deviation in the years 2017 to 2021.

<details>

<summary>Image 5 Details</summary>

### Visual Description

## Heatmap: Global Wind Energy Capacity Factor Deviation

### Overview

A choropleth world map visualizing deviations in wind energy capacity factors between two datasets: `cf_ETHOS_RESKI_Wind` and `cf_EIA`. The map uses color gradients to represent percentage deviations, with blue indicating negative deviations (lower actual capacity) and red indicating positive deviations (higher actual capacity). The legend quantifies deviations relative to the EIA baseline.

### Components/Axes

- **Legend**: Horizontal bar at the bottom labeled "Capacity factor deviation [% (cf_ETHOS_RESKI_Wind – cf_EIA)/cf_EIA]".

- Scale: -80% (dark blue) to +80% (dark red), with intermediate steps:

- Dark blue (-80% to -60%)

- Medium blue (-60% to -40%)

- Light blue (-40% to -20%)

- Gray (-20% to +20%)

- Light orange (+20% to +40%)

- Orange (+40% to +60%)

- Red (+60% to +80%)

- **Map**: Countries shaded according to deviation magnitude. No explicit axis labels, but spatial grounding aligns with standard geographic coordinates.

### Detailed Analysis

- **Color Distribution**:

- **Negative Deviations (Blue)**: Dominant in North America, South America, Australia, and parts of Asia.

- **Positive Deviations (Red/Orange)**: Concentrated in Europe, North Africa, and the Middle East.

- **Neutral (Gray)**: Scattered across Africa, Central Asia, and Oceania.

- **Notable Regions**:

- **Europe**: High positive deviations (orange/red), suggesting overestimation by EIA or stronger wind resources.

- **North Africa**: Red shading indicates significant positive deviations.

- **Middle East**: A single red dot (likely a data point or outlier) stands out in the Arabian Peninsula.

- **South America**: Predominantly light blue, indicating underestimation by EIA.

### Key Observations

1. **Europe’s High Deviations**: Multiple countries (e.g., Germany, Spain) show red/orange shading, implying actual capacity factors exceed EIA estimates by 20–80%.

2. **Middle Eastern Outlier**: The red dot in the Arabian Peninsula suggests an extreme deviation (>60%), requiring further investigation.

3. **South America’s Underestimation**: Most countries (e.g., Brazil, Argentina) show light blue shading, indicating actual capacity factors are 20–40% lower than EIA estimates.

4. **Asia’s Mixed Trends**: East Asia (e.g., China) has light blue shading, while parts of Central Asia are neutral.

### Interpretation

The map highlights systemic discrepancies between EIA wind energy capacity estimates and ETHOS_RESKI_Wind data. Positive deviations in Europe and North Africa may reflect:

- **Improved Wind Resource Assessment**: ETHOS_RESKI_Wind could incorporate higher-resolution wind data or updated turbine efficiency models.

- **Policy Overestimations**: EIA might have conservative estimates due to regulatory or funding constraints.

Negative deviations in South America and parts of Asia suggest:

- **Underestimated Wind Potential**: ETHOS_RESKI_Wind may use more accurate local wind data, revealing lower-than-expected capacity factors.

- **Data Gaps**: Sparse monitoring infrastructure in these regions could lead to EIA overestimations.

The Middle Eastern red dot warrants scrutiny—it could indicate a data anomaly, localized wind resource variability, or a methodological error in either dataset.

### Spatial Grounding & Validation

- The legend’s color coding aligns spatially: red regions cluster in high-latitude areas (Europe, North Africa), while blue regions dominate tropical and subtropical zones.

- Cross-referencing legend colors with map shading confirms consistency (e.g., red in Europe matches the +40%–80% range).

### Limitations

- **Resolution**: Country-level shading may obscure subnational variations.

- **Temporal Scope**: No timeframe is specified for the capacity factors (e.g., annual averages vs. peak seasons).

- **Data Sources**: Unclear if `cf_ETHOS_RESKI_Wind` and `cf_EIA` use identical methodologies (e.g., turbine height, wind speed thresholds).

</details>

The lack of globally accessible, time-resolved wind power generation data significantly hinders precision. Calibration factors, as discussed in Supplementary material 5.8, help mitigate these discrepancies, with national correction factors provided for alignment with IEA data. Furthermore, raster-format correction files extend beyond country boundaries, enabling assessments in regions without wind production and enhancing the global applicability of ETHOS.RESKitWind .

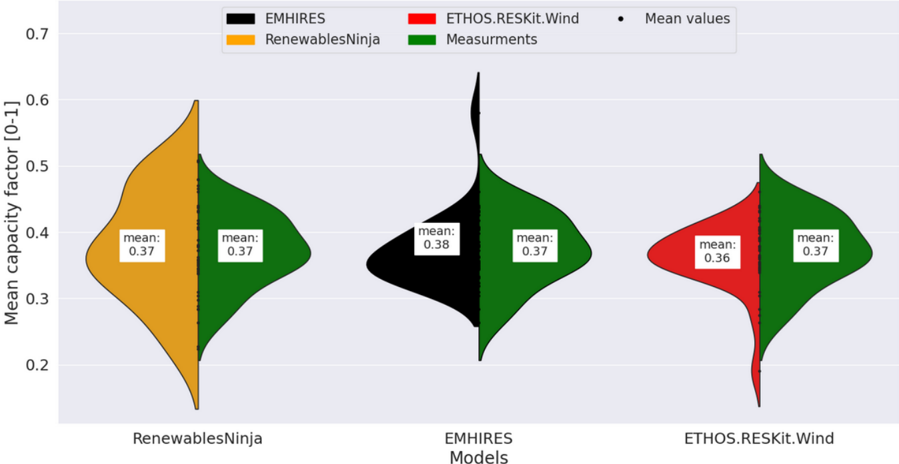

In conclusion, this evaluation underscores the model's ability to improve assessments of wind energy dependencies while highlighting the limitations of relying on aggregated country-level data. ETHOS.RESKitWind demonstrates significant advancements in accuracy compared to non-calibrated workflows, setting a strong foundation for global wind energy modeling. The previously described enhancements of our model also result in superior statistical indicators in comparison to similar models such as renewables.ninja (see Supplementary material 1.13).

## Discussion

In this study, we introduce ETHOS.RESKitWind, an open-source, time-resolved, validated wind power generation simulation tool designed for global applicability . ETHOS.RESKitWind leverages high-resolution wind data (250 m²) from ERA5 and GWA3, providing robust

simulation capabilities and featuring the most extensive turbine model library among available tools. This library includes 880 turbine types and supports the creation of customizable synthetic power curves.

A key innovation in ETHOS.RESKitWind is its calibration process, which uses over 8 million wind speed measurements from 213 global meteorological mast sites across 25 countries and more than 8 million hours of power generation data from 152 wind turbines across seven onshore and offshore regions. This comprehensive dataset enabled a value-dependent correction of systematic wind speed biases, ensuring improved alignment with real-world data. The calibration process significantly enhances model accuracy. Temporal adjustments to input wind speeds shift capacity factors toward smaller values, aligning more closely with frequently measured capacity factors. This results in a net 0.112 improvement in capacity factor deviation compared to turbine-level time-resolved data. When simulating historical country wind fleets, the model reduced the average capacity factor deviation from 21% to 9%. Importantly, no relevant locational capacity factor deviations were observed, and the model performed consistently well across both onshore and offshore regions. Furthermore, the synthetic power curve score, which evaluates alignment between synthetic and manufacturer-provided power curves, demonstrated high accuracy. Approximately 80% of globally installed turbines achieved a minimum correlation of 0.96, underscoring the precision of the model. By reducing capacity factor deviations at both the turbine and aggregated annual levels, ETHOS.RESKitWind demonstrates superior alignment with IEA-based generation data from 71 countries. These advancements position ETHOS.RESKitWind as a leading tool for global wind energy modeling.

Importantly, although ETHOS.RESKitWind can simulate individual turbines, it is better suited to larger-scale assessments involving hundreds of turbine sites. Because of the spatial resolution characteristics of the ERA5 and GWA3 datasets, the model is less accurate at single locations where local wind speed conditions are not adequately represented. Furthermore, diurnal, seasonal, and terrain-based biases, as documented in the literature, fall outside the scope of our current correction method. Future work should concentrate on the resolution of these remaining biases in order to further enhance the precision of wind energy simulations. Moreover, enhancements in higher temporal resolution and more precise local representations of wind would be advantageous for the field. Furthermore, the entire energy and climate community would greatly benefit from the availability of more publicly accessible localized timeresolved wind speeds and power generation data. In light of these considerations, the authors urge the scientific community to engage in more collaborative endeavors and to advocate for the establishment of transparent guidelines governing the accessibility of data for scientific purposes.

The findings of this study hold substantial value for the scientific and energy system analysis communities. ETHOS.RESKitWind marks a major step forward in wind energy modeling, combining global applicability with high spatial resolution and the capability to simulate a wide range of technical turbine characteristics. As the first wind energy simulation tool to undergo a rigorous validation and calibration process across diverse spatial and temporal scales on a global level, it sets a new standard in the field. Additionally, the inclusion of regional correction factors enhances the precision of wind power assessments, even in areas currently lacking wind turbine installations. By enabling more accurate simulations, the tool equips decisionmakers with critical insights to optimize renewable energy utilization and make strategic investments. This advancement significantly supports the integration of renewable energy into global power systems.

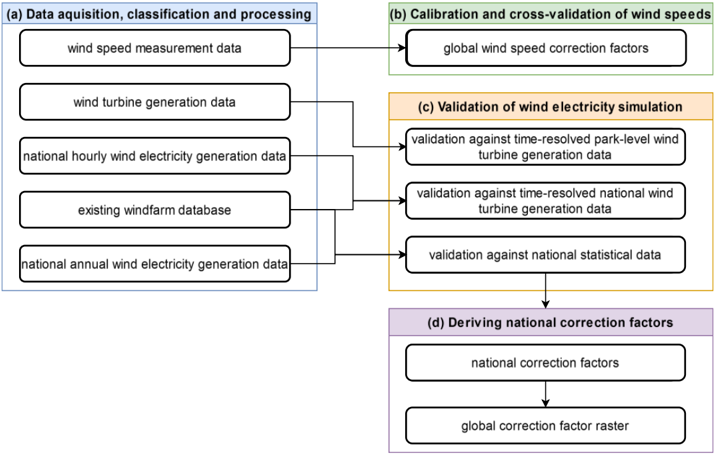

## Methods

In this section, we outline the comprehensive methodology employed for our wind energy simulation and validation approach implemented in ETHOS.RESKitWind [9,24] (see more details about RESKit in Supplementary material 1.6), aimed at providing robust basis for global wind energy assessments. The methodology is structured into four subsections covering (a) data acquisition and processing, (b) deriving global wind speed calibration factors aiming at addressing potential mean errors in the underlying weather data, (c) a subsequent extensive validation of our wind energy simulation workflow by comparing against time-resolved park level power generation data, country-level power generation data and national statistical data, and (d) deriving national correction factors. Each step ensures the accuracy and robustness of the employed simulation framework.

Figure 6: Overview of the applied methodological steps.

<details>

<summary>Image 6 Details</summary>

### Visual Description

## Flowchart: Wind Data Processing and Validation Workflow

### Overview

This flowchart illustrates a multi-stage process for acquiring, calibrating, validating, and deriving correction factors for wind data used in electricity generation simulations. It emphasizes data sources, validation methods, and the derivation of national/global correction factors.

### Components/Axes

- **Sections**:

- **(a) Data Acquisition, Classification, and Processing**:

- Wind speed measurement data

- Wind turbine generation data

- National hourly wind electricity generation data

- Existing windfarm database

- National annual wind electricity generation data

- **(b) Calibration and Cross-Validation of Wind Speeds**:

- Global wind speed correction factors

- **(c) Validation of Wind Electricity Simulation**:

- Validation against time-resolved park-level wind turbine generation data

- Validation against time-resolved national wind turbine generation data

- Validation against national statistical data

- **(d) Deriving National Correction Factors**:

- National correction factors

- Global correction factor raster

- **Flow Arrows**:

- Data from **(a)** feeds into **(b)**.

- **(b)** outputs to **(c)**.

- **(c)** outputs to **(d)**.

- **(d)** produces a "global correction factor raster" as the final output.

### Detailed Analysis

- **Section (a)**: Focuses on raw data inputs, including direct measurements (wind speed), turbine output, and aggregated national data (hourly/annual). The "existing windfarm database" suggests integration of historical operational data.

- **Section (b)**: Highlights calibration using "global wind speed correction factors," implying adjustments to raw wind speed data for consistency or accuracy.

- **Section (c)**: Validates simulations against three benchmarks:

1. Park-level turbine data (high-resolution, localized).

2. National turbine data (broader spatial coverage).

3. National statistical data (macro-level trends).

- **Section (d)**: Derives **national correction factors** from validation results, which are then aggregated into a **global correction factor raster** (spatial representation of corrections).

### Key Observations

1. **Hierarchical Validation**: Validation progresses from localized (park-level) to national scales, ensuring robustness.

2. **Data Integration**: Combines direct measurements (wind speed), operational data (turbine generation), and existing databases for comprehensive analysis.

3. **Output Structure**: The final "global correction factor raster" suggests a spatially explicit model for applying corrections across regions.

### Interpretation

This workflow underscores a systematic approach to improving wind energy simulations by:

- **Calibrating raw data** (Section b) to address measurement biases or inconsistencies.

- **Validating simulations** against multiple data sources (Section c) to ensure reliability at different scales.

- **Deriving correction factors** (Section d) to refine models for national and global applications.

The flowchart implies that corrections are data-driven and iterative, with validation at multiple scales reducing uncertainty. The "global correction factor raster" likely serves as a tool for spatial analysis, enabling region-specific adjustments in wind energy forecasting or resource assessment.

No numerical values or trends are explicitly provided in the diagram, but the structure emphasizes methodological rigor and scalability.

</details>

## Data Acquisition, Classification and Processing

In the following, we will address the acquisition, classification, and processing of data crucial for the validation and enhancement of our wind energy simulation workflow. The data sources encompass global wind speed measurements, wind turbine power generation records, information on existing windfarms, and historical national wind electricity power generation data. Each dataset plays a distinct role in refining our simulation model, either through correction or validation processes.

## Wind speed measurement data

To initiate the study, we collected 18.3 million hourly, mostly openly available recordings from 1980 to 2022 of wind speeds from meteorological masts worldwide, ranging in height from 40 m to 160 m at 210 locations in 25 countries. These recordings are utilized to derive a wind speed correction. Measurements from masts at ground level (10m) have not been included since relevant wind speed heights for turbine simulations are around 100m and large projection distances entail additional sources of error [25]. Utilizing quality control information provided

together with the measured wind speeds, we filtered out erroneous measurements, e.g. no valid recording, negatives, duplicated values, etc. For further processing, we resampled the measured wind speeds and those from ERA5 to hourly values, standardized them to UTC time, and saved their geolocations as well as the measurement heights respectively.

## Wind turbine electricity power generation data

A total of 8 million hourly recordings of turbine electricity generation from 152 onshore and offshore wind turbines and wind farms from 2002 to 2021 from 6 countries globally were collected from various data sources and will be employed in a validation of our wind turbine simulation workflow. Harmonizing this data involved a process analogous to the wind speeds procedure and involved converting the power output time series to a capacity factor time series by dividing the measured power with the nominal capacity. Furthermore, in the case of wind farm data, the reported electricity output was converted into a capacity factor time series by dividing by the total park capacity.

As a quality control measure, we applied an algorithm to filter out out-of-normal operations such as curtailment, maintenance, or other irregularities from the gathered data to avoid distorting the validation results. For this, we simulated the capacity factors of the respective turbines (see Supplementary material 1.6) to first exclude observation periods in which the measured capacity factor was zero while the simulated capacity factor was greater than 0.4 to account for erroneous measurements. Second, we filtered observation periods in which the measured capacity factor exhibited zero for longer than a day to capture maintenance. Lastly, we filtered values where the measured capacity factor does not change for a minimum of 5 hours, while the difference between the measured and simulated capacity factor is greater than 0.1 to filter out curtailment lasting longer than 5 hours.

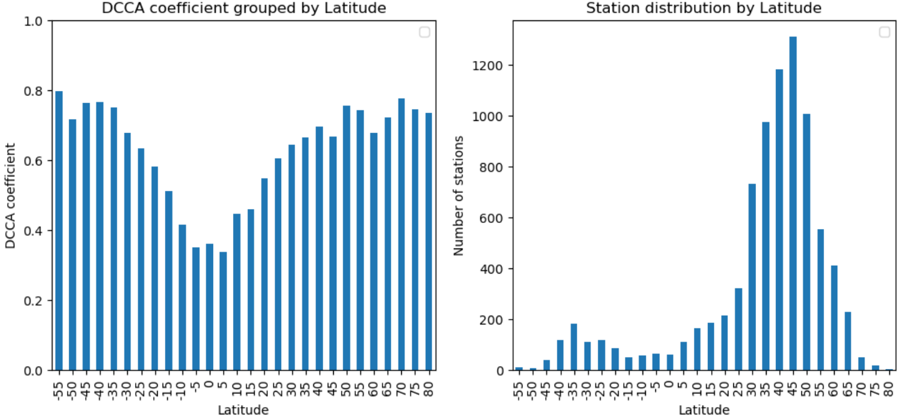

## Database of existing wind farms

Additionally, we acquired a database on existing wind farms containing data on 26,900 wind farm locations worldwide as well as databases on turbine models and power-curves [23] to simulate the existing wind fleet stock and derive national correction factors. The databases include, for instance, information on geolocation, capacity, number of turbines, hub height, turbine model, commissioning, and decommissioning dates until July 2022. It furthermore includes a turbine model database with data on the manufacturer, rated power, rotor diameter, market introduction, and minimum and maximum available hub heights of turbine models. To harmonize and check these databases, preprocessing, void-filling to estimate missing values and data filtering steps are performed as outlined in Supplementary Figure 6. Furthermore, for some entries, erroneous data was identified by manual examination. The manual examination for example involved countries with few wind farms where the capacity and capacity development of the entries in the wind farms database differed substantially from the capacity reported by the IEA Renewable Energy Progress Tracker [26]. If found to be erroneous, data on location, capacity and commissioning dates were manually corrected using additional sources such as reports, OpenStreetMap and satellite data, if possible (s. Supplementary material 1.5 for more details).

Finally, we removed locations with turbine capacities lower than 1 MW, as such turbines are comparably old and typically exhibit very low hub heights, leading to unrealistic simulation outcomes in ETHOS.RESKitWind , which is specifically designed for potential assessments of future energy systems. This arises from the substantial downscaling distance required from the 100 m ERA5 wind speed height to the turbine hub-height, introducing inherent

uncertainties in the wind-speed values. In this context, such wind turbines with small hubheights and low capacity are anticipated to have a marginal impact on the total power generation of a country due to their small capacity, justifying their exclusion from the analysis.

## Country-level statistics and time series data

We obtained annual wind power generation and capacity data from 2017 to 2021 for 71 countries and offshore regions from the IEA Renewable Energy Progress Tracker [26] as a basis for calculating national capacity factors to derive national correction factors for our simulation workflow. Data prior to 2017 has not been included as there was limited global installation of wind capacity in those years and average electricity yields are distorted by a high proportion of older, smaller turbine models. To avoid distortions in capacity factors due to capacity additions during a year, a capacity-weighted capacity factor considering monthly or even daily capacity additions was derived. This sub-annual factor was based on commissioning dates from the employed wind farm database and an extensive manual search to correct and complement the database as well as the IEA data (see Supplementary material 1.5). In Equation ( 1 ), index i denotes the respective wind farm, while op\_hours is the number of hours the wind farm was operational in the respective year based on the commissioning date, and IEA and WD (wind farm database) indicate the data source.

$$c f _ { c o u n t r y , y e a r } ^ { I E A , w e i g h t e d } = \frac { g e n _ { c o u n t r y , y e a r } ^ { I E A } } { c a p _ { c o u n t r y , y e a r } ^ { I E A } } * \frac { 1 } { \underbrace { \sum _ { i , c o u n t r y ( o p h o u r s _ { i , c o u n t r y } ^ { W D } * c a p _ { i , c o u n t r y } ^ { W D } ) } } } \\ \quad c a p _ { c o u n t r y , y e a r } ^ { W D }$$

The weighted capacity factor is especially necessary for countries with limited wind turbine capacities or a large share of commissioned capacity within a year as small deviations in the data have a large impact on the reliability of the calculated capacity factor and therefore the validation results.

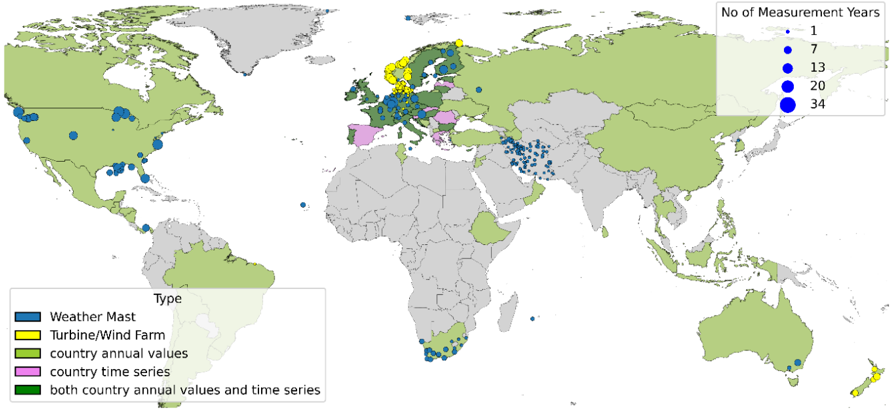

In summary, Figure 7 shows the type and locations of the real-world data that were considered within this study. Statistical country values are available for various countries across the globe with data gaps predominantly in Africa, South America and South Asia. Weather mast measurements are available mainly from the USA, Europe, South-Africa and Iran while wind farm measurements are limited to the North-Sea area.

Figure 7: Spatial overview of location and type of real-world data considered within this study. Data on specific locations, such as measurement data of weather masts (yellow) and wind turbines or wind farms (blue) are shown as circles. Data on country level such as annual country capacity factors (light green), aggregated country time series (pink) or both (dark green) are indicated by coloring the country respectively.

<details>

<summary>Image 7 Details</summary>

### Visual Description

## World Map: Distribution of Measurement Data Types and Temporal Coverage

### Overview

The image is a world map with colored dots representing different types of environmental measurement data and their temporal coverage. The map uses a color-coded legend to distinguish between data types (Weather Mast, Turbine/Wind Farm, country annual values, country time series, and both) and a scale to indicate the number of measurement years (1, 7, 13, 34). The spatial distribution of these data points highlights regional patterns in data collection.

### Components/Axes

- **Legend (Left Side)**:

- **Blue**: Weather Mast

- **Yellow**: Turbine/Wind Farm

- **Green**: Country annual values

- **Pink**: Country time series

- **Dark Green**: Both country annual values and time series

- **Scale (Right Side)**:

- **Dot Size**: Number of measurement years (1, 7, 13, 34)

- **Color**: Data type (as per legend)

- **Map**:

- **Countries**: Colored based on data type (green for "country annual values," pink for "country time series," etc.)

- **Dots**: Placed over countries to indicate specific measurement locations (e.g., Weather Masts, Turbine/Wind Farms).

### Detailed Analysis

- **Weather Mast (Blue)**:

- Concentrated in North America (e.g., United States, Canada), Europe (e.g., Germany, France), and parts of Asia (e.g., China, India).

- Dot sizes vary: 1–34 measurement years, with larger dots (34 years) in Europe and North America.

- **Turbine/Wind Farm (Yellow)**:

- Dominant in Europe (e.g., Germany, Denmark, Spain) and Australia.

- Dot sizes: 1–34 years, with larger dots in Europe.

- **Country Annual Values (Green)**:

- Widespread across all continents, with higher density in Europe, North America, and Asia.

- Dot sizes: 1–34 years, with larger dots in Europe and North America.

- **Country Time Series (Pink)**:

- Limited to Europe (e.g., Germany, France) and parts of Asia (e.g., China).

- Dot sizes: 1–34 years, with larger dots in Europe.

- **Both Country Annual Values and Time Series (Dark Green)**:

- Rare, with only a few dots in Europe (e.g., Germany, France) and North America (e.g., United States).

### Key Observations

1. **Europe Dominates Wind Energy Data**:

- Yellow (Turbine/Wind Farm) and pink (country time series) dots are most concentrated in Europe, suggesting a focus on renewable energy infrastructure and long-term data collection.

2. **North America Has Mixed Data Types**:

- Blue (Weather Mast) and green (country annual values) dots are prevalent, indicating a balance between weather monitoring and annual environmental data.

3. **Temporal Coverage**:

- Larger dots (34 years) are primarily in Europe and North America, reflecting longer-term data collection efforts.

4. **Sparse Data in Africa and South America**:

- Fewer dots overall, with smaller sizes (1–7 years), suggesting limited measurement infrastructure or shorter data collection periods.

### Interpretation

The map reveals a clear regional disparity in environmental data collection. Europe’s dominance in wind energy (yellow dots) and time series data (pink) aligns with its leadership in renewable energy policies. North America’s mix of Weather Masts (blue) and annual values (green) highlights a focus on both real-time weather monitoring and aggregated environmental metrics. The scarcity of data in Africa and South America (smaller dots, fewer years) may indicate underdeveloped infrastructure or prioritization of other data types. The presence of "both" data types (dark green) in Europe and North America suggests integrated approaches to environmental monitoring, combining historical trends with real-time measurements. This spatial and temporal distribution underscores the importance of regional policies and resource allocation in shaping environmental data ecosystems.

</details>

## Calibration and cross-validation of estimated wind speeds from reanalysis weather data

We used the hourly measured wind speeds from meteorological masts to employ a calibration and cross-validation of the reanalysis wind speeds from ERA5 to correct for mean errors and overall under- or overestimations in the wind speed values reported by several publications [15,17,18,27]. For the calibration and cross-validation we focused on wind speeds above 2 m/s due to the operational range of wind turbines [19,28] and measurement heights between 40 and 160 m, resulting in 8.4 million hourly measurements. In a first step, we extracted the wind speeds processed within ETHOS.RESKitWind for the same locations, heights, and time periods of the weather masts without applying wake losses or any other correction factors (see Supplementary material 1.6 for a detailed description). In a second step, wind speeds were binned in 0.1 m/s categories and a proportional regression per bin was used to fit processed and measured wind speeds. Alternative regressors were tested but discarded as our tests indicated signs of overfitting or worse performance (see Supplementary material 1.7.1).

The applied proportional regression function is given in Equation ( 2 ) and is defined by a scaling factor 'a' per wind speed bin. The proportional regressor underwent fitting and validation through k-fold cross-validation. For this the data was split into 210 folds, with each fold corresponding to a mast, with the goal of assigning equal weight to each mast. The scikitlearn Python library [29] was utilized for performing the k-fold split. The choice of k-fold crossvalidation is motivated by its suitability for our methodology, considering that other approaches such as the leave-one-out approach proved computationally intensive, and a rolling crossvalidation performed worse than the k-fold cross-validation during initial testing.

The cross-validation procedure results in 210 fitted regressors, subsequently averaged into a single regressor from which a single scaling factor 'a' per wind speed bin is extracted. These

factors were then used to correct the wind speeds within the ETHOS.RESKitWind according to Equation ( 2 ) ,

$$\begin{array} { r l } { W S _ { c o r r } = a ( w s _ { r a w } ) * w s _ { r a w } } \end{array}$$

where 𝑤𝑠𝑐𝑜𝑟𝑟 represents the corrected wind speed, and 𝑤𝑠𝑟𝑎𝑤 denotes the uncalibrated modeled wind speed. This unified calibration aims to rectify any general under- or overestimation present in the data. The resulting wind speed dependent scaling factors can be found in the Supplementary material. This wind speed correction is applied to every location simulated within ETHOS.RESKitWind.

To assess the quality of the regressor, we utilized a scoring function, using the mean error (ME) to account both for general deviation as well as over- or underestimation of wind speeds and capacity factors. We computed various metrics common in the literature [16,18,27,30,31] to assess the quality of the cross-validation procedure and evaluate our results. To assess the temporal correlation between measured and simulated time series, we evaluated the Pearson correlation and the detrended cross-correlation analysis (DCCA) coefficient. In addition, we used the Perkins' skill score (PSS), a probability density function, to evaluate the normal distribution. Moreover, we proposed a new probability density function called synthetic power curve score SPCS, based on the PSS from 0 to 1, where 1 represents an exact match, with the difference that it uses the cumulative minimum capacity factor distribution of two power curves, taking as reference the power curve of the manufacturer. The SPCS is described in Equation ( 3 ) where ws is the wind speed in each location at hub height, Capacity factor is the respective capacity factor distribution corresponding to wind speed ws for the manufacturer's and the synthetic power curve respectively.

$$S P C S = \sum _ { 0 } ^ { w s } m i n ( C a p a c i t y f a c t o r _ { m a n u f , w s } , C a p a c i t y f a c t o r _ { s y n t h , w s } )$$

Further analysis involves evaluating diurnal and seasonal mean errors in simulated wind speeds and reanalysis data. Results are given in Supplementary material 1.7 as the main focus of this study is the ETHOS.RESKitWind simulation workflow.

## Comparison with time-resolved wind turbine power generation data

Next, we validated the employed ETHOS.RESKitWind simulation workflow by comparing it to processed hourly measured turbine power generation data. First, ETHOS.RESKitWind was utilized to simulate the wind turbine power generation time-series for the measured time spans of each real turbine considering their specific hub heights, rotor diameters, and real power curves, if available. Where real power curves were not available, RESKit was used to generate turbine-specific synthetic power curves. Simulations were executed both with and without applied wind speed correction to assess the potential improvements in the simulation workflow. Furthermore, wind speed losses due to wake effects are considered using the wind efficiency curve (' dena-mean ') from windpowerlib [32]. These wake losses were also considered for all turbine simulations in the results. Subsequently, we assessed the difference in results using various metrics, including root mean square error, DCCA coefficient, and relative mean error. These assessments occurred at the location level. Aggregated assessments are calculated by weighing each location equally when calculating metrics.

## Comparison with country-level statistical data

As the regional coverage of the available time-resolved wind turbine power generation data is limited, and our workflow is intended for global use, we first further validated and subsequently calibrated our model by comparing it against annual turbine and wind farm level power generation output and country-level annual capacity factors from the IEA [26]. The years 2017 to 2021 were used since previous years saw only limited growth of wind capacity.

Annual turbine and wind farm level power generation output from turbines in the United States, Denmark and the United Kingdom were used as they are publicly available. For each location, the average reported capacity factor was calculated using capacity and power generation. Afterwards, the locations were simulated within ETHOS.RESKitWind and the simulated capacity factor was compared with the reported capacity factor.

The filtered wind turbine database, with missing data filled in was first used to simulate countrylevel capacity factors by applying the method described. Second, the database was used to derive IEA-based country-level capacity factors by accounting for intra-annual capacity additions. Extensive data checks have with the following data exclusion rules have been applied: If less than 75% of the official IEA capacity is reported in the wind farm database, the corresponding year was discarded, as this indicates that the wind farm database is incomplete for that year. Omitting this year would potentially lead to large discrepancies, as a different wind fleet would be simulated compared to the one that existed in that year. Additionally, we excluded years in which the country's IEA capacity was less than or equal to 3 MW, as such a low capacity suggests a limited number of plants, where small errors in the input data could result in significant deviations in the simulated country's capacity factor. Furthermore, we discarded a country or the respective year of that country if too many entries in the wind farm database are deemed erroneous. Therefore, the number of considered years varies for each country. The list of the final countries and years considered can be found in Supplementary material 1.8. Finally, we validated the performance of our simulation workflow on a global scale by comparing the resulting, simulated annual country-level capacity factors against IEA-based country-level capacity factors by calculating the average deviation in capacity factors for every year.

Furthermore, to be able to correct our simulation workflow towards official country statistics, we derived additional correction factors, which can be optionally applied in ETHOS.RESKitWind . For this, we calculated a capacity-factor correction factor for every country, representing the average deviation in capacity factors between the IEA-based country-level capacity factors and our simulated country-level capacity factors over the years 2017 to 2021 according to Euqation ( 4 ):

$$I E A$$

$$f _ { c o u n t r y } ^ { c o r r } = m e a n \left ( \frac { c f _ { c o u n t r y , y e a r } ^ { I E A } } { c f _ { c o u n t r y , y e a r } ^ { R E S K i t } } \right ) .$$

This inverse average deviation served as a country correction factor ( 𝒇𝒄𝒐𝒖𝒏𝒕𝒓𝒚 𝒄𝒐𝒓𝒓 ) implemented in ETHOS.RESKitWind to correct the electricity output. To avoid capacity factors above 1 and retain load peaks, the electricity output was corrected by adjusting the processed wind speed instead of directly correcting the simulated capacity factor. This wind speed adjusting is performed iteratively until the capacity factors match with a tolerance of 1%.

Not all countries worldwide can be covered with this approach as only a limited number of countries have installed relevant wind farm capacities. We assume that the observed

deviations mostly stem from regional mean errors from which neighboring countries are also affected. Therefore, we derive a global raster of correction factors, enabling the application of global correction at any point in the world. The global raster is created by assigning every wind farm location used in this study to the respective country correction factor value and applying a global spatial interpolation over these locations. This way, existing regional mean errors are also corrected in countries without any current wind farm capacities.

## Declaration of Generative AI and AI-assisted technologies in the writing process

During the preparation of this work the authors additionally used ChatGPT, Grammarly and DeepL-Write to improve language. No content was created by AI. After using these tools, the authors reviewed and edited the content as needed and take full responsibility for the content of the publication.

## Data and code availability

The model is freely available on the institute's GitHub page (https://github.com/FZJ-IEK3VSA/RESKit). The scripts necessary to reproduce the country-level comparisons are also included. Input data must be obtained by addressing the corresponding sources for different classes of data in question. The authors are not allowed to share this data (see Table 6).

## Acknowledgments

A major part of this work has been carried out within the framework of the H2 Atlas-Africa project (03EW0001) funded by the German Federal Ministry of Education and Research (BMBF).

Part of this work has been carried out within the framework of the HyUSPRe project which has received funding from the Fuel Cells and Hydrogen 2 Joint Undertaking (now Clean Hydrogen Partnership) under grant agreement No 101006632. This Joint Undertaking receives support from the European Union's Horizon 2020 research and innovation programme, Hydrogen Europe and Hydrogen Europe Research.

This work was partly funded by the European Union (ERC, MATERIALIZE, 101076649). Views and opinions expressed are, however, those of the authors only and do not necessarily reflect those of European Union or the European Research Council Executive Agency. Neither the European Union nor the granting authority can be held responsible for them.

This work was supported by the Helmholtz Association under the program "Energy System Design".

Open Access Publications funded by the Deutsche Forschungsgemeinschaft (DFG, German Research Foundation) - 491111487.

## Acknowledgments to data providers

We acknowledge the following people and organizations for providing dataset used in this study. We are grateful for their contribution to this work.

Henning Weisbarth - Denker & Wulf AG

## Author Contributions

Conceptualization: EUPS, PD, CW, HH; methodology: EUPS, PD, FP, CW, HH; software: EUPS, PD, CW; validation: EUPS, PD, FP, CW; formal analysis: EUPS, PD, CW; investigation: EUPS, PD, CW, HH, JW, RM, SD, SC; data curation: all named authors; writing - original draft: EUPS, PD, HH; writing - review and editing: EUPS, PD, HH, TK, JW, JL; visualization: EUPS, PD, HH; supervision: HH, JL, and DS; project administration: HH; funding acquisition: HH. All authors have read and agreed to the published version of the manuscript.

## Declaration of Competing Interest

The authors declare that they have no known competing financial interests or personal relationships that could have appeared to influence the work reported in this paper.

## References

- [1] IRENA IREA. Future of wind: Deployment, investment, technology, grid integration and socio-economic aspects (A Global Energy Transformation paper). Abu Dhabi: International Renewable Energy Agency (IRENA); 2019.

- [2] bp. Statistical Review of World Energy. 2022.

- [3] IEA. Renewable power generation by technology in the Net Zero Scenario, 2010-2030. Renewable Power Generation by Technology in the Net Zero Scenario, 2010-2030 2022. https://www.iea.org/data-and-statistics/charts/renewable-power-generation-bytechnology-in-the-net-zero-scenario-2010-2030 (accessed March 8, 2023).

- [4] Schöb T, Kullmann F, Linßen J, Stolten D. The role of hydrogen for a greenhouse gasneutral Germany by 2045. International Journal of Hydrogen Energy 2023;48:3912437. https://doi.org/10.1016/j.ijhydene.2023.05.007.