2501.08603v3

Model: nemotron-free

## Monte Carlo Tree Search for Comprehensive Exploration in LLM-Based Automatic Heuristic Design

Zhi Zheng 1 Zhuoliang Xie 2 Zhenkun Wang 2 Bryan Hooi 1

MCTS advantages

MCTS advantages

<details>

<summary>Image 1 Details</summary>

### Visual Description

## Diagram: LLM-Based Adaptive Heuristic Discovery (AHD) Framework

### Overview

The image presents two comparative diagrams illustrating heuristic function evolution and optimization in LLM-based AHD systems. Diagram (a) focuses on population dynamics, while diagram (b) details tree-based heuristic search mechanisms. Both use performance-feature tradeoff visualizations with distinct evolutionary strategies.

### Components/Axes

#### Diagram (a): Populations in LLM-Based AHD

- **Axes**:

- X-axis: "Feature of heuristic functions" (categorical, no scale)

- Y-axis: "Performance" (continuous, no numerical scale)

- **Legends**:

- Green circles: "Heuristic functions in the original elite population"

- White circles: "Newly generated heuristic functions"

- Red Xs: "Discarded heuristic functions"

- **Key Elements**:

- Arrows labeled "Population Update" showing directional flow

- Horizontal red line labeled "Performance threshold"

#### Diagram (b): Tree Node Heuristic Functions

- **Axes**:

- X-axis: "Feature of heuristic functions" (categorical, no scale)

- Y-axis: "Performance" (continuous, no numerical scale)

- **Legends**:

- Green circles: "Better-performing tree nodes of heuristic functions"

- Stars: "New MCTS expansions"

- **Key Elements**:

- Tree structure with nodes labeled 1–4

- Arrows labeled "Iterations of Tree Search"

- Dashed lines labeled "Existing MCTS Edges"

### Detailed Analysis

#### Diagram (a)

- **Original vs. New Functions**:

- Green circles (original elite population) cluster in the mid-to-high performance range.

- White circles (newly generated functions) show mixed performance, with some overlapping the original cluster and others near the threshold.

- **Discarded Functions**:

- Red Xs appear below the performance threshold, indicating elimination during updates.

- **Trend**:

- Population updates shift heuristic functions toward higher performance, with discarding of underperforming variants.

#### Diagram (b)

- **Tree Search Process**:

- Nodes 1–4 represent heuristic functions at different search iterations.

- Node 4 (final iteration) shows improved performance compared to earlier nodes.

- **MCTS Expansions**:

- Stars indicate new Monte Carlo Tree Search (MCTS) expansions, correlating with performance gains.

- **Trend**:

- Iterative tree search refines heuristic functions, with later nodes achieving higher performance.

### Key Observations

1. **Performance Thresholding**: Diagram (a) explicitly shows a cutoff for discarding low-performing functions, while diagram (b) implies optimization through iterative search.

2. **Evolutionary Mechanisms**:

- Diagram (a) emphasizes population-based selection (elitism + mutation).

- Diagram (b) highlights tree-based exploration (MCTS) for heuristic refinement.

3. **Visual Ambiguity**:

- No numerical performance values are provided, making quantitative analysis impossible.

- The "Feature of heuristic functions" axis lacks granularity (e.g., no feature dimensions like computational cost or accuracy).

### Interpretation

The diagrams illustrate two complementary strategies for heuristic optimization in LLM-based AHD:

1. **Population Dynamics (a)**:

- Combines elitism (preserving top performers) with mutation (new functions) and culling (discarding poor performers).

- The red threshold suggests a performance floor for survival, akin to survival-of-the-fittest in evolutionary algorithms.

2. **Tree Search (b)**:

- Uses MCTS to explore heuristic function space, with expansions (stars) enabling deeper optimization.

- Node progression (1→4) implies iterative refinement, where later iterations leverage prior knowledge (existing edges).

**Notable Insights**:

- The absence of numerical performance metrics limits direct comparison between strategies.

- The red threshold in (a) and star-based performance in (b) suggest hybrid approaches could combine population-based selection with tree-guided exploration for robust AHD.

- The diagrams emphasize *relative* performance improvements rather than absolute values, focusing on evolutionary trajectories over static benchmarks.

</details>

Feature of heuristic functions

Feature of heuristic functions

The general adoptted population in exsiting LLM-based AHD methods will discard emporarily underperforming codes. MCTS allows (b) MCTS for higher-quality LLM-based AHD (Ours)

MCTS

developing based on temporarily underperforming codes which can help to jump out of local optima.

The general adoptted population in exsiting LLM-based AHD methods will discard emporarily underperforming codes. MCTS allows

MCTS

developing based on temporarily underperforming codes which can help to jump out of local optima.

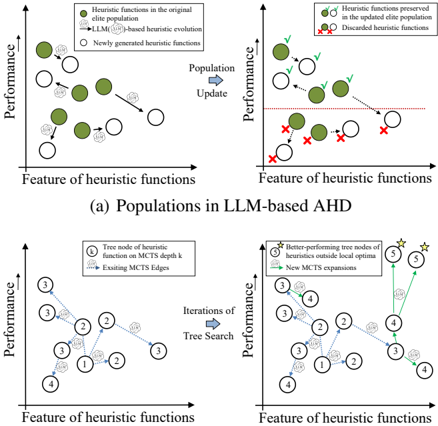

© Copyright National University of Singapore. All Rights Reserved. Population is difficult to maintain diversity and MCTS can explore a wider heuristic func © Copyright National University of Singapore. All Rights Reserved. Population is difficult to maintain diversity and MCTS can explore a wider heuristic func Figure 1. The generally adopted population (a) in existing LLMbased AHD methods (Liu et al., 2024b; Ye et al., 2024a) directly discards low-performance heuristics (under the red dashed line in (a)), thus falling into local optima. MCTS provides chances to develop low-performance heuristics, so it can more comprehensively explore the space of heuristic functions with different features.

## Abstract

Handcrafting heuristics for solving complex optimization tasks (e.g., route planning and task allocation) is a common practice but requires extensive domain knowledge. Recently, Large Language Model (LLM)-based automatic heuristic design (AHD) methods have shown promise in generating high-quality heuristics without manual interventions. Existing LLM-based AHD methods employ a population to maintain a fixed number of top-performing LLM-generated heuristics and introduce evolutionary computation (EC) to iteratively enhance the population. However, these population-based procedures cannot fully develop the potential of each heuristic and are prone to converge into local optima. To more comprehensively explore the space of heuristics, this paper proposes to use Monte Carlo Tree Search (MCTS) for LLM-based heuristic evolution. The proposed MCTS-AHD method organizes all LLMgenerated heuristics in a tree structure and can better develop the potential of temporarily underperforming heuristics. In experiments, MCTS-AHD delivers significantly higher-quality heuristics on various complex tasks. Our code is available 3 .

## 1. Introduction

Manually designed heuristics are promising in addressing complex optimization tasks (e.g., combinatorial optimization (CO) problems) (Desale et al., 2015). They are widely used in real-world applications, such as traffic control (He et al., 2011), job scheduling (Rajendran, 1993), and robotics (Tan et al., 2021). However, manually crafted heuristics often contain intricate workflows and parameter settings, making their design labor-intensive and reliant on task-specific expert knowledge. To achieve easier heuristic design across various tasks, the concept of Automatic Heuristic Design (AHD) (Burke et al., 2013) (also known as Hyper-Heuristics (Ye et al., 2024a)) has attracted extensive attention. It seeks the best-performing heuristic algorithm among valid options. Genetic Programming (GP) (Langdon & Poli, 2013) is commonly employed for AHD, with GP-based AHD methods introducing a series of mutation operators to gradually refine heuristic algorithms (Duflo et al., 2019). Nevertheless, the effectiveness of GP-based methods still relies on human definitions of permissible operators (Liu et al., 2024b), which poses additional implementation difficulties.

1 School of Computing, National University of Singapore, Singapore 2 School of System Design and Intelligent Manufacturing, Southern University of Science and Technology, Shenzhen, China. Correspondence to: Bryan Hooi < bhooi@comp.nus.edu.sg > .

3 Our code is available at https://github.com/ zz1358m/MCTS-AHD-master/tree/main .

In recent years, large language models (LLMs) have shown exceptional effectiveness in various domains (Hadi et al., 2023; 2024; Naveed et al., 2023). Leveraging LLMs to automatically enhance heuristics, LLM-based AHD methods (Liu et al., 2024c; Yu & Liu, 2024) can design high-quality

heuristics for various complex tasks without manual interventions. EoH (Liu et al., 2023; 2024b) and Funsearch (Romera-Paredes et al., 2024) innovatively apply LLMs to AHD by designing a population-based evolutionary computation (EC) procedure. For a given task, several established general frameworks may exist for implementing heuristics, e.g., greedy solving frameworks or frameworks with searchbased ideas for CO problems. LLM-based AHD methods focus on designing key heuristic functions within one of these predefined general frameworks, rather than developing heuristics from scratch. These methods maintain a population of outstanding heuristic functions based on their performances on an evaluation dataset and iteratively prompt LLMsto generate new heuristic functions taking the existing ones as starting points. Building on this population-based EC framework, studies also introduce effective components. EoH develops several prompt strategies that guide LLMs in generating effective heuristics. ReEvo (Ye et al., 2024a) incorporates the reflection mechanism (Shinn et al., 2024) to enhance the reasoning of LLMs among heuristic function samples. HSEvo (Dat et al., 2024) presents diversity metrics and harmony search (Shi et al., 2013) to increase the diversity of the population without compromising effectiveness.

These population-based methods eliminate inferior heuristic functions in order to focus more on top-performance ones. However, lower-performance heuristic functions still have the potential to be outstanding after steps of LLM-based refinement. Thus, as shown in Figure 1, the population structure may guide the evolution of heuristics getting stuck in suboptimal local optima. Funsearch and HSEvo employ multiple-population structures (Cant´ u-Paz et al., 1998) and diversity metrics, respectively, to improve the diversity of populations. Populations with these components can reduce the probability of premature convergence but still fail to explore the complex space of heuristics.

To address these drawbacks, this paper proposes MCTSAHD, the first tree search method for LLM-based AHD, which employs a tree structure over all the heuristic functions generated so far and uses the Monte Carlo Tree Search (MCTS) algorithm with progressive widening technique (Coulom, 2007) to guide the evolution of further heuristics. Its advantages are: 1) Instead of directly discarding inferior heuristic functions, MCTS-AHD enables steps of LLMbased evolution on temporarily underperforming heuristic functions while keeping focus on better-performing ones, achieving a more comprehensive exploration of the heuristic space. 2) The tree structure in MCTS can benefit the LLMbased heuristic refinement, where MCTS-AHD presents a novel tree-special prompt strategy to inspire LLMs with the organized function samples in the MCTS tree paths. Moreover, as components, MCTS-AHD proposes an explorationdecay technique, which linearly decreases the rate at which we perform branching in MCTS as the algorithm progresses.

We also present a novel thought-alignment procedure to generate precise linguistic descriptions of functions. In experiments, we implement MCTS-AHD to design heuristics with several general frameworks for a wide range of NPhard (Ausiello et al., 2012) CO problems and a Bayesian Optimization (BO)-related optimization task. MCTS-AHD achieves significantly higher-quality heuristics than handcrafted heuristics and existing LLM-based AHD methods.

## 2. Preliminary

## 2.1. Definition: AHD & LLM-based AHD

AHD: For a given task P (e.g., a CO problem), AHD methods search for the best-performing heuristic h ∗ within a heuristic space H as follows:

$$h ^ { * } = \underset { h \in H } { \arg \max } \, g ( h ) . \quad \quad ( 1 )$$

The heuristic space H contains all feasible heuristics for tasks P . Given a task P , heuristic h ∈ H is an algorithm mapping the set of task inputs (also called instances) I P into the corresponding set of solutions S P , i.e., h : I P → S P . For example, heuristics for an NP-hard CO task, Traveling Salesman Problem (TSP), map city coordinates (TSP instances) to travel tours (solutions). The function g ( · ) is a performance measure function for heuristics, g : H → R . For a CO task P minimizing an objective function f : S P → R , AHD methods estimate the performance function g by evaluating the heuristic h on a task-specific dataset D . Formally, we enumerate instances ins ∈ D ⊆ I P , obtain their solutions h ( ins ) by h , and calculate the expectation of the objective function values for the solutions as follows:

$$g ( h ) = \mathbb { E } _ { i n s \in D } [ - f ( h ( i n s ) ) ] , \quad ( 2 )$$

The heuristics in H belong to a wide range of general frameworks, and the optimal heuristic could be implemented with multiple frameworks. To focus more on critical heuristic designs within each framework, AHD methods typically predefine a general framework and design only a key heuristic function with specified inputs and outputs within this framework. For example, heuristics for TSP within a step-by-step construction framework sequentially build TSP tours one city after another. AHD methods within this framework for TSP will design a key heuristic function to select the next city based on the partial tour of previously visited cities. For simplicity, we still denote the function to be designed as h .

LLM-based AHD: LLM-based AHD introduces LLMs into the search process for the optimal heuristic function h ∗ (within a predefined framework). Existing LLM-based AHD methods (Liu et al., 2024b; Ye et al., 2024a) maintain a population of M heuristic functions { h 1 , . . . , h M } and employ EC to update the population iteratively. Imitating mutation or crossover operators in EC, LLM-based

LLM-base Actions

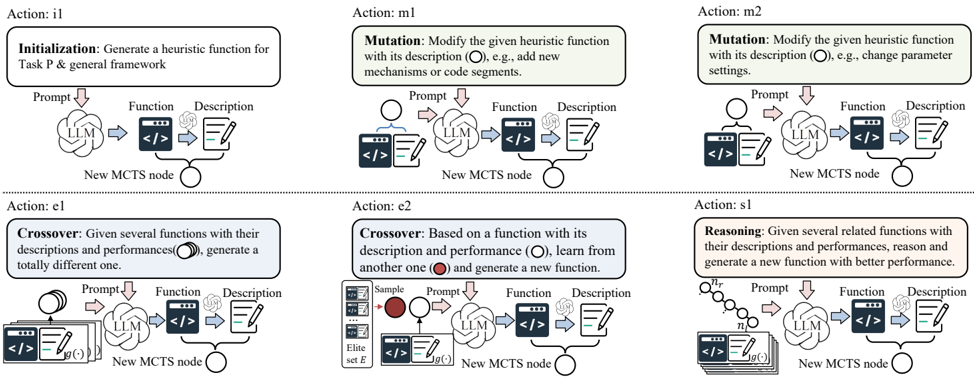

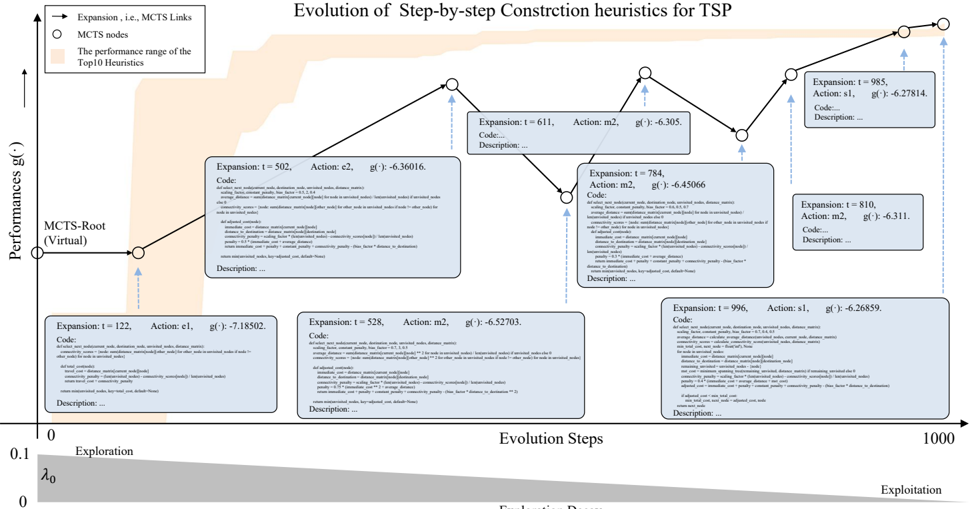

Figure 2. LLM-based actions in MCTS-AHD for heuristic evolution. Actions include initializing a new heuristic (i1); two mutation actions (m1 and m2) to mutate an existing heuristic function into a new one with diverse mechanism or detail settings; two crossover actions (e1 and e2) to generate a new heuristic from multiple existing ones; and a novel tree-path reasoning action (s1) to get a better heuristic function from organized function samples on an MCTS tree path from the root node n r to a leaf node n l .

<details>

<summary>Image 2 Details</summary>

### Visual Description

## Diagram: Process Flow for Heuristic Function Evolution with LLM and MCTS

### Overview

The diagram illustrates a six-step process for evolving heuristic functions using a Large Language Model (LLM) and Monte Carlo Tree Search (MCTS) nodes. It is structured as a grid with two rows and three columns, each cell representing a distinct action (i1, m1, m2, e1, e2, s1). Arrows indicate the flow of information between components: **Prompt**, **Function**, **Description**, and **New MCTS Node**.

---

### Components/Axes

1. **Actions**:

- **i1 (Initialization)**: Generate a heuristic function for Task P & general framework.

- **m1 (Mutation)**: Modify the heuristic function by adding new mechanisms/code segments.

- **m2 (Mutation)**: Modify the heuristic function by changing parameter settings.

- **e1 (Crossover)**: Combine multiple functions to generate a totally new one.

- **e2 (Crossover)**: Learn from another function’s performance to generate a new one.

- **s1 (Reasoning)**: Generate a new function with better performance by analyzing related functions.

2. **Components**:

- **Prompt**: Input text guiding the LLM.

- **Function**: Code snippet (represented by `< / >` icons).

- **Description**: Textual explanation of the function.

- **New MCTS Node**: Output node representing an evolved heuristic function.

3. **Flow**:

- Arrows connect components sequentially: **Prompt → LLM → Function/Description → New MCTS Node**.

- Colors differentiate sections (e.g., green for initialization, blue for mutation, gray for crossover, red for reasoning).

---

### Detailed Analysis

1. **Action i1 (Initialization)**:

- **Process**: The LLM generates a heuristic function and its description based on a prompt.

- **Output**: A new MCTS node is created.

2. **Actions m1/m2 (Mutation)**:

- **m1**: Adds new mechanisms/code segments to an existing function.

- **m2**: Adjusts parameter settings of the function.

- Both use the LLM to refine the function and description before updating the MCTS node.

3. **Actions e1/e2 (Crossover)**:

- **e1**: Combines multiple functions (with descriptions and performances) into a novel one.

- **e2**: Samples an "elite set E" (highlighted in red) to learn from high-performing functions and generate a new one.

4. **Action s1 (Reasoning)**:

- Analyzes multiple related functions (with descriptions and performances) to reason and produce a better-performing function.

---

### Key Observations

- **Modular Design**: Each action operates independently but contributes to the same MCTS node evolution.

- **LLM as Mediator**: The LLM translates prompts into code/descriptions and refines functions across all actions.

- **Elite Set E**: Explicitly referenced in e2, suggesting a selection mechanism for high-performing functions.

- **Iterative Improvement**: The process emphasizes combining, mutating, and reasoning to enhance function performance.

---

### Interpretation

This diagram represents a hybrid approach to heuristic function evolution, blending genetic algorithms (mutation, crossover) with LLM-driven reasoning. The LLM acts as a bridge between human-readable prompts and executable code, while MCTS nodes represent the evolving solutions. The use of an "elite set" in e2 implies a Pareto optimization strategy, prioritizing functions with superior performance metrics. The structured flow ensures systematic exploration of the solution space, balancing creativity (crossover) with refinement (mutation, reasoning). The absence of numerical data suggests the focus is on procedural logic rather than quantitative analysis.

</details>

© Copyright National University of Singapore. All Rights Reserved. AHD methods prompt LLMs to generate heuristics given existing heuristics from the population. In these methods, a heuristic h will only be retained for the next iteration if its performance g ( h ) estimated in the evaluation dataset D exceeds the worst-performing heuristic in the original population, i.e., g ( h ) > min i ∈{ 1 ,...,M } g ( h i ) . This property makes it difficult for population-based methods to adopt a worse-before-better strategy for the optimal heuristic h ∗ .

## 2.2. Monte Carlo Tree Search

MCTS Algorithm. MCTS ( ´ Swiechowski et al., 2023) is a decision-making algorithm widely used in games (Silver et al., 2016) and complex decision-making tasks (Fu et al., 2021). Recent studies also verify the power of MCTS in assisting LLMs to conduct multi-hop reasoning (Feng et al., 2023). In the MCTS tree, each node n c represents a state, and the MCTS will repeatedly select and expand the node with the highest potential judged by a UCT algorithm (Kocsis & Szepesv´ ari, 2006). Each MCTS tree node n c records a quality value Q ( n c ) and a visit count N ( n c ) representing the number of times the node has been selected. From an initial root node n r , MCTS gradually builds the MCTS tree to explore the entire state space. Each round of MCTS consists of four stages as follows:

Selection : The selection stage identifies the most potential MCTS tree node for subsequent node expansions. From the root node n r , the selection stage iteratively selects the child node with the largest UCT value until it reaches a leaf node. Given a current node n c , the UCT values for its child nodes c ∈ Children ( n c ) are calculated as follows:

$$U C T ( c ) = \left ( Q ( c ) + \lambda \cdot \sqrt { \frac { \ln ( N ( n _ { c } ) + 1 ) } { N ( c ) } } \right ) . \quad ( 3 )$$

Expansion : The expansion stage obtains multiple child nodes from the current (leaf) node n c by sampling several actions from the state of n c among all possible options.

Simulation : The simulation stage evaluates all newly expanded leaf nodes for their quality value Q ( · ) .

Backpropagation : The backpropagation process uses the results of simulations to update the values of Q ( · ) , N ( · ) for nodes on the tree path from n c to the root n r .

After several iterations of MCTS, the MCTS tree can contain nodes with high-quality states for games or tasks.

Progressive Widening. Conventional MCTS is not suitable for tasks with extensive action options or dynamically changing environments (Lee et al., 2020b). Progressive widening (Coulom, 2007) is a technique designed for MCTS to fit such tasks. It gradually adds new child nodes to non-leaf nodes as their visit counts N ( · ) increases. Formally, for node n c with child nodes children ( n c ) , a new child node will be added every time the following condition is satisfied:

$$\lfloor N ( n ) ^ { \alpha } \rfloor \geq | c h i l d r e n ( n _ { c } ) | ,$$

where | children ( n c ) | is the number of child nodes of n c .

## 3. MCTS-AHD

To address the challenges faced by population-based LLMbased AHD methods in overcoming local optima and explor-

MCTS settings

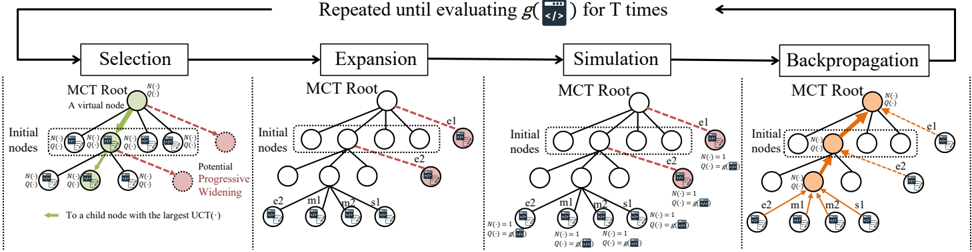

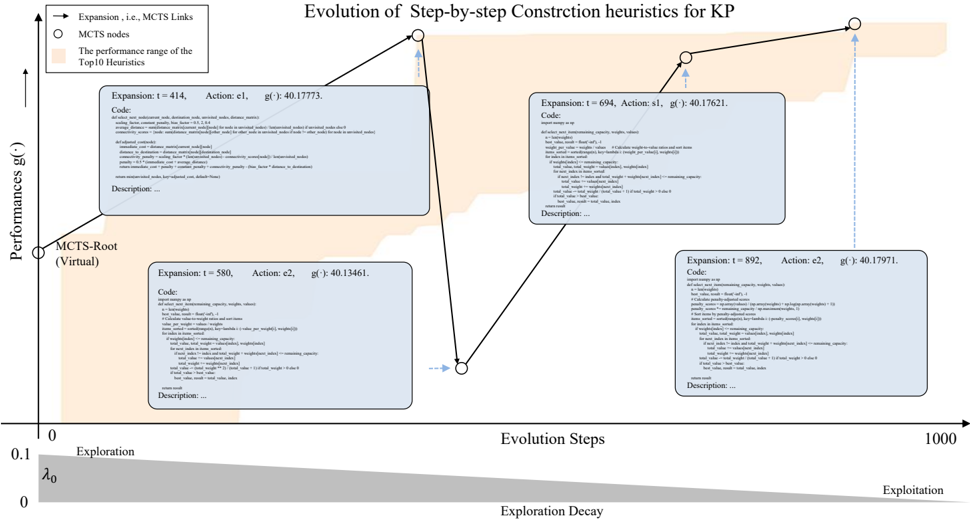

Figure 3. The MCTS process in MCTS-AHD contains four stages, i.e., selection , expansion , simulation , and backpropagation . MCTSAHD simulates the quality value of each node as the performance function values of their heuristics and the MCTS will terminate after total T performance evaluations. MCTS-AHD introduces the progressive widening technique to better crossover original heuristic functions with continuously generated new ones. It conducts the crossover actions e1 for the root node and action e2 for other nodes.

<details>

<summary>Image 3 Details</summary>

### Visual Description

## Flowchart: Monte Carlo Tree Search (MCTS) Algorithm Phases

### Overview

The diagram illustrates the four iterative phases of the Monte Carlo Tree Search (MCTS) algorithm: **Selection**, **Expansion**, **Simulation**, and **Backpropagation**. These phases repeat until a terminal condition (evaluating `g(·)` for `T` times) is met. The flowchart uses color-coded arrows and nodes to represent decision-making and state transitions.

---

### Components/Axes

1. **Nodes**:

- **MCT Root**: Central virtual node (green circle) acting as the starting point.

- **Initial Nodes**: Pre-existing child nodes (white circles) with `N(·)` and `Q(·)` values.

- **Potential Progressive Widening**: Red dashed circles indicating unexplored nodes.

- **New Nodes**: Labeled `e1`, `e2`, `m1`, `m2`, `s1` (expanded during Simulation/Backpropagation).

2. **Arrows**:

- **Green**: "To a child node with the largest UCT(·)" (Selection phase).

- **Red**: "Potential Progressive Widening" (Selection phase).

- **Orange**: "Backpropagation" updates (Backpropagation phase).

3. **Legend**:

- **Colors**:

- Green: Selection actions.

- Red: Unexplored nodes.

- Orange: Backpropagation updates.

- **Placement**: Bottom-left corner.

4. **Text Labels**:

- **Selection**: "A virtual node", "To a child node with the largest UCT(·)".

- **Expansion**: "e1", "e2" (new nodes added).

- **Simulation**: "N(·)=1", "Q(·)=g(·)" (evaluation metrics).

- **Backpropagation**: "N(·)=1", "Q(·)=g(·)" (updated values).

---

### Detailed Analysis

1. **Selection Phase**:

- The MCT Root node evaluates child nodes using the **UCT formula** (Upper Confidence Bound applied to Trees).

- Green arrows direct selection to the child node with the highest UCT value.

- Red dashed circles represent unexplored nodes ("Potential Progressive Widening").

2. **Expansion Phase**:

- New nodes (`e1`, `e2`) are added to the tree, expanding the search space.

- Dashed lines connect the MCT Root to these nodes, indicating potential future paths.

3. **Simulation Phase**:

- Nodes are evaluated using a heuristic or simulation (`g(·)` function).

- `N(·)` and `Q(·)` values are initialized (e.g., `N(·)=1`, `Q(·)=g(·)`).

4. **Backpropagation Phase**:

- Results from Simulation propagate upward via orange arrows.

- The MCT Root and intermediate nodes update their `N(·)` and `Q(·)` values based on simulation outcomes.

---

### Key Observations

- **Iterative Process**: The flowchart emphasizes repetition until `g(·)` is evaluated `T` times, suggesting a loop structure.

- **Node Prioritization**: UCT values guide exploration-exploitation trade-offs during Selection.

- **Color Coding**: Distinct colors (green, red, orange) visually separate phases and actions.

- **Dashed vs. Solid Lines**: Dashed lines denote potential nodes; solid lines represent confirmed paths.

---

### Interpretation

The diagram demonstrates how MCTS balances exploration (trying new nodes) and exploitation (leveraging known high-value nodes). The UCT formula ensures efficient search by prioritizing nodes with high potential. The use of `N(·)` (visit count) and `Q(·)` (action value) tracks node performance, while backpropagation refines these estimates iteratively. The repetition until `T` evaluations implies a bounded computational budget, critical for real-time applications like game AI. The color-coded arrows and nodes simplify understanding of the algorithm’s flow, making it accessible for technical documentation.

</details>

© Copyright National University of Singapore. All Rights Reserved. ing complex heuristic spaces, this paper proposes a novel method called MCTS-AHD. It preserves all heuristic functions LLMs generated so far in an MCTS tree and employs MCTS with progressive widening for heuristic evolution. The MCTS root node n r is a virtual node without representing any heuristics, and each of the other nodes represents an executable Python implementation of a heuristic function h ∈ H along with its linguistic description. As LLM-based actions for MCTS node expansion, MCTS-AHD prompts LLMs in existing strategies (e.g., mutation, crossover) and a novel tree-path reasoning strategy. Moreover, to encourage the exploration of the heuristic space H in the early stages of MCTS and ensure convergence in the later stages, MCTSAHD presents an exploration-decay technique that linearly reduces the exploration factor in UCT.

## 3.1. LLM-based actions in MCTS-AHD

To simulate the mutation or crossover operators in EC, LLMbased AHD methods prompt LLMs with several prompt strategies to generate new heuristic functions from existing ones (Liu et al., 2024b). Utilizing the advantage that the MCTS tree can record the relationships of all the generated heuristics, as shown in Figure 2, MCTS-AHD presents a novel MCTS-specific set of actions for LLM-based heuristic evolution, including action i1, e1, e2, m1, m2, and s1. The detailed prompts for these actions are in Appendix E.1. As a commonality, all prompts contain descriptions of the task P , the predefined general framework, and the inputs and outputs of the key heuristic function along with their meanings. For actions except i1, prompts also contain implementations and descriptions of existing heuristic functions. For each action, LLMs are supposed to output the Python code of a heuristic function and its linguistic description.

Initial Action i1 : As an action for initialization, the action i1 aims to directly generate a heuristic function and its corresponding description from scratch by LLMs.

Mutation Action m1 & m2 : MCTS-AHD incorporates two mutation actions, m1 & m2, to attempt more detailed designs within the original function workflow. Based on the inputted heuristic function, action m1 prompts LLMs to introduce new mechanisms and formulas, and action m2 prompts LLMs to modify the parameter settings.

Crossover Action e1 : To explore heuristic functions with new workflows, we employ a crossover action e1 to generate a new heuristic function that diverges from multiple existing ones. These existing heuristic functions are inputted to LLMs with their performances g ( · ) and descriptions.

Crossover Action e2 : The crossover action e2 prompts LLMs with a parent heuristic function, a reference heuristic function, and their respective performances. LLMs are supposed to identify the beneficial traits and settings in the reference one and craft an improved heuristic function based on the parent one. The reference is sampled from a dynamically maintained elite heuristic function set E consisting of heuristic functions with top 10 performances g ( · ) .

Tree-path Reasoning Action s1 : The MCTS tree paths from the root n r to leaf nodes record the evolution history of heuristic functions. So, utilizing this feature, MCTS-AHD presents a novel action s1 to analyze all unique heuristic functions from the leaf node to be developed n l up to the root n r , identify advantageous designs in these heuristics, and generate an enhanced heuristic function.

Generating Descriptions: The Thought-Alignment Process . Linguistic descriptions of heuristic functions can assist the reasoning of LLMs (Liu et al., 2024b), where EoH prompts LLMs for a description before outputting the function code. However, due to LLM hallucination (Huang et al., 2023), such a procedure could lead to uncorrelated codes and descriptions. For correlated and detailed descriptions, MCTS-AHD proposes a thought-alignment process that summarizes descriptions after the code generations. There-

fore, performing all the MCTS-AHD actions calls LLMs twice. In the first call, we adopt the LLM-generated Python implementation of a heuristic function with action prompts. Then, we conduct a thought-alignment process, prompting LLMs to generate linguistic code descriptions in up to three sentences (refer to Appendix E.2 for prompts). The second LLM call is significantly shorter than the first one, so it will not cause a severe increase in execution time and token cost.

## 3.2. MCTS settings

Figure 3 displays the MCTS process in MCTS-AHD. It first generates N I initial nodes representing and links them to a virtual root node n r that does not represent any heuristics. Subsequently, similar to the regular MCTS introduced in Section2.2, MCTS-AHD repeatedly performs the selection, expansion, simulation, and backpropagation stages as follows until the number of evaluations of heuristics approaches a limit T :

Selection: In the selection process of MCTS-AHD, MCTSAHD normalizes the quality value Q ( · ) to enhance the homogeneity of different tasks in calculating UCT value for child nodes c ∈ Children ( n c ) of a node n c as follows:

$$\begin{array} { r l } & { U C T ( c ) = \left ( \frac { Q ( c ) - q _ { \min } } { q _ { \max } - q _ { \min } } + \lambda \cdot \sqrt { \frac { \ln ( N ( n _ { c } ) + 1 ) } { N ( c ) } } \right ) , \quad e a r l y o r s . } \end{array}$$

where q max and q min are the upper and lower limits of quality values Q ( · ) that ever encountered in MCTS, respectively. From the root n r , MCTS iteratively selects a child node with the largest UCT value until reaching a leaf node.

Expansion : For the leaf node selected in the selection stage, the expansion stage prompts LLMs with actions e2, m1, m2, and s1 to build its child nodes. To attempt various detail designs, in a single expansion stage, MCTS-AHD generates k child nodes with the actions m1 and m2, one with e2 and s1, respectively ( 2 k +2 child nodes in total).

Simulation : Then, MCTS-AHD evaluates these newly generated heuristic functions h on the evaluation dataset D for their performances g ( h ) and directly sets their quality value as their performances, i.e., Q ( n l ) ← g ( h ) . We also record their visit count N ( n l ) ← 1 . Simultaneously, the elite set E for the action e2, q max , and q min will be updated.

Backpropagation : The backpropagation process updates the quality values and the visit counts in MCTS as follows:

<!-- formula-not-decoded -->

Progressive Widening . Since the elite heuristic function set E for the action e2 updates gradually as the search progresses. MCTS-AHD introduces the progressive widening technique to enable the re-exploration of non-leaf nodes, especially nodes with higher visit counts. The progressive widening process will occur when the condition in Eq.(4) is satisfied and we have α = 0 . 5 for MCTS-AHD. We call the action e1 for a new child node when the root node n r qualifies the condition in Eq.(4), and we call action e2 when other nodes qualify. Heuristic functions inputted to the action e1 are uniformly selected from 2 to 5 different subtrees of MCTS. We implement the progressive widening process during the selection stage, then these nodes will also be processed in the simulation and backpropagation stages.

Eventually, MCTS-AHD will output a heuristic function with the highest performance value throughout the MCTS.

## 3.3. Exploration-Decay

The setting of the exploration factor λ in Eq.(5) determines the preferences of MCTS on exploration or exploitation (Browne et al., 2012). A larger λ promotes the exploration of temporarily inferior nodes, while a smaller λ stimulates concentration on nodes with higher quality values Q ( · ) . To facilitate a more comprehensive exploration in the early iterations of MCTS and ensure convergence in the later ones, MCTS-AHD presents an exploration-decay technique, linearly decaying the exploration factor λ in Eq.(5) as follows:

$$\lambda = \lambda _ { 0 } * \frac { T - t } { T } .$$

Although having a task-specific setting λ 0 is helpful for high-quality heuristics, MCTS-AHD strives to keep the generalization ability, so we set λ 0 = 0 . 1 for all tasks.

## 4. Experiments

This section evaluates the proposed MCTS on complex tasks, including NP-hard CO problems and a Cost-aware Acquisition Function (CAF) design task for BO (Yao et al., 2024c). The definitions of these tasks are in Appendix B. We implement MCTS-AHD to design key functions of a wide range of general frameworks (detailed in Appendix C) for these tasks, including step-by-step construction, Ant Colony Optimization (ACO), Guided Local Search (GLS), and BO.

Settings. For experiments in this section, we set the number of initial tree nodes N I = 4 , λ 0 = 0 . 1 , α = 0 . 5 . The running time of each heuristic on the evaluation dataset D is limited to 60 seconds. The composition of evaluation datasets D for each task is detailed in Appendix D as well as the settings of general frameworks. Valuable LLM-based AHD methods should be flexible for various pre-trained LLMs, so we include both GPT-3.5-turbo and GPT-4o-mini .

Baselines. To verify the ability of heuristics designed by MCTS-AHD, we introduce four types of heuristics as baselines: (a) Manually designed heuristics, e.g., Nearest-greedy

Table 1. Designing heuristics with the step-by-step construction framework for TSP and KP. We evaluate methods on 6 test sets with 1,000 instances each. Test sets with in-domain scales (i.i.d. to the evaluation dataset D ) are underlined. Since AHD methods have no guarantees for generalization ability, the effect on in-domain datasets is more important. Optimal for TSP is obtained by LKH (Lin & Kernighan, 1973), and Optimal for KP is the result of OR-Tools. Each LLM-based AHD method is run three times and we report the average performances. The best-performing method with each LLM is shaded, and each test set's overall best result is in bold.

| Task | TSP | TSP | TSP | TSP | TSP | TSP | KP | KP | KP | KP | KP | KP |

|------------------------------|------------------------------|------------------------------|------------------------------|------------------------------|------------------------------|------------------------------|------------------------------|------------------------------|------------------------------|------------------------------|------------------------------|------------------------------|

| N= | N =50 | N =50 | N =100 | N =100 | N =200 | N =200 | N =50, W =12.5 | N =50, W =12.5 | N =100, W =25 | N =100, W =25 | N =200, W =25 | N =200, W =25 |

| Methods | Obj. ↓ | Gap | Obj. ↓ | Gap | Obj. ↓ | Gap | Obj. ↑ | Gap | Obj. ↑ | Gap | Obj. ↑ | Gap |

| Optimal | 5.675 | - | 7.768 | - | 10.659 | - | 20.037 | - | 40.271 | - | 57.448 | - |

| Greedy Construct | 6.959 | 22.62% | 9.706 | 24.94% | 13.461 | 26.29% | 19.985 | 0.26% | 40.225 | 0.12% | 57.395 | 0.09% |

| POMO | 5.697 | 0.39% | 8.001 | 3.01% | 12.897 | 20.45% | 19.612 | 2.12% | 39.676 | 1.48% | 57.271 | 0.09% |

| LLM-based AHD: GPT-3.5-turbo | LLM-based AHD: GPT-3.5-turbo | LLM-based AHD: GPT-3.5-turbo | LLM-based AHD: GPT-3.5-turbo | LLM-based AHD: GPT-3.5-turbo | LLM-based AHD: GPT-3.5-turbo | LLM-based AHD: GPT-3.5-turbo | LLM-based AHD: GPT-3.5-turbo | LLM-based AHD: GPT-3.5-turbo | LLM-based AHD: GPT-3.5-turbo | LLM-based AHD: GPT-3.5-turbo | LLM-based AHD: GPT-3.5-turbo | LLM-based AHD: GPT-3.5-turbo |

| Funsearch | 6.683 | 17.75% | 9.240 | 18.95% | 12.808 | 19.61% | 19.985 | 0.26% | 40.225 | 0.12% | 57.395 | 0.09% |

| EoH | 6.390 | 12.59% | 8.930 | 14.96% | 12.538 | 17.63% | 19.994 | 0.21% | 40.231 | 0.10% | 57.400 | 0.08% |

| MCTS-AHD(Ours) | 6.346 | 11.82% | 8.861 | 14.08% | 12.418 | 16.51% | 19.997 | 0.20% | 40.233 | 0.09% | 57.393 | 0.10% |

| LLM-based AHD: GPT-4o-mini | LLM-based AHD: GPT-4o-mini | LLM-based AHD: GPT-4o-mini | LLM-based AHD: GPT-4o-mini | LLM-based AHD: GPT-4o-mini | LLM-based AHD: GPT-4o-mini | LLM-based AHD: GPT-4o-mini | LLM-based AHD: GPT-4o-mini | LLM-based AHD: GPT-4o-mini | LLM-based AHD: GPT-4o-mini | LLM-based AHD: GPT-4o-mini | LLM-based AHD: GPT-4o-mini | LLM-based AHD: GPT-4o-mini |

| Funsearch | 6.357 | 12.00% | 8.850 | 13.93% | 12.372 | 15.54% | 19.988 | 0.24% | 40.227 | 0.11% | 57.398 | 0.09% |

| EoH | 6.394 | 12.67% | 8.894 | 14.49% | 12.437 | 16.68% | 19.993 | 0.22% | 40.231 | 0.10% | 57.399 | 0.09% |

| MCTS-AHD(Ours) | 6.225 | 9.69% | 8.684 | 11.79% | 12.120 | 13.71% | 20.015 | 0.11% | 40.252 | 0.05% | 57.423 | 0.04% |

(Rosenkrantz et al., 1977), ACO (Dorigo et al., 2006), EI (Mockus, 1974). (b) Traditional AHD method: GHPP (Duflo et al., 2019) (c) Neural Combinatorial Optimization (NCO) methods under the same general frameworks, e.g. POMO (Kwon et al., 2020) and DeepACO (Ye et al., 2024b). (d) Existing LMM-based AHD methods: Funsearch (Romera-Paredes et al., 2024), EoH (Liu et al., 2024b), ReEvo (Ye et al., 2024a), and the most recent work HSEvo (Dat et al., 2024). Funsearch, ReEvo, and HSEvo design heuristics from a handcrafted low-quality seed function, and we provide the same seed function for each design scenario without providing external knowledge. Instead, running EoH and MCTS-AHD does not require manually setting a seed function to initiate the heuristic evolution, so both methods demonstrate better applicability.

We design heuristics with the proposed MCTS-AHD and LLM-based AHD baselines on a single Intel(R) i7-12700 CPU. Following similar settings of Liu et al. (2024b), for almost all tasks, we set the evaluation budget of LLM-based AHD methods on the evaluation dataset D as T = 1 , 000 . In designing heuristics for each application scenario, we conduct three independent runs for each LLM-based AHD method to reduce statistical biases. To verify the significant advantages of MCTS-AHD, we perform more runs on some tasks in Appendix F.3 to obtain the p-value. In designing heuristics with the step-by-step construction framework for the 0-1 Knapsack Problem (KP), executing MCTS-AHD with T =1,000 takes approximately three hours, 1M input tokens, 0.2M output tokens, about 0.3$ with GPT-4o-mini . Compared to LLM-based AHD baselines, there is no significant efficiency degradation or cost improvement.

## 4.1. MCTS-AHD for NP-hard CO Problems

As commonly recognized complex tasks (Korte et al., 2011), this subsection evaluates MCTS-AHD on NP-hard CO prob- lems, including TSP, KP, Capacitated Vehicle Routing Problem (CVRP), Multiple Knapsack Problem (MKP), BinPacking Problem (BPP) with both online and offline settings (online BPP & offline BPP), and Admissible Set Problem (ASP). For these NP-hard CO problems, we apply MCTSAHDto automatically design heuristics with several general frameworks, including step-by-step construction, ACO, and GLS (GLS results are shown in Appendix F.2).

Step-by-Step Construction Framework. The step-by-step construction framework (also known as the constructive heuristic framework) is simple but flexible for task solving, which constructs nodes in feasible CO solutions one by one (Asani et al., 2023). Besides being a general framework for LLM-based AHD methods, it is also the most common framework adopted in NCO methods (Vinyals et al., 2015; Bello et al., 2016). We use MCTS-AHD to design heuristics with the step-by-step construction framework for TSP, KP, online BPP, and ASP (with ASP results in Appendix F.1).

TSP & KP . We first evaluate MCTS-AHD by designing TSP and KP heuristics within step-by-step construction frameworks. The key heuristic function to be designed should select the next TSP node or the next KP item to join taking the temporary solving state (e.g., selected and remaining TSP nodes or KP items) as inputs. This function will be executed recursively until a complete feasible solution is constructed. For all LLM-based AHD methods, the evaluation dataset D contains 64 50-node ( N =50) TSP instances for TSP and 64 100-item ( N =100) KP instances with capacity W = 25 for KP. Table 1 shows the performance of baseline heuristics and LLM-based AHD methods. The Greedy Construct baseline is the Nearest-greedy heuristic algorithm for TSP and it constructs KP solutions by the item with the largest value-weight-ratio. MCTS-AHD exhibits significant advantages on almost all test sets, surpassing manually designed heuristics and LLM-based AHD methods EoH and Fun-

Table 2. Designing heuristics with the ACO general framework for solving TSP, CVRP, MKP, and offline BPP. Each test set contains 64 instances and LLM-based AHD methods' performances are averaged over three runs.

| | TSP | TSP | TSP | TSP | CVRP | CVRP | CVRP | CVRP | MKP | MKP | MKP | MKP | Offline BPP | Offline BPP | Offline BPP | Offline BPP |

|----------------------------|----------------------------|----------------------------|----------------------------|----------------------------|----------------------------|----------------------------|----------------------------|----------------------------|----------------------------|----------------------------|----------------------------|----------------------------|----------------------------|----------------------------|----------------------------|----------------------------|

| Test sets | N =50 | N =50 | N =100 | N =100 | N =50, C =50 | N =50, C =50 | N =100, C =50 | N =100, C =50 | N =100, m =5 | N =100, m =5 | N =200, m =5 | N =200, m =5 | N =500, C =150 | N =500, C =150 | N =1,000, C =150 | N =1,000, C =150 |

| Methods | Obj. ↓ | Gap | Obj. ↓ | Gap | Obj. ↓ | Gap | Obj. ↓ | Gap | Obj. ↑ | Gap | Obj. ↑ | Gap | Obj. ↓ | Gap | Obj. ↓ | Gap |

| ACO | 5.992 | 3.28% | 8.948 | 9.40% | 11.355 | 27.77% | 18.778 | 25.76% | 22.738 | 2.28% | 40.672 | 4.30% | 208.828 | 2.81% | 417.938 | 3.15% |

| DeepACO | 5.842 | 0.71% | 8.282 | 1.26% | 8.888 | 0.00% | 14.932 | 0.00% | 23.093 | 0.75% | 41.988 | 1.20% | 203.125 | 0.00% | 405.172 | 0.00% |

| LLM-based AHD: GPT-4o-mini | LLM-based AHD: GPT-4o-mini | LLM-based AHD: GPT-4o-mini | LLM-based AHD: GPT-4o-mini | LLM-based AHD: GPT-4o-mini | LLM-based AHD: GPT-4o-mini | LLM-based AHD: GPT-4o-mini | LLM-based AHD: GPT-4o-mini | LLM-based AHD: GPT-4o-mini | LLM-based AHD: GPT-4o-mini | LLM-based AHD: GPT-4o-mini | LLM-based AHD: GPT-4o-mini | LLM-based AHD: GPT-4o-mini | LLM-based AHD: GPT-4o-mini | LLM-based AHD: GPT-4o-mini | LLM-based AHD: GPT-4o-mini | LLM-based AHD: GPT-4o-mini |

| EoH | 5.828 | 0.45% | 8.263 | 1.03% | 9.359 | 5.31% | 15.681 | 5.02% | 23.139 | 0.56% | 41.994 | 1.19% | 204.646 | 0.75% | 408.599 | 0.85% |

| ReEvo | 5.856 | 0.94% | 8.340 | 1.98% | 9.327 | 4.94% | 16.092 | 7.77% | 23.245 | 0.10% | 42.416 | 0.19% | 206.693 | 1.76% | 413.510 | 2.06% |

| MCTS-AHD(Ours) | 5.801 | 0.00% | 8.179 | 0.00% | 9.286 | 4.48% | 15.782 | 5.70% | 23.269 | 0.00% | 42.498 | 0.00% | 204.094 | 0.48% | 407.323 | 0.53% |

search. Moreover, compared to an advanced NCO method POMO which requires task-specific training, MCTS-AHD can design better heuristics in 200-node TSP and KP test sets, exhibiting its power to solve NP-hard CO problems.

Online BPP . As another widely considered NP-hard CO problem, online BPP is the online variant of BPP. It allows only immediate bin packing decisions once a new item is received. It is generally adopted as a common evaluation scenario for LLM-based AHD methods. We follow (Liu et al., 2024b) to generate WeiBull BPP instances (Casti˜ neiras et al., 2012) and use four WeiBull instances with diverse scales as the evaluation dataset D . As shown in Table 3, online BPP heuristics designed by MCTS-AHD demonstrate a superior average performance of six test sets.

Table 3. Design step-by-step construction heuristics for solving online BPP. The table exhibits the performance gaps of heuristics to the lower bound. Each LLM-based AHD method is run three times for the average gaps. Each test set contains five WeiBull BPP instances and we underline the four (in-domain) scales contained in D . The scale notations of test sets are abbreviated, e.g., 1k 100 represents 1,000 items and capacity W = 100 .

| Online BPP | Online BPP | Online BPP | Online BPP | Online BPP | Online BPP | Online BPP | Online BPP |

|----------------------------|----------------------------|----------------------------|----------------------------|----------------------------|----------------------------|----------------------------|----------------------------|

| Test sets | 1k 100 | 1k 500 | 5k 100 | 5k 500 | 10k 100 | 10k 500 | Avg. |

| Best Fit | 4.77% | 0.25% | 4.31% | 0.55% | 4.05% | 0.47% | 2.40% |

| First Fit | 5.02% | 0.25% | 4.65% | 0.55% | 4.36% | 0.50% | 2.56% |

| LLM-based AHD: GPT-4o-mini | LLM-based AHD: GPT-4o-mini | LLM-based AHD: GPT-4o-mini | LLM-based AHD: GPT-4o-mini | LLM-based AHD: GPT-4o-mini | LLM-based AHD: GPT-4o-mini | LLM-based AHD: GPT-4o-mini | LLM-based AHD: GPT-4o-mini |

| Funsearch | 2.45% | 0.66% | 1.30% | 0.25% | 1.05% | 0.21% | 0.99% |

| EoH | 2.69% | 0.25% | 1.63% | 0.53% | 1.47% | 0.45% | 1.17% |

| ReEvo | 3.94% | 0.50% | 2.72% | 0.40% | 2.39% | 0.31% | 1.71% |

| HSEvo | 2.64% | 1.07% | 1.43% | 0.32% | 1.13% | 0.21% | 1.13% |

| MCTS-AHD | 2.45% | 0.50% | 1.06% | 0.32% | 0.74% | 0.26% | 0.89% |

Ant Colony Optimization Framework . The ACO is an optimization algorithm inspired by the foraging behavior of ants, which contains a heuristic matrix and implements a path selection mechanism to solve combinatorial optimization problems by simulating the transfer of pheromones between ants (Dorigo et al., 2006; Kim et al., 2024). LLMbased AHD can design a generation function of the heuristic matrix, thereby transforming ACO into a framework and applying it to a variety of tasks. Following Ye et al. (2024a), MCTS-AHD designs heuristics within the ACO framework for four NP-hard CO problems: TSP, CVRP, MKP, and offline BPP. The design results using GPT-4o-mini as LLMs are shown in Table 2, where MCTS-AHD exhibits significant leads to EoH and ReEvo in all in-domain test sets across four CO problems and three out-of-domain test sets. Moreover, the proposed MCTS-AHD can consistently outperform manually designed ACO heuristics in all eight test sets and surpass an outstanding NCO method DeepACO (Ye et al., 2024b) in TSP and MKP test sets.

## 4.2. MCTS-AHD for Other Complex Tasks

To assess whether MCTS-AHD can still perform well in optimization tasks beyond CO problems, we follow Yao et al. (2024c) to evaluate MCTS-AHD by designing heuristic CAFs for BO. The CAF is a crucial component for Cost-aware BO, helping to reach the global optimum in a cost-efficient manner. There are several advanced handcrafted heuristic CAFs, including EI (Mockus, 1974), EIpu (Snoek et al., 2012), and EI-cools (Lee et al., 2020a). We employ two synthetic instances with different landscapes and input dimensions (Ackley and Rastrigin in Table 4) as the evaluation dataset D for LLM-based AHD and also test the manually and automatically designed heuristics on ten other synthetic instances. During heuristic evolutions, we set the sampling budget to 12 and run 5 independent trials for average performances. As shown in Table 4, heuristic CAFs designed by the proposed MCTS-AHD demonstrate superior BO results that outperform both manually designed heuristics and EoH in six out of twelve synthetic instances. It verifies that MCTS-AHD not only performs well in NPhard CO problems but can also show great power in other complex optimization tasks.

## 5. Discussion

Experiments have demonstrated the effectiveness of the proposed MCTS-AHD in designing high-quality heuristics for a wide range of application scenarios. This section first conducts essential ablation studies. Then, we will also analyze the advantages of utilizing MCTS in LLM-based AHD compared to the original population-based EC.

Table 4. Designing CAFs for BO. The table shows the gaps to optimal when running BO on instances with manually designed CAFs and CAFs designed by LLM-based AHD methods. LLM-based AHD methods are run three times for the average gaps. In testing, the evaluation budgets for BO are 30 and we run 10 trials for average gaps. The results of EI, EIpu, and EI-cool are from Yao et al. (2024c).

| Instances | Ackley | Rastrigin | Griewank | Rosenbrock | Levy | ThreeHumpCamel | StyblinskiTang | Hartmann | Powell | Shekel | Hartmann | Cosine8 |

|----------------------------|----------------------------|----------------------------|----------------------------|----------------------------|----------------------------|----------------------------|----------------------------|----------------------------|----------------------------|----------------------------|----------------------------|----------------------------|

| EI | 2.66% | 4.74% | 0.49% | 1.26% | 0.01% | 0.05% | 0.03% | 0.00% | 18.89% | 7.91% | 0.03% | 0.47% |

| EIpu | 2.33% | 5.62% | 0.34% | 2.36% | 0.01% | 0.12% | 0.02% | 0.00% | 19.83% | 7.92% | 0.03% | 0.47% |

| EI-cool | 2.74% | 5.78% | 0.34% | 2.29% | 0.01% | 0.07% | 0.03% | 0.00% | 14.95% | 8.21% | 0.03% | 0.54% |

| LLM-based AHD: GPT-4o-mini | LLM-based AHD: GPT-4o-mini | LLM-based AHD: GPT-4o-mini | LLM-based AHD: GPT-4o-mini | LLM-based AHD: GPT-4o-mini | LLM-based AHD: GPT-4o-mini | LLM-based AHD: GPT-4o-mini | LLM-based AHD: GPT-4o-mini | LLM-based AHD: GPT-4o-mini | LLM-based AHD: GPT-4o-mini | LLM-based AHD: GPT-4o-mini | LLM-based AHD: GPT-4o-mini | LLM-based AHD: GPT-4o-mini |

| EoH | 2.45% | 0.90% | 0.54% | 56.78% | 0.20% | 0.26% | 0.79% | 0.04% | 70.89% | 4.56% | 0.33% | 0.36% |

| MCTS-AHD | 2.40% | 0.77% | 0.36% | 1.68% | 0.01% | 0.02% | 0.20% | 0.01% | 1.27% | 3.94% | 0.38% | 0.34% |

## 5.1. Ablation on Parameters and Components

To validate the necessity of its components, as shown in Table 5, we first remove three proposed components of MCTS-AHD (Progressive Widening, Thought-alignment, and Exploration-decay) and use these variants to design stepby-step heuristics for TSP and KP. Line 3 to Line 5 in Table 5 reports their average optimality gaps over three runs, and all these variants exhibit a clear performance degradation in at least one task compared to the original MCTS-AHD.

The actions for node expansion in MCTS-AHD are also essential. Actions e1 and e2 are associated with the progressive widening, so they cannot be ablated individually. According to Table 5, MCTS-AHD variants without actions s1, m1, and m2 could only design worse heuristics in at least one task, proving the significance of these LLM-based actions. The importance of action s1 also demonstrates that MCTS-AHD benefits from its organized tree structure.

Meanwhile, we measure the sensitivity of the main parameter λ 0 . Results in the last two lines of Table 5 show that although the TSP and KP tasks may have respective preferences in the λ 0 setting, the default setting (i.e., λ 0 = 0 . 1 ) exhibits generally good quality.

Table 5. Ablations on the components, actions, and parameter settings of MCTS-AHD. We use MCTS-AHD variants to design heuristics with the step-by-step construction framework for their optimality gaps on 1,000-instance test sets averaged over five runs .

| Methods | TSP50 | KP100 |

|------------------------------|---------|---------|

| MCTS-AHD (Original, 10 runs) | 10.661% | 0.059% |

| w/o Progressive Widening | 12.132% | 0.064% |

| w/o Thought-alignment | 11.640% | 0.061% |

| w/o Exploration-decay | 11.606% | 0.064% |

| w/o Action s1 | 11.919% | 0.062% |

| w/o Action m1 | 10.921% | 0.083% |

| w/o Action m2 | 11.679% | 0.061% |

| MCTS-AHD variant λ 0 = 0.05 | 11.080% | 0.056% |

| MCTS-AHD variant λ 0 = 0.2 | 12.124% | 0.034% |

## 5.2. MCTS versus Population-based EC

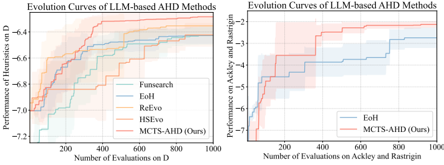

Ability of MCTS-AHD in Escaping from Local Optima . As the main contribution of this paper, instead of populationbased EC, MCTS-AHD can manage inferior but potential heuristic functions, achieving a more comprehensive exploration of the heuristic space H thus avoiding falling into local optima. To verify this, we plot the performance curves of MCTS-AHD and LLM-based AHD baselines in designing step-by-step construction heuristics for TSP and designing CAFs for BOs. Each curve is averaged over at least five runs . As illustrated in Figure 4, all baseline methods with populations exhibit early convergences on performances, but MCTS-AHD can converge to significantly better performance via quick and continuous performance updates.

<details>

<summary>Image 4 Details</summary>

### Visual Description

## Line Graphs: Evolution Curves of LLM-based AHD Methods

### Overview

The image contains two line graphs comparing the performance evolution of LLM-based AHD (Automated Heuristic Design) methods across different evaluation metrics and datasets. The left graph focuses on performance on dataset "D," while the right graph evaluates performance on the Ackley and Rastrigin optimization benchmarks. Both graphs show stepwise improvement curves with shaded confidence intervals.

### Components/Axes

#### Left Graph (Performance on D)

- **Y-axis**: "Performance of Heuristics on D" (values: -7.2 to -6.4)

- **X-axis**: "Number of Evaluations on D" (200 to 1000)

- **Legend**:

- Blue: Funsearch

- Dark Blue: EoH

- Orange: ReEvo

- Teal: HSEvo

- Red: MCTS-AHD (Ours)

#### Right Graph (Performance on Ackley and Rastrigin)

- **Y-axis**: "Performance on Ackley and Rastrigin" (values: -5 to -2)

- **X-axis**: "Number of Evaluations on Ackley and Rastrigin" (200 to 1000)

- **Legend**:

- Blue: EoH

- Red: MCTS-AHD (Ours)

### Detailed Analysis

#### Left Graph Trends

1. **Funsearch (Blue)**: Starts at ~-7.0 (200 evaluations), rises to ~-6.6 (1000 evaluations). Steep initial climb, then plateaus.

2. **EoH (Dark Blue)**: Begins at ~-6.8 (200), improves to ~-6.4 (1000). Gradual, consistent ascent.

3. **ReEvo (Orange)**: Starts at ~-6.6 (200), reaches ~-6.4 (1000). Moderate improvement.

4. **HSEvo (Teal)**: Begins at ~-6.8 (200), ends at ~-6.6 (1000). Slow, steady progress.

5. **MCTS-AHD (Red)**: Starts at ~-6.2 (200), peaks at ~-6.4 (1000). Highest performance throughout.

#### Right Graph Trends

1. **EoH (Blue)**: Starts at ~-5.0 (200), improves to ~-2.5 (1000). Sharp initial rise, then stabilizes.

2. **MCTS-AHD (Red)**: Begins at ~-5.5 (200), reaches ~-2.0 (1000). Outperforms EoH consistently.

### Key Observations

1. **MCTS-AHD Dominance**: In both graphs, MCTS-AHD (Ours) achieves the highest performance across all evaluation counts, with the largest margin in the Ackley/Rastrigin benchmark.

2. **Convergence Patterns**: All methods show diminishing returns after ~600 evaluations, with performance curves flattening.

3. **Confidence Intervals**: Shaded regions indicate variability. The left graph shows wider uncertainty (e.g., Funsearch’s ±0.3 range at 1000 evaluations), while the right graph has tighter bounds (±0.2 for MCTS-AHD).

### Interpretation

The data demonstrates that **MCTS-AHD (Ours)** consistently outperforms other methods in both dataset-specific (D) and general optimization (Ackley/Rastrigin) tasks. The performance gains are most pronounced in the early evaluation stages (200–400 evaluations), suggesting rapid initial learning. The Ackley/Rastrigin benchmark (right graph) reveals MCTS-AHD’s superior generalization capability, as it achieves near-optimal results faster than EoH. The shaded confidence intervals imply that MCTS-AHD’s performance is more stable, with less variance across trials. These results position MCTS-AHD as a robust solution for LLM-based AHD, particularly in complex optimization landscapes.

</details>

- (a) Design Step-by-step Construction heuristics for TSP

- (b) Design CAFs for BO

Figure 4. Evolution curves on two diverse application scenarios.

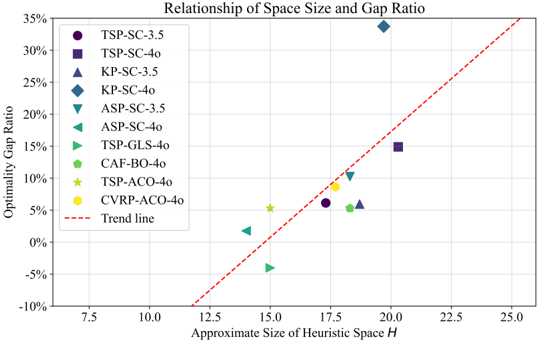

Advantage scopes of MCTS-AHD . Compared to population-based baselines, we claim that MCTS-AHD demonstrates greater advantages in application scenarios with more complex heuristic spaces H and application scenarios with more descriptions as knowledge. We analyze these two claims with experiments in Appendix F.8.

## 6. Conclusion

In conclusion, this paper first applies MCTS to LLM-based AHD. The proposed MCTS-AHD achieves a comprehensive exploration of the heuristic space and can finally design higher-quality heuristics for NP-hard complex tasks. For LLM-based AHD, MCTS can be a more promising evolution method compared to population-based EC.

Limitation and Future Work : As a limitation of MCTSAHD, the convergence speed of MCTS-AHD can still be improved. In the future, we will consider designing MCTSpopulation hybrid methods for better evolution efficiency.

## Impact Statement

This paper presents work whose goal is to advance the field of Machine Learning. There are many potential societal consequences of our work, none which we feel must be specifically highlighted here.

## References

- Abgaryan, H., Harutyunyan, A., and Cazenave, T. Llms can schedule. arXiv preprint arXiv:2408.06993 , 2024.

- Alhindi, A., Alhindi, A., Alhejali, A., Alsheddy, A., Tairan, N., and Alhakami, H. Moea/d-gls: a multiobjective memetic algorithm using decomposition and guided local search. Soft Computing , 23:9605-9615, 2019.

- Ansari, Z. N. and Daxini, S. D. A state-of-the-art review on meta-heuristics application in remanufacturing. Archives of Computational Methods in Engineering , 29(1):427470, 2022.

- Arnold, F. and S¨ orensen, K. Knowledge-guided local search for the vehicle routing problem. Computers & Operations Research , 105:32-46, 2019.

- Arnold, F., Gendreau, M., and S¨ orensen, K. Efficiently solving very large-scale routing problems. Computers & operations research , 107:32-42, 2019.

- Asani, E. O., Okeyinka, A. E., and Adebiyi, A. A. A computation investigation of the impact of convex hull subtour on the nearest neighbour heuristic. In 2023 International Conference on Science, Engineering and Business for Sustainable Development Goals (SEB-SDG) , volume 1, pp. 1-7. IEEE, 2023.

- Ausiello, G., Crescenzi, P., Gambosi, G., Kann, V., Marchetti-Spaccamela, A., and Protasi, M. Complexity and approximation: Combinatorial optimization problems and their approximability properties . Springer Science & Business Media, 2012.

- B¨ ack, T., Fogel, D. B., and Michalewicz, Z. Handbook of evolutionary computation. Release , 97(1):B1, 1997.

- Bello, I., Pham, H., Le, Q. V., Norouzi, M., and Bengio, S. Neural combinatorial optimization with reinforcement learning. arXiv preprint arXiv:1611.09940 , 2016.

- Berto, F., Hua, C., Zepeda, N. G., Hottung, A., Wouda, N., Lan, L., Park, J., Tierney, K., and Park, J. Routefinder: Towards foundation models for vehicle routing problems. arXiv preprint arXiv:2406.15007 , 2024.

- Biggs, N. The traveling salesman problem a guided tour of combinatorial optimization, 1986.

- Blot, A., Hoos, H. H., Jourdan, L., Kessaci-Marmion, M.- ´ E., and Trautmann, H. Mo-paramils: A multi-objective automatic algorithm configuration framework. In Learning and Intelligent Optimization: 10th International Conference, LION 10, Ischia, Italy, May 29-June 1, 2016, Revised Selected Papers 10 , pp. 32-47. Springer, 2016.

- Brandfonbrener, D., Henniger, S., Raja, S., Prasad, T., Loughridge, C. R., Cassano, F., Hu, S. R., Yang, J., Byrd, W. E., Zinkov, R., et al. Vermcts: Synthesizing multistep programs using a verifier, a large language model, and tree search. In The 4th Workshop on Mathematical Reasoning and AI at NeurIPS'24 , 2024.

- Brecklinghaus, J. and Hougardy, S. The approximation ratio of the greedy algorithm for the metric traveling salesman problem. Operations Research Letters , 43(3):259-261, 2015.

- Browne, C. B., Powley, E., Whitehouse, D., Lucas, S. M., Cowling, P. I., Rohlfshagen, P., Tavener, S., Perez, D., Samothrakis, S., and Colton, S. A survey of monte carlo tree search methods. IEEE Transactions on Computational Intelligence and AI in games , 4(1):1-43, 2012.

- Burke, E. K., Gendreau, M., Hyde, M., Kendall, G., Ochoa, G., ¨ Ozcan, E., and Qu, R. Hyper-heuristics: A survey of the state of the art. Journal of the Operational Research Society , 64(12):1695-1724, 2013.

- Burke, E. K., Hyde, M. R., Kendall, G., Ochoa, G., ¨ Ozcan, E., and Woodward, J. R. A classification of hyperheuristic approaches: revisited. Handbook of metaheuristics , pp. 453-477, 2019.

- Cant´ u-Paz, E. et al. A survey of parallel genetic algorithms. Calculateurs paralleles, reseaux et systems repartis , 10 (2):141-171, 1998.

- Casti˜ neiras, I., De Cauwer, M., and O'Sullivan, B. Weibullbased benchmarks for bin packing. In International Conference on Principles and Practice of Constraint Programming , pp. 207-222. Springer, 2012.

- Christofides, N. Worst-case analysis of a new heuristic for the travelling salesman problem. In Operations Research Forum , volume 3, pp. 20. Springer, 2022.

- Coulom, R. Computing 'elo ratings' of move patterns in the game of go. ICGA journal , 30(4):198-208, 2007.

- Dainese, N., Merler, M., Alakuijala, M., and Marttinen, P. Generating code world models with large language models guided by monte carlo tree search. arXiv preprint arXiv:2405.15383 , 2024.

- Dat, P. V. T., Doan, L., and Binh, H. T. T. Hsevo: Elevating automatic heuristic design with diversity-driven harmony

- search and genetic algorithm using llms. arXiv preprint arXiv:2412.14995 , 2024.

- DeLorenzo, M., Chowdhury, A. B., Gohil, V., Thakur, S., Karri, R., Garg, S., and Rajendran, J. Make every move count: Llm-based high-quality rtl code generation using mcts. arXiv preprint arXiv:2402.03289 , 2024.

- Desale, S., Rasool, A., Andhale, S., and Rane, P. Heuristic and meta-heuristic algorithms and their relevance to the real world: a survey. Int. J. Comput. Eng. Res. Trends , 351(5):2349-7084, 2015.

- Dorigo, M., Birattari, M., and Stutzle, T. Ant colony optimization. IEEE computational intelligence magazine , 1 (4):28-39, 2006.

- Drakulic, D., Michel, S., and Andreoli, J.-M. Goal: A generalist combinatorial optimization agent learning. arXiv preprint arXiv:2406.15079 , 2024.

- Duflo, G., Kieffer, E., Brust, M. R., Danoy, G., and Bouvry, P. A gp hyper-heuristic approach for generating tsp heuristics. In 2019 IEEE International Parallel and Distributed Processing Symposium Workshops (IPDPSW) , pp. 521-529. IEEE, 2019.

- Eiben, A. E. and Smith, J. E. Introduction to evolutionary computing . Springer, 2015.

- Feng, X., Wan, Z., Wen, M., McAleer, S. M., Wen, Y., Zhang, W., and Wang, J. Alphazero-like tree-search can guide large language model decoding and training. arXiv preprint arXiv:2309.17179 , 2023.

- Fischetti, M., Lodi, A., and Toth, P. Exact methods for the asymmetric traveling salesman problem. The traveling salesman problem and its variations , pp. 169-205, 2007.

- Fu, Z.-H., Qiu, K.-B., and Zha, H. Generalize a small pre-trained model to arbitrarily large tsp instances. In Proceedings of the AAAI conference on artificial intelligence , volume 35, pp. 7474-7482, 2021.

- Gao, C., Shang, H., Xue, K., Li, D., and Qian, C. Towards generalizable neural solvers for vehicle routing problems via ensemble with transferrable local policy, 2024.

- Guo, P.-F., Chen, Y.-H., Tsai, Y .-D., and Lin, S.-D. Towards optimizing with large language models. arXiv preprint arXiv:2310.05204 , 2023.

- Hadi, M. U., Qureshi, R., Shah, A., Irfan, M., Zafar, A., Shaikh, M. B., Akhtar, N., Wu, J., Mirjalili, S., et al. A survey on large language models: Applications, challenges, limitations, and practical usage. Authorea Preprints , 2023.

- Hadi, M. U., Al Tashi, Q., Shah, A., Qureshi, R., Muneer, A., Irfan, M., Zafar, A., Shaikh, M. B., Akhtar, N., Wu, J., et al. Large language models: a comprehensive survey of its applications, challenges, limitations, and future prospects. Authorea Preprints , 2024.

- He, K., Zhang, X., Ren, S., and Sun, J. Deep residual learning for image recognition. In Proceedings of the IEEE conference on computer vision and pattern recognition , pp. 770-778, 2016.

- He, Q., Head, K. L., and Ding, J. Heuristic algorithm for priority traffic signal control. Transportation research record , 2259(1):1-7, 2011.

- Helsgaun, K. An extension of the lin-kernighan-helsgaun tsp solver for constrained traveling salesman and vehicle routing problems. Roskilde: Roskilde University , 12: 966-980, 2017.

- Hemberg, E., Moskal, S., and O'Reilly, U.-M. Evolving code with a large language model. Genetic Programming and Evolvable Machines , 25(2):21, 2024.

- Huang, L., Yu, W., Ma, W., Zhong, W., Feng, Z., Wang, H., Chen, Q., Peng, W., Feng, X., Qin, B., et al. A survey on hallucination in large language models: Principles, taxonomy, challenges, and open questions. ACM Transactions on Information Systems , 2023.

- Huang, X., Liu, W., Chen, X., Wang, X., Wang, H., Lian, D., Wang, Y., Tang, R., and Chen, E. Understanding the planning of llm agents: A survey. arXiv preprint arXiv:2402.02716 , 2024.

- Hudson, B., Li, Q., Malencia, M., and Prorok, A. Graph neural network guided local search for the traveling salesperson problem. arXiv preprint arXiv:2110.05291 , 2021.

- Jiang, X., Wu, Y., Wang, Y., and Zhang, Y. Unco: Towards unifying neural combinatorial optimization through large language model. arXiv preprint arXiv:2408.12214 , 2024.

- Kambhampati, S., Valmeekam, K., Guan, L., Verma, M., Stechly, K., Bhambri, S., Saldyt, L., and Murthy, A. Llms can't plan, but can help planning in llm-modulo frameworks. arXiv preprint arXiv:2402.01817 , 2024.

- Kim, M., Choi, S., Kim, H., Son, J., Park, J., and Bengio, Y. Ant colony sampling with gflownets for combinatorial optimization. arXiv preprint arXiv:2403.07041 , 2024.

- Kocsis, L. and Szepesv´ ari, C. Bandit based monte-carlo planning. In European conference on machine learning , pp. 282-293. Springer, 2006.

- Kool, W., Van Hoof, H., and Welling, M. Attention, learn to solve routing problems! arXiv preprint arXiv:1803.08475 , 2018.

- Korte, B. H., Vygen, J., Korte, B., and Vygen, J. Combinatorial optimization , volume 1. Springer, 2011.

- Kwon, Y.-D., Choo, J., Kim, B., Yoon, I., Gwon, Y., and Min, S. Pomo: Policy optimization with multiple optima for reinforcement learning. Advances in Neural Information Processing Systems , 33:21188-21198, 2020.

- Kwon, Y.-D., Choo, J., Yoon, I., Park, M., Park, D., and Gwon, Y. Matrix encoding networks for neural combinatorial optimization. Advances in Neural Information Processing Systems , 34:5138-5149, 2021.

- Lam, R., Willcox, K., and Wolpert, D. H. Bayesian optimization with a finite budget: An approximate dynamic programming approach. Advances in Neural Information Processing Systems , 29, 2016.

- Langdon, W. B. and Poli, R. Foundations of genetic programming . Springer Science & Business Media, 2013.

- Lange, R., Tian, Y., and Tang, Y. Large language models as evolution strategies. In Proceedings of the Genetic and Evolutionary Computation Conference Companion , pp. 579-582, 2024.

- Lee, E. H., Perrone, V., Archambeau, C., and Seeger, M. Cost-aware bayesian optimization. arXiv preprint arXiv:2003.10870 , 2020a.

- Lee, J., Jeon, W., Kim, G.-H., and Kim, K.-E. Montecarlo tree search in continuous action spaces with value gradients. In Proceedings of the AAAI conference on artificial intelligence , volume 34, pp. 4561-4568, 2020b.

- Lehman, J., Gordon, J., Jain, S., Ndousse, K., Yeh, C., and Stanley, K. O. Evolution through large models. In Handbook of Evolutionary Machine Learning , pp. 331366. Springer, 2023.

- Lin, S. and Kernighan, B. W. An effective heuristic algorithm for the traveling-salesman problem. Operations research , 21(2):498-516, 1973.

- Liu, F., Tong, X., Yuan, M., and Zhang, Q. Algorithm evolution using large language model. arXiv preprint arXiv:2311.15249 , 2023.

- Liu, F., Lin, X., Wang, Z., Zhang, Q., Xialiang, T., and Yuan, M. Multi-task learning for routing problem with cross-problem zero-shot generalization. In Proceedings of the 30th ACM SIGKDD Conference on Knowledge Discovery and Data Mining , pp. 1898-1908, 2024a.

- Liu, F., Xialiang, T., Yuan, M., Lin, X., Luo, F., Wang, Z., Lu, Z., and Zhang, Q. Evolution of heuristics: Towards efficient automatic algorithm design using large language model. In Forty-first International Conference on Machine Learning , 2024b.

- Liu, F., Yao, Y., Guo, P., Yang, Z., Lin, X., Tong, X., Yuan, M., Lu, Z., Wang, Z., and Zhang, Q. A systematic survey on large language models for algorithm design. arXiv preprint arXiv:2410.14716 , 2024c.

- Liu, F., Zhang, R., Xie, Z., Sun, R., Li, K., Lin, X., Wang, Z., Lu, Z., and Zhang, Q. Llm4ad: A platform for algorithm design with large language model. arXiv preprint arXiv:2412.17287 , 2024d.

- Liu, S., Chen, C., Qu, X., Tang, K., and Ong, Y.-S. Large language models as evolutionary optimizers. In 2024 IEEE Congress on Evolutionary Computation (CEC) , pp. 1-8. IEEE, 2024e.

- L´ opez-Ib´ a˜ nez, M., Dubois-Lacoste, J., C´ aceres, L. P., Birattari, M., and St¨ utzle, T. The irace package: Iterated racing for automatic algorithm configuration. Operations Research Perspectives , 3:43-58, 2016.

- Luong, P., Nguyen, D., Gupta, S., Rana, S., and Venkatesh, S. Adaptive cost-aware bayesian optimization. Knowledge-Based Systems , 232:107481, 2021.

- Lusby, R. M., Larsen, J., Ehrgott, M., and Ryan, D. An exact method for the double tsp with multiple stacks. International Transactions in Operational Research , 17 (5):637-652, 2010.

- Ma, Y., Li, J., Cao, Z., Song, W., Zhang, L., Chen, Z., and Tang, J. Learning to iteratively solve routing problems with dual-aspect collaborative transformer. Advances in Neural Information Processing Systems , 34:1109611107, 2021.

- Mei, Y., Chen, Q., Lensen, A., Xue, B., and Zhang, M. Explainable artificial intelligence by genetic programming: A survey. IEEE Transactions on Evolutionary Computation , 27(3):621-641, 2022.

- Merz, P. and Freisleben, B. Genetic local search for the tsp: New results. In Proceedings of 1997 IEEE International Conference on Evolutionary Computation (ICEC'97) , pp. 159-164. IEEE, 1997.

- Meyerson, E., Nelson, M. J., Bradley, H., Gaier, A., Moradi, A., Hoover, A. K., and Lehman, J. Language model crossover: Variation through few-shot prompting. arXiv preprint arXiv:2302.12170 , 2023.

- Mockus, J. On bayesian methods for seeking the extremum. In Proceedings of the IFIP Technical Conference , pp. 400-404, 1974.

- Naveed, H., Khan, A. U., Qiu, S., Saqib, M., Anwar, S., Usman, M., Akhtar, N., Barnes, N., and Mian, A. A comprehensive overview of large language models. arXiv preprint arXiv:2307.06435 , 2023.

- Qi, Z., Ma, M., Xu, J., Zhang, L. L., Yang, F., and Yang, M. Mutual reasoning makes smaller llms stronger problemsolvers. arXiv preprint arXiv:2408.06195 , 2024.

- Rajendran, C. Heuristic algorithm for scheduling in a flowshop to minimize total flowtime. International Journal of Production Economics , 29(1):65-73, 1993.

- Reinelt, G. Tsplib-a traveling salesman problem library. ORSA journal on computing , 3(4):376-384, 1991.

- Romera-Paredes, B., Barekatain, M., Novikov, A., Balog, M., Kumar, M. P., Dupont, E., Ruiz, F. J., Ellenberg, J. S., Wang, P., Fawzi, O., et al. Mathematical discoveries from program search with large language models. Nature , 625 (7995):468-475, 2024.

- Rosenkrantz, D. J., Stearns, R. E., and Lewis, II, P. M. An analysis of several heuristics for the traveling salesman problem. SIAM journal on computing , 6(3):563-581, 1977.

- S´ anchez-D´ ıaz, X., Ortiz-Bayliss, J. C., Amaya, I., CruzDuarte, J. M., Conant-Pablos, S. E., and Terashima-Mar´ ın, H. A feature-independent hyper-heuristic approach for solving the knapsack problem. Applied Sciences , 11(21): 10209, 2021.

- Sengupta, L., Mariescu-Istodor, R., and Fr¨ anti, P. Which local search operator works best for the open-loop tsp? Applied Sciences , 9(19):3985, 2019.

- Shahriari, B., Swersky, K., Wang, Z., Adams, R. P., and De Freitas, N. Taking the human out of the loop: A review of bayesian optimization. Proceedings of the IEEE , 104 (1):148-175, 2015.

- Shi, W. W., Han, W., and Si, W. C. A hybrid genetic algorithm based on harmony search and its improving. In Informatics and Management Science I , pp. 101-109. Springer, 2013.

- Shinn, N., Cassano, F., Gopinath, A., Narasimhan, K., and Yao, S. Reflexion: Language agents with verbal reinforcement learning. Advances in Neural Information Processing Systems , 36, 2024.

- Silver, D., Huang, A., Maddison, C. J., Guez, A., Sifre, L., Van Den Driessche, G., Schrittwieser, J., Antonoglou, I., Panneershelvam, V., Lanctot, M., et al. Mastering the game of go with deep neural networks and tree search. nature , 529(7587):484-489, 2016.

- Snoek, J., Larochelle, H., and Adams, R. P. Practical bayesian optimization of machine learning algorithms. Advances in neural information processing systems , 25, 2012.

- St¨ utzle, T. and L´ opez-Ib´ a˜ nez, M. Automated design of metaheuristic algorithms. Handbook of metaheuristics , pp. 541-579, 2019.

- Sui, J., Ding, S., Xia, B., Liu, R., and Bu, D. Neuralgls: learning to guide local search with graph convolutional network for the traveling salesman problem. Neural Computing and Applications , 36(17):9687-9706, 2024.

- ´ Swiechowski, M., Godlewski, K., Sawicki, B., and Ma´ ndziuk, J. Monte carlo tree search: A review of recent modifications and applications. Artificial Intelligence Review , 56(3):2497-2562, 2023.

- Tan, C. S., Mohd-Mokhtar, R., and Arshad, M. R. A comprehensive review of coverage path planning in robotics using classical and heuristic algorithms. IEEE Access , 9: 119310-119342, 2021.

- Tuononen, J. Analysis of rebuild local search operator for tsp. Master's thesis, It¨ a-Suomen yliopisto, 2022.

- Vaswani, A., Shazeer, N., Parmar, N., Uszkoreit, J., Jones, L., Gomez, A. N., Kaiser, Ł., and Polosukhin, I. Attention is all you need. Advances in neural information processing systems , 30, 2017.

- Vinyals, O., Fortunato, M., and Jaitly, N. Pointer networks. Advances in neural information processing systems , 28, 2015.

- Voudouris, C. and Tsang, E. Guided local search and its application to the traveling salesman problem. European journal of operational research , 113(2):469-499, 1999.

- Wei, J., Wang, X., Schuurmans, D., Bosma, M., Xia, F., Chi, E., Le, Q. V., Zhou, D., et al. Chain-of-thought prompting elicits reasoning in large language models. Advances in neural information processing systems , 35:24824-24837, 2022.

- Weston, J. and Sukhbaatar, S. System 2 attention (is something you might need too). arXiv preprint arXiv:2311.11829 , 2023.

- Williams, C. K. and Rasmussen, C. E. Gaussian processes for machine learning , volume 2. MIT press Cambridge, MA, 2006.

- Wu, Y., Song, W., Cao, Z., Zhang, J., and Lim, A. Learning improvement heuristics for solving routing problems.. IEEE Transactions on Neural Networks and Learning Systems , 2021.

- Wu, Z., Qi, Q., Zhuang, Z., Sun, H., and Wang, J. Pretokenization of numbers for large language models. In The Second Tiny Papers Track at ICLR 2024 , 2024.

- Xin, L., Song, W., Cao, Z., and Zhang, J. Neurolkh: Combining deep learning model with lin-kernighan-helsgaun heuristic for solving the traveling salesman problem. Advances in Neural Information Processing Systems , 34: 7472-7483, 2021.

- Yang, C., Wang, X., Lu, Y., Liu, H., Le, Q. V., Zhou, D., and Chen, X. Large language models as optimizers, 2024. URL https://arxiv.org/abs/2309.03409 .

- Yao, S., Liu, F., Lin, X., Lu, Z., Wang, Z., and Zhang, Q. Multi-objective evolution of heuristic using large language model. arXiv preprint arXiv:2409.16867 , 2024a.