# Systematic Abductive Reasoning via Diverse Relation Representations in Vector-symbolic Architecture

**Authors**: Zhong-Hua Sun, Ru-Yuan Zhang, Zonglei Zhen, Da-Hui Wang, Yong-Jie Li, Xiaohong Wan, Hongzhi You

> Corresponding author: Hongzhi You, email: Sun, Yong-Jie Li and Hongzhi You are with School of Life Science and Technology, University of Electronic Science and Technology of China (UESTC), Chengdu, China. Ru-Yuan Zhang is with Brain Health Institute, National Center for Mental Disorders, Shanghai Mental Health Center, Shanghai Jiao Tong University School of Medicine and School of Psychology, Shanghai, China. Zonglei Zhen and Xiaohong Wan are with State Key Laboratory of Cognitive Neuroscience and Learning, Beijing Normal University, Beijing␣ China. Da-Hui Wang is with School of Systems Science, Beijing Normal University, Beijing, China.

## Abstract

In abstract visual reasoning, monolithic deep learning models suffer from limited interpretability and generalization, while existing neuro-symbolic approaches fall short in capturing the diversity and systematicity of attributes and relation representations. To address these challenges, we propose a Systematic Abductive Reasoning model with diverse relation representations (Rel-SAR) in Vector-symbolic Architecture (VSA) to solve Raven’s Progressive Matrices (RPM). To derive attribute representations with symbolic reasoning potential, we introduce not only various types of atomic vectors that represent numeric, periodic and logical semantics, but also the structured high-dimentional representation (SHDR) for the overall Grid component. For systematic reasoning, we propose novel numerical and logical relation functions and perform rule abduction and execution in a unified framework that integrates these relation representations. Experimental results demonstrate that Rel-SAR achieves significant improvement on RPM tasks and exhibits robust out-of-distribution generalization. Rel-SAR leverages the synergy between HD attribute representations and symbolic reasoning to achieve systematic abductive reasoning with both interpretable and computable semantics.

Index Terms: Abstract visual reasoning, relation representation, vector-symbolic architecture.

## I Introduction

Raven’s Progressive Matrices (RPM) are a family of psychological intelligence tests widely used for the assessment of abstract reasoning [1, 2]. From a cognitive psychology perspective, abstract visual reasoning in RPM tests involves constructing high-level representations from images and deriving potential relations from these representations [1, 3]. Endowing artificial intelligence with such capabilities is now regarded as a crucial step toward achieving human-level intelligence. However, many recent monolithic deep learning models, which do not explicitly separate perception and reasoning [4, 5, 6, 7, 8, 9], face inherent challenges, such as poor interpretability, limited robustness and generalization, and difficulties in module reuse [10]. Neuro-symbolic architecture, which combines neural visual perception with symbolic reasoning, offers a promising approach to overcoming these challenges and achieving human-level interpretability and generalization [11, 10, 12].

In neuro-symbolic architectures (NSA), Marcus argues that symbol-manipulation in cognition involves representing relations between variables [11]. For RPM tests, object attributes serve as the variables, while potential rules involve the relations. Nevertheless, due to incomplete attribute and relation representations, achieving systematic abduction and execution is still a critical challenge for NSA when performing RPM tests. From the perspective of attributes, recent models such as PrAE [10], the ALANS learner [13], and NVSA (neuro-vector-symbolic architecture) [12] construct attribute representations through neural perception frontends. Notably, the NVSA model achieves hierarchically structured VSA representations of image panels, capturing multiple objects with multiple attributes [12]. Regarding relation representations, PrAE and NVSA achieve abstract reasoning through probabilistic abduction and execution [10] and distributed vector-symbolic architecture (VSA) [12], respectively. Both models rely on predetermined multiple rule templates, each specialized for distinct individual RPM rules. To address the limitations in rule expressiveness, the ALANS learner utilizes learnable rule operators in the abstract algebraic structure, without manual definition for every rules [13]. Additionally, the ARLC model adopts a more expressive VSA-based rule template, operating in the rule parameter space [14]. Both models offer improved interpretabiltiy and generalizability. Despite their advances, previous models fall short in capturing the diversity and systematicity of attribute and relation representations. In contrast, human cognition demonstrates rich and flexible internal representations [15, 16], including arithmetic and logic, and rule-based reasoning systems in cognition are productive and systematic [17]. Therefore, the abstract visual reasoning performance of these models remains open to further improvement.

Previous research indicates that Vector Symbolic Architecture (VSA), a form of high-dimensional (HD) distributed representation, possesses algebraic properties for mathematical operations and can also achieve structured symbolic representations of data [18, 19, 20]. In this work, to achieve comprehensive relation representations, we introduce various types of VSA-based atomic HD vectors with distinct semantic representations, including numeric values, periodic values, and logical values. Given that reasoning in RPM problems involves the overall attributes of multiple objects, we further introduce the structured HD representation (SHDR) for the nxn Grid. They serve as attribute representations necessary for abductive reasoning. Meanwhile, we propose numerical and logical relation functions as relation representations that take multiple HD attribute representations as input and define relations among them. Unlike rule templates designed for individual rules, the two proposed relation functions are specifically tailored to numerical and logical types, providing strong rule expressiveness.

Here, we propose a Systematic Abductive Reasoning model with diverse relation representations (Rel-SAR) for solving RPM, inspired by the original NVSA model [12]. In the Rel-SAR model, visual attribute extraction and rule inference are implemented within a fully unified computational framework in the VSA machinery. The model comprises a neuro-vector frontend for perceiving object attributes of all raw images in RPM problems and a generic vector-symbolic backend for achieving symbolic reasoning. The perception frontend operates on scene-based SHDR of each image panel, which contains multiple objects, each with various attributes, and predicts HD attribute representations by VSA-based symbolic manipulations. The reasoning backend implements the core idea of systematic abductive reasoning: if the given attributes in an RPM adhere to a specific numerical or logical rule, then the relation representations of all attribute pairs can be defined using the corresponding relation functions with identical parameters. These diverse relation representations are involved in both rule abduction and execution phases, enhancing interpretability and improving the capacity for systematic abductive reasoning.

## II Related Work

<details>

<summary>x1.png Details</summary>

### Visual Description

## Diagram: Visual Pattern Recognition Test and Spatial Arrangement Concepts

### Overview

The image is a composite diagram divided into two primary sections, labeled **a** and **b**. Section **a** presents a visual matrix reasoning puzzle (similar to Raven's Progressive Matrices) with a set of "Context Panels" and a set of "Answer Panels." Section **b** illustrates and labels seven distinct spatial arrangement concepts using geometric shapes. The overall purpose appears to be demonstrating or testing pattern recognition and spatial reasoning abilities.

### Components/Axes

**Section a: Pattern Puzzle**

* **Context Panels:** A 3x3 grid of square panels. The bottom-right panel contains a question mark (`?`).

* **Answer Panels:** A 2x4 grid of eight square panels below the context grid. One panel (top-left) is highlighted with a red border.

* **Labels:** The text "Context Panels" is centered below the 3x3 grid. The text "Answer Panels" is centered below the 2x4 grid.

**Section b: Spatial Arrangement Concepts**

* **Layout:** Seven individual square panels, each containing a diagram and a text label below it.

* **Labels (in order, top to bottom, left to right):**

1. `Center`

2. `Left-Right`

3. `2x2Grid`

4. `Up-Down`

5. `3x3Grid`

6. `Out-InCenter`

7. `Out-InGrid`

### Detailed Analysis

**Section a: Context Panels (3x3 Grid)**

Each panel contains exactly three geometric shapes. The shapes vary in type (circle, pentagon, triangle) and fill shade (light gray, medium gray, dark gray/black).

* **Row 1:**

* Panel 1 (Top-Left): Three medium-gray circles.

* Panel 2 (Top-Center): Three medium-gray pentagons.

* Panel 3 (Top-Right): Three medium-gray triangles.

* **Row 2:**

* Panel 1 (Middle-Left): Three dark-gray/black circles.

* Panel 2 (Middle-Center): Three dark-gray/black pentagons.

* Panel 3 (Middle-Right): Three light-gray pentagons.

* **Row 3:**

* Panel 1 (Bottom-Left): Three light-gray circles.

* Panel 2 (Bottom-Center): Three light-gray triangles.

* Panel 3 (Bottom-Right): Contains a large question mark (`?`).

**Section a: Answer Panels (2x4 Grid)**

Each of the eight panels contains three shapes, primarily triangles and pentagons in light gray or white with black outlines.

* **Panel with Red Border (Top-Left of Answer Grid):** Contains three small, light-gray pentagons arranged in a triangular formation (one top, two bottom).

* **Other Panels:** Contain various arrangements of three triangles or three pentagons. For example, the panel to the right of the red-bordered one has three triangles pointing in different directions.

**Section b: Spatial Arrangement Diagrams**

Each diagram uses shapes (pentagons, triangles, circles, diamonds) to illustrate a spatial concept.

1. **Center:** A single, large, black pentagon centered in the panel.

2. **Left-Right:** Two pentagons (one medium-gray, one dark-gray) placed side-by-side, separated by a vertical line.

3. **2x2Grid:** Two light-gray pentagons placed in the top-left and top-right positions of an implied 2x2 grid.

4. **Up-Down:** Two triangles (one medium-gray pointing down, one black pointing up) stacked vertically, separated by a horizontal line.

5. **3x3Grid:** Six medium-gray triangles arranged in the top two rows of an implied 3x3 grid (positions: top-left, top-center, top-right, middle-left, middle-center).

6. **Out-InCenter:** A large, white pentagon (outline) containing a small, black diamond at its center.

7. **Out-InGrid:** A large circle (outline) containing three smaller circles inside: two white (top) and one black (bottom-left).

### Key Observations

1. **Pattern in Context Panels:** There is a clear pattern across rows and columns in Section **a**.

* **Shape Type:** Each row contains one set of circles, one set of pentagons, and one set of triangles, but not in the same order.

* **Shade:** The shade of the shapes is consistent within each row for the first two rows (Row 1: medium gray, Row 2: dark gray for first two panels). Row 3 introduces light gray.

* **Logical Gap:** The missing panel (Row 3, Column 3) should logically contain three light-gray triangles to complete the pattern of shape types (circles, pentagons, triangles) and shade (light gray) for the third row.

2. **Answer Panel Correlation:** The red-bordered answer panel contains three light-gray pentagons. This does **not** match the predicted "three light-gray triangles." This suggests either the puzzle has a more complex rule, or the red border indicates an incorrect selection for demonstration purposes.

3. **Section b as a Key:** The diagrams in Section **b** explicitly define spatial terms (`Center`, `Left-Right`, `2x2Grid`, etc.). These terms likely describe the *arrangement* of the three shapes within each context panel, which is a separate variable from shape type and shade. For example, the shapes in the context panels are not centered; they are scattered, which might correspond to an unlisted "scattered" or "random" arrangement.

### Interpretation

This image is a technical illustration from a cognitive assessment or research paper on visual reasoning. It serves two purposes:

1. **Demonstration of a Test Item (Section a):** It presents a classic matrix reasoning problem where the solver must deduce the rule governing the distribution of shape types and shades across the grid to identify the missing piece. The presence of the red-bordered answer panel suggests this image might be from an answer key or a study analyzing common errors, as the highlighted answer contradicts the most straightforward pattern (shape type completion).

2. **Definition of Spatial Vocabulary (Section b):** It provides a visual lexicon for describing how objects are arranged within a frame. This is crucial for precisely documenting the rules of the puzzle or for scoring responses in a standardized way. The concepts range from simple (`Center`) to more complex relational (`Out-InGrid`).

The disconnect between the obvious pattern in the context panels and the highlighted answer is the most significant finding. It implies that the correct solution may depend on a more nuanced rule involving the spatial arrangements defined in Section **b**, rather than just the shape/shade matrix. For instance, the rule might involve a specific sequence of arrangements (e.g., "scattered," "clustered," "linear") that the solver must also deduce. The image, therefore, doesn't just show a puzzle; it shows the puzzle *and* the conceptual tools needed to solve or analyze it at a deeper level.

</details>

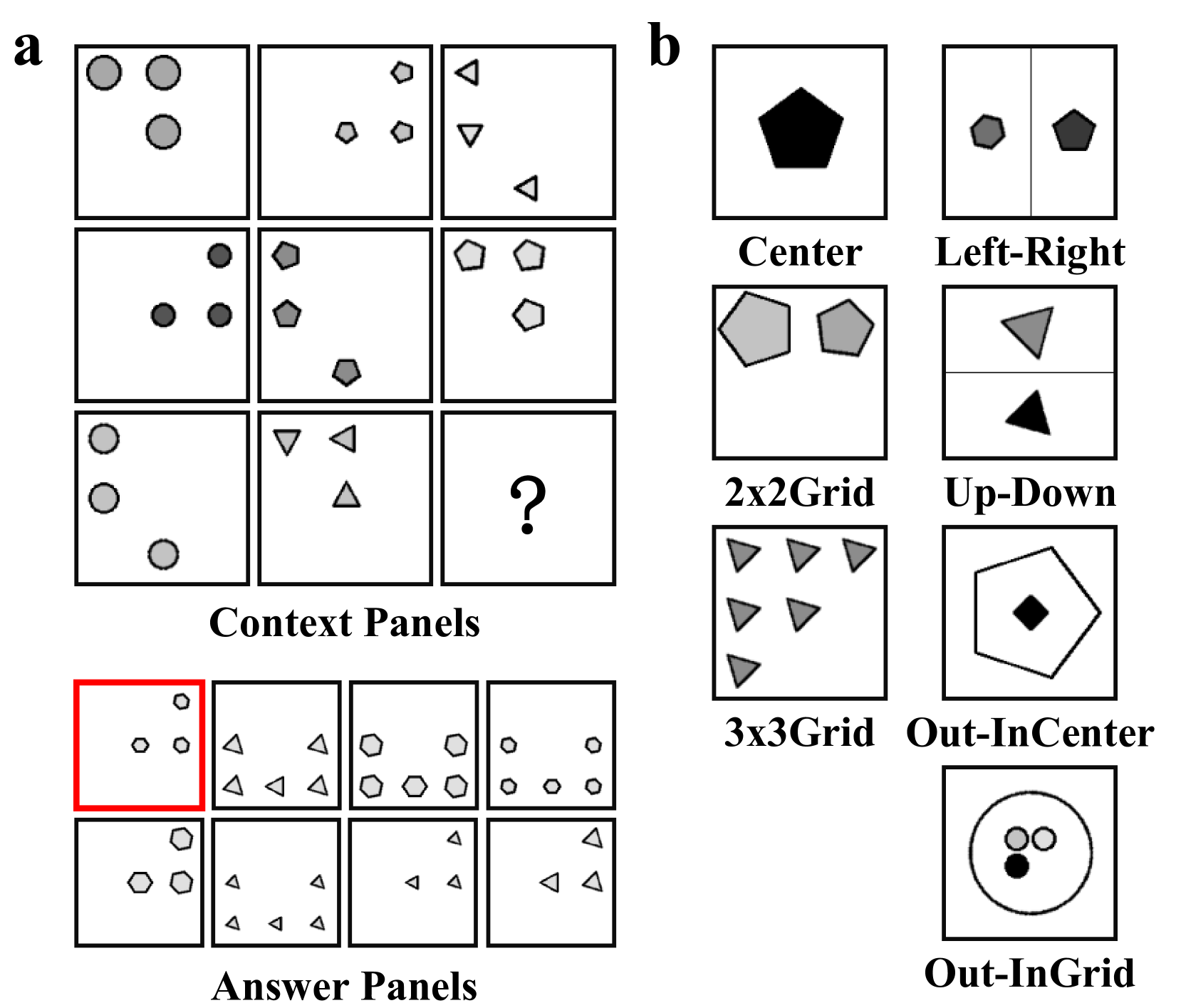

Figure 1: Illustrations for RAVEN dataset. (a) An example of RPM test from RAVEN [21] dataset. In an RPM test, there are 8 context panels and 8 candidate panels. Participants are required to identify the underlying rules governing various attributes within the context panels. Subsequently, participants use these rules to infer the attributes of the missing panel (represented by ”?”) and choose the most appropriate option (highlighted with a red box) from the answer panels. (b) The RAVEN dataset includes seven configurations: Center, 2x2Grid, 3x3Grid, Left-Right (L-R), Up-Down (U-D), Out-InCenter (O-IC) and Out-InGrid (O-IG) [21]. Four types of rules, i.e., Constant, Progression, Arithmetic, and Distribute Three, are applied to five attributes, i.e., Position, Number, Type, Size, and Color, in a row-wise manner. The I-RAVEN dataset [8] is a variant of RAVEN, where answer sets are generated using an attribute bisection tree.

The Raven Progressive Matrices (RPM) is a widely used nonverbal intelligence test designed to assess abstract reasoning. To explore the limitations of current machine learning approaches in solving abstract reasoning tasks, two automatically generated RPM-based datasets—RAVEN [21] and I-RAVEN [8] —have been introduced (Figure 1). Early efforts on RPM primarily employed Relation Network (RN) [22] and their variants [4, 23, 7, 9] to extract relations between context panels. Concurrently, CoPINet [6], MLCL [24], and DCNet [25] integrate contrastive learning in their models. Approaches like MRNet [9] and DRNet [26] aimed to enhance perception capabilities, while SRAN [8] and PredRNet [27] abstract relations using stratified models and prediction errors, respectively. In addition, several methods have focused on scene decomposition and feature disentanglement [28, 29, 30]. Although these monolithic deep learning models achieve high accuracy, they often suffer from limited interpretability and systematic generalization capabilities.

Another branch for solving RPM is based on neuro-symbolic architectures, which explicitly distinguish between perception and reasoning. PrAE [10] employs an object CNN to generate probabilistic scene representations and uses predetermined rule templates for probabilistic abduction and execution. Inspired by abstract algebra and representation theory, ALANS [13], which shares the same perception frontend as PrAE, transforms probabilistic scene distributions into matrix-based algebraic representations. The algebraic reasoning backend of ALANS induces potential rules through trainable operator matrices, eliminating the need for manual rule definitions. In abstract reasoning, Vector Symbolic Architectures (VSA) serve as a bridge between perception and reasoning modules by leveraging its structured distribution representations and algebraic properties. NVSA [12] projects each RPM panel into a high-dimensional vector using a trainable CNN and derives probability mass functions (PMFs) by querying an external codebook. Its reasoning backend embeds these PMFs into distributed VSA representations and performs rule abduction and execution using templates based on VSA algebraic operations. NVSA provides a differentiable and transparent implementation of probabilistic abductive reasoning by leveraging VSA representations and operators. However, its perception frontend requires searching a large external codebook, and its reasoning backend still relies on predetermined rule templates. In contrast, Learn-VRF [31], focuses on reasoning by learning VSA rule formulations, eliminating the need for predetermined templates. ARLC [14] further enhances reasoning by incorporating context augmentation and extending rule templates to accommodate more diverse rules. While ARLC and Learn-VRF implement systematic rule learning, they still struggle to process all RPM rules due to limitations in attribute representation. Recently, a class of methods known as relational bottlenecks has been proposed to enable efficient abstraction, but their capacity to handle complex relations remains uncertain [32, 33, 34, 35]. To address this limitation, Rel-SAR transforms perceptual inputs into high-dimensional attribute representations with symbolic reasoning potential and abducts both logical and numerical rules within a unified framework.

## III Preliminaries

### III-A VSA models utilized in this study

VSAs are a class of computational models that utilize high-dimensional distributed representations [20]. VSA models used in this study are Holographic Reduced Representations (HRR) and its form in the frequency domain, referred to as Fourier Holographic Reduced Representations (FHRR) [36]. A random FHRR atomic vector, denoted as $\boldsymbol{\theta}:=\left\{\theta_{i}\right\}_{i=1}^{d}$ , is composed of elements $\theta_{i}$ that are independently sampled from a uniform distribution, specifically $\theta_{i}\sim\mathcal{U}(-\pi,\pi)$ [36]. The corresponding HRR atomic vector, $\boldsymbol{x}$ , is then obtained by applying the Inverse Fast Fourier Transform (IFFT) to $\boldsymbol{\theta}$ :

$$

\boldsymbol{x}=\mathcal{F}^{-1}\left(e^{j\boldsymbol{\theta}}\right) \tag{1}

$$

Here, $\mathcal{F}$ and $\mathcal{F}^{-1}(\cdot)$ represent the Fast Fourier Transform (FFT) and Inverse FFT (IFFT), respectively. When the dimension $d$ is sufficiently large, these randomly generated vectors exhibit pseudo-orthogonality, making them suitable for representing distinct symbols or concepts.

The similarity between any two vectors is a crucial metric for evaluating the distributed representations in VSAs. In FHRR and HRR, cosine similarity is employed to measure the similarity between two vectors [20]:

$$

\begin{split}sim(\boldsymbol{\theta},\boldsymbol{\phi})&=\frac{1}{d}\sum_{i=1}

^{d}{\cos\left(\theta_{i}-\varphi_{i}\right)}\\

sim(\boldsymbol{x},\boldsymbol{y})&=\frac{\boldsymbol{x}\cdot\boldsymbol{y}}{

\left|\boldsymbol{x}\right|\left|\boldsymbol{y}\right|}\end{split} \tag{2}

$$

where $\boldsymbol{\theta}$ and $\boldsymbol{\phi}$ denote two FHRR vectors, and $\boldsymbol{x}$ and $\boldsymbol{y}$ two HRR vectors. The similarity $sim(\cdot,\cdot)$ ranges from -1 to +1, and above two similarity measures are equivalent. The pseudo-orthogonality refers to the case where the similarity $sim(\cdot,\cdot)\approx 0$ .

### III-B Basic operations and structured symbolic representations

All computations within VSAs are composed of several basic vector algebraic operations, with the primary ones being binding ( $\circ$ ), bundling ( $+$ ) and unbinding ( $\oslash$ ) (Table I). The binding operation ( $\circ$ ) is employed to form a representation of an object that contains information about the context in which it was encountered [20]. The bundling operation ( $+$ ), also known as superposition, generates a composite high dimensional vector that combines several lower-level representations. In calculation, binding has a higher priority than bundling. The unbinding operation ( $\oslash$ ), which is the inverse of binding, extracts a constituent from the compound data structure. Binding and bundling are referred to as composition operations, while unbinding is considered a decomposition operation. All operations do not change the vector dimensionality.

Through the combination of these operations, VSAs can effectively achieve structured symbolic representations [20]. For instance, consider a scene $\boldsymbol{s}$ in which a triangle $\boldsymbol{t}$ is positioned on the left $\boldsymbol{p}_{L}$ and a circle $\boldsymbol{c}$ on the right $\boldsymbol{p}_{R}$ . This scene can be represented as $\boldsymbol{s}=\boldsymbol{p}_{L}\circ\boldsymbol{t}+\boldsymbol{p}_{R}\circ \boldsymbol{c}$ by the role-filler pair [37]. By applying the inverse vector of the left position $\boldsymbol{p}_{L}^{-1}$ to unbind $\boldsymbol{s}$ , we can retrieve an approximate vector representing the content at the left position, i.e., $\boldsymbol{p}_{L}^{-1}\otimes\boldsymbol{s}\approx\boldsymbol{t}$ . Moreover, the triangle $\boldsymbol{t}$ can itself be a compositional scene, where attributes such as color and size are combined into a triangle scene in a similar manner. This decomposable, structure-sensitive, high-dimensional distributed representation has the potential to disentangle complex scenes while maintaining the advantages of traditional connectionist approaches [12].

### III-C The fractional power encoding method

In this study, the rules in RPM are primarily numerical. We introduce the VSA representation of numerical values using the fractional power encoding method (FPE-VSA) [18, 19]. Let $x\in\mathbb{R}$ be a real number and $X\in\mathbb{R}^{d}$ a randomly sampled base vector. The VSA representation $\boldsymbol{v}(x)\in\mathbb{R}^{d}$ for any value $x$ is obtained by repeatedly binding the base vector $X$ with itself $x$ times, as follows:

$$

\boldsymbol{v}\left(x\right):=\left(X\right)^{\left(\circ x\right)} \tag{3}

$$

The FPE method maps arbitrary real numbers to corresponding HD vector, and has the following properties:

$$

\boldsymbol{v}\left(x_{1}+x_{2}\right)=\boldsymbol{v}\left(x_{1}\right)\circ

\boldsymbol{v}\left(x_{2}\right) \tag{4}

$$

This demonstrates that addition $+$ in the real number domain can be represented by the binding operation $\circ$ in the vector domain.

TABLE I: Basic oprations of FHRR and HRR.

| Binding( $\boldsymbol{x}\circ\boldsymbol{y}$ ) Bundling( $\boldsymbol{x}+\boldsymbol{y}$ ) Inverse( $\boldsymbol{x}^{-1}$ ) | $\left(\boldsymbol{\theta}+\boldsymbol{\phi}\right)mod\,\,2\pi$ $angle\left(e^{j\boldsymbol{\theta}}+e^{j\boldsymbol{\phi}}\right)$ $\left(-\boldsymbol{\theta}\right)\,\,mod\,\,2\pi$ | $\mathcal{F}^{-1}\left(\mathcal{F}\left(\boldsymbol{x}\right)\cdot\mathcal{F} \left(\boldsymbol{y}\right)\right)$ $\boldsymbol{x}+\boldsymbol{y}$ $\mathcal{F}^{-1}\left(1/\mathcal{F}\left(\boldsymbol{x}\right)\right)$ |

| --- | --- | --- |

| Unbinding( $\boldsymbol{x}\oslash\boldsymbol{y}$ ) | $\boldsymbol{\theta^{-1}}\circ\boldsymbol{\phi}$ | $\boldsymbol{x^{-1}}\otimes\boldsymbol{y}$ |

## IV Methodology

### IV-A Atomic HD vectors with semantic representations

<details>

<summary>x2.png Details</summary>

### Visual Description

## Multi-Part Diagram: Data Structures and Relational Operations

### Overview

The image is a composite technical diagram consisting of seven distinct panels labeled **a** through **g**. It visually defines different types of vector representations and illustrates operations on relational data structures. The diagrams use a consistent visual language of colored squares, circles, and flowchart elements to convey abstract computational concepts.

### Components/Axes

The image is segmented into two rows:

* **Top Row (Panels a-d):** Illustrates four fundamental vector types.

* **Bottom Row (Panels e-g):** Depicts relational structures and operational flows.

**Panel-Specific Components:**

* **Panel a (Top-Left):** Labeled **"Random Vector"**. Contains six colored squares (red, orange, yellow, green, blue, light blue) scattered in a 2D space. Each square has a faint, blurred circle of a matching color behind it. Three small grey dots (`...`) are present, suggesting continuation or an arbitrary number of elements.

* **Panel b (Top-Center):** Labeled **"Numeric Vector"**. Shows a vertical column of five identical light blue squares. Three blue dots (`...`) are placed above and below the column, indicating a sequence of arbitrary length.

* **Panel c (Top-Right):** Labeled **"Circular Vector"**. Displays twelve light blue squares arranged in a perfect circle. Each square has a faint, blurred blue circle behind it.

* **Panel d (Top-Far Right):** Labeled **"Boolean Vector"**. Features a large, faint green circle. Two green squares are placed on opposite sides of this circle's circumference.

* **Panel e (Bottom-Left):** Contains two labels: **"Binary Relations"** (top) and **"Ternary Relations"** (bottom). Three grey squares are arranged horizontally, labeled below as **`v₁`**, **`v₂`**, and **`v₃`**. Curly braces connect the squares: one brace links `v₁` and `v₂` under "Binary Relations," and a larger brace links all three (`v₁`, `v₂`, `v₃`) under "Ternary Relations."

* **Panel f (Bottom-Center):** A flowchart. An input arrow labeled **`v₁:N`** points into a blue rounded rectangle labeled **`R_Num/Lgc, N`**. A downward arrow labeled **`OP₁:M`** points into the top of this rectangle. An output arrow labeled **`r`** exits the rectangle to the right.

* **Panel g (Bottom-Right):** A flowchart. An input arrow labeled **`v₁:N-1`** points into a blue rounded rectangle labeled **`R⁻¹_Num/Lgc, N`**. Two downward arrows point into the top of this rectangle: one labeled **`OP₁:M`** and another labeled **`r`**. An output arrow labeled **`v_N`** exits the rectangle to the right.

### Detailed Analysis

This section breaks down the informational content of each panel.

* **Vector Representations (a-d):**

* **a. Random Vector:** Represents elements with no inherent order or spatial relationship. The distinct colors and scattered placement imply independence or randomness of the vector components.

* **b. Numeric Vector:** Represents a standard, ordered sequence (likely a list or array). The vertical stack and ellipsis denote a linear, indexed structure of potentially variable length `N`.

* **c. Circular Vector:** Represents elements with a cyclical or periodic relationship. The circular arrangement implies that the first and last elements are connected, common in data structures like circular buffers or for representing periodic data.

* **d. Boolean Vector:** Represents a binary state or membership. The two squares on a circle suggest a true/false, on/off, or present/absent dichotomy for two states or positions.

* **Relational Structures and Operations (e-g):**

* **e. Relations:** Defines the concept of arity in relations. A **Binary Relation** is shown as a relationship between two entities (`v₁` and `v₂`). A **Ternary Relation** is a relationship involving three entities (`v₁`, `v₂`, and `v₃`).

* **f. Forward Operation (`R`):** Depicts a function or operator `R` that takes a vector of `N` elements (`v₁:N`) and a set of operations or parameters (`OP₁:M`) as input. It produces a scalar or resultant value `r`. The subscript `Num/Lgc` suggests the operation can be numerical or logical.

* **g. Inverse Operation (`R⁻¹`):** Depicts the inverse function. It takes a vector of `N-1` elements (`v₁:N-1`), the same operations/parameters (`OP₁:M`), and the resultant value `r` as inputs. It outputs the missing `N`th element (`v_N`). This illustrates solving for an unknown component given the other components and the result of the forward operation.

### Key Observations

1. **Visual Consistency:** The diagram uses a consistent visual vocabulary: squares represent data elements or vectors, circles represent relational contexts (circular, boolean), and rounded rectangles represent operations.

2. **Color Coding:** Colors are used purposefully. In panel **a**, distinct colors emphasize randomness. In panels **b** and **c**, uniform light blue suggests a homogeneous data type. In panel **d**, green is associated with the Boolean state. In panels **f** and **g**, blue is used for the operational blocks.

3. **Mathematical Notation:** The use of subscripts (`₁:N`, `₁:M`) and superscripts (`⁻¹`) follows standard mathematical and computer science conventions for denoting ranges and inverse functions.

4. **Conceptual Progression:** The panels progress from simple data representations (vectors) to more complex relational concepts and finally to operational flows that manipulate these structures.

### Interpretation

This diagram serves as a conceptual primer for a computational or mathematical framework. It establishes a taxonomy of data structures (random, numeric, circular, boolean vectors) and then introduces the core idea of **relational operations**.

The key insight is the relationship between panels **e**, **f**, and **g**. They collectively describe a system where:

1. Data exists in relational forms (binary, ternary).

2. A forward function `R` can compute a result `r` from a complete set of inputs (`v₁:N`).

3. An inverse function `R⁻¹` can be used to *infer* a missing input (`v_N`) when the other inputs (`v₁:N-1`) and the result `r` are known.

This pattern is fundamental to many fields: solving equations in algebra, constraint satisfaction in AI, parameter inference in statistics, and decoding in information theory. The diagram abstractly represents the process of moving from known data to a result (forward) and from a result and partial data to a missing piece of information (inverse). The inclusion of different vector types suggests this operational framework is designed to be agnostic to the specific underlying data structure, whether it's a random set, an ordered list, or a cyclic arrangement.

</details>

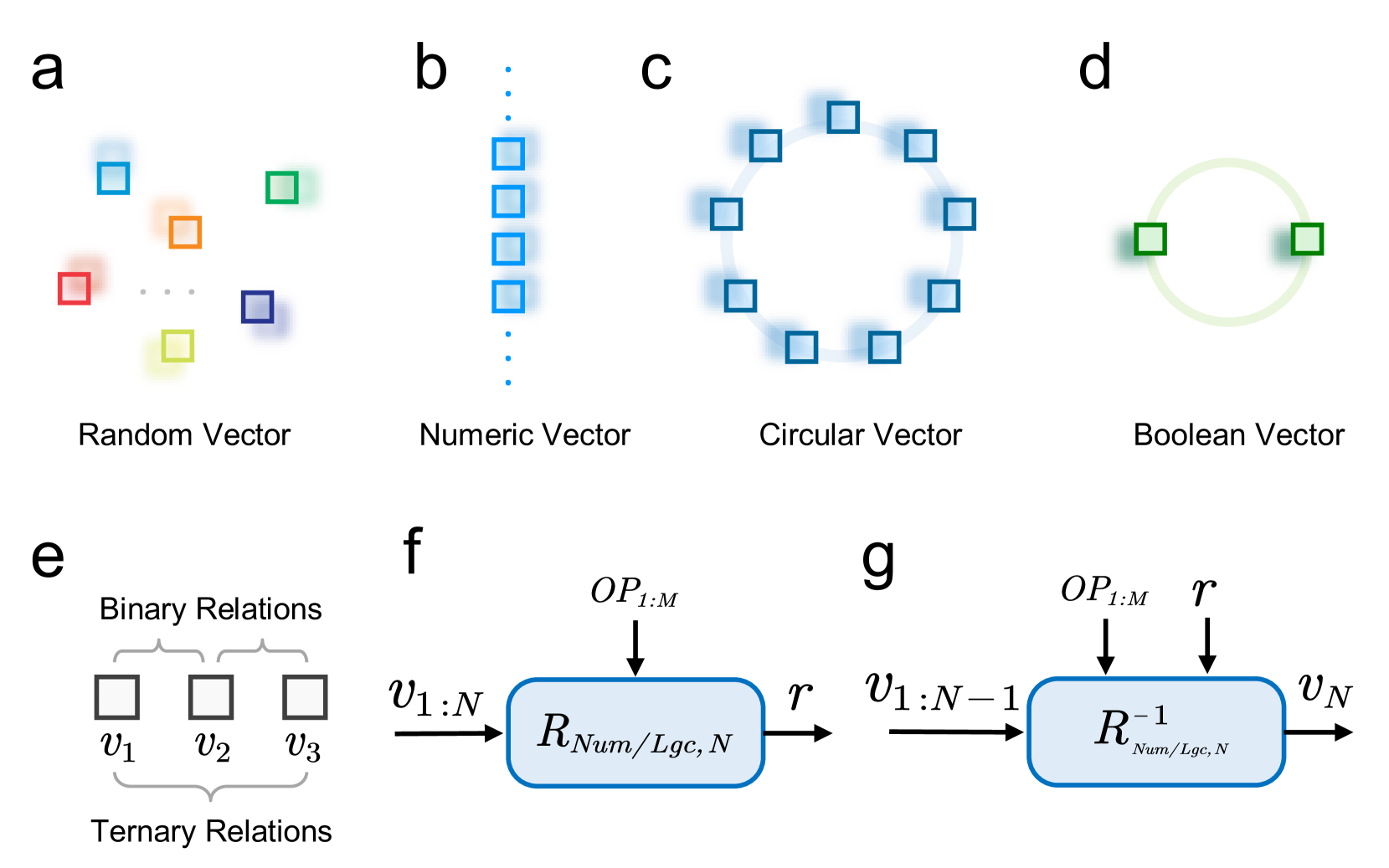

Figure 2: Atomic HD representations and relation functions. (a-d) The Rel-SAR model utilizes four types of atomic HD vectors. Random Vectors (RVs), sampled independently, are used to represent distinct and unrelated symbols or concepts. Numeric Vectors (NVs) are used to represent real numbers and support VSA-based addition-type arithmetic operations. Circular Vectors (CVs) represent periodic values and enable addition-type arithmetic operations with periodicity. Boolean Vectors (BVs), representing logical values of False and True, support VSA operations for logical reasoning. (e) In the RAVEN dataset, for a given attribute, the HD attribute representations in a row of three image panels involve binary or ternary relations. (f) Relation functions describe the numerical or logical relations between multiple HD vector representations $\boldsymbol{v}_{1:N}$ , where $N=2$ for binary and $N=3$ for ternary relations. These relations are governed by the operator powers $OP_{1:M}$ and the output $\boldsymbol{r}$ . (g) For a given relation defined by $OP_{1:M}$ and $\boldsymbol{r}$ , inverse relation functions infer the last HD vector representation $\boldsymbol{v}_{N}$ according to the first $N-1$ representations $\boldsymbol{v}_{1:N-1}$ .

In neuro-vector-symbolic systems, atomic HD vector representations with meaningful semantics are essential for perception and reasoning. We introduce four types of atomic HD vectors used in our model (Figure 2): Random Vectors (RVs), Numeric Vectors (NVs), Circular Vectors (CVs), and Boolean Vectors (BVs). The definitions and properties of these vectors are universal within the VSA framework.

#### IV-A 1 Random Vector

RVs are sampled from specific distributions according to the VSA models, as mentioned in the preliminary section. Due to the absence of numerical or logical relations among RVs and their pseudo-orthogonality in the HD vector space (Figure 2 a), they are often used to represent symbols and concepts assumed to be independent and dissimilar.

#### IV-A 2 Numeric Vector

NVs, generated using the fractional power encoding (FPE-VSA, Equation 3) [18], are employed to represent real numbers (Figure 2 b). NVs $\boldsymbol{v}(r)\in\mathbb{R}^{d}$ can be used to perform addition-type arithmetic operations through the binding (Equation 4) [19].

#### IV-A 3 Circular Vector

CVs are a special class of NVs used to represent periodic values (Figure 2 c). Given a base vector $P$ , where each phase of its elements $\rho_{i}$ is sampled from a discrete distribution ( e.g., for FHRR, $\rho_{i}\sim\mathcal{U}\left(2\pi j/L,\forall j\in\left\{1,\cdots,L\right\}\right)$ , with $L$ being an even number), CVs are defined as $\boldsymbol{p}(r):=(P)^{(\circ r)}$ . These CVs are pseudo-orthogonal to one another and exhibit periodicity with a period of $L$ [19]:

$$

\boldsymbol{p}\left(r+L\right)=\boldsymbol{p}\left(r\right) \tag{5}

$$

If $L$ is odd, the corresponding CVs with period $L$ can be obtained by selecting every other CV from those with period $2L$ .

#### IV-A 4 Boolean Vector

BVs are a specific type of CVs with a period of $L=2$ , used to represent Boolean values (Figure 2 d). Following a similar generation method as for CVs, we can generate vectors with a period of $L=2$ , $\boldsymbol{e}\left(0\right)$ and $\boldsymbol{e}\left(1\right)$ , to represent False and True, respectively. Basic logic operations using BVs are implemented as shown in Table II, where $\boldsymbol{a},\boldsymbol{b}\in\{\boldsymbol{e}(0),\boldsymbol{e}(1)\}$ represent arbitrary Boolean values.

TABLE II: Logic operations implemented by BV

$$

\lnot\boldsymbol{a}=\boldsymbol{a}\circ\boldsymbol{e}\left(1\right) \boldsymbol{a}\oplus\boldsymbol{b}=\boldsymbol{a}\circ\boldsymbol{b} \boldsymbol{a}\land\boldsymbol{b}=\boldsymbol{a}^{\left(\circ sim\left(

\boldsymbol{a},\boldsymbol{b}\right)\right)} \begin{aligned} \boldsymbol{a}\lor\boldsymbol{b}&=\left(\boldsymbol{a}\oplus

\boldsymbol{b}\right)\circ\left(\boldsymbol{a}\land\boldsymbol{b}\right)\\

\,\,&=\boldsymbol{a}\circ\boldsymbol{b}\circ\boldsymbol{a}^{\left(\circ sim

\left(\boldsymbol{a},\boldsymbol{b}\right)\right)}\\

\end{aligned} \tag{1}

$$

### IV-B Relation functions based on atomic HD representations

The rules for abductive reasoning in RPM involve binary and ternary relations among the attributes of corresponding objects in each row of three panels (Figure 2 e and Figure 1 a), as well as numerical and logical relations. In this work, we design general relation functions based on VSA algebra, utilizing the aforementioned atomic vector representations, to be used for rule abductions.

#### IV-B 1 Relation functions

Relation functions, which describe the relations between multiple HD vector representations, are categorized into two types: numerical and logical. Among the atomic HD representations, Numeric Vectors (NVs) and Circular Vectors (CVs) are involved in numerical relations, while Boolean Vectors (BVs) are involved in logical relations.

The numerical relation function, $R_{Num}$ , is defined as follows (Figure 2 f):

$$

\boldsymbol{r}_{Num}=R_{Num}\left(\boldsymbol{v}_{1:N},OP_{1:M}\right)=\circ_{

i=1}^{N}\boldsymbol{v}_{i}^{\left(\circ op_{i}\right)} \tag{6}

$$

where $N$ represents the arity of the relation function, and $\boldsymbol{v}_{1:N}:=\left\{\boldsymbol{v}_{i}\right\}_{i=1}^{N}$ denotes the input set of HD vector representations. $M$ is the number of operator powers and $OP_{1:M}:=\left\{op_{i}\right\}_{i=1}^{M}$ represents the operator powers, which can be considered as parameters of the relation function. The notation $\circ_{i=1}^{N}$ denotes the sequential binding operation applied to the $N$ HD vector representations. $\boldsymbol{r}_{Num}$ is the output HD representation. For the binary numerical relation function, $N=2$ and $M=2$ , while for the ternary numerical relation function, $N=3$ and $M=3$ . Based on the arithmetic properties of NVs and CVs, $R_{Num}$ can describe the additive relations of these two types of HD vector representations. The combination of $OP_{1:M}$ and $\boldsymbol{r}_{Num}$ determines the specific numerical relation in this vector-symbolic method.

Similarly, the simplified logical relation function, $R_{Lgc}$ , is defined as follows (Figure 2 f):

$$

\boldsymbol{r}_{Lgc}=R_{Lgc}\left(\boldsymbol{v}_{1:N},OP_{1:M}\right)=\left(

op_{1}\boldsymbol{v}_{1}\land op_{2}\boldsymbol{v}_{2}\right)\circ op_{3}

\boldsymbol{v}_{3} \tag{7}

$$

where $\boldsymbol{v}_{1:N}:=\left\{\boldsymbol{v}_{i}\right\}_{i=1}^{N}\in\{ \boldsymbol{e}(0),\boldsymbol{e}(1)\}$ denotes the input set of BVs. The full version of logical relation function is described in Appendix A. Here, we consider only the ternary logical relation, so $N=3$ and $M=3$ . The parameter $OP_{1:M}:=\left\{op_{i}\right\}_{i=1}^{M}$ , where $op_{i}\in\{0,1\}$ determines whether to negate $\boldsymbol{v}_{i}$ , with negation ( $\lnot$ ) applied when $op_{i}=1$ and no negation applied when $op_{i}=0$ (see Appendix A). The symbol $\land$ denotes the AND operation, as shown in Table II. Based on the computational properties of BVs detailed in Table II, $R_{Lgc}$ can describe the ternary logical relations involved in RPM. The combination of the operator $OP_{1:M}$ and the output $\boldsymbol{r}_{Lgc}$ determines the specific logical relation in this vector-symbolic method.

<details>

<summary>x3.png Details</summary>

### Visual Description

## Diagram: Hierarchical Scene Representation and Cyclic Object Shift

### Overview

The image is a technical diagram split into two primary panels, labeled **a** and **b**. It illustrates a conceptual model for representing scenes composed of objects with attributes and their spatial transformations. Panel **a** details the hierarchical decomposition of a scene into entities with attributes and a layout. Panel **b** demonstrates a specific application of this model to a 3x3 grid, showing how objects undergo a cyclic positional shift.

### Components/Axes

The diagram is not a chart with axes but a conceptual flow diagram. Its components are:

**Panel a:**

* **Left (Scene):** A circular boundary labeled "Scene" containing an "Out-InGrid" region. Inside, three triangular objects are shown. One object (labeled *j*) is highlighted with a blue dashed box, and a larger pink dashed box encloses all three.

* **Center (Entity & Layout):** A blue box labeled "Entity" lists four attributes: **Type**, **Size**, **Color**, and **Existence**. Each attribute is associated with a pair of symbols: a key (*k_type*, *k_size*, *k_color*, *k_exist*) and a value (*v_j^type*, *v_j^size*, *v_j^color*, *v_j^exist*). Small colored squares (orange, blue, purple, green) and circles represent these components. A legend defines the blue square as "HD representation" and the circle as "Role-filler binding".

* **Center-Right (Aggregation):** Arrows show the aggregation process. The attribute values for object *j* are summed (Σ_Atribute) to form a green square representing object *O_j*. This object is then summed with others (Σ_Object) to form the scene representation *S*.

* **Bottom (Layout):** A pink box labeled "Layout" shows "Position" with the symbol *p_j* (a blue square), connected to the object *O_j*. Faded labels *p_{j-1}* and *p_{j+1}* indicate neighboring positions.

* **Right (Scene Output):** The final aggregated scene representation is labeled "SHDR for image panel" and denoted by the symbol *S*.

**Panel b:**

* **Left (3x3Grid):** A 3x3 grid with cells numbered 1 through 9. The cells are labeled "Position: CVs **p_j**".

* **Center (Configurations):** Three square panels labeled **C₁**, **C₂**, and **C₃**. Each contains a different arrangement of geometric shapes (pentagons, circles).

* **Center-Top (Entity Detail):** A simplified entity box for object *j* in the grid, showing only **Position** (*p_j*) and **Existence** (*v_j^exist*). An orange arrow points from the position in grid **C₁** to the position in grid **C₂**.

* **Right (Aggregation & Transformation):** The entity information is summed (Σ_Object) to create "SHDR for 3x3Grid", denoted *C^{3x3}*. Below, text states: "Objects are cyclically shifted one position to the right". Two equations are provided: *C₁ ∘ p(1) = C₂* and *C₂ ∘ p(1) = C₃*.

### Detailed Analysis

**Panel a - Hierarchical Scene Decomposition:**

1. **Entity Representation:** An object *j* in a scene is defined by a set of attributes. Each attribute (e.g., Color) has a key (*k_color*) and a value (*v_j^color*). The diagram uses color-coding: orange for Type, blue for Size, purple for Color, green for Existence.

2. **Binding and Aggregation:** The key and value for each attribute are bound (indicated by the circle "Role-filler binding"). The values (*v_j^type, v_j^size, v_j^color, v_j^exist*) are aggregated (summed) to form a unified representation of the object, *O_j* (green square).

3. **Scene Construction:** All object representations (*O_{j-1}, O_j, O_{j+1}*) are aggregated to form the complete scene representation, *S*.

4. **Layout Integration:** The object's position (*p_j*) is part of the "Layout" and is linked to the object representation *O_j*.

**Panel b - Application to a 3x3 Grid:**

1. **Grid Structure:** The environment is a discrete 3x3 grid with 9 positions, each represented by a control variable (CV) *p_j*.

2. **Cyclic Shift Operation:** The core process shown is a cyclic shift of objects to the right.

* **C₁:** Contains three pentagons at positions (approx.) 4, 6, and 8.

* **C₂:** Contains three circles at positions (approx.) 1, 4, and 7. The text and arrow indicate the object from position 1 in C₁ has moved to position 2 in C₂ (though the visual shows a circle at position 1 in C₂, suggesting the shift is of the *pattern* or *state*, not necessarily the same physical object).

* **C₃:** Contains three pentagons at positions (approx.) 2, 5, and 9.

3. **Mathematical Notation:** The transformation is formalized. *p(1)* likely represents a permutation function that shifts positions by one step to the right. The operator "∘" denotes the application of this permutation to the configuration, so *C₁ ∘ p(1)* means applying the shift to configuration C₁ to produce C₂.

### Key Observations

1. **Abstraction Level:** The diagram operates at a high level of abstraction, using generic symbols (*k, v, p, O, S, C*) to describe a general framework for scene representation and manipulation.

2. **Color Consistency:** Colors are used consistently to track concepts: blue for "HD representation" (keys and positions), green for "Existence" values and aggregated objects.

3. **Two-Level Model:** Panel **a** shows a general, continuous-like scene model. Panel **b** applies a simplified version of it (focusing only on Position and Existence) to a discrete, structured grid environment.

4. **Transformation Logic:** The cyclic shift in panel **b** is demonstrated visually with changing shapes (pentagons to circles to pentagons) and positions, and formalized mathematically. The shapes may represent different object types or states.

### Interpretation

This diagram presents a **structured, compositional approach to visual scene understanding and reasoning**, likely for an AI or computational model.

* **What it suggests:** The model decomposes a scene into a set of objects, each defined by a bundle of attributes (type, size, color, existence) and a spatial location. This is a "role-filler" or "frame-based" representation. The scene is the sum of its objects. This allows for modular manipulation—changing an attribute of one object or moving an object (changing its *p_j*) updates the scene representation predictably.

* **How elements relate:** The flow is bottom-up: attribute keys and values bind to form object representations, which sum to form the scene. The layout (position) is a special attribute linked to the object. Panel **b** shows how this representation can be used to define and execute transformations (like a cyclic shift) on the scene state by operating on the object positions.

* **Notable patterns/anomalies:** The shift from pentagons to circles in panel **b** is interesting. It could imply that the "Existence" value (*v_j^exist*) or the "Type" attribute is being toggled or cycled as part of the transformation, not just the position. The mathematical notation (*C ∘ p(1)*) suggests the framework is designed to support formal, algebraic operations on scene configurations, which is powerful for planning and prediction tasks. The diagram essentially bridges a perceptual representation (the scene image) with a symbolic, manipulable structure (the entity-attribute-layout model).

</details>

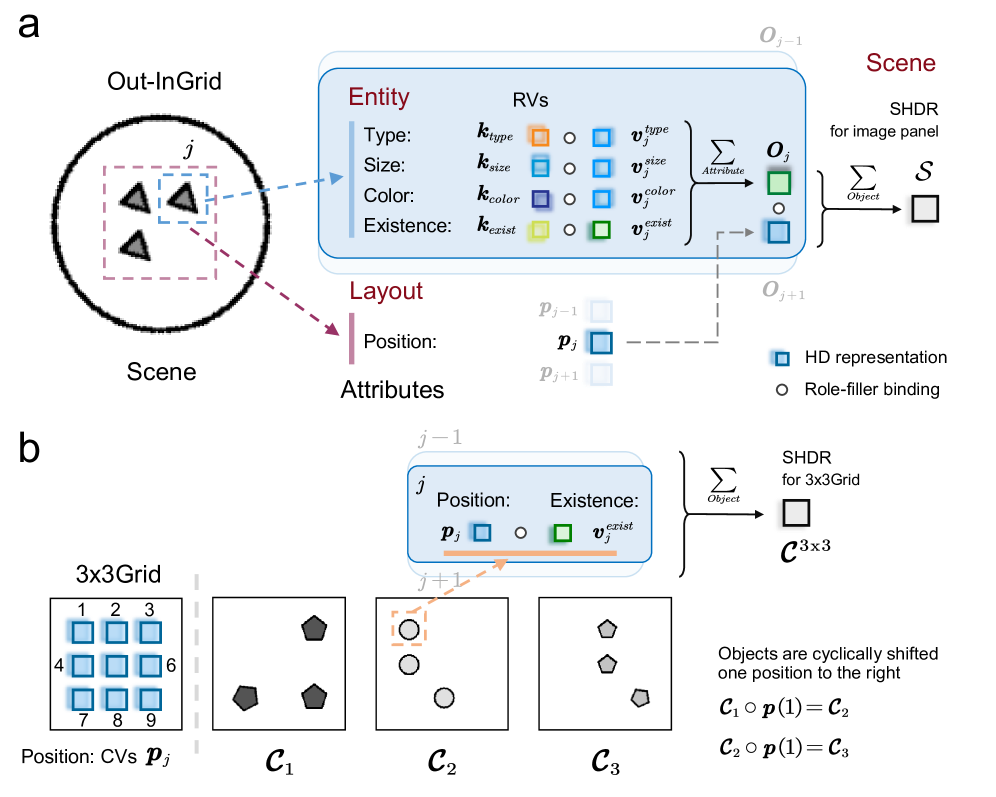

Figure 3: Structured HD representations (SHDR) for the image panel and the nxn Grid. (a) SHDR for the image panel (Equation 10). Taking the Out-InGrid configuration as an example, an image panel contains multiple objects, each with four entity attributes: type, size, color, and existence. Through the first layer of role-filler binding, these attributes are combined to form a SHDR for each object. Additionally, at the layout level, each object has a position attribute. By applying a second layer of role-filler binding, the SHDR for the entire image panel is constructed. (b) SHDR for the nxn Grid (Equation 12). Taking the 3x3Grid configuration as an example, the position vectors for all objects are represented using circular vectors (CVs) with a period of $3\times 3=9$ . The SHDR $\mathcal{C}^{3\mathrm{x}3}$ for this 3x3Grid is obtained by performing role-filler binding between the corresponding position vectors $\boldsymbol{p}_{j}$ and existence vectors $\boldsymbol{v}_{j}$ . Due to the periodic nature of the position vectors, as all objects shift positions cyclically, the SHDR undergoes a binding operation with the position vectors corresponding to the magnitude of the shift.

#### IV-B 2 Inverse relation functions

Rule execution in RPM requires inferring the third attribute value based on the first two attribute values in a row of panels, given a known relation. It represents an inverse problem of rule abduction. In the vector-symbolic method, given the operator power ${OP_{1:M}}$ and the output $\boldsymbol{r}$ , the last vector representation $\boldsymbol{v}_{N}$ can be inferred from the first $N-1$ inputs $\boldsymbol{v}_{1:N-1}$ using the inverse of the relation functions (Figure 2 g). According to Equation 6, the inverse numerical relation function is defined as follows:

$$

\displaystyle\boldsymbol{v}_{N} \displaystyle=R_{Num}^{-1}\left(\boldsymbol{v}_{1:N-1},OP_{1:M},\boldsymbol{r}\right) \displaystyle=\left(\circ_{i=1}^{N-1}\boldsymbol{v}_{i}^{\left(\circ\left(-op_

{i}/op_{M}\right)\right)}\right)\circ\boldsymbol{r}^{\left(\circ\left(-1/op_{M

}\right)\right)} \tag{8}

$$

Similarly, according to Equation 7, the inverse logical relation function is defined as follows:

$$

\displaystyle\boldsymbol{v}_{N} \displaystyle=R_{Lgc}^{-1}\left(\boldsymbol{v}_{1:N-1},OP_{1:M},\boldsymbol{r}\right) \displaystyle=op_{3}\left(op_{1}\boldsymbol{v}_{1}\land op_{2}\boldsymbol{v}_{

2}\right) \tag{9}

$$

.

### IV-C Structured high-dimensional representation and its attribution decomposition

VSA can create structured symbolic representations using atomic HD vector representations and decouple them directly from these structures through algebraic operations [12]. This subsection presents the process of constructing a structured HD representation (SHDR) for an image panel and its decomposition to retrieve individual attribute representations. Additionally, an SHDR for the nxn Grid ( $n=2,3$ ) at the component level is also introduced.

#### IV-C 1 SHDR for the image panel

In RAVEN dataset, each image panel consists of objects, with each object characterized by multiple attributes. Consequently, the structured HD representation (SHDR) for each image panel can be obtained through two layers of role-filler bindings (Figure 3 a). First, the bundling operation is used to construct an SHDR for each object at the entity level by combining its attributes. Then, another bundling operation aggregates these object-level representations to construct a SHDR of the image panel at the scene level. Therefore, each image panel $\mathcal{X}\in\mathbb{R}^{r\times r}$ , with a resolution $r\times r$ , can be represented by an SHDR $\mathcal{S}\in\mathbb{R}^{d}$ as follows:

$$

\displaystyle\mathcal{S} \displaystyle=\sum_{j=1}^{N_{pos}}{\boldsymbol{p}_{j}\circ\boldsymbol{O}_{j}} \displaystyle=\sum_{j=1}^{N_{pos}}{\boldsymbol{p}_{j}\circ\left(\sum_{attr\in

ATTR

}{\boldsymbol{k}_{attr}\circ\boldsymbol{v}_{j}^{attr}}\right)} \tag{10}

$$

Here, $\boldsymbol{O}_{j}$ represents the SHDR of the $j$ th object with different attributes at the entity level, incorporating attributes such as type, size, color, and existence. The attribute set is $ATTR=\left\{type,size,color,exist\right\}$ . At the entity level, the key vector $\boldsymbol{k}_{attr}$ denotes the class of a specific attribute $attr\in ATTR$ , while the value vector $\boldsymbol{v}_{j}^{attr}$ indicates the attribute’s value at the position $j$ . At the scene level, the position vector $\boldsymbol{p}_{j}$ specifies the location of the $j$ -th object.

#### IV-C 2 Representation decomposition

Given an estimated SHDR $\hat{\mathcal{S}}\in\mathbb{R}^{d}$ of an image panel, all SHDRs of objects $\hat{\boldsymbol{O}}_{j}$ at the entity level, along with the corresponding attribute representations $\hat{\boldsymbol{v}}_{j}^{attr}$ , can be derived through a series of unbinding operations [20]. The decomposition process is shown as follows:

$$

\begin{cases}\hat{\boldsymbol{O}}_{j}=\boldsymbol{p}_{j}\oslash\hat{\mathcal{S

}}=\boldsymbol{p}_{l}^{-1}\circ\hat{\mathcal{S}}\\

\hat{\boldsymbol{v}}_{j}^{attr}=\boldsymbol{k}_{attr}\oslash\hat{\boldsymbol{O

}}_{j}=\boldsymbol{k}_{attr}^{-1}\circ\hat{\boldsymbol{O}}_{j}\\

\end{cases} \tag{11}

$$

It is important to note that due to inaccuracies in the estimated SHDR $\hat{\mathcal{S}}$ and the noise introduced by the unbinding operation, the estimated attribute representations $\hat{\boldsymbol{v}}_{j}^{attr}$ may not fully match the original $\boldsymbol{v}_{j}^{attr}$ used in Equation 10.

#### IV-C 3 SHDR for the nxn Grid component

In the RAVEN dataset, three figure configurations— 2x2Grid, 3x3Grid, and Out-InGrid —include components where objects are arranged in an nxn grid pattern at the layout level [21]. Since the positions in the nxn Grid involve component-level rule reasoning, the SHDR for the nxn Grid component ( $n=2,3$ ), focusing only on positions and object existence, is introduced as follows (Figure 3 b):

$$

\mathcal{C}^{\mathrm{nxn}}=\sum_{j=1}^{n\times n}{\boldsymbol{p}_{j}\circ

\boldsymbol{v}_{j}^{exist}} \tag{12}

$$

### IV-D Rules from the perspective of relation functions

TABLE III: Attribute representations and the relation functions involved in rule abductions

hlines = , vlines = , colspec=ccccccc, cells=mode=text, cell13 = c = 2halign = c, cell15 = c = 2halign = c, cell21 = r = 3valign = m, cell51 = r = 5valign = m, cell62 = r = 4valign = m, cell23 = r = 4valign = m, cell63 = r = 3valign = m, cell24 = r = 4valign = m, cell64 = r = 3valign = m, cell26 = r = 7valign = m, cell27 = r = 2valign = m, cell47 = r = 2valign = m, cell67 = r = 2valign = m

Level & Attributes HD representations Rules in RAVEN and their types Relation functions

Entity Type $\begin{array}[]{c}\boldsymbol{v}^{type}\\ \boldsymbol{v}^{size}\\ \boldsymbol{v}^{color}\\ \boldsymbol{v}^{num}\\ \end{array}$ Atomic (NVs) Constant Numerical rules Binary + Numerical

Size Progression

Color Distribute Three Ternary + Numerical

Layout Number Arithmetic

Position $\mathcal{C}^{\mathrm{nxn}}=\sum_{j=1}^{n\times n}{\boldsymbol{p}_{j}\circ \boldsymbol{v}_{j}^{exist}}$ $\begin{array}[]{c}\mathrm{SHDR}\\ \boldsymbol{p}_{j}:\mathrm{CVs}\\ \boldsymbol{v}_{j}^{exist}:\mathrm{RVs}\\ \end{array}$ Constant Binary + Numerical

Progression

Distribute Three Ternary + Numerical

$\boldsymbol{v}^{exist}$ Atomic (BVs) Arithmetic Logical rules Ternary + logical

TABLE IV: Rules and Corresponding combinations of $OP_{1:M}$ and $r_{Num}$ in Relation functions

hlines = , vlines = , colspec=cccccccc, cells=mode=text, cell11 = r = 2, c = 2halign = c, valign = m, cell17 = r = 2valign = m, cell18 = r = 2valign = m, cell31 = r = 6valign = m, cell32 = c = 2halign = l, cell42 = r = 2valign = m,halign = l, cell62 = r = 2valign = m,halign = l, cell82 = c = 2halign = c,halign = l, cell91 = r = 2valign = m, cell92 = r = 2valign = m,halign = l

Rules in RAVEN & Ternary $op_{1}$ $op_{2}$ $op_{3}$ $r$ Examples (Rule $\rightarrow$ Relation function) Binary $op_{1}$ $op_{2}$

Numerical rules Constant 0 $-1$ $+1$ 0 $v_{1}=v_{2}\rightarrow\boldsymbol{v}\left(0\right)=\boldsymbol{v}_{1}^{\left( \circ\left(-1\right)\right)}\circ\boldsymbol{v}_{2}^{\left(\circ 1\right)}$

Progression $+$ 0 $-1$ $+1$ $+1$ , $+2$ $v_{1}+1=v_{2}\rightarrow\boldsymbol{v}\left(+1\right)=\boldsymbol{v}_{1}^{ \left(\circ\left(-1\right)\right)}\circ\boldsymbol{v}_{2}^{\left(\circ 1\right)}$ $-$ 0 $-1$ $+1$ $-1$ , $-2$ $v_{1}-2=v_{2}\rightarrow\boldsymbol{v}\left(-2\right)=\boldsymbol{v}_{1}^{ \left(\circ\left(-1\right)\right)}\circ\boldsymbol{v}_{2}^{\left(\circ 1\right)}$

Arithmetic $+$ $-1$ $-1$ $+1$ $0 0$ $v_{1}+v_{2}=v_{3}\rightarrow\boldsymbol{v}\left(0\right)=\boldsymbol{v}_{1}^{ \left(\circ\left(-1\right)\right)}\circ\boldsymbol{v}_{2}^{\left(\circ(-1) \right)}\circ\boldsymbol{v}_{3}^{\left(\circ 1\right)}$

$-$ $-1$ $+1$ $+1$ $0 0$ $v_{1}-v_{2}=v_{3}\rightarrow\boldsymbol{v}\left(0\right)=\boldsymbol{v}_{1}^{ \left(\circ\left(-1\right)\right)}\circ\boldsymbol{v}_{2}^{\left(\circ 1\right )}\circ\boldsymbol{v}_{3}^{\left(\circ 1\right)}$

Distribute Three $+1$ $+1$ $+1$ Any $v_{1}+v_{2}+v_{3}=Any\rightarrow\boldsymbol{Any}=\boldsymbol{v}_{1}^{\left( \circ 1\right)}\circ\boldsymbol{v}_{2}^{\left(\circ 1\right)}\circ\boldsymbol{ v}_{3}^{\left(\circ 1\right)}$

Logical rules Arithmetic $+$ $+1$ $+1$ $+1$ 0 $\boldsymbol{e}\left(0\right)=\left(\boldsymbol{e}\left(1\right)\circ \boldsymbol{v}_{1}\land\boldsymbol{e}\left(1\right)\circ\boldsymbol{v}_{2} \right)\circ\left(\boldsymbol{e}\left(1\right)\circ\boldsymbol{v}_{3}\right)$

$-$ $0 0$ $+1$ $0 0$ 0 $\boldsymbol{e}\left(0\right)=\left(\boldsymbol{e}\left(0\right)\circ \boldsymbol{v}_{1}\land\boldsymbol{e}\left(1\right)\circ\boldsymbol{v}_{2} \right)\circ\left(\boldsymbol{e}\left(0\right)\circ\boldsymbol{v}_{3}\right)$

The RAVEN dataset contains $4$ rules— Constant, Progression, Arithmetic, and Distribute Three —which operate on $5$ rule-governing attributes [21]. These $5$ attributes include $3$ entity-level attributes: Type, Size, and Color, as well as $2$ layout-level attributes: Number and Position. In this study, the HD representations of these attribute values during rule reasoning and relations between rules and relation functions are shown in Table III.

For the attributes Type, Size, Color, and Number, the four involved rules follow additive arithmetic operations, meaning the attribute values $\boldsymbol{v}^{attr}$ ( $attr\in\left\{type,size,color,number\right\}$ ) are represented using Numeric Vectors (NVs). Therefore, these rules can be defined using the numerical relation function (Equation 6): Constant and Progression correspond to binary relation functions, while Arithmetic and Distribute Three correspond to ternary relation functions. Each rule is associated with specific combinations of $OP_{1:M}$ and $\boldsymbol{r}_{Num}$ , and corresponding details are shown in Table IV.

For the attribute Position, the rules Constant, Progression, and Distribute Three primarily refer to an nxn Grid with multiple objects, which, in an overall sense, follow additive arithmetic operations. Therefore, we use the SHDR $\mathcal{C}^{\mathrm{nxn}}$ for the nxn Grid (Equation 12) to represent the attributes required by these three rules. Since Progression involves a cyclic left or right shift of all objects (Figure 3 b), the position vectors $\boldsymbol{p}_{j}$ in set $\mathcal{C}^{\mathrm{nxn}}$ during rule reasoning are represented by Circular Vectors (CVs). The object existence vectors $\boldsymbol{v}_{j}^{exist}$ are represented by Random Vectors (RVs). These three rules can also be described using numerical relation functions (Equation 6). Take the rule Progression (+1) as an example, where the positions of objects undergo a cyclic right shift. In Figure 3 b, the SHDRs of the 3x3 Grid across a row of three panels exhibit two numerical relations: $\mathcal{C}_{1}\circ\boldsymbol{p}\left(1\right)=\mathcal{C}_{2}$ and $\mathcal{C}_{2}\circ\boldsymbol{p}\left(1\right)=\mathcal{C}_{3}$ , which can be defined using a binary numerical relation function.

In addition, the rule Arithmetic on the attribute Position is belong to the logical rule [21]. The attribute values $\boldsymbol{v}_{j}^{exist}$ are also represented using Boolean Vectors (BVs) that can be operated as shown in Table II. Therefore, the rule Arithmetic for Position corresponds to the ternary logical relation function (Equation 7), and corresponding details about the combinations of $OP_{1:M}$ and $\boldsymbol{r}_{Num}$ in the relation function are shown in Table IV.

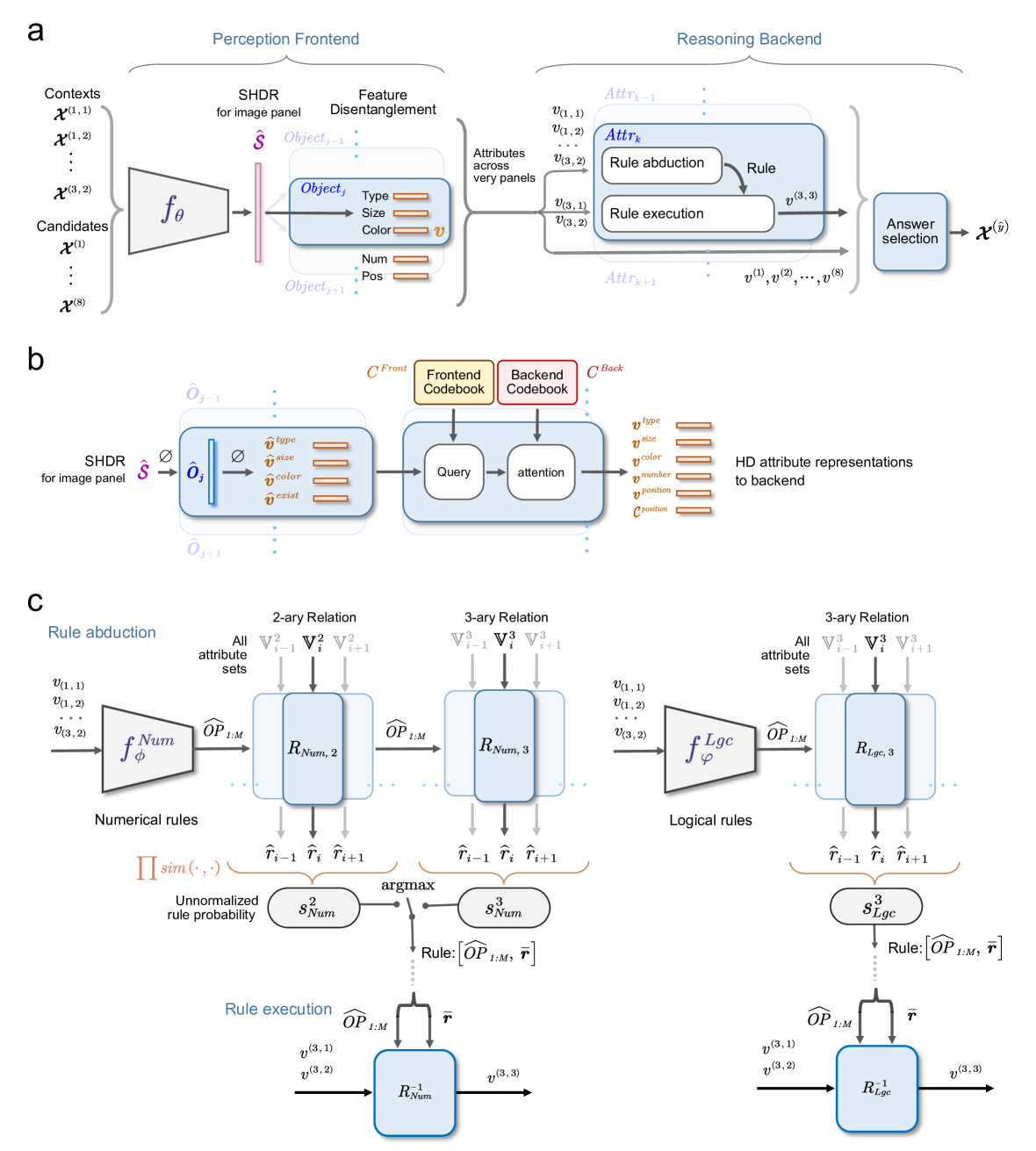

### IV-E The Systematic Abductive Reasoning model

In this section, we present the Systematic Abductive Reasoning model with diverse relation representations (Rel-SAR), inspired by the NVSA [12]. An overview of Rel-SAR is depicted in Figure 4 a. Similar to previous neuro-symbolic models for abstract visual reasoning, Rel-SAR combines a neural visual perception frontend with a symbolic reasoning backend, both utilizing VSA representations with meaningful semantics to facilitate systematic reasoning. The perception frontend employs a neural network to extract the SHDR $\mathcal{S}$ of each image panel $\mathcal{X}$ in the RPM and achieves feature disentanglement from the SHDR using representation decomposition to obtain the HD representations of attributes ( $\boldsymbol{v}$ , $\boldsymbol{p}$ and $\mathcal{C}$ : Table III) required for reasoning in the backend. The reasoning backend consists of three main modules: the rule abduction module, the rule execution module, and the answer selection module. The rule abduction module extracts the corresponding rules ( $OP_{1:M}$ and $\boldsymbol{r}$ : Table IV) for each attribute representation according to appropriate relation function (Equation 6 and 7, Table III). Subsequently, the rule execution module uses these rules to predict the representations of the missing panel’s attributes according to corresponding inverse relation functions (Equation 8 and 9). Finally, the answer selection module compares the predicted attribute representations of the missing panel with the available options in the answer panels and selects the answer.

<details>

<summary>x4.png Details</summary>

### Visual Description

## System Architecture Diagram: Visual Reasoning Model with Perception Frontend and Reasoning Backend

### Overview

The image is a technical diagram illustrating the architecture of a machine learning model designed for visual reasoning tasks. It is divided into three main panels labeled **a**, **b**, and **c**, each detailing different aspects of the system. The overall flow moves from processing raw visual inputs (perception) to abstract reasoning and answer selection. The diagram uses a combination of block diagrams, mathematical notation, and flow arrows to depict data transformation and logical processes.

### Components/Axes

The diagram is segmented into three primary regions:

1. **Panel a (Top):** High-level overview of the complete pipeline, split into a "Perception Frontend" (left) and a "Reasoning Backend" (right).

2. **Panel b (Middle):** A detailed view of the feature disentanglement and attribute representation mechanism, bridging the frontend and backend.

3. **Panel c (Bottom):** A detailed breakdown of the "Rule abduction" and "Rule execution" processes within the Reasoning Backend, separated into numerical and logical rule pathways.

**Key Labels and Notation:**

* **Inputs:** `Contexts` (denoted as `X^(1,1)`, `X^(1,2)`, ..., `X^(3,2)`) and `Candidates` (`X^(1)` ... `X^(8)`).

* **Core Functions:** `f_θ` (Perception Frontend function), `f_Num^φ` (Numerical rule function), `f_Lgc^φ` (Logical rule function).

* **Intermediate Representations:** `Ŝ` (SHDR for image panel), `Object_j` with attributes `Type`, `Size`, `Color`, `Num`, `Pos` (collectively `v`), `Attr_k` (attributes across panels).

* **Rule Components:** `Rule abduction`, `Rule execution`, `Answer selection`.

* **Codebooks:** `Frontend Codebook (C^Front)`, `Backend Codebook (C^Back)`.

* **Attribute Vectors:** `v_type`, `v_size`, `v_color`, `v_number`, `v_position`, `c_position`.

* **Rule Types:** `2-ary Relation`, `3-ary Relation`.

* **Rule Sets:** `R_Num,2`, `R_Num,3`, `R_Lgc,3`.

* **Operations:** `Query`, `attention`, `argmax`, `Π sim(·,·)` (product of similarities).

* **Outputs:** `x^(ŷ)` (selected answer).

### Detailed Analysis

**Panel a: High-Level Pipeline**

* **Perception Frontend (Left):** Takes multiple context images and candidate images as input. These are processed by a function `f_θ` to produce `Ŝ` (SHDR for image panel). This leads to "Feature Disentanglement," where objects (`Object_j`, `Object_j+1`) are identified and their attributes (Type, Size, Color, Num, Pos) are extracted into a vector `v`.

* **Reasoning Backend (Right):** Receives attributes `v` from "very panels" (likely meaning "across panels"). It operates on an attribute set `Attr_k`, which undergoes "Rule abduction" to infer a rule, followed by "Rule execution" to produce a new attribute `v^(3,3)`. This process iterates (`Attr_k-1`, `Attr_k`, `Attr_k+1`). Finally, "Answer selection" uses the processed attributes (`v^(1)`, `v^(2)`, ..., `v^(8)`) to choose the correct candidate `x^(ŷ)`.

**Panel b: Attribute Disentanglement and Representation**

* This panel details the transition from the perception frontend to the reasoning backend.

* The SHDR `Ŝ` is used to generate an object representation `Ô_j` (with a null symbol `Ø` indicating a slot or placeholder).

* This representation is decomposed into disentangled attribute vectors: `v̂_type`, `v̂_size`, `v̂_color`, `v̂_exist`.

* These vectors, along with a `Frontend Codebook (C^Front)`, are fed into a "Query" and "attention" mechanism. The attention mechanism also uses a `Backend Codebook (C^Back)`.

* The output is a set of "HD attribute representations to backend": `v_type`, `v_size`, `v_color`, `v_number`, `v_position`, `c_position`.

**Panel c: Rule Abduction and Execution**

* This panel is split vertically into **Numerical rules** (left) and **Logical rules** (right).

* **Rule Abduction (Top Half):**

* **Numerical Path:** Attribute vectors `v` are input to `f_Num^φ`, producing operator predictions `ÔP_1:M`. These are applied to attribute sets (`V_i^2`, `V_i^3`) within rule modules `R_Num,2` and `R_Num,3` to generate rule hypotheses `r̂_i-1`, `r̂_i`, `r̂_i+1`. A similarity product `Π sim(·,·)` computes unnormalized rule probabilities `s_Num^2` and `s_Num^3`. An `argmax` operation selects the best rule, defined as `[ÔP_1:M, r̄]`.

* **Logical Path:** A parallel process uses `f_Lgc^φ` to generate rules for 3-ary relations, resulting in rule `[ÔP_1:M, r̄]` and probability `s_Lgc^3`.

* **Rule Execution (Bottom Half):**

* The selected rule `[ÔP_1:M, r̄]` is applied to input attributes (e.g., `v^(3,1)`, `v^(3,2)`).

* For numerical rules, this occurs in module `R_Num^-1`, outputting `v^(3,3)`.

* For logical rules, this occurs in module `R_Lgc^1`, outputting `v^(3,3)`.

### Key Observations

1. **Hierarchical Abstraction:** The system moves from concrete pixel data (`Contexts`, `Candidates`) to disentangled object attributes, and finally to abstract relational rules.

2. **Dual Rule Processing:** The model explicitly separates and processes numerical and logical rules, suggesting it handles different types of reasoning (e.g., arithmetic vs. comparative) with specialized pathways.

3. **Iterative Reasoning:** The notation `Attr_k-1`, `Attr_k`, `Attr_k+1` in Panel a implies the reasoning backend operates over multiple steps or layers of attribute abstraction.

4. **Codebook-Mediated Attention:** Panel b reveals that the translation from frontend features to backend-ready attributes is not direct but is mediated by learned codebooks and an attention mechanism, likely to align feature spaces.

5. **Rule Selection via Probability:** The abduction phase doesn't just generate rules; it scores them (`s_Num^2`, `s_Num^3`, `s_Lgc^3`) using a similarity metric, and selects the most probable one via `argmax`.

### Interpretation

This diagram outlines a neuro-symbolic architecture for visual question answering or similar visual reasoning tasks. The **Perception Frontend** acts as a vision backbone that parses images into structured, object-centric representations (disentangled attributes). The **Reasoning Backend** is a symbolic or differentiable logic engine that manipulates these attributes using learned rules.

The core innovation appears to be the **Rule Abduction** mechanism. Instead of having a fixed set of rules, the model *infers* the most applicable rule (numerical or logical, 2-ary or 3-ary) from the current context by scoring candidate rules. This makes the system more flexible and capable of handling novel reasoning problems. The separation into numerical and logical streams suggests an inductive bias built into the model to handle these fundamentally different types of relations efficiently.

The flow from `v` (raw attributes) to `v̂` (disentangled attributes) to `v` (HD representations) to finally `v^(3,3)` (a predicted attribute after rule execution) shows a complete cycle of perception, reasoning, and prediction. The final "Answer selection" module likely compares the predicted outcome `v^(3,3)` or the state of all candidates against the expected answer format to choose the correct image candidate `x^(ŷ)`. This architecture aims to combine the pattern recognition strengths of neural networks with the interpretability and systematic generalization of symbolic reasoning.

</details>

Figure 4: The Systematic Abductive Reasoning model with diverse relation representations (Rel-SAR). (a) Overall architecture of Rel-SAR model. Our model consists of a visual perception frontend, which processes object attributes for $8$ context and $8$ candidate image panels in a RPM test, and a reasoning backend that performs symbolic arithmetic and logical reasoning. The perception frontend utilizes a neural network, $f_{\theta}$ , to obtain the SHDR of each image panel, and then perceives attributes in the form of HD representations required by the downstream reasoning. In the reasoning backend, the rule abduction module extracts rules for each attribute representation using relation functions. The rule execution module then predicts the missing panel’s attribute representations based on inverse relation functions. Finally, the answer selection module compares the predicted attributes of the missing panel with those in the candidate panels and selects the option with the highest similarity. (b) Given the predicted SHDR for each panel, the SHDR of all objects and their corresponding HD attribute representations can be obtained via representation decomposition. Subsequently, the estimated HD attribute representations are refined in two steps: querying the frontend codebook and applying attention based on the backend codebook. This process produces HD attribute representations suitable for backend reasoning, including attributes such as type, size, color, number, and position. (c) In the rule abduction module, the rule learners $f_{\phi}^{Num}$ and $f_{\varphi}^{Lgc}$ predict the operator powers $\widehat{OP}_{1:M}$ for numerical and logical relation functions based on attributes in the context panels. These predicted $\widehat{OP}_{1:M}$ ensure that all binary or ternary relation input pairs ( $\mathbb{V}^{N},N=2,3$ ) produce the same output $\boldsymbol{\hat{r}}$ when processed through their respective relation functions. Therefore, the rule defined by $\widehat{OP}_{1:M}$ and $\boldsymbol{\hat{r}}$ with the highest overall $\boldsymbol{\hat{r}}$ similarity, also viewed as unnormalized probability, is considered the underlying rule. The rule execution module then predicts the attributes of the missing panel using inverse relation functions with the estimated rules.

#### IV-E 1 Perception frontend

The perception frontend operates independently on each of the 16 image panels to extract the HD representations of attributes required for abductive reasoning (Figure 4 a and Figure 4 b). For a given image panel $\mathcal{X}^{ind}\in\mathbb{R}^{r\times r}$ , where $ind\in\left\{\left(1,1\right),\left(1,2\right),\cdots,\left(3,2\right)\right\}$ for 8 contexts and $ind\in\left\{1,2,\cdots,8\right\}$ for 8 candidates, the frontend uses a trainable neural network (ResNet-50) to map the image panel to its estimated SHDR $\hat{\mathcal{S}}^{ind}\in\mathbb{R}^{d}$ : $f_{\theta}:\mathcal{X}\rightarrow\hat{\mathcal{S}}$ , where $\theta$ represents the trainable parameters of the network. Theoretically, the expected SHDR $\mathcal{S}$ for each panel should be organized from the corresponding attribute representations as described by Equation 10. Therefore, the learning objective of $f_{\theta}$ is to minimize the difference between its output $\hat{\mathcal{S}}$ and the theoretical SHDR $\mathcal{S}$ , formulated as:

$$

\underset{\theta}{\min}\left\|f_{\theta}\left(\mathcal{X};\theta\right)-

\mathcal{S}\right\| \tag{13}

$$

Subsequently, the estimated SHDR $\hat{\mathcal{S}}^{ind}$ for each panel undergoes representation decomposition (Figure 4 b), as described in Equation 11, to obtain the estimated HD attribute representations for each object, including Type ( $\hat{\boldsymbol{v}}^{type}_{j}$ ), size ( $\hat{\boldsymbol{v}}^{size}_{j}$ ), color ( $\hat{\boldsymbol{v}}^{color}_{j}$ ), and existence ( $\hat{\boldsymbol{v}}^{exist}_{j}$ ), where $j$ denotes the position index of the corresponding object.

The HD attribute representations are expected to be selected from a set of frontend codebooks for the available attributes of interested in the RAVEN dataset (Figure 4 b). These frontend codebooks include $C_{Num}^{Front}:=\left\{\boldsymbol{v}(r)\right\}_{r=0}^{9}\cup\left\{ \boldsymbol{v}_{null}\right\}$ and $C_{Lgc}^{Front}:=\left\{\boldsymbol{e}(r)\right\}_{r=0}^{1}$ , which represent the numerical value and logic, respectively. $\boldsymbol{v}_{null}$ represents the null attribute representation when there is no object. To improve the neural network’s performance in encoding the SHDR of an image panel, all hypervectors in these frontend codebooks are randomly and independently generated as RVs, rather than using NVs, CVs, or BVs.

However, the estimated HD attribute representations for each object, $\hat{\boldsymbol{v}}^{attr}_{j}$ ( $attr\in\{type,size,color,exist\}$ ), cannot be directly applied to the reasoning backend. First, these representations contain noise introduced by the bundling operation in the form SHDR. Second, as they are expected to be derived from the frontend codebooks of RVs, there are no intrinsic arithmetic or logical relations between the $\hat{\boldsymbol{v}}^{attr}_{j}$ s, which hinders effective reasoning.

To address these issues, we adopt an approach similar to the attention mechanism [38] to obtain the HD attribute representations suitable for the reasoning backend (Figure 4 b). In the query stage, we use the estimated HD attribute representations ( $\hat{\boldsymbol{v}}^{attr}_{j}$ ) as query vectors to compute their similarity with all possible vectors for the corresponding attributes in the frontend codebooks. In the attention stage, these similarity scores are then used as attention weights to perform a weighted summation of the corresponding vectors from the backend codebooks, in which all hypervectors are generated according to their attribute type as shown in Table III. The backend codebooks consist of $C_{Num}^{Back}$ (NVs), $C_{Lgc,BV}^{Back}$ (BVs), $C_{Lgc,RV}^{Back}$ (RVs), and $C_{Pos,\mathrm{nxn}}^{Back}:=\left\{\boldsymbol{p}_{r}\right\}_{r=1}^{n^{2}}$ (CVs for positions in nxn Grid). The updated HD attribute representations obtained after the weighted summation can be utilized in the reasoning backend. The details are provided below.

The query stage: For each attribute $attr\in\left\{type,size,color\right\}$ , we compute the cosine similarity between the estimated HD attribute representation $\hat{\boldsymbol{v}}^{attr}_{j}$ and all possible vectors of $C_{Num}^{Front}$ in the frontend codebooks.

$$

W_{j}^{attr}=sim\left(\hat{\boldsymbol{v}}_{j}^{attr},C_{Num}^{Front}\right) \tag{14}

$$

where $W_{j}^{attr}(r)$ ( $r\in\{0,1,...,9,null\}$ ) represents the attention weights corresponding to the value $r$ of attribute $attr$ at the $j$ th position, based on the query similarity. Similarly, the attention weights for the attribute $attr\in\left\{exist\right\}$ can be obtained by querying the logic codebook $C_{Lgc}^{Front}$ as follows:

$$

W_{j}^{exist}=softmax\left(\beta\cdot sim\left(\hat{\boldsymbol{v}}_{j}^{exist

},C_{Lgc}^{Front}\right)\right) \tag{15}

$$

where $W_{j}^{exist}(r)$ ( $r\in\{0,1\}$ ) corresponds to the presence and absence of the object at $j$ th position, respectively. Here, we use the $softmax$ function to normalize the weights, and $\beta$ denotes the inverse softmax temperature.

The attention stage: The HD attribute representations required by the reasoning backend involve the entity-level attributes Type, Size, and Color, as well as layout-level attributes Number, Position (Table III). For the numerical attribute $attr\in\left\{type,size,color\right\}$ , the corresponding updated HD representation $\boldsymbol{v}_{j}^{attr}$ can be obtained through the weighted summation on the numerical backend codebook $C_{Num}^{Back}$ as follows:

$$

\boldsymbol{v}_{j}^{attr}=\sum_{r\in\left\{0,...,9,null\right\}}{W_{j}^{attr}

\left(r\right)\cdot\boldsymbol{v}\left(r\right)},\boldsymbol{v}\left(r\right)

\in C_{Num}^{Back} \tag{16}

$$

For the logical existence attribute $attr\in\left\{exist\right\}$ , its updated HD representation $\boldsymbol{v}_{j}^{exist}$ are obtained through the weighted summation on the backend codebook $C_{Lgc,RV}^{Back}$ and $C_{Lgc,BV}^{Back}$ , respectively, as follows:

$$

\boldsymbol{v}_{j}^{exist,VT}=\sum_{r\in\left\{0,1\right\}}{W_{j}^{exist}\left

(r\right)\cdot\boldsymbol{e}\left(r\right)},\boldsymbol{e}\left(r\right)\in C_

{Lgc,VT}^{Back} \tag{17}

$$

where $VT\in\{RV,BV\}$ represents the type of atomic HD vectors.

Additionally, we introduce the overall HD attribute representations for the Type, Size, and Color attributes within the nxn Grid. These representations $\boldsymbol{v}_{\mathrm{nxn}}^{attr}$ ( $attr\in\left\{type,size,color\right\}$ ) can be obtained by bundling corresponding HD attribute representations of all objects in the Grid with their attention weights of existence as follows:

$$

\boldsymbol{v}_{\mathrm{nxn}}^{attr}=\sum_{j=1}^{n^{2}}{W_{j}^{exist}\left(1

\right)\cdot\boldsymbol{v}_{j}^{attr}} \tag{1}

$$

For the layout-level attribute Number, its HD attribute representation $\boldsymbol{v}^{number}$ is obtained by projecting the sum of the attention weights of presence to FPE-VSA as follows:

$$

\boldsymbol{v}^{number}=\boldsymbol{v}^{\left(\otimes\sum_{j=1}^{n^{2}}{W_{j}^

{exist}\left(1\right)}\right)} \tag{1}

$$

where $\boldsymbol{v}$ is the base vector of the numerical backend codebook $C_{Num}^{Back}$ .

The layout-level attribute Position within the nxn Grid involves both numerical and logical rules (Table III). Therefore, its HD attribute representations correspond to two distinct rules: the logical representation of each individual object $\boldsymbol{v}_{j}^{position}$ and the overall HD position representation $\mathcal{C}^{position}$ of the entire nxn Grid. The former is an HD existence representation with logical computational properties, that is, $\boldsymbol{v}_{j}^{position}=\boldsymbol{v}_{j}^{exist,BV}$ . Inspired from SHDR for the nxn Grid in Equation 12, the overall HD position representation $\mathcal{C}^{position}$ can be obtained as follows:

$$

\mathcal{C}^{position}_{\mathrm{nxn}}=\sum_{j=1}^{n\times n}{W_{j}^{exist}

\left(1\right)\cdot\boldsymbol{p}_{j}\circ\boldsymbol{v}_{j}^{exist,RV}}\,,

\boldsymbol{p}_{j}\in C_{Pos,\mathrm{nxn}}^{Back} \tag{1}

$$

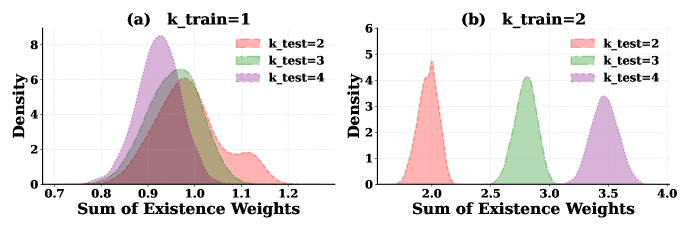

#### IV-E 2 Reasoning backend