# Kimi k1.5: Scaling Reinforcement Learning with LLMs

**Authors**: Kimi Team

## Abstract

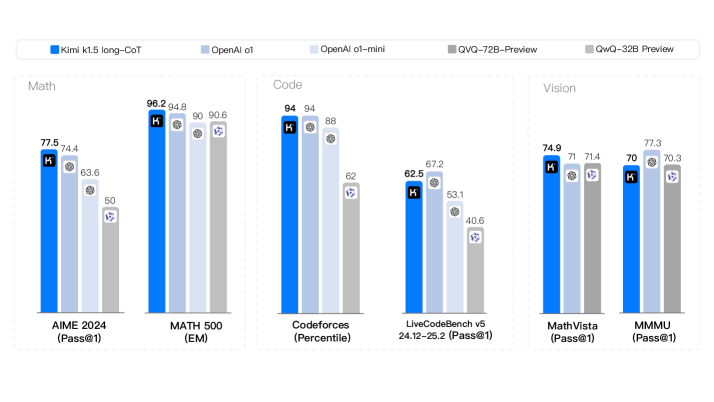

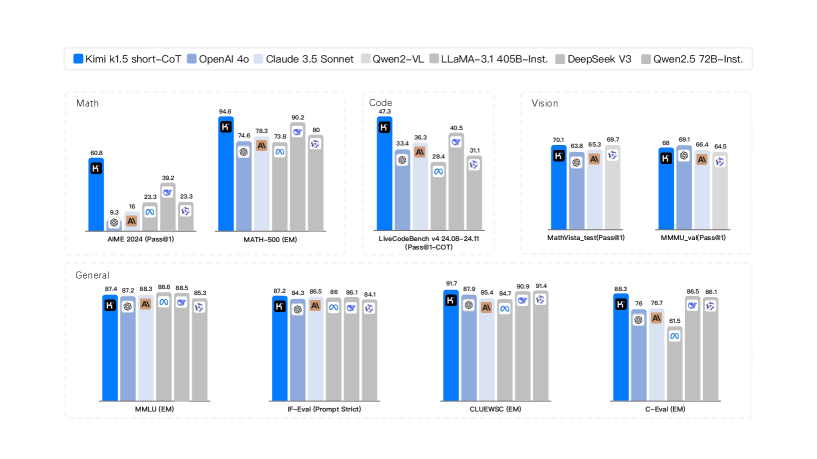

Language model pretraining with next token prediction has proved effective for scaling compute but is limited to the amount of available training data. Scaling reinforcement learning (RL) unlocks a new axis for the continued improvement of artificial intelligence, with the promise that large language models (LLMs) can scale their training data by learning to explore with rewards. However, prior published work has not produced competitive results. In light of this, we report on the training practice of Kimi k1.5, our latest multi-modal LLM trained with RL, including its RL training techniques, multi-modal data recipes, and infrastructure optimization. Long context scaling and improved policy optimization methods are key ingredients of our approach, which establishes a simplistic, effective RL framework without relying on more complex techniques such as Monte Carlo tree search, value functions, and process reward models. Notably, our system achieves state-of-the-art reasoning performance across multiple benchmarks and modalities—e.g., 77.5 on AIME, 96.2 on MATH 500, 94-th percentile on Codeforces, 74.9 on MathVista—matching OpenAI’s o1. Moreover, we present effective long2short methods that use long-CoT techniques to improve short-CoT models, yielding state-of-the-art short-CoT reasoning results—e.g., 60.8 on AIME, 94.6 on MATH500, 47.3 on LiveCodeBench—outperforming existing short-CoT models such as GPT-4o and Claude Sonnet 3.5 by a large margin (up to +550%).

<details>

<summary>x1.png Details</summary>

### Visual Description

\n

## Grouped Bar Chart: AI Model Performance Comparison

### Overview

The image displays a grouped bar chart comparing the performance of five different AI models across six distinct benchmarks. The benchmarks are categorized into three domains: Math, Code, and Vision. The chart uses a consistent color scheme to represent each model, with numerical performance scores displayed atop each bar.

### Components/Axes

* **Legend (Top Center):** A horizontal legend identifies the five models by color:

* **Dark Blue:** Kimi k1.5 long-CoT

* **Light Blue:** OpenAI o1

* **Very Light Blue:** OpenAI o1-mini

* **Medium Gray:** QVQ-72B-Preview

* **Light Gray:** QwQ-32B-Preview

* **Chart Structure:** The chart is divided into three vertical panels, each representing a domain:

1. **Left Panel - Math:** Contains two benchmark groups.

2. **Center Panel - Code:** Contains two benchmark groups.

3. **Right Panel - Vision:** Contains two benchmark groups.

* **X-Axis (Bottom):** Lists the six specific benchmarks, grouped by domain:

* **Math:** `AIME 2024 (Pass@1)` and `MATH 500 (EM)`

* **Code:** `Codeforces (Percentile)` and `LiveCodeBench v5 24.12-25.2 (Pass@1)`

* **Vision:** `MathVista (Pass@1)` and `MMMU (Pass@1)`

* **Y-Axis:** Not explicitly labeled with a scale or title. Performance is indicated by the height of the bars and the numerical labels on top of them. The metric varies by benchmark (e.g., Pass@1, EM, Percentile).

### Detailed Analysis

Performance scores for each model on each benchmark are as follows:

**1. Math Domain**

* **AIME 2024 (Pass@1):**

* Kimi k1.5 long-CoT: 77.5

* OpenAI o1: 74.4

* OpenAI o1-mini: 63.6

* QVQ-72B-Preview: 50

* QwQ-32B-Preview: (Bar present but no numerical label visible)

* **MATH 500 (EM):**

* Kimi k1.5 long-CoT: 98.2

* OpenAI o1: 94.8

* OpenAI o1-mini: 90

* QVQ-72B-Preview: 90.6

* QwQ-32B-Preview: (Bar present but no numerical label visible)

**2. Code Domain**

* **Codeforces (Percentile):**

* Kimi k1.5 long-CoT: 94

* OpenAI o1: 94

* OpenAI o1-mini: 88

* QVQ-72B-Preview: 62

* QwQ-32B-Preview: (Bar present but no numerical label visible)

* **LiveCodeBench v5 24.12-25.2 (Pass@1):**

* Kimi k1.5 long-CoT: 62.5

* OpenAI o1: 67.2

* OpenAI o1-mini: 53.1

* QVQ-72B-Preview: 40.6

* QwQ-32B-Preview: (Bar present but no numerical label visible)

**3. Vision Domain**

* **MathVista (Pass@1):**

* Kimi k1.5 long-CoT: 74.9

* OpenAI o1: 71

* OpenAI o1-mini: 71.4

* QVQ-72B-Preview: (Bar present but no numerical label visible)

* QwQ-32B-Preview: (Bar present but no numerical label visible)

* **MMMU (Pass@1):**

* Kimi k1.5 long-CoT: 70

* OpenAI o1: 77.3

* OpenAI o1-mini: 70.3

* QVQ-72B-Preview: (Bar present but no numerical label visible)

* QwQ-32B-Preview: (Bar present but no numerical label visible)

**Note on Missing Labels:** Several bars, primarily for the `QwQ-32B-Preview` model (light gray) and some for `QVQ-72B-Preview` (medium gray), do not have numerical scores displayed on top. Their relative heights can be inferred visually.

### Key Observations

1. **Model Leadership:** The `Kimi k1.5 long-CoT` (dark blue) model is the top performer or tied for top in 4 out of the 6 benchmarks (AIME 2024, MATH 500, Codeforces, MathVista).

2. **Strong Contender:** The `OpenAI o1` (light blue) model is highly competitive, leading in `LiveCodeBench v5` and `MMMU`, and tying for first in `Codeforces`.

3. **Domain Strengths:**

* **Math:** `Kimi k1.5 long-CoT` shows a clear lead on the AIME benchmark but is closely matched by others on MATH 500.

* **Code:** `Kimi k1.5 long-CoT` and `OpenAI o1` are virtually identical on the Codeforces percentile metric, but `OpenAI o1` has a noticeable lead on the LiveCodeBench pass@1 metric.

* **Vision:** Performance is more tightly clustered. `OpenAI o1` leads on MMMU, while `Kimi k1.5 long-CoT` leads on MathVista.

4. **Performance Drop-off:** The `OpenAI o1-mini` (very light blue) and the two "Preview" models (gray bars) generally score lower than the top two models across most benchmarks, with the `QwQ-32B-Preview` appearing to be the lowest-performing model where its bar height is visible.

### Interpretation

This chart provides a comparative snapshot of frontier AI model capabilities as of the data's collection date (likely early 2025, given the benchmark names). The data suggests a competitive landscape where no single model dominates all categories.

* **Specialization vs. Generalization:** `Kimi k1.5 long-CoT` demonstrates exceptional strength in mathematical reasoning and certain coding tasks, while `OpenAI o1` shows superior performance in other coding benchmarks and complex visual question answering (MMMU). This indicates potential specialization in model training or architecture.

* **Benchmark Sensitivity:** The varying rankings across benchmarks (e.g., the flip between Kimi and OpenAI o1 on the two Code benchmarks) highlight that model evaluation is highly sensitive to the specific task and metric used. A model's "capability" is not a single number but a profile across diverse challenges.

* **The "Preview" Gap:** The significant performance gap between the established models (Kimi, OpenAI o1) and the "Preview" models (QVQ, QwQ) suggests these are either less mature, smaller, or differently optimized models, possibly representing a different tier of capability or a work in progress.

* **Implication for Users:** The choice of model would depend heavily on the primary use case. For math-heavy applications, Kimi k1.5 long-CoT appears strongest. For a mix of coding and visual reasoning, OpenAI o1 is a very strong contender. The chart argues against a one-size-fits-all approach to model selection.

</details>

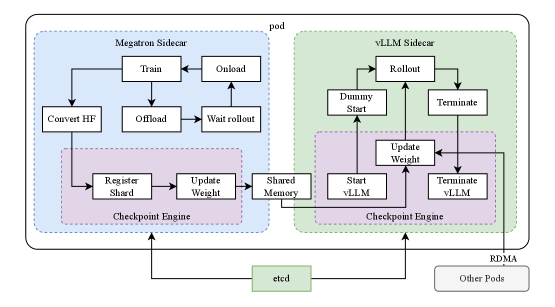

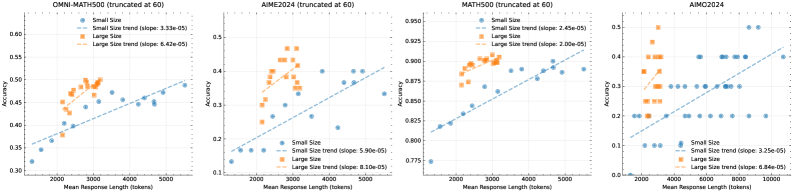

Figure 1: Kimi k1.5 long-CoT results.

<details>

<summary>x2.png Details</summary>

### Visual Description

## Bar Chart Comparison: AI Model Performance Across Multiple Benchmarks

### Overview

The image is a composite bar chart comparing the performance of seven different large language models (LLMs) across eight distinct evaluation benchmarks. The benchmarks are grouped into four categories: Math, Code, Vision, and General. The chart uses a consistent color-coding scheme for each model, as defined in the legend at the top.

### Components/Axes

* **Legend (Top Center):** A horizontal legend identifies seven models with associated color codes:

* **Kimi k1.5 short-CoT:** Dark Blue

* **OpenAI 4o:** Light Blue

* **Claude 3.5 Sonnet:** Light Gray

* **Qwen2-VL:** Medium Gray

* **LLaMA-3.1 405B-Inst.:** Dark Gray

* **DeepSeek V3:** Very Light Gray

* **Qwen2.5 72B-Inst.:** Lightest Gray/White

* **Chart Structure:** The image is divided into four main rectangular panels, each containing one or two bar charts.

* **Top Left Panel (Math):** Contains two bar charts.

* **Top Center Panel (Code):** Contains one bar chart.

* **Top Right Panel (Vision):** Contains two bar charts.

* **Bottom Row (General):** Contains four bar charts.

* **Axes:** Each individual bar chart has:

* **Y-axis:** Represents the performance score (percentage or metric-specific value). The scale is not explicitly numbered, but values are annotated on top of each bar.

* **X-axis:** Lists the specific benchmark name for each group of bars.

### Detailed Analysis

#### **Math Category (Top Left Panel)**

1. **Benchmark: AIME 2024 (Pass@1)**

* **Kimi k1.5 short-CoT (Dark Blue):** 40.8

* **OpenAI 4o (Light Blue):** 9.3

* **Claude 3.5 Sonnet (Light Gray):** 16

* **Qwen2-VL (Medium Gray):** 23.3

* **LLaMA-3.1 405B-Inst. (Dark Gray):** 39.2

* **DeepSeek V3 (Very Light Gray):** 33.3

* **Qwen2.5 72B-Inst. (Lightest Gray):** 33.3

* **Trend:** Kimi k1.5 and LLaMA-3.1 are the top performers, significantly ahead of others. OpenAI 4o has the lowest score.

2. **Benchmark: MATH-500 (EM)**

* **Kimi k1.5 short-CoT (Dark Blue):** 94.6

* **OpenAI 4o (Light Blue):** 74.6

* **Claude 3.5 Sonnet (Light Gray):** 78.3

* **Qwen2-VL (Medium Gray):** 73.8

* **LLaMA-3.1 405B-Inst. (Dark Gray):** 86.2

* **DeepSeek V3 (Very Light Gray):** 80

* **Qwen2.5 72B-Inst. (Lightest Gray):** 80

* **Trend:** Kimi k1.5 shows a dominant lead. LLaMA-3.1 is second. The remaining models cluster in the 73-80 range.

#### **Code Category (Top Center Panel)**

1. **Benchmark: LiveCodeBench v4 24.08-24.11 (Pass@1-COT)**

* **Kimi k1.5 short-CoT (Dark Blue):** 47.9

* **OpenAI 4o (Light Blue):** 33.4

* **Claude 3.5 Sonnet (Light Gray):** 36.3

* **Qwen2-VL (Medium Gray):** 29.4

* **LLaMA-3.1 405B-Inst. (Dark Gray):** 40.5

* **DeepSeek V3 (Very Light Gray):** 31.1

* **Qwen2.5 72B-Inst. (Lightest Gray):** (Bar present but value not clearly visible, appears to be the lowest)

* **Trend:** Kimi k1.5 leads, followed by LLaMA-3.1 and Claude 3.5 Sonnet. Qwen2-VL and DeepSeek V3 are at the lower end.

#### **Vision Category (Top Right Panel)**

1. **Benchmark: MathVista_test (Pass@1)**

* **Kimi k1.5 short-CoT (Dark Blue):** 70.1

* **OpenAI 4o (Light Blue):** 63.6

* **Claude 3.5 Sonnet (Light Gray):** 65.3

* **Qwen2-VL (Medium Gray):** 69.7

* **LLaMA-3.1 405B-Inst. (Dark Gray):** (Bar present but value not clearly visible)

* **DeepSeek V3 (Very Light Gray):** (Bar present but value not clearly visible)

* **Qwen2.5 72B-Inst. (Lightest Gray):** (Bar present but value not clearly visible)

* **Trend:** Kimi k1.5 and Qwen2-VL are the top performers, very close in score. OpenAI 4o is the lowest among the clearly labeled scores.

2. **Benchmark: MMMU_val (Pass@1)**

* **Kimi k1.5 short-CoT (Dark Blue):** 68

* **OpenAI 4o (Light Blue):** 69.1

* **Claude 3.5 Sonnet (Light Gray):** 66.4

* **Qwen2-VL (Medium Gray):** 64.5

* **LLaMA-3.1 405B-Inst. (Dark Gray):** (Bar present but value not clearly visible)

* **DeepSeek V3 (Very Light Gray):** (Bar present but value not clearly visible)

* **Qwen2.5 72B-Inst. (Lightest Gray):** (Bar present but value not clearly visible)

* **Trend:** OpenAI 4o has a slight lead over Kimi k1.5. Claude 3.5 Sonnet and Qwen2-VL follow closely.

#### **General Category (Bottom Row)**

1. **Benchmark: MMLU (EM)**

* **Kimi k1.5 short-CoT (Dark Blue):** 87.4

* **OpenAI 4o (Light Blue):** 87.2

* **Claude 3.5 Sonnet (Light Gray):** 88.3

* **Qwen2-VL (Medium Gray):** 88.6

* **LLaMA-3.1 405B-Inst. (Dark Gray):** 88.5

* **DeepSeek V3 (Very Light Gray):** 88.3

* **Qwen2.5 72B-Inst. (Lightest Gray):** (Bar present but value not clearly visible)

* **Trend:** Extremely tight clustering. Qwen2-VL has a marginal lead. All models score between approximately 87.2 and 88.6.

2. **Benchmark: IFEval (Prompt Strict)**

* **Kimi k1.5 short-CoT (Dark Blue):** 87.2

* **OpenAI 4o (Light Blue):** 84.3

* **Claude 3.5 Sonnet (Light Gray):** 86.5

* **Qwen2-VL (Medium Gray):** 86

* **LLaMA-3.1 405B-Inst. (Dark Gray):** 86.1

* **DeepSeek V3 (Very Light Gray):** 84.1

* **Qwen2.5 72B-Inst. (Lightest Gray):** (Bar present but value not clearly visible)

* **Trend:** Kimi k1.5 leads. Claude, Qwen2-VL, and LLaMA-3.1 are tightly grouped in the mid-86s. OpenAI 4o and DeepSeek V3 are slightly lower.

3. **Benchmark: CLUEWSC (EM)**

* **Kimi k1.5 short-CoT (Dark Blue):** 91.7

* **OpenAI 4o (Light Blue):** 87.9

* **Claude 3.5 Sonnet (Light Gray):** 85.4

* **Qwen2-VL (Medium Gray):** 86.7

* **LLaMA-3.1 405B-Inst. (Dark Gray):** 90.8

* **DeepSeek V3 (Very Light Gray):** 91.4

* **Qwen2.5 72B-Inst. (Lightest Gray):** (Bar present but value not clearly visible)

* **Trend:** Kimi k1.5 and DeepSeek V3 are the top performers, both above 91. LLaMA-3.1 is also strong at 90.8. Claude 3.5 Sonnet is the lowest.

4. **Benchmark: C-Eval (EM)**

* **Kimi k1.5 short-CoT (Dark Blue):** 88.2

* **OpenAI 4o (Light Blue):** 76

* **Claude 3.5 Sonnet (Light Gray):** 76.7

* **Qwen2-VL (Medium Gray):** 81.5

* **LLaMA-3.1 405B-Inst. (Dark Gray):** 86.5

* **DeepSeek V3 (Very Light Gray):** 86.1

* **Qwen2.5 72B-Inst. (Lightest Gray):** (Bar present but value not clearly visible)

* **Trend:** Kimi k1.5 has a clear lead. LLaMA-3.1 and DeepSeek V3 are strong seconds. OpenAI 4o and Claude 3.5 Sonnet are notably lower.

### Key Observations

1. **Model Dominance:** The **Kimi k1.5 short-CoT** model (dark blue bar) is the top performer in 6 out of the 8 benchmarks shown (AIME 2024, MATH-500, LiveCodeBench, MathVista_test, IFEval, CLUEWSC, C-Eval). It shows particular strength in mathematical and coding reasoning tasks.

2. **Competitive Tiers:** Performance is not uniform. In benchmarks like **MMLU**, all models are extremely competitive (within ~1.4 points). In others like **AIME 2024** or **MATH-500**, there is a significant performance gap between the leader and the rest.

3. **Vision Benchmark Split:** In the Vision category, the leadership changes. **Qwen2-VL** is very competitive with Kimi k1.5 on MathVista, and **OpenAI 4o** takes a slight lead on MMMU_val.

4. **Language-Specific Benchmark:** The **C-Eval** benchmark (likely Chinese-language focused) shows a different ranking, with Kimi k1.5 leading, followed by LLaMA-3.1 and DeepSeek V3, while OpenAI 4o and Claude 3.5 Sonnet score significantly lower.

5. **Data Gaps:** For several models (particularly Qwen2.5 72B-Inst., and some bars for LLaMA-3.1 and DeepSeek V3 in the Vision section), the exact numerical score is not clearly legible on the chart, though the bar height is visible.

### Interpretation

This chart provides a snapshot of the competitive landscape among leading LLMs as of the evaluation period (likely late 2024/early 2025 based on benchmark names). The data suggests that **Kimi k1.5 short-CoT** is a highly capable model, especially in tasks requiring complex reasoning (math, code, logic). Its consistent high performance across diverse domains indicates strong generalization.

The tight clustering in general knowledge benchmarks like **MMLU** suggests that top-tier models have reached a similar plateau of broad knowledge. Differentiation now occurs in specialized, harder tasks (e.g., competition-level math, live coding) and in specific domains like vision-language understanding or instruction following (**IFEval**).

The variation in rankings across benchmarks underscores that no single model is universally "best." The optimal choice depends on the specific application: **Qwen2-VL** for certain vision tasks, **OpenAI 4o** for MMMU, **DeepSeek V3** for CLUEWSC, etc. The strong showing of **LLaMA-3.1 405B-Inst.**, an open-weights model, across many benchmarks is notable, demonstrating that open models can compete closely with proprietary ones.

The chart effectively communicates that the field is highly competitive, with rapid iteration leading to frequent changes in the state-of-the-art across different evaluation axes.

</details>

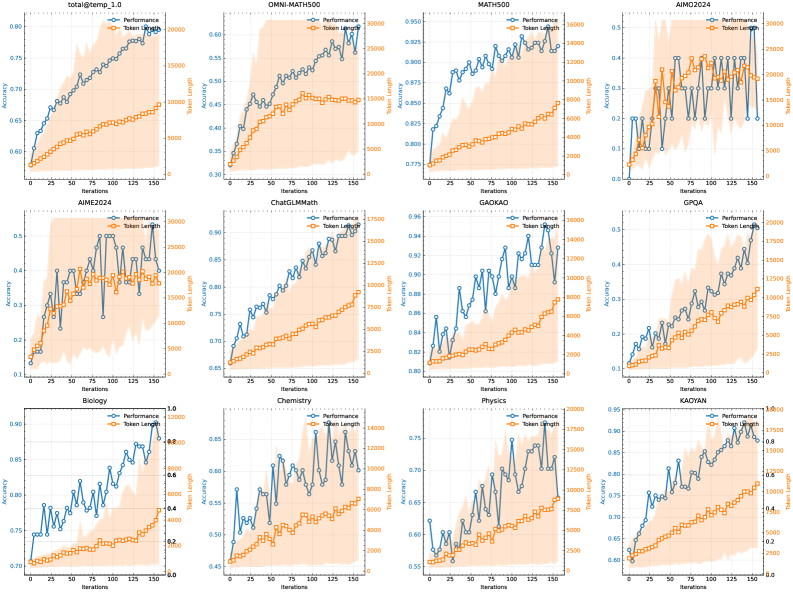

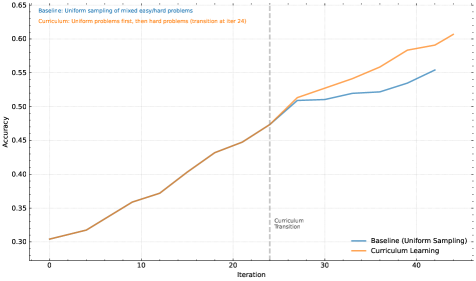

Figure 2: Kimi k1.5 short-CoT results.

## 1 Introduction

Language model pretraining with next token prediction has been studied under the context of the scaling law, where proportionally scaling model parameters and data sizes leads to the continued improvement of intelligence. [19, 14] However, this approach is limited to the amount of available high-quality training data [50, 32]. In this report, we present the training recipe of Kimi k1.5, our latest multi-modal LLM trained with reinforcement learning (RL). The goal is to explore a possible new axis for continued scaling. Using RL with LLMs, the models learns to explore with rewards and thus is not limited to a pre-existing static dataset.

There are a few key ingredients about the design and training of k1.5.

- Long context scaling. We scale the context window of RL to 128k and observe continued improvement of performance with an increased context length. A key idea behind our approach is to use partial rollouts to improve training efficiency—i.e., sampling new trajectories by reusing a large chunk of previous trajectories, avoiding the cost to re-generate the new trajectories from scratch. Our observation identifies the context length as a key dimension of the continued scaling of RL with LLMs.

- Improved policy optimization. We derive a formulation of RL with long-CoT and employ a variant of online mirror descent for robust policy optimization. This algorithm is further improved by our effective sampling strategy, length penalty, and optimization of the data recipe.

- Simplistic Framework. Long context scaling, combined with the improved policy optimization methods, establishes a simplistic RL framework for learning with LLMs. Since we are able to scale the context length, the learned CoTs exhibit the properties of planning, reflection, and correction. An increased context length has an effect of increasing the number of search steps. As a result, we show that strong performance can be achieved without relying on more complex techniques such as Monte Carlo tree search, value functions, and process reward models.

- Multimodalities. Our model is jointly trained on text and vision data, which has the capabilities of jointly reasoning over the two modalities.

Moreover, we present effective long2short methods that use long-CoT techniques to improve short-CoT models. Specifically, our approaches include applying length penalty with long-CoT activations and model merging.

Our long-CoT version achieves state-of-the-art reasoning performance across multiple benchmarks and modalities—e.g., 77.5 on AIME, 96.2 on MATH 500, 94-th percentile on Codeforces, 74.9 on MathVista—matching OpenAI’s o1. Our model also achieves state-of-the-art short-CoT reasoning results—e.g., 60.8 on AIME, 94.6 on MATH500, 47.3 on LiveCodeBench—outperforming existing short-CoT models such as GPT-4o and Claude Sonnet 3.5 by a large margin (up to +550%). Results are shown in Figures 1 and 2.

## 2 Approach: Reinforcement Learning with LLMs

The development of Kimi k1.5 consists of several stages: pretraining, vanilla supervised fine-tuning (SFT), long-CoT supervised fine-turning, and reinforcement learning (RL). This report focuses on RL, beginning with an overview of the RL prompt set curation (Section 2.1) and long-CoT supervised finetuning (Section 2.2), followed by an in-depth discussion of RL training strategies in Section 2.3. Additional details on pretraining and vanilla supervised finetuning can be found in Section 2.5.

### 2.1 RL Prompt Set Curation

Through our preliminary experiments, we found that the quality and diversity of the RL prompt set play a critical role in ensuring the effectiveness of reinforcement learning. A well-constructed prompt set not only guides the model toward robust reasoning but also mitigates the risk of reward hacking and overfitting to superficial patterns. Specifically, three key properties define a high-quality RL prompt set:

- Diverse Coverage: Prompts should span a wide array of disciplines, such as STEM, coding, and general reasoning, to enhance the model’s adaptability and ensure broad applicability across different domains.

- Balanced Difficulty: The prompt set should include a well-distributed range of easy, moderate, and difficult questions to facilitate gradual learning and prevent overfitting to specific complexity levels.

- Accurate Evaluability: Prompts should allow objective and reliable assessment by verifiers, ensuring that model performance is measured based on correct reasoning rather than superficial patterns or random guess.

To achieve diverse coverage in the prompt set, we employ automatic filters to select questions that require rich reasoning and are straightforward to evaluate. Our dataset includes problems from various domains, such as STEM fields, competitions, and general reasoning tasks, incorporating both text-only and image-text question-answering data. Furthermore, we developed a tagging system to categorize prompts by domain and discipline, ensuring balanced representation across different subject areas [24, 27].

We adopt a model-based approach that leverages the model’s own capacity to adaptively assess the difficulty of each prompt. Specifically, for every prompt, an SFT model generates answers ten times using a relatively high sampling temperature. The pass rate is then calculated and used as a proxy for the prompt’s difficulty—the lower the pass rate, the higher the difficulty. This approach allows difficulty evaluation to be aligned with the model’s intrinsic capabilities, making it highly effective for RL training. By leveraging this method, we can prefilter most trivial cases and easily explore different sampling strategies during RL training.

To avoid potential reward hacking [9, 36], we need to ensure that both the reasoning process and the final answer of each prompt can be accurately verified. Empirical observations reveal that some complex reasoning problems may have relatively simple and easily guessable answers, leading to false positive verification—where the model reaches the correct answer through an incorrect reasoning process. To address this issue, we exclude questions that are prone to such errors, such as multiple-choice, true/false, and proof-based questions. Furthermore, for general question-answering tasks, we propose a simple yet effective method to identify and remove easy-to-hack prompts. Specifically, we prompt a model to guess potential answers without any CoT reasoning steps. If the model predicts the correct answer within $N$ attempts, the prompt is considered too easy-to-hack and removed. We found that setting $N=8$ can remove the majority easy-to-hack prompts. Developing more advanced verification models remains an open direction for future research.

### 2.2 Long-CoT Supervised Fine-Tuning

With the refined RL prompt set, we employ prompt engineering to construct a small yet high-quality long-CoT warmup dataset, containing accurately verified reasoning paths for both text and image inputs. This approach resembles rejection sampling (RS) but focuses on generating long-CoT reasoning paths through prompt engineering. The resulting warmup dataset is designed to encapsulate key cognitive processes that are fundamental to human-like reasoning, such as planning, where the model systematically outlines steps before execution; evaluation, involving critical assessment of intermediate steps; reflection, enabling the model to reconsider and refine its approach; and exploration, encouraging consideration of alternative solutions. By performing a lightweight SFT on this warm-up dataset, we effectively prime the model to internalize these reasoning strategies. As a result, the fine-tuned long-CoT model demonstrates improved capability in generating more detailed and logically coherent responses, which enhances its performance across diverse reasoning tasks.

### 2.3 Reinforcement Learning

#### 2.3.1 Problem Setting

Given a training dataset $D=\{(x_i,y^*_i)\}_i=1^n$ of problems $x_i$ and corresponding ground truth answers $y^*_i$ , our goal is to train a policy model $π_θ$ to accurately solve test problems. In the context of complex reasoning, the mapping of problem $x$ to solution $y$ is non-trivial. To tackle this challenge, the chain of thought (CoT) method proposes to use a sequence of intermediate steps $z=(z_1,z_2,\dots,z_m)$ to bridge $x$ and $y$ , where each $z_i$ is a coherent sequence of tokens that acts as a significant intermediate step toward solving the problem [54]. When solving problem $x$ , thoughts $z_t∼π_θ(·|x,z_1,\dots,z_t-1)$ are auto-regressively sampled, followed by the final answer $y∼π_θ(·|x,z_1,\dots,z_m)$ . We use $y,z∼π_θ$ to denote this sampling procedure. Note that both the thoughts and final answer are sampled as a language sequence.

To further enhance the model’s reasoning capabilities, planning algorithms are employed to explore various thought processes, generating improved CoT at inference time [58, 55, 44]. The core insight of these approaches is the explicit construction of a search tree of thoughts guided by value estimations. This allows the model to explore diverse continuations of a thought process or backtrack to investigate new directions when encountering dead ends. In more detail, let $T$ be a search tree where each node represents a partial solution $s=(x,z_1:|s|)$ . Here $s$ consists of the problem $x$ and a sequence of thoughts $z_1:|s|=(z_1,\dots,z_|s|)$ leading up to that node, with $|s|$ denoting number of thoughts in the sequence. The planning algorithm uses a critic model $v$ to provide feedback $v(x,z_1:|s|)$ , which helps evaluate the current progress towards solving the problem and identify any errors in the existing partial solution. We note that the feedback can be provided by either a discriminative score or a language sequence [61]. Guided by the feedbacks for all $s∈T$ , the planning algorithm selects the most promising node for expansion, thereby growing the search tree. The above process repeats iteratively until a full solution is derived.

We can also approach planning algorithms from an algorithmic perspective. Given past search history available at the $t$ -th iteration $(s_1,v(s_1),\dots,s_t-1,v(s_t-1))$ , a planning algorithm $A$ iteratively determines the next search direction $A(s_t|s_1,v(s_1),\dots,s_t-1,v(s_t-1))$ and provides feedbacks for the current search progress $A(v(s_t)|s_1,v(s_1),\dots,s_t)$ . Since both thoughts and feedbacks can be viewed as intermediate reasoning steps, and these components can both be represented as sequence of language tokens, we use $z$ to replace $s$ and $v$ to simplify the notations. Accordingly, we view a planning algorithm as a mapping that directly acts on a sequence of reasoning steps $A(·|z_1,z_2,\dots)$ . In this framework, all information stored in the search tree used by the planning algorithm is flattened into the full context provided to the algorithm. This provides an intriguing perspective on generating high-quality CoT: Rather than explicitly constructing a search tree and implementing a planning algorithm, we could potentially train a model to approximate this process. Here, the number of thoughts (i.e., language tokens) serves as an analogy to the computational budget traditionally allocated to planning algorithms. Recent advancements in long context windows facilitate seamless scalability during both the training and testing phases. If feasible, this method enables the model to run an implicit search over the reasoning space directly via auto-regressive predictions. Consequently, the model not only learns to solve a set of training problems but also develops the ability to tackle individual problems effectively, leading to improved generalization to unseen test problems.

We thus consider training the model to generate CoT with reinforcement learning (RL) [34]. Let $r$ be a reward model that justifies the correctness of the proposed answer $y$ for the given problem $x$ based on the ground truth $y^*$ , by assigning a value $r(x,y,y^*)∈\{0,1\}$ . For verifiable problems, the reward is directly determined by predefined criteria or rules. For example, in coding problems, we assess whether the answer passes the test cases. For problems with free-form ground truth, we train a reward model $r(x,y,y^*)$ that predicts if the answer matches the ground truth. Given a problem $x$ , the model $π_θ$ generates a CoT and the final answer through the sampling procedure $z∼π_θ(·|x)$ , $y∼π_θ(·|x,z)$ . The quality of the generated CoT is evaluated by whether it can lead to a correct final answer. In summary, we consider the following objective to optimize the policy

$$

\displaystyle\max_θE_(x,y^*)∼D,(y,z)∼π_θ≤ft[r(x,y,y^*)\right] . \tag{1}

$$

By scaling up RL training, we aim to train a model that harnesses the strengths of both simple prompt-based CoT and planning-augmented CoT. The model still auto-regressively sample language sequence during inference, thereby circumventing the need for the complex parallelization required by advanced planning algorithms during deployment. However, a key distinction from simple prompt-based methods is that the model should not merely follow a series of reasoning steps. Instead, it should also learn critical planning skills including error identification, backtracking and solution refinement by leveraging the entire set of explored thoughts as contextual information.

#### 2.3.2 Policy Optimization

We apply a variant of online policy mirror decent as our training algorithm [1, 31, 48]. The algorithm performs iteratively. At the $i$ -th iteration, we use the current model $π_θ_{i}$ as a reference model and optimize the following relative entropy regularized policy optimization problem,

$$

\displaystyle\max_θE_(x,y^*)∼D≤ft[E_(y,z)∼π_{θ}≤ft[r(x,y,y^*)\right]-τKL(π_θ(x)||π_θ_{i}(x))\right] , \tag{2}

$$

where $τ>0$ is a parameter controlling the degree of regularization. This objective has a closed form solution

| | $\displaystyleπ^*(y,z|x)=π_θ_{i}(y,z|x)\exp(r(x,y,y^*)/τ)/Z .$ | |

| --- | --- | --- |

Here $Z=∑_y^\prime,z^{\prime}π_θ_{i}(y^\prime,z^\prime|x)\exp(r(x,y^\prime,y^*)/τ)$ is the normalization factor. Taking logarithm of both sides we have for any $(y,z)$ the following constraint is satisfied, which allows us to leverage off-policy data during optimization

| | $\displaystyle r(x,y,y^*)-τ\log Z=τ\log\frac{π^*(y,z|x)}{π_θ_{i}(y,z|x)} .$ | |

| --- | --- | --- |

This motivates the following surrogate loss

| | $\displaystyle L(θ)=E_(x,y^*)∼D≤ft[E_(y,z)∼π_{θ_{i}}≤ft[≤ft(r(x,y,y^*)-τ\log Z-τ\log\frac{π_θ(y,z|x)}{{π}_θ_{i}(y,z|x)}\right)^2\right]\right] .$ | |

| --- | --- | --- |

To approximate $τ\log Z$ , we use samples $(y_1,z_1),\dots,(y_k,z_k)∼π_θ_{i}$ : $τ\log Z≈τ\log\frac{1}{k}∑_j=1^k\exp(r(x,y_j,y^*)/τ)$ . We also find that using empirical mean of sampled rewards $\overline{r}=mean(r(x,y_1,y^*),\dots,r(x,y_k,y^*))$ yields effective practical results. This is reasonable since $τ\log Z$ approaches the expected reward under $π_θ_{i}$ as $τ→∞$ . Finally, we conclude our learning algorithm by taking the gradient of surrogate loss. For each problem $x$ , $k$ responses are sampled using the reference policy $π_θ_{i}$ , and the gradient is given by

$$

\displaystyle\frac{1}{k}∑_j=1^k≤ft(∇_θ\logπ_θ(y_j,z_j|x)(r(x,y_j,y^*)-\overline{r})-\frac{τ}{2}∇_θ≤ft(\log\frac{π_θ(y_j,z_j|x)}{{π}_θ_{i}(y_j,z_j|x)}\right)^2\right) . \tag{3}

$$

To those familiar with policy gradient methods, this gradient resembles the policy gradient of (2) using the mean of sampled rewards as the baseline [20, 2]. The main differences are that the responses are sampled from $π_θ_{i}$ rather than on-policy, and an $l_2$ -regularization is applied. Thus we could see this as the natural extension of a usual on-policy regularized policy gradient algorithm to the off-policy case [33]. We sample a batch of problems from $D$ and update the parameters to $θ_i+1$ , which subsequently serves as the reference policy for the next iteration. Since each iteration considers a different optimization problem due to the changing reference policy, we also reset the optimizer at the start of each iteration.

We exclude the value network in our training system which has also been exploited in previous studies [2]. While this design choice significantly improves training efficiency, we also hypothesize that the conventional use of value functions for credit assignment in classical RL may not be suitable for our context. Consider a scenario where the model has generated a partial CoT $(z_1,z_2,\dots,z_t)$ and there are two potential next reasoning steps: $z_t+1$ and $z^\prime_t+1$ . Assume that $z_t+1$ directly leads to the correct answer, while $z^\prime_t+1$ contains some errors. If an oracle value function were accessible, it would indicate that $z_t+1$ preserves a higher value compared to $z^\prime_t+1$ . According to the standard credit assignment principle, selecting $z^\prime_t+1$ would be penalized as it has a negative advantages relative to the current policy. However, exploring $z^\prime_t+1$ is extremely valuable for training the model to generate long CoT. By using the justification of the final answer derived from a long CoT as the reward signal, the model can learn the pattern of trial and error from taking $z^\prime_t+1$ as long as it successfully recovers and reaches the correct answer. The key takeaway from this example is that we should encourage the model to explore diverse reasoning paths to enhance its capability in solving complex problems. This exploratory approach generates a wealth of experience that supports the development of critical planning skills. Our primary goal is not confined to attaining high accuracy on training problems but focuses on equipping the model with effective problem-solving strategies, ultimately improving its performance on test problems.

#### 2.3.3 Length Penalty

We observe an overthinking phenomenon that the model’s response length significantly increases during RL training. Although this leads to better performance, an excessively lengthy reasoning process is costly during training and inference, and overthinking is often not preferred by humans. To address this issue, we introduce a length reward to restrain the rapid growth of token length, thereby improving the model’s token efficiency. Given $k$ sampled responses $(y_1,z_1),\dots,(y_k,z_k)$ of problem $x$ with true answer $y^*$ , let $len(i)$ be the length of $(y_i,z_i)$ , $min\_len=\min_ilen(i)$ and $max\_len=\max_ilen(i)$ . If $max\_len=min\_len$ , we set length reward zero for all responses, as they have the same length. Otherwise the length reward is given by

| | $\displaystylelen\_reward(i)=≤ft\{\begin{aligned} λ& If r(x,y_i,y^*)=1\ \min(0,λ)& If r(x,y_i,y^*)=0\ \end{aligned}\right. , where λ=0.5-\frac{len(i)-min\_len}{max\_len-min\_len} .$ | |

| --- | --- | --- |

In essence, we promote shorter responses and penalize longer responses among correct ones, while explicitly penalizing long responses with incorrect answers. This length-based reward is then added to the original reward with a weighting parameter.

In our preliminary experiments, length penalty may slow down training during the initial phases. To alleviate this issue, we propose to gradually warm up the length penalty during training. Specifically, we employ standard policy optimization without length penalty, followed by a constant length penalty for the rest of training.

#### 2.3.4 Sampling Strategies

Although RL algorithms themselves have relatively good sampling properties (with more difficult problems providing larger gradients), their training efficiency is limited. Consequently, some well-defined prior sampling methods can yield potentially greater performance gains. We exploit multiple signals to further improve the sampling strategy. First, the RL training data we collect naturally come with different difficulty labels. For example, a math competition problem is more difficult than a primary school math problem. Second, because the RL training process samples the same problem multiple times, we can also track the success rate for each individual problem as a metric of difficulty. We propose two sampling methods to utilize these priors to improve training efficiency.

Curriculum Sampling

We start by training on easier tasks and gradually progress to more challenging ones. Since the initial RL model has limited performance, spending a restricted computation budget on very hard problems often yields few correct samples, resulting in lower training efficiency. Meanwhile, our collected data naturally includes grade and difficulty labels, making difficulty-based sampling an intuitive and effective way to improve training efficiency.

Prioritized Sampling

In addition to curriculum sampling, we use a prioritized sampling strategy to focus on problems where the model underperforms. We track the success rates $s_i$ for each problem $i$ and sample problems proportional to $1-s_i$ , so that problems with lower success rates receive higher sampling probabilities. This directs the model’s efforts toward its weakest areas, leading to faster learning and better overall performance.

#### 2.3.5 More Details on Training Recipe

Test Case Generation for Coding

Since test cases are not available for many coding problems from the web, we design a method to automatically generate test cases that serve as a reward to train our model with RL. Our focus is primarily on problems that do not require a special judge. We also assume that ground truth solutions are available for these problems so that we can leverage the solutions to generate higher quality test cases.

We utilize the widely recognized test case generation library, CYaRon https://github.com/luogu-dev/cyaron, to enhance our approach. We employ our base Kimi k1.5 to generate test cases based on problem statements. The usage statement of CYaRon and the problem description are provided as the input to the generator. For each problem, we first use the generator to produce 50 test cases and also randomly sample 10 ground truth submissions for each test case. We run the test cases against the submissions. A test case is deemed valid if at least 7 out of 10 submissions yield matching results. After this round of filtering, we obtain a set of selected test cases. A problem and its associated selected test cases are added to our training set if at least 9 out of 10 submissions pass the entire set of selected test cases.

In terms of statistics, from a sample of 1,000 online contest problems, approximately 614 do not require a special judge. We developed 463 test case generators that produced at least 40 valid test cases, leading to the inclusion of 323 problems in our training set.

Reward Modeling for Math

One challenge in evaluating math solutions is that different written forms can represent the same underlying answer. For instance, $a^2-4$ and $(a+2)(a-2)$ may both be valid solutions to the same problem. We adopted two methods to improve the reward model’s scoring accuracy:

1. Classic RM: Drawing inspiration from the InstructGPT [35] methodology, we implemented a value-head based reward model and collected approximately 800k data points for fine-tuning. The model ultimately takes as input the “question,” the “reference answer,” and the “response,” and outputs a single scalar that indicates whether the response is correct.

1. Chain-of-Thought RM: Recent research [3, 30] suggests that reward models augmented with chain-of-thought (CoT) reasoning can significantly outperform classic approaches, particularly on tasks where nuanced correctness criteria matter—such as mathematics. Therefore, we collected an equally large dataset of about 800k CoT-labeled examples to fine-tune the Kimi model. Building on the same inputs as the Classic RM, the chain-of-thought approach explicitly generates a step-by-step reasoning process before providing a final correctness judgment in JSON format, enabling more robust and interpretable reward signals.

During our manual spot checks, the Classic RM achieved an accuracy of approximately 84.4, while the Chain-of-Thought RM reached 98.5 accuracy. In the RL training process, we adopted the Chain-of-Thought RM to ensure more correct feedback.

Vision Data

To improve the model’s real-world image reasoning capabilities and to achieve a more effective alignment between visual inputs and large language models (LLMs), our vision reinforcement learning (Vision RL) data is primarily sourced from three distinct categories: Real-world data, Synthetic visual reasoning data, and Text-rendered data.

1. The real-world data encompass a range of science questions across various grade levels that require graphical comprehension and reasoning, location guessing tasks that necessitate visual perception and inference, and data analysis that involves understanding complex charts, among other types of data. These datasets improve the model’s ability to perform visual reasoning in real-world scenarios.

1. Synthetic visual reasoning data is artificially generated, including procedurally created images and scenes aimed at improving specific visual reasoning skills, such as understanding spatial relationships, geometric patterns, and object interactions. These synthetic datasets offer a controlled environment for testing the model’s visual reasoning capabilities and provide an endless supply of training examples.

1. Text-rendered data is created by converting textual content into visual format, enabling the model to maintain consistency when handling text-based queries across different modalities. By transforming text documents, code snippets, and structured data into images, we ensure the model provides consistent responses regardless of whether the input is pure text or text rendered as images (like screenshots or photos). This also helps to enhance the model’s capability when dealing with text-heavy images.

Each type of data is essential in building a comprehensive visual language model that can effectively manage a wide range of real-world applications while ensuring consistent performance across various input modalities.

### 2.4 Long2short: Context Compression for Short-CoT Models

Though long-CoT models achieve strong performance, it consumes more test-time tokens compared to standard short-CoT LLMs. However, it is possible to transfer the thinking priors from long-CoT models to short-CoT models so that performance can be improved even with limited test-time token budgets. We present several approaches for this long2short problem, including model merging [57], shortest rejection sampling, DPO [40], and long2short RL. Detailed descriptions of these methods are provided below:

Model Merging

Model merging has been found to be useful in maintaining generalization ability. We also discovered its effectiveness in improving token efficiency when merging a long-cot model and a short-cot model. This approach combines a long-cot model with a shorter model to obtain a new one without training. Specifically, we merge the two models by simply averaging their weights.

Shortest Rejection Sampling

We observed that our model generates responses with a large length variation for the same problem. Based on this, we designed the Shortest Rejection Sampling method. This method samples the same question $n$ times (in our experiments, $n=8$ ) and selects the shortest correct response for supervised fine-tuning.

DPO

Similar with Shortest Rejection Sampling, we utilize the Long CoT model to generate multiple response samples. The shortest correct solution is selected as the positive sample, while longer responses are treated as negative samples, including both wrong longer responses and correct longer responses (1.5 times longer than the chosen positive sample). These positive-negative pairs form the pairwise preference data used for DPO training.

Long2short RL

After a standard RL training phase, we select a model that offers the best balance between performance and token efficiency to serve as the base model, and conduct a separate long2short RL training phase. In this second phase, we apply the length penalty introduced in Section 2.3.3, and significantly reduce the maximum rollout length to further penalize responses that exceed the desired length while possibly correct.

### 2.5 Other Training Details

#### 2.5.1 Pretraining

The Kimi k1.5 base model is trained on a diverse, high-quality multimodal corpus. The language data covers five domains: English, Chinese, Code, Mathematics Reasoning, and Knowledge. Multimodal data, including Captioning, Image-text Interleaving, OCR, Knowledge, and QA datasets, enables our model to acquire vision-language capabilities. Rigorous quality control ensures relevance, diversity, and balance in the overall pretrain dataset. Our pretraining proceeds in three stages: (1) Vision-language pretraining, where a strong language foundation is established, followed by gradual multimodal integration; (2) Cooldown, which consolidates capabilities using curated and synthetic data, particularly for reasoning and knowledge-based tasks; and (3) Long-context activation, extending sequence processing to 131,072 tokens. More details regarding our pretraining efforts can be found in Appendix B.

#### 2.5.2 Vanilla Supervised Finetuning

We create the vanilla SFT corpus covering multiple domains. For non-reasoning tasks, including question-answering, writing, and text processing, we initially construct a seed dataset through human annotation. This seed dataset is used to train a seed model. Subsequently, we collect a diverse of prompts and employ the seed model to generate multiple responses to each prompt. Annotators then rank these responses and refine the top-ranked response to produce the final version. For reasoning tasks such as math and coding problems, where rule-based and reward modeling based verifications are more accurate and efficient than human judgment, we utilize rejection sampling to expand the SFT dataset.

Our vanilla SFT dataset comprises approximately 1 million text examples. Specifically, 500k examples are for general question answering, 200k for coding, 200k for math and science, 5k for creative writing, and 20k for long-context tasks such as summarization, doc-qa, translation, and writing. In addition, we construct 1 million text-vision examples encompassing various categories including chart interpretation, OCR, image-grounded conversations, visual coding, visual reasoning, and math/science problems with visual aids.

We first train the model at the sequence length of 32k tokens for 1 epoch, followed by another epoch at the sequence length of 128k tokens. In the first stage (32k), the learning rate decays from $2× 10^-5$ to $2× 10^-6$ , before it re-warmups to $1× 10^-5$ in the second stage (128k) and finally decays to $1× 10^-6$ . To improve training efficiency, we pack multiple training examples into each single training sequence.

### 2.6 RL Infrastructure

<details>

<summary>x3.png Details</summary>

### Visual Description

\n

## System Architecture Diagram: Reinforcement Learning Training Pipeline

### Overview

The image displays a technical system architecture diagram illustrating a distributed reinforcement learning (RL) training pipeline. The diagram uses labeled boxes to represent system components and arrows to indicate the flow of data and model weights between them. The overall flow suggests an iterative training process where a policy model is improved using feedback from specialized reward models.

### Components/Axes

The diagram consists of five primary component boxes and a legend, connected by directional arrows.

**Primary Components (Boxes):**

1. **Rollout Workers** (Top-left, cream-colored box): A stack of boxes, indicating multiple instances.

2. **Trainer Workers** (Top-right, light blue box): A stack of boxes containing two sub-components:

* **Policy Model** (Left sub-box, light blue)

* **Reference Model** (Right sub-box, light purple)

3. **Master** (Center, light green box): The central coordinating component.

4. **Reward Models** (Bottom-left, light blue box): Contains four specialized sub-models:

* **Code** (Top-left sub-box)

* **Math** (Top-right sub-box)

* **K-12** (Bottom-left sub-box)

* **Vision** (Bottom-right sub-box)

5. **Replay Buffer** (Bottom-right, pink box): A storage component.

**Legend (Bottom-right corner):**

* **Solid Arrow (→):** Labeled "weight flow"

* **Dashed Arrow (⇢):** Labeled "data flow"

### Detailed Analysis

**Flow and Connections (Traced from Legend and Labels):**

1. **Weight Flow (Solid Arrows):**

* From **Trainer Workers** to **Rollout Workers**: Labeled "weight". This indicates the current policy model weights are sent to the rollout workers for action generation.

* Within **Trainer Workers**: A circular arrow labeled "gradient update" points from the "Policy Model" back to itself, indicating the model parameters are updated via gradient descent during training.

2. **Data Flow (Dashed Arrows):**

* From **Rollout Workers** to **Master**: Labeled "rollout trajectories". The workers send generated experience data (state-action-reward sequences) to the master.

* From **Master** to **Reward Models**: Labeled "eval request". The master sends data to be evaluated by the specialized reward models.

* From **Master** to **Trainer Workers**: Labeled "training data". The master sends processed data (likely trajectories paired with rewards) to the trainers for policy updates.

* Between **Master** and **Replay Buffer**: A bidirectional dashed arrow (no explicit label). This indicates the master can both store new experiences in and retrieve old experiences from the replay buffer.

**Spatial Grounding:**

* The **Legend** is positioned in the bottom-right corner of the diagram.

* The **Master** component is centrally located, acting as the hub for all data flows.

* The **Reward Models** are positioned in the bottom-left, receiving evaluation requests from the central Master.

* The **Replay Buffer** is positioned in the bottom-right, adjacent to the legend.

### Key Observations

* **Modular Reward System:** The "Reward Models" component is explicitly segmented into four distinct domains (Code, Math, K-12, Vision), suggesting the system is designed to train a generalist model or evaluate performance across diverse, specialized tasks.

* **Centralized Coordination:** The "Master" node is critical, managing the flow of trajectories, evaluation requests, training data, and interaction with the replay buffer. It decouples the rollout, reward evaluation, and training processes.

* **Standard RL Components:** The architecture includes classic RL elements: Rollout Workers (for environment interaction), a Replay Buffer (for experience storage), Trainer Workers (for policy optimization), and a Reward Model (for providing feedback).

* **Dual-Model Training:** The "Trainer Workers" contain both a "Policy Model" (being trained) and a "Reference Model." This is a common setup in algorithms like PPO (Proximal Policy Optimization) or RLHF (Reinforcement Learning from Human Feedback), where the reference model provides a stability baseline to prevent the policy from diverging too far.

### Interpretation

This diagram outlines a scalable, distributed reinforcement learning system, likely for training large language models or multi-modal agents. The architecture is designed for efficiency and specialization.

* **What it demonstrates:** The system separates the computationally intensive tasks of generating experience (Rollout Workers), evaluating that experience (Reward Models), and updating the model (Trainer Workers). The Master orchestrates this pipeline, while the Replay Buffer enables learning from past experiences, improving sample efficiency.

* **Relationships:** The flow is cyclical and iterative: Weights go out -> Trajectories come in -> Rewards are evaluated -> Training data is prepared -> The model is updated -> New weights go out. The specialized Reward Models imply the trained agent is intended to perform well across a broad set of intellectual and perceptual tasks (code, math, education, vision).

* **Notable Implications:** The presence of a "Reference Model" strongly suggests the use of a constrained optimization method (like PPO) or an RLHF-style approach, which is crucial for aligning model behavior and preventing reward hacking. The multi-domain reward structure indicates an ambition to create a robust, general-purpose model rather than a narrow specialist. The architecture is built for parallelism, allowing each component to scale independently based on computational demand.

</details>

(a) System overview

<details>

<summary>x4.png Details</summary>

### Visual Description

## Process Flow Diagram: Rollout Worker and Replay Buffer Interaction

### Overview

This image is a technical process flow diagram illustrating the data flow and control logic for a "rollout worker" during "iteration N" of a machine learning or reinforcement learning training process. The diagram shows how prompts are processed, under what conditions they stop, and how the resulting data is stored in a "Replay Buffer." It specifically highlights the mechanism for handling "partial rollouts."

### Components/Axes

The diagram is composed of the following labeled components and flow elements:

1. **Main Process Block (Top):** A large rectangle labeled **"rollout worker"**. Above it is the text **"iteration N"**.

2. **Input Source (Left):** The text **"from prompt set"** indicates the origin of the data streams entering the rollout worker.

3. **Output Destination (Bottom):** A rounded rectangle labeled **"Replay Buffer"**.

4. **Flow Lines & Symbols:** Three distinct horizontal lines enter the "rollout worker" from the left. Their paths and termination points within the worker block are defined by specific symbols, which are explained in a legend.

5. **Legend (Bottom-Right):** A key explaining the meaning of the line termination symbols:

* **Solid line ending in a filled circle (●):** "normal stop"

* **Solid line ending in an open diamond (◇):** "cut by length"

* **Solid line with an 'X' mark (✕):** "repeat, early stop"

6. **Secondary Flow Label (Right):** The text **"save for partial rollout"** is connected via dashed lines to the diamond symbols.

7. **Process Label (Left):** The text **"partial rollout"** is positioned near the lines descending to the Replay Buffer.

### Detailed Analysis

The diagram details three distinct data processing paths originating from the "prompt set":

1. **Path 1 (Top Line):**

* **Trajectory:** Enters the "rollout worker" from the left.

* **Termination:** Ends at a **filled circle (●)** on the right edge of the worker block.

* **Legend Meaning:** This represents a **"normal stop"**.

* **Flow:** A solid line descends directly from this termination point into the "Replay Buffer".

2. **Path 2 (Middle Line):**

* **Trajectory:** Enters the worker, travels right, then turns downward.

* **Termination:** Ends at an **open diamond (◇)** on the right edge of the worker block.

* **Legend Meaning:** This represents a process **"cut by length"**.

* **Associated Action:** A dashed line connects this diamond to the label **"save for partial rollout"**.

* **Flow:** A solid line descends from the diamond's position into the "Replay Buffer".

3. **Path 3 (Bottom Line):**

* **Trajectory:** Enters the worker and is immediately marked with an **'X' (✕)**.

* **Legend Meaning:** This indicates a **"repeat, early stop"** condition.

* **Flow:** After the 'X', the line continues, turns downward, and then splits. One branch goes to the "Replay Buffer". Another branch connects to the dashed line system associated with "save for partial rollout".

**Spatial Grounding:** The legend is positioned in the bottom-right corner. The "save for partial rollout" label is on the right side, aligned with the diamond symbols. The "partial rollout" label is on the left, near the vertical lines feeding the buffer. The "Replay Buffer" is centrally located at the bottom.

### Key Observations

* The diagram explicitly models different stopping conditions for a rollout process, which is critical for efficient training in reinforcement learning.

* The **"cut by length" (◇)** and **"repeat, early stop" (✕)** conditions are both linked to the concept of a **"partial rollout"**, suggesting these are non-standard termination points that require special handling.

* The dashed lines create a secondary data flow path specifically for saving information related to partial rollouts, separate from the main data stream to the replay buffer.

* All three processing paths ultimately result in data being sent to the **"Replay Buffer"**, indicating it is the central storage for all generated experience, regardless of how the rollout ended.

### Interpretation

This diagram illustrates a sophisticated data collection mechanism for iterative model training, likely in a reinforcement learning context. The "rollout worker" generates experience by interacting with an environment using a set of prompts.

The key insight is the system's ability to handle incomplete or aborted trajectories ("partial rollouts") gracefully. Instead of discarding this data, it is categorized and saved. A "normal stop" represents a complete, successful episode. A "cut by length" suggests the episode was truncated due to a maximum step limit. A "repeat, early stop" implies the process was halted early, possibly due to a detected failure state or a need to re-sample.

By storing all these outcomes in the Replay Buffer, the training algorithm can learn from both successful completions and various failure or truncation modes. The "save for partial rollout" mechanism may be used for techniques like importance sampling, trajectory stitching, or training value functions to handle incomplete episodes. This design promotes sample efficiency and robust learning by maximizing the utility of every interaction with the environment.

</details>

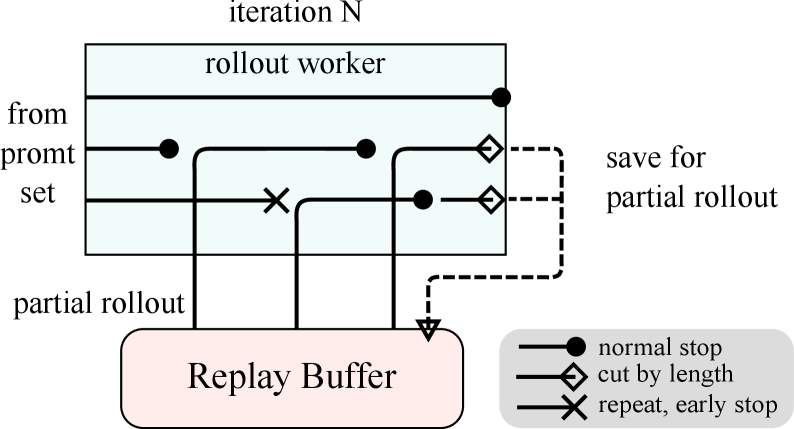

(b) Partial Rollout

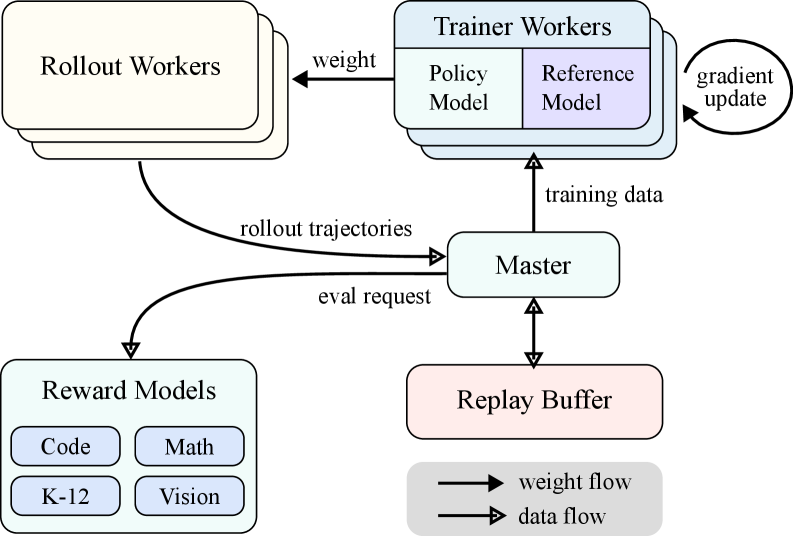

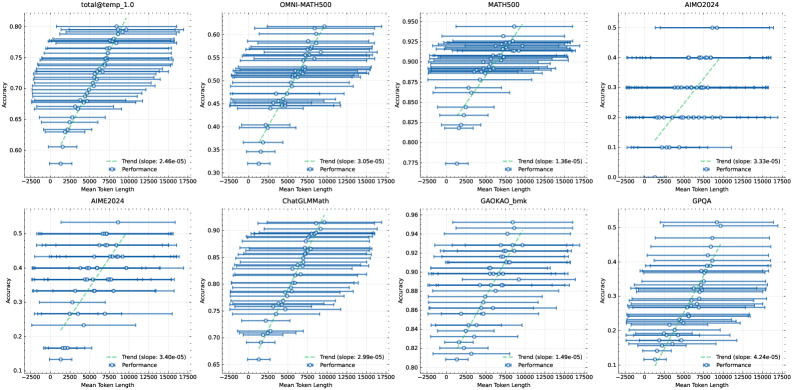

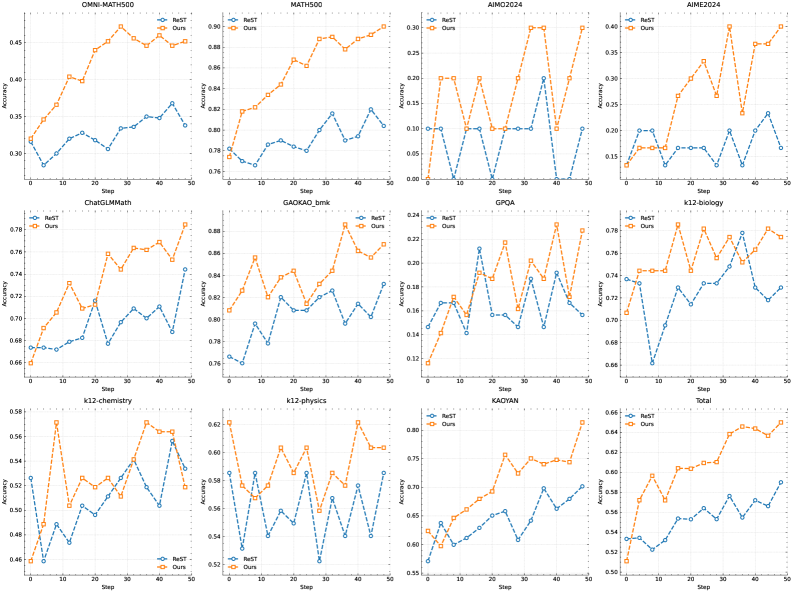

Figure 3: Large Scale Reinforcement Learning Training System for LLM

#### 2.6.1 Large Scale Reinforcement Learning Training System for LLM

In the realm of artificial intelligence, reinforcement learning (RL) has emerged as a pivotal training methodology for large language models (LLMs) [35] [16], drawing inspiration from its success in mastering complex games like Go, StarCraft II, and Dota 2 through systems such as AlphaGo [43], AlphaStar [51], and OpenAI Dota Five [4]. Following in this tradition, the Kimi k1.5 system adopts an iterative synchronous RL framework, meticulously designed to bolster the model’s reasoning capabilities through persistent learning and adaptation. A key innovation in this system is the introduction of a Partial Rollout technique, designed to optimize the handling of complex reasoning trajectories.

The RL training system as illustrated in Figure 3(a) operates through an iterative synchronous approach, with each iteration encompassing a rollout phase and a training phase. During the rollout phase, rollout workers, coordinated by a central master, generate rollout trajectories by interacting with the model, producing sequences of responses to various inputs. These trajectories are then stored in a replay buffer, which ensures a diverse and unbiased dataset for training by disrupting temporal correlations. In the subsequent training phase, trainer workers access these experiences to update the model’s weights. This cyclical process allows the model to continuously learn from its actions, adjusting its strategies over time to enhance performance.

The central master serves as the central conductor, managing the flow of data and communication between the rollout workers, trainer workers, evaluation with reward models and the replay buffer. It ensures that the system operates harmoniously, balancing the load and facilitating efficient data processing.

The trainer workers access these rollout trajectories, whether completed in a single iteration or divided across multiple iterations, to compute gradient updates that refine the model’s parameters and enhance its performance. This process is overseen by a reward model, which evaluates the quality of the model’s outputs and provides essential feedback to guide the training process. The reward model’s evaluations are particularly pivotal in determining the effectiveness of the model’s strategies and steering the model towards optimal performance.

Moreover, the system incorporates a code execution service, which is specifically designed to handle code-related problems and is integral to the reward model. This service evaluates the model’s outputs in practical coding scenarios, ensuring that the model’s learning is closely aligned with real-world programming challenges. By validating the model’s solutions against actual code executions, this feedback loop becomes essential for refining the model’s strategies and enhancing its performance in code-related tasks.

#### 2.6.2 Partial Rollouts for Long CoT RL

One of the primary ideas of our work is to scale long-context RL training. Partial rollouts is a key technique that effectively addresses the challenge of handling long-CoT features by managing the rollouts of both long and short trajectories. This technique establishes a fixed output token budget, capping the length of each rollout trajectory. If a trajectory exceeds the token limit during the rollout phase, the unfinished portion is saved to the replay buffer and continued in the next iteration. It ensures that no single lengthy trajectory monopolizes the system’s resources. Moreover, since the rollout workers operate asynchronously, when some are engaged with long trajectories, others can independently process new, shorter rollout tasks. The asynchronous operation maximizes computational efficiency by ensuring that all rollout workers are actively contributing to the training process, thereby optimizing the overall performance of the system.

As illustrated in Figure 3(b), the partial rollout system works by breaking down long responses into segments across iterations (from iter n-m to iter n). The Replay Buffer acts as a central storage mechanism that maintains these response segments, where only the current iteration (iter n) requires on-policy computation. Previous segments (iter n-m to n-1) can be efficiently reused from the buffer, eliminating the need for repeated rollouts. This segmented approach significantly reduces the computational overhead: instead of rolling out the entire response at once, the system processes and stores segments incrementally, allowing for the generation of much longer responses while maintaining fast iteration times. During training, certain segments can be excluded from loss computation to further optimize the learning process, making the entire system both efficient and scalable.

The implementation of partial rollouts also offers repeat detection. The system identifies repeated sequences in the generated content and terminates them early, reducing unnecessary computation while maintaining output quality. Detected repetitions can be assigned additional penalties, effectively discouraging redundant content generation in the prompt set.

#### 2.6.3 Hybrid Deployment of Training and Inference

<details>

<summary>x5.png Details</summary>

### Visual Description

\n

## System Architecture Diagram: Distributed LLM Training and Inference Pod

### Overview

The image is a technical system architecture diagram illustrating the components and data flow within a single computational "pod" designed for distributed large language model (LLM) training and inference. The diagram is divided into two primary subsystems—the Megatron Sidecar and the vLLM Sidecar—which coordinate through shared memory and external services.

### Components/Axes

The diagram is structured within a large, rounded rectangle labeled **"pod"** at the top center. Inside, two major colored regions define the subsystems:

1. **Megatron Sidecar (Left, Light Blue Background):**

* **Components (Boxes):** `Train`, `Onload`, `Offload`, `Wait rollout`, `Convert HF`, `Register Shard`, `Update Weight`, `Checkpoint Engine`.

* **Flow:** Arrows indicate a cyclical process between `Train`, `Onload`, and `Offload`. `Offload` connects to `Wait rollout`. `Convert HF` feeds into `Register Shard`, which connects to `Update Weight`. Both `Register Shard` and `Update Weight` are contained within a dashed purple box labeled `Checkpoint Engine`.

2. **vLLM Sidecar (Right, Light Green Background):**

* **Components (Boxes):** `Rollout`, `Dummy Start`, `Terminate`, `Update Weight`, `Start vLLM`, `Terminate vLLM`, `Checkpoint Engine`.

* **Flow:** `Rollout` connects to both `Dummy Start` and `Terminate`. `Dummy Start` and `Start vLLM` both feed into `Update Weight`. `Update Weight` also receives input from `Terminate vLLM`. `Terminate` connects to `Terminate vLLM`. The components `Start vLLM`, `Update Weight`, and `Terminate vLLM` are contained within a dashed purple box labeled `Checkpoint Engine`.

3. **Shared Components & External Interfaces:**

* **Shared Memory (Center, Purple Background):** A central box labeled `Shared Memory` sits between the two sidecars. It receives an arrow from the Megatron Sidecar's `Update Weight` and sends an arrow to the vLLM Sidecar's `Update Weight`.

* **etcd (Bottom Center, Light Green Box):** An external service labeled `etcd` has bidirectional arrows connecting to both the Megatron and vLLM `Checkpoint Engine` components.

* **Other Pods (Bottom Right, Gray Box):** A component labeled `Other Pods` is connected via a line labeled **"RDMA"** to the vLLM Sidecar's `Checkpoint Engine`.

### Detailed Analysis

**Spatial Layout & Connections:**

* The **Megatron Sidecar** occupies the left ~45% of the pod. Its internal `Checkpoint Engine` (purple dashed box) is positioned at the bottom of its region.

* The **vLLM Sidecar** occupies the right ~45% of the pod. Its internal `Checkpoint Engine` is also at the bottom of its region.

* The **Shared Memory** component is centrally located, acting as a bridge between the two sidecars' `Update Weight` processes.

* **etcd** is positioned centrally below the pod, indicating its role as a shared coordination service for both checkpoint engines.

* **Other Pods** are external, connected to the vLLM side via a high-speed **RDMA** (Remote Direct Memory Access) link.

**Process Flow (Inferred from Arrows):**

1. **Megatron Sidecar (Training Focus):** The core loop appears to be `Train` -> `Onload` -> `Offload` -> `Wait rollout` -> back to `Train`. Parallel to this, model conversion (`Convert HF`) leads to sharding (`Register Shard`) and weight updates (`Update Weight`) within its checkpoint engine.

2. **vLLM Sidecar (Inference/Rollout Focus):** The process involves initiating rollouts (`Rollout`), which can start via a `Dummy Start` or a full `Start vLLM`. Weight updates (`Update Weight`) are a central hub, receiving inputs from start processes and termination signals (`Terminate vLLM`). The process can be cleanly stopped via `Terminate`.

3. **Coordination:** Model weights are synchronized from the Megatron training side to the vLLM inference side via **Shared Memory**. Both sides persist state and coordinate with the external **etcd** service. The vLLM side can also communicate with other pods via **RDMA**.

### Key Observations

* **Asymmetric Design:** The two sidecars have distinct, specialized component sets. Megatron is oriented around a training loop and model sharding, while vLLM is oriented around managing inference instances (`vLLM`) and rollout processes.

* **Centralized Weight Update:** The `Update Weight` component is a critical junction in both sidecars, suggesting that synchronizing model parameters is a key operation.

* **Dual Checkpoint Engines:** Each sidecar has its own `Checkpoint Engine`, implying independent state management for training and inference processes, coordinated via `etcd`.

* **Explicit External Links:** The diagram explicitly shows integration points with external systems (`etcd` for coordination, `Other Pods` via `RDMA` for distributed communication).

### Interpretation

This diagram depicts a sophisticated architecture for decoupling LLM training from online inference/rollout within a single pod. The **Megatron Sidecar** likely handles the heavy computation of model training, while the **vLLM Sidecar** manages low-latency inference, possibly for reinforcement learning from human feedback (RLHF) or online serving.

The **Shared Memory** bridge is crucial for efficiently transferring updated model weights from the training engine to the inference engine without going through slower storage or network layers. The use of **etcd** suggests a need for strong consistency in managing distributed state (like checkpoint metadata) across the two subsystems. The **RDMA** link to **Other Pods** indicates this pod is part of a larger cluster, where high-speed, low-latency communication between inference instances on different nodes is required.

The architecture solves a key challenge in modern AI systems: how to continuously improve a model (training) while simultaneously serving it or using it to generate new data (inference/rollout) with minimal latency and data transfer overhead. The separation into "sidecars" within a pod allows for independent scaling and lifecycle management of these two workloads.

</details>

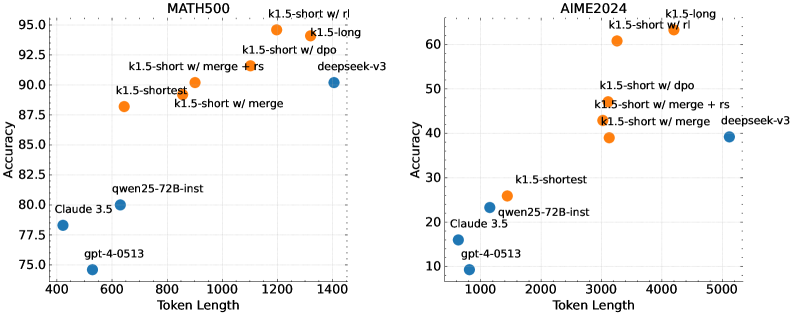

Figure 4: Hybrid Deployment Framework

The RL training process comprises of the following phases:

- Training Phase: At the outset, Megatron [42] and vLLM [21] are executed within separate containers, encapsulated by a shim process known as checkpoint-engine (Section 2.6.3). Megatron commences the training procedure. After the training is completed, Megatron offloads the GPU memory and prepares to transfer current weights to vLLM.

- Inference Phase: Following Megatron’s offloading, vLLM starts with dummy model weights and updates them with the latest ones transferred from Megatron via Mooncake [39]. Upon completion of the rollout, the checkpoint-engine halts all vLLM processes.

- Subsequent Training Phase: Once the memory allocated to vLLM is released, Megatron onloads the memory and initiates another round of training.

We find existing works challenging to simultaneously support all the following characteristics.

- Complex parallelism strategy: Megatron may have different parallelism strategy with vLLM. Training weights distributing in several nodes in Megatron could be challenging to be shared with vLLM.

- Minimizing idle GPU resources: For On-Policy RL, recent works such as SGLang [62] and vLLM might reserve some GPUs during the training process, which conversely could lead to idle training GPUs. It would be more efficient to share the same devices between training and inference.

- Capability of dynamic scaling: In some cases, a significant acceleration can be achieved by increasing the number of inference nodes while keeping the training process constant. Our system enables the efficient utilization of idle GPU nodes when needed.

As illustrated in Figure 4, we implement this hybrid deployment framework (Section 2.6.3) on top of Megatron and vLLM, achieving less than one minute from training to inference phase and about ten seconds conversely.

Hybrid Deployment Strategy

We propose a hybrid deployment strategy for training and inference tasks, which leverages Kubernetes Sidecar containers sharing all available GPUs to collocate both workloads in one pod. The primary advantages of this strategy are:

- It facilitates efficient resource sharing and management, preventing train nodes idling while waiting for inference nodes when both are deployed on separate nodes.

- Leveraging distinct deployed images, training and inference can each iterate independently for better performance.

- The architecture is not limited to vLLM, other frameworks can be conveniently integrated.

Checkpoint Engine

Checkpoint Engine is responsible for managing the lifecycle of the vLLM process, exposing HTTP APIs that enable triggering various operations on vLLM. For overall consistency and reliability, we utilize a global metadata system managed by the etcd service to broadcast operations and statuses.

It could be challenging to entirely release GPU memory by vLLM offloading primarily due to CUDA graphs, NCCL buffers and NVIDIA drivers. To minimize modifications to vLLM, we terminate and restart it when needed for better GPU utilization and fault tolerance.

The worker in Megatron converts the owned checkpoints into the Hugging Face format in shared memory. This conversion also takes Pipeline Parallelism and Expert Parallelism into account so that only Tensor Parallelism remains in these checkpoints. Checkpoints in shared memory are subsequently divided into shards and registered in the global metadata system. We employ Mooncake to transfer checkpoints between peer nodes over RDMA. Some modifications to vLLM are needed to load weight files and perform tensor parallelism conversion.

#### 2.6.4 Code Sandbox

We developed the sandbox as a secure environment for executing user-submitted code, optimized for code execution and code benchmark evaluation. By dynamically switching container images, the sandbox supports different use cases through MultiPL-E [6], DMOJ Judge Server https://github.com/DMOJ/judge-server, Lean, Jupyter Notebook, and other images.

For RL in coding tasks, the sandbox ensures the reliability of training data judgment by providing consistent and repeatable evaluation mechanisms. Its feedback system supports multi-stage assessments, such as code execution feedback and repo-level editing, while maintaining a uniform context to ensure fair and equitable benchmark comparisons across programming languages.

We deploy the service on Kubernetes for scalability and resilience, exposing it through HTTP endpoints for external integration. Kubernetes features like automatic restarts and rolling updates ensure availability and fault tolerance.

To optimize performance and support RL environments, we incorporate several techniques into the code execution service to enhance efficiency, speed, and reliability. These include:

- Using Crun: We utilize crun as the container runtime instead of Docker, significantly reducing container startup times.

- Cgroup Reusing: We pre-create cgroups for container use, which is crucial in scenarios with high concurrency where creating and destroying cgroups for each container can become a bottleneck.

- Disk Usage Optimization: An overlay filesystem with an upper layer mounted as tmpfs is used to control disk writes, providing a fixed-size, high-speed storage space. This approach is beneficial for ephemeral workloads.

| Docker | 0.12 |

| --- | --- |

| Sandbox | 0.04 |

(a) Container startup times

| Docker Sandbox | 27 120 |

| --- | --- |

(b) Maximum containers started per second on a 16-core machine

These optimizations improve RL efficiency in code execution, providing a consistent and reliable environment for evaluating RL-generated code, essential for iterative training and model improvement.

## 3 Experiments

### 3.1 Evaluation

Since k1.5 is a multimodal model, we conducted comprehensive evaluation across various benchmarks for different modalities. The detailed evaluation setup can be found in Appendix C. Our benchmarks primarily consist of the following three categories:

- Text Benchmark: MMLU [13], IF-Eval [63], CLUEWSC [56], C-EVAL [15]

- Reasoning Benchmark: HumanEval-Mul, LiveCodeBench [17], Codeforces, AIME 2024, MATH-500 [26]

- Vision Benchmark: MMMU [59], MATH-Vision [52], MathVista [29]

### 3.2 Main Results

K1.5 long-CoT model

The performance of the Kimi k1.5 long-CoT model is presented in Table 2. Through long-CoT supervised fine-tuning (described in Section 2.2) and vision-text joint reinforcement learning (discussed in Section 2.3), the model’s long-term reasoning capabilities are enhanced significantly. The test-time computation scaling further strengthens its performance, enabling the model to achieve state-of-the-art results across a range of modalities. Our evaluation reveals marked improvements in the model’s capacity to reason, comprehend, and synthesize information over extended contexts, representing a advancement in multi-modal AI capabilities.

K1.5 short-CoT model