<details>

<summary>Image 1 Details</summary>

### Visual Description

Icon/Small Image (244x54)

</details>

## DeepSeek-R1: Incentivizing Reasoning Capability in LLMs via Reinforcement Learning

DeepSeek-AI

research@deepseek.com

## Abstract

General reasoning represents a long-standing and formidable challenge in artificial intelligence. Recent breakthroughs, exemplified by large language models (LLMs) (Brown et al., 2020; OpenAI, 2023) and chain-of-thought prompting (Wei et al., 2022b), have achieved considerable success on foundational reasoning tasks. However, this success is heavily contingent upon extensive human-annotated demonstrations, and models' capabilities are still insufficient for more complex problems. Here we show that the reasoning abilities of LLMs can be incentivized through pure reinforcement learning (RL), obviating the need for human-labeled reasoning trajectories. The proposed RL framework facilitates the emergent development of advanced reasoning patterns, such as self-reflection, verification, and dynamic strategy adaptation. Consequently, the trained model achieves superior performance on verifiable tasks such as mathematics, coding competitions, and STEM fields, surpassing its counterparts trained via conventional supervised learning on human demonstrations. Moreover, the emergent reasoning patterns exhibited by these large-scale models can be systematically harnessed to guide and enhance the reasoning capabilities of smaller models.

## 1. Introduction

Reasoning capability, the cornerstone of human intelligence, enables complex cognitive tasks ranging from mathematical problem-solving to logical deduction and programming. Recent advances in artificial intelligence have demonstrated that large language models (LLMs) can exhibit emergent behaviors, including reasoning abilities, when scaled to a sufficient size (Kaplan et al., 2020; Wei et al., 2022a). However, achieving such capabilities in pre-training typically demands substantial computational resources. In parallel, a complementary line of research has demonstrated that large language models can be effectively augmented through chain-ofthought (CoT) prompting. This technique, which involves either providing carefully designed few-shot examples or using minimalistic prompts such as 'Let's think step by step'(Kojima et al., 2022; Wei et al., 2022b), enables models to produce intermediate reasoning steps, thereby substantially enhancing their performance on complex tasks. Similarly, further performance gains have been observed when models learn high-quality, multi-step reasoning trajectories during the post-training phase (Chung et al., 2024; OpenAI, 2023). Despite their effectiveness, these approaches exhibit notable limitations. Their dependence on human-annotated reasoning traces hinders scalability and introduces cognitive biases. Furthermore, by constraining models to replicate human thought processes, their performance is inherently capped by the human- provided exemplars, which prevents the exploration of superior, non-human-like reasoning pathways.

To tackle these issues, we aim to explore the potential of LLMs for developing reasoning abilities through self-evolution in an RL framework, with minimal reliance on human labeling efforts. Specifically, we build upon DeepSeek-V3-Base (DeepSeek-AI, 2024b) and employ Group Relative Policy Optimization (GRPO) (Shao et al., 2024) as our RL framework. The reward signal is solely based on the correctness of final predictions against ground-truth answers, without imposing constraints on the reasoning process itself. Notably, we bypass the conventional supervised fine-tuning (SFT) phase before RL training. This design choice stems from our hypothesis that human-defined reasoning patterns may limit model exploration, whereas unrestricted RL training can better incentivize the emergence of novel reasoning capabilities in LLMs. Through this process, detailed in Section 2, our model (referred to as DeepSeek-R1Zero) naturally developed diverse and sophisticated reasoning behaviors. In solving reasoning problems, the model exhibits a tendency to generate longer responses, incorporating verification, reflection, and the exploration of alternative approaches within each response. Although we do not explicitly teach the model how to reason, it successfully learns improved reasoning strategies through reinforcement learning.

Although DeepSeek-R1-Zero demonstrates excellent reasoning capabilities, it faces challenges such as poor readability and language mixing, occasionally combining English and Chinese within a single chain-of-thought response. Furthermore, the rule-based RL training stage of DeepSeek-R1-Zero is narrowly focused on reasoning tasks, resulting in limited performance in broader areas such as writing and open-domain question answering. To address these challenges, we introduce DeepSeek-R1, a model trained through a multi-stage learning framework that integrates rejection sampling, reinforcement learning, and supervised finetuning, detailed in Section 3. This training pipeline enables DeepSeek-R1 to inherit the reasoning capabilities of its predecessor, DeepSeek-R1-Zero, while aligning model behavior with human preferences through additional non-reasoning data.

To enable broader access to powerful AI at a lower energy cost, we have distilled several smaller models and made them publicly available. These distilled models exhibit strong reasoning capabilities, surpassing the performance of their original instruction-tuned counterparts. We believe that these instruction-tuned versions will also significantly contribute to the research community by providing a valuable resource for understanding the mechanisms underlying long chain-of-thought (CoT) reasoning models and for fostering the development of more powerful reasoning models. We release DeepSeek-R1 series models to the public at https://huggingface.co/deepseek-ai .

## 2. DeepSeek-R1-Zero

We begin by elaborating on the training of DeepSeek-R1-Zero, which relies exclusively on reinforcement learning without supervised fine-tuning. To facilitate large-scale RL efficiency, we adopt Group Relative Policy Optimization (GRPO) (Shao et al., 2024).

## 2.1. Group Relative Policy Optimization

GRPO(Shao et al., 2024) is the reinforcement learning algorithm that we adopt to train DeepSeekR1-Zero and DeepSeek-R1. It was originally proposed to simplify the training process and reduce the resource consumption of Proximal Policy Optimization (PPO) (Schulman et al., 2017), which is widely used in the RL stage of LLMs (Ouyang et al., 2022).

For each question 𝑞 , GRPO samples a group of outputs { 𝑜 1, 𝑜 2, · · · , 𝑜𝐺 } from the old policy 𝜋𝜃𝑜𝑙𝑑 and then optimizes the policy model 𝜋𝜃 by maximizing the following objective:

$$& \mathcal { J } _ { G R P O } ( \theta ) = \mathbb { E } [ q \sim P ( Q ) , \{ o _ { i } \} _ { i = 1 } ^ { G } \sim \pi _ { \theta _ { o l d } } ( O | q ) ] \\ & \frac { 1 } { G } \sum _ { i = 1 } ^ { G } \left ( \min \left ( \frac { \pi _ { \theta } ( o _ { i } | q ) } { \pi _ { \theta _ { o l d } } ( o _ { i } | q ) } A _ { i } , \text {clip} \left ( \frac { \pi _ { \theta } ( o _ { i } | q ) } { \pi _ { \theta _ { o l d } } ( o _ { i } | q ) } , 1 - \varepsilon , 1 + \varepsilon \right ) A _ { i } \right ) - \beta \mathbb { D } _ { K L } \left ( \pi _ { \theta } | | \pi _ { r e f } ) \right ) ,$$

$$\mathbb { D } _ { K L } \left ( \pi _ { \theta } | | \pi _ { r e f } \right ) = \frac { \pi _ { r e f } ( o _ { i } | q ) } { \pi _ { \theta } ( o _ { i } | q ) } - \log \frac { \pi _ { r e f } ( o _ { i } | q ) } { \pi _ { \theta } ( o _ { i } | q ) } - 1 ,$$

where 𝜋𝑟𝑒𝑓 is a reference policy, 𝜀 and 𝛽 are hyper-parameters, and 𝐴𝑖 is the advantage, computed using a group of rewards { 𝑟 1, 𝑟 2, . . . , 𝑟 𝐺 } corresponding to the outputs within each group:

$$A _ { i } = \frac { r _ { i } - m e a n ( \{ r _ { 1 } , r _ { 2 } , \cdots , r _ { G } \} ) } { s t d ( \{ r _ { 1 } , r _ { 2 } , \cdots , r _ { G } \} ) } .$$

We give a comparison of GRPO and PPO in Supplementary A.3. To train DeepSeek-R1-Zero, we set the learning rate to 3e-6, the KL coefficient to 0.001, and the sampling temperature to 1 for rollout. For each question, we sample 16 outputs with a maximum length of 32,768 tokens before the 8.2k step and 65,536 tokens afterward. As a result, both the performance and response length of DeepSeek-R1-Zero exhibit a significant jump at the 8.2k step, with training continuing for a total of 10,400 steps, corresponding to 1.6 training epochs. Each training step consists of 32 unique questions, resulting in a training batch size of 512. Every 400 steps, we replace the reference model with the latest policy model. To accelerate training, each rollout generates 8,192 outputs, which are randomly split into 16 mini-batches and trained for only a single inner epoch.

Table 1 | Template for DeepSeek-R1-Zero. prompt will be replaced with the specific reasoning question during training.

A conversation between User and Assistant. The user asks a question, and the Assistant solves it. The assistant first thinks about the reasoning process in the mind and then provides the user with the answer. The reasoning process and answer are enclosed within <think>...</think> and <answer>...</answer> tags, respectively, i.e., <think> reasoning process here </think> <answer> answer here </answer> . User: prompt. Assistant:

Our high-performance RL infrastructure is described in Supplementary B.1, ensuring scalable and efficient training.

## 2.2. Reward Design

The reward is the source of the training signal, which decides the direction of RL optimization. For DeepSeek-R1-Zero, we employ rule-based rewards to deliver precise feedback for data in mathematical, coding, and logical reasoning domains. Our rule-based reward system mainly consists of two types of rewards: accuracy rewards and format rewards.

Accuracy rewards evaluate whether the response is correct. For example, in the case of math problems with deterministic results, the model is required to provide the final answer in a specified format (e.g., within a box), enabling reliable rule-based verification of correctness. Similarly, for code competition prompts, a compiler can be utilized to evaluate the model's

<details>

<summary>Image 2 Details</summary>

### Visual Description

## Chart: DeepSeek-R1-Zero Training Performance

### Overview

The image presents two line charts comparing the performance of a DeepSeek-R1-Zero model during training. The left chart displays the AIME (Approximate Inference Model Evaluation) accuracy of two configurations: "r1-zero-pass@1" and "r1-zero-cons@16," along with a baseline for human participants. The right chart shows the average length per response during training for the same model.

### Components/Axes

**Left Chart (Accuracy):**

* **Title:** DeepSeek-R1-Zero AIME accuracy during training

* **Y-axis:** Accuracy (ranging from 0.2 to 0.9)

* **X-axis:** Steps (ranging from 0 to 10000)

* **Legend:** Located in the top-left corner.

* Blue line with circular markers: r1-zero-pass@1

* Red line with circular markers: r1-zero-cons@16

* Green dashed line: human participants

**Right Chart (Average Length):**

* **Title:** DeepSeek-R1-Zero average length per response during training

* **Y-axis:** Average length per response (ranging from 0 to 20000)

* **X-axis:** Steps (ranging from 0 to 10000)

* **Data:** A blue line with a shaded blue area around it, representing the average length per response.

### Detailed Analysis

**Left Chart (Accuracy):**

* **r1-zero-pass@1 (Blue):**

* Starts at approximately 0.2 accuracy at step 0.

* Increases steadily until around step 8000, reaching approximately 0.75 accuracy.

* Shows some fluctuation between steps 8000 and 10000.

* **r1-zero-cons@16 (Red):**

* Starts at approximately 0.25 accuracy at step 0.

* Increases rapidly until around step 4000, reaching approximately 0.8 accuracy.

* Remains relatively stable between steps 4000 and 10000, hovering around 0.8 accuracy.

* **human participants (Green):**

* Maintains a constant accuracy of approximately 0.38 across all steps.

**Right Chart (Average Length):**

* **Average Length (Blue):**

* Starts at a low average length, close to 0, at step 0.

* Gradually increases until around step 8000, reaching an average length of approximately 12500.

* Shows a steeper increase between steps 8000 and 10000, reaching an average length of approximately 15000.

* The shaded area indicates variability in the average length per response.

### Key Observations

* The "r1-zero-cons@16" configuration achieves a higher accuracy than "r1-zero-pass@1" throughout the training process.

* Both configurations outperform human participants in terms of AIME accuracy.

* The average length per response increases significantly as the training progresses.

* The accuracy of "r1-zero-cons@16" plateaus after approximately 4000 steps, while "r1-zero-pass@1" continues to improve until around 8000 steps.

### Interpretation

The data suggests that the "r1-zero-cons@16" configuration is more effective in terms of AIME accuracy compared to "r1-zero-pass@1." The increasing average length per response indicates that the model generates longer responses as it trains, potentially reflecting a more complex understanding of the task. The fact that both configurations surpass human accuracy highlights the potential of the DeepSeek-R1-Zero model for the given task. The plateau in accuracy for "r1-zero-cons@16" might indicate a point of diminishing returns, suggesting that further training may not significantly improve its performance.

</details>

Steps

Steps

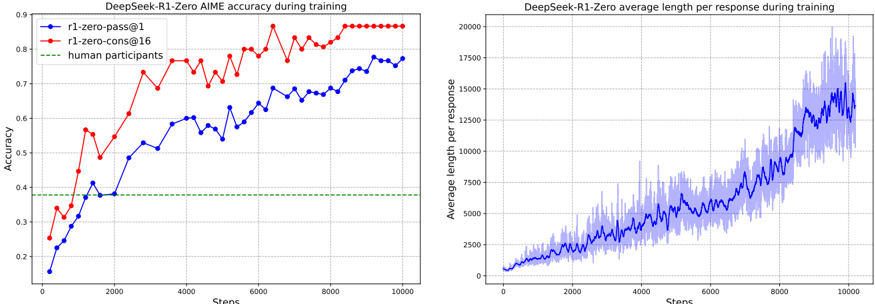

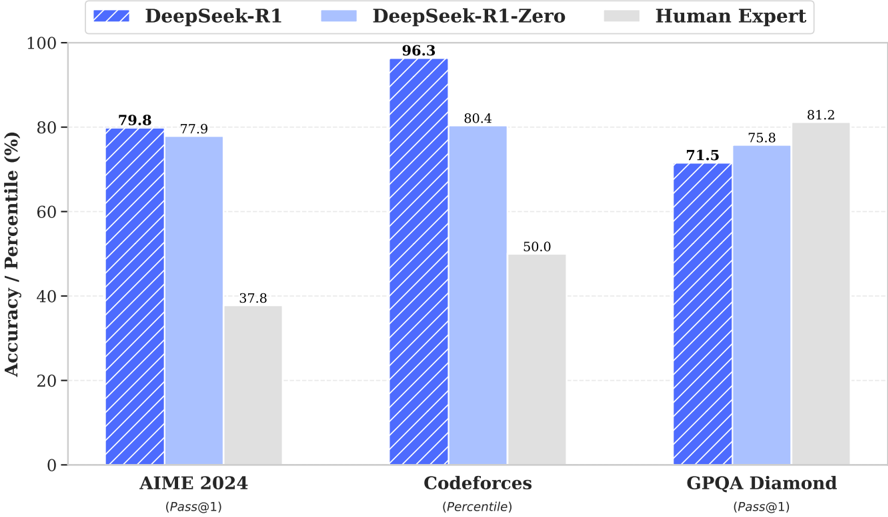

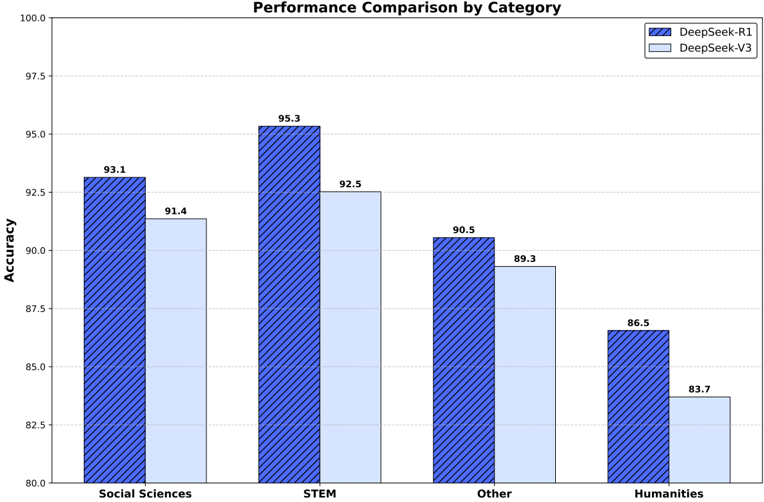

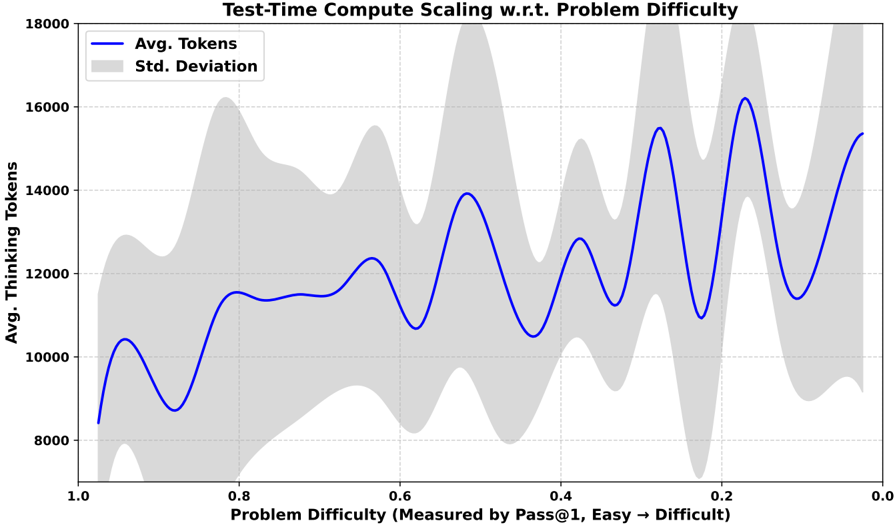

Figure 1 | (a) AIME accuracy of DeepSeek-R1-Zero during training. AIME takes a mathematical problem as input and a number as output, illustrated in Table 32. Pass@1 and Cons@16 are described in Supplementary D.1. The baseline is the average score achieved by human participants in the AIME competition. (b) The average response length of DeepSeek-R1-Zero on the training set during the RL process. DeepSeek-R1-Zero naturally learns to solve reasoning tasks with more thinking time. Note that a training step refers to a single policy update operation.

responses against a suite of predefined test cases, thereby generating objective feedback on correctness.

Format rewards complement the accuracy reward model by enforcing specific formatting requirements. In particular, the model is incentivized to encapsulate its reasoning process within designated tags, specifically ' <think> ' and ' </think> '. This ensures that the model's thought process is explicitly delineated, enhancing interpretability and facilitating subsequent analysis.

$$R e w a r d _ { \text {rule} } = R e w a r d _ { \text {acc} } + R e w a r d _ { \text {format} }$$

The accuracy, reward and format reward are combined with the same weight. Notably, we abstain from applying neural reward models-whether outcome-based or process-based-to reasoning tasks. This decision is predicated on our observation that neural reward models are susceptible to reward hacking during large-scale reinforcement learning. Moreover, retraining such models necessitates substantial computational resources and introduces additional complexity into the training pipeline, thereby complicating the overall optimization process.

## 2.3. Incentivize Reasoning Capability in LLMs

Specifically, we apply the RL technique on the DeepSeek-V3 base to train DeepSeek-R1-Zero. During training, we design a straightforward template, to require DeepSeek-R1-Zero to first produce a reasoning process, followed by the final answer. We intentionally limit our constraints to this structural format, avoiding any content-specific biases to ensure that we can accurately observe the model's natural progression during the RL process.

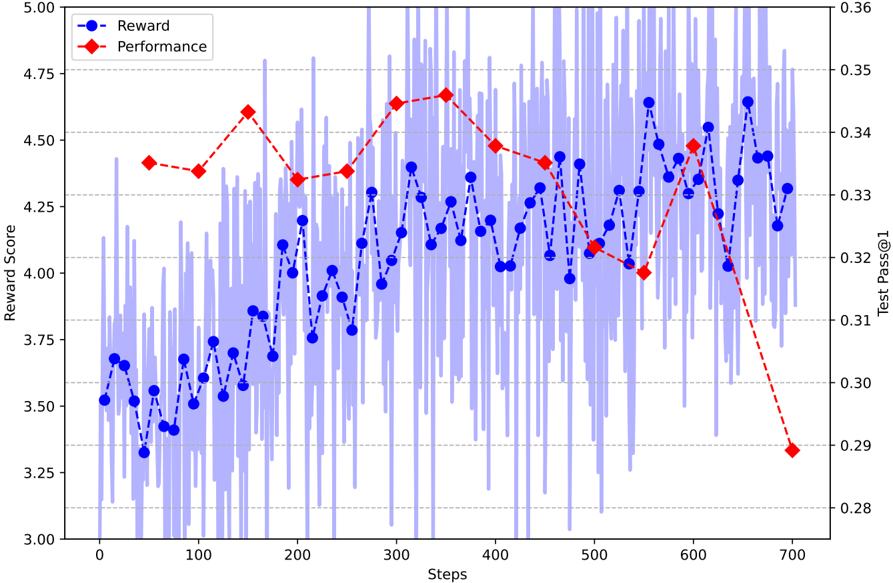

Figure 1(a) depicts the performance trajectory of DeepSeek-R1-Zero on the AIME 2024 benchmark throughout the RL training process, where the average pass@1 score on AIME 2024 shows a significant increase, jumping from an initial 15.6% to 77.9%. In addition, by leveraging the self-consistency decoding (Wang et al., 2023c), the model's performance can be

Table 2 | An interesting 'aha moment' of an intermediate version of DeepSeek-R1-Zero. The model learns to rethink using an anthropomorphic tone. This is also an aha moment for us,

allowing us to witness the power and beauty of reinforcement learning.

```

model learns to rethink using an anthropomorphic tone. This is also an aha moment for us,

allowing us to witness the power and beauty of reinforcement learning.

Question: If a > 1, then the sum of the real solutions of \a - \a + x = x is equal to

Response: <think>

To solve the equation \a - \a + x = x , let's start by squaring both . . .

( \sqrt { a - \sqrt { a + x } } ) ^ { 2 } = x ^ { 2 } \implies a - \sqrt { a + x } = x ^ { 2 } .

Rearrange to isolate the inner square root term:

( a - x ^ { 2 } ) ^ { 2 } = a + x \implies a ^ { 2 } - 2 a x ^ { 2 } + ( x ^ { 2 } ) ^ { 2 } = a + x \implies x ^ { 4 } - 2 a x ^ { 2 } - x + ( a ^ { 2 } - a ) = 0

\dots

Wait, wait. Wait. That's an aha moment I can flag here.

Let's reevaluate this step-by-step to identify if the correct sum can be . . .

We started with the equation:

\sqrt { a - \sqrt { a + x } } = x

First, let's square both sides:

a - \sqrt { a + x } = x ^ { 2 } \implies \sqrt { a + x } = a - x ^ { 2 }

Next, I could square both sides again, treating the equation: . . .

\dots

```

further improved, achieving an accuracy of 86.7%. This performance significantly surpasses the average performance across all human competitors. Besides the math competitions, as shown in Figure 10, DeepSeek-R1-Zero also achieves remarkable performance in coding competitions and graduate-level biology, physics, and chemistry problems. These results underscore the effectiveness of RL in enhancing the reasoning capabilities of large language models.

The self-evolution of DeepSeek-R1-Zero exemplifies how RL can autonomously enhance a model's reasoning capabilities.

As shown in Figure 1(b), DeepSeek-R1-Zero exhibits a steady increase in thinking time throughout training, driven solely by intrinsic adaptation rather than external modifications. Leveraging long CoT, the model progressively refines its reasoning, generating hundreds to thousands of tokens to explore and improve its problem-solving strategies.

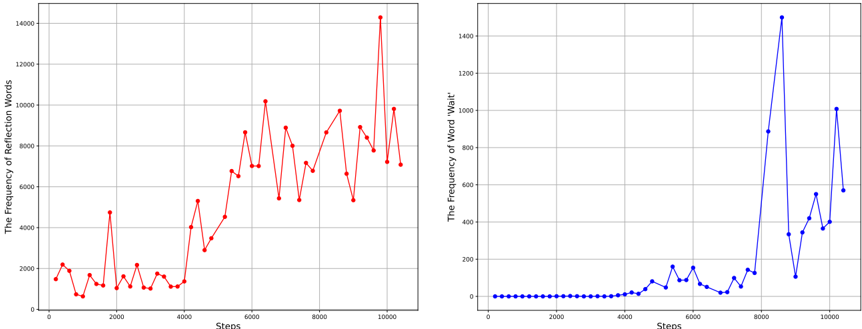

The increase in thinking time fosters the autonomous development of sophisticated behaviors. Specifically, DeepSeek-R1-Zero increasingly exhibits advanced reasoning strategies such as reflective reasoning and systematic exploration of alternative solutions (see Figure 9(a) in Supplementary C.2 for details), significantly boosting its performance on verifiable tasks like math and coding. Notably, during training, DeepSeek-R1-Zero exhibits an 'aha moment' (Table 2), characterized by a sudden increase in the use of the word 'wait' during reflections (see Figure 9(b) in Supplementary C.2 for details). This moment marks a distinct change in reasoning patterns and clearly shows the self-evolution process of DeepSeek-R1-Zero.

The self-evolution of DeepSeek-R1-Zero underscores the power and beauty of RL: rather than explicitly teaching the model how to solve a problem, we simply provide it with the right incentives, and it autonomously develops advanced problem-solving strategies. This serves as a reminder of the potential of RL to unlock higher levels of capabilities in LLMs, paving the way for more autonomous and adaptive models in the future.

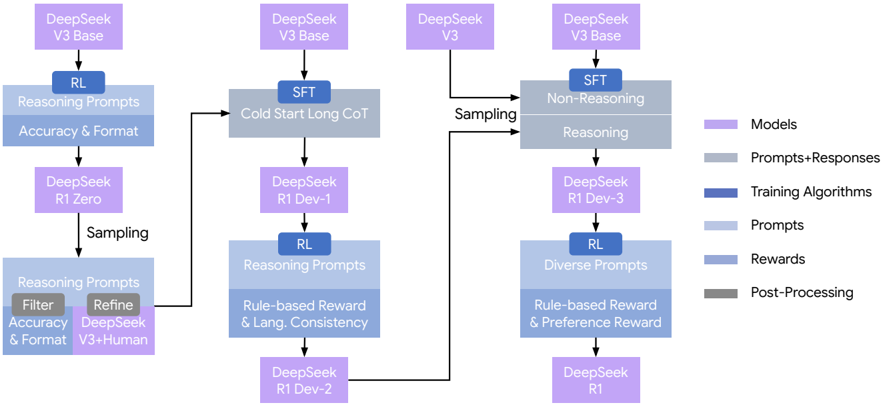

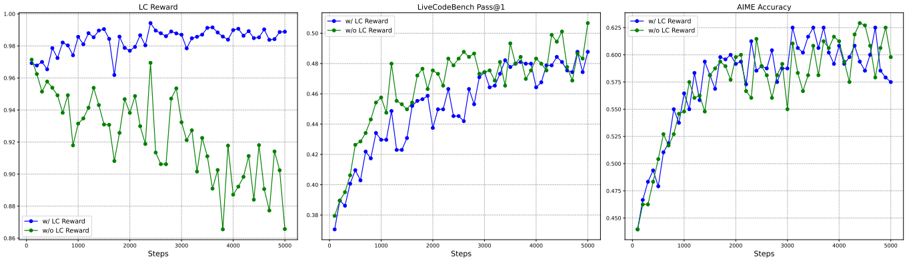

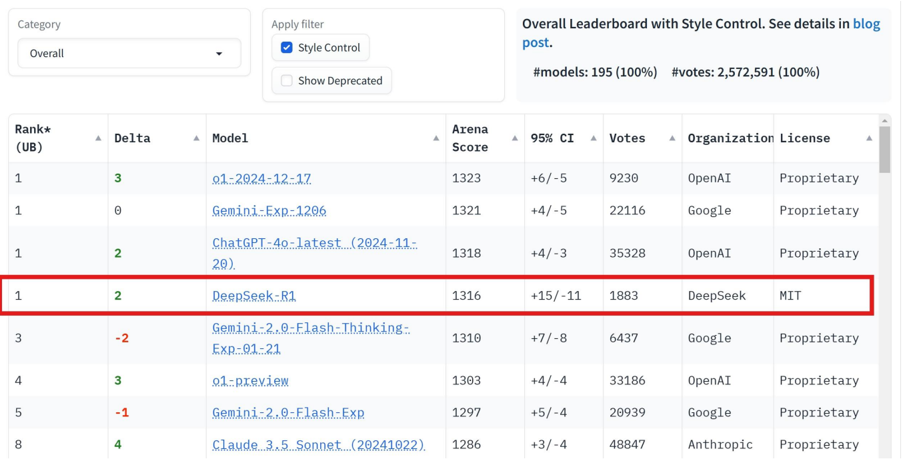

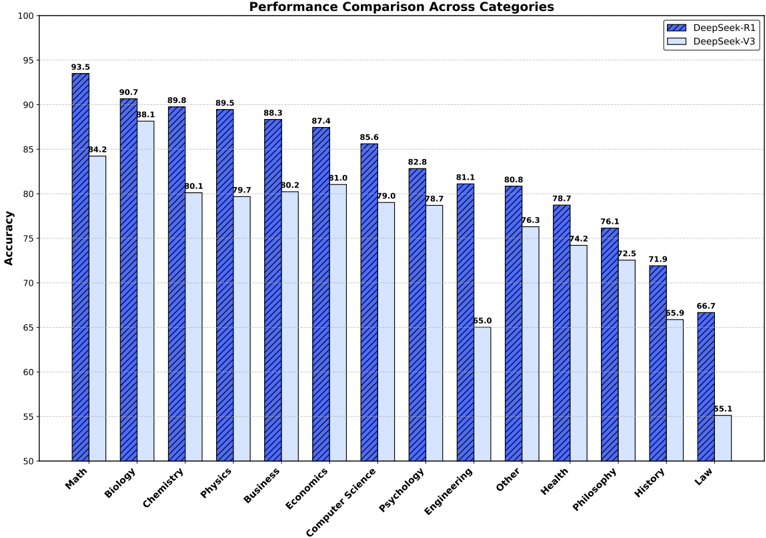

Figure 2 | The multi-stage pipeline of DeepSeek-R1. A detailed background on DeepSeek-V3 Base and DeepSeek-V3 is provided in Supplementary A.1. The models DeepSeek-R1 Dev1, Dev2, and Dev3 represent intermediate checkpoints within this pipeline.

<details>

<summary>Image 3 Details</summary>

### Visual Description

## Diagram: DeepSeek Model Training Flow

### Overview

The image presents a diagram illustrating the training flow of DeepSeek models. It outlines different training paths and components, including models, prompts, training algorithms, and rewards. The diagram shows three distinct training pathways, each starting with a DeepSeek model and progressing through various stages of prompting, reasoning, and reward mechanisms.

### Components/Axes

* **Models:** Represented by light purple rectangles. Examples include "DeepSeek V3 Base," "DeepSeek V3," "DeepSeek R1 Zero," "DeepSeek R1 Dev-1," "DeepSeek R1 Dev-2," "DeepSeek R1 Dev-3," and "DeepSeek R1."

* **Prompts+Responses:** Represented by light gray rectangles. Examples include "Reasoning," "Non-Reasoning," and "Cold Start Long CoT."

* **Training Algorithms:** Represented by dark blue rectangles. Examples include "RL" (Reinforcement Learning) and "SFT" (Supervised Fine-Tuning).

* **Prompts:** Represented by light blue rectangles. Examples include "Reasoning Prompts," "Diverse Prompts," and "Filter Accuracy & Format, Refine DeepSeek V3+Human."

* **Rewards:** Represented by dark gray rectangles. Examples include "Rule-based Reward & Lang. Consistency" and "Rule-based Reward & Preference Reward."

* **Post-Processing:** Represented by dark gray rectangles.

* **Arrows:** Indicate the flow of data and processes between components.

* **Sampling:** Indicates a branching point where data is sampled.

**Legend (Located on the right side of the diagram):**

* Models: Light Purple

* Prompts+Responses: Light Gray

* Training Algorithms: Dark Blue

* Prompts: Light Blue

* Rewards: Dark Gray

* Post-Processing: Dark Gray

### Detailed Analysis

**Pathway 1 (Leftmost):**

1. Starts with "DeepSeek V3 Base" (light purple).

2. Goes through "RL" (Reinforcement Learning - dark blue) applied to "Reasoning Prompts" and "Accuracy & Format" (light blue).

3. Proceeds to "DeepSeek R1 Zero" (light purple).

4. "Sampling" occurs.

5. The sampled data is processed through "Reasoning Prompts" which includes "Filter Accuracy & Format" and "Refine DeepSeek V3+Human" (light blue).

**Pathway 2 (Middle):**

1. Starts with "DeepSeek V3 Base" (light purple).

2. Goes through "SFT" (Supervised Fine-Tuning - dark blue) applied to "Cold Start Long CoT" (light gray).

3. Proceeds to "DeepSeek R1 Dev-1" (light purple).

4. Goes through "RL" (Reinforcement Learning - dark blue) applied to "Reasoning Prompts" and "Rule-based Reward & Lang. Consistency" (light blue).

5. Proceeds to "DeepSeek R1 Dev-2" (light purple).

6. A feedback loop connects "DeepSeek R1 Dev-2" back to the "Reasoning Prompts" stage of Pathway 1.

**Pathway 3 (Rightmost):**

1. Starts with "DeepSeek V3" (light purple) and "DeepSeek V3 Base" (light purple).

2. Both pathways are "Sampling".

3. Goes through "SFT" (Supervised Fine-Tuning - dark blue) applied to "Non-Reasoning" and "Reasoning" (light gray).

4. Proceeds to "DeepSeek R1 Dev-3" (light purple).

5. Goes through "RL" (Reinforcement Learning - dark blue) applied to "Diverse Prompts" and "Rule-based Reward & Preference Reward" (light blue).

6. Proceeds to "DeepSeek R1" (light purple).

### Key Observations

* The diagram illustrates three distinct training pathways for DeepSeek models.

* Reinforcement Learning (RL) and Supervised Fine-Tuning (SFT) are key training algorithms used.

* The models progress through stages of prompting, reasoning, and reward mechanisms.

* Sampling is used to branch the training process.

* There is a feedback loop from "DeepSeek R1 Dev-2" to the "Reasoning Prompts" stage of Pathway 1.

### Interpretation

The diagram provides a high-level overview of the training process for DeepSeek models. It highlights the use of different training algorithms, prompting strategies, and reward mechanisms to optimize model performance. The presence of multiple pathways and sampling suggests that different training approaches are being explored and compared. The feedback loop indicates an iterative refinement process where the model's performance is used to adjust the training process. The diagram suggests a complex and multifaceted approach to training DeepSeek models, incorporating both supervised and reinforcement learning techniques.

</details>

## 3. DeepSeek-R1

Although DeepSeek-R1-Zero exhibits strong reasoning capabilities, it faces several issues. DeepSeek-R1-Zero struggles with challenges like poor readability, and language mixing, as DeepSeek-V3-Base is trained on multiple languages, especially English and Chinese. To address these issues, we develop DeepSeek-R1, whose pipeline is illustrated in Figure 2.

In the initial stage, we collect thousands of cold-start data that exhibits a conversational, human-aligned thinking process. RL training is then applied to improve the model performance with the conversational thinking process and language consistency. Subsequently, we apply rejection sampling and SFT once more. This stage incorporates both reasoning and nonreasoning datasets into the SFT process, enabling the model to not only excel in reasoning tasks but also demonstrate advanced writing capabilities. To further align the model with human preferences, we implement a secondary RL stage designed to enhance the model's helpfulness and harmlessness while simultaneously refining its reasoning capabilities.

The remainder of this section details the key components of this pipeline: Section 3.1 introduces the Reward Model utilized in our RL stages, and Section 3.2 elaborates on the specific training methodologies and implementation details. Data we used in this stage is detailed in Supplementary B.3.

## 3.1. Model-based Rewards

For general data, we resort to reward models to capture human preferences in complex and nuanced scenarios. We build upon the DeepSeek-V3 pipeline and adopt a similar distribution of preference pairs and training prompts. For helpfulness, we focus exclusively on the final summary, ensuring that the assessment emphasizes the utility and relevance of the response to the user while minimizing interference with the underlying reasoning process. For harmlessness, we evaluate the entire response of the model, including both the reasoning process and the summary, to identify and mitigate any potential risks, biases, or harmful content that may arise

during the generation process.

Helpful Reward Model Regarding helpful reward model training, we first generate preference pairs by prompting DeepSeek-V3 using the arena-hard prompt format, listed in Supplementary B.2, where each pair consists of a user query along with two candidate responses. For each preference pair, we query DeepSeek-V3 four times, randomly assigning the responses as either Response A or Response B to mitigate positional bias. The final preference score is determined by averaging the four independent judgments, retaining only those pairs where the score difference ( Δ ) exceeds 1 to ensure meaningful distinctions. Additionally, to minimize length-related biases, we ensure that the chosen and rejected responses of the whole dataset have comparable lengths. In total, we curated 66,000 data pairs for training the reward model. The prompts used in this dataset are all non-reasoning questions and are sourced either from publicly available open-source datasets or from users who have explicitly consented to share their data for the purpose of model improvement. The architecture of our reward model is consistent with that of DeepSeek-R1, with the addition of a reward head designed to predict scalar preference scores.

$$R e w a r d _ { h e l p f u l } = R M _ { h e l p f u l } ( R e s p o n s e _ { A } , R e s p o n s e _ { B } )$$

The helpful reward models were trained with a batch size of 256, a learning rate of 6e-6, and for a single epoch over the training dataset. The maximum sequence length during training is set to 8192 tokens, whereas no explicit limit is imposed during reward model inference.

Safety Reward Model To assess and improve model safety, we curated a dataset of 106,000 prompts with model-generated responses annotated as 'safe" or 'unsafe" according to predefined safety guidelines. Unlike the pairwise loss employed in the helpfulness reward model, the safety reward model was trained using a point-wise methodology to distinguish between safe and unsafe responses. The training hyperparameters are the same as the helpful reward model.

$$R e w a r d _ { s a f e t y } = R M _ { s a f e t y } ( R e s p o n s e )$$

For general queries, each instance is categorized as belonging to either the safety dataset or the helpfulness dataset. The general reward, 𝑅𝑒𝑤𝑎𝑟𝑑𝐺𝑒𝑛𝑒𝑟𝑎𝑙 , assigned to each query corresponds to the respective reward defined within the associated dataset.

## 3.2. Training Details

## 3.2.1. Training Details of the First RL Stage

In the first stage of RL, we set the learning rate to 3e-6, the KL coefficient to 0.001, the GRPO clip ratio 𝜀 to 10, and the sampling temperature to 1 for rollout. For each question, we sample 16 outputs with a maximum length of 32,768. Each training step consists of 32 unique questions, resulting in a training batch size of 512 per step. Every 400 steps, we replace the reference model with the latest policy model. To accelerate training, each rollout generates 8,192 outputs, which are randomly split into 16 minibatches and trained for only a single inner epoch. However, to mitigate the issue of language mixing, we introduce a language consistency reward during RL training, which is calculated as the proportion of target language words in the CoT.

$$R e w a r d _ { l a n g u a g e } = \frac { N u m ( W o r d s _ { t \arg e t } ) } { N u m ( W o r d s ) }$$

Although ablation experiments in Supplementary B.6 show that such alignment results in a slight degradation in the model's performance, this reward aligns with human preferences, making it more readable. We apply the language consistency reward to both reasoning and non-reasoning data by directly adding it to the final reward.

Note that the clip ratio plays a crucial role in training. A lower value can lead to the truncation of gradients for a significant number of tokens, thereby degrading the model's performance, while a higher value may cause instability during training.

## 3.2.2. Training Details of the Second RL Stage

Specifically, we train the model using a combination of reward signals and diverse prompt distributions. For reasoning data, we follow the methodology outlined in DeepSeek-R1-Zero, which employs rule-based rewards to guide learning in mathematical, coding, and logical reasoning domains. During the training process, we observe that CoT often exhibits language mixing, particularly when RL prompts involve multiple languages. For general data, we utilize reward models to guide training. Ultimately, the integration of reward signals with diverse data distributions enables us to develop a model that not only excels in reasoning but also prioritizes helpfulness and harmlessness. Given a batch of data, the reward can be formulated as

$$R e w a r d & = R e w a r d _ { \text {reasoning} } + R e w a r d _ { \text {general} } + R e w a r d _ { \text {language} } \\ \text {where, $Reward$} _ { \text {reasoning} } & = R e w a r d _ { \text {rule} } \\ R e w a r d _ { \text {general} } & = R e w a r d _ { \text {reward_model} } + R e w a r d _ { \text {format} }

\begin{array} { l l } { { R e w a r d = R e w a r d _ { \text {reasoning} } + R e w a r d _ { \text {general} } + R e w a r d _ { \text {language} } } } & { { ( 8 ) } } \\ { { where , $Reward$$_{reasoning}$ = R e w a r d _ { \text {rule} } } } & { { ( 9 ) } } \\ { { R e w a r d _ { \text {general} } = R e w a r d _ { \text {reward_model} } + R e w a r d _ { \text {format} } } } & { { ( 1 0 ) } } \end{array}$$

The second stage of RL retains most of the parameters from the first stage, with the key difference being a reduced temperature of 0.7, as we find that higher temperatures in this stage lead to incoherent generation. The stage comprises a total of 1,700 training steps, during which general instruction data and preference-based rewards are incorporated exclusively in the final 400 steps. We find that more training steps with the model based preference reward signal may lead to reward hacking, which is documented in Supplementary B.5. The total training cost is listed in Supplementary B.4.4.

## 4. Experiment

We evaluate our models on MMLU (Hendrycks et al., 2021), MMLU-Redux (Gema et al., 2025), MMLU-Pro (Wang et al., 2024), C-Eval (Huang et al., 2023), and CMMLU (Li et al., 2024), IFEval (Zhou et al., 2023b), FRAMES (Krishna et al., 2024), GPQA Diamond (Rein et al., 2023), SimpleQA (OpenAI, 2024a), C-SimpleQA (He et al., 2024), SWE-Bench Verified (OpenAI, 2024b), Aider (Gauthier, 2025), LiveCodeBench (Jain et al., 2024) (2024-08 - 2025-01), Codeforces (Mirzayanov, 2025), Chinese National High School Mathematics Olympiad (CNMO 2024) (CMS, 2024), and American Invitational Mathematics Examination 2024 (AIME 2024) (MAA, 2024). The details of these benchmarks are listed in Supplementary D.

Table 3 summarizes the performance of DeepSeek-R1 across multiple developmental stages, as outlined in Figure 2. A comparison between DeepSeek-R1-Zero and DeepSeek-R1 Dev1 reveals substantial improvements in instruction-following, as evidenced by higher scores on the IF-Eval and ArenaHard benchmarks. However, due to the limited size of the cold-start dataset, Dev1 exhibits a partial degradation in reasoning performance compared to DeepSeekR1-Zero, most notably on the AIME benchmark. In contrast, DeepSeek-R1 Dev2 demonstrates

Table 3 | Experimental results at each stage of DeepSeek-R1. Numbers in bold denote the performance is statistically significant (t -test with 𝑝 < 0.01).

| | Benchmark (Metric) | R1-Zero | R1-Dev1 | R1-Dev2 | R1-Dev3 | R1 |

|---------|----------------------------|-----------|-----------|-----------|-----------|--------|

| | MMLU (EM) | 88.8 | 89.1 | 91.2 | 91 | 90.8 |

| | MMLU-Redux (EM) | 85.6 | 90 | 93 | 93.1 | 92.9 |

| | MMLU-Pro (EM) | 68.9 | 74.1 | 83.8 | 83.1 | 84 |

| | DROP (3-shot F1) | 89.1 | 89.8 | 91.1 | 88.7 | 92.2 |

| | IF-Eval (Prompt Strict) | 46.6 | 71.7 | 72 | 78.1 | 83.3 |

| | GPQA Diamond (Pass@1) | 75.8 | 66.1 | 70.7 | 71.2 | 71.5 |

| | SimpleQA (Correct) | 30.3 | 17.8 | 28.2 | 24.9 | 30.1 |

| | FRAMES (Acc.) | 82.3 | 78.5 | 81.8 | 81.9 | 82.5 |

| | AlpacaEval2.0 (LC-winrate) | 24.7 | 50.1 | 55.8 | 62.1 | 87.6 |

| | ArenaHard (GPT-4-1106) | 53.6 | 77 | 73.2 | 75.6 | 92.3 |

| | LiveCodeBench (Pass@1-COT) | 50 | 57.5 | 63.5 | 64.6 | 65.9 |

| | Codeforces (Percentile) | 80.4 | 84.5 | 90.5 | 92.1 | 96.3 |

| | Codeforces (Rating) | 1444 | 1534 | 1687 | 1746 | 2029 |

| | SWE Verified (Resolved) | 43.2 | 39.6 | 44.6 | 45.6 | 49.2 |

| | Aider-Polyglot (Acc.) | 12.2 | 6.7 | 25.6 | 44.8 | 53.3 |

| | AIME 2024 (Pass@1) | 77.9 | 59 | 74 | 78.1 | 79.8 |

| Math | MATH-500 (Pass@1) | 95.9 | 94.2 | 95.9 | 95.4 | 97.3 |

| Math | CNMO2024 (Pass@1) | 88.1 | 58 | 73.9 | 77.3 | 78.8 |

| | CLUEWSC (EM) | 93.1 | 92.8 | 92.6 | 91.6 | 92.8 |

| Chinese | C-Eval (EM) | 92.8 | 85.7 | 91.9 | 86.4 | 91.8 |

| Chinese | C-SimpleQA (Correct) | 66.4 | 58.8 | 64.2 | 66.9 | 63.7 |

marked performance enhancements on benchmarks that require advanced reasoning skills, including those focused on code generation, mathematical problem solving, and STEM-related tasks. Benchmarks targeting general-purpose tasks, such as AlpacaEval 2.0, show marginal improvement. These results suggest that reasoning-oriented RL considerably enhances reasoning capabilities while exerting limited influence on user preference-oriented benchmarks.

DeepSeek-R1 Dev3 integrates both reasoning and non-reasoning datasets into the SFT pipeline, thereby enhancing the model's proficiency in both reasoning and general language generation tasks. Compared to Dev2, DeepSeek-R1 Dev3 achieves notable performance improvements on AlpacaEval 2.0 and Aider-Polyglot, attributable to the inclusion of large-scale non-reasoning corpora and code engineering datasets. Finally, comprehensive RL training on DeepSeek-R1 Dev3 using mixed reasoning-focused and general-purpose data produced the final DeepSeek-R1. Marginal improvements occurred in code and mathematics benchmarks, as substantial reasoning-specific RL was done in prior stages. The primary advancements in the final DeepSeek-R1 were in general instruction-following and user-preference benchmarks, with AlpacaEval 2.0 improving by 25% and ArenaHard by 17%.

In addition, we compare DeepSeek-R1 with other models in Supplementary D.2. Model safety evaluations are provided in Supplementary D.3. A comprehensive analysis is provided in Supplementary E, including a comparison with DeepSeek-V3, performance evaluations on both fresh test sets, a breakdown of mathematical capabilities by category, and an investigation of test-time scaling behavior. Supplementary F shows that the strong reasoning capability can be transferred to smaller models.

## 5. Ethics and Safety Statement

With the advancement in the reasoning capabilities of DeepSeek-R1, we deeply recognize the potential ethical risks. For example, R1 can be subject to jailbreak attacks, leading to the generation of dangerous content such as explosive manufacturing plans, while the enhanced reasoning capabilities enable the model to provide plans with better operational feasibility and executability. Besides, a public model is also vulnerable to further fine-tuning that could compromise inherent safety protections.

In Supplementary D.3, we present a comprehensive safety report from multiple perspectives, including performance on open-source and in-house safety evaluation benchmarks, and safety levels across multiple languages and against jailbreak attacks. These comprehensive safety analyses conclude that the inherent safety level of the DeepSeek-R1 model, compared to other state-of-the-art models, is generally at a moderate level (comparable to GPT-4o (2024-05-13)). Besides, when coupled with the risk control system, the model's safety level is elevated to a superior standard.

## 6. Conclusion, Limitation, and Future Work

We present DeepSeek-R1-Zero and DeepSeek-R1, which rely on large-scale RL to incentivize model reasoning behaviors. Our results demonstrate that pre-trained checkpoints inherently possess substantial potential for complex reasoning tasks. We believe that the key to unlocking this potential lies not in large-scale human annotation but in the provision of hard reasoning questions, a reliable verifier, and sufficient computational resources for reinforcement learning. Sophisticated reasoning behaviors, such as self-verification and reflection, appeared to emerge organically during the reinforcement learning process.

Even if DeepSeek-R1 achieves frontier results on reasoning benchmarks, it still faces several capability limitations, as outlined below:

Structure Output and Tool Use: Currently, the structural output capabilities of DeepSeek-R1 remain suboptimal compared to existing models. Moreover, DeepSeek-R1 cannot leverage tools, such as search engines and calculators, to improve the performance of output. However, as it is not hard to build an RL environment for structure output and tool use, we believe the issue will be addressed in the next version.

Token efficiency: Unlike conventional test-time computation scaling approaches, such as majority voting or Monte Carlo Tree Search (MCTS), DeepSeek-R1 dynamically allocates computational resources during inference according to the complexity of the problem at hand. Specifically, it uses fewer tokens to solve simple tasks, while generating more tokens for complex tasks. Nevertheless, there remains room for further optimization in terms of token efficiency, as instances of excessive reasoning-manifested as overthinking-are still observed in response to simpler questions.

Language Mixing: DeepSeek-R1 is currently optimized for Chinese and English, which may result in language mixing issues when handling queries in other languages. For instance, DeepSeek-R1 might use English for reasoning and responses, even if the query is in a language other than English or Chinese. We aim to address this limitation in future updates. The limitation may be related to the base checkpoint, DeepSeek-V3-Base, mainly utilizes Chinese and English, so that it can achieve better results with the two languages in reasoning.

Prompting Engineering: When evaluating DeepSeek-R1, we observe that it is sensitive to

prompts. Few-shot prompting consistently degrades its performance. Therefore, we recommend users directly describe the problem and specify the output format using a zero-shot setting for optimal results.

Software Engineering Tasks: Due to the long evaluation times, which impact the efficiency of the RL process, large-scale RL has not been applied extensively in software engineering tasks. As a result, DeepSeek-R1 has not demonstrated a huge improvement over DeepSeek-V3 on software engineering benchmarks. Future versions will address this by implementing rejection sampling on software engineering data or incorporating asynchronous evaluations during the RL process to improve efficiency.

Beyond specific capability limitations, the pure RL methodology itself also presents inherent challenges:

Reward Hacking: The success of pure RL depends on reliable reward signals. In this study, we ensure reward reliability through a reasoning-domain rule-based reward model (RM). However, such dependable RMs are difficult to construct for certain tasks, such as writing. If the reward signal is assigned by a model instead of predefined rules, it becomes more susceptible to exploitation as training progresses, which means the policy model may find shortcuts to hack the reward model. Consequently, for complex tasks that cannot be effectively evaluated by a reliable reward model, scaling up pure RL methods remains an open challenge.

In this work, for tasks that cannot obtain a reliable signal, DeepSeek-R1 uses human annotation to create supervised data, and only conduct RL for hundreds of steps. We hope in the future, a robust reward model can be obtained to address such issues.

With the advent of pure RL methods like DeepSeek-R1, the future holds immense potential for solving any task that can be effectively evaluated by a verifier, regardless of its complexity for humans. Machines equipped with such advanced RL techniques are poised to surpass human capabilities in these domains, driven by their ability to optimize performance iteratively through trial and error. However, challenges remain for tasks where constructing a reliable reward model is inherently difficult. In such cases, the lack of a robust feedback mechanism may hinder progress, suggesting that future research should focus on developing innovative approaches to define and refine reward structures for these complex, less verifiable problems.

Furthermore, leveraging tools during the reasoning process holds significant promise. Whether it's utilizing tools like compilers or search engines to retrieve or compute necessary information, or employing external tools-such as biological or chemical reagents, to validate final results in the real world, this integration of tool-augmented reasoning could dramatically enhance the scope and accuracy of machine-driven solutions.

## 7. Author List

The list of authors is organized by contribution role, with individuals listed alphabetically by their first name within each category. Authors marked with an asterisk (*) are no longer affiliated with our team.

Core Contributors : Daya Guo, Dejian Yang, Haowei Zhang, Junxiao Song, Peiyi Wang, Qihao Zhu, Runxin Xu, Ruoyu Zhang, Shirong Ma, Xiao Bi, Xiaokang Zhang, Xingkai Yu, Yu Wu, Z.F. Wu, Zhibin Gou, Zhihong Shao, Zhuoshu Li, Ziyi Gao,

Contributions of the Core Authors: Peiyi Wang and Daya Guo jointly demonstrated that outcome-based RL induces the emergence of long Chain-of-Thought patterns in LLMs, achieving

breakthrough reasoning capabilities. They contributed equally to the creation of R1-Zero, and their work laid the foundation for R1. Daya Guo also contributed to the RL training stability of MOE models. Junxiao Song proposed the GRPO algorithm, implemented the initial version, and introduced rule-based rewards for math tasks. The GRPO algorithm was subsequently refined by Peiyi Wang and Runxin Xu. Zhibin Gou proposed a large PPO clipping strategy to enhance GRPO performance, demonstrating its significance alongside Zhihong Shao and Junxiao Song. Regarding data iteration, reward design, and evaluation, specific teams led efforts across different domains: Qihao Zhu, Z.F. Wu, and Dejian Yang focused on code tasks; Zhihong Shao, Zhibin Gou, and Junxiao Song focused on math tasks; and Peiyi Wang, Ruoyu Zhang, Runxin Xu, and Yu Wu led efforts for other reasoning and general tasks. Additionally, Qihao Zhu and Zhihong Shao contributed to the data selection strategy for RL training, while Zhuoshu Li and Yu Wu co-led the data labeling efforts for the entire project. On the system side, Xiao Bi, Xingkai Yu, Shirong Ma, Xiaokang Zhang, Haowei Zhang, and Ziyi Gao implemented the RL pipeline, optimizing system efficiency and addressing stability issues in large-scale training. Finally, Zhibin Gou, Daya Guo, and Ruoyu Zhang oversaw the final training phase and monitored the model training dynamics. Zhibin Gou led the development of the R1-distill series.

Contributors : Aixin Liu, Bing Xue, Bingxuan Wang, Bochao Wu, Bei Feng, Chengda Lu, Chenggang Zhao, Chengqi Deng, Chong Ruan, Damai Dai, Deli Chen, Dongjie Ji, Erhang Li, Fangyun Lin, Fucong Dai, Fuli Luo*, Guangbo Hao, Guanting Chen, Guowei Li, H. Zhang, Hanwei Xu, Honghui Ding, Huazuo Gao, Hui Qu, Hui Li, Jianzhong Guo, Jiashi Li, Jingchang Chen, Jingyang Yuan, Jinhao Tu, Junjie Qiu, Junlong Li, J.L. Cai, Jiaqi Ni, Jian Liang, Jin Chen, Kai Dong, Kai Hu*, Kaichao You, Kaige Gao, Kang Guan, Kexin Huang, Kuai Yu, Lean Wang, Lecong Zhang, Liang Zhao, Litong Wang, Liyue Zhang, Lei Xu, Leyi Xia, Mingchuan Zhang, Minghua Zhang, Minghui Tang, Mingxu Zhou, Meng Li, Miaojun Wang, Mingming Li, Ning Tian, Panpan Huang, Peng Zhang, Qiancheng Wang, Qinyu Chen, Qiushi Du, Ruiqi Ge, Ruisong Zhang, Ruizhe Pan, Runji Wang, R.J. Chen, R.L. Jin, Ruyi Chen, Shanghao Lu, Shangyan Zhou, Shanhuang Chen, Shengfeng Ye, Shiyu Wang, Shuiping Yu, Shunfeng Zhou, Shuting Pan, S.S. Li, Shuang Zhou, Shaoqing Wu, Shengfeng Ye, Tao Yun, Tian Pei, Tianyu Sun, T. Wang, Wangding Zeng, Wen Liu, Wenfeng Liang, Wenjun Gao, Wenqin Yu*, Wentao Zhang, W.L. Xiao, Wei An, Xiaodong Liu, Xiaohan Wang, Xiaokang Chen, Xiaotao Nie, Xin Cheng, Xin Liu, Xin Xie, Xingchao Liu, Xinyu Yang, Xinyuan Li, Xuecheng Su, Xuheng Lin, X.Q. Li, Xiangyue Jin, Xiaojin Shen, Xiaosha Chen, Xiaowen Sun, Xiaoxiang Wang, Xinnan Song, Xinyi Zhou, Xianzu Wang, Xinxia Shan, Y.K. Li, Y.Q. Wang, Y.X. Wei, Yang Zhang, Yanhong Xu, Yao Li, Yao Zhao, Yaofeng Sun, Yaohui Wang, Yi Yu, Yichao Zhang, Yifan Shi, Yiliang Xiong, Ying He, Yishi Piao, Yisong Wang, Yixuan Tan, Yiyang Ma*, Yiyuan Liu, Yongqiang Guo, Yuan Ou, Yuduan Wang, Yue Gong, Yuheng Zou, Yujia He, Yunfan Xiong, Yuxiang Luo, Yuxiang You, Yuxuan Liu, Yuyang Zhou, Y.X. Zhu, Yanping Huang, Yaohui Li, Yi Zheng, Yuchen Zhu, Yunxian Ma, Ying Tang, Yukun Zha, Yuting Yan, Z.Z. Ren, Zehui Ren, Zhangli Sha, Zhe Fu, Zhean Xu, Zhenda Xie, Zhengyan Zhang, Zhewen Hao, Zhicheng Ma, Zhigang Yan, Zhiyu Wu, Zihui Gu, Zijia Zhu, Zijun Liu*, Zilin Li, Ziwei Xie, Ziyang Song, Zizheng Pan, Zhen Huang, Zhipeng Xu, Zhongyu Zhang, Zhen Zhang,

## Appendix

## A. Background

## A.1. DeepSeek-V3

DeepSeek V3 (DeepSeek-AI, 2024b) is an advanced open-source LLM developed by DeepSeek. Released in December 2024, DeepSeek V3 represents a significant leap forward in AI innovation, designed to rival leading models like OpenAI's GPT-4 and Meta's Llama 3.1, while maintaining remarkable cost efficiency and performance. Built on a Mixture-of-Experts (MoE) architecture, DeepSeek V3 has 671 billion total parameters, with 37 billion activated per token, optimizing both efficiency and capability. It was pre-trained on an expansive dataset of 14.8 trillion highquality, diverse tokens, followed by supervised fine-tuning and reinforcement learning to enhance its abilities across various domains. The model incorporates innovative features like Multi-head Latent Attention (MLA) (DeepSeek-AI, 2024a) for efficient inference, an auxiliaryloss-free load-balancing strategy, and Multi-Token Prediction (MTP) (Gloeckle et al., 2024) to boost performance, particularly in tasks like mathematics and coding.

For the training data of DeepSeek-V3-Base, we exclusively use plain web pages and e-books, without incorporating any synthetic data. However, we have observed that some web pages contain a significant number of OpenAI-model-generated answers, which may lead the base model to acquire knowledge from other powerful models indirectly. However, we did not intentionally include synthetic data generated by OpenAI during the pre-training cooldown phase; all data used in this phase were naturally occurring and collected through web crawling. The pre-training dataset contains a substantial amount of mathematical and code-related content, indicating that DeepSeek-V3-Base has been exposed to a significant volume of reasoning trace data. This extensive exposure equips the model with the capability to generate plausible solution candidates, from which reinforcement learning can effectively identify and optimize high-quality outputs. We did the data contamination in pre-training as described in Appendix D.1. The training data of DeepSeek-V3 base are mostly Chinese and English, which might be the cause for DeepSeek-R1-Zero language mixing when the language consistent reward is absent.

In this paper, we use the notation DeepSeek-V3-Base as the base model, DeepSeek-V3 as the instructed model. Notably, DeepSeek-R1 and DeepSeek-R1-Zero are trained on top of DeepSeek-V3-Base and DeepSeek-R1 leverages non-reasoning data from DeepSeek-V3 SFT data. DeepSeek-R1-Dev1, DeepSeek-R1-Dev2, DeepSeek-R1-Dev3 are intermediate checkpoints of DeepSeek-R1.

## A.2. Conventional Post-Training Paradigm

Post-training has emerged as an essential step in refining pre-trained LLMs to meet specific performance goals and align with human expectations. A widely adopted two-stage posttraining framework is SFT followed by RL (Ouyang et al., 2022).

Supervised Fine-Tuning refines a pre-trained LLM by training it on a curated dataset of inputoutput pairs tailored to specific tasks. The process employs a supervised learning objective, typically minimizing cross-entropy loss between the model's predictions and labeled ground truth (Brown et al., 2020). For instance, in conversational applications, SFT might utilize dialogue datasets where desired responses are explicitly provided, enabling the model to adapt its outputs to predefined standards (Radford et al., 2019). SFT offers several compelling benefits. First, it achieves precise task alignment by leveraging high-quality examples, allowing the model to

excel in domains such as customer support or technical documentation (Radford et al., 2019). Second, its reliance on pre-trained weights ensures computational efficiency, requiring fewer resources than training from scratch. Finally, the use of explicit input-output mappings enhances interpretability, as the model's learning process is directly tied to observable data, minimizing the risk of erratic behavior (Ouyang et al., 2022). Despite its strengths, the performance of SFT hinges on the quality and diversity of the training dataset; narrow or biased data can impair the model's ability to generalize to novel contexts (Brown et al., 2020). Additionally, SFT's static nature-optimizing for fixed outputs-may fail to capture evolving human preferences or nuanced objectives. The labor-intensive process of curating high-quality datasets further complicates its scalability, as errors or inconsistencies in the data can propagate into the model's behavior (Ouyang et al., 2022).

Following SFT, Reinforcement Learning further refines the LLM by optimizing its outputs against a reward signal. In this stage, the model interacts with an environment-often a reward model trained on human feedback-and adjusts its behavior to maximize cumulative rewards. Aprominent instantiation of this approach is Reinforcement Learning from Human Feedback (RLHF), where the reward function encodes human preferences (Christiano et al., 2017). RL thus shifts the focus from static supervision to dynamic optimization. Notably, RL reduces the need for extensive annotated resources; while SFT demands a fully labeled dataset for every input-output pair, RL can operate with a smaller set of human evaluations or a trained reward model, even rule-based reward model, significantly lowering the annotation burden.

The sequential application of SFT and RL combines their complementary strengths. SFT establishes a robust, task-specific baseline by grounding the model in curated examples, while RL refines this foundation to align with broader, human-centric objectives (Ouyang et al., 2022). For example, SFT might ensure grammatical accuracy in a dialogue system, while RL optimizes for engagement and brevity, as demonstrated in the development of InstructGPT (Ouyang et al., 2022). This hybrid approach has proven effective in producing models that are both precise and adaptable.

In this study, we demonstrate that the SFT stage may impede a model's ability to explore and develop effective reasoning strategies. This limitation arises because human-provided responses, which serve as targets during SFT, are not always optimal for model learning; they often omit critical reasoning components such as explicit reflection and verification steps. To address this, DeepSeek-R1-Zero enables direct exploration of reasoning patterns by the model itself, independent of human priors. The reasoning trajectories discovered through this selfexploration are subsequently distilled and used to train other models, thereby promoting the acquisition of more robust and generalizable reasoning capabilities.

## A.3. AComparison of GRPO and PPO

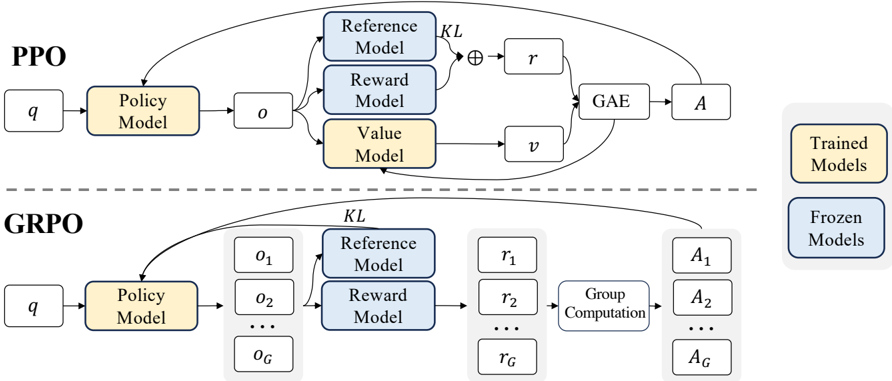

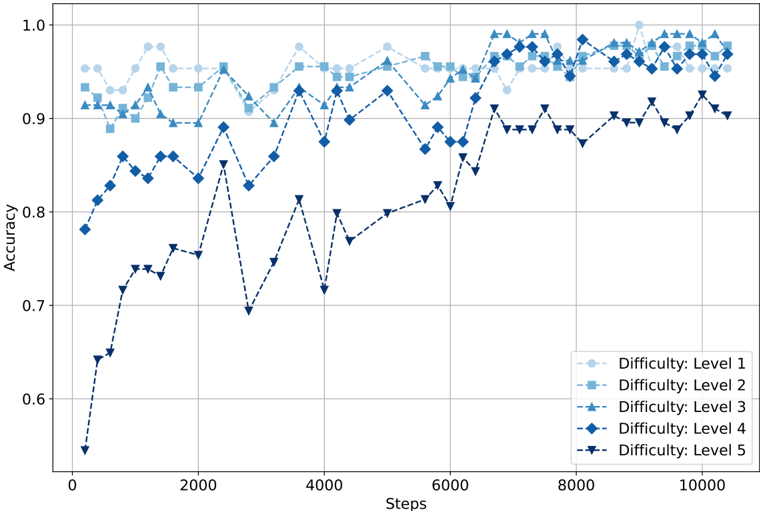

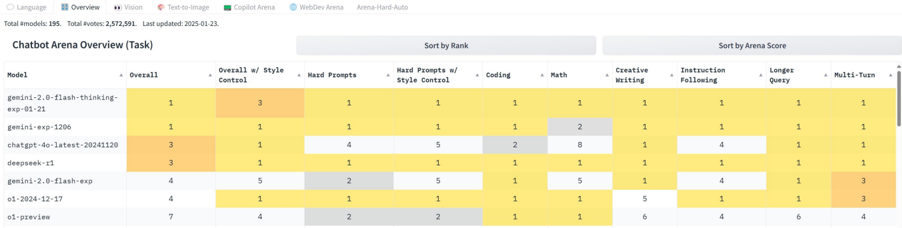

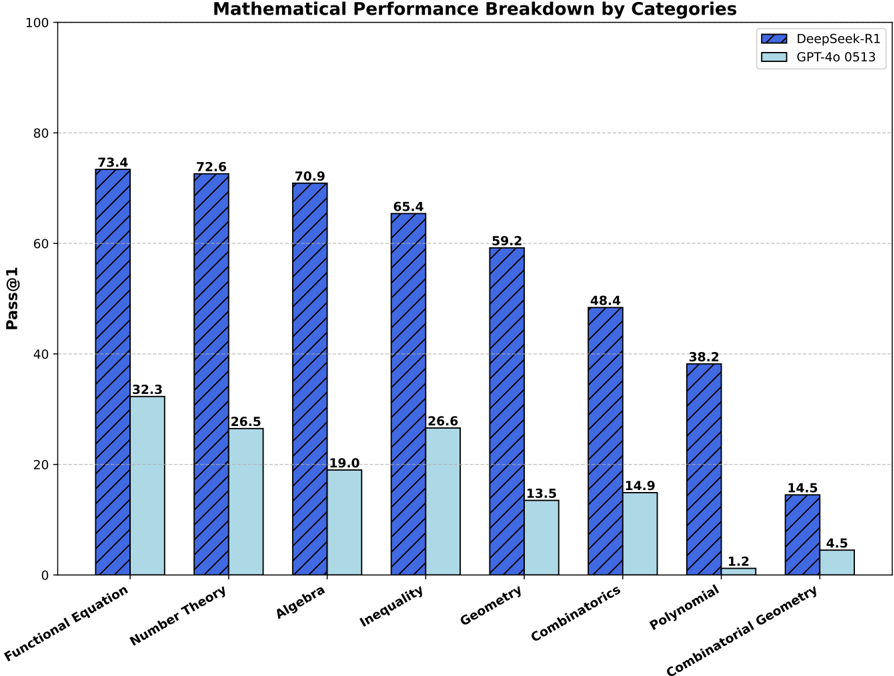

Group Relative Policy Optimization (GRPO) (Shao et al., 2024) is the reinforcement learning algorithm that we adopt to train DeepSeek-R1-Zero and DeepSeek-R1. It was originally proposed to simplify the training process and reduce the resource consumption of Proximal Policy Optimization (PPO) (Schulman et al., 2017), which is widely used in the RL stage of LLMs (Ouyang et al., 2022). For an overall comparison between GRPO and PPO, see Figure 3.

For each question 𝑞 , GRPO samples a group of outputs { 𝑜 1, 𝑜 2, · · · , 𝑜𝐺 } from the old policy

Figure 3 | Demonstration of PPO and our GRPO. GRPO foregoes the value model, instead estimating the advantages from group scores.

<details>

<summary>Image 4 Details</summary>

### Visual Description

## Algorithm Flow Diagram: PPO vs. GRPO

### Overview

The image presents a comparative diagram illustrating the flow of two reinforcement learning algorithms: Proximal Policy Optimization (PPO) and Grouped Robust Policy Optimization (GRPO). The diagram highlights the key components and data flow within each algorithm, emphasizing the differences in their architectures and processes.

### Components/Axes

* **Title:** PPO (top), GRPO (bottom)

* **Blocks:** Representing models, computations, and data.

* **Arrows:** Indicating the flow of data and processes.

* **Labels:**

* `q`: Input to the Policy Model

* `Policy Model`: A core component in both algorithms.

* `o`: Output of the Policy Model in PPO. `o1`, `o2`, ..., `oG` in GRPO.

* `Reference Model`: Used in both algorithms.

* `Reward Model`: Used in both algorithms.

* `Value Model`: Used in PPO.

* `r`: Reward signal in PPO. `r1`, `r2`, ..., `rG` in GRPO.

* `v`: Value function in PPO.

* `GAE`: Generalized Advantage Estimation in PPO.

* `Group Computation`: Process specific to GRPO.

* `A`: Advantage function in PPO. `A1`, `A2`, ..., `AG` in GRPO.

* `KL`: Kullback-Leibler divergence.

* **Legend (Right Side):**

* Yellow: Trained Models

* Blue: Frozen Models

### Detailed Analysis

**PPO (Top)**

1. **Input:** `q` feeds into the `Policy Model`.

2. **Policy Model:** The output `o` from the `Policy Model` is fed into the `Reference Model`, `Reward Model`, and `Value Model`. The `Policy Model` is colored yellow, indicating it is a "Trained Model".

3. **Reference and Reward Models:** The outputs of the `Reference Model` and `Reward Model` are combined using an operation denoted by a circle with a plus sign inside (⊕), along with a `KL` divergence term, to produce `r`. The `Reference Model` and `Reward Model` are colored blue, indicating they are "Frozen Models".

4. **Value Model:** The `Value Model` outputs `v`. The `Value Model` is colored yellow, indicating it is a "Trained Model".

5. **GAE:** `r` and `v` are fed into `GAE` (Generalized Advantage Estimation).

6. **Advantage:** The output of `GAE` is `A`.

7. **Feedback Loop:** There is a feedback loop from `A` back into the `GAE` and the `Policy Model`.

**GRPO (Bottom)**

1. **Input:** `q` feeds into the `Policy Model`.

2. **Policy Model:** The output of the `Policy Model` is a set of values `o1`, `o2`, ..., `oG`. The `Policy Model` is colored yellow, indicating it is a "Trained Model".

3. **Reference and Reward Models:** The outputs `o1`, `o2`, ..., `oG` are fed into the `Reference Model` and `Reward Model`. The `Reference Model` and `Reward Model` are colored blue, indicating they are "Frozen Models".

4. **Reward Signals:** The outputs of the `Reference Model` and `Reward Model`, along with a `KL` divergence term, produce a set of reward signals `r1`, `r2`, ..., `rG`.

5. **Group Computation:** The reward signals `r1`, `r2`, ..., `rG` are fed into a `Group Computation` block.

6. **Advantage:** The output of the `Group Computation` block is a set of advantage functions `A1`, `A2`, ..., `AG`.

7. **Feedback Loop:** There is a feedback loop from `A1`, `A2`, ..., `AG` back into the `Policy Model`.

### Key Observations

* **Model Types:** Both PPO and GRPO use a `Policy Model`, `Reference Model`, and `Reward Model`. PPO also uses a `Value Model`.

* **Frozen vs. Trained Models:** The `Reference Model` and `Reward Model` are "Frozen Models" (blue), while the `Policy Model` and `Value Model` (in PPO) are "Trained Models" (yellow).

* **Grouped Structure:** GRPO introduces a grouped structure for observations, rewards, and advantages (`oG`, `rG`, `AG`), along with a `Group Computation` step.

* **KL Divergence:** Both algorithms incorporate a Kullback-Leibler (KL) divergence term.

* **Feedback Loops:** Both algorithms have feedback loops from the advantage function(s) back to the `Policy Model`.

### Interpretation

The diagram illustrates the architectural differences between PPO and GRPO. GRPO introduces a grouped structure, allowing it to handle multiple observations, rewards, and advantages simultaneously. This suggests that GRPO is designed for environments or tasks where considering groups of data points is beneficial. The use of "Frozen Models" for the `Reference Model` and `Reward Model` in both algorithms implies that these models are pre-trained or fixed during the training process, potentially to provide a stable reference or reward signal. The feedback loops in both algorithms are characteristic of reinforcement learning, allowing the policy to be iteratively improved based on the observed advantages.

</details>

𝜋𝜃𝑜𝑙𝑑 and then optimizes the policy model 𝜋𝜃 by maximizing the following objective:

$$\mathcal { J } _ { G R P O } ( \theta ) & = \mathbb { E } [ q \sim P ( Q ) , \{ o _ { i } \} _ { i = 1 } ^ { G } \sim \pi _ { \theta _ { o l d } } ( O | q ) ] \\ \frac { 1 } { G } \sum _ { i = 1 } ^ { G } \left ( \min \left ( \frac { \pi _ { \theta } ( o _ { i } | q ) } { \pi _ { \theta _ { o l d } } ( o _ { i } | q ) } A _ { i } , \text {clip} \left ( \frac { \pi _ { \theta } ( o _ { i } | q ) } { \pi _ { \theta _ { o l d } } ( o _ { i } | q ) } , 1 - \varepsilon , 1 + \varepsilon \right ) A _ { i } \right ) - \beta \mathbb { D } _ { K L } \left ( \pi _ { \theta } | | \pi _ { r e f } \right ) \right ) , \\

\mathbb { D } _ { K L } \left ( \pi _ { \theta } | | \pi _ { r e f } \right ) = \frac { \pi _ { r e f } ( o _ { i } | q ) } { \pi _ { \theta } ( o _ { i } | q ) } - \log \frac { \pi _ { r e f } ( o _ { i } | q ) } { \pi _ { \theta } ( o _ { i } | q ) } - 1 ,

\mathbb { D } _ { K L } \left ( \pi _ { \theta } | | \pi _ { r e f } \right ) = \frac { \pi _ { r e f } ( o _ { i } | q ) } { \pi _ { \theta } ( o _ { i } | q ) } - \log \frac { \pi _ { r e f } ( o _ { i } | q ) } { \pi _ { \theta } ( o _ { i } | q ) } - 1 ,

\mathbb { D } _ { K L } \left ( \pi _ { \theta } | | \pi _ { r e f } \right ) = \frac { \pi _ { r e f } ( o _ { i } | q ) } { \pi _ { \theta } ( o _ { i } | q ) } - \log \frac { \pi _ { r e f } ( o _ { i } | q ) } { \pi _ { \theta } ( o _ { i } | q ) } - 1 ,

\mathbb { D } _ { K L } \left ( \pi _ { \theta } | | \pi _ { r e f } \right ) = \frac { \pi _ { r e f } ( o _ { i } | q ) } { \pi _ { \theta } ( o _ { i } | q ) } - 1 ,

\mathbb { D } _ { K L } \left ( \pi _ { \theta } | | \pi _ { r e f } \right ) = \frac { \pi _ { r e f } ( o _ { i } | q ) } { \pi _ { \theta } ( o _ { i } | q ) } - 1 ,$$

$$\mathbb { D } _ { K L } \left ( \pi _ { \theta } | | \pi _ { r e f } \right ) = \frac { \pi _ { r e f } ( o _ { i } | q ) } { \pi _ { \theta } ( o _ { i } | q ) } - \log \frac { \pi _ { r e f } ( o _ { i } | q ) } { \pi _ { \theta } ( o _ { i } | q ) } - 1 ,$$

where 𝜋𝑟𝑒𝑓 is a reference policy, 𝜀 and 𝛽 are hyper-parameters, and 𝐴𝑖 is the advantage, computed using a group of rewards { 𝑟 1, 𝑟 2, . . . , 𝑟 𝐺 } corresponding to the outputs within each group:

$$A _ { i } = \frac { r _ { i } - m e a n ( \{ r _ { 1 } , r _ { 2 } , \cdots , r _ { G } \} ) } { s t d ( \{ r _ { 1 } , r _ { 2 } , \cdots , r _ { G } \} ) } .$$

In contrast, in PPO, the advantage is typically computed by applying the Generalized Advantage Estimation (GAE) (Schulman et al., 2015), based not only on the rewards but also on a learned value model. Since the value model is usually of similar size as the policy model, it introduces a significant memory and computational overhead. Additionally, the training objective of the value model is to predict the expected cumulative reward from the current position onward, based on the tokens generated from the beginning up to the current position. This is inherently difficult, especially when only the final outcome reward is available. The challenge becomes even more pronounced when training long chain-of-thought reasoning models. As the output length increases, the model is more likely to engage in behaviors such as reflection and revision during generation, meaning that the content initially generated may later be revised or contradicted, which makes it even less feasible to predict the final reward based on a partial response.

Another key difference between GRPO and PPO is how Kullback-Leibler (KL) divergence between the trained policy and the reference policy is incorporated into the training process. In GRPO, an unbiased estimator of the KL divergence (Schulman, 2020) is directly added in the loss as in equation 11, while in PPO the per-token KL penalty is added as a dense reward at each token (Ouyang et al., 2022). Since the optimization goal of reinforcement learning is to

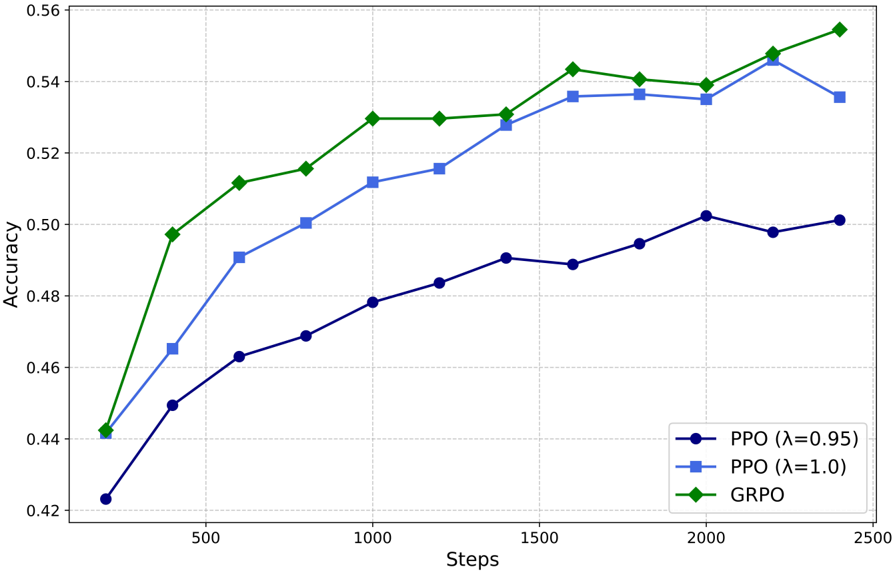

Figure 4 | Performance of PPO and GRPO on the MATH task.

<details>

<summary>Image 5 Details</summary>

### Visual Description

## Line Chart: Accuracy vs. Steps for Different Algorithms

### Overview

The image is a line chart comparing the accuracy of three different algorithms (PPO with λ=0.95, PPO with λ=1.0, and GRPO) over a range of steps. The chart displays accuracy on the y-axis and steps on the x-axis.

### Components/Axes

* **X-axis:** "Steps", ranging from 0 to 2500, with gridlines at intervals of 500.

* **Y-axis:** "Accuracy", ranging from 0.42 to 0.56, with gridlines at intervals of 0.02.

* **Legend:** Located in the bottom-right corner, it identifies the three algorithms:

* Dark Blue: PPO (λ=0.95)

* Light Blue: PPO (λ=1.0)

* Green: GRPO

### Detailed Analysis

* **PPO (λ=0.95) - Dark Blue Line:**

* Trend: Generally increasing, but plateaus and slightly decreases towards the end.

* Data Points:

* At 250 steps, Accuracy ≈ 0.45

* At 500 steps, Accuracy ≈ 0.47

* At 750 steps, Accuracy ≈ 0.475

* At 1000 steps, Accuracy ≈ 0.48

* At 1250 steps, Accuracy ≈ 0.485

* At 1500 steps, Accuracy ≈ 0.49

* At 1750 steps, Accuracy ≈ 0.495

* At 2000 steps, Accuracy ≈ 0.50

* At 2250 steps, Accuracy ≈ 0.498

* At 2500 steps, Accuracy ≈ 0.502

* **PPO (λ=1.0) - Light Blue Line:**

* Trend: Increasing, but plateaus towards the end.

* Data Points:

* At 250 steps, Accuracy ≈ 0.465

* At 500 steps, Accuracy ≈ 0.49

* At 750 steps, Accuracy ≈ 0.50

* At 1000 steps, Accuracy ≈ 0.515

* At 1250 steps, Accuracy ≈ 0.52

* At 1500 steps, Accuracy ≈ 0.535

* At 1750 steps, Accuracy ≈ 0.535

* At 2000 steps, Accuracy ≈ 0.535

* At 2250 steps, Accuracy ≈ 0.54

* At 2500 steps, Accuracy ≈ 0.535

* **GRPO - Green Line:**

* Trend: Increasing rapidly initially, then plateaus, and increases again slightly at the end.

* Data Points:

* At 250 steps, Accuracy ≈ 0.44

* At 500 steps, Accuracy ≈ 0.495

* At 750 steps, Accuracy ≈ 0.51

* At 1000 steps, Accuracy ≈ 0.53

* At 1250 steps, Accuracy ≈ 0.53

* At 1500 steps, Accuracy ≈ 0.53

* At 1750 steps, Accuracy ≈ 0.545

* At 2000 steps, Accuracy ≈ 0.54

* At 2250 steps, Accuracy ≈ 0.545

* At 2500 steps, Accuracy ≈ 0.55

### Key Observations

* GRPO achieves the highest accuracy overall.

* PPO (λ=1.0) performs better than PPO (λ=0.95).

* All algorithms show diminishing returns in accuracy as the number of steps increases.

### Interpretation

The chart demonstrates the performance of different reinforcement learning algorithms in terms of accuracy over a number of steps. GRPO appears to be the most effective algorithm among the three, achieving the highest accuracy. The PPO algorithm's performance is influenced by the lambda parameter, with λ=1.0 resulting in better accuracy than λ=0.95. The plateauing of the accuracy curves suggests that further training steps may not significantly improve the performance of these algorithms.

</details>

maximize cumulative rewards, PPO's approach penalizes the cumulative KL divergence, which may implicitly penalize the length of the response and thereby prevent the model's response length from increasing. In addition, as we may train thousands of steps in the scenario of training long chain-of-thought reasoning models, the trained policy can diverge significantly from the initial reference policy. In order to balance the scope that the training policy can explore and the stability of the training, we periodically update the reference policy to the latest policy during the actual training process.

Figure 4 compares the performance of PPO and GRPO on the MATH task using DeepSeekCoder-V2-Lite (16B MoE with 2.4B active parameters). Unlike GRPO, PPO requires additional hyperparameter tuning-particularly of the 𝜆 coefficient in GAE-and is highly sensitive to this parameter. When 𝜆 is set to 0.95 (the default value in most open-source PPO implementations), PPO performs considerably worse than GRPO. However, with careful tuning (setting 𝜆 to 1.0), PPO's performance improves substantially, nearing that of GRPO.

While PPO can achieve comparable performance when appropriately tuned, it demands additional computational cost for hyperparameter optimization. Moreover, considering the memory and computational overhead associated with training an additional value model, GRPO presents a more practical alternative, especially when training large-scale models with constrained resources.

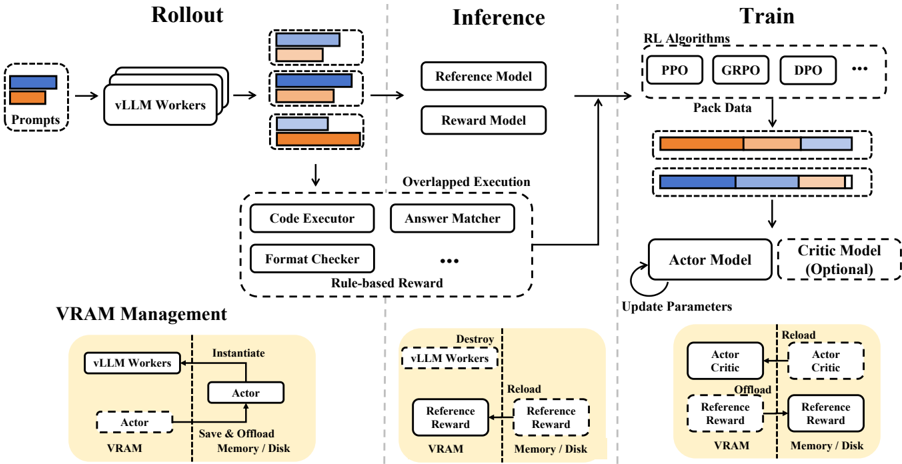

Figure 5 | Overview of our RL framework.

<details>

<summary>Image 6 Details</summary>

### Visual Description

## System Diagram: Reinforcement Learning Workflow

### Overview

The image is a system diagram illustrating a reinforcement learning workflow, likely for training large language models (LLMs). It is divided into three main stages: Rollout, Inference, and Train, with a section on VRAM Management. The diagram shows the flow of data and processes between different components, including models, executors, and memory management.

### Components/Axes

* **Title:** Reinforcement Learning Workflow (implied)

* **Sections:**

* Rollout (top-left)

* Inference (top-center)

* Train (top-right)

* VRAM Management (bottom)

* **Nodes:**

* Prompts

* vLLM Workers

* Reference Model

* Reward Model

* Code Executor

* Answer Matcher

* Format Checker

* RL Algorithms (PPO, GRPO, DPO, ...)

* Actor Model

* Critic Model (Optional)

* Actor

* Reference Reward

* **Edges:** Arrows indicating the flow of data and control.

* **VRAM Management:** Shows instantiation, saving/offloading, destroying, and reloading of models between VRAM and Memory/Disk.

### Detailed Analysis

**1. Rollout Stage (Top-Left):**

* **Prompts:** A box labeled "Prompts" on the left. It contains two horizontal bars, one orange and one blue, representing data or information.

* **vLLM Workers:** "Prompts" feeds into a stack of three boxes labeled "vLLM Workers."

* **Output:** The output of "vLLM Workers" is a set of three boxes, each containing two horizontal bars (one orange, one blue), similar to the "Prompts" box. The relative lengths of the orange and blue bars vary slightly between the three output boxes.

**2. Inference Stage (Top-Center):**

* **Input:** Receives input from the "Rollout" stage.

* **Reference Model:** A box labeled "Reference Model."

* **Reward Model:** A box labeled "Reward Model."

* **Overlapped Execution:** A dashed box labeled "Overlapped Execution" containing:

* Code Executor

* Answer Matcher

* Format Checker

* An ellipsis (...) indicating more components.

* **Rule-based Reward:** The "Overlapped Execution" block is labeled "Rule-based Reward."

* **Output:** The output of the "Inference" stage feeds into the "Train" stage.

**3. Train Stage (Top-Right):**

* **Input:** Receives input from the "Inference" stage.

* **RL Algorithms:** A dashed box labeled "RL Algorithms" containing:

* PPO

* GRPO

* DPO

* An ellipsis (...) indicating more algorithms.

* **Pack Data:** The "RL Algorithms" block is labeled "Pack Data."

* **Actor Model:** A box labeled "Actor Model."

* **Critic Model (Optional):** A dashed box labeled "Critic Model (Optional)."

* **Update Parameters:** An arrow loops from the "Actor Model" back into itself, labeled "Update Parameters."

**4. VRAM Management (Bottom):**

* **Left Section:**

* "vLLM Workers" box.

* "Instantiate" arrow pointing to an "Actor" box.

* "Save & Offload Memory/Disk" arrow pointing from the "Actor" box to a box labeled "Actor" inside a "VRAM" box.

* **Middle Section:**

* "Destroy vLLM Workers" box.

* "Reload" arrow pointing to a "Reference Reward" box.

* "Reference Reward" box inside a "VRAM" box.

* "Memory/Disk" box with a "Reference Reward" box.

* **Right Section:**

* "Reload" arrow pointing to an "Actor Critic" box.

* "Actor Critic" box inside a "VRAM" box.

* "Offload" arrow pointing from the "Actor Critic" box to a "Reference Reward" box.

* "Memory/Disk" box with a "Reference Reward" box.

### Key Observations

* The diagram illustrates a pipeline for training LLMs using reinforcement learning.

* The "Rollout" stage generates data using "vLLM Workers" based on "Prompts."

* The "Inference" stage evaluates the generated data using "Reference" and "Reward" models.

* The "Train" stage updates the "Actor" model based on the rewards and uses a "Critic" model (optionally).

* "VRAM Management" shows how models are instantiated, saved/offloaded, destroyed, and reloaded between VRAM and Memory/Disk.

### Interpretation

The diagram depicts a sophisticated reinforcement learning workflow designed for training large language models. The separation into "Rollout," "Inference," and "Train" stages allows for modularity and optimization. The "VRAM Management" section highlights the importance of efficient memory utilization when dealing with large models. The use of "Reference" and "Reward" models in the "Inference" stage suggests a comparative evaluation process. The "Overlapped Execution" block indicates parallel processing for faster evaluation. The optional "Critic Model" suggests flexibility in the training approach. The diagram emphasizes the iterative nature of reinforcement learning through the "Update Parameters" loop. The presence of multiple RL algorithms (PPO, GRPO, DPO) indicates the potential for experimentation and optimization of the training process.

</details>

## B. Training Details

## B.1. RL Infrastructure

Conducting RL training on large models places high demands on the infrastructure. Our RL framework is architected with a decoupled and extensible structure to facilitate seamless integration of diverse models and algorithms. Within this framework, we have incorporated both intra-modular and inter-modular optimization techniques, to ensure training efficiency and scalability.

Specifically, as depicted in Figure 5, the framework is partitioned into four distinct modules, each corresponding to a specific phase of the RL pipeline:

- Rollout Module: Prompts are loaded from training dataset and uniformly dispatched across multiple vLLM (Kwon et al., 2023) workers, each equipped with the actor model, to sample multiple responses. For DeepSeek-V3 MoE architecture, we implement an expert parallelism strategy across nodes to reduce memory access overhead, and deploy redundant copies of hotspot experts to balance computational loads among different experts. Multi-Token Prediction (MTP) component is also leveraged for self-speculative decoding, significantly accelerating the decoding speed and effectively minimizing the completion time for the longest samples.

- Inference Module: This module loads the reward model and reference to perform a forward pass on the samples generated during the rollout phase, thereby obtaining modelbased rewards and other essential information.

- Rule-based Reward Module: This module computes rule-based rewards for the modelgenerated responses. A unified interface has been designed to accommodate diverse implementations (e.g., code executor, answer matcher, format checker, etc.). Although this module does not require loading models into GPU memory, its execution tends to be time-consuming. To tackle this issue, an asynchronous scheduling approach is employed to overlap its execution with the Rollout and Inference modules, effectively hiding the

associated latency.

- Training Module: This module loads the actor model and the critic model (if required), to compute loss and update model parameters. It provides flexible support for a variety of RL algorithms (e.g., PPO, GRPO, DPO, etc.). To minimize computational waste caused by sequence padding and balance the workload across devices, we design the following data packing strategy: first, all data in a global batch is sorted by length and distributed across processes within the data parallel group; subsequently, within each process, the Best-Fit strategy is applied to pack the data into fixed-length chunks with minimal padding; finally, the number of chunks is adjusted to be equal across all processes. Additionally, we have integrated the DualPipe algorithm, utilized in DeepSeek-V3 training, to achieve efficient pipeline parallelism.

Notably, upon completion of each module (excluding the Rule-based Reward module), the model instances utilized in that phase are automatically offloaded from VRAM to either system memory or disk storage, thereby freeing up VRAM for the subsequent phase.

## B.2. Reward Model Prompt

Please act as an impartial judge and evaluate the quality of the responses provided by two AI assistants to the user prompt displayed below. You will be given assistant A's answer and assistant B's answer. Your job is to evaluate which assistant's answer is better. Begin your evaluation by generating your own answer to the prompt. You must provide your answers before judging any answers.

When evaluating the assistants' answers, compare both assistants' answers with your answer. You must identify and correct any mistakes or inaccurate information.

Then consider if the assistant's answers are helpful, relevant, and concise. Helpful means the answer correctly responds to the prompt or follows the instructions. Note when user prompt has any ambiguity or more than one interpretation, it is more helpful and appropriate to ask for clarifications or more information from the user than providing an answer based on assumptions. Relevant means all parts of the response closely connect or are appropriate to what is being asked. Concise means the response is clear and not verbose or excessive.

Then consider the creativity and novelty of the assistant's answers when needed. Finally, identify any missing important information in the assistants' answers that would be beneficial to include when responding to the user prompt.

After providing your explanation, you must output only one of the following choices as your final verdict with a label: