# Does Functional Package Management Enable Reproducible Builds at Scale? Yes.

**Authors**: Julien Malka, Stefano Zacchiroli, Théo Zimmermann

> LTCI, Télécom Paris Institut Polytechnique de Paris Palaiseau, France

swhid=true bibliography.bib

## Abstract

Reproducible Builds (R-B) guarantee that rebuilding a software package from source leads to bitwise identical artifacts. R-B is a promising approach to increase the integrity of the software supply chain, when installing open source software built by third parties. Unfortunately, despite success stories like high build reproducibility levels in Debian packages, uncertainty remains among field experts on the scalability of R-B to very large package repositories.

In this work, we perform the first large-scale study of bitwise reproducibility, in the context of the Nix functional package manager, rebuilding $709 816$ packages from historical snapshots of the nixpkgs repository, the largest cross-ecosystem open source software distribution, sampled in the period 2017–2023.

We obtain very high bitwise reproducibility rates, between 69 and 91% with an upward trend, and even higher rebuildability rates, over 99%. We investigate unreproducibility causes, showing that about 15% of failures are due to embedded build dates. We release a novel dataset with all build statuses, logs, as well as full “diffoscopes”: recursive diffs of where unreproducible build artifacts differ.

Index Terms: reproducible builds, functional package management, software supply chain, reproducibility, security

## I Introduction

Free and open source software (FOSS) is a great asset to build trust in a computing system, because one can audit the source code of installed components to determine if their security is up to one’s standards. However trusting the source code of the components making up a system is not enough to trust the system itself: before a program can be run on a user machine, it is typically built A term that we will also use in this work for interpreted programs, where it is the runtime environment that has to be built. to obtain an executable artifact, and then distributed onto the target system, involving a set of processes and actors generally referred to as the software supply chain. In recent years, large scale attacks like Solarwinds [alkhadra_solar_2021] or the xz backdoor [noauthor_nvd_nodate] have specifically targeted the software supply chain, underlying the importance of measures to increase its security and also triggering policy response in the European Union and the United States of America [noauthor_cyber_2022, house_executive_2021]. The particular effectiveness of these attacks is due to the difficulty to analyze binary artifacts in order to understand how they might act on the system, hence increasing the need for tooling that provide traceability from executable binaries to their source code.

Reproducible builds (R-B) —the property of being able to obtain the same, bitwise identical, artifacts from two independent builds of a software component—is recognized as a promising way to increase trust in the distribution phase of binary artifacts [lamb_reproducible_2022]. Indeed, if a software is bitwise reproducible, a user may require several independent parties to reach a consensus on the result of a compilation before downloading the built artifacts from one of them, effectively distributing the trust in these artifacts between those parties. For an attacker wanting to compromise the supply chain of that component, it is no longer sufficient to compromise only one of the involved parties. Build reproducibility is however not easy to obtain in general, due to non-determinism in the build processes, documented both by practitioners and researchers [lamb_reproducible_2022, bajaj_unreproducible_2023]. The Reproducible Builds [noauthor_reproducible_2023] project has since 2015 worked to increase bitwise reproducibility throughout the FOSS ecosystems, by coming up with fixes for compilers and other toolchain components, working closely with upstream projects to integrate them.

Unfortunately, a recent study [fourne_its_2023] which interviewed 24 R-B experts still concluded that there is “ a perceived impracticality of fully reproducible builds due to workload, missing organizational buy-in, unhelpful communication with upstream projects, or the goal being perceived as only theoretically achievable ” and that “ much of the industry believes [R-B] is out of reach ”. While there exist some successful examples of package sets with high reproducibility levels like Debian, which consistently achieves a reproducibility rate of more than 95% [noauthor_overview_nodate], those good performances should be put in perspective with the strict quality policies applied in Debian and the relatively limited size of the package set. Uncertainty remains among field experts about the scalability of this approach to larger software distributions.

Nixpkgs is the largest cross-ecosystem FOSS distribution, totaling as of October 2024 about $100 000$ packages. Based on the Repology rankings https://repology.org, accessed Oct. 2024. It includes components from a large variety of software ecosystems, making it an interesting target to study bitwise reproducibility at scale. Nixpkgs is built upon Nix, the seminal implementation of the functional package management (FPM) model [dolstra_purely_2006]. It is generally believed that the FPM model is effective to obtain R-B: FPM packages are pure functions (in the mathematical sense) from build- and run-time dependencies to build artifacts, described as “build recipe”s that can be executed locally by the package manager. Components are built in a sandboxed environment, disallowing access to unspecified dependencies, even if they are present on the system. Previous work has highlighted that this model allows to reproduce build environments both in space and time [malka_reproducibility_2024], a necessary property for build reproducibility. Additionally, nixpkgs’ predefined build processes implement best practices to ensure build reproducibility, like setting the SOURCE_DATE_EPOCH environment variable [noauthor_source_date_epoch_nodate] or automatically verifying that the build path does not appear in the built artifacts [noauthor_build_nodate]. Despite the potential for insightful distribution-wide build reproducibility metrics, nixpkgs limits its monitoring to the narrow set of packages included in the minimal and gnome-based ISO images [noauthor_nixos_nodate], where a reproducibility rate higher than 95% is consistently reported.

Contributions

In this work, we perform the first ever large scale empirical study of bitwise reproducibility of FOSS going back in time, rebuilding historical packages from evenly spaced snapshots of the nixpkgs package repository taken every 4.1 months from 2017 to 2023. With this experiment, we answer the following research questions:

- RQ1: What is the evolution of bitwise reproducible packages in nixpkgs between 2017 and 2023? How does the reproducibility rate evolve over time? Are unreproducible packages eventually fixed? Do reproducible packages remain reproducible?

- RQ2: What are the unreproducible packages? Are they concentrated in specific ecosystems? Are critical packages more likely to be reproducible?

- RQ3: Why are packages unreproducible? Is large-scale identification of common causes possible?

- RQ4: How are unreproducibilities fixed? Are they fixed by specific patches or as part of larger package updates? Are the fixes intentional or accidental?

Besides, we use our experiment to replicate and extend previous results [malka_reproducibility_2024], leading to an additional research question:

- RQ0: Does Nix allow rebuilding past packages reliably (even if not bitwise reproducibly)?

Results

Thanks to this large-scale experiment, we are able to establish for the first time that bitwise reproducibility is achievable at scale, with reproducibility rates ranging from 69% to 91% over the period 2017–2023, despite a continuous increase in the number of packages in nixpkgs. We highlight the wide variability in reproducibility rates across ecosystems packaged in nixpkgs, and show the significant impact that some core packages can have on the overall reproducibility rate of an ecosystem.

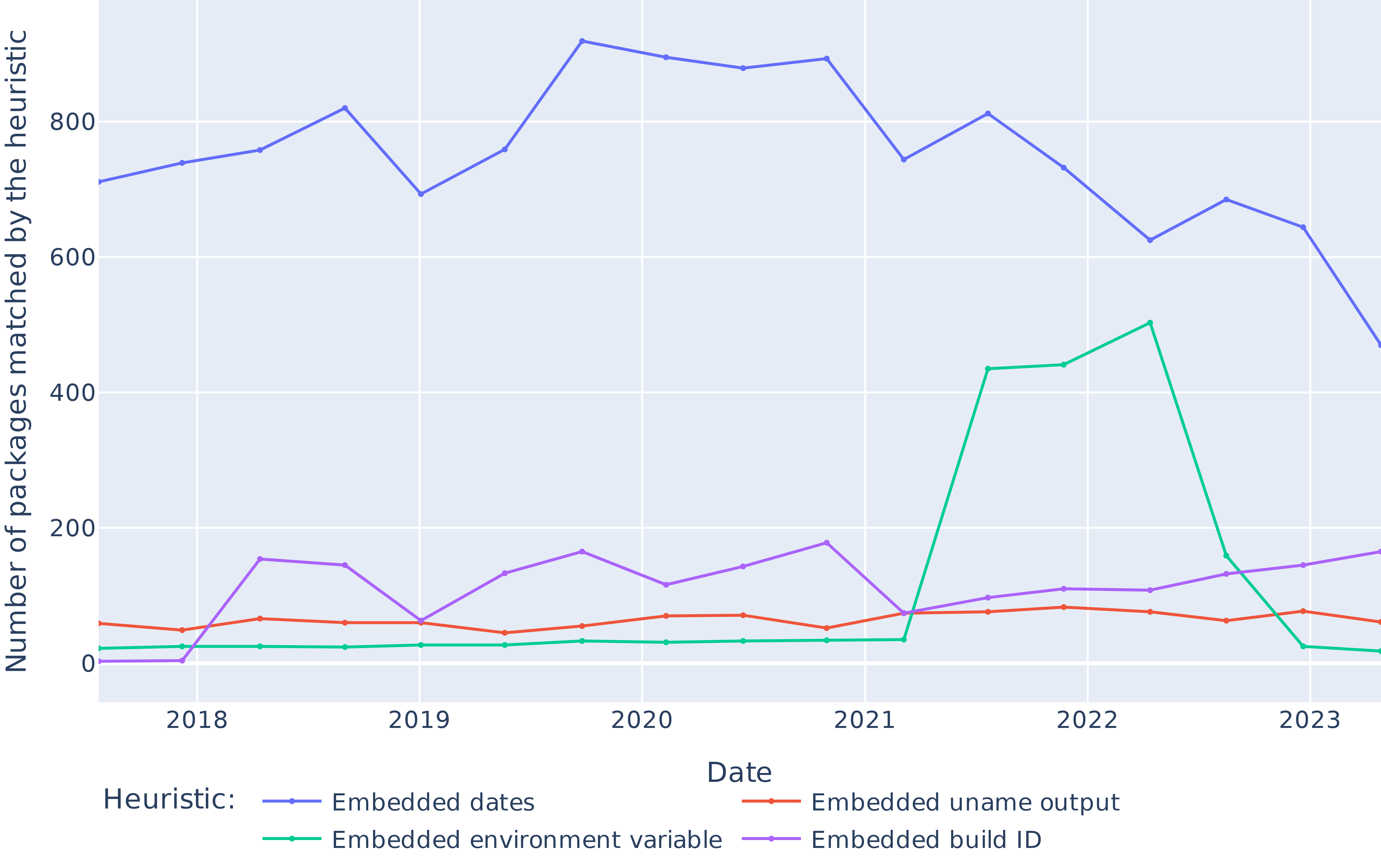

We estimate the prevalence of some common causes of non-reproducibility at a large scale for the first time, showing that about 15% of failures are due to embedded build dates.

As part of this work, we introduce a novel dataset containing build logs and metadata of over $709 000$ package builds, and more than $114 000$ occurrences of non-reproducibility with full artifacts including “diffoscopes”, i.e., recursive diffs of where unreproducible build artifacts differ. Ample room for further research is left open by the dataset, including exploiting the build logs, or applying more complex heuristics or qualitative research to the diffoscopes.

Paper structure

Section II presents the related work. Section III gives some background that is required to understand the experiment, whose methodology is then presented in Section IV. Some descriptive statistics about the dataset are presented in Section V, and the results to our RQs in Section VI. We discuss them in Section VII, and the threats to validity in Section VIII, concluding in Section IX.

## II Related work

### II-A Reproducible builds (R-B)

R-B are a relatively recent concept, which has been picked up and developed mostly by practitioners from Linux distributions and upstream maintainers. The Reproducible Builds project [noauthor_reproducible_2023] has been the main actor in the area. The project has produced a definition of R-B, best practices to achieve them, and tools to monitor the reproducibility of software distributions, and debug unreproducibilities (the diffoscope).

Besides, R-B have picked the interest of the academic community, with a growing number of papers on the topic.

R-B for the security of the software supply chain

R-B are often seen as a way to increase the security of the software supply chain [lamb_reproducible_2022]. Torres-Arias et al. provide a framework to enforce the integrity of the software supply chain for which they demonstrate an application to enforce R-B [torres-arias_-toto_2019]. Our paper does not contribute directly to this line of research but, by demonstrating the feasibility of R-B at scale, it strengthens the case of the approach.

Techniques for build reproducibility

In a series of articles [ren_automated_2018, ren_root_2020, ren_automated_2022], Ren et al. devised a methodology to automate the localization of sources of non-reproducibility in build processes and to automatically fix them, using a database of common patches that are then automatically adapted and applied.

An alternative technique to achieve build reproducibility is proposed by Navarro et al. [navarro_leija_reproducible_2020]. They propose “reproducible containers” that are built in a way that makes the build process fully deterministic, at the expense of performance.

FPMs such as Nix [dolstra_purely_2006] and Guix [courtes_functional_2013] are also presented as a way to achieve R-B. Malka et al. [malka_reproducibility_2024] showed that Nix allows reproducing past build environment reliably, as well as rebuilding old packages with high confidence, but they do not address the question of bitwise reproducibility, which we do with this work.

Relaxing the bitwise reproducibility criterion

Because of the difficulty (real or perceived) to achieve bitwise reproducibility, some authors have proposed to relax the criterion to a more practical one. For instance, accountable builds [poll_analyzing_2021] aim to distinguish between differences that can be explained (accountable differences) or not (unaccountable differences). Our work highlights that bitwise reproducibility is achievable at scale in practice, and thus that relaxing the reproducibility criterion may not be necessary after all.

Empirical studies of R-B

Some other recent academic works have empirically studied R-B in the wild. Two papers from 2023 [butler_business_2023, fourne_its_2023] looked into business adoption of R-B and perceived effectiveness through interviews.

Bajaj et al. [bajaj_unreproducible_2023] mined historical results from R-B tracking in Debian to investigate causes, fix time, and other properties of unreproducibility in the distribution. Our work is similar, but instead of relying on historical R-B tracking, we actually rebuild packages and compare them bitwise to historical build results. When we report on packages being reproducible, it means they have stood the test of time. It also allows us to provide more detailed information on the causes of unreproducibility, in particular by generating diffoscopes and saving them for future research as part of our dataset; whereas diffoscopes from the Debian R-B tracking are not preserved in the long-term. Finally, Bajaj et al. used issue tracker data from the R-B project to identify the most common causes of non-reproducibility, possibly introducing a sampling bias since only root causes that were identified by Debian developers are counted in their statistics. In our work, we try to avoid this bias by performing a large-scale automatic analysis of diffoscopes to automatically identify the prevalence of a selection of causes of non-reproducibility. While we present heuristics comparable to some of the causes identified in Bajaj et al. ’s taxonomy, we derive them from empirical data rather than relying on pre-labeled data from the Debian issue tracker.

The only other work that performed an experimental study of R-B investigated the impact of configuration options [randrianaina_options_2024]. Contrary to them, we rebuild historical versions of packages in their default configuration. Combining the historical snapshot approach of our work with their approach of varying the configuration options could be an interesting future work.

### II-B Linux distributions and package ecosystems

Besides R-B, our work also relates to the literature on Linux distributions and package ecosystems. The nixpkgs repository being the largest cross-ecosystem software distribution, we are able to compare properties of packages across ecosystems. Several previous works have compared package ecosystems (e.g., [decan_empirical_2019]). For an overview of recent research on package ecosystems, see Mens and Decan [mens_overview_2024].

More specifically, nixpkgs is the basis of the NixOS Linux distribution. Linux distributions have a long history of being studied by the research community. Recently, Legay et al. [legay_quantitative_2021] measured the package freshness in Linux distributions. While this is not the topic of this work, our dataset could be used, e.g., to study how frequently packages are updated in nixpkgs.

## III Background

We provide in this section some background knowledge about Nix and R-B, which is required to understand the details of our experiments.

### III-A The FPM model and the Nix store

The FPM model applies the idea of functional programming to package management. Nix packages are viewed as pure functions from their inputs (source code, dependencies, build scripts) to their outputs (binaries, documentation, etc.). Any change to the inputs should produce a different package version. Nix allows multiple versions of the same package to be built and coexist on the same system. To that end, Nix stores build outputs in input-addressed directories (using a hashing function of the inputs) in the Nix store, usually located in the /nix/store directory on disk. Figure 1 shows an example of a Nix packaging expression (a Nix recipe) for the htop package.

⬇ {stdenv, fetchFromGitHub, ncurses, autoreconfHook}: stdenv. mkDerivation rec { pname = "htop"; version = "3.2.1"; src = fetchFromGitHub { owner = "htop-dev"; repo = "htop"; rev = version; sha256 = "sha256-MwtsvdPHcUdegsYj9NGyded5XJQxXri1IM1j4gef1Xk="; }; nativeBuildInputs = [ autoreconfHook ]; buildInputs = [ ncurses ]; }; }

Figure 1: Example Nix expression for the htop package.

### III-B Nix evaluation-build pipeline

Building binary outputs from a Nix package recipe is a two-step process. First, Nix evaluates the expression and transforms it into a derivation, an intermediary representation in the Nix store containing all the necessary information to run the build process. In particular, the derivation contains ahead of time the (input-addressed) output path, that is the exact location in the Nix store where the build artifacts will be stored if that derivation were to be built.

Then, given the derivation file as input, the nix-build command performs the build, creating the pre-computed output path in the Nix store upon completion.

In the same fashion as other Linux distributions, Nix packages may produce multiple outputs (a main output with binaries, one with documentation, etc.). Each output has its own directory in the Nix store, and building the derivation from source systematically produces all its outputs.

### III-C Path substitution and binary caches

Alternatively to building from source, Nix offers the option to download prebuilt artifacts from third party binary caches, which are databases populated with build outputs generated by Nix. Binary caches are indexed by output paths, making it possible for Nix to check for the presence of a precompiled package in a configured cache after the evaluation phase. https://cache.nixos.org is the official cache for the Nix community and most Nix installations come configured to use it as a trusted cache.

### III-D The nixpkgs continuous integration

Hydra [dolstra_nix_nodate] is the continuous integration (CI) platform for the nixpkgs project. At regular intervals in time, it fetches the latest version of nixpkgs’ git master branch and evaluates the pkgs/top-level/release.nix file embedded in the repository. This evaluation yields a list of derivations (or jobs) that are then built by Hydra: one derivation for each of the $≈$ $100 000$ packages contained in nixpkgs nowadays. Upon success of a predefined subset of these jobs, the revision is deemed valid and all the built artifacts are uploaded to the official binary cache to be available to end users.

### III-E Testing bitwise reproducibility with Nix

Nix embarks some minimal tooling to test the reproducibility of a given derivation in the form of a --check flag passed to the nix-build command. To check for bitwise reproducibility, Nix needs a reference that it will try to acquire from one of the configured caches, or fail if not possible. Nix then acquires the build environment of the derivation under consideration, builds the derivation, and compares each of the outputs of the derivation against the local version. The --keep-failed flag can be used to instruct Nix to keep the unreproducible outputs locally for further processing. For our experiment, we alter the behavior of nix-build --check to prevent it from failing early as soon as one unreproducible output is detected.

### III-F Diffoscope

Diffoscope [noauthor_diffoscope_nodate] is a tool developed and maintained by the Reproducible Builds project that aims to simplify the analysis of differences between software artifacts. It is able to recursively unpack binary archives and automatically use ecosystem specific diffing tools to allow for better understanding of what makes two software artifacts different. It generates HTML or JSON artifacts—also called diffoscopes —that can be either interpreted by humans or automatically processed.

## IV Methodology

<details>

<summary>extracted/6418685/medias/pipelinev2.png Details</summary>

### Visual Description

## Diagram: Nixpkgs Reproducibility Analysis Pipeline

### Overview

This diagram illustrates a technical workflow for analyzing the reproducibility of software packages within the Nixpkgs ecosystem. It compares historical Continuous Integration (CI) builds against fresh rebuilds to determine if package artifacts are identical, thereby assessing reproducibility. The process involves sampling revisions from a git repository, executing parallel build pipelines, and performing comparative analysis with follow-up diagnostic steps.

### Components/Axes

The diagram is structured into three primary colored regions and two input nodes, connected by directional arrows indicating data and process flow.

**Input Nodes (Left Side):**

1. **Nixpkgs git repository** (Purple box, bottom-left): The source of package definitions.

2. **Nixpkgs revision** (Purple rounded rectangle, center-left): A specific point-in-time snapshot of the repository. An arrow labeled "Sample 17 revisions" points from the repository to this node, indicating the sampling process.

**Process Regions:**

1. **Historical CI Builds** (Red dashed-border region, top-left): Represents the existing, official build process.

2. **Our Rebuilds** (Green dashed-border region, bottom-left): Represents a parallel, independent rebuild process for comparison.

3. **Our Analysis** (Blue dashed-border region, right): The core analysis engine that compares outputs from the two build processes.

**Legend/Status Indicators:**

* **Status: reproducible** (Green text): Outcome when compared artifacts are identical.

* **Status: buildable but not reproducible** (Orange text): Outcome when compared artifacts are different.

* **Status: failure** (Red text): Outcome when a rebuild fails.

### Detailed Analysis

**Flow from Input:**

The "Nixpkgs revision" node feeds into both the "Historical CI Builds" and "Our Rebuilds" regions simultaneously.

**1. Historical CI Builds (Red Region) - Top-Left Quadrant:**

* **Step 1:** "Evaluation of release.nix by Hydra" (Red box).

* **Step 2:** Arrow leads to "Build all packages using Hydra builders" (Red box).

* **Conditional Step:** A dashed arrow labeled "If build succeeds" and "upload artifacts" leads to "Nixpkgs' binary cache" (Red box). This represents the storage of official build artifacts.

**2. Our Rebuilds (Green Region) - Bottom-Left Quadrant:**

* **Step 1:** "Evaluation of release.nix by nix-eval-jobs" (Green box).

* **Step 2:** Arrow leads to "Computation of evaluation closure and filtering out packages not in cache" (Green box).

* **Step 3:** Arrow leads to "Build all packages using our Buildbot infrastructure" (Green box).

* **Conditional Outcomes:**

* A green arrow labeled "Success" leads to "Store artifacts" (Green box).

* A red arrow labeled "Failure" leads to the status "Status: failure".

**3. Our Analysis (Blue Region) - Right Half:**

* **Input:** Dotted arrows from both "Nixpkgs' binary cache" (Historical) and "Store artifacts" (Rebuilds) converge on the "Compare artifacts" node (Blue box).

* **Comparison Logic:**

* A green arrow labeled "Identical" leads to "Status: reproducible".

* An orange arrow labeled "Different" leads to "Status: buildable but not reproducible".

* **Diagnostic Path (for "Different" outcome):** An arrow from the "Different" status leads to a decision point labeled "If the package is fixed later on". This branches into two parallel diagnostic workflows:

* **Left Branch:** "Bisect to find fixing commit" (Blue box) -> "Manually analyze commit to infer intent" (Blue box).

* **Right Branch:** "Generate Diffoscopes" (Blue box) -> "Run heuristics to categorize them" (Blue box).

### Key Observations

1. **Parallel Process Design:** The core methodology is a side-by-side comparison between a trusted historical build (Hydra) and an independent rebuild (Buildbot). This is a standard approach for testing reproducibility.

2. **Tool Differentiation:** The diagram specifies different tools for each pipeline: "Hydra" for historical builds and "nix-eval-jobs" + "Buildbot" for rebuilds. This independence is crucial for a valid reproducibility test.

3. **Filtering Step:** The rebuild process includes an explicit step to filter out packages not present in the cache, suggesting the analysis focuses on packages that were successfully built historically.

4. **Multi-faceted Diagnostics:** When a package is not reproducible, the workflow doesn't stop. It includes sophisticated follow-up actions: bisecting git history to find fixes and using "Diffoscopes" (a tool for comparing binary files) with heuristics to categorize differences.

5. **Clear Status Taxonomy:** The system defines three clear, mutually exclusive outcomes for any given package build: reproducible, buildable but not reproducible, or failure.

### Interpretation

This diagram outlines a rigorous, automated methodology for auditing software supply chain security and reliability within the Nix package manager ecosystem. Reproducibility is a critical property ensuring that the same source code always produces bit-for-bit identical binaries, which is foundational for security verification, trust, and debugging.

The process demonstrates a **Peircean investigative approach**:

* **Abduction:** The initial hypothesis is that packages *should* be reproducible. The comparison test is designed to probe this.

* **Deduction:** If the hypothesis is true (artifacts are identical), the status is confirmed ("reproducible"). If false (artifacts differ), it triggers a specific diagnostic routine.

* **Induction:** The diagnostic steps (bisecting, diffoscope analysis) gather new evidence to understand *why* reproducibility failed, potentially leading to generalizable rules about common failure modes (categorized by heuristics).

The "If the package is fixed later on" branch is particularly insightful. It shows the system is designed not just to detect problems, but to investigate their resolution, turning a failure into a learning opportunity to understand what kinds of changes break reproducibility and how they are fixed. This moves beyond simple monitoring into active forensic analysis of the build process's integrity.

</details>

Figure 2: Description of our build and analysis pipeline.

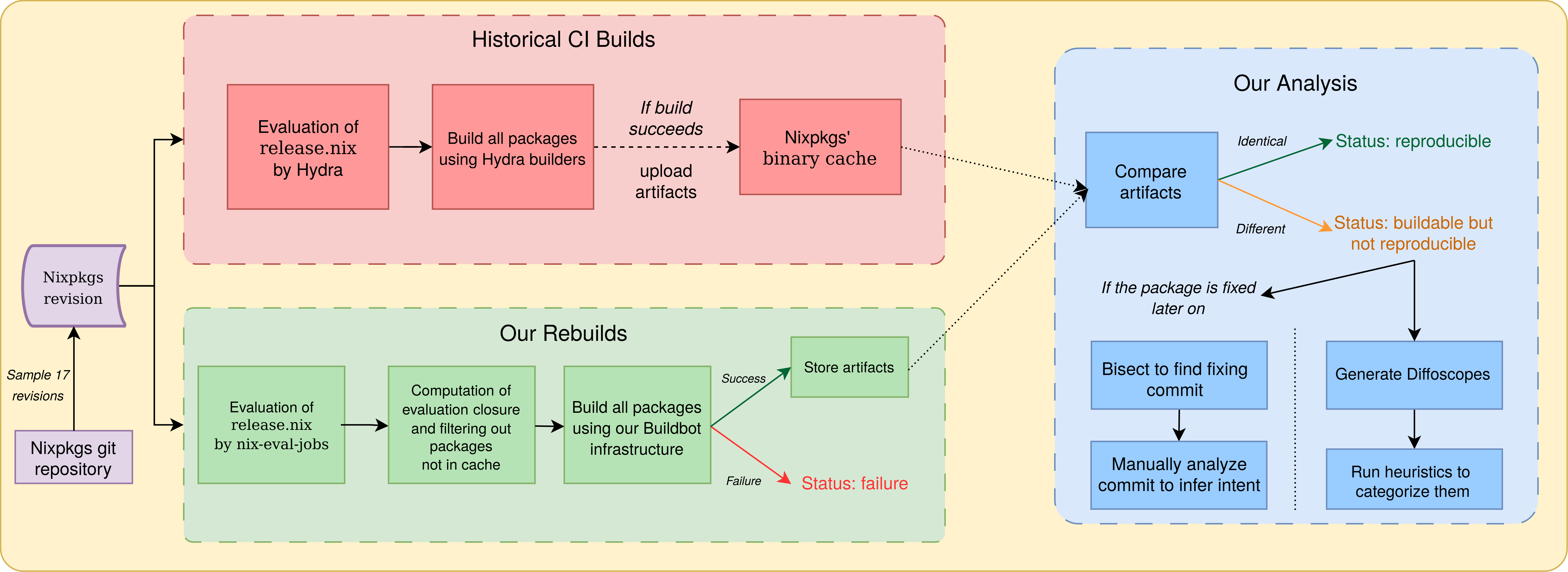

Our build and analysis pipeline is summarized in Figure 2.

### IV-A Reproducibility experiments

#### IV-A 1 Revision sampling

We start from the 200 nixpkgs revisions selected by Malka et al. [malka_reproducibility_2024] in the period July 2017–April 2023. Since building revisions, as opposed to just evaluating them, is very computationally intensive, it was not feasible to build all 200 revisions. Also, it was difficult to correctly estimate how many revisions we could build, due to the ever-growing number of packages in each revision. We hence applied dichotomic sampling: we first build the most recent revision, then the oldest one, then the one in the middle of them, and so on always picking the one in the middle of the largest time interval when choosing. After 17 revisions built, we obtain a regularly spaced sample set of nixpkgs revisions, with one sampled revision every 4.1 months. On average, each revision corresponds to building more than 41 thousand packages (see Figure 3 for details, discussed later).

To perform our builds, we used a distributed infrastructure based on Buildbot [noauthor_buildbot_nodate], a Python CI pipeline framework. Our infrastructure has two types of machines: a coordinator and multiple builders. The coordinator is in charge of distributing the workload and storing data that must be persisted, while the builders are stateless and perform workloads sent by the coordinator. During the course of the experiment (from June to October 2024) the set of builders we used was composed of shared bare-metal machines running various versions of Ubuntu and Fedora and our coordinator was a virtual machine running Ubuntu. Note that to perform our bitwise reproducibility checks, we compare to the historical results coming from Hydra, that uses builders that, at the time, ran older versions of Nix than the ones we used on our builders. Further details on the operating systems, Nix versions and kernel versions that we used on builders can be found in the replication package.

#### IV-A 2 Evaluation and preprocessing

For each revision considered, the coordinator first evaluates pkgs/top-level/release.nix (containing the list of jobs built by Hydra for this revision) using nix-eval-jobs, a standalone and parallel Nix evaluator similar to the one used on Hydra. The outcome of this operation is a list of derivations. The release.nix file is human crafted and does not contain all the dependencies of the listed packages, even though they are built by Hydra along the way. Since we are interested in testing the reproducibility of the entire package graph built by Hydra, we post-process the list of jobs obtained after the evaluation phase to include all intermediary derivations by walking through the dependency graph of each derivation. During this post-processing, we also check that the derivation outputs are present in the official Nix binary cache. This is required to compare our build outputs with historical results for bitwise reproducibility. Derivations missing from the cache can indicate that they historically failed to build, although there can be other reasons for their absence.

#### IV-A 3 Building

To build a job $A$ and test the reproducibility of its build outputs, the builder uses the nix-build --check command, as described in Section III-E. This means that we always assume that $A$ ’s build environment is buildable and always fetch it from the cache. This allows all the derivations in the sample set to be built and checked independently and in parallel, irrespective of where they are located in the package dependency graph. Note that the source code of packages to build is part of the build environment. Relying on the NixOS cache hence avoids incurring into issues such as source code disappearing from the original upstream distribution place. Investigating how much of NixOS can be rebuilt without relying on the cache is an interesting research question, recently explored for Guix [courtes-2024-guix-archiving], but out of scope for this paper.

After each build, we classify the derivation as either building reproducibly, building but not reproducibly or not building. We save the build metadata and the logs, and when available we download and store the historical build logs from the nixpkgs binary cache. Finally, for every unreproducible output path, we store both the historical artifacts and our locally built ones, for comparison purposes.

### IV-B Ecosystem identification and package tracking

To answer RQ1, RQ2 and RQ4, we need to be able to discriminate packages by provenance ecosystem, and track them over time to follow their evolution. To categorize packages by ecosystem, we rely on the first component of the package name when it has several components (for example a package named haskellPackages.network is sorted into the Haskell ecosystem). Sometimes, there are several co-existing versions of an ecosystem in a given nixpkgs revision (for example python37Packages and python38Packages being present in the same revision), and sometimes the name of the ecosystem is modified between successive nixpkgs revisions. Therefore, some deduplication step is necessary. The first and last authors performed this step manually by inspecting the 144 ecosystems from the 17 nixpkgs revisions considered, ordered alphabetically, and deciding which ones to merge independently, then checking the consistency of their results, discussing the few differences (missed merges, or false positives) and reaching a consensus. For instance, the following ecosystems were merged into a single one: php56Packages, php70Packages, …, php82Packages, phpExtensions, phpPackages and phpPackages-unit.

To deduplicate packages appearing in several versions of the same ecosystem, we order by version (favoring the most recent one) and consider any package set without a version number as having a higher priority (since it is the default one in the considered revision, as chosen by the nixpkgs maintainers).

### IV-C Comparison with the minimal ISO image

As part of RQ2, we investigate the difference of reproducibility rate between critical packages whose reproducibility is monitored and the rest of the package set. We are also interested in knowing whether observing the reproducibility health of this subset of packages gives a good enough information on the state of the rest of the project. The minimal and gnome-based ISO images are considered critical subsets of packages and benefit from a community-maintained reproducibility monitoring. We study the minimal ISO image because it contains a limited amount of core packages. We evaluate the Nix expression associated with the image, compute its runtime closure (the set of packages included in the image) and match it with the packages of our dataset to infer their reproducibility statuses.

### IV-D Analyzing causes of unreproducibility using diffoscopes

Analyzing causes of unreproducibility is a tricky debugging activity, usually carried out by practitioners (in particular, by Linux distribution maintainers and members of the Reproducible Builds project). Some automatic fault localization methods have been proposed [ren_root_2020], but they rely on instrumenting the build, while we have to run the Nix builds unchanged to avoid introducing biases.

For each unreproducible output, we run diffoscope with a 5-minute timeout, yielding a dataset of $86 476$ diffoscopes. We then investigate whether we can use our large dataset of diffoscopes for automatic detection of causes of non-reproducibility. The diffoscope tool was mainly designed to help human debugging, but it also supports producing a JSON output, which can then be machine processed.

We wish to explore heuristics that can be applied at the line level, so we recurse through diffoscope structures until leaf nodes, which are diffs in unified diff format. We randomly draw one added line from $10 000$ diffoscopes, sort them by similarity to ease visual inspection, and manually inspect them to derive relevant heuristics. We then run these heuristics on the full diffoscope dataset to determine the proportion of packages impacted by each cause (multiple causes can apply to the same package). The first and last author then evaluate the precision of each these heuristics by manually counting false positives in samples of matched lines for each heuristic.

### IV-E Automatic identification of reproducibility fixes

To investigate fixes to unreproducibilities, for each unreproducible package that becomes reproducible, we run an automatic bisection process to find the first commit that fixes the reproducibility. By looking into the corresponding pull request on GitHub, we check if the maintainers provide information on why this fixes a reproducibility issue, or link to a corresponding bug report. In particular, we are interested to check how often the maintainers are aware that their commit is a reproducibility fix (as opposed to a routine package update, which embeds a reproducibility fix that would have been crafted by the upstream maintainers).

We start from the set of packages from all revisions, and we look specifically at packages that change status from unreproducible to reproducible in two successive revisions. These are our candidate packages (and “old” and “new” commits) for the bisection process.

Since we perform the bisection process on a different runner and at a different time compared to the dataset creation, it can happen that we cannot reproduce the status (reproducible or unreproducible) of some builds. Therefore, before starting the bisection, we verify that we obtain consistent results on the “old” and the “new” revision. Then, for those which behave as expected, we start an automatic git bisect process.

The script used for the automatic git bisect checks for the reproducibility status of the build to mark the selected commit as “old” or “new”. Commits that fail to build or are not available in cache are marked as “skipped”. We use git bisect with the --first-parent flag because intermediate pull request commits are typically not in cache.

For the qualitative analysis, we first group packages by fixing commit, as seeing all packages fixed by a given commit gives valuable information that might help to understand the reproducibility failure being fixed. We then randomly sample fixes and open their commit page on GitHub, locating the corresponding pull request. We manually inspect the pull request (description, commit log, code changes) to first confirm that the bisect phase successfully identified the commit that fixed the reproducibility failure. It may be the case that after careful inspection, the change looks unrelated to the package being fixed (the bisection process can give incoherent results in case of a flaky reproducibility issue or because the package changed status several times between two data points) in which case we discard it. Once we have confirmed that the identified commit is correct, we check whether the commit authors indicate that they are fixing a reproducibility issue and if the commit is a package update or another change. We analyze 100 randomly sampled reproducibility fixes and report our findings. Additionally, we perform the same analysis on the 15 commits that fix the most packages (from $3052$ down to 27 packages fixed) to find potential differences of behavior of the contributors for those larger-scale fixes.

## V Dataset

The main result of running the pipeline of Figure 2 is a large-scale dataset of historical package rebuilds, including (re)build information, bitwise reproducibility status and, in case of non-reproducibility, generated diffoscopes. In this paper, we use the dataset to answer our stated research questions, but many other research questions could be addressed using the dataset, including more in-depth analysis of non-reproducibility causes. We make the dataset available to the research and technical community to foster further exploration on the topic. In the remainder of this section, we provide some descriptive statistics of the dataset.

<details>

<summary>extracted/6418685/paper-images/package-evolution.png Details</summary>

### Visual Description

## Stacked Area Chart: Nix Package Evaluation Growth (2018-2023)

### Overview

This is a stacked area chart illustrating the growth in the number of packages evaluated by three different methods within the Nix ecosystem from early 2018 to mid-2023. The chart shows a consistent upward trend for all metrics, with a particularly sharp increase in 2023.

### Components/Axes

* **Chart Type:** Stacked Area Chart.

* **X-Axis (Horizontal):** Labeled "Date". It displays years from 2018 to 2023, with tick marks at the start of each year. The data appears to be plotted at regular intervals (likely quarterly or bi-annually) within each year.

* **Y-Axis (Vertical):** Labeled "Number of packages". The scale is linear, ranging from 0 to over 70,000, with major gridlines at 10,000 increments (0, 10k, 20k, ..., 70k).

* **Legend:** Positioned at the bottom center of the chart, below the x-axis label. It defines three data series:

1. **Deduplicated evaluation:** Represented by a blue line and area.

2. **Evaluation of release.nix:** Represented by an orange/red line and area.

3. **Evaluation closure of release.nix:** Represented by a green/teal line and area.

* **Data Series Order (from bottom to top in the stack):** Blue (Deduplicated), then Orange (Evaluation of release.nix), then Green (Evaluation closure). The total height of the stacked areas represents the cumulative count for the top series (Green).

### Detailed Analysis

**Trend Verification & Data Points (Approximate Values):**

* **Deduplicated evaluation (Blue - Bottom Layer):**

* **Trend:** Shows a steady, near-linear increase throughout the period.

* **Data Points:**

* Early 2018: ~13,000

* Early 2019: ~19,000

* Early 2020: ~22,000

* Early 2021: ~26,000

* Early 2022: ~31,000

* Early 2023: ~45,000 (sharp increase)

* Mid-2023: ~43,000 (slight dip from peak)

* **Evaluation of release.nix (Orange - Middle Layer):**

* **Trend:** Increases steadily, with a slight plateau/dip around 2019, followed by consistent growth and a sharp rise in 2023.

* **Data Points (Cumulative, i.e., Blue + Orange):**

* Early 2018: ~18,000 (Orange layer adds ~5k)

* Early 2019: ~25,000 (Orange layer adds ~6k)

* Early 2020: ~31,000 (Orange layer adds ~9k)

* Early 2021: ~37,000 (Orange layer adds ~11k)

* Early 2022: ~41,000 (Orange layer adds ~10k)

* Early 2023: ~55,000 (Orange layer adds ~10k)

* Mid-2023: ~54,000 (Orange layer adds ~11k)

* **Evaluation closure of release.nix (Green - Top Layer):**

* **Trend:** Shows the most pronounced growth, especially after 2020. It exhibits a significant jump in early 2023.

* **Data Points (Cumulative Total, i.e., Blue + Orange + Green):**

* Early 2018: ~20,000 (Green layer adds ~2k)

* Early 2019: ~31,000 (Green layer adds ~6k)

* Early 2020: ~37,000 (Green layer adds ~6k)

* Early 2021: ~45,000 (Green layer adds ~8k)

* Early 2022: ~50,000 (Green layer adds ~9k)

* Early 2023: ~76,000 (Green layer adds ~21k - massive increase)

* Mid-2023: ~75,000 (Green layer adds ~21k)

### Key Observations

1. **Consistent Hierarchy:** The "Evaluation closure of release.nix" (Green) always represents the largest number of packages, followed by "Evaluation of release.nix" (Orange), with "Deduplicated evaluation" (Blue) being the smallest subset. This hierarchy is maintained throughout the entire timeline.

2. **Accelerated Growth in 2023:** All three metrics show a dramatic, simultaneous increase in early 2023. The slope of the green line is particularly steep, indicating a major expansion in the evaluation closure.

3. **Steady Underlying Growth:** Prior to 2023, the growth was more gradual and linear, especially for the deduplicated evaluation (blue).

4. **Proportional Relationship:** The gaps between the lines (representing the size of each layer) generally widen over time, suggesting that the more comprehensive evaluation methods (Orange and Green) are growing at a faster rate than the deduplicated core (Blue).

### Interpretation

This chart demonstrates the significant and accelerating growth of the Nix package ecosystem over a five-year period. The data suggests:

* **Ecosystem Expansion:** The total number of packages being evaluated (as shown by the top green line) has more than tripled from ~20,000 in 2018 to over 75,000 in 2023. This indicates a healthy, rapidly expanding repository.

* **Evaluation Complexity:** The consistent gap between the "Deduplicated evaluation" and the other two series highlights that a substantial portion of the evaluated packages are not unique to a specific release file but are part of a larger dependency closure. The widening gap implies that the dependency trees or the scope of what is considered part of a "release" is growing.

* **Major Event in 2023:** The sharp, concurrent spike in all metrics in early 2023 points to a specific event—likely a major repository restructuring, a change in evaluation methodology, or the integration of a very large new set of packages or dependencies. The fact that the green "closure" layer saw the largest absolute increase suggests this event particularly impacted the broader dependency graph.

* **Peircean Insight:** The chart doesn't just show growth; it shows *structured* growth. The maintained hierarchy acts as a sign that the fundamental relationship between these evaluation methods is stable, even as the scale changes. The 2023 anomaly is an index of a significant underlying change in the system being measured.

</details>

Figure 3: Evolution of the number of packages in each nixpkgs revision (as defined by release.nix), in their evaluation closure and after deduplicating ecosystem copies.

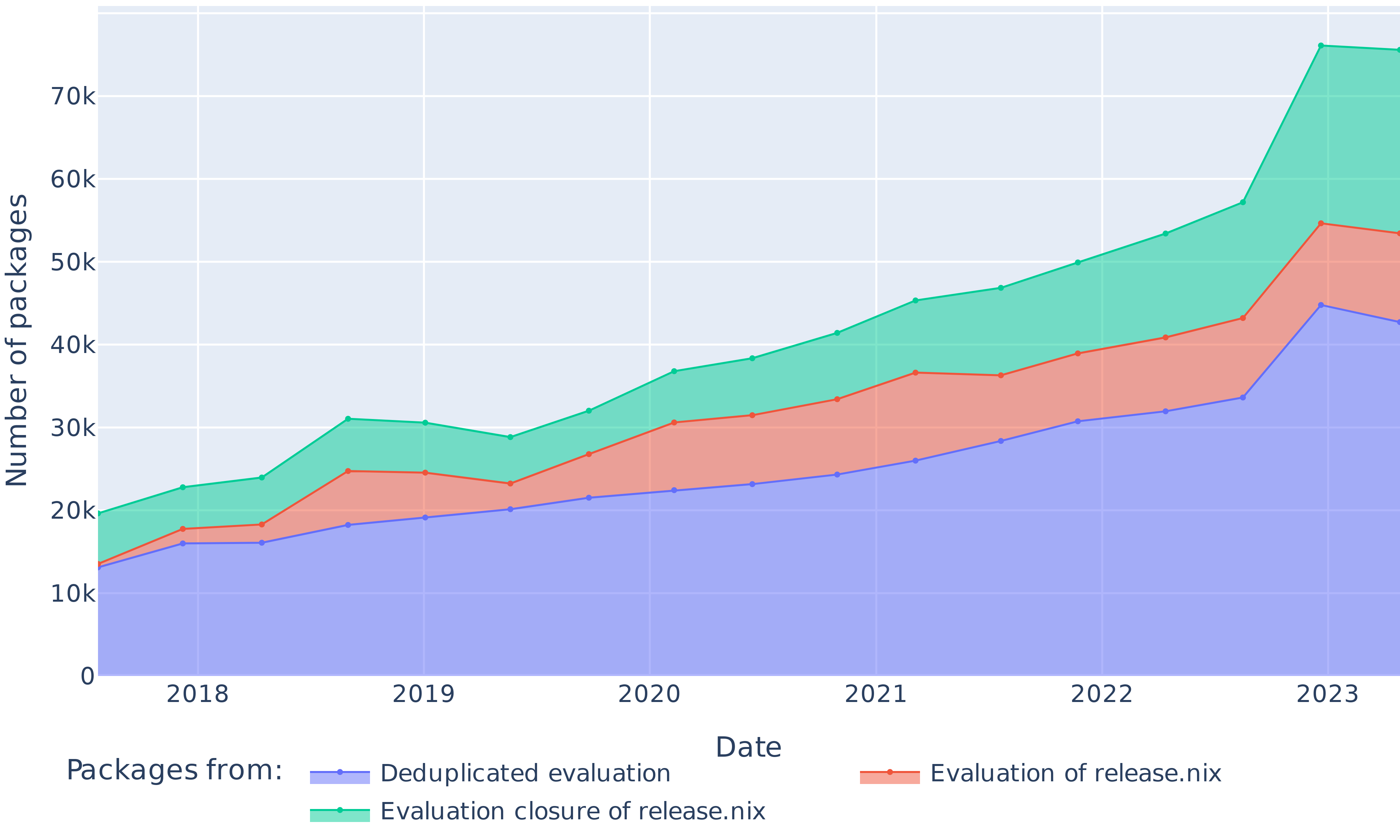

The dataset spans $709 816$ package builds coming from 17 nixpkgs revisions, built over a total of $14 296$ hours. From those builds, $548 390$ are coming directly from the release.nix file and can be tracked by name. They correspond to $58 103$ unique packages that appear over the span of sampled revisions. As can be seen on Figure 3, the number of packages listed to be built by Hydra increased from $13 527$ in 2017 to $53 432$ in 2023. Ecosystems can be present in multiple versions. On average, deduplicating packages in multiple ecosystem copies decreases their number by 21% while adding the evaluation closure of the release.nix file increases the number of jobs by 29%.

<details>

<summary>extracted/6418685/paper-images/ecosystems.png Details</summary>

### Visual Description

## Stacked Area Chart: Growth of Job Numbers Across Software Ecosystems (2018-2023)

### Overview

This is a stacked area chart illustrating the growth in the "Number of jobs" associated with various software ecosystems over a period from early 2018 to mid-2023. The chart shows a consistent upward trend in total jobs, with a particularly sharp increase occurring in 2023. Each colored band represents the contribution of a specific ecosystem to the total job count.

### Components/Axes

* **Chart Type:** Stacked Area Chart.

* **X-Axis (Horizontal):** Labeled "Date". Major tick marks denote the start of each year: 2018, 2019, 2020, 2021, 2022, 2023. Data points appear to be plotted quarterly or bi-annually.

* **Y-Axis (Vertical):** Labeled "Number of jobs". Scale ranges from 0 to 45,000 (45k), with major gridlines at 5k increments (0, 5k, 10k, 15k, 20k, 25k, 30k, 35k, 40k, 45k).

* **Legend:** Positioned at the bottom center of the chart, below the x-axis label. It lists 11 ecosystems with corresponding color swatches. The order in the legend (left to right, top to bottom) is:

1. `other ecosystems` (Light blue-purple)

2. `base` (Salmon/Red-orange)

3. `haskell` (Teal/Green)

4. `python` (Light purple)

5. `perl` (Light orange)

6. `emacsPackages` (Cyan/Light blue)

7. `vimPlugins` (Pink)

8. `lisp` (Light green)

9. `gnome` (Light pink)

10. `xorg` (Yellow)

11. `linux` (Darker blue-purple)

### Detailed Analysis

The chart is stacked, meaning the height of each colored band at a given time represents its individual contribution, and the top line represents the cumulative total. The stacking order from bottom to top is: `other ecosystems`, `base`, `haskell`, `python`, `perl`, `emacsPackages`, `vimPlugins`, `lisp`, `gnome`, `xorg`, `linux`.

**Trend Verification & Data Points (Approximate):**

* **Total Jobs (Top Line):** Shows a steady, near-linear increase from ~13k in early 2018 to ~34k by late 2022. In 2023, there is a dramatic spike, peaking at approximately 45k before a slight decline to ~43k by the chart's end.

* **Ecosystem Breakdown (Bottom to Top):**

* **`other ecosystems` (Light blue-purple):** Forms the base layer. Grows slowly and steadily from ~1k (2018) to ~5k (2023).

* **`base` (Salmon):** The largest single contributor throughout. Grows consistently from ~6.5k (2018, total ~7.5k) to ~15k (2022, total ~20k). In 2023, its contribution expands sharply to ~17k (total ~22k).

* **`haskell` (Teal):** The second-largest contributor. Grows from ~5k (2018, total ~12.5k) to ~7k (2022, total ~27k). In 2023, it expands to ~8k (total ~30k).

* **`python` (Light purple):** Shows significant growth. Starts at ~2k (2018, total ~14.5k), grows to ~4k (2022, total ~31k), and expands to ~5k in the 2023 spike (total ~35k).

* **`perl` (Light orange):** Moderate, steady growth. Contributes ~1k in 2018, ~2k in 2022, and ~2.5k during the 2023 peak.

* **`emacsPackages` (Cyan):** Similar growth pattern to perl. Contributes ~0.5k in 2018, ~1.5k in 2022, and ~2k in the 2023 spike.

* **`vimPlugins` (Pink):** Shows a very sharp increase in 2023. Was a thin band (~0.5k) for years, but during the 2023 spike, its contribution expands dramatically to ~4k.

* **`lisp` (Light green):** Also shows a dramatic 2023 expansion. Was minimal (~0.2k) pre-2023, but during the spike, it becomes a major contributor, adding ~4k.

* **`gnome` (Light pink), `xorg` (Yellow), `linux` (Darker blue-purple):** These form the top three thin bands. Their individual contributions remain relatively small and stable (each <1k) throughout the period, with slight increases visible during the 2023 total spike.

### Key Observations

1. **Dominant Ecosystems:** The `base` and `haskell` ecosystems are the foundational and largest contributors to job numbers throughout the entire period.

2. **Steady Growth Phase (2018-2022):** All ecosystems show gradual, consistent growth, leading to a total job count that more than doubled from ~13k to ~34k.

3. **2023 Anomaly/Surge:** There is a pronounced, simultaneous expansion in the contribution of nearly all ecosystems in 2023, causing the total to jump from ~34k to a peak of ~45k. This surge is most visually dramatic for `vimPlugins` and `lisp`, which transition from minor to major contributors in this short period.

4. **Stacking Clarity:** The chart effectively shows both the total growth and the changing composition of that total over time.

### Interpretation

This chart likely visualizes data from a package repository or job market platform tracking software development activity. The "Number of jobs" probably refers to build jobs, dependency resolution tasks, or job postings requiring specific ecosystem skills.

* **What the data suggests:** The consistent growth from 2018-2022 indicates a healthy, expanding overall ecosystem. The dramatic 2023 spike is the most significant feature. It could represent:

* A major platform or repository update that reclassified or massively increased the count of certain package types (especially `vimPlugins` and `lisp`).

* A viral trend or technological shift that caused a sudden, massive influx of activity or job demand in those specific areas.

* A change in data collection methodology.

* **Relationships:** The stacked nature shows that growth is broad-based; no single ecosystem is solely responsible for the overall trend. However, the `base` ecosystem acts as the stable core, while others like `python`, `vimPlugins`, and `lisp` show more variable, explosive growth potential.

* **Anomalies:** The 2023 spike is the primary anomaly. The near-simultaneous surge across disparate ecosystems (`vimPlugins`, `lisp`, `emacsPackages`) suggests a systemic cause rather than independent, coincidental growth. The slight decline after the peak in 2023 may indicate the initial surge stabilizing.

</details>

Figure 4: Evolution of the size of the nine most popular software ecosystems in nixpkgs, the packages whose ecosystem is undetermined (base), and the packages from other ecosystems (only packages listed in release.nix).

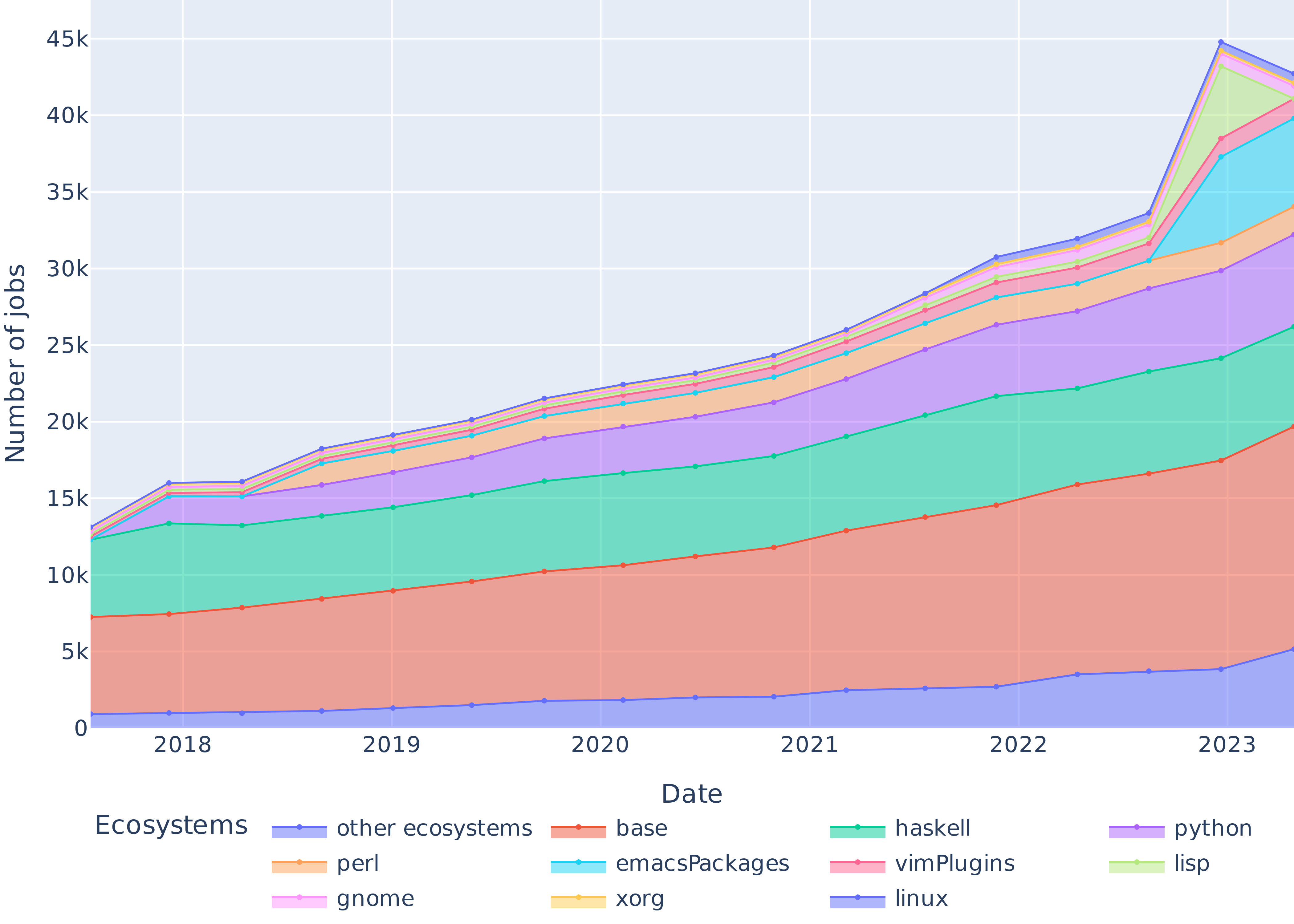

Figure 4 shows the evolution of the top 9 ecosystem sizes in nixpkgs, plus the base namespace. 61.7% of packages belong to an ecosystem, while the rest live in nixpkgs base namespace. The three largest ecosystems are Haskell, Python and Perl, which together account for 42.4% of the packages.

<details>

<summary>extracted/6418685/paper-images/strates.png Details</summary>

### Visual Description

## Stacked Area Chart: Number of Jobs by Package Introduction Revision (2018-2023)

### Overview

This is a stacked area chart tracking the "Number of jobs" over time, from 2018 to 2023. The chart visualizes how the total volume of jobs is composed of contributions from various software packages, each introduced in a specific revision. The overall trend shows a steady increase in total jobs from 2018 to 2022, followed by a dramatic spike in 2023.

### Components/Axes

* **X-Axis (Horizontal):** Labeled "Date". Major tick marks represent years: 2018, 2019, 2020, 2021, 2022, 2023.

* **Y-Axis (Vertical):** Labeled "Number of jobs". Scale ranges from 0 to 45,000 (45k), with increments of 5,000 (5k).

* **Legend:** Positioned at the bottom of the chart, titled "Packages introduced in revision:". It lists 17 distinct packages, each associated with a unique color and a revision number. The legend is organized into three columns.

* **Column 1:** 1377834 (light blue), 1476353 (light purple), 1544614 (pink), 1622813 (yellow), 1724521 (green), 1787891 (cyan).

* **Column 2:** 1416607 (salmon), 1498441 (light orange), 1569427 (light green), 1653283 (medium blue), 1755200 (medium purple), 1794205 (medium pink).

* **Column 3:** 1448353 (teal), 1520586 (light cyan), 1593430 (light pink), 1687868 (dark orange), 1776404 (peach).

### Detailed Analysis

The chart displays 17 stacked layers, each representing the job count attributable to a package from a specific revision. The layers are stacked from bottom to top. The approximate total job count and the trend for each package are as follows:

**Total Job Count Trend:**

* **2018:** ~16,000

* **2019:** ~19,000

* **2020:** ~22,000

* **2021:** ~26,000

* **2022:** ~31,000

* **2023:** ~45,000 (sharp increase)

**Package-Specific Trends (from bottom layer upward):**

1. **1377834 (Light Blue):** The foundational layer. Shows a steady decline from ~13,000 jobs in 2018 to ~9,000 in 2023.

2. **1476353 (Light Purple):** Sits above the blue layer. Also declines, from ~3,000 to ~2,000 jobs.

3. **1544614 (Pink):** Relatively stable band, contributing approximately 2,000 jobs throughout the period.

4. **1622813 (Yellow):** Shows moderate growth, increasing from ~1,000 to ~2,000 jobs.

5. **1724521 (Green):** Demonstrates clear growth, expanding from ~1,000 to ~3,000 jobs.

6. **1787891 (Cyan):** **The most significant layer.** Shows a dramatic, sharp spike in 2023. It grows from ~1,000 jobs in 2018 to an estimated ~4,000+ jobs in 2023, driving the overall total spike.

7. **1498441 (Light Orange) through 1776404 (Peach):** The remaining 11 layers (1498441, 1569427, 1653283, 1755200, 1794205, 1448353, 1520586, 1593430, 1687868, 1776404) collectively form the upper portion of the stack. Each individually contributes a smaller, growing share, typically increasing from a few hundred jobs in 2018 to approximately 1,000-2,000 jobs each by 2023. Their combined growth adds significantly to the total.

### Key Observations

1. **Dominant Growth Driver:** The package from revision **1787891 (cyan)** is the primary outlier, responsible for the disproportionate surge in total jobs in 2023.

2. **Base Layer Decline:** The two earliest packages (1377834, 1476353) show a consistent downward trend, suggesting they are being phased out or superseded.

3. **Broad-Based Growth:** From 2020 onward, nearly all packages introduced in later revisions (from 1569427 onward) show positive growth trends, indicating a diversifying and expanding ecosystem.

4. **Compositional Shift:** The chart illustrates a clear shift in composition. In 2018, the job count was dominated by the earliest packages (1377834, 1476353). By 2023, the contribution is much more distributed across a larger number of newer packages, with 1787891 becoming a major component.

### Interpretation

This chart likely represents the adoption and usage lifecycle of software packages within a specific ecosystem (e.g., a programming language repository, a cloud platform, or an internal toolchain). The "Number of jobs" could refer to computational tasks, build jobs, or deployment instances that depend on these packages.

The data suggests a healthy, evolving system:

* **Innovation and Adoption:** The consistent introduction of new packages (each tied to a revision number) and their subsequent growth in job count indicates active development and user adoption of new tools and libraries.

* **Technology Migration:** The decline of the earliest packages is a natural part of technology evolution, where older solutions are gradually replaced by newer, more efficient, or more feature-rich alternatives.

* **Potential Major Event in 2023:** The explosive growth of package 1787891 in 2023 is the most notable anomaly. This could correspond to a major version release, a critical security update that forced widespread migration, the package becoming a de facto standard for a new popular framework, or integration into a high-volume automated process. The spike is so pronounced it may warrant investigation into the specific events surrounding revision 1787891.

* **Ecosystem Health:** The overall upward trend in total jobs, coupled with the diversification across many packages, points to an expanding user base or increasing intensity of usage within the ecosystem.

</details>

Figure 5: Number of packages introduced by every revision of the dataset and their survival in the package set over time.

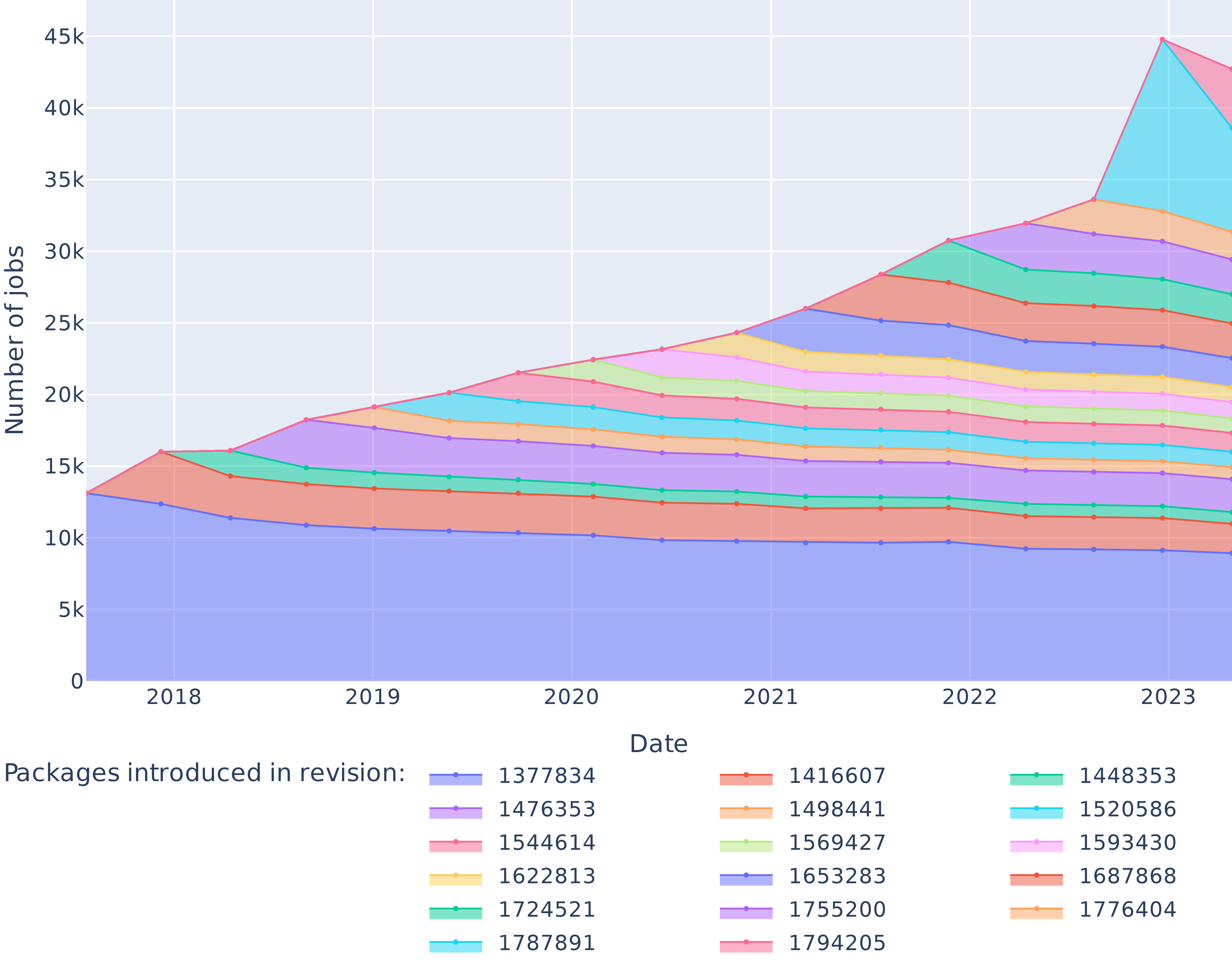

Figure 5 outlines the number of packages introduced in the dataset by each revision, and their survival over time. In particular, as of July 2017 the package set contained $13 114$ elements, $8929$ of which were still present in April 2023.

## VI Results

We present our experimental results below, organized by research question. Their discussion is provided later, in Section VII.

### VI-A RQ0: Does Nix allow rebuilding past packages reliably (even if not bitwise reproducibly)?

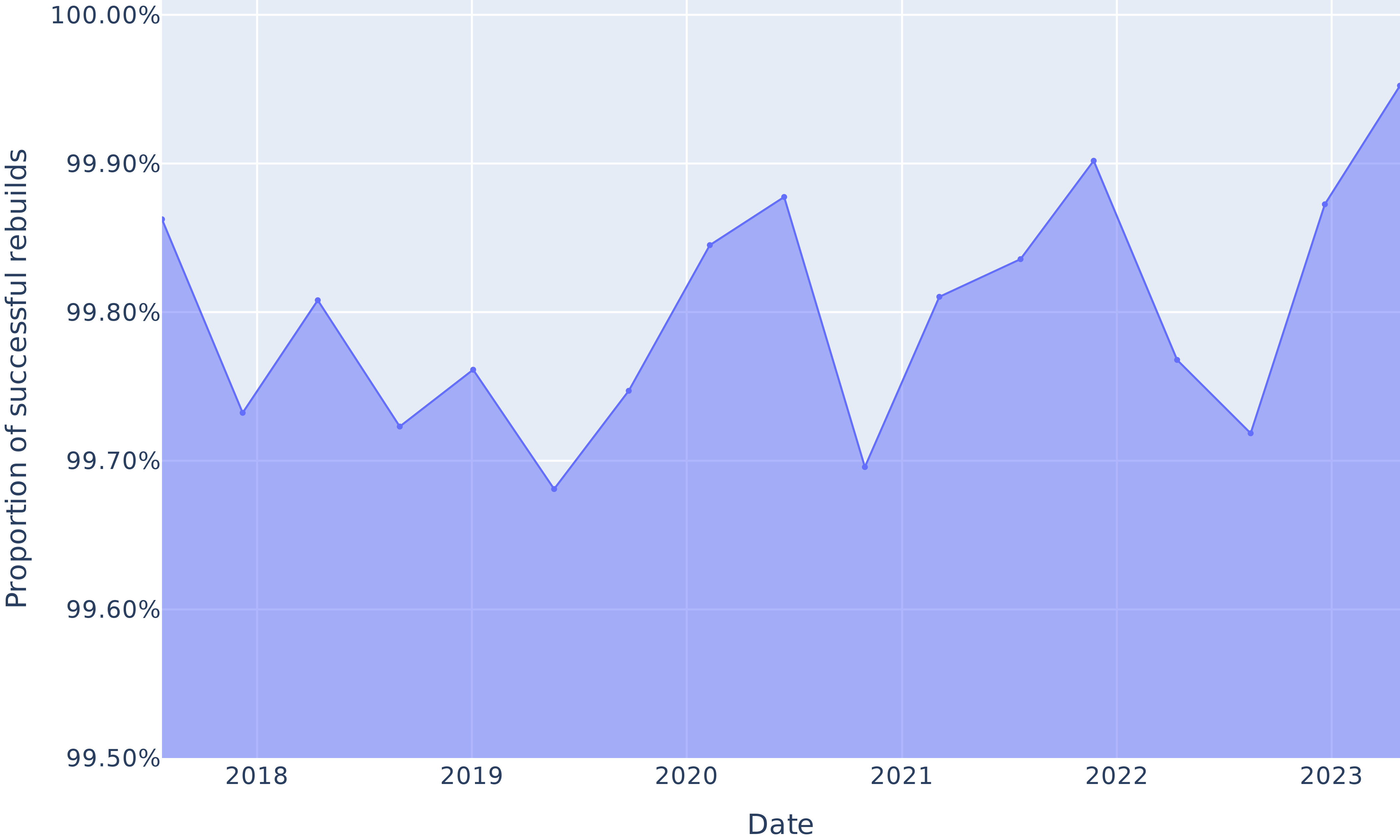

This research question aims to reproduce the results from Malka et al. [malka_reproducibility_2024], as a starting baseline. That earlier work only built one nixpkgs revision, the most ancient in their dataset; in our case, we rebuilt that revision alongside with 16 others, evenly spaced over time to study trends. Figure 6 shows the proportion of packages between 2017 and 2023 that we successfully rebuilt (not necessarily in a bitwise reproducible manner, merely “successfully built” for this RQ).

This proportion varies between 99.68% and 99.95%, confirming previously reported findings: Nix reproducibility of build environments allows for very high rebuildability rate over time. Note that this is not an exact replication of the revision in [malka_reproducibility_2024], because we also included packages not explicitly listed in release.nix, but present in the dependency graph, whereas they did not.

<details>

<summary>extracted/6418685/paper-images/rebuildability.png Details</summary>

### Visual Description

## Area Chart: Proportion of Successful Rebuilds Over Time

### Overview

This is a time-series area chart displaying the proportion of successful rebuilds from early 2018 to late 2023. The chart shows a single data series with a high overall success rate, fluctuating within a narrow band between approximately 99.68% and 99.95%. The area below the line is filled with a light blue color.

### Components/Axes

* **Chart Type:** Area Chart (Line chart with filled area below).

* **X-Axis (Horizontal):**

* **Label:** "Date"

* **Scale:** Time-based, with major tick marks labeled for the years: 2018, 2019, 2020, 2021, 2022, 2023.

* **Range:** Spans from before the "2018" label to after the "2023" label.

* **Y-Axis (Vertical):**

* **Label:** "Proportion of successful rebuilds"

* **Scale:** Percentage, with major grid lines and labels at: 99.50%, 99.60%, 99.70%, 99.80%, 99.90%, 100.00%.

* **Range:** 99.50% to 100.00%.

* **Data Series:**

* **Visual Representation:** A single, continuous blue line connecting data points, with the area beneath it filled in a lighter shade of blue.

* **Legend:** Not present, as there is only one data series.

* **Grid:** A light gray grid is present, with horizontal lines at each labeled Y-axis percentage and vertical lines at each labeled year.

### Detailed Analysis

**Trend Verification:** The line exhibits a volatile, saw-tooth pattern with no single sustained direction. It repeatedly rises and falls within its high-percentage range.

**Data Point Extraction (Approximate Values):**

* **Start of 2018:** ~99.86%

* **Mid-2018:** Dips to ~99.73%

* **Late 2018/Early 2019:** Peaks at ~99.81%

* **Mid-2019:** Dips to ~99.72%

* **Late 2019:** Rises to ~99.76%

* **Early 2020:** Reaches a local low of ~99.68%

* **Mid-2020:** Rises to ~99.75%

* **Late 2020:** Peaks at ~99.88%

* **Early 2021:** Drops sharply to a local low of ~99.70%

* **Mid-2021:** Recovers to ~99.81%

* **Late 2021:** Rises to ~99.84%

* **Early 2022:** Reaches a significant peak at ~99.90%

* **Mid-2022:** Falls to ~99.77%

* **Late 2022:** Dips to ~99.72%

* **Early 2023:** Rises sharply to ~99.88%

* **End of 2023 (Chart Edge):** Reaches the highest visible point, approximately ~99.95%.

### Key Observations

1. **High Baseline Performance:** The proportion of successful rebuilds never falls below ~99.68%, indicating an extremely reliable process.

2. **Cyclical Volatility:** The data shows a repeating pattern of peaks and troughs, suggesting periodic fluctuations in success rates, possibly due to seasonal factors, system updates, or changes in workload.

3. **Notable Peaks:** The highest points occur in late 2020 (~99.88%), early 2022 (~99.90%), and at the end of the series in late 2023 (~99.95%).

4. **Notable Troughs:** The lowest points are in early 2020 (~99.68%) and early 2021 (~99.70%).

5. **Recent Upward Trend:** The final segment from late 2022 to late 2023 shows a strong and sustained upward trajectory, culminating in the highest value on the chart.

### Interpretation

The chart demonstrates a system or process that maintains an exceptionally high level of reliability, with success rates consistently above 99.6%. The "Proportion of successful rebuilds" metric is likely a key performance indicator (KPI) for a technical infrastructure, software deployment pipeline, or manufacturing process.

The observed volatility is the most significant feature. While the baseline is excellent, the regular dips indicate that the process is not perfectly stable. These fluctuations could be investigated to identify root causes—such as specific software releases, infrastructure changes, or increased load periods—that temporarily reduce success rates. The strong recovery and new peak at the end of 2023 suggest that recent improvements or optimizations have been highly effective, pushing the process to its best performance level within the observed timeframe. The narrow Y-axis scale (99.5% to 100%) visually amplifies these small absolute changes, which is appropriate for monitoring a high-reliability system where even a 0.1% change is meaningful.

</details>

Figure 6: Proportion of rebuildable packages over time.

### VI-B RQ1: What is the evolution of bitwise reproducible packages in nixpkgs between 2017 and 2023?

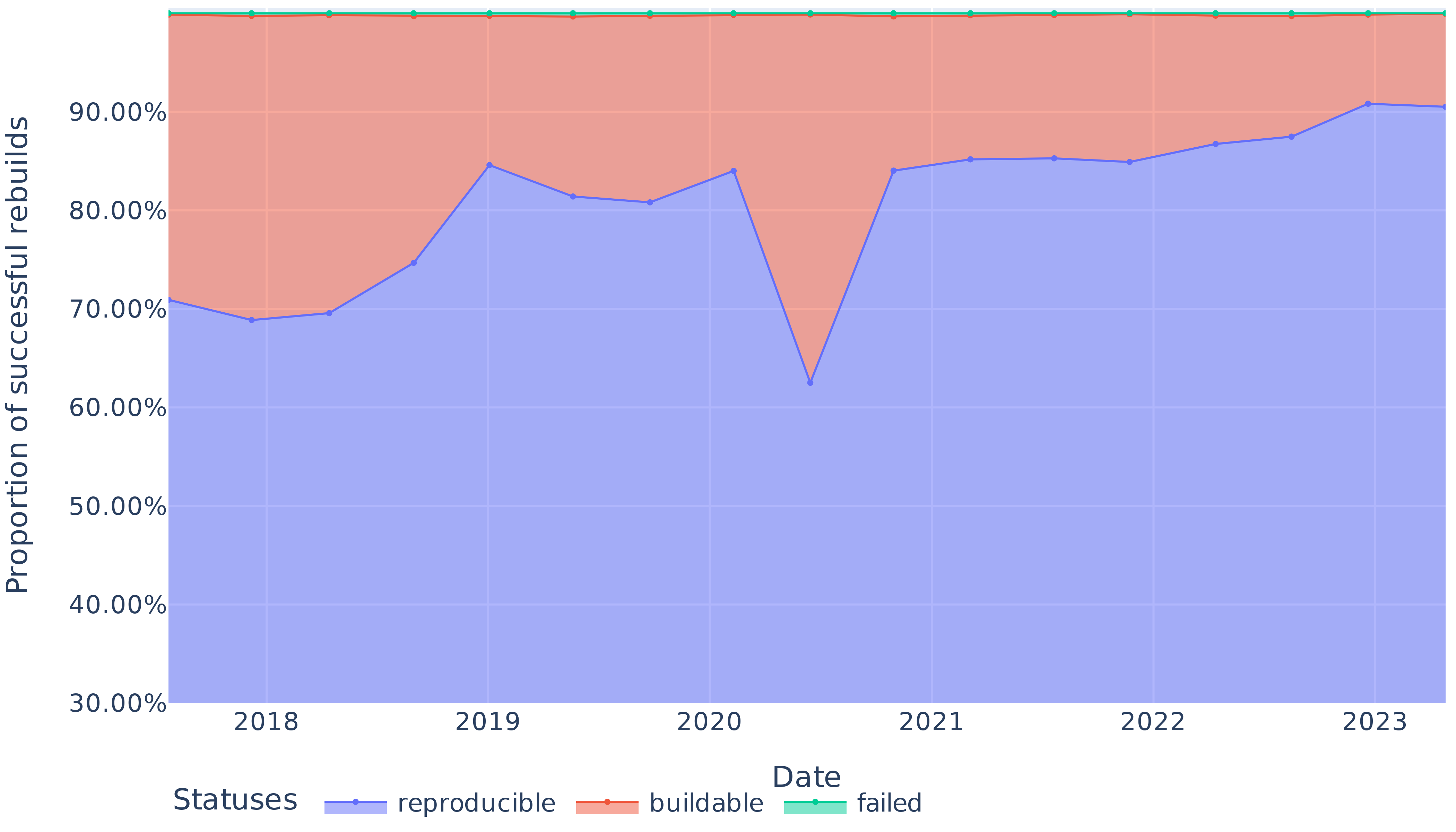

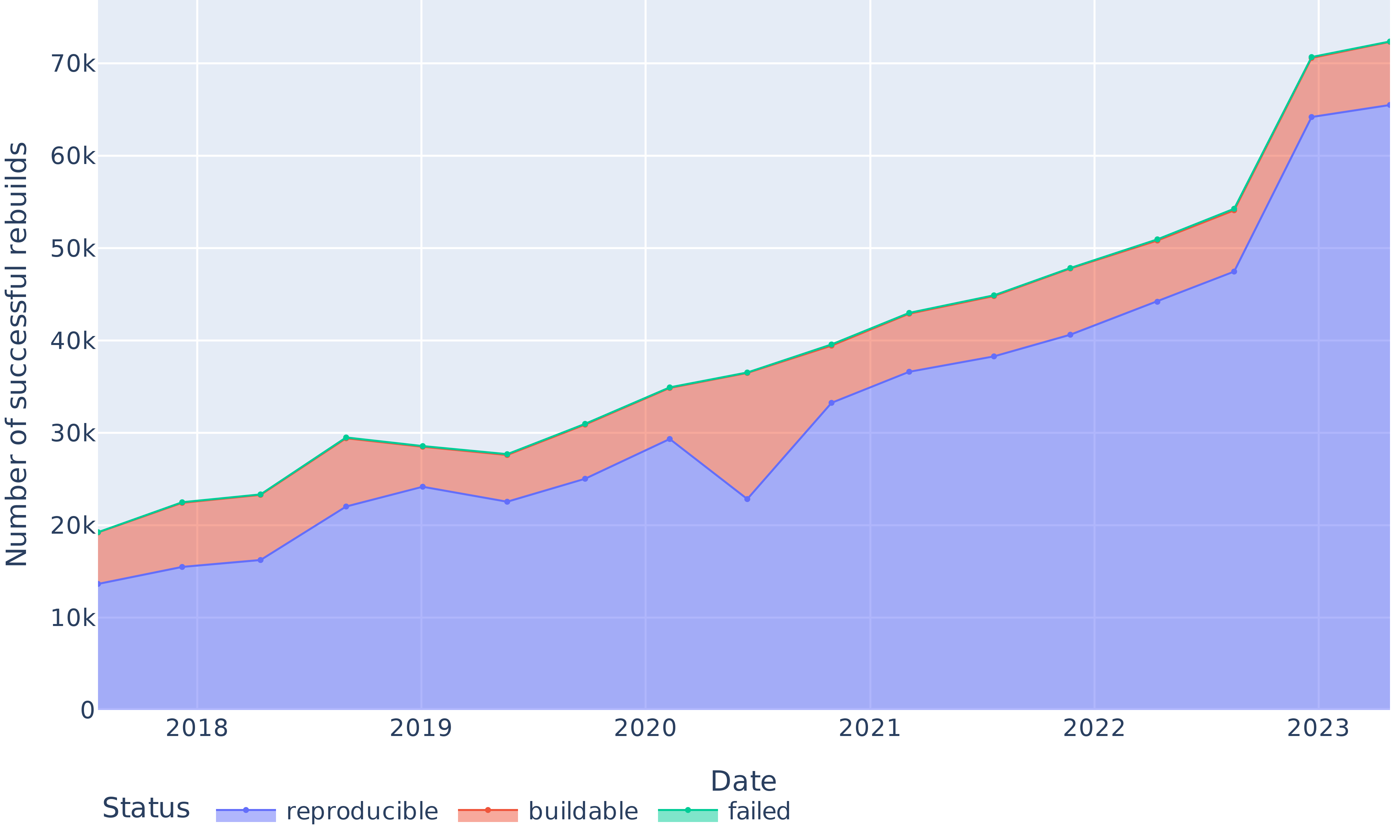

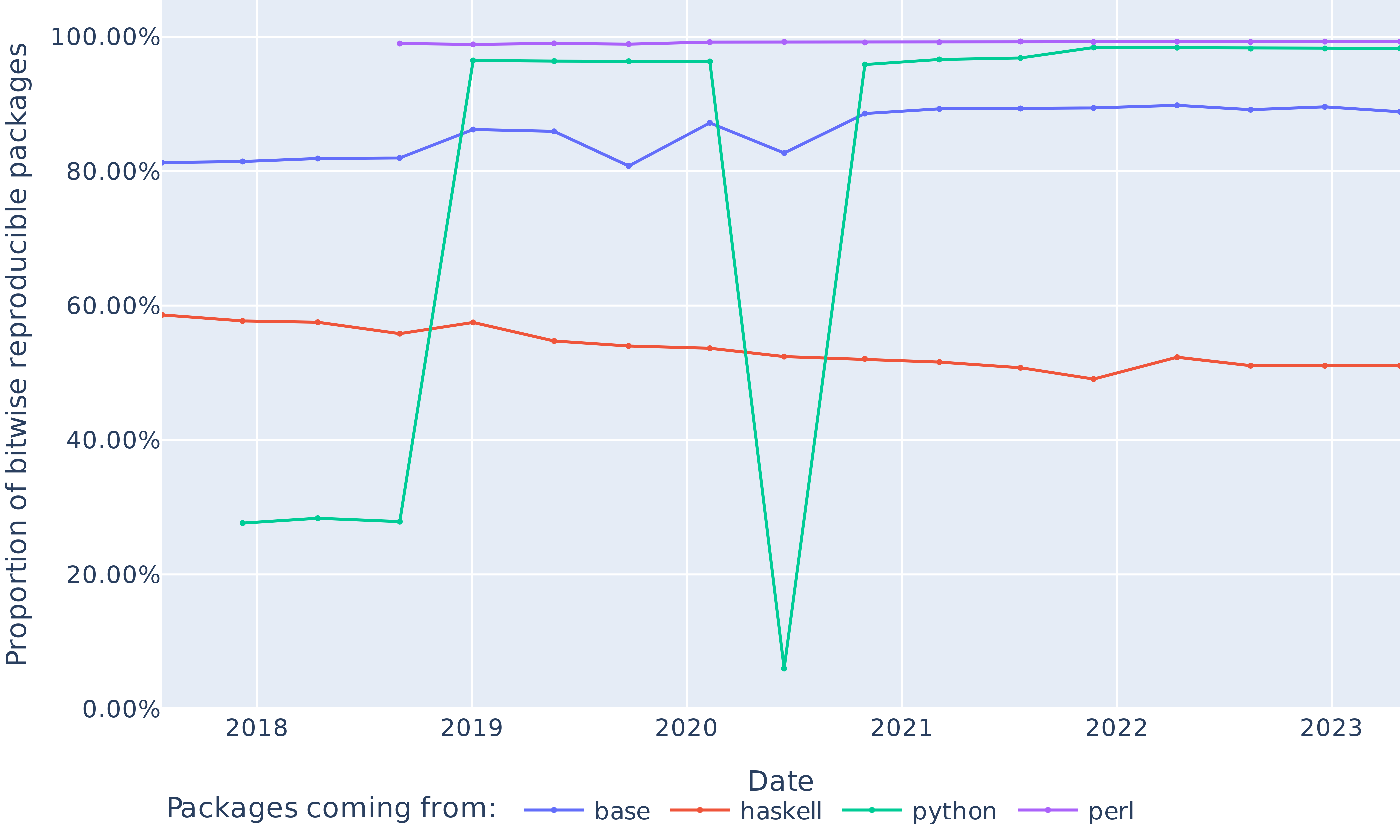

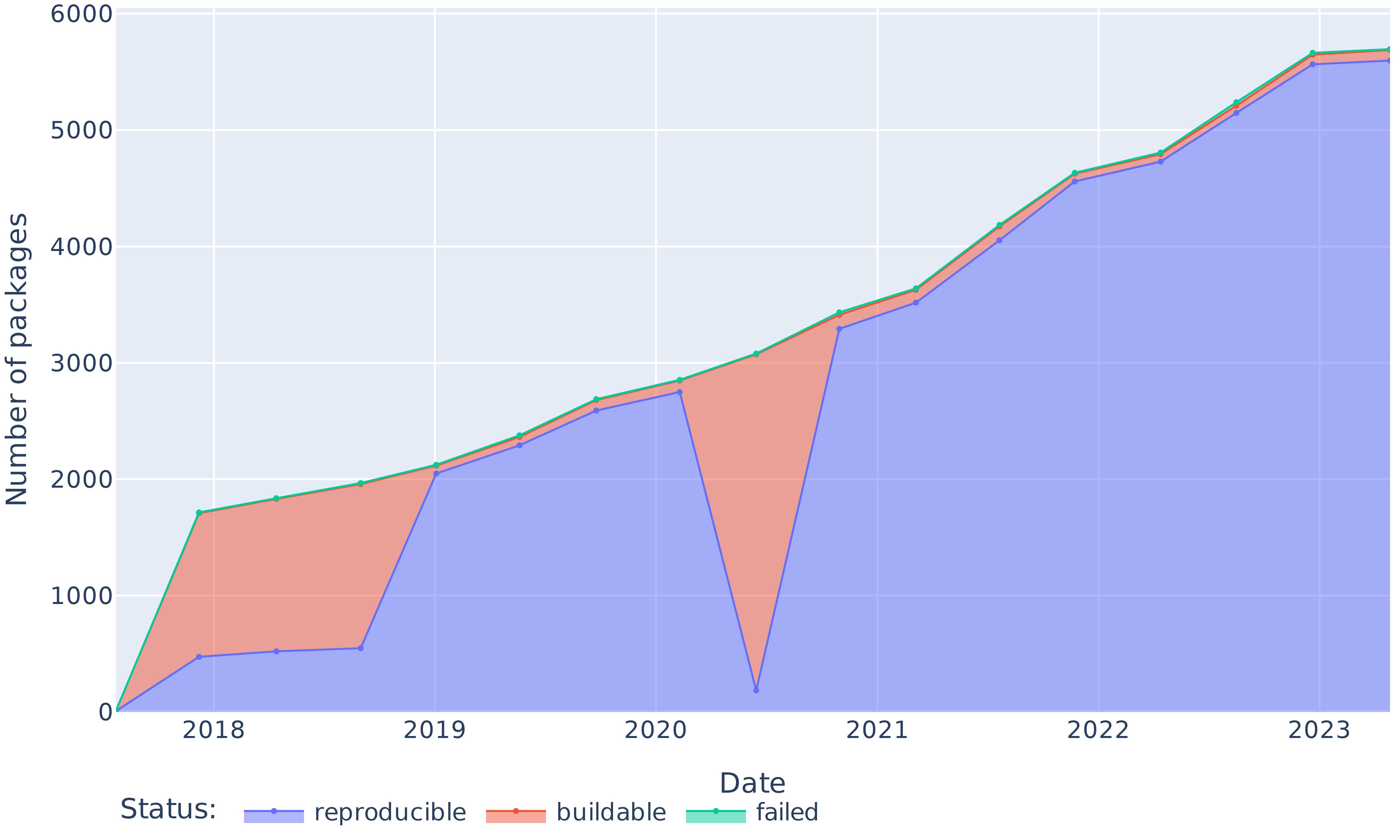

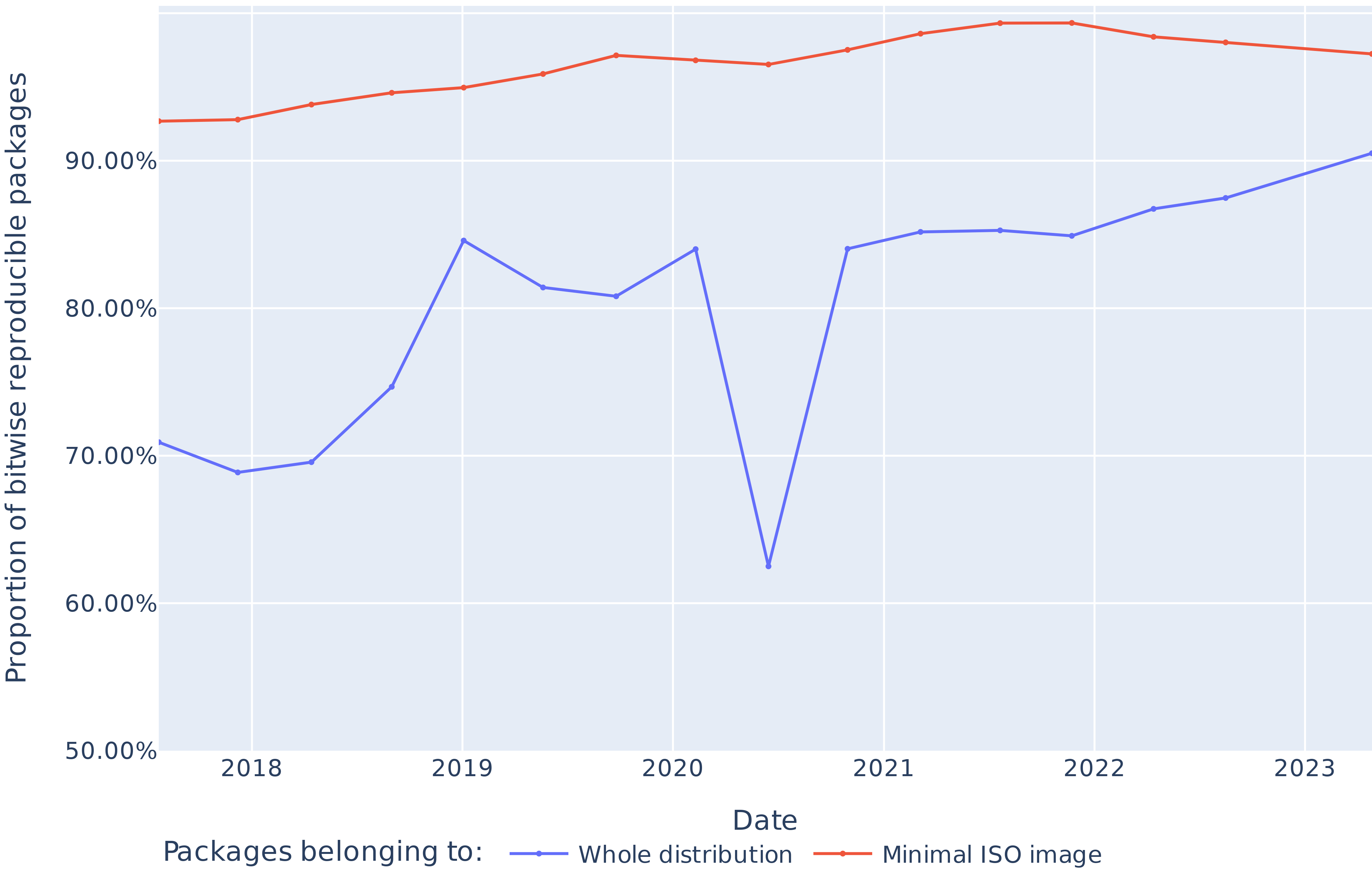

Apart from a significant regression in 2020, we obtain bitwise reproducibility levels between 69% and 91% (see Figure 7). The trends in Figure 8 show that the absolute number of bitwise reproducible packages has consistently gone up and followed the fast growth of the package set. The only exception is the data point for June 2020, where the number of reproducible packages dropped even though the total number of packages grew. We study and explain this reproducibility regression in Section VI-C below.

<details>

<summary>extracted/6418685/paper-images/reproducibility-overall-proportion.png Details</summary>

### Visual Description

## Stacked Area Chart: Proportion of Successful Rebuilds by Status (2018-2023)

### Overview

This is a stacked area chart visualizing the changing proportions of three different statuses for software or system rebuilds over a six-year period, from 2018 to 2023. The chart tracks the "Proportion of successful rebuilds" on the vertical axis against time on the horizontal axis. The three statuses are "reproducible," "buildable," and "failed," which are stacked to show their cumulative contribution to 100% of rebuild attempts.

### Components/Axes

* **Chart Type:** Stacked Area Chart.

* **Y-Axis (Vertical):**

* **Label:** "Proportion of successful rebuilds"

* **Scale:** Percentage, ranging from 30.00% to just above 90.00%. Major gridlines are at 10% intervals (30%, 40%, 50%, 60%, 70%, 80%, 90%).

* **X-Axis (Horizontal):**

* **Label:** "Date"

* **Scale:** Time in years, with markers for 2018, 2019, 2020, 2021, 2022, and 2023. Data points appear to be plotted at quarterly or semi-annual intervals within each year.

* **Legend:**

* **Position:** Bottom center of the chart.

* **Title:** "Statuses"

* **Categories & Colors:**

1. **reproducible:** Represented by a blue line and the blue-filled area at the bottom of the stack.

2. **buildable:** Represented by an orange line and the orange-filled area stacked on top of the blue area.

3. **failed:** Represented by a teal/green line at the very top of the stack. The area for "failed" is not visibly filled, suggesting its proportion is very small.

### Detailed Analysis

**Trend Verification & Data Points (Approximate Values):**

1. **"reproducible" (Blue Line/Area - Bottom Stack):**

* **Trend:** Shows significant volatility with an overall upward trend. It starts around 71%, dips slightly, rises to a peak in 2019, experiences a sharp decline in 2020, then recovers and climbs steadily to its highest point in 2023.

* **Key Points:**

* Start of 2018: ~71%

* Mid-2018: ~69% (local low)

* Early 2019: ~85% (local peak)

* Mid-2020: ~62% (significant trough)

* Early 2021: ~84%

* End of 2022: ~88%

* Mid-2023: ~91% (highest point)

2. **"buildable" (Orange Line/Area - Middle Stack):**

* **Trend:** This represents the proportion of rebuilds that were buildable but not reproducible. Its thickness (the vertical distance between the blue and orange lines) varies inversely with the "reproducible" line. It is thickest when "reproducible" is lowest (e.g., mid-2020) and thinnest when "reproducible" is highest (e.g., 2023).

* **Key Observation:** The orange line itself (the top of the "buildable" area) remains relatively stable near the 100% mark, indicating that the combined total of "reproducible" and "buildable" rebuilds consistently approaches 100%.

3. **"failed" (Teal Line - Top Stack):**

* **Trend:** The teal line is nearly flat and positioned at the very top of the chart, just above the 100% mark (implied). This indicates the proportion of failed rebuilds is consistently very small, likely in the low single-digit percentages or less, throughout the entire period.

* **Key Observation:** There is no visible filled area for "failed," confirming its minimal contribution to the total.

### Key Observations

* **The 2020 Dip:** The most prominent feature is the sharp decline in the "reproducible" proportion during 2020, dropping from over 80% to approximately 62%. This corresponds to a significant expansion of the "buildable" (orange) area.

* **Post-2020 Recovery and Growth:** Following the 2020 low, the "reproducible" proportion not only recovers to pre-dip levels but continues a steady upward trend through 2023, reaching its maximum value.

* **Stability of Total Success:** The sum of "reproducible" and "buildable" (the top of the orange area) remains consistently near 100%, suggesting that outright failures are rare. The primary dynamic is the trade-off between "reproducible" and "buildable" statuses.

* **Legend Placement:** The legend is clearly positioned at the bottom center, with labels directly adjacent to their corresponding colored line samples, ensuring unambiguous mapping.

### Interpretation

This chart likely tracks the health and maturity of a software ecosystem's build system or dependency management over time. The "reproducible" status is the gold standard, meaning a build can be perfectly recreated. "Buildable" suggests it works but may have non-deterministic elements.

* **What the Data Suggests:** The overall upward trend in "reproducible" builds from 2021 onward indicates improving practices, better tooling, or increased emphasis on deterministic builds within the ecosystem. The system is becoming more reliable and predictable.

* **The 2020 Anomaly:** The sharp dip in 2020 is a critical event. It could correlate with a major ecosystem change (e.g., a widespread dependency update, a change in build tool defaults, or the introduction of a new, initially non-reproducible package format). The quick recovery suggests the issue was identified and addressed.

* **Relationship Between Elements:** The inverse relationship between the blue and orange areas highlights a key performance indicator: the conversion of "buildable" projects into "reproducible" ones. The shrinking orange area post-2020 is a positive sign of ecosystem stabilization.

* **The "Failed" Baseline:** The consistently negligible failure rate implies that basic build functionality is well-established. The focus for improvement has rightly shifted to the higher-quality goal of reproducibility.

**In summary, the chart tells a story of an ecosystem that encountered a significant reproducibility challenge around 2020 but has since made strong, sustained progress toward achieving more deterministic and reliable builds.**

</details>

Figure 7: Proportion of reproducible, rebuildable (but unreproducible) and non-rebuildable packages over time.

<details>

<summary>extracted/6418685/paper-images/reproducibility-overall-absolute.png Details</summary>

### Visual Description

## Stacked Area Chart: Successful Rebuilds by Status (2018-2023)

### Overview

This is a stacked area chart tracking the number of successful software or system rebuilds over a period from approximately early 2018 to mid-2023. The chart categorizes rebuilds by their final status: "reproducible," "buildable," and "failed." The data shows a general upward trend in total rebuilds, with a significant acceleration in growth starting in 2022.

### Components/Axes

* **Chart Type:** Stacked Area Chart.

* **X-Axis (Horizontal):** Labeled "Date". It displays years from 2018 to 2023, with major tick marks at the start of each year. The data appears to be plotted at quarterly or semi-annual intervals.

* **Y-Axis (Vertical):** Labeled "Number of successful rebuilds". The scale is linear, ranging from 0 to 70,000 (70k), with major gridlines at intervals of 10,000.

* **Legend:** Located at the bottom center of the chart, titled "Status". It defines three data series:

* **reproducible:** Represented by a blue line and a semi-transparent blue filled area beneath it.

* **buildable:** Represented by a salmon/orange line and a semi-transparent salmon/orange filled area stacked on top of the "reproducible" area.

* **failed:** Represented by a teal/green line and a semi-transparent teal/green filled area stacked on top of the "buildable" area. The top edge of this green area represents the total number of rebuilds (reproducible + buildable + failed).

### Detailed Analysis

**Trend Verification:**

* **reproducible (Blue Line/Area):** Shows a general upward trend with some volatility. It dips notably in mid-2020 before recovering and then rising sharply from 2022 onward.

* **buildable (Orange Line/Area):** The thickness of this band (the difference between the orange and blue lines) remains relatively consistent until 2022, after which it widens slightly, indicating a growing number of builds in this category.

* **failed (Green Line/Area):** The top line (total) follows a similar upward trajectory to the "reproducible" line. The thickness of the green band (failed builds) appears relatively stable as a proportion of the total over time.

**Data Point Extraction (Approximate Values):**

Values are estimated based on the gridlines. The "reproducible" value is read directly from the blue line. The "buildable" value is the difference between the orange and blue lines. The "failed" value is the difference between the green and orange lines. The total is the value of the green line.

| Date (Approx.) | Total (Green Line) | reproducible (Blue) | buildable (Orange - Blue) | failed (Green - Orange) |

| :--- | :--- | :--- | :--- | :--- |

| Early 2018 | ~19,000 | ~14,000 | ~5,000 | ~0 (line starts here) |

| Mid 2018 | ~22,000 | ~15,500 | ~6,500 | ~0 |

| Late 2018 | ~23,000 | ~16,000 | ~7,000 | ~0 |

| Early 2019 | ~29,500 | ~22,000 | ~7,500 | ~0 |

| Mid 2019 | ~28,500 | ~24,000 | ~4,500 | ~0 |

| Late 2019 | ~27,500 | ~22,000 | ~5,500 | ~0 |

| Early 2020 | ~31,000 | ~25,000 | ~6,000 | ~0 |

| Mid 2020 | ~35,000 | ~29,500 | ~5,500 | ~0 |

| Late 2020 | ~36,500 | ~22,500 | ~14,000 | ~0 |

| Early 2021 | ~39,500 | ~33,000 | ~6,500 | ~0 |

| Mid 2021 | ~42,500 | ~36,500 | ~6,000 | ~0 |

| Late 2021 | ~45,000 | ~38,000 | ~7,000 | ~0 |

| Early 2022 | ~48,000 | ~40,500 | ~7,500 | ~0 |

| Mid 2022 | ~51,000 | ~44,000 | ~7,000 | ~0 |

| Late 2022 | ~54,000 | ~47,500 | ~6,500 | ~0 |

| Early 2023 | ~70,500 | ~64,000 | ~6,500 | ~0 |

| Mid 2023 | ~72,000 | ~65,500 | ~6,500 | ~0 |

**Note on "failed" data:** The legend includes "failed," but the green line appears to be the top boundary of the stacked area. The visual representation suggests the green line is the sum of all three categories. The "failed" category, as a distinct band, is not visibly present in the stack, implying its value is zero or negligible throughout the period shown. The green line's label may be misleading; it likely represents the "Total" of all statuses.

### Key Observations

1. **Overall Growth:** The total number of rebuilds (green line) increased from approximately 19,000 in early 2018 to over 72,000 by mid-2023, representing nearly a 280% increase.

2. **Sharp Acceleration:** The most dramatic growth occurs between late 2022 and early 2023, where the total jumps from ~54,000 to ~70,500.

3. **Volatility in "reproducible":** The "reproducible" category shows a significant dip in late 2020 (down to ~22,500 from ~29,500 earlier that year) before beginning a sustained and steep climb.

4. **Stable "buildable" Band:** The number of "buildable" (but not reproducible) rebuilds remains in a relatively narrow band (roughly 4,500 to 7,500) for most of the timeline, with a possible outlier spike in late 2020.

5. **Legend/Data Discrepancy:** The "failed" category listed in the legend does not correspond to a visible, separate data series in the chart. The green line is the cumulative total.

### Interpretation

This chart demonstrates a robust and accelerating adoption or activity in a rebuild process (likely in software engineering, such as package builds or continuous integration) over a five-year period. The consistent growth suggests increasing scale, reliability, or monitoring of these processes.

The relationship between the categories is key: "reproducible" builds form the vast majority of the total, indicating a high standard of build quality and determinism. The "buildable" category represents a smaller, stable subset of builds that succeed but lack full reproducibility. The absence of a visible "failed" band is a strong positive indicator, suggesting either an extremely low failure rate or that the chart's green line is mislabeled and actually represents the sum of "reproducible" and "buildable" only.

The notable dip in "reproducible" builds in late 2020 could indicate a systemic issue, a change in measurement methodology, or a period of transition in the underlying systems. The subsequent sharp recovery and explosive growth from 2022 onward point to a successful resolution of that issue and/or a major scaling-up of operations. The data suggests a system that has matured significantly, achieving both higher volume and a strong emphasis on reproducibility.

</details>

Figure 8: Absolute numbers of reproducible, rebuildable (but unreproducible) and non-rebuildable packages over time.

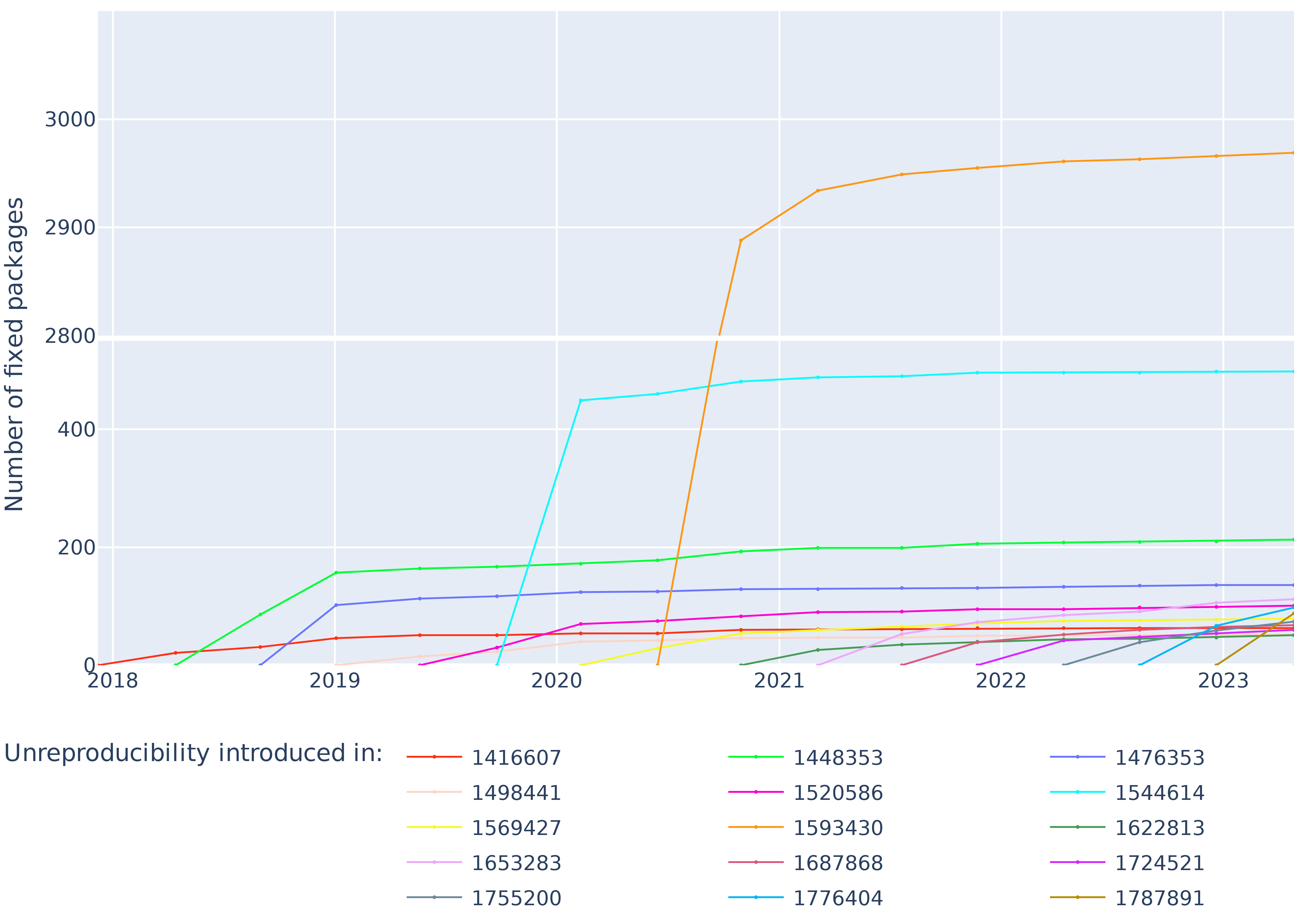

Figure 9 shows for each revision the cumulative amount of unreproducibilities introduced by that revision getting fixed over time. The large slope between the two first points of each plot indicates that most of the unreproducibilities introduced in a revision are fixed in the next revision, on average 62% of them (even raising to 85% if we account for packages fixed after the 2020 reproducibility regression).

<details>

<summary>extracted/6418685/paper-images/fixes-per-revision.png Details</summary>

### Visual Description

## Line Chart: Number of Fixed Packages Over Time by Unreproducibility Introduction Event

### Overview

This is a line chart tracking the cumulative "Number of fixed packages" over time, from early 2018 to late 2023. The chart displays multiple data series, each representing a distinct "Unreproducibility introduced in:" event, identified by a unique numeric code. The data shows how many packages were fixed following the introduction of each specific unreproducibility issue.

### Components/Axes

* **Y-Axis (Vertical):** Labeled "Number of fixed packages". The scale is non-linear, with major gridlines at 0, 200, 400, 2000, 2800, 2900, and 3000. This indicates a significant jump in scale between 400 and 2000.

* **X-Axis (Horizontal):** Represents time, with major year markers for 2018, 2019, 2020, 2021, 2022, and 2023. Data points appear to be plotted at quarterly or semi-annual intervals.

* **Legend:** Located at the bottom center of the chart. It is titled "Unreproducibility introduced in:" and contains 15 entries, each pairing a colored line with a numeric identifier. The legend is organized in three columns.

### Detailed Analysis

**Legend Entries (Color to ID Mapping):**

1. **Red:** 1416607

2. **Light Pink:** 1498441

3. **Yellow:** 1569427

4. **Light Purple:** 1653283

5. **Dark Grey:** 1755200

6. **Bright Green:** 1448353

7. **Magenta:** 1520586

8. **Orange:** 1593430

9. **Dark Red/Brown:** 1687868

10. **Cyan:** 1776404

11. **Blue:** 1476353

12. **Light Blue/Cyan:** 1544614

13. **Dark Green:** 1622813

14. **Purple:** 1724521