# Rollout Roulette: A Probabilistic Inference Approach to Inference-Time Scaling of LLMs using Particle-Based Monte Carlo Methods

**Authors**:

- Isha Puri

- Shiv Sudalairaj

- GX Xu

- Kai Xu

- Akash Srivastava (MIT CSAIL RedHat AI Innovation)

Abstract

Large language models (LLMs) have achieved significant performance gains via scaling up model sizes and/or data. However, recent evidence suggests diminishing returns from such approaches, motivating a pivot to scaling test-time compute. Existing deterministic inference-time scaling methods, usually with reward models, cast the task as a search problem, but suffer from a key limitation: early pruning. Due to inherently imperfect reward models, promising trajectories may be discarded prematurely, leading to suboptimal performance. We propose a novel inference-time scaling approach by adapting particle-based Monte Carlo methods. Our method maintains a diverse set of candidates and robustly balances exploration and exploitation. Our empirical evaluation demonstrates that our particle filtering methods have a 4–16x better scaling rate over deterministic search counterparts on both various challenging mathematical and more general reasoning tasks. Using our approach, we show that Qwen2.5-Math-1.5B-Instruct surpasses GPT-4o accuracy in only 4 rollouts, while Qwen2.5-Math-7B-Instruct scales to o1 level accuracy in only 32 rollouts. Our work not only presents an effective method to inference-time scaling, but also connects rich literature in probabilistic inference with inference-time scaling of LLMs to develop more robust algorithms in future work. Code and further information is available at https://probabilistic-inference-scaling.github.io/

1 Introduction

Large language models (LLMs) continue to improve through larger architectures and massive training corpora (kaplan2020scalinglawsneurallanguage, ; snell2024scalingllm, ). In parallel, inference-time scaling (ITS)—allocating more computation at inference time—has emerged as a complementary approach to improve performance without increasing model size. Recent work has shown that ITS enables smaller models to match or exceed the accuracy of larger ones on complex reasoning tasks (beeching2024scalingtesttimecompute, ), with proprietary systems like OpenAI’s o1 and o3 explicitly incorporating multi-trial inference for better answers (openai2024openaio1card, ).

<details>

<summary>x1.png Details</summary>

### Visual Description

# Technical Document Extraction: Mathematical Logic Flow Diagram

## 1. Overview

This image is a technical diagram illustrating two different logical paths (labeled "1" and "2") taken to solve a word problem. It evaluates the accuracy of each step in the reasoning process using numerical scores and visual indicators (checkmarks and crosses).

## 2. Component Isolation

### Region A: Problem Statement (Left)

* **Icon:** A calculator/math symbol sheet with a question mark.

* **Text Content:**

> "Jane has twice as many pencils as Mark. Together they have 9 pencils. How many pencils does Jane have?"

### Region B: Path Selection (Center-Left)

Two arrows originate from the problem statement, pointing to two distinct reasoning paths:

* **Path 1:** Represented by a thought bubble icon containing the number "1".

* **Path 2:** Represented by a thought bubble icon containing the number "2".

### Region C: Reasoning Path 1 (Top Right)

This path consists of three sequential steps leading to a correct conclusion.

| Step | Mathematical Reasoning Text | Score | Visual Indicator |

| :--- | :--- | :--- | :--- |

| **Step 1** | Total parts = 2 (Jane) + 1 (Mark) = 3 | 0.5156 | Red dotted box around score |

| **Step 2** | Each part = 9 / 3 = 3 pencils | 0.9375 | None |

| **Step 3** | Jane has 2 parts -> 2x3= 6. Final Answer: 6 pencils | 0.9883 | Green underline; Large green checkmark |

### Region D: Reasoning Path 2 (Bottom Right)

This path consists of two sequential steps leading to an incorrect conclusion.

| Step | Mathematical Reasoning Text | Score | Visual Indicator |

| :--- | :--- | :--- | :--- |

| **Step 1** | Total parts = 3 -> each part = 9/3 = 3 | 0.9453 | Green dotted box around score |

| **Step 2** | Jane gets 1 part -> 3. Final Answer: 3 pencils | 0.0133 | Red underline; Large red 'X' mark |

---

## 3. Data Analysis and Trends

### Logical Flow Comparison

* **Path 1 (Correct):** Correctly identifies the ratio (2:1), calculates the value of a single unit (3), and then multiplies by Jane's share (2) to reach the correct answer of 6. The scores increase as the logic progresses toward the final correct answer (0.5156 -> 0.9375 -> 0.9883).

* **Path 2 (Incorrect):** Correctly identifies the total parts (3) in Step 1, but fails in Step 2 by incorrectly assigning Jane only 1 part instead of 2. This results in a final answer of 3. The score drops significantly at the point of the logical error (0.9453 -> 0.0133).

### Visual Coding

* **Green Checkmark:** Indicates the successful completion of Path 1.

* **Red 'X':** Indicates the failure of Path 2.

* **Dotted Boxes:** A red dotted box highlights a lower confidence score in a correct path (Step 1 of Path 1), while a green dotted box highlights a high confidence score in the initial step of the incorrect path.

* **Underlines:** A green underline marks the correct final answer; a red underline marks the incorrect final answer.

## 4. Language Declaration

* **Primary Language:** English.

* **Other Languages:** None present.

</details>

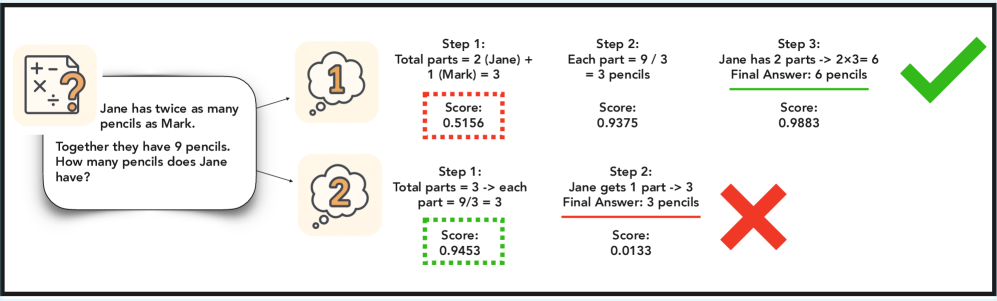

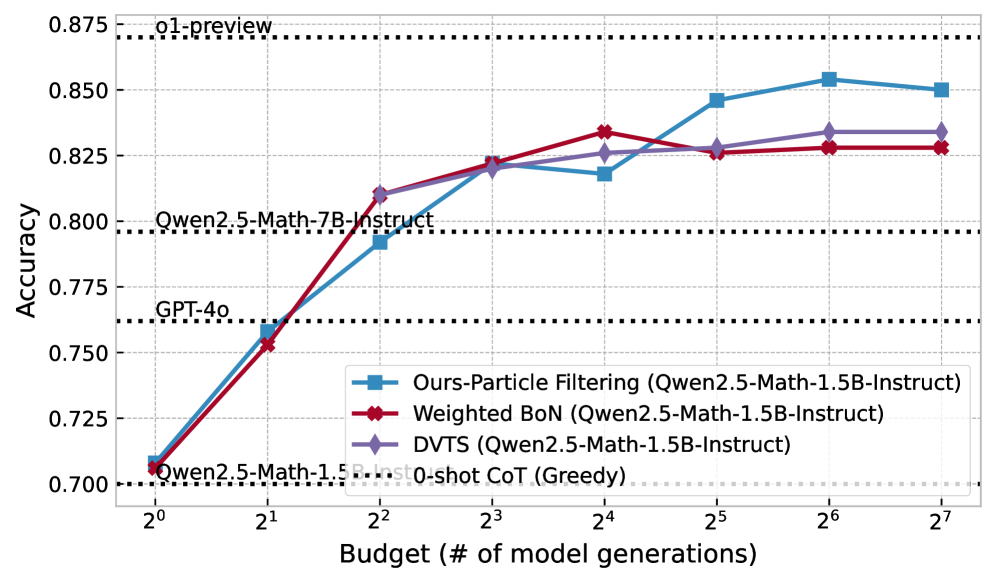

Figure 1: A true example of PRM assigning a lower score to the first step of a solution that turns out to be correct. In deterministic scaling methods, this solution would have been discarded in favor for one that had a higher initial PRM score but turned out to be incorrect.

Beyond answer-level scaling methods like self-consistency and best-of- $n$ sampling (brown2024largelanguage, ), a popular class of ITS methods formulates inference as search guided by a process reward model (PRM), which scores partial sequences step-by-step. This perspective has led to the adoption of algorithms like beam search (zhou2024languageagenttreesearch, ) and Monte Carlo tree search (guan2025rstarmathsmall, ). These methods refine LLM outputs by prioritizing trajectories that score highly according to the PRM. However, they also inherit a major limitation from classical search: early pruning. Because the PRM is an imperfect approximation of true correctness, these methods often eliminate promising candidates too early-based on noisy or miscalibrated partial scores. This failure mode is illustrated in Figure 1. The PRM assigns a higher initial score to Answer 2, which ultimately turns out to be incorrect, and a lower score to the correct Answer 1. A deterministic method like beam search would discard Answer 1 after the first step, never recovering it—even though it is the correct solution. Such brittleness is inherent to greedy search: once a path is pruned, it cannot be revisited.

To address this limitation, we instead maintain a distribution over the possible paths during generation to represent the uncertainty we would like to account for due to the imperfection in reward modeling and propagate it through sampling. We realize this approach in a probabilistic inference framework for inference-time scaling, in which we use particle filtering to sample from the posterior distribution over accepted trajectories. Our method maintains a weighted population of candidate sequences that evolves over time, balancing exploitation of high-PRM paths with stochastic exploration of alternatives. Unlike beam search, which targets the mode of an approximate distribution, particle filtering samples from the typical set, making it inherently more robust to noise and multi-modality in the reward landscape. High-scoring candidates are favored but not allowed to dominate, ensuring that low-probability (but potentially correct) paths remain in play.

We demonstrate that this simple shift-from deterministic search to sampling with uncertainty—produces strong empirical gains. On mathematical and reasoning tasks, our method significantly outperforms existing ITS approaches across multiple model families. For instance, using Qwen2.5-Math-1.5B-Instruct and a compute budget of 4, our method surpasses GPT-4o; with a budget of 32, the 7B model surpasses o1 accuracy.

Our key contributions are as follows.

1. We propose a particle filtering algorithm for inference-time scaling that mitigates early pruning by maintaining exploration across trajectories with uncertainty. We formulate ITS as posterior inference over a state space model defined by an LLM (transition model) and PRM (emission model), enabling principled application of probabilistic inference tools.

1. We present strong results on mathematical and out-of-domain reasoning tasks and study Particle Filtering’s scaling performance.

1. We ablate compute allocation strategies (parallel vs. iterative), PRM aggregation methods, and generation temperature, proposing a new model-based reward aggregation method that improves stability and performance.

1. We demonstrate that our proposed methods have 4–16x faster scaling speed than previous methods based on a search formulation on the MATH500 and AIME 2024 datasets, with small language models in the Llama and Qwen families. We show that PF can scale Qwen2.5-Math-1.5B-Instruct to surpasses GPT-4o accuracy with only a budget of 4 and scale Qwen2.5-Math-7B-Instruct to o1 accuracy with a budget of 32.

2 Background

State space models describe sequential systems that evolve stepwise, typically over time (Särkkä_2013, ). They consist of a sequence of latent states $\{x_{t}\}_{t=1}^{T}$ and corresponding observations $\{o_{t}\}_{t=1}^{T}$ The evolution of states is governed by a transition model $p(x_{t}\mid x_{<t-1})$ , and the observations are governed by the emission model $p(o_{t}|x_{t})$ . The joint distribution of states and observations is given by: $p(x_{1:T},o_{1:T})=p(x_{1}){\prod}_{t=2}^{T}p(x_{t}\mid x_{<t-1}){\prod}_{t=1}^{T}p(o_{t}\mid x_{t})$ , where $p(x_{1})$ is the prior.

Probabilistic inference in SSMs involves estimating the posterior distribution of the hidden states given the observations, $p(x_{1:T}|o_{1:T})$ (Särkkä_2013, ), which is generally intractable due to the high dimensionality of the state space and the dependencies in the model. Common approaches approximate the posterior through sampling-based methods or variational approaches (mackay2003information, ). Particle filtering (PF) is a sequential Monte Carlo method to approximate the posterior distribution in SSMs (nonlinearfiltering, ; sequentialmonte, ). PF represents the posterior using a set of $N$ weighted particles $\{x_{t}^{(i)},w_{t}^{(i)}\}_{i=1}^{N}$ , where $x_{t}^{(i)}$ denotes the $i^{\text{th}}$ particle at time $t$ , and $w_{t}^{(i)}$ is its associated weight. The algorithm iteratively propagates particles using the transition model and updates weights based on the emission model: $w_{t}^{(i)}\propto w_{t-1}^{(i)}p(o_{t}\mid x_{t}^{(i)})$ .

3 Method

<details>

<summary>x2.png Details</summary>

### Visual Description

# Technical Document Extraction: Particle Filtering for Mathematical Reasoning

This document describes a flowchart illustrating a multi-step reasoning process using a "particle filtering" approach to solve a word problem. The process involves generating steps, scoring them, and resampling based on those scores to reach a final answer.

## 1. Input Problem (Header/Left Region)

The process begins with a mathematical word problem contained in a speech bubble:

> "Jane has twice as many pencils as Mark. Together they have 9 pencils. How many pencils does Jane have?"

---

## 2. Process Flow Components

The diagram is organized into three main stages of generation and two intermediate resampling phases.

### Stage 1: Generate a 1st step / score (Pink Header)

Three initial "particles" (hypotheses) are generated:

| Particle | Content | Score |

| :--- | :--- | :--- |

| **Particle 1** | Step 1: Total parts = 2 (Jane) + 1 (Mark) = 3 | 0.3198 |

| **Particle 2** | Step 1: Total parts = 3 -> each part = 9/3 = 3 | 0.9453 |

| **Particle 3** | Step 1: Let J = x, M = 2x | 0.5898 |

### Phase 1: Resampling (Orange Header)

Based on the scores in Stage 1, the particles are redistributed:

* **Particle 2** (highest score) is resampled twice (indicated by black dotted lines leading to two different nodes).

* **Particle 1** is resampled once (indicated by a green dotted line).

* **Particle 3** is discarded (no lines lead from it).

---

### Stage 2: Generate a next step / score (Pink Header)

The resampled particles continue their reasoning:

| Particle | Content | Score | Notes |

| :--- | :--- | :--- | :--- |

| **Particle 1** | Step 2: Each part = 9 / 3 = 3 pencils | 0.9375 | Derived from previous Particle 1 |

| **Particle 2** | Step 2: Jane gets 1 part -> 3. Final Answer: 3 pencils. | 0.0133 | Completed its answer |

| **Particle 3** | Step 2: Mark = 1 part | 0.0392 | Derived from previous Particle 2 |

### Phase 2: Resampling (Orange Header)

* **Particle 1** (highest score in this stage) is resampled twice (indicated by a black dotted line and a green dotted line).

* The other particles are discarded or reach a terminal state with low scores.

---

### Stage 3: Generate a next step / score (Pink Header)

The final reasoning steps are generated:

| Particle | Content | Score | Notes |

| :--- | :--- | :--- | :--- |

| **Particle 1** | Step 3: Mark has 1 part = 3 pencils. Jane has other 6. Final Answer: 6 Pencils. | 0.8133 | Completed its answer |

| **Particle 3** | Step 3: Jane has 2 parts -> 2x3 = 6. Final Answer: 6 pencils. | 0.9883 | Completed its answer |

---

## 3. Final Selection (Green Region)

**Header:** "Select particle with highest reward as final answer"

The system selects the path with the highest final score (0.9883), which is highlighted by a green dotted line leading to the final consolidated solution block.

**Final Answer Content:**

* **Step 1:** Total parts = 2 (Jane) + 1 (Mark) = 3

* **Step 2:** Each part = 9 / 3 = 3 pencils

* **Step 3:** Jane has 2 parts -> 2x3 = 6

* **Final Answer:** 6 pencils

---

## 4. Visual Legend & Logic Summary

* **Color Coding:**

* **Pink Labels:** Action steps (Generation).

* **Orange Labels:** Selection steps (Resampling).

* **Blue Boxes:** Active reasoning particles. The saturation of blue roughly correlates with the confidence/score.

* **Green Box/Lines:** The "Winning" path and final output.

* **Trend Analysis:** The process filters out incorrect logic (like Particle 3's algebraic setup or Particle 2's incorrect Step 2 conclusion) by favoring paths with higher numerical scores during the resampling phases. The green dotted line traces the "optimal" path from Step 1 through to the final result.

</details>

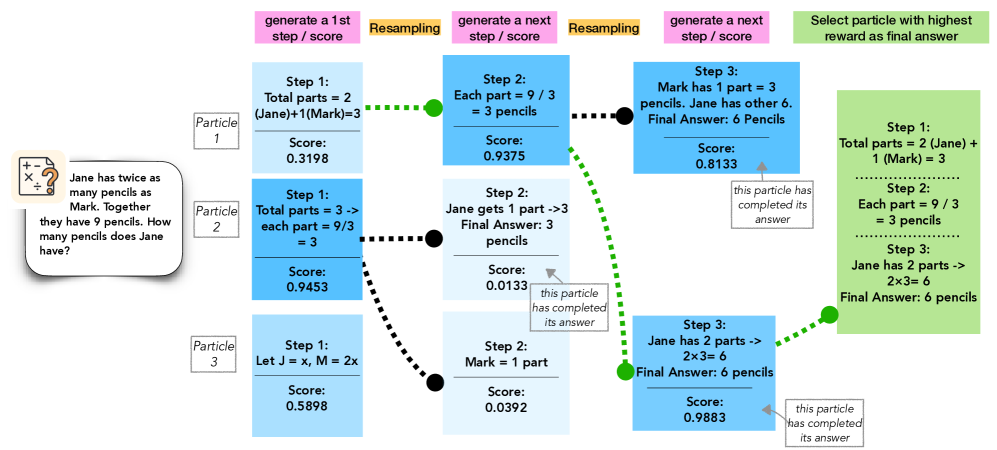

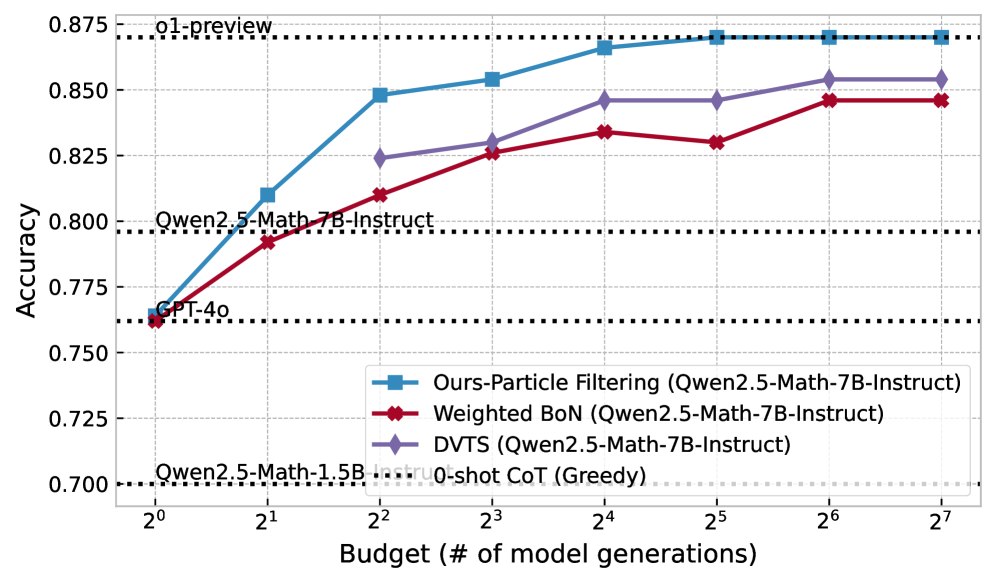

Figure 2: Inference-time scaling with particle filtering: initialize $n$ particles, generate a step for each, score with the PRM, resample via softmax-weighted scores, and repeat until full solutions are formed.

We formulate inference-time scaling for a language model $p_{M}$ as approximate posterior inference in a state space model (SSM) defined over token sequences. Let $x_{1:T}$ denote the sequence of generated tokens (or chunks, such as steps in a math problem), and let $o_{1:T}$ denote binary observations indicating whether the steps so far are accepted, given a prompt $c$ .

The forward model defines the joint distribution over latent states and observations as:

$$

\displaystyle p_{M}(x_{1:T},o_{1:T}\mid c)\propto\prod_{t=1}^{T}p_{M}(x_{t}\mid c,x_{<t-1})\prod_{t=1}^{T}p(o_{t}\mid c,x_{<t}), \tag{1}

$$

where the transition model $p_{M}(x_{t}\mid c,x_{<t-1})$ is given by the language model $M$ , and the emission model $p(o_{t}\mid c,x_{<t})$ is a Bernoulli distribution: $p(o_{t}\mid c,x_{<t})=\mathcal{B}(o_{t};r(c,x_{t}))$ , with reward function $r(c,x_{t})$ encoding the acceptability of the step $x_{t}$ . Figure 3 illustrates this SSM.

Given this model, our goal is to infer the posterior distribution over latent trajectories that yield fully accepted sequences, i.e., $p_{M}(x_{1:T}\mid c,o_{1:T}=\mathbf{1})$ . This formulation makes particle filtering a natural and theoretically grounded choice for inference.

In practice, the true reward function $r$ is often unavailable. Following prior work, we approximate it using a pre-trained preference or reward model (PRM) $\hat{r}$ suited to the target domain (e.g., math reasoning). This results in an approximate emission model: $\hat{p}(o_{t}\mid c,x_{<t})=\mathcal{B}(o_{t};\hat{r}(c,x_{<t}))$ . Substituting this into the forward model, we obtain the approximate joint distribution:

$$

\displaystyle\hat{p}_{M}(x{1:T},o_{1:T}\mid c)\propto\prod_{t=1}^{T}p_{M}(x_{t}\mid c,x_{<t-1})\prod_{t=1}^{T}\hat{p}(o_{t}\mid c,x_{<t}), \tag{2}

$$

and correspondingly aim to estimate the posterior $\hat{p}_{M}(x{1:T}\mid c,o_{1:T}=\mathbf{1})$ . $x_{1}$ $x_{2}$ $·s$ $x_{T}$ $c$ $o_{1}$ $o_{2}$ $·s$ $o_{T}$ $p_{M}(x_{1}\mid c)$ $p_{M}(x_{2}\mid c,x_{1})$ $p_{M}(x_{T}\mid c,x_{<T})$ $\hat{p}(o_{1}\mid c,x_{1})$ $\hat{p}(o_{2}\mid c,x_{2})$ $\hat{p}(o_{t}\mid c,x_{<t})$ language model reward model

Figure 3: State-space model for inference-time scaling. $c$ is a prompt, $x_{1},...,x_{T}$ are LLM outputs, and $o_{1},...,o_{T}$ are “observed” acceptances from a reward model. We estimate the latent states conditioned on $o_{t}=1$ for all $t$ .

3.1 Particle Filtering for Estimating the Posterior

We now apply particle filtering (PF) to approximate the posterior over accepted sequences $x_{1:T}$ under the model in (2). Each particle represents a partial trajectory, and inference proceeds by iteratively generating, scoring, and resampling these particles based on their reward-induced likelihood. At each time step $t$ , PF maintains a population of $N$ weighted particles. The algorithm proceeds as follows:

- Initialization ( $t=1$ ): Each particle is initialized by sampling the first token from the LLM: $x_{1}^{(i)}\sim p_{M}(x_{1}\mid c)$ .

- Propagation ( $t>1$ ): Each particle is extended by sampling the next token from the LLM conditioned on its history: $x_{t}^{(i)}\sim p_{M}(x_{t}\mid c,x_{<t-1}^{(i)})$ .

- Weight Update: Using a reward model $\hat{r}$ , each particle is assigned an unnormalized weight that reflects the likelihood of acceptance: $w_{t}^{(i)}\propto w_{t-1}^{(i)}·\hat{r}(c,x_{<t}^{(i)})$ .

- Resampling: To focus computation on promising trajectories, we sample a new population of particles from the current set using a softmax distribution over weights: $\mathbb{P}_{t}(j=i)={\exp(w_{t}^{(i)})}/{\sum{i\textquoteright=1}^{N}\exp(w_{t}^{(i\textquoteright)})}$ .

The next generation of particles is formed by sampling indices $j_{t}^{(1)},...,j_{t}^{(N)}\sim\mathbb{P}_{t}$ and retaining the corresponding sequences.

This procedure balances exploitation of high-reward partial generations with stochastic exploration, increasing the chances of recovering correct completions even when early steps are uncertain.

The algorithm can be implemented efficiently: both token sampling and reward computation can be parallelized across particles. With prefix caching, the total runtime is comparable to generating $N$ full completions independently.

Final Answer Selection

Particle filtering yields a weighted set of samples approximating the posterior, enabling principled answer selection strategies. While selecting the highest-weighted particle or using (weighted) majority voting better reflects the typical set, we adopt the standard practice of scoring all final outputs with an outcome reward model (ORM) and selecting the top-scoring one for fair comparison with prior work. Additionally, the posterior samples allow richer evaluation—for instance, estimating the expected correctness of the model under its own distribution, rather than relying solely on point estimates. Notably, samples from Algorithm 1 can be used to construct unbiased estimates of any expectation over (2). In the context of math and reasoning, it guarantees the expected accuracy is unbiased, which we formulate in Theorem 2 (proof in Appendix C).

**Theorem 1 (Unbiasedness of Expected Accuracy)**

*Let $\{(w^{(i)},x^{(i)})\}$ be weighted particles from Algorithm 1 and $\mathrm{is\_correct}(x)$ is a function to check the correctness of response $x$ . We have

$$

\mathbb{E}\left\{\sum_{i}\left[w^{(i)}\;\mathrm{is\_correct}(x^{(i)})\right]\right\}=\sum_{x}\left[\hat{p}_{M}(x_{1:T}\mid c,o_{1:T}=\mathbf{1})\;\mathrm{is\_correct}(x^{(i)})\right], \tag{3}

$$

where the expectation is over the randomness of the algorithm itself.*

Reward Aggregation with PRMs

To compute particle weights during generation, we aggregate per-step scores from the process reward model (PRM) $\hat{r}$ . Our default uses a product of step-level rewards to align with the factorized likelihood structure, but alternative aggregation strategies (e.g., min, last-step, or model-based) may offer different trade-offs. We describe and compare these strategies in detail in Appendix A and report ablation results in 4.5.

Sampling v.s. deterministic search

An alternative to our sampling-based approach is to treat inference-time scaling as an optimization problem under the approximate posterior (2), reducing to deterministic search methods like beam search or MCTS. However, these methods assume the reward model $\hat{r}$ is accurate at every step and prune aggressively based on early scores. In practice, PRMs are noisy and their preferences often shift during unrolling. As a result, deterministic search can irreversibly discard trajectories that may have low early scores but high posterior mass overall. Once pruned, such paths cannot be recovered.

In contrast, particle filtering maintains a distribution over partial sequences and uses stochastic resampling to balance exploration and exploitation. This allows recovery from early errors and robustness to reward noise. While beam search targets the mode of the approximate posterior-making it sensitive to local errors-PF samples from the typical set, smoothing over inconsistencies in $\hat{r}$ . Unlike search heuristics, PF provides consistent, unbiased estimators under mild assumptions, and naturally captures multi-modal solutions—critical when multiple valid completions exist. We validate these advantages empirically in Section 4.5.

Multiple iterations and parallel chains

The PF approach to inference-time scaling can be used to define a MCMC kernel that enables two new types of scaling: multiple iterations of complete answers inspired by PG and parallel simulations inspired by parallel tempering. We detail the methodology and results for both extensions in sections B.1 and B.2 in the appendix.

4 Evaluation

We evaluate our proposed methods in this section. We detail our experimental setup in Section 4.1 and start with highlighted results comparing against closed-source models and competitive inference-time scaling methods with open-source models (Section 4.2). We then study how the main algorithm, particle filtering, scales with more computation and compare it with competitors (Section 4.4). We further perform an extensive ablation study on key algorithmic choices like reward models, reward aggregation, final answer aggregation, and LLM temperatures (Section 4.5). Finally, we study different allocations of the compute budget through iterative and parallel extensions (Section 4.5).

4.1 Setup

Models

We consider two types of open-source small language models (SLMs) as our policy models for generating solutions. The first is general models, for which we evaluate Qwen2.5-1.5B-Instruct (qwen_2_5, ), Qwen2.5-7B-Instruct, Llama-3.2-1B-Instruct, and Llama-3.1-8B-Instruct (llama3, ). The second is math models, using Qwen2.5-Math-1.5B-Instruct and Qwen2.5-Math-7B-Instruct. These small models are well-suited for inference-time scaling, enabling efficient search of multiple trajectories.

Process Reward Models

To guide our policy models, we utilized Qwen2.5-Math-PRM-7B (prmlessons, ), a 7B process reward model. We selected this model for its superior performance over other PRMs we tested, including Math-Shepherd-mistral-7b-prm (wang2024mathshepherdverifyreinforcellms, ), Llama3.1-8B-PRM-Deepseek-Data (xiong2024rlhflowmath, ), and EurusPRM-Stage2 (primerm, ). This result as an ablation study is provided in Section 4.5, where we also study the different ways to aggregate step-level rewards from PRMs discussed in Section 3.1.

Baselines

- Greedy: single greedy generation from the model, serving as the “bottom-line” performance.

- Self Consistency (wang2023selfconsistencyimproveschainthought, ): simplest scaling method, majority voting across candidates

- BoN/WBoN (brown2024largelanguage, ): simple RM-based scaling method, scores outputs with outcome reward models

- Beam Search (snell2024scalingllmtesttimecompute, ): structured search procedure that incrementally builds sequences by keeping the top- $k$ highest-scoring partial completions at each generation step.

- DVTS (beeching2024scalingtesttimecompute, ): a parallel extension of beam search that improves the exploration and performance.

Datasets

We evaluate our methods and baselines across a wide variety of tasks that span difficulty level and domain to test basic and advanced problem-solving and reasoning.

- MATH500 (math500, ): 500 competition-level problems from various mathematical domains.

- AIME 2024 (ai_mo_validation_aime, ): 30 high difficulty problems from the American Invitational Mathematics Examination (AIME I and II) 2024.

- NumGLUE Task 2 (Chemistry) (mishra2022numglue, ): 325 questions that test advanced reasoning across real-world tasks, mostly centered on the chemistry domain.

- FinanceBench (islam2023financebenchnewbenchmarkfinancial, ): 150 open-book financial question answering tasks grounded in real-world financial documents and analysis.

Parsing and scoring

Details on parsing and scoring functions across datasets in the Appendix H

4.2 Results on Mathematical Reasoning Datasets

<details>

<summary>x3.png Details</summary>

### Visual Description

# Technical Data Extraction: Model Accuracy vs. Generation Budget

## 1. Image Overview

This image is a line graph comparing the performance (Accuracy) of different inference methods applied to the **Llama-3.2-1B-Instruct** model against various baseline models. The performance is measured relative to a computational "Budget," defined as the number of model generations.

## 2. Axis Definitions

* **Y-Axis (Vertical):** Accuracy.

* **Range:** 0.2 to 0.7+ (labeled increments of 0.1).

* **X-Axis (Horizontal):** Budget (# of model generations).

* **Scale:** Logarithmic (base 2).

* **Markers:** $2^0, 2^1, 2^2, 2^3, 2^4, 2^5, 2^6, 2^7$ (representing 1 to 128 generations).

## 3. Baseline Reference Lines (Horizontal Dotted Lines)

These lines represent "0-shot CoT (Greedy)" performance for specific models, acting as static benchmarks.

* **GPT-4o:** Accuracy $\approx$ 0.76

* **Llama-3.1-70B-Instruct:** Accuracy $\approx$ 0.66

* **Llama-3.1-8B-Instruct:** Accuracy $\approx$ 0.42

* **Llama-3.2-1B-Instruct:** Accuracy $\approx$ 0.225

## 4. Legend and Data Series Analysis

The legend is located in the bottom-right quadrant of the chart area.

### Series 1: Ours-Particle Filtering (Llama-3.2-1B-Instruct)

* **Visual Identifier:** Blue line with square markers.

* **Trend:** Steepest upward slope in the early stages ($2^0$ to $2^3$), maintaining the highest accuracy among the 1B model methods across all budget levels.

* **Key Data Points (Approximate):**

* $2^0$: 0.21

* $2^2$: 0.43 (Surpasses Llama-3.1-8B-Instruct baseline)

* $2^7$: 0.60 (Approaching Llama-3.1-70B-Instruct baseline)

### Series 2: DVTS (Llama-3.2-1B-Instruct)

* **Visual Identifier:** Purple line with diamond markers.

* **Trend:** Steady upward slope. Starts at $2^2$ budget. Consistently performs between the Particle Filtering and Weighted BoN methods.

* **Key Data Points (Approximate):**

* $2^2$: 0.40

* $2^4$: 0.49

* $2^7$: 0.55

### Series 3: Weighted BoN (Llama-3.2-1B-Instruct)

* **Visual Identifier:** Red line with diamond markers.

* **Trend:** Upward slope, but the shallowest of the three experimental methods.

* **Key Data Points (Approximate):**

* $2^0$: 0.20

* $2^3$: 0.40

* $2^7$: 0.505

## 5. Summary Table of Extracted Data (Estimated Values)

| Budget ($2^n$) | Weighted BoN (Red) | Ours-Particle Filtering (Blue) | DVTS (Purple) |

| :--- | :--- | :--- | :--- |

| **$2^0$ (1)** | 0.20 | 0.21 | - |

| **$2^1$ (2)** | 0.27 | 0.31 | - |

| **$2^2$ (4)** | 0.34 | 0.43 | 0.40 |

| **$2^3$ (8)** | 0.40 | 0.50 | 0.44 |

| **$2^4$ (16)** | 0.43 | 0.53 | 0.49 |

| **$2^5$ (32)** | 0.47 | 0.57 | 0.50 |

| **$2^6$ (64)** | 0.48 | 0.60 | 0.53 |

| **$2^7$ (128)** | 0.51 | 0.60 | 0.55 |

## 6. Key Findings

1. **Scaling Efficiency:** The "Ours-Particle Filtering" method applied to a 1B model achieves the accuracy of a 0-shot 8B model with a budget of only 4 generations ($2^2$).

2. **Closing the Gap:** With a budget of 128 generations ($2^7$), the 1B model using Particle Filtering reaches ~60% accuracy, significantly narrowing the gap toward the 70B model's 66% baseline.

3. **Method Superiority:** Particle Filtering consistently outperforms both Weighted Best-of-N (BoN) and DVTS across the entire tested budget range.

</details>

(a) Llama-3.2-1B-Instruct

<details>

<summary>x4.png Details</summary>

### Visual Description

# Technical Document Extraction: Model Accuracy vs. Generation Budget

## 1. Image Overview

This image is a line graph comparing the performance (Accuracy) of different inference-time scaling methods against a computational budget (number of model generations). The chart specifically evaluates these methods using the **Llama-3.1-8B-Instruct** model as a base, while providing horizontal baselines for other models.

---

## 2. Component Isolation

### A. Header / Baselines (Top & Middle Regions)

The chart contains four horizontal black dotted lines representing "0-shot CoT (Greedy)" performance for various models. These serve as static benchmarks.

| Model | Approximate Accuracy |

| :--- | :--- |

| GPT-4o | 0.76 |

| Llama-3.1-70B-Instruct | 0.66 |

| Llama-3.1-8B-Instruct | 0.42 |

| Llama-3.2-1B-Instruct | 0.225 |

### B. Main Chart Area (Data Series)

The x-axis is logarithmic (base 2), and the y-axis is linear. There are three primary data series plotted.

#### Legend

* **Red Line with Diamond Markers**: `Weighted BoN (Llama-3.1-8B-Instruct)`

* **Blue Line with Square Markers**: `Ours-Particle Filtering (Llama-3.1-8B-Instruct)`

* **Purple Line with Diamond Markers**: `DVTS (Llama-3.1-8B-Instruct)`

* **Black Dotted Line**: `0-shot CoT (Greedy)` (Reference for the baselines mentioned in section A).

---

## 3. Trend Verification and Data Extraction

### Series 1: Ours-Particle Filtering (Blue Square)

* **Trend**: This is the highest-performing method. It shows a steep logarithmic growth from $2^0$ to $2^4$, then begins to plateau as it approaches the GPT-4o baseline.

* **Data Points (Approximate):**

* $2^0$ (1): 0.41

* $2^1$ (2): 0.52

* $2^2$ (4): 0.62

* $2^3$ (8): 0.69

* $2^4$ (16): 0.72

* $2^5$ (32): 0.74

* $2^6$ (64): 0.745

* $2^7$ (128): 0.75

### Series 2: DVTS (Purple Diamond)

* **Trend**: This series starts at a higher budget ($2^2$). It shows a steady upward slope, consistently performing better than Weighted BoN but significantly lower than Particle Filtering. It surpasses the Llama-3.1-70B-Instruct baseline at a budget of $2^6$.

* **Data Points (Approximate):**

* $2^2$ (4): 0.54

* $2^3$ (8): 0.59

* $2^4$ (16): 0.62

* $2^5$ (32): 0.63

* $2^6$ (64): 0.66

* $2^7$ (128): 0.67

### Series 3: Weighted BoN (Red Diamond)

* **Trend**: The lowest performing of the three active methods. It shows a steady but slower increase in accuracy, failing to reach the Llama-3.1-70B-Instruct baseline even at the maximum budget shown.

* **Data Points (Approximate):**

* $2^0$ (1): 0.39

* $2^1$ (2): 0.44

* $2^2$ (4): 0.49

* $2^3$ (8): 0.54

* $2^4$ (16): 0.575

* $2^5$ (32): 0.585

* $2^6$ (64): 0.59

* $2^7$ (128): 0.595

---

## 4. Axis and Labels

* **Y-Axis Title**: `Accuracy`

* **Y-Axis Markers**: 0.2, 0.3, 0.4, 0.5, 0.6, 0.7

* **X-Axis Title**: `Budget (# of model generations)`

* **X-Axis Markers (Log Scale)**: $2^0, 2^1, 2^2, 2^3, 2^4, 2^5, 2^6, 2^7$ (representing 1 to 128 generations).

---

## 5. Key Technical Insights

1. **Efficiency**: The "Ours-Particle Filtering" method using an 8B model achieves GPT-4o level performance (approx. 0.75) with a budget of 128 generations ($2^7$).

2. **Scaling**: All methods show diminishing returns as the budget increases, evidenced by the flattening of the curves at higher x-values.

3. **Model Comparison**: The 8B model using Particle Filtering outperforms the 70B model's greedy baseline at a budget of only 8 generations ($2^3$).

</details>

(b) Llama-3.1-8B-Instruct

<details>

<summary>x5.png Details</summary>

### Visual Description

# Technical Document Extraction: Model Accuracy vs. Generation Budget

## 1. Image Overview

This image is a line graph illustrating the relationship between computational budget (measured in the number of model generations) and the accuracy of various Large Language Model (LLM) inference strategies. The chart compares three dynamic sampling/filtering methods against several static baselines.

## 2. Component Isolation

### A. Header / Reference Baselines

The top and middle sections of the chart contain horizontal black dotted lines representing fixed performance benchmarks for specific models:

* **o1-preview**: Positioned at approximately **0.870** accuracy.

* **Qwen2.5-Math-7B-Instruct**: Positioned at approximately **0.796** accuracy.

* **GPT-4o**: Positioned at approximately **0.762** accuracy.

* **Qwen2.5-Math-1.5B-Instruct 0-shot CoT (Greedy)**: Positioned at the baseline of **0.700** accuracy.

### B. Main Chart Area (Data Series)

The chart plots three primary data series using a logarithmic scale for the X-axis. All three series use **Qwen2.5-Math-1.5B-Instruct** as the base model.

**Legend:**

* **Blue Line with Square Markers (■):** Ours-Particle Filtering

* **Red Line with Cross/Diamond Markers (✖/◆):** Weighted BoN (Best-of-N)

* **Purple Line with Diamond Markers (◆):** DVTS

### C. Axes and Scale

* **Y-Axis (Vertical):** Labeled **"Accuracy"**. Scale ranges from **0.700 to 0.875** with increments of 0.025.

* **X-Axis (Horizontal):** Labeled **"Budget (# of model generations)"**. It uses a base-2 logarithmic scale: $2^0, 2^1, 2^2, 2^3, 2^4, 2^5, 2^6, 2^7$ (representing 1 to 128 generations).

## 3. Trend Verification and Data Extraction

| Budget ($2^n$) | Ours-Particle Filtering (Blue ■) | Weighted BoN (Red ✖) | DVTS (Purple ◆) |

| :--- | :--- | :--- | :--- |

| $2^0$ (1) | 0.708 | 0.706 | 0.706 |

| $2^1$ (2) | 0.759 | 0.753 | 0.753 |

| $2^2$ (4) | 0.792 | 0.810 | 0.810 |

| $2^3$ (8) | 0.822 | 0.822 | 0.821 |

| $2^4$ (16) | 0.818 | 0.834 | 0.826 |

| $2^5$ (32) | 0.846 | 0.826 | 0.828 |

| $2^6$ (64) | 0.854 | 0.828 | 0.834 |

| $2^7$ (128) | 0.850 | 0.828 | 0.834 |

## 4. Key Findings and Observations

1. **Scaling Efficiency:** All three methods significantly improve the performance of the 1.5B model. By a budget of $2^2$ (4 generations), the 1.5B model using any of these methods outperforms the much larger **GPT-4o** baseline.

2. **Surpassing Larger Models:** By a budget of $2^3$ (8 generations), all three methods outperform the **Qwen2.5-Math-7B-Instruct** model.

3. **Particle Filtering Superiority:** While Weighted BoN and DVTS perform similarly, the "Ours-Particle Filtering" method shows superior scaling at higher budgets (32+ generations), approaching the performance of the **o1-preview** model.

4. **Diminishing Returns:** The Weighted BoN method appears to plateau or slightly regress after 16 generations ($2^4$), whereas Particle Filtering and DVTS continue to show gains or stability.

</details>

(c) Qwen2.5-Math-1.5B-Instruct

<details>

<summary>x6.png Details</summary>

### Visual Description

# Technical Document Extraction: Model Accuracy vs. Generation Budget

## 1. Image Overview

This image is a line graph comparing the performance (Accuracy) of different inference strategies for Large Language Models (LLMs) against a computational budget (number of model generations). The chart uses a logarithmic scale for the x-axis and includes several horizontal reference lines for baseline models.

## 2. Component Isolation

### A. Header / Reference Baselines

The top of the chart contains horizontal black dotted lines representing fixed performance benchmarks.

* **o1-preview**: Positioned at approximately **0.870** accuracy.

* **Qwen2.5-Math-7B-Instruct**: Positioned at approximately **0.796** accuracy.

* **GPT-4o**: Positioned at approximately **0.762** accuracy.

* **Qwen2.5-Math-1.5B-Instruct**: Positioned at approximately **0.700** accuracy.

### B. Main Chart Area (Data Series)

The chart plots four primary data series against a budget ranging from $2^0$ to $2^7$.

**Axis Labels:**

* **Y-Axis**: "Accuracy" (Range: 0.700 to 0.875, increments of 0.025).

* **X-Axis**: "Budget (# of model generations)" (Logarithmic scale: $2^0, 2^1, 2^2, 2^3, 2^4, 2^5, 2^6, 2^7$).

**Legend:**

1. **Blue line with square markers (■)**: `Ours-Particle Filtering (Qwen2.5-Math-7B-Instruct)`

2. **Red line with diamond markers (◆)**: `Weighted BoN (Qwen2.5-Math-7B-Instruct)`

3. **Purple line with diamond markers (◆)**: `DVTS (Qwen2.5-Math-7B-Instruct)`

4. **Black dotted line (---)**: `0-shot CoT (Greedy)` (Note: This serves as the baseline for the 7B model).

---

## 3. Trend Verification and Data Extraction

### Series 1: Ours-Particle Filtering (Blue, Square Markers)

* **Trend**: Steep upward slope from $2^0$ to $2^4$, then plateaus/saturates as it reaches the `o1-preview` baseline at $2^5$. This is the highest-performing method shown.

* **Data Points (Approximate):**

* $2^0$: 0.765

* $2^1$: 0.810

* $2^2$: 0.848

* $2^3$: 0.854

* $2^4$: 0.866

* $2^5$: 0.870 (Matches `o1-preview`)

* $2^6$: 0.870

* $2^7$: 0.870

### Series 2: DVTS (Purple, Diamond Markers)

* **Trend**: Steady upward slope, consistently performing between the Particle Filtering and Weighted BoN methods. It shows a significant jump between $2^1$ and $2^2$.

* **Data Points (Approximate):**

* $2^0$: 0.762 (Starts at GPT-4o level)

* $2^1$: 0.792

* $2^2$: 0.824

* $2^3$: 0.830

* $2^4$: 0.846

* $2^5$: 0.846

* $2^6$: 0.854

* $2^7$: 0.854

### Series 3: Weighted BoN (Red, Diamond Markers)

* **Trend**: Upward slope but with lower efficiency than the other two methods. It shows a slight dip/stagnation at $2^5$ before rising again at $2^6$.

* **Data Points (Approximate):**

* $2^0$: 0.762

* $2^1$: 0.792

* $2^2$: 0.810

* $2^3$: 0.826

* $2^4$: 0.834

* $2^5$: 0.830 (Slight decrease)

* $2^6$: 0.846

* $2^7$: 0.846

---

## 4. Summary Table of Extracted Data

| Budget ($2^n$) | Ours-Particle Filtering | DVTS | Weighted BoN |

| :--- | :--- | :--- | :--- |

| **$2^0$ (1)** | 0.765 | 0.762 | 0.762 |

| **$2^1$ (2)** | 0.810 | 0.792 | 0.792 |

| **$2^2$ (4)** | 0.848 | 0.824 | 0.810 |

| **$2^3$ (8)** | 0.854 | 0.830 | 0.826 |

| **$2^4$ (16)** | 0.866 | 0.846 | 0.834 |

| **$2^5$ (32)** | 0.870 | 0.846 | 0.830 |

| **$2^6$ (64)** | 0.870 | 0.854 | 0.846 |

| **$2^7$ (128)** | 0.870 | 0.854 | 0.846 |

## 5. Key Observations

* **Efficiency**: The "Ours-Particle Filtering" method reaches the performance level of `o1-preview` (the highest baseline) with a budget of $2^5$ (32 generations).

* **Baseline Comparison**: All three tested methods (Particle Filtering, DVTS, Weighted BoN) using the Qwen2.5-Math-7B-Instruct model eventually exceed the performance of GPT-4o and the base 7B-Instruct model's greedy decoding.

* **Saturation**: All methods appear to reach a performance plateau between $2^6$ and $2^7$ generations.

</details>

(d) Qwen2.5-Math-7B-Instruct

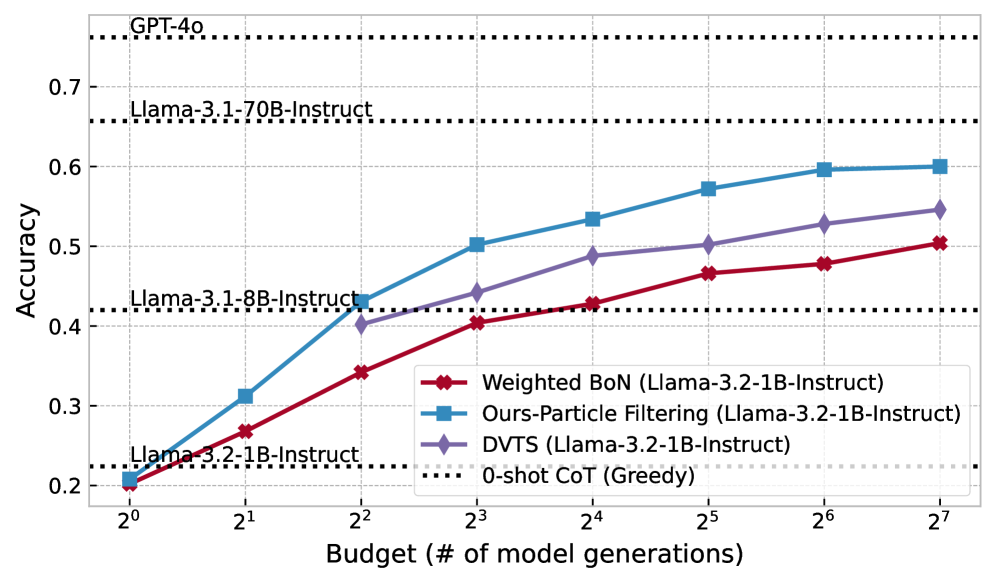

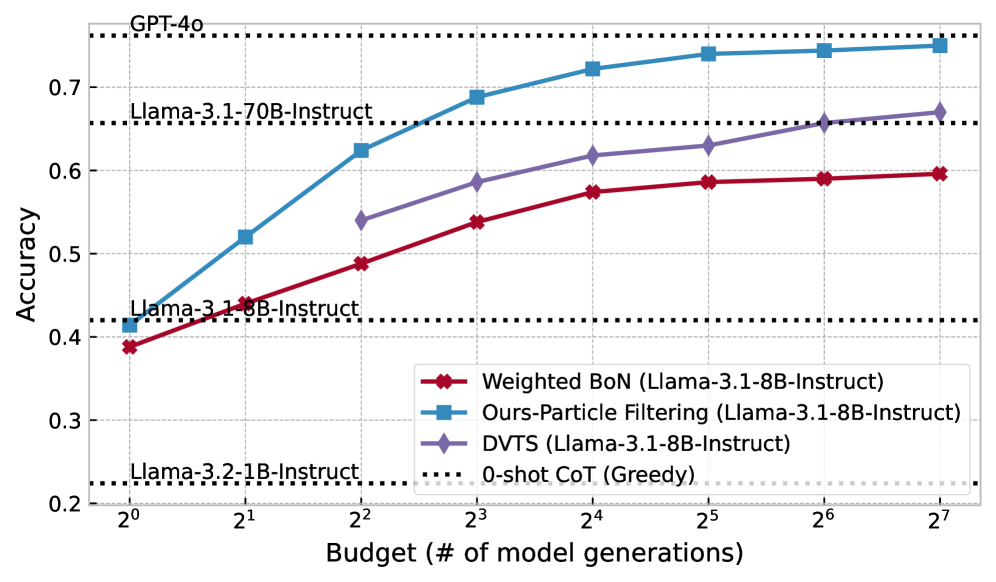

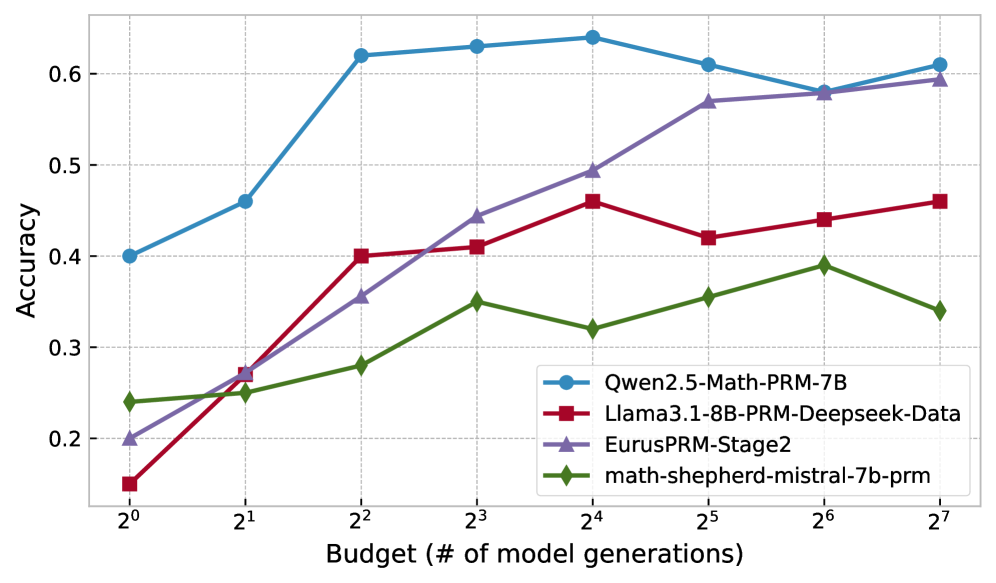

Figure 4: Accuracy vs. Generation Budget across models using different inference-time strategies.

We present our main results in Table 1, comparing Particle Filtering (PF) with a suite of strong inference-time scaling baselines on two challenging mathematical reasoning tasks: MATH500 and AIME 2024. All inference-time scaling methods are evaluated under a fixed compute budget of 32 generations per instance, using Qwen2.5-Math-PRM-7B as the reward model. Specifically, it serves as the PRM for PF, Beam Search, and DVTS, and as the ORM in WBoN.

- PF consistently achieves the best performance across all model sizes. Among all inference-time scaling methods, PF delivers the highest accuracy on both benchmarks, often outperforming alternatives by a significant margin.

- PF unlocks competitive performance even for small models. For instance, Qwen2.5-1.5B-Instruct, when scaled using PF, surpasses the much larger GPT-4o on both MATH500 and AIME 2024. This showcases the ability of inference-time compute to significantly improve performance without increasing model size.

- PF on Qwen2.5-Math-7B-Instruct outperforms o1-preview on MATH500. Scaling Qwen2.5-Math-7B with PF results in a new state-of-the-art among open models: 87.7% on MATH500 and 10/30 on AIME. This surpasses the o1-preview model and highlights the potential of inference-time scaling to close the gap with—or even exceed—the performance of proprietary frontier LLMs using smaller, open models.

For results on additional model families and broader ablations, see Appendix F.

| Model Closed Source LLMs GPT-4o | Method – | MATH500 76.2 | AIME 2024 4/30 |

| --- | --- | --- | --- |

| o1-preview | – | 87.0 | 12/30 |

| Claude 3.5 Sonnet | – | 78.2 | 5/30 |

| Open Source LLMs | | | |

| Llama-3.1 70B Instruct | – | 65.6 | 5/30 |

| Qwen-2.5 Math 72B Instruct | – | 82.0 | 9/30 |

| Open Source General SLMs | | | |

| Qwen-2.5 1.5B Instruct | Greedy | 54.4 | 1/30 |

| Self Consistency | 61.0 | 2/30 | |

| BoN | 67.8 | 1/30 | |

| WBoN | 69.2 | 2/30 | |

| Beam Search | 76.2 | 5/30 | |

| DVTS | 76.6 | 4/30 | |

| \rowcolor [HTML]C1DEB8 | \cellcolor [HTML]C1DEB8 Particle Filtering (Ours) | 79.3 | 6/30 |

| Open Source Math SLMs | | | |

| Qwen-2.5 Math 1.5B Instruct | Greedy | 70.0 | 3/30 |

| Self Consistency | 79.6 | 6/30 | |

| BoN | 81.8 | 4/30 | |

| WBoN | 82.6 | 4/30 | |

| Beam Search | 83.0 | 4/30 | |

| DVTS | 82.8 | 5/30 | |

| \rowcolor [HTML]C1DEB8 | \cellcolor [HTML]C1DEB8 Particle Filtering (Ours) | 84.6 | 7/30 |

| Qwen-2.5 Math 7B Instruct | Greedy | 79.6 | 5/30 |

| Self Consistency | 84.0 | 4/30 | |

| BoN | 82.6 | 5/30 | |

| WBoN | 83.0 | 5/30 | |

| Beam Search | 86.9 | 7/30 | |

| DVTS | 84.6 | 6/30 | |

| \rowcolor [HTML]C1DEB8 | \cellcolor [HTML]C1DEB8 Particle Filtering (Ours) | 87.7 | 10/30 |

Table 1: Results of various LLMs on MATH500 and AIME 2024, highlighting particle filtering performance. All methods used a compute budget of 32 generations with Qwen2.5-Math-PRM-7B as the reward model. Notably, Qwen2.5-Math-7B, with just 32 particles, matches o1-preview on MATH500, demonstrating PF’s effectiveness.

4.3 Results on Generalized Reasoning Datasets

To evaluate whether our inference-time scaling method generalizes beyond mathematical reasoning, we test Particle Filtering on two diverse instruction-following benchmarks: FinanceBench (islam2023financebenchnewbenchmarkfinancial, ), which evaluates financial QA over real-world documents, and NumGLUE Task 2 (Chemistry) (mishra2022numglue, ), which targets numerical reasoning in scientific contexts.

| Greedy | 62.67 | 71.69 |

| --- | --- | --- |

| BoN | 68.00 | 80.92 |

| Self Consistency | 68.67 | 79.32 |

| Beam Search | 67.33 | 80.47 |

| \rowcolor [HTML]C1DEB8 Particle Filtering (Ours) | 70.33 | 84.22 |

Table 2: Results on Non-Math Datasets

As shown in Table 2, Particle Filtering consistently outperforms all other inference-time scaling baselines, achieving the highest accuracy on both datasets. This shows that our method is effective not only for mathematical reasoning but also for broader instruction-following and domain-specific tasks. We use Llama 3.1-8B-Instruct as the policy model and Qwen2.5-Math-PRM-7B as the reward model, with 8 particles for FinanceBench and 32 for NumGLUE.

We note that although we use Qwen2.5-Math-PRM-7B - a reward model trained primarily for mathematical process evaluation - it performs surprisingly well as a reward model on these non-math domains. We hypothesize that such RMs implicitly learn broader reasoning evaluation capabilities during their training, not limited strictly to mathematical content. We leave deeper exploration of this hypothesis and the development of domain-specific or generalized PRMs for future work.

4.4 Scaling with inference-time compute

We now zoom in on how PF scales with inference-time compute. Figure 4 shows the change of performance (in terms of accuracy) with an increasing computation budget ( $N=1,2,4,8,16,32,64,128$ ) across Math SLMs and Non-Math SLMs. As we can see, PF scales 4–16x faster than the next best competitor DVTS, e.g. DVTS requires a budget of 32 to reach the same performance of PF with a budget of 8 with Llama-3.2-1B-Instruct and requires a budget of 128 to reach the performance of PF with a budget of 8 with LLama-3.1-8B-Instruct.

4.5 Ablation study

<details>

<summary>x7.png Details</summary>

### Visual Description

# Technical Data Extraction: Model Accuracy vs. Generation Budget

## 1. Image Overview

This image is a line graph plotting the **Accuracy** of four different Process Reward Models (PRMs) against a varying **Budget (# of model generations)**. The chart uses a logarithmic scale for the x-axis and a linear scale for the y-axis.

## 2. Axis and Legend Specifications

### Axis Labels

* **Y-Axis:** "Accuracy" (Linear scale ranging from approximately 0.15 to 0.65).

* **X-Axis:** "Budget (# of model generations)" (Logarithmic scale base 2, ranging from $2^0$ to $2^7$).

### Axis Markers

* **Y-Axis Markers:** 0.2, 0.3, 0.4, 0.5, 0.6.

* **X-Axis Markers:** $2^0$ (1), $2^1$ (2), $2^2$ (4), $2^3$ (8), $2^4$ (16), $2^5$ (32), $2^6$ (64), $2^7$ (128).

### Legend

| Color | Marker Shape | Label |

| :--- | :--- | :--- |

| **Blue** | Circle (●) | Qwen2.5-Math-PRM-7B |

| **Red** | Square (■) | Llama3.1-8B-PRM-Deepseek-Data |

| **Purple** | Triangle (▲) | EurusPRM-Stage2 |

| **Green** | Diamond (◆) | math-shepherd-mistral-7b-prm |

---

## 3. Data Series Analysis and Trends

### Series 1: Qwen2.5-Math-PRM-7B (Blue, Circle)

* **Trend:** This model consistently maintains the highest accuracy across almost all budget levels. It shows a sharp upward slope from $2^0$ to $2^2$, plateaus/peaks between $2^3$ and $2^4$, experiences a slight dip at $2^6$, and recovers at $2^7$.

* **Estimated Data Points:**

* $2^0$: 0.40

* $2^1$: 0.46

* $2^2$: 0.62

* $2^3$: 0.63

* $2^4$: 0.64 (Peak)

* $2^5$: 0.61

* $2^6$: 0.58

* $2^7$: 0.61

### Series 2: EurusPRM-Stage2 (Purple, Triangle)

* **Trend:** Shows the most consistent and steepest positive linear growth relative to the log-scale budget. It starts as the second-lowest performer and ends as the second-highest, nearly converging with the Qwen model at the highest budget.

* **Estimated Data Points:**

* $2^0$: 0.20

* $2^1$: 0.27

* $2^2$: 0.35

* $2^3$: 0.44

* $2^4$: 0.49

* $2^5$: 0.57

* $2^6$: 0.58

* $2^7$: 0.59

### Series 3: Llama3.1-8B-PRM-Deepseek-Data (Red, Square)

* **Trend:** Starts with the lowest accuracy at $2^0$. It shows a significant jump at $2^2$, followed by a generally upward but volatile trend, including a notable dip at $2^5$.

* **Estimated Data Points:**

* $2^0$: 0.15

* $2^1$: 0.27

* $2^2$: 0.40

* $2^3$: 0.41

* $2^4$: 0.46

* $2^5$: 0.42

* $2^6$: 0.44

* $2^7$: 0.46

### Series 4: math-shepherd-mistral-7b-prm (Green, Diamond)

* **Trend:** This is the lowest-performing model overall for budgets $> 2^1$. The trend is generally upward but very shallow compared to the others, with a peak at $2^6$ followed by a decline at $2^7$.

* **Estimated Data Points:**

* $2^0$: 0.24

* $2^1$: 0.25

* $2^2$: 0.28

* $2^3$: 0.35

* $2^4$: 0.32

* $2^5$: 0.35

* $2^6$: 0.39

* $2^7$: 0.34

---

## 4. Summary Table of Extracted Values (Approximate)

| Budget ($2^x$) | Qwen2.5 (Blue) | EurusPRM (Purple) | Llama3.1 (Red) | Math-Shepherd (Green) |

| :--- | :--- | :--- | :--- | :--- |

| **1 ($2^0$)** | 0.40 | 0.20 | 0.15 | 0.24 |

| **2 ($2^1$)** | 0.46 | 0.27 | 0.27 | 0.25 |

| **4 ($2^2$)** | 0.62 | 0.35 | 0.40 | 0.28 |

| **8 ($2^3$)** | 0.63 | 0.44 | 0.41 | 0.35 |

| **16 ($2^4$)** | 0.64 | 0.49 | 0.46 | 0.32 |

| **32 ($2^5$)** | 0.61 | 0.57 | 0.42 | 0.35 |

| **64 ($2^6$)** | 0.58 | 0.58 | 0.44 | 0.39 |

| **128 ($2^7$)** | 0.61 | 0.59 | 0.46 | 0.34 |

</details>

(a) Results of ablation comparing the performance of PF across PRMs.

<details>

<summary>x8.png Details</summary>

### Visual Description

# Technical Data Extraction: Model Accuracy vs. Budget

## 1. Image Overview

This image is a line graph illustrating the relationship between a computational budget (measured in the number of model generations) and the resulting accuracy of four different methodologies. The chart uses a logarithmic scale for the x-axis and a linear scale for the y-axis.

## 2. Component Isolation

### A. Header/Axes

* **Y-Axis Label:** "Accuracy" (Vertical, left-aligned).

* **Y-Axis Scale:** Linear, ranging from 0.2 to 0.6 with major ticks at intervals of 0.1.

* **X-Axis Label:** "Budget (# of model generations)" (Horizontal, bottom-centered).

* **X-Axis Scale:** Logarithmic (base 2), ranging from $2^0$ to $2^7$.

* **Grid:** Light gray dashed grid lines corresponding to both x and y axis markers.

### B. Legend (Spatial Grounding: Bottom Right)

The legend is located in the lower-right quadrant of the plot area.

* **Blue line with Circle markers ($\bullet$):** Model Aggregation

* **Red line with Square markers ($\blacksquare$):** Last

* **Purple line with Triangle markers ($\blacktriangle$):** Min

* **Green line with Diamond markers ($\blacksquare$):** Product

---

## 3. Data Series Analysis and Trend Verification

### Series 1: Model Aggregation (Blue, Circle)

* **Trend:** Shows a consistent upward trajectory with high stability. It starts at the lowest point and ends as the highest-performing method at the maximum budget.

* **Data Points (Approximate):**

* $2^0$: 0.22

* $2^1$: 0.31

* $2^2$: 0.41

* $2^3$: 0.52

* $2^4$: 0.55

* $2^5$: 0.60

* $2^6$: 0.57

* $2^7$: 0.60

### Series 2: Last (Red, Square)

* **Trend:** Generally upward but exhibits significant volatility at higher budgets. It reaches the absolute peak accuracy of the chart at $2^6$ before dropping sharply.

* **Data Points (Approximate):**

* $2^0$: 0.22

* $2^1$: 0.30

* $2^2$: 0.41

* $2^3$: 0.51

* $2^4$: 0.52

* $2^5$: 0.59

* $2^6$: 0.62 (Peak)

* $2^7$: 0.52

### Series 3: Min (Purple, Triangle)

* **Trend:** The lowest-performing series overall. It shows an initial jump at $2^1$, plateaus/dips at $2^2$, and then follows a slow upward trend that tapers off after $2^5$.

* **Data Points (Approximate):**

* $2^0$: 0.22

* $2^1$: 0.35

* $2^2$: 0.34

* $2^3$: 0.43

* $2^4$: 0.47

* $2^5$: 0.51

* $2^6$: 0.49

* $2^7$: 0.48

### Series 4: Product (Green, Diamond)

* **Trend:** Closely tracks the "Last" and "Model Aggregation" series in the early stages ($2^0$ to $2^3$). It underperforms compared to the top two series in the later stages, showing a peak at $2^5$ followed by a decline and slight recovery.

* **Data Points (Approximate):**

* $2^0$: 0.22

* $2^1$: 0.30

* $2^2$: 0.40

* $2^3$: 0.51

* $2^4$: 0.49

* $2^5$: 0.54

* $2^6$: 0.49

* $2^7$: 0.53

---

## 4. Reconstructed Data Table (Estimated Values)

| Budget ($2^n$) | Model Aggregation (Blue) | Last (Red) | Min (Purple) | Product (Green) |

| :--- | :--- | :--- | :--- | :--- |

| **$2^0$ (1)** | 0.22 | 0.22 | 0.22 | 0.22 |

| **$2^1$ (2)** | 0.31 | 0.30 | 0.35 | 0.30 |

| **$2^2$ (4)** | 0.41 | 0.41 | 0.34 | 0.40 |

| **$2^3$ (8)** | 0.52 | 0.51 | 0.43 | 0.51 |

| **$2^4$ (16)** | 0.55 | 0.52 | 0.47 | 0.49 |

| **$2^5$ (32)** | 0.60 | 0.59 | 0.51 | 0.54 |

| **$2^6$ (64)** | 0.57 | 0.62 | 0.49 | 0.49 |

| **$2^7$ (128)** | 0.60 | 0.52 | 0.48 | 0.53 |

## 5. Key Observations

* **Convergence:** All methods start at the same accuracy (~0.22) when the budget is $2^0$.

* **Top Performer:** "Model Aggregation" appears to be the most robust method, ending with the highest accuracy at the largest budget and showing less volatility than "Last".

* **Volatility:** The "Last" method shows the highest peak accuracy but suffers from a significant performance drop-off after $2^6$ generations.

* **Scaling:** Accuracy for all methods improves significantly as the budget increases from $2^0$ to $2^3$, after which the gains become more marginal or inconsistent.

</details>

(b) Effect of different aggregation strategies for Qwen2.5-Math-PRM-7B.

<details>

<summary>x9.png Details</summary>

### Visual Description

# Technical Document Extraction: Accuracy vs. Model Generation Budget

## 1. Image Overview

This image is a line graph illustrating the relationship between the computational budget (defined as the number of model generations) and the resulting accuracy of a system. The data is categorized by different "Temperature" (Temp) settings, ranging from 0.4 to 1.4.

## 2. Component Isolation

### A. Header/Axes

* **Y-Axis Label:** Accuracy

* **Y-Axis Scale:** Linear, ranging from 0.1 to 0.6 (with major ticks at 0.2, 0.3, 0.4, 0.5, 0.6).

* **X-Axis Label:** Budget (# of model generations)

* **X-Axis Scale:** Logarithmic (base 2), ranging from $2^0$ (1) to $2^7$ (128). Specific markers: $2^0, 2^1, 2^2, 2^3, 2^4, 2^5, 2^6, 2^7$.

### B. Legend (Spatial Grounding: Bottom Right [x≈0.8, y≈0.3])

The legend identifies six distinct data series based on color and marker:

* **Blue (●):** Temp=0.4

* **Dark Red (●):** Temp=0.6

* **Purple (●):** Temp=0.8

* **Green (●):** Temp=1.0

* **Orange (●):** Temp=1.2

* **Pink (●):** Temp=1.4

## 3. Trend Verification and Data Extraction

All series exhibit a general upward trend: as the budget (number of generations) increases, accuracy improves. However, the rate of improvement and the final saturation point vary by temperature.

### Data Table (Estimated Values)

| Budget ($2^n$) | Temp=0.4 (Blue) | Temp=0.6 (Red) | Temp=0.8 (Purple) | Temp=1.0 (Green) | Temp=1.2 (Orange) | Temp=1.4 (Pink) |

| :--- | :--- | :--- | :--- | :--- | :--- | :--- |

| **$2^0$ (1)** | 0.23 | 0.18 | 0.17 | 0.22 | 0.20 | 0.13 |

| **$2^1$ (2)** | 0.35 | 0.33 | 0.30 | 0.31 | 0.29 | 0.23 |

| **$2^2$ (4)** | 0.43 | 0.40 | 0.41 | 0.41 | 0.44 | 0.34 |

| **$2^3$ (8)** | 0.51 | 0.47 | 0.51 | 0.46 | 0.46 | 0.50 |

| **$2^4$ (16)** | 0.50 | 0.55 | 0.55 | 0.52 | 0.55 | 0.55 |

| **$2^5$ (32)** | 0.54 | 0.59 | 0.59 | 0.53 | 0.56 | 0.59 |

| **$2^6$ (64)** | 0.58 | 0.55 | 0.56 | 0.57 | 0.59 | 0.52 |

| **$2^7$ (128)** | 0.64 | 0.52 | 0.58 | 0.58 | 0.59 | 0.55 |

## 4. Key Observations and Analysis

* **Low Budget Performance ($2^0$ to $2^2$):** Lower temperatures (specifically Temp=0.4) generally start with higher accuracy. Temp=1.4 (Pink) is the clear underperformer at low budgets, starting significantly lower than the others.

* **Mid-Range Convergence ($2^3$ to $2^5$):** Between 8 and 32 generations, the performance of all temperature settings converges significantly, with most values falling between 0.45 and 0.60.

* **High Budget Performance ($2^6$ to $2^7$):**

* **Temp=0.4 (Blue)** shows the most consistent late-stage growth, achieving the highest overall accuracy of approximately 0.64 at the $2^7$ budget.

* **Temp=0.6 (Red)** and **Temp=1.4 (Pink)** exhibit "peaking" behavior, where accuracy actually declines or plateaus after $2^5$.

* **Temp=1.2 (Orange)** and **Temp=1.0 (Green)** show stable, high performance at the maximum budget, plateauing around 0.58-0.59.

* **Optimal Temperature:** While lower temperatures perform better at the extremes (very low and very high budgets), a mid-range temperature like 0.8 or 1.2 provides more consistent, competitive results across the middle of the budget spectrum.

</details>

(c) Results of ablation comparing the effect of temperature across different particle budget.

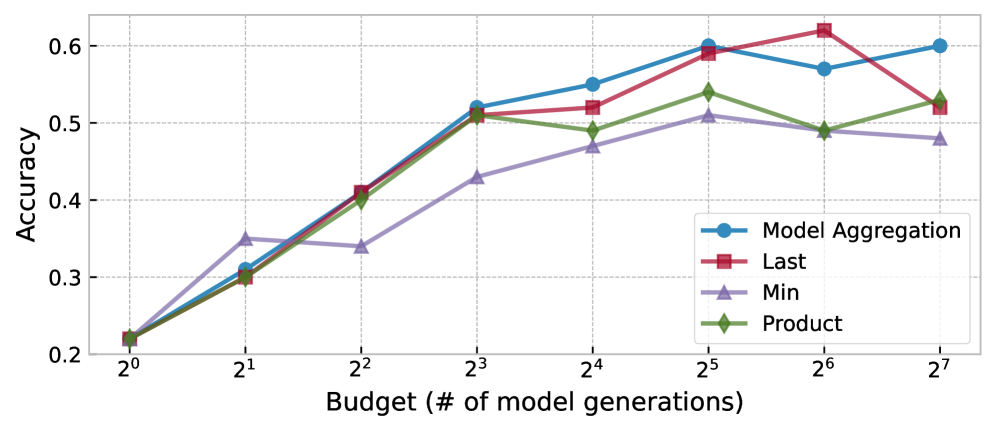

Performance of different PRMs

Figure 5(a) shows an ablation on 100 MATH500 questions, comparing our method’s accuracy across reward functions as the number of particles increases.

Qwen2.5-Math-PRM-7B consistently outperforms other models, making it the natural choice for our main results. Interestingly, while EurusPRM-Stage2 performs poorly with smaller budgets, it improves and eventually matches Qwen2.5-Math-PRM-7B at higher budgets.

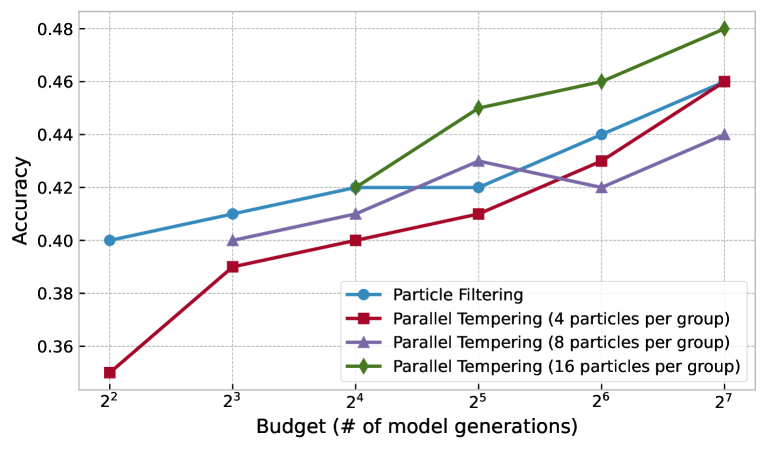

Reward aggregation within PRMs

As mentioned in Section 3.1 / Appendix A and reported by prior works (zhang2025lessonsdeveloping, ), there are multiple ways to use PRMs to calculate reward scores, which can significantly impact final performance. Figure 5(b) studies three existing methods for combining PRM scores—using the last reward, the minimum reward, and the product of all rewards. We also study “Model Aggregation," where the PRM is used as an ORM with partial answers.

As we can see, using Model Aggregation—in essence, feeding into a PRM the entire partial answer alongside the question - scales the best with an increasing budget.

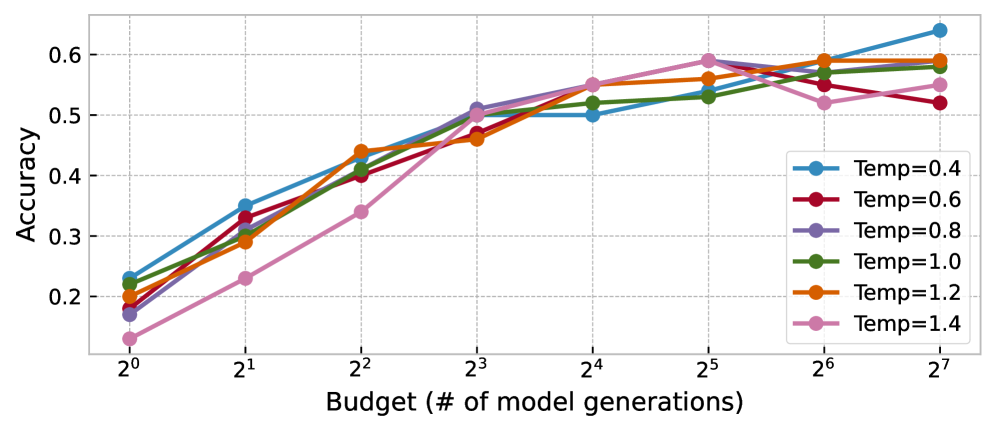

Controlling the state transition—temperatures in LLM generation

We investigate the effect of different LM sampling temperatures on the scaling of our method across varying numbers of particles. The results of our ablation study on a 100-question subset of MATH questions are shown in Figure 5(c).

Our findings show that the common LLM temperature range of 0.4–1.0 performs well, with minimal accuracy variation across budgets. Following (beeching2024scalingtesttimecompute, ), we use temperature 0.8 for all experiments.

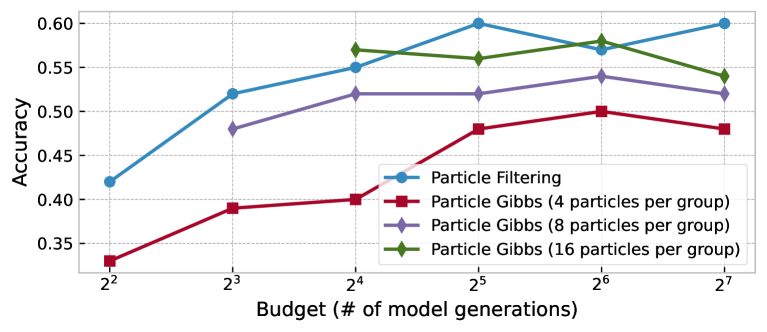

Budget allocation over iterations and parallelism

The multi-iteration and parallel-chain extensions introduced in Section 3.1 / Appendix B provide two more axes to spend computation beyond the number of particles. We explore how different budget allocations affect performance in the appendix.

5 Related Work

Process reward models (PRMs) aim to provide more granular feedback by evaluating intermediate steps rather than only final outputs. They are trained via process supervision, a training approach where models receive feedback on each intermediate step of their reasoning process rather than only on the final outcome. (lightman2023letsverify, ) propose a step-by-step verification approach to PRMs, improving the reliability of reinforcement learning. DeepSeek PRM wang2024mathshepherdverifyreinforcellms uses Mistral to annotate training data for PRMs . (zhang2025lessonsdeveloping, ) introduces Qwen-PRM, which combines both Monte Carlo estimation and model/human annotation approach to prepare training data for a PRM. PRIME (cui2024process, ) proposes to train an outcome reward model (ORM) using an implicit reward objective. The paper shows that implicit reward objective directly learns a Q-function that provides rewards for each token, which can be leveraged to create process-level reward signal. This process eliminates the need for any process labels, and reaches competitive performance on PRM benchmarks.

Inference-time scaling is a key training-free strategy for enhancing LLM performance. (brown2024largelanguage, ) investigates best-of-N (BoN) decoding, showing quality gains via selective refinement. (snell2024scalingllm, ) analyzes how scaling compute improves inference efficiency from a compute-optimality view. While not implementing full Monte Carlo tree search (MCTS), (zhou2024languageagenttreesearch, ) explores a tree-search-inspired approach within language models. (guan2025rstarmathsmall, ) introduces rSTAR, which combines MCTS for data generation and training to improve mathematical reasoning. (beeching2024scalingtesttimecompute, ) discusses beam search and dynamic variable-time search (DVTS) as inference-time scaling methods for open-source LLMs. DVTS runs multiple subtrees in parallel to avoid all leaves getting stuck in local minima.

Particle-based Monte Carlo methods are powerful tools for probabilistic inference. Sequential Monte Carlo (sequentialmonte, ) or particle filtering (nonlinearfiltering, ) has been a classical way to approximate complex posterior distributions over state-space models. Particle Gibbs (PG) sampling (andrieu2010particlemarkov, ) extends these approaches by integrating MCMC techniques for improved inference. (lew2023sequentialmontecarlosteering, ) and (loula2025syntactic, ) use token-based SMC within probabilistic programs to steer LLMs, while (grand2025selfsteeringlanguagemodels, ) apply token-based SMC for self-constrained generation. zhao2024probabilisticinferencelanguagemodels and feng2024stepbystepreasoningmathproblems introduce Twisted SMC for inference in language models. We note that our method differs from feng2024stepbystepreasoningmathproblems in several key ways. First, TSMC relies on a ground-truth verifier and requires joint training of a generator and value function, whereas our approach uses an off-the-shelf generator and a noisy pretrained reward model (PRM), requiring no additional training. Second, our method generalizes to domains lacking ground-truth verifiers, such as instruction and broader language tasks. Finally, we demonstrate significantly stronger empirical results. The authors of TSMC did not release code, and their method requires additional training. We therefore compare our method—using the same generator model (DeepSeekMath7B) and dataset (MATH500) as in their results table—and find it achieves 75.4% accuracy with 128 samples, outperforming TSMC by 14.6 points using fewer than half the samples and no fine-tuning.

Decision making with uncertainty The way of representing uncertainty using softmax is often referred as Boltzmann exploration in the multi-armed bandit (MAB) literature (kuleshov2014algorithms, ). While formulating it as a MAB problem allows it to use a scheduling on the softmax temperature and to derive regret bounds (cesa2017boltzmann, ), we no longer have the same unbiasedness from the particle filtering / SMC formulation.

6 Conclusion

In this paper, we introduce a particle filtering algorithm for inference-time scaling. To address the limitations of deterministic inference-time scaling—namely, early pruning from imperfect reward models—we adapt particle-based Monte Carlo methods to maintain a diverse population of candidate sequences and balance exploration and exploitation. This probabilistic framing enables more resilient generation and opens a principled path for integrating uncertainty into LLM inference. Our evaluation shows these algorithms consistently outperform search-based approaches by a significant margin.

However, inference-time scaling comes with computational challenges. Hosting and running a reward model often introduces high latency, making the process more resource-intensive. For smaller models, prompt engineering is often required to ensure outputs adhere to the desired format. Finally, hyperparameters like budget are problem-dependent and may require tuning across domains.

We hope that the formal connection of inference scaling to probabilistic modeling established in this work will lead to systematic solutions for current limitations of these methods and pave the way for bringing advanced probabilistic inference algorithms into LLM inference-time scaling in future work.

References

- (1) AI-MO. Aimo validation aime dataset. https://huggingface.co/datasets/AI-MO/aimo-validation-aime, 2023. Accessed: 2025-01-24.

- (2) Christophe Andrieu, Arnaud Doucet, and Roman Holenstein. Particle Markov Chain Monte Carlo Methods. Journal of the Royal Statistical Society Series B: Statistical Methodology, 72(3):269–342, June 2010.

- (3) Edward Beeching, Lewis Tunstall, and Sasha Rush. Scaling test-time compute with open models, 2024.

- (4) Bradley Brown, Jordan Juravsky, Ryan Ehrlich, Ronald Clark, Quoc V. Le, Christopher Ré, and Azalia Mirhoseini. Large Language Monkeys: Scaling Inference Compute with Repeated Sampling, July 2024.

- (5) Nicolò Cesa-Bianchi, Claudio Gentile, Gábor Lugosi, and Gergely Neu. Boltzmann exploration done right. Advances in neural information processing systems, 30, 2017.

- (6) Ganqu Cui, Lifan Yuan, Zefan Wang, Hanbin Wang, Wendi Li, Bingxiang He, Yuchen Fan, Tianyu Yu, Qixin Xu, Weize Chen, Jiarui Yuan, Huayu Chen, Kaiyan Zhang, Xingtai Lv, Shuo Wang, Yuan Yao, Hao Peng, Yu Cheng, Zhiyuan Liu, Maosong Sun, Bowen Zhou, and Ning Ding. Process reinforcement through implicit rewards, 2025.

- (7) Pierre Del Moral. Sequential Monte Carlo Methods for Dynamic Systems: Journal of the American Statistical Association: Vol 93, No 443. https://www.tandfonline.com/doi/abs/10.1080/01621459.1998.10473765, 1997.

- (8) Shengyu Feng, Xiang Kong, Shuang Ma, Aonan Zhang, Dong Yin, Chong Wang, Ruoming Pang, and Yiming Yang. Step-by-step reasoning for math problems via twisted sequential monte carlo, 2024.

- (9) Gabriel Grand, Joshua B. Tenenbaum, Vikash K. Mansinghka, Alexander K. Lew, and Jacob Andreas. Self-steering language models, 2025.

- (10) Aaron Grattafiori, Abhimanyu Dubey, Abhinav Jauhri, Abhinav Pandey, Abhishek Kadian, Ahmad Al-Dahle, Aiesha Letman, Akhil Mathur, Alan Schelten, Alex Vaughan, Amy Yang, Angela Fan, Anirudh Goyal, Anthony Hartshorn, Aobo Yang, Archi Mitra, Archie Sravankumar, Artem Korenev, Arthur Hinsvark, Arun Rao, Aston Zhang, Aurelien Rodriguez, Austen Gregerson, Ava Spataru, Baptiste Roziere, Bethany Biron, Binh Tang, Bobbie Chern, Charlotte Caucheteux, Chaya Nayak, Chloe Bi, Chris Marra, Chris McConnell, Christian Keller, Christophe Touret, Chunyang Wu, Corinne Wong, Cristian Canton Ferrer, Cyrus Nikolaidis, Damien Allonsius, Daniel Song, Danielle Pintz, Danny Livshits, Danny Wyatt, David Esiobu, Dhruv Choudhary, Dhruv Mahajan, Diego Garcia-Olano, Diego Perino, Dieuwke Hupkes, Egor Lakomkin, Ehab AlBadawy, Elina Lobanova, Emily Dinan, Eric Michael Smith, Filip Radenovic, Francisco Guzmán, Frank Zhang, Gabriel Synnaeve, Gabrielle Lee, Georgia Lewis Anderson, Govind Thattai, Graeme Nail, Gregoire Mialon, Guan Pang, Guillem Cucurell, Hailey Nguyen, Hannah Korevaar, Hu Xu, Hugo Touvron, Iliyan Zarov, Imanol Arrieta Ibarra, Isabel Kloumann, Ishan Misra, Ivan Evtimov, Jack Zhang, Jade Copet, Jaewon Lee, Jan Geffert, Jana Vranes, Jason Park, Jay Mahadeokar, Jeet Shah, Jelmer van der Linde, Jennifer Billock, Jenny Hong, Jenya Lee, Jeremy Fu, Jianfeng Chi, Jianyu Huang, Jiawen Liu, Jie Wang, Jiecao Yu, Joanna Bitton, Joe Spisak, Jongsoo Park, Joseph Rocca, Joshua Johnstun, Joshua Saxe, Junteng Jia, Kalyan Vasuden Alwala, Karthik Prasad, Kartikeya Upasani, Kate Plawiak, Ke Li, Kenneth Heafield, Kevin Stone, Khalid El-Arini, Krithika Iyer, Kshitiz Malik, Kuenley Chiu, Kunal Bhalla, Kushal Lakhotia, Lauren Rantala-Yeary, Laurens van der Maaten, Lawrence Chen, Liang Tan, Liz Jenkins, Louis Martin, Lovish Madaan, Lubo Malo, Lukas Blecher, Lukas Landzaat, Luke de Oliveira, Madeline Muzzi, Mahesh Pasupuleti, Mannat Singh, Manohar Paluri, Marcin Kardas, Maria Tsimpoukelli, Mathew Oldham, Mathieu Rita, Maya Pavlova, Melanie Kambadur, Mike Lewis, Min Si, Mitesh Kumar Singh, Mona Hassan, Naman Goyal, Narjes Torabi, Nikolay Bashlykov, Nikolay Bogoychev, Niladri Chatterji, Ning Zhang, Olivier Duchenne, Onur Çelebi, Patrick Alrassy, Pengchuan Zhang, Pengwei Li, Petar Vasic, Peter Weng, Prajjwal Bhargava, Pratik Dubal, Praveen Krishnan, Punit Singh Koura, Puxin Xu, Qing He, Qingxiao Dong, Ragavan Srinivasan, Raj Ganapathy, Ramon Calderer, Ricardo Silveira Cabral, Robert Stojnic, Roberta Raileanu, Rohan Maheswari, Rohit Girdhar, Rohit Patel, Romain Sauvestre, Ronnie Polidoro, Roshan Sumbaly, Ross Taylor, Ruan Silva, Rui Hou, Rui Wang, Saghar Hosseini, Sahana Chennabasappa, Sanjay Singh, Sean Bell, Seohyun Sonia Kim, Sergey Edunov, Shaoliang Nie, Sharan Narang, Sharath Raparthy, Sheng Shen, Shengye Wan, Shruti Bhosale, Shun Zhang, Simon Vandenhende, Soumya Batra, Spencer Whitman, Sten Sootla, Stephane Collot, Suchin Gururangan, Sydney Borodinsky, Tamar Herman, Tara Fowler, Tarek Sheasha, Thomas Georgiou, Thomas Scialom, Tobias Speckbacher, Todor Mihaylov, Tong Xiao, Ujjwal Karn, Vedanuj Goswami, Vibhor Gupta, Vignesh Ramanathan, Viktor Kerkez, Vincent Gonguet, Virginie Do, Vish Vogeti, Vítor Albiero, Vladan Petrovic, Weiwei Chu, Wenhan Xiong, Wenyin Fu, Whitney Meers, Xavier Martinet, Xiaodong Wang, Xiaofang Wang, Xiaoqing Ellen Tan, Xide Xia, Xinfeng Xie, Xuchao Jia, Xuewei Wang, Yaelle Goldschlag, Yashesh Gaur, Yasmine Babaei, Yi Wen, Yiwen Song, Yuchen Zhang, Yue Li, Yuning Mao, Zacharie Delpierre Coudert, Zheng Yan, Zhengxing Chen, Zoe Papakipos, Aaditya Singh, Aayushi Srivastava, Abha Jain, Adam Kelsey, Adam Shajnfeld, Adithya Gangidi, Adolfo Victoria, Ahuva Goldstand, Ajay Menon, Ajay Sharma, Alex Boesenberg, Alexei Baevski, Allie Feinstein, Amanda Kallet, Amit Sangani, Amos Teo, Anam Yunus, Andrei Lupu, Andres Alvarado, Andrew Caples, Andrew Gu, Andrew Ho, Andrew Poulton, Andrew Ryan, Ankit Ramchandani, Annie Dong, Annie Franco, Anuj Goyal, Aparajita Saraf, Arkabandhu Chowdhury, Ashley Gabriel, Ashwin Bharambe, Assaf Eisenman, Azadeh Yazdan, Beau James, Ben Maurer, Benjamin Leonhardi, Bernie Huang, Beth Loyd, Beto De Paola, Bhargavi Paranjape, Bing Liu, Bo Wu, Boyu Ni, Braden Hancock, Bram Wasti, Brandon Spence, Brani Stojkovic, Brian Gamido, Britt Montalvo, Carl Parker, Carly Burton, Catalina Mejia, Ce Liu, Changhan Wang, Changkyu Kim, Chao Zhou, Chester Hu, Ching-Hsiang Chu, Chris Cai, Chris Tindal, Christoph Feichtenhofer, Cynthia Gao, Damon Civin, Dana Beaty, Daniel Kreymer, Daniel Li, David Adkins, David Xu, Davide Testuggine, Delia David, Devi Parikh, Diana Liskovich, Didem Foss, Dingkang Wang, Duc Le, Dustin Holland, Edward Dowling, Eissa Jamil, Elaine Montgomery, Eleonora Presani, Emily Hahn, Emily Wood, Eric-Tuan Le, Erik Brinkman, Esteban Arcaute, Evan Dunbar, Evan Smothers, Fei Sun, Felix Kreuk, Feng Tian, Filippos Kokkinos, Firat Ozgenel, Francesco Caggioni, Frank Kanayet, Frank Seide, Gabriela Medina Florez, Gabriella Schwarz, Gada Badeer, Georgia Swee, Gil Halpern, Grant Herman, Grigory Sizov, Guangyi, Zhang, Guna Lakshminarayanan, Hakan Inan, Hamid Shojanazeri, Han Zou, Hannah Wang, Hanwen Zha, Haroun Habeeb, Harrison Rudolph, Helen Suk, Henry Aspegren, Hunter Goldman, Hongyuan Zhan, Ibrahim Damlaj, Igor Molybog, Igor Tufanov, Ilias Leontiadis, Irina-Elena Veliche, Itai Gat, Jake Weissman, James Geboski, James Kohli, Janice Lam, Japhet Asher, Jean-Baptiste Gaya, Jeff Marcus, Jeff Tang, Jennifer Chan, Jenny Zhen, Jeremy Reizenstein, Jeremy Teboul, Jessica Zhong, Jian Jin, Jingyi Yang, Joe Cummings, Jon Carvill, Jon Shepard, Jonathan McPhie, Jonathan Torres, Josh Ginsburg, Junjie Wang, Kai Wu, Kam Hou U, Karan Saxena, Kartikay Khandelwal, Katayoun Zand, Kathy Matosich, Kaushik Veeraraghavan, Kelly Michelena, Keqian Li, Kiran Jagadeesh, Kun Huang, Kunal Chawla, Kyle Huang, Lailin Chen, Lakshya Garg, Lavender A, Leandro Silva, Lee Bell, Lei Zhang, Liangpeng Guo, Licheng Yu, Liron Moshkovich, Luca Wehrstedt, Madian Khabsa, Manav Avalani, Manish Bhatt, Martynas Mankus, Matan Hasson, Matthew Lennie, Matthias Reso, Maxim Groshev, Maxim Naumov, Maya Lathi, Meghan Keneally, Miao Liu, Michael L. Seltzer, Michal Valko, Michelle Restrepo, Mihir Patel, Mik Vyatskov, Mikayel Samvelyan, Mike Clark, Mike Macey, Mike Wang, Miquel Jubert Hermoso, Mo Metanat, Mohammad Rastegari, Munish Bansal, Nandhini Santhanam, Natascha Parks, Natasha White, Navyata Bawa, Nayan Singhal, Nick Egebo, Nicolas Usunier, Nikhil Mehta, Nikolay Pavlovich Laptev, Ning Dong, Norman Cheng, Oleg Chernoguz, Olivia Hart, Omkar Salpekar, Ozlem Kalinli, Parkin Kent, Parth Parekh, Paul Saab, Pavan Balaji, Pedro Rittner, Philip Bontrager, Pierre Roux, Piotr Dollar, Polina Zvyagina, Prashant Ratanchandani, Pritish Yuvraj, Qian Liang, Rachad Alao, Rachel Rodriguez, Rafi Ayub, Raghotham Murthy, Raghu Nayani, Rahul Mitra, Rangaprabhu Parthasarathy, Raymond Li, Rebekkah Hogan, Robin Battey, Rocky Wang, Russ Howes, Ruty Rinott, Sachin Mehta, Sachin Siby, Sai Jayesh Bondu, Samyak Datta, Sara Chugh, Sara Hunt, Sargun Dhillon, Sasha Sidorov, Satadru Pan, Saurabh Mahajan, Saurabh Verma, Seiji Yamamoto, Sharadh Ramaswamy, Shaun Lindsay, Shaun Lindsay, Sheng Feng, Shenghao Lin, Shengxin Cindy Zha, Shishir Patil, Shiva Shankar, Shuqiang Zhang, Shuqiang Zhang, Sinong Wang, Sneha Agarwal, Soji Sajuyigbe, Soumith Chintala, Stephanie Max, Stephen Chen, Steve Kehoe, Steve Satterfield, Sudarshan Govindaprasad, Sumit Gupta, Summer Deng, Sungmin Cho, Sunny Virk, Suraj Subramanian, Sy Choudhury, Sydney Goldman, Tal Remez, Tamar Glaser, Tamara Best, Thilo Koehler, Thomas Robinson, Tianhe Li, Tianjun Zhang, Tim Matthews, Timothy Chou, Tzook Shaked, Varun Vontimitta, Victoria Ajayi, Victoria Montanez, Vijai Mohan, Vinay Satish Kumar, Vishal Mangla, Vlad Ionescu, Vlad Poenaru, Vlad Tiberiu Mihailescu, Vladimir Ivanov, Wei Li, Wenchen Wang, Wenwen Jiang, Wes Bouaziz, Will Constable, Xiaocheng Tang, Xiaojian Wu, Xiaolan Wang, Xilun Wu, Xinbo Gao, Yaniv Kleinman, Yanjun Chen, Ye Hu, Ye Jia, Ye Qi, Yenda Li, Yilin Zhang, Ying Zhang, Yossi Adi, Youngjin Nam, Yu, Wang, Yu Zhao, Yuchen Hao, Yundi Qian, Yunlu Li, Yuzi He, Zach Rait, Zachary DeVito, Zef Rosnbrick, Zhaoduo Wen, Zhenyu Yang, Zhiwei Zhao, and Zhiyu Ma. The llama 3 herd of models, 2024.

- (11) Xinyu Guan, Li Lyna Zhang, Yifei Liu, Ning Shang, Youran Sun, Yi Zhu, Fan Yang, and Mao Yang. rStar-Math: Small LLMs Can Master Math Reasoning with Self-Evolved Deep Thinking, January 2025.

- (12) Pranab Islam, Anand Kannappan, Douwe Kiela, Rebecca Qian, Nino Scherrer, and Bertie Vidgen. Financebench: A new benchmark for financial question answering, 2023.

- (13) Jared Kaplan, Sam McCandlish, Tom Henighan, Tom B. Brown, Benjamin Chess, Rewon Child, Scott Gray, Alec Radford, Jeffrey Wu, and Dario Amodei. Scaling laws for neural language models, 2020.

- (14) Volodymyr Kuleshov and Doina Precup. Algorithms for multi-armed bandit problems. arXiv preprint arXiv:1402.6028, 2014.

- (15) Alexander K. Lew, Tan Zhi-Xuan, Gabriel Grand, and Vikash K. Mansinghka. Sequential monte carlo steering of large language models using probabilistic programs, 2023.

- (16) Hunter Lightman, Vineet Kosaraju, Yura Burda, Harri Edwards, Bowen Baker, Teddy Lee, Jan Leike, John Schulman, Ilya Sutskever, and Karl Cobbe. Let’s verify step by step, 2023.

- (17) Hunter Lightman, Vineet Kosaraju, Yura Burda, Harri Edwards, Bowen Baker, Teddy Lee, Jan Leike, John Schulman, Ilya Sutskever, and Karl Cobbe. Let’s Verify Step by Step, May 2023.

- (18) João Loula, Benjamin LeBrun, Li Du, Ben Lipkin, Clemente Pasti, Gabriel Grand, Tianyu Liu, Yahya Emara, Marjorie Freedman, Jason Eisner, Ryan Cotterell, Vikash Mansinghka, Alexander K. Lew, Tim Vieira, and Timothy J. O’Donnell. Syntactic and semantic control of large language models via sequential monte carlo. In The Thirteenth International Conference on Learning Representations, 2025.

- (19) David JC MacKay. Information theory, inference and learning algorithms. Cambridge university press, 2003.

- (20) Swaroop Mishra, Arindam Mitra, Neeraj Varshney, Bhavdeep Sachdeva, Peter Clark, Chitta Baral, and Ashwin Kalyan. Numglue: A suite of fundamental yet challenging mathematical reasoning tasks. ACL, 2022.