# Token Assorted: Mixing Latent and Text Tokens for Improved Language Model Reasoning

**Authors**: DiJia Su, Hanlin Zhu, Yingchen Xu, Jiantao Jiao, Yuandong Tian, Qinqing Zheng

## Abstract

Large Language Models (LLMs) excel at reasoning and planning when trained on chain-of-thought (CoT) data, where the step-by-step thought process is explicitly outlined by text tokens. However, this results in lengthy inputs where many words support textual coherence rather than core reasoning information, and processing these inputs consumes substantial computation resources. In this work, we propose a hybrid representation of the reasoning process, where we partially abstract away the initial reasoning steps using latent discrete tokens generated by VQ-VAE, significantly reducing the length of reasoning traces. We explore the use of latent trace abstractions in two scenarios: 1) training the model from scratch for the Keys-Finding Maze problem, 2) fine-tuning LLMs on this hybrid data with an extended vocabulary including unseen latent tokens, for both logical and mathematical reasoning problems. To facilitate effective learning, we introduce a simple training procedure that randomly mixes latent and text tokens, which enables fast adaptation to new latent tokens. Our approach consistently outperforms the baselines methods in various benchmarks, such as Math (+4.2%, Llama-3.2-1B), GSM8K (+4.1%, Llama-3.2-3B), and Fresh-Gaokao-Math-2023 (+13.3%, Llama-3.1-8B) with an average reduction of 17% in reasoning trace’s length.

Machine Learning, ICML

## 1 Introduction

Reasoning capabilities are increasingly recognized as a critical component of Artificial General Intelligence (AGI) systems. Recent research has demonstrated that Large Language Models (LLMs) can exhibit sophisticated reasoning and planning abilities using chain-of-thought (CoT) methodologies, including prompting LLMs with examples where complex problems are broken down into explicit reasoning steps (Wei et al., 2022b; Chen et al., 2022a; Yao et al., 2024). More recently, a number of studies have further shown that when models are trained to articulate the intermediate steps of a reasoning process (Nye et al., 2021b; Lehnert et al., 2024), they achieve significantly higher accuracy. The effectiveness of this approach has been demonstrated across multiple domains, including mathematical problem-solving (Yue et al., 2023; Gandhi et al., 2024; Yu et al., 2023; Su et al., 2025; Tong et al., 2024), logical inference (Lin et al., 2024; Dziri et al., 2024), multistep planning tasks (Lehnert et al., 2024; Su et al., 2024), etc.

However, training with explicit reasoning traces in text space comes with notable computational costs (Deng et al., 2023, 2024), as the models must process lengthy input sequences. In fact, much of the text serves primarily to maintain linguistic coherence, rather than conveying core reasoning information. Several works have attempted to mitigate this issue. For example, Hao et al. (2024) investigate reasoning in continuous latent space as a means of compressing the reasoning trace, and Deng et al. (2024) explore internalizing the intermediate steps through iterative CoT eliminations, see Section 2 for more examples. Nonetheless, these approaches rely on multi-stage training procedures that resemble curriculum learning, which still incur significant computational costs, and their final performances fall behind models trained with complete reasoning traces.

To tackle this challenge, we propose to use discrete latent tokens to abstract the initial steps of the reasoning traces. These latent tokens, obtained through a vector-quantized variational autoencoder (VQ-VAE), provide a compressed representation of the reasoning process by condensing surface-level details. More precisely, we replace the text tokens with their corresponding latent abstractions from left to right until a pre-set location, leaving the remaining tokens unchanged. We then fine-tune LLMs with reasoning traces with such assorted tokens, allowing the models to learn from both abstract representations of the thinking process and detailed textual descriptions. One technical challenge posed for the fine-tuning is that the vocabulary is now extended and contains unseen latent tokens. To facilitate quick adaptation to those new tokens, we employ a randomized replacement strategy: during training, we randomly vary the number of text tokens being substituted by latent tokens for each sample. Our experiments confirm that this simple strategy leads to straightforward accommodation of unseen latent tokens.

We conduct a comprehensive evaluation of our approach on a diverse range of benchmarks spanning multiple domains. Specifically, we assess its performance on multistep planning tasks (Keys-Finding Maze) and logical reasoning benchmarks (ProntoQA (Saparov & He, 2022), ProsQA (Hao et al., 2024)) for training T5 or GPT-2 models from scratch. In addition, we fine-tune different sizes of LLama-3.1 and LLama-3.2 models using our approach and evaluate them on a number of mathematical reasoning benchmarks, including GSM8K (Cobbe et al., 2021a), Math (Hendrycks et al., 2021), and OlympiadBench-Math (He et al., 2024), see Section 4.2 for more details. Across all these tasks and model architectures, our models consistently outperform baseline models trained with text-only reasoning traces, demonstrating the effectiveness of compressing the reasoning process with assorted tokens.

## 2 Related Work

Explicit Chain-of-Thought Prompting.

The first line of work in Chain-of-Thought (CoT) use the traditional chain of prompt in text tokens (Wei et al., 2022a; Nye et al., 2021a). Research works demonstrated that by adding few-shot examples to the input prompt or even zero-shot, the model can perform better in question answering (Chen et al., 2022b; Kojima et al., 2022; Chung et al., 2024). To further improve the model reasoning performance, there has been research effort into prompting with self-consistency (Wang et al., 2022). Here the model is prompted to generate multiple responses and select the best one based on majority voting. On the other hand, research has shown that top- $k$ alternative tokens in the beginning of the prompt can also improve the model’s reasoning capability (Wang & Zhou, 2024). On top of these empirical results, there has been research on theoretical understanding of why CoT improves the model’s performance through the lens of expressivity (Feng et al., 2024; Li et al., 2024) or training dynamics (Zhu et al., 2024). In a nutshell, CoT improves the model’s effective depth because the generated output is being fed back to the original input. CoT is also important for LLMs to perform multi-hop reasoning according to the analysis of training dynamics (Zhu et al., 2024).

Learning with CoT Data.

In addition to the success of CoT prompting, an emerging line of works have explored training LLMs on data with high-quality reasoning traces, for example, the works of Nye et al. (2021b); Azerbayev et al. (2023); Lehnert et al. (2024); Su et al. (2024); Yu et al. (2024); Yang et al. (2024); Deng et al. (2023, 2024). There is also a surge of interest in synthesizing datasets with diverse intermediate steps for solving problems in various domains, see, e.g., the works of Kim et al. (2023); Tong et al. (2024); Yu et al. (2023); Yue et al. (2023); Lozhkov et al. (2024). Wen et al. (2024) also theoretically studies how training with reasoning trace can improve the sample complexity of certain tasks.

LLM Reasoning in Latent Space.

There has been research investigating LLM reasoning in the latent space. Hao et al. (2024) have proposed to use the last hidden state of a language model as the next input embeddings, allowing the model to continue reasoning within a continuous latent space. The authors show that this approach effectively captures multiple reasoning paths simultaneously, mimicking a breadth-first-search strategy. Goyal et al. (2023) proposes to insert learnable pause tokens into the original text, in order to delay the generation. As a result, the model can leverage additional computation before providing the final answer. Parallel to this, Pfau et al. (2024) have explored filler tokens, which are used to solve computational tasks that are otherwise unattainable without intermediate token generation. In addition, Liu et al. (2024) propose a latent coprocessor method that operates on the transformer’s key-value cache to improve the LLM performance. Nevertheless, none of these methods have shown good performance when integrated into modern-sized LLMs and tested on real-world LLM datasets instead of synthetic ones. Also, Wang et al. (2023) proposed to use the planning token at the start of generation. Orthogonal to these works, Pagnoni et al. (2024) proposes a tokenization-free architecture that encodes input bytes into continuous patch representations, which is then used to train a latent Transformer, and Barrault et al. (2024) perform autoregressive sentence prediction in an embedding space. While these two works both leverage continuous latent spaces, our work focuses on the direct use of discrete latent tokens.

## 3 Methodology

<details>

<summary>x1.png Details</summary>

### Visual Description

## Diagram: Chain-of-Thought (CoT) with Latent Token Compression

### Overview

The image is a technical schematic diagram illustrating a process for compressing or replacing a segment of a textual Chain-of-Thought (CoT) reasoning sequence with discrete latent tokens. It contrasts a standard textual sequence (X) with a modified sequence (X̃) that incorporates a latent representation.

### Components/Axes

The diagram is structured into three main horizontal sections:

1. **Top Row (Sequence X):** Represents a standard input/output sequence for a language model.

* **Components (left to right):**

* A green box labeled `Prompt`.

* A dashed-line box enclosing a series of blue boxes: `CoT 1`, `CoT 2 ...`, `CoT 32`.

* A series of blue boxes outside the dashed box: `CoT 33`, `...`, `CoT N`.

* A final pink box labeled `Solution`.

* **Spatial Grounding:** The dashed box is positioned centrally, encompassing the first 32 CoT tokens.

2. **Middle Row (Sequence X̃):** Represents the modified sequence with latent compression.

* **Components (left to right):**

* A green box labeled `Prompt`.

* A dashed-line box (aligned vertically with the one above) enclosing:

* An orange box labeled `[boLatent]`.

* Two purple boxes labeled `z1` and `z2`.

* An orange box labeled `[eoLatent]`.

* A red text label `Text` with an arrow pointing from the `[eoLatent]` box to the next component.

* A series of blue boxes: `CoT 33`, `...`, `CoT N`.

* A final pink box labeled `Solution`.

* **Spatial Grounding:** The dashed box in this row is in the same horizontal position as the one above, indicating a direct replacement. The `Text` label is positioned to the right of the dashed box.

3. **Bottom Legend:** Explains the meaning of the colored boxes.

* **Orange Boxes:** `[boLatent]` and `[eoLatent]` - Labeled as "Special delimiters that encode the start / end of the latent tokens".

* **Purple Box:** `z` - Labeled as "Discrete latent tokens".

* **Blue Box:** `CoT N` - Labeled as "The n-th CoT textual tokens".

### Detailed Analysis

The diagram explicitly maps the transformation from sequence X to sequence X̃:

* **Transformation:** The segment of the sequence from `CoT 1` to `CoT 32` in the original sequence (X) is replaced in the modified sequence (X̃).

* **Replacement Content:** The replacement consists of four elements enclosed by special delimiters:

1. `[boLatent]` (begin latent)

2. `z1` (first discrete latent token)

3. `z2` (second discrete latent token)

4. `[eoLatent]` (end latent)

* **Flow Continuity:** After the `[eoLatent]` delimiter, the sequence resumes with the original textual tokens starting from `CoT 33` and continues to the `Solution`. The red `Text` label emphasizes the transition back to the textual domain.

* **Key Relationship:** The dashed boxes and their vertical alignment visually assert that the latent block (`[boLatent] z1 z2 [eoLatent]`) is a functional substitute for the 32 textual CoT tokens.

### Key Observations

1. **Fixed Compression Ratio:** The diagram specifies a compression of exactly 32 textual CoT tokens into 2 discrete latent tokens (`z1`, `z2`), framed by 2 delimiter tokens. This suggests a fixed, predefined compression scheme.

2. **Hybrid Sequence:** The final sequence (X̃) is a hybrid, containing both latent representations (`z1`, `z2`) and explicit textual reasoning steps (`CoT 33` to `CoT N`).

3. **Delimiter Necessity:** The process requires explicit start (`[boLatent]`) and end (`[eoLatent]`) markers to signal the model to switch between processing latent and textual tokens.

4. **No Data Values:** This is a conceptual diagram, not a data chart. It contains no numerical data points, trends, or statistical information. Its purpose is to illustrate an architectural or methodological concept.

### Interpretation

This diagram illustrates a technique for **reasoning compression** in language models. The core idea is to replace a potentially lengthy, explicit chain-of-thought (here, 32 steps) with a compact, learned latent representation (`z1`, `z2`). This could serve several purposes:

* **Efficiency:** Reducing the sequence length to save computational resources during inference.

* **Abstraction:** Encapsulating a complex reasoning subroutine into a dense, symbolic form.

* **Modularity:** Allowing a system to "call" a pre-computed or specialized reasoning module (represented by the latent tokens) and then continue with textual reasoning.

The retention of the later CoT steps (`CoT 33` onward) indicates that the latent compression is applied only to a specific segment of the reasoning process, not the entire chain. This suggests a selective application where only certain reasoning phases are deemed compressible or suitable for latent abstraction. The explicit delimiters are crucial for the model to correctly parse and integrate the hybrid sequence. The diagram effectively argues for a method that blends the interpretability of textual reasoning with the potential efficiency of latent variable models.

</details>

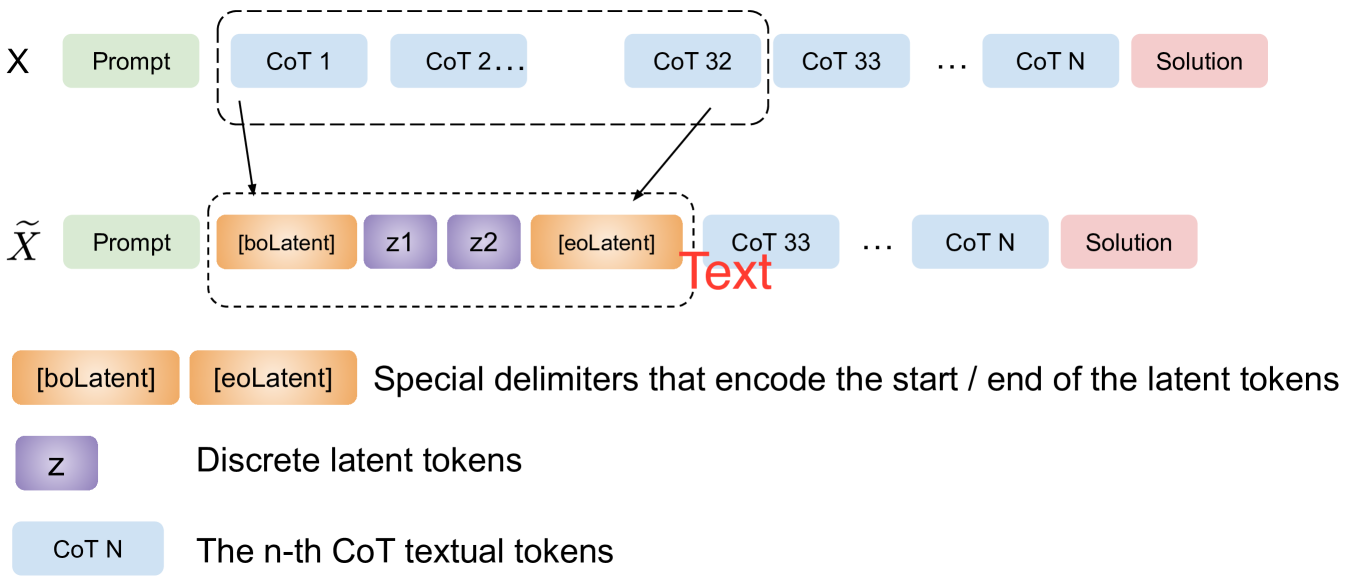

Figure 3.1: An example illustrating our replacement strategy. With chunk size $L=16$ and compression rate $r=16$ , we encode 32 textual CoT tokens into 2 discrete latent tokens from left to right. The other CoT tokens will remain in their original forms.

In this section, we describe our methodology to enable LLMs to reason with discrete latent tokens. The notations are summarized in Appendix B. Let $X=P⊕ C⊕ S$ denote a sample input, where $P=(p_1,p_2,…,p_t_{p})$ are the prompt tokens, $C=(c_1,c_2,…,c_t_{c})$ are the reasoning step (chain-of-thought) tokens, $S=(s_1,s_2,…,s_t_{s})$ are the solution tokens, and $⊕$ denotes concatenation. Our training procedure consists of two stages:

1. Learning latent discrete tokens to abstract the reasoning steps, where we train a model to convert $C$ into a sequence of latent tokens $Z=(z_1,z_2,…,z_t_{z})$ such that $t_z<t_c$ . The compression rate $r=t_c/t_z$ controls the level of abstraction.

1. Training the LLM with a partial and high-level abstract of the reasoning steps, where we construct a modified input ${\widetilde{X}}$ by replacing the first $m$ tokens of $C$ by the corresponding latent abstractions:

$$

{\widetilde{X}}=P⊕[z_1,…,z_\frac{m{r}},c_m+1,…,c_t_{c}]⊕ S. \tag{1}

$$

Figure 3.1 illustrates this replacement strategy. We randomize the value of $m$ during training.

### 3.1 Learning Latent Abstractions

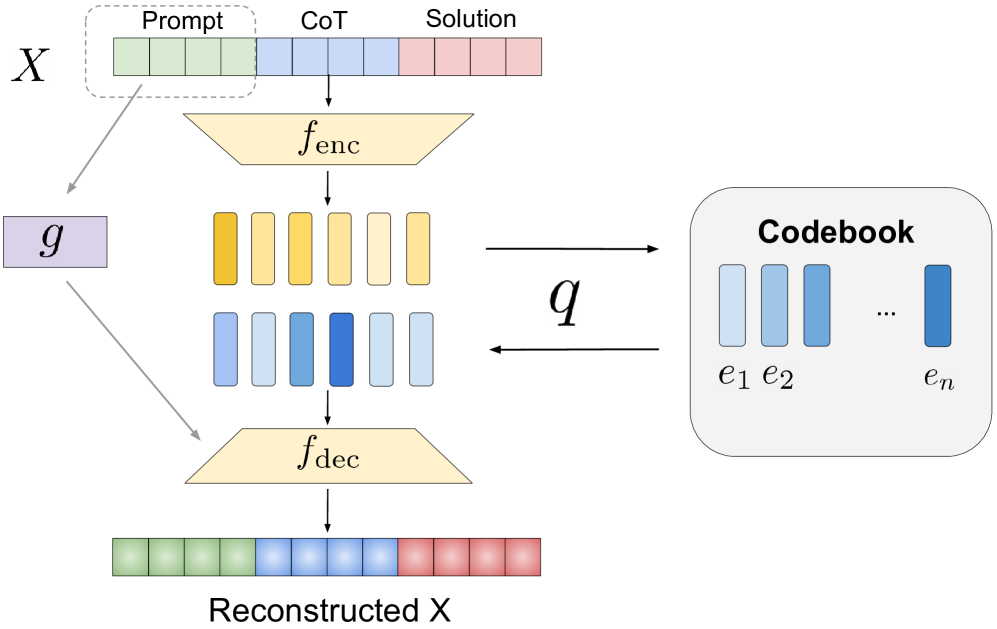

We employ a vector-quantized variable autoencoder (VQ-VAE) (Van Den Oord et al., 2017) type of architecture to map CoT tokens $C$ into discrete latent tokens $Z$ . To enhance abstraction performance, our VQ-VAE is trained on the whole input sequence $X$ , but only applied to $C$ in the next stage. Following Jiang et al. (2022, 2023), we split $X$ into chunks of length $L$ and encode each chunk into $\frac{L}{r}$ latent codes, where $r$ is a preset compression rate. More precisely, our architecture consists of the following five components:

- ${E}:$ a codebook containing $|{E}|$ vectors in ${ℝ}^d$ .

- ${f_enc}:{V}^L↦{ℝ}^d×\frac{L{r}}$ that encodes a sequence of $L$ text tokens to $\frac{L}{r}$ latent embedding vectors $\bar{X}=\bar{x}_1,…,\bar{x}_\frac{L{r}}$ , where ${V}$ is the vocabulary of text tokens.

- $q:{ℝ}^d↦{E}$ : the quantization operator that replaces the encoded embedding $\bar{x}$ by the nearest neighbor in ${E}$ : $q(\bar{x})=\operatorname*{argmin}_e_{i∈{E}}≤ft\|e_i-\bar{x}\right\|^2_2$ .

- $g:{V}^K↦{ℝ}^d$ that maps $K$ text tokens to a $d$ -dimensional embedding vector. We use $g$ to generate a continuous embedding of the prompt $P$ .

- ${f_dec}:{ℝ}^d×\frac{L{r}}×{ℝ}^k↦{V}^L$ that decodes latent embeddings back to text tokens, conditioned on prompt embedding.

In particular, each continuous vector $e∈{E}$ in the codebook has an associated latent token $z$ , which we use to construct the latent reasoning steps $Z$ To decode a latent token $z$ , we look up the corresponding embedding $e∈{E}$ and feed it to ${f_dec}$ ..

<details>

<summary>x2.png Details</summary>

### Visual Description

## Diagram: Neural Network Architecture with Vector Quantization

### Overview

The image displays a technical diagram of a neural network architecture designed for sequence processing and reconstruction. It illustrates a flow from an input sequence `X` through encoding, quantization via a codebook, and decoding to produce a "Reconstructed X". The architecture incorporates a parallel processing path via function `g`.

### Components/Axes

The diagram is composed of several interconnected blocks and labels:

1. **Input Sequence (`X`)**: Located at the top-left. It is a segmented bar divided into three colored sections:

* **Prompt**: Green segments (leftmost).

* **CoT** (Chain-of-Thought): Blue segments (middle).

* **Solution**: Red segments (rightmost).

* A dashed box encloses the "Prompt" section, with an arrow pointing from it to a block labeled `g`.

2. **Encoder (`f_enc`)**: A yellow trapezoid (wider at top) positioned below the input sequence. It receives the full input sequence `X` as indicated by a downward arrow.

3. **Latent Representations**: Below the encoder are two rows of vertical bars representing encoded features:

* **Top Row**: Six yellow bars of varying shades.

* **Bottom Row**: Six blue bars of varying shades, with one bar (fourth from left) being a distinctly darker blue.

4. **Quantization (`q`)**: A bidirectional arrow labeled `q` connects the latent representations to the "Codebook". This indicates a quantization mapping process.

5. **Codebook**: A rounded rectangle on the right side. It contains:

* The title **"Codebook"**.

* A series of blue vertical bars labeled `e₁`, `e₂`, ..., `eₙ`, representing discrete code vectors or embeddings.

6. **Decoder (`f_dec`)**: A yellow trapezoid (wider at bottom) positioned below the latent representations. It receives the quantized latent features.

7. **Output Sequence ("Reconstructed X")**: A segmented bar at the bottom, mirroring the structure of the input `X`:

* Green segments (left).

* Blue segments (middle).

* Red segments (right).

* Labeled **"Reconstructed X"** below it.

8. **Parallel Function (`g`)**: A purple block on the left. It receives input from the "Prompt" section of `X` (via a gray arrow) and its output feeds into the decoder `f_dec` (via another gray arrow), bypassing the main encoder-quantization path.

### Detailed Analysis

The diagram depicts a specific data flow and transformation process:

* **Primary Encoding Path**: The entire input sequence `X` (Prompt + CoT + Solution) is processed by the encoder `f_enc` to produce continuous latent representations (the yellow and blue bars).

* **Quantization Process**: The continuous latent representations are mapped to discrete codes from the **Codebook** via the quantization function `q`. The bidirectional arrow suggests this involves finding the nearest codebook entry (`e₁` to `eₙ`) for each latent vector. The darker blue bar in the latent row likely represents a selected or quantized code.

* **Decoding and Reconstruction**: The quantized latent codes are fed into the decoder `f_dec`. The decoder also receives a direct signal from the input's "Prompt" section via function `g`. The decoder's output is the "Reconstructed X", which aims to replicate the original input's structure (Prompt, CoT, Solution).

* **Parallel Path (`g`)**: This creates a skip-connection or auxiliary pathway, allowing the decoder direct access to the original prompt information, potentially to preserve details or stabilize training.

### Key Observations

1. **Structured Input/Output**: The model explicitly handles sequences with a defined semantic structure (Prompt, Chain-of-Thought, Solution), suggesting it's designed for tasks like reasoning or step-by-step problem-solving.

2. **Vector Quantization (VQ) Core**: The central role of the **Codebook** and quantization `q` identifies this as a Vector Quantized (VQ) model, likely a VQ-VAE or similar, which learns discrete latent representations.

3. **Dual Latent Representation**: The two rows of bars (yellow and blue) after encoding may represent different feature channels or a split in the latent space before quantization.

4. **Asymmetric Encoder/Decoder**: The encoder (`f_enc`) and decoder (`f_dec`) are depicted as trapezoids of opposite orientation, a common visual metaphor for compression (encoding) and reconstruction (decoding).

5. **Color Consistency**: Colors are used consistently to track data types: Green=Prompt, Blue=CoT/Latent Codes, Red=Solution, Yellow=Encoder/Decoder operations.

### Interpretation

This diagram illustrates a **Vector-Quantized Encoder-Decoder architecture with a prompt-conditioned skip connection**.

* **Purpose**: The model is designed to learn a compressed, discrete representation (via the codebook) of structured sequences that involve a prompt, a reasoning chain (CoT), and a final solution. This is highly relevant for generative AI tasks requiring step-by-step reasoning, such as mathematical problem-solving or complex question answering.

* **Mechanism**: The encoder compresses the full sequence into a latent space. The quantization step forces this representation into a discrete set of codes (`e₁...eₙ`), which can improve sample efficiency and enable discrete manipulation. The decoder must then reconstruct the original sequence from these discrete codes.

* **Role of `g`**: The parallel function `g` acting on the prompt suggests a mechanism to prevent the loss of critical initial information during the compression-reconstruction cycle. It ensures the decoder has direct access to the original task specification (the prompt), which could be crucial for generating a coherent and correct solution. This acts as a form of "memory" or "attention" to the input condition.

* **Significance**: This architecture combines the benefits of discrete representation learning (VQ) with the need to preserve and reason over structured textual data. It represents a sophisticated approach to building models that can not only generate text but also internalize and manipulate the reasoning process in a compressed, discrete latent space. The reconstruction goal implies the model is trained in a self-supervised manner to faithfully reproduce its input, learning useful representations in the process.

</details>

Figure 3.2: A graphical illustration of our VQ-VAE. ${f_enc}$ encodes the text tokens into latent embeddings, which are quantized by checking the nearest neighbors in the codebook. ${f_dec}$ decodes those quantized embeddings back to text tokens. When applying the VQ-VAE to compress the text tokens, the discrete latent tokens $Z$ are essentially the index of corresponding embeddings in the codebook.

For simplicity, we assume the lengths of the input $X$ and the prompt $P$ are $L$ and $K$ exactly. Similar to Van Den Oord et al. (2017), we use an objective $L$ composed of 3 terms:

$$

\displaystyleL(X)=\underbrace{\log p(X|{f_dec}(q(\bar{X})|g(P)))}_reconstruction loss+ \displaystyle\enspace∑_i=1^L\underbrace{\|{\texttt{sg}}[\bar{X}_i]-q(\bar{X}_i)\|_2^2}_VQ loss+\underbrace{β\|\bar{X}_i-{\texttt{sg}}[q(\bar{X}_i)]\|_2^2}_commitment loss, \tag{2}

$$

where $\bar{X}={f_enc}(X)$ , ${\texttt{sg}}[·]$ is the stop-gradient operator, and $β$ is a hyperparameter controlling the strength of the commitment loss. The VQ loss and the commitment loss ensure that the encoder outputs remain close to the codebook, while the reconstruction loss concerns with the decoding efficacy. As standard for VQ-VAE, we pass the gradient $∇_{f_{dec}}(L)$ unaltered to ${f_enc}$ directly as the quantization operator $q(·)$ is non-differentiable. Figure 3.2 illustrates our architecture. In practice, we use a causal Transformer for both ${f_enc}$ and ${f_dec}$ , the model details are discussed in Appendix A.

Thus far we obtain a latent representation both semantically meaningful and conducive to reconstruction, setting the stage for the subsequent training phase where the LLM is trained to perform reasoning with abstractions.

### 3.2 Reasoning with Discrete Latent Tokens

In this second stage, we apply the obtained VQ-VAE to form modifed samples ${\widetilde{X}}$ with latent abstractions as in Equation 1, then train an LLM to perform next token prediction. Below, we outline the major design choices that are key to our model’s performance, and ablate them in Section 4.3.

Partial Replacement. Unlike previous planning works (Jiang et al., 2022, 2023) that project the whole input sequence onto a compact latent space, we only replace $m<t_c$ CoT tokens with their latent abstractions, leaving the remaining tokens unchanged. We delimit the latent tokens by injecting a special <boLatent> and <eoLatent> tokens to encapsulate them.

Left-to-Right (AR) Replacement. We replace the leftmost $m$ tokens of $C$ , rather than subsampling tokens at different locations.

Mixing Samples with Varying Values of $m$ . For fine-tuning an existing LLM on the reasoning dataset with latent tokens, one remarkable challenge is to deal with the extended vocabulary. As the LLM is pretrained with trillions of tokens, it is very hard for it to quickly adapt to tokens (and corresponding embeddings) beyond the original vocabulary. Previous works that aim to replace or eliminate CoT tokens (Deng et al., 2024; Hao et al., 2024) employ a multistage curriculum training approach, where those operations are gradually applied to the entire input sequence. In the context of our approach, this means we increase the values of $m$ in each stage until it reaches a pre-set cap value. However, such training procedure is complex and computationally inefficient, where dedicated optimization tuning is needed. In this work, we employ a simple single stage training approach where the value of $m$ is randomly set for each sample. Surprisingly, this not only makes our training more efficient, but also leads to enhanced performance.

Note that we use a VQVAE with a size of 50M, adding minimal parameter overhead. In addition, it is used only once during data preparation (to convert training data into discrete latent code), not during LLM training or inference. During inference, the LLM directly generates latent tokens without any use of VQVAE.

## 4 Experiments

We empirically evaluate our approach on two categories of benchmarks:

1. Synthetic datasets including the Keys-Finding Maze, ProntoQA (Saparov & He, 2022), and ProsQA (Hao et al., 2024), where we pretrain T5 or GPT-2 models from scratch using the method in Section 3;

1. Real-world mathematic reasoning problems, where we fine-tune Llama models (Dubey et al., 2024) on the MetaMathQA (Yu et al., 2023) or the Dart-MATH (Tong et al., 2024) dataset, and then test on in-domain datasets Math and GSM-8K, along with out-of-domain datasets including Fresh-Gaokao-Math-2023, DeepMind-Math, College-Math, OlympiaBench-Math, and TheoremQA.

The detailed setup is introduced in Section 4.1.

We compare our approach to the following baselines:

1. Sol-Only: the model is trained with samples that only contains questions and solutions, without any reasoning steps;

1. CoT: the model is trained with samples with complete CoT tokens;

1. iCoT (Deng et al., 2024): a method that utilizes curriculum learning to gradually eliminate the need of CoT tokens in reasoning;

1. Pause Token (Goyal et al., 2023): a method that injects a learnable pause token into the sample during training, in order to offer extra computation before giving out the final answer.

### 4.1 Benchmarks

#### 4.1.1 Synthetic Benchmarks

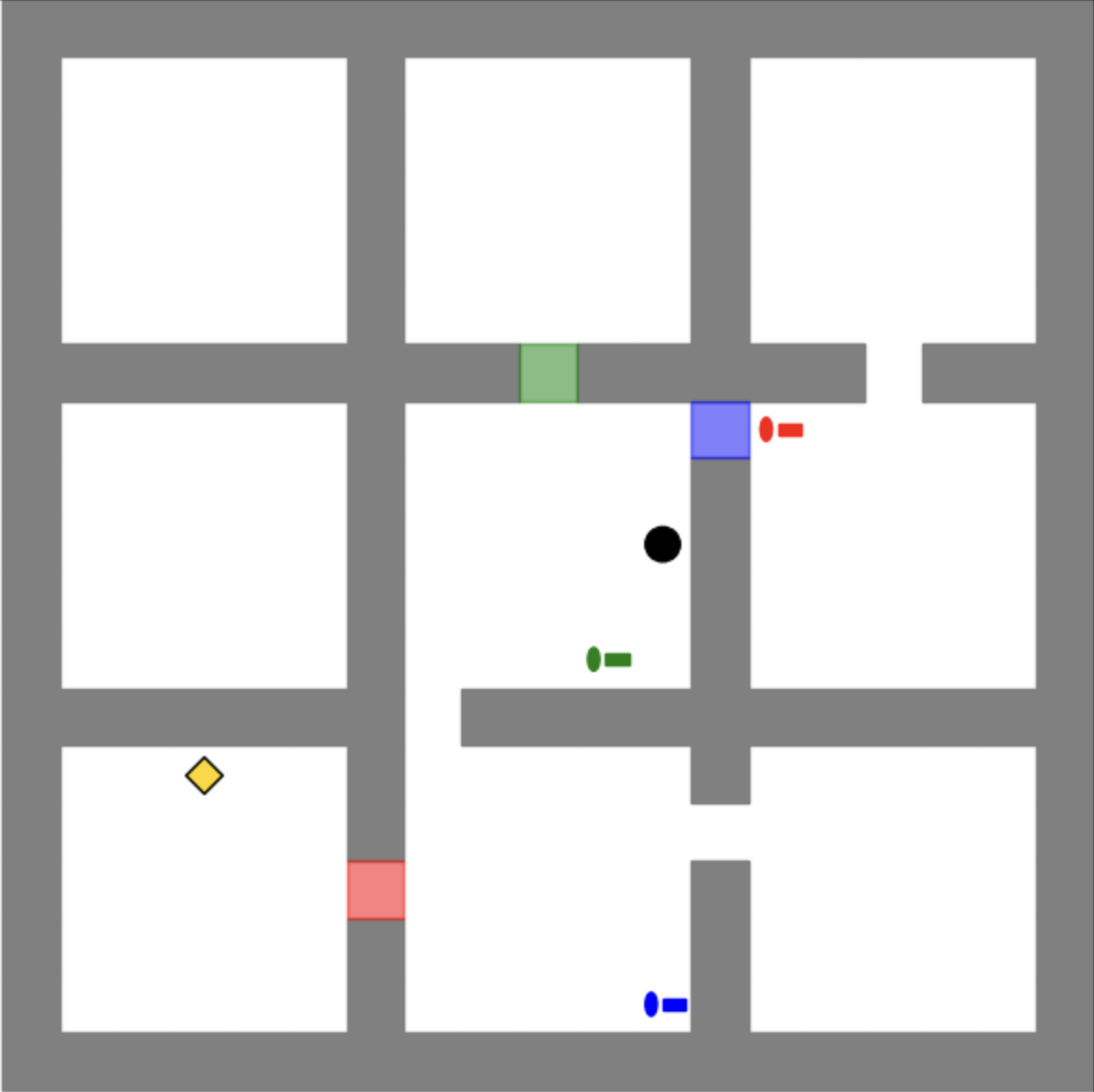

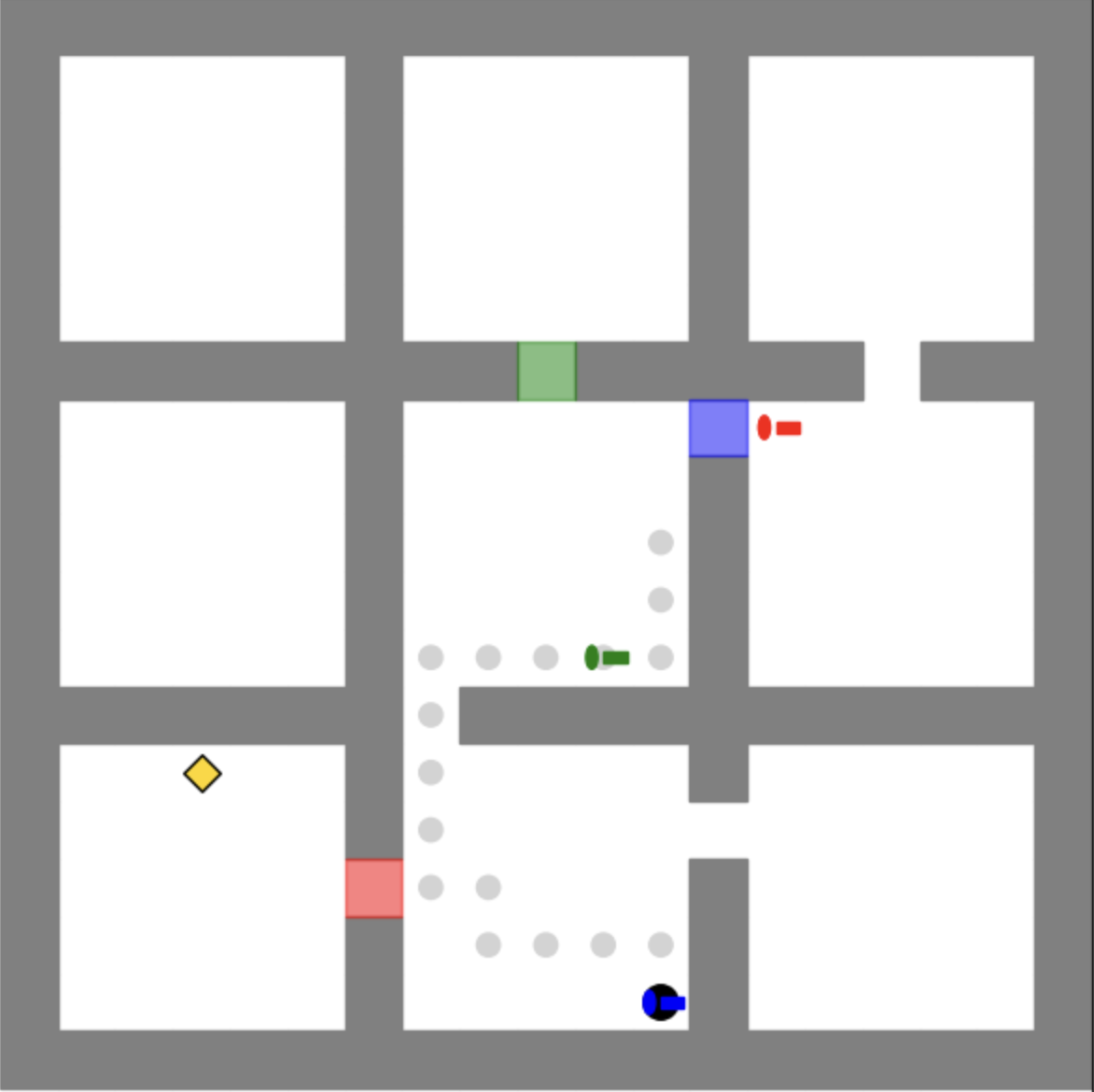

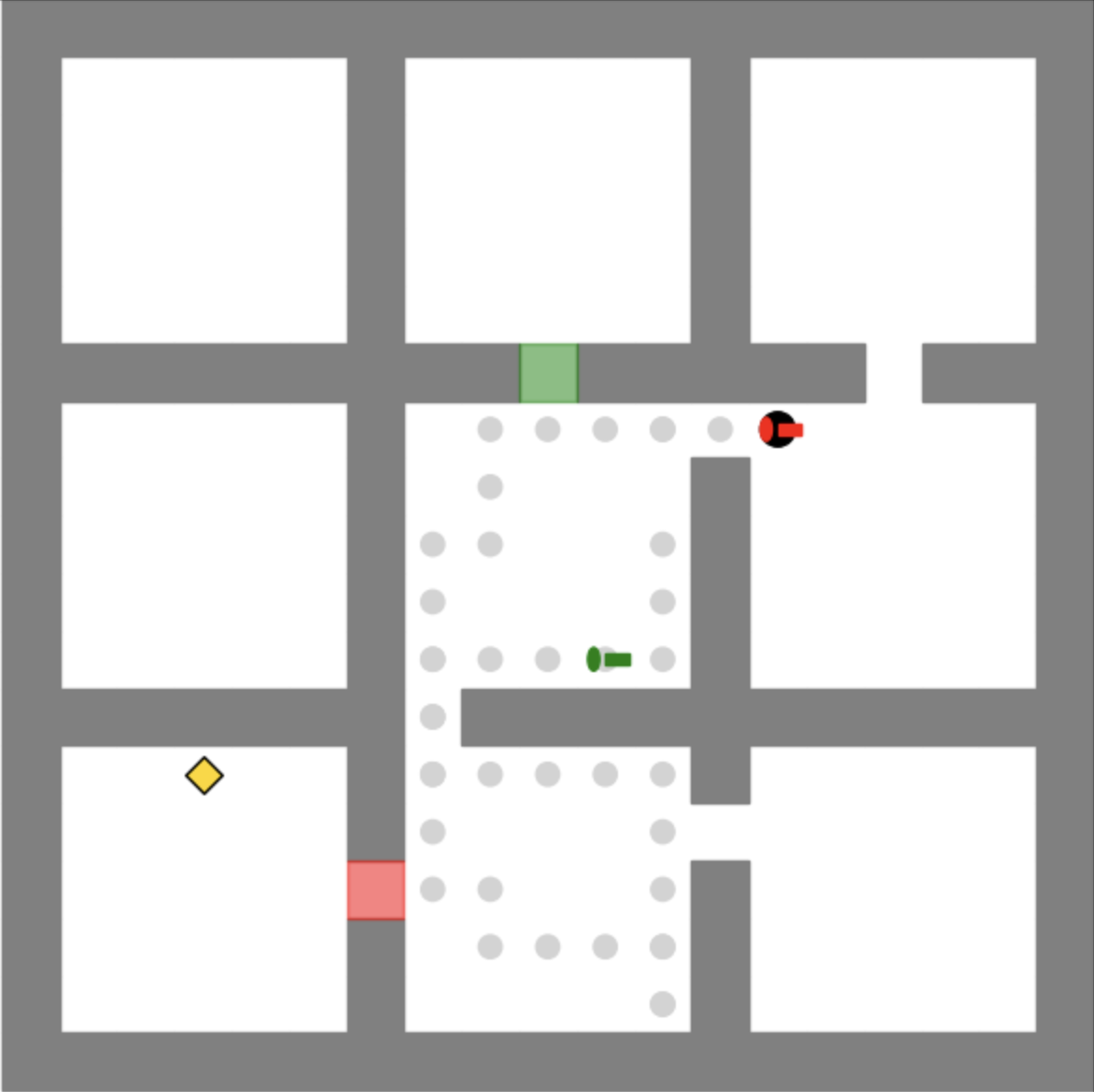

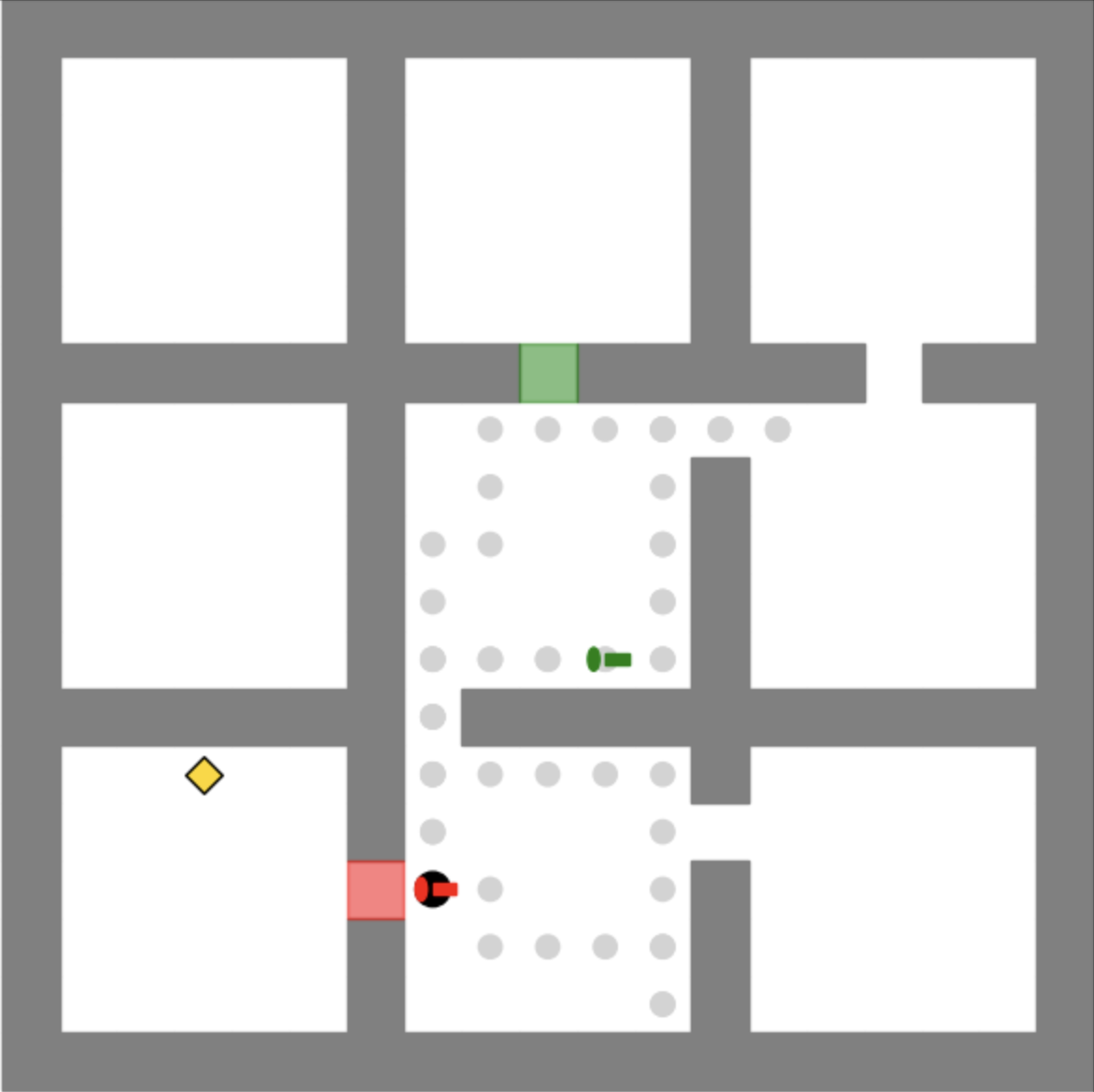

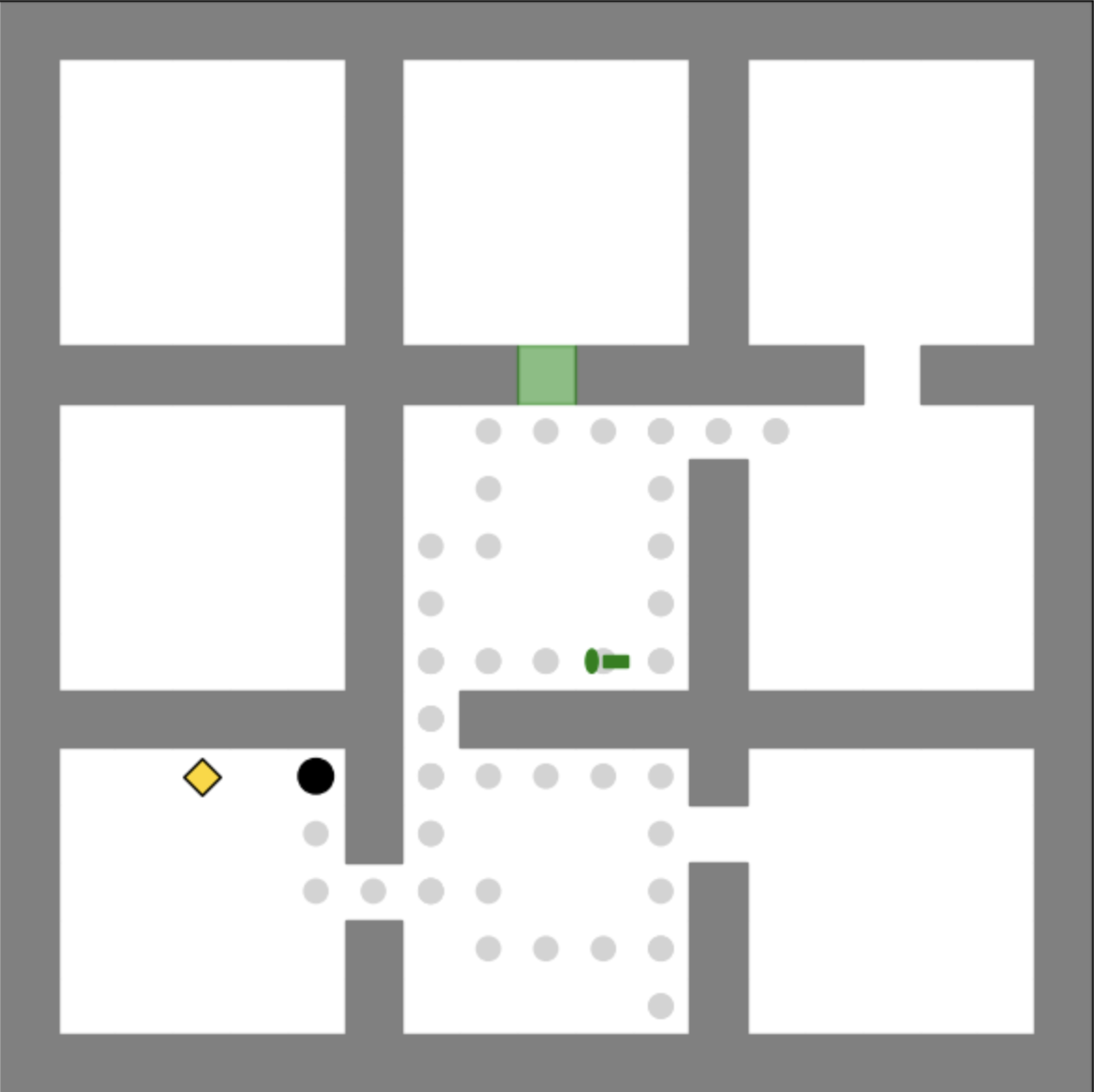

Keys-Finding Maze is a complex navigation environment designed to evaluate an agent’s planning capabilities. The agent is randomly positioned within a maze comprising 4 $3× 3$ interconnected rooms, with the objective of reaching a randomly placed goal destination. To successfully reach the destination, the agent must collect keys (designated with green, red, and blue colors) that correspond to matching colored doors. These keys are randomly distributed among the rooms, requiring the agent to develop sophisticated planning strategies for key acquisition and door traversal. The agent is only allowed to take one key at a time. This environment poses a substantial cognitive challenge, as the agent must identify which keys are necessary for reaching the destination, and optimize the order of key collection and door unlocking to establish the most efficient path to the goal. Following Lehnert et al. (2024); Su et al. (2024), we generate intermediate search traces using the nondeterministic A* algorithm (Hart et al., 1968). The dataset contains 100k training samples. See Section A.2 for more information and graphical illustrations.

ProntoQA (Saparov & He, 2022) is a dataset consists of $9000$ logical reasoning problems derived from ontologies - formal representations of relationships between concepts. Each problem in the dataset is constructed to have exactly one correct proof or reasoning path. One distinctive feature of this dataset is its consistent grammatical and logical structure, which enables researchers to systematically analyze and evaluate how LLMs approach reasoning tasks.

ProsQA (Hao et al., 2024) is a more difficult benchmark building on top of ProntoQA. It contains 17,886 logical problems curated by randomly generated directed acyclic graphs. It has larger size of distracting reasoning paths in the ontology, and thus require more complex reasoning and planning capabilities.

#### 4.1.2 Mathematical Reasoning

We fine-tune pretrained LLMs using the MetaMathQA (Yu et al., 2023) or the Dart-MATH (Tong et al., 2024) dataset. MetaMathQA is a curated dataset that augments the existing Math (Hendrycks et al., ) and GSM8K (Cobbe et al., 2021b) datasets by various ways of question bootstrapping, such as (i) rephrasing the question and generating the reasoning path; (ii) generating backward questions, self-verification questions, FOBAR questions (Jiang et al., 2024), etc. This dataset contains 395k samples in total, where 155k samples are bootstrapped from Math and the remaining 240k come from GSM8K. We rerun the MetaMath data pipeline by using Llama-3.1-405B-Inst to generate the response. Dart-MATH (Tong et al., 2024) also synthesizes responses for questions in Math and GSM8K, with the focus on difficult questions via difficulty-aware rejection tuning. For evaluation, we test the models on the original Math and GSM8K datasets, which are in-domain, and also the following out-of-domain benchmarks:

- College-Math (Tang et al., 2024) consists of 2818 college-level math problems taken from 9 textbooks. These problems cover over 7 different areas such as linear algebra, differential equations, and so on. They are designed to evaluate how well the language model can handle complicated mathematical reasoning problems in different field of study.

- DeepMind-Math (Saxton et al., 2019) consists of 1000 problems based on the national school math curriculum for students up to 16 years old. It examines the basic mathematics and reasoning skills across different topics.

- OlympiaBench-Math (He et al., 2024) is a text-only English subset of Olympiad-Bench focusing on advanced level mathematical reasoning. It contains 675 highly difficult math problems from competitions.

- TheoremQA (Chen et al., 2023) contains 800 problems focuses on solving problems in STEM fields (such as math, physics, and engineering) using mathematical theorems.

- Fresh-Gaokao-Math-2023 (Tang et al., 2024) contains 30 math questions coming from Gaokao, or the National College Entrance Examination, which is a national standardized test that plays a crucial role in the college admissions process.

### 4.2 Main Results

We employ a consistent strategy for training VQ-VAE and replacing CoT tokens with latent discrete codes across all our experiments, as outlined below. The specific model architecture and key hyperparameters used for LLM training are presented alongside the results for each category of benchmarks. All the other details are deferred to Appendix A.

VQ-VAE Training

For each benchmark, we train a VQ-VAE for 100k steps using the Adam optimizer, with learning rate $10^-5$ and batch size 32. We use a codebook of size $1024$ and compress every chunk of $L=16$ tokens into a single latent token (i.e., the compression rate $r=16$ ).

Randomized Latent Code Replacement

We introduce a stochastic procedure for partially replacing CoT tokens with latent codes. Specifically, we define a set of predetermined numbers $M=\{0,72,128,160,192,224,256\}$ , which are all multipliers of $L=16$ . For each training example, we first sample $m_\max∈M$ then sample an integer $m∈[0,16,32,…,m_\max]$ uniformly at random. The first $m$ CoT tokens are replaced by their corresponding latent discrete codes, while the later ones remain as raw text. This stochastic replacement mechanism exposes the model to a wide range of latent-text mixtures, enabling it to effectively learn from varying degrees of latent abstraction.

| Sol-Only CoT Latent (ours) | 3 43 62.8 ( $↑$ +19.8) | 645 1312.0 374.6 | 93.8 98.8 100 ( $↑$ +1.2) | 3.0 92.5 7.7 | 76.7 77.5 96.2 ( $↑$ +18.7) | 8.2 49.4 10.9 |

| --- | --- | --- | --- | --- | --- | --- |

Table 4.1: Our latent approach surpasses the other baselines on Keys-Finding Maze, ProntoQA and ProsQA with a large margin . We use top- $k$ ( $k=10$ ) decoding for Keys-Finding Maze and greedy decoding for ProntoQA and ProsQA. In terms of token efficiency, our latent approach also generates much shorter reasoning traces than the CoT baseline, closely tracking or even outperforming the Sol-Only approach. Bold: best results. Underline: second best results. ( $↑$ +Performance gain compared with the second best result.)

Model In-Domain Out-of-Domain Average Math GSM8K Gaokao-Math-2023 DM-Math College-Math Olympia-Math TheoremQA All Datasets Llama-3.2-1B Sol-Only 4.7 6.8 0.0 10.4 5.3 1.3 3.9 4.6 CoT 10.5 42.7 10.0 3.4 17.1 1.5 9.8 14.1 iCoT 8.2 10.5 3.3 11.3 7.6 2.1 10.7 7.7 Pause Token 5.1 5.3 2.0 1.4 0.5 0.0 0.6 2.1 Latent (ours) 14.7 ( $↑$ +4.2) 48.7 ( $↑$ +6) 10.0 14.6 ( $↑$ +3.3) 20.5 ( $↑$ +3.4) 1.8 11.3 ( $↑$ +0.6) 17.8 ( $↑$ +3.7) Llama-3.2-3B Sol-Only 6.1 8.1 3.3 14.0 7.0 1.8 6.8 6.7 CoT 21.9 69.7 16.7 27.3 30.9 2.2 11.6 25.2 iCoT 12.6 17.3 3.3 16.0 14.2 4.9 13.9 11.7 Pause Token 25.2 53.7 4.1 7.4 11.8 0.7 1.0 14.8 Latent (ours) 26.1 ( $↑$ +4.2) 73.8 ( $↑$ +4.1) 23.3 ( $↑$ +6.6) 27.1 32.9 ( $↑$ +2) 4.2 13.5 28.1 ( $↑$ +2.9) Llama-3.1-8B Sol-Only 11.5 11.8 3.3 17.4 13.0 3.8 6.7 9.6 CoT 32.9 80.1 16.7 39.3 41.9 7.3 15.8 33.4 iCoT 17.8 29.6 16.7 20.3 21.3 7.6 14.8 18.3 Pause Token 39.6 79.5 6.1 25.4 25.1 1.3 4.0 25.9 Latent (ours) 37.2 84.1 ( $↑$ +4.0) 30.0 ( $↑$ +13.3) 41.3 ( $↑$ +2) 44.0 ( $↑$ +2.1) 10.2 ( $↑$ +2.6) 18.4 ( $↑$ +2.6) 37.9 ( $↑$ +4.5)

Table 4.2: Our latent approach outperforms the baselines on various types of mathematical reasoning benchmarks. The models are fine-tuned on the MetaMathQA (Yu et al., 2023) dataset. The Math and GSM8K are in-domain datasets since they are used to generate MetaMathQA, while the others are out-of-domain. Bold: best results. Underscore: second best results. $↑$ +: Performance gain compared with the second best result.

Model In-Domain (# of tokens) Out-of-Domain (# of tokens) Average Math GSM8K Gaokao-Math-2023 DM-Math College-Math Olympia-Math TheoremQA All Datasets Llama-3.2-1B Sol-Only 4.7 6.8 0.0 10.4 5.3 1.3 3.9 4.6 CoT 646.1 190.3 842.3 578.7 505.6 1087.0 736.5 655.2 iCoT 328.4 39.8 354.0 170.8 278.7 839.4 575.4 369.5 Pause Token 638.8 176.4 416.1 579.9 193.8 471.9 988.1 495 Latent (ours) 501.6 ( $↓$ -22%) 181.3 ( $↓$ -5%) 760.5 ( $↓$ -11%) 380.1 ( $↓$ -34%) 387.3 ( $↓$ -23%) 840.0 ( $↓$ -22%) 575.5 ( $↓$ -22%) 518 ( $↓$ -21%) Llama-3.2-3B Sol-Only 6.1 8.1 3.3 14.0 7.0 1.8 6.8 6.7 CoT 649.9 212.1 823.3 392.8 495.9 1166.7 759.6 642.9 iCoT 344.4 60.7 564.0 154.3 224.9 697.6 363.6 344.2 Pause Token 307.9 162.3 108.9 251.5 500.96 959.5 212.8 354.7 Latent (ours) 516.7 ( $↓$ -20%) 198.8 ( $↓$ -6%) 618.5 ( $↓$ -25%) 340.0 ( $↓$ -13%) 418.0 ( $↓$ -16%) 832.8 ( $↓$ -29%) 670.2 ( $↓$ -12%) 513.6 ( $↓$ -20%) Llama-3.1-8B Sol-Only 11.5 11.8 3.3 17.4 13.0 3.8 6.7 9.6 CoT 624.3 209.5 555.9 321.8 474.3 1103.3 760.1 578.5 iCoT 403.5 67.3 444.8 137.0 257.1 797.1 430.9 362.5 Pause Token 469.4 119.0 752.6 413.4 357.3 648.2 600.1 480 Latent (ours) 571.9 ( $↓$ -9 %) 193.9 ( $↓$ -8 %) 545.8 ( $↓$ -2 %) 292.1 ( $↓$ -10%) 440.3 ( $↓$ -8%) 913.7 ( $↓$ -17 %) 637.2 ( $↓$ -16 %) 513.7 ( $↓$ -10%)

Table 4.3: The average number of tokens in the generated responses. Compared with the CoT baseline, our latent approach achieves an $17\$ reduction in response length on average, while surpassing it in final performance according to Table 4.2. The iCoT method generates shorter responses than our approach, yet performs significantly worse, see Table 4.2. $↓$ -: Trace length reduction rate compared with CoT.

#### 4.2.1 Synthetic Benchmarks

Hyperparameters and Evaluation Metric

For our experiments on the ProntoQA and ProsQA datasets, we fine-tune the pretrained GPT-2 model (Radford et al., 2019) for $16$ k steps, where we use a learning rate of $10^-4$ with linear warmup for 100 steps, and the batch size is set to 128. To evaluate the models, we use greedy decoding and check the exact match with the ground truth.

For Keys-Finding Maze, due to its specific vocabulary, we trained a T5 model (Raffel et al., 2020) from scratch for 100k steps with a learning rate of $7.5× 10^-4$ and a batch size of 1024. We evaluate the models by the 1-Feasible-10 metric. Namely, for each evaluation task, we randomly sample 10 responses with top- $k$ ( $k$ =10) decoding and check if any of them is feasible and reaches the goal location.

Results

As shown in Table 4.1, our latent approach performs better than the baselines for both the Keys-Finding Maze and ProntoQA tasks. Notably, the absolute improvement is 15% for the Keys-Finding Maze problem, and we reach 100% accuracy on the relatively easy ProntoQA dataset. For the more difficult ProsQA, the CoT baseline only obtains 77.5% accuracy, the latent approach achieves $17.5\$ performance gain.

Model In-Domain Out-of-Domain Average math GSM8K Fresh-Gaokao-Math-2023 DeepMind-Mathematics College-Math Olympia-Math TheoremQA All Datasets Llama-3.2-1B All-Replace 6.7 4.2 0.0 11.8 6.0 2.1 8.5 5.6 Curriculum-Replace 7.1 9.8 3.3 13.0 7.9 2.4 10.5 7.8 Poisson-Replace 13.9 49.5 10.0 12.2 18.9 2.3 9.0 15.1 Latent-AR (ours) 14.7 48.7 10.0 14.6 20.5 1.8 11.3 17.8 Llama-3.2-3B All-Replace 10.7 12.8 10.0 19.4 12.8 5.3 11.8 11.8 Curriculum-Replace 10.2 14.9 3.3 16.8 12.9 3.9 14.4 10.9 Poisson-Replace 23.6 65.9 13.3 17.9 28.9 2.9 11.2 20.5 Latent (ours) 26.1 73.8 23.3 27.1 32.9 4.2 13.5 28.1 Llama-3.1-8B All-Replace 15.7 19.9 6.7 21.1 19.5 5.0 17.5 15.0 Curriculum-Replace 14.6 23.1 13.3 20.3 18.7 3.9 16.6 15.8 Possion-Replace 37.9 83.6 16.6 42.7 44.7 9.9 19.1 36.3 Latent (ours) 37.2 84.1 30.0 41.3 44.0 10.2 18.4 37.9

Table 4.4: Our latent token replacement strategy significantly outperforms the alternative choices: All-Replace (where all the textual CoT tokens are replaced by latent tokens at once), Curriculum-Replace (where we gradually replace the text tokens for the entire CoT subsequence by latent tokens over the course of training) and Poisson-Replace (where individual chunks of text tokens are replaced with probabilities 0.5).

#### 4.2.2 Mathematical Reasoning

Hyperparameters and Evaluation Metrics

We considered 3 different sizes of LLMs from the LLaMa herd: Llama-3.2-1B, Llama-3.2-3B and Llama-3.1-8B models. For all the models, we fine-tune them on the MetaMathQA dataset for 1 epoch. To maximize training efficiency, we use a batch size of 32 with a sequence packing of 4096. We experiment with different learning rates $10^-5,2.5× 10^-5,5× 10^-5,10^-4$ and select the one with the lowest validation error. The final choices are $10^-5$ for the 8B model and $2.5× 10^-5$ for the others. For all the experiments, we use greedy decoding for evaluation.

Accuracy Comparison

Table 4.2 presents the results. Our latent approach consistently outperforms all the baselines across nearly all the tasks, for models of different sizes. For tasks on which we do not observe improvement, our approach is also comparable to the best performance. The gains are more pronounced in specific datasets such as Gaokao-Math-2023. On average, we are observing a $+5.3$ points improvement for the 8B model, $+2.9$ points improvement for the 3B model, and +3.7 points improvement for the 1B model.

Tokens Efficiency Comparison

Alongside the accuracy, we also report the number of tokens contained in the generated responses in Table 4.3, which is the dominating factor of the inference efficiency. Our first observation is that for all the approaches, the model size has little influence on the length of generated responses. Overall, the CoT method outputs the longest responses, while the Sol-Only method outputs the least number of tokens, since it is trained to generate the answer directly. The iCoT method generates short responses as well ( $42.8\$ reduction compared to CoT), as the CoT data has been iteratively eliminated in its training procedure. However, this comes at the cost of significantly degraded model performance compared with CoT, as shown in Table 4.2. Our latent approach shows an average $17\$ reduction in token numbers compared with CoT while surpassing it in prediction accuracy.

### 4.3 Ablation & Understanding Studies

Replacement Strategies

Our latent approach partially replaces the leftmost $m$ CoT tokens, where the value of $m$ varies for each sample. We call such replacement strategies AR-Replace. Here we consider three alternative strategies:

1. All-Replace: all the text CoT tokens are replaced by the latent tokens.

1. Curriculum-Replace: the entire CoT subsequence are gradually replaced over the course of training, similar to the training procedure used by iCoT and COCONUT (Hao et al., 2024). We train the model for 8 epochs. Starting from the original dataset, in each epoch we construct a new training dataset whether we further replace the leftmost 16 textual CoT tokens by a discrete latent token.

1. Poisson-Replace: instead of replacing tokens from left to right, we conduct a Poisson sampling process to select CoT tokens to be replaced: we split the reasoning traces into chunks consisting of 16 consecutive text tokens, where each chunk is randomly replaced by the latent token with probability 0.5.

Table 4.4 reports the results. Our AR-Replace strategy demonstrate strong performance, outperforming the other two strategies with large performance gap. Our intuition is as follows. When all the textual tokens are removed, the model struggles to align the latent tokens with the linguistic and semantic structures it learned during pretraining.

In contrast, partial replacement offers the model a bridge connecting text and latent spaces: the remaining text tokens serve as anchors, helping the model interpret and integrate the latent representations more effectively. Interestingly, the curriculum learning strategy is bridging the two spaces very well, where All-Replace and Curriculum-Replace exhibit similar performance. This is similar to our observation that iCoT performs remarkably worse than CoT for mathematical reasoning problems. Poisson-Replace demonstrates performance marginally worse to our AR-Replace strategy on the 1B and 8B models, but significantly worse on the 3B model. Our intuition is that having a fix pattern of replacement (starting from the beginning and left to right) is always easier for the model to learn. This might be due to the limited finetuning dataset size and model capacity.

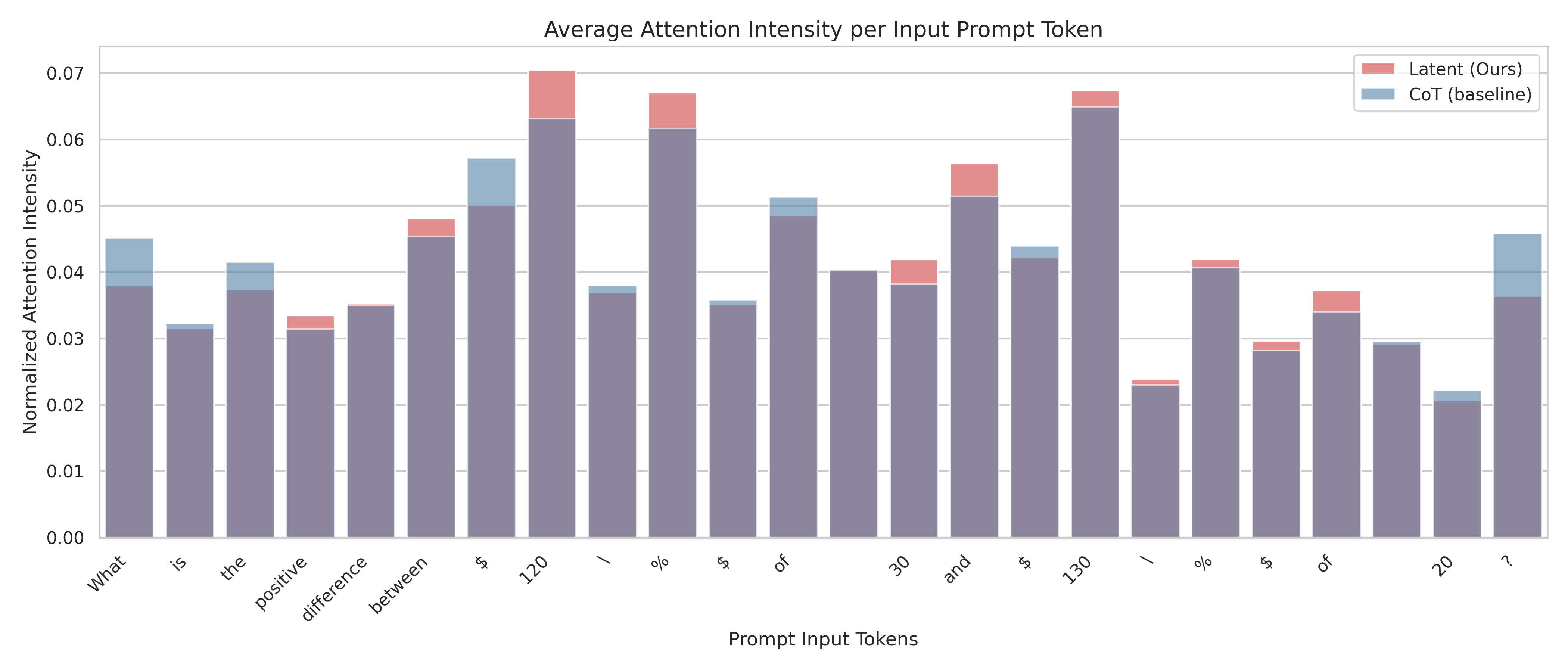

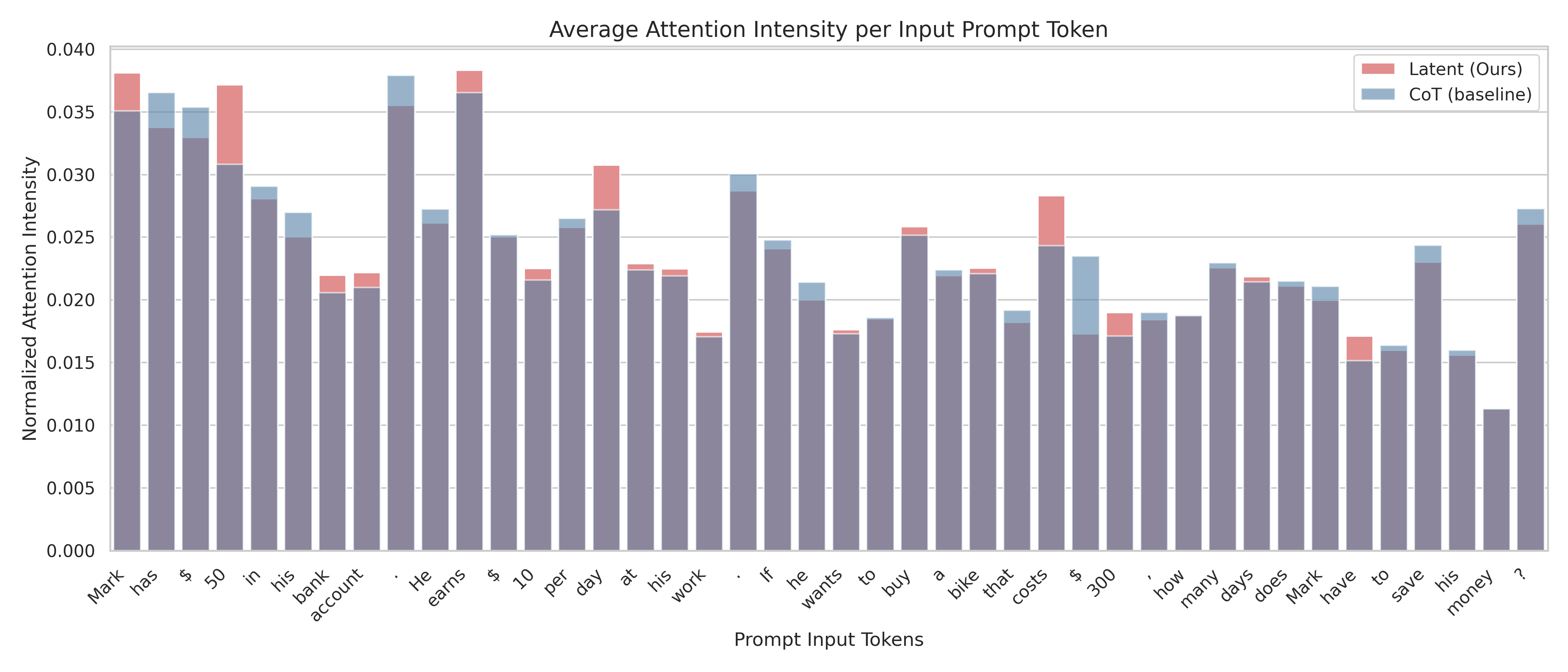

Attention Weights Analysis

To understand the reason why injecting latent tokens enhanced the model’s reasoning performance, we randomly selected two questions from the Math and Collegue-Math dataset and generate responses, then analyze the attention weights over the input prompt tokens:

1. What is the positive difference between $120%$ of 30 and $130%$ of 20?

1. Mark has $50 in his bank account. He earns $10 per day at his work. If he wants to buy a bike that costs $300, how many days does Mark have to save his money?

Specifically, we take the last attention layer, compute the average attention weights over different attention heads and show its relative intensity over the prompt tokens We first compute the average attention weights across multiple heads. This gives us a single lower triangular matrix. Then, we take the column sum of this matrix to get an aggregated attention weights for each token. Last, we normalize the weights by their average to obtain the relative intensity. A one line pseudocode is: column_sum(avg(attention_matrices)) / avg(column_sum(avg(attention_matrices))). . We compare the averaged attention weights of our model with the CoT model in Figure 4.1. Interestingly, our model learns to grasp a stronger attention to numbers and words representing mathematical operations. Both Figure 1(a) and Figure 1(b) show that the latent model focus more on the numbers, such as 120, 30, and 130 for the first question. For the second question, our latent model shows a larger attention weights on numbers including 50, 10, and 300, and also tokens semantically related to mathematical operations such as earns (means addition) and cost (means subtraction). This suggests that, by partially compressing the reasoning trace into a mix of latent and text tokens, we allow the model to effectively focus on important tokens that build the internal logical flow. See Section C.1 for the exact response generated by our approach and the CoT baseline.

<details>

<summary>plots/entry_1.png Details</summary>

### Visual Description

## Bar Chart: Average Attention Intensity per Input Prompt Token

### Overview

This is a stacked bar chart comparing the "Normalized Attention Intensity" assigned to each token in a specific input prompt by two different methods: "Latent (Ours)" and "CoT (baseline)". The chart visualizes how attention is distributed across the words and symbols of the prompt.

### Components/Axes

* **Chart Title:** "Average Attention Intensity per Input Prompt Token"

* **Y-Axis:**

* **Label:** "Normalized Attention Intensity"

* **Scale:** Linear, ranging from 0.00 to 0.07, with major gridlines at intervals of 0.01.

* **X-Axis:**

* **Label:** "Prompt Input Tokens"

* **Categories (Tokens):** The tokens, in order, are: `What`, `is`, `the`, `positive`, `difference`, `between`, `$`, `120`, `/`, `%`, `$`, `of`, `30`, `and`, `$`, `130`, `/`, `%`, `$`, `of`, `20`, `?`. These tokens form the question: "What is the positive difference between $ 120 / % $ of 30 and $ 130 / % $ of 20 ?"

* **Legend:**

* **Location:** Top-right corner of the chart area.

* **Series 1:** "Latent (Ours)" - Represented by a light red/salmon color.

* **Series 2:** "CoT (baseline)" - Represented by a light blue/steel blue color.

* **Bar Structure:** Each bar is stacked. The blue "CoT (baseline)" segment forms the base, and the red "Latent (Ours)" segment is placed on top. The total height of the bar represents the combined normalized attention intensity for that token from both methods.

### Detailed Analysis

The following table reconstructs the approximate data points for each token. Values are estimated from the chart's gridlines. The "Total" is the sum of the two series.

| Prompt Token | CoT (baseline) [Blue, Base] | Latent (Ours) [Red, Top] | Total Height (Approx.) |

| :--- | :--- | :--- | :--- |

| What | ~0.038 | ~0.007 | ~0.045 |

| is | ~0.032 | ~0.000 | ~0.032 |

| the | ~0.037 | ~0.004 | ~0.041 |

| positive | ~0.031 | ~0.002 | ~0.033 |

| difference | ~0.035 | ~0.000 | ~0.035 |

| between | ~0.045 | ~0.003 | ~0.048 |

| $ | ~0.050 | ~0.007 | ~0.057 |

| **120** | **~0.063** | **~0.007** | **~0.070** |

| / | ~0.037 | ~0.001 | ~0.038 |

| % | ~0.062 | ~0.005 | ~0.067 |

| $ | ~0.035 | ~0.001 | ~0.036 |

| of | ~0.049 | ~0.002 | ~0.051 |

| 30 | ~0.040 | ~0.000 | ~0.040 |

| and | ~0.038 | ~0.004 | ~0.042 |

| $ | ~0.051 | ~0.005 | ~0.056 |

| **130** | **~0.065** | **~0.002** | **~0.067** |

| / | ~0.042 | ~0.002 | ~0.044 |

| % | ~0.023 | ~0.001 | ~0.024 |

| $ | ~0.041 | ~0.001 | ~0.042 |

| of | ~0.028 | ~0.002 | ~0.030 |

| 20 | ~0.034 | ~0.003 | ~0.037 |

| ? | ~0.021 | ~0.001 | ~0.022 |

**Trend Verification:**

* The attention intensity is not uniform across tokens.

* Numerical tokens (`120`, `130`, `30`, `20`) and certain symbols (`$`, `%`) generally receive higher attention than common words (`is`, `the`, `and`).

* The token `120` has the highest total attention intensity (~0.070), closely followed by `130` (~0.067) and `%` (~0.067).

* The lowest attention is given to the final question mark `?` (~0.022).

### Key Observations

1. **Peak Attention on Numerical Values:** The tokens `120` and `130` are the clear peaks in the chart, indicating these specific numbers are the primary focus of the model's attention in this prompt.

2. **High Attention on Financial/Percentage Symbols:** The `$` and `%` symbols consistently show elevated attention levels, especially the first `$` and the first `%`.

3. **Variable Contribution of "Latent (Ours)":** The red "Latent" segment adds a noticeable amount of attention on top of the blue "CoT" baseline for several tokens (e.g., `What`, `$`, `120`, `%`, `and`, `$`). However, for many other tokens (e.g., `is`, `difference`, `30`), its contribution is minimal or zero.

4. **Baseline Dominance:** The "CoT (baseline)" method accounts for the vast majority of the attention intensity for every single token. The "Latent" method provides only a small incremental addition.

### Interpretation

This chart demonstrates how two different processing methods ("CoT" and "Latent") allocate attention to the components of a mathematical word problem. The data suggests:

* **The model's "focus" is on the operands and operators.** The highest attention is on the numbers (`120`, `130`) and the percentage/financial symbols (`%`, `$`), which are the core quantitative elements of the question. Function words (`is`, `the`, `of`) receive significantly less attention.

* **The "Latent" method appears to be a refinement technique.** It does not redistribute attention broadly but instead seems to selectively boost the attention on tokens that are already important according to the baseline CoT method (like the numbers and key symbols). This could imply it's designed to sharpen focus on critical information rather than discover new important tokens.

* **The prompt structure is mirrored in the attention pattern.** The two main clauses of the question ("...$ 120 / % $ of 30" and "...$ 130 / % $ of 20") create a parallel structure in the attention peaks, with `120` and `130` being the twin focal points. This indicates the model successfully parses the comparative structure of the query.

In essence, the visualization confirms that the model's attention mechanism, under both methods, correctly identifies the numerical values and financial symbols as the most salient parts of this specific arithmetic prompt. The "Latent" method's role is to marginally intensify this pre-existing focus.

</details>

(a) Prompt: What is the positive difference between $120%$ of 30 and $130%$ of 20?

<details>

<summary>plots/entry_7746.png Details</summary>

### Visual Description

## Bar Chart: Average Attention Intensity per Input Prompt Token

### Overview

This is a grouped bar chart comparing the "Normalized Attention Intensity" assigned to each token in a specific input prompt by two different methods: "Latent (Ours)" and "CoT (baseline)". The chart visualizes how attention is distributed across the words and symbols of a math word problem.

### Components/Axes

* **Chart Title:** "Average Attention Intensity per Input Prompt Token"

* **Y-Axis:** Labeled "Normalized Attention Intensity". Scale ranges from 0.000 to 0.040, with major gridlines at intervals of 0.005.

* **X-Axis:** Labeled "Prompt Input Tokens". It displays the individual tokens (words and punctuation) of the input prompt in sequence.

* **Legend:** Located in the top-right corner.

* **Red/Pink Bar:** "Latent (Ours)"

* **Blue/Grey Bar:** "CoT (baseline)"

* **Input Prompt Tokens (X-axis labels, in order):**

`Mark`, `has`, `$`, `50`, `in`, `his`, `bank`, `account`, `.`, `He`, `earns`, `$`, `10`, `per`, `day`, `at`, `his`, `work`, `.`, `If`, `he`, `wants`, `to`, `buy`, `a`, `bike`, `that`, `costs`, `$`, `300`, `,`, `how`, `many`, `days`, `does`, `Mark`, `have`, `to`, `save`, `his`, `money`, `?`

### Detailed Analysis

The chart displays two bars for each token, representing the attention intensity from each method. The bars are often overlapping or stacked, with the "Latent (Ours)" bar appearing in front of the "CoT (baseline)" bar where they overlap.

**Trend Verification & Data Point Extraction (Approximate Values):**

* **General Trend:** Attention intensity varies significantly across tokens. Both methods show higher attention on key numerical values and entities, but the distribution differs.

* **Token-by-Token Analysis (Approximate Y-values):**

* `Mark`: Latent ~0.038, CoT ~0.035

* `has`: Latent ~0.036, CoT ~0.034

* `$`: Latent ~0.035, CoT ~0.033

* `50`: Latent ~0.037, CoT ~0.031

* `in`: Latent ~0.029, CoT ~0.028

* `his`: Latent ~0.027, CoT ~0.025

* `bank`: Latent ~0.022, CoT ~0.021

* `account`: Latent ~0.022, CoT ~0.021

* `.`: Latent ~0.038, CoT ~0.035

* `He`: Latent ~0.027, CoT ~0.026

* `earns`: Latent ~0.038, CoT ~0.036

* `$`: Latent ~0.025, CoT ~0.025

* `10`: Latent ~0.022, CoT ~0.022

* `per`: Latent ~0.026, CoT ~0.026

* `day`: Latent ~0.031, CoT ~0.027

* `at`: Latent ~0.023, CoT ~0.022

* `his`: Latent ~0.022, CoT ~0.022

* `work`: Latent ~0.017, CoT ~0.017

* `.`: Latent ~0.030, CoT ~0.029

* `If`: Latent ~0.025, CoT ~0.024

* `he`: Latent ~0.021, CoT ~0.020

* `wants`: Latent ~0.017, CoT ~0.017

* `to`: Latent ~0.018, CoT ~0.018

* `buy`: Latent ~0.026, CoT ~0.025

* `a`: Latent ~0.022, CoT ~0.022

* `bike`: Latent ~0.022, CoT ~0.022

* `that`: Latent ~0.019, CoT ~0.018

* `costs`: Latent ~0.028, CoT ~0.024

* `$`: Latent ~0.023, CoT ~0.017

* `300`: Latent ~0.019, CoT ~0.017

* `,`: Latent ~0.019, CoT ~0.018

* `how`: Latent ~0.019, CoT ~0.019

* `many`: Latent ~0.023, CoT ~0.023

* `days`: Latent ~0.022, CoT ~0.021

* `does`: Latent ~0.021, CoT ~0.021

* `Mark`: Latent ~0.021, CoT ~0.020

* `have`: Latent ~0.017, CoT ~0.015

* `to`: Latent ~0.016, CoT ~0.016

* `save`: Latent ~0.024, CoT ~0.023

* `his`: Latent ~0.016, CoT ~0.015

* `money`: Latent ~0.011, CoT ~0.011

* `?`: Latent ~0.027, CoT ~0.026

### Key Observations

1. **Highest Attention Points:** Both methods assign the highest attention to the initial token "`Mark`" and the period "`.`" following the first sentence. The "Latent" method also shows a very high peak on "`earns`".

2. **Numerical Values:** The "Latent" method shows notably higher attention on the first dollar amount "`$`" (preceding 50) and the cost "`$`" (preceding 300) compared to the CoT baseline.

3. **Question Focus:** The final question mark "`?`" receives relatively high attention from both methods, with "Latent" being slightly higher.

4. **Lowest Attention:** The token "`money`" receives the lowest attention intensity from both methods (approx. 0.011).

5. **Method Comparison:** The "Latent (Ours)" method generally exhibits higher attention peaks on semantically critical tokens (like "`Mark`", "`earns`", "`$`", "`day`", "`costs`") compared to the "CoT (baseline)", which has a slightly more uniform distribution. The CoT baseline shows a distinct, isolated higher bar on the token "`$`" (the one before 300) where the Latent bar is lower.

### Interpretation

This chart demonstrates how two different language model processing techniques ("Latent" vs. "Chain-of-Thought baseline") allocate their internal attention when solving a math word problem. The data suggests:

* **Differential Focus:** The "Latent" method appears to place greater emphasis on the core entities (`Mark`), actions (`earns`), and numerical values (`$`, `50`, `10`, `300`) that are crucial for setting up and solving the arithmetic problem. This could indicate a more targeted, problem-solving-oriented attention mechanism.

* **Structural vs. Semantic Attention:** The CoT baseline's relatively higher attention on structural words like the second "`$`" (before 300) and its more even distribution might reflect a process that attends more broadly to the sentence structure as part of its reasoning chain generation.

* **Anomaly:** The significant dip in attention for the final content word "`money`" is notable. It suggests that by the end of the prompt, the models have already extracted the necessary financial context, and the word itself carries less new information for the task of calculating days.

* **Implication:** The differences in attention patterns could correlate with differences in reasoning efficiency or accuracy between the two methods. The "Latent" method's sharper focus on key problem elements might lead to more direct solutions, while the CoT baseline's pattern might be associated with its step-by-step verbal reasoning process. This visualization provides empirical insight into the "black box" of model attention, showing that architectural or methodological changes can significantly alter how a model processes the same input.

</details>

(b) Prompt: Mark has $50 in his bank account. He earns $10 per day at his work. If he wants to buy a bike that costs $300, how many days does Mark have to save his money?

Figure 4.1: Comparing with the CoT model, our latent approach have high attention weights on numbers and text tokens representing mathematical operations.

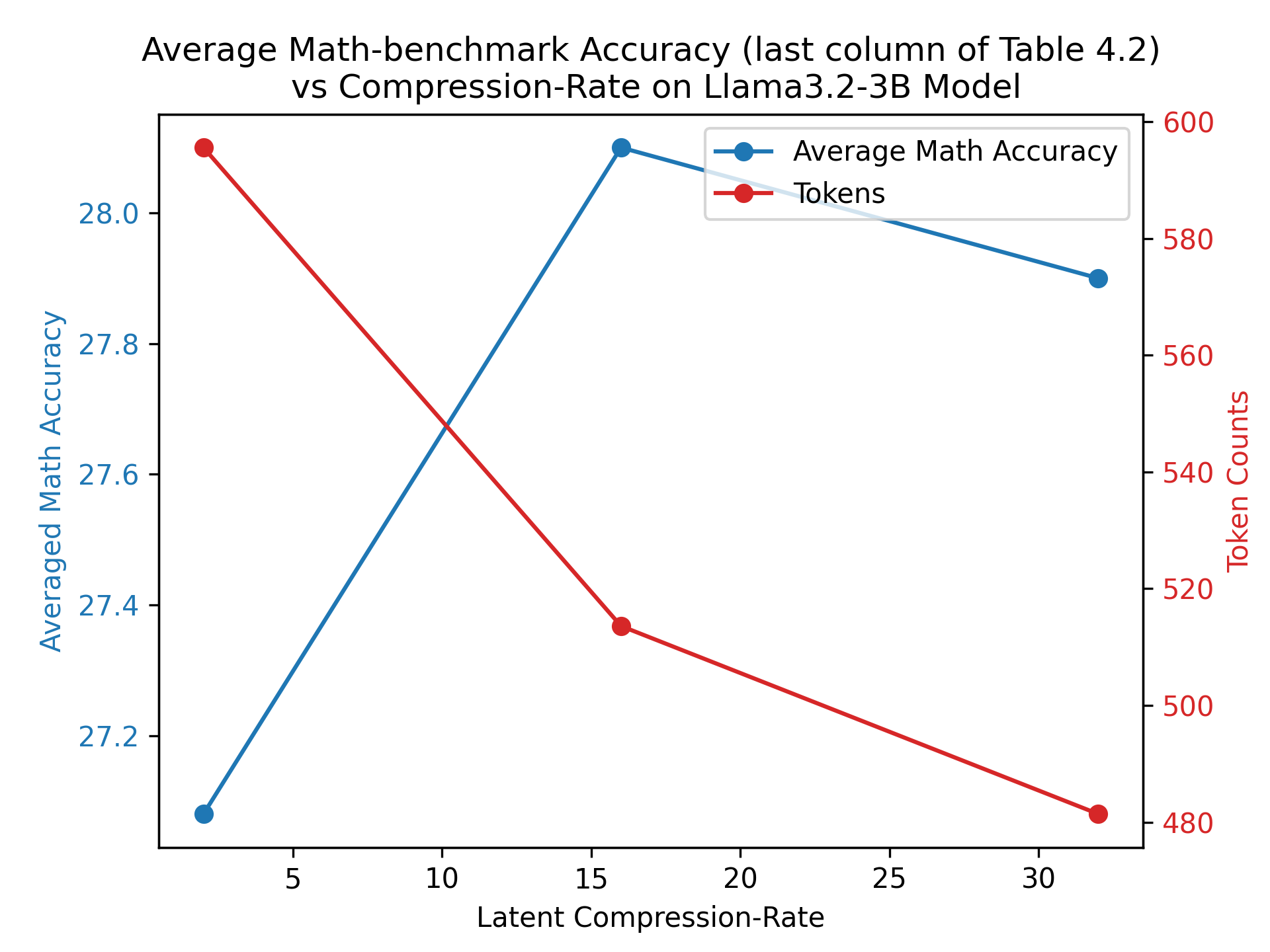

Table 4.5: The Table blow illustrates a clear trend on Llama-3.2-3B model where increasing the compression rate reduces the number of generated tokens due to higher data compression. Notably, a compression rate of 2 shows some improvements over the CoT baseline. Furthermore, there appears to be an optimal ’sweet spot’ where the data is neither overly compressed (rate = 32) nor minimally compressed (rate = 2), optimizing both efficiency and accuracy.

Model In-Domain Out-of-Domain Average math GSM8K Fresh-Gaokao- Math-2023 DeepMind- Mathematics College- Math Olympia- Math TheoremQA All Datasets Llama-3.2-3B CoT (baseline) (Acc.) 21.9 69.7 16.7 27.3 30.9 2.2 11.6 25.2 # of Tokens 649.9 212.1 823.3 392.8 495.9 1166.7 759.6 642.9 Latent- $r=2$ (Acc.) 24.3 71.7 16.7 25.4 32.0 4.7 14.8 27.08 # of Tokens 586.0 207.6 739.6 415.3 471 1036 714 595.6 Latent $r=16$ (Acc.) 26.1 73.8 23.3 27.1 32.9 4.2 13.5 28.1 # of Tokens 516.7 198.8 618.5 340.0 418.0 832.8 670.2 513.6 Latent $r=32$ (Acc.) 25.2 71.5 23.3 26.3 33.3 4.9 14.1 27.9 # of Tokens 496.5 183.3 577.3 311.0 395.2 821.0 585.6 481.4

### 4.4 Ablations on the Latent $r$ parameters

Throughout this paper we have been using $r$ (or the compression ratio) to be 16, in this section, we will be ablating how would $r$ affects the performance of the downstream Math tasks if we vary this parameter.

To this end, we vary this parameter on the Llama-3.2-3B model. Our result is summarized in Table. 4.5. A graphical illustration is shown in Figure. 4.2. A key takeaway is that our latent approach comes out ahead of the CoT baseline for all $r$ settings in terms of fewer tokens (better efficiency) and higher accuracy. This is a strong signal that the shift to a latent representation itself is fundamentally beneficial. In addition, we see that when the $r$ (compression ratio) increases, we expect each latent token to encode more information (higher compression). As a result, we see that, on average, the number of tokens reduces as $r$ increases. However, in terms of the accuracy metric, we see that the model increases initially from 25.2 (overall accuracy) to 27.1 (when $r=2$ ). It further boosts up to 28.1 at $r=16$ , and then it goes down to 27.9 when $r=32$ . This indicates a sweet spot that $r=16$ , it is neither overly-compressed (which implies information loss), nor under-compressed (which implies information is not encoded abstractly enough). This study indicates an interesting trade-off between accuracy and tokens efficiency in our latent approach. So, $r=16$ appears to strike an optimal balance between compact representation and the preservation of task-critical information.

<details>

<summary>plots/plot_r.png Details</summary>

### Visual Description

## Dual-Axis Line Chart: Average Math-benchmark Accuracy vs. Compression-Rate on Llama3.2-3B Model

### Overview

This image is a dual-axis line chart plotting two different metrics against a common independent variable. The chart visualizes the relationship between the "Latent Compression-Rate" of a Llama3.2-3B model and two dependent variables: "Averaged Math Accuracy" and "Token Counts." The data suggests an investigation into the trade-offs between model compression, performance (accuracy), and output length (tokens).

### Components/Axes

* **Chart Title:** "Average Math-benchmark Accuracy (last column of Table 4.2) vs Compression-Rate on Llama3.2-3B Model"

* **X-Axis (Bottom):**

* **Label:** "Latent Compression-Rate"

* **Scale:** Linear scale with major tick marks at 5, 10, 15, 20, 25, and 30.

* **Primary Y-Axis (Left):**

* **Label:** "Averaged Math Accuracy"

* **Scale:** Linear scale with major tick marks at 27.2, 27.4, 27.6, 27.8, and 28.0.

* **Color:** Blue, corresponding to the "Average Math Accuracy" data series.

* **Secondary Y-Axis (Right):**

* **Label:** "Token Counts"

* **Scale:** Linear scale with major tick marks at 480, 500, 520, 540, 560, 580, and 600.

* **Color:** Red, corresponding to the "Tokens" data series.

* **Legend:**

* **Position:** Top-right corner of the plot area.

* **Series 1:** A blue line with a circular marker labeled "Average Math Accuracy."

* **Series 2:** A red line with a circular marker labeled "Tokens."

### Detailed Analysis

The chart displays three data points for each series, connected by straight lines.

**Data Series 1: Average Math Accuracy (Blue Line, Left Y-Axis)**

* **Trend Verification:** The blue line shows a sharp increase followed by a slight decrease. It slopes steeply upward from the first to the second point, then slopes gently downward to the third point.

* **Data Points (Approximate):**

1. At a Latent Compression-Rate of **~2**, the Averaged Math Accuracy is **~27.1**.

2. At a Latent Compression-Rate of **~16**, the Averaged Math Accuracy peaks at **~28.1**.

3. At a Latent Compression-Rate of **~32**, the Averaged Math Accuracy decreases to **~27.9**.

**Data Series 2: Tokens (Red Line, Right Y-Axis)**

* **Trend Verification:** The red line shows a consistent, steep downward trend across all data points.

* **Data Points (Approximate):**

1. At a Latent Compression-Rate of **~2**, the Token Count is **~595**.

2. At a Latent Compression-Rate of **~16**, the Token Count is **~515**.

3. At a Latent Compression-Rate of **~32**, the Token Count is **~480**.

### Key Observations

1. **Inverse Relationship:** There is a clear inverse relationship between the two plotted metrics. As the Latent Compression-Rate increases, the Token Count consistently decreases, while the Math Accuracy first increases and then slightly decreases.

2. **Peak Performance:** The highest average math accuracy (~28.1) is achieved at a moderate compression rate (~16), not at the lowest or highest rate shown.

3. **Non-Linear Accuracy Response:** The model's accuracy does not degrade linearly with compression. It improves significantly from a very low compression rate to a moderate one before beginning to decline.

4. **Strong Linear Compression-Token Correlation:** The reduction in token counts appears to be strongly and almost linearly correlated with the increase in the latent compression rate.

### Interpretation

This chart demonstrates a critical engineering trade-off in model optimization. The data suggests that applying a moderate amount of latent compression (around a rate of 16) to the Llama3.2-3B model yields a "sweet spot": it significantly improves performance on math benchmarks compared to minimal compression, while also reducing the number of tokens generated (which implies greater efficiency). However, pushing compression further (to a rate of 32) begins to harm accuracy, even as it continues to reduce token counts.

The findings imply that compression is not merely a method for reducing model size or inference cost (as proxied by token count) but can also act as a form of regularization or optimization that enhances certain capabilities, up to a point. The peak in accuracy suggests an optimal balance where the compressed representation retains or even focuses on the most salient features for mathematical reasoning. The subsequent decline indicates that excessive compression starts to discard information necessary for maintaining peak performance. This visualization would be crucial for a researcher or engineer deciding on the operational parameters for deploying this model, balancing the need for accuracy against computational efficiency.

</details>

Figure 4.2: A graphical illustration of the compression rate $r$ trade-off between the accuracy and the token efficiency on the Llama-3.2-3B model.

### 4.5 Additional Examples and Interpretability Result

We provide 4 additional example responses for questions in the Math and TheoremQA datasets in Appendix D. In Appendix F, we compare all the approaches when the model is trained on the DART-MATH (Tong et al., 2024) dataset, where similar trends are observed.

We also provide interpretable examples in the Appendix E.

## 5 Conclusion

We present a novel approach to improve the reasoning capabilities of LLMs, by compressing the initial steps of the reasoning traces using discrete latent tokens obtained from VQ-VAE. By integrating both abstract representation and textual details of the reasoning process into training, our approach enables LLMs to capture essential reasoning information with improved token efficiency. Furthermore, by randomizing the number of text tokens to be compressed during training, we unlock fast adaptation to unseen latent tokens. Our comprehensive evaluation demonstrates the effectiveness across multiple domains, outperforming standard methods that rely on complete textual reasoning traces.

## Impact Statement

This paper presents a method to enhance the reasoning capability of Large Language Models (LLMs) by combining latent and text tokens in the reasoning trace. In terms of society impact, while reasoning with (opaque) latent tokens may trigger safety concerns, our approach provides a VQVAE decoder that can decode the latent tokens into human readable format, mitigating such concerns.

## References

- Azerbayev et al. (2023) Azerbayev, Z., Schoelkopf, H., Paster, K., Santos, M. D., McAleer, S., Jiang, A. Q., Deng, J., Biderman, S., and Welleck, S. Llemma: An open language model for mathematics. arXiv preprint arXiv:2310.10631, 2023.

- Barrault et al. (2024) Barrault, L., Duquenne, P.-A., Elbayad, M., Kozhevnikov, A., Alastruey, B., Andrews, P., Coria, M., Couairon, G., Costa-jussà, M. R., Dale, D., et al. Large concept models: Language modeling in a sentence representation space. arXiv e-prints, pp. arXiv–2412, 2024.

- Chen et al. (2022a) Chen, W., Ma, X., Wang, X., and Cohen, W. W. Program of thoughts prompting: Disentangling computation from reasoning for numerical reasoning tasks. arXiv preprint arXiv:2211.12588, 2022a.

- Chen et al. (2022b) Chen, W., Ma, X., Wang, X., and Cohen, W. W. Program of thoughts prompting: Disentangling computation from reasoning for numerical reasoning tasks. arXiv preprint arXiv:2211.12588, 2022b.

- Chen et al. (2023) Chen, W., Yin, M., Ku, M., Lu, P., Wan, Y., Ma, X., Xu, J., Wang, X., and Xia, T. Theoremqa: A theorem-driven question answering dataset. In Proceedings of the 2023 Conference on Empirical Methods in Natural Language Processing, pp. 7889–7901, 2023.

- Chung et al. (2024) Chung, H. W., Hou, L., Longpre, S., Zoph, B., Tay, Y., Fedus, W., Li, Y., Wang, X., Dehghani, M., Brahma, S., et al. Scaling instruction-finetuned language models. Journal of Machine Learning Research, 25(70):1–53, 2024.

- Cobbe et al. (2021a) Cobbe, K., Kosaraju, V., Bavarian, M., Chen, M., Jun, H., Kaiser, L., Plappert, M., Tworek, J., Hilton, J., Nakano, R., et al. Training verifiers to solve math word problems. arXiv preprint arXiv:2110.14168, 2021a.

- Cobbe et al. (2021b) Cobbe, K., Kosaraju, V., Bavarian, M., Chen, M., Jun, H., Kaiser, L., Plappert, M., Tworek, J., Hilton, J., Nakano, R., et al. Training verifiers to solve math word problems. arXiv preprint arXiv:2110.14168, 2021b.

- Deng et al. (2023) Deng, Y., Prasad, K., Fernandez, R., Smolensky, P., Chaudhary, V., and Shieber, S. Implicit chain of thought reasoning via knowledge distillation. arXiv preprint arXiv:2311.01460, 2023.

- Deng et al. (2024) Deng, Y., Choi, Y., and Shieber, S. From explicit cot to implicit cot: Learning to internalize cot step by step. arXiv preprint arXiv:2405.14838, 2024.

- Dubey et al. (2024) Dubey, A., Jauhri, A., Pandey, A., Kadian, A., Al-Dahle, A., Letman, A., Mathur, A., Schelten, A., Yang, A., Fan, A., et al. The llama 3 herd of models. arXiv preprint arXiv:2407.21783, 2024.

- Dziri et al. (2024) Dziri, N., Lu, X., Sclar, M., Li, X. L., Jian, L., Lin, B. Y., West, P., Bhagavatula, C., Bras, R. L., Hwang, J. D., Sanyal, S., Welleck, S., Ren, X., Ettinger, A., Harchaoui, Z., and Choi, Y. Faith and fate: Limits of transformers on compositionality. Advances in Neural Information Processing Systems, 36, 2024.

- Feng et al. (2024) Feng, G., Zhang, B., Gu, Y., Ye, H., He, D., and Wang, L. Towards revealing the mystery behind chain of thought: a theoretical perspective. Advances in Neural Information Processing Systems, 36, 2024.

- Gandhi et al. (2024) Gandhi, K., Lee, D., Grand, G., Liu, M., Cheng, W., Sharma, A., and Goodman, N. D. Stream of search (sos): Learning to search in language. arXiv preprint arXiv:2404.03683, 2024.

- Goyal et al. (2023) Goyal, S., Ji, Z., Rawat, A. S., Menon, A. K., Kumar, S., and Nagarajan, V. Think before you speak: Training language models with pause tokens. arXiv preprint arXiv:2310.02226, 2023.

- Hao et al. (2024) Hao, S., Sukhbaatar, S., Su, D., Li, X., Hu, Z., Weston, J., and Tian, Y. Training large language models to reason in a continuous latent space. arXiv preprint arXiv:2412.06769, 2024.

- Hart et al. (1968) Hart, P. E., Nilsson, N. J., and Raphael, B. A formal basis for the heuristic determination of minimum cost paths. IEEE transactions on Systems Science and Cybernetics, 4(2):100–107, 1968.

- He et al. (2024) He, C., Luo, R., Bai, Y., Hu, S., Thai, Z. L., Shen, J., Hu, J., Han, X., Huang, Y., Zhang, Y., et al. Olympiadbench: A challenging benchmark for promoting agi with olympiad-level bilingual multimodal scientific problems. arXiv preprint arXiv:2402.14008, 2024.

- (19) Hendrycks, D., Burns, C., Kadavath, S., Arora, A., Basart, S., Tang, E., Song, D., and Steinhardt, J. Measuring mathematical problem solving with the math dataset. In Thirty-fifth Conference on Neural Information Processing Systems Datasets and Benchmarks Track (Round 2).

- Hendrycks et al. (2021) Hendrycks, D., Burns, C., Kadavath, S., Arora, A., Basart, S., Tang, E., Song, D., and Steinhardt, J. Measuring mathematical problem solving with the math dataset. NeurIPS, 2021.

- Jiang et al. (2024) Jiang, W., Shi, H., Yu, L., Liu, Z., Zhang, Y., Li, Z., and Kwok, J. Forward-backward reasoning in large language models for mathematical verification. In Findings of the Association for Computational Linguistics ACL 2024, pp. 6647–6661, 2024.

- Jiang et al. (2022) Jiang, Z., Zhang, T., Janner, M., Li, Y., Rocktäschel, T., Grefenstette, E., and Tian, Y. Efficient planning in a compact latent action space. arXiv preprint arXiv:2208.10291, 2022.

- Jiang et al. (2023) Jiang, Z., Xu, Y., Wagener, N., Luo, Y., Janner, M., Grefenstette, E., Rocktäschel, T., and Tian, Y. H-gap: Humanoid control with a generalist planner. arXiv preprint arXiv:2312.02682, 2023.

- Kim et al. (2023) Kim, S., Joo, S. J., Kim, D., Jang, J., Ye, S., Shin, J., and Seo, M. The cot collection: Improving zero-shot and few-shot learning of language models via chain-of-thought fine-tuning. arXiv preprint arXiv:2305.14045, 2023.

- Kojima et al. (2022) Kojima, T., Gu, S. S., Reid, M., Matsuo, Y., and Iwasawa, Y. Large language models are zero-shot reasoners. Advances in neural information processing systems, 35:22199–22213, 2022.

- Lehnert et al. (2024) Lehnert, L., Sukhbaatar, S., Su, D., Zheng, Q., McVay, P., Rabbat, M., and Tian, Y. Beyond a*: Better planning with transformers via search dynamics bootstrapping. In First Conference on Language Modeling, 2024. URL https://openreview.net/forum?id=SGoVIC0u0f.

- Li et al. (2024) Li, Z., Liu, H., Zhou, D., and Ma, T. Chain of thought empowers transformers to solve inherently serial problems, 2024. URL https://arxiv.org/abs/2402.12875.

- Lin et al. (2024) Lin, B. Y., Bras, R. L., and Choi, Y. Zebralogic: Benchmarking the logical reasoning ability of language models, 2024. URL https://huggingface.co/spaces/allenai/ZebraLogic.

- Liu et al. (2024) Liu, L., Pfeiffer, J., Wu, J., Xie, J., and Szlam, A. Deliberation in latent space via differentiable cache augmentation. 2024. URL https://arxiv.org/abs/2412.17747.

- Lozhkov et al. (2024) Lozhkov, A., Ben Allal, L., Bakouch, E., von Werra, L., and Wolf, T. Finemath: the finest collection of mathematical content, 2024. URL https://huggingface.co/datasets/HuggingFaceTB/finemath.

- Nye et al. (2021a) Nye, M., Andreassen, A. J., Gur-Ari, G., Michalewski, H., Austin, J., Bieber, D., Dohan, D., Lewkowycz, A., Bosma, M., Luan, D., et al. Show your work: Scratchpads for intermediate computation with language models. arXiv preprint arXiv:2112.00114, 2021a.

- Nye et al. (2021b) Nye, M., Andreassen, A. J., Gur-Ari, G., Michalewski, H., Austin, J., Bieber, D., Dohan, D., Lewkowycz, A., Bosma, M., Luan, D., et al. Show your work: Scratchpads for intermediate computation with language models. arXiv preprint arXiv:2112.00114, 2021b.

- Pagnoni et al. (2024) Pagnoni, A., Pasunuru, R., Rodriguez, P., Nguyen, J., Muller, B., Li, M., Zhou, C., Yu, L., Weston, J., Zettlemoyer, L., Ghosh, G., Lewis, M., Holtzman, A., and Iyer, S. Byte latent transformer: Patches scale better than tokens. 2024. URL https://arxiv.org/abs/2412.09871.

- Pfau et al. (2024) Pfau, J., Merrill, W., and Bowman, S. R. Let’s think dot by dot: Hidden computation in transformer language models. arXiv preprint arXiv:2404.15758, 2024.

- Radford et al. (2019) Radford, A., Wu, J., Child, R., Luan, D., Amodei, D., Sutskever, I., et al. Language models are unsupervised multitask learners. OpenAI blog, 1(8):9, 2019.

- Raffel et al. (2020) Raffel, C., Shazeer, N., Roberts, A., Lee, K., Narang, S., Matena, M., Zhou, Y., Li, W., and Liu, P. J. Exploring the limits of transfer learning with a unified text-to-text transformer. Journal of Machine Learning Research, 21(140):1–67, 2020. URL http://jmlr.org/papers/v21/20-074.html.

- Saparov & He (2022) Saparov, A. and He, H. Language models are greedy reasoners: A systematic formal analysis of chain-of-thought. arXiv preprint arXiv:2210.01240, 2022.

- Saxton et al. (2019) Saxton, D., Grefenstette, E., Hill, F., and Kohli, P. Analysing mathematical reasoning abilities of neural models. arXiv preprint arXiv:1904.01557, 2019.

- Su et al. (2024) Su, D., Sukhbaatar, S., Rabbat, M., Tian, Y., and Zheng, Q. Dualformer: Controllable fast and slow thinking by learning with randomized reasoning traces. arXiv preprint arXiv:2410.09918, 2024.

- Su et al. (2025) Su, D., Gu, A., Xu, J., Tian, Y., and Zhao, J. Galore 2: Large-scale llm pre-training by gradient low-rank projection. arXiv preprint arXiv:2504.20437, 2025.

- Tang et al. (2024) Tang, Z., Zhang, X., Wang, B., and Wei, F. Mathscale: Scaling instruction tuning for mathematical reasoning. arXiv preprint arXiv:2403.02884, 2024.

- Tong et al. (2024) Tong, Y., Zhang, X., Wang, R., Wu, R., and He, J. Dart-math: Difficulty-aware rejection tuning for mathematical problem-solving. arXiv preprint arXiv:2407.13690, 2024.